Validating Pharmacophore Models: A Comprehensive Guide to Confirming Predictive Power with Experimental Data

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of pharmacophore model validation.

Validating Pharmacophore Models: A Comprehensive Guide to Confirming Predictive Power with Experimental Data

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of pharmacophore model validation. It covers the foundational importance of validation in computational drug discovery, explores established and emerging methodological frameworks for assessing model quality, and details practical troubleshooting strategies to overcome common challenges. A strong emphasis is placed on quantitative validation metrics and comparative analysis against experimental biological data, providing a clear pathway to build confidence in model predictions, de-risk projects, and accelerate the identification of viable lead compounds.

The Critical Role of Validation in Pharmacophore Modeling

In computer-aided drug discovery, pharmacophore models serve as abstract representations of the steric and electronic features essential for molecular recognition and biological activity. These models enable researchers to identify potential drug candidates through virtual screening of compound databases. However, the utility of any pharmacophore model depends entirely on its predictive power and reliability, making rigorous validation an indispensable step in model development. Validation ensures that computational models can accurately distinguish true active compounds from inactive ones, ultimately saving time and resources in downstream experimental testing. This guide examines the key validation methodologies, compares their performance metrics, and provides experimental protocols to help researchers establish confidence in their pharmacophore models.

Core Validation Methodologies and Performance Metrics

Pharmacophore model validation employs multiple complementary approaches to assess model quality, predictive capability, and robustness. The table below summarizes the primary validation methods and their key performance indicators.

Table 1: Comprehensive Overview of Pharmacophore Validation Methods

| Validation Method | Key Performance Indicators | Interpretation Guidelines | Strengths | Limitations |

|---|---|---|---|---|

| Decoy-Based Validation | AUC (Area Under Curve), EF (Enrichment Factor) | AUC > 0.9 (excellent), EF1% = 10 indicates 10-fold enrichment of actives in top 1% of screened compounds [1] [2] | Measures model's ability to distinguish active from inactive compounds | Quality depends on decoy set composition; may not reflect real-world screening |

| Test Set Validation | R²pred, rmse (root mean square error) | R²pred > 0.5 indicates acceptable predictive robustness [2] | Evaluates model performance on unseen compounds | Requires carefully curated external test set with diverse chemical structures |

| Cost Analysis | Δ cost (null cost - total cost) | Δ > 60 indicates model does not reflect chance correlation; configuration cost < 17 is satisfactory [2] | Statistical assessment of model significance | Does not directly measure predictive accuracy for new compounds |

| Fisher's Randomization | Statistical significance (p-value) | p < 0.05 indicates model is statistically significant and not result of chance correlation [2] | Robust statistical validation of model significance | Computationally intensive for large datasets |

| Internal Validation | Q² (LOO cross-validation coefficient), rmse | High Q² and low rmse indicate better predictive ability [2] | Uses training set data efficiently without requiring separate test set | May overestimate model performance compared to external validation |

Experimental Protocols for Key Validation Methods

Decoy Set Validation Protocol

Objective: To evaluate the model's ability to distinguish active compounds from inactive molecules (decoys) [1] [2].

Procedure:

- Decoy Generation: Generate decoy molecules using the DUD-E database generator (https://dude.docking.org/generate) with five key parameters: molecular weight, number of rotational bonds, hydrogen bond donor count, hydrogen bond acceptor count, and octanol-water partition coefficient to ensure physical similarity but chemical distinction from active compounds [2].

- Compound Categorization: Screen both active compounds and decoys using the pharmacophore model. Categorize results as:

- True Positive (TP): Active compounds correctly identified

- True Negative (TN): Decoys correctly rejected

- False Positive (FP): Decoys incorrectly identified as actives

- False Negative (FN): Active compounds incorrectly rejected [2]

- Performance Calculation: Generate Receiver Operating Characteristic (ROC) curve and calculate Area Under Curve (AUC). Calculate Enrichment Factor (EF) using the formula:

- EF = (TP / (TP + FN)) / (Selected compounds / Total compounds) [1]

Quality Control: A valid pharmacophore model should achieve AUC > 0.9 and EF1% (enrichment in top 1% of screened compounds) of at least 10 [1].

Test Set Validation Protocol

Objective: To assess model robustness and predictive performance on an independent compound set [2].

Procedure:

- Test Set Selection: Curate a dedicated test set with diversity in chemical structures and bioactivities, ensuring it represents compounds not used in model development.

- Activity Prediction: Apply the pharmacophore model to predict biological activities of test set compounds based on their pharmacophoric features.

- Accuracy Evaluation: Calculate predictive squared correlation coefficient (R²pred) and root mean square error (rmse) using the equations:

- Interpretation: An R²pred value > 0.5 indicates acceptable robustness and predictive capability of the model.

Cost Function Analysis and Fisher's Randomization Protocol

Objective: To verify that the model represents a statistically significant correlation rather than a chance occurrence [2].

Procedure:

- Cost Calculation: Determine three cost components:

- Weight cost: Fluctuation of weight variation with input actual value

- Error cost: Difference between predicted and observed activity of training set

- Configuration cost: Complexity of hypothesis space (should be < 17) [2]

- Significance Assessment: Calculate Δ cost as the difference between null hypothesis cost and total cost. A Δ > 60 indicates the model does not reflect chance correlation.

- Randomization Test:

- Randomly shuffle biological activity values of dataset compounds

- Reapply pharmacophore modeling to randomized datasets

- Generate distribution of correlation coefficients from randomized sets

- Compare original model correlation with randomization distribution

- A p-value < 0.05 indicates statistical significance [2]

Comparative Performance Analysis of Validation Methods

The table below presents quantitative validation data from recent research studies, enabling direct comparison of validation outcomes across different targets and model types.

Table 2: Experimental Validation Data from Recent Studies

| Study Target | Validation Method | Performance Results | Model Type | Reference |

|---|---|---|---|---|

| XIAP Protein (Cancer) | Decoy Set Validation | AUC = 0.98, EF1% = 10.0 | Structure-based pharmacophore | [1] |

| SARS-CoV-2 PLpro | Structure-based with docking concordance | Identified aspergillipeptide F as best inhibitor | Hybrid pharmacophore-docking approach | [3] [4] |

| Acetylcholinesterase (Alzheimer's) | Experimental testing of computational hits | 6 of 9 tested molecules showed strong inhibition (IC₅₀ ≤ control) | Machine learning-enhanced dyphAI protocol | [5] |

| Anti-HBV Flavonols | Specificity testing | 71% sensitivity, 100% specificity against FDA-approved compounds | Ligand-based pharmacophore | [6] |

Research Reagent Solutions for Validation Experiments

Table 3: Essential Research Tools and Resources for Pharmacophore Validation

| Resource/Tool | Function in Validation | Access Information |

|---|---|---|

| DUD-E Database | Generates property-matched decoy molecules for enrichment calculations | https://dude.docking.org/generate [2] |

| LigandScout | Creates and validates structure-based pharmacophore models; performs virtual screening | Commercial software (Inte: Ligand) [3] [1] [6] |

| ZINC Database | Provides commercially available compounds for virtual screening and test set creation | https://zinc.docking.org [5] [7] [8] |

| ChEMBL Database | Source of bioactive compounds with experimental data for model training and testing | https://www.ebi.ac.uk/chembl [6] |

| Protein Data Bank (PDB) | Source of 3D protein structures for structure-based pharmacophore modeling | https://www.rcsb.org [3] |

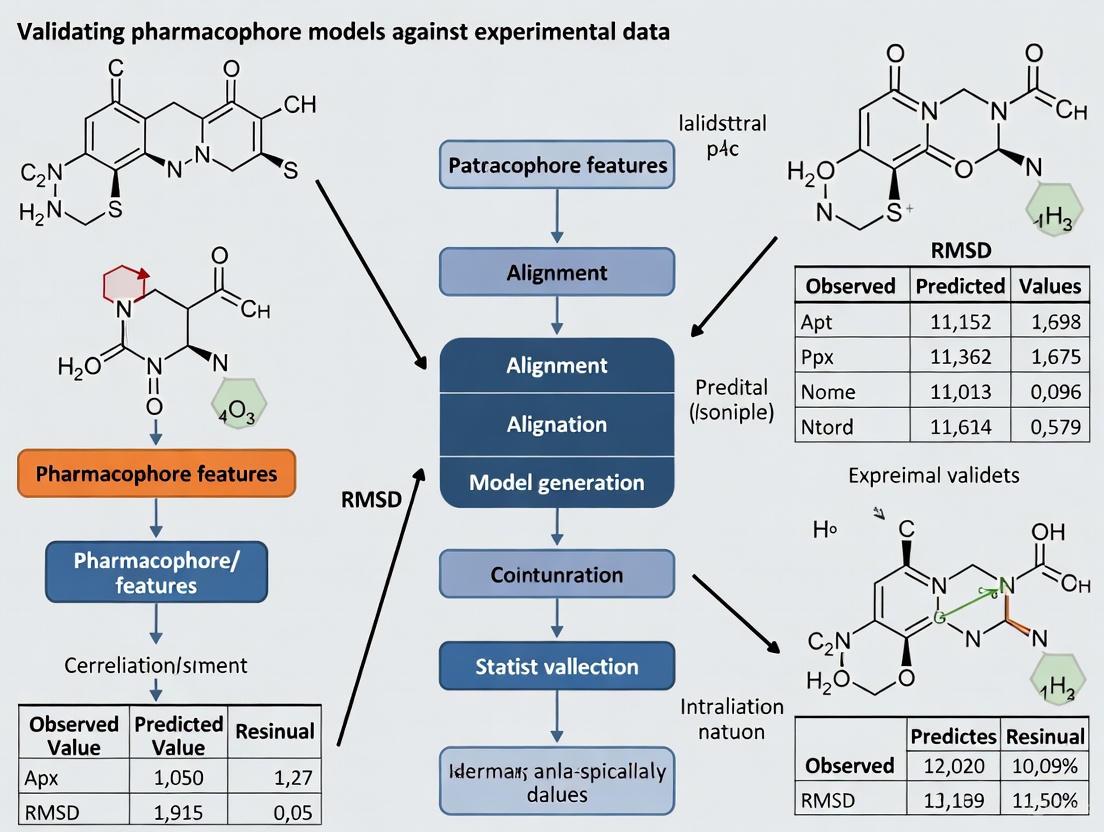

Workflow Diagram of Comprehensive Validation Strategy

Comprehensive Pharmacophore Validation Workflow

Robust validation is the cornerstone of reliable pharmacophore modeling in drug discovery. The integration of multiple validation methods—including decoy set validation, test set prediction, cost analysis, and statistical testing—provides a comprehensive framework for establishing model predictive power. As demonstrated across various therapeutic targets, rigorously validated pharmacophore models consistently demonstrate superior performance in virtual screening campaigns and higher success rates in experimental verification. The protocols and metrics presented in this guide offer researchers a standardized approach to pharmacophore validation, ultimately enhancing the efficiency and success of structure-based drug design initiatives.

In modern computer-aided drug design (CADD), pharmacophore modeling has emerged as a powerful tool for identifying potential drug candidates by representing the essential three-dimensional arrangement of molecular features necessary for biological activity [9]. These models serve as virtual filters to screen millions of compounds, dramatically reducing the time and resources needed for early drug discovery [10]. However, the predictive power of any pharmacophore model hinges on a crucial, non-negotiable step: rigorous validation. Validation transforms an abstract computational hypothesis into a reliable tool that can effectively bridge the gap between in-silico predictions and experimental reality, ensuring that virtual hits have a genuine probability of demonstrating biological activity in the laboratory [9] [10].

Without proper validation, pharmacophore models risk generating false positives and misleading results, potentially wasting significant research resources on dead-end compounds [10]. This comparison guide examines the methodologies, metrics, and real-world applications of pharmacophore model validation, providing researchers with a framework for evaluating the predictive power of their computational models before committing to costly experimental work.

Methodologies for Pharmacophore Model Validation

Theoretical Validation: Assessing Predictive Power Before Laboratory Testing

Theoretical validation represents the first critical assessment of a pharmacophore model's quality before any wet-lab experimentation [10]. This process evaluates whether a model can successfully distinguish known active compounds from inactive molecules using several established computational approaches:

Decoy-based Testing: This method employs the Database of Useful Decoys (DUDe), which generates chemically similar but physiologically inactive molecules to test the model's discrimination capability [11] [1]. The model's ability to retrieve true actives while excluding these decoys provides a crucial measure of its selectivity [1].

Receiver Operating Characteristic (ROC) Analysis: ROC curves graphically represent a model's ability to balance sensitivity (identifying true actives) against specificity (rejecting inactives) [12]. The Area Under the Curve (AUC) quantifies this performance, where values closer to 1.0 indicate superior discriminatory power [12] [1].

Enrichment Factor (EF) Calculation: The EF measures how effectively a model concentrates active compounds early in the screening process compared to random selection [11] [13]. Higher EF values indicate better performance for practical virtual screening applications where resources are limited [13].

Table 1: Key Metrics for Theoretical Validation of Pharmacophore Models

| Validation Metric | Calculation/Definition | Optimal Values | Interpretation |

|---|---|---|---|

| AUC (Area Under ROC Curve) | Area under sensitivity vs. 1-specificity plot | 0.7-0.8 (Good), 0.8-1.0 (Excellent) [12] | Overall discrimination capability between actives and inactives |

| Enrichment Factor (EF) | (Hitssampled⁄Nsampled) ÷ (Hitstotal⁄Ntotal) | >1 indicates enrichment over random [13] | Ability to concentrate actives in early screening stages |

| Goodness of Hit (GH) Score | Composite measure of recall and precision | 0-1 (Higher values indicate better performance) [14] | Overall quality of virtual screening results |

| Early Enrichment (EF1%) | EF at the top 1% of screened database | 10-100+ (Context dependent) [1] | Early recognition capability valuable for large libraries |

Experimental Workflow for Pharmacophore Validation

The following diagram illustrates the comprehensive validation workflow that bridges in-silico predictions with experimental confirmation:

This workflow demonstrates the iterative nature of pharmacophore validation, where models are refined based on both theoretical metrics and experimental feedback.

Comparative Performance: Validated vs. Non-Validated Models

Case Studies in Validation Success

Recent studies across diverse therapeutic targets demonstrate how rigorous validation creates reliable bridges to experimental success:

Neuroblastoma Treatment Targeting BRD4: Researchers developed a structure-based pharmacophore model to identify natural compounds inhibiting the BRD4 protein [11]. The model was validated with an exceptional AUC of 1.0 and enrichment factors ranging from 11.4 to 13.1, indicating outstanding discriminatory power [11]. This theoretical validation preceded the identification of four natural compounds (ZINC2509501, ZINC2566088, ZINC1615112, and ZINC4104882) that showed promising binding affinity and were further validated through molecular dynamics simulations [11].

Cancer Immunotherapy Targeting PD-L1: In developing inhibitors for the PD-1/PD-L1 immune checkpoint pathway, scientists created a structure-based pharmacophore model from the crystal structure 6R3K [12]. Validation with ROC analysis yielded an AUC of 0.819, confirming the model's ability to distinguish active from inactive compounds [12]. This validation enabled the identification of marine natural compound 51320 as a promising PD-L1 inhibitor, which was subsequently confirmed through molecular docking and dynamics simulations to maintain stable conformation with the target protein [12].

Hepatocellular Carcinoma Targeting XIAP: A structure-based pharmacophore model aimed at identifying natural anti-cancer agents targeting XIAP protein achieved excellent validation metrics with an AUC of 0.98 and early enrichment (EF1%) of 10.0 [1]. This robust theoretical validation preceded the identification of three natural compounds (Caucasicoside A, Polygalaxanthone III, and MCULE-9896837409) that demonstrated stability in molecular dynamics simulations, suggesting their potential as lead compounds for XIAP-related cancers [1].

Benchmark Comparison: Pharmacophore vs. Docking-Based Virtual Screening

A comprehensive benchmark study comparing pharmacophore-based virtual screening (PBVS) against docking-based virtual screening (DBVS) across eight diverse protein targets revealed significant performance differences:

Table 2: Performance Comparison of Virtual Screening Methods Across Eight Targets [13]

| Screening Method | Average Hit Rate at 2% Database | Average Hit Rate at 5% Database | Number of Targets with Superior Enrichment | Key Advantage |

|---|---|---|---|---|

| Pharmacophore-Based (PBVS) | Significantly Higher [13] | Significantly Higher [13] | 14 out of 16 cases [13] | Better early enrichment |

| Docking-Based (DBVS) | Lower [13] | Lower [13] | 2 out of 16 cases [13] | Detailed binding mode analysis |

| Combined Approach | Highest [10] | Highest [10] | N/A | Complementary strengths |

The study concluded that "the PBVS method outperformed DBVS methods in retrieving actives from the databases in our tested targets" [13]. This performance advantage highlights the importance of proper pharmacophore model validation, as well-validated pharmacophore models can significantly enhance virtual screening efficiency.

Experimental Protocols for Comprehensive Validation

ROC Curve Generation Protocol

The Receiver Operating Characteristic (ROC) analysis serves as a fundamental validation method for assessing a pharmacophore model's discrimination ability:

Prepare Test Set: Compile a set of known active compounds (20-50 molecules) and generate decoy molecules using the DUD-E server or similar tools [11] [1]

Screen Database: Perform virtual screening using the pharmacophore model against the combined active and decoy compound set

Calculate Metrics: For each scoring threshold, calculate:

- True Positive Rate (Sensitivity) = TP/(TP+FN)

- False Positive Rate (1-Specificity) = FP/(FP+TN)

- where TP=True Positives, FP=False Positives, TN=True Negatives, FN=False Negatives [12]

Plot ROC Curve: Graph TPR against FPR across all possible thresholds [12]

Calculate AUC: Determine the Area Under the Curve using numerical integration methods [12] [1]

Interpret Results: AUC values of 0.5 suggest random performance, 0.7-0.8 indicate good discrimination, and 0.9-1.0 represent excellent discriminatory power [12]

Experimental Validation Workflow

After theoretical validation, comprehensive experimental confirmation follows this established protocol:

Virtual Screening: Apply the validated pharmacophore model to screen large compound databases (e.g., ZINC, containing over 230 million purchasable compounds) [11] [1]

Molecular Docking: Subject virtual hits to molecular docking studies to evaluate binding modes and affinities with the target protein [11] [12]

ADMET Profiling: Predict absorption, distribution, metabolism, excretion, and toxicity properties using tools like SwissADME or admetSAR [11] [12]

Molecular Dynamics Simulations: Conduct MD simulations (typically 50-200 ns) to assess the stability of protein-ligand complexes [11] [1]

Binding Free Energy Calculations: Perform MM-GBSA or MM-PBSA calculations to quantify binding affinities [11]

In Vitro Testing: Experimentally validate top candidates using biological assays to determine IC50 values and dose-response relationships [15]

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Resources for Pharmacophore Modeling and Validation

| Resource/Solution | Function in Validation | Specific Examples | Key Features |

|---|---|---|---|

| Decoy Database | Provides inactive molecules for selectivity testing | DUD-E (Database of Useful Decoys) [11] [1] | Matches physico-chemical properties but dissimilar topology |

| Compound Database | Source of molecules for virtual screening | ZINC database [11] [1] | 230+ million purchasable compounds, ready for docking |

| Validation Software | Calculate enrichment metrics and ROC curves | LigandScout [11] [1] | Automated pharmacophore creation and validation |

| Docking Tools | Confirm binding modes of virtual hits | AutoDock [12], GOLD [13], Glide [13] | Multiple algorithms for consensus docking |

| Dynamics Software | Assess complex stability | GROMACS, AMBER, Desmond | Nanosecond-scale simulations for stability validation |

| ADMET Prediction | Evaluate drug-like properties | SwissADME, admetSAR, PreADMET | Early toxicity and pharmacokinetics assessment |

The evidence from comparative studies and real-world applications consistently demonstrates that comprehensive validation is not an optional extra but an essential requirement for successful pharmacophore modeling. Proper validation through ROC analysis, enrichment calculations, and experimental confirmation transforms computational hypotheses into reliable tools that effectively bridge the in-silico and experimental realms [11] [12] [1].

The benchmark studies revealing pharmacophore-based screening's superiority over docking-based approaches in many scenarios further underscore the importance of rigorous validation practices [13]. As pharmacophore modeling continues to evolve toward addressing more complex challenges like protein-protein interactions and polypharmacology, robust validation methodologies will remain the critical foundation ensuring these computational approaches generate biologically relevant results worthy of experimental investigation [9] [10].

In computational drug discovery, pharmacophore models serve as essential abstract representations of the molecular features necessary for a ligand to interact with a biological target. However, the predictive power and real-world applicability of these models hinge entirely on rigorous validation, grounded in the core statistical principles of sensitivity and specificity, and the overarching imperative to avoid overfitting. Overfitting creates models that perform exceptionally well on training data but fail to generalize to real-world scenarios, ultimately compromising their predictive reliability [16]. This guide provides a comparative analysis of validation methodologies and performance metrics, drawing on recent research to outline robust experimental protocols for ensuring that pharmacophore models are both accurate and trustworthy for drug development professionals.

Quantitative Performance Comparison of Pharmacophore Models

The evaluation of a pharmacophore model's performance requires a multifaceted approach, examining its ability to correctly identify active compounds (sensitivity) while rejecting inactive ones (specificity). The following table summarizes key metrics and their reported values from recent studies.

Table 1: Key Performance Metrics for Pharmacophore Model Validation

| Metric | Definition | Interpretation | Reported Value (Example) |

|---|---|---|---|

| Sensitivity | Proportion of true actives correctly identified by the model [17]. | High sensitivity indicates a low false negative rate; the model misses few potential hits. | 71% for an anti-HBV flavonol model [6]. |

| Specificity | Proportion of true decoys (inactives) correctly rejected by the model [17]. | High specificity indicates a low false positive rate; the model filters out irrelevant compounds well. | 100% for an anti-HBV flavonol model [6]. |

| Enrichment Factor (EF) | Measures how much more concentrated actives are in the hit list compared to a random selection [17]. | An EF >1 indicates the model enriches for active compounds. | Calculated from screening libraries [17]. |

| Goodness of Hit (GH) | A composite score balancing the recall of actives and the false positive rate [17]. | A score closer to 1.0 indicates a high-quality, balanced model. | Calculated from sensitivity and specificity data [17]. |

The performance of a model can vary significantly based on its design and application. For instance, a structure-based pharmacophore model for Focal Adhesion Kinase 1 (FAK1) inhibitors was validated using 114 active compounds and 571 decoys from the DUD-E database, with its sensitivity and specificity calculated using standard formulas [17]. In a separate study, a flavonol-based pharmacophore model targeting Hepatitis B Virus (HBV) demonstrated a sensitivity of 71% and a perfect specificity of 100% when validated against a set of FDA-approved chemicals, highlighting its exceptional ability to avoid false positives [6].

Detailed Experimental Protocols for Model Validation

A robust validation protocol is critical for generating reliable performance metrics. The following sections detail common methodologies used in pharmacophore modeling and the subsequent steps to avoid overfitting.

Structure-Based Pharmacophore Modeling and Validation

This protocol uses a known protein-ligand complex to derive critical interaction features.

- Step 1: Structure Preparation. Obtain the co-crystal structure of the target protein with a bound ligand from the Protein Data Bank (PDB). Model any missing residues using software like MODELLER, and select the model with the lowest Discrete Optimized Protein Energy (zDOPE) score for further analysis [17].

- Step 2: Pharmacophore Generation. Upload the protein-ligand complex to a tool such as Pharmit. The software will identify critical pharmacophoric features (e.g., hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings). Generate multiple candidate models, each containing a different set of 5-6 features [17].

- Step 3: Model Validation with Actives and Decoys. This is the critical step for calculating sensitivity and specificity.

- Library Curation: Download a set of known active compounds and a set of decoy molecules for your target from a database like DUD-E (Directory of Useful Decoys - Enhanced) [17].

- Virtual Screening: Screen both the active and decoy libraries against each of your candidate pharmacophore models.

- Statistical Analysis: Calculate sensitivity (True Positive Rate), specificity (True Negative Rate), Enrichment Factor (EF), and Goodness of Hit (GH) for each model. The model with the highest validation performance (balancing high sensitivity and specificity) is selected for further virtual screening [17].

Addressing Overfitting Through Data Handling and Validation Strategies

Overfitting occurs when a model learns the noise in the training data rather than the underlying pattern. The following practices are essential for mitigation.

- Proper Data Splitting: Data splitting strategies are a primary defense against overfitting. Using a split based on the Butina algorithm or scaffold splitting provides a more challenging and realistic benchmark than a simple random split, as it tests the model's ability to generalize to entirely new chemical scaffolds [18].

- Cautious Hyperparameter Tuning: Extensive optimization of model hyperparameters (e.g., for a Graph Neural Network like ChemProp) can lead to overfitting, particularly on small datasets. Using a preselected set of hyperparameters can sometimes produce models with similar or even better generalizability than those obtained through exhaustive grid optimization [18].

- External Validation: The most critical step is to evaluate the final model on a completely external dataset that was not used in any part of the model building or training process. This provides the best estimate of how the model will perform in a real-world setting [16].

- Adopting Robust Validation Frameworks: Tools like ML-AMPSIT leverage multiple machine learning methods to build surrogate models for sensitivity analysis, helping to identify the most important parameters and reduce over-reliance on a single model's output [19].

Workflow Visualization: Pharmacophore Validation and Overfitting Avoidance

The following diagram illustrates the integrated workflow for developing and validating a pharmacophore model, highlighting key steps to prevent overfitting.

Diagram 1: Pharmacophore model development and validation workflow, showing key overfitting avoidance checkpoints.

Successful pharmacophore modeling relies on a suite of computational tools and databases. The table below lists key resources mentioned in recent literature.

Table 2: Essential Reagents and Resources for Pharmacophore Research

| Resource Name | Type | Primary Function in Validation | Example Use Case |

|---|---|---|---|

| DUD-E Database [17] | Online Database | Provides curated sets of active compounds and decoys for a wide range of biological targets. | Used for calculating the sensitivity and specificity of a FAK1 pharmacophore model [17]. |

| ZINC Database [5] [20] | Commercial Compound Library | A large, publicly available database of purchasable compounds for virtual screening to identify novel hits. | Screened to discover new acetylcholinesterase inhibitors [5] and MAO inhibitors [20]. |

| Pharmit [17] | Web Tool | Performs structure-based pharmacophore generation and provides a platform for virtual screening and model validation. | Used to create and screen pharmacophore models for FAK1 [17]. |

| LigandScout [6] | Software | Enables the development of both ligand-based and structure-based pharmacophore models from molecular data. | Utilized to establish a flavonol-based pharmacophore model for anti-HBV activity [6]. |

| ML-AMPSIT [19] | Computational Tool | A machine learning-based tool for parameter sensitivity and importance analysis, aiding in robust model calibration. | Helps quantify the impact of input parameter variations on model output, reducing overfitting risk. |

The journey from a computational pharmacophore model to a reliable tool for drug discovery is paved with rigorous validation. As this guide has detailed, this process is non-negotiable and must be anchored by the quantitative assessment of sensitivity and specificity, and a relentless focus on strategies to avoid overfitting. By adhering to robust experimental protocols—including proper data splitting, cautious hyperparameter tuning, and, most importantly, external validation—researchers can ensure their models possess not just apparent accuracy on training data, but genuine predictive power for identifying novel therapeutic candidates. In an era of increasingly complex models and algorithms, these core principles remain the bedrock of trustworthy computational science.

In the rigorous field of computer-aided drug design, pharmacophore models serve as abstract blueprints defining the essential steric and electronic features a molecule must possess to interact with a biological target [21]. However, the predictive power of these models is entirely contingent on the quality of their validation. When validation against robust experimental data is inadequate, the consequences cascade through the entire drug discovery pipeline, leading to significant resource depletion and the pursuit of non-viable chemical leads.

The Critical Role of Validation in Pharmacophore Modeling

A pharmacophore model reduces complex molecular interactions to a set of critical features—such as hydrogen bond donors (HBD), hydrogen bond acceptors (HBA), hydrophobic areas (H), and ionizable groups (PI/NI)—that are necessary for biological activity [21]. These models can be built using either a structure-based approach (relying on the 3D structure of the target protein) or a ligand-based approach (derived from a set of known active ligands) [21].

The principle of "Garbage In, Garbage Out" (GIGO) is acutely relevant here. The quality of the model's output is fundamentally dependent on the quality of the input data and the rigor of the validation process [22]. Poor data quality at the input stage, including inaccurate, incomplete, or non-representative structural or activity data, inevitably produces a flawed model. Subsequent decisions based on such a model are built on a shaky foundation, compromising the entire project.

The financial and operational toll of basing research on poorly validated models is substantial. The following table summarizes the key areas of waste identified in scientific and industry analyses:

Table 1: Consequences of Poor Pharmacophore Model Validation

| Impact Area | Specific Consequences | Supporting Data |

|---|---|---|

| Financial Costs | Wasted resources on synthesizing and testing non-viable leads; missed business opportunities. | Poor data quality costs organizations an average of $12.9 - $13.3 million annually [22] [23]. |

| Time & Productivity | Scientists and managers spend excessive time hunting for data, validating accuracy, or cleaning up errors. | Data-intensive businesses waste 50% of time on data-related tasks instead of research [22]. Data scientists spend 80% of their time finding and cleaning data [22]. |

| Operational Efficiency | Delayed project timelines; need for extensive data re-validation and manual correction of screening results. | Labor productivity can drop by up to 20% due to data issues [23]. Up to 40% of companies fail to meet business goals due to flawed data [23]. |

| Strategic Missteps | Misallocation of resources to unpromising chemical series; compromised competitive positioning. | Only 3% of companies' data meets basic quality standards, undermining strategic planning [22]. |

Case Studies: Misguided Lead Optimization in Practice

The Challenge of Demanding Targets

Benchmarking studies on pharmaceutically relevant targets like the A2A adenosine receptor (AA2AR) and heat shock protein 90 (HSP90) have shown that default molecular docking scoring functions often perform poorly, failing to enrich active ligands at the top of virtual screening lists [24]. If a pharmacophore model used for lead optimization is validated solely against these flawed docking poses without experimental correlation, it will perpetuate the same errors. This directs medicinal chemists to optimize compounds based on incorrect interaction hypotheses, wasting months of synthetic effort [24].

The Pitfalls of Incomplete Feature Selection

In a study focused on optimizing Estrogen Receptor beta binders, researchers highlighted that a robust Quantitative Structure-Activity Relationship (QSAR) model must balance predictive accuracy with mechanistic interpretation [25]. A poorly validated model might miss critical synergisms between features, such as the role of specific sp2-hybridized carbon and nitrogen atoms alongside lipophilic features [25]. Lead optimization guided by such a model would focus on the wrong molecular features, leading to costly cycles of analog synthesis with diminishing returns.

Best Practices for Rigorous Experimental Validation

To avoid the pitfalls of poor validation, the following methodologies and protocols are essential for integrating experimental data into the pharmacophore modeling workflow.

Structure-Based Validation Protocols

Methodology: When a protein-ligand co-crystal structure is available, it provides the most direct source for validation [21].

- Protein Preparation: The 3D protein structure from the PDB must be critically prepared, evaluating protonation states, adding hydrogen atoms, and correcting for any missing residues or atoms [21].

- Binding Site Analysis: The ligand-binding site must be defined, preferably using the coordinates of a co-crystallized ligand. Tools like GRID or LUDI can be used to map interaction hotspots [21].

- Experimental Cross-Checking: The generated pharmacophore features must be cross-referenced with the actual interactions observed in the experimental structure (e.g., hydrogen bonds with key residues, hydrophobic contacts). Features that do not correspond to energetically favorable or conserved interactions should be rejected [21].

Enrichment-Driven Optimization and Benchmarking

Methodology: This powerful technique uses known active and inactive/decoy compounds to quantitatively test a model's performance [24].

- Dataset Curation: A high-quality benchmark set, such as those from the DUDE-Z database, is required. This set contains confirmed active ligands and property-matched decoy compounds that are chemically similar but physiologically inactive [24].

- Validation Workflow: The pharmacophore model is used to screen the benchmark set. Its ability to correctly rank active compounds highly while discarding decoys is measured.

- Model Refinement: The model's features and spatial tolerances are iteratively refined to maximize the enrichment of known actives. Advanced methods like the O-LAP algorithm use this approach to optimize shape-focused models by clustering docked active ligands [24].

The logical workflow for rigorous validation is outlined below:

Integration with Molecular Dynamics and Water Mapping

Methodology: Moving beyond static structures, molecular dynamics (MD) simulations provide a dynamic validation framework.

- Simulation Setup: Run MD simulations (e.g., using AMBER or GROMACS) of the apo (ligand-free) protein or protein-ligand complexes in an explicit solvent [26].

- Dynamic Pharmacophore (Dynophore) Generation: Analyze the simulation trajectory to extract interaction points and their frequency, creating a dynamic pharmacophore model [26].

- Water-Based Feature Identification: In apo simulations, the behavior of explicit water molecules in the binding site can be converted into pharmacophore features using tools like PyRod. This "water pharmacophore" maps interaction hotspots and can validate the relevance of features in a model intended for a solvated pocket [26].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following tools and databases are critical for conducting the rigorous validation protocols described above.

Table 2: Essential Research Tools for Pharmacophore Validation

| Tool / Resource | Type | Primary Function in Validation |

|---|---|---|

| RCSB Protein Data Bank (PDB) | Database | Provides experimental 3D structures of proteins and protein-ligand complexes for structure-based model building and cross-validation [21]. |

| DUDE-Z / DUD-E Database | Database | Supplies benchmark sets of known active and decoy molecules for quantitative performance testing and enrichment calculations [24]. |

| Molecular Dynamics Software(e.g., AMBER, GROMACS) | Software Suite | Simulates protein and ligand dynamics in a solvated environment to validate model stability and identify dynamic interaction features [26]. |

| PyRod | Software Tool | Converts data from MD simulations of apo proteins into water-based pharmacophore models, offering an alternative validation perspective [26]. |

| O-LAP Algorithm | Software Tool | Generates and optimizes shape-focused pharmacophore models through graph clustering and enrichment-driven benchmarking [24]. |

| PLANTS | Software Tool | Performs flexible molecular docking to generate ligand poses which can serve as input for model building and as a negative control for validation [24]. |

| GRID / LUDI | Software Tool | Analyses protein binding sites to map molecular interaction fields, helping to validate the chemical relevance of hypothesized pharmacophore features [21]. |

In pharmacophore-based drug discovery, the line between a successful lead optimization campaign and a costly failure is often drawn by the rigor of validation. The consequences of poor validation are not merely theoretical; they are quantifiable in millions of dollars wasted, months of lost productivity, and ultimately, misguided scientific efforts. By adopting a multi-faceted validation strategy that integrates experimental structures, rigorous benchmark sets, and dynamic simulations, researchers can transform their pharmacophore models from potential liabilities into reliable, strategic assets that genuinely accelerate the journey to a clinical candidate.

A Practical Framework for Pharmacophore Model Validation

In computational drug design, a pharmacophore model abstractly represents the spatial and electronic features of a ligand that are crucial for its biological interaction [27]. The predictive accuracy and reliability of these models are paramount, as they are employed in virtual screening to identify potential drug candidates from extensive chemical databases [4]. Validation separates useful models from those that may lead researchers astray, ensuring that computational predictions translate to real-world biological activity. Without rigorous validation, pharmacophore models risk high false-positive rates, misallocating valuable experimental resources [27] [17].

Among the various statistical methods available, Receiver Operating Characteristic (ROC) curves and Area Under the Curve (AUC) analysis have emerged as the gold standard for evaluating the discriminatory power of pharmacophore models [12] [1]. These techniques provide a robust, quantitative framework for assessing a model's ability to distinguish between truly active compounds and inactive decoys, offering a critical benchmark before proceeding to costly experimental stages [17].

Theoretical Foundations of ROC and AUC Analysis

The ROC Curve and Its Interpretation

The ROC curve is a graphical plot that illustrates the diagnostic ability of a binary classifier system, such as a pharmacophore model used for virtual screening. It is created by plotting the True Positive Rate (TPR), or Sensitivity, against the False Positive Rate (FPR), or (1 - Specificity), across a series of classification thresholds [12] [27].

- Sensitivity (True Positive Rate): This measures the model's ability to correctly identify active compounds. It is calculated as the number of true positives divided by the sum of true positives and false negatives (

Sensitivity = (Ha / A) * 100) [17]. A model with high sensitivity successfully retrieves most of the known active molecules from a database. - Specificity (True Negative Rate): This measures the model's ability to correctly reject inactive compounds or decoys. It is calculated as the number of true negatives divided by the sum of true negatives and false positives [17]. High specificity indicates that the model generates few false alarms.

As the threshold for considering a compound a "hit" is varied, the resulting pairs of TPR and FPR values generate the ROC curve. A model with no discriminatory power, equivalent to random selection, will produce a diagonal line from the bottom-left to the top-right corner. Conversely, a model with perfect discrimination will curve sharply towards the top-left corner [12] [28].

AUC as a Performance Metric

The Area Under the ROC Curve (AUC) provides a single, scalar value to summarize the overall performance of the model. The AUC value ranges from 0 to 1, offering a threshold-independent measure of quality [28] [1].

- AUC = 1.0: Represents a perfect classifier. All active compounds are ranked above all inactive decoys.

- AUC = 0.5: Indicates a classifier with no discriminatory power, equivalent to random guessing.

- AUC > 0.7: Generally considered to indicate acceptable discrimination [29].

- AUC > 0.8: Suggests good model performance [12].

- AUC ≥ 0.98: Reflects excellent or outstanding performance, closely approximating an ideal classifier [1].

The AUC is particularly valuable in virtual screening because it evaluates the model's ranking capability, which is often more important than a binary classification at a fixed threshold. A higher AUC signifies a greater probability that a randomly chosen active compound will be ranked higher than a randomly chosen inactive compound by the model [27].

Experimental Protocols for ROC and AUC Validation

Workflow for Model Validation

The validation of a pharmacophore model using ROC curves follows a systematic protocol to ensure unbiased and reproducible results. The following diagram illustrates the key stages of this process.

Key Experimental Steps

Preparation of Active and Decoy Compound Sets: The first critical step involves curating a reliable validation dataset.

- Active Compounds: A set of known active compounds against the target protein is collected from literature and biochemical databases like ChEMBL [11] [1]. These compounds serve as true positives that the model should ideally identify. For example, a study targeting XIAP protein used 10 known active antagonists for validation [1].

- Decoy Compounds: A corresponding set of decoy molecules, which are chemically similar but physiologically inactive, is generated from specialized databases such as the Directory of Useful Decoys - Enhanced (DUD-E) [11] [17]. Decoys act as true negatives, testing the model's ability to avoid false positives.

Virtual Screening and Performance Calculation: The pharmacophore model is used to screen the combined set of active and decoy compounds. The results are tabulated into a confusion matrix, and key metrics are calculated using the following formulas [17]:

- Sensitivity (True Positive Rate):

Sensitivity = (Ha / A) * 100 - Specificity:

Specificity = (Hd / D) * 100(WhereHais the number of active compounds retrieved,Ais the total number of active compounds,Hdis the number of decoys not retrieved, andDis the total number of decoys).

- Sensitivity (True Positive Rate):

ROC Curve Generation and AUC Calculation: The screening results are analyzed across all possible thresholds to generate the ROC curve. The AUC is then computed, often using tools integrated within molecular modeling software like LigandScout or Maestro [28] [29]. The calculated AUC and the shape of the ROC curve provide a direct visual and quantitative assessment of the model's quality.

Comparative Performance Data from Case Studies

ROC and AUC analysis has been successfully implemented across diverse drug discovery projects. The table below summarizes quantitative validation data from recent studies, demonstrating the application of this gold-standard technique.

Table 1: Comparative AUC and Enrichment Factors from Recent Pharmacophore Studies

| Target Protein | Research Objective | AUC Value | Enrichment Factor (EF) | Key Outcome |

|---|---|---|---|---|

| Brd4 [11] | Identify neuroblastoma inhibitors | 1.0 | 11.4 - 13.1 | Excellent performance; identified 4 natural compounds |

| XIAP [1] | Identify anti-cancer agents | 0.98 | 10.0 (at 1% threshold) | Excellent performance; identified 3 stable compounds |

| PD-L1 [12] | Identify immune-oncology inhibitors | 0.819 | Information Not Specified | Good performance; identified marine natural compound 51320 |

| FGFR1 [28] | Identify kinase inhibitors for cancer | Model "high discriminatory power" | Information Not Specified | Successful identification of novel inhibitors |

Analysis of Comparative Data

The data illustrates how AUC values directly correlate with model confidence and screening success. The Brd4 study achieved a perfect AUC of 1.0, which signified an exceptional ability to distinguish actives from decoys and led to the identification of four promising natural compounds with low predicted side effects [11]. Similarly, the XIAP model, with an AUC of 0.98, demonstrated near-perfect classification, resulting in three stable lead compounds validated by molecular dynamics simulation [1]. The PD-L1 model, with a solid AUC of 0.819, provided good discriminatory power, enabling the discovery of a marine natural product as a potential small-molecule inhibitor [12]. These case studies confirm that AUC is a critical and reliable predictor of a pharmacophore model's utility in a practical drug discovery pipeline.

Advanced Metrics and Complementary Validation Techniques

Beyond AUC: Enrichment Factors and GH Score

While AUC provides an overall measure of performance, other metrics offer additional insights, particularly in the early stages of virtual screening where identifying a small number of top-ranked actives is crucial.

- Enrichment Factor (EF): The EF measures how much more likely a model is to find active compounds compared to random selection in a given top fraction of the screened database (e.g., the top 1%). It is calculated as

EF = (Ha / N) / (A / T), whereNis the number of compounds selected from the top of the list, andTis the total number of compounds in the database [11] [17]. A study on Brd4 inhibitors reported excellent EF values ranging from 11.4 to 13.1, indicating high enrichment of active compounds in the top ranks [11]. - Goodness of Hit (GH) Score: The GH score is a composite metric that combines recall (sensitivity) and precision to evaluate the early enrichment capability of a model. A score of 1 represents ideal enrichment, while a score of 0 indicates no enrichment [30] [17].

Integration with Other Validation Methods

ROC/AUC analysis is often used in conjunction with other computational techniques to form a comprehensive validation framework.

- Molecular Docking: Validated pharmacophore models are frequently used to generate a focused compound library, which is then subjected to molecular docking to predict binding poses and affinities, as seen in studies targeting FAK1 and SARS-CoV-2 PLpro [4] [17].

- Molecular Dynamics (MD) Simulations: Top-ranking hits from docking can be further analyzed using MD simulations. This technique assesses the stability of the protein-ligand complex over time and provides more accurate binding free energy calculations via methods like MM-GBSA and MM-PBSA [11] [17].

Essential Research Reagents and Computational Tools

The experimental validation of pharmacophore models relies on a suite of specialized software tools and databases. The following table details key "research reagents" essential for conducting ROC and AUC analysis.

Table 2: Key Computational Tools and Databases for Pharmacophore Validation

| Tool / Database Name | Type | Primary Function in Validation |

|---|---|---|

| DUD-E [1] [17] | Database | Provides benchmark sets of known active compounds and matched decoys for unbiased validation. |

| ZINC Database [11] [1] | Database | A large, commercially available compound library used for virtual screening after model validation. |

| LigandScout [11] [29] | Software | Used for structure-based and ligand-based pharmacophore modeling, and includes ROC analysis for validation. |

| Schrödinger Suite [28] | Software | Integrated drug discovery platform used for pharmacophore modeling, molecular docking, and simulation. |

| Pharmit [17] [31] | Online Tool | A web-based resource for structure-based pharmacophore modeling and high-throughput virtual screening. |

| AutoDock Vina [4] | Software | A widely used molecular docking program for predicting binding modes and affinities of hit compounds. |

| GROMACS [17] | Software | A molecular dynamics simulation package used to study the stability and dynamics of protein-ligand complexes. |

ROC curve analysis and AUC quantification represent the gold standard for validating pharmacophore models in computer-aided drug design. As demonstrated by numerous case studies across various therapeutic targets, these metrics provide an objective, quantitative, and reliable measure of a model's ability to distinguish active from inactive compounds. The consistent correlation between high AUC values and successful downstream identification of novel bioactive agents underscores the critical importance of this validation step. Integrating ROC/AUC analysis with molecular docking, dynamics simulations, and experimental assays creates a powerful, multi-tiered validation framework that enhances the efficiency and success rate of modern drug discovery pipelines.

In modern computational drug discovery, pharmacophore modeling serves as a crucial framework for identifying and optimizing novel therapeutic compounds. A pharmacophore represents an abstract description of molecular features essential for biological recognition, comprising hydrogen bond donors/acceptors, hydrophobic regions, and charged groups spatially arranged to complement a biological target [27]. As these models transition from theoretical constructs to practical screening tools, rigorous validation becomes paramount to ensure their predictive capability and reliability. This validation process quantitatively assesses how effectively a pharmacophore hypothesis can distinguish active compounds from inactive molecules in virtual screening campaigns, with Enrichment Factor (EF) and Goodness-of-Hit (GH) score emerging as the two cornerstone metrics for this evaluation [32] [33].

The critical importance of EF and GH scores extends beyond mere model validation—they provide crucial insights into the cost-effectiveness and probable success of subsequent experimental phases. In a typical virtual screening workflow, thousands to millions of compounds are evaluated computationally before selecting a handful for experimental testing. Without robust validation metrics, researchers risk squandering significant resources on compounds unlikely to display activity. The EF quantitatively measures how much better a pharmacophore model performs compared to random selection, while the GH score provides a balanced assessment that considers both the yield of actives and the false-negative rate [34] [35]. Together, these metrics form a statistical foundation for prioritizing which pharmacophore models to trust and which to refine or discard, ultimately accelerating the identification of novel drug candidates across therapeutic areas including cancer, metabolic disorders, and inflammatory diseases [32] [36] [33].

Theoretical Foundations of Key Metrics

The Enrichment Factor (EF)

The Enrichment Factor (EF) quantifies the performance of a virtual screening method by measuring how effectively it concentrates active compounds early in the screening rank list compared to random selection. The calculation measures the ratio of found actives in a selected top fraction of the screened database to the number of actives expected in that same fraction by random chance [37] [34]. The mathematical expression for EF is:

EF = (Hitₛₐₘₚₗₑ / Nₛₐₘₚₗₑ) / (Hitₜₒₜₐₗ / Nₜₒₜₐₗ)

Where:

- Hitₛₐₘₚₗₑ = Number of active compounds in the top subset of the ranked database

- Nₛₐₘₚₗₑ = Total number of compounds in the top subset screened

- Hitₜₒₜₐₗ = Total number of active compounds in the entire database

- Nₜₒₜₐₗ = Total number of compounds in the entire database

An EF value of 1.0 indicates random performance, while values exceeding 1.0 demonstrate increasingly superior enrichment. For example, in a study identifying cyclooxygenase-2 (COX-2) inhibitors, researchers achieved EF values significantly greater than 1, confirming their model's ability to prioritize bioactive compounds efficiently [32]. The EF is particularly valuable because it directly translates to practical screening efficiency—a high EF means fewer compounds need to be experimentally tested to identify the same number of hits, substantially reducing resource expenditure in early drug discovery [37] [34].

The Goodness-of-Hit (GH) Score

The Goodness-of-Hit (GH) score provides a more nuanced assessment by incorporating both the recovery of active compounds and the penalty for missing actives (false negatives). This metric, introduced by Güner and Henry, ranges from 0 to 1, where higher values indicate better overall performance [35]. The GH score is calculated using three component metrics:

GH = [(3/4 × Ha + 1/4 × Ya) × Ha] / (Htₐ × Htₜₒₜₐₗ)

Where the components are derived from:

- Ha = Number of active compounds in the hit list

- Ht = Total number of compounds in the hit list

- A = Total number of active compounds in the database

- D = Total number of compounds in the database

The GH score effectively balances sensitivity and specificity by rewarding models that retrieve a high proportion of available actives while maintaining a reasonable hit list size. This prevents misleadingly high EF values that can occur with extremely small hit lists containing only a few actives. In the context of TGR5 agonist identification, researchers utilized the GH score alongside EF to validate their pharmacophore model, ensuring it identified genuine actives without excessive false positives [35]. The incorporation of both metrics provides a more comprehensive validation framework than either metric alone.

Table 1: Core Equations for Key Validation Metrics

| Metric | Formula | Interpretation | Optimal Range |

|---|---|---|---|

| Enrichment Factor (EF) | EF = (Hitₛₐₘₚₗₑ/Nₛₐₘₚₗₑ) / (Hitₜₒₜₐₗ/Nₜₒₜₐₗ) | Measures concentration of actives in top fraction | >1 (Higher is better) |

| Goodness-of-Hit (GH) | GH = [(3/4×Ha + 1/4×Ya)×Ha] / (Htₐ×Htₜₒₜₐₗ) | Balances active recovery with false negatives | 0-1 (Closer to 1 is better) |

| Yield of Actives (%A) | %A = (Ha/Ht) × 100 | Percentage of actives in hit list | Higher percentage preferred |

| Enrichment Factor (Alternate) | EF = (Ha/Ht) / (A/D) | Simpler form for quick calculation | >1 (Higher is better) |

Experimental Protocols for Metric Calculation

Establishing a Proper Decoy Set

The foundation of reliable EF and GH calculation lies in the careful construction of a decoy set—a collection of presumed inactive molecules used to assess the pharmacophore model's discriminatory power. The Directory of Useful Decoys (DUD) exemplifies this approach by providing decoys that match the physical properties of active compounds (molecular weight, logP, hydrogen bonding characteristics) while differing in molecular topology to ensure they are unlikely binders [37]. This careful matching prevents artificial inflation of enrichment metrics that can occur when decoys differ substantially from actives in trivial physical properties. For example, in a GPCR-focused study, researchers emphasized that decoys must "resemble the physical properties of the annotated ligands well enough so that enrichment is not simply a separation of gross features, yet be chemically distinct from them" [34]. Proper decoy set construction typically involves selecting 20-50 decoy molecules per active compound, ensuring sufficient statistical power while maintaining chemical diversity [37] [34].

Implementation Workflow

The standard protocol for calculating EF and GH scores follows a systematic workflow that begins with database preparation and proceeds through sequential screening stages. First, the prepared database containing both known actives and decoys is screened using the pharmacophore model as a query. The resulting hits are ranked based on their pharmacophore fit value or complementary scoring metric. Following this ranking, researchers select a threshold cutoff (typically 1-10% of the total database) to define the "enriched subset" for analysis [32] [33]. The specific values for Ha, Ht, A, and D are then extracted from this top fraction and applied to the EF and GH equations. This process is often repeated at multiple cutoff points (1%, 5%, 10%) to generate enrichment curves that visualize performance across the entire ranking spectrum [38]. In recent implementations, this workflow has been automated within software platforms like Discovery Studio and Schrödinger's Maestro, though manual calculation remains straightforward using spreadsheet tools once the essential hit counts are obtained [33] [35].

Comparative Analysis of Validation Approaches

Statistical Validation Frameworks

Beyond EF and GH scores, comprehensive pharmacophore validation incorporates additional statistical measures that provide complementary insights. The receiver operating characteristic (ROC) curve analysis plots the true positive rate against the false positive rate across all possible classification thresholds, with the area under the curve (AUC) providing a threshold-independent assessment of model performance [32] [28]. Meanwhile, Fisher's randomization test (Cat-Scramble) validates the statistical significance of the pharmacophore model by randomly shuffling activity data and confirming that the original model performs significantly better than those generated from randomized datasets [33] [39]. These approaches address different aspects of validation—ROC curves evaluate overall discriminatory power, while Fisher's test assesses the likelihood that the observed correlation occurred by chance. When applied to Akt2 inhibitors, this multi-faceted validation approach confirmed that the developed pharmacophore model genuinely captured structure-activity relationships rather than benefiting from fortuitous correlations [33].

Case Studies in Different Target Classes

The application of EF and GH metrics across diverse target classes demonstrates their universal utility in pharmacophore validation while revealing target-specific performance patterns. In kinase targets like FGFR1, researchers achieved outstanding enrichment (EF > 20) through consensus pharmacophore models that integrated multiple ligand conformations [28]. For GPCR targets such as TGR5, the validation process emphasized GH scores to balance sensitivity and specificity, recognizing the challenges of identifying selective compounds for this target class [35]. In enzyme targets including COX-2, comprehensive validation incorporating both EF and GH scores successfully identified novel chemotypes beyond the original training set [32]. These case studies collectively demonstrate that while optimal threshold values may vary by target class, the consistent application of EF and GH metrics enables meaningful comparison across different target types and therapeutic areas.

Table 2: Performance Benchmarks Across Different Target Classes

| Target Class | Example Target | Reported EF Range | Reported GH Range | Special Considerations |

|---|---|---|---|---|

| Kinases | FGFR1, Akt2 | 10-60 | 0.6-0.8 | High specificity requirements due to conserved ATP-binding site |

| GPCRs | TGR5, Glucagon Receptor | 5-30 | 0.5-0.75 | Membrane environment effects on ligand binding |

| Enzymes | COX-2 | 15-40 | 0.65-0.85 | Often have well-defined active sites with diverse chemical features |

| Nuclear Hormone Receptors | PPARγ | 1-25 | 0.4-0.7 | Ligand flexibility requires comprehensive conformational analysis |

Advanced Considerations and Methodological Refinements

Addressing Statistical Uncertainty

Recent research has highlighted the importance of quantifying statistical uncertainty in enrichment metrics, particularly when evaluating virtual screening performance. As noted in one study, "researchers almost never consider the uncertainty associated with estimating such curves before declaring differences between performance of competing algorithms" despite the fact that "uncertainty is often large because the testing fractions of interest to researchers are small" [38]. This uncertainty stems from two often-overlooked sources: correlation across different testing fractions within a single algorithm, and correlation between competing algorithms being compared. To address these challenges, researchers have developed advanced statistical approaches including confidence bands for hit enrichment curves and EmProc-based hypothesis testing, which provide a more rigorous foundation for claiming significant differences between screening methods [38]. These refined approaches are particularly valuable when evaluating marginal improvements in enrichment that might otherwise be misinterpreted as statistically significant.

Machine Learning-Enhanced Model Selection

The integration of machine learning techniques with traditional pharmacophore validation represents a cutting-edge advancement in the field. Researchers have developed cluster-then-predict workflows that first group pharmacophore models using K-means clustering based on their feature composition and geometric arrangements, then apply logistic regression classifiers to identify models likely to achieve higher enrichment factors [34]. This approach has demonstrated impressive predictive performance, with "positive predictive values (PPV) of 0.88 and 0.76 for selecting high enrichment pharmacophore models from among those generated in experimentally determined and modeled structures, respectively" [34]. Such machine learning-enhanced selection is particularly valuable for targets with limited known activators, where traditional validation using known actives is challenging. Furthermore, these approaches facilitate the identification of high-performing pharmacophore models for orphan targets with neither known ligands nor experimental structures, significantly expanding the applicability of structure-based pharmacophore modeling.

Essential Research Reagent Solutions

Table 3: Key Computational Tools for Pharmacophore Validation

| Tool Category | Specific Software/Resources | Primary Function in Validation | Application Example |

|---|---|---|---|

| Pharmacophore Modeling | Discovery Studio, Schrödinger Maestro, LigandScout | Model generation, feature mapping, hypothesis testing | 3D-QSAR pharmacophore generation for Akt2 inhibitors [33] |

| Decoy Set Databases | DUD (Directory of Useful Decoys), ZINC database | Provides property-matched decoys for unbiased validation | Benchmarking sets for molecular docking [37] |

| Statistical Analysis | R/caret package, SAS Enterprise Miner, JMP | Calculation of EF, GH, ROC curves, confidence estimation | Confidence bands for hit enrichment curves [38] |

| Molecular Docking | GOLD, Glide, AutoDock | Binding mode analysis, complementary scoring | Hierarchical docking (HTVS/SP/XP) for FGFR1 inhibitors [28] |

| Dynamics & Simulation | GROMACS, AMBER, CHARMM | Assessment of binding stability, conformational analysis | MD simulations for HER2 inhibitors [36] |

The rigorous validation of pharmacophore models through Enrichment Factors and Goodness-of-Hit scores provides an essential statistical foundation for reliable virtual screening in drug discovery. These metrics transform qualitative pharmacophore hypotheses into quantitatively validated tools capable of prioritizing chemical matter with increased probability of biological activity. As computational methods continue to evolve, incorporating advanced statistical treatments of uncertainty and machine learning-enhanced selection approaches will further strengthen the validation paradigm. The consistent application of these metrics across diverse target classes, complemented by auxiliary validation methods including ROC analysis and Fisher's randomization, enables researchers to make informed decisions about which pharmacophore models warrant experimental follow-up. Through this rigorous quantitative framework, computational chemists can maximize the value of virtual screening campaigns, significantly accelerating the identification of novel therapeutic agents across disease areas.

Retrospective screening is a cornerstone computational method in early drug discovery, used to validate the predictive power of various molecular models before committing to costly experimental screens. This process tests a model's ability to identify known active compounds hidden within a large database of decoy molecules, which are designed to be chemically similar but physically dissimilar to the actives. The DUD-E (Directory of Useful Decoys: Enhanced) database is a widely adopted benchmark for this purpose, providing a rigorous framework for evaluation [40]. For pharmacophore models—which are abstract 3D representations of the steric and electronic features necessary for a molecule to bind to a target protein—retrospective screening against DUD-E offers a critical validation step [7] [21]. This guide objectively compares the performance of modern, automated pharmacophore generation methods in this specific validation context, providing researchers with experimental data to inform their tool selection.

Experimental Protocols for Retrospective Screening

A standardized experimental protocol is essential for a fair comparison of different pharmacophore methods. The following workflow outlines the key steps for conducting a retrospective screening validation using the DUD-E dataset.

Core Workflow and Methodology

The general process for a DUD-E-based retrospective screening experiment involves several critical stages, from database preparation to performance calculation [7] [40].

Detailed Methodological Considerations

Database Preparation: The DUD-E dataset provides known actives and decoys for multiple protein targets. Decoys are property-matched to actives (similar molecular weight, logP) but are topologically dissimilar to ensure a realistic screening challenge [40]. For screening, multiple low-energy molecular conformers must be generated for all database molecules; tools like RDKit are typically used to produce 20-25 energy-minimized conformers per molecule [7] [40].

Pharmacophore Screening: Screening is performed using specialized software like Pharmit, which efficiently identifies molecules with conformers that match the spatial constraints of the pharmacophore query. A typical tolerance radius of 1 Å is used for feature matching, and receptor exclusion is applied to filter out molecules that sterically clash with the protein [40].

Performance Metrics Calculation: Key metrics include the Enrichment Factor (EF), which measures how much a method enriches the top-ranked results with true actives compared to random selection, and the F1 Score, which balances precision (fraction of retrieved actives that are true actives) and recall (fraction of all true actives that are retrieved) [40].

Performance Comparison of Pharmacophore Generation Methods

Different computational approaches can generate pharmacophores for retrospective screening. The table below compares the performance of several modern methods on the DUD-E benchmark.

Table 1: Performance Comparison of Pharmacophore Methods on DUD-E

| Method | Core Approach | Key Performance Metric | Reported Result on DUD-E | Relative Strength |

|---|---|---|---|---|

| PharmacoForge | Diffusion model conditioned on protein pocket [7] | Ligand docking score & strain energy | Similar docking scores to de novo ligands, but with lower strain energies [7] | Generates commercially available, synthetically accessible ligands [7] |

| PharmRL | CNN + Geometric Q-learning to select interaction features [40] | F1 Score | Better F1 scores than random selection of co-crystal structure features [40] | Effective even without a cognate ligand structure [40] |

| Apo2ph4 | Fragment docking & clustering [7] | Performance in retrospective screening | Proven performance, but requires intensive manual checks [7] | Relies on established docking protocols |

| PGMG | Pharmacophore-Guided deep learning for Molecule Generation [41] | Docking affinity & molecular properties | Generates molecules with strong docking affinities and high validity [41] | Flexible; useful for both ligand- and structure-based design [41] |

Analysis of Comparative Data

The comparative data reveals a trend toward machine learning-driven methods that reduce manual intervention. PharmRL demonstrates that a reinforcement learning approach can automatically select feature combinations that lead to functional pharmacophores, outperforming a strategy of randomly selecting features from a co-crystal structure [40]. Meanwhile, PharmacoForge addresses a different bottleneck by generating pharmacophores that, when screened, yield molecules that are not only potent but also synthetically accessible—a common failure mode for de novo molecular generation models [7].

The Scientist's Toolkit: Essential Research Reagents & Databases

Successful retrospective screening relies on a suite of computational tools and databases. The following table details the key "research reagents" for these experiments.

Table 2: Essential Computational Reagents for Retrospective Screening

| Tool/Resource | Type | Primary Function in Validation | Key Characteristic |

|---|---|---|---|

| DUD-E Database | Benchmark Database | Provides known actives and property-matched decoys for multiple targets [40] | Standardized benchmark for fair method comparison [40] |

| LIT-PCBA | Benchmark Database | Provides another large-scale benchmark for validation, often used alongside DUD-E [7] [40] | Contains a prohibitively large number of molecules for screening [40] |

| Pharmit | Open-source Software | Performs high-speed pharmacophore search of large molecular libraries [7] [40] | Implements sub-linear time search algorithms for efficiency [7] |

| RDKit | Cheminformatics Library | Generates and energy-minimizes multiple molecular conformers [40] | Critical for preparing a screening database where molecules are flexible [40] |

| PDBbind | Curated Database | Provides a curated set of protein-ligand complexes for training and testing [40] | Used to train models like the CNN in PharmRL on "ground truth" interactions [40] |

Retrospective screening using the DUD-E dataset remains a vital practice for validating the quality and utility of pharmacophore models before their deployment in prospective drug discovery campaigns. The experimental data demonstrates that modern automated methods, particularly those leveraging deep learning and reinforcement learning like PharmRL and PharmacoForge, offer robust performance. These tools help to overcome the traditional reliance on expert intuition and co-crystal structures, making powerful pharmacophore-based screening accessible for a broader range of targets, including those with little prior ligand information. Integrating these validated models into virtual screening workflows significantly increases the likelihood of identifying novel, potent, and synthetically tractable chemical matter for further development.

This case study objectively compares the performance of a structure-based pharmacophore model against traditional docking methods for identifying novel Bromodomain-containing protein 4 (BRD4) inhibitors for neuroblastoma treatment. The validation framework integrates computational predictions with experimental confirmation, demonstrating how pharmacophore models serve as efficient filters for enriching hit rates in virtual screening campaigns. Quantitative data from multiple studies reveals that pharmacophore-guided approaches successfully identified natural compounds with binding affinities ranging from -9.623 to -8.894 kcal/mol, with subsequent experimental validation confirming cytotoxic effects in neuroblastoma cell lines [42] [43].

Neuroblastoma is the most common extracranial solid tumor in children, with high-risk cases exhibiting a 5-year survival rate of only 51-60% despite intensive multimodal therapy [44] [45]. BRD4 has emerged as a promising therapeutic target as it functions as an epigenetic reader that regulates the expression of critical oncogenes like MYCN, which is amplified in approximately 20% of high-risk neuroblastoma cases [42] [46]. BRD4 belongs to the bromodomain and extraterminal (BET) family of proteins and contains two bromodomains (BD1 and BD2) that recognize acetylated lysine residues on histones, facilitating the recruitment of transcriptional machinery to promoter and enhancer regions [42]. Pharmacological inhibition of BRD4 potently depletes MYCN in neuroblastoma cells, making it an attractive target for therapeutic development [43].

Experimental Design and Methodologies

Pharmacophore Model Development

The foundational step involved creating a structure-based pharmacophore model using the BRD4 crystal structure (PDB ID: 4BJX) in complex with its co-crystal ligand (73B). The protein structure has a resolution of 1.59 Å, providing a high-quality template for model generation [42] [43]. Researchers used Ligand Scout 4.4 and Pharmit web server to identify critical interaction features between the ligand and the BRD4 binding pocket, deriving the following pharmacophore features [42] [43]:

- Hydrogen bond acceptors (HA)

- Hydrogen bond donors (HD)

- Hydrophobic interactions (HY)

- Aromatic rings (AR)

- Exclusion volumes (EX) - representing steric constraints

The generated model incorporated six hydrophobic contacts, two hydrophilic interactions, one negative ionizable bond, and fifteen exclusion volumes to define the essential chemical space for BRD4 inhibition [43].

Virtual Screening Workflow

The pharmacophore model served as a query to screen large compound databases using a structured virtual screening workflow:

- Database Preparation: Five natural compound databases (CHEMBL, Enamine, MCULE, Chemspace, and ChemDiv) were prepared for screening [42]

- Pharmacophore-based Screening: Compounds were filtered using Lipinski's Rule of Five parameters (H-bond acceptors <10, H-bond donors <5, molecular weight <500, logP <5) to ensure drug-likeness [42]

- Molecular Docking: Retrieved hits underwent molecular docking using Schrödinger Maestro and Glide with Standard Precision (SP) mode to assess binding orientations and affinities [42]

- ADMET Profiling: Promising compounds were evaluated for absorption, distribution, metabolism, excretion, and toxicity properties using QikProp and SwissADME tools [42] [43]

- Molecular Dynamics Simulations: The stability of top protein-ligand complexes was validated through 10-100 ns MD simulations using NAMD 2.14 with CHARMM36 force field [42] [47]

- Binding Free Energy Calculations: The Molecular Mechanics with Generalized Born and Surface Area Solvation (MM-GBSA) method was employed to calculate binding free energies [47] [43]

Experimental Validation Protocols

Computational predictions underwent rigorous experimental validation using the following protocols:

- In Vitro Cytotoxicity Assay: SK-N-AS neuroblastoma cells were treated with identified compounds, and cell viability was measured using WST-8 assay after 24-72 hours [47]

- Western Blot Analysis: Protein expression levels of caspase-1, caspase-3, procaspase-7, and procaspase-8 were analyzed to evaluate apoptosis and pyroptosis induction [47]

- Immunofluorescence: Caspase-1 activation was detected using fluorescent-labelled inhibitors [47]

- Co-crystallographic Analysis: Binding modes of inhibitors were confirmed through X-ray crystallography [8]

The diagram below illustrates the complete validation workflow from pharmacophore development to experimental confirmation:

Performance Comparison: Pharmacophore Models vs. Traditional Docking

Virtual Screening Efficiency

The table below compares the virtual screening efficiency of pharmacophore-based approaches versus traditional molecular docking: