Validating Ligand-Based ADMET Predictions: A Practical Guide to Robust ML Models for Drug Discovery

Accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is crucial for reducing late-stage drug attrition.

Validating Ligand-Based ADMET Predictions: A Practical Guide to Robust ML Models for Drug Discovery

Abstract

Accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is crucial for reducing late-stage drug attrition. This article provides a comprehensive framework for validating ligand-based ADMET models, addressing key challenges from foundational principles to real-world application. We explore the impact of feature representation and data quality, evaluate state-of-the-art methodologies including graph neural networks and ensemble learning, and present systematic approaches for model optimization and troubleshooting. Emphasizing rigorous validation through cross-validation with statistical testing and community blind challenges, this guide equips researchers with practical strategies to enhance the reliability and translational relevance of ADMET predictions in preclinical decision-making.

The Critical Foundation: Understanding ADMET Properties and Data Challenges

The journey of a new drug from concept to clinic is a high-stakes endeavor characterized by immense costs and a sobering likelihood of failure. A critical determinant of this outcome lies in a compound's Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties. Despite technological advances, drug development remains a highly complex, resource-intensive endeavor with substantial attrition rates [1]. Analyses indicate that approximately 40–45% of clinical attrition is attributed to ADMET liabilities, with poor bioavailability and unforeseen toxicity being major contributors [2] [1]. This reality underscores that efficacy and safety, which are directly related to ADMET properties, are fundamental challenges in pharmaceutical R&D [3].

Understanding and predicting these properties early is no longer a luxury but a strategic imperative. The integration of machine learning (ML) and artificial intelligence (AI) has begun to transform this landscape, offering rapid, cost-effective, and reproducible alternatives that integrate seamlessly with existing drug discovery pipelines [4]. This guide objectively compares the performance of various computational approaches for ligand-based ADMET prediction, providing researchers with validated methodologies and data to inform their model selection.

Comparative Analysis of ADMET Prediction Approaches

Performance Benchmarking of Models and Features

Rigorous benchmarking studies provide critical insights into the practical impact of feature representations and algorithm choice in ligand-based ADMET models. A structured approach that moves beyond simply concatenating different molecular representations is essential for building reliable models [5]. The following table summarizes the key findings from recent comparative studies.

Table 1: Performance Comparison of Machine Learning Models and Feature Representations for ADMET Prediction

| Model Category | Example Algorithms | Typical Feature Representations | Reported Advantages | Key Limitations |

|---|---|---|---|---|

| Tree-Based Ensembles | Random Forests (RF), LightGBM, CatBoost [5] | RDKit descriptors, Morgan fingerprints [5] | Generally strong performance; handles diverse feature types; good interpretability [5] | Performance can be dataset-dependent; may struggle with highly complex structure-property relationships [6] |

| Deep Learning (Graph-Based) | Message Passing Neural Networks (MPNNs) like Chemprop [5] | Learned graph representations from molecular structure [1] | Automatically extracts relevant features; state-of-the-art on many tasks [1] [7] | High computational cost; requires large datasets; "black box" nature complicates interpretability [1] |

| Deep Learning (Other) | Multitask Deep Neural Networks [2] | Learned representations from molecular SMILES or fingerprints [2] | Improved generalization by learning from correlated tasks; efficient data utilization [2] | Complex training; risk of negative transfer if tasks are not related [1] |

| Federated Learning | Cross-pharma collaborative models (e.g., MELLODDY) [2] | Various (e.g., fingerprints, graph features) from multiple private datasets [2] | Systematically expands model's effective domain; improves robustness without sharing proprietary data [2] | Complex infrastructure and coordination required; model interpretability challenges remain [2] |

Quantitative Benchmark Results on Public Datasets

Performance evaluations on public benchmarks such as the Therapeutics Data Commons (TDC) offer a standardized way to compare model efficacy. These benchmarks reveal that optimal model and feature choices can be highly dataset-dependent.

Table 2: Illustrative Benchmark Results from Public ADMET Datasets (e.g., TDC)

| ADMET Endpoint | Best Performing Model | Best Feature Representation | Key Performance Metric | Comparative Note |

|---|---|---|---|---|

| Solubility | Random Forest / LightGBM [5] | Combined descriptors and fingerprints [5] | ~0.85 R² (dataset dependent) [5] | Classical models with curated features can compete with or outperform deep learning on some datasets [5]. |

| Metabolic Stability | Multitask Deep Neural Network [2] | Federated learning across diverse datasets [2] | Up to 40-60% reduction in prediction error [2] | Data diversity and representativeness, rather than model architecture alone, are dominant factors [2]. |

| hERG Inhibition | Graph Neural Network (GNN) [1] | Learned graph representations [1] | High AUC-ROC (dataset dependent) [1] [7] | GNNs excel at capturing complex structural relationships relevant to toxicity endpoints [7]. |

| Bioavailability | Ensemble Methods [1] | Multimodal data integration (structure, physicochemical) [1] | Outperforms single-model approaches [1] | Ensemble methods reduce variance and improve generalization [1]. |

Experimental Protocols for Model Validation

A Rigorous Workflow for Benchmarking Ligand-Based Models

To ensure the reliability and practical significance of ADMET models, a rigorous and structured experimental protocol is essential. The following workflow, derived from benchmarking studies, outlines key steps from data preparation to final validation.

Detailed Methodological Breakdown

Data Cleaning and Curation

- Inorganic Salt Removal: Eliminate organometallic compounds and inorganic salts from datasets [5].

- Parent Compound Standardization: Extract the organic parent compound from salt forms to ensure consistency [5].

- Tautomer and SMILES Standardization: Adjust tautomers to consistent functional group representations and canonicalize SMILES strings using tools like the standardisation tool by Atkinson et al. [5].

- De-duplication: Remove duplicate entries, keeping the first entry if target values are consistent, or removing the entire group if inconsistent. For regression tasks, consistency is defined as within 20% of the inter-quartile range [5].

Model Training and Evaluation

- Baseline Model Establishment: Select a initial model architecture (e.g., Random Forest) as a baseline for further optimization [5].

- Iterative Feature Combination: Systematically combine different molecular representations (e.g., RDKit descriptors, Morgan fingerprints, neural network embeddings) to identify high-performing combinations, rather than using arbitrary concatenation [5].

- Scaffold-Based Splitting: Use scaffold-based data splits to evaluate the model's ability to generalize to novel chemical structures, providing a more challenging and realistic assessment than random splits [5].

- Hyperparameter Tuning: Perform dataset-specific hyperparameter optimization to maximize model performance [5].

Statistical Validation and Practical Testing

- Cross-Validation with Hypothesis Testing: Integrate cross-validation with statistical hypothesis testing (e.g., t-tests) to compare model performances and ensure that observed improvements are statistically significant, not due to random chance [5].

- Hold-Out Set Evaluation: Assess the final model performance on a held-out test set that was not used during training or validation [5].

- External Validation on Different Data Sources: Evaluate models trained on one data source (e.g., public data) using a test set from a completely different source (e.g., in-house corporate data). This "practical scenario" test is crucial for assessing real-world applicability [5].

The Scientist's Toolkit: Essential Research Reagents and Platforms

A range of public databases and software platforms are indispensable for developing and validating ligand-based ADMET models. The following table catalogs key resources.

Table 3: Essential Research Reagents, Databases, and Platforms for ADMET Modeling

| Resource Name | Type | Primary Function in ADMET Research | Key Features / Use Cases |

|---|---|---|---|

| Therapeutics Data Commons (TDC) [5] | Curated Database | Provides standardized, public datasets and benchmarks for ADMET-associated properties. | Facilitates fair model comparison; includes scaffold splits for training/validation [5]. |

| RDKit [7] | Cheminformatics Toolkit | Calculates molecular descriptors and fingerprints for use as model features. | Generates RDKit descriptors, Morgan fingerprints; fundamental for feature engineering [5] [7]. |

| Chemprop [5] | Deep Learning Software | Implements Message Passing Neural Networks (MPNNs) for molecular property prediction. | Specialized for graph-based learning; uses molecular structure as direct input [5]. |

| kMoL [2] | Machine Learning Library | Open-source and federated learning library designed for drug discovery tasks. | Supports development of models across distributed datasets without centralizing data [2]. |

| ADMETlab 2.0 [4] | Integrated Online Platform | Provides comprehensive predictions for a wide array of ADMET properties via a web interface. | Useful for rapid, single-compound profiling and validation of internal model results [4]. |

| Biogen In Vitro ADME Dataset [5] | Experimental Dataset | Publicly available in vitro ADME data for non-proprietary small-molecule compounds. | Serves as a valuable external validation set to test model transferability [5]. |

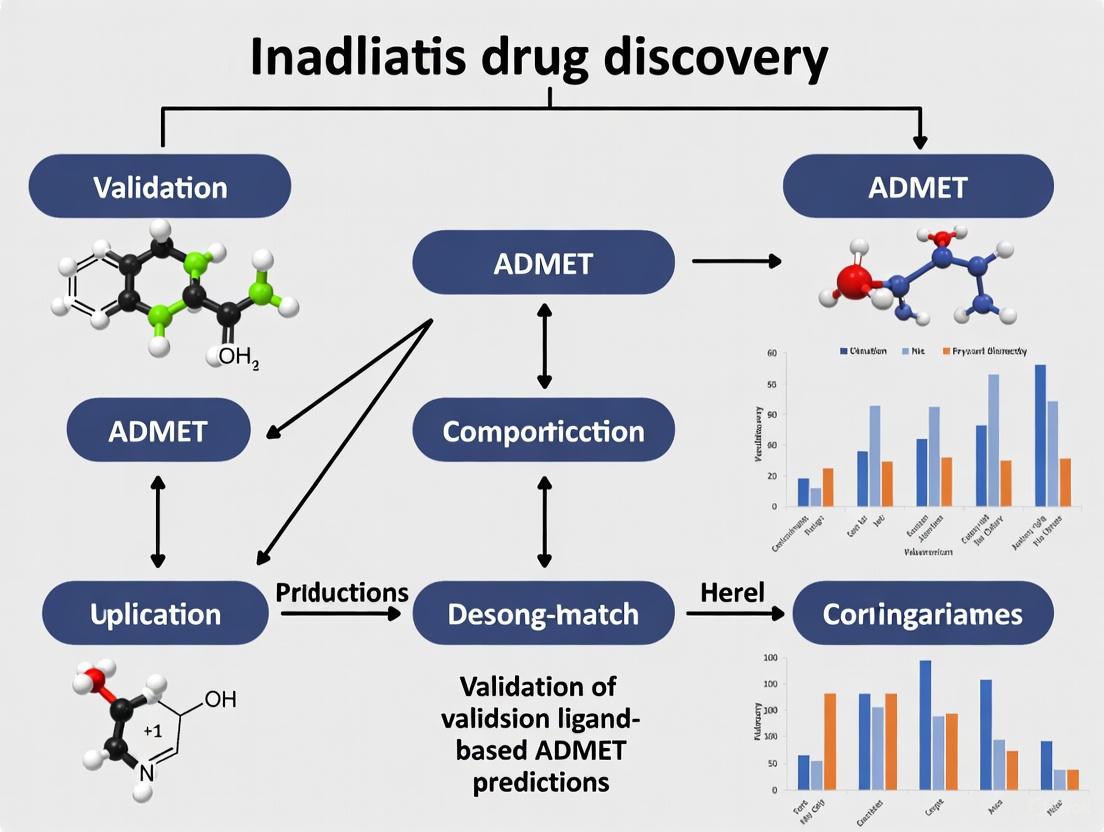

Visualizing the Integrated ADMET Prediction Framework

Modern ADMET prediction platforms are sophisticated, multi-layered systems. The following diagram illustrates the core framework that integrates data, computational methods, and predictive output, which is foundational to many contemporary tools.

The high stakes of ADMET properties in clinical success and attrition are clear. This comparison guide demonstrates that while no single model dominates all ADMET endpoints, rigorous methodologies—careful feature selection, scaffold-based splitting, statistical validation, and external testing—are paramount for building trustworthy predictive models. The field is moving beyond isolated model benchmarks towards integrated frameworks that leverage diverse data, often through federated learning, and prioritize generalizability to novel chemical space and real-world industrial data. By adopting these rigorous protocols and understanding the comparative landscape of available tools, researchers can significantly bolster the confidence in their ligand-based ADMET predictions, thereby de-risking the drug development pipeline and increasing the likelihood of clinical success.

Accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is fundamental to reducing the approximately 40-45% of clinical attrition attributed to pharmacokinetics and safety liabilities [2]. While machine learning (ML) and deep learning (DL) methodologies have revolutionized ADMET prediction, their performance is fundamentally constrained by the quality of the underlying training data. Recent studies consistently demonstrate that data diversity and representativeness, rather than model architecture alone, are the dominant factors driving predictive accuracy and generalization [5] [2]. Public ADMET datasets, while invaluable resources, present significant challenges including inconsistent experimental results, duplicate measurements with varying values, heterogeneous assay conditions, and insufficient representation of drug-like chemical space. This comprehensive analysis examines the critical data quality issues plaguing public ADMET datasets, evaluates current mitigation methodologies, and provides objective comparisons of emerging solutions and platforms.

Critical Data Quality Challenges in Public ADMET Datasets

Fundamental Limitations in Current Benchmark Datasets

Table 1: Key Limitations of Existing Public ADMET Datasets

| Limitation Category | Specific Issue | Impact on Model Performance |

|---|---|---|

| Dataset Scale | Small fraction of publicly available data utilized (e.g., ESOL: 1,128 compounds vs. >14,000 in PubChem) [8] | Limited chemical diversity reduces model generalizability |

| Chemical Representativeness | Mean molecular weight in ESOL: 203.9 Da vs. drug discovery range: 300-800 Da [8] | Poor performance on real-world drug discovery compounds |

| Experimental Variability | Same compound showing different values under different conditions (e.g., solubility varying with pH, buffer) [8] | Introduces noise and contradictions in training data |

| Data Consistency | Inconsistent SMILES representations, fragmented strings, duplicate measurements with varying values [5] | Compromises data integrity and model reliability |

| Annotation Quality | Different binary labels for same SMILES across train/test sets [5] | Fundamental flaws in evaluation benchmarks |

Specific Data Quality Issues and Their Origins

The variability in experimental conditions presents a particularly challenging aspect of ADMET data curation. For aqueous solubility alone, values for identical compounds can vary significantly based on buffer composition, pH levels, and experimental procedures [8]. This biological assay heterogeneity compounds fundamental data cleanliness issues, where studies have reported "inconsistent SMILES representations and multiple organic compounds found in a single fragmented SMILES string, to duplicate measurements with varying values and inconsistent binary labels" [5]. The Therapeutics Data Commons (TDC), while valuable, exhibits these limitations, prompting researchers to implement extensive data cleaning procedures that typically result in the removal of substantial portions of original data [5].

Standardized Protocols for ADMET Data Curation

Comprehensive Data Cleaning Workflow

Experimental Protocol: Data Cleaning and Standardization

Based on benchmarking research by [5]

Objective: To generate consistent, high-quality ADMET datasets from raw public sources by eliminating noise and contradictions.

Methodology Steps:

Remove inorganic salts and organometallic compounds from all datasets using predefined elemental filters.

Extract organic parent compounds from their salt forms using a standardized salt-splitting protocol.

Adjust tautomers to achieve consistent functional group representation across all molecular entries.

Canonicalize SMILES strings using standardized algorithms to ensure uniform molecular representation.

De-duplication procedure: For duplicate entries, keep the first entry if target values are consistent (identical for binary tasks, within 20% of inter-quartile range for regression tasks); remove entire duplicate groups if values are inconsistent [5].

The following workflow diagram illustrates this comprehensive data cleaning process:

Advanced Data Mining with Multi-Agent LLM Systems

Experimental Protocol: LLM-Powered Experimental Condition Extraction

Based on PharmaBench development methodology [8]

Objective: To systematically extract and standardize experimental conditions from unstructured assay descriptions in public databases.

Methodology:

The protocol employs a sophisticated multi-agent LLM system consisting of three specialized components:

Keyword Extraction Agent (KEA): Analyzes assay descriptions to identify and summarize key experimental conditions specific to each ADMET endpoint.

Example Forming Agent (EFA): Generates structured examples of experimental condition extraction based on KEA output for few-shot learning.

Data Mining Agent (DMA): Processes all assay descriptions to systematically identify and extract experimental conditions using the generated examples [8].

This system enabled the processing of 14,401 bioassays from ChEMBL, extracting critical experimental parameters that are essential for normalizing results across different studies [8].

Emerging Solutions and Platform Comparisons

Next-Generation Benchmark Datasets

Table 2: Comparison of ADMET Benchmark Datasets

| Dataset | Size (Entries) | Key Features | Data Quality Innovations |

|---|---|---|---|

| PharmaBench [8] | 52,482 | Eleven ADMET properties | Multi-agent LLM system for experimental condition extraction; rigorous standardization |

| Therapeutics Data Commons (TDC) [5] | ~100,000+ | 28 ADMET-related datasets | Integrated multiple curated sources; benchmark group leaderboard |

| admetSAR 2.0 [9] | 18 endpoints | Comprehensive web server with scoring function | Manually curated models with accuracy metrics for each endpoint |

| Benchmark-ADMET-2025 [10] | Multiple integrated sources | Focus on foundation model era evaluation | Advanced splitting strategies (scaffold, perimeter) for OOD testing |

PharmaBench represents a significant advancement in scale and quality, addressing key limitations of previous benchmarks by incorporating 156,618 raw entries processed through a rigorous workflow that specifically addresses experimental condition variability [8]. The dataset's development involved an extensive data mining process that analyzed 14,401 different bioassays using GPT-4 based agents to extract critical experimental parameters [8].

Platform Performance and Capability Comparison

Table 3: ADMET Prediction Platform Capabilities

| Platform | Core Technology | Data Foundation | Key Differentiators | Limitations |

|---|---|---|---|---|

| ADMET-AI [11] | Chemprop-RDKit graph neural network | 41 ADMET datasets from TDC | Highest average rank on TDC leaderboard; fastest web-based predictor; DrugBank reference comparison (2,579 drugs) | Web interface limited to 1,000 molecules per batch |

| admetSAR 2.0 [9] | SVM, RF, kNN with molecular fingerprints | 18 curated ADMET endpoints | ADMET-score integrating multiple properties; extensive validation against DrugBank, ChEMBL, withdrawn drugs | Limited to pre-defined endpoints; less flexible than GNN approaches |

| Federated ADMET Network [2] | Cross-pharma federated learning | Distributed proprietary datasets | Expands chemical space coverage without data sharing; 40-60% error reduction in Polaris Challenge | Requires participation in consortium; complex implementation |

ADMET-AI currently demonstrates leading performance metrics, achieving the highest average rank on the TDC ADMET Benchmark Group leaderboard while maintaining the fastest prediction times among web-based tools [11]. Its graph neural network architecture, specifically Chemprop-RDKit, was trained on 41 ADMET datasets from TDC and provides both regression predictions (with appropriate units) and classification outputs (as probabilities) [11].

Advanced Modeling Approaches Addressing Data Limitations

Federated learning approaches have emerged as a promising solution to data scarcity and diversity challenges. The MELLODDY project demonstrated that cross-pharma federated learning at unprecedented scale unlocks benefits in QSAR without compromising proprietary information [2]. Key findings indicate that federation systematically extends the model's effective domain, with models demonstrating increased robustness when predicting across unseen scaffolds and assay modalities [2].

For cytochrome P450 metabolism prediction specifically, graph-based approaches including Graph Neural Networks (GNNs), Graph Convolutional Networks (GCNs) and Graph Attention Networks (GATs) have shown particular promise in addressing data quality challenges by better capturing complex molecular interactions [12].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Application in ADMET Research |

|---|---|---|---|

| RDKit [5] | Cheminformatics toolkit | Molecular descriptor calculation and fingerprint generation | Fundamental for molecular representation and feature engineering |

| Chemprop [11] | Graph Neural Network | Message Passing Neural Networks for molecular property prediction | Core architecture of ADMET-AI; state-of-the-art on TDC benchmarks |

| GPT-4 [8] | Large Language Model | Extraction of experimental conditions from unstructured text | Powers multi-agent data mining system in PharmaBench development |

| TDC [5] | Data Commons | Curated benchmark datasets and evaluation framework | Standardized evaluation and comparison of ADMET prediction models |

| Scaffold Split Methods [10] | Data partitioning algorithm | Separate molecules based on core chemical structure | Tests model generalizability to novel chemical scaffolds |

| Federated Learning Framework [2] | Privacy-preserving ML | Collaborative training across distributed datasets | Expands chemical space coverage without data centralization |

The advancement of reliable ADMET prediction models remains intrinsically linked to resolving fundamental data quality challenges in public datasets. Current research demonstrates that systematic data cleaning protocols, LLM-powered curation pipelines, sophisticated benchmarking datasets like PharmaBench, and innovative approaches such as federated learning are collectively addressing these limitations. The objective comparison of platforms presented herein reveals that while tools like ADMET-AI currently lead in performance metrics, the field is rapidly evolving toward more data-centric approaches that prioritize chemical diversity, experimental consistency, and real-world relevance. Future progress will likely depend on continued collaboration across the research community to expand high-quality dataset coverage while developing more sophisticated methods for addressing the inherent noise and variability in experimental ADMET measurements.

In the field of computational drug discovery, the reliability of ligand-based Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) predictions is fundamentally constrained by the quality of the underlying chemical data. Dirty data, characterized by inconsistent molecular representations and duplicate entries, directly undermines model performance and generalizability, leading to unreliable predictions in critical preclinical assessments [5]. As machine learning (ML) approaches become increasingly central to ADMET modeling, establishing rigorous, systematic data cleaning protocols has emerged as an essential prerequisite for building trustworthy predictive systems.

This guide provides a comprehensive comparison of data cleaning methodologies, with a specific focus on SMILES standardization and duplicate removal within the context of ADMET prediction validation. We objectively evaluate the performance of various approaches, supported by experimental data, to offer drug development professionals a clear framework for implementing robust data cleaning protocols that enhance the reliability of their computational models.

The Critical Role of Data Cleaning in ADMET Prediction

Data cleaning is not merely a preliminary step but a foundational component that significantly influences every subsequent stage in the ADMET modeling pipeline. Public ADMET datasets are frequently criticized for data cleanliness issues, including inconsistent SMILES representations, fragmented molecular strings, duplicate measurements with conflicting values, and inconsistent binary labels across training and test sets [5]. These errors introduce noise that directly compromises model performance.

The impact of dirty data extends beyond technical metrics to practical research outcomes. Inconsistent data leads to flawed analysis, erodes customer trust, wastes computational resources, and ultimately undermines strategic decision-making in drug development pipelines [13]. As highlighted in recent benchmarking studies, the selection of compound representations in ADMET models is often not justified or analyzed with limited scope, with many approaches concatenating multiple compound representations without systematic reasoning [5]. This practice underscores the need for standardized preprocessing protocols that ensure data quality before model training begins.

SMILES Standardization Techniques

The Challenge of SMILES Redundancy

The Simplified Molecular-Input Line-Entry System (SMILES) remains a widely used molecular representation in cheminformatics, but it suffers from inherent redundancy where multiple distinct strings can describe the same molecule [14]. This variability arises from permissible syntactic variations within the language, including Kekulé vs. aromatic syntax, differing branch ordering, and alternative ring numbering conventions. For example, 2-(aminomethyl)benzoic acid can be represented by multiple valid SMILES strings, including "NCC1CCCCC1C(=O)O" (Kekulé syntax) and "NCc1ccccc1C(O)O" (aromatic syntax) [14].

This redundancy presents significant challenges for ML models, which may treat these equivalent representations as distinct entities, thereby learning inconsistent structure-property relationships. The problem is particularly acute in large-scale virtual screening and machine learning applications where consistent featurization is essential for model performance.

Standardization Approaches

TokenSMILES: A Grammatical Framework

TokenSMILES addresses SMILES redundancy through a grammatical framework that standardizes SMILES into structured sentences composed of context-free words. The approach applies five key syntactic constraints to minimize redundant enumerations while maintaining valence and octet compliance through semantic parsing rules [14]:

- Branch limitations that control the depth and complexity of nested branches

- Balanced parentheses ensuring proper closure of branch notations

- Aromaticity exclusion that standardizes aromatic system representation

- Canonical atom ordering that follows consistent traversal paths

- Ring closure standardization that applies consistent numbering schemes

The TokenSMILES methodology transforms the Kekulé syntax into a standardized form that equalizes string lengths and isolates chemical information by assigning individual tokens to each atom and symbol. This tokenization follows two sequential rules: first, parsing the original string into individual characters enclosed in square brackets, and second, categorizing tokens according to their syntactic context (left-context vs. right-context symbols) [14].

Practical Implementation and Comparison

Implementation of TokenSMILES is available through SmilX, an open-source tool that generates valid SMILES with accuracy comparable to existing computational implementations for molecules with low hydrogen deficiency (HDI ≤ 4) [14]. The system has demonstrated applicability beyond alkanes through stoichiometric modifications including bond insertion, cyclization, and heteroatom substitution.

Table 1: Comparison of SMILES Standardization Approaches

| Method | Core Principle | Reduction in Redundancy | Limitations |

|---|---|---|---|

| TokenSMILES | Grammatical constraints and tokenization | Substantial for alkanes and moderate HDI systems | Challenges with highly unsaturated systems |

| DeepSMILES | Simplified parenthesis handling | Moderate | Altered syntax requires specialized parsers |

| SELFIES | Guaranteed validity through grammatical constraints | High through guaranteed valid structures | Less human-readable representation |

| Traditional Canonicalization | Unique traversal algorithms | Varies by implementation | Does not address all syntactic variations |

Duplicate Removal Methodologies

The Duplicate Detection Challenge in Chemical Data

Duplicate records in chemical databases manifest in various forms, from exact molecular duplicates to more challenging cases where the same compound appears with different salt components, tautomeric forms, or stereochemical representations. In ADMET datasets, this problem is compounded by duplicate measurements with varying experimental values, creating inconsistencies that directly impact model training and evaluation [5].

The duplicate removal challenge is particularly acute in clinical trials registry records, where the same study can appear across multiple registries with different formatting, field mappings, and identifier systems. While this problem originates in clinical research, it presents analogous challenges to chemical database management, where the same compound may be represented with different SMILES strings, naming conventions, or identifier systems [15].

Deduplication Techniques

Structured Multi-Stage Deduplication

A robust deduplication strategy for chemical data requires a multi-stage approach that progresses from simple exact matching to sophisticated fuzzy matching algorithms:

- Step 1: Exact Matching - Identify and merge records that are identical across key fields such as canonical SMILES, InChI keys, or compound identifiers. This serves as the simplest and safest first step [13] [16].

- Step 2: Structural Standardization - Apply standardization protocols to normalize representations, including salt removal, tautomer normalization, and stereochemistry specification [5].

- Step 3: Fuzzy Matching - Implement algorithms that can identify non-exact matches based on structural similarities, accounting for variations in salt components, tautomeric forms, or stereochemical representations [13].

- Step 4: Confidence Scoring - Assign confidence scores to potential duplicates, allowing high-confidence matches to be merged automatically while flagging lower-confidence ones for manual review [13].

- Step 5: Validation - Test deduplication rules on sample datasets before full implementation to avoid unintended data loss [13].

Unique Identifier-Based Deduplication

For scenarios where unique identifiers are available, such as ClinicalTrials.gov NCT numbers or registry IDs in the WHO International Clinical Trials Registry Platform (ICTRP), a separate deduplication process can yield significantly better results than generic automated approaches [15]. This method is particularly valuable when records lack consistent metadata across sources but share unique study identifiers.

In a recent evaluation, this identifier-focused approach demonstrated 100% precision and 100% recall in identifying duplicates between CTG and ICTRP databases, outperforming automated systems which achieved only 76.8% recall in the same task [15]. The process can be implemented using reference management software like EndNote, which allows batch editing and manipulation of deduplication parameters [15].

Table 2: Performance Comparison of Deduplication Methods

| Method | Precision | Recall | Best Application Context |

|---|---|---|---|

| Identifier-Based Deduplication | 100% [15] | 100% [15] | Records with unique IDs across sources |

| Automated Systematic Review Tools | 100% [15] | 76.8% [15] | Bibliographic records with consistent metadata |

| Multi-Stage Chemical Deduplication | Not explicitly quantified | Not explicitly quantified | Chemical databases with structural variations |

| Manual Review | High (varies) | High (varies) | Small datasets or high-value records |

Experimental Protocols and Workflows

Comprehensive Data Cleaning Protocol for ADMET Datasets

Based on recent benchmarking studies, the following step-by-step protocol has been developed specifically for preparing ADMET datasets for machine learning applications:

Step 1: SMILES Standardization

- Remove inorganic salts and organometallic compounds from the datasets

- Extract organic parent compounds from their salt forms

- Adjust tautomers to have consistent functional group representation

- Canonicalize SMILES strings using standardized algorithms [5]

Step 2: Duplicate Identification and Resolution

- Identify duplicate records based on canonical SMILES

- For duplicates with consistent target values, keep the first entry

- For duplicates with inconsistent target values, remove the entire group to maintain data integrity

- Define "consistent" as exactly the same for binary tasks, and within 20% of the inter-quartile range for regression tasks [5]

Step 3: Data Transformation

- Apply log-transformation to highly skewed distributions for specific ADMET endpoints

- For TDC datasets including clearancemicrosomeaz, halflifeobach and vdss_lombardo, compute metrics on log-transformed values instead of original ones [5]

Step 4: Visual Inspection

- For relatively small datasets, conduct visual inspection of resultant clean datasets using tools like DataWarrior [5]

Workflow Visualization

Data Cleaning Workflow for ADMET Datasets

Impact on Model Performance and Practical Applications

Empirical Evidence from ADMET Benchmarking

Recent systematic evaluations demonstrate the tangible impact of data cleaning on model performance in ADMET prediction tasks. In one comprehensive study, researchers applied rigorous data cleaning procedures resulting in the removal of a number of compounds across datasets due to inconsistencies, duplicates, and representation issues [5]. This cleaning process enabled more reliable feature selection and model evaluation, ultimately supporting more dependable model assessments through integrated cross-validation with statistical hypothesis testing.

The benchmarking revealed that the optimal combination of machine learning algorithms and compound representations is highly dataset-dependent for ADMET prediction tasks, reinforcing the importance of clean, consistent data for identifying these optimal configurations [5]. Without systematic cleaning, the noise introduced by representation inconsistencies and duplicates obscures the true relationship between model architecture and performance.

Case Study: Deduplication in Clinical Trials Data

While not directly from ADMET research, a recent evaluation of deduplication methods in clinical trials registry data provides compelling evidence for the importance of specialized deduplication approaches. The study found that:

- Automated systematic review tools like Covidence demonstrated 100% precision but only 76.8% recall when processing registry records from ClinicalTrials.gov and WHO ICTRP [15]

- A specialized identifier-based deduplication method achieved both 100% precision and 100% recall for the same dataset [15]

- Automated tools mistakenly flagged unique records as duplicates (false positives) while missing substantial numbers of true duplicates (false negatives) [15]

These findings highlight the limitations of generic deduplication approaches when applied to specialized scientific data and underscore the need for domain-specific solutions.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Tools for Chemical Data Cleaning

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| SMILES Standardization | SmilX (TokenSMILES) [14], RDKit [5], Standardisation tool by Atkinson et al. [5] | Canonicalization and grammatical standardization of molecular representations | Preparing consistent input features for ML models |

| Deduplication Platforms | EndNote (desktop) [15], Covidence [15], SRA deduplicator [15] | Identification and merging of duplicate records | Maintaining unique molecular entries in databases |

| Cheminformatics Toolkits | RDKit [5], DeepChem [5] | Molecular manipulation, featurization, and analysis | General chemical data preprocessing and transformation |

| Data Visualization & Inspection | DataWarrior [5] | Visual data quality assessment | Identifying patterns, outliers, and anomalies in chemical datasets |

| Data Validation | Great Expectations [13], AWS Glue DataBrew [13] | Automated validation against business rules | Ensuring data quality standards pre- and post-cleaning |

Systematic data cleaning protocols, particularly SMILES standardization and duplicate removal, are not merely preliminary steps but foundational components for validating ligand-based ADMET predictions. The empirical evidence clearly demonstrates that specialized approaches, such as TokenSMILES for molecular standardization and identifier-based methods for duplicate removal, significantly outperform generic solutions in both precision and recall.

As the field moves toward more complex model architectures and representations, the principles of grammatical standardization, structured deduplication, and systematic validation will become increasingly critical. By implementing the protocols and methodologies compared in this guide, researchers can establish a robust foundation for ADMET prediction models that are both accurate and reliable, ultimately accelerating the drug discovery process while reducing late-stage attrition due to poor pharmacokinetic or toxicity profiles.

In the field of computational drug discovery, the reliable prediction of a compound's Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is a critical determinant of its viability as a drug candidate [5]. The foundation of any ligand-based predictive model lies in its molecular representation—the method of translating chemical structures into a computer-readable format that algorithms can process [17]. These representations bridge the gap between chemical structures and their biological, chemical, or physical properties, serving as the essential input for machine learning (ML) and deep learning (DL) models [17]. The choice between classical, rule-based descriptors and modern, deep-learned features significantly influences model performance, interpretability, and generalizability. This guide objectively compares these two paradigms within the context of validating ligand-based ADMET predictions, providing researchers with experimental data and methodologies to inform their model selection.

Classical Molecular Representations: Rule-Based Feature Engineering

Classical molecular representation methods rely on explicit, rule-based feature extraction derived from chemical and physical properties [17]. They are the product of decades of cheminformatics research and are highly valued for their interpretability and computational efficiency.

Key Types and Examples

- Molecular Descriptors: These are numerical values that quantify specific physical or chemical properties of a molecule. Examples include molecular weight, hydrophobicity (LogP), topological indices, and counts of hydrogen bond donors and acceptors. They are often calculated using toolkits like RDKit [5].

- Molecular Fingerprints: These are typically binary strings (bits) that encode the presence or absence of specific substructures or patterns within a molecule. A prime example is the Extended-Connectivity Fingerprint (ECFP), which captures local atomic environments and is invaluable for representing complex molecules [17]. Other types include functional connectivity fingerprints (FCFP4) [5].

Applications in ADMET Prediction

Classical representations have been successfully applied to various ADMET tasks. For instance, the FP-ADMET and MapLight frameworks combined different molecular fingerprints with ML models to establish robust prediction frameworks for a wide range of ADMET-related properties [17]. Similarly, BoostSweet leveraged a soft-vote ensemble model based on LightGBM, combining layered fingerprints with alvaDesc molecular descriptors to predict molecular sweetness [17].

Deep-Learned Molecular Representations: Data-Driven Feature Learning

Modern AI-driven approaches have shifted the paradigm from predefined rules to data-driven learning [17]. These methods employ deep learning models to automatically learn continuous, high-dimensional feature embeddings directly from raw molecular data.

Key Architectures and Methods

- Graph Neural Networks (GNNs): Models such as Message Passing Neural Networks (MPNNs) directly operate on a molecule's graph structure, where atoms are represented as nodes and bonds as edges. This naturally captures the topological information of the molecule [5].

- Language Model-Based Representations: Inspired by natural language processing (NLP), models like Transformers treat molecular sequences (e.g., SMILES or SELFIES) as a specialized chemical language. They tokenize these strings and learn contextual embeddings for each token [17].

- Other Advanced Methods: The field also includes high-dimensional features-based, multimodal-based, and contrastive learning-based approaches, which can capture complex, non-linear relationships in the data that are difficult to predefine with rules [17].

Experimental Comparison and Benchmarking

Independent benchmarking studies provide critical, empirical data for comparing the performance of classical and deep-learned representations across practical ADMET prediction tasks.

Performance Across ADMET Datasets

The following table summarizes key findings from a comprehensive benchmarking study that evaluated various algorithms and compound representations across multiple public ADMET datasets [5].

| Representation Type | Example Algorithms | Key Strengths | Typical Application Context |

|---|---|---|---|

| Classical Descriptors & Fingerprints | Random Forests (RF), Support Vector Machines (SVM), LightGBM | High interpretability, computational efficiency, performs well on smaller datasets [5] [17] | Initial screening, resource-constrained environments, when model explainability is critical |

| Deep-Learned Representations | Message Passing Neural Networks (MPNN), Transformer-based Models | Superior performance on complex endpoints, automatic feature extraction, reduced need for expert knowledge [5] [17] | Large, complex datasets (e.g., metabolic stability, toxicity), when exploring broad chemical space |

Table 1: A high-level comparison of classical and deep-learned molecular representation approaches.

Insights from a Computational Blind Challenge

The 2025 ASAP-Polaris-OpenADMET Antiviral Challenge provided a unique opportunity for a rigorous, blind test of modeling strategies. A key insight from this challenge was that the superiority of a method is often task-dependent [18]:

- Classical Methods remain highly competitive for predicting compound potency (e.g., pIC50 for SARS-CoV-2 Mpro) [18].

- Modern Deep Learning algorithms, however, significantly outperformed traditional machine learning in ADME prediction [18].

This underscores the importance of selecting a representation type based on the specific prediction target.

Experimental Protocols for Model Validation

For researchers seeking to validate these findings or benchmark their own models, the following methodological details are essential.

Data Curation and Cleaning

The reliability of any model is contingent on data quality. A robust cleaning protocol includes [5]:

- Removing inorganic salts and organometallic compounds.

- Extracting the organic parent compound from salt forms.

- Adjusting tautomers to achieve consistent functional group representation.

- Canonicalizing SMILES strings.

- De-duplication: Keeping the first entry if target values are consistent, or removing the entire group if they are inconsistent. Consistency is defined as identical for binary tasks and within 20% of the inter-quartile range for regression tasks.

Model Training and Evaluation Methodology

A structured approach to model evaluation, as used in benchmarking studies, involves [5]:

- Iterative Feature Selection: Systematically testing and combining different molecular representations (e.g., RDKit descriptors, Morgan fingerprints, and deep-learned embeddings) rather than arbitrary concatenation.

- Hyperparameter Tuning: Conducting dataset-specific optimization of model architectures.

- Robust Model Comparison: Integrating cross-validation with statistical hypothesis testing to assess the statistical significance of performance differences, adding a layer of reliability beyond a simple hold-out test set [5].

- Practical Validation: Evaluating models trained on one data source (e.g., public data) on a test set from a different source (e.g., in-house data) to simulate real-world application.

Figure 1: A generalized workflow for benchmarking molecular representation approaches in ADMET prediction, highlighting key steps from data curation to model validation.

The table below details key software tools, datasets, and resources essential for conducting research in molecular representation and ADMET prediction.

| Resource Name | Type | Primary Function | Relevance to ADMET |

|---|---|---|---|

| RDKit | Software Toolkit | Calculates classical molecular descriptors and fingerprints [5] | Generates interpretable, rule-based features for model training |

| Chemprop | Software Framework | Implements Message Passing Neural Networks (MPNNs) for molecules [5] | Provides state-of-the-art deep learning models for molecular property prediction |

| Therapeutics Data Commons (TDC) | Data Resource | Provides curated public datasets and benchmarks for ADMET-associated properties [5] | Serves as a standard source for training and benchmarking data |

| Deep-PK | Predictive Platform | Predicts pharmacokinetics using graph-based descriptors and multitask learning [19] | Specialized platform for key ADMET endpoints |

| AlvaDesc | Software Toolkit | Calculates a comprehensive set of molecular descriptors [17] | Used to generate a wide array of features for QSAR/ADMET models |

Table 2: A selection of key resources for computational researchers working on molecular representation and ADMET prediction.

The comparison between classical descriptors and deep-learned features reveals a nuanced landscape. Classical methods, with their computational efficiency and interpretability, remain a robust choice for many tasks, particularly with smaller datasets or when predicting compound potency [18] [5]. Conversely, deep-learned representations offer a powerful, data-driven alternative that can automatically extract complex features and has demonstrated significant advantages in certain ADME prediction challenges [18] [19].

The choice is not necessarily mutually exclusive. Hybrid approaches that combine the interpretability of classical descriptors with the predictive power of deep learning are an active area of research. Furthermore, the field is moving towards addressing challenges such as data quality, model interpretability, and generalizability. Future directions include the integration of structure-guided modeling, hybrid AI-quantum frameworks, and multi-omics integration, all poised to further accelerate the discovery of safer and more effective therapeutics [19] [17]. For now, the optimal molecular representation depends critically on the specific endpoint, data availability, and the required balance between performance and interpretability.

Advanced Methodologies: Implementing State-of-the-Art ML Approaches

The selection of appropriate machine learning algorithms is a critical determinant of success in computational drug discovery, particularly for predicting the Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties of candidate molecules. Accurately forecasting these pharmacokinetic and safety profiles early in the development pipeline significantly reduces late-stage attrition rates and accelerates the delivery of viable therapeutics [5] [20]. While numerous machine learning approaches exist, three algorithm families consistently demonstrate superior performance for structured molecular data: Random Forests (RF), Gradient Boosting Machines (GBM), and Deep Neural Networks (DNN). This guide provides an objective comparison of these algorithms within the specific context of validating ligand-based ADMET predictions, enabling researchers to make informed selections based on empirical evidence, dataset characteristics, and practical constraints.

The challenge of algorithm selection extends beyond raw predictive accuracy to encompass considerations of data volume, feature representation, computational resources, and interpretability needs. As noted in benchmarking studies, the optimal model and feature choices can be highly dataset-dependent for ADMET endpoints, necessitating a nuanced understanding of each algorithm's strengths and limitations [5]. This review synthesizes evidence from recent ADMET-focused studies and broader machine learning comparisons to establish a framework for algorithm selection grounded in both theoretical principles and empirical results.

Random Forests

Random Forests constitute an ensemble learning method that operates by constructing a multitude of decision trees during training. The algorithm introduces randomness through two primary mechanisms: bootstrap sampling of the training data (bagging) and random subset selection of features at each split point. This randomness ensures individual trees remain diverse, with the final prediction typically determined by majority voting (classification) or averaging (regression) across all trees in the forest [21] [22].

The key advantage of this approach lies in its inherent variance reduction compared to single decision trees, while simultaneously mitigating overfitting through the collective decision-making process. For ligand-based ADMET prediction, where datasets may contain substantial noise from experimental measurements, this robustness proves particularly valuable [23]. Additionally, Random Forests naturally provide feature importance metrics by tracking how much each feature decreases impurity across all trees, offering valuable insights into which molecular descriptors most significantly influence ADMET properties.

Gradient Boosting Machines

Gradient Boosting Machines represent a different ensemble philosophy based on sequential model building rather than parallel tree construction. Unlike Random Forests, which build trees independently, GBM constructs trees one at a time, with each new tree trained to correct the residual errors made by the previous ensemble [21] [22]. The algorithm operates by optimizing an arbitrary differentiable loss function using gradient descent, where each new tree approximates the negative gradient (direction of steepest descent) of the loss function.

Formally, at iteration ( m ), GBM updates the model as follows: [ Fm(x) = F{m-1}(x) + \betam hm(x) ] where ( F{m-1}(x) ) represents the existing ensemble, ( hm(x) ) is the new weak learner (typically a decision tree), and ( \beta_m ) controls the learning rate [21]. This sequential error-correction mechanism enables GBMs to capture complex, non-linear relationships in data through an additive model structure, often achieving state-of-the-art performance on tabular datasets common in cheminformatics [24]. Modern implementations like LightGBM, XGBoost, and CatBoost have further enhanced performance through optimized computing architectures and specialized handling of categorical features.

Deep Neural Networks

Deep Neural Networks comprise interconnected layers of artificial neurons that learn hierarchical representations of input data through multiple transformations. In drug discovery contexts, DNNs can process various molecular representations—including molecular descriptors, fingerprints, and more recently, learned representations from SMILES strings or molecular graphs [25] [20]. Unlike tree-based methods that require predefined feature representations, certain DNN architectures can automatically extract relevant features from raw molecular representations.

The transformative potential of DNNs lies in their capacity to model extremely complex functions and discover intricate patterns without explicit feature engineering [21] [26]. For ADMET prediction, specialized architectures such as Message Passing Neural Networks (as implemented in Chemprop) and Transformer-based models (like MSformer-ADMET) have demonstrated remarkable performance by directly learning from molecular structure [5] [20]. However, this flexibility comes with substantial data requirements and computational costs, making them most suitable for scenarios with large, high-quality datasets and sufficient computational resources.

Performance Comparison in ADMET Prediction

Quantitative Performance Metrics

Recent benchmarking studies provide empirical evidence of algorithm performance across diverse ADMET prediction tasks. The following table summarizes key findings from comparative evaluations:

Table 1: Performance comparison of algorithms across ADMET prediction tasks

| Algorithm | ADMET Task | Performance Metrics | Key Findings | Source |

|---|---|---|---|---|

| LightGBM (Gradient Boosting) | Anticancer ligand prediction | 90.33% accuracy, AUROC: 97.31% | Superior prediction accuracy with good generalizability | [24] |

| Random Forest | Various ADMET benchmarks | Highly variable across endpoints | Optimal model choice highly dataset-dependent | [5] |

| Gradient Boosting | ADMET feature representation studies | Competitive performance | Often outperforms RF on complex, structured datasets | [21] [5] |

| Deep Neural Networks (MSformer-ADMET) | 22 TDC ADMET tasks | Superior performance across multiple endpoints | Outperformed conventional SMILES-based and graph-based models | [20] |

| Random Forest | Small dataset ADMET prediction | More stable performance | Advantageous for smaller or noisier datasets | [5] [23] |

Analysis of Performance Patterns

The quantitative evidence reveals several important patterns for algorithm selection in ADMET contexts. Gradient Boosting implementations, particularly LightGBM, have demonstrated exceptional performance in specific prediction tasks such as anticancer ligand identification, achieving 90.33% accuracy with 97.31% AUROC in independent testing [24]. This aligns with the broader pattern that well-tuned GBMs often achieve the highest accuracy on structured datasets with complex feature interactions [21].

However, Random Forests maintain important advantages in certain scenarios, particularly with smaller or noisier datasets commonly encountered in early-stage drug discovery [23]. Studies note that while Gradient Boosting may achieve higher peak performance, Random Forests provide more consistent results across diverse ADMET endpoints where the optimal algorithm appears highly dataset-dependent [5].

Deep Neural Networks, especially specialized architectures like MSformer-ADMET, have shown breakthrough performance on comprehensive ADMET benchmarks, outperforming conventional approaches across multiple endpoints [20]. This superior capability comes from their ability to learn directly from molecular structure without relying on pre-engineered features, though this advantage typically materializes only with sufficient training data and computational investment.

Experimental Protocols and Methodologies

Standardized Benchmarking Frameworks

Robust algorithm evaluation in ADMET prediction requires carefully designed experimental protocols. Recent benchmarking studies have implemented rigorous methodologies to ensure fair comparisons:

Table 2: Key components of experimental protocols for algorithm evaluation in ADMET prediction

| Protocol Component | Implementation Details | Purpose | Example Source |

|---|---|---|---|

| Data Cleaning | Standardization of SMILES, removal of duplicates and salts, handling of missing values | Ensure data quality and consistency | [5] |

| Feature Representation | RDKit descriptors, Morgan fingerprints, learned representations | Compare impact of different molecular encodings | [5] [24] |

| Data Splitting | Scaffold split method (via DeepChem) | Assess generalization to novel chemical structures | [5] |

| Model Validation | Cross-validation with statistical hypothesis testing | Ensure statistical significance of performance differences | [5] |

| External Validation | Training on one data source, testing on another | Evaluate practical applicability | [5] |

The Therapeutics Data Commons (TDC) has emerged as a valuable resource for standardized ADMET benchmarking, providing curated datasets and evaluation protocols that facilitate direct algorithm comparisons [5] [20]. Studies leveraging TDC typically employ scaffold splitting, which groups molecules based on their Bemis-Murcko scaffolds and assigns entire scaffolds to training or test sets. This approach more realistically simulates real-world performance when predicting properties for novel chemical scaffolds not represented in the training data [5].

Feature Selection and Representation

A critical methodological consideration in ligand-based ADMET prediction is the selection and engineering of molecular representations. Studies consistently show that feature representation significantly impacts model performance, sometimes more than the choice of algorithm itself [5]. Common approaches include:

- Traditional descriptors and fingerprints: RDKit molecular descriptors, Morgan fingerprints, and other hand-crafted features that encode specific molecular properties and substructures.

- Deep-learned representations: Features automatically extracted by neural networks from raw molecular representations like SMILES strings or molecular graphs.

- Hybrid approaches: Concatenation of multiple representation types to capture complementary information about molecular structure and properties.

Recent research indicates that structured approaches to feature selection—such as variance thresholding, correlation filters, and algorithms like Boruta—can significantly improve model performance and interpretability while reducing overfitting [24]. The Boruta algorithm, which uses a Random Forest classifier to identify statistically important features by comparing original features to shadow features, has proven particularly effective for high-dimensional molecular descriptor sets [24].

Figure 1: Comprehensive workflow for algorithm validation in ADMET prediction, incorporating data cleaning, feature engineering, model training, and rigorous validation stages.

Successful implementation of machine learning algorithms for ADMET prediction requires both computational tools and curated data resources. The following table details essential components of the research toolkit:

Table 3: Essential research reagents and computational tools for ADMET prediction research

| Tool/Resource | Type | Function | Example Applications |

|---|---|---|---|

| Therapeutics Data Commons (TDC) | Data Benchmark | Curated ADMET datasets with standardized splits | Algorithm benchmarking across multiple endpoints [5] [20] |

| RDKit | Cheminformatics Library | Molecular descriptor calculation, fingerprint generation, SMILES processing | Feature engineering for traditional ML algorithms [5] [24] |

| LightGBM/XGBoost | Gradient Boosting Implementation | Efficient gradient boosting with optimized training algorithms | High-performance prediction on structured molecular data [5] [24] |

| Chemprop | Deep Learning Library | Message Passing Neural Networks for molecular property prediction | Graph-based molecular representation learning [5] |

| MSformer-ADMET | Specialized DL Framework | Transformer-based architecture for ADMET prediction | State-of-the-art performance on multiple ADMET endpoints [20] |

| PaDELPy | Descriptor Calculation Tool | Automated computation of molecular descriptors and fingerprints | Feature generation for QSAR modeling [24] |

| Boruta | Feature Selection Algorithm | Random Forest-based feature importance identification | Dimensionality reduction for high-dimensional descriptor sets [24] |

Beyond these computational tools, effective ADMET modeling requires careful data curation and preprocessing. Public ADMET datasets often contain inconsistencies ranging from duplicate measurements with varying values to inconsistent binary labels across training and test sets [5]. Implementing standardized data cleaning protocols—including SMILES standardization, salt removal, tautomer adjustment, and deduplication—is essential for building reliable predictive models [5].

Practical Guidelines for Algorithm Selection

Decision Framework

Based on the comparative analysis of algorithmic performance, computational requirements, and implementation complexity, the following decision framework provides practical guidance for algorithm selection in ligand-based ADMET prediction:

Figure 2: Decision framework for selecting machine learning algorithms in ADMET prediction based on dataset size and interpretability requirements.

Implementation Considerations

Beyond the core decision framework, several practical considerations should guide algorithm selection and implementation:

Computational Resources: Random Forests can be trained in parallel, offering faster training on multi-core systems. Gradient Boosting requires sequential training but often achieves better performance with careful tuning. Deep Neural Networks typically demand significant computational resources, especially for hyperparameter optimization [21] [22].

Hyperparameter Sensitivity: Gradient Boosting generally requires more extensive hyperparameter tuning than Random Forests to prevent overfitting and achieve optimal performance. Deep Neural Networks involve numerous hyperparameters related to architecture design, optimization, and regularization [21].

Data Quality Tolerance: Random Forests typically demonstrate greater robustness to noisy data and outliers commonly found in experimental ADMET measurements. Gradient Boosting may overfit to noise without proper regularization, while Deep Neural Networks require large volumes of clean data to achieve their full potential [21] [23].

Feature Representation Flexibility: Deep Neural Networks can learn directly from raw molecular representations (SMILES, graphs), potentially reducing reliance on manual feature engineering. Tree-based methods typically require precomputed molecular descriptors or fingerprints but often achieve excellent performance with these representations [25] [20].

The selection between Random Forests, Gradient Boosting Machines, and Deep Neural Networks for ligand-based ADMET prediction involves nuanced trade-offs across multiple dimensions of performance, efficiency, and practicality. Evidence from recent benchmarking studies indicates that while Gradient Boosting implementations frequently achieve superior predictive accuracy on structured molecular data, Random Forests offer advantages in stability, interpretability, and performance on smaller datasets. Deep Neural Networks, particularly specialized architectures like MSformer-ADMET, represent the cutting edge for large-scale comprehensive ADMET profiling but demand substantial computational resources and technical expertise.

The optimal algorithm choice ultimately depends on specific research constraints and objectives, including dataset size and quality, interpretability requirements, computational resources, and performance priorities. Rather than seeking a universally superior algorithm, researchers should consider these factors within their specific context, potentially employing the structured decision framework presented herein. As the field advances, hybrid approaches that leverage the complementary strengths of multiple algorithm families may offer the most promising path forward for robust, interpretable, and highly accurate ADMET prediction in drug discovery pipelines.

In modern drug discovery, the failure of drug candidates due to unfavorable Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties remains a significant challenge, contributing substantially to late-stage attrition [1]. Accurately predicting these properties through computational methods has therefore become a critical research focus, with molecular representation serving as the foundational element of any predictive model. For decades, molecular fingerprints—handcrafted, fixed representations based on predefined structural patterns—have been the standard tool for ligand-based ADMET prediction [27]. However, the emergence of Graph Neural Networks (GNNs) presents a paradigm shift, offering data-driven representations that learn directly from molecular graph structures. This review provides a comprehensive comparison of these competing approaches for molecular representation, evaluating their performance, interpretability, and practical utility within the context of validating ligand-based ADMET predictions.

Molecular Representation Fundamentals: From Handcrafted Features to Learned Embeddings

Traditional Molecular Fingerprints

Traditional molecular fingerprints are expert-designed representations that encode molecular structures into fixed-length bit vectors. They operate on predefined rules to capture specific structural patterns or fragments:

- Extended-Connectivity Fingerprints (ECFP): Circular fingerprints that capture atomic environments at different radii, widely used for similarity searching and structure-activity modeling [28] [29].

- PubChem Fingerprints: Encode molecular structures based on predefined chemical substructures derived from the PubChem database [30].

- MACCS Keys: A set of 166 structural keys representing specific atom environments or functional groups [28].

- RDKit Fingerprints: Structural fingerprints implemented within the RDKit cheminformatics package, using a dictionary of known substructures [30].

These representations are inherently interpretable and computationally efficient, making them suitable for use with traditional machine learning models like Random Forest and XGBoost [27]. However, they face limitations in dealing with the high dimensionality and heterogeneity of material data, potentially leading to limited generalization capabilities and insufficient information representation [28].

Graph Neural Networks (GNNs)

GNNs constitute a deep learning approach specifically designed for graph-structured data, making them naturally suited for molecular representation where atoms correspond to nodes and bonds to edges [28]. Unlike fixed fingerprints, GNNs learn task-specific representations through multiple layers of message passing, where each atom's representation is iteratively updated by aggregating information from its neighboring atoms [29]. This approach automatically captures complex structure-property relationships without relying on pre-defined feature engineering.

Key GNN architectures for molecular representation include:

- Graph Convolutional Networks (GCNs): Apply convolutional operations to graph data by aggregating neighbor information using normalized sums [29].

- Graph Attention Networks (GATs): Incorporate attention mechanisms to weigh the importance of different neighboring atoms during message passing [29].

- Message Passing Neural Networks (MPNNs): A general framework that unifies various GNN approaches through message functions and update functions [29].

- Attentive FP: Utilizes a graph attention mechanism to learn context-dependent representations for both atoms and molecules [29].

- Kolmogorov-Arnold GNNs (KA-GNNs): Recently proposed architectures that integrate Kolmogorov-Arnold networks into GNN components, showing enhanced expressivity and interpretability [31].

Performance Comparison: Experimental Evidence Across Multiple Benchmarks

Quantitative Performance Metrics Across Diverse Property Endpoints

Table 1: Comparative Performance of GNNs vs. Fingerprint-Based Models on Molecular Property Prediction

| Dataset Category | Top-Performing Approach | Key Metrics | Notable Models |

|---|---|---|---|

| ADMET Parameters | Mixed Performance | GNNs with multitask learning achieved highest performance for 7/10 ADME parameters [32] | GNN-MT+FT (Multitask Fine-Tuning) [32] |

| Taste Prediction | GNNs & Hybrids | GNNs outperformed other approaches; fingerprints + GNN consensus model was top performer [30] | Molecular fingerprints + GNN consensus model [30] |

| Molecular Property Benchmarks | Descriptor-Based Models | Descriptor-based models generally outperformed graph-based models in prediction accuracy and computational efficiency [29] | SVM, XGBoost, Random Forest [29] |

| Drug Discovery Applications | GNN Foundation Models | MolGPS (GNN foundation model) established SOTA on 26/38 downstream tasks [33] | MolGPS, Graph Transformers [33] |

Critical Analysis of Performance Trends

The experimental evidence reveals a nuanced performance landscape. While some studies indicate that traditional descriptor-based models can match or even exceed GNN performance on certain benchmarks [29], more recent and specialized applications demonstrate clear advantages for GNN approaches:

- Multitask Learning Advantage: GNNs demonstrate particular strength in multitask settings, where knowledge sharing across related tasks improves generalization, especially for ADMET parameters with limited data [32]. This addresses a key challenge in drug discovery where experimental data for certain endpoints is scarce.

- Hybrid Approach Superiority: Consensus models that combine GNNs with molecular fingerprints often achieve state-of-the-art performance, suggesting these representations capture complementary information [34] [30]. The Fingerprint-Enhanced Hierarchical GNN (FH-GNN), which integrates hierarchical molecular graphs with fingerprint features, has demonstrated superior performance on multiple benchmarks [34].

- Foundation Model Scaling: Recent research on GNN scaling laws demonstrates that increasing model size, dataset size, and label diversity consistently improves performance, enabling foundation models like MolGPS to achieve new state-of-the-art results across numerous tasks [33].

Methodological Comparison: Experimental Protocols and Workflows

Traditional Fingerprint-Based Workflow

Table 2: Key Research Reagents and Computational Tools

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Library | Fingerprint generation, molecular descriptors, cheminformatics | Fingerprint calculation, structural manipulation [29] [8] |

| DruMAP | ADME Database | Source of experimental ADME values and compound structures | Training data for predictive models [32] |

| PharmaBench | Benchmark Dataset | Comprehensive ADMET dataset with standardized experimental conditions | Model evaluation and benchmarking [8] |

| XGBoost/Random Forest | Machine Learning Algorithm | Predictive modeling using fingerprint features | Baseline performance comparison [29] [27] |

| SHAP | Interpretation Framework | Model interpretation and feature importance analysis | Explaining fingerprint-based model predictions [29] |

The standard workflow for fingerprint-based approaches involves:

- Molecular Featurization: Generating fingerprint vectors (e.g., ECFP, PubChem fingerprints) or molecular descriptors using tools like RDKit [29].

- Model Training: Applying traditional machine learning algorithms (Random Forest, XGBoost, SVM) to the fingerprint features.

- Interpretation: Using methods like SHAP (Shapley Additive Explanations) to identify which structural features contribute most to predictions [29].

Graph Neural Network Workflow

GNN methodologies employ significantly different experimental protocols:

- Graph Representation: Molecules are represented as graphs with node features (atom type, hybridization, etc.) and edge features (bond type, conjugation, etc.) [29].

- Architecture Selection: Choosing appropriate GNN architectures (GCN, GAT, MPNN, Attentive FP) based on the task requirements.

- Training Strategy: Often employing pretraining on large molecular datasets followed by fine-tuning on specific ADMET endpoints [32] [33].

- Interpretation: Using GNN-specific interpretation techniques such as integrated gradients to highlight atoms and substructures important for predictions [32].

Diagram 1: Comparative workflow for fingerprint-based and GNN-based molecular property prediction. The hybrid approach leverages strengths from both methodologies.

Functional Advantages and Limitations in ADMET Context

Representation Capabilities

- Expressiveness: GNNs demonstrate superior expressiveness by automatically capturing information about atoms, chemical bonds, multi-order adjacencies, and topologies without manual feature engineering [28]. Traditional fingerprints, while capturing explicit structural patterns, may miss complex, non-obvious relationships.

- Adaptability: GNN representations can be dynamically adjusted based on downstream tasks through fine-tuning, whereas fingerprint-based representations remain static once generated [28]. This is particularly valuable for multi-task ADMET prediction where shared learning across endpoints improves efficiency.

- Smooth Latent Spaces: GNNs create continuous, high-dimensional embedding spaces where molecular similarity can be measured using mathematical operations like Euclidean distance or cosine similarity [27]. This enables efficient similarity searching and molecular optimization in continuous spaces, a significant advantage over discrete fingerprint representations.

Practical Implementation Considerations

- Computational Efficiency: Fingerprint-based models with traditional ML algorithms (XGBoost, Random Forest) generally offer superior computational efficiency, requiring only seconds to train on large datasets [29]. GNNs typically demand more computational resources and training time, though this gap may narrow with specialized architectures and hardware optimization.

- Data Requirements: GNNs generally benefit from larger datasets to reach their full potential and may underperform on small datasets where traditional fingerprints excel [29] [27]. However, techniques like multitask learning [32] and foundation model pretraining [33] can mitigate data scarcity issues.

- Interpretability: Fingerprint-based models traditionally hold an advantage in interpretability, with clear mapping between activated bits and chemical substructures [29]. However, recent GNN interpretation methods like integrated gradients [32] and inherently interpretable architectures like KA-GNNs [31] are rapidly closing this gap by highlighting chemically meaningful substructures.

Future Directions and Research Opportunities

The field of molecular representation continues to evolve rapidly, with several promising research directions emerging:

- Geometric and 3D-Aware GNNs: Traditional GNNs operating on 2D molecular graphs are being supplemented by architectures that incorporate 3D molecular geometry and conformational information, better capturing stereochemistry and molecular shape properties critical for binding and toxicity [27].

- Foundation Models for Molecules: Following the success in natural language processing, large-scale pretrained GNN models like MolGPS [33] demonstrate remarkable transfer learning capabilities across diverse molecular tasks, potentially reducing the data requirements for specific ADMET endpoints.

- Multimodal Integration: Combining molecular graph information with other data modalities such as bioassay results, protein structures, and genomic data represents a frontier for improving predictive accuracy and clinical relevance [1].

- Algorithmic Advancements: Novel architectures like KA-GNNs that integrate Kolmogorov-Arnold networks with graph learning show promise for enhancing both predictive performance and interpretability [31].

The comparison between GNNs and traditional fingerprints for molecular representation reveals a complex landscape where neither approach universally dominates. For researchers validating ligand-based ADMET predictions, the strategic selection depends on specific project constraints and objectives:

- Fingerprint-based approaches remain compelling for projects with limited data, requiring rapid prototyping, or prioritizing model interpretability using established cheminformatics tools.

- GNN approaches offer advantages for complex multitask prediction, when leveraging large-scale molecular datasets, or when capturing subtle 3D structural relationships is essential for accurate ADMET forecasting.

- Hybrid methodologies that combine the strengths of both representations increasingly deliver state-of-the-art performance, suggesting that the future of molecular representation lies not in choosing between these paradigms but in effectively integrating them.

As GNN methodologies continue to mature and computational resources expand, the trend toward learned, data-driven representations appears inevitable. However, traditional fingerprints will likely maintain relevance as interpretable, computationally efficient alternatives, particularly in resource-constrained environments or for well-established structure-activity relationships. For the ADMET researcher, maintaining expertise in both paradigms represents the most strategic approach to navigating the evolving landscape of molecular property prediction.