Validating In Silico Lipophilicity Predictions: A Comprehensive Guide for Drug Discovery Scientists

Accurate prediction of lipophilicity, commonly measured as logP and logD, is crucial in drug discovery as it directly influences a compound's absorption, distribution, metabolism, and excretion (ADME).

Validating In Silico Lipophilicity Predictions: A Comprehensive Guide for Drug Discovery Scientists

Abstract

Accurate prediction of lipophilicity, commonly measured as logP and logD, is crucial in drug discovery as it directly influences a compound's absorption, distribution, metabolism, and excretion (ADME). This article provides a comprehensive resource for researchers and drug development professionals on the validation of computational lipophilicity models. It explores the fundamental role of lipophilicity in pharmacokinetics, details the spectrum of available in silico methods from QSAR to advanced machine learning, addresses common pitfalls and optimization strategies, and establishes robust frameworks for experimental validation. By synthesizing current methodologies and validation protocols, this guide aims to enhance the reliability and application of in silico predictions to streamline lead optimization and reduce late-stage attrition in drug development.

Lipophilicity Fundamentals: Why logP and logD are Cornerstones of ADME Profiling

Lipophilicity is a fundamental physical property that significantly influences a drug candidate's behavior, including its solubility, permeability, metabolism, distribution, protein binding, and toxicity. [1] In pharmaceutical development, the balance between lipophilicity and hydrophilicity is crucial for determining the absorption, distribution, metabolism, excretion, and toxicity (ADMET) profile of potential therapeutics. [2] For decades, Lipinski's Rule of Five has served as a key guideline for identifying orally active drugs, specifying that the calculated octanol-water partition coefficient (logP) should be less than 5, among other criteria. [2] However, this rule has limitations, particularly for compounds with ionizable groups, which constitute approximately 95% of drugs. [1] This recognition has led to the increased importance of the distribution coefficient (logD), which accounts for a compound's ionization state at physiologically relevant pH levels, with logD7.4 being of particular interest for its relevance to physiological conditions. [1]

Theoretical Foundations: logP and logD7.4

Partition Coefficient (logP)

The partition coefficient, logP, quantifies the distribution of a compound between two immiscible liquids, typically n-octanol and water. [2] It is defined as the logarithm of the ratio of the concentration of the unionized compound in octanol to its concentration in water. [3] [4] LogP represents the intrinsic lipophilicity of a compound in its neutral state and is a pH-independent value. [2]

Mathematical Definition:

Where [Drug_unionized] represents the concentration of the unionized drug molecule. [3]

Distribution Coefficient (logD7.4)

The distribution coefficient, logD, describes the distribution of all species of a compound (ionized, partially ionized, and unionized) between octanol and water at a specific pH. [2] LogD7.4 refers specifically to this distribution at physiological pH (7.4), making it particularly relevant for predicting drug behavior in the body. [1] Unlike logP, logD is pH-dependent and provides a more accurate representation of a compound's lipophilicity under biological conditions. [2]

Mathematical Definition:

Where [Total_Drug] includes all forms of the drug (ionized and unionized) in each phase. [3] [4]

Theoretical Relationship Between logD, logP, and pKa: For monoprotic acids and bases, logD can be calculated from logP and pKa:

- For acids: LogD = LogP - log₁₀(1 + 10^(pH - pKa)) [3]

- For bases: LogD = LogP - log₁₀(1 + 10^(pKa - pH)) [3]

Table 1: Fundamental Differences Between logP and logD7.4

| Parameter | logP | logD7.4 |

|---|---|---|

| Species Measured | Unionized compound only | All species (ionized + unionized) |

| pH Dependence | pH-independent | pH-dependent (specific to pH 7.4) |

| Physiological Relevance | Limited | High (matches physiological pH) |

| Ionizable Compounds | Incomplete picture | Comprehensive picture |

| Typical Drug Discovery Use | Early screening | ADMET prediction, lead optimization |

Experimental Determination: Methodologies and Protocols

Shake-Flask Method

The shake-flask method is considered the standard technique for direct measurement of both logP and logD7.4. [1]

Protocol:

- Preparation: Saturate n-octanol with water and water with n-octanol prior to use.

- Partitioning: Dissolve the compound in a mixture of n-octanol and aqueous buffer (pH 7.4 for logD7.4) in a flask.

- Equilibration: Shake the mixture vigorously to allow partitioning between the two phases.

- Separation: Allow phases to separate completely via centrifugation or standing.

- Analysis: Measure the concentration of the compound in each phase using appropriate analytical methods (e.g., UV spectroscopy, HPLC).

- Calculation: Calculate logD7.4 as log₁₀([Total compound]ₒcₜₐₙₒₗ/[Total compound]wₐₜₑᵣ).

This method is labor-intensive and requires relatively large amounts of compound but provides direct measurement. [1]

Chromatographic Techniques

Chromatographic methods, particularly reversed-phase High-Performance Liquid Chromatography (HPLC), offer an indirect approach for logD7.4 estimation. [1]

Protocol:

- Column Equilibration: Equilibrate a reversed-phase HPLC column with an appropriate mobile phase.

- Standard Calibration: Measure retention times for compounds with known logD7.4 values to establish a calibration curve.

- Sample Analysis:

- Inject the test compound dissolved in a suitable solvent.

- Use isocratic or gradient elution with buffered mobile phase (pH 7.4).

- Record the retention time or capacity factor.

- Calculation: Determine logD7.4 value by comparing the compound's chromatographic behavior to the calibration curve.

Chromatographic techniques are simpler and more high-throughput but provide indirect assessment of logD7.4. [1]

Potentiometric Titration

Potentiometric methods determine logD7.4 by monitoring pH changes during titration. [1]

Protocol:

- Sample Preparation: Dissolve samples in a water-octanol mixture.

- Titration: Titrate with standard solutions of potassium hydroxide or hydrochloride.

- Monitoring: Record pH changes throughout the titration.

- Analysis: Analyze the titration curve to determine the distribution coefficient.

This approach is limited to compounds with acid-base properties and requires high sample purity. [1]

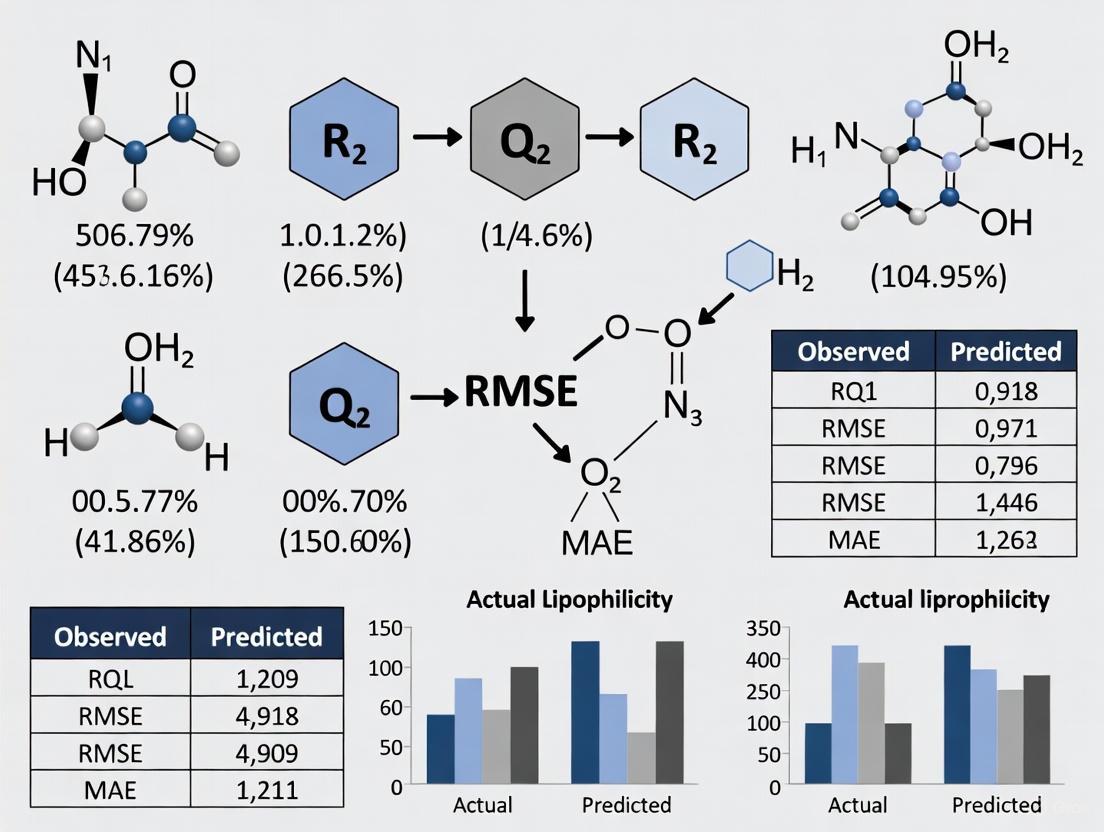

Figure 1: Experimental Workflow for logD7.4 Determination

Computational Prediction: In Silico Approaches

Traditional QSPR Modeling

Quantitative Structure-Property Relationship (QSPR) modeling correlates molecular descriptors with logD7.4 values. [5]

Protocol:

- Dataset Curation: Compile experimental logD7.4 values for diverse compounds.

- Descriptor Generation: Calculate molecular descriptors (e.g., sub-structural molecular fragments, topological indices, electronic parameters).

- Model Training: Apply multiple linear regression (MLR) or other statistical methods to build predictive models.

- Validation: Validate models using cross-validation and external test sets.

Khaledian and Saaidpour developed a QSPR model using sub-structural molecular fragments (SMF) that demonstrated good predictive power for logD7.4 of 300 diverse drugs. [5]

Advanced Machine Learning and Graph Neural Networks

Recent approaches leverage graph neural networks (GNNs) and transfer learning for improved logD7.4 prediction. [1]

RTlogD Protocol:

- Multi-Source Knowledge Integration:

- Pre-training: Use chromatographic retention time (RT) datasets (~80,000 molecules) as a source task.

- Microscopic pKa Integration: Incorporate atomic-level pKa values as features for ionizable sites.

- Multi-task Learning: Include logP as a parallel learning task.

- Model Architecture: Implement graph neural networks that directly learn from molecular structures.

- Training: Fine-tune the pre-trained model on experimental logD7.4 data.

- Validation: Evaluate performance on time-split test sets to assess predictive capability.

The RTlogD model demonstrated superior performance compared to commonly used algorithms and prediction tools. [1]

Table 2: Comparison of Computational Prediction Methods for logD7.4

| Method | Approach | Data Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Traditional QSPR | Linear regression with molecular descriptors | Experimental logD7.4 values | Interpretable, fast computation | Limited to chemical space of training data |

| Fragment-Based | Summation of fragment contributions | Fragment libraries with known contributions | High interpretability, requires less data | Misses complex intramolecular interactions |

| Graph Neural Networks (GNN) | Direct learning from molecular graphs | Large datasets of molecular structures | Captures complex patterns, high accuracy | Black box, requires substantial data |

| RTlogD (Transfer Learning) | Knowledge transfer from RT, pKa, logP | Multiple data sources (RT, pKa, logP, logD) | Addresses data scarcity, high performance | Complex implementation |

Comparative Analysis: Performance and Applications

Performance in Drug Discovery

LogD7.4 provides significant advantages over logP for predicting biological behavior:

Membrane Permeability: LogD7.4 more accurately predicts passive diffusion through lipid membranes as it accounts for the ionization state at physiological pH. [2]

ADMET Prediction: Compounds with moderate logD7.4 values (typically 1-3) exhibit optimal pharmacokinetic and safety profiles. [1] High lipophilicity (logD7.4 > 3) correlates with increased risk of toxic events and poor solubility, while low lipophilicity limits membrane permeability. [1]

Beyond Rule of 5 (bRo5) Space: As drug discovery explores larger compounds beyond traditional Rule of 5 space (molecular weight < 1000 Da, logP between -2 and 10), logD7.4 becomes increasingly valuable for understanding the properties of macrocycles, protein-based agents, and multispecific drugs. [2]

Limitations and Challenges

Experimental Variability: Measured distribution coefficients can vary depending on the measurement method, with shake-flask and pH-metric methods potentially yielding different results for the same compound. [4]

Ionic Species Partitioning: Theoretical calculations assuming only neutral species partition into octanol can introduce error, as octanol can dissolve significant water, allowing ionic species to partition into the organic phase. [1]

Data Limitations: Limited availability of high-quality experimental logD7.4 data restricts the generalization capability of computational models. [1]

Research Reagents and Tools

Table 3: Essential Research Reagents and Computational Tools for Lipophilicity Assessment

| Reagent/Tool | Function/Application | Specifications |

|---|---|---|

| n-Octanol | Organic phase for partition/distribution studies | HPLC grade, pre-saturated with aqueous buffer |

| Buffer Solutions (pH 7.4) | Aqueous phase for logD7.4 determination | Phosphate buffer (10-100 mM), ionic strength control |

| HPLC System with UV Detector | Chromatographic logD determination | Reversed-phase C18 column, buffered mobile phase |

| ACD/Percepta | Commercial software for logP/logD prediction | Includes fragmental and QSPR-based methods |

| ISIDA/QSPR | Open-source software for descriptor calculation | Generates sub-structural molecular fragments |

| RTlogD Model | Advanced GNN for logD7.4 prediction | Incorporates retention time, pKa, and logP knowledge |

The comparison between logP and logD7.4 reveals critical insights for modern drug discovery. While logP remains valuable for assessing intrinsic lipophilicity, logD7.4 provides a more physiologically relevant parameter that accounts for ionization at biological pH. Experimental methods like shake-flask provide direct measurement but are resource-intensive, while chromatographic and potentiometric methods offer higher throughput. Computational approaches have evolved from traditional QSPR to advanced machine learning models like RTlogD that leverage transfer learning from multiple data sources to address the challenge of limited experimental data. For drug discovery professionals, the selection between logP and logD7.4 should be guided by the specific application: logP for initial compound screening and intrinsic property assessment, and logD7.4 for ADMET prediction, lead optimization, and compounds with significant ionization at physiological pH. The continued advancement of predictive models that integrate multiple physicochemical parameters promises to enhance our ability to design compounds with optimal drug-like properties.

The Critical Impact of Lipophilicity on Solubility, Permeability, and Metabolic Stability

In medicinal chemistry, lipophilicity is one of the most critical physicochemical properties determining a compound's behavior in biological systems. Defined as the affinity of a molecule for a lipid environment, lipophilicity is most commonly quantified by its partition coefficient (log P) or distribution coefficient (log D) in an n-octanol/water system [6]. This property serves as a primary underlying structural characteristic that influences higher-level physicochemical and biochemical properties, ultimately governing a drug's solubility, permeability, and metabolic stability [7]. The balance of these properties directly impacts a compound's absorption, distribution, metabolism, and excretion (ADME) profile, making lipophilicity optimization a crucial aspect of rational drug design [8] [9].

Pharmaceutical researchers increasingly rely on in silico predictions to estimate lipophilicity during early discovery phases, but these computational approaches require rigorous experimental validation to ensure their reliability in forecasting biological behavior [6] [10]. This guide provides a comparative analysis of how lipophilicity impacts key pharmaceutical properties, supported by experimental methodologies essential for validating computational predictions.

Lipophilicity Measurements: Experimental Protocols for Validation

Key Experimental Methodologies

Validating in silico lipophilicity predictions requires robust experimental methodologies that generate reliable, reproducible data. The following table summarizes core experimental approaches used in pharmaceutical research.

Table 1: Core Experimental Methods for Lipophilicity and Property Assessment

| Method | Measured Parameter | Protocol Overview | Key Applications |

|---|---|---|---|

| Shake-Flask Method [11] | Log P (unionized compounds), Log D (ionizable compounds) | Compound partitioned between n-octanol and buffer (typically pH 7.4); concentrations measured in both phases via HPLC/UV. | Gold-standard for experimental lipophilicity measurement; validates computational log P/log D predictions. |

| Reverse-Phase TLC [6] | RM0 | Compound spotted on C18-coated TLC plates; mobile phase of water-organic modifier; RM0 = log(1/Rf - 1). | High-throughput lipophilicity screening; supports QSAR studies. |

| Chromatographic Log D [9] | ChromLogD | HPLC with reverse-phase C18 column; retention time correlated with log D using calibration standards. | High-throughput profiling for early discovery; assesses metabolic stability. |

| Equilibrium Solubility [11] [9] | Thermodynamic solubility | Saturation of compound in solvent (e.g., buffer) with agitation until equilibrium; concentration of supernatant measured. | Gold-standard for solubility; informs formulation development. |

| Kinetic Solubility [9] | Kinetic solubility | DMSO stock solution added to aqueous buffer; concentration measured after fixed time (non-equilibrium). | Early-stage screening to triage compounds and interpret assay data. |

| Caco-2 Permeability [9] | Apparent permeability (Papp) | Human colorectal adenocarcinoma cell monolayer; compound transport across monolayer measured. | Predicts intestinal absorption and efflux liability. |

| PAMPA [9] | Passive permeability | Artificial membrane between donor and acceptor compartments; compound passage measured. | High-throughput assessment of passive transcellular permeability. |

| Microsomal Stability [9] | Half-life, Clint | Compound incubated with liver microsomes; depletion over time measured to estimate metabolic clearance. | Predicts in vivo metabolic stability and hepatic clearance. |

Experimental Workflow for Integrated Profiling

The following diagram illustrates a standardized experimental workflow for comprehensively evaluating how lipophilicity impacts critical drug properties, serving to validate computational predictions.

Lipophilicity Thresholds and Property Impacts: Comparative Data Analysis

Extensive pharmaceutical research has established optimal lipophilicity ranges that balance solubility, permeability, and metabolic stability. The following table synthesizes findings from multiple studies correlating log D values with specific property impacts.

Table 2: Correlation Between Lipophilicity and Drug Properties Based on Experimental Data

| Log D₇.₄ Range | Impact on Solubility | Impact on Permeability | Impact on Metabolic Stability | Overall ADME Profile |

|---|---|---|---|---|

| < 1 [7] | High solubility | Low permeability | Low metabolism (potential renal clearance) | Low volume of distribution; variable absorption and bioavailability |

| 1 - 3 [7] [6] | Moderate solubility | Moderate to good permeability | Lower metabolic clearance | Balanced properties; optimal for oral drugs and CNS penetration |

| 3 - 5 [7] | Low solubility | High permeability | Moderate to high metabolism | High volume of distribution; variable oral absorption |

| > 5 [7] | Poor solubility | High permeability (but may be offset by efflux) | High metabolic clearance | Very high volume of distribution; tissue accumulation; poor oral absorption |

Case Study: Lipophilicity-Efficiency Relationships in CYP450 Metabolism

Research on Cytochrome P450 (CYP450) enzymes reveals a crucial relationship between lipophilicity and metabolic stability. Studies indicate that CYP450 enzymes have an inherent affinity for lipophilic substrates due to their lipophilic binding pockets [12]. Analysis of marketed drugs shows that most model substrates of CYP450 isoforms exhibit log D₇.₄ values of approximately 2.5, with Lipophilic Metabolic Efficiency (LipMetE) values in the range of 0-2.5 [12].

The Lipophilic Metabolic Efficiency (LipMetE) parameter has been developed to depict the relationship between lipophilicity and metabolic clearance, similar to how LipE describes the relationship between lipophilicity and potency [12]. For a given range of LipMetE, compounds with higher log D values tend to bind more avidly to CYP450 enzymes and show greater intrinsic clearance [12]. This relationship is particularly important for compounds intended for central nervous system targets, which require careful balancing of lipophilicity for sufficient blood-brain barrier penetration without excessive metabolic clearance [13].

Property-Specific Impacts and Experimental Evidence

Lipophilicity and Solubility

The inverse relationship between lipophilicity and aqueous solubility represents one of the most fundamental trade-offs in drug design. Experimental studies consistently demonstrate that increasing lipophilicity decreases aqueous solubility [8] [7]. Research on novel hybrid compounds shows they are frequently more soluble in buffer pH 2.0 (simulating the gastrointestinal tract environment) than in buffer pH 7.4 (modeling blood plasma), with solubility in 1-octanol being significantly higher due to specific compound-solvent interactions [11].

For instance, kinetic solubility studies of hybrid antifungal compounds revealed that solution saturation occurs more rapidly in buffer pH 7.4 (~300 minutes) than in buffer pH 2.0 (1000-2200 minutes), highlighting how both lipophilicity and environmental pH influence dissolution kinetics [11]. This has direct implications for oral drug absorption, where compounds must dissolve in gastrointestinal fluids before permeating intestinal membranes.

Lipophilicity and Permeability

Lipophilicity directly influences a drug's ability to cross biological membranes via passive diffusion. Cell membranes composed of lipid bilayers preferentially allow passage of lipophilic compounds [8]. Studies on JNK inhibitors demonstrate that when lipophilicity was in the range of 3.7 < log D < 4.5, compounds showed good cell membrane penetration, as evidenced by the ratio of cell-based assay IC₅₀ over enzyme assay IC₅₀ [7].

However, excessively high lipophilicity (log D > 5) can reduce permeability despite favorable partitioning into membranes, as such compounds may exhibit poor desorption from the membrane or become substrates for efflux transporters [7]. Research on blood-brain barrier penetration indicates that moderate lipophilicity around log D ≈ 2 provides optimal balance for CNS drugs, sufficient for membrane partitioning without excessive plasma protein binding or metabolic clearance [6] [13].

Lipophilicity and Metabolic Stability

The relationship between lipophilicity and metabolic stability presents a particularly complex challenge in drug design. CYP450 enzymes, responsible for metabolizing approximately 75% of pharmaceuticals, demonstrate a propensity to metabolize lipophilic compounds to increase aqueous solubility for excretion [12] [7]. Experimental data show a strong correlation between the -log Kₘ (Michaelis constant) and log Pₒw values of structurally diverse CYP2B6 substrates, with metabolic rate increasing with lipophilicity [7].

Highly lipophilic compounds (log D > 3) present greater risks for rapid metabolic turnover, leading to high clearance, poor bioavailability, and potential toxic metabolite formation [12]. The LipMetE parameter has been developed specifically to ensure adequate metabolic stability at required lipophilicity levels, helping medicinal chemists identify compounds with favorable metabolic profiles even when high lipophilicity is necessary for target potency [12].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Tools for Lipophilicity and ADME Profiling

| Tool/Platform | Type | Primary Function | Application Context |

|---|---|---|---|

| SwissADME [6] [10] | Computational Platform | Free web tool for calculating log P, log D, and other physicochemical/ADME parameters | Rapid in silico screening of compound libraries; academic research |

| VCCLAB [6] | Computational Platform | Online platform with multiple log P calculation algorithms (ALOGPS, etc.) | Comparing different calculation methods; consensus predictions |

| EPI Suite [14] | Software Suite | EPA's suite for predicting physicochemical properties and environmental fate | Environmental risk assessment; regulatory submissions |

| n-Octanol/Buffer Systems [6] [11] | Laboratory Reagent | Gold-standard solvent system for experimental log P/log D measurement | Validating computational predictions; QSAR studies |

| Caco-2 Cell Line [9] | Biological Reagent | Human epithelial colorectal adenocarcinoma cells for permeability studies | Predicting intestinal absorption; efflux transporter studies |

| Liver Microsomes [9] | Biological Reagent | Subcellular fractions containing CYP450 enzymes for metabolic stability | Predicting in vivo metabolic clearance; metabolite identification |

| RP-TLC Plates [6] | Laboratory Supply | Reverse-phase C18-coated TLC plates for chromatographic lipophilicity | High-throughput lipophilicity screening; method development |

The comprehensive analysis of lipophilicity impacts reveals that successful drug development requires careful balancing of this fundamental property. The optimal lipophilicity range for oral drugs typically falls between log D 1-3, providing the best compromise between solubility, permeability, and metabolic stability [7] [6]. For CNS-targeted therapeutics, this range may be slightly shifted toward higher lipophilicity (log D ~2-4), but must be carefully controlled to avoid excessive metabolic clearance or plasma protein binding [13].

The relationship between lipophilicity and metabolic efficiency underscores the importance of the LipMetE parameter in lead optimization, helping researchers identify compounds with favorable metabolic profiles despite the inherent affinity of CYP450 enzymes for lipophilic substrates [12]. Experimental data consistently show that moderately lipophilic compounds (log D ~2.5) represent the optimal starting point for further optimization, as exemplified by numerous marketed drugs [12].

Validating in silico predictions with robust experimental methodologies remains crucial for accurate ADME profiling. The integrated experimental workflow presented herein provides a standardized approach for confirming computational forecasts and ensuring that lead compounds possess balanced physicochemical properties suitable for successful drug development.

Lipophilicity, a compound's affinity for a lipophilic environment relative to an aqueous one, is a fundamental physicochemical property that profoundly influences the behavior of drug molecules within biological systems [15]. Commonly expressed as the logarithm of the n-octanol/water partition coefficient (log P) for unionized compounds or the distribution coefficient at physiological pH 7.4 (log D7.4) for ionizable substances, this parameter serves as a critical determinant in the absorption, distribution, metabolism, excretion, and toxicity (ADMET) profile of potential drug candidates [16] [17] [15]. The delicate balance lies in achieving an optimal lipophilicity range: sufficient to cross biological membranes yet moderate enough to avoid poor solubility, promiscuous binding, and increased toxicity risks [17] [15]. This guide objectively compares experimental and computational approaches for lipophilicity assessment, providing supporting data and detailed methodologies to aid researchers in navigating this crucial property during drug development.

Experimental Measurement of Lipophilicity: A Comparative Guide

Accurate determination of lipophilicity is foundational for establishing robust structure-property relationships. The following table summarizes the primary experimental techniques used, their core principles, advantages, and limitations.

Table 1: Comparison of Key Experimental Methods for Lipophilicity Determination

| Method | Core Principle | Reported Lipophilicity Range | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Shake-Flask [18] [17] | Direct partitioning of a compound between n-octanol and an aqueous buffer phase. | Wide range, method-dependent | Considered the gold standard; measures equilibrium directly. | Labor-intensive; requires high compound purity and large amounts; low throughput. [17] |

| Chromatographic Techniques (RP-HPLC/RP-TLC) [19] [17] | Measures retention time/behavior correlated with lipophilicity using a non-polar stationary phase and polar mobile phase. | Log ( k_w ): 1.35 - 5.63 (for thiazol-4(5H)-one derivatives) [19] | High-throughput; requires minimal compound quantity; insensitive to impurities. [17] | Provides indirect measurement; requires calibration with standards; results can be method-specific. [17] |

| Potentiometric Titration [17] | Determines logD from the shift in acid dissociation constant (pKa) when the compound is partitioned between water and octanol. | Limited to compounds with acid-base properties [17] | Can provide both pKa and logD data from a single experiment. | Requires high sample purity; not applicable to all compound classes. [17] |

A high-throughput variant of the shake-flask method enables simultaneous measurement of distribution coefficients for mixtures of up to 10 compounds using high-performance liquid chromatography and tandem mass spectrometry (LC/MS), significantly improving efficiency within the drug discovery process [18]. Reverse-phase thin-layer chromatography (RP-TLC) and high-performance liquid chromatography (RP-HPLC) have been successfully applied to determine the lipophilicity (parameters ( R{M}^{0} ) and ( \log kw ), respectively) of 2-aminothiazol-4(5H)-one derivatives, demonstrating a clear relationship between structural modifications and lipophilicity changes [19].

In Silico Prediction of Lipophilicity: Validating Computational Tools

The limitations of experimental methods have spurred the development of in silico models for logP and logD7.4 prediction. These computational tools offer high speed and low cost, but their accuracy must be rigorously validated. The following table compares several established prediction tools and strategies.

Table 2: Comparison of In Silico Lipophilicity Prediction Approaches

| Tool/Strategy | Description | Key Features / Validation Metrics | Reported Performance / Applicability |

|---|---|---|---|

| RTlogD Model [17] | A novel graph neural network model leveraging knowledge transfer from chromatographic retention time (RT), microscopic pKa, and logP. | Multitask learning framework; pre-trained on ~80,000 molecule RT dataset; incorporates pKa as atomic features. | Superior performance vs. common algorithms/tools on a time-split test set; improved generalization with limited logD data. [17] |

| Peptide-Specific ML Model [20] | A data-driven machine learning QSPR model developed specifically for short linear peptides and peptide mimetics. | Applicable to peptides and derivatives; uses molecular descriptors and machine learning (LASSO, SVR). | Accurate predictions for linear tri- to hexapeptides; applicable in a log D7.4 range of ~-3 to 5; superior to small-molecule models for peptides. [20] |

| AZlogD74 (AstraZeneca) [17] | A proprietary model trained on a massive in-house dataset of over 160,000 molecules. | Continuously updated with new experimental measurements; exemplifies the power of large, high-quality private datasets. | Represents industrial-state-of-the-art; performance fueled by scale and quality of proprietary data. [17] |

| Classical Methods (ALOGPS, etc.) [17] | Established algorithms, often based on quantitative structure-property relationships (QSPR) or fragment contributions. | Vary in their underlying algorithms and descriptor sets; widely accessible. | Often lack accuracy for complex molecules like peptides and mimetics outside their training domain. [20] [17] |

A critical study demonstrates the necessity of bespoke models for specific molecular classes. A machine learning model developed for peptides and peptide derivatives showed superior accuracy in predicting logD7.4 for these compounds compared to established models designed for traditional small molecules, which often lack accuracy outside their training domain [20]. This highlights the importance of selecting or developing domain-specific models for reliable predictions.

Experimental Protocols for Key Lipophilicity Assays

High-Throughput Shake-Flask for Compound Mixtures

This protocol enables the measurement of distribution coefficients for mixtures of up to 10 compounds simultaneously [18].

- Solution Preparation: Prepare n-octanol saturated with phosphate buffer (pH 7.4) and buffer saturated with n-octanol. Dissolve the mixture of up to 10 compounds in a suitable solvent to create a stock solution.

- Partitioning: Combine equal volumes (e.g., 0.5 mL each) of the octanol-saturated buffer and the compound mixture solution in a vial. Vortex vigorously for a defined period (e.g., 1 hour) to ensure thorough mixing and partitioning.

- Phase Separation: Centrifuge the vial to achieve complete separation of the octanol and aqueous buffer phases.

- Analysis via LC-MS/MS: Carefully separate the two phases. Dilute aliquots from each phase as necessary and analyze using Liquid Chromatography with tandem Mass Spectrometry (LC-MS/MS).

- Data Calculation: Quantify the concentration of each compound in the octanol phase ((C{octanol})) and the aqueous phase ((C{aqueous})). The logD7.4 is calculated as: ( \log D{7.4} = \log\left(\frac{C{octanol}}{C_{aqueous}}\right) ). Critical Consideration: The potential for ion pair partitioning, which could cause interactions between compounds in the mixture leading to erroneous results, must be evaluated [18].

Determination of Lipophilicity by RP-HPLC

Chromatographic methods provide an efficient, indirect measurement of lipophilicity [19] [17].

- Column and Mobile Phase: Use a Reverse-Phase C18 column. Prepare the mobile phase as a binary mixture of methanol (or acetonitrile) and a buffer (e.g., phosphate buffer, pH 7.4).

- Gradient Elution: Run a linear gradient of the organic modifier (e.g., from 50% to 100% methanol) at a constant flow rate and temperature. The dead time ((t_0)) must be determined using an unretained compound.

- Retention Time Measurement: Inject the compound of interest and record its retention time ((t_R)).

- Lipophilicity Parameter Calculation: The capacity factor ((k)) is calculated as ( k = (tR - t0)/t0 ). The value of ( \log k ) is then extrapolated to 0% organic modifier (pure aqueous buffer) to obtain the lipophilicity index ( \log kw ) [19]. This is typically done by measuring ( \log k ) at several different organic modifier concentrations and extrapolating linearly.

Visualizing the Impact and Optimization of Lipophilicity

The following diagrams illustrate the critical relationships between lipophilicity and drug properties, as well as the modern workflow for its optimization.

Diagram 1: Lipophilicity Impact on Drug Properties

Diagram 2: Lipophilicity Optimization Workflow

A concrete example of this workflow in action comes from a study on targeted alpha-particle therapy (TAT) for metastatic melanoma. Researchers synthesized a library of DOTA-linker-MC1RL peptides with varying linkers to achieve a range of logD7.4 values [16]. They observed that higher logD7.4 values were associated with decreased kidney uptake, decreased absorbed radiation dose, and decreased acute kidney toxicity. In contrast, conjugates with lower lipophilicities exhibited acute nephropathy and death in animal models, demonstrating a direct causal relationship between lipophilicity, biodistribution, and target organ toxicity [16].

Table 3: Key Research Reagent Solutions for Lipophilicity Studies

| Reagent / Resource | Function / Application | Specific Examples / Notes |

|---|---|---|

| n-Octanol & Aqueous Buffers | The standard solvent system for shake-flask logP/logD determination. | Must be mutually saturated prior to use. Phosphate buffer (pH 7.4) is standard for logD7.4. [18] [17] |

| Reverse-Phase Chromatography Columns | Stationary phase for HPLC-based lipophilicity measurement (log ( k_w )). | C18 columns are most common. The choice of organic modifier (MeOH, ACN) can influence results. [19] |

| LC-MS/MS Systems | Enables high-throughput, sensitive quantification of compounds in mixture shake-flask assays. | Critical for analyzing concentration in both phases without the need for individual compound assays. [18] |

| Validated Compound Libraries | Used for training and benchmarking predictive in silico models. | Public (e.g., ChEMBL) and large proprietary (e.g., AstraZeneca's 160k+ dataset) libraries are key to model accuracy. [20] [17] |

| In Silico Prediction Platforms | Software and algorithms for computational logP/logD estimation. | Range from commercial (e.g., ACD/Labs, Instant Jchem) to academic (e.g., ALOGPS) and bespoke models (e.g., RTlogD). [20] [17] |

Navigating the optimal range of lipophilicity is a critical and non-trivial endeavor in modern drug discovery. As evidenced by the data, both excessively low and high lipophilicity can lead to project failure through poor bioavailability or elevated toxicity, respectively [16] [15]. A strategic, integrated approach is essential for success. This involves leveraging modern in silico tools, particularly bespoke machine learning models trained on relevant chemical spaces like peptides, for initial design and triaging [20] [17]. These predictions must be rapidly validated by robust, medium- to high-throughput experimental methods like RP-HPLC or mixture shake-flask assays [18] [19]. Most importantly, lipophilicity optimization must be conducted with a constant feedback loop to in vitro and in vivo ADMET and efficacy end-points, as the ultimate goal is not a perfect logD value, but a molecule with a balanced therapeutic profile. The case study on TAT conjugates powerfully illustrates how a deliberate strategy to "tune" lipophilicity can successfully modulate biodistribution to reduce morbidity and improve both the safety and efficacy of a drug candidate [16].

Lipophilicity is a fundamental physicochemical property defined as the affinity of a molecule or a moiety for a lipophilic environment [21]. It is most commonly expressed as the logarithm of the partition coefficient (log P) for neutral compounds or the distribution coefficient at a specific pH (log D), which accounts for all ionized and unionized species present in solution [22]. This parameter represents a balance between two major contributions: hydrophobicity, which is the tendency of non-polar compounds to prefer a non-aqueous environment, and polarity, which encompasses electrostatic interactions and hydrogen bonding [21].

In modern drug discovery and development, lipophilicity serves as a pivotal descriptor that profoundly influences a compound's pharmacokinetic and pharmacodynamic behavior. It affects every component of the ADMET profile—Absorption, Distribution, Metabolism, Excretion, and Toxicity [22]. For instance, lipophilicity modulates passive permeation across biomembranes, a crucial step for drug absorption [22]. It also influences drug distribution, including the volume of distribution and plasma protein binding, and affects a compound's ability to cross physiological barriers such as the blood-brain barrier [22]. Furthermore, lipophilicity is implicated in metabolic rate and potential toxicity, including interaction with cardiac ion channels like hERG [22]. Beyond pharmacokinetics, lipophilicity contributes significantly to understanding ligand-target interactions and is a key parameter in quantitative structure-activity relationship (QSAR) studies [22]. Given these widespread implications, accurate determination of lipophilicity through reliable experimental methods is essential for rational drug design and optimization.

The Shake-Flask Method: The Established Gold Standard

Fundamental Principles and Protocol

The shake-flask method is widely regarded as the reference technique against which other methods are validated [23]. This direct method involves partitioning a compound between an organic solvent, typically water-saturated n-octanol, and an aqueous phase, usually a buffer solution such as phosphate buffer at pH 7.4 [23] [24]. The fundamental principle relies on determining the concentration ratio of the compound between these two immiscible phases at equilibrium.

A typical experimental workflow involves the following key steps [23] [21]:

- Phase Preparation and Saturation: The n-octanol is pre-saturated with the aqueous buffer, and conversely, the buffer is saturated with n-octanol to prevent phase volume changes during partitioning.

- Equilibration: The compound of interest is introduced into the biphasic system, which is then vigorously shaken or agitated to facilitate partitioning between the phases.

- Phase Separation: After equilibration, the mixture is allowed to stand or is centrifuged to achieve complete phase separation.

- Concentration Analysis: The concentration of the analyte in each phase is quantified, typically using high-performance liquid chromatography (HPLC) or ultra-performance liquid chromatography (UPLC) [23].

- Calculation: The distribution coefficient (log D) is calculated using the formula: log D = log (C~octanol~/C~water~), where C~octanol~ and C~water~ represent the equilibrium concentrations in the octanol and aqueous phases, respectively [23].

To extend the measurable lipophilicity range and minimize the consumption of often scarce drug candidates, modern adaptations employ multiple phase volume ratios. For instance, one optimized protocol proposes four different procedures and eight volume ratios specifically designed for compounds with low, regular, or high lipophilicity, and high or low aqueous solubility [23] [24]. A significant advantage of this approach is the ability to analyze only one phase (typically the aqueous phase) and calculate the concentration in the other by difference, which enhances accuracy, especially when drug absorption to glass vessels might occur [23].

Key Applications and Performance Data

The shake-flask method is validated for determining log D~7.4~ values across a lipophilicity range of approximately -2.0 to 4.5 [23] [24]. When properly executed with optimized phase volume ratios, the method yields highly reproducible results with a standard deviation generally lower than 0.3 log units [23] [24]. This robust performance and its direct conceptual relationship to the partitioning phenomenon solidify its status as the gold standard.

The method's reliability is evidenced by its application in validating other techniques. For example, in a study investigating the phytochemicals aloin A and aloe-emodin from Aloe vera, an optimized shake-flask method was successfully employed to determine log P values, confirming that aloin A is more hydrophilic than aloe-emodin due to the presence of a β-D-glucopyranosyl unit [25]. Furthermore, while the classical shake-flask method is sometimes considered lower throughput, innovative approaches have been developed to increase efficiency. One such advancement enables the simultaneous measurement of distribution coefficients for mixtures of up to 10 compounds using HPLC with tandem mass spectrometry (LC-MS/MS) detection, significantly boosting capacity for early drug discovery screening [18].

Table 1: Key Characteristics of the Shake-Flask Method

| Feature | Description | Experimental Consideration |

|---|---|---|

| Principle | Direct measurement of concentration in both phases of a biphasic system [23] [21] | Conceptually simple and directly related to the partition phenomenon |

| Standard System | n-Octanol / Aqueous Buffer (e.g., pH 7.4) [23] | Both phases must be mutually saturated before use |

| Analytical Technique | Primarily HPLC or UPLC for quantification [23] | Enables specific quantification even with impurities; low detection limits |

| Optimal log D Range | -2.0 to 4.5 [23] [24] | Beyond this range, accuracy decreases due to detection limit issues |

| Throughput | Low to Medium (can be improved with cassette dosing) [18] | More labor-intensive and time-consuming than chromatographic methods |

| Key Advantage | Considered the gold standard; high accuracy for a wide range of compounds [23] [21] | Results are used to validate other indirect methods |

| Main Limitation | Potential for emulsion formation; requires relatively pure compounds [23] | Labor-intensive and requires careful phase separation |

Chromatographic Methods: High-Throughput Alternatives

Reversed-Phase Thin-Layer Chromatography (RP-TLC)

Fundamental Principles and Protocol

Reversed-phase thin-layer chromatography (RP-TLC) is a simple, cost-effective, and robust chromatographic technique widely used for lipophilicity estimation. In this method, the stationary phase is non-polar (e.g., silica gel impregnated with hydrocarbons like RP-2, RP-8, or RP-18), and the mobile phase is a polar mixture, typically consisting of water and an organic modifier such as methanol, acetonitrile, acetone, or 1,4-dioxane [26] [27].

The lipophilicity is determined from the retention behavior of the compound. The primary measured parameter is the R~M~ value, which is calculated from the retardation factor (R~f~) using the formula: R~M~ = log (1/R~f~ - 1) [27]. To obtain a lipophilicity index independent of the organic modifier concentration, R~M~ values are determined in several mobile phases with varying concentrations of the organic modifier. These values are then extrapolated to zero organic modifier content, yielding the R~MW~ parameter, which correlates well with the log P value from the shake-flask method [26] [27]. The extrapolation can be performed using different mathematical models, such as the Soczewiński-Wachtmeister's equation or Ościk's equation, with studies suggesting that the former may be better suited for compounds with very high or low lipophilicity, while the latter is more suitable for medium-lipophilicity compounds [27].

Key Applications and Performance Data

RP-TLC has been successfully applied to determine the lipophilicity of diverse drug classes, including antiparasitics (e.g., metronidazole, ornidazole), antihypertensives (e.g., nilvadipine, felodipine), and non-steroidal anti-inflammatory drugs (NSAIDs) like ibuprofen and ketoprofen [27]. The technique offers excellent reproducibility and can be a good alternative for characterizing both highly and weakly lipophilic compounds [27]. Its advantages include the ability to analyze several samples simultaneously, minimal sample preparation, and no requirement for sophisticated instrumentation.

A study on neuroleptics (fluphenazine, triflupromazine, etc.) demonstrated the utility of RP-TLC using three different stationary phases (RP-2, RP-8, RP-18) and various organic modifiers. The resulting R~MW~ values showed a consistent pattern across the compounds and aligned well with trends predicted by in silico methods, highlighting the technique's reliability for rapid lipophilicity assessment in drug discovery [26].

Reversed-Phase High-Performance Liquid Chromatography (RP-HPLC)

Fundamental Principles and Protocol

Reversed-phase high-performance liquid chromatography (RP-HPLC) is one of the most prevalent techniques for indirect lipophilicity determination due to its accuracy, reproducibility, and high-throughput capabilities. In this method, the stationary phase typically consists of C18 (ODS) chains chemically bonded to silica particles, creating a hydrophobic environment. The mobile phase is an aqueous-organic mixture (e.g., water-acetonitrile or water-methanol) [22] [28].

The primary measured parameter is the retention time, from which the capacity factor (k') is calculated. To estimate the partition coefficient, the log k' values are measured under several isocratic conditions or a single gradient run, and the log k' at 0% organic modifier (log k~w~) is derived through extrapolation or calculation. This log k~w~ value correlates linearly with the log P from the shake-flask method [22] [28]. The relationship is based on the similarity between the partitioning of a solute in the octanol-water system and its distribution between the hydrophobic stationary phase and the hydrophilic mobile phase.

More advanced applications use specialized stationary phases to model specific biological interactions. Immobilized Artificial Membrane (IAM) HPLC utilizes columns that contain phospholipids covalently bonded to silica, mimicking cell membranes [25]. Additionally, biomimetic HPLC with human serum albumin (HSA) or α1-acid glycoprotein (AGP) stationary phases can provide insights into plasma protein binding, a critical distribution parameter [25].

Key Applications and Performance Data

RP-HPLC is exceptionally versatile and can be applied to a vast spectrum of compounds, from small molecules to complex "beyond Rule of 5" (bRo5) compounds like macrocyclic peptides and PROTACs [28]. Its dynamic range is broad, often exceeding that of the shake-flask method.

A significant advancement is the use of chromatographic retention to predict hydrocarbon-water partition coefficients (e.g., using 1,9-decadiene), which are more relevant to membrane permeability than traditional octanol-water systems. For instance, a study on cyclic peptides established a robust nonlinear regression model (R² = 0.97) between chromatographically determined capacity factors and shake-flask Log D~dd/w~ values. This model allows for high-throughput estimation of membrane-relevant lipophilicity and the derivation of a lipophilic permeability efficiency (LPE) metric, which is highly predictive of passive cell permeability [28].

Table 2: Comparison of Chromatographic Methods for Lipophilicity Determination

| Feature | RP-TLC | RP-HPLC |

|---|---|---|

| Principle | Measurement of R~M~ value on a non-polar stationary phase [27] | Measurement of retention time (or capacity factor k') on a non-polar column [22] |

| Throughput | High (multiple samples per plate) [27] | Medium to High (serial analysis, but automated) [28] |

| Cost | Low | High (instrumentation and solvents) |

| Data Output | R~MW~ (extrapolated to zero organic modifier) [26] [27] | log k~w~ (extrapolated capacity factor) or ChromLogD [28] |

| Optimal Range | Wide, suitable for very high and low lipophilicity [27] | Very wide, including complex molecules (e.g., macrocycles) [28] |

| Key Advantage | Simplicity, low cost, ability to analyze impure samples [27] | High accuracy, reproducibility, automation, and suitability for complex mixtures [22] [28] |

| Main Limitation | Lower precision compared to HPLC, less automated [27] | Higher cost, requires specialized equipment and method development [22] |

Comparative Analysis: Selecting the Right Method

The choice between shake-flask and chromatographic methods depends on the project's stage, available resources, and the required information. The following diagram illustrates the decision-making workflow for method selection based on common research scenarios.

Diagram 1: A workflow for selecting the appropriate lipophilicity determination method based on project requirements.

Integrated Approaches and Orthogonal Validation

A powerful strategy in modern drug development is the combined use of these techniques to leverage their respective strengths. Chromatographic methods (RP-TLC and RP-HPLC) are ideal for high-throughput screening during early discovery due to their speed, minimal compound consumption, and ability to handle impure samples or mixtures [27] [28]. As compounds progress to the lead optimization stage, RP-HPLC provides an excellent balance of throughput and accuracy, especially when using biomimetic stationary phases (IAM, HSA) to gain deeper insights into membrane partitioning and protein binding [25]. Finally, the shake-flask method remains the benchmark for definitive, high-accuracy measurement of critical candidates, and it is essential for validating other methods or providing data for regulatory submissions [23] [21].

This integrated approach is exemplified in a study on aloin A and aloe-emodin, where researchers combined shake-flask, RP-HPLC, IAM-HPLC, and in silico predictions to comprehensively evaluate the compounds' physicochemical and ADME properties [25]. Such a multi-faceted strategy provides a more robust and reliable dataset than any single method alone.

Essential Research Reagent Solutions

Successful implementation of these experimental methods relies on specific, high-quality reagents and materials. The following table details key solutions used in the featured protocols.

Table 3: Key Research Reagent Solutions for Lipophilicity Determination

| Reagent/Material | Function and Role in Experiments | Example from Protocols |

|---|---|---|

| n-Octanol (water-saturated) | Organic phase in shake-flask method; models hydrophobic environments [23] [21] | Used as the standard non-polar solvent in partition coefficient determinations [23] [24] |

| Phosphate Buffer (pH 7.4) | Aqueous phase in shake-flask; models physiological pH [23] | Used for log D~7.4~ determination, physiologically relevant for drug ADMET profiling [23] [24] |

| C18 Stationary Phases | Hydrophobic stationary phase for RP-HPLC and RP-TLC; mimics lipid interactions [26] [28] | Silica-based or polymer-based (e.g., PRP-C18) columns for chromatographic lipophilicity measurement [26] [28] |

| Immobilized Artificial Membrane (IAM) | Chromatographic stationary phase that mimics cell membranes [25] | IAM.HPLC columns used to assess phospholipid binding and membrane permeability potential [25] |

| Organic Modifiers (Acetonitrile, Methanol) | Component of the mobile phase in chromatography; modulates retention [26] [27] | Acetone, acetonitrile, and methanol used in RP-TLC and RP-HPLC mobile phases to elute compounds [26] [27] |

| Human Serum Albumin (HSA) | Stationary phase for biomimetic chromatography [25] | HSA-HPLC columns used to evaluate compound binding to plasma proteins [25] |

In Silico Toolbox: From Traditional QSAR to Advanced AI for Lipophilicity Prediction

In modern drug discovery, computational methods are indispensable for predicting molecular properties, optimizing candidate compounds, and elucidating complex biological interactions. This guide provides an objective comparison of three foundational approaches: Quantitative Structure-Property Relationship (QSPR), Molecular Dynamics (MD), and Quantum Mechanics (QM). Within the critical context of validating in silico lipophilicity predictions, these methodologies offer complementary strengths. Lipophilicity, commonly measured as the octanol-water partition coefficient (LogP), is a fundamental property influencing drug solubility, membrane permeability, and ultimately, bioavailability [29] [30]. The performance of these computational strategies is evaluated based on predictive accuracy, interpretability, computational demand, and applicability to diverse molecular classes, including challenging modalities like targeted protein degraders [30].

Core Principles and Applications

QSPR/QSAR models establish statistical relationships between numerical descriptors of molecular structures and a target property or biological activity. Machine Learning (ML) has dramatically enhanced QSPR, enabling the modeling of complex, non-linear relationships [31] [29]. A key application is the prediction of absorption, distribution, metabolism, and excretion (ADME) properties, such as lipophilicity (LogP/LogD), solubility, and permeability, which are crucial for prioritizing lead compounds [32] [30].

Molecular Dynamics (MD) simulations model the time-dependent physical movements of atoms and molecules based on classical mechanics. By simulating interactions with explicit solvents, MD provides deep insights into solvation dynamics, molecular conformation, and stability—factors directly influencing properties like solubility. For instance, MD-derived properties such as the Solvent Accessible Surface Area (SASA) and Coulombic interaction energies have been successfully used as features in ML models to predict aqueous solubility [29].

Quantum Mechanics (QM) methods solve the Schrödinger equation to describe the electronic structure of molecules. They offer the most fundamental description, capturing phenomena like bond formation/breaking and electronic polarization. QM is particularly valuable for studying chemical reactions and protein-ligand interactions where electronic effects are critical. Advanced approaches like QM/MM combine QM accuracy for a reaction core with MM efficiency for the surrounding environment [33] [34]. QM-driven descriptors, such as molecular orbital energies, are increasingly integrated into QSPR models to improve the prediction of physicochemical and biological endpoints, including toxicity and lipophilicity [35].

Quantitative Performance Comparison

The following table summarizes the documented predictive performance of these approaches for key physicochemical properties relevant to drug discovery.

Table 1: Documented Performance of Computational Approaches for Property Prediction

| Computational Approach | Target Property | Reported Performance | Key Algorithms/Features Used |

|---|---|---|---|

| ML-Driven QSPR | ADME Properties (Global Model) [30] | Low misclassification errors (0.8%-8.1%) for various ADME endpoints across diverse modalities. | Message-Passing Neural Network (MPNN), Deep Neural Network (DNN) |

| • For Heterobifunctional TPDs [30] | ADME Properties | Misclassification errors <15% for key ADME risks (e.g., permeability, CYP3A4 inhibition). | Multitask Learning, Transfer Learning |

| • For Molecular Glues [30] | ADME Properties | Excellent performance with misclassification errors <4% for key ADME risks. | Multitask Learning |

| QSPR | Blood-Brain Barrier Transport (Kp,uu,BBB) [36] | Test set R² = 0.61; 61% of predictions within twofold error. | Random Forest, 2D/3D Physicochemical Descriptors |

| QSPR | Water Solubility of Pt Complexes [32] | RMSE of 0.62 on training set; RMSE of 0.86 on a prospective test set of novel scaffolds. | Consensus Model, Neural Networks, Random Forest |

| MD with ML | Aqueous Solubility (LogS) [29] | R² = 0.87, RMSE = 0.537 on test set using MD-derived descriptors. | Gradient Boosting, Features: LogP, SASA, Coulombic/LJ energies, DGSolv, RMSD |

| QM-Enhanced QSPR | Toxicity & Lipophilicity [35] | Enhanced predictive accuracy and model interpretability for these biological endpoints. | Kernel Ridge Regression, XGBoost, QUantum Electronic Descriptor (QUED) |

Comparative Analysis of Strengths and Limitations

Table 2: Comparative Analysis of Computational Approaches

| Criterion | QSPR | Molecular Dynamics (MD) | Quantum Mechanics (QM) |

|---|---|---|---|

| Computational Cost | Low to Moderate | High (for configurational sampling) | Very High to Prohibitive |

| Handling of Large Systems | Excellent (via descriptors) | Good (system size limited by simulation time) | Poor (limited to small molecules or QM/MM) |

| Interpretability | High (descriptor importance) [32] [35] | High (direct visualization of dynamics) | High (fundamental electronic insights) |

| Predictive Accuracy | High for ADME, but can falter on novel chemical space [32] [30] | High when combined with ML for specific properties [29] | High for electronic properties, but scaling is a challenge |

| Key Applications | High-throughput ADME prediction, virtual screening [32] [30] [36] | Solubility prediction, conformational analysis, protein-ligand interactions [37] [29] | Chemical reactivity, protein-ligand interaction energy decomposition, advanced descriptors [33] [35] |

| Data Dependency | High (requires large, high-quality datasets) [31] | Moderate (needs force field parameters and simulation time) | Low for fundamental calculations, high for ML-based force fields |

| Handling of Novel Chemistries | Requires retraining/transfer learning for out-of-domain molecules [32] [30] | Force field dependent; can be simulated if parameters exist | inherently accurate, but computationally expensive |

Experimental Protocols for Key Studies

Protocol 1: Developing a Global ML-QSPR Model for ADME Prediction

This protocol outlines the methodology for developing robust, global QSPR models for ADME properties, as validated on diverse modalities including targeted protein degraders [30].

- Data Curation: Compile a large, historical dataset of experimental results for the target ADME properties (e.g., permeability, metabolic stability, lipophilicity). Ensure consistent assay protocols and data quality.

- Descriptor Calculation & Chemical Space Analysis: Generate molecular descriptors or fingerprints (e.g., MACS keys) for all compounds. Use techniques like Uniform Manifold Approximation and Projection (UMAP) to visualize and confirm that the chemical space of the test set (e.g., TPDs) is reasonably covered by the training set [30].

- Model Training with Multitask Learning: Employ an ensemble of a Message-Passing Neural Network (MPNN) and a Feed-Forward Deep Neural Network (DNN). The MPNN learns from molecular graph structures, while the DNN processes additional features. Training multiple related properties (tasks) simultaneously helps the model learn generalizable patterns [30].

- Temporal Validation: Split the data temporally, using older compounds for training and more recently tested compounds for validation. This assesses the model's real-world predictive power for new chemical entities [30].

- Performance Evaluation and Transfer Learning: Calculate standard metrics (e.g., Mean Absolute Error - MAE) on the test set. For sub-modalities with higher errors (e.g., heterobifunctional TPDs), apply transfer learning techniques to fine-tune the global model using a smaller, project-specific dataset to improve performance [30].

Protocol 2: Integrating MD Simulations with ML for Solubility Prediction

This protocol details how to use MD-derived properties as features in ML models to predict aqueous solubility (LogS) [29].

- Data and System Setup: Curate a dataset of compounds with experimental LogS values. For each compound, prepare its 3D structure and generate topology files using a force field (e.g., GROMOS 54a7) [29].

- MD Simulation Execution: Solvate each molecule in a box of explicit water molecules. Run MD simulations in the isothermal-isobaric (NPT) ensemble using software like GROMACS to mimic physiological conditions. Ensure simulations are long enough to achieve stable sampling [29].

- Property Trajectory Analysis: From the simulation trajectories, extract key dynamic properties for each compound. Critical properties include [29]:

- Solvent Accessible Surface Area (SASA)

- Coulombic and Lennard-Jones (LJ) interaction energies between the solute and solvent.

- Estimated Solvation Free Energy (DGSolv)

- Root Mean Square Deviation (RMSD) of the solute.

- Average number of solvents in the solvation shell (AvgShell).

- Feature Integration and Model Building: Combine the calculated MD properties with the experimentally known LogP. Use feature selection to identify the most impactful descriptors. Train ensemble ML algorithms (e.g., Random Forest, Gradient Boosting) using these features to predict LogS [29].

- Model Validation: Validate the model on a held-out test set of compounds. The performance (e.g., R² and RMSE) can be comparable to models based solely on structural fingerprints, demonstrating the predictive power of MD-derived features [29].

Protocol 3: QM-Driven Structure-Activity Relationship Study

This protocol describes using QM calculations for an in-depth analysis of protein-protein interaction inhibitors, providing insights beyond traditional methods [33].

- Compound Design and Synthesis: Redesign the central scaffold of known inhibitors to explore new chemical space. Utilize efficient synthesis strategies like multicomponent reactions (e.g., Kabachnik-Fields reaction) to generate diverse compounds [33].

- Biophysical Activity Assay: Measure the activity (e.g., IC₅₀) of the newly synthesized compounds using relevant biophysical assays to determine their experimental potency [33].

- Quantum Mechanical Energy Analysis: Perform QM calculations (e.g., using Density Functional Theory) on the protein-ligand complexes. Employ Energy Decomposition and Deconvolution Analysis (EDDA) to break down the total binding energy into contributions from specific molecular fragments and interactions [33].

- SAR Rationalization: Correlate the calculated QM binding energies and decomposed energy terms with the experimentally measured activities. This allows for the rationalization of the Structure-Activity Relationship (SAR), identifying which structural features and atomic interactions contribute most to binding affinity and potency [33].

Workflow Visualization

The following diagram illustrates a synergistic workflow that integrates QSPR, MD, and QM for comprehensive property prediction in drug discovery.

Figure 1: Integrated Computational Workflow for Property Prediction.

Research Reagent Solutions

Table 3: Essential Computational Tools and Resources

| Tool/Resource Name | Type | Primary Function | Key Application in Research |

|---|---|---|---|

| GROMACS [29] | Software Suite | Molecular Dynamics Simulation | Simulating solute-solvent interactions to extract dynamic properties for solubility prediction. |

| MOE (Molecular Operating Environment) [36] | Software Suite | Molecular Modeling and QSPR | Calculating 2D and 3D physicochemical descriptors for machine learning model development. |

| OCHEM [32] | Online Platform | QSPR Model Development & Hosting | Building, validating, and hosting public consensus models for properties like solubility and lipophilicity. |

| AutoDock Vina [37] | Docking Software | Molecular Docking | Virtual screening of compound libraries to predict protein-ligand binding poses and affinities. |

| QUED Framework [35] | Descriptor Tool | Quantum-Mechanical Descriptor Generation | Generating QM-driven molecular descriptors that integrate electronic and structural information for ML models. |

| MPNN + DNN Ensemble [30] | Machine Learning Algorithm | Multitask Property Prediction | Simultaneously predicting multiple ADME endpoints by learning from molecular graphs and features. |

Lipophilicity, a key physicochemical parameter, significantly influences the absorption, distribution, metabolism, and excretion (ADME) of potential drug candidates [38]. It is most commonly expressed as the logarithm of the octanol/water partition coefficient (logP), which measures the equilibrium distribution of a compound between a lipophilic phase (typically octanol) and an aqueous phase [38] [3]. For ionizable compounds, the distribution coefficient (logD) provides a more meaningful descriptor as it accounts for pH-dependent ionization [38] [3]. Accurate prediction of these parameters is crucial in early drug discovery to optimize bioavailability and minimize costly late-stage failures [38].

Computational methods for predicting lipophilicity have evolved into several distinct approaches, primarily categorized as fragment-based (or substructure-based) and atom-based methods [39]. Fragment-based algorithms, such as ClogP and ACD/logP, operate by dividing molecules into smaller chemical fragments or functional groups with predetermined lipophilicity values, then summing these contributions while applying structural correction factors [39]. In contrast, atom-based approaches, including AlogP and XLOGP variants, decompose molecules to the atomic level, assigning contributions based on atom types and their environments [39]. A third category of property-based methods utilizes molecular descriptors or advanced machine learning techniques that consider the entire molecule's properties rather than relying on additive contributions [39] [40]. Understanding the fundamental differences, strengths, and limitations of these approaches enables researchers to select appropriate tools for specific chemical spaces and applications.

Algorithm Fundamentals: Deconstruction Methods and Theoretical Foundations

Fragment-Based (Substructure-Based) Methods

Fragment-based algorithms rely on the principle that molecular properties can be approximated by the sum of the contributions of their constituent parts. These methods employ predefined libraries of chemical substructures or fragments whose lipophilicity contributions have been determined experimentally [39]. When predicting logP for a new compound, the algorithm identifies all relevant fragments within the molecule, sums their contributions, and applies correction factors to account for intramolecular interactions such as hydrogen bonding or electronic effects that simple addition might miss [39].

The ClogP (Hansch-Leo) method represents one of the most established fragment-based approaches, utilizing a large database of fragment values and numerous correction factors [39]. Its development involved careful experimental validation across diverse chemical structures, making it particularly valuable for drug-like molecules. Similarly, AB/LogP employs an advanced algorithm that uses a comprehensive set of fragments and correction rules to calculate logP values [39]. Fragment methods generally perform well for compounds containing substructures well-represented in their training data but may struggle with novel scaffolds or molecules featuring unusual fragment combinations that lack predefined parameters.

Atom-Based Methods

Atom-based approaches represent a more granular decomposition strategy, where molecules are broken down to the atomic level rather than functional groups. These methods assign contributions based on atom types, considering their hybridization states and neighboring atoms [39]. AlogP exemplifies this approach by utilizing atomic contributions and correction factors based on molecular topology [39]. The XLOGP series represents another prominent atom-based methodology, with XLOGP3 incorporating atomic contributions and cross-terms to better capture intramolecular interactions [39].

Atom-based methods offer advantages for novel chemical structures where predefined fragments may be unavailable, as the atomic decomposition provides more comprehensive coverage of chemical space. However, they may oversimplify complex electronic effects that extend beyond immediate atomic environments. The performance of atom-based methods can vary significantly depending on the chemical class, with some implementations struggling with specific functional groups or complex heterocyclic systems [39].

Emerging Machine Learning Approaches

Recent advances have introduced property-based and machine learning approaches that represent a paradigm shift from traditional decomposition methods. These include Directed-Message Passing Neural Networks (D-MPNNs) which learn molecular representations by transmitting information across bonds, effectively capturing complex structural patterns without explicit fragment libraries [40]. Methods like Chemprop leverage multitask learning, incorporating predictions from established software like Simulations Plus logP and logD as helper tasks to improve accuracy and generalization [40]. Another innovative approach, FREL, employs dual-channel transfer learning based on molecular fragments, combining masked autoencoder and contrastive learning to capture both intra- and inter-molecular relationships [41]. These ML-based methods have demonstrated competitive performance in blind prediction challenges like SAMPL, often outperforming traditional fragment and atom-based approaches, particularly for structurally novel compounds [40].

Comparative Performance Analysis: Benchmarking Studies and Quantitative Evaluations

Methodology of Benchmarking Studies

Rigorous benchmarking of logP prediction algorithms requires carefully designed validation protocols using high-quality experimental data. The "shake-flask" method represents the gold standard for experimental logP determination, where the distribution of a compound between octanol and water phases is measured directly [42]. However, this approach is tedious, time-consuming, and requires large amounts of pure material, making it unsuitable for high-throughput applications [42]. Ultra-High Performance Liquid Chromatography (UHPLC) methods have emerged as efficient alternatives, correlating retention times with known standards to determine logP values for hundreds of compounds with good reproducibility [43] [42].

Critical to meaningful validation is the use of chemically diverse datasets that adequately represent the chemical space of interest. The development of a large, chemically diverse dataset of 707 validated logP values ranging from 0.30 to 7.50 specifically for benchmarking purposes addressed a significant limitation in earlier comparative studies [43]. This dataset includes non-ionizable (46%), basic (30%), acidic (17%), zwitterionic (0.5%), and ampholytic compounds (6.5%), providing a robust foundation for method evaluation [43]. Benchmarking protocols must also account for molecular complexity, as accuracy typically declines with increasing number of non-hydrogen atoms [39]. Proper dataset splitting strategies, such as scaffold-based splits that separate structurally distinct compounds, provide more realistic performance estimates than random splits [40].

Quantitative Performance Comparison

Comprehensive comparisons of logP prediction methods reveal substantial variation in performance across different chemical classes and datasets. One extensive evaluation of 18 methods on industrial datasets containing over 96,000 compounds found that only seven methods performed acceptably on both public and proprietary datasets [39]. The arithmetic average model (AAM), which predicts the same value for all compounds, served as a baseline, with methods performing worse than this baseline considered unacceptable [39].

Table 1: Performance Comparison of logP Prediction Methods on Different Datasets

| Method | Type | Public Dataset (N=266) RMSE | Industrial Dataset (N=95,809) RMSE | Notable Characteristics |

|---|---|---|---|---|

| ClogP | Fragment-based | ~0.6 | ~1.0 | Systematic errors for chemically related molecules |

| XLOGP3 | Atom-based | ~0.6 | ~0.8 | Good performance across diverse structures |

| ALOGP | Atom-based | ~0.7 | ~1.2 | Limitations with complex molecules |

| S+logP | Property-based | ~0.4 | ~0.7 | Uses molecular descriptors and statistical methods |

| Chemprop | Machine Learning | - | 0.66 (SAMPL7) | D-MPNN architecture with multitask learning |

| Simple Equation* | Atom-counting | - | ~0.8 | logP = 1.46 + 0.11NC - 0.11NHET |

Simple equation based on carbon (NC) and heteroatom (NHET) counts [39]

Notably, a simple equation based solely on the number of carbon and heteroatoms (logP = 1.46 + 0.11NC - 0.11NHET) outperformed many established programs in large-scale benchmarking, highlighting the continued challenge of accurate prediction [39]. For context, the average difference between calculated and measured logP values for 70 commercial drugs was approximately 1.05 log units according to investigators at Wyeth Research [42].

Machine learning approaches have shown particular promise in blind prediction challenges. The Chemprop model, which employs directed-message passing neural networks (D-MPNNs) with additional datasets from ChEMBL and predictions from commercial software as helper tasks, achieved an RMSE of 0.66 in the SAMPL7 challenge, ranking second out of 17 submissions [40]. Similarly, the FREL model, which incorporates molecular fragments through dual-channel pretraining, demonstrated state-of-the-art performance on benchmark datasets including Lipophilicity from MoleculeNet [41].

Experimental Protocols for Validation

Traditional Shake-Flask Method

The shake-flask method remains the reference standard for experimental logP determination, despite its limitations for high-throughput applications. The conventional protocol involves the following steps [42]:

- Phase Preparation: High-purity water and n-octanol are mutually saturated by stirring together for 24 hours before separation. This ensures each phase is equilibrated with the other.

- Compound Distribution: The test compound is dissolved in either the aqueous or organic phase, and equal volumes of both phases are combined in a sealed container.

- Equilibration: The mixture is shaken vigorously at constant temperature (typically 25°C) for a predetermined period to establish partitioning equilibrium.

- Phase Separation: After shaking, the mixture is allowed to settle or is centrifuged to achieve complete phase separation.

- Concentration Analysis: The compound concentration in each phase is quantified using analytical techniques such as UV spectroscopy, HPLC, or LC-MS.

- Calculation: The partition coefficient P is calculated as the ratio of concentrations in the octanol and aqueous phases, with logP representing the decimal logarithm of this ratio.

This method is reliable for logP values between -2 and 4, but becomes challenging for highly lipophilic compounds due to emulsion formation and analytical limitations in detecting low aqueous concentrations [42].

High-Throughput Experimental Methods

To address the throughput limitations of shake-flask methods, several automated approaches have been developed:

96-Well Polymer-Water Partitioning [42]:

- Utilizes polyvinyl chloride (PVC) plasticized with dioctyl sebacate (DOS) as the lipid phase in a 96-well format

- Demonstrates excellent correlation with octanol/water partitioning (slope of 0.933)

- Enables rapid determination of distribution coefficients at multiple pH values

- Requires minimal compound amounts (microgram scale)

- Allows simultaneous measurement of pKa and logP values through pH variation

- Employs reversed-phase chromatographic retention times correlated with reference compounds of known logP

- Suitable for logP range of 0-6 with high reproducibility

- Amenable to full automation and high throughput

- Requires appropriate reference standards with similar structural features

- May struggle with strong acids/bases and surface-active compounds

These high-throughput methods have shown significant discrepancies compared to calculated values, emphasizing the continued need for experimental verification, particularly for novel chemical series [42].

Research Reagent Solutions: Essential Tools for lipophilicity Studies

Table 2: Key Reagents and Resources for Experimental logP Determination

| Reagent/Resource | Function/Application | Key Characteristics |

|---|---|---|

| n-Octanol | Reference lipid phase in shake-flask method | Must be high-purity; pre-saturated with water [42] |

| DOS-Plasticized PVC | Polymer phase in high-throughput partitioning | Lipophilicity similar to octanol; enables 96-well format [42] |