Structure-Based vs Ligand-Based Virtual Screening: A Comprehensive Guide to Methods, Validation, and AI-Driven Integration

This article provides a comprehensive examination of structure-based and ligand-based virtual screening (SBVS and LBVS) for researchers and drug development professionals.

Structure-Based vs Ligand-Based Virtual Screening: A Comprehensive Guide to Methods, Validation, and AI-Driven Integration

Abstract

This article provides a comprehensive examination of structure-based and ligand-based virtual screening (SBVS and LBVS) for researchers and drug development professionals. It covers the foundational principles of both approaches, detailing their respective methodologies, from molecular docking and pharmacophore modeling to machine learning-enhanced similarity searches. The content explores advanced troubleshooting and optimization strategies to mitigate common pitfalls, and delivers a critical comparative analysis of their validation performance based on real-world benchmarks and case studies. Finally, it synthesizes key takeaways and outlines the future trajectory of virtual screening, emphasizing the growing power of integrated, AI-accelerated platforms to navigate ultra-large chemical spaces in modern drug discovery.

Virtual Screening 101: Core Principles of Structure-Based and Ligand-Based Approaches

Virtual screening (VS) has become an indispensable component of modern drug discovery, serving as a computational counterpart to experimental high-throughput screening [1]. By leveraging sophisticated algorithms and computational power, VS enables researchers to sift through vast chemical libraries containing millions or even billions of compounds to identify promising candidates with a high probability of biological activity against a specific therapeutic target [2] [3]. This in silico approach dramatically reduces the time and cost associated with the early stages of drug development by prioritizing a manageable number of compounds for experimental validation [1]. The foundation of VS rests on understanding the physicochemical properties of molecules, including their three-dimensional shapes, electrostatic potentials, hydrophobic characteristics, and the spatial distribution of functional groups—all critical determinants of drug-target interactions [1].

Within the VS paradigm, two principal strategies have emerged: structure-based virtual screening (SBVS) and ligand-based virtual screening (LBVS). These approaches differ fundamentally in their underlying principles and information requirements, yet share the common goal of efficiently identifying bioactive compounds [4] [5]. SBVS relies on knowledge of the three-dimensional structure of the biological target, typically obtained through experimental methods such as X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy, or increasingly through computational predictions like AlphaFold2 [4] [1]. In contrast, LBVS operates without target structural information, instead leveraging the chemical and biological properties of known active compounds to identify novel hits through similarity principles [3] [1]. The complementary nature of these approaches has spurred continued innovation in hybrid strategies that seek to harness their combined strengths while mitigating their individual limitations [6] [4] [5].

Core Principles and Methodologies

Structure-Based Virtual Screening (SBVS): A Target-Centric Approach

SBVS methodologies center on predicting the molecular interaction between a compound and its target binding site. The most widely employed SBVS technique is molecular docking, which computationally simulates the binding of small molecule ligands to a protein target [3] [1]. The docking process involves two key components: pose generation, which explores possible orientations and conformations of the ligand within the binding site, and scoring, which ranks these poses based on estimated binding affinity using scoring functions [1]. These scoring functions employ various computational approaches, including force-field based methods that calculate energy terms, empirical functions that parameterize experimental data, knowledge-based potentials derived from structural databases, and increasingly, machine learning-based models that learn complex patterns from large datasets [4] [1].

The SBVS workflow typically begins with target preparation, which involves processing the protein structure, defining the binding site, and potentially accounting for flexibility in the receptor [1]. Simultaneously, compound libraries are prepared through chemical standardization and generation of plausible three-dimensional conformations. The docking process then screens each compound against the target, generating predicted binding modes and associated scores that prioritize candidates for experimental testing [1]. A significant advantage of SBVS is its ability to identify novel chemotypes that may be structurally distinct from known activators, as it focuses on complementarity to the binding site rather than similarity to existing ligands [1] [5]. However, SBVS faces challenges including the accurate prediction of binding affinities, accounting for full protein flexibility and solvation effects, and reliance on the quality and relevance of the available target structure [1] [5].

Ligand-Based Virtual Screening (LBVS): A Chemical-Centric Approach

LBVS methodologies operate under the similarity property principle, which states that structurally similar molecules are likely to exhibit similar biological activities [3] [5]. This approach requires one or more known active compounds as reference templates, from which various molecular descriptors are computed to represent key chemical features and properties [1]. These descriptors can be categorized by dimensionality: 1D descriptors encode bulk properties like molecular weight and lipophilicity; 2D descriptors represent topological features such as structural fingerprints and molecular graphs; and 3D descriptors capture spatial characteristics including molecular shape, volume, and pharmacophoric features [5].

Common LBVS techniques include similarity searching, which quantifies the resemblance between molecules using metrics like the Tanimoto coefficient applied to structural fingerprints [3] [7]; pharmacophore modeling, which identifies essential steric and electronic features necessary for molecular recognition [1] [7]; and quantitative structure-activity relationship (QSAR) modeling, which establishes statistical correlations between molecular descriptors and biological activity through machine learning algorithms [4] [7]. The primary strength of LBVS lies in its computational efficiency, enabling the rapid screening of extremely large compound collections without requiring target structural information [1]. However, LBVS is constrained by its dependence on the quality and diversity of known actives, potential bias toward familiar chemotypes, and limited ability to identify novel scaffolds that diverge significantly from established templates [4] [5].

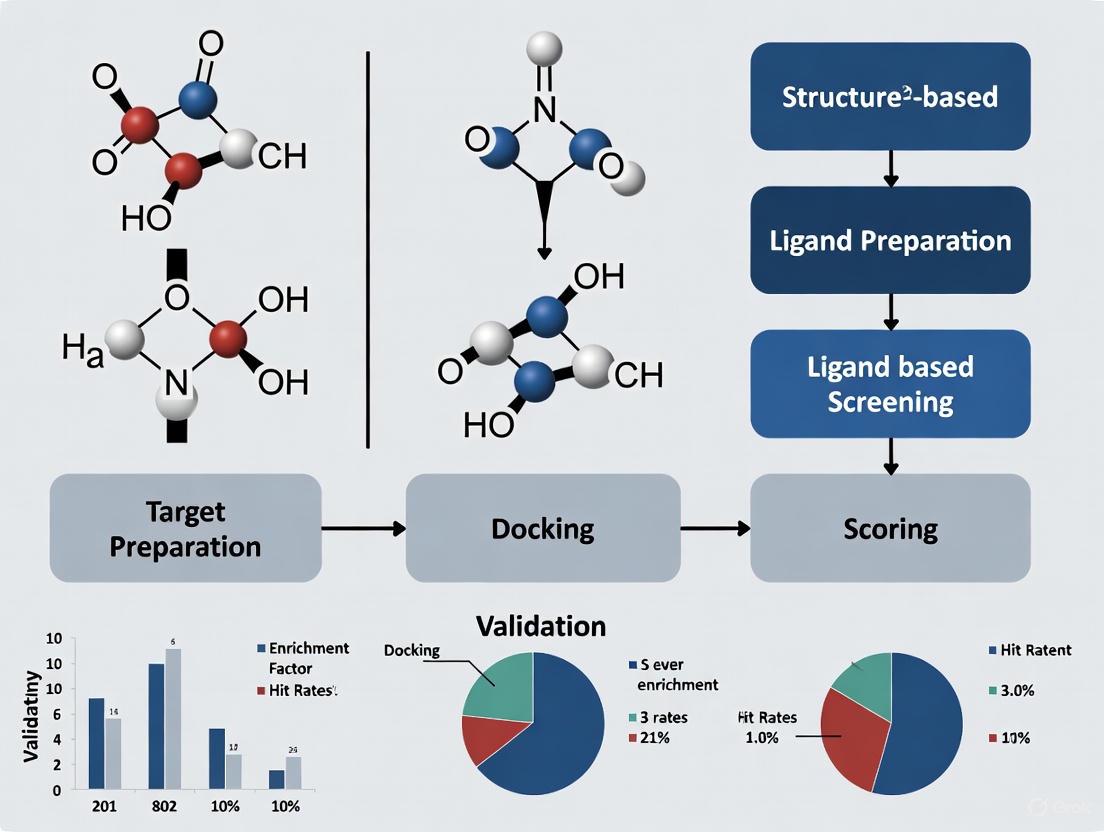

Figure 1: Virtual Screening Workflow Strategies. This diagram illustrates the fundamental workflows for structure-based (SBVS) and ligand-based (LBVS) virtual screening approaches, as well as their combination in hybrid methods [4] [1] [5].

Comparative Analysis: SBVS vs. LBVS

Table 1: Fundamental Characteristics of SBVS and LBVS

| Feature | Structure-Based Virtual Screening (SBVS) | Ligand-Based Virtual Screening (LBVS) |

|---|---|---|

| Information Requirement | 3D structure of target protein | Known active compounds |

| Core Principle | Molecular complementarity to binding site | Chemical similarity to known actives |

| Primary Methodology | Molecular docking and scoring | Similarity searching, pharmacophores, QSAR |

| Chemical Novelty | High potential for novel scaffold identification | Limited by similarity to known chemotypes |

| Computational Cost | Higher (docking computationally intensive) | Lower (rapid similarity calculations) |

| Target Flexibility | Challenging to account for fully | Not applicable (no target structure used) |

| Key Strengths | Identifies novel scaffolds; provides structural insights | High efficiency; no target structure needed |

| Major Limitations | Dependent on quality of target structure; scoring inaccuracies | Limited by knowledge of existing actives; scaffold bias |

Information Requirements and Applicability Domains

The fundamental distinction between SBVS and LBVS lies in their information prerequisites, which directly influences their applicability to different drug discovery scenarios. SBVS requires detailed three-dimensional structural information of the biological target, typically derived from experimental methods such as X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy [1]. With the recent breakthroughs in protein structure prediction via AlphaFold2, SBVS is becoming applicable to a broader range of targets previously lacking experimental structures [4]. This structural foundation enables atomic-level insights into binding interactions and facilitates the identification of completely novel chemotypes that share no obvious structural similarity to known ligands [1] [5].

In contrast, LBVS relies exclusively on knowledge of compounds with confirmed activity against the target of interest, making it particularly valuable for targets with poorly characterized or unknown structures [3] [1]. The performance of LBVS is heavily dependent on the quantity, quality, and structural diversity of known actives, with robust QSAR models typically requiring substantial datasets spanning multiple chemical series and potency ranges [7]. While LBVS excels at finding analogs similar to established chemotypes, it may struggle to identify structurally distinct compounds that interact with the target through novel binding modes [4] [5].

Performance Considerations and Methodological Challenges

Both SBVS and LBVS face distinct methodological challenges that impact their performance and reliability. SBVS methodologies, particularly molecular docking, contend with the accurate prediction of binding affinities—a persistent challenge due to simplifications in scoring functions and the complexities of molecular recognition [1]. The treatment of protein flexibility represents another significant hurdle, as conventional docking often treats receptors as rigid entities despite the dynamic conformational changes that frequently accompany ligand binding [1] [5]. Additionally, the handling of solvent effects, particularly the role of water molecules in mediating protein-ligand interactions, remains computationally demanding and can significantly impact pose prediction and scoring accuracy [5].

LBVS faces its own set of limitations, primarily centered around the "analog bias" or "ligand bias," where overreliance on similar chemical templates may limit structural diversity in screening outputs [7]. The molecular representations and similarity metrics used in LBVS may not fully capture the complex physicochemical properties governing biological activity, potentially leading to false positives or missed opportunities [5] [7]. Furthermore, LBVS models require careful validation to avoid overfitting, particularly with complex machine learning approaches applied to limited training data [7] [8]. The target-dependent performance of both approaches necessitates careful method selection and validation for each specific application [5].

Table 2: Performance Metrics from Comparative Studies

| Evaluation Metric | SBVS Performance | LBVS Performance | Hybrid Methods | Notes |

|---|---|---|---|---|

| Enrichment Factor (EF1%) | Variable (target-dependent) | Variable (target-dependent) | 29.73-52.77 (ENS-VS) [8] | Higher values indicate better early enrichment |

| Area Under Curve (AUC) | ~0.7-0.9 (typical range) | ~0.7-0.9 (typical range) | 0.793-0.982 (ENS-VS) [8] | Measure of overall classification performance |

| Scaffold Novelty | Higher | Lower | Intermediate | SBVS better for identifying novel chemotypes |

| False Positive Rate | Median ~83% in docking [1] | Varies with similarity threshold | Reduced compared to single methods | Significant challenge in SBVS scoring |

| Computational Efficiency | Lower (docking intensive) | Higher (rapid similarity) | Intermediate | LBVS enables larger library screening |

Validation Frameworks and Benchmarking

Benchmarking Datasets and Validation Protocols

Robust validation is essential for assessing the performance of virtual screening methods and guiding their application in prospective drug discovery campaigns. The development of standardized benchmarking datasets has been crucial for objective comparison of SBVS and LBVS approaches [9] [7]. These datasets typically consist of known active compounds paired with "decoys"—carefully selected molecules presumed to be inactive that serve as negative controls [9] [7]. The Directory of Useful Decoys (DUD) and its enhanced version DUD-E have emerged as widely adopted benchmarks containing 102 targets with over 20,000 active compounds and approximately 50 property-matched decoys per active [7] [8]. Other notable resources include DEKOIS, MUV, and target-specific databases designed to minimize biases in performance evaluation [7].

Standard validation protocols involve screening benchmarking datasets and calculating enrichment metrics that quantify the ability to prioritize active compounds over decoys [9] [7]. Common metrics include enrichment factors (EF), which measure the concentration of actives in the top-ranked fraction compared to random selection; receiver operating characteristic (ROC) curves, which plot the true positive rate against the false positive rate across all ranking thresholds; and area under the ROC curve (AUC), which provides an aggregate measure of classification performance [9] [7]. These quantitative assessments enable direct comparison of different screening methods and inform selection of the optimal approach for specific targets or discovery contexts.

Addressing Biases in Benchmarking

The construction of unbiased benchmarking datasets presents significant challenges, as identified in methodological research [9] [7]. Early benchmarking efforts suffered from "artificial enrichment," where decoys differed substantially from actives in simple physicochemical properties, enabling trivial discrimination based on properties like molecular weight rather than specific complementarity [9] [7]. The "analog bias" occurs when actives within a benchmark share high structural similarity, potentially inflating LBVS performance through over-representation of certain chemotypes [7]. Additionally, the potential inclusion of undiscovered active compounds within decoy sets ("false negatives") can lead to underestimated performance metrics [7].

Modern benchmarking databases address these issues through sophisticated decoy selection strategies that match physicochemical properties between actives and decoys while ensuring structural dissimilarity [9] [7]. Tools like DecoyFinder and best practices guidelines enable researchers to generate target-specific benchmarking sets that minimize biases and provide realistic assessment of virtual screening performance [9] [7]. These advances support more reliable method evaluation and translation of retrospective performance to prospective screening success.

Hybrid Approaches: Integrating SBVS and LBVS

Combination Strategies and Workflows

Recognizing the complementary strengths and limitations of SBVS and LBVS, researchers have developed hybrid strategies that integrate both approaches to enhance screening performance [4] [5]. These hybrid methods can be categorized into three primary architectures: sequential, parallel, and fully integrated approaches [4] [5]. Sequential strategies apply LBVS and SBVS in consecutive steps, typically using faster ligand-based methods for initial filtering followed by more computationally intensive structure-based techniques for refined assessment [5]. This funnel-based approach optimizes the trade-off between computational efficiency and screening accuracy, though it may discard true positives that perform poorly in the initial filtering stage [4] [5].

Parallel strategies execute LBVS and SBVS independently and subsequently combine their results through data fusion algorithms that reconcile rankings from both approaches [4] [5]. These methods require careful normalization of heterogeneous scores from different techniques but preserve the individual strengths of each approach [4]. Integrated hybrid methods merge ligand- and structure-based information into a unified framework, such as interaction fingerprint techniques that encode protein-ligand interaction patterns while incorporating ligand structural features [6] [4]. For example, the Fragmented Interaction Fingerprint (FIFI) combines extended connectivity fingerprints of ligands with spatial proximity to binding site residues, retaining sequence order information that distinguishes similar interactions with different residues [6].

Machine Learning-Enhanced Hybrid Methods

The integration of machine learning has significantly advanced hybrid virtual screening approaches, enabling more effective leveraging of both ligand and structure information [4] [8]. ML-based methods can learn complex relationships between molecular features and bioactivity from training data, often outperforming traditional scoring functions [4] [8]. Ensemble learning approaches, such as the ENS-VS method, integrate multiple classifiers including support vector machines, decision trees, and Fisher linear discriminant analysis to improve prediction accuracy and robustness across diverse targets [8]. These methods typically use combined descriptors incorporating both protein-ligand interaction energy terms and ligand structural features to capture complementary information [8].

Interaction fingerprint-based approaches represent another promising direction for hybrid screening, encoding protein-ligand interaction patterns as bit vectors that can be used with machine learning models [6] [4]. These fingerprints, such as PLEC, EIFP, and the recently developed FIFI, facilitate hybrid virtual screening by simultaneously representing ligand structural characteristics and their interactions with the binding site [6]. Retrospective evaluations demonstrate that these hybrid methods can achieve superior performance compared to individual LBVS or SBVS approaches, particularly when limited active compounds are available for training [6].

Figure 2: Hybrid Virtual Screening Strategies. Three primary architectures for combining SBVS and LBVS: sequential, parallel, and integrated approaches [4] [5].

Experimental Protocols and Case Studies

Representative Experimental Protocols

Protocol 1: FIFI (Fragmented Interaction Fingerprint) Implementation

The FIFI method represents a recent advancement in hybrid virtual screening that integrates ligand-based and structure-based information through interaction fingerprints [6]. The protocol begins with preparation of protein-ligand complexes, typically through docking of known active compounds into the target binding site. For each complex, FIFI is constructed by identifying extended connectivity fingerprint (ECFP) atom environments of the ligand that are proximal to protein residues in the binding site [6]. Each unique ligand substructure within each amino acid residue is encoded as a bit while retaining the sequence order of residues, distinguishing it from previous interaction fingerprints like PLEC that do not preserve sequence information [6]. The resulting FIFI vectors are then used with machine learning classifiers (such as Random Forest or Support Vector Machines) trained on known active and inactive compounds. In retrospective validation across six biological targets, FIFI demonstrated consistently higher prediction accuracy compared to existing interaction fingerprints, particularly when limited active compounds were available for training [6].

Protocol 2: ENS-VS (Ensemble Learning Virtual Screening) Workflow

The ENS-VS method employs ensemble learning to improve virtual screening performance through the following steps [8]: First, all active and decoy compounds from benchmarking datasets like DUD-E are docked into the target binding site using Autodock Vina, with the best pose selected for each ligand based on docking score. Next, five protein-ligand interaction energy terms are calculated alongside structure vectors of the ligands to create combined descriptors that capture both interaction energetics and ligand structural features [8]. To address class imbalance between active and decoy compounds, ENS-VS implements a sampling ensemble approach that generates multiple balanced training subsets. Finally, an ensemble classifier integrating Support Vector Machine, Decision Tree, and Fisher Linear Discriminant algorithms predicts compound activity, with majority voting determining the final classification [8]. This approach demonstrated significant improvements in early enrichment (EF1% = 29.73-52.77) compared to traditional docking or single-classifier methods across multiple benchmarking datasets [8].

Application in Real-World Discovery Campaigns

Virtual screening approaches have demonstrated substantial impact in prospective drug discovery campaigns across diverse therapeutic areas. In antiviral drug discovery, SBVS has been successfully employed to identify potential inhibitors against targets including SARS coronavirus protease, leading to the recognition of existing drugs like cinanserin that could be repurposed for antiviral treatment [1]. The integration of virtual screening with ultra-large compound libraries has proven particularly valuable, with recent campaigns screening billions of commercially available compounds through efficient computational workflows [4] [1].

The CACHE (Critical Assessment of Computational Hit-finding Experiments) competition provides objective assessment of virtual screening performance in real-world scenarios [4]. In Challenge #1 focused on finding ligands for the LRRK2-WDR domain, participating teams employed diverse strategies with most incorporating molecular docking alongside various filtering approaches [4]. The results demonstrated that successful virtual screening campaigns typically combine multiple approaches—integrating SBVS for binding mode prediction with LBVS for chemical similarity assessment and additional filters for drug-like properties and synthetic feasibility [4]. These real-world applications underscore the complementary value of both structure-based and ligand-based approaches in addressing the complex challenge of hit identification in drug discovery.

Essential Research Reagents and Computational Tools

Table 3: Key Resources for Virtual Screening Implementation

| Resource Category | Specific Tools/Solutions | Application Function |

|---|---|---|

| SBVS Software | AutoDock Vina, GOLD, Glide, DOCK | Molecular docking and pose prediction |

| LBVS Software | OpenBabel, RDKit, ChemAxon | Molecular descriptor calculation and similarity searching |

| Benchmarking Datasets | DUD-E, DEKOIS 2.0, MUV | Performance validation and method comparison |

| Compound Libraries | ZINC, Enamine REAL, PubChem | Sources of screening compounds |

| Protein Structure Resources | PDB, AlphaFold Protein Structure Database | Source of target structures for SBVS |

| Hybrid Methods | FIFI, PLEC, ENS-VS | Integrated LBVS+SBVS implementations |

| Machine Learning Libraries | scikit-learn, TensorFlow, PyTorch | Implementation of ML-based scoring classifiers |

SBVS and LBVS represent complementary paradigms in computer-aided drug design, each with distinct strengths, limitations, and application domains. SBVS offers the advantage of identifying novel chemotypes through direct modeling of target-ligand interactions but requires high-quality structural information and faces challenges in scoring accuracy [1] [5]. LBVS provides computational efficiency and independence from target structure but may be constrained by chemical bias toward known scaffolds [4] [5]. The integration of these approaches through hybrid methods has emerged as a powerful strategy that leverages their complementary strengths while mitigating individual limitations [6] [4] [5].

Future developments in virtual screening will likely be shaped by several converging trends. The rapid advancement of machine learning and artificial intelligence is transforming both SBVS and LBVS through improved scoring functions, molecular representations, and activity prediction models [4] [8]. The availability of ultra-large chemical libraries encompassing billions of synthesizable compounds necessitates continued optimization of screening efficiency and accuracy [4] [1]. Furthermore, the integration of experimental structural biology with computational predictions creates iterative cycles of model refinement and validation [1]. As these technologies mature, the distinction between SBVS and LBVS may increasingly blur in favor of holistic approaches that seamlessly integrate diverse data types to accelerate therapeutic discovery.

Structure-Based Virtual Screening (SBVS) has become a cornerstone technique in modern drug discovery, providing a computational pipeline to identify novel bioactive molecules by leveraging the three-dimensional (3D) structure of a biological target [10] [11]. This approach serves as a rational and cost-effective alternative or complement to experimental high-throughput screening (HTS), allowing researchers to prioritize the most promising compounds from libraries containing millions to billions of molecules before committing to costly laboratory tests [1]. The fundamental principle of SBVS is the prediction of how small molecule ligands interact with a specific binding site on a target protein, enabling the identification of hits with a high likelihood of biological activity [12].

The primary advantage of SBVS over its counterpart, Ligand-Based Virtual Screening (LBVS), is its ability to discover structurally novel compounds without reliance on known active molecules [8] [13]. While LBVS uses similarity to known actives to find new candidates, SBVS relies on the physical and chemical principles of molecular recognition, making it indispensable for targets with few known modulators or when scaffold hopping is desired [5]. The success of SBVS is evident from its contribution to several marketed drugs, including captopril, saquinavir, and dorzolamide, demonstrating its tangible impact on pharmaceutical development [11].

The Core SBVS Workflow: A Step-by-Step Guide

The typical SBVS workflow is a multi-stage process that transforms a target structure and a compound library into a shortlist of candidates for experimental testing. The general protocol involves careful preparation of both the receptor and the ligands, followed by docking and scoring, and culminates in post-processing to select the final hits [10].

Target Protein Preparation

The first critical step involves preparing the 3D structure of the target protein. The success of the entire SBVS campaign hinges on the quality and biological relevance of this structure [10].

- Source of Structure: The target structure is typically obtained from experimental methods like X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy. When an experimental structure is unavailable, homology modeling can be used to predict the structure from its amino acid sequence [10] [11].

- Preparation Tasks:

- Adding Hydrogens and Assigning Protonation States: Determining the correct protonation states of amino acid residues at a given pH is crucial for accurate electrostatics. Tools like PROPKA and H++ are commonly used for this purpose [10].

- Treating Water Molecules: A decision must be made on which, if any, crystallographic water molecules to retain. These can be crucial for mediating ligand binding but can also lead to false positives if handled incorrectly. Methods like WaterMap and 3D-RISM help evaluate the thermodynamic stability of water molecules [10].

- Filling Missing Loops and Side Chains: Low-resolution structures may have missing segments that need to be modeled.

- Energy Minimization: The structure is gently minimized to relieve steric clashes introduced during the addition of hydrogen atoms [10].

Compound Library Preparation

The virtual chemical library, which can range from thousands to billions of compounds, must also be preprocessed to ensure chemical correctness and relevance [10] [12].

- Format Standardization: Converting 2D structural representations (e.g., SMILES strings) into 3D coordinate models.

- Tautomer and Stereoisomer Enumeration: Generating all possible tautomers and stereoisomers for each compound, as the correct form is unknown prior to docking.

- Protonation State Assignment: Similar to the protein, generating plausible protonation states for ligands at physiological pH.

- Conformer Generation: For flexible docking, multiple low-energy 3D conformations may be generated for each molecule to account for ligand flexibility. Tools like GINGER enable rapid GPU-accelerated conformer generation for ultra-large libraries [12].

Molecular Docking and Scoring

This is the computational heart of SBVS, where each prepared molecule is "docked" into the binding site of the prepared protein.

- Docking Algorithm: The docking program performs a conformational search, exploring possible orientations (poses) and conformations of the ligand within the binding site. The goal is to find the most favorable binding mode [10] [1].

- Scoring Function: Each generated pose is evaluated and ranked using a scoring function, which is a mathematical model that approximates the binding affinity. These functions can be physics-based, empirical, knowledge-based, or, increasingly, machine learning-based [11]. Despite their utility, the inaccurate prediction of true binding affinity remains a key challenge for classical scoring functions [1].

Post-Docking Analysis and Hit Selection

The top-ranking compounds from the docking simulation are not guaranteed hits and require careful post-processing.

- Pose Inspection: Researchers visually inspect the predicted binding modes of top-scoring compounds to check for sensible interactions, such as hydrogen bonds, hydrophobic contacts, and pi-stacking.

- Interaction Fingerprints: Tools like FIFI (Fragmented Interaction Fingerprint) and PLEC (Protein-Ligand Extended Connectivity) encode the pattern of interactions into a bit vector, which can be used with machine learning models to prioritize compounds with interaction patterns similar to known actives [6].

- ADMET Filtering: Compounds are filtered based on predicted Absorption, Distribution, Metabolism, Excretion, and Toxicity properties to increase the likelihood of drug-like candidates.

- Consensus Scoring: Using multiple scoring functions or methods to rank compounds can reduce false positives and improve hit rates. Compounds that rank highly across different methods are considered more reliable [11].

The following diagram illustrates the logical flow and decision points within this core SBVS workflow.

Advanced SBVS Protocols and Machine Learning Enhancements

To address the limitations of standard docking and scoring, several advanced protocols have been developed, with machine learning (ML) playing an increasingly transformative role.

Accounting for Flexibility: Ensemble and 4D Docking

A major limitation of classical docking is treating the protein as a rigid body. In reality, proteins are dynamic, and their binding sites can adopt multiple conformations [5].

- Ensemble Docking: This involves docking compound libraries against an ensemble of multiple protein structures, which can be derived from different experimental crystal structures, NMR models, or molecular dynamics (MD) simulation snapshots. This approach accounts for protein flexibility and increases the chance of finding compounds that bind to different conformational states [10] [12].

- Induced Fit Docking: More computationally intensive than ensemble docking, these protocols allow for both ligand and protein side-chain (and sometimes backbone) flexibility during the docking simulation.

Machine Learning-Augmented Scoring and Workflows

Traditional scoring functions are often a bottleneck in SBVS. ML-based approaches are now being used to overcome this challenge [4] [8].

- Target-Specific ML Scoring: Methods like ENS-VS use ensemble learning models (e.g., combining Support Vector Machines, Decision Trees, and Fisher linear discriminant) trained on target-specific active and decoy compounds. These models use a combination of protein-ligand interaction energy terms and ligand structure vectors as descriptors, significantly improving enrichment over classical scoring functions [8].

- Interaction Fingerprints with ML: Fingerprints like FIFI and PLEC encode the specific interaction patterns between a ligand and its target. These fingerprints can be used as features to train ML classifiers that distinguish active from inactive compounds, combining the strengths of structure-based and ligand-based approaches [6].

- Deep Learning for Binding Affinity Prediction: Advanced deep learning architectures, particularly graph neural networks that model the protein-ligand complex as a graph of atoms, are being developed to predict binding affinity with higher accuracy than classical scoring functions [4] [13].

The diagram below maps the evolution of these advanced SBVS methodologies, from foundational concepts to AI-integrated techniques.

Performance Comparison: SBVS and Its Hybrid Variants

The performance of virtual screening methods is typically measured by metrics such as the Enrichment Factor (EF), which indicates how much better a method is at identifying true active compounds compared to random selection, and the Area Under the ROC Curve (AUC), which measures the overall ability to distinguish actives from inactives [8].

The following table summarizes quantitative performance data from retrospective studies, comparing classical SBVS with advanced and hybrid methods.

Table 1: Performance Comparison of Virtual Screening Methods on Benchmark Datasets

| Method Category | Specific Method / Protocol | Performance Metric | Result (Mean) | Benchmark Dataset |

|---|---|---|---|---|

| Classical SBVS | Autodock Vina (Standard Docking) | Enrichment Factor at 1% (EF1%) | Baseline (e.g., 8.80) | DUD-E [8] |

| Advanced ML-SBVS | ENS-VS (Ensemble Learning) | EF1% | 52.77 | DUD-E [8] |

| ENS-VS (Ensemble Learning) | AUC | 0.982 | DUD-E [8] | |

| Hybrid VS | FIFI (IFP with ML) | Prediction Accuracy | Consistently higher than other IFPs | Six Diverse Targets [6] |

| Sequential LB→SB | LBVS followed by SBVS | Hit Rate | Competitive, widely used standard [5] | Various Case Studies [5] |

The data demonstrates that machine learning-augmented methods like ENS-VS can achieve a dramatic improvement in early enrichment (EF1%) compared to classical docking with Vina, making them far more efficient at identifying the most promising candidates from a large library [8]. Furthermore, hybrid interaction fingerprints like FIFI show stable and high prediction accuracy across diverse targets, validating the strategy of merging ligand and structure-based information [6].

The Scientist's Toolkit: Essential Reagents and Software for SBVS

A successful SBVS campaign relies on a suite of specialized computational tools and databases. The following table details key resources and their functions in the workflow.

Table 2: Essential Research Reagent Solutions for SBVS

| Tool / Resource Name | Type | Primary Function in SBVS | Key Features / Notes |

|---|---|---|---|

| Protein Data Bank (PDB) | Database | Repository for experimental 3D structures of proteins and nucleic acids. | The primary source for target protein structures [11]. |

| DUD-E / DEKOIS 2.0 | Database | Benchmarking sets containing known active compounds and property-matched decoys. | Used for developing and validating new SBVS methods [8]. |

| AutoDock Vina, GOLD, Glide | Software | Molecular docking programs for pose prediction and scoring. | Vina is widely used for its speed and accuracy; Glide and GOLD offer advanced scoring [8] [1] [11]. |

| ICM-Pro | Software | Commercial software suite for molecular modeling, docking, and VS. | Used in professional VS services for docking and pharmacophore modeling [12]. |

| PROPKA, H++ | Software | Tools for predicting pKa values and protonation states of protein residues. | Critical for accurate protein preparation and electrostatic calculations [10]. |

| PLEC, FIFI | Descriptor | Interaction Fingerprints that encode protein-ligand interaction patterns. | Used for post-docking analysis and training ML models for hybrid VS [6]. |

| GINGER | Software | GPU-based tool for high-quality, rapid conformer generation. | Enables processing of ultra-large compound libraries (e.g., 10M compounds/day) [12]. |

| ZINC, Enamine REAL | Database | Public and commercial databases of purchasable and virtual compounds for screening. | Enamine REAL contains billions of make-on-demand compounds for ultra-large VS [12] [4]. |

The SBVS workflow, centered on leveraging target 3D structure for molecular docking, is a powerful and evolving pillar of computer-aided drug design. While the core steps of protein and ligand preparation, docking, and post-processing remain fundamental, the field is being rapidly advanced by protocols that account for system flexibility and, most notably, by the integration of machine learning. The quantitative data shows that these advanced methods, particularly those using ensemble learning and hybrid fingerprints, offer significant performance gains over classical docking. As computational power increases and AI models become more sophisticated, SBVS is poised to become even more accurate and integral to the drug discovery process, enabling the efficient exploration of vast chemical spaces to identify novel therapeutics for untreated diseases.

Ligand-Based Virtual Screening (LBVS) is a foundational computational technique in modern drug discovery, employed to efficiently identify novel bioactive compounds from extensive chemical libraries. This approach is predicated on the chemical similarity principle, which posits that structurally similar molecules are likely to exhibit similar biological activities [14] [15]. LBVS is particularly invaluable in scenarios where the three-dimensional structure of the target protein is unavailable, as it relies exclusively on the structural and physicochemical information of known active ligands [16] [17]. The core objective of a typical LBVS workflow is to enrich a subset of a virtual compound library with molecules that share key characteristics with a set of known actives, thereby increasing the probability of identifying new hit compounds while conserving the resources required for synthesis and biological testing [18] [17].

The versatility of LBVS allows it to be used as a rapid pre-screening filter for ultra-large libraries containing billions of compounds before applying more computationally intensive structure-based methods, or as a standalone approach for lead identification and optimization [16] [18]. Advances in computational power and algorithm design have significantly enhanced the performance and adoption of LBVS, making it a cost-effective and fast alternative to high-throughput screening for discovering new drugs [19].

Core Components of the LBVS Workflow

A robust LBVS workflow integrates several key components, each critical for ensuring the successful identification of novel active compounds.

Query and Ligand Preparation

The initial and a crucial step in LBVS involves the careful selection and preparation of known active compounds, which serve as the query templates for the screening process. The quality and representativeness of these query ligands directly influence the success of the entire campaign [17]. This stage involves:

- Data Collection: Active ligands and their experimental bioactivity data (e.g., Ki, IC50) are retrieved from public databases such as ChEMBL, PubChem, or BindingDB [14] [17].

- Ligand Standardization: Molecular structures must be standardized to ensure consistency. This includes tautomer canonicalization, charge neutralization, and salt removal using tools like MolVS or commercial software [16] [17].

- Conformational Sampling: For 3D LBVS methods, generating a representative set of low-energy conformers for each query ligand is essential. Algorithms such as RDKit's ETKDGv3 are widely used for this purpose, as they efficiently explore the conformational space [16] [17]. The failure to include the bioactive conformation can severely limit the success of subsequent screening steps [17].

Library Preparation

The virtual screening library, which could be an in-house collection or a public database like ZINC, must undergo a similar preparation process [17]. This involves standardizing structures, generating relevant tautomers and protonation states at physiological pH, and, for 3D methods, generating multiple conformers to ensure the bioactive pose is represented [16] [17]. Proper library preparation ensures that the screened molecules are chemically reasonable and that the calculated similarities are meaningful.

Molecular Descriptors and Similarity Searching

The heart of LBVS lies in quantifying the similarity between query and database molecules using molecular descriptors. These can be broadly categorized into 2D and 3D methods.

- 2D Fingerprints: These encode the molecular structure as a bit string based on the presence or absence of specific substructures or topological patterns. Common examples include Morgan fingerprints (also known as ECFP/FCFP), RDKit topological fingerprints, and MACCS keys [16] [14]. Similarity is typically calculated using the Tanimoto coefficient, with values closer to 1.0 indicating higher similarity [14] [15].

- 3D Methods: These approaches compare molecules based on their three-dimensional shape and pharmacophoric features.

- Shape-Based Screening: This method maximizes the overlap of molecular volumes between a query and a database compound. Tools like ROCS are industry standards for this approach [16] [19].

- Pharmacophore-Based Screening: This technique identifies compounds that share a common set of critical functional features (e.g., hydrogen bond donors/acceptors, hydrophobic regions) in a specific spatial arrangement [15] [20].

Table 1: Key Molecular Descriptors and Similarity Metrics in LBVS

| Descriptor Type | Examples | Similarity Metrics | Key Applications |

|---|---|---|---|

| 2D Fingerprints | Morgan (ECFP4), RDKit, MACCS keys | Tanimoto, Tversky, Dice | Rapid screening of large libraries, scaffold hopping [16] [14] |

| 3D Shape | ROCS, VSFlow's shape mode | TanimotoCombo, ShapeTanimoto | Identifying isofunctional molecules with different scaffolds [16] [15] |

| 3D Pharmacophore | Phase, Ligand-Based Pharmacophores | Fit score, RMSD | Filtering for essential interaction features [21] [20] |

Post-Screening Analysis and Hit Selection

The final stage involves analyzing and prioritizing the top-ranking compounds from the similarity search. This is not merely about selecting the highest similarity scores. Researchers must employ chemical diversity analysis to select a set of hits representing distinct scaffolds, thereby reducing redundancy and mitigating the risk of attrition in later stages [17]. Furthermore, manual inspection is critical to verify that key pharmacophoric features are conserved and that the proposed hits are synthetically accessible and possess drug-like properties, often evaluated using rules like Lipinski's Rule of Five or more advanced Multi-Parameter Optimization (MPO) tools [18] [17].

Experimental Protocols and Validation

Validating the performance of an LBVS workflow is essential to establish its reliability and predictive power before prospective application.

Benchmarking Datasets and Protocols

A standard validation protocol involves using benchmark datasets where active compounds and confirmed inactives (decoys) are known. The Directory of Useful Decoys (DUD) is a widely used dataset for this purpose, containing 40 protein targets with active ligands and property-matched decoys [19] [15]. The typical protocol is as follows:

- Define a Query Set: One or more known active compounds are selected from the benchmark dataset to serve as queries.

- Screen the Benchmark Library: The LBVS method is used to rank all molecules (actives and decoys) in the dataset based on their similarity to the query.

- Evaluate Performance: The ranking is analyzed to determine how effectively the method prioritizes known active compounds over decoys.

Key Performance Metrics

The performance of LBVS methods is quantitatively assessed using several standard metrics:

- Area Under the ROC Curve (AUC): Measures the overall ability of the method to distinguish actives from inactives. A perfect classifier has an AUC of 1.0, while random selection yields 0.5 [19] [15].

- Enrichment Factor (EF): Quantifies the concentration of active compounds found within a specific top percentage of the ranked list compared to a random selection. For example, EF1% measures the enrichment in the top 1% of the list [22] [19].

- Hit Rate (HR): The percentage of active compounds recovered within a given top percentage of the ranked list [19].

Table 2: Performance Comparison of Representative LBVS Methods on Benchmark Datasets

| Method / Tool | Descriptor Type | Key Feature | Reported Performance |

|---|---|---|---|

| VSFlow [16] | 2D Fing., Substructure, 3D Shape | Open-source, command-line tool | High speed; enables quick visualization of results. |

| MOST [14] | 2D Fingerprints (Morgan) | Uses explicit bioactivity of the most-similar ligand | Avg. Accuracy: 0.95 (pKi ≥5, cross-validation) |

| HWZ Score [19] | 3D Shape | Novel scoring function for shape overlap | Avg. AUC: 0.84; Avg. HR (top 1%): 46.3% (DUD) |

| CSNAP3D [15] | Hybrid 3D (Shape + Pharmacophore) | Chemical similarity network analysis | High true positive rate (up to 95%) for target prediction |

| ROCS [15] | 3D Shape & Pharmacophore | Industry-standard shape-based screening | ComboScore AUC: 0.59 (Scaffold Hopping benchmark) |

LBVS in the Broader VS Context: Hybrid Approaches

While powerful, LBVS has limitations, including a potential bias towards the chemical space of the query ligands, which can restrict the identification of structurally novel scaffolds (the "dark side" of VS) [17]. To mitigate this and leverage the strengths of different methodologies, hybrid approaches that combine LBVS with Structure-Based Virtual Screening (SBVS) like molecular docking are increasingly adopted [21] [18].

These hybrid strategies can be implemented in several ways:

- Sequential Workflow: A rapid LBVS method (e.g., 2D similarity) is first used to filter a multi-billion compound library down to a manageable size (e.g., millions). This enriched subset is then subjected to more computationally expensive SBVS methods like docking for further refinement [21] [18]. This approach optimizes the trade-off between computational cost and model complexity.

- Parallel Workflow: LBVS and SBVS are run independently on the same library. The final hit list is compiled by either taking the union of top-ranked compounds from both methods or by creating a consensus ranking that integrates scores from both approaches [21] [18]. Evidence suggests that a hybrid model averaging predictions from both LB and SB methods can perform better than either method alone, as errors from one approach can be canceled out by the other [18].

The following diagram illustrates how these methods can be integrated into a single, powerful drug discovery pipeline.

Diagram 1: Integration of LBVS and SBVS in a virtual screening workflow.

Successful implementation of an LBVS workflow relies on a suite of software tools and data resources.

Table 3: Essential Resources for the LBVS Workflow

| Resource Category | Name | Description | Access |

|---|---|---|---|

| Cheminformatics Toolkit | RDKit | Open-source toolkit for cheminformatics; core engine for many custom LBVS tools (e.g., VSFlow) and fingerprint generation [16] [14]. | Open-Source |

| 3D Shape Screening | ROCS | Industry-standard software for rapid 3D shape similarity screening and pharmacophore comparison [19] [15]. | Commercial |

| Bioactivity Databases | ChEMBL | Manually curated database of bioactive, drug-like molecules with binding, functional and ADMET data [14] [17]. | Public |

| Compound Libraries | ZINC | Freely available database of commercially available compounds for virtual screening, containing over 230 million molecules [16] [17]. | Public |

| Workflow & GUI Tools | VSFlow | Open-source command-line tool that integrates substructure, fingerprint, and shape-based screening in one package [16]. | Open-Source |

Ligand-Based Virtual Screening remains a powerful, efficient, and indispensable method in the drug discovery arsenal. Its core strength lies in leveraging the principle of chemical similarity to rapidly identify potential hit compounds from vast chemical spaces, especially when structural data for the target is lacking. As demonstrated by tools like VSFlow and methodologies like the HWZ score and MOST, continued development in similarity algorithms and scoring functions is yielding consistently high performance in benchmark studies, with some achieving average AUC values over 0.8 and hit rates above 45% in the top 1% of ranked lists [14] [19].

However, the full potential of LBVS is often realized when it is used not in isolation, but as part of a strategically integrated workflow that includes structure-based methods. The emerging paradigm of hybrid LB/SB screening, whether sequential or parallel, offers a more robust framework by combining the pattern recognition strength of LBVS with the atomic-level insights of SBVS. This synergistic approach helps overcome the individual limitations of each method, reduces false positives, and increases confidence in the final selection of hits for experimental validation, ultimately accelerating the journey toward discovering novel therapeutic agents [21] [18].

Key Advantages and Inherent Limitations of Each Method

Virtual screening (VS) is a cornerstone of modern computational drug discovery, providing a powerful and cost-effective strategy for identifying bioactive molecules from vast chemical libraries. The two primary computational strategies are structure-based virtual screening (SBVS), which relies on the three-dimensional structure of a target protein, and ligand-based virtual screening (LBVS), which leverages the known properties of active ligands [4] [1]. In the contemporary research landscape, the choice between these methods—or their intelligent integration—is critical for the success of hit-finding campaigns. This guide provides an objective comparison of SBVS and LBVS, detailing their respective advantages, limitations, and performance data to inform their application and validation within drug discovery pipelines.

Core Principles and Methodologies

Structure-Based Virtual Screening (SBVS)

SBVS requires a known or modeled three-dimensional structure of the target protein, typically derived from X-ray crystallography, cryo-electron microscopy (cryo-EM), or computational prediction tools like AlphaFold [18] [1]. The core of SBVS is molecular docking, a computational process that predicts how a small molecule (ligand) binds to a protein's binding site. The workflow generally involves several key steps [23] [1]:

- Target Preparation: The protein structure is processed by removing water molecules, adding hydrogen atoms, and optimizing side-chain conformations.

- Ligand Preparation: Small molecules from a chemical library are converted into a format suitable for docking, often generating multiple 3D conformations for each.

- Docking and Scoring: Algorithms sample possible binding poses for each ligand in the binding site and rank them using a scoring function. This function estimates the binding affinity based on complementary factors like shape, electrostatics, and hydrogen bonding [22].

Ligand-Based Virtual Screening (LBVS)

LBVS is employed when a high-quality protein structure is unavailable, but data on known active compounds exists. It operates on the "similarity-property principle," which states that structurally similar molecules are likely to have similar biological activities [4] [18]. Key LBVS methodologies include:

- Pharmacophore Modeling: This approach identifies and maps the essential 3D features responsible for a ligand's biological activity (e.g., hydrogen bond donors/acceptors, hydrophobic regions). New compounds are screened for their ability to match this spatial arrangement [18].

- Quantitative Structure-Activity Relationship (QSAR): These models use statistical learning to correlate quantitative molecular descriptors of a set of ligands with their biological activity, enabling the prediction of activity for new compounds [4].

- Molecular Similarity Searching: This method uses molecular fingerprints or other descriptors to computationally screen large libraries for compounds that are structurally similar to known actives [18].

Table 1: Overview of Fundamental Methodologies

| Method | Core Requirement | Key Techniques | Underlying Principle |

|---|---|---|---|

| Structure-Based (SBVS) | 3D Protein Structure | Molecular Docking, Scoring Functions | Physical simulation of molecular recognition and binding complementarity. |

| Ligand-Based (LBVS) | Known Active Ligands | Pharmacophore Modeling, QSAR, Similarity Search | Similarity-Property Principle: structurally similar molecules have similar biological activity. |

Direct Comparative Analysis: Advantages and Limitations

SBVS and LBVS offer distinct strengths and face different challenges. A head-to-head comparison reveals their complementary nature.

Key Advantages

Table 2: Key Advantages of SBVS and LBVS

| Aspect | Structure-Based (SBVS) | Ligand-Based (LBVS) |

|---|---|---|

| Scaffold Discovery | High potential for identifying novel and diverse chemotypes that are structurally distinct from known ligands [24]. | Limited by known ligand templates, leading to a tendency to find analogs and similar scaffolds [4]. |

| Mechanistic Insight | Provides atomic-level interaction details (e.g., hydrogen bonds, hydrophobic contacts), offering a hypothesis for the binding mode [18] [1]. | Provides little to no direct information on the binding mode or protein-ligand interactions [4]. |

| Requirement Flexibility | Dependent on a high-quality protein structure, which can be a limitation for some targets. | Can be applied when no protein structure is available, using only ligand information [4] [18]. |

| Computational Efficiency | Computationally intensive, especially for flexible docking and large libraries. | Generally faster and less costly, enabling rapid screening of ultra-large libraries [4] [18]. |

Inherent Limitations and Challenges

Table 3: Inherent Limitations of SBVS and LBVS

| Challenge | Structure-Based (SBVS) | Ligand-Based (LBVS) |

|---|---|---|

| Scoring Accuracy | Scoring functions are a major limitation, often struggling to predict true binding affinity accurately, leading to high false positive rates [22] [1]. | Accuracy depends heavily on the quality and diversity of the known active ligand set used to build the model [4]. |

| Protein Flexibility | Treating the protein as rigid can neglect conformational changes upon binding, though ensemble docking and flexible side-chain methods are emerging solutions [23] [22]. | Not applicable, as the method does not use protein structure. |

| Structural Dependency | Performance is highly sensitive to the quality and resolution of the input protein structure. AlphaFold models may require refinement for reliable docking [18]. | Not applicable. |

| Chemical Novelty | Not applicable. | Strong bias towards known chemical series, potentially missing novel scaffolds that do not match the 2D or 3D similarity queries [4]. |

Experimental Performance and Benchmarking Data

Quantitative benchmarks are essential for validating the performance of virtual screening methods. The following data, drawn from recent studies, highlights the performance of various SBVS tools and the significant impact of machine learning (ML) enhancements.

Table 4: Virtual Screening Performance on Benchmark Datasets

| Study & Method | Target / Dataset | Key Performance Metric | Reported Result |

|---|---|---|---|

| SBVS Benchmarking [23] | PfDHFR (Malaria enzyme) | Enrichment Factor at 1% (EF1%) | PLANTS + CNN-Score: EF1% = 28FRED + CNN-Score: EF1% = 31 |

| RosettaVS [22] | CASF-2016 (285 complexes) | Enrichment Factor at 1% (EF1%) | RosettaGenFF-VS: EF1% = 16.72 |

| HelixVS [25] | DUD-E (102 targets) | Enrichment Factor at 1% (EF1%) | HelixVS: EF1% = 26.968AutoDock Vina: EF1% = 10.022 |

| Ultra-Large Library Screen [24] | CB2 Receptor (GPCR) | Experimental Hit Rate | 55% (6 out of 11 synthesized compounds were active) |

Detailed Experimental Protocol: SBVS Benchmarking

To illustrate a standard validation protocol, the following workflow is adapted from a benchmarking study on the malaria target Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) [23]:

- Protein Structure Preparation: Crystal structures for wild-type (PDB ID: 6A2M) and quadruple-mutant (PDB ID: 6KP2) PfDHFR were obtained from the Protein Data Bank. Structures were prepared using OpenEye's "Make Receptor" by removing water molecules and ions, adding hydrogens, and optimizing hydrogen bonding networks.

- Benchmark Set Curation: The DEKOIS 2.0 protocol was used to create a benchmark set containing 40 known bioactive molecules and 1,200 structurally similar but presumed inactive decoy molecules for each PfDHFR variant (a 1:30 active-to-decoy ratio).

- Docking Experiments: Three docking programs—AutoDock Vina, PLANTS, and FRED—were used to screen the benchmark sets. Grid boxes were defined to encompass the binding site of each variant.

- Machine Learning Re-scoring: The top poses generated by each docking tool were re-scored by two pretrained ML scoring functions: CNN-Score and RF-Score-VS v2.

- Performance Evaluation: Screening performance was quantified using:

- Enrichment Factor (EF1%): Measures the concentration of active compounds in the top 1% of the ranked list.

- pROC-AUC: The area under the semi-log ROC curve, assessing the overall ability to distinguish actives from decoys.

- pROC-Chemotype Plots: Analyzes the chemical diversity of the enriched actives.

This study concluded that re-scoring docking outcomes with ML-based functions, particularly CNN-Score, consistently enhanced performance and enriched diverse, high-affinity binders for both wild-type and drug-resistant PfDHFR variants [23].

Integrated Workflows and Visualization

Given their complementary strengths, the most effective strategies often combine LBVS and SBVS. Integrated workflows can be sequential, parallel, or hybrid [4] [18].

Virtual Screening Strategy Selection

Hybrid Screening Strategies

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 5: Essential Software and Resources for Virtual Screening

| Category | Tool / Resource | Primary Function | Key Application |

|---|---|---|---|

| SBVS Software | AutoDock Vina [23] [22] | Molecular docking with a fast scoring function. | Widely used open-source tool for standard docking tasks. |

| RosettaVS [22] | Physics-based docking with receptor flexibility. | High-precision docking and screening of challenging targets. | |

| FRED, PLANTS [23] | Rigid-body and flexible-ligand docking algorithms. | Benchmarking and structure-based screening campaigns. | |

| LBVS Software | ROCS, eSim [18] | 3D shape- and electrostatic-based similarity searching. | Rapid ligand-based screening and scaffold hopping. |

| QuanSA [18] | 3D-QSAR model building and affinity prediction. | Quantitative affinity prediction from ligand structures. | |

| ML & AI Platforms | HelixVS [25] | Multi-stage VS integrating docking and deep learning scoring. | High-throughput, high-accuracy screening with improved enrichment. |

| CNN-Score, RF-Score [23] | Re-scoring docking poses with machine learning models. | Improving ranking and active enrichment after initial docking. | |

| Chemical Libraries | Enamine REAL, ZINC [24] | Ultra-large libraries of commercially available compounds. | Providing synthetically accessible chemical space for screening. |

| Benchmarking Sets | DEKOIS 2.0, DUD-E [23] [25] | Curated datasets with known actives and decoys. | Objective performance evaluation and validation of VS methods. |

Virtual screening (VS) is a cornerstone of modern computational drug discovery, employed to efficiently identify promising hit compounds from vast chemical libraries. The two primary computational strategies are ligand-based virtual screening (LBVS) and structure-based virtual screening (SBVS). The choice between these methods is not a matter of superiority but is fundamentally dictated by the nature and quantity of available data for the biological target of interest. LBVS relies on the knowledge of known active ligands to find similar compounds, whereas SBVS requires the three-dimensional structure of the target protein to computationally dock and score small molecules [26]. With the advent of machine learning (ML) and artificial intelligence (AI), the capabilities of both approaches have been significantly augmented, leading to the development of sophisticated hybrid strategies [4] [22]. This guide provides an objective, data-driven framework to help researchers select the optimal virtual screening path, validated by performance data from benchmark studies and real-world applications.

Core Methodologies and Their Applications

Ligand-Based Virtual Screening (LBVS)

LBVS methods are used when the structure of the target protein is unknown or uncertain, but information about molecules that bind to it is available.

- Principle: These methods operate on the "similarity-property principle," which states that structurally similar molecules are likely to have similar biological activities [4].

- Key Techniques:

- 2D Similarity Search: This is one of the simplest and most widely used LBVS tools. It encodes molecules into fingerprints (bit strings representing molecular features) and uses metrics like the Tanimoto coefficient to rank compounds in a database by their similarity to known active ligands [26] [27].

- Pharmacophore Modeling: A pharmacophore represents the essential molecular features (e.g., hydrogen bond donors/acceptors, hydrophobic regions) responsible for a ligand's biological activity. Pharmacophore models can be derived from a set of active ligands and used as a 3D query to screen compound libraries [27].

- Quantitative Structure-Activity Relationship (QSAR): This machine learning approach builds a statistical model that correlates numerical descriptors of a set of molecules with their measured biological activity. The model can then predict the activity of new, untested compounds [4] [27].

Structure-Based Virtual Screening (SBVS)

SBVS comes into play when a reliable 3D structure of the target protein (e.g., from X-ray crystallography, NMR, or high-quality models like AlphaFold2) is available [4] [28].

- Principle: SBVS, primarily molecular docking, computationally simulates how a small molecule (ligand) binds to the target's binding site. It aims to predict the optimal binding pose and estimate the binding affinity using a scoring function [28] [26].

- Key Considerations:

- Target Flexibility: Proteins are dynamic. Accounting for side-chain or even backbone flexibility through methods like ensemble docking can improve screening accuracy [28].

- Scoring Functions: These are mathematical functions used to rank docked compounds. They can be physics-based, empirical, or knowledge-based. Recent advances include machine learning-based scoring functions (ML SFs), which have shown superior performance in distinguishing active from inactive compounds [23] [22].

Decision Framework: Choosing Your Path

The following diagram illustrates the decision-making process for selecting a virtual screening strategy, integrating both classical and ML-augmented approaches.

Performance Benchmarking: Quantitative Comparisons

The effectiveness of virtual screening strategies is quantitatively measured using benchmark datasets like DEKOIS and DUD, which contain known active compounds and inactive "decoys" [23]. Key metrics include Enrichment Factor (EF), which measures the concentration of active compounds at the top of a ranked list, and Area Under the Curve (AUC).

Benchmarking of Docking and ML Re-scoring

The table below summarizes data from a benchmarking study on Plasmodium falciparum Dihydrofolate Reductase (PfDHFR), comparing three docking tools and their performance when enhanced with ML re-scoring [23].

Table 1: Performance of Docking and ML Re-scoring for Wild-Type (WT) and Quadruple-Mutant (Q) PfDHFR

| Target Variant | Docking Tool | ML Re-scoring Function | Performance (EF1%) | Key Finding |

|---|---|---|---|---|

| Wild-Type (WT) | AutoDock Vina | None (Standard Scoring) | Worse-than-random | Standard scoring performed poorly. |

| Wild-Type (WT) | AutoDock Vina | RF-Score-VS v2 / CNN-Score | Better-than-random | ML re-scoring significantly improved performance from worse-than-random to better-than-random. |

| Wild-Type (WT) | PLANTS | CNN-Score | 28 | This combination yielded the best enrichment for the WT variant. |

| Quadruple-Mutant (Q) | FRED | CNN-Score | 31 | This combination yielded the best enrichment for the resistant Q variant. |

Abbreviation: EF1%: Enrichment Factor at the top 1% of the screened library.

Conclusion: The study demonstrates that re-scoring docking outputs with ML-based functions like CNN-Score consistently augments SBVS performance and is crucial for identifying diverse, high-affinity binders, especially against resistant mutant variants [23].

Comparison of State-of-the-Art SBVS Tools

The following table compares the performance of various SBVS tools on standard benchmarks, highlighting the impact of advanced force fields and flexibility.

Table 2: Performance Comparison of Advanced SBVS Methods on Public Benchmarks

| Method / Platform | Key Feature | Reported Performance | Reference / Benchmark |

|---|---|---|---|

| RosettaVS (RosettaGenFF-VS) | Physics-based force field with receptor flexibility (side-chains, partial backbone). | EF1% = 16.72; Superior docking & screening power. | CASF-2016 Benchmark [22] |

| CNN-Score | Deep learning-based scoring function. | Hit rate ~3x higher than Vina at top 1%. | Independent Validation [23] |

| RF-Score-VS | Random forest-based scoring function for virtual screening. | Hit rate >3x higher than DOCK3.7 at top 1%. | Independent Validation [23] |

| AutoDock Vina | Widely used, traditional docking program. | Baseline performance (lower than ML-augmented methods). | Multiple Benchmarks [23] [22] |

Experimental Protocols for Key Methodologies

Protocol for a Typical SBVS Workflow with ML Re-scoring

This protocol is adapted from benchmarking studies and the development of the OpenVS platform [23] [22].

Target Preparation:

- Obtain the 3D structure of the target protein (PDB ID: e.g., 6A2M for WT PfDHFR).

- Using a tool like OpenEye's "Make Receptor," remove water molecules, unnecessary ions, and redundant chains.

- Add and optimize hydrogen atoms. Define the binding site coordinates for docking.

Compound Library Preparation:

- Select a database (e.g., ZINC, Enamine REAL). For benchmarking, use a dedicated set like DEKOIS 2.0, which contains active compounds and matched decoys.

- Prepare ligands: generate multiple low-energy 3D conformations for each compound using a tool like OMEGA.

- Convert ligand files into the required format for docking (e.g., PDBQT, mol2).

Molecular Docking:

- Perform docking with a selected tool (e.g., AutoDock Vina, PLANTS, FRED) into the prepared binding site.

- Critical Step: Ensure consistent and appropriate grid box dimensions to encompass the entire binding site.

Machine Learning Re-scoring:

- Extract the top poses (e.g., top 1-10) generated by the docking program for each compound.

- Submit these poses to a pre-trained ML scoring function such as CNN-Score or RF-Score-VS v2.

- Re-rank the entire library of compounds based on the new scores provided by the ML model.

Hit Identification and Validation:

- Select the top-ranked compounds (e.g., top 100-500) from the ML-re-scored list for experimental testing (e.g., biochemical assays, SPR).

Protocol for a Hybrid LBVS/SBVS Screening Strategy

This sequential protocol, informed by successful campaigns in competitions like CACHE, uses LBVS to filter an ultra-large library before more costly SBVS [4].

Ligand-Based Filtering:

- If known active ligands are available, use a fast 2D similarity search (e.g., Tanimoto similarity with a high threshold) or a pharmacophore model to screen a multi-billion compound library.

- Goal: Rapidly reduce the library size from billions to a few hundred thousand diverse compounds that are likely active.

Structure-Based Docking:

- Take the filtered compound set from Step 1 and subject it to molecular docking against the target structure.

- This step adds a structure-based constraint, prioritizing compounds that not only look like actives but also fit well into the binding pocket.

Post-Processing and Analysis:

- Apply additional filters (e.g., drug-likeness, synthetic accessibility).

- Visually inspect the top-ranked docking poses to check for sensible binding interactions.

- Select a final, manageable number of compounds for experimental validation.

Essential Research Reagent Solutions

The table below lists key computational tools and databases that form the essential "reagent kit" for conducting virtual screening.

Table 3: Key Virtual Screening Tools and Databases

| Category | Name | Function / Description |

|---|---|---|

| Public Compound Databases | ZINC | A free database of commercially available compounds for virtual screening. |

| ChEMBL | A manually curated database of bioactive molecules with drug-like properties. | |

| PubChem | A public database with information on biological activities of small molecules. | |

| SBVS Software | AutoDock Vina | A widely used, open-source molecular docking program. |

| RosettaVS | An open-source SBVS method with receptor flexibility and advanced scoring. | |

| Schrödinger Glide | A high-performance docking software suite (commercial). | |

| LBVS & ML Tools | RDKit | An open-source toolkit for cheminformatics and machine learning. |

| CNN-Score / RF-Score-VS | Pre-trained ML scoring functions for re-scoring docking poses. | |

| Benchmarking Sets | DEKOIS | Provides benchmark sets with actives and decoys to evaluate VS methods. |

| DUD (Directory of Useful Decoys) | A classic benchmark set for virtual screening evaluation. |

The decision framework presented here underscores that the choice between LBVS and SBVS is direct and data-driven. SBVS dominates when a reliable protein structure is available, especially with the integration of ML re-scoring and considerations for target flexibility. LBVS is the go-to method in the absence of structural information, provided a set of known active ligands exists. The most powerful strategies, as validated by benchmark studies and real-world applications, combine both approaches in a sequential or parallel manner to leverage their synergistic effects and mitigate their individual limitations [4] [23].

The future of virtual screening is inextricably linked to AI and machine learning. We are witnessing a trend away from traditional, rigid scoring frameworks toward physical-informed, interaction-based models that promise greater generalizability and interpretability [4]. The successful application of open-source, AI-accelerated platforms like OpenVS to screen billion-member libraries in a matter of days signals a new era of efficiency and scale in drug discovery [22]. As these technologies mature, the decision framework will evolve, but the foundational principle will remain: the optimal virtual screening strategy is dictated by a clear-eyed assessment of the available data.

From Theory to Practice: Implementing SBVS and LBVS in Drug Discovery Campaigns

Structure-based virtual screening (SBVS) is a powerful computational approach in modern drug discovery, enabling the rapid identification of hit compounds by leveraging the three-dimensional structure of a biological target. By systematically evaluating large chemical libraries, SBVS predicts how strongly small molecules bind to a target, prioritizing those with the highest potential for further development. This guide details the essential steps of the SBVS workflow—target preparation, library design, and docking protocols—and provides a objective performance comparison with ligand-based virtual screening (LBVS) approaches, supported by experimental data from recent studies.

Target Protein Selection and Preparation

The foundation of a successful SBVS campaign is a high-quality, well-prepared protein structure.

Target Selection and Assessment

The process begins with selecting a suitable protein target, typically one with a known or homology-modeled 3D structure whose modulation is expected to produce a therapeutic effect. The reliability of the entire screening process depends heavily on the quality and resolution of this structure.

- Source and Validation: Obtain the target structure from the Protein Data Bank (PDB) or through comparative homology modeling if an experimental structure is unavailable. The structure should be validated, paying close attention to the resolution (for crystal structures) and the completeness of key regions, especially the binding site.

- Binding Site Identification: Define the binding site coordinates. This can be done by locating the co-crystallized ligand, referencing known mutagenesis data, or using computational tools to predict cryptic or allosteric pockets.

Pre-processing Steps

Before docking, the protein structure must be processed to correct for inconsistencies and optimize its physicochemical state.

- Structure Cleaning: Remove extraneous components like water molecules, ions, and non-relevant co-crystallized ligands. However, structurally important water molecules that mediate protein-ligand interactions should be retained.

- Hydrogen Addition and Protonation States: Add hydrogen atoms and assign correct protonation states to amino acid residues (e.g., Asp, Glu, His, Lys) at the intended physiological pH. This ensures accurate hydrogen bonding and electrostatic interactions.

- Energy Minimization: Perform a limited energy minimization to relieve steric clashes introduced during the addition of hydrogens and assignment of charges, resulting in a more stable and physically realistic structure.

Table 1: Key Steps in Target Preparation

| Step | Description | Common Tools/Functions |

|---|---|---|

| Structure Sourcing | Acquiring 3D structure from PDB or via homology modeling | PDB, MODELLER, SWISS-MODEL |

| Binding Site Definition | Identifying the pocket where ligands will bind | Co-crystallized ligand location, site prediction algorithms |

| Structure Cleaning | Removing non-essential water molecules, ions, and ligands | Molecular visualization software (PyMOL, UCSF Chimera) |

| Hydrogen Addition | Adding H atoms and setting correct protonation states | Molecular docking suites (AutoDock Tools, Schrodinger Maestro) |

| Energy Minimization | Relaxing the structure to remove atomic clashes | Molecular dynamics or docking software force fields |

Chemical Library Design and Preparation

The chemical library is the source of potential hits, and its composition directly influences screening outcomes.

Library Selection and Curation

- Database Sources: Commercially available databases like the ZINC database are widely used, containing millions of purchasable compounds in ready-to-dock 3D formats [29] [30]. These often include specialized subsets, such as natural product libraries or lead-like compounds.

- Chemical Space and Drug-Likeness: Apply filters such as Lipinski's Rule of Five and other criteria to enrich the library with molecules that have desirable pharmacokinetic properties, thereby increasing the likelihood of identifying viable lead compounds.

Ligand Preparation

- Tautomer and Stereoisomer Generation: Generate plausible tautomers and stereoisomers for each compound to ensure the correct bioactive form is represented during docking.

- Energy Minimization: Optimize the 3D geometry of each ligand using molecular mechanics force fields to achieve a low-energy conformation, which provides a more realistic starting point for docking simulations.

Diagram 1: Chemical library preparation workflow for SBVS.

Molecular Docking Protocols and Validation

Docking involves predicting the binding pose and affinity of each small molecule within the target's binding site.

Docking Methodology and Execution

- Algorithm Selection: Choose a docking program (e.g., AutoDock Vina, Glide, GOLD) based on the target and project requirements. Each program uses different search algorithms and scoring functions to sample ligand conformations and rank them.