Structure-Based vs. Ligand-Based Drug Design: A Strategic Guide for Effective Virtual Screening

This article provides a comprehensive guide for researchers and drug development professionals on strategically choosing between structure-based and ligand-based virtual screening approaches.

Structure-Based vs. Ligand-Based Drug Design: A Strategic Guide for Effective Virtual Screening

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on strategically choosing between structure-based and ligand-based virtual screening approaches. It covers the foundational principles of both methods, detailing their respective applications, strengths, and limitations. Readers will find practical guidance on implementing these techniques, optimizing workflows through hybrid strategies, and validating results with real-world case studies, including insights from the CACHE challenge and the impact of AI tools like AlphaFold.

Core Principles: Understanding the Basis of Structure-Based and Ligand-Based Design

Structure-Based Drug Design (SBDD) is a rational drug discovery approach that utilizes the three-dimensional structure of a biological target to guide the design and optimization of therapeutic molecules [1] [2]. This methodology stands in contrast to ligand-based approaches, which rely on knowledge of known active molecules rather than the target structure itself [2] [3]. The foundational principle of SBDD is that detailed structural knowledge of the target's binding site enables the precise design of molecules for optimal interaction, thereby improving drug efficacy and selectivity [4]. This technical guide explores the core principles, methodologies, and applications of SBDD, framing its utility within the broader context of modern drug discovery pipelines and clarifying when it should be prioritized over alternative strategies.

Core Principles and Definitions

Structure-Based Drug Design is a paradigm in medicinal chemistry that leverages the atomic-resolution three-dimensional structure of a biological target—typically a protein—to discover and optimize drug candidates [1] [5]. The central tenet of SBDD is molecular recognition; the designed small molecule (ligand) must complement the target's binding site both geometrically and chemically, forming favorable interactions such as hydrogen bonds, ionic interactions, and hydrophobic contacts [2] [4]. This process is inherently rational and target-centric, moving beyond the trial-and-error approach of traditional screening.

The success of SBDD is fundamentally dependent on the availability and quality of the target's 3D structure [6]. This structural information allows researchers to visually analyze the binding pocket, understand key interaction residues, and computationally simulate how potential drug molecules might bind [4]. The entire SBDD process is iterative, involving multiple cycles of molecular design, synthesis, biological testing, and structural validation, each time using the accumulated structural insights to refine the drug candidate further [5].

SBDD within the Broader Drug Discovery Context

In the landscape of computational drug discovery, SBDD serves a distinct and complementary role to Ligand-Based Drug Design (LBDD). The decision to employ SBDD is primarily contingent on the availability of a reliable 3D structure of the target protein, obtained through experimental methods like X-ray crystallography or Cryo-EM, or increasingly, via high-confidence computational models like AlphaFold2 [6] [7]. When such structural data is unavailable or of poor quality, LBDD approaches, which deduce requirements for binding from the physicochemical properties of known active ligands, become the necessary alternative [2] [8].

The integration of SBDD into drug discovery projects offers several compelling advantages. It enables direct targeting of specific residues in a binding pocket, potentially leading to higher potency and selectivity, which in turn can reduce off-target effects and associated side effects [2]. Furthermore, by providing an atomic-level rationale for binding, SBDD can significantly accelerate the lead optimization process, reducing the number of compounds that need to be synthesized and tested experimentally [6] [5].

Key Methodologies and Experimental Protocols in SBDD

The SBDD workflow employs a suite of sophisticated computational and experimental techniques, each providing critical insights for the drug design process.

Target Structure Determination

The initial and most critical step in SBDD is obtaining a high-quality 3D structure of the target protein.

- X-ray Crystallography: This is the most common method for providing high-resolution protein structures for SBDD [2]. The protocol involves purifying the target protein, growing a well-ordered crystal, and then exposing it to an X-ray beam. The resulting diffraction pattern is analyzed using mathematical algorithms like the Fourier transform to reconstruct the electron density map and, subsequently, the atomic coordinates of the protein [2]. Structures obtained through crystallography are particularly valuable for identifying precise drug binding sites.

- Cryo-Electron Microscopy (Cryo-EM): This technique is rapidly gaining prominence, especially for large protein complexes or membrane proteins that are difficult to crystallize [2]. The protocol involves flash-freezing the protein sample in vitreous ice and then using an electron microscope to collect thousands of 2D images. These images are computationally combined to generate a 3D reconstruction at near-atomic resolution [2]. Cryo-EM has proven invaluable for studying targets like G protein-coupled receptors (GPCRs).

- Nuclear Magnetic Resonance (NMR) Spectroscopy: NMR provides structural and dynamic information about proteins in solution, which can be more physiologically relevant than the crystalline state [2]. It works by measuring the magnetic reactions of atomic nuclei in a strong magnetic field, providing data on inter-atomic distances and torsional angles that can be used to calculate the protein's 3D structure. NMR is particularly suited for studying flexible proteins and conformational changes [2].

- Computational Homology Modeling and AI-Based Prediction: When an experimental structure is unavailable, the 3D structure can be predicted computationally. Homology modeling creates a model based on the known structure of a related protein (a template) [5]. More recently, AI-based tools like AlphaFold2 and AlphaFold3 have revolutionized the field by providing highly accurate protein structure predictions from amino acid sequences alone, dramatically expanding the scope of targets accessible to SBDD [7].

Computational Docking and Virtual Screening

Once a target structure is available, computational docking is used to predict how small molecules from vast virtual libraries bind to the target.

- Molecular Docking Protocol: Docking involves two main components: conformational sampling of the ligand within the binding site and scoring the predicted poses based on estimated binding affinity [6]. The standard workflow is as follows:

- System Preparation: The protein structure is prepared by adding hydrogen atoms, assigning partial charges, and defining the search space (the binding site).

- Ligand Preparation: Small molecules from a virtual library are energy-minimized and their conformational flexibility is considered.

- Pose Generation and Scoring: The algorithm generates millions of potential binding poses and ranks them using a scoring function. This function approximates the binding energy by considering terms like van der Waals forces, electrostatics, hydrogen bonding, and desolvation penalties [6] [5].

- Addressing Limitations: A key limitation of standard docking is treating the protein as rigid. Ensemble docking, which uses multiple protein conformations, and molecular dynamics (MD) simulations are advanced techniques used to account for protein flexibility and provide a more dynamic view of binding [4] [6].

Molecular Dynamics Simulations

MD simulations provide a dynamic view of the ligand-protein complex, going beyond the static picture offered by crystallography or docking.

- Protocol and Workflow:

- A starting structure (e.g., a docked complex) is placed in a simulated solvated box with ions to mimic physiological conditions.

- Newton's equations of motion are numerically integrated for all atoms over time, typically for nanoseconds to microseconds, using software like GROMACS [7].

- The resulting trajectory is analyzed to assess pose stability, identify transient binding pockets, quantify interaction frequencies (e.g., hydrogen bonds), and calculate binding free energies [4] [7].

- Advanced MD Techniques: Methods like steered MD and umbrella sampling can be used to study the unbinding pathways of ligands and to calculate the thermodynamics and kinetics of binding, providing deeper insights for optimizing drug residence time [4].

Table 1: Core Techniques in Structure-Based Drug Design

| Technique | Primary Function | Key Outputs | Common Tools/Examples |

|---|---|---|---|

| X-ray Crystallography | Determine atomic 3D structure of crystallized protein | High-resolution static structure, ligand binding mode | X-ray diffractometers |

| Cryo-EM | Determine 3D structure of large/complex proteins | Near-atomic resolution structure, conformational states | Cryo-electron microscopes |

| Molecular Docking | Predict binding pose and affinity of a ligand | Ranked list of compounds, predicted binding orientation | AutoDock, GOLD, Glide |

| Molecular Dynamics (MD) | Simulate dynamic behavior of ligand-protein complex | Trajectory of atomic motions, binding stability, cryptic pockets | GROMACS, AMBER, NAMD |

| Free Energy Perturbation (FEP) | Calculate relative binding free energies with high accuracy | ΔΔG for congeneric series | Schrödinger FEP+, OpenFE |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Materials for SBDD

| Item | Function/Description | Application in SBDD Workflow |

|---|---|---|

| Purified Target Protein | A high-purity, functional, and stable preparation of the recombinant protein. | Essential for experimental structure determination (Crystallography, Cryo-EM, NMR) and biochemical assays. |

| Crystallization Screening Kits | Sparse-matrix kits containing various buffers, salts, and precipitants. | To identify initial conditions for growing diffraction-quality protein crystals. |

| Virtual Compound Libraries | Large, annotated databases of purchasable or virtual small molecules (e.g., ZINC, Enamine REAL). | Serves as the source of candidates for virtual screening and molecular docking. |

| Homology Modeling Software | Software that models a protein's 3D structure based on a related template (e.g., MODELLER, SWISS-MODEL). | Generates a working structural model when no experimental structure is available. |

| Cloud Computing/ HPC Resources | Scalable computational power for running docking, MD, and other resource-intensive calculations. | Enables high-throughput virtual screening and long-timescale molecular dynamics simulations. |

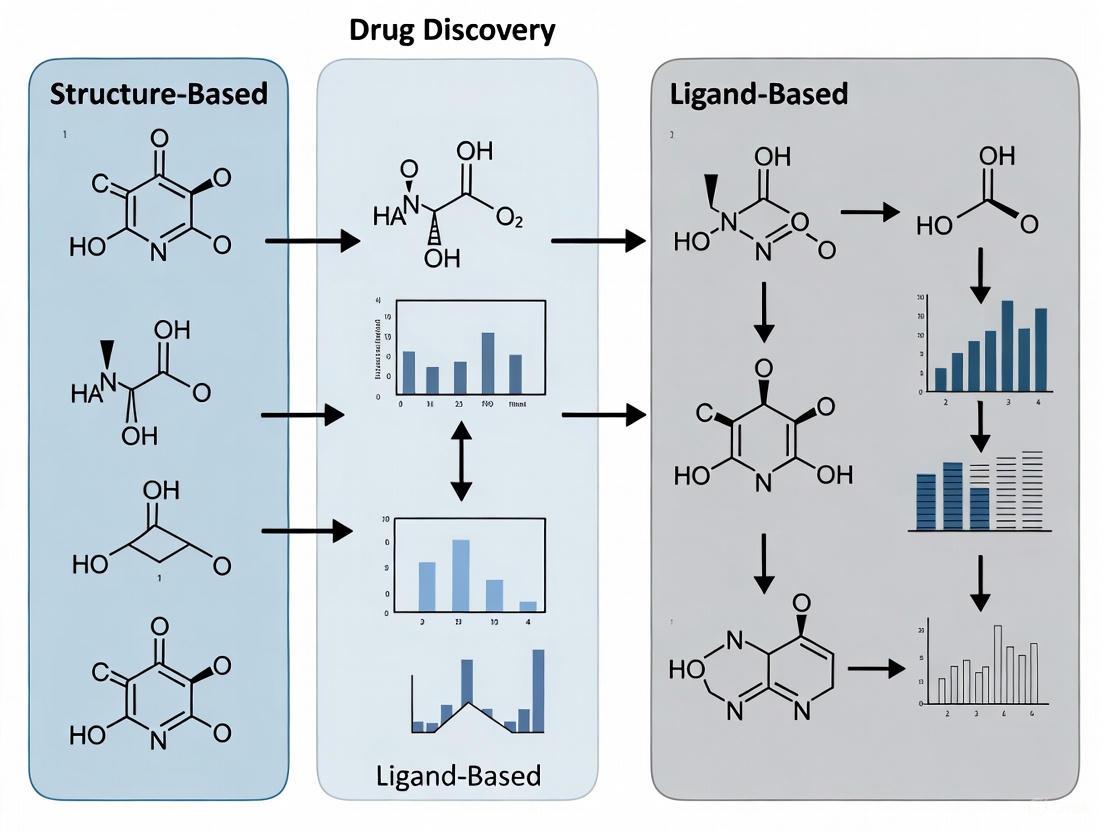

Diagram 1: The Iterative SBDD Workflow. The process is cyclical, with insights from complex structures and dynamics simulations directly informing the next round of chemical optimization.

SBDD vs. LBDD: A Comparative Analysis for Strategic Decision-Making

Choosing between SBDD and LBDD is a critical strategic decision in a drug discovery project. The two approaches are not mutually exclusive and are often combined for greater effectiveness [6] [9].

Foundational Data and Core Techniques

The most fundamental distinction lies in the primary source of information.

- SBDD is predicated on the target's 3D structure. Its core techniques, as detailed in Section 2, include molecular docking, structure-based pharmacophore modeling, and MD simulations, all of which directly analyze the ligand's interaction with the target protein [2] [4].

- LBDD is driven by knowledge of known active ligands. When the target structure is unknown, LBDD infers the characteristics of a binding site indirectly by analyzing a set of active and inactive compounds [2] [3]. Key techniques include:

- Quantitative Structure-Activity Relationship (QSAR): Builds a mathematical model correlating molecular descriptors (e.g., hydrophobicity, electronic properties) with biological activity to predict the activity of new compounds [2] [8].

- Pharmacophore Modeling: Identifies the essential steric and electronic features (e.g., hydrogen bond donors/acceptors, hydrophobic regions) necessary for molecular recognition [8].

- Similarity Searching: Screens compound libraries based on 2D or 3D structural similarity to a known active molecule [6].

Advantages, Limitations, and Decision Framework

Table 3: Strategic Comparison: SBDD vs. LBDD

| Aspect | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Primary Requirement | 3D structure of the target protein [2] [6] | Set of known active (and inactive) ligands [3] [8] |

| Key Advantage | Enables rational design of novel scaffolds; high potential for selectivity and novelty [2] [5] | Can be applied without target structure; fast and resource-efficient for screening [2] [6] |

| Main Limitation | Dependent on availability/quality of protein structure; can be computationally expensive [2] [6] | Limited by chemical bias of known ligands; difficult to design truly novel scaffolds [6] |

| Ideal Use Case | Target with a known, high-resolution structure; designing for a specific binding pocket or allosteric site [4] | Target structure unknown; project has many known actives for training models; initial fast screening [2] [9] |

The decision framework for a medicinal chemist is therefore straightforward:

- Is a reliable 3D structure of the target available? If yes, SBDD becomes immediately applicable and should be leveraged.

- Is there a sufficient amount of high-quality ligand activity data? If a structure is unavailable, but many active compounds are known, LBDD is the primary path forward.

- Can both data types be accessed? An integrated approach, using LBDD for rapid initial filtering and SBDD for detailed analysis of top candidates, is often the most powerful strategy [6] [9]. This hybrid approach mitigates the limitations of each individual method.

Structure-Based Drug Design represents a pinnacle of rational drug discovery, transforming the process from one of empirical screening to one of informed molecular design. By relying on the detailed 3D structure of biological targets, SBDD provides an unparalleled atomic-level perspective for optimizing drug candidates, leading to improved affinity, selectivity, and ultimately, clinical success. While challenges remain in dealing with highly flexible targets and accurately predicting binding energetics, continuous advancements in structural biology techniques like Cryo-EM and computational methods like AI-based structure prediction and machine learning-enhanced scoring are rapidly expanding the frontiers of SBDD [7] [5] [9].

For the modern drug development professional, the choice between SBDD and LBDD is not a binary one but a strategic decision based on available data. SBDD is the method of choice when a high-quality target structure is available, enabling direct and rational intervention in the design process. When structural data is lacking, LBDD provides a powerful alternative. However, the most effective drug discovery pipelines will strategically integrate both approaches, harnessing their complementary strengths to navigate the complex journey from target identification to clinical candidate with greater speed, precision, and confidence.

Ligand-Based Drug Design (LBDD) constitutes a fundamental pillar of computer-aided drug discovery, employed specifically when the three-dimensional structure of the biological target is unknown or unavailable [3] [2]. This approach operates on the central principle that similar molecules tend to exhibit similar biological activities—a concept that allows researchers to infer the structural requirements for bioactivity by analyzing a set of known active compounds [8]. Unlike structure-based methods that rely on target protein structures, LBDD leverages the chemical information of active and inactive compounds to correlate biological activity with chemical structure, establishing Structure-Activity Relationships (SAR) to guide the optimization process [3] [2]. This methodology has proven particularly valuable for targets resistant to structural characterization, such as certain membrane proteins and complex multi-component systems, making it an indispensable tool in the medicinal chemist's arsenal.

Within the broader context of structure-based versus ligand-based approaches, LBDD offers a complementary strategy that accelerates early drug discovery when structural information is limited [6]. While structure-based drug design (SBDD) provides atomic-level insights into binding interactions when target structures are available, LBDD enables progress even when such detailed structural knowledge is lacking [2] [10]. The integration of both approaches has become increasingly common in modern drug discovery, with ligand-based methods often providing initial leads that are subsequently refined using structural insights as they become available [6] [10]. This synergistic relationship maximizes the utility of available chemical and biological data, ultimately enhancing the efficiency of the drug discovery pipeline.

Theoretical Foundations of LBDD

Core Principles and Key Assumptions

The conceptual framework of LBDD rests upon several foundational principles that guide its application and methodology. The most fundamental of these is the similarity principle, which posits that structurally similar molecules are likely to share similar biological properties and activities [8] [11]. This principle enables researchers to extrapolate from known active compounds to predict the activity of untested molecules, providing a rational basis for compound selection and optimization. A second critical assumption is the existence of a pharmacophore—an abstract representation of the steric and electronic features necessary for molecular recognition at a biological target [8]. This pharmacophore concept allows researchers to transcend specific chemical scaffolds and identify common patterns responsible for biological activity across diverse chemical classes.

The theoretical underpinnings of LBDD also acknowledge that biological activity correlates with physicochemical properties such as lipophilicity, electronic characteristics, and steric bulk [8] [11]. These properties can be quantified as molecular descriptors, enabling the development of mathematical models that predict activity based on chemical structure. Furthermore, LBDD operates on the principle that molecular similarity can be quantified using various metrics and representations, from simple 2D fingerprints to complex 3D shape descriptors [11]. Each of these principles contributes to a cohesive framework that supports the diverse methodologies employed in ligand-based design, from quantitative modeling to similarity searching and pharmacophore elucidation.

Comparative Analysis: LBDD vs. Structure-Based Approaches

Table 1: Comparison of Ligand-Based and Structure-Based Drug Design Approaches

| Feature | Ligand-Based Drug Design (LBDD) | Structure-Based Drug Design (SBDD) |

|---|---|---|

| Required Information | Known active ligands (agonists/antagonists) | 3D structure of the target protein |

| Key Methodologies | QSAR, pharmacophore modeling, similarity searching | Molecular docking, de novo design, structure-based virtual screening |

| Target Flexibility | Implicitly accounted for through diverse ligand structures | Explicit modeling often limited without advanced MD simulations |

| Data Requirements | Set of compounds with measured activity | High-resolution protein structure (X-ray, Cryo-EM, NMR, or AlphaFold) |

| Primary Applications | Lead optimization, scaffold hopping, virtual screening | Binding mode prediction, structure-based optimization |

| Computational Demand | Generally lower, suitable for high-throughput screening | Higher, especially with flexible receptor treatments |

| Key Limitations | Dependent on quality and diversity of known actives | Limited by accuracy and relevance of protein structures |

The distinction between LBDD and SBDD represents a fundamental dichotomy in computational drug discovery [2] [6]. While SBDD requires explicit knowledge of the target protein's three-dimensional structure, LBDD operates indirectly through the information embedded in known ligand molecules [2] [12]. This fundamental difference in required inputs leads to divergent applications throughout the drug discovery pipeline. SBDD excels when detailed structural information is available, enabling precise optimization of ligand-receptor interactions [2]. In contrast, LBDD provides a powerful alternative when structural data is lacking or incomplete, allowing research to progress based on chemical information alone [3] [6].

Each approach presents distinct advantages and limitations. SBDD offers atomic-level insights into binding interactions but requires high-quality structural data that may not always be available or biologically relevant [2] [12]. LBDD leverages existing structure-activity relationship data but is constrained by the chemical diversity and quality of known actives [8] [11]. The selection between these approaches often depends on available resources and information, though increasingly, integrated strategies that combine both methodologies are proving most effective [6] [10].

Key Methodologies and Techniques in LBDD

Quantitative Structure-Activity Relationships (QSAR)

QSAR represents one of the most established and widely used methodologies in LBDD, employing mathematical models to correlate quantitative measures of chemical structure with biological activity [8] [11]. The fundamental premise of QSAR is that variations in biological activity can be correlated with changes in quantitative molecular descriptors representing structural or physicochemical properties [8]. This approach transforms qualitative chemical intuition into predictive quantitative models, enabling more efficient lead optimization.

The QSAR modeling process follows a well-defined workflow comprising several critical stages [8]. First, a congeneric series of compounds with experimentally measured biological activities is assembled. Next, molecular descriptors capturing relevant structural and physicochemical properties are calculated for each compound. Statistical or machine learning methods are then employed to derive a mathematical relationship between the descriptors and biological activity. Finally, the resulting model must be rigorously validated to assess its predictive power and domain of applicability [8].

Table 2: Key QSAR Methodologies and Their Applications

| Method Type | Key Descriptors | Representative Techniques | Primary Applications |

|---|---|---|---|

| 2D QSAR | Substituent constants, topological indices, electronic parameters | Hansch analysis, Free-Wilson analysis | Lead optimization, property prediction |

| 3D QSAR | Steric and electrostatic fields, molecular shape | CoMFA (Comparative Molecular Field Analysis), CoMSIA (Comparative Molecular Similarity Indices Analysis) | Binding mode prediction, scaffold hopping |

| Machine Learning QSAR | Diverse descriptor sets including fingerprints, graph-based features | Random Forest, Support Vector Machines, Neural Networks | High-throughput virtual screening, multi-parameter optimization |

Recent advances in QSAR methodology have expanded beyond traditional linear regression approaches to incorporate more sophisticated machine learning techniques [13] [11]. These include support vector machines, random forests, and neural networks capable of capturing complex nonlinear relationships between structure and activity [13]. Additionally, the integration of molecular dynamics simulations has led to the development of conformationally sampled pharmacophore approaches that account for ligand flexibility, enhancing model robustness and predictive accuracy [8].

Pharmacophore Modeling

Pharmacophore modeling represents another cornerstone methodology in LBDD, focusing on the identification of essential molecular features necessary for biological activity [8] [11]. A pharmacophore is defined as an abstract representation of steric and electronic features that a molecule must possess to interact effectively with a biological target [8]. This approach distills complex molecular structures into their functionally critical components, enabling researchers to transcend specific chemical scaffolds and identify novel active compounds through scaffold hopping.

The pharmacophore development process typically involves analyzing a set of known active compounds to identify common structural features and their spatial arrangement [11]. These features may include hydrogen bond donors and acceptors, charged or ionizable groups, hydrophobic regions, and aromatic rings. The resulting pharmacophore model serves as a three-dimensional query for virtual screening, allowing researchers to identify potential hits from large compound libraries based on feature complementarity rather than structural similarity [8] [11].

Figure 1: Pharmacophore Modeling Workflow

Pharmacophore models can be developed through various approaches depending on available information [11]. Ligand-based pharmacophore models are derived exclusively from a set of known active compounds, while structure-based pharmacophores incorporate information from target-ligand complex structures when available [11]. Consensus approaches that combine multiple models often demonstrate enhanced robustness and predictive power. Successful applications of pharmacophore-based virtual screening have led to the discovery of novel bioactive compounds for various therapeutic targets, including HIV protease inhibitors and kinase inhibitors [11].

Molecular Similarity Analysis and Machine Learning Approaches

Molecular similarity analysis represents a more recent but increasingly important methodology in LBDD, leveraging the concept that structurally similar molecules tend to exhibit similar biological activities [11]. This approach employs computational techniques to quantify molecular resemblance, enabling efficient screening of large compound libraries based on similarity to known actives [6] [11]. Similarity can be assessed using various representations, including 2D fingerprints that encode molecular substructures, 3D shape descriptors that capture molecular volume and topography, and pharmacophore fingerprints that represent feature distributions [11].

Machine learning has dramatically transformed LBDD methodologies in recent years, enhancing both predictive accuracy and applicability [13] [11]. Supervised learning algorithms such as random forests and support vector machines can identify complex patterns in structure-activity data that may elude traditional statistical approaches [13]. Deep learning architectures, including graph neural networks that operate directly on molecular graph representations, have shown remarkable performance in activity prediction and molecular generation tasks [13] [14]. These methods can automatically learn relevant features from raw molecular data, reducing reliance on manual descriptor selection and potentially capturing previously overlooked structure-activity relationships [13].

The integration of machine learning with traditional LBDD approaches has expanded the scope and power of ligand-based methods [13] [11]. For instance, deep learning models can now generate novel molecular structures with desired activity profiles using chemical language models trained on known bioactive compounds [14]. These models learn the "grammar" of bioactive molecules and can propose new compounds that satisfy multiple constraints, including predicted activity, synthesizability, and desirable physicochemical properties [14]. Such advances are progressively blurring the boundaries between ligand-based and structure-based approaches, enabling more efficient exploration of chemical space.

Experimental Protocols and Methodological Details

QSAR Model Development and Validation Protocol

The development of robust QSAR models requires careful attention to each step of the modeling process, from data collection to validation [8]. Below is a detailed protocol for QSAR model development:

Data Curation and Preparation

- Collect a series of compounds with consistent, reliably measured biological activity data (e.g., IC50, Ki values)

- Ensure chemical structures are accurately represented and standardized

- Apply appropriate criteria for chemical diversity and activity range

- Divide compounds into training (~70-80%) and test sets (20-30%) using rational methods such as Kennard-Stone or sphere exclusion algorithms

Molecular Descriptor Calculation and Selection

- Compute relevant molecular descriptors using software such as DRAGON, PaDEL, or RDKit

- Perform descriptor preprocessing to remove constant or near-constant variables

- Apply feature selection techniques (genetic algorithms, recursive feature elimination) to identify the most relevant descriptors

- Address multicollinearity through variance inflation factor analysis or principal component analysis

Model Building and Optimization

- Select appropriate machine learning algorithms based on dataset size and characteristics

- Optimize model hyperparameters using cross-validation or grid search approaches

- Build multiple models using different algorithms or descriptor sets for comparison

- Apply techniques to address overfitting, such as regularization or ensemble methods

Model Validation and Applicability Domain Assessment

- Perform internal validation using cross-validation (leave-one-out or k-fold) to calculate Q²

- Conduct external validation using the test set to assess predictive performance

- Calculate relevant metrics: R², Q², RMSE, MAE

- Define the applicability domain to identify where models can reliably predict

This protocol emphasizes the critical importance of validation in QSAR modeling [8]. Without rigorous validation, QSAR models may appear deceptively accurate while lacking true predictive power for novel compounds. The applicability domain definition is particularly crucial, as it establishes the boundaries within which the model can be reliably applied [8] [11].

Pharmacophore Model Generation Protocol

The generation of pharmacophore models follows a systematic process that varies slightly depending on whether ligand-based or structure-based approaches are employed [11]. The following protocol outlines the key steps for ligand-based pharmacophore generation:

Compound Selection and Preparation

- Select a training set of known active compounds with diverse chemical structures but common mechanism of action

- Include known inactive compounds if available to improve model selectivity

- Generate representative conformational ensembles for each compound using methods such as molecular dynamics or systematic searching

- Optimize molecular geometries using appropriate force fields or semi-empirical methods

Pharmacophore Feature Identification and Model Generation

- Define relevant pharmacophore features: hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, charged groups

- Perform molecular alignment based on pharmacophore features or molecular fields

- Identify common features present across active compounds using algorithms such as HipHop or HypoGen

- Generate multiple pharmacophore hypotheses with varying feature compositions and configurations

Model Validation and Refinement

- Validate models using test set compounds with known activity

- Assess model ability to discriminate between active and inactive compounds

- Optimize model parameters based on validation results

- Select the best-performing model for virtual screening applications

For structure-based pharmacophore generation, the process begins with analysis of target-ligand complex structures [11]. Key interactions are identified from the complex, translated into pharmacophore features, and the spatial relationships between these features are defined based on the binding site geometry. This approach benefits from direct structural insights but is limited to targets with available structural information.

Table 3: Key Research Reagents and Computational Tools for LBDD

| Category | Specific Tools/Reagents | Function/Application | Key Features |

|---|---|---|---|

| Chemical Databases | ChEMBL, PubChem, ZINC | Source of chemical structures and bioactivity data | Annotated bioactivities, commercial availability, structural diversity |

| Descriptor Calculation | RDKit, PaDEL, Dragon | Compute molecular descriptors for QSAR | Comprehensive descriptor sets, open-source options, batch processing |

| Pharmacophore Modeling | Catalyst, Phase, MOE | Develop and validate pharmacophore models | Feature identification, conformational analysis, virtual screening |

| QSAR Modeling | WEKA, KNIME, Orange | Build and validate machine learning QSAR models | Multiple algorithms, user-friendly interfaces, model interpretation |

| Similarity Searching | OpenBabel, ChemAxon | Calculate molecular similarity | Multiple fingerprint types, similarity metrics, high-throughput screening |

| Cheminformatics Libraries | RDKit, CDK, ChemPy | Programmatic chemical informatics | Open-source, Python/R interfaces, integration with machine learning |

Successful implementation of LBDD methodologies requires access to specialized computational tools and chemical databases [8] [11]. The resources listed in Table 3 represent essential components of the LBDD toolkit, enabling each stage of the ligand-based design process from data collection to model application. Open-source tools such as RDKit and CDK provide programmable platforms for custom workflow development, while commercial software like Catalyst and MOE offer integrated environments with user-friendly interfaces [11].

Beyond software tools, chemical databases represent critical resources for LBDD [11]. Publicly available databases such as ChEMBL and PubChem provide vast repositories of chemical structures and associated bioactivity data, enabling researchers to access structure-activity relationships for diverse targets [11]. Commercial compound libraries complement these public resources, offering physically available compounds for experimental testing. The careful selection and curation of these data sources significantly impacts the quality and success of LBDD efforts.

Advanced Applications and Future Directions

Integration with Structure-Based Methods

The distinction between ligand-based and structure-based approaches is increasingly blurring as integrated methodologies emerge that leverage the strengths of both paradigms [6] [10]. Sequential workflows that apply ligand-based methods for initial filtering followed by structure-based analysis represent a powerful strategy for efficient virtual screening [6] [10]. This approach uses fast ligand-based techniques such as similarity searching or pharmacophore screening to reduce large compound libraries to manageable sizes, after which more computationally intensive structure-based methods like molecular docking can be applied to the pre-filtered sets [10].

Parallel screening approaches represent another integration strategy, where both ligand-based and structure-based methods are applied independently to the same compound library [10]. The results are then combined using consensus scoring techniques, either by selecting compounds ranked highly by both methods or by multiplying scores to create a unified ranking [10]. This strategy helps mitigate the limitations inherent in each approach—if docking scores are compromised by inaccurate pose prediction, ligand-based similarity methods may still identify active compounds based on known ligand features [10].

The DRAGONFLY framework exemplifies the advanced integration of ligand- and structure-based approaches through deep learning [14]. This method utilizes a drug-target interactome—a graph representation capturing connections between ligands and their targets—to enable both ligand-based and structure-based molecular design within a unified architecture [14]. By leveraging graph neural networks and chemical language models, DRAGONFLY can generate novel molecules conditioned on either known ligand templates or 3D protein binding site information, effectively bridging the gap between ligand-based and structure-based paradigms [14].

AI-Driven De Novo Molecular Design

Recent advances in artificial intelligence have transformed LBDD, particularly in the area of de novo molecular design [13] [14]. Deep learning models can now generate novel molecular structures with desired properties, moving beyond simple similarity searching to truly innovative design [14]. Chemical language models trained on SMILES representations of known bioactive compounds can learn the "grammar" and "syntax" of drug-like molecules, enabling them to generate novel structures that satisfy multiple constraints including predicted activity, synthesizability, and favorable physicochemical properties [14].

Interaction-aware generative models represent another significant advancement, particularly for structure-based design applications [15]. These models incorporate explicit information about protein-ligand interactions—such as hydrogen bonds, hydrophobic interactions, and π-stacking—as conditional constraints during molecular generation [15]. For example, the DeepICL framework sequentially generates ligand atoms based on both the 3D context of a binding pocket and specific interaction conditions, enabling the design of ligands that form predetermined interactions with key residues [15]. This approach demonstrates how prior knowledge of interaction patterns can guide molecular generation even for targets with limited experimental data.

Figure 2: AI-Driven Molecular Design Workflow

These AI-driven approaches are particularly valuable for addressing targets with limited chemical data, where traditional QSAR methods struggle due to insufficient training examples [14] [15]. By leveraging transfer learning and pre-training on large-scale bioactivity datasets, these models can extract generalizable patterns of bioactivity that extend to novel targets with limited data [14]. The continued development of these methodologies promises to further enhance the power and applicability of LBDD, potentially reducing the dependency on extensive structure-activity data for effective molecular design.

Ligand-Based Drug Design represents a sophisticated and evolving discipline that leverages known active compounds to guide the discovery and optimization of novel therapeutic agents [3] [8]. Through methodologies such as QSAR, pharmacophore modeling, and molecular similarity analysis, LBDD enables progress even when structural information about the biological target is limited or unavailable [2] [6]. The fundamental principles underlying these approaches—particularly the similarity principle and the pharmacophore concept—provide a rational foundation for extracting structure-activity relationships from chemical data alone [8] [11].

The ongoing integration of machine learning and artificial intelligence is significantly expanding the capabilities of LBDD [13] [14]. Advanced deep learning models can now generate novel molecular structures with desired activity profiles, while interaction-aware generative approaches incorporate explicit constraints derived from protein-ligand interactions [14] [15]. These developments are progressively blurring the historical distinction between ligand-based and structure-based approaches, enabling more sophisticated and effective drug design strategies that leverage all available chemical and structural information [6] [10].

Within the broader context of structure-based versus ligand-based approaches, LBDD remains an essential component of the drug discovery toolkit [2] [12]. Its particular strength lies in situations where structural information is limited, during early stages of project development, or when pursuing scaffold-hopping strategies to identify novel chemotypes [11]. As computational methodologies continue to advance, the integration of ligand-based and structure-based approaches will likely become increasingly seamless, ultimately accelerating the discovery of novel therapeutic agents through more efficient exploration of chemical space.

The choice between structure-based drug design (SBDD) and ligand-based drug design (LBDD) represents a fundamental strategic decision in computational drug discovery. This decision is primarily constrained by one critical factor: the type and volume of data available to researchers [2]. SBDD relies on the three-dimensional structural information of the target protein, typically obtained through methods such as X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy [1] [2]. In contrast, LBDD utilizes information from known active small molecules (ligands) that interact with the target, employing techniques such as quantitative structure-activity relationship (QSAR) modeling and pharmacophore mapping [2] [16]. The implications of this choice are significant, affecting the novelty of resulting compounds, resource allocation, and ultimate project success [17]. This technical guide provides a comprehensive decision framework based on data availability, enabling researchers to systematically select the optimal computational approach for their specific drug discovery context.

Theoretical Foundations: SBDD and LBDD

Structure-Based Drug Design (SBDD)

SBDD is a computational approach that leverages the three-dimensional structure of biological targets, typically proteins, to design therapeutic molecules [1]. The core principle of SBDD is molecular recognition - designing compounds that exhibit structural and chemical complementarity to the target's binding site [2]. This approach requires high-resolution structural data, which can originate from experimental methods like X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy (cryo-EM), or from computational predictions such as homology modeling [18] [2].

Key Techniques in SBDD:

- Molecular Docking: Simulates the interaction between potential drug candidates and the target protein, predicting binding poses and affinity scores [17] [1].

- Molecular Dynamics (MD) Simulations: Models the dynamic behavior of protein-ligand complexes over time, providing insights into binding stability and conformational changes [18] [19].

- Virtual Screening: Rapidly evaluates large compound libraries against a target structure to identify potential hits [18] [1].

Ligand-Based Drug Design (LBDD)

LBDD approaches are employed when the three-dimensional structure of the target protein is unavailable [2] [16]. Instead, these methods rely on the chemical information from known active ligands to infer requirements for biological activity and design new compounds [20]. The fundamental principle underlying LBDD is the "molecular similarity principle," which states that structurally similar molecules are likely to exhibit similar biological activities [20].

Key Techniques in LBDD:

- Quantitative Structure-Activity Relationship (QSAR): Mathematical models that correlate quantitative molecular descriptors with biological activity [2] [16].

- Pharmacophore Modeling: Identifies and maps the essential steric and electronic features necessary for molecular recognition [2].

- Similarity Searching: Screens compound databases using molecular fingerprints or descriptors to identify structurally similar compounds to known actives [20].

Decision Framework: Selecting Approaches Based on Data Availability

The following framework provides a systematic approach for selecting between SBDD, LBDD, or hybrid methods based on available data resources. This decision matrix enables researchers to optimize their computational strategy according to their specific context.

Table 1: Decision Framework for Selecting Between SBDD and LBDD Approaches

| Data Availability Scenario | Recommended Primary Approach | Key Techniques | Advantages | Limitations |

|---|---|---|---|---|

| High-resolution protein structure available (e.g., from X-ray crystallography, cryo-EM, or high-quality homology models) [2] | Structure-Based Drug Design (SBDD) | Molecular docking [17], Structure-based virtual screening [18], Molecular dynamics simulations [19] | Direct visualization of binding interactions [2]; Potential for novel chemotype discovery beyond known ligand space [17]; Identification of key residue interactions [17] | Dependency on structure quality and resolution [2]; Limited by protein flexibility and solvent effects in simulations [2]; Computational intensity of methods like MD [1] |

| Adequate known active ligands (typically 20+ compounds with activity data) [16] | Ligand-Based Drug Design (LBDD) | QSAR modeling [2] [16], Pharmacophore modeling [2], Similarity searching [20] | No requirement for protein structural data [2]; Generally faster and less computationally demanding [2]; Excellent for optimizing within established chemical series [17] | Limited ability to discover novel chemotypes beyond training data [17]; Bias toward existing chemical space [17]; Model applicability domain restrictions [17] |

| Both protein structure and ligand data available | Hybrid SBDD/LBDD Approaches [20] | Sequential filtering (e.g., LB pre-screening followed by SB docking) [20], Parallel screening with rank fusion [20], Integrated scoring functions [20] | Complementary strengths mitigate individual limitations [20]; Enhanced enrichment and reduced false positives [20]; Increased robustness across diverse chemical classes [20] | Increased computational complexity [20]; Implementation challenges in workflow integration [20]; Requires expertise in both methodologies [20] |

| Limited structural and ligand data ("data-poor" targets) | Fragment-Based Methods or Generative AI with transfer learning | Fragment-based screening [21], Generative models with physics-based scoring [17], Protein-ligand interaction fingerprints [22] | Maximizes information from limited data [17]; Focus on fundamental molecular interactions [21]; Potential for novel scaffold discovery [17] | High uncertainty in predictions; Requires experimental validation; Limited guidance for optimization |

Framework Application Guidance

The decision framework above provides a foundational starting point, but real-world application requires additional considerations:

1. Assessing Data Quality and Quantity:

- For SBDD, structural resolution below 2.5Å is generally preferred, with careful attention to binding site completeness and residue resolution [2].

- For LBDD, the quality and diversity of active ligands are as important as quantity. A dataset of 20 highly similar compounds provides less information than 10 structurally diverse actives with measured potencies [17].

2. Target Flexibility Considerations:

- For highly flexible targets with multiple conformational states, SBDD approaches may require ensemble docking or extensive molecular dynamics simulations, increasing computational demands [20] [2].

- In such cases, LBDD approaches may provide more consistent results despite their limitations in novel chemotype discovery [17].

3. Project Objectives Alignment:

- For projects prioritizing novelty and intellectual property generation, SBDD offers advantages in exploring unprecedented chemotypes beyond known ligand space [17].

- For lead optimization projects within established chemical series, LBDD often provides more efficient guidance for potency and property refinement [2].

The following workflow diagram illustrates the decision process based on data availability:

Experimental Protocols and Methodologies

Structure-Based Protocol: Molecular Docking with Glide

The following protocol outlines the methodology used in the GPCR case study for structure-based scoring with generative models [17]:

1. Protein Preparation:

- Obtain the target protein structure (e.g., DRD2 with Risperidone, PDB ID: 6CM4) [17].

- Remove crystallographic water molecules, except those involved in key bridging interactions.

- Add hydrogen atoms and optimize protonation states of residues at physiological pH.

- Perform restrained energy minimization to relieve steric clashes while maintaining the overall structure.

2. Binding Site Definition:

- Define the binding site using the co-crystallized ligand or through binding site detection algorithms.

- Create a receptor grid with coordinates centered on the binding site (typically 10-20Å box size).

- Set up constraints based on key protein-ligand interactions observed in the crystal structure.

3. Ligand Preparation:

- Generate 3D structures of query ligands from SMILES strings.

- Assign proper bond orders and formal charges.

- Generate stereoisomers and tautomers where applicable.

- Perform conformational sampling to generate multiple low-energy conformers.

4. Docking Execution:

- Use Glide SP or XP precision modes for balance between accuracy and computational cost [17].

- Apply post-docking minimization to refine poses.

- Score poses using the GlideScore function, which combines empirical and force-field-based terms.

5. Result Analysis:

- Cluster poses based on RMSD to identify consensus binding modes.

- Analyze key protein-ligand interactions (hydrogen bonds, hydrophobic contacts, π-stacking).

- Use docking scores for comparative assessment and ranking of compounds.

Ligand-Based Protocol: QSAR Modeling Workflow

This protocol details the development of a QSAR model for ligand-based screening, as referenced in the machine learning applications [16]:

1. Dataset Curation:

- Collect a diverse set of compounds with consistent biological activity measurements (e.g., IC50, Ki).

- Apply rigorous curation: remove duplicates, correct structures, and standardize activity values.

- Divide data into training (70-80%), validation (10-15%), and test sets (10-15%) using rational splitting methods.

2. Molecular Descriptor Calculation:

- Generate comprehensive molecular descriptors using software like PaDEL-Descriptor [18].

- Calculate 1D, 2D, and 3D descriptors encoding structural, topological, and physicochemical properties.

- Apply feature selection techniques (e.g., correlation analysis, random forest importance) to reduce dimensionality.

3. Model Building:

- Train multiple machine learning algorithms (SVM, Random Forest, Neural Networks) [16].

- Optimize hyperparameters using cross-validation on the training set.

- Apply ensemble methods to combine predictions from multiple models for improved robustness.

4. Model Validation:

- Assess internal performance using cross-validation metrics (Q², RMSE).

- Evaluate external predictive power on the test set (R²pred, RMSEext).

- Apply strict applicability domain definition to identify reliable prediction boundaries.

5. Model Application:

- Use the validated model to screen virtual compound libraries.

- Apply applicability domain filters to flag unreliable predictions.

- Interpret descriptor contributions to guide structural optimization.

Table 2: Research Reagent Solutions for Computational Drug Design

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Molecular Docking Software | Glide [17], AutoDock Vina [18], GOLD | Predict protein-ligand binding geometry and affinity | SBDD when protein structure is available |

| Molecular Dynamics Engines | GROMACS [19], AMBER, CHARMM | Simulate dynamic behavior of biomolecular systems | Refining docking poses; studying protein flexibility |

| Cheminformatics Toolkits | RDKit, PaDEL-Descriptor [18], Open Babel [18] | Calculate molecular descriptors and fingerprints | LBDD for QSAR and similarity searching |

| QSAR Modeling Platforms | KNIME, Orange, DataWarrior | Build and validate machine learning QSAR models | LBDD when ligand data is available |

| Structure Preparation Tools | PyMOL [18], Schrodinger Protein Prep Wizard, MOE | Process and optimize protein structures for computation | Essential preprocessing for SBDD |

| Virtual Screening Suites | Schrodinger Suite, OpenEye ROCS, SeeSAR | High-throughput screening of compound libraries | Both SBDD and LBDD for hit identification |

| Generative AI Platforms | REINVENT [17], DeepChem, GuacaMol | De novo molecular generation with objective guidance | Both approaches (structure- or ligand-based scoring) |

Case Studies and Applications

Structure-Based Generative Design for DRD2

A compelling case study demonstrates the application of SBDD in generative molecular design for the dopamine receptor DRD2 [17]. Researchers used the REINVENT algorithm with molecular docking scores from Glide as the optimization objective, rather than traditional ligand-based predictors. This structure-based approach generated molecules with predicted affinity beyond known DRD2 active compounds while exploring novel physicochemical space not represented in existing ligand data [17]. Critically, the model learned to satisfy key residue interactions visible only from the protein structure, demonstrating the unique advantage of SBDD in capturing structural determinants of binding that are inaccessible to ligand-based methods [17].

Hybrid Virtual Screening for Tubulin Inhibitors

A recent study on identifying natural inhibitors of the human αβIII tubulin isotype exemplifies the power of hybrid approaches [18]. Researchers began with structure-based virtual screening of 89,399 natural compounds using AutoDock Vina, selecting the top 1,000 hits based on binding energy. These candidates were then refined using machine learning classifiers trained on known Taxol-site binders versus non-binders [18]. This sequential hybrid strategy identified four promising natural compounds with exceptional binding properties and ADME-T profiles, demonstrating how SBDD and LBDD can be integrated to leverage their complementary strengths while mitigating individual limitations [18].

Implementation Roadmap and Future Directions

Practical Implementation Considerations

Successfully implementing the decision framework requires attention to several practical aspects:

Data Quality Assessment:

- For protein structures, evaluate resolution, completeness of binding sites, and conformational relevance to the biological context.

- For ligand data, assess data consistency, measurement accuracy, and structural diversity of actives and inactives.

Computational Resource Planning:

- SBDD approaches typically require greater computational resources, especially for molecular dynamics or high-precision docking.

- LBDD methods are generally less computationally intensive but require careful model validation and maintenance.

Validation Strategies:

- Always include experimental validation cycles where possible, using biochemical or cellular assays.

- Implement rigorous computational validation, including blinded test sets and prospective prediction tracking.

Emerging Trends and Future Outlook

The field of computational drug design is rapidly evolving, with several trends shaping future applications:

Integration of Artificial Intelligence:

- Deep learning models are increasingly being applied to both protein structure prediction (e.g., AlphaFold) and molecular generation [19] [16].

- AI approaches are bridging SBDD and LBDD through unified architectures that simultaneously leverage structural and ligand data [22] [16].

Data as Strategic Asset:

- Organizations are increasingly treating curated structural and chemical data as valuable products rather than research byproducts [21].

- High-quality, integrated datasets are becoming competitive advantages, particularly for training machine learning models [21].

Federated Data Ecosystems:

- Collaborative platforms are emerging that enable organizations to share structural information while protecting proprietary interests [21].

- These ecosystems accelerate discovery while preserving competitive differentiation.

The decision framework presented in this guide provides a systematic approach for selecting between structure-based and ligand-based drug design strategies based on data availability. By aligning computational approaches with available data resources and project objectives, researchers can optimize their drug discovery efficiency and success rates in this rapidly evolving landscape.

Key Strengths and Inherent Limitations of Each Approach

Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD) represent the two foundational computational approaches in modern drug discovery. SBDD relies on the three-dimensional structural information of the target protein, typically obtained through experimental methods like X-ray crystallography, nuclear magnetic resonance (NMR), or cryo-electron microscopy (cryo-EM), or predicted using AI methods such as AlphaFold [6] [2]. Conversely, LBDD strategies are employed when the target structure is unknown, instead leveraging information from known active molecules that bind and modulate the target's function [6]. Both methodologies aim to identify and optimize promising drug candidates while reducing the number of compounds requiring synthesis and biological testing, thereby saving substantial time and resources [6]. This technical guide provides an in-depth examination of both approaches, framing their application within the critical decision framework of when to use SBDD versus LBDD in research projects.

Core Principles and Methodologies

Structure-Based Drug Design (SBDD)

SBDD operates on the principle of "structure-centric" rational design, where a detailed understanding of protein-ligand interactions guides molecular modifications [6]. The core process involves analyzing the spatial configuration and physicochemical properties of the target's binding site to design or optimize small molecules that can bind with high affinity and specificity [2].

Key Techniques:

- Molecular Docking: Predicts the bound poses (orientation and conformation) of ligand molecules within the target's binding pocket and ranks their binding potential based on a scoring function that incorporates various interaction energies [6].

- Free Energy Pertigation (FEP): A highly accurate but computationally expensive method that estimates binding free energies using thermodynamic cycles, primarily used during lead optimization to quantitatively evaluate small structural changes [6].

- Molecular Dynamics (MD) Simulations: Used to refine docking predictions by exploring the dynamic behavior of protein-ligand complexes, accounting for flexibility in both molecules and providing insights into binding stability [6].

Ligand-Based Drug Design (LBDD)

LBDD is grounded in the "similarity-property principle," which states that structurally similar molecules are likely to exhibit similar biological activities [6] [9]. This approach infers critical binding features indirectly from the chemical characteristics of known active molecules.

Key Techniques:

- Similarity-Based Virtual Screening: Identifies new hits from large compound libraries by comparing candidate molecules against known actives using 2D (molecular fingerprints) or 3D (shape, electrostatic properties) descriptors [6].

- Quantitative Structure-Activity Relationship (QSAR) Modeling: Uses statistical and machine learning methods to relate molecular descriptors to biological activity, enabling prediction of new compounds' activity [6].

- Pharmacophore Modeling: Identifies the essential geometric and chemical features responsible for biological activity by analyzing a set of active compounds, creating a template for screening new molecules [23] [2].

Experimental Workflows

Protocol 1: Structure-Based Virtual Screening (SBVS)

- Target Preparation: Obtain high-resolution 3D protein structure through X-ray crystallography, NMR, cryo-EM, or computational prediction [2] [18].

- Binding Site Analysis: Identify and characterize the binding pocket using spatial and physicochemical descriptors [2].

- Library Preparation: Prepare compound libraries in suitable formats (e.g., PDBQT), generating relevant tautomers, stereoisomers, and protonation states [18].

- Molecular Docking: Perform flexible ligand docking against the binding site using programs like AutoDock Vina or DOCK [23] [18].

- Pose Scoring and Ranking: Evaluate and rank compounds based on docking scores and interaction analysis [6].

- Post-Processing: Refine top hits using MD simulations or FEP calculations [6].

- Experimental Validation: Synthesize and test top-ranked compounds in biological assays [18].

Protocol 2: Ligand-Based Virtual Screening (LBVS)

- Active Ligand Compilation: Curate a set of known active compounds with robust biological data [6].

- Molecular Description: Calculate molecular descriptors or fingerprints for all active and database compounds [18].

- Model Development:

- Database Screening: Screen compound libraries using similarity searches or pharmacophore mapping [6].

- Hit Prioritization: Rank compounds based on similarity scores or predicted activity [6].

- Experimental Validation: Select top candidates for synthesis and biological testing [6].

Table 1: Key Research Reagent Solutions in Computational Drug Design

| Reagent/Resource | Function/Application | Examples/Tools |

|---|---|---|

| Protein Structure Databases | Source of experimental structures for SBDD | Protein Data Bank (PDB) [23] |

| Compound Libraries | Collections of molecules for virtual screening | ZINC database [18], Enamine REAL [9] |

| Docking Software | Predict ligand binding poses and affinities | AutoDock Vina [18], DOCK [23], PLANTS [24] |

| Pharmacophore Modeling Tools | Create and screen pharmacophore models | LigandScout [23], PHASE [23], O-LAP [24] |

| Molecular Descriptor Packages | Calculate chemical features for QSAR/LBVS | PaDEL-Descriptor [18], RDKit [25] |

| Benchmarking Sets | Validate virtual screening methods | DUD-E [23], DUDE-Z [24] |

Diagram 1: Decision workflow for selecting between SBDD and LBDD approaches

Comparative Analysis: Strengths and Limitations

Structure-Based Drug Design

Table 2: Strengths and Limitations of Structure-Based Drug Design

| Aspect | Strengths | Limitations |

|---|---|---|

| Data Requirements | Provides atomic-level insight into specific protein-ligand interactions [6] | Dependent on availability and quality of target structures [6] |

| Chemical Space Exploration | Enables scaffold hopping and novel chemotype identification through rational design [6] | Limited by accuracy of scoring functions and conformational sampling [26] |

| Target Specificity | Direct optimization for selectivity possible through explicit interaction design [27] | Challenging for highly conserved binding sites across target families [26] |

| Computational Resources | High-throughput docking possible for library screening [6] | Advanced methods (FEP, MD) require substantial computational resources [6] |

| Accuracy & Prediction | Physically grounded in molecular recognition principles [6] | Protein flexibility and solvent effects often inadequately captured [26] |

Ligand-Based Drug Design

Table 3: Strengths and Limitations of Ligand-Based Drug Design

| Aspect | Strengths | Limitations |

|---|---|---|

| Data Requirements | Applicable when target structure is unknown [6] | Requires sufficient known active compounds with robust activity data [6] |

| Chemical Space Exploration | Excellent at finding analogs and exploring local chemical space [6] | Limited ability to identify novel scaffolds distant from known chemotypes [6] |

| Target Specificity | Implicitly captures selectivity through known ligand profiles [6] | Difficult to rationally design for selectivity without structural context [6] |

| Computational Resources | Generally faster and more scalable than structure-based methods [6] | 3D methods and machine learning approaches can be computationally intensive [6] |

| Accuracy & Prediction | Strong predictive power within applicability domain of training data [6] | Struggles with extrapolation to novel chemical space [6] |

Integrated Approaches and Advanced Applications

Combined Methodologies

Recognizing the complementary nature of SBDD and LBDD, researchers increasingly employ integrated approaches that leverage the strengths of both methodologies [6] [9]. These integrated strategies can be implemented in sequential, parallel, or hybrid configurations.

Sequential Integration applies different techniques in a consecutive fashion, typically using faster ligand-based methods to narrow the chemical space before applying more computationally intensive structure-based techniques [6] [9]. A common workflow involves rapidly filtering large compound libraries with ligand-based screening (similarity searching or QSAR models), then subjecting the most promising subset to structure-based techniques like molecular docking [6]. This approach improves overall efficiency by applying resource-intensive methods only to a pre-filtered set of candidates.

Parallel or Hybrid Screening employs both structure-based and ligand-based methods simultaneously on the same compound library, then compares or combines results in a consensus scoring framework [6]. Advanced implementations may use hybrid scoring that multiplies compound ranks from each method to yield a unified rank order, favoring compounds ranked highly by both approaches [6]. This strategy helps mitigate limitations inherent in each individual method - for instance, when docking scores are compromised by inaccurate pose prediction, similarity-based methods may still recover true actives based on known ligand features [6].

Diagram 2: Integrated SBDD and LBDD screening strategies

Emerging Trends and Machine Learning Integration

The field of computational drug discovery is being transformed by the integration of machine learning (ML) and artificial intelligence (AI), which enhances both SBDD and LBDD approaches [9] [25].

ML-Enhanced SBDD has seen developments including deep learning-based scoring functions that more accurately predict binding affinities, generative models for de novo molecular design within binding pockets, and improved handling of protein flexibility through conformational ensemble generation [9] [27]. For instance, deep generative models like CMD-GEN utilize coarse-grained pharmacophore points sampled from diffusion models to bridge ligand-protein complexes with drug-like molecules, effectively addressing data scarcity issues [27].

Advanced LBDD benefits from chemical language models that learn meaningful molecular representations, graph neural networks that capture complex structure-activity relationships, and reinforcement learning approaches for multi-parameter optimization [9] [25]. The PGMG (Pharmacophore-Guided deep learning approach for bioactive Molecule Generation) model uses pharmacophore hypotheses as a bridge to connect different types of activity data, enabling flexible generation without further fine-tuning across different drug design scenarios [25].

AI-Based Structure Prediction tools like AlphaFold have dramatically expanded the structural information available for drug targets, even those without experimental structures [6] [26]. However, caution must be exercised as inaccuracies in predicted structures can impact the reliability of subsequent SBDD methods [6]. Recent evaluations suggest that while AlphaFold structures may be sufficient for initial screening, experimental structures generally yield better results for detailed optimization work [26].

Strategic Selection Guidelines

The choice between SBDD and LBDD depends on multiple factors, including data availability, project stage, resource constraints, and specific project goals. The following decision framework provides guidance for selecting the most appropriate approach:

When to Prefer Structure-Based Approaches:

- High-quality experimental or predicted structures of the target are available [6] [2]

- Designing for selectivity against closely related targets is required [26]

- Scaffold hopping to novel chemotypes is desired [6]

- Structural insights are needed to rationalize structure-activity relationships [6]

- Computational resources for docking and molecular dynamics are available [6]

When to Prefer Ligand-Based Approaches:

- Target structure is unavailable and difficult to predict accurately [6] [2]

- Substantial structure-activity data exists for known active compounds [6]

- Rapid screening of large compound libraries is needed [6]

- Analog searching and lead optimization within established series is the goal [6]

- Computational resources are limited [6]

When Integrated Approaches Are Recommended:

- Both structural information and ligand activity data are available [6] [9]

- Maximizing confidence in virtual screening hits is critical [6]

- Balancing novelty (SBDD) with drug-likeness (LBDD) is important [9]

- Resources permit a multi-stage screening approach [6]

SBDD and LBDD represent complementary paradigms in computational drug discovery, each with distinct strengths and limitations. SBDD provides atomic-level insights into binding interactions and enables rational design of novel chemotypes, but depends heavily on the availability and quality of structural information. LBDD offers speed, scalability, and applicability when structural data is lacking, but is constrained by the chemical diversity of known actives and limited ability to design truly novel scaffolds.

The most effective modern drug discovery pipelines increasingly leverage integrated approaches that combine the strengths of both methodologies, often enhanced by machine learning and AI technologies. By understanding the specific capabilities and limitations of each approach, drug discovery researchers can make informed decisions about methodology selection and implementation, ultimately accelerating the identification and optimization of novel therapeutic agents.

Practical Applications: Techniques and Workflows for Effective Implementation

Structure-Based Drug Design (SBDD) represents a foundational pillar of modern computational drug discovery, enabling researchers to rationally design and optimize therapeutic compounds based on the three-dimensional structure of biological targets. This approach stands in complementary contrast to Ligand-Based Drug Design (LBDD), which relies on knowledge of known active compounds when target structural information is unavailable [2]. The completion of the human genome project and subsequent advances in structural biology techniques—including X-ray crystallography, cryo-electron microscopy (cryo-EM), and nuclear magnetic resonance (NMR)—have dramatically expanded the library of available protein structures [28] [12]. More recently, artificial intelligence-based prediction tools like AlphaFold have further revolutionized the field by providing reliable protein structural models, making SBDD applicable to an unprecedented range of therapeutic targets [29] [12].

SBDD techniques permeate all aspects of drug discovery today, from initial hit identification to lead optimization [28]. Compared to traditional experimental high-throughput screening (HTS), virtual screening using SBDD methods offers a more direct, rational, and cost-effective approach to identifying promising drug candidates [28]. This technical guide provides an in-depth examination of three core SBDD techniques—molecular docking, Free Energy Perturbation (FEP), and molecular dynamics (MD) simulations—detailing their theoretical foundations, methodological considerations, implementation protocols, and strategic applications within the broader context of drug discovery workflows.

Molecular Docking: Predicting Ligand-Receptor Interactions

Theoretical Foundations and Methodological Approaches

Molecular docking serves as a cornerstone SBDD technique for predicting the optimal binding conformation and orientation of small molecule ligands within a protein's binding site [28] [6]. The docking process addresses two fundamental questions: what is the preferred binding pose of the ligand within the target site, and how strongly does it bind? These questions correspond to the two fundamental components of any docking algorithm: sampling methods (conformational search) and scoring functions [28].

The earliest understanding of ligand-receptor binding followed Fischer's "lock-and-key" theory, which treated both partners as rigid bodies [28]. This was subsequently refined by Koshland's "induced-fit" theory, which recognizes that both the ligand and receptor adjust their conformations to achieve optimal binding [28]. Modern docking methods attempt to balance computational efficiency with this biological reality, typically treating the ligand as flexible while often keeping the receptor rigid, though advanced methods can incorporate limited receptor flexibility [28] [6].

Table 1: Key Sampling Algorithms in Molecular Docking

| Algorithm | Key Characteristics | Representative Software |

|---|---|---|

| Matching Algorithms | Geometry-based; high speed; uses pharmacophore features | DOCK, FLOG, LibDock [28] |

| Incremental Construction | Fragment-based; docks incrementally; reduces complexity | FlexX, DOCK 4.0 [28] [30] |

| Monte Carlo Methods | Stochastic search; random modifications; can cross energy barriers | AutoDock, ICM, QXP [28] [30] |

| Genetic Algorithms | Evolution-inspired; mutation and crossover operations | AutoDock, GOLD, DIVALI [28] [30] |

| Systematic Search | Exhaustive exploration of torsional space; computationally demanding | Glide, FRED [30] |

Scoring Functions and Accuracy Considerations

Scoring functions are designed to reproduce binding thermodynamics by estimating the enthalpy (ΔH) and entropy (ΔS) components of binding free energy (ΔG) [30]. These functions typically employ physics-based, empirical, or knowledge-based approaches to rank predicted poses and prioritize compounds during virtual screening. Despite advances, accurately predicting absolute binding affinities remains challenging, though docking excels at relative ranking of similar compounds [28] [6].

A critical methodological consideration is validation through "non-cognate" docking, where ligands structurally different from those used in experimental structure determination are docked, as this better represents real-world docking applications than simple re-docking experiments [6]. Docking performance can be compromised when proteins undergo significant conformational changes upon ligand binding, highlighting the need for incorporating flexibility in receptor structures [28] [12].

Diagram 1: Molecular Docking Workflow

Practical Implementation and Protocol Guidelines

Successful molecular docking requires careful attention to multiple preparatory steps. Protein structures must be properly prepared by adding hydrogen atoms, correcting residue protonation states, and optimizing hydrogen bonding networks [31]. When known, water molecules should be maintained in the structure as they may mediate important ligand-protein interactions [31].

For virtual screening applications, library diversity is critical for identifying novel chemical scaffolds [12]. Ultra-large virtual libraries like Enamine's REAL database (containing billions of compounds) have demonstrated successful identification of nanomolar and sub-nanomolar binders in recent screening campaigns [12]. The dramatic expansion of accessible chemical space through such libraries represents a key advancement driving modern SBDD.

Table 2: Molecular Docking Software and Key Features

| Software | Sampling Algorithm | Scoring Function Type | Key Features/Applications |

|---|---|---|---|

| AutoDock | Genetic Algorithm, Monte Carlo | Empirical, Force Field | Flexible ligand docking; user-selectable algorithms [28] [30] |

| GOLD | Genetic Algorithm | Empirical, Knowledge-based | Protein flexibility; high accuracy for pose prediction [28] [30] |

| Glide | Systematic Search, Monte Carlo | Empirical | Hierarchical filtering; accurate for diverse compound classes [30] [31] |

| DOCK | Matching, Incremental Construction | Force Field | Spherical site points; early docking program with continuous development [28] |

| FlexX | Incremental Construction | Empirical | Fragment-based; efficient for medium-sized libraries [28] [30] |

Free Energy Perturbation (FEP): Quantitative Binding Affinity Prediction

Theoretical Basis and Methodological Framework

Free Energy Perturbation represents a more advanced SBDD technique that provides quantitative predictions of binding affinity, typically used during lead optimization stages [29] [32]. FEP calculations are based on statistical mechanics and thermodynamic cycles that compute the free energy difference between related ligands by gradually "morphing" one molecule into another through a series of non-physical, alchemical transformations [32]. These transformations occur in discrete steps called lambda windows, with sufficient overlap between adjacent windows to ensure proper convergence [32].