Strategies to Reduce False Positives in Virtual Screening: A Guide for Drug Discovery Scientists

This article provides a comprehensive guide for researchers and drug development professionals on addressing the pervasive challenge of false positives in structure-based virtual screening.

Strategies to Reduce False Positives in Virtual Screening: A Guide for Drug Discovery Scientists

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the pervasive challenge of false positives in structure-based virtual screening. It explores the fundamental causes and impacts of false positives, reviews cutting-edge methodological advances including machine learning classifiers and consensus scoring models, and offers practical troubleshooting and optimization protocols. The content is validated through comparative analysis of state-of-the-art tools and prospective case studies, delivering actionable strategies to significantly improve hit rates and screening efficiency in early drug discovery.

Understanding the False Positive Problem: Why Most Virtual Screening Hits Fail

Frequently Asked Questions

What is a false positive in the context of virtual screening? In virtual screening, a false positive is a compound that is computationally predicted to be active against a biological target but fails to show activity in subsequent experimental validation [1] [2]. These compounds consume significant time and resources, as they must be synthesized or acquired and then tested experimentally, only to be invalidated.

Why are false positives such a persistent problem? False positives persist due to fundamental limitations in computational models. Scoring functions, which predict binding affinity, can be inaccurate and sometimes fail to account for critical factors such as the role of water molecules in the binding site, ligand strain energy, and unfavorable desolvation penalties [2]. Even advanced rescoring techniques, including those using quantum mechanics, have not yet solved this problem globally [2].

Can machine learning completely eliminate false positives? While machine learning shows significant promise, it has not eliminated the false positive problem. Its performance is highly dependent on the quality and rigor of the training data. If the model is trained on decoy compounds that are too easy to distinguish from actives, it will not perform well in real-world prospective screens where distinguishing truly compelling decoys is the challenge [3]. When trained effectively, machine learning can substantially improve hit rates [3].

What is the most effective strategy to manage false positives? A powerful and robust strategy is the combination of virtual screening with a highly accurate experimental validation technique, such as Surface Plasmon Resonance (SPR). Virtual screening rapidly narrows a library of millions of compounds down to a few hundred or dozen promising candidates. SPR then acts as a "rigorous practical exam," providing label-free, quantitative data on which compounds truly bind to the target protein, effectively filtering out false positives before more costly cellular or functional assays are conducted [1].

Troubleshooting Guides

Guide 1: Diagnosing and Addressing High False Positive Rates in Your Virtual Screening Workflow

A high false positive rate indicates a disconnect between your computational predictions and biological reality. Use this guide to identify and correct common pitfalls.

| Problem Area | Symptoms | Diagnostic Checks | Corrective Actions |

|---|---|---|---|

| Scoring Function Limitations | - High enrichment in docking scores but no activity in assays.- Poor correlation between score and binding affinity in validation. | - Benchmark multiple scoring functions on known actives/inactives for your target.- Check if your hit compounds have strained conformations or unsatisfied polar groups. | - Use consensus scoring from multiple functions [2].- Apply post-docking filters for undesirable chemical features [2]. |

| Inadequate Pose Prediction | - Putative hits have unrealistic binding geometries.- Lack of key interactions seen in crystal structures of known actives. | - Perform visual inspection of top-ranked poses against a known reference structure.- Check for clashes and incorrect binding modes. | - Use a docking method with high pose prediction accuracy (e.g., >90% [4]).- Incorporate water molecules in the binding site if critical for binding [4]. |

| Training Data for ML Models | - Your ML classifier performs well retrospectively but fails prospectively. | - Audit your training set: are the decoys too easy to distinguish from actives? [3] | - Retrain your model using a dataset of "compelling decoys" that closely mimic true binders [3]. |

Guide 2: Implementing a Tiered Experimental Validation Protocol to Filter False Positives

This protocol outlines a step-wise experimental strategy to efficiently triage computational hits and focus resources on the most promising leads.

| Validation Stage | Primary Objective | Key Technique(s) | Key Outcome & Decision Point |

|---|---|---|---|

| Primary Biophysical Validation | Confirm direct, specific binding to the target protein. | Surface Plasmon Resonance (SPR) [1] | Output: Quantitative binding affinity (KD), kinetics (Kon, Koff).Decision: Proceed with compounds that show direct, measurable binding. |

| Secondary Functional Validation | Assess biological activity in a target-specific assay. | Biochemical Activity Assay (e.g., enzyme inhibition) [3] | Output: Half-maximal inhibitory concentration (IC50).Decision: Prioritize compounds with potent activity for further testing. |

| Tertiary Cellular & Phenotypic Validation | Evaluate effect in a cellular context and check for cytotoxicity. | Cell-Based Assay (e.g., reporter gene, phenotypic readout) [1] | Output: Efficacy in cells and therapeutic index.Decision: Advance compounds with desired cellular activity and low toxicity. |

Experimental Data & Protocols

Quantitative Performance of Screening Methods

The following table summarizes the effectiveness of different approaches as reported in recent studies, providing benchmarks for your own work.

| Method / Strategy | Key Metric | Performance Outcome | Context & Notes |

|---|---|---|---|

| Traditional VS + Expert Picking [3] | Hit Rate (Active Compounds) | ~12% | Median performance across 54 successful campaigns; highlights the "baseline" for the field. |

| vScreenML Classifier (Prospective) [3] | Hit Rate (Active Compounds) | ~43% (10 of 23 compounds with IC50 < 50 μM) | Machine learning model trained on "compelling decoys"; demonstrates a significant improvement. |

| VS + SPR Workflow [1] | Experimental Validation Rate | 32.5% (13 of 40 VS hits confirmed by SPR) | A concrete example where SPR filtered out over 65% of virtual screening hits, drastically focusing efforts. |

| Glide WS (Docking Method) [4] | Self-Docking Accuracy | 92% (vs. 85% for Glide SP) | Improved pose prediction can lead to better virtual screening enrichment and reduced false positives. |

Detailed Protocol: Integrated Virtual Screening and SPR Validation

This protocol is adapted from a successful case study that identified a potent PPARγ inhibitor [1].

Objective: To rapidly and reliably identify true small-molecule binders for a protein target from a large compound library.

Workflow Overview:

Step-by-Step Methodology:

Virtual Screening Pre-screening:

- Target Preparation: Obtain a high-resolution crystal structure of your target protein (e.g., from the PDB). Clean the structure, add hydrogen atoms, and define the binding site residue.

- Library Preparation: Prepare a database of compounds in a suitable format for docking. This could be a commercially available library (e.g., ~23,000 compounds [1]) or an ultra-large virtual library.

- Multi-Stage Docking: Perform a structured virtual screening to balance computational cost and accuracy.

- Stage 1 (HTVS): Dock the entire library using a fast, high-throughput method. Select the top 10% of compounds.

- Stage 2 (SP): Redock the selected compounds using a standard precision method. Select the top 10% from this stage.

- Stage 3 (XP): Redock the final subset using an extra precision method. From this, select the top-ranked compounds (e.g., 40 compounds) for experimental testing [1].

SPR Experimental Validation:

- Sample Preparation: Purify the target protein to homogeneity. Prepare DMSO stocks of the shortlisted compounds.

- Single-Concentration Screening: Immobilize the target protein on an SPR sensor chip. Test each compound from the virtual screening shortlist at a single concentration to obtain a binding response. Compare the response to a known positive control [1].

- Kinetic Analysis: For compounds that show binding above the control threshold, perform a concentration-series experiment. This involves injecting a range of concentrations of the compound to obtain binding curves. Fit this data to a model to determine the equilibrium dissociation constant (KD), and the association (Kon) and dissociation (Koff) rates [1].

Downstream Functional Assays:

- Activity Validation: Test the SPR-confirmed binders in a biochemical assay relevant to the target's function (e.g., an enzyme inhibition assay) to determine IC50 values [3].

- Cellular Validation: Finally, validate the most potent compounds in a cell-based model to confirm the biological activity translates to a more complex, physiological environment [1].

The Scientist's Toolkit: Research Reagent Solutions

| Essential Material / Tool | Function in the Context of False Positive Reduction |

|---|---|

| Structure-Based Docking Software (e.g., Glide, Schrödinger) [1] [4] | Predicts the binding mode and affinity of small molecules to a protein target, enabling the rapid screening of ultra-large virtual libraries. |

| Structured Compound Libraries (e.g., MCE Bioactive Library) [1] | Provides a curated, diverse, and often drug-like set of compounds for screening, improving the odds of finding genuine hits. |

| Surface Plasmon Resonance (SPR) Instrument [1] | A gold-standard biophysical technique that provides label-free, quantitative data on binding affinity and kinetics, serving as a critical filter for false positives. |

| Machine Learning Classifiers (e.g., vScreenML) [3] | Trained on challenging datasets, these tools can distinguish true binders from "compelling decoys" with higher accuracy than traditional scoring functions. |

| Crystallographic Structures (PDB) [3] | Provides the experimental 3D structure of the protein target, which is essential for structure-based screening and for understanding true binding interactions. |

Quantitative Data on Virtual Screening Performance

Understanding the typical hit rates and the factors that influence them is crucial for setting realistic expectations and quantifying the problem of false positives in virtual screening (VS). The table below summarizes key quantitative findings from large-scale VS studies.

Table 1: Virtual Screening Hit Rates and Impact of Library Scale

| Metric | 99 Million-Molecule Library | 1.7 Billion-Molecule Library | Implication |

|---|---|---|---|

| Molecules Tested | 44 molecules [5] | 1,521 molecules [6] [5] | Larger scales enable more reliable statistics. |

| Observed Hit Rate | 11.4% (5 hits from 44 tested) [5] | 22.4% (290 hits from 1,294 tested) [5] | Hit rate can improve substantially with larger libraries. |

| Potency of Hits | Activities from 1.3 to 400 µM [5] | 168 inhibitors with Ki < 166 µM [6] | Larger libraries can yield more potent inhibitors. |

| Recommended Minimum Tested | Not specified | >100 molecules [5] | Testing several hundred molecules is needed for hit rates to converge and for reliable results [6]. |

The economic impact of false positives extends beyond virtual screening. The table below outlines costs associated with false positives in a different, but related, context: clinical cancer screening. These figures illustrate the broader economic burden of false-positive results in life sciences.

Table 2: Economic Impact of False-Positive Cancer Screens

| Cost Category | Findings from the Prostate, Lung, Colorectal, and Ovarian (PLCO) Cancer Screening Trial |

|---|---|

| Frequency of False Positives | 43% of the study sample incurred at least one false-positive screen [7]. |

| Follow-up Care Rate | 83% of patients with a false-positive screen received follow-up care [7]. |

| Additional Medical Costs | Adjusted mean difference in medical care expenditures in the year following a false-positive screen was $1,024 for women and $1,171 for men [7]. |

| Non-Medical Costs (Time) | For a false-positive lung cancer screen, patients spent a mean of 1.5 hours receiving care [7]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our virtual screening campaign produced a high number of false positives. What are the primary reasons for this? False positives in VS arise from several well-documented challenges in scoring and pose prediction [8]. Common reasons include:

- Inaccurate Scoring Functions: Scoring functions are widely known to be inaccurate and often struggle to correctly calculate binding affinities, failing to distinguish true binders from decoys [2].

- Incorrect Ligand Poses: The docking process may generate poses that are not physiologically relevant, featuring strained conformations or unsatisfied hydrogen bonds [2].

- High Ligand Strain Energy: The predicted binding pose might require the ligand to adopt a high-energy conformation, which is unfavorable for actual binding.

- Inadequate Treatment of Solvation: The models may not properly account for the favorable or unfavorable contributions of water molecules being displaced upon ligand binding [2].

- Presence of Artifacts: Top-ranking molecules can sometimes be compounds that act as aggregation-based inhibitors or interfere with the assay, rather than true binders [9].

Q2: We have limited resources and can only test a small number of compounds. How many should we test to have confidence in our results? Based on large-scale validation studies, it is recommended to test at least 100 molecules from a virtual screen to achieve reliable results and identify high-affinity hits [5]. Testing only a few dozen compounds leads to high variability and unreliable hit rate estimates. Simulations show that confidence in the true hit rate increases significantly when several hundred molecules are tested [6] [5].

Q3: Does using a larger virtual library automatically lead to better results? While larger libraries (billions of molecules) have been shown to improve hit rates, discover more scaffolds, and yield more potent inhibitors [6] [5], they also amplify the challenge of false positives. As libraries grow, the absolute number of false positives also increases, and they can come to dominate the top-scoring lists if not properly managed [9]. Therefore, larger libraries are beneficial but must be paired with careful analysis and filtering.

Q4: We've tried multiple scoring functions and found they don't agree. What is the value of consensus scoring? While consensus scoring is a popular strategy, evidence suggests it may not be a silver bullet. A systematic study found that neither semi-empirical quantum mechanics potentials, force fields with implicit solvation, nor empirical machine-learning scoring functions could reliably discriminate true positives from false positives [2]. Refining poses with molecular mechanics also provided only marginal improvement. This underscores that the problem is complex and no single rescoring method has yet proven globally effective.

Troubleshooting Guide: Mitigating False Positives

Problem: An unmanageably large number of top-ranking hits from docking, many of which are likely false positives. Solution: Implement a hierarchical workflow that filters results based on multiple criteria beyond the docking score.

- Step 1: Apply Property and Pan-Assay Interference Compound (PAINS) Filters. Remove compounds with undesirable chemical properties or functional groups known to cause assay interference.

- Step 2: Visually Inspect Top-Ranked Poses. An experienced computational chemist should visually examine the predicted protein-ligand complexes. Look for:

- Physiologically reasonable ligand conformations.

- Satisfaction of key hydrogen bonds.

- Appropriate placement of hydrophobic groups in apolar pockets.

- Absence of severe steric clashes [2].

- Step 3: Prioritize Diverse Chemotypes. Instead of selecting the top 100 hits, choose the top 20-30 from several distinct chemical scaffolds to diversify your chances of success and avoid testing multiple analogs of the same false positive.

- Step 4: Use Expert Knowledge. Incorporate any existing structure-activity relationship (SAR) data or knowledge about the target's binding site to select compounds that have features known to be important for activity [8].

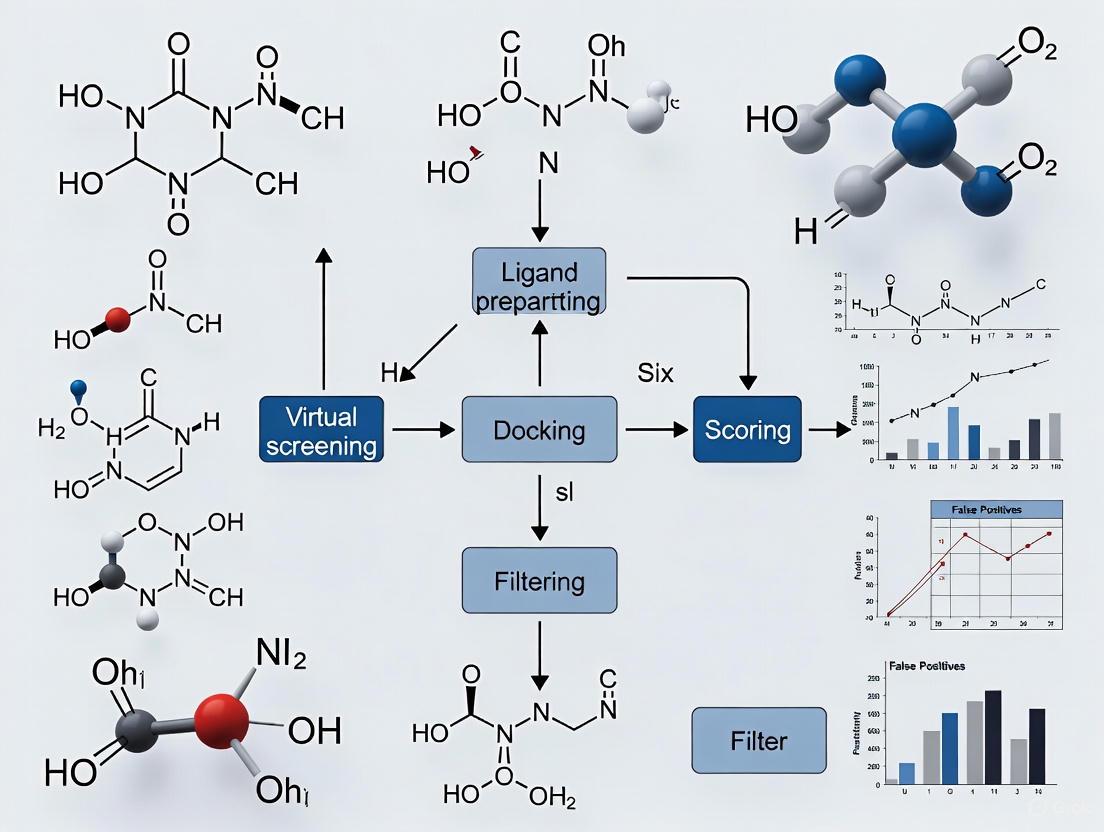

Workflow Visualization

The following diagram illustrates a recommended virtual screening workflow designed to mitigate false positives, from initial preparation to experimental testing.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Reagents and Software for Virtual Screening

| Item / Resource | Function / Description | Example Tools / Vendors |

|---|---|---|

| Virtual Compound Libraries | Large collections of purchasable and readily synthesizable compounds for screening. | ZINC [8], Enamine "make-on-demand" [5] |

| Protein Structure Files | Experimentally determined 3D structures of the target protein. | Protein Data Bank (PDB) [8] |

| Structure Validation Software | Tools to check the reliability of crystallographic models, especially in the binding site. | VHELIBS [8] |

| Conformer Generator | Software to predict the low-energy 3D conformations of molecules for docking. | OMEGA, ConfGen, RDKit (ETKDG) [8] |

| Molecule Standardizer | Tools to prepare 2D structures, defining correct protonation states, charges, and removing salts. | Standardizer, LigPrep, MolVS [8] |

| Docking & Scoring Software | The core computational engine for predicting how ligands bind to the protein and ranking them. | DOCK [6] [5] |

| Visualization Software | Essential for expert inspection of docking poses and rational decision-making. | Flare, Maestro, VIDA [8] |

Troubleshooting Guides

Why does my virtual screening yield a high rate of false positives?

A high false-positive rate is often the result of fundamental limitations in traditional scoring functions and the generation of unrealistic ligand poses [3].

- Problem: Traditional scoring functions struggle to accurately predict binding affinities. They can be overly simplistic, may be overtrained on limited datasets, or fail to consider critical non-linear interactions between energy terms [3].

- Symptoms: Many top-scoring compounds from a virtual screen show no activity in subsequent biochemical assays. The literature indicates a median hit rate of only about 12% when these functions are used [3].

- Diagnosis: The primary issue is that standard scoring functions are not trained to distinguish truly "active" complexes from "compelling decoys"—inactive compounds that nonetheless appear to be good binders in docking poses. If a model is trained on decoys that are easily distinguishable from actives (e.g., due to steric clashes or poor packing), it will perform poorly in real-world applications where decoys are more sophisticated [3].

Resolution

- Implement Advanced Classifiers: Move beyond regression models that predict affinity. Use a machine learning classifier, like vScreenML, specifically trained to discriminate between active complexes and challenging decoy complexes. In a prospective screen against acetylcholinesterase, this approach led to a hit where nearly all candidate inhibitors showed detectable activity, with one compound reaching an IC50 of 280 nM [3].

- Use a Rigorous Training Set: Employ a dedicated training strategy such as the D-COID dataset. This strategy involves matching available active complexes from the PDB with highly compelling, individually matched decoy complexes that mimic the types of poses encountered in real virtual screens [3].

- Validate Prospectively: Always validate a new scoring method or classifier in a prospective experimental screen within the same study to avoid the common pitfall of overfitting and ensure the model's performance is transferable [3].

How can I generate more convincing decoy complexes for machine learning?

The quality of your decoy set is paramount for training an effective classifier [3].

- Problem: If decoy complexes are not "compelling," a machine learning model will learn to exploit trivial differences (e.g., the presence of steric clashes or systematic under-packing in decoys) rather than the nuanced physics of binding. This leads to a model that performs well on benchmarks but fails in real-world applications [3].

- Symptoms: Your classifier achieves high accuracy during retrospective benchmarking but fails to identify active compounds in a prospective screen.

Resolution

- Source Actives from Reliable Structures: Compile your set of active complexes from high-quality crystal structures in the Protein Data Bank (PDB). This ensures the model learns from correct protein-ligand interactions. Filter these ligands to adhere to the same physicochemical properties required for your actual screening library [3].

- Create Matched Decoys: For each active complex, generate decoys that are individually matched and highly compelling. The decoys should be structurally similar to actives but lack the specific interactions required for true binding. The D-COID dataset construction strategy is designed for this purpose [3].

- Minimize Complexes: Subject both active and decoy complexes to energy minimization before training. This prevents the model from simply distinguishing between crystal structures and docked models, forcing it to learn more generalizable features of binding [3].

Frequently Asked Questions (FAQs)

What is the typical hit rate I should expect from a structure-based virtual screen?

A review of 54 successful virtual screening campaigns revealed a median hit rate of approximately 12%. This means that, on average, only about 12% of the top-scoring compounds selected for experimental testing show confirmable activity in biochemical assays. The most potent initial hit from these campaigns typically had a Kd or Ki value of around 3 µM [3].

What is the key difference between a regression model and a classifier for virtual screening?

- Regression Models: These are trained to recapitulate the known binding affinities (e.g., Kd, Ki) of protein-ligand complexes. A significant limitation is that they are never explicitly trained on inactive (decoys) complexes during the training process [3].

- Binary Classifiers: These are trained to distinguish between two categories: "active" complexes and "inactive" or "decoy" complexes. This approach is often more appropriate for virtual screening, where the primary task is to sift through a large library of mostly inactive compounds to find the few true actives [3].

What are common reasons for the disappointing performance of machine learning scoring functions?

Two major artifacts can inflate benchmark performance and lead to poor real-world results [3]:

- Information Leakage: This occurs when the validation or testing data are not truly independent of the training data, leading to overfitting. The model memorizes the training data rather than learning generalizable rules.

- Detecting Chemical Bias: Some models may inadvertently learn systematic differences in the chemical properties of the active versus decoy compound sets, rather than learning the structural determinants of binding from the protein-ligand complex structures.

Experimental Data & Protocols

Performance Comparison: Traditional vs. Machine Learning Approaches

The table below summarizes key performance metrics from a prospective virtual screening study using the vScreenML classifier against the acetylcholinesterase (AChE) target [3].

| Metric | Traditional Scoring (Typical Median) | vScreenML (Prospective AChE Screen) |

|---|---|---|

| Hit Rate | ~12% of tested compounds show activity [3] | Nearly all candidates showed detectable activity [3] |

| Number of Active Compounds | Not Specified | 23 compounds tested [3] |

| Potency of Most Active Hit | ~3 µM (Kd/Ki) [3] | 280 nM (IC50), Ki = 173 nM [3] |

| Number of Potent Hits | Not Specified | 10 compounds with IC50 < 50 µM [3] |

Experimental Protocol: Prospective Validation on AChE

This protocol details the key experimental steps from the vScreenML prospective validation study [3].

1. Virtual Screening with vScreenML

- Objective: Identify candidate inhibitors of acetylcholinesterase (AChE) from a chemical library.

- Method: The compound library was docked against the 3D structure of AChE. The resulting protein-ligand complexes were then scored using the pre-trained vScreenML classifier. The top-ranking compounds based on the classifier's score were selected for experimental testing.

2. Experimental Validation via Biochemical Assay

- Objective: Experimentally measure the inhibitory activity of the top-scoring virtual hits.

- Methodology:

- Assay Type: Biochemical inhibition assay.

- Target: Acetylcholinesterase (AChE).

- Measurement: Determine the half-maximal inhibitory concentration (IC50) for each compound.

- Calculation: For the most potent inhibitor, the inhibition constant (Ki) was calculated from the IC50 value.

Workflow and Pathway Diagrams

Virtual Screening Workflow: Traditional vs ML Approach

D-COID Training Strategy for Robust ML Models

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Virtual Screening Research |

|---|---|

| D-COID Dataset | A specialized training dataset designed to build effective machine learning classifiers. It provides a set of active protein-ligand complexes paired with highly compelling, individually matched decoy complexes to teach models to distinguish true binders from sophisticated non-binders [3]. |

| vScreenML Classifier | A general-purpose machine learning classifier for virtual screening, built on the XGBoost framework. It is trained using the D-COID strategy to evaluate docked protein-ligand complexes and score them based on their likelihood of being active [3]. |

| Protein Data Bank (PDB) | A critical source for high-quality, experimentally determined 3D structures of protein-ligand complexes. These structures are used as reliable examples of "active" complexes for training and benchmarking scoring functions and classifiers [3]. |

| Acetylcholinesterase (AChE) Biochemical Assay | A standard experimental method used for the prospective validation of virtual screening hits. It measures the half-maximal inhibitory concentration (IC50) of candidate compounds to confirm and quantify their biological activity against the AChE target [3]. |

FAQs: Understanding Data Quality in Virtual Screening

What is the "Decoy Dilemma" in virtual screening? The "Decoy Dilemma" refers to the significant challenge where the use of poorly designed or biased decoy molecules (presumed inactive compounds) in training machine learning models for virtual screening leads to high false positive rates and an over-optimistic estimation of a model's predictive performance. This occurs because models learn to recognize superficial patterns in the decoys rather than true binding interactions, compromising their ability to identify real hits in experimental validation [10].

How do false positives impact the drug discovery process? False positives in virtual screening have substantial practical consequences. They consume significant wet-lab time and reagents, as most compounds selected in ultra-large virtual screening campaigns turn out to be inactive when characterized in biochemical assays. While false negatives represent missed opportunities, false positives incur very real expenses. For example, hit rates for non-GPCR targets are typically low, with one screen of 235 million compounds against SARS-CoV-2 main protease yielding only 3 hits from 100 compounds tested (3% hit rate) [11].

What are the main causes of data quality issues in training datasets? Data quality issues stem from several sources: inconsistent data collection leading to biases, hidden biases in commonly used decoy sets like DUD-E, mislabeled compounds, and datasets that fail to adequately represent the vast chemical space of real-world compounds. These imperfections mean models learn from noisy or erroneous patterns rather than true structure-activity relationships [12] [10].

Can more sophisticated AI algorithms compensate for poor-quality data? No. Evidence shows that superior performance comes from better data quality and representation rather than more complex algorithms. One study achieved 99% accuracy with a conventional support vector machine (SVM) algorithm using optimized data, far surpassing performances of virtual screening platforms using sophisticated deep learning methods. This highlights that poor understanding and erroneous use of chemical data—not deficiencies in AI algorithms—typically leads to poor predictive performance [10].

Troubleshooting Guides

Problem: High False Positive Rates in Virtual Screening

Symptoms

- Computationally selected compounds fail to show activity in experimental assays

- High initial hit rates in silico that don't translate to wet-lab validation

- Models perform well on benchmark tests but poorly in real-world applications

Diagnostic Steps

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Audit decoy set composition | Identify hidden biases or inappropriate properties in decoys |

| 2 | Analyze chemical space coverage | Verify adequate representation of relevant chemical space |

| 3 | Check for label consistency | Identify mislabeled active/inactive compounds |

| 4 | Test model on external validation sets | Assess generalizability beyond training data |

Solutions

- Implement Data-Centric Validation: Systematically assess the properties of chemical data, including representation, quality, quantity, and composition [10].

- Use Multiple Receptor Conformations: Account for receptor flexibility by docking to multiple distinct receptor structures, then select only intersection ligands that rank highly across all conformations [13].

- Apply Advanced Machine Learning Classifiers: Use tools like vScreenML 2.0, which is specifically trained to distinguish active complexes from carefully curated decoys that would otherwise represent false positives [11].

- Improve Data Representation: Employ merged molecular representations (e.g., Extended+ECFP6 fingerprints) rather than single representations for better molecular description [10].

Problem: Model Performance Doesn't Generalize to New Targets

Symptoms

- Excellent performance on training and validation data

- Poor performance when applied to novel target classes

- Inability to identify true binders for structurally diverse proteins

Solutions

- Expand Training Diversity: Incorporate newly released structures from protein data banks and include additional features for enhanced discriminative power [11].

- Implement Robust Feature Selection: Identify and use the most important structural and interaction features rather than all possible features to prevent overfitting and improve generalization [11].

- Utilize Physics-Based Methods: Combine machine learning with physics-based docking approaches like RosettaVS that model receptor flexibility and incorporate both enthalpy and entropy calculations [14].

Experimental Protocols & Methodologies

Protocol 1: Multiple Receptor Conformation (MRC) Screening for False Positive Reduction

Purpose: To reduce false positives in structure-based virtual screening by accounting for receptor plasticity through the use of multiple distinctive receptor structures.

Materials

- Receptor structures (crystal structures or MD simulation outputs)

- Chemical compound library for screening

- Docking software (e.g., GOLD)

- Control molecules (high-affinity and low-affinity controls)

Procedure

- Generate Multiple Receptor Conformations: Explore structural flexibility using molecular dynamics simulations. Extract distinctive structures from trajectory data [13].

- Prepare Receptor Models: Use resultant distinctive structures and crystal structure as receptor models for docking exercises [13].

- Dock Compound Library: Separately dock the entire molecule library (including control molecules) to each conformation of the receptor using appropriate docking software [13].

- Rank and Select Intersection Ligands: For each receptor model, generate a top-ranked molecule list. Select only the common members that appear in all top-ranked lists from all receptor models [13].

- Experimental Validation: Test selected intersection molecules for binding affinity to verify true positive identification.

Expected Results: This selection strategy successfully distinguishes high-affinity and low-affinity control molecules and identifies true binders while excluding false positives that only rank highly in some receptor conformations [13].

Workflow: MRC Screening for False Positive Reduction

Protocol 2: Machine Learning Classification with vScreenML 2.0

Purpose: To implement an improved machine learning classifier that reduces false positives in structure-based virtual screening by distinguishing active complexes from decoys.

Materials

- Protein-ligand complex structures

- vScreenML 2.0 software (Python implementation)

- Curated training dataset with active compounds and decoys

- Feature calculation tools

Procedure

- Feature Calculation: Compute key molecular features including ligand potential energy, buried unsatisfied atoms for select polar groups in ligand, additional 2D structural features of ligands, complete characterization of interface interactions in protein-ligand complexes, and pocket-shape features [11].

- Feature Selection: Identify and use the 49 most important features to ensure model generalization and avoid overtraining, rather than using all 165 available features [11].

- Model Training: Train the classifier on carefully curated datasets of active complexes and decoys, incorporating newly released structures from PDB [11].

- Model Application: Apply the trained model to score protein-ligand complexes from virtual screening, with active complexes receiving scores close to 1 and decoy complexes receiving scores close to 0 [11].

- Hit Selection: Prioritize compounds with high scores for experimental validation.

Expected Results: vScreenML 2.0 demonstrates dramatically improved performance over traditional methods, with higher recall (0.89 vs. 0.67 in original) and Matthews correlation coefficient (0.89 vs. 0.69 in original), significantly reducing false positive rates [11].

Workflow: vScreenML 2.0 Classification Process

Data Presentation

Virtual Screening Performance Comparison Across Methods

Table 1: Performance metrics of various virtual screening approaches for reducing false positives

| Method | Key Principle | Performance Metrics | Advantages | Limitations |

|---|---|---|---|---|

| Multiple Receptor Conformations [13] | Selects intersection ligands ranking highly across all receptor conformations | Successfully identified 14/14 high-affinity controls for T-loop pocket; 7/7 for RNA binding site | Accounts for receptor plasticity; Reduces conformation-specific false positives | Computationally intensive; Requires multiple quality structures |

| vScreenML 2.0 [11] | Machine learning classifier trained to distinguish actives from decoys | MCC: 0.89; Recall: 0.89; Significant improvement over original vScreenML (MCC: 0.69) | Dramatically reduces false positives; Improved generalizability | Requires careful feature selection and curation |

| RosettaVS with Active Learning [14] | Physics-based docking with active learning for ultra-large libraries | EF1%: 16.72 (vs. 11.9 for second-best method); High accuracy in pose prediction | Models full receptor flexibility; Efficient for billion-compound libraries | Complex implementation; Computational resource demands |

| Data-Centric AI with Conventional ML [10] | Focus on data quality over algorithm complexity | 99% accuracy with SVM vs. complex deep learning methods | Emphasizes interpretability; Challenges assumption that complex AI is always better | Requires significant data curation expertise |

Research Reagent Solutions for False Positive Reduction

Table 2: Essential tools and resources for implementing effective false positive reduction strategies

| Resource | Type | Function in False Positive Reduction | Access |

|---|---|---|---|

| GOLD Software [13] | Docking software | Used for molecular docking exercises with multiple receptor conformations | Commercial |

| RosettaVS [14] | Virtual screening platform | Implements high-speed docking modes (VSX, VSH) and active learning for ultra-large libraries | Open-source |

| vScreenML 2.0 [11] | Machine learning classifier | Distinguishes active complexes from decoys using optimized feature set | Open-source |

| DUD-E Dataset [10] | Benchmark decoy set | Provides decoy molecules for training and testing (with caution for hidden biases) | Public |

| Otava PrimScreen1 Library [13] | Diversity molecule library | Used for validation of screening approaches with known controls | Commercial |

| BALL Framework [15] | Computational library | Provides tools for ligand/receptor preparation, scoring, docking, and QSAR analysis | Open-source |

In the field of drug discovery, virtual screening (VS) serves as a critical computational technique to identify potential hit compounds from extensive molecular libraries by predicting their binding affinity to a biological target [8] [16]. While this approach significantly reduces the time and resources needed for initial screening phases compared to traditional high-throughput methods, it faces a substantial challenge: high false positive rates [13]. False positives occur when compounds are incorrectly predicted to be active, leading researchers down unproductive experimental pathways. The repercussions include wasted synthetic efforts, misallocated assay resources, and delayed project timelines, presenting a major bottleneck in early drug discovery [17] [13]. This case study examines the root causes of false positives in virtual screening campaigns and presents proven strategies to mitigate them, enabling more efficient and cost-effective drug discovery.

False positives in virtual screening can originate from various methodological and technical limitations. Understanding these sources is the first step toward developing effective countermeasures.

Receptor Rigidity and Plasticity: Traditional structure-based virtual screening (SBVS) often treats the protein receptor as a rigid structure. However, this simplification can lead to inaccurate ligand binding energy estimations and poor binding mode predictions, as it fails to account for the natural flexibility and dynamics of the protein [13]. Conversely, while using multiple receptor conformations (MRCs) addresses this issue, each distinct conformation introduced can bring its own set of false positives, complicating the selection of true ligands [13].

Compound Interference in Indirect Assays: Many biochemical assays used for experimental validation rely on indirect detection methods, particularly coupled enzyme systems. In these systems, a test compound might inhibit or interfere with the coupling enzymes rather than the target enzyme itself, generating a false signal of activity [17]. For example, in common kinase assays that use luciferase-based detection, compounds that inhibit luciferase can appear as false positives for kinase inhibition.

Inadequate Compound Preparation and Conformational Sampling: The preparation of compound libraries for virtual screening requires careful attention to molecular details. If the bioactive conformation of a compound is not included among the generated conformers, it may be incorrectly dismissed. Conversely, generating high-energy conformations that have a low probability of being accessed at room temperature can be misleading and cause false positive results [8]. Proper definition of protonation states, tautomers, and stereochemistry is equally crucial.

Limitations of Specific VS Methodologies: Certain virtual screening approaches are inherently more prone to false positives. Pharmacophore modeling, for instance, has been noted to often suffer from a high false positive rate, meaning a low proportion of virtual hits hold up under experimental verification [16]. Similarly, oversimplified similarity methods may extrapolate poorly beyond a very short distance in chemical space [16].

Quantitative Impact: The Real Cost of False Positives

The impact of false positives is not merely theoretical; it has direct, measurable consequences on screening efficiency and resource allocation. The following table summarizes the potential resource impact of false positives in a typical high-throughput screening (HTS) campaign.

Table 1: Resource Impact of False Positives in a Hypothetical HTS Campaign of 250,000 Compounds

| Metric | Coupled Enzyme Assay (1.5% FPR) | Direct Detection Assay (0.1% FPR) | Impact Reduction |

|---|---|---|---|

| False Positive Leads | 3,750 | 250 | 15-fold (93%) |

| Re-screening Costs | High | Low | Significant savings |

| Project Timeline | Delayed (weeks) | Accelerated | Improved efficiency |

| SAR Confusion | High | Low | More reliable optimization |

FPR: False Positive Rate. Data adapted from Bellbrook Labs [17].

Beyond the immediate resource drain, false positives can obscure legitimate structure-activity relationships (SAR), complicating the critical lead optimization process and potentially steering medicinal chemistry efforts in the wrong direction [17].

Troubleshooting Guide: Mitigating False Positives

This section provides a practical, actionable guide for researchers to diagnose and address common causes of false positives in their virtual screening workflows.

FAQ 1: Why do my virtual screening hits consistently fail experimental validation?

Potential Causes and Solutions:

Cause: Inadequate Treatment of Receptor Flexibility.

- Solution: Implement a multiple receptor conformation (MRC) strategy. Use molecular dynamics (MD) simulations [13] or experimental structures from the Protein Data Bank (PDB) [8] to generate an ensemble of representative receptor structures for docking.

- Protocol: Run MD simulations of the apo (unliganded) receptor. Extract snapshots from the trajectory that capture distinct conformational states. Use these structures, alongside any available crystal structures, as separate receptor models in your docking workflow [13].

Cause: Library Preparation Artifacts.

- Solution: Meticulously prepare your screening library. Ensure comprehensive conformational sampling and correct protonation states.

- Protocol: Use specialized software like OMEGA [8] or RDKit's ETKDG method [8] for 3D conformer generation. Employ tools like LigPrep [8] or MolVS [8] for standardizing structures, generating tautomers, and setting correct protonation states at physiological pH.

Cause: Over-reliance on a Single VS Method.

- Solution: Adopt a hierarchical or consensus screening strategy that combines multiple, orthogonal VS methods to filter out false positives.

- Protocol: Start with a fast, ligand-based method like similarity searching to enrich the library. Follow with a more computationally intensive structure-based method like docking. Finally, apply strict drug-likeness filters (e.g., Lipinski's Rule of 5) and ADME property prediction using tools like SwissADME [8] or QikProp [8].

FAQ 2: How can I reduce false positives arising from my validation assays?

Potential Causes and Solutions:

Cause: Interference in Coupled Enzyme Assays.

- Solution: Transition to a direct detection assay method.

- Protocol: Replace indirect, coupled assays (e.g., luciferase-based ADP detection) with a direct, homogeneous immunoassay like the Transcreener ADP² assay [17]. This method directly measures ADP formation using a fluorescent tracer and an antibody, eliminating the coupling enzymes that are a common source of interference.

Cause: Compound-Based Optical Interference.

- Solution: Use detection modes less susceptible to interference from test compounds.

- Protocol: In assay development, opt for fluorescence polarization (FP) or time-resolved FRET (TR-FRET) readouts, especially those utilizing far-red tracers, as they minimize compound-related fluorescence and quenching artifacts [17].

Diagram 1: A robust VS workflow integrating multiple strategies to minimize false positives.

Key Experimental Protocols for False Positive Reduction

Protocol 1: Consensus Docking with Multiple Receptor Conformations (MRCs)

This protocol, demonstrated effectively for influenza A nucleoprotein, leverages receptor flexibility to distinguish true binders [13].

- Generate Receptor Conformations: Use molecular dynamics (MD) simulations of the target protein (either apo or holo form) to sample its flexible landscape. Extract several (e.g., 5-6) structurally distinct snapshots from the trajectory [13].

- Docking Execution: Dock the entire virtual library against each generated receptor conformation separately, using standard docking software (e.g., GOLD [13]).

- Consensus Hit Selection: For each receptor conformation, generate a ranked list of top-scoring compounds. The final list of true ligand candidates is determined by selecting the intersection molecules—those that appear in the top-ranked lists across all or most of the different receptor models [13]. This strategy identifies compounds that bind favorably to multiple conformations of the binding site, a characteristic of true inhibitors, while discarding conformation-specific false positives.

Protocol 2: Implementing a Direct Biochemical Assay for Validation

This protocol focuses on validating hits from kinases, ATPases, or other ATP-dependent enzymes with minimal artifact interference [17].

- Assay Principle: Use a homogeneous, "mix-and-read" immunoassay that directly detects the reaction product (e.g., ADP) without coupling enzymes. The assay is based on competitive immunodetection: ADP produced by the enzyme displaces a fluorescent tracer from an antibody, resulting in a measurable change in signal (e.g., fluorescence polarization) [17].

- Assay Setup: In a low-volume microplate (384- or 1536-well), incubate the target enzyme with ATP and the test compound.

- Detection: After a suitable reaction time, add the detection mixture containing the anti-ADP antibody and the fluorescent tracer. Incubate to allow for competitive binding and measure the signal.

- Hit Confirmation: Compounds that genuinely inhibit the enzyme will reduce ADP production, leading to a lower signal change compared to active controls. This direct method ensures that the measured signal reflects only the activity of the target enzyme.

Diagram 2: A diagnostic decision tree for troubleshooting high false positive rates.

The Scientist's Toolkit: Essential Reagents & Software

Table 2: Key Resources for Robust Virtual Screening and Validation

| Resource Name | Type | Primary Function | Role in Reducing False Positives |

|---|---|---|---|

| GOLD [13] | Docking Software | Molecular docking and scoring. | Used in consensus docking with MRCs to identify consistent binders. |

| OMEGA [8] | Conformer Generator | Predicts 3D conformations of small molecules. | Ensures bioactive conformation is represented in the screening library. |

| RDKit [8] | Cheminformatics Toolkit | Open-source library for descriptor calculation and conformer generation. | Provides tools for molecular standardization and preparation. |

| Transcreener ADP² Assay [17] | Biochemical Assay Kit | Direct, homogeneous detection of ADP formation. | Eliminates interference from coupling enzymes in kinase/ATPase screens. |

| VHELIBS [8] | Validation Software | Validates reliability of PDB coordinates and electron density maps. | Ensures quality of receptor structures used in SBVS. |

| SwissADME [8] | Web Tool | Predicts ADME properties and drug-likeness. | Filters out compounds with undesirable properties early in the workflow. |

False positives represent a significant and costly challenge in virtual screening, but they are not insurmountable. A proactive approach that combines computational rigor—such as careful library and receptor preparation, and consensus strategies—with robust, direct experimental assays for validation can dramatically reduce the false positive rate. By integrating the troubleshooting guides and protocols outlined in this case study, researchers can refine their screening campaigns, save valuable resources, and accelerate the journey toward discovering genuine lead compounds.

Advanced Screening Methodologies: From Machine Learning to Consensus Approaches

Core Concepts & FAQ

What is the primary innovation of the vScreenML approach? vScreenML introduces a machine learning classifier trained to distinguish true active compounds from carefully curated "compelling decoys" in structure-based virtual screening. Its key innovation is the D-COID training strategy, which uses decoy complexes that are individually matched to active complexes and are difficult to distinguish, forcing the model to learn more robust and generalizable features for reducing false positives [3].

Why is vScreenML 2.0 a significant improvement over the original? vScreenML 2.0 provides a streamlined Python implementation that removes challenging usability issues and dependencies on obsolete or proprietary software present in the original version. It also incorporates new features and an updated model trained on newly released protein structures, leading to dramatically improved performance [11].

How does vScreenML 2.0 perform compared to other methods? In benchmarks, vScreenML 2.0 significantly outperforms other widely used virtual screening tools. It shows a substantial improvement in the Matthews Correlation Coefficient (MCC), increasing from 0.69 in the original model to 0.89 in vScreenML 2.0. It also demonstrates superior performance in Receiver Operating Characteristic (ROC) curve analysis [11].

Troubleshooting Common Experimental Issues

Issue: Poor real-world performance despite good benchmark scores.

- Potential Cause: Information leakage or overfitting during training, often because the validation/test data are not truly non-redundant from the training data [3].

- Solution: Ensure a rigorous data separation strategy. The vScreenML methodology uses held-out test sets involving protein targets entirely dissimilar to those in the training set for validation [11].

Issue: Low hit rates in prospective virtual screens for non-GPCR targets.

- Potential Cause: This is a common challenge in the field. Traditional virtual screening methods often have low hit rates for non-GPCR targets, sometimes as low as 3-11% [11].

- Solution: Implement a robust machine learning classifier like vScreenML. In a prospective screen against acetylcholinesterase (a non-GPCR target), vScreenML achieved a hit rate where most purchased compounds showed detectable activity, with over half exhibiting IC50 values better than 50 μM [3].

Issue: Difficulty installing or using the original vScreenML tool.

- Potential Cause: The original vScreenML had complicated dependencies, including outdated or expensive software [11].

- Solution: Use vScreenML 2.0, which is designed for ease of installation and use. It is available as a streamlined Python package from its GitHub repository (https://github.com/gandrianov/vScreenML2) [11].

Experimental Protocol: Key Methodology of vScreenML

The following diagram outlines the core workflow for training and applying the vScreenML classifier:

1. Curate Active Complexes:

- Source high-quality protein-ligand complexes from the Protein Data Bank (PDB).

- Filter ligands to adhere to desired physicochemical properties (e.g., drug-like properties) relevant to your virtual screening library [3].

- Subject the crystal structures to energy minimization to better resemble the docked poses encountered in virtual screening [3].

2. Generate Compelling Decoys (D-COID Dataset):

- Create a set of decoy complexes that are individually matched to each active complex.

- Ensure these decoys are "compelling" and difficult to distinguish from actives based on simple rules (e.g., they should not be systematically underpacked or lack intermolecular hydrogen bonds). This prevents the classifier from learning on trivial differences [3].

3. Feature Calculation and Selection:

- Calculate a comprehensive set of features that describe each protein-ligand complex. For vScreenML 2.0, this includes 165 features covering areas like [11]:

- Ligand potential energy.

- Buried unsatisfied polar atoms.

- 2D structural features of the ligand.

- Complete characterization of protein-ligand interface interactions.

- Pocket-shape features.

- Apply feature selection to identify the 49 most important features to prevent overfitting and ensure model generalization [11].

4. Model Training:

- Train a binary classifier using the XGBoost framework on the labeled dataset of active and decoy complexes [3].

- The model's objective is to output a score close to 1 for active complexes and close to 0 for decoy complexes.

5. Prospective Screening:

- Apply the trained vScreenML model to score and rank compounds from a large, docked virtual library (e.g., multi-million compound Enamine libraries).

- Select the top-scoring compounds for experimental purchase and validation in biochemical assays [11] [3].

Performance Data & Key Materials

Table 1: Prospective Virtual Screening Hit Rates (Comparative Summary) [11]

| Target Protein | Library Size Screened | Traditional VS Hit Rate | vScreenML Hit Rate |

|---|---|---|---|

| Acetylcholinesterase (AChE) | Not Specified | ~12% (Typical for non-GPCR) | >50% (IC50 < 50 μM) |

| Serotonin 5-HT2A Receptor | 75 million | 24% | Not Applicable |

| SARS-CoV-2 Main Protease | 235 million | 3% | Not Applicable |

Table 2: Key Research Reagent Solutions [11] [3]

| Reagent / Resource | Function in the vScreenML Workflow |

|---|---|

| D-COID Dataset | A custom dataset of active and "compelling decoy" complexes for training robust classifiers. |

| XGBoost Framework | The machine learning library used to train the vScreenML classifier. |

| Enamine "Make-on-Demand" Library | An ultra-large chemical library (~29 billion compounds) used for prospective virtual screening. |

| vScreenML 2.0 Software | The improved, user-friendly Python implementation for reducing false positives in virtual screening. |

Table 3: vScreenML 2.0 Retrospective Benchmarking Results [11]

| Evaluation Metric | Original vScreenML | vScreenML 2.0 |

|---|---|---|

| Matthews Correlation Coefficient (MCC) | 0.69 | 0.89 |

| Recall (True Positive Rate) | 0.67 | 0.89 |

| Precision | Not Explicitly Stated | Improved |

Core Concepts and FAQs

What is consensus scoring in virtual screening?

Consensus scoring is a computational strategy in drug discovery that combines the results from multiple, independent virtual screening methods to produce a single, more robust ranking of potential hit compounds. Instead of relying on a single scoring function or method, it amalgamates various conventional screening approaches—such as QSAR, pharmacophore modeling, molecular docking, and 2D shape similarity—into a unified consensus score [18]. The core principle is that by integrating multiple sources of evidence, the consensus approach mitigates the individual weaknesses and biases of any single method, leading to better discrimination between true active compounds and false positives [18] [19].

Why should I use a consensus strategy instead of a single, well-validated method?

Even well-validated single methods have specific limitations and can produce false positives due to their particular scoring algorithms. A consensus strategy enhances data set enrichment over single scoring functions by approximating the true value more closely through repeated samplings, which improves active compound clustering and recovers more actives than decoys [18]. Evidence shows that consensus scoring consistently outperforms separate screening methods, achieving higher performance metrics and prioritizing compounds with higher experimental activity values [18] [19].

What are the most common ways to implement consensus scoring?

There are two primary approaches to implementing consensus scoring: sequential and parallel [18].

- Sequential Approach: This is a hierarchical workflow where different methods are applied as sequential filters to a progressively smaller number of compounds. For example, a workflow might encompass stages such as pharmacophore screening, application of property filters, followed by docking, and culminating in manual selection [18].

- Parallel Approach: This involves running multiple screening methods independently on the same compound library. The results are then combined using a consensus framework [19]. This can be done by:

- Parallel Scoring: Selecting top candidates from the rankings of each independent method without combining them into a single score. This increases the likelihood of recovering potential actives [19].

- Hybrid (Consensus) Scoring: Creating a single unified ranking through multiplicative or averaging strategies, such as calculating a weighted average Z-score across the different methodologies. This favors compounds that rank highly across multiple methods, thereby increasing confidence in the selections [18] [19].

My docking protocol works well in redocking experiments. Why does it still produce many false positives in a virtual screen?

A successful redocking validation, typically measured by a Root-Mean-Square Deviation (RMSD) of less than 2Å from the experimental pose, confirms that your docking software can reproduce a known ligand binding mode [20]. However, this does not fully validate the scoring function's ability to correctly rank novel, diverse compounds from a large library. Scoring functions often struggle with accurate affinity prediction and can be misled by specific chemical features, leading to false positives [8] [21]. Virtual screening deals with highly biased databases containing millions of low-affinity compounds and very few true actives. In such a scenario, even a scoring function with good overall performance can generate a large number of false positives, overwhelming the true hits [21]. Consensus scoring helps cancel out these method-specific errors.

Troubleshooting Common Issues

Problem: Consensus strategy fails to improve enrichment over the best single method.

Potential Causes and Solutions:

- Cause: High Correlation Between Methods: If the methods in your consensus (e.g., two different docking programs with similar scoring functions) are highly correlated, they will make the same mistakes. The strength of consensus scoring lies in combining diverse and complementary methods.

- Cause: Poor Performance of Individual Methods: A consensus of poorly performing methods is unlikely to yield good results. The phrase "garbage in, garbage out" applies.

- Cause: Ineffective Consensus Rule: Using a simplistic or inappropriate rule for combining scores (e.g., a simple mean) might not optimally leverage the available information.

- Solution: Implement a weighted consensus scoring system. Weights can be assigned based on the individual performance of each method. For example, a novel metric like "w_new" can be used to refine machine learning model rankings by weighing various model-specific parameters, thereby creating a more powerful consensus [18].

Problem: The final hit list lacks chemical diversity, containing only analogues of known actives.

Potential Causes and Solutions:

- Cause: Analogue Bias in Training Data: If the known active compounds used to build ligand-based models or train targeted functions are from a narrow chemotype, the screening output will be biased towards similar compounds [18].

- Solution: During dataset preparation, assess and mitigate "analogue bias." Employ a rigorous workflow to validate datasets, using physicochemical property analysis and fragment fingerprints to ensure diversity. Enhance structural diversity within the training sets to yield more robust and generalizable models [18].

- Cause: Over-Reliance on a Single High-Performing Method: If one method in the consensus (e.g., a specific fingerprint similarity) strongly dominates the final score, it can suppress novel chemotypes identified by other methods.

- Solution: Use a parallel screening approach instead of a strict consensus for the final selection. By examining the top-ranked compounds from each method independently, you can manually select diverse hits from each list, ensuring novel scaffolds are not missed [19].

Problem: The computational workflow is too slow for screening ultra-large libraries.

Potential Causes and Solutions:

- Cause: Applying All Methods to Entire Library: Running several computationally expensive methods (e.g., molecular docking, 3D pharmacophore) on millions of compounds is impractical.

- Solution: Implement a sequential filtering workflow. First, use fast ligand-based methods (e.g., 2D similarity or pharmacophore) to quickly filter the library to a manageable size (e.g., 1-5%). Then, apply more computationally intensive structure-based methods like docking only to this pre-filtered subset [18] [19]. This conserves expensive calculations for compounds most likely to succeed.

Experimental Protocols and Workflows

Protocol: Implementing a Basic Weighted Consensus Scoring Workflow

This protocol outlines the steps to integrate multiple virtual screening scores into a weighted consensus score.

1. Method Selection and Individual Scoring:

- Select at least two diverse virtual screening methods (e.g., Docking, Pharmacophore, 2D QSAR).

- Run each method on your target compound library.

- For each method, generate a normalized score (e.g., Z-score) for every compound. Z-score = (RawScore - MeanofAllScores) / StandardDeviationofAllScores. This places all scores on a comparable scale.

2. Weight Assignment:

- Assign a performance-based weight to each method. A reliable weight can be derived from the method's performance in a validation study (e.g., its AUC value from an enrichment study or R² value from a predictive model) [18].

- The weight for method i can be calculated as: Weightᵢ = PerformanceMetricᵢ / Σ(AllPerformance_Metrics). This ensures the weights sum to 1.

3. Consensus Score Calculation:

- For each compound j, calculate the weighted consensus score (CS):

- CSj = (Weight₁ * Z-scorej₁) + (Weight₂ * Z-scorej₂) + ... + (Weightn * Z-score_jₙ)

- Rank all compounds in the library based on their consensus score (CS_j) in descending order.

4. Validation:

- Validate the consensus ranking using an external test set or through retrospective enrichment studies, comparing the area under the ROC curve (AUC) for the consensus approach against each individual method [18].

Protocol: Validation via Retrospective Enrichment Study

This is a critical experiment to demonstrate the effectiveness of your consensus strategy before applying it to a novel screen.

1. Dataset Preparation:

- Prepare a validated dataset for a protein target with known active compounds and a large number of decoy molecules. Repositories like DUD-E are commonly used for this purpose [18].

- To ensure robustness, adopt a stringent active-to-decoy ratio (e.g., 1:125) and rigorously assess the dataset for biases in the distribution of physicochemical properties between actives and decoys [18].

2. Screening and Ranking:

- Run all individual screening methods and the consensus protocol on the combined set of actives and decoys.

- For each method, generate an ordered list of compounds from most to least promising.

3. Enrichment Calculation:

- Plot the enrichment curve by calculating the fraction of true active compounds found (v-axis) as a function of the fraction of the total database screened (x-axis).

- Calculate the Area Under the ROC Curve (AUC). A higher AUC indicates better performance in prioritizing actives over decoys. The consensus method should achieve a higher AUC than any single method [18].

Table 1: Example Enrichment Results for Different Consensus Methods on Protein Target PPARG

| Scoring Method | AUC Value | Key Strength / Note |

|---|---|---|

| Docking (Vina) | 0.75 | Good pose prediction, weaker affinity ranking |

| Pharmacophore | 0.78 | Excellent chemical feature matching |

| 2D QSAR | 0.71 | Fast, good for analogues |

| Consensus (Mean) | 0.83 | Improved over any single method |

| Consensus (Weighted) | 0.90 | Superior performance using performance-based weights [18] |

Essential Research Reagent Solutions

Table 2: Key Software and Tools for Consensus Virtual Screening

| Item Name | Function / Application | Brief Description |

|---|---|---|

| ROCS (OpenEye) | 3D Ligand-Based Screening | Rapid overlay of structures based on 3D molecular shape and chemical features [19]. |

| QuanSA (Optibrium) | 3D QSAR & Affinity Prediction | Constructs interpretable binding-site models from ligand data to predict both pose and quantitative affinity [19]. |

| OMEGA (OpenEye) | Conformer Generation | Systematic conformer generator used to create a broad set of low-energy 3D conformations for each compound [8]. |

| RDKit (Open-Source) | Cheminformatics & Descriptors | Open-source toolkit for calculating molecular fingerprints, descriptors, and generating conformers (e.g., ETKDG method) [18] [8]. |

| Schrödinger Suite | Integrated Modeling | Comprehensive platform offering tools for docking (Glide), conformer generation (ConfGen), and ligand preparation (LigPrep) [8]. |

| Flare (Cresset) | Structure-Based Design | Software for molecular visualization, docking, and calculating electrostatic and hydrophobic fields for ligand alignment [8] [19]. |

| AutoDock Vina (Open-Source) | Molecular Docking | Widely used open-source program for protein-ligand docking and scoring [18]. |

Workflow Visualization

Consensus Scoring Workflow

Dataset Bias Assessment

Troubleshooting Guide: Addressing False Positives

Why is my virtual screen yielding a high rate of false positives?

A high false-positive rate, where many top-ranked compounds show no activity in experimental assays, is a common challenge. This is often due to limitations in the scoring functions used in molecular docking [3]. The table below summarizes the primary causes and their solutions.

| Cause of False Positives | Description | Solution |

|---|---|---|

| Scoring Function Limitations | Traditional scoring functions can be misled by certain molecular features, prioritizing compounds that do not bind well in reality [3]. | Use a machine learning classifier like vScreenML to re-score docking outputs and filter out compelling decoys [11]. |

| Inadequate Receptor Flexibility | Rigid receptor models cannot account for induced fit upon ligand binding, leading to inaccurate pose and affinity predictions for many compounds [14]. | Employ docking protocols that allow for side-chain and limited backbone flexibility, such as the RosettaVS VSH mode [14]. |

| Systematic Experimental Error | Artifacts in HTS assays, such as those from pipetting errors or plate effects, can make inactive compounds appear active [22]. | Apply statistical tests and normalization methods (e.g., B-score) to raw HTS data to detect and correct for systematic error before hit selection [22]. |

How can I improve the hit rate from my billion-compound screen?

Improving hit rates involves making the virtual screening process more intelligent and efficient. The following table outlines key strategies.

| Strategy | Description | Key Implementation |

|---|---|---|

| Active Learning | Use machine learning to iteratively select the most promising compounds for expensive docking calculations, avoiding a full-library screen [14]. | Integrate a target-specific neural network that trains concurrently with the docking process to triage compounds [14]. |

| Multi-Parameter Optimization | Screen for multiple properties beyond simple potency, such as selectivity and ADMET, from the beginning [23]. | Use generative AI models designed to jointly optimize for potency, selectivity, and pharmacokinetic properties [23]. |

| Tiered Screening Protocols | Combine fast initial screening with high-precision follow-up. | Use a fast docking mode (e.g., RosettaVS VSX) for initial triage, followed by a high-precision mode (VSH) with full receptor flexibility for final ranking [14]. |

Frequently Asked Questions (FAQs)

What are the typical hit rates I should expect from an ultra-large virtual screen?

Hit rates can vary significantly based on the target protein class and the screening methodology. The table below provides a benchmark from published campaigns.

| Target Class / Context | Typical Hit Rate | Potency Range | Citation |

|---|---|---|---|

| GPCR Targets | High (14% - 63%) | Low nanomolar to low micromolar | [11] |

| Non-GPCR Enzymes | Lower (~3% - 12%) | Mid-nanomolar to high micromolar | [11] |

| Challenging Targets (CACHE Benchmark) | Very Low (~3%) | Mostly inactive | [11] |

| AI-Generated Molecules | Claimed to be equivalent to a 1M HTS | N/A | [23] |

My HTS data is noisy. How can I determine if systematic error is affecting my hit selection?

Systematic errors in HTS are often location-based (e.g., affecting specific rows, columns, or wells across plates) and can be identified statistically [22].

- Visual Inspection: Create a hit distribution surface map. In an error-free assay, hits should be evenly distributed across the plate. Clusters in specific rows, columns, or well locations indicate systematic error [22].

- Statistical Testing: Apply a Student's t-test to the raw HTS measurements to formally assess the presence of systematic error before applying any correction method [22].

- Data Correction: If error is detected, apply robust normalization methods like the B-score, which uses a two-way median polish to remove row and column effects, followed by normalization using the Median Absolute Deviation (MAD) [22].

What is the most effective way to use machine learning to reduce false positives?

The most effective strategy is to train a binary classifier on a challenging dataset that teaches the model to distinguish true active complexes from "compelling decoys"—inactive compounds that scoring functions typically rank highly [3].

- Implementation: The vScreenML tool uses the XGBoost framework. It is trained on crystal structures of active complexes from the PDB and carefully matched decoy complexes that are difficult to distinguish, ensuring the model learns non-trivial differences [3] [11].

- Result: In a prospective screen against acetylcholinesterase, this approach led to a dramatic improvement, with nearly all candidate inhibitors showing detectable activity and 10 out of 23 compounds having an IC50 better than 50 µM [3].

Experimental Protocols for Validated Workflows

Protocol: Structure-Based Virtual Screening with RosettaVS

This protocol describes how to use the RosettaVS platform for a high-accuracy, AI-accelerated virtual screen of a billion-compound library [14].

1. Protein Structure Preparation

- Define your protein target and binding pockets by providing its amino acid sequence or a 3D structure.

- Perform protein structure pre-processing and optimization, including the addition of hydrogens and assignment of partial charges [24].

2. Ligand Library Preparation

- Start with a commercial library (e.g., Enamine's "make-on-demand" library) or a proprietary collection. Pre-filter compounds using a set of ~32 physicochemical and drug-like properties [24].

- Perform chemical data augmentation and 2D/3D similarity filtering to create an optimized screening map [24].

3. Tiered Virtual Screening

- Initial Triage (VSX Mode): Use the RosettaVS Express mode for rapid initial screening. This mode is designed for speed and uses a simplified energy function [14].

- Active Learning: Employ the OpenVS platform to train a target-specific neural network concurrently with the docking process. This model actively selects the most promising compounds for further docking, drastically reducing the number of compounds that require full computational treatment [14].

- High-Precision Docking (VSH Mode): Take the top hits from the initial triage and re-dock them using the RosettaVS High-precision mode. This mode incorporates full receptor flexibility (side-chains and limited backbone) for more accurate pose and affinity prediction [14].

4. Post-Docking Analysis

- Re-scoring with ML Classifier: To mitigate false positives, re-score the top-ranked docking poses from VSH with a machine learning classifier like vScreenML 2.0 [11].

- Selectivity and Safety Profiling: Perform advanced ligand- and structure-based multi-target profiling against a panel of thousands of human proteins. Use an AI-powered ADME-Tox prediction system to assess pharmacokinetic endpoints [24].

Protocol: Machine Learning-Based False Positive Reduction with vScreenML 2.0

This protocol uses the vScreenML 2.0 classifier to filter out false positives from a list of docked protein-ligand complexes [11].

1. Input Generation

- Requirement: A set of docked protein-ligand complexes in PDB format.

- Feature Calculation: vScreenML 2.0 calculates 165 features describing the protein-ligand interface. These include:

- Ligand potential energy.

- Buried unsatisfied polar atoms.

- 2D structural features of the ligand.

- Complete characterization of interface interactions (e.g., hydrogen bonds, salt bridges).

- Pocket-shape features [11].

2. Model Application

- The streamlined Python implementation of vScreenML 2.0 is used to score each complex.

- The model uses the 49 most important features to ensure generalization and avoid overfitting.

- Each complex receives a score between 0 (likely false positive/decoy) and 1 (likely true active) [11].

3. Hit Selection

- Rank the docked compounds based on their vScreenML 2.0 score.

- Prioritize compounds with scores closest to 1 for experimental testing.

- In a benchmark study, this method achieved a Matthews Correlation Coefficient (MCC) of 0.89, significantly outperforming standard scoring functions [11].

The Scientist's Toolkit: Essential Research Reagents & Software

The table below lists key software tools and computational methods essential for conducting robust, AI-accelerated virtual screening.

| Tool/Solution | Function | Key Feature |

|---|---|---|

| RosettaVS / OpenVS | An open-source, physics-based virtual screening platform. | Models receptor flexibility and uses active learning for efficient screening of billion-compound libraries [14]. |

| vScreenML 2.0 | A machine learning classifier for reducing false positives. | Trained on challenging decoys to distinguish true actives; outperforms standard scoring functions [11]. |

| B-score Normalization | A statistical method for correcting systematic error in HTS data. | Uses a two-way median polish to remove row and column effects from assay plates [22]. |

| Generative AI Models (e.g., Enki) | AI for designing novel molecules optimized for multiple properties. | Jointly optimizes for potency, selectivity, and ADMET, exploring vast regions of chemical space [23]. |

| FAIR Data Principles | A framework for data management. | Ensures data is Findable, Accessible, Interoperable, and Reusable, which is critical for training reliable AI models [25]. |

| Specialized Biologics LIMS | A Laboratory Information Management System for biologics. | Centralizes complex drug discovery data, making it AI-ready and reducing errors in downstream analysis [25]. |

Troubleshooting Guides & FAQs

Common Problem 1: High False Positive Rate in Virtual Screening

User Question: "My virtual screening campaign is identifying a large number of hits, but most turn out to be inactive when tested experimentally. What structure-based strategies can I use to reduce these false positives?"

Expert Answer: High false positive rates are a common challenge, often resulting from over-reliance on single docking scores and insufficient filtering. The integration of machine learning classifiers and advanced motif analysis has proven highly effective.

Solution: Implement a multi-stage filtering workflow that goes beyond traditional docking scores.