Strategic Balance: Optimizing Computational Cost and Accuracy in Modern Drug Design

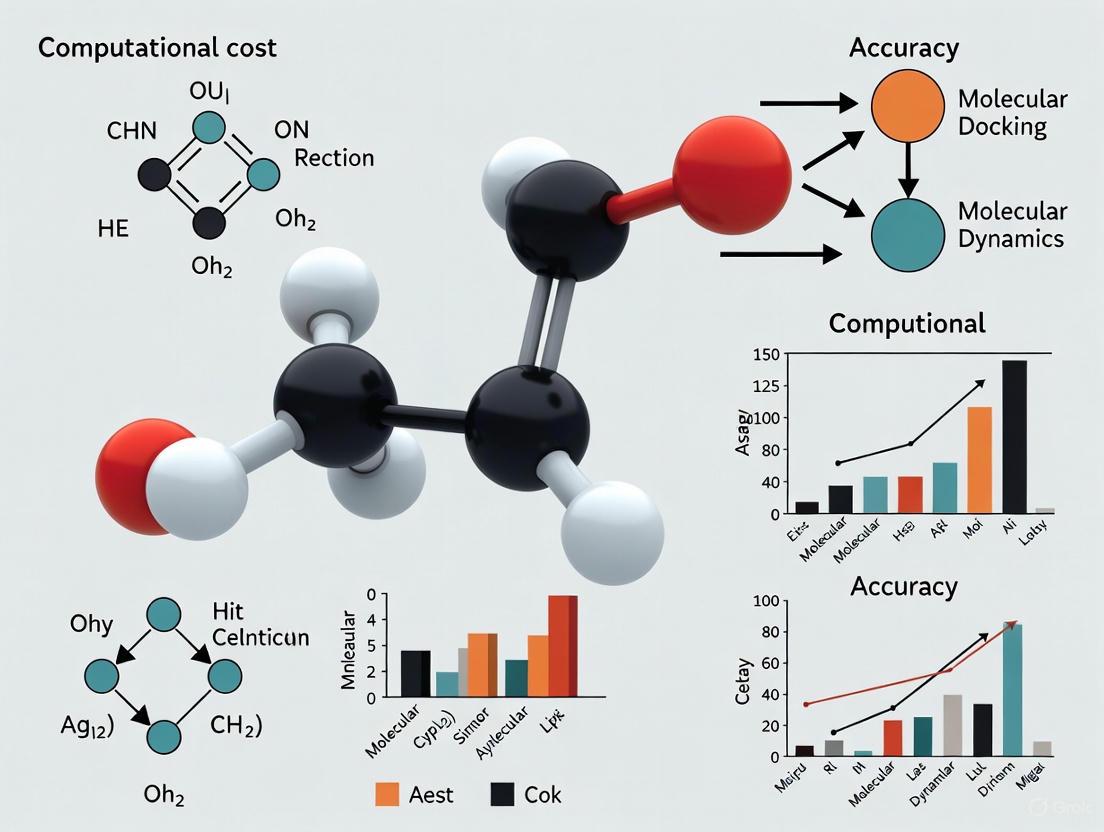

This article explores the critical challenge of balancing computational cost and predictive accuracy in contemporary drug discovery.

Strategic Balance: Optimizing Computational Cost and Accuracy in Modern Drug Design

Abstract

This article explores the critical challenge of balancing computational cost and predictive accuracy in contemporary drug discovery. Aimed at researchers and development professionals, it examines the foundational trade-offs between resource-intensive high-fidelity simulations and rapid, scalable screening methods. The discussion spans methodological advances in AI-driven generative models, active learning frameworks, and hybrid quantum-mechanical/machine-learning approaches. It further provides practical strategies for troubleshooting and optimizing computational workflows, and concludes with a comparative analysis of validation protocols that ensure computational predictions translate into successful experimental outcomes, ultimately guiding the development of more efficient and reliable drug discovery pipelines.

The Fundamental Trade-Off: Understanding the Cost-Accuracy Paradigm in Drug Discovery

Frequently Asked Questions (FAQs)

Q1: What are the key differences between traditional and contemporary computational drug discovery methods?

Traditional methods, such as molecular docking and Quantitative Structure-Activity Relationship (QSAR) modeling, are well-established foundations of computer-aided drug design (CADD). They provide reliable, physics-based frameworks for predicting how a small molecule might interact with a biological target [1]. Contemporary methods are defined by the integration of Artificial Intelligence (AI) and machine learning (ML), enabling rapid de novo molecular generation, ultra-large-scale virtual screening, and predictive modeling of complex properties [2]. The core difference lies in the approach and scale: traditional methods often rely on predefined rules and smaller datasets, while AI-driven methods can learn complex patterns from massive datasets, often leading to faster exploration of a much broader chemical space [1].

Q2: My high-throughput screening (HTS) assay shows no activity window. What could be wrong?

A lack of an assay window, where there is no difference between positive and negative controls, is a common issue. The most frequent causes are related to instrument setup or reagent problems [3].

- Instrument Configuration: For assays using technologies like TR-FRET, an incorrect choice of emission filters is a primary culprit. The instrument must be set up exactly according to the manufacturer's recommendations [3].

- Reagent and Protocol Issues: For enzymatic assays like the Z'-LYTE, the problem could lie in the development reaction. Testing with over-developed and under-developed controls can help diagnose if the issue is with the reagents rather than the instrument [3].

- Compound Preparation: Differences in how stock solutions are prepared between labs can lead to significant variations in measured potency (EC50/IC50) [3].

Q3: What are common sources of false positives in HTS, and how can I mitigate them?

False positives, or compounds that appear active but are not, are a major challenge in HTS. They often arise from compound interference with the assay system itself [4]. Common types and their mitigations are summarized in the table below.

Table: Common Types of Compound Interference in High-Throughput Screening

| Type of Interference | Effect on Assay | Characteristics | Prevention Strategies |

|---|---|---|---|

| Compound Aggregation | Non-specific enzyme inhibition; protein sequestration [4]. | Concentration-dependent; steep Hill slopes; inhibition is sensitive to detergent concentration [4]. | Include 0.01–0.1% non-ionic detergent (e.g., Triton X-100) in the assay buffer [4]. |

| Compound Fluorescence | Increase or decrease in detected light, affecting apparent potency [4]. | Reproducible and concentration-dependent [4]. | Use red-shifted fluorophores; implement time-resolved fluorescence (TRF) detection [4]. |

| Firefly Luciferase Inhibition | Inhibition of the reporter enzyme, mimicking target activity [4]. | Concentration-dependent inhibition of luciferase activity [4]. | Use an orthogonal assay with a different reporter; test actives against purified luciferase [4]. |

| Redox Cycling | Generation of hydrogen peroxide, leading to non-specific oxidation [4]. | Potency depends on the concentration of the compound and reducing reagents [4]. | Replace strong reducing agents (e.g., DTT) with weaker ones (e.g., glutathione) in buffers [4]. |

Q4: How can I balance computational cost and accuracy when setting up a virtual screening workflow?

Balancing the computational expense of high-accuracy methods with the need to screen billions of molecules is a central challenge. A tiered or iterative approach is often the most efficient strategy.

- Rapid Pre-screening: Use fast ligand-based methods like pharmacophore models or 2D similarity searches to quickly reduce a multi-billion compound library to a more manageable size (e.g., millions) [5].

- Structure-Based Screening: Apply molecular docking, which balances speed and structural insight, to further narrow the list to thousands or hundreds of candidates [6] [5].

- High-Accuracy Refinement: For the top hits, use computationally expensive but highly accurate methods like molecular dynamics (MD) simulations or quantum mechanics/molecular mechanics (QM/MM) to calculate binding free energies and validate interaction stability [5] [1]. This layered approach ensures that costly resources are only spent on the most promising molecules.

Troubleshooting Guides

Guide 1: Troubleshooting Computational Workflows

Problem: Inability to handle ultra-large chemical libraries (billions of compounds) due to computational limitations.

- Solution A: Iterative Screening: Do not dock every molecule in the library. Use an iterative process where a fast method (e.g., machine learning model) filters the library, and a slower, more accurate method (e.g., docking) is applied only to the top candidates from the previous round. This can dramatically reduce computing time [6].

- Solution B: Leverage Specialized Hardware and Software: Use GPU (Graphics Processing Unit) computing to accelerate docking and deep learning calculations [6]. Utilize open-source platforms like VirtualFlow that are specifically designed for ultra-large virtual screens on high-performance computing clusters [6].

- Solution C: Synthon-Based Screening: For some targets, break the target's active site into smaller, modular parts (synthons). Screen smaller fragment libraries against these modules and then recombine the best hits to form full molecules, reducing the combinatorial complexity [6].

Problem: AI-generated molecules are not synthetically accessible or have poor drug-like properties.

- Solution A: Apply Expert-System Rules: Use AI models that are trained with rules derived from robust organic synthesis reactions. This biases the generation towards molecules that are easier to synthesize [6].

- Solution B: Integrate Predictive Filters: Incorporate ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) prediction models, such as those based on the Lipinski's Rule of Five, directly into the generative AI workflow. This ensures generated molecules are filtered for drug-likeness in real-time [1].

Guide 2: Troubleshooting Experimental Assay Validation

Problem: Inconsistent potency (IC50/EC50) values for the same compound between different labs or assay runs.

- Root Cause: The most common reason is differences in the preparation of compound stock solutions [3].

- Troubleshooting Steps:

- Standardize Protocol: Ensure all labs use the same solvent, dilution method, and storage conditions for stock solutions.

- Verify Solubility: Confirm the compound is fully soluble in the assay buffer at the tested concentrations. Precipitation can lead to inaccurate readings.

- Use Controls: Include a standard reference compound with a known potency in every assay run to monitor inter-assay variability.

Problem: A biochemical assay shows activity, but the compound is inactive in a subsequent cell-based assay.

- Root Cause: The compound may not be able to cross the cell membrane or is being actively pumped out by efflux transporters [3]. Alternatively, it could be metabolically unstable within the cell.

- Troubleshooting Steps:

- Check Membrane Permeability: Use computational tools to predict logP and other permeability descriptors. Experimentally, run a parallel artificial membrane permeability assay (PAMPA).

- Assess Efflux Liability: Test the compound in the presence of an efflux transporter inhibitor (e.g., verapamil for P-gp). If activity is restored, efflux is likely the cause.

- Evaluate Metabolic Stability: Incubate the compound with liver microsomes or hepatocytes to determine its half-life.

Workflow and Pathway Visualizations

HTS Hit Triage and Validation Workflow

Computational Cost vs. Accuracy Workflow

Research Reagent Solutions

Table: Essential Tools and Reagents for Modern Drug Discovery

| Item | Function | Application Context |

|---|---|---|

| TR-FRET Kits (e.g., LanthaScreen) | Time-Resolved Förster Resonance Energy Transfer assays measure molecular interactions (e.g., kinase binding) with high sensitivity and reduced fluorescence interference [3]. | Target engagement studies in high-throughput screening [3]. |

| DNA-Encoded Libraries (DELs) | Vast collections of small molecules (billions) where each compound is tagged with a unique DNA barcode, enabling efficient screening via affinity selection and PCR amplification [6]. | Hit identification for a wide range of protein targets [6]. |

| Molecular Glue Assay Kits | Biochemical kits (e.g., using FRET) designed to quantify the affinity of a molecular glue for its target and the resulting enhancement of protein-protein interaction in a single workflow [7]. | Identification and characterization of molecular glues, an emerging therapeutic modality [7]. |

| On-Demand Chemical Libraries (e.g., ZINC, GDB) | Ultra-large, virtual catalogs of readily synthesizable compounds, often containing billions of molecules, which can be screened computationally before synthesis [6]. | Virtual screening for hit and lead discovery against known protein structures [6]. |

| AI/ML ADMET Prediction Platforms | Software tools that use machine learning models to predict absorption, distribution, metabolism, excretion, and toxicity properties of compounds in silico [1]. | Early-stage prioritization of drug candidates with favorable pharmacokinetic and safety profiles [1]. |

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center addresses common computational challenges in drug design, providing actionable guidance for researchers balancing simulation accuracy with resource constraints.

Frequently Asked Questions (FAQs)

Q1: Why do my all-atom molecular dynamics (MD) simulations consume so much computational power and time? All-atom MD simulations model every atom in a molecular system, explicitly calculating all forces and interactions over time. The computational demand stems from the need to solve equations of motion for thousands of atoms over millions of time steps to capture biologically relevant timescales. For example, simulating a protein-ligand complex at high fidelity can require tracking ~50,000-100,000 atoms [8]. High-performance computing (HPC) platforms, particularly those with Graphics Processing Units (GPUs), are often mandatory to handle this load [9] [10]. The computational requirements can easily exceed the capabilities of a single desktop machine, necessitating cluster-level resources [9].

Q2: What are the primary cost drivers in large-scale virtual screening campaigns? The cost is driven by the scale of the chemical library and the complexity of the scoring function. Ultra-large libraries containing billions of compounds require massive parallelization [2]. Techniques like "blind virtual screening" that screen large ligand databases against entire protein surfaces simultaneously are computationally intensive but can be accelerated using GPU architectures [9]. The choice between simpler, faster docking and more accurate, slower free-energy perturbation (FEP) calculations creates a direct trade-off between cost and predictive quality [10].

Q3: My GPU-based cluster's power consumption is very high. Are there more efficient alternatives? High-end GPUs can increase a cluster node's power consumption by up to 30%, significantly impacting the total cost of ownership (TCO) [9]. Volunteer computing paradigms (e.g., BOINC/Ibercivis) offer a valid alternative for non-real-time bioinformatics applications, distributing tasks across donated desktop GPUs and saving on energy, collocation, and administration costs [9]. For specific workflows, shifting to coarse-grained (CG) simulations can reduce resource demands, enabling the study of longer biological timescales at a significantly reduced computational cost [11].

Q4: How can I predict key drug properties without running expensive simulations for every candidate? Machine learning (ML) and deep learning models can predict Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties and other key pharmacological profiles directly from molecular structure [2] [12]. Once trained on high-fidelity simulation or experimental data, these models can screen thousands of candidates in minutes on standard hardware. Quantitative Structure-Property Relationship (QSPR) models, particularly using graph neural networks, have shown robust transferability to experimental datasets, accurately predicting properties across energy, pharmaceutical, and petroleum applications [12].

Troubleshooting Common Experimental Issues

Issue 1: Molecular Dynamics Simulation Fails Due to System Instability

- Problem: Simulation crashes or produces unrealistic results shortly after initiation.

- Diagnosis: Often caused by incorrect system parameterization, steric clashes, or improper initial conditions.

- Solution:

- Parameterization Check: Verify the topology for both protein and ligand. Use tools like

acpypewith the GAFF (General AMBER Force Field) for small molecules and ensure compatibility with your protein force field (e.g., AMBER99SB) [8]. - Energy Minimization: Always run an energy minimization step before starting the production simulation to relieve any steric clashes or inappropriate geometry. The GROMACS initial setup tool typically handles this [8].

- Equilibration Protocol: Implement a stepped equilibration. First, equilibrate the system with positional restraints on the protein and ligand heavy atoms (using an ITP file), allowing the solvent to relax. Then, perform a full system equilibration without restraints [8].

- Parameterization Check: Verify the topology for both protein and ligand. Use tools like

Issue 2: High-Throughput Virtual Screening is Taking Too Long

- Problem: Screening a large compound library is prohibitively slow, delaying project timelines.

- Diagnosis: The screening methodology may not be optimized for scale.

- Solution:

- Hybrid AI Screening: Combine traditional docking with deep learning models to pre-filter compound libraries or prioritize candidates, which can boost hit rates and scaffold diversity more efficiently than either method alone [2].

- Multi-GPU Parallelization: Leverage GPU-accelerated docking software (e.g., BINDSURF) that can divide the protein surface into independent regions (spots) and screen ligands against them simultaneously [9].

- Workflow Breakdown: Split the screening workflow into smaller, manageable jobs that can be run in parallel on an HPC cluster or a volunteer computing infrastructure [9].

Issue 3: Machine Learning Model for Property Prediction Performs Poorly on New Data

- Problem: A QSPR model trained on simulation data fails to generalize to experimental results.

- Diagnosis: The model may suffer from overfitting or a distribution shift between simulation data and real-world conditions.

- Solution:

- Data Quality and Augmentation: Ensure the training dataset from MD simulations is large and diverse. High-throughput MD generating over 30,000 data points, as in one formulation study, can provide a robust foundation [12].

- Advanced Model Architecture: Use models designed for formulation systems, such as the Set2Set-based method (FDS2S), which have been shown to outperform simpler approaches by better handling aggregated chemical information from multiple ingredients and varying compositions [12].

- Transfer Learning: Fine-tune a pre-trained model on a smaller set of high-quality experimental data specific to your target domain to bridge the gap between simulation and reality [12].

Quantitative Data on Computational Methods

The table below summarizes the performance and cost characteristics of different computational techniques used in drug discovery.

Table 1: Comparison of Computational Methods in Drug Discovery

| Method | Key Application | Typical Hardware | Computational Cost / Time | Key Fidelity Trade-off |

|---|---|---|---|---|

| Classical MD (All-Atom) [11] [8] | Protein-ligand dynamics, binding site analysis | GPU clusters, HPC | Very High (Nanoseconds/day for large systems) | High spatial and temporal detail vs. extremely high cost and short simulation timescales. |

| Coarse-Grained (CG) MD [11] | Long-timescale processes (e.g., ligand residence time) | GPU clusters | Medium (Microseconds to milliseconds achievable) | Loss of atomic detail enables longer timescales at reduced cost; good for ranking congeneric series. |

| GPU-Accelerated Virtual Screening [9] | Ultra-large library docking | Single GPU to Multi-GPU | Medium-High (Depends on library size and protein spots) | High throughput and speed vs. potential approximations in binding energy calculations. |

| Free Energy Perturbation (FEP) [10] | Accurate binding affinity prediction | High-end GPU clusters | Very High (Days per calculation) | Considered a high-accuracy standard for affinity; computationally intensive, limiting throughput. |

| AI/ML for QSPR [2] [12] | ADMET, property prediction | Standard GPU Workstation | Low (After model training) | Fast prediction vs. dependency on quality and size of training data; potential generalization errors. |

| Volunteer Computing [9] | Non-real-time screening (e.g., BINDSURF) | Distributed Desktop GPUs | Low (Cost), High (Elapsed Time) | Very low hardware cost and energy consumption vs. slower turnaround time due to distributed nature. |

Experimental Protocols for Key Techniques

This protocol uses high-throughput MD and ML to predict properties of chemical mixtures (formulations).

1. System Setup and Simulation:

- Component Selection: Identify miscible solvent combinations using experimental miscibility tables (e.g., from CRC Handbook).

- Composition Variation: For binary mixtures, vary component ratios (e.g., 20%, 40%, 50%, 60%, 80%). For ternary+, use ratios like 60/20/20 or equal ratios.

- Force Field and Solvation: Employ a force field like OPLS4, solvated in a water model such as TIP3P.

- Simulation Run: Run production MD simulation for a sufficient duration (e.g., >10 ns) to ensure equilibrium and proper sampling.

2. Data Extraction:

- From the production trajectory, extract ensemble-averaged properties:

- Packing Density: Measures how tightly packed the molecules are.

- Heat of Vaporization (ΔHvap): Correlates with cohesion energy and viscosity.

- Enthalpy of Mixing (ΔHm): Fundamental thermodynamic property for solubility and phase stability.

3. Machine Learning Model Training:

- Input Features: Use molecular structure and composition data.

- Model Architectures: Benchmark methods like Formulation Descriptor Aggregation (FDA), Formulation Graph (FG), and the Set2Set-based method (FDS2S). Studies show FDS2S often outperforms others.

- Validation: Validate simulation-derived properties (density, ΔHvap, ΔHm) against experimental data to ensure correlation (e.g., R² ≥ 0.84).

Ligand Residence Time is critical for drug efficacy and can be estimated via multi-scale simulations.

1. Enhanced Sampling Simulation:

- Choice of Scale: Decide between All-Atom (AA) for high accuracy or Coarse-Grained (CG) for higher throughput and ranking.

- Reaction Coordinate Learning: Use a deep-learning protocol like Deep-LDA to extract meaningful coordinates from metastable state information.

- Simulation Technique: Apply an infrequent metadynamics strategy, such as Frequency Adaptive Metadynamics, to accelerate unbinding events and observe rare transitions.

2. Data Analysis:

- Pathway Classification: Use a dynamic time-warping algorithm to cluster and identify multiple unbinding pathways.

- RT Calculation: Compute the residence time corresponding to each pathway cluster. This workflow enables RT estimation across a wide range, from nanoseconds to thousands of seconds.

Workflow and Pathway Visualizations

MD Simulation Workflow

Method Selection Guide

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Computational Tools for Drug Discovery

| Tool Name | Type | Primary Function | Relevance to Cost/Accuracy Balance |

|---|---|---|---|

| GROMACS [9] [8] | MD Software | High-performance molecular dynamics simulations. | Open-source; highly optimized for CPU/GPU, reducing time-to-solution and enabling larger/faster simulations. |

| AMBER99SB / GAFF [8] | Force Field | Provides parameters for potential energy calculations. | AMBER99SB for proteins; GAFF for small molecules. Accuracy of force field directly impacts reliability of results. |

| BINDSURF [9] | Screening App | High-throughput parallel blind virtual screening on GPUs. | Democratizes access to large-scale screening by running on consumer GPUs or volunteer grids. |

| BOINC/Ibercivis [9] | Computing Platform | Volunteer computing middleware. | Offers a low-cost alternative to owning large GPU clusters for non-real-time problems. |

| TORCHMD [10] | Deep Learning Framework | Neural network potentials for molecular simulations. | Represents next-generation potentials that could dramatically speed up accurate simulations. |

| FDS2S Model [12] | ML Model | Predicts formulation properties from structure/composition. | Reduces need for extensive MD simulations for every new formulation candidate after initial training. |

| ANI Neural Network [10] | ML Potential | Accelerated quantum chemistry calculations. | Provides quantum-mechanical accuracy at a fraction of the computational cost of traditional methods. |

In the field of computational drug discovery, predictive models are only as reliable as the data upon which they are built. High-stakes AI applications magnify the importance of data quality due to its significant downstream impact on prediction accuracy [13]. A "domino effect" exists where errors in data can easily propagate, creating a compounding negative impact that results in increased technical debt over time [13]. As the industry increasingly adopts AI and machine learning (ML) to reduce development costs and improve success rates, researchers face the fundamental challenge of balancing computational expenses with predictive accuracy [14] [1]. This technical support center provides practical guidance for navigating data-related challenges, ensuring your predictive models deliver reliable, actionable results.

Troubleshooting Guides: Addressing Common Data Challenges

Data Quantity and Quality Assessment

Problem: Researchers cannot determine if their dataset has sufficient quantity and quality for robust predictive modeling.

Diagnosis:

- Check for common data quality issues: incomplete data, data bias, noise, and insufficient domain expertise in data curation [13].

- Evaluate if the dataset size is commensurate with model complexity. Overfitting occurs when models with many parameters are trained on limited data, causing excellent performance on training data but failure to predict unseen data [15].

- Assess data coverage to ensure it adequately represents the chemical space relevant to your research question [16].

Solution: Follow this systematic assessment protocol:

- Define Data Requirements: Clearly establish the intended purpose of your model, as this dictates data selection criteria [16].

- Evaluate Three Key Characteristics: Ensure your data demonstrates:

- Quantity: Sufficient data points for the specific modeling task. While diverse compound classification requires large volumes, refined quantitative models may need fewer, highly-specific data points [16].

- Quality: Implement rigorous quality control measures. For biomedical data, this includes standardized processing protocols, quality control metrics assessing integrity and usability, and ontology-backed metadata for uniformity [13].

- Coverage: The dataset must span the relevant chemical or biological space for your application to ensure model generalizability [16].

- Quantitative Assessment: Use the following metrics to evaluate your dataset's readiness:

Table 1: Data Quality and Quantity Assessment Metrics

| Assessment Dimension | Key Metrics | Target Threshold |

|---|---|---|

| Data Quantity | Number of unique compounds | Project-dependent: 1,000s for classification, 100s for QSAR [16] |

| Number of data points per compound | Minimum 3-5 technical replicates [13] | |

| Intrinsic Data Quality | Metadata completeness | All essential metadata fields populated (e.g., organism, cell line, disease) [13] |

| Standardization | Consistent field names and ontology-backed values [13] | |

| Measurement reliability | Use of appropriate technology platforms with stringent quality controls [13] | |

| Extrinsic Data Quality | Data integrity | No accidental/malicious modification; all eligible data from source available [13] |

| Accuracy | Correctness of values in metadata fields and measurements [13] |

Handling Missing or Censored Data

Problem: Experimental datasets often contain missing values or censored data (e.g., activity values recorded as "<" or ">"), which can skew model performance.

Diagnosis:

- Identify missing data patterns: check if values are missing completely at random, at random, or not at random.

- Locate censored data in activity measurements (e.g., IC50, EC50 values reported as <0.001 nM or >100 μM) [16].

Solution:

- Data Audit: Inspect activity prefixes and remarks fields to identify inconsistencies documented in primary sources [16].

- Strategic Removal: For initial models, remove rows with critical null values or censored data, particularly for continuous models which are more sensitive to these issues than categorical models [16].

- Advanced Imputation: For advanced handling, employ multiple imputation techniques or treat censored data as survival analysis problems for more nuanced modeling.

Managing Computational Costs During Data Processing

Problem: Data preparation consumes approximately 80% of the time in machine learning projects, creating a significant bottleneck and computational expense [16].

Diagnosis:

- The process of data cleaning, standardization, and transformation is computationally intensive and time-consuming.

- Inefficient data pipelines lead to redundant processing and increased cloud computing costs.

Solution:

- Leverage Curated Databases: Utilize pre-curated, high-quality databases like GOSTAR, ChEMBL, or DrugBank to reduce initial cleaning overhead [16] [1].

- Implement Progressive Data Loading: Process data in batches rather than loading entire datasets into memory.

- Automate Standardization Pipelines: Develop automated scripts for repetitive tasks like chemical structure standardization, salt stripping, and tautomer generation [16].

- Cost-Benefit Analysis: Balance the computational cost of data preparation against potential model improvement. Use the following workflow to optimize resources:

Data Preparation Cost Optimization Workflow

Experimental Protocols for Data Quality Assurance

Protocol: Standardized Data Processing for Predictive Modeling

This protocol ensures consistent, high-quality data preparation for robust predictive modeling, based on industry best practices [13] [16].

I. Data Selection and Retrieval

- Target Definition: Clearly define molecular targets using standardized identifiers (UniProt ID, Common Name).

- Structure-Activity Relationship (SAR) Focus: Ensure retrieved data has chemical structures associated with bioactivity results.

- Experimental Conditions Audit: Document experimental conditions (assay type, measurement parameters) to identify comparable data.

II. Data Pre-processing and Transformation

- Endpoint Consistency: Identify the most prevalent endpoint (IC50, EC50, %Inhibition) and maintain consistency.

- Unit Standardization: Convert all activity measurements to standardized units (nM, μM).

- Structure Standardization:

- Strip salts and remove duplicates

- Generate canonical tautomers

- Filter extreme outliers (polymers, mixtures)

III. Data Quality Validation

- Null Value Check: Identify and address rows with critical missing values.

- Chemical Diversity Assessment: Evaluate whether the dataset adequately covers the chemical space relevant to your prediction goals.

- Bias Evaluation: Check for overrepresentation of certain compound classes or structural motifs.

Protocol: Internal Validation of Model Performance

To obtain an honest assessment of prediction model performance and correct for optimism, use this internal validation protocol [15].

I. Performance Metric Selection

- Discrimination: Evaluate the model's ability to distinguish between different outcome classes (e.g., active vs. inactive compounds).

- Calibration: Assess the agreement between predicted probabilities and observed outcomes.

II. Validation Method Selection

- Bootstrapping: Create multiple bootstrap samples by drawing with replacement from the original dataset; develop the model in each bootstrap sample and test it in the original sample.

- k-fold Cross-Validation: Split data into k folds (typically k=5 or 10); develop the model in k-1 folds and test it in the left-out fold.

- Temporal Validation: For time-series data, develop the model on earlier time points and validate it on later time points.

Frequently Asked Questions (FAQs)

Q1: What are the most common data quality issues that undermine predictive models in drug discovery? The most prevalent issues include: (1) Incomplete data where critical metadata is missing; (2) Data bias from overrepresentation of certain compound classes; (3) Noise in experimental measurements that obscures true signals; and (4) Insufficient domain expertise in data curation, leading to misinterpretation of experimental nuances [13]. These issues create a "domino effect" where errors propagate through the entire modeling pipeline [13].

Q2: How much data is sufficient for building a reliable predictive model? The required data volume depends on your specific research question. For classifying compounds as active/inactive across diverse chemical spaces, thousands of compounds are typically needed. For refined quantitative models optimizing molecular interactions (e.g., based on x-ray crystallography), fewer but highly precise data points may suffice [16]. The key is ensuring your data has adequate coverage of the relevant chemical space for your prediction goals [16].

Q3: What is the difference between intrinsic and extrinsic data quality? Intrinsic data quality refers to qualities inherent to the data itself, established during data generation (experiment design, metadata annotations, measurement quality) [13]. Extrinsic data quality refers to aspects influenced by systems and procedures that engage with the data post-creation (standardization, accuracy, integrity, breadth, and completeness) [13]. Intrinsic quality is typically fixed once data is collected, while extrinsic quality can be enhanced through curation.

Q4: How can we balance the trade-off between model complexity and data availability? This balance represents the bias-variance trade-off. Simple models with limited data have high bias but low variance, while complex models may overfit (high variance) [15]. Use techniques like penalization (regularization) to reduce model complexity and bring the model to the "sweet spot" of this trade-off curve [15]. Cross-validation helps identify the optimal complexity for your available data [15].

Q5: What are the risks of overhyping AI capabilities in drug discovery? Overhyping AI creates several problems: (1) clouded decision-making driven by FOMO rather than scientific merit; (2) unrealistic expectations that lead to disillusionment when results aren't immediate; (3) unsustainable AI development cycles; and (4) downplaying human creativity and insight [17]. Researchers emphasize that "the output of a model is only as good as the input of the data" [17].

Table 2: Key Data Resources for Predictive Modeling in Drug Discovery

| Resource Category | Specific Examples | Primary Function | Key Features |

|---|---|---|---|

| Commercial SAR Databases | GOSTAR [16] | Provides structure-activity relationship data | Millions of compounds with associated bioactivity endpoints; curated by domain experts |

| Public Compound Databases | ChEMBL [18], DrugBank [1], ZINC [1], LOTUS [18], COCONUT [18] | Annotated bioactivity data for diverse compounds | Open-access; extensive compound libraries with target and activity information |

| Natural Product Databases | NPASS [18], SuperNatural II [18] | Specialized in natural product compounds | Structural and activity data for natural products and their sources |

| Traditional Medicine Databases | TCMSP [18], TCMID [18], SymMap [18] | Bridges traditional medicine with modern research | Connects herbal formulations, chemical compounds, and target information |

| Protein Databases | UniProt [1], Protein Data Bank (PDB) [1] | Protein sequence and structural information | Essential for target identification and structure-based drug design |

| AI/ML Platforms | DeepChem [1], OpenEye [1] | Machine learning for drug discovery | Open-source and commercial platforms for building predictive models |

| ADMET Prediction Tools | ADMET Predictor [1], SwissADME [1] | Predicts pharmacokinetic and toxicity profiles | Critical for evaluating drug-likeness and prioritizing compounds |

Data Integration and Molecular Representation Workflow

Successfully integrating diverse data sources and selecting appropriate molecular representations are critical steps in preparing data for AI-driven natural product drug discovery [18]. The following workflow illustrates this process:

Data Integration and Molecular Representation Workflow

Frequently Asked Questions

FAQ 1: Why is high 'accuracy' on my training data a red flag for binding affinity prediction models?

A high accuracy on your training set, followed by a significant performance drop on a new, independent test set, is a classic symptom of data leakage or overfitting. In drug discovery, public benchmarks often contain hidden similarities between training and test complexes. If a model encounters test proteins or ligands that are highly similar to those in its training data, it can achieve high scores by "memorizing" rather than genuinely learning the underlying physics of binding. To ensure true generalization, you must use rigorously curated data splits that remove proteins and ligands with high sequence or structural similarity from the training set [19].

FAQ 2: My dataset has thousands of inactive compounds for every active one. Which metrics should I use to evaluate my virtual screening model?

In this scenario of extreme class imbalance, generic metrics like Accuracy are entirely misleading. You should instead rely on metrics designed for early recognition and ranking:

- Precision-at-K (PatK): Measures the proportion of true active compounds in your top K ranked predictions. This is crucial for assessing the quality of your candidate shortlist [20].

- Enrichment Factor (EF): Quantifies how much your model enriches active compounds in the top fraction of the ranked list compared to a random selection.

- Recall/Sensitivity: Ensures you are not missing a large number of potential active compounds. The trade-off between Precision and Recall is captured by the F1 score, but for hit discovery, PatK is often the most operational metric [20].

FAQ 3: How can I validate that my model is learning real protein-ligand interactions and not just ligand chemistry?

Perform a simple but powerful ablation study. Train and test your model in two conditions:

- With full protein-ligand complex information.

- With protein information completely removed, using only ligand features.

If the model performance does not drop significantly in the second condition, it indicates the model is largely ignoring protein context and basing its predictions on ligand memorization. A robust model should show a clear performance decline when protein data is absent, proving it learns the interaction [19].

FAQ 4: What is the best data partitioning strategy to ensure my model generalizes to novel drug targets?

Avoid random splitting based solely on ligands, as it often leads to data leakage. Instead, use structure-based partitioning:

- UniProt-based Splitting: Ensure all complexes of a given protein (or protein family) are entirely contained within either the training or test set. This tests the model's ability to predict for genuinely novel targets [21].

- Anchor-Query Frameworks: For limited data, this method leverages a small set of reference "anchor" complexes to predict the behavior of new "query" complexes, improving generalization even with sparse data [21].

Troubleshooting Guides

Problem: Inflated Performance on Benchmarks but Poor Real-World Screening

Diagnosis This is typically caused by train-test data leakage, where the data used to test the model is not independent from the data used to train it. This creates an over-optimistic view of model performance [19].

Solution Adopt a strict data curation and splitting protocol.

- Curate Your Dataset: Use tools like PDBbind CleanSplit [19] or create your own splits based on protein similarity.

- Apply Multi-level Filtering: Remove from your training set any complexes that meet the following criteria with any test complex [19]:

- Protein Similarity: TM-score > 0.7

- Ligand Similarity: Tanimoto coefficient > 0.9

- Binding Conformation Similarity: Pocket-aligned ligand RMSD < 2.0 Å

- Validate Externally: Always test the final model on a completely external dataset from a different source (e.g., Astex Diverse Set [22]) to confirm its real-world applicability.

Experimental Protocol: Implementing a Clean Data Split

- Objective: To create training and test sets with no significant protein, ligand, or binding mode similarity.

- Materials: A dataset of protein-ligand complexes with affinity labels (e.g., PDBbind [19]).

- Software: Clustering algorithms, tools for calculating TM-score (protein structure alignment) and Tanimoto coefficient (ligand similarity).

- Procedure: [19]

- Cluster by Protein: Group complexes by their protein UniProt ID or by protein fold similarity (e.g., TM-score > 0.7).

- Assign Whole Clusters: Move entire protein clusters into either the training or test set. Do not split clusters.

- Filter Ligands: Within the training set, remove any ligands that are highly similar (Tanimoto > 0.9) to any ligand in the test set.

- Verify: Re-calculate similarity metrics between the final training and test sets to ensure separation.

Clean Data Splitting Workflow

Problem: Model Fails to Identify Critical but Rare Toxicological Signals

Diagnosis Standard metrics like Accuracy and ROC-AUC are biased by the majority class (non-toxic compounds), making them insensitive to rare events. Your model is not being evaluated on its ability to find what matters most [20].

Solution Implement rare-event-sensitive metrics and adjust your loss function to penalize missing these events.

- Use Targeted Metrics:

- Rare Event Sensitivity: Calculate recall specifically for the rare class (e.g., toxic compounds).

- Precision-Weighted Scoring: Combine high precision (to minimize false alarms) with high recall for the rare class.

- Incorporate Domain Knowledge: Use Pathway Impact Metrics to evaluate if the model's predictions for rare events align with known biological pathways (e.g., toxicological pathways), adding a layer of biological interpretability [20].

Experimental Protocol: Evaluating Rare Event Detection

- Objective: To quantitatively assess an ML model's performance in detecting a rare adverse event or toxicological signal.

- Materials: A labeled dataset (e.g., transcriptomics data) with rare event annotations.

- Software: Standard ML libraries (e.g., scikit-learn) and pathway analysis tools (e.g., GO enrichment).

- Procedure: [20]

- Define the Rare Class: Clearly identify the positive class (e.g., "toxic").

- Calculate Class-Specific Recall: Compute Recall (True Positives / All Actual Positives) for the rare class. This is your Rare Event Sensitivity.

- Calculate Precision-at-K: For the K samples the model is most confident are "toxic," calculate the proportion that are true positives.

- Pathway Enrichment Analysis: For the compounds predicted as "toxic," perform a pathway over-representation analysis. A good model will show significant enrichment in biologically relevant pathways.

Metric Selection Tables

Table 1: Choosing the Right Metric for Your Drug Discovery Task

| Research Task | Recommended Primary Metrics | Metrics to Avoid or Supplement | Rationale |

|---|---|---|---|

| Virtual Screening & Hit ID | Precision-at-K (P@K), Enrichment Factor (EF) | Accuracy, ROC-AUC | Focuses evaluation on the top of the ranking list, which is most critical for selecting compounds for experimental testing [20]. |

| Binding Affinity Prediction | Pearson's R, RMSE, MAE | R² (in isolation) | Pearson's R measures the linear correlation between predicted and experimental values, while RMSE/MAE quantify error magnitude. Always report with confidence intervals [22]. |

| Toxicity & Rare Event Prediction | Rare Event Sensitivity, Precision-Weighted Score | Accuracy, F1 Score (with imbalance) | Directly measures the model's ability to find the "needle in the haystack." F1 can be misleading if the positive class is extremely rare [20]. |

| Lead Optimization | RMSE, MAE | During optimization, the absolute error in affinity prediction is key to prioritizing the best candidates [22]. |

Table 2: Quantitative Performance Comparison of Affinity Prediction Models on PDBbind v.2016 Core Set

| Model | Reported Pearson's R | Pearson's R (Trained on CleanSplit) | Key Strength / Weakness |

|---|---|---|---|

| DeepAtom (3D-CNN) [22] | 0.83 | Information Missing | Light-weight model; minimal feature engineering. Performance on clean data split not reported. |

| GEMS (GNN) [19] | Not applicable | ~0.82 (State-of-the-art) | Designed and validated on a cleaned dataset (PDBbind CleanSplit), ensuring robust generalization [19]. |

| GenScore [19] | High (~0.8 range) | Marked Drop | Performance heavily inflated by data leakage; drops significantly when trained on a clean dataset [19]. |

| Pafnucy [19] | High (~0.8 range) | Marked Drop | Performance heavily inflated by data leakage; drops significantly when trained on a clean dataset [19]. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Computational Evaluation

| Tool / Resource | Function | Relevance to Metric Evaluation |

|---|---|---|

| PDBbind Database [19] [22] | A curated database of protein-ligand complexes with experimental binding affinity data. | The primary benchmark for training and testing binding affinity prediction models. |

| PDBbind CleanSplit [19] | A curated version of PDBbind with minimized data leakage between training and test sets. | Essential for obtaining a genuine estimate of your model's generalization ability to unseen complexes [19]. |

| CASF Benchmark [19] | The Comparative Assessment of Scoring Functions benchmark. | A standard set for evaluating scoring functions; use with caution and in conjunction with CleanSplit to avoid overestimation [19]. |

| Astex Diverse Set [22] | A small, high-quality set of protein-ligand complexes selected for diversity. | Useful as a compact, external validation set to confirm model performance on diverse targets [22]. |

| Normalized Drug Response (NDR) [23] | A drug scoring metric that accounts for cell growth rates and experimental noise using positive and negative controls. | Improves consistency and accuracy in cell-based drug sensitivity screening, leading to more reliable experimental validation data [23]. |

Model Validation and Evaluation Logic

Methodological Arsenal: From AI Generators to Physics-Based Simulations

Generative AI and Active Learning for Cost-Effective Molecule Design

Frequently Asked Questions (FAQs)

Q1: What are the most common reasons a generative model produces invalid or non-synthesizable molecules? This typically stems from issues with the model's training data or its molecular representation. If the training data contains synthetic complexities or errors, the model will learn them. Using a simplified molecular representation like SELFIES, which is designed to always produce valid molecular structures, can mitigate invalidity. For synthesizability, integrating a synthetic accessibility (SA) score as a filter within an active learning cycle ensures only realistically makeable molecules are promoted for further optimization [24] [25] [26].

Q2: How can I address the "sparse reward" problem when optimizing for multi-target affinity? The sparse reward problem, where very few generated molecules meet all desired targets, is common in multi-objective optimization. A structured active learning (AL) paradigm is effective here. Instead of a single reward function, use a tiered filtering approach. First, use fast, coarse filters (e.g., for drug-likeness). Then, apply more computationally expensive affinity oracles (e.g., docking) only to molecules that pass the initial chemical filters. This progressively refines the search space and makes learning more efficient [27].

Q3: My model's performance has degraded after several active learning cycles. What could be causing this? This "performance drift" can occur if the model becomes over-specialized on a narrow region of chemical space, losing its ability to generate diverse structures. To combat this, ensure your AL workflow includes explicit diversity checks. Incorporate metrics like molecular similarity to the training set or within the generated batch. Periodically fine-tuning the model not just on the newly selected "hits," but also on a subset of the original, broader training data can help maintain generalizability and prevent catastrophic forgetting [25] [26].

Q4: What is the most computationally expensive part of an AI-driven molecule design workflow, and how can its cost be managed? Physics-based molecular simulations, such as molecular dynamics (MD) for estimating binding residence times or absolute binding free energy (ABFE) calculations, are often the most computationally intensive steps [11] [26]. To manage this cost, use them strategically. Employ a multi-stage workflow where these expensive methods are used only for final candidate validation. Use faster methods like molecular docking for initial, high-volume screening within the AL loops. Emerging methods that use coarse-grained (CG) simulations can also provide a favorable balance between cost and accuracy for ranking compounds [11].

Q5: How can human expertise be integrated into an automated generative AI workflow? Human feedback is irreplaceable for assessing nuanced qualities like "molecular beauty"—a holistic view of synthetic practicality, therapeutic potential, and clinical translatability. Technically, this can be implemented via Reinforcement Learning with Human Feedback (RLHF). In this setup, a drug-hunting expert reviews a subset of generated molecules and provides feedback (e.g., rankings or scores), which is then used to fine-tune the generative model's objective function, aligning its outputs more closely with human expert judgment [26].

Troubleshooting Guides

Issue 1: Generative Model Produces Chemically Invalid or Repetitive Structures

Problem: Your generative model is outputting a high percentage of molecules that are chemically impossible or it is stuck generating very similar structures (mode collapse).

Diagnosis and Solution Steps:

Check Molecular Representation:

- Diagnosis: If you are using SMILES strings, their strict syntactic rules can easily lead to invalid outputs.

- Solution: Switch from SMILES to a SELFIES (Self-referencing embedded strings) representation. SELFIES is designed so that every string corresponds to a valid molecule, drastically reducing invalidity rates [24].

Assess Training Data Diversity:

- Diagnosis: The training dataset may be too small or not diverse enough, leading the model to simply memorize and reproduce its inputs.

- Solution: Curate a larger, more diverse training set. During generation, monitor the internal diversity of the output batch. If diversity drops, adjust the sampling temperature (if available) to encourage exploration or incorporate an explicit diversity penalty into the sampling algorithm [26].

Inspect the Reward Function:

- Diagnosis: In reinforcement learning (RL) setups, an overly narrow reward function can cause mode collapse.

- Solution: Redesign the reward function to be multi-objective. Instead of just optimizing for affinity, include terms for structural diversity, synthetic accessibility, and drug-likeness. This encourages the model to explore a wider area of chemical space [25] [27].

Issue 2: Active Learning Workflow is Too Computationally Expensive

Problem: The iterative cycle of generation, evaluation, and model retraining is taking too long or consuming prohibitive computational resources.

Diagnosis and Solution Steps:

- Implement a Multi-Fidelity Evaluation Strategy:

- Diagnosis: Using a high-cost, high-accuracy evaluation method (like FEP or MD) on every generated molecule is not scalable.

- Solution: Adopt a tiered (nested) active learning framework. The following workflow illustrates this cost-effective strategy [25]:

Nested active learning workflow for cost efficiency.

- Optimize Expensive Simulations:

- Diagnosis: Physics-based simulations are the primary bottleneck.

- Solution: For residence time (RT) estimation, consider using coarse-grained (CG) simulations instead of all-atom (AA) where appropriate. CG simulations can correctly rank congeneric ligand series at a significantly reduced computational cost [11]. Reserve the most accurate (and expensive) methods for the final validation of a handful of top candidates.

Issue 3: Generated Molecules Have Good Predicted Affinity but Poor Experimental Performance

Problem: There is a significant disconnect between your in silico predictions (e.g., docking scores) and experimental results in the lab.

Diagnosis and Solution Steps:

Audit Your Affinity Oracle:

- Diagnosis: Molecular docking scores are a coarse proxy for affinity and can be "hacked" by generative AI to produce molecules that score well but are not truly drug-like [26].

- Solution: Do not rely on docking alone. For molecules that pass initial docking, apply more rigorous physics-based validation. This can include shorter MD simulations to check for complex stability or more advanced methods like free energy perturbation (FEP) to calculate binding affinities more accurately [25] [26].

Evaluate Broader Drug-like Properties:

- Diagnosis: The molecules may be binding to the target but have poor ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties, causing them to fail in biological assays.

- Solution: Integrate ADMET prediction models early in the active learning cycle. Use fast, predictive models for solubility, permeability, and metabolic stability as filters before molecules even reach the affinity oracle stage. This ensures that optimized compounds have a better overall profile [26] [28].

Experimental Protocols

Protocol 1: Nested Active Learning with a Variational Autoencoder (VAE)

This protocol details a method proven to generate novel, synthesizable molecules with high predicted affinity for targets like CDK2 and KRAS [25].

1. Data Preparation and Model Initialization

- Molecular Representation: Represent all molecules as canonical SMILES strings.

- Tokenization: Tokenize the SMILES strings and convert them into one-hot encoded vectors.

- Initial Training: Train a Seq2Seq VAE on a large, general dataset of drug-like molecules (e.g., ZINC). This teaches the model the "grammar" of chemistry.

- Target-Specific Fine-tuning: Fine-tune the pre-trained VAE on a smaller, target-specific dataset of known actives.

2. Nested Active Learning Cycles The core of the protocol involves two nested feedback loops: an "Inner" chemical cycle and an "Outer" affinity cycle [25].

Nested active learning cycles for balanced exploration and optimization.

Inner AL Cycle (Chemical Optimization):

- Step 1: Sample the VAE to generate a large batch of new molecules.

- Step 2: Filter these molecules using fast chemoinformatic oracles:

- Remove molecules with undesired structural motifs.

- Apply thresholds for drug-likeness (e.g., Lipinski's Rule of Five).

- Apply a synthetic accessibility (SA) score threshold.

- Step 3: Promote molecules that are dissimilar from those already selected to maintain diversity.

- Step 4: Add the molecules that pass all filters to a "temporal-specific set."

- Step 5: Fine-tune the VAE on this temporal set. Repeat for a fixed number of iterations (e.g., 3).

Outer AL Cycle (Affinity Optimization):

- Step 1: After the inner cycles, take the accumulated molecules from the temporal set.

- Step 2: Evaluate them using a more expensive affinity oracle, such as molecular docking.

- Step 3: Promote the top-scoring molecules that meet a predefined docking score threshold.

- Step 4: Transfer these molecules to a "permanent-specific set."

- Step 5: Fine-tune the VAE on this permanent set. This macro cycle then repeats, returning to the inner cycles for further exploration.

3. Candidate Selection and Validation

- After completing the AL cycles, select top candidates from the permanent set.

- Subject these to more rigorous physics-based validation, such as binding free energy calculations (e.g., FEP, ABFE) or advanced molecular dynamics simulations (e.g., PELE) to refine poses and assess stability [25].

- Select the final molecules for synthesis and experimental testing.

Protocol 2: Multi-Target Inhibitor Design with Structured AL

This protocol extends the nested AL concept to design molecules that inhibit multiple related targets (e.g., pan-inhibitors for viral proteases) [27].

1. Workflow Setup

- Model: Use a Sequence-to-Sequence (Seq2Seq) VAE.

- Training: Pre-train on a general molecule dataset. Fine-tune on a "fixed specific dataset" containing molecules with known affinity for any of the multiple targets (does not require affinity for all simultaneously).

2. Two-Level Active Learning Workflow

- Level 1: Chemical AL Cycle

- Run for

niterations (e.g., 2-3). - Generate molecules and filter based on physicochemical properties and structural alerts.

- Fine-tune the VAE on the accumulated molecules to bias generation toward drug-like chemical space.

- Run for

- Level 2: Affinity AL Cycle

- Run after Chemical AL.

- Evaluate all accumulated molecules for simultaneous predicted affinity to all targets (e.g., multi-target docking).

- Filter and keep only molecules that meet the affinity threshold for all targets.

- Fine-tune the VAE on this multi-target active set.

This two-level approach sequentially tackles the problem, first ensuring chemical quality and then layering on the complex multi-target constraint, making the sparse reward problem more tractable [27].

Performance Data and Metrics

The tables below summarize key quantitative findings from recent studies, providing benchmarks for success and computational cost.

Table 1: Experimental Validation Results of AI-Designed Molecules

| Target | Generative Platform / Workflow | Key Experimental Outcome | Reported Timeline/Efficiency |

|---|---|---|---|

| CDK2 & KRAS | VAE with Nested Active Learning [25] | For CDK2: 9 molecules synthesized, 8 showed in vitro activity, 1 with nanomolar potency. | Workflow successfully generated novel, synthesizable scaffolds. |

| Idiopathic Pulmonary Fibrosis | Insilico Medicine's Generative AI Platform [29] | AI-designed molecule (ISM001-055) reached Phase IIa trials with positive results. | Target to Phase I trials achieved in ~18 months (versus ~5 years traditional). |

| Multiple (e.g., Oncology) | Exscientia's Centaur Chemist [29] | Multiple AI-designed molecules entered clinical trials. | In silico design cycles ~70% faster, requiring 10x fewer synthesized compounds. |

Table 2: Computational Cost and Efficiency of Different Methods

| Computational Method | Typical Application | Relative Computational Cost | Key Consideration |

|---|---|---|---|

| Molecular Docking | High-throughput affinity screening | Low | Fast but can be inaccurate; prone to exploitation by AI [26]. |

| Free Energy Perturbation (FEP) | Accurate binding affinity prediction | Very High | High accuracy but prohibitive for screening large libraries; best for final validation [26]. |

| All-Atom (AA) Molecular Dynamics | Residence time estimation, stability | Very High | Can bridge scales from nanoseconds to seconds, but computationally intensive [11]. |

| Coarse-Grained (CG) Simulations | Relative ranking of ligand series | Medium | Correctly ranks ligands at significantly reduced cost vs. AA [11]. |

| Active Learning (AL) Workflow | Full molecule design cycle | Variable | Total cost depends on oracle expense; a nested strategy can reduce cost by 30-40% [30] [25]. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software and Computational Tools for Generative Molecule Design

| Tool / Reagent | Function / Purpose | Relevance to Cost-Accuracy Balance |

|---|---|---|

| VAE (Variational Autoencoder) | Generative model that learns a continuous, interpretable latent space of molecules. | Enables smooth exploration and interpolation; faster sampling than some other models, suitable for integration with AL [25]. |

| SELFIES | Molecular string representation where every string is guaranteed to be a valid molecule. | Reduces computational waste on invalid structures, improving overall workflow efficiency [24]. |

| Synthetic Accessibility (SA) Score | A predictive score estimating the ease of synthesizing a given molecule. | A critical filter to avoid generating molecules that are impractical or too expensive to make, guiding AI toward realistic designs [25] [26]. |

| Molecular Docking Software | Predicts the binding pose and score of a small molecule within a protein's binding site. | A medium-cost oracle for affinity used in intermediate AL stages to screen large libraries before applying more expensive methods [25] [27]. |

| Free Energy Perturbation (FEP) | A physics-based method for calculating relative binding free energies with high accuracy. | A high-cost, high-accuracy validation tool. Used sparingly on final candidates to ensure predictive success before synthesis [26]. |

| Coarse-Grained (CG) Simulation | A simplified simulation model that reduces computational cost by grouping atoms. | Provides a middle-ground for tasks like residence time estimation, offering better accuracy than docking at lower cost than all-atom MD [11]. |

Troubleshooting Guides and FAQs

This section addresses common technical challenges researchers face when performing ultra-large virtual screening (ULVS) and provides practical solutions grounded in current methodologies.

FAQ 1: My virtual screening hits are not showing activity in experimental validation. How can I improve the selection of true binders?

- Problem: The primary challenge is the accuracy of the scoring function to distinguish true binders from non-binders, which is a key factor for the success of virtual screening [31].

- Solutions:

- Implement a Multi-Stage Docking Protocol: Use a two-tiered approach. Start with a fast, less accurate docking mode (e.g., VSX in RosettaVS) to screen the entire library, then re-dock the top hits with a high-precision mode (e.g., VSH) that incorporates full receptor flexibility for final ranking [31].

- Incorporate Receptor Flexibility: Many docking programs fail to model protein flexibility, leading to inaccurate pose and affinity predictions. Employ methods like RosettaVS that allow for flexible side chains and limited backbone movement to better model induced fit upon ligand binding [31].

- Use Advanced Scoring Functions: Move beyond standard scoring functions. For instance, the RosettaGenFF-VS force field combines enthalpy calculations with a model estimating entropy changes (∆S) upon ligand binding, which has demonstrated superior performance in benchmarks for identifying native binding poses and early enrichment of true positives [31].

- Apply Post-Docking Filters: Use additional criteria like chemical property filters, similarity to known active compounds, or synthetic accessibility to further refine the hit list after docking [32].

FAQ 2: The computational cost of screening a multi-billion compound library is prohibitive. What strategies can reduce this burden?

- Problem: Performing physics-based docking on billions of compounds is extremely time-consuming and resource-intensive [31].

- Solutions:

- Leverage Active Learning: Integrate target-specific neural networks that learn during the docking process. These models can triage and select the most promising compounds for expensive docking calculations, drastically reducing the number of molecules that need full docking simulation [31].

- Utilize High-Performance Computing (HPC) and Cloud Resources: Platforms like VirtualFlow are designed for highly parallelized screening on large computer clusters, offering perfect scaling behavior to efficiently handle ultra-large libraries [33].

- Adopt a Hierarchical Screening Workflow: Do not dock every molecule. Use fast ligand-based similarity searches (e.g., using ROCS) or pharmacophore models to create a focused subset of the library before proceeding to more computationally expensive structure-based docking [34] [32].

- Employ GPU Acceleration: Use docking programs and platforms optimized for graphics processing units (GPUs) to significantly speed up calculations [31].

FAQ 3: How can I ensure my virtual screening campaign explores novel chemical space and does not just rediscover known chemotypes?

- Problem: Over-reliance on known ligand scaffolds can limit the structural diversity of discovered hits [35].

- Solutions:

- Screen Ultra-Large and Diverse Libraries: Use commercially accessible libraries that contain billions of synthesizable compounds, such as the Enamine REAL space, which provide access to vast and unprecedented regions of chemical space [35] [33] [32].

- Apply Generative AI for Library Expansion: Use generative deep learning models or algorithms like STONED to create novel molecular structures from known active compounds. This imposes random structural mutations to generate a diverse library of "child" molecules for screening, balancing randomness with domain knowledge [24] [36].

- Combine Ligand- and Structure-Based Methods: If known active ligands are available, use 3D ligand-based screening (e.g., with Blaze or ROCS) to find bioisosteric replacements that are chemically distinct but share similar three-dimensional shape and electrostatics, helping to escape IP-congested chemical space [32].

FAQ 4: What are the best practices for preparing a protein target structure for an ultra-large virtual screen?

- Problem: The quality of the input protein structure is critical for the success of structure-based virtual screening.

- Solutions:

- Select the Right Structure: Prefer high-resolution X-ray crystallographic structures. If the target has multiple conformational states, choose one that is relevant for ligand binding or consider using multiple structures for screening.

- Model Missing Data: Use computational modeling to fill gaps in the experimental structure, such as missing loops or side chains, and to build a greater understanding of the target system. Tools within platforms like Cresset's Flare can assist with this [32].

- Define the Binding Site Carefully: Accurately identify the active site region for docking. If the binding site is unknown, "blind docking" approaches can be used, though they are more challenging [31].

Key Experimental Protocols and Workflows

This section outlines detailed methodologies for setting up and executing an ultra-large virtual screening campaign, summarizing key quantitative data for comparison.

Protocol: An AI-Accelerated Virtual Screening Workflow (OpenVS)

This protocol, adapted from a study in Nature Communications, describes a workflow for screening multi-billion compound libraries against a defined protein target in under seven days [31].

Table 1: Key Steps in the AI-Accelerated ULVS Workflow

| Step | Description | Key Parameters & Considerations |

|---|---|---|

| 1. Library Preparation | Obtain a ready-to-dock library (e.g., ZINC, Enamine REAL). Pre-process compounds: generate 3D conformations, assign protonation states, and apply energy minimization. | Library size can exceed 1 billion compounds. Pre-processing ensures structural correctness for docking [33]. |

| 2. Target Preparation | Prepare the protein structure: add hydrogens, assign partial charges, and optimize side-chain conformations. Define the binding site coordinates. | Use a high-resolution structure. Modeling receptor flexibility at this stage is crucial for accuracy [31] [32]. |

| 3. Active Learning Screening | Use the OpenVS platform. A target-specific neural network is trained on-the-fly to select promising compounds for docking with RosettaVS. The process starts with a fast VSX mode. | This step drastically reduces the number of compounds requiring full docking, saving computational resources [31]. |

| 4. High-Precision Docking | The top-ranked compounds from the initial screen (e.g., 0.1-1%) are re-docked using the high-precision VSH mode of RosettaVS, which includes full receptor flexibility. | VSH provides more accurate pose and affinity predictions but is computationally more expensive [31]. |

| 5. Hit Identification & Analysis | Rank the final compounds using the improved RosettaGenFF-VS scoring function. Apply post-filtering based on chemical properties, diversity, and synthesizability. | The final output is a manageable list of top candidates (tens to hundreds) for experimental validation [31] [32]. |

AI-Accelerated ULVS Workflow Diagram: This workflow uses active learning to efficiently triage a large library before more computationally intensive docking stages.

Protocol: A Generative HTVS Workflow for Novel Emitter Design

This protocol, derived from a Journal of Materials Chemistry C paper, uses a generative approach to create a screening library, which is also highly applicable to drug discovery [36].

Table 2: Key Steps in the Generative HTVS Workflow

| Step | Description | Key Parameters & Filters |

|---|---|---|

| 1. Library Generation | Apply the STONED algorithm to known active "parent" molecules. This performs random point mutations on SELFIES strings to generate thousands of novel "child" molecules. | 2000 child molecules per parent. SELFIES representation guarantees 100% molecular validity [36]. |

| 2. Initial Filtering | Apply rudimentary filters to remove undesirable structures. | Remove open-shell molecules, molecules with ring sizes other than 5 or 6, molecules with <30 atoms, and molecules with low structural similarity (Tanimoto <0.25) to parents [36]. |

| 3. Synthesisability Screening | Evaluate the synthetic accessibility of the remaining candidates. | Use scores like RAscore to filter out molecules that are likely very difficult to synthesize [24] [36]. |

| 4. Geometry Optimization | Perform initial molecular mechanics geometry optimizations, followed by more accurate DFT geometry optimizations. | This step ensures the molecules are in a stable, low-energy conformation for property calculation [36]. |

| 5. Property Prediction | Use Time-Dependent DFT (TDDFT) calculations to predict key electronic properties relevant to the target (e.g., ΔEST for TADF emitters). | This is the most computationally intensive step and acts as the primary filter for identifying promising hits [36]. |

Generative HTVS Workflow Diagram: This workflow starts by generating a novel chemical library from known actives before applying a funnel of successive filters.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software and Library Solutions for Ultra-Large Virtual Screening

| Tool / Resource Name | Type | Function in ULVS |

|---|---|---|

| VirtualFlow [33] | Open-Source Platform | A highly automated, open-source platform for preparing and screening ultra-large ligand libraries on computing clusters with perfect scaling behavior. It can use various docking programs. |

| OpenVS / RosettaVS [31] | Open-Source Docking Method & Platform | A state-of-the-art, physics-based virtual screening method (RosettaVS) within an open-source platform (OpenVS). It uses an improved force field (RosettaGenFF-VS) and models receptor flexibility. |

| Orion & Gigadock [34] | Commercial Software Suite | Provides scalable solutions for gigabyte-scale docking (Gigadock) and fast ligand-based screening (ROCS), along with access to vast, ready-to-screen commercial compound libraries. |

| Cresset Blaze & Flare [32] | Commercial Software Suite | Offers ligand-based virtual screening (Blaze) for finding bioisosteric replacements and structure-based screening (Flare Docking), including solutions for ultra-large libraries (Ignite). |

| Enamine REAL Space [33] [32] | Commercially Accessible Compound Library | One of the largest and freely available ready-to-dock ligand libraries, containing billions of synthesizable molecules for screening. |

| STONED Algorithm [36] | Generative Algorithm | Generates a diverse library of novel molecular structures by applying random mutations to the SELFIES strings of known parent molecules. |

Technical Support Center: Troubleshooting Hybrid AI-Physics Methods in Drug Design

This support center provides practical guidance for researchers integrating artificial intelligence (AI) with physics-based models in drug discovery. The following FAQs address common experimental challenges, focusing on balancing computational cost and accuracy.

Frequently Asked Questions

FAQ 1: How can we improve the target engagement and synthetic accessibility of molecules generated by AI models?

Answer: This is a common challenge where generative models (GMs) produce molecules with high predicted affinity but low practical utility. Implement a nested active learning (AL) framework to iteratively refine the AI's output.

- Recommended Protocol: Integrate a Variational Autoencoder (VAE) with two AL cycles [25].

- Inner AL Cycle: Use chemoinformatics oracles (filters) to evaluate generated molecules for drug-likeness and synthetic accessibility (SA). Retrain the VAE with molecules that pass these filters.

- Outer AL Cycle: Use physics-based molecular modeling oracles (e.g., molecular docking scores) to evaluate binding affinity. Retrain the VAE with molecules that show high predicted affinity.

- Expected Outcome: This workflow guides the GM to explore novel chemical spaces while ensuring generated molecules are synthesizable and have high target engagement. One study reported the synthesis of 9 CDK2-targeted molecules using this method, with 8 showing in vitro activity [25].

FAQ 2: Our AI model performs well on training data but generalizes poorly to novel chemical scaffolds. What strategies can help?

Answer: This "applicability domain" problem often stems from over-reliance on a single type of model or data. A hybrid approach improves generalization.

- Recommended Protocol: Combine data-driven AI with physics-based simulation for validation [37] [25].

- Use generative AI or other ML models for initial, high-throughput screening of vast chemical spaces.

- Apply physics-based methods like molecular dynamics (MD) simulations or free energy perturbation (FEP) to a shortlist of candidates for a more reliable, mechanistic evaluation of binding.

- Rationale: AI models excel at rapid interpolation within known data spaces, while physics-based methods are superior for extrapolating to novel structures because they are based on fundamental physical principles [38] [37]. This balances speed with accuracy.

FAQ 3: What is the most computationally efficient way to leverage AI for predicting molecular properties during early-stage screening?

Answer: For early-stage screening where throughput is critical, traditional machine learning (ML) models offer a favorable balance of performance and computational cost.

- Recommended Protocol:

- Model Selection: Use traditional models like XGBoost or Random Forest for property prediction tasks (e.g., logP, solubility) [39].

- Data Representation: Employ standard molecular descriptors or fingerprints as model inputs.

- Justification: While deep learning models may achieve slightly higher accuracy, traditional ML models deliver strong performance with significantly lower inference latency and computational resource requirements, making them ideal for large-scale virtual screening [39].

FAQ 4: How can we address the 'black box' nature of complex AI models to ensure regulatory acceptance in drug development?

Answer: Model interpretability is crucial for regulatory trust and scientific insight. A multi-faceted strategy is required.

- Recommended Protocol:

- Use Inherently Interpretable Models: For specific tasks, use models like Logistic Regression that provide clear feature importance (e.g., molecular descriptors) [39].

- Implement Explainable AI (XAI) Techniques: Apply post-hoc interpretation methods to complex models like deep neural networks to highlight which structural features drove a prediction.

- Maintain a Human-in-the-Loop: Ensure that medicinal chemists and domain experts interpret AI outputs and guide the discovery process [37] [40]. Regulatory guidance, like the FDA's 2025 draft, emphasizes the need for understanding AI model behavior in the context of final product safety [37].

Performance and Cost Benchmarking of AI Models

The table below summarizes the trade-offs between different AI model types to help you select the right tool for your project's needs [39].

Table 1: Model Performance and Computational Cost Benchmark for a Regulatory Classification Task

| Model Category | Example Models | Key Strength | Computational Cost & Speed |

|---|---|---|---|

| Traditional ML | XGBoost, Random Forest, Logistic Regression | Strong accuracy with high interpretability (especially Logistic Regression) | Low computational cost; fast inference latency |

| Deep Learning | CNNs (Convolutional Neural Networks) | High classification accuracy | Modest computational resources required |

| Large Language Models (LLMs) | Transformer-based Models (e.g., GPT) | Natural language explanations for decisions | High computational cost; significantly slower inference |

Experimental Protocol: Implementing a Hybrid VAE-Active Learning Workflow

This protocol details the methodology for integrating a generative AI model with physics-based active learning, as referenced in FAQ 1 [25].

Objective: To generate novel, drug-like, and synthesizable molecules with high predicted affinity for a specific protein target.

Workflow Overview:

Required Research Reagent Solutions:

Table 2: Essential Tools and Materials for the Hybrid Workflow

| Item Name | Function / Explanation |

|---|---|

| Variational Autoencoder (VAE) | A generative AI model that learns a continuous latent space of molecular structures, enabling the generation of novel molecules. |

| CHEMOTION ELN | An electronic lab notebook for managing and curating the initial-specific and generated compound datasets. |

| RDKit | An open-source chemoinformatics toolkit used to calculate drug-likeness (e.g., Lipinski's Rule of 5) and synthetic accessibility scores. |

| Molecular Docking Software (e.g., AutoDock Vina, GOLD) | A physics-based oracle used in the Outer AL cycle to predict the binding pose and affinity of generated molecules to the target protein. |