Selecting Molecular Descriptors for QSAR: A Strategic Guide from Foundations to AI-Enhanced Validation

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of selecting molecular descriptors for Quantitative Structure-Activity Relationship (QSAR) modeling.

Selecting Molecular Descriptors for QSAR: A Strategic Guide from Foundations to AI-Enhanced Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of selecting molecular descriptors for Quantitative Structure-Activity Relationship (QSAR) modeling. It covers the foundational principles of molecular descriptors, from 1D physicochemical properties to 4D conformational ensembles and AI-generated deep descriptors. The piece delves into methodological strategies, including variable selection techniques and the impact of descriptor choice on model interpretability. It further addresses common troubleshooting scenarios, such as managing high-dimensional data and defining the model's applicability domain. Finally, the article synthesizes modern validation paradigms, comparing classical and machine learning approaches, and emphasizes the necessity of rigorous external validation and adherence to OECD principles for developing robust, predictive QSAR models in drug discovery.

The Building Blocks of QSAR: Understanding Molecular Descriptor Types and Their Fundamental Roles

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a 1D and a 4D molecular descriptor? The core difference lies in the complexity of the molecular representation they capture. A 1D descriptor typically represents global, whole-molecule properties that do not require structural or connectivity information, such as molecular weight or atom counts [1] [2]. In contrast, a 4D descriptor incorporates the dimension of time and interaction fields, often derived from molecular dynamics simulations or the placement of a molecule within a 3D grid to probe its interactions with a receptor site, providing information on specific, conformation-dependent interactions [1] [2].

Q2: My QSAR model is overfitting. How can my choice of descriptors contribute to this, and how can I address it? Overfitting often occurs when the number of descriptors is too large relative to the number of compounds in your dataset, or when descriptors are highly correlated [3]. To address this:

- Apply Feature Selection: Use techniques like genetic algorithms (wrapper methods) or LASSO regression (embedded methods) to identify and retain only the most relevant descriptors [3].

- Start Simpler: Consider beginning your modeling process with simpler, more interpretable 1D or 2D descriptors, which can be just as predictive as 3D/4D descriptors for many endpoints and are less computationally intensive [2].

- Validate Rigorously: Always use robust validation methods, such as k-fold cross-validation and an external test set, to ensure your model's performance is genuine [4] [3].

Q3: I've identified an important "bulk" property descriptor like molecular weight in my model. Should I use this to guide chemical modifications? Not necessarily in isolation. Recent research highlights that high-dimensional descriptor spaces are often confounded, meaning a "bulk" property may be a proxy for a true, specific pharmacophore [5]. Before guiding synthesis, it is crucial to perform deconfounding analysis to determine if the descriptor has a causal link to the activity, or merely a correlational one. Advanced statistical frameworks, such as Double Machine Learning (DML), can help distinguish true causal features from spurious ones [5].

Q4: How do I know which level of descriptor (1D-4D) to start with for my QSAR project? A hierarchical approach is often most efficient [6]:

- Start with 1D/2D Descriptors: These are fast to compute and often provide a strong baseline model. If performance is satisfactory, this can save significant time and resources [2].

- Progress to 3D Descriptors: If the biological endpoint is known to be highly dependent on stereochemistry or 3D shape (e.g., receptor binding), move to 3D descriptors. Ensure you have a reliable method for conformational sampling [2].

- Reserve 4D for Complex Problems: Use 4D descriptors for the most challenging cases where explicit modeling of ligand-receptor interactions or dynamics is necessary [6].

Q5: What are the minimal criteria for a molecular descriptor to be considered well-defined and useful for QSAR? A robust molecular descriptor should meet several key criteria [1]:

- Invariance: Its value must be invariant to molecular manipulations that don't change the underlying structure, such as atom numbering, rotation, or translation.

- Unambiguous Algorithm: It must be defined by a clear and unambiguous mathematical procedure.

- Good Correlation: It should correlate with at least one experimental property.

- Low Degeneracy: It should, ideally, have a low probability of producing the same value for different molecules.

- Structural Interpretation: The descriptor should have a meaningful chemical or structural interpretation to provide insights for chemists [7].

Troubleshooting Common Experimental Issues

Problem: Poor Predictive Performance on External Test Set Your model performs well on training data but poorly on new, unseen compounds.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect Applicability Domain | Check if the new compounds are structurally dissimilar to your training set. | Define the applicability domain of your model. Use similarity metrics to ensure new compounds fall within the chemical space the model was trained on [3]. |

| Data Quality Issues | Re-inspect the original experimental data for the training set. Look for errors, outliers, or inconsistent measurement conditions. | Perform rigorous data cleaning and curation: standardize structures, remove duplicates, and handle missing values appropriately [3]. |

| Overfitting | Compare performance metrics between the training set and cross-validation. A large gap indicates overfitting. | Apply feature selection to reduce the number of descriptors and simplify the model. Use regularization techniques [3]. |

Problem: Model Lacks Chemical Interpretability The model is predictive, but you cannot extract meaningful chemical insights to guide design.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Use of "Black Box" Models/Descriptors | Evaluate the model's inherent interpretability. Models like Random Forest can provide feature importance. | Use descriptor types that are chemically intuitive. Implement model interpretation techniques like the Gini index for Random Forest to identify which structural features (e.g., aromatic moieties, specific atoms) are most influential [4] [7]. |

| High Correlation Among Descriptors | Calculate the correlation matrix of your descriptors. | Apply a descriptor whitening technique or select a subset of uncorrelated descriptors to isolate the individual effect of each feature. Consider causal inference methods to deconfound descriptors [5]. |

The Scientist's Toolkit: Essential Software for Descriptor Calculation

The following table lists key software tools for computing molecular descriptors, along with their capabilities and key characteristics.

| Software Name | 0D/1D | 2D Fingerprints | 3D/4D Descriptors | Key Characteristics | License |

|---|---|---|---|---|---|

| alvaDesc [1] | Yes | Yes | Yes | Comprehensive descriptor calculation; available for Windows, Linux, macOS; updated in 2025. | Proprietary, Commercial |

| Dragon [1] | Yes | Yes | Yes | Historically a industry standard; now discontinued. | Proprietary, Commercial |

| Mordred [1] | Yes | No | Yes | Based on RDKit; open-source; a community-maintained fork is available. | Free, Open Source |

| PaDEL-Descriptor [1] [3] | Yes | Yes | Yes | Based on the Chemistry Development Kit (CDK); discontinued but widely used. | Free |

| RDKit [1] [3] | Yes | Yes | Yes | Versatile cheminformatics toolkit; includes descriptor calculation; actively updated (2024). | Free, Open Source |

| scikit-fingerprints [1] | Yes | Yes | Yes | A Python library specifically for calculating molecular fingerprints; updated in 2025. | Free, Open Source |

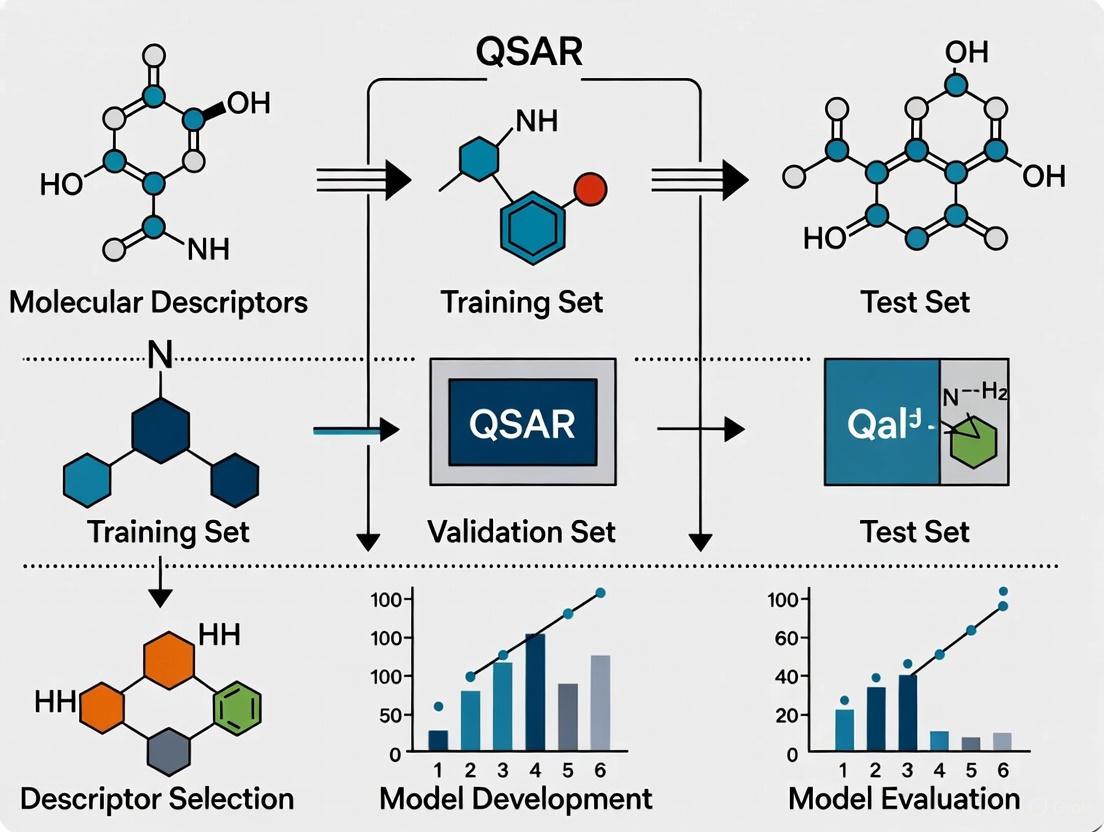

Standard Experimental Protocol: A Hierarchical Workflow for Descriptor Selection and Model Building

This protocol outlines a systematic, hierarchical approach for selecting molecular descriptors and developing a validated QSAR model, based on established best practices [3] [6].

Objective: To build a robust and interpretable QSAR model by sequentially progressing through levels of molecular complexity, using the information from each step to inform the next.

Materials:

- Dataset: A curated set of chemical structures (e.g., as SMILES strings) and their corresponding biological activity values (e.g., IC50, pIC50).

- Software: A descriptor calculation tool (see Toolkit table above), a data analysis environment (e.g., Python with scikit-learn, R), and a molecular modeling platform if 3D/4D descriptors are required.

Methodology:

Step 1: Data Curation and Preparation

- Collect and Clean: Compile the dataset from reliable sources (e.g., ChEMBL, PubChem). Standardize chemical structures: remove salts, normalize tautomers, and define stereochemistry clearly [3].

- Handle Activity Data: Convert all activity values to a consistent scale (e.g., log-transform IC50 to pIC50).

- Split Data: Divide the dataset into a training set (~80%) and a hold-out test set (~20%). The test set must be kept completely separate until the final model validation [3].

Step 2: Hierarchical Descriptor Calculation and Modeling The essence of this hierarchic system is that the QSAR problem is solved sequentially. At each subsequent stage, the problem is not solved from scratch, but the information obtained from the previous step is used [6].

1D/2D Model:

- Calculate Descriptors: Compute a large set of 1D (constitutional) and 2D (topological) descriptors using software like RDKit or Mordred.

- Feature Selection: Apply a feature selection method (e.g., genetic algorithm, LASSO) to the training set to reduce dimensionality and avoid overfitting.

- Model Building & Validation: Train a model (e.g., Random Forest, PLS) using the selected descriptors. Evaluate performance using 5-fold cross-validation on the training set. Record key metrics (e.g., R², Q², MCC).

3D Model:

- Generate 3D Conformers: For each molecule, generate a low-energy 3D conformation.

- Calculate 3D Descriptors: Compute 3D descriptors (e.g., WHIM, GETAWAY, quantum-chemical descriptors).

- Feature Selection & Modeling: Perform feature selection on the 3D descriptor pool. Build and validate a new model using the same protocol as in Step 2.1.

- Compare and Learn: Compare the performance of the 3D model with the 1D/2D model. If performance does not improve significantly, the simpler model may be sufficient. Analyze the important 3D descriptors for structural insights [2].

4D Model (If Required):

- Define Interaction Probes: Place the molecules in a 3D grid and use probes (e.g., water, methyl group) to calculate interaction energy fields (as in 4D descriptors or CoMFA) [2].

- Calculate 4D Descriptors: Derive descriptors from these interaction fields or from molecular dynamics trajectories.

- Build Final Model: Build and validate a model using these 4D descriptors. This step is typically reserved for cases where 3D shape and interaction specifics are critical and simpler models are inadequate.

Step 3: Final Model Validation and Reporting

- External Validation: Apply the final chosen model (whether it be from 1D/2D, 3D, or 4D) to the hold-out test set that was set aside in Step 1. This provides an unbiased estimate of the model's predictive power [3].

- Define Applicability Domain: Characterize the chemical space of the training set to establish the range of structures for which the model can make reliable predictions [3].

- Report Results: Document the model's algorithm, key descriptors, performance statistics, and applicability domain according to regulatory standards like the OECD QSAR Model Reporting Format (QMRF) where applicable [8] [9].

Hierarchical Descriptor Selection Workflow

Data Presentation: Key Performance Metrics from a Modern QSAR Study

The following table summarizes the robust performance of a Random Forest QSAR model using SubstructureCount fingerprints, developed to predict the activity of Plasmodium falciparum dihydroorotate dehydrogenase (PfDHODH) inhibitors for anti-malarial drug discovery [4]. The model was validated on balanced data using an oversampling technique.

| Model Stage | Matthews Correlation Coefficient (MCC) | Accuracy | Sensitivity (Recall) | Specificity |

|---|---|---|---|---|

| Training Set | 0.97 | > 80% | > 80% | > 80% |

| Cross-Validation | 0.78 | > 80% | > 80% | > 80% |

| External Test Set | 0.76 | > 80% | > 80% | > 80% |

Interpretation: The high MCC values across all stages, particularly the strong external test set MCC of 0.76, indicate a model with excellent predictive power and robustness, minimizing false positives and false negatives. The high sensitivity and specificity confirm its balanced ability to identify both active and inactive compounds [4].

Core Concepts: The Dimensionality of Molecular Descriptors

Hierarchy of Molecular Descriptor Dimensions

Frequently Asked Questions (FAQs) on Descriptor Selection and Application

Q1: What is the fundamental difference between classical Hansch analysis and modern descriptor-based QSAR?

A1: Classical Hansch Analysis is a Linear Free Energy Relationship (LFER) approach that uses a limited set of interpretable physicochemical parameters—namely hydrophobicity (Log P), electronic effects (Hammett σ constants), and steric effects (Taft Es constants)—to correlate structure with biological activity via a linear equation [10]. In contrast, modern QSAR utilizes high-dimensional molecular descriptors (often hundreds or thousands) and advanced machine learning (ML) algorithms. The key challenge with modern approaches is that standard ML models can be misled by high correlations between these descriptors, incorrectly identifying proxy "bulk" properties (e.g., molecular weight) as important, instead of the true causal pharmacophoric features [5].

Q2: My QSAR model has good internal validation statistics but fails in external prediction. What could be the cause?

A2: This is a common issue often rooted in experimental errors within the training data and overfitting. Studies show that even a small ratio of experimental errors in the modeling set can significantly deteriorate external prediction performance [11]. While consensus predictions can help identify compounds with potential experimental errors, simply removing compounds with large cross-validation errors does not reliably improve external predictivity and may lead to overfitting [11]. Furthermore, models trained on confounded correlations rather than true causal effects are likely to fail when applied to new chemical spaces [5].

Q3: How can I identify and mitigate the effect of experimental errors in my dataset?

A3: You can use the QSAR modeling process itself to help prioritize potential outliers. The methodology is as follows [11]:

- Develop a QSAR model and perform a cross-validation (e.g., fivefold).

- Sort all compounds in the dataset by the magnitude of their prediction errors from the cross-validation.

- Analyze the top compounds with the largest errors; these are likely to contain experimental inaccuracies. Research indicates that this method can successfully prioritize compounds with simulated experimental errors, with performance quantified by ROC enrichment factors [11]. Table: Experimental Error Prioritization Performance

| Dataset Type | Top 1% Enrichment | Top 20% Enrichment | Notes |

|---|---|---|---|

| Categorical (MDR1) | 12.9x | 4.7x | Compared to random selection |

| Continuous (LD50) | 4.2x - 5.3x | 2.3x | Varies by error simulation strategy |

Q4: What are "causal descriptors," and how can they be identified?

A4: Causal descriptors are molecular features that have a statistically significant and unconfounded causal effect on the biological activity, rather than just a correlational link. A framework using Double/Debiased Machine Learning (DML) has been proposed to identify them [5]. The experimental protocol involves:

- DML Estimation: Use DML to estimate the causal effect of each individual molecular descriptor on the activity, while treating all other p-1 descriptors as potential confounders.

- Hypothesis Testing: Apply statistical testing (e.g., the Benjamini-Hochberg procedure) to the p causal estimates to control the False Discovery Rate (FDR). This framework has been shown in validation studies to successfully reject spurious, confounded descriptors and correctly identify the true causal features [5].

Troubleshooting Guides for Common Technical Issues

Issue 1: Poor Model Interpretability and Spurious Correlations

Problem: The model highlights "bulk" properties like molecular weight as key drivers, which are likely proxies, not true mechanistic features.

Solution: Implement a causal inference framework.

- Apply Deconfounding Techniques: Use the DML and FDR control framework to deconfound the descriptor space [5].

- Workflow: The diagram below outlines the process for identifying causal descriptors.

Issue 2: Handling Structural and Experimental Data Errors

Problem: Underlying data quality issues, such as structural misrepresentation or experimental variability, lead to poor and unreliable models.

Solution: Establish a rigorous data curation and validation protocol.

- Chemical Structure Curation: Follow a standardized workflow to remove and correct structural errors, which is a major source of model inaccuracy [11].

- Experimental Error Identification: Use the cross-validation prioritization method described in FAQ A3 to flag potential outliers for expert review [11].

- Leverage Consensus: Employ consensus predictions from multiple models, as they are more robust in identifying compounds with potential experimental errors [11].

Issue 3: Integrating Read-Across with QSAR Modeling

Problem: Simple read-across predictions are subjective and hard to quantify, while QSAR models may lack sufficient data.

Solution: Combine the strengths of both approaches using novel methodologies.

- Use RASAR Frameworks: Implement Read-Across Structure-Activity Relationship (RASAR) models. These use similarity-based descriptors derived from read-across, combined with traditional molecular descriptors, to build more predictive ML models [12].

- Adopt Advanced Platforms: Utilize platforms like OrbiTox, which integrate chemistry-based similarity searching, molecular descriptors, and QSAR models into a unified read-across workflow [13]. Frameworks like Generalized Read-Across (GenRA) also provide a more quantitative and automated approach [12].

- Workflow: The following diagram illustrates the integrated RASAR approach.

Table: Key Resources for Evolving QSAR Practices

| Tool/Resource | Category | Function & Explanation |

|---|---|---|

| Hansch Equation | Foundational Model | The original framework relating biological activity (log 1/C) to hydrophobicity (log P), electronic (σ), and steric (Es) parameters [10]. |

| Double Machine Learning (DML) | Statistical Method | A causal inference method used to deconfound molecular descriptors and estimate true causal effects on activity [5]. |

| Benjamini-Hochberg Procedure | Statistical Method | A hypothesis testing procedure used to control the False Discovery Rate (FDR) when testing hundreds of molecular descriptors simultaneously [5]. |

| Read-Across Structure-Activity Relationship (RASAR) | Modeling Approach | A hybrid technique that uses similarity descriptors from read-across to build more predictive QSAR-like models [12]. |

| OrbiTox Platform | Software Platform | A read-across platform featuring similarity searching, Saagar molecular descriptors, and built-in QSAR models for regulatory submissions [13]. |

| OECD QSAR Toolbox | Software Platform | A widely used software for profiling chemicals, filling data gaps via read-across, and grouping chemicals into categories [8]. |

| Consensus Modeling | Modeling Strategy | Averaging predictions from multiple individual QSAR models to improve robustness and identify potential experimental errors [11]. |

Fundamental Concepts FAQ

What are lipophilicity (Log P), electronic, and steric effects and why are they crucial for QSAR?

Lipophilicity, commonly measured as the partition coefficient Log P, quantifies how a compound distributes itself between a lipophilic phase (like octanol) and an aqueous phase (like water). It is a key determinant in a drug's absorption, distribution, membrane permeability, and overall pharmacokinetics [14] [15]. According to Lipinski's "rule of five," an orally active drug candidate should typically have a Log P value of less than 5 [14]. For ionizable compounds, the distribution coefficient Log D (which accounts for all ionized and unionized species) is used instead, as it provides a more accurate picture at physiological pH values [14] [15].

Electronic effects describe how the electron distribution within a molecule influences its interactions. This includes the influence of lone-pair electrons, atomic charges, and molecular orbital energies (like HOMO and LUMO), which affect a molecule's polarity, polarizability, and its ability to form hydrogen bonds [16] [17]. These factors are critical for understanding binding interactions with a biological target.

Steric effects relate to the spatial arrangement and bulkiness of atoms within a molecule, which can physically impede interactions with a biological target. Steric parameters help quantify molecular volume and shape, which are vital for understanding how a drug fits into its binding site [16] [18].

In QSAR, these properties are translated into molecular descriptors. They form the foundation of models that connect a molecule's physical structure to its biological activity, enabling the prediction and optimization of new drug candidates [14] [16].

What is the difference between Log P and Log D?

| Property | Definition | Best Used For |

|---|---|---|

| Log P | The logarithm of the partition coefficient for the uncharged, neutral form of a molecule between octanol and water [14]. | Non-ionic compounds; a pure measure of intrinsic lipophilicity. |

| Log D | The logarithm of the apparent distribution coefficient, which accounts for all forms of the compound (both ionized and unionized) in the two phases at a specific pH [14] [15]. | Ionizable compounds; provides a more relevant measure of lipophilicity at a given physiological pH (e.g., 7.4 for blood). |

The relationship between Log D and Log P for ionizable compounds is given by: Log D = Log P - log(1 + 10^(pH-pKa)) for acids, and with a corresponding adjustment for bases [14]. This highlights that Log D is pH-dependent, making it essential for modeling activity across different physiological environments like the stomach (pH ~2) or intestine (pH 5-6.8) [14].

Troubleshooting Guides

How do I select the right molecular descriptors for my QSAR model?

Problem: Model shows poor predictive power, potentially due to inappropriate descriptor selection.

Solution: Follow this systematic workflow to choose descriptors based on your molecular property and available resources.

Why does my calculated Log P differ from experimental values, and how can I improve accuracy?

Problem: Significant discrepancy between computational Log P predictions and experimental shake-flask results.

Solution:

Identify the Source of Error:

- Ionizable Compounds: Ensure you are calculating Log D, not Log P, if your compound ionizes within the physiological pH range. This is a common oversight [14] [15].

- Molecular Complexity: Fragment-based calculation methods can be inaccurate for complex molecules with intramolecular interactions (e.g., hydrogen bonding) that are not fully captured by simple group contributions [15].

- Experimental Error: The experimental data in your training set may itself contain errors, which can skew your model [11].

Troubleshooting Steps:

| Step | Action | Rationale |

|---|---|---|

| 1 | Verify the ionization state (pKa) of your compound and calculate Log D at the relevant pH. | Corrects for the most common error in lipophilicity assessment for ionizable drugs [14]. |

| 2 | Use a consensus prediction by averaging results from multiple computational methods (fragment-based, whole-molecule, etc.). | Mitigates the inherent limitations and biases of any single calculation method [11]. |

| 3 | For critical compounds, validate computational predictions with a high-throughput experimental measure like HPLC retention time comparison. | Provides an experimental anchor point and helps identify outliers in computational predictions [15]. |

| 4 | Cross-validate your QSAR model and check if the compound is within the model's Applicability Domain (AD). | Flags predictions for molecules that are too structurally dissimilar from the training set, which are likely to be unreliable [11]. |

How can I computationally quantify electronic and steric effects for my QSAR study?

Problem: Need robust, calculable descriptors for electronic and steric properties.

Solution: Utilize the following descriptors, selectable based on your computational resources and need for accuracy.

Table: Computational Descriptors for Electronic and Steric Effects

| Effect Type | Descriptor | Description | Calculation Method & Notes |

|---|---|---|---|

| Electronic | HOMO/LUMO Energies | Energy of the Highest Occupied and Lowest Unoccupied Molecular Orbitals. Indicates nucleophilicity/electrophilicity [17]. | Quantum Chemical Calculation (DFT, Semi-empirical). A fundamental QM descriptor. |

| Atomic Partial Charges | The calculated electron density on individual atoms. | Semi-empirical or DFT. Can be used in regression equations for Log P [14]. | |

| Lone-Pair Electron Index (LEI) | A topological index that quantifies the electrostatic effect of heteroatoms' lone-pair electrons [16]. | Topological/Fragment-based. Simple to calculate and highly effective in QSAR models [16]. | |

| Dipole Moment | Measure of the overall molecular polarity. | Quantum Chemical Calculation. Influenced by both molecular symmetry and atomic charges. | |

| Steric | Molecular Volume Index (MVI) | A topological index based on van der Waals volumes of atoms [16]. | Topological. Easy to compute from molecular structure. |

| Taft's Steric Parameter (Eₛ) | A classic parameter defining the bulk of a substituent [16] [18]. | Empirical/Fragment-based. Derived from experimental kinetics; available from lookup tables. | |

| van der Waals Volume | The 3D volume occupied by the molecule. | Quantum Chemical or Molecular Mechanics. Provides a direct 3D measure of molecular bulk. |

Experimental Protocols & Methodologies

Protocol: Predicting Log P via Direct Solvation Free Energy Calculation

This method uses quantum chemical calculations combined with continuum solvation models to predict Log P based on first principles [14].

Principle: Log P is calculated from the transfer free energy (ΔGtransfer) of a molecule from water to octanol, using the formula: log P = -ΔGtransfer / (RT ln 10), where ΔGtransfer = ΔGsolvation(octanol) - ΔG_solvation(water) [14].

Workflow:

- Geometry Optimization: Perform a full geometry optimization of the molecule in the gas phase using a quantum chemical method (e.g., Density Functional Theory with the B3LYP functional and 6-31G* basis set) [19] [17].

- Solvation Free Energy Calculation: Using the optimized geometry, calculate the solvation free energy (ΔGsolvation) in two separate single-point energy calculations:

- ΔGsolvation(water): In a continuum solvation model representing water (e.g., IEF-PCM or SMD).

- ΔG_solvation(octanol): In a continuum solvation model representing octanol.

- Compute Log P: Calculate ΔG_transfer and apply the formula above to obtain the Log P value.

Troubleshooting Tips:

- High Computational Cost: For large datasets, use faster semi-empirical quantum methods (e.g., PM6/MOPAC) as a trade-off between speed and accuracy [17].

- Inaccurate for Ions: This direct method is best suited for neutral molecules. For ions, use the Log D relationship [14].

Protocol: Calculating Quantum Chemical Electronic Descriptors

This protocol outlines the steps to compute key electronic descriptors like HOMO/LUMO energies and polarizability [17].

Software: Use quantum chemical software packages like Gaussian, GAMESS, or MOPAC, often with a graphical interface like MOLDEN.

Step-by-Step Guide (e.g., for HOMO Energy):

- Build the Molecule: Construct a 3D model using a molecular builder.

- Submit Geometry Optimization: Run a geometry optimization job to find the molecule's most stable structure. A common method is DFT with the B3LYP functional and the 6-31G* basis set [19].

- Analyze Output:

- Once optimized, the output file contains the orbital energies.

- Open the output in a program like MOLDEN to visualize the HOMO and LUMO orbitals to confirm their character.

- The HOMO and LUMO energies are directly listed in the output file [17].

Step-by-Step Guide for Polarizability:

- Start with Optimized Geometry: Use the geometry from Step 2 above.

- Submit a Single-Point Calculation: Run a calculation with the

POLARkeyword (in MOPAC) or a similar function to request polarizability calculation. - Extract Result: The polarizability volume (in ų) is reported in the output file [17].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational and Experimental Resources

| Item Name | Function in Research | Application Context |

|---|---|---|

| clogP Software | Fragment-based calculation of Log P for high-throughput virtual screening [14]. | Rapid prediction of lipophilicity in early-stage drug discovery. |

| Continuum Solvation Model (e.g., IEF-PCM) | A computational model that treats the solvent as a continuous dielectric to calculate solvation free energies [14]. | Used for direct, QM-based Log P prediction and solvation energy calculations. |

| Quantum Chemical Software (Gaussian, GAMESS) | Performs ab initio or DFT calculations to compute electronic descriptors (HOMO/LUMO, charges, polarizability) [17]. | Generating highly accurate electronic structure descriptors for QSAR. |

| Semi-empirical Software (MOPAC) | Uses parameterized quantum methods for faster calculation of properties for large molecules [17]. | A balance of speed and accuracy for larger datasets or molecules. |

| n-Octanol/Water System | The experimental gold-standard system for measuring Log P via the shake-flask method [15]. | Generating experimental lipophilicity data for validation. |

| Immobilized Artificial Membrane (IAM) | Chromatographic surface that mimics a cell membrane to measure drug-membrane partitioning [15]. | Provides a more biologically relevant measure of lipophilicity than octanol/water. |

FAQs: Core Definitions and Selection

1. What are the fundamental differences between topological, quantum chemical, and 3D surface descriptors?

Topological, quantum chemical, and 3D surface descriptors encode different aspects of molecular structure, making them suitable for various applications in Quantitative Structure-Activity Relationship (QSAR) modeling. The table below summarizes their core characteristics.

Table 1: Fundamental Comparison of Molecular Descriptor Types

| Descriptor Type | Definition & Basis | Key Examples | Primary Applications in QSAR |

|---|---|---|---|

| Topological Descriptors | 2D numerical indices encoding molecular connectivity and atomic arrangement from the molecular graph. [20] | Wiener index, Zagreb indices, Connectivity index ( [21]) | Modeling molecular size, shape, branching; high-throughput virtual screening of large databases. [20] [21] |

| Quantum Chemical Descriptors | Descriptors derived from quantum mechanical calculations, representing electronic structure and energetic properties. [22] | HOMO/LUMO energies, Hardness (η), Electrostatic Potential (ESP), Polarizability (α) [22] | Predicting chemical reactivity, reaction mechanisms, and interactions involving electron transfer. [22] [23] |

| 3D Surface Descriptors | Descriptors based on the molecule's 3D structure, representing steric and electrostatic fields around it. [24] | Comparative Molecular Field Analysis (CoMFA), Comparative Molecular Similarity Indices Analysis (CoMSIA) fields [24] | Understanding steric and electrostatic requirements for ligand-receptor binding; lead optimization. [24] |

2. When should I prioritize quantum chemical descriptors over topological descriptors?

Prioritize quantum chemical descriptors when your research involves predicting or interpreting phenomena directly related to a molecule's electronic structure, such as [22] [23]:

- Chemical reactivity and reaction rate constants.

- Specific ligand-target interactions governed by orbital-controlled mechanisms (e.g., nucleophilic or electrophilic attack).

- Investigations where the electronic energy or the distribution of electron density is a critical determinant of activity.

Prioritize topological descriptors when [20] [21]:

- Screening very large chemical databases for rapid similarity assessment or initial activity profiling.

- The biological activity is primarily influenced by molecular size, shape, or branching patterns rather than precise electronic effects.

- Computational resources or time are limited, as topological descriptors are fast and inexpensive to compute.

3. My 3D-QSAR model performance is poor. Could the molecular alignment be the issue?

Yes, the alignment of molecules is a critical step in 3D-QSAR methods like CoMFA and CoMSIA and is a common source of poor model performance. [24] To troubleshoot:

- Verify the Pharmacophore Hypothesis: Ensure alignment is based on a robust pharmacophore model that reflects the key functional groups responsible for biological activity.

- Check Conformational Selection: Confirm that all molecules are in their biologically active conformation. Using a low-energy conformation that is not the bioactive one can mislead the model.

- Use Multiple Alignment Rules: Test different alignment rules (e.g., based on a common scaffold, field fit, or receptor site) to see which yields the most predictive and interpretable model.

Troubleshooting Guides

Guide 1: Addressing Overfitting in QSAR Models During Descriptor Selection

Overfitting occurs when a model is too complex and learns noise from the training data instead of the underlying structure-activity relationship, leading to poor predictive performance on new compounds.

Protocol:

- Initial Descriptor Pool Calculation: Calculate a wide range of descriptors (e.g., using software like PaDEL-Descriptor, Dragon, or Mordred). [21] [3]

- Data Reduction: Remove descriptors with low variance or high correlation to others to reduce redundancy. [21]

- Feature Selection: Apply robust feature selection methods to identify the most relevant descriptors.

- Filter Methods: Select descriptors based on univariate statistical tests (e.g., correlation with the activity). [3]

- Wrapper Methods: Use algorithms like Genetic Algorithms to find the descriptor subset that optimizes model performance. [21]

- Embedded Methods: Utilize techniques like LASSO regression, which performs variable selection as part of the model building process. [3]

- Apply the "5:1 Rule": As a rule of thumb, have no more than one descriptor for every five compounds in the training set to maintain a good data-point-to-descriptor ratio. [21]

- Validate Rigorously:

- Internal Validation: Use k-fold cross-validation (e.g., 5-fold or 10-fold) on the training set. [3] A high cross-validated R² (Q²) is a good indicator of robustness.

- External Validation: Test the final model on a completely independent set of compounds that were not used in model building or selection. This is the gold standard for assessing predictive ability. [3]

Flowchart: A rigorous workflow to prevent overfitting during descriptor selection.

Guide 2: Selecting an Appropriate Level of Theory for Quantum Chemical Descriptors

The accuracy of quantum chemical (QC) descriptors depends on the computational method (level of theory) used. An inappropriate choice can lead to inaccurate descriptors and flawed models.

Protocol:

- Define the Requirement for Accuracy vs. Cost: Balance computational cost with the required accuracy. Density Functional Theory (DFT) is often the best compromise, offering good accuracy for reasonable cost for many systems. [22]

- Select a Functional and Basis Set: For DFT, popular general-purpose functionals include B3LYP and ωB97X-D. Pair with a basis set like 6-31G* for a good starting point. [22]

- Validate with a Test Set: For a small, representative subset of your molecules, calculate the QC descriptors using a higher level of theory (e.g., ab initio) and compare with your chosen method. If the trends are consistent, the chosen method is likely sufficient. [22]

- Consider the System: For systems with significant dispersion forces (e.g., π-π stacking), use a functional that accounts for these (e.g., ωB97X-D). For open-shell systems, use an unrestricted method. [22]

- Ensure Geometry Optimization: Always use fully optimized molecular geometries at the same level of theory used for descriptor calculation. Using non-optimized or poorly optimized structures is a common error. [22]

Table 2: Troubleshooting Quantum Chemical Descriptor Calculations

| Problem | Potential Cause | Solution |

|---|---|---|

| Unphysically high energy values | Incorrect electronic state specification (e.g., singlet vs. triplet). | Re-check the multiplicity and charge of the molecule. |

| Descriptors fail to correlate with activity | Level of theory is inadequate; descriptors are inaccurate. | Re-calculate with a higher level of theory (e.g., larger basis set, different functional). |

| Calculation fails to converge | Molecular geometry is unstable or has symmetry issues. | Tweak the initial geometry or use a computational software's built-in stability analysis. |

| Long computation times for large molecules | Using high-level ab initio methods on large, flexible molecules. | Switch to DFT or a well-parameterized semi-empirical method (e.g., PM7). [22] |

Guide 3: Implementing a Robust 3D-QSAR Workflow with CoMFA/CoMSIA

A systematic workflow is essential for building interpretable and predictive 3D-QSAR models.

Protocol:

- Data Set Preparation: Curate a set of molecules with known biological activities and defined stereochemistry. [3]

- Molecular Construction and Optimization: Build 3D structures and optimize their geometry using molecular mechanics (e.g., with MMFF94) or quantum chemical methods. [24]

- Conformational Analysis: For flexible molecules, determine the likely bioactive conformation, often the global energy minimum or a conformation aligned with a known rigid inhibitor. [24]

- Molecular Alignment: This is the most critical step. Align molecules based on a common scaffold or a pharmacophore hypothesis. This defines their relative orientation in the 3D grid. [24]

- Descriptor Generation (Field Calculation):

- Place the aligned molecules into a 3D grid.

- For CoMFA, calculate steric (Lennard-Jones) and electrostatic (Coulombic) interaction energies between a probe atom and each molecule at every grid point. [24]

- For CoMSIA, similar fields are calculated but with a Gaussian function, avoiding singularities and making results less sensitive to molecular alignment. [24]

- Partial Least Squares (PLS) Analysis: Use PLS regression to correlate the field values (descriptors) with the biological activity. [24] [3]

- Model Validation and Visualization: Validate the model via cross-validation and an external test set. Visualize the results as 3D coefficient contour maps, showing regions where steric or electrostatic changes are favorable or unfavorable for activity. [24]

Flowchart: Key steps for building a 3D-QSAR model with CoMFA/CoMSIA.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Essential Software Tools for Descriptor Calculation and QSAR Modeling

| Tool Name | Type/Function | Key Utility |

|---|---|---|

| Dragon | Software | Calculates thousands of molecular descriptors (2D/3D). Industry standard for comprehensive descriptor profiles. [21] |

| PaDEL-Descriptor | Software | An open-source alternative for calculating 2D and 1D molecular descriptors. [3] |

| Gaussian, GAMESS | Software | Perform quantum chemical calculations to derive accurate quantum chemical descriptors (HOMO, LUMO, etc.). [22] |

| Multiwfn | Software | A powerful wavefunction analyzer for calculating and analyzing a wide range of quantum chemical descriptors from computed wavefunctions. [22] |

| Sybyl (Tripos) | Software Suite | The commercial platform historically containing the CoMFA and CoMSIA routines for 3D-QSAR. [24] |

| RDKit | Open-Source Toolkit | A collection of cheminformatics and machine-learning software; can calculate descriptors and integrate with Python-based modeling workflows. [3] |

The Critical Link Between Descriptor Selection and Model Interpretability

Troubleshooting Guide: Common Descriptor Selection Issues

This guide addresses frequent challenges researchers face when selecting molecular descriptors for QSAR studies, impacting model interpretability and performance.

1. Problem: The "Black Box" Model

- Symptoms: Your machine learning model has good predictive power, but you cannot explain which molecular features drive the activity predictions.

- Root Cause: Over-reliance on high-dimensional, correlated descriptors that lack clear chemical meaning [25] [5].

- Solution: Implement descriptor importance adjustment methods, such as the modified Counter-Propagation Artificial Neural Network (CPANN) that dynamically weights descriptor importance during training [25]. Combine this with interpretability techniques like SHAP (SHapley Additive exPlanations) analysis to quantify each descriptor's contribution to predictions [26].

2. Problem: Model Fails to Generalize

- Symptoms: High accuracy on training data but poor performance on new compounds.

- Root Cause: Descriptor selection does not account for the model's Applicability Domain (AD), or includes redundant, highly correlated descriptors [26].

- Solution:

- Preprocessing: Remove highly correlated descriptors (e.g., Pearson’s |r| > 0.95) to reduce multicollinearity [26].

- Domain Assessment: Calculate the Mahalanobis Distance for new compounds to verify they fall within the training set's chemical space [26]. Use the χ² distribution to set a statistically valid threshold.

3. Problem: Spurious Correlations Mislead Design

- Symptoms: The model highlights "bulk" properties (e.g., molecular weight) as important, which are likely proxies for true causal features like specific pharmacophores [5].

- Root Cause: Standard machine learning models identify correlations but cannot distinguish causal relationships from confounding factors [5].

- Solution: Employ causal inference frameworks like Double/Debiased Machine Learning (DML). This method estimates the unconfounded causal effect of each descriptor by treating all others as potential confounders, providing more reliable guidance for synthesis [5].

4. Problem: Mechanistic Interpretation is Impossible

- Symptoms: Unable to relate model-selected descriptors to known toxicological mechanisms or structural alerts.

- Root Cause: Descriptors are purely statistical constructs without established links to physicochemical properties or biological mechanisms [25].

- Solution: Prioritize descriptors that can be mapped to known mechanistic features. For example, in carcinogenicity models, descriptors like

nRNNOx(number of N-nitroso groups) can be directly linked to the structural alert "alkyl and aryl–N-nitroso groups" known to form DNA adducts [25].

Frequently Asked Questions (FAQs)

Q1: What are the key criteria for selecting interpretable molecular descriptors? A descriptor should ideally be:

- Computationally Feasible: Reasonable to calculate for large compound libraries [27].

- Distinct Chemical Meaning: Linked to a understandable molecular or physicochemical property (e.g., logP, polar surface area, hydrogen bond count) [27].

- Mechanistically Relevant: Correlated with the known or proposed biological mechanism of action [25].

- Non-Redundant: Provides unique information not captured by other descriptors in the set [26].

Q2: How can I balance model complexity with interpretability? Use genetic algorithms (GA) for feature selection. The GA optimizes a fitness function (e.g., adjusted R²) that rewards model performance while penalizing complexity (number of descriptors) [26]. This inherently leads to simpler, more interpretable models without sacrificing excessive predictive power.

Q3: My model is interpretable, but my peers question its mechanistic validity. How can I address this? Adhere to the OECD's fifth principle for QSAR validation, which recommends "a mechanistic interpretation, if possible" [25]. Strengthen your interpretation by:

- Explicitly linking key descriptors from your model to established structural alerts or pharmacophores from literature [25].

- Using hypothesis testing frameworks like the Benjamini-Hochberg procedure on causal descriptor effects to control the False Discovery Rate (FDR) and provide statistical rigor [5].

Q4: What are the best practices for validating that my descriptor selection is sound?

- Internal Validation: Use rigorous cross-validation and metrics like Q² and RMSE to ensure the model is robust [26].

- External Validation: Test the model on a completely held-out test set [26].

- Domain of Applicability: Always define and report the model's applicability domain using methods like Mahalanobis Distance to clarify for which compounds the interpretations are valid [26].

Experimental Protocols for Robust Descriptor Selection

Protocol 1: Genetic Algorithm for Optimal Descriptor Selection

This methodology is used to identify a compact, optimal subset of descriptors that maximizes model performance and interpretability [26].

- Descriptor Calculation & Preprocessing: Compute a diverse set of molecular descriptors (constitutional, topological, electronic, geometrical) using software like ChemoPy or PaDEL. Standardize the resulting matrix by centering to the mean and scaling to unit variance. Remove descriptors with zero variance or excessive missing values [26].

- Correlation Filtering: Reduce multicollinearity by calculating the Pearson correlation matrix and removing one descriptor from any pair with |r| > 0.95 [26].

- Genetic Algorithm Setup:

- Representation: Use a binary chromosome where each gene represents the presence (1) or absence (0) of a specific descriptor.

- Fitness Function: Use a function like

Fitness = R²_adj - (k/n), wherekis the number of selected descriptors andnis the number of training samples. This penalizes overly complex models [26]. - Evolution: Run the GA for a set number of generations (e.g., 50) or until performance plateaus.

- Model Construction: Build a Multiple Linear Regression (MLR) model using the final subset of GA-selected descriptors. The model takes the form:

pIC50 = β₀ + β₁x₁ + β₂x₂ + … + βₙxₙ, where eachxis a selected descriptor and its coefficientβindicates the magnitude and direction of its effect on activity [26].

Protocol 2: Causal Descriptor Identification with Double Machine Learning

This advanced protocol helps distinguish causally influential descriptors from spurious correlates [5].

- Problem Formulation: Treat the assessment of each descriptor's effect as a causal inference problem, where all other descriptors are potential confounders.

- Double Machine Learning Workflow:

- For each candidate descriptor

x_i, use a machine learning model (e.g., Random Forest) to predict the biological activityyusing all other descriptors (the confounder set,z). - Simultaneously, use another ML model to predict the candidate descriptor

x_iusing the same confounder setz. - The causal effect of

x_iis estimated from the residuals of these two models, effectively "deconfounding" the relationship [5].

- For each candidate descriptor

- Hypothesis Testing: Apply the Benjamini-Hochberg procedure to the p-values of all causal effect estimates to control the False Discovery Rate (FDR). This provides a statistically sound list of descriptors with significant causal links to the activity [5].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in Descriptor Selection & QSAR |

|---|---|

| ChemoPy | A Python package for calculating a comprehensive set of molecular descriptors (topological, constitutional, etc.) from chemical structures [26]. |

| Genetic Algorithm (GA) | An optimization technique used to select an optimal, minimal subset of descriptors by balancing model performance and complexity [26]. |

| SHAP (SHapley Additive exPlanations) | A game-theoretic method to interpret model predictions by quantifying the marginal contribution of each descriptor to the final prediction for any given compound [26]. |

| Double Machine Learning (DML) | A causal inference framework used to estimate the unconfounded causal effect of a descriptor on biological activity, filtering out spurious correlations [5]. |

| Mahalanobis Distance | A statistical measure used to define the Applicability Domain of a QSAR model, identifying compounds that are too dissimilar from the training set for reliable prediction [26]. |

Workflow Visualization

The diagram below illustrates a robust workflow for selecting interpretable molecular descriptors, integrating key steps from the troubleshooting guides and experimental protocols.

Comparative Analysis of QSAR Modeling Algorithms

The table below summarizes the performance and interpretability of different machine learning algorithms used in QSAR modeling, as demonstrated in a study on KRAS inhibitors [26].

| Modeling Algorithm | Key Advantage | Interpretability Strength | Example Performance (R²) [26] |

|---|---|---|---|

| Partial Least Squares (PLS) | Handles multicollinearity well via latent variables. | Good; variable importance in projection (VIP) scores indicate descriptor relevance. | 0.851 |

| Genetic Algorithm-MLR (GA-MLR) | Optimally balances model size and predictive power. | High; produces a simple, transparent linear equation with defined coefficients. | 0.677 |

| Random Forest (RF) | Robust to overfitting and noise. | Moderate; provides permutation-based importance and is compatible with SHAP analysis. | 0.796 |

| XGBoost | High predictive accuracy with complex data. | Moderate; compatible with SHAP for non-linear effect interpretation. | Not Specified |

From Theory to Practice: Strategic Descriptor Selection and Implementation in Modern QSAR

Frequently Asked Questions

Q1: My QSAR model is sensitive to outliers in the biological activity data. Which variable selection method should I use? The LAD-LASSO (Least Absolute Deviation-Least Absolute Shrinkage and Selection Operator) is specifically designed to handle this issue. Unlike standard LS-LASSO, which uses a least squares criterion sensitive to outliers, LAD-LASSO employs a least absolute deviation criterion that is robust against heavy-tailed errors and severe outliers [28]. This method provides low bias in estimating large coefficients and maintains good prediction performance even when outlier observations are present in your dataset [28].

Q2: How does the choice of mutual information estimator impact the performance of the mRMR feature selection method? The performance of the Maximum Relevance Minimum Redundancy (mRMR) method is highly dependent on the mutual information estimator chosen. Different estimators, such as the Parzen window, equidistant partitioning (cells method), or bias-corrected versions, can yield varying results [29]. The estimator must be carefully selected based on your dataset characteristics, as an inappropriate choice can lead to unreliable feature selection. A bias-corrected estimator often improves mRMR performance by providing more stable mutual information assessments [29].

Q3: When should I prefer mutual information-based methods over Genetic Algorithms for variable selection in QSAR studies? Mutual information methods are generally preferred when you need computational efficiency and want to capture both linear and nonlinear dependencies between descriptors and biological activity [29] [30]. Genetic Algorithms are more appropriate when you're exploring a complex feature space and want to avoid local minima, though they can be computationally intensive [31]. For high-dimensional descriptor spaces, mutual information methods like mRMR or DMIM often provide better computational performance [29] [30].

Q4: What are the key differences between filter methods (like mutual information) and wrapper methods (like Genetic Algorithms)? Filter methods (e.g., mutual information) evaluate features based on intrinsic data properties, independent of a specific classifier, making them computationally efficient and model-agnostic [29]. Wrapper methods (e.g., Genetic Algorithms) use the performance of a specific predictive model to evaluate feature subsets, potentially yielding better performance but at higher computational cost and with potential overfitting risks [31]. Embedded methods like LASSO incorporate feature selection directly into the model training process, providing a balance between both approaches [28].

Q5: Why does my LASSO-selected model show high bias in estimating large coefficients? This is a known limitation of standard LS-LASSO (Least Squares-LASSO), which can produce high bias when estimating large coefficients [28]. Consider using robust variants like LAD-LASSO, which demonstrates lower bias for large coefficient estimation while maintaining the sparsity and variable selection capabilities of traditional LASSO [28]. The bias arises from the simultaneous variable selection and parameter estimation in LS-LASSO, which LAD-LASSO mitigates through its robust objective function [28].

Troubleshooting Guides

Issue 1: Poor Generalization Performance Despite High Training Accuracy

Problem: Your QSAR model performs well on training data but poorly on external test sets after variable selection.

Solution:

- Apply stricter validation protocols: Use external test sets that were completely excluded from the variable selection process [32].

- Evaluate applicability domain: Ensure test compounds fall within the chemical space of your training set [28] [27].

- Check for overfitting in selection: Use cross-validation during variable selection, not just during model building [32].

- Consider simpler models: If using Genetic Algorithms, they may overfit with too many generations; reduce population size or generations [31].

Performance metrics to check:

- Concordance Correlation Coefficient (CCC) should be >0.8 for external validation [32]

- rm² metric should meet established thresholds [32]

- R² and MSE for both training and test sets should be comparable [28]

Issue 2: Inconsistent Variable Selection Across Different Algorithms

Problem: Different variable selection methods (GA, LASSO, Mutual Information) yield different descriptor subsets for the same dataset.

Solution:

- Analyze descriptor redundancy: High correlation between descriptors can cause this issue. Pre-filter strongly correlated descriptors (e.g., |r| > 0.95) [28].

- Check statistical significance: Use multiple methods and select descriptors consistently identified across methods [31].

- Evaluate stability: Use bootstrap resampling to assess selection frequency of each descriptor [28].

- Prioritize interpretability: Select the descriptor set that aligns best with known structure-activity relationships in your chemical domain [27].

Issue 3: Computational Limitations with High-Dimensional Descriptor Spaces

Problem: Variable selection becomes computationally prohibitive with thousands of molecular descriptors.

Solution:

- Implement pre-filtering: Use simple correlation measures or variance thresholds to reduce descriptor space before applying advanced methods [28].

- Choose efficient algorithms: For high-dimensional spaces, mRMR with efficient mutual information estimators often outperforms Genetic Algorithms computationally [29].

- Use embedded methods: LASSO variants perform selection during modeling, reducing computational overhead compared to wrapper methods [28].

- Leverage optimized software: Use specialized packages like DRAGON with built-in selection tools rather than custom implementations [28].

Performance Comparison of Variable Selection Methods

Table 1: Key Characteristics of Variable Selection Methods in QSAR

| Method | Key Strengths | Limitations | Optimal Use Cases |

|---|---|---|---|

| Genetic Algorithms | Effective for complex feature spaces; Avoid local minima [31] | Computationally intensive; Risk of overfitting [31] | Moderate-dimensional data (<500 descriptors); Complex nonlinear relationships [31] |

| LASSO/LAD-LASSO | Simultaneous selection & estimation; Robust to outliers (LAD-LASSO) [28] | High bias for large coefficients (standard LASSO) [28] | High-dimensional data; When interpretability is important [28] |

| Mutual Information (mRMR) | Captures nonlinear dependencies; Computationally efficient [29] | Performance depends on estimator choice [29] | Large datasets; When both linear & nonlinear relationships exist [29] |

| Decomposed Mutual Information (DMIM) | Overcomes complementarity penalization [30] | Less established in QSAR literature [30] | Classification tasks; When complementary features are important [30] |

Table 2: Typical Performance Metrics for Validated QSAR Models

| Validation Type | Metric | Acceptable Threshold | Notes |

|---|---|---|---|

| Internal | Q² (LOO-CV) | >0.5 | May be optimistic [32] |

| External | R²test | >0.6 | Should be close to R²training [28] [32] |

| External | CCC | >0.8 | More reliable than R² alone [32] |

| External | rm² | >0.5 | Specific for QSAR validation [32] |

| Overall | MSEtest | As low as possible | Should be comparable to MSEtraining [28] |

Experimental Protocols

Protocol 1: Implementing LAD-LASSO for Robust Variable Selection

Purpose: Select molecular descriptors while maintaining robustness to outliers in biological activity data.

Materials:

- Dataset with molecular structures and biological activities

- DRAGON software or equivalent for descriptor calculation [28]

- MATLAB, R, or Python with optimization packages

- Preprocessing tools for descriptor filtering

Procedure:

- Calculate descriptors: Compute molecular descriptors using DRAGON (≈3224 descriptors) [28]

- Preprocess descriptors:

- Remove constant and near-constant variance descriptors

- Eliminate highly correlated descriptors (|r| > 0.95) [28]

- Standardize remaining descriptors to zero mean and unit variance

- Implement LAD-LASSO optimization:

- Use the objective function: minβ(∑|yi - xi'β| + λ∑|βj|)

- Apply cross-validation to select optimal λ parameter

- Use alternating direction method of multipliers (ADMM) for efficient optimization [33]

- Select final descriptors: Choose descriptors with non-zero coefficients in the optimal model

- Validate selection: Build QSAR model with selected descriptors and evaluate using external test set

Troubleshooting Notes:

- If convergence is slow, adjust optimization tolerance parameters

- If too many/few descriptors are selected, adjust λ range in cross-validation

- Verify robustness by artificially introducing outliers and comparing with standard LASSO

Protocol 2: mRMR with Bias-Corrected Mutual Information Estimation

Purpose: Select features with maximum relevance to activity and minimum redundancy among themselves using improved mutual information estimation.

Materials:

- Dataset with continuous molecular descriptors and biological activity

- Programming environment with mutual information estimation capabilities

- mRMR implementation with customizable estimator options

Procedure:

- Prepare data: Preprocess descriptors and ensure continuous format

- Select mutual information estimator: Choose from:

- Parzen window estimation

- Equidistant partitioning (cells method)

- Bias-corrected estimator (recommended) [29]

- Implement mRMR algorithm:

- Initialize selected feature set S = ∅

- For each iteration:

- Calculate relevance: rel(f) = I(f; C) for all features f ∉ S

- Calculate redundancy: red(f) = (1/|S|) ∑ I(f; s) for all s ∈ S

- Select feature maximizing: rel(f) - red(f)

- Add to S [29]

- Add regularization (optional): Include small regularization term in denominator for numerical stability [29]

- Determine stopping criterion: Use predefined number of features or performance plateau

Validation:

- Compare with other estimators to assess stability

- Evaluate final feature set using classification/regression performance

- Use Y-randomization to confirm significance [28]

The Scientist's Toolkit

Table 3: Essential Computational Tools for Variable Selection in QSAR

| Tool/Software | Primary Function | Application in Variable Selection |

|---|---|---|

| DRAGON | Molecular descriptor calculation | Calculates 3224+ molecular descriptors for QSAR [28] |

| Mordred | Molecular descriptor calculation | Python package for calculating 1600+ molecular descriptors [34] |

| MATLAB | Numerical computing | Implementation of LAD-LASSO and correlation filtering [28] |

| PaDEL-Descriptor | Molecular descriptor calculation | Generates molecular descriptors for cheminformatics [3] |

| Cerius2 | Molecular modeling | Includes Genetic Algorithm (GFA) for variable selection [31] |

| MOE | Molecular modeling | QuaSAR-Evolution module for GA-based selection [31] |

Workflow Visualization

In Quantitative Structure-Activity Relationship (QSAR) research, the initial set of calculated molecular descriptors is often vast and highly dimensional. Datasets can contain hundreds to thousands of descriptors, many of which are redundant, noisy, or irrelevant for predicting biological activity. This high dimensionality poses significant challenges, including overfitting, increased computational costs, and difficulty in model interpretation—a phenomenon known as the "curse of dimensionality" [35] [36]. Dimensionality reduction techniques are therefore not merely optional pre-processing steps but are fundamental to developing robust, interpretable, and predictive QSAR models. This technical support guide focuses on two powerful, complementary methods: Principal Component Analysis (PCA), a feature extraction technique, and Recursive Feature Elimination (RFE), a feature selection method. We provide detailed troubleshooting and FAQs to help researchers effectively implement these techniques within their QSAR workflows.

The table below summarizes the key characteristics of PCA and RFE to help you select the appropriate strategy.

| Feature | Principal Component Analysis (PCA) | Recursive Feature Elimination (RFE) |

|---|---|---|

| Category | Feature Extraction [35] | Feature Selection [35] [37] |

| Core Principle | Projects data to a new, lower-dimensional space of orthogonal Principal Components (PCs) that maximize variance [35] [36]. | Iteratively removes the least important features based on a model's feature importance scores [37]. |

| Output | Principal Components (PCs)—linear combinations of all original features [35]. | A subset of the original, interpretable molecular descriptors [37]. |

| Interpretability | Low; PCs are mathematical constructs and often lack direct chemical meaning [35]. | High; retains the original descriptors, allowing for direct structure-activity interpretation [37]. |

| Primary Use Case | Dealing with multicollinearity; reducing noise; visualizing high-dimensional data [38] [39]. | Identifying the most impactful molecular descriptors to guide lead optimization [37]. |

Experimental Protocols and Workflows

Protocol 1: Implementing PCA for Descriptor Reduction

PCA is an unsupervised technique from linear algebra used to project a dataset into a lower-dimensional space while preserving its essential variance [35] [36].

Detailed Methodology:

- Data Standardization: Before applying PCA, standardize your descriptor matrix so that each descriptor has a mean of 0 and a standard deviation of 1. This ensures all descriptors contribute equally to the variance, regardless of their original units [35].

- Covariance Matrix Computation: Calculate the covariance matrix of the standardized data to understand how the descriptors vary with one another.

- Eigendecomposition: Perform eigendecomposition on the covariance matrix to obtain eigenvalues and eigenvectors. The eigenvectors represent the principal components (PCs), and the eigenvalues indicate the amount of variance captured by each PC [35].

- Projection: Project the original data onto the selected principal components to create a new, reduced dataset [36].

Key Considerations:

- Number of Components: The choice of how many PCs to retain is critical. A common approach is to select the number that captures a pre-defined threshold of the total variance (e.g., 90-95%). This can be determined by analyzing the scree plot, which plots the eigenvalues in descending order [36].

- Variance Threshold: Retain components that contribute significantly to the total variance and discard those with negligible contribution.

Protocol 2: Implementing RFE for Feature Selection

RFE is a supervised wrapper feature selection method that recursively prunes the least important features from a model to find the optimal subset that maximizes predictive performance [37].

Detailed Methodology:

- Model Training: Train a supervised learning algorithm (e.g., Random Forest or Support Vector Machine) capable of outputting feature importance scores on the entire set of descriptors.

- Feature Ranking: Rank all molecular descriptors based on the model's feature importance scores (e.g.,

feature_importances_in scikit-learn) [37]. - Feature Pruning: Remove the least important feature(s) from the current set.

- Recursion: Repeat steps 1-3 on the progressively smaller subset of descriptors until the desired number of features is reached.

- Performance Evaluation: At each iteration, evaluate the model's performance (e.g., via cross-validated accuracy or R²) to identify the subset of features that yields the best performance [37].

Key Considerations:

- Base Model: The choice of the underlying model (e.g., Random Forest) is crucial as it determines how feature importance is calculated.

- Optimal Feature Count: The final number of features to select is a hyperparameter. It is determined by identifying the point where model performance is maximized before it begins to degrade due to the removal of important features [37].

The Scientist's Toolkit: Essential Research Reagents & Software

The table below lists key computational tools and resources essential for implementing PCA and RFE in QSAR studies.

| Tool/Resource | Function | Application in QSAR |

|---|---|---|

| PaDEL-Descriptor [40] [3] | Calculates molecular descriptors and fingerprints. | Generates the initial high-dimensional feature set from chemical structures. |

| scikit-learn (Python) [36] | Machine learning library containing PCA, RFE, and various estimators. | Provides the primary API for implementing the dimensionality reduction protocols. |

| R Statistical Environment [40] | Platform for statistical computing and graphics. | Used for model building, validation, and generating dynamic analysis reports. |

| KNIME / RapidMiner [40] [41] | Graphical workflow platforms for data analytics. | Enables the construction of reproducible, visual pipelines for QSAR modeling. |

| Dragon [41] [3] | Commercial software for calculating a wide range of molecular descriptors. | An alternative to PaDEL for comprehensive descriptor calculation. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My QSAR model's performance dropped after applying PCA. What could be the cause? This often occurs when biologically relevant variance is not the primary variance captured by the first few principal components. PCA is unsupervised and selects components that maximize total variance in the descriptor space, which may not always align with variance predictive of the biological activity. Consider using supervised dimensionality reduction methods or applying feature selection (like RFE) instead.

Q2: How do I determine the optimal number of features to select with RFE? The optimal number is not predetermined. You must perform RFE iteratively, evaluating model performance (e.g., using cross-validated accuracy or R²) at each step. Plot the performance metric against the number of features. The optimal number is typically at or near the point of peak performance before it starts to decline [37].

Q3: Can PCA and RFE be used together in a QSAR workflow? Yes, this is a powerful and common strategy. You can use PCA initially to reduce noise and handle multicollinearity among a large number of descriptors. The resulting PCs can then be used in an RFE process to further refine the most predictive components, although this sacrifices some interpretability.

Q4: Why are my selected molecular descriptors from RFE chemically unintelligible or difficult to interpret? Some powerful molecular descriptors (e.g., certain topological or quantum chemical indices) are inherently complex. Focus on identifying the physicochemical properties these descriptors represent (e.g., lipophilicity, polarity, molecular size). This abstraction can provide the chemical insight needed to guide molecular design [39].

Common Issues and Solutions

| Problem | Potential Cause | Solution |

|---|---|---|

| PCA results are dominated by a few descriptors. | Descriptors were not standardized before applying PCA, so those with larger scales dominate the variance. | Always standardize data (mean=0, std=1) before performing PCA [35]. |

| RFE is computationally slow for a large descriptor set. | The base model is being retrained a very large number of times. | Increase the number of features removed per step. Use a faster base model or perform an initial filter-based feature selection to reduce the starting set. |

| Model performance is unstable after RFE. | The selected feature subset is too small or sensitive to small changes in the training data. | Use a more robust model like Random Forest as the RFE estimator. Use repeated cross-validation to get a more stable estimate of performance for each subset. |

| Poor external validation performance after dimensionality reduction. | The applicability domain of the model has been violated, or the reduction was overfitted to the training set. | Ensure the PCA transformation or RFE feature set is derived only from the training data and then applied to the test set. Define and check the applicability domain of your final model [3]. |

Troubleshooting Guide: Descriptor Selection in QSAR Modeling

FAQ: My QSAR model performs well on the training data but poorly on new compounds. What is the issue? This is a classic sign of overfitting, where the model has memorized the training data noise instead of learning the generalizable structure-activity relationship. To resolve this:

- Apply Rigorous Feature Selection: Reduce the number of descriptors. In a study on NF-κB inhibitors, researchers started with 17,967 descriptors. They applied a Pearson correlation-based filter (cutoff of 0.6) to remove highly correlated features, followed by univariate analysis and SVC-L1 regularization to select the most statistically significant descriptors, ultimately reducing the feature set to a more robust size [42].

- Define the Applicability Domain (AD): Use methods like the leverage method to define the chemical space your model is valid for. Predictions for compounds outside this domain are unreliable [43].

- Simplify the Model: If your dataset is limited, a simpler linear model (e.g., MLR or PLS) may generalize better than a complex non-linear model (e.g., ANN) [3].

FAQ: How many molecular descriptors should I use for my model? There is no fixed number, but the ratio of compounds to descriptors should be sufficient to avoid chance correlations. Best practices involve:

- Use Feature Selection Algorithms: Do not rely on a arbitrary number. Employ filter methods (like correlation analysis), wrapper methods (like genetic algorithms), or embedded methods (like LASSO) to identify the optimal descriptor subset [3].

- Prioritize Interpretability: A model with fewer, chemically meaningful descriptors is often more valuable than a "black box" with thousands. The case study on 121 NF-κB inhibitors successfully developed a simplified MLR model with a reduced number of terms that maintained accuracy [43].

FAQ: What types of descriptors are most informative for modeling NF-κB inhibition? Research indicates that a combination of descriptor types is effective.

- 2D and Fingerprint Descriptors: For classifying TNF-α induced NF-κB inhibitors, models built using 2D descriptors and molecular fingerprints achieved higher predictive accuracy (AUC up to 0.66) than those using 3D descriptors alone (AUC 0.56) [42].

- Steric and Hydrophobic Properties: Although for a different target (Histamine H3R), QSAR models consistently showed that steric and hydrophobic properties of ligands are critical for good biological affinity [44]. This highlights the importance of these fundamental physicochemical properties in drug-target interactions.

FAQ: How can I validate that my descriptor selection process is sound? Robust validation is key to a reliable QSAR model.

- Internal Validation: Use cross-validation techniques (e.g., 5-fold or 10-fold) on your training set to assess model stability [3].

- External Validation: The gold standard is to test the model on a completely independent set of compounds that were not used in model building or feature selection. In the NF-κB case study, the dataset was split into a training set (~80%) and an independent test set (~20%) for final evaluation [43] [42].

- Use of Multiple Algorithms: Compare models built using different algorithms (e.g., MLR vs. ANN) on the same selected descriptors. In one study, an ANN model demonstrated superior reliability and prediction for NF-κB inhibitors compared to an MLR model [43].

Experimental Protocol: QSAR Modeling for NF-κB Inhibitors

The following workflow is compiled from successful case studies on NF-κB inhibitor prediction [43] [42].

1. Dataset Curation

- Source: Collect a dataset of chemical compounds with experimentally determined NF-κB inhibitory activities (e.g., IC50 values). Public repositories like PubChem BioAssay (e.g., AID 1852) are common sources [42].

- Preparation: Standardize chemical structures (e.g., remove salts, normalize tautomers). Divide the dataset randomly into a training set (~80%) for model development and a test set (~20%) for external validation. Ensure both sets cover a diverse chemical space.

2. Molecular Descriptor Calculation and Preprocessing

- Calculation: Use software like PaDEL-Descriptor or Dragon to calculate a comprehensive set of 1D, 2D, and 3D molecular descriptors from the compounds' SMILES representations. This can generate thousands of initial descriptors [42].

- Preprocessing: Normalize descriptor values (e.g., using Standard Scaler for z-score normalization). Remove descriptors with a high percentage (e.g., >80%) of null values or with low variance across the dataset [42].

3. Descriptor Selection

- Correlation Filtering: Apply a Pearson correlation filter (e.g., threshold of 0.6) to remove highly correlated descriptors and reduce multicollinearity [42].

- Advanced Selection: Use statistical and machine learning-based methods to identify the most significant descriptors. Proven techniques include:

4. Model Building and Validation

- Algorithm Selection: Build models using both linear (e.g., Multiple Linear Regression - MLR) and non-linear (e.g., Artificial Neural Networks - ANN, Support Vector Machines - SVM) algorithms.

- Internal Validation: Perform k-fold cross-validation (e.g., 5-fold) on the training set to tune model parameters and prevent overfitting.

- External Validation: Use the held-out test set for the final evaluation of the model's predictive performance. Key metrics include R², Mean Absolute Error (MAE), and for classification, Area Under the Curve (AUC).

The following diagram summarizes the experimental workflow for building a validated QSAR model.