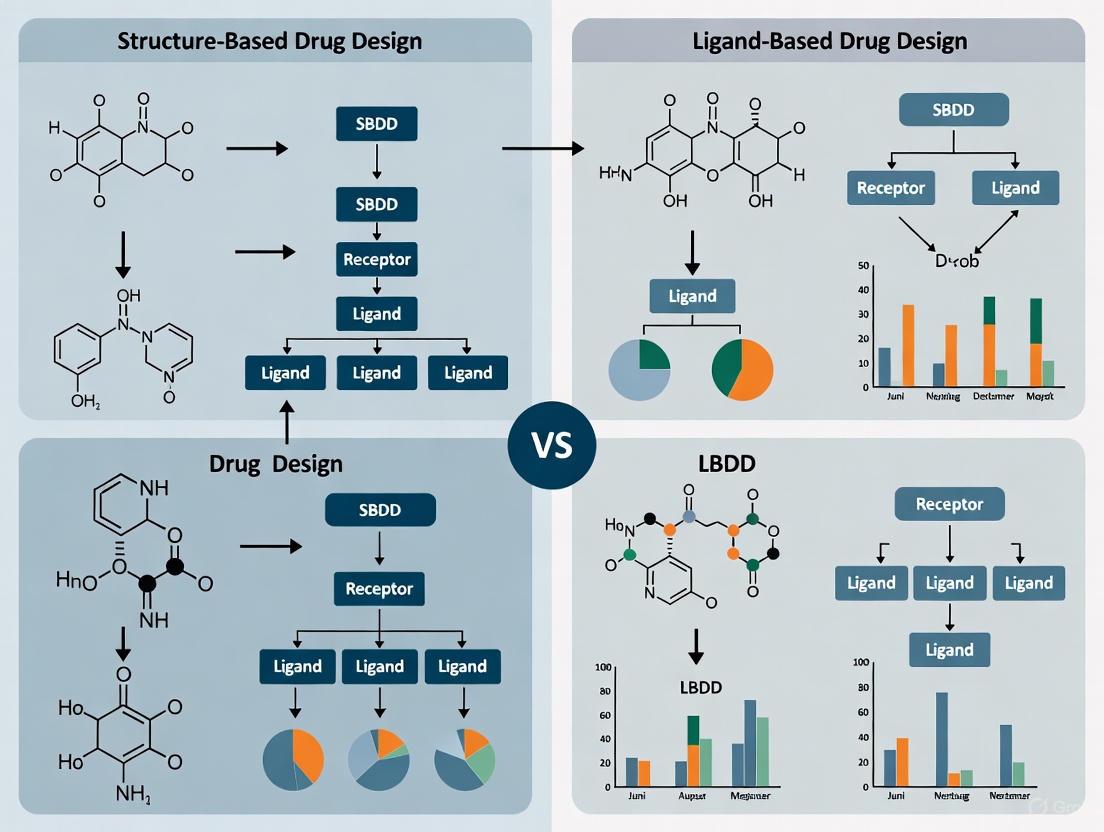

SBDD vs LBDD: A Comprehensive Guide to Structure-Based and Ligand-Based Drug Design

This article provides researchers, scientists, and drug development professionals with a detailed comparison of Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD).

SBDD vs LBDD: A Comprehensive Guide to Structure-Based and Ligand-Based Drug Design

Abstract

This article provides researchers, scientists, and drug development professionals with a detailed comparison of Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD). It explores the foundational principles, core methodologies, and practical applications of both approaches. The content addresses common challenges and optimization strategies, offers comparative analysis for method selection, and examines the growing impact of AI and integrated workflows on modern computational drug discovery.

SBDD and LBDD Explained: Core Principles and When to Use Each Approach

Structure-Based Drug Design (SBDD) is a foundational computational methodology in modern drug discovery that relies on the three-dimensional structural information of biological targets to guide the design and optimization of small-molecule therapeutics. This approach operates on the fundamental principle that a drug's biological activity stems from its precise molecular interaction with a specific target, typically a protein, nucleic acid, or other macromolecule involved in a disease pathway. By analyzing the atomic-level structure of the target's binding site—including its geometry, electrostatic properties, and hydrophobicity—researchers can rationally design molecules with complementary features to achieve high binding affinity and specificity [1] [2].

The pivotal distinction between SBDD and Ligand-Based Drug Design (LBDD) lies in their foundational information sources. SBDD directly utilizes the 3D structure of the target protein itself, while LBDD infers design principles from the known properties and structures of active small molecules (ligands) that bind to the target, without requiring direct knowledge of the protein's structure [1] [3]. This makes SBDD a target-centric approach, suitable when high-quality structural data is available, whereas LBDD serves as a powerful alternative when structural information is absent or limited. The sequential or parallel integration of both approaches often provides complementary insights that enhance the efficiency of early-stage drug discovery [3].

Core Methodologies and Experimental Protocols in SBDD

The successful application of SBDD relies on a multi-step, cyclical process that integrates structural biology, computational modeling, and experimental validation. The core workflow begins with obtaining a high-resolution structure of the target and proceeds through binding site analysis, molecular design, and optimization [1].

Target Structure Determination

The initial and most critical step in SBDD is acquiring an accurate, high-resolution three-dimensional structure of the target macromolecule. Several experimental and computational techniques are employed for this purpose, each with distinct strengths and applications.

Table 1: Key Techniques for Protein Structure Determination in SBDD

| Technique | Basic Principle | Resolution & Applicability | Key Advantages | Common Use in SBDD |

|---|---|---|---|---|

| X-ray Crystallography | Analyzes X-ray diffraction patterns from protein crystals to determine atomic positions. | High (often <2.5 Å); requires stable, crystallizable proteins. | Provides highly detailed, atomic-resolution structures. | Historically the most common source for SBDD target structures [1]. |

| Cryo-Electron Microscopy (Cryo-EM) | Images protein complexes flash-frozen in vitreous ice using electron beams. | High to Medium (now often <3 Å); suitable for large complexes and membrane proteins. | No crystallization needed; ideal for large, flexible complexes like membrane proteins [4]. | Growing use for targets difficult to crystallize (e.g., GPCRs, ion channels) [1] [4]. |

| Nuclear Magnetic Resonance (NMR) | Measures magnetic properties of atomic nuclei in solution to deduce interatomic distances and angles. | Medium; suitable for smaller proteins and studying dynamics. | Provides information on protein dynamics and flexibility in a solution state. | Used to study ligand interactions and conformational changes [1]. |

| Computational Prediction (e.g., AlphaFold) | Uses machine learning to predict protein 3D structure from its amino acid sequence. | Varies; can be very high for some targets. | Rapid generation of models for targets with no experimental structure [4]. | Unprecedented access to models for previously inaccessible targets; requires validation [4] [3]. |

Molecular Docking and Virtual Screening

Once a reliable target structure is obtained, molecular docking is used to predict the preferred orientation and conformation (the "pose") of a small molecule when bound to the target. Docking also provides a score estimating the binding affinity, enabling the virtual screening of large compound libraries to identify potential hits [2] [3].

Detailed Protocol for Molecular Docking and Virtual Screening:

- Preparation of Structures: The protein structure is prepared by adding hydrogen atoms, assigning partial charges, and defining the protonation states of amino acid residues. The binding site is explicitly defined, often based on the location of a co-crystallized ligand or known functional residues. Small molecules from virtual libraries are also prepared by generating their 3D conformations and assigning appropriate charges [2].

- Conformational Search: The docking algorithm performs a search to explore possible binding modes for the ligand within the defined binding site. This involves sampling the ligand's translational, rotational, and torsional degrees of freedom [2]. Common search algorithms include:

- Systematic Search (Incremental Construction): The ligand is fragmented and rebuilt piece-by-piece within the binding site, as implemented in programs like FlexX and Surflex [2].

- Stochastic Search (Genetic Algorithms): The ligand's conformation and orientation are randomly varied, and "populations" of poses are evolved over generations to find optimal solutions. This is used by programs like AutoDock and GOLD [2].

- Scoring and Ranking: Each generated pose is evaluated using a scoring function. These functions are mathematical approximations that estimate the binding free energy by considering various terms such as hydrogen bonding, van der Waals forces, electrostatic interactions, and desolvation penalties [2]. The top-ranked compounds based on these scores are selected for further experimental testing.

Accounting for Flexibility: Molecular Dynamics Simulations

A significant limitation of standard docking is its treatment of the protein as a rigid body. In reality, proteins are dynamic, and their conformations change upon ligand binding. Molecular Dynamics (MD) Simulations address this by simulating the physical movements of atoms over time, providing insights into the dynamic behavior of the drug-target complex [4].

Detailed Protocol for the Relaxed Complex Method:

This method combines MD simulations with docking to account for target flexibility [4].

- MD Simulation of the Target: An extensive MD simulation is run on the apo (unliganded) protein or an existing ligand-protein complex.

- Conformational Sampling: The simulation trajectory is analyzed to capture a diverse set of protein conformations, including those that may reveal cryptic pockets not observed in the initial crystal structure [4].

- Ensemble Docking: Representative "snapshot" structures from the MD trajectory are used as individual targets for molecular docking. This allows screening against multiple, physiologically relevant conformations of the target, increasing the chances of identifying hits that might be missed using a single, static structure [4].

The Scientist's Toolkit: Essential Reagents and Computational Solutions

Table 2: Key Research Reagent Solutions for SBDD

| Category / Tool Name | Function / Application | Key Features |

|---|---|---|

| Protein Production & Crystallization | ||

| Cloning Vectors (e.g., pET series) | High-yield recombinant protein expression in host systems (e.g., E. coli, insect cells). | Essential for producing milligram quantities of pure, stable protein for structural studies. |

| Crystallization Screening Kits (e.g., from Hampton Research) | Identify initial conditions for growing diffraction-quality protein crystals. | Pre-formulated solutions streamline the often labor-intensive crystallization process. |

| Structure Determination & Analysis | ||

| Cryo-EM Grids | Support samples for flash-freezing and imaging in the electron microscope. | Enable high-resolution structure determination without crystallization. |

| Molecular Graphics Software (e.g., PyMol, ChimeraX) | Visualization, analysis, and manipulation of 3D structural data. | Critical for analyzing binding sites, protein-ligand interactions, and preparing figures. |

| Computational Screening & Design | ||

| Ultra-Large Virtual Libraries (e.g., ZINC, Enamine REAL) | Source of billions of synthesizable small molecules for virtual screening. | Dramatically expands the explorable chemical space beyond physical compound collections [4] [5]. |

| Molecular Docking Software (e.g., AutoDock Vina, GLIDE, GOLD) | Predict binding poses and affinities of ligands to a target structure. | Core tool for structure-based virtual screening and pose prediction [2] [5]. |

| Molecular Dynamics Software (e.g., GROMACS, NAMD, AMBER) | Simulate the time-dependent dynamic behavior of proteins and complexes. | Used for refining models, studying stability, and sampling conformations (e.g., Relaxed Complex Method) [4]. |

SBDD Cyclical Workflow

SBDD vs. LBDD: A Comparative Analysis

SBDD and LBDD represent two complementary paradigms in computational drug discovery. The choice between them depends primarily on the availability of structural or ligand information.

Table 3: Comparative Analysis: SBDD vs. LBDD

| Parameter | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Fundamental Basis | 3D structure of the biological target (receptor). | Known active ligands that bind to the target. |

| Primary Objective | Design molecules complementary to the target's binding site. | Design molecules similar to known active ligands. |

| Key Techniques | Molecular docking, structure-based virtual screening (SBVS), molecular dynamics (MD), free-energy perturbation (FEP). | Quantitative Structure-Activity Relationship (QSAR), pharmacophore modeling, ligand-based virtual screening (LBVS), similarity searching [1] [2] [3]. |

| Data Requirements | High-resolution protein structure (experimental or predicted). | A set of known active and inactive compounds with associated bioactivity data. |

| Major Advantages | Rational design: Allows for direct optimization of interactions. Scaffold hopping: Can identify novel chemotypes that fit the binding site. High specificity and potential to reduce off-target effects [1]. | No protein structure needed. Fast and computationally efficient for screening. Excellent for establishing initial Structure-Activity Relationships (SAR) [1] [3]. |

| Key Limitations | Dependent on the availability and quality of the target structure. Limited by inherent protein flexibility. Scoring functions can be inaccurate [1] [4] [3]. | Limited to the chemical space defined by known actives. Difficult to design truly novel scaffolds (scaffold hopping). Cannot directly visualize target interactions [1] [3]. |

Case Studies and Clinical Impact

SBDD has been instrumental in developing numerous approved drugs across therapeutic areas, validating its power and practicality.

- Captopril (Capoten): One of the earliest successes of SBDD, this antihypertensive drug was developed based on the structure of carboxypeptidase A, leading to the first orally active Angiotensin-Converting Enzyme (ACE) inhibitor [4] [6].

- HIV-1 Protease Inhibitors (e.g., Saquinavir, Ritonavir): The design of these antiviral agents relied heavily on the 3D structures of HIV protease and its complexes, which allowed for the creation of potent inhibitors that fit precisely into the enzyme's active site, revolutionizing AIDS treatment [6].

- Oseltamivir (Tamiflu): This neuraminidase inhibitor, used for treating influenza, was developed using the crystal structure of influenza neuraminidase, enabling the design of a transition-state analogue that effectively blocks the enzyme's function [6].

The field of SBDD is being transformed by several converging technological advances. The integration of machine learning (ML) is enhancing predictive accuracy in virtual screening and binding affinity prediction, as demonstrated by studies identifying natural inhibitors against specific tubulin isotypes [5]. The explosion of structural data, driven by the AlphaFold database of predicted structures and advances in Cryo-EM, is providing unprecedented access to previously intractable targets [4]. Furthermore, the ability to screen ultra-large chemical libraries containing billions of molecules is expanding the horizons of discoverable chemical space [4] [3].

In conclusion, Structure-Based Drug Design stands as a powerful, target-centric pillar of modern drug discovery. By leveraging atomic-level structural information, it enables the rational and precise design of therapeutic molecules, differentiating it fundamentally from ligand-based approaches. As computational power, algorithms, and structural data continue to grow, SBDD is poised to become even more integral to the efficient and innovative development of new medicines.

In the field of computer-aided drug discovery (CADD), Ligand-Based Drug Design (LBDD) represents a fundamental paradigm that leverages chemical information from known active compounds to guide the development of new therapeutic candidates. This approach stands in contrast to Structure-Based Drug Design (SBDD), which relies on three-dimensional structural information of the biological target [1] [4]. LBDD emerges as a particularly valuable strategy when the three-dimensional structure of the target protein is unavailable or difficult to obtain, allowing researchers to proceed with drug discovery efforts based solely on knowledge of compounds that effectively modulate the target of interest [7]. The core premise of LBDD is that structurally similar molecules often exhibit similar biological activities—a principle that enables the prediction and design of new chemical entities with desired pharmacological properties [8].

The strategic position of LBDD within the drug discovery toolkit becomes especially important for targets that resist structural characterization through methods like X-ray crystallography, NMR, or cryo-EM, particularly membrane proteins and large complexes [1] [4]. Furthermore, even when structural information is available, LBDD offers complementary approaches that can accelerate early-stage hit identification and optimization through efficient analysis of chemical space and structure-activity relationships [9]. This technical guide explores the core principles, methodologies, and applications of LBDD, framing it within the broader context of SBDD versus LBDD research paradigms for drug development professionals seeking to maximize the value of chemical information in their discovery campaigns.

Core Principles of Ligand-Based Drug Design

LBDD operates on several fundamental principles that distinguish it from structure-based approaches. The most central of these is the similarity principle, which posits that molecules with similar structural features are likely to exhibit similar biological activities and properties [8] [9]. This principle enables researchers to extrapolate from known active compounds to predict the activity of new chemical entities, forming the basis for many LBDD techniques. The similarity principle is mathematically operationalized through various molecular descriptors and similarity metrics that quantify the degree of structural or property resemblance between compounds.

A second key principle is the pharmacophore concept, which abstracts specific molecular features from active compounds that are essential for their biological activity [1]. A pharmacophore model captures the spatial arrangement of critical functional groups—such as hydrogen bond donors, hydrogen bond acceptors, hydrophobic regions, and charged groups—that facilitate molecular recognition between a ligand and its biological target. This abstraction allows researchers to design novel compounds that maintain these essential features while exploring diverse chemical scaffolds.

Third, LBDD relies on the principle of cheminformatic pattern recognition, where statistical relationships between chemical structures and biological activities are derived from experimental data [1] [7]. Through Quantitative Structure-Activity Relationship (QSAR) modeling and machine learning approaches, these patterns can be formalized into predictive models that guide compound optimization and prioritization. This data-driven approach becomes increasingly powerful as the volume and diversity of compound activity data grow, enabling more accurate predictions of potency, selectivity, and ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties.

Table 1: Core Principles of Ligand-Based Drug Design

| Principle | Key Concept | Methodological Implementation |

|---|---|---|

| Similarity Principle | Structurally similar compounds have similar biological activities | Molecular similarity searching, molecular fingerprints, shape-based alignment |

| Pharmacophore Concept | Essential structural features required for biological activity | Pharmacophore modeling, feature alignment, 3D database screening |

| Cheminformatic Pattern Recognition | Statistical relationships between structure and activity can be modeled | QSAR, machine learning, classification models |

Key Methodologies and Techniques in LBDD

Quantitative Structure-Activity Relationship (QSAR)

Quantitative Structure-Activity Relationship (QSAR) represents one of the most established methodologies in LBDD, employing mathematical models to correlate quantitative molecular descriptors with biological activity [1] [9]. The fundamental premise of QSAR is that variations in biological activity can be correlated with changes in measurable or calculable molecular properties through statistical methods. The standard QSAR workflow begins with molecular descriptor calculation, where numerical representations of chemical structures are generated, encompassing physicochemical properties (e.g., logP, molecular weight, polar surface area), electronic features, and topological indices [1]. These descriptors serve as independent variables in mathematical models that predict biological activity as the dependent variable.

The second critical phase involves model building and validation, where statistical techniques—ranging from traditional regression methods to modern machine learning algorithms—identify relationships between molecular descriptors and biological activity [7] [9]. Model validation is essential to ensure predictive capability and avoid overfitting, typically employing techniques such as cross-validation, external test sets, and y-scrambling. A properly validated QSAR model can significantly accelerate lead optimization by predicting the activity of unsynthesized compounds, prioritizing chemical series with the highest potential, and identifying key structural features that drive potency.

More advanced implementations include 3D-QSAR approaches, which incorporate spatial molecular fields and alignment information to create more sophisticated models that capture stereoelectronic requirements for biological activity [9]. These techniques, including Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Indices Analysis (CoMSIA), provide visual representations of structure-activity relationships that guide medicinal chemists in rational compound design. The experimental protocol for QSAR modeling requires careful curation of biological data, appropriate descriptor selection, rigorous validation procedures, and application within the model's defined applicability domain to ensure reliable predictions.

Pharmacophore Modeling

Pharmacophore modeling is a powerful LBDD technique that identifies the essential steric and electronic features necessary for molecular recognition at a biological target [1]. A pharmacophore model abstractly represents these critical features and their spatial relationships without explicit reference to specific molecular scaffolds, enabling scaffold hopping and identification of structurally diverse compounds that maintain the necessary elements for binding. The methodology typically begins with conformational analysis of known active compounds to explore their accessible three-dimensional shapes, followed by common feature identification that extracts shared structural elements across multiple active molecules.

The construction of a pharmacophore model can follow either a ligand-based or structure-based approach, with ligand-based methods relying exclusively on the structural features and alignment of known active compounds [1]. These ligand-based approaches include common feature pharmacophore generation, which identifies shared elements among actives, and quantitative pharmacophore modeling, which incorporates activity data to weight feature importance. Once developed, pharmacophore models serve as virtual screening queries to identify potential hits from compound databases, as design templates for novel compound synthesis, and as analytical tools to understand key interactions driving biological activity [1] [9].

The experimental protocol for pharmacophore modeling requires a carefully curated set of active compounds with diverse structural features, conformational analysis to represent molecular flexibility, feature definition and spatial alignment, model validation using known actives and inactives, and application to database screening or compound design. Successful pharmacophore models can significantly accelerate early drug discovery by enabling efficient exploration of chemical space and identification of novel chemotypes that would not be discovered through simple similarity searching.

Similarity-Based Virtual Screening

Similarity-based virtual screening leverages the similarity principle to identify potential active compounds from large chemical libraries based on their resemblance to known active molecules [8] [9]. This methodology employs various molecular representation schemes to quantify chemical similarity, with molecular fingerprints representing one of the most common approaches for rapid similarity searching in massive compound collections. These binary bit strings encode the presence or absence of specific structural patterns or chemical features within a molecule, enabling efficient calculation of similarity metrics such as Tanimoto coefficients.

Advanced similarity methods extend into three-dimensional space, comparing molecules based on shape similarity and electrostatic complementarity rather than two-dimensional structural features [8] [9]. These 3D similarity approaches can identify compounds that share similar spatial arrangements of key functional groups despite having different molecular scaffolds, potentially revealing structurally novel active compounds. The BioSolveIT platform, for example, offers tools for both 2D similarity searching in trillion-sized chemical spaces and 3D molecule superpositioning to match shape and chemical features of template ligands [8].

The implementation of similarity-based virtual screening involves selection of appropriate query compounds, choice of molecular representation and similarity metric, definition of similarity thresholds, efficient searching of chemical databases, and experimental validation of prioritized compounds. When properly executed, this approach provides an efficient method for hit identification that complements other virtual screening techniques, particularly in the early stages of drug discovery when target structural information may be limited.

Comparative Analysis: LBDD vs. SBDD

Understanding the distinctions and complementary strengths between Ligand-Based and Structure-Based Drug Design is essential for deploying the most effective strategy for a given drug discovery scenario. While SBDD requires detailed three-dimensional structural information of the target protein—obtained through experimental methods like X-ray crystallography, NMR, or cryo-EM, or predicted through AI systems like AlphaFold—LBDD operates independently of target structure, relying instead on chemical information from known active compounds [1] [4] [9]. This fundamental difference in required input information dictates the applicability of each approach and influences their respective advantages and limitations.

SBDD provides atomic-level insights into protein-ligand interactions, enabling rational design of compounds with optimized binding geometries and specific molecular interactions [1] [4]. Techniques such as molecular docking and free-energy perturbation (FEP) calculations allow researchers to predict binding modes and affinities, guiding structure-based optimization with high precision. However, SBDD faces challenges including target flexibility, difficulties in modeling induced fit and allosteric effects, and computational demands when handling large compound libraries [4]. Additionally, the quality of SBDD predictions is highly dependent on the accuracy and relevance of the protein structure used, with potential errors propagating through the design process [9].

In contrast, LBDD excels in its ability to rapidly screen vast chemical spaces using efficient similarity-based methods, making it particularly valuable during early hit identification when structural information may be limited [9]. By leveraging patterns in existing chemical and biological data, LBDD can identify novel chemotypes through scaffold hopping and guide optimization through quantitative structure-activity relationships. The limitations of LBDD include its reliance on existing active compounds, potential bias toward known chemical space, and lack of explicit structural context for understanding binding interactions [9]. The complementary nature of these approaches has led to increased integration in modern drug discovery, with hybrid workflows that leverage the strengths of both paradigms.

Table 2: Comparison of Ligand-Based and Structure-Based Drug Design Approaches

| Parameter | Ligand-Based Drug Design (LBDD) | Structure-Based Drug Design (SBDD) |

|---|---|---|

| Required Information | Known active compounds and their activities | 3D structure of the target protein |

| Key Techniques | QSAR, pharmacophore modeling, similarity searching | Molecular docking, molecular dynamics, FEP |

| Applicability Domain | Targets without structural information | Targets with known or predictable structures |

| Computational Efficiency | High-throughput screening of large libraries | More computationally intensive, especially for flexible docking |

| Strengths | Scaffold hopping, rapid screening, patentability | Rational design, specificity optimization, binding mode prediction |

| Limitations | Limited to known chemical space, no structural context | Dependent on structure quality, challenges with flexibility |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of LBDD methodologies requires both computational tools and chemical resources that enable effective exploration of chemical space and validation of computational predictions. The research reagent solutions outlined below represent essential components of a modern LBDD workflow, facilitating everything from initial model development to experimental confirmation of predicted activities.

Table 3: Essential Research Reagents and Solutions for LBDD

| Tool Category | Representative Solutions | Function in LBDD |

|---|---|---|

| Chemical Databases | REAL Database, SAVI, Commercial Screening Libraries | Sources of compounds for virtual screening and purchasing candidates for experimental validation [4] |

| Cheminformatics Platforms | BioSolveIT's infiniSee, SeeSAR, Scaffold Hopper | Navigation of chemical spaces, similarity searching, and compound prioritization [8] |

| Molecular Modeling Software | Schrodinger Suite, Cresset's Spark | Conformational analysis, pharmacophore modeling, and 3D-QSAR studies [7] |

| Building Block Collections | Enamine BUILDING BLOCK Database, Key Organics | Sources for virtual compound libraries and custom synthesis of designed molecules [4] |

| Screening Compounds | Fragment Libraries, Diverse Compound Sets | Experimental validation of computational predictions and structure-activity relationship exploration |

Chemical databases and virtual libraries form the foundation of LBDD efforts, providing the structural data necessary for similarity searching, pharmacophore mapping, and QSAR modeling. The dramatic expansion of accessible chemical space—with virtual libraries now containing billions of readily synthesizable compounds—has significantly enhanced the potential of LBDD to identify novel active chemotypes [4]. These databases include commercially available compounds, virtual compounds accessible through on-demand synthesis, and specialized collections targeting specific protein families or therapeutic areas.

Computational platforms for chemical space navigation represent another critical component, enabling researchers to efficiently search trillion-sized molecular collections for compounds similar to query structures [8]. Tools such as BioSolveIT's infiniSee platform provide specialized search modes including Scaffold Hopper for discovering new chemical scaffolds that maintain core features of active molecules, Analog Hunter for locating and evaluating similar compounds, and Motif Matcher for identifying compounds containing specific molecular substructures [8]. These platforms often incorporate both 2D similarity methods for rapid screening and 3D approaches for shape-based alignment and functional overlap assessment.

Specialized software for molecular modeling and analysis enables the implementation of specific LBDD techniques including pharmacophore modeling, 3D-QSAR, and molecular alignment. Platforms such as the Schrodinger software suite and Cresset's Spark provide tools for ligand-based design that complement structure-based approaches, allowing researchers to generate design hypotheses based on known active compounds [7] [9]. These tools facilitate the transition from computational models to practical design suggestions that medicinal chemists can implement through compound synthesis or procurement.

Integrated Approaches: Combining LBDD and SBDD

While LBDD and SBDD represent distinct approaches with different information requirements, their integration offers powerful synergies that can enhance the efficiency and success of drug discovery campaigns [9]. Integrated workflows typically follow either sequential or parallel implementation patterns, with each strategy offering distinct advantages depending on the available data and project objectives. In sequential approaches, ligand-based methods often provide an initial filtering of chemical space, followed by structure-based refinement of the most promising candidates [9]. This strategy leverages the computational efficiency of LBDD for handling large compound libraries while employing more resource-intensive SBDD methods on a focused subset.

Parallel implementation involves independent application of both LBDD and SBDD methods to the same compound library, with results combined through consensus scoring or hybrid ranking schemes [9]. This approach helps mitigate the limitations inherent in each method—for instance, when docking scores are compromised by inaccurate pose prediction, similarity-based methods may still recover active compounds based on known ligand features. The complementary nature of these approaches extends to their fundamental perspectives: structure-based methods provide atomic-level insights into specific protein-ligand interactions, while ligand-based methods infer critical binding features from patterns across known active molecules [9].

Advanced implementations of integrated drug discovery include the use of protein conformational ensembles derived from molecular dynamics simulations to capture binding site flexibility, with accompanying sets of diverse ligands that provide complementary information for both structure-based and ligand-based screening [4] [9]. Similarly, combining 3D-QSAR-based binding affinity predictions with free-energy perturbation calculations has demonstrated complementarity in both prediction error and applicability domains [9]. These integrated strategies represent the cutting edge of computational drug discovery, leveraging the complementary strengths of LBDD and SBDD to maximize the probability of identifying high-quality lead compounds with optimal properties.

The field of Ligand-Based Drug Design continues to evolve, driven by advancements in computational power, algorithmic innovation, and the growing availability of chemical and biological data. Machine learning and artificial intelligence are revolutionizing LBDD approaches, enabling more accurate predictions of activity, selectivity, and ADMET properties from chemical structure alone [10] [11]. Deep learning architectures can now identify complex patterns in chemical data that transcend traditional molecular descriptors, potentially uncovering novel structure-activity relationships that would remain hidden to conventional methods. The integration of these AI approaches with physics-based modeling represents a promising direction for next-generation drug design [11].

The exponential growth of accessible chemical space—with virtual libraries now encompassing billions to trillions of synthesizable compounds—presents both opportunities and challenges for LBDD [4]. While this expansion dramatically increases the potential for discovering novel chemotypes, it also demands more efficient methods for navigating this vast chemical territory. Future developments will likely focus on intelligent exploration strategies that balance diversity with predicted activity, leveraging both ligand-based and structure-based insights to prioritize the most promising regions of chemical space for synthesis and testing.

In conclusion, Ligand-Based Drug Design remains an essential component of the modern drug discovery toolkit, particularly when structural information about the biological target is limited or unavailable. By leveraging chemical information from known active compounds, LBDD enables efficient exploration of chemical space, identification of novel chemotypes through scaffold hopping, and optimization of potency and properties through quantitative structure-activity relationships. When combined with structure-based approaches in integrated workflows, LBDD contributes to a comprehensive drug discovery strategy that maximizes the value of available information to accelerate the development of new therapeutic agents. As computational methods continue to advance, the role of LBDD is likely to expand further, solidifying its position as a cornerstone of efficient, data-driven drug discovery.

In modern computational drug discovery, Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD) represent two fundamental paradigms for identifying and optimizing therapeutic compounds [12] [9]. SBDD utilizes the three-dimensional structure of a biological target to guide drug design, whereas LBDD infers drug-target interactions from the known properties of active ligands when structural information is unavailable [9] [13]. The selection between these approaches carries significant implications for project feasibility, resource allocation, and ultimate success. This technical guide provides researchers and drug development professionals with a comprehensive comparison of these methodologies, enabling data-driven decision-making within pharmaceutical research programs.

Fundamental Principles and Data Requirements

Core Methodological Differences

The foundational distinction between SBDD and LBDD lies in their starting information and underlying philosophy.

Structure-Based Drug Design (SBDD) requires knowledge of the target's 3D molecular structure, typically obtained through experimental methods like X-ray crystallography, cryo-electron microscopy (cryo-EM), or computational predictions from tools like AlphaFold [4] [9]. This structural knowledge enables researchers to visualize the target's binding sites and directly model how potential drug molecules might interact with it. SBDD focuses on designing compounds that form complementary steric and electronic interactions with the target, utilizing techniques such as molecular docking to predict binding orientation and affinity [4] [14]. The SBDD approach is particularly powerful for targeting novel binding sites and achieving high specificity.

Ligand-Based Drug Design (LBDD) is employed when the 3D structure of the target protein is unknown or unavailable. Instead, this approach leverages information from known active compounds that bind to the target of interest [9] [13]. The core assumption is that structurally similar molecules tend to exhibit similar biological activities—the "similarity principle" [9]. LBDD methods include similarity searching, pharmacophore modeling, and Quantitative Structure-Activity Relationship (QSAR) modeling, which establishes mathematical relationships between molecular descriptors and biological activity [13]. This approach is especially valuable for optimizing existing drug classes and exploring chemical analogs.

The data requirements and sources for these approaches differ significantly, influencing their applicability in various research scenarios.

Table 1: Data Requirements for SBDD and LBDD

| Aspect | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Primary Data | 3D protein structure from PDB, AlphaFold, or experimental methods | Chemical structures and biological activity data of known ligands |

| Data Sources | Protein Data Bank (PDB), AlphaFold Database, experimental structural biology | DrugBank, ChEMBL, in-house corporate databases, published IC50/Ki values |

| Key Inputs | Atomic coordinates of target binding site, co-crystallized ligands | Molecular descriptors, fingerprints, bioactivity measurements (IC50, Ki) |

| Data Challenges | Structure quality, resolution, conformational flexibility, solvation effects | Data quality, consistency of activity measurements, molecular diversity |

For SBDD, the Protein Data Bank (PDB) remains the primary repository for experimentally determined structures, while the AlphaFold Database now provides over 214 million predicted protein structures, dramatically expanding structural coverage of the proteome [4]. These resources enable SBDD for targets previously inaccessible to structural methods. However, challenges persist regarding structure quality, conformational dynamics, and the biological relevance of certain structural states [4] [15].

LBDD relies on chemical and bioactivity databases such as DrugBank and ChEMBL, which contain curated information on known active compounds and their measured effects [16] [13]. The quality and diversity of this ligand data directly impact model reliability, with limitations including activity measurement inconsistencies, insufficient chemical diversity, and potential biases in reported compounds [9] [13].

Technical Methodologies and Experimental Protocols

Structure-Based Drug Design Workflow

SBDD employs a suite of computational techniques that leverage structural information to predict and optimize drug-target interactions.

SBDD Methodology Workflow

Molecular Docking Protocol is a cornerstone SBDD technique for predicting how small molecules bind to a protein target [4] [9]. A standardized protocol involves:

Protein Preparation: Obtain the 3D structure from PDB or AlphaFold. Remove water molecules and cofactors unless functionally relevant. Add hydrogen atoms, assign partial charges, and define protonation states of residues using tools like PDB2PQR or protein preparation modules in molecular modeling suites.

Binding Site Definition: Identify the binding cavity using computational methods such as FPocket or SiteMap. For targets with known active sites, define the search space using a grid box centered on the key residues.

Ligand Preparation: Generate 3D structures of candidate molecules. Assign proper bond orders, add hydrogen atoms, and generate possible tautomers and protonation states at physiological pH using tools like LigPrep or MOE.

Docking Execution: Perform flexible ligand docking against a rigid or semi-flexible protein using software like AutoDock Vina, GLIDE, or GOLD. Use standardized parameters with appropriate search exhaustiveness.

Pose Scoring and Ranking: Evaluate binding poses using scoring functions (e.g., ChemScore, PLP). Select top-ranked compounds based on docking scores and visual inspection of key interactions.

Molecular Dynamics (MD) Simulation provides insights beyond static docking by modeling the dynamic behavior of protein-ligand complexes [4]. A typical MD protocol includes:

System Setup: Solvate the protein-ligand complex in a water box (e.g., TIP3P water model). Add ions to neutralize the system and achieve physiological salt concentration.

Energy Minimization: Perform steepest descent and conjugate gradient minimization to remove steric clashes and bad contacts.

Equilibration: Run simulations with position restraints on heavy atoms of the protein and ligand, gradually releasing restraints while maintaining constant temperature (300K) and pressure (1 bar).

Production Run: Conduct unrestrained MD simulation for timescales relevant to the biological process (typically 100ns-1μs). Use packages like AMBER, GROMACS, or NAMD.

Trajectory Analysis: Calculate root-mean-square deviation (RMSD), radius of gyration (Rg), and hydrogen bonding patterns. Identify stable binding modes and conformational changes using tools like VMD and MDTraj.

Ligand-Based Drug Design Workflow

LBDD methodologies extract information from chemical structures to predict activity without requiring target structural data.

LBDD Methodology Workflow

QSAR Modeling Protocol establishes quantitative relationships between molecular structure and biological activity [13]. A robust QSAR development process includes:

Dataset Curation: Collect a minimum of 20-30 compounds with consistent, reliable activity data (e.g., IC50, Ki values). Divide into training (∼80%) and test sets (∼20%) using rational division methods like Kennard-Stone or random sampling.

Molecular Descriptor Calculation: Compute thousands of molecular descriptors capturing structural, electronic, and topological features using tools like Dragon, RDKit, or PaDEL-Descriptor. Include constitutional, topological, geometrical, charge-related, and constitutional descriptors.

Descriptor Selection and Reduction: Apply feature selection techniques like genetic algorithms, stepwise regression, or VIP scores to identify the most relevant descriptors and avoid overfitting.

Model Building: Employ machine learning algorithms including Multiple Linear Regression (MLR), Partial Least Squares (PLS), Support Vector Machines (SVM), or Artificial Neural Networks (ANN). For ANN, optimize architecture (e.g., [8.11.11.1] topology) and training parameters [13].

Model Validation: Perform internal validation (cross-validation, leave-one-out) and external validation using the test set. Calculate statistical metrics: R², Q², RMSE. Define the applicability domain using the leverage approach to identify reliable prediction boundaries [13].

Pharmacophore Modeling Protocol identifies the spatial arrangement of chemical features essential for biological activity:

Active Ligand Selection: Choose 3-10 structurally diverse compounds with confirmed high activity against the target.

Conformational Analysis: Generate representative conformational ensembles for each compound using algorithms like Monte Carlo Multiple Minimum or systematic torsion driving.

Feature Mapping: Identify common chemical features (hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, charged groups) across active conformations.

Model Generation: Use software like HypoGen, Phase, or MOE Pharmacophore to generate pharmacophore hypotheses with optimal spatial alignment of features.

Model Validation: Test the model against a set of known active and inactive compounds. Calculate enrichment factors and use ROC curves to evaluate predictive performance.

Comparative Analysis: Key Differentiators

Performance and Application Metrics

Table 2: Quantitative Comparison of SBDD and LBDD Approaches

| Parameter | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Success Rate | Hit rates of 10-40% in experimental testing [4] | Varies with data quality and model applicability domain |

| Computational Cost | High (docking, MD simulations require GPU clusters) | Moderate (descriptor calculation, similarity searches) |

| Time Requirements | Days to weeks for screening billion-compound libraries [4] | Hours to days for screening comparable libraries |

| Data Requirements | Single protein structure sufficient to begin | Dozens of active compounds recommended for reliable models |

| Novel Scaffold Identification | Excellent for discovering novel chemotypes | Limited by similarity to known actives (scaffold hopping possible) |

| Market Adoption | ~55% revenue share in CADD market [17] | Growing at fastest CAGR in CADD market [17] |

Strengths and Limitations Analysis

SBDD Advantages include the ability to design entirely novel chemotypes not limited by existing chemical knowledge, high potential for rational optimization of binding interactions, and direct visualization of binding modes that facilitates mechanistic understanding [4] [14]. The approach is particularly powerful for targets with deep, well-defined binding pockets and when pursuing allosteric modulators targeting novel sites.

SBDD Limitations involve significant dependency on structure quality and resolution, computational intensity especially for flexible systems, challenges with accurately scoring binding affinities, and limited consideration of pharmacokinetic properties without additional modeling [4] [9]. Membrane proteins and highly flexible targets remain particularly challenging despite advances in structural biology.

LBDD Advantages include applicability when no structural information is available, faster screening of ultra-large chemical libraries, proven effectiveness for lead optimization series, and established success in predicting ADMET properties [9] [13]. The methodology demonstrates particular strength in scaffold hopping and rapid analog optimization.

LBDD Limitations encompass requirement for sufficient known active compounds, potential bias toward existing chemical scaffolds, inability to directly visualize binding interactions, and challenges extrapolating beyond the chemical space of training data [9] [13]. Model interpretability remains a concern with complex machine learning approaches.

Decision Framework and Integrated Approaches

Strategic Selection Guidelines

Choosing between SBDD and LBDD depends on multiple project-specific factors. The following decision framework supports systematic approach selection:

Drug Design Approach Decision Framework

Prioritize SBDD When:

- High-resolution experimental or predicted structures are available (e.g., from PDB or AlphaFold)

- Novel chemical scaffolds are desired, distinct from known actives

- Target has well-defined, druggable binding pockets

- Project goals include structure-based rational optimization

- Adequate computational resources are available for docking and simulations

Prioritize LBDD When:

- No reliable structural information exists for the target

- Substantial SAR data is available for similar compounds

- Rapid screening of large compound libraries is required

- Limited computational resources are available

- Project focuses on analog optimization and scaffold hopping

Integrated Workflows and Hybrid Approaches

Combining SBDD and LBDD creates synergistic workflows that leverage the strengths of both approaches [9]. Effective integration strategies include:

Sequential Integration: Large compound libraries are first filtered using fast ligand-based methods (similarity searching, QSAR), followed by structure-based docking of the prioritized subset [9]. This approach balances computational efficiency with structural insights, particularly useful when screening billion-compound libraries.

Parallel Screening: Both SBDD and LBDD methods are applied independently to the same compound library, with results combined using consensus scoring [9]. This strategy mitigates method-specific limitations and increases confidence in selected hits.

Hybrid Scoring: Combines ranks from both approaches through multiplication or weighted averaging, favoring compounds ranked highly by both methods [9]. This approach increases specificity and reduces false positives in virtual screening campaigns.

Essential Research Reagents and Computational Tools

Research Reagent Solutions

Table 3: Essential Research Materials for SBDD and LBDD

| Reagent/Tool | Function | Application Context |

|---|---|---|

| REAL Database | Commercially available on-demand compound library (>6.7B compounds) | Virtual screening for both SBDD and LBDD [4] |

| SAVI Library | Synthetically accessible virtual inventory by NIH | Access to synthesizable chemical space for screening [4] |

| Selective Side-Chain Labeling Kits | NMR-driven SBDD for protein-ligand complexes | Enables characterization of molecular interactions in solution [15] |

| DNA-Encoded Libraries (DELs) | High-throughput screening of millions of compounds | Hit discovery for both approaches [18] |

| Click Chemistry Toolkits | Rapid synthesis of diverse compound libraries | Generating analogs for SAR expansion [18] |

| QSAR Model Development Software | Build predictive activity models | LBDD optimization and activity prediction [13] |

SBDD and LBDD represent complementary paradigms in modern drug discovery, each with distinct strengths, limitations, and optimal application domains. SBDD provides atomic-level insights for rational design when structural information is available, while LBDD offers efficient screening and optimization capabilities based on chemical similarity principles. The most successful drug discovery programs strategically integrate both approaches, leveraging their complementary strengths to accelerate the identification and optimization of therapeutic candidates. As both methodologies continue to advance—through improved AI-driven structure prediction in SBDD and more sophisticated machine learning in LBDD—their synergistic application will remain fundamental to addressing the increasing complexity of drug discovery challenges.

In modern computational drug discovery, Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD) represent the two foundational pillars that researchers employ to identify and optimize therapeutic compounds [19]. The fundamental distinction between these approaches lies in their starting point: SBDD requires detailed three-dimensional structural information of the biological target, while LBDD leverages knowledge from existing active molecules that bind to the target [1]. This distinction creates a clear divergence in their application domains, methodological frameworks, and implementation prerequisites.

Choosing between these methodologies is not merely a technical decision but a strategic one that significantly influences the trajectory of a drug discovery campaign. The right choice depends critically on the available structural and ligand information, resource constraints, and the specific biological target under investigation [9]. This guide examines the essential prerequisites for both approaches, providing researchers with a structured framework for selecting the optimal path based on their specific project context and available resources.

Core Principles and Technical Foundations

Structure-Based Drug Design (SBDD)

SBDD is a methodology that designs or optimizes small molecule compounds by analyzing the spatial configuration and physicochemical properties of a target protein's binding site [1]. This approach operates on the principle of molecular recognition - designing molecules that are stereochemically and electrostatically complementary to a specific binding site on a target protein [2]. The availability of a high-resolution three-dimensional structure enables researchers to visually inspect binding site topology, including clefts, cavities, sub-pockets, and electrostatic properties [2].

The core process of SBDD involves a cyclic workflow of knowledge acquisition that begins with obtaining a reliable target structure, followed by in silico studies to identify potential ligands, synthesis of promising compounds, and experimental evaluation of biological properties [2]. When active compounds are identified, the three-dimensional structure of the ligand-receptor complex can be determined, providing critical insights into binding conformations, key intermolecular interactions, and ligand-induced conformational changes that inform the next design cycle [2].

Ligand-Based Drug Design (LBDD)

LBDD employs information from known active small molecules (ligands) to design new compounds when the three-dimensional structure of the target protein is unavailable or poorly characterized [1]. This approach is grounded in the similarity principle, which posits that structurally similar molecules are likely to exhibit similar biological activities [9]. By analyzing the chemical properties, substructure patterns, and mechanism of action of existing ligands, researchers can predict and design compounds with comparable or improved activity [1].

LBDD methods infer critical binding features indirectly by identifying patterns within sets of known active and inactive compounds [9]. These approaches excel at pattern recognition and generalization across chemically diverse ligands for a given target, even with limited structure-activity data [9]. The effectiveness of LBDD increases with the number and diversity of known active compounds available for analysis, as this provides a more comprehensive basis for identifying the essential features required for biological activity.

Decision Framework: Choosing Between SBDD and LBDD

The choice between SBDD and LBDD hinges on several critical factors, primarily the availability of structural information about the target protein and known active compounds. The following table summarizes the key decision criteria and optimal use cases for each approach.

Table 1: Decision Framework for Selecting Between SBDD and LBDD

| Factor | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Primary Requirement | 3D structure of target protein (experimental or predicted) [19] [9] | Known active ligands with measured activity [1] |

| Structural Information | Essential - from X-ray crystallography, Cryo-EM, NMR, or AI prediction (AlphaFold) [4] [1] | Not required - applied when structure is unknown [19] |

| Ligand Information | Beneficial but not mandatory | Essential - requires sufficient known actives for pattern recognition [9] |

| Target Flexibility Handling | Requires specialized methods (MD simulations, ensemble docking) [4] [2] | Naturally accounts for flexibility through diverse ligand structures |

| Optimal Use Cases | Target-focused screening, rational design, optimizing binding interactions [2] [9] | Scaffold hopping, early hit identification, QSAR modeling [9] [1] |

| Computational Intensity | Generally higher, especially with dynamics simulations [4] [9] | Generally lower, more scalable for large libraries [9] |

The decision workflow for selecting the appropriate approach can be visualized as follows:

Key Methodologies and Experimental Protocols

SBDD Methodologies

Molecular Docking

Molecular docking is a cornerstone SBDD technique that predicts the bound conformation (pose) of small molecule ligands within a target binding site and provides a ranking of their binding potential based on scoring functions [2] [9]. The process involves two critical steps: (1) exploration of conformational space representing various potential binding modes, and (2) accurate prediction of interaction energy for each predicted binding conformation [2].

Docking algorithms employ different conformational search strategies. Systematic search methods incrementally modify structural parameters through techniques like incremental construction, where ligands are gradually built within the binding site [2]. Stochastic methods randomly modify structural parameters using algorithms such as Genetic Algorithms (GA), which apply concepts of natural selection to efficiently explore conformational space [2].

Table 2: Common Molecular Docking Software and Their Methodologies

| Software | Search Algorithm | Key Features | Applications |

|---|---|---|---|

| AutoDock [2] | Genetic Algorithm | Efficient conformational sampling, free energy calculation | Virtual screening, binding mode prediction |

| GOLD [2] | Genetic Algorithm | Protein flexibility, chemical accuracy | Lead optimization, pose prediction |

| GLIDE [2] | Systematic search | Hierarchical filters, precision docking | High-throughput virtual screening |

| Surflex-Dock [2] | Incremental construction | Molecular similarity, protonol generation | Fragment-based design, lead discovery |

| DOCK [2] | Incremental construction | Sphere matching, chemical matching | Geometry-based docking, library screening |

Molecular Dynamics Simulations

Molecular dynamics (MD) simulations address a significant limitation of conventional docking: target flexibility [4]. By simulating the physical movements of atoms and molecules over time, MD can model conformational changes within a ligand-target complex upon binding [4]. The Relaxed Complex Method is a systematic approach that selects representative target conformations from MD simulations for use in docking studies, often revealing novel, cryptic binding sites not apparent in static crystal structures [4].

Advanced MD methods like accelerated molecular dynamics (aMD) address the timescale limitation of conventional MD by adding a boost potential to smooth the system's potential energy surface, thereby decreasing energy barriers and accelerating transitions between different low-energy states [4]. This enables more efficient sampling of distinct biomolecular conformations and helps address receptor flexibility and cryptic pocket problems [4].

LBDD Methodologies

Quantitative Structure-Activity Relationship (QSAR)

QSAR modeling establishes a mathematical relationship between chemical structure descriptors and biological activity using statistical and machine learning methods [2] [1]. The fundamental protocol involves: (1) calculating molecular descriptors (physicochemical properties, 2D fingerprints, substructure patterns, 3D shape), (2) selecting appropriate descriptors correlated with activity, (3) model training using known active compounds, and (4) model validation and activity prediction for new compounds [1].

Recent advances in 3D QSAR methods, particularly those grounded in physics-based representations of molecular interactions, have improved their ability to predict activity even with limited structural data [9]. While SBDD methods like free energy perturbation are often limited to small structural changes around a known reference compound, 3D QSAR models can generalize well across chemically diverse ligands for a given target [9].

Pharmacophore Modeling

Pharmacophore modeling identifies the essential molecular features responsible for biological activity by extracting common characteristics from a set of known active compounds [1]. A pharmacophore model typically includes features such as hydrogen bond donors, hydrogen bond acceptors, hydrophobic regions, aromatic rings, and charged groups, along with their spatial relationships [1].

The experimental protocol involves: (1) selecting a diverse set of known active compounds, (2) conformational analysis to explore flexible geometries, (3) molecular alignment to identify common features, (4) model generation capturing critical interactions, and (5) virtual screening using the validated model [1]. Pharmacophore models are particularly valuable for scaffold hopping - identifying novel chemical structures that maintain the essential features required for binding [9].

Integrated Approaches and Workflow Design

While SBDD and LBDD are powerful independently, integrating these approaches creates synergistic workflows that leverage their complementary strengths [9]. Integrated strategies can follow sequential, parallel, or hybrid screening frameworks to maximize efficiency and effectiveness in early-stage drug discovery.

Sequential Workflow Integration

A common integrated approach employs a sequential workflow where large compound libraries are first filtered using rapid ligand-based screening based on 2D/3D similarity to known actives or QSAR models [9]. The most promising subset of compounds then undergoes more computationally intensive structure-based techniques like molecular docking and binding affinity predictions [9]. This sequential integration narrows the chemical space, enabling structure-guided approaches to focus on the most viable candidates and significantly improving overall computational efficiency [9].

Parallel and Hybrid Screening Approaches

Advanced discovery pipelines employ parallel screening, running SBDD and LBDD methods independently but simultaneously on the same compound library [9]. Each method generates its own ranking, with results compared or combined in a consensus framework. In hybrid scoring, compound ranks from each method are multiplied to yield a unified rank order, favoring compounds ranked highly by both approaches and thus prioritizing specificity [9]. This parallelism helps mitigate limitations inherent in each approach - when docking scores are compromised by inaccurate pose prediction, similarity-based methods may still recover actives based on known ligand features [9].

The following diagram illustrates the complementary information captured by SBDD and LBDD approaches:

Successful implementation of SBDD and LBDD approaches requires access to specialized databases, software tools, and computational resources. The following table catalogues essential resources for designing and executing effective drug discovery campaigns.

Table 3: Essential Research Toolkit for SBDD and LBDD

| Resource Category | Specific Tools/Databases | Key Application | Access |

|---|---|---|---|

| Protein Structure Databases | PDB (Protein Data Bank) [4], AlphaFold Database [4] | Experimental & predicted structures for SBDD | Public |

| Ultra-Large Compound Libraries | Enamine REAL [4], NIH SAVI [4] | Billions of synthesizable compounds for screening | Commercial/Public |

| Molecular Docking Software | AutoDock [2], GOLD [2], GLIDE [2] | Binding pose prediction and virtual screening | Commercial/Academic |

| QSAR & Modeling Platforms | Open3DQSAR [1], Schrodinger QSAR [2] | Ligand-based activity prediction | Commercial/Academic |

| MD Simulation Packages | GROMACS, AMBER, NAMD [4] | Sampling flexibility and binding dynamics | Academic/Commercial |

| Structural Biology Techniques | X-ray Crystallography [1], Cryo-EM [1], NMR [1] | Experimental structure determination | Specialized Facilities |

The choice between Structure-Based Drug Design and Ligand-Based Drug Design represents a critical early decision in drug discovery that significantly influences project trajectory and resource allocation. SBDD offers atomic-level precision for rational design when reliable target structures are available, while LBDD provides powerful pattern recognition capabilities when ligand information is abundant but structural data is limited. Rather than viewing these approaches as mutually exclusive, modern drug discovery increasingly leverages their complementary strengths through integrated workflows that maximize the utility of both target-specific information and known ligand activity data.

As structural biology advances through methods like Cryo-EM and AI-based structure prediction, and chemical libraries expand to billions of accessible compounds, the strategic integration of SBDD and LBDD will continue to enhance prediction accuracy, accelerate hit identification, and ultimately improve the efficiency of early-stage drug discovery. Researchers who thoughtfully combine these approaches while understanding their respective prerequisites and limitations will be best positioned to navigate the complex landscape of modern pharmaceutical development.

Techniques in Action: Core Methodologies and Real-World Applications of SBDD and LBDD

Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD) represent two fundamental paradigms in modern drug discovery. While LBDD infers drug-target interactions indirectly by analyzing known active molecules, SBDD utilizes the three-dimensional structural information of the biological target to directly design or optimize compounds [19] [1]. This distinction is analogous to designing a key by studying the lock itself (SBDD) versus copying patterns from existing keys (LBDD) [20]. The SBDD approach is uniquely powerful for generating novel chemical scaffolds and optimizing binding interactions when a reliable protein structure is available [20] [1].

The core SBDD toolkit comprises sophisticated computational techniques that leverage structural information, with molecular docking, molecular dynamics (MD), and free energy perturbation (FEP) forming a critical methodology hierarchy. These techniques enable researchers to predict how small molecules interact with target proteins, study the dynamic behavior of these complexes, and quantitatively calculate binding affinities [2] [4] [9]. The integration of these methods has become increasingly vital in addressing the high costs and failure rates in drug discovery, with computational approaches potentially reducing discovery costs by up to 50% [4].

This technical guide examines the principles, methodologies, and applications of these three cornerstone SBDD techniques, providing researchers with a comprehensive framework for their implementation in modern drug discovery pipelines.

Molecular Docking: Predicting Ligand-Receptor Interactions

Fundamental Principles and Applications

Molecular docking is a fundamental SBDD technique that predicts the preferred orientation and conformation of a small molecule ligand when bound to a protein target. By simulating this molecular recognition process, docking algorithms generate binding poses and score them based on interaction energetics, enabling virtual screening of compound libraries and analysis of binding modes [2] [9]. The method operates on the molecular recognition principle that optimal binding occurs when steric, electrostatic, and hydrophobic complementarity are achieved between ligand and receptor [2].

The primary applications of molecular docking include:

- Virtual screening: Rapid evaluation of large chemical libraries to identify potential hit compounds [2] [4]

- Binding mode analysis: Prediction of molecular interactions stabilizing ligand-receptor complexes [2]

- Lead optimization: Guidance for structural modifications to improve binding affinity and selectivity [9]

Key Methodological Components

Docking methodologies incorporate two essential components: conformational search algorithms and scoring functions [2].

Table 1: Molecular Docking Conformational Search Algorithms

| Algorithm Type | Representative Software | Key Characteristics | Limitations |

|---|---|---|---|

| Systematic Search | FRED, Surflex-Dock, DOCK | Incremental ligand construction in binding site; avoids combinatorial explosion | May converge to local energy minima |

| Stochastic Search | AutoDock, Gold | Genetic algorithms explore energy landscape broadly; better global minimum identification | Higher computational cost |

Scoring functions estimate binding affinity using various approaches:

- Force field-based: Calculate energies using molecular mechanics force fields

- Empirical: Parameterized using experimental binding data

- Knowledge-based: Derived from statistical analysis of atom pair frequencies in known structures [2]

Experimental Protocol for Molecular Docking

A robust molecular docking protocol involves these critical steps:

Protein Preparation

Ligand Preparation

- Generate 3D structures from 1D/2D representations

- Assign proper bond orders and formal charges

- Generate possible tautomers and stereoisomers

Docking Execution

- Select appropriate search algorithm based on ligand flexibility

- Define search space encompassing binding pocket

- Perform multiple docking runs to ensure reproducibility

Pose Analysis and Validation

- Cluster resulting poses by spatial similarity

- Analyze key molecular interactions (H-bonds, hydrophobic contacts, π-stacking)

- Validate protocol through redocking known crystallographic ligands [9]

For challenging flexible molecules like macrocycles, enhanced sampling or multi-conformer approaches are recommended [9].

Molecular Dynamics: Accounting for Flexibility and Dynamics

Principles and Significance

Molecular dynamics simulations address a critical limitation of molecular docking: the inherent flexibility of both ligands and protein targets. By simulating the time-dependent evolution of a molecular system, MD captures conformational changes, binding/unbinding events, and allosteric transitions that static docking cannot [4]. This capability is particularly valuable for studying membrane proteins, which constitute over 50% of drug targets but represent only a small fraction of structures in the PDB [20].

The implementation of MD in SBDD has been transformative, enabling:

- Dynamic behavior assessment: Evaluation of binding stability and residence times

- Flexible docking: Utilization of multiple receptor conformations

- Cryptic pocket identification: Detection of transient binding sites not apparent in crystal structures [4]

Accelerated Molecular Dynamics Methods

Traditional MD simulations face timescale limitations in observing rare events like complete ligand unbinding. Accelerated MD (aMD) addresses this by applying a boost potential to smooth energy barriers, enhancing conformational sampling [4]. The core principle involves modifying the potential energy surface according to:

[ V'(r) = V(r) + \Delta V(r) ]

Where (V(r)) is the original potential and (\Delta V(r)) is the boost potential applied when (V(r) < E), creating a flattened effective surface that facilitates transitions between low-energy states.

The Relaxed Complex Method

The Relaxed Complex Method (RCM) represents a powerful integration of MD and docking that explicitly accounts for receptor flexibility [4]. This approach involves:

- Conformational sampling: Running extended MD simulations of the target protein

- Representative structure selection: Clustering trajectories to identify distinct conformational states

- Ensemble docking: Screening compounds against multiple protein conformations

RCM significantly improves virtual screening hit rates compared to single-structure docking, as it accounts for the dynamic nature of binding sites and enables identification of compounds that target transient pockets [4].

Table 2: Molecular Dynamics Simulation Parameters and Applications

| Parameter | Typical Values/Range | Application Context |

|---|---|---|

| Simulation Time | Nanoseconds to milliseconds | Dependent on process kinetics and sampling method |

| Force Field | CHARMM, AMBER, OPLS | Determines accuracy of physical interactions |

| Enhanced Sampling | aMD, Meta-dynamics | Rare event sampling and barrier crossing |

| Solvation Model | Explicit, Implicit | Balance between accuracy and computational cost |

Free Energy Perturbation: Quantitative Binding Affinity Prediction

Theoretical Foundations

Free Energy Perturbation represents the most computationally intensive yet theoretically rigorous approach in the SBDD toolkit for predicting binding affinities. FEP applies statistical mechanics principles to calculate free energy differences between related systems, typically comparing protein-ligand complexes with slight structural modifications [9]. The method operates through thermodynamic cycles that transform one ligand into another in both bound and unbound states, enabling calculation of relative binding free energies without directly simulating the physical binding process.

The FEP approach is particularly valuable in lead optimization stages, where it can quantitatively predict the impact of small chemical modifications on binding affinity, potentially distinguishing between favorable changes of ~1 kcal/mol (approximately 5-fold affinity improvement) and unfavorable modifications [9].

FEP Implementation Protocol

A standard FEP calculation involves these methodological stages:

System Preparation

- Create initial structures for both bound and unbound states

- Solvate systems in explicit water molecules with appropriate ion concentrations

- Ensure consistent atom mapping between initial and final states

λ-Window Setup

- Divide the transformation pathway into discrete λ windows (typically 12-24)

- Each λ value represents a different hybrid potential combining initial and final states

Simulation Execution

- Run equilibration phases at each λ window

- Perform production simulations with sufficient sampling

- Employ Hamiltonian replica exchange to enhance phase space overlap

Free Energy Calculation

- Analyze energy differences using Bennett Acceptance Ratio (BAR) or Multistate BAR (MBAR)

- Estimate statistical uncertainties through block averaging or bootstrapping

The computational expense of FEP limits its application to relatively small chemical perturbations, typically involving changes of a few heavy atoms [9].

Integrated Workflows and Complementary Approaches

Synergistic Method Integration

The most effective SBDD strategies combine docking, MD, and FEP in complementary workflows that leverage the respective strengths of each technique [4] [9]. A typical integrated approach might include:

- Initial screening: Molecular docking of large virtual libraries

- Pose refinement and validation: MD simulations of top-ranked complexes

- Affinity optimization: FEP calculations on closely related analogs

This hierarchical strategy maximizes efficiency by applying increasingly accurate but computationally expensive methods to progressively smaller compound sets [9].

SBDD and LBDD Complementarity

While this guide focuses on SBDD methodologies, the most robust drug discovery pipelines often integrate both structure-based and ligand-based approaches [19] [9]. LBDD techniques like Quantitative Structure-Activity Relationship (QSAR) modeling and pharmacophore mapping provide valuable complementary information, particularly when structural data is limited or to validate SBDD predictions [1] [9]. Hybrid approaches can leverage ligand-based screening to narrow chemical space before applying more resource-intensive structure-based methods [9].

Table 3: Comparison of SBDD Computational Techniques

| Method | Typical Application | Computational Cost | Key Limitations |

|---|---|---|---|

| Molecular Docking | Virtual screening, binding mode prediction | Low to moderate | Fixed receptor conformation, approximate scoring |

| Molecular Dynamics | Binding stability, conformational sampling | Moderate to high | Timescale limitations, force field accuracy |

| Free Energy Perturbation | Lead optimization, affinity prediction | Very high | Limited to small perturbations, system setup sensitivity |

Research Reagent Solutions

Table 4: Essential Computational Tools for SBDD Methodologies

| Tool Category | Representative Software | Primary Function |

|---|---|---|

| Docking Software | AutoDock, Glide, GOLD, FRED | Ligand pose prediction and scoring |

| MD Simulation Packages | AMBER, CHARMM, GROMACS, NAMD | Biomolecular dynamics simulation |

| FEP Platforms | Schrödinger FEP+, OpenFE | Binding free energy calculations |

| Structure Preparation | MOE, Chimera, Maestro | Protein and ligand preprocessing |

| Visualization & Analysis | VMD, PyMOL, MDTraj | Simulation trajectory analysis |

Visualization of SBDD Workflows

Integrated SBDD Methodology Framework

SBDD Methodology Integration

Relaxed Complex Method Workflow

Relaxed Complex Method