RTlogD Model Performance: A Comprehensive Benchmarking Against Commercial LogD Prediction Tools

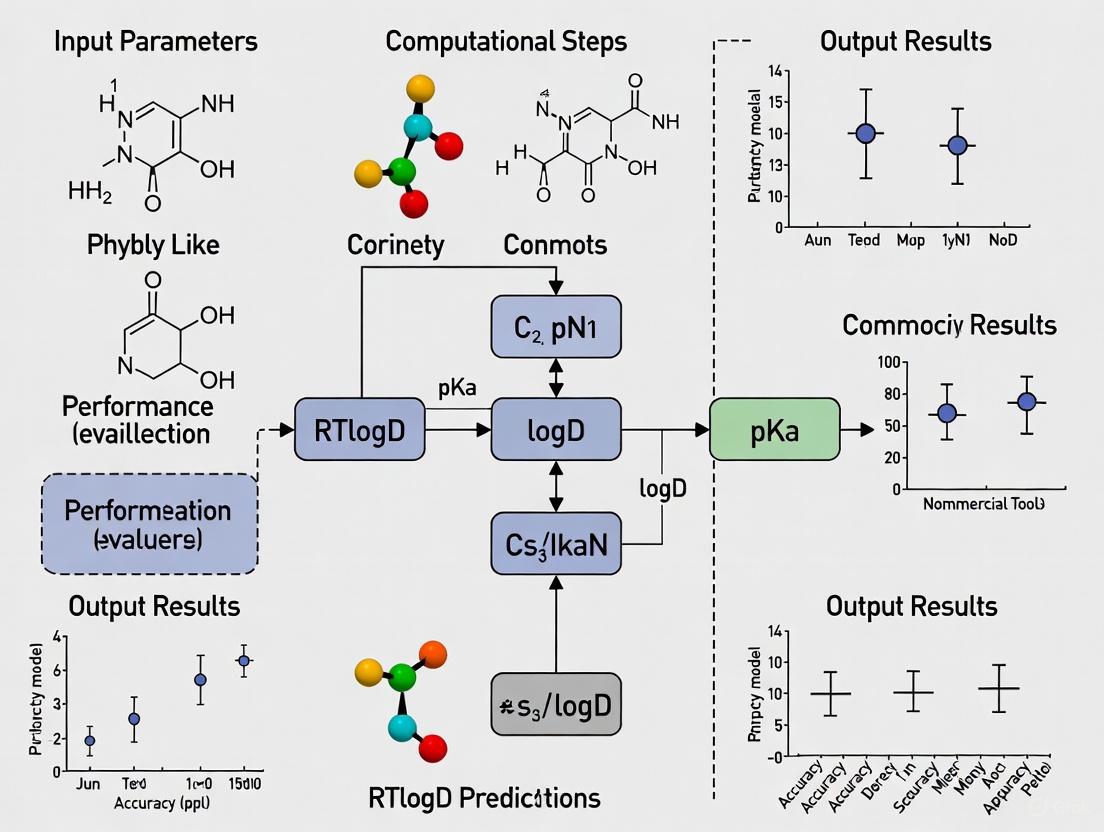

This article provides a rigorous performance evaluation of the novel RTlogD model, which enhances logD7.4 prediction by transferring knowledge from chromatographic retention time, microscopic pKa, and logP data.

RTlogD Model Performance: A Comprehensive Benchmarking Against Commercial LogD Prediction Tools

Abstract

This article provides a rigorous performance evaluation of the novel RTlogD model, which enhances logD7.4 prediction by transferring knowledge from chromatographic retention time, microscopic pKa, and logP data. Aimed at researchers and drug development professionals, we explore the foundational principles addressing data scarcity in logD modeling, detail the multi-source knowledge integration methodology, analyze strategies for model optimization and troubleshooting, and present a comparative validation against established commercial tools. The analysis demonstrates RTlogD's superior performance in accuracy and generalizability, highlighting its potential to improve efficiency in drug discovery and design workflows.

The LogD Prediction Challenge: Overcoming Data Scarcity with Novel Approaches

The Critical Role of logD7.4 in Drug Discovery and Development

Lipophilicity is a fundamental physical property that exerts a significant influence on various aspects of drug behavior, including solubility, permeability, metabolism, distribution, protein binding, and toxicity [1]. In drug-like molecules, lipophilicity affects physicochemical properties that directly impact a compound's absorption, distribution, metabolism, elimination, and toxicological profile. The lipophilicity of a potential drug is typically quantified through two key parameters: the partition coefficient (logP), which describes the differential solubility of a neutral compound in n-octanol and water, and the distribution coefficient (logD), which measures the lipophilicity of an ionizable compound across a mixture of ionic species at a specific pH [1]. Of particular importance in drug discovery is logD at physiological pH 7.4 (logD7.4), as this value more accurately represents the partitioning behavior of ionizable compounds under biological conditions [1].

The critical nature of logD7.4 optimization stems from its direct relationship to drug efficacy and safety. High lipophilicity has been associated with an increased risk of toxic events, while excessively low lipophilicity may limit drug absorption and metabolism [1]. Compounds with moderate logD7.4 values typically exhibit optimal pharmacokinetic and safety profiles, leading to improved therapeutic effectiveness [1]. According to Bhal's studies, logD should be considered in the "Rule of 5" instead of logP, highlighting its heightened relevance in modern drug discovery [1]. Furthermore, Yang et al. demonstrated that logD7.4 values help distinguish aggregators from non-aggregators, addressing a critical challenge in early drug development [1].

Experimental logD7.4 Determination: Methods and Challenges

Several experimental techniques have been developed to measure logD7.4, each with distinct advantages and limitations. The shake-flask method, where n-octanol serves as the octanol phase and buffer acts as the aqueous phase, remains the most commonly used approach [1]. However, this method is labor-intensive and requires large amounts of synthesized compounds, making it unsuitable for high-throughput applications. Chromatographic techniques, particularly high-performance liquid chromatography (HPLC) systems, rely on the distribution behavior between mobile and stationary phases [1]. While simpler and more stable against impurities than the shake-flask method, HPLC provides only an indirect assessment of logD7.4 and is generally less accurate. Potentiometric titration approaches involve dissolving samples in n-octanol and titrating them with potassium hydroxide or hydrochloride [1]. These methods are limited to compounds with acid-base properties and require high sample purity, restricting their general applicability.

The challenges associated with experimental logD7.4 determination have driven the development of computational prediction tools. The limited availability of high-quality experimental data poses a significant challenge for building robust prediction models [1]. Pharmaceutical companies like Bayer, AstraZeneca, and Merck & Co. have leveraged their extensive proprietary datasets to develop internal models with superior performance [1]. For instance, AstraZeneca's AZlogD74 model is trained on a dataset of over 160,000 molecules that is continuously updated with new measurements [1]. This disparity between proprietary and publicly available data has created a performance gap between commercial and academic models, emphasizing the need for innovative approaches that maximize learning from limited data.

The RTlogD Model: An Innovative Multi-Source Knowledge Approach

Model Architecture and Theoretical Foundation

The RTlogD model represents a novel computational framework that addresses the data limitation challenge in logD7.4 prediction by leveraging knowledge from multiple related domains [1]. This innovative approach combines three key elements: (1) pre-training on chromatographic retention time (RT) datasets, (2) incorporation of microscopic pKa values as atomic features, and (3) integration of logP as an auxiliary task within a multitask learning framework [1]. The theoretical foundation of RTlogD rests on the strong correlation between these physicochemical properties and lipophilicity, enabling the model to extract and transfer relevant patterns from larger, more readily available datasets.

The relationship between chromatographic retention time and lipophilicity provides a particularly valuable knowledge source for the model. Chromatographic techniques generate substantial retention time data that surpasses the available logP and pKa data [1]. Previous research has established that retention time is influenced by lipophilicity, with Parinet et al. using calculated logD and logP as descriptors to predict retention time [1]. RTlogD effectively reverses this relationship, using retention time patterns to inform logD predictions. The model was pre-trained on a dataset of nearly 80,000 molecules with chromatographic retention time data, significantly expanding its molecular representation capabilities before fine-tuning on the more limited logD dataset [1].

Experimental Protocols and Implementation

The development and validation of the RTlogD model followed a rigorous experimental protocol. The researchers curated the DB29 dataset consisting of experimental logD values gathered from ChEMBLdb29 [1]. To ensure data quality, the dataset exclusively included experimental logD values obtained from shake-flask, chromatographic, and potentiometric titration approaches, with specific pretreatment steps: (1) records with pH values outside the range of 7.2-7.6 were removed; (2) records with solvents other than octanol were eliminated; and (3) all data was manually verified with errors corrected [1]. This meticulous curation process addressed common data quality issues, including partition coefficients not logarithmically transformed and transcription errors where values recorded in ChEMBLdb29 did not align with primary literature sources.

For model implementation, the RTlogD framework utilized graph neural networks (GNNs) for molecular representation learning [1]. The incorporation of microscopic pKa values as atomic features provided valuable insights into ionizable sites and ionization capacity at the atomic level, offering more specific ionization information than macroscopic pKa values. The multitask learning framework simultaneously learned logD and logP tasks, with domain information from the logP task serving as an inductive bias that improved learning efficiency and prediction accuracy for logD [1]. The model is publicly available through a GitHub repository, which provides installation instructions recommending Mamba to create the environment for RTlogD [2].

Comparative Performance: RTlogD vs. Commercial Tools

Quantitative Benchmarking Results

The performance of the RTlogD model was rigorously evaluated against commonly used algorithms and prediction tools through comprehensive benchmarking studies. As shown in Table 1, RTlogD demonstrated superior performance compared to widely used tools such as ADMETlab2.0, PCFE, ALOGPS, FP-ADMET, and the commercial software Instant Jchem [1]. The model's innovative approach of leveraging multiple knowledge sources translated into measurable improvements in prediction accuracy and generalization capability, particularly for novel chemical structures.

Table 1: Performance Comparison of logD7.4 Prediction Tools

| Tool Name | Type | Key Features | Performance Notes |

|---|---|---|---|

| RTlogD | Academic Model | Transfer learning from RT; microscopic pKa features; logP multitask learning | Superior performance vs. commonly used tools [1] |

| Instant Jchem | Commercial Software | Comprehensive chemical data management and prediction | Outperformed by RTlogD in comparative analysis [1] |

| ADMETlab2.0 | Web Platform | Integrated ADMET property prediction | Outperformed by RTlogD in benchmarking [1] |

| PCFE | Algorithm | Fragment-based estimation | Outperformed by RTlogD [1] |

| ALOGPS | Web Tool | Virtual Computational Chemistry Laboratory | Outperformed by RTlogD [1] |

| FP-ADMET | Model | Fingerprint-based ADMET prediction | Outperformed by RTlogD [1] |

| Canvas | Commercial Software | Licensed, dedicated prediction software | More accurate than free tools in SCRA study [3] |

| ChemDraw | Commercial Software | Structure-based property prediction | Provided competitive estimates in SCRA study [3] |

Independent validation studies on specialized chemical families further confirmed the performance advantages of sophisticated prediction approaches. In an evaluation of synthetic cannabinoid receptor agonists (SCRAs), licensed, dedicated software packages such as Canvas and ChemDraw provided more accurate lipophilicity predictions than free tools or those with prediction as a secondary function [3]. Nevertheless, the latter still provided competitive estimates in most cases, with experimental logD7.4 values for tested SCRAs ranging from 2.48 (AB-FUBINACA) to 4.95 (4F-ABUTINACA) [3].

Analysis of Prediction Approaches Across Tools

The benchmarking results reveal important patterns in logD7.4 prediction accuracy across different methodological approaches. Tools that incorporate multiple physicochemical properties and leverage larger, more diverse datasets consistently outperform those relying on single-parameter estimations or limited training data. The success of RTlogD's multi-source knowledge approach highlights the value of integrating related physicochemical properties to enhance prediction capabilities. Similarly, the superior performance of licensed software tools like Canvas and ChemDraw in independent evaluations suggests that dedicated development resources and comprehensive algorithm optimization contribute significantly to prediction accuracy [3].

Another critical factor in prediction performance is the applicability domain of each tool – the chemical space where the model can reliably extrapolate. A comprehensive benchmarking study of computational tools for predicting toxicokinetic and physicochemical properties found that models for PC properties (average R² = 0.717) generally outperformed those for TK properties (average R² = 0.639 for regression) [4]. This performance differential underscores the relative complexity of predicting distribution-related properties like logD7.4 compared to more fundamental physicochemical parameters. The study further emphasized the importance of evaluating model performance inside the applicability domain, as prediction reliability significantly decreases for compounds structurally dissimilar to those in the training set [4].

Research Applications and Practical Implementation

Experimental Workflow for logD7.4 Evaluation

The practical implementation of logD7.4 evaluation in drug discovery follows a structured workflow that integrates both experimental and computational approaches. As illustrated in Figure 2, this process typically begins with compound design and synthesis, proceeds through experimental assessment or computational prediction, and culminates in data interpretation that informs subsequent compound optimization cycles.

Essential Research Reagents and Tools

Successful logD7.4 evaluation requires specific research reagents and computational tools. Table 2 summarizes key solutions and their functions in lipophilicity assessment, providing researchers with practical resources for implementation.

Table 2: Essential Research Reagent Solutions for logD7.4 Assessment

| Reagent/Tool | Function/Application | Implementation Context |

|---|---|---|

| n-Octanol/Buffer System | Standard solvent system for shake-flask logD7.4 determination | Experimental measurement [1] |

| HPLC Systems with C18 Columns | Chromatographic hydrophobicity index (CHI) logD7.4 determination | High-throughput experimental assessment [3] |

| Potentiometric Titration Setup | logD7.4 determination for ionizable compounds with high purity | Experimental measurement for compounds with acid-base properties [1] |

| ACD/ChromGenius | Commercial chromatography software for retention time prediction | Retention time modeling and logD estimation [5] |

| OPERA-RT | Open-source QSRR model for retention time prediction | Retention time modeling in non-targeted analysis [5] |

| RDKit | Open-source cheminformatics toolkit | SMILES standardization and molecular descriptor calculation [4] |

| CompTox Chemistry Dashboard | Chemical database with property data | Candidate structure generation and property filtering [5] |

The integration of these tools into a cohesive workflow enables comprehensive lipophilicity assessment. For instance, chromatographic techniques can be combined with computational predictions to enhance confidence in results. Research has demonstrated that both OPERA-RT and ACD/ChromGenius can predict 95% of retention times within a ±15% chromatographic time window of experimental retention times [5]. This level of accuracy makes retention time prediction a valuable filtering tool in identification workflows, with OPERA-RT screening out a greater percentage of candidate structures within a 3-minute RT window (60% vs. 40%) compared to ACD/ChromGenius, though retaining fewer known chemicals (42% vs. 83%) [5].

The critical role of logD7.4 in drug discovery and development continues to drive methodological innovations in both experimental assessment and computational prediction. The RTlogD model represents a significant advancement in the field, demonstrating how knowledge transfer from related physicochemical properties can overcome the limitations imposed by scarce experimental data. By leveraging chromatographic retention time, microscopic pKa values, and logP within a unified framework, RTlogD achieves superior performance compared to commonly used prediction tools [1].

Future developments in logD7.4 prediction will likely focus on expanding high-quality experimental datasets and developing more sophisticated knowledge transfer methodologies. Pharmaceutical companies will continue to leverage their proprietary data advantages, while academic researchers will innovate in algorithmic approaches that maximize learning from public data [1]. The integration of emerging machine learning techniques, particularly deep learning architectures that can automatically learn relevant molecular features from raw structural data, holds particular promise for enhancing prediction accuracy and generalizability. As these computational tools continue to evolve, their integration into streamlined drug discovery workflows will play an increasingly vital role in accelerating the development of therapeutics with optimal pharmacokinetic and safety profiles.

Limitations of Experimental logD Determination Methods

The distribution coefficient (logD) is a critical physicochemical parameter in drug discovery, quantifying a compound's lipophilicity at a specific pH (typically pH 7.4) and profoundly influencing its absorption, distribution, metabolism, excretion, and toxicity (ADMET) profile [1] [6]. Accurate logD determination is therefore essential for selecting drug candidates with optimal pharmacokinetics and minimal toxicity. The experimental methods for measuring logD, while considered foundational, are fraught with significant limitations that affect their application in modern, high-throughput discovery settings. This guide objectively details these constraints, providing a structured comparison and the experimental data necessary to understand the trade-offs involved in logD determination.

The primary experimental techniques for logD determination include the shake-flask method, chromatographic methods, and potentiometric titration. The following sections detail their protocols and inherent limitations.

The Shake-Flask Method

Experimental Protocol: The shake-flask method is widely regarded as the gold standard for logD measurement [1]. The standard protocol involves the following steps [7]:

- Preparation: The test compound is dissolved in a mixture of pre-saturated n-octanol and aqueous buffer (e.g., phosphate-buffered saline at pH 7.4). The presence of a co-solvent like Dimethyl Sulfoxide (DMSO) is common, though its concentration must be controlled (typically ≤0.5% v/v) to avoid impacting the measured logD value [7].

- Equilibration: The mixture is vigorously agitated (shaken) for a predetermined period to allow the compound to distribute between the two immiscible phases.

- Separation: After shaking, the mixture is allowed to settle or is centrifuged to achieve complete phase separation.

- Quantification: The concentration of the compound in each phase is determined using a quantitative analytical technique, most often Liquid Chromatography coupled with tandem Mass Spectrometry (LC-MS/MS) [7]. The logD is calculated as the logarithm of the ratio of the compound's concentration in the octanol phase to its concentration in the aqueous phase.

Core Limitations: Despite its status as a reference method, the conventional shake-flask approach faces several challenges [7] [1]:

- Low Throughput: The process is inherently slow, labor-intensive, and requires manual intervention for phase separation and analysis, making it unsuitable for screening large compound libraries in early discovery [7].

- Substantial Compound Requirement: This method requires relatively large amounts of purified compound, which is often a scarce resource during the early stages of drug discovery [7] [1].

- Analytical Burden: Analyzing each compound individually leads to a large number of bioanalytical samples, consuming significant instrument time and resources [7].

High-Throughput Modifications: To address the throughput limitation, a sample pooling approach has been developed. This method pools multiple compounds together in a single shake-flask experiment, leveraging LC-MS/MS for multiplexed quantification [7].

- Validation Data: This approach was validated using 37 structurally diverse compounds with logD values ranging from -0.04 to 6.01. A comparison between single and pooled compound measurements showed an excellent correlation (R² = 0.9879) with a Root Mean Square Error (RMSE) of 0.21, demonstrating that at least 37 compounds can be measured simultaneously with acceptable accuracy [7].

- Key Limitation of Pooling: While it dramatically increases throughput and reduces the number of samples for analysis, the sample pooling method is technically more complex and relies heavily on advanced, rapid generic LC-MS/MS bioanalysis for accurate quantification of multiple analytes in a single run [7].

Chromatographic Methods

Experimental Protocol: Techniques such as High-Performance Liquid Chromatography (HPLC) estimate logD by measuring the retention time of a compound on a chromatographic column, which correlates with its lipophilicity [1]. The logD value is inferred by comparing its retention behavior to a set of standards with known logD values.

Core Limitations:

- Indirect Measurement: This method does not measure a partition coefficient directly but relies on a correlation model, which can introduce inaccuracies [1].

- Accuracy and Reproducibility Issues: The correlation between retention time and logD can deviate significantly for acidic and basic compounds. Furthermore, variations in column performance over time (e.g., column aging) necessitate frequent recalibration and reanalysis of standards to maintain accuracy, impacting reproducibility [7] [1].

- Limited Thermodynamic Insight: As a non-equilibrium method, it may not fully capture the thermodynamic aspects of partitioning [1].

Potentiometric Titration

Experimental Protocol: This method involves dissolving the sample in a water-saturated n-octanol medium and titrating with an acid or base while monitoring the pH potentiometrically. The logD is determined from the shift in the titration curve compared to an aqueous reference titration [1].

Core Limitations:

- Limited Applicability: It is primarily suitable for compounds with acid-base properties (ionizable groups) and requires a high degree of sample purity, limiting its general use [1].

- Complex Data Interpretation: The analysis of titration curves can be complex, especially for molecules with multiple ionizable groups [1].

Comparative Analysis of Experimental Limitations

The table below summarizes the key limitations and associated experimental data for the primary logD determination methods.

Table 1: Comparative Limitations of Experimental logD Determination Methods

| Method | Throughput | Compound Consumption | Key Experimental Limitations & Associated Data | Applicability |

|---|---|---|---|---|

| Shake-Flask (Traditional) | Low (Manual, slow) [7] [1] | High (Microgram to milligram) [7] [1] | - DMSO Sensitivity: LogD measurement is affected by DMSO content; >0.5% DMSO can distort results [7].- Analytical Burden: Generates a high number of bioanalytical samples [7]. | Broad; considered the gold standard [1]. |

| Shake-Flask (Sample Pooling) | High (37+ compounds per run) [7] | Reduced per compound [7] | - Technical Complexity: Requires advanced LC-MS/MS for multiplexed quantification [7].- Validation Data: RMSE of 0.21 vs. traditional method [7]. | Broad, but requires specialized instrumentation [7]. |

| Chromatographic (e.g., HPLC) | Moderate to High [1] | Low [1] | - Accuracy Deviation: Acidic and basic substances can show significant errors [7].- Reproducibility Data: Requires maintenance and reanalysis of standards due to column performance variations [7]. | Broad, but correlations may fail for ionizable compounds [7] [1]. |

| Potentiometric Titration | Low [1] | Moderate [1] | - Limited Compound Scope: Restricted to ionizable compounds and requires high purity [1]. | Narrow (Ionizable compounds only) [1]. |

The Impact of Experimental Variability

A significant, often overlooked challenge in experimental logD determination is the substantial variability in measured values. This variability represents the aleatoric limit or irreducible error for any predictive model trained on such data [8].

- Evidence from Inter-laboratory Studies: Investigations into inter-laboratory measurements of solubility (a related property) have found standard deviations typically ranging between 0.5 and 1.0 log units [8]. One study of 411 compounds reported an average inter-laboratory standard deviation of 0.58 [8], while another, which standardized materials and methods across 12 labs, still found variations resulting in a standard deviation as high as 0.74 log units due to differences in data analysis alone [8].

- Implication for logD: While this data specifically concerns solubility, it highlights the profound impact of experimental protocols, conditions, and data analysis on physicochemical measurements. This level of inherent noise in training and benchmark data poses a fundamental challenge to developing and validating highly accurate in silico logD models [8] [9].

Workflow Diagram of logD Determination

The following diagram illustrates the decision pathways and limitations involved in selecting an experimental method for logD determination.

The Scientist's Toolkit: Key Research Reagents and Materials

The following table details essential materials and reagents used in experimental logD determination, particularly the shake-flask method.

Table 2: Essential Research Reagents for logD Determination

| Reagent/Material | Function in logD Determination |

|---|---|

| n-Octanol | Represents the lipid phase in the partitioning system, mimicking biological membranes [7] [1]. |

| Aqueous Buffer (e.g., PBS at pH 7.4) | Represents the aqueous physiological environment; the pH is critical for measuring the distribution of ionizable compounds [7] [1]. |

| Dimethyl Sulfoxide (DMSO) | A common co-solvent used to dissolve compounds with poor aqueous solubility. Concentration must be kept low (≤0.5%) to avoid altering the true partition coefficient [7]. |

| LC-MS/MS System | The core analytical instrument for quantifying compound concentrations in each phase. Essential for sensitivity, specificity, and for the multiplexed analysis used in high-throughput pooling methods [7]. |

| Reference Drug Standards | Compounds with known, reliably measured logD values (e.g., Propranolol, Warfarin) used for method validation and quality control [7]. |

Lipophilicity, quantified as the distribution coefficient between n-octanol and buffer at physiological pH 7.4 (logD7.4), is a fundamental physical property with profound influence on drug behavior. It affects critical processes including solubility, permeability, metabolism, distribution, protein binding, and toxicity [1] [10]. Accurate prediction of logD7.4 is therefore crucial for successful drug discovery and design, enabling researchers to optimize compounds for better bioavailability and safety profiles [1].

However, computational models for predicting logD7.4 face a significant challenge: the limited availability of high-quality experimental data [1]. This data scarcity stems from the labor-intensive and compound-intensive nature of experimental methods like the shake-flask technique, leading to restricted dataset sizes that impede the development of models with satisfactory generalization capability [1]. This article explores how data scarcity shapes the landscape of computational logD prediction and provides a comparative evaluation of current approaches, with a specific focus on the innovative strategies employed by the RTlogD model to overcome this fundamental limitation.

The Data Scarcity Challenge in logD Modeling

Origins and Consequences of Limited Data

The core challenge in logD modeling is a straightforward but formidable one: logD experimental datasets are severely limited [1]. This scarcity is not incidental but rooted in the complexities of experimental determination. The shake-flask method, while considered a standard, is labor-intensive and requires large amounts of synthesized compounds, naturally restricting the volume of data that can be generated [1]. Chromatographic and potentiometric techniques, while offering alternatives, introduce their own limitations in accuracy or applicability [1].

The consequence of this data scarcity is a direct restriction on the generalization capability of predictive models. Machine learning and deep learning architectures, particularly graph neural networks (GNNs), typically demand substantial data volumes to learn robust structure-property relationships [1] [11]. Without access to large, diverse training sets, models struggle to accurately predict properties for novel chemical scaffolds outside their training distribution.

Industry vs. Academic Disparity

The impact of data scarcity is most evident in the performance disparity between proprietary industrial models and publicly available academic tools. Pharmaceutical companies like AstraZeneca have developed models, such as AZlogD74, trained on datasets of over 160,000 molecules [1]. These companies continuously update their models with new measurements, creating a data advantage that translates to superior predictive performance [1]. This disparity highlights how data access, rather than algorithmic sophistication alone, often determines practical utility in real-world drug discovery applications.

Computational Strategies to Overcome Data Scarcity

Knowledge Transfer and Multi-Task Learning

Innovative approaches that leverage related chemical properties have emerged as powerful strategies to circumvent data limitations. The RTlogD model exemplifies this paradigm by integrating knowledge from multiple sources through several key mechanisms [1] [2]:

- Transfer Learning from Chromatographic Retention Time (RT): By pre-training on a large dataset of nearly 80,000 chromatographic retention time measurements, the model learns generalizable molecular representations influenced by lipophilicity before fine-tuning on the limited logD data [1].

- Multi-Task Learning with logP: Incorporating logP (the partition coefficient for neutral compounds) as an auxiliary task creates a shared representation that benefits the primary logD prediction task [1].

- Incorporation of Microscopic pKa Values: Using atomic-level pKa features provides the model with crucial information about ionizable sites and ionization capacity, directly informing the pH-dependent distribution behavior captured by logD [1].

These approaches align with established methodologies for addressing data scarcity in deep learning, including transfer learning and leveraging domain knowledge [11].

Correction-Based and Hybrid Models

Another prevalent strategy involves building correction models based on existing computational predictions. For instance, some researchers have proposed QSAR models that use calculated logP and pKa values from commercial software as descriptors, then training on available experimental logD data to correct systematic errors in the initial predictions [6]. This approach effectively uses established algorithms as feature generators while applying machine learning to refine their outputs based on limited experimental evidence.

Comparative Performance Evaluation

Benchmarking Methodology and Experimental Protocols

To objectively evaluate the performance of logD prediction tools, researchers typically employ carefully curated test sets with experimentally determined logD7.4 values. The following workflow outlines a standard benchmarking approach derived from recent comprehensive evaluations [4]:

Fig. 1: Workflow for benchmark studies depicting key stages from data preparation to performance assessment [4].

The experimental protocol for validating the RTlogD model specifically involved [1] [2]:

- Data Sourcing: Experimental logD values were gathered from ChEMBLdb29, exclusively using values obtained via shake-flask, chromatographic, or potentiometric methods at pH 7.2-7.6.

- Data Curation: Rigorous quality control was implemented, including manual verification against primary literature, correction of transcription errors, and removal of records with inconsistent pH values or solvents other than octanol.

- Model Training: The RTlogD framework combined pre-training on chromatographic retention data, multi-task learning with logP, and incorporation of microscopic pKa atomic features.

- Time-Split Validation: A temporally separated test set containing molecules reported within the past two years was used to simulate real-world predictive performance on novel compounds.

- Comparative Analysis: Performance was benchmarked against commonly used tools including ADMETlab2.0, PCFE, ALOGPS, FP-ADMET, and the commercial software Instant JChem.

Quantitative Performance Comparison

The table below summarizes key performance metrics for various logD prediction tools, illustrating how different approaches to the data scarcity challenge yield different levels of predictive accuracy:

Table 1: Performance comparison of logD prediction tools

| Tool/Model | Approach | Key Features | Reported Performance | Reference |

|---|---|---|---|---|

| RTlogD | Transfer Learning, Multi-Task | Pre-training on RT data, logP auxiliary task, microscopic pKa features | Superior performance vs. common algorithms & commercial tools | [1] |

| AZlogD (AstraZeneca) | Proprietary Model | Trained on >160,000 in-house molecules | High performance (leverage large proprietary data) | [1] |

| ALogP | Empirical/Fragment-Based | Additive atomic contributions | Linear correlation with experimental logD for specific macrocycles (R² > 0.98) | [12] |

| XlogP | Empirical/Fragment-Based | Atom-based with correction factors | Overestimates lipophilicity for macrocycles (avg. dev. 2.8 log units) | [12] |

| ChemAxon | Empirical | Based on molecular structure | Underestimates lipophilicity for macrocycles (avg. dev. 3.9 log units) | [12] |

Case Study: Performance on Challenging Chemotypes

The performance gap between different approaches becomes particularly evident when predicting logD for complex molecular architectures. A recent study on triazine macrocycles revealed significant deviations between predicted and experimental values for common algorithms [12]. While absolute predictions showed substantial errors (e.g., average deviations of 0.9, 2.8, and 3.9 log units for ALogP, XlogP, and ChemAxon, respectively), a strong linear relationship (R² > 0.98) was observed between ALogP predictions and experimental values for aliphatic macrocycles [12]. This suggests that even when algorithms fail to predict absolute values accurately, they may capture relative trends within chemical series, enabling useful applications in lead optimization through linear correction.

Table 2: Performance on triazine macrocycles (deviation from experimental logD)

| Algorithm | Average Deviation (log units) | Trend | Application Potential |

|---|---|---|---|

| ALogP | 0.9 | Underestimation | High (after linear correction) |

| XlogP | 2.8 | Overestimation | Moderate (after linear correction) |

| ChemAxon | 3.9 | Underestimation | Moderate (after linear correction) |

Essential Research Reagents for logD Modeling

Successful computational logD prediction relies on both algorithmic innovation and access to critical data resources and software tools. The following table details key "research reagents" in this field:

Table 3: Essential resources for computational logD research

| Resource Name | Type | Function/Role | Access |

|---|---|---|---|

| ChEMBL Database | Chemical Database | Source of public domain bioactivity data, including experimental logD values | Public |

| RDKit | Cheminformatics Toolkit | Open-source platform for cheminformatics, descriptor calculation, and machine learning | Public |

| Chromatographic Retention Time Data | Experimental Data | Large-scale dataset for transfer learning approaches to enhance logD models | Public [1] |

| pKa Prediction Tools | Computational Tool | Provides ionization state information critical for understanding pH-dependent distribution | Both commercial and public |

| logP Prediction Algorithms | Computational Tool | Provides partition coefficient data for neutral species as input for logD models or multi-task learning | Both commercial and public |

The critical challenge of data scarcity continues to shape the development and application of computational logD models. While traditional fragment-based and empirical methods provide reasonable baseline performance, their accuracy limitations for novel or complex chemotypes remain significant. The most promising approaches, exemplified by RTlogD and proprietary industry models, directly address the data bottleneck through innovative knowledge transfer from related properties and massive, often proprietary, training sets.

Future progress in the field will likely depend on several key developments: (1) increased sharing of high-quality experimental data through public databases; (2) more sophisticated transfer learning frameworks that can integrate information from multiple complementary chemical properties; and (3) community-wide benchmarking efforts using standardized, temporally separated test sets to ensure realistic performance assessment. As these trends converge, computational logD prediction will continue to evolve from a screening tool to a reliable decision-making aid in drug discovery pipelines.

Lipophilicity is a fundamental physical property that profoundly influences a drug candidate's behavior, impacting solubility, permeability, metabolism, distribution, protein binding, and toxicity [13]. For decades, the partition coefficient, logP, has served as a standard measure of lipophilicity. LogP quantifies the distribution of a neutral, unionized compound between two immiscible liquids, typically octanol and water [14]. Its historical importance is canonized in Lipinski's Rule of Five, which suggests that for good oral bioavailability, a compound's calculated logP should be less than 5 [14].

However, a significant limitation plagues logP: it assumes the compound exists only in its unionized form [14]. This is problematic because approximately 95% of drugs contain ionizable groups [13]. For these molecules, logP provides an incomplete picture, as it fails to account for the changing ionization states that occur at different physiological pH levels [14] [15]. Consequently, the distribution coefficient, logD, has emerged as a more relevant and accurate metric for drug discovery. Unlike logP, logD is pH-dependent and measures the lipophilicity of a compound, accounting for all species present in solution—ionized, partially ionized, and unionized—at a specified pH, most commonly the physiological pH of 7.4 (logD7.4) [14] [13] [16]. This distinction is crucial for understanding a drug's real-world behavior in the varying environments of the human body.

logP vs. logD: A Fundamental Distinction

Definitions and Theoretical Foundations

The core difference between logP and logD lies in their treatment of ionization.

- logP (Partition Coefficient): Defined as the logarithm of the ratio of the concentration of a solute in octanol to its concentration in water, for the neutral species only [14] [17]. It is a constant for a given compound.

- logD (Distribution Coefficient): Defined as the logarithm of the ratio of the sum of the concentrations of all species of the compound (ionized and unionized) in octanol to the sum of the concentrations of all species in water [17]. LogD is a function of pH.

Mathematically, for a monoprotic acid, the relationship is often expressed as: LogD = LogP - log(1 + 10^(pH - pKa)) [15] This equation highlights how logD depends on both the intrinsic lipophilicity (logP) and the ionization state (governed by pH and pKa).

The Physiological Imperative: Why logD Matters More

The human body presents a mosaic of pH environments. The gastrointestinal tract, which an orally administered drug must traverse, has a pH ranging from 1.5–3.5 in the stomach to ~7.4 in the blood [14] [15]. A compound's ionization state, and therefore its lipophilicity and ability to cross membranes, changes dramatically across this pH gradient.

Table 1: Changing pH Environment of the Gastrointestinal Tract

| Physiological Compartment | Approximate pH Range |

|---|---|

| Stomach | 1.5 – 3.5 |

| Duodenum | 4.0 – 6.0 |

| Jejunum and Ileum | 6.0 – 7.4 |

| Blood | 7.4 |

Consider a compound with a basic amine (pKa ~10.9) and a pyridine (pKa ~4.8). Its logP might suggest high lipophilicity and good membrane permeability. However, its logD profile reveals that at physiologically relevant pH (1–8), the neutral form is virtually non-existent. The logD prediction correctly indicates high aqueous solubility and low lipophilicity in these regions, contradicting the prediction from logP alone [14]. Relying solely on logP could therefore lead to severe miscalculations of a drug's absorption and distribution.

This has direct consequences for a compound's ADMET profile (Absorption, Distribution, Metabolism, Excretion, and Toxicity). Optimal logD7.4 values are associated with better safety and pharmacokinetic profiles [13]. High lipophilicity (high logD) is correlated with increased risk of toxicity and poor solubility, while low lipophilicity can limit membrane permeability and absorption [13] [18]. Moderating logD is thus a key objective in lead optimization.

The logD Prediction Challenge and the Rise of the RTlogD Model

The Hurdles in Experimental logD Determination

Experimental determination of logD7.4 is often a bottleneck in drug discovery. The most common method is the shake-flask method, where a compound is partitioned between n-octanol and a buffer at pH 7.4 [13]. While considered a gold standard, this method is labor-intensive, requires substantial amounts of pure compound, and is difficult to automate for high-throughput screening [13]. Other techniques, such as chromatographic (HPLC) and potentiometric approaches, offer alternatives but come with their own limitations in accuracy, scope, or sample purity requirements [13].

In Silico Predictions and the Data Scarcity Problem

The challenges of experimental measurement have driven the development of in silico prediction tools. Traditional quantitative structure-property relationship (QSPR) models and newer artificial intelligence (AI) methods, particularly graph neural networks (GNNs), have been employed [13]. However, the central limitation for all computational models is the scarce availability of high-quality, experimental logD data for training. This data scarcity restricts the generalization capability and predictive accuracy of publicly available models [13]. While large pharmaceutical companies like AstraZeneca have built superior internal models using proprietary datasets of over 160,000 molecules, these are not accessible to the broader research community [13].

The RTlogD Model: A Novel Multi-Source Knowledge Framework

To address the fundamental challenge of data scarcity, a novel logD7.4 prediction model named RTlogD was developed. This model enhances prediction accuracy by transferring knowledge from multiple related domains through a sophisticated machine-learning framework [13].

The RTlogD model integrates three key sources of information:

- Chromatographic Retention Time (RT): Liquid chromatography retention time is strongly influenced by a compound's lipophilicity. The model is first pre-trained on a large dataset of nearly 80,000 chromatographic RT measurements, allowing it to learn general patterns of molecular lipophilicity from a much larger dataset than is available for logD itself [13].

- Microscopic pKa Values: The model incorporates predicted microscopic pKa values as atomic features. Unlike macroscopic pKa, which describes the molecule as a whole, microscopic pKa provides valuable insights into the ionization capacity of specific ionizable sites, offering a more granular view of the molecule's ionization state [13].

- logP as an Auxiliary Task: The model is trained using a multi-task learning framework where logP prediction is learned in parallel with logD. The domain knowledge embedded in logP acts as an inductive bias, guiding the model to learn more robust and accurate features for the primary logD task [13].

The following diagram illustrates the integrated architecture of the RTlogD framework.

Performance Benchmark: RTlogD vs. Commercial Tools

A rigorous evaluation of the RTlogD model was conducted against several commonly used prediction algorithms and commercial software tools. The model was tested on a time-split dataset containing molecules reported within the past two years, a method that better simulates real-world predictive performance on new chemical entities [13].

Table 2: Performance Comparison of logD7.4 Prediction Tools

| Prediction Tool / Model | Key Methodology | Reported Performance Notes |

|---|---|---|

| RTlogD | GNN with transfer learning from RT, microscopic pKa, and multi-task learning with logP. | Superior performance compared to commonly used algorithms. |

| ADMETlab 2.0 | Web platform for ADMET property prediction. | Commonly used benchmark. |

| ALOGPS | Associative Neural Network trained on public data. | Widely used; performance superseded by newer models. |

| PCFE | Graph-based model for property prediction. | Outperformed by RTlogD. |

| FP-ADMET | Fingerprint-based random forest models for ADMET properties. | Provides comparable performance for some properties. |

| Instant JChem | Commercial software for property prediction and data management. | Commercial tool; outperformed by RTlogD. |

The results demonstrated that the RTlogD model achieved superior performance compared to the other tools, including the commercial software Instant JChem [13]. This superior performance is attributed to its innovative approach of knowledge transfer, which effectively mitigates the issue of limited logD training data.

Experimental Protocols for logD Modeling and Evaluation

Data Curation and Preprocessing

The foundation of any robust predictive model is a high-quality dataset. For the RTlogD model and other benchmarks, experimental logD values are often curated from public databases like ChEMBL. A typical data preprocessing protocol involves several critical steps to ensure data integrity [13]:

- Source Data: Extract experimental logD values from a trusted source (e.g., ChEMBLdb29).

- pH Filtration: Retain only records measured at or near pH 7.4 (e.g., within the range of 7.2–7.6).

- Solvent Filtration: Remove records where solvents other than n-octanol were used.

- Method Filtration: Include only data from reliable methods like shake-flask, chromatographic techniques, or potentiometric titration.

- Manual Verification: Correct common errors, such as values not logarithmically transformed or transcription mismatches with original literature.

The Matched Molecular Pair (MMP) Analysis for Functional Group Contributions

Beyond global logD prediction, understanding the lipophilic contribution of individual functional groups is vital for medicinal chemists. This is often achieved through Matched Molecular Pair (MMP) analysis [18].

Table 3: Example Lipophilicity Contributions (ΔlogD₇.₄) of Common Functional Groups from MMP Analysis

| Functional Group | Radius = 0 (Median ΔlogD₇.₄) | Radius = 3 (Median ΔlogD₇.₄) | Notes |

|---|---|---|---|

| -F | +0.22 (n=2845) | +0.08 (n=412) | |

| -Cl | +0.76 (n=3493) | +0.89 (n=583) | |

| -CF₃ | +1.08 (n=2367) | +1.17 (n=388) | |

| -OH | -0.40 (n=2559) | -0.57 (n=424) | |

| -COOH | -1.36 (n=1294) | -1.29 (n=179) | Ionizable |

| -NH₂ | -1.34 (n=1683) | -1.41 (n=258) | Ionizable |

Protocol for MMP Analysis:

- Generate MMPs: Use an in-house algorithm to fragment a large database of compounds with known logD7.4 values, creating pairs of molecules that differ only by a single, defined functional group.

- Define Radius: The "radius" defines the extent of the shared molecular structure around the point of substitution. A radius of 0 includes all possible substitutions, while a radius of 3 restricts the analysis to a specific, shared substructure (e.g., a 1,4-disubstituted phenyl ring) [18].

- Calculate ΔlogD: For each MMP, calculate the difference in logD7.4 (ΔlogD7.4).

- Statistical Analysis: Calculate the median ΔlogD7.4 value for each functional group across all its occurrences. The median is preferred to limit the effect of experimental outliers [18].

Table 4: Essential Research Reagents and Tools for logD Studies

| Item / Resource | Function / Description |

|---|---|

| n-Octanol & Buffer (pH 7.4) | The standard solvent system for shake-flask logD7.4 determination. |

| High-Performance Liquid Chromatography (HPLC) | Instrumentation for chromatographic logD estimation and retention time measurement. |

| ACD/Percepta | Commercial software suite providing predictors for logP, logD, pKa, and other physicochemical properties. |

| ChEMBL Database | A large, open-source bioactivity database containing curated experimental logD data for model training and validation. |

| Matched Molecular Pair (MMP) Algorithm | Computational tool to identify and analyze closely related compound pairs, critical for understanding structure-property relationships. |

The distinction between logP and logD is not merely academic; it is a fundamental consideration for the successful design and development of modern therapeutics, especially for ionizable molecules which constitute the vast majority of drugs. While logP describes the lipophilicity of an idealized, neutral compound, logD provides a pH-dependent, physiologically relevant measure that directly impacts a compound's solubility, permeability, and overall ADMET profile.

The RTlogD model represents a significant advancement in the accurate in silico prediction of logD7.4. By innovatively leveraging knowledge from chromatographic retention time, microscopic pKa, and logP prediction within a multi-task learning framework, it overcomes the critical challenge of limited experimental data. Benchmarking studies confirm that this approach delivers superior performance compared to commonly used algorithms and commercial tools, offering the research community a powerful and promising method to guide the optimization of drug candidates. As drug discovery continues to venture into more complex chemical space beyond the Rule of Five, the precise understanding and prediction of logD will only grow in importance.

Lipophilicity, quantified as the distribution coefficient between n-octanol and buffer at physiological pH 7.4 (logD7.4), is a fundamental physical property in drug discovery. It significantly influences a compound's solubility, permeability, metabolism, distribution, protein binding, and toxicity [1]. Accurate logD prediction is therefore crucial for optimizing the pharmacokinetic and safety profiles of drug candidates, thereby increasing their likelihood of clinical success [1] [4].

Pharmaceutical companies employ a range of in silico strategies to predict logD, leveraging their extensive proprietary data and advanced computational models. This guide objectively compares the performance of various industrial and academic approaches, with a specific focus on the novel RTlogD model and its evaluation against established commercial tools.

Comparative Analysis of logD Prediction Approaches

The following table summarizes the core methodologies and key characteristics of different logD prediction approaches used in the industry and academia.

Table 1: Comparison of logD Prediction Methodologies

| Methodology / Tool | Core Approach | Key Features | Data Foundation |

|---|---|---|---|

| RTlogD Model [1] | Graph Neural Network (GNN) with Transfer & Multi-Task Learning | Pre-training on chromatographic retention time (RT); integration of microscopic pKa and logP as an auxiliary task. | Public data (ChEMBL); ~80,000 RT molecules. |

| Industrial Proprietary Models (e.g., AstraZeneca's AZlogD74) [1] | Likely QSAR/Machine Learning | Continuously updated models trained on vast in-house experimental databases. | Proprietary data (e.g., >160,000 molecules for AZlogD74). |

| QSAR/Machine Learning Correction Models [6] | Machine Learning (e.g., QSAR) | Uses predicted ClogP and pKa from commercial software as descriptors to build a correction model based on experimental logD data. | Public and proprietary data sets. |

| Molecular Dynamics (MD) Simulations [19] | Physics-Based Simulation | Calculates logP from solvation free energy; derives logD using predicted pKa and ionization states. | Molecular mechanics force fields (e.g., OPLS-AA, CHARMM). |

| Commercial Software (e.g., Instant Jchem, ACD/Percepta) [1] [6] | Typically fragment- or property-based | Often relies on calculated logP and pKa to estimate the distribution of species at a given pH. | Varies by software; often large, curated databases. |

Experimental Protocols for logD Prediction

The RTlogD Model Methodology

The RTlogD framework employs a multi-faceted knowledge transfer strategy to overcome the challenge of limited logD experimental data [1].

- Pre-training on Chromatographic Retention Time (RT): A graph neural network is first pre-trained on a large dataset of nearly 80,000 molecules with chromatographic retention time data. Since RT is influenced by lipophilicity, this step allows the model to learn relevant molecular representations from a larger data source [1].

- Integration of Microscopic pKa Values: The model incorporates predicted acidic and basic microscopic pKa values as atomic features. This provides granular information on the ionization states of specific atoms within the molecule, offering valuable insights into ionization capacity that directly impacts logD [1].

- Multitask Learning with logP: The model is fine-tuned on the logD7.4 task using a smaller set of experimental data. During this phase, logP prediction is included as a parallel auxiliary task. This shared learning process provides an inductive bias that improves the model's efficiency and accuracy for the primary logD task [1].

Diagram 1: RTlogD model workflow.

Industrial Machine Learning Correction Models

Companies like Roche have developed sophisticated machine learning workflows that integrate commercial software predictions with experimental data [6]. The general protocol involves:

- Descriptor Generation: Using commercial software (e.g., for calculating ClogP and pKa) to generate initial property predictions, which are used as model descriptors [6].

- Model Training: Training a machine learning model (e.g., a QSAR model) with available experimental logD data. The model learns to correct the systematic errors present in the initial software predictions [6].

- Uncertainty Quantification: Implementing robust uncertainty quantification methods to discriminate among predictions. This allows scientists to identify reliable predictions and exclude compounds with low-confidence predictions from assay submission, leading to significant cost and time savings [20].

Molecular Dynamics-Based Prediction

For specific applications like cyclic peptides, molecular dynamics simulations offer a physics-based alternative [19].

- Solvation Free Energy Calculation: The partition coefficient (LogP) is obtained from the solvation free energy of the molecule in n-octanol and water, calculated using molecular dynamics simulations under a specific forcefield (e.g., OPLS-AA or CHARMM) [19].

- pKa and Ionization State Consideration: The distribution coefficient (LogD) at a desired pH is then calculated from the obtained LogP by considering the molecule's predicted pKa values and the ionization states of each residue at that pH [19].

Performance Benchmarking

A critical performance evaluation of the RTlogD model was conducted against several commonly used algorithms and prediction tools on a time-split test set containing recently reported molecules [1].

Table 2: Performance Comparison of RTlogD vs. Other Tools [1]

| Prediction Tool / Model | RMSE | MAE | R² |

|---|---|---|---|

| RTlogD | 0.455 | 0.326 | 0.825 |

| ADMETlab2.0 | 0.596 | 0.438 | 0.712 |

| ALOGPS | 0.621 | 0.461 | 0.680 |

| FP-ADMET | 0.578 | 0.427 | 0.692 |

| PCFE | 0.534 | 0.397 | 0.735 |

| Instant Jchem | 0.615 | 0.455 | 0.658 |

Abbreviations: RMSE (Root Mean Square Error), MAE (Mean Absolute Error), R² (Coefficient of Determination).

The data demonstrates that the RTlogD model achieved superior performance, with the lowest error metrics (RMSE and MAE) and the highest coefficient of determination (R²), indicating a better fit and more accurate predictions compared to the other tools [1].

For MD-based approaches, a study on cyclic peptides reported that predictions using the OPLS-AA forcefield agreed with experimental LogD values with an average deviation of 1.39 ± 0.86 log units across multiple pH values, which was noted to be better than predictions using the CHARMM forcefield or a commercial software [19].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description |

|---|---|

| Chromatographic Retention Time Database [1] | A large dataset of small molecule retention times used for pre-training models to learn lipophilicity-related features. |

| Microscopic pKa Predictor [1] | Software or model that predicts pKa values for specific ionizable atoms, providing detailed ionization information. |

| Commercial logP/pKa Software [6] | Tools that provide baseline predictions for logP and pKa, which can be used as descriptors in correction models. |

| Molecular Dynamics Software (e.g., GROMACS) [19] [21] | Software packages used to run simulations for calculating solvation free energies and other dynamics-derived properties. |

| Curated Experimental logD Database (e.g., from ChEMBL) [1] [6] | High-quality, experimentally determined logD values, essential for training and validating data-driven models. |

| Graph Neural Network (GNN) Framework [1] | A deep learning architecture capable of directly learning from molecular graph structures. |

The landscape of logD prediction in the pharmaceutical industry is diverse, encompassing approaches ranging from proprietary models built on massive in-house data to innovative academic models like RTlogD that creatively leverage transfer learning. Benchmarking studies demonstrate that the RTlogD model, which integrates knowledge from chromatographic retention time, microscopic pKa, and logP, exhibits superior predictive performance compared to several commonly used tools. Meanwhile, industry practices highlight a trend towards using machine learning to refine existing software predictions and a growing emphasis on uncertainty quantification to guide efficient experimental testing. The choice of methodology ultimately depends on the specific project needs, available data, and desired balance between computational cost and predictive accuracy.

Inside RTlogD: Architectural Innovation Through Multi-Source Knowledge Transfer

In drug discovery, the lipophilicity of a compound, quantified by the distribution coefficient at physiological pH (logD7.4), is a fundamental property that significantly influences solubility, permeability, metabolism, and toxicity [1]. Accurate logD7.4 prediction is therefore crucial for optimizing the pharmacokinetic and safety profiles of drug candidates. However, the development of robust predictive models has been hampered by the limited availability of experimental logD data, as traditional measurement methods are labor-intensive and require large amounts of synthesized compounds [1].

To address this data scarcity, a novel architecture has emerged that leverages chromatographic retention time (RT) as a rich source of information for model pre-training. Chromatographic behavior is intrinsically influenced by a compound's lipophilicity, creating a strong correlation between retention time and logD7.4 [1]. This relationship provides a foundation for transfer learning, where knowledge gained from predicting retention time on large, available datasets can be transferred to improve logD prediction on smaller, more specialized datasets. The RTlogD model exemplifies this approach, combining pre-training on chromatographic retention time with other physicochemical features to enhance logD7.4 prediction accuracy and generalization [1] [10]. This guide provides a detailed comparison of this core architecture against other commercial and academic prediction tools.

Core Architectural Framework of RTlogD

Foundational Principles and Multi-Source Knowledge Transfer

The RTlogD model is built on a multi-faceted knowledge transfer framework designed to overcome the limitation of small logD datasets. Its architecture integrates three key sources of information [1]:

- Chromatographic Retention Time (RT) Pre-training: The model is first pre-trained on a large dataset of nearly 80,000 chromatographic retention time measurements. This initial step allows the model to learn generalizable features related to molecular interaction and separation, which are influenced by the same hydrophobic forces that govern lipophilicity. This pre-trained model is subsequently fine-tuned on the specific logD7.4 task, significantly enhancing its generalization capability [1].

- Integration of Microscopic pKa Values: Unlike macroscopic pKa, which describes the molecule as a whole, microscopic pKa values are incorporated as atomic features. This provides the model with granular, site-specific information about the ionization states of ionizable atoms, offering valuable insights into the ionization capacity that directly affects a compound's distribution coefficient [1].

- logP as a Multitask Learning Objective: The partition coefficient for the neutral species (logP) is integrated as an auxiliary task within a multitask learning framework. This forces the model to learn the underlying domain information shared between logP and logD, acting as a beneficial inductive bias that improves learning efficiency and final prediction accuracy for logD7.4 [1].

Experimental and Data Methodology

The performance of the core architecture was validated through a rigorous experimental protocol.

Data Curation (DB29-data): The primary modeling dataset was constructed from ChEMBLdb29, containing experimental logD values measured at pH 7.4 (± 0.2) via shake-flask, chromatographic, or potentiometric methods. Stringent data pretreatment was applied, including the removal of records with incorrect pH or solvents, and manual correction of logarithmic transformation and transcription errors by cross-referencing primary literature [1].

Model Training and Ablation Studies: The RTlogD model was built using a Graph Neural Network (GNN). Its performance was benchmarked against commonly used tools, and a series of ablation studies were conducted to isolate the contribution of each architectural component (RT pre-training, pKa features, logP multitask learning) to the overall predictive power [1].

Evaluation Protocol: Model performance was assessed on a time-split dataset containing molecules reported within the past two years, simulating a real-world scenario for predicting novel compounds. Standard metrics for regression tasks, such as Root Mean Square Error (RMSE) and Coefficient of Determination (R²), were likely used, consistent with practices in the field [1].

Table 1: Key Research Reagent Solutions in the RTlogD Framework

| Solution / Resource | Function in the Research Context |

|---|---|

| ChEMBLdb29 Database | Provided the core dataset of experimental logD7.4 values for model training and validation [1]. |

| Chromatographic RT Dataset | A large-scale dataset (~80,000 molecules) used for pre-training, enabling knowledge transfer for lipophilicity [1]. |

| Graph Neural Network (GNN) | The core machine learning algorithm for graph representation learning of entire molecules and property prediction [1]. |

| Microscopic pKa Predictor | A computational tool (implied) to generate atomic-level pKa features, providing site-specific ionization information [1]. |

The following diagram illustrates the complete RTlogD workflow, from data sources to final prediction.

Performance Comparison: RTlogD vs. Alternative Tools

The RTlogD model was objectively compared against a range of widely used predictive tools. The results demonstrate the clear advantage of its multi-source architecture.

Table 2: Quantitative Performance Comparison of logD7.4 Prediction Tools

| Tool / Model | Reported Performance | Key Methodology | Notable Strengths & Limitations |

|---|---|---|---|

| RTlogD (Proposed) | Superior performance vs. common algorithms and tools [1] | GNN with RT pre-training, microscopic pKa, and logP multitask learning [1] | Strengths: High accuracy, addresses data scarcity via transfer learning. Limitations: Relies on quality of source task data. |

| ADMETlab2.0 | Compared in study [1] | Comprehensive platform for ADMET property prediction, likely using various QSAR/QSPR methods [1] | Strengths: Wide range of predicted properties. Limitations: Performance on logD7.4 surpassed by specialized RTlogD model. |

| ALOGPS | Compared in study [1] | Online prediction system for logP and logS, based on associative neural networks [1] | Strengths: Established, widely used tool. Limitations: May not incorporate modern multi-task or transfer learning. |

| Commercial Software (e.g., Instant Jchem) | Compared in study [1] | Commercial package with property prediction capabilities [1] | Strengths: Integrated chemical data management. Limitations: Predictive performance may lag behind specialized AI models. |

Complementary Advances in Chromatographic Data Prediction

The principle of using chromatographic behavior to inform molecular properties is actively evolving. Recent studies have developed sophisticated models that predict retention parameters directly, which could further enhance frameworks like RTlogD.

Intelligent Column Chromatography Prediction: A 2025 study introduced a Quantum Geometry-informed Graph Neural Network (QGeoGNN) that predicts chromatographic retention volume by encoding molecular 3D conformations, physicochemical descriptors, and operational parameters. A key feature is its use of transfer learning to adapt the model to diverse column specifications, overcoming the "one-size-fits-all" limitation. It also introduces a quantitative Separation Probability (Sp) metric to guide experimental optimization [22].

RT-Pred Web Server: This tool allows for accurate, customized liquid chromatography retention time prediction. It enables users to train custom prediction models using their own chromatographic method data, achieving high correlation coefficients (R² of 0.95 on training and 0.91 on validation) [23]. The ability to create method-specific models underscores the importance of contextual data for achieving high prediction accuracy.

Table 3: Comparison of Advanced Chromatographic Prediction Features

| Feature / Model | RTlogD | QGeoGNN for CC [22] | RT-Pred Server [23] |

|---|---|---|---|

| Primary Prediction Target | logD7.4 | Retention Volume & Separation Probability | Retention Time |

| Use of Transfer Learning | Pre-training on RT for logD | Adaptation to column specifications | Custom model training per CM |

| Key Innovation | Multi-source knowledge transfer | 3D molecular features & operational parameters | User-friendly, customizable models |

| Application in Workflow | Early drug design for lipophilicity | Purification process optimization | Compound identification in LC-MS |

The Broader Ecosystem: Alignment and Data Handling

For data-driven approaches in chromatography to be successful, consistent and accurate data processing is a prerequisite. Advances in retention time alignment ensure that the data used for training and prediction is reliable, particularly in large-cohort studies.

Deep Learning for Alignment: DeepRTAlign is a deep learning-based tool that addresses both monotonic and non-monotonic RT shifts in large cohort LC-MS studies. It combines a coarse alignment (pseudo warping function) with a deep neural network for direct matching, outperforming existing popular tools and improving identification sensitivity without compromising quantitative accuracy [24].

Open-Source Frameworks: Tools like AlphaPept represent a move towards modern, open-source frameworks for MS data processing. Built in Python, it leverages high-performance computing and community-driven development to achieve rapid processing of large datasets, facilitating the ecosystem in which predictive models operate [25].

Data Workflow Challenges: A key industry article highlights that disjointed data files, scattered metadata, and manual reporting are major barriers to applying AI/ML in chromatography. Centralized, vendor-agnostic data systems are identified as a critical need to overcome these hurdles and fully leverage historical data for predictive modeling [26].

The core architecture of pre-training on chromatographic retention time data, as exemplified by the RTlogD model, represents a significant leap forward in the accurate prediction of logD7.4. The experimental data confirms that this approach, which systematically transfers knowledge from RT, microscopic pKa, and logP, delivers superior performance compared to commonly used alternatives.

The future of this architectural paradigm is promising. It can be extended by integrating more advanced chromatographic predictors, such as the QGeoGNN for 3D-aware feature extraction or customizable models from servers like RT-Pred. Furthermore, as the underlying data ecosystem matures through improved alignment algorithms and centralized data management, the quality and volume of training data will increase, leading to even more robust and generalizable models. For researchers and drug development professionals, adopting and building upon this multi-source, transfer learning architecture offers a powerful strategy to optimize critical physicochemical properties early in the drug discovery pipeline.

Integrating Microscopic pKa as Atomic-Level Features

Performance Comparison of the RTlogD Model vs. Commercial Tools

This guide provides an objective performance evaluation of the RTlogD model, a novel in silico framework for predicting lipophilicity (logD~7.4~), against established commercial and open-source tools. Accurate logD~7.4~ prediction is crucial in drug discovery as it significantly influences a compound's solubility, permeability, metabolism, and toxicity. [1]

The RTlogD model's innovative integration of microscopic pKa values as atomic-level features is a key differentiator. Unlike macroscopic pKa, which describes the dissociation constant for the entire molecule, microscopic pKa provides the acid dissociation constant for a specific proton at a specific site, holding the rest of the bonding pattern fixed. [27] This offers more granular insights into ionizable sites and ionization capacity, which is critical for predicting the distribution of different ionic species at physiological pH. [1]

Quantitative Performance Comparison

The following table summarizes the predictive performance, measured by Root Mean Square Error (RMSE), of the RTlogD model compared to other commonly used tools on a time-split test set. A lower RMSE indicates superior accuracy.

Table 1: Performance comparison of logD~7.4~ prediction tools on a time-split test set.

| Prediction Tool | Type | Reported RMSE |

|---|---|---|

| RTlogD | Novel Research Model | 0.360 |

| Instant Jchem | Commercial Software | 0.585 |

| ADMETlab 2.0 | Open-source Platform | 0.629 |

| PCFE | Computational Model | 0.634 |

| ALOGPS | Online Tool | 0.716 |

| FP-ADMET | Open-source Platform | 0.730 |

As the data shows, the RTlogD model demonstrated superior performance, achieving a significantly lower RMSE than the compared tools. [1] Ablation studies within the original research confirmed that the inclusion of microscopic pKa, logP as an auxiliary task, and pre-training on chromatographic retention time data all contributed to this enhanced performance. [1]

Experimental Protocols and Methodologies

RTlogD Model Workflow

The development of the RTlogD model involved a multi-stage, knowledge-transfer approach. The diagram below illustrates the integrated workflow.

This workflow integrates three key strategies:

- Pre-training on Chromatographic Retention Time (RT): A model was first pre-trained on a large dataset of nearly 80,000 chromatographic retention times. [1] Since RT is influenced by lipophilicity, this step allows the model to learn generalizable features from a much larger dataset than is available for logD, enhancing its generalization capability. [1]

- Multi-task Learning with logP: The model was fine-tuned to simultaneously predict logD~7.4~ and logP (the partition coefficient for the neutral species). [1] Using logP as an auxiliary task provides an inductive bias that improves learning efficiency and accuracy for the primary logD task. [1]

- Integration of Microscopic pKa as Atomic Features: Predicted acidic and basic microscopic pKa values were incorporated directly as features at the atomic level. [1] This provides the model with valuable, site-specific information about the ionization capacity of individual atoms, which is crucial for distinguishing the lipophilicity of different ionization forms of a molecule. [1]

Data Curation and Model Training

The experimental logD~7.4~ data (DB29-data) for model training and evaluation was meticulously curated from ChEMBL database version 29. [1] Key steps included:

- Source and Method Filtering: Only experimental values obtained via the shake-flask method, chromatographic techniques, or potentiometric titration were included. [1]

- pH Criterion: Records were restricted to a physiological pH range of 7.2 to 7.6. [1]

- Solvent Criterion: Only data using octanol as the organic phase was retained. [1]

- Manual Verification: Data was manually checked for errors, such as values not being logarithmically transformed or transcription errors against primary literature. [1]

The model's architecture is based on a Graph Neural Network (GNN), which operates directly on the molecular graph structure, making it well-suited for incorporating atom-level features like microscopic pKa. [1]

The following table details key computational tools and data resources relevant to this field of research.

Table 2: Key research reagents and computational solutions for logD and pKa prediction.

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| Chromatographic Retention Time Data | Experimental Dataset | Used for pre-training models to learn general lipophilicity-related features, expanding the chemical space covered. [1] |

| Microscopic pKa Predictor | Computational Model | Provides atom-level ionization constants, which serve as critical features for predicting the distribution of ionic species. [1] |

| Graph Neural Network (GNN) | Modeling Architecture | Enables direct learning from molecular structures and the integration of atomic-level features like microscopic pKa. [1] |

| ChEMBL Database | Public Bioactivity Database | A primary source for curated experimental physicochemical and ADMET data for model training and validation. [1] |

| ACD/Perceptra | Commercial Software | Used in related studies to generate predicted pKa and logP values as descriptors for machine learning models. [6] |

| Shake-Flask Assay | Experimental Method | The "gold-standard" experimental technique for measuring logD values used to build reliable training datasets. [1] |

Lipophilicity is a fundamental physicochemical property that significantly influences the absorption, distribution, metabolism, excretion, and toxicity (ADMET) of drug candidates [13] [14]. Traditionally, lipophilicity is quantified via two key metrics: the partition coefficient (logP), which describes the distribution of a compound's neutral form between octanol and water, and the distribution coefficient (logD), which accounts for all ionized and unionized species at a specific pH, most commonly physiological pH 7.4 (logD7.4) [14]. As logD provides a more accurate representation of a compound's behavior under physiological conditions, its reliable prediction is crucial for successful drug discovery and design [13].

However, predicting logD7.4 accurately presents significant challenges, primarily due to the limited availability of high-quality experimental data for model training [13]. To address this data scarcity, innovative machine learning approaches that leverage related chemical properties have emerged. Among these, multitask learning (MTL) frameworks, which jointly learn logD7.4 and the related logP property, have demonstrated considerable promise by enhancing model generalization and prediction accuracy [13]. This guide objectively evaluates the performance of one such model—RTlogD, which incorporates logP as an auxiliary task—against other commonly used commercial and academic logD prediction tools.

The RTlogD Framework: Methodology and Workflow

The RTlogD model represents a sophisticated computational framework designed to overcome data limitations in logD7.4 prediction by transferring knowledge from multiple related tasks and data sources [13]. Its architecture integrates several innovative components, as illustrated in the experimental workflow below.

Core Architectural Components

Chromatographic Retention Time (RT) Pre-training: The model is first pre-trained on a large dataset of nearly 80,000 chromatographic retention time measurements [13]. Since retention time is influenced by lipophilicity, this pre-training on a substantially larger dataset allows the model to learn generalized molecular representations that are beneficial for the subsequent logD prediction task.

Multitask Learning with logP: A central feature of the RTlogD framework is its multitask learning architecture that simultaneously learns to predict both logD7.4 and logP [13]. By treating logP as an auxiliary task, the model leverages the domain information and structural relationships between these two related properties, which serves as an inductive bias that improves learning efficiency and final prediction accuracy for logD7.4.