QSAR Modeling: From Foundational Principles to AI-Driven Applications in Drug Discovery

This article provides a comprehensive exploration of Quantitative Structure-Activity Relationship (QSAR) modeling, a cornerstone computational technique in modern drug discovery and development.

QSAR Modeling: From Foundational Principles to AI-Driven Applications in Drug Discovery

Abstract

This article provides a comprehensive exploration of Quantitative Structure-Activity Relationship (QSAR) modeling, a cornerstone computational technique in modern drug discovery and development. Tailored for researchers and pharmaceutical professionals, it begins by demystifying the core principles that link molecular structure to biological activity. The discussion then progresses to a detailed examination of the QSAR workflow—from data preparation and descriptor calculation to building models with both classical and advanced machine learning algorithms. A dedicated troubleshooting section addresses common challenges like data quality and model overfitting, while a rigorous comparative analysis outlines best practices for model validation and interpretation. By synthesizing foundational knowledge with current advancements in AI and deep learning, this guide serves as a vital resource for leveraging QSAR to accelerate the efficient design of novel therapeutic agents.

The Foundation of QSAR: Unlocking the Link Between Chemical Structure and Biological Activity

Quantitative Structure-Activity Relationship (QSAR) is a computational modeling method that uses mathematical and statistical approaches to establish a quantitative connection between the chemical structure of a molecule and its biological activity or physicochemical properties [1]. First pioneered in the 1960s by Hansch and Fujita, QSAR has evolved into an indispensable tool in organic chemistry and drug discovery, enabling researchers to predict the behavior of compounds before they are synthesized or tested experimentally [1]. The fundamental premise underlying QSAR is that molecular structure determines all physicochemical and biological properties—a principle that allows scientists to modify structures systematically to enhance desired activities or minimize undesirable ones.

The importance of QSAR extends across multiple scientific disciplines, including drug discovery, environmental chemistry, and materials science [1]. In pharmaceutical research specifically, QSAR methodologies help identify potential lead compounds, optimize their potency and selectivity, and predict pharmacokinetic and toxicological properties, thereby accelerating the development of new therapeutics while reducing reliance on animal testing [1] [2]. As regulatory frameworks increasingly promote New Approach Methodologies (NAMs), QSAR models have gained formal recognition for chemical hazard assessment, particularly in identifying endocrine disrupting chemicals [2].

Molecular Descriptors: Quantifying Structural Features

Definition and Classification

Molecular descriptors are numerical representations that encode specific aspects of molecular structure and properties [1]. These quantitative metrics serve as the independent variables in QSAR models, enabling the correlation of structural features with biological endpoints. Descriptors can capture information ranging from simple atomic composition to complex three-dimensional electronic distributions.

Table 1: Classification of Major Molecular Descriptor Types

| Descriptor Category | Description | Examples | Biological Correlations |

|---|---|---|---|

| Topological Descriptors | Derived from 2D molecular graph representation | Wiener index, molecular connectivity indices [1], reducible Zagreb indices [3] | Molecular branching, size; correlates with bioavailability [3] |

| Geometric Descriptors | Based on 3D molecular geometry | Molecular surface area, volume [1] | Steric effects, binding pocket compatibility |

| Electronic Descriptors | Capture electronic distribution | Atomic charges, dipole moment [1] | Intermolecular interactions, binding affinity |

| Physicochemical Descriptors | Represent bulk properties | logP (hydrophobicity), solubility [1] | Membrane permeability, solubility, toxicity |

Key Descriptors and Their Significance

Topological indices have proven particularly valuable in QSAR studies due to their computational efficiency and strong predictive power. These graph-theoretical descriptors are calculated from the hydrogen-suppressed molecular structure, where atoms represent vertices and bonds represent edges [3]. For example, the reducible first and second Zagreb indices have demonstrated excellent correlations with physicochemical properties of pharmaceutical compounds [3]. The reducible first Zagreb index is defined as:

$$RM{1}(G)=\sum\limits{uv\varepsilon E(G)} (\frac{n}{d{u}}+\frac{n}{d{v}})$$

where n represents the total number of vertices in graph G, and d$u$ and d$v$ represent the degrees of vertices u and v, respectively [3].

Similarly, the reducible reciprocal Randic index has shown significant utility in predicting biological activity:

$$RR(G)=\sum\limits{uv\varepsilon E(G)} (\sqrt{\frac{n}{d{u}}\times \frac{n}{d_{v}}})$$

These topological descriptors often exhibit strong correlations with critical physicochemical properties including boiling point, molar refractivity, lipophilicity (LogP), and molar volume, making them invaluable for predicting absorption, distribution, metabolism, and excretion (ADME) properties of drug candidates [3].

QSAR Modeling Methodologies

Model Development Workflow

The development of robust QSAR models follows a systematic workflow that ensures predictive accuracy and reliability. The process begins with molecular structure input and progresses through descriptor calculation, statistical modeling, and validation [1].

Statistical and Machine Learning Approaches

QSAR modeling employs diverse statistical and machine learning techniques to establish correlations between molecular descriptors and biological activity. Traditional methods include Multiple Linear Regression (MLR) and Partial Least Squares (PLS) regression, which work well for linear relationships [1]. However, with increasing computational power and complex datasets, machine learning algorithms have become predominant.

Random Forest (RF) has emerged as a particularly effective algorithm due to its capacity to identify relevant features and its relative ease of interpretation [4]. In a recent study on Plasmodium falciparum dihydroorotate dehydrogenase inhibitors for anti-malarial drug discovery, Random Forest outperformed 11 other machine learning models when using SubstructureCount fingerprints, achieving Matthews Correlation Coefficient (MCC) values exceeding 0.65 in cross-validation and test sets [4].

Artificial Neural Networks (ANNs) have also demonstrated excellent predictive ability in QSAR modeling. A study on profen-class nonsteroidal anti-inflammatory drugs (NSAIDs) utilized ANNs with topological indices as inputs, resulting in a coefficient of determination (R²) of 0.94 and a mean squared error of 0.0087 on the test set [5].

Table 2: Machine Learning Algorithms in QSAR Modeling

| Algorithm | Mechanism | Advantages | Application Examples |

|---|---|---|---|

| Random Forest (RF) | Ensemble of decision trees | Handles non-linearity, identifies feature importance [4] | PfDHODH inhibitors for malaria [4] |

| Artificial Neural Networks (ANN) | Multi-layer perceptron | Captures complex relationships, high predictive accuracy [1] [5] | NSAID property prediction [5] |

| Support Vector Machines (SVM) | Maximum margin hyperplane | Effective in high-dimensional spaces [1] | Thyroid hormone disruption prediction [2] |

| Extreme Gradient Boosting (XGBoost) | Gradient boosted decision trees | Handles missing values, regularization prevents overfitting [3] | Asthma drug property prediction [3] |

Experimental Protocols for QSAR Model Development

Protocol 1: Standard QSAR Modeling Pipeline

Data Curation and Preprocessing: Collect biological activity data (e.g., IC₅₀, Ki) from reliable databases such as ChEMBL [4]. For a study on PfDHODH inhibitors, 465 inhibitors were curated from ChEMBL (ID CHEMBL3486) [4].

Chemical Structure Standardization: Convert chemical representations to standardized formats using tools like RDKit [6].

Molecular Descriptor Calculation: Compute topological, electronic, geometric, and physicochemical descriptors using appropriate software [1] [3].

Dataset Division: Split data into training (∼80%), cross-validation (∼10%), and test sets (∼10%) using techniques like stratified sampling to maintain activity distribution [4].

Feature Selection: Apply feature importance metrics (e.g., Gini index in Random Forest) to identify most relevant descriptors [4]. For PfDHODH inhibitors, analysis revealed that nitrogenous groups, fluorine atoms, oxygenation features, aromatic moieties, and chirality significantly influenced inhibitory activity [4].

Model Training and Validation: Train multiple algorithms and validate using rigorous statistical metrics including accuracy, sensitivity, specificity, and Matthews Correlation Coefficient (MCC) [4].

Protocol 2: Advanced Machine Learning QSAR with Handling of Imbalanced Data

Data Balancing: Address class imbalance using either undersampling or oversampling techniques [4].

Chemical Fingerprint Calculation: Generate molecular fingerprints such as SubstructureCount fingerprints, which have shown superior performance in classification tasks [4].

Model Optimization with Ensemble Methods: Implement ensemble methods like Balanced Random Forest with optimized hyperparameters.

Comprehensive Validation: Employ both internal (cross-validation) and external validation with completely separate test sets [4].

Applicability Domain Assessment: Define the chemical space where the model provides reliable predictions [2].

Applications in Drug Discovery and Development

Lead Optimization and Activity Prediction

QSAR approaches have revolutionized lead optimization in drug discovery by providing quantitative insights into how specific structural modifications affect biological activity. In anti-malarial drug development, QSAR models successfully identified key molecular features contributing to PfDHODH inhibition, including aromatic moieties, chiral centers, and specific heteroatom patterns (nitrogen, oxygen, and fluorine) [4]. This information guides medicinal chemists in prioritizing synthetic efforts toward compounds with higher predicted activity.

The application of QSAR extends to predicting diverse biological endpoints beyond primary pharmacology, including toxicological properties and environmental impact. For thyroid hormone system disruption, QSAR models have been developed to predict molecular initiating events in adverse outcome pathways, such as inhibition of thyroperoxidase (TPO) or binding to thyroid hormone receptors [2]. This capability is particularly valuable for regulatory assessments under initiatives like the European Chemicals Strategy for Sustainability [2].

Emerging Applications in Materials Science and Energetic Compounds

While traditionally focused on pharmaceutical applications, QSAR methodologies are increasingly applied in materials science, particularly for the design and optimization of energetic molecules [7]. Machine-learning-driven QSPR models can predict critical safety characteristics (impact sensitivity, thermal stability) and energetic properties (enthalpy of formation, detonation velocity) of energetic compounds, significantly reducing the need for hazardous experimental testing [7].

Integration with Complementary Computational Approaches

Pharmacophore Modeling

Pharmacophore modeling represents a complementary approach to QSAR that identifies the essential structural features responsible for biological activity [8]. A pharmacophore is defined as "a set of common chemical features that describe the specific ways a ligand interacts with a macromolecule's active site in three dimensions" [8]. These features include hydrogen bond donors/acceptors, charge interactions, hydrophobic regions, and aromatic interactions.

Pharmacophore models can be derived either from protein-ligand complex structures (structure-based) or from a set of active ligands (ligand-based) [8]. The integration of pharmacophore modeling with QSAR enhances virtual screening efforts by incorporating three-dimensional molecular recognition patterns into the predictive framework. This combined approach has been successfully applied to identify novel inhibitors for various targets, including phytocompounds active against Waddlia chondrophila, an emerging pathogen associated with human miscarriages [9].

Molecular Dynamics and Advanced Sampling Techniques

Molecular dynamics (MD) simulations provide dynamic insights that complement static QSAR models by capturing the temporal evolution of protein-ligand interactions [8] [9]. MD simulations "determine coordinates of a protein-ligand with respect to time" and incorporate "solvent effects, dynamic features and the free energy associated with protein/ligand binding" [8].

In a study on Waddlia chondrophila, 100ns molecular dynamics simulations validated the stability of phytocompound-target complexes initially identified through docking studies [9]. Binding free energy calculations using MMGBSA further corroborated the significant binding affinity between the phytocompounds and their target proteins [9]. The integration of MD with QSAR enables more reliable prediction of binding affinities and residence times, which are critical parameters for drug efficacy.

Table 3: Essential Computational Tools and Databases for QSAR Research

| Resource Category | Specific Tools/Databases | Function | Application Example |

|---|---|---|---|

| Chemical Databases | ChEMBL [4], PubChem [9], ChemSpider [5], Zinc [9] | Source of chemical structures and bioactivity data | PfDHODH inhibitors IC₅₀ data from ChEMBL (ID CHEMBL3486) [4] |

| Descriptor Calculation | RDKit [6], Dragon, MOE [9] | Compute molecular descriptors and fingerprints | SubstructureCount fingerprint calculation [4] |

| Machine Learning Platforms | MATLAB [3], Python scikit-learn, TensorFlow | Implement ML algorithms for QSAR modeling | Random Forest implementation for PfDHODH inhibitors [4] |

| Molecular Modeling | MOE (Molecular Operating Environment) [9], GROMACS [8], LAMMPS [8] | Molecular docking, dynamics simulations, and structure analysis | Docking and dynamics of phytocompounds against bacterial targets [9] |

| Validation Tools | AlphaFold [9], ProCheck [9], Verify3D [9] | Protein structure prediction and model validation | Target protein structure evaluation for Waddlia chondrophila [9] |

Future Directions and Emerging Trends

The field of QSAR modeling continues to evolve with several emerging trends shaping its future trajectory. Machine learning and deep learning approaches are being increasingly adopted to improve model accuracy and handle complex, high-dimensional datasets [1] [6]. Graph neural networks represent a particularly promising direction, with methods like GraphGIM enhancing molecular representation learning through contrastive learning between 2D graphs and multi-view 3D geometry images [6].

Another significant trend involves the integration of QSAR with other modeling techniques, such as molecular dynamics and docking, to provide more comprehensive understanding of molecular interactions [1]. This multi-scale modeling approach captures phenomena ranging from atomic-level interactions to system-level responses, bridging gaps between short-term molecular events and longer-term biological outcomes.

The growing emphasis on interpretable artificial intelligence and explainable QSAR models addresses the critical need for mechanistic understanding alongside predictive accuracy [7]. Future developments will likely focus on multi-objective optimization frameworks that simultaneously balance potency, selectivity, and ADMET properties while providing transparent insights into structural determinants of activity [7].

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of computational medicinal chemistry, operating on the fundamental principle that a direct, quantifiable relationship exists between a chemical compound's molecular structure and its biological activity [10] [11]. The development of these mathematical models has transitioned from traditional, physics-based methodologies to contemporary strategies powered by artificial intelligence (AI), machine learning (ML), and big data analytics [12]. This evolution has transformed QSAR from a conceptual framework into an indispensable tool for in silico drug discovery, environmental toxicology, and compound optimization, enabling the prediction of biological activity, physicochemical properties, and toxicity profiles for novel substances without the immediate need for extensive laboratory experimentation [10] [11] [13]. This review details the key historical milestones, methodological advancements, and future directions of QSAR modeling, providing scientists with a comprehensive technical guide framed within the context of modern computational workflows.

Historical Development and Key Milestones

The conceptual journey of QSAR began over a century ago, rooted in the systematic observation that the biological effects of molecules are determined by their physicochemical characteristics [14].

The Foundational Era (1868-1950s)

The earliest recognized quantitative work was published in 1868 by Crum-Brown and Fraser, who proposed the first general equation relating biological activity to chemical structure, expressed simply as φ = f(C), where φ represents physiological activity and C denotes chemical constitution [14]. Subsequent work by Richet (late 19th century) demonstrated an inverse relationship between the toxicity of simple organic compounds and their water solubility [14]. Shortly thereafter, Meyer and Overton, working independently, established crucial linear relationships between lipophilicity (measured as oil-water partition coefficients) and the narcotic activity of various substances [14]. The early 20th century saw further refinements, including Fuhner's evidence of group additivity in homologous series and Ferguson's application of thermodynamic principles to drug activity [14].

The Formative Modern Era (1960s-1980s)

The 1960s marked a critical turning point with the pioneering work of Corwin Hansch, who introduced a revolutionary multi-parameter approach [14]. The Hansch equation incorporated lipophilicity (log P), electronic (σ), and steric (Eₛ) parameters to create a more robust model for biological activity [14]. The general forms of the Hansch equation are:

- Linear:

Log BA = a log P + b σ + c Eₛ + constant - Non-linear:

Log BA = a log P + b (log P)² + c σ + d Eₛ + constant[14]

Concurrently, the Free-Wilson model was developed, employing a de novo approach based on the additive contributions of specific substituents to biological potency [14]. A mixed approach, combining the strengths of both Hansch and Free-Wilson methodologies, was later proposed by Kubinyi, further enhancing the modeling flexibility [14].

The Computational Revolution (1990s-Present)

The advent of increased computational power and the availability of large-scale chemical databases catalyzed the next major leap. Traditional QSAR, reliant on manual descriptor calculation and linear regression, began to be supplemented—and sometimes replaced—by machine learning algorithms like support vector machines (SVM) and random forests [12] [13]. The most recent contemporary shift is characterized by the integration of deep learning, graph neural networks (GNNs), and generative models, which can automatically learn complex representations from raw molecular structures such as graphs and SMILES strings [12] [15] [13]. The transition of key QSAR methodologies is summarized in Table 1.

Table 1: Historical Evolution of Key QSAR Methodologies and Representations

| Time Period | Dominant Methodologies | Molecular Representations | Key Innovations |

|---|---|---|---|

| 1868-1950s | Crum-Brown Equation, Richet's Solubility, Meyer-Overton Rule [14] | Qualitative Structural Formulae | Linking structure to activity; concept of lipophilicity [14] |

| 1960s-1980s | Hansch Analysis, Free-Wilson Analysis, Mixed Approach [14] | 1D/2D Physicochemical Descriptors (log P, σ, Eₛ) [14] | Multi-parameter regression; substituent contribution models [14] |

| 1990s-2010s | MLR, PLS, SVM, Random Forest [10] [16] | 2D Molecular Descriptors & Fingerprints (e.g., ECFP) [17] | Machine learning; high-throughput virtual screening [12] |

| 2010s-Present | Deep Neural Networks, Graph Neural Networks (GNNs), Transformers [12] [15] [13] | Molecular Graphs, SMILES (as sequences), 3D Conformations [15] [17] | Representation learning; end-to-end prediction; integration with multimodal data [12] [13] |

Core Methodologies and Workflows

The development of a robust, predictive QSAR model follows a systematic workflow, from data curation to final validation. Adherence to this workflow is critical for regulatory acceptance, particularly under frameworks like the OECD principles [11].

The Standard QSAR Modeling Workflow

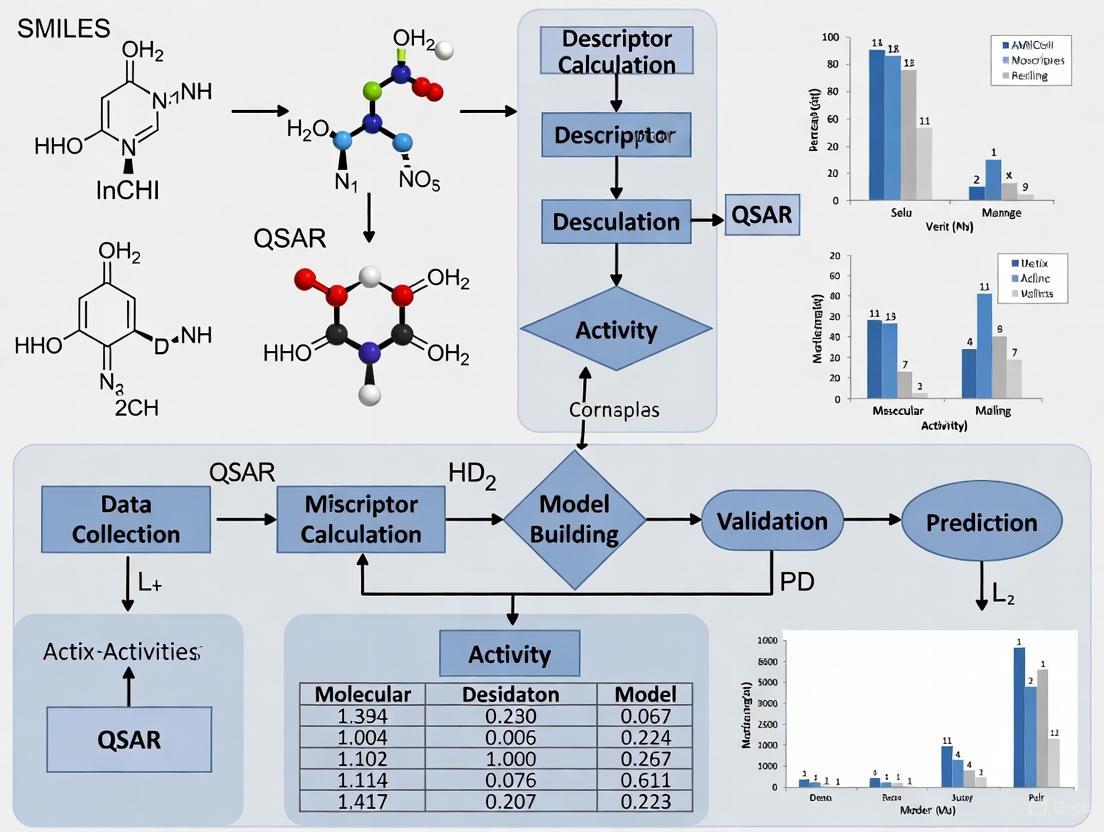

The following diagram illustrates the standard workflow for building a validated QSAR model.

Data Preparation and Molecular Representations

The initial and most critical phase involves the careful preparation of input data.

- Dataset Collection: A dataset of chemical structures and associated biological activities (e.g., IC₅₀, Ki) is compiled from reliable sources like ChEMBL or DrugBank [10] [16]. The dataset must be of high quality, with standardized biological values and curated structures.

- Data Cleaning and Preprocessing: This involves removing duplicates, standardizing structures (e.g., neutralizing charges, removing salts), handling missing values, and normalizing biological activity data (e.g., log-transformation) [10].

- Molecular Descriptor Calculation: Molecules are translated into numerical representations using software tools such as RDKit, Dragon, or PaDEL-Descriptor [17] [10]. Descriptors can be constitutional, topological, geometric, or electronic in nature [10].

- Feature Selection: Given the high dimensionality of descriptor spaces (often thousands of descriptors), techniques like random forest feature importance, LASSO regression, or mutual information filtering are employed to select the most relevant features and mitigate overfitting [17].

Model Building and Validation Protocols

This phase involves selecting algorithms, training models, and rigorously assessing their predictive power.

- Algorithm Selection: A spectrum of algorithms is used, ranging from interpretable linear models like Multiple Linear Regression (MLR) and Partial Least Squares (PLS) to non-linear methods like Support Vector Machines (SVM), Random Forests, and advanced deep learning architectures like Graph Neural Networks (GNNs) [15] [10] [16].

- Training and Validation: The dataset is split into training, validation, and external test sets. The model is built on the training set, and its hyperparameters are tuned using the validation set, often via k-fold cross-validation [10].

- External Validation and Applicability Domain: The final model's performance is assessed on a completely held-out external test set. Furthermore, the applicability domain (AD) must be defined to identify the chemical space within which the model can make reliable predictions [11] [16]. This is a key requirement for regulatory acceptance [11].

Table 2: Summary of Common QSAR Modeling Algorithms and Their Applications

| Algorithm Category | Specific Examples | Typical Applications | Key Advantages & Limitations |

|---|---|---|---|

| Linear Methods | Multiple Linear Regression (MLR), Partial Least Squares (PLS) [10] [16] | Establishing baseline models; interpretable relationships [16] | Advantages: High interpretability, simple to implement.Limitations: Assumes linearity, cannot capture complex interactions. |

| Non-Linear Machine Learning | Support Vector Machines (SVM), Random Forest (RF), Gradient Boosting [17] [10] [13] | Predictive toxicology, activity classification in complex datasets [13] | Advantages: Captures non-linear relationships, generally good performance.Limitations: Can be prone to overfitting; less interpretable than linear models. |

| Deep Learning | Graph Neural Networks (GNNs), Multi-Layer Perceptrons (MLPs), Transformers [15] [17] [13] | State-of-the-art activity prediction; direct learning from molecular graphs or SMILES [15] | Advantages: State-of-the-art accuracy; automatic feature learning.Limitations: "Black-box" nature; requires large datasets and computational resources. |

Contemporary Innovations and Future Directions

The field of QSAR is undergoing a rapid transformation driven by AI, leading to novel modeling paradigms that enhance both predictive power and integrative capacity.

Graph Neural Networks and Multi-Modal Learning

A significant advancement is the application of Graph Neural Networks (GNNs), which natively operate on molecular graphs where atoms are nodes and bonds are edges [15]. This representation allows GNNs to learn rich, hierarchical features directly from the molecular structure, often outperforming traditional models that rely on pre-defined fingerprints [15] [13]. Furthermore, multi-modal learning frameworks (e.g., Uni-QSAR) are being developed to integrate diverse data types, such as 1D SMILES sequences, 2D molecular graphs, and 3D spatial conformations, within a single model, leading to more robust predictions [17].

Explainable AI and Federated Learning

To address the "black-box" nature of complex AI models, Explainable AI (XAI) techniques are being incorporated to provide insights into the model's decision-making process, enhancing trust and interpretability for experimental validation teams [12]. Simultaneously, federated learning frameworks are emerging as a solution to data privacy challenges, allowing for the decentralized training of models across multiple institutions without sharing proprietary data [12].

Emerging Quantum and Hybrid Approaches

On the horizon, quantum machine learning (QML) is being explored for QSAR. Early studies suggest that quantum-enhanced kernel methods can outperform classical counterparts in limited-data settings, potentially opening new avenues for modeling complex structure-activity landscapes [17].

The following diagram illustrates how these modern approaches create an integrated, AI-driven QSAR workflow.

For researchers embarking on QSAR modeling, a suite of software tools and data resources is essential. The following table details key components of the modern QSAR toolkit.

Table 3: Essential Research Reagents and Resources for QSAR Modeling

| Resource Category | Specific Tools / Databases | Primary Function in QSAR Workflow |

|---|---|---|

| Descriptor Calculation | RDKit, Dragon, PaDEL-Descriptor, Mordred [17] [10] | Generates numerical molecular descriptors from chemical structures for model training. |

| Chemical Databases | ChEMBL, ZINC, PubChem, DrugBank [12] [10] | Provides access to millions of compounds with annotated bioactivity data for dataset building. |

| Machine Learning Libraries | Scikit-learn, DeepChem, Keras, PyTorch, DGL [15] [17] [13] | Offers implementations of classic and deep learning algorithms for model construction. |

| Toxicology Data | Tox21 Challenge Data [15] [13] | Supplies standardized experimental screening results for training predictive toxicology models. |

| Validation & Compliance | OECD QSAR Toolbox [11] | Aids in following OECD validation principles for regulatory submission. |

The journey of QSAR from the foundational equation of Crum-Brown and Fraser to the contemporary AI-powered models illustrates a remarkable evolution in computational chemistry. The field has matured from establishing simple linear relationships based on a handful of physicochemical parameters to leveraging deep learning on complex molecular graphs. The future of QSAR lies not in the replacement of traditional methods, but in their intelligent integration with contemporary AI, creating hybrid models that are both powerful and interpretable [12]. As these models become more sophisticated through the incorporation of explainable AI, federated learning, and multi-modal data, they are poised to further accelerate drug discovery, refine toxicity assessments, and contribute significantly to the development of safer, more effective therapeutics. For the scientific community, mastering both the historical foundations and the modern innovations outlined in this guide is essential for advancing research in computational medicinal chemistry.

Quantitative Structure-Activity Relationship (QSAR) models are regression or classification models used in chemical and biological sciences to relate a set of "predictor" variables to the potency of a response variable, which is typically a biological activity of chemicals [18]. The fundamental assumption underlying all QSAR approaches is that similar molecules have similar activities, a principle formally known as the Structure-Activity Relationship (SAR) [18]. In practice, QSAR modeling translates this principle into a mathematical framework where biological activity is expressed as a function of physicochemical properties and/or structural properties plus an error term: Activity = f(physiochemical properties and/or structural properties) + error [18].

The development of reliable QSAR models depends critically on three essential components: high-quality datasets, precisely calculated molecular descriptors, and appropriate mathematical models [19]. Molecular descriptors serve as the fundamental bridge between chemical structure and predicted activity—they are mathematical representations that quantify various electronic, geometric, or steric properties of molecules [20] [18]. By converting structural information into numerical values, descriptors enable the application of statistical and machine learning methods to find correlations between molecular structure and biological response. The accuracy and relevance of these descriptors directly determine the predictive power and interpretability of the resulting QSAR models [19].

Classification of Molecular Descriptors

Molecular descriptors can be categorized based on the dimensionality of the structural information they encode and the specific properties they represent. The diagram below illustrates the hierarchical classification of major descriptor types and the QSAR modeling approaches they enable.

Figure 1: Classification of molecular descriptors and their associated QSAR approaches.

One-Dimensional (1D) Descriptors

1D descriptors, also known as bulk properties, represent whole-molecule characteristics without considering atomic connectivity or three-dimensional geometry. These include fundamental physicochemical properties such as the octanol-water partition coefficient (logP), which measures lipophilicity; molar refractivity (MR), which combines molecular size and polarizability; and various other thermodynamic parameters [18]. In classical QSAR approaches like Hansch analysis, these global properties are correlated with biological activity under the assumption that absorption, distribution, and binding interactions can be captured through such macroscopic properties [19].

Two-Dimensional (2D) Descriptors

2D descriptors are derived from the molecular graph structure, considering atomic connectivity but ignoring three-dimensional conformation. This category includes topological indices that encode information about molecular branching, size, and shape based on graph theory representations [18]. Also belonging to this category are electronic descriptors that quantify charge distribution, polarizability, and orbital characteristics. These descriptors can be computed directly from molecular connection tables and are particularly valuable for high-throughput screening and initial structure-activity analyses [19].

Three-Dimensional (3D) Descriptors

3D descriptors capture the spatial arrangement of atoms in a molecule, recognizing that molecular binding occurs in 3D and that biological receptors perceive ligands as shapes carrying complex force fields [21]. The most significant 3D descriptors are Molecular Interaction Fields (MIFs), which map steric, electrostatic, and other interaction energies around molecules using various chemical probes [21]. These fields form the foundation of 3D-QSAR techniques like CoMFA (Comparative Molecular Field Analysis) and CoMSIA (Comparative Molecular Similarity Indices Analysis), which statistically correlate spatial field variations with biological activity differences across compound series [18] [21].

Fragment-Based Descriptors

Fragment-based descriptors operate on the principle of group contribution methods, where molecular properties are estimated as the sum of contributions from constituent structural fragments [18]. For example, the partition coefficient (logP) can be predicted using fragment methods known as "CLogP" that have been shown to generally provide better predictions than atomic-based methods [18]. This approach, formalized as GQSAR, offers flexibility to study various molecular fragments of interest in relation to biological response variation, and can consider cross-term fragment descriptors to identify key fragment interactions [18].

Mathematical Foundations of QSAR

Fundamental Mathematical Relationships

The mathematical core of QSAR modeling establishes a quantitative relationship between molecular descriptors (X) and biological activity (Y). This relationship can be expressed in two primary forms:

- Regression Models: For continuous activity values (e.g., IC₅₀, Ki), where Y = f(X) + ε

- Classification Models: For categorical activity outcomes (e.g., active/inactive, toxic/non-toxic) [18]

The transformation of biological activity data into appropriate mathematical representations is crucial. For binding affinities, values are typically converted to pIC₅₀ = -log₁₀(IC₅₀(M)) or pKi = -log₁₀(Ki(M)) to create linear relationships with free energy changes [22]. Activity thresholds are often applied for classification models, such as using 1 μM as a cutoff between active and inactive compounds [22].

Statistical and Machine Learning Methods

QSAR modeling employs diverse mathematical techniques, ranging from traditional statistical methods to advanced machine learning algorithms:

- Partial Least Squares (PLS): Particularly dominant in 3D-QSAR for handling the high dimensionality and multicollinearity of field descriptors [18] [21]

- Artificial Neural Networks (ANNs): Including specialized architectures like Counter-Propagation Artificial Neural Networks (CPANN) that can adaptively weight molecular descriptor importance during training [20]

- Support Vector Machines (SVM): Effective for both regression and classification tasks in chemical descriptor-based QSAR [18]

- Deep Learning Models: Emerging approaches that automatically learn relevant features from molecular structures without relying exclusively on pre-defined descriptors [19]

The mathematical model serves as the bridge between molecular structure and activity, with more flexible models capable of capturing complex, non-linear relationships but often at the cost of interpretability [19].

Quantitative Analysis of Descriptor Performance

Table 1: Performance comparison of qualitative vs. quantitative (Q)SAR models for antitarget prediction

| Model Type | Balanced Accuracy | Sensitivity | Specificity | Compounds within Applicability Domain |

|---|---|---|---|---|

| Qualitative SAR (Ki values) | 0.80 | Generally higher | Lower | Higher |

| Quantitative QSAR (Ki values) | 0.73 | Generally lower | Higher | Lower |

| Qualitative SAR (IC₅₀ values) | 0.81 | Generally higher | Lower | Higher |

| Quantitative QSAR (IC₅₀ values) | 0.76 | Generally lower | Higher | Lower |

Data derived from a study creating (Q)SAR models for 30 antitargets using GUSAR software and ChEMBL 20 database [22].

Table 2: Recent trends in QSAR research based on bibliometric analysis (2014-2023)

| Research Aspect | Evolutionary Trend | Implications |

|---|---|---|

| Dataset Sizes | Steady increase | Enables more robust and generalizable models |

| Descriptor Types | Growing diversity with emphasis on 3D descriptors | Improved representation of molecular interactions |

| Model Complexity | Shift toward deep learning methods | Enhanced predictive ability but reduced interpretability |

| Model Validation | Increased focus on applicability domain assessment | Improved reliability for practical applications |

Analysis based on publications in the Journal of Chemical Information and Modeling [19].

Experimental Protocols in QSAR Development

Data Set Preparation and Curation Protocol

Data Extraction: Collect structures and experimental values (Ki, IC₅₀) from reliable databases like ChEMBL, ensuring consistent measurement units (nM) and relationship types (use only records with "=" in the "Relation" field) [22].

Data Transformation: Convert activity values to pIC₅₀ = -log₁₀(IC₅₀(M)) or pKi = -log₁₀(Ki(M)) to establish linear relationships with free energy changes [22].

Value Consolidation: For compounds with multiple experimental values, calculate median values to characterize strongly skewed distributions while retaining important chemical space coverage [22].

Activity Thresholding: For classification models, establish thresholds between active and inactive compounds (e.g., 1 μM) [22].

Data Splitting: Implement fivefold cross-validation by sorting sets by ascending activity values, assigning numbers 1-5 sequentially to structures, and dividing into five unique parts for training and testing [22].

Molecular Descriptor Calculation Methodology

Descriptor Selection: Choose appropriate descriptors based on the QSAR approach (1D, 2D, 3D, or fragment-based) and the specific biological endpoint [19].

3D Structure Preparation: For 3D-QSAR, generate low-energy conformations and ensure proper alignment of training set compounds using crystallographic data or molecular superimposition software [21].

Molecular Interaction Field Calculation:

- Grid Generation: Superimpose a 3D lattice defining regularly distributed grid points around the molecules [21]

- Probe Selection: Choose appropriate probes (carbon sp³ for steric fields, charged carbon sp³ for electrostatic fields, or multi-atom probes for specific interactions) [21]

- Field Computation: Calculate interaction energies at each grid point using potential functions (Coulomb's law for electrostatic fields, Lennard-Jones potential for steric fields) [21]

Descriptor Optimization: Apply feature selection techniques to reduce dimensionality while retaining chemically relevant information [19].

Model Validation and Applicability Domain Assessment

Internal Validation: Perform cross-validation (e.g., fivefold CV) to assess model robustness [18].

External Validation: Split data into training and prediction sets, or use blind external validation on new data [18].

Statistical Analysis: Calculate correlation coefficients (R²), root mean square error (RMSE), balanced accuracy, sensitivity, and specificity [22] [18].

Applicability Domain Definition: Establish the chemical space region where reliable predictions can be expected using approaches such as visual validation with tools like MolCompass, which employs parametric t-SNE models to visualize chemical space and identify model cliffs [23].

Chance Correlation Testing: Perform Y-scrambling to verify absence of fortuitous correlations [18].

Table 3: Essential computational tools and resources for QSAR modeling

| Tool/Resource | Type | Primary Function | Key Features |

|---|---|---|---|

| GUSAR Software | Software Platform | QSAR Model Development | Uses QNA and MNA descriptors; self-consistent regression [22] |

| ChEMBL Database | Chemical Database | Bioactivity Data Source | Manually curated data on drug-like molecules and their biological effects [22] |

| GRID Program | Computational Tool | Molecular Interaction Field Calculation | Multiple probes for mapping interaction energies in active sites [21] |

| VEGA Platform | QSAR Tool | Environmental Fate Prediction | Multiple models for persistence, bioaccumulation, and mobility [24] |

| MolCompass | Visualization Framework | Chemical Space Navigation | Parametric t-SNE for visual validation of QSAR models [23] |

| CPANN Algorithms | Modeling Algorithm | Neural Network QSAR | Adaptive descriptor importance weighting; interpretable models [20] |

Advanced Concepts and Future Directions

Interpretability and Mechanistic Insight

A significant challenge in modern QSAR modeling lies in balancing predictive accuracy with interpretability. The Organisation for Economic Co-operation and Development (OECD) emphasizes the importance of "a mechanistic interpretation, if possible" as one of its key principles for QSAR validation [20]. Advanced approaches like the modified Counter-Propagation Artificial Neural Networks (CPANN) dynamically adjust molecular descriptor importance during training, allowing identification of key molecular features responsible for classifying molecules into specific endpoint classes [20]. This capability bridges the gap between "black box" predictions and chemically meaningful insights, potentially revealing relationships between selected molecular descriptors and known structural alerts for toxicity or other endpoints [20].

Expanding the Applicability Domain

The applicability domain (AD) represents the chemical space region where a QSAR model can reliably predict activity, and its proper definition remains crucial for trustworthy predictions [23]. Recent approaches focus on visual validation of QSAR models, enabling researchers to identify compounds or regions of chemical space where model predictions are unsatisfactory [23]. Tools like MolCompass implement parametric t-SNE models to create deterministic projections of chemical space, allowing consistent mapping of new compounds and facilitating identification of "model cliffs" where small structural changes lead to large prediction errors [23]. This visualization approach complements numerical AD metrics and enhances understanding of model limitations.

Emerging Trends and Universal QSAR Goals

The pursuit of universally applicable QSAR models capable of reliably predicting properties/activities across diverse chemical spaces continues to drive methodological innovations. Bibliometric analyses reveal several emerging trends, including the development of larger and higher-quality datasets, more accurate molecular descriptors, and the integration of deep learning methods that automatically learn relevant features from molecular structures [19]. The ongoing challenge lies in addressing three fundamental requirements: (1) sufficient training data to cope with molecular complexity and diversity, (2) precise molecular descriptors that balance dimensionality and computational cost, and (3) powerful yet flexible mathematical models capable of learning complex structure-activity relationships [19]. As these elements continue to evolve, the predictive ability, interpretability, and application domain of QSAR models will continue to expand, solidifying their role as indispensable tools in molecular design and drug discovery.

The Crucial Role of QSAR in Modern Drug Discovery and Development

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational drug discovery, enabling researchers to mathematically correlate the chemical structures of compounds with their biological activities. The foundational principle of QSAR—that a molecule's biological activity is determined by its molecular structure—has transformed pharmaceutical development from a largely empirical process to a rational, data-driven science [25]. Over the past six decades, QSAR has evolved from simple linear models based on a few physicochemical parameters to sophisticated artificial intelligence (AI)-driven approaches capable of navigating complex chemical spaces [19]. This evolution has positioned QSAR as an indispensable tool for addressing the formidable challenges of contemporary drug development, where escalating costs (exceeding $2.8 billion per approved drug), extended timelines (10-15 years), and high failure rates necessitate more efficient and predictive approaches [16].

The integration of QSAR methodologies into drug discovery pipelines provides a strategic framework for prioritizing chemical synthesis and experimental testing, significantly reducing resource burdens while increasing the probability of success. By enabling the virtual screening of large compound libraries, QSAR models allow researchers to focus experimental efforts on the most promising candidates, thereby compressing discovery timelines and reducing reliance on extensive animal testing [26]. In today's era of AI-enabled drug discovery, QSAR has emerged as a platform technology that synergizes with structural biology, cheminformatics, and machine learning to accelerate the identification and optimization of therapeutic compounds across diverse disease areas [27] [28].

Theoretical Foundations and Methodological Evolution

Historical Development and Basic Principles

The conceptual origins of QSAR trace back to the 19th century when Crum-Brown and Fraser first proposed that the physiological activity of molecules depends on their chemical structure [16]. The field formally began in the early 1960s with the pioneering work of Hansch and Fujita, who developed a method for predicting biological activity using physicochemical parameters such as lipophilicity (log P), electronic properties (Hammett constants), and steric effects [25]. This approach, known as Hansch analysis, established the fundamental QSAR paradigm of expressing biological activity as a mathematical function of molecular descriptors:

Activity = f(D₁, D₂, D₃...) where D₁, D₂, D₃ represent molecular descriptors [16].

The underlying principle of similarity states that compounds with similar structures tend to exhibit similar biological activities, forming the basis for predicting properties of novel compounds based on their position in chemical space [25]. This principle enables QSAR models to generalize from known structure-activity relationships to new chemical entities, providing a powerful framework for molecular design.

The QSAR Workflow: From Data Curation to Model Validation

The development of reliable QSAR models follows a systematic workflow comprising several critical stages, each requiring rigorous execution to ensure predictive accuracy and relevance.

Figure 1: Comprehensive QSAR modeling workflow illustrating the sequential stages from data collection to predictive application.

The process begins with the collection and curation of high-quality datasets containing chemical structures and corresponding biological activities (e.g., IC₅₀, EC₅₀ values) obtained through standardized experimental protocols [16] [19]. Data curation is particularly critical, as chemical structure errors directly propagate to model inaccuracies [27]. The next stage involves molecular descriptor calculation, where chemical structures are translated into numerical representations encoding various physicochemical, topological, or quantum-chemical properties [19]. With thousands of potential descriptors available, feature selection and dimensionality reduction techniques such as Principal Component Analysis (PCA), Recursive Feature Elimination (RFE), or LASSO regularization are employed to identify the most relevant descriptors and mitigate overfitting [28] [19].

Model development applies statistical or machine learning algorithms to establish mathematical relationships between selected descriptors and biological activity. This stage typically utilizes a training set of compounds (approximately 75-80% of available data) to build the model [29]. Finally, rigorous validation assesses model performance on external test sets and defines the applicability domain—the chemical space within which the model provides reliable predictions [16] [19]. The leverage method is commonly used to determine this domain, ensuring that predictions are only made for compounds structurally similar to those in the training set [16].

Molecular Descriptors: Encoding Chemical Information

Molecular descriptors serve as the fundamental building blocks of QSAR models, quantitatively representing specific aspects of molecular structure and properties. These descriptors are typically categorized based on the complexity of structural information they encode:

Table 1: Classification of Molecular Descriptors in QSAR Modeling

| Descriptor Type | Description | Examples | Applications |

|---|---|---|---|

| 1D Descriptors | Global molecular properties | Molecular weight, log P (partition coefficient), pKa | Preliminary screening, physicochemical property prediction |

| 2D Descriptors | Topological descriptors based on molecular connectivity | Molecular fingerprints, topological indices, graph-based descriptors | Similarity searching, large-scale virtual screening |

| 3D Descriptors | Spatial molecular features | Molecular surface area, volume, steric parameters, electrostatic potentials | Structure-based design, conformational analysis |

| 4D Descriptors | Conformational ensembles accounting for flexibility | Multiple molecular conformations, interaction fields | Pharmacophore modeling, receptor-based design |

| Quantum Chemical Descriptors | Electronic structure properties | HOMO-LUMO energies, dipole moment, electrostatic potential surfaces | Mechanism analysis, reactivity prediction |

Recent advances include "deep descriptors" derived from neural networks that automatically learn relevant molecular features from raw structural data such as SMILES strings or molecular graphs, potentially capturing more complex structure-activity relationships than traditional engineered descriptors [28].

Classical to Modern: The Evolution of QSAR Modeling Techniques

Classical Statistical Approaches

Classical QSAR methodologies rely on statistical techniques to establish linear relationships between molecular descriptors and biological activity. These methods remain valuable for their interpretability and robustness, particularly with limited datasets.

Multiple Linear Regression (MLR) represents one of the most widely used classical approaches, generating models in the form of simple linear equations that are easily interpretable [16] [28]. Partial Least Squares (PLS) regression excels in handling datasets with numerous correlated descriptors by projecting variables into a lower-dimensional space of latent factors [28]. Principal Component Regression (PCR) combines PCA with regression, using principal components as independent variables to address multicollinearity issues [28].

The primary limitation of classical approaches lies in their assumption of linear relationships between descriptors and activity, which often fails to capture the complex, nonlinear interactions prevalent in biological systems. Additionally, these methods typically require careful feature selection to avoid overfitting and maintain model interpretability [28].

Machine Learning and Ensemble Methods

Machine learning has dramatically expanded the capabilities of QSAR modeling by enabling the detection of complex, nonlinear patterns in high-dimensional chemical data. These algorithms automatically learn the relationship between molecular structure and biological activity without pre-specified assumptions about the underlying functional form.

Table 2: Machine Learning Algorithms in QSAR Modeling

| Algorithm | Principles | Advantages | Limitations |

|---|---|---|---|

| Random Forest (RF) | Ensemble of decision trees using bagging | Handles noisy data, built-in feature selection, robust to outliers | Limited extrapolation beyond training data |

| Support Vector Machines (SVM) | Finds optimal hyperplane to separate classes | Effective in high-dimensional spaces, memory efficient | Performance depends on kernel selection |

| k-Nearest Neighbors (kNN) | Predicts based on similar compounds in descriptor space | Simple implementation, no training phase | Computationally intensive for large datasets |

| Artificial Neural Networks (ANN) | Network of interconnected nodes mimicking neural processing | Captures complex nonlinear relationships, handles diverse data types | Requires large datasets, prone to overfitting |

Ensemble methods have emerged as particularly powerful approaches, combining multiple models to produce more accurate and stable predictions than any single constituent model. Comprehensive ensemble techniques that diversify across multiple subjects (different algorithms, descriptor types, and data splits) have demonstrated superior performance compared to individual models or limited ensembles [29]. For example, a comprehensive ensemble method applied to 19 bioassay datasets achieved an average AUC of 0.814, outperforming individual models like ECFP-RF (AUC 0.798) and PubChem-RF (AUC 0.794) [29].

Deep Learning and the Emergence of Deep QSAR

The integration of deep learning represents the cutting edge of QSAR modeling, giving rise to the subfield of "deep QSAR" [27]. Deep neural networks with sophisticated architectures can automatically learn hierarchical molecular representations directly from structural data, eliminating the need for manual descriptor engineering.

Graph Neural Networks (GNNs) operate directly on molecular graphs, treating atoms as nodes and bonds as edges, thereby naturally representing molecular topology [27] [28]. SMILES-based transformers adapt natural language processing techniques to process simplified molecular input line entry system strings as chemical "sentences" [27]. Convolutional Neural Networks (CNNs) applied to molecular structures can detect spatially localized structural patterns relevant to biological activity [29].

These deep learning approaches demonstrate particular strength in scenarios with large, diverse chemical datasets, where they can uncover complex structure-activity relationships that elude traditional methods. The ANN [8.11.11.1] architecture applied to NF-κB inhibitors, for instance, demonstrated superior reliability and predictive power compared to MLR models [16].

Experimental Protocols and Implementation Frameworks

Protocol: Development and Validation of a QSAR Model for NF-κB Inhibitors

The following detailed protocol exemplifies a robust QSAR modeling approach, as applied to Nuclear Factor-κB (NF-κB) inhibitors, a promising therapeutic target for immunoinflammatory diseases and cancer [16]:

Dataset Compilation:

- Curate 121 compounds with reported experimental IC₅₀ values against NF-κB from literature sources

- Ensure consistent activity measurements obtained through standardized bioassays

- Apply chemical structure standardization and curation to eliminate errors

Data Division:

- Randomly partition compounds into training (~66%) and test sets (~34%)

- Maintain similar activity distributions across both sets

- Apply the leverage method to define the applicability domain

Descriptor Calculation and Selection:

- Compute molecular descriptors using cheminformatics software (e.g., DRAGON, PaDEL, RDKit)

- Apply ANOVA for initial descriptor screening based on statistical significance

- Utilize correlation analysis and stepwise selection to identify optimal descriptor subsets

Model Development:

- Implement MLR with feature selection to generate linear models

- Train ANN architectures (e.g., [8.11.11.1] topology) using backpropagation

- Apply regularization techniques (e.g., weight decay, dropout) to prevent overfitting

Model Validation:

- Perform internal validation using 5-fold cross-validation on training set

- Conduct external validation using held-out test set

- Calculate statistical metrics: R² (coefficient of determination), Q² (cross-validated R²), RMSE (root mean square error)

- Apply Y-randomization to confirm model robustness

Model Interpretation and Application:

- Analyze descriptor contributions to identify structural features influencing activity

- Utilize the validated model to predict activities of novel compounds

- Synthesize and test highest-ranking candidates for experimental verification

Successful QSAR modeling relies on specialized software tools, databases, and computational resources that collectively enable the construction and application of predictive models.

Table 3: Essential Resources for QSAR Modeling

| Resource Category | Specific Tools | Function | Availability |

|---|---|---|---|

| Cheminformatics Software | RDKit, PaDEL-Descriptor, DRAGON | Molecular descriptor calculation, structural analysis | Open-source / Commercial |

| QSAR Modeling Platforms | QSARINS, KNIME, Scikit-learn | Model development, validation, and visualization | Open-source / Commercial |

| Chemical Databases | PubChem, ChEMBL, ZINC | Source of chemical structures and bioactivity data | Publicly accessible |

| Molecular Visualization | PyMOL, Chimera, MarvinView | Structure manipulation and analysis | Freemium / Commercial |

| Programming Environments | Python, R, Julia | Custom model implementation and analysis | Open-source |

Applications in Contemporary Drug Discovery

Target-Based Drug Discovery

QSAR models have become integral to targeted drug discovery campaigns against specific therapeutic targets. In anti-breast cancer drug development, QSAR has been extensively applied to optimize compounds targeting estrogen receptors, HER2, and various kinase pathways [25]. Similarly, for Alzheimer's disease, researchers have developed 2D-QSAR models to design blood-brain barrier permeable BACE-1 inhibitors, successfully optimizing key molecular properties while maintaining potency [28].

In antiviral discovery, QSAR approaches have been deployed against SARS-CoV-2 targets, with machine learning models developed to screen potential main protease (Mᴾʳᵒ) inhibitors, rapidly identifying candidate compounds for experimental validation [28]. These target-specific applications demonstrate how QSAR accelerates lead optimization by providing clear design rules that mediate the trade-offs between potency, selectivity, and physicochemical properties.

ADMET Prediction and Toxicity Assessment

Beyond primary pharmacology, QSAR models have become indispensable tools for predicting absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties early in the drug discovery process. These applications directly address the high attrition rates in drug development, where pharmacokinetic and safety issues remain leading causes of failure [26] [28].

Environmental toxicology represents another significant application area, where QSAR models predict the ecotoxicological effects of chemicals on various species, supporting regulatory decisions and green chemistry initiatives [26]. The implementation of QSAR in regulatory contexts, such as the REACH framework in Europe, highlights the maturity and reliability of well-validated models for specific endpoints [28].

Emerging Applications: PROTACs and Targeted Protein Degradation

QSAR modeling is expanding into novel therapeutic modalities, most notably proteolysis-targeting chimeras (PROTACs) and other targeted protein degradation approaches [28]. These heterobifunctional molecules present unique modeling challenges due to their larger size, complex physicochemical properties, and dual-target engagement requirements. QSAR approaches adapted for these degraders must account for ternary complex formation, cellular permeability challenges, and hook effect dynamics—representing an exciting frontier for methodological innovation [28].

Current Trends and Future Perspectives

AI Integration and Multidisciplinary Convergence

The integration of artificial intelligence with QSAR modeling continues to advance, with several emerging trends shaping the field's trajectory. Explainable AI approaches, including SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations), are addressing the "black box" problem of complex models by providing mechanistic insights into predictions [28]. Multi-task learning frameworks simultaneously model multiple biological endpoints, leveraging shared information to improve generalization, particularly for datasets with limited compounds [27] [29].

The field is also witnessing increased multidisciplinary integration, with QSAR serving as a bridge between computational chemistry, structural biology, and systems pharmacology [30]. This convergence enables the development of more physiologically relevant models that incorporate target engagement data from technologies like Cellular Thermal Shift Assay (CETSA) to validate computational predictions in biologically complex systems [30].

Quantum Computing and Next-Generation QSAR

Quantum computing represents a frontier technology with potential applications in QSAR modeling, particularly through Quantum Support Vector Machines (QSVMs) that leverage quantum mechanical principles to process information in Hilbert spaces [31] [32]. These approaches theoretically offer advantages for handling high-dimensional data and capturing complex molecular interactions, though they remain in early developmental stages [31].

Challenges and Implementation Considerations

Despite substantial advances, QSAR modeling faces several persistent challenges. Data quality and standardization remain critical, as model performance is fundamentally limited by the quality of training data [27] [19]. Model interpretability becomes increasingly difficult with complex deep learning architectures, creating barriers to chemical intuition and design [28]. Applicability domain characterization requires careful attention to ensure models are not applied beyond their validated chemical spaces [16] [19].

Successful implementation requires rigorous validation protocols, domain awareness, and integration with experimental verification in iterative design-make-test-analyze cycles. As the field progresses, the development of universal QSAR models capable of accurate predictions across diverse chemical spaces remains an aspirational goal—one that will require advances in dataset size and quality, molecular representation, and algorithm development [19].

QSAR modeling has evolved from its origins in linear regression to become an indispensable component of modern drug discovery, integrated throughout the value chain from target validation to lead optimization. The convergence of QSAR with artificial intelligence, structural biology, and experimental pharmacology has created a powerful ecosystem for accelerated therapeutic development. As methodological innovations continue to emerge—particularly in deep learning, explainable AI, and quantum-inspired algorithms—QSAR's predictive power and domain of applicability will continue to expand. For researchers and drug development professionals, mastery of QSAR principles and applications represents not merely a technical skill but a strategic imperative in the quest to develop novel therapeutics with greater efficiency and success.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational chemistry and drug discovery, providing mathematical frameworks that correlate chemical structure with biological activity or physicochemical properties [18]. These models are founded on the fundamental principle that the structure of a molecule determines its properties, enabling researchers to predict the activity of new compounds without costly and time-consuming synthetic effort and biological testing [33]. The general form of a QSAR model is expressed as Activity = f(physicochemical properties and/or structural properties) + error, where the function relates molecular descriptors to a quantitative measure of biological response [18]. The evolution of QSAR methodologies has progressed through distinct generations characterized by increasing sophistication in molecular representation—from simple atomic counts to complex conformational ensembles—each building upon the limitations of its predecessor to offer more accurate and mechanistically insightful predictions [34] [28].

The predictive power of QSAR models has made them indispensable across multiple scientific disciplines, including drug discovery, toxicology, environmental science, and materials science [18] [33]. In pharmaceutical research specifically, QSAR approaches have transitioned from traditional statistical models to advanced machine learning and deep learning frameworks that can capture complex nonlinear relationships across expansive chemical spaces [28]. This technical guide examines the fundamental descriptor types that form the foundation of all QSAR modeling, categorized by their dimensional representation, and provides researchers with a comprehensive framework for selecting appropriate descriptors based on their specific research objectives.

Molecular Descriptors: The Foundation of QSAR

Molecular descriptors are quantifiable numerical representations that capture the structural, physicochemical, and biological properties of chemical compounds [34] [33]. These descriptors serve as the independent variables in QSAR models, encoding chemical information into a mathematical form suitable for statistical analysis and machine learning algorithms [28]. The process of transforming molecular structures into numerical descriptors enables the application of pattern recognition, regression techniques, and classification algorithms to predict biological activities and properties of untested compounds [34].

The concept of dimensionality in molecular descriptors refers to the level of structural representation used to compute them, ranging from simple atomic counts to complex representations that account for molecular flexibility and dynamics [33]. Higher-dimensional descriptors typically capture more complex structural information but require greater computational resources and more sophisticated modeling approaches [35]. The appropriate selection of descriptors is crucial for developing robust QSAR models, as it directly influences model accuracy, interpretability, and applicability domain [18] [33].

Table 1: Classification of Molecular Descriptors by Dimension

| Descriptor Dimension | Structural Information Encoded | Example Descriptors | Common Applications |

|---|---|---|---|

| 1D Descriptors | Global molecular properties derived from chemical formula | Molecular weight, atom counts, bond counts, logP [34] [33] | Preliminary screening, high-throughput profiling [33] |

| 2D Descriptors | Structural connectivity and topology | Topological indices, connectivity indices, 2D fingerprints, molecular graphs [34] [33] | Virtual screening, similarity searching, toxicity prediction [34] [33] |

| 3D Descriptors | Spatial molecular geometry and shape | Molecular surface area, volume, electrostatic potentials, steric fields [18] [36] [37] | Lead optimization, structure-based design [36] [37] |

| 4D Descriptors | Conformational ensembles and dynamics | Interaction energy descriptors (Lennard-Jones, Coulomb), occupancy profiles [35] [38] | Modeling flexible molecules, protein-ligand interactions [35] [38] |

The selection of appropriate descriptors must balance computational efficiency with representational completeness, while always considering the domain of applicability and the specific biological endpoint being modeled [18] [33]. As the pharmaceutical industry increasingly embraces AI-driven approaches, molecular descriptors continue to evolve, with graph-based representations and learned embeddings offering new opportunities for capturing complex structure-activity relationships [28].

1D Descriptors: One-Dimensional Representations

1D descriptors represent the most fundamental level of molecular representation, encoding global molecular properties that can be derived directly from the chemical formula or connection table without consideration of molecular geometry or topology [33]. These descriptors provide a coarse-grained characterization of molecules and are computationally efficient to calculate, making them suitable for initial screening and profiling of large chemical libraries [34]. Common 1D descriptors include molecular weight, element counts, ring counts, and the partition coefficient (LogP), which provides information about a compound's hydrophobicity [34] [33].

The primary advantage of 1D descriptors lies in their computational simplicity and ease of interpretation [33]. Models based on 1D descriptors typically train quickly and can provide initial structure-activity trends with minimal computational investment. However, this simplicity comes at the cost of limited structural resolution, as 1D descriptors contain no information about atomic connectivity or spatial arrangement [34]. Consequently, QSAR models based solely on 1D descriptors often lack the granularity needed for lead optimization stages in drug discovery, though they remain valuable for preliminary property profiling and high-throughput prioritization [33].

2D Descriptors: Two-Dimensional Representations

2D descriptors incorporate information about the connectivity of atoms within a molecule, representing the molecular structure as a graph where atoms correspond to vertices and bonds to edges [33]. This topological representation enables the calculation of descriptors that capture more nuanced structural patterns than is possible with 1D descriptors alone [34]. The most commonly used 2D descriptors include constitutional descriptors (representing molecular composition), electrostatic descriptors (reflecting electronic distribution), topological descriptors (derived from graph theory), and fragment-based descriptors that encode the presence of specific functional groups or substructures [33].

Topological descriptors, such as connectivity indices and molecular fingerprints, are particularly valuable for similarity searching and virtual screening [34]. Molecular fingerprints, including MDL keys and PubChem fingerprints, represent molecules as bit strings that indicate the presence or absence of specific structural features [33]. These descriptors enable rapid comparison of chemical structures across large databases and have become fundamental tools in chemoinformatics [34]. The widespread adoption of 2D descriptors stems from their favorable balance between computational efficiency and structural information content, making them the most commonly used descriptor type in QSAR modeling [33].

Table 2: Categories and Examples of 2D Molecular Descriptors

| Descriptor Category | Description | Specific Examples |

|---|---|---|

| Constitutional Descriptors | Properties related to molecular composition | Molecular weight, total number of atoms, number of aromatic rings [33] |

| Topological Descriptors | Properties derived from molecular graph representation | Connectivity indices, Wiener index, Zagreb index [33] |

| Electrostatic Descriptors | Properties related to electronic distribution | Partial atomic charges, dipole moment, polarizability [33] |

| Geometrical Descriptors | Properties related to atomic spatial arrangement (calculated from 2D coordinates) | Van der Waals surface area, shadow indices [33] |

| Fragment-Based Descriptors | Presence or absence of specific structural motifs | Molecular fingerprints, MDL keys, functional group counts [33] |

Despite their utility, 2D descriptors share a significant limitation with their 1D counterparts: they contain no explicit information about the three-dimensional conformation of molecules, which is often critical for biological recognition and activity [35]. This limitation becomes particularly important when modeling interactions with structurally defined biological targets, necessitating the use of higher-dimensional descriptors for more accurate activity prediction [36] [37].

3D Descriptors: Three-Dimensional Representations

3D descriptors encode information about the spatial arrangement of atoms in a molecule, providing a representation of molecular shape, steric bulk, and electronic distribution in three-dimensional space [18] [36]. These descriptors are typically derived from a single, low-energy conformation of a molecule or from an alignment of multiple molecules based on their putative binding mode [37]. The development of 3D-QSAR approaches, such as Comparative Molecular Field Analysis (CoMFA) and Comparative Molecular Similarity Indices Analysis (CoMSIA), represents a significant advancement in QSAR methodology by explicitly relating biological activity to interaction fields surrounding molecules [18] [36].

In 3D-QSAR studies, molecules are first aligned in three-dimensional space based on either experimental data (e.g., protein-ligand crystal structures) or molecular superimposition algorithms [18]. Interaction fields, including steric (shape) and electrostatic potentials, are then calculated at grid points surrounding the aligned molecules [36]. These interaction potentials serve as the 3D descriptors in the QSAR model, which is typically constructed using partial least squares (PLS) regression to handle the high dimensionality of the descriptor space [18] [36]. The resulting models provide visual representations of regions in space where specific molecular properties enhance or diminish biological activity, offering medicinal chemists intuitive guidance for structural modification [36].

A recent application of 3D-QSAR modeling demonstrated its continued relevance in modern drug discovery. In a study on 6-hydroxybenzothiazole-2-carboxamide derivatives as monoamine oxidase B (MAO-B) inhibitors, researchers developed a CoMSIA model with excellent predictive ability (q² = 0.569, r² = 0.915) [36]. The model successfully identified key structural features influencing MAO-B inhibition and guided the design of novel derivatives with predicted nanomolar activity, subsequently validated through molecular docking and dynamics simulations [36]. Similarly, a 3D-QSAR study on indole derivatives as aromatase inhibitors for breast cancer treatment employed a Self-Organizing Molecular Field Analysis (SOMFA) approach, effectively predicting activity using shape and electrostatic potential fields [37].

Figure 1: The typical workflow for 3D-QSAR model development, involving conformation generation, molecular alignment, field calculation, and model validation.

Despite their advantages in capturing spatial properties, 3D-QSAR methods have limitations, particularly their dependence on molecular alignment and their treatment of molecules as rigid entities with single, bioactive conformations [35]. This simplification fails to account for the dynamic nature of ligand-receptor interactions, where both partners exhibit conformational flexibility [35] [38]. This limitation has motivated the development of more advanced four-dimensional QSAR approaches that explicitly incorporate molecular flexibility.

4D Descriptors: Four-Dimensional Representations

4D descriptors extend the concept of 3D-QSAR by incorporating molecular flexibility as the fourth dimension, representing molecules as ensembles of conformations, orientations, tautomers, or protonation states rather than single static structures [35] [38]. This approach acknowledges that molecules exist as dynamic ensembles under physiological conditions and that biological recognition often involves induced-fit mechanisms [38]. In 4D-QSAR, descriptors are computed as averages over multiple molecular states, providing a more realistic representation of the conformational space sampled by flexible molecules [35].

The fourth dimension in these descriptors typically refers to ensemble averaging of molecular states, addressing both conformational flexibility and alignment freedom that plague traditional 3D-QSAR methods [38]. Modern implementations of 4D-QSAR, such as the LQTA-QSAR method, use molecular dynamics (MD) simulations to generate conformational ensemble profiles (CEP) for each compound [38]. Interaction energy descriptors, including Lennard-Jones (LJ) and Coulomb (C) potentials, are computed from these ensembles and serve as the basis for model construction [38]. This MD-QSAR approach represents a significant advancement in the field, leveraging GPU-accelerated computing and modern machine learning techniques to handle the computational complexity of conformational sampling [35].

A recent application of 4D-QSAR to N-substituted urea/thioureas as human glutaminyl cyclase (hQC) inhibitors for Alzheimer's disease demonstrated the power of this approach [38]. The developed model showed excellent statistical reliability (Q² = 0.521, R² = 0.933) and successfully guided the design of new compounds with predicted enhanced activity [38]. Molecular dynamics simulations confirmed the stability of designed compounds in the hQC binding pocket, with several showing higher binding free energies than the reference compound [38]. This study exemplifies how 4D-QSAR can provide valuable insights for optimizing flexible molecules with complex structure-activity relationships.

Figure 2: 4D-QSAR workflow incorporating molecular dynamics simulations to account for conformational flexibility in descriptor calculation.