QSAR Model Validation and Applicability Domain: A Comprehensive Guide for Reliable Predictions in Drug Discovery

This article provides a comprehensive overview of the critical principles and practices for validating Quantitative Structure-Activity Relationship (QSAR) models and defining their Applicability Domain (AD).

QSAR Model Validation and Applicability Domain: A Comprehensive Guide for Reliable Predictions in Drug Discovery

Abstract

This article provides a comprehensive overview of the critical principles and practices for validating Quantitative Structure-Activity Relationship (QSAR) models and defining their Applicability Domain (AD). Tailored for researchers, scientists, and drug development professionals, it covers the foundational importance of validation, detailed methodologies for internal and external validation, strategies for troubleshooting common pitfalls, and a comparative analysis of established validation criteria. With the increasing reliance on computational models for virtual screening and regulatory decisions, this guide synthesizes current knowledge to empower scientists in building, assessing, and deploying robust, reliable, and predictive QSAR models, ultimately enhancing the efficiency and success rate of drug discovery pipelines.

The Critical Pillars of QSAR: Understanding Validation and Applicability Domain

Why Validation is Non-Negotiable in QSAR Modeling

Frequently Asked Questions (FAQs)

1. Why is the coefficient of determination (r²) alone insufficient to prove my model is valid? A high r² value for your test set does not guarantee a predictive or reliable model. Statistical analyses of numerous published QSAR models reveal that a model can have an r² > 0.6 yet fail other, more rigorous validation criteria. Relying solely on r² can lead to models that are overfitted or have significant prediction errors for new compounds [1] [2].

2. What is the Applicability Domain (AD) and why is it mandatory? The Applicability Domain (AD) defines the chemical, structural, or biological space covered by the training data used to build the model [3]. It is a critical principle for assessing model reliability because a QSAR model is primarily valid for interpolation within the training data space, rather than extrapolation [3]. The OECD states that defining the AD is a fundamental principle for having a valid QSAR model for regulatory purposes [3]. Predictions for compounds outside the AD are considered unreliable.

3. My model performs well internally but fails on new data. What went wrong? This is a classic sign of overfitting, where your model has memorized the training data instead of learning the underlying structure-activity relationship. To avoid this, ensure you have used a proper external validation protocol. This involves splitting your data into a training set (for model development) and a test set (for final predictive assessment) before modeling begins [1] [4] [2]. Furthermore, verify that your model's Applicability Domain is well-defined, as high error can occur when predicting compounds structurally different from your training set [3] [5].

4. What are the key statistical parameters I should report for model validation? You should report a suite of parameters that evaluate different aspects of model performance. The table below summarizes essential metrics for regression models, many of which go beyond simple r² [1] [2].

Table 1: Key Statistical Parameters for QSAR Model Validation

| Parameter | Description | Acceptance Threshold |

|---|---|---|

| Q² (from LOO or LMO-CV) | Internal robustness/predictivity (from cross-validation) | Typically > 0.5 [6] |

| r² (test set) | Goodness-of-fit for external test set | > 0.6 is common, but not sufficient alone [2] |

| Concordance Correlation Coefficient (CCC) | Measures how well predictions mirror experiments (line of unity) | > 0.8 - 0.9 [2] |

| rₘ² | A metric incorporating r² and r₀² | A high value is desired; check specific literature [2] |

| Slope (K or K') | Slope of regression lines through origin | Should be close to 1 (e.g., 0.85-1.15) [2] |

5. Are there simple methods to define the Applicability Domain for my model? Yes, several common methods exist, ranging from simple range-based checks to more complex distance-based calculations. The choice of method depends on your model's complexity and descriptors. The table below outlines some standard approaches [3] [7].

Table 2: Common Methods for Defining the Applicability Domain (AD)

| Method | Brief Description | Considerations |

|---|---|---|

| Range-based (Bounding Box) | Checks if a new compound's descriptors fall within the min/max range of the training set descriptors. | Simple but can include large, empty regions with no training data [7]. |

| Leverage (Hat Matrix) | Identifies influential compounds in the model's descriptor space. A high leverage for a new compound indicates extrapolation [3]. | A common and statistically sound approach for regression models [3]. |

| Distance-Based (e.g., Euclidean, Mahalanobis) | Measures the distance from the new compound to the training set in descriptor space. | Requires defining a distance threshold. Mahalanobis distance accounts for correlation between descriptors [3] [7]. |

| Similarity-Based (e.g., Tanimoto on Fingerprints) | Calculates the structural similarity (e.g., using ECFP fingerprints) to the nearest neighbor in the training set [5]. | Intuitive and directly tied to the chemical similarity principle. |

Troubleshooting Guides

Problem: Inconsistent External Validation Results Across Different Criteria

Issue: Your model passes some external validation checks but fails others, creating uncertainty about its predictive power.

Solution:

- Audit Your Validation Metrics: Systematically calculate all recommended validation parameters from Table 1. Do not cherry-pick only the successful ones.

- Check for Regression Through Origin (RTO) Artifacts: Some validation criteria (e.g.,

r₀²) use regression through the origin. Be aware that different software packages may calculate this parameter differently, leading to inconsistent results. Ensure you are using the correct statistical formulas [2]. - Adopt a Consensus Approach: A model should be considered predictive only if it satisfies a majority or a defined set of these criteria simultaneously. For example, a model might be required to have

r² > 0.6,CCC > 0.8, and a slopeKbetween 0.85 and 1.15 [2].

Problem: High Prediction Error for New Compounds

Issue: Your model has satisfactory internal validation statistics, but its predictions for new, external compounds are inaccurate.

Solution:

- Determine if the Compound is Within the Applicability Domain: This is the first and most critical step. Use one of the methods from Table 2 (e.g., leverage or distance-based) to check if the new compound falls within your model's AD. If it is outside, the prediction should be flagged as unreliable [3] [8].

- Analyze the Training Set Diversity: High external error often indicates that your training set does not adequately represent the chemical space you are trying to predict. Consider adding more diverse compounds to your training set to broaden the AD [5].

- Investigate Model Overfitting: Re-examine your model development process. Did you use too many descriptors relative to the number of compounds? Apply feature selection techniques to build a simpler, more robust model [6].

Experimental Protocols

Protocol 1: Standard Workflow for QSAR Model Validation

This protocol outlines the essential steps for developing and validating a robust QSAR model, integrating both statistical and applicability domain checks [1] [4] [2].

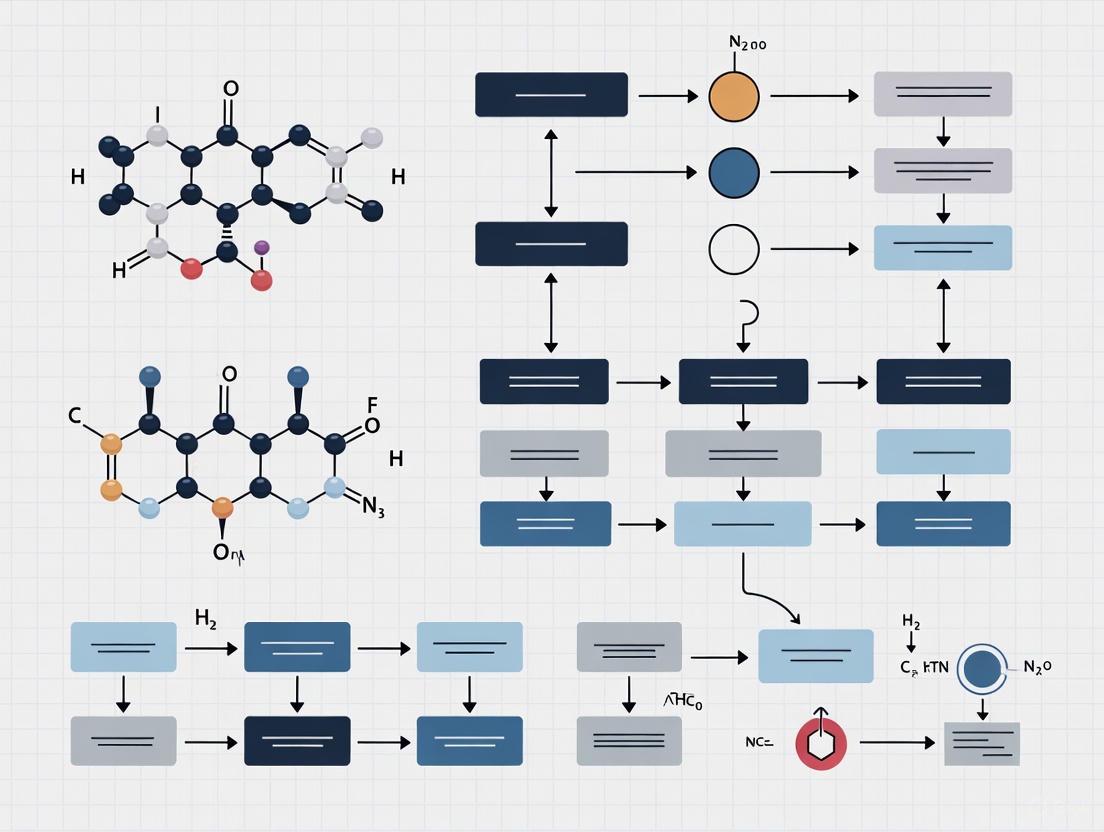

Diagram 1: QSAR Validation Workflow

Steps:

- Data Collection and Curation: Collect a high-quality dataset of compounds with known biological activities. Clean the data and remove duplicates.

- Data Splitting: Randomly split the dataset into a training set (typically ~70-80%) for model development and a test set (~20-30%) for external validation. This split must be done prior to descriptor calculation or model building to avoid data leakage.

- Descriptor Calculation and Selection: Calculate molecular descriptors or fingerprints for all compounds. Use feature selection methods on the training set only to reduce dimensionality and avoid overfitting.

- Model Development: Build the model (e.g., using Multiple Linear Regression, Random Forests, etc.) using only the training set data.

- Internal Validation: Assess model robustness using techniques like Leave-One-Out (LOO) or Leave-Many-Out cross-validation on the training set. The cross-validated correlation coefficient (Q²) is a key metric here.

- Define Applicability Domain (AD): Using the training set data, calculate the boundaries of your model's AD using a chosen method (e.g., leverage, Euclidean distance).

- External Validation and AD Check:

- Use the finalized model to predict the activities of the test set compounds.

- Calculate the external validation statistics from Table 1 (e.g., r², CCC, rₘ²).

- Simultaneously, check if each test set compound falls within the defined AD.

- Final Model Assessment: A model is deemed predictive if the test set compounds are primarily within the AD and the external validation statistics meet the accepted thresholds.

Protocol 2: Defining an Applicability Domain Using Leverage and Hat Values

This method is particularly useful for regression-based QSAR models [3].

Methodology:

- From your finalized model and training set data, construct the descriptor matrix (X).

- Calculate the hat matrix (H): H = X(XᵀX)⁻¹Xᵀ.

- The leverage of a compound is given by the diagonal elements of the hat matrix,

hᵢᵢ. - The critical leverage value (

h*) is typically defined ash* = 3p/n, wherepis the number of model descriptors + 1, andnis the number of training compounds. - To check a new compound:

- Calculate its descriptor vector (

xₙₑw). - Calculate its leverage:

hₙₑw = xₙₑw(XᵀX)⁻¹xₙₑwᵀ. - If

hₙₑw > h*, the compound is considered to have high leverage and is outside the AD, meaning the prediction is an extrapolation and should be used with caution.

- Calculate its descriptor vector (

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for QSAR Modeling and Validation

| Tool / Reagent | Type | Function in QSAR Validation |

|---|---|---|

| Molecular Descriptor Software (e.g., Dragon) | Software | Calculates thousands of theoretical molecular descriptors from chemical structures, which form the independent variables (X) in the model [1]. |

| Morgan Fingerprints (ECFPs) | Molecular Representation | Encodes molecular structure as circular atom environments. Used for structural similarity searches and as descriptors for machine learning models, crucial for defining the AD [5]. |

| Tanimoto Distance/Similarity | Metric | A standard measure for quantifying structural similarity based on fingerprints. Used to find a compound's nearest neighbors in the training set for AD assessment [5] [9]. |

| Hat Matrix & Leverage | Statistical Metric | Identifies influential points in the model's descriptor space and is a core method for defining the Applicability Domain [3]. |

| Concordance Correlation Coefficient (CCC) | Statistical Metric | Measures the agreement between predicted and observed values, more rigorous than r² for confirming a model lines up with the line of unity [2]. |

| Kernel Density Estimation (KDE) | Statistical Method | A modern, advanced method for determining the Applicability Domain by estimating the probability density of the training data in feature space, effectively identifying sparse regions [7]. |

Frequently Asked Questions (FAQs)

1. What is an Applicability Domain (AD) and why is it crucial for QSAR models?

The Applicability Domain (AD) defines the boundaries within which a QSAR model's predictions are considered reliable. It represents the chemical, structural, or biological space covered by the training data used to build the model. The AD determines if a new compound falls within the model's scope, ensuring the model's underlying assumptions are met. Predictions for compounds within the AD are generally more reliable than those outside, as models are primarily valid for interpolation within the training data space rather than extrapolation. According to OECD principles, defining the AD is a mandatory requirement for a valid QSAR model for regulatory purposes [3] [10].

2. What are the common methods for defining the Applicability Domain?

No single, universally accepted algorithm exists, but several methods are commonly employed [3]:

- Range-based and Geometric methods: Such as Bounding Box (based on min/max descriptor values) and Convex Hull (smallest convex area containing the training set)

- Distance-based methods: Using Euclidean, Mahalanobis, or leverage values to measure similarity to training compounds

- Probability-density distribution-based strategies: Estimating the probability density distribution of the training data Recent benchmarking suggests the standard deviation of model predictions may offer the most reliable AD approach [3].

3. How can I identify if my query compound is outside the model's Applicability Domain?

A compound may be outside the AD if [3] [10]:

- Its molecular descriptors fall outside the range of the training set descriptors (for Bounding Box method)

- It lies outside the convex hull of the training set in descriptor space

- Its distance (Euclidean, Mahalanobis, or leverage) from the training set centroid exceeds a defined threshold

- Its structural features differ significantly from training compounds when using fingerprint-based methods like Tanimoto distance on Morgan fingerprints

4. What should I do if my compound falls outside the Applicability Domain?

Predictions for compounds outside the AD should be treated with extreme caution. Consider [3]:

- Using an alternative QSAR model with a different training set that includes compounds similar to your query

- Employing read-across approaches to identify similar compounds with experimental data

- Conducting experimental testing to verify predictions

- Using consensus predictions from multiple models

- Clearly documenting the AD limitation when reporting results for regulatory submissions

5. How does data quality in the training set affect the Applicability Domain?

Experimental errors in training data significantly impact model reliability and AD definition. Studies show that as the ratio of questionable data in modeling sets increases, QSAR model performance deteriorates. Compounds with large prediction errors in cross-validation are often those with potential experimental errors. However, simply removing these compounds doesn't necessarily improve external predictions due to overfitting risks [11].

Troubleshooting Guides

Problem: Inconsistent predictions when using different Applicability Domain methods

Symptoms: A compound is considered within AD by one method but outside by another; predictions vary significantly based on AD method used.

Solution:

- Understand the strengths and limitations of each AD method (see Table 1)

- Use multiple complementary AD methods to make an informed decision

- For regulatory purposes, choose conservative AD methods that minimize false inclusions

- Consider the specific endpoint and chemical space when selecting AD methods

Prevention: Document all AD methods used and their criteria when reporting QSAR results. Use standardized AD approaches recommended for your specific application domain.

Problem: Model performs poorly even for compounds within the defined Applicability Domain

Symptoms: High prediction errors for compounds theoretically within AD; poor external validation metrics even when AD criteria are met.

Solution:

- Verify training data quality - experimental errors can undermine model reliability [11]

- Check for overfitting through rigorous cross-validation

- Re-evaluate descriptor selection - ensure they are relevant to the endpoint

- Assess whether the training set adequately represents the chemical space

- Consider consensus modeling with multiple algorithms

Prevention: Implement thorough data curation protocols before model development. Use multiple validation techniques throughout model development.

Problem: Defining Applicability Domain for complex machine learning models

Symptoms: Traditional AD methods don't align well with deep learning model behavior; uncertainty quantification is challenging.

Solution:

- For neural networks, consider using dropout-based uncertainty estimation or deep ensembles

- Implement SHAP (SHapley Additive exPlanations) or similar methods to interpret feature contributions [12]

- Use Bayesian neural networks for inherent uncertainty quantification

- Consider the model's performance on different chemical scaffolds, not just descriptor ranges

Prevention: Select modeling approaches with built-in uncertainty quantification when possible. Develop AD strategies during model training, not as an afterthought.

Method Comparison Tables

Table 1: Comparison of Major Applicability Domain Approaches

| Method Type | Examples | Key Features | Limitations | Best Use Cases |

|---|---|---|---|---|

| Range-based | Bounding Box, PCA Bounding Box | Simple implementation; Easy interpretation | Cannot identify empty regions; Ignores descriptor correlations | Initial screening; High-throughput applications |

| Geometric | Convex Hull | Defines explicit boundaries | Computationally complex for high dimensions; Cannot detect internal empty regions | Low-dimensional descriptor spaces (2-3 dimensions) |

| Distance-based | Euclidean, Mahalanobis, Leverage | Handles correlated descriptors (Mahalanobis); Provides continuous measure of similarity | Threshold definition is arbitrary; Performance depends on distance metric chosen | Regression models (leverage); Correlated descriptor spaces |

| Probability Density-based | Kernel-weighted sampling | Accounts for data distribution; Identifies dense and sparse regions | Computationally intensive; Complex implementation | When training set distribution is non-uniform |

Table 2: Research Reagent Solutions for Applicability Domain Assessment

| Tool/Software | Function | Key Features | Access |

|---|---|---|---|

| OECD QSAR Toolbox | Integrated QSAR development and AD assessment | Regulatory-focused; Includes multiple AD methods; Read-across capability | Free download [13] |

| VEGA | QSAR platform with AD evaluation | Specifically designed for regulatory use; Multiple validated models | Freeware [14] |

| Dragon | Molecular descriptor calculation | Calculates 5000+ molecular descriptors; Essential for descriptor-based AD | Commercial |

| RDKit | Cheminformatics toolkit | Open-source; Descriptor calculation and similarity metrics | Open source |

| PaDEL-Descriptor | Molecular descriptor generation | Calculates 1875 descriptors; Fingerprints for 12,833 compounds | Freeware |

Experimental Protocols

Protocol 1: Standardized Workflow for Assessing Applicability Domain

Purpose: To systematically evaluate whether new query compounds fall within a QSAR model's Applicability Domain.

Materials:

- Curated training set with experimental data

- Calculated molecular descriptors for training and query compounds

- QSAR model implementation

- Software for statistical analysis (R, Python, or specialized QSAR platforms)

Procedure:

- Descriptor Calculation: Calculate the same molecular descriptors used in model development for both training and query compounds

- Range Check: Verify all query compound descriptors fall within min-max range of training descriptors

- Leverage Calculation: Compute leverage values for query compounds using the hat matrix: ( h = x(X^TX)^{-1}x^T ) where X is the model matrix of training data [3]

- Distance Measurement: Calculate Mahalanobis or Euclidean distance to training set centroid

- Similarity Assessment: Compute structural similarity (e.g., Tanimoto distance on Morgan fingerprints) to nearest training compound

- Consensus Evaluation: Integrate results from multiple methods to make final AD determination

Troubleshooting: If different methods give conflicting results, consider the query compound "outside AD" for conservative regulatory applications.

Protocol 2: Evaluating Model Performance Across Applicability Domain

Purpose: To assess how prediction error changes with distance from training set.

Materials:

- Validated QSAR model

- Test set compounds with known experimental values

- Software for distance calculations and statistical analysis

Procedure:

- Distance Calculation: For each test compound, calculate distance to nearest training compound using Tanimoto distance on Morgan fingerprints

- Stratification: Bin test compounds based on their distance to training set (e.g., 0-0.2, 0.2-0.4, 0.4-0.6, 0.6-1.0)

- Error Calculation: Compute prediction errors (e.g., MSE, MAE) for each distance bin

- Trend Analysis: Evaluate how error changes with increasing distance from training set

- Threshold Definition: Establish practical AD boundaries based on acceptable error levels for your application

Expected Results: Prediction error typically increases with distance from training set [5]. For regulatory applications, conservative thresholds (e.g., Tanimoto distance < 0.4-0.6) are often appropriate.

Workflow and Relationship Diagrams

AD Assessment Workflow

AD Method Classification

Frequently Asked Questions (FAQs)

FAQ 1: What are the OECD Principles for QSAR Validation and why are they critical for regulatory acceptance?

The OECD Principles for QSAR Validation are a set of five criteria that must be fulfilled for the results of (Q)SAR models to be accepted for regulatory purposes. They provide a scientific foundation to build trust in predictions and ensure consistency. Their core requirement is that a model must be associated with a defined Applicability Domain (AD) [15]. The principles are [15] [16]:

- A defined endpoint: The biological effect or property being predicted must be transparently defined, as models can be constructed using data from different experimental conditions and protocols.

- An unambiguous algorithm: The algorithm used to construct the model must be clearly described.

- A defined domain of applicability: The region in chemical space where the model's predictions are reliable must be established.

- Appropriate measures of goodness-of-fit, robustness, and predictivity: The model must be statistically evaluated, preferably through both internal and external validation.

- A mechanistic interpretation, if possible: The model should be interpreted in the context of a biological or toxicological mechanism, where such knowledge exists.

FAQ 2: How is the Molecular Similarity Principle applied in modern predictive toxicology?

The Molecular Similarity Principle, often summarized as "similar compounds should behave similarly," is the foundation of many non-testing methods [17]. While originally focused on structural similarity, its application has broadened to include [17]:

- Physicochemical property similarity

- Similarity in metabolic fate (ADME)

- Biological similarity based on high-throughput screening data (e.g., from ToxCast)

This principle is directly applied in techniques like Read-Across (RA), where data gaps for a target chemical are filled by using data from similar source compounds [17]. More recently, the principle has been integrated with QSAR to create hybrid models known as read-across structure–activity relationships (RASAR), which use similarity descriptors to build models with enhanced predictivity [17].

FAQ 3: My QSAR model has high statistical accuracy, but its predictions are rejected for regulatory use. What is the most likely cause?

The most probable cause is a poorly defined or undocumented Applicability Domain (AD). A model with high accuracy for its training set may still make unreliable predictions for chemicals that are structurally or property-wise different from those it was built on [3]. Regulatory frameworks like REACH require that the applicability domain is clearly defined to understand the boundaries within which a model's predictions are reliable [15]. Predictions for compounds outside the AD are considered extrapolations and are treated with much lower confidence [3].

FAQ 4: What are the best practices for defining the Applicability Domain of a classification QSAR model?

Defining the AD is crucial for identifying reliable predictions. A benchmark study compared various AD measures and found that the best approach depends on whether you are performing novelty detection or confidence estimation [18].

Table: Efficiency of Applicability Domain Measures for Classification Models [18]

| Category | Purpose | Best Performing Measure | Key Finding |

|---|---|---|---|

| Novelty Detection | Flags compounds structurally dissimilar to the training set. Independent of the classifier. | Distance-based methods (e.g., Euclidean distance in descriptor space). | Identifies remote objects but is generally less powerful than confidence estimation. |

| Confidence Estimation | Estimates the reliability of a specific prediction using the classifier's information. | Class probability estimates (e.g., from Random Forest). | Constantly performs best for differentiating reliable from unreliable predictions. |

The study concluded that classification Random Forests in combination with their class probability estimates are a highly effective starting point for predictive classifiers with a well-defined AD [18].

Troubleshooting Guides

Problem: Read-Across (RA) predictions are deemed too subjective and lack reproducibility.

Background: Traditional, expert-driven RA can be challenging to reproduce and have accepted by regulators [17].

Solution: Implement a more quantitative and systematic RA workflow.

Table: Troubleshooting Read-Across Predictions

| Symptom | Possible Cause | Solution |

|---|---|---|

| Regulatory pushback on RA justification. | Reliance solely on structural similarity for complex endpoints. | Under the EU's REACH regulation, especially for human health effects, further evidence of biological and toxicokinetic similarity is required [17]. |

| High uncertainty in RA predictions. | Lack of a framework to characterize and quantify uncertainty. | Adopt established frameworks like those from Schultz et al. or Patlewicz et al. to systematically document uncertainty [17]. |

| The RA prediction is an isolated, non-quantified estimate. | The approach is purely qualitative. | Use quantitative RA methods such as:• Generalized Read-Across (GenRA): A similarity-weighted average prediction based on multiple features [17].• Quantitative RASAR (q-RASAR): Integrates RA with QSAR by using similarity descriptors in a machine learning model, enhancing objectivity and predictivity [17]. |

Problem: Defining a scientifically sound Applicability Domain (AD) for a regression-based QSAR model.

Background: The OECD requires a defined AD, but no single algorithm is universally mandated [3]. The choice depends on the model and data.

Solution: Select and implement an appropriate AD method.

Table: Common Methods for Defining the Applicability Domain [3]

| Method Type | Description | Common Techniques |

|---|---|---|

| Range-Based | Defines the AD based on the range of descriptor values in the training set. | Bounding Box. |

| Geometric | Defines the geometric space occupied by the training data. | Convex Hull. |

| Distance-Based | Measures the distance of a new compound from the training set. | Euclidean or Mahalanobis distance. |

| Leverage-Based | A widely used method for regression models; calculates the leverage of a new compound based on the model's descriptor matrix. | The leverage value is compared to a critical threshold to determine if the compound is influential or outside the AD [3]. |

Experimental Protocol: Benchmarking Applicability Domain Measures

This protocol is based on a published benchmark study [18].

- Data Preparation: Curate a data set with known endpoint activities. Ensure it is representative of the chemical space of interest.

- Classifier Selection: Select a diverse set of classification techniques (e.g., Random Forest, Support Vector Machines, k-Nearest Neighbors).

- Model Training & Validation: Train each classifier on the training set. Use cross-validation (e.g., 5-fold CV) to estimate the general prediction error.

- Calculate AD Measures: For an independent test set, calculate various AD measures for each classifier. This includes:

- Novelty Detection Measures: Distance to training set in descriptor space.

- Confidence Estimation Measures: Class probability estimates from the classifiers themselves.

- Benchmarking: For each AD measure, compute a Receiver Operating Characteristic (ROC) curve to assess how well the measure differentiates between correct and incorrect predictions. Use the Area Under the ROC Curve (AUC ROC) as the primary criterion to rank the performance of the AD measures.

- Conclusion: Identify the AD measure that best characterizes the probability of misclassification for a given classifier.

QSAR Model Validation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Computational Tools for QSAR and Molecular Similarity

| Item | Function / Purpose | Example / Note |

|---|---|---|

| Molecular Descriptors | Quantitative representations of molecular structure and properties used as model inputs. | Topological indices, physicochemical properties (logP, polar surface area), quantum mechanical descriptors [17]. |

| Molecular Fingerprints | Binary vectors that encode the presence or absence of specific structural features. | Used for rapid similarity searching and as descriptors in machine learning models [17] [19]. |

| OECD QSAR Toolbox | Software designed to fill data gaps for chemicals by grouping them into categories and applying read-across and trend analysis. | A key tool for regulatory assessment, it helps identify profilers and structural alerts [15]. |

| Electrotopographic State Index (Sstate₃D) | A 3D atomic descriptor that encodes structural and electrostatic information. | Used in advanced similarity methods like Maximum Common Property (MCPhd) to go beyond pure topology [20]. |

| Class Probability Estimates | The probability of class membership output by a classifier (e.g., Random Forest). | The benchmarked best measure for defining the Applicability Domain of a classification model [18]. |

Molecular Similarity Applications

The Consequences of Poor Validation and an Ill-Defined AD

Troubleshooting Guides

Guide 1: Diagnosing an Ill-Defined Applicability Domain

Problem: Your QSAR model performs well during internal tests but generates unreliable and erroneous predictions for new compounds.

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| High prediction errors for compounds structurally different from the training set. | The model's Applicability Domain (AD) is not defined, leading to uncontrolled extrapolation [3] [21]. | Formally define the AD using a suitable method (e.g., leverage, distance-based) and use it to screen prediction compounds [22]. |

| Inability to determine when a prediction is an interpolation vs. an extrapolation. | Lack of a defined boundary for the chemical/response space of the training set [22]. | Characterize the interpolation space of your training data using range-based, geometrical, or distance-based methods [3]. |

| The model frequently predicts compounds later verified to be outliers. | No method is in place to identify test compounds that are outside the model's structural or response space [21]. | Implement an outlier detection criterion, such as checking if a compound's descriptors fall outside the training set's threshold ranges [21]. |

Guide 2: Addressing Poor Model Validation

Problem: Your QSAR model has a high goodness-of-fit ((r^2)) but fails to predict the activity of an external test set accurately.

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| High (r^2) but low predictive (r^2) ((q^2) or external (r^2)). | Overfitting and chance correlation; reliance on internal validation (e.g., LOO (q^2)) alone is insufficient [22] [23]. | Adopt a rigorous validation protocol: split data into training/test sets and use external validation as the gold standard [24] [22]. |

| Model performance degrades significantly when applied to a new, external dataset. | The test set compounds are outside the model's Applicability Domain [3]. | Before external prediction, check that all external test compounds fall within the defined AD of your model [22]. |

| Unstable models that change drastically with minor changes in the training data. | The model lacks robustness, potentially due to irrelevant descriptors or overfitting [22]. | Perform Y-randomization (scrambling) to test for chance correlation and use ensemble methods to improve stability [22] [18]. |

Guide 3: Resolving Unreliable Predictions and Error Analysis

Problem: You are unable to trust individual predictions or estimate their associated error.

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| No confidence metric is provided with individual predictions. | The model uses no confidence estimation technique [18]. | Use classifiers that provide class probability estimates, which are natural confidence indicators [18]. |

| Predictions for similar compounds have highly variable and large errors. | The model is being applied in a region of chemical space with little or no training data [5]. | Employ a distance-based AD measure (e.g., Tanimoto distance) and reject predictions for compounds beyond a set threshold [5]. |

| The model's uncertainty estimates do not correlate with actual prediction errors. | The method for estimating uncertainty is unreliable for the given data distribution [7]. | Explore advanced domain classification techniques, such as those based on kernel density estimation, to better identify unreliable regions [7]. |

Frequently Asked Questions (FAQs)

FAQ Category: Applicability Domain (AD)

Q1: What exactly is the Applicability Domain of a QSAR model, and why is it mandatory according to OECD Principle 3?

The Applicability Domain (AD) defines the chemical, structural, and biological space represented by the training data used to build a QSAR model. It establishes the boundaries within which the model's predictions are considered reliable [3] [21]. OECD Principle 3 mandates its definition because QSAR models are fundamentally based on interpolation. Predicting a compound outside the AD is an extrapolation, which carries higher uncertainty and risk. A defined AD helps users identify these situations, ensuring the model is used reliably for regulatory purposes [3] [22].

Q2: What are the common methods for defining the Applicability Domain?

There is no single universal method, but several approaches are commonly used [3] [21]:

- Range-based: Checking if a new compound's descriptor values fall within the range of the training set descriptors.

- Distance-based: Calculating the Euclidean, Mahalanobis, or Tanimoto distance of a new compound from the training set molecules in the descriptor space [3] [5].

- Geometrical Methods: Using a bounding box or convex hull to define the region containing the training set [3].

- Leverage Approach: For regression models, using the hat matrix to identify influential compounds and define the AD [3].

- Class Probability Estimates: For classification models, the estimated probability of class membership is a natural and powerful measure to define the AD and estimate prediction confidence [18].

Q3: My model has a well-defined AD, but it flags too many potentially interesting compounds as "outside the domain." What can I do?

A very conservative AD can limit the exploration of chemical space. Consider these options:

- Use More Powerful Algorithms: Recent evidence suggests that advanced machine learning models, particularly deep learning, may have a wider effective AD and a better ability to extrapolate than conventional QSAR algorithms [5].

- Benchmark AD Measures: Some AD measures are more efficient than others. Research indicates that for classification models, class probability estimates often outperform other measures in identifying unreliable predictions [18].

- Adjust the Threshold: The threshold for the AD is often a balance between reliability and coverage. You may adjust it based on the level of risk acceptable for your project, but this should be done transparently.

FAQ Category: Model Validation

Q4: What is the critical difference between internal and external validation, and why are both necessary?

- Internal Validation (e.g., cross-validation) assesses the model's robustness and goodness-of-fit using only the training set data. It checks how stable the model is to perturbations in its own data [22].

- External Validation assesses the model's true predictive power by testing it on a set of compounds that were not used in any part of the model development process [22] [23].

Both are necessary because a model can have an excellent fit and seem robust internally (high (r^2) and (q^2)) but still fail to predict new data if it is overfitted or has a narrow AD. External validation is the most definitive proof of a model's practical utility [22] [25].

Q5: What are the best practices for performing external validation?

- Proper Data Splitting: The full dataset should be split into a training set (for model building) and a test set (for external validation). Ideally, this should be done in a way that ensures the test set is within the AD of the training set [21] [22].

- Use of an External Test Set: The external test set must be completely excluded from the model training, variable selection, and parameter optimization phases [23].

- Report Predictive Metrics: Calculate and report predictive metrics such as (r^2_{pred}) for regression models or sensitivity/specificity for classification models on the external test set [22] [25].

- Apply the AD: Use the defined AD to assess whether the external test compounds are within the model's reliable prediction space [3].

FAQ Category: Data & Methodology

Q6: What are the OECD principles for QSAR validation?

The OECD established five principles to ensure the scientific validity and regulatory acceptance of QSAR models [22]:

- A defined endpoint: The biological activity being predicted must be clear and unambiguous.

- An unambiguous algorithm: The method used to generate the model must be transparent.

- A defined domain of applicability: The scope of the model must be clearly stated.

- Appropriate measures of goodness-of-fit, robustness, and predictivity: The model must be validated both internally and externally.

- A mechanistic interpretation, if possible: Providing a biological or chemical rationale for the model is encouraged, though not always required.

Q7: What are some common "research reagents" or essential components for building a reliable QSAR model?

The table below details key "research reagents" for robust QSAR modeling.

| Item / Solution | Function in the QSAR Experiment | Key Consideration |

|---|---|---|

| Curated Chemical Dataset | The foundational material containing chemical structures and associated biological activity data. | Data quality is paramount. Requires rigorous curation to remove errors and duplicates [24]. |

| Molecular Descriptors | Quantitative representations of chemical structure and properties (e.g., logP, molar refractivity, verloop parameters [25]). | Descriptors should be meaningful and relevant to the endpoint. Variable selection helps avoid overfitting [23]. |

| Validation Framework | The protocol (internal & external) for testing model robustness and predictivity. | External validation is the most critical step for establishing trust in the model's predictions [22] [23]. |

| Applicability Domain (AD) Method | The tool to define the boundaries of reliable prediction (e.g., leverage, distance-to-model [3]). | Not a single universal method. The choice depends on the model and data. Class probability is effective for classification [18]. |

Experimental Protocols & Workflows

Protocol 1: Standard Workflow for Developing a Validated QSAR Model

This protocol outlines the critical steps for building a QSAR model that adheres to OECD principles and incorporates a defined Applicability Domain [24] [22].

Protocol 2: Methodology for Benchmarking Applicability Domain Measures

This protocol is based on benchmark studies that evaluate different AD measures to identify the most effective one for a given classification task [18].

1. Objective: To determine the best AD measure for differentiating between reliable and unreliable predictions from a QSAR classification model.

2. Materials & Software:

- Several binary classification techniques (e.g., Random Forests, Support Vector Machines).

- Multiple chemical datasets with categorical activity data.

- Computable AD measures (e.g., class probability, leverage, distance to training set).

3. Experimental Procedure:

- Step 1: Model Training. Train each classifier on the training set of each dataset.

- Step 2: Prediction. Use the trained models to predict an independent test set.

- Step 3: Calculate AD Measures. For each prediction in the test set, calculate the value of each AD measure.

- Step 4: Determine Reliability. Compare the model's prediction to the true activity value. A prediction is "reliable" if it is correct, and "unreliable" if it is incorrect.

- Step 5: Generate ROC Curves. For each AD measure, create a Receiver Operating Characteristic (ROC) curve by treating it as a classifier for prediction reliability.

- Step 6: Benchmark. Calculate the Area Under the ROC Curve (AUC ROC) for each AD measure. A higher AUC ROC indicates a better measure for identifying unreliable predictions.

4. Expected Outcome: Benchmarking studies have shown that class probability estimates provided by the classifier itself consistently perform well as an AD measure for classification models [18]. The results can be summarized in a comparative table:

| AD Measure | Classifier | Avg. AUC ROC (from benchmark studies) | Efficiency for AD |

|---|---|---|---|

| Class Probability | Random Forest | High (~0.85) | Best [18] |

| Leverage | PLS / MLR | Variable | Moderate |

| Euclidean Distance | Any | Variable | Moderate |

| Tanimoto Distance | Any | Variable | Moderate |

Visualization: The Relationship Between Model Error and Applicability Domain

The following diagram illustrates the core logical relationship that underpins the need for an Applicability Domain: prediction error generally increases as a compound becomes less similar to the training set data [5] [7].

A Practical Toolkit for QSAR Validation and Applicability Domain Implementation

Frequently Asked Questions

Q1: The external validation criteria for my QSAR model give conflicting results. One metric says the model is predictive, but another does not. Which one should I trust?

This is a common challenge. Relying on a single metric is not sufficient. The coefficient of determination (r²) alone, for instance, is not a reliable indicator of model validity [1] [2]. A model should be judged based on a consensus of multiple validation parameters.

- Recommended Action: Do not rely on a single criterion. Apply a set of complementary metrics, such as the Golbraikh & Tropsha criteria, the Concordance Correlation Coefficient (CCC), and the rm² metric together to get a holistic view of your model's predictivity [26] [2]. The CCC is noted for being particularly restrictive and prudent, often helping to make decisions when other measures conflict [26].

Q2: What is the most stringent validation metric to guard against over-optimistic model performance?

Based on comparative studies, the Concordance Correlation Coefficient (CCC) is shown to be the most restrictive and precautionary validation measure [26]. It evaluates not just the correlation, but also the agreement between observed and predicted data, ensuring that the predictions are both precise and accurate relative to the line of perfect concordance (the 45-degree line).

- Threshold: A CCC value greater than 0.8 is generally indicative of a predictive model [2].

Q3: How do I implement the rm² metric for my model validation?

The rm² metric is a stringent measure developed to assess a model's true predictive power by considering the actual difference between observed and predicted values without using the training set mean as a reference [27]. It has three variants:

- rm²(LOO): For internal validation using Leave-One-Out cross-validation.

- rm²(test): For external validation of the test set.

- rm²(overall): For analyzing the overall performance considering both internal and external sets [27]. This metric is widely used by QSAR experts to select the most robust and predictive models [2].

Comparison of Key External Validation Metrics

The table below summarizes the core principles, advantages, and challenges of the three major validation criteria discussed in the FAQs.

| Criterion | Key Principle | Key Statistical Thresholds | Reported Advantages | Common Challenges |

|---|---|---|---|---|

| Golbraikh & Tropsha [28] [24] [2] | A multi-faceted approach testing correlation and slope of regressions. | 1. r² > 0.62. Slopes (K or K') between 0.85 & 1.153. (r² - r₀²)/r² < 0.1 | Comprehensive, checks for consistency from multiple angles. | Calculations for r₀² can be a source of controversy and statistical debate [2]. |

| Concordance Correlation Coefficient (CCC) [26] [2] | Measures both precision and accuracy relative to the line of perfect agreement. | CCC > 0.8 | Considered the most restrictive and stable metric; helps resolve conflicts between other methods. | A conceptually simple but very stringent measure. |

| rm² Metric [27] [2] | Assesses predictivity based on direct differences between observed and predicted values. | A higher rm² value indicates better predictivity. | A stringent measure that is popular for model selection; has variants for different validation types. | Like the Golbraikh & Tropsha criteria, its calculation can be affected by the formula used for r₀² [2]. |

Experimental Protocol: Implementing a Multi-Metric Validation Strategy

This protocol provides a step-by-step methodology for rigorously validating a QSAR model using a consensus of the criteria outlined above, as recommended in best practices reviews [28] [24].

1. Data Preparation and Splitting

- Curate Your Dataset: Ensure chemical structures and biological data are accurate and standardized.

- Split Data: Divide the full dataset into a training set (for model development) and a test set (for external validation). The test set should never be used during model building.

2. Model Development

- Develop your QSAR model using the training set only, employing your chosen descriptor sets and statistical modeling techniques (e.g., MLR, PLS, ANN).

3. External Validation and Calculation

- Use the developed model to predict the activities of the compounds in the test set.

- Calculate the following statistical parameters by comparing the experimental versus predicted values for the test set:

- The coefficient of determination (r²).

- The slopes of the regression lines through the origin (K and K').

- The coefficients of determination for regression through the origin (r₀² and r₀'²).

- The rm² metric.

- The Concordance Correlation Coefficient (CCC).

4. Interpretation and Decision

- Compare your calculated values against the accepted thresholds for each criterion (as shown in the table above).

- A model should ideally satisfy all or a strong consensus of these criteria to be deemed predictive. The CCC can serve as a tie-breaker in cases of conflict [26].

- Always define the Applicability Domain of your model to understand for which new compounds reliable predictions can be made [28] [24].

QSAR Model Validation Workflow

The following diagram illustrates the logical workflow for developing and validating a predictive QSAR model, incorporating the key validation stages.

The Scientist's Toolkit: Essential Reagents for QSAR Modeling

This table lists key software tools and resources essential for conducting rigorous QSAR model development and validation.

| Tool/Resource | Function in QSAR Modeling |

|---|---|

| OECD QSAR Toolbox [13] [29] | A comprehensive software platform for profiling chemicals, grouping into categories, and filling data gaps via read-across and QSAR models. Essential for regulatory application. |

| DRAGON Software [1] [2] | A widely used application for calculating thousands of molecular descriptors from chemical structures, which are the independent variables in a QSAR model. |

| Statistical Software (e.g., SPSS, R) [2] | Used for the core steps of model development (e.g., Multiple Linear Regression) and for calculating complex validation metrics (r², CCC, etc.). |

| Custom Calculators [13] | The OECD QSAR Toolbox allows for the building of custom calculators for specific data gap-filling needs, enhancing the flexibility of predictions. |

Frequently Asked Questions (FAQs) on Internal Validation for QSAR Models

1. What is the primary goal of internal validation? The goal of internal validation is to estimate how well your QSAR model will perform on new, unseen data drawn from the same population used for model development. It provides an estimate of the model's generalization error or prediction error [30] [31].

2. When should I use cross-validation over bootstrap validation, and vice versa?

Simulation studies suggest there is no single best method for all cases, but general guidelines exist [32]. Repeated cross-validation (e.g., 10-fold CV repeated 50-100 times) is an excellent competitor and is particularly recommended for extreme cases, such as when you have more predictors (p) than samples (N) [33] [32]. The bootstrap (especially the Efron-Gong optimism bootstrap) is often faster and is recommended for non-extreme cases (N > p), as it validates model building with the full sample size N [33] [32]. The .632+ bootstrap estimator is particularly useful for small sample sizes or when using discontinuous accuracy scoring rules [30] [32].

3. I've seen large discrepancies between my cross-validation and bootstrap results. What does this mean? A significant difference (e.g., 20+ points in a performance metric) can indicate issues with your validation setup or model stability. First, ensure you are using a sufficient number of repetitions. For cross-validation, a single 10-fold CV may be imprecise; it should be repeated 50-100 times for stable estimates [33] [32]. For bootstrap, 200-400 repetitions are typically used [33]. Second, and most critically, you must ensure that every step of the supervised learning process (including any feature selection based on the outcome variable Y) is repeated afresh within each resample of the validation. Failure to do this rigourously introduces bias and invalidates the validation [33].

4. What is "model selection bias" and how can I avoid it? Model selection bias occurs when the same data is used to both select a model (e.g., choose which features to include) and report its final performance. This leads to overoptimistic and untrustworthy error estimates because the validation data is not independent of the model selection process [31]. The solution is to use a method like double (nested) cross-validation, where an outer loop handles model assessment and an inner loop handles model selection and tuning. This ensures that the test set in the outer loop is completely blind to the model selection process [31].

5. How do I define the Applicability Domain (AD) for my QSAR model? The Applicability Domain is the region in chemical and response space where the model's predictions are reliable [21] [18]. Defining the AD is a fundamental principle for OECD-approved QSARs. Methods include:

- Range-based methods: Defining boundaries based on the descriptor ranges in the training set.

- Distance-based methods: Such as the leverage approach or Euclidean distance, to measure how similar a new compound is to the training set.

- Probability density estimation: Using techniques like kernel density estimation (KDE) to model the distribution of training data in the feature space [7].

- Leveraging the model itself: For classification models, the class probability estimate is often the most powerful measure for defining the AD, as it directly reflects an object's distance to the decision boundary and its likelihood of misclassification [18].

Troubleshooting Guide: Common Internal Validation Issues

Problem 1: Over-optimistic Model Performance

Symptoms:

- Performance during internal validation is much lower than the performance on the original training data.

- The model fails to predict new compounds accurately.

Possible Causes and Solutions:

- Cause: Non-rigorous Validation. The most common cause is that the model development process (especially feature selection) was not repeated within each validation resample [33].

- Solution: Implement a rigorous validation protocol where the entire model building workflow, from start to finish, is repeated for every training set partition in cross-validation or every bootstrap sample. Use double cross-validation if you are performing model selection [33] [31].

- Cause: Overfitting. The model is too complex and has learned the noise in the training data.

- Solution: Apply regularization techniques, simplify the model, or use a validation method like the bootstrap

.632+estimator, which is designed to correct for overfitting bias [30] [32].

Problem 2: High Variation in Performance Estimates

Symptoms:

- Each time you run the validation, you get a significantly different performance estimate.

Possible Causes and Solutions:

- Cause: Insufficient Repeats. A single run of k-fold cross-validation can have high variance [32].

- Solution: Use repeated cross-validation (e.g., 10-fold CV repeated 100 times) to average out the variability and obtain a more precise estimate [33] [32]. For bootstrap, using 400 or more resamples can stabilize the estimate.

Problem 3: Unreliable Predictions for New Compounds

Symptoms:

- The model performs well on the training and test sets but fails for external compounds.

Possible Causes and Solutions:

- Cause: Compound is Outside the Applicability Domain. The new compound is structurally or chemically too different from the compounds used to train the model [21] [18].

- Solution: Always define and report the Applicability Domain of your QSAR model. Before predicting a new compound, check if it falls within the AD using a suitable method like leverage, distance to training set, or class probability. Reject or flag predictions for compounds outside the AD [18].

Comparison of Key Internal Validation Methods

The table below summarizes the core characteristics of the most common internal validation techniques.

Table 1: Summary of Internal Validation Methods for QSAR Models

| Method | Key Principle | Key Formula / Output | Typical Number of Repetitions | Advantages | Disadvantages |

|---|---|---|---|---|---|

| k-Fold Cross-Validation | Data split into k folds; model trained on k-1 folds and tested on the left-out fold [30]. | Average performance across all k folds. | k=5 or k=10; repeated 50-100 times for precision [33] [32]. | Makes efficient use of all data; good balance of bias and variance. | Can be computationally expensive with repeats; training sets are correlated. |

| Bootstrap (Optimism) | Resample with replacement to create many datasets of size N; estimate & subtract optimism from apparent performance [33] [30]. | Optimism-adjusted performance: Apparent Performance - Optimism |

200-400 [33]. | Uses full sample size N for model building; often faster than repeated CV. | Poor performance in extreme N < p situations [33]. |

| Bootstrap (.632+) | Weighted average of the apparent performance and the out-of-bag (OOB) performance, corrected for overfitting [30] [32]. | θ.632+ = (1-ω)*θ_orig + ω*θ_OOB where weight ω accounts for overfitting rate [30]. |

200-400 | Corrects for the bias of the simple bootstrap; good for small samples and discontinuous scores [30] [32]. | Can be downwardly biased in small samples with high signal-to-noise [32]. |

| Double Cross-Validation | Two nested loops: outer loop for model assessment, inner loop for model selection/tuning [31]. | Unbiased performance estimate of the model selection process. | Varies (e.g., 10-fold outer, 5-fold inner) | Gold standard for unbiased error estimation when model selection is involved [31]. | Computationally very intensive. |

Experimental Protocols for Key Validation Techniques

Protocol 1: Repeated k-Fold Cross-Validation

Objective: To obtain a robust and precise estimate of model prediction error.

- Shuffle the dataset randomly.

- Split the dataset into k folds of approximately equal size.

- For each fold: a. Treat the current fold as the test set. b. Use the remaining k-1 folds as the training set. c. From the training set, repeat the entire model building process (including feature selection, parameter tuning, etc.). d. Fit the model and evaluate its performance on the test set.

- Calculate the average performance across all k folds. This is one "repeat".

- Repeat steps 1-4 a large number of times (e.g., 100 times).

- Final Estimate: The overall average performance across all repeats and all folds is your estimate of prediction error.

Protocol 2: Bootstrap .632+ Validation

Objective: To estimate prediction error while correcting for overfitting bias.

- Define the apparent performance (

θ_orig) by training and testing the model on the entire original dataset. - Define a non-informative model performance (

γ), e.g., 0.5 for AUC. - For b = 1 to B (B = 200-400):

a. Take a bootstrap sample (random sample with replacement) of size N from the original data. This is the training set.

b. The instances not in the bootstrap sample form the out-of-bag (OOB) test set.

c. Train a model on the bootstrap sample, repeating the entire model building process.

d. Calculate the performance (

θ_OOB^b) of this model on the OOB sample. - Calculate the average OOB performance:

⟨θ_OOB⟩ = average(θ_OOB^b) - Calculate the relative overfitting rate:

R = (⟨θ_OOB⟩ - θ_orig) / (γ - θ_orig) - Calculate the weight:

ω = 0.632 / (1 - 0.368*R) - Final Estimate: Compute the

.632+estimate:θ.632+ = (1-ω)*θ_orig + ω*⟨θ_OOB⟩[30].

Workflow Visualizations

Bootstrap Validation Process

Double Cross-Validation Process

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Software and Methodological "Reagents" for QSAR Validation

| Tool / Solution | Category | Primary Function in Validation |

|---|---|---|

| Efron-Gong Optimism Bootstrap | Statistical Method | Estimates overfitting (optimism) directly and subtracts it from apparent performance for bias correction [33] [32]. |

| .632+ Bootstrap Estimator | Statistical Method | Provides a weighted performance estimate that balances apparent and out-of-bag performance, robust to overfitting [30] [32]. |

| Double (Nested) Cross-Validation | Validation Protocol | Prevents model selection bias by strictly separating data used for model selection from data used for performance assessment [31]. |

| Kernel Density Estimation (KDE) | Applicability Domain | Estimates the probability density of training data in feature space to define the Applicability Domain and identify outlier compounds [7]. |

| Class Probability Estimates | Applicability Domain / Confidence Measure | For classifiers, this provides a natural confidence score for each prediction, directly related to the likelihood of misclassification [18]. |

| Descriptor Calculation Software (e.g., RDKit, Dragon) | Cheminformatics Tool | Generates numerical molecular descriptors from chemical structures, which form the feature space for modeling and AD definition [34]. |

Frequently Asked Questions (FAQs)

Q1: What is the Applicability Domain (AD) of a QSAR model and why is it critical for regulatory acceptance? The Applicability Domain (AD) defines the boundaries within which a Quantitative Structure-Activity Relationship (QSAR) model's predictions are considered reliable. It represents the chemical, structural, and response space covered by the training data used to build the model [10] [3]. According to the OECD principles for QSAR validation, a defined AD is a mandatory requirement for models intended for regulatory use, such as under the EU's REACH legislation [10] [35]. It ensures that predictions are made for chemicals structurally similar to those in the training set, thereby minimizing unreliable extrapolations [10] [3]. Using a model outside its AD can lead to incorrect predictions and faulty decision-making, which is a significant risk in areas like drug development or environmental risk assessment [36].

Q2: I have built a regression QSAR model. Which method is most straightforward to implement for defining its AD? For a straightforward implementation, the leverage method is often recommended, particularly for regression-based models [10] [3]. It is computationally simple and is proportional to the Mahalanobis distance of a compound from the centroid of the training data in the descriptor space [10] [36]. The leverage for a compound is calculated from the "hat" matrix, ( H = X(X^TX)^{-1}X^T ), where ( X ) is the model matrix of training set descriptors [10] [36]. A common rule is to set a warning threshold at a leverage value of ( 3p/n ), where ( p ) is the number of model descriptors and ( n ) is the number of training compounds [10]. Compounds with a leverage higher than this threshold are considered influential and may be outside the AD.

Q3: My test compound falls outside the AD according to the bounding box method but inside according to the distance-based method. Which result should I trust? This discrepancy is common because different AD methods characterize the chemical space differently. The bounding box method only considers the range of individual descriptors and can include large, empty regions within the hyper-rectangle, ignoring correlations between descriptors [10]. Distance-based methods, like Euclidean or Mahalanobis distance, measure the proximity of a test compound to the center or density of the training set, which often provides a more refined estimate of similarity [10] [37]. In this case, the distance-based result is likely more reliable. For critical applications, a consensus approach using multiple methods is advisable to get a more robust assessment of the AD [38].

Q4: How can I define the AD for a non-linear machine learning model, such as an Artificial Neural Network (ANN)? For non-linear models like ANNs, traditional methods designed for linear models may not be optimal. A distance-based approach using the Minimum Euclidean Distance Space (MEDS) has been successfully applied to Counter-Propagation ANNs (CP-ANNs) [37]. This method leverages the internal architecture of the network: during training, the minimum Euclidean distance from each input compound to the "winning" neuron in the Kohonen layer is calculated. The domain of the model is then defined by the maximum of these distances found in the training set. A query compound is considered within the AD if its Euclidean distance to its nearest neuron is less than or equal to this threshold [37]. Kernel Density Estimation (KDE) is another powerful, model-agnostic method that can handle the complex geometries often associated with non-linear model spaces [7].

Q5: What is the role of probability density distributions in defining the AD, and when should I use this method? Probability density distribution-based methods, such as Kernel Density Estimation (KDE), define the AD by estimating the probability density of the training set in the feature space [7]. A query compound falls within the AD if it lies in a region of feature space with a probability density above a predefined threshold. KDE is particularly advantageous because it naturally accounts for data sparsity and can identify arbitrarily complex, non-convex, and even multiple disjointed regions where the model is reliable [7]. This makes it superior to simpler geometric methods like convex hull, which can include large empty spaces with no training data. KDE is a general approach suitable for various model types, especially when the training data has a complex, non-uniform distribution [7].

Troubleshooting Guides

Issue 1: High False Positive Rate in Out-of-Domain Detection

Problem: Your AD method is flagging too many compounds as outliers, even though their predictions seem reasonable.

Solution: Systematically check the following:

- Review the Threshold: The threshold for defining "outside" the AD is often arbitrary and user-defined [10]. A threshold that is too strict will increase false positives.

- Check for Redundant Descriptors: The presence of highly correlated descriptors can skew distance calculations (like Euclidean distance) and leverage values.

- Action: Apply Principal Component Analysis (PCA) to your descriptors and define the AD in the principal component space (PCA Bounding Box) [10]. This transforms the axes to be orthogonal, correcting for correlation.

- Evaluate the Method Itself: Simple range-based methods (e.g., Bounding Box) are prone to this issue.

Issue 2: Inconsistent AD Results Across Different Software Tools

Problem: You get different AD classifications for the same compound when using different software packages.

Solution: This is typically caused by differences in the underlying algorithm implementation or model-specific parameters.

- Identify the Core Algorithm: Different tools may use different default algorithms (e.g., leverage, k-NN, convex hull) or variations of the same algorithm [35] [39].

- Action: Carefully review the documentation of each software to confirm which AD method is being applied. For instance, the OPERA tool uses a combination of leverage and vicinity of query chemicals [39].

- Verify Input Data Standardization: Many AD methods are sensitive to the scale and standardization of the input descriptors. Inconsistent pre-processing between tools will lead to different results.

- Action: Ensure that the same standardization method (e.g., Z-score) is applied to your descriptors before feeding them into different software. The standardization formula is ( S{ki} = (X{ki} - \bar{X}i) / \sigma{Xi} ), where ( X{ki} ) is the original descriptor value, ( \bar{X}i ) is the mean, and ( \sigma{X_i} ) is the standard deviation [35].

- Adopt a Consensus Approach: No single AD method is universally ideal [35] [38].

- Action: Do not rely on a single tool. Use multiple methods and software to get a consensus view. A compound flagged by several different methods is more confidently outside the AD than one flagged by only a single method.

Issue 3: Defining AD for Complex Classification Models

Problem: Standard AD methods, designed for regression, are not performing well on your categorical QSAR classification model.

Solution: Employ AD measures specifically designed for classification or model-agnostic measures.

- Use the Rivality Index (RI): The RI is a measure of a molecule's capacity to be correctly classified. It assigns values in the interval [-1, +1] to each molecule [38].

- Action: Molecules with high positive RI values are determined to be outside the AD and are likely outliers. Molecules with strongly negative RI values are confidently inside the AD. Molecules with RI values near zero are "activity borders" and their predictions are less reliable [38]. This method has a low computational cost and does not require building the final model.

- Implement the CLASS-LAG Method: This method is designed for binary classification models where the machine learning algorithm provides a continuous prediction score.

- Action: For a molecule ( J ) with a prediction ( y(J) ) in the interval [-1, +1], the CLASS-LAG distance is calculated as ( d_{CLASS-LAG}(J) = min{|-1 - y(J)|, |1 - y(J)|} ) [38]. This distance measures how close the prediction is to a decision boundary, serving as an indicator of prediction reliability.

- Leverage Ensemble-Based Uncertainty: For models built as an ensemble (e.g., Random Forest), the standard deviation of the predicted probabilities or class labels across the individual models in the ensemble can be a powerful AD measure [36] [38]. A high standard deviation indicates high uncertainty and that the compound may be outside the AD.

Experimental Protocols

Protocol 1: Defining AD using Leverage and the Hat Matrix

Objective: To identify test set compounds that are structurally extreme relative to the training set of a linear QSAR model.

Materials:

- Training set descriptor matrix (( X_{train} )), size ( n \times p ) (n compounds, p descriptors).

- Test set descriptor matrix (( X{test} )), standardized using the mean and standard deviation of ( X{train} ).

- Computational software (e.g., Python with NumPy, R, or a dedicated tool like the "Enalos Domain – Leverages" KNIME node [35]).

Methodology:

- Standardize Training Descriptors: Standardize each descriptor in the training set to have a mean of zero and a standard deviation of one.

- Calculate the Hat Matrix: Compute the hat matrix for the training set using the formula: ( H = X{train} (X{train}^T X{train})^{-1} X{train}^T ).

- Extract Training Leverages: The leverage for each training compound is the corresponding diagonal element of the hat matrix ( H ), ( h_{ii} ).

- Determine the Warning Threshold: Calculate the threshold as ( h^* = 3p/n ), where ( p ) is the number of descriptors and ( n ) is the number of training compounds [10].

- Standardize Test Set: Standardize the test set descriptors using the mean and standard deviation from the training set.

- Calculate Test Leverages: For each test compound ( x{test} ), compute its leverage as ( h{test} = x{test}^T (X{train}^T X{train})^{-1} x{test} ).

- Assign AD Status: A test compound is considered outside the AD if ( h_{test} > h^* ).

Interpretation: Compounds with leverages above ( h^* ) are structurally influential and far from the centroid of the training set. Predictions for these compounds should be treated with caution.

Protocol 2: Implementing a k-Nearest Neighbors (k-NN) Distance-Based AD

Objective: To define the AD based on the local similarity of a test compound to its nearest neighbors in the training set.

Materials:

- Training set descriptor matrix (( X_{train} )).

- Test set descriptor matrix (( X_{test} )).

- Software capable of calculating distance matrices and nearest neighbors (e.g., Python with scikit-learn).

Methodology:

- Pre-process Data: Standardize all descriptors based on the training set's mean and standard deviation.

- Choose Parameters: Select the number of neighbors, ( k ) (a common choice is k=5 [36]), and a distance metric (Euclidean distance is commonly used [36] [37]).

- Calculate the Threshold from Training Data: For each training compound, find the distance to its ( k^{th} )-nearest neighbor within the training set. The AD threshold (( d_{threshold} )) can be defined as the maximum of these ( k^{th} )-nearest neighbor distances across the entire training set, or a chosen percentile (e.g., 95th) [10] [37].

- Evaluate Test Compounds: For each test compound, calculate the Euclidean distance to its ( k^{th} )-nearest neighbor in the training set.

- Assign AD Status: A test compound is considered inside the AD if this distance is less than or equal to ( d_{threshold} ).

Interpretation: This method identifies test compounds that are in sparse regions of the training set's chemical space. A large distance to the k-NN indicates the compound is not well-represented by the model's training data.

Protocol 3: Applying Kernel Density Estimation (KDE) for AD Determination

Objective: To define the AD based on the probability density of the training data in the feature space, suitable for complex and non-linear models.

Materials:

- Training set descriptor matrix.

- Test set descriptor matrix.

- Software for KDE (e.g., Python with SciPy or scikit-learn).

Methodology:

- Dimensionality Reduction (Optional but Recommended): To combat the "curse of dimensionality," reduce the descriptor space using PCA. Use the first few principal components that explain sufficient variance (e.g., >80-90%) [7].

- Fit KDE to Training Data: Fit a kernel density estimation model to the (reduced) training set data. A Gaussian kernel is typically used. The bandwidth of the kernel can be estimated using Scott's or Silverman's rule.

- Estimate Density Threshold: Calculate the log-likelihood for each training compound under the fitted KDE model. Set a density threshold, for instance, as the ( 5^{th} ) percentile of the training set log-likelihoods [7].

- Evaluate Test Compounds: Project test compounds into the same PCA space (using the loadings from the training set). Calculate their log-likelihood using the fitted KDE model.

- Assign AD Status: A test compound is considered inside the AD if its log-likelihood is greater than the predefined threshold.

Interpretation: KDE defines the AD as the high-density regions of the training set's feature space. Test compounds falling in low-density regions are considered outliers, as the model has not learned from similar examples.

Comparative Data Tables

Table 1: Comparison of Core Applicability Domain Methods

| Method Category | Specific Method | Key Principle | Advantages | Limitations | Best Suited For |

|---|---|---|---|---|---|

| Geometric | Bounding Box | Checks if descriptors fall within min-max range of training set. | Simple, intuitive, fast to compute [10]. | Ignores correlation between descriptors and empty regions within the box [10]. | Initial, rapid data screening. |

| Geometric | Convex Hull | Defines the smallest convex shape containing all training points. | Precisely defines the boundaries of the training set [10]. | Computationally complex for high dimensions; cannot identify internal empty regions [10]. | Low-dimensional descriptor spaces (2D-3D). |

| Distance-Based | Leverage | Measures distance to the centroid of training data, accounting for correlation. | Handles correlated descriptors; well-suited for linear regression models [10] [3]. | Assumes a unimodal, roughly normal distribution of data [10]. | Linear QSAR models. |

| Distance-Based | k-Nearest Neighbors (k-NN) | Measures distance to the k-th nearest training compound. | Accounts for local data density; simple to implement [36] [37]. | Requires choice of k and distance metric; performance depends on data distribution [10]. | Both linear and non-linear models. |

| Probability-Based | Kernel Density Estimation (KDE) | Estimates the probability density function of the training data. | Handles complex data distributions and multiple regions; accounts for sparsity [7]. | Computationally intensive; suffers from the "curse of dimensionality" [7]. | Complex, non-linear models (e.g., ANNs). |

| Ensemble-Based | Std. Dev. of Predictions | Uses the standard deviation of predictions from an ensemble of models. | Directly estimates prediction uncertainty; model-agnostic [36] [38]. | Requires building multiple models (e.g., via bagging). | Any ensemble model (e.g., Random Forest). |

Table 2: Troubleshooting Checklist for Common AD Challenges

| Symptom | Potential Cause | Recommended Action |

|---|---|---|

| Too many compounds flagged as outliers. | AD threshold is set too strictly. | Relax the threshold (e.g., use 95th percentile instead of max value) [10]. |

| Inconsistent AD results between tools. | Different algorithms or data pre-processing. | Standardize input data; use a consensus of multiple methods [35] [38]. |

| Poor model performance even inside the AD. | The model itself may be of low quality or overfitted. | Re-evaluate the model's internal validation metrics (e.g., Q², cross-validation accuracy). |

| AD method fails for a non-linear model. | The method (e.g., leverage) assumes linearity. | Switch to a model-agnostic method like KDE or k-NN distance [7] [37]. |

| Difficulty interpreting the AD for a classification model. | Using a method designed for regression. | Apply a classification-specific method like the Rivality Index or CLASS-LAG [38]. |

Workflow and Signaling Pathways

Table 3: Essential Computational Tools for AD Research

| Tool / Resource Name | Type | Primary Function in AD Research | Access / Link |

|---|---|---|---|

| KNIME with Enalos Nodes | Software Node | Provides pre-built nodes for calculating AD using Euclidean distance and Leverage methods [35]. | https://www.knime.com/ |