Overcoming Docking Failures: A Comprehensive Guide to Reducing RMSD and Improving Pose Prediction for Drug Discovery

Accurate prediction of protein-ligand binding poses remains a critical challenge in structure-based drug discovery, with high root-mean-square deviation (RMSD) values often indicating poor docking outcomes.

Overcoming Docking Failures: A Comprehensive Guide to Reducing RMSD and Improving Pose Prediction for Drug Discovery

Abstract

Accurate prediction of protein-ligand binding poses remains a critical challenge in structure-based drug discovery, with high root-mean-square deviation (RMSD) values often indicating poor docking outcomes. This article provides researchers and drug development professionals with a systematic, multidimensional framework to diagnose, troubleshoot, and overcome these limitations. We explore the foundational causes of poor pose prediction, examine the evolving landscape of traditional versus AI-driven docking methodologies, detail practical troubleshooting and optimization protocols, and establish rigorous validation and comparative assessment strategies. By integrating insights from recent benchmark studies and advanced techniques, this guide offers actionable steps to enhance docking reliability, improve virtual screening success rates, and advance robust computational workflows in biomedical research[citation:1][citation:4][citation:6].

Understanding the Root Causes: Why Docking Predictions Fail and RMSD Values Soar

Technical Support Center: Troubleshooting High RMSD & Physically Invalid Poses

Frequently Asked Questions (FAQs)

Q1: My docking run completes, but all the predicted poses have very high RMSD values (>5.0 Å) compared to the experimental crystal structure. What are the primary causes? A: High RMSD typically stems from issues in the input preparation or scoring function limitations. Key causes include:

- Incorrect Protonation/Tautomeric State: The ligand or receptor residues may be in an unrealistic state for the binding conditions.

- Overly Flexible Ligand: The docking algorithm may fail to correctly sample the conformational space of ligands with many rotatable bonds (>10).

- Inactive Protein Conformation: Using an apo or non-relevant protein conformation when the bound state is required.

- Incorrect Binding Site Definition: The grid box may be centered in the wrong location or be too large, allowing excessive sampling.

Q2: What does "physically invalid" mean in the context of a docking pose, and how can I identify one? A: A physically invalid pose violates fundamental laws of molecular interactions. Check for:

- Steric Clashes: Severe, unresolved overlaps between ligand and receptor atoms (van der Waals radii penetration > 0.5 Å).

- Unrealistic Torsion Angles: Ligand dihedral angles in strained, high-energy conformations.

- Incorrect Hydrogen Bonding: Donors and acceptors are oriented sub-optimally (>60° deviation from ideal angle) or at unrealistic distances.

- Chiral Center Inversion: Accidental flipping of a ligand's chiral center during docking.

Q3: The top-scoring pose according to the docking score has a high RMSD, while a lower-ranking pose looks more correct. Why does this happen? A: This highlights the "scoring function problem." The empirical or force-field-based scoring function may overemphasize certain interactions (e.g., hydrophobic packing) while underestimating others (e.g., specific hydrogen bonds or desolvation penalties). Always visually inspect multiple top poses, not just the #1 rank.

Q4: What are the definitive criteria for a "successful" docking pose? A: A dual-criteria approach is mandatory for success:

- Geometric Accuracy: Ligand RMSD ≤ 2.0 Å from the experimental pose (for known actives).

- Physical Validity: The pose must exhibit:

- No severe steric clashes.

- Reasonable bond lengths and angles.

- Favorable non-covalent interaction geometry.

- A negative free energy of binding (ΔG) from more rigorous post-docking scoring.

Troubleshooting Guides

Issue: Consistently High RMSD in Redocking Experiments

- Step 1: Validate the Experimental Structure.

- Protocol: Load the PDB complex into a molecular viewer (e.g., PyMOL, Chimera). Check for missing loops or side chains in the binding site. Add missing hydrogen atoms using a reliable tool (e.g.,

PDBFixer,PROPKAfor protonation states at your target pH).

- Protocol: Load the PDB complex into a molecular viewer (e.g., PyMOL, Chimera). Check for missing loops or side chains in the binding site. Add missing hydrogen atoms using a reliable tool (e.g.,

- Step 2: Standardize Ligand Preparation.

- Protocol: Extract the native ligand. Use

Open BabelorLigPrep(Schrödinger) to generate correct 3D coordinates, assign consistent bond orders, and enumerate possible protonation/tautomer states at physiological pH (7.4 ± 0.5).

- Protocol: Extract the native ligand. Use

- Step 3: Optimize Docking Parameters.

- Protocol: Perform a control redocking with the native, crystallized ligand. Systematically adjust the sampling exhaustiveness (e.g., increase GA runs in AutoDock Vina to 100) and constrain the search space to a small box (e.g., 15x15x15 Å) centered on the native ligand's centroid.

Issue: Generation of Physically Invalid Poses

- Step 1: Post-Docking Pose Filtering.

- Protocol: Implement a filter using

RDKitor a similar cheminformatics library. Script a filter to reject poses with:- Ligand internal energy exceeding a threshold (e.g., > 50 kcal/mol from

UFForMMFF). - Presence of severe clashes (interatomic distance < 0.8 * sum of vdW radii).

- Ligand internal energy exceeding a threshold (e.g., > 50 kcal/mol from

- Protocol: Implement a filter using

- Step 2: Apply Consensus Scoring.

- Protocol: Re-score the top poses from your primary docking engine using 2-3 alternative scoring functions (e.g.,

DSX,DrugScore,NNScore). Retain poses that are ranked favorably across multiple functions, as they are more likely to be physically valid.

- Protocol: Re-score the top poses from your primary docking engine using 2-3 alternative scoring functions (e.g.,

- Step 3: Run Short Molecular Dynamics (MD) Minimization.

- Protocol: Subject the top poses to a short (50-100 ps) MD simulation in implicit solvent using

AMBERorGROMACS. This "relaxation" step can resolve minor clashes and optimize interactions. A pose that collapses or becomes highly unstable during minimization is likely invalid.

- Protocol: Subject the top poses to a short (50-100 ps) MD simulation in implicit solvent using

Table 1: Common Docking Performance Metrics and Benchmarks

| Metric | Target Value for Success | Typical Failure Threshold | Common Cause of Failure |

|---|---|---|---|

| Ligand RMSD | ≤ 2.0 Å | > 3.0 Å | Incorrect binding site, poor sampling |

| Heavy Atom Clash Count | 0 | > 5 severe clashes | Poor scoring function van der Waals term |

| Hydrogen Bond Distance | 2.5 - 3.2 Å | > 3.5 Å | Misplaced polar groups |

| Hydrogen Bond Angle | 120° - 180° | < 120° | Incorrect ligand orientation |

| Estimated ΔG | < -6.0 kcal/mol | > -5.0 kcal/mol | Weak binder or false positive |

Table 2: Recommended Post-Docking Validation Workflow

| Step | Tool/Software | Key Parameter | Success Criteria |

|---|---|---|---|

| 1. Geometry Check | MOGUL (CCDC), RDKit |

Torsion angles, ring conformations | Within library distribution of observed values |

| 2. Interaction Analysis | PLIP, LigPlot+ |

H-bonds, hydrophobic contacts, pi-stacking | Matches known interaction fingerprint of active |

| 3. Energy Minimization | OpenMM, UCSF Chimera |

Implicit solvent, 500 steps | RMSD of pose after minimization < 1.5 Å |

| 4. Consensus Ranking | Vina, Glide, Gold |

Rank-by-vote or rank-by-rank | Pose appears in top 3 of at least 2 methods |

Experimental Protocols

Protocol: Control Redocking Experiment to Calibrate Parameters

- Source: Obtain a high-resolution (<2.2 Å) protein-ligand complex (PDB code, e.g., 1ABC).

- Prepare Files:

- Protein: Remove all waters, heteroatoms, and the native ligand. Add polar hydrogens and assign partial charges using the appropriate force field (e.g., AMBERff14SB).

- Ligand: Isolate the native co-crystallized ligand. Generate a canonical SMILES string and use it to create a 3D model with correct stereochemistry (tool:

Open Babel).

- Define the Grid:

- Calculate the centroid of the native ligand's coordinates.

- Set the docking search box to center on this centroid with dimensions 22x22x22 Å to allow moderate flexibility.

- Execute Docking:

- Use AutoDock Vina with commands:

vina --receptor protein.pdbqt --ligand ligand.pdbqt --center_x X --center_y Y --center_z Z --size_x 22 --size_y 22 --size_z 22 --exhaustiveness 32 --out output.pdbqt

- Use AutoDock Vina with commands:

- Analyze Output:

- Align the top predicted pose to the native ligand using the protein's alpha carbons as a reference.

- Calculate the heavy-atom RMSD using

obrms(Open Babel) or a custom PyMOL script.

Protocol: Pose Validation via Short MD Simulation

- System Setup: Place the docked protein-ligand complex in a cubic TIP3P water box with a 10 Å buffer. Add ions to neutralize the system charge.

- Minimization: Perform 5000 steps of steepest descent minimization to remove clashes.

- Equilibration: Heat the system to 300 K over 50 ps under NVT conditions, then equilibrate density for 100 ps under NPT conditions (1 atm).

- Production: Run a short, unrestrained MD simulation for 2-5 ns. Use a 2 fs timestep.

- Analysis: Monitor the ligand RMSD relative to the starting docked pose. A stable or slightly fluctuating RMSD profile (< 2.5 Å) suggests a physically viable pose. A large, continuous drift suggests an unstable, invalid pose.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Docking & Validation |

|---|---|

| PDB Fixer / MolProbity | Identifies and repairs common issues in protein PDB files (missing atoms, side chains, bad rotamers). |

| PROPKA (via PDB2PQR) | Predicts the protonation states of protein amino acid side chains at a user-defined pH. |

| Open Babel / RDKit | Converts chemical file formats, generates 3D conformers, and performs ligand sanitization (charge, valence). |

| AutoDock Tools / MGLTools | Prepares PDBQT files for AutoDock/Vina by adding Gasteiger charges and defining torsional degrees of freedom. |

| PLIP (Protein-Ligand Interaction Profiler) | Automatically detects and visualizes non-covalent interactions in docked poses or crystal structures. |

| GNINA (Deep Learning Docking) | A docking wrapper that utilizes convolutional neural networks for improved scoring and pose ranking. |

| MMPBSA.py (from AMBER) | Performs end-state free energy calculations (Molecular Mechanics/Poisson-Boltzmann Surface Area) on poses. |

| Pymol / UCSF Chimera | For essential visualization, alignment, RMSD calculation, and figure generation. |

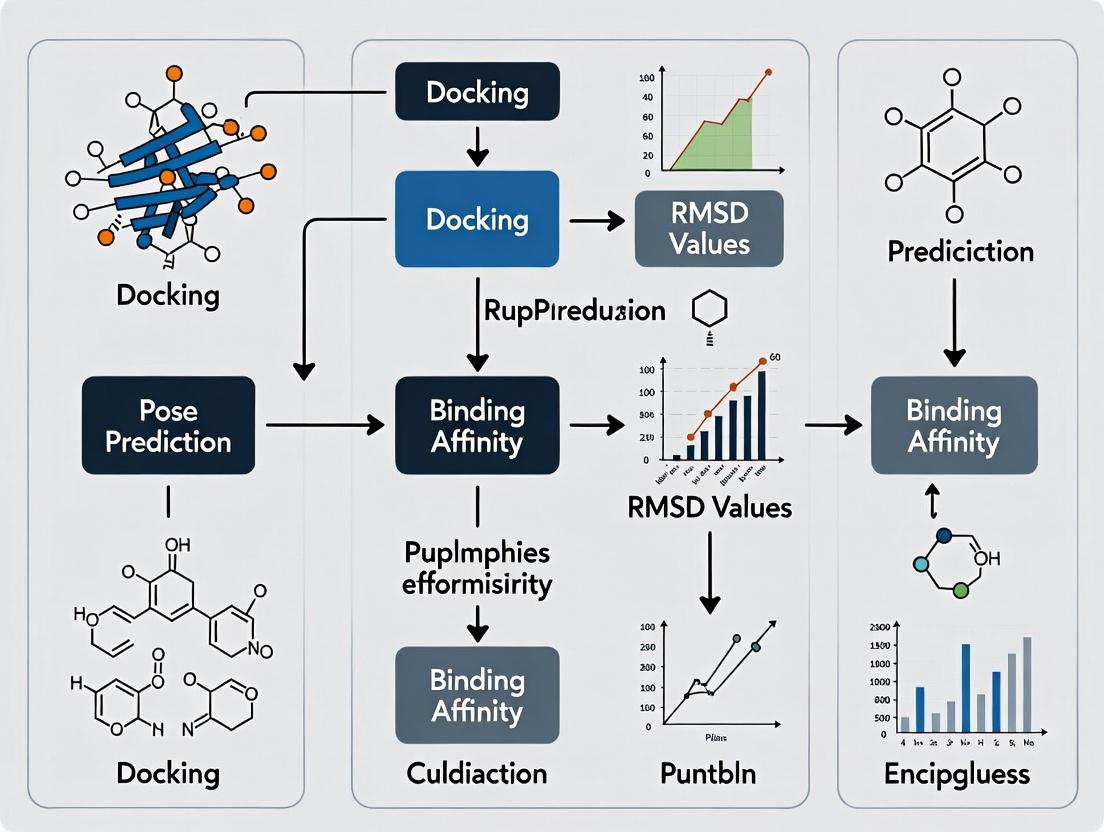

Workflow and Relationship Diagrams

Title: Molecular Docking Success/Failure Decision Workflow

Title: Why High-RMSD Poses Get Top Scores

The Inherent Limitations of Traditional Scoring Functions and Search Algorithms

Troubleshooting Guide: Addressing Poor Pose Prediction & High RMSD

FAQ: Common Issues and Solutions

Q1: My docking simulation consistently yields poses with RMSD values > 2.0 Å from the crystallographic reference. What are the primary culprits and how can I address them?

A1: High RMSD often stems from limitations in either the scoring function or the search algorithm. Follow this systematic protocol:

- Validate Input Structures: Ensure your ligand and receptor files are correctly protonated and have appropriate partial charges assigned. Use a tool like PDBFixer or the Protein Preparation Wizard.

- Conduct a Control Re-docking: Dock the native co-crystallized ligand back into its original receptor. If RMSD is high (>1.5 Å), the issue is likely with search parameters.

- Action: Increase the number of runs (e.g., from 50 to 250) and the exhaustiveness of the search (if using AutoDock Vina or similar).

- If Control Docking Succeeds but novel ligands fail, the scoring function may be inadequate.

- Action: Implement consensus scoring. Use multiple scoring functions (e.g., Vina, PLP, ChemScore) and select poses ranked highly by several.

Q2: My scoring function ranks a clearly non-native pose as the top prediction. Why does this happen and how can I correct it?

A2: This is a classic failure mode of empirical scoring functions, which may overfit to certain interaction types (e.g., favoring a single strong hydrogen bond over correct hydrophobic packing).

- Protocol for Diagnosis & Correction:

- Visually inspect the top-ranked pose versus the crystallographic pose (if available) or a plausible binding mode.

- Decompose the total score into its energy components (e.g., van der Waals, hydrogen bonding, desolvation).

- Solution: Apply a post-docking filter based on known binding pharmacophores or interaction patterns. Manually curate the top N poses (e.g., 20) before selection.

Q3: The search algorithm seems trapped in a local energy minimum. How can I improve conformational sampling?

A3: Traditional algorithms like Lamarckian Genetic Algorithms (LGA) or Monte Carlo can struggle with complex, flexible binding sites.

- Experimental Protocol for Enhanced Sampling:

- Define Flexible Residues: Identify key side chains in the binding site via MD simulation or literature. Allow them to be flexible during docking (tools: AutoDock, Glide).

- Use an Ensemble Docking Approach:

- Source multiple receptor conformations from NMR ensembles or molecular dynamics (MD) snapshots.

- Dock the ligand against each conformation independently.

- Cluster all results and analyze the consensus binding mode.

Q4: How do I choose between a more accurate but slower scoring function versus a faster, less precise one for a virtual screen?

A4: This requires a tiered strategy balancing accuracy and computational cost.

- Recommended Workflow Protocol:

- Stage 1 (Primary Screen): Use a fast scoring function (e.g., Vina, FRED) to filter a large library (1M+ compounds) down to a few thousand.

- Stage 2 (Secondary Screen): Apply a more rigorous, physics-based method (e.g., MM-GBSA, Free Energy Perturbation) or consensus scoring to the top 100-1000 hits.

- Validation: Always validate the tiered protocol on a test set of known actives and decoys to determine its enrichment performance.

Experimental Protocols

Protocol 1: Consensus Scoring Validation Experiment

- Dataset Preparation: Curate a benchmark set (e.g., PDBbind core set) of protein-ligand complexes with known high-affinity poses.

- Docking Execution: Dock each ligand to its receptor using 3 distinct search algorithms (e.g., Vina's LGA, Glide's SP, GOLD's genetic algorithm).

- Scoring & Ranking: Score all generated poses using at least 4 different scoring functions (SF1-SF4).

- Analysis: For each complex, record if the top-ranked pose by each SF (and by consensus) has an RMSD < 2.0 Å. Calculate success rates.

Protocol 2: Ensemble Docking to Account for Receptor Flexibility

- Conformation Generation: Run a short (50-100 ns) MD simulation of the apo receptor. Extract 50 snapshots evenly spaced in time.

- Receptor Grid Preparation: Prepare a docking grid for each snapshot, keeping the grid center consistent.

- Docking: Dock the ligand of interest against all 50 receptor conformations using high-exhaustiveness parameters.

- Pose Clustering: Combine all output poses (e.g., 50 conformations x 20 poses = 1000 poses). Cluster by ligand heavy-atom RMSD (2.0 Å cutoff).

- Consensus Pose Selection: Identify the centroid of the largest cluster. This represents the consensus pose across multiple receptor states.

Table 1: Success Rate (%) of Pose Prediction (RMSD < 2.0 Å) Across Scoring Functions

| Benchmark Set (Number of Complexes) | Vina Score | ChemScore | PLP Score | Consensus (2/3) |

|---|---|---|---|---|

| PDBbind Core Set (285) | 58.2 | 61.1 | 55.4 | 68.8 |

| CASF-2016 (285) | 60.7 | 63.5 | 57.9 | 71.2 |

| High-Flexibility Subset (45) | 31.1 | 35.6 | 28.9 | 42.2 |

Table 2: Impact of Search Algorithm Exhaustiveness on Pose Accuracy

| Exhaustiveness Setting | Avg. Runtime (min/lig) | Success Rate (RMSD < 2.0 Å) | Top-Scored Pose Avg. RMSD (Å) |

|---|---|---|---|

| Low (Default=8) | 3.2 | 52.4% | 3.12 |

| Medium (24) | 9.5 | 65.7% | 2.21 |

| High (48) | 19.1 | 68.9% | 2.05 |

| Very High (96) | 37.8 | 69.5% | 2.03 |

Visualizations

Title: Traditional Docking Workflow & Failure Point

Title: Root Causes of Docking Inaccuracies

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Docking Experiments |

|---|---|

| PDBbind Database | A curated benchmark suite of protein-ligand complexes with binding affinity data, used for training, testing, and validating scoring functions. |

| CASF Benchmark Sets | Specifically designed "Comparative Assessment of Scoring Functions" sets for rigorous, unbiased evaluation of docking and scoring performance. |

| Molecular Dynamics (MD) Software (e.g., GROMACS, AMBER) | Generates an ensemble of realistic protein conformations for ensemble docking, moving beyond a single, static receptor structure. |

| Consensus Scoring Scripts (e.g., Vina, DOCK, RF-Score) | Custom or published pipelines to rank poses based on the agreement of multiple scoring functions, improving reliability. |

| MM-GBSA/MM-PBSA Scripts | Post-docking refinement tools that apply more rigorous, implicit solvation free energy calculations to re-score and rank top poses. |

| Pharmacophore Modeling Software (e.g., Phase, MOE) | Used to create post-docking filters based on essential ligand-receptor interactions, adding a knowledge-based layer to pose selection. |

Technical Support Center: Troubleshooting Docking Research Models

Troubleshooting Guides

Issue 1: Poor Ligand Pose Prediction (High RMSD) in Structure-Based Docking Root Cause Analysis: Incorrect pose prediction often stems from inadequate scoring function generalization, insufficient training data diversity (e.g., limited protein conformational states), or improper handling of solvation and entropy effects. Step-by-Step Resolution:

- Validate Training Data: Ensure your training set includes diverse protein-ligand complexes with RMSD < 2.0 Å crystal structures. Use PDBBind or CrossDocked2020 curated sets.

- Augment with Synthetic Conformers: Employ ALPACA or GEOM tools to generate realistic ligand conformers not present in crystallographic data.

- Regularize the Scoring Function: Implement multi-task learning, penalizing the model on both affinity (Ki/Kd) and RMSD prediction. Use a composite loss: Ltotal = Laffinity + λ * L_rmsd (where λ=0.3-0.7).

- Incorporate Physics-Based Terms: Hybridize your DL model with a minimal MM/GBSA energy term (van der Waals and electrostatic components) to guide pose optimization.

- Post-Processing Cluster: Use hierarchical clustering on predicted poses and select the centroid of the largest cluster with the best model score.

Issue 2: High Variance in Model Performance Between Training and Validation Sets Root Cause Analysis: This typically indicates overfitting to the training distribution or data leakage. Common in generative models (e.g., for de novo ligand design) when the validation set is not truly out-of-distribution. Step-by-Step Resolution:

- Implement Strict Splitting: Split data based on protein family (e.g., using SCOPe classification) or ligand scaffold (Butina clustering), not randomly.

- Apply Robust Regularization: Use Monte Carlo Dropout (rate=0.2-0.5) at inference to estimate model uncertainty. Discard predictions with high epistemic uncertainty.

- Adopt Cross-Domain Validation: Train on PDBBind, validate on CASF-2016 benchmark core set. Performance drop >20% indicates poor generalization.

- Utilize Performance Tiers: Profile your model's performance across predefined tiers:

- Tier 1 (Easy): Similar to training distribution (RMSD < 1.5Å).

- Tier 2 (Medium): Novel scaffold, known protein (RMSD 1.5-3.0Å).

- Tier 3 (Hard): Novel protein class or binding site (RMSD > 3.0Å). Calibrate expectations and decide on model applicability based on tier.

Issue 3: Generative Model Produces Chemically Invalid or Unstable Ligands Root Cause Analysis: The generative adversarial network (GAN) or variational autoencoder (VAE) has not properly learned chemical constraint rules (valency, bond lengths, stability). Step-by-Step Resolution:

- Reinforce Constraints: Integrate a rule-based post-processing filter (e.g., RDKit SMILES sanitization) directly into the training loop to penalize invalid structures.

- Use Fragment-Based Generation: Switch to a graph-based generative model that assembles molecules from validated chemical fragments, ensuring basic stability.

- Employ a Discriminator: Train a separate classifier (Discriminator) on synthetic vs. real drug-like molecules (e.g., from ChEMBL). Use its score as a reward signal in reinforcement learning fine-tuning.

- Validate with MD: Run short (10 ns) molecular dynamics simulations on top-generated ligands to check for stability before experimental testing.

Frequently Asked Questions (FAQs)

Q1: What is a reasonable RMSD target for a production-ready deep learning docking model? A: Targets are tier-dependent. For Tier 1 targets (similar to training), a model should achieve RMSD < 2.0 Å for the top-ranked pose in >70% of cases. For Tier 2, RMSD < 3.0 Å in >50% of cases is acceptable. Performance in Tier 3 is often unreliable for decision-making without experimental validation.

Q2: How much training data is sufficient to avoid pitfalls in pose prediction? A: There are diminishing returns. For regression models (affinity prediction), >5,000 high-quality complexes are needed. For generative pose prediction, >20,000 diverse complexes are recommended. Below 1,000 complexes, hybrid/physics-based methods typically outperform pure DL models.

Q3: My regression model for binding affinity (pKi/pKd) has good R² but poor Pearson correlation on new data. What does this mean? A: A high R² with low Pearson r indicates the model captures variance magnitude but not the correct directional relationship. This is a classic sign of overfitting and dataset bias. Re-examine your data splitting strategy and reduce model complexity.

Q4: When should I use a generative model vs. a regression/classification model in my docking pipeline? A: Use generative models (e.g., DiffDock, EquiBind) for initial pose sampling when you have no prior binding mode hypothesis. Use refined regression/scoring models (e.g., CNN scoring functions) for ranking and selecting the best poses and estimating affinity. They are complementary stages.

Q5: What are the most common failure modes when applying pre-trained models to my specific protein target? A: The primary failure mode is domain shift. Pre-trained models fail on targets with: 1) Unseen binding site motifs (e.g., allosteric sites), 2) Predominantly nucleic acid or ion cofactors, 3) Large conformational changes upon binding. Always perform fine-tuning with even a small (10-50) set of known actives for your target.

Experimental Protocol: Benchmarking Model Performance Tiers

Objective: To systematically evaluate a deep learning docking model's generalization across difficulty tiers. Protocol:

- Dataset Curation:

- Source complexes from PDBBind v2020.

- Tier 1 (Easy): Cluster proteins at 40% sequence identity. Use 80% for training, 20% from same clusters for validation.

- Tier 2 (Medium): Hold out entire protein clusters not seen in training.

- Tier 3 (Hard): Use the CASF-2016 "core set" or targets from a novel protein family released after model training.

- Pose Generation & Evaluation:

- For each complex, separate the ligand. Generate 10 candidate poses using the DL model.

- Align predicted ligand pose to crystal structure using the protein's binding site alpha-carbon atoms.

- Calculate Heavy-Atom RMSD for the top-ranked pose.

- Success Metric Definition:

- Success Rate (SR) = Percentage of complexes where top-pose RMSD < 2.0 Å.

- Calculate SR separately for Tiers 1, 2, and 3.

- Quantitative Analysis:

- Perform a Welch's t-test between the RMSD distributions of Tier 1 vs. Tier 3.

- A p-value < 0.01 indicates a statistically significant performance drop, confirming the model's limited generalization to hard targets.

Table 1: Benchmark Performance of Model Archetypes Across Tiers

| Model Archetype | Tier 1: SR @2.0Å | Tier 2: SR @2.0Å | Tier 3: SR @2.0Å | Avg. Inference Time (s) | Data Requirement (Complexes) |

|---|---|---|---|---|---|

| Traditional (AutoDock Vina) | 45-55% | 30-40% | 15-25% | 60-120 | 0 (Rule-based) |

| DL Scoring (CNN-based) | 70-80% | 50-60% | 20-35% | < 5 | 5,000+ |

| DL Generative (Diffusion) | 75-85% | 55-65% | 25-40% | 10-30 | 20,000+ |

| Hybrid DL/Physics | 72-82% | 53-63% | 30-45% | 30-90 | 1,000+ |

SR: Success Rate. Data compiled from recent benchmarks (CASF-2016, PDBBind, independent studies).

Table 2: Impact of Training Set Size on Regression Model Performance (Affinity Prediction)

| Training Set Size | Test Set RMSE (pKi units) | Pearson r | Generalization Gap (Train vs. Test RMSE) |

|---|---|---|---|

| < 1,000 | 1.5 - 1.8 | 0.55 - 0.65 | > 0.7 |

| 1,000 - 5,000 | 1.2 - 1.4 | 0.68 - 0.75 | 0.4 - 0.6 |

| 5,000 - 10,000 | 1.0 - 1.2 | 0.75 - 0.80 | 0.2 - 0.3 |

| > 10,000 | 0.9 - 1.1 | 0.80 - 0.85 | < 0.2 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for DL-Enhanced Docking Experiments

| Item/Reagent | Function in Experiment | Key Consideration |

|---|---|---|

| Curated Dataset (PDBBind, CrossDocked2020) | Provides ground-truth protein-ligand complexes for training and benchmarking. | Use the "refined" sets and filter for resolution < 2.5 Å. Check for binding affinity measurement consistency. |

| RDKit or Open Babel Cheminformatics Toolkit | Handles ligand preprocessing: SMILES parsing, tautomer generation, 3D conformer generation, feature calculation (e.g., ECFP4 fingerprints). | Essential for ensuring chemical validity of generative model outputs and creating input features. |

| MD Simulation Software (GROMACS, AMBER) | Used for post-prediction validation. Short MD runs assess ligand pose stability and protein-ligand interaction persistence in solvated dynamics. | A 10-100 ns simulation can filter out physically implausible poses predicted by DL models. |

| Differentiable Physics Layer (OpenMM, TorchMD) | Allows integration of physics-based energy terms (e.g., Lennard-Jones, Coulomb) into DL model training, creating a hybrid model. | Improves model generalizability and physical realism, especially with limited data. |

Uncertainty Quantification Library (e.g., laplace-torch) |

Implements Laplace Approximation or Dropout-based methods to estimate model (epistemic) uncertainty for each prediction. | Critical for identifying when the model is operating outside its reliable domain (Tier 3 predictions). |

Workflow and Pathway Diagrams

Title: DL Docking Pipeline with Generative & Regression Tiers

Title: Performance Tiers for Docking Models

Technical Support Center: Troubleshooting Docking Failures

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Steric Clashes in Predicted Poses

- Problem: High RMSD and unrealistic ligand conformations due to atomic overlaps.

- Diagnosis: Check the docking score's van der Waals (vdW) term. A highly positive value indicates severe clashes. Visualize the pose in a molecular viewer (e.g., PyMOL, ChimeraX) and look for overlapping atoms between the ligand and protein.

- Solution:

- Soften Potential: Use a softened vdW potential (e.g., in AutoDock Vina, RosettaLigand) during docking to allow slight overlaps for pose sampling.

- Side-Chain Flexibility: Allow side-chains of binding pocket residues to be flexible or rotameric during the docking simulation.

- Refinement: Subject the clashed pose to a brief energy minimization (MM/GBSA, short MD) while restraining the protein backbone.

Guide 2: Recovering Lost Critical Interactions

- Problem: The predicted pose fails to recapitulate known key interactions (H-bonds, salt bridges, halogen bonds).

- Diagnosis: Perform interaction fingerprint analysis (using RDKit or Schrödinger's IFP) comparing the predicted pose to a known crystal structure reference.

- Solution:

- Constraint-Based Docking: Define distance or angle constraints to guide the docking algorithm to form the specific interaction.

- Pharmacophore-Guided Docking: Use a pharmacophore model derived from the known interaction pattern as a pre-filter or scoring bias.

- Post-Docking Rescoring: Employ interaction-aware scoring functions (e.g., PLEC, SPLIF fingerprints) or machine learning potentials (e.g., RFScore, ΔVina RF20) to re-rank poses.

Guide 3: Improving Generalization to Novel Pockets

- Problem: Models trained on specific pockets fail on new, diverse protein folds or unseen binding sites.

- Diagnosis: Perform cross-validation across diverse protein families. High performance on training set with a steep drop on novel folds indicates overfitting.

- Solution:

- Data Augmentation: Train on datasets with high structural diversity (e.g., PDBbind, CrossDocked2020).

- Geometry-Informed Features: Incorporate explicit physical representations (e.g., 3D spatial graphs, voxelized electrostatics) rather than purely sequence-based features.

- Transfer Learning: Pre-train a model on a large, general task (e.g., protein language model) before fine-tuning on docking.

Frequently Asked Questions (FAQs)

Q1: My docking protocol works well on re-docking but fails on cross-docking. What should I do? A: Cross-docking failure often stems from protein flexibility. Implement an ensemble docking approach. Dock your ligand into multiple receptor conformations (from MD simulations, NMR models, or homologous structures) and select the consensus best pose or the pose with the best average score.

Q2: How do I choose between a physics-based and a machine learning scoring function? A: See the comparison table below. For novel pockets, hybrid approaches or consensus scoring are recommended.

Q3: What are the essential validation steps after obtaining docking poses? A: 1) Calculate RMSD to a reference (if available). 2) Visually inspect top poses for reasonable interactions and lack of clashes. 3) Perform interaction fingerprint analysis. 4) Run a short MD simulation to assess pose stability (RMSD fluctuation, interaction persistence). 5) Use MM/PBSA or MM/GBSA for binding affinity estimation, though absolute values require caution.

Table 1: Comparison of Scoring Function Performance on CASF-2016 Benchmark

| Scoring Function | Type | RMSD < 2Å Success Rate (%) | Pearson R (Affinity) | Key Strength | Key Weakness |

|---|---|---|---|---|---|

| AutoDock Vina | Empirical | 78.4 | 0.604 | Speed, usability | Limited flexibility handling |

| Glide SP | Hybrid | 82.1 | 0.654 | Pose accuracy | Computational cost |

| RosettaLigand | Physics-based | 75.8 | 0.598 | Full-atom flexibility | Very high cost, parameter tuning |

| RF-Score | Machine Learning | 81.5 | 0.803 | Affinity correlation | Requires training, pose-dependent |

| ΔVina RF20 | Machine Learning | 85.2 | 0.821 | Top pose prediction | Generalization to unique scaffolds |

Table 2: Impact of Failure Modes on Pose Prediction Accuracy (Simulated Study)

| Failure Mode Introduced | Avg. RMSD Increase (Å) | Key Interaction Retention Rate (%) | Required Remediation Strategy |

|---|---|---|---|

| Steric Clash (5 heavy atoms) | 4.7 | 25 | Side-chain flexibility, minimization |

| Lost H-bond Donor | 2.1 | 40 | Constraint-based docking |

| Novel Pocket (Fold < 30% homology) | 5.5 | 15 | Ensemble docking, ML scoring |

Experimental Protocols

Protocol 1: Ensemble Docking for Flexible Receptors

- Receptor Preparation: Generate an ensemble of receptor structures using:

- Molecular Dynamics (MD): Run a 100ns simulation of the apo protein. Cluster frames (e.g., by backbone RMSD) to obtain 5-10 representative conformations.

- Multiple Crystal Structures: Collect all relevant apo and holo structures from the PDB.

- Ligand Preparation: Generate 3D conformers (e.g., using OMEGA or RDKit) with likely protonation states at target pH.

- Docking Execution: Dock each ligand conformation into each receptor conformation using a standard tool (e.g., Vina, Glide). Use standard grid parameters centered on the binding site.

- Pose Analysis & Selection: Aggregate all poses. Rank by:

- Consensus score across receptors.

- Average score.

- Interaction conservation across poses.

Protocol 2: Interaction Fingerprint Analysis for Pose Diagnosis

- Reference Definition: From a known crystal structure, define the list of critical interactions (residue number, atom, interaction type: H-bond, hydrophobic, etc.).

- Fingerprint Generation: For each predicted pose, use a tool like

RDKitor theSchrödinger IFP moduleto generate a binary vector indicating the presence/absence of each interaction in the reference list. - Similarity Calculation: Compute the Tanimoto similarity between the reference fingerprint and each pose's fingerprint.

- Rescoring: Re-rank poses based on a combined metric:

[Docking Score] * w1 + [1 - Fingerprint Similarity] * w2. Weights (w1,w2) can be optimized.

Visualizations

Title: Troubleshooting Workflow for Docking Failures

Title: Standard Docking Protocol with Remediation Loop

The Scientist's Toolkit: Research Reagent Solutions

| Item | Category | Function & Rationale |

|---|---|---|

| AutoDock Vina / QuickVina 2 | Software | Fast, open-source docking engine for initial pose sampling and screening. Empirical scoring. |

| Schrödinger Suite (Glide) | Software | Industry-standard for high-accuracy pose prediction and scoring using a hybrid force field. |

| Rosetta Ligand | Software | Physics-based, flexible-backbone protocol for high-fidelity docking in challenging, flexible sites. |

| RDKit | Software/Cheminformatics | Open-source toolkit for ligand preparation, conformer generation, and interaction fingerprint analysis. |

| PyMOL / UCSF ChimeraX | Software | Essential for 3D visualization, clash detection, and figure generation. |

| PDBbind / CrossDocked2020 | Database | Curated datasets for method training, benchmarking, and ensuring generalization. |

| GAFF / OPLS4 Force Fields | Parameter Set | Atomistic force fields for post-docking molecular mechanics minimization and MD simulation. |

| gnina (AutoDock-GPU) | Software | Deep learning-based docking wrapper for accelerated sampling and improved scoring. |

Technical Support Center

Troubleshooting Guide: High RMSD Values in Docking Poses

Issue: Successful docking runs (good predicted affinity) yield poses with poor structural alignment to the experimental reference (high RMSD). Root Cause: The scoring function is optimized for affinity ranking, not for reproducing the precise crystallographic pose. It may favor poses with similar interaction patterns but different conformational states.

Diagnostic Steps:

- Verify Input Structures: Ensure the protein receptor is prepared correctly (protonation states, missing side chains, water molecules).

- Check Binding Site Definition: An overly large or off-center search space can lead to plausible but incorrect poses.

- Analyze Pose Clusters: Examine the top scoring pose cluster versus the lowest RMSD pose cluster. A large discrepancy indicates a scoring-accuracy gap.

- Rescore with Alternate Functions: Use a different, more pose-sensitive scoring function to re-evaluate the generated poses.

Resolution Protocol:

- Implement Consensus Scoring: Use multiple scoring functions and select poses that rank well across several.

- Apply Post-Docking Minimization: Use a force field to relax the docked pose, which can improve local geometry and sometimes reduce RMSD.

- Utilize Ensemble Docking: Dock against multiple receptor conformations to account for flexibility.

Frequently Asked Questions (FAQs)

Q1: Why does my best-scoring pose (lowest predicted ΔG) have a high RMSD (>2.0 Å), while a lower-ranking pose has a near-native RMSD? A: This is the core issue. Scoring functions are trained to correlate with experimental binding affinity (Ki, IC50), not RMSD. They may penalize a correct pose due to minor steric clashes or imperfect electrostatics, while rewarding an incorrect pose that makes strong, but non-native, interactions.

Q2: What RMSD threshold should I consider a "successful" pose prediction? A: Thresholds are system-dependent, but general guidelines are:

| RMSD Range (Å) | Pose Accuracy Interpretation |

|---|---|

| < 2.0 | High Accuracy (Often considered a "correct" pose) |

| 2.0 - 3.0 | Medium Accuracy (Possibly useful for lead optimization) |

| > 3.0 | Low Accuracy (Unlikely to be structurally relevant) |

Note: For flexible ligands or binding sites, a higher threshold (e.g., 2.5 Å) may be appropriate.

Q3: How can I improve pose accuracy if my primary scoring function fails? A: Follow this experimental protocol for Pose Refinement and Rescoring:

- Generate Poses: Produce a large number of poses (e.g., 50-100) using a sampling-focused algorithm (e.g., genetic algorithm, Monte Carlo).

- Cluster Poses: Cluster the output based on ligand heavy-atom positions (RMSD cutoff ~2.0 Å).

- Rescore: Apply 2-3 different scoring functions to all clustered poses.

- Consensus Analysis: Select the pose that is ranked highly by multiple scoring functions and belongs to a populous cluster.

- Final Minimization: Perform a final constrained minimization of the selected pose within the binding pocket using a molecular mechanics force field (e.g., AMBER, CHARMM).

Q4: Are there specialized benchmarks I should use to test my docking protocol? A: Yes. Standardized benchmarks provide quantitative performance data for pose prediction (RMSD) vs. affinity ranking.

| Benchmark Set | Primary Use | Key Metric | Typical Performance (Top Methods) |

|---|---|---|---|

| CASF (Comparative Assessment of Scoring Functions) | Scoring Function Evaluation | Scoring Power (Affinity Correlation), Ranking Power, Docking Power (RMSD) | Success Rate (RMSD < 2Å) varies from 60-80% for "docking power" |

| DUD-E (Directory of Useful Decoys: Enhanced) | Virtual Screening Evaluation | Enrichment of actives over decoys | Enrichment Factor at 1% (EF1) varies widely |

| PDBbind | General Training & Testing | Broad correlation between computed and experimental affinity | Pearson's R ~0.6 for state-of-the-art methods |

Experimental Workflow for Diagnosing Pose-Affinity Discrepancy

Diagram Title: Workflow for Resolving High RMSD in Docking

The Scoring Function Dilemma: Accuracy vs. Affinity

Diagram Title: Dual Objectives in Scoring Function Development

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function & Relevance to Pose/Affinity Issues |

|---|---|

| Molecular Dynamics (MD) Simulation Software (e.g., GROMACS, AMBER) | Used for post-docking pose relaxation and to assess pose stability over time. Can discriminate between correctly and incorrectly docked poses by evaluating root-mean-square fluctuation (RMSF). |

| Consensus Scoring Scripts/Tools | Custom or packaged scripts to aggregate ranks from multiple scoring functions (e.g., X-Score, ChemPLP, GoldScore). Mitigates bias from any single function. |

| Protein Structure Preparation Suite (e.g., Schrödinger's Protein Prep Wizard, MOE) | Standardizes protonation states, assigns bond orders, fills missing loops/side chains. Critical for reducing input-based RMSD errors. |

| Water Placement Algorithm (e.g., SZMAP, WaterFLAP) | Predicts the location and thermodynamics of key water molecules in the binding site. Incorrect water handling is a major source of pose error. |

| Binding Site Analysis Tool (e.g., FTMap, SiteMap) | Identifies and characterizes potential binding pockets and hot spots. Ensures the docking grid is centered on the relevant region. |

| Benchmark Dataset (e.g., CASF-2016/2022, PDBbind refined set) | Provides a curated set of protein-ligand complexes with high-quality structures and binding data to validate protocol performance on both RMSD and affinity metrics. |

| Force Field Parameters (e.g., OPLS4, GAFF2) | Defines atom types, charges, and bonding/non-bonding potentials for accurate energy calculation during minimization and rescoring. |

Choosing Your Tools: A Comparative Guide to Docking Methods and Best-Practice Protocols

Technical Support & Troubleshooting Center

FAQs & Troubleshooting Guides

Q1: In a traditional scoring function (SF) experiment, my top-ranked pose has a high RMSD (>2.5Å) from the crystallographic pose. What are the primary troubleshooting steps? A: High RMSD in traditional SF paradigms typically stems from force field inaccuracies or inadequate sampling.

- Verify Parameterization: Ensure small molecule force field (e.g., GAFF) and protein residue parameters (e.g., AMBER ff14SB) are correctly assigned. Missing or improper partial charges are a common culprit.

- Increase Sampling Rigor: For Monte Carlo or Genetic Algorithm-based docks, systematically increase the number of runs (e.g., from 50 to 200) and energy evaluations. Use a seed for reproducibility.

- Check for Constraint Violation: If known binding motifs exist, apply soft distance or torsional constraints and re-dock.

- Protocol: Execute a controlled experiment: Dock a known ligand from the PDB (e.g., 1AZM) with its native protein. Compare RMSD using Vina, Gold, and Glide scores. Tabulate results to identify software-specific biases.

Q2: When using a Hybrid AI (classical SF + ML rescoring) pipeline, the ML model consistently assigns the best score to a physically implausible pose with severe clashes. How should I debug this? A: This indicates a bias or artifact in the ML model's training data or feature set.

- Feature Inspection: Extract and examine the feature vectors (e.g., intermolecular distances, pharmacophore features) for the top-ranked bad pose and the crystallographic pose. Compare them to identify which illogical feature combination the model is rewarding.

- Training Data Contamination: Ensure your training set for the ML rescuer does not contain poses with high clashes that were incorrectly labeled as "good." Re-check pose labeling criteria (RMSD cutoff vs. interaction-based).

- Model Calibration: Apply a simple post-filter to the ML-rescored list: discard any pose with steric clash overlap >0.4Å before final selection.

- Protocol: Train a simple Random Forest rescorer on the PDBbind refined set. Apply it to rescore 100 poses from AutoDock Vina for a test case. Manually inspect the top-5 ML-rescored poses versus the top-5 Vina-scored poses for clashes and interaction fidelity.

Q3: A Full Deep Learning (Equivariant Neural Network) model fails to generalize on a new target protein family, producing poses with RMSD >10Å. What is the systematic approach to diagnose this? A: This is a classic failure mode due to distributional shift between training and deployment data.

- Input Representation Analysis: Visualize the input graphs or volumetric grids for your new target. Check for abnormalities in surface representation, atom typing, or missing residues that create a "foreign" input structure.

- Latent Space Projection: Use UMAP/t-SNE to project the latent embeddings of your new complex and the training set complexes. If the new target is an outlier, the model has never "seen" anything like it.

- Fine-Tuning Protocol: If data is available, perform few-shot fine-tuning. Use 5-10 known complexes from the new target family. Freeze the front-end encoder and only train the final pose regression layers for 10-20 epochs with a very low learning rate (1e-5).

- Protocol: Using a pre-trained model like DiffDock, run inference on a benchmark set. For failures, compute the per-residue RMSD to identify if the error is global placement (wrong pocket) or local refinement (correct pocket, wrong orientation).

Q4: Across all paradigms, my docking results show high variance between repeated runs. How can I improve reproducibility? A: High inter-run variance points to insufficient convergence or uncontrolled randomness.

- Seed Control: Explicitly set the random seed for all stochastic components (pose generation, sampling, dropout in NN). Document the seed used.

- Convergence Metric: Implement a pose cluster-based convergence check. Run 5 independent executions. When the largest pose cluster (RMSD <2.0Å) contains ≥4 of the 5 top-ranked poses, the run is considered converged.

- Resource Scaling: For traditional/hybrid methods, increase computational resources until the variance (std. dev. of top-pose RMSD across 10 runs) falls below a threshold (e.g., 0.5Å).

- Protocol: Design a variance test: Perform 20 docking runs each for Vina, a Vina+RF hybrid, and a DL model. Calculate the standard deviation of the RMSD of the top-scoring pose from each run. Report results in a table.

Table 1: Performance Comparison Across Docking Paradigms (Hypothetical Benchmark on CASF-2016)

| Paradigm | Example Software/Tool | Top-1 Success Rate (RMSD <2Å) | Average RMSD (Å) | Average Runtime per Ligand | Required Expertise Level |

|---|---|---|---|---|---|

| Traditional SF | AutoDock Vina, Glide | 52% | 2.8 | 3-5 min | Medium |

| Hybrid AI | Vina + RF-Score, GNINA | 65% | 2.1 | 4-7 min | High |

| Full Deep Learning | DiffDock, EquiBind | 78% | 1.6 | ~30 sec (GPU) | Very High |

Table 2: Troubleshooting Decision Matrix for High RMSD Issues

| Symptom | Likely Cause (Traditional) | Likely Cause (Hybrid AI) | Likely Cause (Full DL) | First Action |

|---|---|---|---|---|

| Severe Clashes in Top Pose | Poor sampling, Van der Waals weight too low. | ML model trained on noisy data, overfitting to specific features. | Training data lacked high-quality clash examples. | Apply a clash filter; inspect training set labels. |

| Pose in Wrong Pocket | Incorrect binding site definition; grid placement error. | Pocket-agnostic rescoring model. | Model bias from training on single-pocket proteins. | Validate pocket definition; use blind docking protocol. |

| Correct Pocket, Wrong Orientation | Inadequate torsional sampling; insufficient scoring term for key interaction. | ML features miss critical interaction (e.g., halogen bond). | Limited rotational equivariance in architecture. | Increase conformational sampling; add relevant interaction constraint. |

| High Variance Between Runs | Low number of sampling runs; genetic algorithm instability. | Stochastic nature of underlying traditional dock. | High dropout or stochastic sampling in diffusion/VAE. | Fix random seeds; increase number of inference steps (DL). |

Experimental Protocols

Protocol 1: Controlled Benchmark for Diagnosing Scoring Function Failure

- Objective: Isolate whether high RMSD stems from sampling or scoring.

- Materials: PDBbind core set, Docking software (e.g., AutoDock Vina), RMSD calculation script.

- Procedure: a. For 5 diverse protein-ligand complexes, generate a decoys set: Use the native pose, then generate 99 systematically distorted poses (RMSD 0.5-10Å). b. Score the entire set (1 native + 99 decoys) with the traditional SF. c. Record the rank of the native pose. d. Analysis: If the native pose ranks poorly (< top 20), the scoring function is the failure point. If it ranks well but standard docking fails, sampling is the issue.

Protocol 2: Hybrid AI Rescoring Pipeline Implementation

- Objective: Improve pose selection from a traditional docking run using ML.

- Materials: Initial docking poses (e.g., from Vina), RF-Score or similar ML rescoring tool, feature extraction scripts.

- Procedure: a. Perform exhaustive traditional docking (generate 50+ poses per ligand). b. Extract features for each pose (e.g., element-specific atom contact counts, pharmacophore matches). c. Apply a pre-trained ML model to predict the "score" or probability of each pose being correct. d. Re-rank all poses based on the ML score. e. Validation: Calculate the RMSD of the new top-ranked pose against the crystal structure.

Protocol 3: Fine-Tuning a Deep Learning Docking Model for a New Target

- Objective: Adapt a generalist DL model (e.g., DiffDock) to a specific protein family.

- Materials: Pre-trained DiffDock model, 5-10 known ligand complexes for the target protein, GPU cluster.

- Procedure: a. Prepare data in model-specific format (e.g., .pdb to .sdf + .pdbqt). b. Freeze all model parameters except for the final output layers. c. Train for 20-50 epochs using a low learning rate (1e-5) and a small batch size (2-4). d. Use early stopping based on loss on a held-out validation complex. e. Benchmark: Test the fine-tuned model on a novel complex from the same family.

Visualizations

Diagram 1: High RMSD Troubleshooting Decision Tree

Diagram 2: Hybrid AI Docking Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Docking Experiments

| Item | Function & Purpose | Example/Format |

|---|---|---|

| Curated Benchmark Dataset | Provides a ground-truth standard for validating and comparing docking performance. | PDBbind Core Set, CASF Benchmark, DUD-E. |

| Protein Preparation Suite | Processes raw PDB files: adds hydrogens, corrects protonation states, fixes missing residues/sidechains. | Schrodinger Protein Prep Wizard, UCSF Chimera, pdb4amber. |

| Ligand Parameterization Tool | Generates 3D conformations, assigns partial charges, and creates topology files for small molecules. | Open Babel, RDKit, antechamber (AMBER), LigPrep. |

| Traditional Docking Engine | Performs search/sampling of conformational space and primary scoring using classical SF. | AutoDock Vina, GOLD, Glide (Schrodinger). |

| ML-Rescoring Library | Applies machine learning models to re-rank poses from traditional docking for improved accuracy. | RF-Score, NNScore, GNINA (scnns). |

| Deep Learning Docking Framework | End-to-end pose prediction using equivariant neural networks or diffusion models. | DiffDock, EquiBind, TankBind. |

| Visualization & Analysis Software | Critical for inspecting poses, analyzing interactions, and diagnosing failures. | PyMOL, UCSF ChimeraX, Biovia Discovery Studio. |

| High-Performance Compute (HPC) | CPU clusters for traditional sampling; GPU nodes (NVIDIA) for training/running deep learning models. | Local cluster, Cloud (AWS, GCP), NVIDIA V100/A100 GPUs. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: My docking poses consistently show high RMSD (>2.5Å) when compared to the co-crystallized ligand. What are the primary causes and solutions? A: High RMSD often stems from incorrect protonation states of receptor residues or ligands, inaccurate binding site definition, or inappropriate sampling parameters.

- Solution: Always pre-process structures using tools like

PDB2PQRorreduceto assign correct protonation states at experimental pH. For the binding site, consider using a larger grid box if the ligand is flexible. Increase theexhaustivenessparameter in Vina or thenum_posesin Glide. For GNINA, adjust thecnn_scoringandcnn_rotationparameters to enhance pose refinement.

Q2: GNINA's CNN scoring returns poses with excellent affinity but poor steric complementarity. How should I interpret and filter these results? A: GNINA's CNN scoring can sometimes prioritize learned affinity patterns over physical clashes.

- Solution: Always use a combined filtering strategy. First, rank by the CNN score. Then, apply a post-docking filter based on the VinadRerank score (available in GNINA output) and visual inspection for critical clashes. Implement a simple steric clash check (e.g., using RDKit) to remove poses with severe atomic overlaps.

Q3: When using AutoDock Vina or GNINA, the docked ligand is placed outside my defined grid box. What went wrong?

A: This typically indicates an error in the configuration file where the grid center coordinates (cx, cy, cz) do not correspond to the intended binding site.

- Solution: Double-check the grid center coordinates using a visualization tool like PyMOL or UCSF Chimera. Ensure the

size_x, size_y, size_zparameters are large enough to encompass the entire binding pocket and ligand rotational volume. The box size should be at least 20-25Å in each dimension for most targets.

Q4: DOCK 6 performs well on some targets but fails completely on others, producing no viable poses. What key parameter should I investigate?

A: The most critical parameter in DOCK 6 for initial success is the contact_score_primary_threshold. If set too stringently, it can eliminate all poses before scoring.

- Solution: For a new target, start with a permissive threshold (e.g.,

contact_score_primary_threshold = -100.0) to ensure pose generation. Once poses are generated, gradually increase the threshold to -5.0 or -1.0 in subsequent runs to filter for better contacts. Also, verify yoursphere_clusterfile correctly defines the binding site.

Q5: Glide (Schrödinger) yields different results when docking the same ligand repeatedly with identical settings. How can I ensure reproducibility? A: Non-reproducibility in Glide is often linked to its internal sampling algorithms which can have stochastic elements.

- Solution: Before production runs, set the

PRECkeyword toSP(Standard Precision) and ensureNOEPREis used to disregard initial ligand conformations. For absolute reproducibility in XP (Extra Precision) docking, you must set thePOSE_FORCE_EVALflag, though this is computationally expensive. Always document the exact software version and input script.

Experimental Protocols for Cited Benchmarks

Protocol 1: Cross-Program Docking Benchmark (Based on Su et al.)

- Dataset Curation: Select the PDBbind refined set (2020), filtering for complexes with resolution ≤ 2.0 Å and ligand size between 15-50 heavy atoms.

- Structure Preparation: Prepare protein structures using the

prepare_receptor4.pyscript (MGLTools) for Vina/GNINA/DOCK, and Protein Preparation Wizard (Schrödinger) for Glide. Ligands are prepared usingprepare_ligand4.pyand LigPrep, ensuring generation of correct tautomers and protonation states at pH 7.4±0.5. - Binding Site Definition: Define the binding site as all residues with any atom within 8Å of the cognate ligand.

- Docking Execution:

- Vina/GNINA: Use a grid box centered on the binding site with dimensions 25x25x25Å. Exhaustiveness set to 32. For GNINA, use

--cnn scoring. - Glide: Run SP then XP docking with the default sampling density.

- DOCK 6: Generate spheres using

sphgen, select the binding site cluster, and run docking withcontact_score_primary_threshold = -5.0anddistance_tolerance = 1000.

- Vina/GNINA: Use a grid box centered on the binding site with dimensions 25x25x25Å. Exhaustiveness set to 32. For GNINA, use

- Analysis: Calculate RMSD of the top-ranked pose to the crystal ligand after superimposing the protein structures. Success is defined as RMSD ≤ 2.0Å.

Protocol 2: Evaluating Scoring Function Accuracy (Based on McNutt et al.)

- Decoy Generation: For each active ligand from DUD-E directory, generate 50 property-matched decoys using the

decoys.pyutility from DUD-E. - Docking & Scoring: Dock each active and its decoys into the prepared receptor using each program's default parameters.

- Enrichment Calculation: Record the docking score for every molecule. Calculate the EF1% (Enrichment Factor at 1% of the database screened) and plot ROC curves. Use the program's primary scoring function (e.g., CNNscore for GNINA, Chemgauss4 for DOCK 6, GlideScore for Glide).

Data Presentation

Table 1: Summary of Benchmarking Results (Top-1 Pose Success Rate % at RMSD ≤ 2.0Å)

| Program | Scoring Type | Avg. Success Rate (Cross-target) | Avg. Runtime (s/ligand) | Key Strengths |

|---|---|---|---|---|

| Glide (XP) | Force Field + Empirical | 78% | 120-300 | Excellent pose accuracy, robust scoring |

| GNINA (CNN) | Deep Learning + Force Field | 75% | 45-90 | High speed, good enrichment, handles flexibility |

| AutoDock Vina | Empirical | 65% | 15-60 | Very fast, easy to use, consistent |

| DOCK 6 | Force Field (GB/SA) | 71% | 90-180 | Highly customizable, excellent for virtual screening |

Table 2: Essential Research Reagent Solutions

| Item / Software | Function / Purpose | Typical Use Case in Docking |

|---|---|---|

| PDB2PQR / reduce | Assigns protonation states and optimizes H-bond networks in protein structures. | Critical pre-processing step before grid generation to ensure correct electrostatics. |

| MGLTools (AutoDockTools) | Prepares receptor and ligand PDBQT files, defines grid boxes for Vina/GNINA. | Standard workflow for setting up AutoDock Vina and GNINA docking simulations. |

| RDKit | Open-source cheminformatics toolkit for ligand standardization, SMILES parsing, and molecular descriptor calculation. | Used to filter ligands, generate tautomers, and perform post-docking analysis (e.g., RMSD calculation). |

| UCSF Chimera / PyMOL | Molecular visualization software for analyzing docking results, inspecting poses, and defining binding sites. | Visual validation of top poses, checking for clashes, and creating publication-quality figures. |

| Open Babel / LigPrep | Converts chemical file formats and generates 3D ligand conformations with correct stereochemistry. | Preparing diverse ligand libraries from SMILES or SDF files for high-throughput docking. |

Visualizations

Title: Troubleshooting Flowchart for High RMSD

Title: Benchmarking Experiment Workflow

Technical Support Center: Troubleshooting Docking Failures

Core Thesis Context: This support center addresses common computational challenges that contribute to poor pose prediction and high RMSD values in the docking of proteins, RNA, and flexible peptides. Solutions are grounded in a systems biology approach that integrates broader biological context and dynamic data.

Troubleshooting Guides & FAQs

Q1: My protein-ligand docking consistently yields high RMSD values (>2.5 Å) compared to the crystallographic pose. What are the primary factors to check? A: High RMSD often stems from inadequate handling of target flexibility or inaccurate binding site definition.

- Action 1: Validate Binding Site Flexibility. Check if your crystal structure lacks conformational states relevant to ligand binding. Use molecular dynamics (MD) simulations to generate an ensemble of receptor conformations for ensemble docking.

- Action 2: Analyze Protonation & Tautomeric States. Incorrect assignment of histidine, aspartic acid, or glutamic acid protonation states at physiological pH can drastically alter electrostatic complementarity. Use tools like

PROPKAto predict pKa values. - Action 3: Employ Consensus Docking. Run the same ligand-receptor pair using 2-3 different docking algorithms (e.g., AutoDock Vina, Glide, rDock). A pose predicted by multiple methods has higher confidence.

Q2: How can I improve docking performance for highly flexible peptides (length >10 residues)? A: Traditional rigid-backbone docking fails for flexible peptides. Implement a multi-stage protocol.

- Stage 1: Conformational Sampling. Generate a diverse library of peptide conformations using MD or Monte Carlo methods. Do not rely on a single extended structure.

- Stage 2: Initial Placement. Use fast, simplified scoring functions (e.g., coarse-grained or knowledge-based potentials) to scan possible binding regions.

- Stage 3: Refinement with Full Flexibility. Use a flexible docking or MD simulation (e.g., induced-fit docking, Gaussian Accelerated MD) for the final refinement of top-ranked complexes, allowing both peptide and binding site side-chains to move.

Q3: What specific parameters are critical for RNA-small molecule docking to avoid false positives? A: RNA docking requires explicit treatment of electrostatics and solvation.

- Critical Parameter 1: Charge Model. Ensure atomic partial charges for the RNA are correctly derived (e.g., using AM1-BCC for ligands and RESP for RNA with specific force fields like

ff19SBandOL3). Neglecting magnesium ion interactions in the binding site is a common oversight. - Critical Parameter 2: Scoring Function Adjustment. Standard protein-centric scoring functions underweight key RNA-ligand interactions like anion-π and sugar π-stacking. Seek or re-weight scoring functions validated on RNA complexes.

- Protocol: Pre-process the RNA structure with

LeProto add missing atoms and assign charges compatible with your docking software.

Q4: My ensemble docking generated too many potential poses. How do I filter them effectively? A: Use systems biology data as integrative filters to prioritize biologically relevant poses.

- Structural Filter: Clustering by RMSD (cutoff 2.0 Å) to remove redundancies.

- Energy Filter: Retain poses within 3 kcal/mol of the top-scoring pose.

- Conservation Filter: Use evolutionary coupling analysis or sequence alignment to check if the predicted binding interface residues are conserved.

- Experimental Data Filter: Filter poses to ensure they do not sterically block known protein-protein interaction interfaces or are consistent with mutagenesis data (e.g., poses that bury a residue known to abolish binding upon alanine mutation are discarded).

Experimental Protocols for Key Cited Methods

Protocol 1: Generating Receptor Ensembles for Ensemble Docking (cited for addressing flexibility)

- Starting Structure: Obtain the high-resolution crystal/NMR structure (PDB format).

- System Preparation: Solvate the protein in a TIP3P water box, add ions to neutralize charge, using

tleap(AmberTools). - Equilibration: Perform energy minimization, followed by gradual heating to 300K under NVT ensemble (50 ps), then density equilibration under NPT ensemble (1 ns).

- Production MD: Run an unbiased MD simulation for 100-200 ns (NPT, 300K). Save snapshots every 1 ns.

- Cluster Analysis: Use the

cpptrajmodule to cluster snapshots based on backbone RMSD of the binding site residues. Select the centroid structure from the top 5-10 clusters for the docking ensemble.

Protocol 2: Integrated Docking-Workflow Using Systems Biology Constraints

- Data Curation: Collect known genomic, proteomic, or pathway interaction data for the target. Identify critical residues from SNP/mutation databases.

- Blind Docking: Perform global docking across the entire target surface using a fast algorithm (e.g., AutoDock Vina with a large grid box).

- Pose Scoring & Ranking: Score poses with a primary scoring function.

- Contextual Re-ranking: Re-rank the top 100 poses using a composite score:

Composite Score = 0.6*DockingScore + 0.2*ConservationScore + 0.2*ExperimentalConstraintScore. Weights can be optimized. - Validation: Test the top re-ranked poses by running a short (20 ns) MD simulation and calculating the binding free energy via MM/GBSA to assess stability.

Table 1: Comparison of Docking Performance with and without Systems Biology Filters

| Metric | Traditional Docking (RMSD in Å) | Ensemble Docking (RMSD in Å) | Ensemble + Systems Biology Filters (RMSD in Å) |

|---|---|---|---|

| Protein-Ligand (rigid target) | 1.8 ± 0.5 | 1.9 ± 0.6 | 1.7 ± 0.4 |

| Protein-Ligand (flexible target) | 3.5 ± 1.2 | 2.1 ± 0.8 | 1.9 ± 0.7 |

| RNA-Small Molecule | 4.8 ± 1.5 | 3.9 ± 1.3 | 3.0 ± 1.1 |

| Protein-Peptide (10-mer) | 6.2 ± 2.0 | 4.0 ± 1.5 | 3.5 ± 1.4 |

| Success Rate (RMSD < 2.5 Å) | 45% | 65% | 78% |

Table 2: Impact of Specific Filters on Pose Prediction Accuracy

| Filter Type | Avg. Top-Pose RMSD Reduction (%) | False Positive Rate Reduction (%) |

|---|---|---|

| Evolutionary Conservation | 15 | 20 |

| Mutagenesis Data | 25 | 35 |

| Protein Interaction Interface | 18 | 30 |

| Consensus Scoring (2 methods) | 10 | 15 |

Visualizations

Title: Systems Biology-Enhanced Docking Workflow

Title: Integrative Pose Filtering Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software & Data Resources for Improved Docking

| Item | Function | Example/Tool |

|---|---|---|

| Force Field for Biomolecules | Provides parameters for potential energy calculations; critical for MD and scoring. | ff19SB (Proteins), OL3 (RNA), GAFF2 (Ligands) |

| Conformational Sampling Engine | Generates an ensemble of flexible target or peptide conformations. | AMBER, GROMACS, RosettaFlexPepDock |

| Conservation Analysis Tool | Maps evolutionarily conserved residues onto structures to identify functional sites. | ConSurf, HMMER |

| Biological Database API | Programmatic access to mutation, pathway, and interaction data for filtering. | UniProt API, PDBe-KB, STRING DB |

| Free Energy Calculation Suite | Validates and refines final docked poses by estimating binding affinity. | MM-PBSA/GBSA in AMBER/NAMD |

| Visualization & Analysis Platform | Critical for analyzing docking results, interactions, and trajectories. | PyMOL, VMD, ChimeraX |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After docking a large library, I observe poor pose prediction when comparing my top hits to known experimental structures (e.g., from PDB). The RMSD values are consistently high (>3.0 Å). What are the primary causes and initial steps to diagnose this? A1: High RMSD post-docking typically indicates issues with receptor preparation, ligand parametrization, or scoring function mismatch.

- Diagnose Receptor State: Verify protonation states of key residues (e.g., His, Asp, Glu) in the binding site using a tool like

PROPKA. An incorrect tautomer or protonation state can drastically alter electrostatics. - Check Ligand Tautomers/Charges: Ensure the ligand's dominant protonation and tautomeric state at the target pH is correctly assigned. Use chemical perception tools (e.g.,

LigPrep,Open Babel) to generate biologically relevant states. - Validate the Grid: Confirm the docking grid is centered and sized appropriately to fully encompass the binding site with a margin of at least 10 Å. A misplaced grid is a common cause of poor pose retrieval.

Q2: My virtual screen yields thousands of hits, but subsequent experimental validation shows very low confirmation rates. How can I improve the enrichment of true actives? A2: Low enrichment often stems from over-reliance on a single docking score. Implement a consensus or post-docking filtering strategy.

- Consensus Scoring: Rank compounds using 2-3 different scoring functions (e.g., Vina, Glide SP, ChemPLP). Prioritize hits that rank well across multiple functions.

- Interaction Fingerprinting: Filter poses based on the formation of key interactions (e.g., hydrogen bonds with a catalytic residue, specific hydrophobic contacts) known from crystallographic data. Use tools like

OpenCADD-KLIFSorPlip. - Pharmacophore Filter: Apply a structure-based pharmacophore model derived from a known active ligand or binding site to discard poses that do not match essential features.

Q3: During receptor preparation for a large screen, what are the critical steps to ensure the protein structure is suitable for docking? A3:

- Source Selection: Prefer high-resolution (<2.2 Å) crystal structures with a ligand bound in the desired site. Structures from the PDB require careful curation.

- Preprocessing: Remove all non-essential molecules (water, ions, original ligand, cofactors) except those structurally critical for binding.

- Add Missing Components: Add missing hydrogen atoms and, if necessary, model missing side chains (e.g., with

PDBFixerorModeller). - Optimize Hydrogen Bonding: Perform a constrained energy minimization of added hydrogens to relieve steric clashes using software like

AMBERorSchrödinger's Protein Preparation Wizard.

Q4: What computational resources and time should I anticipate for a screen of 1 million compounds? A4: Resource requirements vary by software and hardware. Below is a general estimate for a standard physics-based docking program (e.g., AutoDock Vina, Smina) on a CPU cluster.

Table 1: Estimated Resource Requirements for a 1M Compound Screen

| Parameter | Approximate Value/Time | Notes |

|---|---|---|

| CPU Cores | 500-1000 | Modern screening can leverage GPU acceleration (e.g., with Vina-GPU, DiffDock), reducing time by ~10-50x. |

| Wall Clock Time | 24-72 hours | Assumes efficient job distribution across a cluster. Single-core equivalent would be ~1-2 years. |

| Storage (Input/Output) | 50-100 GB | Depends on ligand library format and the amount of pose data saved per compound. |

| Memory per Core | 2-4 GB | Typically sufficient for most protein targets. |

Q5: How do I handle water molecules in the binding site during preparation? Should I keep or remove them? A5: This is a nuanced decision. Follow this protocol:

- Analyze Conservation: Retain water molecules that are highly conserved in multiple co-crystal structures of the same receptor and that mediate ligand-protein interactions (bridging hydrogen bonds).

- Test Empirically: Perform a focused docking benchmark with known actives using two receptor models: one with the conserved water(s) included (treated as part of the receptor, often with "tethered" or "toggle" settings), and one without.

- Compare Performance: Evaluate which model better reproduces the native pose (lowest RMSD) and ranks known actives over decoys. Use the superior model for the full screen.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Software for Large-Scale Docking

| Item | Function & Rationale |

|---|---|

| High-Quality Protein Structure (from PDB or homology model) | The foundational input. Resolution, bound ligand, and lack of major gaps in the binding site are critical for success. |

| Curated Small Molecule Library (e.g., ZINC, Enamine REAL, MCULE) | The ligand source. Libraries must be pre-filtered by drug-likeness (e.g., Lipinski's Rule of 5), prepared with correct 3D geometries, tautomers, and charges. |

Receptor Preparation Suite (e.g., Schrödinger Maestro, MOE, UCSF Chimera/AutoDockTools) |

Used to add hydrogens, assign charges, optimize H-bond networks, and define the binding site grid. |

| Docking Software (e.g., AutoDock Vina, GLIDE, GOLD, rDock) | Performs the conformational search and scoring. Choice depends on target, speed, and accuracy needs. |

| Post-Processing Analysis Tools (e.g., RDKit, PyMOL, PoseView) | For clustering results, visualizing top poses, analyzing interaction fingerprints, and generating figures. |

| High-Performance Computing (HPC) Cluster | Essential for completing screens of >100k compounds in a reasonable timeframe. GPU resources significantly accelerate the process. |

Experimental Protocols

Protocol 1: Standardized Workflow for Preparing a Ligand Library from ZINC

- Download: Select and download a subset (e.g., "Drug-Like" or "Lead-Like") from the ZINC20 database in SDF format.

- Filter: Use

RDKitin Python to filter molecules based on molecular weight (150-500 Da), logP (<5), and number of rotatable bonds (<10). Remove molecules with reactive functional groups. - Prepare States: Generate probable protonation states and tautomers at pH 7.4 ± 0.5 using

LigPrep(Schrödinger) orOpen Babel(obabel -p 7.4). - Minimize Energy: Perform a brief molecular mechanics minimization (e.g., with the MMFF94 force field) to relieve steric strain.

- Format Conversion: Convert the final library into the required input format for your docking software (e.g.,

.mol2with partial charges,.pdbqtfor Vina).

Protocol 2: Benchmarking and Validating the Docking Setup

- Create a Benchmark Set: Compile 10-20 known active ligands with reliable co-crystal structures (from PDB). Generate 50-100 decoy molecules per active (e.g., from the DUD-E database) that are physically similar but chemically distinct.

- Perform Docking: Dock the combined set of actives and decoys using your prepared receptor and protocol.

- Analyze Pose Accuracy: For each active, calculate the RMSD of the top-scoring docked pose to the experimental pose. A successful setup should produce RMSD < 2.0 Å for most actives.

- Analyze Enrichment: Calculate the enrichment factor (EF) at 1% of the screened database. Plot the Receiver Operating Characteristic (ROC) curve. A good protocol shows early enrichment and an area under the curve (AUC) > 0.7.

Visualization of Workflows

Diagram 1: High-Level Docking Screen Workflow

Diagram 2: Troubleshooting High RMSD Protocol

Integrating AI-Powered Design and Synthesis Platforms (e.g., AIDDISON) into the Workflow

AIDDISON Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when integrating the AIDDISON platform into docking and synthesis workflows, specifically within a research thesis context focused on improving pose prediction accuracy and reducing RMSD values.

Frequently Asked Questions (FAQs)

Q1: After generating compounds with AIDDISON, my subsequent docking simulations still yield high RMSD values (>2.0 Å) against the crystal pose. What are the primary troubleshooting steps? A: High RMSD post-AIDDISON suggestion typically indicates a ligand strain or target flexibility issue. Follow this protocol:

- Validate Generated Conformers: Use the

CONFCHECKmodule to analyze the torsional strain of the top suggested compounds. Compounds with high internal strain often dock poorly. - Reconcile Protonation States: Ensure the protonation state of the ligand (generated for synthesis) matches the physiological pH conditions of your docking experiment. Use the

-pHflag in the preparation step. - Review Target Preparation: High RMSD may stem from an inaccurate binding site definition. Cross-verify the binding site coordinates used by AIDDISON with recent PDB entries and consider side-chain flexibility of key residues.

Q2: I am experiencing a "Synthesis Feasibility Score" below 0.5 for all high-scoring pose prediction hits. How can I improve this? A: A low synthesis score suggests the AI's suggested molecules are chemically complex or require unavailable precursors.

- Adjust Search Parameters: In the "Design" tab, increase the "Synthetic Accessibility Weight" slider from its default (0.5) to a higher value (0.7-0.8). This biases the generative model towards simpler, more synthesizable scaffolds.

- Curate Building Blocks: The platform's suggestions are limited by your provided or selected chemical libraries. Upload or select a custom building block library that reflects your lab's current available chemical inventory.

- Use the Retro-Synthesis Viewer: Analyze the proposed synthetic route for top hits. You can manually edit the route to use more accessible intermediates and resubmit for a new feasibility score.

Q3: The platform's pose prediction seems to ignore key water-mediated hydrogen bonds in the active site. How can I include solvent effects? A: AIDDISON’s default pose optimization uses a dehydrated binding site for speed.

- Explicit Water Toggle: Enable "Conserved Waters" in the Advanced Docking Parameters. You must provide a

.pqrfile for conserved crystallographic waters. - Post-Processing Hydration: After generating the top 10 poses, run a short molecular dynamics (MD) simulation with explicit solvent (SPC water model) for 1-2 ns. Re-dock the averaged structure from the MD trajectory. This often improves RMSD by accounting for dynamic water networks.

Q4: When running batch jobs for virtual compound screening, the job fails with an "Unexpected Stereochemistry Error." What does this mean? A: This error arises when the SMILES notation for an input compound is ambiguous or contains undefined stereocenters.

- Pre-Filter Input Library: Always run your input compound library (.smi or .sdf) through a standardization tool (e.g., RDKit's

MoleculeSanitize) before uploading. - Check SMILES Flags: Ensure your SMILES strings explicitly define stereochemistry using

/and\bonds or@symbols for tetrahedral centers. The platform requires unambiguous input. - Isolate the Faulty Molecule: The error log will list the compound ID causing the failure. Remove or correct this specific entry and resubmit the batch.

Q5: How do I reconcile differences between the "AI-Predicted Binding Affinity (pKi)" and my experimental enzymatic assay results? A: Discrepancies are common and used for model refinement. Follow this validation protocol:

- Create a Calibration Table: Synthesize and test a small, diverse set of 15-20 compounds spanning the predicted affinity range.

- Perform Linear Regression: Plot Experimental pKi vs. Predicted pKi. Use the slope and intercept to calibrate future predictions.

- Feedback Loop: Submit your experimental results through the "Model Feedback" portal. This continuously retrains the underlying AI models, improving accuracy for your specific target class.