Overcoming Data Quality Issues in LBDD: Strategies for Robust Drug Discovery

This article addresses the critical challenge of data quality in Ligand-Based Drug Design (LBDD), a methodology essential for developing therapeutics when target protein structures are unavailable.

Overcoming Data Quality Issues in LBDD: Strategies for Robust Drug Discovery

Abstract

This article addresses the critical challenge of data quality in Ligand-Based Drug Design (LBDD), a methodology essential for developing therapeutics when target protein structures are unavailable. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive framework covering the foundational understanding of common data pitfalls, practical methodological applications, advanced troubleshooting techniques, and rigorous validation protocols. By synthesizing current best practices, the content equips teams to enhance the reliability of their SAR models, accelerate lead optimization, and ultimately increase the success rate of drug discovery projects.

Understanding the Data Quality Landscape in Modern LBDD

The Critical Impact of Poor Data Quality on SAR and Predictive Models

In Ligand-Based Drug Design (LBDD), the primary goal is to discover novel therapeutics by analyzing the structural and physicochemical properties of biologically active compounds. The core assumption is that similar molecules exhibit similar biological activities—a principle formalized through the Structure-Activity Relationship (SAR). Predictive models in LBDD rely entirely on the quality of the chemical data and associated biological annotations from which they learn. Poor data quality directly compromises these models, leading to wasted resources and failed experiments. This guide addresses the critical data quality challenges in LBDD research and provides actionable solutions.

Frequently Asked Questions (FAQs)

Q1: What are the most common data quality issues in LBDD datasets? The most prevalent issues are incorrect or inconsistent biological activity labels (e.g., misreported IC₅₀ values), incorrect chemical structure representation (e.g., missing stereochemistry, invalid tautomers), and imbalanced datasets where inactive compounds vastly outnumber active ones, causing models to be biased toward predicting inactivity [1] [2].

Q2: How can I quickly check my chemical dataset for major errors? Begin by running automated checks for structural integrity (e.g., valency, unusual atom types), standardizing structures (e.g., neutralizing charges, removing counterions), and verifying that biological activity data is consistently reported in the same units (e.g., all as Ki or all as IC₅₀). Using toolkits like RDKit can automate many of these checks [1].

Q3: My model has high accuracy but poor predictive power. What's wrong? This is a classic symptom of an imbalanced dataset. When one class (e.g., inactive compounds) dominates, a model can achieve high accuracy by always predicting the majority class, while failing to identify the active compounds you're interested in. Focus on metrics like sensitivity (recall), specificity, and F1-score instead of accuracy alone [2].

Q4: How much data is typically needed to build a reliable SAR model? There is no universal answer, but the required amount depends on the complexity of the SAR and the diversity of the chemical space you are exploring. A general best practice is to start with a pilot model using available data, then use active learning techniques to selectively label the most informative new data points to improve the model efficiently [3] [4].

Troubleshooting Guides

Problem: Model Performance is Poor Due to Imbalanced Data

Issue: Your predictive model ignores the minority class (e.g., active compounds) because the dataset is imbalanced.

Solution: Apply data sampling techniques to rebalance the class distribution before training your model.

Methodology:

- Prepare Your Data: Compute molecular descriptors (e.g., MACCS keys, Morgan fingerprints) for all compounds and define the binary activity labels (active/inactive) [2].

- Choose a Sampling Method:

- Random Under-Sampling (RandUS): Randomly removes instances from the majority class. Use when you have a very large dataset and can afford to lose information [2].

- Synthetic Minority Over-sampling Technique (SMOTE): Creates new, synthetic examples for the minority class in the feature space. This is often the most effective method [2].

- Augmented Random Under-Sampling (AugRandomUS): A more advanced under-sampling method that uses a "Most Common Features" (MCF) fingerprint to remove majority class instances that are less informative, preserving more variance [2].

- Retrain and Validate: Train your model on the resampled dataset and validate its performance on a held-out, originally imbalanced test set. Use metrics like sensitivity and specificity to evaluate success.

Table 1. Comparison of Sampling Methods for Imbalanced Chemical Data

| Method | Description | Best For | Performance Note |

|---|---|---|---|

| No Sampling | Uses the original, imbalanced dataset. | Baseline comparison. | Often leads to high specificity but very low sensitivity [2]. |

| Random Under-Sampling (RandUS) | Randomly removes majority class examples. | Very large datasets. | Can improve sensitivity but risks losing important data [2]. |

| SMOTE | Generates synthetic minority class examples. | Most common scenarios. | Effectively reduces the sensitivity-specificity gap; achieved 96% sensitivity and 91% specificity in a DILI study [2]. |

| Augmented Random Under-Sampling (AugRandomUS) | Removes majority examples based on feature commonality. | Datasets where information retention is critical. | Preserves more variance in the majority class compared to random under-sampling [2]. |

Problem: Inconsistent or Low-Quality Data Labeling

Issue: Biological activity data (labels) are inconsistent, noisy, or inaccurate, leading to an unreliable SAR.

Solution: Implement a rigorous data labeling and annotation workflow to ensure label quality and consistency.

Methodology:

- Define Clear Annotation Guidelines: Create a detailed document that defines labeling criteria (e.g., what constitutes an "active" compound), label definitions, and includes clear examples. This ensures consistency across different labelers [5] [3].

- Use Multiple Labelers & Quality Assurance: For critical datasets, have multiple experts (e.g., medicinal chemists) label the same compounds. Implement a quality control process where a senior scientist reviews the labels and resolves discrepancies [3].

- Continuous Monitoring and Improvement: As your model trains, it may reveal areas where the data is ambiguous. Use this feedback to refine your labeling guidelines and relabel problematic data points in an iterative process [3].

- Leverage Active Learning: Use the model itself to identify the most valuable data points for which to obtain new labels, optimizing the time and cost of labeling [3].

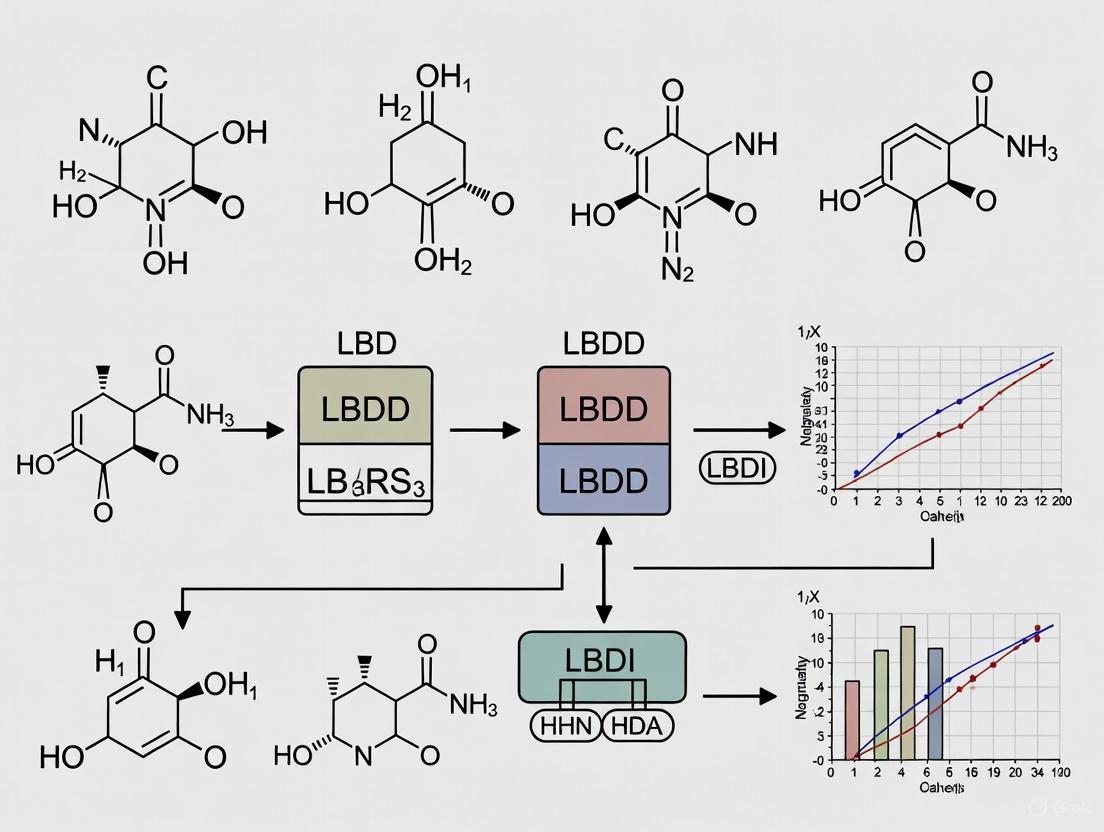

The following workflow diagram illustrates a robust process for creating high-quality labeled datasets for SAR modeling:

Problem: Model Fails to Generalize to New Compound Classes

Issue: The model performs well on its training data but fails to predict the activity of compounds from a different chemical series.

Solution: Ensure your training data is diverse and representative, and carefully select molecular descriptors.

Methodology:

- Audit Dataset Diversity: Analyze the chemical space coverage of your training set using techniques like Principal Component Analysis (PCA) or t-SNE. Ensure it includes multiple chemical scaffolds and core structures relevant to your target [1].

- Select Appropriate Descriptors: Move beyond simple 2D descriptors. For tasks where 3D conformation is critical (e.g., pharmacophore modeling), use molecular mechanics (MM) or quantum mechanics (QM) methods to generate accurate 3D conformations and derive 3D descriptors [1].

- Apply Robust Validation: Always use a rigorous external validation set containing compounds from a different chemical series that were not used in any part of the model building process. This is the best test of a model's generalizability [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 2. Essential Computational Tools and Resources for LBDD

| Tool / Resource | Function | Relevance to Data Quality |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit. | Used for standardizing chemical structures, calculating molecular descriptors (e.g., Morgan fingerprints), and handling data curation tasks [2]. |

| MACCS Keys / Morgan Fingerprints | Molecular fingerprinting systems. | Provide a numerical representation of molecular structure, essential for similarity searching and managing dataset diversity [2]. |

| SMOTE | A synthetic data generation algorithm. | Corrects for class imbalance in datasets by generating plausible new examples of the minority class, improving model sensitivity [2]. |

| Molecular Mechanics (MM) Force Fields | Empirical energy functions. | Generate accurate 3D conformational models of ligands, which are critical for creating high-quality 3D-QSAR and pharmacophore models [1]. |

| Quality Control (QC) Protocols | Defined procedures for data review. | Systematic checks by senior scientists to verify the accuracy and consistency of labeled biological data, preventing garbage-in-garbage-out outcomes [3]. |

The following diagram maps the logical relationship between data quality issues, their impacts on SAR models, and the recommended solutions discussed in this guide:

For researchers in data-driven life sciences, the path from experimental data to a validated discovery is fraught with systemic pitfalls that can compromise data integrity, derail projects, and waste invaluable resources. This guide provides a practical troubleshooting framework for identifying, resolving, and preventing the most common data quality issues in Life Sciences Data and Analytics (LBDD) research. By addressing these challenges, scientists and drug development professionals can build a more robust foundation for innovation.

Frequently Asked Questions (FAQs)

Q1: What are the most critical data pitfalls in life sciences research? The most critical pitfalls can be categorized into issues with data integrity, infrastructure, and governance. These include inaccurate data entries, the proliferation of data silos, inadequate metadata management, poor data security, and insufficient user training. Addressing these is foundational to any successful data-driven research program [6] [7].

Q2: How do data silos specifically impact drug discovery timelines? Data silos force researchers to waste time locating and reconciling data from disparate, unconnected systems. This fragmentation delays cross-functional collaboration, leads to repeated experiments, and prevents the extraction of actionable insights from years of valuable research. It is a major factor in drug development now averaging over $2.2 billion per successful asset and spanning 7-9 years [8] [9].

Q3: Can automation alone solve our data quality and cataloging problems? No. While automation is excellent for scaling metadata management—such as with automated lineage tracking or AI-driven PII identification—it cannot provide the essential business context. Relying solely on automation results in metadata that lacks meaning, making it difficult for users to trust and derive value from the data. A balance of automation and structured human input is required [10].

Q4: What is the business case for investing in data cataloging and integration? The business case is powerful. Breaking down data silos and implementing effective data management leads to faster, evidence-based decisions, improved clinical trial efficiency, and reduced regulatory risks. Deloitte estimates that AI investments supported by enterprise-wide digital integration could boost pharma revenue by up to 11% and yield up to 12% in cost savings [8].

Troubleshooting Guides

The Problem of Data Silos

Issue: Critical research data is trapped in isolated systems across R&D, clinical trials, and regulatory departments, slowing innovation and collaboration [8] [9].

Symptoms:

- Inability to access or locate key datasets across different teams.

- Duplication of experiments and data collection efforts.

- Contradictory conclusions drawn from different parts of the organization.

- Difficulty achieving a unified view of patient or compound data.

Resolution Steps:

- Audit and Identify: Map all data sources and owners across the organization to identify existing silos [7].

- Implement a Centralized Platform: Adopt cloud-native platforms and unified data repositories (e.g., data lakes) to integrate legacy and real-time datasets into a single, secure environment [8] [11].

- Enforce Standards: Use pharma-specific data standards like CDISC, SDTM, and ADaM to ensure consistent structuring of clinical trial and other research datasets [8].

- Establish Governance: Create strong data governance frameworks with clear ownership to ensure ongoing data integrity, traceability, and secure sharing [8].

Prevention Plan:

- Foster a culture of data sharing over data hoarding.

- Designate data stewards for key data domains.

- Invest in interoperable systems with open APIs from the outset.

The Problem of Inadequate Metadata Management

Issue: Data lacks sufficient context (metadata), making it difficult for researchers to find, understand, and trust the data they need [10] [6].

Symptoms:

- Datasets are discovered but their meaning, provenance, or quality is unclear.

- Researchers spend excessive time manually investigating data origins.

- Low user adoption of data catalogs and other discovery tools.

- Misinterpretation of data leads to flawed analyses.

Resolution Steps:

- Create a Business Glossary: Define and maintain key terms (e.g., "active patient," "treatment response") consistently across the organization [10].

- Implement Structured Context: Use the data catalog to prompt business users for critical context, such as "What business function does this dataset support?" and "Are there any business rules users should know?" [10].

- Assign Data Owners: Link every critical dataset to a defined owner responsible for validating its business meaning and quality [10].

- Balance Automation & Human Input: Use automation for technical metadata extraction, but rely on domain experts (scientists, researchers) to provide the essential business context [10].

Prevention Plan:

- Integrate metadata collection into standard research documentation workflows.

- Regularly review and update metadata as processes and experiments evolve.

The Problem of Poor Data Quality at Source

Issue: Data is migrated or ingested into analytical systems without proper validation, leading to analyses built on inaccurate or incomplete foundations [6] [7].

Symptoms:

- Unexplained discrepancies in analytical reports.

- Frequent need for data cleansing and correction post-hoc.

- A "whack-a-mole" approach to fixing data issues without addressing root causes [7].

- Erosion of trust in data-driven insights.

Resolution Steps:

- Conduct a Pre-Migration Audit: Before moving data to a new catalog or lake, conduct a full data audit to profile quality [6].

- Cleanse and Validate: Cleanse, standardize, and validate data before migration, rather than trying to fix issues afterward [6].

- Establish Quality Guidelines: Define and implement clear data quality standards and metrics (e.g., completeness, accuracy, freshness) [6].

- Implement Root Cause Analysis: When an issue is found, avoid superficial fixes. Instead, investigate the data pipeline to find and correct the origin of the problem [7].

Prevention Plan:

- Automate data quality checks within data pipelines where possible.

- Schedule regular, recurring data quality audits.

Data Pitfalls: Impact and Prevalence

Table 1: Common data pitfalls and their quantitative impact on research and development.

| Data Pitfall | Primary Impact | Estimated Financial/Business Impact |

|---|---|---|

| Data Silos [8] [9] | Slows drug development, causes redundant experiments | Contributes to an average drug development cost of >$2.2B; 7-9 year timelines |

| Poor Data Quality [6] [7] | Leads to incorrect insights and wasted R&D effort | Undermines AI/ML projects; creates continuous "firefighting" and rework |

| Inadequate Metadata [10] | Reduces data discoverability and trust | Renders data catalogs useless; high opportunity cost from unused data assets |

| Isolated Data Catalogs [10] | Low adoption across technical and business teams | Creates fragmented workflows; fails to support compliance and governance needs |

| Superficial Monitoring [7] | Creates false sense of security; misses early warning signs | Issues detected only when they become full-blown crises, requiring costly fixes |

Table 2: Technical root causes and recommended solutions for data pitfalls.

| Technical Root Cause | Resulting Pitfall | Recommended Solution |

|---|---|---|

| Fragmented sources & proprietary formats [8] | Data Silos | Implement cloud-based data lakes & enforce data standards (e.g., CDISC, FHIR) [8] [11] |

| Neglecting pre-migration data audits [6] | Poor Data Quality | Institute data quality guidelines and regular audit schedules [6] |

| Over-reliance on automation [10] | Inadequate Metadata | Blend automated metadata extraction with structured input from business users [10] |

| Limited connector support [10] | Isolated Data Catalogs | Select a data catalog with broad, scalable connectivity to existing and future tools [10] |

| Focusing on symptoms, not root causes [7] | Superficial Monitoring | Implement pipeline traceability metrics and a culture of root cause analysis [7] |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key tools and technologies for building a robust data foundation in life sciences research.

| Tool Category | Example Solutions | Function in Overcoming Data Pitfalls |

|---|---|---|

| Unified Data Platforms | AWS HealthLake, Azure for Life Sciences, Google Cloud Healthcare API [11] | Integrates EHRs, imaging, genomic, and clinical data into a single environment to break down silos. |

| AI-Powered Data Catalogs | Secoda [6], OvalEdge [10] | Provides a centralized inventory of all data assets, enabling discovery, governance, and lineage tracking. |

| Data Harmonization Tools | AI and NLP for data annotation [8] | Cleanses, standardizes, and enriches fragmented datasets from R&D, clinical, and regulatory streams. |

| Pipeline Monitoring Tools | Pantomath [7] | Offers deep monitoring and traceability to find the root cause of data issues, not just surface-level symptoms. |

| Interoperability Standards | CDISC (SDTM, ADaM), FHIR [8] [11] | Ensures consistent data structuring for clinical trials and healthcare data, enabling seamless exchange and analysis. |

Experimental Protocol: Data Quality Assessment for Research Datasets

Objective: To systematically assess the quality of a newly acquired or generated research dataset before it is used in analytical modeling or decision-making.

Background: High-quality input data is non-negotiable for reliable research outcomes. This protocol provides a standardized methodology to evaluate key data quality dimensions.

Materials:

- Source dataset (e.g., genomic data, clinical trial data, compound screening results)

- Data profiling tool (e.g., custom Python/R scripts, integrated data quality features in platforms like Secoda [6] or Pantomath [7])

- Access to defined data standards and business glossary

Methodology:

- Completeness Check:

- Calculate the percentage of missing values for each critical field (column).

- Action: If any field exceeds a pre-defined threshold (e.g., >5% missing), flag for review and imputation or exclusion.

Accuracy and Validity Check:

- Validate data against known value ranges or predefined rules (e.g., patient age must be 18-100, gene expression values must be positive).

- Action: Document and investigate all records that fail validation checks.

Consistency Check:

- Check for internal consistency (e.g., a patient's "date of death" cannot be before "date of diagnosis") and cross-system consistency where applicable.

- Action: Resolve inconsistencies by verifying against the system of record.

Uniqueness Check:

- Identify duplicate records based on a defined key (e.g., Patient ID, Compound ID).

- Action: De-duplicate records while preserving the most complete and accurate information.

Contextual Validation:

- Engage a domain expert (e.g., a research scientist) to review a sample of the data to ensure it aligns with biological and experimental expectations.

- Action: Annotate findings in the data catalog or business glossary [10].

Reporting: Document all findings, actions taken, and final quality metrics. This report should be stored with the dataset in the data catalog for future reference.

Visualizing the Data Pitfall Resolution Workflow

The diagram below outlines a logical workflow for diagnosing and resolving common data pitfalls, moving from symptom identification to a validated solution.

Data Pitfall Diagnosis and Resolution Workflow

How Biased and Mislabeled Data Compromise AI and Machine Learning Initiatives

Frequently Asked Questions (FAQs)

Q1: What are the most common types of data flaws that affect AI in research? The most common data flaws can be categorized as follows:

| Data Flaw Category | Description | Common Examples & Consequences |

|---|---|---|

| Labeling Bias [12] [13] [14] | Errors or human prejudices in the manually assigned labels used for training. | - An AI hiring tool penalized resumes with the word "women's" [13].- Inconsistent labeling of medical images caused a model to learn hospital-specific artifacts instead of disease features [14]. |

| Selection & Sampling Bias [15] [12] | The collected data is not representative of the real-world population or environment. | - Facial recognition systems trained predominantly on lighter-skinned males performed poorly on darker-skinned females [15].- A health risk algorithm trained on healthcare spending data favored white patients over Black patients [15]. |

| Measurement & Instrument Bias [12] [16] | Arises from errors in data collection instruments or procedures. | - In healthcare AI, data heterogeneity across different institutions, equipment, and workflows can lead to biased models that do not generalize well [16]. |

| Data Quality Issues [14] | Fundamental problems with the data's structure and completeness. | - Missing data, duplication, and inconsistent formats can derail models, leading to systemic bias and inaccurate outputs [14]. |

Q2: Why can't a technically sound algorithm overcome these data issues? Machine learning algorithms are designed to find and replicate patterns in the data they are given. If the training data contains biases or errors, the algorithm will learn them as ground truth. A Bar-Ilan University study emphasizes that most AI failures stem from flawed data, not flawed code [14]. An algorithm is statistically brilliant but conceptually wrong if it learns the wrong patterns from poor-quality data [14].

Q3: What is an AI hallucination, and how is it related to data quality? An AI hallucination occurs when a generative AI tool produces fabricated, inaccurate information that appears plausible [15]. This often happens because the model is designed to predict the next word or sequence based on patterns in its vast training data, which contains both accurate and inaccurate information, without an inherent ability to verify truth [15]. Mislabeled or biased training data can significantly contribute to these erroneous outputs.

Q4: What are some post-processing methods to mitigate bias in existing models? Post-processing methods are applied after a model is trained and are especially useful for "off-the-shelf" algorithms. An umbrella review identified several key methods [17]:

| Mitigation Method | How It Works | Effectiveness & Notes |

|---|---|---|

| Threshold Adjustment [17] | Adjusting the decision threshold for different demographic subgroups to ensure fairer outcomes. | Showed significant promise, reducing bias in 8 out of 9 trials [17]. |

| Reject Option Classification [17] | The model abstains from making automated decisions on cases where its predictions are most uncertain, flagging them for human review. | Reduced bias in approximately half of the trials [17]. |

| Calibration [17] | Adjusting the model's output probabilities to better reflect the true likelihood of outcomes across different groups. | Reduced bias in approximately half of the trials [17]. |

Troubleshooting Guides

Guide 1: Diagnosing and Mitigating Data Bias

This guide outlines a lifecycle approach to managing data quality, from planning to utilization [18].

Stage 1: Planning

- Objective: Define data standards and a clear strategy for quality management [18].

- Actionable Steps:

- Diversify Data and Teams: Proactively ensure your training data represents the full spectrum of the real-world population. Maintain diverse tech teams to help identify potential biases from multiple perspectives [12].

- Establish Data Governance: Create a data management plan that describes how data will be handled throughout the project lifecycle, including stewardship, security, and accessibility for reuse [18].

Stage 2: Construction

- Objective: Collect data and manage the overall data construction process, reflecting clinical or research attributes [18].

- Actionable Steps:

- Leverage Pre-processing Tools: Use advanced methods to clean data before model training. For example, the FAU CA-AI method uses L1-norm PCA to automatically detect and remove mislabeled data points (outliers) without manual tuning [19].

- Implement Self-Supervised Standardization: In healthcare AI, use techniques like self-supervised image style conversion to enhance structural and style consistency across diverse datasets from different institutions, improving model generalizability [16].

Stage 3: Operation

- Objective: Conduct data quality assessments on the constructed data from various angles [18].

- Actionable Steps:

- Continuously Monitor for Data Drift: Models can lose accuracy as the real world changes. Implement ongoing monitoring to detect performance degradation early [14].

- Audit with Clear and Structured Prompts: When using generative AI, vague prompts can lead to inaccurate answers. Use specific prompts and techniques like Chain-of-Thought Prompting to expose logical gaps or unsupported claims [15].

Stage 4: Utilization

- Objective: Share quality validation outcomes, enhance data quality, and recalibrate [18].

- Actionable Steps:

- Apply Post-processing Mitigation: For deployed models, use techniques like threshold adjustment to improve fairness without retraining the model [17].

- Critically Evaluate Outputs and Diversify Sources: Always cross-reference AI-generated content with trusted, peer-reviewed publications or consult with domain experts [15].

Guide 2: A Protocol for Detecting and Correcting Mislabeled Data

This protocol is based on the FAU CA-AI method for robust pre-processing [19].

Objective: To automatically identify and remove incorrectly labeled data points from a training dataset before model training, thereby improving model accuracy and reliability [19].

Methodology Details:

- Technique: L1-norm Principal Component Analysis (PCA) [19].

- Process: This mathematical technique analyzes the training data within each class to identify data points that significantly deviate from the rest of the group. These outliers are often the result of label errors [19].

- Key Advantages:

- Fully Automatic: The process requires no manual parameter tuning or user intervention [19].

- Robust and Scalable: It can be applied to any AI model and handles the tricky task of rank selection without user input [19].

- Effective: Testing on benchmarks like the Wisconsin Breast Cancer dataset showed consistent improvements in classification accuracy, even in datasets previously considered clean [19].

The Scientist's Toolkit: Research Reagent Solutions

Essential materials and methods for addressing data quality in research.

| Item / Solution | Function in Mitigating Data Issues |

|---|---|

| L1-norm PCA [19] | A robust pre-processing mathematical technique used to automatically detect and remove mislabeled data points (outliers) in a training dataset. |

| Retrieval-Augmented Generation (RAG) [15] | An architecture for generative AI tools that retrieves information from trusted sources (e.g., a private knowledge base or syllabus) before generating a response, improving factual accuracy. |

| Threshold Adjustment [17] | A post-processing bias mitigation method that changes the classification threshold for different demographic groups to ensure fairer outcomes, ideal for implemented models. |

| Self-Supervised Standardization [16] | A method, particularly useful for medical images, that enhances consistency across diverse datasets from different institutions while preserving patient privacy via decentralized learning. |

| Chain-of-Thought Prompting [15] | A prompting technique that asks an AI model to explain its reasoning step-by-step, which helps expose logical gaps or unsupported claims, improving transparency and accuracy. |

Troubleshooting Guides for Common Data Challenges

This guide addresses frequent data quality issues in Literature-Based Discovery (LBD) for drug development. Use the tables below to diagnose problems and implement solutions.

Table 1: Troubleshooting Data Collection & Management Pitfalls

| Pitfall & Symptoms | Root Cause | Recommended Solution | Regulatory & Standards Context |

|---|---|---|---|

| Using general-purpose tools (e.g., spreadsheets); data authenticity errors, inability to prove consistent performance. | Tools lack validation for regulatory compliance. | Implement purpose-built, pre-validated clinical data management software [20]. | ISO 14155:2020 requires validation of electronic systems for authenticity, accuracy, reliability, and consistent intended performance [20]. |

| Using basic tools for complex studies; inability to manage protocol changes, obsolete forms in use, no real-time status. | Manual systems (e.g., paper binders) cannot handle complexity or change efficiently. | Transition to an Electronic Data Capture (EDC) system. Plan for maximum complexity and use tools that manage change easily [20]. | Modern GCP principles embrace technological innovation. EDC systems prevent use of outdated forms and ensure data integrity [21]. |

| Using closed systems; manual data export/merge required, high risk of human error. | Systems lack APIs, creating data silos and inefficient workflows. | Utilize open systems with Application Programming Interfaces (APIs) for seamless data transfer between EDC, CTMS, and other tools [20]. | FDA guidance encourages modern innovations in trial conduct. Automated data flow improves integrity and readiness for regulatory scrutiny [21] [20]. |

| Forgotten clinical workflow; site friction, protocol deviations, data entry errors. | Study design is idealized and does not account for real-world clinical practice variations. | Test study protocols extensively in simulated environments. Involve end-user clinicians in the testing process to fit their workflow [20]. | ICH E6(R3) GCP guidance introduces flexible, risk-based approaches. Understanding real-world workflow is key to practical trial design [21]. |

| Lax data access controls; compliance risks during audits, former employees retain system access. | Lack of documented procedures for user management and poor system permission controls. | Implement documented SOPs for adding/removing users. Use software with robust user role management and detailed audit logs [20]. | Regulatory authorities audit system access controls and permissions. Maintained audit logs are a fundamental requirement for data credibility [20]. |

Table 2: Troubleshooting Data Integrity & Analytical Challenges

| Challenge & Impact | Root Cause | Recommended Solution & Methodology |

|---|---|---|

| Data Decay in LBD models; outdated hypotheses, reduced prediction accuracy. | Static knowledge bases fail to incorporate newly published literature and data. | Protocol: Establishing a Continuous Model Validation Framework 1. Automated Literature Monitoring: Use APIs from PubMed and other databases to set up alerts for new publications in your target domain. 2. Scheduled Re-Runs: Integrate new literature into your LBD model quarterly (or more frequently for fast-moving fields). 3. Performance Benchmarking: Compare the novel predictions from your updated model against the previous version and a manually curated gold-standard set of known relationships. Track precision and recall metrics. |

| Flawed Integration of Multi-Scale Data; inability to connect molecular, clinical, and RWD insights. | Data silos and lack of a unifying framework to relate different types of biological and clinical information. | Protocol: Implementing a Multi-Scale Data Integration Pipeline 1. Data Harmonization: Map all data sources (e.g., genomic, patient records, adverse event reports) to common ontologies like SNOMED CT or MeSH. 2. Relationship Modeling: Employ semantic models or knowledge graphs to represent relationships between entities (e.g., 'Drug A inhibits Protein B, which is encoded by Gene C, associated with Disease D') [22]. 3. Hypothesis Generation: Use LBD techniques like "open discovery" (connecting A to C via B) to traverse the knowledge graph and generate testable hypotheses for drug repurposing or adverse event prediction [22]. |

| Uninformative Terms in LBD Results; noisy, irrelevant discoveries that waste validation resources. | LBD systems generate many connections, but not all are novel or biologically meaningful. | Protocol: Filtering for Semantic Soundness 1. Term Filtering: Pre-process the literature corpus to remove overly general, non-specific terms (e.g., "activity," "level") that contribute noise [22]. 2. Ranking Strategies: Implement ranking algorithms that prioritize potential discoveries based on metrics like co-occurrence frequency, semantic similarity, or graph-based centrality measures [22]. 3. Expert Review: The top-ranked discoveries must always undergo review by a domain expert to assess biological plausibility before initiating wet-lab experiments. |

Frequently Asked Questions (FAQs)

Q1: Our LBDD research is academic. Do we still need to worry about FDA guidance and ISO standards? Yes. While regulatory compliance may not be your immediate goal, these guidelines represent the industry's best practices for ensuring data quality, integrity, and reproducibility. Adhering to these principles, such as using validated data collection methods, will strengthen the credibility of your research and facilitate future translational partnerships.

Q2: What is the simplest first step we can take to improve data quality in our LBDD workflows? The most impactful first step is to transition from spreadsheets to a structured data capture system. This could be a simple electronic lab notebook (ELN) or a more advanced system with API capabilities. This single change reduces manual entry errors, enforces data structure, and creates a single source of truth for your experiments.

Q3: How can we better account for human factors in our data processes? Involve your team in the design of data workflows. Conduct dry-runs of experimental protocols and data entry procedures to identify points of friction or confusion. A process that is intuitive and fits seamlessly into the researcher's routine is less prone to error. Documenting these testing sessions also provides evidence of a quality-focused approach [20].

Q4: Are there computational models that can help us overcome data limitations? Absolutely. In silico models, including those used for digital twins, are increasingly used to complement in vitro studies. They can integrate multi-scale data, simulate experiments, and generate hypotheses about mechanisms that are difficult or expensive to probe experimentally [23] [24]. The FDA also encourages the use of AI/ML and innovative trial designs, especially for small populations, which can be informed by such models [21] [25].

Experimental Protocols for Data Quality Assurance

Protocol 1: Systematic Cross-Validation of LBD-Generated Hypotheses

Objective: To empirically validate novel drug repurposing hypotheses generated by an LBD system while minimizing resource waste on false positives.

Methodology:

- Hypothesis Generation: Using your LBD system (e.g., based on co-occurrence or semantic models), generate a ranked list of potential drug-disease connections (e.g., "Drug X may treat Disease Y") [22].

- In Silico Triage:

- Pathway Enrichment Analysis: For the top 20 hypotheses, input the drug and disease-associated genes into a pathway analysis tool (e.g., DAVID, Metascape). Prioritize hypotheses where the drug's known targets and the disease's genetic basis share significant biological pathways.

- Literature-Based Plausibility Scoring: Manually check the recent literature for any emerging, direct evidence that might confirm or contradict the hypothesized link.

- In Vitro Validation (Pilot Study):

- Cell Model: Select a clinically relevant cell line model for the target disease (Y).

- Dosing: Treat cells with a range of physiologically achievable concentrations of Drug X.

- Endpoint Assay: Perform a high-content viability assay (e.g., CellTiter-Glo). Include appropriate controls (vehicle, positive control).

- Analysis: Confirm a statistically significant (p < 0.05) dose-response effect compared to vehicle control.

Protocol 2: Benchmarking a New LBD System Performance

Objective: To quantitatively evaluate the performance of a new or updated LBD system against a known standard.

Methodology:

- Create a Gold-Standard Dataset: Curate a set of 50-100 known, previously discovered drug-disease relationships from reputable sources (e.g., FDA-approved drug labels, clinicaltrials.gov). Ensure these discoveries were made within the timeframe of your literature corpus.

- Run Benchmark Test: For each known relationship (A-C) in your gold standard, task the LBD system with identifying the linking term (B).

- Calculate Metrics:

- Precision: Of all the proposed links (B terms) the system generates, what percentage are correct (based on expert judgment)?

- Recall: What percentage of the known relationships in your gold standard did the system successfully "re-discover"?

- Iterate and Improve: Use these metrics to fine-tune your system's parameters (e.g., filtering thresholds, ranking algorithms) before applying it to novel discovery tasks [22].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for Robust LBDD Research

| Item / Resource | Function in LBDD Research | Key Considerations |

|---|---|---|

| Validated Electronic Data Capture (EDC) System | Ensures reliable, audit-proof collection of experimental and clinical data, forming a foundation for high-quality analysis. | Pre-validated for ISO 14155/21 CFR Part 11 compliance is ideal. API connectivity is crucial for integrating with other tools [20]. |

| Literature-Based Discovery (LBD) Platform | Systematically generates novel hypotheses by analyzing hidden connections across the vast biomedical literature. | Evaluate based on its underlying model (co-occurrence, semantic), filtering capabilities, and ranking algorithms [22]. |

| Standardized Biomedical Ontologies (e.g., MeSH, SNOMED CT) | Provides a controlled vocabulary for data annotation, enabling seamless data integration, sharing, and semantic reasoning. | Critical for overcoming flawed integration of data from disparate sources and ensuring computational tools "understand" the concepts. |

| In Silico Modeling & Digital Twin Platform | Creates a virtual representation of a biological system or patient to simulate experiments, predict outcomes, and optimize trial designs. | Particularly valuable for assessing mechanisms and designing trials for rare diseases with small patient populations [24]. |

| API-Enabled Data Warehousing | A centralized repository that connects via APIs to all data sources (lab instruments, EDC, literature databases) to break down data silos. | The technical backbone for solving the problem of flawed integration, enabling a unified view of all research data. |

Visualizing Data Quality Workflows

Data Quality Remediation Flow

LBD Hypothesis Generation

Technical Support Center

Troubleshooting Guide: Common ALCOA+ Implementation Issues

Problem 1: Data is not Attributable

Symptoms: Cannot identify who created or modified data; system uses shared login credentials; audit trails are missing or incomplete.

Root Causes: Shared user accounts; lack of system authentication controls; inadequate audit trail configuration.

Solution: Implement unique user IDs with role-based access control. Configure systems to automatically capture user identity, date, and time in metadata. For manual records, require handwritten signatures with dates. Validate that audit trails are enabled and functioning correctly [26] [27] [28].

Verification Steps:

- Review system access logs for shared account usage

- Verify audit trails capture user ID, timestamp, and action for all data changes

- Check manual records for complete signature and date information

Problem 2: Failure to Maintain Contemporaneous Records

Symptoms: Data recorded significantly after observation; inconsistent timestamps; time zone confusion; back-dated entries.

Root Causes: Manual recording processes; system clocks not synchronized; lack of real-time data capture.

Solution: Use automated timestamping synchronized to external time standards (UTC/NTP). Implement electronic systems that capture time automatically. For manual recording, place dated logbooks at point of use and establish procedures for immediate documentation [26] [27].

Verification Steps:

- Audit system time synchronization with external standards

- Review audit trails for timestamp anomalies

- Verify procedures require recording at time of activity

Problem 3: Original Data Not Preserved

Symptoms: Reliance on transcripts or copies; missing source data; inability to trace reports back to original records.

Root Causes: Use of scrap paper for initial recording; transcription practices; inadequate source data management.

Solution: Record directly to approved media (electronic systems or bound notebooks). Preserve dynamic source data (e.g., device waveforms, electronic event logs). Implement controlled procedures for certified copies that are distinguishable from originals [26] [27].

Verification Steps:

- Trace final reports back to source data

- Verify preservation of dynamic electronic records

- Review certified copy procedures

Problem 4: Incomplete Data or Audit Trails

Symptoms: Missing data points; deleted records without trace; incomplete metadata; inability to reconstruct events.

Root Causes: Data deletion capabilities; inadequate audit trail configuration; missing metadata retention.

Solution: Configure systems to prevent permanent data deletion. Implement comprehensive audit trails that record all data changes without obscuring originals. Retain all relevant metadata and contextual information needed for reconstruction [26] [27].

Verification Steps:

- Attempt data deletion to verify prevention controls

- Review audit trail completeness for critical data changes

- Verify metadata retention supports full event reconstruction

Frequently Asked Questions (FAQs)

Q1: What is the difference between ALCOA and ALCOA+?

ALCOA represents the five core principles: Attributable, Legible, Contemporaneous, Original, and Accurate. ALCOA+ adds four enhanced principles: Complete, Consistent, Enduring, and Available. The "+" principles emphasize data lifecycle management and long-term integrity [26] [27].

Q2: How should we handle corrections to existing data?

Make corrections without obscuring the original entry. Document the reason for change, who made it, and when. Use single-line strikethroughs for manual records with initials and date. Electronic systems should preserve original data in audit trails [27] [29].

Q3: What are the FDA's expectations for audit trail review?

FDA expects risk-based, trial-specific, proactive, and ongoing audit trail review focused on critical data. Document the scope, frequency, responsibilities, and outcomes. Reviews may be manual or technology-assisted using patterns and triggers [26] [29].

Q4: How long must we retain GMP records and data?

Retention periods vary by application but often extend for the product's shelf life plus specified duration (typically 1-5 years). Data must remain enduring—intact, readable, and usable—throughout the retention period regardless of technology changes [26] [27].

Q5: Can we use electronic signatures instead of handwritten signatures?

Yes, FDA permits electronic signatures which are legally binding. They must be unique to one individual, properly authenticated, and verified by the organization. Implement controls to ensure they cannot be reused or reassigned [28].

ALCOA+ Principles Reference Table

The table below details all nine ALCOA+ principles with definitions and implementation examples.

| Principle | Definition | Implementation Examples | Common Pitfalls |

|---|---|---|---|

| Attributable | Link data to person/system creating it [26] | Unique user IDs; audit trails; signature protocols [27] | Shared logins; missing attribution [28] |

| Legible | Data remains readable and understandable [26] | Permanent recording; clear language; reversible encoding [26] [27] | Faded ink; obsolete file formats [27] |

| Contemporaneous | Recorded at time of activity [26] | Automated timestamps; real-time recording [26] | Delayed entries; post-dating [27] |

| Original | First capture or certified copy [26] | Source data preservation; certified copy procedures [26] [27] | Reliance on transcripts; lost source data [27] |

| Accurate | Error-free representation of truth [26] | Validation checks; calibration; amendment controls [26] [27] | Unverified data; uncalibrated instruments [27] |

| Complete | All data including metadata available [26] | No data deletion; full audit trails; metadata retention [26] | Deleted records; incomplete metadata [26] |

| Consistent | Chronological sequence maintained [27] | Sequential timestamps; time synchronization [26] | Conflicting dates; timezone errors [26] |

| Enduring | Lasting and intact for retention period [26] | Validated backups; archiving; migration planning [26] [27] | Media degradation; obsolete technology [27] |

| Available | Retrievable for review when needed [26] | Indexed storage; search capabilities; access controls [26] | Lost records; poor organization [27] |

Research Reagent Solutions

| Tool Category | Example Products | Primary Function | Application in LBDD |

|---|---|---|---|

| Data Integrity Platforms | Ataccama ONE, Informatica MDM [30] | Data quality management, profiling, and monitoring [30] | Ensure research data completeness and accuracy [31] |

| Metadata Management | Oracle OCI Data Catalog, Talend Data Catalog [31] [30] | Organize technical, business, and operational metadata [31] | Maintain data context and lineage for regulatory submissions [31] |

| Data Quality Tools | Precisely Trillium, IBM InfoSphere [30] | Data cleansing, standardization, deduplication [30] | Cleanse experimental data; remove inconsistencies [31] |

| Automated Validation | Custom Python scripts, JavaScript validation [32] | Real-time data validation during entry [32] | Implement format, range, and consistency checks [32] |

| Monitoring & Alerting | DataDog, Apache Superset, Talend Data Quality [32] | Continuous data quality monitoring [32] | Detect anomalies in experimental data streams [32] |

ALCOA+ Implementation Workflow

Building a Robust LBDD Workflow: From Data Acquisition to Model Development

Implementing Data Governance and Establishing a Single Source of Truth

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

1. What is a Single Source of Truth (SSOT) in the context of research? A Single Source of Truth (SSOT) is a structured data management practice where every critical data element is stored and maintained in one definitive location [33]. In LBDD research, this ensures all scientists base decisions on the same consistent, accurate data, eliminating discrepancies that can arise from multiple data versions across projects or departments [34] [33].

2. Why are data quality dimensions like 'consistency' so critical for LBDD? Data quality dimensions are measurable components of data quality. In a systematic review of digital health data, consistency was identified as the most influential dimension, impacting all others like accuracy, completeness, and accessibility [35]. Inconsistent data, such as a drug being referred to by different names (e.g., "Aspirin" vs. "Acetylsalicylic Acid") in different datasets, can skew high-throughput screening results and lead to the premature dismissal of promising drug candidates [36].

3. What are the most common root causes of poor data quality in a research environment? Common root causes include [36]:

- Siloed Data Systems: Data compartmentalized across departments and platforms.

- Lack of Standardization: Absence of uniform data formats and protocols.

- Manual Curation Errors: Human errors in handling high-dimensional data (e.g., genomics, proteomics).

- Inadequate Data Management: Lack of robust data governance frameworks.

- Lack of Quality Control: No systematic checks to identify inaccuracies or missing information.

4. How does poor data quality directly impact our research outcomes and costs? The hidden costs of poor data quality in biopharma R&D are extensive [36]:

| Cost Category | Impact on LBDD Research |

|---|---|

| Financial Costs | Wasted investment in failed drug candidates; costs of repeating experiments or trials due to unreliable data. |

| Time Costs | Significant delays in research pipelines and extended timelines for drug approval. |

| Missed Opportunities | Overlooked therapeutic targets due to inconsistent or fragmented data; wasted innovation potential. |

| Reputational Damage | Loss of trust from stakeholders, investors, and regulatory bodies. |

5. What is the role of data governance in establishing an SSOT? Data governance is the foundation of a successful SSOT [37]. It involves the policies, processes, and standards that ensure data is accurate, consistent, and trustworthy. Key components include establishing standardized definitions for key metrics, implementing data quality checks, and defining clear ownership and responsibility for data sources [38] [37].

Troubleshooting Common Data Issues

Issue 1: Inconsistent Data Formats and Naming Conventions Across Datasets

- Problem: The same entity (e.g., a gene, protein, or chemical compound) is represented differently across datasets, making integration and analysis impossible.

- Solution: Implement a data governance policy that mandates the use of standard operating procedures (SOPs) for data entry and formatting [38]. Leverage automated data validation and transformation tools (ETL pipelines) to streamline and enforce these standards [39] [38].

Issue 2: Data Silos Impeding Cross-Functional Research

- Problem: Critical data is trapped within specific departments (e.g., genomics, clinical trials), preventing a unified view of the research pipeline.

- Solution: Create a centralized data repository, such as a cloud data warehouse or data lake, to integrate disparate sources [39] [40]. Facilitate this integration with advanced platforms and APIs that pull data on a set cadence, ensuring the SSOT is comprehensive and up-to-date [34] [38].

Issue 3: Proliferation of Duplicate and Outdated Data Records

- Problem: Multiple entries for the same experimental subject or compound distort results and waste resources.

- Solution: Conduct regular data audits and use data cleansing (deduplication) tools to merge or remove duplicates [41] [40]. Establish processes for the continuous propagation of record updates and changes (data synchronization) to prevent data decay [41].

Experimental Protocols for Data Quality Assessment

Protocol 1: Assessing Data Quality Dimensions in an Existing Dataset

This protocol provides a methodology to systematically evaluate the quality of a research dataset against core dimensions defined in the DQ-DO framework [35].

1. Objective To quantitatively measure the adherence of a dataset to the six core dimensions of digital health data quality: Accessibility, Accuracy, Completeness, Consistency, Contextual Validity, and Currency.

2. Materials and Reagents

- Dataset for evaluation

- Data profiling and auditing software (e.g., IBM DataStage, Talend Data Catalog) [41]

- Access to source system documentation and defined business rules

3. Methodology

- Step 1: Dimension Definition. For each of the six dimensions, define specific, measurable rules for your dataset. For example:

- Completeness: Mandatory fields (e.g.,

Sample_ID) shall not contain null values. - Accuracy: The

Gene_Symbolfield must match entries in an official database like HGNC. - Consistency: The

Concentration_Unitfield must be uniformly expressed as "nM" across all records. - Currency: The

Last_Calibration_Datefor instruments must be within the last 12 months.

- Completeness: Mandatory fields (e.g.,

- Step 2: Automated Profiling. Use data profiling tools to scan the dataset and identify rule violations, such as null counts, format anomalies, and value range exceptions [41].

- Step 3: Manual Sampling. Perform a random record review (e.g., 2% of the dataset) to validate automated findings and assess contextual validity—fitness for your specific research purpose [35].

- Step 4: Calculation. Compute a quality score for each dimension.

Dimension Score (%) = [(Total Records - Non-Conforming Records) / Total Records] * 100 - Step 5: Reporting. Document scores, identified issues, and their potential impact on research outcomes.

4. Expected Output A data quality assessment report, summarized in a table for easy comparison:

| Data Quality Dimension | Measurement Rule | Conforming Records | Non-Conforming Records | Quality Score |

|---|---|---|---|---|

| Completeness | Sample_ID is not null |

9,850 | 150 | 98.5% |

| Accuracy | Gene_Symbol is valid |

9,700 | 300 | 97.0% |

| Consistency | Concentration_Unit = 'nM' |

9,900 | 100 | 99.0% |

| Currency | Date is within last 6 months |

8,000 | 2,000 | 80.0% |

| Contextual Validity | IC50_Value is a positive number |

9,950 | 50 | 99.5% |

| Accessibility | Data is queryable via API | N/A | N/A | 100% |

Protocol 2: Implementing a Pilot Single Source of Truth

This protocol outlines a step-by-step process for establishing a pilot SSOT for a specific research domain (e.g., a high-throughput screening campaign) [34].

1. Objective To create a unified, authoritative source for all data related to a defined research project, enabling faster, more confident decision-making and eliminating data reconciliation efforts.

2. Materials and Reagents

- Identified critical data sources (e.g., ELN, LIMS, assay output files)

- A centralized data platform (e.g., cloud data warehouse like Snowflake) [34] [40]

- Data integration and transformation tools (e.g., Talend, Informatica) [34] [33]

- Data governance policy document

3. Methodology

- Step 1: Secure Buy-In. Collaborate with senior research leaders and key scientists to choose the best data sources and secure support for the pilot [34].

- Step 2: Define Governance. Establish clear data definitions (e.g., "What defines a 'hit' in this screen?") and assign data stewards responsible for quality and integrity [38] [37].

- Step 3: Integrate Data. Use ETL (Extract, Transform, Load) pipelines to pull data from source systems, transform it into a standardized format, and load it into the central platform [39] [38].

- Step 4: Control Access. Implement role-based access controls to ensure researchers can securely access the data they need [34] [38].

- Step 5: Validate and Roll Out. Triple-check data accuracy and compliance requirements. Train the pilot group of users and officially launch the SSOT [34].

4. Expected Output A fully functional, trusted data repository for the pilot project, leading to reduced time spent debating data integrity and accelerated analysis.

Data Management Workflow Visualization

SSOT Logical Architecture Diagram

Data Governance and Quality Control Workflow

The Scientist's Toolkit: Research Reagent Solutions

This table details key solutions and their functions for establishing and maintaining high-quality data in LBDD research.

| Research Reagent / Solution | Function in Data Management |

|---|---|

| Cloud Data Warehouse (e.g., Snowflake) | Serves as the central, scalable repository for the SSOT, storing structured data for reporting and analytics [34] [40]. |

| Data Integration Platform (e.g., Talend) | Facilitates the consolidation of data from multiple sources (LIMS, ELN, etc.) into the SSOT through ETL processes, ensuring data is transformed and standardized [34] [33]. |

| Master Data Management (MDM) Solution | Provides a single point of reference for critical "master" data entities (e.g., compound, target, or patient information), ensuring accuracy and consistency across all systems [33]. |

| Data Catalog Tool | Organizes data assets at scale, making them discoverable and understandable for researchers by providing context, definitions, and lineage [34]. |

| Data Observability Platform | Enables automated monitoring of data health across its entire lifecycle, providing alerts for anomalies and facilitating root cause analysis of data issues [41]. |

| AI-Powered Analytics Platform | Allows researchers to query the SSOT using natural language, enabling self-service analytics and faster insight generation without constant IT support [38]. |

Advanced Data Profiling and Cleansing Techniques for Molecular Datasets

Within the context of structure-based drug design, the integrity of molecular datasets is paramount. Data quality issues such as inaccuracies, inconsistencies, and missing values can significantly compromise the reliability of computational models and experimental results, ultimately hindering drug discovery efforts. This technical support center provides targeted troubleshooting guides and FAQs to help researchers identify, diagnose, and rectify common data quality challenges in molecular datasets, thereby supporting the broader research goal of overcoming data quality issues in LBDD.

Troubleshooting Guides

Guide 1: Resolving Missing Data in Genomic Variant Call Format (VCF) Files

Problem: A significant number of missing genotype calls (encoded as "./.") in a VCF file from a genome-wide association study (GWAS), leading to a loss of statistical power.

| Observation | Possible Cause | Solution |

|---|---|---|

| High rate of missing genotypes per sample | Poor DNA sample quality or low sequencing depth. | Re-sequence low-coverage samples or apply a minimum depth filter (e.g., DP ≥ 10) during variant calling [42]. |

| High rate of missing genotypes per variant | Stringent variant calling filters or low-quality variants. | Re-call variants with adjusted filters or impute missing genotypes using a reference panel [43]. |

| Missing data in specific genomic regions | Repetitive or hard-to-sequence regions (e.g., centromeres). | Mask these regions from analysis or use specialized imputation tools designed for complex loci [44]. |

Experimental Protocol for Missing Data Imputation:

- Data Preparation: Extract the missing genotype data from your VCF file.

- Tool Selection: Choose an imputation tool (e.g., X-LDR for biobank-scale data or BEAGLE for smaller datasets) [43].

- Reference Panel: Select an appropriate reference panel (e.g., 1000 Genomes) that matches the population structure of your dataset [44].

- Execution: Run the imputation algorithm. For tools like X-LDR, this involves a stochastic process to estimate missing values at scale [43].

- Validation: Compare the imputed dataset with the original, checking for the restoration of expected linkage disequilibrium patterns and the absence of artifactual signals [44].

Guide 2: Correcting Inconsistent Data Formats in Metabolomic Feature Tables

Problem: Inconsistent formatting of metabolite identifiers and abundance values in a mass spectrometry-based metabolomics dataset, preventing comparative analysis.

| Observation | Possible Cause | Solution |

|---|---|---|

| Inconsistent metabolite naming (e.g., "L-Ascorbic acid", "ASCORBATE") | Lack of a controlled vocabulary during manual data entry from multiple analysts. | Implement a data standardization rule that maps all entries to a standard database identifier (e.g., HMDB or PubChem CID) [45] [46]. |

| Multiple date formats (e.g., "2025-01-28", "01/28/25") in sample metadata | Data aggregation from different instrument software with locale-specific settings. | Apply data transformation scripts to convert all dates to an ISO 8601 standard (YYYY-MM-DD) [47] [48]. |

| Concentration values in mixed units (e.g., µM, nM) | Merging datasets from different laboratories or experimental protocols. | Normalize all values to a single unit (e.g., µM) using a conversion factor during data pre-processing [46]. |

Experimental Protocol for Data Standardization:

- Profiling: Use a data profiling tool to identify all unique formats and inconsistencies in the feature table [46].

- Rule Definition: Create a set of business rules for standardization (e.g., "All metabolites must be referenced by HMDB ID").

- Transformation: Employ a data quality tool or script (e.g., Python's pandas library) to execute find-and-replace operations and unit conversions based on the defined rules [45].

- Validation: Use a rule engine to verify that all data now adheres to the predefined format, flagging any remaining outliers for manual review [46].

Guide 3: Eliminating Duplicate Spectra in Molecular Networking

Problem: Duplicate or highly similar mass spectra in a molecular networking analysis of natural products, skewing network topology and downstream interpretation.

| Observation | Possible Cause | Solution |

|---|---|---|

| Multiple spectra for the same compound from the same sample | Redundant data extraction from the same chromatographic peak. | Apply a deduplication algorithm that clusters MS2 spectra based on modified cosine similarity and retains only the most representative spectrum per cluster [49]. |

| The same compound detected in multiple fractions or samples | Expected biological or experimental replication. | Use record matching to identify these duplicates but retain the information, tagging them as coming from different samples rather than deleting them [48]. |

| Duplicate records of known standards | Repeated injections of the same standard compound. | Implement a laboratory information management system (LIMS) to track standards and flag duplicate entries automatically [47]. |

Experimental Protocol for Spectral Deduplication:

- Similarity Calculation: Calculate the modified cosine similarity between all MS2 spectral pairs in the dataset. This algorithm accounts for mass shifts due to neutral losses and functional groups [49].

- Clustering: Group spectra into clusters where the similarity score exceeds a defined threshold (e.g., >0.7).

- Consensus Building: For each cluster, generate a consensus spectrum that averages the fragmentation patterns of its members.

- Data Consolidation: Replace the duplicate spectra in the feature table with a single entry for the consensus spectrum, preserving the links to all original sample sources [49].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical data quality checks to perform on a new molecular dataset before beginning analysis? The most critical checks, often performed through data profiling, include:

- Completeness: Quantifying the percentage of missing values across samples and features [47] [48].

- Uniqueness: Identifying duplicate records, such as redundant spectra or genomic variants [45] [49].

- Validity: Ensuring data conforms to expected formats, value ranges, and controlled vocabularies [46].

- Consistency: Checking for logical contradictions, such as a sample's collection date being before the subject's birth date [47].

FAQ 2: How can we handle outliers in high-throughput screening data without introducing bias? Outlier treatment should be a reasoned, documented process:

- Detection: Use statistical methods like Z-scores or the Interquartile Range (IQR) to mathematically define outliers [45].

- Investigation: Before removal, consult experimental logs. An outlier may be a rare biological event or a technical artifact (e.g., a pipetting error) [45].

- Treatment: Based on context, you can cap the outlier to a maximum/minimum value, transform the data, or if confirmed to be an artifact, remove the data point. Always document the rationale [45].

FAQ 3: Our multi-omics data from different platforms is inconsistently formatted. What is the best strategy for integration? Successful integration relies on robust standardization:

- Establish a Common Schema: Define a master data model with standard formats (e.g., ISO for dates, HGNC for gene names) for all incoming data [46].

- Use ETL/ELT Pipelines: Employ Extract, Transform, Load (ETL) tools to automatically map and convert source data into the common schema, applying validation rules at each step [45] [48].

- Leverage Metadata: Use detailed sample and experimental metadata to ensure accurate alignment of data points across different omics layers [50].

FAQ 4: What techniques can we use to identify and manage "dark data" within our research group? Dark data—collected but unused information—can be managed by:

- Cataloging: Implement a data catalog tool to automatically scan and index all files in storage systems, making them discoverable [47].

- Profiling: Run data profiling on discovered datasets to assess their quality, structure, and potential value [46].

- Curation: Based on profiling, decide to either annotate and integrate valuable dark data into active projects or, if it is irrelevant or obsolete, securely archive or delete it to reduce storage costs and complexity [48].

Workflow Visualizations

Diagram 1: Molecular Data Cleansing Workflow

Diagram 2: Spectral Data Deduplication Process

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function/Benefit |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5) | Reduces sequence errors in PCR amplification during library preparation for sequencing, ensuring high data accuracy from the outset [42]. |

| PreCR Repair Mix | Repairs damaged DNA templates before amplification, helping to recover data from degraded samples and reduce missing values [42]. |

| PCR & DNA Cleanup Kits (e.g., Monarch) | Removes inhibitors and purifies DNA/RNA, preventing artifacts in downstream sequencing and ensuring more reliable variant calls [42]. |

| Reference Genomes (e.g., T2T-CHM13) | Provides a complete and accurate baseline for aligning sequencing reads, improving the validity and consistency of genomic data, especially in complex regions [43] [44]. |

| Spectral Libraries & Databases | Essential for annotating MS2 spectra in molecular networking. The lack of cosmetic-specific databases is a current challenge, highlighting the need for domain-specific resources [49]. |

FAQ: Understanding the Analytical Target Profile (ATP) in Data-Centric Research

What is an Analytical Target Profile (ATP) and why is it critical for data quality?

The Analytical Target Profile (ATP) is a foundational concept from Analytical Quality by Design (AQbD) that defines the intended purpose and required performance standards of an analytical method [51]. In the context of data, it outlines what you need to measure, the required quality of the measurement, and the data quality attributes necessary to ensure the data is fit for its purpose in research, such as supporting a critical decision in drug development [51] [52]. It is the formal agreement on what constitutes "quality" for your specific data asset.

How does an ATP differ from a simple data specification?

An ATP goes beyond a basic data specification by being explicitly tied to the business or research objective and defining the method performance requirements [51] [52]. While a specification might list expected data types, an ATP defines the Critical Data Quality Attributes (CDQAs)—such as accuracy, completeness, and timeliness—that are vital for the data to fulfill its intended role in a specific, high-impact context like a research publication or a regulatory submission [51].

What are the key components of a well-defined ATP?

A robust ATP for data should clearly articulate the following:

- Purpose of the Data: The specific business or research question the data is intended to answer [51].

- Critical Data Quality Attributes (CDQAs): The measurable characteristics of the data that must be controlled, such as allowable missingness rate, precision of numeric fields, or maximum data freshness [51] [53].

- Acceptance Criteria: The specific, quantifiable limits or ranges for each CDQA [52].

The relationship between these components and the overall data lifecycle can be visualized in the following workflow:

Troubleshooting Guide: Common Data Quality Issues and AQbD Solutions

Implementing an ATP and AQbD approach helps prevent and resolve common data quality issues. The following table summarizes these problems and their proactive solutions.

| Data Quality Issue | Impact on Research | Proactive AQbD Solution |

|---|---|---|

| Incomplete Data [54] [47] [48] | Creates blind spots and flawed analysis, leading to incorrect conclusions. | Define "completeness" for critical fields in the ATP and set up automated monitoring to alert on gaps [54]. |

| Duplicate Data [54] [47] [48] | Distorts aggregations and metrics (e.g., double-counting revenue), skewing ML models. | Use rule-based or probabilistic deduplication checks integrated into the data pipeline as part of the control strategy [47]. |

| Inaccurate Data [54] [47] [48] | Breeds mistrust in the entire data ecosystem; decisions are based on incorrect facts. | Establish data validation rules and outlier detection at the point of entry or during ETL processing, as defined by the ATP's accuracy requirements [54] [48]. |

| Inconsistent Data [54] [47] | Causes conflicting reports and broken integrations when data from multiple sources doesn't align. | Map data lineage to establish a single "source of truth" for each data element and implement automated sync processes [54]. |

| Outdated (Stale) Data [54] [47] [48] | Using old data for current analysis erodes business effectiveness and leads to misguided actions. | Set and monitor Service Level Agreements (SLAs) for data freshness based on the project's needs, as specified in the ATP [54]. |

| Schema Changes [54] | A simple column rename can cascade into dozens of broken dashboards and pipelines. | Implement a formal review process for schema changes and use automated testing to validate compatibility across the data ecosystem [54]. |

Troubleshooting High Background "Noise" in Your Data

Problem: Your datasets suffer from high background "noise"—meaning irrelevant, invalid, or orphaned data that obscures the true signal and complicates analysis [54] [47] [48].

Investigation and Resolution Methodology:

- Define "Validity": Based on your ATP, establish clear, business-level validation rules for each data type. This goes beyond format (e.g., "valid email") to include logical checks (e.g., "startdate must be before enddate") [54].

- Identify the Source: Use data profiling tools to scan datasets for invalid entries and orphaned records (records that have lost their parent relationship) [47] [48].

- Quarantine and Cleanse: Implement a "data quarantine" zone where invalid data is routed for review and correction before it is allowed into primary analytical datasets [54].

- Prevent Recurrence: Add referential integrity constraints in databases and data validation checks at the point of entry to prevent orphaned and invalid data from being introduced [54] [47].

Troubleshooting Weak or No Signal from Data Pipelines

Problem: A critical data pipeline is not updating (no signal) or is delivering incomplete data (weak signal), impacting downstream dashboards and models [54] [53].

Investigation and Resolution Methodology:

- Check Pipeline Freshness & Volume: The first step is to use broad metadata monitoring to check if the table is updating on schedule (freshness) and if the row counts are within expected ranges (volume) [53].

- Assess Data Lineage: Use end-to-end lineage to trace the pipeline back to its source system. A failure or delay in an upstream source or process is a common root cause [55] [53].

- Inspect Logs: Analyze logs from pipeline components (e.g., Airflow, dbt) to identify errors, failures, or code changes that may have caused the interruption [53].

- Implement Layered Monitoring: Establish a control strategy with both "broad" metadata monitors for all production tables and "deep," field-level monitors for your most critical gold-tier datasets to ensure rapid detection of such issues [53].

The Scientist's Toolkit: Essential Components for a Data AQbD Framework

The following table details key "reagent solutions" or essential components needed to build a proactive data quality system based on AQbD principles.

| Tool / Component | Function in the Data AQbD Framework |

|---|---|

| Data Observability Platform | Provides the foundational ability to monitor data health, detect anomalies, and track lineage across the entire data stack [55] [53]. |

| Static Code Analysis for Data | Analyzes data transformation code (SQL, Python) before execution to identify potential issues like schema mismatches or incorrect logic, enabling "shifting left" of data quality [55]. |

| Automated Data Testing & Validation | Executes predefined tests (e.g., for uniqueness, nullness, accuracy) against data to ensure it meets the acceptance criteria defined in the ATP [53]. |

| Lineage Tracking Tool | Maps the flow of data from source to consumption, providing critical context for impact analysis and rapid root-cause investigation when issues occur [55] [53]. |

| CI/CD Integration for Data (DataOps) | Automates quality checks within version control and deployment pipelines, acting as a gatekeeper to prevent data-breaking changes from reaching production [55]. |

The logical relationship and data flow between these components in a preventative system is shown below:

Strategies for Handling Unstructured and Multi-format Chemical Data

Troubleshooting Guides and FAQs

FAQ: Data Collection and Standardization

How can we collaboratively collect and manage chemical research data without relying on commercial systems? An effective solution is the implementation of an open, community-driven platform. The Chemistry Knowledge Base (CKB) uses Semantic MediaWiki (SMW) enhanced with chemistry-specific tools. This system allows researchers to capture chemical structures in machine-readable formats and input data through standardized forms, ensuring consistent organization and effective data comparison. This approach provides a structured, collaboratively usable platform for research outcomes without dependency on commercial databases [56].

What are the foundational principles for ensuring data integrity in a regulated environment? Adherence to the ALCOA+ principle is crucial for regulatory compliance. This framework mandates that all data must be [57]:

- Attributable: Who generated the data and when?

- Legible: Can the data be read and understood?

- Contemporaneous: Was the data recorded at the time of the activity?

- Original: Is this the first record (or a certified copy)?

- Accurate: Is the data free from errors?

- Complete: Does the data include all relevant information?

- Consistent: Is the data in a expected sequence?

- Enduring: Is the data recorded for the long term?

- Available: Can the data be accessed throughout its lifetime?

FAQ: Data Quality and Validation