Optimizing Ligand-Based Virtual Screening: Strategies to Boost Performance and Hit Rates in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on optimizing ligand-based virtual screening (LBVS) performance.

Optimizing Ligand-Based Virtual Screening: Strategies to Boost Performance and Hit Rates in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing ligand-based virtual screening (LBVS) performance. It covers the foundational principles of LBVS, explores advanced methodological approaches including machine learning and 3D shape-based screening, and offers practical troubleshooting strategies to overcome common pitfalls. By examining validation frameworks, performance metrics, and real-world case studies from sources like the DUD database and CACHE challenge, this resource delivers actionable insights for enhancing enrichment factors, hit rates, and computational efficiency in modern drug discovery pipelines.

Ligand-Based Virtual Screening Fundamentals: Core Principles and Similarity Methods

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between LBVS and SBVS? Ligand-Based Virtual Screening (LBVS) relies on known active ligands for a target to identify new hits based on similarity or quantitative structure-activity relationship (QSAR) models. In contrast, Structure-Based Virtual Screening (SBVS) uses the three-dimensional structure of the target protein to identify complementary compounds, primarily through molecular docking [1].

2. When should I prioritize LBVS over SBVS? Prioritize LBVS in the following scenarios [2] [3] [1]:

- No 3D Protein Structure: When the 3D structure of the target is unavailable or of low quality (e.g., from low-resolution homology models like early AlphaFold models).

- Early-Stage Library Screening: For rapidly filtering very large, chemically diverse libraries (millions to billions of compounds) where computational speed is essential.

- Scaffold Hopping: When the goal is to identify novel chemical scaffolds that are structurally diverse from known actives but share similar pharmacophoric or field properties.

- Limited Computational Resources: LBVS methods are generally faster and less computationally expensive than SBVS.

3. What are the main limitations of LBVS? The primary limitations are [1]:

- Lack of Structural Novelty: It can be biased towards compounds similar to known actives, potentially missing structurally unique chemotypes.

- Dependence on Known Actives: The quality of the screen is directly dependent on the quantity and quality of known active compounds used to build the model.

- No Binding Mode Information: Unlike SBVS, LBVS does not provide insights into the atomic-level interactions or binding pose within the protein's active site.

4. Can LBVS and SBVS be used together? Yes, combining both methods is a powerful and recommended strategy [3] [1]. This hybrid approach can mitigate the limitations of each individual method. Common integration strategies include:

- Sequential Workflows: Using fast LBVS to reduce a large library to a manageable subset, which is then processed with more computationally intensive SBVS.

- Parallel Screening: Running LBVS and SBVS independently and then comparing or combining the results using consensus scoring to increase confidence in the selected hits.

5. Why might my LBVS campaign fail to identify viable hits? Common reasons for failure include [2]:

- Inadequate Conformer Sampling: The generated 3D conformations for each compound do not include the bioactive conformation.

- Poorly Prepared Ligand Library: Incorrect protonation states, tautomers, or stereochemistry can lead to inaccurate similarity calculations.

- Low Quality or Small Training Set: The set of known active ligands used for the model is too small, non-diverse, or contains inaccurate activity data.

- Over-reliance on a Single Method: Different LBVS methods have different strengths; using only one approach may miss valid hits.

Troubleshooting Guides

Issue 1: Low Enrichment of Active Compounds in Retrospective Screening

Problem: When testing your LBVS method on a dataset with known actives and inactives (a "decoys" set), the method fails to prioritize (enrich) the active compounds near the top of the ranked list.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Non-informative Pharmacophore | The pharmacophoric features or molecular fields derived from your known actives are too generic. | Analyze the key interactions of known actives with the target (if structural data exists). Use a set of diverse, high-quality actives to build a consensus model [4]. |

| Inadequate Molecular Representation | The 2D fingerprints or 3D descriptors used are not capturing the features critical for binding. | Switch to or combine with alternative methods. For scaffold hopping, 3D shape and electrostatic methods (e.g., ROCS) often outperform 2D fingerprints [4]. |

| Poor Conformational Sampling | The bioactive conformation of your query or library molecules is not being generated. | Use a robust conformer generator (e.g., OMEGA, ConfGen) that produces a broad, energetically reasonable set of conformers [2]. |

Issue 2: Failure in Scaffold Hopping

Problem: The LBVS method successfully retrieves active compounds, but they are all structurally very similar (analogues) to your known starting ligands, failing to identify novel chemotypes.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Over-reliance on 2D Fingerprints | 2D fingerprints like ECFP are excellent at finding analogues but less effective at scaffold hopping. | Implement 3D field-based methods like OpenEye's Shape Tanimoto (ROCS) or Cresset FieldScreen, which are less dependent on underlying atom connectivity [4]. |

| Query Set is Too Homogeneous | The set of known actives used for the similarity search lacks chemical diversity. | Curate a query set that includes multiple, diverse chemotypes active against your target to create a more generalized pharmacophore or similarity model [4]. |

Issue 3: High False Positive Rate in Prospective Screening

Problem: Compounds ranked highly by your LBVS model are purchased or synthesized and tested, but show no biological activity.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Ignoring Compound Filters | The virtual hits may have undesirable properties that make them promiscuous, toxic, or unlikely to be active (e.g., pan-assay interference compounds, or PAINS). | Apply stringent property and substructure filters during library preparation to remove compounds with unfavorable ADME/Tox profiles or problematic functional groups [2] [3]. |

| Lack of SBVS Cross-Check | The proposed hits may be chemically similar to actives but cannot actually fit into the binding site due to steric or electrostatic clashes. | If a protein structure is available, use a fast docking program to quickly verify that the LBVS hits can achieve a reasonable binding pose [3]. |

Experimental Protocols

Protocol 1: Standard Workflow for a 3D Shape-Based LBVS Campaign

This protocol outlines the steps for a typical LBVS using 3D shape and feature similarity, a method known for its scaffold-hopping potential [2] [4].

1. Library Preparation

- Input: Obtain structures of compounds to screen (e.g., from in-house collections, ZINC, or commercial suppliers).

- Standardization: Use software like Standardizer or MolVS to standardize structures, remove salts, and neutralize charges.

- Tautomer and Protonation States: Generate relevant tautomeric and protonation states at physiological pH (e.g., 7.4) using tools like LigPrep [2].

- Conformer Generation: For each compound, generate a representative ensemble of low-energy 3D conformations. Use a high-performance algorithm like OMEGA or RDKit's ETKDG to ensure broad coverage of conformational space [2].

2. Query Preparation

- Select Known Actives: Curate a set of known, potent, and diverse active compounds for your target.

- Generate Bioactive Conformations: For each active, generate a set of low-energy conformers. If a co-crystal structure is available, this conformation should be included in the set.

3. Shape-Based Screening

- Method Selection: Use a tool like ROCS (Rapid Overlay of Chemical Structures).

- Alignment and Scoring: For each compound in the screening library, align its conformers to the conformers of the query molecule(s). Score the alignment based on the Tanimoto Combo score, which combines 3D shape similarity (Shape Tanimoto) and chemical feature similarity (Color Score) [4].

4. Post-Processing and Hit Selection

- Ranking: Rank all screened compounds based on their best similarity score against the query set.

- Diversity Analysis: Inspect the top-ranked compounds to ensure chemical diversity and select a subset for further testing or for refinement with SBVS.

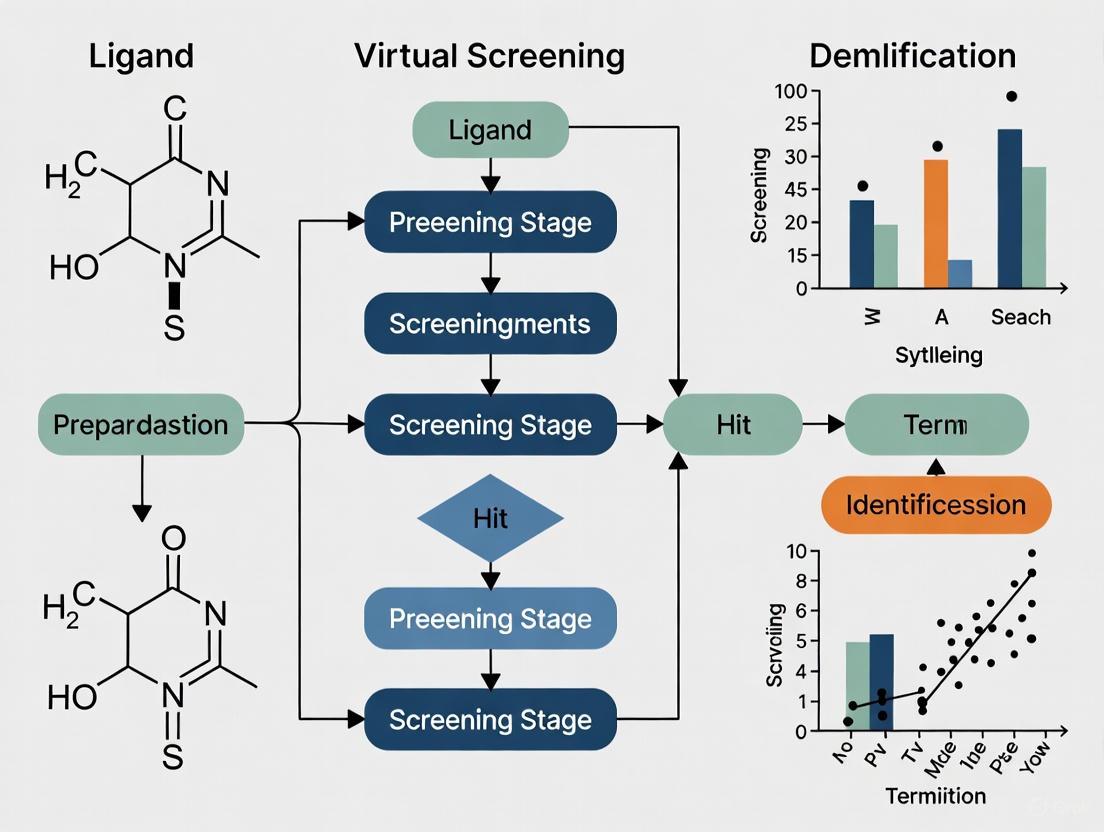

The logical flow of this protocol is summarized in the diagram below:

Protocol 2: Sequential LBVS-to-SBVS Hybrid Screening

This protocol leverages the speed of LBVS to filter a massive library, followed by the precision of SBVS on a focused subset [3] [1].

1. Ultra-Large Library Preparation

- Focus on preparing a library of billions of compounds, prioritizing efficient storage and retrieval. Full conformational sampling may be skipped initially.

2. Initial LBVS Filter

- Apply a fast LBVS method, such as 2D similarity searching (e.g., ECFP6 fingerprints) or a pre-computed 3D pharmacophore model.

- Goal: Reduce the library size from billions to a few hundred thousand or million compounds.

3. Refined LBVS or Direct Docking

- On the reduced library, perform a more computationally intensive LBVS (e.g., 3D shape similarity) to further reduce the set to tens of thousands of compounds.

- Alternatively, proceed directly to molecular docking.

4. Structure-Based Virtual Screening

- Receptor Preparation: Prepare the protein structure (add hydrogens, assign protonation states, optimize side-chains) [5].

- Molecular Docking: Dock the focused library (from step 3) into the target's binding site using a program like DOCK3.7, AutoDock Vina, or Glide [6].

- Pose Ranking: Rank the docked compounds by their predicted binding affinity (docking score).

5. Consensus Scoring and Hit Selection

- Combine the rankings from the LBVS and SBVS steps using a data fusion method like sum rank or reciprocal rank to create a final prioritized list [7] [1].

- Visually inspect the top-ranked compounds' predicted binding poses before selecting candidates for experimental testing.

The following workflow illustrates this sequential hybrid approach:

Performance Data and Method Comparison

The table below summarizes a systematic comparison of different virtual screening methods on the PARP1 inhibitors, providing quantitative performance data [7].

Table 1: Virtual Screening Method Performance on PARP1 Inhibitors

| Method Category | Specific Method | Key Performance Finding |

|---|---|---|

| Ligand-Based (LBVS) | 2D Similarity (Torsion Fingerprint) | Excellent screening performance |

| Ligand-Based (LBVS) | Structure-Activity Relationship (SAR) Models | Excellent screening performance (6 models tested) |

| Structure-Based (SBVS) | Glide Docking | Excellent screening performance |

| Structure-Based (SBVS) | Complex-Based Pharmacophore (Phase) | Excellent screening performance |

| Data Fusion | Reciprocal Rank | Best performing data fusion method |

| Data Fusion | Sum Score | Good performance in framework enrichment |

The table below compares the key characteristics of LBVS and SBVS to guide method selection [3] [1] [8].

Table 2: LBVS vs. SBVS: A Comparative Overview

| Feature | Ligand-Based Virtual Screening (LBVS) | Structure-Based Virtual Screening (SBVS) |

|---|---|---|

| Required Input | Known active ligands | 3D structure of the target protein |

| Computational Speed | Fast. Suitable for billion-compound libraries [3]. | Slow. Best for libraries of thousands to millions of compounds [1]. |

| Scaffold Hopping | Good to Excellent (especially 3D field-based methods) [4]. | Moderate. Can be constrained by the predefined binding site geometry. |

| Handles Receptor Flexibility | Implicitly, via diverse ligand conformations. | Explicit handling is computationally expensive and often limited [5]. |

| Provides Binding Mode | No | Yes |

| Key Limitation | Limited by existing ligand data; cannot discover novel mechanisms. | Relies on quality and relevance of the protein structure used [5]. |

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software Tools for LBVS

| Tool Name | Function | Brief Description |

|---|---|---|

| RDKit | Cheminformatics & Conformer Generation | Open-source toolkit for cheminformatics. Includes molecular standardization (MolVS) and conformer generation (ETKDG method) [2]. |

| OMEGA (OpenEye) | Conformer Generation | Commercial, high-performance system for rapidly generating small molecule conformers [2]. |

| ROCS (OpenEye) | 3D Shape Similarity | Tool for aligning molecules based on their 3D shape and chemical features (pharmacophores), central to scaffold-hopping [4]. |

| EON (OpenEye) | Electrostatic Comparison | Calculates the similarity of electrostatic potential between aligned molecules, complementing shape-based screening [4]. |

| Cresset FieldScreen | 3D Field-Based Screening | Uses molecular fields (electrostatics, sterics, hydrophobicity) to compare molecules and identify hits with similar interaction potential [4]. |

| Schrödinger LigPrep | Ligand Preparation | Prepares high-quality, energy-minimized 3D structures for large libraries, generating possible states at a specified pH [2]. |

| FTrees | 2D Similarity | Graph-based method for molecular similarity that is less dependent on the underlying 2D structure than fingerprints [4]. |

Frequently Asked Questions (FAQs)

1. What is the Similarity-Property Principle (SPP) and why is it foundational to LBVS? The Similarity-Property Principle is the assumption that structurally similar molecules are likely to have similar properties, with biological activity being the property of most interest in drug discovery [9] [10] [11]. This principle is the cornerstone of Ligand-Based Virtual Screening (LBVS), as it justifies the use of computational methods to search for new active compounds based on their resemblance to known active molecules [12] [10].

2. My similarity search is retrieving structurally similar compounds that are biologically inactive. Why does this happen? This occurrence, often referred to as an "activity cliff," represents a key limitation of the SPP [11]. It highlights that the relationship between structural similarity and bioactivity is not always linear or straightforward. Factors such as specific protein-ligand interactions, metabolic pathways, and cellular context can mean that minor structural changes sometimes lead to drastic changes in biological activity.

3. For a given target, which molecular fingerprint should I use to get the best results? The optimal fingerprint can depend on whether you are searching for close analogs or more diverse structures. Performance benchmarks indicate that no single fingerprint is universally best, but some generally perform well [11]. The table below summarizes the performance characteristics of several common fingerprints.

Table 1: Performance of Selected Molecular Fingerprints in Similarity Searching

| Fingerprint | Best Use Case | Reported Performance Notes |

|---|---|---|

| ECFP4 | Ranking diverse structures; general virtual screening | Among the best performers for virtual screening; good mean rank in large benchmarks [11]. |

| ECFP6 | Ranking diverse structures | Performance is among the best, alongside ECFP4 and topological torsions [11]. |

| Topological Torsions (TT) | Ranking diverse structures | Shows performance similar to ECFP4 and ECFP6 in virtual screening benchmarks [11]. |

| Atom Pairs (AP) | Ranking very close analogues | Outperforms other fingerprints when the goal is to identify the closest structural analogs [11]. |

4. How can I improve the enrichment of active compounds in my virtual screening results? Beyond selecting an appropriate fingerprint, consider these strategies:

- Data Fusion: Combine the similarity rankings from multiple different similarity measures or from multiple query molecules. This can often yield better results than relying on a single method or query [13].

- Use Larger Bit-Vector Lengths: When using circular fingerprints like ECFP, increasing the bit-vector length from 1,024 to 16,384 can significantly improve performance by reducing the number of hash collisions [11].

- Re-scoring with Machine Learning: For structure-based methods, using machine learning scoring functions to re-score initial docking results has been shown to substantially improve the identification of active compounds [14].

Troubleshooting Guide

Table 2: Common LBVS Issues and Solutions

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Poor enrichment of known actives in a similarity search. | The chosen molecular fingerprint or similarity measure is not well-suited to the chemical space of the target. | 1. Benchmark alternative fingerprints (e.g., switch from MACCS to ECFP4).2. Implement a data fusion approach to combine rankings from multiple methods [13]. |

| The Similarity-Principle appears to fail, with high structural similarity but low activity. | Encountering "activity cliffs" or the chosen descriptor ignores critical 3D structural or pharmacophoric features. | 1. Use pharmacophore-focused representations like Extended Reduced Graphs (ErG) combined with Graph Edit Distance, which can identify bioactivity similarities in structurally diverse molecules [12].2. Incorporate 3D descriptors or shape-based similarity methods if applicable. |

| Inconsistent or non-reproducible similarity rankings. | Lack of standardization in fingerprint generation parameters or molecular preprocessing. | 1. Document and standardize the tautomer and protonation states of molecules before fingerprint generation.2. Use consistent and well-documented software tools (e.g., RDKit) with fixed parameters [10]. |

| Low hit-rate in experimental validation of top-ranked virtual hits. | The virtual screening protocol may be enriched with "docking artifacts" or may be prioritizing compounds that are not drug-like. | 1. Apply pre-filters for drug-likeness (e.g., Lipinski's Rule of Five) and desired physicochemical properties to the library before screening [15].2. Experimentally test molecules across a range of ranking scores to identify the true peak hit-rate for your model [15]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Tools for LBVS Experiments

| Item / Software | Function / Application | Key Features & Notes |

|---|---|---|

| RDKit | An open-source cheminformatics toolkit for performing molecular operations and computing descriptors [12] [10]. | Used to generate fingerprints (e.g., Morgan, MACCS), calculate molecular descriptors, and compute similarity measures. It is a fundamental tool for prototyping and building LBVS workflows [10]. |

| Extended Reduced Graphs (ErG) | A molecular representation that abstracts a structure into pharmacophore-type nodes [12]. | Useful for identifying bioactivity similarities across structurally diverse groups of molecules. Can be compared using Graph Edit Distance (GED) for a graph-only driven comparison [12]. |

| DEKOIS 2.0 Benchmark Sets | Publicly available benchmark sets for evaluating virtual screening performance [14]. | Provides known active molecules and carefully selected decoys for various protein targets, enabling rigorous benchmarking of screening protocols. |

| Machine Learning Scoring Functions (e.g., CNN-Score, RF-Score-VS) | Re-scoring the output of structure-based docking to improve the identification of true binders [14]. | Pretrained ML models can significantly improve enrichment over classical scoring functions, especially for resistant protein variants [14]. |

| AutoDock Vina, FRED, PLANTS | Common molecular docking software for Structure-Based Virtual Screening (SBVS) [14]. | While for SBVS, they are often used in conjunction with LBVS. Their results can be enhanced by ML-based re-scoring [14]. |

Experimental Protocol: Benchmarking a Similarity Search Method

This protocol provides a methodology to evaluate the performance of a fingerprint or similarity measure using a dataset with known actives and inactives (decoys).

Objective: To determine the effectiveness of a molecular similarity method in enriching active compounds from a background of inactive decoys.

Materials:

- Software: A cheminformatics toolkit (e.g., RDKit [10]).

- Dataset: A benchmark set (e.g., from DEKOIS 2.0 [14]) containing a list of known active molecules and a list of decoy molecules for a specific target.

Methodology:

- Data Preparation:

- Select one or more known active molecules to serve as the query (or "seed") for the similarity search.

- Prepare a screening library by combining the remaining active molecules (that were not used as the query) with all the decoy molecules.

Molecular Representation:

- For every molecule in the query set and the screening library, compute the molecular fingerprint or descriptor you wish to benchmark (e.g., ECFP4, MACCS, ErG) [10].

Similarity Calculation and Ranking:

- For each query molecule, calculate the molecular similarity (e.g., using the Tanimoto coefficient) between the query and every molecule in the screening library [10].

- Rank all molecules in the screening library in descending order of their similarity to the query.

Performance Evaluation:

- Plot an enrichment curve: the cumulative fraction of actives found (y-axis) against the fraction of the screened database (x-axis) [10].

- Calculate key metrics such as the Enrichment Factor (EF) at a specific threshold (e.g., EF1%), which measures the ratio of actives found in the top 1% of the ranked list compared to a random selection [14].

The following diagram illustrates the logical workflow and decision points for applying the SPP in a virtual screening campaign, integrating the troubleshooting and optimization strategies discussed.

Frequently Asked Questions (FAQs)

1. When should I choose a 2D fingerprint method over a 3D shape or pharmacophore approach for virtual screening? Use 2D fingerprints when working with large compound libraries and you need fast, computationally efficient screening. They perform as well as state-of-the-art 3D structure-based models for predictions of toxicity, solubility, partition coefficient, and protein-ligand binding affinity based only on ligand information [16]. Choose 3D methods when you have reliable 3D structural information of the target or known active ligands, and you need to account for spatial complementarity and scaffold hopping.

2. Why does my 3D shape-based virtual screening yield a high rate of false negatives? A high false negative rate in shape-based screening often occurs because active ligands with shapes differing from your query structure are incorrectly discarded [17]. This can be mitigated by:

- Using multiple diverse query molecules instead of a single one.

- Ensuring your shape-overlapping procedure explores the entire 3D space with a sufficient number of iterations.

- Employing a more robust scoring function that goes beyond simple Tanimoto coefficients on shape-density overlap [17].

3. How can I improve the selectivity of my ligand-based pharmacophore model to avoid matching inactive compounds? Incorporate information about inactive compounds during the pharmacophore model development process. Actively search for 3D pharmacophores that are common to active compounds but are absent in known inactive ones. This approach helps create more selective models and reduces the chance of false positives [18].

4. My pharmacophore-based virtual screening is slow. What pre-filtering strategies can I implement? Implement multi-step filtering to quickly eliminate compounds that cannot fit the query:

- Feature-count matching: First, remove molecules that do not possess the minimum number of pharmacophoric features present in your query model.

- Pharmacophore keys: Use binary representations (fingerprints) of molecules that encode possible 2-point, 3-point, or 4-point pharmacophores. Screening these keys becomes a simple intersection test [19]. These lossless filters can significantly speed up the screening process by reducing the number of molecules that undergo the computationally expensive 3D alignment step [19].

5. Can AI and deep learning be integrated with traditional pharmacophore methods? Yes, deep learning can significantly enhance pharmacophore methods. For example:

- DiffPhore: A knowledge-guided diffusion framework that uses 3D ligand-pharmacophore mapping to generate ligand conformations that maximally map to a given pharmacophore model, improving binding conformation prediction [20].

- TransPharmer: A generative model that integrates ligand-based pharmacophore fingerprints with a transformer framework for de novo molecule generation, demonstrating strong performance in scaffold hopping and producing bioactive ligands [21]. These AI-driven approaches leverage large datasets to capture generalizable ligand-pharmacophore mapping patterns.

Troubleshooting Guides

Issue 1: Low Enrichment in 2D Fingerprint-Based Virtual Screening

Problem: Your 2D fingerprint similarity search fails to adequately enrich active compounds in the top ranks of your virtual screening results.

Solutions:

- Consensus Modeling: Combine predictions from multiple 2D fingerprints and advanced machine learning algorithms. Using a combination of Random Forest (RF), Gradient Boosted Decision Tree (GBDT), or Deep Neural Networks (DNNs) with different fingerprint types can significantly improve performance over single-method approaches [16].

- Fingerprint Selection: Choose the fingerprint type appropriate for your target. The table below summarizes common 2D fingerprint categories and their characteristics:

Table 1: Categories and Characteristics of Common 2D Fingerprints

| Fingerprint Category | Examples | Key Characteristics | Typical Use Cases |

|---|---|---|---|

| Substructure Key-Based | MACCS [16] | Predefined list of structural keys; 166 bits [16] | Fast preliminary screening |

| Topological/Path-Based | FP2, Daylight [16] | Encodes linear paths of atoms/bonds; 256-2048 bits [16] | General QSAR, similarity search |

| Circular | ECFP4 [16] | Encodes atom environments within a radius; hashed | Activity prediction, scaffold hopping |

| Pharmacophore Fingerprints | 2D Pharmacophore (Pharm2D), Extended Reduced Graph (ERG) [16] | Captures binding-related features and topological distances between them [22] | Ligand-based virtual screening |

Issue 2: Handling Conformational Flexibility in 3D Pharmacophore Screening

Problem: The performance of your 3D pharmacophore screening is highly sensitive to the input conformations of the database molecules, leading to inconsistent results.

Solutions:

- Pre-computed Conformational Databases: Instead of generating conformations on-the-fly, use dedicated screening databases that store multiple pre-computed low-energy conformations for each molecule. This approach allows for faster and more consistent screening [19].

- Multi-Conformer Pharmacophore Alignment: For more advanced users, tools like DiffPhore use a diffusion-based framework to generate ligand conformations that natively align with the pharmacophore model, effectively handling flexibility during the generation process itself [20].

The following workflow diagram illustrates a robust 3D pharmacophore-based virtual screening process that incorporates these solutions:

Issue 3: Poor Performance in Shape-Based Screening for Specific Targets

Problem: Your shape-based virtual screening performs poorly (e.g., AUC < 0.5) for certain protein targets, making it difficult to distinguish actives from inactives.

Solutions:

- Advanced Scoring Functions: Move beyond simple Tanimoto scoring. Implement more robust scoring functions like the HWZ score, which was developed to better discriminate active from inactive compounds. This score-based approach has demonstrated an average AUC value of 0.84 ± 0.02 across 40 diverse targets, showing less sensitivity to the choice of target compared to traditional methods [17].

- Hybrid Approach: Combine shape matching with pharmacophoric constraints. Tools like USRCAT perform shape recognition with added pharmacophoric constraints, which can improve selectivity [18].

Table 2: Performance Comparison of Virtual Screening Methods

| Method | Average AUC (95% CI) | Average Hit Rate at Top 1% | Key Advantage |

|---|---|---|---|

| HWZ Score (Shape-based) [17] | 0.84 ± 0.02 | 46.3% ± 6.7% | Robust across diverse targets |

| 2D Fingerprint Consensus Models [16] | Comparable to 3D models (ligand-based tasks) | Varies by fingerprint and ML algorithm | Computational efficiency |

| 3D Complex-Based Methods [16] | Superior for complex-based affinity prediction | N/A | Utilizes full target structure information |

Experimental Protocols & Validation

Protocol 1: Validating a Ligand-Based Pharmacophore Model

Purpose: To ensure your developed 3D pharmacophore model is valid and selective before proceeding to large-scale virtual screening.

Steps:

- Data Curation: Collect a dataset with known active and inactive compounds from reliable databases like ChEMBL. Categorize them based on their activity values (e.g., pIC50 ≥ 7 for actives, pIC50 ≤ 5 for inactives) [18].

- Model Generation: Use a tool like pmapper to generate 3D pharmacophore signatures. The algorithm identifies common pharmacophores among active compounds that are absent in inactives, using a canonical signature based on feature types and 3D geometry [18].

- Retrospective Screening: Screen your curated database using the generated model. A valid model should recall a high percentage of known actives while excluding most inactives.

- Comparison to 2D Similarity: Perform a standard 2D similarity search (e.g., using ECFP4 fingerprints) on the same dataset. A superior 3D pharmacophore model should demonstrate clear advantages, such as better scaffold hopping capability [18].

- Pose Validation (If possible): If X-ray structures of protein-ligand complexes are available, check if your model can match the binding pose of the co-crystallized ligand. This confirms the model's biological relevance [18].

Protocol 2: Implementing a 2D Fingerprint Consensus Model

Purpose: To maximize virtual screening performance by leveraging the strengths of multiple 2D fingerprints and machine learning algorithms.

Steps:

- Fingerprint Generation: Calculate multiple types of 2D fingerprints for your training set of compounds with known activity. Key types to include are:

- ECFP4 (Circular)

- MACCS (Substructure key)

- Daylight (Path-based)

- Pharmacophore Fingerprints (e.g., Pharm2D, ERG) [16]

- Model Training: Train separate predictive models (e.g., Random Forest, Gradient Boosted Decision Tree, or Deep Neural Networks) for each fingerprint type.

- Build Consensus: Combine the predictions from these individual models into a final consensus prediction. This can be done by averaging scores or using a meta-classifier.

- Validation: Evaluate the consensus model on a held-out test set. This approach has been shown to achieve performance comparable to 3D structure-based models for many ligand-based prediction tasks [16].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Resources for Ligand-Based Virtual Screening

| Resource Name | Type | Primary Function | Access |

|---|---|---|---|

| RDKit [16] | Software Library | Cheminformatics toolkit; generates 2D fingerprints (ECFP, MACCS, etc.) and handles molecular data. | Open-source |

| Openbabel [16] | Software Library | Chemical file format conversion and descriptor calculation. | Open-source |

| pmapper [18] | Software Tool | Generates 3D pharmacophore signatures and performs ligand-based pharmacophore modeling. | Open-source |

| DiffPhore [20] | AI Software Framework | "On-the-fly" 3D ligand-pharmacophore mapping using a knowledge-guided diffusion model. | N/A |

| TransPharmer [21] | AI Generative Model | Pharmacophore-informed de novo molecule generation for scaffold hopping. | N/A |

| ZINC20 Database [23] [20] | Compound Library | Publicly accessible database of commercially available compounds for virtual screening. | Public |

| Database of Useful Decoys (DUD) [17] | Benchmarking Set | Contains active compounds and matched decoys for validating virtual screening methods. | Public |

Frequently Asked Questions (FAQs)

FAQ 1: What are the key differences between traditional and modern AI-driven molecular representations, and when should I use each?

Traditional molecular representations, such as SMILES strings and molecular fingerprints, are rule-based and rely on expert knowledge. SMILES provides a compact string encoding of a molecule's structure, while fingerprints (like ECFP) encode substructural information into fixed-length binary vectors for similarity searching [24] [25]. These are computationally efficient and excel in tasks like similarity search, clustering, and initial virtual screening [26] [25]. In contrast, modern AI-driven representations use deep learning models like Graph Neural Networks (GNNs) to automatically learn continuous, high-dimensional feature embeddings directly from data [24] [26]. These are better at capturing complex, non-linear relationships between structure and function and are superior for sophisticated tasks like predicting intricate molecular properties or generating novel scaffolds [24]. For a new virtual screening campaign, start with traditional fingerprints for high-throughput library filtering and use AI-driven graph representations for more accurate prediction of short-listed candidates.

FAQ 2: My graph-based model's predictions lack interpretability. How can I identify which substructures the model deems important?

This is a common challenge with atom-level GNNs, where interpretations can be scattered and not align with chemically meaningful substructures [27]. To address this:

- Use Explainable AI (XAI) Techniques: Employ built-in attention mechanisms or post-hoc interpretation methods that highlight atoms or bonds contributing to the prediction.

- Leverage Reduced Molecular Graphs: Implement models that use higher-level graph representations, such as Functional Group or Junction Tree graphs [27]. In these representations, nodes correspond to entire chemical substructures, making the model's decision-making process more coherent and chemically intuitive. For instance, a model might highlight an entire "carboxylic acid group" as important instead of separate, disconnected oxygen and hydrogen atoms.

- Adopt Multi-Graph Models: Frameworks like MMGX use multiple graph representations simultaneously. The interpretation from these different views provides more comprehensive and chemically sound insights into the features and potential substructures the model uses [27].

FAQ 3: Can I combine different molecular representations to improve virtual screening performance?

Yes, combining representations is a powerful strategy. While some studies found that simply concatenating different feature vectors did not yield significant improvements [25], more sophisticated multi-modal or hybrid models have shown great promise. These models integrate different data types, such as molecular graphs, SMILES strings, and quantum mechanical properties, to generate more comprehensive molecular representations [26]. For example:

- MolFusion employs multi-modal fusion of different representations [26].

- MMGX leverages multiple molecular graphs (Atom, Pharmacophore, JunctionTree, FunctionalGroup) within a single model, which has been shown to improve performance and provide more robust interpretations [27]. The key is to use architectures designed to intelligently fuse information from these different modalities rather than simply combining raw features.

FAQ 4: How can I incorporate fundamental chemical knowledge into a deep learning model for more accurate predictions?

Integrating external chemical knowledge can guide the model to learn more meaningful patterns and improve generalization. A leading method is to use a Knowledge Graph (KG) as a prior.

- Construct a Domain-Specific KG: Build a knowledge graph that encapsulates fundamental knowledge, such as the ElementKG, which contains information about chemical elements, their attributes, and their relationships with functional groups [28].

- Inject Knowledge During Pre-training: Use the KG to guide graph augmentation in contrastive learning. For example, create augmented molecular graphs by linking atoms based on their relationships in the ElementKG, which establishes chemically meaningful associations beyond direct bonds [28].

- Use Prompts During Fine-tuning: Employ "functional prompts" based on knowledge graph entities (like functional groups) to evoke task-specific knowledge in the pre-trained model during fine-tuning on downstream tasks like property prediction [28]. This approach, used in the KANO framework, has demonstrated superior performance and provides chemically sound explanations [28].

Troubleshooting Guides

Problem: Low Performance in Virtual Screening Accuracy Your model fails to identify active compounds or has a high false positive rate.

- Potential Cause 1: Inadequate Molecular Representation. The chosen representation may not capture features critical for the specific target.

- Solution:

- Benchmark Representations: Test multiple representations on your data. MACCS fingerprints are a robust, simple baseline, while molecular descriptors (e.g., from PaDEL) excel for physical properties [25]. Graph-based models are better for complex activity prediction.

- Use a Multi-Graph Approach: Implement a model like MMGX that simultaneously learns from atom-level and reduced graphs (Pharmacophore, FunctionalGroup) to capture both atomic and substructural information [27].

- Solution:

- Potential Cause 2: Data Scarcity or Bias. The training set is too small or not representative of the chemical space being screened.

- Solution:

- Utilize Self-Supervised Learning (SSL): Pre-train a model on a large, unlabeled molecular dataset (e.g., from PubChem) using contrastive learning [28] or masked atom prediction [26]. Fine-tune the pre-trained model on your smaller, labeled dataset.

- Apply Data Augmentation: Use chemically valid augmentation techniques. The element-guided graph augmentation from the KANO framework is a good example, as it preserves molecular semantics while creating positive pairs for contrastive learning [28].

- Solution:

Problem: Model Predictions Are Not Chemically Interpretable The model makes accurate predictions, but you cannot understand the reasoning behind them, hindering trust and lead optimization.

- Potential Cause: Atom-Level Interpretations are Chemically Sparse. Standard interpretation on atom-level graphs may highlight isolated atoms that don't form a recognizable chemical motif [27].

- Solution:

- Switch to a Multi-Graph Explainable Model: Use the MMGX framework or similar. Analyze the attention weights from the FunctionalGroup or JunctionTree graph views, which provide explanations at the level of substructures that are more meaningful to chemists [27].

- Validate Interpretations: Use datasets with known ground-truth important substructures (synthetic binding logic datasets) or published structural alerts to quantitatively verify that the model is highlighting chemically relevant features [27].

- Solution:

Problem: Computational Bottlenecks in Processing Large Compound Libraries Screening millions of compounds is prohibitively slow.

- Potential Cause: Use of Computationally Expensive Models. Complex deep learning models like large GNNs or transformers are slow for inference on massive libraries.

- Solution:

- Implement a Multi-Stage Screening Pipeline:

- Stage 1 (Coarse Filtering): Use fast similarity searches with molecular fingerprints (ECFP, MACCS) to quickly reduce the library size to a manageable number of candidates (e.g., top 1%) [25].

- Stage 2 (Fine Filtering): Apply more accurate but slower graph-based models or molecular docking to the shortlisted candidates for precise prediction.

- Optimize Feature Calculation: Pre-compute and store molecular features for your entire in-house library to avoid on-the-fly computation during screening runs.

- Implement a Multi-Stage Screening Pipeline:

- Solution:

Experimental Protocols & Data Presentation

Protocol 1: Benchmarking Molecular Representations for Property Prediction

This protocol outlines how to evaluate different molecular representations on a specific prediction task to select the best one for your virtual screening pipeline.

1. Objective: Systematically compare the performance of various molecular feature representations on a given molecular property prediction dataset.

2. Materials/Reagents:

- Dataset: A labeled dataset (e.g., BACE, BBBP, ESOL from MoleculeNet) [27].

- Software: RDKit (for fingerprint and descriptor calculation) [25], deep learning frameworks (PyTorch, TensorFlow).

- Representations:

3. Methodology:

- Step 1: Data Preprocessing. Split the data into training, validation, and test sets (e.g., 80/10/10). Apply standard scaling to continuous molecular descriptors.

- Step 2: Feature Generation. For each molecule in the datasets, generate the different feature vectors (fingerprints, descriptors) or graph structures.

- Step 3: Model Training. Train a standard machine learning model (e.g., Random Forest) on the fingerprint and descriptor features. Separately, train a GNN on the graph data. Use the validation set for hyperparameter tuning.

- Step 4: Evaluation. Predict on the held-out test set and evaluate using relevant metrics (e.g., ROC-AUC for classification, RMSE for regression).

4. Expected Output: A performance table that allows for direct comparison to inform representation selection.

Table 1: Example Benchmarking Results on a Classification Task (e.g., BBBP)

| Molecular Representation | Model | ROC-AUC | Key Advantage |

|---|---|---|---|

| MACCS Fingerprint | Random Forest | 0.89 | Simplicity, speed [25] |

| ECFP Fingerprint | Random Forest | 0.91 | State-of-the-art fingerprint [25] |

| PaDEL Descriptors | Random Forest | 0.87 | Direct physicochemical properties [25] |

| Atom-Level Graph | GNN | 0.93 | Learns complex structural patterns [27] |

| Multi-Graph (MMGX) | GNN | 0.95 | Combines multiple views for superior performance [27] |

Protocol 2: Knowledge-Guided Pre-training with ElementKG

This protocol details how to incorporate fundamental chemical knowledge via a knowledge graph to enhance a molecular representation model.

1. Objective: Pre-train a graph neural network using contrastive learning guided by a chemical element-oriented knowledge graph (ElementKG) to learn more meaningful molecular embeddings.

2. Materials/Reagents:

- Unlabeled Molecular Dataset: A large collection of molecules (e.g., from PubChem) in SMILES or graph format.

- ElementKG: A knowledge graph containing entities for chemical elements and functional groups, their properties, and relations [28].

- Software: KG embedding tool (e.g., OWL2Vec*), deep learning framework [28].

3. Methodology:

- Step 1: Knowledge Graph Embedding. Use OWL2Vec* to learn vector embeddings for all entities and relations in the ElementKG [28].

- Step 2: Element-Guided Graph Augmentation. For a given molecular graph, identify its constituent elements. Under the guidance of the ElementKG, create an augmented graph by linking atom nodes that share the same element type or have relations in the KG, even if they are not directly bonded. This forms a positive pair

(Original Graph, Augmented Graph)for contrastive learning [28]. - Step 3: Contrastive Pre-training. Train a GNN encoder by feeding the two views of the molecule. Use a contrastive loss function to maximize the agreement between the embeddings of the original and augmented graphs. This teaches the model to be invariant to semantically meaningful, knowledge-driven variations [28].

- Step 4: Downstream Fine-tuning. Use the pre-trained GNN as a starting point for fine-tuning on specific property prediction tasks, potentially using functional prompts to recall relevant knowledge [28].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Datasets for Molecular Representation Research

| Item Name | Function/Brief Explanation | Example/Reference |

|---|---|---|

| RDKit | Open-source cheminformatics software; used for generating fingerprints, descriptors, and molecular graphs from SMILES. | [25] |

| PaDEL-Descriptor | Software for calculating molecular descriptors and fingerprints. Useful for generating traditional feature vectors. | [25] |

| MoleculeNet | A benchmark collection of molecular datasets for various property prediction tasks. Used for standardized model evaluation. | [27] |

| ElementKG | A chemical element-oriented knowledge graph. Provides fundamental domain knowledge to enhance model semantics and interpretability. | [28] |

| MMGX Framework | A model supporting multiple molecular graph representations (Atom, Pharmacophore, etc.) for improved learning and interpretation. | [27] |

| KANO Framework | A method for knowledge graph-enhanced molecular contrastive learning with functional prompts for pre-training and fine-tuning. | [28] |

| OGBN-Mol | A large-scale molecular graph dataset from the Open Graph Benchmark, suitable for pre-training graph models. | - |

| DeepChem | An open-source toolkit for deep learning in drug discovery, life sciences, and quantum chemistry. Provides implementations of various models. | - |

The Critical Role of Data Preprocessing and Compound Library Standardization

Frequently Asked Questions (FAQs)

General Principles

Why is data preprocessing and library standardization critical for ligand-based virtual screening (LBVS) performance?

Standardization ensures that molecular comparisons are consistent and meaningful. Inconsistent representations of the same molecule (e.g., different salt forms, charges, or tautomeric states) can lead to invalid similarity calculations and missed hits. Standardizing a library creates a uniform basis for fingerprint generation, shape comparison, and substructure search, which are the foundations of LBVS. A well-prepared library significantly enhances the signal-to-noise ratio, leading to better enrichment of true active compounds [29].

What are the most common data issues that preprocessing aims to correct?

The most common issues include:

- Salts and Counterions: These can dominate molecular representations and skew similarity metrics if not removed.

- Charges: Inconsistent charge states can make identical molecules appear different.

- Tautomers: Different tautomeric forms of the same molecule can generate different fingerprints.

- Stereochemistry: Incorrect or unspecified stereochemistry can lead to improper 3D shape and pharmacophore alignment.

- File Formats and Integrity: Errors during format conversion can corrupt structural information.

Technical Implementation

Which tools can automate the library preparation and standardization process?

Several open-source tools are available:

- VSFlow: Includes a

preparedbtool specifically for standardizing molecules, removing salts, neutralizing charges, and generating conformers and fingerprints, largely based on RDKit and MolVS rules [29]. - RDKit: A core cheminformatics framework used by tools like VSFlow for molecular standardization, descriptor calculation, and fingerprint generation [29].

- OpenBabel/MolVS: Used for converting file formats and standardizing molecules according to common rulesets, such as charge neutralization and salt stripping [30] [14].

- jamlib (from jamdock-suite): A script-based tool that automates the generation of energy-minimized, standardized compound libraries in ready-to-dock formats [30].

How should I handle tautomers and protonation states during standardization?

The general best practice is to generate a single, canonical representation for each molecule to avoid redundancy. Tools like VSFlow offer an optional canonicalize step that adds the canonical tautomer to the database [29]. For protonation states, standardizing to a neutral form is common for LBVS. However, the optimal state might be target-dependent. If information about the bioactive protonation state is available, it should be used.

What are the key considerations for preparing a library for 3D shape-based screening?

For 3D methods, generating biologically relevant conformers is crucial. This typically involves:

- Using a Robust Algorithm: Methods like the RDKit's ETKDGv3 are commonly used to generate diverse, reasonable 3D conformations [29].

- Energy Minimization: Optimizing generated conformers with a forcefield (e.g., MMFF94) ensures geometric stability [29].

- Multiple Conformers: Storing multiple conformers per molecule accounts for flexibility and increases the chance of matching a query's shape [29].

Troubleshooting Guides

Problem: Low Hit Enrichment and High False-Positive Rates

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inconsistent Molecular Standardization | Check if the same molecule exists in multiple forms (e.g., salt vs. free base) in your library. | Re-process the entire library through a standardization pipeline (e.g., VSFlow's preparedb with standardize and canonicalize flags) to ensure a single, consistent representation per compound [29]. |

| Poor Quality or Absence of 3D Conformers | Visually inspect the 3D structures of top-ranking compounds for unrealistic geometries. | Regenerate conformers using a well-validated method like ETKDGv3 followed by forcefield minimization (e.g., MMFF94) [29]. |

| Inappropriate Fingerprint or Screen Type | Retrospectively benchmark different fingerprint types (e.g., ECFP4, FCFP4) and similarity measures (e.g., Tanimoto, Dice) on a dataset with known actives and decoys. | Switch the fingerprint type or screening method. For scaffold hopping, use a circular fingerprint like Morgan/ECFP. For finding close analogs, substructure or similarity searches with a topological fingerprint may be better [29] [31]. |

Problem: Performance and Scalability Issues with Large Libraries

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inefficient Library Format | Time how long it takes to load your library file. Large SDF or SMILES files can be slow to parse. | Convert the library to a faster, binary format. VSFlow, for example, uses a custom .vsdb (pickle) format that significantly enhances loading speed for large databases [29]. |

| Lack of Parallelization | Check if the screening tool is using only one CPU core. | Utilize tools that support multiprocessing. VSFlow implements parallelization via Python's multiprocessing module, allowing it to run on multiple cores/threads [29]. |

| Oversized Library for the Task | Evaluate if the entire multi-billion compound library needs to be screened. | Apply pre-filtering. Use gross physicochemical properties (e.g., logP, molecular weight) or a very fast initial similarity filter to create a smaller, more focused library for the more computationally intensive screening step [15] [3]. |

Problem: Errors During Library Preparation and Screening

| Error Message / Symptom | Likely Cause | Resolution |

|---|---|---|

| "Molecule could not be parsed" or "Invalid valence." | The molecular structure is invalid, or an atom has an impossible bonding pattern. This is common in data sourced from different databases. | Use a tool like MolVS or RDKit to validate and correct the valences. The preparedb tool in VSFlow can perform such standardization automatically [29]. |

| Fingerprint similarity results are nonsensical. | Molecular fingerprints were not pre-calculated and stored, or are being calculated on-the-fly with inconsistent parameters. | Pre-calculate and store fingerprints for the entire standardized database before screening, ensuring parameter consistency. VSFlow's preparedb does this with the fingerprint flag [29]. |

| 3D shape alignment fails or is poor. | The query or database molecules lack 3D conformers, or have only a single, low-energy conformer that is not bioactive-like. | Generate multiple, diverse 3D conformers for both query and database molecules. Use the preparedb tool with the conformers option to build a multi-conformer database [29]. |

Workflow Visualization

The diagram below illustrates a standardized workflow for preparing compound libraries for virtual screening, integrating best practices from the cited methodologies.

Research Reagent Solutions

The following table lists essential tools and resources for building a robust compound preprocessing and library standardization pipeline.

| Tool / Resource | Type | Primary Function in Preprocessing | Key Features |

|---|---|---|---|

| VSFlow [29] | Open-source Software Tool | End-to-end library preparation and screening. | Standardization via MolVS rules; 2D fingerprint & 3D multi-conformer generation; creates optimized .vsdb database files. |

| RDKit [29] | Cheminformatics Framework | Core chemistry operations. | Molecular I/O, sanitization, standardization, fingerprint calculation, conformer generation. |

| MolVS [29] | Library | Molecular Standardization. | Implements rules for charge neutralization, salt stripping, and tautomer canonicalization. |

| OpenBabel [30] [14] | Chemical Toolbox | Format conversion and command-line sanitization. | Converts between >100 chemical formats; performs basic charge correction and hydrogen adjustment. |

| jamlib [30] | Bash Script | Automated library generation for docking. | Downloads and prepares specific libraries (e.g., FDA-approved drugs); energy minimizes and converts to PDBQT. |

| ETKDGv3 [29] | Algorithm | 3D Conformer Generation. | RDKit's knowledge-based method for generating diverse, experimentally-like molecular conformers. |

| MMFF94 [29] | Force Field | Energy Minimization. | Optimizes the geometry of generated 3D conformers to low-energy states. |

Advanced LBVS Methodologies: From Traditional Similarity to AI-Driven Screening

This technical support guide addresses common challenges in configuring and applying 2D fingerprint methods for ligand-based virtual screening (LBVS). Within the broader objective of optimizing LBVS performance, the selection of an appropriate molecular fingerprint and similarity coefficient is critical for successfully identifying novel active compounds. This document provides targeted troubleshooting and methodological guidance to enhance the reliability and effectiveness of your screening workflows.

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between ECFP and FCFP fingerprints?

- ECFP (Extended Connectivity Fingerprint) is a substructure-preserving circular fingerprint that captures atom environments in a molecule based on elemental atom types and connectivity. It is designed for general-purpose molecular similarity assessment [32].

- FCFP (Functional-Class Fingerprint) is a feature fingerprint where atoms are assigned to generalized functional classes (e.g., hydrogen bond donor, acceptor, aromatic ring). It is better suited for activity-based virtual screening, as it focuses on pharmacophoric features rather than specific atomic structures [32].

2. When should I use the Tversky similarity coefficient over Tanimoto?

The Tversky coefficient is advantageous when your virtual screening scenario is asymmetric [33]. This often occurs when using a small, potent reference molecule to search a large database. The Tversky measure introduces two parameters, α and β, which allow you to weight the importance of features in the reference and database molecules differently. Setting a higher weight for the reference molecule (e.g., α > β) can make the search more sensitive to the specific features of your lead compound [33].

3. My virtual screening results lack structural diversity. How can I improve this?

Relying solely on a single, high-similarity Tanimoto threshold can confine results to well-explored chemical areas. To enhance diversity:

- Utilize Multiple Reference Structures: Incorporate several structurally diverse known actives and use data fusion techniques to combine the similarity scores. This has been shown to be a highly effective and efficient screening approach [34].

- Combine LBVS with Structure-Based Methods: Implement a parallel or sequential workflow that integrates ligand-based similarity screening with structure-based methods like molecular docking. This hybrid approach can help identify novel scaffolds that possess the required binding characteristics [1].

4. Is a Tanimoto score of 0.5 always significant?

No, the statistical significance of a Tanimoto score is not absolute. It depends on factors such as the size of the database being searched and the complexity (number of bits set) in the query molecule's fingerprint [35]. A score of 0.5 may be highly significant in a large database search but less so in a smaller, more focused library. For robust results, statistical measures like p-values or Z-scores should be considered to assess significance against a random background model [35].

5. Why do I get different similarity rankings when using different fingerprint types?

Different fingerprints encode fundamentally different molecular information. For instance:

- MACCS Keys, a dictionary-based fingerprint, may identify structures as more similar [32].

- ECFP, encoding circular atom environments, often identifies the same set of molecules as less similar [32]. The choice of fingerprint directly influences the definition of "similarity." You should select a fingerprint type that aligns with the goal of your study—use substructure-preserving fingerprints (e.g., ECFP) if chemical scaffold features are important, and feature-based fingerprints (e.g., FCFP) if biological activity is the primary concern [32].

Troubleshooting Common Experimental Issues

Problem 1: Poor Enrichment of Active Compounds in Virtual Screening

Symptoms: The top-ranked compounds from a screen show high calculated similarity to the reference structure but are confirmed to be inactive in subsequent biological assays.

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Suboptimal Fingerprint Choice | Compare the performance of ECFP vs. FCFP on a validation set with known actives and inactives. | Switch from ECFP to FCFP (or vice versa) or test a combination of different fingerprint types [32]. |

| Inadequate Similarity Coefficient | Check if the actives are systematically smaller or larger than the reference. | For a small reference molecule, try the Tversky similarity with a higher weight (α) on the reference features [33]. |

| Bias in the Reference Set | Analyze the structural diversity of your known active compounds used as references. | Use multiple reference structures and apply data fusion (e.g., sum of similarity scores) to get a more robust ranking [34]. |

Problem 2: Inconsistent Similarity Results with Different Software or Toolkits

Symptoms: The same pair of molecules yields a significantly different Tanimoto score when fingerprints are generated with different software libraries.

Resolution Steps:

- Verify Fingerprint Parameters: Ensure that critical generation parameters are identical across toolkits. For ECFP, the most important parameter is the diameter (or radius). ECFP4 has a diameter of 4 bonds, which corresponds to a radius of 2 bonds [32].

- Check Bit-Vector Length: The length of the final hashed bit-vector (e.g., 1024, 2048 bits) can lead to different rates of "bit collisions," slightly altering the final fingerprint. Use the same length for consistent comparisons [32].

- Confirm Atom Typing Scheme: Different toolkits may use slightly different rules for atom typing (e.g., in FCFP), which changes the features being encoded. Consult the software documentation to understand the exact methodology.

Performance Comparison and Experimental Data

Fingerprint Performance in Virtual Screening

The table below summarizes the average recall rates (at 1% of the database) for different fingerprint types across 11 activity classes from the MDL Drug Data Report (MDDR) database, demonstrating their effectiveness in identifying active compounds [34].

| Fingerprint Type | Key Characteristics | Mean Recall @ 1% (MDDR) |

|---|---|---|

| ECFP_4 | Circular fingerprint, diameter 4, atom-based | Up to 45.9% (depending on normalization) [34] |

| FCFP_4 | Circular fingerprint, diameter 4, feature-based | Up to 45.1% (depending on normalization) [34] |

| BCI | Dictionary-based structural keys | 36.0% [34] |

| Daylight | Linear path-based, hashed | 34.7% [34] |

| Unity | Dictionary- and pattern-based | 34.0% [34] |

| CATS | Topological pharmacophore | 19.4% [34] |

| Coefficient | Formula | Best Use Case |

|---|---|---|

| Tanimoto | ( T = \frac{c}{a + b - c} ) | General-purpose similarity search, symmetric comparison [32] [33]. |

| Tversky | ( Tv = \frac{c}{\alpha(a - c) + \beta(b - c) + c} ) | Asymmetric search, e.g., when the reference molecule is much smaller than the database molecules [33]. |

| Dice | ( D = \frac{2c}{a + b} ) | Similar to Tanimoto but gives more weight to the common features. |

Standard Experimental Protocols

Protocol 1: Conducting a Single-Reference Similarity Search with ECFP/FCFP

This is a core methodology for ligand-based virtual screening [34] [32].

- Fingerprint Generation:

- For each molecule (reference and database), generate a fingerprint vector. For ECFP4, use a radius of 2 and a bit-vector length of 2048.

- Example using RDKit in Python:

- Similarity Calculation:

- Calculate the Tanimoto coefficient between the reference fingerprint and every fingerprint in the database.

- Example:

- Ranking and Selection:

- Rank all database molecules in descending order of their similarity score.

- Select the top-ranking compounds for further analysis or experimental testing.

Protocol 2: Data Fusion for Multiple Reference Structures

Using multiple active reference structures can significantly improve screening performance [34].

- Individual Searches: Perform a separate similarity search for each known active reference structure against the database.

- Score Fusion: For each database molecule, combine the similarity scores obtained from all reference searches. A common and effective method is the sum of scores:

Fused_Score(M) = Similarity(M, Ref1) + Similarity(M, Ref2) + ... + Similarity(M, RefN) - Final Ranking: Rank the database molecules based on their fused scores in descending order. This prioritizes compounds that are similar to several active reference structures.

Workflow and Signaling Diagrams

ECFP/FCFP Fingerprint Generation Logic

Virtual Screening Troubleshooting Pathway

Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| MDL Drug Data Report (MDDR) Database | A standard benchmark database containing compounds and their therapeutic activity classes, used for validating virtual screening methods [34]. |

| Database of Useful Decoys (DUD) | A public database designed for benchmarking virtual screening programs, containing active ligands and computationally matched decoys for multiple protein targets [17]. |

| Extended Connectivity Fingerprint (ECFP) | A circular fingerprint that captures atomic connectivity information, ideal for assessing general structural similarity and scaffold hopping [32]. |

| Functional-Class Fingerprint (FCFP) | A circular fingerprint that uses generalized pharmacophoric features, better suited for bioactivity prediction and identifying functionally similar compounds with different scaffolds [32]. |

| Tanimoto Coefficient | The most common symmetric similarity metric, ideal for general-purpose similarity searches where the reference and target molecules are considered equally [32] [33]. |

| Tversky Similarity | An asymmetric similarity measure that allows the researcher to bias the search towards the features of the reference molecule, useful for scaffold hopping or when using a small lead compound [33]. |

3D shape-based screening is a powerful ligand-based virtual screening (LBVS) method that operates on a fundamental principle: molecules with similar three-dimensional shapes are likely to exhibit similar biological activities [17]. This technique is particularly valuable for scaffold hopping, as it can identify potential hit molecules with activity even when they are topologically dissimilar to a known reference ligand [36]. This technical support center addresses the key questions and challenges researchers face when implementing these methods, from selecting the right tool to optimizing performance in contemporary drug discovery projects.

FAQ: Core Concepts and Method Selection

1. What is the core hypothesis behind 3D shape-based virtual screening?

The core hypothesis is the Similarity-Property Principle, which states that molecules with similar shapes and chemical feature distributions (their "pharmacophores") are likely to share similar binding properties with a biological target [17] [3]. These methods do not require the 3D structure of the target protein; instead, they use a known active ligand as a reference to find new compounds by maximizing the overlap of their molecular volumes and chemical features [37] [2].

2. When should I choose a shape-based method over a structure-based method like docking?

Consider shape-based screening in these scenarios [3] [2]:

- No Protein Structure: When a high-quality 3D structure of the target protein is unavailable or unreliable.

- Ultra-Large Libraries: For rapidly filtering billions of compounds in the early stages of a campaign. Ligand-based methods are generally faster and less computationally expensive than structure-based docking [36] [37].

- Scaffold Hopping: When you explicitly want to discover novel chemical scaffolds that are topologically different from your reference but share its overall shape and pharmacophore.

- As a Pre-Filter: To create a manageable subset of promising compounds for more rigorous and computationally expensive structure-based methods [37] [1].

3. What are the main differences between ROCS, USR, and newer open-source tools?

The table below summarizes the key characteristics of these methods.

Table 1: Comparison of 3D Shape-Based Screening Methods

| Method | Description | Key Features | Availability |

|---|---|---|---|

| ROCS (Rapid Overlay of Chemical Structures) | Industry-standard method that uses 3D Gaussian functions to describe molecular shape and a "color force field" for chemical features [17]. | High performance; widely used and cited; includes chemical feature matching. | Commercial (OpenEye) |

| USR (Ultrafast Shape Recognition) | Describes molecular shape using distributions of atomic coordinates (moment invariants) without requiring alignment [17]. | Extremely fast; alignment-free; but may be less accurate than superposition-based methods. | Open Source |

| Open-Source Alternatives (e.g., Lig3DLens, VSFlow, ESPSim/rdMolAlign) | Modern toolkits that leverage open-source libraries (e.g., RDKit) for 3D conformer generation and alignment, often incorporating electrostatics [38]. | Customizable workflows; integrates electrostatics (ESPSim); leverages active developer communities. | Open Source (e.g., GitHub) |

4. My shape-based screen is yielding too many false positives. How can I improve precision?

A high false-positive rate often indicates an over-reliance on shape alone. Consider these strategies:

- Incorporate Chemical Features: Use tools like ROCS's "color force field" or add an electrostatics similarity score (e.g., with ESPSim) to ensure matches are chemically meaningful [17] [38].

- Apply Pre-Filters: Use physicochemical property filters (e.g., molecular weight, logP) or desirability filters (e.g., PAINS) to remove undesirable compounds before the shape screening [38] [2].

- Use a Hybrid Workflow: Follow up your shape screen with a more precise structure-based method like molecular docking on the top-ranked hits. This confirms the hits can plausibly bind to the target's active site [1] [3].

5. I am concerned about missing active compounds (false negatives). What can I do?

False negatives can occur if the bioactive conformation of your query ligand is not well-represented. To mitigate this:

- Conformational Sampling: Generate a comprehensive and diverse set of low-energy conformers for your reference ligand. Using a single, potentially irrelevant conformation is a major limitation [2].

- Multiple Query Ligands: If available, use several known active compounds with diverse scaffolds as separate queries. This accounts for the fact that different active molecules may present different shapes to the same binding site [2].

- Avoid Overly Restrictive Queries: Ensure your query's defined pharmacophore features are not too specific, which could exclude valid but slightly different actives.

Troubleshooting Common Experimental Issues

Issue 1: Poor enrichment in retrospective screening benchmarks.

- Potential Cause: The generated 3D conformation of your query ligand is not representative of its bioactive conformation.

- Solution: Revisit your conformer generation protocol. Use robust algorithms like ETKDG (in RDKit), OMEGA (OpenEye), or ConfGen (Schrödinger) that are designed to produce biologically relevant conformations [2]. For a critical query, consider using a conformation derived from a protein-ligand crystal structure if available.

Issue 2: The screening process is too slow for my large compound library.

- Potential Cause: You are using a high-precision but computationally expensive method for the entire library.

- Solution: Implement a staged workflow.

- Prefiltering: Use a fast, 1D fingerprint-based method (e.g., ECFP) or the ultrafast USR algorithm to quickly reduce the library size [36].

- Shape Screening: Apply a more accurate 3D shape tool (e.g., ROCS, Lig3DLens) to the pre-filtered subset.

- Refinement: Subject the top-ranked hits from shape screening to docking or other high-precision methods [37] [39].

Table 2: Example of a Staged Workflow Performance (Quick Shape from Schrödinger)

Workflow Stage Technology Library Size Time to Screen 6.5B Storage for 6.5B Quick Shape 1D-SIM prefilter + Shape CPU Screening > 4.0 billion ~5.5 days 0.4 TB [36]

Issue 3: Results are highly dependent on the choice of the single query molecule.

- Potential Cause: This is a known weakness of many query-dependent ligand-based methods [17].

- Solution:

- Multi-Query Screening: Run parallel screens with multiple known active molecules and combine the results [2].

- Create a Pharmacophore Model: Distill the essential shape and chemical features from multiple actives into a single pharmacophore query that is less biased toward one specific scaffold.

- Use a Hybrid Model: Consider methods like QuanSA, which build a binding-site model based on multiple ligands and their affinity data, reducing reliance on a single query [3].

Essential Experimental Protocols

Protocol 1: A Standard Open-Source 3D Shape Screening Workflow

This protocol outlines the steps for a typical screening campaign using open-source tools, as implemented in toolkits like Lig3DLens [38].

1. Library Preparation and Preprocessing

- Input: A compound library in SDF, CSV, or other common formats.

- Steps:

- Standardization: Standardize chemical structures (e.g., neutralize charges, remove duplicates) using tools like

datamolorMolVS[38] [2]. - Filtering: Apply property filters (e.g., molecular weight, rotatable bonds, logP) to focus on drug-like chemical space and remove compounds with undesirable functional groups [38] [40].

- Output: A cleaned and filtered SD file.

- Standardization: Standardize chemical structures (e.g., neutralize charges, remove duplicates) using tools like

2. 3D Conformer Generation & Alignment

- Input: The preprocessed library and a reference ligand (SMILES or SDF).

- Steps:

- Generate 3D Conformers: Use

RDKitto generate multiple low-energy conformers for each library compound. For the reference molecule, a single, well-chosen conformation (e.g., from a crystal structure) is often used [38]. - Shape Alignment: Use

rdMolAlignfrom RDKit to align each conformer of each library compound to the reference molecule, maximizing shape overlap. - Scoring: Calculate similarity scores. The primary score is typically shape similarity (Tanimoto combo). The ESPSim package can be used to calculate an electrostatic similarity score for a more robust assessment [38].

- Generate 3D Conformers: Use

3. Post-Screening Analysis & Hit Selection

- Input: The output file with similarity scores for all screened compounds.

- Steps:

- Ranking: Rank compounds based on their combined shape and electrostatic scores.

- Clustering: To ensure chemical diversity, cluster the top-ranked hits (e.g., using k-means clustering on ECFP fingerprints) and select representative compounds from each cluster [38].

- Visual Inspection: Manually inspect the top-ranked, diverse hits to verify the quality of the shape overlap and chemical feature alignment.

The following diagram visualizes the logical flow of this standard open-source screening workflow.

Diagram 1: Standard open-source 3D shape screening workflow.

Protocol 2: A Hybrid LBVS/SBVS Workflow for Ultra-Large Libraries

For the most effective screening of ultra-large libraries (billions of compounds), a hybrid approach that sequentially combines ligand- and structure-based methods is recommended [37] [1] [3]. The drugsniffer pipeline is an example of this philosophy [37].

1. Target and Library Setup

- Define the protein target and its binding pocket.

- Acquire a library of synthesizable small molecules (e.g., ZINC, Enamine REAL).

2. De Novo Ligand Design & Similarity Pre-screening

- Use de novo design software (e.g., AutoGrow4) to generate a diverse set of potential ligands tailored to the binding pocket [37].

- Use these de novo ligands as queries for a fast ligand-based similarity search (e.g., using 2D fingerprints or USR) to identify structurally similar molecules within the ultra-large library. This drastically reduces the library to a manageable size.

3. Structure-Based Refinement

- Perform in silico docking with a tool like RosettaVS or AutoDock Vina on the millions (not billions) of compounds identified in the previous step [37] [39].

- Apply a neural network model or other scoring function to predict and rank binding affinity based on the docked poses.

4. ADMET Filtering

- Finally, apply custom ADMET filters to remove compounds with potential toxicity or poor pharmacokinetic properties [37].

The workflow for this advanced, multi-stage pipeline is illustrated below.

Diagram 2: Hybrid LBVS/SBVS workflow for billion-molecule screening.

Table 3: Essential Software and Databases for 3D Shape-Based Screening

| Category | Resource | Description | Use Case |

|---|---|---|---|

| Open-Source Software | RDKit | A core cheminformatics library used for molecule manipulation, descriptor calculation, and conformer generation [38] [2]. | The foundation for building custom screening workflows. |

| Lig3DLens / VSFlow | Open-source toolkits that provide end-to-end pipelines for 3D shape and electrostatic similarity screening [38]. | Ready-to-use, open-source alternatives to commercial software. | |

| ESPSim | A package for calculating electrostatic similarity scores for aligned molecules [38]. | Adding an electrostatics component to shape-based scoring. | |

| Commercial Software | ROCS | Industry-standard for rapid 3D shape overlay with chemical feature matching [17]. | High-performance, production-ready shape screening. |

| Schrödinger Shape Screening | Suite of workflows (Quick Shape, Shape GPU) for screening libraries from millions to billions of compounds [36]. | Screening ultra-large commercial libraries with high efficiency. | |

| Compound Libraries | ZINC / Enamine | Databases of commercially available compounds, with "make-on-demand" libraries containing billions of molecules [36] [1]. | Source of virtual compounds for screening. |

| Preparation & Validation | DecoyFinder | Tool for selecting decoy molecules to benchmark virtual screening performance [2]. | Validating the enrichment power of a screening protocol. |