Next-Generation ADMET Prediction: Leveraging Machine Learning to Reduce Attrition and Accelerate Drug Discovery

This article provides a comprehensive overview of the transformative impact of machine learning (ML) on ADMET prediction in early drug discovery.

Next-Generation ADMET Prediction: Leveraging Machine Learning to Reduce Attrition and Accelerate Drug Discovery

Abstract

This article provides a comprehensive overview of the transformative impact of machine learning (ML) on ADMET prediction in early drug discovery. It explores the foundational challenges of traditional methods, details state-of-the-art ML methodologies like graph neural networks and federated learning, and offers practical strategies for overcoming data quality and model interpretability issues. By examining rigorous validation frameworks and real-world applications, the article equips researchers and drug development professionals with the knowledge to integrate advanced predictive models into their workflows, ultimately aiming to mitigate late-stage failures and streamline the development of safer, more effective therapeutics.

The ADMET Prediction Challenge: Why Traditional Methods Fail and Why It Matters

Technical Support Center

Troubleshooting Common ADMET Experimental Challenges

This section addresses frequent issues encountered during in vitro ADMET assays, helping researchers identify potential pitfalls and improve the translatability of their data.

Table: Common Experimental Challenges and Solutions

| Challenge Area | Common Symptom | Potential Root Cause | Recommended Action |

|---|---|---|---|

| Metabolic Stability | Consistent underestimation of human in vivo metabolic turnover [1] | Over-reliance on conventional microsomal assays; missing non-CYP enzymes | Supplement with assays using primary human hepatocytes or multi-organ gut/liver models [1]. |

| Permeability & Absorption | Poor correlation between animal and human bioavailability data [1] | Interspecies differences in physiology and metabolic capacity [1] | Use human-relevant advanced in vitro models (e.g., Caco-2, OOC gut/liver) to estimate human bioavailability [2] [1]. |

| Drug-Drug Interactions (DDIs) | Inaccurate DDI predictions, particularly for intestinal interactions | Models fail to fully account for intestinal Cytochrome P450 (CYP) metabolism [1] | Incorporate data on intestinal CYP activity and variability into DDI prediction models [1]. |

| Toxicity | Unexpected organ toxicity or genotoxicity in later stages | Over-reliance on single-endpoint assays; missing complex biological interactions | Implement a panel of in vitro toxicity assays (cytotoxicity, mitochondrial toxicity) and use in silico models with structural alerts [3]. |

| Data Variability | High intra- and inter-assay variability in cell-based models | Use of cell lines with low and variable expression levels of key proteins (e.g., CYPs) [1] | Transition to more consistent and physiologically relevant cell systems, such as primary human intestinal cells [1]. |

| Model Generalizability | Poor performance of machine learning models on novel compound scaffolds | Limited data diversity and coverage of chemical space in training sets [4] | Employ federated learning to train models on larger, more diverse datasets from multiple organizations without sharing proprietary data [4]. |

Frequently Asked Questions (FAQs)

Q1: Our team relies heavily on machine learning (ML) for early ADMET prediction, but model performance drops significantly on our newest chemical series. What could be causing this and how can we improve it?

A: This is a classic problem of model generalizability, often resulting from limited data diversity [4]. ML models trained on narrow chemical spaces fail to extrapolate to novel scaffolds.

- Solution: Consider federated learning approaches, which allow collaborative model training across multiple pharmaceutical companies' datasets without centralizing sensitive data. This significantly expands the learned chemical space and improves robustness for unseen compounds [4]. Internally, ensure your validation uses rigorous scaffold-based splitting, not random splits, to better simulate real-world performance on new chemotypes [4].

Q2: Our in vitro metabolic stability data from liver microsomes did not predict the high human in vivo clearance we observed in the clinic. Why did this happen?

A: Conventional in vitro systems like liver microsomes sometimes fail to capture the full complexity of human metabolism, especially for drugs with complex ADME profiles or those metabolized by non-CYP enzymes [1].

- Solution: Adopt a combination approach. Integrate data from more physiologically relevant systems, such as primary human hepatocytes or interconnected gut-liver organ-on-a-chip models, into Physiologically Based Pharmacokinetic (PBPK) modeling and simulation. This iterative, multi-faceted method provides a more comprehensive picture of a drug's ADME profile before first-in-human studies [1].

Q3: How can we better predict and account for population differences in intestinal metabolism and drug-drug interactions during early development?

A: Traditional Caco-2 cell models have limitations, including variable and low expression of key CYP enzymes compared to the human intestine, and they cannot model donor-to-donor variability [1].

- Solution: Incorporate advanced in vitro models that use primary human intestinal cells. These systems, especially when fluidically linked to liver models, offer a more accurate estimation of first-pass metabolism and bioavailability across different populations, thereby improving DDI predictions [1].

Q4: For advanced modalities like PROTACs, our standard ADME tools seem inadequate. How can we tackle the challenge of poor oral bioavailability for these large molecules?

A: You are correct that advanced drug modalities require a rethink of the traditional ADME toolbox. Their high molecular weight and poor permeability make oral delivery particularly challenging [1].

- Solution:

- Use advanced tools: Implement organ-on-a-chip (OOC) technology to uniquely profile oral bioavailability in vitro for these complex molecules.

- Explore chemical strategies: Test approaches like developing a prodrug form of the PROTAC or modifying the chemistry to improve cellular permeability [1].

- Iterate with models: Use the human-relevant data from OOC systems to rationally design and test strategies to improve oral bioavailability before committing to costly synthesis and in vivo studies [1].

Experimental Protocols & Methodologies

1. Protocol for a Tiered Metabolic Stability Assessment

Objective: To evaluate the metabolic stability of new chemical entities using a tiered approach for better human translation.

- Step 1: Primary High-Throughput Screen. Use human liver microsomes (HLM) or hepatocytes in a 96-well format. Incubate test compound (1 µM) with NADPH-generating system for 0, 15, and 45 minutes. Terminate reaction with cold acetonitrile. Analyze by LC-MS/MS to determine half-life and intrinsic clearance [2].

- Step 2: Confirmatory & Mechanistic Studies. For compounds with complex profiles from Step 1, use suspended primary human hepatocytes or sandwich-cultured human hepatocytes to capture both Phase I and Phase II metabolism and transporter effects [1].

- Step 3: Integrated System Modeling. Feed the in vitro data into a PBPK model. For compounds where conventional assays fail, use data from interconnected gut-liver MPS (Microphysiological Systems) to iteratively refine the model and improve human predictions [1].

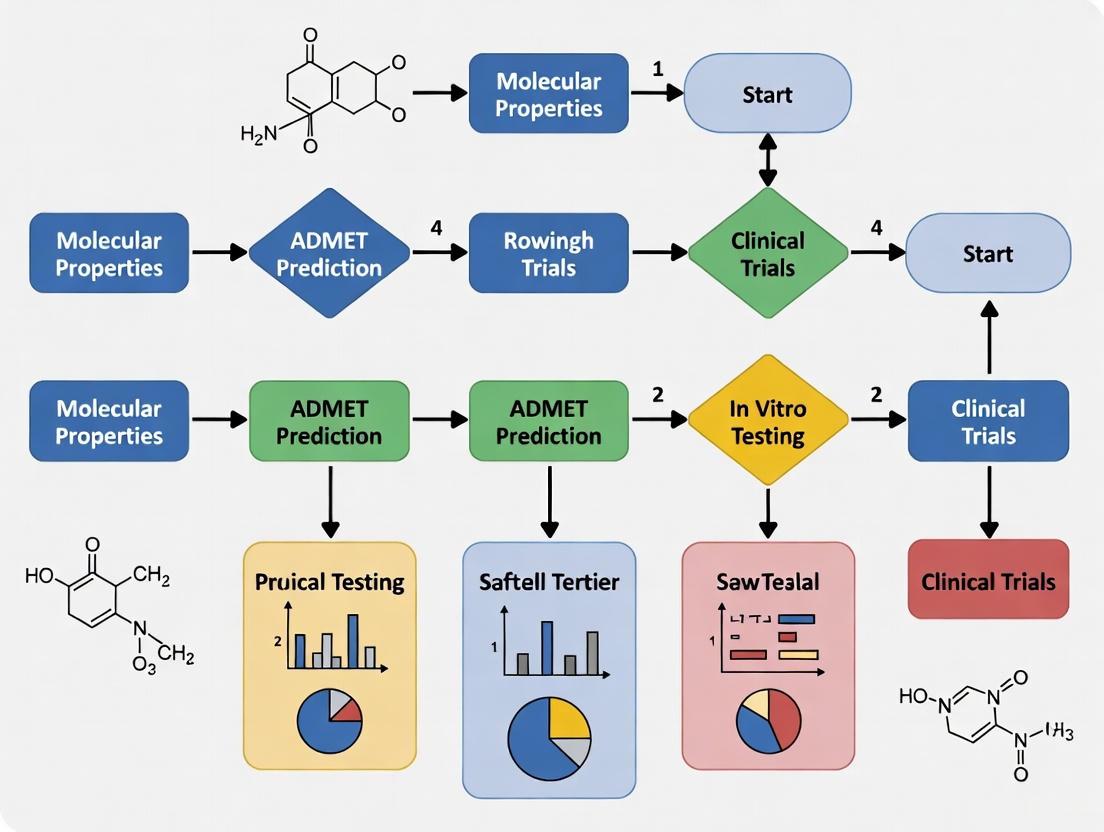

2. Workflow for Integrating In Silico and Experimental ADMET Data

The following workflow diagram illustrates a modern strategy for leveraging computational predictions to guide experimental testing, creating a more efficient discovery cycle.

The Scientist's Toolkit: Key Research Reagents & Materials

Table: Essential Materials for In Vitro DMPK and ADMET Assays

| Tool / Reagent | Function / Application | Key Consideration |

|---|---|---|

| Human Liver Microsomes (HLM) | A subcellular fraction used for high-throughput assessment of CYP450-mediated metabolic stability and metabolite identification [2]. | Does not capture non-microsomal enzymes or transporter effects. |

| Primary Human Hepatocytes | Gold-standard cell system for predicting hepatic clearance, enzyme induction, and metabolite profiling; contains full complement of hepatic enzymes and transporters [2] [1]. | Donor variability can be a factor; cryopreserved formats improve accessibility. |

| Caco-2 Cell Line | A human colon carcinoma cell line that, upon differentiation, forms a monolayer mimicking the intestinal epithelium. Used to predict passive transcellular absorption and efflux transporter effects (e.g., P-gp) [2] [3]. | Levels of expressed CYP enzymes are generally lower and more variable than in human intestine [1]. |

| Recombinant CYP Enzymes | Individually expressed human CYP isoforms (e.g., CYP3A4, CYP2D6). Used to identify which specific enzyme is responsible for metabolizing a drug candidate [3]. | Essential for reaction phenotyping and understanding the risk of drug-drug interactions. |

| Transporters (e.g., P-gp, OATP) | Cell-based or vesicle assays expressing specific uptake or efflux transporters. Used to evaluate a drug's potential for transporter-mediated DDIs, tissue distribution, and excretion [2]. | Critical for understanding complex pharmacokinetics beyond metabolism. |

| Organ-on-a-Chip (OOC) / MPS | Advanced microphysiological systems that culture primary human cells under perfused flow to recreate organ-level function (e.g., gut, liver). Used for complex ADME assays like integrated gut-liver bioavailability [1]. | Provides more physiologically relevant human data but can be more complex to operate than traditional assays. |

Important Note: The selection of the appropriate tool depends on the specific ADMET property being investigated, the stage of the drug discovery project, and the balance between throughput and physiological relevance.

In early drug discovery, the evaluation of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is fundamental for determining a drug candidate's clinical success. Conventional approaches, including traditional experimental assays and static Quantitative Structure-Activity Relationship (QSAR) models, have long been used for this purpose. However, these methods are fraught with limitations, from being resource-intensive to lacking robustness and generalizability. This technical support document outlines the common challenges faced with these conventional approaches and provides troubleshooting guidance to help scientists navigate and overcome these issues, thereby improving the efficiency and predictive power of ADMET evaluation in early research.

Frequently Asked Questions (FAQs)

1. Why do conventional ADMET assays contribute to high drug attrition rates? Conventional experimental ADMET assays are often conducted later in the drug design process and can struggle to accurately predict human in vivo outcomes. Suboptimal pharmacokinetic profiles and unforeseen toxicity, which are frequently not identified until these resource-intensive assays are run, remain major contributors to clinical failure. Their high cost and labor requirements often mean they are not used exhaustively early on, allowing molecules with poor ADMET properties to advance [5] [6].

2. What is the primary limitation of traditional QSAR and in silico ADMET models? The primary limitation is a lack of robustness and generalizability. Many conventional computational models are trained on limited or homogeneous datasets, causing their performance to degrade significantly when making predictions for novel molecular scaffolds or compounds outside the distribution of their training data. They often operate as "black boxes" with poor interpretability, hindering mechanistic understanding [4] [6].

3. How does data scarcity impact the development of reliable ADMET models? Data scarcity is a fundamental challenge. Experimental ADMET data is often heterogeneous and low-throughput. When models are trained on small or non-diverse datasets that capture only limited sections of the relevant chemical space, they fail to learn the broad structure-property relationships needed for accurate predictions on new compound classes. This data limitation is often a greater bottleneck than the model architecture itself [4].

4. What are the common technical pitfalls in running molecular assays for ADMET? Common pitfalls include achieving insufficient sensitivity (leading to false negatives) or specificity (leading to false positives and cross-contamination), often exacerbated by inaccurate liquid handling. Manual workflows introduce human error and inconsistencies, compromising reproducibility. Furthermore, assays are often difficult to scale efficiently without compromising precision [7].

5. How can I improve the reliability of my in silico ADMET predictions? To improve reliability, ensure your model's Applicability Domain (AD) is well-defined and that predictions are interpreted with caution for compounds falling outside it. Leveraging models trained on larger and more diverse datasets, such as through federated learning, can significantly enhance generalizability. Additionally, employing multi-task architectures that learn from overlapping signals across multiple ADMET endpoints can boost overall performance and robustness [4] [8].

Troubleshooting Guides

Problem 1: Poor Generalizability of In-House QSAR Models

Symptoms: Your model performs well on your internal training set but shows significantly degraded accuracy when predicting properties for novel compound series or external datasets.

Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Limited Data Diversity: The training data covers too narrow a region of chemical space. | Utilize Federated Learning: Participate in or build models using federated learning networks. This approach allows for collaborative training on distributed proprietary datasets from multiple pharmaceutical partners, dramatically expanding the chemical space and diversity the model learns from without sharing raw data [4]. |

| Incorrect Applicability Domain (AD) Assessment: Predictions are made for compounds structurally distant from the training set. | Implement Rigorous AD Checks: Define and apply a strict applicability domain for your models. Use tools like scaffold-based cross-validation during model development to realistically estimate performance on new scaffolds. Always report the AD alongside predictions [8]. |

| Outdated or Simple Model Architecture: Reliance on single-task models or simple QSAR methods. | Adopt Advanced ML Frameworks: Transition to state-of-the-art methods like Graph Neural Networks (GNNs) and multi-task learning (MTL). GNNs better capture complex molecular structures, while MTL allows knowledge from related ADMET tasks to improve prediction accuracy [6]. |

Recommended Experimental Protocol: Model Validation with Scaffold Splitting

- Data Preparation: Curate your dataset and standardize molecular structures.

- Scaffold-Based Splitting: Use a tool like RDKit to identify molecular Bemis-Murcko scaffolds. Split the data into training and test sets such that compounds in the test set have scaffolds not present in the training set.

- Model Training: Train your model on the training set.

- Performance Assessment: Evaluate the model on the scaffold-held-out test set. This provides a more realistic estimate of its performance on truly novel chemotypes [4].

Problem 2: Resource Intensiveness of Experimental ADMET Assays

Symptoms: The ADMET screening process is creating a bottleneck due to high consumption of precious reagents, long timelines, and reliance on animal studies, making it expensive and slow for early-stage lead optimization.

Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Low-Throughput Experimental Designs: Manual workflows and large-volume assays. | Implement Assay Miniaturization: Use automated, non-contact liquid handlers capable of dispensing nanoliter volumes. This can reduce reagent consumption by up to 50%, conserve precious samples, and significantly lower costs while maintaining data quality [7]. |

| High Compound Requirements: Traditional assays require a non-negligible amount of synthetic material. | Shift to In Silico Triage: Integrate computational ADMET prediction tools at the very beginning of the drug design process. Use platforms for virtual screening to prioritize compounds with a higher probability of favorable ADMET properties before they are synthesized, reducing the wet-lab burden [9] [10]. |

| Lengthy Timelines for In Vivo Toxicity Studies: Animal studies are time-consuming and raise ethical concerns. | Adopt Advanced In Vitro Mechanistic Assays: Incorporate functionally relevant, human-based in vitro assays earlier. For example, use Cellular Thermal Shift Assays (CETSA) to confirm direct target engagement in a physiologically relevant cellular context, de-risking candidates before proceeding to animal studies [9]. |

Recommended Experimental Protocol: Automated High-Throughput Solubility Screening

- Sample Preparation: Use an acoustic or piezo-electric non-contact liquid handler (e.g., I.DOT) to transfer nanoliter volumes of compound DMSO stock solutions into assay plates.

- Buffer Addition: Use the same automated system to add aqueous buffer to induce precipitation.

- Incubation and Reading: Incubate the plates and use an integrated microplate reader to measure turbidity or UV absorption.

- Data Analysis: Automate data processing to classify compounds based on solubility. This miniaturized, automated workflow drastically increases throughput and reduces reagent use compared to manual, milliter-scale methods [7].

Problem 3: Lack of Interpretability in Complex ML Models

Symptoms: Your deep learning model provides accurate ADMET predictions, but you cannot understand the reasoning behind them, making it difficult to gain scientific insight or guide medicinal chemistry efforts.

Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| "Black-Box" Nature of Models: Complex models like deep neural networks lack inherent interpretability. | Employ Explainable AI (XAI) Techniques: Integrate post-hoc interpretation methods such as SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to highlight which molecular substructures or features most influenced the model's prediction for a specific compound [6]. |

| Focus Solely on Prediction Accuracy: The model was developed and selected based only on its numerical accuracy, not its ability to provide insights. | Prioritize Mechanistic Interpretability: During model selection, favor architectures that offer a balance between performance and interpretability. When possible, use models that provide confidence scores or uncertainty estimates for their predictions to guide decision-making [6]. |

Comparative Analysis of Conventional vs. Modern Approaches

The table below summarizes key limitations of conventional approaches and contrasts them with modern solutions.

| Aspect | Conventional Approach & Limitations | Modern Solution & Key Benefits |

|---|---|---|

| Data Foundation | Isolated, limited datasets leading to poor generalization [4]. | Federated Learning across multiple organizations. Expands chemical space coverage without centralizing data [4]. |

| Model Architecture | Static QSAR models and single-task learning [6]. | Graph Neural Networks (GNNs) & Multi-Task Learning (MTL). Captures complex structure and improves accuracy via shared learning [6]. |

| Experiment Throughput | Manual, low-throughput, high-volume assays [7]. | Automation & Miniaturization. Enables high-throughput screening with nanoliter volumes, saving reagents and time [7]. |

| Target Engagement | Indirect or biochemical measures lacking cellular context. | Cellular Thermal Shift Assay (CETSA). Confirms target engagement in a physiologically relevant cellular environment [9]. |

| Model Interpretability | "Black-box" models with little insight [6]. | Explainable AI (XAI) and Applicability Domain (AD). Provides reasoning for predictions and defines model boundaries [6] [8]. |

Workflow and Relationship Visualizations

ADMET Model Improvement Pathway

This diagram illustrates the strategic pathway for transitioning from limited, conventional ADMET models to robust, next-generation predictive tools.

Experimental ADMET Workflow Optimization

This workflow contrasts the traditional, resource-intensive ADMET screening process with an optimized, AI-integrated modern approach.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential tools and technologies for implementing modernized ADMET prediction and screening workflows.

| Tool / Technology | Function in ADMET Research |

|---|---|

| Automated Non-Contact Liquid Handler (e.g., I.DOT) | Enables assay miniaturization by precisely dispensing nanoliter volumes, reducing reagent use and increasing throughput while minimizing cross-contamination [7]. |

| Cellular Thermal Shift Assay (CETSA) | Investigates target engagement by measuring the thermal stabilization of a protein target upon ligand binding in a physiologically relevant cellular or tissue context, bridging the gap between biochemical potency and cellular efficacy [9]. |

| Graph Neural Networks (GNNs) | A class of deep learning models that directly operate on molecular graph structures, superiorly capturing the complex relationships between atoms and bonds for improved ADMET property prediction [6]. |

| Federated Learning Platform (e.g., Apheris) | Provides a secure framework for multiple institutions to collaboratively train machine learning models on distributed private datasets without data sharing, overcoming data scarcity and improving model generalizability [4]. |

| Applicability Domain (AD) Assessment Tools | Methods and software (e.g., in VEGA, ADMETLab) that evaluate whether a new compound is within the chemical space a QSAR/ML model was trained on, crucial for assessing prediction reliability [8]. |

Frequently Asked Questions

Q1: Why do my ADMET models perform well in validation but fail on new compound series? This is a classic symptom of the data diversity problem. Models are often trained on public datasets that have limited chemical structural diversity or are biased toward specific chemotypes. When you introduce a new scaffold that is not well-represented in the training data, the model operates outside its "applicability domain," and predictions become unreliable [11] [12]. The model literally has no good reference points for making a prediction.

Q2: How can I quickly check if a compound is within my model's applicability domain? A common and effective method is to calculate the Tanimoto similarity between your query compound and the nearest neighbor in the model's training set. The versatile Nearest Neighbor (vNN) method, for instance, uses a predefined similarity threshold (e.g., based on ECFP4 fingerprints). If no compound in the training set meets this similarity criterion, the model should refrain from making a prediction, thus alerting you to the coverage issue [11].

Q3: What are the main sources of data variability that harm model performance? The primary sources of variability that create a "noisy" dataset include [12]:

- Experimental Conditions: The same property (e.g., aqueous solubility) can yield different results under different buffer conditions, pH levels, or laboratory procedures.

- Data Origin: Merging data from different sources (e.g., ChEMBL, PubChem) without accounting for systematic differences in experimental protocols.

- Structural Bias: Historical datasets are often over-represented with certain successful drug-like scaffolds and lack diversity, providing poor coverage of novel chemical space.

Q4: Are there public benchmarks that address the data diversity problem? Yes, next-generation benchmarks are being developed to tackle this. PharmaBench is one such effort, created by using a large-language-model (LLM) based system to meticulously extract and standardize experimental conditions from over 14,000 bioassays. This process results in a larger and more consistent dataset designed to be more representative of compounds used in real drug discovery projects [12].

Troubleshooting Guides

Problem: Inconsistent Predictions for Structurally Similar Compounds

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inconsistent training data due to merged results from different experimental assays [12]. | 1. Check the source of the experimental data for your compounds.2. Trace back the original publications or assay descriptions for methodological details. | Use data curation pipelines, like the one used for PharmaBench, that identify and standardize experimental conditions before model training [12]. |

| Model operating at the edge of its applicability domain [11]. | Calculate the similarity distance of the problematic compounds to the model's training set. You will likely find they are on the periphery. | Use a model with a defined applicability domain that warns you when a prediction is not reliable. Consider generating new experimental data for these chemotypes to expand the training set [11]. |

Problem: Model Fails to Generalize to Novel Scaffolds

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Training set lacks structural diversity and is clustered in specific regions of chemical space [13]. | Perform a principal component analysis (PCA) or t-SNE visualization of your training set versus the novel scaffolds you are testing. | Integrate data from multiple consolidated sources like PharmaBench or use the vNN platform to rapidly update your model with new assay data without full retraining [11] [12]. |

| Over-reliance on small, legacy benchmark datasets like ESOL (n=1,128) which have low molecular weight and differ from modern drug discovery compounds [12]. | Compare the molecular weight and other properties of your compounds to the training set's average. | Switch to larger, more modern benchmarks. For example, PharmaBench contains 52,482 entries with molecular weights more typical of drug discovery projects (300-800 Dalton) [12]. |

Data and Experimental Protocols

Table 1: Comparison of ADMET Dataset Scales and Properties This table highlights the scale and scope of different data resources, underscoring the data diversity challenge.

| Dataset Name | Key ADMET Properties Covered | Number of Entries | Key Characteristics & Limitations |

|---|---|---|---|

| PharmaBench [12] | 11 key properties (e.g., Solubility, Permeability, CYP inhibition) | 52,482 | Created by processing 14,401 bioassays; designed for industrial drug discovery (MW 300-800). |

| MoleculeNet [12] | 17 properties across physical chemistry and physiology | >700,000 | A broad collection, but some specific datasets (e.g., ESOL) are small (n=1,128) and contain lighter compounds (avg. MW 203.9). |

| admetSAR 2.0 Models [14] | 18 binary and continuous endpoints (e.g., Ames, HIA, P-gp) | Varies by endpoint (e.g., 8,348 for Ames mutagenicity) | A widely used web server; the associated ADMET-score integrates these 18 properties into a single drug-likeness index. |

Table 2: The ADMET-Score Components and Weights This scoring function helps evaluate the overall drug-likeness of a compound by integrating multiple ADMET predictions [14].

| Endpoint | Property Type | Dataset Size (Positive/Negative) | Model Accuracy |

|---|---|---|---|

| Ames mutagenicity | Toxicity | 4866 / 3482 | 0.843 |

| Human Intestinal Absorption (HIA) | Absorption | 500 / 78 | 0.965 |

| P-glycoprotein Inhibitor (P-gpi) | Distribution | 1172 / 771 | 0.861 |

| CYP2D6 Inhibitor | Metabolism | 3060 / 11681 | 0.855 |

| hERG Inhibitor | Toxicity | 717 / 261 | 0.804 |

| Caco-2 Permeability | Absorption | 303 / 371 | 0.768 |

| Acute Oral Toxicity | Toxicity | — | 0.832 |

Experimental Protocol: Implementing a vNN-based ADMET Prediction

The following methodology details how to use the versatile Nearest Neighbor (vNN) approach for making reliable predictions within a defined applicability domain [11].

- Input Molecule Preparation: Provide the query molecule(s) by drawing the structure, entering the canonical SMILES string, or uploading a file in

.csvor.txtformat with columns labeledNAMEandSMILES[11]. - Fingerprint Generation: The system will automatically compute the ECFP4 (Extended-Connectivity Fingerprints with a diameter of 4 bonds) for the query molecule. These fingerprints capture meaningful molecular features and are used for similarity calculations [11].

- Similarity Calculation & Neighbor Selection: For the query molecule, the system calculates the Tanimoto distance to every molecule in the model's training set. The Tanimoto distance is defined as:

d = 1 - [n(P ∩ Q) / (n(P) + n(Q) - n(P ∩ Q))]wheren(P ∩ Q)is the number of common features in molecules p and q, andn(P)andn(Q)are the total features for each molecule. All neighbors with a distanced_iless than or equal to a pre-optimized thresholdd_0are selected [11].

- Applicability Domain Check: If no neighbors are found within the

d_0threshold, the model returns no prediction, ensuring reliability. The proportion of test molecules that pass this check is the model's coverage [11]. - Weighted Prediction: For molecules within the applicability domain, a weighted average of the neighbors' experimental activities is computed. The weight for each neighbor

iis given bye^(-(d_i/h)^2), wherehis a smoothing factor. The final predicted activityyis [11]:y = [ Σ (y_i * e^(-(d_i/h)^2) ) ] / [ Σ e^(-(d_i/h)^2) ]for alliwhered_i ≤ d_0.

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for ADMET Modeling

| Tool / Resource | Function in Addressing Data Diversity |

|---|---|

| ECFP4 Fingerprints | A method to convert molecular structure into a numerical fingerprint, enabling quantitative similarity searches and defining the applicability domain [11]. |

| Tanimoto Distance | A standard metric for quantifying the structural similarity between two molecules based on their fingerprints, crucial for the vNN method [11]. |

| Multi-Agent LLM System | An advanced data curation tool (e.g., using GPT-4) that automatically extracts and standardizes experimental conditions from thousands of assay descriptions, enabling the creation of robust datasets like PharmaBench [12]. |

| ADMET-Score | A comprehensive scoring function that integrates 18 predicted ADMET properties into a single value, providing a holistic view of a compound's drug-likeness and helping to triage candidates [14]. |

Workflow Diagrams

Diagram Title: Data Curation to Reliable Prediction Workflow

Diagram Title: vNN Applicability Domain Logic

Technical Support Center: Troubleshooting ML-Driven ADMET Prediction

Frequently Asked Questions (FAQs)

Q1: My ML model for toxicity prediction performs well on internal data but fails on novel chemical scaffolds. How can I improve its generalizability?

A: This is a common issue known as model degradation, often caused by limited chemical diversity in your training set. To address this:

- Utilize Federated Learning: Participate in or establish a federated learning network. This approach allows you to collaboratively train models with other institutions, expanding the chemical space your model learns from without sharing proprietary data. Federation has been shown to systematically improve model robustness and expand applicability domains [4].

- Implement Rigorous Data Curation: Before training, slice your data by scaffold and assay type to understand modelability. Use scaffold-based cross-validation, not random splits, to better simulate performance on truly novel compounds [4].

- Adopt Advanced Architectures: Move beyond simple QSAR models. Use graph neural networks (GNNs) or multi-task learning frameworks that can capture complex structure-property relationships more effectively, leading to better generalization [6] [15].

Q2: How can I address the "black box" problem of deep learning models to gain insights for lead optimization?

A: Improving model interpretability is crucial for scientific validation and guiding chemistry efforts.

- Leverage Explainable AI (XAI) Techniques: Employ methods like SHAP or LIME to attribute predictions to specific molecular features or substructures. This helps you understand which chemical groups contribute to a favorable or unfavorable ADMET profile [6].

- Incorporate Interpretable Representations: Use models that combine learned representations (like Mol2Vec embeddings) with a curated set of known physicochemical descriptors (e.g., molecular weight, logP). This blends the power of deep learning with the intuitiveness of classical descriptors [15].

- Explore Symbolic Regression: Emerging methods like Deep Generative Symbolic Regression aim to discover concise, closed-form mathematical equations from data, offering inherent interpretability [16].

Q3: Our experimental ADMET data is heterogeneous and low-throughput. How can we build reliable models with such sparse data?

A: Sparse, heterogeneous data is a key challenge in pharmacology. Modern ML offers several strategies:

- Apply Multi-Task Learning (MTL): Train a single model to predict multiple ADMET endpoints simultaneously. MTL allows the model to learn shared representations across related tasks, which acts as a form of regularization and improves performance on tasks with limited data [6] [15].

- Use Pre-trained Models and Fine-Tuning: Start with a model pre-trained on a large, public chemical database. Fine-tune this model on your smaller, proprietary dataset. This transfer learning approach can significantly boost performance with limited data [4] [15].

- Integrate Expert Knowledge: Hybrid models that combine neural networks with expert-defined ordinary differential equations can perform well in small-sample regimes. This incorporates established pharmacological principles to guide the learning process [16].

Troubleshooting Guides

Issue: Model Performance is Poor or Unreliable

| Step | Action & Description | Key Transaction/Code (if applicable) |

|---|---|---|

| 1 | Audit Data Quality & Diversity : Check for data imbalance, assay consistency, and sufficient coverage of the chemical space relevant to your project. | Use internal data sanity checks and chemical clustering tools. |

| 2 | Validate Model Generalization : Ensure you are not overfitting. Use scaffold-based splits for cross-validation, not random splits. | from sklearn.model_selection import PredefinedSplit or similar. |

| 3 | Benchmark Against Null Models : Compare your model's performance against simple baselines (e.g., predicting the mean) to confirm it has learned meaningful patterns [4]. | Implement statistical significance tests (e.g., t-test) on performance distributions. |

| 4 | Check Feature Representation : Experiment with different molecular featurization methods (e.g., ECFP fingerprints, graph representations, Mordred descriptors) to find the most informative one for your endpoint [15]. | from rdkit.Chem import AllChemfrom mordred import Calculator, descriptors |

Issue: Model is Not Accepted by Regulatory or Internal Safety Standards

| Step | Action & Description | Key Transaction/Code (if applicable) |

|---|---|---|

| 1 | Enhance Interpretability : Integrate model explanation tools to provide mechanistic insights and justify predictions. | Use libraries like SHAP or LIME to generate feature importance plots. |

| 2 | Ensure Rigorous Validation : Follow regulatory-endorsed validation principles. Perform extensive external validation on held-out compounds that are structurally distinct from your training set. | Refer to FDA/EMA guidelines on computational model validation. |

| 3 | Document the Workflow Meticulously : Maintain a clear record of data provenance, model architecture, hyperparameters, and all validation results to build a compelling case for model credibility. | - |

Experimental Protocols & Data Presentation

Protocol 1: Implementing a Multi-Task Deep Learning Model for ADMET Prediction

This protocol outlines the steps for building a model that predicts multiple ADMET endpoints simultaneously, improving data efficiency and prediction consistency [6] [15].

- Data Collection & Curation:

- Gather datasets for the desired ADMET endpoints (e.g., solubility, CYP450 inhibition, hERG liability).

- Standardize molecular structures (e.g., using RDKit) and handle missing values.

- Crucially, slice data by molecular scaffold and perform a "modelability" analysis to assess the inherent predictability of each endpoint [4].

- Molecular Featurization:

- Convert each molecule into a numerical representation. We recommend a hybrid approach:

- Model Architecture & Training:

- Architecture: Design a neural network with shared hidden layers (for common feature learning) and task-specific output heads (for individual endpoint prediction).

- Training: Use a combined loss function (e.g., weighted sum of mean squared error for regression tasks and cross-entropy for classification tasks). Train with mini-batch stochastic gradient descent.

- Model Validation:

- Employ scaffold-based cross-validation: Split data so that molecules with the same Bemis-Murcko scaffold are in the same fold. This tests generalization to new chemotypes [4].

- Benchmarking: Compare your multi-task model against single-task models and established baselines. Use multiple random seeds and folds to report a distribution of results, not just a single score [4].

Diagram: Multi-Task Learning Workflow for ADMET Prediction

Protocol 2: Setting Up a Federated Learning Cycle for Cross-Organizational Model Training

This protocol enables collaborative model improvement on distributed private datasets [4].

- Network Initialization:

- Each participant (e.g., a pharma company) is a node in the network with its own private ADMET dataset.

- A central coordinator initializes a global ML model (e.g., a GNN) and defines the training protocol.

- Federated Learning Cycle:

- Step 1 - Distribution: The coordinator sends the current global model to a subset of participants.

- Step 2 - Local Training: Each participant trains the model on their local data for a set number of epochs.

- Step 3 - Aggregation: Participants send their model updates (e.g., weight gradients) back to the coordinator. Crucially, raw data never leaves the local site.

- Step 4 - Averaging: The coordinator aggregates these updates (e.g., using Federated Averaging) to create an improved global model.

- Iteration and Validation:

- Repeat the cycle for multiple rounds.

- Periodically evaluate the performance of the updated global model on held-out validation sets provided by the participants.

Diagram: Federated Learning Process

Quantitative Performance Data

Table 1: Comparative Performance of ML Approaches on Key ADMET Endpoints [6] [4]

| ADMET Endpoint | Traditional QSAR | Single-Task Deep Learning | Multi-Task / Federated Deep Learning | Key Benefit |

|---|---|---|---|---|

| Human Liver Microsomal Clearance | Limited generalizability | Improved accuracy | 40-60% reduction in prediction error [4] | Better in vitro-in vivo extrapolation |

| Solubility (KSOL) | Struggles with complex scaffolds | Good with sufficient data | Higher accuracy on novel chemotypes [4] | Improved formulation guidance |

| hERG Cardiotoxicity | High false negative rate | More sensitive | Increased robustness & applicability domain [6] [4] | Reduced late-stage cardiac attrition |

| CYP450 Inhibition | Based on static descriptors | Captures complex patterns | Superior in predicting drug-drug interactions [15] | Enhanced clinical safety profile |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for ML-Driven ADMET Research

| Tool / Resource Name | Type | Primary Function |

|---|---|---|

| Therapeutics Data Commons (TDC) [17] | Software/Database | Provides curated, unified datasets and benchmarks for various ADMET and drug discovery tasks. |

| Chemprop [15] | Software | A message-passing neural network specifically designed for molecular property prediction, supporting multi-task learning. |

| RDKit [15] | Software | Open-source cheminformatics toolkit used for molecule standardization, descriptor calculation, and fingerprint generation. |

| Apheris Federated ADMET Network [4] | Platform | A commercial platform enabling pharmaceutical companies to collaboratively train ADMET models using federated learning. |

| Mol2Vec [15] | Algorithm | An unsupervised method for learning vector representations of molecular substructures, analogous to Word2Vec in NLP. |

| Receptor.AI ADMET Model [15] | Service/Model | A commercial ADMET prediction service using a multi-task model with Mol2Vec embeddings and curated descriptors. |

| SHAP (SHapley Additive exPlanations) | Library | A game-theoretic approach to explain the output of any machine learning model, crucial for interpreting "black box" models. |

| Federated Averaging Algorithm [4] | Algorithm | The core algorithm used in federated learning to aggregate model updates from distributed clients into a central model. |

A Practical Guide to Modern ML Techniques for ADMET Prediction

Frequently Asked Questions (FAQs)

Q1: Why should I use a Graph Neural Network over traditional descriptors for ADMET prediction? Traditional models rely on pre-calculated molecular descriptors, which can be a simplified representation and may not capture all features relevant to complex ADMET properties [18]. GNNs directly learn from the molecular graph structure (atoms as nodes, bonds as edges), inherently capturing important topological information that can lead to more accurate predictions and bypass the need for computationally expensive descriptor retrieval and selection [18].

Q2: My ensemble model is not performing better than my single best model. What could be wrong? Ensemble methods, including bagging and boosting, do not always guarantee better performance [19]. This can happen if the base models in your ensemble lack diversity and make correlated errors, if you are using the wrong ensemble method for your problem (e.g., using bagging with consistently biased models), or if the ensemble is overfitting the training data despite techniques like bootstrap sampling [20] [19]. Ensuring model diversity and selecting the appropriate ensemble strategy is crucial.

Q3: In Multi-Task Learning, how do I decide the weights for combining losses from different tasks? There is no one-size-fits-all answer. A simple start is a weighted sum of losses, where weights can be fixed based on domain knowledge or task importance [21]. More advanced, automated methods include uncertainty weighting, where the weight for each task's loss is dynamically learned based on the task's inherent uncertainty [22]. Another strategy is to adjust weights dynamically based on validation performance, reducing the weight for tasks where accuracy is high to focus the model on harder tasks [21].

Q4: What does "task relatedness" mean in Multi-Task Learning, and why is it important? Task relatedness implies that the tasks you are training on simultaneously share some common underlying factors or features that the model can learn and leverage [22]. For example, predicting the inhibition of different cytochrome P450 enzymes (CYP2C9, CYP2C19, etc.) are related tasks as they all involve metabolic clearance [18]. Training on related tasks acts as a form of regularization, improving the model's generalization. Using unrelated tasks can lead to negative transfer, where the performance on one or more tasks degrades due to interference from other tasks [22].

Troubleshooting Guides

Graph Neural Networks for Molecular Property Prediction

| Problem | Possible Cause | Solution |

|---|---|---|

| Poor generalization to new molecular scaffolds | Overfitting on small training datasets or over-smoothing where node features become too similar after many GNN layers. | Incorporate regularization like dropout (e.g., 50%) within GNN layers [18] [23]. Reduce the number of GNN layers to capture a more local neighborhood instead of the entire graph. |

| Model fails to capture key functional groups | The GNN's message-passing range is too limited, or node features lack crucial chemical information. | Increase the number of GNN layers to allow information to propagate from more distant atoms. Enrich node feature vectors with atomic properties like hybridization, formal charge, and whether the atom is in a ring [18]. |

| High computational cost and long training times | The molecular graphs are large or the GNN architecture is complex. | Utilize mini-batching of graphs during training. Consider simplifying the model architecture or using sampling techniques to neighbor nodes during message passing. |

Ensemble Methods in ADMET Modeling

| Problem | Possible Cause | Solution |

|---|---|---|

| High computational and memory resources | Ensemble methods require training and storing multiple models. | Use weaker but faster base models (e.g., shallow decision trees). For inference, use model distillation to compress the ensemble into a single, smaller model. |

| No significant improvement over a single model | Lack of diversity among base models; they all make similar errors. | Introduce diversity by using different algorithms (e.g., SVM, RF, NNET), different subsets of features, or different subsets of training data (bagging) [20] [24]. |

| Ensemble performance is biased or unfair | Bias in the training data can be amplified and perpetuated by the ensemble. | Apply fairness-aware metrics and preprocessing techniques to the training data before building the ensemble models [20]. |

Multi-Task Learning for Joint ADMET Endpoint Prediction

| Problem | Possible Cause | Solution |

|---|---|---|

| One task dominates the training, hurting performance on others | The loss magnitude of one task is much larger than others, causing the optimizer to prioritize it. | Implement a dynamic loss balancing strategy, such as uncertainty weighting, to automatically scale the contribution of each task's loss [22] [25]. |

| Negative transfer: Performance is worse than single-task models | The tasks are not sufficiently related and are interfering with each other. | Conduct a pre-training analysis of task relationships. Architectures with soft parameter sharing (separate models with regularized parameters) can be more robust to unrelated tasks than hard parameter sharing [22]. |

| Difficulty in interpreting which features are important for which task | The shared layers in MTL make it non-trivial to attribute predictions to specific tasks. | Use model interpretability techniques like attention mechanisms to identify which molecular substructures the model deems important for each specific ADMET task [26]. |

Experimental Protocols & Data Presentation

Protocol 1: Implementing an Attention-based GNN for ADMET Prediction

This protocol is based on a study that used an attention-based GNN to predict properties like lipophilicity and CYP450 inhibition [18].

- Molecular Graph Construction: Convert SMILES strings into molecular graphs. Each atom is a node, and each bond is an edge.

- Node Features: Create a feature vector for each atom. Include atomic number, formal charge, hybridization, and whether it is in a ring (see Table 1) [18].

- Adjacency Matrices: Create multiple adjacency matrices to represent different bond types: single (

A2), double (A3), triple (A4), and aromatic (A5), in addition to the total bond matrix (A1) [18].

- Model Architecture:

- Use Graph Attention (GAT) layers to update node representations. These layers allow a node to assign different importance to its neighbors [18].

- After several message-passing layers, perform a global pooling (e.g., sum or mean) to get a graph-level representation for the entire molecule.

- Pass this representation through fully connected layers to produce the final prediction (regression or classification).

- Training: Use a five-fold cross-validation strategy to robustly evaluate model performance. Use task-appropriate loss functions (Mean Squared Error for regression, Cross-Entropy for classification) [18].

Protocol 2: Building an Adaptive Ensemble for ADMET Classification

This protocol is inspired by the Adaptive Ensemble Classification Framework (AECF) designed for unbalanced ADME data [24].

- Data Balancing: Given a dataset with a high imbalance ratio (IR), first apply a sampling method (e.g., SMOTE for oversampling, or random undersampling) to create multiple balanced training subsets [24].

- Generate Individual Models: On each balanced subset, train a diverse set of base classifiers (e.g., Support Vector Machine, Random Forest, Artificial Neural Networks). A Genetic Algorithm (GA) can be used to select optimal features for each model [24].

- Combine Models: Instead of simple majority voting, use an optimized ensemble rule. The AECF framework uses an adaptive procedure that selects individual models based on both their accuracy and diversity to create a final, robust ensemble model [24].

Table 1: Performance Comparison of Ensemble Methods on ADMET Datasets Table based on the evaluation of the AECF framework against bagging and boosting on five ADMET classification tasks [24].

| ADMET Property | Dataset Size (Compounds) | Single Best Model (Avg. AUC) | Bagging (Avg. AUC) | Boosting (Avg. AUC) | Adaptive Ensemble (AECF) (Avg. AUC) |

|---|---|---|---|---|---|

| Caco-2 Permeability (CacoP) | 1,387 | ~0.82 | ~0.83 | ~0.84 | 0.857 - 0.860 |

| Human Intestinal Absorption (HIA) | Information missing | ~0.86 | ~0.87 | ~0.88 | 0.897 - 0.918 |

| Oral Bioavailability (OB) | Information missing | ~0.75 | ~0.76 | ~0.77 | 0.782 - 0.798 |

| P-glycoprotein Substrates (PS) | Information missing | ~0.79 | ~0.80 | ~0.81 | 0.814 - 0.831 |

| P-glycoprotein Inhibitors (PI) | Information missing | ~0.86 | ~0.87 | ~0.88 | 0.887 - 0.890 |

Protocol 3: Setting Up a Multi-Task Learning Model with Dynamic Loss Weighting

- Model Architecture (Hard Parameter Sharing):

- Shared Encoder: A series of layers common to all tasks. For molecular data, this could be a GNN or a set of dense layers processing molecular descriptors.

- Task-Specific Heads: Separate output layers for each ADMET task (e.g., one for solubility, one for CYP2C9 inhibition) [22].

- Dynamic Loss Function: Implement a loss function that automatically balances the contribution of each task. The following wrapper can be used with PyTorch, as described in research on quantum-enhanced MTL [25] and other guides [22].

- Training Loop: In each training step, compute the loss for each task, pass these losses to the

MultiTaskLossWrapperto get the total loss, and then run the backward pass [21].

Workflow Visualization

GNN, Ensemble, and MTL Relationship

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Advanced ADMET Modeling

| Item | Function | Example Use Case |

|---|---|---|

| Therapeutics Data Commons (TDC) | A platform providing curated benchmarks and datasets for drug discovery, including standardized ADMET tasks [18]. | For training and fairly evaluating GNN, MTL, and ensemble models on a level playing field [18] [25]. |

| PyTorch Geometric (PyG) | A library built upon PyTorch for deep learning on graphs and other irregular structures [23]. | Implementing GNN architectures like GCN or GAT for molecular graph processing [23]. |

| RDKit | An open-source cheminformatics toolkit that allows for the computation of molecular descriptors and conversion of SMILES to molecular graphs [25]. | Generating node and edge features from SMILES strings to feed into a GNN [18] [25]. |

| XGBoost | An optimized library for implementing gradient boosting, a powerful sequential ensemble method [20]. | Creating a high-performance ensemble model for ADMET classification or regression. |

| Chemprop | A message-passing neural network specifically designed for molecular property prediction, often used as a strong baseline [25]. | Serves as a backbone model for more advanced frameworks, such as those integrating quantum descriptors for MTL [25]. |

Performance Benchmarking and Quantitative Outcomes

Federated learning has demonstrated significant, quantifiable benefits for ADMET prediction, where model performance is often limited by the availability of diverse chemical data. The table below summarizes key performance metrics from recent large-scale implementations.

Table 1: Measured Performance Benefits of Federated Learning for ADMET Prediction

| Study / Implementation | Performance Improvement | Scope and Data Diversity | Key ADMET Endpoints Validated |

|---|---|---|---|

| MELLODDY Project [4] [27] | Consistent, systematic outperformance of local baseline models. | Unprecedented scale across multiple pharmaceutical companies. | Quantitative Structure-Activity Relationship (QSAR) models. |

| Polaris ADMET Challenge [4] | 40–60% reduction in prediction error. | Broad collaborative benchmarking initiative. | Human & mouse liver microsomal clearance, solubility (KSOL), permeability (MDR1-MDCKII). |

| Cross-Pharma Research [4] | Performance gains scaled with the number and diversity of participants. | Multiple participating organizations with heterogeneous data. | Expanded applicability domains and robustness across unseen molecular scaffolds. |

Frequently Asked Questions (FAQs) & Troubleshooting

General Concepts

Q1: What is federated learning in the context of drug discovery? Federated Learning (FL) is a decentralized machine learning approach that enables multiple parties (e.g., pharmaceutical companies, research institutions) to collaboratively train a model without sharing their raw data. Instead of centralizing datasets, each participant trains a model locally on their private data, and only the model updates (like gradients or weights) are sent to a central server for aggregation into an improved global model. This preserves data privacy and intellectual property [4] [28].

Q2: How does federated learning specifically help with ADMET prediction? Accurate ADMET prediction requires learning from a vast and diverse chemical space. Individual organizations possess limited data, causing models to perform poorly on novel compounds. Federated learning overcomes this by creating a global model that learns from the combined chemical diversity of all participants. This leads to models with broader applicability domains and significantly reduced prediction errors, especially for pharmacokinetic and safety endpoints [4].

Q3: Does federated learning guarantee data privacy? Federated learning significantly enhances privacy by keeping raw data localized. However, for robust privacy protection, it is typically combined with additional techniques like differential privacy (adding calibrated noise to model updates) and secure multi-party computation (encrypting updates during aggregation) to prevent potential reconstruction of raw data from the shared model parameters [28] [29].

Technical Implementation & Troubleshooting

Q4: We are experiencing slow convergence of the global model. What can we do? Slow convergence is a common challenge. Consider the following solutions:

- Increase Local Epochs: Allow more training passes on local datasets before aggregation [30].

- Adaptive Learning Rates: Implement learning rate schedules that decrease over time to stabilize training [30].

- Client Sampling: Instead of aggregating updates from all nodes every round, select a subset of nodes with more representative or higher-quality data [30].

- Advanced Algorithms: Use algorithms like FedProx, which handles statistical heterogeneity (non-IID data) more effectively by adding a proximal term to the local loss function, preventing local updates from drifting too far from the global model [29].

Q5: How do we handle participants with different data formats, assay protocols, or computational resources? This heterogeneity is a key technical barrier.

- Model Heterogeneity: Use frameworks like TensorFlow Federated or PySyft that support standardized protocols and can accommodate some level of heterogeneity [28].

- Data Heterogeneity: The federated averaging process is designed to learn from non-identically distributed data. Techniques like stratification and careful evaluation can mitigate bias [4].

- Resource Constraints: For participants with limited computational power, use techniques like gradient compression or allow for smaller model architectures. Asynchronous aggregation protocols can also prevent the system from waiting for the slowest node [30] [31].

Q6: What are the best practices for validating a federated model for ADMET prediction? Rigorous validation is critical for trust in the models. Best practices include:

- Scaffold-Based Splitting: Partition data by molecular scaffold during training and testing to evaluate performance on truly novel chemotypes, preventing over-optimistic results [4].

- Multiple Seed and Fold Evaluation: Run experiments across multiple random seeds and cross-validation folds to report a distribution of results, not just a single score [4].

- Benchmark Against Null Models: Compare the federated model's performance against simple baseline models and established noise ceilings to confirm that improvements are statistically significant and practically useful [4].

Q7: How can we protect the federated learning process from security threats like model poisoning? Malicious actors could submit bad updates to degrade the global model.

- Anomaly Detection: Implement statistical outlier detection to identify and reject suspicious model updates before aggregation. AI agents can be tasked with evaluating each update for anomalies [30].

- Byzantine-Robust Aggregation: Use aggregation algorithms that are inherently robust to a certain fraction of malicious clients, instead of a simple averaging (FedAvg) strategy [30].

- Secure Aggregation: Employ cryptographic protocols that allow the server to aggregate model updates without being able to decipher any single participant's update, enhancing privacy and security [29].

Experimental Protocol: Implementing a Federated Learning Workflow for ADMET

The following workflow diagram and detailed protocol outline the key stages for setting up a federated learning experiment for ADMET property prediction.

Federated Learning Workflow for ADMET Prediction.

Protocol Steps:

Project Setup and Governance

- Define Objectives: Clearly state the ADMET endpoint to be predicted (e.g., metabolic clearance, hERG inhibition) [28].

- Form Consortium & Establish Agreement: Select participating organizations. A critical step is to establish a legal and technical framework covering data usage, intellectual property (IP) rights, and model ownership. Using a trusted third-party coordinator can streamline this [27].

- Implement Privacy Safeguards: Decide on and configure privacy-enhancing technologies (e.g., differential privacy parameters, secure aggregation protocols) [28] [29].

Technical Configuration and Initialization

- Select an FL Framework: Choose a framework like TensorFlow Federated or PySyft [28].

- Define Model Architecture: Agree upon a common neural network architecture (e.g., multi-task deep learning model) that all participants will use for local training [4].

- Initialize Global Model: The central server initializes a global model with random weights or pre-trained on public data [29].

Federated Training Loop

- Model Distribution: The central server sends the current global model to all or a sampled subset of participating clients [29].

- Local Training: Each client trains the model on its local, private ADMET dataset. The number of local epochs is a key hyperparameter [28].

- Update Transmission: Clients send their model updates (weight differences or gradients) back to the server. These updates are encrypted or noise-perturbed as per the agreed privacy protocol [29].

- Secure Aggregation: The server collects the updates. Using a secure aggregation protocol, it combines them—typically via a weighted average based on the sample size of each client—to produce a new, improved global model [4] [29].

Model Evaluation and Deployment

- Convergence Check: The process repeats from Step 3a until the global model's performance on a held-out validation set plateaus or meets a predefined target [29].

- Final Validation: The final global model is rigorously evaluated using scaffold-based cross-validation and benchmarked against internal models to quantify performance gains [4].

- Deployment and Inference: The validated global model can be deployed for inference. Participants can use it internally or set up a private inference service that respects data privacy [30].

Research Reagent Solutions: Key Tools and Frameworks

The successful implementation of a federated learning system requires a stack of software tools and libraries. The table below lists essential "research reagents" for building an FL platform for drug discovery.

Table 2: Essential Tools and Frameworks for Federated Learning in Drug Discovery

| Tool/Framework Name | Type | Primary Function | Relevance to ADMET Research |

|---|---|---|---|

| TensorFlow Federated (TFF) [28] | Open-Source Framework | Provides libraries for implementing decentralized computation and federated learning on top of TensorFlow. | Ideal for building and simulating FL workflows for large-scale chemical data. |

| PySyft [28] | Open-Source Library | A library for secure and private deep learning that works with PyTorch and TensorFlow. | Enables advanced privacy-preserving techniques like secure multi-party computation. |

| kMoL [4] | Open-Source Library | A machine and federated learning library specifically designed for drug discovery. | Offers cheminformatics-specific functionalities tailored to molecular data. |

| Differential Privacy Libraries | Software Library | Libraries (e.g., TensorFlow Privacy) that implement algorithms for adding calibrated noise to data or model updates. | Critical for providing mathematical guarantees of data privacy in the FL pipeline. |

| Secure Aggregation Protocols [28] | Cryptographic Protocol | Protocols that allow a server to aggregate model updates from multiple clients without decrypting any individual update. | Protects participant confidentiality from the central coordinator itself. |

ADMET prediction platforms are categorized into open-source and commercial suites, each with distinct advantages for early drug discovery. These tools help scientists prioritize compounds by predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity properties.

Open-source platforms like Admetica provide transparency and customization, allowing researchers to build and validate their own models [32]. Commercial suites such as ADMET Predictor offer extensively validated, enterprise-ready solutions with integrated workflows and support [33].

Comparative Platform Specifications

Table 1: Key Features of ADMET Prediction Platforms

| Platform | Type | Key Features | Primary Use Cases | Installation Method |

|---|---|---|---|---|

| Admetica [32] | Open-Source | Comprehensive pre-built models; CLI & REST APIs; Visual results exploration | Academic research; Proof-of-concept studies; Custom model development | pip install admetica==1.4.1 |

| ADMET Predictor [33] | Commercial | 175+ property predictions; AI/ML platform; Integrated HT-PBPK simulations | Industrial drug discovery; Regulatory decision support; Risk assessment | Enterprise installation on Windows systems [34] |

Troubleshooting Guides

Common Installation Issues

Problem: Dependency conflicts during Admetica installation.

- Solution: Create a clean Python virtual environment before installation. This isolates package dependencies and prevents version clashes with existing libraries [32].

Problem: License activation failure for ADMET Predictor.

- Solution: Verify your system meets requirements (Windows 10/11 64-bit, 16GB RAM recommended). Contact your organization's license administrator to confirm Reprise license server configuration or RLMCloud activation [34].

Problem: Docker container for Admetica web interface fails to start.

- Solution: Ensure Docker daemon is running. Use the provided setup script from the

admetica_webdirectory, which automates image building and container deployment [32].

Data Processing and Prediction Errors

Problem: SMILES string parsing errors.

- Solution: Validate SMILES format using a cheminformatics library like RDKit before submitting to the prediction pipeline. Check for invalid characters or syntax.

Problem: Low prediction confidence scores.

- Solution: Check if your query compound falls within the model's applicability domain. Predictions for structurally novel compounds outside the training data domain have higher uncertainty [33] [35].

Problem: Inconsistent results between different platforms.

- Solution: Recognize that models are trained on different datasets using various algorithms. Consistent, high-quality experimental data is crucial for reliable benchmarking, as literature data often shows poor correlation between sources [35].

Experimental Protocols and Workflows

Standardized ADMET Prediction Workflow

The diagram below outlines a robust methodology for running and validating ADMET predictions, incorporating best practices from open and commercial platforms.

Core Experimental Methodology

Dataset Preparation and Curation

- Data Source Identification: Utilize high-quality, consistently generated experimental data. Public datasets like those in Admetica's

Datasetsfolder provide starting points [32]. - Data Preprocessing: Apply rigorous curation: remove duplicates, standardize measurement units (e.g., convert IC50 to µM), and classify binary outcomes (e.g., inhibition >50% = 1) [32].

- Train-Test Splitting: Implement time-split or structural-cluster splits to mimic real-world predictive scenarios, avoiding random splits that overestimate performance [35].

Model Training and Validation (Admetica)

- Model Selection: Choose algorithm based on data size and endpoint type. Admetica uses Chemprop for multiple endpoints [32].

- Hyperparameter Optimization: Use cross-validation on training data to optimize learning rate, hidden layer size, and other architecture choices.

- Performance Assessment: Evaluate using multiple metrics: MAE, RMSE, R² for regression; accuracy, balanced accuracy, ROC AUC for classification [32].

Prospective Validation Framework

- Blind Challenge Paradigm: Participate in community blind challenges to objectively assess model performance on unseen data [35].

- Experimental Correlation: Select key predictions for experimental verification to establish ground truth and iteratively improve models.

Frequently Asked Questions (FAQs)

Q: How do I choose between open-source and commercial ADMET platforms?

- A: Consider open-source for academic research, method development, and when customization is needed. Choose commercial solutions for regulated environments, enterprise integration, and when relying on extensively validated models with support [33] [32].

Q: What is the typical accuracy I can expect from ADMET predictions?

- A: Performance varies by endpoint. For example, Admetica reports ROC AUC of 0.87 for CYP3A4 inhibition and 0.885 for hERG inhibition [32]. Commercial tools may offer higher accuracy through proprietary datasets and ensemble methods.

Q: How can I assess if a prediction is reliable for my compound?

- A: Use the model's applicability domain assessment and confidence estimates. Be cautious with compounds structurally different from the training data. Consider consensus predictions from multiple models [33].

Q: What are the most common pitfalls in ADMET prediction?

- A: Key issues include: overreliance on single predictions, ignoring uncertainty estimates, extrapolating beyond applicability domains, and using models trained on irrelevant chemical space [35].

Q: Can I integrate these tools into our existing drug discovery workflow?

- A: Yes. Admetica offers REST APIs and CLI integration [32]. ADMET Predictor provides enterprise-ready automation through REST APIs, Python wrappers, and connectors for platforms like Certara D360 and Schrödinger LiveDesign [33].

Essential Research Reagent Solutions

Table 2: Key Resources for ADMET Prediction Research

| Resource | Function | Example/Format |

|---|---|---|

| Chemical Databases | Provide structures & experimental data for training | ChEMBL, ZINC, PROTAC-DB [32] |

| Descriptor Calculation | Generates molecular features for ML | Molecular weight, logP, hydrogen bond donors/acceptors [33] |

| Validation Assays | Experimental verification of predictions | CYP inhibition, Caco-2 permeability, hERG binding [32] |

| Visualization Tools | Results interpretation & exploration | 2D/3D scatter plots, property distribution charts [33] [32] |

| Workflow Platforms | Pipeline orchestration & automation | KNIME, Datagrok, Python scripting environments [33] [32] |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Our ML model for solubility prediction performs well on the training set but fails on new chemical series. What could be the issue?

This is a classic problem of the Applicability Domain (AD). Models can fail when new compounds are structurally different from those in the training set [36]. To address this:

- Define Your Model's Applicability Domain: Use chemical similarity measures or descriptor ranges to establish the chemical space where your model is reliable [36].

- Use Diverse Training Data: Ensure your training set encompasses a broad chemical space, including various scaffolds and property ranges relevant to your project [36].

- Retrain with Local Data: Frequently retrain your model with new experimental data ("local data") from your project to improve its performance on relevant chemical series [36].

Q2: What are the best practices for curating data to build a reliable ML model for ADMET prediction?

Data quality is the most critical factor. The principle of "garbage in, garbage out" applies fully here [37].

- Ensure Data Provenance: Know the origin of your data, the experimental protocols used to generate it, and how it has been cleaned and harmonized [37].

- Check for Consistency: For classification models, ensure consistent activity thresholds across all data sources. For regulatory endpoints like hERG inhibition, use a biologically relevant threshold (e.g., IC50 < 10 µM) [38].

- Embrace FAIR Principles: Curate data to be Findable, Accessible, Interoperable, and Reusable to enhance model reproducibility and utility [39].

Q3: How can we improve the interpretability of a "black box" ML model like a deep neural network for CYP inhibition?

Model interpretability is essential for building trust and guiding chemical design [36] [37].

- Use Interpretable Features: Employ molecular fingerprints (like ECFP_8) or descriptors (like logP, TPSA) that have a clear chemical meaning. Naïve Bayesian models, for instance, can highlight structural fragments favorable or unfavorable for activity [38].

- Implement Model Transparency: Document the model's strengths, limitations, specific purpose, and the assumptions inherent in its design. Provide context around its decision-making process [37].

- Leverage Domain Expertise: Collaborate with medicinal chemists and toxicologists to validate model interpretations and translate predictions into actionable chemical design strategies [36] [37].

Troubleshooting Common Experimental Issues

Problem: Low Cell Attachment Efficiency in Hepatocyte Assays Hepatocytes are critical for experimental validation of metabolism and toxicity, but poor attachment can compromise assays [40].

| Possible Cause | Recommendation |

|---|---|

| Improper Thawing | Thaw cells rapidly (<2 mins at 37°C) and use recommended thawing medium (e.g., HTM Medium) [40]. |

| Rough Handling | Mix cells slowly and use wide-bore pipette tips to avoid shearing. Ensure a homogenous mixture before counting [40]. |

| Poor-Quality Substratum | Use high-quality coated plates (e.g., Gibco Collagen I-Coated Plates) to improve cell adhesion [40]. |

| Incorrect Seeding Density | Check the lot-specific specification sheet for the optimal seeding density and observe cells under a microscope after plating [40]. |

Case Studies & Experimental Protocols

Case Study 1: Predicting hERG Toxicity with Naïve Bayesian Classification

Objective: To develop a robust classification model to identify compounds with a high risk of inhibiting the hERG potassium channel, a major cause of drug-induced cardiotoxicity [38].

Experimental Protocol/Methodology:

- Data Set Curation: A diverse data set of 806 compounds with reliable hERG inhibition data (IC50) was assembled. Compounds were categorized as blockers or non-blockers using a threshold of IC50 < 10 µM [38].

- Data Splitting: The data set was split into a training set (620 molecules) and an external test set (120 molecules). Two additional external test sets from WOMBAT-PK and PubChem were used for validation [38].

- Descriptor Calculation: Fourteen molecular descriptors critical for ADMET prediction were calculated, including ALogP, molecular weight (MW), hydrogen bond donors/acceptors (nHBD/nHBA), topological polar surface area (TPSA), and number of rotatable bonds [38].

- Fingerprint Generation: Extended-connectivity fingerprints (ECFP_8) were generated to capture key structural features [38].

- Model Building & Validation: A Naïve Bayesian classifier was built using the molecular descriptors and fingerprints. The model was validated using leave-one-out cross-validation on the training set and, most importantly, on the held-out external test sets [38].

Results Summary: The model demonstrated high and consistent predictive accuracy across all test sets, confirming its robustness and ability to generalize to new data [38].

| Model | Training Set Accuracy (LOO-CV) | Test Set I Accuracy | WOMBAT-PK Test Set Accuracy | PubChem Test Set Accuracy |

|---|---|---|---|---|

| Naïve Bayesian Classifier | 84.8% | 85.0% | 89.4% | 86.1% |

Case Study 2: Analyzing Property Trends of Protein-Protein Interaction (PPI) Inhibitors

Objective: To computationally analyze the physicochemical (PC) and ADMET properties of PPI inhibitors (iPPIs) compared to other drug target classes to guide the design of compounds with improved developability profiles [41].

Experimental Protocol/Methodology:

- Data Set Assembly: Eight distinct datasets were compiled: iPPIs, enzyme inhibitors, GPCR ligands, ion channel modulators, nuclear receptor ligands, allosteric modulators, oral marketed drugs (OMD), and oral natural product-derived drugs (NPD) [41].

- Property Calculation: A wide range of PC and ADMET properties were calculated for all compounds, including MW, logP, logD, TPSA, HBD, HBA, solubility, and predicted toxicity risks [41].

- Statistical Analysis: The mean, median, and 95th percentile values for each property were computed and compared across the different datasets using statistical methods to identify significant differences [41].

Results Summary: The analysis confirmed that iPPIs occupy a distinct and challenging chemical space, characterized by higher molecular weight and lipophilicity compared to many other target classes and marketed drugs [41].

| Property | iPPIs (Mean) | Oral Marketed Drugs (Mean) | Key Implication |

|---|---|---|---|

| Molecular Weight (MW) | 521 Da | ~ | Can impact absorption, bile elimination, and off-target interactions [41]. |

| logP (Lipophilicity) | 4.8 | ~ | High lipophilicity is linked to poor solubility, promiscuity, and toxicity risks (e.g., hERG, CYP inhibition) [41]. |

| Hydrogen Bond Donors (HBD) | 2.1 | 1.7 | A lower HBD count in OMD suggests this property is critical for good permeability and bioavailability [41]. |

| Topological Polar Surface Area (TPSA) | 101 Ų | ~ | Higher TPSA can be a limiting factor for passive permeability, especially for CNS targets [41]. |

General Workflow for Developing ML-based ADMET Models

The following diagram outlines a consensus workflow for building and deploying reliable ML models in drug discovery, integrating principles from multiple case studies.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key materials and tools referenced in the successful deployment of ADMET prediction models.

| Item | Function in Research | Example/Reference |

|---|---|---|

| Cryopreserved Hepatocytes | In vitro cell-based systems for experimental validation of metabolic stability, drug-drug interactions, and toxicity [40]. | Human hepatocytes, HepaRG cells [36] [40]. |

| Specialized Cell Culture Media | Supports the growth, plating, and maintenance of functional primary cells and cell lines in vitro. | Williams' Medium E with Plating and Incubation Supplement Packs [40]. |