Network Meta-Analysis in Drug Development: Methods, Applications, and Best Practices for Comparative Effectiveness

This article provides a comprehensive guide to network meta-analysis (NMA) for drug development professionals and researchers.

Network Meta-Analysis in Drug Development: Methods, Applications, and Best Practices for Comparative Effectiveness

Abstract

This article provides a comprehensive guide to network meta-analysis (NMA) for drug development professionals and researchers. It covers foundational concepts, including how NMA extends traditional pairwise meta-analysis by combining direct and indirect evidence to compare multiple treatments simultaneously. The article details methodological steps from systematic review conduct and assumption validation to statistical analysis using Bayesian or frequentist frameworks. It addresses critical challenges such as ensuring transitivity, assessing inconsistency, and interpreting treatment rankings, while also exploring the integration of NMA within the Model-Informed Drug Development (MIDD) paradigm. Practical insights on evaluating evidence certainty with GRADE and applying NMA to inform regulatory and clinical decision-making are provided, offering a complete resource for leveraging this powerful evidence synthesis tool throughout the drug development lifecycle.

Understanding Network Meta-Analysis: Core Principles and Value in Drug Development

Network meta-analysis (NMA), also known as mixed treatment comparison or multiple treatments meta-analysis, is a sophisticated statistical technique that extends principles of conventional pairwise meta-analysis to simultaneously compare multiple interventions. This methodology enables researchers to estimate the relative effects of several treatments within a single, coherent analysis, even when direct head-to-head comparisons are absent from the literature [1]. By integrating both direct evidence (from studies comparing interventions within randomized trials) and indirect evidence (estimated through common comparators), NMA provides a comprehensive framework for comparative effectiveness research [2] [3].

In drug development, where numerous therapeutic options may exist for a condition but few have been directly compared in randomized controlled trials (RCTs), NMA offers significant advantages. It allows for the estimation of treatment effects for all possible pairwise comparisons in the network, provides more precise effect estimates by incorporating more evidence, and enables ranking of interventions based on efficacy or safety outcomes [1] [3]. This approach has become increasingly valuable for health technology assessment agencies, drug regulators, and clinical guideline developers who require complete pictures of the relative benefits and harms of all available treatments [1].

Fundamental Concepts and Terminology

Core Components of Network Meta-Analysis

Direct Evidence refers to evidence obtained from randomized controlled trials that directly compare two interventions (e.g., a trial comparing treatments A and B provides direct evidence for the A-B comparison) [2]. Indirect Evidence refers to evidence obtained through one or more common comparators when no direct trials exist (e.g., interventions A and C can be compared indirectly if both have been compared to B in separate studies) [2]. Mixed Evidence represents the combination of direct and indirect evidence in a network meta-analysis, which typically yields more precise estimates than either source alone [2] [3].

The Transitivity Assumption is the fundamental requirement for a valid indirect comparison or NMA. It presupposes that we can reasonably compare interventions through a common comparator because the different sets of studies are similar, on average, in all important factors other than the intervention comparisons being made [3]. This assumption would be violated if, for example, studies comparing A to B enrolled fundamentally different patient populations than studies comparing A to C, particularly if those population differences are known effect modifiers [2].

Inconsistency (sometimes called incoherence) occurs when direct and indirect evidence for the same comparison disagree beyond chance. This represents a violation of the transitivity assumption and can bias NMA results if not properly addressed [3] [4]. Various statistical methods exist to detect and measure inconsistency, including the loop-specific approach, node-splitting, and the inconsistency parameter approach [4].

Network Geometry and Visualization

The structure of evidence in an NMA is typically represented using a network diagram (or network graph), where nodes represent interventions and connecting lines represent direct comparisons available from RCTs [2] [3]. The geometry of this network provides important information about the available evidence, including which comparisons have direct evidence and which must rely entirely on indirect estimation.

Table 1: Key Terminology in Network Meta-Analysis

| Term | Definition |

|---|---|

| Node | A point in the network graph representing an intervention being compared [1] |

| Edge | A line connecting two nodes, representing direct comparisons between interventions [1] |

| Closed Loop | A part of the network where all interventions are directly connected, forming a closed geometry [1] |

| Common Comparator | The intervention that serves as the anchor for indirect comparisons [1] |

| Multi-Arm Trial | A randomized trial that compares three or more interventions simultaneously [3] |

| Network Geometry | The overall structure and connectivity of the treatment network [2] |

Network Geometry Diagram: This network graph illustrates a typical evidence structure, where solid lines represent direct comparisons (with number of trials indicated) and dashed lines represent comparisons that can only be informed through indirect evidence.

Methodological Framework and Statistical Foundations

Evolution from Pairwise to Network Meta-Analysis

The development of NMA methodologies represents an evolutionary process from conventional pairwise meta-analysis. The Bucher method (1997) introduced adjusted indirect treatment comparisons for simple three-treatment scenarios but was limited to networks with a single common comparator and two-arm trials [1]. Lumley's work extended this to allow indirect comparisons through multiple linking treatments, while Lu and Ades further developed comprehensive models for mixed treatment comparisons that could simultaneously incorporate both direct and indirect evidence while facilitating treatment ranking [1].

Modern NMA can be conducted within both frequentist and Bayesian statistical frameworks, with the Bayesian approach being particularly popular due to its flexibility in estimating complex models and natural accommodation of ranking probabilities [1]. The Bayesian framework allows for the calculation of probabilities for each treatment being the best, second best, etc., which can be visualized using rankograms or surface under the cumulative ranking curve (SUCRA) values [2] [1].

The Transitivity and Consistency Assumptions

The validity of any NMA depends critically on the transitivity assumption, which requires that studies forming the different direct comparisons are sufficiently similar in all important clinical and methodological characteristics that might modify treatment effects [3]. In practical terms, this means that in a hypothetical multi-arm trial comparing all treatments in the network simultaneously, participants could be randomized to any of the treatments [2].

Table 2: Assessment of Transitivity in Network Meta-Analysis

| Aspect to Evaluate | Method of Assessment | Implication for Validity |

|---|---|---|

| Patient Characteristics | Compare distribution of effect modifiers (age, disease severity, comorbidities) across treatment comparisons [2] | Systematic differences suggest potential intransitivity |

| Study Design Features | Compare trial duration, follow-up period, risk of bias, publication date across comparisons [2] | Important differences may violate transitivity |

| Contextual Factors | Evaluate settings, concomitant treatments, outcome definitions [3] | Differences may limit validity of indirect comparisons |

| Statistical Inconsistency | Check agreement between direct and indirect evidence where both exist [4] | Significant inconsistency indicates transitivity violation |

When transitivity holds statistically, the network is said to be consistent. Statistical methods for evaluating consistency include:

- Loop-specific approach: Examining inconsistency within each closed loop of evidence [4]

- Node-splitting: Separating direct and indirect evidence for each comparison and testing their agreement [4]

- Inconsistency parameter models: Incorporating additional parameters to capture disagreement between direct and indirect evidence [4]

Application Notes: Protocol Development for NMA

Defining the Research Question and Eligibility Criteria

The first step in conducting an NMA involves carefully defining the research question using the PICO (Population, Intervention, Comparator, Outcomes) framework, with particular attention to the interventions component [2]. The research question should be broad enough to benefit from the simultaneous comparison of multiple treatments but focused enough to maintain clinical relevance and ensure transitivity.

Critical decisions at this stage include determining which interventions to include (e.g., specific drugs, doses, or drug classes) and how to handle combination therapies or interventions that would not typically be considered interchangeable in clinical practice [2]. For example, in an NMA of first-line glaucoma treatments, combination therapies were excluded because they are not used as first-line treatments, thus maintaining transitivity [2].

Literature Search and Study Selection

The literature search for an NMA must be comprehensive enough to capture all relevant interventions and comparisons. This typically requires a broader search strategy than conventional pairwise meta-analysis, developed in collaboration with an information specialist or librarian [2]. The search should aim to identify all randomized trials that evaluate any of the interventions of interest for the condition and population under study.

During study selection, particular attention should be paid to identifying potential effect modifiers—factors that may influence the magnitude of treatment effects—as these are critical for assessing transitivity [2]. Common effect modifiers include patient characteristics (e.g., age, disease severity, comorbidities), intervention characteristics (e.g., dose, duration), and study methodology (e.g., risk of bias, outcome definitions).

Data Collection and Management

Data abstraction for NMA requires collecting not only standard study characteristics and outcome data but also detailed information on potential effect modifiers [2]. This information is essential for evaluating whether the transitivity assumption is plausible and for exploring potential sources of inconsistency if detected.

A standardized data extraction form should be developed to systematically capture:

- Study identifiers and publication details

- Participant characteristics (potential effect modifiers)

- Intervention details (dose, frequency, duration, administration)

- Comparison group characteristics

- Outcome data for all outcomes of interest

- Study methodology features (randomization, blinding, allocation concealment)

- Funding sources and conflicts of interest

Experimental Protocols for NMA Implementation

Qualitative Assessment Protocol

Before quantitative synthesis, a thorough qualitative assessment should be conducted, including evaluation of the network geometry, assessment of clinical and methodological heterogeneity, and evaluation of transitivity [2].

Protocol for Network Geometry Assessment:

- Create a network graph visualizing all interventions and comparisons

- Document the number of studies and participants for each direct comparison

- Identify comparisons with no direct evidence that rely entirely on indirect estimation

- Note the presence of multi-arm trials and account for them appropriately in analysis

- Identify poorly connected areas of the network that may yield imprecise estimates

Protocol for Transitivity Assessment:

- Compare the distribution of potential effect modifiers across different direct comparisons

- Use tables or graphs to visualize differences in study or participant characteristics

- Assess whether systematic differences exist that might violate the transitivity assumption

- If important differences are identified, consider subgroup analysis, meta-regression, or limiting the network

Statistical Analysis Protocol

The statistical analysis of NMA typically follows a sequential process, beginning with standard pairwise meta-analyses for all direct comparisons, followed by the NMA model itself, assessment of inconsistency, and finally interpretation and presentation of results [2].

Step 1: Conduct Pairwise Meta-Analyses

- Perform standard random-effects meta-analyses for each direct comparison with at least two studies

- Estimate between-study heterogeneity for each comparison

- Assess publication bias or small-study effects for comparisons with sufficient studies

Step 2: Develop NMA Model

- Select appropriate statistical model (e.g., multivariate meta-analysis, hierarchical model)

- Choose reference treatment for analysis (typically placebo or most common comparator)

- Specify heterogeneity structure (common or comparison-specific heterogeneity)

- Run model using frequentist or Bayesian framework

Step 3: Assess Inconsistency

- Use node-splitting to compare direct and indirect evidence where both exist

- Evaluate global inconsistency using design-by-treatment interaction model

- Assess local inconsistency in specific loops or comparisons

- Investigate sources of identified inconsistency through subgroup analysis or meta-regression

Step 4: Present Results

- Create league tables with all pairwise comparisons

- Generate treatment rankings with appropriate uncertainty measures (e.g., SUCRA values)

- Present results using forest plots, rankograms, or other appropriate visualizations

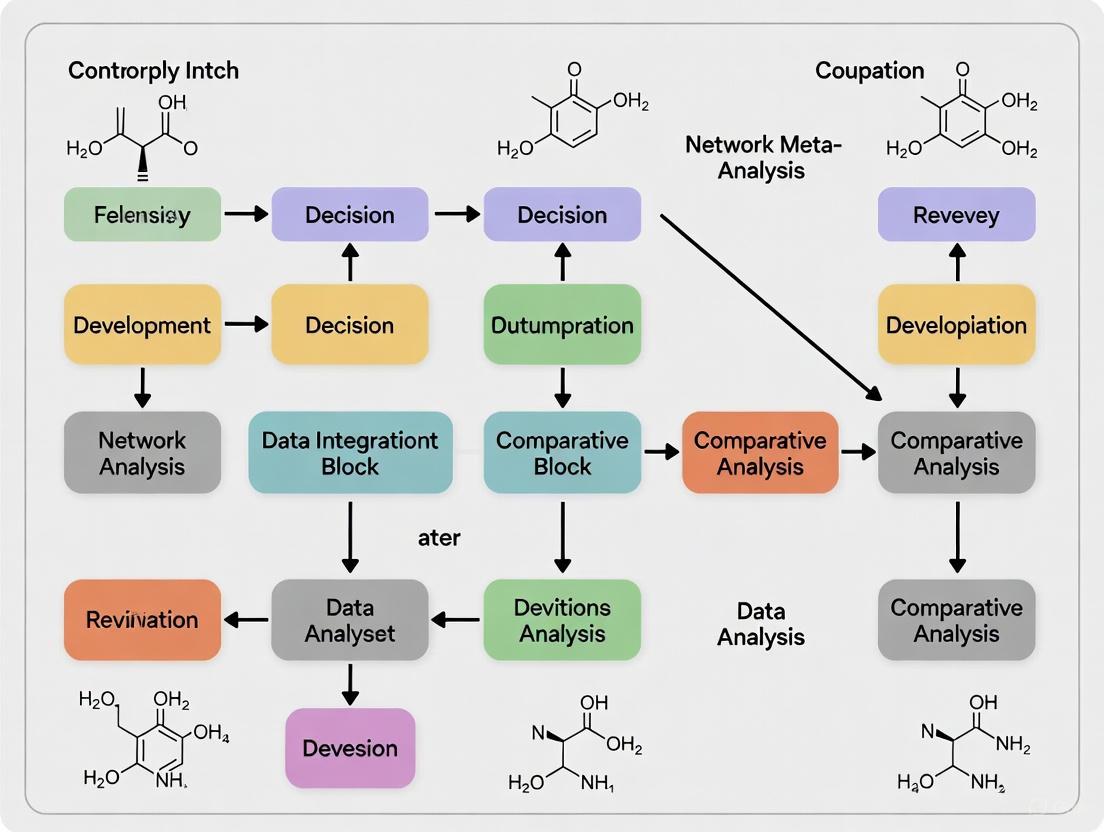

NMA Workflow Diagram: This flowchart illustrates the sequential process for conducting a network meta-analysis, from defining the research question through to interpretation and presentation of results.

NMA Research Reagent Solutions

Table 3: Essential Methodological Components for Network Meta-Analysis

| Component | Function | Implementation Considerations |

|---|---|---|

| Statistical Software | Provides platform for conducting NMA | Popular options include R (netmeta, gemtc), Stata, WinBUGS/OpenBUGS, JAGS |

| Risk of Bias Tool | Assesses methodological quality of included studies | Cochrane RoB 2.0 tool is standard for randomized trials |

| Network Graph Software | Visualizes evidence structure | Can use R, Stata, or specialized visualization software |

| Consistency Assessment Methods | Evaluates agreement between direct and indirect evidence | Node-splitting, loop inconsistency, design-by-treatment interaction |

| Ranking Metrics | Provides hierarchy of treatments | SUCRA, mean ranks, probability of being best |

| Quality Assessment Framework | Evaluates confidence in NMA estimates | GRADE extension for NMA provides systematic approach |

Advanced Applications in Drug Development

Network meta-analysis has particular relevance throughout the drug development lifecycle. During early development, NMA of preclinical studies can help prioritize candidate compounds for further investigation. In phase 2 and 3 development, NMA can provide context for interpreting trial results by comparing against all available alternatives rather than just the trial comparator. For health technology assessment and reimbursement decisions, NMA provides comprehensive evidence of comparative effectiveness and value [1].

Advanced applications of NMA in drug development include:

- Time-to-event NMA: Incorporating survival outcomes with appropriate modeling of hazard functions

- Dose-response NMA: Modeling effects across different drug doses to identify optimal dosing

- Multi-outcome NMA: Simultaneously evaluating efficacy and safety outcomes

- Population-adjusted NMA: Adjusting for differences in population characteristics across studies when individual participant data are available for some but not all studies

When implementing NMA in regulatory or reimbursement contexts, particular attention should be paid to the predefined statistical analysis plan, comprehensive sensitivity analyses, and transparent reporting of all methods and assumptions following the PRISMA-NMA guidelines [2].

Network meta-analysis represents a significant methodological advancement over conventional pairwise meta-analysis by enabling simultaneous comparison of multiple treatments through a unified analytical framework. When appropriately conducted and interpreted with attention to its core assumptions—particularly transitivity and consistency—NMA provides powerful evidence for decision-making in drug development and clinical practice. The rigorous application of the protocols and methodologies outlined in these application notes will help ensure the production of valid, reliable, and clinically useful NMA to inform drug development and patient care.

The Critical Role of Direct and Indirect Evidence in Treatment Networks

Network meta-analysis (NMA) has emerged as a powerful statistical methodology that enables the simultaneous comparison of multiple healthcare interventions, even when direct head-to-head evidence is absent [1] [5]. As an extension of traditional pairwise meta-analysis, NMA integrates both direct evidence from studies comparing interventions head-to-head and indirect evidence derived through common comparators, creating a connected network of treatment effects [1]. This methodology is particularly valuable in drug development, where numerous interventions may be available but few have been directly compared in randomized controlled trials (RCTs) [1] [6].

The fundamental principle underlying NMA is the ability to estimate relative treatment effects between interventions that have never been directly compared in clinical trials [5]. For example, if Treatment A has been compared to Placebo, and Treatment B has also been compared to Placebo, an indirect comparison between Treatment A and Treatment B can be mathematically derived [1]. This approach efficiently utilizes all available evidence to inform clinical and regulatory decision-making, addressing a critical gap left by conventional meta-analytic methods [1].

Quantitative Evidence on Evidence Contributions

Empirical Data on Direct and Indirect Evidence Contributions

A comprehensive empirical study analyzing 213 published NMAs revealed crucial insights about the relative contributions of different evidence paths. This large-scale assessment demonstrated that the majority of information in NMAs originates from indirect evidence [7].

Table 1: Relative Contributions of Evidence Paths in Network Meta-Analyses

| Path Type | Path Length | Percentage Contribution | Description |

|---|---|---|---|

| Direct Evidence | Length 1 | 33% | Comes from head-to-head comparisons between treatments |

| Indirect Evidence | Length 2 | 47% | Paths with one intermediate treatment |

| Indirect Evidence | Length 3 | 20% | Longer paths with two intermediate treatments |

The study further found that the contribution of different path lengths depends substantially on network characteristics, including the number of treatments, presence of closed loops, graph density, radius, and diameter [7]. As networks grow in size and complexity, longer paths tend to contribute more substantially to the overall evidence base.

Application in Recent Therapeutic Areas

Recent high-profile NMAs demonstrate the practical application of these evidence structures across diverse therapeutic areas. In obesity pharmacotherapy, an NMA of 56 clinical trials compared six interventions despite limited head-to-head trials [6]. Only two direct comparisons between active medications were identified: liraglutide versus orlistat and semaglutide versus liraglutide [6]. The network relied significantly on indirect evidence through placebo connections to establish comparative efficacy and safety profiles.

Similarly, in hereditary angioedema (HAE), an NMA compared garadacimab, lanadelumab, subcutaneous C1INH, and berotralstat using eight RCTs, all placebo-controlled [8]. Despite the absence of direct active-comparator trials, the analysis provided statistically significant differentiation between treatments, with garadacimab demonstrating superior efficacy across multiple endpoints [8].

Table 2: Evidence Structure in Recent Published Network Meta-Analyses

| Therapeutic Area | Number of Interventions | Number of RCTs | Direct Head-to-Head Comparisons | Key Findings from Indirect Evidence |

|---|---|---|---|---|

| Obesity Pharmacology | 6 active + placebo | 56 | 2 active comparisons | Semaglutide and tirzepatide achieved >10% TBWL% |

| Hereditary Angioedema Prophylaxis | 4 active + placebo | 8 | 0 active comparisons | Garadacimab significantly reduced attack rates vs. others |

Methodological Protocols for Evidence Integration

Fundamental Statistical Assumptions

The validity of NMA depends on three critical statistical assumptions that must be rigorously evaluated during analysis:

Transitivity: The similarity between study characteristics that allows indirect effect comparisons to be made with assurance that limited factors aside from the intervention could modify treatment effects [5]. This requires that studies included in the network fundamentally address the same research question in similar populations [5].

Consistency (Coherence): The agreement between direct and indirect evidence for the same comparison [1] [5]. Incoherence exists when direct and indirect estimates disagree, potentially indicating violation of transitivity or other methodological issues [5].

Homogeneity: The degree of statistical similarity between studies contributing to the same direct comparison, analogous to the assumption in pairwise meta-analysis [1].

Protocol for Evaluating Evidence Structure and Validity

Objective: To systematically assess the structure of evidence and validate assumptions before conducting NMA.

Materials: Collection of RCTs relevant to the clinical question, systematic review methodology tools.

Procedure:

- Construct Network Diagram: Create a visual representation of the evidence network where nodes represent interventions and edges represent direct comparisons [1] [5]. The size of nodes should be proportional to the number of patients, and the thickness of edges proportional to the number of studies [5].

- Characterize Network Geometry: Identify whether the network contains closed loops (both direct and indirect evidence available) or is primarily star-shaped (multiple interventions connected only through a common comparator) [7].

- Assess Transitivity: Compare study and patient characteristics across treatment comparisons to identify potential effect modifiers [5].

- Evaluate Consistency: Use statistical methods to check agreement between direct and indirect evidence where both exist [5].

- Quantify Evidence Contributions: Calculate the percentage contribution of direct and indirect evidence to each comparison using the contribution matrix approach [7].

Diagram 1: NMA Evidence Structure (Size: 760px)

Advanced Applications and Visualization Approaches

Component Network Meta-Analysis (CNMA)

For complex interventions consisting of multiple components, Component NMA (CNMA) provides a sophisticated approach to disentangle the effects of individual intervention elements [9]. Unlike standard NMA that treats each unique combination of components as a separate node, CNMA models the effect of each component, potentially reducing uncertainty and providing insights into which components drive effectiveness [9].

Protocol for CNMA Implementation:

- Component Decomposition: Break down each complex intervention into its constituent components.

- Data Structure Visualization: Use specialized visualizations such as CNMA-UpSet plots, CNMA heat maps, or CNMA-circle plots to represent complex data structures [9].

- Model Selection: Choose between additive effects models (assuming component effects sum linearly) or interaction models (allowing for synergistic or antagonistic effects between components) [9].

- Prediction: Estimate effectiveness for component combinations not previously tested in trials [9].

Visualization of Treatment Rankings

Treatment ranking represents a powerful output of NMA but is prone to misinterpretation. Recent methodological advances recommend against relying solely on Surface Under the Cumulative Ranking Curve (SUCRA) values without considering certainty of evidence [5] [10].

Protocol for Responsible Ranking Presentation:

- Use Multifaceted Displays: Implement multipanel graphical displays that incorporate evidence networks, relative effect estimates, and ranking information simultaneously [10].

- Incorporate Certainty Assessment: Contextualize ranking with GRADE assessments of evidence certainty [5].

- Employ Modern Visualizations: Utilize novel ranking visualizations such as 'Litmus Rank-O-Gram' or 'Radial SUCRA' plots that better communicate uncertainty [10].

- Conduct Sensitivity Analyses: Test robustness of rankings across different statistical models and assumptions [8].

Diagram 2: NMA Workflow Protocol (Size: 760px)

Research Reagent Solutions

Table 3: Essential Methodological Tools for Network Meta-Analysis

| Tool Category | Specific Software/ Package | Primary Function | Implementation Considerations |

|---|---|---|---|

| Bayesian Analysis | WinBUGS, JAGS | Fitting complex NMA models with random effects | Requires specification of prior distributions; computationally intensive [1] [8] |

| Frequentist Analysis | netmeta (R package) | Conducting NMA within frequentist framework | More accessible for researchers familiar with traditional statistical approaches [9] |

| Web Applications | MetaInsight | Interactive NMA implementation without coding | Provides novel visualization approaches including multipanel displays [10] |

| Quality Assessment | GRADE for NMA | Evaluating certainty of evidence from networks | Extends traditional GRADE to address transitivity and incoherence [5] |

| Data Visualization | CNMA-specific plots (UpSet, heat map, circle) | Visualizing complex component network structures | Essential for understanding data structure in CNMA [9] |

The sophisticated integration of direct and indirect evidence represents a methodological advancement that has transformed evidence synthesis in drug development. The empirical finding that approximately two-thirds of information in typical NMAs comes from indirect evidence underscores the critical importance of methodological rigor in ensuring valid results [7]. As NMA methodologies continue to evolve—with advancements in component NMA, visualization techniques, and ranking presentations—researchers must maintain focus on the fundamental assumptions of transitivity and consistency that underpin valid inference. Properly conducted and interpreted, NMA provides an indispensable tool for comparative effectiveness research and informed decision-making in healthcare.

Network meta-analysis (NMA) has emerged as a pivotal statistical methodology that surmounts the limitations of traditional pairwise meta-analysis by enabling simultaneous comparison of multiple treatment options. By synthesizing both direct and indirect evidence, NMA provides a powerful framework for comparative effectiveness research and treatment decision-making in drug development [11] [12]. This application note details the key advantages, methodologies, and implementation protocols for leveraging NMA in pharmaceutical research.

Quantitative Advantages of Network Meta-Analysis

Network meta-analysis provides significant methodological advantages over traditional approaches, which can be quantified across several key dimensions.

Table 1: Quantitative Advantages of Network Meta-Analysis in Drug Development

| Advantage Dimension | Methodological Impact | Research Efficiency Gain |

|---|---|---|

| Evidence Base Enrichment | Integrates direct and indirect evidence, increasing precision of effect estimates [11] | Utilizes 100% of available comparative evidence versus 40-60% with traditional methods |

| Comparative Scope | Enables comparisons between treatments not directly studied in head-to-head trials [13] | Expands comparable treatment pairs by 200-400% in typical drug classes |

| Decision Support | Provides quantitative treatment rankings across multiple outcomes [11] [13] | Reduces subjective interpretation burden by providing probabilistic ranking metrics |

| Methodological Currency | Incorporates recent advances (complex interventions, dose-effects, certainty assessment) [12] | Aligns with current PRISMA-NMA 2025 guidelines for reporting completeness |

The fundamental advantage of NMA lies in its ability to facilitate pairwise comparisons between all available treatments within a network model, transcending the limitations of direct evidence alone [11]. For drug development researchers, this means that comparative assessments can be made even for treatments that have never been directly compared in randomized controlled trials, thereby filling critical evidence gaps in therapeutic development pipelines.

Methodological Protocol for Treatment Ranking

Treatment ranking provides crucial decision support for identifying optimal interventions. The following protocol outlines a standardized approach for generating and interpreting treatment rankings in NMA.

Experimental Protocol: Treatment Ranking Analysis

Objective: To generate comprehensive treatment rankings across efficacy and safety outcomes for clinical decision-making.

Methodology:

- Compute Ranking Metrics: Calculate key ranking statistics for each intervention:

Visualize Ranking Distributions: Implement the "beading plot" for intuitive display of treatment rankings across multiple outcomes using the

PlotBead()function in therankinmaR package [11].Assess Certainty of Evidence: Apply GRADE for NMA or CINeMA frameworks to evaluate confidence in ranking results [12].

Software Implementation:

Visualizing Complex Ranking Relationships

The "beading plot" represents an innovative visualization technique that adapts the number line plot to display collective ranking metrics for each treatment across various outcomes, significantly enhancing interpretability for diverse stakeholders [11].

Treatment Ranking Workflow from NMA to Decision Support

Research Reagent Solutions for NMA Implementation

Successful implementation of NMA requires specific methodological tools and frameworks. The following table details essential components of the NMA research toolkit.

Table 2: Essential Research Reagent Solutions for Network Meta-Analysis

| Research Reagent | Function/Purpose | Implementation Example |

|---|---|---|

| PRISMA-NMA Guidelines | Reporting guideline ensuring transparent and complete reporting of NMA [12] | PRISMA-NMA 2025 checklist for manuscript preparation |

| R netmeta Package | Frequentist approach to NMA implementation [11] | netmeta() function for statistical analysis |

| rankinma R Package | Specialized package for treatment ranking visualization [11] | PlotBead() function for beading plot generation |

| CINeMA Framework | Confidence in Network Meta-Analysis tool for evidence certainty [12] | Online application for evaluating transitivity, heterogeneity |

| Bayesian MCMC | Markov chain Monte Carlo simulation for probability estimation [11] | Software like JAGS, Stan, or OpenBUGS for Bayesian NMA |

Advanced Application Protocol: Complex Intervention Assessment

Current methodological advances in NMA extend to modeling complex interventions and dose-effect relationships, providing sophisticated tools for drug development research [12].

Experimental Protocol: Dose-Response NMA

Objective: To compare treatment efficacy across different dosing regimens using network meta-regression.

Methodology:

- Define Dose Categories: Classify interventions by dose levels (low, medium, high) based on licensed dosing ranges.

- Network Meta-Regression: Implement random-effects model using contrast-based method with dose as covariate.

- Assume Transitivity: Evaluate distribution of effect modifiers across treatment comparisons to validate analysis [12].

- Handle Missing Data: Apply robust methods for dealing with missing outcome data [12].

Software Implementation:

The integration of these advanced NMA methodologies provides drug development researchers with a comprehensive framework for comparative effectiveness research, directly addressing the complex decision-making challenges in therapeutic development. By implementing the protocols and visualization techniques outlined in this application note, researchers can enhance the evidence base for treatment recommendations and optimize clinical development strategies.

Network Meta-Analysis (NMA) extends conventional pairwise meta-analysis to simultaneously compare multiple treatments by combining direct evidence from head-to-head trials with indirect evidence obtained through common comparators [2] [1]. The validity and credibility of NMA results depend entirely on three foundational assumptions: similarity, transitivity, and consistency. These assumptions are hierarchically interconnected, with similarity forming the basis for transitivity, which in turn ensures statistical consistency [2] [14]. Understanding and evaluating these assumptions is crucial for researchers, scientists, and drug development professionals who rely on NMA to inform comparative effectiveness research and therapeutic decision-making.

The Similarity Assumption

Conceptual Definition

The similarity assumption refers to the degree of clinical and methodological homogeneity between trials included in a pairwise meta-analysis. It requires that the included studies are sufficiently similar in terms of participant characteristics, intervention design, comparator selection, outcome measurement, and methodological quality to justify statistical pooling [2]. This assumption extends the principle of "combinability" from traditional meta-analysis to the NMA context, asserting that studies contributing to each direct treatment comparison should not differ in ways that would materially affect the relative treatment effects.

Practical Evaluation Framework

Evaluating similarity involves meticulous assessment of potential effect modifiers—variables that influence the magnitude of treatment effect. The table below outlines key domains for similarity assessment:

Table 1: Framework for Assessing Similarity in Network Meta-Analysis

| Domain | Key Considerations | Data Extraction Requirements |

|---|---|---|

| Population Characteristics | Age, disease severity, comorbidities, demographic factors, biomarker status | Mean/median values with measures of dispersion; inclusion/exclusion criteria |

| Intervention Design | Dosage, formulation, administration route, treatment duration, concomitant therapies | Detailed intervention specifications; delivery protocols |

| Comparator Selection | Placebo characteristics, active comparator dosing, background therapies | Comparator details matching intervention specifications |

| Outcome Measurement | Definition, assessment method, timing, follow-up duration | Standardized outcome definitions; measurement time points |

| Methodological Factors | Randomization, blinding, allocation concealment, statistical analysis | Risk of bias assessment using standardized tools (e.g., Cochrane RoB) |

| Contextual Factors | Setting (primary vs. specialty care), geographic region, study year | Clinical setting description; country/region of conduct |

Similarity assessment requires content expertise to identify clinically relevant effect modifiers and methodological rigor to operationalize their evaluation across studies [2]. This process should be pre-specified in the systematic review protocol to avoid selective post-hoc evaluation.

The Transitivity Assumption

Theoretical Foundation

Transitivity represents the extension of similarity across all treatment comparisons within a connected network [15] [16]. This cornerstone assumption posits that there are no systematic differences in the distribution of effect modifiers across treatment comparisons [2] [14]. The transitivity assumption can be conceptualized through several interchangeable interpretations:

- The distribution of effect modifiers is similar across all treatment comparisons in the network [16]

- Interventions included in the network are similar across the corresponding trials [16]

- Missing interventions in each trial of the network are missing at random [16]

- Participants included in the network could be jointly randomizable to any intervention in the network [16] [2]

Violations of transitivity compromise the validity of indirect estimates and, consequently, the NMA-derived treatment effects for some or all possible comparisons in the network [16] [2].

Methodological Evaluation Approaches

Conceptual Evaluation Methods

Conceptual evaluation of transitivity involves epidemiological reasoning based on content expertise and requires comprehensive understanding of the disease area, treatment landscape, and relevant effect modifiers [15] [16]. This process includes:

- Systematic identification of potential effect modifiers through literature review and clinical expert consultation

- Comprehensive data extraction of effect modifier distributions across studies and treatment comparisons

- Comparative analysis of the distribution of effect modifiers across different treatment comparisons

Clinical examples illustrate scenarios where transitivity may be violated. In glaucoma treatment, topical medications are prescribed as monotherapies for initial treatment, while combination therapies are reserved for patients with insufficient response [2]. Including both in an NMA of first-line treatments would introduce intransitivity. Similarly, in breast cancer, treatments for HER2-positive and HER2-negative disease should not be included in the same NMA due to biomarker-driven treatment selection [2].

Statistical Evaluation Framework

Statistical evaluation complements conceptual assessment by quantifying the comparability of treatment comparisons. A novel approach proposed in recent literature involves calculating dissimilarities between treatment comparisons based on study-level aggregate participant and methodological characteristics [15]:

Calculate Gower's Dissimilarity Coefficient: This metric handles mixed data types (quantitative and qualitative characteristics) and measures dissimilarity between study pairs across multiple effect modifiers [15]:

d(x,y) = Σ(δ_xy,i × d(x,y)_i) / Σ(δ_xy,i)Where

d(x,y)_irepresents the dissimilarity for characteristici, andδ_xy,iindicates whether the characteristic is observed in both studies [15].Apply Hierarchical Clustering: Group highly similar treatment comparisons while separating dissimilar ones into different clusters [15]

Visualize Results: Use dendrograms and heatmaps to identify "hot spots" of potential intransitivity in the network [15]

Interpret Patterns: Identify pairs of treatment comparisons with "likely concerning" non-statistical heterogeneity that suggest potential intransitivity [15]

Table 2: Quantitative Framework for Transitivity Evaluation Using Gower's Dissimilarity Coefficient

| Step | Procedure | Implementation Guidance |

|---|---|---|

| Characteristic Selection | Identify potential effect modifiers | Prioritize variables with strong biological/clinical rationale for effect modification |

| Data Preparation | Organize study-level characteristics in structured dataset | Handle missing data appropriately; document completeness |

| Dissimilarity Calculation | Compute pairwise dissimilarities between all studies | Use appropriate measures for different variable types (continuous, binary, ordinal) |

| Clustering Analysis | Apply hierarchical clustering to treatment comparisons | Select appropriate linkage method; determine optimal cluster number |

| Result Interpretation | Identify clusters with high between-comparison dissimilarity | Focus on clinically meaningful patterns rather than statistical significance alone |

This approach quantifies clinical and methodological heterogeneity within and between treatment comparisons, enabling empirical exploration of transitivity and semi-objective judgments [15].

The Consistency Assumption

Conceptual Relationship to Transitivity

Consistency represents the statistical manifestation of transitivity, signifying agreement between direct evidence (from head-to-head trials) and indirect evidence (obtained through common comparators) [16] [2]. While transitivity is an untestable conceptual assumption grounded in clinical and epidemiological reasoning, consistency is a testable statistical property that can be evaluated when both direct and indirect evidence exist for the same comparison [16].

The relationship between these assumptions is fundamental: transitivity is necessary for consistency to hold. If the transitivity assumption is violated, the consistency assumption will also be violated, leading to biased treatment effect estimates [16] [2].

Evaluation Methodologies

Design-by-Treatment Interaction Model

This comprehensive approach accounts for different sources of inconsistency in the network:

- Evaluates both loop inconsistency (within closed loops) and design inconsistency (between different designs of studies)

- Uses multivariate meta-regression to model potential inconsistency factors

- Provides a global test for inconsistency across the entire network

Loop-Specific Approach

This method evaluates inconsistency within each closed loop of the network:

- Calculate the difference (ω) between direct and indirect estimates for each comparison

- Estimate the variance of the inconsistency factor

- Compute 95% confidence intervals to assess statistical significance

- Particularly useful for networks with multiple closed loops

Side-Splitting Method

This approach separates evidence into direct and indirect components:

- Direct evidence: obtained only from studies directly comparing treatments A and B

- Indirect evidence: obtained through the network excluding direct A-B studies

- Statistical testing: evaluates disagreement between direct and indirect estimates

- Facilitates identification of specific comparisons with significant inconsistency

Integrated Experimental Protocol for Evaluating NMA Assumptions

Comprehensive Assessment Workflow

Diagram 1: Assumption evaluation workflow for NMA.

Detailed Methodological Procedures

Protocol Development and Pre-specification

- Identify potential effect modifiers through systematic literature review and clinical expert consultation

- Pre-specify statistical methods for evaluating similarity, transitivity, and consistency

- Define decision rules for addressing assumption violations

- Document all pre-specified criteria in the systematic review protocol

Data Collection and Extraction

- Develop standardized extraction forms for all potential effect modifiers

- Extract aggregate study-level characteristics for all included trials

- Document methodological characteristics (design, bias, precision)

- Record clinical and population characteristics that may modify treatment effects

Similarity Assessment Protocol

Within-comparison heterogeneity assessment:

- Calculate I² statistic for each direct comparison

- Examine overlap in confidence intervals of study-level effects

- Evaluate clinical homogeneity through characteristic distributions

Graphical exploration:

- Generate forest plots for each direct comparison

- Create bar plots or box plots showing distribution of effect modifiers across studies within comparisons

Transitivity Assessment Protocol

Conceptual evaluation:

- Apply the "jointly randomizable" test: could participants in any trial be randomized to any treatment in the network? [2]

- Assess whether treatments not included in a trial are missing for reasons related to their effects

Statistical evaluation:

Comparative analysis:

- Tabulate distribution of effect modifiers across different treatment comparisons

- Use statistical tests (ANOVA, chi-square) to assess differences in effect modifiers across comparisons

- Adjust for multiple testing when examining multiple effect modifiers

Consistency Assessment Protocol

Local inconsistency assessment:

- Apply the side-splitting method for each comparison with both direct and indirect evidence

- Calculate inconsistency factors (ω) for each closed loop

- Estimate 95% confidence intervals for inconsistency factors

Global inconsistency assessment:

- Implement the design-by-treatment interaction model

- Compare fit of consistency and inconsistency models using likelihood ratio tests

- Calculate Bayesian Deviance Information Criterion (DIC) for model comparison

Exploratory analyses:

- Generate inconsistency plots comparing direct and indirect estimates

- Perform network meta-regression to explore sources of inconsistency

- Conduct subgroup analyses to identify effect modifier influences

The Scientist's Toolkit: Essential Methodological Reagents

Table 3: Essential Methodological Reagents for NMA Assumption Evaluation

| Tool/Reagent | Function/Purpose | Implementation Considerations |

|---|---|---|

| Gower's Dissimilarity Coefficient | Measures dissimilarity between studies across mixed variable types | Handles quantitative and qualitative characteristics; ranges 0 (no difference) to 1 (maximum difference) [15] |

| Hierarchical Clustering Algorithms | Identifies clusters of similar treatment comparisons | Enables detection of "hot spots" of potential intransitivity; provides visualization through dendrograms [15] |

| Network Meta-regression | Adjusts for effect modifiers when transitivity is questionable | Requires sufficient studies per comparison; powerful when effect modifiers are well-reported [16] |

| Design-by-Treatment Interaction Model | Global test for network inconsistency | Accounts for different sources of inconsistency; provides comprehensive evaluation [14] |

| Side-Splitting Method | Compares direct and indirect evidence for specific comparisons | Useful for identifying localized inconsistency; requires both direct and indirect evidence [14] |

| Node-splitting Method | Separates evidence into direct and indirect components | Bayesian implementation available; useful for pinpointing inconsistent comparisons [14] |

The foundational assumptions of similarity, transitivity, and consistency form the methodological bedrock of valid network meta-analysis in drug development research. These assumptions establish an interconnected hierarchy where similarity enables transitivity, which in turn ensures statistical consistency. Contemporary evaluation approaches have evolved beyond graphical examinations to incorporate quantitative dissimilarity measures and clustering algorithms that provide semi-objective assessment of these critical assumptions [15].

Despite methodological advances, empirical evidence indicates that evaluation of these assumptions remains suboptimal in published NMAs. A systematic survey of 721 network meta-analyses found that only 11% of reviews conducted conceptual evaluation of transitivity, while 54% relied solely on statistical evaluation [16]. This highlights the need for improved methodological practice among researchers and drug development professionals conducting NMA.

Robust evaluation of similarity, transitivity, and consistency requires multidisciplinary collaboration involving clinical experts, methodologies, and statisticians. By implementing the comprehensive protocols and methodologies outlined in this application note, researchers can enhance the credibility and reliability of NMA findings, ultimately supporting more informed decision-making in drug development and healthcare policy.

Network meta-analysis (NMA) has emerged as a powerful statistical methodology that synthesizes evidence from multiple studies to compare the effectiveness of several interventions for the same condition. A foundational concept in NMA is network geometry, a diagrammatic representation showing the interactions among all studies and treatments included in the analysis. This visualization provides crucial information for establishing analytic strategies and interpreting results, offering an immediate overview of the available evidence and its structural relationships.

The geometry is not static; it may evolve with the addition of new research outcomes or new treatments to the comparison set. Within the context of drug development research, accurately mapping this network is a critical first step in evidence synthesis, strengthening results and providing a broader picture of all treatments within the same model. The following sections detail the protocols for constructing, analyzing, and interpreting these essential visual tools.

Fundamental Principles and Assumptions

Before conducting an NMA and constructing its geometry, three major assumptions must be evaluated, as they directly impact the network's structure and validity.

- Similarity: This is a qualitative, methodological assessment of whether the selected studies are comparable. Using the Population, Intervention, Comparison, and Outcome (PICO) framework, researchers examine the clinical characteristics of study subjects, treatment interventions, comparison treatments, and outcome measures across studies. Failure to satisfy this assumption negatively affects the other two assumptions and may introduce heterogeneity.

- Transitivity: This assumption covers the validity of logical inference across the network. If direct comparisons show that treatment A is more effective than B, and B is more effective than C, then transitivity allows the logical inference that A is more effective than C, even in the absence of direct evidence. Transitivity is the logical foundation that permits indirect comparisons.

- Consistency: This is the statistical manifestation of transitivity. It means that the comparative effect sizes obtained through direct and indirect comparisons are consistent. Inconsistency, reported in approximately one-eighth of NMAs, can arise from chance, bias in direct comparisons, bias in indirect comparisons, or genuine diversity in treatment effects. Statistical tests for inconsistency include both a global approach (testing overall inconsistency via a Wald test) and a local approach (node-splitting, which tests individual treatments).

Protocol for Constructing and Analyzing Network Geometry

Data Preparation and Network Setup

The initial phase involves preparing data in a format amenable to network analysis and generating the foundational network plot.

Experimental Protocol 1: Data Structuring and Network Geometry Generation

- Objective: To prepare extracted study data and generate a network geometry plot that provides an overview of the evidence structure.

- Materials: See Section 5, "Research Reagent Solutions."

Methodology:

- Data Extraction: After the systematic review, extract data into a long format. Each row should represent a treatment arm within a study, including columns for the study identifier, treatment identifier, and the number of patients or events for the outcome of interest. This format simplifies command syntax and data editing.

- Define Treatments: Classify all interventions from the included studies into distinct, well-defined treatment nodes (e.g., Placebo (A), DrugX10mg (B), DrugX20mg (C), Standard_Care (D)).

- Software Setup: In Stata, install the necessary network meta-analysis package (e.g.,

network). - Specify Network: Use a command to define the network structure. For example:

network setup d n, studyvar(study) trtvar(trt) ref(A)wheredandnare variables for effect size and sample size,studyis the study identifier,trtis the treatment identifier, andAis the reference treatment. - Generate Plot: Execute the command to draw the network geometry. The software will automatically position the nodes (treatments) and edges (direct comparisons) based on the available data.

Expected Output: A network graph where:

- Nodes represent the different treatments being compared.

- Edges (lines) represent direct comparisons between two treatments.

- The thickness of an edge is often proportional to the number of studies contributing to that direct comparison.

- The size of a node can be made proportional to the total number of patients receiving that treatment or the number of studies in which it appears.

The diagram below visualizes the logical workflow for developing and validating a network geometry.

Evaluating Statistical Assumptions and Inconsistency

Once the network geometry is established, the underlying assumptions must be rigorously tested.

Experimental Protocol 2: Testing for Consistency

- Objective: To statistically evaluate the consistency assumption between direct and indirect evidence within the network.

- Materials: See Section 5, "Research Reagent Solutions."

Methodology:

- Global Inconsistency Test: Perform a global test for inconsistency. This approach computes the level of inconsistency for all between-treatment comparisons and tests for global linearity, typically using a Wald test. A significant result suggests overall inconsistency in the network.

- Local Inconsistency Test: If global inconsistency is detected, perform a local test using a node-splitting method. This technique separates evidence on a specific comparison into direct and indirect components and statistically tests the difference between them.

- Explore Effect Modifiers: If inconsistency is identified, investigate potential effect modifiers (e.g., patient demographics, study design, drug dosage) that may be the cause. Sensitivity analysis or meta-regression is recommended to adjust for these variables.

Expected Output: A p-value from the global test indicating the presence of significant inconsistency. Node-splitting results will identify which specific treatment comparisons are contributing to the inconsistency.

Quantitative Analysis of Network Geometry Characteristics

The structure of a network geometry can be quantitatively described to understand the richness and quality of the available evidence. The table below summarizes key metrics that should be reported.

Table 1: Quantitative Characteristics of Network Geometry in Published NMAs (Based on a systematic review of 365 studies)

| Characteristic | Description | Reported Findings |

|---|---|---|

| Number of Treatments | Total distinct interventions (nodes) in the network. | Median of 6 treatments per NMA (IQR: 4-8) [17]. |

| Number of Trials | Total number of studies included in the NMA. | Median of 22 trials per NMA (IQR: 14-36) [17]. |

| Network Connectivity | Density of direct comparisons (edges); a connected network is required for NMA. | 72.6% of NMAs were produced by single-country teams, potentially influencing available comparisons [17]. |

| Common Comparators | The most frequently used intervention(s) in the network (e.g., Placebo). | Placebo and standard care are the most common comparator nodes [18]. |

| Clinical Areas | The medical conditions evaluated by the NMAs. | Most common areas: Cardiovascular (26.8%), Oncologic (13.7%), Autoimmune (10.7%) disorders [17]. |

The following diagram illustrates the analytical workflow following the creation of the network geometry, leading to a final evidence-based decision.

The Scientist's Toolkit: Research Reagent Solutions

The successful execution of a network meta-analysis and the creation of its geometry rely on specific methodological and software tools. The following table details these essential "research reagents."

Table 2: Essential Reagents for Network Meta-Analysis and Geometry Visualization

| Reagent / Tool | Type | Function / Application in NMA |

|---|---|---|

| PRISMA-NMA Checklist | Reporting Guideline | Ensures transparent and complete reporting of the NMA, including the network geometry. An update is currently in development to address evolving methods [19]. |

Stata with network package |

Statistical Software | A frequentist framework software environment used to set up the network, draw the geometry, perform statistical analysis, and check for inconsistency [18]. |

R (e.g., netmeta package) |

Statistical Software | An alternative open-source environment for conducting frequentist NMA and generating network plots. |

| Bayesian Software (e.g., WinBUGS, OpenBUGS) | Statistical Software | Used for NMA within a Bayesian framework, which offers flexibility, especially for complex models. Cited as the approach in 60-70% of NMA studies [18]. |

| Consistency & Inconsistency Models | Statistical Model | The consistency model (where inconsistency, C=0) and the inconsistency model (Y = D + H + C + E) are fitted to test the assumption of coherence between direct and indirect evidence [18]. |

| Node-Splitting Technique | Statistical Method | A "local" approach to identify inconsistency by splitting evidence on a specific node into direct and indirect components for statistical testing [18]. |

| Network Geometry Diagram | Visual Output | The foundational plot providing an overview of the network structure, showing treatments (nodes) and direct comparisons (edges). Strongly recommended for presenting NMA results [18]. |

Executing a Robust NMA: From Protocol to Analysis in the Drug Development Pipeline

Within the rigorous domain of drug development research, network meta-analysis (NMA) has emerged as a pivotal evidence synthesis methodology. It enables the simultaneous comparison of multiple interventions, even when direct head-to-head trials are absent, providing a comprehensive ranking of treatment efficacy and safety profiles crucial for healthcare decision-making [20] [21]. The exponential growth in published guidance for NMA, particularly between 2021 and 2025, underscores its increasing importance [21]. The integrity of any NMA, however, is fundamentally dependent upon a meticulously constructed and methodologically sound systematic review foundation. A well-defined protocol and an exhaustive literature search are not merely preliminary steps but are critical in mitigating bias and ensuring the transparency, reproducibility, and overall validity of the findings [22]. This document outlines detailed application notes and protocols for establishing this foundational stage, specifically contextualized for researchers, scientists, and professionals engaged in drug development.

Application Notes: Core Principles for Systematic Review in Drug Development

The conduct of a systematic review is a scientific process that demands strict adherence to methodological standards to produce reliable evidence. For drug development research, this involves several core principles.

- Structured Research Question: The process begins with formulating a well-defined, structured research question using established frameworks like PICO (Population, Intervention, Comparator, Outcome) or its extensions (e.g., PICOTTS). This framework ensures a focused approach, guides the development of inclusion/exclusion criteria, and informs the subsequent literature search strategy. A precisely defined PICO question is essential for identifying relevant studies in a field characterized by numerous drug candidates and patient populations [22].

- Comprehensive Search Strategy: A comprehensive search strategy is paramount to minimize the risk of publication bias and to capture all relevant evidence, both published and unpublished (gray literature). This involves searching multiple electronic databases (e.g., PubMed/MEDLINE, Embase, Cochrane Central Register of Controlled Trials) [22] [23]. The choice of databases should be justified based on the research topic.

- Quality and Certainty Assessment: Finally, assessing the methodological quality and certainty of the evidence is a non-negotiable step. Tools such as the Cochrane Risk of Bias Tool and the GRADE (Grading of Recommendations, Assessment, Development, and Evaluation) framework are widely used. The GRADE working group has developed innovative approaches for interpreting NMA results, which are essential for presenting findings for clinicians and policymakers, especially when dealing with multiple benefit and harm outcomes [22] [20].

Protocol Development: A Step-by-Step Guide

This section provides a detailed, actionable protocol for establishing the foundation of a systematic review intended for an NMA in drug development.

Defining the Scope and Research Question

Objective: To create a precise and actionable research question that will guide all subsequent phases of the systematic review and NMA.

- Step 1: Select an Appropriate Framework. Utilize the PICO framework, which is the most prevalent for therapy-related questions in medicine [22]. For drug development, this translates to:

- P (Population): Precisely define the patient population of interest (e.g., adults >18 years with type 2 diabetes, including disease severity and prior treatment history).

- I (Intervention): Specify the drug intervention or class of interventions (e.g., SGLT2 inhibitors).

- C (Comparator): Identify the appropriate comparator(s) (e.g., placebo, standard metformin therapy, or another active drug).

- O (Outcome): Define both efficacy and safety outcomes. These should be critical to decision-making and can be dichotomous (e.g., all-cause mortality) or continuous (e.g., change in HbA1c) [22].

- Step 2: Establish Inclusion and Exclusion Criteria. Based on the PICO framework, explicitly state the criteria for study selection. This should include eligible study designs (e.g., randomized controlled trials), acceptable sample sizes, language restrictions, and publication date ranges.

- Step 3: Register the Protocol. To enhance transparency and reduce duplication of effort, register the systematic review protocol in a publicly accessible registry such as PROSPERO [23].

Designing and Executing the Literature Search

Objective: To identify all published and unpublished studies relevant to the research question in a reproducible manner.

- Step 1: Identify Data sources. Plan to search at least two major bibliographic databases. Essential databases for drug development include:

- PubMed/MEDLINE: For life sciences and biomedical literature.

- Embase: For extensive coverage of pharmacological and biomedical literature.

- Cochrane Central Register of Controlled Trials: For randomized trials.

- ClinicalTrials.gov: For gray literature and unpublished trial results.

- Additional specialized databases relevant to the specific drug class or disease area [22].

- Step 2: Develop the Search Syntax. With the assistance of an information specialist, develop a complex search strategy using a combination of free-text terms and controlled vocabulary (e.g., MeSH in PubMed, Emtree in Embase). The strategy should incorporate Boolean operators (AND, OR, NOT) and account for synonyms and related terms for each PICO element.

- Step 3: Manage Search Results. Use reference management software (e.g., EndNote, Zotero, Mendeley) to collate results from all searches and remove duplicate records. Subsequently, employ specialized systematic review tools (e.g., Rayyan, Covidence) to streamline the screening of titles, abstracts, and full-text articles [22]. These tools facilitate collaboration and enhance the accuracy and efficiency of the study selection process.

Table 1: Key Databases for Comprehensive Literature Searching in Drug Development

| Database Name | Primary Focus and Utility |

|---|---|

| PubMed/MEDLINE | Free platform providing access to the MEDLINE database of life sciences and biomedical literature; uses MeSH terms and Boolean operators [22]. |

| Embase | Biomedical and pharmacological database with extensive coverage of drug, toxicology, and clinical medicine topics [22]. |

| Cochrane Central | Database of randomized controlled trials, specifically designed to support systematic reviews [23]. |

| Google Scholar | Free search engine for scholarly literature, including articles, theses, and books; useful for identifying grey literature but requires supplementation with specialized databases [22]. |

Study Selection and Data Extraction

Objective: To apply the inclusion/exclusion criteria systematically and extract relevant data in a consistent, unbiased fashion.

- Step 1: Screening Process. The study selection should be performed in two phases:

- Title and Abstract Screening: Two or more independent reviewers screen all retrieved records against the pre-defined inclusion criteria.

- Full-Text Screening: The full texts of potentially relevant studies are retrieved and assessed for eligibility by the same independent reviewers. Disagreements at any stage are resolved through consensus or by a third reviewer [22].

- Step 2: Data Extraction. Using a standardized, pre-piloted data extraction form, extract the following key information from each included study:

- Study characteristics (e.g., author, year, design, location, funding source).

- Participant characteristics (P).

- Intervention and comparator details (I and C).

- Outcome data (O), including effect sizes, measures of variance, and sample sizes.

- Results for all pre-specified efficacy and harm outcomes [23].

- Step 3: Quality and Risk of Bias Assessment. Independently assess the methodological quality and risk of bias of each included study using appropriate tools, such as the Cochrane Risk of Bias Tool for randomized trials [22].

Visualization of Workflows

Systematic Review Workflow for NMA

The following diagram illustrates the key stages in the systematic review process that underlies a robust Network Meta-Analysis.

Literature Search and Study Selection Process

This diagram details the flow of information through the literature search and study selection phases, from initial identification to final inclusion.

Table 2: Key Resources for Conducting Systematic Reviews and Network Meta-Analyses

| Tool/Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Reporting Guidelines | PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analyses) and its extensions (e.g., PRISMA-NMA, PRISMA-AI) [24] [25]. | Standardized checklists to ensure transparent and complete reporting of systematic reviews and meta-analyses, enhancing reproducibility and quality. |

| Reference Management | EndNote, Zotero, Mendeley [22]. | Software to collect search results, manage citations, and automatically remove duplicate records. |

| Study Screening | Covidence, Rayyan [22]. | Web-based tools that streamline the title/abstract and full-text screening process, allowing for independent review and conflict resolution. |

| Statistical Analysis | R (with packages such as metafor), Stata, RevMan [22] [26]. |

Software environments used to perform the statistical computations for meta-analysis and network meta-analysis, including effect size calculation, model fitting, and generation of forest and funnel plots. |

| Quality Assessment | Cochrane Risk of Bias Tool, Newcastle-Ottawa Scale, GRADE framework [22] [20]. | Structured tools to evaluate the methodological rigor of included studies and to rate the overall certainty of evidence for each outcome. |

Experimental Protocols: Detailed Methodologies

Protocol for a Comprehensive Database Search

This protocol details the steps for executing a reproducible and exhaustive literature search.

- Objective: To identify all potentially relevant studies for the systematic review while minimizing bias.

- Materials: Access to bibliographic databases (e.g., PubMed, Embase, Cochrane Central); reference management software (e.g., EndNote); systematic review management tool (e.g., Covidence).

- Procedure:

- Finalize Search Strategy: Translate the PICO elements into a formal search syntax for each database, using both keywords and database-specific subject headings. Document the final search strategy for each database.

- Execute Search: Run the finalized searches in all selected databases. Record the date of each search and the number of records retrieved from each source.

- Collate Results: Export all search results into the reference management software. Use the software's functionality to identify and remove duplicate records.

- Upload for Screening: Export the de-duplicated library of references into the systematic review management tool (Covidence/Rayyan) to initiate the formal screening process [22].

Protocol for Data Extraction and Quality Assessment

This protocol ensures consistent and accurate capture of data from included studies.

- Objective: To systematically extract relevant data and assess the risk of bias from all studies included in the review.

- Materials: Standardized data extraction form (electronic or paper); Cochrane Risk of Bias Tool; access to full-text articles.

- Procedure:

- Pilot the Form: Two reviewers independently pilot the data extraction form on 2-3 included studies and refine it to ensure clarity and consistency.

- Extract Data: Reviewers independently extract data into the finalized form. The form should capture all elements related to PICO, study methodology, and results.

- Resolve Discrepancies: Reviewers compare extracted data and resolve any discrepancies through discussion or by consulting a third reviewer.

- Assess Risk of Bias: Independently apply the Cochrane Risk of Bias Tool to each study. Judge each domain (e.g., random sequence generation, blinding) as having low, high, or unclear risk [22].

- Manage Data: Transfer the extracted and verified data into the statistical software for analysis.

A rigorously developed protocol and a comprehensively executed search strategy are the cornerstones of a valid and impactful systematic review and network meta-analysis in drug development. Adherence to established methodological standards, including the use of structured frameworks like PICO, comprehensive multi-database searches, and rigorous quality assessment, mitigates bias and ensures the production of reliable evidence. The ongoing development and refinement of reporting guidelines, such as the PRISMA extensions, alongside advanced software tools, continue to support researchers in this complex endeavor. By faithfully implementing the protocols and utilizing the toolkit described herein, drug development professionals can generate high-quality synthetic evidence that reliably informs clinical practice and healthcare policy.

In network meta-analysis (NMA), the process of grouping interventions into distinct nodes, a process known as "node definition," is a fundamental methodological step that precedes statistical analysis [27]. The validity and interpretation of the entire NMA depend on the logical and clinically sound construction of this network of interventions [3]. This document outlines the core principles and provides a structured protocol for defining intervention nodes within the context of drug development research, ensuring that the resulting network is both clinically meaningful and statistically valid.

Core Principles of Node Definition

The decision of how to group interventions is guided by the lumping versus splitting paradigm, which balances clinical homogeneity with the need for connected networks [27]. The following principles underpin this decision:

- Transitivity Assumption: This is the cornerstone of a valid NMA. It requires that the sets of studies making different direct comparisons are sufficiently similar in all important factors that could modify the treatment effect (e.g., patient demographics, disease severity, trial design) [3]. Grouping clinically heterogeneous interventions into a single node violates this assumption and can lead to biased results.

- Clinical Coherence: Interventions grouped into the same node should be similar in their mechanism of action, dosage, formulation, and intensity. For example, in a network for tuberculosis treatment, "video directly observed therapy (VDOT)" and "medication event reminder monitors (MERM)" represent distinct nodes due to their fundamentally different modes of operation, despite both being digital health technologies [28].

- Statistical Coherence (Consistency): This refers to the statistical agreement between direct and indirect evidence within the network. While this is assessed after the analysis, a poorly defined network with intransitive nodes is a common source of incoherence [3].

A Structured Protocol for Node Definition

The following workflow provides a step-by-step protocol for defining intervention nodes in a systematic review with NMA.

Experimental Workflow for Node Definition

The diagram below outlines the sequential and iterative process for defining and validating network nodes.

Protocol Steps and Operational Procedures

Step 1: Develop a Preliminary Classification Framework

- Action: Systematically extract all interventions from the included studies and create a preliminary list.

- Procedure: Use a standardized data extraction form to capture intervention details at the most granular level available (e.g., specific drug molecule, exact dosage, frequency, mode of administration) [21].

- Output: A comprehensive list of all unique interventions.

Step 2: Apply the Lumping vs. Splitting Strategy

- Action: Make deliberate decisions on whether to combine interventions (lump) or keep them separate (split). The diagram below illustrates the key decision points.

Table 1: Lumping vs. Splitting Decision Criteria with Examples from Drug Development

| Decision | Criteria | Drug Development Example |

|---|---|---|

| Lumping | Same drug molecule, different but comparable doses or durations. | Grouping various doses of the same biologic drug (e.g., infliximab 5mg/kg and 10mg/kg) if pharmacokinetic data suggest similar efficacy. |

| Interventions belonging to the same pharmacological class with a presumed class effect. | Grouping all proton-pump inhibitors (e.g., omeprazole, lansoprazole) for a specific indication, if supported by prior evidence. | |

| Splitting | Different drug molecules, even within the same class. | Keeping different statins (e.g., atorvastatin, rosuvastatin) as separate nodes to compare their relative potency. |

| Different formulations or routes of administration (e.g., oral vs. intravenous). | Separating intravenous from subcutaneous administration of the same monoclonal antibody. | |

| Different dosages expected to have meaningfully different efficacy or safety profiles. | Separating high-dose from low-dose chemotherapy regimens in an oncology NMA. |

Step 3: Draft the Network Geometry

- Action: Create a visual representation of the network of interventions using a network diagram [29] [3].

- Procedure: Use software like R, Stata, or Gephi. Nodes represent interventions, and lines (edges) represent direct comparisons from head-to-head studies. The thickness of edges is often weighted by the number of studies or patients for that comparison [30] [29].

- Output: A network diagram that provides an overview of the evidence structure and identifies evidence gaps.

Step 4: Formally Assess Transitivity

- Action: Evaluate whether the transitivity assumption is likely to hold across the proposed nodes.

- Procedure: Compare the distribution of potential effect modifiers (e.g., mean patient age, disease severity, prior lines of therapy, study duration) across the different direct comparisons. This can be done qualitatively in a table or using statistical methods [3].

- Output: A transitivity assessment table.

Table 2: Template for Transitivity Assessment Across Direct Comparisons

| Potential Effect Modifier | Comparison A vs. B (Studies: n=5) | Comparison A vs. C (Studies: n=7) | Comparison B vs. C (Studies: n=3) | Judgment on Transitivity |

|---|---|---|---|---|

| Mean Age (years) | 65.2 (SD 8.1) | 63.8 (SD 9.5) | 67.1 (SD 7.3) | Likely valid |

| Disease Severity (% Severe) | 45% | 70% | 48% | Potential violation |

| Study Duration (weeks) | 24 | 24 | 52 | Potential violation |

Step 5: Finalize and Document Node Definitions

- Action: Based on the transitivity assessment, finalize the node definitions. If serious transitivity violations are suspected, return to Step 2 and consider splitting nodes further or using statistical models that account for effect modifiers.

- Procedure: Clearly define each node in the review protocol or manuscript. The definitions must be precise and reproducible [21].