Navigating the Lipophilicity-Promiscuity Nexus: Strategies for Optimizing Drug Safety and Efficacy

This article provides a comprehensive analysis of the critical relationship between high lipophilicity and target promiscuity in small-molecule drug discovery.

Navigating the Lipophilicity-Promiscuity Nexus: Strategies for Optimizing Drug Safety and Efficacy

Abstract

This article provides a comprehensive analysis of the critical relationship between high lipophilicity and target promiscuity in small-molecule drug discovery. It explores the foundational principles of how physicochemical properties influence pharmacokinetics and safety profiles, detailing computational and experimental methodologies for prediction and measurement. The content offers practical strategies for troubleshooting optimization challenges and validates approaches through comparative analysis of successful and discontinued drugs. Aimed at researchers and drug development professionals, this review synthesizes current knowledge to guide the design of compounds with improved therapeutic indices and reduced development attrition.

The Lipophilicity-Promiscuity Link: Understanding Fundamental Mechanisms and Impacts

FAQs on Lipophilicity and Promiscuity

Q1: What is the fundamental difference between LogP and LogD?

- LogP is the logarithm of the partition coefficient (P), which is the ratio of the concentration of a neutral (unionized) compound in an organic phase (typically n-octanol) to its concentration in an aqueous phase (water). It is a constant for a given compound [1] [2] [3].

- LogD is the logarithm of the distribution coefficient (D), which is the ratio of the sum of the concentrations of all species of a compound (both ionized and unionized) in the organic phase to the sum in the aqueous phase at a specified pH. LogD is therefore pH-dependent and provides a more accurate measure of lipophilicity for ionizable compounds under physiological conditions [1] [2] [4].

Q2: Why are LogP and LogD critical parameters in drug discovery?

Lipophilicity is a key physicochemical parameter that influences nearly all aspects of a drug's behavior, including [5] [4] [3]:

- Absorption and Permeability: Impacts a compound's ability to cross biological membranes.

- Distribution: Affects tissue penetration and volume of distribution.

- Metabolism and Clearance: Higher lipophilicity often correlates with increased metabolic clearance.

- Toxicity and Promiscuity: Increased lipophilicity is linked to target promiscuity, off-target effects (e.g., hERG inhibition), and other ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) liabilities.

- Solubility: Higher lipophilicity generally leads to lower aqueous solubility.

Q3: What is molecular promiscuity, and why is it significant?

- Definition: Molecular promiscuity denotes the ability of a ligand to specifically interact with multiple, sometimes distantly related, target proteins [6] [7].

- Significance: While promiscuity can lead to unwanted side effects, it is receiving increasing attention because it can enhance drug efficacy through polypharmacology (simultaneous modulation of multiple targets) and provides a molecular basis for drug repositioning [6] [8]. However, highly promiscuous compounds also carry a higher risk of toxicity [5].

Q4: What are common experimental methods for determining LogP and LogD?

The following table summarizes the key methodologies [4] [3]:

| Method | Description | Key Considerations |

|---|---|---|

| Shake-Flask | The compound is shaken in a mixture of octanol and water (or buffer); concentrations in each phase are measured at equilibrium. | Considered the "gold standard"; can be slow and requires a method for concentration analysis [4] [3]. |

| Chromatographic Methods | Using High-Performance Liquid Chromatography (HPLC) to determine retention time, which is correlated with known LogP values of standard compounds. | A faster, high-throughput alternative to the shake-flask method [3]. |

Q5: How can computational methods for LogP/LogD prediction fail, and how can this be mitigated?

Computational methods, while invaluable, have limitations:

- Fragment-Based Methods: These methods sum contributions from molecular fragments and correction factors. They can be inaccurate for novel scaffolds or functional groups not well-represented in their training data [1] [3].

- Mitigation: It is crucial to use a single, consistent computational tool for a series of compounds and to validate predictions with experimental data whenever possible. Some software allows the training set to be extended with in-house measured values for greater accuracy [3].

Troubleshooting Common Experimental and Data Interpretation Issues

Problem 1: In Vivo Half-Life Does Not Improve Despite Lowering Lipophilicity

- Background: A common strategy to improve pharmacokinetics is to reduce lipophilicity to lower clearance (CL). However, this often fails to extend the in vivo half-life (T~1/2~) [5].

- Root Cause: The volume of distribution (V~d,ss~) and clearance (CL) are often highly correlated and similarly affected by lipophilicity. Reducing lipophilicity can lower both V~d,ss~ and CL, resulting in little to no net improvement in T~1/2~, which is a function of both parameters (T~1/2~ = 0.693 • V~d,ss~ / CL) [5].

- Solution: Focus on identifying and addressing specific metabolic soft-spots in the molecule to improve metabolic stability (lower CL~int~) without necessarily reducing lipophilicity. Matched molecular pair analysis has shown that strategies improving metabolic stability without decreasing lipophilicity have an 82% probability of prolonging half-life, compared to only a 30% probability for strategies that rely solely on lowering lipophilicity [5].

Problem 2: Suspecting Apparent Promiscuity Due to Assay Artifacts

- Background: Apparent multi-target activity (promiscuity) can be a false positive resulting from compound-mediated assay interference [7].

- Root Cause: Compounds can form aggregates, react under assay conditions, or engage in non-specific interactions with proteins, leading to artifactual activity readouts [7].

- Solution: Implement rigorous filtering of screening data to exclude compounds with chemical functionalities prone to artifacts. Use secondary, orthogonal assays to confirm true activity. Analysis of public screening data has shown that after applying such liability filters, a significant proportion of initially "promiscuous" compounds can be eliminated from consideration [7].

Problem 3: Difficulty in Rationalizing or Designing for Desired Promiscuity

- Background: Designing compounds with specific multi-target activity is challenging because the structural basis for promiscuity is often not generalizable [8].

- Root Cause: Machine learning studies reveal that structural features distinguishing promiscuous from non-promiscuous compounds are highly dependent on the specific target combination. Models trained to recognize promiscuity for one target pair typically fail to predict it for a different target pair [8].

- Solution: Instead of seeking universal "promiscuity features," focus on local structure-promiscuity relationships for your target of interest. Analyze structural data (e.g., X-ray complexes) of known promiscuous ligands bound to their targets to understand the specific interaction patterns and binding modes that enable multi-target activity [6] [8].

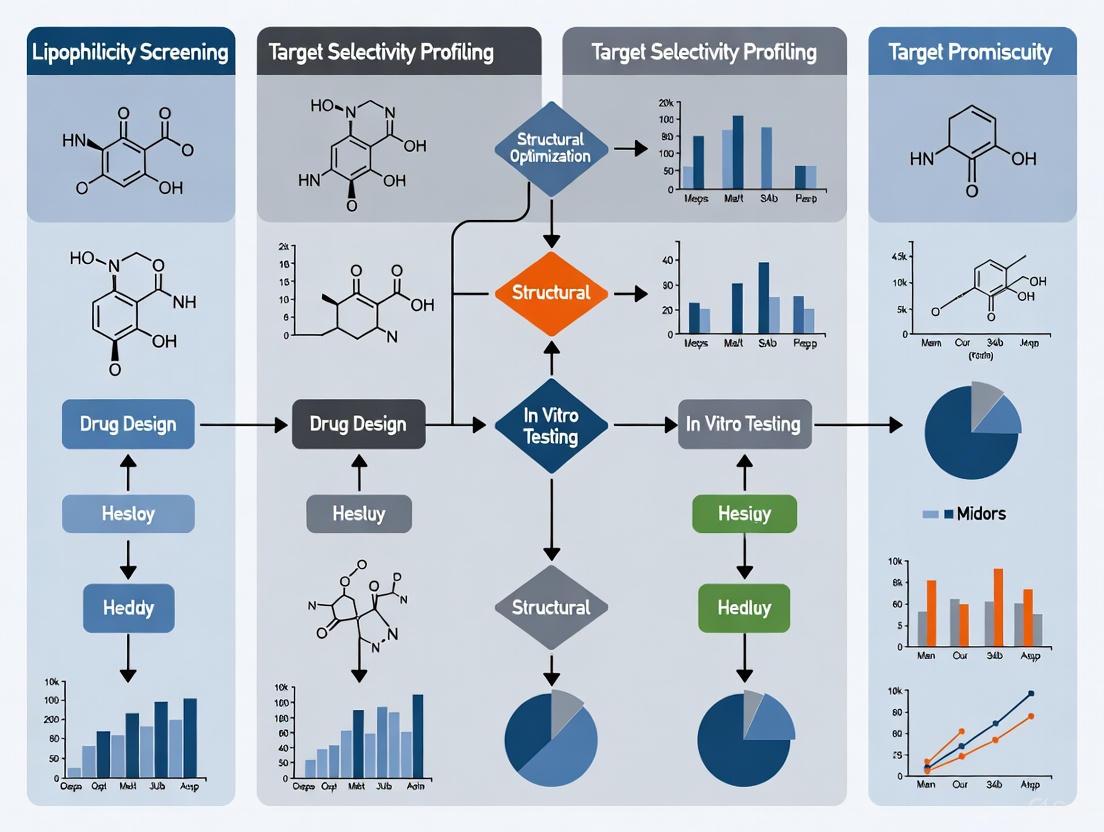

Diagram: Lipophilicity and Promiscuity in Drug Discovery

Lipophilicity and Promiscuity Relationships

Experimental Protocols & Workflows

Shake-Flask Method for LogD Determination

This is a standard protocol for experimentally measuring the distribution coefficient [4] [3].

Research Reagent Solutions & Materials:

| Reagent/Material | Function |

|---|---|

| n-Octanol | Represents the lipophilic/organic phase. |

| Aqueous Buffer (e.g., pH 7.4) | Represents the aqueous phase at physiological pH. |

| Test Compound | The molecule whose LogD is being characterized. |

| Analytical Instrument (e.g., HPLC-UV, LC-MS) | To accurately quantify the concentration of the compound in each phase after partitioning. |

Detailed Methodology:

- Preparation: Pre-saturate n-octanol with the aqueous buffer and vice-versa by mixing them thoroughly and allowing them to separate. This prevents volume changes in the phases during the experiment.

- Partitioning: Dissolve a known amount of the test compound in a known volume of one of the phases (e.g., the aqueous buffer). Combine this with an equal volume of the other pre-saturated phase (e.g., n-octanol) in a suitable container.

- Equilibration: Shake the mixture vigorously for a predetermined time at a constant temperature to allow the compound to distribute between the two phases.

- Separation: Allow the mixture to stand undisturbed until the two phases are completely separated.

- Analysis: Carefully sample from each phase and measure the concentration of the compound in each using a suitable analytical method (e.g., HPLC-UV).

- Calculation: Calculate the Distribution Coefficient (D) using the formula: ( D = \frac{[Concentration]{octanol}}{[Concentration]{buffer}} ) LogD is then the logarithm (base 10) of this value.

Workflow for Identifying True Multitarget Activity (Promiscuity)

This workflow helps distinguish true promiscuity from false positives [7] [8].

Promiscuity Confirmation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key resources used in the experiments and analyses cited in this guide.

| Research Reagent / Resource | Function / Explanation |

|---|---|

| n-Octanol / Water System | The standard solvent system for measuring LogP/LogD, serving as a model for a drug partitioning between lipid bilayers and aqueous body fluids [1] [4] [3]. |

| Rat Hepatocytes (RH CL~int~) | An in vitro system used to measure intrinsic metabolic clearance, helping to predict in vivo metabolic stability and half-life [5]. |

| Matched Molecular Pairs (MMPs) | A computational analysis technique that identifies pairs of compounds differing only by a small, well-defined structural change. Used to quantify the effect of specific chemical transformations on properties like LogD, metabolic stability, and promiscuity [5] [3]. |

| Structural Fingerprints (for ML) | Numerical representations of chemical structure used in machine learning models to diagnose and predict structure-promiscuity relationships for specific target combinations [8]. |

| CYP450 Inhibition Assays | Essential experimental panels to assess a compound's potential for drug-drug interactions, a common liability linked to high lipophilicity and promiscuity [5] [3]. |

Troubleshooting Guide: FAQs on Lipophilicity in Drug Discovery

FAQ 1: Why is my compound showing high membrane permeability in assays but also exhibiting promiscuous behavior and off-target toxicity?

This is a classic consequence of high lipophilicity. While lipophilicity is a key driver for passive diffusion across lipid membranes, it is also a major factor in off-target binding and certain toxicity mechanisms.

- Root Cause: High lipophilicity, often measured by LogP or LogD, increases the likelihood of a compound engaging in non-specific, hydrophobic interactions with a wide range of biological targets beyond its intended one [9]. Furthermore, specific structural features, such as a basic center with a pKa > 6, are strongly associated with promiscuous binding to aminergic GPCRs, ion channels, and transporters [9]. Certain amphiphilic compounds can also induce phospholipidosis, a toxicity driven by their physicochemical properties [10].

- Solution:

- Modify Physicochemical Properties: Aim to reduce lipophilicity by introducing polar groups or replacing lipophilic substituents with more polar bioisosteres [11]. However, this must be done carefully to avoid compromising permeability.

- Evaluate the Necessity of a Basic Center: If a basic amine is not part of the core pharmacophore, consider replacing it with a neutral group to reduce promiscuity [9].

- Adopt "Molecular Chameleonicity": Design larger molecules (e.g., beyond Rule of 5) that can adopt a polar, open conformation in aqueous environments to enhance solubility, and a non-polar, closed conformation in lipid membranes to maintain permeability [11].

FAQ 2: How can I accurately predict passive drug permeability early in the discovery process?

Predicting permeability is essential for estimating oral bioavailability. A combination of computational and experimental methods is recommended.

- Root Cause: Reliance on a single method or insufficient understanding of the relationship between molecular descriptors and permeability can lead to poor predictions.

- Solution:

- Utilize In Silico Tools: Services like the Permeability server can provide theoretical assessments of passive permeability [12].

- Employ High-Throughput Simulations: Physics-based coarse-grained models can efficiently explore chemical space and establish a permeability surface based on key molecular descriptors like bulk partitioning free energy and pKa [13].

- Implement Standardized In Vitro Assays:

FAQ 3: Our lead compound has excellent potency but a high logP (>5). What are the specific risks, and how can we mitigate them?

A high logP is a significant risk factor that requires careful management.

- Root Cause: High lipophilicity is correlated with increased promiscuity, poor aqueous solubility, and a higher risk of off-target toxicities [10] [9].

- Solution:

- Conduct Early Safety Profiling: Screen the compound against a small, representative panel of high-risk off-targets (e.g., aminergic GPCRs, hERG channel) to identify promiscuity early [9].

- Monitor for Phospholipidosis: Be alert for cytoplasmic vacuolation in in vitro or in vivo studies, a common toxicity of cationic amphiphilic drugs [10].

- Balance Properties: Use strategies like Aufheben to simultaneously improve aqueous solubility and membrane permeability through rational molecular design, rather than simply increasing hydrophilicity at the expense of permeability [11].

The following tables consolidate quantitative data and structural alerts related to lipophilicity, permeability, and promiscuity.

Table 1: Permeability and Promiscuity Relationships with Lipophilicity and Charge

| Property / Metric | Impact on Permeability | Impact on Promiscuity / Toxicity | Key Evidence |

|---|---|---|---|

| High Lipophilicity (High LogP) | Increases passive permeability by favoring partitioning into lipid membranes [13] [11]. | Markedly increases promiscuity and risk of off-target effects [9]. | Promiscuity rarely observed for compounds with cLogP < 3 [9]. |

| Basic Center (pKa > 6) | Can enhance permeability in some contexts. | The most important determinant of promiscuity in safety panels; high hit rates at aminergic targets [9]. | Positively charged compounds show ~15% average target hit rate at aminergic GPCRs [9]. |

| Molecular Weight (500-3000 Da, bRo5) | Challenging to achieve high permeability; often requires chameleonic properties [11]. | Can be associated with unique off-target risks, e.g., with oligonucleotides or lipid nanoparticles [10]. | Requires design strategies that go beyond the classic Rule of 5 [11]. |

Table 2: Structural Motifs and Property Alerts

| Structural Motif / Property | Associated Risk or Effect | Recommended Action |

|---|---|---|

| Tricyclic motif with basic amine | High-risk motif for broad promiscuity [9]. | Scrutinize necessity; consider structural modification early. |

| Cationic Amphiphilic Structure | Strong association with phospholipidosis and vacuolation [10]. | Incorporate specific in vitro or in vivo screening for this pathology. |

| High Hydrophobicity + Positive Charge | Increased risk of cytotoxicity and haemolysis [10]. | Monitor in cytotoxicity assays and inspect for haemolytic potential. |

Experimental Protocols for Key Assays

Protocol 1: Determining Apparent Permeability (Papp) using PAMPA

Objective: To measure the passive, artificial membrane permeability of a compound in a high-throughput format [12].

Materials:

- PAMPA plate system (donor and acceptor plates with a membrane filter)

- Artificial lipid solution (e.g., lecithin in dodecane)

- Test compound solution in buffer (e.g., PBS)

- Acceptor sink buffer

- UV plate reader or LC-MS/MS for quantification

Method:

- Membrane Preparation: Coat the filter of the PAMPA donor plate with the artificial lipid solution and allow it to set.

- Plate Assembly: Fill the donor well with the test compound solution. Place the membrane on top and ensure contact. Fill the acceptor well with the sink buffer.

- Incubation: Assemble the plate and incubate at room temperature for a predetermined time (e.g., 4-16 hours) to reach steady state.

- Sampling and Analysis: After incubation, sample from both donor and acceptor compartments. Quantify the drug concentration in each compartment using a suitable analytical method (e.g., UV spectrometry or LC-MS/MS).

- Data Calculation:

- Calculate the drug flux (j): ( j = (dQ/dt) \times (1/A) ), where ( dQ/dt ) is the slope of the accumulated amount in the acceptor compartment over time, and ( A ) is the permeation area.

- Calculate the apparent permeability (Papp): ( P{app} = j / C0 ), where ( C_0 ) is the initial concentration in the donor compartment [12].

- Papp is typically classified as: poor (< 1.0 × 10⁻⁶ cm/s), moderate (1–10 × 10⁻⁶ cm/s), or good (>10 × 10⁻⁶ cm/s) [11].

Protocol 2: Early Safety Profiling with a Focused Target Panel

Objective: To identify pharmacological promiscuity early in drug discovery by screening against a minimal panel of high-risk off-targets.

Materials:

- Cell lines or protein preparations expressing high-risk targets (e.g., hERG, 5-HT2B, α1A-adrenergic receptor, dopamine D2 receptor) [9].

- Relevant assay kits (e.g., binding or functional assays).

- Test compounds and reference controls.

Method:

- Panel Selection: Select a panel of 10-20 targets known to attract high hit rates, particularly for compounds with specific properties (e.g., basic amines). Aminergic GPCRs, the hERG channel, and opioid receptors are prime candidates [9].

- Assay Execution: Run standardized binding or functional assays for each target in the panel according to established protocols.

- Data Analysis: Determine the percentage inhibition or activation at a single concentration of the test compound (e.g., 10 µM). A compound is considered a "hit" for a specific target if it shows significant activity (e.g., >50% inhibition/activation).

- Interpretation: Calculate a "hit rate" for the compound across the panel. A high hit rate indicates a promiscuous compound. This data can be used to prioritize compounds for further development and guide chemical redesign to mitigate off-target interactions [9].

Visualizing Key Concepts and Workflows

Diagram 1: Lipophilicity Impact on Permeability and Promiscuity

Diagram 2: Mechanistic Pathways of Off-Target Toxicity

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Permeability and Safety Assessment

| Research Reagent / Tool | Function in Research | Key Application Note |

|---|---|---|

| Caco-2 Cell Line | A human intestinal epithelial cell model used to predict drug absorption, incorporating both passive and active transport mechanisms [12]. | The gold standard for in vitro assessment of intestinal permeability; provides data on efflux and transporter effects. |

| PAMPA Plate | A high-throughput artificial membrane system designed to measure passive transcellular permeability [12]. | Ideal for early-stage screening due to its speed, low cost, and suitability for automation. |

| Selected Target Panels (e.g., aminergic GPCRs) | A curated set of recombinant proteins or cell lines for profiling compound activity against known high-risk off-targets [9]. | Enables early detection of pharmacological promiscuity. A panel of ~10 targets can effectively identify most promiscuous compounds. |

| Coarse-Grained (CG) Martini Model | A physics-based computational model that reduces molecular complexity, allowing for high-throughput simulation of membrane permeability across vast chemical spaces [13]. | Used to predict permeability coefficients and map structure-permeability relationships for thousands of compounds in silico. |

Structural and Physicochemical Drivers of Promiscuous Binding

Frequently Asked Questions

What is lipophilicity and why is it critical in drug discovery? Lipophilicity, often measured as LogP, represents the ratio of a compound's concentration in an oil phase versus an aqueous phase at equilibrium [14]. It is a fundamental physicochemical parameter that significantly influences various pharmacokinetic properties, including absorption, distribution, membrane permeability, and routes of clearance [14]. A drug's affinity for biological membranes and its binding ability are heavily influenced by its lipophilicity [15].

How does lipophilicity relate to promiscuous binding and transporter activity? High lipophilicity is a key driver of promiscuous binding, as it can increase a drug's likelihood of interacting with off-target proteins and promiscuous transporters [5]. P-glycoprotein (P-gp), a highly flexible and promiscuous transporter that effluxes over 200 chemically diverse substrates from cells, is a prime example. Its conformational plasticity allows it to bind a wide array of structures, and lipophilicity is a key factor in determining whether a compound will be a substrate [16].

What is the primary experimental method for determining lipophilicity? Reverse-phase thin layer chromatography (RP-TLC) is a widely used, simple, and low-cost method for determining lipophilicity-related parameters like the isocratic retention factor (Rₘ) and chromatographic hydrophobic index (φ₀) [15]. Reverse-phase HPLC (RP-HPLC) is another excellent method valued for its accuracy and on-line detection capabilities [15].

My compound has high lipophilicity and shows high clearance. Will simply reducing lipophilicity always extend its half-life? Not necessarily. While lowering lipophilicity can decrease clearance, it often also reduces the volume of distribution. Since half-life depends on the balance between volume of distribution and clearance, this strategy can fail to improve half-life if it does not specifically address a metabolic soft-spot [5]. Transformations that improve metabolic stability without decreasing lipophilicity are often more successful for half-life extension [5].

What are some common strategies to mitigate high lipophilicity and reduce promiscuity? Common strategies include [5]:

- Introducing metabolically stable polar groups (e.g., replacing a methyl with a fluorine).

- Reducing overall hydrocarbon content and molecular planarity.

- Introducing hydrogen bond donors or acceptors.

- Addressing specific metabolic soft-spots identified in assays, rather than making global changes to lipophilicity.

Troubleshooting Guides

Problem: Poor Aqueous Solubility and High Non-Specific Binding

Background: Compounds with high lipophilicity often suffer from poor aqueous solubility, which can impede absorption and lead to high non-specific binding, confounding assay results [14].

| Investigation | Possible Cause | Suggested Action |

|---|---|---|

| Solubility Check | Poor dissolution in aqueous buffers. | Use solubilizing agents (e.g., DMSO, cyclodextrins); consider salt formation for ionizable compounds. |

| Assay Signal | High background signal due to compound aggregation or adhesion to labware. | Include control wells without biological target; use detergents (e.g., Tween-20) in buffers to reduce non-specific binding [17]. |

| Cellular Uptake | Low intracellular concentration despite good LogP. | Investigate if the compound is a substrate for efflux transporters like P-gp [16]. |

Experimental Protocol: Investigating P-gp Substrate Status

- Cell Line: Use polarized cell lines (e.g., MDCK, Caco-2) overexpressing human P-gp.

- Assay Setup: Seed cells on transwell filters and allow them to form tight monolayers. Check monolayer integrity by measuring transepithelial electrical resistance (TEER).

- Bidirectional Transport: Add your test compound to either the apical (A) or basolateral (B) chamber.

- A-to-B direction: Measures substrate permeation.

- B-to-A direction: Measures active efflux.

- Inhibition: Repeat the experiment in the presence of a known P-gp inhibitor (e.g., Verapamil, Elacridar).

- Data Analysis: Calculate the efflux ratio (B-to-A / A-to-B permeability). An efflux ratio >2 that is reduced by an inhibitor suggests the compound is a P-gp substrate [16].

Problem: Unpredictable Clearance and Short Half-Life

Background: High lipophilicity generally correlates with increased metabolic clearance, leading to a short in vivo half-life, which can be problematic for maintaining target coverage [5].

| Investigation | Possible Cause | Suggested Action |

|---|---|---|

| In Vitro Stability | High intrinsic clearance in hepatocyte or microsomal assays. | Identify metabolic soft-spots using metabolite identification (MetID) studies. |

| In Vivo PK | High in vivo clearance not predicted by in vitro assays. | Investigate extra-hepatic metabolism or other clearance pathways (e.g., biliary excretion). |

| Half-Life | Low volume of distribution (Vd,ss) counteracts reduced clearance. | Focus on structural modifications that lower clearance without drastically reducing Vd,ss, such as targeted blocking of metabolic soft-spots [5]. |

Experimental Protocol: Metabolic Soft-Spot Identification

- Incubation: Incubate the test compound with liver microsomes or hepatocytes (from rat, mouse, or human) in the presence of NADPH cofactor.

- Time Points: Remove aliquots at specified time points (e.g., 0, 15, 30, 60 minutes).

- Termination: Stop the reaction by adding an organic solvent (e.g., acetonitrile).

- Analysis: Centrifuge to precipitate proteins and analyze the supernatant using LC-MS/MS.

- Data Interpretation: Identify metabolites based on mass shifts. The structures of major metabolites reveal the labile parts of the molecule (soft-spots) for further modification [5].

Data Presentation

Table 1: Impact of Lipophilicity on Key Pharmacokinetic Parameters in Neutral Compounds [5]

| LogD₇.₄ Range | Typical Vd,ss,u (L/kg) | Typical CLu (mL/min/kg) | Impact on Half-Life |

|---|---|---|---|

| <1 | Low | Low | Variable; can be short due to renal clearance. |

| 1 - 2.5 | Moderate | Moderate | Most favorable balance for half-life. |

| >2.5 - 4 | High | High | Often short due to very high clearance. |

| >4 | Very High | Very High / Variable | Can be long if clearance is low, but solubility is a major issue. |

Table 2: Effect of Common Molecular Transformations on Half-Life and Lipophilicity [5]

| Transformation | Typical ΔLogD₇.₄ | Impact on Metabolic Stability | Probability of Prolonging Half-Life |

|---|---|---|---|

| H → F | Decrease | Increases | High |

| H → Cl | Increase | Increases | High |

| CH₃ → CF₃ | Increase | Increases | High |

| Lowering LogD without addressing soft-spot | Decrease | No change / slight increase | Low (~30%) |

| Improving metabolic stability without lowering LogD | Variable | Increases | High (~82%) |

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions

| Reagent / Assay | Function in Promiscuity & ADME Research |

|---|---|

| P-glycoprotein (P-gp) Assay Systems | To determine if a new chemical entity is a substrate or inhibitor of this key promiscuous efflux transporter, critically impacting its distribution, particularly to the brain [16]. |

| Rat Hepatocyte (RH) CLint Assay | An in vitro assay to measure intrinsic metabolic clearance, used to predict in vivo hepatic clearance and identify compounds with high metabolic lability [5]. |

| Octanol-Water Partitioning | The gold-standard experimental system for determining the partition coefficient (LogP) or distribution coefficient (LogD) of a compound, defining its lipophilicity [14] [5]. |

| LC-MS/MS Systems | Essential for conducting metabolite identification (MetID) studies to pinpoint metabolic soft-spots and for quantifying drug concentrations in bio-matrices during PK studies. |

Experimental Workflow Diagrams

Diagram 1: Troubleshooting high lipophilicity workflow.

Diagram 2: P-gp transport cycle and promiscuity.

Frequently Asked Questions (FAQs)

Q1: How does lipophilicity fundamentally affect a drug's journey in the body? Lipophilicity, often measured as LogP (partition coefficient) or LogD (distribution coefficient at a specific pH), is a primary physicochemical property that influences every aspect of a drug's pharmacokinetics (PK) [18] [19]. It underlies higher-level properties, affecting passive membrane permeability, solubility, metabolic stability, and the route of clearance [18] [20]. A drug's lipophilicity determines its ability to cross biological membranes for absorption, its distribution into tissues, and how it is ultimately cleared from the body, either via hepatic metabolism or renal excretion [20] [19].

Q2: What is the optimal lipophilicity range for an orally administered drug? While context-dependent, a LogD₇.₄ between 1 and 3 is generally considered desirable for oral drugs [18]. This range often provides a balanced profile:

- LogD₇.₄ < 1: Associated with high solubility but potentially low permeability and poor absorption [18].

- LogD₇.₄ 1–3: Typically offers a good balance of moderate solubility and permeability, with potential for good absorption and bioavailability [18].

- LogD₇.₄ > 3–5: Often leads to low solubility, high metabolism, and variable oral absorption [18].

Q3: I need to increase my drug's brain penetration. Is increasing lipophilicity a reliable strategy? Increasing lipophilicity can enhance blood-brain barrier (BBB) permeation, but it is a double-edged sword [18]. While higher lipophilicity can improve passive diffusion across the compact BBB, it can also increase binding to efflux pumps like P-glycoprotein and raise metabolic clearance [18] [19]. Therefore, simply increasing lipophilicity without considering these other factors may not improve overall brain exposure and could be counterproductive. The parameter Δlog P (a measure of the difference between lipophilicity in two solvent systems) has also been used as an indicator for blood-brain partitioning [18].

Q4: Why did reducing my compound's lipophilicity not extend its half-life as expected? This is a common pitfall. Reducing lipophilicity often decreases clearance (CL), but it can also reduce the volume of distribution (Vd,ss) because the drug is less likely to distribute into tissues [5]. Since half-life (T₁/₂) is proportional to both Vd,ss and CL, if both decrease similarly, the net effect on half-life can be negligible [5]. A more successful strategy is to directly address a metabolic soft-spot to improve metabolic stability, rather than relying solely on global lipophilicity reduction [5].

Q5: How does lipophilicity influence the clearance route of peptide-drug conjugates? For peptide-drug conjugates and other larger modalities, lipophilicity remains a key determinant of clearance route [20]. Higher lipophilicity (higher LogD) favors hepatic clearance and reduces kidney uptake and associated toxicity [20]. Conversely, lower lipophilicity favors renal clearance. Tuning lipophilicity is therefore a viable strategy to shift the clearance route and mitigate organ-specific toxicity, for example, in targeted radiotherapies [20].

Troubleshooting Guides

Issue 1: Poor Oral Absorption

Problem: Your drug candidate shows low oral bioavailability due to poor absorption.

Potential Causes and Solutions:

| Potential Cause | Diagnostic Experiments | Corrective Actions |

|---|---|---|

| Low Permeability (LogD too low) | - Measure apparent permeability in Caco-2 or MDCK cell assays.- Determine experimental LogD₇.₄. | - Increase lipophilicity within the optimal range (e.g., LogD 1-3) [18].- Introduce non-polar groups (e.g., methyl) to enhance membrane penetration [18]. |

| Low Solubility (LogD too high) | - Measure equilibrium solubility in aqueous buffer.- Review in silico LogP/LogD predictions. | - Reduce lipophilicity by introducing polar groups (e.g., amine, hydroxyl) or ionizable moieties [21].- Consider formulation strategies like nanoemulsions or lipid-based drug delivery systems (LBDDS) to enhance solubility [21]. |

| Efflux by P-gp | - Conduct bidirectional permeability assays with and without a P-gp inhibitor. | - Structural modification to reduce the compound's affinity for the P-gp efflux pump, which may involve reducing lipophilicity or specific structural features [19]. |

Issue 2: High Clearance and Short Half-Life

Problem: Your compound is cleared too quickly, leading to a short half-life that may require frequent dosing.

Potential Causes and Solutions:

| Potential Cause | Diagnostic Experiments | Corrective Actions |

|---|---|---|

| High Metabolic Lability | - Assess stability in liver microsomes or hepatocytes.- Identify metabolic soft-spots via metabolite ID studies. | - Targeted metabolism mitigation: Address the specific soft-spot (e.g., replace a labile methyl group with a cyclopropyl or fluorine) [5].- General lipophilicity reduction: Lower LogD to reduce nonspecific binding to CYP450 enzymes, but be aware this may also reduce Vd,ss [18] [5]. |

| High Renal Clearance of Unbound Drug | - Determine fraction unbound in plasma (fᵤ).- Measure renal clearance in vivo. | - Increasing lipophilicity can reduce renal clearance by increasing plasma protein binding and tissue distribution, shifting clearance to hepatic metabolism [18] [20]. |

Issue 3: High Volume of Distribution Leading to Off-Target Tissue Accumulation

Problem: The drug distributes extensively into tissues, leading to a large volume of distribution and potential off-target toxicity.

Potential Causes and Solutions:

| Potential Cause | Diagnostic Experiments | Corrective Actions |

|---|---|---|

| Excessive Lipophilicity | - Determine tissue distribution in vivo.- Measure plasma protein binding and log D₇.₄. | - Reduce overall lipophilicity to decrease tissue partitioning [18].- Introduce polar or ionizable groups (at physiological pH) to increase solubility in plasma and extracellular fluid. |

| Target Promiscuity and Toxicity | - Conduct counter-screening against common off-targets (e.g., hERG).- Use panels like BioMAP for phenotypic toxicity profiling [22]. | - Reduce lipophilicity, as higher LogD is correlated with increased promiscuity and off-target interactions, including hERG inhibition [18] [19]. |

Experimental Protocols for Key Assays

Determination of Lipophilicity (LogD₇.₄) using the Shake-Flask Method

The shake-flask method is considered the gold standard for the direct experimental determination of LogP/LogD [19].

Principle: A compound is partitioned between n-octanol (non-polar phase) and an aqueous buffer (polar phase, typically pH 7.4). After equilibration and phase separation, the concentration of the solute in each phase is quantified, and the LogD is calculated [19].

Materials:

- Research Reagent Solutions:

- n-Octanol (HPLC grade)

- Phosphate Buffered Saline (PBS), pH 7.4

- Test compound solution in a suitable solvent (e.g., DMSO)

- HPLC vials and LC system with UV/Vis or MS detection

Procedure:

- Pre-saturation: Saturate the n-octanol with PBS and the PBS with n-octanol by vortexing the two phases together and allowing them to separate overnight. Use the pre-saturated phases for the experiment.

- Partitioning: Add a known amount of your test compound to a glass vial. Introduce equal volumes (e.g., 1 mL each) of pre-saturated n-octanol and PBS. Seal the vial tightly.

- Equilibration: Agitate the mixture vigorously for 1 hour at constant temperature (e.g., 25°C) using a mechanical shaker.

- Phase Separation: Allow the phases to separate completely for several hours, or use centrifugation to accelerate separation.

- Quantification: Carefully sample from each phase. Dilute the n-octanol phase with a water-miscible solvent (e.g., methanol) if necessary. Analyze the samples using a calibrated LC-UV or LC-MS method to determine the concentration of the compound in each phase.

- Calculation:

- LogD₇.₄ = Log₁₀ (Concentration in n-octanol / Concentration in buffer)

Visual Workflow:

In Vitro Metabolic Stability Assay in Rat Hepatocytes

This assay predicts in vivo metabolic clearance [5].

Principle: The test compound is incubated with metabolically active hepatocytes. The disappearance of the parent compound over time is monitored to calculate an intrinsic clearance (CLᵢₙₜ) value.

Materials:

- Research Reagent Solutions:

- Cryopreserved or fresh rat hepatocytes

- Williams' E Medium (with incubation supplements)

- Test compound (e.g., 1 mM stock in DMSO)

- Stopping solution (e.g., acetonitrile with internal standard)

- LC-MS/MS system for bioanalysis

Procedure:

- Thawing/Preparation: Thaw cryopreserved hepatocytes according to the vendor's protocol and determine viability (should be >80%). Adjust the cell density to a working suspension (e.g., 1 million viable cells/mL).

- Incubation: Pre-warm the hepatocyte suspension in a shaking incubator at 37°C. Initiate the reaction by adding the test compound (final concentration typically 0.5-1 µM, DMSO concentration ≤0.1%).

- Sampling: At predetermined time points (e.g., 0, 5, 15, 30, 45, 60 minutes), remove an aliquot of the incubation mixture and transfer it to a tube containing the stopping solution (acetonitrile) to precipitate proteins and stop the reaction.

- Analysis: Centrifuge the stopped samples to remove precipitated protein. Analyze the supernatant using LC-MS/MS to determine the peak area of the parent compound.

- Calculation: Plot the natural logarithm of the parent compound concentration remaining versus time. The slope of the linear phase is the elimination rate constant (k). Intrinsic clearance (CLᵢₙₜ, mL/min/kg) can be calculated using the formula: CLᵢₙₜ = k / (number of cells per mL * liver weight per kg body weight).

Visual Workflow:

Table 1: Impact of Lipophilicity (LogD₇.₄) on Key Pharmacokinetic Parameters This table synthesizes general relationships observed in drug discovery [18] [20].

| LogD₇.₄ Range | Solubility | Permeability | Primary Clearance Route | Volume of Distribution (Vd,ss) | Common PK Challenges |

|---|---|---|---|---|---|

| < 1 | High | Low | Renal | Low | Low absorption and bioavailability; Potential for renal clearance [18]. |

| 1 - 3 | Moderate | Moderate | Balanced | Moderate | Balanced profile; Potential for good oral absorption [18]. |

| 3 - 5 | Low | High | Hepatic Metabolism | High | Variable oral absorption; Nonlinear PK due to enzyme saturation [18]. |

| > 5 | Poor | High | Hepatic Metabolism | Very High | Poor oral absorption; High metabolic clearance; Promiscuity & toxicity risk [18] [19]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents for Lipophilicity and PK Studies

| Reagent / Material | Function/Brief Explanation | Example Use Cases |

|---|---|---|

| n-Octanol & Buffer Systems | The standard solvent system for the direct measurement of partition/distribution coefficients (LogP/LogD) [19]. | Shake-flask LogD determination [19]. |

| Cryopreserved Hepatocytes | Metabolically competent cells used to assess in vitro metabolic stability and predict in vivo hepatic clearance [5]. | Intrinsic clearance (CLᵢₙₜ) assays; Metabolite identification studies. |

| Caco-2 Cell Line | A human colon adenocarcinoma cell line that, upon differentiation, forms a monolayer with properties of intestinal epithelium. Used to model oral drug absorption. | Apparent permeability (Pₐₚₚ) assays; Studies on efflux transport (e.g., P-gp). |

| LC-UV and LC-MS Systems | Essential analytical tools for quantifying compound concentration in complex matrices like buffers, biological fluids, and cell lysates. | LogD analysis; Bioanalysis from in vitro and in vivo samples; Metabolite profiling. |

| Specialized Lipid Excipients | Ionizable lipids, phospholipids, and cationic lipids used in formulations to overcome delivery challenges of highly lipophilic drugs [21]. | Formulating Lipid Nanoparticles (LNPs) for nucleic acids; Creating lipid-based drug delivery systems (LBDDS) for small molecules [21]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is pharmacological promiscuity and why is it a major safety concern? Answer: Pharmacological promiscuity describes the activity of a single compound against multiple, unintended biological targets. This is a significant safety concern because engaging off-target receptors and enzymes can lead to a range of adverse side effects, often causing drug candidates to fail during clinical development or even leading to the withdrawal of approved drugs from the market. Undesired promiscuity is a primary source of safety attrition in the drug discovery process [9].

FAQ 2: Which molecular properties are most strongly associated with increased promiscuity? Answer: The most important molecular properties linked to increased promiscuity are high lipophilicity and the presence of a basic center.

- Lipophilicity: Promiscuity increases with lipophilicity (often measured as LogP or ClogP). Marked promiscuity is rarely observed for compounds with a ClogP < 3 [9].

- Basic Center: A basic center with a calculated pKa (cpKa(B)) greater than 6 is the most significant determinant of promiscuity in typical safety panels. This is because such compounds frequently interact with a small set of targets, such as aminergic GPCRs, which attract surprisingly high hit rates [9].

FAQ 3: How can colloidal aggregation lead to false-positive results in screening? Answer: Highly lipophilic molecules, like cannabidiol (CBD), have very poor aqueous solubility. Above a critical concentration (the Critical Aggregation Concentration, or CAC), these molecules can form colloidal dispersions or aggregates in assay media. These colloids can nonspecifically interfere with proteins and enzymes, leading to false-positive signals in in vitro assays that do not represent true, specific pharmacological activity. This phenomenon can misleadingly suggest a compound is broadly active [23].

FAQ 4: What are some experimental strategies to identify and eliminate false-positive hits? Answer: To prioritize high-quality hits and eliminate artifacts, a cascade of experimental approaches is recommended [24]:

- Counter Screens: Use assays designed solely to detect compound-mediated assay interference (e.g., autofluorescence, signal quenching).

- Orthogonal Assays: Confirm bioactivity using an entirely different readout technology (e.g., follow up a fluorescence-based readout with a luminescence- or absorbance-based assay).

- Biophysical Assays: Employ techniques like Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) to validate direct binding and measure affinity.

- Use of Detergents: Adding non-ionic detergents like Triton X-100 can disrupt colloidal aggregates and prevent this specific type of false-positive interference [23].

- Cellular Fitness Screens: Test for general cytotoxicity to ensure the observed activity is not simply due to cell death [24].

FAQ 5: Which target families are most frequently hit by promiscuous compounds? Answer: Analysis of large screening datasets reveals that a relatively small set of targets are responsible for the majority of promiscuity. The most frequently hit target classes include [9]:

- Aminergic GPCRs (e.g., serotonin, dopamine, and adrenergic receptors)

- Certain ion channels

- Opioid receptors

- Aminergic transporters (e.g., serotonin transporter)

Troubleshooting Guides

Issue 1: Investigating Promiscuity and Off-Target Activity

Problem: A lead compound shows excellent potency against its primary therapeutic target but demonstrates a high fail rate in a broad safety pharmacology panel, indicating potential promiscuity.

Solution: Systematically investigate the physicochemical drivers and specific off-target interactions.

Step-by-Step Guide:

- Profile Physicochemical Properties: Calculate key properties, especially lipophilicity (LogP/ClogP) and the pKa of any basic centers. Compounds with ClogP > 3 and a basic center (pKa > 6) have a high risk of promiscuity [9].

- Screen Against a Representative Mini-Panel: To enable early recognition of promiscuity, screen the compound against a small, focused panel of high-risk targets. This should include key aminergic GPCRs (e.g., 5-HT2B, 5-HT2C, α1A, α1B, H1, M1, M2) and the hERG channel [9].

- Conduct Binding Assays with Detergents: If promiscuous inhibition is observed in enzymatic or binding assays, repeat the experiments in the presence of a non-ionic detergent like Tocrisolve or Triton X-100 (e.g., at 0.01%). A significant reduction in activity suggests the effect is due to nonspecific colloidal aggregation rather than specific target binding [23].

- Perform Orthogonal Binding Validation: Use a biophysical method like Surface Plasmon Resonance (SPR) to confirm direct binding to the primary target and to characterize binding kinetics (kon, koff). This helps distinguish specific from nonspecific interactions [25] [24].

- Analyze Dose-Response Curves: Examine the shape of the dose-response curve. Steep, shallow, or bell-shaped curves can indicate toxicity, poor solubility, or compound aggregation, signaling a potential false-positive result [24].

Workflow Diagram:

Issue 2: Managing High Lipophilicity in Lead Compounds

Problem: A compound series has high lipophilicity (LogP > 5), leading to poor aqueous solubility, assay promiscuity, and potential long-term toxicity risks.

Solution: Implement strategies to reduce lipophilicity and mitigate its negative effects.

Step-by-Step Guide:

- Quantify the Risk: Calculate the Fraction Lipophilicity Index (FLI). The drug-like FLI range is typically 0-8. Compounds falling outside this range, especially on the high end, present a significant risk for promiscuity and poor absorption [26].

- Apply Structural Modifications:

- Introduce Polar Groups: Add hydrogen bond donors/acceptors or slightly acidic/basic groups to increase solubility and reduce LogP.

- Reduce Aromatic Rings: Lower the number of aromatic rings and heavy atoms to decrease molecular "obesity" [26].

- Remove Halogens: Replace halogen atoms (which greatly increase lipophilicity) with more polar bioisosteres like cyanos, amides, or hydroxys [9].

- Optimize Lipophilic Efficiency (LipE): Use metrics like Lipophilic Ligand Efficiency (LLE), where LLE = pIC50 (or pEC50) - LogP (or LogD). Focus on compound series that maintain high potency while achieving lower lipophilicity [26].

- Utilize Automation for Low-Volume Assays: To overcome solubility limitations in testing, use automated, non-contact liquid handlers that enable miniaturization (e.g., nanoliter-range dispensing). This reduces reagent consumption and allows for more reliable testing of compounds with limited solubility [27].

Relationship Diagram: High Lipophilicity and Clinical Consequences

Data Presentation

Table 1: Key Physicochemical Properties Linked to Promiscuity and Safety Attrition

| Property | High-Risk Threshold | Associated Consequence | Clinical Impact |

|---|---|---|---|

| Lipophilicity (LogP/ClogP) | > 3 - 5 [9] [26] | Increased nonspecific binding, poor solubility, metabolic instability [23] [26] | Higher risk of off-target toxicity, poor pharmacokinetics, and drug-drug interactions [9] |

| Presence of a Basic Center | pKa(B) > 6 [9] | High hit rates on aminergic GPCRs, ion channels, and transporters [9] | Cardiovascular side effects, central nervous system disturbances, and other off-target toxicities [9] |

| Fraction Lipophilicity Index (FLI) | Outside 0 - 8 range [26] | Suboptimal balance of permeability and solubility, predicting poor absorption [26] | Low oral bioavailability and increased risk of development failure [26] |

Table 2: Experimental Strategies to Mitigate Promiscuity-Related Artifacts

| Strategy | Method / Reagent | Function / Purpose | Key Outcome |

|---|---|---|---|

| Counter Assays | Signal-based control assays (e.g., fluorescence quenching test) | Identify technology-specific assay interference [24] | Flags compounds that interfere with the detection method, not the biology |

| Orthogonal Assays | SPR, ITC, MST, or a different readout technology (e.g., luminescence) [24] | Confirm bioactivity and binding via an independent method [24] | Validates true positive hits and provides reliable affinity data |

| Aggregation Control | Addition of Triton X-100 (0.01%) or other detergents [23] | Disrupts colloidal aggregates causing false positives [23] | Distinguishes specific target binding from nonspecific colloidal interference |

| Cellular Fitness Assays | CellTiter-Glo, Caspase assays, High-content imaging [24] | Monitor general cell health and viability [24] | Excludes compounds whose activity is due to general cytotoxicity |

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function / Explanation |

|---|---|

| Triton X-100 | A non-ionic detergent used to disrupt and prevent the formation of colloidal aggregates in biochemical assays. Its inclusion helps confirm that observed inhibitory activity is due to specific target binding and not nonspecific aggregation [23]. |

| Tocrisolve | A commercially available, water-soluble lipid emulsion often used as a vehicle for solubilizing highly lipophilic compounds in aqueous assay buffers, helping to mitigate solubility-related artifacts. |

| Bovine Serum Albumin (BSA) | Often added to assay buffers to reduce nonspecific binding of test compounds to plates and equipment, thereby lowering background signal and false-positive rates [24]. |

| I.DOT Liquid Handler | An automated, non-contact dispenser that enables miniaturization of assays to nanoliter volumes. This reduces reagent consumption and allows for more reliable testing of compounds with limited solubility or availability [27]. |

| SPR Chip (e.g., CM5) | The sensor surface used in Surface Plasmon Resonance instruments. It is coated with a dextran matrix that can be functionalized to immobilize a protein target, allowing for label-free analysis of binding kinetics and affinity [25] [24]. |

Computational and Experimental Tools for Prediction and Characterization

Frequently Asked Questions

Q1: What are the most common reasons for large discrepancies between predicted and experimental log P/log D values?

Large discrepancies often arise from several key issues:

- Inadequate Training Data: The chemical space of your compounds is not well-represented in the model's training set. This is particularly common for novel scaffolds, such as Pt(IV) derivatives in platinum complexes, where models trained on older data showed significantly increased Root Mean Squared Error (RMSE) from 0.62 to 0.86 when applied to newer compounds [28].

- Incorrect Descriptor Selection: Using models with descriptors unsuitable for your compound class. For instance, standard small-molecule models often fail for peptides and peptide mimetics, advocating for bespoke approaches [29].

- Improper Protonation State: log D is pH-dependent, and incorrect assignment of the major microspecies at physiological pH (7.4) is a common source of error [29].

Q2: How can I improve prediction accuracy for complex molecules like peptides or metal complexes?

- Use Specialized Models: Employ models specifically developed for your compound class. For peptides and peptide derivatives, machine learning models using tailored molecular descriptors have demonstrated superior accuracy over general-purpose models [29].

- Leverage Consensus Modeling: Combine predictions from multiple models or algorithms. Consensus approaches have been shown to improve accuracy and robustness [30] [28].

- Expand Chemical Space Coverage: If generating new data, ensure it covers underrepresented chemical regions. Retraining a model with a combined dataset that included novel phenanthroline-containing Pt(IV) complexes drastically reduced RMSE from 1.3 to 0.34 [28].

Q3: What is the best workflow to validate computational lipophilicity predictions in a wet-lab setting?

A robust validation workflow integrates both in silico and experimental methods:

- Computational Triangulation: Obtain predictions from multiple algorithms (e.g., AlogPs, XlogP3, ACD/logP) and establish a consensus [30].

- Experimental Benchmarking: Use Reverse-Phase Thin-Layer Chromatography (RP-TLC) as a rapid, cost-effective experimental method to determine the lipophilicity parameter (RₘW) for a representative subset of your compounds [30].

- Correlation Analysis: Establish a quantitative relationship between your experimental RₘW values and the computational predictions to validate and calibrate the in silico models for your specific compound series [30].

Q4: How can I use lipophilicity predictions to address target promiscuity and toxicity risks in early drug discovery?

- Monitor the Lipophilicity Threshold: Compounds with very high log P/log D often have increased risk of promiscuity and toxicity. Implement a log D threshold (e.g., <4 or <5) as a critical filter during compound selection and lead optimization [30].

- Integrate with Other Predictors: Lipophilicity should not be viewed in isolation. Use it as one input into a broader ADMET profiling workflow that includes predictions for solubility, metabolic stability, and hERG binding to gain a more comprehensive safety assessment [31] [32].

Troubleshooting Guides

Issue 1: Poor Correlation Between Predicted and Chromatographic Lipophilicity (RₘW)

Problem: Values from in silico tools (e.g., ALOGPS, XlogP) do not align with experimental RP-TLC results.

Solution:

- Verify Chromatographic Conditions:

- Stationary Phase: Confirm the use of an appropriate reverse-phase plate (e.g., RP-18F₂₅₄ for standard small molecules).

- Mobile Phase: Ensure a binary solvent system with a well-characterized organic modifier (e.g., acetone, acetonitrile, 1,4-dioxane) and water is used. Prepare fresh mobile phases to avoid composition drift [30].

- Standardize Data Processing: Calculate the RₘW value from the slope of the line when Rₘ is plotted against the volume fraction of the organic modifier. Ensure a sufficient number of data points (≥5 concentrations) for a reliable linear fit [30].

- Audit Computational Inputs:

Issue 2: Machine Learning Model Fails on Novel Chemotypes

Problem: A pre-trained ML model for log D prediction performs poorly on your newly synthesized compounds.

Solution:

- Conformity Check: Analyze the new compounds using Principal Component Analysis (PCA) or similar techniques to visualize their position relative to the model's training set chemical space [29].

- Model Retraining/Fine-Tuning:

- If possible, provide experimental log D data for a few representative new chemotypes to fine-tune the existing model.

- For larger datasets, develop a new model using machine learning techniques like Support Vector Regression (SVR) with descriptors selected via methods like LASSO, which have proven effective for specific compound classes like peptides [29].

- Adopt a Multi-Task Learning Approach: Consider using newer models that predict solubility and lipophilicity simultaneously, as these endpoints are thermodynamically linked and can improve overall prediction robustness [28].

Issue 3: Integrating Lipophilicity Predictions into a PBPK Model

Problem: Translating a calculated log D value into reliable parameters for a Physiologically-Based Pharmacokinetic (PBPK) model.

Solution:

- Parameter Mapping: Understand that lipophilicity (log D) is a key input for estimating tissue-to-plasma partition coefficients (Kp) in PBPK models, often via mechanistic equations like those of Rodgers and Rowland.

- Quantify Uncertainty: Acknowledge the inherent uncertainty in in silico predictions. For critical PBPK applications, use a prediction confidence interval (e.g., log D ± 0.5) to perform sensitivity analysis and understand its impact on key PK outcomes like Cmax and AUC [33].

- Leverage AI-Enhanced Workflows: Explore emerging platforms that integrate AI and ML for improved parameter estimation and uncertainty quantification in PBPK modeling, which can help address challenges posed by complex drug formulations and drug-drug interactions [33].

Comparative Data Tables

Table 1: Performance of Computational Log P/Log D Prediction Tools

| Tool / Algorithm | Typical Application Domain | Key Strengths | Reported Error (RMSE) / Accuracy | Key Considerations |

|---|---|---|---|---|

| Consensus Models [30] [28] | Broad small molecules, Pt complexes | Improved robustness by averaging multiple models | RMSE: ~0.62 (Solubility), ~0.44 (log D) [28] | Performance depends on constituent algorithms |

| SVR with Selected Descriptors(e.g., SVR(Lasso)) [29] | Peptides & peptide mimetics | Handles non-linear relationships; tailored descriptors | RMSE: 0.39-0.47 (LIPOPEP); ~90% within ±0.5 log units [29] | Requires feature selection; performance drops on dissimilar chemotypes (RMSE >1.3 on AZ set) [29] |

| ALOGPS(e.g., ALOGPS 2.1) [30] | Broad small molecules | Widely accessible; extensive training data | Accuracy compared to chromatographic results varies [30] | Part of a suite of algorithms (ilogP, XlogP3) for comparison [30] |

| Linear Models (e.g., LASSO) [29] | Peptides (linear, natural) | Interpretable; identifies key physicochemical descriptors | RMSE: ~0.60 (LIPOPEP); ~75% within ±0.5 log units [29] | Less accurate than non-linear methods like SVR for complex molecules [29] |

Table 2: Experimental vs. Computational Lipophilicity Determination

| Method | Throughput | Key Measured Parameter | Typical Cost | Primary Application in Workflow |

|---|---|---|---|---|

| Shake-Flask (Gold Standard) [29] | Low | log P / log D | High | Validation and calibration of computational methods |

| RP-TLC [30] | Medium to High | RₘW (correlates to log P) | Low | Rapid experimental profiling; validation of in silico predictions for neuroleptics and other compound series [30] |

| In Silico Prediction (e.g., QSPR/ML) [29] [28] | Very High | Calculated log P / log D | Very Low | Virtual screening of large libraries; early-stage lead prioritization |

| Multi-Task AI Models [28] [32] | Very High | Simultaneous prediction of solubility & log D | Very Low | Integrated property prediction for efficient candidate optimization [28] |

Experimental Protocols

Protocol 1: Determining Lipophilicity by Reverse-Phase Thin-Layer Chromatography (RP-TLC)

Methodology: This protocol is adapted from studies determining the lipophilicity of neuroleptics and other active substances [30].

Materials:

- Stationary Phase: Reverse-phase TLC plates (e.g., RP-2F₂₅₄, RP-8F₂₅₄, RP-18F₂₅₄) with different chain lengths.

- Mobile Phase: Binary mixtures of an organic modifier and water. Common modifiers include acetone, acetonitrile, and 1,4-dioxane. Prepare a series of at least 5-6 solutions with varying volume fractions of the organic modifier (e.g., 0.3, 0.4, 0.5, 0.6, 0.7, 0.8).

- Sample Preparation: Dissolve test compounds in a volatile, water-miscible solvent (e.g., methanol) to a concentration of ~1 mg/mL.

Procedure:

- Spot 1-2 µL of each sample solution onto the TLC plate.

- Develop the chromatogram in a pre-saturated twin-trough chamber at room temperature.

- After development, air-dry the plates and visualize the spots under UV light or using an appropriate detection method.

- Measure the retention factor, Rₘ, for each spot using the formula: Rₘ = log(1/Rf - 1).

Data Analysis:

- For each compound, plot the Rₘ values against the volume fraction of the organic modifier in the mobile phase.

- The lipophilicity parameter RₘW is defined as the extrapolated Rₘ value for zero organic modifier (pure water as the mobile phase), which is the intercept of the obtained linear relationship. RₘW is interpreted as an experimental log P value [30].

Protocol 2: Building a Support Vector Regression (SVR) Model for Peptide log D₇.₄

Methodology: This protocol outlines the workflow for developing a bespoke machine learning model for peptides, as described in [29].

Data Curation:

- Collect a dataset of peptides with experimentally determined log D₇.₄ values (e.g., the LIPOPEP set of 243 peptides).

- Standardize structures and curate data to remove duplicates and outliers.

Descriptor Calculation and Selection:

- Calculate a comprehensive set of 1D and 2D molecular descriptors (e.g., using software like MOE or Dragon).

- Apply a feature selection algorithm like LASSO (Least Absolute Shrinkage and Selection Operator) to identify the most relevant descriptors (e.g., 11 out of 120 related to charge and surface polarity) [29].

Model Training and Validation:

- Use the selected descriptors as input for a Support Vector Regression (SVR) model with a Gaussian kernel.

- Optimize the hyperparameters (e.g., C, γ) via cross-validation on the training set.

- Validate the final model on a held-out external test set. The reported model achieved an RMSE of 0.39 and 90.6% of predictions within ±0.5 log units on an external test [29].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Software for Lipophilicity Research

| Item | Function / Application | Example Use Case |

|---|---|---|

| RP-TLC Plates (RP-2, RP-8, RP-18) [30] | Experimental determination of chromatographic lipophilicity (RₘW) | Comparing lipophilicity trends across a series of neuroleptic drug analogs [30]. |

| Organic Modifiers(Acetone, Acetonitrile, 1,4-dioxane) [30] | Components of the mobile phase in RP-TLC | Optimizing separation and linearity of Rₘ vs. solvent composition plots [30]. |

| Molecular Descriptor Software(e.g., MOE, Dragon) [29] | Generation of numerical representations of chemical structures for QSPR/ML models | Calculating descriptors for input into a Support Vector Regression (SVR) model for peptide log D [29]. |

| Machine Learning Environments(e.g., Python/scikit-learn, OCHEM) [28] | Platform for building, training, and deploying predictive models | Developing a multi-task consensus model for solubility and lipophilicity of platinum complexes [28]. |

| Online Prediction Platforms(e.g., ALOGPS, OCHEM) [30] [28] | Quick, accessible log P/log D predictions for initial screening | Triangulating predictions from multiple algorithms to form an initial consensus for a new chemical entity [30]. |

Workflow and Relationship Visualizations

In Silico Lipophilicity Prediction Workflow

Evolution of Lipophilicity Prediction Methods

Lipophilicity-Driven Promiscuity and Risk Mitigation

Troubleshooting Guide: Common Experimental Challenges

This guide addresses frequent issues encountered in off-target prediction and validation workflows, providing targeted solutions for researchers.

Table 1: Troubleshooting Common Off-Target Prediction and Analysis Problems

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| High false positive rate in computational predictions | Overly permissive similarity thresholds; inadequate training data; model overfitting [34]. | Adjust prediction score cut-off (e.g., use ≥0.6 pseudo-score in OTSA); employ ensemble methods; use updated, comprehensive training datasets [34] [35]. |

| Low editing efficiency (CRISPR-Cas9) | Poor gRNA design; inefficient delivery method; suboptimal Cas9 expression [36]. | Verify gRNA targets a unique sequence; optimize delivery (electroporation, lipofection); use a strong, cell-type-appropriate promoter; codon-optimize Cas9 [36]. |

| Cell toxicity | High concentrations of CRISPR components or small molecules; excessive nuclease activity [36]. | Titrate component concentrations to find balance between efficacy and viability; use high-fidelity Cas9 variants; employ Cas9 protein with nuclear localization signal [36] [37]. |

| Uncertainty in off-target validation | Insensitive detection methods; analyzing wrong candidate sites [37]. | Use orthogonal validation methods (e.g., GUIDE-seq, CIRCLE-seq); base candidate sites on robust in silico prediction tools [35] [38]. |

| Difficulty translating in silico predictions to in vivo results | Model trained only on limited in vitro data; lacking epigenetic or cellular context features [39] [35]. | Use models like CCLMoff that incorporate epigenetic data (e.g., chromatin accessibility, DNA methylation) and are trained on diverse datasets [35]. |

Frequently Asked Questions (FAQs)

What are the core components of an integrated off-target prediction framework like OTSA?

The Off-Target Safety Assessment (OTSA) framework employs a hierarchical, multi-method computational process. It integrates:

- Ligand-Centric Methods: 2-D chemical similarity searches and Quantitative Structure-Activity Relationship (QSAR) models [34].

- Target-Centric Methods: 3-D protein structure-based approaches, including binding site pocket similarity searches and automated molecular docking [34].

- Machine Learning Algorithms: Artificial Neural Networks (aNN), Support Vector Machines (SVM), and Random Forests (RF) to improve prediction accuracy [34].

- Score Normalization: Predictions from orthogonal methods are combined into a normalized pseudo-score, where a value ≥ 0.6 is typically considered significant [34].

This integrated approach, covering over 7,000 targets, allows for the prediction of safety-relevant off-target interactions that might be missed by experimental screens alone [34].

How do the latest deep learning models improve CRISPR off-target prediction?

Newer models like CRISPR-M and CCLMoff address key limitations of earlier tools:

- Novel Encoding Schemes: They move beyond simple one-hot encoding, using more sophisticated representations that expand the feature space and better capture sequence relationships [39] [35].

- Advanced Architectures: They employ complex neural networks, such as multi-view models (combining CNNs and Bidirectional LSTMs) and transformer-based language models, which can learn generalizable patterns from sgRNA and DNA sequences [39] [35].

- Comprehensive Training: They are trained on large, diverse datasets compiled from multiple genome-wide detection technologies (e.g., GUIDE-seq, CIRCLE-seq), improving their generalization across different experimental conditions [35].

- Incorporation of Biological Context: Some models, like CCLMoff-Epi, can integrate epigenetic features such as chromatin state, which influences Cas9 accessibility and activity [35].

What is the connection between lipophilicity, molecular properties, and target promiscuity?

Analysis of approved and discontinued drugs reveals a clear link between physicochemical properties and promiscuity.

Table 2: Molecular Properties Correlation with Off-Target Promiscuity [34]

| Property | High Promiscuity Profile | Low Promiscuity Profile |

|---|---|---|

| Molecular Weight (MW) | 300 - 500 Da | > 700 Da or < 200 Da |

| Topological Polar Surface Area (TPSA) | ~200 Ų | Information Not Specific |

| Calculated logP (clogP) | ≥ 7 | Information Not Specific |

| Key Finding | Compounds within this property band average 9.3 off-target interactions per drug. | Higher MW compounds show "significantly lower promiscuity." |

Furthermore, the nature of the protein binding site itself is a major factor. Promiscuous binding sites are often large, hydrophobic, and have specific residue compositions, which facilitate interactions with a variety of ligands. In contrast, selective binding sites tend to be smaller and more polar, interacting with only one type of ligand [40].

What are the best practices for validating off-target effects in a clinical development setting?

A rigorous, multi-stage approach is recommended for therapeutic development:

- Prediction Phase: Use state-of-the-art in silico tools (e.g., CCLMoff, CRISPR-M) to nominate potential off-target sites during gRNA or compound design [39] [35] [37].

- Pre-Clinical Validation: Employ highly sensitive in vitro and cell-based methods to verify predictions.

- Definitive Analysis: Where necessary and feasible, use Whole Genome Sequencing (WGS) to perform an unbiased assessment of off-target effects, including large chromosomal rearrangements [37]. The FDA now expects characterization of CRISPR off-target editing in preclinical studies to minimize patient safety risks [37].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents for Off-Target Prediction and Analysis

| Reagent / Material | Function / Application | Examples / Notes |

|---|---|---|

| High-Fidelity Cas9 Variants | Engineered nucleases with reduced off-target cleavage activity while maintaining on-target efficiency. | e.g., HiFi Cas9, SpCas9-HF1; crucial for therapeutic applications [36] [37]. |

| Chemically Modified gRNAs | Synthetic guide RNAs with modifications that enhance stability and reduce off-target editing. | Modifications like 2'-O-methyl (2'-O-Me) and phosphorothioate (PS) bonds [37]. |

| Curated Bioactivity Databases | Provide training data for ligand-based in silico prediction models. | MOAD (Mother Of All Databases), ChEMBL; source of >1 million compound SAR data points [34] [40]. |

| NGS-Based Detection Kits | Experimental kits for genome-wide identification of off-target sites. | GUIDE-seq, CIRCLE-seq, Digenome-seq; detect Cas9 binding, cleavage, or repair products [35] [38]. |

| Epigenetic Data Tracks | Information on chromatin state used to improve prediction accuracy in specific cell types. | CTCF binding, H3K4me3, DNA methylation (RRBS); can be integrated into models like CCLMoff [35]. |

Experimental Workflow Diagrams

OSHA Computational Prediction Workflow

CRISPR Off-Target Validation Workflow

FAQ: Core Concepts and Definitions

Q1: What are compound aggregators and why are they a problem in drug discovery? Compound aggregators are small molecules that self-associate in aqueous solution to form colloidal particles. These aggregates can non-specifically inhibit target proteins, leading to false positive results in high-throughput screening (HTS) campaigns. This nonspecific attachment deceptively suggests target engagement, wasting significant resources on follow-up studies for invalid hits [42].

Q2: How does lipophilicity relate to aggregation and promiscuity? High lipophilicity is a key physicochemical property strongly correlated with increased compound promiscuity and aggregation potential. Research shows that promiscuity rarely occurs for compounds with calculated log P (cLogP) < 3 and becomes markedly more frequent as lipophilicity increases. Furthermore, high lipophilicity often decreases solubility, directly promoting the formation of aggregates [43] [9].

Q3: What is the role of Machine Learning in identifying aggregators? Machine Learning (ML) models, such as random forest classifiers, can predict compounds likely to cause assay interference based on their chemical structure. Trained on historical data from artefact or counter-screen assays, these models learn structural descriptors and patterns associated with aggregating behavior, allowing for the early flagging of such compounds before they enter costly experimental phases [44].

Q4: What is the difference between a false positive and a false negative in this context? A false positive occurs when a benign compound is incorrectly flagged as an aggregator, potentially causing a genuine hit to be discarded. A false negative occurs when a true aggregator is not identified, allowing it to proceed and cause interference in subsequent assays. The goal of optimization is to minimize false positives without increasing false negatives [45] [46].

FAQ: Troubleshooting Experimental Issues

Q1: Our ML model has high accuracy but is missing known aggregators (high false negatives). What can we do? A model missing aggregators often suffers from an unrepresentative training set or imbalanced data.

- Solution: Review your training data. Ensure it contains a sufficient number of confirmed, structurally diverse aggregators. Techniques like synthetic minority over-sampling (SMOTE) can help balance the dataset. You can also adjust the classification threshold to favor recall over precision, making the model more sensitive to potential aggregators [46].

Q2: How can we validate a positive result from our ML predictor in the lab? ML predictions should always be confirmed experimentally. Here are key methods:

- Photonic Crystal (PC) Biosensor Assay: A label-free method that quantifies the mass density of material adsorbed to a surface, providing a direct and quantitative measure of aggregation. It is compatible with high-throughput automated systems [42].

- Dynamic Light Scattering (DLS): Measures particle size distribution in solution, but can be inconsistent for non-spherical aggregates [42].

- Enzymatic Counter-Screens (e.g., α-chymotrypsin assay): A colorimetric assay that detects inhibition insensitive to enzyme concentration, a hallmark of aggregation-based inhibition [42].

- Scanning Electron Microscopy (SEM): Provides visual confirmation of aggregate formation [42].

Q3: Our model is flagging too many compounds as potential aggregators (high false positives). How can we refine it? A high false positive rate can stem from overly broad structural alerts or a lack of contextual information.

- Solution: Fine-tune the model's detection rules. Analyze the structural features of the compounds being incorrectly flagged and adjust the model's parameters or feature weights. Incorporate additional filters, such as stringent lipophilicity cutoffs (e.g., cLogP > 5). Implementing a multi-layered approach where ML predictions are combined with results from a rapid, primary experimental screen (like a PC biosensor assay) can effectively triage and validate predictions [47] [45].

Experimental Protocols for Aggregator Identification

Protocol 1: Aggregation Detection Using a Photonic Crystal Biosensor Assay This protocol provides a label-free, quantitative method for identifying small-molecule aggregators [42].

- Preparation: Dilute test compounds in an aqueous assay buffer (e.g., PBS). A known aggregator and non-aggregator should be included as controls.

- Baseline: Using an automated liquid handler, transfer buffer alone to the photonic crystal (PC) biosensor microplate wells and record the baseline signal.

- Sample Addition: Replace the buffer with the compound solutions.

- Incubation: Incubate the microplate to allow aggregates to form and adsorb to the biosensor surface.

- Measurement: Measure the peak wavelength value (PWV) shift of the PC biosensor. This shift is proportional to the mass density of material adsorbed.

- Analysis: A significant PWV shift relative to the negative control indicates compound aggregation. The results can be confirmed visually using Scanning Electron Microscopy (SEM).

The workflow for this experimental protocol is as follows:

Protocol 2: Building an ML Model for Aggregator Prediction This outlines the steps for creating a predictive ML model using historical screening data [44].

- Data Collection: Compile a dataset of compounds with known aggregation status (e.g., from artefact/counter-screen assays). Annotate each compound as a "Compound Interfering with an Assay Technology" (CIAT) or non-CIAT.

- Feature Generation: Calculate molecular descriptors (e.g., 2D fingerprints, molecular weight, logP, number of rotatable bonds) for all compounds.

- Model Training: Split the data into training and test sets. Train a machine learning algorithm, such as a Random Forest classifier, on the training set to distinguish between CIATs and non-CIATs based on their descriptors.

- Model Validation: Evaluate the model's performance on the held-out test set using metrics like ROC AUC, precision, and recall.

- Deployment: Use the trained model to predict the aggregation propensity of new, untested compounds early in the discovery pipeline.

The workflow for developing this machine learning model is as follows:

Table 1: Comparison of Experimental Methods for Aggregator Identification [42]

| Method | Principle | Throughput | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Photonic Crystal Biosensor | Label-free mass density measurement | High | Quantitative, direct measurement of adsorption | Requires specialized equipment |

| Dynamic Light Scattering (DLS) | Measures particle size distribution | Medium | Provides size information | Inconsistent for non-spherical particles |

| α-chymotrypsin Assay | Enzymatic inhibition detection | Medium | Simple, colorimetric readout | Indirect measure of aggregation |

| Scanning Electron Microscopy (SEM) | High-resolution imaging | Low | Provides visual confirmation | Low throughput, qualitative |

Table 2: Key Research Reagent Solutions