Navigating Activity Cliffs in QSAR: Strategies for Robust Prediction and Drug Discovery

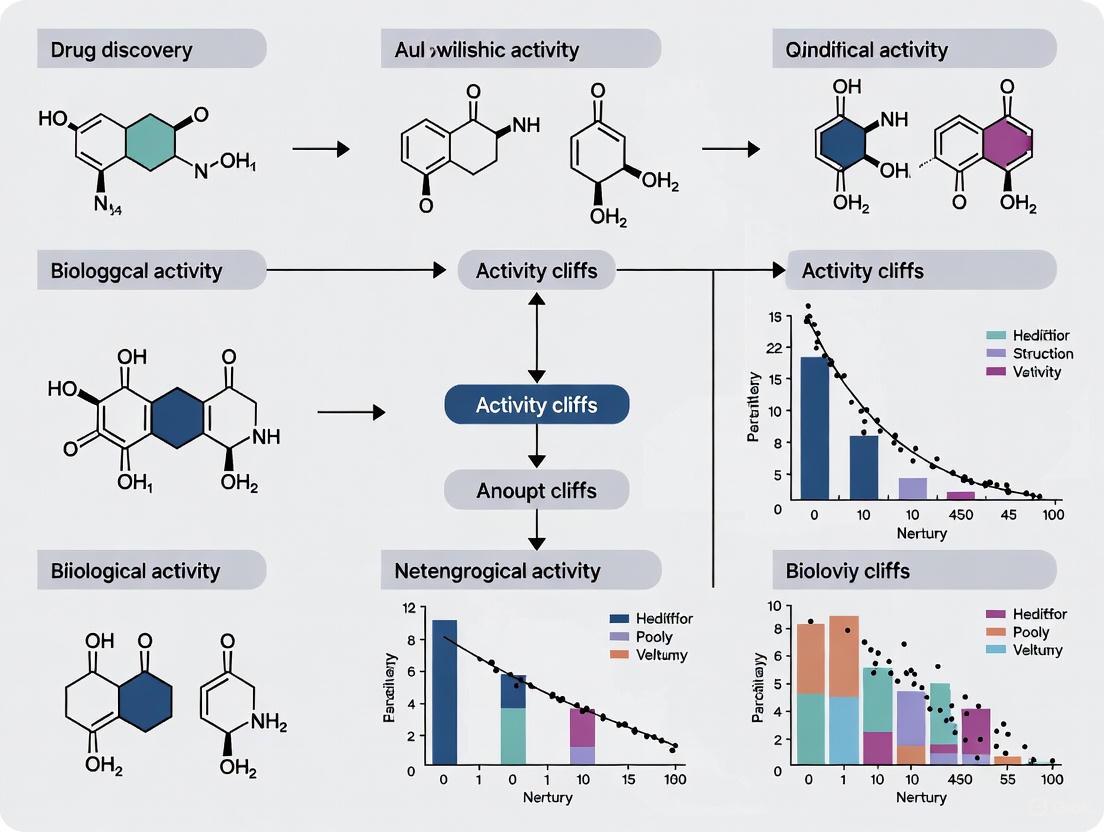

Activity cliffs, where small structural changes lead to large potency differences, present a significant challenge for Quantitative Structure-Activity Relationship (QSAR) models in drug discovery.

Navigating Activity Cliffs in QSAR: Strategies for Robust Prediction and Drug Discovery

Abstract

Activity cliffs, where small structural changes lead to large potency differences, present a significant challenge for Quantitative Structure-Activity Relationship (QSAR) models in drug discovery. This article provides a comprehensive guide for researchers and development professionals on understanding, managing, and overcoming these challenges. We explore the foundational concepts of activity cliffs and their impact on predictive modeling, detail advanced methodological approaches including structure-based and machine learning techniques, and offer practical troubleshooting strategies for optimizing model performance. The article also covers rigorous validation protocols and comparative analyses of different modeling strategies, concluding with future directions for integrating these approaches into more reliable predictive frameworks for biomedical research.

Understanding Activity Cliffs: Defining the QSAR Prediction Challenge

What Are Activity Cliffs? Defining Structural Similarity and Potency Discontinuity

FAQ 1: What is the formal definition of an activity cliff?

An activity cliff (AC) is formally defined as a pair of chemically similar compounds that exhibit a large difference in potency against the same biological target [1]. This phenomenon directly challenges the fundamental principle in medicinal chemistry that structurally similar molecules should have similar biological activities [2]. Two key criteria are used to identify them [2]:

- Similarity Criterion: The compounds must be structurally similar. This is often assessed using molecular fingerprints (like ECFPs) and the Tanimoto similarity coefficient, with a typical threshold above 80-90% similarity [2] [3].

- Potency Difference Criterion: The difference in their binding affinity or inhibitory potency must be significant, usually defined as a difference of at least two orders of magnitude (e.g., a 100-fold change) [2].

FAQ 2: Why are activity cliffs a major problem in QSAR modeling?

Activity cliffs are a significant source of prediction error in Quantitative Structure-Activity Relationship (QSAR) models because they represent sharp discontinuities in the chemical landscape [1]. These models typically rely on the smoothness of the structure-activity relationship; when a tiny structural change causes a dramatic potency shift, it confounds standard machine learning algorithms [1] [4]. Consequently, models often incur a significant drop in performance when predicting compounds involved in activity cliffs [1].

FAQ 3: What are the common molecular representations used to quantify similarity for activity cliff identification?

The choice of molecular representation significantly influences activity cliff identification. Different fingerprints capture different aspects of molecular structure, leading to varying similarity assessments [5].

Table 1: Common Molecular Fingerprints for Similarity Assessment

| Fingerprint Category | Description | Common Examples | Best Use Case |

|---|---|---|---|

| Radial (Circular) Fingerprints | Iteratively capture information about the neighborhood of each atom up to a given diameter. | ECFP, FCFP [5] | Activity-based virtual screening and machine learning [5]. |

| Substructure-Preserving Fingerprints | Use a predefined library of structural patterns; a bit is turned "on" if the pattern is present. | MACCS, PubChem [5] | When substructure features are of primary importance [5]. |

| Topological Fingerprints | Encode the graph distance between atoms or features within the molecule. | Atom Pair, Topological Torsion (TT) [5] | Useful for larger molecular systems [5]. |

FAQ 4: My QSAR model's performance is poor. How can I troubleshoot if activity cliffs are the cause?

Follow this systematic troubleshooting guide to diagnose the impact of activity cliffs on your QSAR model.

Methodology for Each Step:

- Step 1: Calculate Activity Cliffs: For your dataset, calculate pairwise structural similarities (e.g., using ECFP4 fingerprints and Tanimoto coefficient). Then, identify all compound pairs that meet your defined similarity (e.g., >0.9) and potency difference (e.g., >100-fold) thresholds [3].

- Step 2: Identify 'Cliffy' Compounds: Create a list of all unique compounds that participate in at least one activity cliff. These are your "cliffy" compounds [3].

- Step 3: Perform a Model-Diagnostic Test: Split your test set into two groups: one containing the "cliffy" compounds and another containing the remaining "non-cliffy" compounds. Evaluate your QSAR model's prediction performance (e.g., R², RMSE) separately on these two groups. A significant performance drop on the "cliffy" set confirms that activity cliffs are a major source of error [1].

- Step 4: Conclusion & Next Steps: If activity cliffs are confirmed as a problem, consider using advanced modeling techniques specifically designed to handle them.

FAQ 5: What advanced modeling techniques can better predict activity cliffs?

Traditional QSAR models and even some deep learning models struggle with activity cliffs. However, recent research has yielded more promising approaches:

Table 2: Advanced Techniques for Activity Cliff Prediction

| Technique | Core Idea | Reported Advantage |

|---|---|---|

| Structure-Based Methods (e.g., Docking) | Uses 3D protein structure to simulate ligand binding. Advanced protocols like ensemble docking can achieve significant accuracy by accounting for protein flexibility [2]. | Provides a structural rationale for the cliff by analyzing differences in binding interactions [2]. |

| Explanation-Supervised GNNs (e.g., ACES-GNN) | A graph neural network (GNN) trained with explanation supervision. It is forced to learn that potency differences arise from the uncommon substructures between a cliff pair [3]. | Simultaneously improves predictive accuracy and model interpretability by generating chemically meaningful explanations [3]. |

| Pre-training with Triplet Loss (e.g., ACtriplet) | Uses a pre-training strategy with triplet loss (from face recognition) to learn representations that better distinguish between highly similar molecules [4] [6]. | Makes better use of existing data and has been shown to significantly improve deep learning performance on AC prediction across multiple datasets [6]. |

The Scientist's Toolkit: Essential Reagents & Solutions for Activity Cliff Analysis

Table 3: Key Computational Tools for Activity Cliff Research

| Item / Resource | Function / Description | Typical Use in AC Analysis |

|---|---|---|

| ChEMBL / BindingDB | Public repositories of bioactive molecules with curated potency data (e.g., Ki, IC50) [2]. | Primary sources for extracting compound datasets and associated activity values for a target of interest [2] [3]. |

| RDKit / Chemaxon | Open-source and commercial cheminformatics toolkits. | Used for standardizing molecules, generating fingerprints (ECFPs), and calculating molecular similarities [5] [7]. |

| Matched Molecular Pair (MMP) Algorithm | A method to systematically identify all pairs of compounds that differ only by a single, well-defined structural transformation [8]. | Provides a chemically intuitive and consistent way to define the "similarity" criterion for activity cliffs, reducing arbitrariness [8]. |

| Docking Software (e.g., ICM) | Software to predict the 3D pose and binding affinity of a small molecule in a protein's binding site [2]. | Used in structure-based approaches to rationalize or predict activity cliffs by analyzing binding modes and interactions [2]. |

| Graph Neural Network (GNN) Framework | Deep learning frameworks (e.g., PyTorch, TensorFlow) with GNN libraries [3]. | Building and training advanced AC prediction models like ACES-GNN that operate directly on molecular graphs [3]. |

The Impact of Activity Cliffs on Drug Discovery and Lead Optimization

Frequently Asked Questions (FAQs)

1. What is an Activity Cliff (AC)? An Activity Cliff is a pair of structurally similar compounds that share a high degree of molecular similarity but exhibit a large, unexpected difference in their binding affinity (potency) for the same biological target [1] [9]. For example, a small chemical modification, such as the addition of a single hydroxyl group, can lead to a change in potency of almost three orders of magnitude [1].

2. Why are Activity Cliffs a significant problem in QSAR modeling? QSAR models are built on the principle that similar molecules have similar properties. Activity Cliffs directly violate this principle, creating sharp discontinuities in the Structure-Activity Relationship (SAR) landscape [1] [10]. They are a major source of prediction error, causing models to often fail in predicting the large potency difference between two similar compounds [1] [11] [10].

3. Do all machine learning models struggle with Activity Cliffs equally? Benchmarking studies have shown that while all models see a performance drop on AC-rich datasets, traditional machine learning methods based on molecular descriptors can sometimes outperform more complex deep learning models [11]. However, newer approaches that explicitly design models to address ACs, such as those using triplet loss or explanation supervision, are showing promise [6] [3].

4. How can I assess if my dataset has a significant number of Activity Cliffs? Several computational methods exist:

- SALI (Structure-Activity Landscape Index): A straightforward method that generates a matrix where the largest values indicate potential activity cliffs [12].

- eSALI (extended SALI): A more recent, computationally efficient method that quantifies the activity landscape roughness of an entire dataset without requiring exhaustive pairwise comparisons [12].

5. What strategies can I use to build better models in the presence of Activity Cliffs?

- Data Splitting: Use data splitting methods that ensure a uniform distribution of ACs between training and test sets, rather than simple random splits [12].

- Specialized Models: Employ models specifically designed for AC prediction, such as those using triplet loss or explanation-supervised learning [6] [3].

- Model Evaluation: Always include "activity-cliff-centered" metrics during model development and evaluation to specifically test performance on these critical cases [11].

Troubleshooting Guides

Problem: Poor Prediction Accuracy on Activity Cliff Compounds

Potential Causes and Solutions:

Cause 1: Inadequate Molecular Representation. The model's featurization method may not capture the subtle structural differences that cause the large potency shift.

- Solution: Benchmark different molecular representations.

- Protocol: Train and evaluate identical model architectures using different input features. Consistent with research, Extended Connectivity Fingerprints (ECFPs) have been shown to be a strong baseline, but Graph Neural Networks (GNNs) can be competitive or superior for the specific task of AC classification [1] [13].

Cause 2: Data Leakage and Improper Dataset Splitting. If structurally similar compounds forming an AC pair are split between training and test sets, the model may appear to perform well by simply remembering the training data, rather than learning the underlying SAR.

- Solution: Implement rigorous, AC-aware data splitting protocols.

- Protocol: Use methods like "extended similarity (eSIM)" and "extended SALI (eSALI)" to create training and test sets with a uniform distribution of activity cliffs and chemical space [12]. Studies have found that these uniform splitting methods can lead to better models than random splits in AC-rich scenarios.

Cause 3: Standard Model Architecture is Not Sensitive to Fine-Grained Differences. Standard QSAR models may over-emphasize large, shared structural features between an AC pair and ignore the critical, minor modifications.

- Solution: Utilize advanced deep learning frameworks designed for comparative analysis.

- Protocol: Implement the ACES-GNN (Activity-Cliff-Explanation-Supervised GNN) framework [3]. This method supervises both the prediction and the model's explanation, forcing it to correctly attribute the potency difference to the specific uncommon substructures. The workflow is detailed in the diagram below.

Problem: Model is Unstable and Provides Inconsistent Explanations for Similar Compounds

Potential Causes and Solutions:

- Cause: The model's reasoning is not aligned with chemical intuition (e.g., the "Clever Hans" effect), where it makes correct predictions for the wrong reasons.

- Solution: Integrate explanation supervision into the training process.

- Protocol: As implemented in the ACES-GNN framework [3], define ground-truth explanations for AC pairs in your training set. The ground truth is based on the uncommon substructures between the two molecules. The model is then trained to not only predict the activity difference correctly but also to produce atom-level attributions that highlight these specific uncommon substructures. This dual supervision significantly improves both predictive accuracy and the chemical reasonableness of the explanations.

Experimental Data & Protocols

Table 1: Benchmark Performance of Different Models on Activity Cliff Compounds

This table summarizes findings from a large-scale benchmark study on 30 molecular targets, illustrating how different model types perform on AC-rich test sets [11].

| Model Category | Example Algorithms | Typical Performance on ACs | Key Findings |

|---|---|---|---|

| Traditional Machine Learning | Random Forest, Support Vector Machines (using molecular descriptors) | Moderate to High | Often outperforms more complex deep learning models on AC compounds [11]. |

| Deep Learning (Graph-Based) | Graph Neural Networks (GNNs), Message Passing Neural Networks (MPNNs) | Variable | Can achieve good performance but often struggles with ACs; performance is highly dataset-dependent [11] [3]. |

| Advanced AC-Specific Models | ACtriplet [6], ACES-GNN [3] | High | Models integrating triplet loss or explanation supervision show significant improvements in both AC prediction and explanation quality. |

Table 2: Key Data Splitting Methods and Their Impact on Modeling Activity Cliffs

The method used to split data into training and test sets critically impacts a model's ability to generalize to activity cliffs [12].

| Splitting Method | Description | Implication for Activity Cliff Modeling |

|---|---|---|

| Random Split | Compounds are assigned to train/test sets randomly. | High risk of data leakage; can lead to overoptimistic performance estimates as AC pairs may be split across sets [12]. |

| Cluster-based Stratified Split | Molecules are clustered, and splits are stratified based on whether a molecule is part of an AC. | Reduces data leakage and provides a more realistic assessment of model performance on ACs [11]. |

| Extended Similarity (eSIM/eSALI) Methods | Splits are designed to achieve a uniform distribution of chemical space and activity landscape roughness between train and test sets. | Creates more robust training environments and fairer tests, often leading to better generalization than random splits [12]. |

Protocol: Identifying Activity Cliffs in a Dataset using the SALI Index

This is a standard method for identifying activity cliffs from a dataset of compounds and their measured potencies [12].

- Calculate Molecular Similarity: For all possible pairs of compounds (i and j) in your dataset, compute a structural similarity value. The Tanimoto coefficient based on Extended Connectivity Fingerprints (ECFPs) is commonly used.

- Calculate Potency Difference: For each pair, calculate the absolute difference in their biological activity (e.g., pKi, pIC50).

- Compute SALI Value: For each pair, calculate the Structure-Activity Landscape Index (SALI) using the formula: SALI(i,j) = |Potency(i) - Potency(j)| / (1 - Similarity(i,j)) [12]

- Identify Cliffs: Rank all compound pairs by their SALI values. Pairs with the highest SALI values are the most significant activity cliffs, as they represent large potency differences from small structural changes (low similarity).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Activity Cliff Research

| Tool / Reagent | Function / Purpose | Application Note |

|---|---|---|

| ECFPs (Extended Connectivity Fingerprints) | A molecular representation that encodes circular atom neighborhoods into a bit string (fingerprint). | The most widely used fingerprint for similarity search and QSAR. Serves as a strong baseline for many modeling tasks, including AC detection [1] [12]. |

| Graph Neural Networks (GNNs) | A class of deep learning models that operate directly on graph-structured data, such as molecular graphs. | Can autonomously learn optimal molecular representations. Frameworks like ACES-GNN leverage GNNs for improved AC prediction and interpretation [3]. |

| SALI / eSALI Indices | Quantitative metrics to identify and quantify the roughness of activity landscapes in a dataset. | SALI is used for pairwise cliff identification [12], while eSALI provides a faster, scalable assessment of an entire set's landscape [12]. |

| MoleculeACE Benchmark | An open-access benchmarking platform designed to evaluate model performance on activity cliffs [11]. | Allows researchers to rigorously test their QSAR models against standardized AC-centric metrics, ensuring robust evaluation [11]. |

| Triplet Loss (from ML) | A loss function that learns embeddings by pulling similar examples (non-AC pairs) closer and pushing dissimilar ones (AC pairs) apart. | Used in models like ACtriplet to improve the model's ability to discriminate between subtle structural changes that lead to large potency differences [6]. |

Frequently Asked Questions

FAQ: What defines an Activity Cliff (AC) in practical terms? An Activity Cliff is defined by a pair of chemically similar compounds that show a large difference in potency against the same biological target. This "similarity" can be quantified using molecular fingerprints and the Tanimoto coefficient, while a "large" potency difference is often heuristically set at a 100-fold change [14].

FAQ: Why are Activity Cliffs a significant challenge for QSAR modeling? Activity Cliffs pose a major challenge because they directly contradict the fundamental similarity principle in cheminformatics, which states that similar molecules should have similar properties [15]. QSAR models frequently struggle to predict these abrupt changes in activity, making ACs a major source of prediction error [1]. Effectively identifying and handling them is crucial for building more robust predictive models.

FAQ: What are the main weaknesses of the Structure-Activity Landscape Index (SALI)? The standard SALI formula has three key limitations [15]:

- It is mathematically undefined when the molecular similarity (

sij) is exactly 1. - It is an unbounded value.

- Its calculation for a whole dataset has quadratic complexity (O(N²)), making it computationally expensive for large libraries.

FAQ: How can the limitations of SALI be overcome? Recent research proposes using a Taylor series expansion to reformulate SALI, creating a defined expression even at high similarity values [15]. Furthermore, new metrics like iCliff leverage the iSIM framework to quantify the overall roughness of an activity landscape with linear complexity (O(N)), which is much more efficient for large datasets [15].

Experimental Protocols & Methodologies

Protocol 1: Systematic Evaluation of QSAR Models for AC Prediction

This protocol outlines a method to assess the capability of various QSAR models to classify compound pairs as Activity Cliffs [1].

- Dataset Curation: Compile binding affinity data for a target of interest (e.g., dopamine receptor D2, factor Xa). Standardize structures (e.g., using the RDKit pipeline) and curate activity values to a consistent unit (e.g., Ki in nM) [1].

- Molecular Representation: Calculate multiple molecular representations for each compound. Standard representations include [1]:

- Extended-Connectivity Fingerprints (ECFP4): A circular topological fingerprint.

- Physicochemical-Descriptor Vectors (PDVs): A set of numerical descriptors capturing structural and physicochemical properties.

- Graph Isomorphism Networks (GINs): A type of graph neural network that learns molecular representations directly from the graph structure.

- Model Construction & Training: Build several QSAR models by combining the different representations with various regression algorithms, such as Random Forests (RFs), k-Nearest Neighbours (kNNs), or Multilayer Perceptrons (MLPs). Use the training set to develop models that predict the activity of individual compounds [1].

- AC Classification: Use the trained QSAR models to predict the activities of both compounds in a similar pair. Classify the pair as an AC if the predicted absolute activity difference exceeds a predefined threshold [1].

- Performance Evaluation: Evaluate the models on two tasks [1]:

- General QSAR Performance: The ability to predict the activity of individual compounds.

- AC-Sensitivity: The ability to correctly classify compound pairs as ACs or non-ACs.

Protocol 2: Identifying and Quantifying Activity Landscapes with iCliff

This protocol describes a modern, efficient method to quantify the prevalence of Activity Cliffs across an entire compound library [15].

- Data Preparation: Assemble a dataset of compounds with associated bioactivity values (e.g., IC50, Ki).

- Fingerprint Generation: Encode all molecules using a binary fingerprint representation (e.g., ECFP4).

- Calculate iCliff Metric: Compute the iCliff indicator using the following formula, which integrates average property differences and the global similarity of the set [15]:

iCliff = [ (ΣP_i²/N) - (ΣP_i/N)² ] * [ (1 + iT + iT² + iT³) / 2 ]- P_i: The property (e.g., potency) of molecule i.

- N: The total number of molecules in the set.

- iT: The iSIM Tanimoto, representing the average pairwise similarity of the entire set, calculated from the fingerprint matrix.

- Interpretation: A higher iCliff value indicates a rougher activity landscape and a greater overall presence of Activity Cliffs within the analyzed compound set [15].

Data Presentation

Table 1: Common Molecular Representations for Similarity Assessment in AC Analysis

| Representation Type | Description | Key Characteristics | Applicability to ACs |

|---|---|---|---|

| ECFP4 (Extended-Connectivity Fingerprints) [1] [14] | A circular topological fingerprint that captures atom environments. | 2D representation; robust and widely used; a Tanimoto threshold of ~0.56 is often used to define similarity [14]. | Classical baseline; consistently delivers strong general QSAR performance [1]. |

| MACCS Keys [14] | A structural key fingerprint based on 166 predefined chemical fragments. | 2D representation; easily interpretable; a Tanimoto threshold of ~0.85 is commonly used [14]. | Provides a structurally intuitive similarity criterion. |

| Matched Molecular Pairs (MMPs) [14] | Defines similarity by a single-site structural transformation between two compounds. | Highly intuitive and chemically meaningful; does not rely on a similarity threshold. | Improves chemical interpretability of ACs; directly identifies the specific modification causing the cliff [14]. |

| Graph Isomorphism Networks (GINs) [1] | A graph neural network that learns molecular representations from the compound's graph structure. | Adaptive, learned representation; can capture complex sub-structural features. | Competitive with or superior to ECFPs for direct AC-classification tasks [1]. |

Table 2: Quantitative Criteria and Metrics for Activity Cliff Definition

| Criterion | Common Thresholds & Metrics | Notes and Considerations |

|---|---|---|

| Similarity Criterion [14] | - ECFP4 Tc ≥ 0.56- MACCS Tc ≥ 0.85- MMP-based | Thresholds are representation-dependent. MMPs provide a discrete, non-threshold-based criterion. |

| Potency Difference Criterion [14] | - ≥ 100-fold (or 2 log units) | A common heuristic, but potency distributions can vary by target. Using statistically significant differences is an alternative. |

| Pairwise Cliff Metric (SALI) [15] | SALI(i,j) = |P_i - P_j| / (1 - s_ij) |

Standard metric; undefined when s_ij=1. Prefer the Taylor Series reformulation for robustness. |

| Global Landscape Metric (iCliff) [15] | iCliff = [ (ΣP_i²/N) - (ΣP_i/N)² ] * [ (1 + iT + iT² + iT³) / 2 ] |

Linear complexity (O(N)); provides a single value for the roughness of an entire dataset. |

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software Solutions

| Item | Function in AC Analysis | Example Tools / Implementation |

|---|---|---|

| Cheminformatics Toolkit | For standardizing chemical structures, calculating descriptors, and handling molecular data. | RDKit [1], PaDEL-Descriptor [16] |

| Fingerprint & Similarity Calculator | To generate molecular representations (e.g., ECFP4, MACCS) and compute pairwise similarity. | RDKit, CDK (Chemistry Development Kit) |

| QSAR Modeling Environment | To build and validate predictive models using various algorithms and representations. | Python (with scikit-learn, Deep Graph Library), R |

| Activity Landscape Analyzer | To calculate AC metrics (SALI, iCliff) and visualize structure-activity relationships. | Custom scripts implementing iCliff [15], SAR analysis tools |

Workflow Visualization

Activity Cliff Analysis Workflow

From SALI to iCliff: Metric Evolution

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: What exactly is an "Activity Cliff" and why is it a significant problem in QSAR modeling? An activity cliff (AC) is a pair of structurally similar molecules that exhibit a large difference in potency toward the same biological target [2] [1]. This defies the central principle in medicinal chemistry that structurally similar compounds tend to have similar biological activities [2]. For QSAR modeling, these abrupt changes in the structure-activity relationship (SAR) landscape are a major source of prediction error, as machine learning models often struggle to predict these large potency discontinuities [1].

FAQ 2: My QSAR model performs well on most compounds but fails on specific pairs. Could activity cliffs be the cause? Yes, this is a common scenario. Research has consistently shown that the predictive performance of QSAR models, including modern deep learning approaches, drops significantly when tested on compounds involved in activity cliffs [1]. If your model's errors are concentrated around specific, similar compound pairs with large experimental potency gaps, activity cliffs are the most likely cause.

FAQ 3: Which computational methods are most reliable for predicting activity cliffs? Advanced structure-based methods have shown significant accuracy. Ensemble-docking and template-docking, which use multiple receptor conformations, are particularly promising [2]. For ligand-based approaches, graph isomorphism networks (GINs) have been found to be competitive with or even superior to classical molecular representations like extended-connectivity fingerprints (ECFPs) for the specific task of AC classification [1].

FAQ 4: Should I remove activity cliffs from my training data to improve general QSAR model performance? This is not recommended. While activity cliffs can hinder predictability, simply removing them from training data results in a loss of precious SAR information [1]. A more robust strategy is to identify these cliffs and potentially use specialized modeling techniques that can better handle the discontinuities they represent.

Troubleshooting Guide: Addressing Common Activity Cliff Analysis Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Low AC-prediction sensitivity | Model cannot identify cliffs when activities of both compounds are unknown [1]. | Incorporate the known activity of one compound in the pair to boost sensitivity [1]. |

| Inconsistent cliff identification | Arbitrary thresholds for structural similarity and potency difference [2]. | Use a consistent definition like Matched Molecular Pairs (MMP) and public data repositories for standardization [2]. |

| Poor structure-based prediction | Using a single, rigid receptor conformation for docking [2]. | Switch to ensemble-docking using multiple receptor conformations to account for protein flexibility [2]. |

| Performance drop on "cliffy" compounds | Standard QSAR models are inherently challenged by SAR discontinuities [1]. | Benchmark models on cliff-forming compounds specifically; consider using GIN-based representations [1]. |

Experimental Protocols & Data Presentation

Key Methodology: Structure-Based Prediction via Ensemble Docking

This protocol, adapted from a study on structure-based predictions, outlines the use of ensemble docking to rationalize activity cliffs [2].

Detailed Workflow:

- Database Curation: Compile a database of cliff-forming pairs from public sources like the PDB. The criteria for a 3D activity cliff (3DAC) are typically:

- 3D Similarity: ≥80% using a function that accounts for positional, conformational, and chemical differences in binding modes.

- Potency Difference: ≥ two orders of magnitude (e.g., 100-fold difference in IC50 or Ki) [2].

- Receptor Preparation:

- Collect multiple crystallographic structures for the same protein target to represent receptor flexibility.

- The final dataset should encompass numerous unique protein-ligand complexes across multiple pharmaceutically relevant targets [2].

- Docking Simulations:

- Perform molecular docking of both the high-potency and low-potency partners of a cliff pair using an advanced docking engine.

- Conduct simulations starting from "ideal" scenarios (e.g., re-docking into the native structure) and progressively move to more realistic, prospective scenarios (e.g., cross-docking) [2].

- Analysis:

- Analyze the predicted binding modes and scores.

- Rationalize the potency difference by identifying key interactions compromised or gained due to the small structural change, such as loss of a hydrogen bond, ionic interaction, or disruption of a favorable binding site conformation [2].

Key Methodology: Ligand-Based AC Prediction using QSAR Models

This protocol describes how to repurpose standard QSAR models for activity cliff classification [1].

Detailed Workflow:

- Data Set Construction: Build binding affinity data sets for specific targets (e.g., dopamine receptor D2, factor Xa) from sources like ChEMBL. Standardize SMILES strings and remove errors.

- Molecular Representation: Choose one or more representation methods:

- Extended-Connectivity Fingerprints (ECFPs): Classical circular fingerprints.

- Physicochemical-Descriptor Vectors (PDVs): Numerical descriptors of molecular properties.

- Graph Isomorphism Networks (GINs): A type of graph neural network that learns features directly from the molecular graph structure [1].

- Model Training: Train QSAR regression models to predict the activity of individual compounds using various algorithms (e.g., Random Forests, k-Nearest Neighbors, Multilayer Perceptrons).

- AC Classification:

- For a pair of similar compounds, use the trained QSAR model to predict the activity of each molecule individually.

- Calculate the absolute difference between the two predicted activities.

- Classify the pair as an activity cliff if the predicted potency difference exceeds a predefined threshold [1].

Quantitative Data from Activity Cliff Studies

Table 1: Example 3D Activity Cliff (3DAC) Database Composition [2]

| UniProt ID | Protein Target | Number of 3DACs | Number of Unique Ligands | Number of Receptor Conformations |

|---|---|---|---|---|

| P24941 | Cyclin-dependent kinase 2 (CDK2) | 26 | 36 | 34 |

| P00734 | Prothrombin (THRB) | 24 | 28 | 28 |

| P07900 | Heat shock protein 90-alpha (HSP90A) | 17 | 17 | 17 |

| P00742 | Coagulation factor X (FA10) | 16 | 20 | 20 |

| P56817 | Beta-secretase 1 (BACE1) | 13 | 16 | 15 |

Table 2: Comparison of QSAR Model Performance on AC Prediction [1]

| Molecular Representation | Machine Learning Algorithm | AC Prediction Performance (Sensitivity) | General QSAR Prediction Performance |

|---|---|---|---|

| Extended-Connectivity Fingerprints (ECFPs) | Random Forest (RF) | Low to Moderate | Consistently strong |

| Graph Isomorphism Networks (GINs) | Multilayer Perceptron (MLP) | Competitive or Superior to ECFPs | Variable, can be lower than ECFPs |

| Physicochemical-Descriptor Vectors (PDVs) | k-Nearest Neighbors (kNN) | Low | Moderate |

Visualization of Workflows

Activity Cliff Analysis Workflow

Diagram Title: Activity Cliff Identification and Analysis Process

Structure-Based vs. Ligand-Based AC Prediction

Diagram Title: Structure-Based vs. Ligand-Based AC Prediction

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Activity Cliff Analysis

| Item | Function / Application in AC Research |

|---|---|

| Public Structural Databases (PDB) | Source for experimentally determined 3D structures of protein-ligand complexes to build 3DAC datasets [2]. |

| Bioactivity Databases (ChEMBL, BindingDB) | Provide curated potency data (e.g., Ki, IC50) for molecules, essential for calculating experimental potency differences [2] [1]. |

| Molecular Similarity Tools (RDKit) | Calculate 2D (Tanimoto) and 3D similarity metrics to identify structurally similar compound pairs according to defined thresholds [2]. |

| Docking Software (ICM, AutoDock, etc.) | Perform ensemble-docking and template-docking simulations to predict binding modes and rationalize potency gaps structurally [2]. |

| Graph Neural Network Libraries (PyTorch Geometric, DGL) | Implement and train graph isomorphism networks (GINs) and other GNNs for advanced molecular representation and AC classification [1]. |

| Extended-Connectivity Fingerprints (ECFPs) | A classical yet powerful molecular representation for building baseline QSAR models for activity prediction [1]. |

Troubleshooting Guides

Q1: Why does my QSAR model perform well on most compounds but fails spectacularly on a few specific ones?

Problem: Your model shows high overall accuracy but produces significant prediction errors for certain compounds, often large over- or under-estimations of activity.

Diagnosis: This pattern strongly indicates the presence of activity cliffs (ACs) in your dataset. ACs are pairs or groups of structurally similar compounds that exhibit large differences in biological activity [10] [1]. Traditional QSAR models, which rely on the fundamental similarity principle ("similar compounds have similar properties"), struggle with these abrupt discontinuities in the structure-activity landscape [17].

Solution:

- Systematically Identify ACs: Calculate the Structure-Activity Landscape Index (SALI) for compound pairs in your dataset [12] [18]:

SALI(i,j) = |P_i - P_j| / (1 - s_ij)where Pi and Pj are potencies of compounds i and j, and s_ij is their structural similarity [18]. High SALI values indicate potential ACs.

Analyze Model Performance Distribution: Check if prediction outliers correlate with identified AC compounds. Models tend to over-smooth predictions for AC pairs, underestimating the more active and overestimating the less active compound [1].

Apply AC-Aware Modeling: Consider using specialized approaches like graph neural networks with explanation supervision (ACES-GNN) or activity cliff-aware reinforcement learning (ACARL) that explicitly handle SAR discontinuities [3] [19].

Problem: You need to evaluate whether your dataset contains inherent characteristics that might limit QSAR model development.

Diagnosis: The modelability of a dataset is significantly compromised by the presence of activity cliffs, among other factors [10]. A rough activity landscape makes it difficult for ML algorithms to learn consistent structure-activity relationships.

Solution:

- Calculate Modelability Index (MODI): This metric quantifies the smoothness of the SAR landscape for binary classification datasets [1]. Low MODI values indicate poor modelability.

Use Global Roughness Metrics: For regression datasets, compute the iCliff index, which quantifies overall activity landscape roughness in linear O(N) time complexity [18]:

iCliff = [ΣP_i²/N - (ΣP_i/N)²] × (1 + iT + iT² + iT³)/4where iT is the iSIM Tanimoto similarity [18]. Higher iCliff values suggest greater AC presence.Perform SALI Matrix Analysis: Generate a SALI matrix for all compound pairs and identify clusters of high values, which indicate AC-rich regions that will challenge QSAR models [10] [12].

Q3: My deep learning QSAR model still fails on activity cliffs. Isn't deep learning supposed to handle complex patterns?

Problem: Despite using advanced deep learning architectures, your model still struggles with AC prediction.

Diagnosis: This is a common observation - neither enlarging training sets nor increasing model complexity reliably improves AC prediction [1] [19]. Deep neural networks often over-emphasize shared structural features between AC pairs, failing to capture the critical minor modifications that drive large potency changes [3].

Solution:

- Incorporate Explanation Supervision: Use frameworks like ACES-GNN that integrate explanation supervision into GNN training, forcing the model to focus on structurally relevant regions that explain AC behavior [3].

Employ Contrastive Learning: Implement AC-aware reinforcement learning (ACARL) with contrastive loss that actively prioritizes learning from AC compounds [19].

Leverage Multi-Representation Learning: Combine different molecular representations (descriptors, fingerprints, graph features) as different representations may capture different aspects of SAR discontinuity [1].

Q4: How should I split my data to properly evaluate QSAR model performance on activity cliffs?

Problem: Standard random splitting gives over-optimistic performance estimates because structurally similar AC compounds may appear in both training and test sets.

Diagnosis: Conventional data splitting methods can lead to data leakage for AC compounds, artificially inflating perceived model performance [12]. True generalization capability for ACs requires careful splitting strategies.

Solution:

- Apply Extended Similarity-Based Splitting: Use methods like diverse selection or uniform splitting based on complementary extended similarity (eSIM) to ensure proper representation of chemical space in both training and test sets [12].

Implement AC-Conscious Splitting: For AC-focused studies, use clustering followed by stratified splitting based on whether molecules participate in ACs [12].

Validate with Multiple Splits: Always evaluate performance using multiple splitting strategies and compare results between random and AC-conscious splits [12].

Experimental Protocols

Protocol 1: Comprehensive Activity Cliff Identification

Purpose: Systematically identify and characterize activity cliffs in molecular datasets.

Materials: Molecular structures (SMILES format), bioactivity data (Ki, IC50, or EC50 values), cheminformatics toolkit (e.g., RDKit).

Procedure:

- Data Standardization:

- Standardize SMILES strings using established pipelines (e.g., ChEMBL structure pipeline) [1].

- Remove salts, solvents, and isotopic information.

- Convert activity values to consistent units and transform to pKi/pIC50 (-log10 values).

Molecular Representation:

Similarity Calculation:

Activity Cliff Identification:

- Apply multiple criteria for comprehensive AC detection [3]:

- Substructure similarity: ECFP Tanimoto > 0.9 AND potency difference ≥ 10-fold

- Scaffold similarity: Scaffold-based ECFP Tanimoto > 0.9 AND potency difference ≥ 10-fold

- SMILES similarity: Levenshtein distance-based similarity > 0.9 AND potency difference ≥ 10-fold

- Apply multiple criteria for comprehensive AC detection [3]:

Landscape Visualization:

Activity Cliff Identification Workflow

Protocol 2: QSAR Modelability Assessment

Purpose: Quantitatively evaluate the suitability of a dataset for QSAR modeling, considering activity cliff prevalence.

Materials: Molecular dataset with structures and bioactivities, computational resources for pairwise comparisons.

Procedure:

- Calculate Modelability Index (MODI) for Classification Data:

- For each compound pair with same activity class, check if they are structurally similar (Tanimoto > threshold)

- For each compound pair with different activity classes, check if they are structurally similar

- MODI = 1 - (number of same-class similar pairs / number of different-class similar pairs) [1]

Compute iCliff Index for Regression Data:

- Calculate mean squared potency: ΣP_i²/N

- Calculate squared mean potency: (ΣP_i/N)²

- Compute iSIM Tanimoto (iT) using column sums of fingerprint matrix [18]

- Apply formula: iCliff = [ΣPi²/N - (ΣPi/N)²] × (1 + iT + iT² + iT³)/4

Perform ROGI Analysis:

- Cluster compounds using hierarchical clustering with varying similarity thresholds

- Calculate potency variance within each cluster at each threshold

- ROGI quantifies how potency variance changes with clustering threshold [18]

Interpret Results:

- Low MODI (<0.7) indicates poor modelability

- High iCliff values indicate rough activity landscape

- Compare against benchmark datasets for context

Protocol 3: AC-Conscious QSAR Modeling with Explanation Supervision

Purpose: Develop QSAR models with enhanced capability to predict and explain activity cliffs.

Materials: Molecular dataset with identified ACs, deep learning framework with GNN support.

Procedure:

- Data Preparation:

Model Architecture Setup:

- Implement Message Passing Neural Network (MPNN) backbone [3]

- Add explanation supervision branch

- Configure gradient-based attribution method

ACES-GNN Training:

- Incorporate AC explanation supervision into loss function [3]

- Use multi-task learning for both prediction and explanation

- Apply regularization to prevent overfitting to AC examples

Validation and Interpretation:

- Evaluate both predictive accuracy and explanation quality

- Compare against baseline models without explanation supervision

- Analyze model attention on AC compound pairs

AC-Conscious QSAR Modeling Workflow

Quantitative Data Tables

Table 1: Activity Cliff Quantification Metrics Comparison

| Metric | Mathematical Formula | Complexity | Key Advantages | Optimal Range |

|---|---|---|---|---|

| SALI | SALI(i,j) = |P_i - P_j| / (1 - s_ij) |

O(N²) | Simple, intuitive interpretation | Higher values = steeper cliffs [18] |

| Taylor-SALI | TS_SALI(i,j) = (P_i - P_j)² × (1 + s_ij + s_ij² + s_ij³)/4 |

O(N²) | Defined when s_ij=1, better numerical stability | Higher values = steeper cliffs [18] |

| iCliff | iCliff = [ΣP_i²/N - (ΣP_i/N)²] × (1 + iT + iT² + iT³)/4 |

O(N) | Linear scaling, no pairwise comparisons | Higher values = rougher landscape [18] |

| ROGI | Based on potency variance change with clustering threshold | O(N²) | Correlates with ML model error | Higher values = rougher landscape [18] |

| MODI | 1 - (same-class similar pairs / different-class similar pairs) |

O(N²) | Directly related to binary classification modelability | 0-1, >0.7 = modelable [1] |

Table 2: QSAR Model Performance on Activity Cliff Compounds

| Model Architecture | Molecular Representation | Overall RMSE | AC Compound RMSE | AC Sensitivity | Key Limitations |

|---|---|---|---|---|---|

| Random Forest | ECFP4 | 0.48 | 0.82 | 0.31 | Struggles with discontinuity, oversmooths ACs [1] |

| Graph Isomorphism Network | Graph Features | 0.52 | 0.79 | 0.35 | Competitive but computationally intensive [1] |

| Multilayer Perceptron | Physicochemical Descriptors | 0.55 | 0.88 | 0.28 | Poor generalization to AC compounds [1] |

| k-Nearest Neighbors | ECFP4 | 0.61 | 0.95 | 0.24 | Severely affected by ACs in chemical space [1] |

| ACES-GNN | Graph Features + Explanation | 0.45 | 0.68 | 0.52 | Requires ground-truth AC explanations [3] |

Table 3: Research Reagent Solutions for Activity Cliff Research

| Reagent/Resource | Type | Function in AC Research | Key Features | Availability |

|---|---|---|---|---|

| ECFP4 Fingerprints | Molecular Representation | Structural similarity calculation | Circular topology, capture radial substructures | RDKit, OpenBabel [3] |

| SALI Calculator | Analysis Tool | Quantifying AC magnitude | Simple implementation, pairwise analysis | Custom implementation [18] |

| iCliff Calculator | Analysis Tool | Global landscape roughness | O(N) complexity, no pairwise comparisons | Custom implementation [18] |

| ACES-GNN Framework | Modeling Framework | AC-aware QSAR modeling | Explanation supervision, improved AC prediction | Research implementation [3] |

| ACARL Framework | Generative Framework | AC-aware molecular design | Contrastive loss, RL-based optimization | Research implementation [19] |

| ChEMBL Database | Data Resource | Bioactivity data for AC analysis | Curated SAR data, multiple targets | Public repository [3] |

FAQs

Q5: Are activity cliffs necessarily "bad" for drug discovery, or can they be beneficial?

A: Activity cliffs represent both a challenge and an opportunity. While they complicate QSAR modeling, they provide extremely valuable information for medicinal chemists. ACs reveal which specific structural modifications have dramatic effects on potency, offering crucial insights for lead optimization. Understanding ACs can help design compounds with significantly improved activity through minimal structural changes [1] [19].

Q6: What percentage of activity cliffs in my dataset should concern me for QSAR modeling?

A: There's no universal threshold, but studies indicate that datasets with >30% AC compounds show significantly degraded QSAR performance [3]. However, the distribution matters as much as the percentage - clustered ACs cause more problems than uniformly distributed ones [12]. Use iCliff values compared to benchmark datasets and monitor the performance gap between random and AC-conscious data splits [12] [18].

Q7: Can I simply remove activity cliffs from my dataset to improve QSAR performance?

A: This is generally not recommended. While removing ACs might improve apparent model performance, it eliminates crucial SAR information and creates artificially smooth activity landscapes that don't reflect reality [1]. This can lead to models that fail in real-world applications where AC behavior is important. Instead, use AC-aware modeling approaches or ensure your test set properly represents AC compounds to accurately evaluate model capabilities [12] [3].

Q8: How do I choose between different activity cliff identification methods?

A: The choice depends on your dataset size and research goals. For small datasets (<1000 compounds), comprehensive pairwise SALI analysis is feasible. For larger datasets, use iCliff for global assessment followed by targeted SALI analysis on suspect regions [18]. For MMP-focused studies, use matched molecular pair identification. Consider using multiple similarity measures (substructure, scaffold, SMILES) as they capture different types of ACs [3].

Q9: Are newer deep learning methods ultimately better for handling activity cliffs?

A: Not necessarily. Recent studies show that conventional descriptor-based methods sometimes outperform complex deep learning models on AC compounds [1]. The key advantage of newer approaches like ACES-GNN is their ability to provide explanations alongside predictions, helping understand why ACs occur [3]. Success depends more on proper AC-conscious training strategies than on model complexity alone. Ensemble approaches combining traditional and DL methods often work best.

Advanced Modeling Approaches for Predicting and Analyzing Activity Cliffs

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: What is the main advantage of using ensemble docking over single-receptor docking when studying activity cliffs?

- Answer: Ensemble docking accounts for inherent protein flexibility, which is often the underlying cause of activity cliffs. While single, rigid receptor structures may poorly accommodate structurally similar ligands, leading to inaccurate affinity predictions, docking against multiple receptor conformations (an ensemble) allows you to capture different binding site shapes. This significantly improves the ability to rationalize and predict cases where small structural changes in a ligand cause large potency differences [2] [20] [21].

FAQ 2: My ensemble docking results are overwhelmed by false positive poses. How can I mitigate this?

- Answer: The increase in false positive poses is a common pitfall when enlarging the receptor ensemble. To address this:

- Use a Composite Scoring Strategy: Implement a hybrid scoring function that combines traditional docking scores with ligand-based similarity measures, such as the Atomic Property Field (APF) method. This biases pose selection toward chemically reasonable solutions [20].

- Apply Machine Learning (ML): Use ensemble learning methods, like Random Forest, on the docking output to re-rank poses and affinities. ML can identify the most important receptor conformations and reduce the weight of false positives from less relevant structures [21].

- Optimize Ensemble Size: Avoid using all available conformations. Employ graph-based redundancy removal or feature importance analysis to select a smaller, non-redundant set of the most informative receptor structures [21].

FAQ 3: How do I select the optimal number and type of receptor conformations for my ensemble?

- Answer: The optimal ensemble is not simply the largest one. Follow these steps:

- Start with Experimentally Derived Conformations: Curate a set of structures from the PDB, ideally co-crystallized with various ligands [2] [21].

- Remove Redundancy: Use a graph-based method to eliminate highly similar conformations, which is more efficient than traditional clustering [21].

- Rank by Importance: If you have a set of ligands with known affinities, dock them into your initial ensemble. Then, use feature selection from an ML model to identify which receptor conformations contribute most to accurate affinity prediction. A few key structures are often sufficient [21].

FAQ 4: Can ensemble docking successfully predict activity cliffs prospectively?

- Answer: Yes, advanced structure-based methods have demonstrated significant accuracy in predicting activity cliffs. Studies on diverse datasets of cliff-forming pairs have shown that ensemble docking and template docking can correctly identify large potency differences between structurally similar compounds, moving beyond mere rationalization to prospective prediction [2].

Key Experimental Protocols

Protocol 1: Ligand-Biased Ensemble Docking (LigBEnD)

This hybrid protocol combines receptor structure-based docking with ligand-based similarity to improve pose prediction, especially when protein flexibility is not fully captured by the available ensemble [20].

Prepare the Receptor Ensemble:

- Select multiple protein conformations from a source like the Pocketome database, which provides pre-aligned structures of ligand-binding pockets [20].

- For each conformation, remove the co-crystallized ligand and optimize the protein structure (add hydrogens, sample side-chain rotamers).

- Generate grid potential maps for the binding site that represent electrostatics, hydrophobicity, and hydrogen bonding.

Prepare Ligand-Based APF Templates:

- Convert the co-crystallized ligand(s) into an Atomic Property Field (APF). This represents each atom by a vector of seven physicochemical properties (e.g., H-bond donor, acceptor, lipophilic) [20].

- Calculate seven grid maps to represent these property fields in 3D space.

Dock and Score Candidate Ligands:

- Dock flexible ligands into each receptor conformation in the ensemble.

- For each generated pose, calculate two scores:

- The standard docking score.

- An APF similarity score, which measures how well the pose's atomic properties match the APF template.

- Use a composite score that combines the docking and APF scores to select the final pose.

Protocol 2: Machine Learning-Guided Ensemble Selection and Affinity Prediction

This protocol uses ensemble learning to select the most important protein conformations and improve binding affinity predictions from ensemble docking data [21].

Curate and Prepare a Non-Redundant Receptor Ensemble:

- Collect all available X-ray structures for your target (e.g., from the PDB).

- Define the binding site based on residues in contact with any ligand across all structures.

- Use a graph-based redundancy removal method to select a diverse, non-redundant set of conformations for initial docking.

Perform Ensemble Docking and Extract Features:

- Dock a set of ligands with known experimental binding affinities into every conformation in your non-redundant ensemble.

- For each ligand, record the best docking score (e.g., from Autodock Vina or AutoDock4) obtained from each receptor conformation as your feature set.

Train a Machine Learning Model for Affinity Prediction:

- Use a Random Forest or other ensemble learning regressor.

- Input Features: The vector of best docking scores for each ligand across all receptor conformations.

- Target Variable: The experimental binding affinity.

- Train the model to predict affinity from the docking scores.

Identify Critical Conformations and Refine the Ensemble:

- After training, use the model's feature importance measure to rank the contribution of each receptor conformation to the final prediction.

- Select the top-ranked conformations to create a refined, optimal ensemble for future virtual screening, reducing computational cost and false positives.

Research Reagent Solutions

Table: Essential computational tools and data resources for ensemble docking studies.

| Name | Type | Function in Research |

|---|---|---|

| Pocketome [20] | Database | A curated collection of pre-aligned protein-ligand binding pockets from the PDB, providing a convenient starting point for building receptor ensembles. |

| Atomic Property Field (APF) [20] | Computational Method | A ligand-based method that represents molecules as grids of physicochemical properties; used to guide docking and assess 3D similarity independent of molecular topology. |

| ALiBERO [2] | Computational Protocol | A method for generating new receptor conformations through ligand-guided backbone ensemble refinement, useful when experimental structures are inadequate. |

| SCARE [20] | Computational Protocol | A method for generating alternative receptor conformations through side-chain rearrangement and backbone minimization. |

| ChEMBL [2] [19] | Database | A large, open-access repository of bioactive molecules with drug-like properties and their annotated targets, providing experimental activity data for validation. |

| Random Forest (RF) [21] | Machine Learning Algorithm | An ensemble learning method used to create scoring functions that re-rank docking outputs, improving affinity prediction and identifying key receptor conformations. |

Experimental Workflow Diagrams

Workflow for ML-Optimized Ensemble Docking

Ligand-Biased Ensemble Docking (LigBEnD) Workflow

Leveraging 3D-QSAR and Comparative Molecular Field Analysis (CoMFA) for Cliff Detection

Activity cliffs (ACs) represent a significant challenge in quantitative structure-activity relationship (QSAR) modeling and rational drug design. They are defined as pairs of structurally similar compounds that nevertheless exhibit a large difference in their binding affinity for a given biological target [1]. The presence of activity cliffs directly defies the fundamental molecular similarity principle, which states that chemically similar compounds should have similar biological activities [1]. For medicinal chemists, ACs can be puzzling and confound their understanding of structure-activity relationships (SARs), as they reveal that small chemical modifications can have unexpectedly large biological impacts [1].

In computational chemistry, ACs are suspected to form one of the major roadblocks for successful QSAR modeling, as these abrupt changes in potency are expected to negatively influence machine learning algorithms for pharmacological activity prediction [1]. In fact, studies have shown that the density of ACs in a molecular dataset is strongly predictive of its overall modelability by classical descriptor- and fingerprint-based QSAR methods [1]. This technical support article provides troubleshooting guidance and experimental protocols for researchers aiming to detect and manage activity cliffs using 3D-QSAR and Comparative Molecular Field Analysis (CoMFA) approaches.

Troubleshooting Guides

Alignment Issues in 3D-QSAR

Problem: Poor predictive performance of 3D-QSAR/CoMFA models due to incorrect molecular alignment.

Explanation: In 3D-QSAR, unlike most 2D-QSAR, the input data has inherent noise because the correct alignment of molecules is generally unknown [22]. The alignment of molecules provides the majority of the signal for the model, and incorrect alignments will result in models with limited or no predictive power [22].

Solutions:

- Follow a rigorous alignment workflow: Identify an initial reference molecule that represents the dataset well and invest time in establishing its likely bioactive conformation using crystal structures or FieldTemplater [22].

- Use multiple references: For most datasets, 3-4 reference molecules are needed to fully constrain all others. Progressively add references to cover all structural variations in the dataset [22].

- Apply substructure alignment: Ensure the common core of a compound series is always properly aligned while still maximizing electrostatic and shape similarity for the rest of the molecule [22].

- Avoid output-dependent alignment tweaking: Never adjust alignments based on model results or pay more attention to aligning highly active compounds. This introduces bias and invalidates the model [22].

Prevention:

- Complete all alignment work before running the QSAR analysis

- Document alignment rules and reference molecules thoroughly

- Validate alignment choices independently of model performance

Handling False Hits and Low Predictive Power

Problem: High rate of false positives in virtual screening and poor external predictability of 3D-QSAR models.

Explanation: QSAR-based virtual screening typically yields about 12% of predicted compounds showing actual biological activity, meaning nearly 90% of results may be false hits [23]. This problem exacerbates with activity cliffs, where models frequently fail to predict the large potency differences between similar compounds [1].

Solutions:

- Expand training set diversity: Ensure training sets include compounds spanning the chemical space of interest, particularly around known activity cliff regions [1].

- Apply consensus modeling: Combine 2D- and 3D-QSAR models to leverage complementary strengths [23].

- Define applicability domain: Determine the chemical space where the model can make reliable predictions and avoid extrapolation beyond this domain [23].

- Implement rigorous validation: Use external test sets that were not involved in any model development steps [23].

Diagnostic Steps:

- Perform y-scrambling to verify the absence of chance correlations

- Analyze prediction residuals for patterns indicating systematic errors

- Check model performance on compounds involved in known activity cliffs [1]

Model Validation and Robustness Issues

Problem: 3D-QSAR models show good internal statistics but perform poorly on new compounds, particularly those involved in activity cliffs.

Explanation: Model validation is a critical step in QSAR modeling to assess predictive performance, robustness, and reliability [24]. Without proper validation, models may appear statistically sound but fail in practical applications, especially for challenging cases like activity cliffs [1].

Solutions:

- Employ external validation: Use an independent test set that was not used during model development [24].

- Apply multiple validation techniques: Combine leave-one-out (LOO) cross-validation, k-fold cross-validation, and external test set validation [24].

- Use randomization tests: Verify that models outperform those built with randomized activity data [24].

- Check cliff sensitivity specifically: Evaluate model performance specifically on known activity cliff pairs in the test set [1].

Validation Metrics to Monitor:

- q² for internal validation

- r²ₚᵣₑd for external validation

- RMSE (Root Mean Square Error) for both training and test sets

- Specificity and sensitivity for classification models

Frequently Asked Questions (FAQs)

Q1: Why do 3D-QSAR models particularly struggle with activity cliffs?

A: 3D-QSAR methods like CoMFA assume continuous structure-activity relationships, where small structural changes lead to gradual activity changes [1]. Activity cliffs represent discontinuities in this relationship, where minimal structural modifications cause dramatic potency shifts [1]. These discontinuities often result from complex molecular recognition phenomena such as binding mode changes, specific hydrogen bonding interactions, or subtle steric effects that are challenging to capture with standard molecular field approximations [1].

Q2: What are the key differences between classic 2D-QSAR and 3D-QSAR in handling activity cliffs?

A: The table below summarizes the fundamental differences:

Table: Comparison of 2D-QSAR vs. 3D-QSAR Approaches for Activity Cliff Detection

| Feature | 2D-QSAR | 3D-QSAR (CoMFA/CoMSIA) |

|---|---|---|

| Molecular Representation | Descriptors from molecular graph | 3D molecular fields and steric/electrostatic properties |

| Alignment Dependency | Alignment-free | Highly alignment-dependent |

| Cliff Detection Mechanism | Based on descriptor similarity with activity differences | Based on field similarity with activity differences |

| Sensitivity to Molecular Conformation | Low | High |

| Interpretation of Cliff Causes | Limited to descriptor analysis | Visual field contours suggest steric/electronic causes |

| Performance on Cliffy Compounds | Generally poor, with significant performance drops [1] | Also challenged, but may provide mechanistic insights |

Q3: Can modern graph neural networks outperform classical 3D-QSAR for activity cliff prediction?

A: Current evidence suggests that graph neural networks, such as Graph Isomorphism Networks (GINs), show promise but don't consistently outperform classical methods. Recent studies found that graph isomorphism features are competitive with or superior to classical molecular representations for AC-classification and can serve as baseline AC-prediction models [1]. However, for general QSAR prediction, extended-connectivity fingerprints (ECFPs) still consistently deliver the best performance among tested input representations [1]. Surprisingly, highly nonlinear deep learning models also show performance drops on "cliffy" compounds, similar to classical methods [1].

Q4: How critical is molecular alignment for successful 3D-QSAR analysis?

A: Alignment is fundamentally critical for 3D-QSAR success. As one expert emphasizes, "the three secrets to great 3D-QSAR: alignment, alignment and alignment" [22]. The majority of the signal in 3D-QSAR models comes from the alignments rather than the specific field calculations [22]. Incorrect alignments will produce models with limited or no predictive power, while proper alignment requires significant effort and should be completed before any model development [22].

Q5: What experimental protocols improve 3D-QSAR model robustness for cliff detection?

A: The following workflow provides a systematic approach for developing robust 3D-QSAR models with enhanced cliff detection capability:

Diagram: Experimental workflow for robust 3D-QSAR modeling with activity cliff detection capability

Q6: How can researchers identify whether poor prediction stems from activity cliffs versus general model deficiencies?

A: To distinguish activity cliff-related failures from general model deficiencies:

- Perform pairwise similarity analysis: Identify structurally similar compound pairs using Tanimoto similarity or other metrics [1]

- Calculate potency differences: For similar pairs (typically >0.85 similarity), calculate the absolute activity difference [1]

- Identify cliff compounds: Flag compounds involved in pairs with high similarity but large potency differences [1]

- Compare performance metrics: Separately calculate model performance on cliffy versus non-cliffy compounds [1]

- Visualize structural neighborhoods: Examine the chemical space around mispredicted compounds for cliff patterns [1]

Significantly worse performance on cliffy compounds specifically indicates activity cliffs as the primary issue, while uniformly poor performance suggests general model deficiencies.

Essential Research Reagents and Computational Tools

Table: Essential Research Tools for 3D-QSAR and Activity Cliff Studies

| Tool Category | Specific Software/Resource | Primary Function | Application in Cliff Detection |

|---|---|---|---|

| Molecular Descriptors | Dragon, PaDEL-Descriptor, RDKit, Mordred | Calculate molecular descriptors | Generate 2D descriptors for similarity analysis and cliff identification |

| 3D-QSAR Modeling | SYBYL/CoMFA, Open3DQSAR, | Perform 3D-QSAR analysis | Develop CoMFA/CoMSIA models with steric and electrostatic fields |

| Alignment Tools | FieldAlign, Flexible Alignment, ROCS | Molecular superposition | Establish pharmacophore alignment for 3D-QSAR |

| Cheminformatics | RDKit, OpenBabel, ChemAxon | Chemical structure handling | Standardize structures, calculate fingerprints, assess similarity |

| Quantum Chemistry | Gaussian, ORCA | Structure optimization | Obtain reliable 3D geometries and electronic properties |

| Model Validation | QSARINS, scikit-learn | Statistical validation | Implement rigorous validation protocols and applicability domain assessment |

Experimental Protocols

Protocol: Systematic Molecular Alignment for 3D-QSAR

Purpose: To establish a reproducible molecular alignment protocol that maximizes signal for 3D-QSAR while minimizing bias.

Materials:

- Set of molecular structures with known biological activities

- Molecular modeling software with alignment capabilities (e.g., SYBYL, MOE, Open3DALIGN)

- Reference structures (crystal ligand structures if available)

Procedure:

- Identify initial reference molecule: Select a compound that is representative of the dataset, preferably with known bioactive conformation from crystallography [22]

- Perform initial alignment: Align the rest of the dataset to the reference using field-based or substructure alignment methods [22]

- Identify poorly constrained molecules: Systematically review alignments to identify compounds with substituents going into regions not covered by the reference [22]

- Add supplementary references: Select representative examples of poorly aligned compounds and manually adjust their alignment based on chemical knowledge (ignoring activity values), then promote to additional references [22]

- Realign complete dataset: Use multiple references with substructure alignment constraints to realign the entire dataset [22]

- Final alignment check: Verify all alignments make chemical sense without reference to activity values [22]

Critical Notes:

- Alignment must be completed before any QSAR model development

- Never adjust alignment based on model performance or residual analysis

- Document all reference molecules and alignment rules for reproducibility

Protocol: Activity Cliff Identification and Analysis

Purpose: To systematically identify and characterize activity cliffs in a dataset prior to 3D-QSAR modeling.

Materials:

- Dataset of compounds with standardized structures and biological activities

- Cheminformatics toolkit (e.g., RDKit, CDK)

- Similarity calculation and clustering methods

Procedure:

- Standardize molecular structures: Remove salts, normalize tautomers, handle stereochemistry consistently [23]

- Calculate molecular similarity: Compute pairwise structural similarities using appropriate fingerprints (ECFP4, ECFP6 recommended) [1]

- Identify similar pairs: Flag compound pairs with similarity above a defined threshold (typically >0.85 Tanimoto similarity) [1]

- Calculate potency differences: For each similar pair, compute the absolute difference in biological activity (pIC50, pKi, etc.)

- Define activity cliffs: Apply a threshold for significant potency difference (typically >100-fold or 2 log units) to identify cliff pairs [1]

- Characterize cliff compounds: Flag all compounds involved in one or more activity cliffs as "cliffy" compounds [1]

- Analyze structural basis: Examine the specific structural modifications responsible for cliff effects

Analysis Outputs:

- List of activity cliff pairs with similarity and potency difference metrics

- Identification of cliffy compounds for separate model evaluation

- Structural patterns associated with cliff effects

- Dataset modelability assessment based on cliff density [1]

Protocol: Rigorous 3D-QSAR Model Validation

Purpose: To implement comprehensive validation strategies for 3D-QSAR models with specific attention to activity cliff prediction.

Materials:

- Aligned molecular dataset with biological activities

- 3D-QSAR software (e.g., SYBYL/CoMFA, Open3DQSAR)

- Statistical analysis environment (e.g., R, Python with scikit-learn)

Procedure:

- Data partitioning: Split dataset into training and test sets using rational methods (e.g., Kennard-Stone, sphere exclusion), ensuring cliff compounds are represented in both sets [24]

- Model training: Develop CoMFA/CoMSIA models using training set compounds only

- Internal validation: Perform leave-one-out (LOO) and k-fold cross-validation to assess model robustness [24]

- External validation: Predict test set compounds not used in model development [24]

- Cliff-specific validation: Separately evaluate model performance on cliffy versus non-cliffy compounds [1]

- Randomization test: Verify model significance through y-scrambling [24]

- Applicability domain: Define the chemical space where model predictions are reliable [23]

Validation Metrics:

- q² (cross-validated r²) for internal validation

- r²ₚᵣₑd and RMSEₚᵣₑd for external validation

- Sensitivity and specificity for cliff detection

- Separate performance metrics for cliffy compounds [1]

Machine Learning and Deep Learning Architectures for Cliff Prediction

Frequently Asked Questions

Q1: Why do my QSAR models consistently fail to predict activity cliffs accurately?

Activity cliffs (ACs) represent pairs of structurally similar compounds that exhibit a large difference in binding affinity, directly defying the principle of molecular similarity [1] [7]. This inherent discontinuity in the structure-activity relationship (SAR) landscape is a major roadblock for standard machine learning algorithms [1] [25]. All models struggle with this, but some handle it better than others. Deep learning models, despite their complexity, often show a more significant performance drop on AC compounds compared to traditional machine learning methods based on molecular descriptors [25].

Q2: What is the best molecular representation to use for activity cliff prediction?

Current benchmarking indicates that classical molecular representations can be highly competitive. Extended Connectivity Fingerprints (ECFPs) are a robust baseline for general QSAR performance [1] [25]. However, for the specific task of AC classification, Graph Isomorphism Networks (GINs), a type of graph neural network, have shown promise, being competitive with or even superior to classical fingerprints [1]. For the most interpretable insights, especially when structural data is available, structure-based methods like docking into multiple receptor conformations can be highly effective for rationalizing cliff formation [2].

Q3: My model's overall performance is good, but it fails on critical compounds. How can I evaluate its performance on activity cliffs specifically?

Relying on overall performance metrics like R² can be misleading, as they can be high even when performance on ACs is poor [25]. You should incorporate dedicated, "activity-cliff-centered" metrics during model development and evaluation [25]. Frameworks like MoleculeACE (Activity Cliff Estimation) are specifically designed to benchmark models on their ability to predict the properties of activity cliffs, providing a clearer picture of model performance on these critical edge cases [25].

Q4: How should I structure my training data to improve activity cliff prediction?

Be cautious of data splitting methods. Some studies use compound-pair-based splits, which can lead to information leakage and overoptimistic performance because individual molecules can appear in both training and test sets [1]. Always ensure that the two compounds forming an activity cliff pair are placed in the same split (both in training or both in test) to avoid data leakage and ensure a more realistic evaluation [1].

Troubleshooting Guides

Problem: Poor Model Performance on Activity Cliffs

| Symptom | Potential Cause | Solution |

|---|---|---|

| High overall accuracy but large errors on similar compound pairs. | SAR landscape discontinuity; model learns an overly smooth function. | Incorporate AC-focused metrics (e.g., from MoleculeACE) for model selection [25]. |

| Deep learning model underperforms compared to simpler models on cliffs. | Deep learning's heightened sensitivity to SAR discontinuities. | Use traditional machine learning with molecular descriptors as a strong baseline [25]. |

| Model cannot distinguish the more active compound in a similar pair. | Model misses subtle structural features critical for binding. | Use graph-based models (e.g., GINs) or structure-based docking to capture complex feature interactions [1] [2]. |

| Inconsistent performance across different datasets. | Varying density and types of activity cliffs in different datasets. | Analyze the activity cliff density and landscape of your dataset before modeling [1]. |

Problem: Data Handling and Preparation Issues

| Symptom | Potential Cause | Solution |

|---|---|---|

| Model performance on cliffs seems too good to be true. | Data leakage from improper splitting of cliff pairs. | Implement a rigorous split at the compound-pair level to ensure partners are in the same set [1]. |

| Model fails to account for drastic activity changes from small structural modifications. | Key molecular features (e.g., stereochemistry) are not captured. | Use representations that encode 3D or stereochemical information, especially for targets known to be stereosensitive [7]. |

| Poor generalization in prospective screening. | Training data does not adequately represent the "cliffy" regions of chemical space. | Curate training sets to include matched molecular pairs (MMPs) and known cliffs where possible [2]. |

Experimental Data & Performance

Table 1: Benchmarking Model Performance on Activity Cliffs across Multiple Targets (Based on MoleculeACE [25])

| Model Category | Example Methods | Key Finding on Activity Cliffs |

|---|---|---|

| Traditional Machine Learning | Random Forest (RF), k-Nearest Neighbors (kNN) | Often outperforms more complex deep learning methods on AC compounds [25]. |

| Deep Learning (Graph-based) | Graph Isomorphism Networks (GIN) | Competitive with or superior to classical representations for AC-classification tasks [1]. |

| Deep Learning (String-based) | Models using SMILES strings | Generally struggles with AC prediction [25]. |

| Structure-Based Methods | Ensemble Docking, Template Docking | Can achieve significant accuracy in predicting and rationalizing 3D activity cliffs when structural data is available [2]. |

Table 2: Summary of Key Research Reagents and Computational Tools

| Item | Function in Research |

|---|---|

| Extended Connectivity Fingerprints (ECFPs) | A circular fingerprint that captures radial, atom-centered substructures, widely used for calculating molecular similarity and as a molecular representation [25]. |