Molecular Similarity Analysis: From Foundational Concepts to AI-Driven Applications in Drug Discovery

This article provides a comprehensive overview of molecular similarity analysis, a cornerstone concept in cheminformatics and modern drug discovery.

Molecular Similarity Analysis: From Foundational Concepts to AI-Driven Applications in Drug Discovery

Abstract

This article provides a comprehensive overview of molecular similarity analysis, a cornerstone concept in cheminformatics and modern drug discovery. It explores the foundational principle that structurally similar molecules often share similar properties and biological activities. The content covers the evolution from traditional descriptor-based methods to advanced AI-driven approaches, detailing their applications in virtual screening, scaffold hopping, and target prediction. Practical guidance is offered for troubleshooting common challenges and optimizing methods for specific tasks like natural product analysis or controlled substance identification. Finally, the article synthesizes key validation strategies and comparative performance analyses across different methods, providing researchers and drug development professionals with a robust framework for selecting and applying the most appropriate molecular similarity techniques in their work.

The Principle of Molecular Similarity: A Cornerstone of Cheminformatics and Drug Design

Core Principle and Theoretical Foundations

The Similarity-Property Principle is a foundational concept in chemistry, particularly in cheminformatics and drug discovery. It posits that molecules with similar structures are likely to exhibit similar properties [1] [2]. These properties can encompass a wide range of physical characteristics (e.g., boiling point, solubility) and biological activities (e.g., pharmacological activity against a target protein) [1].

This principle forms the bedrock of Quantitative Structure-Property Relationship (QSPR) and Quantitative Structure-Activity Relationship (QSAR) modeling, where statistical methods are used to predict the properties or biological activities of molecules based on numerical descriptors derived from their structures [1]. The principle's strength lies in its utility for tasks like lead optimization in drug discovery, where systematic chemical modification of a lead compound is performed to improve its properties while maintaining its core structure and activity [2] [3]. It is crucial to note that similarity is a subjective concept whose definition depends heavily on the context—molecules can be similar in one aspect (e.g., 2D topology) but different in another (e.g., 3D shape) [2]. Furthermore, significant exceptions to the principle, known as "activity cliffs," occur when structurally similar compounds exhibit large differences in biological activity [4].

Quantitative Descriptors and Similarity Metrics

To operationalize the Similarity-Property Principle, molecular structures must be converted into a quantitative format that can be compared computationally. This is primarily achieved through molecular fingerprints and similarity coefficients.

Molecular Fingerprints: Structural Representation

Molecular fingerprints are vector representations that encode the presence or absence of specific structural features or properties within a molecule [3]. They can be broadly categorized as follows:

- Substructure-Preserving Fingerprints: These use a predefined dictionary of structural patterns or linear paths through the molecular graph. They are ideal for substructure searching and similarity assessments based on explicit chemical motifs [3]. Examples include:

- Feature Fingerprints: These capture characteristics related to structure-activity relationships and are often more effective for activity-based virtual screening [3]. They are not designed for substructure search.

- Circular Fingerprints (e.g., ECFP, FCFP): Capture the local environment around each atom by iteratively considering neighbors out to a specific radius, effectively encoding atom-centered substructures [4] [3].

- Atom-Pair Fingerprints: Encode the topological distance between all pairs of atoms in a molecule, providing information about medium-range features [4].

- Pharmacophore Fingerprints: Encode potential interaction points (e.g., hydrogen bond donors, acceptors, hydrophobic regions) and their spatial relationships, incorporating physico-chemical properties [2] [3].

- Shape-Based Fingerprints (e.g., USR, ROCS): Describe the three-dimensional shape of a molecule and its surface, enabling scaffold hopping by identifying molecules with similar shapes but different chemical skeletons [5] [3].

Table 1: Comparison of Common Molecular Fingerprint Types

| Fingerprint Type | Representation | Key Features | Common Applications |

|---|---|---|---|

| MACCS Keys [3] | Dictionary-based, Substructure | Predefined list of ~960 structural fragments; interpretable | General similarity searching, substructure filtering |

| Circular (ECFP) [4] [3] | Feature-based, Circular | Encodes atom environments; excellent for activity prediction | Virtual screening, QSAR, machine learning |

| Atom-Pair [4] | Feature-based, Topological | Captures graph distance between atom pairs | Similarity searching, especially for medium-sized molecules |

| Pharmacophore [2] [3] | Feature-based, 2D/3D | Based on physico-chemical interaction features | Virtual screening, bioisosteric replacement |

| Shape-Based (USR) [5] | Feature-based, 3D | Alignment-free; describes molecular shape using atomic distributions | Scaffold hopping, target prediction, drug repurposing |

Similarity Coefficients: Quantifying Comparison

Once molecules are represented as fingerprints, their similarity is quantified using a similarity coefficient. The most widely used metric for binary fingerprints is the Tanimoto coefficient (also known as the Jaccard coefficient) [6] [4] [7].

The Tanimoto coefficient (( T )) between two molecules, A and B, is calculated as: ( T = c / (a + b - c) ) where:

- ( a ) = number of "on" bits in molecule A's fingerprint

- ( b ) = number of "on" bits in molecule B's fingerprint

- ( c ) = number of bits "on" in both A and B [3]

This coefficient ranges from 0 (no similarity) to 1 (identical fingerprints) [7]. While a high Tanimoto score (e.g., T > 0.85 for Daylight fingerprints) is often used as a threshold for similarity, it is a misconception that this universally guarantees similar bioactivity, as this relationship is highly context-dependent [6] [4]. Other similarity and distance metrics include the Dice coefficient, Cosine coefficient, Euclidean distance, and Manhattan distance [3].

Experimental Protocols

This section provides a detailed methodology for a standard ligand-based virtual screening workflow, a primary application of the Similarity-Property Principle.

Protocol 1: Ligand-Based Virtual Screening using 2D Fingerprints

Purpose: To identify potential bioactive compounds from a large chemical database by comparing their 2D structural similarity to a known active reference compound.

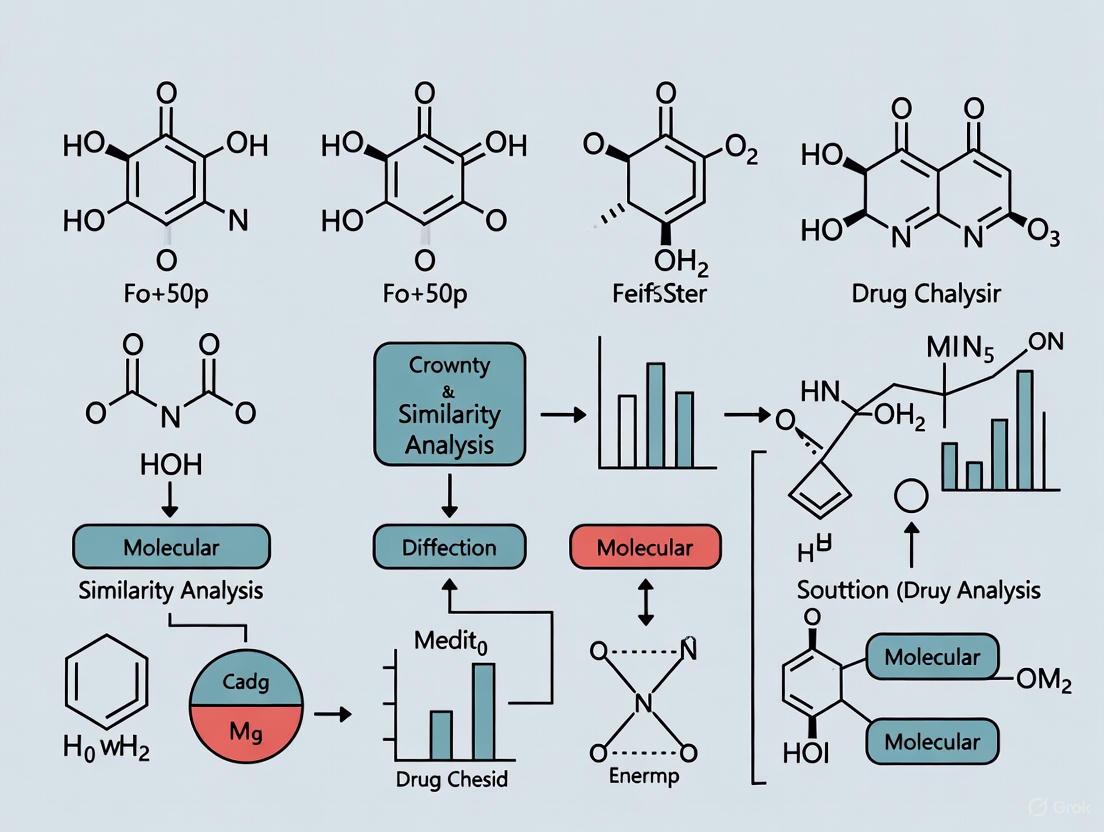

The logical flow for a standard similarity-based virtual screening protocol is visualized above.

Materials and Reagents

- Query Compound: A small molecule with confirmed and potent biological activity against the target of interest. This can be obtained from internal research or public databases like ChEMBL [8] [9].

- Chemical Database: A digital collection of compounds to be screened. Examples include corporate compound libraries, commercially available screening collections, or public databases such as PubChem [8] or ZINC.

- Software/Coding Environment: Cheminformatics toolkits such as RDKit (Python), CDK (Java), or commercial software packages (e.g., ChemAxon, OpenEye) that can generate molecular fingerprints and calculate similarity metrics [9] [3].

- Computing Hardware: A standard computer workstation is sufficient for screening databases of up to a few million compounds. For larger screens, high-performance computing (HPC) clusters or specialized hardware may be required [7].

Step-by-Step Procedure

Prepare Query and Database

- Obtain or draw the structure of the known active query compound in a standard format (e.g., SMILES, SDF).

- Prepare the chemical database by standardizing structures: remove salts, neutralize charges, and generate canonical tautomers if necessary. This ensures consistent fingerprint generation.

Generate Molecular Fingerprints

- Select an appropriate fingerprint type based on your goal (see Table 1). For a general-purpose screen, the ECFP4 (Extended Connectivity Fingerprint with a diameter of 4) is a robust choice [3].

- Using your chosen software, generate the fingerprint for the query molecule and for every molecule in the prepared database.

Calculate Similarity Scores

Rank and Analyze Results

- Sort the database compounds in descending order of their Tanimoto similarity score.

- Apply a threshold to select top candidates. While context-dependent, scores above 0.5-0.6 for ECFP4 often indicate meaningful similarity, though higher thresholds (e.g., >0.7) may be used to select fewer, more confident hits [6] [4].

- Visually inspect the top-ranking molecules to confirm the perceived chemical similarity and identify potential scaffold hops.

Select Hits for Validation

- Select a subset of the top-ranking compounds for experimental testing.

- Procure these compounds and subject them to a biological assay to validate the predicted activity.

Protocol 2: Creating a Target Profile Fingerprint for Drug Repurposing

Purpose: To predict new therapeutic uses for existing drugs by comparing their biological "target profiles" rather than their chemical structures.

The process for using biological profiles to infer new drug applications is shown above.

Materials and Reagents

- Drug-Target Interaction Databases: Sources of known and predicted interactions between drugs and proteins, such as DrugBank [8], ChEMBL [8] [9], or STITCH.

- Drug of Interest: An approved drug or late-stage clinical candidate for which new indications are sought.

- Data Analysis Environment: A programming environment (e.g., Python/R) capable of handling binary vectors and performing similarity calculations.

Step-by-Step Procedure

Compile Target Interaction Data

- From your chosen database(s), extract a comprehensive list of all human protein targets (e.g., enzymes, ion channels, receptors).

- For the drug of interest, record its known interactions with each target in this list. Interactions can include binding, activation, inhibition, etc.

Construct Binary Fingerprint

- Create a binary vector (fingerprint) where each position corresponds to a unique protein target from the comprehensive list.

- Set a bit to '1' if the drug is known to interact with that target, and '0' otherwise [8]. This vector is the drug's target profile fingerprint.

Build Reference Profile Database

- Construct the same target profile fingerprint for all other drugs in the database with known therapeutic indications.

Calculate Profile Similarity

- Compute the similarity (e.g., using the Tanimoto coefficient) between the target profile of your drug of interest and every other drug in the reference database [8].

- Rank the reference drugs based on their target profile similarity to your query drug.

Hypothesize New Indications

- Analyze the top-ranked, most similar drugs. If your query drug shares a highly similar target profile with drugs used for a different disease, this suggests a potential for drug repurposing [8].

- This hypothesis must be validated through further in vitro and in vivo studies.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Resources for Molecular Similarity Analysis

| Item Name | Function / Description | Example Sources / Tools |

|---|---|---|

| Cheminformatics Toolkits | Software libraries for manipulating chemical structures, generating fingerprints, and calculating similarity. | RDKit, CDK (Chemistry Development Kit), OpenEye Toolkits [9] [3] |

| Chemical Structure Databases | Curated collections of chemical compounds with associated structural information and, often, biological data. | PubChem, ChEMBL [8] [9], ZINC, in-house corporate databases |

| Drug-Target Interaction Databases | Databases linking drugs, clinical candidates, or bioactive compounds to their known protein targets. | DrugBank [8], ChEMBL [8], STITCH |

| Molecular Fingerprint Algorithms | The specific algorithms used to convert a chemical structure into a numerical vector representation. | ECFP/FCFP, MACCS Keys, Atom-Pair, Pharmacophore Fingerprints [4] [3] |

| Similarity Coefficient Metrics | Mathematical formulas used to quantify the degree of similarity between two molecular fingerprints. | Tanimoto, Dice, Cosine, Tversky [6] [3] |

Advanced Applications and Current Research

The application of the Similarity-Property Principle extends far beyond simple 2D similarity searches.

- Drug Repurposing: As detailed in Protocol 2, biological profiles (e.g., target interactions, gene expression responses, adverse effect profiles) can be used as "fingerprints" to identify new therapeutic uses for existing drugs, significantly reducing development time and cost [8].

- Scaffold Hopping: 3D shape similarity methods (e.g., ROCS, USR) are powerful tools for identifying compounds with similar bioactivity but different chemical scaffolds, helping to invent around patents or improve drug-like properties [5].

- Predicting Adverse Effects and Drug-Drug Interactions: Similarity principles are applied to predict off-target interactions that may cause adverse drug reactions (ADRs) or dangerous drug-drug interactions (DDIs). By comparing a new drug candidate to compounds with known safety issues, researchers can identify potential risks early in development [8].

- Context-Dependent Similarity for Small Fragments: Recent research addresses the challenge of quantifying similarity for small molecular fragments (e.g., R-groups). New methods, such as Embedded Fragment Vectors (EFVs) adapted from natural language processing, generate vector representations that capture the latent context of a fragment within a series of active compounds, leading to more meaningful similarity assessments for fragment-based design [9].

Molecular similarity analysis serves as a foundational element in modern computational chemistry and drug discovery, enabling researchers to navigate complex chemical spaces and predict molecular behavior. The core premise—that structurally similar molecules exhibit similar properties—underpins critical applications from virtual screening to lead optimization [10] [11]. The rapid integration of big data, machine learning (ML), and generative artificial intelligence (AI) has further heightened the importance of robust molecular similarity quantification [10] [12]. This application note provides a comprehensive overview of current molecular representation methods and similarity metrics, detailing experimental protocols for their evaluation and application in drug discovery pipelines. By framing these components within a practical toolkit for researchers, we aim to bridge the gap between theoretical concepts and their implementation in real-world drug development scenarios.

Molecular Representation Methods

Molecular representations translate chemical structures into computationally readable formats, forming the essential bridge between molecular structure and predictive modeling. These methods have evolved from traditional rule-based approaches to sophisticated AI-driven learning paradigms [12].

Table 1: Classification of Molecular Representation Methods

| Category | Examples | Key Characteristics | Primary Applications |

|---|---|---|---|

| String-Based | SMILES, SELFIES, InChI [12] | Compact string encodings; human-readable | Data storage, exchange; initial input for AI models |

| Descriptor-Based | Molecular weight, hydrophobicity, topological indices [12] | Quantifies physicochemical properties | QSAR modeling, property prediction |

| Fingerprint-Based | Extended-Connectivity Fingerprints (ECFP), MACCS Keys [12] [13] | Binary or numerical vectors encoding substructural patterns | Similarity searching, clustering, virtual screening |

| AI-Driven Learned Representations | Graph Neural Networks, Transformers, Variational Autoencoders [12] | Continuous, high-dimensional embeddings learned from data | Molecular generation, property prediction, scaffold hopping |

Traditional representation methods, particularly molecular fingerprints, remain widely employed due to their computational efficiency and interpretability. Extended-connectivity fingerprints (ECFP) capture local atomic environments through an iterative hashing process that records circular atom neighborhoods, making them invaluable for similarity searching and quantitative structure-activity relationship (QSAR) modeling [12]. Similarly, MACCS keys implement a dictionary of predefined structural fragments to create binary fingerprint vectors suitable for rapid similarity comparisons [13].

The limitations of traditional methods in capturing complex structure-function relationships have spurred development of AI-driven approaches. Modern techniques employ deep learning architectures including graph neural networks (GNNs), transformers, and variational autoencoders to learn continuous, high-dimensional feature embeddings directly from molecular data [12]. These representations capture both local and global molecular features without relying on predefined rules, enabling more sophisticated modeling of molecular behavior in tasks such as molecular generation, scaffold hopping, and lead optimization [12] [14].

Similarity Metrics and Evaluation

Similarity metrics quantitatively compare molecular representations, with the choice of metric heavily influencing the outcomes of similarity-based analyses. The Tanimoto coefficient (also known as Jaccard similarity) remains the most prevalent metric for binary fingerprints, calculating the ratio of shared features to total unique features between two molecules [13]. Alternative metrics including Dice similarity and Cosine similarity offer different mathematical approaches to quantifying feature overlap, with performance varying across specific applications [13].

Recent research has highlighted critical considerations in similarity metric selection and evaluation. A 2025 study systematically evaluated the correlation between molecular similarity measures and electronic structure properties using a dataset of over 350 million molecule pairs, proposing a framework based on neighborhood behavior and kernel density estimation (KDE) analysis [10]. This work addresses a significant gap in the field, as previous evaluations primarily relied on biological activity datasets with qualitative metrics, limiting relevance for non-biological domains including electronic structure property prediction [10].

Specialized similarity metrics have also emerged for specific applications. Genheden and Shields (2025) developed a novel similarity score for comparing synthetic routes that combines atom similarity and bond similarity metrics, providing a continuous similarity axis (0-1) that aligns with chemist intuition when evaluating route strategy [15]. This approach demonstrates how domain-specific similarity metrics can offer more meaningful comparisons for specialized tasks in drug development.

Table 2: Performance Comparison of Fingerprint and Similarity Metric Combinations in Target Prediction

| Fingerprint | Similarity Metric | Prediction Accuracy | Optimal Use Cases |

|---|---|---|---|

| Morgan Fingerprint | Tanimoto | High [13] | General target prediction, diverse chemical spaces |

| MACCS Keys | Dice | Moderate [13] | Rapid screening, large database searches |

| ECFP4 | Tanimoto | Moderate-High [13] | Activity prediction, scaffold hopping |

Experimental Protocols

Protocol 1: Similarity-Based Virtual Screening

Purpose: To identify potential bioactive compounds through similarity searching against databases of known active molecules.

Materials:

- Query molecule(s) with desired biological activity

- Chemical database for screening (e.g., ChEMBL, ZINC)

- Computational infrastructure for similarity calculations

- Molecular representation toolkit (fingerprint generation capabilities)

Procedure:

- Representation Generation: Convert query molecule(s) into appropriate molecular representations. For initial screening, Morgan fingerprints (radius 2, 2048 bits) provide a robust balance of specificity and computational efficiency [13].

- Database Preparation: Precompute identical representations for all compounds in the screening database. Implement efficient data structures (e.g., bit vectors) for rapid similarity searching.

- Similarity Calculation: For each database compound, calculate similarity to query using Tanimoto coefficient for fingerprint representations [13].

- Result Ranking: Sort database compounds by descending similarity score.

- Hit Identification: Select top-ranking compounds for further experimental validation, typically considering the top 1-5% of ranked compounds or those exceeding a similarity threshold of 0.7-0.8 [13].

Validation: Assess screening performance through retrospective validation using known active compounds not included in the query set. Calculate enrichment factors and receiver operating characteristic (ROC) curves to quantify screening efficiency [16].

Protocol 2: Evaluating Similarity Measure Correlation with Molecular Properties

Purpose: To quantitatively assess how effectively molecular similarity measures capture specific molecular properties.

Materials:

- Curated dataset of molecules with associated property data (electronic, redox, or optical properties) [10]

- Multiple molecular representation methods (fingerprints, descriptors, learned representations)

- Statistical analysis software environment (Python/R)

- Kernel density estimation (KDE) implementation

Procedure:

- Dataset Curation: Compile a comprehensive dataset of molecule pairs with associated property data. The D3TaLES and OCELOT databases provide valuable sources for electronic structure properties [10].

- Pairwise Calculations: For all molecule pairs, compute:

- Similarity/distance using multiple representation methods

- Absolute property differences for each property of interest

- Neighborhood Analysis: Apply the neighborhood behavior principle, which posits that molecules with high similarity should have small property differences [10].

- KDE Analysis: Implement kernel density estimation to quantify the probability density of property differences across the similarity space [10].

- Correlation Quantification: Calculate correlation coefficients between similarity values and property differences. Use the KDE area ratio to evaluate how well each similarity measure discriminates between similar and dissimilar property pairs [10].

Validation: The framework should be validated using negative controls (random similarity measures) and positive controls (similarity measures known to correlate with specific properties). Statistical significance testing should accompany all correlation analyses [10].

Protocol 3: AI-Driven Scaffold Hopping

Purpose: To identify structurally diverse compounds with similar biological activity through advanced molecular representations.

Materials:

- Known active compound(s) (reference scaffolds)

- AI-based molecular representation model (e.g., graph neural network, transformer)

- Chemical space exploration toolkit

- Multi-objective optimization framework

Procedure:

- Representation Learning: Employ graph neural networks or transformer architectures to generate molecular embeddings that capture structural and functional characteristics beyond traditional fingerprints [12].

- Similarity Search in Latent Space: Conduct similarity calculations using continuous distance metrics (Euclidean, Cosine) in the learned latent space rather than traditional fingerprint space [12].

- Diversity Constraints: Implement similarity thresholds that balance structural novelty with maintained bioactivity, typically targeting similarity values of 0.4-0.7 to ensure scaffold diversity while preserving activity [12].

- Multi-parameter Optimization: Integrate additional constraints including synthetic accessibility, physicochemical properties, and potential off-target interactions.

- Experimental Validation: Prioritize and synthesize top candidates for biological testing, focusing on compounds that maintain target interaction while introducing novel scaffold architectures [12].

Validation: Validate scaffold hopping success through experimental confirmation of bioactivity and structural characterization of novel scaffolds. Retrospective validation using known scaffold hops can quantify method performance [12].

Visualization of Workflows

Figure 1: Similarity-Based Virtual Screening Workflow

Figure 2: AI-Driven Scaffold Hopping Workflow

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Molecular Similarity Analysis

| Reagent/Tool | Type | Function | Example Sources/Implementations |

|---|---|---|---|

| ChEMBL Database | Bioactivity Database | Provides curated bioactivity data for validation and ground truth mapping [13] [16] | https://www.ebi.ac.uk/chembl/ |

| RDKit | Cheminformatics Toolkit | Open-source platform for molecular representation generation and manipulation | https://www.rdkit.org/ |

| Molecular Fingerprints | Representation Method | Encodes molecular structure as fixed-length vectors for similarity computation [12] [13] | ECFP, Morgan, MACCS implementations in RDKit |

| Tanimoto Coefficient | Similarity Metric | Calculates similarity between binary fingerprint representations [13] | Standard implementation in cheminformatics libraries |

| Kernel Density Estimation | Statistical Tool | Quantifies probability density of property differences across similarity space [10] | SciPy, R statistical environment |

| Graph Neural Networks | AI-Based Representation | Learns continuous molecular embeddings capturing structural and functional features [12] | PyTor Geometric, Deep Graph Library |

| BoltzGen | Generative AI Model | Generates novel protein binders for challenging therapeutic targets [17] | https://github.com/ram-compbio/BoltzGen |

Discussion and Outlook

Molecular similarity analysis continues to evolve rapidly, with several emerging trends shaping its future development and application. The integration of AI-driven representation methods is gradually supplementing traditional fingerprints, particularly for complex tasks including scaffold hopping and de novo molecular design [12]. These approaches demonstrate enhanced capability in capturing subtle structure-function relationships that elude predefined representation schemes.

Critical evaluation of similarity measures remains an active research area. Recent work highlighting the variable correlation between similarity measures and electronic structure properties underscores the importance of selecting representations aligned with specific property domains [10]. This suggests a movement toward context-dependent similarity assessment rather than one-size-fits-all approaches.

The emergence of specialized similarity metrics for specific applications, such as synthetic route comparison [15], indicates maturation of the field toward addressing nuanced challenges in drug development. Similarly, rigorous benchmarking practices are becoming increasingly standardized, incorporating multiple data sources and evaluation metrics to ensure robust performance assessment [16].

Future directions likely include increased emphasis on multimodal representations that integrate structural, pharmacological, and bioactivity data, as well as greater attention to domain adaptation techniques that address challenges when applying models across diverse chemical domains [18]. As generative AI models continue to advance [17], molecular similarity analysis will play an increasingly crucial role in validating and curating generated compounds, ensuring they occupy biologically relevant chemical spaces while introducing appropriate structural novelty.

Molecular similarity analysis forms the cornerstone of modern chemoinformatics, playing a pivotal role in drug discovery, materials science, and chemical risk assessment. The journey from simple topological indices to complex molecular fingerprints represents a paradigm shift in how scientists quantify and exploit molecular structure. This evolution has fundamentally transformed our capacity to navigate chemical space, predict compound properties, and accelerate the development of new therapeutic agents. Where early researchers relied on hand-calculated numerical descriptors derived from molecular graphs, today's scientists employ high-dimensional fingerprint vectors that capture intricate structural patterns through automated computational workflows. This article traces this critical technological transition, providing both historical context and practical protocols that empower researchers to leverage these powerful tools in contemporary molecular similarity analysis.

Historical Foundations: Topological Indices

Topological indices (TIs) are numerical descriptors derived from the graph representation of molecular structure, where atoms correspond to vertices and bonds to edges. Their development provided the first mathematical framework for quantifying structure-property relationships.

The Early Development of Topological Indices

The foundation of chemical graph theory was laid in 1947 when Harry Wiener introduced the Wiener index to estimate paraffin boiling points, marking the birth of topological indices as computable molecular descriptors [19]. This pioneering work demonstrated that molecular topology alone—independent of bond lengths or angles—could correlate with physicochemical properties [19]. The 1970s witnessed significant expansion with Gutman defining the first and second Zagreb indices (M₁ and M₂), which summed the degrees of adjacent vertices and their products, respectively [20]. This period established TIs as legitimate tools for Quantitative Structure-Property Relationship (QSPR) studies.

The development of topological indices has followed a generational progression:

- First-generation: Integer-based descriptors like the Wiener and Zagreb indices

- Second-generation: Real-number descriptors with enhanced discrimination power

- Third-generation: Stereochemical descriptors accounting for three-dimensional features [21]

Throughout the 1980s and 1990s, researchers developed increasingly sophisticated indices including the Randić index (1975), Balaban index (1982), and atom-bond connectivity (ABC) index (1998) [19]. Each new index aimed to reduce structural degeneracy (where different structures yield identical index values) while improving correlation with experimental properties.

Calculation Methodologies for Topological Indices

The computation of topological indices relies on graph theoretical operations applied to hydrogen-suppressed molecular structures. The following protocol outlines the standard methodology:

Protocol 1: Calculating Degree-Based Topological Indices

Molecular Graph Representation

- Represent the molecule as a graph G(V,E) where V represents non-hydrogen atoms and E represents covalent bonds

- For each vertex u ∈ V(G), calculate the degree d_u (number of adjacent vertices)

Edge Partitioning

- Partition the edge set E(G) based on the degrees of incident vertices

- For example, E₁ = {uv ∈ E(G) | du=1, dv=2}, E₂ = {uv ∈ E(G) | du=2, dv=2}, etc.

Index Computation

- Apply the specific mathematical formula for each topological index

- For the first Zagreb index: M₁(G) = Σ{uv∈E(G)} (du + d_v)

- For the second Zagreb index: M₂(G) = Σ{uv∈E(G)} (du · d_v)

- For the Randić index: Rα(G) = Σ{rs∈E(G)} (dr × ds)^α, where α = 1, -1, ½, -½ [19]

Validation

- Verify calculations against known benchmark molecules

- Implement in computational environments like Python for accuracy and reproducibility [19]

Figure 1: Workflow for calculating topological indices from molecular structure.

Contemporary Applications in QSPR

Despite their historical origins, topological indices remain relevant in modern research. Recent studies demonstrate their continued utility in predicting physicochemical properties of bioactive compounds. For polyphenols including ferulic acid and vanillic acid, topological indices show strong predictive correlations with properties like boiling point, molecular weight, and polar surface area [20]. Similar approaches successfully model properties of cancer drugs such as Aminopterin and Daunorubicin, with temperature-based indices yielding statistically significant QSPR models (p-value < 0.05) [22].

Table 1: Selected Topological Indices and Their Applications

| Index Name | Mathematical Formula | Primary Application | Reference |

|---|---|---|---|

| Wiener Index | W(G) = ½Σ_{u,v} d(u,v) | Boiling point prediction | [19] |

| First Zagreb Index | M₁(G) = Σ{uv∈E(G)} (du + d_v) | Molecular complexity | [20] |

| Second Zagreb Index | M₂(G) = Σ{uv∈E(G)} (du · d_v) | Polar surface area | [20] |

| Randić Index | Rα(G) = Σ{rs∈E(G)} (dr × ds)^α | Biological activity | [19] |

| Atom-Bond Connectivity | ABC(G) = Σ{rs∈E(G)} √[(dr + ds - 2)/(dr · d_s)] | Strain energy | [19] |

| Symmetric Division | SDD(G) = Σ{uv∈E(G)} (du/dv + dv/d_u) | Boiling point prediction | [20] |

The Transition to Molecular Fingerprints

The limitations of single numerical descriptors prompted the development of molecular fingerprints—binary or integer vectors that capture structural patterns within molecules.

The Rise of Substructure Fingerprints

Early fingerprint methods encoded the presence of specific structural fragments in molecules using predefined dictionaries. The Molecular ACCess System (MACCS) fingerprints, with 167 structural keys, became one of the most widely used systems [23]. Similarly, PubChem fingerprints encompass 881 predefined substructures, transforming molecular representation into orderly digital sequences [24]. In these systems, each bit position corresponds to a specific chemical substructure; setting bits to 1 or 0 indicates the substructure's presence or absence in the target molecule [24].

This approach significantly advanced virtual screening capabilities by enabling rapid similarity assessment through bitstring comparison. However, these dictionary-based fingerprints suffered from limited coverage of chemical space and inability to recognize novel structural patterns absent from predefined fragment lists.

Circular Fingerprints and the Morgan Algorithm

A fundamental advancement came with the introduction of circular fingerprints, particularly the Morgan fingerprint and its implementation as the Extended-Connectivity Fingerprint (ECFP) [25]. Unlike dictionary-based approaches, ECFP algorithms atom environments around each non-hydrogen atom up to a specified radius, creating identifiers that capture increasingly larger molecular neighborhoods.

Protocol 2: Generating Extended-Connectivity Fingerprints (ECFP)

Atom Initialization

- Assign initial identifiers to each non-hydrogen atom based on atomic number, bond connectivity, and other atomic features

Iterative Neighborhood Updates

- For each iteration (radius increase):

- Update each atom identifier by combining its current identifier with those of its direct neighbors

- Employ a hashing function to manage combinatorial explosion

- For each iteration (radius increase):

Feature Capture

- Collect all atom environment identifiers across all radii (typically r = 0-3)

- Apply folding or feature selection to create fixed-length representation

Final Fingerprint Generation

- Represent the molecule as a bitstring where each bit indicates the presence of specific structural environments

- Common variants include ECFP4 (diameter 4 bonds) and ECFP6 (diameter 6 bonds) [25]

The ECFP approach excelled at identifying structurally similar compounds with shared bioactivity, becoming the gold standard for small molecule virtual screening [25]. However, its local environment focus limited performance for larger biomolecules where global shape characteristics become increasingly important.

Modern Approaches: Hybrid and Specialized Fingerprints

Contemporary fingerprint development has focused on addressing limitations of previous approaches through hybrid methodologies and specialized applications.

The MAP4 Fingerprint: A Universal Molecular Representation

The MinHashed Atom-Pair fingerprint (MAP4) represents a significant recent advancement by combining the strengths of substructure and atom-pair approaches [25]. MAP4 achieves remarkable performance across diverse molecular classes from small drug-like compounds to large peptides, addressing a critical limitation of previous fingerprints specialized for either small or large molecules.

Protocol 3: Creating MAP4 Fingerprints

Circular Substructure Encoding

- For each non-hydrogen atom j, generate canonical SMILES for circular substructures with radii r = 1 and r = 2 bonds using RDKit

- Designate these as CSᵣ(j)

Atom-Pair Shingle Construction

- Calculate topological distance TP_{j,k} between all atom pairs (j,k)

- Create atom-pair shingles: CSᵣ(j) | TP_{j,k} | CSᵣ(k) for each radius

- Place SMILES strings in lexicographical order

Hashing and MinHashing

- Apply SHA-1 hashing to convert shingles to integers

- Use MinHashing to generate fixed-length fingerprint vectors (typically 1024 or 2048 dimensions)

- Employ the locality-sensitive hashing (LSH) technique for efficient similarity search [25]

Figure 2: MAP4 fingerprint generation workflow combining substructure and atom-pair approaches.

3D Structural Interaction Fingerprints

While most fingerprints encode 2D molecular structure, 3D structural interaction fingerprints have emerged to capture the spatial relationships critical to molecular recognition. These approaches encode protein-ligand interactions, significantly enhancing binding affinity prediction and structure-activity relationship analysis [26]. By representing interactions such as hydrogen bonds, hydrophobic contacts, and ionic interactions as bit vectors, these fingerprints enable machine learning models to precisely predict binding modes and differentiate ligand functionalities (e.g., distinguishing agonists from antagonists) [26].

Multi-Modal Fingerprint Integration

The Multi Fingerprint and Graph Embedding model (MultiFG) exemplifies the cutting edge of fingerprint technology by integrating multiple fingerprint types with graph-based embeddings [23]. This approach combines MACCS (structural), Morgan (circular), RDKIT (topological), and ErG (2D pharmacophore) fingerprints with molecular graph embeddings, then processes these diverse representations through attention-enhanced convolutional networks and Kolmogorov-Arnold Networks (KAN) [23].

In side effect prediction tasks, MultiFG achieved an AUC of 0.929, significantly outperforming previous state-of-the-art models, while demonstrating strong generalization to novel drugs [23]. This multi-modal strategy overcomes limitations of individual fingerprint types by capturing complementary aspects of molecular structure.

Table 2: Performance Comparison of Modern Fingerprint Approaches

| Fingerprint Type | Molecular Scope | Key Advantages | Benchmark Performance |

|---|---|---|---|

| ECFP4/ECFP6 | Small molecules | Excellent for virtual screening, target prediction | Best in class for small molecules [25] |

| Atom-Pair | Large molecules, peptides | Global shape perception, scaffold hopping | Superior for biomolecules [25] |

| MAP4 | Small molecules, biomolecules, metabolome | Universal application, balanced performance | Outperforms others on combined benchmark [25] |

| 3D Interaction | Protein-ligand complexes | Captures spatial interactions, binding modes | Differentiates agonists/antagonists [26] |

| MultiFG (Multi-modal) | Drug side effect prediction | Integrates multiple representations, interpretable | AUC 0.929 for side effect prediction [23] |

Table 3: Key Research Reagents and Computational Tools

| Resource Name | Type | Function | Application Context |

|---|---|---|---|

| RDKit | Open-source cheminformatics toolkit | Fingerprint calculation, molecular manipulation | General-purpose molecular informatics [25] |

| PubChem Fingerprints | Dictionary-based fingerprints | 881 structural keys for molecular representation | Rapid similarity screening [24] |

| MAP4 | MinHashed atom-pair fingerprint | Unified representation for diverse molecular classes | Cross-domain similarity search [25] |

| Topological Index Calculator | Python-based computational tools | Compute Zagreb, Randić, Wiener indices | QSPR modeling and prediction [19] |

| Structural Interaction Fingerprints | 3D protein-ligand analysis | Encode binding site interactions | Structure-based drug design [26] |

| MultiFG Framework | Multi-modal fingerprint integration | Combine fingerprint types with graph embeddings | Enhanced predictive performance [23] |

The evolution from simple topological indices to sophisticated multi-modal fingerprints represents a remarkable journey of increasing abstraction and computational power. Where topological indices provided the foundational insight that molecular topology encodes physicochemical behavior, modern fingerprints exploit this principle through high-dimensional representations that capture increasingly nuanced structural patterns. This progression has fundamentally expanded our capacity to navigate chemical space, from early boiling point predictions to contemporary applications in drug side effect forecasting and protein-ligand interaction modeling. As molecular representation continues to evolve, integrating these complementary approaches—leveraging both the interpretability of topological indices and the predictive power of modern fingerprints—will remain essential for addressing complex challenges in drug discovery and molecular design.

Key Applications in Drug Discovery and Chemical Space Exploration

Molecular similarity analysis serves as a foundational principle in modern drug discovery, operating on the concept that structurally similar molecules often exhibit similar biological activities [7] [5]. This principle enables researchers to navigate the vast chemical space—the theoretical universe of all possible organic molecules—efficiently to identify promising therapeutic candidates [27]. The evolution from simple structural comparisons to advanced artificial intelligence (AI)-driven representations has significantly expanded the applications of molecular similarity in scaffold hopping, target prediction, and virtual screening [12]. These methods have become indispensable tools for reducing the immense costs and timelines associated with traditional drug development, which typically exceeds $2.3 billion and 10-15 years per approved drug [28]. This application note details key protocols and methodologies leveraging molecular similarity to address critical challenges in drug discovery, providing researchers with practical frameworks for implementation.

Key Application Areas and Methodologies

Molecular similarity approaches can be broadly categorized based on their underlying representations and methodologies. The table below summarizes the primary approaches, their key characteristics, and representative applications.

Table 1: Overview of Molecular Similarity Approaches in Drug Discovery

| Approach Category | Key Characteristics | Representative Methods | Primary Applications |

|---|---|---|---|

| 2D Fingerprint-Based | Encodes 2D structural patterns as binary vectors; fast and computationally efficient | ECFP, FCFP, MACCS, Atom Pairs [12] [29] | Virtual screening, similarity searching, clustering |

| 3D Shape-Based | Captures molecular volume and steric properties; enables scaffold hopping | USR (Ultrafast Shape Recognition), ROCS [5] | Scaffold hopping, binding mode prediction |

| AI-Driven Representations | Learns continuous molecular features from data using deep learning | Graph Neural Networks, Transformers, VAEs [12] | De novo molecular design, property prediction |

| Ligand-Based Target Prediction | Infers targets based on similarity to known active compounds | MolTarPred, Similarity Ensemble Approach [13] [28] | Polypharmacology profiling, drug repurposing |

| Clinical Property-Based | Utilizes phenotypic effects (side effects, indications) | Jaccard similarity on clinical profiles [30] | Drug repurposing, safety profiling |

Quantitative Comparison of Similarity Methods

The performance of similarity methods varies significantly across different applications. The following table summarizes quantitative comparisons from benchmark studies, providing guidance for method selection.

Table 2: Performance Comparison of Molecular Similarity and Prediction Methods

| Method/Approach | Similarity Metric/Algorithm | Reported Performance | Application Context |

|---|---|---|---|

| Molecular Fingerprints | Tanimoto coefficient [7] | Standard for structural similarity | General similarity screening |

| Clinical Profile Similarity | Jaccard similarity [30] | Superior performance for side effect/indication based prediction | Drug repurposing |

| USR (Shape Similarity) | Inverse Manhattan distance [5] | 1,546-14,238× faster than other 3D methods; successful prospective applications | Virtual screening, scaffold hopping |

| MolTarPred | 2D similarity with Morgan fingerprints [13] | Most effective target prediction method in benchmark | Target identification |

| AI-Based Representation | Graph Neural Networks [12] | Superior capture of structure-function relationships beyond predefined rules | Molecular property prediction |

Application Note 1: Scaffold Hopping via Molecular Similarity

Background and Principles

Scaffold hopping represents a crucial application of molecular similarity in lead optimization, aimed at identifying novel core structures (scaffolds) while maintaining desired biological activity [12]. This approach is particularly valuable for overcoming intellectual property limitations, improving pharmacokinetic properties, or reducing toxicity associated with existing lead compounds [12]. Traditional methods relied on molecular fingerprinting and structural similarity searches, but modern AI-driven approaches using graph neural networks and variational autoencoders have dramatically expanded the ability to explore diverse chemical spaces and generate novel scaffolds absent from existing chemical libraries [12].

Experimental Protocol: Shape-Based Scaffold Hopping

Principle: This protocol utilizes 3D shape similarity to identify structurally diverse compounds with similar biological activity by matching molecular volume and steric properties rather than specific atomic connectivity [5].

Materials and Reagents:

- Query compound with known biological activity

- Database of 3D compound structures (e.g., ZINC, ChEMBL)

- Computational software: RDKit (open-source) or USR-VS web server

Procedure:

- Query Preparation:

- Generate a low-energy 3D conformation of the query molecule using conformation generation software

- Ensure proper protonation states for physiological conditions (pH 7.4)

Shape Descriptor Calculation:

- Compute shape descriptors for the query molecule. For USR, this involves: a. Calculate molecular centroid (ctd) b. Identify closest atom to centroid (cst) c. Identify farthest atom from centroid (fct) d. Identify farthest atom from fct (ftf) e. For each reference point, compute the first three statistical moments (mean, variance, skewness) of the distance distribution to all other atoms [5]

Database Screening:

- Calculate identical shape descriptors for all compounds in the screening database

- Compute similarity scores using the inverse Manhattan distance between query and database compound descriptors: S = 1 / (1 + 1/12 × Σ|M_query - M_database|) [5]

- Rank database compounds by similarity score

Hit Analysis:

- Select top-ranking compounds (typically top 1-5%) for visual inspection

- Verify chemical diversity of selected hits compared to query scaffold

- Progress selected hits to experimental validation

Troubleshooting:

- Low chemical diversity in hits: Adjust similarity threshold or incorporate additional chemical filters

- Computational time concerns: Utilize pre-computed shape descriptors or hardware acceleration [5]

Application Note 2: Target Prediction via Ligand Similarity

Background and Principles

Target prediction using ligand similarity operates on the principle that compounds with similar structures often bind to similar biological targets [13] [28]. This approach has become fundamental for understanding polypharmacology, identifying off-target effects, and drug repurposing [13]. The method leverages large-scale bioactivity databases (e.g., ChEMBL, BindingDB) containing experimentally validated compound-target interactions [13]. When a query molecule shows high similarity to compounds with known targets, it can be inferred to interact with the same targets, enabling the generation of testable hypotheses for new therapeutic applications [13].

Experimental Protocol: Similarity-Based Target Fishing

Principle: This protocol identifies potential biological targets for a query compound by comparing its structural features to databases of compounds with annotated targets [13].

Materials and Reagents:

- Query compound (SMILES representation)

- Bioactivity database (ChEMBL recommended)

- Computational tools: MolTarPred or similar target prediction software

- Morgan fingerprints (radius 2, 2048 bits)

Procedure:

- Database Preparation:

- Download and preprocess ChEMBL database (version 34 or newer)

- Filter bioactivity data to include only high-confidence interactions (confidence score ≥ 7)

- Retain records with standard values (IC50, Ki, EC50) below 10,000 nM

- Remove duplicate compound-target pairs and non-specific protein targets [13]

Fingerprint Generation:

- Generate Morgan fingerprints for the query compound (radius 2, 2048 bits)

- Utilize precomputed fingerprints for database compounds or generate anew

Similarity Calculation and Target Prediction:

- Calculate Tanimoto similarity between query fingerprint and all database compounds

- Identify top similar compounds (typically 10-15 closest neighbors)

- Extract targets associated with similar compounds

- Rank targets by similarity scores of their associated ligands

Result Interpretation:

- Apply confidence threshold (similarity score > 0.5 typically meaningful)

- Consider target families with multiple hits as higher confidence predictions

- Generate mechanistic hypotheses for top-ranked targets

Validation:

- Experimental confirmation through binding assays or functional testing

- Cross-reference with expression data for physiological relevance

- Case study: Fenofibric acid successfully repurposed as THRB modulator for thyroid cancer [13]

Table 3: Key Research Reagents and Computational Tools for Molecular Similarity Analysis

| Resource Type | Specific Examples | Key Functionality | Access Information |

|---|---|---|---|

| Bioactivity Databases | ChEMBL, BindingDB, PubChem [13] [27] | Source of annotated compound-target interactions | Publicly available |

| Cheminformatics Toolkits | RDKit, OpenBabel | Molecular fingerprint generation, similarity calculation | Open-source |

| Target Prediction Tools | MolTarPred, PPB2, SuperPred [13] | Ligand-based target identification | Standalone codes and web servers |

| Similarity Visualization | Similarity Maps [29] | Visualize atomic contributions to similarity | RDKit implementation |

| 3D Shape Similarity | USR-VS, ROCS [5] | Alignment-free shape comparison | Web servers and commercial |

| Specialized Compound Libraries | Dark Chemical Matter, InertDB [27] | Negative data for model training | Publicly available |

Advanced Applications and Emerging Directions

Visualization of Similarity Relationships

Understanding the atomic contributions to molecular similarity is crucial for rational drug design. Similarity maps provide a visualization strategy that colors atoms based on their contribution to the overall similarity between two molecules or to a machine learning model's prediction [29]. The methodology works by systematically removing bits associated with each atom from the molecular fingerprint and recalculating the similarity or predicted probability [29]. Atoms are then colored based on the resulting difference - green indicates positive contributions (similarity decreases when the atom is removed), pink indicates negative contributions, and gray represents no change [29]. This approach has been successfully applied to various fingerprint types including atom-pair fingerprints and circular fingerprints like ECFP4 (Morgan2) and FCFP4 (FeatMorgan2) [29].

Integration with Crystal Structure Prediction

Emerging methodologies are integrating molecular similarity with crystal structure prediction (CSP) for materials discovery applications, particularly for organic molecular semiconductors [31]. This approach uses evolutionary algorithms that incorporate CSP into the fitness evaluation of candidate molecules, allowing optimization based on predicted materials properties rather than molecular properties alone [31]. While computationally intensive, reduced sampling schemes (e.g., focusing on the most frequent space groups) have made this approach feasible, demonstrating that crystal structure-aware searching outperforms molecular property-based optimization for identifying molecules with high electron mobilities [31].

Machine Learning Integration

Modern molecular similarity approaches increasingly incorporate machine learning to capture complex, nonlinear relationships between chemical structure and biological activity [12] [28]. Methods such as graph neural networks directly learn molecular representations from data, capturing both local and global molecular features more effectively than predefined representations [12]. These AI-driven representations have shown particular promise in scaffold hopping and de novo molecular design, enabling exploration of chemical spaces beyond those covered by existing compound libraries [12].

The principle that structurally similar molecules are likely to exhibit similar properties is a cornerstone of modern chemical research and drug development [32]. This principle, often expressed as Property = f(Structure), underpins efforts to predict chemical behavior, bioactivity, and environmental fate without exhaustive experimental testing. However, the practical application of this principle is fraught with a fundamental challenge: the quantification of "similarity" is inherently subjective [32]. The choice of how to represent a molecular structure (the function g) and how to relate that representation to a property (the function f) is not unique and can dramatically alter the outcome of a similarity assessment [32]. This subjectivity directly impacts the reliability of predictions in critical areas such as virtual screening, chemical hazard assessment, and the development of Quantitative Structure-Property Relationship (QSPR) models.

This application note explores the sources and implications of this subjectivity. We present quantitative data comparing different predictive methodologies, detailed protocols for evaluating similarity measures, and visualization tools to aid researchers in navigating the complex landscape of molecular similarity analysis.

Quantitative Benchmarking of Predictive Models

The uncertainty inherent in structure-based predictions is evident when comparing outputs from different QSPR software packages. Evaluations on datasets of key physicochemical properties—such as octanol-water (KOW), octanol-air (KOA), and air-water (KAW) partition ratios—reveal significant variations in prediction accuracy and uncertainty metrics [33].

Table 1: Comparison of QSPR Model Performance for Partition Ratio Predictions

| QSPR Software | Reported Uncertainty Metric | Performance on External Data | Factor Increase Needed to Capture 90% of Data |

|---|---|---|---|

| IFSQSAR | 95% Prediction Interval (PI95) from RMSEP | Captures ~90% of external experimental data | 1 (Baseline) |

| OPERA | Expected Prediction Range | Captures significantly less than 90% of data | At least 4 |

| EPI Suite | No explicit uncertainty in output; documentation lists uncertain structures | Captures significantly less than 90% of data | At least 2 |

Furthermore, the performance of similarity-based methods is not always superior to simplistic approaches. In some virtual screening applications, sophisticated fingerprint methods have been shown to perform no better than simple "dumb" descriptors, such as atom counts by element, which contain no structural information [32]. This finding challenges the assumption that more complex molecular representations necessarily lead to more chemically meaningful similarity rankings.

Experimental Protocols for Evaluating Similarity Subjectivity

Protocol: Assessing the Robustness of Chemical Language Models (ChemLMs) with AMORE

Purpose: To evaluate whether a ChemLM recognizes different textual representations (e.g., SMILES) of the same molecule as equivalent, thereby probing its understanding of chemical structure versus mere textual patterns [34].

Principle: The AMORE (Augmented Molecular Retrieval) framework tests the hypothesis that a model's internal embedding for different valid representations of the same molecule should be similar. A model that fails this test is likely learning superficial text features rather than foundational chemical principles [34].

Procedure:

- Dataset Preparation: Compile a set of original molecular SMILES strings, denoted as X = x1, x2, ..., xn.

- SMILES Augmentation: For each molecule in X, generate a set of augmented SMILES strings, X' = x'1, x'2, ..., x'n, using identity-preserving transformations. These can include:

- Randomizing the atom order (starting atom).

- Using different numbering for rings.

- Varying the representation of aromaticity.

- Explicitly adding or removing hydrogen atoms.

- Embedding Generation: Use the ChemLM under evaluation to encode each original and augmented SMILES string into a fixed-dimensional vector embedding. Let e(xi) represent the embedding of the original SMILES and e(x'j) represent the embedding of an augmented SMILES.

- Distance Calculation: For each original molecule xi, calculate the distance (e.g., Euclidean or cosine distance) between its embedding e(xi) and the embedding of its augmented version e(x'i).

- Nearest-Neighbor Analysis: For each original embedding e(xi), rank all augmented embeddings e(x'1), e(x'2), ..., e(x'n) by their distance. A chemically robust model should rank e(x'i) (the augmentation of the same molecule) as the nearest neighbor.

- Metric Calculation: Calculate the percentage of molecules for which the nearest augmented embedding is the correct one (i.e., x'i is ranked first for xi). A low percentage indicates poor robustness to SMILES variations.

Diagram 1: Workflow for the AMORE Robustness Evaluation Protocol.

Protocol: Consensus Prediction and Applicability Domain Analysis for QSPRs

Purpose: To manage prediction uncertainty and identify reliable vs. unreliable predictions when using multiple QSPR models for chemical assessments [33].

Principle: No single QSPR model is universally reliable. A consensus approach, coupled with a defined Applicability Domain (AD), helps recognize and evaluate uncertainty. The AD is "the response and chemical structure space in which the model makes predictions with a given reliability" [33].

Procedure:

- Model Selection: Select multiple QSPR models (e.g., IFSQSAR, OPERA, EPI Suite) that predict the target property.

- Prediction Execution: Run the chemical structure of interest through all selected models to collect predictions and any available uncertainty metrics (e.g., prediction intervals, reliability scores).

- Applicability Domain Check: For each model, assess if the query chemical falls within its AD. Common AD checks include:

- Chemical Similarity: Calculate the similarity (e.g., Tanimoto coefficient using ECFP6 fingerprints) between the query and the model's training set molecules. A low maximum similarity indicates an out-of-AD prediction.

- Leverage/Extrapolation: Determine if the query's descriptor values fall within the multivariate space of the training set.

- Range Check: Verify that the predicted property value is within a theoretically plausible range.

- Consensus Analysis: Compare the predictions from all models. A high degree of agreement among models that include the chemical in their AD increases confidence in the consensus value. Significant disagreement flags high uncertainty.

- Uncertainty Integration: For the final predicted value, report a consensus (e.g., median) along with a measure of dispersion (e.g., range or standard deviation) from the models that passed the AD check. Models with quantified uncertainty (e.g., IFSQSAR's PI95) should be weighted more heavily.

Diagram 2: Workflow for Consensus Prediction and Applicability Domain Analysis.

The Scientist's Toolkit: Key Reagents and Computational Solutions

Table 2: Essential Research Reagents and Computational Tools

| Item / Reagent | Function / Purpose | Relevance to Similarity Analysis |

|---|---|---|

| Extended Connectivity Fingerprints (ECFP6) | Topological molecular descriptor capturing circular atom neighborhoods. | Provides a standardized, high-resolution representation of molecular structure for similarity calculations (Tanimoto coefficient) and clustering [35]. |

| SMILES Strings | Text-based representation of molecular structure. | Serves as the primary input for many ChemLMs and QSPRs. Its non-uniqueness is a key source of subjectivity, necessitating robustness testing [34]. |

| Tanimoto Coefficient (Tc) | Similarity metric calculating the proportion of shared features between two molecular fingerprints. | The most widely used metric for quantifying 2D molecular similarity. A Tc of 1.0 indicates identical fingerprints, while 0.0 indicates no similarity [35]. |

| Applicability Domain (AD) Metrics | A set of rules and boundaries defining a model's reliable prediction space. | Critical for identifying when a model is extrapolating and its predictions become unreliable, thus managing subjectivity and uncertainty [33]. |

| Graph Neural Networks (GNNs) | Machine learning models that operate directly on graph representations of molecules (atoms=nodes, bonds=edges). | Enhances atomic structure representation by learning from connectivity and can be designed to be equivariant (equivGNN), improving accuracy for complex motifs [36]. |

The quantification of chemical similarity is an indispensable yet intrinsically subjective process. The reliability of predictions in drug discovery and chemical safety assessment hinges on acknowledging and actively managing this subjectivity. As demonstrated, performance varies significantly among QSPR models, and even advanced ChemLMs can be fragile when faced with different textual representations of the same molecule. By adopting rigorous evaluation protocols like AMORE, implementing consensus approaches with well-defined Applicability Domains, and leveraging enhanced representation methods like equivariant GNNs, researchers can navigate these challenges more effectively. A transparent and critical approach to molecular similarity analysis is fundamental to generating reliable, actionable data.

A Practical Guide to Molecular Similarity Methods and Their Real-World Applications

Molecular similarity analysis is a cornerstone of modern cheminformatics and drug discovery, underpinning the Similar Property Principle which states that structurally similar molecules are likely to exhibit similar properties [37]. At the heart of computational similarity assessment lie 2D molecular fingerprints, fixed-length vector representations that encode chemical structure information [3]. These descriptors enable rapid comparison of chemical structures across vast compound libraries, facilitating critical discovery workflows including virtual screening, hit identification, and structure-activity relationship (SAR) analysis [38].

This application note focuses on three foundational fingerprint methodologies: Extended Connectivity Fingerprints (ECFP), MACCS structural keys, and path-based structural keys. Each algorithm embodies a different philosophy for structural representation, leading to distinct performance characteristics in screening scenarios [3] [39]. We provide a quantitative comparison of their properties, detailed protocols for their implementation, and data-driven recommendations for their application in rapid screening environments.

Algorithmic Foundations and Key Differentiators

ECFP (Extended Connectivity Fingerprint) are circular fingerprints that dynamically generate atom-centered substructural features through an iterative process [40]. They capture radial atom environments up to a specified diameter (typically 2, 4, or 6 bonds) and hash these features into a fixed-length bit string [40]. ECFP is not based on predefined structural patterns, making it highly adaptable to novel chemotypes [40].

MACCS Keys are a prime example of structural key fingerprints that utilize a predefined dictionary of 166 structural fragments [39]. Each bit in the MACCS fingerprint corresponds to a specific chemical substructure (e.g., "presence of a carbonyl group" or "aromatic ring count"), providing easily interpretable structural information [39].

Path-based Hashed Fingerprints (exemplified by Daylight-like fingerprints) enumerate all linear paths through the molecular graph up to a predetermined length (typically 5-7 bonds) [3]. These paths are then hashed into a fixed-length bit string, providing a comprehensive representation of molecular connectivity [3].

Table 1: Comparative Characteristics of Major 2D Fingerprint Types

| Feature | ECFP | MACCS Keys | Path-Based Hashed |

|---|---|---|---|

| Algorithm Type | Circular/topological | Structural keys | Path-based/hashed |

| Representation | Atom environments | Predefined fragments | Linear paths |

| Bit Length | Configurable (typically 1024-16384) | Fixed (166 public keys) | Configurable (typically 512-2048) |

| Interpretability | Low (hashed features) | High (defined fragments) | Medium (hashed paths) |

| Substructure Search | Not suitable | Suitable (pre-screening) | Suitable (pre-screening) |

| Optimal Application | Similarity searching, ML | Rapid screening, SAR | General purpose similarity |

Performance Benchmarking in Screening Applications

Comprehensive benchmarking studies provide critical insights into fingerprint performance across different screening scenarios. A large-scale evaluation of 28 different fingerprints revealed that ECFP4 and ECFP6 are among the best performers for ranking diverse structures by similarity, while atom pair fingerprints (a topological descriptor) showed superior performance when ranking very close analogs [37].

Notably, ECFP performance in virtual screening significantly improves when the bit-vector length increases from 1,024 to 16,384, reducing bit collisions and increasing resolution [37]. This enhancement comes at the cost of increased computational resources, highlighting the practical trade-offs in method selection.

For natural products, which present unique challenges due to their complex stereochemistry and high sp³-carbon content, recent evidence suggests that while ECFP remains a strong performer, other fingerprints may match or exceed its performance for specific bioactivity prediction tasks [41]. This underscores the importance of context-dependent fingerprint selection.

Table 2: Experimental Performance Benchmarks Across Fingerprint Types

| Fingerprint | Virtual Screening (Mean AUC) | Close Analog Ranking | Scaffold Hopping Potential |

|---|---|---|---|

| ECFP4 | 0.78 [37] | Medium | Medium |

| ECFP6 | 0.79 [37] | Medium | High |

| MACCS | 0.72 [37] | Low | Low |

| Topological Torsion | 0.77 [37] | High | Medium |

| Atom Pairs | 0.75 [37] | High [37] | Medium |

Experimental Protocols

Fingerprint Generation Workflows

Protocol 1: ECFP Implementation for Virtual Screening

Purpose: To generate high-resolution ECFP fingerprints optimized for ligand-based virtual screening.

Materials:

- RDKit or Chemaxon JChem cheminformatics toolkit

- Chemical structures in SMILES, SDF, or other standard formats

- Computational resources capable of processing target library size

Procedure:

- Structure Standardization:

- Remove salts, neutralize charges, and generate canonical tautomers

- Generate stereochemically aware molecular graphs

- Verify molecular validity and atom typing

ECFP Generation Parameters:

- Set fingerprint diameter to 4 (ECFP4) for balanced performance or 6 (ECFP6) for increased specificity [37]

- Configure bit vector length to 16,384 for optimal virtual screening performance [37]

- Enable count simulation for machine learning applications (ECFC variant)

- Include stereochemical information when relevant to target

Similarity Calculation:

Validation:

- Benchmark against known active/inactive datasets

- Calculate enrichment factors and ROC curves

- Verify chemical diversity of retrieved hits

Protocol 2: MACCS Keys for Rapid SAR Analysis

Purpose: To utilize MACCS keys for efficient structure-activity relationship analysis and compound clustering.

Materials:

- MDL MACCS 166-key implementation (available in RDKit, OpenBabel, CDK)

- Curated dataset with biological activity data

- Clustering and visualization tools

Procedure:

- Fingerprint Generation:

- Generate 166-bit MACCS keys for all compounds in dataset

- Verify fragment detection against known structural features

- Export binary fingerprint matrix for analysis

Similarity Analysis:

- Calculate pairwise Tanimoto similarities across compound set

- Identify nearest neighbors for query compounds

- Generate similarity maps and chemical space distributions

SAR Interpretation:

- Correlate specific key occurrences with biological activity

- Identify key fragments associated with potency changes

- Detect activity cliffs where small structural changes cause large potency shifts [3]

Clustering Application:

- Apply hierarchical clustering or Jarvis-Patrick algorithm

- Select cluster representatives for screening prioritization

- Visualize chemical space coverage using PCA or t-SNE

Protocol 3: Performance Validation Benchmarking

Purpose: To quantitatively evaluate fingerprint performance for specific screening applications.

Materials:

- Curated benchmark datasets (e.g., ChEMBL bioactivity data) [37]

- Multiple fingerprint implementations

- Statistical analysis environment

Procedure:

- Dataset Curation:

- Select reference molecules with known activities

- Create increasingly diverse analog series [37]

- Include decoy compounds for virtual screening validation

Benchmarking Protocol:

- Generate all fingerprint types for complete dataset

- Calculate similarity matrices for each method

- Perform similarity searching with multiple query compounds

Performance Metrics:

- Calculate enrichment factors (EF₁%, EF₅%)

- Generate ROC curves and calculate AUC values

- Assess early recovery rates (RIE, BEDROC)

- Statistical significance testing via paired t-tests

Contextual Interpretation:

- Evaluate performance differences for close analogs vs. diverse compounds

- Assess scaffold hopping capability

- Document computational efficiency and scalability

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Implementation Examples |

|---|---|---|---|

| RDKit | Open-source cheminformatics | Fingerprint generation, similarity calculation, SAR analysis | ECFP, MACCS, Atom Pair generation [37] |

| ChEMBL Database | Bioactivity database | Benchmark datasets, known active compounds, validation sets | Literature-derived activity data [37] |

| Tanimoto Coefficient | Similarity metric | Quantitative similarity comparison between fingerprints | T = c/(a+b-c) for binary fingerprints [3] |

| GenerateMD (Chemaxon) | Commercial descriptor generation | Production-scale fingerprint generation, database integration | ECFP, chemical fingerprint generation [43] |

| Python Scikit-learn | Machine learning library | Performance metrics, clustering, dimensionality reduction | ROC-AUC, t-SNE visualization [41] |

Application Guidelines and Decision Framework

Fingerprint Selection Strategy

Choose ECFP when:

- Screening diverse chemical libraries for virtual screening [37]

- Maximum performance in ligand-based virtual screening is critical

- Machine learning applications require high-resolution descriptors [44]

- Novel chemotypes outside predefined fragment libraries are encountered

Choose MACCS Keys when:

- Rapid preliminary screening of large compound libraries is needed

- Interpretability of structural features is important for SAR [39]

- Computational resources are limited (166-bit vectors are efficient)

- Baseline performance comparison is required

Choose Path-Based Hashed Fingerprints when:

- General-purpose similarity searching is required

- Balance between performance and interpretability is needed

- Daylight fingerprint compatibility is desired

Performance Optimization Recommendations

- ECFP Resolution: Increase bit-vector length from 1,024 to 16,384 for significant virtual screening performance improvement [37]

- Diameter Selection: Use ECFP4 (diameter 4) for most applications; ECFP6 (diameter 6) when finer granularity is required [40]

- Similarity Metrics: Employ Tanimoto for binary fingerprints; alternate metrics (Dice, Cosine) may provide complementary performance [3]

- Composite Approaches: Consider consensus methods combining multiple fingerprint types for challenging targets [44]

ECFP, MACCS keys, and path-based structural keys each occupy distinct niches in the molecular screening toolkit. ECFP fingerprints generally provide superior performance in virtual screening applications, particularly when configured with extended bit lengths and appropriate diameter parameters. MACCS keys offer exceptional efficiency and interpretability for rapid screening and SAR analysis. Path-based hashed fingerprints deliver balanced performance for general similarity searching.

Informed fingerprint selection requires consideration of specific screening goals, chemical space characteristics, and computational constraints. The protocols and benchmarks provided herein enable researchers to implement these critical cheminformatics tools with confidence, accelerating compound discovery and optimization through robust molecular similarity analysis.

Scaffold hopping, a central strategy in modern medicinal chemistry, aims to identify novel molecular backbones that retain the biological activity of a known active compound [45]. This approach is critical for overcoming limitations of existing lead compounds, such as poor pharmacokinetic properties, toxicity, or intellectual property constraints [46] [12]. The underlying principle challenges the simplistic interpretation of the similarity-property principle by demonstrating that structurally diverse compounds can bind the same biological target if they share key molecular interaction capabilities [45].

Three-dimensional (3D) shape and pharmacophore methods have emerged as powerful computational tools enabling successful scaffold hopping. These techniques operate on the premise that a protein binding pocket recognizes specific 3D arrangements of functional features and complementary molecular shape, rather than specific two-dimensional (2D) chemical graphs [47] [48]. By focusing on these 3D characteristics, researchers can identify or design novel chemotypes that maintain the essential biological activity while exploring uncharted chemical space. This application note details the core methodologies, provides practical protocols, and presents performance data for these transformative technologies.

Core Methodologies and Definitions

Key Concepts

- Scaffold Hopping: The process of discovering structurally novel compounds by modifying the central core structure (scaffold) of a known active molecule while preserving its biological activity [45] [46]. Successful scaffold hops are characterized by significant structural novelty combined with conserved biological function.