Molecular Docking for Virtual Screening: A Comprehensive Guide to Protocols, AI Advances, and Best Practices in Drug Discovery

This article provides a comprehensive guide to molecular docking protocols for virtual screening, tailored for researchers and drug development professionals.

Molecular Docking for Virtual Screening: A Comprehensive Guide to Protocols, AI Advances, and Best Practices in Drug Discovery

Abstract

This article provides a comprehensive guide to molecular docking protocols for virtual screening, tailored for researchers and drug development professionals. It covers the foundational principles of docking, including key components like conformational search algorithms and scoring functions. The guide details step-by-step methodologies for setting up automated screening pipelines, from compound library preparation to results ranking. It addresses common challenges and offers optimization strategies, including the integration of artificial intelligence and receptor flexibility. Finally, it presents a critical evaluation of current docking tools, comparing traditional and deep learning-based methods across multiple performance metrics to ensure biologically relevant and reproducible results in lead discovery and drug repurposing.

Understanding Molecular Docking: Core Concepts and Components for Virtual Screening

Defining Molecular Docking and Its Role in Modern Drug Discovery

Molecular docking is a computational technique that predicts the preferred orientation and binding conformation of a small molecule (ligand) when bound to a target protein or receptor. By simulating this molecular interaction, docking aims to predict the stability of the resulting complex and estimate the binding affinity, which is crucial for understanding biological function and accelerating drug discovery [1].

The efficacy of a drug is often dependent on specific interactions with its protein target. Effective drug-target interaction requires close proximity and appropriate orientation, allowing key molecular surface regions to fit precisely and form a stable complex conformation to exert the expected biological effect [2]. Molecular docking computationally simulates this process to find the stable complex conformation and quantitatively evaluate the binding affinity through scoring functions [2].

# Key Concepts and Classifications of Docking Methods

Molecular docking methodologies have evolved significantly from rigid body approaches to sophisticated algorithms accounting for molecular flexibility. The table below summarizes the primary classifications.

Table 1: Classifications of Molecular Docking Approaches

| Classification Basis | Type | Key Characteristic | Implication |

|---|---|---|---|

| System Flexibility [1] | Rigid Docking | Treats both ligand and protein as rigid structures. | Low computational cost but may miss key interactions due to flexibility. |

| Flexible Docking | Accounts for conformational flexibility of the ligand, and sometimes the receptor. | More accurate representation of binding but demands significantly more computational power and time. | |

| Computational Approach [2] | Traditional Physics-Based (e.g., Glide, AutoDock Vina) | Relies on empirical rules, heuristic search algorithms, and physics-based scoring functions. | Can be computationally intensive and sometimes limited by the precision of the scoring function. |

| Deep Learning (DL) Regression-Based | Uses DL models to directly predict binding conformations and energies from input data. | High speed but often fails to produce physically valid poses [2]. | |

| Deep Learning Generative Models (e.g., Diffusion Models) | Generates binding poses through a generative process, like diffusion. | Excels in pose accuracy but can have high steric tolerance, leading to physical implausibilities [2]. | |

| Hybrid Methods (e.g., AI scoring with traditional search) | Integrates traditional conformational searches with AI-driven scoring functions. | Often provides the best balance between accuracy and physical validity [2]. |

# Quantitative Performance of Docking Methods

The performance of docking tools is typically benchmarked across several dimensions, including pose prediction accuracy, physical plausibility, and utility in virtual screening. A comprehensive 2025 study evaluated various methods across three benchmark datasets: the Astex diverse set (known complexes), the PoseBusters benchmark set (unseen complexes), and the DockGen dataset (novel protein binding pockets) [2]. The results reveal a clear performance stratification.

Table 2: Docking Performance Across Benchmark Datasets (Success Rates %)

| Docking Method | Category | Astex Diverse Set | PoseBusters Benchmark | DockGen (Novel Pockets) | |||

|---|---|---|---|---|---|---|---|

| RMSD ≤2Å | PB-Valid | RMSD ≤2Å | PB-Valid | RMSD ≤2Å | PB-Valid | ||

| Glide SP | Traditional | 81.18 | 97.65 | 68.22 | 97.20 | 52.63 | 94.74 |

| SurfDock | Generative DL | 91.76 | 63.53 | 77.34 | 45.79 | 75.66 | 40.21 |

| DiffBindFR (SMINA) | Generative DL | 75.30 | 58.93 | 47.66 | 46.73 | 35.98 | 45.50 |

| Interformer | Hybrid | 82.35 | 89.41 | 59.81 | 85.98 | 49.12 | 82.46 |

| KarmaDock | Regression DL | 51.76 | 50.00 | 31.78 | 40.19 | 21.05 | 42.11 |

Table Notes: RMSD ≤2Å represents the percentage of predictions with a root-mean-square deviation ≤ 2 Å, indicating high pose accuracy. PB-Valid is the percentage of predictions deemed physically plausible by the PoseBusters toolkit, checking for chemical and geometric consistency [2]. The combined success rate (RMSD ≤2Å & PB-Valid) highlights a key trade-off; for example, while SurfDock has superior pose accuracy, Glide SP and hybrid methods like Interformer consistently achieve better physical validity and a more balanced overall performance [2].

# A Protocol for Structure-Based Virtual Screening

The following workflow outlines a generalized protocol for a large-scale docking screen, synthesizing best practices for hit identification [3].

Target and Library Preparation

- Protein Structure Preparation: Begin with a high-resolution 3D structure of the target protein, typically from the Protein Data Bank (PDB) [3]. The quality of the receptor structure significantly influences docking calculations, with better results often observed from higher-resolution crystal structures [1]. Protonation states and missing residues should be addressed.

- Compound Library Curation: Select a screening library, such as the publicly available ZINC database of commercially available compounds [3]. For benchmarking, use a directory of useful decoys (DUD), which includes decoy molecules physically similar but topologically distinct from known ligands, providing a more stringent test by reducing enrichment factor bias [4].

Docking Configuration and Control Calculations

- Control Docking: Before the full screen, dock a set of known active ligands and decoys against the target [3]. This step is critical to validate the docking setup and assess its ability to discriminate binders from non-binders, a process known as enrichment [4].

- Parameter Optimization: Based on the control results, refine docking parameters such as the search space and scoring function weights to ensure they are suitable for your specific target [3].

Large-Scale Docking and Hit Prioritization

- Execution: Run the docking program against the entire prepared compound library. With modern computational resources, screening hundreds of millions of compounds is feasible [3].

- Analysis: Rank the output compounds based on their predicted binding affinity (docking score). Visually inspect the predicted binding poses of the top-ranking compounds to check for sensible interactions (e.g., hydrogen bonds, hydrophobic contacts) and physical plausibility [2]. The prioritized hit list should proceed to experimental validation.

Table 3: Key Research Reagents and Computational Tools

| Item Name | Function / Application | Relevant Details |

|---|---|---|

| Directory of Useful Decoys (DUD) [4] | A bias-corrected benchmarking set for evaluating virtual screening performance. | Contains 2,950 ligands for 40 targets, each with 36 property-matched decoys to provide a stringent test for docking enrichment. |

| ZINC Database [4] [3] | A public database of commercially available compounds for virtual screening. | A primary source for "drug-like" molecules to build screening libraries. |

| PoseBusters [2] | A validation toolkit to evaluate the physical plausibility of docking predictions. | Systematically checks predicted poses for chemical and geometric consistency, including bond lengths, angles, and protein-ligand clashes. |

| AutoDock Vina [2] [1] | A widely used molecular docking program. | An example of a traditional physics-based method with a hybrid scoring function and efficient optimization. |

| Glide [2] [1] | A high-performance docking tool from Schrödinger. | Noted for its exceptional performance in maintaining physical validity (e.g., >94% PB-valid rates across benchmarks) [2]. |

| Deep Learning Docking (e.g., SurfDock) [2] | Next-generation docking using generative or regression models. | Offers superior pose accuracy (e.g., >90% on known complexes) but may produce physically implausible structures, requiring careful validation [2]. |

# Application Note: Docking in Hit Discovery and Optimization

Molecular docking transforms drug discovery by enabling the predictive screening of vast chemical libraries, prioritizing lead compounds for synthesis, and optimizing drug candidates based on their interaction with target proteins [1]. A practical application is identifying natural product ligands for therapeutic targets, such as using flavonol glycosides from Eruca sativa as potential peroxisome proliferator-activated receptor-alpha (PPAR-α) agonists to improve skin barrier function [5]. In such studies, molecular docking simulations can predict how these flavonols bind to the PPAR-α ligand-binding domain, providing a structural basis for their observed agonistic activity and guiding the rational design of more potent analogs [5].

While powerful, molecular docking alone is insufficient to ensure the safety and efficacy of a drug candidate. It predicts binding affinity and interaction but does not account for pharmacokinetics, toxicity, off-target effects, or in vivo behavior [6]. Therefore, experimental validation through molecular dynamics simulation, ADMET profiling, and in vitro and in vivo studies remains essential [6].

Molecular docking is an indispensable tool in modern computational drug discovery, enabling researchers to predict how a small molecule ligand interacts with a protein target at the atomic level. The reliability of docking predictions hinges on two fundamental computational components: sampling algorithms, which explore possible ligand conformations and orientations within the protein's binding site, and scoring functions, which evaluate and rank these potential binding modes to predict the most biologically relevant complex [7] [8]. In the context of virtual screening, where thousands to millions of compounds are evaluated in silico, the balanced performance of these components directly impacts the success rate of identifying true hits while managing computational resources [9] [10]. This application note details the core principles, current methodologies, and practical protocols for these essential elements, providing a framework for their effective implementation in structure-based drug discovery pipelines.

Core Component I: Sampling Algorithms

Sampling algorithms address the challenge of exploring the vast conformational and positional space available to a ligand within a protein's binding site. The goal is to generate a set of plausible binding modes, or "poses," that includes near-native configurations resembling the experimentally determined structure.

Classification and Foundations

Sampling algorithms can be broadly categorized by their search strategy and how they handle molecular flexibility. The earliest methods treated both ligand and protein as rigid bodies, searching only six degrees of translational and rotational freedom [7]. While fast, this "lock-and-key" approach has largely been superseded by methods that account for ligand flexibility, and more recently, partial or full receptor flexibility, in line with the "induced-fit" theory of binding [7].

Table 1: Major Classes of Sampling Algorithms in Molecular Docking

| Algorithm Class | Key Principle | Representative Software | Advantages | Limitations |

|---|---|---|---|---|

| Matching Algorithms | Maps ligand into active site based on shape complementarity and chemical features [7]. | DOCK [7], FLOG [7], LibDock [7] | High computational speed, suitable for virtual screening [7]. | Limited handling of flexibility, risk of overlooking valid poses. |

| Incremental Construction | Divides ligand into fragments; docks base fragment and rebuilds ligand incrementally [7]. | FlexX [7], DOCK 4.0 [7] | Efficient handling of ligand flexibility. | Performance can depend on choice of base fragment. |

| Monte Carlo (MC) | Generates new poses via random transformations; accepts or rejects based on energy criteria [7]. | AutoDock (early versions) [7], ICM [7] | Ability to escape local energy minima. | May require many iterations for convergence. |

| Genetic Algorithms (GA) | Encodes poses as "chromosomes"; evolves populations using mutation and crossover [7]. | AutoDock [7], GOLD [7] | Effective search of high-dimensional space. | Computationally intensive; many parameters to tune. |

| Molecular Dynamics (MD) | Simulates physical movements of atoms over time under classical mechanics [7]. | Various (often for refinement) [7] | Most accurate physical model, can model full flexibility. | Extremely high computational cost, poor at crossing energy barriers. |

Practical Considerations for Algorithm Selection

The choice of a sampling algorithm involves trade-offs between computational speed, accuracy, and the biological system's complexity. For large-scale virtual screening, matching algorithms or incremental construction methods offer a favorable balance of speed and accuracy [7]. For more precise pose prediction, especially for ligands with high flexibility, stochastic methods like Genetic Algorithms are often preferred [11] [7]. A powerful modern approach is algorithm selection, which uses machine learning to choose the best algorithm for a specific protein-ligand pairing, acknowledging that no single algorithm is optimal for all systems [11].

Core Component II: Scoring Functions

Once a set of candidate poses is generated, scoring functions are used to evaluate and rank them. Their primary roles are pose prediction (identifying the correct binding mode) and binding affinity prediction (estimating the strength of the interaction) [8] [10].

Types of Scoring Functions

Scoring functions are typically classified into four main categories, each with a distinct theoretical foundation.

Table 2: Categories of Scoring Functions for Protein-Ligand Docking

| Function Type | Fundamental Principle | Typical Energy Terms | Advantages | Disadvantages |

|---|---|---|---|---|

| Physics-Based | Based on classical molecular mechanics force fields [12] [8]. | Van der Waals, electrostatic interactions, implicit solvation [12] [13]. | Strong physical basis, transferable. | Computationally expensive; sensitive to inaccuracies in force fields and solvation models. |

| Empirical | Fits weighted energy terms to experimental binding affinity data [12] [8]. | Hydrogen bonds, hydrophobic contacts, rotatable bond penalty, clash term [12]. | Fast calculation, optimized for known data. | Risk of overfitting; limited transferability to novel target classes. |

| Knowledge-Based | Derives potentials from statistical analysis of atom-pair frequencies in structural databases [12] [8]. | Pairwise atomic contact potentials [11] [12]. | Good balance of speed and accuracy [13]. | Quality depends on database size and diversity; physical interpretation is indirect. |

| Machine Learning (ML)-Based | Learns complex relationship between structural features and binding affinity without a pre-defined functional form [12] [10]. | Various structural and chemical descriptors (e.g., intermolecular contacts) [10]. | High predictive accuracy on diverse test sets; ability to capture complex patterns [12]. | Black-box nature; requires large, high-quality training data; potential for overfitting [12]. |

Advancements in Machine Learning Scoring Functions

ML-based scoring functions represent a significant shift from classical functions. They consistently outperform classical functions in binding affinity prediction and virtual screening tasks [12] [10]. For example, the OnionNet-SFCT model uses an AdaBoost random forest trained on protein-ligand intermolecular contacts and serves as a correction term to the empirical Vina score, significantly improving pose prediction and virtual screening enrichment [10]. A key to robust ML-scoring functions is training them on diverse docking poses rather than only on crystal structures, which improves their ability to discriminate between native and non-native poses [10].

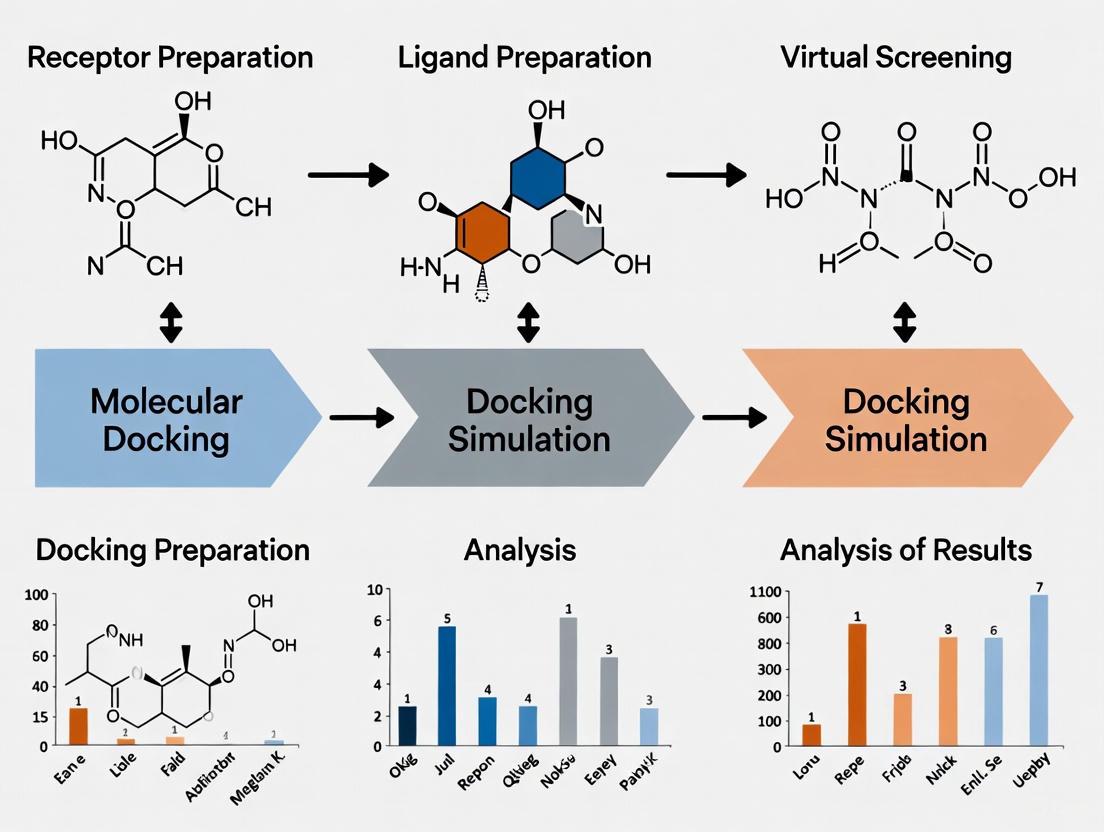

Integrated Docking Workflow

A typical molecular docking protocol integrates both sampling and scoring into a cohesive workflow, often implemented in automated pipelines for virtual screening [9]. The diagram below illustrates the logical flow and decision points in a standard docking experiment.

Diagram 1: Standard molecular docking workflow, illustrating the sequential steps from system preparation to result analysis.

Application Notes & Experimental Protocol

This protocol outlines the steps for performing a virtual screening experiment using AutoDock Vina, a widely used docking program that employs a hybrid stochastic search and an empirical scoring function [9] [10].

Protocol: Virtual Screening of a Compound Library

Objective: To identify potential hit compounds from a library of natural products against a target protein (e.g., New Delhi metallo-β-lactamase-1, NDM-1) [14].

Software Requirements: Unix-like command line environment, AutoDock Vina, Python with RDKit and sklearn libraries, and visualization software (e.g., PyMOL) [9] [14].

Step-by-Step Methodology:

Protein Preparation:

Ligand Library Preparation:

- Source a library of compounds (e.g., 4,561 natural products from ChemDiv) [14].

- Generate 3D structures for each compound and minimize their energy using a force field (e.g., MMFF94 with OpenBabel) [14].

- For each ligand, generate multiple probable tautomers and ionization states at physiological pH.

- Convert all prepared ligands to PDBQT format.

Grid Box Configuration:

- Define the docking search space. If the binding site is known, center the grid box on the native ligand's position.

- Use AutoDockTools to set the grid box center and size. Example for NDM-1: center (x=2.19, y=-40.58, z=2.22) with size (20Å × 16Å × 16Å) [14].

- Save the configuration to a text file.

Docking Execution:

- Run AutoDock Vina in batch mode for the entire library using the prepared configuration.

- Use an

exhaustivenessvalue of 10-20 to balance speed and accuracy [14]. - Generate multiple poses (e.g., 10) per ligand to capture a range of binding modes.

- Command example:

vina --config config.txt --ligand ligand.pdbqt --out docked_ligand.pdbqt --log log.txt.

Post-Docking Analysis:

- Pose Ranking: Rank all generated poses from the entire library based on the normalized Vina score (binding affinity in kcal/mol) [14].

- Cluster Analysis: Perform Tanimoto similarity-based clustering on top-ranked compounds using RDKit and k-means clustering to prioritize chemotypes [14].

- Visual Inspection: Visually inspect the top-ranked poses of promising candidates for key interactions (e.g., hydrogen bonds, hydrophobic contacts, metal coordination).

The Scientist's Toolkit: Key Research Reagents and Software

Table 3: Essential Computational Tools for Molecular Docking

| Tool / Resource | Type | Primary Function | Application Note |

|---|---|---|---|

| AutoDock Vina [10] [14] | Docking Software | Performs sampling and scoring of ligands. | Balances speed and accuracy; ideal for virtual screening. |

| Glide (Schrödinger) [15] | Docking Software | Uses hierarchical filters and empirical scoring. | High pose prediction accuracy; suitable for lead optimization. |

| GOLD [7] | Docking Software | Uses Genetic Algorithm for sampling. | Robust handling of ligand flexibility. |

| RDKit [14] | Cheminformatics Library | Handles ligand preparation, descriptor calculation, and clustering. | Essential for preprocessing and post-analysis in Python scripts. |

| PDBbind [10] | Database | Curated database of protein-ligand complexes with binding affinities. | Used for training and benchmarking scoring functions. |

| DUD-E / DUD-AD [10] | Benchmark Dataset | Datasets for evaluating virtual screening enrichment. | Used to validate the screening power of a docking protocol. |

| OpenBabel [14] | Chemical Toolbox | Converts file formats and performs ligand energy minimization. | Prepares ligand structures for docking. |

The synergistic performance of sampling algorithms and scoring functions dictates the success of molecular docking in drug discovery. While classical methods remain robust and widely used, the field is increasingly leveraging machine learning to enhance both sampling efficiency—through per-instance algorithm selection—and scoring accuracy—via models trained on large, diverse structural datasets [11] [10]. For researchers, the optimal docking strategy involves careful consideration of the biological question, the available computational resources, and the known limitations of each method. Validating protocols against systems with known experimental outcomes is crucial. The continued integration of more sophisticated ML models and the inclusion of full receptor flexibility promise to further elevate docking from a valuable predictive tool to an even more reliable cornerstone of structure-based drug design.

In the realm of molecular modelling and structure-based drug design, molecular docking predicts the preferred orientation of one molecule to another when bound to form a stable complex [16]. The universe of all possible spatial arrangements of a molecule is its conformational space. Exhaustively exploring this space is a fundamental challenge, as its size grows exponentially with the number of degrees of freedom [17]. Among the various strategies developed, systematic search methods represent a rigorous, grid-based approach that, in principle, can identify all sterically allowed conformations within the resolution of a defined grid, free from the path-dependency and local minima entrapment that can plague stochastic methods [18].

This article details the application of systematic search protocols within the context of virtual screening campaigns. We provide a foundational overview of the method, present quantitative data comparing different search strategies, and offer a detailed protocol for implementing a systematic conformational search using the Z Module in CHARMM, illustrated with a specific application to protein structure prediction.

Theoretical Foundation and Key Concepts

Systematic search operates by dividing the variables governing molecular conformation—typically torsion angles—into a regular grid [18]. Each point on this multi-dimensional grid is evaluated in a defined sequence. This "parallel-generation" approach, where many trial structures are generated from a common starting point, ensures unbiased and even sampling of the conformational space [17]. This is a key advantage over "serial-generation" procedures like Monte Carlo simulations, where uneven sampling can introduce systematic errors [17].

The method is particularly powerful in hierarchical build-up procedures, where the conformations of smaller molecular fragments are determined first and then combined to model larger structures [17]. Furthermore, systematic search frameworks can seamlessly integrate statistical information, such as rotamer libraries for protein side chains, to improve efficiency and biological relevance [17].

Table 1: Core Concepts in Conformational Space Exploration.

| Concept | Description | Implication for Docking |

|---|---|---|

| Conformational Space | The set of all possible spatial arrangements (conformations) of a molecule. | The search space for docking is vast; exhaustive exploration is often computationally infeasible for large systems [17]. |

| Systematic Search | A method that samples conformation space by evaluating structures at regular intervals (a grid) across torsional degrees of freedom [18]. | Provides unbiased, complete sampling within grid resolution; avoids missing low-energy regions [17]. |

| Hierarchical Build-up | A strategy where larger structures are assembled from pre-determined low-energy conformations of smaller fragments [17]. | Makes large conformational search problems tractable by breaking them into smaller, manageable sub-problems. |

| Rotamer Libraries | Databases of statistically favored side-chain conformations derived from known protein structures. | Can be integrated into systematic searches to constrain the search to biologically probable states, enhancing efficiency [17]. |

Comparative Analysis of Search Methodologies

While systematic search is powerful, it is one of several strategies for conformational analysis. The choice of method often involves a trade-off between sampling completeness and computational cost.

Table 2: Comparison of Conformational Space Search Methodologies.

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Systematic Search | Regular, grid-based sampling of dihedral angles [18]. | Unbiased, comprehensive sampling; not prone to missing minima; deterministic results [17]. | Computational cost grows exponentially with number of rotatable bonds (curse of dimensionality) [17]. |

| Molecular Dynamics (MD) | Simulates physical motions of atoms over time based on classical mechanics. | Accounts for true dynamics and thermodynamics; uses physical force fields [16]. | Computationally expensive; sampling is time-dependent and may be slow to escape local minima. |

| Monte Carlo / Stochastic | Random changes to conformation; acceptance based on energy criteria [17]. | Can overcome energy barriers; more efficient than MD for some problems. | Sampling can be uneven and path-dependent; may miss important regions; results can vary between runs [17]. |

| Genetic Algorithms | Uses evolutionary principles (mutation, crossover) to evolve populations of conformations [16]. | Effective for exploring large, complex search spaces; allows for ligand and limited protein flexibility. | Requires multiple runs; can be slower than shape-based methods for high-throughput virtual screening [16]. |

The following workflow diagram illustrates the logical decision process for selecting and applying a systematic search method, highlighting its role in a broader structure prediction pipeline.

Application Notes: Protocol for a Systematic Conformational Search

This section provides a detailed protocol for performing a systematic conformational search, exemplified by the Z Method as implemented in the CHARMM program [17]. The following case study demonstrates a specific application.

Case Study: 36-Dimensional Prediction of the CheY Protein Structure

The Z Method was applied to predict the tertiary structure of the signal transduction protein CheY (128 residues), comprising 5 α-helices and 5 β strands [17].

- Objective: To predict the native structure of CheY starting from its amino acid sequence and known secondary structure elements (SSEs).

- Challenge: The conformational space was defined by 36 dihedral angles (4 per loop × 9 loops), making an exhaustive grid search at a high resolution computationally prohibitive (e.g., a 10° grid spacing would result in ~10⁴² points) [17].

- Solution: A hierarchical build-up procedure was employed to make the problem tractable [17].

Experimental Protocol

Step 1: System Setup and Parameterization

- Software: CHARMM molecular simulation program with the Z Module [17].

- Atomic Model: A polar hydrogen representation was used.

- Energy Function: The EEF1 implicit solvation energy function was used to evaluate the energy of each trial conformation [17].

- Initial Structure: The internal coordinates of the ten SSEs were held fixed. The conformational search focused on the dihedral angles in the nine loops connecting these SSEs.

Step 2: Hierarchical Build-up Procedure

- Fragment Generation: The protein was divided into smaller fragments, each containing a subset of the SSEs.

- Systematic Search on Fragments: For each fragment, a systematic search was performed over its relevant subset of dihedral angles. The Z Method was used to generate trial structures by applying grid-based changes to the torsion angles.

- Conformer Selection: For each fragment, a library of low-energy conformations was retained for the next stage.

- Fragment Assembly: Larger fragments were constructed by combining the low-energy conformers of smaller fragments, followed by another round of systematic search and minimization to optimize the interface between them.

- Final Assembly: This process was repeated iteratively until the complete protein structure was assembled.

Step 3: Final Refinement and Analysis

- A final energy minimization was performed on the fully assembled structure.

- The predicted structure was compared to the experimentally determined native structure by calculating the Root-Mean-Square Deviation (RMSD) of atomic positions.

Key Outcomes

- Accuracy: The final predicted structure was within 1.56 Å RMSD of the native CheY structure [17].

- Computational Cost: The entire prediction was completed in approximately two-and-a-half days on AMD Opteron processors [17].

- Performance vs. Other Methods: In the same study, Monte Carlo and simulated annealing trials on smaller versions of the problem using the same energy model resulted in less accurate predictions, highlighting the utility of unbiased systematic sampling [17].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Computational Resources for Systematic Conformational Searches.

| Tool / Resource | Type | Primary Function in Systematic Search |

|---|---|---|

| CHARMM / Z Module | Molecular Simulation Software | Provides a versatile platform for implementing systematic, grid-based conformational search protocols; enables hierarchical build-up and use of conformer libraries [17]. |

TINKER / scan |

Molecular Modeling Suite | Performs systematic conformational searches by combining large torsional motion with local geometry optimization; allows manual or automatic selection of dihedral angles to rotate [19]. |

| EEF1 | Implicit Solvation Energy Function | An efficient energy function used to evaluate trial conformations, accounting for solvation effects without the cost of explicit water molecules [17]. |

| Rotamer Libraries | Database | Libraries of statistically favored side-chain conformations that can be used to constrain or guide the systematic search, improving biological relevance and computational efficiency [17]. |

Workflow Visualization of the Z Method Protocol

The following diagram details the specific workflow of the Z Method protocol as applied in the CheY case study, from initialization to the final predicted structure.

Molecular docking is a cornerstone technique in computer-aided drug design (CADD) that predicts the preferred orientation and binding affinity of a small molecule (ligand) when bound to a target macromolecule (receptor), such as a protein [20] [1]. The primary challenge in molecular docking is efficiently searching the vast conformational space of the ligand within the receptor's binding site to find the optimal binding pose, a problem that is computationally complex due to the numerous degrees of freedom involved [21]. Stochastic search methods provide powerful solutions to this challenge by using probabilistic algorithms to explore the search space efficiently without requiring an exhaustive, and often infeasible, systematic search [22] [23].

Two of the most prominent stochastic methods employed in docking programs are Genetic Algorithms (GAs) and Monte Carlo (MC) simulations. These methods are particularly valued for their ability to handle flexible ligand docking and their robust global search capabilities, which help in avoiding local minima during optimization [22] [21]. Their integration into docking software has been crucial for the virtual screening of ultra-large chemical libraries, a practice that has become increasingly important in modern drug discovery [24].

Theoretical Foundations of Genetic Algorithms and Monte Carlo Methods

Genetic Algorithms (GAs)

Genetic Algorithms are a class of evolutionary algorithms inspired by the process of natural selection [22] [25]. In the context of molecular docking, a GA treats the ligand's conformation and orientation as an individual's "genetic code." This code typically includes translational, rotational, and torsional degrees of freedom [21]. The algorithm operates through an iterative process of selection, crossover, and mutation to evolve a population of potential solutions toward an optimal binding pose.

The core steps of a GA in molecular docking are:

- Initialization: A population of random ligand poses is generated within the receptor's binding site.

- Evaluation: Each pose is scored using a fitness function, typically the docking scoring function that estimates binding affinity.

- Selection: Poses with better fitness scores are preferentially selected for reproduction.

- Crossover: Genetic material from two parent poses is combined to create offspring poses.

- Mutation: Random changes are introduced to poses to maintain population diversity.

- Generational Replacement: The old population is replaced with the new generation of offspring, and the process repeats.

GAs are particularly effective for docking problems with highly flexible ligands, as they can efficiently explore complex energy landscapes [21]. The Lamarckian Genetic Algorithm used in AutoDock represents a notable variant, where local search is integrated to refine solutions within generations [21].

Monte Carlo (MC) Simulations

Monte Carlo methods rely on random sampling to explore the conformational space [22] [26]. The fundamental principle involves generating random changes to the ligand's pose and accepting or rejecting these changes based on a probabilistic criterion.

The Metropolis criterion is the most common acceptance rule in MC-based docking [26]. A newly generated pose is always accepted if it has a more favorable energy (or score) than the previous pose. If the new pose has a less favorable energy, it may still be accepted with a probability proportional to the Boltzmann factor, ( e^{(-\Delta E/RT)} ), where ( \Delta E ) is the energy difference, ( R ) is the gas constant, and ( T ) is the temperature parameter [22] [26]. This controlled acceptance of energetically unfavorable moves helps the algorithm escape local minima and conduct a thorough exploration of the search space.

MC methods are often combined with simulated annealing, where the "temperature" parameter is gradually decreased during the simulation. This allows for broad exploration initially and finer tuning as the simulation progresses, improving the likelihood of finding the global minimum [21].

Quantitative Comparison of Stochastic Search Methods

The table below summarizes the key characteristics, advantages, and disadvantages of Genetic Algorithms and Monte Carlo methods as applied to molecular docking.

Table 1: Comparison of Genetic Algorithms and Monte Carlo Methods in Molecular Docking

| Feature | Genetic Algorithms (GAs) | Monte Carlo (MC) Methods |

|---|---|---|

| Core Principle | Population-based evolution inspired by natural selection [22] [25] | Stochastic sampling based on random moves and probabilistic acceptance [22] [26] |

| Key Operators | Selection, Crossover, Mutation [21] | Random Move Generation, Metropolis Criterion [26] |

| Handling of Flexibility | Excellent for handling highly flexible ligands via torsion angle encoding [21] | Effective for ligand flexibility through random torsional changes [22] |

| Search Capability | Strong global search due to population diversity and crossover [21] | Good global search, especially when combined with simulated annealing [21] |

| Primary Advantage | Efficiently explores complex search spaces and recombines good solution features [24] [21] | Simpler to implement; Metropolis criterion helps escape local minima [22] [26] |

| Primary Disadvantage | Can be computationally intensive due to population management; may require parameter tuning [21] | May require many iterations for convergence; sequential nature can limit parallelization [22] |

| Example Docking Software | GOLD [25], AutoDock [21], REvoLd [24] | AutoDock Vina [21], MCDOCK [21] [23] |

Performance Benchmarking and Application Data

The efficacy of stochastic search methods is demonstrated through their performance in real-world docking benchmarks and applications. The following table quantifies the performance of several algorithm implementations.

Table 2: Performance Benchmarking of Docking Algorithms Utilizing Stochastic Methods

| Algorithm/Software | Core Search Method | Reported Performance | Application Context |

|---|---|---|---|

| REvoLd [24] | Evolutionary Algorithm (EA) | Hit rate improvements by factors of 869 to 1622 vs. random screening; docks 49,000-76,000 molecules for a target screen. | Ultra-large library screening (e.g., Enamine REAL space with >20 billion molecules) [24] |

| GOLD [25] | Genetic Algorithm (GA) | Widely validated and trusted for pose prediction accuracy over >20 years; handles ligand and partial protein flexibility. | Lead identification and optimization in virtual screening [25] |

| MSCA [21] | Multi-Swarm Competitive Algorithm (EA variant) | Competitive performance on CASF-2016 benchmark (285 complexes); improved accuracy with highly flexible ligands. | Novel docking program for challenging flexible ligand problems [21] |

| AutoDock Vina [21] | Monte Carlo & Simulated Annealing | Improved speed and accuracy over AutoDock; widely used for its balance of performance and usability. | General-purpose protein-ligand docking [27] [21] |

Protocol for Virtual Screening Using an Evolutionary Algorithm

This protocol details the use of the REvoLd (RosettaEvolutionaryLigand) algorithm for screening ultra-large make-on-demand combinatorial libraries, such as the Enamine REAL space [24].

Pre-docking Preparation

Receptor Preparation:

- Obtain the three-dimensional structure of the target protein, preferably from high-resolution X-ray crystallography or Cryo-EM. Correct any structural issues (missing atoms, sidechains, loops). Structures prepared with tools like those in Discovery Studio are suitable [25].

- Calculate pKa values and assign protonation states to residues in the binding site.

- Generate the receptor file in PDBQT format for docking, ensuring the correct assignment of flexible residues if required.

Define the Search Space:

- The algorithm exploits the combinatorial nature of make-on-demand libraries, which are constructed from lists of substrates and chemical reactions [24]. No pre-enumeration of all molecules is required.

- The ligand's conformational space is defined by its rotatable bonds, which are encoded within the algorithm.

REvoLd Docking Procedure

Initialization:

- Generate a random start population of 200 ligand individuals. This size provides sufficient variety to initiate the optimization process effectively [24].

Evolutionary Optimization Cycle:

- Evaluation: Dock all ligands in the current generation against the prepared receptor using the RosettaLigand flexible docking protocol, which accounts for full ligand and receptor flexibility [24]. The docking score serves as the fitness function.

- Selection: Select the top 50 fittest individuals to advance to the next generation. This elitist selection strategy preserves the best solutions.

- Reproduction:

- Crossover: Perform crossovers between the fit molecules to recombine favorable structural motifs and enforce variance.

- Mutation: Apply multiple mutation steps:

- Fragment Switch: Switch single fragments to low-similarity alternatives to maintain well-performing parts while introducing significant local changes.

- Reaction Switch: Change the reaction of a molecule and search for similar fragments within the new reaction group to explore different regions of the combinatorial space.

- Secondary Optimization Round: Introduce a second round of crossover and mutation that excludes the fittest molecules. This allows lower-scoring ligands with potentially useful molecular information to improve and contribute to the gene pool [24].

Termination:

- Run the evolutionary optimization for 30 generations. This number strikes a good balance between convergence and exploration, with good solutions often appearing after 15 generations and discovery rates flattening after 30 [24].

- For a comprehensive screen, conduct multiple independent runs (e.g., 20 runs per target). The random starting seeds lead to different exploration paths and yield diverse high-scoring motifs [24].

Post-docking Analysis

- Ranking: Rank the final population of ligands from all runs based on their docking scores (binding affinity estimates).

- Cluster Analysis: Cluster the top-ranked hits by molecular scaffold to assess the diversity of the discovered compounds.

- Interaction Analysis: Visually inspect the predicted binding poses of the top hits, analyzing key protein-ligand interactions (hydrogen bonds, ionic bonds, van der Waals forces, and hydrophobic interactions) [20].

Workflow Visualization of a Genetic Algorithm for Docking

The following diagram illustrates the iterative workflow of a Genetic Algorithm as applied to the molecular docking process.

The Scientist's Toolkit: Essential Reagents and Software

Table 3: Key Research Reagent Solutions for Stochastic Docking

| Item Name | Function / Purpose | Example Use Case |

|---|---|---|

| REvoLd (Rosetta) [24] | Evolutionary algorithm for screening ultra-large combinatorial libraries without full enumeration. | Targeted exploration of billions of compounds in make-on-demand spaces like Enamine REAL. |

| GOLD [25] | Genetic algorithm-based docking suite for predicting ligand binding with high accuracy. | Lead identification and optimization in structure-based drug design projects. |

| AutoDock Vina [27] [21] | Docking program using a hybrid of Monte Carlo and Simulated Annealing for search. | General-purpose virtual screening and binding pose prediction. |

| QuickVina 2 [27] | A faster variant of AutoDock Vina, optimized for speed. | Accelerating virtual screening workflows on local machines or clusters. |

| jamdock-suite [27] | A protocol of Bash scripts automating a virtual screening pipeline from setup to ranking. | Lowering the access barrier for researchers setting up local virtual screening. |

| RosettaLigand [24] | A flexible docking protocol within Rosetta that allows for full ligand and receptor flexibility. | Used as the docking engine within the REvoLd algorithm for accurate pose scoring. |

| FPocket [27] | Open-source software for detecting and characterizing protein-ligand binding pockets. | Identifying potential binding sites on a protein target before docking. |

| ZINC Database [27] | A public repository of commercially available compounds for virtual screening. | Sourcing ready-to-dock molecular structures for library generation. |

Physics-Based, Empirical, and Knowledge-Based Scoring Functions

Molecular docking is a cornerstone computational method in structural biology and drug discovery, aimed at predicting the three-dimensional structure of a protein-ligand or protein-protein complex and estimating the strength of their interaction [28]. A critical component of the docking pipeline is the scoring function, a mathematical model used to predict the binding affinity of a complex by evaluating the interactions between the molecules [28] [22]. The accuracy of scoring functions is paramount for successful virtual screening, as it directly influences the ability to identify true binding poses and distinguish active compounds from inactive ones [28] [29]. Scoring functions can be broadly categorized into three classical types—physics-based, empirical, and knowledge-based—each with distinct theoretical foundations, advantages, and limitations [28]. This article provides a detailed overview of these scoring function classes, supported by comparative data and experimental protocols, to guide researchers in selecting and applying these tools within virtual screening workflows.

Classification and Theoretical Foundations of Scoring Functions

The table below summarizes the core principles, representative methods, and key characteristics of the three main classes of classical scoring functions.

Table 1: Classification and Characteristics of Classical Scoring Functions

| Function Class | Theoretical Basis | Representative Methods | Key Advantages | Inherent Limitations |

|---|---|---|---|---|

| Physics-Based | Calculates binding energy based on physical force fields (e.g., van der Waals, electrostatics); may include solvation and entropy terms [28] [29]. | MMFF94S-based functions, DockTScore [29] | Strong theoretical foundation; detailed description of molecular interactions [29]. | High computational cost; accuracy depends on force field parameterization [28] [29]. |

| Empirical | Estimates binding affinity as a weighted sum of energy terms, with coefficients fit to experimental binding affinity data [28]. | FireDock, RosettaDock, ZRANK2 [28] | Faster computation; simpler interpretation of energy terms [28] [22]. | Risk of overfitting to training data; performance dependent on dataset quality and diversity [28] [29]. |

| Knowledge-Based | Derives statistical potentials from the observed frequencies of atom or residue pairwise distances in known protein structures via Boltzmann inversion [28]. | AP-PISA, CP-PIE, SIPPER [28] | Good balance between accuracy and computational speed [28]. | Potentials may lack direct physical meaning; performance relies on the size and quality of the structural database [28]. |

The following diagram illustrates the logical relationship between the input data, the core principles, and the output for each class of scoring function.

Quantitative Comparison of Scoring Function Performance

Evaluating scoring functions requires standardized benchmarks. The table below summarizes the performance of various classical and deep learning-based scoring functions across key public datasets, focusing on pose prediction accuracy and virtual screening success.

Table 2: Performance Comparison of Classical and Deep Learning-Based Scoring Functions on Public Benchmarks

| Scoring Method | Type | Pose Prediction Success Rate (RMSD ≤ 2 Å) | Virtual Screening Efficacy (AUC/EF) | Key Strengths / Weaknesses |

|---|---|---|---|---|

| FireDock | Empirical | Varies by dataset and complex [28] | Varies by dataset and complex [28] | Strength: Incorporates flexible refinement and various energy terms. Weakness: Performance can be heterogeneous [28]. |

| RosettaDock | Empirical | Varies by dataset and complex [28] | Varies by dataset and complex [28] | Strength: Comprehensive energy function. Weakness: Computationally intensive [28]. |

| PyDock | Hybrid | Varies by dataset and complex [28] | Varies by dataset and complex [28] | Strength: Balances electrostatics and desolvation energy [28]. |

| AP-PISA | Knowledge-Based | Varies by dataset and complex [28] | Varies by dataset and complex [28] | Strength: Uses multiple potentials for better discrimination [28]. |

| SurfDock | Deep Learning (Generative) | 91.76% (Astex), 77.34% (PoseBusters), 75.66% (DockGen) [2] | Varies by dataset and complex [2] | Strength: Exceptional pose accuracy. Weakness: Suboptimal physical validity (e.g., steric clashes) [2]. |

| Glide SP | Traditional (Physics-Empirical) | Lower than SurfDock [2] | Varies by dataset and complex [2] | Strength: Excellent physical validity (≥94% PB-valid rate). Weakness: Lower pose accuracy than top DL methods [2]. |

| KarmaDock / QuickBind | Deep Learning (Regression) | Low (e.g., ~20-30% on PoseBusters) [2] | Varies by dataset and complex [2] | Strength: Fast prediction. Weakness: Often produces physically invalid poses; poor generalization [2]. |

Experimental Protocols for Scoring Function Evaluation

Protocol for Benchmarking Scoring Functions using CCharPPI

Objective: To objectively evaluate and compare the performance of different scoring functions on a standardized dataset of protein-protein complexes, independent of the docking sampling algorithm [28].

Materials:

- CCharPPI Server: A web-based platform for the comprehensive assessment of scoring functions [28].

- Benchmark Dataset: A curated set of protein-protein complexes with known native structures (e.g., from CAPRI benchmarks) [28].

Procedure:

- Dataset Preparation: Compile a set of candidate docking decoys and their native reference structure for the complexes to be evaluated.

- Server Submission: Submit the ensemble of complex structures to the CCharPPI server.

- Function Selection: On the server, select the scoring functions to be evaluated (e.g., FireDock, ZRANK2, SIPPER).

- Analysis of Results: The server returns a ranked list of models for each scoring function. Key metrics to analyze include:

- Success Rate: The percentage of cases where a near-native structure is ranked within the top N positions.

- Scoring Time: Record the computational time required by each function, as runtime impacts large-scale applications [28].

Protocol for Developing a Target-Specific Scoring Function

Objective: To create a customized scoring function for a specific protein target class (e.g., proteases, protein-protein interactions) to improve binding affinity prediction accuracy [29].

Materials:

- Training Set: High-quality protein-ligand complex structures with experimental binding affinity data, curated for the specific target class (e.g., from PDBbind) [29].

- Software: Tools for structure preparation (e.g., Maestro Protein Preparation Wizard) and model training (e.g., Scikit-learn for SVM/Random Forest) [29].

Procedure:

- Data Curation:

- Select complexes from databases like PDBbind based on specific criteria (e.g., EC Number for enzymes).

- Manually prepare structures: assign correct protonation/tautomeric states using tools like Epik, optimize hydrogen bonding, and remove water molecules [29].

- Feature Calculation: For each complex, compute physics-based and structural descriptors. Essential features include:

- Van der Waals and electrostatic interaction energies (e.g., using MMFF94S force field).

- Solvation and lipophilic interaction terms.

- Ligand torsional entropy contribution.

- Surface area and contact-based terms [29].

- Model Training:

- Split the curated dataset into training (e.g., 75%) and test (e.g., 25%) sets.

- Employ regression algorithms to correlate the calculated features with experimental binding affinity. Start with Multiple Linear Regression (MLR) for interpretability, then progress to non-linear methods like Support Vector Machine (SVM) or Random Forest (RF) for potentially higher accuracy [29].

- Validation: Assess the trained model on the independent test set and public benchmarks (e.g., DUD-E datasets) to evaluate its predictive power and virtual screening utility [29].

The workflow for this protocol is visualized below.

Table 3: Key Software and Data Resources for Scoring Function Development and Application

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| CCharPPI Server [28] | Web Server | Allows assessment of scoring functions independent of the docking process, enabling direct comparison on standardized datasets [28]. |

| PDBbind Database [29] | Curated Database | Provides a large, high-quality collection of protein-ligand complexes with experimentally measured binding affinities for training and testing scoring functions [29]. |

| DUD-E Datasets [29] | Benchmarking Set | Contains benchmark structures for evaluating virtual screening performance, including known actives and decoy molecules for various targets [29]. |

| Maestro (Schrödinger) [29] | Software Suite | Used for comprehensive protein and ligand structure preparation, including protonation state assignment, hydrogen bonding optimization, and energy minimization [29]. |

| MMFF94S Force Field [29] | Molecular Mechanics Model | Provides the fundamental physics-based terms (van der Waals, electrostatics) used as descriptors in modern, physics-informed scoring functions like DockTScore [29]. |

| Scikit-learn / SVM / Random Forest [29] | Machine Learning Library | Offers implementations of robust regression algorithms (SVM, Random Forest) for developing non-linear scoring functions from physics-based and empirical descriptors [29]. |

Application Notes and Best Practices

- Address Data Bias: Be aware that scoring functions may perform well on "in-distribution" data but poorly on "out-of-distribution" complexes. Always test functions on datasets relevant to your specific target [28].

- Consider Trade-offs: Deep learning-based scoring functions can show superior pose accuracy but often produce physically implausible structures (e.g., incorrect bond lengths, steric clashes). Traditional methods like Glide SP consistently demonstrate high physical validity [2].

- Incorporate Solvation and Entropy: A common limitation of many scoring functions is the inadequate treatment of solvation effects and entropic contributions. Prioritize functions that explicitly account for these terms, as they are critical for an accurate description of the binding process [29].

- Validate with Experimental Data: Computational predictions must be validated experimentally. Use techniques like Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) to confirm binding affinities of top-ranked compounds.

The Critical Role of Public Compound Databases like ZINC

The ZINC database (ZINC Is Not Commercial) is a cornerstone resource in computational drug discovery, providing a free and publicly accessible collection of commercially available or synthesizable small molecules specifically curated for virtual screening [30]. Developed and maintained by the Irwin and Shoichet Laboratories at the University of California, San Francisco (UCSF), ZINC originated in 2005 to bridge the gap between computational predictions and experimental validation by providing researchers with tangible, purchasable compounds [31] [30]. The database has undergone significant evolution, with ZINC20 (released in 2020) offering over 230 million purchasable compounds in ready-to-dock, 3D formats, and an additional 750 million searchable analogs [31]. The most recent iteration, ZINC-22, has expanded dramatically to include over 37 billion 2D-searchable compounds, with more than 4.5 billion available in 3D formats, primarily from make-on-demand libraries [30].

ZINC's core value lies in its meticulous curation and organization. Compounds are annotated with vendor information, physicochemical properties, and biologically relevant states, including protomers and tautomers generated for physiological pH (~7.4) using ChemAxon's JChem suite [30]. The database is organized into "tranches" based on properties such as heavy atom count, lipophilicity (logP), and charge, enabling efficient subset selection for targeted virtual screens [30]. By prioritizing purchasability and synthesizability, ZINC ensures that hits identified through virtual screening can be rapidly procured for experimental validation, significantly accelerating the early stages of drug discovery [30].

Table 1: Key Specifications of Major ZINC Database Versions

| Database Version | Total Compounds | 3D Ready-to-Dock Compounds | Key Features |

|---|---|---|---|

| ZINC (2005) | ~728,000 [30] | ~728,000 [30] | Initial launch with purchasable compounds. |

| ZINC12 (2012) | ~35 million [30] | ~20 million [30] | Continuous catalog updates; property-based subsets. |

| ZINC15 (2015) | >100 million [30] | >100 million [30] | Includes in-stock and make-on-demand compounds. |

| ZINC20 (2020) | 1.4 billion searchable [30] | 230 million purchasable [31] | Integrated analog searching (SmallWorld, Arthor). |

| ZINC-22 (2023) | 54.9 billion (2D) [30] | 5.9 billion [30] | Focus on massive make-on-demand libraries. |

Application in Virtual Screening: Case Studies

The utility of the ZINC database is best demonstrated through its successful application in diverse virtual screening campaigns targeting various diseases. The following case studies illustrate its critical role in identifying novel therapeutic leads.

Identification of SARS-CoV-2 3CL Protease Inhibitors

In response to the COVID-19 pandemic, researchers performed a molecular docking-based virtual screening of 2,000 compounds from the ZINC database against the SARS-CoV-2 3CL protease (3CLpro), a key enzyme responsible for viral replication [32]. The protocol involved preparing the protein structure (PDB ID: 6LU7) by removing water molecules and adding hydrogens, while ZINC compounds were converted to PDBQT format for docking. The screening identified four top compounds—ZINC32960814, ZINC12006217, ZINC03231196, and ZINC33173588—which exhibited high binding affinity with free energy of binding (FEB) values ranging from -12.3 to -11.2 kcal/mol, outperforming the co-crystallized ligand N3 (FEB: -7.5 kcal/mol) [32]. These compounds also showed stable interactions with the catalytic dyad residues (Cys145 and His41) of 3CLpro and fulfilled Lipinski's Rule of Five, indicating promising drug-like properties for further development as anti-COVID-19 agents [32].

Discovery of ROCK2 Inhibitors

A comprehensive study integrated pharmacophore modeling, virtual screening, molecular docking, and molecular dynamics (MD) simulations to discover novel inhibitors for Rho-associated protein kinase 2 (ROCK2), a therapeutic target for cancer, cardiovascular, and neurodegenerative disorders [33]. The initial virtual screening of over 13 million molecules from the ZINC database using a pharmacophore model based on the co-crystal ligand (5YS) of ROCK2 yielded 4,809 hits [33]. Subsequent molecular docking refined this set to compounds with binding affinities between -11.55 and -9.91 kcal/mol. ADMET profiling and MD simulations further identified two promising lead compounds that demonstrated stable binding with the ROCK2 protein, highlighting the power of ZINC in facilitating a multi-tiered computational pipeline for lead identification [33].

Screening for B-Cell Lymphoma 2 (Bcl-2) Inhibitors

Researchers screened 151,837 natural products from the ZINC Natural Product database to find inhibitors of Bcl-2, a target protein overexpressed in small cell lung cancer [34]. The workflow employed pharmacophore-based virtual screening followed by molecular docking validation. The pharmacophore model reduced the initial compound pool by approximately 85.64% to 6,615 candidates [34]. Molecular docking further narrowed this list, identifying a lead compound (tc259) with a binding energy of -11.02 kcal/mol and an inhibition constant of 8.33 nM [34]. This case underscores the value of ZINC's specialized subsets, such as natural products, for exploring specific chemical spaces in oncology drug discovery.

Table 2: Summary of Virtual Screening Case Studies Using the ZINC Database

| Therapeutic Target | Disease Context | ZINC Subset Screened | Key Findings | Citation |

|---|---|---|---|---|

| SARS-CoV-2 3CL Protease | COVID-19 | 2,000 compounds | 4 hits with FEB -12.3 to -11.2 kcal/mol; interactions with catalytic dyad. | [32] |

| ROCK2 Kinase | Cancer, Neurodegenerative Diseases | ~13 million compounds | 2 stable leads identified via pharmacophore screening, docking, and MD simulations. | [33] |

| Bcl-2 Protein | Small Cell Lung Cancer | 151,837 natural products | Lead compound tc259: binding energy -11.02 kcal/mol, Ki 8.33 nM. | [34] |

Experimental Protocols

This section provides detailed, step-by-step protocols for key virtual screening methodologies that leverage the ZINC database, formatted as application notes for laboratory use.

Application Note: Virtual Screening Protocol for Protein Target Using AutoDock Vina

Objective: To identify potential lead compounds from the ZINC database against a protein target of interest using molecular docking. Materials:

- Protein Data Bank (PDB) structure of target

- ZINC database compounds (e.g., in MOL2 or SDF format)

- Software: AutoDock Tools, AutoDock Vina, Open Babel (if needed for format conversion)

Procedure:

- Protein Preparation: a. Obtain the 3D crystal structure of the target protein from the PDB (e.g., PDB ID: 6LU7). b. Remove all non-essential molecules (water, co-factors, native ligands) using AutoDock Tools (ADT). c. Add polar hydrogen atoms and assign Gasteiger charges. d. Save the prepared structure in PDBQT format.

Ligand Preparation: a. Download a compound subset from ZINC in a ready-to-dock format like MOL2 or SDF. b. Convert the ligand files to PDBQT format using a tool like Open Babel or the functionality within ADT.

Grid Box Configuration: a. In ADT, define the docking grid box (e.g., 60 Å x 60 Å x 60 Å) centered on the protein's active site. b. Set the grid spacing parameter to 0.375 Å. c. Save the grid configuration parameters in a

config.txtfile.Virtual Screening Execution: a. Use AutoDock Vina via the command line with the prepared PDBQT files and configuration file.

b. For high-throughput screening, script the process to iterate over all ligand files.

Analysis of Results: a. Extract the binding affinity (in kcal/mol) from the output log files for each compound. b. Rank all screened compounds based on their binding affinity. c. Visually inspect the docking poses of the top-ranking compounds in molecular visualization software (e.g., PyMOL, UCSF Chimera) to analyze key interactions (hydrogen bonds, hydrophobic contacts, etc.) with the active site residues.

Application Note: Pharmacophore-Based Virtual Screening Protocol

Objective: To rapidly filter large sections of the ZINC database using a pharmacophore model prior to molecular docking. Materials:

- Protein-ligand co-crystal structure or a set of known active compounds.

- Software: Pharmit server or equivalent pharmacophore modeling software.

- ZINC database access.

Procedure:

- Pharmacophore Model Generation: a. Analyze the interactions between a co-crystallized ligand (e.g., 5YS in PDB: 7P6N) and the protein's binding pocket. b. Define critical chemical features, such as hydrogen bond donors, hydrogen bond acceptors, hydrophobic regions, and aromatic rings. c. Use a tool like the Pharmit server to generate the pharmacophore hypothesis based on these features [33].

Database Screening: a. Input the pharmacophore model into the Pharmit server. b. Set search filters based on Lipinski's Rule of Five (Molecular Weight < 500, HBD < 5, HBA < 10, logP < 5) to focus on drug-like molecules. c. Execute the search against the ZINC database. The server will return a list of compounds that match the pharmacophore query.

Hit Selection and Downstream Processing: a. Download the list of matching compounds (hits). b. This refined list of hits then serves as the input for the more computationally intensive molecular docking protocol described in Application Note 3.1.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources for Virtual Screening with ZINC

| Resource Name | Type | Primary Function in Workflow | Key Features / Notes |

|---|---|---|---|

| ZINC Database | Compound Library | Source of purchasable/synthesizable small molecules for screening. | Offers pre-filtered subsets (drug-like, lead-like, natural products); ready-to-dock 3D formats [31] [30]. |

| AutoDock Vina | Docking Software | Performs molecular docking to predict ligand binding pose and affinity. | Fast, widely used; command-line interface suitable for screening [32]. |

| Pharmit | Pharmacophore Server | Enables pharmacophore-based virtual screening of large databases. | Web-based; integrates directly with ZINC for real-time screening [33]. |

| Protein Data Bank (PDB) | Data Repository | Source of 3D structural data for the biological target protein. | Essential for preparing the receptor structure for docking. |

| AutoDock Tools (ADT) | Utility Software | Prepares protein and ligand files for docking with AutoDock/Vina. | Used to add hydrogens, assign charges, and define the docking grid box [32]. |

| Schrödinger Maestro | Modeling Suite | Integrated platform for protein prep, ligand prep, docking (Glide), and MD. | Commercial software with a comprehensive toolset for advanced workflows [33]. |

Workflow and Pathway Visualizations

Virtual Screening Workflow

Implementing a Virtual Screening Pipeline: From Setup to Execution

Step-by-Step Guide to Building a Fully Local, Automated Screening Pipeline

Virtual screening has become a cornerstone of modern computational drug discovery, enabling researchers to rapidly identify potential hit compounds from vast chemical libraries. This Application Note provides a detailed, step-by-step protocol for establishing a fully local, automated virtual screening pipeline using exclusively free and open-source software. The protocol is designed to lower the access barrier for researchers new to structure-based drug discovery while improving efficiency for experienced users [35] [27]. By implementing this pipeline, researchers can perform automated virtual screening—from compound library preparation to docking evaluation—entirely within their local computing environment, ensuring data privacy and computational reproducibility without reliance on commercial software or cloud services.

The jamdock-suite presented here exemplifies the trend toward modular, script-based workflows that enhance reproducibility and scalability in virtual screening campaigns. This approach is particularly valuable for drug repurposing studies, where screening libraries of existing drugs (such as FDA-approved compounds) against new biological targets can significantly accelerate therapeutic development [27] [36].

The Scientist's Toolkit: Essential Materials and Reagents

Table 1: Key Research Reagent Solutions for Virtual Screening Pipeline

| Resource Name | Type | Primary Function | Source/Identifier |

|---|---|---|---|

| ZINC Database | Chemical Database | Provides chemical and structural information for millions of commercially available compounds | https://zinc.docking.org/ [27] |

| AutoDock Vina/QuickVina 2 | Docking Software | Performs molecular docking simulations with scoring function optimization | https://github.com/QVina/qvina [27] |

| Open Babel | Chemical Toolbox | Handles chemical format conversion and manipulation | Installed via package manager [27] |

| MGLTools (AutoDockTools) | Molecular Graphics | Prepares receptor and ligand files in PDBQT format | https://ccsb.scripps.edu/mgltools/ [27] |

| fpocket | Binding Site Detection | Identifies and characterizes potential ligand-binding pockets | https://github.com/Discngine/fpocket [27] |

| jamdock-suite | Automation Scripts | Orchestrates the complete virtual screening workflow | https://github.com/jamanso/jamdock-suite [27] |

System Configuration and Installation Protocol

Initial System Setup

The protocol is designed for Unix-like operating systems. Windows 11 users should install Windows Subsystem for Linux (WSL) before proceeding.

Experimental Protocol: WSL Installation for Windows Users

- Open Windows PowerShell as administrator (right-click and select "Run as administrator").

- Execute the command:

wsl --install. - Restart the system if required to complete the installation.

- Click on the Ubuntu icon and create a default user account [27].

Experimental Protocol: Software Dependency Installation

- Update and upgrade system packages:

- Install essential packages and software:

- Install AutoDockTools (MGLTools):

- Install fpocket for binding pocket detection:

- Install QuickVina 2 (AutoDock Vina variant):

- Install and configure the jamdock-suite scripts:

The entire installation process requires approximately 35 minutes to complete. After installation, researchers can invoke jamlib, jamreceptor, jamqvina, jamresume, and jamrank directly from any terminal window [27].

Automated Screening Pipeline: Workflow and Execution

The automated virtual screening pipeline consists of five modular programs that work in sequence to transform raw chemical and receptor data into ranked docking hits. This modular approach provides flexibility, allowing researchers to customize each stage according to their specific research needs [27].

Diagram 1: Automated screening pipeline workflow showing the sequence of five modular programs that transform raw data into ranked docking hits.

Experimental Protocols for Pipeline Execution

Experimental Protocol: Compound Library Generation with jamlib

- Generate a library of FDA-approved drugs:

- Create a custom compound library from specific ZINC subsets:

- Generate a diverse library based on structural similarity:

The jamlib script automatically retrieves compound structures, performs energy minimization, and converts all molecules to PDBQT format required for docking with Vina. This addresses the critical bottleneck of preparing large compound libraries, particularly for FDA-approved drugs whose PDBQT formats are not readily available in ZINC [27].

Experimental Protocol: Receptor Preparation with jamreceptor

- Prepare the receptor structure and identify binding pockets:

- Select the desired binding pocket from the fpocket analysis output.

- The script automatically generates the PDBQT file and defines the grid box coordinates based on the selected pocket [27].

The jamreceptor script utilizes fpocket for binding site detection and characterization. Fpocket not only identifies potential binding cavities but also provides druggability scores to facilitate selection of the most relevant docking sites [27].

Experimental Protocol: Automated Docking with jamqvina

- Execute the docking campaign across the entire compound library:

- For large libraries, monitor progress and resume if interrupted:

The jamqvina script supports execution on local machines, cloud servers, and HPC clusters, offering better scalability than GUI-based tools. The jamresume function ensures robustness during long-running docking processes that may span days [27].

Experimental Protocol: Results Ranking with jamrank

- Rank docking results using two scoring methods:

- Generate a prioritized list of top hits for further investigation:

The jamrank script evaluates docking outcomes using two scoring methods to help identify the most promising hits, providing researchers with a prioritized list for further experimental validation [27].

Performance Considerations and Technical Specifications

Computational Requirements and Optimization

Table 2: Performance Characteristics and Resource Requirements

| Pipeline Stage | Time Estimate | Computational Load | Key Dependencies |

|---|---|---|---|

| System Setup | 35 minutes | Low | Internet connection, sudo privileges |

| Library Generation (1000 compounds) | 1-2 hours | Medium | ZINC database access, Open Babel |

| Receptor Preparation | 5-10 minutes | Low | PDB file, fpocket, AutoDockTools |

| Docking (1000 compounds) | 4-24 hours | High | CPU cores, sufficient RAM |

| Results Ranking | 5-15 minutes | Low | Docking output files |

The pipeline is designed to handle libraries of varying sizes, from focused sets of FDA-approved drugs to large custom collections. For ultra-large chemical libraries exceeding one billion molecules, researchers can implement advanced AI-enabled screening approaches like Deep Docking, which can accelerate virtual screening by up to 100-fold through iterative docking of library subsets synchronized with ligand-based prediction of remaining docking scores [37].

The modular nature of the jamdock-suite allows researchers to adapt the pipeline to their specific computational resources. For high-throughput screening, the pipeline can be deployed on high-performance computing clusters, while smaller-scale drug repurposing projects can run effectively on standalone workstations [27].

Applications in Drug Discovery Research

Case Study: Virtual Screening for Antibiotic Resistance

A recent study demonstrated the application of virtual screening approaches to address the critical challenge of antibiotic resistance. Researchers employed molecular docking and molecular dynamics simulations to screen 192 FDA-approved drugs against New Delhi Metallo-β-lactamase-1 (NDM-1), a bacterial enzyme that confers resistance to β-lactam antibiotics [36].

The study identified four repurposed drugs—zavegepant, ubrogepant, atogepant, and tucatinib—as top candidates with favorable binding affinities for NDM-1. Subsequent molecular dynamics simulations confirmed the structural stability of these interactions over time, validating the docking predictions [36]. This case study exemplifies how automated virtual screening pipelines can rapidly identify promising therapeutic candidates for urgent public health threats.

Integration with Downstream Validation

While virtual screening provides valuable computational predictions, hit compounds typically require experimental validation through biochemical assays, structural biology approaches (such as X-ray crystallography), and further medicinal chemistry optimization. The ranked hit list generated by the jamrank script serves as the starting point for these downstream validation studies, prioritizing the most promising candidates for further investigation [27] [36].

This protocol provides researchers with a comprehensive, fully local solution for automated virtual screening that leverages exclusively free and open-source software. The jamdock-suite significantly lowers the barrier to entry for structure-based drug discovery while offering the robustness and flexibility required for production-scale virtual screening campaigns. By implementing this automated pipeline, research teams can accelerate their early drug discovery and repurposing efforts, efficiently transforming chemical libraries into prioritized hit lists for experimental validation.

Within the framework of molecular docking protocols for virtual screening, the initial and crucial step of compound library curation fundamentally determines the quality and efficiency of the entire research pipeline. Structure-based virtual screening is a powerful computational approach for drug discovery, allowing researchers to predict how large libraries of small molecules will interact with a biological target [27]. The success of this method hinges on the availability of properly formatted chemical compounds. The PDBQT file format, essential for popular docking tools like AutoDock Vina, stores molecular structures, atomic coordinates, partial charges, and atom types necessary for docking calculations [38]. However, the absence of PDBQT-format files in major public databases like ZINC can hinder the generation of large compound libraries, making their preparation an arduous and time-consuming task, particularly for users without extensive experience [27]. This application note details standardized protocols for generating both FDA-approved and custom compound libraries in PDBQT format, providing researchers with a robust methodology to lower the access barrier to high-quality virtual screening.

Available Compound Libraries for Virtual Screening

Researchers can curate libraries from various sources, ranging from focused sets of clinically approved drugs to ultra-large collections of commercially available compounds. The table below summarizes key libraries relevant to drug discovery and repurposing projects.

Table 1: Selected Compound Libraries for Virtual Screening

| Library Name | Number of Compounds | Description | Relevant Research Example |

|---|---|---|---|

| NCATS Pharmaceutical Collection (NPC) | 2,807 (v2.1) | Contains all drugs approved by the U.S. FDA and related international agencies [39]. | Drug repurposing for Polycystic Kidney Disease [39]. |

| Genesis Collection | 126,400 | A novel modern chemical library emphasizing high-quality chemical starting points and core scaffolds for derivatization [39]. | Target class profiling of small molecule methyltransferases [39]. |

| Pubchem Collection | 45,879 | A retired Pharma screening collection with a diversity of novel, medicinally-tractable small molecules [39]. | Advancing therapies for Charcot-Marie-Tooth disease type 1A [39]. |