Measuring Success: A Comprehensive Guide to Performance Metrics for Virtual Screening Protocols

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating virtual screening (VS) protocols.

Measuring Success: A Comprehensive Guide to Performance Metrics for Virtual Screening Protocols

Abstract

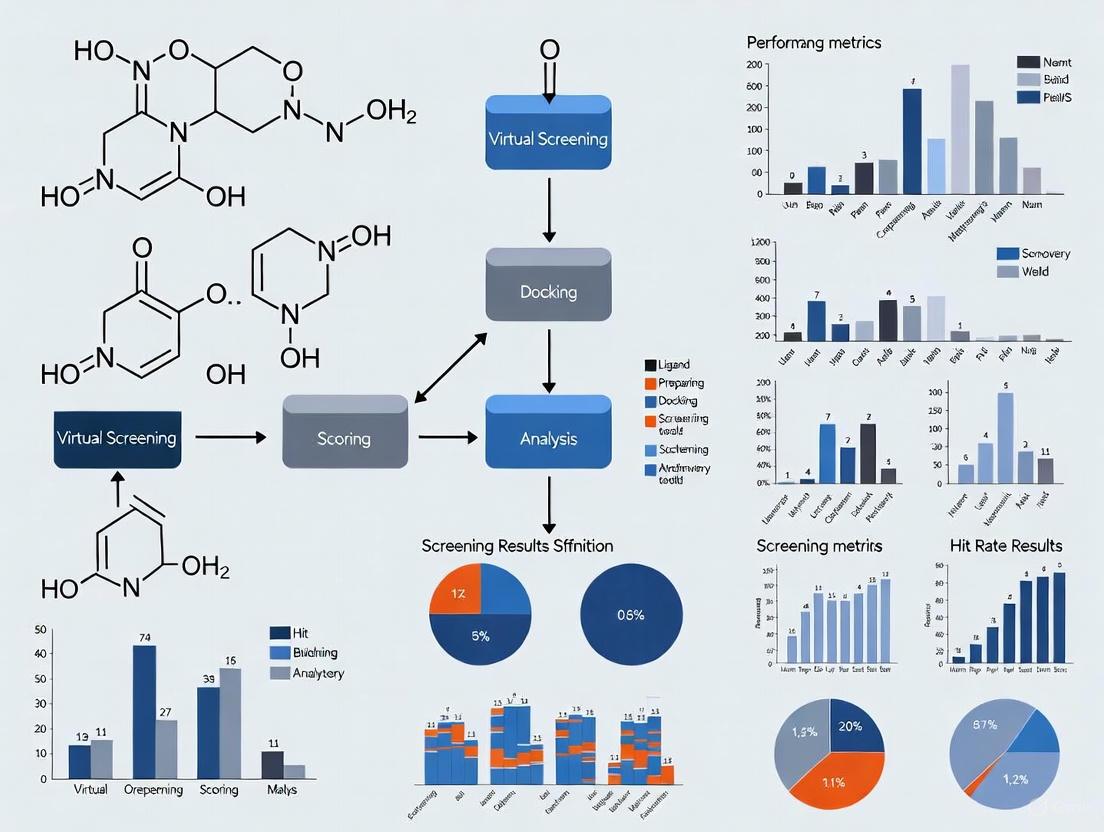

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating virtual screening (VS) protocols. It covers foundational metrics, explores their application across different VS methodologies, addresses common challenges in result interpretation and optimization, and outlines rigorous validation and comparative analysis techniques. The goal is to equip practitioners with the knowledge to accurately assess VS performance, improve hit rates, and make data-driven decisions in early-stage drug discovery.

Virtual Screening Metrics 101: Core Concepts and Industry Benchmarks

In the field of drug discovery, virtual screening (VS) serves as a fundamental computational technique for identifying initial hit compounds—molecules with biological activity against a therapeutic target—from extensive chemical libraries. Establishing clear criteria for what constitutes a 'hit' is crucial for the success of subsequent lead optimization campaigns [1]. Unlike traditional high-throughput screening (HTS), where statistical analyses of large experimental datasets can inform hit selection, virtual screening typically tests a smaller fraction of higher-scoring compounds, making standardized hit identification less established [1]. This guide objectively compares different hit identification criteria and their impact on the performance of virtual screening protocols, providing a framework for researchers to make informed decisions in their discovery pipelines.

Established Hit Identification Criteria in Virtual Screening

Activity Cut-offs and Experimental Metrics

A critical analysis of virtual screening results published between 2007 and 2011 revealed that only approximately 30% of studies reported a clear, predefined hit cutoff, indicating a lack of consensus in the field [1]. The activity cut-offs employed in these studies generally fall into several potency ranges, with varying prevalence as shown in Table 1.

Table 1: Distribution of Activity Cut-offs in Virtual Screening Studies

| Activity Cut-off Range | Percentage of Studies | Typical Assay Metrics |

|---|---|---|

| 1-25 μM | 32.3% | IC₅₀, EC₅₀, Kᵢ, Kd, % Inhibition |

| 25-50 μM | 12.8% | IC₅₀, EC₅₀, Kᵢ, Kd, % Inhibition |

| 50-100 μM | 12.1% | IC₅₀, EC₅₀, Kᵢ, Kd, % Inhibition |

| 100-500 μM | 13.3% | IC₅₀, EC₅₀, Kᵢ, Kd, % Inhibition |

| >500 μM | 5.9% | IC₅₀, EC₅₀, Kᵢ, Kd, % Inhibition |

Cut-offs at sub-micromolar levels are rarely used in initial virtual screening, as the primary goal is often to identify novel chemical scaffolds suitable for further optimization rather than highly potent compounds from the outset [1]. The most commonly used experimental metrics for defining hits are single-concentration percentage inhibition and concentration-response endpoints like IC₅₀, EC₅₀, Kᵢ, or Kd [1].

Ligand Efficiency Metrics

While fragment-based screening commonly employs ligand efficiency (LE) metrics to normalize experimental activity by molecular size, this practice has not been widely adopted in virtual screening hit identification [1]. Ligand efficiency is calculated as the free energy of binding divided by the number of heavy atoms or molecular weight, providing a measure of binding efficiency independent of molecular size. A key recommendation from literature analyses is the use of size-targeted ligand efficiency values as hit identification criteria, which helps prioritize compounds with optimal properties for further development [1].

Comparative Analysis of Virtual Screening Methodologies

Performance of Structure-Based Virtual Screening Protocols

A 2025 systematic evaluation of structure-based virtual screening (SBVS) methodologies for predicting urease inhibitory activity provides insightful performance comparisons across different computational approaches [2]. This study assessed five protocol variants integrating various docking and scoring methods, with their performance quantified using statistical correlation metrics and error-based measures as shown in Table 2.

Table 2: Performance Comparison of SBVS Methodologies

| Methodology | Description | Performance in Compound Ranking | Absolute Binding Energy Prediction |

|---|---|---|---|

| Molecular Docking | Standard rigid or flexible docking | Variable, highly dependent on scoring function | Moderate accuracy |

| Induced-Fit Docking (IFD) | Accounts for sidechain flexibility | Improved over standard docking for flexible sites | Moderate accuracy |

| Quantum-Polarized Ligand Docking (QPLD) | Incorporates quantum mechanical charges | Improved for charged/polar interactions | Moderate accuracy |

| Ensemble Docking (ED) | Uses multiple receptor conformations | Consistently outperforms other docking methods | Moderate accuracy |

| MM-GBSA | Molecular mechanics with solvation | Consistently outperforms other methods | Higher errors in absolute prediction |

The study found that while MM-GBSA and ensemble docking consistently outperformed other methods in compound ranking, MM-GBSA exhibited higher errors in absolute binding energy predictions [2]. The research also investigated the influence of data fusion techniques, revealing that the minimum fusion approach remained robust across all conditions, while increasing the number of docking poses generally reduced predictive accuracy [2].

The Impact of Protein Structure Selection

The choice of protein structure significantly impacts virtual screening outcomes. Recent advances in structure prediction, particularly AlphaFold3, have demonstrated potential for generating appropriate protein structures for SBVS, especially for targets lacking experimental structural data [3].

Table 3: Performance of AlphaFold3-Generated Structures in Virtual Screening

| Input Strategy | Screening Performance | Remarks |

|---|---|---|

| No Ligand (Apo) | Baseline performance | Does not capture ligand-induced changes |

| Co-crystallized Ligand | Improved performance | Requires known experimental complex |

| Active Ligand | Highest screening performance | Enhances prediction accuracy of holo form |

| Decoy Ligand | Similar to apo performance | Limited improvement over baseline |

Studies show that holo structures predicted by AlphaFold3 with ligand inclusion yield higher screening performance than apo structures generated without ligand input [3]. Notably, incorporating active ligands enhances screening performance, whereas decoys produce results similar to apo predictions [3]. The use of experimentally determined template structures as references in AlphaFold3 further improves prediction outcomes. Additionally, lower molecular weight ligands tend to generate predicted structures that more closely resemble experimental holo structures, thus improving screening efficacy [3].

Experimental Protocols for Hit Validation

Hit Validation Workflow

Following computational identification of potential hits, experimental validation is essential to confirm biological activity and compound quality. The hit validation process typically consists of a suite of assays designed to eliminate false positives, confirm activity with the intended target, and establish an initial ranking of compounds by activity [4]. A standardized workflow for this process is detailed below:

Diagram Title: Hit Validation and Assessment Workflow

Key Methodologies in Hit Validation

Dose-Response Analysis: Initial screening hits are subjected to concentration-response studies to determine potency metrics (IC₅₀, EC₅₀, Kᵢ, Kd). This confirms the concentration-dependent nature of the activity and provides quantitative data for comparing compounds [4].

Orthogonal Assays: These secondary assays use different physical or technical principles to confirm activity. Common biophysical techniques include Surface Plasmon Resonance (SPR) and Bio-Layer Interferometry (BLI) for direct binding confirmation; Isothermal Titration Calorimetry (ITC) and Thermal Shift Assays for characterizing binding thermodynamics; and NMR Spectroscopy for providing direct evidence of target-ligand complex formation in solution [4].

Counter-Screens: These assays eliminate false positives by testing for assay interference compounds, assessing selectivity against related targets, and screening for general cytotoxicity. This includes applying filters for Pan-Assay Interference Compounds (PAINS) to eliminate promiscuous binders [4].

Advanced Approaches: Active Learning in Virtual Screening

Active learning methods represent a paradigm shift in computer-assisted drug discovery by incorporating adaptive feedback loops into the screening process [5]. Instead of full-deck screening, these algorithms test focused subsets of compounds and use experimental readouts to refine molecule selection for subsequent screening cycles, significantly reducing costs and resource consumption [5].

Modern implementations of active learning, such as Schrödinger's Active Learning Applications, combine machine learning with physics-based data to achieve remarkable efficiency. These platforms can screen billions of compounds by docking only a small, strategically selected subset, recovering approximately 70% of the same top-scoring hits that would have been found from exhaustive docking, for only 0.1% of the computational cost [6].

Table 4: Key Research Reagents and Computational Tools for Virtual Screening

| Tool Category | Examples | Function | Application in Hit ID |

|---|---|---|---|

| Molecular Docking Software | AutoDock Vina, Glide, GOLD, DOCK | Predicts ligand binding pose and affinity | Primary virtual screening tool [7] |

| Protein Structure Databases | PDB, AlphaFold DB | Provides 3D structures of target proteins | Structure-based screening foundation [8] |

| Compound Libraries | ZINC, ChEMBL, Reaxys | Collections of purchasable or known bioactive compounds | Source of candidate molecules [8] |

| Conformer Generators | OMEGA, ConfGen, RDKit | Predicts 3D conformations of small molecules | Library preparation for 3D methods [8] |

| Scoring Functions | MM-GBSA, Force field-based, Empirical | Ranks compounds by predicted binding affinity | Hit prioritization [7] [2] |

| Ligand-Based Tools | ROCS, Phase, UNITY | Identifies compounds similar to known actives | Alternative when structures unavailable [8] |

Establishing appropriate hit identification criteria requires careful consideration of activity cut-offs, ligand efficiency metrics, and validation protocols. The comparative data presented in this guide demonstrates that methodological choices significantly impact virtual screening outcomes. Ensemble docking and MM-GBSA generally provide superior compound ranking, while the integration of active learning approaches and advanced structure prediction tools like AlphaFold3 can dramatically enhance screening efficiency. A robust hit identification strategy should incorporate size-targeted ligand efficiency metrics, rigorous experimental validation through orthogonal assays, and consideration of both potency and compound quality to ensure successful transition from hits to viable lead compounds.

In the field of computer-aided drug discovery, virtual screening (VS) serves as a fundamental technique for rapidly identifying potential hit compounds from extensive molecular databases. The efficacy of these computational methods hinges on robust performance metrics that quantitatively evaluate their ability to discriminate between active and inactive molecules. While numerous validation metrics exist, Enrichment Factor (EF), Area Under the Receiver Operating Characteristic Curve (AUC-ROC), and Success Rates (often expressed as Hit Rate) have emerged as central indicators for assessing virtual screening performance [9] [10] [11]. These metrics provide complementary insights into different aspects of a method's predictive capability, with EF and Hit Rate focusing on early recognition and AUC-ROC evaluating overall ranking performance.

The selection of appropriate metrics is not merely a technical formality; it directly influences the interpretation of virtual screening results and the subsequent prioritization of compounds for experimental testing. Each metric embodies specific assumptions and sensitivities, making understanding their characteristics, strengths, and limitations essential for researchers, scientists, and drug development professionals who rely on these computational tools [9] [12]. This guide provides a comparative analysis of these key performance indicators, supported by experimental data and detailed methodologies from contemporary research.

Metric Definitions and Theoretical Foundations

Enrichment Factor (EF)

The Enrichment Factor (EF) is a widely used metric that quantifies the concentration of active compounds within a selected top fraction of a ranked database compared to a random distribution [11]. It is defined as the proportion of true active compounds found in the selection set relative to the proportion of true active compounds in the entire dataset [9] [11]. The mathematical formulation is:

[EF(\chi) = \frac{(ns / Ns)}{(n / N)} = \frac{N \times ns}{n \times Ns}]

Where:

- (n_s) = number of true active compounds in the selection set (top-ranked fraction)

- (N_s) = total number of compounds in the selection set

- (n) = total number of true active compounds in the entire dataset

- (N) = total number of compounds in the entire dataset

- (\chi) = fraction of the database screened ((N_s / N))

The EF metric is highly intuitive and particularly valuable for assessing early enrichment, which is critical in virtual screening campaigns where only a small fraction of a compound library can be tested experimentally [12]. However, a known limitation is its dependence on the ratio of active to inactive compounds in the dataset, and it suffers from a saturation effect once all active compounds are recovered in the early portion of the ranked list [11].

Area Under the ROC Curve (AUC-ROC)

The Receiver Operating Characteristic (ROC) curve is a fundamental tool for evaluating the overall ranking performance of virtual screening methods. It plots the True Positive Rate (TPR or Sensitivity) against the False Positive Rate (FPR) across all possible classification thresholds [9] [12]. The Area Under the ROC Curve (AUC-ROC) provides a single scalar value representing the overall ability of the method to rank active compounds higher than inactive ones [12].

The AUC represents the probability that a randomly chosen active compound will be ranked higher than a randomly chosen inactive compound [12]. An ideal ranking yields an AUC of 1.0, while a random ranking gives an AUC of 0.5 [12]. The mathematical components are:

[TPR(\chi) = \frac{ns}{n}] [FPR(\chi) = \frac{Ns - ns}{N - n}] [AUC = \int{0}^{1} TPR(FPR) \, dFPR]

A key advantage of AUC-ROC is its independence from the cutoff threshold and the prevalence of actives in the dataset [12]. However, a significant limitation is that it summarizes performance across the entire ranking, which may not adequately reflect early enrichment capabilities that are most relevant in practical virtual screening scenarios [9] [12].

Success Rate and Hit Rate

Success Rate, commonly operationalized as Hit Rate (HR), measures the proportion of active compounds identified within a specified top fraction of the ranked database [10]. It is a straightforward metric that directly answers the practical question: "What percentage of the selected compounds are active?" [10]. The calculation is:

[HR(\chi) = \frac{ns}{Ns} \times 100\%]

This metric is sometimes referred to as precision in the context of classification metrics [11]. In a recent study evaluating a novel ligand-based virtual screening approach, the average Hit Rate at the top 1% and 10% of the ranked database across 40 protein targets were reported as 46.3% and 59.2%, respectively [10]. Hit Rate provides directly interpretable values for decision-making in drug discovery projects but is highly dependent on the chosen threshold and the ratio of actives to inactives in the dataset.

Table 1: Key Characteristics of Virtual Screening Performance Metrics

| Metric | Mathematical Definition | Key Strength | Primary Limitation | Optimal Value |

|---|---|---|---|---|

| Enrichment Factor (EF) | (EF(\chi) = \frac{N \times ns}{n \times Ns}) | Measures early recognition capability; highly intuitive | Dependent on ratio of actives/inactives; saturation effect | >1 (Higher is better) |

| AUC-ROC | (AUC = \int_{0}^{1} TPR(FPR) dFPR) | Overall ranking assessment; threshold-independent | Does not specifically measure early enrichment | 1.0 |

| Hit Rate (HR) | (HR(\chi) = \frac{ns}{Ns} \times 100\%) | Directly interpretable for experimental planning | Highly dependent on selection threshold and active ratio | 100% |

Comparative Analysis of Metric Performance

Practical Considerations in Metric Selection

The choice of performance metrics significantly influences the assessment of virtual screening methods. EF and Hit Rate are most valuable when the practical constraint is testing only a small fraction of a compound library, as they directly quantify the yield of actives in this critical early region [9] [10]. In contrast, AUC-ROC provides a more comprehensive evaluation of ranking quality across the entire database, which is important for applications requiring complete database ranking [12].

A critical challenge in virtual screening evaluation is that each metric emphasizes different aspects of performance. The AUC-ROC can sometimes be misleading, as methods with identical AUC values may show dramatically different early enrichment behaviors [12]. This was explicitly demonstrated in research showing that "both the Early (pink) and Late (blue) curves have an AUC of exactly 0.5" despite one showing significantly better early recognition [12]. Consequently, the field has moved toward reporting multiple metrics to present a more complete picture of virtual screening performance.

Experimental Comparisons from Contemporary Research

Recent comparative studies have provided valuable insights into the behavior of these metrics in practical scenarios. A 2025 study systematically evaluating virtual screening methodologies for predicting urease inhibitory activity found that while Molecular Mechanics/Generalized Born Surface Area (MM-GBSA) and Ensemble Docking (ED) consistently outperformed other methods in compound ranking, the MM-GBSA approach exhibited higher errors in absolute binding energy predictions [2]. This highlights how different methodological choices can affect performance as measured by various metrics.

In developing new virtual screening approaches, researchers often report multiple metrics to demonstrate comprehensive performance. For instance, in the evaluation of a new ligand-based virtual screening approach using the Directory of Useful Decoys (DUD) dataset, the method achieved "an average AUC value of 0.84 ± 0.02" while also reporting that "the average HR values at top 1% and 10% of the active compounds for the 40 targets were 46.3% ± 6.7% and 59.2% ± 4.7%, respectively" [10]. This multi-faceted reporting provides a more complete picture of method capability than any single metric could offer.

Table 2: Experimental Performance Data from Virtual Screening Studies

| Study Context | Methodology | AUC-ROC | EF/HR Performance | Key Findings |

|---|---|---|---|---|

| Ligand-Based VS Approach [10] | New shape-overlapping method (HWZ score) | 0.84 ± 0.02 (average across 40 targets) | HR@1% = 46.3% ± 6.7%; HR@10% = 59.2% ± 4.7% | Improved overall performance with less sensitivity to target choice |

| Structure-Based VS Comparison [2] | MM-GBSA vs. Ensemble Docking | Not reported | MM-GBSA and ED consistently outperformed in ranking | MM-GBSA showed higher errors in absolute binding energy predictions |

| Docking Software Evaluation [9] | Surflex-dock, ICM, AutoDock Vina | Varied by target and method | Early enrichment differed significantly between methods | Performance method- and target-dependent |

Experimental Protocols for Metric Evaluation

Standard Benchmarking Workflow

The reliable evaluation of virtual screening performance metrics requires standardized experimental protocols and high-quality benchmarking datasets. A typical workflow begins with dataset selection and curation, followed by virtual screening execution, and concludes with performance calculation and statistical analysis [9] [13]. The Directory of Useful Decoys (DUD) has emerged as a widely adopted public benchmarking dataset containing known active compounds for 40 targets, with 36 decoys carefully selected for each active compound to minimize bias [9] [10]. This dataset design helps ensure meaningful evaluation of virtual screening methods.

Proper data curation is essential for reliable metric calculation. This process typically includes standardizing chemical structures, removing duplicates, neutralizing salts, and filtering out compounds with unusual elements or structural issues [13]. As demonstrated in recent benchmarking studies, rigorous curation significantly enhances dataset quality and consequently improves the reliability of performance metrics [13]. For example, in one comprehensive benchmarking study, researchers applied automated curation procedures that addressed "the identification and the removal of inorganic and organometallic compounds and mixtures, of those compounds including unusual chemical elements, the neutralization of salts, removal of duplicates at SMILES level and the standardization of chemical structures" [13].

Calculation of Metrics and Statistical Validation

Following virtual screening execution, the resulting ranked lists undergo metric calculation at specified threshold points. Standard practice involves calculating EF and Hit Rate at early recovery points such as 0.5%, 1%, and 2% of the ranked database [12]. AUC-ROC calculation typically employs methods such as the trapezoidal rule to approximate the area under the ROC curve [9]. To ensure statistical robustness, bootstrapping approaches are often employed to estimate confidence intervals, with vROCS software, for instance, reporting "mean value 95% confidence limits" derived from bootstrapping [12].

Statistical significance testing between different virtual screening methods is increasingly recognized as essential for meaningful comparisons. The p-value implementation in tools like vROCS uses "a one-sided statistical test based on the prior assumption that method B is superior to method A" [12]. This approach allows researchers to determine whether observed differences in metric values reflect true methodological superiority rather than random variation. The interpretation follows standard statistical practice: "If the p-value tends towards 0.0 then the results for the Base run are better than the 'Compare to...' run" [12].

Diagram 1: Virtual screening metric evaluation involves dataset preparation, screening execution, and comprehensive metric calculation with statistical validation.

Advanced Metric Considerations and Emerging Approaches

Limitations and Complementary Metrics

While EF, AUC-ROC, and Hit Rate are widely adopted, they possess limitations that have prompted the development of complementary metrics. The saturation effect of EF occurs when "the actives saturate the early positions of the ranking list and the performance metric cannot get any higher, thereby preventing to distinguish between good and excellent models" [11]. Similarly, AUC-ROC's summarization of overall performance means it "does not directly answer the questions some want posed, i.e. the performance of a method in the top few percent" of the ranked list [12].

To address these limitations, researchers have developed specialized metrics including:

- Relative Enrichment Factor (REF): Addresses the saturation effect by considering the maximum EF achievable at a given cutoff point [11]

- ROC Enrichment (ROCE): Defined as "the fraction of actives found when a given fraction of inactives has been found" [11]

- BEDROC: Boltzmann-Enhanced Discrimination of ROC incorporates an exponential weighting function to emphasize early recognition [9]

- Predictiveness Curves: Transferred from clinical epidemiology, these curves visualize the distribution of scores and their relationship to activity probability, providing intuitive graphical assessment of predictive power [9]

The Power Metric and Statistically Robust Alternatives

A more recent development is the Power Metric, introduced as a statistically robust enrichment metric with early recovery capability [11]. This metric is defined as "the fraction of the true positive rate divided by the sum of the true positive and false positive rates, for a given cutoff threshold" [11]. The Power Metric demonstrates robustness with respect to variations in the applied cutoff threshold and the ratio of active to inactive compounds, while maintaining sensitivity to variations in model quality [11].

Other statistically grounded metrics gaining adoption include:

- Matthew's Correlation Coefficient (MCC): A balanced measure that can be used on classes of different sizes, returning +1 for perfect prediction, 0 for random prediction, and -1 for total disagreement [11]

- Correct Classification Rate (CCR): Also known as balanced accuracy, defined as the average of sensitivity and specificity [11]

These metrics offer improved statistical properties while addressing the early recognition problem fundamental to virtual screening applications. The ideal characteristics of a virtual screening metric, as outlined by Nicholls, include "independence to extensive variables, statistical robustness, straightforward assessment of error bounds, no free parameters," and being "easily understandable and interpretable" [11].

Table 3: Advanced Metrics for Virtual Screening Evaluation

| Metric | Calculation | Application Context | Advantage |

|---|---|---|---|

| Relative EF (REF) | (REF(\chi) = \frac{100 \times n_s}{\min(N \times \chi, n)}) | Early enrichment assessment | Addresses EF saturation effect; range 0-100 |

| Power Metric | (Power(\chi) = \frac{TPR(\chi)}{TPR(\chi) + FPR(\chi)}) | Early recognition problems | Statistically robust; insensitive to prevalence |

| BEDROC | Weighted average of ROC | Early recognition | Emphasizes early ranks with parameter α |

| MCC | (\frac{TP \times TN - FP \times FN}{\sqrt{(TP+FP)(TP+FN)(TN+FP)(TN+FN)}}) | Balanced classification assessment | Works well with imbalanced datasets |

Benchmarking Datasets and Software Tools

High-quality benchmarking datasets are fundamental for rigorous virtual screening evaluation. The Directory of Useful Decoys (DUD) is a cornerstone resource containing "known active compounds for 40 targets, including 36 decoys for each active compound" specifically designed to minimize artificial enrichment [9] [10]. More recent specialized datasets include ApisTox, a comprehensive benchmark dataset for classifying small molecule toxicity in honey bees, which demonstrates the expansion of virtual screening applications beyond human drug targets [14].

Specialized software tools enable the calculation and comparison of virtual screening metrics. Commercial packages such as ROCS from OpenEye provide integrated metric calculation, including "ROC curves together with its AUC, 95% confidence limits" and "early enrichment at 0.5%, 1% and 2% of decoys retrieved" [12]. Open-source alternatives and custom scripts implemented in Python, particularly using libraries like RDKit, offer flexibility for specialized analyses and integration with data curation pipelines such as MEHC-Curation, a Python framework for high-quality molecular dataset preparation [15] [13].

Data Curation and Chemical Space Analysis Tools

Robust metric evaluation requires careful dataset preparation. Data curation frameworks address common issues in molecular databases, implementing multi-stage pipelines for "validation, cleaning, normalization" with "integrated duplicate removal and error tracking" [15]. These tools transform "an intricate process into a straightforward operation" essential for reproducible virtual screening research [15].

Chemical space analysis tools ensure the relevance of metric evaluation to specific research contexts. By applying techniques such as Principal Component Analysis (PCA) on molecular descriptors, researchers can visualize and validate that benchmarking datasets adequately represent the chemical space of interest, including "industrial chemicals, approved drugs, and natural chemical products" [13]. This analysis confirms that performance metrics derived from benchmarking studies have validity for real-world applications.

Diagram 2: Metric selection depends on screening goals, with different metrics optimized for early recognition, overall ranking, or statistical robustness.

The comparative analysis of Enrichment Factor, AUC-ROC, and Success Rates reveals a landscape of complementary rather than competing metrics. EF and Hit Rate excel in quantifying early enrichment, the practical scenario in most virtual screening applications. AUC-ROC provides comprehensive assessment of overall ranking capability, while emerging metrics like the Power Metric offer improved statistical robustness. Contemporary research practice favors multi-metric reporting with statistical validation to fully characterize virtual screening performance. As the field advances, the integration of these metrics with rigorous dataset curation and chemical space analysis will continue to enhance the reliability and applicability of virtual screening in drug discovery and development.

In modern drug discovery, the initial identification of small molecules through virtual screening represents a critical funnel that narrows the search space from near-infinite chemical possibilities to a manageable collection of lead compounds [16]. While traditional screening has often prioritized raw binding potency, this approach fails to account for fundamental molecular properties that determine ultimate drug success. The pursuit of potency alone often results in larger, more complex molecules with poor physicochemical properties that face higher rates of attrition in later development stages [1] [17].

Ligand efficiency (LE) and related size-targeted metrics address this challenge by normalizing biological activity against molecular size, lipophilicity, and other key parameters [18] [17]. These metrics provide a more balanced approach to lead selection by answering a crucial question: is the observed affinity worth the molecular "price" being paid in size and lipophilicity? This comparative guide examines the performance, implementation, and practical utility of these critical metrics within virtual screening protocols, providing researchers with data-driven insights for their drug discovery campaigns.

Theoretical Foundations: Defining Ligand Efficiency and Related Metrics

Core Concepts and Calculation Methods

Ligand efficiency metrics are fundamentally based on the principle of normalizing observed affinity by various measures of molecular size or properties. The most basic formulation of ligand efficiency (LE) scales the free energy of binding by the number of non-hydrogen atoms [17] [19]:

Where ΔG° represents the standard free energy of binding and Nₙₕ is the number of non-hydrogen atoms. However, this apparently simple calculation harbors a significant thermodynamic limitation—its nontrivial dependency on the concentration unit (C°) used to express affinity [17]. Because the logarithm function cannot take dimensioned arguments, Kd values must be scaled by an arbitrary concentration unit (typically 1 M), meaning LE "cannot be defined objectively in absolute terms for individual compounds because there is no physical basis for favoring a particular value of C° for calculation of LE" [17].

The Expanding Universe of Efficiency Metrics

In response to the limitations of basic LE, researchers have developed multiple efficiency metrics that address different aspects of molecular optimization (Table 1).

Table 1: Key Ligand Efficiency Metrics and Their Applications in Virtual Screening

| Metric | Calculation | Primary Application | Advantages | Limitations |

|---|---|---|---|---|

| Ligand Efficiency (LE) | -ΔG°/Nₙₕ [16] [17] | Initial lead selection; Size normalization | Simple calculation; Intuitive "bang for buck" [17] | Concentration unit dependency; Oversimplifies binding physics [17] |

| Lipophilic Ligand Efficiency (LLE/LipE) | pActivity - LogP/D [18] [17] | Balancing potency and lipophilicity | Physically interpretable (transfer from octanol to binding site) [17] | Less relevant for highly ionized compounds [17] |

| Fit Quality (FQ) | [pChEMBL ÷ HA] ÷ [0.0715 + (7.5328 ÷ HA) + (25.7079 ÷ HA²) - (361.4722 ÷ HA³)] [18] | Benchmarking against expected size-affinity relationships | Contextualizes efficiency relative to expected performance [18] | Complex calculation; Limited familiarity |

| Size-Independent LE (SILE) | pChEMBL ÷ HA⁰·³ [18] | Comparing compounds of different sizes | Reduces size bias in efficiency assessment [18] | Empirical exponent choice |

| Binding Efficiency Index (BEI) | pChEMBL / (MW in kDa) [18] | Fragment-based screening | Dimensionless; Easy to calculate [18] | Still has concentration dependency [17] |

Comparative Performance Analysis of Efficiency Metrics

Discrimination Power: Differentiating Drugs from Typical Compounds

A comprehensive analysis of 643 marketed drugs acting on 271 targets revealed that efficiency metrics provide exceptional discrimination between successful drugs and typical research compounds. The study found that "96% of drugs have LE or LLE values, or both, greater than the median values of their target comparator compounds" [18]. This striking statistic demonstrates the power of these metrics to identify compounds with drug-like optimization paths, even when comparing molecules acting at the same biological target.

The same research examined multiple metrics across 1,104 drug-target pairs and found consistent differentiation, with recent drugs (approved 2010-2020) displaying "no overall differences in molecular weight, lipophilicity, hydrogen bonding or polar surface area from their target comparator compounds" but being distinguished primarily by "higher potency, ligand efficiency (LE), lipophilic ligand efficiency (LLE), and lower carboaromaticity" [18].

Practical Performance in Virtual Screening Implementation

In direct virtual screening applications, the performance of efficiency metrics varies significantly. One study investigating 13 diverse protein targets found that "smina's docking score did not provide a means to calculate Ki no matter the approach" and that "ranking and/or classification was not markedly improved when including other parameters than docking score alone" [16]. However, the researchers did observe that the "Fit Quality (FQ) metric offers some improvement over smina's docking score on average," though they cautioned that "we could not identify a metric that was superior for all targets" [16].

Table 2: Experimental Performance of Efficiency Metrics Across Different Target Classes

| Study Focus | Targets Evaluated | Key Findings on Metric Performance | Practical Recommendations |

|---|---|---|---|

| Virtual Screening Assessment [16] | 13 diverse targets with ≥10 inhibitors each | FQ offered average improvement over docking score alone; No universal superior metric | Target-specific metric optimization needed; FQ recommended for initial trials |

| Drug vs. Comparator Analysis [18] | 271 targets across multiple classes | LE and LLE differentiated 96% of drugs from median target comparators | LE/LLE thresholds effective for prioritization; Combined approach superior |

| Literature Analysis (2007-2011) [1] | 402 publications across multiple targets | Only ~30% used predefined hit cutoffs; None used LE as primary selection criteria | Standardization needed; Size-targeted LE values recommended for hit identification |

Impact on Hit Identification and Optimization

The implementation of efficiency metrics directly influences the quality of initial hits and their optimization potential. Analysis of virtual screening results published between 2007-2011 revealed that only approximately 30% of studies reported "a clear, predefined hit cutoff and no clear consensus on hit selection criteria was identified" [1]. Notably, "ligand efficiency was not used as a hit selection metric in any of these reports" despite its potential benefits [1].

Researchers have recommended "the use of size-targeted ligand efficiency values as hit identification criteria" to enable more successful optimization [1]. This approach recognizes that initial hits with superior efficiency provide better starting points for medicinal chemistry, as "the most efficient optimization paths are those for which the necessary potency gains are accompanied by the smallest increases in perceived risk" [17].

Experimental Protocols and Methodologies

Standard Protocol for Efficiency Metric Implementation

The integration of efficiency metrics into virtual screening workflows follows a systematic process that transforms raw docking results into efficiency-normalized rankings. AUDocker LE, a graphical interface for AutoDock Vina, exemplifies this approach by automating the calculation and application of ligand efficiency metrics [19]. The standard methodology involves:

Molecular Size Determination: Calculation of heavy atom count (non-hydrogen atoms) or molecular weight for each compound in the screening library [19].

Affinity Measurement: Docking score or experimental binding affinity conversion to consistent energy units (typically kcal/mol).

Efficiency Calculation: Application of the formula LE_ligand = ΔG/N, where ΔG represents binding free energy and N is the number of non-hydrogen atoms [19].

Normalization and Selection: Comparison of calculated efficiencies to reference standards using approaches like δLE = LEligand/LEstandard, with selection criteria of δLE > 1 or δLE ≥ m+3σ (where m is the average δLE for all compounds against a specific target and σ is the standard deviation) [19].

Ligand Efficiency Screening Workflow: This diagram illustrates the standard protocol for implementing efficiency metrics in virtual screening, from initial preparation through final hit selection.

Advanced Normalization Techniques

For complex screening scenarios involving multiple protein targets or diverse chemical libraries, additional normalization approaches address context-dependent variability. One implemented method uses the formula:

Where V represents the normalized score value assigned to the ligand, V₀ is the binding energy from docking, ML is the average score for all ligands against a specific protein, and MR is the average score for a specific ligand across all proteins [19]. This approach helps mitigate false positives/negatives arising from differential ligand-protein interaction tendencies.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Computational Tools for Efficiency-Driven Virtual Screening

| Tool/Resource | Primary Function | Efficiency Metric Support | Implementation Requirements |

|---|---|---|---|

| AUDocker LE [19] | GUI for virtual screening with AutoDock Vina | Automated LE calculation and normalization | Windows OS, Python 2.5, .NET framework |

| RosettaVS [20] | Physics-based virtual screening platform | Customizable metric implementation | High-performance computing cluster |

| OpenVS [20] | AI-accelerated screening platform | Integration with machine learning approaches | CPU/GPU clusters, Linux environment |

| ChEMBL Database [18] | Bioactivity data resource | Reference values for metric benchmarking | Web access or local installation |

| RDKit [18] | Cheminformatics toolkit | Molecular descriptor calculation | Python programming environment |

Integration with Modern Virtual Screening Platforms

Efficiency Metrics in AI-Accelerated Screening

Modern virtual screening platforms increasingly incorporate efficiency metrics directly into their selection pipelines. The OpenVS platform, which leverages artificial intelligence to accelerate screening of billion-compound libraries, integrates efficiency considerations through its combination of "enthalpy calculations (ΔH) with a new model estimating entropy changes (ΔS) upon ligand binding" [20]. This approach recognizes that comprehensive efficiency assessment must account for both energetic components.

The platform employs a two-stage docking protocol with "virtual screening express (VSX) for rapid initial screening, while the virtual screening high-precision (VSH) is a more accurate method used for final ranking of the top hits from the initial screen" [20]. This hierarchical approach enables the practical application of more computationally intensive efficiency assessments to progressively smaller compound subsets.

Performance in Benchmark Studies

In standardized benchmarking, platforms incorporating advanced physics-based scoring have demonstrated superior performance in identifying true binders. RosettaVS achieved "the leading performance to accurately distinguish the native binding pose from decoy structures" in CASF-2016 benchmarks [20]. Particularly impressive was its performance in screening power tests, where "the top 1% enrichment factor from RosettaGenFF-VS (EF1% = 16.72) outperforms the second-best method (EF1% = 11.9) by a significant margin" [20].

This improved enrichment directly supports more effective efficiency-based triage by providing more reliable binding affinity estimates for subsequent efficiency calculations.

Limitations and Critical Considerations

Theoretical and Practical Constraints

Despite their utility, ligand efficiency metrics face significant theoretical and practical challenges that researchers must acknowledge. The fundamental limitation remains that conventional LE "cannot be regarded as physically meaningful because perception of efficiency varies with the concentration unit in which affinity is expressed" [17]. This thermodynamic limitation stems from the logarithm function's inability to take dimensioned arguments.

Practically, metrics may perform inconsistently across different target classes and screening contexts. One comprehensive assessment concluded that "we could not identify a metric that was superior for all targets" [16], highlighting the context-dependent nature of metric performance. Researchers should therefore avoid over-reliance on any single metric and instead consider consensus approaches.

Optimization Pitfalls and Risk Management

Blind pursuit of improved efficiency metrics can lead to suboptimal compound profiles if applied without chemical insight. The incremental nature of drug design means that "the most efficient optimization paths are those for which the necessary potency gains are accompanied by the smallest increases in perceived risk" [17]. However, non-linear relationships between molecular size and affinity can make consistent efficiency gains challenging throughout optimization campaigns.

The field has increasingly recognized that "simple drug design guidelines based on molecular size and/or lipophilicity typically become progressively less useful as more" complex optimization challenges emerge [17]. Therefore, efficiency metrics serve best as guideposts rather than absolute rules in late-stage optimization.

Ligand efficiency metrics have evolved from simple size-normalization concepts to sophisticated tools that balance multiple physicochemical properties against biological activity. The comparative evidence demonstrates that these metrics, particularly when used in combination, can significantly enhance virtual screening outcomes by prioritizing compounds with superior optimization potential.

Future developments will likely address current limitations through improved theoretical foundations, target-specific metric optimization, and deeper integration with machine learning approaches. As virtual screening libraries expand to billions of compounds [20], efficient triage based on these multidimensional metrics will become increasingly critical for computational drug discovery.

Virtual screening (VS) has become an indispensable tool in modern drug discovery, enabling researchers to efficiently prioritize potential drug candidates from vast chemical libraries. As the computational drug discovery field matures, rigorous benchmarking and standardized performance assessment of VS methodologies have emerged as critical components for advancing the field. This comparative guide examines current industry standards for analyzing performance data across published virtual screening campaigns, providing researchers with objective frameworks for evaluating different computational approaches. By synthesizing findings from recent benchmarking studies, this analysis aims to establish evidence-based best practices for VS protocol selection and performance validation within the broader context of performance metrics research.

Performance Benchmarking of Virtual Screening Methodologies

Comparative Analysis of Screening Protocols

Recent comprehensive studies have systematically evaluated multiple virtual screening approaches across various protein targets to establish performance benchmarks. Valdés-Muñoz et al. (2025) conducted a thorough comparison of five protocol variants integrating molecular docking, induced-fit docking (IFD), quantum-polarized ligand docking (QPLD), ensemble docking (ED), and molecular mechanics/generalized Born surface area (MM-GBSA) using multiple crystallographic structures of Helicobacter pylori urease [2].

Table 1: Performance Comparison of Virtual Screening Methodologies

| Methodology | Statistical Correlation | Error Metrics | Key Strengths | Limitations |

|---|---|---|---|---|

| MM-GBSA | High Pearson correlation with pIC₅₀ | Higher absolute binding energy errors | Excellent compound ranking accuracy | Computationally intensive |

| Ensemble Docking (ED) | Strong Spearman ranking correlation | Moderate error metrics | Consistent performance across protein structures | Requires multiple protein structures |

| Induced-Fit Docking (IFD) | Moderate correlation | Variable error rates | Accounts for protein flexibility | High computational cost |

| Quantum-Polarized Ligand Docking (QPLD) | Good for charged compounds | Specialized application | Improved handling of electronic effects | Limited general applicability |

| Standard Molecular Docking | Baseline performance | Standard error profiles | Fast screening capability | Lower ranking accuracy |

The study revealed that MM-GBSA and ensemble docking consistently outperformed other methods in compound ranking, though MM-GBSA exhibited higher errors in absolute binding energy predictions [2]. The research also demonstrated that using pIC₅₀ values as experimental references provided higher Pearson correlations compared to IC₅₀ values, reinforcing the suitability of pIC₅₀ for affinity prediction in VS campaigns.

Data Fusion Techniques and Pose Selection

The performance of virtual screening workflows is significantly influenced by data fusion strategies and pose selection parameters. Research has evaluated various fusion approaches including minimum, median, arithmetic, geometric, harmonic, and Euclidean means for combining results from multiple screening protocols [2]. The minimum fusion approach demonstrated particular robustness across diverse conditions, maintaining reliable performance when other techniques showed sensitivity to methodological variations.

Regarding pose selection, studies have investigated the impact of varying numbers of docking poses (ranging from 1 to 100) on ligand ranking accuracy. Contrary to intuitive expectations, increasing the number of poses generally reduced predictive accuracy in many scenarios, highlighting the importance of optimal pose selection rather than maximal pose consideration [2].

Machine Learning Enhancement of Virtual Screening

The integration of machine learning scoring functions has emerged as a transformative approach for enhancing virtual screening performance. A 2025 benchmarking study evaluated structure-based virtual screening across wild-type and quadruple-mutant variants of Plasmodium falciparum dihydrofolate reductase (PfDHFR), comparing three docking tools (AutoDock Vina, PLANTS, and FRED) with two machine learning rescoring approaches (CNN-Score and RF-Score-VS v2) [21].

Table 2: Machine Learning Rescoring Performance for PfDHFR Variants

| Docking Tool | Rescoring Method | Wild-Type EF 1% | Quadruple-Mutant EF 1% | Chemical Diversity |

|---|---|---|---|---|

| PLANTS | CNN-Score | 28 | 24 | High diversity |

| FRED | CNN-Score | 25 | 31 | Moderate diversity |

| AutoDock Vina | RF-Score-VS v2 | 22 | 19 | Improved over baseline |

| PLANTS | None (Default) | 15 | 17 | Standard |

| AutoDock Vina | None (Default) | Worse-than-random | Worse-than-random | Poor |

The findings demonstrated that rescoring with CNN-Score consistently augmented SBVS performance, enriching diverse and high-affinity binders for both PfDHFR variants [21]. Notably, for the wild-type PfDHFR, PLANTS demonstrated the best enrichment when combined with CNN rescoring (EF 1% = 28), while for the quadruple-mutant variant, FRED exhibited the best enrichment with CNN rescoring (EF 1% = 31). The chemotype enrichment analysis further revealed that these rescoring combinations effectively retrieved diverse high-affinity actives at early enrichment stages, addressing a critical challenge in virtual screening campaigns.

Experimental Protocols and Methodologies

Benchmarking Standards and Dataset Preparation

Rigorous virtual screening benchmarking relies on standardized datasets and preparation protocols. The DEKOIS 2.0 benchmark set has emerged as a widely adopted standard, providing challenging decoy sets that enable meaningful performance evaluation [21]. Typical benchmarking protocols employ a ratio of 1 active compound to 30 decoys, ensuring sufficient statistical power for enrichment calculations.

Protein structure preparation follows consistent workflows across studies: crystal structures are obtained from the Protein Data Bank, followed by removal of water molecules, unnecessary ions, redundant chains, and crystallization molecules. Hydrogen atoms are then added and optimized, with the prepared structures saved in appropriate formats for subsequent docking procedures [21].

Small molecule preparation typically involves generating multiple conformations for each ligand, particularly for docking programs like FRED that require pre-generated conformers. Tools such as Omega are commonly employed for conformation generation, while format conversion utilities like OpenBabel facilitate preparation for specific docking tools [21].

Docking Methodologies and Parameters

Docking experiments follow standardized protocols to ensure reproducibility and fair comparison across methods:

AutoDock Vina: Protein and ligand files are converted to PDBQT format using MGLTools. Grid boxes are sized to encompass the binding site (typically 20-25Å in each dimension) with 1Å grid spacing. The search efficiency is typically maintained at default settings [21].

PLANTS: Ligand files are converted to mol2 format with correct atom types assigned using SPORES software. The method employs ant colony optimization algorithms for pose prediction [21].

FRED: Requires pre-generated ligand conformations from tools like Omega. The method uses a systematic search approach followed by optimization and scoring [21].

Performance Evaluation Metrics

Standardized metrics enable objective comparison across virtual screening methodologies:

Enrichment Factor (EF): Measures the early recognition capability of active compounds, typically reported at 1% of the screened database.

Area Under the Curve (AUC): Both ROC-AUC and pROC-AUC provide overall performance assessment, with pROC-AUC emphasizing early enrichment.

Statistical Correlation: Pearson and Spearman correlations evaluate the relationship between predicted and experimental binding affinities.

Error Metrics: Mean absolute error (MAE), root-mean-squared error (RMSE), and inlier ratio metric quantify prediction errors [2].

Visualization of Virtual Screening Workflows

The following diagram illustrates a standardized virtual screening workflow integrating both traditional and machine learning-enhanced approaches:

Virtual Screening Workflow Integrating Traditional and ML Approaches

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Virtual Screening

| Tool/Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| Docking Software | AutoDock Vina, PLANTS, FRED, Glide, SILCS | Molecular docking and pose generation | Structure-based virtual screening campaigns |

| Machine Learning Scoring | CNN-Score, RF-Score-VS v2 | Rescoring docking poses to improve enrichment | Enhancement of traditional docking performance |

| Benchmarking Datasets | DEKOIS 2.0 | Standardized actives and decoys for performance evaluation | Method validation and comparison |

| Structure Preparation | OpenEye Toolkits, MGLTools, SPORES | Protein and ligand preparation for docking studies | Pre-processing for virtual screening |

| Conformation Generation | Omega | Multiple conformation generation for ligands | Ligand preparation for specific docking tools |

| Performance Assessment | Custom Python/R scripts, ROC analysis tools | Calculation of enrichment factors and statistical metrics | Virtual screening campaign evaluation |

Emerging Standards and Future Directions

Active Learning Approaches

Recent advances in active learning virtual screening represent a paradigm shift in handling large chemical libraries. Benchmarking studies have compared active learning protocols across Vina, Glide, and SILCS-based docking at transmembrane binding sites [22]. These workflows iteratively train surrogate models to prioritize promising compounds, significantly reducing the number of required docking calculations while maintaining screening accuracy.

Performance evaluation indicates that Vina-MolPAL achieved the highest top-1% recovery, while SILCS-MolPAL reached comparable accuracy at larger batch sizes while providing more realistic description of heterogeneous membrane environments [22]. These approaches demonstrate how methodological innovations continue to enhance the efficiency and effectiveness of virtual screening campaigns.

Uncertainty Quantification

As artificial intelligence approaches become increasingly integrated into drug discovery, uncertainty quantification has emerged as a critical consideration for establishing trust in model predictions [23]. The reliability of AI predictions is strongly dependent on the applicability domain, with predictions outside this domain potentially misleading decision-making processes.

State-of-the-art uncertainty quantification approaches enable autonomous drug design by providing confidence levels for model predictions, allowing researchers to make informed decisions about which results to prioritize for experimental validation [23]. This represents an important evolution in performance standards for virtual screening, moving beyond simple enrichment metrics to include reliability estimates.

Performance Presentation Standards

The presentation of virtual screening performance data has evolved toward greater transparency and completeness, echoing standards in other fields [24]. Effective performance communication requires clear documentation of methodologies, complete disclosure of relevant parameters, and appropriate contextualization of results.

Best practices include maintaining data and records used to calculate performance metrics, providing detailed supporting information for brief presentations, and clearly identifying any simulated or retrospective results [24]. These standards ensure that virtual screening performance claims are fair, accurate, and complete, enabling meaningful comparison across studies.

The analysis of performance data from published virtual screening campaigns reveals evolving industry standards centered on rigorous benchmarking, methodological transparency, and comprehensive performance assessment. Ensemble docking and MM-GBSA approaches consistently demonstrate strong performance in compound ranking, while machine learning rescoring methods significantly enhance enrichment factors, particularly for challenging targets like resistant enzyme variants. The integration of active learning workflows and uncertainty quantification represents the next frontier in virtual screening standardization, promising more efficient and reliable screening campaigns. As the field advances, adherence to established performance presentation standards ensures the continued progress and credibility of virtual screening in drug discovery.

In the field of computational drug discovery, the ability to objectively evaluate and compare the performance of virtual screening methods is paramount. Foundational benchmark datasets provide the standardized frameworks necessary for this rigorous validation, enabling researchers to assess how well their algorithms can predict binding poses, rank compounds by affinity, and distinguish active drugs from inactive molecules. Among these, the Comparative Assessment of Scoring Functions (CASF) and the Directory of Useful Decoys (DUD) and its enhanced version (DUD-E) have emerged as cornerstone resources. These benchmarks allow for the systematic testing of computational methods under controlled conditions, providing reproducible and comparable results across different studies and methodologies. The integrity of these benchmarks is critical, as they directly influence the development of new scoring functions, docking protocols, and machine learning models in structure-based drug design. This guide provides a comparative analysis of these foundational tools, detailing their structures, applications, and the experimental protocols essential for their use in foundational performance metrics research for virtual screening.

Comparative Analysis of Benchmark Datasets

The CASF and DUD/E benchmarks serve complementary yet distinct roles in the evaluation pipeline. CASF is primarily focused on assessing the predictive power of scoring functions, whereas DUD/E is designed to evaluate a method's capability in virtual screening tasks. The table below summarizes their core characteristics:

Table 1: Core Characteristics of CASF and DUD/E Benchmarks

| Feature | CASF (Comparative Assessment of Scoring Functions) | DUD-E (Directory of Useful Decoys, Enhanced) |

|---|---|---|

| Primary Purpose | Evaluate scoring functions for binding pose prediction (docking power) and affinity ranking (scoring power) [20] [25]. | Evaluate virtual screening methods' ability to distinguish target binders from non-binders (screening power) [26] [27]. |

| Key Metrics | Root Mean Square Deviation (RMSD) for pose prediction; Correlation coefficients (R, ) for affinity ranking [20] [25]. | Enrichment Factor (EF), particularly EF1%; Area Under the ROC Curve (AUC-ROC) [21] [28]. |

| Dataset Composition | High-quality protein-ligand complexes with experimentally measured binding affinities from the PDBbind database [29] [30]. | Known active compounds paired with property-matched, chemically dissimilar decoy molecules presumed to be inactive [26] [27]. |

| Typical Workflow | Re-docking and re-scoring of known complexes to assess pose reproduction and affinity prediction accuracy [25]. | Docking of a mixed library of actives and decoys, then ranking to see if actives are prioritized [21]. |

| Common Applications | Development and validation of novel scoring functions for binding affinity prediction [30]. | Validation of virtual screening protocols before application to novel targets [26] [21]. |

A critical consideration for researchers is the ongoing evolution and refinement of these benchmarks. For instance, the standard CASF benchmark is derived from the PDBbind database. However, recent studies have revealed a substantial data leakage between PDBbind and the CASF test sets, where nearly half of the CASF test complexes have highly similar counterparts in the PDBbind training set [29]. This inflation has led to the proposal of a refined, non-redundant training dataset known as PDBbind CleanSplit to enable a genuine assessment of a model's generalization capability [29]. Similarly, new decoy-generation tools like LIDEB's Useful Decoys (LUDe) have been developed to improve upon DUD-E by generating decoys that are similar to active compounds in physical properties but topologically distinct, thereby reducing the risk of artificial enrichment during benchmarking [26].

Experimental Protocols for Benchmarking

To ensure reproducible and meaningful results, researchers must adhere to standardized experimental protocols when using these benchmarks. The following workflows outline the core methodologies for leveraging CASF and DUD-E.

Protocol for CASF Benchmarking

The CASF benchmark is typically used to evaluate a method's "docking power" (ability to reproduce the native binding pose) and "scoring power" (ability to predict binding affinity). The general workflow is as follows [20] [25]:

- Dataset Acquisition: Obtain the latest CASF benchmark (e.g., CASF-2016 or CASF-2013) from the PDBbind database.

- Protein-Ligand Preparation: Prepare the protein structures by removing water molecules, adding hydrogen atoms, and assigning partial charges. Similarly, prepare the ligand structures from the crystal complexes.

- Re-docking: For each protein-ligand complex in the benchmark, re-dock the ligand into the protein's binding site using the method being evaluated.

- Pose Prediction Assessment (Docking Power): Calculate the Root Mean Square Deviation (RMSD) between the heavy atoms of the top-scoring docked pose and the experimentally determined co-crystallized ligand pose. A pose with an RMSD ≤ 2.0 Å is generally considered successfully docked [31].

- Affinity Prediction Assessment (Scoring Power): Use the scoring function to predict the binding affinity of the native pose. Compare the predicted affinities against the experimental values (e.g., -logKd/Ki) using correlation coefficients like Pearson's R or calculate the root-mean-square error (RMSE).

Protocol for DUD-E Virtual Screening Evaluation

The DUD-E benchmark evaluates a method's performance in a realistic virtual screening scenario—retrieving known active compounds from a large pool of decoys [21] [27]:

- Library Preparation: For a target of interest, compile the set of known active molecules and the corresponding decoys provided by DUD-E.

- Docking and Scoring: Dock the entire combined library (actives + decoys) against the target protein structure. Score all generated poses using the chosen scoring function.

- Ranking and Enrichment Analysis: Rank all compounds based on their best docking score. Analyze this ranked list to calculate performance metrics.

- Key Metric Calculation:

- Enrichment Factor (EF): Measures the concentration of active compounds at a specific threshold of the ranked list (e.g., top 1%). It is calculated as EF = (Hitssampled / Nsampled) / (Hitstotal / Ntotal), where a higher EF indicates better early enrichment [21].

- AUC-ROC: The Area Under the Receiver Operating Characteristic curve, which assesses the overall ability to distinguish actives from decoys across all ranking thresholds.

The logical relationship and application of these benchmarks within a virtual screening method development pipeline can be visualized as follows:

Performance Data from Comparative Studies

Independent benchmarking studies provide crucial data for comparing the performance of various docking tools and scoring functions. The following table summarizes findings from a recent study evaluating three docking tools and two machine learning re-scoring functions against wild-type (WT) and quadruple-mutant (Q) Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) using the DEKOIS 2.0 benchmark set, which follows the DUD-E paradigm [21].

Table 2: Virtual Screening Performance on PfDHFR Targets (Best EF1% Values)

| Target | Docking Tool | Scoring Function | Performance (EF1%) |

|---|---|---|---|

| WT PfDHFR | PLANTS | CNN-Score | 28 [21] |

| WT PfDHFR | AutoDock Vina | RF-Score-VS v2 / CNN-Score | Improved from worse-than-random to better-than-random [21] |

| Q PfDHFR | FRED | CNN-Score | 31 [21] |

| Q PfDHFR | FRED | Native (CHEMPLP) | 19 [21] |

Key Insights from Data:

- Machine Learning Re-scoring Enhances Performance: The data consistently shows that re-scoring docking outputs with ML-based scoring functions like CNN-Score and RF-Score-VS v2 significantly improves early enrichment (EF1%) compared to using the docking tool's native scoring function [21].

- Tool Performance is Target-Dependent: No single docking tool was best for both PfDHFR variants. PLANTS combined with CNN-Score performed best for the wild-type, while FRED with CNN-Score was superior for the resistant quadruple mutant, highlighting the importance of benchmarking against specific targets of interest [21].

Beyond specific docking tools, broader benchmarks have been conducted. For example, the RosettaVS method, when benchmarked on the CASF-2016 dataset, demonstrated a top 1% enrichment factor (EF1%) of 16.72, outperforming the second-best method by a significant margin (EF1% = 11.9) [20]. Furthermore, the HPDAF deep learning model for affinity prediction, trained on the PDBbind CleanSplit to avoid data leakage, achieved a 7.5% increase in Concordance Index and a 32% reduction in Mean Absolute Error on the CASF-2016 dataset compared to its predecessor, DeepDTA [30].

The Scientist's Toolkit: Essential Research Reagents

Successful virtual screening benchmarking relies on a suite of software tools and data resources. The table below details key solutions referenced in the studies analyzed.

Table 3: Essential Research Reagents for Virtual Screening Benchmarking

| Tool / Resource | Type | Primary Function in Benchmarking |

|---|---|---|

| PDBbind Database [29] [30] | Data Resource | A comprehensive database of protein-ligand complexes with experimentally measured binding affinities; serves as the source for the CASF benchmarks. |

| LUDe [26] | Software Tool | An open-source decoy-generation tool designed to create putative inactive compounds that challenge virtual screening models without being topologically similar to known actives. |

| DUBS Framework [31] | Software Tool | A Python framework for rapidly creating standardized benchmarking sets from the Protein Data Bank (PDB), helping to address issues of file format inconsistency. |

| CNN-Score & RF-Score-VS v2 [21] | Scoring Function | Pretrained machine learning scoring functions used to re-score docking poses, often significantly improving the discrimination between active and inactive compounds. |

| OpenVS / RosettaVS [20] | Virtual Screening Platform | An open-source, AI-accelerated virtual screening platform that incorporates active learning and the RosettaVS docking protocol for screening ultra-large chemical libraries. |

| Fpocket [27] | Software Tool | A tool for detecting geometric cavities in protein structures that can serve as potential binding pockets, crucial for benchmarking on apo (unbound) structures. |

The CASF and DUD-E benchmarks are indispensable for foundational analysis in virtual screening research. CASF provides the rigorous framework needed to dissect and improve the components of scoring functions, particularly for binding pose and affinity prediction. In contrast, DUD-E offers a realistic testbed for evaluating the overall performance of a virtual screening pipeline in its core mission: enriching active compounds from a vast molecular library. The experimental protocols and performance data presented herein offer a guide for researchers to conduct standardized, reproducible evaluations. Furthermore, the growing toolkit of resources, from decoy generators like LUDe to ML-based scoring functions, continues to push the field forward. However, researchers must remain vigilant of inherent benchmark limitations, such as data leakage in older dataset splits and analog bias, and engage with newly curated, cleaner benchmarks like PDBbind CleanSplit to ensure their methods genuinely advance the state of the art in computational drug discovery.

Applying Metrics Across VS Methods: From Structure-Based to AI-Driven Screening

Structure-based virtual screening (SBVS) is a fundamental computational approach in drug discovery, used to identify hit compounds by predicting their interaction with a target protein of known three-dimensional structure [32] [33] [34]. The performance and predictive accuracy of SBVS workflows are highly dependent on the reliability of molecular docking and scoring functions, necessitating rigorous assessment using specific, well-defined metrics [2] [35]. This guide objectively compares the performance of current SBVS methodologies and scoring functions by examining the experimental data and benchmarks used to evaluate their docking power (accuracy of binding pose prediction), screening power (ability to identify active compounds), and scoring power (binding affinity prediction) [36]. The focus is on providing a comparative analysis of key performance metrics and the experimental protocols used for their validation, providing researchers with a framework for methodological selection.

Core Performance Metrics in SBVS

The evaluation of SBVS methods revolves around three principal metrics, each measuring a distinct capability crucial for a successful virtual screen.

- Docking Power: This refers to the ability of a scoring function to identify and rank the correct binding pose of a ligand, typically defined as the one closest to the experimentally determined native structure. Performance is most commonly measured by the Root-Mean-Square Deviation (RMSD) between the predicted pose and the native pose. A lower RMSD indicates higher accuracy, with poses below 2.0 Å generally considered "near-native" [36]. The success rate is often reported as the percentage of complexes for which a near-native pose is ranked first (Top-1 success rate) or within the top few poses.

- Screening Power: Also known as "enrichment ability," this metric evaluates how effectively a method prioritizes known active compounds over inactive ones or decoys in a virtual screen. The standard measure has been the Enrichment Factor (EF), which calculates the concentration of actives in a selected top fraction of the screened library compared to a random selection [37] [35]. Recently, the Bayes Enrichment Factor (EFB) has been proposed as an improved metric that uses random compounds instead of carefully curated decoys, avoids the inherent maximum value limitation of traditional EF, and allows for enrichment estimation at much lower selection fractions [37].

- Scoring Power: This measures the ability to produce binding scores that correlate linearly with experimentally determined binding affinities (e.g., IC50, Ki). It is typically assessed using statistical correlation coefficients like Pearson's R (for linear correlation) and Spearman's ρ (for rank correlation) between predicted scores and experimental values [2].

Table 1: Key Performance Metrics for SBVS Evaluation

| Metric | Definition | Common Measures | Interpretation |

|---|---|---|---|

| Docking Power | Ability to predict the correct binding pose | RMSD, Top-1 Success Rate | Lower RMSD and higher success rate are better. |

| Screening Power | Ability to enrich actives in a ranked list | Enrichment Factor (EF), Bayes EF (EFB), AUC | Higher EF/EFB indicates better enrichment of actives. |

| Scoring Power | Ability to predict binding affinity | Pearson's R, Spearman's ρ | Values closer to 1.0 indicate better predictive accuracy. |

Comparative Performance of Scoring Methodologies

Recent studies have systematically evaluated various docking protocols, classical scoring functions, and novel machine-learning-based approaches. The data below summarizes benchmark findings to facilitate comparison.

Performance Comparison of Scoring Functions

Benchmarking studies on standardized datasets like DEKOIS 2.0 and CSAR 2014 provide a direct comparison of screening and docking power across different classes of scoring functions.

Table 2: Comparative Performance of Selected Scoring Functions on Independent Benchmarks

| Scoring Function | Type | Screening Power (EF1%) | Docking Power (Mean Native Pose Rank) | Key Features |

|---|---|---|---|---|

| SCORCH [35] | Machine Learning Consensus | 13.78 (on DEKOIS 2.0) | 5.9 (on CSAR 2014) | Uses multiple poses and RMSD-based labeling; addresses decoy bias. |

| Autodock Vina [37] [35] | Empirical | ~7.0 (on DUD-E) | 30.4 (as baseline in CSAR 2014) | Widely used classical scoring function. |

| Vinardo [37] | Empirical | ~11.0 (on DUD-E) | Information Missing | A variant of the Vina scoring function. |

| Dense (Pose) [37] | Machine Learning | ~21.0 (on DUD-E) | Information Missing | Machine-learning model trained for pose prediction. |

| GNINA [36] | Deep Learning (CNN) | Information Missing | High success rate on cross-docked poses | Uses a 3D convolutional neural network; trained on cross-docked poses for robustness. |

Comparison of Docking Protocols and Data Fusion Strategies

Beyond individual scoring functions, the overall SBVS protocol—including how docking poses are generated and combined—significantly impacts performance. A comparative study on urease inhibitors evaluated several advanced protocols [2].

Table 3: Comparison of SBVS Protocol Variants for Urease Inhibition Prediction

| SBVS Protocol | Spearman ρ (Ranking) | Pearson R (pIC50) | Key Findings |

|---|---|---|---|

| Molecular Docking | Baseline | Baseline | Performance is highly variable. |

| Ensemble Docking (ED) | High | Moderate | Consistently outperformed single-structure docking in compound ranking. |

| MM-GBSA Rescoring | High | Lower | Excellent ranking but higher errors in absolute binding energy prediction. |

| Induced-Fit Docking (IFD) | Moderate | Moderate | Accounts for side-chain flexibility. |

| QPLD | Moderate | Moderate | Incorporates quantum mechanical effects. |

| Data Fusion: Minimum | N/A | N/A | Most robust fusion technique for combining scores from multiple poses. |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, standardized experimental protocols are used for training and evaluating SBVS methods.

Dataset Curation and Preparation

The foundation of any robust benchmark is a high-quality, curated dataset.

- Training with Cross-Docked Poses: To improve model generalizability, datasets like CrossDocked2020 and PDBbind-CrossDocked-Core are constructed by docking ligands into binding pockets of similar but non-identical proteins. This mimics real-world screening scenarios better than re-docking into a native structure and reduces performance inflation [36].

- Addressing Decoy Bias: For screening power assessment, benchmarks like DEKOIS and LIT-PCBA use property-matched decoys to avoid artificial enrichment. The SCORCH methodology emphasizes applying the same preparation and docking procedures to both active ligands and decoys to minimize bias [35].

- Rigorous Dataset Splitting: To prevent data leakage and over-optimistic performance in machine learning models, strategies like refined-core splitting and threefold clustered-cross-validation are employed. The BayesBind benchmark is explicitly designed for ML models, ensuring that its protein targets are structurally dissimilar to those in common training sets like BigBind [37].

Machine Learning Model Training

Advanced MLSFs like SCORCH and GNINA follow detailed training workflows to maximize docking and screening power.

- Feature Engineering: Models are trained using diverse feature sets, including:

- Data Augmentation: The SCORCH method improves performance by augmenting training data with multiple ligand poses and labeling them based on their RMSD from the native structure, rather than relying on a single pose per complex [35].

- Consensus and Uncertainty Estimation: SCORCH employs a consensus of three different machine learning models (Random Forest, XGBoost, and Deep Neural Network) to improve robustness and provides an uncertainty estimate for its predictions, which helps in prioritizing experimental testing [35].

Specialized Protocols for Protein Flexibility

Protein flexibility is a major challenge. Ensemble docking and multi-state modeling (MSM) are key strategies to address it.

- Ensemble Docking: This protocol involves docking compound libraries into multiple experimentally determined or simulated conformations of the target protein. Representative scores (e.g., minimum, arithmetic mean) from the multiple docking runs are used for the final ranking [33] [34].