Mastering Molecular Flexibility: Advanced Strategies for Protein Side-Chain Modeling in Drug Discovery Docking

This article provides a comprehensive guide for researchers and drug development professionals on tackling the critical challenge of protein flexibility in molecular docking.

Mastering Molecular Flexibility: Advanced Strategies for Protein Side-Chain Modeling in Drug Discovery Docking

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on tackling the critical challenge of protein flexibility in molecular docking. Moving beyond the limitations of rigid-receptor models, we explore the foundational importance of side-chain and backbone movements driven by induced fit and conformational selection. The review systematically details current methodological approaches—from traditional ensemble docking and side-chain rotamer optimization to cutting-edge deep learning models like DiffDock and AlphaFold-Multimer. We further address practical troubleshooting for common pitfalls such as cryptic pockets and scoring failures, and establish a framework for the validation and comparative analysis of flexible docking methods. By synthesizing insights across these four core intents, the article aims to equip scientists with the knowledge to select, apply, and critically evaluate strategies for modeling protein dynamics, thereby enhancing the accuracy and success rate of structure-based drug design.

Why Protein Flexibility Isn't a Bug, It's a Feature: The Biological Imperative for Dynamic Docking

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Why does my docking software (AutoDock Vina, GOLD) fail to predict the correct binding pose for my ligand, even when using a high-resolution crystal structure?

- Answer: This is a classic symptom of rigid docking failure. High-resolution structures are static snapshots, but proteins are dynamic. The binding site side chains or backbone may need to shift to accommodate your ligand—a phenomenon known as "induced fit." Rigid docking cannot model these movements. The predicted pose may clash with side chains that are, in reality, flexible.

FAQ 2: My docking scores (ΔG, Ki) show strong binding, but experimental assays show no activity. What went wrong?

- Answer: This discrepancy often stems from over-reliance on static structures. A favorable score in a rigid docking simulation does not account for the entropic penalty of locking flexible side chains into a single conformation or the energy cost of required backbone movements. Your ligand might score well in a computationally favorable but biologically inaccessible pose.

FAQ 3: How can I identify if my target protein requires flexible docking approaches?

- Answer: Perform a preliminary analysis:

- Compare multiple structures: Align all available PDB structures (apo and holo forms) of your target. Use RMSD analysis on the binding site residues.

- Analyze B-factors: High B-factors (temperature factors) in the binding site region indicate intrinsic flexibility.

- Check for conformational changes: Look for significant side chain rotamer changes or backbone shifts between apo and ligand-bound states.

Experimental Protocol: Comparative Analysis of Rigid vs. Flexible Docking This protocol is cited from common practices to validate docking approaches.

System Preparation:

- Select a target protein with both apo (unliganded) and holo (liganded) crystal structures available (e.g., Kinase X, PDB IDs: 1ABC [apo], 1DEF [holo]).

- Prepare the protein files (remove water, add hydrogens, assign charges) using a tool like UCSF Chimera or MOE.

- Extract the native co-crystallized ligand from the holo structure.

Rigid Docking Experiment:

- Use the apo protein structure as the rigid receptor.

- Define a docking grid centered on the native ligand's binding site from the holo structure.

- Perform re-docking of the native ligand using a rigid-body algorithm (e.g., AutoDock Vina in its default mode).

- Record the top-scoring pose and its Root Mean Square Deviation (RMSD) from the native crystallographic pose. An RMSD > 2.0 Å typically indicates a failed prediction.

Flexible Docking Experiment:

- Using the same apo receptor, identify key binding site residues (within 5Å of the native ligand) that differ in conformation between the apo and holo structures.

- Perform docking again, allowing specified side chains (and optionally, backbone segments) to be flexible. Use software like FRED (OE Docking) with an induced-fit protocol or Schrödinger's Glide with SP or XP precision and side-chain sampling.

- Record the top-scoring pose and its RMSD.

Data Analysis:

- Compare the RMSD values and visual alignment of the poses. Flexible docking should significantly improve pose prediction accuracy (lower RMSD) for targets with pronounced induced fit.

Table 1: Representative Docking Results for Kinase X (Hypothetical Data)

| Docking Method | Receptor State | Flexible Residues | Top-Score RMSD (Å) | Calculated ΔG (kcal/mol) | Experimental IC₅₀ (nM) |

|---|---|---|---|---|---|

| Rigid (Vina) | Apo | None | 4.7 | -9.1 | >10,000 |

| Flexible Side Chains | Apo | Lys45, Glu67, Asp92 | 1.2 | -8.5 | 250 |

| Induced-Fit (Full) | Apo | Backbone + Side Chains | 0.9 | -10.2 | 50 |

| Native (Holo) | Holo | N/A | 0.0 | -11.0 | 12 |

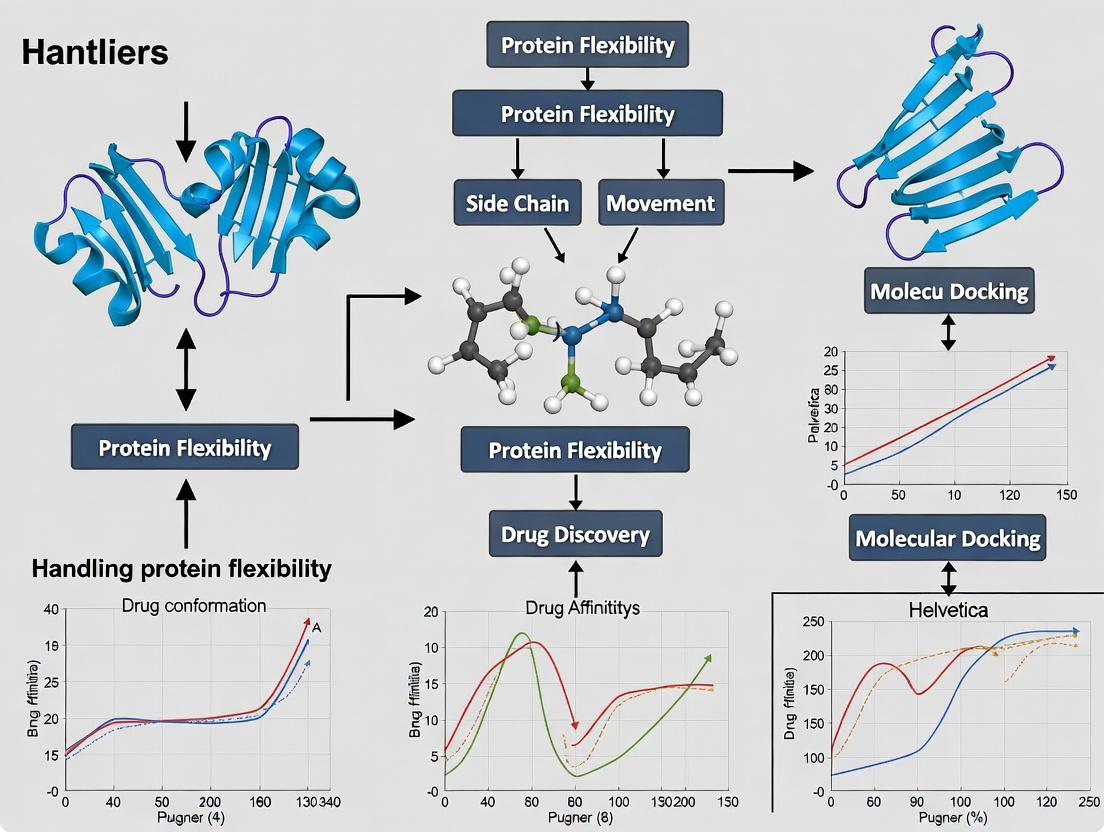

Diagram 1: Rigid vs Flexible Docking Workflow

Diagram 2: Protein Conformational States Impacting Docking

The Scientist's Toolkit: Research Reagent & Software Solutions

| Item Name | Category | Function & Relevance to Flexible Docking |

|---|---|---|

| Molecular Dynamics (MD) Software (e.g., GROMACS, AMBER, NAMD) | Software Suite | Simulates protein movement over time. Used to generate an ensemble of receptor conformations for "ensemble docking" to account for flexibility. |

| Docking Software with Flexibility (e.g., Schrödinger Glide/Induced Fit, MOE, FRED, AutoDockFR) | Software Suite | Implements algorithms that allow side-chain rotation, backbone movement, or both during the docking search, moving beyond the rigid lock-and-key model. |

| Protein Data Bank (PDB) Apo Structures | Data Resource | Structures of the target protein without a bound ligand. Essential for setting up realistic, flexible docking simulations that mimic a real-world drug discovery scenario. |

| Normal Mode Analysis (NMA) Tools (e.g., ProDy, ElNemo) | Analysis Tool | Predicts large-scale, collective motions of a protein. These low-frequency modes can be used to generate plausible alternative conformations for docking. |

| Conformational Ensemble Database (e.g., PDBFlex, DynaMine) | Data Resource | Databases that curate and analyze protein flexibility from the PDB, helping identify inherently flexible regions critical for binding. |

| SiteMap (Schrödinger) or FTMap | Analysis Software | Identifies and characterizes binding sites, including estimating their druggability and potential for flexibility/induced fit. |

Technical Support Center: Troubleshooting Protein Flexibility in Docking

FAQs & Troubleshooting Guides

Q1: My docking poses show poor complementarity despite good overall binding scores. The ligand seems to clash with protein side chains. What is the core biophysical issue and how can I address it? A: This often indicates a failure to account for the Induced Fit model. The rigid receptor you used does not represent the conformation the protein adopts upon ligand binding. You are likely docking into a static crystal structure that is not fully complementary to your ligand's unbound shape.

- Troubleshooting Steps:

- Pre-process with side chain rotamer libraries: Use a tool like SCWRL4 or RosettaFixBB to sample probable side chain conformations around the binding pocket before docking.

- Employ ensemble docking: Dock your ligand into an ensemble of multiple receptor conformations (from NMR, MD simulations, or multiple crystal structures). This samples Conformational Selection.

- Use flexible docking protocols: Switch to a docking algorithm that explicitly allows for side chain (and sometimes backbone) flexibility during the docking search, such as Glide SP/XP, FlexX, or AutoDock Vina in its flexible side chains mode.

Q2: How do I decide whether to use an Induced Fit Docking (IFD) protocol or Ensemble Docking for my target? A: The choice depends on the known conformational variability of your target and computational resources.

- Use Ensemble Docking when:

- Multiple apo/holo structures are available (e.g., from PDB).

- The target is known to have large-scale domain motions or distinct conformational states.

- You are screening large compound libraries and need a faster first-pass method.

- Use Induced Fit Docking when:

- Only one static (often apo) structure is available.

- You are working on a few key lead compounds and need detailed pose prediction.

- You suspect significant side chain rearrangements or small backbone shifts are crucial for binding.

Q3: My Molecular Dynamics (MD) simulations show the protein populates many states. How do I select representative structures for Ensemble Docking? A: You must cluster your MD trajectory based on binding site geometry.

- Experimental Protocol:

- Trajectory Alignment: Align all frames of your MD trajectory to a reference (e.g., the protein backbone of the binding site alpha carbons).

- Binding Site Atom Selection: Define the set of residues (and their atoms) that line the binding pocket.

- Calculate RMSD Matrix: Calculate the pairwise Root Mean Square Deviation (RMSD) for the selected atoms across all frames.

- Cluster: Use a clustering algorithm (like k-means, hierarchical, or DBSCAN) on the RMSD matrix to group similar conformations.

- Extract Representatives: Select the central frame (closest to the cluster centroid) from each of the top 5-10 most populated clusters. This set comprises your docking ensemble.

Q4: In induced fit protocols, how do I balance computational cost vs. accuracy when defining the flexible residue region? A: Incorrect region selection leads to long runtimes or inaccurate poses.

- Step-by-Step Guide:

- Initial Rigid Docking: First, dock your ligand into the rigid receptor. Generate a large number of poses (e.g., 100-200).

- Identify Interacting Residues: Analyze the top 20-30 poses. Any protein residue with an atom within 5-7 Å of any ligand atom in any of these poses should be flagged.

- Define the Flexible Shell: The flexible region should include all flagged residues. Optionally, add a second shell of residues that are covalently connected to the first shell to allow for collective motion.

- Refinement: Run the full IFD protocol (e.g., Prime-Maestro or MOE induced fit) using this defined region. The table below provides quantitative guidance.

Table 1: Quantitative Comparison of Core Docking Strategies for Flexibility

| Strategy | Core Model Addressed | Typical CPU Time per Ligand | Key Output Metric | Best For |

|---|---|---|---|---|

| Rigid Receptor Docking | Lock-and-Key (limited) | 1-5 minutes | Docking Score (ΔG) | High-throughput virtual screening of stable binding sites. |

| Ensemble Docking | Conformational Selection | 5-30 minutes (per ensemble member) | Consensus Score/Rank across ensemble | Targets with known pre-existing multiple conformations. |

| Soft-Potential Docking | Partial Induced Fit | 5-15 minutes | Docking Score with van der Waals buffering | Moderate side chain adjustments without explicit flexibility. |

| Side-Chain Flexible Docking | Induced Fit (local) | 10-60 minutes | Docking Score & refined side chain χ angles | Local side chain rearrangements upon ligand binding. |

| Full Induced Fit Docking | Induced Fit (full) | 1-8 hours per ligand | Refined Pose, Protein-Ligand H-bonds, MM/GBSA ΔG | Final lead optimization, detailed binding mode analysis. |

Experimental Protocol: A Combined Conformational Selection & Induced Fit Workflow

Title: Integrated Workflow for Handling Protein Flexibility in Docking

Objective: To accurately predict ligand binding modes for a flexible target by combining ensemble docking (conformational selection) with subsequent induced fit refinement.

Materials & Reagents: See "The Scientist's Toolkit" below. Methodology:

- Ensemble Generation (Conformational Selection Sampling):

- Source 3-5 distinct experimental structures of your target from the PDB (prioritizing different liganded states and apo forms).

- Alternatively, run a short (100-200 ns) MD simulation of the apo protein. Cluster the trajectory as described in FAQ A3 to generate 5 representative conformers.

- Prepare each protein structure (remove waters, add hydrogens, optimize H-bonds, assign partial charges) using your standard molecular modeling suite.

- Ensemble Docking:

- Dock your ligand library into each prepared receptor conformation using a standard rigid-receptor docking algorithm (e.g., Glide HTVS or SP).

- For each ligand, retain the top 5 poses from each receptor conformation.

- Ranking: Score all poses from all ensembles with a consistent, more rigorous scoring function (e.g., Glide SP or MM/GBSA). Rank ligands by their best score across the entire ensemble.

- Induced Fit Refinement (Induced Fit Modeling):

- Select the top 10-20 ligand hits and their best-scoring receptor conformation from the ensemble docking step.

- Subject each ligand-receptor complex to an Induced Fit Docking protocol.

- Define Flexibility: Allow residues within 8 Å of the docked ligand pose to be flexible (both side chains and backbone).

- Run the refinement cycle (docking → protein structure refinement → redocking).

- Validation & Analysis:

- Compare the final IFD poses to the initial ensemble docking pose. Analyze key interaction changes.

- Validate top poses using binding free energy calculations (e.g., FEP, MM/PBSA) or, ideally, by comparison with a newly obtained co-crystal structure.

Diagrams

Title: Integrated Flexibility Docking Workflow

Title: Conformational Selection vs. Induced Fit Models

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Protein Flexibility Research

| Item/Resource | Function/Benefit | Example/Tool |

|---|---|---|

| Molecular Dynamics Software | Samples the conformational landscape of an apo or holo protein over time. | GROMACS, AMBER, NAMD, Desmond (Schrödinger) |

| Conformational Ensemble Database | Provides pre-existing experimental ensembles of protein conformations for ensemble docking. | PDBFlex, Mol* 3D Viewer Database, Dynameomics |

| Protein Preparation Suite | Adds hydrogens, optimizes H-bond networks, corrects protonation states, and minimizes structures for docking. | Protein Preparation Wizard (Maestro), MOE QuickPrep, UCSF Chimera |

| Docking Software with Flexibility | Performs docking while allowing protein side chains (and sometimes backbone) to move. | Glide (Induced Fit Docking), MOE (Induced Fit), AutoDockFR, RosettaLigand |

| Free Energy Perturbation (FEP) Software | Provides high-accuracy binding free energy predictions for final pose validation and ranking. | FEP+ (Schrödinger), AMBER, CHARMM, OpenMM |

| Side Chain Rotamer Library | Provides statistically probable side chain conformations for remodeling binding pockets. | SCWRL4, Rosetta, Dunbrack Library (incorporated in most suites) |

| Clustering & Analysis Tool | Analyzes MD trajectories or pose sets to identify representative conformations. | MDAnalysis (Python), cpptraj (AMBER), VMD, Scikit-learn (for clustering) |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: In my docking simulation, the side chains of the receptor's binding pocket are collapsing into unrealistic conformations, leading to poor pose prediction. How can I address this? A1: This is a common issue when using rigid receptor models. Implement side-chain flexibility using a rotamer library approach. Pre-generate a set of probable rotameric states for key pocket residues (e.g., Tyr, Arg, Lys, Glu) using tools like SCWRL4 or RosettaFixBB. Perform docking against each relevant combinatorial state or use a "soft" potential that allows for minor side-chain movement during docking. Ensure your chosen rotamer library is compatible with your force field.

Q2: My target protein has a flexible loop near the binding site that is missing from the crystal structure or in a non-representative conformation. What experimental and computational strategies can I use? A2: First, consult alternative experimental structures (NMR, cryo-EM) from the PDB. If none exist:

- Experimentally: Consider limited proteolysis coupled with mass spectrometry to identify flexible regions. Hydrogen-deuterium exchange (HDX-MS) can map solvent-accessible, dynamic loops.

- Computationally: Use molecular dynamics (MD) simulations to sample loop conformations. Cluster the MD trajectories to generate representative loop models for ensemble docking. Alternatively, use loop modeling servers like MODELLER or RosettaLoopModeling to generate in silico conformers.

Q3: When performing ensemble docking to account for domain shifts, how do I select which protein conformers from the PDB to include in my ensemble? A3: Do not simply select all available structures. Analyze the ensemble for redundancy and relevance:

- Cluster by conformation: Use a metric like backbone RMSD on the domain of interest to cluster similar structures.

- Select representatives: Choose the centroid structure from each major cluster.

- Prioritize relevance: Prefer structures bound to ligands (any ligand), especially those pharmacologically similar to your compound. Also, consider structures solved under different conditions (e.g., pH, ionic strength).

- Validate: Cross-dock known ligands to ensure the ensemble can reproduce native binding modes.

Q4: How do I quantitatively evaluate if accounting for protein flexibility has significantly improved my virtual screening results? A4: Use standardized metrics and compare against a rigid receptor control. Key performance indicators (KPIs) include:

Table 1: Key Metrics for Evaluating Flexible Docking Protocols

| Metric | Description | Target Improvement vs. Rigid |

|---|---|---|

| Enrichment Factor (EF₁%) | Concentration of true hits in the top 1% of ranked list. | Increase of >50% is significant. |

| Area Under the ROC Curve (AUC) | Overall ability to discriminate actives from decoys. | Statistically significant increase (p<0.05, paired t-test). |

| Root-Mean-Square Deviation (RMSD) | Accuracy of top-ranked pose for known ligands. | Reduction to <2.0 Å. |

| Pose Recovery Rate | Percentage of known ligands docked within 2.0 Å of native pose. | Increase of >20 percentage points. |

Troubleshooting Guides

Issue: High Computational Cost of Full Flexibility Methods. Symptoms: Docking a single ligand takes hours/days; screening a library is infeasible. Solution Guide:

- Hybrid Approach: Use a multi-step protocol. Step 1: Rapid rigid-receptor docking (e.g., Vina) of entire library. Step 2: Take top 1000-5000 hits and re-dock using a flexible side-chain method (e.g., Glide SP/XP, Freddy).

- Focused Flexibility: Restrict side-chain movement to residues within 5-8 Å of the docked ligand in the initial pose.

- Employ Caching: If using MD-based ensembles, pre-generate and grid all receptor conformations to avoid on-the-fly calculations.

Issue: Generation of Unphysiological Protein Conformations. Symptoms: Docked ligands are buried in pockets that are sterically impossible in a real protein; abnormal torsion angles. Solution Guide:

- Constraint Application: Apply harmonic positional restraints to protein backbone atoms during minimization/relaxation steps.

- Energy Thresholds: Reject any docking pose where the protein's internal energy (strain) exceeds a predefined threshold (e.g., 10 kcal/mol above the starting crystal structure).

- Check Steric Clashes: Post-docking, filter poses where ligand atoms clash heavily (van der Waals overlap > 0.5 Å) with fixed backbone atoms.

Experimental Protocols

Protocol 1: Generating a Side-Chain Rotamer Ensemble for Docking Objective: Create a set of plausible side-chain conformations for a binding site. Materials: See "The Scientist's Toolkit" below. Method:

- Prepare the protein structure (PDB file): Remove water, add hydrogens, assign protonation states at target pH using PDB2PQR or MolProbity.

- Identify flexible residues: Select all residues with any heavy atom within 10 Å of the binding site centroid.

- Generate rotamers: Use SCWRL4 command:

scwrl4 -i input.pdb -o output.pdb -s input.rotamer.config. The config file specifies which residues to sample. - Cluster and select: For each residue, cluster the generated rotamers by chi-angle similarity. Select the centroid and the most divergent rotamer from each cluster.

- Create combinatorial states: For a pocket with 4 key residues, each with 3 selected rotamers, you would generate 3⁴ = 81 receptor files for ensemble docking.

Protocol 2: Loop Conformational Sampling via Short MD Simulation Objective: Sample accessible states of a missing or flexible loop (≤ 15 residues). Method:

- System Preparation: Use CHARMM-GUI to solvate the protein in a TIP3P water box, add 0.15 M NaCl ions. Use the CHARMM36m force field.

- Simulation: Run in AMBER, GROMACS, or NAMD. a. Minimization: 5000 steps steepest descent. b. NVT equilibration: 100 ps, heating to 300 K. c. NPT equilibration: 100 ps, pressure to 1 bar. d. Production run: 50-100 ns, saving coordinates every 10 ps.

- Trajectory Analysis: Align trajectories to the protein's stable core (not the loop). Cluster the loop backbone conformations using the gmx cluster tool (GROMACS) with a cutoff of 2.0 Å RMSD.

- Model Extraction: Extract the protein structure corresponding to the centroid of the top 3-5 most populated clusters for use in docking.

Visualizations

Title: Decision Workflow for Handling Protein Flexibility in Docking

Title: Detailed Rotamer Ensemble Generation Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Protein Flexibility Studies

| Item / Resource | Type | Primary Function |

|---|---|---|

| SCWRL4 | Software | Predicts protein side-chain conformations using a backbone-dependent rotamer library. |

| Rosetta Software Suite | Software | Provides comprehensive tools for de novo protein structure prediction, loop modeling, and flexible docking. |

| GROMACS / AMBER | Software | High-performance molecular dynamics packages for sampling protein conformational dynamics. |

| PyMOL / ChimeraX | Software | Visualization and analysis of structural ensembles, measurement of RMSD, and cavity analysis. |

| CHARMM36m / AMBER ff19SB | Force Field | Optimized molecular mechanics parameter sets for accurate simulation of protein dynamics. |

| Protein Data Bank (PDB) | Database | Repository of experimental protein structures to source conformational ensembles. |

| MolProbity / PDB2PQR | Web Service | Validates and prepares protein structures, assigns protonation states for simulation/docking. |

| Glide (Schrödinger) | Software | Docking program with advanced options for handling receptor flexibility (induced fit). |

| AutoDock FRED (OpenEye) | Software | Docking tool designed for high-throughput screening against pre-generated receptor ensembles. |

Troubleshooting Guides & FAQs

Q1: Why does my docking program fail to predict the correct binding pose for a ligand known to bind from crystallography, even when using the crystal structure? A: This is often due to minor side chain adjustments in the binding site upon ligand binding, which are not captured by rigid-receptor docking. The static crystal structure may have side chain conformers incompatible with the docking pose.

- Troubleshooting Steps:

- Visually inspect the crystal ligand pose versus your top predicted pose. Look for clashes with side chains.

- Perform a short molecular dynamics (MD) relaxation of the protein-ligand complex from the crystal structure, then re-dock into the relaxed receptor.

- Use a docking program that incorporates side chain flexibility (e.g., induced fit) or perform ensemble docking (see Protocol 1).

Q2: My virtual screen against a single protein structure yielded many high-scoring compounds, but hit rates in experimental validation were very low. What went wrong? A: This is a classic sign of poor enrichment due to receptor rigidity. The static structure represents only one conformational state. Compounds that score well against this state may not bind to the protein's other biologically relevant conformations, leading to false positives.

- Troubleshooting Steps:

- Analyze if your target protein is known to have multiple conformations (e.g., from multiple PDB entries).

- Implement an ensemble docking approach using representative structures from different conformational states (see Protocol 1).

- Apply a consensus scoring strategy across multiple conformations to penalize compounds that only fit one state.

Q3: When generating a conformational ensemble for my target, how many structures are sufficient, and how should I select them? A: There is no universal number, but the goal is to cover the relevant conformational space without introducing redundancy.

- Troubleshooting Steps:

- Start with available experimental structures (apo, holo, with different ligands).

- Use clustering analysis (e.g., on backbone RMSD or binding site residue RMSD) on MD simulation snapshots or generated conformers.

- Select cluster centroids that represent distinct binding site shapes. Typically, 5-10 well-chosen structures can significantly improve enrichment over a single structure.

- Validate your ensemble by re-docking known active ligands and decoys to ensure it improves early enrichment (EF1%).

Q4: My induced fit docking (IFD) protocol is computationally expensive and time-consuming. Are there efficient alternatives? A: Yes, for large-scale virtual screening, full IFD on millions of compounds is impractical.

- Troubleshooting Steps:

- Use a two-stage protocol: First, screen against a rigid receptor ensemble using fast docking. Second, subject top-ranked compounds (e.g., top 1000) to a more rigorous IFD or side chain optimization protocol.

- Consider using softened-potential or flexible side chain methods during the initial screening phase, which are faster than full IFD.

- Utilize homology models or conformers generated with normal mode analysis as a cheaper alternative to MD for ensemble generation.

Experimental Protocols

Protocol 1: Ensemble Docking for Improved Virtual Screening Enrichment

Objective: To improve the identification of true active compounds (enrichment) in virtual screening by accounting for receptor flexibility. Methodology:

- Ensemble Generation:

- Collect all available experimental structures (apo, holo, mutant forms) of the target from the PDB.

- If limited structures exist, perform molecular dynamics (MD) simulation of the apo protein or use conformational sampling tools (e.g., FRODA, tCONCOORD) to generate diverse snapshots.

- Cluster the pool of structures based on the RMSD of binding site residues.

- Select the centroid structure from each major cluster to form the docking ensemble.

- Preparation:

- Prepare each protein structure consistently: add hydrogens, assign protonation states, and optimize hydrogen bonds.

- Prepare the ligand library in a corresponding 3D format with enumerated tautomers/protonation states.

- Docking Execution:

- Dock the entire compound library into each receptor conformation in the ensemble using a standard docking program (e.g., AutoDock Vina, Glide, GOLD).

- Score Integration:

- For each compound, retain the best score across all ensemble members (best-rank or best-score method).

- Alternatively, use the average score across the ensemble, though best-rank is often more effective.

- Analysis:

- Rank the entire library based on the integrated score.

- Calculate enrichment factors (EF) and plot ROC curves to compare performance against single-structure docking.

Protocol 2: Induced Fit Docking (IFD) for Pose Prediction

Objective: To accurately predict the binding pose of a ligand by allowing side chain and backbone adjustments in the binding site. Methodology:

- Initial Docking:

- Prepare the rigid receptor structure and ligand.

- Perform standard docking with a softened potential (van der Waals radii scaling to 0.5-0.8) to generate an initial set of ligand poses.

- Protein Refinement:

- For each ligand pose (or a selected subset of top poses), refine the surrounding protein residues (typically within 5-10 Å of the ligand).

- Use a protein structure prediction or minimization algorithm (e.g., Prime, Rosetta) to optimize side chains and local backbone.

- Redocking:

- Dock the ligand rigidly into each refined protein structure generated in step 2.

- Scoring & Selection:

- Score each final protein-ligand complex using a more detailed scoring function (e.g., MM-GBSA, scoring with implicit solvation).

- Select the pose with the most favorable overall energy as the final predicted pose.

Table 1: Impact of Ensemble Docking on Virtual Screening Enrichment

| Target Protein | Method | # of Actives Found (Top 1%) | EF1%* | Reference/Note |

|---|---|---|---|---|

| HIV-1 Protease | Single Crystal Structure | 12 | 12.0 | Baseline |

| HIV-1 Protease | Ensemble (4 MD snapshots) | 21 | 21.0 | 75% improvement |

| Kinase AKT1 | Single Structure (Apo) | 5 | 5.0 | Baseline |

| Kinase AKT1 | Ensemble (3 PDB states) | 14 | 14.0 | 180% improvement |

| GPCR (Beta-2) | Homology Model | 8 | 8.0 | Baseline |

| GPCR (Beta-2) | Ensemble (5 MD states) | 17 | 17.0 | 112% improvement |

*Enrichment Factor at 1% of the screened database.

Table 2: Pose Prediction Accuracy with Flexible vs. Rigid Docking

| Target (PDB Code) | Rigid Receptor Docking RMSD (Å)* | Induced Fit/Flexible Docking RMSD (Å)* | Improvement |

|---|---|---|---|

| 1TIM (Thymidine Kinase) | 4.7 | 1.2 | 74% |

| 3PTB (Trypsin) | 3.8 | 0.9 | 76% |

| 1HWR (HIV-1 Protease) | 5.2 | 1.5 | 71% |

| 2J5C (Kinase) | 4.1 | 1.8 | 56% |

*Average RMSD of the top-ranked pose compared to the crystallographic ligand pose for a benchmark set of re-docked complexes.

Diagrams

Title: Diagnosing Poor Virtual Screening Results

Title: Ensemble Docking Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for Handling Flexibility in Docking

| Item | Category | Function/Benefit |

|---|---|---|

| Molecular Dynamics Software(e.g., GROMACS, AMBER, NAMD) | Conformational Sampling | Generates physically realistic protein conformational ensembles for ensemble docking. |

| Induced Fit Docking Suite(e.g., Schrodinger IFD, MOE Induced Fit) | Flexible Docking | Allows side-chain/backbone movement during docking for accurate pose prediction. |

| Normal Mode Analysis Tools(e.g., ProDy, ElNémo) | Conformational Sampling | Efficiently samples large-scale, low-energy protein motions to generate relevant conformers. |

| Clustering Algorithms(e.g., MDTraj, GROMOS) | Ensemble Analysis | Identifies representative structures from large ensembles of conformations (MD, PDB). |

| MM-GBSA/MM-PBSA Scripts | Scoring & Validation | Provides more rigorous binding free energy estimates for post-docking pose ranking and validation. |

| Curated Benchmark Sets(e.g., DUD-E, CSAR) | Validation | Provides datasets with known actives and decoys to validate enrichment protocols. |

| Structure Preparation Tools(e.g., PDBFixer, MolProbity, Protein Prep Wizard) | Pre-processing | Ensures consistent protonation, missing residue/atom handling, and steric clash removal. |

The Computational Toolbox: From Rotamer Libraries to AI Co-Folding for Flexible Docking

Troubleshooting Guides & FAQs

Q1: My side chain packing with a rotamer library is yielding unrealistically high clash scores. What are the primary causes and solutions? A: This typically indicates issues with the library itself or its application.

- Cause 1: Library-Protein Backbone Mismatch. The rotamer library was derived from a different backbone conformation or resolution range than your target protein.

- Solution: Use a backbone-dependent library. Ensure the χ-angle distributions in your library are filtered for backbone φ/ψ angles similar to your target. Re-evaluate your choice between a statistical (e.g., Dunbrack) or a conformationally diverse (e.g., Penultimate) library.

- Cause 2: Inadequate van der Waals Radii or Clash Criteria.

- Solution: Check the atomic radii parameters in your energy function. Standard CHARMM or AMBER radii may need scaling (e.g., 0.8-0.9x) for packing calculations. Adjust the clash cutoff energy.

- Protocol: To diagnose, systematically run packing on a high-resolution crystal structure where side chains are known. If the native rotamer is not found or scores poorly, the library/energy function is at fault.

Q2: When implementing Dead-End Elimination (DEE), the algorithm terminates early without finding a solution or runs excessively long. How do I troubleshoot this? A: DEE performance is highly sensitive to the pruning criteria and energy function.

- Cause 1: Overly Relaxed or Stringent DEE Criteria (∆E). A loose criterion fails to prune rotamers, causing combinatorial explosion. A very strict criterion prunes necessary rotamers, leading to no solution.

- Solution: Implement incremental DEE. Start with Goldstein DEE (stronger, safer). If slow, apply Split DEE or use an initial

∆Emargin of 2-3 kcal/mol, tightening it to 0.5-1 kcal/mol in later cycles. Monitor the number of rotamer pairs pruned per cycle.

- Solution: Implement incremental DEE. Start with Goldstein DEE (stronger, safer). If slow, apply Split DEE or use an initial

- Cause 2: Inaccurate or Noisy Energy Function.

- Solution: The DEE theorem requires a pairwise decomposable energy function. Verify that your total energy is a sum of self (Eself) and pairwise (Epair) terms. Noise from non-pairwise terms (e.g., some solvation models) violates DEE assumptions.

- Protocol: Use the following logic flow to adjust DEE parameters:

Troubleshooting DEE Implementation Flow

Q3: My Monte Carlo (MC) simulation for side chain sampling gets trapped in a high-energy local minimum. What advanced MC strategies can I employ? A: Basic Metropolis MC is prone to this. Implement enhanced sampling protocols.

- Solution 1: Use Replica Exchange Monte Carlo (REMC).

- Protocol: Run N parallel simulations (replicas) at different temperatures (e.g., from 300K to 500K). After a set number of steps, attempt to swap configurations between adjacent temperatures with a probability based on their energies and temperatures. This allows high-energy states at high T to be refined at low T.

- Solution 2: Implement a Hybrid Move Set.

- Protocol: Do not only change one rotamer at a time. Every 100-1000 steps, propose a "concerted rotation" move for a loop or a "side chain flip" for buried residues. This disrupts local minima.

- Solution 3: Utilize a Simulated Annealing Schedule.

- Protocol: Start the MC simulation at a high temperature (e.g., 1000K) and geometrically cool to the target temperature (e.g., 300K) over 50,000-100,000 steps. Use a cooling factor (α) of 0.99 to 0.999 per step.

Q4: How do I choose between a deterministic method (like DEE) and a stochastic method (like MC) for my specific protein system? A: The choice depends on system size, required guarantee, and computational resources.

| Criterion | Dead-End Elimination (DEE) | Monte Carlo (MC) / REMC |

|---|---|---|

| System Size | Best for systems with < 200 residues to pack. | Scalable to very large systems (e.g., protein complexes). |

| Solution Guarantee | Finds global minimum if pairwise energy and criteria are met. | Finds near-optimal solution; no absolute guarantee. |

| Computational Cost | High memory for pairwise matrices; time can explode for large systems. | Lower memory; time is controllable by step count. |

| Flexibility Handling | Requires discrete rotamers; backbone is fixed. | Can be integrated with backbone flexibility via moves. |

| Best Use Case | Final precise packing of a protein core after backbone modeling. | Initial exploratory sampling, docking, flexible loops. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function / Explanation |

|---|---|

| Dunbrack Backbone-Dependent Rotamer Library | The standard statistical library. Provides χ-angle probabilities and frequencies based on backbone φ/ψ angles, crucial for realistic sampling. |

| Penultimate Rotamer Library | A conformationally diverse library derived from high-resolution structures with minimal filtering. Useful for exploring rare or strained conformations. |

| SCWRL4 Software | A widely used algorithm that combines a rotamer library with DEE and graph theory for fast, deterministic side-chain placement. |

| Rosetta Packer | A sophisticated, stochastic Monte Carlo-based packing algorithm within the Rosetta suite. Uses an annealer and a custom rotamer library for high-resolution design. |

| CHARMM36 / AMBER ff19SB Force Fields | Provide the essential van der Waals parameters, atomic radii, and torsion energy terms for calculating the self and pairwise energies during rotamer evaluation. |

| MolProbity | A validation server used to diagnose packing errors. Provides clashscores, rotamer outliers, and Ramachandran plots to assess the quality of packed models. |

Experimental Protocol: Integrating DEE and MC for High-Resolution Docking

Objective: Refine the side chains at a protein-ligand interface after initial rigid docking.

Methodology:

- Input Preparation: Extract the protein-ligand complex from the docking pose. Define the sampling region (residues within 8Å of the ligand) and the background region (all other residues).

- Initial Optimization (Deterministic Stage):

- Apply the SCWRL4 algorithm to pack all side chains of the protein using a backbone-dependent rotamer library.

- Fix the side chains in the background region in their SCWRL4-optimized conformations.

- Flexible Refinement (Stochastic Stage):

- On the sampling region, run a Replica Exchange Monte Carlo (REMC) simulation.

- Energy Function: Use a simplified molecular mechanics force field (e.g., van der Waals, electrostatics, implicit GB/SA solvation).

- Parameters: Run 8 replicas exponentially spaced from 300K to 450K. Perform 50,000 MC steps per replica. Attempt replica swaps every 100 steps. Proposed moves include single-rotamer changes and occasional rigid-body "wiggles" of the ligand.

- Analysis & Validation:

- Cluster the low-temperature replica trajectories from the final 20,000 steps.

- Select the centroid of the largest cluster as the final refined model.

- Validate using MolProbity to ensure no new steric clashes or rotamer outliers were introduced.

Hybrid DEE-MC Side Chain Refinement Workflow

Troubleshooting Guides & FAQs

Q1: In ensemble docking, my ligand consistently docks to only one or two receptor conformers out of a large ensemble. How do I ensure broader sampling? A1: This indicates a bias in your ensemble generation or scoring. First, verify that your ensemble (e.g., from MD simulations, NMR models, or multiple crystal structures) represents biologically relevant conformational diversity. Use principal component analysis to check for clustering. In the docking setup, ensure you are using a consensus scoring approach across all frames, not just the top score from a single conformer. A common protocol is:

- Dock the ligand against each receptor conformer independently using standard rigid-receptor settings.

- Re-score all generated poses from all dockings with a single, robust scoring function (e.g., MM/GBSA).

- Analyze the distribution of top-ranked poses across the ensemble to identify consensus binding modes.

Q2: When applying soft docking, how do I determine the optimal van der Waals (vdW) scaling parameters to avoid excessive false positives? A2: Optimal parameters are system-dependent. A recommended experimental protocol is:

- Benchmarking: Use a set of known binders and decoys for your target.

- Titration: Perform docking with a range of vdW scaling factors for the receptor (e.g., 0.8, 0.9, 1.0) and ligand (e.g., 0.8, 1.0).

- Validation: Calculate the enrichment factor (EF) or area under the ROC curve (AUC) for each parameter set.

- Selection: Choose the parameter set that maximizes early enrichment (e.g., EF1%). Typically, receptor softness values between 0.8-0.9 and ligand softness at 1.0 provide a good starting point.

Q3: During on-the-fly side-chain relaxation, the binding site collapses or distorts unrealistically. What controls can prevent this? A3: This is often due to inadequate restraints. Implement a multi-step protocol:

- Restraint Strategy: Apply harmonic restraints to the protein backbone atoms and to side-chains beyond the defined "flexible region" (e.g., residues within 5-7 Šof the ligand). Use a force constant of 5-10 kcal/mol/Ų.

- Staged Minimization: First, minimize only the ligand pose. Then, minimize the selected flexible side-chains while keeping the ligand partially restrained. Finally, perform a light minimization of the entire complex with very low restraints.

- Sampling: Use a hybrid Monte Carlo/Minimization algorithm rather than simple gradient descent to escape local minima.

Q4: How do I choose between these three flexibility methods for a new target with no known binders? A4: Base your choice on the expected scale and type of flexibility, informed by preliminary analysis.

| Method | Best For | Recommended Preliminary Analysis |

|---|---|---|

| Ensemble Docking | Large-scale, pre-existing conformational changes (e.g., domain movements, allostery). | Analyze available PDB structures for the target. Run short, unconstrained MD to observe major collective motions. |

| Soft Docking | Small, local side-chain adjustments and induced-fit with minimal backbone movement. | Examine B-factors in crystal structures; high B-factors indicate intrinsic flexibility. Good for high-throughput virtual screening. |

| On-the-Fly Relaxation | Precise modeling of induced-fit where the binding site geometry is unknown. | Use when the apo and holo structures differ significantly in side-chain rotamers. Best for lead optimization after initial hits. |

Q5: What are the common computational pitfalls that lead to long run times in on-the-fly relaxation, and how can they be mitigated? A5: The main pitfalls are an overly large flexible region and exhaustive sampling. Mitigation strategies:

- Define a Minimal Flexible Residue Set: Use only side-chains with atoms within a cutoff (e.g., 5.0 Å) of the docked ligand pose. Do not make the entire binding site flexible.

- Use a Pruned Rotamer Library: Employ a library of common rotamers (e.g., from Dunbrack's library) as starting points instead of complete freedom.

- Limit Cycles: Set strict iteration limits for the relaxation loop (e.g., 50-100 cycles of rotamer trial and minimization).

- Parallelization: Perform relaxation on multiple ligand poses independently in parallel.

Experimental Protocols

Protocol 1: Generating and Validating a Receptor Ensemble for Docking

Objective: Create a diverse, relevant ensemble of protein conformations for ensemble docking. Steps:

- Source Structures: Collect all available experimental (NMR, X-ray) structures of the target from the PDB. Include both apo and holo forms.

- Superimposition: Superimpose all structures on a reference (e.g., the structure with the highest resolution) using the protein backbone.

- Cluster: Perform RMSD-based clustering (cutoff ~1.5-2.0 Å for Cα atoms of the binding site) to identify representative conformers.

- MD Simulation (Optional but recommended): Solvate and neutralize a representative structure. Run an unbiased MD simulation (50-100 ns). Extract snapshots at regular intervals (e.g., every 1 ns).

- Consensus Binding Site Analysis: Align all MD snapshots and experimental structures. Calculate the per-residue RMSF to identify consistently flexible regions.

- Final Ensemble Selection: Select 5-10 structures that maximize the diversity of binding site volume and side-chain rotamer states, as quantified by tools like

POVMEorSiteMap.

Protocol 2: Implementing a Soft Docking Workflow with AutoDock Vina

Objective: Perform a virtual screen with implicit flexibility using softened potentials. Steps:

- Prepare Receptor and Ligands: Use

AutoDockToolsto add hydrogens, compute Gasteiger charges, and save receptor and ligands in PDBQT format. - Define a Spacious Search Grid: Center the grid box on the binding site. Set box dimensions 20-25% larger than typical rigid docking to accommodate minor shifts.

- Modify Configuration File: Create a Vina configuration file (

conf.txt) with the following key parameters: - Execute Docking with Soft Parameters: Run Vina with an external scoring function wrapper (like

smina) that allows vdW scaling:

- Post-processing: Analyze the variance in top-pose coordinates compared to rigid docking results.

Protocol 3: On-the-Fly Side-Chain Relaxation using Rosetta

Objective: Refine a docked pose by optimizing side-chain conformations. Steps:

- Input Preparation: Start with a protein-ligand complex PDB file. Generate a Rosetta parameter file (

LIG.params) for the ligand usingmolfile_to_params.py. - Define Flexibility: Create a resfile (

flex.resfile) specifying which side-chains to repack/minimize (e.g.,START \n 47 A ALLOWAA PIKAA FAMILYVWfor residue 47 to be flexible). - Run Relaxation: Execute the Rosetta

relaxprotocol with constraints and ligand awareness.

- Cluster Outputs: Cluster the output decoys based on ligand RMSD and select the lowest-energy structure from the largest cluster as the final refined model.

Visualizations

Title: Decision Flowchart for Flexibility Methods

Title: Ensemble Docking Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Flexibility Modeling |

|---|---|

| Molecular Dynamics Software (e.g., GROMACS, AMBER, NAMD) | Generates dynamic ensembles of protein conformations through physics-based simulations, providing input structures for ensemble docking. |

| Protein Data Bank (PDB) Structures | Source of multiple experimental conformations (apo/holo, mutant, bound to different ligands) to build initial static ensembles. |

| Docking Suite with Scripting (e.g., AutoDock Vina, smina, DOCK6) | Core engine for pose generation. Scripting allows automation over multiple receptor files and parameter sets for ensemble & soft docking. |

| Rosetta Modeling Suite | Provides robust protocols for on-the-fly side-chain repacking and relaxation with advanced scoring functions and rotamer libraries. |

| Consensus Scoring Scripts (Python/bash) | Custom scripts to aggregate, re-score, and rank poses from multiple docking runs, enabling meta-analysis of ensemble results. |

| Structure Analysis Tools (e.g., PyMOL, VMD, MDAnalysis) | Visualize conformational changes, measure RMSD/RMSF, and analyze binding site volumes and interactions pre- and post-relaxation. |

| Rotamer Library (e.g., Dunbrack 2011) | A curated set of statistically preferred side-chain dihedral angles, used to limit the search space during on-the-fly refinement. |

| MM/GBSA or MM/PBSA Scripts (e.g., in AMBER) | More rigorous, physics-based scoring method used to re-evaluate and rank poses from initial docking screens across ensembles. |

Troubleshooting Guides & FAQs

Q1: My Molecular Dynamics (MD) simulation of a protein-ligand complex becomes unstable and crashes within the first few nanoseconds. What are the primary causes and solutions? A: This is often due to incorrect system preparation or force field parameters.

- Check 1: Ligand Parameters. Ensure your ligand's topology and force field parameters (charges, bond definitions) are correctly generated using tools like

antechamber(GAFF) orCGenFF. Manually inspect the generated parameter file for missing terms. - Check 2: System Neutralization and Solvation. The system must be electrostatically neutral. Add counterions (Na+, Cl-) before solvation. Use a solvent box (e.g., TIP3P water) with sufficient padding (≥1.0 nm from the protein).

- Check 3: Energy Minimization. Perform rigorous, multi-step minimization before heating. Start with steepest descent (5,000 steps) on the solute heavy atoms with restraints, then on the entire system.

- Protocol: A robust protocol is:

pdb2gmxfor protein.antechamber&parmchk2for ligand.tleap(AmberTools) or manual assembly in GROMACS to combine.- Solvate with

solvate. - Neutralize with

genion. - Minimization → NVT equilibration (100ps, 300K) → NPT equilibration (100ps, 1 bar) → Production MD.

Q2: The conformational ensemble from my MD simulation is too narrow and doesn't capture the expected large-scale motion seen in experiments. How can I enhance sampling? A: Standard MD is limited by timescales. Employ enhanced sampling techniques.

- Solution 1: Replica Exchange Molecular Dynamics (REMD). Run parallel simulations at different temperatures, allowing exchanges to overcome energy barriers. Requires significant computational resources.

- Solution 2: Metadynamics. Apply a history-dependent bias potential along defined Collective Variables (CVs) (e.g., dihedral angles, distance). This pushes the system to explore new states.

- Solution 3: Accelerated MD (aMD). Boost the potential energy surface to reduce barrier heights, promoting broader sampling with a single simulation.

- Recommendation: For initial broad exploration, aMD is computationally efficient. For characterizing transitions between known states, use Metadynamics with well-chosen CVs.

Q3: Normal Mode Analysis (NMA) with an elastic network model yields unrealistic, symmetric low-frequency modes for my multi-domain protein. What's wrong? A: This typically arises from an inappropriate coarse-graining cutoff or model initialization.

- Check 1: Cut-off Distance. The default cutoff (e.g., 10-15Å) for connecting springs in the ENM might be too low for large domains or too high, creating spurious connections. Systematically test cutoffs between 8-20Å.

- Check 2: Input Structure. NMA analyzes equilibrium fluctuations. Ensure your input PDB structure is energy-minimized to remove steric clashes, which distort the harmonic potential.

- Check 3: Missing Residues. Large gaps in the structure can create artificially soft regions. Consider using a homology model to fill gaps or analyze domains separately.

- Protocol for Robust NMA:

- Minimize the crystal structure using a simple force field (e.g., CHARMM or GROMOS).

- Use the prody Python API:

from prody import *. - Parse structure:

structure = parsePDB('your.pdb'). - Build ENM:

anm = ANM('Your Protein'). anm.buildHessian(structure, cutoff=12.0).anm.calcModes(n_modes=20).- Visually inspect modes 7-20 (beyond the first 6 trivial rotational/translational modes).

Q4: How do I quantitatively compare and select the most relevant conformations from a combined MD-NMA ensemble for subsequent docking studies? A: Use clustering based on structural similarity and rank by relevance (e.g., population, energy, mode collectivity).

- Step 1: Dimensionality Reduction. Perform Principal Component Analysis (PCA) on the Cα atom positions of the combined trajectory (MD frames + NMA-generated deformations along low-frequency modes).

- Step 2: Clustering. Apply the k-means or DBSCAN algorithm on the first 2-3 principal components to identify distinct conformational clusters.

- Step 3: Selection. From each major cluster, select the centroid structure (most representative) and the lowest potential energy structure (if energy data is available from MD).

- Data Presentation: Summarize the clusters as below.

Table 1: Conformational Cluster Analysis from Combined MD-NMA Sampling

| Cluster ID | Population (%) | Avg. RMSD from Crystal (Å) | Representative Use Case for Docking |

|---|---|---|---|

| 1 (Closed) | 45.2 | 1.1 | Dock known competitive inhibitors. |

| 2 (Open-I) | 28.7 | 3.5 | Dock allosteric modulators or large substrates. |

| 3 (Open-II) | 15.1 | 4.2 | Investigative docking for novel chemotypes. |

| 4 (Twisted) | 11.0 | 5.8 | Likely irrelevant; high energy. |

Experimental Protocol: Generating an NMA-Augmented Ensemble for Ensemble Docking

- Input: High-resolution crystal structure (PDB format).

- Preprocessing: Remove water, ligands, and ions. Add missing side chains with SCWRL4 or Modeller.

- Energy Minimization: Minimize the structure in vacuum using 500 steps of steepest descent followed by 1500 steps of conjugate gradient (e.g., with GROMACS or NAMD).

- Normal Mode Analysis: Using the minimized structure, perform NMA with an elastic network model (cutoff=12Å). Extract the 10 lowest-frequency non-trivial modes.

- Conformation Generation: Displace the structure along each mode (± 2 standard deviations, 5 steps each direction) to generate 100 deformed structures.

- MD Refinement: Subject each deformed structure (and the original) to a short, restrained MD simulation (100ps, positional restraints on Cα) in implicit solvent to relax side chains and remove clashes.

- Clustering: Cluster all 101 refined structures based on Cα RMSD using a hierarchical algorithm (cutoff=2.5Å).

- Final Ensemble: Select the centroid of each cluster with >5% population for the final docking ensemble.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in MD/NMA Sampling |

|---|---|

| GROMACS | Open-source MD software suite for high-performance simulation, energy minimization, and trajectory analysis. |

| AMBER/NAMD | Alternative MD packages with advanced force fields and enhanced sampling algorithms. |

| ProDy | Python toolkit for protein dynamics analysis, including NMA, PCA, and elastic network models. |

| MDAnalysis | Python library for analyzing MD trajectories (RMSD, clustering, distances). |

| PyMOL | Molecular visualization system for inspecting structures, trajectories, and conformational changes. |

| CHARMM36/AMBER ff19SB | Modern, state-of-the-art force fields for accurate modeling of protein dynamics and interactions. |

| PLUMED | Open-source plugin for free-energy calculations and enhanced sampling (Metadynamics, Umbrella Sampling). |

| GalaxyDock3 | Example of a docking server capable of performing ensemble docking across multiple protein conformations. |

Diagram 1: Workflow for Enhanced Conformational Sampling

Diagram 2: Sampling Techniques in Thesis Context

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: DiffDock frequently outputs poses with high confidence scores that are physically unrealistic (e.g., severe steric clashes, incorrect binding mode). What are the primary checks and corrective steps? A: This often stems from input preprocessing or model limitations.

- Check 1: Verify your protein and ligand input files. Ensure the protein PDB is clean (no missing heavy atoms in binding site residues, standard atom names). For the ligand, ensure correct protonation state and stereochemistry. Use tools like

Open BabelorRDKitto sanitize. - Check 2: Review the confidence metrics. DiffDock outputs a confidence score (likelihood) and an associated error (pLDDT-like). Poses with high confidence but low pLDDT may be unreliable. Filter using:

confidence > 0.8 and pLDDT > 70. - Corrective Step: Use DiffDock's top poses as initial guesses for a subsequent refinement with a physics-based method (e.g., AMBER/CHARMM minimization or MD simulation). This resolves clashes and improves local geometry without losing the overall binding pose.

Q2: When using AlphaFold-Multimer for complex prediction before docking, how do we handle regions with very low pLDDT (e.g., <50) in the predicted interface? A: Low pLDDT indicates high uncertainty in side chain or backbone placement, a key challenge in the thesis on protein flexibility.

- Protocol 1: Ensemble Docking. Run AlphaFold-Multimer 5-10 times with different random seeds to generate a structural ensemble. Dock your ligand against all members of this ensemble.

- Protocol 2: Flexible Loop Refinement. Use the predicted aligned error (PAE) matrix to identify flexible interface loops. Employ specialized tools like Rosetta Relax or AlphaFold2's built-in relaxation on these low-confidence regions before docking.

- Protocol 3: Consider bypassing AF-Multimer for that specific region and instead use a curated multiple sequence alignment (MSA) or experimental fragment data if available.

Q3: FlexPose successfully generates multiple protein conformations, but the computational cost is prohibitive for large-scale virtual screening. What optimization strategies are valid? A: FlexPose models flexibility but requires strategic use.

- Strategy 1: Targeted Flexibility. Restrict the flexible modeling to a defined binding site radius (e.g., 10-15Å around the known or predicted ligand location). This drastically reduces the number of residues and degrees of freedom.

- Strategy 2: Clustering & Representative Selection. Generate a large ensemble once for your target. Cluster the resulting structures using RMSD (e.g., with

MMseqs2orGROMACS). Select 3-5 centroid structures from the largest clusters as representative conformers for screening. - Strategy 3: Hybrid Pipeline. Use a faster method (like DiffDock) for initial rigid screening, then apply FlexPose refinement only to the top 100-1000 hits.

Q4: How do we integrate these three tools (DiffDock, AlphaFold-Multimer, FlexPose) into a coherent workflow that respects protein flexibility? A: Follow this sequential protocol designed to address hierarchical flexibility.

Experimental Protocol: Integrated Flexibility-Conscious Docking Workflow

- Input Preparation: Prepare protein sequence(s) and ligand SMILES/3D structure.

- Complex Structure Prediction: Run AlphaFold-Multimer (if docking to a protein-protein interface) or AlphaFold2 (for a single chain). Analyze pLDDT and PAE.

- Conformational Ensemble Generation: Feed the AF2-predicted structure into FlexPose. Generate an ensemble (N=20-50) focusing on low pLDDT regions and binding site side chains.

- Ensemble Docking: For each conformer in the ensemble, run DiffDock (predicting M=40 poses per conformer).

- Pose Aggregation & Ranking: Pool all poses (N x M). Re-rank using a consensus score combining DiffDock confidence, physical energy score (from a quick MM/GBSA calculation), and consistency across the ensemble.

- Refinement: Subject the top 5-10 consensus poses to final all-atom molecular dynamics (MD) simulation or energy minimization in explicit solvent.

Q5: What are the key quantitative benchmarks comparing the performance of DiffDock, FlexPose, and traditional docking on flexible targets? A: Recent literature highlights the following performance metrics on standard benchmarks like PDBBind and a flexible subset of D3R Grand Challenges.

Table 1: Comparative Performance Metrics for Flexible Docking Tools

| Tool / Method | Success Rate (RMSD < 2Å) | Typical Runtime per Ligand | Explicitly Models Protein Flexibility? | Key Strength |

|---|---|---|---|---|

| DiffDock (latest) | ~38% (general) / 52% (flexible subset) | 10-30 sec (GPU) | No (but ensemble-based) | Ultra-fast pose generation, excellent on large motions. |

| FlexPose | N/A (conformer generator) | Minutes to hours (GPU) | Yes (backbone & side chain) | Generates diverse, physics-informed protein states. |

| AutoDock Vina | ~22% (flexible subset) | 1-5 min (CPU) | Limited (side chains only) | Widely used, robust baseline. |

| AlphaFold-Multimer | ~30% (interface RMSD < 2Å) | Hours (GPU/TPU) | Implicitly via MSA | Predicts novel complexes de novo. |

| Integrated Pipeline | ~58% (flexible targets, estimated) | Hours (GPU cluster) | Yes (full protocol) | Addresses hierarchical flexibility end-to-end. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagent Solutions for End-to-End Flexible Docking

| Item / Resource | Function / Purpose | Example/Provider |

|---|---|---|

| AlphaFold2/3 & Multimer | Provides de novo protein or complex structures from sequence, the foundational input for docking when no structure exists. | Google DeepMind, ColabFold, LocalColabFold. |

| DiffDock Model Weights | Pre-trained neural network parameters enabling fast, diffusion-based ligand docking. | Available on GitHub (microsoft/DiffDock). |

| FlexPose Codebase | Implements the SE(3)-equivariant model for generating protein conformational ensembles. | Available on GitHub (DeepGraphLearning/FlexPose). |

| Curated Flexible Benchmark Sets | Datasets for training and evaluating on challenging, flexible binding sites. | PDBFlex, D3R Grand Challenge targets, PoseBusters benchmark. |

| MD Simulation Package | For post-docking refinement and validation in explicit solvent. | GROMACS, AMBER, NAMD, OpenMM. |

| MM/GBSA Scripts | For rapid post-docking binding energy estimation and re-scoring. | Tools integrated in AMBER, Schrodinger Prime, or standalone scripts. |

| Structure Cleaning Suite | Prepares PDB files, adds hydrogens, corrects protonation states. | PDBFixer, MolProbity, UCSF Chimera. |

| Ligand Parameterization Tool | Generates topology and parameter files for small molecules in MD. | ACPYPE (AnteChamber PYthon Parser interfacE), CHARMM-GUI. |

Visualization: Workflows and Relationships

Title: Integrated Flexible Docking Workflow

Title: Thesis Context: Tools Addressing Flexibility Types

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During MD simulations for cryptic pocket prediction, my protein structure becomes unstable or unfolds. What could be the cause and how do I fix it? A: This is often due to inadequate equilibration or excessive force on applied probes. First, ensure a stepwise equilibration protocol: 1) Solvate and minimize the system. 2) Perform 100ps NVT equilibration, slowly heating from 0K to 300K with backbone restraints (force constant 10 kcal/mol/Ų). 3) Perform 1ns NPT equilibration, gradually releasing restraints. If using probe-based methods (like CPtraj), reduce the probe force constant from a typical 1.0 kcal/mol/Ų to 0.2-0.5 kcal/mol/Ų to prevent distortion. Monitor RMSD and radius of gyration during equilibration before production runs.

Q2: My cryptic pocket detection algorithm yields too many false positives. How can I refine the results? A: Filter predictions using a consensus and conservation approach. Implement the following workflow: 1) Run at least two different detection methods (e.g., MD with cryptic finder, and machine learning like P2Rank). 2) Cluster predicted pockets based on spatial overlap (≥50%). 3) Cross-reference with evolutionary conservation scores from ConSurf; true functional pockets often show higher conservation. 4) Validate with short, targeted docking of fragment libraries; pockets that bind diverse fragments with sensible poses are more likely to be true.

Q3: When incorporating backbone flexibility in docking, the computational cost becomes prohibitive. What are the current efficient strategies? A: Utilize ensemble docking with pre-generated conformational states. Follow this protocol: 1) Generate an ensemble using accelerated MD (aMD) or conformational flooding to sample states faster. Key parameters: aMD dihedral boost energy of 5-6 kcal/mol and alpha factor of 0.2. 2) Cluster the ensemble (RMSD cutoff 2.5Å) to a manageable number (e.g., 5-10 representative structures). 3) Dock ligands against each member in parallel. 4) Use a consensus scoring function that weights results by the cluster population. This balances cost and coverage.

Q4: How do I handle significant side-chain rearrangements when docking into a flexible pocket? A: Employ a two-stage protocol combining soft docking and explicit side-chain optimization. Methodology: 1) Stage 1 (Soft Docking): Perform initial docking with a "soft" potential that allows for minor clashes (van der Waals scaling factor 0.8-0.9). This identifies plausible binding regions. 2) Stage 2 (Side-Chain Refinement): For top poses, use a tool like RosettaFlexPepDock or Schrödinger's Induced Fit module. Define flexible residue shells (5Å around the ligand). Run short Monte Carlo/Minimization cycles (typically 50 cycles) to repack and minimize side chains.

Q5: My experimental validation (e.g., X-ray) does not show the predicted cryptic pocket. What are common reasons? A: The primary reason is the lack of a stabilizing ligand or allosteric effector in the experimental system. Cryptic pockets are often ligand-induced. For validation: 1) Co-crystallize with the fragment/hit identified in silico to stabilize the open state. 2) Use hydrogen-deuterium exchange mass spectrometry (HDX-MS) to detect increased solvent accessibility in the predicted region upon ligand binding. 3) Consider if crystal packing forces may be inhibiting the conformational change; try solution-based techniques like NMR.

Data Presentation

Table 1: Comparison of Computational Methods for Cryptic Pocket Detection

| Method | Principle | Avg. CPU Time* | Success Rate† | Key Limitation |

|---|---|---|---|---|

| MD with Probes (CPtraj) | Apply external probes during simulation | 48-72 hours | ~65% | Can distort protein if probe force is high |

| Machine Learning (P2Rank) | Trained on known pocket features | 5-10 minutes | ~70% | Limited to patterns seen in training data |

| Normal Mode Analysis (NMA) | Low-frequency collective motions | 1-2 hours | ~40% | Often misses large, anharmonic motions |

| Metadynamics w/ CVs | Bias simulation with collective variables | 96+ hours | ~75% | Defining optimal CVs is non-trivial |

*Time for a typical 300-residue protein on a standard 24-core node. †Defined as predicting a known cryptic pocket within 4Å RMSD of its experimental open structure.

Table 2: Performance of Flexible Docking Strategies on the DUD-E Diverse Set

| Strategy | Backbone Treatment | Side-Chain Treatment | Avg. RMSD (Å) | Enrichment Factor (EF1%) | Computational Cost (Relative to Rigid) |

|---|---|---|---|---|---|

| Rigid Receptor | Fixed | Fixed | 5.8 | 12.1 | 1x |

| Ensemble Docking | Multiple states | Fixed per state | 2.5 | 25.4 | 5-10x |

| Induced Fit (IFD) | Fixed | Flexible & repacked | 2.1 | 28.7 | 50-100x |

| Full Flexible (e.g., Rosetta) | Flexible (minimal) | Flexible & repacked | 1.8 | 30.5 | 1000x+ |

Experimental Protocols

Protocol 1: Identifying Cryptic Pockets Using Accelerated Molecular Dynamics (aMD) and Grid Inhomogeneous Solvation Theory (GIST)

- System Preparation: Prepare the protein structure (e.g., from PDB) using

pdb4amber, removing heteroatoms. Add missing hydrogens and side chains withChimeraorPDB2PQR. Solvate in a TIP3P water box with 10Å buffer. Neutralize with Na+/Cl- ions. - Parameterization & Minimization: Use

ff14SBforce field in AMBER/pmemd.cuda. Minimize in two stages: 1) Solvent only (5000 steps), 2) Full system (10000 steps). - Equilibration: Heat system from 0K to 300K over 100ps (NVT, backbone restraint 10 kcal/mol/Ų). Then equilibrate at 1 atm over 1ns (NPT, gradually reducing restraints to 0).

- aMD Production Run: Apply aMD boost potentials to dihedral angles. Typical settings:

dihedral boost energy = 5.0 kcal/mol,alpha_d = 0.2. Run a 200-500ns simulation usingpmemd.cuda. - Trajectory Analysis: Use

CPtrajto extract frames every 100ps. Calculate per-residue RMSF to identify flexible regions. UseGISTanalysis on water occupancy and thermodynamics to map regions of displaceable water, indicating potential cryptic pockets. - Pocket Detection: Input the ensemble of frames into

PocketMinerorMDpocketto cluster and rank transient cavities.

Protocol 2: Ensemble Docking with Backbone Flexibility

- Ensemble Generation: Starting from an apo structure, run a short (50-100ns) conventional MD simulation. Cluster the trajectory using

cppsas(RMSD on Cα atoms, cutoff 2.5Å). Select the top 5 centroid structures and the original apo structure to form an initial ensemble. - Ensemble Refinement (Optional): For each centroid, run a local conformational sampling using

Rosetta relaxorSchrödinger Primeto refine side chains and minor backbone adjustments. - Grid Preparation: For each receptor structure, prepare a docking grid in

AutoDockorSchrödinger Glide. Ensure the grid center encompasses the region of interest and is large enough (≥20Å per side) to accommodate different pocket shapes. - Parallel Docking: Dock the ligand library against each ensemble member independently using standard precision (SP) or high-throughput virtual screening (HTVS) mode.

- Pose Consensus & Scoring: Collect all poses. Cluster poses based on ligand heavy-atom RMSD (<2.0Å). Calculate a consensus score:

Final Score = (Docking Score) - (Cluster Size Weight) + (Ensemble Frequency Weight). - Validation: Visually inspect top poses from different ensemble members for consistency in binding mode despite receptor variations.

Mandatory Visualization

Diagram 1: Workflow for Cryptic Pocket Discovery & Targeting

Diagram 2: Multi-Stage Flexible Docking Decision Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Cryptic Pocket Studies

| Item | Function / Purpose | Example Tools / Software |

|---|---|---|

| Enhanced Sampling Suites | Accelerates exploration of conformational landscape beyond typical MD timescales. | AMBER (pmemd.cuda w/ aMD), GROMACS (PLUMED plugin), NAMD (Collective Variable-based MetaDynamics) |

| Pocket Detection Algorithms | Identifies and characterizes cavities, including transient ones, from structural ensembles. | MDpocket, P2Rank, PocketMiner, Fpocket |

| Flexible Docking Software | Performs ligand docking allowing for receptor flexibility (backbone and/or side-chain). | Schrödinger (Induced Fit Docking), AutoDockFR, RosettaLigand, HADDOCK |

| Ensemble Generation Tools | Creates representative sets of protein conformations for ensemble-based approaches. | CPtraj, Bio3D, MOE, Conformer Selection via NMA |

| Solvent Analysis Tools | Analyzes water dynamics and energetics to locate displaceable water sites (hydrophobic hotspots). | Grid Inhomogeneous Solvation Theory (GIST) in AMBER, Placevent |

| Fragment Libraries | Small, diverse chemical fragments for experimental probing of predicted cryptic pockets. | Maybridge Rule of 3 Fragment Library, FDA Fragment Library, in-house curated fragments |

| Validation Suites | Integrates computational predictions with experimental data for cross-verification. | HDX-MS analysis software (HDExaminer), X-ray crystallography (PHENIX, CCP4), NMR chemical shift analysis (SHIFTX2) |

Solving the Flexibility Puzzle: Diagnosing Failures and Optimizing Docking Protocols

Troubleshooting Guides & FAQs

Q1: My docking run produced no viable poses (no hits). How do I determine if the issue is with sampling or scoring? A: This is a primary diagnostic question. Follow this systematic check:

- Check Sampling Coverage: First, ensure your sampling algorithm explored the conformational space adequately. Visually inspect a large number of generated poses (e.g., 1000+) superposed on the receptor. If no pose places the ligand near the known or predicted binding site, sampling has failed.

- Perform a Control Re-dock: If you have a known native complex, separate the ligand and re-dock it back into the binding site. Use very high exhaustiveness or number of poses. If the native pose is not reproduced among the top scores, the scoring function is likely problematic for this specific system.

- Analyze Pose Clustering: Even with a poor scoring function, good sampling often generates a few correct poses scattered among many decoys. Cluster your output poses by RMSD. If a cluster near the correct pose exists but is ranked poorly, the issue is primarily scoring.

Q2: I get poses in the correct binding site, but they are geometrically implausible (bad clashes, wrong orientation). What does this indicate? A: This typically indicates a scoring function failure. The function is not penalizing steric clashes or rewarding correct interactions (e.g., hydrogen bonds, hydrophobic packing) strongly enough. It can also point to inadequate protein preparation, such as incorrect protonation states of key side chains or missing structural waters.

Q3: How can I diagnostically decouple sampling from scoring in a real-world experiment? A: Implement a cross-docking and re-docking protocol.

- Re-docking: Dock the native ligand back into its original receptor structure. High success here validates the docking protocol for a static snapshot.

- Cross-docking: Dock the same ligand into other receptor conformations (e.g., from different crystallographic structures or MD snapshots). Failure here often highlights protein flexibility issues that sampling cannot overcome.

Q4: My docking works for some ligand classes but fails for others. Is this sampling or scoring? A: This is most often a scoring problem. Most scoring functions are parameterized on specific chemical moieties and interactions. Failure on a new chemotype suggests the function cannot accurately estimate its binding affinity. Consider using a consensus score or a machine-learning-based scoring function trained on diverse data.

Q5: What specific experimental controls can I run to validate my docking protocol? A: Use a decoy set or benchmark dataset like the Directory of Useful Decoys (DUD-E). A robust protocol should:

- Enrich known active compounds over decoys.

- Reproduce known binding modes (pose prediction).

- Show a correlation (not necessarily linear) between docking scores and experimental binding affinities (Ki/IC50) for a congeneric series.

Diagnostic Workflow & Experimental Protocols

Diagnostic Decision Framework

The following workflow provides a step-by-step diagnostic path.

Title: Diagnostic Decision Tree for Failed Docks

Key Experimental Protocols

Protocol 1: Re-docking & Cross-Docking Validation

- Preparation: Prepare the protein structure(s) consistently: add hydrogens, assign partial charges, optimize side-chain orientations for ambiguous residues.

- Re-docking: Extract the native ligand, generate a 3D conformation, and define a docking grid centered on its centroid. Dock with high sampling (exhaustiveness > 100, poses > 1000).

- Analysis: Calculate the Root-Mean-Square Deviation (RMSD) of the top-ranked pose vs. the native pose. Success is typically defined as RMSD < 2.0 Å.

- Cross-Docking: Repeat the docking into an ensemble of alternative receptor conformations (from NMR, MD, or multiple crystal structures).

Protocol 2: Consensus Scoring Diagnostic

- Run Docking: Generate a large pose ensemble (e.g., 1000 poses).

- Multi-Score Evaluation: Score the entire ensemble with 3-4 fundamentally different scoring functions (e.g., force-field-based, empirical, knowledge-based).

- Rank and Compare: Rank poses by each score independently. Identify poses that consistently rank highly across multiple functions.

- Interpretation: If a pose is top-ranked by multiple functions, it is a high-confidence prediction. Disagreement indicates scoring function bias or an inherently difficult case.

Data Presentation

Table 1: Typical Success Rates for Re-docking vs. Cross-Docking on Common Benchmarks

| Benchmark Set | Re-docking Success (RMSD < 2Å) | Cross-docking Success (RMSD < 2Å) | Implied Dominant Challenge |

|---|---|---|---|

| Rigid Protein Benchmark | 85-95% | 75-90% | Minor Flexibility |

| High-Flexibility Targets | 70-85% | 30-50% | Protein Flexibility |

| Diverse Decoy Set (DUD-E) | N/A | Enrichment Factor (EF1%) > 10 | Scoring Function Specificity |

Table 2: Diagnostic Signals and Their Likely Causes

| Observed Result | Likely Sampling Issue | Likely Scoring Issue | Recommended Action |

|---|---|---|---|

| No poses in binding site | Primary | Secondary | Increase search space, use global docking. |

| Correct pose found but not top-ranked | Secondary | Primary | Use consensus scoring, rescore with MD/MM-GBSA. |

| Poor correlation with activity series | Unlikely | Primary | Try machine-learning or customized scoring. |

| Works for some proteins, not others | Possible | Primary | Check protein prep (protonation, waters). |

| Poses have high steric clash | Unlikely | Primary | Adjust van der Waals scaling parameters. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Docking Diagnostics

| Item | Function in Diagnostics | Example Software/Tool |

|---|---|---|

| Protein Structure Ensemble | Provides alternative conformations to test sampling robustness against flexibility. | PDB, MOE, Concoord, MD Simulation Trajectories |

| Decoy Database | Evaluates scoring function's ability to distinguish true binders from similar non-binders. | DUD-E, DEKOIS 2.0 |