Machine Learning for Caco-2 Permeability Prediction: A Comprehensive Guide for Drug Development

This article provides a comprehensive analysis of machine learning (ML) applications for predicting Caco-2 cell permeability, a critical parameter in oral drug development.

Machine Learning for Caco-2 Permeability Prediction: A Comprehensive Guide for Drug Development

Abstract

This article provides a comprehensive analysis of machine learning (ML) applications for predicting Caco-2 cell permeability, a critical parameter in oral drug development. It explores the foundational challenges of the Caco-2 assay and the subsequent need for in silico models. The piece delves into a wide array of methodological approaches, from traditional QSPR to advanced graph neural networks and multitask learning, highlighting their implementation on platforms like KNIME. It further addresses crucial troubleshooting aspects, including data curation and managing applicability domains for complex modalities like cyclic peptides and targeted protein degraders. Finally, the article offers a comparative validation of different ML models, examines their transferability to industrial settings, and discusses the integration of these predictions with advanced in vitro systems to enhance the prediction of human intestinal absorption for researchers and drug development professionals.

The Caco-2 Gold Standard and the Imperative for Machine Learning

The Caco-2 cell model, derived from human colorectal adenocarcinoma, stands as the preeminent in vitro tool for predicting intestinal drug absorption and permeability for over three decades. Its gold-standard status stems from an unparalleled ability to spontaneously differentiate into enterocyte-like cells that form polarized monolayers with well-developed tight junctions and brush borders, closely mimicking the human intestinal epithelium. This application note details the experimental protocols for utilizing this benchmark model, its critical applications in drug discovery, and its evolving role in powering modern machine learning algorithms for permeability prediction. By providing a biologically relevant, reproducible, and high-throughput compatible system, the Caco-2 model continues to be an indispensable asset for researchers and drug development professionals, forming a critical experimental foundation for advanced in silico methodologies.

In the realm of drug development, oral administration remains the preferred route due to its convenience and patient compliance, making good intestinal absorption a prerequisite for clinical success [1] [2]. The Caco-2 (Cancer coli-2) cell line, established from a human colon carcinoma, has emerged as the most widely utilized in vitro model for predicting human intestinal drug absorption since its introduction in the 1970s [3] [4]. The model's supremacy originates from its unique biological characteristics: when cultured under standard conditions, Caco-2 cells undergo spontaneous differentiation into a polarized monolayer expressing key features of small intestinal enterocytes, including microvilli structures, brush border enzymes, and various carrier transport systems [3]. This application note elucidates why this model maintains its benchmark status, provides detailed protocols for its implementation, and explores its integral role in the development of machine learning frameworks for permeability prediction, thereby bridging classical experimental biology with cutting-edge computational science.

Key Characteristics and Strengths of the Caco-2 Model

The enduring utility of the Caco-2 model in pharmaceutical research is anchored in several defining strengths that collectively justify its gold-standard status.

Predictive Power for Passive Diffusion: The differentiated Caco-2 monolayer forms tight junctions on Transwell inserts, creating a robust biological barrier that enables reliable prediction of drug permeability for passively diffused compounds, a critical parameter in the Biopharmaceutics Classification System (BCS) [5] [2].

Expression of Relevant Transporters and Enzymes: Unlike simpler artificial membranes, Caco-2 cells express a variety of drug-metabolizing enzymes (e.g., cytochrome P450 enzymes, phase II enzymes) and transporter proteins (e.g., P-glycoprotein (P-gp), Multidrug Resistance-Associated Proteins (MRPs), and Breast Cancer Resistance Protein (BCRP)) that are instrumental in carrier-mediated drug absorption and efflux [3] [2]. This allows for the investigation of active transport and efflux mechanisms.

High Reproducibility and Ease of Use: The model allows for consistent and reproducible results across experiments, a vital requirement for comparative studies and regulatory submissions [5]. Furthermore, the cells are relatively straightforward to culture, making them accessible to most laboratories [4].

Fast Differentiation and Functional Markers: Caco-2 cells differentiate relatively rapidly, expressing mature functional properties of enterocytes. The monolayer exhibits high transepithelial electrical resistance (TEER), a key indicator of barrier integrity, and expresses most receptors and enzymes found in the normal intestinal epithelium [4].

Table 1: Key Functional Transporters Expressed in Caco-2 Cell Models and Their Roles

| Transporter | Localization in Caco-2 | Primary Role in Drug Absorption | Example Substrates |

|---|---|---|---|

| P-gp (MDR1) | Apical membrane | Effluxes drugs back into the lumen, reducing bioavailability | Digoxin, Fexofenadine, Paclitaxel [2] |

| BCRP | Apical membrane | Excretion of conjugates and efflux of various compounds | Daunorubicin, Rosuvastatin, Topotecan [2] |

| MRP2 | Apical membrane | Efflux of phase II metabolites (e.g., glucuronides) | Cisplatin, Indinavir [2] |

| PepT1 | Apical membrane | Uptake of di/tri-peptides and peptidomimetic drugs | Valacyclovir, Ampicillin, Captopril [2] |

Applications in Drug Discovery and Development

The Caco-2 cell model serves as a versatile workhorse across multiple stages of the drug discovery and development pipeline, providing critical data that informs decision-making.

Intestinal Absorption and Permeability Screening

The primary application of the Caco-2 model is the prediction of oral drug absorption. By measuring the apparent permeability coefficient (Papp) of a compound as it traverses the cell monolayer from the apical (AP, luminal) to the basolateral (BL, blood) compartment, researchers can classify compounds as having high, medium, or low permeability [1] [6]. This data is fundamental for BCS classification and for prioritizing lead compounds during early-stage discovery [1].

Mechanistic Studies of Transport Pathways

The model is indispensable for elucidating the precise mechanisms by which compounds cross the intestinal epithelium. Studies can determine whether absorption occurs via transcellular (across cells) or paracellular (between cells) routes, and whether the process is passive or involves carrier-mediated uptake or efflux [3] [4]. For instance, the role of efflux transporters like P-gp can be probed by using specific inhibitors and comparing the bidirectional transport (AP→BL vs. BL→AP) to calculate an efflux ratio [6] [2].

Predicting Herb-Drug and Food-Drug Interactions

Caco-2 cells are widely used to screen for potential interactions between conventional drugs and herbal supplements or food components. These interactions often occur via inhibition or induction of metabolic enzymes or drug transporters in the gut [2]. For example, a study on polyphenols like hesperetin, which showed a high efflux ratio, suggests a potential for interaction with efflux transporters [6].

Assessing Mucosal Toxicity and Barrier Function

The integrity of the Caco-2 monolayer, routinely monitored by measuring TEER, provides a sensitive platform to assess the potential mucosal toxicity of new chemical entities or formulations. A decline in TEER indicates a compromise of the barrier function, which can be further investigated by measuring the expression of tight junction proteins [4].

Diagram 1: Standard Caco-2 Permeability Assay Workflow

Detailed Experimental Protocol: The 7-Day Caco-2 Assay

While traditional Caco-2 differentiation takes 21 days, a well-validated 7-day protocol offers a time and resource-saving alternative for high-throughput screening during lead optimization [1]. The following protocol outlines the key steps.

Materials and Reagents

Table 2: The Scientist's Toolkit: Essential Reagents for Caco-2 Assays

| Item | Function/Description | Example/Note |

|---|---|---|

| Caco-2 Cells | The core cellular model. | Use low-passage cells (< passage 30) to ensure consistency [4]. |

| Transwell Inserts | Porous membrane supports for cell growth and polarization. | Typical pore size: 0.4 μm or 3.0 μm. |

| Dulbecco's Modified Eagle Medium (DMEM) | Standard culture medium. | Supplemented with 10-20% Fetal Bovine Serum (FBS), 1% Non-Essential Amino Acids (NEAA), and 1% L-Glutamine. |

| Transport Buffer | Physiologically relevant buffer for permeability assays. | e.g., Hanks' Balanced Salt Solution (HBSS) with 10 mM HEPES, pH 7.4. |

| Transepithelial Electrical Resistance (TEER) Meter | To non-invasively monitor the integrity and tightness of the cell monolayer. | Acceptable TEER values typically exceed 300 Ω·cm² [3]. |

| LC-MS/MS or HPLC System | For sensitive and accurate quantification of the test compound in the samples. | Essential for determining apparent permeability (Papp). |

Protocol Steps

Cell Seeding and Culture: Seed Caco-2 cells onto the apical side of collagen-coated Transwell inserts at a high density (e.g., (1.0 \times 10^5) cells/cm²). Culture the cells for 7 days, changing the medium every 48 hours. The cells are maintained at 37°C in a humidified atmosphere of 5% CO₂ [1].

Monolayer Integrity Validation: Prior to the experiment, validate the integrity of the differentiated monolayer by measuring TEER. Only use inserts with TEER values above a pre-defined threshold (e.g., > 300 Ω·cm²). Alternatively, the permeability of a paracellular marker like Lucifer Yellow can be used to confirm tight junction formation [3].

Permeability Experiment:

- Pre-incubation: Wash the monolayers twice with pre-warmed transport buffer.

- Dosing: Add the test compound dissolved in transport buffer to the donor compartment (AP for AP→BL permeability; BL for BL→AP efflux studies). Add corresponding blank buffer to the receiver compartment.

- Incubation: Place the plates in an orbital shaker (e.g., 50-60 rpm) at 37°C to minimize the unstirred water layer effect. The standard incubation time is 2 hours, with sampling from the receiver compartment at multiple time points (e.g., 30, 60, 90, 120 min) for kinetic analysis [1] [6].

Sample Analysis and Calculation:

- Analyze the concentration of the test compound in the receiver chamber samples using a validated analytical method (e.g., LC-MS/MS).

- Calculate the apparent permeability coefficient (Papp) using the formula: [ P{app} = \frac{dQ}{dt} \times \frac{1}{A \times C0} ] where (dQ/dt) is the transport rate (mol/s), (A) is the surface area of the membrane (cm²), and (C_0) is the initial concentration in the donor compartment (mol/mL) [6].

The Caco-2 Model in the Era of Machine Learning

The rich, high-quality experimental data generated from Caco-2 assays provides the foundational datasets required to train and validate sophisticated machine learning (ML) models for permeability prediction, accelerating early-stage drug design.

Data Generation for Model Training

ML models, including recent message-passing neural networks (MPNNs) and AutoML frameworks like CaliciBoost, require large, curated datasets of molecular structures and their corresponding Caco-2 permeability values (e.g., Papp) for training [7] [8] [9]. The experimental protocols described above are the primary source of this critical data.

Addressing Class Imbalance with Advanced ML

A significant challenge in building multiclass permeability predictors is the inherent class imbalance in available datasets. Advanced ML strategies, such as the XGBoost classifier combined with oversampling techniques like ADASYN, have been successfully employed to address this, achieving high predictive accuracy (test accuracy: 0.717, MCC: 0.512) [7].

Feature Selection and Model Interpretation

The performance of ML models is heavily dependent on molecular feature representation. Studies have demonstrated that 2D/3D molecular descriptors (e.g., PaDEL, Mordred) are particularly effective for Caco-2 prediction [9]. Furthermore, tools like SHAP analysis are applied to interpret the best-performing models, elucidating which molecular descriptors are most influential in determining permeability, thereby providing valuable insights for medicinal chemists [7].

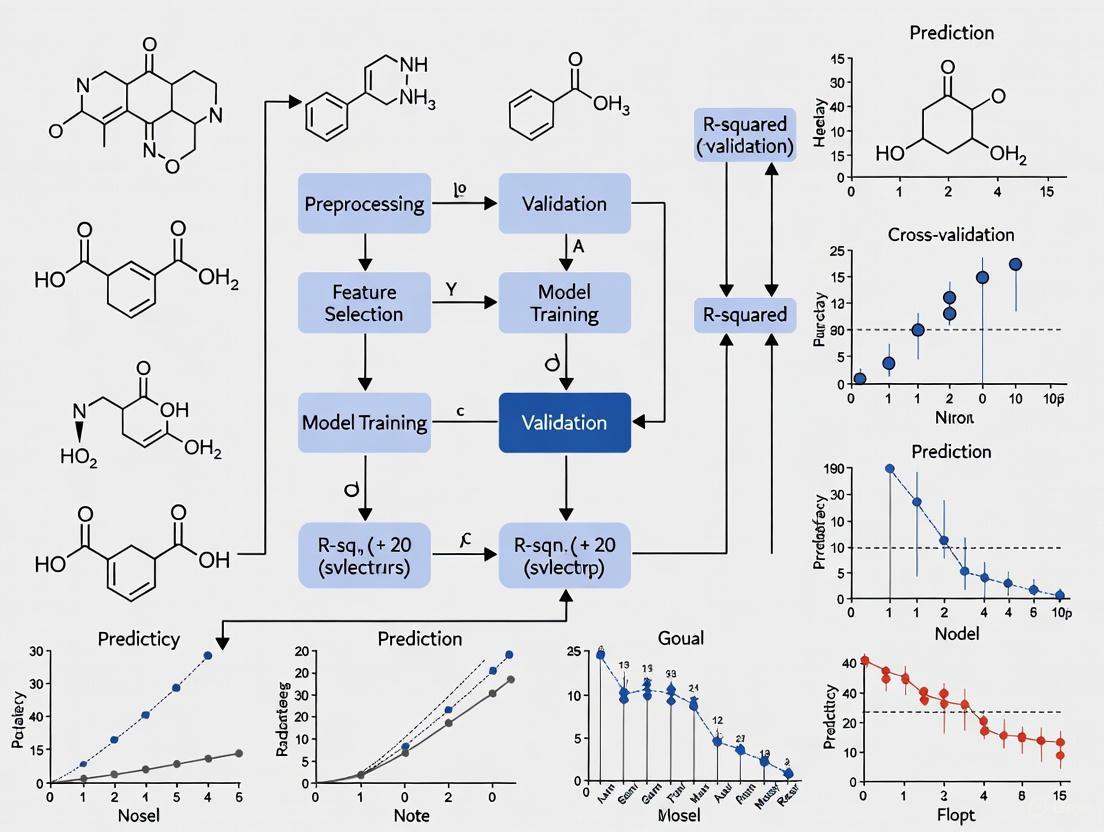

Diagram 2: Caco-2 Data in ML Permeability Prediction

Limitations and Future Perspectives

Despite its benchmark status, the Caco-2 model has recognized limitations, which are driving innovation toward more physiologically relevant systems.

Lack of Cellular Heterogeneity: The model primarily consists of enterocytes, lacking other key intestinal cell types like goblet cells (which secrete mucus), enteroendocrine cells, and M-cells [5] [4]. This is being addressed by developing co-culture models, such as combining Caco-2 with mucus-producing HT29-MTX cells [3] [10].

Variable and Non-Physiological Expression of Enzymes/Transporters: The expression levels of certain metabolic enzymes (e.g., Carboxylesterases CES1/CES2, CYP3A4) and transporters can be low or non-physiological compared to the human intestine [5]. This can lead to inaccurate predictions for prodrugs or compounds that are their substrates.

Extended Differentiation Time: The traditional 21-day culture period is a bottleneck for high-throughput screening. The adoption of accelerated protocols (e.g., 7-day) and the use of engineered scaffolds are mitigating this issue [1] [10].

The future lies in integrating data from next-generation models, such as primary human stem cell-derived models (e.g., RepliGut) and gut-on-a-chip microphysiological systems (MPS), which offer more human-relevant expression of enzymes and transporters [5]. Furthermore, the fluidic integration of gut models with other organs (e.g., liver) in multi-organ chips provides a transformative approach to model first-pass metabolism and predict systemic bioavailability more accurately [5]. These advanced systems will generate even richer biological data, further powering the next generation of machine learning predictive models.

The Caco-2 cell model remains the undisputed benchmark for in vitro intestinal permeability assessment due to its robust biology, proven predictive power, and extensive validation history. Its well-characterized protocols for evaluating passive and active transport mechanisms provide an indispensable framework for drug development. Crucially, the high-quality, experimentally derived permeability data from Caco-2 assays serves as the essential fuel for the development of sophisticated machine learning algorithms, creating a powerful synergy between traditional lab-based science and modern in silico prediction. As the field advances, the Caco-2 model will continue to be a critical point of reference and a foundational tool, even as its limitations are addressed by more complex and human-relevant next-generation models.

Within drug discovery, the accurate assessment of intestinal permeability is a critical determinant of a compound's potential for oral bioavailability. The Caco-2 cell monolayer model has emerged as the in vitro gold standard for this purpose, owing to its morphological and functional similarity to human intestinal enterocytes [10] [11]. However, its integration into high-throughput screening (HTS) paradigms is significantly hampered by three interconnected challenges: extended experimental timelines, substantial resource costs, and inherent experimental variability [10] [12] [13]. This Application Note delineates these challenges and details how the adoption of accelerated protocols and machine learning (ML) models can de-bottleneck the permeability screening process, providing researchers with efficient and reliable tools for early-stage drug development.

The traditional Caco-2 protocol requires a prolonged cultivation period of 21 to 24 days for the cells to fully differentiate into a polarized monolayer [10] [11]. This timeframe is incompatible with the rapid pace of modern drug discovery, necessitating faster solutions. Furthermore, the assay is labor-intensive and requires specialized materials and analytical equipment, contributing to its high cost [14] [13]. Compounding these issues is the heterogeneity of the Caco-2 cell line itself and differences in experimental protocols across laboratories, which lead to considerable variability in reported permeability measurements [13]. This variability limits the reliability of data and complicates the construction of large, consistent datasets needed for robust quantitative structure-property relationship (QSPR) modeling.

Experimental Challenges and Accelerated Protocol

The primary experimental bottlenecks of the traditional Caco-2 assay are its duration and operational complexity. The extended differentiation time increases risks of microbial contamination and demands significant laboratory resources [11]. To address this, an accelerated 7-day protocol has been developed, enabling higher-throughput screening without sacrificing data quality [12].

Accelerated 7-Day Caco-2 Permeability Assay

This protocol outlines the procedure for establishing functional Caco-2 monolayers in a 96-well format within one week, optimized for direct UV compound analysis.

2.1.1 Research Reagent Solutions & Materials

Table 1: Essential Materials for the 7-Day Caco-2 Assay

| Item Name | Function/Description |

|---|---|

| Caco-2 Cells | Human colon adenocarcinoma cell line, capable of differentiating into enterocyte-like cells. |

| 96-Well Polycarbonate Filter Plates | Supports high-density cell seeding and monolayer formation for permeability measurement. |

| Novel Cell Culture Boxes | Allows complete submergence of culture plates; medium is exchanged outside the plate to enhance productivity and minimize contamination. |

| UV-Transparent Transport Buffer | Enables direct quantification of permeated drug via UV absorption, eliminating need for complex sample preparation. |

| High Glucose DMEM Medium | Standard culture medium for supporting high-density cell growth and differentiation. |

2.1.2 Step-by-Step Methodology

- Cell Seeding: Seed Caco-2 cells at a high density (e.g., 100,000 cells per well) onto 96-well polycarbonate filter plates.

- Accelerated Cultivation: Place the seeded plates into novel cell culture boxes, fully submerged in standard culture medium. Incubate at 37°C, 5% CO₂ for 7 days. The medium outside the plate is exchanged regularly, but the individual wells are not accessed, reducing labor and contamination risk.

- Monolayer Integrity Check: Post-cultivation, confirm the integrity and functionality of the monolayers, for example, by measuring Transepithelial Electrical Resistance (TEER) or using marker compounds.

- Permeability Assay: a. Aspirate the culture medium from both the apical (AP) and basolateral (BL) compartments. b. Add the test compound dissolved in the novel UV-transparent transport buffer to the donor compartment (e.g., AP side for A-B permeability). c. Place the receiver compartment (e.g., BL side) with the UV-transparent buffer. d. Incubate the plate under standard conditions (e.g., 37°C) with gentle agitation for the desired duration (e.g., 2 hours).

- Sample Analysis: Directly transfer samples from the receiver compartment to a UV-compatible microplate. Quantify the concentration of the permeated compound by measuring its UV absorption. Calculate the apparent permeability (Papp) using standard equations.

2.1.3 Protocol Advantages

- Time Efficiency: Reduces cell culture time from 21 days to 7 days, drastically accelerating throughput [12].

- Cost-Effectiveness: Minimizes reagent use and labor through the 96-well format and non-invasive feeding system.

- Analytical Simplicity: The direct UV method eliminates the need for liquid chromatography/tandem mass spectrometry (LC/MS/MS) for many compounds, simplifying analysis and reducing costs [12]. For even higher throughput, multiplexed LC/MS/MS systems (e.g., four-way multiplexed electrospray interface) can be employed to maximize analytical speed [15].

The following workflow diagram illustrates the streamlined, accelerated protocol and its position within a broader R&D pipeline that integrates machine learning.

Machine Learning for Predictive Permeability Assessment

Computational models, particularly machine learning algorithms, offer a powerful strategy to overcome the limitations of experimental screening. By learning from existing experimental data, these models can predict the Caco-2 permeability of novel compounds instantly, prioritizing synthesis and testing for the most promising candidates [14] [11].

Performance Comparison of ML Algorithms

Multiple studies have systematically benchmarked various ML algorithms for Caco-2 permeability prediction. The table below summarizes the performance of prominent models, demonstrating that ensemble and graph-based methods often achieve superior accuracy.

Table 2: Benchmarking Performance of Selected Machine Learning Models for Caco-2 Permeability Prediction

| Model Name | Model Type | Key Features/Molecular Representation | Reported Performance (Test Set) | Source/Reference |

|---|---|---|---|---|

| CaliciBoost (AutoML) | Automated ML Ensemble | Combines multiple feature representations (PaDEL, Mordred descriptors); Uses Bayesian optimization. | Best MAE on benchmark datasets. 15.73% MAE reduction with 3D vs. 2D descriptors. | [16] |

| XGBoost | Gradient Boosting | Combined Morgan fingerprints and RDKit 2D descriptors. | Superior performance in comparative study; R² ~0.76, RMSE ~0.38. | [17] [11] |

| SVM-RF-GBM Ensemble | Hybrid Ensemble | Combined SVM, Random Forest, and Gradient Boosting. | RMSE = 0.38, R² = 0.76. | [17] |

| Directed-MPNN (D-MPNN) | Graph Neural Network | Molecular graph representation; captures complex structural relationships. | Consistently top performance in cyclic peptide benchmark. | [18] [19] |

| Random Forest (RF) | Ensemble (Bagging) | Morgan fingerprints or RDKit 2D descriptors; robust to overfitting. | RMSE between 0.43–0.51 on large validation sets. | [13] [11] |

| Atom-Attention MPNN (AA-MPNN) | Graph Neural Network | Integrates self-attention with contrastive learning; highlights critical substructures. | Enhanced predictive accuracy and model interpretability. | [19] |

QSPR Model Building Protocol

For researchers aiming to develop their own predictive models, the following general protocol provides a robust framework.

3.2.1 Data Curation and Preprocessing

- Data Collection: Compile experimental Caco-2 Papp values from public databases (e.g., ChEMBL, CycPeptMPDB) and literature [18] [14] [11].

- Standardization: Standardize molecular structures using toolkits like RDKit (e.g., neutralizing charges, handling tautomers) to ensure consistency [11].

- Data Cleaning: Resolve duplicate entries by averaging measurements with low standard deviation (e.g., ≤ 0.3). Exclude entries with missing critical data [11].

3.2.2 Molecular Featurization Convert standardized molecular structures into numerical representations. Common approaches include:

- Molecular Descriptors: Calculate physicochemical descriptors (e.g., LogP, TPSA, molecular weight) using software like RDKit, PaDEL, or Mordred. The incorporation of 3D descriptors can significantly boost performance [17] [16].

- Fingerprints: Generate structural fingerprints such as Morgan (ECFPs) or MACCS keys to encode molecular substructures [16] [11].

- Graph Representations: For graph neural networks, represent atoms as nodes and bonds as edges in a molecular graph [18] [19] [11].

3.2.3 Model Training and Validation

- Data Splitting: Split the curated dataset into training, validation, and test sets. Use random splits for general performance assessment and scaffold splits to rigorously evaluate generalization to novel chemotypes [18].

- Algorithm Selection: Train a diverse set of algorithms (e.g., RF, XGBoost, SVM, GNNs). AutoML frameworks like AutoGluon can automate model selection and hyperparameter optimization [16] [14].

- Validation: Perform rigorous internal validation (e.g., k-fold cross-validation) and external validation on a hold-out test set. Use metrics like RMSE, R², and MAE for regression tasks. Apply Y-randomization and applicability domain analysis to ensure model robustness [13] [11].

The logical flow for building and deploying a high-quality predictive model is summarized in the following diagram.

The challenges of time, cost, and variability inherent in the traditional Caco-2 assay are no longer insurmountable barriers to high-throughput permeability screening. The integration of accelerated experimental protocols, which reduce cultivation time from 21 days to 7 days, with highly predictive machine learning models establishes a powerful, synergistic strategy. This combined approach enables drug discovery researchers to efficiently prioritize lead compounds with favorable permeability characteristics early in the development pipeline. By adopting these methodologies, laboratories can significantly enhance productivity, reduce reliance on costly and time-consuming experimental screens, and accelerate the journey of oral drug candidates from the bench to the clinic.

The application of machine learning (ML) to predict Caco-2 permeability represents a paradigm shift in drug discovery, offering the potential to rapidly prioritize compounds with favorable intestinal absorption profiles. However, the predictive accuracy of these sophisticated algorithms is fundamentally constrained by a critical upstream bottleneck: the scarcity of high-quality, consistent experimental permeability data for model training and validation [11] [20]. This data hurdle stems from the inherent biological and technical variability of the Caco-2 assay system itself, which, if not meticulously managed, propagates noise and uncertainty into computational models, limiting their reliability and applicability domain.

The Caco-2 cell line, derived from human colorectal adenocarcinoma, is the "gold standard" in vitro model for predicting human intestinal permeability due to its ability to differentiate into enterocyte-like cells expressing relevant transporters and forming tight junctions [21] [22]. Nevertheless, this biological complexity is a double-edged sword. The heterogeneity of Caco-2 subpopulations and significant inter-laboratory variations in culture methods, assay conditions, and validation protocols lead to substantial discrepancies in reported permeability coefficients (Papp) for the same compounds [21] [20]. One analysis found "substantial differences for absolute apparent permeability coefficients (Papp) of compounds between datasets from various laboratories with high normalized RMSE values in the range of 0.46 to 0.58" [20]. This variability, compounded by challenges in data curation from public sources, creates a significant barrier to developing robust, generalizable ML models that perform consistently across diverse chemical space.

The Data Scarcity and Variability Challenge

The journey from cell culture to a final Papp value is fraught with potential sources of variation that directly impact data quality and consistency which are essential for ML.

- Cell Culture Conditions: The Caco-2 cell line exhibits high internal heterogeneity and external variability [21]. Factors such as the number of cell passages, seeding density, and the duration of cell differentiation (typically 21–24 days) can significantly influence the formation and integrity of the cell monolayer, thereby affecting permeability measurements [20] [22].

- Assay Protocol Differences: Variations in transport buffer composition, incubation time, and pH can alter compound permeability [21]. A critical mitigating strategy is the inclusion of Bovine Serum Albumin (BSA) in the assay buffer. BSA reduces non-specific binding of lipophilic compounds to plasticware and improves their aqueous solubility, leading to more accurate concentration measurements and higher recovery rates, which is crucial for generating reliable data for BCS Class II compounds [22].

- Validation and Standardization Gaps: While regulatory agencies provide acceptance criteria for Caco-2 model validation, they do not specify a detailed protocol [21]. This lack of standardized methodology across laboratories directly contributes to dataset inconsistencies that hinder the aggregation of high-quality data from public sources for ML training.

Consequences for Machine Learning

The experimental variability directly translates into several concrete problems for ML development which impact model reliability and usability.

- Limitations in Data Quantity and Quality: The "paucity of high-quality Caco-2 permeability data has impeded the development of accurate models with a wide applicability domain" [11]. This data scarcity is a major bottleneck for training complex models, particularly deep learning architectures that typically require large amounts of consistent data.

- Noise and Uncertain Labels in Training Data: When models are trained on aggregated data from multiple sources with inherent experimental noise, they learn from "uncertain" labels. This noise can cap the achievable performance of even the most advanced algorithms and reduce the model's ability to generalize to new, unseen compounds [20].

- Impaired Model Generalizability and Transferability: The performance of a model trained on public data often drops when applied to proprietary industrial datasets. One study noted that while boosting models "retained a degree of predictive efficacy when applied to industry data," there was a noticeable performance degradation, highlighting the domain shift problem caused by data inconsistency [11].

Standardized Experimental Protocols for High-Quality Data Generation

To overcome the data hurdle, rigorous standardization of experimental procedures is paramount. The following protocol provides a framework for generating consistent, high-quality Caco-2 permeability data suitable for ML model development.

Cell Culture and Monolayer Preparation

Objective: To establish a fully differentiated and functional Caco-2 cell monolayer. Procedure: 1. Cell Seeding: Seed Caco-2 cells at a density of (1 \times 10^5) cells/cm² on collagen-coated polyester transwell inserts [23]. 2. Cell Differentiation: Culture the cells for 18–22 days to achieve full differentiation, changing the culture medium every 48 hours [22] [23]. Maintain at 37°C in a humidified atmosphere with 5% CO₂. 3. Monolayer Integrity Verification: Prior to permeability assays, confirm monolayer integrity using:

- Transepithelial Electrical Resistance (TEER): Acceptable values are >1000 Ω·cm² for 24-well plates and >500 Ω·cm² for 96-well plates [23].

- Paracellular Flux Marker: Use Lucifer Yellow. The apparent permeability (Papp) should be ≤ (1 \times 10^{-6}) cm/s, and the paracellular flux should be ≤0.5-0.7% [22] [23].

Permeability Assay and Sample Analysis

Objective: To determine the apparent permeability coefficient (Papp) of test compounds. Procedure: 1. Assay Preparation:

- Prepare test and reference compounds in transport buffer (e.g., HBSS). A starting concentration of 10 µM is suggested for unknown compounds [23].

- For efflux assessment, include transport in both apical-to-basolateral (A-B) and basolateral-to-apical (B-A) directions.

- Pre-warm all solutions to 37°C. 2. Compound Incubation:

- Add the compound solution to the donor compartment and fresh buffer to the receiver compartment.

- Incubate for 2 hours at 37°C with gentle agitation [23]. 3. Sample Collection and Analysis:

- Collect samples from both donor and receiver compartments at the end of incubation.

- Use a validated bioanalytical method for quantification. A UPLC-MS/MS method capable of simultaneously quantifying multiple markers is recommended for efficiency and consistency [24]. 4. Data Calculation and Acceptance Criteria:

- Calculate Papp using the formula: (P{app} = \frac{dQ/dt}{C0 \times A}) where (dQ/dt) is the permeation rate (nmol/s), (C_0) is the initial donor concentration (nmol/mL), and (A) is the monolayer area (cm²) [22] [23].

- Calculate percent recovery: (\% Recovery = \frac{Total\ compound\ recovered}{Initial\ compound} \times 100) Low recovery may indicate solubility, binding, or metabolism issues [22].

- Include reference compounds for assay validation (Table 1).

The following workflow diagram summarizes the key steps for generating reliable Caco-2 data.

Reference Compounds for Assay Validation

Table 1: Essential Reference Compounds for Caco-2 Assay Validation and Standardization

| Compound | Function | Expected Papp (×10⁻⁶ cm/s) | Permeability Class | Key Mechanism |

|---|---|---|---|---|

| Atenolol | Low permeability marker [23] | ~1.64 [21] | Low | Passive paracellular transport [24] |

| Propranolol | High permeability marker [23] | ~30.76 [21] | High | Passive transcellular diffusion [24] |

| Digoxin | P-gp substrate marker [23] | N/A | Efflux substrate | P-glycoprotein-mediated efflux |

| Verapamil | P-gp inhibitor control [24] [23] | N/A | High Permeability / Inhibitor | Passive transcellular diffusion & P-gp inhibition [24] |

| Quinidine | Efflux marker [24] | N/A | High Permeability / Efflux | Passive diffusion & P-gp substrate [24] |

| Metoprolol | High permeability marker [23] | ~37.33 [21] | High | Passive transcellular diffusion |

Computational Strategies for Noisy and Limited Data

When high-quality experimental data is limited, specific computational strategies can help build more robust models.

Advanced Machine Learning Approaches

- Algorithm Selection: Ensemble methods like XGBoost and Random Forest have demonstrated strong performance in predicting Caco-2 permeability, often outperforming other models, particularly on complex datasets [11] [7] [20]. Their inherent robustness to noise makes them well-suited for handling experimental variability.

- Data Balancing Techniques: For classification tasks, dataset imbalance poses a significant challenge. Employing balancing strategies like ADASYN oversampling can significantly improve model performance, with XGBoost classifiers using this method achieving test accuracies of 0.717 and MCC of 0.512 [7].

- Representation Learning and Deep Learning: Combining multiple molecular representations improves model comprehension. Using Morgan fingerprints alongside RDKit 2D descriptors provides both substructure and global physicochemical information [11]. Furthermore, Message Passing Neural Networks and Atom-Attention MPNNs directly learn from molecular graph structures, capturing intricate topological features crucial for permeability [11] [8].

Data Curation and Model Validation Best Practices

Robust model development requires rigorous data preparation and validation, not just advanced algorithms.

- Data Curation Workflow: Implement a multi-step curation process [11] [20]:

- Molecular Standardization: Standardize structures to consistent tautomer and neutral forms.

- Duplicate Management: For compounds with multiple measurements, calculate the mean and standard deviation. Retain only those with low standard deviation (e.g., ≤ 0.3) to ensure data consistency [11].

- Descriptor Calculation and Variable Selection: Use recursive feature selection to eliminate correlated and uninformative descriptors, simplifying the model and enhancing interpretability [20].

- Rigorous Model Validation:

- Employ Y-randomization tests to ensure the model is not learning by chance [11].

- Define the Applicability Domain to identify compounds for which predictions are reliable [11].

- Use an external validation set from a different source (e.g., an industrial in-house dataset) to assess real-world generalizability [11].

The following diagram visualizes this integrated computational pipeline.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for Caco-2 Permeability Studies

| Reagent / Tool | Function | Example Use-Case |

|---|---|---|

| Caco-2 Ready-to-Use Plates | Pre-seeded, differentiated monolayers for immediate assay use. | Reduces inter-laboratory variability and saves 3 weeks of culture time (e.g., CacoReady) [23]. |

| BSA (Bovine Serum Albumin) | Added to transport buffer to improve solubility of lipophilic compounds and reduce non-specific binding. | Crucial for obtaining reliable data for BCS Class II compounds by increasing recovery and accurate Papp determination [22]. |

| Validated UPLC-MS/MS Method | Simultaneous quantification of multiple permeability markers with high sensitivity and specificity. | Enables high-throughput, precise measurement of key analytes like atenolol, propranolol, quinidine, and verapamil in a single run [24]. |

| Reference Compound Kit | A set of well-characterized control compounds for assay validation. | Ensures consistency and regulatory compliance by verifying monolayer performance and assay accuracy (see Table 1) [21] [23]. |

| Automated KNIME Workflow | Open-source platform for building automated QSPR modeling workflows. | Facilitates data curation, feature selection, model building, and virtual screening of Caco-2 permeability [20]. |

The hurdle of high-quality, consistent Caco-2 permeability data is a significant but surmountable challenge in the age of machine learning for drug discovery. Overcoming it requires a dual-pronged strategy: a steadfast commitment to experimental rigor and standardization at the bench to generate reliable data, coupled with the intelligent application of robust computational methods designed to handle the noise and scarcity inherent in existing datasets. By adopting standardized protocols, leveraging advanced ML algorithms like XGBoost and AA-MPNN, and implementing rigorous data curation and validation practices, researchers can transform this data hurdle into a foundation for predictive models that truly accelerate the development of orally administered drugs.

The assessment of intestinal permeability represents a critical hurdle in the early stages of oral drug development. For decades, the Caco-2 cell assay, derived from human colorectal adenocarcinoma cells, has served as the gold standard for in vitro permeability assessment due to its morphological and functional similarity to human enterocytes [20] [11]. This assay is endorsed by regulatory bodies for classifying compounds according to the Biopharmaceutics Classification System (BCS) [11]. However, the extensive cultivation period of 7-24 days required for cell differentiation, coupled with substantial costs and experimental variability, renders traditional Caco-2 assays impractical for high-throughput screening [20] [11].

The transition from in vitro to in silico methods addresses these limitations through machine learning (ML) and quantitative structure-property relationship (QSPR) modeling. By leveraging computational power, these approaches enable rapid permeability prediction for vast chemical libraries, significantly accelerating candidate selection [20] [17]. This application note details the implementation of ML models for Caco-2 permeability prediction, providing researchers with validated protocols and frameworks to integrate these tools into early drug discovery workflows.

Performance Benchmarking of ML Models

The evaluation of diverse machine learning algorithms has identified several high-performing approaches for Caco-2 permeability prediction. The following table summarizes the performance metrics of recently developed models, demonstrating the current state of the art in this field.

Table 1: Performance Metrics of Recent Caco-2 Permeability Prediction Models

| Model Name | Algorithm Type | Dataset Size | Key Metrics | Reference |

|---|---|---|---|---|

| KNIME Workflow | Consensus Random Forest | >4,900 molecules | RMSE: 0.43-0.51; R²: 0.57-0.61 (validation sets) | [20] |

| XGBoost Model | Gradient Boosting | 5,654 compounds | Superior performance on test sets vs. comparable models | [11] |

| SVM-RF-GBM Ensemble | Multiple Algorithms | 1,817 compounds | RMSE: 0.38; R²: 0.76 (test set) | [17] |

| CaliciBoost | AutoML (AutoGluon) | 906 compounds (TDC) | Best MAE performance with PaDEL, Mordred descriptors | [16] |

| CPMP | Molecular Attention Transformer | 1,310 compounds | R²: 0.62 (Caco-2 test set) | [25] |

Beyond these specific implementations, systematic comparisons of algorithms reveal that boosting methods (XGBoost, GBM) frequently outperform other approaches, while ensemble models that combine multiple algorithms often achieve the highest performance [11] [17]. The incorporation of 3D molecular descriptors from PaDEL and Mordred has been shown to reduce mean absolute error by approximately 16% compared to using 2D features alone [16].

Experimental Protocol for Model Development

Data Curation and Preprocessing

The foundation of any robust QSPR model lies in the quality and consistency of the underlying data. The following protocol outlines the essential steps for data preparation:

Data Collection and Aggregation: Collect experimental Caco-2 permeability values (Papp) from publicly available datasets such as those compiled in TDC (Therapeutics Data Commons) or OCHEM [11] [16]. Permeability measurements should be converted to consistent units (cm/s × 10⁻⁶) and transformed to a base-10 logarithmic scale (logPapp) for modeling [20] [11].

Data Curation and Standardization:

- Apply structural standardization using RDKit's MolStandardize module to achieve consistent tautomer states and neutral forms [11].

- Remove entries with missing permeability values and identify duplicate compounds.

- For molecules with multiple measurements, calculate mean values and standard deviations. Retain only entries with a standard deviation ≤ 0.3-0.5 to minimize experimental variability [20] [11].

Dataset Partitioning: Split the curated dataset into training, validation, and test sets using an 8:1:1 ratio. For more rigorous validation, implement scaffold-based splitting to assess model performance on structurally novel compounds [11] [16].

Molecular Representation and Feature Selection

The choice of molecular representation significantly impacts model performance and interpretability:

Descriptor Calculation: Compute 2D and 3D molecular descriptors using tools such as RDKit, PaDEL, or Mordred. These capture key physicochemical properties including molecular weight, logP, topological polar surface area (TPSA), hydrogen bond donors/acceptors, and rotatable bonds [17] [16].

Fingerprint Generation: Generate structural fingerprints such as Morgan fingerprints (ECFPs) with a radius of 2 and 1024 bits to encode molecular substructures [11] [16].

Feature Selection:

Model Training and Validation

The model development phase requires careful algorithm selection and validation:

Algorithm Selection: Train multiple algorithm types including Random Forest, XGBoost, Support Vector Machines (SVM), and neural networks to identify the best performer for your specific dataset [11] [17].

Hyperparameter Optimization: Conduct hyperparameter tuning via grid search or Bayesian optimization, using k-fold cross-validation (typically 5-fold) to prevent overfitting [11] [7].

Model Validation:

- Perform Y-randomization testing to confirm models do not learn chance correlations [11] [25].

- Define the applicability domain to identify compounds for which predictions are reliable [11].

- Evaluate models using external test sets and, when available, proprietary industry datasets to assess transferability [11].

The following workflow diagram illustrates the complete model development process:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of ML models for Caco-2 permeability prediction requires both computational tools and experimental reagents for model training and validation.

Table 2: Essential Research Reagents and Computational Tools for Caco-2 Permeability Prediction

| Category | Tool/Reagent | Specific Examples | Function/Purpose |

|---|---|---|---|

| Computational Tools | Analytics Platforms | KNIME, Python/R | Workflow development and model implementation |

| Cheminformatics | RDKit, PaDEL, Mordred | Molecular descriptor and fingerprint calculation | |

| Machine Learning | Scikit-learn, XGBoost, AutoGluon | Algorithm implementation and automation | |

| Deep Learning | D-MPNN, Molecular Attention Transformer | Advanced neural network architectures | |

| Experimental Materials | Cell Lines | Caco-2 (ATCC HTB-37) | Gold standard in vitro permeability model |

| Culture Reagents | DMEM, FBS, Non-essential amino acids, Penicillin/Streptomycin | Cell line maintenance and differentiation | |

| Transport Buffers | HBSS, MES, HEPES | Permeability assay physiological conditions | |

| Reference Compounds | Metoprolol, Propranolol (high permeability), Atenolol (low permeability) | Assay validation and QC | |

| Data Resources | Public Databases | TDC, OCHEM, ChEMBL | Experimental permeability data for training |

Implementation Workflow for Drug Discovery

Integrating in silico Caco-2 permeability prediction into existing drug discovery pipelines requires a systematic approach. The following workflow enables efficient compound prioritization:

This workflow begins with a virtual compound library that undergoes multi-parameter optimization, with Caco-2 permeability prediction serving as a critical filter. Top-ranked compounds are synthesized, and their permeability is confirmed through experimental assays. This integrated approach significantly reduces the number of compounds requiring synthesis and testing, accelerating the discovery timeline and reducing costs [20] [17].

For lead optimization, Matched Molecular Pair Analysis (MMPA) can identify specific chemical transformations that improve permeability, providing medicinal chemists with actionable structural insights [11]. Additionally, model interpretability techniques such as SHAP analysis reveal which molecular descriptors most significantly impact permeability predictions, enabling data-driven structural modification [7].

Machine learning models for Caco-2 permeability prediction represent a paradigm shift in early drug discovery, effectively bridging the gap between in vitro assessment and high-throughput screening needs. The integration of these in silico tools enables researchers to prioritize compounds with favorable absorption characteristics before synthesis, optimizing resource allocation and accelerating the identification of viable drug candidates. As algorithms advance and datasets expand, these predictive models will play an increasingly central role in developing orally bioavailable therapeutics, ultimately enhancing the efficiency and success rate of drug development programs.

A Landscape of ML Algorithms and Workflows for Permeability Prediction

Within the paradigm of modern drug discovery, the accurate prediction of Caco-2 cell permeability stands as a critical determinant for assessing the oral bioavailability potential of drug candidates. This application note delineates a comprehensive, experimentally-validated framework for leveraging machine learning algorithms—specifically Random Forest (RF), eXtreme Gradient Boosting (XGBoost), Support Vector Machine (SVM), and the Directed Message Passing Neural Network (DMPNN)—to forecast Caco-2 permeability. The content is framed within a broader thesis that posits the integration of robust machine learning models with diverse molecular representations can significantly augment the efficiency and predictive accuracy of early-stage drug development pipelines. The protocols herein are designed for an audience of researchers, scientists, and drug development professionals engaged in cheminformatics and predictive ADMET modeling.

Performance Benchmarking: A Quantitative Synopsis

A consolidated summary of key benchmarking studies provides a quantitative foundation for algorithm selection. The performance of RF, XGBoost, SVM, and DMPNN varies significantly based on the dataset, molecular representations, and splitting strategies employed.

Table 1: Benchmark Performance of Algorithms for Caco-2 Permeability Prediction

| Algorithm | Molecular Representation | Dataset | Key Metric | Performance | Citation |

|---|---|---|---|---|---|

| XGBoost | Morgan FP + RDKit 2D Descriptors | Large Caco-2 (n=5,654) | Test Set Performance | Top performer vs. RF, SVM, GBM, DMPNN | [11] |

| DMPNN | Molecular Graph | Large Caco-2 (n=5,654) | Test Set Performance | Comparable performance, outperformed by XGBoost | [11] |

| Random Forest (RF) | Molecular Graph | Large Caco-2 (n=5,654) | Test Set Performance | Evaluated, outperformed by XGBoost | [11] |

| SVM | Molecular Graph | Large Caco-2 (n=5,654) | Test Set Performance | Evaluated, outperformed by XGBoost | [11] |

| XGBoost | Multi-source Feature Fusion | Cyclic Peptide (n=5,826) | AUROC | 0.9546 (in top-performing fusion model) | [26] |

| DMPNN | Molecular Graph | Cyclic Peptide (n=5,826) | Performance across tasks | Consistently top performance in regression and classification | [18] |

| Random Forest (RF) | Fingerprints / Descriptors | Cyclic Peptide (n=5,826) | Performance | Achieved comparable performance to advanced models | [18] |

| SVM | Fingerprints / Descriptors | Cyclic Peptide (n=5,826) | Performance | Achieved comparable performance to advanced models | [18] |

Table 2: Impact of Data Splitting Strategy on Model Generalizability (Cyclic Peptide Data)

| Data Splitting Strategy | Description | Implication for Model Generalizability |

|---|---|---|

| Random Split | Dataset divided randomly into training, validation, and test sets. | Higher reported generalizability due to chemical similarity between splits. [18] |

| Scaffold Split | Splits are based on molecular scaffolds, separating structurally distinct compounds. | Lower model generalizability; provides a more rigorous assessment of model robustness. [18] |

Experimental Protocols

Protocol 1: Dataset Curation and Preprocessing for Caco-2 Permeability Modeling

This protocol outlines the steps for constructing a robust, machine-learning-ready dataset from public sources.

- Objective: To compile and standardize experimental Caco-2 permeability data from heterogeneous public datasets into a curated, non-redundant dataset suitable for model training.

- Materials & Software: RDKit, Python environment, publicly available datasets (e.g., from TDC, OCHEM, and literature [11] [16]).

- Procedure:

- Data Aggregation: Combine Caco-2 permeability data from multiple public datasets. One benchmark study aggregated data from three sources, resulting in an initial set of 7,861 compounds [11].

- Unit Conversion and Value Assignment: Convert all apparent permeability (Papp) values to a consistent unit (e.g., cm/s × 10–⁶) and apply a base-10 logarithmic transformation. For compounds with multiple measurements, calculate the mean and standard deviation. Retain only entries with a standard deviation ≤ 0.3 to minimize uncertainty, using the mean value for modeling [11].

- Molecular Standardization: Process all molecular structures using a tool like RDKit's

MolStandardizemodule. This generates consistent tautomer canonical states and final neutral forms while preserving stereochemistry [11]. - Deduplication: Remove duplicate entries to create a non-redundant dataset. The aforementioned study resulted in a final curated set of 5,654 compounds [11].

- Data Splitting: Partition the curated dataset into training, validation, and test sets. A common ratio is 8:1:1. To ensure robust evaluation, perform this partitioning 10 times with different random seeds and report average performance metrics [11]. For a more rigorous assessment of generalizability to novel chemotypes, a scaffold-based split is recommended [18].

Protocol 2: Comprehensive Molecular Featurization

This protocol describes the generation of multiple molecular representations to train and evaluate different algorithms.

- Objective: To represent chemical structures in computer-interpretable formats that encapsulate structural and physicochemical information critical for permeability prediction.

- Materials & Software: RDKit, PaDEL, Mordred, or CDDD software/descriptors.

- Procedure:

- 2D Molecular Descriptors: Generate a comprehensive set of physicochemical descriptors using tools like RDKit, PaDEL, or Mordred. These capture global properties such as molecular weight, logP, and topological polar surface area (TPSA) [11] [16].

- 3D Molecular Descriptors: Compute descriptors that require 3D conformational information using tools like PaDEL or Mordred. Studies have shown that incorporating 3D descriptors can reduce the Mean Absolute Error (MAE) by over 15% compared to using 2D features alone [16].

- Structural Fingerprints: Generate binary bit vectors that represent the presence or absence of specific substructures.

- Molecular Graphs: For graph-based models like DMPNN, represent a molecule as a graph (G=(V,E)), where (V) represents atoms (nodes) and (E) represents bonds (edges). This is the native input for the DMPNN algorithm as implemented in packages like ChemProp [18] [11].

Protocol 3: Model Training, Validation, and Industrial Application

This protocol covers the training, hyperparameter optimization, and critical validation steps for developing a production-ready model.

- Objective: To train, optimize, and validate machine learning models for Caco-2 permeability prediction, and to assess their transferability to industrial settings.

- Materials & Software: Python libraries (Scikit-learn, XGBoost, ChemProp), access to an in-house industrial dataset for external validation (optional).

- Procedure:

- Algorithm Selection and Training:

- Ensemble Methods (RF, XGBoost): Train using concatenated molecular features (e.g., Morgan fingerprints + 2D descriptors). XGBoost has been identified as a top performer in direct comparisons [11] [27].

- Support Vector Machine (SVM): Train using the same feature sets, typically requiring feature scaling for optimal performance.

- Deep Learning (DMPNN): Train using molecular graphs as input. The DMPNN architecture directly learns from the graph structure without the need for hand-crafted features [18] [11].

- Hyperparameter Optimization: Employ a five-fold cross-validation approach on the training set to optimize model-specific hyperparameters. This mitigates overfitting and ensures robust model selection [7].

- Model Validation:

- Internal Validation: Evaluate the optimized model on the held-out test set from the public data, reporting metrics like MAE, RMSE, and R².

- Y-Randomization Test: Validate the model's robustness by shuffling the target permeability values and re-training. A significant drop in performance confirms the model learned true structure-property relationships and not chance correlations [11].

- Applicability Domain Analysis: Define the chemical space where the model's predictions are reliable, often using methods like leverage or distance-based approaches [11].

- Industrial Validation: Test the model's transferability by evaluating its performance on a proprietary, in-house dataset (e.g., from a pharmaceutical company like Shanghai Qilu). This step is critical for verifying real-world utility [11].

- Algorithm Selection and Training:

Workflow Visualization

The following diagram illustrates the integrated experimental and computational pipeline for Caco-2 permeability prediction.

Caco-2 Permeability Prediction Workflow

Table 3: Key Software and Data Resources for Caco-2 Permeability Modeling

| Tool / Resource | Type | Function in Research | Citation |

|---|---|---|---|

| RDKit | Cheminformatics Software | Open-source toolkit for molecular standardization, descriptor calculation (RDKit2D), and fingerprint generation (Morgan). | [11] |

| PaDEL Descriptors | Molecular Descriptor Software | Calculates a comprehensive set of 2D and 3D molecular descriptors for featurization. | [16] |

| Mordred Descriptors | Molecular Descriptor Software | Computes a large set of 2D and 3D molecular descriptors, often used alongside PaDEL. | [16] |

| ChemProp | Deep Learning Framework | Specialized software for implementing DMPNN and other graph neural networks for molecular property prediction. | [18] [11] |

| XGBoost | Machine Learning Library | Library implementing the gradient boosting framework, frequently a top performer in benchmark studies. | [11] [27] |

| AutoGluon (AutoML) | Automated Machine Learning Framework | Automates the machine learning pipeline, including feature preprocessing, model selection, and hyperparameter tuning. | [16] |

| Therapeutics Data Commons (TDC) | Data Resource | Provides curated benchmarks, including Caco-2 permeability datasets for model training and evaluation. | [16] |

| OCHEM Database | Data Resource | Online chemical database with a large collection of experimental Caco-2 permeability measurements. | [16] |

Within the critical field of machine learning (ML) for drug discovery, the accurate prediction of Caco-2 permeability serves as a vital benchmark for assessing intestinal absorption and oral bioavailability of potential drug candidates [16] [11]. The performance of these predictive models is profoundly influenced by the choice of molecular representation, which translates chemical structures into a computer-readable format [8]. This application note provides a detailed comparison of three predominant representation types—molecular fingerprints, 2D descriptors, and molecular graphs—framed within the context of Caco-2 permeability prediction research. We summarize quantitative performance data, provide standardized protocols for implementation, and outline essential computational toolkits to guide researchers in selecting and applying the most effective representation for their specific project needs.

Comparative Performance Analysis

Systematic evaluations reveal that the predictive performance of molecular representations can vary based on the dataset and ML algorithm used. The following table summarizes key findings from recent benchmarking studies for Caco-2 permeability prediction.

Table 1: Comparative Performance of Molecular Representations and Model Combinations for Caco-2 Permeability Prediction

| Molecular Representation | Example Algorithms | Reported Performance (Metric, Value) | Key Strengths |

|---|---|---|---|

| 2D/3D Descriptors (RDKit, PaDEL, Mordred) | LightGBM [28], XGBoost [11], AutoGluon (CaliciBoost) [16] | MAE: 0.38-0.40 [29], Best MAE for PaDEL/Mordred [16] | High interpretability, encodes physicochemical properties, effective on small-to-medium datasets [16] [29]. |

| Molecular Fingerprints (Morgan/ECFP, MACCS) | SVM-RF-GBM Ensemble [29], Random Forest [11] | R²: 0.76 [29], RMSE: 0.38 [29] | Captures substructure patterns, computationally efficient, widely used for similarity searches [30]. |

| Molecular Graphs (D-MPNN, AA-MPNN) | Graph Neural Networks (GNNs) with Contrastive Learning [8] | Improved predictive accuracy vs. traditional methods [8] | Learns features directly from molecular structure; no need for hand-crafted features; high potential with sufficient data [8] [11]. |

| Hybrid Representations (Descriptors + Fingerprints) | CombinedNet [11], Consensus Models [20] | RMSE: 0.43-0.51 for validation sets [20] | Combines global (descriptors) and local (fingerprints) information; can leverage strengths of multiple representations [11]. |

A comprehensive study by CaliciBoost, which utilized Automated Machine Learning (AutoML), identified PaDEL, Mordred, and RDKit descriptors as particularly effective for Caco-2 prediction [16]. Notably, the incorporation of 3D descriptors alongside 2D features led to a 15.73% reduction in Mean Absolute Error (MAE), highlighting the value of stereochemical information [16]. For larger chemical spaces, particularly those beyond the Rule of Five (bRo5), the combination of LightGBM algorithm with RDKit descriptors has proven to be a very efficient and effective setup for a simple global model [28].

Detailed Experimental Protocols

Protocol 1: Building a Model with 2D Descriptors and Ensemble Methods

This protocol is ideal for projects with small to medium-sized datasets and requires interpretable models.

Data Curation and Standardization

- Collect SMILES strings and experimental logPapp values from sources like TDC (e.g., Caco2_Wang with 906 compounds) or OCHEM (with ~9,402 compounds) [16].

- Standardize molecular structures using RDKit's

StandardizeSmiles()andCleanup()functions to ensure consistent representation. Remove duplicates and entries with high experimental variability (e.g., standard deviation of replicates > 0.3) [28] [11].

Descriptor Calculation and Feature Selection

- Calculate a comprehensive set of 2D descriptors using RDKit (e.g.,

Descriptors.descListfor 209 descriptors) [28], PaDEL, or Mordred software. - Perform feature selection to reduce dimensionality and mitigate overfitting. Use a recursive feature elimination (RFE) approach combined with a genetic algorithm (GA) to select the most informative descriptors (e.g., reducing from 523 to 41 predictors) [29].

- Calculate a comprehensive set of 2D descriptors using RDKit (e.g.,

Model Training with AutoML or Boosting

- Split the data using a time-split or scaffold split to simulate real-world forecasting or assess generalization to novel chemotypes [28] [16].

- Employ an AutoML framework like AutoGluon or a boosting algorithm like XGBoost/LightGBM. For example, in AutoGluon, specify the task as regression and provide the descriptor table as input. The framework will handle algorithm selection and hyperparameter tuning [16].

- Key hyperparameters for LightGBM include setting the maximum number of leaves to 35, the number of boosted trees to 2000, and the learning rate to 0.05 [28].

Model Validation and Interpretation

- Validate the model on a held-out test set and, if available, an external validation set or an in-house pharmaceutical dataset to assess transferability [11].

- Use SHAP (SHapley Additive exPlanations) analysis to interpret the model and identify which molecular descriptors (e.g., topological polar surface area, logP) are most critical for permeability predictions [16].

Protocol 2: Implementing a Graph Neural Network with Contrastive Learning

This protocol is suited for projects with larger datasets that aim to leverage deep learning without heavy feature engineering.

Molecular Graph Construction

Self-Supervised Pretraining

- Pretrain an Atom-Attention Message Passing Neural Network (AA-MPNN) encoder on a large dataset of unlabeled molecules using contrastive learning [8].

- Generate positive samples for contrastive learning via graph augmentation techniques like atom masking, where random atoms in the molecule are masked. The model learns to create similar embeddings for the original and masked molecules [8].

Supervised Fine-Tuning

- After pretraining, add a feed-forward network (FFN) head to the encoder for the downstream regression task of predicting logPapp [8].

- Fine-tune the entire model (encoder and FFN) on the curated, labeled Caco-2 permeability dataset. This allows the model to adapt its general molecular knowledge to the specific property prediction task.

Model Evaluation and Attention Visualization

- Evaluate the fine-tuned model on a scaffold-split test set to assess its ability to generalize to new molecular scaffolds.

- Leverage the self-attention mechanisms in the AA-MPNN to visualize which atoms and substructures the model "attends to" when making a prediction, thereby enhancing model interpretability [8].

Workflow Diagram: Comparative Model Evaluation

The following diagram illustrates a generalized workflow for the systematic evaluation of different molecular representations, as discussed in the protocols above.

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

Table 2: Key Software and Tools for Molecular Representation and Modeling

| Tool Name | Type | Primary Function in Research | Key Advantage |

|---|---|---|---|

| RDKit [28] [11] | Cheminformatics Library | Calculates molecular descriptors (RDKit descriptors), generates fingerprints (Morgan/ECFP), and standardizes structures. | Open-source, widely adopted, and integrated into many workflows (e.g., KNIME). |

| PaDEL & Mordred [16] | Descriptor Calculation Software | Generates a comprehensive set of 2D and 3D molecular descriptors. | High descriptor coverage; Mordred includes 3D descriptors which can significantly boost performance [16]. |

| AutoGluon [16] | Automated Machine Learning (AutoML) | Automates the ML pipeline including feature preprocessing, model selection, and hyperparameter tuning. | Accessible for non-experts, produces strong baseline models with minimal code. |

| KNIME Analytics Platform [20] | Workflow Management | Provides a visual interface for building, validating, and deploying automated QSPR prediction workflows. | Promotes reproducibility and allows integration of various nodes for data handling, descriptor calculation, and ML. |

| ChemProp [11] | Deep Learning Framework | Specialized for molecular property prediction using Directed Message Passing Neural Networks (D-MPNN). | User-friendly implementation of state-of-the-art graph neural networks for molecules. |

The selection of an optimal molecular representation is a foundational step in building robust ML models for Caco-2 permeability prediction. For many practical applications in drug discovery, particularly with limited data, 2D and 3D descriptors used with boosting algorithms or AutoML provide an excellent balance of performance, interpretability, and computational efficiency [28] [16] [11]. For large, diverse datasets, molecular graphs combined with advanced GNNs and contrastive learning represent the cutting edge, offering high accuracy and novel insights without manual feature engineering [8]. Researchers are encouraged to validate their chosen approach on external or project-specific internal datasets to ensure real-world applicability, and to consider hybrid representations to fully leverage the complementary strengths of different molecular encoding strategies [11] [20].

Leveraging Multitask Learning (MTL) to Improve Predictions Across Related Endpoints

Within the broader scope of developing machine learning algorithms for Caco-2 permeability prediction, Multitask Learning (MTL) has emerged as a powerful paradigm to overcome a critical challenge in drug discovery: data scarcity. Traditional single-task models for predicting absorption, distribution, metabolism, and excretion (ADME) properties, including Caco-2 permeability, often suffer from limited generalization performance when training data is insufficient [31]. MTL addresses this by simultaneously learning multiple related tasks, allowing for shared representations and information transfer across tasks. This approach has demonstrated superior predictive accuracy and generalization compared to single-task models, particularly for ADME endpoints with limited experimental data [32] [31].

The MTL Paradigm in ADME Prediction

Conceptual Framework

Multitask learning operates on the principle that related tasks often share underlying biological or physicochemical determinants. In the context of ADME prediction, properties such as Caco-2 permeability, blood-brain barrier (BBB) penetration, and solubility are influenced by common molecular characteristics [8] [31]. By learning these tasks jointly, MTL models can identify and leverage these shared factors, leading to more robust and accurate predictions.

Quantitative Performance Advantages

Recent studies have demonstrated the tangible benefits of MTL approaches over traditional single-task models across various ADME parameters. The table below summarizes the performance advantages observed in a comprehensive study that developed an AI model capable of predicting ten different ADME parameters.

Table 1: Performance of MTL with Fine-Tuning (GNNMT+FT) for ADME Prediction

| ADME Parameter | Description | Number of Compounds | MTL Performance Advantage |

|---|---|---|---|

| Papp Caco-2 | Permeability coefficient (Caco-2) | 5,581 | Achieved highest performance versus conventional methods [31] |

| fubrain | Fraction unbound in brain homogenate | 587 | Addressed data scarcity, improving generalization [31] |

| Solubility | Solubility | 14,392 | Achieved highest performance versus conventional methods [31] |

| CLint | Hepatic intrinsic clearance | 5,256 | Achieved highest performance versus conventional methods [31] |

| Fup human | Fraction unbound in human plasma | 3,472 | Achieved highest performance versus conventional methods [31] |

This MTL approach, which combines multitask learning with subsequent fine-tuning for each specific ADME parameter, achieved the highest performance for seven out of ten ADME parameters compared to conventional methods [31]. The success is particularly notable for parameters with limited data, such as fubrain, where MTL mitigates overfitting by leveraging shared information from related tasks.

Protocol for Implementing MTL in Caco-2 Permeability Prediction

Data Compilation and Curation

Purpose: To assemble a high-quality, multi-task dataset for model training. Steps:

- Data Collection: Compile experimental data for Caco-2 permeability (Papp Caco-2) and related ADME parameters from public databases and in-house sources. Key parameters include fraction unbound in plasma (fup), solubility, hepatic intrinsic clearance (CLint), and blood-to-plasma ratio (Rb) [31].

- Data Curation: Standardize molecular structures using tools like the RDKit plugin in KNIME. Remove duplicates and mixtures. Address experimental variability by calculating mean values and standard deviations for compounds with repeated measurements [20].

- Data Partitioning: Split the data into training, validation, and test sets. A recommended split is 70% for training, 10% for validation, and 20% for independent testing [33]. Ensure the chemical space is well-represented across all splits.

Molecular Representation and Feature Selection

Purpose: To convert chemical structures into a computer-interpretable format that captures relevant features. Steps:

- Descriptor Calculation: Generate molecular descriptors and fingerprints from 2D structures. Common choices include:

- Feature Selection: Reduce dimensionality to minimize noise and overfitting.

- Apply a missing value cut-off (e.g., 10%) and remove low-variance descriptors [20].

- Use recursive feature elimination with a Random Forest permutation importance score to identify the most predictive descriptors [20] [17].

- Perform correlation analysis (Pearson correlation ≥ 0.85) to eliminate highly correlated features [20].

Model Architecture and Training

Purpose: To construct and train a Multitask Learning model capable of predicting multiple ADME endpoints. Steps:

- Model Selection: Implement a Graph Neural Network (GNN) based MTL architecture. GNNs directly process molecular graphs, effectively characterizing complex structures [31].

- Multitask Pretraining:

- Graph Embedding: Use a graph-embedding function,

f_θ(G_i), to map a molecular graphG_ito an embedding vectorh_i[31]. - Shared Layers: The initial layers of the network are shared across all tasks to learn a common representation.

- Task-Specific Heads: Following the shared layers, implement separate output layers (

g_θm(h_i)) for each ADME parameterm(e.g., Caco-2 permeability, solubility) [31]. - Loss Function: Minimize the total multitask loss,

L_MT, which is the sum of Smooth L1 losses for all tasks (Equation 4 & 5) [31].

- Graph Embedding: Use a graph-embedding function,

- Task-Specific Fine-Tuning:

- Use the pretrained shared layers from the multitask model as a fixed feature extractor.

- Re-train only the task-specific output layers for each ADME parameter individually, minimizing the loss

L_FT(m)for each taskm(Equation 6) [31]. This step adapts the general knowledge to the specifics of each endpoint.

Model Validation and Explanation

Purpose: To evaluate model performance and interpret predictions for lead optimization. Steps:

- Performance Validation: Assess the model using the held-out test set. Report Root Mean Square Error (RMSE) and R-squared (R²) for regression tasks, and Accuracy/AUC for classification tasks [20] [17].

- Interpretability Analysis: Apply explainable AI techniques, such as the Integrated Gradients (IG) method, to the MTL model. IG quantifies the contribution of individual atoms or substructures to the predicted ADME values, providing visual and quantitative insights for medicinal chemists [31].

- Prospective Validation: Test the model on external compound sets, such as known clinical candidates before and after lead optimization, to validate its predictive power and utility in a real-world drug discovery context [31].

Workflow Visualization

Diagram 1: MTL for ADME Prediction Workflow. This workflow outlines the key stages for developing a Multitask Learning model, from data preparation to final validation, highlighting the shared representation learning and task-specific adaptation crucial for MTL success [31] [33].

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 2: Key Software Tools and Platforms for MTL in Drug Discovery

| Tool/Platform Name | Type | Primary Function in MTL Research | Access |

|---|---|---|---|

| KNIME Analytics Platform [20] | Workflow Platform | Data curation, descriptor calculation, and automated QSPR model development. | Freely Available |

| RDKit [20] | Cheminformatics Library | Calculation of molecular descriptors and fingerprints within KNIME or Python environments. | Open Source |

| Enalos Cloud Platform [8] | Web Service | Provides pre-built models (e.g., AA-MPNN with Contrastive Learning) for predicting BBB and Caco-2 permeability. | Online Platform |

| Baishenglai (BSL) [34] | Comprehensive Platform | Integrates seven core tasks (e.g., property prediction, DTI) using GNNs and other advanced ML techniques. | Freely Available Online |

| kMoL Package [31] | Programming Library | Used for constructing Graph Neural Network (GNN) models, including multitask architectures. | Not Specified |

| scikit-learn [33] | Programming Library | Provides implementation of base learners like Random Forest for building MTL stacks (e.g., MTForestNet). | Open Source |

Integrating Multitask Learning into the predictive modeling toolkit for Caco-2 permeability and related ADME endpoints represents a significant advancement over single-task approaches. By leveraging shared information across tasks, MTL mitigates the challenges of data scarcity, enhances prediction accuracy for low-data endpoints, and provides more robust models for virtual screening and lead optimization. The protocols and resources outlined herein provide a foundation for researchers to implement and benefit from this powerful machine learning paradigm, ultimately contributing to more efficient and informed drug discovery pipelines.

Within drug discovery, predicting intestinal permeability is a critical step for assessing the potential oral bioavailability of new chemical entities. The Caco-2 cell line, derived from human colorectal adenocarcinoma, serves as a well-established in vitro model for this purpose, mimicking the human intestinal mucosa [35]. However, experimental permeability assessment is time-consuming, expensive, and subject to protocol-related variability, limiting its throughput in early discovery stages [35].

The integration of machine learning (ML) with automated workflow platforms like KNIME Analytics Platform presents a powerful strategy to overcome these limitations. This document provides detailed application notes and protocols for developing supervised recursive machine learning approaches on the KNIME platform to create reliable prediction models for Caco-2 permeability, framed within broader research on machine learning algorithms for this endpoint [35]. These automated workflows enable the high-throughput screening necessary for virtual compound libraries, facilitating faster and more cost-effective decision-making.

Workflow Methodology

The development of a robust Caco-2 permeability prediction model involves a multi-step process, from data collection and curation to model deployment. The methodology below is adapted from a published study that created an automated prediction platform using a curated dataset of over 4,900 molecules [35].

Data Collection and Curation

Data quality is the foundation of any reliable model. The initial step involves gathering experimental Caco-2 permeability data from public sources.

- Data Sources: The model can be constructed from several publicly available datasets, such as those published by Wang et al. (2016; 1,272 compounds), Wang and Cheng (2017; 1,827 compounds), and Wang et al. (2020; 4,464 compounds) [35].

- Data Standardization: All apparent permeability (