Lipophilicity Descriptors in QSAR: From Foundational Concepts to Advanced Applications in Drug Discovery

This article provides a comprehensive overview of lipophilicity descriptors and their pivotal role in Quantitative Structure-Activity Relationship (QSAR) studies.

Lipophilicity Descriptors in QSAR: From Foundational Concepts to Advanced Applications in Drug Discovery

Abstract

This article provides a comprehensive overview of lipophilicity descriptors and their pivotal role in Quantitative Structure-Activity Relationship (QSAR) studies. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of lipophilicity, including its critical influence on a compound's absorption, distribution, and transport across biological membranes. The scope extends to methodological approaches for descriptor calculation and measurement, covering both traditional and emerging computational and chromatographic techniques. It addresses common challenges in model interpretation and optimization, highlighting advanced strategies from recent research. Finally, the article offers a rigorous framework for the validation and comparative analysis of lipophilicity descriptors against biological endpoints and ADMET properties, underscoring their indispensable value in designing effective and safe therapeutics.

Lipophilicity Fundamentals: Why This Key Physicochemical Property Governs Drug Activity

Lipophilicity is a fundamental physicochemical property in drug discovery, exerting a profound influence on a compound's absorption, distribution, metabolism, excretion, and toxicity (ADMET). In Quantitative Structure-Activity Relationship (QSAR) studies, lipophilicity descriptors are pivotal for building reliable models that correlate chemical structure with biological activity [1]. The partition coefficient (logP) and distribution coefficient (logD) are the two primary metrics used to quantify lipophilicity. These descriptors are integral to the "hydrophobic pharmacophore" concept in 3D-QSAR, significantly impacting ligand binding affinity and the success of virtual screening efforts [1]. While traditional rules like Lipinski's Rule of Five emphasized logP, the field is increasingly moving towards a more nuanced understanding, recognizing logD's critical role in predicting the behavior of ionizable compounds in varying physiological environments [2]. This guide provides a comparative analysis of logP and logD, underpinned by experimental data and their application in modern drug design.

Fundamental Concepts: logP vs. logD

logP, the partition coefficient, defines the ratio of the concentrations of a neutral (unionized) compound in a mixture of two immiscible solvents, typically 1-octanol and water [2]. It is a constant for a given compound under specified temperature conditions.

logD, the distribution coefficient, describes the ratio of the sum of the concentrations of all forms of a compound (neutral, ionized, and partially ionized) present in the two phases at a specific pH [3] [2]. Unlike logP, logD is pH-dependent.

The relationship between logP and logD for a monoprotic acid or base can be described by the following equation, which accounts for the compound's ionization constant (pKa) [3]:

logD = logP - log(1 + 10^(pH - pKa)) (for acids, Δi = +1)

logD = logP - log(1 + 10^(pKa - pH)) (for bases, Δi = -1)

Table 1: Core Differences Between logP and logD

| Feature | Partition Coefficient (logP) | Distribution Coefficient (logD) |

|---|---|---|

| Chemical Species Measured | Neutral (unionized) form only [2] | All forms: neutral, ionized, and partially ionized [2] |

| pH Dependence | Constant, independent of pH [2] | Variable, depends on the pH of the aqueous phase [2] |

| Physicochemical Basis | Differential solubility of neutral species in octanol and water [3] | Apparent lipophilicity accounting for ionization state at a specific pH [3] |

| Primary Application | Fundamental measure of intrinsic lipophilicity for neutral compounds | More accurate prediction of solubility and permeability for ionizable drugs under physiological conditions [2] |

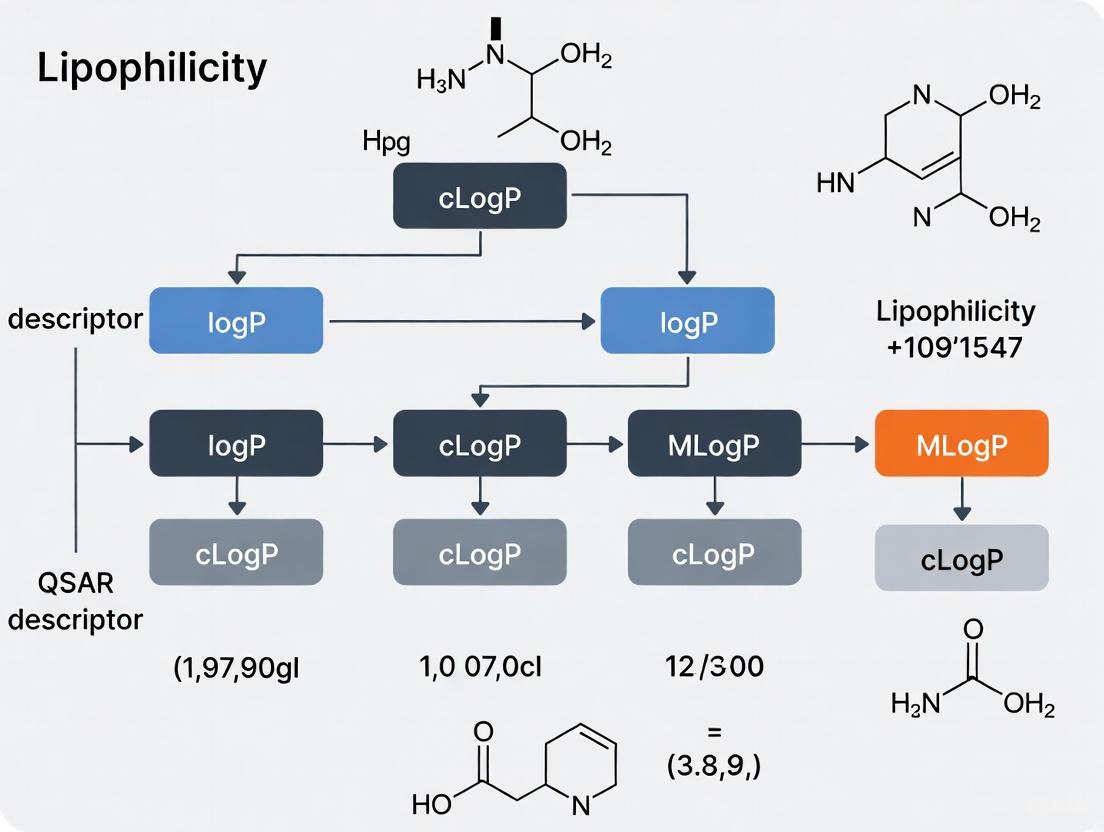

Diagram 1: Relationship between logP, logD, and drug properties. logP only measures the neutral species, while logD accounts for both neutral and ionized forms, which directly influence key properties like permeability and solubility.

Experimental Determination: Methodologies and Protocols

Several experimental techniques are employed to determine logP and logD values, each with distinct advantages and limitations.

Shake-Flask Method

The classical shake-flask method is considered a reference standard [4]. It involves dissolving the compound in a mixture of 1-octanol and a buffer solution (e.g., at pH 7.4 for logD7.4), vigorously shaking to allow partitioning, separating the phases by centrifugation, and quantifying the compound concentration in each phase using analytical techniques like UV spectrophotometry or HPLC [4]. While direct, this method is labor-intensive, requires high compound purity, and can be challenging for compounds with extreme logP values [4].

Chromatographic Methods

Reverse-phase High Performance Liquid Chromatography (RP-HPLC) offers a robust and resource-sparing alternative [5]. This method correlates a compound's retention time on a hydrophobic column with its lipophilicity. A calibration curve is created using reference standards with well-established logP values. The retention factor is then used to estimate the logP/logD of unknown compounds [5] [4]. This method is suitable for high-throughput analysis and requires minimal amounts of compound, making it valuable for early-stage discovery [5].

Potentiometric Titration

This approach is applicable to ionizable compounds and involves titrating the sample in a two-phase system (octanol/water) [4]. The shift in the titration curve compared to a water-only system is used to calculate the logP. This method is limited to compounds with acid-base properties and requires high sample purity [4].

Table 2: Comparison of Key Experimental Methods for Lipophilicity Determination

| Method | Principle | Advantages | Disadvantages | Typical Throughput |

|---|---|---|---|---|

| Shake-Flask [4] | Direct partitioning between octanol and water | Considered a gold standard; direct measurement | Laborious, requires compound purification, low throughput for extreme logP values | Low |

| RP-HPLC [5] [4] | Correlation of retention time with lipophilicity | High-throughput, low compound consumption, robust against impurities | Indirect measurement; requires calibration standards | High |

| Potentiometric Titration [4] | pKa shift in a biphasic system | Provides pKa and logP simultaneously | Limited to ionizable compounds; requires high purity | Medium |

Computational Prediction: Models and Performance

Computational approaches are indispensable for high-throughput virtual screening. Models range from traditional fragment-based methods to modern machine learning algorithms.

- Fragment-Based Methods: These methods, such as those in the ClogP software, operate on the principle of averaged contributions of simple molecular fragments, which are corrected against large experimental datasets [3].

- Whole-Molecule Approaches: These use molecular lipophilicity potential (MLP) or topological indices to predict logP [3].

- Machine Learning (ML) and QSAR Models: Recent advances use ML to build correction models that improve upon commercial software predictions. For instance, models trained with public or proprietary data using ClogP and predicted pKa as descriptors have shown enhanced predictive capability for logD [6] [7].

- Quantum Chemical Calculations: These methods use descriptors derived from electronic structure calculations (e.g., atomic partial charges, dipole moments) or directly calculate solvation free energy using continuum solvation models to predict logP [3].

Table 3: Comparison of Computational logP/logD Prediction Tools and Performance

| Tool/Method | Type | Key Features | Reported Performance / Notes |

|---|---|---|---|

| ClogP [6] [3] | Fragment-based | Industry standard; uses summed fragment contributions | Can lead to systematic errors for chemically related series [6] |

| RTlogD Model [4] | Machine Learning (Graph Neural Network) | Integrates knowledge from chromatographic retention time, microscopic pKa, and logP via multitask learning | Superior performance compared to commonly used algorithms; addresses data scarcity [4] |

| AZlogD74 (AstraZeneca) [4] | Machine Learning | In-house model trained on >160,000 molecules; continuously updated | High performance due to extensive, proprietary dataset [4] |

| Quantum Chemical-based QSPR [3] | QM-based Descriptors | Uses atomic charges, MO energies, etc., from semi-empirical or DFT calculations | High computational cost; accuracy depends on solvation model [3] |

Diagram 2: General workflow for developing a QSAR model for logP/logD prediction, highlighting the cyclical process of validation and refinement.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Lipophilicity Studies

| Reagent / Material | Function in Experimentation |

|---|---|

| 1-Octanol | Standard organic solvent simulating lipid environments in shake-flask and reference value for computational models [4] [8]. |

| Buffer Solutions (various pH) | Aqueous phase to control pH environment, critical for logD determination and simulating physiological conditions (e.g., pH 7.4) [2] [8]. |

| Reverse-Phase HPLC Columns (e.g., C18) | Stationary phase for chromatographic lipophilicity measurement; interacts with analytes based on hydrophobicity [5]. |

| Reference Standards (e.g., known logP drugs) | Compounds with well-established logP values for calibrating chromatographic methods or validating new assays [5]. |

The accurate definition and determination of lipophilicity via logP and logD are cornerstones of rational drug design. While logP remains a fundamental descriptor of intrinsic lipophilicity, logD provides a more physiologically relevant picture for ionizable compounds, which constitute the vast majority of drugs. The choice between them in QSAR studies must be intentional. Experimental methods like shake-flask and HPLC provide crucial ground-truth data, while modern computational strategies—especially machine learning models that leverage large datasets and transfer learning—are rapidly closing the gap between prediction and reality. As drug discovery ventures into novel chemical spaces beyond the Rule of Five, a sophisticated understanding and application of these lipophilicity descriptors will be more critical than ever for optimizing compound efficacy and safety.

Lipophilicity, quantitatively expressed as the partition coefficient (log P), is a fundamental molecular descriptor that profoundly influences the pharmacokinetic and pharmacodynamic profile of bioactive compounds. Within Quantitative Structure-Activity Relationship (QSAR) studies, lipophilicity serves as a critical independent variable for predicting a compound's behavior in biological systems. This property dictates passive diffusion across lipid bilayers, influencing absorption and distribution, while simultaneously impacting interactions with metabolic enzymes and transporters, thereby affecting metabolism, excretion, and toxicity (ADMET). The optimization of lipophilicity is therefore a central challenge in medicinal chemistry, as it represents a delicate balance between achieving sufficient membrane permeation and avoiding excessive tissue accumulation or non-specific binding that leads to toxicity. This guide objectively compares the experimental and computational methodologies used to quantify lipophilicity and its downstream effects on biological endpoints, providing a framework for researchers to select appropriate tools for drug development.

Experimental and In Silico Paradigms for Assessing Lipophilicity

The accurate determination of lipophilicity is a critical first step in understanding a compound's biological imperative. Researchers can choose from established experimental techniques or modern in silico prediction tools, each with distinct advantages and limitations. The following table provides a comparative overview of these key methodologies.

Table 1: Comparison of Methodologies for Lipophilicity Assessment

| Method Type | Specific Method | Key Output | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Experimental | Shake-Flask [9] | log P (Partition Coefficient) | Considered a gold standard; direct measurement [9]. | Time-consuming; requires high compound purity; limited log P range (~-2 to 4) [9]. |

| Experimental | Chromatographic (RP-TLC, RP-HPLC) [9] | RM0, log k0 (Chromatographic Lipophilicity Indices) | High-throughput; small sample amount; good reproducibility [9]. | Indirect measure; values are system-dependent and relative to standards. |

| In Silico | Consensus Computational Models (e.g., iLOGP, XLOGP3) [9] [10] | log P (Predicted Partition Coefficient) | Extremely fast; no physical sample required; ideal for virtual screening [9]. | Accuracy varies by algorithm and chemical space; potential for significant prediction errors, especially for novel scaffolds [10]. |

| In Silico | Multitask Neural Networks (e.g., OCHEM) [10] | Simultaneous prediction of solubility & lipophilicity | High-throughput; can model multiple related properties at once [10]. | Performance depends on the quality and breadth of training data; "black box" nature can limit interpretability. |

Lipophilicity as the Primary Driver of Membrane Permeation

Membrane permeation is the first major biological hurdle a drug candidate must overcome. The Parallel Artificial Membrane Permeation Assay (PAMPA) is a widely adopted high-throughput model for evaluating passive transcellular permeability, a process heavily governed by lipophilicity.

Key Experimental Protocol: PAMPA for Permeability Screening

A standard PAMPA protocol involves creating a lipid-impregnated filter that acts as an artificial membrane between a donor and an acceptor well plate [11]. The test compound is added to the donor well, and its appearance in the acceptor compartment is measured over time, typically using UV spectroscopy or LC-MS, to calculate an apparent permeability coefficient (Papp) [11]. Critical steps include:

- Membrane Formation: A microfiltration plate is coated with a lipid solution (e.g., egg lecithin in n-dodecane or other solvents like 1,9-decadiene or hexadecane) to mimic the biological barrier [11].

- Assay Execution: The donor plate is filled with buffer (pH 7.3) containing the test compound and sandwiched with the membrane plate and an acceptor plate. The system is incubated unstirred or with minimal agitation for a set period (e.g., several hours) [11].

- Analysis and UWL Correction: The concentration in the acceptor compartment is analyzed. For highly hydrophobic compounds (calculated log Papp > -4.5), the observed permeability is often lower than predicted due to the barrier of the unstirred water layer (UWL) and membrane retention; this necessitates correction via bilinear QSAR models [11].

Data Correlation: PAMPA Permeability and Caco-2 Cell Models

PAMPA permeability coefficients show a strong correlation with permeability from more complex, cell-based models like Caco-2, which themselves are used to predict human intestinal absorption [11]. This validates PAMPA as a reliable, high-throughput tool for early-stage permeability screening. The relationship between lipophilicity and permeability is not linear; beyond an optimal log P, the UWL becomes the rate-limiting barrier.

Figure 1: The Permeation Pathway. This diagram visualizes the pathway of a compound crossing a lipid membrane, highlighting the unstirred water layer (UWL) as a critical barrier for highly lipophilic molecules.

The Distribution Dilemma: Lipophilicity and Blood-Brain Barrier Penetration

Distribution to specific tissues, particularly the brain, is a key determinant of a drug's efficacy and safety. The Blood-Brain Barrier (BBB) is a highly selective endothelial membrane that tightly regulates central nervous system (CNS) access.

Key Experimental & Computational Protocol: Evaluating BBB Permeability

BBB permeability can be assessed through in vivo or in silico methods.

- In Vivo Measurement: The gold-standard metric is log BB, the logarithm of the ratio of the steady-state concentration of a drug in the brain to that in the blood or plasma [12]. This is determined by administering the compound to laboratory rodents, followed by tissue extraction and bioanalysis [12].

- In Silico QSAR Modeling: Given the cost and ethical concerns of in vivo studies, QSAR models are widely used for prediction. A robust QSAR workflow involves:

- Data Curation: Compiling a large dataset of chemical structures and their corresponding experimental log BB values from public sources like ChEMBL and the literature [12] [13].

- Descriptor Calculation & Model Training: Calculating molecular descriptors (e.g., topological, physicochemical) and using machine learning algorithms (e.g., Multiple Linear Regression (MLR), Random Forest (RF), or deep learning frameworks like ImageMol) to build a predictive model [12] [14].

- Validation: Rigorously validating the model using external test sets and statistical measures like sensitivity, negative predictivity, and coverage to ensure real-world applicability [12].

Data Correlation: Molecular Properties and BBB Penetration

QSAR models consistently identify a set of core physicochemical properties that govern BBB permeability [12]. These include molecular size/polar surface area, hydrogen bonding potential, and critically, lipophilicity [12]. Compounds with moderate lipophilicity and low polar surface area generally demonstrate superior passive diffusion across the BBB. However, highly lipophilic compounds may be substrates for efflux transporters like P-glycoprotein (P-gp), which actively pumps them out of the brain, underscoring the need for a balanced approach [12].

Table 2: Key Descriptors in BBB Permeability QSAR Models

| Molecular Descriptor Category | Specific Examples | Influence on log BB |

|---|---|---|

| Lipophilicity | Calculated log P (e.g., ALOGP, MLOGP) [12] | A primary driver; optimal mid-range values favor permeation. |

| Polarity & Size | Topological Polar Surface Area (TPSA), Molecular Weight [12] | Inverse relationship; high TPSA/MW reduces permeation. |

| Hydrogen Bonding | Number of Hydrogen Bond Donors/Acceptors [12] | Inverse relationship; high counts reduce permeation. |

An Integrated View: Lipophilicity within the ADMET Spectrum

Lipophilicity's influence extends far beyond permeation and distribution, affecting the entire ADMET profile. Modern drug discovery employs integrated in silico workflows to profile compounds early on.

Key Experimental & Computational Protocol: Comprehensive ADMET Prediction

A standard computational ADMET assessment involves:

- Structure Preparation and Optimization: 2D chemical structures are drawn (e.g., with ChemDraw) and converted to 3D structures. Energy minimization and geometry optimization are performed using computational methods like Density Functional Theory (DFT) with a basis set such as B3LYP/6-31G to identify the most stable conformer [15] [16].

- Descriptor Calculation and Drug-Likeness Filtering: Software like PaDEL-Descriptor is used to calculate thousands of molecular descriptors (constitutional, topological, quantum-chemical) [15] [17]. Compounds are first filtered through rules like Lipinski's Rule of Five, Veber, or Egan rules to flag those with potentially poor oral bioavailability [15] [9].

- ADMET Endpoint Prediction: The optimized structures and/or their descriptors are used as input for predictive platforms such as SwissADME and pkCSM. These tools estimate a wide range of properties, including solubility, BBB permeability, CYP450 enzyme inhibition, and hepatotoxicity [15] [9] [18].

Data Correlation: The Lipophilicity-Toxicity Link

A strong correlation exists between high lipophilicity and an increased risk of toxicity and adverse drug reactions [9]. This is due to several factors:

- Promiscuous Binding: Lipophilic compounds are more likely to engage in off-target interactions, leading to unintended pharmacological effects and toxicity [9].

- Metabolic Instability: High lipophilicity often makes compounds more susceptible to metabolism by cytochrome P450 enzymes, potentially generating reactive, toxic metabolites [14].

- Tissue Accumulation: Excessive lipophilicity leads to sequestration in adipose tissue and other lipid-rich compartments, resulting in a long half-life and potential chronic toxicity [9].

Figure 2: The log P - ADMET Relationship. This diagram illustrates the direct influence of lipophilicity (log P) on ADME properties and its established correlation with toxicity risks.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful investigation into lipophilicity and its biological effects relies on a suite of specific reagents, software, and experimental systems.

Table 3: Essential Research Reagents and Tools

| Tool / Reagent Name | Function / Role | Field of Application |

|---|---|---|

| Egg Lecithin / n-dodecane [11] | Lipid solution for creating the artificial membrane in PAMPA. | In Vitro Permeability |

| Caco-2 Cell Line [11] | Human colon adenocarcinoma cell line used as an in vitro model of intestinal permeability. | In Vitro Permeability |

| RP-TLC Plates [9] | Reversed-phase thin-layer chromatography plates for experimental determination of lipophilicity indices (RM0). | Lipophilicity Measurement |

| PaDEL-Descriptor [15] [17] | Open-source software for calculating molecular descriptors for QSAR model building. | Computational Chemistry |

| SwissADME / pkCSM [15] [9] | Freely accessible web tools for predicting the ADMET profile of chemical compounds. | ADMET Prediction |

| DFT (B3LYP/6-31G) [15] [16] | A computational method for optimizing molecular geometry and calculating quantum-chemical descriptors. | QSAR / Molecular Modeling |

| ImageMol [14] | A self-supervised image representation learning framework for predicting molecular properties and targets. | AI in Drug Discovery |

| PharmaBench [13] | A large, curated benchmark dataset for developing and validating ADMET predictive models. | Data Science / Cheminformatics |

In Quantitative Structure-Activity Relationship (QSAR) studies, molecular descriptors serve as the fundamental numerical representations that bridge chemical structure with biological activity and physicochemical properties. For predicting lipophilicity—a critical parameter governing drug absorption, distribution, metabolism, and excretion (ADME)—the selection of appropriate descriptor methodologies directly impacts model accuracy and interpretability. Fragment-based, atomic contribution, and property-based descriptors represent three distinct philosophical approaches to quantifying molecular characteristics, each with unique strengths and limitations for specific research applications in drug development. As the field advances, understanding the core distinctions, computational requirements, and optimal use cases for each descriptor type enables researchers to make informed decisions in QSAR model development, particularly for complex properties like lipophilicity that influence compound behavior in biological systems.

Theoretical Foundations and Methodologies

Fragment-Based Descriptors

Fragment-based descriptors operate on the principle that molecular properties emerge from identifiable structural subunits within a compound. These descriptors systematically decompose molecules into functional groups, rings, or other chemically meaningful substructures, representing the molecule as a collection of these fragments. The foundation of this approach lies in fragment-based drug discovery (FBDD), where small, low-complexity molecular fragments serve as efficient starting points for lead optimization [19] [20]. Unlike traditional high-throughput screening that uses large, drug-like molecules, FBDD utilizes smaller fragments (typically ≤20 heavy atoms) that display more 'atom-efficient' binding interactions despite lower initial affinity [19].

Key Methodology: The process typically begins with molecular fragmentation using algorithms such as Retrosynthetic Combinatorial Analysis Procedure (RECAP) or Breaking of Retrosynthetically Interesting Chemical Substructures (BRICS) [19] [20]. These techniques apply chemically logical cleavage rules to break molecules at specific bond types while ensuring the resulting fragments maintain chemical validity. For example, RECAP fragmentation identifies and breaks bonds formed through common chemical reactions, generating fragments that resemble building blocks used in synthetic chemistry [20]. The resulting fragments are then encoded as binary fingerprints indicating presence or absence, or as count descriptors quantifying occurrences within the parent molecule.

Recent advances include the development of specialized foundation models like FragAtlas-62M, a GPT-2 based chemical language model trained on over 62 million fragments from the ZINC-22 database [21]. Such models achieve 99.90% chemical validity in fragment generation while maintaining broad coverage of fragment chemical space, enabling more comprehensive fragment descriptor development [21]. The generated fragments can be further described using properties such as the fraction of sp3-hybridized carbon atoms (Fsp3), plane of best fit (PBF), and principal moments of inertia (PMI) to capture three-dimensional characteristics [22].

Atomic Contribution Descriptors

Atomic contribution approaches conceptualize molecules as collections of atoms, with each atom contributing incrementally to the overall molecular properties. These methods typically assign values to atoms based on their type, hybridization state, and chemical environment, then sum these atomic contributions to arrive at molecular-level predictions. The fundamental premise is that properties like lipophilicity can be approximated as the sum of contributions from all constituent atoms, modified by their molecular context.

Key Methodology: The development of atomic contribution descriptors begins with parameterization using experimental data. Researchers derive atomic coefficients by fitting contributions to measured properties across diverse chemical datasets. For lipophilicity prediction, the well-known ClogP method developed by Daylight Chemical Information Systems uses a large database of experimental log P values to determine contribution parameters for atoms in different environments [23]. The methodology accounts for factors such as atomic hybridization, bond types, and proximity to functional groups that might influence the atom's contribution.

A more sophisticated implementation appears in Property-Labelled Materials Fragments (PLMF), which adapts fragment descriptors for inorganic crystals by differentiating atoms based on tabulated chemical and physical properties [24]. In this approach, atoms are "colored" according to properties including Mendeleev group and period numbers, valence electron count, atomic mass, electron affinity, ionization potentials, electronegativity, and polarizability [24]. The model establishes connectivity through Voronoi-Dirichlet partitioning and considers both path fragments (linear strands of up to four atoms) and circular fragments (coordination polyhedra) to capture the atomic environment [24].

Recent innovations include novel atom-pair descriptors that integrate both fingerprint and property characteristics [23]. These descriptors represent molecules as lists of atom-pair feature sets, with each set containing atom types for two atoms, their relationship features (connectivity, ring membership), and isomerism information (cis-trans configuration) [23]. This hybrid approach maintains atomic-level resolution while capturing important relational information between atomic pairs.

Property-Based Descriptors

Property-based descriptors utilize whole-molecule physicochemical properties as predictive variables in QSAR models. These descriptors capture emergent molecular properties that result from the complex interplay of atomic and fragment contributions, providing a more holistic representation of molecular characteristics. For lipophilicity prediction, property-based descriptors offer the advantage of directly incorporating relevant physicochemical information rather than inferring it from structural components.

Key Methodology: Property-based descriptor calculation begins with the computation of fundamental molecular properties using specialized software tools. Commonly used platforms include PaDEL-Descriptor, Open Babel, and RDKit, which can compute thousands of molecular descriptors from chemical structure inputs [17] [23]. These descriptors encompass diverse property categories including topological indices, constitutional descriptors, electronic properties, and geometrical descriptors.

For lipophilicity modeling, key property-based descriptors often include the logarithm of the octanol-water partition coefficient (LogP), topological polar surface area (TPSA), molecular weight, hydrogen bond donor and acceptor counts, rotatable bond count, and molar refractivity [17] [23]. The calculation methods vary—for example, LogP may be computed using atom-based contribution methods like ClogP or property-based methods like ALogP from VEGA software [25]. Similarly, TPSA calculations employ fragment-based approaches that sum contributions from polar atom types, representing a hybrid between fragment and property-based methodologies [23].

Advanced implementations incorporate machine learning to optimize descriptor importance during model training. Counter-propagation artificial neural networks (CPANN) can dynamically adjust molecular descriptor importance values for different structural classes of molecules, enhancing model adaptability to diverse compound sets [26]. This approach improves classification performance while maintaining interpretability through identification of key molecular features responsible for specific endpoint predictions [26].

Table 1: Core Methodological Principles of Three Descriptor Types

| Descriptor Type | Fundamental Unit | Calculation Approach | Key Algorithms/Methods |

|---|---|---|---|

| Fragment-Based | Molecular substructures | Decomposition into functional units | RECAP, BRICS, Retrosynthetic rules [20] |

| Atomic Contribution | Individual atoms | Summation of atomic parameters | ClogP, Atom-type descriptors, PLMF [24] [23] |

| Property-Based | Whole-molecule properties | Direct computation from structure | PaDEL, RDKit, Topological indices [17] [23] |

Experimental Protocols and Workflow Implementation

Standardized QSAR Modeling Workflow

Implementing a robust QSAR modeling approach requires a systematic workflow that ensures reproducible and predictive models. The following protocol outlines a standardized procedure applicable across descriptor types, with specific considerations for lipophilicity prediction:

Dataset Curation and Preprocessing: Compile a diverse set of compounds with experimentally measured lipophilicity values (e.g., LogP). The dataset should encompass sufficient structural diversity to support model generalization while maintaining a balanced representation of chemical classes. Remove compounds with questionable measurements or structural errors. For the cyclodextrin affinity study, researchers cleaned data by removing structural and activity outliers, retaining only one-to-one complexes measured in water at pH 7 and 298±2 K [17].

Chemical Structure Standardization: Process all chemical structures through standardized normalization procedures including neutralization of salts, tautomer standardization, and removal of duplicate structures. This ensures consistent descriptor calculation across the chemical dataset.

Descriptor Calculation: Compute molecular descriptors using selected approaches. For fragment-based descriptors, apply fragmentation algorithms like RECAP or BRICS. For atomic contribution methods, implement parameterized atomic contribution schemes. For property-based descriptors, calculate physicochemical properties using software like PaDEL-Descriptor, which can generate over 1,000 chemical descriptors for each molecule [17].

Dataset Splitting: Divide the dataset into training, testing, and external validation sets using representative sampling of the target property values. A common approach employs a 75:25 train-to-test split with multiple partitions to ensure robustness, plus an external validation set comprising 15% of the data withheld from model development [17].

Model Training and Validation: Develop QSAR models using appropriate statistical or machine learning methods. Validate models using both internal (cross-validation) and external validation techniques. For the cyclodextrin affinity models, researchers performed leave-one-out cross-validation, y-randomization, and applicability domain analysis to ensure model reliability [17].

Model Interpretation and Application: Interpret the validated models to identify key structural features influencing lipophilicity. Apply the models to predict lipophilicity for new chemical entities, ensuring predictions fall within the model's applicability domain.

Specialized Protocols by Descriptor Type

Fragment-Based Protocol: Implement molecular fragmentation using RECAP or similar rules that break molecules at specific bond types (amide, ester, etc.) [20]. Encode the resulting fragments as binary fingerprints or count vectors. For 3D characterization, compute shape descriptors such as principal moments of inertia (PMI) and plane of best fit (PBF) to capture molecular geometry [22].

Atomic Contribution Protocol: Calculate atomic contributions using established schemes like ClogP or develop custom atomic parameters by fitting experimental data. For complex systems, implement Voronoi-Dirichlet partitioning to establish atomic connectivity as used in Property-Labelled Materials Fragments (PLMF) for inorganic crystals [24].

Property-Based Protocol: Compute a comprehensive set of molecular properties using software like PaDEL-Descriptor. Select relevant descriptors through feature selection techniques to avoid overfitting. For enhanced predictive performance, implement machine learning approaches like counter-propagation artificial neural networks (CPANN) that dynamically adjust descriptor importance during training [26].

Diagram Title: Comprehensive QSAR Modeling Workflow with Descriptor Pathways

Comparative Performance Analysis

Quantitative Performance Metrics

Evaluating descriptor performance requires assessment across multiple metrics including predictive accuracy, computational efficiency, interpretability, and applicability domain. The following table summarizes comparative performance based on published studies:

Table 2: Performance Comparison of Descriptor Types in QSAR Modeling

| Performance Metric | Fragment-Based | Atomic Contribution | Property-Based |

|---|---|---|---|

| Predictive Accuracy (R²) | 0.912 (PCR model on acylshikonin derivatives) [27] | Varies by parameterization | 0.7-0.8 (Cyclodextrin affinity models) [17] |

| Interpretability | High (direct structural correlation) | Moderate (atomic parameter interpretation) | Variable (property-structure relationship) |

| Computational Demand | Moderate to High | Low to Moderate | Moderate |

| Novel Compound Handling | Limited to known fragments | Good for covered atom types | Good within chemical space |

| 3D Structure Encoding | Good (PBF, PMI metrics) [22] | Limited without extensions | Good (geometrical descriptors) |

| Application Scope | Drug discovery, FBDD [19] | Lipophilicity, solubility | Broad QSAR/QSPR |

Case Studies in Lipophilicity Prediction

Fragment-Based Success: In studies of acylshikonin derivatives with antitumor activity, fragment-based descriptors coupled with principal component regression (PCR) demonstrated exceptional predictive performance (R² = 0.912, RMSE = 0.119) [27]. The models identified specific electronic and hydrophobic fragments as key determinants of cytotoxic activity, providing clear structural insights for lead optimization.

Atomic Contribution Efficiency: Atomic contribution methods like ClogP remain widely used for lipophilicity prediction due to their computational efficiency and reasonable accuracy across diverse chemical classes. These methods excel at rapid screening of large compound libraries, though they may struggle with novel atom environments not well-represented in training data.

Property-Based Versatility: For predicting cyclodextrin-drug interactions relevant to drug delivery systems, property-based QSAR models achieved R² values of 0.7-0.8 while offering significant time efficiency—calculating in minutes what docking programs accomplished in hours [17]. These models successfully integrated diverse molecular properties including hydrogen bonding capacity, polar surface area, and steric factors to predict complexation behavior.

Table 3: Essential Computational Tools for Descriptor Calculation and QSAR Modeling

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular fragmentation, descriptor calculation, fingerprint generation | Open-source [20] |

| PaDEL-Descriptor | Software | Calculates >1,000 molecular descriptors and >10 fingerprint types | Open-source [17] |

| ZINC Database | Fragment Library | Source of commercially available fragments for FBDD | Freely accessible [21] |

| VEGA | QSAR Platform | Multiple models for persistence, bioaccumulation, mobility prediction | Freeware [25] |

| EPI Suite | Predictive Suite | Estimates physicochemical properties and environmental fate | Freeware [25] |

| CPANN | Modeling Algorithm | Counter-propagation ANN with dynamic descriptor importance | Research code [26] |

| FragAtlas-62M | Foundation Model | GPT-2 based fragment generator trained on 62M fragments | Openly available [21] |

The comparative analysis of fragment-based, atomic contribution, and property-based descriptors reveals distinctive profiles that recommend each for specific scenarios in lipophilicity prediction and QSAR modeling. Fragment-based descriptors excel in drug discovery contexts where structural interpretability and fragment-based optimization strategies are paramount, particularly for targets with known fragment binding sites. Atomic contribution methods offer computational efficiency for high-throughput lipophilicity screening of large compound libraries, though they may lack precision for complex molecular environments. Property-based descriptors provide versatile whole-molecule representations that capture emergent physicochemical properties, making them valuable for complex phenomena like cyclodextrin complexation and environmental fate prediction.

For researchers focused on lipophilicity descriptors in QSAR studies, the optimal approach frequently involves strategic combination of these descriptor types—using atomic contribution methods for initial screening, fragment-based descriptors for lead optimization with clear structure-activity guidance, and property-based descriptors for complex ADMET prediction where multiple physicochemical factors interact. As artificial intelligence and machine learning continue to advance, particularly through specialized foundation models like FragAtlas-62M for fragments and adaptive algorithms like CPANN for descriptor optimization, the integration of these descriptor paradigms will likely yield increasingly sophisticated and predictive models for lipophilicity and other critical pharmaceutical properties.

The Central Role of Lipophilicity in Established and Modern QSAR Frameworks

Lipophilicity, quantitatively expressed as the partition coefficient (logP) between n-octanol and water, represents one of the most fundamental molecular descriptors in Quantitative Structure-Activity Relationship (QSAR) modeling [28]. This parameter measures a compound's relative affinity for lipid versus aqueous environments, making it critically influential in determining a drug's absorption, distribution, transport across biological membranes, and ultimate biological activity [29] [28]. In classic and modern drug design, lipophilicity serves as a pivotal factor affecting various chemical and biological properties, including solubility, cell membrane penetration, protein-binding strength, metabolism, and elimination [1] [29]. The central principle is that drugs must be lipophilic enough to penetrate lipid membranes yet not so lipophilic that they become trapped or exhibit poor aqueous solubility [29].

The significance of lipophilicity extends across the entire drug discovery pipeline, from initial compound screening to lead optimization. Its influence on a molecule's ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) profile makes it an indispensable parameter for predicting the behavior of candidate molecules in biological systems [29]. This comprehensive review examines the evolution of lipophilicity descriptors from classical QSAR approaches to their integration within modern artificial intelligence-driven frameworks, comparing methodological protocols and highlighting advanced applications in contemporary drug discovery.

Classical Lipophilicity Descriptors and Experimental Determination

Theoretical Foundations and Calculation Methods

The classical determination of lipophilicity employs the shake-flask method, which directly measures the partitioning of a compound between n-octanol and water phases, expressed as LogP = log10([Drug]n-octanol/[Drug]water) [28]. This experimental approach, while considered a gold standard, is tedious, time-consuming, and less amenable to automation, particularly for degradable compounds [29].

To address these limitations, computational methods for determining logP have been developed based on different theoretical methodologies. Fragment-based approaches (e.g., AClogP) calculate molecular lipophilicity by summing contributions from constituent fragments and correction factors [30] [29]. Atomic contribution methods (e.g., AlogP) compute logP by assigning values to individual atoms within the molecular structure, while property-based methods (e.g., MLOGP) utilize linear regression based on physicochemical properties [29]. These computational approaches offer significant advantages, including short computation time and the ability to obtain logP parameters before compound synthesis, making them economically and ecologically attractive [29].

Table 1: Classical Computational Methods for Lipophilicity Determination

| Method Name | Algorithm Type | Theoretical Basis | Key Characteristics |

|---|---|---|---|

| AClogP | Fragment-based | Fragmental contributions | Sums fragment values with correction factors |

| AlogP | Atomic-based | Atomic contributions | Calculates from individual atom contributions |

| MLOGP | Property-based | Linear regression | Uses physicochemical parameters in regression model |

| XLOGP2/XLOGP3 | Atomic-based | Atom-based with correction factors | Incorporates structural corrections for accuracy |

| KOWWIN | Fragment-based | Group contribution method | EPISuite model for logKow prediction |

Chromatographic Methods for Lipophilicity Assessment

Chromatographic techniques, particularly Reversed-Phase Thin-Layer Chromatography (RP-TLC) and High-Performance Liquid Chromatography (HPLC), have emerged as practical alternatives for experimental lipophilicity determination [29]. These methods utilize octadecylsilanized silica gel (RP-18) as the stationary phase, which mimics the structure of long-chain fatty acids in biological membranes, with water-organic solutions typically serving as the mobile phase [29].

The primary advantages of chromatographic approaches include:

- Speed and repeatability compared to traditional shake-flask methods

- Insensitivity to contaminants or degradation products

- Wider dynamic range and online detection capabilities

- Reduced sample handling and minimal sample sizes required

In RP-TLC, the relative lipophilicity is expressed as RM0, which can be converted to the chromatographic hydrophobicity index (logPTLC) for direct comparison with calculated logP values [29]. This methodology has been successfully applied to diverse compound classes, including angularly condensed diquino- and quinonaphthothiazines with potential anticancer and antioxidant activities [29].

Lipophilicity in Modern QSAR Frameworks

Integration with Machine Learning and Artificial Intelligence

The emergence of machine learning (ML) and artificial intelligence (AI) has revolutionized QSAR modeling, enabling the development of highly predictive models that capture complex, non-linear relationships between molecular structure and biological activity [31]. Algorithms including Support Vector Machines (SVM), Random Forests (RF), and Gradient-Boosted Trees (GBT) have demonstrated robust performance in lipophilicity-related QSAR tasks [32] [31].

Recent advances incorporate deep learning approaches such as Multilayer Perceptrons (MLP), Graph Neural Networks (GNNs), and SMILES-based transformers, which can learn molecular representations directly from structural data without manual descriptor engineering [31]. These models have shown exceptional performance in predicting lipophilicity and related properties, with one study reporting 96% accuracy and an F1 score of 0.97 for MLP models predicting lung surfactant inhibition—a property closely tied to lipophilicity [32].

Table 2: Machine Learning Performance in Lipophilicity-Focused QSAR

| ML Algorithm | Application Context | Key Performance Metrics | Computational Efficiency |

|---|---|---|---|

| Multilayer Perceptron (MLP) | Lung surfactant inhibition prediction | 96% accuracy, F1 score: 0.97 | Moderate computational cost |

| Support Vector Machines (SVM) | Environmental fate of cosmetic ingredients | High performance for logKow prediction | Lower computation cost |

| Random Forest (RF) | Molecular descriptor selection | Robustness, built-in feature selection | Handles noisy data effectively |

| Gradient-Boosted Trees (GBT) | Toxicity and bioactivity prediction | High predictive accuracy | Ensemble learning approach |

| Graph Neural Networks (GNN) | End-to-end molecular property prediction | Captures structural relationships | Requires significant training data |

Three-Dimensional Descriptors and Quantum Mechanical Approaches

Modern QSAR frameworks have expanded beyond traditional 2D lipophilicity descriptors to incorporate three-dimensional parameters that provide more comprehensive representations of molecular interactions [1]. These 3D-QSAR approaches utilize continuum solvation models and quantum mechanical-based descriptors to gain novel insights into structure-activity relationships [1].

Key advancements in this domain include:

- Quantum chemical descriptors such as HOMO-LUMO gap, dipole moment, molecular orbital energies, and electrostatic potential surfaces that model electronic properties influencing bioactivity [31]

- 4D descriptors that account for conformational flexibility by considering ensembles of molecular structures rather than single static conformations [31]

- Continuum solvation models that provide more accurate representations of molecules under physiological conditions [1]

These sophisticated descriptors have proven particularly valuable in modeling complex biological interactions where molecular shape, electronic distribution, and dynamic flexibility significantly influence binding affinity and specificity [1].

Experimental Protocols and Methodological Comparisons

Benchmarking Studies and Validation Frameworks

Robust validation is essential for assessing the reliability of lipophilicity descriptors in QSAR models. Recent research has developed synthetic benchmark datasets with pre-defined patterns to systematically evaluate interpretation approaches [33] [34]. These datasets represent tasks with varying complexity levels, from simple atom-based additive properties to pharmacophore hypotheses, enabling quantitative metrics of interpretation performance [34].

Standardized experimental workflows for lipophilicity-focused QSAR typically include:

- Dataset Curation - Collecting compounds with comparable activity values obtained through standardized experimental protocols [35]

- Descriptor Calculation - Computing molecular descriptors using tools like DRAGON, PaDEL, and RDKit [31]

- Model Training - Applying machine learning algorithms with appropriate cross-validation strategies [32]

- Validation - Assessing model performance using both internal (R², Q²) and external validation datasets [35]

- Interpretation - Applying explainable AI techniques like SHAP and LIME to identify critical molecular features [31]

Case Study: Lipophilicity Determination of Diquino- and Quinonaphthothiazines

A recent investigation exemplifies the integrated experimental-computational approach to lipophilicity assessment [29]. This study evaluated 21 newly synthesized diquinothiazines and quinonaphthiazines with potential anticancer and antioxidant activities using both computational and chromatographic methods.

Experimental Protocol:

- Computational Screening - Eight different algorithms (AlogPs, AClogP, AlogP, MLOGP, XLOGP2, XLOGP3, logP, and ClogP) calculated logP values for all tested compounds

- Chromatographic Analysis - RP-TLC determined experimental RM0 values, converted to logPTLC

- Descriptor Correlation - Computed logP values correlated with chromatographic measurements and ADME parameters

- Drug-likeness Assessment - Applied Lipinski's, Veber's, and Egan's rules to evaluate pharmaceutical potential

Key Findings:

- Calculated logP values varied significantly depending on the algorithm used

- Most programs did not differentiate between isomeric structures, though ALOGPS and MLOGP showed some discriminatory capability

- Linear correlations between logPTLC and predicted ADME parameters generally showed poor predictive power

- All tested compounds complied with drug-likeness rules, suggesting potential as orally active therapeutics [29]

Visualization of Lipophilicity-QSAR Workflows

Experimental Lipophilicity Determination Pathway

Experimental Lipophilicity Determination Pathway

AI-Integrated QSAR Modeling Framework

AI-Integrated QSAR Modeling Framework

Table 3: Essential Resources for Lipophilicity-Focused QSAR Research

| Resource Category | Specific Tools/Software | Primary Application | Key Features |

|---|---|---|---|

| Descriptor Calculation | RDKit, Mordred, PaDEL, DRAGON | Molecular descriptor computation | 1D-3D descriptor libraries, open-source access |

| Classical QSAR Modeling | QSARINS, Build QSAR | Statistical model development | MLR, PLS, PCR algorithms with validation tools |

| Machine Learning Frameworks | scikit-learn, XGBoost, KNIME | ML model implementation | SVM, RF, GBT algorithms with hyperparameter tuning |

| Deep Learning Platforms | PyTorch, Lightning, Deepchem | Neural network development | GNN, MLP, transformer architectures |

| Experimental Determination | CDS (Constrained Drop Surfactometer) | Laboratory lipophilicity measurement | Surface tension analysis for surfactant inhibition |

| Validation & Interpretation | SHAP, LIME, Benchmark datasets | Model explainability and validation | Feature importance ranking, performance metrics |

Lipophilicity maintains its central role in QSAR frameworks, evolving from a simple partition coefficient to a multifaceted descriptor integrated with advanced computational approaches. The convergence of classical lipophilicity measures with modern machine learning and artificial intelligence has created powerful predictive tools that accelerate drug discovery and optimization. Future developments will likely focus on enhanced interpretation methods for complex "black box" models, standardized benchmarking datasets, and integrated multi-parameter optimization platforms that balance lipophilicity with other critical molecular properties for improved drug design outcomes.

As the field advances, the synergy between experimental lipophilicity determination and computational prediction will continue to strengthen, providing researchers with increasingly sophisticated methods to navigate chemical space and identify promising therapeutic candidates with optimal physicochemical profiles for desired biological activities.

Calculating and Applying Lipophilicity Descriptors: Computational and Experimental Methodologies

In quantitative structure-activity relationship (QSAR) studies, lipophilicity represents one of the most fundamental molecular descriptors influencing biological activity, environmental fate, and pharmacokinetic properties [36] [37]. The partition coefficient (LogP), defined as the ratio of a compound's concentration in n-octanol to its concentration in water at equilibrium, quantitatively expresses this lipophilicity for the neutral, un-ionized form of a molecule [37]. Within drug discovery, LogP profoundly impacts absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties, serving as a key parameter in influential rules such as Lipinski's "Rule of Five" [38]. Similarly, in environmental science, LogP helps predict chemical bioaccumulation and mobility [25]. Given the resource-intensive nature of experimental LogP determination, which involves methods like shake-flask or slow-stir techniques with a standard deviation ranging from 0.01 to 0.84 log units, in silico prediction tools have become indispensable for rapid screening and compound prioritization [38]. These computational algorithms provide researchers with efficient means to estimate this critical parameter, though their methodological approaches and performance characteristics vary significantly. This guide provides an objective comparison of four established prediction algorithms—ALogP, XLOGP, ClogP, and MLOGP—to inform their appropriate application in scientific research.

Theoretical Foundations and Algorithmic Classifications

LogP prediction algorithms can be broadly categorized into three methodological groups based on their fundamental approach to calculating lipophilicity [39]. Understanding these classifications is crucial for selecting the appropriate tool for a given chemical space or research question.

Atomic Methods (ALogP): These methods operate on the principle that a molecule's logP can be derived from the sum of contributions from its individual constituent atoms. Each atom type is assigned a specific value, and the final logP is calculated through a simple, additive table look-up process. This approach is generally fast and well-suited for smaller molecules with non-complex aromatic systems [39].

Fragment/Compound Methods (ClogP, XLOGP): These approaches utilize a dataset of experimentally determined logP values for full compounds or molecular fragments, modeling them using QSPR or other regression techniques. The final prediction sums the contributions of these identified fragments along with applicable correction factors. This method often demonstrates superior accuracy for complex but standard small molecules, particularly those with complex aromaticity, provided the molecule contains structural motifs similar to those in the model's training set [39]. It is important to note that while "ClogP" is often used generically to mean "calculated logP," it technically refers to a specific proprietary method owned by BioByte Corp./Pomona College [39]. XLOGP, specifically version 2.0, is an atom-additive method that incorporates 90 distinct atom types and 10 correction factors to enhance its accuracy and robustness, effectively making it a hybrid approach [40].

Property-Based Methods (MLogP): These methods utilize whole-molecule physicochemical properties or topological descriptors as inputs to a regression model, rather than relying on atom or fragment contributions. Moriguchi's MlogP, a seminal example, initially used counts of lipophilic and hydrophilic atoms in its regression, explaining nearly 75% of the variance in a dataset of 1,230 compounds. Later versions incorporated 11 correction factors, increasing the explained variance to 91% [39]. This method is computationally fast and was historically valuable for screening large chemical libraries.

Table 1: Fundamental Classifications and Characteristics of LogP Algorithms

| Algorithm | Primary Classification | Core Computational Principle | Key Developer / Owner |

|---|---|---|---|

| ALogP | Atomic | Summation of contributions from individual atom types | Ghose, A.K. & Crippen, G.M. [39] |

| XLOGP | Hybrid (Atom+Corrections) | Atom-additive method with 90 atom types & 10 correction factors | Dr. Renxiao Wang [40] |

| ClogP | Fragment/Compound | Summation of contributions from molecular fragments with corrections | BioByte Corp. / Pomona College [39] |

| MLogP | Property-Based | Regression model based on counts of lipophilic/hydrophilic atoms and correction factors | Moriguchi, I. et al. [39] |

Performance Comparison and Experimental Validation

The ultimate value of a predictive model lies in its accuracy and reliability when compared to experimental data. Independent comparative studies provide the most objective basis for evaluating performance.

A comprehensive study by Pyka et al. (2006) evaluated 193 drugs with different pharmacological activities, comparing various theoretical logP values against experimental n-octanol-water partition coefficients (logP_exp) [36]. The findings offer a direct, empirical comparison of several algorithms' performance. The study concluded that the experimental partition coefficients correlated best with those calculated by the logP(Kowwin) and AlogPs methods [36]. Furthermore, it established that the prediction of experimental logP was feasible based on logP(Kowwin), AlogPs, and ClogP for fifteen specific drugs, including adrenalin, ibuprofen, and theophylline [36].

Regarding other algorithms, a separate analysis cited in the literature reported Root Mean Square Error (RMSE) values for several methods when compared to experimental measurements. MLOGP showed an RMSE of 2.03 log units, while the fragment-based CLOGP had an RMSE of 1.23 log units [38]. In contrast, the developers of XLOGP version 2.0 reported a strong correlation (r = 0.973) with a standard error (s) of 0.349 for a large set of 1,853 organic compounds [40]. These performance metrics must be interpreted with the understanding that they may derive from different validation datasets and conditions.

Table 2: Comparative Performance Metrics of LogP Algorithms

| Algorithm | Reported Correlation (r) / RMSE | Validation Set Size & Context | Noted Strengths / Limitations |

|---|---|---|---|

| ALogP | High correlation with logP_exp [36] | 193 drugs [36] | Suitable for smaller, simpler molecules [39] |

| XLOGP | r = 0.973, s = 0.349 [40] | 1,853 organic compounds [40] | Robust; handles larger electronic effects via corrections [40] |

| ClogP | RMSE = 1.23 [38]; Useful for prediction [36] | Independent analysis [38]; 193 drugs [36] | Accurate for standard small molecules; proprietary [39] |

| MLogP | RMSE = 2.03 [38] | Independent analysis [38] | Fast for large datasets; potentially less accurate [39] [38] |

Experimental Protocols for LogP Prediction Validation

For researchers aiming to validate or apply these in silico tools, understanding the experimental and computational protocols used in benchmark studies is essential. The following methodologies are commonly employed in the field.

Benchmarking Predictive Accuracy

The core protocol for validating LogP algorithms involves comparing computational predictions against experimentally determined values. The study by Pyka et al. is representative of this approach [36]. The methodology can be summarized as follows:

- Compound Selection: A diverse set of compounds (e.g., 193 drugs of different pharmacological classes) is selected [36].

- Experimental Determination: The experimental n-octanol-water partition coefficient (logP_exp) for each compound is measured, typically using the shake-flask method under controlled conditions (temperature, pH) to ensure consistency and capture the un-ionized species [36] [37].

- Computational Prediction: The logP values for the same set of compounds are calculated using the various theoretical methods under investigation (e.g., AlogPs, ClogP, etc.) [36].

- Statistical Analysis: The theoretical values are statistically compared to the experimental benchmark data. This involves calculating correlation coefficients (r), standard errors (s), and/or root mean square errors (RMSE) to objectively quantify predictive performance [36] [40] [38].

Machine Learning-Driven QSAR Workflow

More modern approaches integrate machine learning (ML) with traditional QSAR, a workflow that can be adapted for developing or refining logP predictors. A 2024 study on fungicidal mixtures outlines this general protocol [41]:

- Data Set Preparation: The biological activity data (e.g., EC50 for fungicidal inhibition) and associated chemical structures are compiled.

- Descriptor Calculation: The 3D molecular geometries of the compounds are optimized using computational chemistry software (e.g., HyperChem). Molecular descriptors are then calculated, which can include constitutional, topological, and quantum-chemical descriptors [41].

- Model Building & Validation: Multiple modeling techniques are applied:

- Multiple Linear Regression (MLR): Establishes a linear correlation between selected descriptors and activity.

- Machine Learning Models (ANN/SVM): Non-linear models like Artificial Neural Networks (ANN) and Support Vector Machines (SVM) are trained. The dataset is typically divided into training and validation sets. The models' predictive performance is then evaluated using metrics like R² for internal cross-validation (R²cv) and external validation (R²test) [41].

A Practical Guide for Algorithm Selection

Choosing the most suitable LogP algorithm depends on the nature of the chemical space and the research objective. Based on the comparative analysis, the following guidance is recommended [39]:

- For Simple, Small Molecules (e.g., Fragment-Sized): ALogP is often sufficient and computationally efficient. However, a hybrid method like XLOGP may provide better accuracy.

- For Complex, Standard Small Molecules (Typical Drug-Like Compounds): Fragment-based methods such as ClogP are frequently the most accurate. Hybrid methods like XLOGP serve as a strong second choice.

- For Complex, Non-Standard Molecules (with Rare Motifs): Performance can be variable. Testing both a fragment-based method like ClogP and a hybrid method like XLOGP is advisable. If resources allow, determining logP experimentally for a few representative compounds allows for empirical validation of the best predictive model for that specific chemical series.

- For High-Throughput Screening of Large Libraries: Property-based methods like the original MLogP or other modern, fast algorithms are designed for speed with large datasets, though this may come at a cost to accuracy.

Table 3: Key Software and Resources for logP Prediction and QSAR Modeling

| Tool / Resource | Function / Description | Representative Algorithms |

|---|---|---|

| VEGA | A platform integrating various (Q)SAR models for toxicity and property prediction. | ALogP [25] |

| EPI Suite | A comprehensive suite for screening-level assessment of environmental fate. | KOWWIN (logP) [25] |

| ADMETLab | A web-based platform for systematic ADMET evaluation of chemicals. | Integrated LogP predictors [25] |

| ChemAxon | Provides a suite of cheminformatics software for drug discovery. | Multiple methods (VG, KlogP) [39] |

| BioByte ClogP | The proprietary implementation of the classic fragment-based method. | ClogP [39] |

| Molecular Structure Optimizer (e.g., HyperChem) | Software for drawing and energy-minimizing 3D molecular structures. | Used for descriptor calculation in custom QSAR [41] |

ALogP, XLOGP, ClogP, and MLOGP each offer distinct advantages rooted in their theoretical approaches. No single algorithm universally outperforms all others in every chemical context. Fragment-based and hybrid methods like ClogP and XLOGP generally demonstrate strong performance for drug-like molecules, while atomic methods like ALogP provide speed for simpler compounds. The choice of tool should be guided by the specific chemistry involved, the required balance between speed and accuracy, and, where possible, validated against experimental data for the compound series of interest. As QSAR and drug discovery continue to evolve, understanding these nuances empowers researchers to make informed decisions in leveraging in silico predictions for optimizing compound properties.

Lipophilicity, quantified as the logarithm of the n-octanol-water partition coefficient (logP) or distribution coefficient (logD), is a fundamental physicochemical parameter in Quantitative Structure-Activity Relationship (QSAR) studies [42] [43]. It serves as a critical descriptor for predicting the pharmacokinetic and pharmacodynamic behavior of drug candidates, influencing absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles [44] [42]. While in silico calculation methods exist, their accuracy can be variable, making experimental determination essential for reliable data [3] [42] [43]. Chromatographic techniques have emerged as powerful, indirect proxies for measuring lipophilicity, offering advantages in speed, reproducibility, and applicability across a wide polarity range [43] [45]. This guide objectively compares the performance of key chromatographic methods—Reversed-Phase Thin-Layer Chromatography (RP-TLC), Reversed-Phase High-Performance Liquid Chromatography (RP-HPLC), and Ultra-Performance Liquid Chromatography/Mass Spectrometry (UPLC/MS)—for logP determination in a research context.

Chromatographic methods estimate lipophilicity by correlating a compound's retention behavior in a reversed-phase system with its partitioning in a biphasic system. The retention factor, derived from this behavior, can be mathematically transformed to predict logP values [42] [43].

Key Chromatographic Methods

The table below summarizes the core principles, outputs, and key performance characteristics of the main chromatographic techniques used for logP estimation.

Table 1: Comparison of Key Chromatographic Methods for Lipophilicity Assessment

| Method | Core Principle & Lipophilicity Output | Key Performance Characteristics |

|---|---|---|

| RP-TLC | Retention parameter (( RM )) is calculated from the retardation factor (( RF )): ( RM = \log(1/RF - 1) ). ( R_{M0} ) (extrapolated to zero organic modifier) is used as a lipophilicity index [44] [45]. | Throughput: High (parallel analysis). Cost: Low. Advantages: Simple, fast, uses minimal solvents, suitable for impure samples [44] [45]. Limitations: Lower resolution compared to HPLC. |

| RP-HPLC | Lipophilicity index is the logarithm of the retention factor, logk (( \log k = \log(tR - t0)/t0 )). log( kw ) (extrapolated to 100% water) is correlated with logP [43] [45]. | Throughput: Moderate. Cost: Moderate. Advantages: High reproducibility, robust, insensitivity to impurities, broad dynamic range, high resolution [46] [43]. Limitations: Can be time-consuming for highly lipophilic compounds. |

| UPLC/MS | Utilizes sub-2μm particles for high-pressure separation. Provides logk or log( k_w ) analogous to HPLC. Coupling with MS offers definitive analyte identification [45]. | Throughput: High. Cost: High. Advantages: Very fast analysis, high resolution and sensitivity, superior peak capacity, selective detection with MS [45]. Limitations: Requires sophisticated instrumentation and method development. |

| HILIC | Retains polar compounds via hydrophilic interactions. Complementary to RP-LC, covering a different chemical space, particularly for very polar compounds (logD < 0) [47]. | Throughput: Moderate. Cost: Moderate to High. Advantages: Excellent for very polar/ionic analytes overlooked by RP-LC [47]. Limitations: Sensitive to operational parameters, can exhibit broad peak widths (~7s) [47]. |

Quantitative Method Comparison Data

A systematic 2025 study compared multiple chromatographic platforms using 127 environmentally relevant compounds, providing a direct performance comparison for logP determination [47]. The data below highlights the complementarity of different techniques.

Table 2: Analytical Coverage of Chemical Space by Chromatographic Platform (Adapted from [47])

| Chromatographic Platform | Approximate Coverage of Analytes with logD > 0 | Approximate Coverage of Analytes with logD < 0 (Very Polar) | Typical Peak Width | Key Application Note |

|---|---|---|---|---|

| RP-LC | ~90% | Low | ~4 seconds | Gold standard for non-polar to moderately polar compounds. |

| SFC | ~70% | Up to ~60% | ~2.5 seconds | Narrowest peaks; good complement to RP-LC. |

| HILIC | <30% | Up to ~60% | ~7 seconds | Essential for polar ionic compounds; broader peaks. |

| IC | <30% | Performance better in negative mode | ~17 seconds | Suitable for ionic analytes; requires specific eluents. |

| RP-LC + SFC | >94% combined coverage | >94% combined coverage | N/A | Optimal combination for extended coverage. |

| RP-LC + HILIC | >94% combined coverage | >94% combined coverage | N/A | Optimal combination for extended coverage. |

Detailed Experimental Protocols

Protocol for RP-TLC logP Determination

This protocol is adapted from a 2025 study on the lipophilicity of neuroleptics and a 2024 study on PDE10A inhibitors [44] [45].

- Stationary Phase: Use pre-coated silica gel RP-18 F~254~ plates (e.g., from Merck).

- Mobile Phase: Prepare binary mixtures of a water-miscible organic modifier and water or buffer. Common modifiers include methanol, acetonitrile, or 1,4-dioxane [44]. Prepare a series of concentrations (e.g., from 40% to 80% organic modifier in 5% increments) [45].

- Sample Preparation: Dissolve analytes in a suitable solvent like methanol to a concentration of ~1 mg/mL [45].

- Application: Apply sample solutions as bands (e.g., 5 mm) onto the plate using an automatic applicator (e.g., CAMAG Linomat). Spot 10 µL of each solution [45].

- Chromatography Development: Place the plate in a vertical chamber previously saturated with the mobile phase vapor for about 20 minutes. Develop the chromatogram over a fixed distance (e.g., 9 cm) [45].

- Detection: Visualize spots under UV light at appropriate wavelengths (e.g., 254 nm or 366 nm) [45].

- Data Calculation:

- Measure the retardation factor (( RF )) for each spot: ( RF = \text{Distance traveled by solute} / \text{Distance traveled by solvent front} ).

- Calculate the ( RM ) value: ( RM = \log(1/RF - 1) ).

- For each compound, plot ( RM ) values against the concentration of the organic modifier in the mobile phase.

- Extrapolate the linear plot to 0% organic modifier to obtain ( R_{M0} ), which is used as the chromatographic lipophilicity index [44] [45].

Protocol for RP-HPLC logP Determination Using an AQbD Approach

This protocol is based on a 2025 study developing a method for Favipiravir, showcasing an Analytical Quality by Design (AQbD) framework [46].

- Column: A C18 column (e.g., Inertsil ODS-3, 250 mm x 4.6 mm, 5 µm) is standard. For metal-sensitive compounds, use columns with inert hardware [46] [48].

- Mobile Phase: A typical mobile phase is a mixture of acetonitrile and an aqueous buffer (e.g., 20 mM disodium hydrogen phosphate, pH adjusted to 3.1 with phosphoric acid). An isocratic elution with 18% acetonitrile and 82% buffer can be used [46].

- System Parameters: Flow rate of 1.0 mL/min, column temperature at 30°C, and detection by DAD at 323 nm [46].

- Data Calculation:

- Record the retention time (( tR )) of the analyte and the void time (( t0 )) of an unretained compound (e.g., methanol or sodium nitrate).

- Calculate the retention factor, ( k ): ( k = (tR - t0)/t0 ).

- The logarithm of the retention factor, logk, serves as a lipophilicity index under isocratic conditions. For a more universal measure, logk can be determined at several organic modifier concentrations and extrapolated to 100% aqueous mobile phase to obtain log( kw ) [43].

Figure 1: Generic Workflow for HPLC-Based logP Determination.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of chromatographic logP methods requires specific materials. The following table details essential solutions and materials, with recommendations informed by current vendor offerings in 2025 [48].

Table 3: Essential Research Reagents and Materials for Chromatographic logP Determination

| Item Category | Specific Examples & Descriptions | Function in logP Determination |

|---|---|---|

| Stationary Phases | RP-18 F~254~ TLC Plates (e.g., Merck Si 60 RP-18 F~254~): Pre-coated plates for RP-TLC [45]. | The non-polar phase for retention; backbone of reversed-phase chromatography. |

| U/HPLC C18 Columns: (e.g., Fortis Evosphere C18/AR, Waters Acquity UPLC BEH C18) [45] [48]. | High-efficiency columns for fast and high-resolution separations. | |

| Alternative Selectivity Phases: Biphenyl (e.g., Horizon Aurashell Biphenyl), Phenyl-Hexyl (e.g., Advanced Materials Technology Halo) [48]. | Provide complementary selectivity for challenging separations (e.g., isomers). | |

| Mobile Phase Modifiers | Methanol, Acetonitrile, 1,4-Dioxane [44]. | Organic modifiers that control elution strength and retention. |

| Buffers & Additives | Ammonium Acetate/Ammonia (pH 7.4), Disodium Hydrogen Phosphate (pH 3.1), Formic Acid [46] [45]. | Control pH and ionic strength to suppress analyte ionization and modulate retention. |

| Specialized Columns | Inert Hardware Columns: (e.g., Restek Raptor Inert, Advanced Materials Technology Halo Inert) [48]. | Minimize metal-analyte interactions, improving peak shape and recovery for metal-sensitive compounds (e.g., phosphates). |

| HILIC Columns: (e.g., Waters Acquity Premier BEH Amide) [47]. | Essential for retaining and analyzing very polar compounds that are not held by RP-LC. |

No single chromatographic method universally rules logP determination; each has distinct strengths and applications within QSAR research [47]. RP-TLC remains a valuable tool for high-throughput, low-cost initial screening. RP-HPLC is the robust, versatile workhorse for most applications, while UPLC/MS offers superior speed and sensitivity for complex mixtures. As demonstrated in Table 2, combining RP-LC with a complementary technique like HILIC or SFC is the most effective strategy to cover a broad chemical space, ensuring highly polar and ionic analytes are not missed in lipophilicity screening [47].

The choice of method should be guided by the specific research needs:

- For rapid screening of a large number of compounds, use RP-TLC.

- For high-accuracy, reproducible logP data of well-defined compounds, use RP-HPLC.

- For analyzing complex mixtures or when definitive identification is required, use UPLC/MS.

- For compounds that are highly polar (logD < 0) or ionic, incorporate HILIC or IC into the workflow.

Figure 2: Strategic Selection of Chromatographic Methods for logP Determination.

The efficacy and pharmacokinetic profile of a drug candidate are profoundly influenced by its molecular properties, among which lipophilicity is a paramount factor. In the context of Quantitative Structure-Activity Relationship (QSAR) studies, lipophilicity descriptors are fundamental for building robust predictive models. They serve as a major contribution to host-guest interactions and ligand binding affinity [1]. The design of acylshikonin derivatives as antitumor agents provides an exemplary case for demonstrating how an integrated computational workflow, prioritizing lipophilicity, can efficiently streamline drug discovery.

This case study objectively compares the performance of different methodological components within a comprehensive QSAR-Docking-ADMET workflow. By detailing experimental protocols and presenting quantitative data, this guide serves as a framework for researchers aiming to implement a similar strategy for optimizing anticancer agents.

The integrated workflow for optimizing acylshikonin derivatives follows a sequential, multi-stage protocol that synergizes various computational techniques. This approach is designed to iteratively refine and validate potential drug candidates before costly synthetic and experimental efforts.

Workflow Diagram

The following diagram illustrates the logical sequence and feedback loops within the standard integrated workflow.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of this workflow relies on a suite of specialized software and computational tools. The table below catalogues the essential "research reagents" for each phase of the process.

Table 1: Key Research Reagent Solutions for the Integrated Workflow

| Tool Category | Example Software/Platform | Primary Function in Workflow |

|---|---|---|

| Chemical Modeling | Schrödinger Maestro [49], OCHEM [10] | Provides an integrated environment for molecular modeling, QSAR, and property prediction. |

| Descriptor Calculation | Density Functional Theory (DFT) [50], Molecular Force Fields (MMFF) [51] | Calculates quantum mechanical and physicochemical descriptors (e.g., polarizability, HBD) for QSAR. |