Ligand-Based Virtual Screening: A 2025 Guide to Maximizing Enrichment Rates in Early Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on evaluating and optimizing ligand-based virtual screening (LBVS) enrichment rates.

Ligand-Based Virtual Screening: A 2025 Guide to Maximizing Enrichment Rates in Early Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on evaluating and optimizing ligand-based virtual screening (LBVS) enrichment rates. It covers foundational principles, from defining enrichment rates and benchmarking sets to avoiding common biases. The review details cutting-edge methodologies, including deep learning and fragment-based approaches, and explores strategies for troubleshooting and performance optimization. Finally, it offers a rigorous framework for the validation and comparative assessment of LBVS methods against structure-based techniques, synthesizing key takeaways to enhance R&D efficiency and success rates in modern drug discovery pipelines.

Core Concepts and Benchmarking Foundations for LBVS Enrichment

In the face of rising research and development costs, which now exceed $3.5 billion per novel drug, the pharmaceutical industry is in a persistent battle to improve its R&D efficiency [1]. For researchers using ligand-based virtual screening (LBVS)—a method to identify new bioactive compounds from large chemical libraries by comparing them to known active ligands—quantifying success is not just beneficial; it is essential. At the heart of this quantification is the enrichment rate, a crucial metric for evaluating the performance of virtual screening approaches and ensuring that limited R&D resources are focused on the most promising candidates [2].

This guide will objectively compare the methods and datasets used to measure enrichment rates, providing scientists with the experimental protocols and tools needed to conduct rigorous and unbiased assessments of their LBVS campaigns.

Understanding Enrichment Rates

In virtual screening, the primary goal is to "filter out thousands of nonbinders in silico" and identify a shortlist of molecules with a high probability of being true binders [2]. The enrichment rate measures how effectively a screening method achieves this goal.

Conceptually, ligand enrichment is "a metric to assess the capacity to place true ligands at the top-rank of the screen list among a pool of a large number of decoys" [2]. In practice, a virtual screen ranks all compounds in a library from most to least likely to be active. A method with good enrichment will have concentrated the true active molecules at the very top of this list. A poor method will scatter them randomly throughout the ranking. High enrichment rates in early screening directly translate to more efficient downstream research, as they reduce the cost and time associated with synthesizing and experimentally testing non-binders [2].

The standard tool for visualizing and quantifying this performance is the Enrichment Factor (EF) plot, often derived from a retrospective screening simulation using a benchmarking set.

Comparative Analysis of Benchmarking Data and Methods

The accurate measurement of enrichment rates relies on benchmarking sets—curated collections of known active ligands and presumed inactive molecules (decoys) [2]. The quality of these sets is paramount, as biases can lead to a misleadingly optimistic or pessimistic assessment of a method's true power. The table below summarizes key LBVS-specific benchmarking sets.

Table 1: Key Ligand-Based Virtual Screening (LBVS) Benchmarking Sets

| Dataset Name | Source of Actives | Source of Inactives/Decoys | Key Features and Considerations |

|---|---|---|---|

| Maximum Unbiased Validation (MUV) [2] | PubChem (actives with EC50) [2] | PubChem (inactives) [2] | Specifically designed to be maximum-unbiased; uses a background of ~500 decoys per active to reduce the chance of artificial enrichment [2]. |

| DUD LIB VS 1.0 [2] | DUD ligands [2] | DUD decoys [2] | An early LBVS-specific set derived from the Directory of Useful Decoys (DUD) [2]. |

| REPROVIS-DB [2] | Information not in search results | Information not in search results | The "database of reproducible virtual screens"; details are limited in the provided search results [2]. |

The choice of benchmarking set is critical because an unsuitable set can produce a biased assessment that does not reflect real-world performance. The three main types of bias to avoid are [2]:

- "Analogue bias": When the active molecules in the set are too structurally similar to each other, making it easy for similarity-based methods to perform well without demonstrating broad applicability.

- "Artificial enrichment": When decoys are not property-matched to actives (e.g., differing significantly in molecular weight or lipophilicity), allowing trivial filters, rather than true predictive power, to separate them.

- "False negatives": When the set of presumed inactives accidentally contains molecules that are actually active against the target, which penalizes methods that correctly identify them.

Experimental Protocols for Assessing Enrichment Rates

To ensure a fair and objective comparison of different LBVS methods, a standardized experimental protocol must be followed. The workflow below outlines the key steps for a retrospective enrichment assessment.

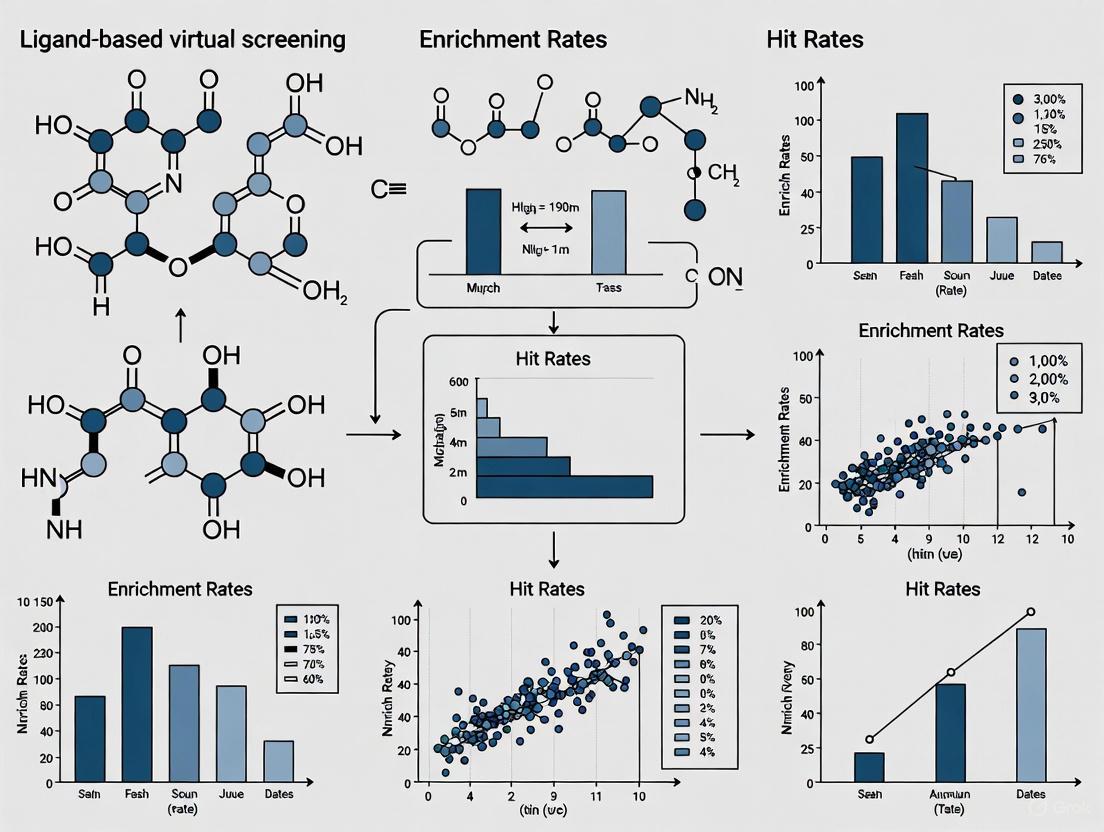

Diagram 1: Workflow for enrichment rate assessment. This process evaluates Ligand-Based Virtual Screening (LBVS) method performance using benchmarking sets.

Detailed Methodology

Select an Unbiased Benchmarking Set: Choose a dataset designed for LBVS, such as MUV, to minimize the biases outlined above [2]. The set should contain a list of confirmed active ligands and a larger pool of property-matched decoys.

Execute the Virtual Screen: Run the LBVS method (e.g., a similarity search or a Quantitative Structure-Activity Relationship (QSAR) model) on the entire benchmarking set. The method will compute a score (e.g., a similarity value or a predicted probability of activity) for every molecule in the set.

Generate a Rank-Ordered List: Sort all compounds—both actives and decoys—based on their scores, from most to least likely to be active.

Calculate Performance Metrics: The ranked list is used to compute enrichment metrics. A common and robust metric is the Enrichment Factor (EF), which can be calculated at a specific fraction of the screened library (e.g., EF1%).

- Formula:

EF = (Hitssampled / Nsampled) / (Hitstotal / Ntotal) - Variables:

Hitssampled: Number of known active ligands found within the top-ranked fraction (e.g., the top 1%).Nsampled: Total number of compounds in that top-ranked fraction (e.g., 1% of the total library size).Hitstotal: Total number of known active ligands in the entire benchmarking set.Ntotal: Total number of compounds in the entire benchmarking set.

- An EF of 1 indicates random performance; higher values indicate better enrichment.

- Formula:

Analyze and Compare: Plot the cumulative number of active compounds found versus the fraction of the library screened (a ROC curve can also be used). Compare the EF plots and EF values of different methods to determine which one performs best for your target of interest.

Successful enrichment rate evaluation depends on both data and software. The following table details key resources for building and executing LBVS experiments.

Table 2: Essential Research Reagents and Computational Tools for LBVS

| Item / Resource | Type | Function in Enrichment Evaluation |

|---|---|---|

| MUV Dataset [2] | Benchmarking Data | A publicly available, maximum-unbiased set used to fairly evaluate and compare LBVS methods without analogue bias or artificial enrichment [2]. |

| Chembench [2] | Software Platform | A publicly accessible workflow management system that incorporates QSAR modeling workflows for LBVS, enabling researchers to build and apply predictive models [2]. |

| 2D Structural Fingerprints [2] | Computational Descriptor | A pivotal tool for LBVS; these are vector representations of molecular structure used to calculate similarity between molecules, forming the basis of many screening methods [2]. |

| Support Vector Machine (SVM) [3] | Machine Learning Algorithm | A type of ligand-based scoring function that can be trained on known active and inactive molecules to predict the activity of new compounds, guiding molecule generation or prioritization [3]. |

| Directory of Useful Decoys (DUD/DUD-E) [2] | Benchmarking Data | While designed for structure-based screening, its ligands and property-matched decoys are sometimes adapted or used in LBVS evaluations, as seen in DUD LIB VS 1.0 [2]. |

In an era of intense pressure to improve pharmaceutical R&D productivity, leveraging robust metrics is not optional [1]. For scientists employing ligand-based virtual screening, a rigorous and unbiased evaluation of enrichment rates is a cornerstone of research efficiency. By using well-designed benchmarking sets like MUV, following standardized experimental protocols, and correctly interpreting enrichment factors, research teams can objectively compare computational methods. This disciplined approach ensures that valuable wet-lab resources are dedicated to the most promising virtual hits, ultimately accelerating the journey toward discovering novel therapeutics.

Ligand-Based Virtual Screening (LBVS) is a fundamental approach in modern drug discovery that identifies potential bioactive compounds by leveraging the chemical similarity and shared properties of known active molecules, without requiring 3D structural information of the target protein. The accuracy and effectiveness of LBVS methodologies must be rigorously evaluated through benchmarking sets—carefully curated collections of known active compounds and presumed inactive molecules (decoys) that mimic real-world screening scenarios [2]. These benchmarking sets enable researchers to assess the ligand enrichment power of various VS approaches, providing crucial metrics on their ability to prioritize true actives over decoys in retrospective screening experiments [4].

The development of specialized benchmarking sets for LBVS presents unique challenges distinct from those for Structure-Based Virtual Screening (SBVS). While SBVS-specific sets like Directory of Useful Decoys (DUD) and DUD-E have been widely available, ready-to-apply datasets specifically designed for LBVS have remained limited [5] [2]. This primer examines the evolution, methodological foundations, and current landscape of LBVS-specific benchmarking sets, with particular focus on their critical role in producing unbiased evaluations of virtual screening performance within ligand enrichment rate research.

The Evolution of Benchmarking Sets: From General to LBVS-Specific

Historical Context and Key Challenges

The development of benchmarking datasets has evolved significantly from initially using random decoys to sophisticated strategies that minimize evaluation biases. Early benchmarking efforts utilized simple property-matched decoys, but these often introduced systematic biases that compromised virtual screening assessments [4]. Three critical issues have been identified in benchmarking set quality:

- Artificial Enrichment: Occurs when ligands differ significantly from decoys in low-dimension vector space of physicochemical properties or molecular topologies, allowing trivial discrimination based on obvious dissimilarities rather than method performance [5] [2].

- Analogue Bias: Results from overrepresentation of structural analogues in the active set, which can artificially inflate the performance of similarity-based LBVS methods [5] [2].

- False Negatives: Arises when decoys presumed inactive are actually active against the target, leading to underestimation of method performance [2].

The Transition to LBVS-Specific Designs

While SBVS-specific benchmarking sets like DUD [2], DUD-E [6], DEKOIS [2], and GLL/GDD [5] became increasingly available, their direct application to LBVS evaluation remained problematic due to inherent structural biases. This limitation prompted the development of dedicated LBVS-specific benchmarking sets designed to address the unique requirements of similarity-based screening approaches [5].

Table 1: Historical Overview of Major Virtual Screening Benchmarking Sets

| Name | Publication Year | Primary Design Purpose | Decoy Selection Strategy | Notable Features |

|---|---|---|---|---|

| DUD | 2006 | SBVS | Property-matched but structurally dissimilar [2] | First major systematic benchmarking set; 36 decoys per ligand [2] |

| MUV | 2009 | LBVS | Based on PubChem bioactivity data using refined nearest neighbor analysis [2] | Specifically designed to minimize analogue bias; 500 decoys per ligand [2] |

| DUD LIB V1.0 | 2009 | LBVS | Clustering of actives to enlarge chemical diversity [2] | Applied weighting scheme based on ROC metric following ligand clustering [5] |

| REPROVIS-DB | 2011 | LBVS | Compiles data from prior LBVS applications [5] | Includes reference compounds, screening databases, and experimentally confirmed hits [5] |

| ULS/UDS | 2014 | LBVS | Property matching with topological dissimilarity [5] | Unbiased Ligand/Decoy Sets with three-strategy bias reduction [5] |

| MUBD-HDACs | 2015 | Both SBVS & LBVS | Maximal unbiased benchmarking for HDACs [6] | Covers all 4 classes including 14 HDAC isoforms; applicable to both approaches [6] |

Key LBVS-Specific Benchmarking Sets and Their Methodologies

Maximum Unbiased Validation (MUV)

The MUV dataset represents a foundational LBVS-specific benchmarking approach derived from PubChem bioactivity data. Its design employs refined nearest neighbor analysis originated from spatial statistics to effectively minimize analogue bias [2] [4]. The MUV selection strategy specifically addresses the overrepresentation of structural analogues by ensuring active compounds are separated by a sufficient distance in chemical space, thereby creating a more challenging and realistic benchmarking scenario [2].

Unbiased Ligand Set (ULS) and Unbiased Decoy Set (UDS)

The ULS/UDS methodology introduces a comprehensive workflow specifically designed to address LBVS benchmarking requirements [5]. This approach incorporates three main strategies to minimize biases:

- Analogues Excluding: Actively removes structural analogues from the active compound set to reduce analogue bias [5].

- Physicochemical Properties-Based Strategy: Implements property matching between actives and decoys while ensuring they occupy similar regions of physicochemical property space [5].

- Topology-Based Strategy: Maintains structural dissimilarity in chemical topology between actives and decoys to avoid false negatives [5].

This methodology was specifically validated on GPCR targets, demonstrating a significant reduction in both "artificial enrichment" and "analogue bias" compared to the GPCR Ligand Library (GLL)/GPCR Decoy Database (GDD) set [5].

Maximal Unbiased Benchmarking Data Sets for HDACs (MUBD-HDACs)

The MUBD-HDACs represents an extension of unbiased benchmarking principles to histone deacetylase targets. This comprehensive set covers all 4 HDAC classes (including Class III Sirtuins family) and 14 HDAC isoforms, comprising 631 inhibitors and 24,609 unbiased decoys [6]. Its development demonstrated unique applicability to both LBVS and SBVS approaches, addressing the limited coverage of HDAC isoforms in existing benchmarking resources [6]. The MUBD-HDACs also introduced a novel metric, NLBScore, to detect "2D bias" and "LBVS favorable" effects within benchmarking sets [6].

Table 2: Comparative Analysis of Major LBVS-Specific Benchmarking Sets

| Benchmarking Set | Chemical Space Coverage | Bias Reduction Strategies | Target Coverage | Decoys per Ligand Ratio |

|---|---|---|---|---|

| MUV | PubChem-derived actives and inactives [2] | Spatial statistics and nearest neighbor analysis [2] | Targets with sufficient PubChem bioactivity data [2] | 500 [2] |

| ULS/UDS | GPCR-focused from GLL/GDD [5] | Three-strategy approach: analogues excluding, property and topology filtering [5] | 17 agonists/antagonists sets of 10 GPCRs [5] | 39 (original GLL/GDD ratio) [5] |

| MUBD-HDACs | HDAC inhibitors from ChEMBL [6] | Maximal unbiased benchmarking with NLBScore metric [6] | 14 HDAC isoforms [6] | ~39 (24,609 decoys for 631 ligands) [6] |

| DUD LIB V1.0 | DUD ligands with enhanced diversity [2] | Ligand clustering to enlarge chemical diversity [5] | Limited to targets in original DUD [2] | Not specified |

Methodological Deep Dive: Constructing Unbiased Benchmarking Sets

Workflow for Unbiased Benchmarking Set Construction

The construction of maximal unbiased benchmarking sets follows a systematic workflow designed to simultaneously ensure chemical diversity of actives while maintaining physicochemical similarity yet topological dissimilarity between actives and decoys [5] [6]. The following diagram illustrates this comprehensive methodology:

Experimental Protocols for Benchmarking Set Validation

The validation of benchmarking set quality typically employs Leave-One-Out (LOO) Cross-Validation using multiple LBVS approaches [5] [6]. The standard experimental protocol involves:

VS Method Selection: Employ diverse LBVS methods including 2D similarity searching (using structural fingerprints like MACCS and FCFP_6) and physicochemical property-based screening ("simp" method) [5].

Cross-Validation Scheme: Implement LOO-CV where each active compound is systematically left out as a query against a screening database containing the remaining actives and all decoys [5].

Performance Metrics: Calculate average AUC (Area Under the Curve) of ROC (Receiver Operating Characteristic) curves across all queries [5]. Additional metrics include Enrichment Factors (EF) at early screening percentages (EF1%, EF5%) [7].

Bias Assessment: Compare performance with known biased sets (e.g., GLL/GDD) to quantify reduction in artificial enrichment [5]. Implement the NLBScore metric to detect residual 2D bias [6].

This protocol ensures that the benchmarking sets provide a challenging but fair evaluation platform that reflects real-world LBVS application scenarios while minimizing systematic biases.

Table 3: Key Research Reagent Solutions for LBVS Benchmarking Studies

| Resource | Type | Primary Function | Access Information |

|---|---|---|---|

| MUBD-HDACs | Benchmarking set | Maximal unbiased benchmarking for histone deacetylases [6] | Freely available at http://www.xswlab.org/ [6] |

| DUD-E Server | Decoy generation tool | Generates target-specific decoys for SBVS [6] | http://dude.docking.org/generate [6] |

| DecoyFinder | Decoy generation tool | Builds target-specific decoy sets using DUD algorithm [5] | http://urvnutrigenomica-ctns.github.io/DecoyFinder/ [2] |

| ZINC Database | Compound library | Source of putative inactive compounds for decoy selection [2] [4] | https://zinc.docking.org/ [2] |

| ChEMBL Database | Bioactivity database | Source of known active compounds for ligand set construction [6] | https://www.ebi.ac.uk/chembl/ [6] |

| GPCR Ligand Library (GLL) | Specialized benchmarking set | Ligand and decoy sets for GPCR targets [5] | http://cavasotto-lab.net/Databases/GDD/ [5] |

Current Trends and Future Perspectives

The field of LBVS benchmarking continues to evolve with emerging methodologies and technologies. Recent advances include the integration of artificial intelligence and machine learning approaches to further enhance the quality and applicability of benchmarking sets [8] [9]. The development of Alpha-Pharm3D, which utilizes 3D pharmacophore fingerprints to predict ligand-protein interactions, represents one such innovation that shows promise for improving virtual screening accuracy [9].

Additionally, there is growing recognition of the need for benchmarking sets that can adequately address the challenges posed by difficult targets such as protein-protein interactions, allosteric sites, and resistant mutant variants [7]. The comprehensive benchmarking of both wild-type and quadruple-mutant PfDHFR variants demonstrates this evolving trend toward addressing real-world drug discovery challenges [7].

Future directions in LBVS benchmarking will likely focus on the development of dynamic benchmarking sets that can adapt to expanding chemical space, incorporate experimental validation data more systematically, and provide more nuanced assessment of scaffold-hopping capability—a critical requirement for successful lead discovery in LBVS campaigns.

In the field of computer-aided drug discovery, virtual screening (VS) has become an indispensable technique for identifying bioactive compounds against specific targets in a cost-effective and time-efficient manner [10] [2]. Retrospective small-scale virtual screening based on benchmarking datasets has been widely used to estimate ligand enrichments of VS approaches in prospective, real-world drug discovery efforts [10] [2]. The performance of each virtual screening approach is typically measured by ligand enrichment, a metric that assesses the capacity to place true ligands at the top-rank of the screen list among a pool of a large number of decoys—presumed inactives not likely to bind to the target [10] [2]. The combination of true ligands and their associated decoys is known as the benchmarking set [10].

However, the intrinsic differences between benchmarking sets and real screening chemical libraries can cause significantly biased assessment outcomes [10] [2]. The quality of these benchmarking sets becomes crucial for fair and comprehensive evaluation of virtual screening methods [2]. When benchmarking sets contain inherent biases, they cannot accurately reflect the realistic enrichment power of various approaches for prospective virtual screening campaigns, potentially leading to overestimated performance metrics and misguided method selection in actual drug discovery projects [10] [2]. This article examines the three main types of biases—analogue bias, artificial enrichment, and false negatives—that plague virtual screening validation and provides comparative analysis of methodologies to overcome these challenges.

Types of Benchmarking Bias in Virtual Screening

Analogue Bias

Analogue bias occurs when a benchmarking set contains chemically similar structures (analogues) within the ligand set, making the enrichment unrealistically easy and causing performance overestimation [2] [11]. This type of bias is characterized by highly similar chemical structures in the ligand set, which can artificially inflate the perceived performance of ligand-based virtual screening approaches that rely on chemical similarity measures [11]. When structurally analogous compounds dominate the active ligand set, similarity-based methods can achieve impressive early enrichment simply by recognizing familiar structural patterns, without demonstrating true predictive power for diverse chemotypes [10]. This creates a misleading assessment that doesn't reflect real-world screening scenarios where discovering novel structural classes is often the primary objective.

The problem of analogue bias is particularly pronounced in benchmarking sets that were compiled without careful consideration of chemical diversity [10]. Early benchmarking sets often gathered all known actives for a target without applying sufficient structural clustering or diversity selection, resulting in overrepresentation of certain chemical scaffolds [10]. This bias disproportionately benefits similarity-based methods in comparative assessments, potentially leading researchers to select suboptimal approaches for prospective campaigns where structural novelty is essential [11].

Artificial Enrichment

Artificial enrichment bias is mainly caused by significant mismatching of low-dimensional physicochemical properties between designed decoys and ligands [2] [11]. This bias makes ligand enrichment of virtual screening approaches unrealistically easy, leading to performance overestimation [11]. In structure-based virtual screening, this occurs when decoys are physically or chemically distinguishable from active ligands in ways that scoring functions can easily detect, without actually recognizing true binding interactions [10].

The directory of useful decoys (DUD) dataset and its enhanced version DUD-E were specifically designed to address this bias by ensuring that decoys resemble actives in physical properties but differ in topology [10] [2]. However, if property matching is insufficient, the decoys become artificially easy to distinguish from true binders, creating an unrealistic assessment scenario [10]. For example, if decoys systematically differ in molecular weight, lipophilicity, or polar surface area, even simplistic scoring functions can achieve high enrichment by recognizing these property disparities rather than genuine binding affinity [10] [11]. This provides an exaggerated view of method performance that doesn't translate to real screening libraries where such systematic differences don't exist.

False Negatives

False negative bias occurs when presumed inactives in the decoy set turn out to be actives, thereby reducing the apparent ligand enrichment and potentially causing researchers to overlook valuable screening methods [10] [11]. This problem extends beyond traditional virtual screening benchmarks; recent research on DNA-encoded chemical libraries (DECLs) has revealed widespread false negatives that impair machine learning-based lead prediction [12].

In DECL selections, studies have found that numerous active compounds are frequently missed, with multiple false negatives for each identified hit [12]. The presence of the DNA-conjugation linker has been identified as a factor contributing to the underdetection of active molecules, as it can influence binding behavior and obscure true activity [12]. This bias compromises the predictive power of DECL data for prioritizing hits, anticipating target selectivity, and training machine learning models [12]. The false negative problem is particularly insidious because it leads to underestimation of method performance and may cause researchers to abandon potentially effective screening approaches due to artificially depressed enrichment metrics.

Table 1: Characteristics and Impacts of Major Benchmarking Biases

| Bias Type | Main Causes | Impact on VS Assessment | Common in Dataset Types |

|---|---|---|---|

| Analogue Bias | Chemically similar structures in ligand set | Overestimation of LBVS performance | Early benchmarking sets without diversity control |

| Artificial Enrichment | Physicochemical property mismatches between decoys and ligands | Overestimation of SBVS performance | Poorly constructed decoy sets |

| False Negatives | Active compounds misclassified as inactives | Underestimation of method performance | DECL data and sets with insufficient activity testing |

Experimental Assessment of Benchmarking Bias

Benchmarking Datasets and Protocols

Multiple standardized datasets have been developed to address benchmarking biases in virtual screening. The Directory of Useful Decoys (DUD) and its enhanced version DUD-E are among the most widely used benchmarking sets for structure-based virtual screening approaches [10] [2]. DUD-E comprises 102 targets with 22,886 active compounds and 1.4 million decoys, employing a property-matching strategy to generate decoys that resemble actives in physical properties but differ in topology [10] [2]. For ligand-based virtual screening, the Maximum Unbiased Validation (MUV) dataset was specifically designed to avoid analogue bias by ensuring that active compounds are structurally diverse while decoys are selected from confirmed inactives through neighborhood-based analysis [10] [2].

The experimental protocol for bias assessment typically involves running virtual screening algorithms on these benchmarking sets and evaluating their performance using enrichment metrics [10] [13]. The critical step is comparing performance across different dataset types to identify inconsistencies that may indicate bias susceptibility. For example, a method that performs well on DUD but poorly on MUV might be leveraging analogue bias, while one that shows the reverse pattern might be sensitive to the different decoy selection strategies [10] [2]. The leave-one-out cross-validation (LOO CV) approach has been used to demonstrate that maximum-unbiased benchmarking sets show consistent performance as measured by property matching, ROC curves, and AUCs [10].

Performance Metrics and Statistical Evaluation

The hit enrichment curve is commonly used to summarize the effectiveness of a virtual screening campaign, plotting the proportion of active ligands identified (recall) as a function of the fraction of ligands tested [13]. A key consideration in evaluating these curves is that uncertainty is often large at the small testing fractions that are most relevant to researchers [13]. Appropriate statistical inference must account for two sources of correlation that are often overlooked: correlation across different testing fractions within a single algorithm, and correlation between competing algorithms [13].

The EmProc method has been developed as an effective approach for hypothesis testing and constructing confidence intervals for hit enrichment curves [13]. This method is particularly important because traditional statistical tests assuming independent binomial proportions are inappropriate due to the correlation introduced when determining testing order based on scores from all ligands [13]. For the comparative assessment of scoring functions, the CASF-2016 benchmark provides standardized tests for docking power (ability to identify native binding poses), scoring power (ranking binding affinities), and screening power (distinguishing binders from non-binders) [14].

Table 2: Standardized Benchmarking Datasets for Virtual Screening

| Dataset | Primary VS Type | Key Features | Target Coverage | Decoy Selection Strategy |

|---|---|---|---|---|

| DUD/DUD-E | Structure-based | Property-matched decoys | 102 targets | Physical property matching with topological dissimilarity |

| MUV | Ligand-based | Avoids analogue bias | 17 targets | Neighborhood-based analysis of PubChem data |

| DEKOIS | Structure-based | Focus on difficult decoys | Multiple targets | Optimized to be difficult for docking programs |

| MUBD-CRs | Both | Maximum unbiased design | 13 chemokine receptors | Spatial random distribution with property matching |

Methodological Approaches to Overcome Bias

Maximum Unbiased Benchmarking Sets (MUBD)

Recent advances in benchmarking methodologies have led to the development of maximum unbiased benchmarking datasets (MUBD) designed to minimize all three major types of bias [11]. The unique feature of the MUBD approach is its pursuit of spatial random distribution of compounds in the decoy set while maintaining good property matching [10]. This methodology has been implemented in tools like MUBD-DecoyMaker and successfully applied to build benchmarking sets for various target classes, including human histone deacetylases (HDACs) and chemokine receptors [10] [11].

For chemokine receptors, the MUBD-hCRs dataset encompasses 13 subtypes, composed of 404 ligands and 15,756 decoys, with demonstrated chemical diversity in ligands and maximal unbiased decoys in terms of both "artificial enrichment" and "analogue bias" [11]. The validation studies show that MUBD-hCRs performs effectively in ligand enrichment assessments of both structure-based and ligand-based virtual screening approaches compared to other publicly available benchmarking datasets [11]. The key innovation in MUBD is the application of a uniform selection policy that doesn't preferentially exclude certain compound types, thereby maintaining chemical diversity while controlling for physicochemical properties [10] [11].

AI-Driven and Flexible Docking Approaches

Artificial intelligence approaches are increasingly being applied to address benchmarking biases in virtual screening. AI-driven methods enhance protein-ligand interaction predictions across pose prediction, scoring, and virtual screening tasks [8]. Geometric deep learning models and hybrid approaches integrating sequence and structure-based embeddings have shown particular promise in refining ligand binding site identification and improving scoring functions [8]. These methods can surpass traditional docking approaches by better capturing the complex relationships between protein features and ligand binding.

The RosettaVS platform exemplifies recent advances, incorporating receptor flexibility through modeling of sidechain and limited backbone movement, which proves critical for targets requiring induced conformational changes upon ligand binding [14]. This platform employs a modified docking protocol with two modes: virtual screening express (VSX) for rapid initial screening and virtual screening high-precision (VSH) with full receptor flexibility for final ranking of top hits [14]. Benchmarking results demonstrate that RosettaGenFF-VS achieves leading performance in distinguishing native binding poses from decoy structures and identifies the best binding small molecules within the top 1% ranked molecules, surpassing other methods [14].

Diagram 1: Comprehensive approach to bias mitigation in virtual screening. The framework illustrates how multiple methodological solutions converge to reduce benchmarking bias and improve real-world screening performance.

Comparative Performance of Bias-Reduced Methodologies

Benchmarking Results Across Dataset Types

Comparative studies evaluating virtual screening methods on different benchmarking datasets reveal significant performance variations that highlight the impact of bias correction. The MUBD-hCRs dataset, when applied to chemokine receptors CXCR4 and CCR5, demonstrated capabilities in designating optimal virtual screening approaches that differed from recommendations based on more biased datasets [11]. Similarly, the RosettaVS method showed top performance on the CASF-2016 benchmark, with an enrichment factor of 16.72 at the top 1%, significantly outperforming the second-best method (EF1% = 11.9) [14].

The screening power test, which assesses the capability of a scoring function to identify true binders among negative small molecules, shows that bias-reduced methods maintain performance across diverse target types and chemical spaces [14]. Analysis of various screening power subsets demonstrates significant improvements in more polar, shallower, and smaller protein pockets compared to other methods [14]. This consistent performance across challenging target classes indicates that the bias reduction approaches translate to generalized improvements rather than target-specific optimization.

Statistical Validation of Method Improvements

Robust statistical validation is essential for confirming that observed performance improvements result from genuine methodological advances rather than random variation or residual biases. Recent work on confidence bands and hypothesis tests for hit enrichment curves addresses the critical need for appropriate uncertainty quantification in virtual screening assessment [13]. The EmProc-based confidence bands provide simultaneous coverage with minimal width, enabling proper comparison of entire enrichment curves rather than just individual points [13].

These statistical approaches are particularly valuable given the extremely imbalanced nature of virtual screening datasets, where active compounds may represent less than 1% of the total compounds screened [13]. By accounting for correlation between different testing fractions and between competing algorithms, these methods prevent false conclusions about method superiority that could arise from improper handling of uncertainty [13]. The implementation of these statistical techniques in accessible software tools makes rigorous comparison of bias-reduced methodologies practical for research groups without specialized statistical expertise.

Table 3: Performance Comparison of Bias-Reduced Virtual Screening Methods

| Method/Dataset | Enrichment Factor (Top 1%) | ROC AUC | Early Enrichment | Bias Resistance |

|---|---|---|---|---|

| RosettaVS | 16.72 | 0.78 | Excellent | High |

| MUBD-hCRs | 14.35 | 0.75 | Very Good | Very High |

| DUD-E | 11.90 | 0.72 | Good | Medium |

| Traditional Methods | 8.45 | 0.65 | Moderate | Low |

The Scientist's Toolkit: Research Reagent Solutions

Essential Benchmarking Datasets

DUD-E (Directory of Useful Decoys Enhanced): Contains 102 targets with 22,886 active compounds and 1.4 million decoys. Uses property-matching strategy to generate decoys that resemble actives in physical properties but differ in topology. Essential for structure-based virtual screening validation [10] [2].

MUV (Maximum Unbiased Validation): Specifically designed for ligand-based virtual screening with 17 targets. Avoids analogue bias through structurally diverse active compounds and neighborhood-based decoy selection from confirmed PubChem inactives [10] [2].

MUBD-hCRs (Maximal Unbiased Benchmarking Data Sets for human Chemokine Receptors): Covers 13 chemokine receptor subtypes with 404 ligands and 15,756 decoys. Validated for chemical diversity and unbiased decoys, applicable to both structure-based and ligand-based approaches [11].

CASF-2016 Benchmark: Standardized benchmark for scoring function assessment with 285 diverse protein-ligand complexes. Provides tests for docking power, scoring power, and screening power with carefully designed train/test splits [14].

Statistical Validation Tools

EmProc Method: Provides hypothesis testing and confidence intervals for hit enrichment curves, specifically designed to handle correlation across testing fractions and between algorithms. Essential for proper statistical inference in virtual screening assessment [13].

Confidence Band Procedures: Enable simultaneous inference along entire hit enrichment curves rather than just at individual points. Critical for comprehensive method comparison while controlling Type I error rates [13].

Specialized Software Platforms

MUBD-DecoyMaker: Implementation of the maximum unbiased benchmarking dataset methodology. Enables researchers to build custom benchmarking sets that minimize analogue bias, artificial enrichment, and false negatives [11].

RosettaVS: Open-source virtual screening platform incorporating receptor flexibility and active learning for efficient screening of billion-compound libraries. Demonstrates state-of-the-art performance on standard benchmarks [14].

OpenVS: AI-accelerated virtual screening platform integrating all necessary components for drug discovery. Supports screening of multi-billion compound libraries with both high-speed and high-precision modes [14].

The identification and mitigation of analogue bias, artificial enrichment, and false negatives represent critical challenges in the validation of virtual screening methods. Through the development of maximum unbiased benchmarking datasets, improved statistical validation methods, and AI-enhanced screening platforms, the field has made substantial progress toward more reliable assessment of virtual screening performance. The comparative analysis presented in this guide demonstrates that bias-reduced methodologies consistently outperform traditional approaches across multiple benchmarking scenarios, providing more accurate predictions of real-world screening utility. As these advanced tools and datasets become more widely adopted, they promise to enhance the efficiency and success rates of structure-based drug discovery campaigns, ultimately accelerating the delivery of new therapeutic agents for human disease.

In the field of computer-aided drug discovery, virtual screening (VS) has become an indispensable technique for rapidly identifying potential hit compounds from extensive chemical libraries. The success of any VS campaign, whether ligand-based or structure-based, hinges on the computational method's ability to discriminate between active and inactive molecules. To quantify this discrimination power, researchers rely on a set of well-established performance metrics, primarily Enrichment Factors (EF), Receiver Operating Characteristic (ROC) curves, and the Area Under the Curve (AUC). These metrics provide the quantitative foundation for comparing different virtual screening approaches and validating new methodologies against established benchmarks. Within the broader thesis on evaluating ligand-based VS enrichment rates, understanding the proper application, interpretation, and limitations of these metrics is paramount for advancing the field and developing more effective screening protocols.

The fundamental challenge in VS methodology evaluation is balancing global assessment (how a method performs across an entire database) with early enrichment (how well it identifies actives at the very top of a ranked list). While the AUC provides a single-figure summary of overall performance, the early enrichment metrics address the practical reality of drug discovery, where researchers typically have resources to test only the top-ranked compounds. This comparative guide examines the theoretical foundations, calculation methodologies, and practical interpretations of these key metrics, supported by experimental data from leading studies and software implementations.

Theoretical Foundations of Key Metrics

Receiver Operating Characteristic (ROC) Curves and Area Under Curve (AUC)

The Receiver Operating Characteristic (ROC) curve is a fundamental graphical representation of a virtual screening method's ability to discriminate between active and inactive compounds across all possible classification thresholds [15]. In a typical ROC plot, the true positive rate (sensitivity) is plotted on the Y-axis against the false positive rate (1-specificity) on the X-axis as the score threshold decreases [16]. The top-scoring compounds appear closest to the origin, and an ideal ROC curve would rise vertically to 100% true positives before moving horizontally, indicating that all active compounds were identified before any inactive ones [16].

The Area Under the ROC Curve (AUC) provides a single numeric value summarizing the overall performance, with a perfect method achieving AUC = 1.0 and random selection yielding AUC = 0.5 [15] [16]. The AUC represents the probability that a randomly chosen active compound will be ranked higher than a randomly chosen inactive compound [16]. While AUC is valuable as a global performance measure, a significant limitation is that different ROC curves can yield identical AUC values while having markedly different early enrichment characteristics, which is critically important in practical virtual screening scenarios [15] [16].

Table 1: Interpretation of AUC Values

| AUC Value | Performance Interpretation | Probability of Correct Ranking |

|---|---|---|

| 0.5 | Random | 50% |

| 0.7-0.8 | Acceptable | 70-80% |

| 0.8-0.9 | Excellent | 80-90% |

| 0.9-1.0 | Outstanding | 90-100% |

Enrichment Factor (EF) and Early Enrichment Metrics

The Enrichment Factor (EF) addresses the critical "early recognition" problem in virtual screening by measuring the concentration of active compounds at the top fraction of a ranked list [15]. EF is calculated as the fraction of actives found in a specified top percentage of the screened database divided by the fraction of actives expected from random selection [17]. This metric is particularly valuable because it directly corresponds to how virtual screening is used in practice, where researchers typically only test the top 1-5% of ranked compounds due to resource constraints [15].

Early Enrichment is typically reported at specific cutoffs such as 0.5%, 1%, or 2% of the ranked database [16]. The formula for calculating EF at a given cutoff (X%) is:

EF = (Number of actives in top X% / Total number of actives) / (X% of database / Total database size) [17]

Unlike AUC, EF is highly dependent on the ratio of active to inactive compounds in the dataset, which complicates direct comparisons between studies with different database compositions [15]. To address this limitation, ROC enrichment (ROCe) has been proposed as an alternative early enrichment metric that represents the ability of a test to discriminate between active and inactive compounds at a specific percentage of false positives retrieved [15].

Specialized Metrics: BEDROC and Chemical Diversity Assessment

To overcome limitations in both AUC and EF, researchers have developed specialized metrics that provide more nuanced performance assessments. The Boltzmann-Enhanced Discrimination of ROC (BEDROC) incorporates an exponential weighting scheme that assigns greater importance to active compounds found early in the ranked list [17] [15]. This metric uses an adjustable parameter (α) to control how strongly the ranking is weighted toward the very top compounds, providing a tunable balance between global and early recognition assessment [15].

For evaluating chemical diversity in addition to pure enrichment, average-weighted ROC (awROC) and average-weighted AUC (awAUC) have been developed [15]. These approaches weight active compounds based on their cluster membership, giving more credit to methods that identify actives from different chemical families rather than multiple similar compounds from a single scaffold [15]. A significant challenge with these diversity-aware metrics is their sensitivity to the specific clustering methodology used to define chemical families [15].

Experimental Comparison of Virtual Screening Methods

Performance Benchmarking on Standardized Datasets

Virtual screening methodologies are typically validated against standardized databases containing known active compounds and carefully selected decoy molecules. The Directory of Useful Decoys (DUD) and its enhanced version DUD-E have emerged as widely accepted benchmarks for these evaluations [18] [19]. These databases provide non-active compounds (decoys) with similar physicochemical properties to actives but different chemical structures, creating challenging test conditions that mimic real screening scenarios [19].

Table 2: Performance Comparison of Virtual Screening Methods on DUD/DUD-E Datasets

| Method | Average AUC | Average EF 1% | Targets Tested | Key Innovation |

|---|---|---|---|---|

| HWZ Score [18] | 0.84 ± 0.02 | 46.3% ± 6.7% | 40 | New shape-overlapping procedure and scoring function |

| ENS-VS [19] | 0.982 | 52.77 | 37 DUD-E targets | Ensemble learning with multiple classifiers |

| SIEVE-Score [19] | 0.912 | 42.64 | 37 DUD-E targets | Machine learning scoring function |

| RosettaVS (VSH mode) [14] | Superior to other methods | High early enrichment | Multiple targets | Receptor flexibility modeling and improved forcefield |

Recent advances in machine learning have demonstrated significant improvements in virtual screening performance. The ENS-VS method, which integrates support vector machine, decision tree, and Fisher linear discriminant classifiers using a combination of protein-ligand interaction terms and ligand structure descriptors, achieved an average EF 1% of 52.77 on DUD-E datasets, substantially outperforming traditional docking programs like Autodock Vina and other machine learning approaches [19]. Similarly, the HWZ score-based virtual screening approach demonstrated robust performance across 40 DUD targets with an average AUC of 0.84 and hit rates of 46.3% at the top 1% of ranked compounds [18].

Experimental Protocols for Method Validation

Standardized experimental protocols are essential for meaningful comparison between different virtual screening approaches. The typical workflow for benchmarking studies includes:

Dataset Preparation: Researchers select targets from standard databases like DUD-E or DEKOIS 2.0, ensuring adequate numbers of active compounds (typically >200) for reliable statistical analysis [19]. Structurally similar compounds between training and test sets are excluded to prevent bias.

Molecular Docking: All active and decoy compounds are docked into the target's binding site using programs such as Autodock Vina, with the best pose selected based on the docking score [19].

Feature Calculation: For machine learning approaches, descriptors are computed including protein-ligand interaction energy terms and ligand structure representations [19].

Model Training: In target-specific methods, machine learning models are trained using active compounds as positives and decoys as negatives, often employing techniques to address class imbalance [19].

Performance Evaluation: The trained models are used to rank compounds, and standard metrics (AUC, EF, BEDROC) are calculated using tools like Rocker [17].

Statistical Validation: Bootstrapping methods are typically employed to generate confidence intervals, and p-values are calculated when comparing different methods to determine statistical significance [16].

The Rocker tool has become a valuable resource for standardized performance calculation, providing AUC, BEDROC, and enrichment factors with both linear and logarithmic ROC curve visualization capabilities [17]. This open-source tool helps ensure consistency in metric calculation across different studies.

Advanced Considerations in Metric Selection and Interpretation

The Early Recognition Problem and Metric Limitations

The fundamental tension in virtual screening metric selection stems from the early recognition problem - the practical need to identify active compounds within the very top fraction of a ranked list versus the theoretical desire for a comprehensive assessment of ranking quality [15]. While AUC provides a global performance measure, it fails to distinguish between methods that perform well at early recognition versus those that excel at overall ranking [15] [16]. This limitation is particularly problematic in real-world drug discovery where only the top 1-5% of compounds typically undergo experimental testing.

Each primary metric carries specific limitations that researchers must consider when interpreting results. EF values are highly dependent on the ratio of active to inactive compounds in the dataset and become less reliable when fewer inactive molecules are present [15]. The BEDROC metric, while addressing early recognition, depends on an adjustable parameter (α) that controls the strength of early weighting and requires careful parameter selection [15]. AUC values can be misleadingly high for targets with many actives, as the metric naturally increases with the number of active compounds in the dataset [16].

Best Practices for Comprehensive Assessment

Leading researchers recommend a multi-metric approach to virtual screening evaluation that addresses both global and early recognition performance [15] [16]. The following practices represent current consensus in the field:

Report both AUC and early enrichment (EF at 0.5%, 1%, 2%) to provide complete performance characterization [16].

Use standardized datasets like DUD-E with consistent active:decoy ratios to enable cross-study comparisons [19].

Include confidence intervals for all metrics using bootstrapping methods to communicate statistical uncertainty [16].

Consider chemical diversity through awAUC or similar metrics when scaffold hopping is a research priority [15].

Provide statistical significance testing (p-values) when comparing methods to distinguish meaningful improvements from random variation [16].

The virtual screening community continues to debate optimal metric selection, with different research groups advocating for specific approaches based on their screening priorities and methodological focus [15]. This lack of consensus underscores the importance of transparent reporting and multiple metric inclusion to enable readers to form comprehensive assessments of method performance.

Table 3: Essential Research Reagent Solutions for Virtual Screening

| Reagent/Resource | Type | Function | Example Sources |

|---|---|---|---|

| DUD/DUD-E Database | Compound Library | Provides validated active/decoy sets for benchmarking | dud.docking.org |

| DEKOIS 2.0 | Compound Library | Benchmarking sets with potential active compounds excluded | DEKOIS website |

| Rocker | Software Tool | Calculates AUC, EF, BEDROC and visualizes ROC curves | jyu.fi/rocker |

| ROCS | Virtual Screening Software | Shape-based screening with industry-standard metrics | OpenEye Scientific |

| Autodock Vina | Docking Software | Open-source docking for structure-based screening | Scripps Research |

| Chemical Fingerprints | Computational Descriptors | Represent molecular structure for similarity searching | Various cheminformatics packages |

The comprehensive evaluation of virtual screening methods requires careful consideration of multiple performance metrics, each with distinct strengths and limitations. Enrichment Factors provide crucial insight into early recognition capability, ROC curves and AUC provide global performance assessment, and specialized metrics like BEDROC and awAUC address specific screening objectives such as early enrichment and chemical diversity. The experimental data from benchmark studies consistently shows that modern approaches, particularly those incorporating machine learning and ensemble methods, significantly outperform traditional docking programs across these metrics.

Within the broader context of ligand-based virtual screening enrichment rate research, this analysis demonstrates that no single metric can fully capture method performance. Researchers should select metrics aligned with their specific screening objectives—whether prioritizing early enrichment, scaffold hopping, or overall ranking quality—while maintaining transparency in reporting and statistical rigor in analysis. As the field continues to evolve, standardization of evaluation protocols and metric reporting will be essential for meaningful cross-study comparisons and continued methodological advancement.

Advanced Methodologies: From Deep Learning to Fragment-Based Screening

In the field of computer-aided drug discovery, Ligand-Based Virtual Screening (LBVS) is a fundamental technique for identifying potential drug candidates by comparing molecules against known active compounds, especially when 3D protein structural data is limited or unavailable. The core challenge in LBVS lies in achieving high enrichment rates—the ability to prioritize truly active molecules over inactive ones in large chemical libraries. The adoption of deep learning architectures has significantly transformed this landscape, offering superior capabilities in learning complex molecular patterns directly from data. This guide objectively compares the performance of three prominent deep learning architectures—Graph Neural Networks (GNNs), Transformers, and Convolutional Neural Networks (CNNs)—in enhancing LBVS enrichment rates, providing a synthesis of current experimental data and methodologies for researchers and drug development professionals.

Deep learning architectures excel in LBVS by automatically learning relevant molecular representations from input data, moving beyond the limitations of traditional expert-crafted descriptors. The table below summarizes the core characteristics and strengths of each architecture in the context of LBVS.

Table 1: Core Architectural Characteristics in LBVS

| Architecture | Primary Data Representation | Key Mechanism | Reported Strength in LBVS |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Molecular Graph (Atoms as nodes, bonds as edges) | Message-passing between connected nodes | Learns intrinsic structural and topological relationships; superior with expert-crafted descriptors [20] [21]. |

| Transformers | Molecular Sequence (e.g., SMILES, Amino Acid Sequence) | Self-attention weighing the importance of different sequence parts | Excels at capturing long-range dependencies within sequences for affinity prediction [22]. |

| Convolutional Neural Networks (CNNs) | 3D Grid (Voxelized structure) or 1D/2D Fingerprints | Convolutional filters scanning local features | Powerful feature extractors from structured data; effective as scoring functions [23] [7]. |

Quantitative benchmarking across studies reveals how these architectures perform on key LBVS metrics, particularly enrichment at early stages (EF1%) and overall area under the curve (AUC).

Table 2: Comparative LBVS Performance Metrics Across Architectures

| Architecture / Model | Target / Benchmark | Key Performance Metric | Reported Result | Comparative Context |

|---|---|---|---|---|

| GCN with Descriptors [20] [21] | Ligand-Based VS | Not Specified | Significant improvement over descriptor-only or GCN-only models | Simpler GNNs with descriptors can match complex models. |

| SphereNet with Descriptors [20] [21] | Ligand-Based VS | Not Specified | Marginal improvement over standalone model | |

| Ligand-Transformer [22] | Mutant EGFRLTC Kinase | Experimental Validation | Identification of low nanomolar potency inhibitors | Accurately predicts binding affinity and population shifts. |

| Alpha-Pharm3D [9] | NK1R & other targets | AUROC | ~90% | Competitive performance on diverse datasets. |

| CNN-Score (Rescoring) [7] | PfDHFR (Malaria target) | EF1% | 28 (WT), 31 (Quadruple Mutant) | Consistently improved performance over classical docking. |

Analysis of Key Experimental Findings

The Synergistic Power of GNNs and Expert-Crafted Descriptors

A pivotal finding from recent research is that the integration of GNNs with traditional expert-crafted chemical descriptors creates a synergistic effect, significantly boosting LBVS performance [20] [21]. This hybrid approach combines the strength of deep learning in automatic feature discovery with the robust, domain-knowledge embedded in classical descriptors. The benefits of this integration, however, are architecture-dependent. Studies show that while models like GCN and SchNet demonstrate pronounced improvements when descriptors are added, more complex GNNs like SphereNet show only marginal gains [20]. Intriguingly, when combined with descriptors, even simpler GNNs can achieve performance levels comparable to their more sophisticated counterparts, suggesting a path toward more computationally efficient and interpretable models without sacrificing efficacy [21].

Transformers for Sequence-Based Affinity Prediction

Transformer architectures, particularly the Ligand-Transformer model, introduce a powerful sequence-based approach to LBVS [22]. This method uses the amino acid sequence of the target protein and the molecular topology of the small molecule to predict the binding affinity and characterize the conformational population shifts upon binding. This capability is crucial for understanding the molecular mechanisms of drug action. Applied to the mutant EGFRLTC kinase, Ligand-Transformer successfully identified inhibitors with low nanomolar potency, demonstrating its practical utility in lead discovery [22]. Its sequence-based nature offers a distinct advantage when 3D structural data is limited or of low quality.

CNNs as Powerful Scoring Functions

CNNs continue to be highly effective, particularly when applied as scoring functions to re-rank docking outputs. In a benchmark study against Plasmodium falciparum Dihydrofolate Reductase (PfDHFR), rescoring docking poses with CNN-Score significantly enhanced early enrichment [7]. For the wild-type enzyme, the combination of PLANTS docking and CNN rescoring achieved an EF1% of 28, while for the resistant quadruple mutant, FRED docking with CNN rescoring achieved an impressive EF1% of 31 [7]. This demonstrates CNN-based scoring's robustness and its critical role in improving the success rate of virtual screening, especially against challenging drug-resistant targets.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear technical framework, this section outlines the key experimental methodologies cited in the comparative analysis.

Protocol: Integrating GNNs with Expert Descriptors for LBVS

This protocol is based on the work by Liu et al. (2025) on synergistic integration [20] [21].

- Data Preparation and Splitting: Curate a dataset of molecules with known activity states (active/inactive). Implement a scaffold split to partition the data into training and test sets, ensuring that molecules with core structural similarities are separated. This evaluates the model's ability to generalize to novel chemotypes, closely mimicking real-world discovery challenges [20].

- Feature Generation:

- GNN Representation: For each molecule, generate a molecular graph representation. Process it through a GNN (e.g., GCN, SchNet, SphereNet) to obtain a learned vector representation (embedding) [20] [21].

- Expert-Crafted Descriptors: Calculate a set of traditional chemical descriptors (e.g., molecular weight, logP, topological indices, pharmacophore fingerprints) for the same molecule [21].

- Feature Integration: Concatenate the GNN-learned embedding vector with the vector of expert-crafted descriptors to form a unified molecular representation [21].

- Model Training and Validation: Train a classifier (e.g., a fully connected network) on the concatenated features using the training set. Validate the model's performance on the scaffold-split test set, focusing on enrichment metrics (e.g., EF1%, AUROC) to assess its virtual screening power [20].

Protocol: Rescoring Docking Poses with CNN-Based Scoring Functions

This protocol is derived from the benchmarking study on PfDHFR targets [7].

- Initial Docking: Perform molecular docking of a benchmark dataset (containing known actives and decoys) against the target protein using one or more docking programs (e.g., AutoDock Vina, PLANTS, FRED). Generate multiple poses per ligand.

- Pose Preparation: Collect the top poses generated for each ligand by the docking program.

- CNN Rescoring: Process each protein-ligand complex pose through a pre-trained CNN-based scoring function (e.g., CNN-Score). The CNN typically takes a voxelized 3D representation of the binding site as input, and outputs a predicted binding score or affinity [7].

- Ranking and Evaluation: Re-rank all ligands based on the best CNN-Score obtained for any of their poses. Compare this new ranking against the original docking ranking. Calculate enrichment factors (EF1%) and AUC to quantify the improvement in identifying true actives, particularly at the very top of the ranked list [7].

Visualizing Workflows and Architectures

The following diagrams illustrate the core experimental workflows and architectural integrations described in this guide.

GNN-Descriptor Hybrid Workflow

CNN Rescoring for Virtual Screening

The following table details key computational tools, datasets, and resources essential for implementing the deep learning architectures for LBVS discussed in this guide.

Table 3: Key Research Reagents and Computational Resources

| Item Name | Type | Primary Function in LBVS | Relevant Architecture |

|---|---|---|---|

| RDKit | Software Library | Cheminformatics toolkit for descriptor calculation, molecular graph generation, and conformer sampling [9]. | GNNs, Hybrid Models |

| GNN-Descriptor Code [20] [21] | Code Repository | Implements the synergistic integration of graph neural networks with expert-crafted molecular descriptors. | GNNs, Hybrid Models |

| Ligand-Transformer [22] | Model / Algorithm | A transformer-based model for predicting protein-ligand binding affinity from sequence and molecular topology data. | Transformers |

| CNN-Score [7] | Pre-trained Model | A convolutional neural network-based scoring function for re-ranking and improving virtual screening hit rates. | CNNs |

| DEKOIS 2.0 [7] | Benchmark Dataset | Provides benchmark sets with known actives and carefully selected decoys for rigorous VS evaluation. | All (Evaluation) |

| PDBbind [24] [25] | Database | A comprehensive database of protein-ligand complexes with binding affinity data for training and testing scoring functions. | All (Training) |

| ChEMBL [9] | Database | A large-scale database of bioactive molecules with drug-like properties, used for model training and validation. | All (Training) |

Leveraging Molecular Fingerprints and 3D Pharmacophore Models for Enhanced Similarity Searching

Molecular similarity serves as a foundational principle in modern drug discovery, underpinning the widely accepted paradigm that structurally similar molecules are more likely to exhibit similar biological properties [26] [27]. This concept has become increasingly crucial in our current data-intensive research environment, where similarity measures form the backbone of numerous machine learning procedures for virtual screening (VS) [26]. The transformation of molecular structures into computer-readable formats, known as molecular representation, provides the essential bridge between chemical structures and their predicted biological, chemical, or physical properties [28]. As drug discovery tasks grow more sophisticated, the selection of appropriate molecular representation methods directly impacts the effectiveness of similarity searching and the enrichment rates of virtual screening campaigns [28] [29].

Molecular fingerprints and 3D pharmacophore models represent two complementary approaches to molecular representation, each with distinct strengths and limitations. Molecular fingerprints encode structural or physicochemical information into fixed-length bit strings or numerical vectors, enabling rapid similarity comparisons across large compound libraries [29]. Pharmacophore models, by contrast, abstract molecules into their essential functional features—such as hydrogen bond donors/acceptors, hydrophobic regions, and charged groups—arranged in three-dimensional space [30]. This review provides a comprehensive comparison of these methodologies within the context of ligand-based virtual screening, examining their theoretical foundations, practical implementations, and performance in enriching active compounds from screening libraries. We focus specifically on how these complementary techniques can be leveraged to improve early hit identification in drug discovery pipelines.

Theoretical Foundations and Methodologies

Molecular Fingerprints: Encoding Chemical Information

Molecular fingerprints function as highly compressed representations that transform chemical structures into consistent numerical formats suitable for computational analysis [29]. These representations can be broadly categorized into several types based on their underlying algorithms and the chemical information they capture:

Dictionary-based fingerprints (also called structural keys) use predefined functional groups or substructure motifs where each bit position represents the presence or absence of a specific molecular feature [29]. Common examples include Molecular ACCess System (MACCS) and PubChem fingerprints, which are particularly effective for rapid substructure searching and filtering [31] [29].

Circular fingerprints dynamically generate molecular fragments rather than relying on predefined dictionaries. These algorithms center on each non-hydrogen atom and extend radially to include neighboring atoms through iterative processes [29]. The widely used Extended Connectivity Fingerprints (ECFP) belong to this category and are considered a de facto standard for encoding drug-like compounds [28] [31]. Related implementations include Functional Class Fingerprints (FCFP) which incorporate pharmacophore-like features [31] [29].

Topological fingerprints capture structural information based on molecular graph theory, representing molecules as mathematical constructs with atoms as vertices and bonds as edges [29]. Examples include Atom Pairs and Topological Torsion descriptors, which encode connectivity patterns and atomic properties throughout the molecular framework [29].

Pharmacophore fingerprints represent a hybrid approach that incorporates elements of both structural and functional representation. These fingerprints identify key pharmacophoric points and encode their pairwise or triple relationships within the molecular structure [31] [29].

The similarity between fingerprint representations is typically quantified using the Jaccard-Tanimoto coefficient, which measures the overlap between two binary vectors relative to their union [31]. This metric enables rapid comparison of molecular pairs across large screening libraries.

3D Pharmacophore Models: Capturing Essential Interactions

Pharmacophore models represent a more abstract approach to molecular representation, focusing on the spatial arrangement of features essential for biological activity rather than specific structural motifs [30]. The International Union of Pure and Applied Chemistry defines a pharmacophore as "an ensemble of steric and electronic features that is necessary to ensure the optimal supramolecular interactions with a specific biological target and to trigger (or block) its biological response" [29].

Modern computational approaches to pharmacophore modeling include:

Ligand-based pharmacophore generation which derives common feature arrangements from structurally diverse active compounds [30]. The recently developed TransPharmer model exemplifies this approach, using topological pharmacophore fingerprints to guide molecular generation and scaffold hopping [30].

Structure-based pharmacophore generation which extracts interaction features from protein-ligand complex structures when structural data is available [29].

Pharmacophore fingerprinting which systematically captures the spatial relationships between pharmacophoric features within a single molecule, enabling similarity comparisons based on potential interaction capabilities rather than structural similarity [31] [29].

Pharmacophore models are particularly valuable for scaffold hopping—identifying structurally distinct compounds that share similar biological activity—as they abstract away structural details while preserving the essential functional arrangement required for target interaction [28] [30].

Comparative Performance Analysis

Virtual Screening Enrichment Metrics

To objectively evaluate the performance of molecular fingerprints and pharmacophore models in similarity-based virtual screening, we analyzed multiple benchmark studies focusing on key enrichment metrics. The table below summarizes the comparative performance of different molecular representation methods across various screening tasks:

Table 1: Performance Comparison of Molecular Representation Methods in Virtual Screening

| Method Category | Specific Method | Enrichment Factor (EF1%) | Scaffold Hopping Capability | Best Application Context |

|---|---|---|---|---|

| Circular Fingerprints | ECFP4 [31] | Moderate to High (5-25) | Limited | Drug-like compounds, QSAR modeling |

| Circular Fingerprints | FCFP4 [31] | Moderate (5-20) | Moderate | Functional activity prediction |

| Pharmacophore Fingerprints | ErG fingerprints [30] | High (15-30) | High | Scaffold hopping, bioactivity-based screening |

| Pharmacophore Fingerprints | TransPharmer [30] | Very High (20-50) | Very High | De novo generation, pharmacophore-constrained design |

| Topological Fingerprints | Atom Pairs [29] | Moderate (8-18) | Moderate | Structural diversity, complex scaffolds |

| Dictionary-based Fingerprints | MACCS [31] | Low to Moderate (3-15) | Low | Rapid screening, substructure search |

Case Study: TransPharmer in Kinase Inhibitor Discovery

A recent prospective validation of the TransPharmer model demonstrated the power of pharmacophore-informed approaches for scaffold hopping in practical drug discovery settings [30]. Researchers applied this generative model to design novel Polo-like Kinase 1 (PLK1) inhibitors with distinct structural scaffolds from known actives. The methodology followed this workflow:

Pharmacophore Fingerprint Extraction: Topological pharmacophore features were encoded from known active PLK1 inhibitors using multi-scale, interpretable fingerprints [30].

GPT-based Molecular Generation: A generative pre-training transformer framework generated novel molecular structures conditioned on the pharmacophore fingerprints [30].

Synthesis and Experimental Validation: Four generated compounds featuring a new 4-(benzo[b]thiophen-7-yloxy)pyrimidine scaffold were synthesized and tested for PLK1 inhibition [30].

The results were striking: three of the four synthesized compounds showed submicromolar activity, with the most potent compound (IIP0943) exhibiting 5.1 nM potency against PLK1—comparable to the reference inhibitor at 4.8 nM [30]. Additionally, IIP0943 demonstrated high selectivity for PLK1 over related kinases and submicromolar activity in inhibiting HCT116 cell proliferation [30]. This case study illustrates how pharmacophore-based approaches can successfully identify novel bioactive scaffolds that might be overlooked by traditional fingerprint-based similarity methods.

Performance in Natural Products Chemical Space

The chemical space of natural products presents particular challenges for molecular representation due to structural complexity, higher fractions of sp³-hybridized carbons, and increased stereochemical diversity [31]. A comprehensive benchmark study evaluated 20 different fingerprinting algorithms on over 100,000 unique natural products from COCONUT and CMNPD databases, with performance assessed through both similarity searching and QSAR modeling tasks [31].

The research revealed that different fingerprint encodings can provide fundamentally different views of the natural product chemical space, leading to substantial variations in pairwise similarity and virtual screening performance [31]. While extended-connectivity fingerprints (ECFPs) represent the de facto standard for drug-like compounds, other fingerprints matched or outperformed them for bioactivity prediction of natural products [31]. This highlights the importance of selecting representation methods appropriate for the specific chemical space being investigated, particularly for structurally complex compound classes like natural products.

Table 2: Specialized Applications and Limitations of Molecular Representation Methods

| Representation Method | Strength Applications | Key Limitations | Data Requirements |

|---|---|---|---|

| 2D Molecular Fingerprints | High-throughput screening, scaffold hopping within similar chemotypes [29] | Limited capture of 3D conformational features [30] | Large compound libraries with structural annotations |

| 3D Pharmacophore Models | Scaffold hopping across diverse chemotypes, structure-based design [30] | Conformational dependence, higher computational cost [30] | Known actives or protein-ligand complex structures |

| Protein-Ligand Interaction Fingerprints | Binding mode prediction, target-specific screening [29] | Requires structural data, limited to known binding sites [29] | High-quality protein-ligand complex structures |

Integrated Workflows and Best Practices

Experimental Protocols for Method Validation

Based on the reviewed literature, we recommend the following experimental protocol for evaluating molecular representation methods in virtual screening campaigns:

Benchmark Dataset Curation: Assemble a diverse set of known active compounds and matched decoys for the target of interest. Include multiple scaffold classes to properly assess scaffold-hopping capability [31].

Method Selection and Implementation:

Similarity Calculation and Compound Ranking: Calculate Tanimoto coefficients for fingerprint methods or pharmacophore overlap scores for 3D methods [30] [31]. Rank the screening database by similarity to known active reference compounds.

Enrichment Analysis: Calculate enrichment factors at progressive fractions of the screened database (EF1%, EF5%) and plot receiver operating characteristic curves to visualize method performance [30] [31].

Scaffold Diversity Assessment: Analyze the structural diversity of top-ranked compounds using scaffold network analysis or molecular clustering to ensure the method identifies chemically novel hits [30].

This workflow can be visualized in the following diagram:

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Key Research Reagents and Computational Tools for Molecular Similarity Screening

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit [30] [31] | Open-source cheminformatics library | Fingerprint calculation, molecular manipulation | General-purpose molecular representation and similarity searching |

| OpenBabel | Chemical toolbox | Format conversion, descriptor calculation | Preprocessing of chemical structures from diverse sources |

| TransPharmer [30] | Generative model with pharmacophore fingerprints | De novo molecular generation under pharmacophore constraints | Scaffold hopping, lead optimization with maintained bioactivity |

| ErG Fingerprints [30] | Pharmacophore fingerprint | 2D pharmacophore similarity evaluation | Rapid scaffold hopping in virtual screening |

| CETSA [32] | Experimental target engagement platform | Cellular target engagement validation | Experimental confirmation of computational predictions |

| AutoDock [32] | Molecular docking software | Structure-based binding pose prediction | Complementary validation of similarity-based approaches |