Ligand-Based ADMET Prediction: A Comprehensive Guide to Models, Methods, and Best Practices for Drug Developers

This article provides a thorough exploration of ligand-based models for predicting the Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) of small molecules—a critical component in reducing late-stage drug development failures.

Ligand-Based ADMET Prediction: A Comprehensive Guide to Models, Methods, and Best Practices for Drug Developers

Abstract

This article provides a thorough exploration of ligand-based models for predicting the Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) of small molecules—a critical component in reducing late-stage drug development failures. Tailored for researchers, scientists, and drug development professionals, we cover the foundational principles of these in silico methods, detail the latest machine learning algorithms and feature representations, and offer strategies for troubleshooting and optimizing model performance. A dedicated section on validation and benchmarking discusses robust evaluation techniques, including cross-validation with statistical testing and performance on external datasets, to ensure model reliability. By synthesizing current research and practical applications, this guide aims to equip practitioners with the knowledge to build and deploy more predictive and trustworthy ADMET models.

Understanding ADMET and the Power of Ligand-Based Modeling

The early and accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is a critical determinant of success in the drug discovery pipeline. Ligand-based computational models, which predict these properties directly from chemical structure information, have emerged as indispensable tools for prioritizing promising drug candidates and reducing late-stage attrition rates. The development and rigorous benchmarking of such models rely fundamentally on access to high-quality, curated experimental data. This application note provides a detailed guide to the primary public data sources and benchmarking platforms essential for research on ligand-based ADMET prediction models. We focus on the Therapeutics Data Commons (TDC) and the ChEMBL database, and further introduce specialized resources like PharmaBench, equipping researchers with the protocols needed to navigate, utilize, and contribute to this evolving landscape [1] [2] [3].

The Therapeutics Data Commons (TDC)

The Therapeutics Data Commons (TDC) is a unifying platform designed to systematically access and evaluate machine learning models across the entire spectrum of therapeutics development [4] [5]. It provides a structured collection of AI-ready datasets and curated benchmarks, with a significant emphasis on ADMET properties. Its three-tiered hierarchical structure—organizing data into problems, tasks, and datasets—facilitates targeted access to relevant data for specific machine learning goals, such as single-instance prediction of molecular properties [4].

A key feature of TDC is its ADMET Benchmark Group, a carefully curated collection of 22 datasets that are central to ligand-based ADMET model development and evaluation [6]. TDC is minimally dependent on external packages, and any dataset can be retrieved with only a few lines of Python code, making it highly accessible for both beginners and experts [4].

ChEMBL Database

ChEMBL is a manually curated database of bioactive molecules with drug-like properties, integrating chemical, bioactivity, and genomic data [3]. It serves as a foundational resource for data mining in drug discovery. For ADMET research, ChEMBL provides a vast repository of experimental results extracted from the scientific literature, including data on metabolic stability, protein binding, and toxicity [1] [7].

A primary challenge with using raw data from ChEMBL and similar sources is the complexity of data annotation. Experimental results for the same compound can vary significantly under different conditions (e.g., pH, measurement technique), and these critical experimental conditions are often embedded within unstructured assay description texts rather than explicit data columns [1]. This necessitates sophisticated data processing and filtering workflows to construct reliable benchmark datasets.

Specialized ADMET Benchmarks: PharmaBench

To address the limitations of existing benchmarks, such as small dataset sizes and poor representation of drug-like compounds, new resources like PharmaBench have been developed. PharmaBench is a comprehensive benchmark set for ADMET properties, comprising eleven datasets and 52,482 entries [1] [7].

Its creation leveraged a multi-agent data mining system based on Large Language Models (LLMs) to efficiently identify and extract experimental conditions from 14,401 bioassays in the ChEMBL database [1]. This innovative approach allows for the merging and standardization of entries from multiple sources based on key experimental parameters, resulting in a larger and more clinically relevant benchmark that is particularly suited for training modern AI models [1] [7].

Table 1: Summary of Key Public Data Sources for ADMET Prediction

| Data Source | Core Focus | Key Features | Notable Use Case |

|---|---|---|---|

| Therapeutics Data Commons (TDC) | Unified ML benchmarks for therapeutics | Hierarchical API, 22 ADMET datasets, leaderboards, ready-to-use data loaders [6] [4] | Benchmarking model performance on standardized ADMET tasks [8] |

| ChEMBL | Manually curated bioactivity data | Integrates chemical, bioactivity, and genomic data from literature [3] | Source of raw experimental data for building new custom datasets [1] |

| PharmaBench | Enhanced ADMET benchmarks | LLM-curated experimental conditions, 52,482 entries, focused on drug-like compounds [1] [7] | Training and evaluating models on a large, condition-aware dataset |

Protocols for Accessing and Utilizing Benchmarks

Protocol 1: Accessing the TDC ADMET Benchmark Group

This protocol details the steps to retrieve a benchmark dataset from the TDC ADMET Group, train a model, and evaluate its performance, which is a prerequisite for submission to the TDC leaderboard [8].

Procedure

- Initialize the Benchmark Group: Import the

admet_groupand initialize the benchmark group object. It is recommended to specify a path to store the data. - Retrieve a Specific Benchmark: Obtain a specific benchmark, for example,

Caco2_Wang. Thegetmethod returns a dictionary containing the benchmark's name, the combined training/validation set (train_val), and the test set (test). - Generate Training and Validation Splits: Use the TDC utility function to split the

train_valdata into training and validation sets using a scaffold split, which groups compounds by their molecular backbone to assess generalization to novel chemotypes. Execute this over multiple seeds (e.g., 1 to 5) to ensure robust performance measurement [8]. - Train Model and Generate Predictions: Within the loop, replace the comment block with your model training code using the

trainandvalidsets. After training, generate predictions (y_pred_test) for the benchmark's test set. - Evaluate Model Performance: After completing the runs, use the TDC evaluator to calculate the average performance and standard deviation across all seeds.

Protocol 2: Data Preprocessing and Cleaning for ADMET Modeling

Public datasets often contain noise and inconsistencies that can severely compromise model performance. This protocol outlines a standardized data cleaning workflow, as emphasized in recent benchmarking studies [9].

Procedure

- Standardize SMILES Representations: Use a tool like the

standardiserby Atkinson et al. to convert SMILES strings into a consistent canonical representation. This includes handling tautomers and neutralizing charges [9]. - Remove Inorganics and Organometallics: Filter out inorganic salts and organometallic compounds that are not relevant for small-molecule drug discovery.

- Extract Parent Organic Compounds: For compounds in salt form, strip the salt components to isolate the parent organic compound, which is typically the entity of interest for property prediction.

- Deduplicate Compounds: Identify and handle duplicate entries based on canonical SMILES.

- For regression tasks, if the reported values for duplicates fall within a pre-defined range (e.g., within 20% of the inter-quartile range), keep the first entry. If the values are highly inconsistent, remove the entire group.

- For classification tasks, keep duplicates only if all labels are identical (all 0 or all 1); otherwise, remove the group [9].

- Address Data Skewness: For regression endpoints with highly skewed distributions (e.g., clearance, volume of distribution), apply a log-transformation to the target values to make the distribution more normal and improve model stability [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Ligand-based ADMET Modeling

| Tool / Reagent | Type | Function in Research |

|---|---|---|

| RDKit | Cheminformatics Library | Calculates molecular descriptors (e.g., Morgan fingerprints, topological descriptors), handles molecule I/O, and performs substructure searching [9]. |

| OpenAI GPT-4 API | Large Language Model | Powers advanced data curation systems (e.g., multi-agent LLM) to extract experimental conditions from unstructured text in bioassay descriptions [1] [7]. |

| Chemprop | Deep Learning Library | Provides implementations of Message Passing Neural Networks (MPNNs) specifically designed for molecular property prediction [9]. |

| scikit-learn | Machine Learning Library | Offers implementations of classical ML models (e.g., Random Forest, SVM) and utilities for data splitting, hyperparameter tuning, and evaluation [9]. |

Experimental Workflow for ADMET Model Benchmarking

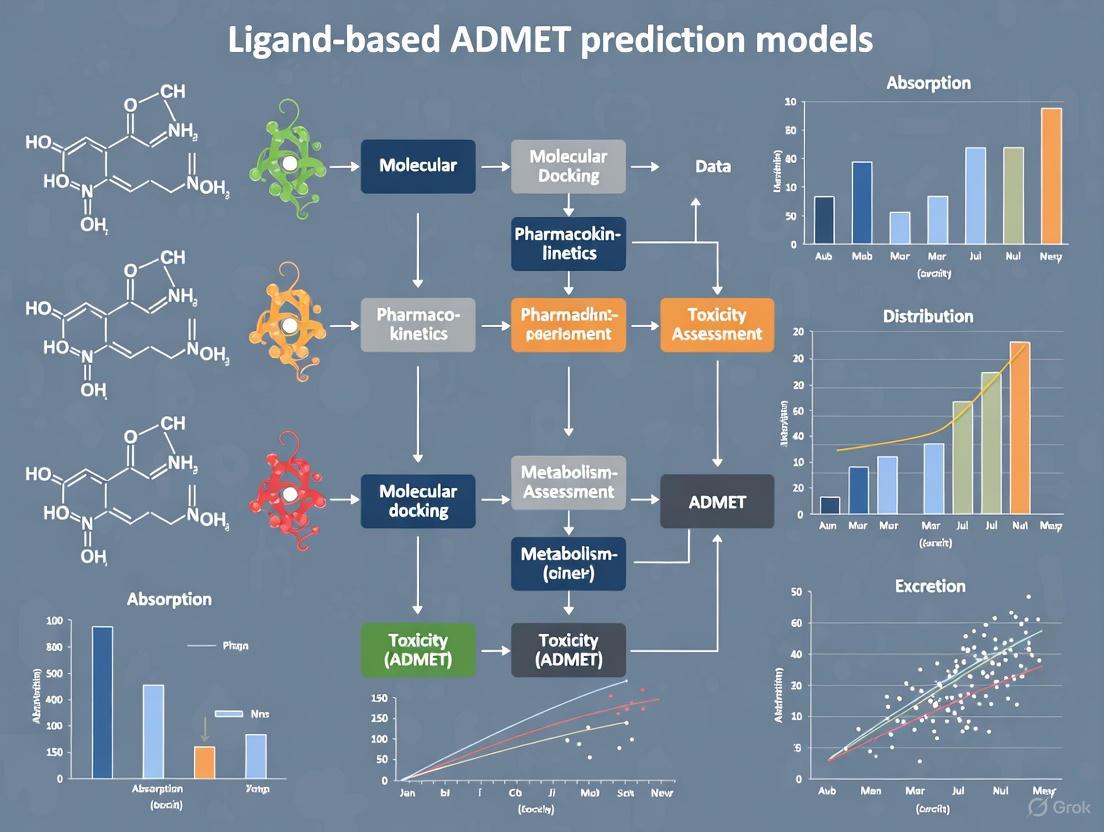

The diagram below illustrates the integrated experimental workflow for building and benchmarking a ligand-based ADMET prediction model, from data acquisition to final evaluation.

ADMET Model Benchmarking Workflow

The reliable prediction of ADMET properties is a cornerstone of modern computational drug discovery. This application note has detailed the protocols and resources necessary to conduct rigorous research in this field. By leveraging structured benchmarking platforms like TDC, foundational data sources like ChEMBL, and emerging, robustly curated resources like PharmaBench, researchers can develop and validate ligand-based models with greater confidence. Adherence to the provided protocols for data access, preprocessing, and model evaluation will promote reproducibility and facilitate meaningful comparisons across different algorithmic approaches, ultimately accelerating the development of safer and more effective therapeutics.

Building and Applying Predictive ADMET Models: From Algorithms to Workflow Integration

The early and accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is a critical determinant in the success of drug discovery and development [2] [10]. Ligand-based in silico models, which predict these properties directly from chemical structure, have become indispensable tools for prioritizing compounds with optimal pharmacokinetics and minimal toxicity risks [10]. The performance of these models hinges on the choice of machine learning (ML) algorithm and its synergy with molecular feature representations. This Application Note provides a structured, comparative evaluation of four prominent ML algorithms—Random Forests, Support Vector Machines, Gradient Boosting, and Deep Neural Networks—within the context of building robust ligand-based ADMET prediction models. We summarize quantitative benchmarking results, detail experimental protocols for model training and evaluation, and provide a curated toolkit of research reagents to facilitate implementation.

Algorithm Performance Comparison

Evaluating algorithms on benchmark ADMET tasks reveals their relative strengths. The following table synthesizes key performance metrics from recent comparative studies as a guide for initial algorithm selection.

Table 1: Comparative Performance of Machine Learning Algorithms for ADMET Prediction

| Algorithm | Best-suited ADMET Tasks | Reported Accuracy/Performance | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Tree-based Ensemble (RF, LGBM) | Classification & regression on small-molecule datasets [9] [11] | LGBM: 90.33% Accuracy, 97.31% AUROC (Anticancer ligand prediction) [11] | High accuracy, robust to noise, fast training, native feature importance [11] [12] | Struggles with extrapolation beyond chemical space of training data [9] |

| Support Vector Machine (SVM) | Not specified in results | Not specified in results | Effective in high-dimensional spaces [2] | Performance heavily dependent on kernel and hyperparameter choice [9] |

| Gradient Boosting (LGBM, CatBoost) | General ADMET tasks, leaderboard benchmarks [9] | Top performer in structured data benchmarks, outperforming RF and SVM in some studies [9] | State-of-the-art on many tabular benchmarks, handles mixed data types | Can be prone to overfitting without careful tuning [9] |

| Deep Neural Network (DNN/MPNN) | Tasks with complex structure-activity relationships [9] [13] | Highly variable; can outperform on some endpoints, underperform on others vs. trees [9] | Capable of learning features directly from SMILES or graphs (e.g., Chemprop) [9] | High computational cost, requires large data, risk of overfitting on small datasets [9] |

Experimental Protocols for Model Development

Data Acquisition and Curation Protocol

Objective: To gather and standardize a high-quality dataset for model training.

- Step 1: Source Data. Obtain molecular structures (as SMILES strings) and corresponding experimental ADMET endpoint values from public databases such as ChEMBL, PubChem, or specialized benchmarks like PharmaBench [7] and the Therapeutics Data Commons (TDC) [9].

- Step 2: Clean and Standardize.

- Remove Inorganics/Salts: Filter out inorganic salts, organometallic compounds, and extract the organic parent compound from salt forms [9].

- Standardize SMILES: Use toolkits (e.g., from Atkinson et al.) to canonicalize SMILES, adjust tautomers, and remove duplicates. Inconsistent measurements for the same compound should be reconciled by keeping the first entry if values are consistent, or removing the entire group if not [9].

- Step 3: Curate Assay Conditions. For endpoints like solubility, use a multi-agent LLM system to extract critical experimental conditions (e.g., buffer, pH) from assay descriptions to ensure data consistency [7].

- Step 4: Data Splitting. Split the cleaned dataset into training, validation, and test sets using a scaffold split to assess the model's ability to generalize to novel chemical structures [9] [7].

Feature Calculation and Selection Protocol

Objective: To generate informative numerical representations of molecules.

- Step 1: Calculate Molecular Descriptors. Use cheminformatics toolkits like RDKit or PaDEL to compute a comprehensive set of 1D and 2D molecular descriptors and fingerprints (e.g., Morgan fingerprints) [9] [11].

- Step 2: Apply Feature Selection.

- Variance & Correlation Filter: Remove features with near-zero variance (e.g., variance < 0.05) and then eliminate one feature from any pair with a Pearson correlation > 0.85 to reduce multicollinearity [11].

- Boruta Algorithm: Employ this wrapper method with a Random Forest classifier to identify features with statistically significant importance compared to shadow features [11]. The final feature set should consist of the features confirmed by Boruta.

Model Training and Evaluation Protocol

Objective: To train and robustly evaluate the performance of different algorithms.

- Step 1: Implement Algorithms. Use standard libraries:

scikit-learnfor RF and SVM,LightGBMorCatBoostfor gradient boosting, andChempropfor MPNNs. - Step 2: Hyperparameter Optimization. Conduct a dataset-specific hyperparameter search using Bayesian optimization or grid search within a cross-validation loop on the training set.

- Step 3: Validate with Statistical Testing. Perform k-fold cross-validation (k=5 or 10) on the training set and apply statistical hypothesis tests (e.g., paired t-test) to compare the performance distributions of different models or feature sets. This identifies statistically significant improvements [9].

- Step 4: Final Evaluation. Retrain the best model on the entire training set and evaluate its performance on the held-out scaffold-split test set using relevant metrics (e.g., AUC-ROC, RMSE, Accuracy) [9].

Diagram 1: Model development workflow.

The Scientist's Toolkit: Essential Research Reagents

The following table lists key software, data resources, and descriptors required for developing ligand-based ADMET models.

Table 2: Essential Research Reagents for Ligand-based ADMET Modeling

| Reagent / Resource | Type | Function in ADMET Modeling | Key Features |

|---|---|---|---|

| RDKit | Software Library | Calculates molecular descriptors and fingerprints; handles SMILES standardization [9] [11]. | Provides RDKit descriptors, Morgan fingerprints, and basic molecular operations. |

| PaDELPy | Software Library | Computes molecular descriptors and fingerprints from SMILES strings [11]. | Extracts a large set of 1D/2D descriptors and fingerprints for model featurization. |

| Therapeutics Data Commons (TDC) | Data Resource | Provides curated benchmark datasets and leaderboards for ADMET properties [9]. | Standardized datasets for fair model comparison and evaluation. |

| PharmaBench | Data Resource | A comprehensive, recently introduced benchmark set for ADMET properties [7]. | Larger size and greater chemical diversity than previous benchmarks. |

| Mol2Vec | Molecular Representation | Generates vector embeddings of molecular substructures for use with DNNs [13]. | An endpoint-agnostic featurization method that captures substructure context. |

| Scikit-learn | Software Library | Implements classic ML algorithms (RF, SVM) and model evaluation tools [11]. | Provides a unified API for training, tuning, and evaluating traditional models. |

| Chemprop | Software Library | Implements Message Passing Neural Networks (MPNNs) for molecular property prediction [9]. | A state-of-the-art DNN framework that learns directly from molecular graphs. |

| Boruta Algorithm | Feature Selection Method | Identifies statistically significant features from a high-dimensional set [11]. | A robust wrapper method that reduces overfitting and improves model interpretability. |

This Application Note provides a structured framework for selecting and implementing machine learning algorithms in ligand-based ADMET prediction. Quantitative benchmarks and experimental protocols indicate that tree-based ensemble methods like LightGBM often provide a powerful and efficient baseline, while Deep Neural Networks (e.g., MPNNs in Chemprop) offer a compelling alternative for tasks with complex structure-activity relationships, provided sufficient data is available [9] [11]. The critical steps of rigorous data curation, appropriate feature selection, and evaluation using scaffold splits with statistical testing are paramount for developing models that generalize reliably to novel chemical entities. By leveraging the protocols and resources detailed herein, researchers can make informed decisions in their model-building process, ultimately accelerating the identification of viable drug candidates.

Within drug discovery, the assessment of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is crucial for de-risking candidate molecules. A primary safety concern is drug-induced cardiotoxicity, often resulting from the unintended blockade of the human Ether-à-go-go-Related Gene (hERG) potassium channel. Inhibition of this channel can cause acquired Long QT Syndrome (LQTS), a severe cardiac side effect that has led to the withdrawal of numerous pharmaceuticals from the market [14] [15]. Consequently, the development of robust in silico models to predict hERG liability early in the discovery pipeline is a significant focus within ligand-based ADMET prediction research.

This application note details a structured protocol for building a high-performance, ligand-based classification model for hERG-mediated cardiotoxicity. The framework integrates modern machine learning (ML) techniques with rigorous data curation and validation practices, providing a reliable tool for prioritizing compounds with reduced cardiotoxicity risk [14].

Background and Significance

The hERG potassium channel is vital for the repolarization phase of the cardiac action potential. Its central cavity is notably promiscuous, binding to structurally diverse small molecules, which makes predicting this off-target activity particularly challenging [14] [15]. Regulatory agencies like the FDA and EMA now require thorough hERG liability assessments, making predictive models an indispensable component of the preclinical toolkit [15].

While in vitro assays exist, they are often labor-intensive, low-throughput, and costly. Ligand-based in silico models, which predict activity based solely on chemical structure, offer a scalable and cost-effective alternative for screening large virtual compound libraries before synthesis [14] [16].

The following diagram illustrates the end-to-end computational workflow for developing the hERG cardiotoxicity prediction model.

Materials and Reagents

Research Reagent Solutions

The following table lists the essential computational tools and data resources required to implement the described protocol.

Table 1: Essential Research Reagents and Computational Tools

| Item Name | Function/Application in Protocol | Specific Notes & Variants |

|---|---|---|

| ChEMBL Database | Primary public repository for bioactive molecules with curated hERG assay data. | Used v25 for model training; v28 for temporal validation [14]. |

| PubChem BioAssay | Supplementary source of hERG inhibition data, both HTS and non-HTS. | Used to build larger, more realistic datasets [15]. |

| KNIME Analytics Platform | Open-source platform for data pipelining, curation, and analysis. | Integrates nodes for RDKit, SDF handling, and machine learning [14] [17]. |

| RDKit | Open-source cheminformatics toolkit. | Used for calculating molecular descriptors and fingerprints within KNIME [17]. |

| VSURF Algorithm | Feature selection method to identify the most relevant molecular descriptors. | Reduces overfitting and improves model interpretability [14]. |

| SMOTE Technique | Data sampling method to handle class imbalance by generating synthetic minority-class instances. | Crucial for improving model sensitivity to hERG blockers [14]. |

Methodology

Data Curation and Preparation

Principle: The predictive power of any QSAR model is fundamentally dependent on the quality of its underlying data. A meticulous, multi-stage curation process is therefore imperative [14] [15].

Protocol:

- Data Retrieval: Extract hERG activity data from public repositories like ChEMBL (Target ID: CHEMBL240) and PubChem. Prioritize entries with IC50 values measured against the human channel in direct binding assays [14].

- Standardization:

- Activity Labeling: Binarize continuous IC50 values into "blocker" and "non-blocker" classes. While a threshold of 10 µM is common, a more stringent 1 µM threshold is often more relevant for identifying critical concerns in drug development programs [14].

- Deduplication: Remove duplicate molecules, retaining only the most potent or reliable measurement for each unique chemical structure [17].

Molecular Descriptor Calculation and Feature Selection

Principle: Molecular structures must be translated into a numerical representation (descriptors or fingerprints) that machine learning algorithms can process.

Protocol:

- Descriptor Calculation: Use cheminformatics toolkits like RDKit or alvaDesc to compute a comprehensive set of molecular features. These can include:

- Feature Selection: Apply a feature selection algorithm like VSURF to the initial, high-dimensional descriptor set. This step identifies a reduced subset of descriptors most relevant to hERG binding, which mitigates the "curse of dimensionality," reduces noise, and enhances model interpretability [14].

Model Training with Machine Learning

Principle: Employing a diverse set of ML algorithms and handling class imbalance robustly leads to more generalizable and predictive models.

Protocol:

- Data Splitting: Implement a temporal validation split. Use older data (e.g., from ChEMBL v25) for training and newer, previously unseen data (e.g., from ChEMBL v28) for testing. This approach provides a realistic estimate of a model's performance on future compounds [14].

- Address Class Imbalance: Apply the Synthetic Minority Over-sampling Technique (SMOTE) to the training set only. This technique generates synthetic examples of the minority class (typically hERG blockers) to balance the class distribution, preventing the model from being biased toward the majority class [14].

- Algorithm Selection and Training: Train multiple classifier types on the processed training data. Common high-performing algorithms for this task include [14] [15] [17]:

- Random Forest (RF)

- eXtreme Gradient Boosting (XGBoost)

- Deep Neural Networks (DNN) / Multilayer Perceptron (MLP)

- Support Vector Machine (SVM)

Model Validation and Evaluation

Principle: A rigorous, multi-faceted evaluation strategy is essential to confirm model robustness and predictive power.

Protocol:

- Performance Metrics: Evaluate the model on a held-out test set using a suite of metrics to get a complete picture [14] [16]:

- Balanced Accuracy (BA): Crucial for imbalanced datasets.

- Area Under the ROC Curve (AUC): Measures overall ranking performance.

- Sensitivity (Recall): Ability to correctly identify true hERG blockers.

- Specificity: Ability to correctly identify true non-blockers.

- Matthew's Correlation Coefficient (MCC): A balanced measure considering all confusion matrix categories.

- Benchmarking: Compare the performance of your final model against existing published models (e.g., DeepHIT, CardioTox) using the same external test set to establish its relative advantage [14].

Anticipated Results and Analysis

When the above protocol is executed successfully, one can expect the development of a highly predictive model. For instance, a model based on this workflow achieved a maximum balanced accuracy of 0.91 and an AUC of 0.95 on a robustly curated dataset of ~8,000 compounds [14].

Table 2: Example Performance Metrics for Different Model Types

| Model Type | Balanced Accuracy | AUC | Sensitivity | Specificity | Key Strengths |

|---|---|---|---|---|---|

| Random Forest | 0.89 | 0.94 | 0.85 | 0.93 | High interpretability, robust to noise. |

| XGBoost | 0.91 | 0.95 | 0.87 | 0.95 | High performance, handles complex relationships. |

| Deep Neural Network | 0.90 | 0.94 | 0.88 | 0.92 | Automatic feature learning from raw inputs. |

| Stacking Ensemble (HERGAI) | N/A | N/A | 0.94 (at 1µM) | N/A | State-of-the-art performance; identifies potent blockers [15]. |

Model Interpretation

Beyond mere prediction, understanding the chemical features associated with hERG blockade is critical for medicinal chemists. The model can be interpreted by analyzing:

- Feature Importance: For tree-based models (RF, XGBoost), the built-in feature importance scores can be calculated. This analysis often highlights descriptors related to lipophilicity, molecular size, and the presence of specific hydrophobic or basic nitrogen-containing groups as key determinants of hERG binding [17].

- Applicability Domain (AD): The model's reliability is confined to its AD—the chemical space defined by its training data. Techniques like Isometric Stratified Ensemble (ISE) mapping can be used to estimate the AD and flag compounds for which predictions may be less reliable [17].

Troubleshooting

Table 3: Common Issues and Recommended Solutions

| Problem | Potential Cause | Solution |

|---|---|---|

| Low Sensitivity (missing true blockers) | Severe class imbalance in the training data. | Apply SMOTE or other resampling techniques. Adjust the classification threshold based on the ROC curve. |

| Low Specificity (too many false alarms) | Model is overly complex or training data contains noisy non-blocker labels. | Strengthen data curation. Perform more aggressive feature selection to reduce overfitting. |

| Poor Performance on External Set | Dataset shift; the external set is chemically different from the training set. | Implement temporal validation from the start. Define and check the model's Applicability Domain for new predictions. |

| Model is a "Black Box" | Use of complex algorithms like DNNs without interpretation tools. | Use model-agnostic interpretation tools (e.g., SHAP) or prioritize inherently more interpretable models like Random Forest. |

This application note provides a comprehensive, proven protocol for developing a predictive model for hERG-mediated cardiotoxicity. By emphasizing rigorous data curation, the use of diverse machine learning algorithms, and robust temporal validation, this ligand-based framework delivers a tool with high predictive power. Integrating such a model into early drug discovery workflows enables researchers to proactively identify and mitigate cardiotoxicity risks, thereby accelerating the development of safer therapeutic agents.

The optimization of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties represents a critical challenge in modern drug discovery. The high failure rate of drug candidates in clinical trials due to unfavorable pharmacokinetic and safety profiles has necessitated the early integration of ADMET forecasting into the discovery pipeline [18]. Within the broader context of ligand-based ADMET prediction models research, multi-objective optimization has emerged as a transformative approach, enabling the simultaneous balancing of multiple, often competing, molecular properties. Unlike single-parameter optimization, which may improve one property at the expense of others, multi-objective strategies aim to identify chemical designs that represent the optimal compromise across a full spectrum of ADMET and efficacy criteria [19].

The rise of artificial intelligence (AI) and machine learning (ML) has catalyzed the development of sophisticated computational platforms capable of navigating this complex molecular design space. These tools leverage a variety of ligand-based representations—from classical molecular descriptors and fingerprints to advanced graph neural networks—to predict ADMET endpoints and guide molecular optimization [9] [18]. This application note provides an overview of emerging platforms in this domain, with a specific focus on their application within ligand-based model frameworks. We detail the operational protocols for key tools and benchmark their performance, providing researchers with a practical guide for implementing these technologies in drug discovery workflows.

Several advanced software platforms now integrate multi-objective optimization capabilities for ADMET property design. These systems typically combine high-fidelity predictive models with algorithms that efficiently explore chemical space to identify structures satisfying multiple target profiles.

Table 1: Comparison of Multi-Objective ADMET Optimization Platforms

| Platform Name | Core AI/ML Methodology | Optimization Strategy | Key ADMET Properties Addressed | Model Representation |

|---|---|---|---|---|

| ChemMORT [19] | Deep Learning | Multi-Objective Particle Swarm Optimization (MOPSO) | Poly (ADP-ribose) polymerase-1 inhibitor optimization; Inverse QSAR | Not Specified |

| ADMETboost [20] | Extreme Gradient Boosting (XGBoost) | Ensemble feature learning | 22 ADMET benchmark tasks from TDC (e.g., Caco2 permeability, bioavailability, toxicity) | Fingerprints & Descriptors (MACCS, ECFP, Mordred) |

| ADMET-AI [21] | Graph Neural Network (Chemprop-RDKit) | High-throughput screening and prioritization | 41 ADMET datasets from TDC; BBB penetration, hERG, solubility, ClinTox | Graph-based & RDKit descriptors |

| ADMET Predictor [22] | Proprietary AI/ML | ADMET Risk scoring; "soft" threshold rules | >175 properties; solubility, logD, pKa, CYP metabolism, DILI | Atomic and molecular descriptors |

| ACD/ADME Suite [23] | QSAR and rule-based | Integrated physicochemical modeling | BBB penetration, CYP450, P-gp, bioavailability, Vd, PPB | Structure-based physicochemical |

A critical differentiator among these platforms is their approach to molecular representation. Ligand-based models rely exclusively on chemical structure information, featurizing molecules using either learned representations (e.g., graph neural networks used by ADMET-AI) or predefined feature sets (e.g., the ensemble of fingerprints and descriptors used by ADMETboost) [9] [21] [20]. For instance, ADMETboost employs an ensemble of six distinct featurizers including RDKit descriptors and Mordred descriptors to enable sufficient learning for its XGBoost models, which have achieved top rankings on the Therapeutics Data Commons (TDC) benchmark leaderboard [20].

The optimization algorithms themselves vary. ChemMORT utilizes Multi-Objective Particle Swarm Optimization (MOPSO), a population-based stochastic algorithm that explores chemical space by simulating the social behavior of particles [19]. In contrast, commercial suites like ADMET Predictor implement rule-based systems such as their "ADMET Risk" score, which uses soft thresholds to quantify a molecule's potential liabilities against a profile calibrated from known successful drugs [22].

Experimental Protocols and Workflows

Protocol for Benchmarking ADMET Model Performance

Robust evaluation is fundamental to reliable ADMET prediction. The following protocol, adapted from recent benchmarking studies, outlines a standardized process for training and evaluating ligand-based ADMET models [9] [24].

Data Curation and Standardization

- Compound Standardization: Standardize compound representations using a tool like that from Atkinson et al. [9]. This includes neutralizing salts, removing inorganics and organometallics, adjusting tautomers, and generating canonical SMILES.

- Duplicate Removal: Remove duplicate compounds. For continuous data, average values if the standardized standard deviation is <0.2; otherwise, remove the group. For classification, keep only entries with identical labels [9] [24].

- Outlier Detection: Identify and remove response outliers using Z-score analysis (e.g., |Z-score| > 3) and inter-dataset inconsistencies [24].

Data Splitting

- Use scaffold splitting to partition the dataset into training (80%) and test (20%) sets. This evaluates a model's ability to generalize to structurally novel compounds, simulating a real-world application scenario [20].

Model Training with Hyperparameter Optimization

- For a given model (e.g., XGBoost), perform 5-fold cross-validation on the training set.

- Conduct a randomized grid search to optimize hyperparameters (e.g.,

n_estimators,max_depth,learning_rate). The parameter set with the highest average cross-validation performance is selected for the final model [20].

Model Evaluation and Validation

- Hold-out Test Set: Evaluate the final model on the scaffold-held-out test set.

- Performance Metrics:

- Regression Tasks (e.g., solubility, logD): Use Mean Absolute Error (MAE) and Spearman's correlation coefficient (ρ) [20] [24].

- Classification Tasks (e.g., hERG inhibition, Ames mutagenicity): Use Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) [20].

- Statistical Significance Testing: Integrate cross-validation with statistical hypothesis testing (e.g., paired t-tests) to confirm the significance of performance differences between model configurations [9].

Protocol for Multi-Objective Optimization with ChemMORT

The ChemMORT platform exemplifies a closed-loop design-make-test-analyze cycle for inverse QSAR, automating the search for novel compounds that meet multiple desired ADMET and activity profiles [19].

Objective Definition

- Define the primary objective, typically a target biological activity (e.g., IC50 for a specific enzyme inhibition).

- Define ADMET constraints, which may include properties like aqueous solubility, hERG channel blocking potential, cytochrome P450 inhibition, and human intestinal absorption. Set acceptable thresholds or desired value ranges for each.

Initial Model Training

- Train a predictive QSAR model for the primary activity objective using a curated dataset of known actives and inactives.

- Train individual ADMET property models or access pre-trained models for the defined constraint endpoints.

Multi-Objective Particle Swarm Optimization (MOPSO)

- Initialization: Generate an initial population of candidate molecular structures.

- Iterative Search:

- Evaluation: Score each candidate molecule in the population using the trained QSAR and ADMET models.

- Fitness Assignment: Calculate a composite fitness score based on the defined multi-objective function (balancing primary activity and ADMET constraints).

- Swarm Update: Update the position and velocity of each "particle" (candidate) in the chemical space based on its own experience and the swarm's best-known positions, exploring new structural analogs.

- Termination: The process iterates until a stopping criterion is met (e.g., a maximum number of iterations or convergence of the fitness score).

Output and Analysis

- The output is a Pareto front of optimized compounds, representing the best possible trade-offs between the primary activity and the ADMET constraints.

- These candidate structures can then be prioritized for synthesis and experimental validation.

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

Successful implementation of multi-objective ADMET optimization relies on a suite of computational "reagents" – software libraries, descriptors, and databases that form the building blocks of the predictive models.

Table 2: Essential Computational Reagents for Ligand-Based ADMET Modeling

| Reagent Category | Specific Tool / Database | Primary Function in Workflow |

|---|---|---|

| Cheminformatics Libraries | RDKit [9] [20] | Core cheminformatics operations: SMILES parsing, descriptor calculation (rdkit_desc), fingerprint generation (Morgan), and molecular standardization. |

| Molecular Descriptors | Mordred Descriptors [20] | Calculates a comprehensive set of ~1,800 2D and 3D chemical descriptors directly from molecular structure. |

| Molecular Fingerprints | Extended Connectivity Fingerprints (ECFP) [20] | Generates circular topological fingerprints that capture molecular substructures and are widely used for similarity searching and ML. |

| Molecular Fingerprints | MACCS Keys [20] | A set of 166 predefined structural binary keys used for substructure screening and molecular representation. |

| Benchmark Data | Therapeutics Data Commons (TDC) [9] [21] [20] | Provides curated, standardized benchmark datasets and splits for fair evaluation of ADMET prediction models across multiple tasks. |

| Machine Learning Framework | XGBoost [20] | A powerful tree-based gradient boosting framework that often achieves state-of-the-art performance on tabular data from fingerprint/descriptor features. |

| Deep Learning Framework | Chemprop [21] | A message-passing neural network specifically designed for molecular property prediction, capable of learning directly from molecular graphs. |

| Reference Drug Database | DrugBank [21] | A database of approved drugs used as a reference set to contextualize ADMET predictions (e.g., percentiles for solubility or toxicity). |

The integration of multi-objective optimization platforms into the drug discovery pipeline marks a significant advancement in the quest for safer and more effective therapeutics. Tools like ChemMORT, ADMETboost, and ADMET-AI provide powerful, AI-driven solutions to the complex challenge of balancing potency with pharmacokinetics and safety [19] [21] [20]. As demonstrated, their effectiveness is underpinned by robust experimental protocols for model benchmarking and optimization, which emphasize data curation, appropriate data splitting, and rigorous statistical validation [9] [24].

The continued evolution of these platforms is inextricably linked to progress in the broader field of ligand-based ADMET prediction models. Future directions point toward the use of even larger and more diverse training datasets, the development of more sophisticated molecular representations, and the tighter integration of these predictive tools with generative AI for de novo molecular design [18]. By leveraging the protocols and resources detailed in this application note, researchers can confidently employ these emerging tools to accelerate the identification of viable drug candidates with optimized ADMET profiles.

Overcoming Challenges: Strategies for Robust and Generalizable ADMET Models

In ligand-based ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction, data quality is not merely a technical concern but a fundamental determinant of model reliability and translational success. Molecular property prediction models are exceptionally vulnerable to data quality issues, where noisy measurements, inconsistencies, and duplicates can significantly distort structure-activity relationships and compromise prediction accuracy [9]. The transformative potential of artificial intelligence in drug discovery remains contingent on addressing these foundational data challenges, as inadequate data quality leads to inaccurate property predictions that can misdirect entire compound optimization campaigns [25].

Research indicates that poor data quality costs organizations an average of $12.9 million annually, with scientific enterprises facing additional costs from misdirected research and development efforts [26]. Within ADMET prediction specifically, public datasets are frequently criticized for data cleanliness issues ranging from inconsistent SMILES representations and duplicate measurements with varying values to inconsistent binary labels for identical compounds [9]. These problems are compounded when models trained on one data source must be applied to different datasets, a common scenario in practical drug discovery settings.

Understanding Data Quality Issues in Scientific Datasets

Taxonomy of Data Quality Problems

Data quality issues in ADMET datasets manifest in several distinct forms, each with particular implications for predictive modeling:

Table 1: Common Data Quality Issues in ADMET Datasets

| Issue Type | Description | Impact on ADMET Prediction |

|---|---|---|

| Noisy Measurements | Experimental variability, measurement errors, or inconsistent assay conditions | Introduces uncertainty in structure-activity relationships, reduces model precision |

| Inconsistent Data | Conflicting values for the same field across systems or inconsistent formats | Creates contradictory learning signals, compromises model reliability |

| Duplicate Data | Multiple entries for the same entity with conflicting or redundant information | Skews dataset representativeness, biases model parameters |

| Incomplete Data | Missing values or entire rows in datasets | Reduces effective dataset size, introduces selection bias |

| Inaccurate Data | Data points that fail to represent real-world values | Misleads model optimization, produces systematically flawed predictions |

| Outdated Data | Information that is no longer current or relevant | Limits model applicability to contemporary chemical space |

| Mislabeled Data | Incorrect assignment of labels or categories | Corrupts fundamental supervised learning process |

These data quality dimensions collectively determine the signal-to-noise ratio in datasets, which directly correlates with model performance ceilings. Research indicates that data processing and cleanup can consume over 30% of analytics teams' time due to poor data quality and availability [27].

Root Causes in ADMET Data Generation

The primary sources of data quality issues in ADMET contexts include:

- Assay variability: Different experimental conditions, measurement techniques, and laboratory protocols introduce systematic inconsistencies [9].

- Data integration problems: Combining data from multiple sources (literature, proprietary assays, public databases) without adequate standardization.

- Human annotation errors: Manual data entry mistakes, misclassification, and subjective interpretation of results.

- Evolving standards: Changes in measurement protocols, reporting requirements, and scientific understanding over time.

- Molecular representation inconsistencies: Variations in SMILES strings, stereochemistry representation, and tautomer handling [9].

Experimental Protocols for Data Quality Assurance

Comprehensive Data Cleaning Protocol for ADMET Datasets

This protocol provides a systematic approach for cleaning ADMET datasets prior to model development, based on established methodologies in cheminformatics [9].

Materials and Software Requirements

Table 2: Essential Tools for ADMET Data Cleaning

| Tool Name | Type | Primary Function | Application in ADMET Context |

|---|---|---|---|

| RDKit | Cheminformatics library | Molecular descriptor calculation, SMILES handling | Standardization of molecular representations, descriptor calculation |

| DataWarrior | Visualization software | Data profiling and visualization | Interactive inspection of molecular datasets, outlier detection |

| Custom standardization scripts | Computational protocol | SMILES canonicalization | Consistent molecular representation across datasets |

| Python/Pandas | Programming environment | Data manipulation and analysis | Implementation of cleaning pipelines, duplicate management |

Step-by-Step Procedure

Remove Inorganic Salts and Organometallic Compounds

- Filter compounds containing non-organic elements (excluding H, C, N, O, F, P, S, Cl, Br, I, B, Si)

- Justification: ADMET properties primarily concern organic molecules; inclusion of organometallics introduces confounding factors

Extract Organic Parent Compounds from Salt Forms

- Identify and separate salt counterions using predefined salt lists

- Retain only the organic parent compound for property prediction

- Exclusion criteria: Omit salt components that can themselves be parent organic compounds (e.g., citrate/citric acid) by excluding components containing two or more carbons

Standardize Tautomeric Representations

- Apply consistent tautomerization rules to ensure identical compounds have identical representations

- Use standardized tools with modified organic element definitions to include boron and silicon

Canonicalize SMILES Strings

- Generate canonical SMILES representations using consistent algorithms

- Ensure stereochemistry is explicitly and consistently represented

Deduplication with Consistency Rules

- Identify duplicate molecular representations

- For consistent duplicates (target values exactly same for binary tasks or within 20% of inter-quartile range for regression tasks): keep first entry

- For inconsistent duplicates: remove entire group to avoid contradictory training signals

Visual Inspection and Validation

- Use DataWarrior for final dataset inspection

- Manually verify ambiguous cases and edge conditions

- Document all cleaning decisions for reproducibility

Quality Control Measures

- Implement automated validation checks for SMILES validity and molecular integrity

- Maintain audit trails of all removed compounds with justifications

- Compare dataset statistics before and after cleaning to identify systematic biases

- For specialized endpoints like solubility: remove all salt complexes as different salts of the same compound may have different properties

Data Quality Assessment Framework

The data quality assessment framework provides quantitative metrics for evaluating dataset integrity across multiple dimensions relevant to ADMET prediction.

Table 3: Data Quality Metrics for ADMET Datasets

| Quality Dimension | Measurement Approach | Acceptance Threshold | Evaluation Frequency |

|---|---|---|---|

| Accuracy | Cross-reference with validated benchmark compounds | ≥ 98% match with reference values | Pre-processing |

| Completeness | Percentage of missing values in critical fields | ≤ 2% missing mandatory fields | Pre-processing & quarterly |

| Consistency | Uniformity of molecular representations and assay values | ≥ 97% consistency across representations | Pre-processing |

| Uniqueness | Proportion of duplicate molecular entries | < 1% duplicate records | Pre-processing |

| Timeliness | Assay date assessment and technology relevance | Appropriate to contemporary discovery practices | Annual review |

| Validity | Conformance to structural and biochemical rules | 100% valid molecular structures | Pre-processing |

Implementation Workflow for Data Quality Management

The following diagram illustrates the comprehensive workflow for addressing data quality issues in ADMET prediction projects:

Integration with Model Development Workflow

The relationship between data quality processes and model development stages is critical for successful ADMET prediction implementation.

Research Reagent Solutions for Data Quality Management

Table 4: Essential Research Reagents for ADMET Data Quality Management

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| Data Quality Tools | Great Expectations, Soda Core, OvalEdge | Automated validation, monitoring | Pipeline data validation, quality dashboards |

| Cheminformatics Libraries | RDKit, Chemprop | Molecular standardization, descriptor calculation | SMILES canonicalization, feature generation |

| Data Profiling Tools | OpenRefine, DataWarrior | Data assessment, visualization | Initial data exploration, outlier identification |

| Workflow Management | Apache Airflow, Nextflow | Pipeline orchestration | Reproducible data processing workflows |

| Molecular Standardization | Custom standardization scripts | Consistent representation | Tautomer normalization, salt stripping |

Systematic approaches to tackling data quality issues—including noisy measurements, inconsistencies, and duplicates—are fundamental to advancing ligand-based ADMET prediction models. The protocols and frameworks presented herein provide researchers with structured methodologies for ensuring data integrity throughout the model development lifecycle. By implementing comprehensive data cleaning procedures, establishing rigorous quality assessment metrics, and maintaining continuous monitoring systems, research teams can significantly enhance the reliability and predictive power of their ADMET models. As the field progresses toward increasingly sophisticated AI-driven approaches, these foundational data quality practices will remain essential for translating computational predictions into successful therapeutic outcomes.

In the field of ligand-based ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction, machine learning (ML) models have become indispensable tools for accelerating drug discovery. However, the performance and reliability of these models are critically dependent on their ability to generalize to new, unseen chemical data. Overfitting represents a fundamental challenge, where a model learns patterns specific to its training data—including noise and outliers—but fails to perform accurately on external test sets or prospective compounds. This Application Note examines how strategic hyperparameter tuning and dataset-specific optimization methodologies can mitigate overfitting, thereby enhancing the predictive robustness of ADMET models. Within the broader thesis of advancing ligand-based ADMET prediction, these practices are not merely procedural but are essential for building trust in computational tools that guide critical decisions in drug development pipelines.

The Overfitting Challenge in ADMET Prediction

The high-dimensional nature of molecular descriptor data, often comprising thousands of fingerprints and physicochemical properties, makes ADMET models particularly susceptible to overfitting. This is exacerbated by the relatively small, noisy, and imbalanced datasets typically available in the domain [9] [2]. The conventional practice of indiscriminately concatenating multiple feature representations without systematic justification can further amplify this risk, leading to models that excel on internal validation but disappoint in practical, external validation scenarios [9]. The consequences are tangible: inaccurate predictions can misdirect medicinal chemistry efforts, contributing to the high attrition rates observed in later stages of drug development [2]. Therefore, a disciplined approach to model construction, emphasizing generalization capacity, is paramount.

Methodologies for Robust Model Development

Data Preprocessing and Feature Selection

A foundational step in preventing overfitting is the curation of high-quality input data. This begins with rigorous data cleaning to remove inconsistent measurements, standardize molecular representations, and eliminate duplicates [9]. Subsequently, strategic feature selection reduces dimensionality, filters out noise, and retains the most informative molecular descriptors.

Protocol: Multistep Feature Selection for Dimensionality Reduction

- Objective: To identify a robust subset of molecular descriptors that contribute meaningfully to the prediction task, thereby reducing model complexity and overfitting potential.

- Materials: A dataset of molecules represented by a high-dimensional vector of molecular descriptors or fingerprints.

- Procedure:

- Variance Threshold Filtering: Calculate the variance of each feature across the dataset. Remove all features with a variance below a predefined threshold (e.g., 0.05), as these low-variance descriptors contribute minimal information [11].

- Correlation Filtering: Compute the Pearson correlation coefficient for all pairs of remaining features. Where pairs exhibit a correlation coefficient exceeding a set threshold (e.g., 0.85), remove one of the features to mitigate multicollinearity [11].

- Wrapper Method (Boruta Algorithm): Employ the Boruta algorithm, a wrapper method built around a Random Forest classifier. This method compares the importance of original features against "shadow features" (randomly permuted versions) to identify descriptors with statistically significant importance scores [11]. Retain only the features confirmed by this analysis.

- Validation: The performance of the selected feature subset should be evaluated using a nested cross-validation strategy to ensure that the selection process itself does not leak information and induce optimism bias.

Hyperparameter Tuning Strategies

Hyperparameters control the learning process itself. Tuning them is essential for finding the optimal balance between bias and variance.

Protocol: Systematic Hyperparameter Optimization

- Objective: To identify the hyperparameter configuration that maximizes a model's generalization performance on unseen data.

- Materials: A cleaned and feature-selected training dataset; a defined ML algorithm (e.g., LightGBM, Random Forest); a search space of hyperparameters.

- Procedure:

- Define Search Space: Identify key hyperparameters to optimize. For tree-based ensembles like LightGBM, these often include

num_leaves(model complexity),learning_rate,feature_fraction(random feature selection per tree), andlambda_l1/lambda_l2(L1 and L2 regularization strengths) [11] [2]. - Select Search Methodology:

- Grid Search: Exhaustively searches over a specified subset of hyperparameters. Best for small, discrete search spaces.

- Random Search: Samples hyperparameter combinations randomly from a defined space. Often more efficient than grid search for high-dimensional spaces.

- Bayesian Optimization: Builds a probabilistic model of the objective function (e.g., validation score) to direct the search towards promising configurations, typically offering superior efficiency [2].

- Implement Nested Cross-Validation: To obtain an unbiased estimate of model performance and mitigate overfitting during tuning, use a nested setup. An inner loop (e.g., 5-fold CV) performs the hyperparameter search on the training fold, while an outer loop (e.g., 5-fold CV) evaluates the best-found model on the held-out validation fold [9].

- Define Search Space: Identify key hyperparameters to optimize. For tree-based ensembles like LightGBM, these often include

- Validation: The final model, configured with the optimized hyperparameters, must be evaluated on a completely held-out test set that was not involved in the tuning process.

Dataset-Specific Model Optimization

The "one-size-fits-all" approach is often suboptimal in ADMET prediction. Dataset-specific optimization involves tailoring the model architecture and representation to the unique characteristics of each endpoint's data.

Protocol: Iterative Representation and Architecture Selection

- Objective: To determine the optimal combination of molecular representation and ML algorithm for a specific ADMET dataset.

- Materials: A cleaned dataset for a specific ADMET endpoint; multiple molecular representations (e.g., RDKit descriptors, Morgan fingerprints, learned embeddings); multiple ML algorithms (e.g., SVM, Random Forest, LightGBM, Neural Networks) [9].

- Procedure:

- Baseline Establishment: Train a simple model (e.g., Random Forest with default parameters) using a standard representation to establish a performance baseline.

- Iterative Representation Testing: Systematically train and evaluate the chosen model architecture using different molecular representations and their reasoned combinations, rather than naive concatenation [9].

- Architecture Comparison: Compare different ML algorithms using the best-performing representation(s) from the previous step.

- Statistical Hypothesis Testing: Apply statistical tests (e.g., paired t-test) on the cross-validation results to determine if the performance improvements from optimization steps are statistically significant [9].

- Validation: The optimized, dataset-specific model should be evaluated on an external test set from a different data source to simulate a practical deployment scenario and truly assess its generalizability [9].

Key Experimental Results and Data

The following tables summarize quantitative findings from recent studies that implement the aforementioned protocols, demonstrating their impact on model performance and robustness.

Table 1: Impact of Feature Selection and Model Tuning on Predictive Performance

| Study / Model | Endpoint(s) | Key Methodology | Result / Performance Impact |

|---|---|---|---|

| ACLPred [11] | Anticancer ligand prediction | Multistep feature selection (Variance, Correlation, Boruta) + LightGBM tuning | Accuracy: 90.33%, AUROC: 97.31% on independent test data. |

| Benchmarking Study [9] | Multiple ADMET properties | Dataset-specific representation selection + hyperparameter tuning + statistical testing | Significant performance improvement over non-optimized models; enhanced generalizability to external data. |

| ChemMORT [28] | Multi-objective ADMET optimization | Latent space representation + Particle Swarm Optimization | Effective optimization of multiple ADMET endpoints while maintaining bioactivity. |

Table 2: Essential Research Reagent Solutions for ADMET Modeling

| Research Reagent / Tool | Type | Function in Experiment |

|---|---|---|

| RDKit [9] [11] | Cheminformatics Library | Calculates molecular descriptors (rdkit_desc), generates Morgan fingerprints, and handles SMILES standardization. |

| PaDELPy [11] | Descriptor Calculation | Computes a comprehensive set of 1D and 2D molecular descriptors and fingerprints. |

| Boruta [11] | Feature Selection Algorithm | Identifies statistically significant features using a Random Forest-based wrapper method. |

| Scikit-learn [11] [2] | ML Library | Provides implementations for variance thresholding, correlation analysis, and various ML algorithms and validation techniques. |

| LightGBM / XGBoost [11] [28] | ML Algorithm | Gradient boosting frameworks known for high performance on structured data; offer built-in regularization to combat overfitting. |

| Therapeutics Data Commons (TDC) [9] [29] | Data Repository | Provides curated public datasets for ADMET-associated properties for benchmarking and model training. |

Workflow and Pathway Visualizations

Comprehensive Model Optimization Workflow

The diagram below outlines the integrated logical workflow for developing a robust, generalizable ADMET prediction model, incorporating the protocols for data preprocessing, feature selection, hyperparameter tuning, and validation discussed in this note.

Hyperparameter Tuning via Nested Cross-Validation

This diagram details the nested cross-validation process, a critical protocol for obtaining unbiased performance estimates during hyperparameter tuning and preventing overfitting to a single validation set.

Within the domain of ligand-based Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) prediction, the reliability of machine learning (ML) models is paramount. A significant challenge that compromises this reliability is the external data dilemma: the sharp performance degradation often observed when models trained on public data sources are applied to proprietary industrial datasets or data from different experimental protocols [30] [31]. This dilemma stems from dataset shifts arising from differences in experimental conditions, measurement techniques, and population biases inherent in data collected from disparate sources [7]. As ADMET models become increasingly integrated into early-stage drug discovery, assessing and mitigating the impact of these shifts is critical for building trust in in silico predictions and avoiding costly late-stage failures. This Application Note addresses this challenge by providing structured protocols for evaluating model performance across different data sources, grounded in the context of ligand-based ADMET prediction research.

Core Challenge: Data Variability and Its Impact on Model Generalization

The core of the external data dilemma lies in the heterogeneity of ADMET data. Public benchmarks, while invaluable, often differ substantially from the compounds encountered in industrial drug discovery pipelines. For instance, the mean molecular weight of compounds in some public solubility datasets is around 204 Dalton, whereas compounds in active drug discovery projects typically range from 300 to 800 Dalton [7]. This represents a fundamental shift in the chemical space being modeled.

Furthermore, experimental results for identical compounds can vary significantly under different conditions. For solubility, factors such as buffer type, pH level, and experimental procedure can lead to different measured values for the same molecule [7]. Similar variability exists for other ADMET endpoints. When a model trained on one source of data, with its specific experimental conditions and compound distributions, is applied to a different source, this dataset shift can lead to a precipitous drop in predictive performance, undermining the model's practical utility [30].

Benchmarking Evidence: Quantifying the Performance Gap

Recent benchmarking studies have quantitatively illustrated the performance gap that emerges in cross-source validation scenarios. The following table summarizes key findings from recent investigations into this external data dilemma.

Table 1: Documented Performance Gaps in Cross-Source Model Validation

| ADMET Endpoint | Training Source | Test Source | Reported Performance Gap | Citation |

|---|---|---|---|---|

| General ADMET Properties | Public TDC Datasets | Internal Pharma Data | Model performance assessed in practical scenario; specific metrics not detailed in excerpt | [30] [32] |

| Caco-2 Permeability | Combined Public Datasets | Shanghai Qilu In-house Dataset | Boosting models "retained a degree of predictive efficacy" on industry data | [31] |

| Multiple ADMET Endpoints | Isolated Proprietary Data | Federated Multi-Pharma Data | Federated models achieved 40-60% reduction in prediction error vs. isolated models | [33] |

| Human Plasma Protein Binding (hPPB) | TDC (ppbr_az) |

Biogen In-house Data | Evaluation of models trained on one source and tested on another for the same property | [30] |

These findings underscore a consistent theme: models optimized for internal validation on a single data source frequently experience a significant drop in performance when faced with data from a new source. This highlights the inadequacy of traditional hold-out validation and necessitates more robust evaluation protocols.

Experimental Protocol for Cross-Source Model Validation

To systematically assess model robustness against the external data dilemma, we propose the following detailed experimental protocol. This workflow is designed to be integrated into the standard model development cycle for ligand-based ADMET predictions.

The diagram below outlines the key stages of the cross-source validation protocol.

Step-by-Step Methodology

Step 1: Data Acquisition and Curation

- Data Collection: Secure datasets for a target ADMET property (e.g., Caco-2 permeability) from at least two distinct sources (e.g., public repositories like TDC [30] and an internal pharmaceutical company dataset [31]).

- Data Cleaning and Standardization: Apply a rigorous cleaning pipeline to all datasets to ensure consistency and minimize noise. This should include:

- SMILES Standardization: Use tools like the standardisation tool by Atkinson et al. to achieve consistent molecular representations, including handling of salts and tautomers [30].

- Duplicate Removal: Identify and remove duplicate compounds. For entries with multiple measurements, retain only those with consistent values (e.g., within 20% of the inter-quartile range for regression tasks) [30] [31].

- Outlier Inspection: Employ visualization tools like DataWarrior for manual inspection of the resultant clean datasets to identify and remove obvious outliers [30].

Step 2: Model Training and Optimization

- Baseline Model Training: Train a set of diverse ML models (e.g., Random Forest, XGBoost, Support Vector Machines, and Message Passing Neural Networks like Chemprop [30]) on the primary training set (e.g., from a public source).

- Feature Representation: Investigate different molecular representations, including classical descriptors (e.g., RDKit 2D descriptors), fingerprints (e.g., Morgan fingerprints), and deep-learned representations [30] [31]. The choice of representation can significantly impact model generalizability.

- Hyperparameter Optimization: Tune model hyperparameters using a validation set split from the primary training data, employing techniques like cross-validation.

Step 3: Cross-Source Validation and Evaluation

- Internal Validation: Evaluate the optimized models on a standard hold-out test set from the same data source as the training set. This establishes a baseline performance metric.

- External Validation: Apply the trained models directly to the entirety of the second, external dataset (e.g., the internal pharmaceutical dataset) without any retraining. This step is crucial for simulating a real-world scenario where a model is deployed on data from a new lab.

- Performance Comparison: Calculate the same performance metrics (e.g., R², RMSE for regression; AUC, accuracy for classification) on both the internal and external test sets. The difference in performance quantifies the impact of the dataset shift.

Step 4: Analysis and Reporting

- Statistical Hypothesis Testing: To move beyond single-score comparisons, integrate cross-validation with statistical hypothesis testing. For example, use a paired t-test or Wilcoxon signed-rank test on the performance distributions from multiple cross-validation runs to determine if the performance drop on the external dataset is statistically significant [30].

- Applicability Domain (AD) Analysis: Assess whether performance degradation on the external set is linked to compounds falling outside the model's applicability domain. Models are typically more reliable for compounds structurally similar to their training data [31].

- Error Analysis: Investigate the characteristics of compounds for which the model makes the largest errors on the external set. This can reveal systematic biases in the training data or the external data.

The following table details key software, databases, and computational tools essential for implementing the described cross-source validation protocols.

Table 2: Key Research Reagents and Computational Tools for Cross-Source Validation

| Tool/Resource Name | Type | Primary Function in Validation | Relevance to External Data Dilemma |

|---|---|---|---|

| Therapeutics Data Commons (TDC) [30] | Data Repository | Provides curated, public benchmark datasets for ADMET properties. | Serves as a standard source of public data for initial model training and benchmarking. |

| RDKit [30] | Cheminformatics Toolkit | Calculates molecular descriptors (e.g., RDKit 2D) and fingerprints (e.g., Morgan). | Enables consistent featurization of molecules from different sources into a common representation space. |

| Chemprop [30] [31] | Deep Learning Library | Implements Message Passing Neural Networks (MPNNs) for molecular property prediction. | Allows training of graph-based models that can learn directly from molecular structure. |

| PharmaBench [7] | Data Benchmark | A comprehensive benchmark set for ADMET properties, created by merging entries from different sources using LLMs. | Provides a larger and more diverse dataset for training, potentially improving model generalizability. |

| Apheris Federated ADMET Network [33] | Modeling Platform | Enables federated learning, allowing models to be trained across distributed proprietary datasets without data centralization. | A cutting-edge solution for increasing the effective chemical space a model learns from, directly addressing data diversity limitations. |

Mitigation Strategies and Future Directions

While rigorous validation identifies the problem, several strategies can mitigate the external data dilemma:

- Federated Learning: This approach allows multiple institutions to collaboratively train a model on their combined data without sharing the underlying data, thus preserving privacy. Federated models have been shown to systematically outperform models trained on isolated datasets, with performance improvements scaling with the number and diversity of participants [33].

- Data Curation and Fusion: Initiatives like PharmaBench use Large Language Models (LLMs) to extract experimental conditions from assay descriptions, enabling a more intelligent fusion of data from different sources by accounting for the context of the experiments [7].

- Utilizing Structured Feature Selection: Moving beyond the common practice of indiscriminately concatenating different molecular representations, a structured approach to feature selection can help identify the most robust and generalizable features for a specific prediction task [30].

The external data dilemma presents a significant barrier to the reliable deployment of ligand-based ADMET models in practical drug discovery. However, by adopting a structured evaluation protocol that incorporates cross-source validation, statistical testing, and applicability domain analysis, researchers can rigorously quantify model limitations and build more robust predictive tools. The integration of emerging strategies like federated learning and advanced data curation holds the promise of developing next-generation ADMET models with truly generalizable predictive power across the diverse chemical and biological space of modern drug discovery.

In the field of drug discovery, ligand-based ADMET prediction models have become indispensable tools for early risk assessment of candidate compounds. However, the transition from traditional machine learning to more complex deep learning architectures has created a critical need for model interpretability—the ability to understand which specific molecular features drive predictions of absorption, distribution, metabolism, excretion, and toxicity. The "black box" nature of many advanced algorithms poses significant challenges for medicinal chemists who require actionable insights to guide molecular design. Model interpretability addresses this gap by revealing the contribution of individual molecular descriptors, fingerprints, and structural motifs to ADMET endpoint predictions, thereby building trust in predictions and providing meaningful directions for chemical optimization [9] [2].

The importance of explainable artificial intelligence (XAI) in ADMET prediction extends beyond mere technical curiosity; it represents a fundamental requirement for effective drug design. By identifying features that positively influence desirable ADMET properties or flag structural alerts associated with toxicity, interpretable models transform predictive outputs into concrete design strategies [11]. This document outlines standardized protocols and application notes for interpreting ligand-based ADMET models, providing researchers with methodologies to extract and validate the molecular features that underpin critical predictions in the drug development pipeline.

Core Concepts and Methodological Frameworks

Molecular Representations and Their Interpretability

The foundation of any interpretable ligand-based model lies in its molecular representation scheme. Different representations offer varying balances between predictive performance and inherent interpretability. Traditional fingerprint-based and descriptor-based approaches provide a transparent mapping between molecular structures and input features, whereas learned representations from graph neural networks or language models often require additional post-processing techniques to elucidate feature importance [34].

Classical Molecular Descriptors numerically encode physicochemical properties (e.g., molecular weight, logP, polar surface area) and topological features of compounds. These descriptors are inherently interpretable as they correspond to well-understood chemical properties that medicinal chemists routinely utilize [11] [2]. Molecular Fingerprints, such as Morgan fingerprints (also known as ECFP), encode the presence of specific substructures or atomic environments within a molecule as bit vectors. While excellent for similarity searching and machine learning, their interpretability requires mapping activated bits back to corresponding chemical substructures [9] [34]. Deep Learning Representations, including embeddings from graph neural networks and transformers, capture complex, high-dimensional patterns but represent the greatest interpretability challenge. Techniques such as attention mechanism analysis and gradient-based feature attribution are typically required to interpret these models [18] [34].

Techniques for Model Interpretation

Interpretability techniques can be broadly categorized as intrinsic (leveraging properties of inherently interpretable models) or post-hoc (applied after model training to explain its behavior). Tree-based models like Random Forest and LightGBM offer intrinsic interpretability through feature importance metrics derived from metrics like Gini impurity or information gain [11]. For more complex models, including deep neural networks, post-hoc methods like SHapley Additive exPlanations (SHAP) and LIME have become standard tools. SHAP in particular provides a unified approach by calculating the marginal contribution of each feature to the prediction based on cooperative game theory, offering both global and local interpretability [11].

Experimental Protocols for Feature Importance Analysis

Protocol 1: Implementing SHAP for Tree-Based ADMET Models

This protocol details the application of SHAP analysis to tree-based ensemble models, such as LightGBM, to interpret ADMET prediction models, following the approach demonstrated in ACLPred for anticancer activity prediction [11].

- Objective: To identify and visualize molecular descriptors that most significantly influence ADMET endpoint predictions.

- Materials: Pre-processed dataset of compounds with calculated molecular descriptors and experimental ADMET values; Trained tree-based model (e.g., LightGBM, Random Forest); Python environment with

shap,pandas, andmatplotliblibraries. - Procedure:

- Model Training: Train a tree-based ensemble model (e.g., LightGBM) on the standardized ADMET dataset using best practices, including cross-validation.

- SHAP Explainer Initialization: Initialize a

TreeExplainerobject from theshaplibrary using the trained model. - SHAP Value Calculation: Calculate SHAP values for all compounds in the validation set or a representative sample using the