Integrating Ligand-Based and Structure-Based Methods in Drug Discovery: A Comprehensive Guide to Combined Virtual Screening Approaches

This article provides a comprehensive examination of combined Ligand-Based (LB) and Structure-Based (SB) virtual screening approaches in modern drug discovery.

Integrating Ligand-Based and Structure-Based Methods in Drug Discovery: A Comprehensive Guide to Combined Virtual Screening Approaches

Abstract

This article provides a comprehensive examination of combined Ligand-Based (LB) and Structure-Based (SB) virtual screening approaches in modern drug discovery. It explores the foundational principles behind LB and SB method integration, detailing practical sequential, parallel, and hybrid implementation strategies. The content addresses common methodological challenges including protein flexibility and scoring function limitations, while presenting troubleshooting frameworks for optimization. Through validation case studies and comparative performance analysis, we demonstrate how integrated LB-SB approaches significantly enhance hit rates and success probabilities over single-modality methods. This resource offers drug development professionals actionable insights for implementing robust virtual screening pipelines that leverage the complementary strengths of both informational domains.

The Synergistic Foundation: Understanding LB and SB Virtual Screening Integration

Defining Ligand-Based and Structure-Based Virtual Screening Core Concepts

Virtual screening (VS) is a cornerstone of modern computational drug discovery, providing a cost-effective method for identifying promising hit compounds from vast chemical libraries. The two primary computational strategies are Ligand-Based Virtual Screening (LBVS) and Structure-Based Virtual Screening (SBVS). A growing body of evidence indicates that hybrid approaches, which combine these methods, consistently achieve superior performance in enrichment studies by mitigating the inherent limitations of each standalone technique [1] [2]. This guide provides a objective comparison of these core methodologies, supported by experimental data and detailed protocols.

Core Concepts and Methodologies

Ligand-Based Virtual Screening (LBVS)

LBVS relies on the "similarity-property principle," which posits that structurally similar molecules are likely to have similar biological activities [2]. This approach requires known active ligands for a target but does not need the target's 3D structure.

- Key Techniques: LBVS methods include quantitative structure-activity relationship (QSAR) models, pharmacophore searching, and 3D shape-based similarity comparisons [1] [2]. Tools like

ROCSandeSimrapidly overlay 3D chemical structures to maximize the similarity of pharmacophoric features such as shape, electrostatics, and hydrogen-bonding potential [1]. - Typical Workflow: A known active compound is used as a query to screen a chemical library. Compounds are ranked based on their similarity to the query, and the top-ranked compounds are selected for experimental testing [3].

Structure-Based Virtual Screening (SBVS)

SBVS depends on the 3D atomic structure of a biological target, typically a protein, to identify ligands that bind favorably to a specific binding site [4] [5].

- Key Technique - Molecular Docking: This is the most common SBVS method. It involves predicting the binding pose of a small molecule within a protein's binding site and scoring the interaction to estimate binding affinity [4] [6].

- Typical Workflow: The protein structure is prepared, a chemical library is pre-processed, and then each compound is computationally "docked" into the binding site. The resulting poses are scored and ranked, and the top candidates are selected for experimental validation [4].

Quantitative Performance Comparison

The table below summarizes the performance of various virtual screening methods based on published benchmarking studies and real-world applications.

| Method Category | Specific Method / Context | Performance Metric | Reported Result | Key Finding / Context |

|---|---|---|---|---|

| SBVS (Docking) | RosettaGenFF-VS [7] | Top 1% Enrichment Factor (EF1%) | 16.72 | Outperformed other physics-based scoring functions on the CASF-2016 benchmark. |

| SBVS (Docking) | PLANTS (WT PfDHFR) [8] | EF1% | 28 | Best enrichment when combined with CNN-based rescoring for wild-type P. falciparum enzyme. |

| SBVS (Docking) | FRED (Quadruple Mutant PfDHFR) [8] | EF1% | 31 | Best enrichment when combined with CNN-based rescoring for a drug-resistant malaria enzyme variant. |

| LBVS (Shape-Based) | HWZ Score (Average across 40 DUD targets) [3] | Area Under ROC Curve (AUC) | 0.84 ± 0.02 | Demonstrated robust, above-random performance and less sensitivity to target choice. |

| Hybrid (Sequential LB/SB) | Ultra-large Library for CB2 Antagonists [9] | Experimental Hit Rate | 55% (6 of 11 compounds) | A massive 140M compound library was docked; synthesized top candidates showed very high validation success. |

| Hybrid (Consensus Scoring) | LFA-1 Inhibitor Optimization [1] | Mean Unsigned Error (MUE) | Significant Drop | A hybrid model averaging LBVS (QuanSA) and SBVS (FEP+) predictions performed better than either method alone. |

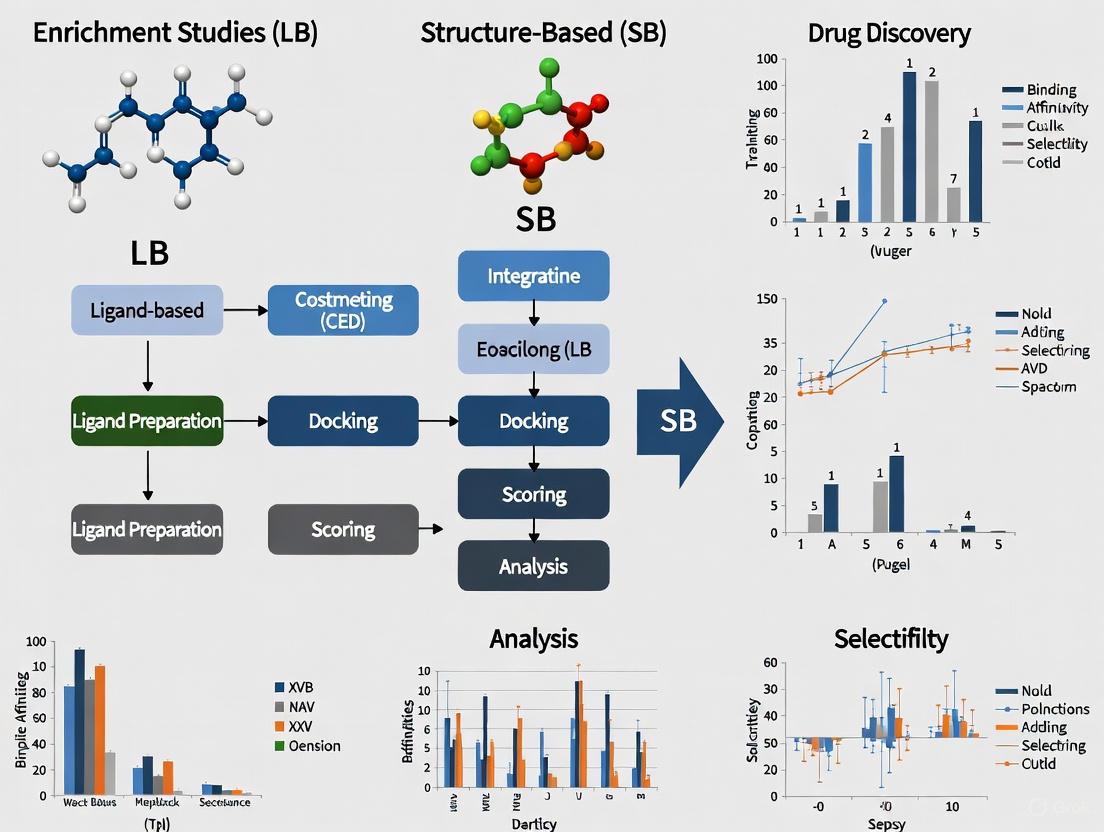

Diagram: Virtual Screening Workflow Strategies. The diagram illustrates the parallel, sequential, and hybrid pathways for combining LBVS and SBVS, which can mitigate the limitations of each individual method [1] [2].

Detailed Experimental Protocols

Protocol 1: Structure-Based Screening of an Ultra-Large Library

This protocol, derived from a successful campaign to discover Cannabinoid Type II receptor (CB2) antagonists, details the screening of a library of hundreds of millions of compounds [9].

- Step 1: Library Enumeration. A combinatorial library of 140 million compounds was built in silico using the SuFEx click chemistry reaction scheme and building blocks retrieved from commercial vendor servers like Enamine and ZINC15 [9].

- Step 2: Receptor Model Preparation & Benchmarking. A 4D structural model of the CB2 receptor was created by combining its crystal structure with two ligand-guided optimized conformations (for antagonists and agonists). This model accounted for binding site flexibility and showed better discrimination of known binders from decoys than the crystal structure alone [9].

- Step 3: Virtual Ligand Screening & Compound Selection. The entire library was docked into the 4D receptor model. The top ~340,000 compounds were re-docked with higher precision. Final compounds for synthesis were nominated based on a combination of docking score, predicted binding pose, chemical novelty, and synthetic tractability [9].

- Step 4: Experimental Validation. Of 11 compounds synthesized and tested, 6 showed CB2 antagonist potency better than 10 μM, yielding a high hit rate of 55% and validating the screening approach [9].

Protocol 2: Benchmarking Docking Tools with Machine Learning Rescoring

This protocol assesses the performance of docking programs against wild-type and drug-resistant variants of a malaria target, Plasmodium falciparum Dihydrofolate Reductase (PfDHFR) [8].

- Step 1: Protein and Benchmark Set Preparation. Crystal structures for both wild-type (WT) and quadruple-mutant (QM) PfDHFR were prepared. The DEKOIS 2.0 protocol was used to create a benchmark set containing known active molecules and challenging decoy molecules for each variant [8].

- Step 2: Docking Experiments. Three docking tools—AutoDock Vina, PLANTS, and FRED—were used to screen the benchmark sets against both protein variants [8].

- Step 3: Machine Learning Rescoring. The docking poses generated by each program were rescored using two pretrained machine learning scoring functions:

CNN-ScoreandRF-Score-VS v2[8]. - Step 4: Performance Evaluation. The screening performance was quantified using the Area Under the ROC Curve (AUC) and the Early Enrichment Factor at 1% (EF1%). The study found that rescoring with

CNN-Scoreconsistently improved performance, with the best combinations being PLANTS/CNN for WT (EF1%=28) and FRED/CNN for QM (EF1%=31) [8].

The Scientist's Toolkit: Key Research Reagents & Software

| Category | Item / Software | Primary Function in VS |

|---|---|---|

| SBVS Docking Software | AutoDock Vina [8] [6] | Widely used, open-source docking program for pose prediction and scoring. |

| PLANTS [8] | Docking tool known for its performance in benchmark studies, especially with ML rescoring. | |

| FRED [8] | Docking program that excels in screening performance for specific targets like resistant enzymes. | |

| Glide (Schrödinger) [6] [7] | High-performance commercial docking software, often a top performer in accuracy benchmarks. | |

| LBVS & Hybrid Software | ROCS (OpenEye) [3] [1] | Industry-standard for 3D shape-based similarity screening and molecular overlay. |

| QuanSA (Optibrium) [1] | LBVS method that builds 3D binding-site models to predict both pose and quantitative affinity. | |

| Machine Learning Tools | CNN-Score [8] | A pretrained deep learning scoring function used to rescore docking poses and improve enrichment. |

| RF-Score-VS v2 [8] | A random forest-based scoring function designed to improve virtual screening hit rates. | |

| Chemical Libraries | Enamine REAL [9] [2] | Source of ultra-large, synthetically accessible compound libraries for screening. |

| Benchmarking Sets | DEKOIS 2.0 [8] | Public benchmark sets containing active molecules and property-matched decoys to evaluate VS performance. |

| DUD (Directory of Useful Decoys) [3] [7] | A benchmark dataset with 40 targets, used to test a method's ability to distinguish actives from inactives. |

Performance Analysis and Limitations

While SBVS often provides excellent enrichment, its accuracy is highly dependent on the quality of the protein structure and the docking scoring function. Notably, a 2025 systematic evaluation revealed that despite advances, traditional physics-based docking methods like Glide SP still consistently excel in producing physically plausible poses compared to many deep learning-based docking paradigms, which can generate chemically invalid structures despite good pose accuracy metrics [6].

LBVS is computationally efficient but can be limited by the chemical diversity of the known actives, potentially missing novel scaffolds [2]. The integration of both methods into a hybrid workflow, as evidenced by the case studies and performance tables, consistently leads to improved outcomes, higher hit rates, and more robust predictions by leveraging their complementary strengths [1] [2].

In the pursuit of novel therapeutic compounds, virtual screening (VS) stands as a cornerstone technology within the drug discovery pipeline. Computational approaches for VS are broadly classified into two complementary categories: ligand-based (LB) and structure-based (SB) methods [10]. LB techniques exploit the structural and physicochemical properties of known active ligands to screen for similar compounds through the molecular similarity principle. In contrast, SB methods, most notably molecular docking, utilize the three-dimensional (3D) structure of the target protein to identify compounds that exhibit structural and chemical complementarity to the binding site [10]. The central challenge in modern drug discovery lies in the immense diversity of the chemical universe, estimated to contain between 10^20 to 10^24 synthesizable molecules [10]. This vast chemical space necessitates robust computational strategies capable of efficiently discriminating between active and inactive compounds. While both LB and SB methods have demonstrated widespread success in identifying drug-like candidates, each approach possesses inherent strengths and limitations that influence their application and performance. Recognizing these complementary characteristics has stimulated the development of integrated computational frameworks that synergistically combine LB and SB techniques, creating a more holistic strategy that leverages all available chemical and structural information to enhance the success of drug discovery projects [10].

Ligand-Based Methods: Foundations and Applications

Ligand-based virtual screening (LBVS) operates under the fundamental principle of molecular similarity, which posits that structurally similar molecules are likely to exhibit similar biological activities [10]. This approach requires knowledge of known active compounds but does not depend on structural information about the biological target. LBVS methodologies employ a diverse array of molecular descriptors to quantify similarity, including one-dimensional (1D) and two-dimensional (2D) descriptors that encode chemical composition and topological features, as well as three-dimensional (3D) descriptors associated with molecular fields, molecular shape and volume, and pharmacophore models [10]. Pharmacophore models are particularly powerful as they abstract the essential steric and electronic features necessary for molecular recognition of a biological target.

The primary strength of LBVS lies in its computational efficiency, enabling the rapid screening of extremely large chemical libraries at relatively low computational cost [10]. This efficiency makes LB methods particularly valuable in the early stages of drug discovery when structural information about the target may be limited or unavailable. Additionally, LBVS can successfully identify novel chemotypes through scaffold hopping, where compounds with different structural backbones but similar spatial arrangement of key functional groups are recognized as potential hits.

However, LBVS suffers from several significant limitations. A major shortcoming is the template bias, where the screening results are heavily dependent on the choice of reference ligand(s) used to build the model [10]. This can lead to overfitting and a tendency to identify compounds that are structurally similar to known actives but may not offer significant advantages. Furthermore, LB methods typically require activity data for model calibration, including information about poorly active or inactive compounds, which may not always be available in sufficient quantity or quality [10]. The effectiveness of LBVS is also highly dependent on the molecular descriptors and similarity metrics employed, with different choices potentially yielding substantially different results.

Structure-Based Methods: Principles and Implementation

Structure-based virtual screening (SBVS) relies on the 3D atomic structure of the target protein, typically obtained through X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy. The most widely used SBVS technique is molecular docking, which predicts the preferred orientation (pose) of a small molecule when bound to a protein target, followed by scoring functions that estimate the binding affinity [10]. Docking algorithms explore the conformational space of the ligand within the binding site, evaluating complementarity based on shape, electrostatic interactions, hydrogen bonding, and hydrophobic effects.

The key advantage of SBVS is its ability to identify novel chemotypes that may be structurally distinct from known activators but still complement the binding site geometry and chemistry [10]. This approach is particularly valuable for targeting novel proteins or allosteric sites where few known activators exist. SB methods also provide atomic-level insights into ligand-target interactions, facilitating rational medicinal chemistry optimization of hit compounds.

Despite its powerful capabilities, SBVS faces several formidable challenges. A major limitation is the proper accounting of protein flexibility, as binding sites can adopt diverse conformational states through side chain rearrangements, loop movements, and even remodeling of secondary structural elements upon ligand binding [10]. The treatment of water molecules represents another significant challenge, as bridging waters or ordered water networks can critically mediate ligand-protein interactions but are difficult to accurately model in docking calculations [10]. Perhaps the most persistent limitation lies in the approximate nature of scoring functions, which must balance computational efficiency with binding affinity prediction accuracy [10]. These functions often struggle to account for all relevant physicochemical contributions to binding, particularly entropic effects and desolvation penalties. Additionally, the computational cost of SBVS is generally higher than LBVS, especially when incorporating protein flexibility or using more sophisticated scoring approaches.

Integrated Approaches: Combining LB and SB Methodologies

The complementary nature of LB and SB methods has motivated the development of integrated strategies that leverage the strengths of both approaches while mitigating their individual limitations. These hybrid frameworks can be broadly categorized into three main architectures: sequential, parallel, and truly hybrid approaches [10].

Sequential Strategies

Sequential approaches implement a multi-step VS pipeline where LB and SB techniques are applied consecutively to progressively filter virtual libraries toward the most promising candidates [10]. A typical sequential protocol employs computationally efficient LB methods for initial library filtering, followed by more computationally demanding SB techniques for refined assessment of the pre-filtered compound set. This strategy optimizes the trade-off between computational efficiency and methodological sophistication throughout the screening process. However, a potential drawback of strictly sequential approaches is that they may not fully exploit all available information simultaneously, as each step operates with limited data types [10].

Parallel Strategies

In parallel approaches, LB and SB methods are executed independently on the same compound library, and the results are combined afterward to select candidates for biological testing [10]. The independent rankings from each method can be integrated using various data fusion techniques, such as rank aggregation or consensus scoring. This strategy has demonstrated meaningful increases in both performance and robustness compared to single-modality approaches [10]. However, the effectiveness of parallel strategies can be sensitive to target-specific characteristics, including the nature of the template ligand used for similarity searching and the specific conformational state of the protein structure used for docking [10].

Hybrid Strategies

True hybrid approaches represent the most integrated framework, where LB and SB elements are combined into a unified methodology that simultaneously leverages both types of information [10]. These methods may involve pharmacophore constraints derived from known activators to guide docking calculations, or similarity metrics that incorporate complementarity to the binding site. Hybrid strategies aim to most fully capitalize on the synergistic potential of LB and SB information, potentially offering superior performance compared to sequential or parallel implementations [10].

Table 1: Classification of Combined LB-SB Virtual Screening Strategies

| Strategy Type | Implementation | Advantages | Limitations |

|---|---|---|---|

| Sequential | LB and SB methods applied in consecutive steps | Optimizes computational efficiency; Progressive filtering | Does not exploit all available information simultaneously |

| Parallel | LB and SB methods run independently; results combined | Increased performance and robustness; Reduces method-specific bias | Sensitivity to target structural details; Requires effective data fusion |

| Hybrid | LB and SB elements integrated into unified methodology | Maximizes synergistic potential; Holistic use of available information | Increased implementation complexity; Methodological development challenges |

Experimental Evidence and Case Studies

The practical effectiveness of combined LB-SB approaches has been demonstrated in several prospective drug discovery campaigns. These case studies provide compelling evidence for the enhanced performance achievable through integrated methodologies.

In one representative application, Spadaro and colleagues utilized a pharmacophoric model derived from X-ray crystallographic data in conjunction with LBVS techniques to identify novel inhibitors of the 17β-hydroxysteroid dehydrogenase type 1 (17β-HSD1) enzyme [10]. This integrated approach led to the discovery of a keto-derivative compound exhibiting inhibitory potency in the nanomolar range, demonstrating the successful application of structure-informed ligand-based screening [10].

A second compelling example comes from the work of Debnath and colleagues, who employed a combined VS strategy to discover selective non-hydroxamate histone deacetylase 8 (HDAC8) inhibitors [10]. Their methodology began with pharmacophore-based screening of approximately 4.3 million compounds, followed by ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) filtering of the top hits, and subsequent molecular docking evaluation [10]. This multi-tiered approach identified compounds SD-01 and SD-02, which demonstrated exceptional inhibitory activity against HDAC8 with IC50 values of 9.0 and 2.7 nM, respectively [10]. This case study exemplifies how sequential application of complementary VS techniques can yield highly potent chemical entities.

Table 2: Representative Case Studies of Combined LB-SB Virtual Screening

| Study Target | LB Method | SB Method | Key Results | Citation |

|---|---|---|---|---|

| 17β-HSD1 Inhibitors | Pharmacophore model | X-ray crystallographic data | Nanomolar-range inhibitor identified | [10] |

| HDAC8 Inhibitors | Pharmacophore screening | Molecular docking | Compounds with IC50 values of 9.0 and 2.7 nM | [10] |

The experimental workflow for combined LB-SB approaches typically follows a structured pathway that systematically integrates multiple computational techniques. The diagram below illustrates a generalized workflow for a sequential LB-SB virtual screening protocol.

Essential Research Reagents and Computational Tools

The implementation of effective LB-SB virtual screening campaigns requires access to specialized software tools, databases, and computational resources. The table below summarizes key resources that facilitate integrated VS approaches.

Table 3: Essential Research Reagents and Computational Tools for LB-SB Virtual Screening

| Resource Category | Specific Examples | Function in LB-SB Workflow |

|---|---|---|

| Chemical Databases | ZINC, ChEMBL, PubChem | Source of compound libraries for screening |

| Protein Data Resources | Protein Data Bank (PDB) | Source of 3D protein structures for SBVS |

| LBVS Software | ROCS, Phase, Open3DALIGN | Molecular similarity searching, pharmacophore modeling |

| SBVS Software | AutoDock, GOLD, Glide, FRED | Molecular docking and binding pose prediction |

| Hybrid Platforms | Schrödinger Suite, MOE | Integrated environments supporting both LB and SB methods |

| Visualization Tools | PyMOL, Chimera, Maestro | Analysis and interpretation of screening results |

The strategic integration of ligand-based and structure-based virtual screening methods represents a powerful paradigm in modern computational drug discovery. As evidenced by the case studies and methodological frameworks discussed herein, combined LB-SB approaches consistently demonstrate enhanced performance compared to individual methods alone, leveraging their complementary strengths while mitigating their respective limitations. The sequential, parallel, and hybrid implementation strategies offer flexible frameworks adaptable to various research scenarios and information availability.

Future developments in this field will likely focus on several key areas. Improved handling of protein flexibility and explicit solvent effects in docking calculations remains a priority for enhancing SBVS accuracy. Advances in machine learning and artificial intelligence are creating opportunities for more sophisticated molecular representations and scoring functions that seamlessly integrate LB and SB information. Additionally, the increasing availability of large-scale bioactivity data and protein structural information continues to enrich the knowledge base from which both LB and SB methods draw. As these computational technologies mature and experimental validation continues to demonstrate their value, integrated LB-SB approaches will undoubtedly play an increasingly central role in accelerating the discovery of novel therapeutic agents.

Historical Evolution and Drivers for Combined LB-SB Approaches

The landscape of computer-aided drug discovery has been fundamentally shaped by the complementary strengths and weaknesses of two primary computational methodologies: structure-based (SB) and ligand-based (LB) approaches. Structure-based methods, including molecular docking, leverage three-dimensional structural information of the biological target to predict ligand binding, while ligand-based techniques utilize known active compounds to search for structurally similar molecules with comparable biological activity under the molecular similarity principle [11] [12]. Individually, each approach carries significant limitations—LB methods exhibit bias toward the reference template and may overfit to input structures, whereas SB methods struggle with accounting for full protein flexibility and accurately estimating binding affinities with scoring functions [11].

The historical evolution of virtual screening (VS) has progressively demonstrated that a holistic framework integrating both LB and SB techniques can enhance the success of drug discovery projects by exploiting available information for both ligands and targets [11]. This review examines the developmental trajectory, key methodological drivers, and performance benchmarks of combined LB-SB approaches, providing a comprehensive comparison guided by experimental data from enrichment studies.

Strategic Approaches for Combining LB and SB Methods

The integration of LB and SB virtual screening has crystallized into three principal strategic frameworks, each with distinct operational logics and implementation pathways.

Sequential Strategies

Concept and Workflow: Sequential approaches divide the virtual screening pipeline into consecutive filtering steps, typically applying faster LB techniques for initial prefiltering before employing more computationally intensive SB methods for final candidate selection [11]. This strategy optimizes the trade-off between computational cost and screening accuracy.

Historical Implementation: A representative example is the workflow employed by Debnath et al., who screened a database of 4.3 million compounds initially using a pharmacophore model (LB), followed by ADMET filtering, and finally molecular docking (SB) [11]. This sequential cascade successfully identified histone deacetylase 8 (HDAC8) inhibitors with nanomolar potency, demonstrating the practical efficacy of this approach [11].

Parallel Strategies

Concept and Workflow: Parallel strategies execute LB and SB screening independently, then combine the results through consensus scoring or rank-based fusion techniques [11] [13]. This approach leverages the orthogonal perspectives of both methods to achieve more robust predictions.

Historical Implementation: The study by Spadaro et al. exemplified parallel integration by using a pharmacophoric model derived from X-ray crystallographic data (SB) in conjunction with LBVS techniques to identify novel inhibitors of 17β-hydroxysteroid dehydrogenase type 1 [11]. The parallel application led to the discovery of a keto-derivative compound with nanomolar inhibitory potency [11].

Hybrid Strategies

Concept and Workflow: Hybrid strategies create deeply integrated frameworks where LB and SB elements inform each other throughout the screening process, often through iterative feedback loops [11]. These approaches aim to achieve true synergy rather than simple combination.

Historical Implementation: RosettaAMRLD represents an advanced hybrid approach that integrates Monte Carlo Metropolis algorithm with reaction-driven molecule proposal and similarity-guided fragment sampling [14]. This method combines the target-specific insights of SB approaches with the chemical guidance of LB similarity assessments in a single workflow for de novo drug design [14].

Table 1: Comparison of Strategic Frameworks for Combining LB and SB Methods

| Strategy Type | Key Characteristics | Advantages | Limitations | Representative Examples |

|---|---|---|---|---|

| Sequential | Step-wise application of LB then SB methods | Computational efficiency; Progressive filtering | Early LB stage might exclude viable compounds | Pharmacophore screening followed by docking [11] |

| Parallel | Independent execution with combined results | Robustness through consensus; Complementary strengths | Requires normalization of different scoring systems | Consensus scoring with molecular similarity and docking [13] |

| Hybrid | Deeply integrated with iterative feedback | Synergistic effects; Adaptive optimization | Implementation complexity; Computational intensity | RosettaAMRLD with similarity-guided docking [14] |

Performance Benchmarks and Experimental Data

Rigorous benchmarking studies have quantitatively assessed the performance gains achieved through combined LB-SB approaches compared to individual methods.

Enrichment Metrics and Comparative Performance

A comprehensive assessment of hybrid methods combining lipophilic molecular similarity and docking across 44 datasets encompassing 41 different targets demonstrated consistent improvement over pure LB or SB methods [13]. The hybrid approaches enhanced both early recognition capability (as measured by ROCe%) and overall performance (as measured by AUC) in virtual screening campaigns [13].

Table 2: Performance Comparison of Pure vs. Combined LB-SB Methods in Virtual Screening

| Method Category | Early Enrichment (ROCe%) | Overall Performance (AUC) | Chemical Diversity of Identified Actives | Implementation Considerations |

|---|---|---|---|---|

| Pure LB Methods | Variable; highly target-dependent | Moderate to high | Limited by reference compound bias | Fast computation; Limited by known actives |

| Pure SB Methods | Inconsistent due to scoring function limitations | Moderate | Potentially high but uneven | Computationally intensive; Requires target structure |

| Combined LB-SB Methods | Consistently superior | Highest across targets | Enhanced diversity | Balanced computational cost; Maximizes available information |

Target-Dependent Performance Variations

The effectiveness of combined strategies exhibits significant dependency on target characteristics. Methods incorporating 3D similarity approaches like PharmScreen, which computes the 3D distribution of atomic lipophilicity from quantum mechanical-based continuum solvation calculations, showed particularly strong performance when integrated with docking programs including Glide, rDock, and GOLD [13]. The synergy between these methods helped mitigate individual limitations, resulting in more reliable identification of active compounds across diverse target classes [13].

Experimental Protocols and Methodologies

Protocol for Combined Molecular Similarity and Docking

Objective: To implement a hybrid LB-SB virtual screening protocol that enhances hit rates and chemical diversity of identified active compounds.

Workflow Components:

- Input Preparation: Target protein structure preparation and compound library curation

- Lipophilic Molecular Similarity Calculation: Use PharmScreen to compute 3D similarity based on atomic lipophilicity distributions derived from quantum mechanical calculations [13]

- Molecular Docking: Perform docking with selected programs (Glide, rDock, or GOLD) [13]

- Score Integration: Combine similarity measurements and docking scores using mixed strategies for re-ranking screened ligands [13]

- Validation: Assess performance using early enrichment (ROCe%) and overall performance (AUC) metrics across diverse target sets [13]

Key Parameters: The protocol employs a dynamic weighting scheme that balances the contributions of similarity scores and docking scores, optimized through benchmarking across multiple targets [13].

Reaction-Driven De Novo Design Protocol

Objective: To generate novel, synthetically accessible ligands through combined SB docking and LB similarity guidance.

Workflow Components:

- Input Complex: Protein-ligand complex with defined binding pocket (from experimental structures or docking) [14]

- Combinatorial Library: Predefined reactions and corresponding reagents from ultralarge libraries (e.g., Enamine REAL space) [14]

- Monte Carlo Sampling: Iterative ligand generation through similarity-guided fragment sampling using Tversky similarity index [14]

- Ligand Evaluation: Binding assessment using RosettaLigand flexible docking capabilities [14]

- Multi-round Optimization: Cascaded sampling workflow extending best-performing routes to escape local minima [14]

Key Innovation: The geometrically weighted sampling approach prioritizes fragments with higher structural similarity to reference molecules while maintaining exploration of diverse chemical space, ensuring both synthetic accessibility and binding optimization [14].

Diagram 1: Hybrid LB-SB Virtual Screening Workflow. This diagram illustrates the parallel execution of LB and SB methods followed by score integration, as implemented in successful combined screening protocols [11] [13].

Table 3: Key Computational Tools and Resources for Combined LB-SB Approaches

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| PharmScreen | LB Similarity Tool | Computes 3D molecular similarity based on atomic lipophilicity | Lipophilic similarity assessment in hybrid screening [13] |

| Glide, rDock, GOLD | SB Docking Programs | Predict ligand binding poses and scores using scoring functions | Structure-based component of hybrid screening [13] |

| RosettaAMRLD | Hybrid Design Platform | Reaction-driven de novo ligand design with similarity guidance | Integrated LB-SB design without known binders [14] |

| Enamine REAL Space | Chemical Library | Ultralarge collection of synthetically accessible compounds | Source of feasible chemical structures for design [14] |

| RIFDock | SB Docking Algorithm | Rapid interface docking using rotamer interaction fields | Broad exploration of binding modes in protein design [15] |

The historical evolution of combined LB-SB approaches represents a paradigm shift in computational drug discovery, moving from isolated applications to integrated frameworks that leverage the synergistic potential of complementary methodologies. Experimental benchmarks across diverse targets consistently demonstrate that hybrid strategies outperform individual methods in both early enrichment and overall identification of active compounds, while also enhancing the chemical diversity of hits [13].

The developmental trajectory of these integrated approaches has been driven by several key factors: (1) the need to mitigate individual methodological limitations through combination; (2) the availability of increasingly accurate SB structural data, enhanced by advances like AlphaFold; and (3) the expansion of synthetically accessible chemical spaces for LB guidance [11] [14]. As the field progresses, the continued refinement of integration strategies, scoring functions, and benchmarking standards will further solidify the role of combined LB-SB approaches as indispensable tools in modern drug discovery pipelines.

Diagram 2: Classification of Combined LB-SB Strategic Approaches. This diagram outlines the three primary frameworks for integrating ligand-based and structure-based methods in virtual screening, as categorized in the literature [11].

Molecular Docking, Pharmacophore Modeling, and Molecular Similarity

Virtual screening (VS) is a cornerstone of modern computational drug discovery, enabling researchers to efficiently identify potential hit compounds from vast chemical libraries. The methodologies are broadly classified into two categories: structure-based (SB) methods, which rely on the three-dimensional structure of the target protein, and ligand-based (LB) methods, which utilize the structural and physicochemical properties of known active ligands [10]. Molecular docking, a primary SB technique, predicts the preferred orientation of a small molecule within a target's binding site. Pharmacophore modeling and molecular similarity searches, both LB approaches, identify new candidates based on the essential interaction features of known actives or their overall structural resemblance [10].

Individually, these methods have proven successful, but they possess distinct limitations. LB methods can be biased toward the chemical scaffolds of the training set, while SB methods like docking often struggle with accurately scoring compounds and accounting for full protein flexibility [10]. Consequently, the integration of LB and SB techniques into combined strategies has emerged as a powerful approach to overcome these weaknesses, creating a holistic framework that leverages all available information to enhance the success of drug discovery projects [10]. This guide provides a comparative analysis of these core techniques and explores how their synergy leads to more robust enrichment in virtual screening campaigns.

Core Concepts and Comparative Analysis

Key Terminology and Definitions

- Molecular Docking: A structure-based computational method that predicts the preferred orientation (pose) and binding affinity of a small molecule (ligand) when bound to a macromolecular target (e.g., a protein). The quality of the prediction is evaluated using a scoring function [6] [16].

- Pharmacophore Modeling: A ligand-based method that abstracts the essential steric and electronic features of a bioactive molecule that are necessary for its molecular recognition by a target. A pharmacophore model does not represent a real molecule, but a map of interactions such as hydrogen bond donors/acceptors, hydrophobic regions, and charged centers [17] [18].

- Molecular Similarity: A fundamental concept in chemoinformatics which posits that structurally similar molecules are likely to have similar properties or biological activities. This principle is operationalized using molecular descriptors or fingerprints (e.g., ECFP) to calculate similarity metrics and search chemical databases [19].

Performance Comparison of Core Techniques

The table below summarizes the fundamental characteristics, strengths, and weaknesses of each method.

Table 1: Comparative Overview of Key Virtual Screening Methods

| Feature | Molecular Docking | Pharmacophore Modeling | Molecular Similarity |

|---|---|---|---|

| Primary Classification | Structure-Based (SB) | Ligand-Based (LB) | Ligand-Based (LB) |

| Required Input | 3D Protein Structure, Ligand Structure | Known Active Ligand(s) and/or Protein Structure | Known Active Ligand(s) |

| Underlying Principle | Complementarity and energetic favorability of ligand binding to a protein pocket | Abstraction of key chemical features responsible for biological activity | The "similar property principle": similar structures imply similar activity |

| Key Output | Predicted binding pose & affinity score | A 3D query of chemical features for database screening | A ranked list of compounds based on similarity to a reference |

| Major Strengths | Provides structural insights into binding modes; can discover novel scaffolds | Highly interpretable; efficient for high-throughput screening; less computationally expensive than docking | Fast and scalable to very large libraries; excellent for finding analogs |

| Major Limitations | Scoring function inaccuracies; handling protein flexibility is challenging [10] | Dependent on quality and diversity of input ligands; may miss novel chemotypes [10] | Inherent bias towards the reference scaffold; can miss active but structurally distinct compounds |

Performance of Docking Methods: Traditional vs. Deep Learning

Recent advances have introduced deep learning (DL) into molecular docking. A 2025 systematic evaluation compared traditional and DL-based docking methods across multiple dimensions, including pose prediction accuracy and physical validity (e.g., plausible bond lengths, absence of steric clashes) [6]. The following table synthesizes key findings from this study.

Table 2: Performance Comparison of Traditional and Deep Learning Docking Methods [6]

| Method Type | Representative Tools | Pose Accuracy (RMSD ≤ 2 Å) | Physical Validity (PB-Valid Rate) | Key Characteristics |

|---|---|---|---|---|

| Traditional | Glide SP, AutoDock Vina | Moderate to High | High (≥94% across datasets) | Robust and reliable; excels at producing physically plausible poses. |

| Generative Diffusion | SurfDock, DiffBindFR | High (e.g., SurfDock: >70%) | Moderate (e.g., SurfDock: ~40-63%) | Superior pose accuracy but often produces chemically invalid structures. |

| Regression-Based | KarmaDock, QuickBind | Variable, often lower | Low | Often fail to produce physically valid poses; fast but less reliable. |

| Hybrid (AI Scoring) | Interformer | Moderate | High | Balances pose accuracy with physical validity; combines AI scoring with traditional search. |

Integration Strategies: LB-SB Synergy in Action

Combining LB and SB methods can mitigate their individual limitations and leverage their complementary strengths. The primary integration strategies, as classified in the literature, are sequential, parallel, and hybrid [10].

Workflow Diagrams for Combined LB-SB Strategies

The following diagrams illustrate the three main strategies for integrating ligand-based and structure-based virtual screening.

Sequential Strategy Workflow

Parallel Strategy Workflow

Hybrid Strategy Workflow

Experimental Protocols for Combined Workflows

The protocols below detail how these integration strategies are implemented in practice, based on successful case studies.

Protocol 1: Sequential LB → SB Screening for Kinase Inhibitors This protocol is based on a collaborative compound proposal contest for identifying inhibitors of tyrosine-protein kinase Yes [20].

- Ligand-Based Pre-filtering: The large compound library (e.g., 2.2 million compounds) is first screened using LB methods. This involves:

- Similarity Search: Calculate 2D Tanimoto indices between library compounds and known active ligands. Select compounds exceeding a threshold (e.g., >0.55) [20].

- Pharmacophore Screening: Generate a pharmacophore model from known actives and screen the library to find compounds that match the essential features [20].

- Structure-Based Refinement: The reduced subset (e.g., a few thousand compounds) from Step 1 is subjected to molecular docking.

- Hit Selection: Select top-ranked compounds from the docking study for experimental validation.

Protocol 2: Structure-Based Pharmacophore with LB Screening for hMPV Inhibitors This hybrid protocol demonstrates the direct integration of SB and LB information into a single query [21].

- Structure-Based Model Generation:

- Obtain the 3D structure of the target (e.g., hMPV RNA-dependent RNA polymerase, PDB: 8FPJ).

- Analyze the binding site of a co-crystallized inhibitor. Define the critical chemical interaction features (e.g., hydrogen bond donors/acceptors, aromatic rings, hydrophobic regions) to create a structure-based pharmacophore model [21].

- Ligand-Based Virtual Screening:

- Use the generated pharmacophore model as a 3D query to screen large commercial databases (e.g., MolPort, ZINC) [21].

- Retrieve compounds that map successfully to all or most of the pharmacophore features.

- Further Filtering and Validation:

Table 3: Key Computational Tools and Databases for Virtual Screening

| Item Name | Category/Type | Primary Function in Research |

|---|---|---|

| AutoDock Vina [6] | Molecular Docking Software | Predicts protein-ligand binding poses and scores binding affinities using an efficient search algorithm and scoring function. |

| Glide [6] [16] | Molecular Docking Software | Performs high-accuracy hierarchical docking and scoring, often used for final refinement stages. |

| DiffDock [17] | Deep Learning Docking | Uses a diffusion model to generate ligand binding poses, showing high pose prediction accuracy. |

| PHASE [17] | Pharmacophore Modeling | Creates, validates, and screens databases using highly customizable pharmacophore models. |

| AncPhore [17] | Pharmacophore Modeling | Identifies anchor pharmacophore features from protein-ligand complexes and supports virtual screening. |

| DiffPhore [17] | AI-based Pharmacophore | A knowledge-guided diffusion framework for 3D ligand-pharmacophore mapping and binding conformation generation. |

| Extended-Connectivity Fingerprints (ECFP) [22] | Molecular Fingerprint | Encodes molecular structure into a fixed-length bit string for rapid similarity searching and machine learning. |

| ZINC/ChEMBL [21] [20] | Chemical Database | Publicly accessible databases of commercially available and bioactive compounds for virtual screening. |

| PyRx [21] | Virtual Screening Platform | An open-source GUI that integrates docking and screening tools for a streamlined workflow. |

| SwissADME [21] | ADMET Prediction Tool | Web-based tool for predicting key pharmacokinetic and drug-like properties of hit compounds. |

Molecular docking, pharmacophore modeling, and molecular similarity searches each offer unique advantages for virtual screening. The choice of method depends heavily on the available structural and ligand information. As performance data show, traditional docking methods like Glide remain robust for producing physically valid poses, while emerging deep learning methods show promise in pose accuracy but require further refinement [6]. The most significant trend, however, is the move toward integrated LB-SB strategies. Whether sequential, parallel, or truly hybrid, these combined approaches leverage the strengths of each paradigm to achieve a level of enrichment and hit diversity that is difficult to attain with any single method [10] [20]. The continued development of AI-powered tools, particularly those that natively bridge LB and SB concepts like DiffPhore [17], is set to further solidify this holistic framework as the future of computational hit discovery.

Theoretical Basis for Enhanced Performance Through Integration

Computer-aided drug discovery (CADD) has emerged as a transformative force in pharmaceutical research, significantly reducing the time and costs associated with traditional high-throughput screening while expanding the diversity of searchable compounds [14] [23]. CADD methodologies are broadly categorized into two complementary paradigms: structure-based (SB) methods, which simulate interactions between small molecules and target protein structures, and ligand-based (LB) methods, which infer structure-activity relationships from known binders and non-binders [14] [24]. The integration of these approaches represents a paradigm shift in computational drug discovery, leveraging the complementary strengths of each method to overcome their individual limitations and enhance predictive performance.

The therapeutic imperative for this integration is substantial. Conventional drug discovery remains exceptionally resource-intensive, requiring a better part of a decade and costing between $161 million and $4.54 billion to bring a new drug to market [14]. Nearly half these costs are incurred during early discovery stages, creating an urgent need for more efficient screening methodologies [14]. Furthermore, the estimated drug-like chemical space exceeds 10^60 molecules, far surpassing the practical screening capacity of current SB virtual screening campaigns, which are limited to libraries of approximately 10^9 molecules [14]. This vast search space necessitates more intelligent navigation strategies that can prioritize promising regions for exploration.

Table 1: Fundamental Approaches in Computer-Aided Drug Design

| Approach | Core Methodology | Data Requirements | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| Structure-Based (SB) | Molecular docking, binding site analysis | Protein 3D structures | Target-specific insights; novel scaffold identification | Dependent on quality of structural data; computationally expensive |

| Ligand-Based (LB) | QSAR, pharmacophore modeling, similarity searching | Known active/inactive compounds | Effective when structure unavailable; leverages historical data | Limited to chemical space similar to known actives |

| Integrated LB-SB | Hybrid models combining both methodologies | Structural and ligand activity data | Enhanced coverage of chemical space; improved prediction accuracy | Implementation complexity; data integration challenges |

The recent proliferation of protein structural data, accelerated by deep-learning advances such as AlphaFold, has created unprecedented opportunities for SB methods [14] [23]. Simultaneously, the expansion of chemical bioactivity databases has enriched the foundation for LB approaches. This confluence of data resources, combined with methodological innovations in machine learning and chemoinformatics, establishes an ideal environment for developing integrated LB-SB frameworks that can more effectively navigate the complex landscape of drug discovery.

Methodological Framework: Experimental Protocols for LB-SB Integration

Transfer Learning Architecture for Bioactivity Prediction

A pioneering protocol for LB-SB integration employs transfer learning to enhance bioactivity predictions for pharmaceutically relevant targets, particularly G protein-coupled receptors (GPCRs) including opioid receptors [24]. This methodology addresses the critical challenge of limited training data for specific biological targets by leveraging knowledge from larger, related datasets.

Experimental Protocol:

- Data Curation: Bioactivity data for target receptors (δ-, μ-, and κ-opioid receptors) are retrieved from the IUPHAR/BPS Guide to Pharmacology and ChEMBL database (Release 32) [24]. Inactive ligands are defined as those with potency worse than 10 μM (pKi, pIC50, or pEC50 < 5).

- Descriptor Calculation:

- LB Descriptors: 43 molecular descriptors encompassing physical-chemical properties, atom counts, and topological indices are computed using RDKit's ComputeProperties function [24].

- SB Descriptors: Protein-ligand complexes from the PDB are processed using iChem's cavity-based pharmacophores. Similarity values are calculated between ligand conformers and cavity-derived pharmacophores, with features derived from maximum, average, and 75th percentile similarity scores [24].

- Model Architecture: Two neural network architectures are implemented:

- Dense Neural Network (DNN): Processes concatenated LB and SB feature vectors through multiple fully connected layers.

- Graph Convolutional Network (GCN): Operates directly on molecular graph structures to learn relevant features [24].

- Transfer Learning Implementation: Models are pretrained using supervised learning on a larger dataset encompassing the entire opioid receptor subfamily, then fine-tuned on target-specific datasets for individual receptor subtypes [24].

Reaction-Driven De Novo Design with RosettaAMRLD

The Rosetta Automated Monte Carlo Reaction-based Ligand Design (RosettaAMRLD) protocol integrates SB design with reaction-driven synthesis planning to ensure synthetic accessibility [14]. This approach combines the flexible ligand docking capabilities of the Rosetta software suite with ultralarge combinatorial libraries to generate novel, synthetically accessible drug-like molecules.

Experimental Protocol:

- Input Preparation: A protein-ligand complex (from experimental structures or docking) and a combinatorial library with predefined reactions and corresponding reagents.

- Monte Carlo Metropolis Algorithm: Iterative process consisting of:

- Ligand Generation: Similarity-guided fragment sampling using RDKit Daylight-like Fingerprints and Tversky index for asymmetric similarity calculations [14].

- Geometrically Weighted Sampling: Favors fragments with higher similarity to reference molecules while maintaining diversity through ranking-based weighting [14].

- Alignment and Evaluation: Proposed ligands are aligned to previous ligands and evaluated for binding using Rosetta's scoring functions [14].

- Reaction-Based Assembly: Fragments are placed in "reaction containers" corresponding to predefined chemical reactions, with products generated virtually by combining fragments from each component sub-container [14].

- Cascaded Sampling Workflow: The protocol is repeated multiple times, extending only the best-performing routes to efficiently explore chemical space and escape local minima [14].

Performance Comparison: Quantitative Assessment of Integrated Approaches

Benchmarking Transfer Learning Efficacy for Opioid Receptors

The integrated LB-SB approach with transfer learning demonstrates substantial improvements in bioactivity prediction accuracy compared to conventional methods. When applied to opioid receptor targets, this methodology significantly enhances model performance, particularly valuable for targets with limited training data.

Table 2: Performance Comparison of Bioactivity Prediction Methods for Opioid Receptors

| Methodology | Receptor Subtype | Accuracy (%) | Precision | Recall | AUC-ROC | Training Data Size |

|---|---|---|---|---|---|---|

| LB-Only DNN | MOR | 74.2 | 0.71 | 0.75 | 0.79 | 1,847 ligands |

| SB-Only DNN | MOR | 76.8 | 0.74 | 0.77 | 0.81 | 25 complex structures |

| Integrated LB-SB DNN | MOR | 82.5 | 0.80 | 0.83 | 0.87 | Combined features |

| LB-Only GCN | KOR | 72.6 | 0.70 | 0.73 | 0.77 | 1,923 ligands |

| Integrated LB-SB GCN | KOR | 84.3 | 0.82 | 0.85 | 0.89 | Combined features |

| Transfer Learning DNN | DOR | 88.7 | 0.86 | 0.89 | 0.92 | Pretrained on full OR family |

The performance advantage of integrated approaches is particularly pronounced for the κ-opioid receptor (KOR), where the Graph Convolutional Network achieves an AUC-ROC of 0.89 with integrated LB-SB features compared to 0.77 with LB features alone [24]. Similarly, for the δ-opioid receptor (DOR), transfer learning improves prediction accuracy to 88.7% compared to 76-82% with standard approaches [24]. This demonstrates the substantial benefit of leveraging both structural information and ligand descriptors within a transfer learning framework.

Evaluating RosettaAMRLD Performance Across Protein Targets

RosettaAMRLD has been rigorously benchmarked across diverse protein target classes, demonstrating consistent advantages over random sampling and fragment-based approaches in generating high-quality ligand candidates with improved docking scores and synthetic accessibility.

Table 3: RosettaAMRLD Performance Across Protein Target Classes

| Protein Target | Target Class | Method | Mean Docking Score (REU) | Synthetic Accessibility | Diversity (Tanimoto) | Key Interactions Recapitulated |

|---|---|---|---|---|---|---|

| TrmD | Methyltransferase | Random Sampling | -42.3 | Not assessed | 0.82 | Limited |

| TrmD | Methyltransferase | RosettaAMRLD | -58.7 | High (reaction-based) | 0.79 | 94% of native contacts |

| CDK2 | Kinase | Fragment-Based | -51.2 | Variable | 0.85 | 78% of key interactions |

| CDK2 | Kinase | RosettaAMRLD | -63.4 | High (reaction-based) | 0.81 | 92% of key interactions |

| OX1R | GPCR | Random Sampling | -39.8 | Not assessed | 0.88 | Limited |

| OX1R | GPCR | RosettaAMRLD | -55.1 | High (reaction-based) | 0.83 | 89% of native contacts |

The benchmarking results demonstrate RosettaAMRLD's consistent ability to generate novel ligands with significantly improved docking scores compared to random sampling—approximately 16-18 REU (Rosetta Energy Units) better across diverse target classes [14]. Multiround iteration further enhances output quality, resulting in molecules with in silico properties exceeding those of known actives while maintaining high synthetic accessibility through reaction-driven design [14]. The method successfully explores ultralarge chemical spaces (leveraging combinatorial libraries such as Enamine REAL space exceeding 30 billion compounds) while preserving key protein-ligand interactions found in known actives [14].

Successful implementation of integrated LB-SB approaches requires specialized computational tools and data resources. The following table summarizes key research reagent solutions essential for conducting these advanced computational experiments.

Table 4: Essential Research Reagent Solutions for Integrated LB-SB Studies

| Resource Category | Specific Tool/Resource | Key Functionality | Application in LB-SB Integration |

|---|---|---|---|

| Chemical Libraries | Enamine REAL Space | >30 billion make-on-demand compounds | Ultralarge virtual screening; reaction-based design [14] |

| Bioactivity Databases | IUPHAR/BPS Guide to Pharmacology | Curated bioactive ligands & targets | Training data for LB models; bioactivity benchmarks [24] |

| Bioactivity Databases | ChEMBL | Bioactivity data from literature | Model training; negative data for machine learning [24] |

| Structural Databases | Protein Data Bank (PDB) | Experimental protein structures | SB docking; binding site analysis [24] |

| Cheminformatics | RDKit | Molecular descriptor calculation | Fingerprint generation; similarity calculations [14] [24] |

| Molecular Modeling | Rosetta Software Suite | Flexible ligand docking | Binding affinity evaluation; pose optimization [14] |

| Deep Learning | DeepChem | Neural network architectures | DNN/GCN implementation; multi-task learning [24] |

| Descriptor Calculation | iChem Volsite Tool | Cavity-based pharmacophores | SB molecular descriptor generation [24] |

| Conformer Generation | OpenEye Toolkits | 3D conformer enumeration | SB descriptor calculation; shape-based screening [24] |

The integration of ligand-based and structure-based approaches represents a significant methodological advancement in computational drug discovery. The experimental evidence demonstrates that hybrid LB-SB frameworks consistently outperform single-modality approaches across multiple performance metrics, including prediction accuracy, docking scores, and ability to recapitulate key molecular interactions while maintaining synthetic accessibility.

The transfer learning architecture for opioid receptors achieves 84-89% prediction accuracy by leveraging knowledge transfer across related targets [24], while RosettaAMRLD generates novel ligands with docking scores 16-18 REU better than random sampling across diverse protein classes [14]. These performance advantages stem from the complementary nature of LB and SB information—where LB methods provide robust activity landscapes based on chemical similarity, and SB approaches offer atomic-level insights into binding interactions.

For researchers and drug development professionals, adopting these integrated methodologies requires specialized computational resources and expertise but offers substantial returns in screening efficiency and hit rates. As computational power increases and algorithms evolve, further refinement of these integrated approaches will continue to enhance their predictive accuracy and practical utility. The ongoing expansion of chemical and structural databases, combined with methodological innovations in deep learning and reaction-driven design, positions integrated LB-SB strategies as essential components of the modern drug discovery toolkit with potential to significantly accelerate the identification of novel therapeutic candidates.

Implementation Frameworks: Sequential, Parallel, and Hybrid LB-SB Strategies

In the demanding field of drug discovery, virtual screening (VS) stands as a cornerstone methodology for identifying novel bioactive molecules from vast chemical libraries. Computational VS approaches are broadly classified into two categories: ligand-based (LB) methods, which rely on the structural and physicochemical properties of known active compounds, and structure-based (SB) methods, which utilize the three-dimensional structure of the biological target. While each has proven successful, their complementary strengths and weaknesses have stimulated the development of hybrid strategies that integrate both approaches into a cohesive framework [10].

Among these hybrid strategies, sequential approaches that progressively filter chemical libraries from LB pre-screening to SB refinement have emerged as a particularly efficient and powerful paradigm. These methods acknowledge that LB techniques, with their lower computational cost, are ideal for rapidly narrowing down large libraries, while more computationally demanding SB methods can be reserved for the detailed assessment of a prioritized subset of compounds [10]. This article provides a comparative guide to these sequential LB-to-SB protocols, framing them within broader research on combined methods and detailing the experimental data, protocols, and tools that underpin their success.

Theoretical Foundations and Comparative Frameworks

The rationale for combining LB and SB methods stems from their inherent limitations. LBVS can be biased toward the reference template and may miss novel chemotypes, while SBVS struggles with protein flexibility and the accurate scoring of binding affinities [10]. Sequential approaches aim to optimize the trade-off between computational expense and predictive accuracy.

Classification of Hybrid Virtual Screening Strategies

A useful classification system, as proposed by Drwal and Griffith, categorizes combined LB-SB strategies into three main types [10]:

- Sequential Approaches: The VS pipeline is divided into consecutive steps, with the output of one step serving as the input for the next. This typically involves LB pre-filtering followed by SB refinement.

- Parallel Approaches: LB and SB methods are run independently on the entire chemical library, and the results are combined at the end to select candidates.

- Hybrid Approaches: LB and SB information is integrated into a single, unified computational formalism.

This guide focuses on the sequential approach, which is widely adopted for its practical balance of efficiency and depth.

Experimental Protocols and Workflow Design

A typical sequential LB-to-SB workflow involves a multi-stage funnel designed to efficiently prioritize the most promising candidates. The specific methodologies employed at each stage can vary, but the underlying logic remains consistent.

General Sequential Workflow

The following diagram illustrates the standard workflow for a sequential LB-to-SB virtual screening protocol.

Stage 1: Ligand-Based Pre-screening

The primary goal of this initial stage is to rapidly reduce the chemical space of the virtual library to a manageable number of candidates.

- Objective: Enrich the dataset with compounds that have a high similarity to known active molecules.

- Common Methods:

- 2D Molecular Similarity: Uses molecular fingerprints (e.g., ECFP, FCFP) and similarity coefficients (e.g., Tanimoto) to compare structures [10].

- Pharmacophore Modeling: Identifies compounds that match a 3D arrangement of chemical features essential for biological activity [10].

- Quantitative Structure-Activity Relationship (QSAR) Models: Predicts activity based on mathematical models derived from known active and inactive compounds.

- Protocol Details: A known active ligand (or a set of actives) is used as a reference. The entire virtual library is screened, and each compound is assigned a similarity score. A threshold is set, and only the top-ranking compounds (e.g., 1-5% of the library) proceed to the next stage. This step is computationally inexpensive, allowing for the screening of millions of compounds in a short time.

Stage 2: Structure-Based Refinement

The filtered library from Stage 1 is then subjected to a more rigorous, computationally intensive assessment based on the target's 3D structure.

- Objective: Evaluate the binding mode and affinity of the prioritized compounds within the target's binding site.

- Common Methods:

- Molecular Docking: Predicts the preferred orientation (pose) of a small molecule within the protein's binding pocket. Scoring functions are then used to estimate the binding affinity [10].

- Molecular Mechanics/Generalized Born Surface Area (MM/GBSA): A more refined but costly method to calculate binding free energies from docked complexes.

- Protocol Details: The 3D structure of the target protein (from X-ray crystallography, cryo-EM, or homology modeling) is prepared by adding hydrogen atoms, assigning charges, and defining the binding site. Each compound from the LB-pre-screened list is docked into the site. Compounds are ranked based on their docking scores, and the top-ranked poses are visually inspected for key interactions (e.g., hydrogen bonds, hydrophobic contacts, pi-stacking). The final selection of hits for experimental validation is made from this refined list.

Performance Comparison and Experimental Data

The effectiveness of the sequential approach is demonstrated by its successful application in numerous drug discovery projects, often yielding hit rates competitive with experimental high-throughput screening.

Representative Case Studies

The table below summarizes key examples from the literature where sequential LB-to-SB strategies led to the identification of potent inhibitors.

Table 1: Case Studies of Successful Sequential LB-SB Virtual Screening

| Target | LB Pre-screening Method | SB Refinement Method | Identified Hit | Reported Potency (IC₅₀) | Key Outcome |

|---|---|---|---|---|---|

| 17β-HSD1 Enzyme [10] | Pharmacophore model derived from X-ray data | Molecular Docking | Keto-derivative compound | Nanomolar range | Novel inhibitor identified via holistic framework. |

| HDAC8 Enzyme [10] | Pharmacophore model & ADMET filtering | Molecular Docking | SD-01, SD-02 | 9.0 nM, 2.7 nM | Selective non-hydroxamate inhibitors discovered from 4.3M compound library. |

Comparative Performance Data

Studies have shown that sequential approaches can significantly enhance screening efficiency. For instance, a prospective study by Swann et al. demonstrated that parallel or sequentially combined methods increase both performance and robustness compared to single-modality approaches [10]. The hit rates from such combined strategies are often substantially higher than those from standalone LB or SB methods, though the results can be sensitive to the specific target and the quality of the structural and ligand data used [10].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing a sequential VS pipeline requires a suite of specialized software tools and databases. The following table outlines key resources essential for conducting these studies.

Table 2: Key Research Reagent Solutions for Sequential LB-SB Workflows

| Tool/Resource Name | Type | Primary Function in Workflow |

|---|---|---|

| Molecular Fingerprints (ECFP/FCFP) | Computational Descriptor | LB Stage: Encodes molecular structure for rapid similarity searching. |

| Pharmacophore Modeling Software | Computational Algorithm | LB Stage: Defines and searches for essential 3D chemical features for bioactivity. |

| Protein Data Bank (PDB) | Structural Database | SB Stage: Source of 3D protein structures for docking and binding site analysis. |

| Molecular Docking Software | Computational Algorithm | SB Stage: Predicts ligand binding pose and provides a scoring function for ranking. |

| Chemical Vendor Libraries | Compound Database | Source of commercially available molecules for the initial virtual library. |

Sequential approaches that leverage progressive filtering from ligand-based pre-screening to structure-based refinement represent a powerful and pragmatic strategy in modern drug discovery. By rationally combining the speed of LB methods with the mechanistic insight of SB techniques, this pipeline efficiently navigates the immense complexity of chemical space to identify high-quality hit compounds. The documented success stories and available toolkit make it an indispensable methodology for researchers aiming to accelerate the early stages of drug development. As computational power grows and algorithms become more sophisticated, the integration of adaptive and AI-driven elements will likely further enhance the precision and predictive power of these sequential protocols.

The escalating complexity of modern drug discovery, coupled with the exponential growth of virtual chemical libraries now encompassing billions of molecules, has necessitated a paradigm shift toward high-performance computing solutions [23]. Within this context, parallel implementation strategies have emerged as critical enablers for managing computational workloads that would otherwise be prohibitive. These approaches are particularly transformative for combined ligand-based and structure-based (LB-SB) methods, which integrate complementary virtual screening techniques to enhance hit identification rates while mitigating the limitations inherent in each individual approach [11].

The strategic integration of independent execution—where computational tasks are distributed across multiple processing units—with rank fusion techniques that mathematically combine results from diverse screening methods, represents a sophisticated framework for accelerating early-stage drug discovery [25] [26]. This article provides a comparative analysis of parallel implementation architectures within hybrid virtual screening, examining their performance characteristics, experimental validation, and practical implementation requirements to guide researchers in selecting appropriate strategies for their specific discovery pipelines.

Foundational Concepts: Independent Execution and Rank Fusion

Parallelization Paradigms in Virtual Screening

Parallel computing in drug discovery operates primarily through two complementary approaches:

Embarrassingly Parallel Distribution: This strategy involves distributing independent screening tasks across multiple computing nodes without inter-process communication. In virtual screening, this typically manifests as different molecules or molecular subsets being processed simultaneously across cluster nodes [25]. The pOptiPharm implementation exemplifies this approach by automating molecule distribution between available nodes in a cluster, enabling linear scaling with chemical database size [25].

Within-Algorithm Parallelization: This more sophisticated approach parallelizes components of a single algorithmic instance. The RosettaAMRLD framework employs this through its Monte Carlo Metropolis algorithm, where multiple ligand candidates are generated and evaluated concurrently [14]. Similarly, pOptiPharm implements a second parallelization layer that distributes the optimization of individual molecule poses across multiple threads [25].

Reciprocal Rank Fusion: Mathematical Foundation

Reciprocal Rank Fusion (RRF) provides a robust mathematical framework for aggregating results from multiple virtual screening methods. The core RRF formula calculates a unified score for each document (or molecule) across different rankers [26]:

RRF(d) = Σ(r ∈ R) 1 / (k + r(d))

Where:

- d represents a document (or molecule in virtual screening)

- R is the set of rankers (different virtual screening methods)

- k is a smoothing constant (typically 60)

- r(d) is the rank of document d in ranker r

The RRF algorithm operates on the principle of reciprocal ranking, assigning greater weight to higher positions (lower rank numbers) across different retrieval methods [26]. The constant k serves as a smoothing factor that prevents any single retriever from dominating the results and enables effective tie-breaking for lower-ranked items [26].

Table 1: Key Characteristics of Rank Fusion Methods

| Method | Mathematical Basis | Advantages | Typical Applications |

|---|---|---|---|

| Reciprocal Rank Fusion (RRF) | Σ 1/(k + rank) | Robust to outliers, balances multiple evidence sources | Hybrid LB-SB screening, multi-method consensus |

| Geometrically Weighted Sampling | Ranking-based weighted selection | Promotes diversity while favoring similarity | Fragment sampling in de novo design [14] |

| Scaled Bliss Synergy | Combination index modeling | Accounts for higher-order drug interactions | Therapeutic synergy analysis [27] |

Comparative Analysis of Parallel Implementation Architectures

Framework Architectures and Implementation Approaches

Table 2: Parallel Virtual Screening Frameworks: Architectural Comparison

| Framework | Parallelization Strategy | Fusion Method | Chemical Space Coverage | Target Classes Validated |

|---|---|---|---|---|

| RosettaAMRLD [14] | Monte Carlo with reaction-driven sampling | Geometrically weighted similarity fusion | ~30 billion compounds (Enamine REAL) | Kinases (CDK2), GPCRs (OX1R), Transferases (TrmD) |

| pOptiPharm [25] | Two-layer: database distribution & pose optimization | Shape similarity maximization | Large-scale molecular databases | Not target-specific (general LBVS) |

| COMBImage2 [27] | Plate-based experimental parallelization | Temporal response pattern mining | 246+ drug combinations | Glioblastoma (CUSP9v4 protocol) |

| V-SYNTHES [23] | Synthon-based modular screening | Docking score integration | 11 billion compounds | GPCRs, Kinases |

The experimental workflows for these parallel frameworks can be visualized through their core operational processes:

Experimental Performance and Benchmarking Data

Table 3: Experimental Performance Metrics of Parallel Screening Approaches

| Framework | Sampling Efficiency | Docking Score Improvement | Computational Resource Requirements | Validation Outcomes |

|---|---|---|---|---|

| RosettaAMRLD [14] | Superior to random sampling (exact metrics not specified) | Significant improvement over known actives | High (Monte Carlo sampling + reaction processing) | Novel, synthetically accessible ligands with active-like poses |

| pOptiPharm [25] | Finds better solutions than sequential OptiPharm | Not applicable (shape-based method) | Near-linear scaling with processing units | Reduced computation time proportional to units |

| Ultra-Large Docking [23] | Enabled screening of billions of compounds | Subnanomolar hits for GPCR targets | Specialized hardware (GPU clusters) | Clinical candidates identified from 8.2B compounds |

Experimental Protocols and Implementation Guidelines

Protocol 1: RosettaAMRLD for De Novo Ligand Design

Methodology:

- Input Preparation: Prepare protein-ligand complex with defined binding pocket (from experimental structures or docking results). Even poorly docked complexes or those with low-affinity ligands can serve as starting points [14].

- Library Configuration: Access combinatorial fragment libraries (e.g., Enamine REAL space) with predefined reactions and corresponding reagents.

- Parallel Sampling Execution:

- Encode input molecule and library fragments using RDKit Daylight-like Fingerprints.

- Rank fragments based on Tversky similarity index to reference molecule.

- Employ geometrically weighted sampling to populate reaction containers.

- Generate candidate molecules through virtual reaction of sampled fragments.

- Pose Evaluation: Align proposed ligands to previous ligand and evaluate binding using RosettaLigand scoring functions.

- Iterative Refinement: Implement cascaded sampling workflow, extending only the best-performing routes to escape local minima.

Key Parameters:

- Similarity metric: Tversky index for fragments, Tanimoto similarity for candidates.

- Sampling: Geometrically weighted to balance similarity and diversity.

- Termination: User-defined candidate limits or convergence metrics.

Protocol 2: pOptiPharm for Parallel Ligand-Based Screening

Methodology:

- Infrastructure Setup: Configure computing cluster with distributed memory architecture.

- Database Partitioning: Automatically distribute molecules between available nodes using embarrassingly parallel paradigm.

- Parallel Optimization:

- Initialize multiple OptiPharm instances across nodes.

- For each molecule, optimize ten decision variables (seven for rotation, three for displacement).

- Implement thread-pool for parallel pose optimization within each instance.

- Result Aggregation: Collect optimized poses from all nodes.

- Rank Fusion: Apply similarity maximization to generate final ranking.

Key Parameters:

- Population size (M): Impacts exploration depth.

- Iterations (tmax): Controls reproduction-selection-improvement cycles.

- Radius (Rtmax): Decreases from search space diameter to specified value, connecting global and local exploration.

Protocol 3: Hybrid LB-SB with Reciprocal Rank Fusion

Methodology:

- Independent Screening Execution:

- Run structure-based docking screening in parallel.

- Simultaneously execute ligand-based similarity screening.

- Apply additional specialized screening methods as available.

- Result Ranking: Generate ordered lists from each screening method.

- RRF Calculation: For each molecule, compute RRF score = Σ 1/(60 + rank) across all screening methods.