In Silico logP Prediction: A Comprehensive 2024 Comparison of Methods, Tools, and Best Practices

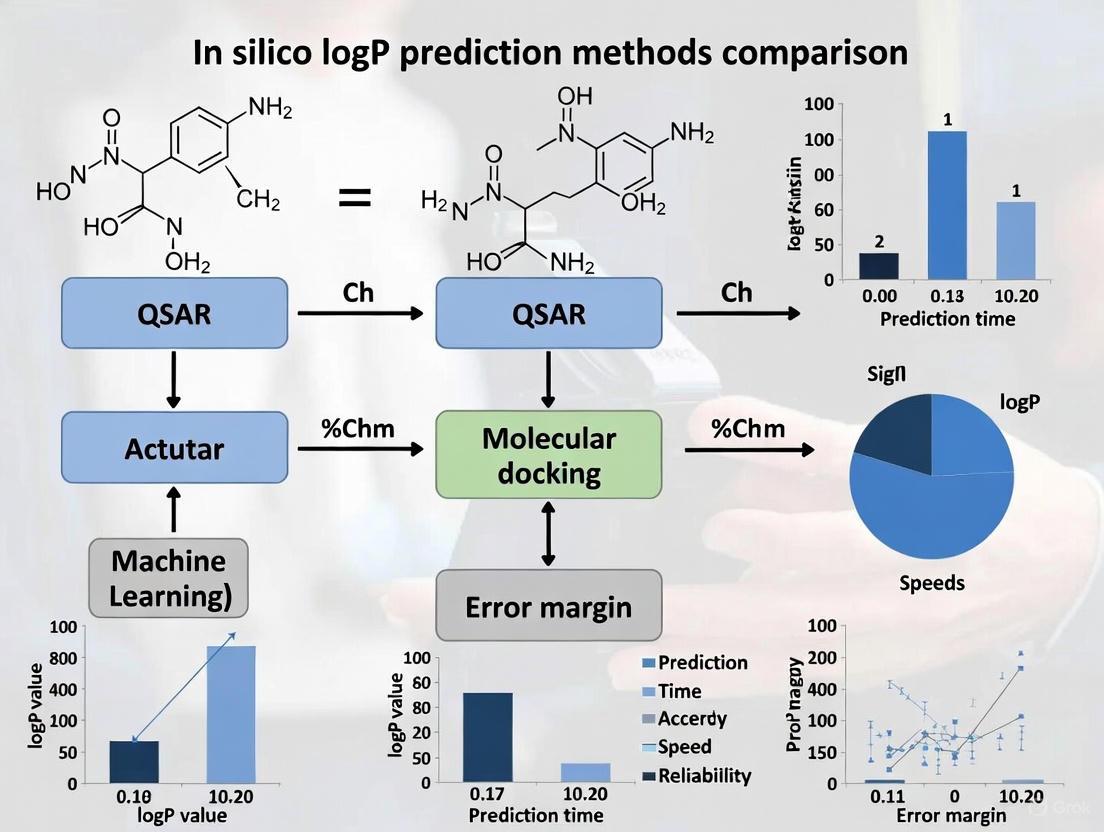

This article provides a comprehensive analysis of in silico logP prediction methods, a critical parameter in drug discovery for optimizing pharmacokinetic profiles.

In Silico logP Prediction: A Comprehensive 2024 Comparison of Methods, Tools, and Best Practices

Abstract

This article provides a comprehensive analysis of in silico logP prediction methods, a critical parameter in drug discovery for optimizing pharmacokinetic profiles. We explore the foundational principles of molecular lipophilicity and its impact on ADMET properties. The review systematically compares traditional substructure-based and property-based methods with modern machine learning and AI-driven approaches, including tools like SwissADME and ADMET Predictor. We address common challenges in predicting logP for complex molecules and provide troubleshooting strategies. Furthermore, we present a rigorous validation framework based on recent benchmarking studies, evaluating predictive performance across diverse chemical spaces. This guide is tailored for researchers and drug development professionals seeking to select and apply the most effective logP prediction strategies for their projects.

Understanding logP: The Fundamental Driver of Drug Disposition and Efficacy

Lipophilicity, the physicochemical property describing how a compound partitions between a lipid-like and an aqueous environment, is a fundamental determinant in the absorption, distribution, metabolism, excretion, and toxicity (ADMET) of pharmaceutical compounds. Accurately predicting and optimizing ADMET properties early in the drug development process is essential for selecting compounds with optimal pharmacokinetics and minimal toxicity, thereby mitigating the risk of late-stage failures [1]. For decades, Lipinski's Rule of Five has served as a central guideline for identifying orally active drugs, with the calculated octanol-water partition coefficient (logP) identified as one of the key parameters [2]. The rule proposed that a good "druggable" compound should have a logP value of less than 5, among other criteria [2].

However, the landscape of drug discovery is evolving. As the explored chemical space expands beyond small molecules, there is an increasing number of approved oral drug compounds that go beyond the Rule of 5 (bRo5). These include larger compounds such as macrocycles, protein-based agents, and multispecific drugs like antibody-drug conjugates (ADCs) and proteolysis targeting chimeras (PROTACs) [2]. This expansion has necessitated a more nuanced understanding of lipophilicity, particularly the critical distinction between logP and logD, which is the focus of this application note.

Theoretical Foundations: logP and logD

The Partition Coefficient (logP)

The partition coefficient, logP, is a definitive measure of a compound's inherent lipophilicity. It quantifies the equilibrium distribution of a single, unionized compound between two immiscible phases: typically, 1-octanol (representing lipid membranes) and water (representing biological fluids) [3]. Mathematically, logP is defined as:

[ \text{logP} = \log{10} \left( \frac{[\text{Drug}]{\text{octanol}}}{[\text{Drug}]_{\text{water}}} \right) ]

where ([\text{Drug}]{\text{octanol}}) and ([\text{Drug}]{\text{water}}) represent the concentrations of the unionized drug in the octanol and aqueous phases, respectively [3]. A higher logP value indicates greater lipophilicity, which generally correlates with improved passive membrane permeability. Conversely, a lower logP value indicates higher hydrophilicity and, typically, better aqueous solubility [3].

The Distribution Coefficient (logD)

The distribution coefficient, logD, provides a more physiologically relevant measure of lipophilicity because it accounts for a critical factor: ionization. Unlike logP, which only considers the neutral form of a compound, logD considers the distribution of all forms of a compound—ionized, partially ionized, and unionized—at a specific pH [2]. Its definition is:

[ \text{logD} = \log{10} \left( \frac{[\text{Drug}]{\text{octanol}}}{[\text{Drug}]{\text{water}} + [\text{Ion}]{\text{water}}} \right) ]

where ([\text{Ion}]_{\text{water}}) represents the concentration of the ionized form in the aqueous phase [3]. logD is therefore pH-dependent and should always be reported with the corresponding pH value (e.g., logD at pH 7.4) [2].

The Critical Distinction and Relationship

The fundamental distinction lies in their treatment of ionization. LogP is a constant for a given compound, reflecting the lipophilicity of its neutral form. LogD is a variable that changes with pH, reflecting the actual lipophilicity of the compound under specific biological conditions [2]. For compounds without ionizable groups, logP and logD are identical across all pH values. However, for the vast majority of drug candidates that contain ionizable sites, logD provides a far more accurate picture of a compound's behavior [2].

A theoretical relationship exists between logD, logP, and pKa. For a monoprotic acid, the equation is:

[ \text{logD} = \text{logP} - \log \left( 1 + 10^{(\text{pH} - \text{pKa})} \right) ]

For a monoprotic base, the relationship is:

[ \text{logD} = \text{logP} - \log \left( 1 + 10^{(\text{pKa} - \text{pH})} \right) ]

These equations demonstrate how ionization at a given pH dramatically affects the observed lipophilicity [3]. The following diagram illustrates the logical relationship between these key properties and their collective impact on drug behavior.

Figure 1: The relationship between compound properties, logP/logD, and ADMET outcomes. logD integrates the effects of ionization (governed by pKa and pH) to provide a physiologically relevant lipophilicity metric.

Critical Roles in ADMET Properties

Lipophilicity is not merely a number to be recorded; it is a property that profoundly influences the entire journey of a drug through the body.

Absorption and Permeability

For oral drugs, absorption requires traversing the lipid bilayers of the intestinal epithelium. While a sufficiently lipophilic character (as indicated by logD) is necessary for passive diffusion through these membranes, an excessively high logD can be detrimental. It can lead to poor dissolution in the gastrointestinal fluids or sequestration in food components, ultimately reducing absorption [2] [3]. The changing pH environment of the GI tract, from the highly acidic stomach (pH 1.5-3.5) to the more neutral intestines (pH 6-7.4), means that a drug's logD, not its logP, determines its effective permeability at each site [2].

Distribution and Volume of Distribution (VDss)

Once absorbed, a drug must distribute to its site of action. Lipophilicity is a key driver of tissue distribution and penetration, including crossing the blood-brain barrier. A recent sensitivity analysis demonstrated that logP is the most influential physicochemical parameter in determining the human volume of distribution at steady state (VDss) for neutral and weakly basic drugs [4]. High lipophilicity (logP > 5) can enhance a drug's ability to cross the blood-brain barrier, but it can also lead to excessive tissue accumulation and a large VDss, potentially necessitating higher loading doses [5] [4]. Furthermore, accuracy in logP values is critical, as methods for predicting VDss show varying sensitivity to this parameter; some methods significantly overpredict distribution for highly lipophilic compounds (logP > 3.5) [4].

Metabolism, Excretion, and Toxicity

Lipophilicity directly influences a drug's metabolic fate. Highly lipophilic compounds are more likely to be substrates for metabolic enzymes, particularly cytochrome P450s, which can lead to rapid clearance or the generation of reactive metabolites [1]. From an excretion standpoint, hydrophilic compounds (low logD) are more readily eliminated via the kidneys, while lipophilic compounds often require metabolic conversion to more hydrophilic forms before they can be excreted in urine or bile. Elevated lipophilicity is also correlated with an increased risk of promiscuous binding to off-target proteins and specific toxicities, such as phospholipidosis and inhibition of cardiac ion channels [1] [4].

In Silico Prediction Methods and Performance

The experimental determination of logP, via methods like the shake-flask technique, is labor-intensive, costly, and can be subject to experimental variability (standard deviations can range from 0.01 to 0.84 log units) [6]. Consequently, a variety of in silico prediction methods have been developed, which can be broadly categorized as follows.

- Substructure-Based Methods: These include fragmental (e.g., CLOGP) and atom-based methods. They operate by cutting molecules into defined fragments or down to single atoms, summing the contributions of these substructures to arrive at a final logP value [7] [6].

- Property-Based Methods: These approaches utilize descriptions of the entire molecule, such as topological descriptors or 3D-structure representations, and employ techniques ranging from simple linear regressions to complex machine learning models [7] [5].

- Recent Machine Learning Advances: Modern deep-learning models leverage sophisticated molecular representations. For instance, Mol2vec generates high-dimensional vector embeddings of molecules and their substructures, which can be used with models like multi-layer perceptrons (MLP), convolutional neural networks (Conv1D), and long short-term memory networks (LSTM) to achieve state-of-the-art prediction accuracy [5].

Quantitative Performance Comparison of Prediction Tools

The predictive performance of various methods can be benchmarked on public challenges and independent studies. The following table summarizes reported accuracy metrics for several representative methods.

Table 1: Performance Comparison of logP Prediction Methods

| Method / Tool | Type | Reported RMSE | Reported MAE | Key Characteristics | Source / Dataset |

|---|---|---|---|---|---|

| Chemaxon logP | Atomic Increments (Empirical) | 0.31 | 0.23 | Improved implementation of atomic increments; high accuracy on blind challenge [8]. | SAMPL 6 Challenge (11 compounds) [8] |

| MF-LOGP | Random Forest (Formula-based) | 0.52 | 0.83 | Uses only molecular formula as input; no structural information required [6]. | Independent validation (2,713 compounds) [6] |

| Deep Learning (Mol2vec) | Deep Learning Ensemble | ~0.60 (approx. from graph) | N/R | Uses Mol2vec embeddings; reported to outperform MPNN and Graph Convolution models [5]. | Lipophilicity dataset (4,200 molecules) [5] |

| ACD/LogP GALAS | Hybrid (GALAS) | N/R | N/R | 80% of predictions within 0.5 log units for new training set; incorporates local similarity adjustment [9]. | Internal Validation (>1,000 compounds) [9] |

| Reference (clogP Biobyte) | Fragmental | 0.82 | 0.68 | Included as a common reference method in benchmarks [8]. | SAMPL 6 Challenge [8] |

N/R = Not Reported in the sourced context.

The Scientist's Toolkit: Key Software and Reagents

Table 2: Essential Research Tools for Lipophilicity Prediction and Analysis

| Tool / Reagent | Function / Description | Use Case in Research |

|---|---|---|

| ACD/Percepta Platform | Software suite providing multiple logP and logD predictors (Classic, GALAS, Consensus), along with pKa and solubility prediction [9] [10]. | Integrated physicochemical property profiling; generating QMRF/QPRF reports for regulatory compliance [9]. |

| Chemaxon JChem Suite | Provides empirical logP prediction based on an atomic increments approach with proprietary extensions [8]. | LogP prediction integrated into chemical drawing, database management, and workflow tools like KNIME [8]. |

| Mol2vec | An unsupervised machine learning algorithm that generates high-dimensional vector representations of molecules from their substructures [5]. | Creating molecular descriptor vectors for use in custom deep-learning models for property prediction [5]. |

| n-Octanol and Water | The standard solvent system for both experimental measurement and the theoretical definition of logP [6] [3]. | Used in shake-flask or slow-stir experiments to determine experimental partition coefficients [6]. |

| Buffers (various pH) | Aqueous solutions to control the pH environment for experimental measurements. | Essential for the determination of pH-dependent distribution coefficients (logD) [2]. |

Experimental and Computational Protocols

Protocol: Shake-Flask Determination of logP

This protocol outlines the standard method for the experimental determination of the octanol-water partition coefficient [6].

- Preparation: Pre-saturate 1-octanol and water (or buffer) with each other by mixing them thoroughly and allowing them to separate before use. This ensures volume stability during the experiment.

- Partitioning: Dissolve a known amount of the compound of interest in a suitable volume of one phase (e.g., the octanol-saturated water phase). Combine this with an equal volume of water-saturated octanol in a separation flask.

- Equilibration: Shake the mixture vigorously for a predetermined time at a constant temperature to establish equilibrium.

- Separation: Allow the phases to separate completely. This may require centrifugation if an emulsion has formed.

- Analysis: Carefully separate the two phases and quantify the concentration of the compound in each phase using a suitable analytical method (e.g., HPLC-UV, LC-MS).

- Calculation: Calculate logP as the log10 of the ratio of the concentration in the octanol phase to the concentration in the aqueous phase.

Protocol: In Silico logP Prediction with Commercial Software

This general workflow describes the process for predicting logP using standard commercial software like ACD/Percepta or Chemaxon [9] [10].

- Input Structure: Provide the chemical structure of the compound. This can be done by drawing the structure directly in the software's interface, importing a molecular file (e.g., SDF, MOL), or providing a SMILES string or InChI code.

- Algorithm Selection: Select the desired prediction algorithm (e.g., Classic, GALAS, Consensus) based on the desired balance of speed, interpretability, and accuracy.

- Execution: Run the calculation. The software will process the structure based on its internal model.

- Analysis of Results: Review the predicted logP value. Many software packages provide additional data, such as:

- A reliability index or confidence interval.

- A calculation protocol breaking down contributions from different molecular fragments.

- A list of similar structures from the training set with their experimental values.

- Reporting: Generate a report of the results, which can often be formatted for regulatory submission (e.g., QPRF report).

Workflow for Integrating logP/logD in Lead Optimization

The following diagram outlines a recommended workflow for applying lipophilicity metrics in a drug discovery program to de-risk ADMET issues early in the process.

Figure 2: A cyclical lead optimization workflow integrating computational prediction and experimental measurement of lipophilicity to guide compound design.

The distinction between logP and logD is not merely academic; it is a fundamental consideration for successful drug design. While logP describes the intrinsic lipophilicity of a neutral molecule, logD provides the critical, pH-contextualized view necessary for predicting a compound's behavior in the varied physiological environments of the human body. As drug discovery ventures further into challenging chemical space, including beyond-Rule-of-5 compounds, the accurate prediction and measurement of these parameters become even more vital.

The integration of robust in silico tools, which are continuously improving in accuracy through advanced machine learning and larger training sets, allows for early and efficient screening of compound libraries. However, these predictions must be validated with careful experimental protocols as compounds advance. A strategic workflow that leverages both computational and experimental assessments of lipophilicity provides a powerful framework for steering lead optimization efforts, helping to balance potency with desirable ADMET properties and ultimately increasing the probability of developing successful therapeutic agents.

The octanol-water partition coefficient (logP) is a fundamental physicochemical parameter that quantifies a compound's hydrophobicity or lipophilicity. It is defined as the base-10 logarithm of the equilibrium concentration ratio of a neutral compound in the n-octanol and water phases. For ionizable compounds, the pH-dependent distribution coefficient (logD) is used instead [11]. This parameter serves as an extrathermodynamic reference scale that expresses differences in the non-ideality of a compound's solution in organic solvent versus water [11]. The molecular basis of partitioning lies in the transfer free energy (ΔG) required to move a molecule from water to octanol, driven by the balance of molecular interactions including hydrogen bonding capacity, molecular bulk properties, and disperse forces [12].

In pharmaceutical research and environmental toxicology, logP profoundly influences drug bioavailability, membrane permeability, and bioaccumulation potential [13] [11]. Its prediction from chemical structure remains an active area of research, with applications spanning from early drug discovery to environmental risk assessment [14] [15].

Molecular Interactions Governing Partitioning Behavior

Key Structural Determinants of logP

Partitioning behavior emerges from specific molecular interactions and structural features:

- Molecular Bulk/Volume: Larger molecular volumes generally increase lipophilicity by enhancing non-polar interactions with the octanol phase [12] [4]

- Hydrogen-Bonding Capacity: Compounds with strong hydrogen bond donors (A) and acceptors (B) favor the aqueous phase, reducing logP [12] [11]

- Dipole-Dipole Interactions: Molecular polarity and polarizability (S) influence partitioning through differential solvation in polar versus non-polar environments [11]

- Ionization State: For ionizable compounds, the neutral species partitions more readily into octanol, while ionized forms favor water [16] [11]

These factors collectively determine a molecule's preference for the octanol or aqueous phase, with hydrogen-bonding and molecular volume being particularly dominant [12] [11].

Established Experimental Protocols for logP Determination

Shake-Flask Method (OECD TG 107)

Principle: The classic direct measurement method where compounds are partitioned between pre-saturated octanol and water phases through vigorous mixing [11].

Detailed Protocol:

- Phase Preparation: Pre-saturate high-purity water with n-octanol and n-octanol with water by mixing overnight at constant temperature (typically 25°C)

- Separation: Allow phases to separate completely; use the saturated phases for experimentation

- Equilibration: Add compound to the system, shake vigorously for 30-60 minutes to establish equilibrium

- Phase Separation: Centrifuge if necessary to achieve complete phase separation

- Analysis: Quantify compound concentration in both phases using appropriate analytical methods (HPLC, UV-Vis)

- Calculation: Determine logP = log10([compound]octanol/[compound]water)

Applicability: Optimal for logP values between -2 and 4; requires compound stability and analytical detection in both phases [11].

Reverse-Phase High Performance Liquid Chromatography (RP-HPLC, OECD TG 117)

Principle: An indirect method correlating chromatographic retention behavior with partitioning coefficients [16] [11].

Detailed Protocol for Basic Compounds (IS-RPLC) [16]:

- Column Selection: Silica-based C18 column (e.g., 250 × 4.6 mm, 5 μm)

- Mobile Phase: Methanol/phosphate buffer (pH 7.0-10.0), pre-saturated with octanol

- Calibration: Analyze at least 6 reference compounds with known logP values at varying methanol fractions (φ = 0.1-0.7)

- Retention Measurement: Inject test compounds, measure retention factors (k) at multiple mobile phase compositions

- Data Analysis: Plot logk vs. φ, determine logkw (retention factor in 100% aqueous mobile phase)

- QSRR Modeling: Apply quantitative structure-retention relationship: logD = a × logkw + b × ne + c × A + d × B + e, where ne represents electrostatic charge, A and B represent hydrogen bonding parameters [16]

Advantages: Minimal compound requirement, applicable to impure samples, high throughput capability [16] [11].

Table 1: Comparison of Key Experimental logP Determination Methods

| Method | logP Range | Precision | Throughput | Key Limitations |

|---|---|---|---|---|

| Shake-Flask (OECD 107) | -2 to 4 | ±0.3 log units | Low | Emulsion formation, concentration dependence [11] |

| Slow-Stirring (OECD 123) | 4.5 to 8.2 | ±0.3-0.5 log units | Low | Long equilibration times, adsorption issues [11] |

| Generator Column (EPA 830.7560) | 1 to 6 | ±0.3 log units | Medium | Complex apparatus, limited to higher logP [11] |

| RP-HPLC (OECD 117) | 0 to 6 | ±0.5 log units | High | Requires reference compounds, stationary phase dependence [16] [11] |

In Silico Prediction Methods: From Fragment-Based to Deep Learning Approaches

Fragment-Based and Atom-Typer Methods

These approaches decompose molecular structures into substructural elements with defined contributions:

- Fragment Contribution Methods: logP = Σaifi + ΣbiFi, where fi represents fragment contributions and Fi represents correction factors for fragment interactions [17] [11]

- Atom-Typer Methods: Each atom is classified by its chemical environment using descriptors such as atomic number, hybridization, and neighboring atoms [17]

JPlogP Case Study [17]: The JPlogP method uses a six-digit atom-type code: A (charge+1), BB (atomic number), C (non-hydrogen bond count), DD (element-specific hybridation and environment). The model was trained on predicted data from multiple methods (AlogP, XlogP2, SlogP, XlogP3) to distill collective knowledge into a single model, demonstrating improved performance on pharmaceutical-like compounds [17].

Linear Solvation Energy Relationships (LSER)

LSER models partition coefficients using solute descriptors representing specific molecular interactions [12] [11]:

Where:

- E: Excess molar refraction

- S: Polarity/polarizability

- A: Hydrogen-bond acidity (donor strength)

- B: Hydrogen-bond basicity (acceptor strength)

- V: McGowan characteristic volume

- e,s,a,b,v: Solvent-specific coefficients

Molecular size (V) and hydrogen-bond basicity (B) typically dominate the equation, with larger molecules favoring octanol and stronger H-bond acceptors favoring water [11].

Advanced Deep Learning Approaches

Recent deep neural network (DNN) models directly learn structure-property relationships from large datasets:

DNN Architecture and Training [18]:

- Input Representation: Molecular graphs or SMILES strings

- Data Augmentation: Inclusion of all potential tautomeric forms significantly improves model robustness and accuracy

- Performance: Best models achieve root mean square errors (rmse) of 0.47 log units on test data, comparable to experimental variability (0.2-0.4 log units) [18]

- Advantage: Automatic feature learning eliminates need for manual descriptor selection

Table 2: Comparison of In Silico logP Prediction Approaches

| Method Type | Representative Tools | Theoretical Basis | Performance (RMSE) | Key Advantages |

|---|---|---|---|---|

| Fragment-Based | ClogP, ACD/logP, KOWWIN | Additive constitutive principles | 0.5-1.0 log units [17] [18] | Interpretability, well-established |

| Atom-Based | XlogP2, XlogP3, AlogP, JPlogP | Atomic contributions with corrections | 0.4-0.8 log units [17] | Broad applicability, no missing fragments |

| Property-Based | MlogP, LSER-based methods | Physicochemical descriptors | 0.5-0.9 log units [11] | Mechanistic insight, QSRR compatibility |

| Deep Learning | DNNtaut, ALOGPS, OCHEM | Pattern recognition in large datasets | 0.3-0.5 log units [18] | Automatic feature learning, high accuracy |

Consensus Modeling: A Strategy for Enhanced Reliability

The Consensus Modeling Workflow

Individual prediction methods exhibit variable performance across different chemical classes, with no single method consistently superior [11]. Consolidated logP values, derived as the mean of at least five valid estimates from independent methods (experimental and computational), provide more robust hydrophobicity measures with variability typically within 0.2 log units [11].

Figure 1: Consensus Modeling Workflow for Robust logP Prediction

Table 3: Essential Research Reagents and Computational Tools for logP Studies

| Category | Specific Items/Resources | Function/Application | Key Characteristics |

|---|---|---|---|

| Experimental Materials | HPLC-grade n-octanol | Organic phase for partitioning | High purity, water-saturated |

| Buffer solutions (various pH) | Aqueous phase control | Phosphate buffers commonly used | |

| C18 columns (silica-based) | Stationary phase for RP-HPLC | Different pore sizes for varied analytes | |

| Reference compounds | Method calibration and validation | Known logP values, structural diversity | |

| Computational Tools | OPERA | Physicochemical property predictions | QSAR-ready descriptors [19] |

| DeepChem | Deep learning library for chemistry | Graph convolution capabilities [18] | |

| SwissADME, admetSAR | Web-based property prediction | Multiple endpoints including logP [13] | |

| Titania (Enalos Cloud) | Integrated property prediction | OECD-validated models [13] | |

| Data Resources | PhysProp Database | Experimental logP data | Historical reference dataset |

| ChemPharos | Curated chemical data | FAIR data principles [13] | |

| PubChem BioAssay | Bioactivity and property data | Large-scale screening data [13] |

The relationship between chemical structure and octanol-water partitioning is governed by fundamental molecular interactions including hydrogen bonding, molecular volume, and polarity. For reliable logP determination in research and regulatory contexts:

- Apply Method Appropriately: Match experimental or computational methods to compound characteristics and required precision

- Implement Consensus Approaches: Combine multiple estimation methods to minimize individual method biases and uncertainties [11]

- Consider Chemical Domain: Select methods with demonstrated performance for specific chemical classes of interest

- Account for Ionization: Use logD for ionizable compounds and ensure proper pH control or specification

As computational methods advance, particularly deep learning approaches with robust molecular representations, the accuracy and applicability domains of logP prediction continue to expand, supporting more efficient drug discovery and environmental risk assessment.

The octanol-water partition coefficient (logP) is a fundamental physicochemical property that serves as a key indicator of a compound's lipophilicity. In drug discovery and development, logP has a direct correlation with a molecule's absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties, making it a critical parameter in computer-aided drug design (CADD) [20]. LogP represents the ratio of a compound's concentration in n-octanol (representing lipid membranes) to its concentration in water (representing biological fluids) at equilibrium, typically expressed as a logarithmic value [21]. This application note explores the crucial relationship between logP and key drug fate processes, providing structured data, experimental protocols, and computational approaches for researchers and drug development professionals engaged in comparing in silico logP prediction methods.

logP in Drug Absorption and Distribution

Cellular Permeability and Absorption

Lipophilicity, as quantified by logP, plays a pivotal role in a compound's ability to permeate cell membranes and achieve optimal oral bioavailability. For effective absorption, a compound must strike a balance in lipophilicity—sufficiently lipophilic to traverse lipid bilayers yet sufficiently aqueous-soluble for dissolution in biological fluids. This balance is typically associated with logP values between 2 and 5, which are associated with favorable absorption characteristics [20].

The relationship between logP and membrane permeability follows a parabolic pattern, where both extremely low and high logP values result in poor absorption. Excessively hydrophilic compounds (low logP) cannot partition into lipid membranes, while highly lipophilic compounds (high logP) may become trapped within the membrane or exhibit poor dissolution in gastrointestinal fluids.

Blood-Brain Barrier Penetration

For drugs targeting the central nervous system (CNS), appropriate logP values are indispensable for crossing the blood-brain barrier (BBB). CNS drugs generally require a higher degree of lipophilicity to cross the BBB effectively and reach their target sites within the brain [20]. However, this relationship is complex, as excessive lipophilicity can increase the likelihood of recognition by efflux transporters such as P-glycoprotein (P-gp), which actively removes compounds from the brain [22].

Passive diffusion across the BBB, a non-saturable mechanism dependent on a compound's partition into the lipid membrane, is primarily governed by lipophilicity. Therefore, logP serves as a key predictor for initial BBB permeability assessment during CNS drug development [22].

Table 1: Optimal logP Ranges for Key ADME Processes

| ADME Process | Optimal logP Range | Biological Rationale |

|---|---|---|

| General Oral Absorption | 2 - 5 | Balances membrane permeability with aqueous solubility for gastrointestinal absorption [20] |

| CNS Penetration | Moderately higher within 2-5 range | Enhanced lipophilicity required for BBB passive diffusion, but balance needed to avoid efflux transporter recognition [20] |

| Solubility Formulation | Lower end of range preferred | High logP inversely correlates with aqueous solubility; lower values facilitate dissolution [20] |

Figure 1. ADMET Relationships of logP. Diagram illustrates how logP influences key drug disposition characteristics.

logP in Solubility, Toxicity, and Side Effects

Aqueous Solubility and Formulation Challenges

The relationship between a compound's logP value and its aqueous solubility is inversely proportional, with high logP values often signaling poor water solubility [20]. This presents significant challenges in formulation and delivery, as a balance must be struck between lipophilicity for effective cellular absorption and aqueous solubility for systemic availability.

Understanding and optimizing logP is critical in developing drug formulations that achieve this balance. The logP value can inform the choice of formulation strategies, guiding the selection of appropriate excipients and delivery systems that enhance the solubility of lipophilic drugs. This, in turn, improves bioavailability, ensuring that drugs can be effectively absorbed into the bloodstream and reach their intended targets within the body [20].

Toxicity and Tissue Accumulation

In drug development, accurately predicting and managing the toxicity and side effects of potential pharmaceutical compounds is paramount. Compounds characterized by very high logP values pose a particular concern, as they may preferentially accumulate in lipid-rich tissues, potentially leading to adverse toxicity levels [20]. This underscores the importance of closely monitoring and optimizing logP values throughout the drug design process to mitigate such risks effectively.

Furthermore, a nuanced understanding of how a compound's logP value influences its interactions with biological targets enables scientists to modify the drug's chemical structure judiciously. Such strategic modifications aim to minimize unwanted interactions that could result in side effects, thereby enhancing the drug's therapeutic index [20].

Table 2: logP-Related Formulation and Toxicity Considerations

| Property | Relationship with logP | Consequence & Mitigation Strategy |

|---|---|---|

| Aqueous Solubility | Inverse correlation | Challenge: Poor solubility limits dissolution and absorption.Mitigation: Formulation approaches (e.g., surfactants, liposomes, solid dispersions) [20] |

| Tissue Accumulation | Positive correlation (high logP) | Challenge: Accumulation in lipid-rich tissues (e.g., adipose, liver) leading to long-term or unpredictable toxicity [20].Mitigation: Structural modification to reduce logP; therapeutic monitoring. |

| Non-Specific Binding | Positive correlation | Challenge: Increased binding to non-target proteins and tissues, reducing free drug concentration and potentially increasing background signal in imaging agents [22].Mitigation: Optimize logP and introduce polar functional groups. |

Computational logP Prediction Methods

The experimental measurement of logP can be costly and time-consuming, driving the development of computational prediction methods [23]. These in silico models can be broadly classified into several families, each with distinct advantages and limitations, a key consideration for thesis research comparing these approaches.

Atom-based methods (e.g., ALOGP) sum additive contributions of individual atoms. They are simple and fast but may lack accuracy for complex structures where electronic effects are significant [23]. Fragment-based methods (e.g., CLOGP) sum hydrophobic contributions of larger molecular fragments and apply correction factors for interactions. They generally perform better than atom-based methods for larger molecules [23]. Topology/Graph-based models use 2D molecular descriptors or modern deep neural networks (DNNs) trained on molecular graphs [23]. Property-based methods use theoretical rigorous physical-chemical principles, such as calculating the transfer free energy from water to octanol using molecular mechanics (MM) or quantum mechanics (QM) approaches [23].

Performance Benchmarking

Recent benchmarking studies assess the performance of various computational tools for predicting logP and other physicochemical properties. One comprehensive review evaluated twelve software tools implementing QSAR models and found that models for physicochemical properties generally outperformed those for toxicokinetic properties [24]. The study emphasized the importance of external validation and assessing performance within the model's applicability domain.

A study on the FElogP model, which uses molecular mechanics Poisson-Boltzmann surface area (MM-PBSA) to calculate transfer free energy, reported a root mean square error (RMSE) of 0.91 log units and a Pearson correlation (R) of 0.71 when validated against a diverse set of 707 molecules from the ZINC database [23]. This performance was superior to several commonly used QSPR and machine learning-based models in this specific benchmark.

Table 3: Comparison of logP Prediction Method Families

| Method Family | Examples | Key Principles | Advantages | Limitations |

|---|---|---|---|---|

| Atom-Based | ALOGP [23] | Sum of atom contributions | Fast computation; simple implementation | Less accurate for complex or large molecules; misses specific interactions |

| Fragment-Based | CLOGP [23] | Sum of fragment constants + corrections | Handles larger molecules well; accounts for intramolecular effects | Dependent on fragment library completeness; training-set dependent [23] |

| Topology/Graph-Based | MlogP, DNN models [23] | Uses 2D topological descriptors or molecular graphs | Can capture complex patterns without explicit rules; modern DNNs are powerful | Can be a "black box"; performance heavily reliant on training data quality/diversity [23] |

| Property-Based | FElogP [23] | MM-PBSA/GBSA calculation of transfer free energy | Physically rigorous principle; not directly parameterized on experimental logP | Higher computational cost; requires 3D structures and molecular mechanics parameters [23] |

Experimental Protocols

Shake-Flask Method (Gold Standard)

Principle: This method directly measures the partition coefficient by equilibrating the compound between n-octanol and water phases, followed by quantification of the solute concentration in each phase [23].

Procedure:

- Phase Saturation: Pre-saturate n-octanol with water and water with n-octanol by shaking equal volumes together for 24 hours. Allow phases to separate for another 24 hours before use.

- Sample Preparation: Dissolve a known amount of the test compound in a suitable volume of either the water-saturated octanol or the octanol-saturated water in a sealed vial or tube.

- Equilibration: Equilibrate the system by shaking mechanically for 24-48 hours at constant temperature (e.g., 25°C). Ensure shaker speed is sufficient for mixing but not so high as to form emulsions.

- Phase Separation: Centrifuge the mixture if necessary to achieve complete phase separation.

- Quantification: Carefully separate the two phases. Analyze the concentration of the compound in each phase using a validated analytical method (e.g., HPLC-UV, GC, or LC-MS). The initial concentration in the spiked phase should be verified.

- Calculation: logP = log10 (Coctanol / Cwater), where C is the equilibrium concentration.

Chromatographic Method (High-Throughput Alternative)

Principle: The reversed-phase high performance liquid chromatography (RP-HPLC) retention time of a compound correlates with its lipophilicity. The method is calibrated with compounds of known logP values [23] [20].

Procedure:

- Chromatographic System: Use a standardized RP-HPLC system with a C18 column and a mobile phase of water and a water-miscible organic solvent (e.g., methanol or acetonitrile).

- Mobile Phase: Isocratic or gradient elution can be used. For isocratic methods, a mobile phase composition that provides adequate retention for the analytes must be determined.

- Calibration: Inject a series of standard compounds with known, reliably measured logP values covering a wide range. Record their retention times (or capacity factors, k').

- Measurement: Inject the test compound and measure its retention time under identical conditions.

- Calculation: Construct a calibration curve by plotting the known logP values of the standards against their measured log retention parameters (e.g., log k'). Use the regression equation from this curve to calculate the logP of the test compound based on its retention time.

Figure 2. Computational logP Prediction Workflow. Decision tree outlining the general workflow for different families of in silico logP prediction methods.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Resources for logP Prediction Research

| Tool / Resource Name | Type / Category | Primary Function in Research | Access / Note |

|---|---|---|---|

| OPERA | QSAR Software Suite | Open-source battery of QSAR models for predicting logP and other PC properties; includes applicability domain assessment [24]. | Freely available |

| SwissADME | Web Service | Provides multiple logP predictions (iLOGP, XLOGP3, WLOGP) alongside other ADME parameters for a comprehensive profile [21]. | Freely available online |

| RDKit | Cheminformatics Library | Open-source toolkit for cheminformatics and machine learning; used for structure standardization, descriptor calculation, and model building [24]. | Freely available (Python) |

| ADMET Predictor | Commercial Platform | Comprehensive commercial software for predicting ADMET properties, including logP, using proprietary models [21]. | Commercial license |

| BIOVIA Discovery Studio | Commercial Modeling Suite | Integrated environment for molecular modeling and simulation, including logP calculation tools [21]. | Commercial license |

| PubChem PUG REST API | Database Access | Programmatic interface to retrieve chemical structures (SMILES) and property data for dataset curation [24]. | Freely available |

| ZINC Database | Compound Library | Publicly accessible database of commercially available compounds; source of curated structures and experimental data for benchmarking [23]. | Freely available |

Application Notes

The prediction of the n-octanol/water partition coefficient (logP) is a cornerstone of modern drug discovery, influencing a compound's absorption, distribution, metabolism, excretion, and toxicity (ADMET) [23]. The journey from empirical observations to sophisticated in silico models represents a paradigm shift in how chemists design new therapeutic agents. This note details the historical evolution and current methodologies for logP prediction, providing a framework for their application in a research setting.

Historical Foundation and Key Developments

The conceptual foundation for logP prediction was laid by Hansch and Fujita in the 1960s with the development of the substituent constant method [25]. This approach calculated a molecule's logP by adding a substituent's π-constant to the measured logP of a parent compound [26]. While revolutionary, its major limitation was the dependency on a measured logP value for every new parent structure [25]. This spurred the development of more generalizable "fragment-based" methods, such as the CLOGP program from Pomona College. CLOGP was designed to deconstruct any molecule into its constituent fragments automatically, using updatable data tables to reassemble them into a logP value while accounting for intramolecular interactions [25]. A key philosophical tenet of the CLOGP development team was to base calculations on known solvation forces and physical chemistry principles, rather than relying solely on statistical correlations [25]. The subsequent emergence of atom-based and later, topology and property-based methods, has significantly expanded the toolkit available to researchers [23].

Modern Methodologies and Performance

Contemporary logP prediction methods can be broadly categorized, each with distinct advantages and limitations as summarized in Table 1.

Table 1: Comparison of Modern logP Prediction Methodologies

| Method Type | Representative Examples | Core Principle | Key Advantages | Reported Performance (RMSE on ZINC707*) |

|---|---|---|---|---|

| Fragment-Based | CLOGP [23] | Summation of hydrophobic contributions from molecular fragments with correction factors [23]. | High interpretability; based on physical chemistry principles [25]. | >1.00 (est.) [23] |

| Atom-Based | AlogP, XlogP [23] [17] | Summation of contributions from individual atoms, often with corrections for neighboring atoms [23]. | Fast calculation; suitable for high-throughput screening. | ~1.13 (OpenBabel) [23] |

| Topology/ML-Based | DNN Models, MlogP [23] | Use of topological descriptors or deep neural networks on molecular graphs to predict logP [23]. | Can capture complex, non-additive effects without explicit rules. | 1.23 (DNN) [23] |

| Property-Based (Physical) | FElogP [23] | Calculation via solvation free energy using Molecular Mechanics Poisson-Boltzmann Surface Area (MM-PBSA) [23]. | Rigorous physical basis; not dependent on a specific training set. | 0.91 [23] |

| Consensus/Ensemble | JPlogP [17] | Distills knowledge from multiple prediction methods into a single model trained on averaged predictions [17]. | Mitigates individual model bias; often superior performance on pharmaceutical-like compounds [17]. | N/A |

*The ZINC707 dataset is a structurally diverse set of molecules with high-quality measurement data, providing a rigorous benchmark [23].

The performance of any logP predictor is highly dependent on the chemical space of the test set [17]. Models trained on public datasets like PhysProp may not perform as well on molecules typical of pharmaceutical research [17]. The FElogP model, which calculates logP from first principles using transfer free energy, has demonstrated exceptional performance (RMSE = 0.91, R = 0.71) on the diverse ZINC707 benchmark, outperforming several established QSPR and machine learning models [23]. Meanwhile, consensus approaches like JPlogP, which leverages the knowledge embedded in multiple existing predictors, have shown to be particularly effective for drug-like molecules [17].

Critical Application in Pharmacokinetic Prediction

Accurate logP prediction is not an academic exercise; it is critical for predicting key pharmacokinetic parameters. Volume of distribution at steady state (VDss) is one such parameter, and its prediction is highly sensitive to the input logP value [4]. A recent sensitivity analysis demonstrated that among six different methods for predicting human VDss, the Rodgers-Rowland method is highly sensitive to logP, often leading to significant over-prediction for lipophilic drugs (logP > 3), while methods like Oie-Tozer and TCM-New are more robust [4]. This underscores the importance of selecting both an accurate logP value and a VDss prediction method that is appropriate for the compound's lipophilicity.

Experimental Protocols

Protocol: Measurement of logP via the Shake-Flask Method

The shake-flask method is a classical, direct technique for measuring logP [23].

Principle: A solute is allowed to distribute between immiscible water-saturated n-octanol and n-octanol-saturated water phases. The partition coefficient is determined from the concentration ratio at equilibrium [23].

Materials:

- Research Reagent Solutions:

- n-Octanol (HPLC grade)

- Deionized water (HPLC grade)

- Compound of interest (high purity)

- Phosphate buffer (if needed for pH control)

- Analytical instrument (e.g., HPLC-UV, LC/MS/MS) [27]

Procedure:

- Preparation of Saturated Solvents: Mutually saturate n-octanol and water by mixing them in a separatory funnel for 24 hours. Allow the phases to separate fully and use them for all subsequent steps.

- Sample Preparation: Prepare a solution of the test compound in a suitable solvent (e.g., DMSO), ensuring the final concentration in the partitioning system is below its solubility limit in both phases.

- Partitioning: Add an appropriate volume of the compound stock solution to a vial. Evaporate the solvent under a stream of nitrogen. Add precisely measured volumes of the water-saturated n-octanol and n-octanol-saturated water to the vial.

- Equilibration: Seal the vial and shake it vigorously on a mechanical shaker for a predetermined time (e.g., 1 hour) at a constant temperature (e.g., 25°C) to reach equilibrium.

- Phase Separation: Centrifuge the vial to achieve complete and sharp separation of the two phases.

- Quantification: Carefully sample from each phase and analyze the solute concentration using a calibrated analytical method such as HPLC-UV or LC/MS/MS [27].

- Calculation: Calculate logP using the formula: logP = log10([C]octanol / [C]water), where [C] is the concentration in the respective phase.

Critical Notes:

- This method is best suited for compounds with logP in the range of -2 to 4.

- For ionizable compounds, the pH of the aqueous phase must be carefully controlled (typically 1-2 units from the pKa) to ensure the compound is in its neutral form. Corrections for ionization are required if this is not the case [25].

- Vigorous shaking can lead to emulsion formation, resulting in inaccurate measurements [25].

Protocol: In Silico logP Prediction Using a Free Energy-Based Method (FElogP)

This protocol outlines the steps for predicting logP using the physical property-based FElogP method, which leverages molecular dynamics simulations [23].

Principle: logP is calculated from the transfer free energy of moving a molecule from water to n-octanol, derived from solvation free energies computed using the MM-PBSA approach [23].

Workflow:

Materials (Software/Tools):

- Research Reagent Solutions (Computational):

- Structure Editor: e.g., Avogadro, ChemDraw (for drawing and initial geometry optimization).

- Force Field Parametrization Tool: e.g., Antechamber (for assigning GAFF2 force field parameters) [23].

- Molecular Dynamics Engine: e.g., AMBER, GROMACS, OpenMM (for running solvation simulations).

- MM-PBSA/GBSA Tool: e.g.,

MMPBSA.pyfrom AMBER tools (for calculating solvation free energies from trajectories) [23].

Procedure:

- Input Structure Preparation: Generate a 3D structure of the molecule of interest. Perform geometry optimization using semi-empirical or DFT methods to obtain a low-energy conformation.

- System Setup: Assign atomic partial charges (e.g., using AM1-BCC) and force field parameters (e.g., GAFF2). Solvate the molecule in periodic boxes of water (e.g., TIP3P) and n-octanol, ensuring sufficient padding between the solute and box edges.

- Molecular Dynamics Simulation:

- Energy Minimization: Remove any bad contacts in the system.

- Heating: Gradually heat the system to the target temperature (e.g., 298.15 K).

- Equilibration: Run simulations under constant pressure and temperature (NPT ensemble) until the system density and energy stabilize.

- Production Run: Perform an extended simulation (e.g., 10-100 ns) to collect a trajectory for free energy analysis.

- Free Energy Calculation: Use the MM-PBSA method on frames extracted from the production trajectory. This involves calculating the average of the molecular mechanics energy, and the polar and non-polar solvation energies (ΔGPB and ΔGSA) for the solute in both water and n-octanol [23].

- logP Calculation: Compute the solvation free energy in each solvent (ΔGsolv). The logP is then calculated using the fundamental relationship: logP = (ΔGwatersolv - ΔGoctanol_solv) / (RT ln 10) [23].

The Scientist's Toolkit

Table 2: Essential Research Reagents, Tools, and Software for logP Studies

| Item Name | Function/Application | Specific Examples / Notes |

|---|---|---|

| n-Octanol & Water | The standard solvent system for partition coefficient measurement [23]. | Must be mutually saturated before use to ensure volume stability and thermodynamic consistency. |

| HPLC-UV / LC-MS/MS | Analytical instruments for quantifying solute concentration in the shake-flask method [27] [23]. | Provides high sensitivity and specificity; essential for low-concentration samples. |

| Rapid Equilibrium Dialysis (RED) | Device for measuring fraction unbound in plasma (fup), a key parameter in pharmacokinetic modeling that relates to logP [27]. | Used in conjunction with logP for mechanistic VDss predictions [27]. |

| Molecular Dynamics Engine | Software for simulating the physical movements of atoms and molecules over time. | GROMACS, AMBER, OpenMM; core component for physical property-based methods like FElogP [23]. |

| MM-PBSA/GBSA Tools | Computes solvation free energies from MD trajectories, enabling logP prediction via transfer free energy [23]. | A key utility in methods like FElogP; implementations are available in packages like AMBER. |

| logP Prediction Software | Programs for fast, in silico estimation of logP. | CLOGP (fragment-based), ACD/logP (fragment-based), OpenBabel (atom-based) [23]. |

| Machine Learning Platforms | Environment for building and deploying custom or pre-trained logP prediction models. | KNIME, Python (with scikit-learn, deepchem); used in methods like JPlogP and DNN models [17]. |

Within modern drug discovery, the optimization of a compound's Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties is as crucial as targeting its biological activity. Key to this optimization are three fundamental physicochemical properties: the partition coefficient (logP), the dissociation constant (pKa), and the aqueous solubility (LogS). These properties are deeply interconnected, collectively governing a molecule's behavior in biological systems. This Application Note delineates the essential relationships between logP, pKa, and solubility, and provides detailed protocols for their in silico and experimental determination, framed within a broader research context comparing computational logP prediction methods.

Theoretical Foundations and Interrelationships

Defining the Core Properties

- logP: The partition coefficient, logP, quantifies a compound's lipophilicity by measuring its concentration ratio between two immiscible phases: 1-octanol and water. It is defined as LogP = log([Drug]octanol / [Drug]water) and is a property of the neutral, unionized molecule [3]. LogP is a primary indicator of a compound's ability to cross lipid membranes.

- pKa: The pKa value indicates the strength of an acid or a base. It is the pH at which 50% of the molecule is ionized. The ionization state of a molecule, which changes with environmental pH, profoundly impacts its solubility and permeability [3] [28].

- LogS: Aqueous solubility (often expressed as LogS) is the natural logarithm of a compound's solubility in water, measured in moles per liter. It determines the maximum concentration available for absorption in the gastrointestinal tract [3].

The Critical Relationship: logP, pKa, and logD

A molecule's effective lipophilicity in a specific pH environment is described by its distribution coefficient (logD). Unlike logP, logD accounts for all species present—both ionized and unionized—in the aqueous phase. The relationship between logP and pKa is mathematically embodied in the calculation of logD.

For a monoprotic acid: LogD = LogP - log(1 + 10^(pH - pKa)) [3]

For a monoprotic base: LogD = LogP - log(1 + 10^(pKa - pH))

This relationship, visualized in the diagram below, is critical for drug design. A drug must possess a balanced lipophilicity profile: sufficient hydrophilicity to be soluble in aqueous environments like blood (pH ~7.4), and sufficient lipophilicity to cross lipid membranes. This balance is often a moving target, as a drug encounters different pH environments throughout the body, from the highly acidic stomach (pH 1.5-3.5) to the more neutral intestines (pH 6-7.4) [3].

Figure 1: The Interplay of pH, pKa, logP, and logD in Determining Bioavailability. The diagram illustrates how the environmental pH and a molecule's intrinsic pKa govern its ionization state, which in turn determines the distribution coefficient (LogD). LogD directly influences the critical balance between aqueous solubility and membrane permeability, ultimately impacting bioavailability.

Experimental Determination: Core Protocols

Reliable experimental data is the foundation for validating in silico predictions. The following protocols outline standard methods for determining logP and pKa.

Protocol: HPLC-Based logP Determination

This robust, high-throughput method estimates logP without traditional octanol-water shaking, using Reverse-Phase High-Performance Liquid Chromatography (RP-HPLC) [29].

3.1.1 Research Reagent Solutions

Table 1: Essential Materials for HPLC-Based logP Analysis

| Item | Function |

|---|---|

| RP-HPLC System | Analytical instrument for separation and detection. |

| C18 Column | Non-polar stationary phase that interacts with analytes based on hydrophobicity. |

| Aqueous Buffer (e.g., pH 6, 9) | Mobile phase component mimicking physiological conditions. |

| Organic Solvent (e.g., Acetonitrile, Methanol) | Mobile phase component for eluting hydrophobic compounds. |

| Drug/Compound Standards | Analytes for which logP is to be determined. |

| Reference Standards with known logP | Compounds with well-established logP values for creating a calibration curve. |

3.1.2 Step-by-Step Workflow

- Mobile Phase Preparation: Prepare a series of mobile phases with varying ratios of aqueous buffer (e.g., pH 6.0) and organic solvent (e.g., acetonitrile).

- System Equilibration: Equilibrate the HPLC system and the C18 column with each mobile phase composition until a stable baseline is achieved.

- Reference Standard Analysis: Inject the reference standards and record their retention times (Tr) for each mobile phase composition.

- Calibration Curve: For each reference standard, plot the measured retention factor (k) against the percentage of organic solvent. Extrapolate the retention factor to 100% water (log kw). Create a calibration curve by plotting the known logP values of the references against their calculated log kw values.

- Analyte Measurement: Inject the drug compound of unknown logP and follow steps 3 and 4 to determine its log k_w.

- logP Calculation: Use the calibration curve to convert the measured log k_w of the unknown compound into a logP value [29].

Protocol: Potentiometric (pH-Metric) pKa and logP Determination

This method determines pKa and logP simultaneously by monitoring pH changes during a titration [28].

3.2.1 Research Reagent Solutions

Table 2: Essential Materials for Potentiometric Titration

| Item | Function |

|---|---|

| Sirius T3 Instrument (or equivalent) | Automated analytical system for performing titrations and measuring pH. |

| pH Electrode | Precisely measures the hydrogen ion concentration in the solution. |

| Titrant (Acid, e.g., HCl) | Standardized solution for decreasing the pH of the sample solution. |

| Titrant (Base, e.g., KOH) | Standardized solution for increasing the pH of the sample solution. |

| Water-Miscible Cosolvent (e.g., Methanol, DMSO) | Aids in dissolving compounds with poor aqueous solubility. |

| Inert Gas (e.g., Nitrogen) | Bubbled through the solution to exclude carbon dioxide. |

3.2.2 Step-by-Step Workflow

- Sample Preparation: Dissolve 2-5 mg of the solid compound in a water-cosolvent mixture (e.g., water-methanol) to ensure complete dissolution [28].

- Acid-Base Titration:

- The instrument titrates the sample with a strong acid (e.g., HCl) to a low pH, ensuring the compound is fully protonated.

- It then performs a reverse titration with a strong base (e.g., KOH) back to a high pH.

- The entire process is monitored with a precision pH electrode.

- pKa Calculation: The pKa value is calculated from the resulting titration curve by identifying the pH at the inflection point, which corresponds to the pH where half of the molecules are ionized.

- logP Determination (Dual-Phase Titration): For logP, the titration is repeated in a two-phase system of water and octanol. The difference in the titration curves between the one-phase (aqueous) and two-phase systems is used to calculate the partition coefficient of the neutral species (logP) [28].

In Silico Prediction Methods and Performance

Computational tools offer a rapid and cost-effective alternative for predicting logP, especially in the early stages of drug discovery.

Different software vendors employ a variety of algorithms, each with its own strengths:

- Group Contribution Methods: These methods, used by tools like Molinspiration miLogP and Chemaxon's logP, calculate logP by summing atom-based or fragment-based contributions derived from large training sets of experimental data [8] [30].

- GALAS (Global, Adjusted Locally According to Similarity): This methodology, implemented in ACD/Percepta, builds a global model that is then refined based on the similarity of the query compound to structures with known experimental data in the training library [9] [10].

- Consensus Models: Some platforms, such as ACD/Percepta, offer a consensus logP that averages the results from multiple independent algorithms (e.g., Classic and GALAS) to improve prediction reliability [10].

Quantitative Accuracy Comparison of Prediction Tools

Benchmarking studies, such as the blind SAMPL (Statistical Assessment of the Modeling of Proteins and Ligands) challenges, provide objective comparisons of predictive accuracy.

Table 3: Benchmarking Accuracy of Selected logP Prediction Tools

| Software / Method | Algorithm Type | Reported Accuracy (RMSE*) | Training Set Size | Key Application Notes |

|---|---|---|---|---|

| Chemaxon (SAMPL 6) | Empirical / Group Contribution | 0.31 [8] | Proprietary Extensions | Achieved highest accuracy in the SAMPL 6 blind challenge. |

| ACD/LogP GALAS (v2025) | GALAS | ~0.5 log units for 80% of new compounds [9] | >22,000 compounds [9] [10] | Improved from v2024; expanded coverage for bRo5 space (PROTACs, peptides). |

| Molinspiration miLogP | Group Contribution | stdev = 0.428 [30] | >12,000 molecules [30] | 80.2% of predictions have error < 0.5; known for robustness. |

| ALOGPS 2.1 | Neural Network (E-state indices) | rms = 0.35 [31] | 12,908 molecules [31] | Provides predictions from multiple public algorithms for comparison. |

| Reference: MOE (various) | Multiple | 0.543 - 0.605 (RMSE on SAMPL 6) [8] | Varies | Serves as a common reference point for performance comparison. |

*RMSE: Root Mean Square Error

Figure 2: Generalized Workflow for In Silico logP Prediction. The process begins with a chemical structure input, which is processed by one or more prediction algorithms. These algorithms utilize different methodologies (e.g., group contribution, neural networks) to compute a logP value, often accompanied by a reliability index to gauge prediction confidence.

Application in Drug Discovery and Development

Understanding and applying the relationships between logP, pKa, and solubility is vital for rational drug design.

- Informing Lead Optimization: A compound's calculated logD profile can guide structural modifications. For instance, adding an ionizable group or altering substituents can adjust the pKa, thereby shifting the logD curve to achieve a better balance of solubility and permeability at the target physiological pH [3] [28].

- Predicting Pharmacokinetic Properties: LogP and logD are key inputs for Quantitative Structure-Activity Relationship (QSAR) models that predict ADMET properties. They correlate with intestinal absorption, plasma protein binding, volume of distribution, and penetration of the blood-brain barrier [3] [14].

- Application to Natural Products: Natural compounds often fall outside the "rule of five" and present unique challenges like poor solubility or chemical instability. In silico profiling of logP, pKa, and solubility allows for the early identification of these challenges, guiding the selection of viable candidates from natural product libraries for further investigation [14].

The interplay between logP, pKa, and solubility forms a cornerstone of physicochemical property analysis in drug discovery. While logP defines intrinsic lipophilicity, its operational value is realized through logD, which incorporates the critical dimension of ionization as a function of pH and pKa. A multidisciplinary approach that integrates robust experimental protocols with state-of-the-art in silico predictions is essential for accurately profiling compounds. As computational models continue to improve in accuracy and expand their coverage to novel chemical spaces like PROTACs and cyclic peptides, their role in de-risking drug candidates and accelerating the path to the clinic will only become more pronounced [9].

Computational logP Prediction: From Traditional Methods to Modern AI Solutions

The octanol-water partition coefficient (logP) is a fundamental physicochemical property that measures a compound's lipophilicity, serving as a critical parameter in drug discovery for predicting absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles [23]. Substructure-based approaches represent one of the two primary categories of computational methods for predicting logP, operating on the fundamental principle that a molecule's lipophilicity can be approximated by the sum of contributions from its constituent parts [7]. These methods can be broadly classified into atom-based approaches, which decompose molecules to the single-atom level, and fragmental methods, which utilize larger molecular fragments as the fundamental contribution units [7] [17]. The underlying hypothesis of these additive methods is that molecular lipophilicity is primarily determined by the hydrophobic and hydrophilic contributions of discrete structural components, though successful implementations typically incorporate correction factors to account for intramolecular interactions that deviate from perfect additivity [32].

These computational approaches have gained significant importance in pharmaceutical research since experimental logP determination can be costly, time-consuming, and challenging for unstable compounds or those that are difficult to synthesize [23]. By providing rapid in silico estimates of lipophilicity, substructure-based methods enable medicinal chemists to prioritize compounds with favorable drug-like properties early in the discovery pipeline, aligning with established guidelines such as Lipinski's Rule of Five which specifies logP < 5 for good oral bioavailability [33] [32].

Quantitative Comparison of Method Performance

Table 1: Performance comparison of substructure-based logP prediction methods

| Method | Approach Type | Key Features | Reported RMSE | Applicable Chemical Space |

|---|---|---|---|---|

| JPlogP | Atom-based | 6-digit atom typing system; trained on consensus predictions | High performance on pharmaceutical benchmark | Drug-like molecules [17] |

| XLOGP3 | Atom & Fragment | Uses molecular fragments with correction factors | N/A | Broad organic compounds [23] [32] |

| ALOGP | Atom-based | Simple atomic contributions | N/A | Small molecules [23] |

| CLOGP | Fragment-based | Fragment constants with interaction corrections | Overestimates for large, flexible molecules [23] | |

| MRlogP | Machine Learning | Transfer learning; uses atomic & topological descriptors | 0.715 (PHYSPROP) | Drug-like molecules (QED > 0.67) [32] |

Table 2: Performance benchmarks across different datasets

| Method | Public Dataset (N=266) | Nycomed Dataset (N=882) | Pfizer Dataset (N=95,809) | Martel Dataset (N=707) |

|---|---|---|---|---|

| AAM (Baseline) | Baseline RMSE | Baseline RMSE | Baseline RMSE | N/A |

| Majority of Methods | Reasonable results | Variable performance | Variable performance | N/A |

| Successful Methods | 30 methods tested | Only 7 methods successful | Only 7 methods successful | N/A |

| Simple NC/NHET Equation | Comparable to many programs | Comparable to many programs | Comparable to many programs | N/A |

The performance of substructure-based logP predictors varies significantly across different chemical spaces [7]. While many methods demonstrate reasonable accuracy on public datasets with limited molecular diversity, their performance often declines with increasing molecular complexity and size [7]. A comprehensive evaluation of logP prediction methods revealed that accuracy generally decreases as the number of non-hydrogen atoms in a molecule increases, highlighting a key limitation of additive approaches [7]. Notably, only seven of the tested methods maintained acceptable performance across both public and large industrial datasets [7].

For drug discovery applications, methods specifically trained or optimized on pharmaceutical-like chemical space generally outperform those developed for broader applications [17]. The Martel dataset, comprising 707 structurally diverse drug-like molecules with consistently measured logP values, has emerged as a valuable benchmark for evaluating predictive accuracy in relevant chemical space [23] [17]. On this challenging dataset, many popular methods exhibit higher error rates (RMSE > 1.0) compared to their reported performance on traditional benchmarks [23].

Experimental Protocols

Protocol 1: Implementing Atom-Based Contribution Methods

Principle: Atom-based methods calculate logP by summing predetermined contribution values for each atom in a molecule, often with corrections for specific molecular environments [23] [32].

Procedure:

- Molecular Standardization:

- Input molecular structures in SMILES or SDF format

- Remove salts and standardize tautomeric forms using tools like RDKit [32]

- Generate canonical tautomeric representation for consistent atom typing

Atom Typing:

- Classify each atom according to predefined atom types based on:

- Example: In JPlogP, this is encoded as a 6-digit number (A-BB-C-DD) representing charge, atomic number, connectivity, and special classifier [17]

Contribution Summation:

- Retrieve pre-calculated hydrophobic contribution values for each atom type

- Sum all atomic contributions to obtain preliminary logP estimate

- Apply necessary correction factors for specific structural features:

Validation:

- Compare predicted values against experimental data for benchmark compounds

- Calculate performance metrics (RMSE, R²) to evaluate accuracy

Protocol 2: Implementing Fragmental Contribution Methods

Principle: Fragmental methods decompose molecules into larger structural units (fragments) with predetermined contribution values, often demonstrating improved accuracy for complex molecules compared to atom-based approaches [23].

Procedure:

- Fragment Identification:

- Apply predefined fragmentation rules to decompose molecule into standard fragments

- Identify overlapping fragments and apply hierarchy rules for fragment selection

- Classify fragments based on structural characteristics and bonding patterns

Fragment Contribution Calculation:

Special Case Handling:

Result Compilation:

- Sum all fragment contributions and correction factors

- Apply global adjustment factors if implemented in method

- Generate final logP prediction with uncertainty estimation

Protocol 3: Consensus Approach Implementation

Principle: Combining predictions from multiple methods often improves accuracy and reliability by leveraging complementary strengths of different approaches [17] [32].

Procedure:

- Method Selection:

- Select 3-5 diverse substructure-based methods with different underlying approaches

- Include both atom-based (e.g., ALOGP, XLOGP3) and fragment-based methods when possible

- Ensure methods cover relevant chemical space for target compounds

Prediction Generation:

- Execute each selected method using standardized molecular inputs

- Apply method-specific parameters as recommended by developers

- Capture all individual predictions and associated metadata

Result Integration:

- Calculate arithmetic mean of all predictions as consensus value

- Alternatively, use weighted averaging based on known method performance for specific compound classes

- Estimate uncertainty from standard deviation of individual predictions

Validation and Application:

- Establish applicability domain based on training set coverage

- Identify outliers where methods show significant disagreement (>2 log units)

- Flag predictions with high uncertainty for experimental verification priority

The Scientist's Toolkit

Table 3: Essential research reagents and computational tools for substructure-based logP prediction

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| RDKit | Cheminformatics Library | Molecular standardization, descriptor calculation, fingerprint generation | Open Source [32] |

| OpenBabel | Chemical Toolbox | Format conversion, FP4 fingerprint generation | Open Source [32] |

| JPlogP | Atom-Based Predictor | logP prediction using optimized atom typing system | Open Source [17] |

| XLOGP3 | Atom & Fragment Method | logP prediction using combined atomic and fragmental approach | Open Source [23] |

| ALOGP | Atom-Based Predictor | Simple atomic contribution method | Open Source [23] |

| VEGA | Platform | Multiple logP prediction methods implementation | Free Access [32] |

| Martel Dataset | Benchmark Data | 707 diverse drug-like molecules with consistent logP measurements | Publicly Available [23] [17] |

| PHYSPROP Database | Training Data | Curated experimental physicochemical properties | Publicly Available [17] [32] |

Workflow Visualization

Figure 1: Workflow for substructure-based logP prediction demonstrating parallel atom-based and fragment-based approaches with consensus integration.

Technical Considerations and Limitations

Substructure-based logP prediction methods, while computationally efficient and widely applicable, face several important limitations that researchers must consider. A significant challenge is the decline in prediction accuracy with increasing molecular size and complexity, as additive approaches often fail to adequately capture emergent hydrophobic effects in large, flexible molecules [7] [23]. This limitation manifests particularly in pharmaceutical applications where molecular weight trends have increased over time, resulting in systematic overprediction of logP for contemporary drug candidates [23].

The chemical space coverage of training data significantly impacts method performance, with specialized approaches like MRlogP (trained on drug-like molecules with QED > 0.67) demonstrating superior accuracy within their intended domain compared to general-purpose methods [32]. This highlights the importance of selecting methods appropriate for specific research contexts rather than relying on universal solutions.

The "missing fragment problem" represents another key limitation, occurring when novel chemical motifs absent from training datasets encounter spurious contribution estimates [34]. This issue can be mitigated through approaches that incorporate comprehensive fragment libraries or employ transfer learning techniques that leverage both experimental and predicted data [32]. Recent advances include hybrid methods that combine substructure-based approaches with machine learning on molecular descriptors or graph-based representations, potentially offering improved accuracy while maintaining interpretability [33] [34].

Future methodological developments will likely focus on integrating physicochemical principles more explicitly into substructure-based frameworks, enhancing domain-specific optimization, and developing improved correction schemes for complex molecular interactions that deviate from simple additivity assumptions.

Property-based techniques represent a fundamental approach in in silico prediction of molecular properties, particularly the octanol-water partition coefficient (logP). Unlike substructure-based methods that decompose molecules into fragments, property-based techniques utilize holistic molecular descriptors and empirical relationships to predict lipophilicity. These methods leverage computed physicochemical properties and topological descriptors that encapsulate key aspects of molecular structure and electronic environment, establishing quantitative relationships with logP through statistical modeling and machine learning approaches [7] [35]. Within pharmaceutical research and drug development, these techniques enable rapid virtual screening of compound libraries and optimization of lead compounds for desirable absorption, distribution, metabolism, excretion, and toxicity (ADMET) profiles, significantly reducing reliance on costly and time-consuming experimental measurements [15] [36].

The theoretical foundation of property-based logP prediction rests on linear free-energy relationships (LFERs) that connect molecular structural features to partitioning behavior between octanol and water phases. These approaches capture the underlying physicochemical principles governing solute partitioning, including hydrophobic effects, polar interactions, and solvation energies [35]. The computational efficiency and strong interpretability of property-based models have established them as indispensable tools in modern cheminformatics and drug discovery pipelines, particularly when handling large chemical databases where fragment-based methods may struggle with novel molecular scaffolds [7] [17].

Topological Descriptors in logP Prediction

Theoretical Basis and Key Descriptors

Topological descriptors are mathematical representations of molecular structure derived from graph theory, where atoms are represented as vertices and bonds as edges in a molecular graph [37] [36]. These two-dimensional descriptors encode information about molecular connectivity, branching, and size without requiring three-dimensional coordinates or conformational analysis. The calculation of topological indices is computationally efficient and easily automated, making them particularly valuable for high-throughput screening of large chemical databases [37].

The most significant topological descriptors applied in logP prediction include:

- Topological Polar Surface Area (TPSA): Calculated as the sum of contributions from polar atoms (oxygen, nitrogen, and attached hydrogens) based on a fragment-based approach that avoids 3D structure calculation [38]. TPSA strongly correlates with membrane permeability and intestinal absorption, making it particularly valuable for pharmaceutical applications.

- Wiener Index: One of the earliest topological indices, defined as the sum of the distances between all pairs of carbon atoms in alkane molecules [36]. This index correlates with molecular volume and branching and has been used to predict boiling points of hydrocarbons.

- Zagreb Indices: Describe the distribution of vertex degrees in the molecular graph and capture molecular branching complexity [37].

- Randić Index: Evaluates molecular branching and connectivity, providing insights into molecular complexity and hydrophobic surface area [37].