Implementing Random Forest for ADMET Classification: A Practical Guide for Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on implementing Random Forest (RF) models for predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties.

Implementing Random Forest for ADMET Classification: A Practical Guide for Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing Random Forest (RF) models for predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties. It covers the foundational rationale for choosing RF, detailed methodological workflows for model development, advanced strategies for troubleshooting and optimizing performance on complex biomedical data, and rigorous validation techniques. By synthesizing current best practices and case studies, this guide aims to equip scientists with the knowledge to build robust, predictive ADMET classification models that can reduce late-stage attrition and accelerate the drug discovery pipeline.

Why Random Forest? Foundations for Robust ADMET Prediction

The Critical Role of ADMET Prediction in Reducing Drug Attrition

A fundamental challenge in modern drug discovery is the high failure rate of drug candidates, with approximately 40–45% of clinical attrition attributed to unfavorable absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties. [1] The typical drug development process spans 10 to 15 years, making early-stage prioritization of viable candidates crucial for reducing costs and improving success rates. [2] Traditional experimental ADMET assessment is often time-consuming, cost-intensive, and limited in scalability, creating a critical bottleneck. [2] The integration of artificial intelligence (AI) and machine learning (ML), including random forest models, has revolutionized this landscape by enabling rapid, cost-effective, and reproducible in silico prediction of ADMET properties. These computational approaches allow researchers to filter extensive compound libraries early in the discovery pipeline, significantly enhancing the probability of advancing molecules with optimal druglike characteristics. [3] [2]

Current AI and ML Approaches in ADMET Prediction

The fusion of AI with computational chemistry has transformed molecular modeling and property prediction. While this article focuses on the implementation of random forest models, it is important to contextualize them within the broader ecosystem of AI/ML approaches.

- Core Algorithms: Support vector machines, random forests, and deep learning models such as graph neural networks (GNNs) and transformers are widely employed. These algorithms support molecular representation, virtual screening, and ADMET property prediction. [3]

- Generative Models: Generative adversarial networks (GANs) and variational autoencoders (VAEs) enable de novo drug design, creating novel molecular structures optimized for desired properties. [3]

- Federated Learning: This emerging technique allows multiple pharmaceutical organizations to collaboratively train models on distributed proprietary datasets without sharing confidential data. This approach systematically expands the model's chemical coverage and improves robustness, addressing limitations posed by isolated, non-representative datasets. [1] Cross-pharma studies have demonstrated that federated models consistently outperform local baselines, with performance gains scaling with the number and diversity of participants. [1]

Table 1: Key ML Algorithms for ADMET Prediction and Their Applications

| Algorithm Category | Examples | Primary Applications in ADMET |

|---|---|---|

| Supervised Learning | Random Forests, Support Vector Machines | Classification and regression tasks for solubility, permeability, toxicity [2] |

| Deep Learning | Graph Neural Networks, Transformers | Molecular representation learning, endpoint prediction from chemical structure [3] [4] |

| Generative Models | GANs, Variational Autoencoders | De novo design of novel compounds with optimized ADMET profiles [3] |

| Federated Learning | Multi-institutional collaborative models | Training on distributed private data to improve model generalizability [1] |

Implementing Random Forest for ADMET Classification: Protocols and Workflows

Random Forest, an ensemble ML method, is particularly well-suited for ADMET classification tasks due to its robustness against overfitting and ability to handle high-dimensional data. The following section outlines a detailed protocol for developing and applying Random Forest models in this context.

Data Acquisition and Preprocessing

The development of a robust random forest model begins with acquiring a high-quality, curated dataset. Key public data repositories include ChEMBL, PubChem, and the Therapeutics Data Commons (TDC), which provides 41 benchmark ADMET datasets. [4] Data preprocessing is critical for model performance and involves several key steps: [2]

- Data Cleaning: Remove duplicates, correct erroneous structures, and handle missing values.

- Normalization: Scale numerical features to a standard range to ensure stable model training.

- Feature Selection: Identify and retain the most predictive molecular descriptors. Filter methods (e.g., correlation-based feature selection) can efficiently remove redundant features, while wrapper or embedded methods often yield more optimal feature subsets at a higher computational cost. [2] Studies have shown that models trained on non-redundant, selected features can achieve over 80% accuracy. [2]

Molecular Representation and Feature Engineering

For random forest models, molecules are typically represented using fixed-length numerical vectors known as molecular descriptors. These can be categorized as: [2]

- 1D Descriptors: Constitutional descriptors (e.g., molecular weight, atom count).

- 2D Descriptors: Topological descriptors (e.g., molecular connectivity indices).

- 3D Descriptors: Geometrical descriptors (e.g., surface area, volume).

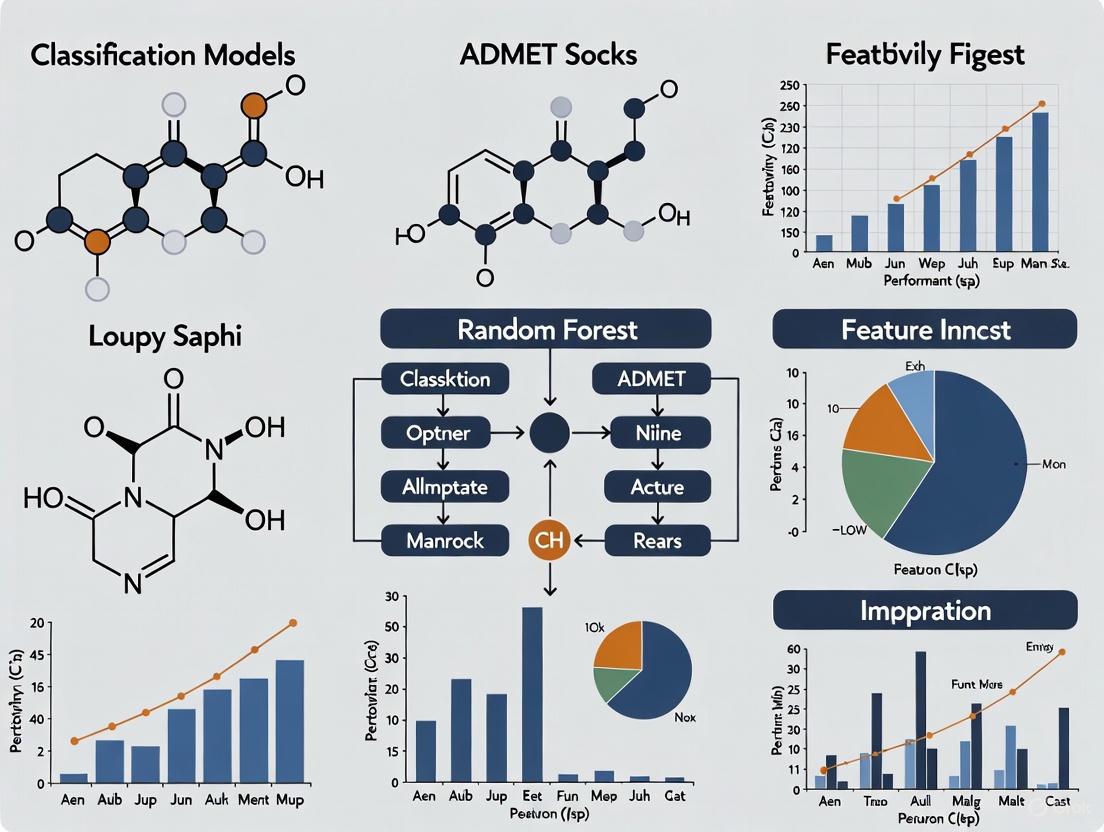

Software packages like RDKit, Dragon, and MOE are commonly used to calculate thousands of these descriptors from molecular structures. [2] The figure below illustrates the complete workflow for building a Random Forest ADMET classification model.

Model Training and Validation Protocol

A rigorous training and validation protocol is essential for developing a reliable model.

- Data Splitting: Use a temporal split or scaffold-based split to separate data into training and test sets. This mimics real-world drug discovery scenarios where models predict properties for novel chemical scaffolds. [5]

- Hyperparameter Tuning: Optimize key Random Forest parameters such as the number of trees in the forest (

n_estimators), maximum depth of trees (max_depth), and the number of features considered for splitting (max_features). Utilize cross-validation on the training set for this purpose. - Model Validation: Employ k-fold cross-validation across multiple seeds to evaluate model performance robustly. The final model should be assessed on a held-out test set that was not used during training or tuning. [1] [2]

- Performance Metrics: For classification tasks, use metrics including Area Under the Receiver Operating Characteristic Curve (AUROC), accuracy, precision, and recall. Benchmark performance against null models and established noise ceilings to confirm significant gains. [1] [4]

Table 2: Experimental Protocol for Random Forest-based ADMET Model Development

| Step | Protocol Description | Key Parameters & Considerations |

|---|---|---|

| Data Curation | Extract structures and assay data from public (e.g., TDC) or proprietary databases. | Assay consistency, structural duplicates, experimental variability. |

| Descriptor Calculation | Compute 1D, 2D, and/or 3D molecular descriptors using software like RDKit. | Feature quality over quantity; aim for non-redundant, informative descriptors. |

| Model Training | Train Random Forest classifier using a scaffold-based split of the data. | n_estimators: 100-1000; max_depth: avoid overfitting; max_features: 'sqrt' or 'log2'. |

| Model Validation | Evaluate using k-fold cross-validation and a final hold-out test set. | Use multiple random seeds and folds to get a performance distribution, not a single score. [1] |

| Performance Benchmarking | Compare AUROC, accuracy, etc., against baseline models and published benchmarks. | The TDC ADMET Leaderboard provides a standard for comparison. [4] |

Essential Research Tools and Platforms

The successful implementation of ADMET prediction models relies on a suite of software tools and platforms. The following table details key reagents and computational solutions for this field.

Table 3: Research Reagent Solutions for ADMET Prediction

| Tool/Platform Name | Type | Key Functionality |

|---|---|---|

| ADMET-AI [4] | Web Server / Python Package | Fast, accurate predictions for 41 ADMET endpoints; provides percentiles relative to approved drugs for context. |

| ADMETlab 3.0 [6] | Web Server | Comprehensive evaluation of 119 ADMET and physicochemical endpoints using a Directed Message Passing Neural Network (DMPNN). |

| ADMET Predictor [7] | Commercial Software Platform | Predicts over 175 properties, includes AI-driven drug design, PBPK simulations, and an "ADMET Risk" score for compound prioritization. |

| Therapeutics Data Commons (TDC) [4] | Data Repository & Benchmark | Provides curated datasets and a leaderboard for benchmarking models on ADMET prediction tasks. |

| RDKit [4] | Cheminformatics Library | Calculates molecular descriptors and fingerprints; essential for feature generation for Random Forest models. |

| Apheris Federated ADMET Network [1] | Federated Learning Platform | Enables collaborative model training across multiple institutions without centralizing proprietary data. |

Recent community-wide blind challenges, such as the ASAP Discovery x OpenADMET challenge, have provided rigorous testing grounds for these tools. These challenges involve predicting crucial endpoints like human and mouse liver microsomal stability, solubility (KSOL), and permeability (MDR1-MDCKII) for novel compounds, accurately simulating real-world drug discovery hurdles. [5] Top-performing approaches in these benchmarks, which often include models trained on broad, well-curated data, have demonstrated 40–60% reductions in prediction error compared to simpler models. [1]

The field of in silico ADMET prediction is rapidly evolving. Future directions include the development of hybrid AI-quantum computing frameworks, integration of multi-omics data for a more holistic biological view, and a growing emphasis on model interpretability to build trust and facilitate regulatory acceptance. [3] The adoption of federated learning promises to overcome the critical limitation of data scarcity by unlocking the collaborative potential of privately held datasets across the pharmaceutical industry. [1]

In conclusion, AI-powered ADMET prediction, strategically implemented with robust models like Random Forest, is no longer a supplementary tool but a cornerstone of modern drug discovery. By enabling the early identification and mitigation of pharmacokinetic and toxicity liabilities, these computational approaches directly address the primary cause of clinical phase attrition. This leads to a more efficient discovery pipeline, significant cost savings, and an increased likelihood of delivering safe and effective therapeutics to patients.

Ensemble learning is a powerful machine learning paradigm that operates on a simple but effective principle: combining multiple base models to create a single, superior predictive model. This approach mitigates the weaknesses of individual models, leading to enhanced accuracy, robustness, and generalization on unseen data. The core idea is analogous to seeking multiple expert opinions before making a critical decision—the collective judgment is often more reliable than any single viewpoint. In chemical data analysis, particularly for complex tasks like predicting Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, ensemble methods have demonstrated significant success [3] [2].

Several popular techniques exist for creating ensembles. Bagging (Bootstrap Aggregating) reduces model variance by training multiple base learners on different random subsets of the original data and then aggregating their predictions. Boosting sequentially trains models, with each new model focusing on the errors of the previous ones, thereby reducing bias. Stacking combines the predictions of multiple heterogeneous models using a meta-learner to produce the final output [8] [9]. The Random Forest algorithm is a quintessential example of an ensemble method that leverages the bagging technique to great effect.

The Random Forest Algorithm: A Deep Dive

Random Forest is an ensemble learning method that constructs a multitude of decision trees during training. Its design incorporates two layers of randomness to ensure that the individual trees are de-correlated, which is key to its superior performance.

The algorithm operates through the following mechanism:

- Bootstrap Sampling: From the original dataset of size N, multiple new training sets are created by randomly sampling N instances with replacement. This process, known as bootstrapping, means each tree is trained on a slightly different subset of the data.

- Feature Randomness: At each node of a decision tree, the algorithm does not consider all available features to find the best split. Instead, it randomly selects a subset of features (often the square root of the total number of features) and determines the optimal split from within this subset.

- Tree Construction: A decision tree is grown fully on its bootstrapped sample and feature subsets, typically without pruning.

- Aggregation: For a regression task, the final prediction is the average of the predictions from all individual trees. For a classification task, it is the majority vote [10].

This two-fold random process ensures that the trees in the "forest" are diverse. While individual trees might be highly sensitive to the training data (high variance), averaging their results cancels out this noise, leading to a stable and accurate model. The key parameters that can be tuned in a Random Forest include the number of trees in the forest, the maximum depth of each tree, the minimum number of samples required to split a node, and the number of features to consider at each split.

Key Advantages for Chemical and ADMET Data

Random Forest offers a suite of advantages that make it particularly well-suited for handling the intricacies of chemical data and ADMET prediction tasks in drug discovery.

Handling Structured/Tabular Data: Chemical data is often represented in a structured, tabular format, with rows representing molecules and columns representing molecular descriptors or fingerprints. Random Forest consistently demonstrates top-tier performance on such data, often outperforming more complex deep learning models [10] [11].

Robustness to Noise and Irrelevant Features: High-throughput screening and molecular descriptor calculation can generate datasets with many features, not all of which are relevant to the target property. Random Forest is inherently robust to noisy features and irrelevant descriptors, as the random feature selection process makes it unlikely that a single spurious feature will dominate all trees [2] [12].

No Requirement for Feature Scaling: Unlike algorithms like Support Vector Machines (SVMs) that are sensitive to the scale of input data, Random Forest is based on decision trees that make splits based on feature thresholds. This makes it immune to the scale of the input features, simplifying the data preprocessing pipeline [2].

Implicit Feature Importance Analysis: A significant benefit for scientific inquiry is the ability of Random Forest to provide a ranked list of feature importance. This helps medicinal chemists and computational scientists identify which molecular descriptors (e.g., LogP, polar surface area, specific functional groups) are most influential for a given ADMET endpoint, thereby offering valuable insights into the underlying structure-property relationships [2] [13].

Effectiveness on Small to Medium-Sized Datasets: Drug discovery projects, especially in early stages, may have limited experimental data. Random Forest is known to perform well even with smaller datasets, unlike deep learning models which typically require vast amounts of data to avoid overfitting [14] [11].

Model Interpretability with SHAP: While ensemble models can be seen as "black boxes," techniques like SHapley Additive exPlanations (SHAP) can be applied to interpret the predictions. SHAP quantifies the contribution of each feature to an individual prediction, enhancing model transparency and trustworthiness for critical decision-making in drug development [8].

Proven Performance in Practical Scenarios: Empirical studies and benchmarks have repeatedly confirmed the strong performance of Random Forest in ADMET prediction. It has been shown to outperform traditional QSAR models and provides a robust baseline against which more complex models are often compared [2] [12] [14].

The following table summarizes its performance as documented in recent scientific literature.

Table 1: Documented Performance of Random Forest in Various Predictive Tasks

| Application Domain | Reported Performance Metrics | Context / Comparison |

|---|---|---|

| ADMET Prediction | High accuracy in predicting solubility, permeability, metabolism, and toxicity [2]. | Outperforms traditional QSAR models; provides rapid, cost-effective screening [2]. |

| Chemical Safety | Accuracy: 0.983, Precision: 0.903, Recall: 0.781, F1-score: 0.863, AUC: 0.963 [8]. | RF-XGBoost ensemble model for predicting chemical production accidents. |

| Molecular Property Prediction | Robust performance across multiple benchmark datasets [11]. | A strong performer compared to various representation learning models. |

| Pairwise Molecular Modeling | Competitive performance in predicting ADMET property differences [14]. | Used as a benchmark against specialized deep learning models like DeepDelta. |

Experimental Protocol for ADMET Classification

This section provides a detailed, step-by-step protocol for developing a Random Forest model to classify compounds based on a specific ADMET property, such as hepatic clearance or hERG inhibition.

Data Collection and Preprocessing

- Data Sourcing: Obtain a dataset from public repositories such as the Therapeutics Data Commons (TDC), ChEMBL, or PubChem. The dataset should contain molecular structures (as SMILES strings or InChIs) and the corresponding experimental values for the target ADMET property [2] [12].

- Data Cleaning and Curation:

- Standardization: Standardize all SMILES strings using a tool like the one from Atkinson et al. to ensure consistent representation. This includes removing salts, neutralizing charges, and generating canonical tautomers [12].

- Duplicate Removal: Identify and remove duplicate molecules. If duplicates have conflicting property values, either average them or remove the entire group to avoid ambiguity.

- Outlier Handling: Visually inspect the distribution of the target property and consider removing extreme outliers that may represent measurement errors.

- Data Splitting: Split the cleaned dataset into training (~70%), validation (~15%), and hold-out test (~15%) sets. Use scaffold splitting to ensure that molecules with different core structures are represented across the sets. This evaluates the model's ability to generalize to novel chemotypes, which is crucial for real-world drug discovery [12] [11].

Feature Engineering and Molecular Representation

- Descriptor Calculation: Calculate molecular descriptors using software like RDKit. These are numerical representations of molecular properties (e.g., molecular weight, LogP, number of hydrogen bond donors/acceptors, polar surface area) [2] [11].

- Fingerprint Generation: Generate 2D molecular fingerprints. The Extended-Connectivity Fingerprint (ECFP), particularly ECFP4 (radius=2) or ECFP6 (radius=3) with a bit length of 1024 or 2048, is a standard and effective choice for capturing molecular substructures [11].

- Feature Selection (Optional): To reduce dimensionality and mitigate overfitting, apply feature selection methods. Filter methods (e.g., removing low-variance or highly correlated features) or embedded methods (which use the model's own feature importance) are commonly used [2].

Table 2: Essential Research Reagent Solutions for Random Forest-based ADMET Modeling

| Tool / Resource Name | Type | Primary Function in Workflow |

|---|---|---|

| RDKit | Cheminformatics Library | Calculates molecular descriptors (e.g., 2D descriptors) and generates fingerprints (e.g., Morgan/ECFP fingerprints) from molecular structures [12] [11]. |

| scikit-learn | Machine Learning Library | Provides the implementation for the Random Forest classifier/regressor, data splitting, preprocessing, and model evaluation metrics [14]. |

| Therapeutics Data Commons (TDC) | Data Repository | Supplies curated, publicly available datasets for ADMET and other drug discovery-related prediction tasks [12]. |

| SHAP Library | Model Interpretation Tool | Explains the output of the trained Random Forest model by quantifying the contribution of each input feature to individual predictions [8]. |

| Scaffold Split Method | Data Splitting Algorithm | Groups molecules by their Bemis-Murcko scaffolds and splits the data to ensure different core structures are in training and test sets, assessing model generalizability [12]. |

Model Training and Validation

- Baseline Model Training: Train a standard Random Forest model on the training set using default hyperparameters from a library like scikit-learn.

- Hyperparameter Tuning: Optimize the model performance on the validation set by tuning key hyperparameters. A common strategy is Grid Search or Randomized Search.

- nestimators: Number of trees in the forest (e.g., 100, 500, 1000).

- maxdepth: Maximum depth of the trees (e.g., 10, 20, None).

- minsamplessplit: Minimum number of samples required to split an internal node.

- minsamplesleaf: Minimum number of samples required to be at a leaf node.

- max_features: Number of features to consider for the best split (e.g., 'sqrt', 'log2').

- Cross-Validation: Perform k-fold cross-validation (e.g., k=5 or k=10) on the training set to obtain a robust estimate of the model's performance and stability during the tuning process [2] [12].

Model Evaluation and Interpretation

- Performance Assessment: Evaluate the final, tuned model on the held-out test set. For a classification task, report key metrics such as Accuracy, Precision, Recall, F1-score, and Area Under the ROC Curve (AUC-ROC) [8] [12].

- Model Interpretation:

- Global Interpretation: Extract and plot the model's built-in feature importance to understand which molecular descriptors contribute most to the predictions overall.

- Local Interpretation: Use the SHAP library to explain individual predictions. Create summary plots and force plots to illustrate how each feature pushes the model's output for a single compound towards a particular class [8].

The following diagram illustrates the complete experimental workflow.

Random Forest ADMET Modeling Workflow

Advanced Applications and Ensemble Techniques

While powerful on its own, Random Forest is often used as a base component in more sophisticated ensemble architectures to push the boundaries of predictive performance.

Stacking Ensembles: A stacking ensemble combines multiple base models (e.g., Random Forest, Support Vector Machines, XGBoost) by using a meta-learner to blend their predictions. For instance, a study on chemical safety accidents demonstrated that a stacking ensemble of RF and XGBoost achieved superior performance (Accuracy: 0.983, F1-score: 0.863) compared to any single model [8]. The logical flow of a stacking ensemble is shown below.

Stacking Ensemble Model Architecture

Pairwise Modeling with DeepDelta: A novel application involves moving beyond predicting absolute properties to predicting property differences between two molecules. The DeepDelta approach, which uses a deep neural network, has been shown to outperform traditional methods where Random Forest and other models predict properties for single molecules and the differences are calculated by subtraction. This highlights an area where specialized architectures can surpass standard Random Forest, though Random Forest remains a strong benchmark [14].

In conclusion, Random Forest is a versatile, robust, and powerful algorithm that serves as an indispensable tool for researchers tackling the challenges of chemical data analysis and ADMET prediction. Its straightforward implementation, combined with its high performance and interpretability, makes it an excellent starting point for any modeling pipeline and a reliable benchmark for evaluating more complex methodologies.

The application of Random Forest (RF) algorithms has become a cornerstone in modern computational pharmacology, offering a robust framework for predicting critical molecular properties. This ensemble learning method, known for its high accuracy and resistance to overfitting, is particularly effective at modeling the complex, non-linear relationships between a molecule's physicochemical descriptors and its biological activity [15]. Within drug discovery, RF models are revolutionizing the early-stage assessment of drug-likeness and the prediction of peptide therapeutic properties, enabling researchers to prioritize promising candidates with a higher probability of clinical success [15] [16]. This Application Note details two concrete case studies and provides a standardized protocol for implementing RF in ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) classification research, directly supporting the broader thesis of its successful implementation in molecular property prediction.

Case Studies in Drug-Likeness and Peptide Property Prediction

Case Study 1: Prediction of Peptide Drug-Likeness Using Rule-Based Violations

A 2025 study provides a direct and quantifiable example of using RF to predict peptide drug-likeness based on established structural rules [15].

- Objective: To develop fast and reliable computational filters for assessing the drug-likeness and potential oral developability of peptide therapeutics, which often fall outside the scope of classical small-molecule rules like Lipinski's Rule of Five (Ro5) [15].

- Dataset: The research curated a large dataset of over 300,000 drug and non-drug molecules from PubChem. Molecular descriptors were extracted using the RDKit cheminformatics toolkit, and violation counts for three rule sets were generated: the classic Ro5, the peptide-oriented beyond Rule of Five (bRo5), and Muegge's criteria [15].

- Model Implementation: RF classifier and regressor models were trained with varying numbers of trees (10, 20, and 30) to predict violation counts for these rules [15].

- Key Results: The developed RF models demonstrated exceptional performance in learning the relationships between molecular descriptors and rule violations, achieving near-perfect metrics on the training data. The model predictions showed strong agreement with established computational platforms like SwissADME, validating their use as a rapid preliminary filter [15].

Table 1: Performance Metrics of RF Models for Predicting Rule Violations [15]

| Rule Set | RF Model (Number of Trees) | Accuracy | Precision | Recall | F1-Score |

|---|---|---|---|---|---|

| Ro5 | 10 | 1.0 | 1.0 | 1.0 | 1.0 |

| Ro5 | 20 | 1.0 | 1.0 | 1.0 | 1.0 |

| Ro5 | 30 | 1.0 | 1.0 | 1.0 | 1.0 |

| bRo5 | 10 | 0.999 | 0.999 | 0.999 | 0.999 |

| bRo5 | 20 | 0.999 | 0.999 | 0.999 | 0.999 |

| bRo5 | 30 | 0.999 | 0.999 | 0.999 | 0.999 |

| Muegge | 10 | 0.985 | 0.985 | 0.985 | 0.985 |

| Muegge | 20 | 0.986 | 0.986 | 0.986 | 0.986 |

| Muegge | 30 | 0.986 | 0.986 | 0.986 | 0.986 |

The study concluded that RF models provide a powerful in-silico filter for peptide drug-likeness, capable of supporting the prioritization of orally developable candidates [15].

Case Study 2: ADME-Informed Drug-Likeness Classification with ADME-DL

Moving beyond simple structural rules, a 2025 study introduced ADME-DL, a novel pipeline that leverages RF on top of pharmacokinetic-informed embeddings for a more biologically grounded assessment of drug-likeness [16].

- Objective: To overcome the limitations of purely structural screening methods by integrating interdependent ADME properties into drug-likeness prediction, thereby bridging the gap towards clinical viability [16].

- Dataset & Feature Engineering: The model first used 21 specific ADME endpoints (e.g., Caco-2 permeability, Pgp substrate, CYP inhibition) from public sources. A key innovation was the use of a sequential multi-task learning approach (A→D→M→E) to pretrain molecular foundation models, creating an ADME-informed embedding space that reflects a compound's pharmacological lifecycle [16].

- Model Implementation: A Random Forest classifier was then trained on these ADME-enriched embeddings to distinguish approved drugs from non-drug compounds found in large chemical libraries [16].

- Key Results: The ADME-DL framework achieved an improvement of up to +2.4% over state-of-the-art, structure-only baselines. This demonstrates that respecting the inherent dependencies between ADME tasks produces more relevant and accurate predictions, effectively encoding pharmacokinetic principles into the drug-likeness classification [16].

Case Study 3: Stacking Ensemble Model for Anti-Inflammatory Peptide Prediction

Further demonstrating the versatility of tree-based methods for peptide therapeutics, a 2022 study developed AIPStack, a stacking ensemble model for predicting anti-inflammatory peptides (AIPs) [17].

- Objective: To accurately and efficiently identify AIPs for the treatment of inflammation [17].

- Model Implementation: The AIPStack model used a two-layer stacking ensemble architecture. The first layer (base-classifiers) consisted of Random Forest and Extremely Randomized Tree models. The second layer (meta-classifier) used a Logistic Regression model to combine the outputs from the base-classifiers. Peptide sequences were represented using hybrid features fused from two amino acid composition descriptors [17].

- Key Results: The proposed AIPStack model achieved an AUC of 0.819, an accuracy of 0.755, and an MCC of 0.510 on an independent test set, outperforming existing AIP predictors. The study also used SHAP analysis to interpret the model and highlight the essential sequence features required for AIP activity [17].

Experimental Protocol: Building an RF Model for ADMET Classification

This protocol outlines the steps for constructing a robust RF model for molecular property prediction, incorporating best practices from recent literature.

The following diagram illustrates the end-to-end experimental workflow for building and interpreting an RF model for ADMET classification.

Step-by-Step Detailed Methodology

Step 1: Data Curation and Cleaning Curate a dataset of molecules with associated experimental ADMET properties from public sources like ChEMBL, PubChem, or specialized benchmarks such as PharmaBench [18] or the Therapeutics Data Commons (TDC) [12]. Implement a rigorous cleaning pipeline:

- Standardize SMILES: Use tools to generate consistent canonical SMILES representations, adjust tautomers, and extract the parent organic compound from salts [12].

- Remove Inorganics: Filter out inorganic salts, organometallic compounds, and fragments [12].

- Deduplicate: Remove duplicate compounds, keeping the first entry if target values are consistent, or removing the entire group if values are inconsistent [12].

Step 2: Feature Representation and Engineering Compute molecular descriptors and fingerprints for each compound. Common choices include:

- RDKit Descriptors: A set of 200+ physicochemical descriptors (e.g., molecular weight, logP, H-bond donors/acceptors) [15] [12].

- Morgan Fingerprints (ECFP): Circular fingerprints encoding molecular substructures and topology [12].

- ADME-Informed Embeddings (Advanced): For a more powerful model, use pre-trained molecular foundation models (e.g., graph neural networks) that have been fine-tuned on ADME tasks to generate informative embedding vectors as features [16].

Step 3: Dataset Splitting Partition the cleaned and featurized dataset into training, validation, and test sets. To ensure a rigorous evaluation and avoid artificial inflation of performance, use a scaffold split [12] [18]. This method groups molecules based on their Bemis-Murcko scaffolds, ensuring that structurally distinct molecules are placed in different splits, thereby testing the model's ability to generalize to novel chemotypes.

Step 4: Random Forest Model Training and Tuning Train the RF model on the training set. While RF is less prone to overfitting than single models, hyperparameter tuning can optimize performance.

- Key Hyperparameters:

n_estimators(number of trees),max_depth(maximum tree depth),min_samples_split(minimum samples required to split a node) [15]. - Tuning Method: Use cross-validation on the training set (e.g., 5-fold) to find the optimal hyperparameters that maximize the chosen performance metric (e.g., ROC-AUC, F1-score).

Step 5: Model Evaluation and Interpretation Evaluate the final model on the held-out test set using appropriate metrics.

- Classification Metrics: Accuracy, Precision, Recall, F1-Score, and Area Under the Receiver Operating Characteristic Curve (ROC-AUC) [15] [17].

- Feature Importance Analysis: Determine which molecular features most influenced the model's predictions using techniques like Gini importance, Permutation Feature Importance (PFI), or SHapley Additive exPlanations (SHAP) [19] [20]. This provides critical insight into the structural drivers of the ADMET property.

Visualization and Interpretation of Random Forest Models

Understanding the "why" behind a model's prediction is crucial for building trust and generating scientific insights. The following diagram outlines a multi-granularity approach to interpreting a trained RF model.

Explanation of the Interpretation Workflow:

- Global Interpretation: Techniques like feature importance provide a top-level view of which features (e.g.,

logP,PSA,HBD) are most influential across the entire dataset for the model's predictions [19] [20]. - Cluster-Based Interpretation: For a deeper dive, decision trees within the forest can be clustered based on the similarity of their decision rules and predictions. This allows researchers to identify common "modes of reasoning" within the complex ensemble, moving beyond an oversimplified summary or the impractical task of analyzing every single tree [21]. Visualization of these clusters can be done via Rule Plots and Feature Plots [21].

- Local Interpretation: Methods like SHAP can explain the prediction for a single molecule, quantifying how each of its features contributed to the final outcome, which is invaluable for debugging and candidate optimization [17] [20].

Table 2: Key Software, Databases, and Computational Tools

| Category | Item | Function / Description |

|---|---|---|

| Cheminformatics & Descriptor Calculation | RDKit | An open-source toolkit for cheminformatics. Used to compute molecular descriptors (e.g., for Ro5, bRo5), generate fingerprints, and handle standardization of chemical structures [15] [12]. |

| Public Data Sources | PubChem | A database of chemical molecules and their activities against biological assays. Serves as a primary source for drug and non-drug molecules [15]. |

| ChEMBL | A manually curated database of bioactive molecules with drug-like properties. Provides high-quality SAR and ADMET data [18]. | |

| Benchmark Datasets | Therapeutics Data Commons (TDC) | A collection of curated datasets and benchmarks for machine learning in drug discovery, including numerous ADMET prediction tasks [12] [16] [18]. |

| PharmaBench | A recent, comprehensive benchmark for ADMET properties, designed to be more representative of compounds in drug discovery projects than previous sets [18]. | |

| Machine Learning & Modeling | scikit-learn | A core Python library for machine learning. Provides implementations of the Random Forest algorithm, model evaluation metrics, and tools like permutation importance [19]. |

| Model Interpretation | SHAP (SHapley Additive exPlanations) | A game theory-based method to explain the output of any machine learning model. Used to quantify the contribution of each feature to individual predictions [19] [17] [20]. |

Within modern drug discovery, the in silico prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties has become indispensable for reducing late-stage attrition rates. Among the various machine learning (ML) techniques applied, Random Forest (RF) has established itself as a particularly robust and widely-used algorithm. This Application Note delineates the comparative strengths of RF against other prominent ML algorithms in the context of ADMET modeling, providing researchers with structured quantitative data, detailed experimental protocols, and actionable guidelines for model implementation within a broader thesis on RF-based ADMET classification.

Performance Comparison of ML Algorithms in ADMET Tasks

Extensive benchmarking studies provide critical insights into the performance of various ML algorithms. The following tables summarize key findings from recent large-scale analyses.

Table 1: Overall Algorithm Performance Across Diverse ADMET Datasets [12]

| Algorithm | Typical Use Case | Key Strengths | Common Limitations |

|---|---|---|---|

| Random Forest (RF) | Classification & Regression on small to medium-sized datasets | High robustness, handles mixed data types, provides feature importance, less prone to overfitting than deep learning on small data | Performance can plateau with very large data; may be outperformed by boosting in some regression tasks |

| XGBoost | Regression tasks (e.g., Caco-2 permeability) | Often superior predictive accuracy on structured data, efficient handling of missing values | Can be more sensitive to hyperparameters, greater risk of overfitting without careful tuning |

| Support Vector Machine (SVM) | Classification tasks with clear margins | Effective in high-dimensional spaces, strong theoretical foundations | Performance heavily dependent on kernel and parameter choice; less interpretable |

| Message Passing Neural Network (MPNN) | Tasks with abundant data and complex structural relationships | Captures intricate molecular topology directly from graphs | Requires very large datasets; high computational cost; risk of overfitting on small data |

Table 2: Quantitative Performance on Specific ADMET Endpoints [12] [22]

| ADMET Endpoint | Best Performing Algorithm | Key Metric Performance | Comparative RF Performance |

|---|---|---|---|

| Caco-2 Permeability | XGBoost [22] | Superior R² on regression tasks | Strong, but generally slightly lower R² than XGBoost |

| Toxicity Classification (e.g., Tox21) | Random Forest [12] | High AUC and robustness on public benchmarks | Consistently ranks as a top performer for classification |

| Metabolic Stability | Ensemble Methods (RF, XGBoost) | High accuracy with scaffold splits | Demonstrates excellent generalization and data efficiency |

| Solubility | LightGBM / XGBoost | Low RMSE on regression | Competitive, but often outperformed by gradient boosting |

Experimental Protocol for Building RF-Based ADMET Models

This protocol outlines a standardized workflow for developing and validating robust RF models for ADMET property prediction, incorporating best practices from recent literature.

Data Acquisition and Curation

Objective: To gather and standardize a high-quality molecular dataset for model training. Materials: Public databases (e.g., ChEMBL, TDC, PharmaBench), RDKit, Python environment. Procedure:

- Data Sourcing: Obtain molecular structures (as SMILES strings) and corresponding experimental ADMET values from curated public sources like the Therapeutics Data Commons (TDC) or PharmaBench [12] [18].

- Data Cleaning: Implement a rigorous cleaning pipeline using a standardized tool kit [12]:

- Remove inorganic salts and organometallic compounds.

- Extract the organic parent compound from salt forms.

- Standardize tautomers to consistent functional group representations.

- Canonicalize all SMILES strings.

- De-duplicate entries, retaining the first entry for consistent duplicates or removing the entire group for inconsistent measurements.

- Data Splitting: Partition the cleaned dataset into training, validation, and test sets using an 8:1:1 ratio. For a more rigorous assessment of generalizability, use scaffold splitting to ensure that molecules with different core structures are present in different splits [12] [22].

Molecular Feature Representation

Objective: To convert molecular structures into numerical features interpretable by the RF algorithm. Materials: RDKit cheminformatics toolkit. Procedure:

- Fingerprints: Generate Morgan fingerprints (also known as circular fingerprints) using RDKit with a radius of 2 and a bit vector length of 1024. This captures local atomic environments [12] [22].

- Descriptors: Calculate RDKit 2D descriptors, which include a set of physicochemical properties such as molecular weight, logP, topological polar surface area (TPSA), and hydrogen bond donors/acceptors. Normalize these descriptors [22].

- (Optional) Feature Combination: Investigate the performance of a combined feature set by concatenating Morgan fingerprints and 2D descriptors. A structured feature selection process is recommended over simple concatenation [12].

Model Training and Hyperparameter Optimization

Objective: To train an RF model with optimized hyperparameters for maximum predictive performance. Materials: Scikit-learn library in Python. Procedure:

- Baseline Model: Instantiate a baseline

RandomForestRegressororRandomForestClassifierfrom scikit-learn with default parameters. - Hyperparameter Tuning: Conduct a grid search or randomized search with 5-fold cross-validation on the training set to optimize key parameters:

n_estimators: Number of trees in the forest (typical range: 100-1000).max_depth: Maximum depth of the tree (typical range: 10-100, or None).min_samples_split: Minimum number of samples required to split an internal node (typical range: 2-10).min_samples_leaf: Minimum number of samples required to be at a leaf node (typical range: 1-4).

- Model Training: Train the final RF model on the entire training set using the identified optimal hyperparameters.

Model Validation and Evaluation

Objective: To assess the model's predictive accuracy and generalizability robustly. Procedure:

- Performance Metrics: Evaluate the model on the held-out test set using task-appropriate metrics:

- Regression (e.g., Caco-2 Papp): R², Mean Absolute Error (MAE), Root Mean Squared Error (RMSE).

- Classification (e.g., Toxicity): Area Under the ROC Curve (AUC), Accuracy, F1-Score.

- Statistical Validation: Perform Y-randomization tests to ensure the model's performance is not due to chance correlations [22].

- Applicability Domain Analysis: Define the model's applicability domain using methods like leverage or distance-based measures to identify molecules for which predictions are reliable [22].

- External Validation: Where possible, test the model on a completely external dataset from a different source (e.g., an in-house pharmaceutical company dataset) to evaluate its real-world transferability [12] [22].

Diagram 1: RF ADMET modeling workflow.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Software, Databases, and Tools for RF-based ADMET Modeling

| Tool Name | Type | Primary Function in ADMET Modeling | Reference |

|---|---|---|---|

| RDKit | Cheminformatics Library | Generates molecular features (fingerprints, 2D descriptors); handles SMILES standardization. | [12] [22] |

| Therapeutics Data Commons (TDC) | Data Repository | Provides curated, publicly available benchmark datasets for ADMET property prediction. | [12] [18] |

| PharmaBench | Data Repository | Offers a large-scale, condition-aware ADMET benchmark dataset compiled using LLM-based curation. | [18] |

| Scikit-learn | ML Library | Provides implementation of Random Forest and other ML algorithms for model building and evaluation. | [22] |

| Chemprop | Deep Learning Library | Implements Message Passing Neural Networks (MPNNs) for comparative analysis against RF. | [12] |

| Deep-PK / DeepTox | Specialized AI Platform | AI-driven platforms for pharmacokinetics and toxicity prediction; useful for benchmarking. | [3] |

Application Notes & Case Studies

Case Study: Caco-2 Permeability Prediction

A 2025 benchmark study provides a direct comparison of RF and other algorithms for predicting Caco-2 permeability, a critical metric for oral absorption [22].

Findings:

- XGBoost demonstrated the best overall performance for this regression task.

- Random Forest delivered strong, reliable performance and was notably robust, showing less variance across different data splits compared to more complex models.

- The study highlighted that while deep learning models like DMPNN can work well, they did not outperform the carefully tuned tree-based ensembles on this specific endpoint, underscoring the data-efficiency of RF.

Recommendation: For Caco-2 modeling, begin with RF as a robust baseline. If marginal performance gains are critical, invest resources in tuning XGBoost.

Strategic Selection of Molecular Representations

The choice of molecular representation significantly impacts RF performance [12].

Guidelines:

- Morgan Fingerprints are a powerful default choice for RF, effectively capturing substructural information.

- RDKit 2D Descriptors provide complementary information related to physicochemical properties.

- Combined Features: Systematic, dataset-specific feature selection (e.g., using RF's built-in feature importance) for combining fingerprints and descriptors is superior to naive concatenation and can lead to performance improvements.

Diagram 2: Feature representation impact on RF.

Random Forest remains a cornerstone algorithm for ADMET modeling due to its exceptional robustness, interpretability, and consistent performance across diverse endpoints, particularly with the small-to-medium-sized datasets typical in drug discovery. While gradient boosting methods like XGBoost may achieve marginally superior accuracy in certain regression tasks, and deep learning models like MPNNs excel with abundant data and complex structural relationships, RF's reliability and low risk of overfitting make it an ideal baseline model and a strong candidate for production use. Its ability to provide feature importance metrics further aids chemists in understanding the structural drivers of ADMET properties, thereby bridging the gap between predictive modeling and scientific insight. For researchers building ADMET classification models, implementing the standardized protocols and validation frameworks outlined in this document will ensure the development of robust, generalizable, and impactful RF models.

Building Your Model: A Step-by-Step RF Implementation Workflow

The accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties constitutes a critical component in modern drug discovery, serving as a fundamental determinant of a compound's efficacy, safety, and ultimate clinical success [18] [23]. Early assessment and optimization of ADMET properties are essential for mitigating the risk of late-stage failures and for the successful development of new therapeutic agents. The development of computational approaches provides a fast and cost-effective means for drug discovery, allowing researchers to focus on candidates with better ADMET potential and reduce labor-intensive and time-consuming wet-lab experiments [18]. For researchers implementing machine learning models such as random forest for ADMET classification and regression, the selection of high-quality, representative benchmarking data is as crucial as the choice of algorithm itself. This application note provides a detailed guide to sourcing and utilizing three pivotal resources—PharmaBench, ChEMBL, and the Therapeutics Data Commons (TDC)—with specific protocols for their application in random forest-based ADMET modeling research.

A comparative analysis of the featured resources reveals distinct advantages and specializations, which are summarized quantitatively in Table 1. This comparison enables informed selection based on specific research requirements.

Table 1: Comparative Analysis of ADMET Data Resources

| Resource | Primary Focus & Description | Key Strengths | Dataset Scale (Examples) | Data Processing Level |

|---|---|---|---|---|

| PharmaBench [18] [24] [25] | A comprehensive benchmark set created using a multi-agent LLM system to extract and standardize experimental conditions from bioassays. | - LLM-curated experimental conditions- Extensive data cleaning and standardization- Focus on drug-like compounds (MW 300-800 Da) | - 52,482 final entries for AI modeling- 11 ADMET datasets- Sourced from 14,401 bioassays | Highly processed, model-ready benchmarks with train/test splits. |

| TDC ADMET Group [26] [23] [4] | A centralized benchmark group aggregating curated datasets from various published sources for fair model comparison. | - Well-established leaderboard- Standardized scaffold splits- Diverse property coverage (22 datasets) | - e.g., CYP2D6 Inhibition: 13,130 entries- e.g., BBB Penetration: 1,975 entries- e.g., Solubility: 9,982 entries | Pre-processed, standardized benchmarks with predefined splits. |

| ChEMBL [27] [28] [29] | A manually curated database of bioactive molecules with drug-like properties, aggregating data from scientific literature. | - Vast repository of raw bioactivity data- Manually curated targets and compounds- Includes diverse assay types (Binding, Functional, ADMET) | - Over 5.4 million bioactivity measurements- More than 1 million compounds- 5,200 protein targets | Raw and standardized data; requires significant pre-processing for ML. |

Detailed Resource Profiles and Experimental Protocols

PharmaBench: A LLM-Enhanced Benchmark

PharmaBench directly addresses limitations in existing benchmarks, specifically their small size and lack of representation of compounds used in actual drug discovery projects [18]. Its creation involved a sophisticated, multi-agent Large Language Model (LLM) system to mine experimental conditions from unstructured bioassay descriptions, which are critical for normalizing conflicting results for the same compound under different experimental setups [18]. The final resource provides 52,482 curated entries across eleven key ADMET properties, making it particularly suited for training and evaluating robust machine learning models [24].

Protocol 1: Implementing Random Forest with PharmaBench Data

- Data Acquisition: Clone the PharmaBench repository from GitHub (

mindrank-ai/PharmaBench) and load the desired dataset (e.g.,BBBfor blood-brain barrier penetration) using the provided scripts in thedata/final_datasets/path [24]. - Feature Calculation: Using the standardized SMILES strings provided, calculate molecular descriptors (e.g., using RDKit) or fingerprints (e.g., Morgan fingerprints) for each compound. These will serve as the feature matrix (X) for the random forest model.

- Target Assignment: Use the provided experimental values as the prediction target (y). For classification tasks like BBB, the labels are binary (e.g., penetrating vs. non-penetrating) [24].

- Data Splitting: Utilize the provided

scaffold_train_test_labelorrandom_train_test_labelto ensure a fair model evaluation. The scaffold split is recommended to assess a model's ability to generalize to novel chemotypes [18] [24]. - Model Training and Validation: Train a random forest classifier/regressor (e.g., using

scikit-learn) on the training set and validate its performance on the designated test set using appropriate metrics (e.g., AUROC for classification, MAE for regression).

Therapeutics Data Commons (TDC) ADMET Benchmark Group

The TDC provides a unified platform for accessing and benchmarking models on ADMET predictions. Its benchmark group is formulated from 22 datasets, each with predefined training, validation, and test sets created using scaffold splitting to simulate real-world generalization challenges [26]. This makes it an ideal resource for direct model comparison and for researchers seeking a standardized evaluation framework.

Protocol 2: Accessing and Evaluating on TDC Benchmarks

- Environment Setup: Install the TDC package using pip (

pip install tdc). - Benchmark Initialization: Import the ADMET group and load a specific benchmark, for example, the Caco-2 permeability dataset [26].

- Model Training: Train your random forest model on the

train_valdata. Perform hyperparameter optimization via cross-validation on this set. - Prediction and Evaluation: Generate predictions (

y_pred) for the held-outtestset and use the TDC's evaluation function to obtain the performance metric [26].

ChEMBL: A Primary Source for Data Curation

ChEMBL is a foundational resource for bioactivity data, manually extracted from peer-reviewed literature [29]. It contains binding, functional, and ADMET information for millions of compounds. Unlike the pre-curated benchmarks above, using ChEMBL directly offers maximum flexibility but requires significant data curation effort. This involves querying for specific assay types (e.g., 'A' for ADME) and then standardizing units, managing salt forms, and dealing with data variability [28] [29].

Protocol 3: Building a Custom ADMET Dataset from ChEMBL

- Data Retrieval: Access data via the ChEMBL web interface or by downloading the complete database. Filter assays by type (e.g., 'A' for ADME, 'P' for Physicochemical) to find relevant datasets, such as human PPBR (Plasma Protein Binding Rate) [28] [23].

- Data Standardization and Filtering:

- Apply confidence score filters (e.g., ≥ 8) to ensure high-quality target assignments [28].

- Standardize compounds to parent structures, stripping salt forms to ensure consistency.

- Filter for consistent units and standard relation (e.g.,

standard_units == 'nM',standard_relation == '='). - Use the

data_validity_commentfield to flag or remove potentially erroneous data points [28].

- Data Cleaning and Deduplication: A critical step is resolving duplicate measurements for the same compound. Implement a strategy such as keeping the median value or the value from the most reliable assay source.

- Curate the Final Set: Apply drug-likeness filters (e.g., molecular weight between 300-800 Daltons) if desired, and finally split the curated dataset using scaffold-based methods to prepare it for model training [18] [12].

Workflow Visualization

The logical relationship and data flow between these resources and the modeling process can be visualized in the following diagram.

Successful implementation of ADMET prediction models relies on a suite of software tools and data resources. Table 2 details key components of the research toolkit.

Table 2: Essential Research Reagents and Resources for ADMET Modeling

| Tool/Resource | Type | Primary Function in ADMET Research |

|---|---|---|

| RDKit | Cheminformatics Library | Calculates molecular descriptors (e.g., RDKit descriptors) and fingerprints (e.g., Morgan fingerprints) from SMILES strings, which are essential features for random forest models [12]. |

| scikit-learn | Machine Learning Library | Provides the implementation for the Random Forest algorithm (e.g., RandomForestClassifier and RandomForestRegressor), along with utilities for model evaluation and hyperparameter tuning. |

| PharmaBench | Data Resource | Offers a large-scale, pre-processed benchmark with experimental conditions extracted by LLMs, ideal for testing model generalizability on drug-like compounds [18] [24]. |

| TDC Python API | Data Resource & API | Facilitates easy access to multiple curated ADMET benchmarks with standardized splits, enabling rapid prototyping and fair model comparison [26] [23]. |

| ChEMBL Web Services | Data Resource & API | Provides access to a vast repository of raw bioactivity data, allowing for the construction of custom, task-specific datasets for ADMET modeling [27] [29]. |

| Scaffold Split Methods | Data Processing Method | Generates training and test sets based on molecular Bemis-Murcko scaffolds, ensuring that models are tested on structurally distinct compounds, which better simulates real-world performance [26] [12]. |

Data Preprocessing, Cleaning, and Handling Missing Values

In the field of drug discovery and development, the accurate prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties stands as a critical bottleneck, with traditional experimental approaches being time-consuming, cost-intensive, and limited in scalability [2]. Machine learning (ML), particularly random forest algorithms, has emerged as a transformative tool for early-stage ADMET prediction, offering enhanced accuracy and reduced experimental burden [2]. The performance of these ML models is profoundly influenced by the quality of input data, making robust data preprocessing, cleaning, and missing value handling not merely preliminary steps but foundational components of reliable ADMET classification research. This article outlines structured protocols and application notes to guide researchers in effectively implementing these critical data preparation phases within the context of ADMET prediction using random forest models.

Understanding Missing Data Mechanisms in ADMET Studies

The appropriate handling of missing values begins with a clear understanding of the underlying mechanisms, as this dictates the selection of imputation methods and influences potential biases in the resulting model.

Table 1: Classification of Missing Data Mechanisms

| Mechanism | Acronym | Definition | Example in ADMET Context |

|---|---|---|---|

| Missing Completely at Random | MCAR | The missingness is unrelated to any observed or unobserved data [30] [31]. | A sample is lost due to a technical instrument failure, independent of its molecular properties. |

| Missing at Random | MAR | The missingness is related to other observed variables but not the missing value itself [30] [31]. | The likelihood of a solubility measurement being missing depends on the compound's molecular weight, which is fully recorded. |

| Not Missing at Random | NMAR | The missingness is related to the unobserved missing value itself [31] [32]. | A highly toxic compound is systematically missing a toxicity endpoint because it proved fatal at low doses in preliminary tests. |

| Structurally Missing | - | The data is logically absent and does not apply to the observation [30] [31]. | A metabolic stability value for a compound that is not metabolized by the tested enzyme system. |

Critical Steps in Data Preprocessing for ADMET Modeling

A systematic workflow is essential for transforming raw, often messy data into a clean dataset suitable for training robust random forest models [33].

Data Acquisition and Initial Exploration

The first step involves gathering the dataset from public or proprietary repositories. Common public databases for ADMET-related properties include ChEMBL, PubChem, and DrugBank [2]. Upon import, initial exploration should assess data shape, types of variables (continuous, categorical), and the presence of missing values and outliers.

Handling Missing Values

Missing data is a pervasive issue. While simple methods like listwise deletion (removing rows with any missing values) are available, they can lead to significant data loss and biased models [33]. Imputation—replacing missing values with plausible estimates—is generally preferred. The choice of imputation strategy should align with the identified missing data mechanism (see Table 1).

Encoding Categorical Data

Random forest algorithms require all input to be numerical. Categorical variables, such as salt form or specific assay types, must be converted. One-hot encoding is a robust technique that creates new binary (0/1) columns for each category [30] [33]. This avoids imposing an arbitrary ordinal relationship on categories that lack a natural order.

Feature Scaling

While random forests are generally robust to the scale of features, scaling can be beneficial for interpretation and is essential if the preprocessed data will later be used with other algorithms sensitive to feature magnitude (e.g., SVMs or neural networks) [33]. Standard Scaler (which centers data to have a mean of 0 and standard deviation of 1) or Robust Scaler (which uses median and interquartile range and is resistant to outliers) are commonly used methods [33] [34].

Figure 1: A generalized data preprocessing workflow for preparing ADMET data for machine learning. RF stands for Random Forest.

Data Splitting

The final, critical step is to split the fully preprocessed dataset into training, validation, and testing sets. The training set is used to build the random forest model. The validation set is used for hyperparameter tuning and model selection, while the test set is held back entirely until the very end to provide an unbiased evaluation of the final model's generalization performance [33]. A typical split is 70/15/15 or 80/10/10.

Advanced Random Forest-Based Imputation for Missing Values

For high-stakes ADMET prediction, advanced imputation methods that model complex relationships within the data are recommended. Two powerful, random forest-based techniques are Miss Forest and MICE Forest.

Miss Forest Protocol

Miss Forest is an iterative imputation method that can handle mixed data types (continuous and categorical) and complex, non-linear relationships without assuming a specific data distribution [31].

Experimental Protocol:

- Initialization: Make a initial rough imputation for all missing values, for example, using the mean/mode.

- Iteration: For each variable with missing values,

X_j: a. Set the currently imputedX_jas the target variable. b. Use all other variables as features to train a Random Forest model on the observed values ofX_j. c. Use the trained model to predict the missing values inX_j. d. Update the dataset with the new imputations forX_j. - Stopping Criterion: Repeat Step 2 for all variables, cycling through multiple iterations until the imputations stabilize. Stabilization is typically defined as when the difference in imputed values between two consecutive iterations increases for the first time, or a pre-set maximum number of iterations is reached [31].

Figure 2: The iterative imputation workflow of the Miss Forest algorithm.

MICE Forest Protocol

Multiple Imputation by Chained Equations (MICE) is a flexible framework that can be powered by a Random Forest model (often implemented via the LightGBM library) [31]. Instead of producing a single imputed dataset, MICE generates multiple versions, each with different plausible imputations, allowing for the quantification of imputation uncertainty.

Experimental Protocol:

- Kernel Initialization: Create an initial

ImputationKernelwith the raw, missing data. - Multiple Dataset Generation: Run the

mice(...)algorithm for a specified number of iterations (m) and for a specified number of imputed datasets (n). This createsnseparate, complete datasets. - Model Training & Pooling: Train your final random forest ADMET model on each of the

nimputed datasets. Aggregate the results (e.g., average predictions or parameters) to obtain a final model that accounts for the uncertainty introduced by the missing data [31].

Table 2: Comparison of Advanced Random Forest Imputation Methods

| Feature | Miss Forest | MICE Forest |

|---|---|---|

| Core Principle | Iterative, model-based single imputation. | Multiple Imputation, accounts for uncertainty. |

| Output | One complete dataset. | Multiple complete datasets (e.g., 5 or 10). |

| Handling of Data Types | Excellent for mixed data types [31]. | Excellent for mixed data types. |

| Robustness to Outliers & Non-linearity | High, due to Random Forest's inherent properties [31]. | High. |

| Computational Load | High (multiple RF models per iteration). | Very High (multiple RF models across multiple datasets). |

| Best Use Case | High-precision imputation for a final model when computational resources are less constrained. | When quantifying the uncertainty introduced by missing data is a priority. |

The Scientist's Toolkit: Essential Reagents for Data Preprocessing

Table 3: Key Software and Libraries for Data Preprocessing in ADMET Research

| Tool / Library | Language | Primary Function | Application Note |

|---|---|---|---|

| Scikit-learn | Python | Comprehensive ML library including RandomForestRegressor/Classifier, SimpleImputer, KNNImputer, and scaling tools [30] [35]. |

The workhorse for most preprocessing and model-building tasks. Well-documented and widely supported. |

| MissingPy | Python | Provides an implementation of the Miss Forest algorithm [31]. | The go-to library for applying the Miss Forest imputation technique directly. |

| MiceForest | Python | Enables fast MICE imputation using LightGBM (a gradient boosting framework similar to RF) [31]. | Ideal for performing multiple imputation on large ADMET datasets efficiently. |

| Pandas & NumPy | Python | Foundational libraries for data manipulation, analysis, and numerical computations [30] [31]. | Essential for all stages of data loading, cleaning, and transformation before model training. |

Practical Application: A Python Code Snippet for Miss Forest

The following code demonstrates the application of Miss Forest to impute missing values in a dataset, a common step before training an ADMET classifier.

Within the rigorous context of ADMET classification research, data preprocessing is not a mere technicality but a pivotal factor that determines the success of subsequent random forest models. A systematic approach—beginning with the diagnosis of missing data mechanisms, proceeding through careful handling of missing values using sophisticated methods like Miss Forest or MICE Forest, and culminating in proper encoding and splitting—is paramount. By adhering to the detailed protocols and application notes outlined herein, researchers and drug development professionals can significantly enhance the reliability, accuracy, and translational potential of their predictive models, thereby streamlining the arduous path of drug discovery and development.

In the context of implementing Random Forest (RF) for ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) classification models, feature engineering is not merely a preliminary step but a critical determinant of model success. RF, an ensemble learning method, excels at identifying complex relationships within high-dimensional data, making it a popular choice for predicting molecular properties [3] [2]. The algorithm's performance, however, is inherently dependent on the quality and relevance of the input features. Molecular descriptors and fingerprints serve as numerical representations that translate chemical structures into a format computable by machine learning models like RF [36]. The strategic selection and design of these features, grounded in chemical domain knowledge, directly influence the model's ability to generalize and provide interpretable insights, which is paramount for making reliable decisions in drug development pipelines.

Molecular Representation: A Primer for Random Forest Input

For an RF model to process chemical structures, they must first be converted into fixed-length numerical vectors. These representations encode different aspects of molecular structure and properties, which the RF algorithm uses to construct its decision trees.

Molecular Descriptors: These are numerical values that capture a molecule's physicochemical properties and topological characteristics. They can range from simple counts of atoms (constitutional descriptors) to more complex properties like logP (lipophilicity), molecular weight, or the number of hydrogen bond donors and acceptors, which are often used in rules-of-thumb like Lipinski's Rule of Five [37] [2]. Software like RDKit and PaDEL-Descriptor is commonly used to calculate thousands of such descriptors [12].

Molecular Fingerprints: These are typically bit-vectors (strings of 0s and 1s) where each bit indicates the presence or absence of a particular substructure or structural pattern in the molecule [36]. They are highly effective for RF models as they efficiently capture substructural information that is relevant to biological activity.

The table below summarizes the primary types of fingerprints and their relevance to ADMET prediction with RF.

Table 1: Classification and Characteristics of Molecular Fingerprints

| Fingerprint Type | Core Principle | Key Examples | Advantages for ADMET/RF |

|---|---|---|---|

| Dictionary-Based (Structural Keys) [36] | Predefined list of structural fragments; bits correspond to specific substructures. | MACCS, PubChem | Fast computation; interpretable; good for scaffold hopping. |

| Circular [36] | Captures circular neighborhoods around each atom up to a specified radius; not predefined. | ECFP, FCFP | Captures novel features; excellent for activity prediction; de facto standard in QSAR. |

| Topological (Path-Based) [36] | Based on molecular graph theory; enumerates all linear paths of bonds. | Daylight, Atom Pairs | Encodes overall molecular topology; good for similarity searching. |

| Pharmacophore [36] | Represents spatial arrangements of functional features critical for binding. | 3-point, 4-point Pharmacophore | Incorporates 3D molecular information; relevant for mechanism-based models. |

Experimental Protocols for Feature Engineering and Model Training

Protocol: Data Preprocessing and Standardization for ADMET Datasets

Objective: To clean and standardize molecular data from public sources (e.g., ChEMBL, TDC) to ensure consistency and reliability for model training [18] [12].

- SMILES Standardization: Use toolkits like RDKit to convert all SMILES strings into a canonical form. This includes stripping salts, neutralizing charges, and removing stereochemistry if not relevant [12].

- Duplicate Removal: Identify and remove duplicate molecular entries. For entries with conflicting activity values, apply a consistency check (e.g., remove the entire group if values are inconsistent) [12].

- Activity Thresholding: For regression data (e.g., IC50 values), convert to binary classification labels (e.g., "active" vs. "inactive") based on domain-knowledge-driven thresholds [37].

- Data Splitting: Split the cleaned dataset into training (e.g., 80%) and test (e.g., 20%) sets using scaffold-based splitting to assess the model's ability to generalize to novel chemotypes [12].

Protocol: Calculating and Selecting Molecular Features

Objective: To generate a diverse set of molecular features and select the most informative subset for model training.

- Feature Generation:

- Feature Combination: Investigate the performance of individual feature sets and their logical combinations (e.g., concatenating ECFP fingerprints with a select number of physicochemical descriptors) to create a richer representation [12].

- Feature Selection:

Protocol: Building and Evaluating a Random Forest ADMET Classifier

Objective: To train an optimized RF model and evaluate its performance rigorously.

- Model Training: Train a RF classifier (e.g., using

sklearn.ensemble.RandomForestClassifier) on the training set using the selected features. - Hyperparameter Optimization: Perform a grid or random search over key hyperparameters such as

n_estimators(number of trees),max_depth, andmax_features(number of features considered for a split) using cross-validation [12]. - Model Evaluation:

- Metrics: Calculate accuracy, Matthews Correlation Coefficient (MCC), sensitivity, and specificity on the held-out test set [37].

- Validation: Employ k-fold cross-validation combined with statistical hypothesis testing (e.g., Mann-Whitney U test) to ensure the observed performance is statistically significant and not due to random chance [12].

Figure 1: High-level workflow for building an RF-based ADMET classifier.

Interpreting Random Forest Models for Scientific Insight

A key advantage of using RF in a research setting is its interpretability through feature importance measures. Understanding which features drive predictions can yield valuable scientific insights.

- Gini Importance: This built-in measure (often called "mean decrease in impurity") calculates the total reduction in node impurity (e.g., Gini index) attributable to a feature across all trees in the forest. A higher value indicates a feature that is more frequently used and effective for splitting data [19].

- Permutation Feature Importance: A more robust technique that evaluates the drop in model performance (e.g., accuracy) when the values of a specific feature are randomly shuffled in the test set. A large drop indicates that the feature is important for the model's predictions [19] [38].

- SHAP (SHapley Additive exPlanations) Values: This method quantifies the marginal contribution of each feature to the prediction for a single instance. It provides a unified measure of importance and is particularly useful for understanding individual predictions [19].

Table 2: Comparison of Feature Importance Interpretation Methods

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Gini Importance [19] | Sum of impurity decrease across all nodes using the feature. | Fast to compute; native to RF. | Biased towards high-cardinality features. |

| Permutation Importance [19] [38] | Measures performance drop after feature permutation. | Statistically sound; easy to understand. | Computationally more expensive. |

| SHAP Values [19] | Based on cooperative game theory; assigns contribution per prediction. | Consistent and locally accurate; explains single predictions. | Computationally intensive. |

Figure 2: Pathways for interpreting a trained Random Forest model.

Case Study: Optimizing an HCV NS3 Inhibitor Classification Model

A recent study provides a concrete example of this workflow in action. The research aimed to classify compounds as active or inactive against the Hepatitis C virus (HC3) NS3 protein using RF [37].

- Dataset: 290 bioactive compounds with known IC50 values were retrieved from ChEMBL and labeled based on activity thresholds [37].

- Feature Engineering: Twelve different molecular fingerprint descriptors were generated and tested using the PaDEL-Descriptor software. This included the CDK graph-only fingerprint, extended fingerprints, and others [37].

- Model Training and Selection: An RF model was trained and optimized alongside other classifiers (e.g., SVM, IBk). The model utilizing the CDK graph-only fingerprint was identified as the best performer [37].

- Results: The optimized RF model achieved an accuracy of 89.66% and an MCC of 0.795 on the test set, underscoring the impact of selecting the optimal molecular representation [37]. The study also performed a chemical space analysis using descriptors like Molecular Weight and LogP, confirming a statistically significant distinction between active and inactive compounds, which validated the model's decision boundaries [37].

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software and Resources for Feature Engineering in ADMET Modeling

| Tool / Resource | Type | Primary Function | Application Note |

|---|---|---|---|

| RDKit [12] | Cheminformatics Library | Calculates molecular descriptors, fingerprints, and handles molecule standardization. | Open-source; widely used for prototyping and production of descriptor-based features. |

| PaDEL-Descriptor [37] | Software Descriptor Calculator | Computes a comprehensive set of 1D, 2D, and fingerprint descriptors. | Useful for generating a wide array of features directly from SMILES strings. |

| Therapeutics Data Commons (TDC) [18] [12] | Benchmark Datasets | Provides curated, scaffold-split ADMET datasets for model training and evaluation. | Critical for benchmarking model performance against community standards. |

| WEKA [37] | Machine Learning Workbench | Provides a GUI and API for implementing and comparing multiple ML algorithms, including RF. | Beneficial for researchers who prefer a graphical interface for rapid model prototyping. |

| scikit-learn [19] | Machine Learning Library | Python library for building and evaluating RF models, including feature importance. | Industry standard for implementing and deploying optimized RF models in Python. |