From Lock and Key to AI: The Evolution of Structure-Based Ligand Discovery

This article chronicles the transformative journey of structure-based ligand discovery, a pivotal methodology in rational drug design.

From Lock and Key to AI: The Evolution of Structure-Based Ligand Discovery

Abstract

This article chronicles the transformative journey of structure-based ligand discovery, a pivotal methodology in rational drug design. It explores the foundational shift from serendipitous discovery to a target-driven science, initiated by Emil Fischer's 'lock and key' hypothesis. We delve into the core methodological pillars—from early X-ray crystallography to modern cryo-EM and AI-powered structure prediction—that enable the visualization and exploitation of target structures. The discussion addresses persistent challenges like protein flexibility and cryptic pockets, outlining computational solutions such as molecular dynamics simulations. Finally, the article validates the approach through its clinical successes, assesses its impact on reducing the cost and time of drug development, and forecasts future directions fueled by artificial intelligence and ultra-large library screening, providing a comprehensive resource for researchers and drug development professionals.

The Foundational Shift: From Serendipity to Rational Design

The landscape of modern drug discovery is increasingly dominated by rational, structure-based approaches, powered by advanced computational tools and high-resolution structural biology [1] [2]. However, this present state rests upon a foundational history dominated by two fundamental paradigms: serendipitous discovery and systematic chemical modification [1] [3]. Before the advent of X-ray crystallography, nuclear magnetic resonance (NMR), and cryo-electron microscopy (cryo-EM) that enabled precise visualization of drug targets, scientists relied on observational chance and the meticulous derivatization of known active molecules [3]. This "Pre-Structure Era" was characterized not by a lack of methodology, but by a different kind of scientific ingenuity—one that leveraged phenotypic observation, clinical correlation, and synthetic chemistry to develop life-saving therapeutics. This article delineates the core principles and methodologies of this era, framing them within the historical context of ligand discovery research. It provides a detailed technical guide to the experimental approaches that underpinned drug discovery when the three-dimensional structure of biological targets remained largely unknown.

The Serendipity Paradigm: Discovery Through Observation

The serendipity paradigm refers to the discovery of therapeutic agents through unexpected observations during research aimed at unrelated goals or through the keen interpretation of clinical or experimental anomalies [1]. This approach did not rely on a predefined hypothesis about a specific molecular target but was driven by phenotypic outcomes, either in patients or in biological assays.

Foundational Examples and Workflows

Classic examples of serendipitous drug discovery share a common theme: an astute investigator recognized the significance of an unexpected result.

- Penicillin: The discovery by Alexander Fleming of the antibacterial properties of the Penicillium mold following the accidental contamination of a bacterial culture is the archetypal example. This observation, followed by its development into a systemic therapeutic, revolutionized the treatment of bacterial infections and established a new class of drugs [1] [3].

- Chlordiazepoxide: The first benzodiazepine was discovered during synthetic chemistry work aimed at developing new dyes, demonstrating how research in one field can yield groundbreaking therapeutics in another [1].

- Cyclosporin: Initially investigated as an anti-tubercular antibiotic, it was subsequently found to possess potent immunosuppressive properties, which ultimately transformed the field of organ transplantation [1].

- Sildenafil (Viagra): Originally developed as an antihypertensive agent, its unexpected side effect led to its repurposing and the creation of an entirely new pharmacological class for treating erectile dysfunction [1].

The generalized workflow for this paradigm, from initial observation to therapeutic application, is illustrated below.

Experimental Protocols for Isolation and Characterization

Following an initial observation, the critical next step was to isolate and characterize the active substance. The general protocol for a natural product discovery, such as penicillin, involved:

- Fermentation and Production: The producing organism (e.g., Penicillium mold) was cultured in large-scale fermentation broths to produce sufficient quantities of the active compound.

- Extraction and Solvent Partitioning: The broth was filtered to separate the biomass. The filtrate was then subjected to liquid-liquid extraction using organic solvents (e.g., amyl acetate) to concentrate the active principle from the aqueous medium.

- Bioassay-Guided Fractionation: The crude extract was subjected to a series of purification steps, such as column chromatography or counter-current distribution. Each fraction was tested for biological activity using a relevant assay (e.g., a zone-of-inhibition assay on a bacterial lawn for antibiotics). Only fractions retaining activity were processed further.

- Purification and Crystallization: Active fractions were further purified through techniques like recrystallization to obtain the pure compound for structural elucidation.

- Structural Elucidation: The chemical structure of the pure compound was determined using available analytical techniques, which in the early era included elemental analysis, melting point determination, and functional group tests. Later, techniques like mass spectrometry and NMR became standard.

- In Vivo Efficacy and Toxicity Testing: The purified compound was administered to animal models of the disease to confirm its therapeutic efficacy and to conduct preliminary assessments of its safety and pharmacokinetics.

Table 1: Key Reagent Solutions in Serendipitous Natural Product Discovery

| Research Reagent / Material | Function in Experimental Protocol |

|---|---|

| Fermentation Broth | Production medium for the organism generating the active natural product. |

| Selective Growth Media | To culture and isolate the specific bacterium or fungus of interest. |

| Organic Solvents (e.g., Amyl Acetate, Chloroform) | For liquid-liquid extraction to concentrate the active compound from aqueous solutions. |

| Chromatography Media (e.g., Silica Gel, Alumina) | For fractionating crude extracts based on differential adsorption to isolate the active component. |

| Bacterial Lawn (e.g., Staphylococcus culture) | A bioassay system to detect and quantify antimicrobial activity during purification. |

| Animal Disease Models (e.g., infected mice) | To confirm the in vivo therapeutic efficacy of the purified substance. |

The Chemical Modification Paradigm: Systematic Derivatization

When a biologically active compound (a "lead" molecule) was identified—whether through serendipity or other means—but its properties were suboptimal, systematic chemical modification became the primary tool for improvement [1] [3]. This approach was conducted without knowledge of the target's structure and was guided entirely by the relationship between chemical structure and observed biological activity, known as Structure-Activity Relationships (SAR).

Foundational Examples and Rationale

The goal of chemical modification was to enhance desirable drug properties while minimizing drawbacks. Key historical examples include:

- Aspirin (Acetylsalicylic Acid): The natural product salicylic acid, found in willow bark, was known for its anti-inflammatory and analgesic effects but caused severe gastric irritation. Chemical acetylation of the phenolic hydroxyl group yielded aspirin, which provided a more stable and significantly less irritating prodrug that is hydrolyzed to salicylic acid in the body [1].

- Ranitidine vs. Cimetidine: Ranitidine is a chemical modification of the first H2-receptor antagonist, cimetidine. The change in the chemical structure resulted in a drug with higher potency, a longer half-life, and a better side-effect profile [1].

- Pindolol vs. Propranolol: As a derivative of the beta-blocker propranolol, pindolol was designed to have intrinsic sympathomimetic activity and, crucially, to avoid the first-pass metabolism in the liver, leading to higher and more predictable oral bioavailability [1].

The logical framework for deciding which chemical modifications to pursue is outlined in the following diagram.

Experimental Protocols for SAR-Driven Modification

The process of hit-to-lead optimization through chemical modification followed an iterative "Design-Make-Test-Analyze" (DMTA) cycle, even if not formally named as such at the time [4]. A generalized protocol for this process is as follows:

Define the Lead Optimization Goals (Design): Based on the profile of the initial hit compound, define the specific properties to be improved. These could include:

- Potency: Increasing affinity for the target (e.g., lowering IC50 or EC50).

- Selectivity: Reducing activity against related off-targets to minimize side effects.

- Pharmacokinetics (PK): Improving absorption, distribution, metabolic stability, and excretion (ADME).

- Solubility: Enhancing water solubility for better formulation and absorption.

- Toxicity: Eliminating or reducing mechanism-based or off-target toxicities.

Synthesize Analogues (Make): A library of analogues is synthesized where specific parts of the lead molecule are systematically altered. Common modifications include:

- Side-chain homologation: Varying the length of alkyl chains.

- Bioisosteric replacement: Replacing a functional group with another that has similar physicochemical properties (e.g., replacing a carboxylic acid with a tetrazole ring to maintain acidity while altering metabolism).

- Ring closure/opening: Creating or breaking cyclic structures to alter conformation and rigidity.

- Introducing/changing steric hindrance: To block metabolically vulnerable sites on the molecule.

Biological and Pharmacological Testing (Test): The synthesized analogues are subjected to a cascade of in vitro and in vivo assays.

- Primary In Vitro Assay: A biochemical or cell-based assay to determine potency and efficacy (e.g., enzyme inhibition, receptor binding affinity).

- Selectivity Assays: Testing against related targets (e.g., other receptor subtypes) to assess specificity.

- Early ADME Profiling: This includes assays for metabolic stability in liver microsomes, permeability in Caco-2 cell monolayers, and plasma protein binding.

- In Vivo Efficacy and PK: Promising compounds are advanced to animal models to confirm therapeutic effect and to characterize pharmacokinetic parameters like bioavailability, half-life, and clearance.

Data Analysis and SAR Establishment (Analyze): The biological data from the tested analogues are compiled and analyzed to identify correlations between specific chemical features and the observed biological effects. This SAR table guides the design of the next generation of compounds, initiating a new DMTA cycle.

Table 2: Key Reagent Solutions in Chemical Modification and SAR Studies

| Research Reagent / Material | Function in Experimental Protocol |

|---|---|

| Chemical Synthesis Reagents | Starting materials, catalysts, and solvents for the synthetic modification of the lead compound. |

| In Vitro Target Assay (e.g., purified enzyme, cell membrane prep) | To determine the primary potency (IC50, Ki) of new analogues against the intended target. |

| Liver Microsomes (from various species) | An in vitro system to predict metabolic stability and identify potential metabolites. |

| Caco-2 Cell Line | A model of the human intestinal epithelium used to predict oral absorption and permeability. |

| Animal Plasma/Serum | For determining plasma protein binding, which influences the free fraction of drug available for activity. |

| Relevant Animal Disease Model | To validate the in vivo efficacy of optimized lead compounds. |

Table 3: Quantitative Impact of Chemical Modification in Historical Drug Examples

| Parent Compound | Derivative Drug | Key Chemical Change | Impact on Drug Properties |

|---|---|---|---|

| Salicylic Acid | Aspirin | Acetylation of phenolic -OH | ↓ Gastric irritation, ↑ stability [1] |

| Cimetidine | Ranitidine | Change from imidazole to furan ring, with substituted diaminonitroethene | ↑ Potency, ↑ half-life, ↓ side effects [1] |

| Propranolol | Pindolol | Incorporation of an indole ring and other modifications | Avoids first-pass metabolism, ↑ bioavailability [1] |

| Natural Paclitaxel | Semi-synthetic Paclitaxel | Modification of side chains | Improved production yield and efficacy [3] |

The Scientist's Toolkit: Core Materials and Methods

The research reagent solutions and essential materials that defined the Pre-Structure Era toolkit were foundational to both serendipitous discovery and chemical modification efforts.

Table 4: The Pre-Structure Era Scientist's Toolkit

| Tool / Material | Category | Brief Explanation of Function |

|---|---|---|

| Fermentation & Extraction Systems | Serendipity / Natural Products | Enabled the production and initial concentration of active compounds from microbial sources. |

| Chromatography Systems | Both | The cornerstone of purification, separating complex mixtures into individual components for testing and identification. |

| Animal Disease Models | Both | Provided the primary in vivo system for confirming therapeutic efficacy and assessing toxicity before human trials. |

| Chemical Synthesis Laboratory | Chemical Modification | Enabled the deliberate and systematic alteration of lead compounds to explore SAR. |

| In Vitro Bioassays | Both | Provided a means to quantitatively measure biological activity (e.g., antimicrobial zones of inhibition, enzyme activity). |

| Basic Analytical Instruments (e.g., NMR, MS) | Both | Allowed for the determination of the molecular structure of isolated natural products and synthesized analogues. |

The Pre-Structure Era, governed by the paradigms of chance discovery and chemical modification, was a period of profound achievement that laid the essential groundwork for modern pharmacology [3]. The methodologies developed during this time—bioassay-guided fractionation, systematic SAR analysis, and the iterative DMTA cycle—established core principles that remain relevant today. While the tools were different, the fundamental goals of identifying efficacious and safe therapeutics were the same. The serendipitous discoveries provided the initial chemical matter, and the rigorous application of chemical modification refined these leads into usable drugs. This historical context is crucial for understanding the evolution of drug discovery. It highlights that the current paradigm of structure-based ligand discovery did not emerge in a vacuum but is a sophisticated extension of these early principles, now augmented with powerful structural and computational tools that allow for a more targeted and efficient approach [2] [5]. The legacy of the Pre-Structure Era is a testament to the power of observation, chemical intuition, and persistent optimization in the face of profound biological complexity.

This whitepaper examines Emil Fischer's 1894 'lock and key' hypothesis, a foundational concept that has profoundly influenced the fields of enzymology and structure-based ligand discovery. We detail the historical context of its proposal, the key experimental evidence that supported and refined it, and its enduring legacy in modern drug development. The discussion is framed within the broader history of structural biology, highlighting how this seminal idea provided the initial conceptual framework for rational drug design, ultimately enabling the precise targeting of biomacromolecules that is central to contemporary pharmaceutical research. The trajectory from Fischer's rigid model to today's dynamic understanding of molecular recognition is explored, underscoring its critical role in shaping a century of scientific progress.

In the late 19th century, understanding how enzymes achieve their remarkable specificity—the ability to discriminate between very similar chemical molecules—was a central challenge in biochemistry. Prior to Fischer's work, Louis Pasteur had observed stereospecificity in fermentation, noting that microorganisms could distinguish between the d- and l-forms of tartaric acid [6] [7]. However, the mechanistic basis for this discrimination remained a mystery. The scientific community was engaged in a debate between vitalists, who believed a "life-force" was necessary for complex transformations, and those who sought purely chemical explanations [6]. It was within this context that Emil Fischer, a German chemist at the University of Berlin, conducted his studies on the interactions between enzymes and their substrates. His work sought to provide a structural and chemical rationale for the observed specificity of enzymatic reactions, moving beyond vitalist principles and toward a mechanistic model based on molecular geometry.

Fischer's Seminal Work: The 1894 Hypothesis

In his 1894 paper, "Einfluss der Configuration auf die Wirkung der Enzyme" (Influence of Configuration on the Action of Enzymes), Fischer proposed a structural interpretation of enzyme selectivity [7]. Based on his experiments with sugars and hydrolytic enzymes, he concluded that for an enzyme to act upon a substrate, the two molecules must possess complementary geometric forms. He articulated this concept with a powerful analogy: "To use a picture, I should say that the enzyme and substrate must fit each other like a lock and a key" [7].

This lock and key model posited several foundational principles that would guide biochemical research for decades [8] [9]:

- Complementary Shapes: The enzyme (the lock) and the substrate (the key) possess specific, complementary three-dimensional geometries.

- Rigid Interaction: The model implied that both the enzyme's active site and the substrate are pre-formed, rigid structures that do not change upon binding.

- Specificity Mechanism: The precise steric fit explained the enzyme's high degree of specificity; only the correctly shaped "key" (substrate) could fit into the "keyhole" (active site) of the enzyme.

This hypothesis was groundbreaking because it moved the explanation of biological specificity from the realm of abstract vitalism to the tangible world of molecular structure and chemistry. It provided a testable framework for investigating enzyme action and set the stage for the field of structural biochemistry.

Experimental Validation and Key Methodologies

Fischer's hypothesis was a theoretical prediction that required rigorous experimental validation. The following decades saw critical experiments that tested and ultimately confirmed the structural basis of his model.

Key Historical Experiments

Table 1: Key Experiments Validating the Structural Nature of Enzymes and Substrate Binding.

| Experiment (Year) | Lead Researcher(s) | Key Methodology | Finding & Significance |

|---|---|---|---|

| First Enzyme Crystallization (1926) | James B. Sumner [6] | Purification & Crystallization: Isolated and crystallized the enzyme urease from jack beans. | Confirmed enzymes are proteins; demonstrated they are discrete chemical entities with a defined structure, a prerequisite for the lock-and-key model. |

| Crystallization of Digestive Enzymes (1930s) | John H. Northrop [6] | Crystallization: Successfully crystallized pepsin, trypsin, and chymotrypsin. | Further solidified that enzymes are proteins, reinforcing the structural basis of their function. |

| Determination of Protein Primary Structure (1951) | Frederick Sanger [6] | Sequencing: Determined the complete amino acid sequence of insulin. | Revealed that proteins have a unique, defined sequence, establishing a foundation for understanding structure-function relationships. |

| First Protein 3D Structures (1958-1960) | John Kendrew & Max Perutz [6] | X-ray Crystallography: Solved the structures of myoglobin and hemoglobin. | Provided the first direct visual evidence of the complex three-dimensional structure of proteins, confirming they possess unique folds. |

| Lysozyme with Inhibitor Complex (1965) | David Chilton Phillips et al. [6] | X-ray Crystallography: Solved the structure of lysozyme with a bound inhibitor. | First visualization of an enzyme's active site with a ligand; directly showed complementary shape and specific atomic interactions, offering definitive proof for Fischer's concept. |

Detailed Experimental Protocol: Enzyme Crystallization and Structure Determination

The most definitive validation of the lock-and-key model came from X-ray crystallography. The following protocol outlines the general methodology used in these groundbreaking studies, such as the work on lysozyme [6]:

Protein Purification:

- Isolate the enzyme from its biological source (e.g., egg white for lysozyme) using techniques like salt precipitation, chromatography, and ultrafiltration.

- Assess purity using activity assays and gel electrophoresis.

Crystallization:

- Use vapor diffusion or batch crystallization methods.

- Prepare a concentrated, pure solution of the enzyme.

- Mix the enzyme solution with a precipitant solution (e.g., ammonium sulfate, polyethylene glycol) under controlled conditions of pH and temperature.

- Allow for slow, ordered formation of protein crystals over days to weeks.

X-ray Data Collection:

- Mount a single crystal in a capillary tube or cryo-loop.

- Expose the crystal to a collimated X-ray beam.

- Measure the diffraction pattern produced as the crystal is rotated.

Phase Problem Solution and Electron Density Map Calculation:

- Use methods like Multiple Isomorphous Replacement (MIR) or Molecular Replacement (if a related structure is known) to determine the phase of the diffracted waves.

- Combine amplitude and phase information to compute an electron density map.

Model Building and Refinement:

- Fit the known amino acid sequence of the enzyme into the electron density map.

- For enzyme-inhibitor complexes, build the model of the inhibitor into the electron density observed in the active site.

- Use iterative computational refinement to adjust the atomic model to best fit the experimental data.

Evolution of the Model: From Rigid Lock-and-Key to Dynamic Recognition

While foundational, Fischer's original model was eventually recognized as overly simplistic. The rigid lock-and-key concept could not fully explain certain enzymatic phenomena, such as allosteric regulation or the stabilization of the transition state [8] [10]. This led to the development of more sophisticated models.

The Induced Fit Model

In 1958, Daniel Koshland proposed the induced fit model to address the limitations of Fischer's hypothesis [8] [11]. This model states that the initial interaction between enzyme and substrate is relatively weak, but that these weak interactions rapidly induce conformational changes in the enzyme's structure. These changes strengthen binding and create a more optimal catalytic environment [11]. The enzyme's active site is not a static lock but a dynamic entity that molds itself around the substrate.

The Keyhole-Lock-Key Model

A more recent refinement is the keyhole-lock-key model, which accounts for enzymes with deeply buried active sites [10]. This model incorporates the role of access tunnels (the keyholes) that connect the solvent to the internal active site (the lock). Substrates must first navigate these tunnels before binding, adding another layer of specificity and regulation to the catalytic cycle [10].

The Toolkit for Research: Essential Reagents and Materials

The experimental journey to validate and refine the lock-and-key hypothesis relied on a suite of biochemical and structural biology tools.

Table 2: Key Research Reagent Solutions for Enzymology and Structural Studies.

| Research Reagent / Material | Function & Application in Context |

|---|---|

| Purified Enzyme Preparations | Essential for in vitro studies of enzyme kinetics and specificity. Early work used extracts (e.g., diastase, pepsin), while modern research requires highly purified proteins for crystallization [6]. |

| Substrate Analogs & Inhibitors | Used to probe the geometry and chemical properties of the active site. Transition state analogs were crucial for validating Pauling's theory of transition state stabilization, a refinement of the lock-and-key model [6] [10]. |

| Crystallization Kits | Commercial screens containing diverse precipitant conditions to systematically identify optimal parameters for growing high-quality protein crystals for X-ray studies. |

| Synchrotron Radiation | High-intensity X-ray source used in modern crystallography for studying very small crystals and collecting high-resolution diffraction data, enabling detailed visualization of enzyme-ligand interactions. |

| Molecular Modeling Software | Computational tools to visualize, dock ligands, and simulate the dynamics of enzyme-substrate interactions, directly testing the predictions of induced fit and keyhole-lock-key models [2]. |

Impact on Modern Structure-Based Ligand Discovery

Fischer's lock-and-key hypothesis is the intellectual cornerstone of structure-based drug design (SBDD). The fundamental principle that a ligand's biological activity is determined by its complementary fit to a protein target directly underpins modern pharmaceutical research [1] [2].

- Rational Drug Design: The lock-and-key analogy provided the initial conceptual framework for designing drugs to fit specific molecular targets. This shifted drug discovery from a serendipitous process to a rational one [3]. SBDD involves using the three-dimensional structure of a therapeutic target to identify and optimize lead compounds that bind with high affinity and specificity [2].

- Virtual Screening and Molecular Docking: Computational methods now allow for the in silico screening of millions of compounds against a target protein's structure. Docking algorithms score compounds based on their predicted complementary fit to the binding site, a direct application of Fischer's principle [2].

- Success Stories: Many FDA-approved drugs are triumphs of SBDD, which traces its lineage to Fischer's hypothesis. Notable examples include HIV-1 protease inhibitors (e.g., amprenavir), the antibiotic norfloxacin, and dorzolamide, a carbonic anhydrase inhibitor for glaucoma [2].

Table 3: Applications of the Lock-and-Key Principle in Modern Drug Discovery.

| Application | Description | Direct Link to Lock-and-Key Concept |

|---|---|---|

| Structure-Based Drug Design (SBDD) | Using the 3D structure of a biological target to design therapeutic molecules. | The core premise is designing a "key" (drug) to fit a "lock" (protein target). |

| Fragment-Based Drug Discovery | Identifying small, weak-binding molecular fragments and optimizing them into potent drugs. | Relies on the initial complementary binding of a fragment to a part of the "lock" [3]. |

| Virtual Screening | Computationally screening large compound libraries against a target structure. | Uses scoring functions to rank molecules based on their predicted geometric and chemical complementarity [2]. |

| PROTACs | Bifunctional molecules that recruit cellular machinery to degrade disease-causing proteins. | One end of the PROTAC must have a complementary fit to the target protein, the other to an E3 ubiquitin ligase [3]. |

Emil Fischer's 1894 'lock and key' hypothesis was a paradigm shift that elegantly linked molecular structure to biological function. While modern science has revealed a much more dynamic and nuanced picture of molecular recognition—encompassing induced fit, conformational selection, and the role of access tunnels—the core intuition of Fischer's analogy remains profoundly correct and influential. It provided the essential conceptual vocabulary and research agenda that guided the development of enzymology and structural biology. Today, its legacy is embedded in the very fabric of rational drug discovery, where the quest for the perfect "key" to a pathological "lock" continues to drive innovation in the development of new therapeutics. This conceptual breakthrough established the foundational principle for a century of structure-based ligand discovery research, demonstrating that the precise interaction of complementary shapes is a fundamental tenet of molecular biology.

The development of Captopril and HIV Protease Inhibitors (PIs) represents a foundational milestone in the history of structure-based ligand discovery. These successes demonstrated the transformative potential of rationally designing drugs based on the three-dimensional structure of biological targets, moving beyond traditional serendipitous discovery methods. Both drug classes target proteolytic enzymes but emerged from distinct starting points: Captopril from natural product investigation and HIV PIs from targeted antiviral strategy. Their development validated protease inhibition as a powerful therapeutic approach for treating diverse human diseases, from cardiovascular disorders to infectious diseases, and established core principles that continue to guide modern drug discovery efforts. This review examines the structural insights, design strategies, and clinical impacts of these pioneering agents within the broader context of structure-based drug discovery research.

Captopril: The First Orally Active ACE Inhibitor

Discovery and Structural Insights

Captopril's development marked the first successful application of structure-based design for a protease inhibitor, originating from investigations of the Brazilian pit viper (Bothrops jararaca) venom [12] [13]. Researchers discovered that peptides in the venom potently inhibited Angiotensin-Converting Enzyme (ACE), a zinc metalloprotease critical in the Renin-Angiotensin-Aldosterone System (RAAS) that regulates blood pressure [14]. The key structural insight was that these bradykinin-potentiating peptides contained a terminal Ala-Pro sequence that interacted with the ACE active site [14].

Using this natural template, researchers at E.R. Squibb & Sons designed captopril to emulate the C-terminal dipeptide of these venom peptides while incorporating features to enhance oral bioavailability [12] [13]. The final optimized structure contained several critical elements:

- A thiol (SH) group that coordinates with the zinc ion in the ACE active site

- An L-proline group that docks into the S2 pocket of ACE, enhancing specificity and oral bioavailability

- A methyl group adjacent to the thiol that optimizes fit within the S1' pocket [13]

This rational design process, completed in 1975, resulted in the first orally active ACE inhibitor, approved for medical use in 1980 [13].

Mechanism of Action and Therapeutic Impact

Captopril exerts its antihypertensive effects through specific inhibition of ACE, a key enzyme in the RAAS pathway. The mechanism involves:

- Blocking Angiotensin II Production: ACE normally converts angiotensin I to the potent vasoconstrictor angiotensin II; captopril prevents this conversion [13]

- Potentiating Bradykinin: ACE inactivates the vasodilator bradykinin; captopril inhibits this inactivation, promoting vasodilation [13] [15]

The clinical introduction of captopril transformed cardiovascular treatment, providing a targeted therapeutic approach with fewer side effects than previous antihypertensive agents [12]. Its success validated RAAS modulation as a strategy for treating hypertension and congestive heart failure, paving the way for subsequent ACE inhibitors and related agents.

Table 1: Key Properties of Captopril

| Property | Description | Clinical Significance |

|---|---|---|

| Target Enzyme | Angiotensin-Converting Enzyme (ACE) | Zinc metalloprotease in RAAS pathway |

| Mechanism | Competitive inhibition via zinc coordination | Reversible blockade of angiotensin II formation |

| Bioavailability | 70-75% | Good oral absorption |

| Half-Life | 1.9-3 hours | Requires 2-3 times daily dosing |

| Key Structural Features | Thiol group (zinc binding), L-proline (bioavailability) | Enables potent inhibition and oral activity |

| Primary Indications | Hypertension, congestive heart failure, diabetic nephropathy | First-line therapy for various cardiovascular conditions |

Experimental Characterization

The binding affinity and inhibitory potency of captopril were characterized through established biochemical and pharmacological methods:

ACE Inhibition Assay: Enzyme activity is typically measured using hippuryl-histidyl-leucine (HHL) as a substrate. ACE cleaves HHL to produce hippuric acid, which is quantified spectrophotometrically or by HPLC. Captopril's IC₅₀ (concentration causing 50% inhibition) is in the low nanomolar range [14].

Radioligand Binding Studies: Competition experiments with labeled angiotensin I determine captopril's binding affinity (Kᵢ) for ACE, demonstrating tight-binding inhibition with dissociation constants typically <10 nM [15].

In Vivo Pharmacology: Blood pressure reduction is measured in hypertensive animal models (e.g., spontaneously hypertensive rats, renal hypertensive dogs) following oral administration, establishing dose-response relationships and duration of action [12].

HIV Protease Inhibitors: Transforming AIDS Treatment

Structural Biology and Rational Design

The design of HIV protease inhibitors represented one of the most sophisticated applications of structure-based drug discovery in the late 20th century. HIV-1 protease is an aspartic protease that functions as a homodimer, with each monomer contributing one catalytic aspartic acid residue (Asp25 and Asp25') to form the active site [16]. The enzyme is essential for viral replication, processing the Gag and Gag-Pol polyprotein precursors into functional viral proteins [16] [17].

Key structural insights guiding inhibitor design included:

- Catalytic Mechanism: HIV protease cleaves peptide bonds through a water-mediated nucleophilic attack, forming a tetrahedral transition state [16] [14]

- Substrate Specificity: The enzyme recognizes and cleaves at specific sequences, particularly between Phe-Pro, Phe-Leu, and Phe-Thr residues [18]

- Flap Dynamics: Flexible glycine-rich β-sheets form flaps that close over the active site upon substrate binding [16]

First-generation inhibitors (saquinavir, ritonavir, indinavir) incorporated non-cleavable transition-state isosteres such as hydroxyethylene or hydroxyethylamine moieties to mimic the tetrahedral intermediate of substrate hydrolysis [16]. These designs exploited the enzyme's extended substrate-binding site, typically making interactions across at least seven subsites (S4 to S4') [16].

Evolution of HIV Protease Inhibitors

The initial success of first-generation HIV PIs was followed by continued optimization to address limitations including poor bioavailability, metabolic instability, and emerging drug resistance.

Table 2: Evolution of HIV Protease Inhibitors

| Generation | Representative Agents | Key Advances | Clinical Impact |

|---|---|---|---|

| First-Generation | Saquinavir (1995), Ritonavir (1996), Indinavir (1996), Nelfinavir (1997) | Proof-of-concept for transition-state mimics; introduction of HAART | Dramatic reductions in viral load and AIDS-related mortality |

| Second-Generation | Lopinavir (2000), Atazanavir (2003), Darunavir (2006) | Improved resistance profiles; better tolerability; once-daily dosing options | Effective treatment of PI-resistant virus; simplified regimens |

| Pharmacokinetic Enhancers | Low-dose ritonavir, cobicistat | CYP3A4 inhibition to boost PI concentrations | Enhanced efficacy, reduced pill burden, improved adherence |

The introduction of HIV protease inhibitors in the mid-1990s, combined with reverse transcriptase inhibitors, marked the beginning of Highly Active Antiretroviral Therapy (HAART), which transformed HIV/AIDS from a fatal disease to a manageable chronic condition [18] [16]. Between 1995 and 1996, the introduction of PIs was correlated with a significant increase in survival time in AIDS patients, dwarfing the effect of previously used antiretroviral agents [18].

Experimental Characterization of HIV Protease Inhibitors

The development of HIV PIs relied on sophisticated biochemical and structural biology methods:

Protease Enzyme Assays: Inhibitor potency is determined using fluorogenic or chromogenic substrates that mimic natural cleavage sites (e.g., sequences from Gag-Pol polyprotein). The IC₅₀ values for first-generation PIs ranged from sub-nanomolar to low nanomolar (saquinavir Kᵢ = 0.12 nM; ritonavir Kᵢ = 0.015 nM) [16].

Crystallographic Studies: X-ray structures of inhibitor-protease complexes revealed detailed binding interactions. Analyses showed inhibitors typically form hydrogen bonds of 2.68-3.24 Å with protease active site residues, with strongest interactions occurring with the flexible flap regions (residues 48-50) [16].

Cell-Based Antiviral Assays: Inhibition of viral replication is quantified in HIV-infected T-cell lines (e.g., MT-4, CEM-SS) measuring protection from cytopathic effects or reduction in p24 antigen production. EC₅₀ values (concentration for 50% protection) are determined for lead compounds [16].

Resistance Profiling: Susceptibility to clinical HIV isolates with defined resistance mutations is assessed through phenotypic antiviral assays, guiding optimization of second-generation inhibitors with improved resistance profiles [16].

Research Reagent Solutions

Table 3: Essential Research Tools for Protease Inhibitor Development

| Research Reagent | Application | Function in Discovery Pipeline |

|---|---|---|

| Recombinant Proteases | Enzyme inhibition assays | Source of purified target enzyme for high-throughput screening |

| Fluorogenic Substrates | Kinetic characterization | Enable continuous monitoring of protease activity and inhibition |

| Crystallography Systems | Structure-determination | Facilitate elucidation of enzyme-inhibitor complexes for SBDD |

| Cell-Based Reporter Assays | Antiviral activity assessment | Quantify functional inhibition in biologically relevant systems |

| Clinical Isolate Panels | Resistance profiling | Evaluate efficacy against resistant mutant enzymes and viruses |

Pathway and Experimental Visualizations

RAAS Pathway and ACE Inhibition

Diagram Title: RAAS Pathway and Captopril Mechanism

HIV Protease Inhibitor Development Workflow

Diagram Title: HIV Protease Inhibitor Development Pipeline

The successes of captopril and HIV protease inhibitors established enduring paradigms in structure-based drug discovery. Captopril demonstrated that rational design based on natural product templates could yield therapeutics with novel mechanisms of action, while HIV protease inhibitors showed that targeting pathogen-specific enzymes with designed transition-state analogs could produce transformative treatments for infectious diseases. Together, these pioneers validated protease inhibition as a therapeutic strategy and structure-based design as a powerful discovery approach. Their development stories continue to inform current drug discovery efforts, particularly in targeting challenging enzyme classes, and represent foundational case studies in the ongoing evolution of rational therapeutic design.

The 20th century witnessed a revolutionary transformation in pharmaceutical science: the shift from serendipitous drug discovery to rational drug design. This paradigm moved the field from a reliance on observation, chance, and the screening of natural products to an approach grounded in the principled understanding of disease mechanisms, molecular targets, and the three-dimensional structure of biological molecules [1]. The core of rational drug design lies in the inventive process of discovering new medications based on knowledge of a biological target, designing molecules that are complementary in shape and charge to the biomolecular target with which they interact [19]. This methodology stands in stark contrast to the earlier "molecular roulette" approach that dominated drug discovery until the late 19th century, where medicines were often concocted with a mixture of empiricism and prayer, and the difference between a poison and a medicine was often merely the dose [20] [21]. The rise of rational drug design represents a fundamental reorientation in how scientists conceptualize the interaction between drugs and their targets, ultimately enabling the development of therapies that precisely intervene in disease pathways.

Foundational Concepts and Early Theoretical Frameworks

The conceptual groundwork for rational drug design was laid through key theoretical advances that provided a framework for understanding molecular interactions. In the early 1890s, Emil Fischer introduced the seminal "lock and key" model to describe drug-receptor interaction, proposing that both the drug and the receptor interact as rigid bodies without changing their conformations [1] [19]. This model established the principle of molecular complementarity, suggesting that a drug (the "key") must sterically and chemically fit its biological target (the "lock") to elicit an effect.

This initial concept was later refined by Daniel Koshland in the 1950s with his proposal of the "induced fit" hypothesis [1]. Koshland recognized that both the drug and the receptor molecule undergo conformational changes during interaction, adopting the most suitable conformation to connect with each other. This dynamic understanding of molecular recognition, which has since been proven many times by X-ray structures and in silico simulations, became a critical consideration for designing effective drugs. These theoretical models established the fundamental principle that the biological activity of a compound is determined by its specific three-dimensional structure and its interaction with the target site.

Technological Enablers: The Tools That Made Rational Design Possible

The transition to rational drug design was propelled by parallel advances in structural biology and analytical techniques that enabled researchers to visualize biological molecules at atomic resolution.

Key Analytical Techniques in Structural Biology

Table 1: Fundamental analytical techniques that enabled rational drug design

| Technique | Underlying Principle | Contribution to Drug Design | Era of Significant Impact |

|---|---|---|---|

| X-ray Crystallography | Determines 3D structure by measuring diffraction patterns of X-rays through crystalline samples | Provided first atomic-level views of protein structures and drug-target complexes [3] | 1960s-present |

| Nuclear Magnetic Resonance (NMR) Spectroscopy | Uses magnetic fields to determine structure of molecules in solution | Enabled study of protein dynamics and ligand binding in near-physiological conditions [3] | 1980s-present |

| Cryo-Electron Microscopy (Cryo-EM) | Images frozen hydrated samples with electrons to determine macromolecular structures | Allows visualization of large complexes and membrane proteins difficult to crystallize [3] | 2010s-present |

| Homology Modeling | Predicts 3D structure based on similarity to known protein structures | Enabled target modeling when experimental structures were unavailable [2] | 1990s-present |

These structural biology techniques provided the essential windows into the atomic world that made structure-based design feasible. The determination of the carboxypeptidase A structure by Quiocho and Lipscomb in 1967 via X-ray crystallography marked a pivotal moment, providing one of the first detailed views of a zinc-metalloprotease active site that would later prove critical for ACE inhibitor design [22].

Key Historical Milestones in Rational Drug Design

The development of rational drug design progressed through several distinct phases, each building upon previous discoveries and technological innovations.

The Birth of Chemotherapy and the Magic Bullet Concept

In the early 20th century, Paul Ehrlich pioneered the concept of "magic bullets"—therapies that would selectively target disease-causing organisms without harming the host [23]. Although Ehrlich's work predated true rational design, his systematic screening of hundreds of organic arsenic compounds (leading to the 606th compound, Salvarsan, for syphilis treatment) established the principle of selective toxicity and systematic screening that would inform later approaches [23].

The Captopril Breakthrough: A Case Study in Early Rational Design

The development of Captopril, the first angiotensin-converting enzyme (ACE) inhibitor approved in 1981, represents the first unequivocal success of structure-based rational drug design [22]. This project demonstrated how knowledge of enzyme mechanism and active site architecture could guide drug discovery.

Experimental Protocol: The Captopril Development Process

The methodology followed by researchers at Squibb (Cushman, Ondetti, and colleagues) provides a template for early rational drug design:

Target Identification and Validation: Angiotensin-converting enzyme (ACE) was identified as a key regulator of blood pressure via the renin-angiotensin system [22].

Natural Product Insight: Observation that Brazilian viper (Bothrops jararaca) venom caused dramatic blood pressure drops led to isolation of ACE-inhibitory peptides [22].

Lead Compound Isolation: Researchers isolated and characterized teprotide, a nine-amino-acid peptide from venom that potently inhibited ACE [22].

Clinical Validation: Intravenous teprotide demonstrated blood pressure-lowering effects in humans, confirming ACE inhibition as a viable therapeutic strategy [22].

Enzyme Mechanism Studies: ACE was identified as a zinc metalloprotease based on its inhibition by chelating agents and reactivation by zinc ions [22].

Active Site Modeling: Researchers constructed a conceptual model of the ACE active site by analogy with carboxypeptidase A (whose structure was known), identifying key features including a zinc ion at the catalytic site [22].

Inhibitor Design Strategy: Based on a published carboxypeptidase A inhibitor (benzylsuccinic acid), researchers designed succinyl amino acid derivatives that mimicked the transition state of peptide hydrolysis [22].

Structure-Activity Optimization: Systematic modification of the lead compound (2-methyl succinyl proline) yielded captopril, where replacement of a carboxylate with a thiol group increased potency 1000-fold due to stronger zinc coordination [22].

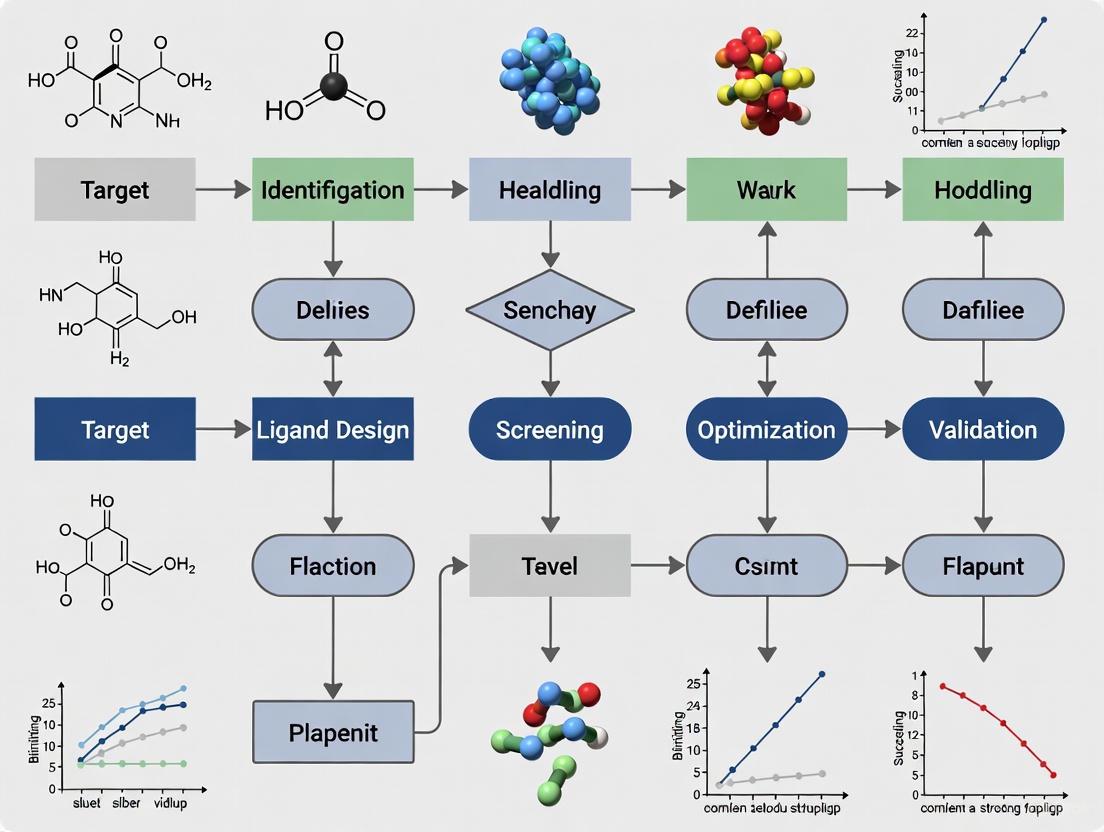

Diagram 1: The rational design workflow for Captopril discovery

The HIV Protease Inhibitors: Computational Design Matures

The 1990s witnessed another landmark achievement with the development of HIV protease inhibitors, which represented the maturation of structure-based drug design [2]. The approach combined X-ray crystallography of the protease target with computational methods:

Structure Determination: X-ray crystallography revealed HIV protease as a C2-symmetric homodimer with an active site at its center [2].

Structure-Based Design: Researchers designed symmetric inhibitors that mimicked the natural peptide substrate but incorporated non-cleavable transition-state isosteres.

Computational Optimization: Molecular modeling and dynamics simulations guided the optimization of inhibitor binding affinity and selectivity.

The success of HIV protease inhibitors demonstrated the power of combining high-resolution structural information with computational methods, validating structure-based drug design as a productive approach for antiviral development [2].

The Epigenetic Therapeutics: Rational Design Expands to New Target Classes

The discovery of epigenetic drugs further illustrates the expansion of rational approaches to new biological domains. The early epigenetic agents like 5-azacytidine (azacytidine) and 5-aza-2'-deoxycytidine (decitabine) were initially developed as nucleoside analogs in the 1960s without knowledge of their epigenetic mechanism [24]. Their ability to inhibit DNA methyltransferases (DNMTs) through incorporation into DNA and trapping the enzymes was only discovered in 1980 by Jones and Taylor [24]. This understanding of mechanism then enabled the rational design of improved epigenetic therapies, including later histone deacetylase (HDAC) inhibitors such as vorinostat [24].

Evolution of Methodological Approaches

The methodological sophistication of rational drug design evolved significantly throughout the 20th century, progressing from basic concepts to computationally intensive approaches.

The Scientist's Toolkit: Essential Research Reagents and Technologies

Table 2: Key research reagents and technologies that enabled rational drug design

| Research Tool | Function in Drug Design | Specific Examples |

|---|---|---|

| Zinc Metalloprotease Assays | Quantitative evaluation of ACE inhibition | Cushman's first quantitative ACE assay [22] |

| Recombinant Protein Expression | Production of pure target proteins for structural studies | Cloning and expression of therapeutic targets [2] |

| Crystallization Screening Kits | Identification of conditions for protein crystallization | Sparse matrix screens for X-ray crystallography [2] |

| Molecular Modeling Software | Visualization and manipulation of 3D molecular structures | Early packages for protein-ligand docking [2] [19] |

| Synchrotron Radiation Sources | High-intensity X-rays for protein crystallography | Enabled structure determination of challenging targets [3] |

The Computational Revolution: From Manual Docking to Automated Screening

The latter part of the 20th century saw computational methods become increasingly integrated into the drug design process. Early molecular mechanics methods allowed researchers to estimate the strength of intermolecular interactions between small molecules and their biological targets [19]. The development of docking algorithms and scoring functions enabled virtual screening of compound libraries, dramatically accelerating the identification of lead compounds [2] [19].

Diagram 2: Structure-based drug design workflow in the computational era

Impact and Legacy: Transforming Pharmaceutical Development

The adoption of rational drug design principles had profound effects on pharmaceutical development, shifting investment from traditional phenotypic screening to target-based approaches. Analysis of pharmaceutical company portfolios showed that by 2001, nearly 60-70% of discovery portfolios were allocated to drugs with novel targets, many identified through genomic and structure-based approaches [20]. Furthermore, targets with stronger validation of their biological role in human disease, often established through genetic evidence, demonstrated significantly lower failure rates in clinical development due to lack of efficacy [20].

The rational design paradigm also fundamentally changed the skill sets required for drug discovery, creating demand for specialists in structural biology, bioinformatics, and computational chemistry alongside traditional medicinal chemists [25]. This interdisciplinary approach would eventually pave the way for 21st-century innovations, including fragment-based drug discovery and the targeting of protein-protein interactions [3].

The rise of rational drug design during the 20th century represents one of the most significant transformations in pharmaceutical science. Beginning with theoretical models of drug-receptor interactions and progressing through landmark successes like Captopril and HIV protease inhibitors, the field evolved from conceptual foundations to practical application driven by advances in structural biology and computational methods. This paradigm shift moved drug discovery from a largely empirical process to an engineering discipline grounded in detailed understanding of biological mechanisms and molecular recognition. The legacy of these 20th-century developments continues to shape modern drug discovery, providing the essential methodological framework for today's targeted therapies and precision medicines.

Methodological Pillars: Techniques Powering Modern Ligand Discovery

The field of structural biology, propelled by techniques such as X-ray crystallography, cryo-electron microscopy (cryo-EM), and nuclear magnetic resonance (NMR) spectroscopy, has fundamentally revolutionized drug discovery. The ability to determine the three-dimensional structures of biological macromolecules at atomic or near-atomic resolution has transformed the process of ligand discovery from a purely empirical endeavor to a rational, structure-based science [26]. This whitepaper provides an in-depth technical guide to these core experimental methods, framing their development and application within the broader historical context of structure-based ligand discovery research. We detail the fundamental principles, experimental workflows, and unique capabilities of each technique, emphasizing their complementary roles in elucidating protein-ligand interactions for therapeutic development. Designed for researchers, scientists, and drug development professionals, this document also presents structured comparisons, detailed methodologies, and essential resource tables to serve as a practical reference in the ongoing effort to relate structural information to biological function [27].

Historical Context and the Rise of Structure-Based Drug Design

The foundation of structure-based ligand discovery was laid over a century ago with Paul Ehrlich's introduction of the "pharmacophore" concept, which defined the properties of a compound responsible for its pharmacological effect [26]. However, the field's "big bang" was ignited by the first atomic-level protein structures, beginning with myoglobin at 2-Å resolution in 1960, determined using X-ray crystallography [27]. For decades, X-ray crystallography remained the dominant technique, with over 112,000 protein structures deposited in the Protein Data Bank (PDB) [27]. Its success was fueled by technological and methodological advances, including synchrotron radiation sources, cryo-cooling to mitigate radiation damage, and robust phasing methods like multi-wavelength anomalous dispersion (MAD) [27].

NMR spectroscopy emerged as a powerful alternative for determining protein structures in solution, offering the unique advantage of probing molecular dynamics and conformational states without crystallization [28] [29]. More recently, cryo-EM has experienced a "resolution revolution," driven by advances in direct electron detectors and image processing software, enabling high-resolution structure determination of large complexes and membrane proteins that were previously intractable [27] [30]. This evolution has established a versatile toolkit where these techniques are no longer seen as mutually exclusive but are increasingly combined to tackle the complex challenges of modern drug discovery [31].

Core Principles and Technical Comparison

X-ray Crystallography

Fundamental Principle: X-ray crystallography determines structure by measuring the diffraction patterns produced when a beam of X-rays interacts with a crystalline sample. The positions and intensities of the diffraction spots are used to compute an electron density map, into which an atomic model is built [32] [31]. The quality of the structure is heavily dependent on the degree of order within the crystal.

Key Outputs: The refined model includes atomic coordinates, occupancy, and atomic displacement parameters (ADPs or B-factors), which describe atomic displacement due to thermal motion and static disorder [32].

Cryo-Electron Microscopy (Cryo-EM)

Fundamental Principle: In single-particle cryo-EM, a beam of high-energy electrons is used to image individual macromolecules flash-frozen in a thin layer of vitreous ice. Thousands of two-dimensional projection images are computationally classified, aligned, and averaged to reconstruct a three-dimensional density map [30] [31]. This method avoids the need for crystallization and preserves the sample in a near-native state.

Key Outputs: The result is a 3D electron density map, often at near-atomic resolution, which is used for model building. Modern cryo-EM can resolve structures to atomic resolution (e.g., 1.2 Å) [30].

Nuclear Magnetic Resonance (NMR) Spectroscopy

Fundamental Principle: NMR spectroscopy exploits the magnetic properties of atomic nuclei (e.g., ¹H, ¹³C, ¹⁵N) in a strong magnetic field. The analysis of chemical shifts, J-couplings, and nuclear Overhauser effects (NOEs) provides information on interatomic distances, dihedral angles, and overall dynamics, enabling the calculation of a 3D structure of a protein in solution [28] [29].

Key Outputs: NMR yields an ensemble of structures that represent the conformational landscape of the protein in solution, offering direct insight into molecular dynamics and flexibility [28].

Table 1: Quantitative Comparison of Key Technical Parameters

| Parameter | X-ray Crystallography | Cryo-EM | NMR Spectroscopy |

|---|---|---|---|

| Typical Resolution | Atomic (often <2.0 Å) | Near-atomic to atomic (now often <3 Å) [30] | Atomic, but detail can be limited by molecular tumbling |

| Sample State | Static, crystalline lattice | Near-native, vitrified solution [31] | Dynamic, solution |

| Ideal Size Range | <数百 kDa [27] | >~100 kDa (smaller targets now possible) [30] | <~50 kDa (limits pushed with techniques) [28] |

| Sample Consumption | High (for crystallization trials) | Low [30] | High (for concentration) [28] |

| Throughput | High (for established crystals) | Moderate to High (increasingly automated) | Low to Moderate |

| Key Advantage | High-resolution precise atomic coordinates [27] | Avoids crystallization; handles large complexes/membrane proteins [30] | Probes dynamics and transient states in solution [28] |

| Key Limitation | Crystallization bottleneck; crystal packing artifacts | Resolution can be limited for small, flexible targets | Intrinsically low sensitivity; molecular size limit |

Table 2: Strengths and Limitations in Drug Discovery Context

| Aspect | X-ray Crystallography | Cryo-EM | NMR Spectroscopy |

|---|---|---|---|

| Target Flexibility | Challenged by high flexibility (poor electron density) | Can deconvolute conformational heterogeneity [27] | Ideal for characterizing dynamics and disordered proteins [28] |

| Membrane Proteins | Challenging, but many successes | Highly effective (e.g., GPCRs) [30] | Limited by size and need for membrane mimetics |

| Ligand Screening | Excellent for fragment screening (FBDD) via soaking [32] [33] | Emerging for FBDD, especially for large targets [30] | Excellent for detecting weak, transient binding in FBDD [29] |

| Dynamic Information | Indirect, via temperature factors/occupancy; time-resolved studies possible | Time-resolved methods emerging to capture kinetics [34] | Direct measurement of dynamics over multiple timescales [28] |

| Structure Validation | Agreement with electron density and stereochemistry (R/Rfree) [32] | Agreement with 3D map and stereochemistry | Agreement with experimental restraints (NOEs, couplings) and stereochemistry |

Detailed Experimental Protocols

X-ray Crystallography Workflow for Ligand Screening

The following protocol is typical for fragment-based drug discovery (FBDD) using crystal soaking [32] [33].

Protein Purification and Crystallization:

- Purify the target protein to homogeneity using standard chromatographic techniques (e.g., affinity, size exclusion).

- Identify initial crystallization conditions using high-throughput screening of sparse-matrix screens.

- Optimize hit conditions to produce large, single, and well-diffracting crystals.

Ligand Soaking and Harvesting:

- Ligand Preparation: Prepare a concentrated stock solution of the ligand (or fragment) in a solvent compatible with the crystal (typically DMSO or the crystallization mother liquor).

- Soaking: Transfer a single crystal into a stabilizing solution (mother liquor) containing the ligand. The ligand concentration is typically high (e.g., 1-10 mM) to drive binding despite potentially weak affinity (KD in µM-mM range). Soaking times can range from minutes to hours.

- Cryo-protection and Harvesting: After soaking, transfer the crystal to a cryo-protectant solution (e.g., mother liquor with added glycerol or ethylene glycol) to prevent ice formation. Flash-cool the crystal in liquid nitrogen.

Data Collection and Processing:

- Transport the crystal to a synchrotron X-ray source or use a home-source X-ray generator.

- Collect a complete X-ray diffraction dataset by rotating the crystal in the X-ray beam and recording diffraction images on a detector.

- Index and integrate the diffraction images to obtain intensities and scale the data.

Structure Solution and Analysis:

- Phasing: Obtain phase information, typically by molecular replacement using a known high-resolution structure of the unliganded protein as a search model.

- Model Building and Refinement: Build the protein model into the experimental electron density map. The difference electron density map (Fo-Fc) is calculated to reveal areas of unaccounted density, indicating the bound ligand.

- Ligand Fitting: Fit the ligand into the positive difference density, refine its position and occupancy, and validate the binding mode through analysis of protein-ligand interactions.

Single-Particle Cryo-EM Workflow

This protocol outlines the key steps for determining a protein-ligand complex structure using single-particle cryo-EM [30].

Sample Preparation and Vitrification:

- Sample Optimization: Purify the protein or complex of interest. Incubate with the ligand to form the complex. The sample must be monodisperse and stable. For membrane proteins, this often involves solubilization in detergents or nanodiscs.

- Grid Preparation: Apply a small volume (e.g., 3-5 µL) of the sample to a perforated carbon grid (e.g., Quantifoil or C-flat).

- Blotting and Plunge-freezing: Blot away excess liquid with filter paper and immediately plunge the grid into a cryogen (liquid ethane or a mixture of ethane/propane) cooled by liquid nitrogen. This vitrifies the water, preserving the particles in a glass-like, hydrated state.

Data Collection:

- Load the vitrified grid into a high-end transmission electron microscope (TEM) equipped with a field emission gun (FEG) and a direct electron detector (DED).

- Collect "movies" (a series of frames) of the sample at a defined defocus under low-dose conditions (e.g., ~1-2 e⁻/Ų/frame) to minimize beam-induced motion and radiation damage.

Image Processing and 3D Reconstruction:

- Pre-processing: Motion-correct the movies and estimate the contrast transfer function (CTF) for each micrograph.

- Particle Picking: Automatically select hundreds of thousands to millions of individual protein particles from the micrographs.

- 2D Classification: Classify the extracted particle images into 2D class averages to remove non-particle images, junk, and obvious contaminants.

- Initial Model Generation: Create an initial 3D model ab initio or by using a existing low-resolution structure as a reference.

- 3D Classification and Refinement: Perform 3D classification to isolate structurally homogeneous subsets of particles, often revealing different conformational states or the presence/absence of a ligand. Refine the selected particle subset to generate a high-resolution 3D reconstruction.

Model Building and Refinement:

- De novo model building: Build an atomic model into the high-resolution cryo-EM density map, often starting from an existing model of the protein.

- Refinement and Validation: Refine the atomic model against the cryo-EM map, ensuring proper stereochemistry and good fit to the density.

NMR Workflow for Fragment Screening

This protocol focuses on the use of NMR for identifying fragment hits in FBDD, which is one of its primary applications in drug discovery [29].

Sample and Library Preparation:

- Protein Labeling: For target-observed NMR, the protein is typically uniformly labeled with ¹⁵N and/or ¹³C isotopes. This is achieved by expressing the protein in minimal media containing ¹⁵N-ammonium sulfate and ¹³C-glucose as the sole nitrogen and carbon sources.

- Fragment Library: A library of 500-2000 compounds adhering to the "rule of three" (MW ≤ 300, cLogP ≤ 3, H-bond donors/acceptors ≤ 3) is assembled. Fragments are often pooled into groups of 5-10 for ligand-observed NMR, with care to avoid signal overlap.

Hit Screening (Two Primary Methods):

- Ligand-Observed NMR:

- Experiment: Conduct ¹H 1D line-broadening, saturation transfer difference (STD), or WaterLOGSY experiments on a mixture of fragments in the presence of the protein.

- Readout: A change in the NMR signal of the fragment (e.g., line broadening, signal attenuation) indicates binding. This method is fast and requires no isotopic labeling of the protein.

- Target-Observed NMR:

- Experiment: Perform a ¹⁵N-¹H Heteronuclear Single Quantum Coherence (HSQC) experiment on the labeled protein in the absence and presence of the fragment.

- Readout: Chemical shift perturbations (CSPs) or line broadening in the protein's HSQC spectrum upon fragment addition indicate binding and can often pinpoint the binding site.

- Ligand-Observed NMR:

Hit Validation and Characterization:

- Dose-Response: Confirm hits by performing a titration with the individual fragment and measuring CSPs to estimate binding affinity (KD).

- Binding Site Mapping: Map the CSPs onto the protein structure to identify the binding site.

- Structure Determination (Optional): For promising hits, a full structure of the protein-fragment complex can be determined using intermolecular NOEs, paramagnetic relaxation enhancement (PRE), and other NMR restraints.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Structural Biology

| Item | Function/Description |

|---|---|

| High-Purity Target Protein | The biological macromolecule of interest (e.g., enzyme, receptor, complex). Must be purified to homogeneity and functionally active. |

| Crystallization Screening Kits | Commercial sparse-matrix screens (e.g., from Hampton Research, Molecular Dimensions) containing hundreds of conditions to identify initial crystallization leads. |

| Cryo-EM Grids | Specimen supports, typically gold or copper with a perforated carbon film (e.g., Quantifoil), onto which the sample is applied for vitrification. |

| Direct Electron Detector (DED) | A key hardware advancement for cryo-EM that records images with high signal-to-noise and allows for motion correction of movie frames [30]. |

| Isotopically Labeled Compounds | ¹⁵N-labeled ammonium salts and ¹³C-labeled glucose for producing isotopically enriched protein samples required for multidimensional NMR experiments [29]. |

| Fragment Library | A collection of 500-2000 small, soluble compounds following the "Rule of 3" for use in FBDD campaigns via X-ray, NMR, or cryo-EM [29] [33]. |

| Ligands/Inhibitors | Small molecules, substrates, or drug candidates whose binding interactions with the target protein are to be characterized. |

| Cryo-Protectants | Chemicals like glycerol, ethylene glycol, or sucrose used to prevent ice crystal formation in protein crystals during cryo-cooling for X-ray data collection [27]. |

| Detergents/Membrane Mimetics | Agents like n-Dodecyl-β-D-maltoside (DDM), amphipols, or nanodiscs used to solubilize and stabilize membrane proteins for all structural studies. |

| Data Processing Software Suites | Integrated software for structure determination (e.g., CCP4, Phenix for crystallography; RELION, cryoSPARC for cryo-EM; CYANA, XPLOR-NIH for NMR) [35]. |

Integrated Applications in Drug Discovery

The true power of modern structural biology lies in the integrated use of X-ray crystallography, cryo-EM, and NMR to address complex problems in drug discovery.

Fragment-Based Drug Discovery (FBDD): FBDD has become a mainstream approach for identifying chemical starting points. NMR and X-ray crystallography are particularly powerful for the initial identification of weakly binding fragments (screening) and for guiding their optimization into lead compounds with high affinity [29] [33]. Cryo-EM is increasingly being applied to FBDD for large targets like RNA polymerase or viral spike proteins [30].

Targeting Challenging Protein Classes: Cryo-EM has revolutionized the study of membrane proteins, such as G-protein-coupled receptors (GPCRs), and large, dynamic complexes like the RNA exosome. It provides structures in near-native environments without the constraints of crystal packing [30] [31]. NMR remains unparalleled for characterizing intrinsically disordered proteins (IDPs) and mapping protein-protein interactions (PPIs), offering insights into regions that are invisible to crystallography [28].

Capturing Dynamics for Drug Design: Understanding molecular dynamics is crucial for designing effective drugs. Time-resolved cryo-EM is emerging as a technique to visualize rare intermediate states and conformational changes during biochemical reactions, providing invaluable insights for designing drugs that target specific functional states [34]. NMR inherently provides atomic-level information on dynamics and populations of conformational states on timescales from picoseconds to seconds [28]. This dynamic information is essential for understanding allosteric regulation and designing drugs that exploit these mechanisms.

Combining Techniques: A common and powerful integrative approach involves docking high-resolution X-ray or NMR structures of individual components into a lower-resolution cryo-EM map of a large complex. This method, known as "hybrid" or "integrative" modeling, allows researchers to interpret the architecture and mechanism of large molecular machines that are difficult to crystallize as a whole [31].

X-ray crystallography, cryo-EM, and NMR spectroscopy form a complementary and powerful toolkit that has firmly established structure-based design as a cornerstone of modern drug discovery. The historical trajectory from the first protein structures to today's dynamic and integrative approaches demonstrates a field in constant evolution. The "resolution revolution" in cryo-EM has democratized high-resolution structure determination for many challenging targets, while advancements in NMR and X-ray methods continue to deepen our understanding of molecular interactions and dynamics. The future of structure-based ligand discovery lies in the synergistic combination of these techniques, further enhanced by machine learning and artificial intelligence, to visualize and target the full complexity of biological macromolecules in health and disease. This integrated, dynamics-aware approach holds the promise of accelerating the development of novel therapeutics for some of the most challenging human diseases.

For decades, the ability to accurately determine and predict the three-dimensional structure of proteins from their amino acid sequences has represented one of the most significant challenges in structural biology. Knowledge of protein tertiary structure provides invaluable insights into molecular function, guides experimental design, and facilitates the development of therapeutics for disease. Two computational approaches have fundamentally transformed this landscape: the established methodology of homology modeling and the revolutionary artificial intelligence system AlphaFold. The progression from homology modeling to AlphaFold represents a paradigm shift in structure-based ligand discovery research, dramatically accelerating the pace of biological investigation and drug development [36] [37]. This review examines the technical foundations, comparative performance, and practical applications of these transformative technologies within the context of modern drug discovery.

Historical Foundations: Homology Modeling

Principles and Methodologies

Homology modeling, also known as comparative modeling, operates on the fundamental biological principle that protein three-dimensional structure is more evolutionarily conserved than amino acid sequence. The method relies on the existence of a homologous, experimentally-determined template structure to predict the configuration of a target protein sequence [38] [39]. The accuracy of the resulting model is directly correlated with the degree of sequence identity between the target and template, with models exceeding 50% sequence identity generally considered sufficiently accurate for drug discovery applications, while those below 25% identity are considered tentative at best [38].

The homology modeling process constitutes a multi-step workflow that requires careful execution at each stage to produce a reliable protein model [38]:

- Template Identification and Fold Recognition: The target sequence is compared against databases of known protein structures using search tools like BLAST or more sensitive profile-based methods such as PSI-BLAST and Hidden Markov Models to identify suitable templates [38].

- Target-Template Alignment: Accurate sequence alignment is critical, as alignment errors represent the primary source of significant deviations in comparative models. This step often employs multiple sequence alignment tools like ClustalW, T-Coffee, or PROBCONS [38].

- Model Building: The actual protein model is constructed using the template structure as a scaffold. Common techniques include rigid-body assembly, segment matching, and spatial restraint satisfaction [38].

- Loop and Side-Chain Modeling: Regions of structural variation (loops) and side-chain conformations are modeled, often through conformational searches against structural libraries [36] [38].

- Model Refinement and Validation: The initial model undergoes energy minimization and molecular dynamics simulation to relieve steric clashes and improve stereochemistry. The final model is validated using geometric checks and statistical Z-scores [38].

Applications and Limitations in Drug Discovery

Homology modeling established itself as an indispensable tool for generating structural hypotheses when experimental structures were unavailable. The approach proved particularly valuable for identifying ligand binding sites, understanding substrate specificity, and annotating protein function [38]. In structure-based drug design, homology models provided a structural context for virtual screening and rational ligand optimization, especially for target classes like G protein-coupled receptors (GPCRs) where experimental structures were historically difficult to obtain [26] [38].

However, the methodology contained inherent limitations. Template availability presented a significant constraint, with suitable templates unavailable for a substantial proportion of protein sequences [40]. Model accuracy decreased substantially with lower sequence identity to templates, particularly in loop regions and side-chain placements [38] [40]. The approach also fundamentally could not predict structures for proteins with no evolutionary relatives of known structure, leaving entire protein families structurally uncharacterized [39].

The AlphaFold Revolution

A Technical Leap in Structure Prediction

The development of AlphaFold by DeepMind, particularly the AlphaFold2 version unveiled at the CASP14 assessment in 2020, represented a quantum leap in protein structure prediction accuracy. The system demonstrated the ability to predict protein structures with atomic-level accuracy competitive with experimental methods in a majority of cases, solving a five-decade-old grand challenge in biology [37] [39] [41].

Unlike homology modeling, AlphaFold employs a novel deep learning architecture that integrates physical and biological knowledge about protein structure with multi-sequence alignments [41]. The neural network comprises two primary components:

- The Evoformer: A novel neural network block that processes inputs through attention-based mechanisms to generate representations of multiple sequence alignments and residue pairs. This module enables direct reasoning about spatial and evolutionary relationships within the protein [41].

- The Structure Module: This component introduces an explicit 3D structure through rotations and translations for each protein residue. It employs an equivariant transformer to reason about unrepresented side-chain atoms and utilizes a loss function that emphasizes orientational correctness [41].

A key innovation is the system's iterative refinement process, termed "recycling," where outputs are repeatedly fed back into the same modules, significantly enhancing prediction accuracy [41]. The network is trained on structures from the Protein Data Bank and can directly predict the 3D coordinates of all heavy atoms for a given protein using primary amino acid sequence and aligned homologous sequences as inputs [41].

Unprecedented Scale and Accessibility