Docking Scoring Functions Compared: A 2025 Guide to Performance, Pitfalls, and AI Advances

Accurate scoring functions are critical for the success of molecular docking in structure-based drug design.

Docking Scoring Functions Compared: A 2025 Guide to Performance, Pitfalls, and AI Advances

Abstract

Accurate scoring functions are critical for the success of molecular docking in structure-based drug design. This article provides a comprehensive, up-to-date comparison of docking scoring function performance for researchers and drug development professionals. We explore the foundational principles of classical and machine-learning-based functions, detail methodological approaches for their application in virtual screening, and offer practical strategies for troubleshooting and optimization. Finally, we present a rigorous validation framework, comparing the performance of various functions across different targets and highlighting how emerging artificial intelligence (AI) methods are reshaping the field. The insights synthesized here aim to guide the selection and application of scoring functions to improve the efficiency and success rate of virtual screening campaigns.

The Building Blocks of Prediction: Understanding Scoring Function Types and Mechanisms

Scoring functions are the computational engines of molecular docking, tasked with predicting the binding mode and affinity of a ligand to its biological target. They achieve this by approximating the interaction energy between the molecules, serving as a critical filter to identify the most likely binding poses from millions of possibilities and to rank compounds in virtual screening campaigns [1] [2]. Their performance directly impacts the success rate of structure-based drug design, influencing the accuracy of predicted protein-ligand complexes and the efficient identification of promising hit compounds [3].

The field is characterized by a diversity of approaches, each with distinct strengths and weaknesses. The core task of a scoring function can be broken down into several key capabilities, or "powers," that are used to benchmark their performance.

- Docking Power: The ability to identify the correct binding pose of a ligand, typically defined as a pose with a Root Mean Square Deviation (RMSD) of less than 2 Å from the experimental structure [2].

- Scoring Power: The capability to compute a binding score that correlates linearly with experimentally measured binding affinities [2].

- Ranking Power: The proficiency to correctly rank a series of ligands bound to the same protein based on their binding affinity [2].

- Screening Power: The effectiveness in distinguishing true binders from non-binders (decoys) in a virtual screen, often measured by enrichment factors [2].

A Comparative Taxonomy of Scoring Function Methodologies

Scoring functions are traditionally categorized by their underlying theoretical foundations. The table below outlines the main types, their core principles, and representative examples.

Table 1: Classification and comparison of classical and machine learning-based scoring functions.

| Category | Core Principle | Representative Examples | Key Characteristics |

|---|---|---|---|

| Physics-Based | Calculates energy using classical force fields summing Van der Waals, electrostatic, and sometimes solvation terms [3]. | GROMOS96 [4], AMBER | High computational cost; explicit physical representation [3]. |

| Empirical-Based | Fits weighted energy terms (e.g., H-bonding, hydrophobic) to experimental binding affinity data using linear regression [3] [5]. | MOE's London dG, Alpha HB [5], Glide XP, AutoDock Vina's function [6] | Faster computation; performance depends on training data [3]. |

| Knowledge-Based | Derives potentials from statistical analysis of atom-pair frequencies in known protein-ligand structures (Boltzmann inversion) [3]. | AP-PISA, SIPPER [3] | Good balance of speed and accuracy [3]. |

| Hybrid | Combines elements from the above categories into a single scoring scheme [3]. | PyDock, HADDOCK [3] | Aims to leverage the strengths of multiple approaches. |

| Machine/Deep Learning (ML/DL) | Learns complex, non-linear relationships between protein-ligand structural features and binding affinities or native poses [3] [2]. | Various 3D-CNNs, Graph Neural Networks [3] [2] | No predetermined functional form; requires large training datasets [2]. |

Performance Comparison: Classical vs. Deep Learning and Across Software

Independent benchmarking studies reveal that no single scoring function excels universally across all tasks. The following table summarizes quantitative performance data from recent comparative assessments.

Table 2: Experimental performance comparison of selected scoring functions across different benchmarks.

| Scoring Function | Type | Docking Power (Pose Selection) | Key Comparative Findings |

|---|---|---|---|

| MOE (London dG & Alpha HB) | Empirical | N/A | Showed the highest pairwise comparability and performance in a 2025 InterCriteria Analysis (ICrA) on the CASF-2013 benchmark [1] [5]. |

| AutoDock Vina | Empirical | Used as a common baseline in DL studies [2]. | A 2024 review noted that DL-based pose selectors frequently outperform classical SFs like Vina in identifying near-native poses [2]. |

| Deep Learning Pose Selectors | Deep Learning | Superior to classical SFs like PLANTS ChemPLP, Glide XP, and AutoDock Vina [2]. | Designed specifically for pose selection, overcoming limitations of affinity-based SFs; performance depends on training data [2]. |

| NMRScore | Experimental Data-Based | Outperformed 8 docking program SFs (AutoDock, Dock, Glide, MOE, etc.) in ranking native-like poses for FKBP [7]. | Uses NMR chemical shift perturbations as a scoring metric, showing excellent correlation with correct poses [7]. |

Beyond pose prediction, the screening power of scoring functions is critical for drug discovery. A 2025 study on large-scale docking benchmarks highlighted that machine learning models trained on docking scores can effectively prioritize molecules for testing. For example, models trained on just 1% of a massive docking library could identify a significant fraction of the top 0.01% scoring compounds, demonstrating the potential for ML to augment traditional scoring in virtual screening [8].

Experimental Protocols for Benchmarking Scoring Functions

To ensure fair and reproducible comparisons, the community relies on standardized benchmarks and protocols. The most cited protocol involves using the CASF (Comparative Assessment of Scoring Functions) benchmark.

The CASF Benchmark Methodology

The CASF benchmark, particularly the CASF-2013 and CASF-2016 versions, provides a high-quality dataset of protein-ligand complexes from the PDBbind database for a head-to-head evaluation of scoring functions [1] [5]. A typical workflow for assessing docking power is as follows:

- Dataset Curation: A set of protein-ligand complexes with high-resolution crystal structures and reliable binding affinity data is selected (e.g., the 195 complexes in CASF-2013) [5].

- Re-docking: For each complex, the native ligand is extracted and then re-docked into its protein's binding site using the docking program of interest. This generates multiple candidate poses for each ligand [5].

- Pose Scoring and Selection: The scoring function under evaluation is used to score all generated poses. The top-ranked pose (the one with the "best" score, e.g., most negative) is selected as the prediction.

- Accuracy Calculation: The Root Mean Square Deviation (RMSD) between the top-ranked predicted pose and the experimentally determined co-crystallized ligand structure is calculated.

- Performance Metric: The docking power is reported as the percentage of test cases where the top-ranked pose has an RMSD below a defined threshold (commonly 2 Å), indicating a successful, near-native prediction [2].

This process was employed in a 2025 study comparing MOE's scoring functions, which also analyzed other outputs like the best docking score and the score of the pose with the lowest RMSD to provide a multi-faceted performance assessment [5].

Protocol for Novel Scoring Methods like NMRScore

Alternative methods like NMRScore employ a different, experimentally grounded protocol [7]:

- Pose Generation: Multiple docking poses for a protein-ligand complex are generated using one or several docking programs.

- Chemical Shift Calculation: NMR chemical shifts are calculated for each of the generated docking poses.

- Experimental Comparison: The calculated chemical shifts for each pose are compared to the actual experimental NMR chemical shifts.

- Scoring and Ranking: The NMRScore is defined as the RMSD between the calculated and experimental chemical shifts. A lower NMRScore indicates a closer match to the true native structure, allowing poses to be ranked accordingly [7].

For researchers conducting comparative studies on scoring functions, several key resources and tools are indispensable.

Table 3: Key research reagents and resources for benchmarking scoring functions.

| Resource Name | Type | Function in Research |

|---|---|---|

| PDBbind Database | Curated Database | Provides a comprehensive collection of protein-ligand complexes with experimentally measured binding affinity data, serving as the foundation for benchmarks like CASF [5]. |

| CASF Benchmark | Standardized Benchmark | Offers a ready-to-use subset of PDBbind for the fair and standardized comparison of scoring functions' docking, scoring, ranking, and screening powers [5]. |

| CCharPPI Server | Computational Server | Allows for the evaluation of scoring functions independent of the docking process, enabling isolated assessment of the scoring step [3]. |

| Large-Scale Docking (LSD) Database | Benchmarking Database | Provides access to docking scores and results for billions of molecules across multiple targets, useful for training ML models and benchmarking screening power [8]. |

| Smina | Docking Software | A fork of AutoDock Vina that offers enhanced control over scoring terms and command-line usability, facilitating customized docking and scoring experiments [9]. |

In summary, scoring functions are indispensable tools in computational drug discovery, but their performance is highly variable and context-dependent. Empirical functions like those in MOE and Vina are widely used, but deep learning methods are emerging as powerful alternatives, particularly for the critical task of pose selection. Rigorous benchmarking using standardized protocols and databases like CASF is essential for selecting the appropriate scoring function for a specific research goal. The ongoing integration of machine learning and novel data sources like NMR chemical shifts promises to further enhance the accuracy and reliability of these computational tools.

In structure-based drug discovery, molecular docking is a pivotal technique for predicting how a small molecule (ligand) binds to a target protein. The reliability of this process depends critically on the scoring function, a mathematical model that approximates the binding affinity between the ligand and protein by calculating their interaction energy [1] [10]. Scoring functions are employed to determine the binding mode and site of a ligand, predict binding affinity, and identify potential drug leads for a given protein target [10]. Despite intensive research, accurate and rapid prediction of protein-ligand interactions remains a central challenge in molecular docking, driving continuous development and refinement of scoring methodologies [10] [11].

These functions can be conceptually categorized into four classical types: physics-based, empirical, knowledge-based, and modern machine learning-based approaches, with hybrid methods combining elements from multiple categories [10] [3]. This guide provides a comparative analysis of these scoring function paradigms, examining their theoretical foundations, performance characteristics, and practical applications in drug development workflows.

Theoretical Foundations and Classification

The four classical pillars of scoring functions each employ distinct theoretical approaches to quantify molecular interactions.

Physics-Based Scoring Functions

Physics-based scoring functions use classical force fields to calculate binding energy through fundamental physical interactions. They typically sum Van der Waals and electrostatic interactions between the protein and ligand, sometimes incorporating solvent effects, polarization, and charge features for improved accuracy [3]. These functions are often designed for use in molecular dynamics simulations and may require explicit treatment of water or an implicit solvent model [11]. The GBVI/WSA dG function in MOE (Molecular Operating Environment) represents an example of a force-field based scoring function [5]. While physically rigorous, these methods generally incur high computational costs [3].

Empirical Scoring Functions

Empirical scoring functions estimate binding affinity by summing a series of weighted energy terms parameterized to reproduce experimental binding affinities or binding poses [11]. They incorporate physically meaningful terms similar to force-field functions but may also include more complex, heuristic terms for hydrophobic and desolvation interactions not easily addressed by purely physical models [11]. The weights for different terms are typically determined using linear regression or other fitting techniques against training datasets of known protein-ligand complexes [10] [11]. Examples include London dG, ASE, Affinity dG, and Alpha HB in MOE, and the default scoring functions in AutoDock Vina and smina [11] [5]. These functions are typically less prone to overfitting due to constraints imposed by physical terms [11].

Knowledge-Based Scoring Functions

Knowledge-based (statistical-potential) scoring functions derive simplified potentials directly from structural databases using Boltzmann inversion of pairwise distances between atoms or residues in the two proteins [3]. This approach seeks to approximate complex physical interactions using large numbers of simple terms learned from existing protein-ligand complex structures [11]. However, the resulting scoring function may lack immediate physical interpretation, and the numerous terms increase overfitting risk, necessitating rigorous validation protocols [11]. Methods such as AP-PISA and CP-PIE fall into this category [3]. These functions generally offer a good balance between accuracy and speed [3].

Machine Learning-Based Scoring Functions

Machine learning (ML) and deep learning (DL) approaches represent a modern evolution beyond classical functions. These methods learn complex transfer functions that map combinations of interface features, energy terms, and accessible surface area to predict scoring functions [3]. Unlike traditional empirical functions with fixed parametric forms, ML-based functions can capture non-linear relationships between structural features and binding affinity, often demonstrating superior performance when sufficient training data is available [12]. These include random forest models and neural networks trained on structural and interaction fingerprints [12].

Hybrid Scoring Functions

Hybrid approaches combine elements from multiple scoring function categories to leverage their complementary strengths. For instance, HADDOCK incorporates terms for Van der Waals forces, electrostatic interactions, desolvation energy, and experimental data restraints [3]. PyDock balances electrostatic and desolvation energies [3]. These methods aim to overcome limitations of individual approaches through strategic combination of different scoring methodologies.

Table 1: Fundamental Characteristics of Scoring Function Types

| Function Type | Theoretical Basis | Parametrization Method | Key Advantages | Inherent Limitations |

|---|---|---|---|---|

| Physics-Based | Classical molecular mechanics | First principles | Strong physical interpretability | High computational cost |

| Empirical | Multi-parameter regression | Linear regression on experimental data | Computational efficiency; Physical terms | Dependent on training data quality |

| Knowledge-Based | Statistical mechanics | Boltzmann inversion on structural databases | Balanced accuracy/speed | Potential overfitting; Less physical interpretation |

| Machine Learning | Pattern recognition | Model training on diverse features | Handles non-linear relationships; High accuracy with sufficient data | Black-box nature; Data hunger |

| Hybrid | Combined principles | Multiple approaches | Leverages complementary strengths | Increased complexity |

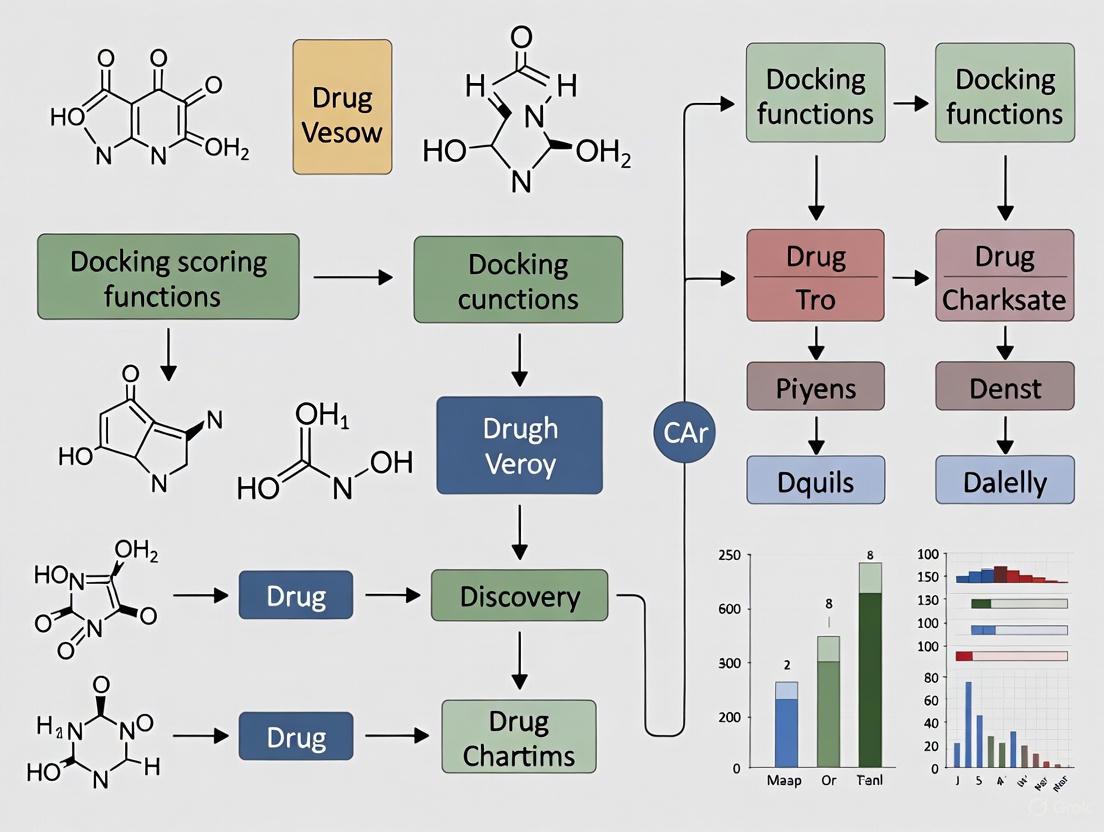

Figure 1: Classification Framework for Molecular Docking Scoring Functions

Performance Comparison and Benchmarking

Comparative Assessment Metrics

Scoring functions are typically evaluated using multiple performance metrics that reflect their capabilities in different docking scenarios:

- Pose Prediction Accuracy: Measured by the root mean square deviation (RMSD) between predicted poses and co-crystallized ligand structures, with lower RMSD values indicating better performance [1] [5].

- Binding Affinity Prediction: Assessed through correlation coefficients (R²) between predicted and experimental binding affinities [13].

- Virtual Screening Enrichment: Evaluated using metrics like Area Under the Curve (AUC) and Enrichment Factor (EF) that measure the ability to prioritize active compounds over decoys in database screening [14] [15].

- Consistency and Robustness: Performance stability across diverse protein targets and ligand chemotypes [3] [13].

Performance Across Scoring Function Types

Recent benchmarking studies reveal distinct performance patterns across scoring function categories:

Table 2: Comparative Performance of Scoring Function Types Across Benchmarks

| Function Type | Pose Prediction | Affinity Prediction | Virtual Screening | Computational Speed | Consistency Across Targets |

|---|---|---|---|---|---|

| Physics-Based | Variable [5] | Moderate (R² ~0.3-0.5) [13] | Moderate | Slow | Variable |

| Empirical | Good (BestRMSD) [5] | Moderate (R² ~0.3-0.5) [11] | Good (AUC ~0.8) [15] | Fast | Moderate |

| Knowledge-Based | Good [3] | Moderate | Good | Fast | Moderate |

| Machine Learning | Good [12] | Good (R² ~0.69) [13] | Excellent | Fast (after training) | Good |

| Hybrid | Good [3] | Moderate to Good | Good | Moderate | Good |

A pairwise comparison of five MOE scoring functions using InterCriteria Analysis revealed that London dG and Alpha HB showed the highest comparability, while the lowest RMSD was identified as the best-performing docking output metric [1] [5]. In virtual screening contexts, Glide's empirical scoring function demonstrated strong performance with an average AUC of 0.80 across 39 target systems, recovering 34% of known actives in the top 2% of screened compounds [15].

Advanced quantum-mechanical approaches like SQM2.20 show particularly strong binding affinity prediction, achieving an average R² of 0.69 across ten diverse protein targets in the PL-REX benchmark dataset, reaching accuracy similar to much more expensive density functional theory (DFT) calculations but in minutes rather than days [13].

Consensus Scoring Approaches

Consensus scoring combines multiple scoring functions to improve reliability. However, research indicates that simple consensus methods using freely available programs like AutoDock Vina, smina, and idock perform equal to or worse than the highest-scoring individual program (smina in this case) [14]. This contrasts with studies using more diverse commercial programs where consensus approaches showed benefits, suggesting that consensus scoring works best when combining fundamentally different scoring methodologies rather than similar ones [14].

Experimental Protocols and Methodologies

Standard Benchmarking Protocols

Rigorous assessment of scoring functions requires standardized benchmarking datasets and methodologies:

- Dataset Curation: High-quality datasets like CASF-2013 (195 protein-ligand complexes), CSAR-NRC HiQ (343 curated structures), and PL-REX (10 diverse protein targets) provide consistent benchmarking frameworks [11] [5] [13]. These datasets encompass diverse protein families, ligand chemotypes, and binding affinities.

- Evaluation Workflow: Standard protocols involve re-docking ligands into protein structures, generating multiple poses, scoring them with different functions, and comparing results to experimental reference data using metrics like RMSD for pose prediction and correlation coefficients for affinity prediction [1] [5].

- Cross-Validation: Proper validation requires clustered cross-validation to assess model generalizability and avoid overfitting to specific targets or ligand types [11].

Figure 2: Standard Benchmarking Workflow for Scoring Function Evaluation

Case Study: Empirical Function Development

The development of custom empirical scoring functions demonstrates a systematic methodology:

- Term Selection: Starting with a diverse set of interaction terms (Gaussian, repulsion, hydrogen bonding, hydrophobic, electrostatic, desolvation) [11].

- Parameter Optimization: Using linear regression or similar techniques to fit term weights to experimental binding affinity data [11].

- Training and Validation: Employing cross-validation on high-quality datasets like CSAR-NRC HiQ 2010 to prevent overfitting and ensure generalizability [11].

- Implementation: Integrating optimized functions into docking software like smina for practical application [11].

This approach yielded a custom scoring function that improved sampling of low RMSD poses compared to the default AutoDock Vina scoring function [11].

Case Study: Target-Specific Machine Learning Function

For target-specific applications, specialized machine learning workflows have demonstrated success:

- Data Collection: Retrieving experimental data from databases like BindingDB for the specific target of interest [12].

- Feature Engineering: Generating interaction fingerprints (IFP, SIFP) and chemical descriptors (ECFP4, ECFP6, MACCS) from protein-ligand complexes [12].

- Model Training: Applying random forest classifiers and regressors to learn relationships between features and binding affinities [12].

- Validation: Assessing performance through enrichment factor analysis and molecular dynamics simulations to verify binding stability [12].

This approach for SARS-CoV-2 3CLpro inhibitors achieved an area under the precision-recall curve of 0.80, outperforming generic scoring functions [12].

The Scientist's Toolkit

Successful scoring function development and application relies on several key resources:

Table 3: Essential Research Tools for Scoring Function Development and Application

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| MOE (Molecular Operating Environment) | Commercial Software | Provides multiple scoring functions (London dG, Alpha HB, etc.) | Drug discovery platform with diverse scoring capabilities [5] |

| smina | Open Source Software | AutoDock Vina fork optimized for scoring and custom functions | Academic research, custom scoring function development [11] |

| CSAR-NRC HiQ Dataset | Benchmark Data | 343 curated protein-ligand structures with reliable affinities | Training and validation of scoring functions [11] |

| CASF-2013 | Benchmark Data | 195 protein-ligand complexes from PDBbind database | Comparative assessment of scoring functions [5] |

| PL-REX Dataset | Benchmark Data | High-quality structures and affinities for 10 diverse targets | Rigorous validation of scoring accuracy [13] |

| CCharPPI Server | Web Service | Assessment of scoring functions independent of docking | Isolated evaluation of scoring components [3] |

| BindingDB | Database | Experimental binding affinities for target-specific applications | Training target-specific scoring functions [12] |

The comparative analysis of scoring function paradigms reveals that each approach offers distinct advantages and limitations. Empirical functions provide an effective balance of accuracy and speed for routine virtual screening, while physics-based methods offer stronger physical foundations at higher computational cost. Knowledge-based approaches deliver reasonable performance across multiple applications, and machine learning methods show promising results, particularly for target-specific applications.

The development of SQM2.20 demonstrates how semiempirical quantum-mechanical methods can bridge the gap between fast approximate functions and computationally intensive quantum calculations, achieving DFT-level accuracy in minutes rather than days [13]. Meanwhile, target-specific machine learning functions show how leveraging experimental data for particular protein targets can yield superior performance compared to generic functions [12].

Future directions in scoring function development will likely focus on hybrid approaches that combine the strengths of multiple methodologies, increased incorporation of quantum-mechanical calculations as computational resources grow, wider application of machine learning techniques to capture complex relationships, and development of improved benchmark datasets with high-quality experimental data across diverse target classes. As these methodologies evolve, scoring functions will continue to enhance their critical role in structure-based drug discovery, providing increasingly reliable predictions of molecular interactions to accelerate therapeutic development.

Molecular docking is a cornerstone of computational drug discovery, used to predict how small molecule ligands interact with protein targets. The heart of any docking protocol is its scoring function (SF), which approximates binding affinity by calculating the interaction energy between a ligand and a biomacromolecule. For decades, classical scoring functions—categorized as physics-based, empirical, or knowledge-based—have dominated the field. However, these traditional approaches often rely on simplified physical models or linear regression techniques, which have plateaued in performance for critical tasks like virtual screening (VS) and binding affinity prediction [16] [3].

The influx of large-scale structural and binding data, coupled with advances in computational power, has fueled a paradigm shift toward machine learning (ML) and deep learning (DL) scoring functions. Unlike classical functions, ML/DL SFs can learn complex, non-linear relationships directly from data, bypassing the need for pre-defined mathematical formulas or explicit physical approximations [16] [17]. This article provides a comparative performance analysis of this new paradigm, objectively evaluating ML/DL scoring functions against classical alternatives and within their own burgeoning categories. We synthesize findings from recent benchmark studies to offer drug discovery researchers a clear guide to the capabilities, optimal applications, and practical implementation of these powerful new tools.

Performance Benchmarking: Quantitative Comparisons

The superiority of ML/DL scoring functions is consistently demonstrated across multiple benchmarks, particularly in virtual screening and affinity prediction.

Virtual Screening and Binding Affinity Prediction

Table 1: Virtual Screening Performance on the DUD-E Benchmark

| Scoring Function | Type | Hit Rate (Top 1%) | Hit Rate (Top 0.1%) | Notes |

|---|---|---|---|---|

| RF-Score-VS [16] | Machine Learning (Random Forest) | 55.6% | 88.6% | Trained on 15,426 active and 893,897 inactive molecules from DUD-E. |

| CNN-Score [18] | Deep Learning (Convolutional Neural Network) | ~3x Vina's rate [18] | - | Significant improvement over classical SFs. |

| AutoDock Vina [16] | Classical (Empirical) | 16.2% | 27.5% | Baseline for comparison. |

Table 2: Performance Against Resistant Malaria Target (PfDHFR)

| Method | Variant | Best Enrichment (EF 1%) | Key Finding |

|---|---|---|---|

| PLANTS + CNN-Score [18] | Wild-Type (WT) | 28 | Best performance for WT PfDHFR. |

| FRED + CNN-Score [18] | Quadruple-Mutant (Q) | 31 | Best performance for resistant variant. |

| AutoDock Vina [18] | Both | Worse-than-random (WT) | Performance improved to better-than-random with ML re-scoring. |

The data shows that ML SFs dramatically enhance the early enrichment crucial for practical drug discovery. RF-Score-VS achieves a hit rate at the top 0.1% that is more than three times higher than Vina, demonstrating an exceptional ability to prioritize the most promising candidates [16]. Furthermore, ML re-scoring can salvage the performance of weaker docking tools, as seen with Vina, transforming their output from worse-than-random to statistically useful for screening [18]. This capability is especially valuable for challenging targets like drug-resistant enzymes.

Pose Prediction and Physical Plausibility

While ML/DL methods excel in scoring, their performance in generating physically plausible binding poses is more nuanced. A comprehensive 2025 evaluation of docking methods across the Astex diverse set, PoseBusters benchmark, and DockGen dataset reveals a critical performance hierarchy [17].

Table 3: Docking Pose Accuracy and Physical Validity (Combined Success Rate: RMSD ≤ 2 Å & Physically Valid)

| Method Category | Example Methods | Astex Diverse Set | PoseBusters (Unseen) | DockGen (Novel Pockets) |

|---|---|---|---|---|

| Traditional Methods | Glide SP | ~61% (est.) | High | Maintains >94% physical validity [17] |

| Hybrid Methods | Interformer | ~55% (est.) | Moderate | Better balance than pure DL [17] |

| Generative Diffusion | SurfDock, DiffBindFR | ~61% (SurfDock) | ~39% (SurfDock) | ~33% (SurfDock) |

| Regression-Based DL | KarmaDock, GAABind | Lowest | Lowest | Lowest |

This analysis reveals that generative diffusion models like SurfDock achieve superior pose accuracy (e.g., >75% RMSD ≤ 2 Å across benchmarks), but often produce poses with steric clashes or incorrect hydrogen bonding, leading to low physical validity [17]. In contrast, traditional methods and hybrid approaches (AI scoring with traditional conformational search) offer the best balance between accurate and physically plausible pose generation. Regression-based DL models, which directly predict ligand coordinates, frequently fail to produce chemically valid structures [17].

Experimental Protocols and Methodologies

Benchmarking studies follow rigorous protocols to ensure fair and generalizable comparisons. Understanding these methodologies is key to interpreting the data.

Common Benchmarking Datasets

- DUD-E (Directory of Useful Decoys - Enhanced): A standard benchmark for virtual screening. It contains 102 targets, each with a set of confirmed active molecules and "decoys"—physically similar but topologically distinct molecules presumed to be inactive [16]. This tests a SF's ability to discriminate true binders from non-binders.

- CASF (Comparative Assessment of Scoring Functions): A core set from the PDBbind database, often used to evaluate scoring power (binding affinity prediction), ranking power (relative ranking of ligands), docking power (pose prediction), and screening power [1] [19].

- DEKOIS: Another virtual screening benchmark set, used for cross-validation and testing generalizability [16] [18].

Validation Strategies

To prevent overfitting and ensure model generalizability, especially for ML/DL methods, studies employ strict cross-validation strategies [16]:

- Per-Target: A separate ML model is trained and tested exclusively on data (actives and decoys) for a single protein target.

- Horizontal Split: Training and test sets contain data from all targets, simulating a scenario where known ligands exist for the targets being screened.

- Vertical Split: Training and test sets contain data from completely different targets. This represents the most challenging scenario of predicting binding for a protein with no known ligands and tests the model's ability to generalize to novel targets.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Software and Resources for Scoring Function R&D

| Resource Name | Type/Function | Brief Description |

|---|---|---|

| MolScore [20] | Benchmarking & Evaluation Framework | An open-source Python framework that unifies scoring, evaluation, and benchmarking for generative models and de novo drug design. |

| CCharPPI [3] | Scoring Evaluation Server | A web server that allows for the assessment of scoring functions independent of their native docking programs. |

| RF-Score-VS [16] | Machine Learning SF | A ready-to-use random forest-based scoring function optimized for virtual screening performance. |

| CNN-Score [18] | Deep Learning SF | A convolutional neural network-based scoring function that shows consistent improvement in virtual screening enrichment. |

| PoseBusters [17] | Validation Toolkit | A toolkit to systematically evaluate docking predictions for physical and chemical plausibility, complementing RMSD metrics. |

| DOCKSTRING [20] | Benchmarking Suite | A benchmark suite that includes docking tasks against specific protein targets for evaluating generative models. |

Integrated Workflows and Logical Relationships

The application of ML/DL scoring functions often involves a multi-step workflow, from data preparation to final evaluation. The diagram below outlines this process and the role of different scoring function types.

Discussion and Future Directions

The evidence unequivocally positions ML and DL scoring functions as the superior choice for virtual screening and binding affinity prediction, offering substantial performance gains over classical methods [16] [18]. However, the paradigm is not without its challenges. A significant hurdle is generalization. ML/DL models can struggle when encountering proteins or binding pockets that are underrepresented in their training data, limiting their application in novel target discovery [17]. Furthermore, as the pose prediction analysis shows, physical plausibility remains a concern for many pure DL methods, particularly regression-based models [17].

The future of scoring functions lies in addressing these limitations. Promising directions include:

- Hybrid Methods: Combining the robust pose generation of traditional search algorithms with the superior scoring power of AI, as seen in methods like Interformer, offers a balanced and practical solution [17].

- Physics-Informed ML: Integrating physical principles and energy terms into ML models, as explored in frameworks like DockBind, could enhance the physical realism and generalizability of predictions [21].

- Focus on Data Quality and Diversity: Improving the size, quality, and chemical/structural diversity of training datasets is paramount for building more robust models that perform reliably across the proteome.

In conclusion, while classical scoring functions still hold value for certain tasks like generating physically sound initial poses, the new paradigm of ML/DL scoring is here to stay. For researchers aiming to maximize the success of their virtual screening campaigns, leveraging ML/DL functions for re-scoring docking poses is no longer an advanced tactic but a necessary standard.

Molecular docking is a cornerstone of modern computational drug discovery, enabling researchers to predict how small molecules (ligands) interact with biological targets (proteins) [5]. The accuracy of these predictions hinges critically on scoring functions, which are mathematical algorithms used to predict the binding affinity and orientation of a ligand within a protein's binding site [3]. These functions approximate the complex energetics of molecular interactions, and their performance directly impacts the success of virtual screening and structure-based drug design [5].

Scoring functions can be broadly classified into four main categories, each with a distinct theoretical foundation for assessing protein-ligand complexes. Physics-based functions use classical force fields to calculate interactions, while empirical functions sum weighted energy terms derived from experimental data [5] [3]. Knowledge-based methods employ statistical potentials from databases of known structures, and the emerging Machine Learning/Deep Learning (ML/DL) approaches learn complex relationships directly from data [3]. The following diagram illustrates the logical relationships and classification of these primary scoring function types.

Selecting an appropriate scoring function is a significant challenge for researchers. This guide provides an objective, data-driven comparison of the major scoring approaches, detailing their inherent strengths and weaknesses to inform method selection in drug discovery projects.

Comparative Analysis of Scoring Function Categories

The table below summarizes the core characteristics, strengths, and weaknesses of the four primary scoring function categories.

Table 1: Comparative overview of scoring function categories

| Category | Theoretical Basis | Key Strengths | Inherent Weaknesses |

|---|---|---|---|

| Physics-Based [3] | Classical force fields (van der Waals, electrostatics) | Strong theoretical foundation; good transferability | High computational cost; limited by implicit solvation models |

| Empirical-Based [5] [3] | Linear regression to experimental binding affinities | Fast calculation; optimized for binding affinity prediction | Risk of overfitting; limited to represented chemical space in training set |

| Knowledge-Based [3] | Statistical potentials from structural databases (e.g., PDB) | Good balance of speed and accuracy; no need for parameter fitting | Dependence on database quality and size; limited by data completeness |

| Machine Learning/Deep Learning [3] | Complex non-linear models trained on structural and energy data | High potential accuracy; ability to capture complex patterns | Large data requirements; "black box" nature; potential poor generalization |

In-Depth Category Performance

Empirical-Based Functions

These functions, such as London dG and Alpha HB in MOE software, calculate binding affinity by summing up a series of weighted energy terms describing hydrogen bonding, hydrophobic interactions, and entropy loss [5]. A pairwise comparison study using InterCriteria Analysis (ICrA) on the CASF-2013 benchmark revealed that Alpha HB and London dG showed the highest comparability, suggesting consistent performance across a diverse set of protein-ligand complexes [5] [1]. Their primary strength is computational efficiency, making them suitable for high-throughput virtual screening. However, their performance can degrade when applied to protein complexes or ligand chemotypes not well-represented in their training data [3].

Knowledge-Based Functions

Knowledge-based scoring functions offer a favorable balance between accuracy and computational speed [3]. Methods like AP-PISA and SIPPER leverage the growing repository of protein structures in the Protein Data Bank (PDB) to derive statistical potentials [3]. They operate on the principle that frequently observed atomic interactions in experimental structures are likely to be energetically favorable. A key advantage is that they do not require explicit parameter fitting for different energy terms. Their main limitation is their dependency on the completeness and quality of the underlying structural database, which can lead to biases against novel protein complexes or rare interaction types [3].

Physics-Based Functions

Physics-based functions, such as GBVI/WSA dG in MOE, use explicit physical energy terms like van der Waals forces and electrostatics to calculate interaction energies [5] [3]. These methods have a strong theoretical foundation and are generally more transferable across different systems. However, they suffer from high computational costs and often rely on simplified approximations for solvation effects and entropy, which can limit their predictive accuracy [3]. They are often used for detailed analysis of a limited number of candidate complexes rather than initial high-throughput screening.

Machine/Deep Learning-Based Functions

ML/DL approaches represent the cutting edge, using algorithms to learn complex scoring functions directly from data. These models can integrate a wide variety of features, including interface characteristics, energy terms, and solvent-accessible surface areas [3]. Their key strength is the potential to capture complex, non-linear relationships that classical functions might miss, leading to higher accuracy. For instance, some 3D convolutional neural network (3D-CNN) models have been successfully validated on the CASF-2013 benchmark [5]. The drawbacks include their "black box" nature, which makes interpretation difficult, and a high risk of poor generalization if the model is applied to data outside its training distribution [3].

Quantitative Performance Data from Benchmark Studies

Robust benchmarking is essential for an objective comparison. The CASF (Comparative Assessment of Scoring Functions) benchmark is a widely accepted standard for evaluating scoring functions [5]. The table below summarizes key quantitative findings from recent benchmark studies, including the CASF-2013 dataset and larger-scale surveys.

Table 2: Key performance metrics of selected scoring functions from benchmark studies

| Scoring Function | Category | Key Performance Metric | Result | Context & Dataset |

|---|---|---|---|---|

| FMS (DOCK) [22] | Hybrid (Pharmacophore + Energy) | Pose Reproduction Success | 93.5% (20% increase vs. SGE) | SB2012 database (1,043 complexes) |

| FMS + SGE (DOCK) [22] | Hybrid | Pose Reproduction Success | 98.3% | SB2012 database (1,043 complexes) |

| Alpha HB & London dG (MOE) [5] [1] | Empirical | Pairwise Comparability (ICrA) | Highest | CASF-2013 dataset (195 complexes) |

| BestRMSD (MOE) [5] | N/A (Docking Output) | Docking Output Performance | Best-performing | CASF-2013 dataset (195 complexes) |

| Classical Methods (e.g., ZRANK2, FireDock) [3] | Empirical / Knowledge-Based | Runtime | Fast | Large-scale docking applications |

| DL-based Methods [3] | Machine/Deep Learning | Runtime | Variable (can be high) | Large-scale docking applications |

Insights from Specialized Scoring Approaches

The Pharmacophore Matching Similarity (FMS) scoring function in DOCK demonstrates the power of combining geometric and chemical feature matching with traditional energy scoring. When used alone, FMS dramatically improved pose reproduction success by approximately 20% compared to the standard grid energy (SGE) score. When combined with SGE, the success rate reached 98.3% across 1,043 protein-ligand complexes [22]. This highlights a major strength: the ability to leverage known inhibitor geometries to guide docking. Its weakness may lie in its dependency on a well-defined reference pharmacophore, which might not be available for all targets.

Experimental Protocols for Benchmarking

To ensure the reproducibility and reliability of scoring function evaluations, standardized experimental protocols are used. The following diagram outlines a typical workflow for a comparative assessment study.

Detailed Methodology

The typical benchmark study involves several critical stages:

Dataset Curation: A high-quality, diverse set of protein-ligand complexes with known 3D structures and binding affinity data is essential. The CASF-2013 benchmark subset of the PDBbind database, containing 195 carefully selected protein-ligand complexes, is a prime example [5] [1]. This diversity ensures that scoring functions are tested across various protein families and ligand chemotypes.

Molecular Docking and Pose Generation: For each complex in the dataset, the native ligand is re-docked into its protein's binding site. Studies often save numerous candidate poses (e.g., 30) per ligand to test the scoring function's ability to identify the correct conformation [5].

Performance Metrics and Outputs: The evaluation typically uses multiple docking outputs to assess different capabilities:

- Pose Reproduction (BestRMSD): The lowest Root-Mean-Square Deviation (RMSD) between any predicted pose and the co-crystallized experimental structure. This measures the sampling capability and the scoring function's ability to identify a correct geometry. This metric was identified as the best-performing docking output in the MOE study [5].

- Scoring Power: The correlation between the best docking score (BestDS) and the experimentally measured binding affinity (e.g., -logKd/Ki). This assesses the function's ability to predict binding strength [5].

- Ranking Power: The ability to correctly rank-order multiple ligands based on their predicted affinity against a single target.

Data Analysis: Advanced analysis techniques, such as InterCriteria Analysis (ICrA), can be applied to perform pairwise comparisons of scoring functions and reveal complex relationships not always captured by simple correlation analysis [5].

Successful docking studies rely on a suite of software tools, datasets, and computational resources. The table below details key components of the modern computational scientist's toolkit.

Table 3: Essential resources for docking and scoring function research

| Resource Name | Type | Primary Function in Research | Relevance to Scoring |

|---|---|---|---|

| PDBbind Database [5] | Database | Comprehensive collection of protein-ligand complexes with binding affinity data. | Provides curated data for training empirical and knowledge-based functions and for benchmark tests. |

| CASF Benchmark [5] [1] | Benchmark Set | Standardized subset of PDBbind for comparative assessment of scoring functions. | Enables objective, head-to-head performance comparison of different scoring methods. |

| Molecular Operating Environment (MOE) [5] | Software Suite | Integrated drug discovery platform with multiple embedded scoring functions. | Contains five scoring functions (London dG, ASE, Affinity dG, Alpha HB, GBVI/WSA dG) for direct comparison. |

| DOCK [22] | Docking Software | Structure-based design program supporting various scoring functions, including FMS. | Allows for pharmacophore-based and energy-based scoring, including hybrid approaches. |

| CCharPPI Server [3] | Web Server | Community server for computational scoring of protein-protein complexes. | Enables the evaluation of scoring functions independent of the docking process. |

| InterCriteria Analysis (ICrA) [5] | Analysis Method | Multi-criterion decision-making approach for pairwise comparison. | Helps reveal nuanced relations and comparability between different scoring functions. |

From Theory to Practice: Implementing Docking and Scoring in Virtual Screening

This guide provides an objective comparison of molecular docking workflows, focusing on the critical steps of protein preparation and pose generation. We synthesize data from recent benchmarking studies to help you select the most effective protocols and tools for your drug discovery projects.

Workflow Components and Scoring Function Fundamentals

A robust molecular docking workflow is essential for accurate prediction of how small molecule ligands interact with protein targets. This process typically involves protein preparation, ligand preparation, docking simulation, and pose scoring. The scoring function, which approximates the binding affinity by calculating the interaction energy between a ligand and a protein, is a key element determining the success of docking protocols [5].

Scoring functions are generally categorized into four main types [23]:

- Physics-based functions calculate binding energy using classical force fields, summing Van der Waals and electrostatic interactions.

- Empirical functions estimate binding affinity by summing weighted energy terms parameterized against experimental data.

- Knowledge-based functions use statistical potentials derived from pairwise atom distances in known structures.

- Machine Learning (ML)-based functions learn complex relationships between structural features and binding affinities from large datasets.

Each category offers distinct trade-offs between computational speed, accuracy, and physical interpretability. The choice of scoring function directly impacts the reliability of virtual screening and binding mode prediction [23].

Quantitative Comparison of Docking Performance

Benchmarking studies provide critical data on the performance of various docking programs and scoring functions. The tables below summarize key metrics from recent comprehensive evaluations.

Table 1: Docking program performance on COX-1 and COX-2 enzymes for pose prediction [24]

| Docking Program | Success Rate (RMSD < 2 Å) | Key Characteristics |

|---|---|---|

| Glide | 100% | Outstanding pose prediction accuracy |

| GOLD | 82% | Reliable performance |

| AutoDock | 75% | Widely used, moderate performance |

| FlexX | 59% | Lower success rate |

| Molegro Virtual Docker (MVD) | Not specified in top performers | Included in initial evaluation |

Table 2: Performance comparison of MOE scoring functions on CASF-2013 benchmark [5]

| MOE Scoring Function | Type | Key Findings from Pairwise Comparison |

|---|---|---|

| Alpha HB | Empirical | Highest comparability with London dG |

| London dG | Empirical | Highest comparability with Alpha HB |

| ASE | Empirical | Performance varies by output metric |

| Affinity dG | Empirical | Performance varies by output metric |

| GBVI/WSA dG | Force-field | Performance varies by output metric |

Table 3: Virtual screening performance on DUD-E benchmark (102 targets) [16]

| Scoring Function | Type | Hit Rate at Top 1% | Hit Rate at Top 0.1% | Pearson Correlation |

|---|---|---|---|---|

| RF-Score-VS | Machine Learning | 55.6% | 88.6% | 0.56 |

| AutoDock Vina | Empirical | 16.2% | 27.5% | -0.18 |

The data reveals that machine-learning scoring functions like RF-Score-VS can substantially outperform classical functions in virtual screening scenarios, showing remarkable enrichment of active compounds, particularly in the top percentage of ranked molecules [16]. For pose prediction, Glide demonstrated exceptional performance in correctly predicting binding modes of COX inhibitors [24].

Experimental Protocols and Benchmarking Methodologies

Dataset Curation and Preparation

Robust benchmarking requires high-quality, curated datasets. The CASF-2013 benchmark subset of the PDBbind database provides a standardized set of 195 protein-ligand complexes with binding affinity data [5]. The DUD-E (Directory of Useful Decoys: Enhanced) dataset offers 102 protein targets with active ligands and property-matched decoys, enabling virtual screening performance assessment [16].

Protein preparation typically involves:

- Removing redundant chains, water molecules, and ions

- Adding missing hydrogen atoms and correcting protonation states

- Generating appropriate receptor grids centered on binding sites

Ligand preparation includes:

- Generating 3D conformations from molecular structures

- Assigning appropriate bond orders and formal charges

- Energy minimization and tautomer enumeration

Docking and Evaluation Protocols

For pose prediction, the root mean square deviation (RMSD) between docked poses and experimental reference structures serves as the primary metric. An RMSD value below 2.0 Å generally indicates successful docking [24].

Studies typically evaluate multiple docking outputs [5]:

- Best docking score: The most favorable predicted affinity

- Best RMSD: The lowest RMSD between predicted and native poses

- RMSD of best-score pose: The accuracy of the top-ranked pose

- Score of best-RMSD pose: The affinity prediction for the most accurate pose

For virtual screening, performance is measured using:

- Enrichment factors: The fold-enrichment of active compounds at early screening stages

- Receiver Operating Characteristic (ROC) curves: Plotting true positive rate against false positive rate

- Area Under the Curve (AUC): Overall performance metric with values from 0.5 (random) to 1.0 (perfect)

Cross-validation strategies are critical for machine-learning scoring functions [16]:

- Per-target: Training and testing on the same target

- Horizontal split: Training and testing sets contain different ligands from the same targets

- Vertical split: Training and testing on completely different protein targets

Workflow Visualization

The following diagram illustrates the key stages and decision points in a robust docking workflow:

Research Reagent Solutions

Table 4: Essential tools for molecular docking workflows

| Tool/Category | Representative Examples | Primary Function |

|---|---|---|

| Commercial Software | MOE (Molecular Operating Environment), Glide, GOLD | Integrated docking platforms with multiple scoring functions |

| Open-Source Docking Tools | AutoDock, AutoDock Vina, smina, DOCK | Molecular docking with customizable parameters |

| Specialized Scoring Functions | RF-Score-VS, NNScore, SFCscore | Machine-learning based scoring and ranking |

| Benchmark Datasets | CASF-2013, CSAR-NRC HiQ, DUD-E | Standardized datasets for method validation |

| Protein Preparation Tools | AutoDock Tools, Schrodinger Protein Preparation Wizard | Structure cleanup, protonation, and optimization |

| Ligand Preparation Tools | OpenBabel, Omega, LigPrep | 2D to 3D conversion, tautomer generation, energy minimization |

Establishing a robust workflow from protein preparation to pose generation requires careful consideration of both the docking tools and scoring functions. The benchmarking data presented reveals that while tools like Glide excel in pose prediction, machine-learning scoring functions like RF-Score-VS offer substantial advantages in virtual screening enrichment.

The optimal workflow depends on the specific research goal: pose prediction versus virtual screening. For pose prediction, emphasis should be placed on sampling algorithms and their integration with accurate scoring functions. For virtual screening, machine-learning scoring functions trained on appropriate data provide superior enrichment of active compounds. By implementing the standardized protocols and controls outlined in this guide, researchers can enhance the reliability and reproducibility of their molecular docking studies.

The accurate prediction of how a small molecule (ligand) binds to a protein target is a cornerstone of structure-based drug design. Central to this molecular docking process are scoring functions, which are mathematical models used to predict the binding affinity and orientation of a ligand within a protein's binding site. The reliability of these scoring functions directly impacts the success of virtual screening and lead optimization campaigns. Given the proliferation of both classical and machine learning-based scoring functions, the question of how to objectively evaluate and compare their performance has become paramount. This is where public benchmark data sets play an indispensable role. These standardized collections of protein-ligand complexes provide a common framework for the comparative assessment of scoring algorithms, enabling researchers to identify strengths, weaknesses, and optimal use cases for different docking tools. This guide focuses on two of the most influential benchmarks in the field: the Directory of Useful Decoys, Enhanced (DUD-E) and the Comparative Assessment of Scoring Functions (CASF) benchmark, detailing their composition, proper application, and how they are used to objectively quantify performance in molecular docking.

Core Public Data Sets for Docking Validation

Directory of Useful Decoys, Enhanced (DUD-E)

The Directory of Useful Decoys, Enhanced (DUD-E) was developed to address limitations identified in its predecessor, DUD. It serves as a community standard for benchmarking docking programs in virtual screening tasks, which focus on distinguishing potential active compounds from non-binders.

- Design and Composition: DUD-E contains 102 targets across diverse protein classes, including kinases, proteases, nuclear receptors, GPCRs, and ion channels. It comprises 22,886 clustered ligands drawn from ChEMBL, each with confirmed binding affinity. A key feature is that each ligand is paired with 50 property-matched decoys—molecules that are physically similar to the ligands (in terms of molecular weight, logP, number of rotatable bonds, and hydrogen-bond donors/acceptors) but are topologically dissimilar to minimize the likelihood of actual binding. This design creates a challenging and unbiased benchmark for testing a scoring function's ability to enrich true ligands amid a background of deceivingly similar non-binders [25].

- Primary Application: The principal metric for evaluation on DUD-E is enrichment, which measures how effectively a scoring function prioritizes known ligands over decoys in a virtual screening workflow. DUD-E is particularly valued for its focus on screening power [26] [27].

Comparative Assessment of Scoring Functions (CASF)

The Comparative Assessment of Scoring Functions (CASF) benchmark, built upon the PDBbind database, is designed for the comprehensive evaluation of scoring functions across multiple capabilities beyond just virtual screening.

- Design and Composition: The CASF benchmark (e.g., CASF-2013, CASF-2016) is a high-quality curated set of hundreds of protein-ligand complexes with experimentally determined binding structures and affinities [5]. Its "core set" is selected to maximize structural diversity and the quality of experimental data.

- Primary Application: CASF provides a framework for evaluating scoring functions across four distinct, critical metrics [26] [27]:

- Scoring Power: The linear correlation between predicted scores and experimentally measured binding affinities.

- Ranking Power: The capability to correctly rank the binding affinities of different ligands for a single target.

- Docking Power: The ability to identify the native binding pose (or one close to it) from a set of computer-generated decoy poses.

- Screening Power: Similar to DUD-E, it evaluates the enrichment of known binders over non-binders.

The following table summarizes the key characteristics of these two benchmark data sets.

Table 1: Key Characteristics of DUD-E and CASF Benchmark Data Sets

| Feature | DUD-E | CASF |

|---|---|---|

| Primary Purpose | Virtual Screening / Enrichment | Holistic Scoring Function Assessment |

| Core Application | Screening Power | Scoring, Ranking, Docking, & Screening Power |

| Ligands | 22,886 clustered ligands with known activity [25] | Hundreds of complexes with binding affinity data [5] |

| Decoys | 50 property-matched decoys per ligand [25] | Computer-generated decoy poses; non-binders |

| Key Metrics | Enrichment (e.g., AUC, early enrichment) | Pearson's R (Scoring), Spearman's ρ (Ranking), Success Rate (Docking) [26] |

| Target Diversity | 102 targets, including GPCRs & ion channels [25] | Diverse set from the PDBbind database |

Experimental Protocols for Benchmarking

To ensure reproducible and objective comparisons, standardized protocols are employed when using DUD-E and CASF.

Benchmarking with DUD-E

A typical virtual screening benchmark using DUD-E follows a structured workflow, illustrated below.

The process involves preparing the DUD-E data for a specific target, which includes the known active ligands and their matched decoys. The docking program and its scoring function are then used to rank the entire combined set of actives and decoys. The resulting ordered list is analyzed to compute enrichment metrics. A common and telling metric is the area under the receiver operating characteristic curve (AUC), where a perfect enrichment yields an AUC of 1.0 and random performance gives an AUC of 0.5. Early enrichment, such as the fraction of true actives recovered in the top 1% or 2% of the ranked list, is often considered even more critical for assessing practical utility in large-scale virtual screens [15].

Benchmarking with CASF

The CASF benchmark employs a more multi-faceted workflow to evaluate the four key powers of a scoring function.

For scoring power, the scoring function is applied to the native crystal structures of the complexes in the CASF core set. The predicted scores are then correlated against the experimental binding affinities (e.g., K(d) or K(i)) using Pearson's correlation coefficient (R). For ranking power, the function is used to score multiple ligands for a single target, and the ranking of these ligands by score is compared to their experimental ranking using Spearman's rank correlation coefficient (ρ). For docking power, a set of decoy poses (including a near-native pose) is generated for each complex. The scoring function's ability to identify the near-native pose as the best-scoring one is measured as a success rate. Finally, the screening power test evaluates the function's ability to identify true binders for a target from a pool of non-binders [26] [27].

Comparative Performance Data

Benchmarking studies consistently reveal that no single scoring function excels across all tasks, highlighting a performance trade-off.

Performance on DUD-E

Studies using DUD-E demonstrate that performance can vary significantly. For instance, in one evaluation, the Glide (SP) docking program achieved an average AUC of 0.80 across 39 targets from the original DUD set. In terms of early enrichment, it recovered an average of 25% of known actives in the top 1% of its ranked list [15]. This level of performance is considered robust, though top-performing methods can achieve higher metrics. Newer machine-learning consensus methods, such as CoBDock, also report strong performance on DUD-E, leveraging multiple docking algorithms to improve accuracy [28].

Performance on CASF

The multi-faceted nature of CASF makes it an excellent tool for revealing the specialized strengths of different scoring functions. The table below summarizes a hypothetical comparison based on trends observed in the literature.

Table 2: Hypothetical Comparative Performance of Different Scoring Function Types on CASF Metrics

| Scoring Function Type | Scoring Power (Pearson's R) | Docking Power (Top 1 Success Rate) | Screening Power (Enrichment Factor) | Notes |

|---|---|---|---|---|

| Classical Empirical (e.g., GlideScore) | Moderate (~0.6) | High (>85%) | High | Balanced performance for docking & screening [26] [15] |

| ML-based Regression Models | High (>0.8) | Low to Moderate | Low | Excellent affinity prediction, poor pose ID [26] [27] |

| ML-based with Δ-ML/Data Augmentation | High (>0.8) | High (>85%) | High | Balanced, high performance across tasks [26] |

| Knowledge-based/Statistical | Moderate | Moderate | Moderate | Good balance of speed and accuracy [23] |

A specific 2025 study comparing the five scoring functions within the Molecular Operating Environment (MOE) software on the CASF-2013 set found that the lowest RMSD (BestRMSD) was the best-performing docking output for pose prediction. Furthermore, the two empirical scoring functions, Alpha HB and London dG, demonstrated the highest comparability and performance in their analysis [1] [5].

The Scientist's Toolkit: Essential Research Reagents

To conduct rigorous docking benchmarks, researchers rely on a suite of publicly available data and software.

Table 3: Essential Resources for Docking Benchmarking Studies

| Resource Name | Type | Primary Function in Benchmarking | Access |

|---|---|---|---|

| DUD-E | Benchmark Data Set | Provides targets, active ligands, and property-matched decoys for virtual screening enrichment tests [25]. | http://dude.docking.org |

| PDBbind & CASF | Benchmark Data Set | Provides a comprehensive collection of protein-ligand complexes with binding affinities for holistic scoring function assessment [26]. | http://www.pdbbind.org.cn |

| Smiles2Dock | Benchmark Data Set | A large-scale, ML-ready dataset with docking scores for over 1.7M ligands against 15 AlphaFold2 proteins [29]. | https://huggingface.co/datasets/tlemenestrel/Smiles2Dock |

| AutoDock Vina | Docking Software | A widely used, open-source docking program often used as a baseline or component in consensus methods [28]. | http://vina.scripps.edu |

| P2Rank | Cavity Detection Tool | Predicts ligand binding sites on protein structures, often used to guide blind docking protocols [29] [28]. | https://github.com/rdk/p2rank |

| RDKit | Cheminformatics Toolkit | An open-source toolkit for cheminformatics used for ligand preparation, descriptor calculation, and file format conversion [27]. | https://www.rdkit.org |

The rigorous and objective evaluation of molecular docking scoring functions is a critical component of methodological development in computational drug discovery. Public benchmark data sets, most notably DUD-E for virtual screening enrichment and the CASF benchmark for comprehensive multi-task assessment, provide the essential, standardized playgrounds for this evaluation. The consistent application of these benchmarks reveals a clear landscape: classical force-field and empirical functions often provide robust, balanced performance, while modern machine-learning-based functions can achieve superior results in specific tasks, such as binding affinity prediction. The emerging trend is towards balanced multi-task scoring functions, often leveraging machine learning to correct classical scores or to create novel, physics-informed models. For practitioners, the choice of a scoring function should be guided by its proven performance on these benchmarks in the specific task of interest—be it pose prediction, affinity ranking, or virtual screening—ensuring that computational predictions are built upon a foundation of validated performance.

Molecular docking is a cornerstone of computational drug discovery, enabling researchers to predict how small molecule ligands interact with protein targets. While docking algorithms can generate numerous potential binding poses, a critical bottleneck remains: the scoring function (SF) that evaluates these poses and predicts binding affinity. Traditional SFs, whether physics-based, empirical, or knowledge-based, often struggle with accuracy due to their simplified treatment of complex molecular interactions and their reliance on predetermined functional forms [30]. This limitation directly impacts the reliability of virtual screening (VS) campaigns, where the ability to distinguish true binders from non-binders is paramount.

The emergence of machine learning (ML) has introduced a paradigm shift in scoring function development. Unlike classical approaches, ML scoring functions do not assume a fixed relationship between structural features and binding affinity. Instead, they infer this relationship directly from experimental data, capturing complex, non-linear patterns that traditional methods miss [30]. This review explores the powerful strategy of combining conventional docking tools with ML-based rescoring, presenting a comprehensive analysis of performance gains, practical methodologies, and future directions for this integrated approach.

Performance Comparison: Traditional Docking vs. ML-Rescoring

Quantitative benchmarks demonstrate that ML rescoring consistently enhances virtual screening performance across diverse protein targets. The following tables summarize key findings from recent large-scale evaluations.

Table 1: Virtual Screening Enrichment (EF1%) for PfDHFR Antimalarial Target [18]

| Docking Method | Rescoring SF | Wild-Type EF1% | Quadruple-Mutant EF1% |

|---|---|---|---|

| PLANTS | CNN-Score | 28 | - |

| FRED | CNN-Score | - | 31 |

| AutoDock Vina | None (Default) | Worse-than-random | - |

| AutoDock Vina | RF/CNN | Better-than-random | - |

Table 2: Tiered Performance of Docking Paradigms (CASF Benchmark) [17]

| Performance Tier | Method Class | Representative Examples | Key Characteristics |

|---|---|---|---|

| 1 (Best) | Traditional Methods | Glide SP | High physical validity (>94% PB-valid) |

| 2 | Hybrid AI Scoring | Interformer | Balanced pose accuracy and validity |

| 3 | Generative Diffusion | SurfDock, DiffBindFR | Superior pose accuracy (>70% RMSD ≤2Å) |

| 4 | Regression-based Models | KarmaDock, QuickBind | Often produce physically invalid poses |

Table 3: Performance Metrics for Machine Learning Scoring Functions [31]

| Scoring Function | Baseline SF | ML Method | Scoring Power (R) | Screening Power |

|---|---|---|---|---|

| ΔLin_F9XGB | Lin_F9 | XGBoost | 0.853 (local optimized poses) | Superior on LIT-PCBA dataset |

| ΔVinaXGB | AutoDock Vina | XGBoost | Top performer on CASF-2016 | Robust across tasks |

| ΔVinaRF20 | AutoDock Vina | Random Forest | High | Good screening power |

Experimental Protocols for ML Rescoring

Standard Rescoring Workflow

The typical rescoring pipeline involves sequential execution of traditional docking followed by ML-based evaluation. A recent benchmarking study on PfDHFR inhibitors exemplifies this protocol [18]:

Protein Preparation: Crystal structures (PDB IDs: 6A2M for wild-type, 6KP2 for quadruple-mutant) are prepared by removing water molecules, unnecessary ions, and redundant chains. Hydrogen atoms are added and optimized using tools like OpenEye's "Make Receptor".

Ligand and Decoy Preparation: Active compounds and decoy molecules from the DEKOIS 2.0 benchmark set are prepared using Omega and OpenBabel to generate multiple conformations and appropriate file formats.

Traditional Docking: Three docking tools—AutoDock Vina, PLANTS, and FRED—are used to generate poses. The docking grid is defined to encompass the entire binding site.

ML Rescoring: The generated poses are rescored using pretrained ML SFs (CNN-Score and RF-Score-VS v2) without modifying the poses themselves.

Performance Evaluation: Enrichment factors (EF1%), pROC curves, and chemotype enrichment are calculated to quantify screening performance.

Delta Machine Learning Protocol

The Δ-machine learning approach has emerged as a particularly effective strategy for developing robust SFs [31]. This method learns a correction term to an existing baseline SF, leveraging the physical principles embedded in classical functions while enhancing accuracy with ML:

Training Set Construction: Curate a diverse set of protein-ligand complexes with experimental binding affinities (e.g., from PDBbind). Include crystal poses, locally optimized poses, and docked poses to ensure robustness.

Feature Engineering: Develop comprehensive feature sets encompassing protein-ligand interaction descriptors (e.g., polar-polar, polar-nonpolar, and nonpolar-nonpolar interactions in different distance ranges) and ligand-specific features.

Model Training: Employ ML algorithms like XGBoost to train a model that predicts the difference between experimental binding affinities and baseline SF predictions.

Validation: Rigorously test the resulting Δ-SF using benchmarks like CASF-2016 that evaluate scoring, ranking, docking, and screening power across diverse protein families.

ML Rescoring Workflow

The Scientist's Toolkit: Essential Research Reagents and Software

Table 4: Key Research Tools for Docking and ML Rescoring

| Tool Name | Type | Primary Function | Application Context |

|---|---|---|---|

| AutoDock Vina | Docking Program | Conformational search and scoring | Generating initial ligand poses |

| PLANTS | Docking Program | Protein-ligand docking | Pose generation with swarm algorithm |

| FRED | Docking Program | Exhaustive docking | High-throughput pose generation |

| CNN-Score | ML Scoring Function | Pose rescoring using CNN | Improving virtual screening enrichment |

| RF-Score-VS v2 | ML Scoring Function | Random forest-based scoring | Distinguishing actives from decoys |

| ΔLin_F9XGB | ML Scoring Function | Delta machine learning SF | Superior scoring/ranking across pose types |

| PDBbind | Database | Curated binding affinity data | Training and benchmarking SFs |

| DEKOIS 2.0 | Benchmark Set | Active/decoy complexes | Virtual screening performance evaluation |

Discussion and Future Perspectives

The integration of ML rescoring with traditional docking represents a significant advancement in structure-based drug design. The empirical evidence consistently demonstrates that this combined approach outperforms either method in isolation. ML SFs excel at leveraging large datasets to identify complex binding patterns, while traditional docking provides physically plausible starting conformations [30] [18].

Despite these promising results, important limitations and research challenges remain. First, the generalization of ML SFs to novel protein targets or binding pockets outside their training distribution requires further investigation [17]. Second, while ML rescoring improves enrichment, some deep learning methods generate poses with questionable physical validity despite favorable RMSD values [17]. Finally, the computational cost of some ML approaches may limit their application to ultra-large libraries, though this continues to improve with hardware and algorithmic advances.

Future developments will likely focus on target-specific SFs that leverage transfer learning for improved performance on novel targets, multi-task learning that incorporates additional biological data, and explainable AI approaches to interpret the structural basis of ML predictions. The field is also moving toward end-to-end deep learning pipelines that integrate pose generation and scoring in a unified framework.

The powerful synergy between traditional docking tools and machine learning rescoring functions has demonstrably enhanced the accuracy and reliability of structure-based virtual screening. Quantitative benchmarks across diverse protein targets reveal that ML rescoring consistently improves enrichment over traditional scoring functions alone, particularly for challenging drug-resistant targets. The experimental protocols and toolkit resources outlined in this review provide researchers with practical guidance for implementing these methods in their drug discovery pipelines. As machine learning algorithms continue to evolve and structural databases expand, the rescoring paradigm will play an increasingly vital role in accelerating the identification of novel therapeutic compounds.

The persistent global health challenge of malaria is significantly compounded by the emergence of drug-resistant strains of the Plasmodium falciparum parasite. The enzyme Plasmodium falciparum Dihydrofolate Reductase (PfDHFR), crucial for the parasite's DNA synthesis, represents a critical therapeutic target. Resistance to antifolate drugs, such as pyrimethamine, primarily arises from mutations in the PfDHFR active site, most notably the quadruple-mutant (Q) variant (N51I/C59R/S108N/I164L) [18] [32]. This case study examines a comprehensive benchmarking analysis that evaluated the performance of structure-based virtual screening (SBVS) using classical docking tools enhanced by machine learning (ML) re-scoring against both wild-type (WT) and resistant (Q) PfDHFR variants [18]. The findings provide a validated computational framework for accelerating the discovery of novel antimalarial agents effective against resistant malaria.

Experimental Protocols & Workflow

The study employed a rigorous SBVS benchmarking protocol to assess and enhance the prediction of high-affinity binders for PfDHFR [18].

Protein and Benchmark Set Preparation

- Protein Structures: The crystal structures of the wild-type PfDHFR (PDB ID: 6A2M) and the quadruple-mutant variant (PDB ID: 6KP2) were retrieved from the Protein Data Bank. Protein preparation was performed using OpenEye's "Make Receptor" tool, which involved removing water molecules and extraneous ions, adding hydrogen atoms, and optimizing the resulting structures [18].

- DEKOIS 2.0 Benchmark Set: A high-quality benchmark set was compiled for each PfDHFR variant using the DEKOIS 2.0 protocol. For each target, 40 known bioactive molecules were curated from the literature and BindingDB. Subsequently, 1,200 structurally similar but physiologically inactive decoy molecules were generated for each set, maintaining a challenging 1:30 ratio of active to decoy compounds [18].

- Ligand Preparation: The small molecule structures were prepared using the Omega software to generate multiple conformations. File formats were converted as needed for the different docking programs using OpenBabel and SPORES software [18].

Docking and Machine Learning Re-scoring

- Docking Tools: Three widely used generic docking programs were evaluated:

- AutoDock Vina (version 1.5.7)

- PLANTS (version 1.2)

- FRED (from OpenEye, version 4.3.2.0) The docking grid boxes were centered on the active site of the respective protein structures [18].

- Machine Learning Re-scoring: The ligand poses generated by each docking tool were subsequently re-scored using two pre-trained machine learning scoring functions:

- CNN-Score: A scoring function based on a convolutional neural network.

- RF-Score-VS v2: A scoring function for virtual screening based on a random forest algorithm [18].