Demystifying QSAR Validation: A Practical Guide to the OECD Principles for Drug Discovery

This article provides a comprehensive guide to the OECD principles for QSAR validation, a cornerstone of modern computational toxicology and drug discovery.

Demystifying QSAR Validation: A Practical Guide to the OECD Principles for Drug Discovery

Abstract

This article provides a comprehensive guide to the OECD principles for QSAR validation, a cornerstone of modern computational toxicology and drug discovery. Tailored for researchers, scientists, and development professionals, it covers the fundamental rationale behind the principles, a step-by-step methodological breakdown of their application, common pitfalls and optimization strategies, and their role in regulatory acceptance versus alternative frameworks. The goal is to equip practitioners with the knowledge to build, validate, and confidently deploy robust, reliable QSAR models for predictive safety and efficacy assessment.

What Are the OECD QSAR Principles and Why Do They Matter in Biomedical Research?

The Genesis and Global Impact of the OECD Validation Framework

Within the context of a broader thesis on OECD principles for QSAR (Quantitative Structure-Activity Relationship) validation, this whitepaper details the genesis and global impact of the OECD Validation Framework. Established to promote the regulatory acceptance of (Q)SAR models for chemical hazard assessment, the framework provides a standardized, principle-based approach to ensure scientific rigor and reliability. Its development was driven by the need for efficient, animal-free safety assessment methods within regulatory decision-making, aligning with global efforts in green chemistry and the 3Rs (Replacement, Reduction, and Refinement of animal testing).

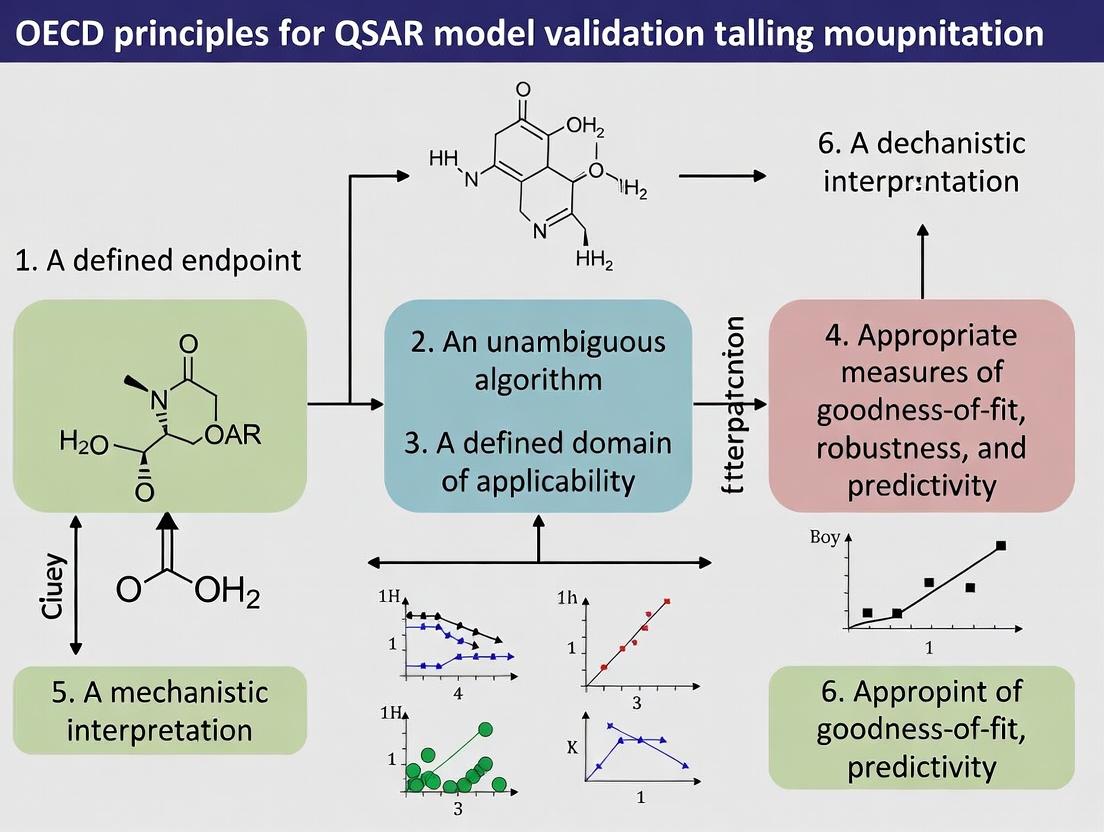

Genesis: The Five OECD Principles for QSAR Validation

The cornerstone of the framework is the set of five validation principles, formally adopted in 2004 (OECD Series on Testing and Assessment No. 49). They were established to evaluate if a (Q)SAR model is scientifically valid for a specific regulatory purpose.

Table 1: The Five OECD Principles for QSAR Validation

| Principle Number | Principle Name | Core Requirement |

|---|---|---|

| 1 | A defined endpoint | The endpoint being predicted must be unambiguous and biologically/regulatorily significant. |

| 2 | An unambiguous algorithm | The algorithm for generating the prediction must be described in a transparent and reproducible manner. |

| 3 | A defined domain of applicability | The chemical scope of the model must be clearly defined, indicating for which substances it is reliable. |

| 4 | Appropriate measures of goodness-of–fit, robustness, and predictivity | The model's performance must be assessed using internal (training set) and external (test set) validation statistics. |

| 5 | A mechanistic interpretation, if possible | A description of the mechanistic link between chemical descriptor and endpoint strengthens scientific confidence. |

Title: Logical Flow of OECD QSAR Validation Principles

Experimental Protocol for QSAR Model Validation

Following the OECD principles, a standard validation protocol involves sequential steps.

Detailed Methodology for Key Validation Experiments:

- Endpoint Curation & Data Preparation: Assemble a high-quality dataset with measured endpoint values (e.g., LC50, mutagenicity). Apply strict quality controls. Split data into a training set (≈70-80%) and a hold-out external test set (≈20-30%) using defined algorithms (e.g., Kennard-Stone, sphere exclusion).

- Model Development & Internal Validation: On the training set, compute molecular descriptors. Develop the model using a chosen algorithm (e.g., Partial Least Squares, Random Forest). Perform internal validation via techniques like:

- Cross-validation (CV): Typically 5-fold or 10-fold CV. The dataset is partitioned, the model is rebuilt multiple times, and predictive performance is averaged.

- Y-scrambling: The endpoint values are randomly shuffled to confirm the model is not based on chance correlation.

- External Validation & Domain Definition: Apply the final model, frozen from the training step, to the external test set. Calculate external validation metrics (see Table 2). Define the Applicability Domain using methods such as leverage (Williams plot), distance-based measures, or descriptor ranges.

- Performance Assessment & Reporting: Calculate and report all statistical metrics for both internal and external validation. Provide a transparent description of the algorithm and, if available, a mechanistic rationale.

Table 2: Key Quantitative Metrics for QSAR Validation (Principle 4)

| Metric | Formula / Description | Acceptability Threshold (Typical) | Purpose |

|---|---|---|---|

| R² (Coefficient of Determination) | R² = 1 - (SSE/SST) | > 0.6 | Goodness-of-fit for training set. |

| Q² (Cross-validated R²) | Calculated during CV (e.g., LOO, 5-fold). | > 0.5 | Measure of internal robustness/predictivity. |

| RMSE (Root Mean Square Error) | RMSE = √[Σ(Ŷᵢ - Yᵢ)²/n] | Context-dependent; lower is better. | Overall error magnitude. |

| MAE (Mean Absolute Error) | MAE = Σ|Ŷᵢ - Yᵢ|/n | Context-dependent; lower is better. | Robust measure of average error. |

| Sensitivity (for Classification) | TP / (TP + FN) | > 0.7-0.8 | Ability to identify true positives. |

| Specificity (for Classification) | TN / (TN + FP) | > 0.7-0.8 | Ability to identify true negatives. |

| Concordance (for Classification) | (TP + TN) / Total | > 0.75-0.8 | Overall classification accuracy. |

SSE: Sum of Squared Errors of prediction; SST: Total Sum of Squares; Ŷᵢ: Predicted value; Yᵢ: Experimental value; n: number of compounds; TP: True Positive; TN: True Negative; FP: False Positive; FN: False Negative.

Title: Experimental Workflow for QSAR Model Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools and Resources for QSAR Development & Validation

| Item/Resource | Function in QSAR Validation | Example(s) |

|---|---|---|

| Curated Chemical Databases | Source of high-quality experimental endpoint data for model training and testing. | EPA CompTox Chemistry Dashboard, OECD QSAR Toolbox, CHEMBL. |

| Chemical Standardization Tools | Ensure consistent representation of chemical structures (e.g., tautomers, salts) before descriptor calculation. | RDKit, OpenBabel, KNIME. |

| Molecular Descriptor Software | Calculate numerical representations of chemical structures that serve as model input variables. | DRAGON, PaDEL-Descriptor, RDKit Descriptors. |

| Machine Learning/Modeling Platforms | Provide algorithms for building regression and classification models and performing internal validation. | R (caret, randomForest), Python (scikit-learn), WEKA, MOE. |

| Applicability Domain (AD) Tools | Implement algorithms to define the chemical space where model predictions are considered reliable. | AMBIT, Standalone AD software within QSAR Toolbox. |

| Validation Statistics Software/Code | Calculate the suite of performance metrics required by OECD Principle 4. | Custom scripts in R/Python, QSARINS, Model Validation reports in KNIME. |

| OECD QSAR Toolbox | An integrative software supporting grouping, read-across, and profiling, with built-in functionality for applying OECD principles. | Primary tool for regulatory application of (Q)SARs and filling data gaps. |

Global Impact and Regulatory Adoption

The Framework has become the global benchmark, transforming regulatory science and chemical management.

Table 4: Global Impact of the OECD QSAR Validation Framework

| Region/Program | Impact and Adoption | Key Legislation/Context |

|---|---|---|

| European Union | Cornerstone of REACH legislation. Allows use of (Q)SAR predictions instead of testing for specific endpoints, provided they meet OECD principles. | REACH (EC 1907/2006), ECHA Guidance on QSARs. |

| United States | Used by EPA for chemical screening and prioritization under TSCA. Integrated into the Endocrine Disruptor Screening Program (EDSP). | TSCA, EPA's New Chemicals Program, OCSPP guidelines. |

| International Collaboration | Facilitates mutual acceptance of data (MAD) among OECD member countries, reducing non-tariff trade barriers. | OECD Mutual Acceptance of Data (MAD) system. |

| Global Harmonization | Provides a common language and standard, enabling joint projects and data sharing worldwide (e.g., IATA). | Integrated Approaches to Testing and Assessment (IATA). |

| Industry | Provides a clear roadmap for developing in-house models for early screening and R&D decision-making, reducing costs and animal use. | Internal safety assessment, green chemistry design. |

Title: Global Impact Pathways of the OECD Framework

The OECD Validation Framework for QSARs, grounded in its five principled pillars, has evolved from a theoretical construct into a foundational element of modern regulatory toxicology and green chemistry. By providing a rigorous, transparent, and internationally harmonized methodology for assessing model credibility, it has catalyzed the regulatory acceptance of non-animal methods, fostered global cooperation, and established a enduring standard for predictive science in chemical safety assessment. Its continued evolution remains critical for addressing new endpoint and emerging chemical challenges.

Within the context of quantitative structure-activity relationship (QSAR) model validation for regulatory use, the Organisation for Economic Co-operation and Development (OECD) principles provide the definitive framework. This whitepaper offers an in-depth technical guide to these five principles, explaining their role as a cornerstone in predictive toxicology and drug development research. Adherence to these principles ensures that QSAR models are scientifically valid, transparent, and fit for purpose in chemical risk assessment and pharmaceutical screening.

The Five OECD Principles: A Technical Deconstruction

The OECD principles were established to facilitate the regulatory acceptance of QSAR models. The following table summarizes the core quantitative and qualitative requirements of each principle.

Table 1: The Five OECD Principles for QSAR Validation

| Principle | Core Requirement | Key Metrics & Descriptors |

|---|---|---|

| 1. A defined endpoint | The biological or chemical effect being predicted must be unambiguous. | - Experimental protocol identifier (e.g., OECD TG 471).- Measured variable (e.g., LD50, EC50, Ames test result).- Units of measurement (e.g., mg/L, mmol/L, binary (+/-)). |

| 2. An unambiguous algorithm | A clear description of the computational procedure used to generate the prediction. | - Algorithm type (e.g., Multiple Linear Regression, Random Forest, Neural Network).- Algorithm software & version.- Complete set of equations and/or source code. |

| 3. A defined domain of applicability | The chemical space and response range for which the model is reliable must be specified. | - Structural/Descriptor ranges (e.g., log P: -2 to 5, MW: 50-500 g/mol).- Applicability domain method (e.g., Leverage, Distance-based, PCA).- Percentage of training set within domain (typically >80%). |

| 4. Appropriate measures of goodness-of-fit, robustness, and predictivity | The model must be statistically validated internally and externally. | - Goodness-of-fit: R², RMSE (Training set).- Robustness: Q² (LOO or LCO-CV), sPRESS.- Predictivity: R²ext, RMSEext, Concordance, Sensitivity/Specificity (Test set). |

| 5. A mechanistic interpretation, if possible | The model should be associated with a biologically meaningful mechanism. | - Key molecular descriptors (e.g., log P, HOMO/LUMO, polar surface area).- Correlation with known toxicophores or pharmacophores.- Alignment with Adverse Outcome Pathways (AOPs). |

Experimental Protocols for QSAR Validation

The validation of a QSAR model against the OECD principles requires rigorous experimental design. The following protocols are standard in the field.

Protocol 1: Defining the Applicability Domain (Principle 3)

Objective: To mathematically define the chemical space where the model's predictions are reliable. Methodology:

- Descriptor Calculation: Compute relevant molecular descriptors (e.g., constitutional, topological, electronic) for the training set compounds.

- Space Definition: Use a method such as:

- Leverage Approach: Calculate the hat matrix H = X(XᵀX)⁻¹Xᵀ, where X is the descriptor matrix. The warning leverage h is typically set to 3(p+1)/n, where p is the number of model descriptors and n is the number of training compounds. A new compound with leverage > h is outside the domain.

- Distance-Based Approach: Calculate the standardized Euclidean distance of a new compound to its k-nearest neighbors in the training set in descriptor space. A distance exceeding a predefined threshold (e.g., the maximum distance observed in the training set) places the compound outside the domain.

- Documentation: Report the method, parameters, and the percentage of the training set considered "inside" the domain.

Protocol 2: External Validation of Predictivity (Principle 4)

Objective: To assess the model's ability to predict new, untested data. Methodology:

- Data Splitting: Before model development, randomly divide the full dataset into a Training Set (~70-80%) for model building and a Test Set (~20-30%) for validation. Ensure both sets represent the chemical and response space.

- Model Development: Develop the QSAR model using only the training set data.

- Prediction & Evaluation: Use the finalized model to predict the endpoint values for the withheld test set.

- Statistical Calculation: Compute external validation metrics:

- R²ext: Coefficient of determination for the test set predictions.

- RMSEext: Root mean square error for the test set.

- Concordance Correlation Coefficient (CCC): Measures agreement between observed and predicted values.

- For classification models: Calculate Sensitivity, Specificity, and Accuracy.

Visualizing the QSAR Validation Workflow

The logical process of developing and validating an OECD-compliant QSAR model is depicted below.

QSAR Model Development and Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing the OECD principles requires specific tools and materials. The following table lists key resources.

Table 2: Key Research Reagent Solutions for QSAR Validation

| Item | Function in QSAR Validation |

|---|---|

| Curated Chemical Databases (e.g., EPA CompTox, ChEMBL) | Provide high-quality, structured biological endpoint data for model training and testing (Principle 1). |

| Cheminformatics Software (e.g., RDKit, PaDEL-Descriptor) | Generate standardized molecular descriptors and fingerprints necessary for algorithm development and domain definition (Principles 2 & 3). |

| Statistical & ML Platforms (e.g., R, Python/scikit-learn, KNIME) | Implement modeling algorithms, perform cross-validation, and calculate all required goodness-of-fit/predictivity metrics (Principles 2 & 4). |

| Applicability Domain Toolkits (e.g., AMBIT, ISIDA/DA) | Specialized software for calculating leverage, distances, and other measures to formally define the model's domain (Principle 3). |

| Adverse Outcome Pathway (AOP) Knowledge Bases (e.g., OECD AOP Wiki) | Provide structured biological knowledge to support mechanistic interpretation of model descriptors (Principle 5). |

| QSAR Reporting Formats (e.g., QMRF, QPRF) | Standardized templates for documenting all model parameters and validation results, ensuring transparency and regulatory compliance. |

Quantitative Structure-Activity Relationship (QSAR) models, once primarily tools for chemical hazard assessment and regulatory compliance, have undergone a paradigm shift. Their application now critically underpins modern drug discovery pipelines. This whitepaper details this expansion, firmly framing the discussion within the context of the Organisation for Economic Co-operation and Development (OECD) principles for QSAR validation. We provide a technical guide on implementing these principles to develop robust, reliable models suitable for both regulatory submission and early-stage pharmaceutical research.

The migration of QSARs from regulatory toxicology to drug discovery necessitates an unwavering commitment to model validation. In regulatory contexts (e.g., REACH, ICH), validation ensures predictions are defensible for priority-setting and risk assessment. In drug discovery, it builds confidence in virtual screening, lead optimization, and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) prediction. The OECD principles provide the universal framework for this rigor.

The OECD Principles: A Framework for Reliability

For a QSAR model to be considered valid for use, it must satisfy the following five principles:

- A defined endpoint: The biological or chemical effect being modeled must be unambiguous and experimentally measurable.

- An unambiguous algorithm: A transparent description of the mathematical procedure and software used.

- A defined domain of applicability: Explicit boundaries within which the model's predictions are reliable.

- Appropriate measures of goodness-of-fit, robustness, and predictivity: Quantitative statistical validation.

- A mechanistic interpretation, if possible: Relating model descriptors to biological or chemical mechanisms increases scientific plausibility.

Core Methodologies & Experimental Protocols

Protocol for Developing an OECD-Compliant QSAR Model

Objective: To construct a validated QSAR model for predicting a specific endpoint (e.g., hERG channel inhibition, aqueous solubility).

Materials & Data:

- Chemical Dataset: A curated set of compounds with reliable, experimental endpoint data.

- Descriptor Calculation Software: e.g., DRAGON, PaDEL-Descriptor, RDKit.

- Modeling Platform: e.g., Python/R with scikit-learn/keras, WEKA, MOE.

- Validation Suite: Software for calculating OECD metrics.

Procedure:

- Data Curation: Clean structures, remove duplicates, correct experimental errors. Standardize chemical representation (e.g., tautomer, protonation state at physiological pH).

- Descriptor Generation & Filtering: Calculate molecular descriptors (2D, 3D) and fingerprints. Remove constant, near-constant, and highly correlated descriptors.

- Data Splitting: Partition data into Training Set (∼70-80%), Test Set (∼10-15%), and an external Validation Set (∼10-15%) not used in any model building.

- Model Building (Training Phase): Apply machine learning algorithms (e.g., Random Forest, Support Vector Machine, Neural Networks) on the training set. Use internal validation (e.g., 5-fold cross-validation) to tune hyperparameters.

- Internal Validation: Assess the model on the held-out Test Set. Calculate performance metrics (see Table 1).

- Domain of Applicability (DA) Definition: Establish a DA using methods like leverage (Williams plot), distance-based measures (e.g., Euclidean distance in descriptor space), or probability density-based approaches.

- External Validation: The ultimate test. Predict the endpoint for the external Validation Set compounds. Performance must meet pre-defined acceptance criteria.

- Mechanistic Interpretation: Analyze descriptor importance (e.g., feature ranking from Random Forest, PLS coefficients) to link molecular properties to the endpoint.

Protocol for Applying a QSAR Model in Virtual Screening

Objective: To computationally prioritize compounds from a large library for experimental testing.

Procedure:

- Library Preparation: Prepare a database of purchasable or in-house compounds (e.g., 1M molecules). Standardize structures.

- Descriptor Calculation: Compute the same set of descriptors used in the trained model.

- DA Filtering: For each compound, check if it falls within the model's DA. Flag or exclude outliers.

- Prediction & Ranking: Generate predictions for all compounds within the DA. Rank them by favorable predicted activity/perty.

- Diversity & Visual Inspection: Select a subset of top-ranked compounds ensuring structural diversity. Perform expert chemoinformatic review.

Data Presentation: Performance Metrics for Validated QSARs

Table 1: Key Statistical Metrics for QSAR Model Validation

| Metric | Formula / Description | Interpretation | Acceptability Threshold (Typical) |

|---|---|---|---|

| Goodness-of-Fit | Measures model performance on training data | ||

| R² (Training) | Coefficient of Determination | Proportion of variance explained by the model. | > 0.7 |

| RMSE (Training) | Root Mean Square Error | Average magnitude of prediction error. | Context-dependent. |

| Robustness | Measures model stability via internal CV | ||

| Q²ₙₒₒ or R²ₒᵥ | Predictive squared correlation coefficient from Leave-One-Out or k-fold CV. | Should be close to R² (training). | > 0.6, (Q² > 0.5) |

| Predictivity | Measures performance on unseen data | ||

| R² (Test/Ext) | R² on external test/validation set. | Gold standard for real-world accuracy. | > 0.6 |

| RMSE (Test/Ext) | RMSE on external set. | Should be comparable to training RMSE. | Context-dependent. |

| Classification Metrics | For categorical endpoints (e.g., active/inactive) | ||

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correct classification rate. | > 0.7 |

| Sensitivity/Recall | TP/(TP+FN) | Ability to identify true actives. | > 0.7 |

| Specificity | TN/(TN+FP) | Ability to identify true inactives. | > 0.7 |

| AUC-ROC | Area Under ROC Curve | Overall ranking performance. | > 0.8 |

TP=True Positive, TN=True Negative, FP=False Positive, FN=False Negative

Visualizing the Integrated Workflow

Diagram Title: OECD-Compliant QSAR Model Development and Application Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Tools for QSAR Modeling

| Item/Category | Example Product/Software | Primary Function in QSAR Workflow |

|---|---|---|

| Chemical Databases | PubChem, ChEMBL, ZINC15, DrugBank | Sources of experimental bioactivity and property data for model training and validation. |

| Descriptor Calculation | RDKit (Open Source), DRAGON, MOE, PaDEL-Descriptor | Generates numerical representations (descriptors/fingerprints) of molecular structures. |

| Modeling & ML Platforms | Python (scikit-learn, TensorFlow), R (caret), WEKA, KNIME | Provides algorithms for building regression/classification models (RF, SVM, ANN, etc.). |

| Validation Software | QSAR-Co, MFMLab, in-house scripts | Calculates OECD validation metrics and defines the Domain of Applicability. |

| Cheminformatics Suites | OpenBabel, ChemAxon JChem, Schrödinger Suite | Handles chemical file format conversion, standardization, and basic molecular properties. |

| Visualization | Matplotlib/Seaborn (Python), Spotfire, Graphviz | Creates plots for model diagnostics (Williams plots, ROC curves) and workflow diagrams. |

| High-Performance Computing | Local Clusters, Cloud (AWS, GCP) | Provides computational power for descriptor calculation and training on large datasets. |

The development and validation of Quantitative Structure-Activity Relationship (QSAR) models represent a cornerstone in modern computational toxicology and drug discovery. This guide is framed within the broader thesis that adherence to the Organisation for Economic Co-operation and Development (OECD) principles for QSAR validation is not merely a regulatory checkbox but a foundational framework for ensuring scientific integrity. These principles—a defined endpoint, an unambiguous algorithm, a defined domain of applicability, appropriate measures of goodness-of-fit, robustness, and predictivity, and a mechanistic interpretation, where possible—provide the scaffold for achieving reliability, transparency, and regulatory readiness. For researchers and drug development professionals, rigorous implementation of these principles translates to trustworthy predictions that can confidently inform safety assessments and early-stage lead optimization.

Core Methodologies & Experimental Protocols for QSAR Validation

Protocol for Defining the Applicability Domain (AD)

The Applicability Domain defines the chemical space on which the model is trained and for which its predictions are reliable.

- Descriptor Calculation: Compute a relevant set of molecular descriptors (e.g., topological, electronic, geometrical) for the entire training set using standardized software (e.g., RDKit, Dragon).

- Domain Characterization: Employ a combination of methods:

- Range-Based: For each descriptor, define the min/max values observed in the training set.

- Distance-Based: Calculate the similarity of a new compound to the training set. Common metrics include the Euclidean distance or Mahalanobis distance in the principal component space of the descriptors.

- Leverage Approach: Compute the leverage (h) for a new compound using the descriptor matrix (X) of the training set: ( h = x^T (X^TX)^{-1} x ), where x is the descriptor vector of the new compound. A leverage greater than the warning leverage ( h^* = 3p/n ) (where p is the number of model parameters and n is the number of training compounds) indicates the compound is outside the AD.

- Decision Rule: A compound is considered within the AD only if it satisfies all chosen criteria (e.g., within range for >95% of descriptors and similarity distance below a defined threshold).

Protocol for Assessing Robustness (Internal Validation)

Robustness evaluates the model's stability to perturbations in the training data.

- Resampling Procedure: Perform k-fold cross-validation (typically k=5 or 10) or repeated leave-many-out validation.

- Model Training & Prediction: For each iteration, hold out a subset of data, train the model on the remaining data, and predict the held-out values.

- Metric Calculation: Calculate performance metrics (e.g., ( Q^2 ) (cross-validated R²), RMSEcv) for the predictions across all iterations.

- Acceptance Criterion: A model is generally considered robust if ( Q^2 > 0.5 ), though domain-specific thresholds apply.

Protocol for Assessing Predictivity (External Validation)

Predictivity is the ultimate test of a model's performance on truly independent data.

- Data Splitting: Initially, the full dataset is rationally split into a training set (~70-80%) and a completely independent test set (~20-30%). Splitting should ensure the test set is within the AD of the training model.

- Blind Prediction: The model is built exclusively on the training set. Its finalized form (algorithm, parameters) is then used to predict the endpoint values for the test set compounds without any further adjustment.

- Metric Calculation: Calculate external validation metrics comparing predictions to experimental values for the test set (see Table 1).

Data Presentation: Key Validation Metrics

Table 1: Core Quantitative Metrics for QSAR Model Validation

| Metric | Formula | Interpretation | Ideal Value |

|---|---|---|---|

| R² (Fit) | ( 1 - \frac{\sum (yi - \hat{y}i)^2}{\sum (y_i - \bar{y})^2} ) | Goodness-of-fit for training data. | > 0.7 |

| Q² (LOO-CV) | ( 1 - \frac{\sum (yi - \hat{y}{(i)})^2}{\sum (y_i - \bar{y})^2} ) | Internal robustness via leave-one-out cross-validation. | > 0.5 |

| RMSE | ( \sqrt{\frac{1}{n} \sum (yi - \hat{y}i)^2} ) | Average prediction error (same units as y). | As low as possible |

| RMSEext | ( \sqrt{\frac{1}{n{ext}} \sum (y{ext} - \hat{y}_{ext})^2} ) | Average error on the external test set. | Comparable to RMSE |

| CCC | ( \frac{2 \cdot \sum (yi - \bar{y})(\hat{y}i - \bar{\hat{y}})}{\sum (yi - \bar{y})^2 + \sum (\hat{y}i - \bar{\hat{y}})^2 + n(\bar{y} - \bar{\hat{y}})^2} ) | Concordance correlation coefficient; measures agreement. | Close to 1 |

| MAE | ( \frac{1}{n} \sum |yi - \hat{y}i| ) | Mean Absolute Error; robust to outliers. | As low as possible |

Table 2: Summary of OECD Principle Implementation Workflow

| OECD Principle | Technical Implementation Method | Output/Documentation for Transparency |

|---|---|---|

| 1. Defined Endpoint | Use standardized experimental protocols (e.g., OECD TG). | Clear endpoint definition, units, measurement conditions. |

| 2. Unambiguous Algorithm | Use open-source scripts (Python/R) or fully described commercial software settings. | Published code, software name/version, all equation parameters. |

| 3. Defined Applicability Domain | Leverage, PCA, or similarity-based methods (Protocol 2.1). | List of descriptors with ranges, similarity threshold value. |

| 4. Goodness-of-Fit & Robustness | Calculate R², RMSE; perform cross-validation (Protocol 2.2). | Table of internal validation metrics (as in Table 1). |

| 5. Predictivity | External validation with hold-out test set (Protocol 2.3). | Table of external validation metrics and scatter plot. |

| 6. Mechanistic Interpretation | Descriptor significance analysis, mapping to known pathways. | Discussion of key descriptors and their physicochemical meaning. |

Visualizing the QSAR Validation Workflow

QSAR Validation Workflow Aligned with OECD Principles

Decision Logic for Applying a Validated QSAR Model

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Resources for QSAR Development & Validation

| Item / Solution | Function in QSAR Workflow | Example Source / Tool |

|---|---|---|

| Curated Chemical/Activity Databases | Provides high-quality training and test data with standardized endpoints. | ChEMBL, PubChem, OECD QSAR Toolbox. |

| Chemical Descriptor Software | Generates numerical representations of molecular structures for modeling. | DRAGON, PaDEL-Descriptor, RDKit (Open Source). |

| Chemoinformatics & Modeling Suites | Platforms for data analysis, model building, and validation. | KNIME, Orange Data Mining, Scikit-learn (Python). |

| Applicability Domain Scripts | Implements algorithms to define and assess chemical domain borders. | AMBIT (Toxtree), In-house Python/R scripts. |

| Statistical Validation Packages | Automates calculation of fit, robustness, and predictivity metrics. | Caret (R), scikit-learn model_selection (Python). |

| Mechanistic Alert & Profiling Tools | Links structural features to potential toxicological mechanisms. | OECD QSAR Toolbox, Sarah Nexus, Derek Nexus. |

| Reporting Template (OECD MQN) | Ensures transparent and standardized reporting of models for regulatory submission. | OECD (Q)SAR Model Reporting Format (MRF). |

Adherence to the OECD principles for QSAR validation provides a systematic, defensible, and transparent pathway from model conception to regulatory application. By implementing the detailed protocols for domain definition, robustness, and predictivity testing outlined herein, researchers generate not just predictive models, but credible scientific evidence. The resulting reliability builds trust in computational predictions, the inherent transparency facilitates peer review and collaboration, and together, they form the bedrock of regulatory readiness—enabling the confident use of QSAR models to support critical decisions in drug development and chemical safety assessment.

Implementing the OECD Principles: A Step-by-Step Guide to QSAR Model Development

Quantitative Structure-Activity Relationship (QSAR) models are pivotal computational tools in modern regulatory science and drug discovery, enabling the prediction of chemical properties, toxicity, and biological activity. Their reliable application, however, hinges on rigorous validation. The Organisation for Economic Co-operation and Development (OECD) established a set of five principles to ensure the regulatory acceptability of QSAR models. The first and foundational principle is "a defined endpoint." This principle mandates a clear, unambiguous definition of the biological or chemical effect being modeled, forming the bedrock upon which a curated dataset is built. This technical guide elaborates on the operationalization of this principle, detailing the methodologies for endpoint specification and the subsequent construction of a high-quality, fit-for-purpose dataset.

Deconstructing the "Defined Endpoint"

A defined endpoint is not merely a label (e.g., "mutagenicity"). It is a precise operational specification of the biological effect, the experimental conditions under which it was measured, and the units of measurement. Ambiguity here propagates through model development, leading to unreliable and non-interpretable predictions.

Core Components of a Defined Endpoint:

- Biological/Chemical Phenomenon: The specific effect (e.g., Ames test mutagenicity, LogP for lipophilicity, IC50 for kinase inhibition).

- Assay Protocol & Experimental Conditions: Standardized test guidelines (e.g., OECD TG 471 for Ames test), species, cell line, exposure time, pH, temperature.

- Measured Value and Units: The quantitative or qualitative result (e.g., revertant count, partition coefficient, molar concentration).

- Data Type: Continuous (e.g., pIC50), categorical (e.g., active/inactive), or ordinal.

Table 1: Examples of Poorly vs. Well-Defined Endpoints

| Poorly Defined Endpoint | Well-Defined Endpoint (OECD-aligned) |

|---|---|

| "Cytotoxicity" | "In vitro cell viability inhibition measured in human hepatocarcinoma (HepG2) cells after 48h exposure, expressed as half-maximal inhibitory concentration (IC50) in µM, following OECD Guidance Document 129." |

| "Water Solubility" | "Intrinsic water solubility (S_w) measured in pure water at 25°C using the shake-flask method (OECD TG 105), expressed in mol/L." |

| "hERG Blockage" | "Inhibition of the human Ether-à-go-go-Related Gene potassium channel current measured via patch-clamp electrophysiology in transfected mammalian cells, expressed as percentage inhibition at 10 µM test concentration." |

Protocol for Building a Curated Dataset

Once the endpoint is rigorously defined, the creation of a curated dataset follows a systematic, multi-stage protocol. This process transforms raw, scattered data into a reliable model-ready resource.

Experimental Protocol for Data Curation

Stage 1: Data Sourcing and Aggregation

- Objective: Collect all available relevant data from public and proprietary sources.

- Methodology:

- Identify relevant databases (e.g., PubChem BioAssay, ChEMBL, EPA's CompTox Chemicals Dashboard, DrugBank).

- Perform structured queries using chemical identifiers (SMILES, InChIKey, CAS RN) and endpoint-specific keywords aligned with the definition.

- Extract associated metadata (assay ID, experimental parameters, measurement values, confidence scores).

- Log all sources with provenance information (Source, ID, Access Date).

Stage 2: Data Standardization and Harmonization

- Objective: Ensure chemical structures and data values are consistent and comparable.

- Methodology:

- Chemical Structure Standardization: Use toolkits (e.g., RDKit, OpenBabel) to: desalt molecules, neutralize charges, generate canonical SMILES, remove duplicates, and verify valence correctness.

- Endpoint Value Harmonization: Convert all activity values to a uniform scale and unit (e.g., all IC50 to pIC50 = -log10(IC50[M])). For categorical data, apply consistent classification thresholds (e.g., active if IC50 < 10 µM).

Stage 3: Quality Control and Curation

- Objective: Identify and resolve errors, inconsistencies, and outliers.

- Methodology:

- Plausibility Filtering: Remove physically impossible values (e.g., negative solubility, LogP > 25).

- Outlier Detection: Employ statistical (e.g., Z-score, IQR) and chemical domain expertise to flag outliers for manual review.

- Conflict Resolution: For multiple measurements on the same compound, apply rules: prioritize data from the definitive assay (as per endpoint definition), use the highest quality source, or compute a weighted average. Document all decisions.

- Chemical Space Analysis: Use principal component analysis (PCA) or t-SNE on molecular descriptors to visualize coverage and identify clusters/voids.

Stage 4: Final Dataset Assembly and Documentation

- Objective: Produce a fully annotated, ready-to-use dataset.

- Methodology:

- Assemble the final list of unique, standardized chemical structures.

- Attach the harmonized endpoint value for each compound.

- Include crucial metadata columns: compound identifier, endpoint value, endpoint definition, data source, confidence flag.

- Create a comprehensive README document detailing all curation steps, decision rules, and software versions used.

Title: QSAR Dataset Curation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools and Resources for Endpoint Definition and Dataset Curation

| Tool/Resource Name | Category | Primary Function | Key Features for Curation |

|---|---|---|---|

| ChEMBL | Public Database | Repository of bioactive molecules with drug-like properties. | Provides standardized bioactivity data (IC50, Ki, etc.) linked to detailed assay descriptions, enabling precise endpoint mapping. |

| OECD QSAR Toolbox | Software Platform | Grouping of chemicals into categories and filling data gaps. | Critical for applying OECD principles, identifying analogue chemicals, and accessing regulatory datasets for endpoint clarification. |

| RDKit | Open-Source Cheminformatics | Programming toolkit for cheminformatics. | Performs chemical standardization, descriptor calculation, and substructure analysis essential for data cleaning and exploration. |

| KNIME Analytics Platform | Data Analytics Integration | Visual programming for data pipelining. | Enables building reproducible, documented workflows that integrate data sourcing, standardization, and modeling steps. |

| PubChem | Public Database | World's largest collection of freely accessible chemical information. | Aggregates data from hundreds of sources, useful for initial data gathering and cross-referencing activity values. |

| pKa & LogP Predictors (e.g., ChemAxon, ACD/Labs) | Predictive Software | Calculates key physicochemical properties. | Used to flag implausible experimental values during quality control and to generate predictive descriptors. |

| EPA CompTox Chemicals Dashboard | Regulatory Database | Access to EPA-curated chemistry, toxicity, and exposure data. | Provides high-quality, well-defined toxicity endpoints aligned with OECD test guidelines for environmental QSARs. |

Data Presentation: Quantitative Analysis of a Curated Dataset

The impact of curation is demonstrable. The following table summarizes a hypothetical but realistic analysis comparing raw aggregated data to the final curated dataset for an Ames mutagenicity model (Endpoint: Binary outcome from Salmonella typhimurium reverse mutation assay, following OECD TG 471).

Table 3: Impact of Curation on Dataset Quality for an Ames Mutagenicity Model

| Metric | Raw Aggregated Data | After Stage 2 (Standardization) | Final Curated Dataset (After Stage 3) |

|---|---|---|---|

| Total Unique Compounds | 12,500 | 11,200 (10.4% reduction) | 9,850 (21.2% reduction) |

| Inconsistent Activity Labels | ~850 compounds with conflicting calls | Resolved to single label per compound | All conflicts resolved via rule-based prioritization |

| Presence of Inorganic/Salts | 320 entries | Removed (0 retained) | Removed |

| Duplicates (by InChIKey) | ~1,300 duplicate entries | Removed (0 retained) | Removed |

| Data Source Coverage | 18 different databases | Harmonized from 18 sources | 4 high-priority sources retained for final model |

| Activity Ratio (Active:Inactive) | 42:58 | 45:55 | 40:60 (after outlier removal) |

Principle 1 is not an administrative formality but a scientific imperative. A meticulously defined endpoint provides the "true north" for all subsequent model development. The rigorous, transparent process of building a curated dataset directly addresses the fundamental OECD tenets of transparency (documented process) and scientific robustness (reliable input data). Without this disciplined foundation, even the most sophisticated algorithmic approaches (Principles 4 & 5) risk producing models that are numerically sound but scientifically meaningless. Therefore, investing substantial effort in defining the endpoint and curating the dataset is the most critical step in developing a QSAR model fit for purpose in regulatory decision-making or drug discovery.

Within the Organisation for Economic Co-operation and Development (OECD) principles for the validation of Quantitative Structure-Activity Relationship (QSAR) models, Principle 2 is fundamental for ensuring scientific rigor and regulatory acceptance. It states: "An unambiguous algorithm" must be provided. This principle mandates that the methodology used to generate a predictive model is transparent, fully described, and reproducible by an independent party. For researchers and drug development professionals, this moves beyond mere model performance; it requires a defensible, stepwise rationalization of the chosen algorithm, its parameters, and its suitability for the specific endpoint being predicted. This guide details the technical implementation of this principle in modern computational chemistry and cheminformatics workflows.

Core Concept: Defining "Unambiguous Algorithm"

An unambiguous algorithm is a precisely defined, step-by-step computational procedure. In QSAR context, this encompasses the entire modeling pipeline:

- Molecular Structure Representation: How chemical structures are converted into numerical or graphical descriptors.

- Descriptor Calculation & Selection: The exact set of molecular descriptors and the method for their calculation and selection.

- Mathematical Form of the Model: The type of model (e.g., linear regression, support vector machine, random forest, neural network) and its exact equation or architecture.

- Fitting Procedure: The optimization method and its associated parameters (e.g., learning rate, convergence criteria, number of trees).

- Applicability Domain Definition: The method for determining the chemical space where the model's predictions are reliable.

Ambiguity in any step compromises the model's reproducibility and challenges its use in regulatory decision-making.

Detailed Methodologies for Key Algorithmic Steps

Protocol for Molecular Descriptor Calculation and Rationalization

Objective: To generate a consistent, reproducible, and chemically meaningful numerical representation of compounds.

Procedure:

- Standardization: Apply a canonical standardization protocol (e.g., using RDKit or OpenBabel) to all input structures: neutralize charges, remove salts, generate canonical tautomers, and enforce specific stereo-chemistry rules.

- Descriptor Suite Selection: Choose a defined suite of descriptors a priori based on mechanistic understanding of the endpoint. Example suites include: RDKit 2D descriptors, Mordred descriptors, or Dragon-like subsets (e.g., topological, constitutional, electronic).

- Calculation: Compute all descriptors in the chosen suite using a specified software version (e.g.,

mordredlibrary v1.2.0). - Descriptor Filtering & Reduction: a. Remove descriptors with zero or near-zero variance (variance < 1e-7). b. Remove one of any pair of descriptors with correlation > 0.95 (Pearson's r). c. Apply a variance inflation factor (VIF) threshold (<5) to reduce multicollinearity in linear models.

- Documentation: Record the final descriptor list, their calculated values for the training set, and the software/version used.

Protocol for Model Algorithm Selection and Training

Objective: To select and train a predictive model with a fully specified, reproducible algorithm.

Procedure:

- Data Splitting: Perform a defined split (e.g., 70/15/15) into training, validation (for hyperparameter tuning), and external test sets. Use a stratified method for classification to preserve class ratios. Seed all random number generators (e.g.,

random_state=42). - Algorithm Rationalization: Justify the choice of algorithm (e.g., Random Forest) based on data characteristics: non-linearity, descriptor dimensionality, and endpoint nature (categorical/continuous).

- Hyperparameter Definition & Tuning: a. Define the hyperparameter search space explicitly (see Table 1). b. Use a specified cross-validation method (e.g., 5-fold stratified CV) on the training set only. c. Employ a defined search strategy (e.g., Bayesian optimization for 50 iterations) to identify the optimal hyperparameters, optimizing for a predefined metric (e.g., balanced accuracy for classification).

- Final Model Training: Train the final model using the optimized hyperparameters on the entire training set.

- Model Serialization: Save the final model object (e.g., as a

.pklfile) along with all necessary metadata (scalers, descriptor list, applicability domain model).

Table 1: Example Hyperparameter Search Space for Common Algorithms

| Algorithm | Hyperparameter | Rationale for Inclusion | Specified Search Range/Options |

|---|---|---|---|

| Random Forest | n_estimators |

Controls ensemble size/complexity | [100, 200, 500] |

max_depth |

Limits tree depth to prevent overfitting | [5, 10, 20, None] | |

min_samples_split |

Minimum samples to split a node | [2, 5, 10] | |

| Support Vector Machine (RBF) | C |

Regularization parameter | Log-uniform: [1e-3, 1e3] |

gamma |

Kernel inverse radius | Log-uniform: [1e-4, 1e1] | |

| Gradient Boosting | learning_rate |

Shrinkage of tree contributions | [0.01, 0.05, 0.1] |

n_estimators |

Number of boosting stages | [100, 200] | |

max_depth |

Individual tree depth | [3, 5, 7] |

Adherence to Principle 2 enables fair, unambiguous comparison of model performance. Below is a template for reporting key metrics.

Table 2: Mandatory Performance Metrics for QSAR Model Reporting (Example Data)

| Metric | Purpose | Calculation | Acceptability Threshold (Example) | Model A (RF) | Model B (SVM) |

|---|---|---|---|---|---|

| Q² (LOO-CV) | Internal predictive ability | 1 - (PRESS/SStotal) | > 0.5 | 0.72 | 0.68 |

| R²test | Goodness of fit on external test set | Cov²xy/(σxσy) | > 0.6 | 0.75 | 0.70 |

| RMSEtest | Prediction error magnitude | √(Σ(Ŷi-Yi)²/n) | Context-dependent | 0.45 | 0.52 |

| Sensitivity | Ability to identify positives | TP / (TP + FN) | > 0.7 | 0.85 | 0.78 |

| Specificity | Ability to identify negatives | TN / (TN + FP) | > 0.7 | 0.82 | 0.88 |

| Balanced Accuracy | Overall accuracy for imbalanced data | (Sensitivity + Specificity) / 2 | > 0.7 | 0.835 | 0.83 |

Visualizing the QSAR Modeling Workflow

Title: Unambiguous QSAR Model Development Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools for Implementing Principle 2

| Item/Category | Specific Examples | Function & Role in Ensuring an Unambiguous Algorithm |

|---|---|---|

| Cheminformatics Library | RDKit, OpenBabel | Performs canonical structure standardization, descriptor calculation, and substructure searching. Version control is critical. |

| Descriptor Calculation Suite | Mordred, PaDEL, Dragon | Generates a comprehensive, reproducible set of molecular descriptors from standardized structures. |

| Machine Learning Framework | Scikit-learn, XGBoost, TensorFlow/PyTorch | Provides well-documented, versioned implementations of algorithms with controlled random seeds for reproducibility. |

| Hyperparameter Optimization | Optuna, Scikit-optimize, GridSearchCV | Systematically and reproducibly searches the defined parameter space to identify optimal model settings. |

| Model Serialization | Joblib (*.pkl), ONNX, PMML |

Saves the exact model state, including all weights, parameters, and scaling factors, for independent reloading and prediction. |

| Version Control System | Git, with platforms like GitHub/GitLab | Tracks every change to code, descriptors, and model parameters, providing a complete audit trail. |

| Containerization | Docker, Singularity | Encapsulates the entire software environment (OS, libraries, code) to guarantee identical execution across different machines. |

| Applicability Domain Tool | AMBIT, DCDistance, PCA-based methods | Implements a specified method to define the chemical space where the model's predictions are considered reliable. |

The Organisation for Economic Co-operation and Development (OECD) Principles for the Validation of (Quantitative) Structure-Activity Relationship [(Q)SAR] Models provide a foundational framework for regulatory acceptance of predictive computational tools. Principle 3 explicitly mandates that a model must be accompanied by a "definition of its applicability domain" (AD). This principle acknowledges that no model is universally valid; its reliability is confined to the chemical space for which it was developed and validated. Within drug development, defining the AD is critical for assessing the reliability of predictions for novel compounds, thereby mitigating risk in decision-making processes related to lead optimization, toxicity assessment, and prioritization of synthetic targets.

Theoretical Foundation and Significance

The Applicability Domain represents the response and chemical structure space of the training set, characterized by the model's descriptors and the modeled response. Predictions for new compounds falling within this domain are considered reliable, while extrapolation outside the AD carries higher uncertainty. Key conceptual approaches include:

- Range-Based Methods: Define boundaries based on the range of individual descriptor values in the training set.

- Distance-Based Methods: Assess the similarity of a new compound to the training set molecules (e.g., leverage, Euclidean distance, Mahalanobis distance).

- Geometric Methods: Define the convex hull of the training set in the descriptor space.

- Probability Density Distribution Methods: Estimate the probability density of the training set.

Failure to define and respect the AD can lead to inaccurate predictions, wasted resources, and potential safety issues in downstream development.

Methodologies for Defining the Applicability Domain

Descriptor Range-Based Approach (Bounding Box)

This method defines the AD as the multidimensional rectangle spanned by the minimum and maximum values of each descriptor used in the model.

Experimental Protocol:

- Descriptor Calculation: Compute all model descriptors for the training set compounds.

- Range Determination: For each descriptor

i, identify its minimum (min_i) and maximum (max_i) value across the training set. - AD Criterion Definition: A query compound is considered within the AD if, for every descriptor

i, its valuex_isatisfies:min_i - δ ≤ x_i ≤ max_i + δ, whereδis a small tolerance (often 0 or a scaled fraction of the range). - Application: For a new compound, calculate its descriptors and verify compliance with all ranges. Flag any descriptor value outside the defined bounds.

Leverage and Williams Plot

Leverage (h_i) measures a compound's influence on its own prediction and its position in the descriptor space relative to the model's centroid. The Williams plot combines leverage and standardized residuals.

Experimental Protocol:

- Model Matrix: For a linear model with

pdescriptors andntraining compounds, construct then x (p+1)model matrixX(including intercept). - Leverage Calculation: Calculate the hat matrix

H = X(XᵀX)⁻¹Xᵀ. The leverage for compoundiis thei-th diagonal element ofH(h_ii). The warning leverageh*is typically set to3(p+1)/n. - Standardized Residuals: Compute the cross-validated or externally validated standardized residuals for each compound.

- Plotting: Generate a Williams plot with leverage (

h_i) on the x-axis and standardized residual on the y-axis. Define AD boundaries ath*and ±3 standard residual units. - Interpretation: Compounds with high leverage (

h_i > h*) are structurally influential or outliers in descriptor space. Compounds with high residuals are response outliers.

Distance-Based Methods: k-Nearest Neighbors (k-NN)

This approach assesses the similarity of a query compound to its nearest neighbors in the training set within the multidimensional descriptor space.

Experimental Protocol:

- Descriptor Space Normalization: Standardize all descriptors (e.g., zero mean, unit variance) to ensure equal weighting in distance calculation.

- Distance Metric Selection: Choose a suitable metric (e.g., Euclidean, Manhattan, Mahalanobis).

- Threshold Determination: For each training set compound, calculate the mean distance to its

knearest neighbors within the training set. Establish a threshold distanced_thras, for example, the 90th percentile of these mean distances. - AD Assessment: For a new compound, find its

knearest neighbors in the training set and compute the mean distanced_q. Ifd_q ≤ d_thr, the compound is within the AD.

Table 1: Common Applicability Domain Methods and Their Key Parameters

| Method | Core Metric | Typical Threshold | Advantages | Limitations |

|---|---|---|---|---|

| Descriptor Range | Per-descriptor value | min_i, max_i |

Simple, intuitive, fast to compute. | Does not account for correlation between descriptors. High-dimensional space can be overly restrictive. |

| Leverage | Hat value (h_i) |

h* = 3(p+1)/n |

Integrated with model structure. Identifies influential points. | Primarily for linear models. Requires matrix inversion. |

| k-NN Distance | Mean distance to k neighbors | Percentile-based (e.g., 90th) | Intuitive similarity measure. Non-parametric. | Computationally intensive for large sets. Choice of k and metric is critical. |

| PCA-Based Domain | Score in principal component space | Hotelling's T², DModX | Handles descriptor correlation. Reduces dimensionality. | Interpretation of PCs can be complex. |

Table 2: Example AD Assessment for a Hypothetical hERG Inhibition QSAR Model

| Compound ID | Prediction (pIC50) | Experimental (pIC50) | In AD? (Y/N) | Reason if Outside |

|---|---|---|---|---|

| Train-045 | 5.2 | 5.3 | Y | - |

| Train-128 | 6.8 | 4.9 | N | High residual (Response outlier) |

| New-001 | 6.1 | N/A | Y | All descriptors within range, leverage < h* |

| New-002 | 7.5 | N/A | N | Mean k-NN distance > d_thr (Structural outlier) |

Visualizing the Applicability Domain Concept

Decision Flow for Model Applicability Assessment

Structural Outlier Outside the Convex Hull AD

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Resources for AD Development and Assessment

| Item / Solution | Function in AD Definition | Example/Note |

|---|---|---|

| Chemical Descriptor Software | Calculates molecular fingerprints, topological, electronic, and geometric descriptors for training and query sets. | Dragon, MOE, RDKit, PaDEL-Descriptor. |

| Cheminformatics Libraries | Provides programming tools for similarity searching, distance calculations, and AD algorithm implementation. | RDKit, CDK, ChemPy. |

| Model Development Suites | Often include built-in modules for leverage calculation, PCA, and domain estimation. | SIMCA (for PLS), KNIME, Orange. |

| Curated Chemical Databases | Source of training set structures and associated biological data; quality is paramount. | ChEMBL, PubChem, DrugBank. |

| Statistical Software/Environments | For advanced statistical distance measures (Mahalanobis), clustering, and threshold optimization. | R, Python (SciPy, scikit-learn), MATLAB. |

| Standardized Data Formats | Ensures interoperability between tools in the AD assessment workflow. | SMILES, SDF, CSV. |

Implementation Workflow and Best Practices

Detailed Protocol for a Consolidated AD Assessment:

- Training Set Curation: Assemble a high-quality, curated set of compounds with measured endpoints. Ensure diversity but relevance to the target chemical space.

- Descriptor Calculation & Selection: Calculate a broad pool of descriptors. Apply feature selection to reduce dimensionality and remove redundant/correlated variables relevant to the model.

- Model Training: Develop the QSAR model using the selected descriptors and training set.

- Multi-Method AD Definition:

- Calculate descriptor ranges for the final descriptor set.

- Compute the leverage warning threshold

h*for the model. - Perform PCA on the training set descriptors. Calculate the critical Hotelling's T² (for scores) and DModX (for residuals) thresholds at a chosen confidence level (e.g., 95%).

- Determine the optimal

kand threshold distanced_thrfor the k-NN approach via cross-validation.

- AD Integration: Establish a consensus rule. For example: a query compound is considered within the AD only if it passes all criteria (within all descriptor ranges, leverage < h*, T² and DModX below critical limits, and mean distance < d_thr). A more relaxed rule might require passing 3 out of 4.

- Reporting: Document all AD criteria, thresholds, and software used. The AD must be transportable and transparent for end-users.

Best Practices:

- Use Multiple Methods: A consensus approach increases robustness.

- Visualize: Always use Williams plots, PCA score plots, and distance distributions to communicate the AD.

- Context Matters: The strictness of the AD should reflect the model's purpose (screening vs. regulatory).

- Continuous Refinement: As more reliable data becomes available, the training set and AD can be expanded.

Within the OECD framework for the validation of Quantitative Structure-Activity Relationship (QSAR) models, Principle 4 is a critical determinant of model reliability and regulatory acceptance. It mandates that a model must be assessed using both internal validation (to ensure robustness and prevent overfitting) and external validation (to evaluate predictive power and generalizability). This principle moves beyond simple statistical goodness-of-fit to a rigorous, protocol-driven evaluation of model performance. For researchers and drug development professionals, the implementation of robust validation measures is non-negotiable for translating computational predictions into credible scientific insights or regulatory submissions.

Core Validation Metrics: Quantitative Frameworks

Robust validation requires the calculation of specific, interpretable metrics. The following tables summarize the key quantitative measures for internal and external validation.

Table 1: Core Internal Validation Metrics & Thresholds

| Metric | Formula / Method | Ideal Threshold | Purpose & Interpretation |

|---|---|---|---|

| Q² (LOO or LMO) | ( Q^2 = 1 - \frac{\sum (y{obs} - y{pred})^2}{\sum (y{obs} - \bar{y}{train})^2} ) | > 0.5 | Cross-validated coefficient of determination. Measures model robustness and protection against overfitting. |

| RMSECV | ( \sqrt{\frac{\sum{i=1}^{n} (y{i} - \hat{y}_{i(i)})^2}{n}} ) | Low, context-dependent | Cross-validated Root Mean Square Error. Quantifies average prediction error in model units. |

| Y-Randomization | Correlation coefficient (R² or Q²) after scrambling response variable. | Significant drop in performance (e.g., R² < 0.3) | Confirms model is not based on chance correlation. Typically repeated >50 times. |

| Applicability Domain (AD) - Leverage | ( hi = \mathbf{x}i^T (\mathbf{X}^T\mathbf{X})^{-1} \mathbf{x}_i ) | ( h_i \leq h^* = \frac{3p}{n} ) | Identifies if a prediction is an interpolation (within AD) or an extrapolation (outside AD). |

Table 2: Core External Validation Metrics & Thresholds

| Metric | Formula / Method | OECD-Suggested Threshold | Purpose & Interpretation |

|---|---|---|---|

| R²ext | ( R^2{ext} = 1 - \frac{\sum (y{obs,ext} - y{pred,ext})^2}{\sum (y{obs,ext} - \bar{y}_{train})^2} ) | > 0.6 | Explanatory power for the external set. Uses training set mean. |

| Q²F1, Q²F2, Q²F3 | Variants based on denominator using external/test set variance or training set variance. | > 0.6 | Predictive squared correlation coefficients. Q²F3 is often preferred. |

| RMSEext | ( \sqrt{\frac{\sum (y{obs,ext} - y{pred,ext})^2}{n_{ext}}} ) | Comparable to RMSECV | Average prediction error for the external set. |

| CCC (Concordance Correlation Coefficient) | ( \rhoc = \frac{2s{xy}}{sx^2 + sy^2 + (\bar{x} - \bar{y})^2} ) | > 0.85 | Measures agreement between observed and predicted values (precision & accuracy). |

| MAEext | ( \frac{\sum |y{obs,ext} - y{pred,ext}|}{n_{ext}} ) | Low, context-dependent | Mean Absolute Error. Robust to outliers. |

Experimental Protocols for Validation

Protocol for Internal Validation via k-Fold Cross-Validation

Objective: To estimate model robustness and predictive ability within the training data.

- Dataset Preparation: Standardize descriptors and scale the response variable if necessary. Let n = total number of training compounds.

- Data Splitting: Randomly partition the dataset into k subsets (folds) of approximately equal size. Common k values are 5 or 10.

- Iterative Training/Validation:

- For i = 1 to k:

- Hold out fold i as the temporary validation set.

- Train the QSAR model using the remaining k-1 folds.

- Use the trained model to predict the activities of compounds in fold i.

- Record the predicted values.

- For i = 1 to k:

- Metric Calculation: After all iterations, all training compounds have a cross-validated prediction. Calculate Q², RMSECV, etc., using these predictions.

Protocol for External Validation with a True Test Set

Objective: To evaluate the model's predictive power on unseen, independent data.

- Initial Data Division: Before any model development, randomly divide the full dataset into a Training Set (~70-80%) and a Hold-Out Test Set (~20-30%). Ensure both sets span the chemical and activity space (stratified sampling).

- Model Development: Develop the final QSAR model using only the Training Set. This includes descriptor selection, algorithm optimization, and internal validation (as per Protocol 3.1).

- Final Model Locking: Fix all model parameters (coefficients, selected descriptors, scaling factors).

- External Prediction: Apply the locked model to the descriptors of the Hold-Out Test Set to generate predictions.

- Metric Calculation: Calculate R²ext, Q²F1-F3, RMSEext, CCC, and MAEext by comparing these predictions to the experimental values of the Test Set.

Protocol for Y-Randomization Testing

Objective: To verify the model is not the result of chance correlation.

- Baseline Model: Build the QSAR model with the original training data and response variable (Y). Record its R² and Q².

- Randomization Iteration: Repeat the following 50-100 times:

- Randomly permute (shuffle) the values of the response vector (Y) relative to the descriptor matrix (X).

- Build a new model using the same descriptor set and modeling technique with the scrambled Y.

- Record the R² and Q² of this randomized model.

- Statistical Analysis: Plot the distribution of randomized model performance metrics. Calculate the mean and standard deviation. The original model's performance should be a significant outlier (e.g., p < 0.05 from a t-test) compared to the distribution of randomized model performances.

Visualizing the Validation Workflow & Relationships

Workflow Diagram: Principle 4 Validation Process

Decision Logic for QSAR Model Acceptance

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Tools & Resources for QSAR Validation

| Item / Solution | Function in Validation | Example / Specification |

|---|---|---|

| Chemical Descriptor Software | Generates numerical representations of molecular structures for model building. | DRAGON, PaDEL-Descriptor, RDKit, MOE. |

| Modeling & Validation Suite | Platform for algorithm training, internal CV, and metric calculation. | scikit-learn (Python), R (caret, pls), SIMCA, KNIME. |

| External Validation Dataset | A curated, chemically diverse set of compounds with high-quality experimental data, held out from training. | Public sources: ChEMBL, PubChem BioAssay. Must be truly external. |

| Applicability Domain Tool | Software or script to calculate leverage, distance-based metrics, or PCA-based boundaries. | AMBIT (Toxtree), in-house scripts using PCA & Hotelling's T². |

| Y-Randomization Script | Custom script to automate response permutation and model recalibration. | Python (NumPy, scikit-learn), R with for-loop. Minimum 50 iterations. |

| Statistical Analysis Package | For advanced metric calculation (CCC, confidence intervals) and graphical analysis. | R (DescTools), GraphPad Prism, Python (SciPy, statsmodels). |

| Standardized Reporting Template | Checklist or document to ensure all OECD validation principles are reported transparently. | Based on OECD QSAR Toolbox reporting formats or journal-specific guidelines. |

The Organisation for Economic Co-operation and Development (OECD) Principles for the Validation of (Quantitative) Structure-Activity Relationships [(Q)SARs] provide a foundational framework for regulatory acceptance of computational models in chemical safety assessment and drug development. Principle 5, "A (Q)SAR should be associated with a mechanistic interpretation," is not merely a supplementary guideline but a critical determinant of a model's scientific validity, reliability, and domain of applicability. This principle elevates a model from a statistical correlation to a scientifically defensible tool. Mechanistic interpretation provides the biological or physicochemical rationale linking molecular structure to the predicted activity or property, thereby offering transparency, enhancing trust, and allowing for the extrapolation beyond the training set with greater confidence.

Defining Mechanistic Interpretation in (Q)SAR

Mechanistic interpretation refers to the elucidation of the biological, chemical, or physical processes that explain why a specific molecular structure leads to a particular endpoint. It moves beyond the "black box" by connecting molecular descriptors (e.g., logP, HOMO/LUMO energies, polar surface area, presence of toxicophores) to biologically relevant events.

Core Components:

- Biological Pathway Alignment: The descriptor profile of a compound should be logically linked to known molecular initiating events (MIEs) and key events (KEs) in an Adverse Outcome Pathway (AOP) or therapeutic mode-of-action pathway.

- Physicochemical Rationale: For properties like absorption or solubility, descriptors must relate to established physical chemistry principles (e.g., lipophilicity and membrane permeability).

- Domain of Applicability Definition: A mechanistic basis allows for the clear definition of the chemical space where the model is reliable, as compounds sharing the mechanism are likely to be predicted accurately.

Methodologies for Establishing Mechanistic Interpretation

Establishing mechanistic interpretation is a multi-faceted process integrating computational, in chemico, and in vitro data.

Descriptor Analysis and Profiling

- Protocol: Perform statistical correlation (e.g., PLS, decision tree analysis) between all model descriptors and the endpoint. Identify the most influential descriptors. For each top descriptor, conduct a literature review to establish its known mechanistic role in the endpoint (e.g., electrophilicity descriptors for skin sensitization, relating to the MIE of covalent binding to skin proteins).

- Data Requirement: The model's descriptor importance list and comprehensive scientific literature.

Read-Across within the Applicability Domain

- Protocol: For a new query compound, identify its nearest neighbors in the training set using distance metrics (e.g., Euclidean, Mahalanobis). Manually curate and compare the mechanistic profiles (toxicophores, metabolic soft spots, etc.) of the query and its neighbors. Prediction confidence is high only if mechanistic similarity underpins the structural similarity.

- Data Requirement: A well-annotated training set with known mechanisms or toxicophores.

Experimental Validation of the Hypothesized Mechanism

- Protocol: Select representative compounds from different prediction categories (e.g., high-activity, low-activity). Employ targeted in vitro assays designed to probe the specific Key Event predicted by the model. For example, for an endocrine disruption model based on estrogen receptor (ER) binding, confirm predictions using a standardized ER transactivation assay (e.g., OECD TG 455).

- Data Requirement: Compounds, relevant cell lines or biochemical kits, and assay protocols.

Table 1: Summary of Key Methodological Approaches for Mechanistic Interpretation

| Methodology | Primary Objective | Key Output | Typical Quantitative Metrics |

|---|---|---|---|

| Descriptor Analysis | Link model variables to biological/chemical theory | Mechanistic hypothesis for descriptor-endpoint relationship | Descriptor importance weight (from PLS, Random Forest); Correlation coefficient (R²) with endpoint. |

| Read-Across Analysis | Ensure predictions are based on mechanistic similarity, not just statistical proximity | Justification for inclusion within the Applicability Domain | Similarity distance (Tanimoto index, Euclidean distance); Mechanistic alert concordance. |

| In Vitro Assay Validation | Confirm the biological activity predicted by the model | Experimental evidence supporting the mechanistic basis | IC50/EC50 values; Assay-specific positive/negative call rates vs. model prediction. |

| Adverse Outcome Pathway (AOP) Mapping | Frame model predictions within a regulatory-relevant biological narrative | AOP network diagram showing where the model predicts MIEs or KEs | Weight of Evidence (WoE) score for AOP alignment. |

Visualization of Workflow and Pathways

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Mechanistic QSAR Investigation

| Reagent / Material | Provider Examples | Primary Function in Mechanistic Studies |

|---|---|---|

| Direct Peptide Reactivity Assay (DPRA) Kit | Thermo Fisher, Eurofins | In chemico test to quantify covalent binding to peptides, directly probing the Molecular Initiating Event for skin sensitization AOP. |

| AREc32 Cell Line | ATCC, commercial labs | Reporter gene cell line (Luciferase) under control of Antioxidant Response Element. Used to confirm activation of the Keap1-Nrf2 pathway, a key event for many toxicities. |

| Stable Transfected ERα, AR CALUX Assays | PerkinElmer, BioDetection Systems | Cell-based bioassays for specific nuclear receptor activation (Estrogen/Androgen Receptor), validating endocrine disruption mechanisms. |

| Metabolite Generation Systems (e.g., S9, Hepatocytes) | Corning, BioIVT | Used to incubate with test compounds to generate bioactive metabolites, exploring mechanisms involving bioactivation. |

| CYP450 Inhibition Assay Kits (Fluorogenic) | Promega, Thermo Fisher | High-throughput screening to determine if a compound's toxicity or DD mechanism involves inhibition of specific cytochrome P450 enzymes. |

| Reactive Oxygen Species (ROS) Detection Probes (DCFH-DA, DHE) | Abcam, Cayman Chemical | Flow cytometry or fluorescence microscopy probes to validate oxidative stress as a putative mechanism predicted by descriptors related to redox potential. |

| Pan-Assay Interference Compounds (PAINS) Filters | Various computational libraries | Computational toolkits to identify compounds with substructures known to cause assay interference, ensuring mechanistic signals are genuine. |

The integration of computational workflows into modern drug discovery and chemical safety assessment represents a paradigm shift, fundamentally guided by the Organisation for Economic Co-operation and Development (OECD) principles for the validation of Quantitative Structure-Activity Relationship (QSAR) models. These principles—(1) a defined endpoint, (2) an unambiguous algorithm, (3) a defined domain of applicability, (4) appropriate measures of goodness-of-fit, robustness, and predictivity, and (5) a mechanistic interpretation, where possible—provide the essential framework for transforming standalone in silico tools into reliable components of a decision-support system. This technical guide details the methodology for building a validated, integrated workflow that transitions from predictive computation to actionable insight, ensuring regulatory and scientific rigor.

Core Integrated Workflow Architecture

The end-to-end workflow integrates data curation, model application, validation, and interpretation into a cohesive decision-support pipeline.

Diagram Title: Integrated QSAR Workflow with OECD Principles

Detailed Methodologies and Experimental Protocols

Protocol: Chemical Standardization and Descriptor Calculation

Objective: To generate reproducible, high-quality chemical structure data for modeling.

- Input: Chemical structures in SMILES, SDF, or MOL file format.

- Standardization (Knime/PaDEL/RDKit):

- Salts and solvents are removed using a predefined fragmentation protocol.

- Structures are neutralized (if required by the model).

- Tautomers are enumerated and canonicalized to a standard form.

- 3D geometries are generated (e.g., using CORINA or RDKit's ETKDG) and minimized with the MMFF94 force field.

- Descriptor Calculation:

- A predefined set of 2D and 3D molecular descriptors (e.g., topological, electronic, geometrical) is calculated using software such as PaDEL-Descriptor, RDKit, or Dragon.

- Descriptors with zero variance or high pairwise correlation (|r| > 0.95) are removed to reduce dimensionality.

- Output: A standardized dataset in CSV or .arff format.

Protocol: QSAR Model Application and Domain of Applicability (DA) Assessment

Objective: To generate a reliable prediction with a defined confidence metric.

- Model Loading: A pre-validated QSAR model (e.g., a partial least squares (PLS) or random forest model) is loaded. The algorithm and endpoint are documented per OECD Principle 1 & 2.

- Descriptor Scaling: Input descriptor values are scaled identically to the training set (e.g., mean-centering and unit variance).

- Prediction: The scaled descriptors are passed to the model to generate a numerical or categorical prediction (e.g., pLC50, mutagenicity class).

- Applicability Domain Assessment:

- Method (Leverage/Williams Plot): Calculate the leverage (h) for the new chemical using the training set descriptor matrix (X): h = xᵀ(XᵀX)⁻¹x.

- The critical leverage h* is defined as 3p'/n, where p' is the number of model variables + 1, and n is the number of training compounds.

- Decision Rule: If h > h*, the chemical is structurally extrapolated and the prediction is flagged as unreliable.

- Standardized Residuals: Predictions with a standardized residual > 3 standard deviation units are flagged for high prediction error.

Protocol: Internal Validation (Y-Randomization)

Objective: To confirm the model's robustness and lack of chance correlation.

- The original response variable (Y) of the training set is randomly shuffled.

- A new model is built using the original descriptor matrix (X) and the scrambled Y-values.

- This process is repeated 100-200 times.

- The performance metrics (e.g., Q², R²) of the scrambled models are recorded.

- Success Criterion: The performance of the original model must be significantly better (e.g., p < 0.05) than the distribution of performances from the scrambled models.

Data Presentation: Model Performance Metrics

Table 1: Summary of Key Validation Metrics for QSAR Models Aligned with OECD Principle 4

| Metric | Formula | Interpretation | Threshold for Acceptance |

|---|---|---|---|

| R² (Coefficient of Determination) | R² = 1 - (SSres/SStot) | Goodness-of-fit for training data. Proportion of variance explained. | > 0.6 (context-dependent) |

| Q² (LOO-CV) | Q² = 1 - (Σ(yᵢ - ŷᵢ)² / Σ(yᵢ - ȳ)²) | Internal predictivity using Leave-One-Out Cross-Validation. | > 0.5 (typically) |

| RMSE (Root Mean Square Error) | RMSE = √[Σ(yᵢ - ŷᵢ)²/n] | Average magnitude of prediction error. | As low as possible, relative to data range. |

| MAE (Mean Absolute Error) | MAE = Σ|yᵢ - ŷᵢ|/n | Robust measure of average error magnitude. | As low as possible. |

| Sensitivity (for Classification) | TP / (TP + FN) | Ability to identify true positives. | > 0.7 (context-dependent) |

| Specificity (for Classification) | TN / (TN + FP) | Ability to identify true negatives. | > 0.7 (context-dependent) |

| Concordance (Accuracy) | (TP + TN) / Total | Overall correct classification rate. | > 0.75 (context-dependent) |

Mechanistic Interpretation and Pathway Mapping

To satisfy OECD Principle 5, predictions are linked to potential biological mechanisms. For an endocrine disruption endpoint, a simplified Adverse Outcome Pathway (AOP) can be visualized.

Diagram Title: Integrating QSAR into an Adverse Outcome Pathway (AOP)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Database Tools for QSAR Workflow Integration

| Item/Software | Primary Function | Relevance to Workflow |

|---|---|---|