Deep Neural Networks in Toxicity Endpoint Prediction: Transforming Drug Safety with AI

This article provides a comprehensive overview of the application of Deep Neural Networks (DNNs) for predicting chemical and drug toxicity endpoints.

Deep Neural Networks in Toxicity Endpoint Prediction: Transforming Drug Safety with AI

Abstract

This article provides a comprehensive overview of the application of Deep Neural Networks (DNNs) for predicting chemical and drug toxicity endpoints. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles, key architectural models—including Multilayer Perceptrons (MLPs), Convolutional Neural Networks (CNNs), Graph Neural Networks (GNNs), and Transformers—and their implementation for various toxicity endpoints such as hepatotoxicity, cardiotoxicity, and carcinogenicity. The content delves into strategies for overcoming common challenges like data scarcity and model interpretability, discusses rigorous validation and benchmarking practices, and synthesizes the future trajectory of AI-driven predictive toxicology in enhancing the efficiency and safety of biomedical research.

The Foundation of AI in Predictive Toxicology: Core Concepts and Urgent Needs

The Critical Role of Toxicity Prediction in Modern Drug Discovery and Public Health

Toxicity prediction has become a cornerstone of modern drug discovery, playing a pivotal role in ensuring patient safety and reducing late-stage drug development failures. Traditional toxicity assessment methods relying on animal experiments face significant challenges including high costs, lengthy timelines, ethical concerns, and limited accuracy in human extrapolation [1] [2]. The emergence of artificial intelligence (AI) and deep learning technologies is fundamentally reshaping this landscape, enabling more accurate, efficient, and mechanism-based toxicity evaluation early in the drug development pipeline [3] [4].

Approximately 30% of drug development failures are attributed to safety concerns, making toxicity the leading cause of attrition beyond efficacy issues [1] [2]. Furthermore, nearly 30% of marketed drugs are subsequently withdrawn due to unforeseen toxic reactions [2]. These statistics underscore the critical importance of robust toxicity prediction frameworks that can identify potential safety issues before drugs enter clinical trials or reach the market. AI-powered models, particularly deep neural networks, have demonstrated remarkable capabilities in predicting diverse toxicity endpoints by learning complex patterns from chemical structures, biological assays, and multi-omics data [5] [3].

This application note explores the transformative impact of deep learning in predictive toxicology, with a specific focus on toxicity endpoint prediction. We provide detailed protocols for implementing state-of-the-art models, comprehensive data analysis frameworks, and essential research tools that empower scientists to integrate these advanced methodologies into their drug discovery workflows.

Essential Databases for Toxicity Endpoint Prediction

The development of robust deep learning models for toxicity prediction relies on comprehensive, high-quality datasets. The table below summarizes key publicly available databases that serve as valuable resources for training and validating toxicity prediction models.

Table 1: Essential Databases for Toxicity Endpoint Prediction Research

| Database Name | Data Content & Scope | Key Endpoints Covered | Utility in DL Research |

|---|---|---|---|

| TOXRIC [1] [6] | 80,081 unique compounds; 122,594 toxicity measurements | Multi-species acute toxicity (59 endpoints) | Training data for multi-condition toxicity prediction |

| Tox21 [5] [3] | 8,249 compounds; 12 high-throughput assays | Nuclear receptor signaling, stress response pathways | Benchmark for multi-task deep learning models |

| ToxCast [7] [3] | ~4,746 chemicals; hundreds of endpoints | High-throughput screening data for various mechanisms | Biological feature extraction for in vivo toxicity prediction |

| ChEMBL [1] [3] | Manually curated bioactive molecules | ADMET data, bioactivity data | Pre-training molecular representation models |

| DrugBank [1] [3] | Comprehensive drug information | Drug targets, interactions, adverse reactions | Contextualizing toxicity within pharmacological profiles |

| PubChem [1] [6] | Massive chemical substance database | Structure, activity, toxicity data | Large-scale feature extraction and model training |

| hERG Central [3] | >300,000 experimental records | Cardiotoxicity (hERG channel inhibition) | Specialized cardiotoxicity prediction |

These databases enable researchers to access diverse toxicity data spanning multiple species, administration routes, and measurement indicators. The TOXRIC database, for instance, provides comprehensive acute toxicity data covering 15 test species, 8 administration routes, and 3 measurement indicators, making it particularly valuable for developing models that can extrapolate across experimental conditions [6]. Similarly, Tox21 has served as a critical benchmark in community-wide challenges to compare computational toxicity prediction methods [5].

Deep Learning Approaches for Toxicity Endpoint Prediction

Molecular Representation Strategies

Deep learning models for toxicity prediction employ diverse molecular representation strategies, each with distinct advantages for capturing relevant chemical information:

Graph-Based Representations: Molecular graphs with atoms as nodes and bonds as edges enable Graph Neural Networks (GNNs) to learn directly from molecular topology [5] [3]. This approach naturally captures structural alerts and functional groups associated with toxicity.

Image-Based Representations: 2D structural images of chemical compounds processed through convolutional neural networks (CNNs) or Vision Transformers have demonstrated competitive performance in toxicity classification tasks [5] [8]. The DenseNet121 architecture, for instance, has shown superior performance in extracting discriminative features from molecular images [5].

Sequence-Based Representations: SMILES strings processed through Recurrent Neural Networks (RNNs) or Transformer architectures learn contextualized embeddings of chemical structures [5] [3]. Models like ChemBERTa treat chemical structures as linguistic sequences to capture structural patterns correlated with toxicity.

Multimodal Fusion: Integrating multiple representation types (e.g., molecular descriptors with structural images) through joint fusion mechanisms has shown enhanced predictive performance by capturing complementary chemical information [8].

Advanced Architectural Frameworks

Recent research has introduced sophisticated neural architectures specifically designed for toxicity prediction challenges:

The ToxACoL (Adjoint Correlation Learning) framework addresses data scarcity for specific endpoints by modeling relationships between multiple toxicity endpoints using graph topology [6]. This approach enables knowledge transfer from data-rich to data-scarce endpoints through graph convolution operations, significantly improving prediction accuracy for human-specific toxicity endpoints with limited data [6].

Multimodal deep learning architectures combine Vision Transformers for processing molecular structure images with Multilayer Perceptrons for handling numerical chemical property data [8]. A joint fusion mechanism integrates features from both modalities, achieving superior predictive accuracy compared to single-modality approaches [8].

Explainable AI techniques such as Grad-CAM visualizations and SHAP analysis provide interpretable insights into model predictions by highlighting molecular substructures or features contributing to toxicity classifications [5] [3]. This transparency is crucial for building trust in AI predictions and facilitating scientific discovery.

Experimental Protocols for Toxicity Endpoint Prediction

Protocol: Implementing an Image-Based Toxicity Prediction Pipeline Using DenseNet121

This protocol details the implementation of a deep learning pipeline for toxicity prediction using 2D molecular structure images based on the DenseNet121 architecture [5].

Materials and Reagents

- Chemical Compounds: Curated set of compounds with known toxicity endpoints from Tox21 or TOXRIC databases

- Computational Environment: Python 3.8+ with PyTorch or TensorFlow deep learning frameworks

- Software Libraries: RDKit for molecular structure processing, OpenCV for image preprocessing, scikit-learn for model evaluation

Procedure

Data Preparation and Molecular Image Generation

- Obtain SMILES strings and corresponding toxicity labels from selected database

- Convert SMILES to 2D molecular structures using RDKit

- Generate standardized 224×224 pixel RGB images with white background and black structure depictions

- Apply data augmentation techniques (rotation, translation, slight scaling) to improve model generalization

- Split dataset into training (70%), validation (15%), and test (15%) sets using scaffold splitting to ensure structural diversity

Model Architecture Implementation

- Implement DenseNet121 backbone with pretrained weights on ImageNet

- Modify final classification layer to output 12 units (for Tox21 endpoints) with sigmoid activation

- Add batch normalization before each convolution layer to stabilize training

Key configuration parameters:

- Input shape: (224, 224, 3)

- Optimization: Adam optimizer with learning rate 0.001

- Loss function: Binary cross-entropy for multi-label classification

- Batch size: 32

Model Training and Optimization

- Train model for 100 epochs with early stopping based on validation loss

- Implement learning rate reduction on plateau (factor=0.5, patience=5)

- Apply gradient clipping to prevent exploding gradients

- Monitor per-endpoint AUROC and overall mean AUROC

Model Interpretation Using Explainable AI

- Implement Grad-CAM visualization to highlight molecular regions influencing predictions

- Generate attention maps for correct and incorrect predictions

- Correlate highlighted regions with known structural alerts for toxicity

Expected Outcomes

This pipeline should achieve competitive performance on the Tox21 benchmark, with expected mean AUROC >0.80. The Grad-CAM visualizations should identify chemically meaningful substructures associated with toxicity endpoints, providing both predictive accuracy and mechanistic insights.

Protocol: Multi-Endpoint Toxicity Prediction with ToxACoL Framework

This protocol implements the ToxACoL framework for multi-condition acute toxicity assessment, specifically designed to address data scarcity challenges [6].

Materials and Reagents

- Toxicity Dataset: TOXRIC database or comparable multi-endpoint acute toxicity data

- Computational Resources: High-memory GPU workstation (≥16GB VRAM)

- Software Dependencies: PyTorch Geometric for graph operations, NetworkX for graph visualization

Procedure

Endpoint Graph Construction

- Extract multi-condition endpoints (species, administration routes, measurement indicators)

- Calculate endpoint relationships based on experimental condition similarity and cross-endpoint compound toxicity correlations

- Construct endpoint graph topology where nodes represent endpoints and edges represent relationship strength

Adjoint Correlation Mechanism Implementation

- Implement dual learning branches for compounds and endpoints

- Initialize compound representations using learned features from pretrained GNN

- Initialize endpoint representations using one-hot encoding of experimental conditions

Key hyperparameters:

- Graph convolution layers: 3

- Hidden dimension: 256

- Correlation operation: concatenation followed by linear transformation

- Dropout rate: 0.3

Knowledge Transfer via Graph Convolution

- Perform message passing on endpoint graph to propagate information between related endpoints

- Update endpoint-aware compound representations at each layer

- Apply residual connections to preserve original compound features

Multi-Task Prediction and Optimization

- Implement task-specific output heads for each endpoint

- Apply balanced sampling or loss weighting to address endpoint data imbalance

- Optimize using AdamW optimizer with weight decay 0.01

Expected Outcomes

The ToxACoL model should demonstrate significant performance improvements (43-87%) for data-scarce human endpoints compared to single-task learning approaches. The framework should effectively transfer knowledge from data-rich to data-scarce endpoints, reducing required training data by 70-80% for sparse endpoints [6].

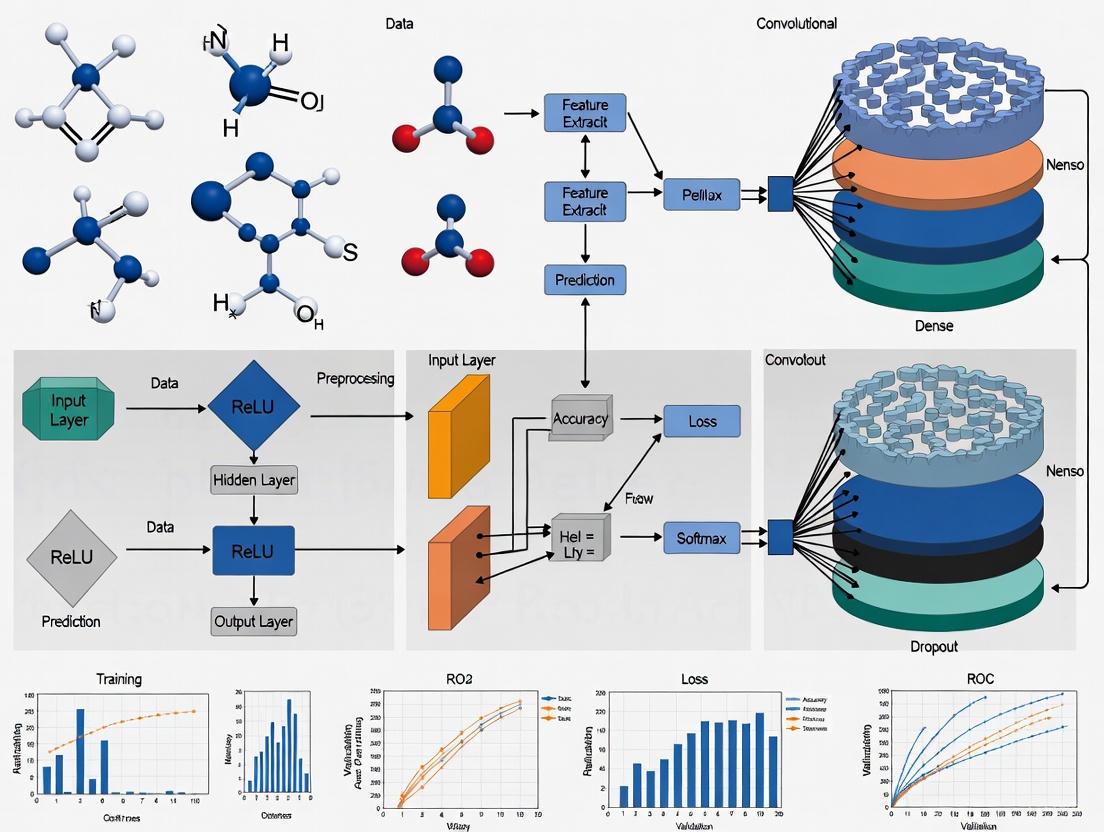

Visualization of Deep Learning Workflows

Multi-Modal Toxicity Prediction Workflow

Multi-Modal Toxicity Prediction Workflow

ToxACoL Framework Architecture

ToxACoL Framework Architecture

Table 2: Essential Research Reagent Solutions for Toxicity Prediction Research

| Resource Category | Specific Tools & Platforms | Key Functionality | Application in Toxicity Prediction |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch, TensorFlow, PyTorch Geometric | Model implementation and training | Building and training custom neural network architectures |

| Cheminformatics Libraries | RDKit, OpenBabel, ChemAxon | Molecular representation and manipulation | Generating molecular descriptors, fingerprints, and structure images |

| Toxicity Databases | TOXRIC, Tox21, ToxCast, ChEMBL | Curated toxicity data sources | Model training, validation, and benchmarking |

| Molecular Visualization | PyMOL, ChimeraX, RDKit Visualization | 3D/2D structure analysis | Interpretability and structural alert identification |

| Explainable AI Tools | SHAP, Captum, Grad-CAM | Model interpretation and visualization | Identifying toxicity-related molecular substructures |

| High-Performance Computing | NVIDIA GPUs, Google Colab, AWS EC2 | Computational acceleration | Training large-scale deep learning models |

| Web Platforms | ToxACoL Online, admetSAR | Accessibility and deployment | Rapid toxicity prediction for experimentalists |

Deep learning approaches are revolutionizing toxicity prediction in drug discovery by enabling more accurate, efficient, and interpretable assessment of potential safety issues. The protocols and frameworks presented in this application note provide researchers with practical methodologies for implementing state-of-the-art toxicity prediction models in their workflows. By integrating multimodal molecular representations, leveraging endpoint relationships through graph-based learning, and incorporating explainable AI techniques, these advanced approaches address critical challenges in predictive toxicology.

The continued evolution of deep learning architectures, coupled with the growing availability of high-quality toxicity data, promises to further enhance our ability to identify toxic compounds early in the drug development pipeline. This transformation not only reduces reliance on animal testing but also significantly decreases late-stage attrition rates, ultimately accelerating the delivery of safer therapeutics to patients. As these technologies mature, their integration into standardized drug discovery workflows will become increasingly essential for maintaining competitiveness and ensuring patient safety in pharmaceutical development.

The field of in silico toxicology has undergone a profound transformation, evolving from traditional Quantitative Structure-Activity Relationships (QSARs) to sophisticated artificial intelligence (AI) and deep learning (DL) models [9] [10]. This paradigm shift addresses a critical challenge in drug development: the accurate prediction of adverse drug reactions (ADRs), which remain a major cause of high attrition rates and significant financial losses [9]. Traditional toxicity testing methods, such as in vitro assays and animal studies, often fail to predict human-specific toxicities accurately due to species differences and limited scalability [9]. The emergence of AI and machine learning (ML) has introduced transformative approaches that leverage large-scale datasets—including omics profiles, chemical properties, and electronic health records (EHRs)—to provide early and accurate identification of toxicity risks [9]. This evolution not only improves the efficiency of drug discovery but also aligns with the 3Rs principle (Replacement, Reduction, and Refinement) by minimizing reliance on animal testing [9] [11].

The journey from QSAR to deep learning represents more than just a technical upgrade; it signifies a fundamental change in how toxicological data is integrated and interpreted. QSAR models, which predict toxicological effects based solely on chemical structure, have shown considerable success but are limited by their exclusive reliance on structural data [10]. This shortcoming is particularly evident in drug toxicity assessment, where minor structural modifications can result in significant toxicity changes, as seen in the case of ibuprofen (safe) and ibufenac (hepatotoxic), which differ by only a single methyl group [10]. Advances in AI now enable the development of models that integrate diverse data types and uncover complex toxicity mechanisms, thereby enhancing predictive accuracy and providing explainable insights [9] [12].

The QSAR Foundation and Its Limitations

Core Principles and Applications

Quantitative Structure-Activity Relationships (QSARs) have been the cornerstone of computational toxicology for decades, operating on the fundamental principle that a chemical's biological or toxicological activity is determined by its molecular structure [10]. These models employ various machine learning (ML) algorithms to predict toxicity from chemical representations known as chemical descriptors, which quantify properties such as lipophilicity, electronic distribution, and steric factors [11] [10]. In forensic toxicology, QSAR techniques provide a quick and economical means to anticipate the effects of substances related to cases like poisoning and the detection of new psychoactive drug compounds [11]. A typical QSAR workflow begins with thorough data curation, involving the collection of details about the chemical's structure, related analogs, and any known toxicological endpoints [11]. This is followed by descriptor computation and model selection, where prediction algorithms are used to forecast toxicity endpoints, including acute toxicity, organ toxicity, and carcinogenicity [11].

Limitations of Traditional QSAR Approaches

Despite their successes, traditional QSAR models face significant limitations that restrict their application in modern drug development. Their exclusive reliance on chemical structures often fails to capture complex biological interactions and mechanisms underlying toxicity [10]. This structural reliance limits their predictive power for drugs where small modifications cause major toxicity changes, as demonstrated by the ibuprofen/ibufenac paradox [10]. Furthermore, many QSAR tools struggle with novel scaffolds and unusual ring conformations (e.g., bicyclic organophosphates), meaning that designer opioids may fall outside of their training sets, yielding uncertain predictions [11]. This lack of mechanistic context and inability to generalize to structurally novel compounds represents a critical gap in traditional QSAR approaches, necessitating more advanced methodologies that can incorporate broader chemical knowledge and biological context.

Table 1: Evolution of In Silico Toxicology Approaches

| Era | Primary Approach | Key Features | Limitations |

|---|---|---|---|

| Traditional | QSAR (Quantitative Structure-Activity Relationships) [11] [10] | - Relies on chemical structure and descriptors- Employs classical ML algorithms- Well-established workflow | - Limited to structural information- Struggles with novel scaffolds- Misses complex biological mechanisms |

| Modern | Deep Learning & QKAR (Quantitative Knowledge-Activity Relationships) [12] [10] | - Integrates diverse data (omics, clinical, knowledge)- Uses multi-task deep neural networks- Provides explainable predictions via methods like CEM | - Requires large, high-quality datasets- "Black-box" nature requires explainability methods- Computational intensity |

The Rise of AI and Deep Learning in Toxicology

Multi-Task Deep Learning for Enhanced Predictions

The application of deep learning (DL) frameworks represents a significant advancement in predictive toxicology. Modern approaches utilize multi-task deep neural networks (MTDNN) that simultaneously model in vitro, in vivo, and clinical toxicity data, overcoming the limitations of single-task models that predict toxicity for each platform separately [12]. This multi-task learning paradigm acknowledges that a single molecule can demonstrate a multitude of responses across different assays and organisms, allowing for more comprehensive toxicity profiling [12]. Studies have demonstrated that MTDNNs accurately predict toxicity for all endpoints, including clinical, as indicated by the area under the Receiver Operator Characteristic curve and balanced accuracy [12]. The use of advanced molecular representations, such as pre-trained SMILES embeddings (SE), further enhances clinical toxicity predictions compared to existing models by encoding relationships between chemicals within datasets, unlike simpler representations like Morgan Fingerprints (FP) that only vectorize the presence of substructures [12].

Knowledge-Enhanced Models: The QKAR Framework

A novel framework termed Quantitative Knowledge-Activity Relationships (QKAR) has emerged to enhance toxicity predictions by leveraging domain-specific knowledge through large language models (LLMs) and text embedding [10]. QKAR models predict drug toxicity using knowledge representations generated from comprehensive drug summaries created by AI models like GPT-4o, which are then converted to numerical vectors using text embedding models [10]. These knowledge representations capture semantic relationships, contextual details, and syntactic structures, making them effective for classification tasks [10]. Research on drug-induced liver injury (DILI) and drug-induced cardiotoxicity (DICT) has demonstrated that QKARs consistently outperform traditional QSARs, particularly in differentiating drugs with similar structures but different toxicity profiles [10]. The integration of knowledge-based and structure-based representations, termed Q(K + S)ARs, offers further enhanced prediction accuracy by combining both domain-specific knowledge and structural data [10].

Application Notes: Implementing Advanced In Silico Models

Protocol 1: Developing a Multi-Task Deep Neural Network for Toxicity Prediction

Objective

To develop a multi-task deep neural network (MTDNN) capable of simultaneously predicting in vitro, in vivo, and clinical toxicity endpoints using different molecular representations.

Materials and Data Preparation

- Chemical Compounds: Curate datasets with known toxicity endpoints (e.g., ClinTox for clinical toxicity, Tox21 for in vitro assays, RTECS for in vivo acute oral toxicity) [12].

- Molecular Representations:

- Morgan Fingerprints (FP): Compute using cheminformatics software (e.g., RDKit). These are circular fingerprints vectorizing the presence of substructures within varying radii around an atom [12].

- SMILES Embeddings (SE): Generate pre-trained embeddings using a neural network-based model that translates from non-canonical SMILES to canonical SMILES, encoding relationships between chemicals [12].

- Data Splitting: Split data into training, validation, and test sets. For temporal validation, split based on drug approval years to simulate real-world application (e.g., pre-1997 vs. post-1997 for DILI) [10].

Model Architecture and Training

- Network Architecture: Design a deep neural network with shared hidden layers and separate output layers for each task (in vitro, in vivo, clinical) [12].

- Training Protocol: Train the model using backpropagation and gradient descent. Utilize a loss function that combines the losses from all tasks. Implement early stopping based on validation performance to prevent overfitting.

- Performance Evaluation: Assess model performance using metrics such as the area under the Receiver Operator Characteristic curve (AUC-ROC), balanced accuracy, and precision-recall curves [12].

Model Explanation Using Contrastive Explanations

- Method: Adapt the Contrastive Explanations Method (CEM) to explain the DNN's predictions by identifying pertinent positive (PP) and pertinent negative (PN) features [12].

- Output: PPs represent the minimal necessary substructures (toxicophores) for a toxic prediction, while PNs represent substructures whose absence is critical for the prediction or whose addition would flip the prediction from toxic to non-toxic [12].

- Validation: Compare identified PPs against known toxicophores (e.g., unsubstituted bonded heteroatoms, aromatic amines, Michael receptors) to validate explanations [12].

Diagram 1: MTDNN workflow for toxicity prediction.

Protocol 2: Building a Quantitative Knowledge-Activity Relationship (QKAR) Model

Objective

To develop a QKAR model for predicting specific drug toxicity endpoints (e.g., DILI, DICT) by leveraging domain-specific knowledge from text embeddings.

Knowledge Representation Generation

- Knowledge Summarization: Use a large language model (e.g., GPT-4o) with specialized prompts to generate comprehensive knowledge summaries for each drug [10]. Employ prompts of varying specificity:

- Text Embedding: Convert the generated knowledge summaries (or just drug names for a baseline) into high-dimensional vector representations using a text embedding model (e.g.,

text-embedding-3-large), resulting in a 3072-dimensional vector for each drug [10].

Model Development and Comparison with QSAR

- Machine Learning Algorithms: Apply multiple ML algorithms with distinct complexity levels (e.g., K-Nearest Neighbors, Logistic Regression, Support Vector Machine, Random Forest, Extreme Gradient Boosting) on the knowledge representations [10].

- QSAR Baseline: Develop comparable QSAR models using traditional chemical descriptors on the identical dataset [10].

- Model Evaluation: Conduct a rigorous performance comparison between QKAR and QSAR models using appropriate metrics (e.g., AUC, accuracy, precision, recall) on a held-out test set. Use a temporal split to simulate prospective prediction [10].

Table 2: Key Research Reagent Solutions for In Silico Toxicology

| Reagent / Resource | Type | Primary Function | Example Sources/Tools |

|---|---|---|---|

| Toxicity Datasets | Data | Provide curated experimental data for model training and validation. | ClinTox [12], Tox21 Challenge [12], DILIst [10], DICTrank [10] |

| Molecular Descriptors | Computational Feature | Represent chemical structures numerically for QSAR models. | Morgan Fingerprints [12], Chemical Descriptors (lipophilicity, steric factors) [11] [10] |

| Knowledge Embeddings | Computational Feature | Represent domain knowledge as numerical vectors for QKAR models. | GPT-4o generated summaries [10], text-embedding-3-large model [10] |

| Contrastive Explanation Method (CEM) | Software/Algorithm | Explains model predictions by identifying pertinent positive/negative features. | Adapted CEM for molecular structures [12] |

Comparative Analysis and Future Directions

Performance Evaluation of Different Modeling Approaches

Comparative analyses reveal the significant advantages of modern AI approaches over traditional methods. In studies comparing QKARs and QSARs for DILI and DICT prediction using identical datasets, QKARs consistently outperformed QSARs across different knowledge representations and machine learning algorithms [10]. The level of knowledge embedded in the representation directly impacted performance, with comprehensive pharmacological toxiocology (PharmTox) summaries yielding better predictions than simple summaries (SimpleTox) or drug names alone [10]. Furthermore, multi-task deep learning models have demonstrated superior performance in clinical toxicity prediction compared to single-task models, particularly when using pre-trained SMILES embeddings [12]. These models also facilitate transfer learning, where a base model trained on abundant in vivo or in vitro data can be fine-tuned for clinical toxicity prediction, minimizing the need for extensive clinical data [12].

Table 3: Performance Comparison of Modeling Approaches

| Model Type | Data Input | Key Advantage | Reported Performance |

|---|---|---|---|

| Traditional QSAR [10] | Chemical Structure Descriptors | Established, interpretable | Baseline performance for DILI/DICT prediction |

| Multi-Task DNN [12] | Morgan Fingerprints (FP) / SMILES Embeddings (SE) | Simultaneous multi-endpoint prediction; transfer learning | SE inputs improved clinical toxicity predictions vs. benchmarks |

| QKAR (Knowledge-Based) [10] | Text Embeddings of Drug Knowledge | Captures complex biological context beyond structure | Consistently outperformed QSARs on DILI and DICT |

| Hybrid Q(K+S)AR [10] | Integrated Knowledge & Structure | Leverages both structural and contextual information | Highest prediction accuracy for drug toxicity endpoints |

Future Perspectives and Implementation Challenges

The future of in silico toxicology is poised to see increased implementation of AI-powered techniques, streamlining toxicological investigations and enhancing overall accuracy in forensic and regulatory evaluations [11]. However, several challenges remain to be addressed for widespread adoption. Data quality and standardization are critical, as models require large-scale, high-quality datasets for training [9]. Model interpretability continues to be a concern, necessitating robust explanation methods like CEM to build trust among end-users and regulators [12]. Regulatory acceptance requires thorough validation and alignment with legal standards, particularly in forensic settings where evidence must conform to strict admissibility criteria [11]. Financial considerations also play a role, with break-even analyses indicating that forensic labs need to conduct a sufficient volume of analyses (e.g., >625 per year) to achieve cost efficiency through in silico strategies [11]. As these challenges are addressed, AI and deep learning models will increasingly revolutionize predictive toxicology, ensuring safer and more efficient drug development processes.

Diagram 2: Future directions for in silico toxicology.

Within drug discovery and development, the accurate prediction of toxicological endpoints is paramount to ensuring patient safety and reducing late-stage compound attrition. Hepatotoxicity, cardiotoxicity, carcinogenicity, and genotoxicity represent critical organ-specific and systemic toxicity concerns that are traditionally identified through costly, time-consuming, and ethically challenging in vivo studies [2]. The emergence of deep neural networks (DNNs) and other artificial intelligence (AI) methodologies offers a paradigm shift, enabling the data-driven prediction of these endpoints from chemical structure and in vitro data [7] [2]. This Application Note delineates key experimental protocols, quantitative endpoints, and pathway mechanisms essential for generating high-quality data to train and validate robust DNN models for toxicity prediction. By framing toxicity within a computational research context, we provide a framework for integrating experimental biology with machine learning to advance predictive toxicology.

Hepatotoxicity

Pathophysiology and Clinical Relevance

Drug-Induced Liver Injury (DILI), or hepatotoxicity, is the leading cause of acute liver failure and a major reason for drug withdrawal from the market [13] [14]. DILI can be classified as either intrinsic (dose-dependent and predictable, as with acetaminophen) or idiosyncratic (unpredictable and often host-dependent, as with certain antibiotics) [13] [14]. The liver's susceptibility stems from its central role in metabolizing xenobiotics, often generating reactive metabolites that can cause oxidative stress, mitochondrial dysfunction, and direct cellular damage [13] [15].

KeyIn VitroProtocol: Mechanistic Endpoints in Primary Human Hepatocytes

Application: This protocol is designed for the early identification of compounds with the potential to cause severe DILI (sDILI) by measuring key mechanistic endpoints in a physiologically relevant model system. The data generated is ideal for training DNN models to predict clinical hepatotoxicity from in vitro readouts [15].

Materials:

- Cell Model: Primary cultured human hepatocytes (pooled from multiple donors to capture population variability).

- Test Article: Drug candidates dissolved in DMSO (final concentration typically ≤0.1%).

- Key Reagents:

- ATP Assay Kit (e.g., luminescence-based).

- Cell-permeable fluorescent ROS probe (e.g., CM-H2DCFDA).

- GSH Assay Kit (e.g., colorimetric or fluorescent).

- Caspase-3/7 Activation Assay Kit (e.g., luminescent or fluorescent).

Procedure:

- Cell Seeding and Compound Treatment: Plate primary human hepatocytes in collagen-coated plates and allow to adhere. Treat cells with a range of drug concentrations (e.g., 1-100 µM) and a vehicle control for a defined period (e.g., 24-72 hours).

- Endpoint Measurement:

- Cellular ATP Content: Lyse cells and add ATP assay substrate. Measure luminescence, which is proportional to ATP concentration.

- Reactive Oxygen Species (ROS): Incubate cells with the ROS probe. Measure fluorescence intensity, which increases with ROS production.

- Glutathione (GSH) Depletion: Lyse cells and use a GSH assay kit to quantify the remaining reduced glutathione.

- Caspase Activation: Add a caspase-3/7 substrate to cells. Measure luminescence or fluorescence, which increases with caspase activity.

- Data Analysis:

- Generate dose-response curves for all endpoints.

- Calculate the Area Under the Curve (AUC) for each dose-response.

- Compute the ROS/ATP AUC ratio, identified as a superior predictor for distinguishing sDILI compounds [15].

Table 1: Quantitative Endpoints for Hepatotoxicity Assessment

| Endpoint | Measurement | Implication for DILI | Utility in DNN Training |

|---|---|---|---|

| Clinical Biomarkers | Serum ALT, AST, ALP, Total Bilirubin [13] | Indicator of hepatocellular necrosis and liver function impairment. | Labels for supervised learning of clinical outcomes. |

| ROS/ATP AUC Ratio | Area under the dose-response curve of the ROS to ATP ratio [15] | High value indicates oxidative stress coupled with energy depletion, strongly associated with sDILI. | Highly informative numerical input feature for classification models. |

| Cellular ATP Content | Luminescence signal proportional to intracellular ATP [15] | Depletion indicates mitochondrial dysfunction and loss of energy homeostasis. | Input feature for predicting mechanistic toxicity. |

| GSH Depletion | Colorimetric/fluorometric measurement of reduced glutathione [15] | Reflects exhaustion of the primary antioxidant defense system, increasing susceptibility to oxidative stress. | Input feature for predicting metabolic activation and oxidative stress. |

Hepatotoxicity Pathway Diagram

Diagram Title: Key Molecular Pathways in Drug-Induced Hepatotoxicity

Cardiotoxicity

Pathophysiology and Clinical Relevance

Cardiotoxicity encompasses a range of adverse effects on the heart, from arrhythmias to heart failure. A primary mechanism of drug-induced cardiotoxicity is the inhibition of the human Ether-à-go-go-Related Gene (hERG) potassium channel, which delays cardiac repolarization, leading to Long QT syndrome and potential torsades de pointes [16] [2]. Beyond hERG, radiation oncology studies have demonstrated that damage to specific cardiac substructures is linked to distinct clinical syndromes, such as pericardial events from atrial irradiation and ischemic events from left ventricle exposure [17].

KeyIn Silicoand Clinical Endpoints

Application: Predicting cardiotoxicity, especially hERG inhibition, is a standard component of safety pharmacology. DNN models can leverage both structural data for in silico hERG prediction and clinical dosimetry data to forecast organ-level damage.

Table 2: Quantitative Endpoints for Cardiotoxicity Assessment

| Endpoint | Measurement | Implication for Cardiotoxicity | Utility in DNN Training |

|---|---|---|---|

| hERG IC50 | In vitro patch-clamp assay measuring concentration for 50% hERG channel inhibition [16] [2] | Direct indicator of arrhythmogenic risk; low IC50 signifies high risk. | Core endpoint for classification and regression models from chemical structure. |

| Left Ventricle (LV) V30 | Volume of LV receiving ≥30 Gy of radiation [17] | Strongly correlated with subsequent ischemic events (e.g., myocardial infarction). | Feature for DNN models predicting toxicity from radiotherapy dosimetry. |

| Right Atrium (RA) V30 | Volume of RA receiving ≥30 Gy of radiation [17] | Strongly correlated with pericardial events (e.g., pericarditis, effusion). | Feature for DNN models predicting toxicity from radiotherapy dosimetry. |

| Clinical Events | Diagnosis of pericarditis, myocardial infarction, significant arrhythmia [17] | Confirms clinical manifestation of cardiotoxicity. | Gold-standard labels for supervised learning of clinical outcomes. |

Carcinogenicity & Genotoxicity

Pathophysiology and Clinical Relevance

Carcinogenicity is the ability of a substance to induce tumors, while genotoxicity refers to its capacity to damage DNA, which is a key initiating event in carcinogenesis [18]. Over 90% of known human chemical carcinogens are genotoxic [18]. Mechanisms include inducing mutations (e.g., in oncogenes like KRAS or tumor suppressors like TP53), causing chromosomal aberrations, and promoting epigenetic modifications that dysregulate gene expression [18] [19].

KeyIn VitroandIn SilicoProtocols

Application: A battery of tests is employed to assess genotoxic potential. Data from these assays, along with chemical structure information, is used to build DNN models for predicting carcinogenic risk without long-term animal studies.

Ames Test Protocol (for Bacterial Reverse Mutation)

- Purpose: To identify mutagenic compounds via their ability to induce revertant mutations in bacterial strains.

- Materials: Salmonella typhimurium strains (e.g., TA98, TA100, TA1535) with and without metabolic activation (S9 fraction from rat liver) [18] [16].

- Procedure: Incubate bacteria with the test compound and a minimal glucose medium. Count the number of revertant colonies after incubation. A significant, dose-dependent increase in revertants indicates mutagenicity.

- DNN Integration: The binary outcome (mutagenic/non-mutagenic) and the dose-response data from the Ames test serve as critical labels for training and validating DNN models [16].

In Silico Genotoxicity Prediction Workflow

- Purpose: To rapidly screen virtual compound libraries for structural alerts associated with genotoxicity.

- Materials: Chemical structures of compounds (e.g., in SMILES format); in silico prediction software (e.g., using QSAR, machine learning, or expert rule-based systems) [18] [2].

- Procedure: Input chemical structures into the prediction platform. The platform identifies toxicophores (structural fragments associated with toxicity, such as specific aromatic amines or alkylating agents) and computes probabilities for endpoints like Ames positivity [16].

- DNN Integration: The identified toxicophores and predicted probabilities can be used as input features for more complex DNN models, or the entire process can be replaced by an end-to-end DNN that learns structural alerts directly from molecular graphs [2].

Genotoxicity and Carcinogenicity Pathway Diagram

Diagram Title: Genotoxicity to Carcinogenicity Pathway and Assay Links

Table 3: Key Assays for Genotoxicity and Carcinogenicity Assessment

| Assay Endpoint | System | Measurement | Utility in DNN Training |

|---|---|---|---|

| Ames Test | In vitro (Bacteria) | Count of reverse mutations; positive/negative result [16]. | Primary label for supervised learning of mutagenicity from chemical structure. |

| In Silico Ames Prediction | In silico (Computational) | Probability of Ames test positivity; identification of toxicophores [16]. | Input feature or direct prediction target for structure-based models. |

| Micronucleus Assay | In vitro (Mammalian cells) | Frequency of micronuclei in cytoplasm, indicating chromosomal damage [18]. | Label for predicting clastogenic and aneugenic activity. |

| Carcinogenicity Bioassay | In vivo (Rodents) | Incidence and multiplicity of tumors after long-term exposure. | Gold-standard label for carcinogenicity prediction models, though scarce. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Toxicity Endpoint Investigation

| Research Reagent | Function and Application | Example Use Case |

|---|---|---|

| Primary Human Hepatocytes | Gold-standard in vitro model for hepatotoxicity; retains human-specific drug metabolism and toxicity pathways [15]. | Measuring mechanistic endpoints (ROS, ATP, GSH) for DILI prediction. |

| hERG-Expressing Cell Lines | Cell lines (e.g., HEK293, CHO) engineered to stably express the hERG potassium channel. | In vitro patch-clamp electrophysiology to determine IC50 for cardiotoxicity risk assessment [2]. |

| S9 Metabolic Activation Fraction | Liver homogenate containing cytochrome P450 enzymes and other metabolizing enzymes. | Added to in vitro assays like the Ames test to simulate mammalian metabolic activation of pro-mutagens [18]. |

| ToxCast Bioactivity Database | A large-scale database from the U.S. EPA containing in vitro screening results for thousands of chemicals across hundreds of assay endpoints [7]. | A primary data source for training and validating multi-task DNN models for various toxicity endpoints. |

| Molecular Descriptors & Fingerprints | Numerical representations of chemical structure (e.g., molecular weight, logP, ECFP fingerprints). | Input features for QSAR and DNN models predicting toxicity endpoints directly from chemical structure [7] [2]. |

For researchers developing deep neural networks (DNNs) for toxicity prediction, selecting high-quality, appropriately scaled data is a critical first step. The Tox21, ToxCast, ChEMBL, and DrugBank databases provide complementary chemical and bioactivity data at different scales and with distinct foci, making them suitable for various stages of model development.

Table 1: Core Quantitative Profile of Key Toxicology Databases

| Database | Primary Focus & Data Type | Approximate Scale (Unique Chemicals) | Key Applicability for DNN Development |

|---|---|---|---|

| Tox21 [20] | Quantitative High-Throughput Screening (qHTS); in vitro bioactivity | Part of a ~10,000 substance library [21] | Ideal for training models on high-quality, consistent qHTS data from a defined chemical set. |

| ToxCast (EPA) [21] [22] | High-Throughput Screening; diverse in vitro bioactivity profiles | ~9,400 substances (DTXSIDs) [21] | Provides massive, multi-endpoint bioactivity data for diverse chemical structures. |

| ChEMBL [23] | Manually curated bioactivity data (drug-like molecules) | >2.4 million "research compounds"; 17,500+ drugs/clinical candidates [23] | Excellent for pre-training or developing models on a vast array of drug-target interactions. |

| DrugBank [24] [23] | Comprehensive drug data (approved & investigational) | Contains comprehensive drug data [23] | Provides detailed, structured data on approved drugs for clinical toxicity endpoint modeling. |

Table 2: Data Accessibility and Structural Features

| Database | Access Model | Key Structural Data Provided | Toxicity Endpoint Annotations |

|---|---|---|---|

| Tox21 [25] | Open Access | Chemical structure, annotations [25] | Screening data for pathways (e.g., nuclear receptor, stress response) [20] |

| ToxCast [21] [26] | Open Data | DSSTox standard chemical fields (structure, CASRN, etc.) [21] | Assay endpoints for mitochondrial function, nuclear receptors, etc. [21] [22] |

| ChEMBL [23] | Open Access | Chemical structure or biological sequence [23] | Bioactivity data (e.g., IC50, Ki); integrated with drug safety warnings [23] |

| DrugBank [24] [23] | Free for non-commercial use [23] | Chemical structure, detailed drug metabolism info [24] | Drug-protein interactions, adverse event reports, cytochrome P450 enzyme data [24] |

Experimental Protocols for Data Access and Utilization

Protocol: Accessing and Processing ToxCast & Tox21 Data for DNN Input

This protocol details the steps to acquire and structure ToxCast and Tox21 data, which are essential for creating high-quality training datasets for deep neural networks.

Materials and Software Requirements

- Computing Environment: Computer with internet access and R/Python installed.

- R Packages:

tcpl(ToxCast Data Analysis Pipeline),tcplFit2,ctxR[21]. - Data Sources: EPA CompTox Chemicals Dashboard, ToxCast Data Download Page, Tox21 Data Browser [21] [25].

Procedure

- Data Acquisition:

a. Navigate to the EPA's "Exploring ToxCast Data" page [21].

b. Download the most recent

invitrodbMySQL database package (e.g., v4.3) and the associatedtcplR package [21]. c. Alternatively, access data programmatically via the CTX Bioactivity API [21] or extract specific chemical sets from the CompTox Chemicals Dashboard [25].

Data Loading and Initial Processing: a. Install and load the

tcplpackage in your R environment. b. Use thetcplfunctions to load theinvitrodbdatabase and run initial queries. The package processes concentration-response data through curve-fitting to generate activity metrics [21]. c. For Tox21 data, access the quantitative high-throughput screening (qHTS) data via the Tox21 Data Browser or directly from PubChem [25]. This data includes chemical structure, annotations, and quality control information.Data Curation and Integration: a. Data Cleaning: Filter data based on quality control flags provided in the datasets to ensure reliability. b. Feature Engineering: Extract and calculate molecular descriptors (e.g., molecular weight, logP, topological polar surface area) from the chemical structures (SMILES/InChI) using libraries like RDKit in Python. c. Label Assignment: Use the activity calls and potency metrics (e.g., AC50 values) from the ToxCast/Tox21 assays as labels for your DNN model. Assays can be grouped by biological pathways (e.g., estrogen receptor pathway) to create more robust endpoint labels [21] [22].

Dataset Assembly for Machine Learning: a. Merge the curated bioactivity data with the engineered molecular features. b. Split the final dataset into training, validation, and test sets, ensuring that chemicals from the same structural series are not split across sets to prevent data leakage.

Protocol: Leveraging ChEMBL and DrugBank for Model Context and Enhancement

This protocol outlines how to integrate the rich, drug-focused data from ChEMBL and DrugBank to augment DNN models initially trained on ToxCast/Tox21 data.

Materials and Software Requirements

- Data Sources: ChEMBL website via EBI, DrugBank [27] [24] [23].

- Tools: SQL skills or web services for accessing ChEMBL; knowledge of DrugBank's data structure [23].

Procedure

- Data Retrieval:

a. ChEMBL: Access the database via its web interface or download the complete SQL dump. Use the

molecule_dictionarytable to identify approved drugs and clinical candidate drugs, which are clearly distinguished from research compounds [23]. b. DrugBank: Download the dataset after registering and agreeing to its license terms for non-commercial use. Parse the XML or CSV files to extract detailed drug information, adverse effects, and drug-target interactions [24] [23].

- Data Integration for Model Augmentation: a. Transfer Learning: Pre-train a DNN on the vast bioactivity data in ChEMBL (>2.4 million compounds) to learn general representations of chemical structures and their biological effects. Then, fine-tune the model on the smaller, more specific ToxCast/Tox21 dataset [23] [2]. b. Feature Enrichment: Use DrugBank's detailed annotations (e.g., cytochrome P450 interactions, involved metabolic pathways, and known adverse effects) as additional input features or as multi-task learning targets to improve model robustness and clinical relevance [24]. c. Validation Set Creation: Use the approved drugs in both ChEMBL and DrugBank as a high-quality, clinically relevant external test set to evaluate your model's predictive power on real-world compounds [23].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools and Data Resources

| Tool/Resource | Function in Protocol | Access Link / Reference |

|---|---|---|

tcpl R Package |

Core data processing, curve-fitting, and visualization for ToxCast data [21]. | EPA Exploring ToxCast Data Page [21] |

| CompTox Chemicals Dashboard | Web-based interface for exploring and downloading ToxCast/Tox21 chemical and bioactivity data [22] [25]. | https://comptox.epa.gov/dashboard |

| Tox21 Data Browser | Access and visualize Tox21 qHTS data, including concentration-response curves [25]. | Tox21.gov Resources [25] |

| ChEMBL Database | Provides manually curated bioactivity data for drug-like molecules for model pre-training and validation [27] [23]. | https://www.ebi.ac.uk/chembl/ |

| RDKit | Open-source cheminformatics toolkit for calculating molecular descriptors and fingerprints from chemical structures. | https://www.rdkit.org/ |

| DrugBank Database | Provides detailed drug metadata, interactions, and adverse effects for feature enrichment and clinical validation [24] [23]. | https://go.drugbank.com/ |

Advanced DNN Architectures and Their Application to Toxicity Endpoints

Accurate prediction of chemical toxicity is a pivotal research area in chemistry, biotechnology, and national defense, with critical implications for public safety, environmental health, and drug development [8]. The widespread use of industrial chemicals, pesticides, and pharmaceuticals necessitates precise toxicological assessments to ensure regulatory compliance and minimize harm [8]. However, the inherent complexity of chemical substances and scarcity of comprehensive datasets have hindered progress in this field. Existing prediction models often rely on narrow datasets focused on specific toxic endpoints, which limits their generalizability and practical application [8].

Traditional machine learning techniques, including Quantitative Structure-Activity Relationship (QSAR) models, have demonstrated moderate success but frequently fall short due to their reliance on manually engineered features and inability to effectively model non-linear relationships inherent in chemical data [8]. While deep learning models offer transformative potential by leveraging advanced architectures to extract complex patterns, existing approaches are often restricted to single-modality inputs, failing to capitalize on the synergistic benefits of multi-modal data fusion [8].

This application note details an innovative multimodal deep learning framework that integrates chemical property data with molecular structure images to enhance toxicity prediction accuracy. By combining a Vision Transformer (ViT) for image-based feature extraction with a Multilayer Perceptron (MLP) for numerical data processing, the proposed model enables simultaneous evaluation of diverse toxicological endpoints through a joint fusion mechanism [8]. The protocols described herein provide researchers with comprehensive methodologies for implementing this advanced predictive system within toxicity endpoint prediction research.

Background and Significance

Multimodal learning represents a paradigm shift in computational toxicology, addressing fundamental limitations of single-modality approaches. Conventional models utilizing either molecular descriptors or structural images in isolation fail to capture the complementary chemical information necessary for robust toxicity assessment [8]. The integration of diverse molecular representations—including graphs, SMILES strings, 2D images, and NMR spectra—has demonstrated consistent performance improvements across multiple toxicity benchmarks [28].

Recent advancements in attention mechanisms and fusion strategies have enabled more effective integration of heterogeneous chemical data. The Mixture of Experts (MoE) architecture, particularly when incorporated into attention mechanisms, has shown remarkable capability in processing modalities of molecular images, graphs, and fingerprints, achieving up to 8.33% higher AUROC and 9.11% higher AUPRC compared to conventional methods [29]. Similarly, frameworks incorporating mitochondrial toxicity data alongside structural representations have enhanced hepatotoxicity prediction, achieving AUC values up to 0.81 [30].

Transformer-based architectures have emerged as particularly powerful tools for molecular property prediction due to their ability to autonomously learn long-range atom-to-atom interactions on a global scale [31]. However, these models may struggle to capture intricate substructure details such as covalent bonds and functional groups. The integration of topological data analysis to extract multi-scale topological features from 3D structural information addresses this limitation by providing comprehensive representations of local substructure information [31].

Model Architecture and Implementation

The proposed multimodal framework employs a joint intermediate fusion strategy to combine information from chemical structure images and property data at an intermediate processing stage. This approach preserves unique characteristics of each modality while enabling the model to learn interactions between different data types [8]. The architecture consists of two primary processing streams converging through a fusion mechanism for final toxicity prediction.

Component Specifications

Image Processing Backbone: Vision Transformer (ViT)

The image processing component utilizes a Vision Transformer (ViT) architecture to extract features from 2D structural images of chemical compounds [8]. The implementation specifications are detailed below:

- Architecture: ViT-Base/16 model following Dosovitskiy et al. [8]

- Input Specifications: 224 × 224 pixel resolution images processed as 16 × 16-pixel patches

- Pre-training: ImageNet-21k dataset

- Fine-tuning: Custom dataset of 4,179 molecular structure images

- Feature Extraction: 128-dimensional output feature vector

- Parameter Count: 98,688 trainable parameters in the MLP dimensionality reduction layer

The ViT model processes input images according to the transformation: f_img = ViT(I), where I ∈ ℝ^(H×W×C) represents the input image of height H, width W, and C channels [8].

Tabular Data Processing: Multi-Layer Perceptron (MLP)

The chemical property data processing stream employs a Multi-Layer Perceptron (MLP) to handle numerical and categorical features [8]. The technical specifications include:

- Input: Tabular data X ∈ ℝ^(nfeatures) where nfeatures denotes the number of chemical descriptors

- Output: 128-dimensional feature vector f_tab

- Transformation: f_tab = MLP(X)

- Parameter Count: (Dtab + 1) × 128 trainable parameters, where Dtab represents tabular data dimensionality

Fusion Mechanism and Classification

The fusion layer concatenates feature vectors from both modalities to create a comprehensive representation [8]:

- Fusion Operation: ffused = [fimg; f_tab] ∈ ℝ^256

- Classification: Fully connected layer with sigmoid activation for binary toxicity prediction

Experimental Setup and Performance Metrics

The model was evaluated using standardized toxicity datasets with multiple endpoints. Performance was assessed through the following metrics [8]:

- Accuracy: Proportion of correct predictions among total predictions

- F1-Score: Harmonic mean of precision and recall

- Pearson Correlation Coefficient (PCC): Linear correlation between predicted and actual values

- ROC-AUC: Area under Receiver Operating Characteristic curve

- AUPRC: Area under Precision-Recall curve

Table 1: Performance Metrics of Multimodal Fusion Model

| Metric | Value | Benchmark |

|---|---|---|

| Accuracy | 0.872 | - |

| F1-Score | 0.86 | - |

| PCC | 0.9192 | - |

| ROC-AUC | - | 0.831 [28] |

| AUPRC | - | 9.11% improvement vs. baseline [29] |

Table 2: Comparative Performance Across Multimodal Architectures

| Model | Modalities | Key Innovation | Performance |

|---|---|---|---|

| ViT+MLP Fusion [8] | Images, Chemical Properties | Joint Intermediate Fusion | Accuracy: 0.872, F1: 0.86 |

| MoltiTox [28] | Graphs, SMILES, Images, 13C NMR | Attention-Based Fusion | ROC-AUC: 0.831 on Tox21 |

| MEMOL [29] | Images, Graphs, Fingerprints | Mixture of Experts + Multi-head Attention | AUROC: +8.33%, AUPRC: +9.11% |

| M3Hep [30] | SMILES, Graphs, Mitochondrial Toxicity | Masking Strategy | AUC: 0.81, MCC: 0.49 |

| Topological Fusion [31] | 3D Structures | Topological Simplices | Improvement: 1.2-3.0% on classification tasks |

Protocols and Application Notes

Dataset Curation and Preprocessing

Molecular Structure Image Acquisition

- Source Databases: Programmatic extraction from PubChem and eChemPortal using CAS numbers [8]

- Collection Method: Python-based web crawler for systematic image retrieval

- Chemical Diversity: Curated selection of organic and inorganic compounds encompassing pharmaceuticals, agrochemicals, and industrial chemicals with diverse functional groups, stereochemical configurations, and molecular sizes [8]

- Image Specifications: 224×224 pixel resolution, 16×16 patch size, normalized to ImageNet-21k statistics

Chemical Property Data Collection

- Data Types: Numerical descriptors and categorical features [8]

- Preprocessing: Normalization and standardization to optimize for deep learning applications

- Integration: Alignment of chemical properties with structural images using CAS numbers

Model Training Protocol

Vision Transformer Fine-tuning

- Base Model: Pre-trained ViT-Base/16 weights [8]

- Fine-tuning Dataset: 4,179 molecular structure images [8]

- Optimization: Adaptive moment estimation (Adam) with learning rate decay

- Regularization: Dropout, weight decay, and early stopping

Multimodal Integration Training

- Fusion Strategy: Joint intermediate fusion with concatenation [8]

- Training Schedule: Progressive unfreezing of modality-specific encoders

- Balancing: Class weight adjustment for imbalanced toxicity endpoints

Interpretation and Validation

- Visualization: Attention mapping for ViT to identify salient molecular regions

- Validation: Cross-validation on multiple toxicity endpoints

- Benchmarking: Comparison against state-of-the-art unimodal and multimodal baselines

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function | Implementation Notes |

|---|---|---|

| Vision Transformer (ViT) | Extracts features from molecular structure images | Use ViT-Base/16 architecture; fine-tune on molecular images [8] |

| Multilayer Perceptron (MLP) | Processes numerical chemical property data | Configure based on feature dimensions; output 128-dimensional vector [8] |

| Joint Fusion Layer | Combines image and numerical features | Concatenate modality outputs to 256-dimensional vector [8] |

| Molecular Image Dataset | Provides structural information for model training | Curate from PubChem/eChemPortal; ensure chemical diversity [8] |

| Chemical Property Data | Supplies quantitative descriptors for compounds | Normalize and align with image data using CAS numbers [8] |

| Topological Simplices | Captures fine-grained substructure information | Extract 1D/2D simplices from 3D molecular data [31] |

| Mixture of Experts (MoE) | Enhances multimodal integration | Employ sparse gating for expert selection [29] |

Workflow Visualization

Molecular Structure and Property Fusion Workflow

The integration of chemical properties and molecular structure images through ViT and MLP architectures represents a significant advancement in toxicity prediction capabilities. The documented framework demonstrates robust performance across multiple metrics, with an accuracy of 0.872, F1-score of 0.86, and PCC of 0.9192 [8]. This multimodal approach effectively addresses limitations of single-modality models by leveraging complementary chemical information.

The protocols and application notes provided herein offer researchers comprehensive guidance for implementing these advanced predictive systems. Future directions include incorporation of additional modalities such as 13C NMR spectra [28] and mitochondrial toxicity data [30], enhanced interpretability through attention mechanisms, and extension to broader toxicity endpoints. As multimodal learning continues to evolve, these frameworks will play an increasingly vital role in accelerating drug discovery and improving chemical safety assessment.

Leveraging Multi-task Deep Learning for Simultaneous Prediction of Multiple Endpoints

In the field of drug development, toxicity remains a major cause of candidate failure, contributing significantly to the high cost of marketed drugs [12]. Traditional single-task learning (STL) models, which predict toxicity endpoints in isolation, fail to leverage the inherent relatedness between various toxicity manifestations across different biological platforms. Multi-task deep learning (MTDL) has emerged as a powerful paradigm that simultaneously learns multiple related tasks by leveraging both task-specific and shared information, leading to streamlined model architectures, improved performance, and enhanced generalizability [32]. This application note details the theoretical foundations, experimental protocols, and practical implementation of MTDL frameworks for the simultaneous prediction of multiple toxicity endpoints, within the broader context of deep neural networks for toxicity prediction research.

Theoretical Foundations and Benefits of Multi-task Learning

Multi-task learning is a learning paradigm that jointly learns multiple related tasks, moving away from the traditional approach of handling tasks in isolation [32]. In the context of toxicity prediction, this involves training a single model on diverse endpoints spanning in vitro, in vivo, and clinical platforms. The fundamental principle is that learning signals from multiple related tasks are integrated during updates of shared model parameters, which allows the model to leverage mutual insights, particularly benefiting tasks with limited data [32] [33].

The key advantage of MTDL over STL is its ability to improve data efficiency and model robustness. By sharing representations across tasks, MTDL reduces the risk of overfitting on small datasets and can enhance prediction accuracy for endpoints with sparse data [34] [35]. This is particularly valuable in toxicology, where clinical toxicity data is often limited but can be informed by more abundant in vitro and in vivo data [12]. However, a challenge known as the "Robin Hood effect" can occur, where performance improvements on some tasks come at the cost of reduced accuracy on others, highlighting the importance of appropriate task grouping strategies [33].

Experimental Data and Performance Comparison

Key Studies in MTDL for Toxicity Prediction

Recent research has demonstrated the successful application of MTDL frameworks to toxicity prediction. A 2023 study developed a multi-task deep neural network (MTDNN) using two molecular representations: Morgan Fingerprints (FP) and pre-trained SMILES embeddings (SE). This model simultaneously predicted in vitro (12 Tox21 assay endpoints), in vivo (mouse acute oral toxicity), and clinical toxicity (clinical trial failure due to toxicity from ClinTox) [12]. The model showed accurate predictions across all endpoints, with the SMILES embeddings particularly improving clinical toxicity predictions compared to existing benchmarks [12].

Another large-scale study from 2021 curated the largest publicly available multi-species acute toxicity dataset, comprising over 80,000 compounds measured against 59 acute systemic toxicity endpoints. The study developed multiple single- and multi-task models, including Random Forest, deep neural networks (DNNs), and convolutional/graph convolutional neural networks. The results demonstrated that a consensus model from three multi-task learning approaches significantly outperformed other models, particularly for the 29 smaller tasks (with fewer than 300 compounds) [34].

Quantitative Performance Comparison

Table 1: Performance comparison of modeling approaches on toxicity datasets.

| Study | Dataset & Endpoints | Model Architecture | Key Performance Metrics |

|---|---|---|---|

| Maynard et al. (2023) [12] | - In vitro: 12 Tox21 assays- In vivo: Mouse acute oral toxicity- Clinical: ClinTox | Multi-task DNN with Morgan FP and SMILES SE | - Improved clinical toxicity predictions vs. MoleculeNet benchmarks- Comparable to state-of-the-art for specific in vitro, in vivo, clinical endpoints |

| Large-scale Acute Toxicity (2021) [34] | 59 acute toxicity endpoints (>80,000 compounds) | - ST-RF, ST-DNN- MT-DNN- Consensus Model | - Consensus model (from 3 MTL approaches) outperformed others- Particularly better for 29 smaller tasks (<300 compounds) |

| Phan et al. (2020s) [35] | Various applications | Gradient-based MTL with flat minima seeking | - Outperformed existing gradient-based MTL techniques- Improved task performance, model robustness, and calibration |

Table 2: Comparison of single-task vs. multi-task learning characteristics.

| Aspect | Single-Task Learning (STL) | Multi-Task Learning (MTL) |

|---|---|---|

| Data Efficiency | May perform poorly on tasks with limited data [34] | Leverages related tasks to improve performance on data-sparse tasks [34] [35] |

| Computational Cost | Separate model for each task increases resource demands [35] | Single shared backbone reduces redundant feature calculations [35] |

| Generalizability | Higher risk of overfitting, especially on small datasets [35] | Improved generalization through shared representations [35] |

| Key Challenge | Neglects inter-task relationships | Potential for gradient conflicts and negative transfer [35] |

Detailed Experimental Protocols

Data Curation and Processing Protocol

Objective: To create a standardized, high-quality dataset for training MTDL models from diverse public data sources. Materials: Raw data from public databases (e.g., ChemIDplus, Tox21, ClinTox); KNIME analytics platform or similar; ChemAxon Standardizer software. Procedure:

- Data Extraction: Collect molecular structures and associated toxicity endpoints from relevant databases. For acute toxicity, extract measurements in harmonized units (mg/kg, μg/kg, ng/kg) [34].

- Compound Standardization:

- Strip salts, solvents, and counterions.

- Remove large organic compounds (≥ 2,000 Da), mixtures, and inorganic compounds.

- Standardize specific chemotypes (aromatic, nitro groups, sulfo groups, tautomers, protonation states) using ChemAxon Standardizer [34].

- Duplicate Handling:

- Identify duplicate compound entries.

- If duplicates have discordant potencies (>0.2 -log units), exclude both entries.

- If potencies are similar, calculate the average value and retain one entry [34].

- Endpoint Filtering: Remove endpoints with fewer than 100 reported measurements to ensure model reliability [34].

- Data Splitting: Partition the curated dataset into training, validation, and test sets (e.g., 80/10/10 split) using stratified sampling to maintain endpoint distribution.

Molecular Representation and Feature Generation

Objective: To convert standardized chemical structures into numerical representations suitable for deep learning. Materials: Curated chemical structures; RDKit or similar cheminformatics library. Procedure:

- Morgan Fingerprints (FP):

- SMILES Embeddings (SE):

- Use a neural network-based model (e.g., sequence-to-sequence) to translate from non-canonical SMILES to canonical SMILES.

- This process encodes relationships between chemicals within the datasets, creating continuous vector representations [12].

- Alternative Representations (Optional):

Multi-task DNN Architecture and Training Protocol

Objective: To construct and train a deep neural network capable of simultaneous prediction of multiple toxicity endpoints. Materials: Processed dataset with molecular representations and endpoint labels; deep learning framework (e.g., TensorFlow/Keras, PyTorch). Procedure:

- Model Architecture Design:

- Input Layer: Size corresponding to molecular representation dimension (e.g., 1024 for Morgan FP).

- Shared Hidden Layers: 2-3 fully connected (dense) layers with ReLU activation functions. These layers learn features common across all tasks [12] [35].

- Task-Specific Heads: Multiple output branches, one for each endpoint, with appropriate activation functions (sigmoid for binary classification, softmax for multi-class, linear for regression) [12].

- Training with Gradient Balancing:

- Loss Function: Use a weighted sum of task-specific losses. For binary classification, binary cross-entropy is appropriate.

- Gradient Handling: Implement gradient balancing techniques to mitigate conflicts:

- Flat Minima Seeking: Apply Sharpness-Aware Minimization (SAM) to find flat regions in the loss landscape, improving generalization [35].

- Hyperparameter Optimization:

- Perform grid search over: number of epochs, batch size, activation functions, learning rate of Adam optimizer, and number of neurons in dense layers [34].

- Use validation set performance for early stopping and model selection.

- Model Evaluation:

- Assess on held-out test set using endpoint-appropriate metrics: Area Under the ROC Curve (AUC-ROC), balanced accuracy, precision, recall, F1-score [12].

- Compare against single-task baseline models to quantify MTL benefits.

Diagram 1: MTDL Experimental Workflow

Visualization of Model Architectures and Relationships

Multi-task DNN Architecture for Toxicity Prediction

Diagram 2: MT-DNN Model Architecture

Gradient Balancing in Multi-task Learning

Diagram 3: Gradient Balancing Concept

Table 3: Key computational tools and resources for MTDL in toxicity prediction.

| Resource Category | Specific Tool/Resource | Function and Application |

|---|---|---|

| Public Data Sources | ChemIDplus [34], Tox21 Challenge [12], ClinTox [12], ChEMBL [34] | Provide curated in vitro, in vivo, and clinical toxicity data for model training and benchmarking. |

| Cheminformatics Tools | RDKit [34], ChemAxon Standardizer [34] | Process chemical structures, generate molecular fingerprints (e.g., Morgan, Avalon), and standardize compounds. |

| Deep Learning Frameworks | TensorFlow/Keras [34], PyTorch | Implement and train multi-task DNN architectures with flexible configuration of shared and task-specific layers. |

| Model Interpretation | Contrastive Explanations Method (CEM) [12] | Identify pertinent positive and negative features (toxicophores) that drive model predictions for increased trustworthiness. |

| Specialized MTL Methods | PCGrad [35], CAGrad [35], IMTL [35] | Advanced gradient manipulation algorithms that balance learning across tasks and mitigate negative transfer. |

Graph Neural Networks (GNNs) for Direct Molecular Graph Analysis and Interpretability

Graph Neural Networks (GNNs) have emerged as transformative tools in molecular property prediction, fundamentally shifting the paradigm from traditional descriptor-based methods to direct molecular graph analysis [36] [37]. In toxicity endpoint prediction research, GNNs excel by natively representing molecules as graph structures where atoms correspond to nodes and bonds to edges, thereby preserving the intrinsic topological information of chemical compounds [36]. This representation enables GNNs to learn features directly from molecular geometry and connectivity, capturing complex structure-property relationships essential for accurate toxicity assessment [2] [37].

The integration of GNNs into toxicology research addresses critical limitations of conventional Quantitative Structure-Activity Relationship (QSAR) models, which often rely on pre-defined molecular fingerprints and neglect complex biological interactions underlying compound toxicity [38]. Recent advancements have demonstrated that GNNs achieve superior predictive performance by modeling multiscale toxicological mechanisms, from molecular-level metabolic activation and covalent modifications to cellular-level mitochondrial dysfunction and oxidative stress [2]. Furthermore, incorporating biological knowledge graphs encompassing genes, pathways, and assays provides richer semantic context and structured prior knowledge, significantly enhancing both predictive accuracy and mechanistic interpretability [38].

Advanced GNN Architectures for Molecular Property Prediction

Kolmogorov-Arnold Graph Neural Networks (KA-GNNs)

The recently proposed Kolmogorov-Arnold GNN (KA-GNN) framework integrates Fourier-based Kolmogorov-Arnold network modules into the three fundamental components of GNNs: node embedding, message passing, and readout [36]. This architecture replaces conventional multilayer perceptrons with learnable univariate functions based on Fourier series, enabling accurate modeling of both low-frequency and high-frequency structural patterns in molecular graphs [36]. The Fourier-based formulation provides strong theoretical approximation guarantees grounded in Carleson's convergence theorem and Fefferman's multivariate extension, ensuring expressive power for complex molecular representations [36].

Two architectural variants—KA-Graph Convolutional Networks (KA-GCN) and KA-Graph Attention Networks (KA-GAT)—have demonstrated consistent outperformance over conventional GNNs across seven molecular benchmarks [36]. In KA-GCN, node embeddings are computed by passing concatenated atomic features and neighboring bond features through KAN layers, while message passing follows the GCN scheme with feature updates via residual KANs [36]. KA-GAT incorporates edge embeddings by fusing bond features with endpoint node features, enabling more expressive representation learning [36].

Knowledge Graph-Enhanced GNN Frameworks

Integrating toxicological knowledge graphs with GNNs significantly enhances predictive performance for toxicity endpoints [38]. Heterogeneous graph models enriched with knowledge graph information substantially outperform traditional models relying solely on structural features across multiple metrics including AUC, F1-score, accuracy, and balanced accuracy [38]. The Graph Positioning System (GPS) model achieved an AUC of 0.956 for nuclear receptor (NR-AR) prediction tasks, highlighting the critical role of biological mechanism information in toxicity prediction [38].

The construction of toxicological knowledge graphs (ToxKG) incorporates multiple entity types—including chemicals, genes, pathways, key events, molecular initiating events, and adverse outcomes—with biologically meaningful relationships such as CHEMICALBINDSGENE, CHEMICALDECREASESEXPRESSION, and GENEINPATHWAY [38]. This structured representation captures complex compound-gene-pathway associations, providing richer biological context for toxicity prediction models [38].

Interpretable GNN Architectures

The black-box nature of conventional GNNs reduces interpretability, limiting trust in their predictions for critical applications like drug safety assessment [39]. To address this challenge, SEAL (Substructure Explanation via Attribution Learning) introduces a novel interpretable GNN that attributes model predictions to meaningful molecular subgraphs [39]. By decomposing input graphs into chemically relevant fragments and explicitly reducing inter-fragment message passing, SEAL achieves strong alignment between fragment contributions and model predictions [39]. Extensive evaluations demonstrate that SEAL outperforms other explainability methods in both quantitative attribution metrics and human-aligned interpretability, providing more intuitive and trustworthy explanations to domain experts [39].

Quantitative Performance Analysis

Comparative Performance of GNN Architectures on Tox21 Dataset

Table 1: Performance comparison of GNN models on Tox21 dataset with knowledge graph integration

| GNN Model | Type | NR-AR AUC | Average AUC | Key Strengths |

|---|---|---|---|---|

| GPS | Heterogeneous | 0.956 | 0.891 | Best overall performance with biological context |

| HGT | Heterogeneous | 0.942 | 0.878 | Effective for complex heterogeneous relations |

| R-GCN | Heterogeneous | 0.928 | 0.865 | Models relational dependencies |

| HRAN | Heterogeneous | 0.919 | 0.857 | Hierarchical attention mechanisms |

| GAT | Homogeneous | 0.874 | 0.812 | Attention-based neighbor weighting |

| GCN | Homogeneous | 0.851 | 0.794 | Standard graph convolution baseline |

Source: Adapted from [38]

Performance of KA-GNNs on Molecular Benchmarks