Computer-Aided Drug Discovery: A Comprehensive Overview of AI, Methods, and Future Trends

This article provides a comprehensive overview of Computer-Aided Drug Discovery (CADD), a transformative force that integrates computational biology, chemistry, and artificial intelligence to streamline drug development.

Computer-Aided Drug Discovery: A Comprehensive Overview of AI, Methods, and Future Trends

Abstract

This article provides a comprehensive overview of Computer-Aided Drug Discovery (CADD), a transformative force that integrates computational biology, chemistry, and artificial intelligence to streamline drug development. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of CADD, detailing both structure-based and ligand-based design methods. The scope extends to practical applications in virtual screening and molecular docking, an honest examination of current methodological challenges and limitations, and a forward-looking analysis of how AI and machine learning are validating and reshaping the field. The content synthesizes the latest trends and data to offer a realistic perspective on how computational approaches are rationalizing and accelerating the journey from concept to clinic.

The CADD Revolution: From Serendipity to Rational Design

Computer-Aided Drug Design (CADD) represents a transformative interdisciplinary field that integrates computational chemistry, molecular modeling, bioinformatics, and cheminformatics to accelerate and rationalize drug discovery and development processes [1]. This methodology fundamentally shifts pharmaceutical research from traditional trial-and-error approaches toward a hypothesis-driven paradigm based on understanding atomic-level interactions between chemical compounds and biological targets [2] [1]. At its core, CADD utilizes computational power to model, predict, and optimize how small molecules interact with biological targets—typically proteins or nucleic acids—before synthesis and experimental testing [1]. The emergence of CADD as a central pillar in modern pharmaceutical research coincides with critical advancements in structural biology, which provides three-dimensional architectures of biomolecules, and the exponential growth of computational power that enables complex simulations [2].

The historical evolution of CADD dates back several decades when drug discovery relied heavily on serendipity and empirical screening [1]. Initially, molecular modeling was limited to experts in physical organic chemistry using command-line software [1]. As experimental methods in structural biology—particularly X-ray crystallography and nuclear magnetic resonance (NMR) spectroscopy—began generating detailed three-dimensional structures of biological targets, researchers gained the unprecedented ability to design drugs rationally based on structural information [1]. This paradigm shift accelerated with improvements in computer hardware, the rise of high-throughput screening methods, and advancements in molecular modeling algorithms [1]. Today, CADD has transitioned from a supplementary tool to a central component in drug discovery pipelines across both academic research and the pharmaceutical industry [3].

Fundamental Methodologies in CADD

CADD methodologies are broadly categorized into two complementary approaches: structure-based drug design (SBDD) and ligand-based drug design (LBDD). The selection between these approaches depends primarily on the availability of structural information about the biological target and known active compounds [4] [5].

Structure-Based Drug Design (SBDD)

Structure-based drug design relies directly on the three-dimensional structural information of biological targets, typically obtained through experimental methods like X-ray crystallography, cryo-electron microscopy, or NMR spectroscopy, or through computational approaches like homology modeling when experimental data is unavailable [1] [5]. The fundamental premise of SBDD is that knowledge of the target's atomic structure enables researchers to design molecules that complementarily fit into binding pockets, thereby modulating the target's biological function [1].

Molecular docking serves as a cornerstone technique in SBDD, predicting the preferred orientation and position of a small molecule (ligand) when bound to its target protein [2]. Docking algorithms generate multiple binding poses and rank them using scoring functions that estimate binding affinity based on various energy terms and interaction patterns [2] [1]. These scoring functions may be physics-based, empirical, or knowledge-based, with recent innovations incorporating machine learning to improve prediction accuracy [1]. Virtual screening, an extension of docking, enables the computational assessment of vast compound libraries against a target to identify potential hit compounds [2]. This approach dramatically reduces the number of compounds requiring experimental testing by prioritizing the most promising candidates [4] [5].

Molecular dynamics (MD) simulations complement static structural methods by modeling the time-dependent behavior of biomolecular systems [2] [1]. By solving Newton's equations of motion for all atoms in the system, MD simulations capture conformational fluctuations, binding pocket dynamics, and allosteric communication pathways that influence drug binding [1]. Advanced sampling techniques like metadynamics and replica exchange methods help overcome temporal limitations, while hardware advances like GPU computing have extended accessible simulation timescales [1]. MD simulations provide insights into binding mechanisms, residence times, and conformational changes induced by ligand binding—information inaccessible through static approaches alone [1].

Table 1: Key Software Tools for Structure-Based Drug Design

| Tool | Primary Application | Advantages | Limitations |

|---|---|---|---|

| AutoDock Vina [2] | Molecular docking | Fast, accurate, easy to use | Less accurate for complex systems |

| GROMACS [2] | Molecular dynamics simulations | High performance, open-source | Steep learning curve |

| AlphaFold2 [2] | Protein structure prediction | High accuracy, no template needed | Limited accuracy for certain protein classes |

| Rosetta [2] | Protein structure prediction | Ab initio modeling capabilities | Computationally intensive |

| SWISS-MODEL [2] | Homology modeling | Fully automated, user-friendly | Dependent on template availability |

Ligand-Based Drug Design (LBDD)

When three-dimensional structural information of the biological target is unavailable, ligand-based drug design offers powerful alternative approaches that leverage known active compounds [4] [5]. LBDD operates on the fundamental similarity principle—that molecules with similar structural features tend to exhibit similar biological activities [1].

Quantitative Structure-Activity Relationship (QSAR) modeling represents a foundational LBDD technique that employs statistical methods to correlate quantitative molecular descriptors with biological activity [2] [1]. Molecular descriptors encompass structural, electronic, and physicochemical properties that numerically encode characteristics relevant to molecular recognition and binding [1]. QSAR models enable the prediction of biological activity for new compounds based on their structural features, guiding lead optimization efforts by identifying which chemical modifications may enhance potency [2].

Pharmacophore modeling identifies the essential steric and electronic features necessary for molecular recognition at a biological target [1]. A pharmacophore represents an abstract description of molecular features—including hydrogen bond donors/acceptors, hydrophobic regions, aromatic rings, and charged groups—and their spatial arrangement that confers biological activity [1]. Pharmacophore models serve as templates for virtual screening of compound databases to identify new chemical entities containing the critical features required for activity [1].

Table 2: Core Techniques in Ligand-Based Drug Design

| Technique | Methodology | Applications | Key Considerations |

|---|---|---|---|

| QSAR Modeling [2] [1] | Statistical correlation of molecular descriptors with biological activity | Lead optimization, activity prediction | Model applicability domain, descriptor selection |

| Pharmacophore Modeling [1] | Identification of essential molecular features for biological activity | Virtual screening, de novo design | Feature definition, conformational coverage |

| Molecular Similarity [1] | Comparison of molecular fingerprints or descriptors | Hit identification, scaffold hopping | Similarity metric selection, representation method |

Experimental Protocols in CADD

Molecular Docking Protocol

A standardized molecular docking protocol provides a systematic approach for predicting ligand binding modes and estimating binding affinities [2] [1]:

Target Preparation: Obtain the three-dimensional structure of the biological target from experimental sources (Protein Data Bank) or computational modeling [1]. Remove water molecules and cofactors unless functionally relevant. Add hydrogen atoms, assign partial charges, and define atom types using appropriate force fields.

Binding Site Identification: Characterize the target's binding site using computational methods. Grid generation defines the spatial coordinates for docking calculations, typically encompassing the known active site or predicted binding regions [1].

Ligand Preparation: Generate three-dimensional structures of candidate ligands from chemical databases. Assign proper bond orders, add hydrogen atoms, and optimize geometry using molecular mechanics force fields. Generate possible tautomeric states and stereoisomers.

Docking Execution: Perform the docking calculation using selected software (e.g., AutoDock Vina, GOLD, Glide) [2]. The docking algorithm samples possible ligand conformations and orientations within the binding site, evaluating each pose using a scoring function [2] [1].

Pose Analysis and Ranking: Analyze the resulting binding poses based on scoring function values and interaction patterns. Identify key molecular interactions (hydrogen bonds, hydrophobic contacts, π-stacking) that contribute to binding affinity and specificity.

Validation: Validate the docking protocol by redocking known ligands and comparing predicted versus experimental binding modes. Calculate root-mean-square deviation (RMSD) values to assess pose prediction accuracy [1].

QSAR Modeling Protocol

Quantitative Structure-Activity Relationship modeling follows a rigorous protocol to develop predictive models [2] [1]:

Data Curation: Compile a dataset of compounds with corresponding biological activity values (e.g., IC50, Ki). Ensure chemical structures are standardized and activity data is consistent. Divide the dataset into training (∼80%) and test (∼20%) sets.

Molecular Descriptor Calculation: Compute numerical descriptors encoding structural, electronic, and physicochemical properties using software like Dragon or RDKit. Descriptors may include topological indices, electronic parameters, steric factors, and hydrophobicity measures.

Descriptor Selection and Reduction: Apply feature selection methods to identify the most relevant descriptors, eliminating redundant or uninformative variables. Use techniques like principal component analysis (PCA) to reduce dimensionality and avoid overfitting.

Model Development: Employ statistical or machine learning algorithms (e.g., multiple linear regression, partial least squares, random forest, support vector machines) to correlate descriptors with biological activity [2]. Optimize model parameters through cross-validation.

Model Validation: Assess model performance using both internal (cross-validation) and external (test set prediction) validation [1]. Evaluate using metrics including R², Q², and root-mean-square error (RMSE).

Model Interpretation: Analyze the contribution of individual descriptors to biological activity, deriving insights into structural features that enhance or diminish potency. Apply the model to predict activity of new compounds and guide chemical optimization.

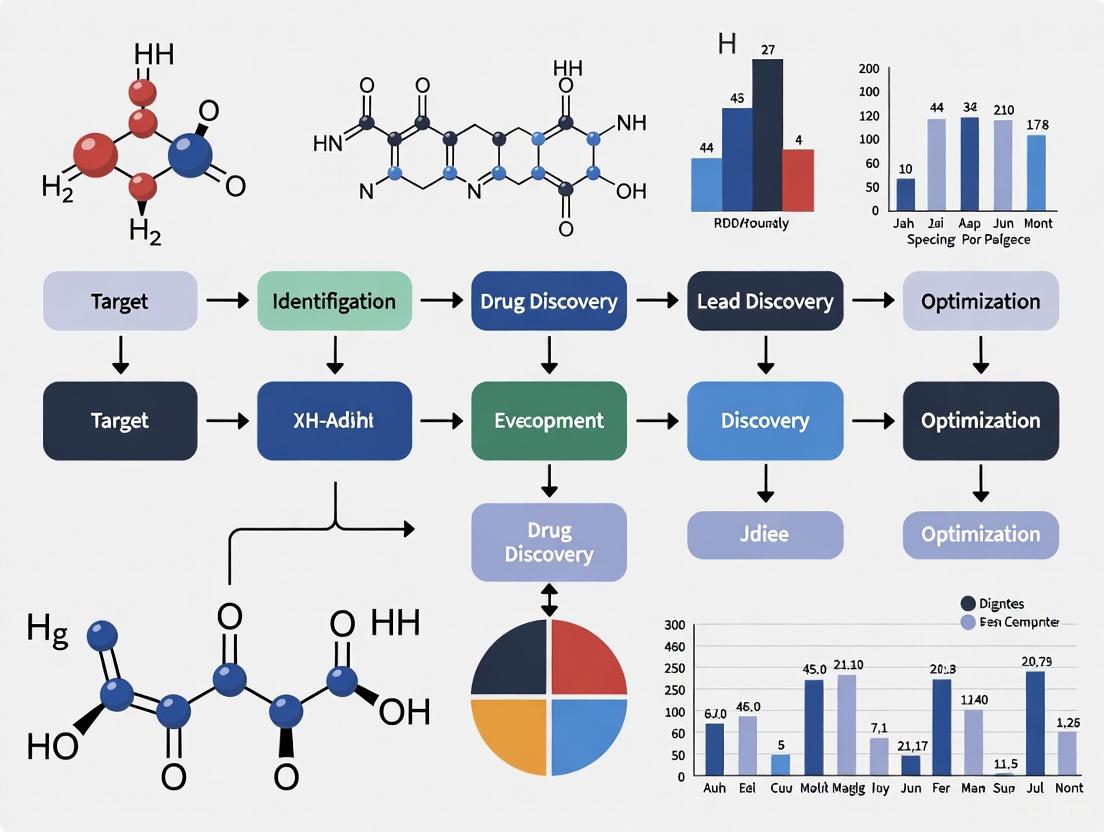

CADD Workflow and Signaling Pathways

The following diagram illustrates the integrated workflow of computer-aided drug design, highlighting the synergy between structure-based and ligand-based approaches:

CADD Methodology Integration Workflow

Successful implementation of CADD methodologies requires access to specialized computational tools, databases, and software resources. The following table catalogs essential components of the modern computational chemist's toolkit:

Table 3: Essential Research Reagent Solutions for CADD

| Resource Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Protein Structure Databases [2] | Protein Data Bank (PDB), AlphaFold Protein Structure Database | Provide experimentally determined and predicted protein structures for target identification and characterization |

| Compound Libraries [1] | ZINC, ChEMBL, PubChem | Curated collections of small molecules for virtual screening and lead identification |

| Molecular Docking Software [2] | AutoDock Vina, GOLD, Glide, DOCK | Predict binding modes and affinities of small molecules to biological targets |

| Molecular Dynamics Packages [2] | GROMACS, NAMD, AMBER, OpenMM | Simulate time-dependent behavior of biomolecular systems and ligand-target complexes |

| Cheminformatics Platforms [2] | RDKit, Open Babel, ChemAxon | Process chemical structures, calculate molecular descriptors, and handle chemical data |

| QSAR Modeling Tools [1] | KNIME, Orange, WEKA | Develop and validate quantitative structure-activity relationship models |

| Visualization Software [5] | PyMOL, Chimera, Discovery Studio | Visualize molecular structures, binding interactions, and simulation trajectories |

| High-Performance Computing [3] | GPU clusters, Cloud computing platforms, Supercomputing resources | Provide computational power for demanding simulations and large-scale virtual screens |

Current Applications and Success Stories

CADD has demonstrated significant impact across multiple therapeutic areas, accelerating drug discovery while reducing costs and attrition rates [4] [5]. Notable successes include:

Antiviral Drug Discovery: During the COVID-19 pandemic, CADD tools were deployed to rapidly screen existing drugs and identify candidates targeting SARS-CoV-2 proteins like the main protease (Mpro) and spike protein [5]. Molecular docking, molecular dynamics, and virtual screening approaches identified potential inhibitors for experimental validation, compressing discovery timelines significantly [3].

Oncology Therapeutics: Structure-based approaches have contributed to developing targeted kinase inhibitors with enhanced specificity and reduced off-target effects [5]. CADD methods have enabled the design of inhibitors targeting specific mutant variants, such as second-generation inhibitors for mutant isocitrate dehydrogenase 1 (mIDH1) in acute myeloid leukemia to address drug resistance [3].

Antibiotic Development: CADD approaches are being leveraged to combat antimicrobial resistance by designing novel molecules targeting bacterial enzymes [5]. For oral diseases, CADD has facilitated the development of peptide-based drugs, small molecules, and plant-derived compounds targeting dental caries, periodontitis, and oral cancer [6].

Protein-Protein Interaction Modulators: Targeting traditionally "undruggable" protein-protein interactions represents a frontier in drug discovery where CADD plays a crucial role [7]. Computational methods help identify and optimize small molecules and peptidomimetics that disrupt pathological protein interactions [7].

Challenges and Future Directions

Despite substantial advances, CADD faces several persistent challenges that represent opportunities for methodological improvement [1] [3]:

Accuracy of Scoring Functions: The limited accuracy of current scoring functions for molecular docking remains a significant constraint, often generating false positives or failing to correctly rank ligands due to complexities in modeling solvation effects, entropy contributions, and protein flexibility [1] [3].

Sampling Limitations: While enhanced sampling techniques have improved molecular dynamics simulations, accurately capturing rare events such as ligand unbinding or allosteric transitions remains computationally intensive and time-consuming [1].

Data Quality and Availability: The predictive performance of CADD methods, particularly machine learning approaches, depends heavily on the quality, completeness, and diversity of training data [3]. Biased datasets toward well-studied target classes can limit generalizability [3].

Integration of Multi-Omics Data: Effectively incorporating diverse biological data—genomics, proteomics, metabolomics—into drug design pipelines remains challenging due to standardization issues and computational complexity [3].

Future directions in CADD research focus on addressing these limitations through technological innovation [8] [3]:

Artificial Intelligence and Machine Learning: AI/ML approaches are revolutionizing CADD by improving predictive accuracy of binding affinities, enabling de novo molecular design, and extracting maximal knowledge from available data [2] [7] [8]. Deep learning models show particular promise for molecular property prediction and generative chemistry [8].

Hybrid Methodologies: Combining physics-based simulations with machine learning leverages the complementary strengths of both approaches [7]. Neural network potentials, for example, aim to achieve quantum mechanical accuracy at molecular mechanics computational cost [8].

Quantum Computing: Though still in early stages, quantum computing holds potential to solve complex molecular simulations and optimization problems currently intractable for classical computers [8].

Democratization through Cloud Computing: Cloud-based platforms and improved software accessibility are making advanced CADD capabilities available to smaller research institutions and startups, broadening participation in computational drug discovery [9].

As CADD continues evolving, its integration with experimental approaches and emerging technologies promises to further accelerate therapeutic development, ultimately enabling more precise and effective treatments for diverse diseases [3]. The ongoing synthesis of biological insight and computational technology positions CADD as an indispensable component of 21st-century pharmaceutical research [5].

The field of drug discovery has undergone a profound transformation, shifting from traditional serendipitous findings to a precision-driven engineering discipline. This paradigm shift represents a fundamental reimagining of pharmaceutical development, moving from resource-intensive screening toward targeted rational design powered by computational intelligence. The serendipitous discoveries that once defined the field, such as penicillin, have given way to rational drug design approaches that target specific biological mechanisms with increasing precision [10]. This transition has accelerated dramatically with advances in computational power, biomolecular spectroscopy, and artificial intelligence, enabling researchers to explore chemical spaces beyond human capabilities and predict molecular behavior with unprecedented accuracy [11] [12].

The limitations of traditional approaches became increasingly apparent as pharmaceutical industries faced significant challenges in delivering safe and effective medicines. The historical reliance on high-throughput screening of compound libraries, while technologically advanced, often produced drugs with significant toxicity and severe side effects due to off-target interactions [11]. Modern system-based pharmacology now aims to address these challenges by integrating chemical, molecular, and systematic information to design small molecules with controlled toxicity and minimized side effects [11]. This whitepaper examines the core computational methodologies driving this transformation, provides detailed experimental protocols, and explores the emerging trends that will define the future of rational drug development.

Core Methodologies in Computer-Aided Drug Discovery

Ligand-Based Drug Design Approaches

Ligand-based drug design (LBDD) operates on the fundamental principle that a ligand's structure contains all necessary information to infer its mechanism of action and biological properties [11]. This approach is particularly valuable when the three-dimensional structure of the target protein is unknown or difficult to obtain. The methodology extracts essential chemical features from biologically active compounds to construct predictive models that guide the design of novel therapeutic agents with optimized properties.

The chemical similarity principle forms the theoretical foundation of LBDD, positing that structurally similar molecules likely share similar biological activities [11]. This principle enables large-scale database searches to identify compounds with improved bioactivities based on known active structures. Mathematically, chemical structures are represented as graphs where atoms constitute vertices and chemical bonds form edges [11]. Advanced chemoinformatics algorithms then extract key characteristics from these molecular graphs—including vertex count, bond connectivity, and molecular paths—to create distinctive chemical fingerprints that facilitate similarity comparisons.

Table 1: Key Chemical Fingerprinting Methods in Ligand-Based Drug Design

| Fingerprint Type | Representative Examples | Key Features | Primary Applications |

|---|---|---|---|

| Path-Based Fingerprints | Daylight, Obabel FP2 | Uses molecular paths at different bond lengths as features; offers high specificity due to unique path dependency | Similarity searching, lead optimization |

| Substructure-Based Fingerprints | MACCS Keys | Employs predefined substructures; characterizes molecules via binary presence/absence arrays | Scaffold hopping, functional group analysis |

| Hybrid Approaches | Extended Connectivity Fingerprints | Combines path information with chemical properties; balances specificity and diversity | Machine learning models, polypharmacology studies |

The LBDD workflow follows a systematic process: (1) a target molecule with desired biological activity serves as the query for chemical database searches; (2) similar ligands with analogous biological properties are identified using similarity metrics; (3) original ligands are structurally modified to suggest novel molecules with enhanced activities [11]. The Tanimoto index serves as the predominant similarity metric, quantifying shared feature bits between two fingerprints on a scale of 0-1, with values of 0.7-0.8 typically indicating significant chemical similarity [11].

Ligand-based approaches have evolved beyond simple similarity searching to incorporate sophisticated target prediction algorithms. Methods like the Similarity Ensemble Approach (SEA) calculate similarity values against random backgrounds using BLAST-like algorithms to overcome the limitations of bioactivity cliffs [11]. Furthermore, network poly-pharmacology has emerged as a comprehensive framework for analyzing drug-target interactions, utilizing bipartite networks to map complex drug-gene interactions and identify both primary targets and off-target effects [11].

Structure-Based Drug Design Approaches

Structure-based drug design (SBDD) represents the cornerstone of rational drug discovery, leveraging detailed three-dimensional structural knowledge of biological targets to design therapeutic compounds with precise molecular interactions [11]. This approach has been revolutionized by advances in structural biology techniques, including X-ray crystallography, nuclear magnetic resonance (NMR) spectroscopy, and cryo-electron microscopy, which provide atomic-resolution insights into protein-ligand interactions.

The SBDD paradigm enables researchers to identify shape-complementary ligands that optimize interactions with specific binding sites on target proteins [11]. When a validated disease target with a known crystal structure is available, structure-based approaches facilitate the de novo design of ligands that bind with high affinity and specificity. The integration of molecular modeling and structure-activity relationship (SAR) analysis has become instrumental in optimizing lead compounds through iterative design cycles [11].

Molecular docking, a fundamental technique in SBDD, computationally predicts the preferred orientation of a small molecule when bound to its target protein. This method employs sophisticated sampling algorithms to generate plausible binding poses and scoring functions to rank these poses based on their predicted binding affinities. Docking studies provide critical insights into molecular recognition processes and guide the optimization of lead compounds through structure-based design strategies.

Table 2: Principal Structure-Based Drug Design Methods and Applications

| Method Category | Key Techniques | Data Requirements | Output Deliverables |

|---|---|---|---|

| Molecular Docking | Rigid/flexible docking, ensemble docking | Protein 3D structure, ligand library | Binding poses, affinity predictions, binding site analysis |

| Structure-Based Virtual Screening | High-throughput docking, pharmacophore screening | Target structure, compound database | Hit identification, lead compound prioritization |

| Binding Site Analysis | Pocket detection, residue networking, solvent mapping | Protein structure, molecular dynamics trajectories | Allosteric site identification, hot spot prediction |

| Molecular Dynamics Simulations | All-atom MD, enhanced sampling, free energy calculations | Initial protein-ligand complex, force field parameters | Binding stability, conformational dynamics, mechanism of action |

The convergence of SBDD with artificial intelligence has produced transformative capabilities in drug discovery. Hybrid AI-structure/ligand-based virtual screening with deep learning significantly boosts hit rates and scaffold diversity [12]. These integrated approaches enable ultra-large-scale virtual screening of billions of compounds and predictive modeling of ADMET (absorption, distribution, metabolism, excretion, and toxicity) properties, dramatically accelerating the lead identification and optimization processes [12].

Experimental Protocols and Workflows

AI-Driven De Novo Molecular Design Protocol

The integration of artificial intelligence with traditional computational methods has established powerful new paradigms for de novo molecular design. This protocol outlines the workflow for generating novel therapeutic compounds using AI-driven approaches, demonstrating how these methods can compress discovery timelines from years to months.

Step 1: Target Identification and Validation

- Utilize computational models to identify and validate disease-modifying targets through genomic, proteomic, and structural data integration

- Implement federated learning approaches to collaboratively train models across institutions without sharing sensitive data, enhancing predictive accuracy while maintaining privacy [10]

- Apply AlphaFold-generated protein structures when experimental structures are unavailable, leveraging its database of over 200 million predicted structures [10]

Step 2: Molecular Generation and Optimization

- Employ deep graph networks and generative models to create novel molecular structures with desired properties

- In a 2025 case study, researchers used this approach to generate 30,000 designs for molecules targeting a fibrosis-related protein in just 21 days [10]

- Implement transfer learning to fine-tune models on specific target classes, enhancing generation efficiency and success rates

Step 3: Synthesis and Experimental Validation

- Prioritize candidates for synthesis based on predicted binding affinity, drug-likeness, and synthetic accessibility

- The same 2025 study synthesized six generated molecules, with two tested in cells and the most promising candidate evaluated in mice, completing the entire process in 46 days [10]

- Validate target engagement using Cellular Thermal Shift Assay (CETSA) to confirm direct binding in intact cells and tissues [13]

This AI-driven workflow demonstrates revolutionary efficiency improvements, with platforms like Exscientia's cutting the traditional drug discovery timeline from 4.5 years to just 12-15 months [10].

Virtual Screening and Hit Identification Protocol

Virtual screening has become a frontline tool in modern drug discovery, enabling computational triaging of large compound libraries before resource-intensive experimental work. This protocol details the integrated structure-based and ligand-based virtual screening approach for hit identification.

Step 1: Library Preparation and Compound Curation

- Compile compound libraries from commercial sources, in-house collections, or virtually generated molecules

- Prepare structures through geometry optimization, protonation state assignment, and tautomer generation

- Calculate molecular descriptors and fingerprints for ligand-based screening approaches

Step 2: Structure-Based Virtual Screening

- Prepare the target protein structure through hydrogen addition, charge assignment, and binding site definition

- Conduct high-throughput docking using platforms like AutoDock and SwissADME to filter for binding potential and drug-likeness [13]

- Recent advancements demonstrate that integrating pharmacophoric features with protein-ligand interaction data can boost hit enrichment rates by more than 50-fold compared to traditional methods [13]

Step 3: Ligand-Based Virtual Screening

- For targets without experimental structures, employ ligand-based similarity searching using known active compounds as queries

- Apply chemical similarity networks to cluster diverse chemical structures into distinct scaffolds or chemotypes [11]

- Correlate each chemotype with specific molecular targets using consensus statistical schemes similar to those used in protein-protein interaction networks [11]

Step 4: Hit Prioritization and Validation

- Integrate results from multiple screening approaches to generate a prioritized list of candidate compounds

- Apply ADMET prediction models to filter compounds with unfavorable pharmacokinetic or toxicity profiles

- Select top candidates for experimental validation using binding assays and functional studies

Virtual Screening Workflow: This diagram illustrates the integrated structure-based and ligand-based virtual screening protocol for hit identification in rational drug discovery.

Target Engagement Validation Protocol

Target engagement validation represents a critical bridge between computational predictions and biological activity. This protocol outlines the experimental workflow for confirming that computationally designed compounds interact with their intended targets in physiologically relevant systems.

Step 1: Cellular Thermal Shift Assay (CETSA)

- Apply CETSA to validate direct target binding in intact cells and native tissue environments

- Expose cells or tissue samples to the test compound across a range of concentrations and temperatures

- Measure thermal stabilization of the target protein using immunoblotting or high-resolution mass spectrometry

- A 2024 study successfully applied CETSA to quantify drug-target engagement of DPP9 in rat tissue, confirming dose- and temperature-dependent stabilization ex vivo and in vivo [13]

Step 2: Competitive Ligand-Binding Assays (CLBA)

- For GPCR targets and other membrane receptors, implement CLBA to characterize receptor-ligand interactions

- Titrate the test compound against a radiolabeled or fluorescently labeled reference ligand

- Quantify binding affinity (Kd) and inhibition constants (Ki) through displacement curves

- Modern nonradioactive assay alternatives overcome previous limitations associated with radioisotope use [14]

Step 3: Functional Activity Assessment

- Determine whether the compound acts as an agonist, antagonist, or allosteric modulator

- Measure downstream signaling responses relevant to the target pathway

- For GPCR targets, monitor second messenger production (cAMP, Ca2+, β-arrestin recruitment)

- Establish efficacy (EC50/IC50) and potency values for lead optimization

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of rational drug discovery requires specialized research tools and reagents that enable both computational predictions and experimental validation. The following table details essential components of the modern drug discovery toolkit.

Table 3: Essential Research Reagents and Solutions for Rational Drug Discovery

| Tool/Reagent Category | Specific Examples | Function and Application | Key Features |

|---|---|---|---|

| Target Identification Platforms | Genome-wide pan-GPCR screening platform [14] | Systematic exploration of compound-target interactions across entire protein families | Enables high-throughput screening against hundreds of GPCRs simultaneously |

| Structural Biology Resources | AlphaFold database, Protein Data Bank | Provides 3D structural information for target-based drug design | AlphaFold has generated over 200 million structures, vastly expanding structural coverage [10] |

| Chemical Databases | ChEMBL, PubChem, DrugBank, BindingDB [11] | Target-annotated chemical libraries for ligand-based design and target prediction | Curated bioactivity data for similarity searching and machine learning |

| Cellular Target Engagement Assays | CETSA (Cellular Thermal Shift Assay) [13] | Quantitative measurement of drug-target binding in physiologically relevant environments | Confirms binding in intact cells and tissues, bridging biochemical and cellular efficacy |

| Virtual Screening Software | AutoDock, SwissADME [13] | Computational prediction of binding interactions and drug-like properties | Enables triaging of large compound libraries before synthesis and testing |

| AI-Driven Design Tools | Deep graph networks, generative models [13] [12] | De novo molecular generation and optimization | Dramatically compresses discovery timelines; enabled 46-day discovery cycle in case study [10] |

Emerging Trends and Future Perspectives

Integration of Artificial Intelligence and Federated Learning

Artificial intelligence has evolved from a promising disruptive technology to a foundational capability in modern drug discovery [13]. The integration of AI throughout the drug development pipeline has accelerated critical stages including target identification, candidate screening, pharmacological evaluation, and quality control [12]. This AI-driven transformation is not merely accelerating existing processes but enabling fundamentally new approaches to drug design.

Federated learning represents a particularly promising paradigm for collaborative drug discovery while addressing data privacy concerns. This machine learning technique allows models to be trained across multiple institutions without sharing sensitive proprietary data [10]. Instead of transferring data to a central server, each participating organization computes model updates using their local data, and only these updates are shared to improve a collective model. This approach enables pharmaceutical companies to leverage diverse datasets while protecting intellectual property, potentially reducing both time and cost in the drug discovery process [10].

The future of AI in drug discovery will likely see increased emphasis on interpretable AI and explainable results, particularly as regulatory agencies require greater transparency in computational approaches [15]. As these technologies mature, we can anticipate more sophisticated multi-objective optimization algorithms that simultaneously balance potency, selectivity, and developability criteria in molecular design.

Tackling Undruggable Targets and New Modalities

Rational drug discovery is increasingly expanding beyond traditional small molecules to address undruggable targets through innovative approaches. The 2025 Gordon Research Conference on Computer-Aided Drug Design highlights growing focus on targeted protein degradation, biologics engineering, and other novel therapeutic modalities [7]. These approaches represent the next frontier in drug discovery, targeting previously inaccessible disease mechanisms.

New modalities are increasingly becoming mainstream as the field looks to drug biological complex targets with strong biological rationales [7]. Computational methods are evolving to support the design of protein degraders, RNA-targeting agents, and other sophisticated therapeutic approaches that operate through novel mechanisms of action. The 2025 conference program specifically includes sessions on "Computational Methods for New Modalities" and "Building the Future Biologics," reflecting the strategic importance of these approaches [7].

The convergence of machine learning and physics-based computational chemistry holds particular promise for addressing these complex targets [7]. By combining data-driven insights with fundamental physical principles, researchers can develop more accurate predictive models for challenging systems where limited experimental data is available. This integration represents a powerful approach to expand the druggable genome and develop therapies for previously untreatable conditions.

Evolution of Drug Discovery Paradigms: This timeline visualization shows the transition from traditional methods to the emerging next-generation approaches combining AI and physics-based modeling.

Quantum Computing and Next-Generation Simulation

Quantum mechanics is increasingly finding practical application in drug discovery, particularly for modeling electronic interactions and covalent bonding [7]. The 2025 GRC conference includes dedicated sessions on "Quantum Mechanics in Drug Design," highlighting its growing importance in addressing challenging chemical phenomena [7]. While still emerging, quantum-inspired algorithms and early quantum computing applications show promise for revolutionizing molecular simulations.

The combination of machine learning and molecular dynamics simulations enables researchers to explore biological processes at unprecedented temporal and spatial scales [15]. These approaches provide insights into conformational dynamics, allosteric mechanisms, and binding processes that were previously inaccessible to direct observation. Since 2020, AI-based molecular dynamics simulation has emerged as a research hotspot, particularly applied to COVID-19, disease prognosis, and cancer therapeutics [15].

As these technologies mature, we anticipate a shift toward truly predictive in silico drug development, where computational models accurately forecast clinical efficacy and safety during early design stages. This capability would represent the ultimate realization of the paradigm shift from trial-and-error to targeted rational drug discovery, potentially transforming pharmaceutical development from a high-risk venture to a precision engineering discipline.

The paradigm shift from traditional trial-and-error to targeted rational drug discovery represents a fundamental transformation in pharmaceutical science. This transition has been enabled by advances in computational power, structural biology, and artificial intelligence that allow researchers to approach drug development as a precision engineering challenge rather than a screening endeavor. The integration of computer-aided drug discovery methodologies throughout the research pipeline has dramatically improved efficiency, with AI-driven platforms compressing discovery timelines from years to months [10] and increasing hit rates by more than 50-fold in some cases [13].

The future of drug discovery will be characterized by increasingly sophisticated hybrid approaches that combine physics-based modeling with data-driven machine learning [7] [12]. These methodologies will expand the druggable genome to include previously inaccessible targets and enable the development of novel therapeutic modalities beyond traditional small molecules. Furthermore, technologies like federated learning will facilitate collaborative model development while preserving data privacy, potentially accelerating innovation across the pharmaceutical industry [10].

As these computational technologies continue to evolve, they promise to further reduce the risks, costs, and timelines associated with drug development. However, successful translation will require tight integration between computational predictions and experimental validation, with techniques like CETSA providing critical bridges between in silico designs and biological activity [13]. The organizations that master this integration—combining computational foresight with robust experimental validation—will lead the next wave of pharmaceutical innovation, delivering more effective and safer medicines to patients through rational design principles.

Computer-Aided Drug Design (CADD) has transitioned from a supplementary tool to a central component in modern drug discovery pipelines, offering more efficient and cost-effective approaches to identify and optimize therapeutic agents [3]. The global CADD market is experiencing rapid growth, fueled by increasing investments, technological innovation, and the rising demand for quicker, more affordable drug development processes [16]. CADD integrates computational tools with traditional pharmacological methods to streamline the discovery and development of novel therapeutic agents [3]. Within this framework, two primary computational strategies have emerged: Structure-Based Drug Design (SBDD) and Ligand-Based Drug Design (LBDD). These methodologies differ fundamentally in their starting points and information requirements but share the common goal of accelerating the identification of viable drug candidates while reducing resource consumption [17] [18]. This guide provides an in-depth technical examination of both approaches, their methodologies, applications, and emerging trends, framed within the broader context of computer-aided drug discovery research.

Structure-Based Drug Design (SBDD)

Core Principle and Definition

Structure-Based Drug Design is a methodology that relies on the three-dimensional structural information of the biological target, typically a protein, to design or optimize small molecule compounds [17]. The core idea is "structure-centric," utilizing the detailed architecture of the target's binding site to guide the development of molecules that can bind with high affinity and specificity [17]. This approach is applicable when the three-dimensional structure of the target is known, often obtained through experimental techniques such as X-ray crystallography, nuclear magnetic resonance (NMR), or cryo-electron microscopy (cryo-EM), or predicted computationally using AI tools like AlphaFold [18] [19].

Key Techniques and Methodologies

Target Structure Determination

The SBDD process begins with obtaining a high-resolution structure of the target protein [17].

- X-ray Crystallography: This method determines the three-dimensional structure of protein crystals by analyzing their X-ray diffraction patterns. It is often used for proteins with relatively stable structures that are easy to crystallize [17].

- Nuclear Magnetic Resonance (NMR): NMR studies the structure, dynamics, and interactions of molecules in solution without requiring crystallization. It is particularly suitable for proteins that cannot form crystals and for studying flexible, dynamically changing structures [17].

- Cryo-Electron Microscopy (Cryo-EM): This technique obtains high-resolution three-dimensional images of macromolecular complexes without crystallization. It is ideal for membrane proteins, viruses, and large multiprotein complexes [17].

- Computational Prediction (e.g., AlphaFold): AI-based tools like AlphaFold can predict protein structures with high reliability, providing models for targets where experimental structures are unavailable. The AlphaFold database has released over 214 million predicted protein structures, vastly expanding the potential targets for SBDD [19].

Molecular Docking

Molecular docking is a core SBDD technique that predicts the preferred orientation (pose) of a small molecule ligand when bound to its target protein. The process involves searching the conformational space of the ligand within the protein's binding site and scoring the resulting complexes to estimate binding affinity [18]. Docking is valuable for both virtual screening and lead optimization, helping to rationalize structural modifications to improve a lead compound's binding affinity and potency [18]. A significant challenge is effectively handling the flexibility of both the ligand and the protein target [18].

Molecular Dynamics (MD) Simulations

MD simulations model the physical movements of atoms and molecules over time, providing insights into the dynamic behavior of protein-ligand complexes [19]. They help account for protein flexibility, sample conformational changes, and reveal cryptic pockets not evident in static structures. The Relaxed Complex Method is a systematic approach that uses representative target conformations from MD simulations for docking studies, improving the chances of identifying valid binding modes [19]. Enhanced sampling methods like accelerated MD (aMD) help overcome energy barriers for more efficient exploration of the energy landscape [19].

Free-Energy Perturbation (FEP)

FEP is a computationally intensive method used during lead optimization to quantitatively estimate the binding free energies resulting from small structural changes to a molecule [18]. It provides highly accurate affinity predictions but is generally limited to small perturbations around a known reference structure [18].

Experimental Protocol: A Typical SBDD Workflow

- Target Selection and Structure Preparation: A target protein implicated in a disease pathway is identified. Its 3D structure is obtained via experimental methods or computational prediction and prepared for simulation (e.g., adding hydrogen atoms, assigning partial charges) [17] [19].

- Binding Site Analysis: The protein structure is analyzed to identify and characterize potential binding pockets, focusing on features like shape, hydrophobicity, and key amino acid residues [17].

- Virtual Screening: Large libraries of compounds are docked into the binding site. Each compound is scored and ranked based on predicted binding affinity [18] [19]. This step narrows down thousands to a few dozen promising candidate molecules.

- Hit Validation and Lead Optimization: The top-ranking virtual hits are procured or synthesized and tested experimentally for binding affinity and biological activity. For confirmed hits, iterative cycles of structure-based design and synthesis are performed—often guided by docking, MD, and FEP—to optimize potency, selectivity, and drug-like properties [17] [18].

- In Vitro and In Vivo Validation: Optimized lead compounds undergo further biological testing in cellular and animal models to assess efficacy and safety before potential clinical development [17].

Ligand-Based Drug Design (LBDD)

Core Principle and Definition

Ligand-Based Drug Design is an approach used when the three-dimensional structure of the target protein is unknown or unresolved [17]. Instead of relying on direct structural information of the target, LBDD infers the characteristics of the binding site and designs new active compounds by analyzing a set of known active ligands that bind to the target of interest [17] [18]. The fundamental assumption is that structurally similar molecules are likely to exhibit similar biological activities, a concept known as the "similarity principle" [18].

Key Techniques and Methodologies

Quantitative Structure-Activity Relationship (QSAR)

QSAR is a mathematical modeling technique that relates quantitative measures of molecular structure (descriptors) to biological activity [17] [18]. Molecular descriptors can include electronic properties, hydrophobicity, steric parameters, and more. A QSAR model is built using data from known active compounds and can then predict the activity of new compounds, helping prioritize molecules for synthesis and testing [17]. While traditional 2D QSAR models require large datasets, advanced 3D QSAR methods, particularly those using physics-based representations, can predict activity with limited structure-activity data and generalize well across chemically diverse ligands [18].

Pharmacophore Modeling

A pharmacophore model defines the essential molecular features and their spatial arrangement necessary for a molecule to interact with a target and elicit a biological response [17]. These features can include hydrogen bond donors and acceptors, hydrophobic regions, charged groups, and aromatic rings. The model is generated from the common features of a set of known active molecules and can be used as a query to screen compound databases for new scaffolds (scaffold hopping) that fulfill the same pharmacophoric requirements [17].

Similarity-Based Virtual Screening

This technique identifies potential hits from large chemical libraries by comparing candidate molecules against one or more known active compounds [18]. Similarity can be assessed using 2D molecular fingerprints (encoding molecular substructures) or 3D descriptors (such as molecular shape, electrostatic potentials, or pharmacophore alignments) [18]. Successful 3D similarity screening requires accurate alignment of candidate structures with the reference active molecule(s) [18].

Experimental Protocol: A Typical LBDD Workflow

- Ligand Set Compilation and Curation: A collection of known active ligands for the target of interest is assembled from literature or proprietary databases. The biological activity data (e.g., IC50, Ki) for these compounds is also gathered [17] [18].

- Molecular Descriptor Calculation and Model Building: For QSAR, relevant molecular descriptors are computed for all compounds in the training set. A statistical or machine learning model is then built to correlate the descriptors with the biological activity [17] [18]. For a pharmacophore model, the active ligands are aligned, and their common chemical features are abstracted into a 3D query [17].

- Database Screening and Activity Prediction: A virtual compound library is screened using the developed model. In QSAR, the model predicts the activity of each compound in the library. In pharmacophore or similarity screening, the database is searched for molecules that match the model or are sufficiently similar to the known actives [18].

- Hit Identification and Validation: The top-ranked compounds from the virtual screen are selected, acquired, and subjected to experimental testing to validate their activity against the target [17].

- Iterative Optimization: The newly tested compounds, whether active or inactive, provide additional data points to refine the QSAR or pharmacophore model, leading to an iterative cycle of prediction and testing for further lead optimization [18].

Comparative Analysis: SBDD vs. LBDD

Table 1: Comparison of Structure-Based and Ligand-Based Drug Design

| Aspect | Structure-Based Drug Design (SBDD) | Ligand-Based Drug Design (LBDD) |

|---|---|---|

| Fundamental Requirement | 3D structure of the target protein [17] [19] | Set of known active ligands [17] [18] |

| Core Principle | Direct design based on complementarity to the binding site [17] | Inference from similarity and quantitative analysis of known actives [17] [18] |

| Primary Techniques | Molecular Docking, Molecular Dynamics, Free-Energy Perturbation [18] [19] | QSAR, Pharmacophore Modeling, Similarity Search [17] [18] |

| Key Advantage | Provides atomic-level insight into binding interactions; enables rational design [17] [18] | Applicable when target structure is unknown; generally faster and less resource-intensive [17] [18] |

| Main Limitation | Dependent on the availability and quality of the target structure [17] [18] | Limited by the quantity and quality of known active compounds; may introduce bias [18] |

| Ideal Use Case | Target with a known or predictable high-resolution structure [19] | Well-established target with many known ligands, or a novel target with some known modulators [17] |

Table 2: Market Share and Growth Trends (2024 Data) [16] [20]

| Segment | Leading Approach (2024) | Projected Growth |

|---|---|---|

| By Type | Structure-Based Drug Design (SBDD) ~55% share | Ligand-Based Drug Design (LBDD) fastest growing |

| By Technology | Molecular Docking ~40% share | AI/ML-based drug design fastest growing |

| By Application | Cancer Research ~35% share | Infectious diseases segment fastest growing |

| By End-User | Pharmaceutical & Biotech Companies ~60% share | Academic & Research Institutes fastest growing |

Integrated and Hybrid Approaches

The distinction between SBDD and LBDD is not rigid, and combining them often yields superior results by leveraging their complementary strengths [18]. Integrated workflows can mitigate the limitations inherent in each standalone method.

Sequential Integration

A common strategy is to use LBDD for initial rapid filtering of large compound libraries, followed by SBDD for a more detailed analysis of the narrowed-down candidate set [18]. For instance, a library of millions of compounds can first be screened using a 2D similarity search or a QSAR model to select a few thousand diverse candidates. This subset then undergoes more computationally intensive molecular docking. This sequential approach improves overall efficiency by applying resource-intensive methods only to the most promising compounds [18].

Hybrid and Parallel Screening

Advanced pipelines employ parallel screening, where both SBDD and LBDD methods are run independently on the same compound library [18]. The results are then combined using a consensus scoring framework. For example, a compound's final rank could be derived from multiplying its individual ranks from docking and from a ligand-based similarity search. This favors compounds that are highly ranked by both methods, increasing confidence in the selection [18]. Another strategy is to select the top-ranked compounds from each method independently, ensuring a diverse set of candidates and reducing the risk of missing true actives due to the limitations of one approach [18].

Diagram: A decision workflow for integrating SBDD and LBDD approaches in a drug discovery campaign.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for SBDD and LBDD

| Reagent / Material | Function / Application | Context of Use |

|---|---|---|

| Target Protein | The biological macromolecule (e.g., enzyme, receptor) implicated in the disease pathway. | Required for experimental structure determination (SBDD) and for biochemical/cellular assays to validate computational predictions (SBDD & LBDD) [17]. |

| Known Active Ligands | Small molecules with confirmed activity and binding affinity for the target. | Serve as the foundational dataset for building QSAR/pharmacophore models (LBDD) and as positive controls and references for docking (SBDD) [17] [18]. |

| Compound Libraries | Large, diverse collections of small molecules (commercial, in-house, or virtual). | Source for virtual screening to identify novel hit compounds (SBDD & LBDD) [19]. Ultra-large libraries (e.g., Enamine REAL) now contain billions of molecules [19]. |

| Crystallization Kits | Pre-formulated solutions to facilitate the growth of protein crystals. | Essential for obtaining protein structures via X-ray crystallography (SBDD) [17]. |

| Isotopically Labeled Nutrients (e.g., ¹⁵N, ¹³C) | Used to culture proteins for Nuclear Magnetic Resonance (NMR) studies. | Required for multi-dimensional NMR experiments to determine protein structure and dynamics in solution (SBDD) [17]. |

| Structure Prediction Software (e.g., AlphaFold) | AI-based tools for predicting protein 3D structures from amino acid sequences. | Provides structural models for targets without experimental structures, enabling SBDD for a wider range of targets [18] [19]. |

The fields of SBDD and LBDD are being profoundly transformed by the integration of Artificial Intelligence (AI) and Machine Learning (ML) [16] [12] [21]. AI enables rapid de novo molecular generation, ultra-large-scale virtual screening, and predictive modeling of ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties [12]. Hybrid AI-structure/ligand-based screening with deep learning is boosting hit rates and scaffold diversity [12]. The market segment for AI/ML-based drug design is projected to be the fastest-growing in terms of technology [16] [20].

Another significant trend is the expansion of accessible chemical space through ultra-large virtual libraries, which now encompass billions of readily synthesizable compounds, dramatically increasing the odds of finding novel and potent hits [19]. Furthermore, the fusion of AI-driven design with automated laboratories is poised to revolutionize drug discovery timelines, creating closed-loop systems that can design, synthesize, and test molecules with minimal human intervention [12].

In conclusion, both SBDD and LBDD are powerful, complementary pillars of computer-aided drug discovery. The choice between them depends on the available structural and ligand information. SBDD offers a direct, rational path when the target structure is known, while LBDD provides a powerful inference-based alternative when it is not. The future lies not in using them in isolation, but in their intelligent integration, augmented by the growing power of AI and machine learning, to accelerate the delivery of new therapeutics for patients in need.

The field of computer-aided drug discovery (CADD) is undergoing a revolutionary transformation, driven by the powerful convergence of two technological forces: unprecedented advances in structural biology and the exponential growth in computational power. For decades, drug discovery relied heavily on traditional experimental methods that were often time-consuming and costly. The emergence of sophisticated structural biology techniques, particularly cryo-electron microscopy (cryo-EM) and cryo-electron tomography (cryo-ET), has provided researchers with an increasingly clear view of biological macromolecules at near-atomic resolution [22]. Simultaneously, computational capacity has grown at a rate exceeding Moore's Law, enabling the application of artificial intelligence and massive virtual screening campaigns to drug design [23]. This whitepaper examines how these dual forces are reshaping the landscape of drug discovery, providing researchers with an unprecedented toolkit for understanding disease mechanisms and developing novel therapeutics.

Advances in Structural Biology

The Resolution Revolution: From In Vitro to In Situ

Structural biology has evolved dramatically from its beginnings in X-ray crystallography to the current era of in situ structural biology. Where previous techniques required isolated, purified proteins in non-native environments, modern approaches aim to observe biomolecular entities within their full cellular context to fully grasp their interactions and functions [22]. This shift represents a fundamental change in perspective – from studying components in isolation to understanding systems in context.

The peak of this advancement has been achieved through cryo-electron microscopy (cryo-EM), which has matured to facilitate the study of large macromolecular assemblies and molecular machines in their native cellular environment [22]. Key milestones in this evolution include:

- 1958: John Kendrew reports the first X-ray structure of myoglobin at ~6 Å resolution [22]

- 1985: Kurt Wüthrich reports the first NMR protein structure [22]

- 2010s-Present: cryo-EM and cryo-ET enable near-atomic resolution of cellular structures [22]

Modern Structural Biology Techniques and Applications

Table 1: Key Structural Biology Techniques Driving Drug Discovery

| Technique | Resolution Range | Key Applications in Drug Discovery | Notable Advantages |

|---|---|---|---|

| Cryo-EM Single Particle | Near-atomic to atomic [22] | Membrane protein structure determination, large complexes [22] | Handles difficult-to-crystallize targets, minimal sample preparation |

| Cryo-Electron Tomography (Cryo-ET) | Near-atomic in situ [22] | Cellular context visualization, organelle architecture [22] | Preserves native cellular environment, captures molecular machines in action |

| Serial Femtosecond Crystallography | Atomic [24] | G protein-coupled receptors (GPCRs), time-resolved studies [24] | Enables room temperature data collection, time-resolved structural studies |

| Microcrystal Electron Diffraction (MicroED) | Atomic [24] | Small crystal structures, natural products [24] | Works with nanocrystals unsuitable for X-ray crystallography |

| Integrative Modeling | Multi-scale [22] | Supercomplex assembly, dynamic processes [22] | Combines multiple data sources for comprehensive models |

These techniques have enabled groundbreaking applications in drug discovery, including the structural analysis of G protein-coupled receptors (GPCRs) – major drug targets – in various functional states, providing crucial insights for structure-based drug design [24]. Furthermore, cryo-ET has revealed the structure and arrangement of the mitochondrial oxidative phosphorylation machinery within intact cells using cryo-lamella focused ion beam (FIB) milling combined with subtomogram averaging [22].

Experimental Workflow: In Situ Structural Analysis via Cryo-ET

The following diagram illustrates a representative workflow for in situ structural analysis using cryo-electron tomography, a key methodology in modern structural biology:

Cryo-ET Workflow for In Situ Structural Biology

This workflow enables researchers to achieve near-atomic resolution structures within native cellular environments, revolutionizing our understanding of complex biological processes and facilitating targeted drug design.

Exponential Growth in Computational Power

Unprecedented Computational Demand

The computational requirements for modern CADD and AI-driven research are growing at an extraordinary pace that exceeds traditional metrics. According to recent analyses, AI's computational needs are growing more than twice as fast as Moore's law, pushing toward 100 gigawatts of new demand in the US by 2030 [23]. This exponential growth is largely driven by the training of increasingly large and complex AI models for drug discovery applications.

The scale of this demand becomes clear when examining current projections:

Table 2: Projected Computational Power Demand for AI and Data Centers

| Year | Projected Global AI Data Center Power Demand | Comparative Scale | Key Drivers |

|---|---|---|---|

| 2025 | 10 GW additional capacity [25] | More than total power capacity of Utah [25] | Large language model training, molecular dynamics simulations |

| 2027 | 68 GW total capacity [25] | Nearly equivalent to California's total 2022 capacity (86 GW) [25] | Ultra-large virtual screening, generative AI for molecular design |

| 2030 | 200 GW global compute requirements [23]; 327 GW global power demand [25] | 10% of total US electricity consumption [26] | Personalized medicine models, whole-cell simulations |

This unprecedented demand creates significant infrastructure challenges, with building the required data centers necessitating approximately $500 billion of capital investment each year – a staggering sum that far exceeds any anticipated government subsidies [23].

Meeting the Computational Challenge

Multiple approaches are emerging to address these massive computational requirements:

Behind-the-Meter Generation: Data center developers are increasingly building their own power generation on-site rather than relying solely on utility companies. In Texas, the Stargate project involving OpenAI and Oracle is building 10 gas turbines to serve as backup power [26].

Alternative Energy Sources: Natural gas is expected to power about 60% of new datacenter demand, with a growing interest in nuclear power, including small modular reactors [26].

Algorithmic Efficiency: Innovations in AI algorithms promise to reduce computational demands. Techniques such as mixed-precision matrix computation, chain-of-thought prompting, and large model distillation boost performance while lowering computational load [23].

Demand Response Programs: Researchers at Duke University estimate that if datacenter operators agreed to dial back power use during just 1% of their expected uptime, it would create "curtailment-enabled headroom" equivalent to 125 GW of power capacity [26].

Synergistic Applications in Drug Discovery

Integrated Computational Methodologies

The convergence of advanced structural data and massive computational power has enabled several transformative approaches to drug discovery:

Ultra-Large Virtual Screening

Structure-based virtual screening has scaled dramatically, now enabling the screening of gigascale chemical spaces containing billions of compounds [24]. This approach leverages the growing database of protein structures and massive computational resources to identify novel drug candidates with unprecedented efficiency. For example, combined physics-based and machine learning methods enabled a computational screen of 8.2 billion compounds, with selection of a clinical candidate achieved after just 10 months and only 78 molecules synthesized [24].

The workflow for ultra-large virtual screening demonstrates the integration of computational approaches:

Ultra-Large Virtual Screening Workflow

Cellular-Scale Simulations

Advanced computational resources now enable the modeling of entire cellular environments. Researchers at the University of Groningen have employed coarse-grained modeling to construct dynamical 3D models of whole cells, integrating structural data from multiple sources to create comprehensive simulations of cellular processes [22]. These simulations provide unprecedented insights into drug mechanisms of action within physiological contexts.

Research Reagent Solutions: Computational Tools for Drug Discovery

Table 3: Essential Computational Tools and Their Applications in CADD

| Tool Category | Specific Tools/Platforms | Function in Drug Discovery | Key Applications |

|---|---|---|---|

| Structure Prediction | AlphaFold 2/3, RFdiffusion, ESM [27] | Predict 3D protein structures from sequence | Target identification, structure-based design |

| Virtual Screening | V-SYNTHES, Molecular docking platforms [24] | Screen billions of compounds for binding affinity | Hit identification, lead optimization |

| Molecular Dynamics | Martini Coarse-Grained Model [22] | Simulate molecular movements and interactions | Binding mechanism analysis, allostery studies |

| Integrative Modeling | Integrative Modeling Platform (IMP) [22] | Combine multiple data sources for structural models | Complex assembly modeling, molecular machine analysis |

| AI-Driven Design | Generative AI models, Deep learning frameworks [24] | Design novel drug candidates with desired properties | De novo drug design, molecular optimization |

Case Studies: Successful Integration in Therapeutic Development

CADD in Oral Diseases

Computer-aided drug design has demonstrated significant success in developing treatments for oral diseases, including dental caries, periodontitis, and oral cancer. CADD has been applied to the development of peptide-based drugs, small molecules, and plant extracts for oral diseases, showcasing its versatility across therapeutic modalities [6]. Specific applications include:

- Antibacterial Therapies: Targeting glucosyltransferase C of Streptococcus mutans to prevent dental caries formation [6]

- Anti-Cancer Approaches: Repurposing ginsenoside C and Rg1 as treatments for oral cancer through computational screening [6]

- Anti-Inflammatory Strategies: Designing inhibitors for inflammatory pathways involved in periodontitis [6]

Accelerated Discovery Timelines

The combination of structural insights and computational power has dramatically compressed drug discovery timelines. In one notable example, researchers used generative AI to identify a lead candidate in just 21 days, followed by rapid synthesis, and in vitro and in vivo testing [24]. Another project completed a computational screen of 8.2 billion compounds and selected a clinical candidate after only 10 months and the synthesis of just 78 molecules [24], demonstrating extraordinary efficiency compared to traditional methods.

Future Perspectives and Challenges

Emerging Trends

The field of computer-aided drug discovery continues to evolve rapidly, with several emerging trends shaping its future:

Cellular-Scale Structural Biology: The ongoing development of cryo-ET and correlative microscopy techniques aims to build a comprehensive cell structure atlas detailing the anatomy and morphology of cellular content at near-atomic resolution [22].

Generative AI for Drug Design: Beyond predictive models, generative AI systems are now capable of designing novel drug candidates with specific properties, potentially unlocking entirely new chemical spaces for therapeutic development [27].

Quantum Computing Applications: Though still in early stages, quantum computing holds promise for addressing particularly challenging computational problems in drug discovery, such as precise binding energy calculations and complex protein folding predictions [23].

Critical Challenges

Despite remarkable progress, significant challenges remain:

Infrastructure Demands: The enormous power requirements for advanced computation create potential bottlenecks. Global AI data center power demand could reach 68 GW by 2027 – nearly doubling global data center power requirements from 2022 [25].

Methodological Integration: Effectively combining data from multiple structural biology techniques and computational approaches requires sophisticated integration platforms and standardized protocols [22].

Validation Gaps: Computational predictions must be rigorously validated experimentally, and mismatches in virtual screening can lead to false positives that must be identified through laboratory testing [6].

The continued synergy between structural biology and computational power will undoubtedly drive further innovations in drug discovery. As these fields advance, they promise to deliver more effective therapeutics with greater efficiency, ultimately transforming how we treat human disease.

The development of zanamivir (marketed as Relenza) represents a seminal achievement in pharmaceutical research, serving as the first celebrated success story for structure-based computer-aided drug design (CADD) [28]. This neuraminidase inhibitor emerged in the late 1990s as a therapeutic agent against both influenza A and B viruses, establishing an entirely new class of antiviral agents and validating computational approaches to drug discovery [29] [30]. For researchers and drug development professionals, the zanamivir case study demonstrates the powerful synergy of structural biology, computational chemistry, and rational drug design—a paradigm that has since influenced countless other drug discovery programs [31].

This whitepaper examines the historical context, design strategy, and experimental validation of zanamivir, framing its development within the broader thesis of CADD methodology evolution. The journey from viral protein structure determination to clinically approved medication marked a transition from serendipitous discovery to targeted, rational drug design, establishing a blueprint that would reshape modern pharmaceutical development [28].

Historical Context and Influenza Treatment Landscape

The Clinical Need for Influenza Therapeutics

Prior to the 1990s, the therapeutic arsenal against influenza was severely limited. Influenza represented a substantial global health burden, affecting hundreds of millions annually and causing significant morbidity and mortality, particularly among high-risk populations including the elderly, those with chronic respiratory conditions, and immunocompromised individuals [32]. In Australia alone, approximately 3,000 deaths annually were attributed to influenza or its complications each winter [32].

The available antivirals, amantadine and rimantadine, targeted the M2 ion channel but were effective only against influenza A viruses and faced rapid emergence of resistance [33] [31]. Additionally, vaccines provided variable protection due to the constant antigenic drift and shift of influenza viruses, creating an urgent need for novel therapeutic approaches that could target conserved viral elements across multiple strains [33].

The Emergence of Structure-Based Drug Design

The 1980s witnessed critical advancements that would enable zanamivir's development. The publication of the first neuraminidase crystal structure by Colman, Varghese, and Laver in 1983 provided the essential structural blueprint for rational inhibitor design [30]. This breakthrough revealed the atomic details of the enzyme's active site—a conserved cavity among influenza A and B strains that would become the target for drug design [30] [34].

Concurrently, computational power was increasing exponentially, making it feasible to perform complex molecular simulations and calculations that were previously impractical [28]. The convergence of structural biology and computational chemistry created the foundation for what would become the first successful application of structure-based drug design against an infectious disease target.

The Rational Drug Design Strategy

Target Identification and Validation

Neuraminidase (also known as sialidase) was identified as a promising drug target due to its essential role in the influenza virus life cycle. This viral surface enzyme cleaves sialic acid receptors from host cells and viral proteins, enabling the release and spread of progeny virions from infected cells [30] [35]. Without functional neuraminidase, influenza viruses aggregate at the cell surface and cannot initiate new infections [35].

Critical to its attractiveness as a target, the neuraminidase active site was found to be highly conserved across influenza A and B strains, suggesting that inhibitors targeting this site might demonstrate broad-spectrum activity and have a higher barrier to resistance [30] [35]. This conservation stemmed from the enzyme's essential catalytic function, which could not tolerate significant mutation without compromising viral fitness.

Structural Insights and Lead Compound

The design strategy began with analysis of the natural substrate, sialic acid (N-acetylneuraminic acid), and a known weak inhibitor, 2-deoxy-2,3-didehydro-N-acetylneuraminic acid (DANA) [29] [30]. DANA, identified in 1974, served as a structural template but possessed insufficient potency for clinical development [29].

X-ray crystallographic studies of neuraminidase complexes revealed key insights about the active site architecture [30] [34]. Particularly important was the identification of three key regions:

- A negatively charged zone that aligned with the C4 hydroxyl group of DANA

- A conserved glutamic acid residue (Glu119) that could form salt bridges with positively charged groups

- A hydrophobic pocket adjacent to the glycerol side chain

These structural features informed the strategy for designing more potent inhibitors through systematic modification of the DANA scaffold [30].

Computational Design and Optimization

The rational design of zanamivir employed computational modeling techniques that were groundbreaking for their time. Using the GRID software developed by Molecular Discovery, researchers probed the neuraminidase active site to identify energetically favorable interactions and optimal positions for specific functional groups [29].

This computational analysis revealed two critical modifications to the DANA scaffold:

Replacement of the C4 hydroxyl with an amino group: The GRID software identified a negatively charged region in the active site that aligned with the C4 hydroxyl group of DANA. Replacement with a positively charged amino group created a salt bridge interaction with conserved glutamic acid residues (Glu119), improving binding affinity approximately 100-fold [29].

Introduction of a guanidino group: Further analysis revealed that Glu119 was positioned at the bottom of a conserved pocket perfectly sized to accommodate a larger, more basic guanidine group. This substitution replaced the C4 hydroxyl with a guanidino moiety, creating even stronger electrostatic interactions with the acidic residues in the active site [29] [30].

The resulting compound—4-guanidino-Neu5Ac2en, later named zanamivir—functioned as a transition-state analogue inhibitor that tightly bound the neuraminidase active site with nanomolar affinity [30]. The design strategy exemplified structure-based drug design, leveraging atomic-level structural information to systematically optimize a lead compound into a potent therapeutic agent.

Experimental Validation and Methodologies

The computational predictions required rigorous experimental validation through a series of methodological approaches that confirmed both the mechanism of action and therapeutic potential of zanamivir.

Structural Biology and Binding Confirmation