Comparative Analysis of Fragment Screening Methods: A Strategic Guide for Modern Drug Discovery

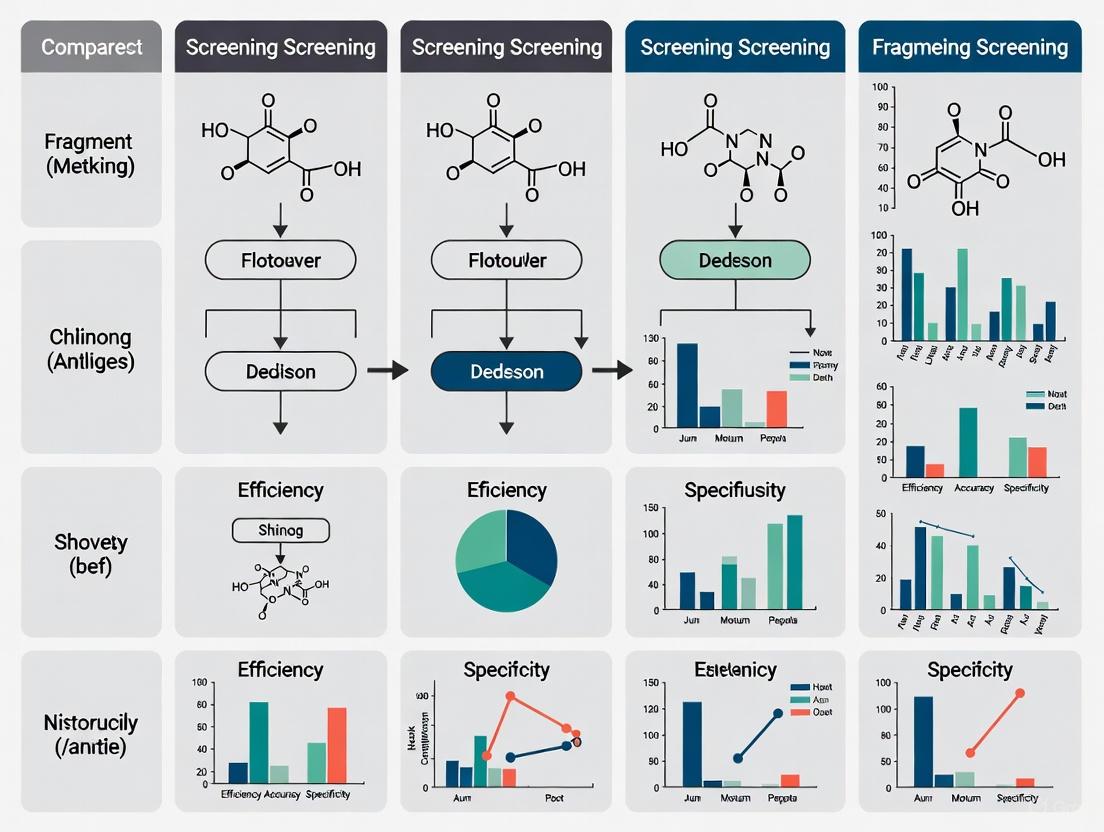

This article provides a comprehensive comparative analysis of fragment screening methodologies essential for early-stage drug discovery.

Comparative Analysis of Fragment Screening Methods: A Strategic Guide for Modern Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of fragment screening methodologies essential for early-stage drug discovery. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of Fragment-Based Drug Discovery (FBDD), contrasts the application and performance of key biophysical techniques—including X-ray crystallography, NMR, SPR, MST, and TSA—and addresses critical troubleshooting and optimization strategies. By synthesizing current trends, validation frameworks, and real-world case studies, this review serves as a strategic guide for selecting and implementing optimal fragment screening approaches to efficiently identify novel chemical matter for challenging therapeutic targets.

The Foundation of FBDD: Principles, Libraries, and Evolving Landscape

Core Principles and Advantages of FBDD over High-Throughput Screening

In target-based drug discovery, the initial identification of bioactive molecules, or "hits," is a critical first step. For decades, High-Throughput Screening (HTS) has been the dominant method, testing vast libraries of hundreds of thousands to millions of drug-like compounds [1]. Fragment-Based Drug Discovery (FBDD) has emerged as a powerful complementary approach, using smaller, simpler molecular fragments to find starting points for drug development [2] [3]. While HTS screens large, complex molecules, FBDD leverages the efficient binding properties of low-molecular-weight fragments, offering distinct advantages, particularly for challenging targets [4]. This guide provides an objective, data-driven comparison of these two strategies to inform selection for drug discovery projects.

Core Principles and Comparative Analysis

Fundamental Characteristics and Differences

The core difference between the two approaches lies in the starting compounds. HTS libraries consist of complex, drug-like molecules, while FBDD libraries are composed of small, low-complexity fragments.

Table 1: Fundamental Characteristics of FBDD and HTS

| Characteristic | Fragment-Based Drug Discovery (FBDD) | High-Throughput Screening (HTS) |

|---|---|---|

| Library Size | 1,000 - 3,000 compounds [1] [5] | Hundreds of thousands to millions of compounds [1] |

| Molecular Weight | Typically < 300 Da [2] [1] | Typically 400 - 650 Da [1] |

| Physicochemical Rules | Rule of 3 (Ro3): MW ≤ 300, HBD ≤ 3, HBA ≤ 3, cLogP ≤ 3 [2] [6] | Rule of 5 (Ro5) for drug-likeness [1] |

| Typical Hit Affinity | Weak (µM - mM range) [2] [5] | Stronger (nM - low µM range) [2] |

| Primary Screening Methods | Biophysical (SPR, NMR, X-ray, DSF) [3] [4] | Biochemical activity-based assays [1] |

| Hit Rate | Generally higher for the chemical space sampled [4] | Typically ~1% [1] |

Key Strategic Advantages of FBDD

- Efficient Exploration of Chemical Space: The simplicity of fragments means that a small library of 1,000-2,000 compounds can sample a much greater proportion of available chemical space than a vastly larger HTS library [2] [4]. This is because the number of possible molecules grows exponentially with molecular size.

- Higher Atom Efficiency and Ligand Efficiency: Fragments, despite having low absolute affinity, often display high ligand efficiency (binding energy per atom) [2] [4]. They form efficient, high-quality interactions with the target, providing an optimal starting point for optimization [1].

- Success with "Undruggable" Targets: FBDD has proven particularly effective against challenging target classes, such as protein-protein interactions (PPIs) and allosteric sites, which are often difficult to address with larger HTS compounds [2] [4]. Notable successes include venetoclax (targeting BCL-2) and sotorasib (targeting KRAS G12C) [2] [7].

Methodological Comparisons: Workflows and Techniques

Screening Methodologies and Experimental Protocols

The fundamental difference in hit affinity dictates the required screening technologies. HTS primarily uses biochemical assays to measure functional inhibition or activation. In contrast, FBDD relies on sensitive biophysical methods to detect weak binding directly.

Table 2: Comparison of Key FBDD Screening Methods and Protocols

| Method | Key Principle | Typical Experimental Protocol | Key Data Output |

|---|---|---|---|

| Surface Plasmon Resonance (SPR) | Measures change in refractive index near a sensor surface when a ligand binds to an immobilized target protein [3]. | 1. Immobilize target protein on biosensor chip.2. Inject fragment solutions over chip surface.3. Monitor binding response in real-time [4]. | Sensogram data providing binding affinity (KD), and kinetics (kon, koff) [4]. |

| X-ray Crystallography | Provides an atomic-resolution 3D structure of the fragment bound to the target protein [6]. | 1. Soak fragments into crystals of the target protein.2. Collect X-ray diffraction data.3. Solve and analyze electron density to find bound fragments [6] [8]. | Precise fragment binding mode and protein conformational changes [6]. |

| Nuclear Magnetic Resonance (NMR) | Detects changes in the magnetic properties of atomic nuclei upon fragment binding [3]. | 1. Monitor chemical shifts of protein or fragment signals.2. Titrate fragments into protein solution.3. Analyze perturbation of NMR spectra [3]. | Confirmation of binding, and can identify binding site. |

| Differential Scanning Fluorimetry (DSF) | Measures the shift in a protein's thermal stability (Tm) upon fragment binding using a fluorescent dye [3]. | 1. Incubate protein with fragment and fluorescent dye.2. Gradually increase temperature.3. Monitor fluorescence to determine Tm shift [3]. | ΔTm (change in melting temperature) indicates stabilization from binding. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of FBDD campaigns requires specific reagents and materials, particularly for the sensitive biophysical methods involved.

Table 3: Key Research Reagent Solutions for FBDD

| Reagent / Material | Function in FBDD | Application Notes |

|---|---|---|

| Fragment Library | A curated collection of 1,000-3,000 low molecular weight compounds following the Rule of Three [2] [6]. | Libraries are designed for maximal chemical diversity and often include 3D-shaped fragments to overcome planarity [2]. |

| Stable, Purified Protein | The target for fragment screening. Required in highly pure, monodisperse form for biophysical assays [1]. | Quality and stability are paramount. Milligram quantities are often needed, especially for X-ray crystallography [5]. |

| SPR Biosensor Chips | Surface for immobilizing the target protein to measure fragment binding in real-time [3] [4]. | Different chip chemistries (e.g., CM5, NTA) allow for various immobilization strategies [3]. |

| Crystallization Reagents | Sparse matrix screens and optimization reagents to grow high-quality, diffraction-ready crystals of the target protein [6] [8]. | Essential for X-ray crystallography-based screening. |

| Synchrotron Beamtime | Access to high-intensity X-ray sources for rapid collection of diffraction data from protein crystals [6] [8]. | Enables high-throughput X-ray fragment screening. |

Both HTS and FBDD are established, powerful strategies for hit identification. The choice between them is target-dependent and often influenced by institutional resources and expertise [1]. HTS is a broad, agnostic approach that can directly identify potent, drug-like inhibitors but requires significant infrastructure and can suffer from low hit rates. FBDD is a targeted, efficient approach that provides high-quality starting points with superior ligand efficiency, proving particularly valuable for intractable targets, albeit often requiring structural biology support and extensive medicinal chemistry optimization. Understanding these core principles and practical requirements enables research teams to make an informed strategic choice for their drug discovery programs.

Fragment-Based Drug Discovery (FBDD) has matured from a specialized technique into a mainstream approach widely used across industrial and academic settings for identifying novel therapeutic compounds [9]. This method involves screening small, low molecular weight organic molecules (fragments) against a biological target, providing efficient starting points for drug development. The foundational concept for defining these fragments was established in 2003 with the proposal of the "Rule of Three" (Ro3) [9] [2]. The Ro3 serves as a set of guidelines for designing fragment libraries, analogous to the role of Lipinski's Rule of Five for drug-like compounds, ensuring fragments possess properties suitable for subsequent optimization into drug candidates [10].

The rationale behind using fragments lies in their superior efficiency in exploring chemical space and their ability to form high-quality interactions with target proteins [2]. Due to their low complexity, fragments often engage in more atom-efficient binding interactions compared to larger molecules, avoiding suboptimal contacts that can complicate optimization [11]. This efficiency is quantified by ligand efficiency—binding free energy normalized for the number of non-hydrogen atoms—which is typically higher for fragments than for larger hits identified through high-throughput screening (HTS) [11]. The Rule of Three provides a standardized framework to capitalize on these advantages by ensuring fragments maintain optimal physicochemical properties for effective screening and subsequent lead development [2] [10].

The Rule of Three: Definition and Evolution

Core Principles and Parameters

The Rule of Three was originally defined to describe the desirable physicochemical properties for molecules included in FBDD screening collections [9]. The core criteria establish clear boundaries for fragment characteristics, emphasizing small size and reduced complexity to maximize binding efficiency and optimization potential.

Table 1: The Original Rule of Three Criteria

| Physicochemical Property | Rule of Three Threshold | Rationale |

|---|---|---|

| Molecular Weight (MW) | ≤ 300 Da | Limits molecular size and complexity [9] [2] |

| clogP | ≤ 3 | Controls lipophilicity, improving solubility and reducing promiscuity [9] [2] |

| Hydrogen Bond Donors (HBD) | ≤ 3 | Restricts the number of polar groups donating H-bonds [9] [2] |

| Hydrogen Bond Acceptors (HBA) | ≤ 3 | Limits the number of polar groups accepting H-bonds [9] [2] |

| Number of Rotatable Bonds (NROT) | ≤ 3 | Reduces flexibility, favoring pre-organization for binding [9] [10] |

| Polar Surface Area (PSA) | ≤ 60 Ų | Ensures sufficient polarity for aqueous solubility [9] [10] |

The relationship between the Rule of Three and the better-known Lipinski's Rule of Five is intentional. The Ro3 was designed to provide a starting point of simple molecules that can be rationally optimized into leads that comply with Lipinski's guidelines for oral bioavailability [10]. However, a key distinction exists: while Lipinski's rules predict oral bioavailability of drug candidates, the Rule of Three evaluates fragments for their suitability as optimization starting points [10].

Practical Application and Contemporary Interpretation

In practice, the Rule of Three is often treated as a guideline rather than a strict rule [12] [2]. A decade after its introduction, the original authors noted that some criteria, particularly the limits on hydrogen bond donors and acceptors, had not been widely adopted with strict uniformity, partly due to ambiguities in how these properties are defined [9]. For instance, whether the nitrogen in a tertiary amide should count as a hydrogen bond acceptor remains a point of discussion, leading to variations in library design [12].

Experimental evidence supports a flexible application. A landmark study screening 364 fragments against endothiapepsin found that while all 11 crystallographically validated hits had molecular weights <300 and only one had ClogP > 3, the majority exceeded the limit for "Lipinski acceptors" [12]. However, when hydrogen bond acceptors were counted more judiciously (excluding atoms like aniline nitrogens), only one fragment violated the Ro3 acceptor criterion. This suggests that a contextual interpretation of the parameters, rather than rigid adherence, is most productive [12].

Modern fragment libraries have evolved, and successful fragments often violate at least one Rule of Three parameter, most commonly the hydrogen bond acceptor count [2]. The critical consensus is that molecular weight and ClogP are the most important parameters to control, as they directly impact molecular obesity and compound tractability [12] [2].

Table 2: Evolution of Fragment Properties in Modern FBDD

| Aspect | Early Strict Interpretation | Modern Flexible Application |

|---|---|---|

| Adherence | Treated as a strict filter for library selection | Viewed as a guideline; some violations are acceptable [2] |

| Key Parameters | All parameters considered equally | MW and ClogP are prioritized as most critical [12] |

| HBA/HBD Counts | Strictly ≤3, with predefined atom typing | More nuanced counting; functional group context matters [12] |

| Library Diversity | Limited to strict Ro3 compliance | Broader diversity, includes "3D" and complex fragments [2] |

| Hit Identification | Potential for reduced chemotype variety [12] | Increased variety of chemotypes, improving hit discovery [12] |

Experimental Methodologies for Fragment Screening

Fragment screening requires specialized experimental protocols due to the inherently weak nature of fragment-target interactions (typically in the µM–mM range) [10]. Standard biochemical assays used in HTS are often insufficiently sensitive, necessitating robust biophysical techniques and a screening cascade for hit validation.

Key Biophysical Screening Techniques

The following workflow illustrates the typical process for identifying and validating fragment hits, integrating multiple orthogonal methods:

Surface Plasmon Resonance (SPR) is exceptionally well-suited for FBDD due to its sensitivity in detecting weak interactions and ability to provide kinetic data [13]. Modern implementations use multiplexed strategies, screening fragments against multiple complementary surfaces or target conditions simultaneously. This approach expands the range of addressable targets, including large dynamic proteins, multi-protein complexes, and aggregation-prone proteins [13]. For instance, SPR biosensor methods have been successfully applied to challenging targets like acetyl choline binding protein (AChBP), lysine demethylase 1 in complex with a corepressor (LSD1/CoREST), and human tau protein [13]. Recent advancements include high-throughput SPR screening over large target panels, enabling rapid ligandability assessment and affinity cluster mapping across many targets in days rather than years [14].

X-ray Crystallography has emerged as a powerful primary screening method, facilitated by specialized high-throughput platforms at synchrotron facilities [11]. The "crystallography-first" approach is bolstered by evidence that pre-screening with other biophysical methods can lead to the loss of valuable hits [11]. The Diamond Light Source XChem facility, for example, has been responsible for more than 50% of all publicly disclosed crystallographic fragment-screening campaigns [11]. Technological advances in beamline instrumentation, detectors, and robotics now allow collection of hundreds of datasets daily, making large-scale crystallographic screening feasible [11].

Nuclear Magnetic Resonance (NMR) spectroscopy was one of the first methods used for FBDD and remains a cornerstone technique [11] [10]. It is particularly valuable for detecting binding and quantifying weak affinities, even without detailed structural information initially.

Hit Validation and Optimization

After primary screening, confirmed hits undergo rigorous validation. A minimum of two orthogonal methods—techniques based on different physical principles—is typically required to eliminate false positives and confirm specific binding [10]. This cascade often includes:

- Dose-response analysis to determine binding affinity and ligand efficiency.

- Competition assays with known ligands to identify binding site and mode.

- Structural characterization (X-ray crystallography) to elucidate binding pose [10].

Validated hits are then optimized through structure-guided strategies:

- Fragment Growing: Adding functional groups to enhance interactions.

- Fragment Linking: Connecting two fragments that bind nearby sites.

- Fragment Merging: Combining features of multiple hits into a single molecule [15].

Table 3: Essential Research Reagents and Solutions for Fragment Screening

| Reagent/Solution | Function in FBDD | Application Example |

|---|---|---|

| Stable, Purified Target Protein | Core reagent for screening assays; requires high purity and structural integrity | Used in all biophysical methods (SPR, X-ray, NMR); essential for complex formation [13] |

| Rule of Three Fragment Library | Diverse collection of 500-2000 low molecular weight compounds for primary screening | Commercially available or custom-designed libraries; basis for initial hit identification [2] [10] |

| Biosensor Chips (e.g., CM5, NTA) | Immobilization surfaces for SPR-based screening | Used in multiplexed SPR strategies to capture target proteins or complexes [13] |

| Crystallization Reagents & Soaking Solutions | Facilitate structural studies of fragment binding | Used in high-throughput crystallographic screening at synchrotron facilities [11] |

| Orthogonal Validation Assays | Secondary screens to confirm binding and reduce false positives | Intact mass spectrometry, peptide mapping, reactivity assays (for covalent fragments) [16] |

Emerging Trends and Future Perspectives

Fragment-based drug discovery continues to evolve with several emerging trends pushing beyond traditional Rule of Three boundaries. Covalent FBDD has gained significant traction, with specialized libraries incorporating warheads designed to engage nucleophilic amino acid residues like cysteine, lysine, and histidine [16]. These libraries balance warhead diversity with structural flexibility, enabling targeted covalent inhibition previously challenging with conventional approaches [16]. Companies like Frontier Medicines are pioneering platforms that unite fragment-based and covalent discovery to target previously intractable proteins [14].

The integration of artificial intelligence and machine learning with FBDD is accelerating discovery cycles. AI-driven analysis tools, such as Biacore Insight Software, can reduce data analysis time by over 80% while enhancing reproducibility [14]. Computational methods like F-SAPT (Functional-group Symmetry-Adapted Perturbation Theory) provide unprecedented quantum chemical insights into protein-ligand interactions, guiding optimization strategies [14].

There is also growing recognition of the need for increased three-dimensional (3D) character in fragment libraries. Traditional sets often contain predominantly planar, aromatic structures, but incorporating fragments with higher fraction of sp³-hybridized carbons (Fsp³) improves solubility and explores underexplored chemical space [2]. Finally, the field is grappling with challenges of data management and sharing as crystallographic fragment screening generates massive datasets. Establishing effective mechanisms for preserving and sharing these heterogeneous datasets is crucial for advancing research and training AI models [11].

The Rule of Three has provided an invaluable framework for defining fragments in FBDD over the past two decades, contributing directly to approved drugs like vemurafenib, venetoclax, and sotorasib [2] [15]. While its core principles of low molecular weight, minimal complexity, and controlled lipophilicity remain foundational, contemporary application favors a flexible interpretation prioritizing molecular weight and clogP as the most critical parameters [12] [2]. Successful FBDD campaigns rely on sophisticated biophysical screening methods, orthogonal hit validation, and structure-guided optimization—processes that have been revolutionized by high-throughput crystallography, multiplexed SPR, and computational advances [11] [13]. As the field continues to evolve with covalent targeting, AI integration, and expanded library design, the Rule of Three will likely continue serving as a flexible guideline rather than a rigid rule, enabling innovative approaches to previously "undruggable" targets [14] [2].

Design and Curation of Effective Fragment Libraries for Maximum Diversity

Fragment-Based Drug Discovery (FBDD) has evolved into a mature and powerful strategy for generating novel lead compounds, offering distinct advantages for challenging therapeutic targets where traditional high-throughput screening often fails [15]. This approach identifies low molecular weight fragments (typically MW < 300 Da) that bind weakly to a target using highly sensitive biophysical methods, with these initial hits subsequently optimized into potent leads through structure-guided strategies [15]. The fundamental theoretical advantage of FBDD lies in its efficient exploration of chemical space: the number of possible fragment-sized molecules is orders of magnitude smaller than lead-sized molecules, enabling more comprehensive coverage with significantly smaller compound collections [17] [11]. Consequently, maximizing diversity represents a paramount objective in fragment library design, as library composition directly influences the sampling efficiency of chemical space and the novelty of potential hit compounds [18].

The critical challenge in implementing a successful FBDD approach lies in designing a fragment library that balances size with diversity. While smaller libraries reduce time and monetary costs, they must contain sufficient structural diversity to maximize the probability of identifying novel hit compounds against diverse biological targets [18]. This comparative analysis examines quantitative metrics for assessing library diversity, explores the relationship between library size and diversity, evaluates experimental methodologies for library validation, and provides strategic recommendations for designing optimized fragment libraries within the broader context of fragment screening methods research.

Quantitative Metrics for Assessing Fragment Library Diversity

The concept of "diversity" in fragment libraries requires precise quantitative definition to enable meaningful comparisons between different library selections. Researchers primarily utilize three categories of diversity descriptors, each with distinct advantages and applications [18].

Table 1: Diversity Metrics for Fragment Library Assessment

| Metric Category | Specific Metrics | Definition | Application in FBDD |

|---|---|---|---|

| Structural Descriptors | Tanimoto Similarity [18] | Pairwise similarity measure based on molecular fingerprints | Lower values indicate greater dissimilarity between fragments |

| Richness [18] | Number of unique structural fingerprints in a library | Measures coverage of chemical space features | |

| True Diversity [18] | Effective number of structural features considering proportional abundances | Incorporates both uniqueness and evenness of feature distribution | |

| Physicochemical Descriptors | Principal Moments of Inertia (PMI) [19] | Ratio of moments of inertia describing molecular shape | Quantifies 3D character from rod-like to disk-like to spherical |

| Fraction of sp3 carbons (Fsp3) [19] | Number of sp3 hybridized carbons divided by total carbon count | Measures saturation and structural complexity | |

| Functional Descriptors | Bioactivity profiles [18] | Compound activities against panel of biological targets | Most relevant but resource-intensive to acquire |

Structural descriptors, particularly molecular fingerprints, serve as the most routinely applied diversity measures in FBDD. Extended-connectivity fingerprints effectively represent chemical structures by capturing bond connectivity patterns [18]. True diversity, derived from ecological diversity indices, represents particularly sophisticated metric that considers not only the number of unique structural features but also their proportional abundances [18] [17]. A library with more even distribution of feature abundances demonstrates higher true diversity than one with the same number of features but uneven distribution [18]. Physicochemical descriptors provide complementary information, with PMI analysis specifically addressing three-dimensional shape diversity—a critical consideration since conventional fragment libraries often overrepresent flat, aromatic structures [19].

Library Size Versus Diversity: Quantitative Relationships

Comprehensive analysis of size-diversity relationships reveals that while library size significantly impacts diversity, there exists a point of diminishing returns where additional compounds provide minimal diversity gains or even decrease certain diversity metrics.

In a landmark study, researchers evaluated libraries ranging from 100 to 100,000 compounds selected from 227,787 commercially available fragments [18]. The results demonstrated several critical relationships essential for optimal library design:

Table 2: Size-Diversity Relationships in Fragment Libraries

| Library Size | Marginal Richness (fingerprints/compound) | True Diversity Trend | Percentage of Total Available Fragments |

|---|---|---|---|

| 100 fragments | 28.9 | Rapid increase | 0.04% |

| 1000 fragments | ~20.1 (estimated) | Increasing | 0.44% |

| 2000 fragments | ~15.5 (estimated) | Near maximum | 0.88% |

| 5000 fragments | 13.4 | Plateau phase | 2.19% |

| 17,666 fragments | Negative values observed | Maximum reached | 7.76% |

| 100,000 fragments | Minimal gains | Declining | 43.89% |

The data reveals several crucial design principles. First, marginal richness—the additional unique fingerprints per additional fragment—declines dramatically as library size increases [18]. Diversity-based selections from 100 fragments achieved 28.9 new fingerprints per compound, while the efficiency dropped to 13.4 fingerprints per compound when expanding from 2,000 to 5,000 fragments [18]. This demonstrates that smaller, well-designed libraries provide substantially greater diversity per compound than larger libraries.

Second, and perhaps most surprisingly, true diversity reaches an optimum at approximately 17,666 fragments (7.76% of available compounds) and subsequently declines [18]. This occurs because commercially available compounds themselves are not perfectly diverse, and beyond a certain point, additional compounds introduce structural redundancy that decreases the overall evenness of feature distribution [17].

Remarkably, only 2,052 fragments (less than 1% of available compounds) are required to achieve the same level of true diversity as the entire collection of 227,787 commercially available fragments [18]. This finding has profound implications for library design, suggesting that optimally selected fragment libraries of approximately 1,000-2,000 compounds can provide diversity equivalent to much larger collections while dramatically reducing screening resources [18] [17]. This size range aligns perfectly with successful FBDD campaigns and commonly used fragment libraries, which typically contain 1,000-2,000 compounds [18] [6].

Experimental Protocols for Library Validation and Screening

Library Selection and Preparation Methodologies

Robust experimental protocols ensure that theoretical diversity translates into practical screening success. The process typically begins with compound filtering using "Rule of 3" criteria (MW ≤ 300, HBD ≤ 3, HBA ≤ 3, cLogP ≤ 3) and removal of undesirable functionalities using PAINS filters and medicinal chemistry expertise [6] [19]. For the European Fragment Screening Library (EFSL), this initial filtering resulted in 734 qualified fragments from an initial collection [6].

Structural clustering follows filtering, typically using MACCS fingerprints with Tanimoto distance thresholds (e.g., 0.635-0.647) to group structurally similar compounds [6]. From these clusters, representatives are selected based on lowest intra-cluster Tanimoto distance and highest predicted solubility [6]. Finally, medicinal chemistry curation applies heuristic criteria to exclude borderline fragments, compounds with unusually high sp3-character, low predicted solubility, or unfavorable polar atom distributions [6].

For crystallographic screening, specialized preparation protocols are essential. The EFSL-96 library was prepared as dried compounds on MRC 96-well low-profile plates using acoustic transfer systems, with compounds provided as 100 mM DMSO-d6 stocks [6]. This format ensures compatibility with high-throughput crystallography workflows and prevents DMSO-related damage to protein crystals.

Crystallographic Screening Workflow

The crystallographic screening workflow begins with protein production and purification, requiring highly pure, monodisperse protein that reproducibly forms high-resolution crystals [6] [20]. For example, endothiapepsin was purified to concentration of 5 mg/mL in sodium acetate buffer (pH 4.6), while the NS2B–NS3 Zika protease construct was expressed in BL21(DE3) cells and purified using immobilized metal affinity and size exclusion chromatography [6].

Crystal growth follows rigorous protocols to ensure uniformity essential for PanDDA analysis [20]. Endothiapepsin crystals were grown in sitting drops by mixing protein solution with reservoir solution containing PEG 4000 and ammonium acetate, with microseeding employed to improve reproducibility [6]. The NS2B–NS3 Zika protease was crystallized using optimized conditions with high protein concentration (40 mg/mL) [6].

Fragment soaking introduces compounds into crystal lattice, either individually or in cocktails [11] [20]. Specialized equipment like Formulatrix RockImagers with Echo dispensers enables precise compound delivery into crystallization drops without damaging crystals [20]. Soaking times vary from hours to overnight, with DMSO tolerance being a critical factor [20].

Data collection increasingly occurs at synchrotron facilities with specialized fragment-screening platforms [11]. These facilities provide high-throughput data collection capabilities, with some capable of collecting hundreds of datasets daily [11]. Data processing utilizes specialized pipelines like PanDDA (Pan-Dataset Density Analysis) to detect weak fragment binding by analyzing multiple datasets simultaneously and amplifying the signal of low-occupancy ligands [20].

Case Study: Implementation and Validation of a Diverse Fragment Subset

The implementation and validation of the EFSL-96 library provides compelling evidence for the effectiveness of carefully designed, diverse fragment subsets [6]. This 96-member sub-library was derived from the larger 1,056-compound European Fragment Screening Library through a rigorous selection process [6].

In validation screens against two biologically relevant targets, EFSL-96 demonstrated impressive performance:

- Endothiapepsin: 31% hit rate (30 fragments from 96)

- NS2B–NS3 Zika protease: 18% hit rate (17 fragments from 96) [6]

These hit rates compare favorably with typical fragment screening campaigns and confirm that a well-designed, compact library can efficiently identify binders to diverse protein targets [6] [15]. Additionally, the library design enabled rapid hit expansion, as fragments were derived from the larger European Chemical Biology Library (ECBL) containing nearly 100,000 compounds [6]. This integrated design allowed identification of follow-up compounds through consultation with medicinal chemistry experts, yielding two validated follow-up binders for each target within a very short timeframe without requiring synthetic chemistry [6].

This case study demonstrates that small, highly diverse fragment libraries can provide substantial practical advantages in screening efficiency, cost-effectiveness, and rapid follow-up compound identification.

Essential Research Reagents and Tools for Fragment Screening

Successful implementation of fragment screening campaigns requires specialized reagents, tools, and infrastructure. The following table details essential components of a fragment screening toolkit.

Table 3: Essential Research Reagents and Tools for Fragment Screening

| Category | Specific Tools/Reagents | Function/Purpose | Examples/Specifications |

|---|---|---|---|

| Fragment Libraries | EFSL-96 [6] | Pre-validated diverse fragment subset | 96 fragments, high solubility, dried compounds in 96-well format |

| 3D-Shaped Fragment Library [19] | Specialized library with enhanced 3D character | 15,000 fragments, Fsp3 > 0.47, chiral centers ≥ 1 | |

| Protein Production | Expression Systems [6] | Recombinant protein production | BL21(DE3) E. coli cells, pET vectors |

| Purification Systems [6] | Protein purification | HisTrap columns, size exclusion chromatography | |

| Crystallization | Crystallization Screens [20] | Initial crystal condition identification | Commercial sparse matrix screens, optimized conditions |

| Imaging Systems [20] | Crystal detection and monitoring | Formulatrix RockImager with automated imaging | |

| Soaking & Harvesting | Acoustic Liquid Handlers [6] [20] | Precise compound transfer | Echo acoustic dispensers, nanoliter transfer |

| Crystal Mounting Tools [20] | Crystal manipulation and cryo-cooling | Micro-loops, crystal shifting instruments | |

| Data Collection | Synchrotron Facilities [11] | High-throughput X-ray data collection | Diamond Light Source, Canadian Light Source |

| Data Analysis | PanDDA Software [20] | Weak fragment binding detection | Identifies low-occupancy ligands across multiple datasets |

| Structural Biology Software [20] | Structure solution and refinement | Coot, Phenix, Buster |

Comparative Analysis and Strategic Recommendations

Based on comprehensive analysis of diversity metrics, size relationships, and experimental results, several strategic recommendations emerge for designing and curating effective fragment libraries:

Optimal Library Size and Composition

The evidence strongly supports targeted libraries of 1,000-2,000 compounds as optimal for most FBDD campaigns [18]. This size range captures the maximum diversity efficiency while remaining practically manageable. For specialized applications or resource-limited settings, even smaller libraries of 96-500 carefully selected fragments can provide substantial coverage, as demonstrated by the EFSL-96 validation [6]. Libraries should prioritize structural diversity over sheer size, using quantitative metrics like true diversity to guide selection [18] [17].

Diversity Assessment and Quality Control

Library design should implement multiple diversity metrics rather than relying on a single measure. Combining Tanimoto similarity, richness, and true diversity provides complementary perspectives on library coverage [18]. Additionally, 3D shape diversity should be specifically addressed through PMI analysis and Fsp3 metrics, as conventional libraries often overrepresent flat, aromatic structures [19]. Integration of medicinal chemistry expertise remains essential for removing problematic compounds and applying heuristic knowledge beyond algorithmic selection [6].

Practical Implementation Considerations

For crystallographic screening, compound solubility and crystal compatibility are critical practical factors that must be addressed during library design [6] [20]. Additionally, integration with larger compound collections for hit expansion significantly enhances library utility, as demonstrated by the EFSL-ECBL connection [6]. Finally, access to specialized infrastructure including synchrotron beamlines, acoustic liquid handlers, and advanced computational tools like PanDDA is essential for successful implementation [11] [20].

The strategic design of fragment libraries balancing diversity with practical considerations provides a powerful foundation for successful fragment-based drug discovery campaigns against increasingly challenging therapeutic targets.

Global Research Trends and Bibliometric Insights in FBDD (2015-2024)

Fragment-Based Drug Discovery (FBDD) has established itself as a fundamental pillar in modern pharmaceutical research, offering a complementary approach to traditional High-Throughput Screening (HTS). This methodology involves identifying small, low molecular weight compounds (fragments) that bind weakly to biological targets, then systematically optimizing them into potent drug candidates through structure-guided chemistry [2] [3]. Over the past decade (2015-2024), FBDD has matured into a widely adopted strategy with proven success against challenging targets, including protein-protein interactions and previously "undruggable" oncogenic proteins [15] [21]. The approach's core strength lies in its efficient sampling of chemical space; smaller fragment libraries can explore a proportionally greater range of chemical diversity compared to larger HTS libraries composed of more complex molecules [2].

This guide provides a comprehensive bibliometric analysis and comparative evaluation of FBDD research trends, experimental methodologies, and technological advancements between 2015 and 2024. By synthesizing quantitative publication data, methodological comparisons, and emerging technological integrations, we aim to deliver an objective resource for researchers and drug development professionals navigating the evolving fragment screening landscape.

Bibliometric Analysis: Global Research Output and Influential Trends

Publication Metrics and Geographic Distribution

A systematic analysis of the Web of Science Core Collection database from January 1, 2015, to November 1, 2024, identified 1,301 primary research articles on FBDD [22] [23]. The field demonstrated fluctuating but sustained growth with an average annual publication growth rate of 1.42% and significant international collaboration, with 34.82% of articles featuring cross-border co-authorship [23]. The research output revealed interesting citation patterns, with the highest average citations per article occurring in 2018, while more recent publications (2023-2024) showed decreased citation rates, potentially reflecting a shift in research focus or the natural delay in citation accumulation [23].

Table 1: Global Research Contributions to FBDD (2015-2024)

| Country | Number of Publications | Percentage of Total Output |

|---|---|---|

| United States | 889 | 31.5% |

| China | 719 | 25.5% |

| Other Countries | 1,212 | 43.0% |

Geographically, the United States and China emerged as the dominant contributors to FBDD research, producing 889 and 719 publications respectively during the analysis period [22] [23]. This duopoly reflects significant investment in pharmaceutical research and structural biology capabilities within both nations. Prominent research institutions driving FBDD innovation included the Center National de la Recherche Scientifique (CNRS) in France, the University of Cambridge in the United Kingdom, and the Chinese Academy of Sciences [22] [23].

Key Research Topics and Conceptual Evolution

Keyword co-occurrence analysis of the 3,020 author keywords identified in the bibliometric dataset reveals the conceptual structure and evolving research priorities within the FBDD field [22] [23]. The core research directions during this period focused on "fragment-based drug discovery," "molecular docking," and "drug discovery" [22] [23]. These keyword clusters reflect the integrated nature of modern FBDD, which combines experimental screening with computational approaches.

The temporal evolution of research focus shows a noticeable shift from foundational methodology development toward applications for challenging target classes and integration with emerging technologies. Specifically, the latter part of the analysis period (2020-2024) demonstrated increased attention to "virtual screening," "machine learning," and "undruggable targets" [15] [21]. This trend aligns with several successful applications of FBDD against difficult targets, most notably the KRAS G12C inhibitor sotorasib, which was approved in 2021 and originated from fragment-based approaches [2] [21].

Comparative Analysis of Fragment Screening Methodologies

Experimental Protocols for Major Screening Techniques

FBDD relies on sensitive biophysical techniques to detect the weak binding interactions (typically in the μM to mM range) characteristic of fragment hits [3] [24]. The following section details standardized experimental protocols for the primary screening methods employed in FBDD.

Surface Plasmon Resonance (SPR) Protocol

Purpose: To detect fragment binding in real-time and determine kinetic parameters (association/dissociation rates) and affinity [3]. Procedure:

- Immobilize the purified target protein on a biosensor chip via covalent coupling or high-affinity capture [3].

- Flow fragments individually over the chip surface at high concentration (typically 0.1-10 mM) [24].

- Monitor the change in reflective index at the chip surface, which correlates with mass changes upon fragment binding [3] [24].

- Analyze the sensorgram data to calculate association (kₐ) and dissociation (kḍ) rate constants, from which the equilibrium dissociation constant (K_D) is derived [3]. Critical Considerations: Protein immobilization must preserve native conformation. Reference surface subtraction is essential to correct for nonspecific binding and buffer effects [3].

Nuclear Magnetic Resonance (NMR) Spectroscopy Protocol

Purpose: To identify fragment binding and map the binding site on the target protein [3] [24]. Procedure:

- Prepare isotope-labeled (¹⁵N, ¹³C) protein or maintain unlabeled protein with reference fragments [3].

- Collect either protein-observed or ligand-observed NMR spectra.

- Titrate fragments to determine binding affinity from dose-dependent chemical shift changes [3]. Critical Considerations: Requires protein with good stability, solubility, and reasonable molecular weight. High fragment concentrations (up to 1 mM) are typically used [3].

X-ray Crystallography Screening Protocol

Purpose: To obtain atomic-resolution structures of fragment-bound complexes for structure-based design [24]. Procedure:

- Generate reproducible crystals of the target protein [24].

- Soak crystals in solutions containing high concentrations of fragments (typically 10-100 mM) or co-crystallize with fragments [24].

- Collect high-resolution X-ray diffraction data at synchrotron sources.

- Solve structures and identify electron density corresponding to bound fragments [15] [24]. Critical Considerations: Requires robust, well-diffracting crystals. Soaking conditions must be optimized to avoid crystal damage. High fragment solubility is crucial [24].

Differential Scanning Fluorimetry (DSF) Protocol

Purpose: To identify fragment binding through thermal stabilization of the target protein [3]. Procedure:

- Incubate purified protein with fragments and a fluorescent dye (e.g., SYPRO Orange) that binds hydrophobic regions exposed upon denaturation [3].

- Gradually increase temperature (typically 25-95°C) while monitoring fluorescence.

- Determine the melting temperature (T_m) at which the protein unfolds.

- Identify positive hits as fragments that significantly increase Tm (ΔTm > 1°C) compared to protein alone [3]. Critical Considerations: Protein concentration is typically low (μM range) with high ligand:protein ratio. False positives/negatives can occur; orthogonal validation is recommended [3].

Performance Comparison of Screening Methodologies

Table 2: Comparative Analysis of Fragment Screening Techniques

| Method | Throughput | Protein Consumption | Information Obtained | Approximate Cost | Key Limitations |

|---|---|---|---|---|---|

| SPR | Medium | Low (μg per fragment) | Affinity, kinetics | $$$ | Immobilization may affect function |

| NMR | Low | High (mg) | Binding site, affinity | $$$$ | Requires isotopic labeling; low throughput |

| X-ray | Low | Medium (mg) | Atomic structure | $$$$ | Requires crystallizable protein |

| DSF | High | Very Low (ng) | Thermal shift | $ | Indirect binding measure; false positives |

| ITC | Very Low | High (mg) | Affinity, thermodynamics | $$$ | Very low throughput; high protein use |

This comparative analysis reveals a clear trade-off between throughput, structural information depth, and resource requirements. While X-ray crystallography provides the most detailed structural data essential for fragment optimization, it demands significant resources and crystallizable proteins [24]. SPR offers a balanced approach with medium throughput and rich kinetic information, making it valuable for early screening phases [3]. DSF provides the highest throughput with minimal protein consumption but requires orthogonal validation due to its indirect measurement of binding [3].

Technological Integration and Workflow Visualization

The Modern FBDD Workflow

The contemporary FBDD process integrates multiple screening technologies with computational approaches in an iterative workflow [15]. The following diagram illustrates this integrated approach:

Emerging Technologies: AI and Machine Learning Integration

A significant trend in recent FBDD research (2020-2024) is the integration of artificial intelligence and machine learning to accelerate various stages of the discovery pipeline [15] [21]. Computational approaches now complement experimental screening at multiple points:

- Virtual Screening: Molecular docking of fragment libraries prior to experimental screening to prioritize compounds [24].

- Hit Optimization: Free energy perturbation (FEP) calculations and QSAR models guide medicinal chemistry efforts [15] [21].

- Library Design: AI-driven generative models design novel fragments with optimized properties [21].

This integration has created hybrid screening platforms that combine biophysical screening with AI/ML to enhance hit discovery and filter artefacts [15]. The application of these computational technologies has demonstrated particular utility for optimizing fragment growth vectors and predicting synthetic accessibility, potentially reducing the number of design-synthesize-test cycles required [21].

Research Reagent Solutions for FBDD Implementation

Table 3: Essential Research Reagents and Materials for FBDD

| Reagent/Material | Function | Specification | Key Suppliers |

|---|---|---|---|

| Fragment Libraries | Source of screening compounds | 1,000-2,000 compounds; MW 150-300 Da; Rule of 3 compliance | Life Chemicals [25], Custom synthesis |

| Biosensor Chips | SPR protein immobilization | CM5, NTA, or CAP chips for different coupling strategies | Cytiva, Bruker, Reichert |

| NMR Isotopes | Protein labeling for NMR | ¹⁵N-ammonium chloride; ¹³C-glucose for uniform labeling | Cambridge Isotopes, CortecNet |

| Crystallization Kits | Protein crystallization screening | Sparse matrix screens (e.g., JCSG, Morpheus) | Hampton Research, Molecular Dimensions |

| Thermal Shift Dyes | DSF protein denaturation detection | SYPRO Orange, 5000X concentrate in DMSO | Thermo Fisher, Life Technologies |

| Surface Plasmon Resonance Systems | Fragment binding analysis | Biacore systems or equivalent | Cytiva, Bruker, Nicoya |

| NMR Spectrometers | Fragment binding detection | High-field (600-900 MHz) with cryoprobes | Bruker, JEOL |

| X-ray Diffraction Systems | Structural characterization | Home source or synchrotron access | Rigaku, synchrotron facilities |

The selection of appropriate reagent solutions significantly impacts FBDD success. For fragment libraries, diversity and quality outweigh sheer size, with optimal libraries containing 1,000-2,000 compounds that maximize coverage of chemical space while maintaining favorable physicochemical properties [25] [2]. Commercial libraries typically follow the "Rule of Three" (molecular weight ≤300, cLogP ≤3, hydrogen bond donors ≤3, hydrogen bond acceptors ≤3) though these are not absolute requirements [2] [3]. Recent trends emphasize libraries with greater three-dimensionality and sp³ character to address flat aromatic scaffolds that dominate traditional sets [2].

Case Studies: Successful FBDD Applications and Clinical Translations

The impact of FBDD is demonstrated by several FDA-approved drugs and clinical candidates derived from fragment approaches. Two notable examples highlight the methodology's versatility:

Sotorasib (KRAS G12C Inhibitor)

Sotorasib represents a landmark achievement in FBDD, targeting the KRAS G12C mutant previously considered "undruggable" [2] [21]. The discovery campaign identified fragment hits binding to a previously unrecognized pocket adjacent to the switch II region [21]. Structure-guided optimization, primarily through fragment growing, transformed a weak mM binder into a nM inhibitor that covalently targets the cysteine residue of the G12C mutant [21]. This case exemplifies FBDD's ability to address challenging targets through identification of novel binding sites.

Venetoclax (BCL-2 Inhibitor)

Venetoclax, approved for certain types of leukemia, originated from fragment screening using NMR spectroscopy [15] [21]. Initial fragments binding to the BCL-2 protein were optimized through a combination of fragment growing and merging strategies [21]. The resulting drug represents one of the first successful targeting of a protein-protein interaction interface, demonstrating FBDD's utility for this difficult target class [21].

The bibliometric analysis and methodological comparison presented herein demonstrate that FBDD has matured into a robust, widely adopted approach in drug discovery. The period from 2015 to 2024 witnessed the methodology's expansion from primarily academic and biotech applications to widespread pharmaceutical implementation [22] [26]. Future research directions are likely to emphasize several key areas based on emerging trends:

First, the integration of computational technologies, particularly artificial intelligence and machine learning, will continue to accelerate screening and optimization cycles [15] [21]. These approaches will enhance virtual screening capabilities and enable more predictive optimization of fragment hits. Second, methodological innovations will expand FBDD to more challenging target classes, including membrane proteins, RNA targets, and complex multiprotein systems [15] [5]. Finally, the combination of FBDD with complementary approaches like DNA-encoded library technology will provide integrated strategies for difficult targets [5].

As FBDD continues to evolve, its proven track record against diverse target classes, including previously "undruggable" targets, positions it as a cornerstone methodology for future therapeutic development. The ongoing technological innovations and deepened theoretical understanding highlighted in this analysis suggest that FBDD will remain a dynamic and impactful field in the coming decade.

Fragment-Based Drug Discovery (FBDD) has established itself as a powerful and versatile approach in modern drug development. By starting with small, low-molecular-weight compounds known as fragments, FBDD provides a efficient path to identify novel chemical starting points, especially for challenging targets once considered "undruggable" [27]. This guide provides a comparative analysis of successful drugs and candidates originating from FBDD, detailing the screening methodologies and experimental protocols that underpin their development.

FBDD Success Stories: From Fragments to Medicines

The track record of FBDD includes several FDA-approved drugs and a robust pipeline of clinical candidates. The following table summarizes key achievements in the field.

Table 1: FDA-Approved Drugs Originating from Fragment-Based Drug Discovery

| Drug Name (Generic) | Target | Indication | Key Screening Method(s) | Year Approved |

|---|---|---|---|---|

| Vemurafenib (Zelboraf) [27] | BRAF kinase [27] | Melanoma [27] | Not Specified in Sources | 2011 [27] |

| Capivasertib [28] | AKT kinase [28] | Oncology [28] | Not Specified in Sources | 2023 (Noted as approved in 2025 publication) [28] |

| Multiple other drugs [27] [28] | Various | Oncology | Various | 7-8 total approved drugs as of 2025 [14] [27] [28] |

Beyond approved drugs, the FBDD pipeline is robust, with close to 70 drug candidates in clinical trials as of early 2025 [14]. Recent clinical candidates highlighted in conferences include:

- Novel, reversible pan-RAS inhibitors for cancer, discovered through FBDD and developed into macrocyclic analogues [14].

- ABBV-973, a potent, pan-allele small molecule STING agonist for intravenous administration, which was optimized from a fragment hit [14].

- Pyrazolocarboxamide RIP2 Kinase inhibitors for inflammatory diseases, discovered via a fragment screening and design program [14].

Methodological Comparison in FBDD

The success of FBDD relies on a suite of sensitive biophysical and structural techniques to detect the weak binding interactions characteristic of fragments. The workflow typically involves a primary screen followed by orthogonal validation and structural elaboration.

Table 2: Comparison of Key Experimental Techniques in Fragment-Based Drug Discovery

| Technique | Primary Application in FBDD | Key Experimental Protocol Details | Advantages |

|---|---|---|---|

| X-Ray Crystallography [6] | Primary screening & hit validation; provides atomic-resolution structures [6]. | Protein crystals are soaked with fragments. Data collection at synchrotrons; structures solved by molecular replacement [6]. | Directly visualizes binding mode; identifies novel allosteric sites [14] [6]. |

| Surface Plasmon Resonance (SPR) [27] | Primary screening & kinetic characterization [14] [27]. | Target protein immobilized on sensor chip. Fragments injected; binding responses (Rmax) and kinetics (KD, kon, koff) measured [27]. | Label-free; provides kinetic and affinity data; can be high-throughput [14] [27]. |

| Nuclear Magnetic Resonance (NMR) [29] | Primary screening & affinity measurement [29]. | Ligand-observed (1D 1H: STD, T1ρ) or protein-observed (2D 1H-15N HSQC) methods used [29]. | Detects weak interactions; provides structural information on binding [29]. |

| Microscale Thermophoresis (MST) [6] | Binding validation & affinity measurement [6]. | Measures mobility of fluorescently labeled protein in a temperature gradient upon fragment binding [6]. | Requires minimal sample volume; works in complex biological buffers [6]. |

| Virtual Screening (GCNCMC) [30] | Computational hit identification & binding mode prediction [30]. | Grand Canonical Nonequilibrium Candidate Monte Carlo simulations insert/delete fragments in a defined protein region during MD simulations [30]. | Finds occluded binding sites; predicts affinities without restraints; complements experimental methods [30]. |

Case Study Protocols: From Screen to Candidate

Crystallographic Fragment Screening against Zika Virus Protease

A 2025 study detailed a screen against the challenging NS2B–NS3 Zika protease complex [6].

- Library: A diverse 96-fragment subset of the European Fragment Screening Library (EFSL-96) was used [6].

- Experimental Protocol: The library was prepared as dried compounds in 96-well plates. Protein crystals were grown and soaked with fragments. Data were collected at synchrotron facilities, and structures were solved to identify bound fragments [6].

- Hit Expansion: Follow-up binders were rapidly identified by testing related, larger compounds from the broader European Chemical Biology Library (ECBL), demonstrating a efficient hit expansion strategy [6].

SPR-Based Screening for SLC Transporter Targets

Evotec developed a novel workflow for targeting challenging Solute Carrier (SLC) transporters [31].

- Assay Development: A real-time kinetics assay was developed using Grating Coupled Interferometry (GCI), a label-free biosensor technique [31].

- Screening: A 3,000-fragment library was screened using this GCI approach [31].

- Hit Expansion: Validated fragment hits informed a machine learning (ML) model, which was used to select 1,000 lead-like compounds from a 250,000-compound library. This ML-guided approach achieved a 4× higher hit rate compared to random screening [31].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful FBDD campaigns depend on specialized reagents and tools. The table below lists key solutions used in the featured experiments and the broader field.

Table 3: Key Research Reagent Solutions for Fragment-Based Drug Discovery

| Research Reagent / Tool | Function in FBDD Workflow |

|---|---|

| Fragment Libraries (e.g., EFSL-96 [6], F2X [6]) | Curated collections of small molecules designed for high diversity and optimal physicochemical properties, serving as the primary source for screening hits. |

| Biacore T200 SPR System [27] | An instrument platform for label-free, high-sensitivity fragment screening and kinetic characterization. |

| Synchrotron Beamlines [6] | High-intensity X-ray sources enabling rapid data collection for crystallographic fragment screening. |

| Control Compounds & Mutant Proteins [27] | Used in assay development and as counterscreens to discriminate specific from non-specific fragment binding. |

| Mnova Software Suite [29] | Specialized NMR data analysis tools for processing screening data (Mnova Screen 1D/2D) and calculating binding affinities (Mnova Binding). |

| Machine Learning Models [31] | Computational tools that leverage bioactivity and structural fingerprints to enable efficient hit expansion from fragments into lead-like chemical space. |

Visualizing the FBDD Workflow and Method Interplay

The following diagram illustrates the standard FBDD workflow and how key experimental methods integrate into the hit-to-lead process.

FBDD Workflow and Methods

Fragment-Based Drug Discovery has proven its significant value in delivering approved medicines and a rich clinical pipeline, particularly in oncology. Its strength lies in its ability to efficiently identify high-quality starting points against challenging biological targets. The continued evolution of integrated strategies—combining powerful experimental techniques like crystallography and SPR with computational methods like machine learning and advanced molecular simulations—is further accelerating the FBDD process. This synergy ensures that FBDD remains a cornerstone of modern drug discovery, poised to deliver the next generation of innovative therapeutics.

A Deep Dive into Screening Technologies: From Theory to Practical Application

Fragment-based drug discovery (FBDD) has revolutionized pharmaceutical development by identifying weakly potent, small molecule starting points for lead development. The fundamental premise involves screening low molecular weight compounds (typically <300 Da) against a target protein, resulting in higher hit rates and more efficient exploration of diverse chemical space compared to traditional high-throughput screening [32] [33]. While numerous biophysical methods exist for fragment screening—including nuclear magnetic resonance (NMR), surface plasmon resonance (SPR), and differential scanning fluorimetry—X-ray crystallography has emerged as a powerful primary screening tool that provides direct structural insights unmatched by other techniques [32] [33]. The "crystallography first" paradigm advocates for employing X-ray crystallography as the primary screening method, enabling immediate three-dimensional structural readouts of protein-fragment complexes and accelerating the structure-based drug design process [32].

Historically, X-ray crystallography was under-appreciated as a primary screening tool due to perceptions of low throughput and technical difficulty [32] [33]. However, pioneering work by researchers like Mattos and Ringe with multiple solvent crystallographic structures (MSCS), and subsequent developments by pharmaceutical companies including Abbott Laboratories (CrystaLEADS) and Astex Therapeutics (Pyramid platform), demonstrated the feasibility and power of crystallographic screening [32]. Recent technical advances in synchrotron beamlines, robotic crystal handling, detectors, and data collection software have dramatically increased throughput, making crystallographic screening comparable to other techniques in terms of timeline while providing superior structural information [32] [33] [34]. This evolution has positioned crystallography as a compelling first choice for fragment screening campaigns when suitable protein crystals are available.

Comparative Analysis: X-ray Crystallography Versus Other Screening Methods

Technical Comparison of Primary Screening Methods

The following table provides a systematic comparison of X-ray crystallography against other predominant fragment screening methodologies, highlighting the unique advantages of the crystallography-first approach.

| Screening Method | Key Advantages | Inherent Limitations | Primary Information Obtained | Optimal Use Case |

|---|---|---|---|---|

| X-ray Crystallography | Direct 3D structural data; Unmatched range of detectable binding affinity (millimolar to sub-nanomolar); Reveals novel/allosteric binding sites; Identifies binding mode and protein conformational changes [32] [33] [35]. | Requires robust, high-resolution crystals; Throughput limited by crystal handling and data collection; Higher initial resource investment for crystal system development [32] [36]. | Atomic-resolution structure of protein-fragment complex; Precise binding pose and interactions [32]. | Primary screening when robust crystal systems exist; Targets with potential for allosteric modulation. |

| NMR Spectroscopy | Detects weak binding events; Provides quantitative affinity data (KD); Probes binding in solution (no crystal packing artifacts) [37] [34]. | Lower structural resolution than crystallography; Limited by protein size for some experiments; Can be resource-intensive for protein labeling [37] [34]. | Binding confirmation, approximate binding location, and quantitative binding affinity [34]. | Solution-based screening prior to crystallography; Validating binding events detected by other methods. |

| Surface Plasmon Resonance (SPR) | Provides real-time kinetic data (kon, koff); High sensitivity; Amenable to automation and relatively high throughput [34]. | Cannot determine structural basis of binding; Prone to false positives from non-specific binding; Requires immobilization of target protein [34]. | Binding kinetics and affinity; Stoichiometry of binding [34]. | Secondary validation of fragment hits; Kinetic profiling. |

| Differential Scanning Fluorimetry | Low material consumption; Technically simple and low-cost; Rapid screening capability [32]. | Indirect measurement of binding (thermal stability); High false positive/negative rates; No structural information [32]. | Thermal shift (ΔTm) indicating potential stabilization from binding. | Initial low-cost triage of fragment libraries. |

Quantitative Performance Metrics

Data from successful screening campaigns provide concrete evidence of the performance of the crystallography-first approach, as detailed in the table below.

| Performance Metric | X-ray Crystallography | NMR Spectroscopy | Surface Plasmon Resonance |

|---|---|---|---|

| Typical Hit Rate | 1-5% (SGX) [34]; ~3-8% (various targets) [32] | 0.01 - 0.8% (Abbott, 23 targets) [34] | Varies significantly by target |

| Affinity Range | Millimolar to sub-nanomolar [32] | High micromolar to low millimolar [34] | High micromolar to nanomolar |

| Throughput (Compounds/Week) | 1,000-2,000 fragments [35] | Varies by method; generally high | Very high (can exceed 10,000) |

| Key Differentiating Output | 3D atomic structure of complex [32] | Binding site mapping and affinity [34] | Kinetic parameters (kon, koff) [34] |

A notable example of the power of crystallographic screening comes from a 2024 campaign against Schistosoma mansoni thioredoxin glutathione reductase (SmTGR), where researchers screened 768 fragments and observed 49 binding events involving 35 distinct fragments across 16 binding sites, providing a rich structural foundation for inhibitor design [36].

The Crystallographic Screening Workflow: From Cocktail Design to Hit Identification

The crystallography-first paradigm relies on a streamlined, iterative process that maximizes structural information yield. The following diagram illustrates the integrated workflow that connects computational design, experimental execution, and AI-driven analysis in modern crystallographic screening.

Critical Workflow Components and Methodologies

Fragment Library and Cocktail Design

The design of the fragment library and its organization into screening cocktails is a critical foundation for success. Multiple strategies have been developed by leading organizations:

- Diversity-Oriented Design: Astex Pharmaceuticals pioneered grouping 4 fragments per cocktail with strong emphasis on chemical diversity to facilitate deconvolution and reduce multiple fragment binding [32].

- Shape-Similarity Approach: Johnson & Johnson designed cocktails of 5 compounds with similar shapes, taking advantage of potential multiple-fragment binding to strengthen electron density [32].

- Computational Design: The University of Washington's Biomolecular Structure Center used shape fingerprint analysis to design 68 cocktails of 10 structurally diverse compounds from a carefully filtered 680-compound library [32].

Modern approaches increasingly incorporate AI and machine learning. For instance, AbbVie calculated druggability scores for the entire human genome and integrated ESM-2 embeddings with ligand data using AffinityNet to predict binding affinity with exceptional accuracy [38].

Crystal Preparation and Soaking Methods

The success of crystallographic screening is heavily dependent on crystal quality and robustness. Key considerations include:

- Crystal Engineering: Prior to fragment screening of HIV-1 reverse transcriptase (RT), researchers introduced point mutations and C-terminal truncations to reduce surface entropy and improve resolution [32].

- Soaking Methodologies: Efficient soaking protocols must balance fragment concentration (typically 50-200 mM) with crystal stability, requiring optimization of soaking time, temperature, and cryoprotection [32] [39].

- Crystal Robustness: The Medical Structural Genomics for Protozoan Parasites Consortium reported that only 19 of 26 protein targets produced fragment binding, with limitations including poor resolution (<2.8 Å) and reduced crystal stability in soaking conditions [32].

The XChem facility at Diamond Light Source has extensively streamlined these processes, generating 35,000 datasets from uniquely soaked crystals in 2017 alone [40].

Data Collection and Hit Identification

Technical improvements have dramatically accelerated data collection and analysis:

- Automated Data Collection: Robotic crystal mounting, powerful detectors, and automated data collection software have significantly increased throughput [32] [33].

- High-Throughput Processing: Streamlined pipelines like those at XChem package structure solution calculations into single processes that provide ready-to-view maps for evaluating fragment binding [40].

- Hit Validation: Electron density maps must be carefully evaluated to distinguish genuine fragment binding from solvent effects or crystal artifacts [39].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of the crystallography-first paradigm requires specific reagents and materials, as detailed in the following table.

| Reagent/Material | Function in Crystallographic Screening | Key Considerations |

|---|---|---|

| Fragment Library | Collection of 500-2,000 low molecular weight compounds (<300 Da) for screening [39]. | Diversity, solubility (>50 mM in DMSO), chemical stability, absence of reactive groups [32] [39]. |

| Crystallization Reagents | Precipients, buffers, and salts to produce robust, high-resolution protein crystals [35]. | Compatibility with soaking conditions; minimal interference with potential binding sites [35]. |

| Cryoprotectants | Compounds (e.g., glycerol, ethylene glycol) to prevent ice formation during cryocooling [32]. | Must preserve crystal integrity while not displacing bound fragments [32]. |

| Halogenated Fragments | Brominated or fluorinated compounds for anomalous diffraction and NMR studies [33]. | Aid in fragment identification and confirm binding through multiple methods [33]. |

| High-Throughput Crystallization Plates | Specialized plates for efficient crystal production and soaking [40]. | Compatibility with automated liquid handling and crystal harvesting systems [40]. |

Synergy with Modern Computational Approaches

The crystallography-first approach powerfully integrates with contemporary AI and computational drug discovery methods, creating an accelerated feedback loop for lead optimization.

AI and Foundation Models

The structural data generated by crystallographic screening provides essential training data for biological foundation models (BioFMs). Companies like AbbVie have leveraged ESM-2 embeddings integrated with ligand data to predict binding affinity with exceptional accuracy [38]. The concept of the "informacophore"—minimal chemical structures combined with computed molecular descriptors and machine-learned representations—is emerging as a data-driven extension of traditional pharmacophore modeling [41].

Federated Learning and Collaborative Discovery

Federated learning approaches address the challenge of accessing diverse structural data while protecting intellectual property. The AI Structural Biology (AISB) consortium, with participants from Johnson & Johnson and AbbVie, leverages federated learning to collaboratively train AI models across distributed datasets without exposing underlying proprietary data [38]. This enables shared insights that enhance drug specificity and improve molecular interactions.

The "Lab in the Loop" Paradigm

Genentech's concept of a "lab in the loop" creates a tightly integrated, iterative cycle where AI models trained on experimental data generate predictions that guide laboratory experiments [38]. As new crystallographic data is produced, it feeds back into the models to refine them and improve accuracy. This continuous feedback loop allows researchers to explore vast chemical spaces and simultaneously optimize multiple therapeutic properties.

The crystallography-first paradigm represents a powerful strategy for fragment-based drug discovery when appropriate crystal systems are available. Its unparalleled ability to provide direct three-dimensional structural information on fragment binding accelerates the entire drug discovery process, from initial hit identification to lead optimization. While the approach requires significant initial investment in crystal system development and infrastructure, the returns in structural insights and design guidance can substantially reduce later-stage attrition.

Successful implementation requires careful consideration of several factors: the availability of robust, high-resolution crystals; appropriate fragment library design; streamlined soaking and data collection protocols; and integration with computational methods. As AI and automation continue to advance, the synergy between crystallographic screening and computational prediction will likely further enhance the throughput and impact of the crystallography-first approach, solidifying its role as a cornerstone of modern structure-based drug discovery.

Fragment-Based Drug Discovery (FBDD) has matured into a powerful strategy for generating novel therapeutic leads, particularly for challenging targets considered "undruggable" by traditional high-throughput screening (HTS) [42] [15]. This approach identifies low molecular weight fragments (typically <300 Da) that bind weakly to a target, which are then optimized into potent leads through structure-guided strategies [15]. The fundamental advantage of FBDD lies in its efficient exploration of chemical space; smaller fragment libraries provide broader coverage than much larger libraries of drug-like compounds [11]. Since fragments bind with high ligand efficiency (binding free energy per heavy atom), they provide excellent starting points for optimization into drugs with favorable properties [11].

The identification of these weakly binding fragments relies exclusively on highly sensitive biophysical methods that can detect interactions with dissociation constants (K~d~) in the high micromolar to millimolar range [15]. Among the numerous techniques available, Nuclear Magnetic Resonance (NMR), Surface Plasmon Resonance (SPR), and Microscale Thermophoresis (MST) have emerged as foundational tools in the FBDD workflow [43]. These "biophysical workhorses" enable researchers to detect and validate fragment binding, each offering distinct advantages, limitations, and specific applications. This guide provides a comparative analysis of these three core techniques, examining their underlying principles, experimental requirements, and performance characteristics to inform method selection for fragment screening campaigns.

Core Principles and Workflows

Nuclear Magnetic Resonance (NMR) Spectroscopy

NMR spectroscopy probes fragment binding by detecting changes in the magnetic properties of atomic nuclei. It can be conducted in two primary modes: protein-observed NMR, which monitors chemical shift perturbations in isotopically labeled (~15~N or ~13~C) proteins upon fragment binding, and ligand-observed NMR, which detects changes in the properties of fragment molecules when they interact with the target protein [43] [44]. Protein-observed NMR provides detailed information on the binding site and can distinguish between specific and non-specific binding, but it requires significant amounts of labeled protein and is generally limited to proteins under ~40 kDa due to spectral broadening in larger molecules [43]. Ligand-observed methods like Saturation Transfer Difference (STD) and Water-LOGSY are more versatile for screening and can handle larger proteins without labeling, but provide less detailed structural information [43].

Surface Plasmon Resonance (SPR)

SPR is a label-free technique that measures biomolecular interactions in real-time through changes in the refractive index near a sensor surface [45] [43]. One binding partner (typically the protein target) is immobilized on a dextran-coated gold chip, while the other (the fragment) flows over the surface in solution [45]. Binding events increase the local refractive index, causing a measurable shift in the SPR angle [43]. This response is recorded in resonance units (RU) over time, generating sensograms that provide detailed kinetic information (association rate k~on~ and dissociation rate k~off~) in addition to the equilibrium dissociation constant K~d~ [45]. A significant advantage of SPR is its ability to detect low-affinity fragment binding with high sensitivity, with a reliable detection limit for binding affinity around 500 µM, though weaker affinities have been reported [43].

Microscale Thermophoresis (MST)

MST quantifies biomolecular interactions by measuring the directed movement of molecules through a microscopic temperature gradient [46] [47]. This movement, known as thermophoresis, depends on molecular properties including size, charge, and hydration shell—all of which typically change upon binding [45] [47]. In a standard MST experiment, one binding partner is fluorescently labeled, and an infrared laser creates a localized temperature gradient in the sample [47]. The instrument monitors fluorescence changes as molecules move through this gradient, with binding-induced changes in thermophoretic behavior enabling quantification of affinity [47]. A key advantage of MST is its ability to measure interactions under near-native conditions, including in complex biological liquids like cell lysates, and with very low sample consumption [47]. An extension called kinetic MST (KMST) can also determine binding kinetics alongside affinity in a single experiment by analyzing thermal relaxation processes [46].

Comparative Performance Analysis

Technical Specifications and Performance Metrics

The table below summarizes the key technical characteristics and performance metrics of NMR, SPR, and MST in the context of fragment screening.

Table 1: Technical Specifications and Performance Comparison

| Parameter | NMR | SPR | MST |

|---|---|---|---|

| Detection Principle | Chemical shift changes/magnetization transfer | Refractive index change near sensor surface | Thermophoretic movement in temperature gradient |

| Throughput | Medium (ligand-observed); Low (protein-observed) | High | Medium to High |

| Sample Consumption | High (5–50 mg protein) [43] | Low | Very Low (<5 μL) [46] |

| Labeling Required | No (for most modes); Isotopic labeling for protein-observed | No | Yes (fluorescent label for one partner) |

| Immobilization Required | No | Yes (one partner) | No |

| Affinity Range (Kd) | µM–mM [43] | ~500 µM to nM [43] | nM–mM [47] |