Building Robust QSAR Models: A Comprehensive Workflow from Data to Validation

This article provides a complete guide to the Quantitative Structure-Activity Relationship (QSAR) modeling workflow, tailored for researchers and drug development professionals.

Building Robust QSAR Models: A Comprehensive Workflow from Data to Validation

Abstract

This article provides a complete guide to the Quantitative Structure-Activity Relationship (QSAR) modeling workflow, tailored for researchers and drug development professionals. It covers foundational principles, including molecular descriptors and data curation, then progresses to advanced methodological applications of both classical and machine learning algorithms. The guide addresses critical troubleshooting and optimization strategies to avoid common pitfalls and concludes with rigorous internal and external validation techniques to ensure model reliability and regulatory acceptance. By synthesizing traditional best practices with emerging trends like AI integration and performance metric reevaluation, this resource serves as a practical handbook for developing predictive, interpretable, and impactful QSAR models in modern drug discovery.

Laying the Groundwork: Core Principles and Data Preparation for QSAR

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone methodology in cheminformatics and computer-aided drug design. These computational models mathematically correlate the physicochemical properties or theoretical molecular descriptors of chemical compounds with their biological activity or chemical properties [1]. The foundational principle underpinning QSAR is that molecular structure determines properties, which in turn govern biological activity, enabling the prediction of activities for novel compounds without the need for immediate synthesis and experimental testing [1] [2].

The QSAR paradigm has evolved significantly from its origins in the early 1960s with Hansch analysis, which utilized simple physicochemical parameters like lipophilicity (log P) and electronic effects (Hammett constants) [3] [4]. Today, the field encompasses thousands of potential molecular descriptors and employs sophisticated machine learning algorithms, including deep learning techniques that define the emerging field of "deep QSAR" [5] [4]. This evolution has expanded QSAR's applications beyond drug discovery to include toxicology prediction, environmental risk assessment, and material science, making it an indispensable tool across numerous scientific disciplines [1] [2].

Essential Components of QSAR Modeling

Molecular Descriptors: Quantifying Chemical Structure

Molecular descriptors are numerical representations that encode specific structural, topological, or physicochemical features of molecules, serving as the independent variables in QSAR models [6] [4]. The selection of appropriate descriptors is critical, as they must comprehensively represent molecular properties, correlate with biological activity, be computationally feasible, and possess distinct chemical interpretability [4].

Table 1: Major Categories of Molecular Descriptors in QSAR

| Descriptor Category | Description | Examples | Applications |

|---|---|---|---|

| Constitutional | Describe molecular composition without geometry | Molecular weight, atom count, bond count | Basic characterization of drug-likeness |

| Topological | Encode molecular connectivity patterns | Molecular connectivity indices, Wiener index | Modeling absorption and distribution |

| Geometric | Capture 3D spatial characteristics | Molecular volume, surface area, inertia moments | Receptor-ligand complementarity studies |

| Electronic | Quantify electronic distribution | Partial charges, dipole moment, HOMO/LUMO energies | Modeling interactions with enzyme active sites |

| Thermodynamic | Represent energy-related properties | Log P (lipophilicity), molar refractivity, solubility | Predicting bioavailability and permeability |

The accuracy and relevance of descriptors directly govern the predictive power and interpretability of QSAR models. The field has witnessed a transition from simple, interpretable descriptors to high-dimensional descriptor spaces, facilitated by software tools like PaDEL-Descriptor, Dragon, and RDKit [6] [4]. This evolution presents the critical challenge of balancing descriptor dimensionality with model interpretability and computational efficiency [4].

Mathematical Algorithms: From Linear Regression to Deep Learning

QSAR modeling employs diverse mathematical approaches to establish quantitative relationships between descriptors and biological activity. These can be broadly categorized into linear and non-linear methods [6].

Linear QSAR models, including Multiple Linear Regression (MLR) and Partial Least Squares (PLS), assume a direct, additive relationship between molecular descriptors and biological response. These models offer high interpretability, as the contribution of each descriptor is represented by a coefficient in a linear equation [6]. The general form of a linear QSAR model is:

[ \text{Activity} = b + \sum{i=1}^{n} wi \times \text{Descriptor}_i ]

where (w_i) are the model coefficients, (b) is the intercept, and (n) is the number of descriptors [6].

Non-linear QSAR models capture more complex structure-activity relationships using techniques such as Support Vector Machines (SVM), Random Forest (RF), and Artificial Neural Networks (ANNs) [6]. The general form of a non-linear QSAR model is:

[ \text{Activity} = f(\text{Descriptor}1, \text{Descriptor}2, ..., \text{Descriptor}_n) ]

where (f) is a non-linear function learned from the data [6]. These methods often demonstrate superior predictive performance for complex biological endpoints but can function as "black boxes" with limited interpretability [5].

Recent advances incorporate deep learning architectures that automatically learn relevant feature representations from molecular structures, potentially reducing the dependency on pre-defined descriptors [5] [7]. The emergence of graph-based models that operate directly on molecular graphs represents a particularly promising direction [5].

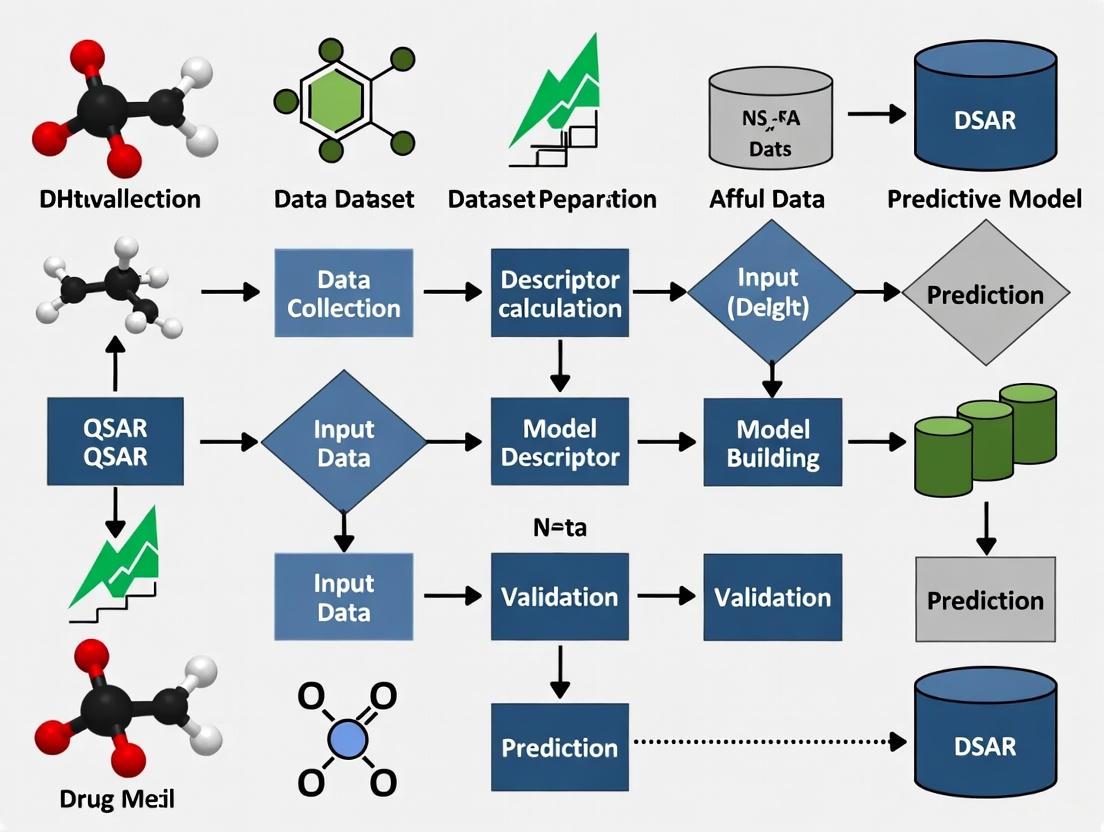

Diagram 1: QSAR Model Development Workflow. This flowchart outlines the standard procedure for developing validated QSAR models, from initial data collection through to final predictive application.

Application Note: QSAR in Anti-Malarial Drug Discovery

Experimental Background and Objectives

The emergence of artemisinin resistance in Plasmodium falciparum has created an urgent need for novel antimalarial agents with new mechanisms of action [8]. Dihydroorotate dehydrogenase (DHODH) represents a promising drug target as it catalyzes a critical step in pyrimidine biosynthesis essential for parasite proliferation [8]. This application note details a QSAR study aimed at developing predictive classification models for identifying novel PfDHODH inhibitors, demonstrating the practical implementation of the QSAR paradigm in addressing a significant public health challenge.

Detailed Experimental Protocol

Data Set Curation and Preparation

- Data Source: IC~50~ values for PfDHODH inhibitors were extracted from the ChEMBL database (ChEMBL ID CHEMBL3486), a manually curated repository of bioactive molecules with drug-like properties [8].

- Data Curation: The initial data set was rigorously curated to remove duplicates, compounds with missing activity values, and those falling outside relevant potency ranges. This resulted in a final set of 465 inhibitors for model development [8].

- Activity Classification: Continuous IC~50~ values were converted to categorical classes (active/inactive) using appropriate threshold values based on biological significance.

- Data Splitting: The curated data set was divided into training (~80%) and external test (~20%) sets using statistical methods such as Kennard-Stone algorithm to ensure representative chemical space coverage [8] [6].

Molecular Descriptor Calculation and Feature Selection

- Descriptor Types: Twelve distinct sets of chemical fingerprints (binary structural keys representing molecular features) were calculated for all compounds using cheminformatics software [8].

- Feature Selection: Recursive feature elimination was employed to identify the most informative molecular descriptors, removing 62-99% of redundant features to reduce noise and prevent model overfitting [8] [9].

- Data Balancing: Both undersampling and oversampling techniques were applied to address class imbalance, with balanced oversampling proving most effective for this data set [8].

Model Building and Optimization

- Algorithm Selection: Twelve different machine learning algorithms were evaluated, including Random Forest (RF), Support Vector Machines (SVM), and Neural Networks [8].

- Hyperparameter Tuning: Model hyperparameters were optimized via grid search with 5-fold cross-validation on the training set to maximize predictive performance [8].

- Model Training: Final models were trained using the optimized parameters on the complete training set.

Model Validation and Evaluation

- Internal Validation: Model performance was assessed via cross-validation on the training set using Matthew's Correlation Coefficient (MCC) as the primary metric [8].

- External Validation: The final model was evaluated on the held-out test set to estimate real-world predictive performance [8] [6].

- Applicability Domain: The chemical space where the model could make reliable predictions was characterized to guide appropriate application [10].

Key Results and Research Implications

The optimized Random Forest model using SubstructureCount fingerprints demonstrated superior performance with MCC values of 0.97 (training), 0.78 (cross-validation), and 0.76 (external test set), indicating high predictive accuracy and robustness [8]. Feature importance analysis using the Gini index revealed that nitrogenous groups, fluorine atoms, oxygen-containing functionalities, aromatic moieties, and chirality centers were critical structural features influencing PfDHODH inhibitory activity [8].

This QSAR study successfully identified key structural determinants for PfDHODH inhibition, providing valuable insights for medicinal chemistry optimization of lead compounds. The validated model enables virtual screening of compound libraries to identify novel chemotypes with potential anti-malarial activity, significantly accelerating the drug discovery process against artemisinin-resistant malaria [8].

Advanced Protocols in QSAR Modeling

Comprehensive Model Validation Framework

Robust validation is imperative for developing reliable QSAR models with true predictive power [1] [6]. The following multi-tiered validation protocol must be implemented:

Table 2: Comprehensive QSAR Model Validation Strategy

| Validation Type | Protocol | Key Metrics | Acceptance Criteria |

|---|---|---|---|

| Internal Validation | 5-fold or 10-fold cross-validation | Q², R², RMSE | Q² > 0.6 for regression; MCC > 0.6 for classification |

| External Validation | Prediction on completely held-out test set | Predictive R², Concordance | R²~pred~ > 0.6; Strong correlation (p < 0.05) |

| Randomization Test | Y-scrambling with multiple iterations | R²~random~, Q²~random~ | Significant difference from original model (p < 0.01) |

| Applicability Domain | Leverage approaches, distance measures | Williams plot, PCA-based distance | >80% of predictions within domain |

Internal validation via cross-validation assesses model robustness by iteratively partitioning the training data and measuring predictive performance across folds [6]. External validation using a completely independent test set provides the most realistic estimate of a model's predictive power for novel compounds [6]. Y-randomization tests confirm that model performance stems from genuine structure-activity relationships rather than chance correlations [1]. Defining the applicability domain is crucial for identifying the chemical space where the model can make reliable predictions [10].

Table 3: Essential Computational Tools for QSAR Modeling

| Tool Category | Representative Software/Services | Primary Function | Key Features |

|---|---|---|---|

| Descriptor Calculation | PaDEL-Descriptor, Dragon, RDKit, Mordred | Generate molecular descriptors from structures | 1D-3D descriptors, fingerprint generation, batch processing |

| Cheminformatics Platforms | KNIME, Orange, Pipeline Pilot | Workflow automation and data pipelining | Visual programming, data preprocessing, model integration |

| Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch | Algorithm implementation and model building | Extensive algorithm collections, neural networks, hyperparameter optimization |

| Chemical Databases | ChEMBL, PubChem, ZINC | Source of chemical structures and bioactivity data | Annotated bioactivities, commercial availability, structural diversity |

| Model Validation Suites | QSAR Model Reporting Format (QMRF), OECD QSAR Toolbox | Standardized model validation and reporting | Regulatory compliance, standardized metrics, interoperability |

Deep QSAR and Emerging Methodologies

The integration of deep learning with traditional QSAR has created the emerging subfield of "deep QSAR" [5]. These approaches leverage neural networks with multiple hidden layers to automatically learn relevant feature representations from molecular structures, potentially surpassing the predictive performance of traditional descriptor-based methods [5] [7].

Advanced deep QSAR protocols include:

- Graph Neural Networks that operate directly on molecular graphs, inherently capturing topological relationships [5]

- Multi-task learning frameworks that simultaneously predict multiple biological endpoints, leveraging shared representations across related tasks [5]

- Hybrid models integrating QSAR with molecular dynamics simulations to incorporate structural and dynamical information [7]

- Generative models for de novo molecular design that create novel chemical structures with optimized properties [5]

Diagram 2: Comparison of Traditional and Deep QSAR Approaches. This diagram contrasts descriptor-based QSAR, which relies on pre-calculated molecular features, with deep QSAR methods that automatically learn relevant representations from raw molecular inputs.

The QSAR paradigm has evolved from simple linear regression models based on handfuls of interpretable descriptors to complex, high-dimensional models capable of predicting diverse biological endpoints with remarkable accuracy [4]. This evolution has been driven by advances in three critical areas: the emergence of larger, higher-quality datasets; the development of more sophisticated molecular descriptors; and the adoption of powerful machine learning algorithms, particularly deep learning architectures [5] [4].

Future developments in QSAR modeling will likely focus on expanding applicability domains to encompass more diverse chemical space, improving model interpretability through explainable AI techniques, and enhancing predictive reliability for novel chemotypes [4]. The integration of QSAR with structural biology information through hybrid approaches, along with the adoption of multi-task and transfer learning strategies, promises to further increase the utility of these models in drug discovery pipelines [7]. As these methodologies continue to mature, QSAR will remain an indispensable component of the molecular design toolkit, enabling more efficient exploration of chemical space and acceleration of therapeutic development.

In the disciplined pursuit of drug discovery, Quantitative Structure-Activity Relationship (QSAR) modeling serves as a fundamental in silico technique that correlates the structural properties of molecules with their biological activity [11]. The predictive power and interpretability of these models are wholly dependent on the molecular descriptors used as input variables. Molecular descriptors are numerical quantities that encode chemical information derived from a molecule's symbolic representation, transforming molecular structures into useful numbers for computational analysis [12] [13].

The selection of appropriate descriptors is therefore not merely a preliminary step but a critical decision point that dictates the success of any QSAR workflow. Descriptors span multiple levels of complexity—from simple atomic counts to sophisticated quantum mechanical calculations—each capturing different facets of molecular structure and properties [13] [14]. This article provides a structured overview of essential molecular descriptors across this complexity spectrum, presents practical protocols for their application, and integrates this knowledge within a comprehensive QSAR model development framework, empowering researchers to make informed choices in their molecular design efforts.

A Hierarchical Taxonomy of Molecular Descriptors

Molecular descriptors can be systematically classified based on the dimensionality of the molecular representation from which they are derived and the chemical information they encode. This hierarchical taxonomy progresses from simple, easily computed descriptors to complex, information-rich ones, with each category serving distinct purposes in QSAR modeling [13] [14].

Table 1: Classification of Molecular Descriptors by Dimensionality and Type

| Descriptor Class | Information Content | Key Examples | Typical QSAR Application |

|---|---|---|---|

| 0D (Constitutional) | Atomic composition & counts; additive properties | Molecular weight, atom counts, molecular formula [13] [14] | Preliminary screening, drug-likeness filters (e.g., Lipinski's Rule of 5) |

| 1D (Substructural) | Presence/absence or count of specific fragments | Functional group counts, hydrogen bond donors/acceptors, rotatable bonds [13] | Pharmacophore feature identification, toxicity prediction |

| 2D (Topological) | Atomic connectivity & molecular graph features | Wiener index, Zagreb index, connectivity indices, Kier & Hall descriptors [11] [12] | High-throughput virtual screening, similarity searching |

| 3D (Geometric/Steric) | 3D atomic coordinates & spatial arrangement | Molecular volume, surface area, 3D-MoRSE descriptors, WHIM descriptors [12] [13] | Modeling stereoselective interactions, receptor fit prediction |

| 3D (Quantum Chemical) | Electronic distribution & energetics | Partial atomic charges, HOMO/LUMO energies, dipole moment, polarizability [15] [16] | Modeling electronic-driven interactions, reaction mechanism studies |

| 4D (Interaction Fields) | Ligand-probe interaction energies in 3D space | GRID, CoMFA, CoMSIA fields [13] [14] | Detailed structure-based design, understanding binding interactions |

The foundational principle when selecting descriptors is that the information content of the descriptors should be appropriately matched to the complexity of the biological endpoint being modeled [13]. Using overly simplistic descriptors for a complex phenomenon may yield uninformative models, while using highly complex descriptors for a simple property may introduce noise and lead to overfitting [11] [13]. The following sections detail the primary descriptor classes within this hierarchy.

0D and 1D Descriptors: The Constitutional and Substructural Foundation

0D descriptors are derived from the chemical formula alone and require no information about molecular structure or connectivity [13]. They include simple counts of atoms and bonds, molecular weight, and sums of basic atomic properties. While their information content is low and they often have high degeneracy (the same value for different molecules, including isomers), they are straightforward to calculate, interpret, and are invaluable for constructing simple, robust models for properties like molecular refractivity [13].

1D descriptors incorporate substructural information, typically representing the presence, absence, or frequency of specific functional groups or fragments in a molecule [13]. These include counts of hydrogen bond donors and acceptors, rotatable bonds (a measure of flexibility), and rings. Such descriptors form the basis of popular drug-likeness rules and are essential in substructural analysis for identifying toxicophores or other activity-defining fragments [17].

2D Descriptors: Harnessing the Power of Molecular Topology

2D descriptors, or topological indices, are derived from the hydrogen-suppressed molecular graph, where atoms are represented as vertices and bonds as edges [11] [13]. They encode patterns of atomic connectivity and are invariant to the molecule's conformation. Key categories include:

- Connectivity Indices (e.g., Randić, Kier & Hall): These capture the degree of branching in a molecule and have been successfully correlated with various physicochemical properties [11].

- Wiener Index: One of the earliest topological indices, defined as the sum of the shortest path distances between every pair of atoms in the molecular graph, related to molecular volume and boiling points [11].

- Kappa Shape Indices: Describe the molecular shape and flexibility based on the graph's topology [11].

A significant advantage of 2D descriptors is their computational efficiency and independence from molecular conformation, making them ideal for high-throughput screening of large chemical databases [11] [14]. In many practical applications, models built with 2D descriptors perform as well as, or even better than, those built with more complex 3D descriptors [14].

3D Descriptors: Encoding Spatial and Electronic Reality

3D descriptors require the 3D spatial coordinates of a molecule's atoms and thus capture stereochemical and geometric information that 2D descriptors cannot [13]. This class can be further divided into steric/geometric and quantum chemical descriptors.

Steric and Geometric Descriptors include simple measures like molecular volume, solvent-accessible surface area, and moment of inertia, which describe the overall size and shape of the molecule [17]. More sophisticated 3D descriptors, such as WHIM (Weighted Holistic Invariant Molecular) and 3D-MoRSE (3D Molecule Representation of Structures based on Electron diffraction), are holistic representations that are invariant to translation and rotation [12] [13].

Quantum Chemical Descriptors are derived from quantum mechanical calculations and provide detailed insight into a molecule's electronic structure and reactivity [15] [16]. Key descriptors include:

- HOMO/LUMO Energies: The energies of the Highest Occupied and Lowest Unoccupied Molecular Orbitals, indicating a molecule's ability to donate or accept electrons.

- Partial Atomic Charges: Describe the electron density distribution and are critical for modeling electrostatic interactions.

- Dipole Moment and Polarizability: Measure the overall molecular polarity and its response to an electric field.

These descriptors are indispensable for modeling biological activities where electronic effects, such as charge-transfer interactions or covalent binding, play a dominant role [16]. Their calculation, however, is computationally intensive and requires careful geometry optimization [15].

4D Descriptors and Beyond: Capturing Interaction Landscapes

4D descriptors extend the concept further by incorporating interaction energy information within a 3D grid. In methods like GRID, CoMFA (Comparative Molecular Field Analysis), and CoMSIA (Comparative Molecular Similarity Indices Analysis), the molecule is placed in a 3D lattice, and its interaction energies with various chemical probes (e.g., water, methyl group, carbonyl oxygen) are computed at each grid point [13] [14]. This rich data captures the molecule's potential interaction preferences with a biological target, providing a direct link to structure-based design principles.

Diagram 1: A strategic workflow for selecting molecular descriptors within a QSAR model development pipeline, highlighting key decision points.

Essential Protocols for Descriptor Calculation and Selection

Protocol 1: Calculation of a Comprehensive 2D Descriptor Set Using RDKit

Objective: To compute a diverse set of 2D molecular descriptors (constitutional, topological, and electronic) directly from SMILES strings using the open-source RDKit library.

Materials:

- Software: Python environment with RDKit installed.

- Input Data: A file containing molecular structures as SMILES strings and a corresponding compound identifier.

Procedure:

- Environment Setup: Install the RDKit library via conda (

conda install -c conda-forge rdkit). - Data Loading: Read the input file (e.g., CSV) using pandas.

- Molecule Object Generation: Iterate through the SMILES strings and convert each into an RDKit molecule object. Include sanitization checks to handle invalid structures.

- Descriptor Calculation: Utilize RDKit's descriptor modules (

rdkit.Chem.Descriptorsandrdkit.ML.Descriptors) to calculate a comprehensive set of properties. Key descriptors to include are:- Constitutional: Molecular weight, number of heavy atoms, rotatable bonds, H-bond donors/acceptors.

- Topological: Topological polar surface area (TPSA), graph-based indices.

- Electronic: Crippen logP and molar refractivity.

- Data Output: Compile all calculated descriptors into a structured data frame and export to a CSV file for subsequent modeling.

Notes: This protocol is highly efficient for large datasets. RDKit computes these descriptors from the 2D graph, requiring no 3D conformation, which makes it exceptionally fast [14].

Protocol 2: Feature Selection Using VSURF in a QSAR Workflow

Objective: To identify a non-redundant, biologically relevant subset of descriptors from a large initial pool to build a robust, interpretable, and predictive QSAR model while avoiding overfitting.

Materials:

- Software: R statistical environment with the

VSURFpackage installed. (Note: This method is also integrated into automated workflow tools like the KNIME-based workflow cited [18]). - Input Data: A data matrix where rows are compounds and columns are the extensive set of calculated molecular descriptors and the corresponding biological activity values.

Procedure:

- Data Preprocessing: Clean the descriptor matrix by removing descriptors with near-zero variance or high pairwise correlation.

- VSURF Execution: Run the

VSURFfunction, which is a Random Forest-based algorithm that operates in three steps [18]:- Step 1 (Interpretation): Eliminates descriptors irrelevant to the activity.

- Step 2 (Prediction): Selects a small subset of descriptors that contribute meaningfully to prediction accuracy.

- Step 3 (Selection): Removes redundant descriptors from the subset obtained in Step 2.

- Output Analysis: The final output of VSURF is a minimal set of descriptors that are most predictive. The relative importance of these descriptors should be examined to gain mechanistic insights.

Notes: Feature selection is a critical step in QSAR model development. It improves model interpretability, reduces the risk of overfitting from noisy descriptors, and can provide faster and more cost-effective models [11] [18].

Table 2: Key Software Tools for Molecular Descriptor Calculation

| Tool Name | Descriptor Coverage | Key Features | License |

|---|---|---|---|

| alvaDesc [12] | 0D to 3D, Fingerprints | Comprehensive, user-friendly GUI & CLI, updated in 2025 | Commercial |

| Dragon [12] [17] | 0D to 3D, >5000 descriptors | Historically a gold standard, now discontinued | Was Commercial |

| RDKit [12] [17] | 0D, 2D, 3D, Fingerprints | Open-source, Python API, active development, highly customizable | Free Open Source |

| Mordred [12] | 0D, 2D, 3D | Open-source, based on RDKit, calculates >1800 descriptors, Python library | Free Open Source |

| PaDEL-Descriptor [12] [17] | 0D, 2D, 3D, Fingerprints | Based on the Chemistry Development Kit (CDK), GUI and CLI, now discontinued | Free |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for a QSAR Workflow

| Tool / Resource | Category | Function in QSAR Workflow |

|---|---|---|

| RDKit [12] [17] | Cheminformatics Library | Core calculation of 2D/3D descriptors and fingerprints; molecule handling. |

| VSURF R Package [18] | Feature Selection Algorithm | Identifies relevant, non-redundant descriptors from a large initial pool. |

| KNIME Analytics Platform [18] | Workflow Management | Provides a visual interface to build, execute, and manage the entire QSAR pipeline. |

| alvaDesc [12] | Descriptor Software | Computes a vast array of 0D-3D descriptors for robust model development. |

| SYNTHIA Retrosynthesis [19] | Synthesis Planning | Aids in the design of synthetically accessible compounds identified via QSAR. |

Concluding Remarks

The strategic selection of molecular descriptors is a cornerstone of effective QSAR model development. As explored throughout this article, the descriptor landscape is hierarchically structured, ranging from fast-computing constitutional descriptors to mechanistically insightful quantum chemical indices. The guiding principle for the modeler is to align the complexity of the descriptors with the specific biological endpoint and the project's goals, whether it be high-throughput virtual screening or detailed mechanistic elucidation [11] [13].

The future of descriptors in QSAR is being shaped by the integration of artificial intelligence and machine learning. Recent research focuses on developing methods for the dynamic adjustment of descriptor importance [20] and on leveraging deep learning to automatically derive optimal molecular representations from raw structural data [17]. Furthermore, the push for model interpretability remains paramount, as evidenced by the OECD's principle that QSAR models should have a mechanistic interpretation, wherever possible [20]. By thoughtfully applying the protocols and principles outlined in this article, researchers can harness the full power of molecular descriptors to accelerate the rational design of novel, effective therapeutics.

Within the Quantitative Structure-Activity Relationship (QSAR) model development workflow, the initial stages of data collection and curation are not merely preliminary steps but are fundamentally critical to the success and reliability of any subsequent computational analysis. The principle of "garbage in, garbage out" is acutely relevant; even the most sophisticated machine learning algorithms cannot compensate for poor-quality input data [21]. Robust data collection and curation strategies ensure that the developed models are predictive, interpretable, and suitable for regulatory acceptance. These processes involve gathering relevant chemical structures and their associated biological activities, followed by a rigorous protocol to check their correctness, standardize them, and produce consistent, ready-to-use datasets for cheminformatic analysis [22]. This document outlines detailed application notes and protocols for these foundational stages, framed within the broader context of a QSAR model development thesis.

The Critical Role of Data Quality in QSAR

The evolution of QSAR from basic linear models to advanced machine learning and AI-based techniques has placed an even greater emphasis on dataset quality, reproducibility, and the clear definition of a model's applicability domain [21]. High-quality, well-curated data is the bedrock upon which robust, predictive models are built. Inadequate attention to data quality at this stage can introduce biases and errors that propagate through the entire workflow, leading to models with poor predictive performance and limited practical utility. Furthermore, regulatory guidelines, such as those from the OECD, emphasize the importance of reliable data for ensuring model credibility in chemical safety and pharmaceutical applications [21]. A standard procedure for data retrieval and curation, implemented in freely available workflows, has been recognized as a tool of high interest in the field of computational chemistry [22].

Consequences of Inadequate Data Curation

Neglecting rigorous data curation can lead to several critical failures in QSAR modeling:

- Introduction of Biases and Errors: Incorrect structures or inconsistent activity data can skew the model's learning process.

- Poor Predictive Performance: Models built on unreliable data fail to generalize to new, external chemicals.

- Limited Regulatory Acceptance: Models that do not adhere to best practices for data quality, as outlined in OECD guidelines, are unlikely to be accepted for regulatory decision-making [21].

- Misleading Structure-Activity Relationships: The core objective of the model is compromised, leading to incorrect hypotheses about the chemical features responsible for biological activity.

Data Collection and Curation Protocol

The following protocol provides a detailed methodology for the collection and curation of chemical data to develop a high-quality dataset for QSAR modeling. The entire workflow is also summarized in Figure 1.

Data Collection and Acquisition

Objective: To gather a comprehensive set of chemical structures and their corresponding biological activity data from reliable public and/or proprietary sources.

Materials and Reagents:

- Public Chemical Databases: (e.g., PubChem, ChEMBL). Provide a rich source of bioactive molecules and their properties.

- Proprietary Corporate Databases: Internal collections of synthesized and tested compounds.

- Literature Mining Tools: Software to extract chemical and biological data from scientific publications.

Procedure:

- Define Scope and Endpoint: Clearly delineate the chemical space and the biological activity or toxicity endpoint of interest (e.g., IC50, LD50). This defines the model's applicability domain from the outset [21].

- Identify Data Sources: Select appropriate public and/or proprietary databases from which to retrieve chemical data, typically in the form of SMILES (Simplified Molecular-Input Line-Entry System) strings [22].

- Data Retrieval: Extract chemical structures (as SMILES, SDF, or other formats) and their associated experimental endpoint values. Ensure that all data is associated with consistent units of measurement.

Data Curation and Standardization

Objective: To check the correctness of the retrieved chemical data and curate them to produce a consistent and ready-to-use dataset [22].

Procedure:

- Structure Verification:

- Check the validity of all SMILES strings or structural files.

- Remove any entries that contain atoms other than those in the defined set (e.g., no heavy metals for a drug-like dataset).

- Check and correct for valency errors.

- Standardization (See Table 1 for common tasks):

- Tautomer Standardization: Select a single, representative tautomer for each molecule to ensure consistency.

- Charge Standardization: Standardize protonation states to a relevant pH (e.g., pH 7.4) using appropriate tools.

- Stereochemistry: Define and consistently represent stereochemical centers. Consider defining racemic mixtures explicitly if the experimental data does not distinguish enantiomers.

- Descriptor Calculation: Use open-source software like PaDEL-Descriptor to calculate molecular descriptors and fingerprints [21].

- Activity Data Verification:

- Unit Consistency: Ensure all biological activity values (e.g., IC50, Ki) are reported in the same unit (e.g., nM, µM).

- Duplicate Removal: Identify and resolve duplicate entries for the same chemical structure. Prefer the most reliable measurement or calculate an average if multiple valid measurements exist.

- Outlier Detection: Visually and statistically inspect the distribution of activity values to identify and investigate potential outliers that may represent experimental errors. The use of binned histograms can be highly effective for visual outlier detection [23].

Dataset Preparation for Modeling

Objective: To create the final, curated dataset that is partitioned for model training and validation.

Procedure:

- Chemical Diversity Analysis: Assess the structural diversity of the curated dataset using calculated descriptors to ensure a representative coverage of the chemical space.

- Data Splitting: Split the curated dataset into training and test sets using methods such as random splitting or time-split cross-validation [21]. This is critical for estimating the goodness of prospective prediction.

- Format for Analysis: Structure the data into a single, well-formatted table. As a fundamental best practice for analysis, each row should represent a unique compound, and each column should represent an attribute of that compound, such as a descriptor or the activity value [23]. Ensure the data is stored in rows and columns, with column headers in the first row.

Figure 1: Data Curation Workflow. This diagram outlines the logical sequence of steps for curating chemical data for QSAR modeling.

Data Presentation and Analysis

This section summarizes key quantitative aspects and reagent solutions involved in the data curation process for easy comparison and implementation.

Table 1: Common Data Standardization Tasks in QSAR Curation

| Standardization Task | Description | Common Tools/Functions |

|---|---|---|

| Tautomer Standardization | Selects a single, representative tautomeric form for each molecule to ensure consistency. | RDKit (CanonicalTautomer), OpenBabel |

| Charge Standardization | Adjusts protonation states to a defined pH (e.g., 7.4) to reflect physiological conditions. | RDKit, MOE, ChemAxon Marvin |

| Stereochemistry Definition | Explicitly defines stereocenters; important for chiral activity differences. | RDKit, CDK (Chemistry Development Kit) |

| Descriptor Calculation | Generates numerical representations of molecular structures. | PaDEL-Descriptor [21], RDKit, Dragon |

| Duplicate Removal | Identifies and consolidates or removes identical chemical structures. | In-house scripts, KNIME, Pipeline Pilot |

Table 2: Essential Research Reagent Solutions for QSAR Data Curation

| Item / Solution | Function / Purpose |

|---|---|

| KNIME Analytics Platform | An open-source platform for implementing automated workflows for data retrieval, curation, and machine learning in QSAR [22]. |

| RDKit | An open-source cheminformatics toolkit used for molecule standardization, descriptor calculation, and scaffold analysis. |

| PaDEL-Descriptor Software | Open-source software to calculate a comprehensive set of molecular descriptors and fingerprints [21]. |

| Public Chemical Databases (e.g., ChEMBL, PubChem) | Provide large, annotated chemical datasets of bioactive molecules for model building. |

| Curated In-House Compound Libraries | Proprietary collections of chemically diverse compounds with high-quality, internally generated activity data. |

The development of robust Quantitative Structure-Activity Relationship (QSAR) models fundamentally depends on the quality and consistency of the underlying chemical structure input. The concept of "QSAR-ready" structures describes chemical representations that have undergone standardized preparation to ensure molecular descriptors calculated from them accurately reflect the compounds' properties and biological activities. This process is particularly critical for tautomerizable molecules, which constitute approximately 25% of marketed drugs and can exist as multiple structures interconverting through proton movement and bond rearrangement [24] [25]. Without proper standardization, the same compound represented in different tautomeric states can yield different molecular fingerprints, hydrophobicities, pKa values, and three-dimensional shapes, ultimately compromising QSAR model accuracy, repeatability, and reliability [26] [24].

This application note details standardized protocols for achieving QSAR-ready chemical structures through automated standardization workflows with particular emphasis on tautomer handling. Framed within the broader context of QSAR model development workflow research, we provide comprehensive methodologies, practical tools, and validation approaches to ensure chemical data quality prior to model building.

The Critical Challenge of Tautomerism in QSAR

Prevalence and Impact on Modeling

Tautomerism presents a multifaceted challenge for computer-aided molecular design. Analysis of marketed drugs reveals that 26% exist as an average of three tautomers, potentially increasing dataset size by 1.64-fold when all forms are considered [24]. Different tautomers of the same molecule exhibit distinct molecular fingerprints, hydrophobicities, pKa values, 3D shapes, and electrostatic properties [24]. Furthermore, proteins frequently preferentially bind to a tautomer that may be present in low abundance in aqueous solution, creating discrepancies between experimental conditions and computational representations [24].

The proper treatment of tautomers affects virtually every aspect of QSAR modeling:

- Library Design: Similarity or diversity assessments may inadvertently include similar molecules encoded as different tautomers

- Descriptor Calculation: QSAR algorithms must decide which tautomer(s) to use for molecular descriptor calculation

- Model Interpretation: Structure-activity relationships become complicated when tautomerism influences biological activity measurements

- Virtual Screening: Docking protocols must determine which tautomers to include and account for tautomerization in scoring functions [24]

Thermodynamic Considerations and Environmental Dependence

Tautomeric ratios are highly dependent on molecular structure and solvent environment [24]. Small changes in structure or solvent can dramatically alter tautomer distributions, complicating the assignment of physical property measurements to specific chemical structures and identification of bioactive species from tautomeric mixtures. Table 1 summarizes key factors influencing tautomeric equilibria.

Table 1: Factors Influencing Tautomeric Equilibria

| Factor | Impact on Tautomerism | Example |

|---|---|---|

| Solvent Environment | Dramatically shifts tautomer ratios | 4-Hydroxypyridine exists predominantly as 4-pyridone in water [24] |

| Substituent Effects | Electronic properties influence preferred form | Ortho-nitro group favors open form in ring-chain tautomerism [24] |

| Intramolecular H-bonding | Can stabilize otherwise less favored tautomers | Intramolecular H-bonds in enol forms can increase their prevalence [24] |

| Protein Binding | Macromolecules may selectively bind minor tautomers | Barbiturate analogue bound to matrix metalloproteinase 8 as minor tautomer (20 kcal/mol less stable in solution) [24] |

| Measurement Context | Experimental conditions affect observed ratios | NMR may detect multiple tautomers while crystallography might capture only one [24] |

Automated Workflows for QSAR-Ready Standardization

Comprehensive Standardization Protocol

The "QSAR-ready" workflow represents a systematic approach to chemical structure standardization prior to QSAR modeling. Implemented within the KNIME workflow environment, this automated protocol ensures consistent molecular representations across diverse chemical datasets [26]. The workflow comprises three high-level steps:

- Structure Encoding and Reading: Chemical structures are read from various input formats and converted into in-memory molecular representations

- Identifier Cross-Referencing: Existing chemical identifiers are cross-referenced for consistency verification

- Structure Standardization: A series of operations transforms structures into standardized representations [26]

The following diagram illustrates the complete QSAR-ready standardization workflow:

Tautomer Handling Methodologies

Tautomer standardization represents perhaps the most critical step in the QSAR-ready workflow. Multiple approaches exist for addressing tautomerism in computational chemistry, each with distinct advantages and limitations:

Rule-Based Tautomer Standardization

Most automated QSAR workflows implement rule-based systems that transform tautomers into a single canonical representation. These systems typically:

- Enumerate possible tautomers based on molecular structure

- Apply predetermined rules to select the predominant tautomer

- Generate standardized output for descriptor calculation [26]

This approach balances computational efficiency with reasonable accuracy for most QSAR applications, particularly when processing large chemical datasets.

Quantum Mechanical Approaches

For higher accuracy requirements, quantum mechanics (QM) based methods provide a more rigorous foundation for tautomer prediction. These approaches calculate the relative energies of different tautomers to determine their stability and prevalence. Traditional QM methods like Density Functional Theory (DFT) calculations offer accuracy but remain computationally prohibitive for large datasets [25].

Emerging hybrid quantum chemistry-quantum computation workflows show promise for efficient prediction of preferred tautomeric states. These approaches:

- Select active-space molecular orbitals based on quantum chemistry methods

- Map Hamiltonian onto quantum devices using efficient encoding methods

- Employ variational quantum eigensolver (VQE) algorithms for ground state estimation [25]

While still in development, quantum computing approaches may eventually enable accurate tautomer prediction with reduced computational resources compared to traditional QM methods [25].

Experimental Protocols and Implementation

Protocol: QSAR-Ready Standardization in KNIME

This protocol details the implementation of an automated QSAR-ready workflow using KNIME analytics platform [26]:

Materials:

- Chemical structures in SMILES, SDF, or other standard formats

- KNIME Analytics Platform (version 4.0 or higher)

- CDK (Chemistry Development Kit) nodes or RDKit nodes within KNIME

- "QSAR-ready" workflow components [26]

Procedure:

- Data Input Configuration

- Configure file reader nodes to import chemical structures

- Specify input format (SMILES, SDF, etc.) and character encoding

- Include appropriate identifier fields for cross-referencing

Structure Standardization

- Implement desalting step to remove counterions and salts

- Apply stereochemistry stripping for 2D QSAR models

- Configure tautomer standardization parameters:

- Set maximum number of tautomers to generate (default: 100)

- Define reaction patterns for proton movement

- Specify timeout for tautomer enumeration (default: 60 seconds)

- Apply nitro group standardization to consistent representations

- Implement valence correction to fix invalid valence states

- Configure neutralization rules for ionizable groups

Duplicate Handling

- Set similarity threshold for duplicate identification (typically 0.95-1.0 Tanimoto)

- Define precedence rules for duplicate selection (e.g., highest data quality)

- Implement duplicate removal or grouping

Output Generation

- Export standardized structures in desired format

- Include audit trail of applied transformations

- Generate summary statistics of standardization results

Validation:

- Compare molecular descriptor variance before and after standardization

- Verify consistent representation of known tautomer pairs

- Assess impact on QSAR model performance using control datasets

Protocol: Tautomer Enumeration and Scoring

For applications requiring explicit consideration of multiple tautomeric states, this protocol describes a comprehensive tautomer handling approach:

Materials:

- Chemical structure with tautomerizable functional groups

- Tautomer enumeration software (e.g., ChemAxon, OpenEye)

- Computational resources for quantum mechanical calculations (optional)

Procedure:

- Tautomer Enumeration

- Identify tautomerizable functional groups (keto-enol, lactam-lactim, etc.)

- Generate all possible tautomers considering:

- Proton movement between heteroatoms

- Ring-chain equilibria

- Valence tautomerism

- Apply ring conformation analysis where relevant

Tautomer Scoring

- Method A: Empirical Scoring

- Apply rule-based prioritization (e.g., favor keto over enol forms)

- Use known thermodynamic preferences for common scaffolds

- Consider steric and electronic effects of substituents

- Method B: Quantum Mechanical Scoring

- Perform geometry optimization for each tautomer

- Calculate relative energies using appropriate theory level (e.g., B3LYP/6-31G*)

- Include solvation effects using implicit solvent models (e.g., PCM, SMD)

- Apply Boltzmann distribution to estimate population ratios

- Method A: Empirical Scoring

Representation Selection

- For single-representation QSAR: Select lowest energy tautomer

- For multi-representation QSAR: Include all tautomers above population threshold (e.g., >5%)

- Weight contributions according to estimated populations if needed

Validation:

- Compare predicted dominant tautomers with experimental crystal structures where available

- Validate population estimates against spectroscopic data

- Assess consistency across different enumeration algorithms

Essential Tools for QSAR-Ready Standardization

The Scientist's Toolkit: Software Solutions

Table 2: Essential Tools for Achieving QSAR-Ready Chemical Structures

| Tool Name | Type | Key Features | Tautomer Handling | License |

|---|---|---|---|---|

| KNIME with Chemistry Extensions | Workflow Platform | Automated QSAR-ready workflow, visual pipeline design, descriptor calculation [26] | Rule-based standardization with customizable parameters | Open Source |

| QSPRpred | Python Package | Data set curation, model building, serialization of preprocessing steps [27] | Integration with external tautomer standardization libraries | Open Source |

| QSAR Toolbox | Comprehensive Application | Data gap filling, metabolic simulation, read-across, category building [28] | Integrated tautomer profiling and metabolism simulation | Free |

| PaDEL-Descriptor | Descriptor Calculator | Molecular descriptor and fingerprint calculation, includes pre-processing [29] | Basic structure standardization prior to descriptor calculation | Open Source |

| Epik | Tautomer Prediction | pKa prediction, tautomer enumeration, ligand preparation for docking | Physics-based methods for tautomer population estimation | Commercial |

Implementation Considerations for Automated Workflows

When implementing automated QSAR-ready workflows, several critical factors ensure success:

Data Quality Assessment:

- Evaluate dataset modelability before extensive processing

- Identify potential representation inconsistencies early

- Establish baseline quality metrics for comparison [9]

Feature Selection Integration:

- Implement efficient variable selection to remove redundant descriptors

- Reduce prediction error by 19% on average through proper feature selection

- Increase percentage of variance explained (PVE) by 49% compared to models without feature selection [9]

Reproducibility and Deployment:

- Serialize complete preprocessing workflows alongside models

- Ensure consistent application of standardization to new compounds

- Document all transformation parameters for regulatory compliance [27]

Impact on QSAR Model Performance

Proper structure standardization significantly enhances QSAR model reliability. Studies demonstrate that automated QSAR-ready workflows:

- Improve model accuracy through consistent molecular representation

- Enhance model reproducibility across different research groups

- Increase descriptor reliability by eliminating representation artifacts [26]

For tautomer-rich datasets, appropriate handling can determine model success. Comparative studies show that models built with standardized tautomer representations outperform those using raw chemical inputs, particularly for endpoints sensitive to hydrogen bonding and molecular shape [24].

Achieving QSAR-ready structures through automated standardization and systematic tautomer handling represents a foundational step in robust QSAR model development. The protocols and methodologies detailed in this application note provide researchers with practical approaches to address chemical representation challenges, particularly for the approximately 25% of drug-like molecules capable of tautomerism.

Future developments in this field will likely include:

- Increased integration of quantum mechanical methods for tautomer prediction

- Hybrid approaches combining rule-based efficiency with QM accuracy

- Machine learning models trained on both structural and energetic data

- Expanded tautomer databases supporting empirical method development

As QSAR modeling continues to evolve in pharmaceutical development and regulatory science, ensuring chemical structure quality through standardized "QSAR-ready" protocols will remain essential for building predictive, reliable, and interpretable models.

In Quantitative Structure-Activity Relationship (QSAR) modeling, the fundamental principle is that a compound's biological activity is a function of its chemical structure [30] [6]. The reliability of any QSAR model is intrinsically linked to how well the chemical space of its training data is defined and how this definition is used to assess new predictions. Two critical, interconnected processes govern this reliability: dataset splitting, which ensures a rigorous evaluation of the model's generalizability, and the definition of the applicability domain (AD), which identifies the region of chemical space where the model's predictions are reliable [31] [32]. Proper implementation of these steps is essential for building trust in model outputs and for effective decision-making in drug discovery [31]. This document outlines standardized protocols for these crucial components within a QSAR model development workflow.

Dataset Splitting Strategies

Dataset splitting partitions available data into training and test sets, simulating the model's performance on unseen compounds. The choice of strategy significantly impacts performance estimates [33].

Table 1: Comparison of Dataset Splitting Methods in QSAR Modeling.

| Splitting Method | Core Principle | Advantages | Limitations | Suitable For |

|---|---|---|---|---|

| Random Split [33] | Compounds assigned randomly to training/test sets. | Simple, fast to implement. | Overly optimistic performance; test set molecules are often highly similar to training set molecules. | Initial algorithm benchmarking. |

| Scaffold Split [33] | Groups molecules by Bemis-Murcko scaffolds; all molecules sharing a scaffold are placed in the same set. | Reduces artificial inflation of performance; ensures test scaffolds are novel. | Can separate chemically similar molecules with different scaffolds. | Realistic simulation of scaffold-hopping discovery. |

| Butina Split [33] | Clusters molecules using molecular fingerprints (e.g., Morgan) via the Butina algorithm; entire clusters are assigned to a set. | Accounts for overall molecular similarity, not just core scaffolds. | Clustering results and subsequent split are sensitive to algorithm parameters. | General-purpose evaluation of model generalizability. |

| Time Split [33] | Uses the temporal order of data acquisition; older data for training, newer data for testing. | Best mimics real-world discovery where future compounds are predicted from past data. | Requires timestamped data, which is not always available. | Prospective validation with historical project data. |

| Step-Forward Cross-Validation (SFCV) [34] | Sorts data by a property (e.g., logP) and sequentially expands the training set. | Mimics chemical optimization; tests extrapolation to more drug-like space. | Complex setup; requires a meaningful property for sorting. | Assessing model performance during lead optimization. |

Experimental Protocol: Scaffold Split with Cross-Validation

This protocol ensures that molecules with structurally distinct cores are separated between training and test sets, providing a rigorous assessment of a model's ability to generalize to novel chemotypes [33].

Detailed Methodology:

- Input Data: A dataset of compounds represented by SMILES strings and their corresponding biological activity values (e.g., pIC₅₀).

- Software & Tools: RDKit and a scikit-learn compatible environment.

- Procedure:

- Scaffold Generation: For each molecule SMILES, generate its Bemis-Murcko scaffold using the

get_bemis_murcko_clustersfunction (or equivalent from theuseful_rdkit_utilspackage) [33]. This process iteratively removes monovalent atoms to reveal the core molecular framework. - Group Assignment: Use the generated scaffold identifiers as the grouping key for the split.

- Split Execution: Instantiate the

GroupKFoldShuffleobject fromuseful_rdkit_utilswith the desired number of splits (e.g.,n_splits=5) andshuffle=Trueto randomize the order of scaffolds in each fold [33]. - Index Generation: Call the

splitmethod, providing the molecular descriptors (e.g., fingerprint vectors), the activity values (e.g.,df.logS), and the scaffold group labels (e.g.,df.bemis_murcko). The method returns the indices for the training and test sets for each cross-validation fold, ensuring no scaffold is present in both sets in any given fold [33].

- Scaffold Generation: For each molecule SMILES, generate its Bemis-Murcko scaffold using the

Diagram 1: Workflow for performing a scaffold-based split of a chemical dataset.

Defining the Applicability Domain (AD)

The Applicability Domain is the chemical space defined by the training compounds and the model algorithm within which predictions are considered reliable [32]. As models are not universal, the AD is a necessary condition for establishing prediction confidence [35] [31]. A model's performance degrades as queried compounds move farther from the training chemical space [32].

Table 2: Common Techniques for Defining the Applicability Domain (AD).

| AD Method | Description | Key Metric | Interpretation |

|---|---|---|---|

| Leverage [30] | Measures a compound's distance from the centroid of the training data in descriptor space. | Williams plot (Leverage vs. Standardized Residual). | High leverage compounds are outside the structural AD. |

| k-Nearest Neighbors (k-NN) Density [32] | Calculates the local density of training points around a new compound. | Average Euclidean distance to k-nearest neighbors in training set. | Low density indicates a sparse region; prediction is less reliable. |

| Reliability-Density Neighbourhood (RDN) [32] | An advanced method combining local data density, prediction bias, and precision. | A composite score based on density and local model reliability. | Maps "safe" and "unsafe" regions, identifying holes in the chemical space. |

| Conformal Prediction (CP) [31] | A framework providing uncertainty quantification and prediction intervals for individual predictions. | Prediction interval or set, calibrated at a user-defined confidence level (1-α). | Wider intervals or empty sets indicate lower confidence; the method ensures validity. |

Experimental Protocol: Reliability-Density Neighbourhood (RDN)

The RDN method offers a robust AD by mapping local reliability across the chemical space, characterizing each training instance by its neighbourhood density, bias, and precision [32].

Detailed Methodology:

- Input: A trained QSAR model and its standardized training set descriptors.

- Software: The RDN R package (available at https://github.com/machLearnNA/RDN) [32].

- Procedure:

- Feature Selection: Optimize the set of molecular descriptors used for distance calculations. The top features selected by an algorithm like ReliefF often yield better AD characterization than using the entire feature set [32].

- Parameter Calibration: Set the parameter

k, the number of nearest neighbors to consider for the local density and reliability calculations. - Calculate Training Set Properties: For each training compound, compute:

- Density: The average Euclidean distance to its k nearest neighbors in the training set.

- Bias: The difference between the measured activity and the model's predicted activity for that compound.

- Precision: The standard deviation of predictions from an ensemble of models (or from cross-validation) for the compound.

- Define Local Thresholds: Establish a unique reliability threshold for each training compound based on the calculated density, bias, and precision.

- Assess New Compounds: For a new query compound:

- Find its k nearest neighbors in the training set.

- Compute its distance to these neighbors.

- Determine if it falls within the combined reliability-density neighbourhood of the training compounds. A compound is inside the AD if it is sufficiently close to reliable and dense regions of the training space [32].

Diagram 2: The process for assessing a new compound using the Reliability-Density Neighbourhood (RDN) applicability domain method.

Advanced Protocol: Conformal Prediction for Uncertainty Quantification

Conformal Prediction (CP) provides a mathematically rigorous framework for quantifying prediction uncertainty. It is particularly useful for handling distribution shifts and restoring model reliability on new chemical domains without full retraining [31].

Detailed Methodology:

- Data Splitting: Split the initial training data into a proper training set and a calibration set.

- Model Training: Train the QSAR model on the proper training set.

- Nonconformity Score Calculation: Use the calibration set to compute nonconformity scores, which measure how different a data point is from the training examples [31].

- Prediction Interval Generation: For a new test compound, the CP framework produces a prediction interval (for regression) or a prediction set (for classification) with a user-specified confidence level (e.g., 90%). The width of the interval or the content of the set inherently communicates the uncertainty of the prediction [31].

- Recalibration for New Domains: If the model is applied to a new, dissimilar chemical space (e.g., a new series of cyclic peptides), the exchangeability assumption may break. To restore confidence, recalibrate the CP by replacing the original calibration set with a small subset of data from the new targeted domain. This strategy has been shown to restore model validity efficiently without the computational cost of retraining [31].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key software tools and resources for implementing dataset splitting and applicability domain analysis.

| Tool/Resource | Type | Primary Function | Application in Protocol |

|---|---|---|---|

| RDKit [34] [33] | Cheminformatics Library | Calculates molecular descriptors, fingerprints, and scaffolds. | Core component for featurization and scaffold-based splitting. |

| scikit-learn [33] | Machine Learning Library | Provides model algorithms and data splitting utilities (e.g., GroupKFold). |

Implementation of ML models and integration with custom splitters. |

| RDN Package [32] | R Library | Implements the Reliability-Density Neighbourhood AD method. | Used in Protocol 2.1 to define the applicability domain. |

| CIMtools [35] | Python Library | Contains featurization and AD methods for chemical reactions. | Example of specialized tools for reaction-based modeling. |

| Usefulrdkitutils [33] | Utility Package | Provides helper functions, including GroupKFoldShuffle. |

Enables reproducible scaffold-splitting with cross-validation. |

From Theory to Practice: Model Building Algorithms and Implementation

In modern drug discovery, Quantitative Structure-Activity Relationship (QSAR) modeling serves as a computational bridge between chemical structure and biological activity, enabling researchers to predict compound properties before synthesis. The selection of an appropriate algorithm—whether linear or non-linear—represents a critical decision point that directly influences model interpretability, predictive accuracy, and ultimate utility in pharmaceutical development. These mathematical models correlate molecular descriptors (quantitative representations of chemical structures) with biological activities through statistical learning methods, forming the backbone of ligand-based drug design [30] [6] [36].

The fundamental principle underlying QSAR is that molecular structure quantitatively determines biological effect, expressed mathematically as Activity = f(D₁, D₂, D₃...), where D represents molecular descriptors [30]. This relationship can be modeled using either linear functions that assume additive descriptor contributions or non-linear functions that capture complex interactions. The evolution of QSAR has progressed from simple linear regression applied to congeneric series to sophisticated machine learning approaches capable of handling diverse chemical spaces with complex, non-linear structure-activity relationships [36] [37].

Theoretical Foundations: Linear vs. Non-Linear Methods

Linear QSAR Methods

Linear QSAR models establish a direct, proportional relationship between molecular descriptors and biological activity, operating under the assumption that descriptor contributions are additive and independent. These methods generate highly interpretable models through explicit coefficient estimates for each descriptor, making them particularly valuable for mechanistic interpretation and regulatory applications [6] [37].

The general form of a linear QSAR model is: Activity = w₁D₁ + w₂D₂ + ... + wₙDₙ + b, where w represents coefficient weights, D denotes molecular descriptors, and b is the model intercept [6]. Among linear approaches, Multiple Linear Regression (MLR) has been one of the most widely used mapping techniques in QSAR research for decades, providing transparent models where the influence of each structural feature is quantitatively expressed [30]. Partial Least Squares (PLS) regression offers an alternative linear approach that handles descriptor multicollinearity by projecting variables into a latent space that maximizes covariance with the response variable, making it particularly useful for datasets with correlated descriptors [37] [38].

Non-Linear QSAR Methods

Non-linear QSAR methods capture complex, non-additive relationships between molecular structure and biological activity that linear models cannot adequately represent. These approaches are particularly valuable when activity depends on synergistic descriptor interactions or when the underlying structure-activity relationship follows complex patterns [6] [37].

The general form of a non-linear QSAR model is: Activity = f(D₁, D₂, D₃...), where f represents a non-linear function learned from data [6]. Artificial Neural Networks (ANNs) mimic biological neural systems through interconnected nodes that process descriptor inputs, with multi-layer architectures capable of learning hierarchical representations [30] [37]. Support Vector Machines (SVMs) operate by mapping descriptor data into high-dimensional feature spaces where optimal separation hyperplanes are constructed, demonstrating particular effectiveness with limited samples and high-dimensional descriptors [37]. Additional non-linear approaches include Random Forests (RF), which aggregate predictions from multiple decision trees to improve accuracy and reduce overfitting [37], and Radial Basis Function (RBF) networks that employ localized activation functions to capture non-linear patterns, sometimes combined with PLS in hybrid approaches like RBF-PLS [38].

Table 1: Characteristics of Primary QSAR Modeling Algorithms

| Algorithm | Type | Key Advantages | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Multiple Linear Regression (MLR) | Linear | High interpretability, simple implementation, minimal parameters | Assumes linearity and descriptor independence, sensitive to multicollinearity | Congeneric series, mechanistic interpretation, regulatory applications |

| Partial Least Squares (PLS) | Linear | Handles correlated descriptors, works with high-dimensional data | Reduced interpretability of latent variables, still assumes linearity | Descriptor-rich environments, spectral data, aligned congeneric series |

| Artificial Neural Networks (ANN) | Non-linear | Captures complex relationships, high predictive power, fault tolerance | Black-box nature, extensive data requirements, computationally intensive | Large diverse datasets, complex SAR, when prediction accuracy is prioritized |

| Support Vector Machines (SVM) | Non-linear | Effective in high dimensions, robust to outliers, strong theoretical foundation | Parameter sensitivity, limited interpretability, computational cost with large datasets | Moderate-sized datasets, non-linear patterns, classification tasks |

| Random Forests (RF) | Non-linear | Handles non-linearity, built-in feature importance, robust to outliers | Limited extrapolation, ensemble interpretation challenges | Diverse chemical spaces, feature selection, robust performance needs |

Algorithm Selection Criteria

Dataset Characteristics and Size

Dataset size and diversity fundamentally influence algorithm selection, with linear methods generally requiring fewer samples than their non-linear counterparts. For congeneric series (typically 20-100 compounds) with gradual structural modifications, MLR and PLS often yield interpretable, predictive models by capturing primary structure-activity trends [30] [38]. As chemical diversity increases, introducing complex, non-linear relationships, ANN and RF models typically demonstrate superior predictive performance by detecting patterns that linear methods miss [30] [39]. Extremely large datasets (thousands to millions of compounds) enable deep learning architectures to automatically learn relevant features and complex representations without explicit descriptor engineering [37].

The activity distribution within the dataset further guides algorithm choice. Balanced datasets with approximately normal activity distributions suit most algorithms, while highly skewed distributions with activity cliffs may benefit from non-linear methods that better handle discontinuities. When working with high-dimensional descriptor spaces (hundreds to thousands of descriptors), PLS and RF offer inherent dimensionality reduction, while MLR requires careful feature selection to avoid overfitting [37] [38].

Model Interpretability vs. Predictive Accuracy

The interpretability-accuracy tradeoff represents a central consideration in algorithm selection, with significant implications for drug discovery decision-making. Linear models provide direct mechanistic insights through descriptor coefficients that quantify each structural feature's contribution to activity—for example, identifying how hydrophobicity or electronic properties influence binding [30] [40]. This transparency is particularly valuable during lead optimization, where understanding structure-activity relationships guides structural modifications [36].

Non-linear models often achieve higher predictive accuracy, particularly for complex endpoints involving multiple interacting mechanisms, but operate as "black boxes" with limited intuitive interpretation [39] [40]. Recent advances in model interpretation tools, including SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations), help mitigate this limitation by quantifying feature importance in non-linear models [37]. The choice ultimately depends on project goals: early discovery prioritizing candidate screening may favor accurate predictions, while mechanism-driven optimization requires interpretable models [40] [37].

Implementation practicalities, including computational infrastructure and analytical expertise, significantly constrain algorithm selection. Linear methods like MLR and PLS are computationally efficient, running on standard hardware with minimal programming expertise, while ANN and deep learning approaches demand substantial computational resources (GPUs), programming proficiency, and specialized libraries like TensorFlow or PyTorch [37]. The development timeline further influences choices, with linear models typically requiring less tuning and validation time than complex non-linear approaches [6].

Table 2: Empirical Performance Comparison Across QSAR Studies

| Study Context | Dataset Size | Best Performing Algorithm | Key Performance Metrics | Comparative Algorithms |

|---|---|---|---|---|

| NF-κB inhibitors [30] | 121 compounds | ANN ([8.11.11.1] architecture) | Superior reliability and prediction accuracy | Multiple Linear Regression (MLR) |

| Anti-HIV indolyl aryl sulfones [39] | 97 compounds | Artificial Neural Network (ANN) | External prediction r² = 0.781 | Stepwise regression, GFA-MLR, PLS |

| HIV-1 reverse transcriptase inhibitors [38] | 111 compounds | RBF-PLS (hybrid) | Significantly superior to CoMFA/CoMSIA | MLR, PLS, RBF neural network |

| Antioxidant capacity of phenolics [6] | Not specified | Artificial Neural Network (ANN) | Stronger predictive performance | Partial Least Squares (PLS) |

Experimental Protocols

Protocol 1: Implementing Multiple Linear Regression QSAR

Multiple Linear Regression (MLR) represents a foundational approach for linear QSAR modeling, particularly effective with congeneric series and moderately sized datasets (20-100 compounds) where interpretability is prioritized [30] [38].

Step-by-Step Procedure:

- Data Preparation and Curation: Collect and standardize chemical structures, removing duplicates, inorganic compounds, and mixtures. Verify biological activity data consistency, converting all values to a common scale (typically pIC₅₀ or pEC₅₀) [36].

- Descriptor Calculation and Preprocessing: Compute molecular descriptors using software such as DRAGON, PaDEL, or RDKit. Perform descriptor preprocessing by removing constant/near-constant descriptors and scaling remaining descriptors to zero mean and unit variance [6] [37].

- Feature Selection: Apply feature selection techniques like Stepwise Regression, Genetic Algorithm, or Ant Colony Optimization to identify the most relevant, non-collinear descriptors. Validate selected descriptors for minimal intercorrelation (VIF < 5) [38].

- Dataset Division: Split data into training (70-80%) and test (20-30%) sets using rational methods (e.g., Kennard-Stone) to ensure representative chemical space coverage in both sets [39] [6].

- Model Training: Perform MLR analysis on the training set using statistical software (R, Python/scikit-learn) to derive the linear equation: pIC₅₀ = b + c₁D₁ + c₂D₂ + ... + cₙDₙ.

- Model Validation: Apply the developed model to predict test set activities. Calculate internal (Q², R²) and external validation metrics (R²ₜₑₛₜ, RMSE) following OECD guidelines [41] [42].

- Applicability Domain Definition: Establish the model's applicability domain using leverage approach to identify compounds within acceptable structural space [30].

Troubleshooting Tips:

- Address multicollinearity through feature selection or PLS regression

- If model shows poor predictive ability, reconsider descriptor selection or explore non-linear methods

- Validate model robustness through y-scrambling or bootstrapping

Protocol 2: Implementing Artificial Neural Network QSAR

Artificial Neural Networks (ANNs) provide powerful non-linear modeling capabilities for complex structure-activity relationships, particularly with larger, diverse datasets (>100 compounds) where prediction accuracy outweighs interpretability needs [30] [39].

Step-by-Step Procedure:

- Data Preparation and Descriptor Calculation: Follow steps 1-2 from the MLR protocol, with particular attention to data quality as ANNs are sensitive to noise and outliers.

- Feature Selection and Dataset Division: Perform feature selection using methods compatible with non-linear relationships (e.g., Random Forest importance). Split data into training (70%), validation (15%), and test (15%) sets [30] [37].

- Network Architecture Design: Determine optimal network architecture through iterative experimentation. Begin with a single hidden layer containing neurons between the input and output layer sizes. The NF-κB inhibitor study employed an [8.11.11.1] architecture with two hidden layers [30].

- Parameter Optimization: Systematically optimize critical parameters including learning rate (0.01-0.1), momentum (0.5-0.9), and number of training epochs. Implement early stopping using the validation set to prevent overfitting [30].

- Model Training and Validation: Train the network using backpropagation algorithm. Monitor training and validation error to identify optimal stopping point. Evaluate final model on the test set using external validation metrics [30] [39].

- Model Interpretation: Apply interpretation techniques such as Partial Dependence Plots or Sensitivity Analysis to extract mechanistic insights from the "black-box" model [40] [37].

Troubleshooting Tips:

- If validation error increases while training error decreases, reduce model complexity or increase training data

- For unstable predictions, implement ensemble averaging of multiple networks

- Use regularization techniques (dropout, weight decay) to improve generalization

QSAR Algorithm Selection Workflow