Building a Robust Virtual Screening Workflow: From Molecular Docking Basics to AI-Enhanced Validation

This article provides a comprehensive guide for researchers and drug development professionals on establishing a rigorous virtual screening workflow with molecular docking.

Building a Robust Virtual Screening Workflow: From Molecular Docking Basics to AI-Enhanced Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on establishing a rigorous virtual screening workflow with molecular docking. It begins by deconstructing the core components and foundational theory of virtual screening, highlighting common pitfalls with incompatible programs and lost reproducibility[citation:1][citation:4]. The guide then details a step-by-step methodological pipeline, from target analysis and compound library preparation to executing docking simulations and analyzing results[citation:2][citation:4]. A dedicated section addresses critical troubleshooting and optimization strategies to overcome the inherent limitations of scoring functions and improve biological relevance[citation:2][citation:7]. Finally, the article explores advanced validation techniques and comparative analyses, including consensus scoring and AI-driven methods, to distinguish true binders from false positives and ensure reliable hit identification[citation:3][citation:6][citation:9]. This end-to-end resource is designed to equip scientists with the knowledge to build efficient, reproducible, and predictive virtual screening campaigns.

Laying the Groundwork: Understanding Virtual Screening Fundamentals and Core Concepts

Virtual Screening (VS) is a computational methodology used to identify promising lead compounds from vast chemical libraries by predicting their interaction with a biological target. Within a molecular docking research thesis, establishing a robust VS workflow is critical for prioritizing compounds for in vitro validation, optimizing resource allocation, and accelerating early drug discovery.

Primary Objectives:

- Efficiency: Rapidly reduce millions of compounds to a manageable number (< 1000) for detailed study.

- Enrichment: Increase the probability of identifying true active molecules (hits) over inactive ones.

- Fidelity: Employ sequential filters that balance computational cost with predictive accuracy.

- Reproducibility: Implement a documented, standardized protocol for consistent results.

Hierarchical Filtering Strategy: A Multi-Tiered Funnel

The core strategy employs a cascade of filters, increasing in complexity and accuracy while decreasing the number of compounds.

Table 1: Hierarchical Filtering Tiers in Virtual Screening

| Tier | Filter Name | Primary Objective | Typical Library Reduction | Computational Cost | Key Metrics |

|---|---|---|---|---|---|

| 1 | Property & Drug-Likeness | Remove compounds with unfavorable ADMET/physical properties. | 80-90% | Very Low | Lipinski's Rule of 5, QED, PAINS alerts. |

| 2 | Pharmacophore/Shape | Retain compounds matching essential interaction features or 3D shape of a known active. | 50-70% (of Tier 1 output) | Low | Fit value, RMSD to query shape. |

| 3 | Molecular Docking (Standard Precision) | Predict binding pose and score affinity for all compounds passing Tiers 1 & 2. | 90-95% (of Tier 2 output) | Medium | Docking Score (e.g., Glide SP Score, Vina score). |

| 4 | Molecular Docking (High Precision) | Refine top poses from Tier 3 with more rigorous scoring. | 10-20% (of Tier 3 output) | High | MM-GBSA/MM-PBSA ΔG, Prime score. |

| 5 | Visual Inspection & Clustering | Final curation based on interaction patterns and chemical diversity. | 20-50% (of Tier 4 output) | Very High (expert time) | Interaction analysis, cluster representatives. |

Detailed Application Notes and Protocols

Protocol 3.1: Tier 1 – Property-Based Filtering

- Objective: Filter out compounds with poor drug-likeness or obvious undesirable moieties.

- Software: Open-source tools like RDKit or commercial suites (e.g., Schrödinger Canvas, MOE).

- Method:

- Input: Raw compound library (e.g., ZINC20, Enamine REAL) in SMILES or SDF format.

- Calculate Descriptors: Compute molecular weight, LogP, hydrogen bond donors/acceptors, topological polar surface area (TPSA).

- Apply Rules: Filter for compliance with Lipinski's Rule of 5 (or appropriate guidelines for beyond Rule of 5 space).

- Pan-Assay Interference Compounds (PAINS) Filter: Remove compounds matching PAINS substructures using a validated filter set.

- Output: A cleaned library for subsequent structure-based filtering.

Protocol 3.2: Tier 3 – Standard Precision Docking

- Objective: Score and rank compounds based on predicted binding affinity and pose.

- Software: AutoDock Vina, GNINA, Schrödinger Glide SP.

- Method (Using AutoDock Vina):

- Receptor Preparation: From a protein crystal structure (PDB), remove water, add hydrogens, assign charges (e.g., using AutoDockTools or MGLTools).

- Ligand Preparation: Convert filtered compounds to 3D, minimize energy, assign flexible torsions.

- Grid Box Definition: Define a search space centered on the binding site. Example coordinates and size:

center_x = 10.5, center_y = 22.0, center_z = 18.0, size_x = 20, size_y = 20, size_z = 20. - Docking Execution: Run Vina with command:

vina --receptor protein.pdbqt --ligand library.pdbqt --config config.txt --out results.pdbqt --log log.txt. Use--exhaustivenesssetting of 8-32 for balance of speed/accuracy. - Post-processing: Extract docking scores (in kcal/mol) from the output log file. Select top 1-5% of compounds based on score for Tier 4.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools & Datasets

| Item | Function in VS Workflow | Example/Provider |

|---|---|---|

| Compound Libraries | Source of small molecules for screening. | ZINC20 (free), Enamine REAL (commercial), MCULE (commercial). |

| Protein Data Bank (PDB) | Source of 3D macromolecular structures for target preparation. | www.rcsb.org |

| Cheminformatics Toolkit | For ligand preparation, descriptor calculation, and filtering. | RDKit (open-source), Schrödinger LigPrep (commercial). |

| Molecular Docking Software | Core engine for pose prediction and scoring. | AutoDock Vina (open-source), Glide (commercial), GOLD (commercial). |

| Free Energy Calculations | For high-affinity prediction post-docking. | Schrödinger Prime MM-GBSA (commercial), AMBER (open-source). |

| Visualization Software | Critical for final pose inspection and analysis. | PyMOL (commercial/open-source), Maestro (commercial), UCSF ChimeraX (free). |

| High-Performance Computing (HPC) | Infrastructure to run computationally intensive steps. | Local clusters, cloud computing (AWS, Azure). |

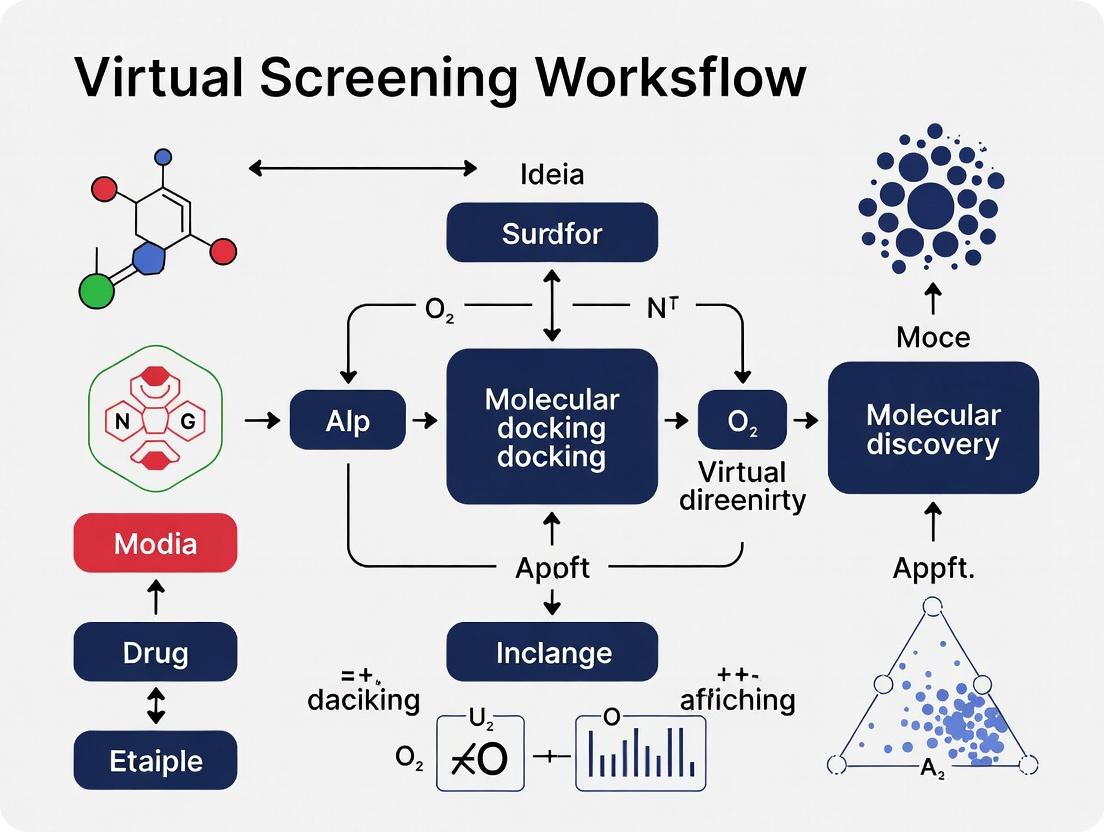

Workflow Visualization

Diagram Title: Hierarchical Virtual Screening Workflow Funnel

Molecular docking is a pivotal computational technique in structural biology and drug discovery, enabling the prediction of the preferred orientation of a small molecule (ligand) when bound to a target macromolecule (receptor). Within a virtual screening workflow, a robust docking protocol is essential for efficiently identifying novel lead compounds. This document details the core components, protocols, and practical considerations for establishing a reliable molecular docking pipeline.

Ligand Preparation

The initial step involves curating and optimizing the 3D structures of small molecules for docking.

Protocol: Standard Ligand Preparation

Objective: To generate accurate, energetically minimized, and protonated 3D ligand structures in a format suitable for docking.

- Source Compounds: Obtain 2D structures (e.g., SDF, SMILES) from databases like ZINC, PubChem, or in-house libraries.

- Generate 3D Conformations: Use tools like Open Babel (

obabel -ismi input.smi -osdf --gen3D -O output.sdf) or RDKit to convert 2D representations to 3D. - Add Hydrogens and Protonation States: At a physiological pH of 7.4 ± 0.5, assign correct protonation and tautomeric states using tools like

epik(Schrödinger) ormolconvert(ChemAxon). For metal-complexing ligands, consider alternative states. - Energy Minimization: Perform a brief molecular mechanics optimization (e.g., using the MMFF94 or UFF force field) to relieve steric clashes. This step is often integrated into the 3D generation process.

- Output Format: Convert all prepared ligands into a unified format (e.g., MOL2, SDF, PDBQT for AutoDock) with appropriate atom types and partial charges.

Key Quantitative Considerations in Ligand Preparation

Table 1: Common Ligand Preparation Software and Their Characteristics

| Software/Tool | Primary Method | Typical Processing Speed (molecules/sec) | Key Strength | Common Output Format |

|---|---|---|---|---|

| Open Babel | Rule-based, Force Field | 100-500 | Open-source, fast batch processing | SDF, MOL2, PDBQT |

| RDKit | Rule-based, Force Field | 50-200 | Programmable (Python), extensive cheminformatics | SDF, MOL2 |

| LigPrep (Schrödinger) | OPLS4 Force Field, Epik | 10-50 | Accurate tautomer/protonation state enumeration | MAE |

| MOE | MMFF94 Force Field | 20-80 | Integrated suite with visualization | MDB, MOL2 |

Receptor Preparation

The accuracy of the receptor (protein/nucleic acid) structure is the most critical factor influencing docking success.

Protocol: Protein Receptor Preparation from a PDB File

Objective: To generate a clean, all-atom, energetically reasonable protein structure for docking.

- Structure Selection & Import: Download the target protein structure (e.g., from the Protein Data Bank, PDB). Prefer high-resolution (<2.0 Å) structures with a relevant ligand co-crystallized.

- Initial Cleaning: Remove all non-essential molecules: crystallographic water molecules, ions, and original bound ligands. Retain structurally important water molecules or cofactors (e.g., heme, Mg²⁺).

- Add Missing Components: Add missing hydrogen atoms. Model missing side chains (e.g., using SCWRL4) and, if necessary, short missing loops.

- Assign Protonation States & Tautomers: For histidine, aspartate, glutamate, lysine, etc., assign correct protonation states at pH 7.4. Use tools like

PDB2PQRorH++server. Pay special attention to the active site residues. - Energy Minimization: Perform restrained minimization of the added hydrogens and side chains to remove steric clashes, keeping the protein backbone fixed. Tools:

AMBER,CHARMM, orUCSF Chimera. - Define the Binding Site: Based on the co-crystallized ligand or known catalytic residues, define the search space (grid box) for docking. Center coordinates and box dimensions must be recorded.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Receptor Preparation and Docking

| Item/Category | Specific Examples | Function in Workflow |

|---|---|---|

| Structure Visualization | UCSF Chimera, PyMOL, Maestro | Visual inspection, cleaning, binding site analysis, and result visualization. |

| Force Fields | AMBER ff19SB, CHARMM36, OPLS4 | Provide parameters for energy minimization and scoring function calculations. |

| Protonation State Tools | PROPKA (integrated), H++ server, Epik | Predict pKa values and assign correct protonation states of residues at a given pH. |

| Docking Suites | AutoDock Vina/GPU, Glide (Schrödinger), GOLD | Perform the core docking simulation, sampling ligand poses and scoring them. |

| Scoring Function Libraries | AutoDock4.2, ChemPLP (GOLD), GlideScore | Algorithms that rank predicted ligand poses based on estimated binding affinity. |

Title: Workflow for Protein Receptor Preparation

Docking Execution

This phase involves the computational sampling of ligand conformations and orientations within the defined binding site.

Protocol: Running a Virtual Screen with AutoDock Vina

Objective: To dock a library of prepared ligands against a prepared receptor to generate pose and affinity predictions.

- Input Preparation: Ensure receptor is in PDBQT format (

prepare_receptor4.pyfrom AutoDockTools). Ensure all ligands are in PDBQT format (prepare_ligand4.py). - Configuration File: Create a

conf.txtfile specifying: - Run Docking: Execute the command:

vina --config conf.txt --log results.log --out results. For batch screening, a shell script to iterate over individual ligands is recommended. - Output Collection: The output (

results.pdbqt) contains multiple predicted poses per ligand, each with a docking score (in kcal/mol). Extract the top-scoring pose for each ligand for analysis.

Quantitative Performance Metrics

Table 3: Typical Docking Parameters and Performance

| Docking Program | Scoring Function | Typical Exhaustiveness/Search Effort | Approx. Time/Ligand (CPU) | Output Metric (Unit) |

|---|---|---|---|---|

| AutoDock Vina | Hybrid (Vina) | 8-32 (default=8) | 30-90 seconds | Affinity (kcal/mol) |

| AutoDock-GPU | Hybrid (Vina/AD4) | 50 | 2-10 seconds* | Affinity (kcal/mol) |

| Glide (SP) | GlideScore | Standard Precision (SP) | 1-2 minutes | GScore (kcal/mol) |

| GOLD | ChemPLP, GoldScore | Default (10x GA runs) | 1-3 minutes | Fitness Score |

- *Using NVIDIA GPU acceleration.

Post-Docking Analysis and Scoring

The final step involves interpreting results, ranking compounds, and selecting hits.

Protocol: Analyzing Docking Results and Hit Selection

Objective: To identify credible binding poses and rank ligands based on calculated binding affinities and interaction patterns.

- Pose Clustering & Inspection: Visually inspect the top-ranked poses of high-scoring ligands using PyMOL or Chimera. Look for consistency in binding mode (pose clustering) and key interactions (H-bonds, salt bridges, hydrophobic contacts).

- Rescoring: Apply a secondary, more rigorous scoring function (e.g., MM/GBSA calculation using

AMBERorSchrödinger Prime) to the top 100-1000 poses to improve ranking accuracy. This step is computationally expensive. - Interaction Fingerprinting: Generate interaction fingerprints (IFPs) to compare the binding mode of hits to a known active/native ligand.

- Consensus Scoring: Combine rankings from multiple scoring functions to mitigate the limitations of any single function and improve hit identification robustness.

- Hit Selection Criteria: Select compounds based on a combination of:

- Favorable docking score (e.g., ≤ -7.0 kcal/mol for Vina).

- Plausible binding mode forming key interactions.

- Drug-like properties (filter using Lipinski's Rule of Five).

- Commercial availability or synthetic feasibility.

Title: Post-Docking Analysis and Hit Selection Workflow

A systematic docking workflow comprising meticulous ligand/receptor preparation, controlled docking execution, and critical post-docking analysis forms the backbone of a reliable virtual screening campaign. Each component introduces specific parameters and choices that must be optimized and validated for the target of interest. Integrating these components into an automated, reproducible pipeline is essential for leveraging molecular docking effectively in modern drug discovery research.

Application Notes and Protocols

This document details the foundational steps required to establish a robust, reliable, and reproducible virtual screening (VS) workflow for molecular docking research. Success in VS is contingent on rigorous upfront preparation, which directly dictates the quality of downstream computational experiments and the likelihood of identifying true bioactive compounds.

Bibliographic Research: Defining the Biological and Chemical Landscape

Objective: To comprehensively understand the disease context, biological target, known ligands, and existing structure-activity relationships (SAR) before any computational experiment begins.

Protocol:

- Target Identification & Validation Review:

- Sources: PubMed, Google Scholar, ClinicalTrials.gov, OMIM, UniProt.

- Action: Perform keyword searches (e.g., "target name," "disease pathogenesis," "genetic validation," "knockout phenotype"). Collect and review primary literature and meta-analyses supporting the target's role in the disease.

- Deliverable: A summary document with key validation evidence (genetic, pharmacological, clinical).

Ligand and SAR Data Mining:

- Sources: ChEMBL, PubChem, BindingDB, Patent databases (e.g., USPTO, Espacenet).

- Action: Query the target (by name, UniProt ID) across databases. Download bioactivity data (IC50, Ki, Kd). Filter for high-confidence data (e.g., unambiguous assay type, reported equilibrium constants).

- Deliverable: A curated dataset of known actives, inactive analogs, and associated metadata (Table 1).

Structural Biology Review:

- Sources: Protein Data Bank (PDB), PDBsum, literature.

- Action: Search for experimentally determined structures (X-ray, Cryo-EM) of the target, preferably in complex with relevant ligands or tool compounds. Assess resolution, ligand occupancy, and any conformational states.

Table 1: Quantitative Summary of Curated Bibliographic Data for a Hypothetical Kinase Target

| Data Category | Source | Count | Key Metric (Median) | Purpose in VS Workflow |

|---|---|---|---|---|

| Bioactivity Records | ChEMBL v33 | 4,250 entries | Ki = 18 nM | Define active/inactive thresholds; train machine learning models. |

| Unique Small Molecules | PubChem/ChEMBL | 1,850 compounds | MW: 415 Da | Create a diverse decoy set for benchmarking. |

| High-Resolution Structures | PDB | 42 structures | Resolution: 2.1 Å | Guide binding site definition, receptor preparation, and docking protocol validation. |

| Known Clinical Candidates | PubMed/Patents | 12 compounds | Phase II (Max) | Inform chemical tractability and potential off-target effects. |

Data Collection and Curation: Building Reproducible Inputs

Objective: To transform bibliographic information into clean, machine-readable data for computational setup.

Protocol:

- Ligand Database Curation for Screening:

- Source Library Selection: Choose a commercial (e.g., ZINC, Enamine REAL) or public compound library. Apply filters based on drug-likeness (e.g., Lipinski's Rule of Five, PAINS filters, reactive groups).

- Preparation: Download SMILES strings or 2D structures. Standardize tautomers, protonation states (at physiological pH 7.4), and generate 3D conformers using tools like RDKit or Open Babel.

- File Format: Generate multi-conformer databases in industry-standard formats (e.g., .sdf, .mol2).

- Receptor Structure Preparation:

- Structure Selection: Prioritize structures with high resolution (<2.5 Å), relevant ligands, and minimal mutations. Consider the biological oligomeric state.

- Preparation Workflow: Use a software suite (e.g., Schrödinger's Protein Preparation Wizard, UCSF Chimera, MOE) to: add missing hydrogen atoms, assign bond orders, correct missing side chains, and optimize H-bond networks.

- Protonation States: Use empirical pKa prediction tools (e.g., PROPKA) to determine the protonation states of key binding site residues (His, Asp, Glu) in the context of the bound ligand and physiological pH.

Table 2: Research Reagent Solutions for Data Collection & Preparation

| Item / Software Solution | Provider / Example | Function in Protocol |

|---|---|---|

| Chemical Database | ZINC20, Enamine REAL, MCULE | Provides vast, purchasable libraries of small molecules for virtual screening. |

| Cheminformatics Toolkit | RDKit, Open Babel | Used for molecular standardization, descriptor calculation, file format conversion, and filtering. |

| Protein Preparation Suite | Schrödinger Maestro, MOE, UCSF Chimera | Integrates tools for adding hydrogens, assigning charges, optimizing H-bonds, and refining protein structures. |

| pKa Prediction Tool | PROPKA, Epik (Schrödinger) | Predicts protonation states of amino acid side chains at a specified pH, critical for accurate electrostatics. |

| Structure Visualization | PyMOL, UCSF Chimera | Enables visual inspection of binding sites, ligand interactions, and structural quality. |

Target Assessment: Defining the Docking Universe

Objective: To critically evaluate the target's druggability and define precise parameters for molecular docking experiments.

Protocol:

- Binding Site Analysis and Characterization:

- Tools: CASTp, fpocket, SiteMap (Schrödinger).

- Action: Identify and rank potential binding pockets on the protein surface. Characterize them by volume, depth, hydrophobicity, and enclosure.

- Deliverable: Selection of the primary, biologically relevant binding site for docking.

- Docking Protocol Validation (Critical Step):

- Reference Set: From the curated bibliographic data, create a set of known active ligands and decoy molecules (inactive or presumed inactive with similar physchem properties).

- Re-docking & Cross-docking: Re-dock the native ligand to its original structure to test pose reproduction (RMSD < 2.0 Å). Cross-dock multiple actives into multiple receptor structures to assess protocol robustness.

- Enrichment Assessment: Perform a virtual screen of the active/decoy set. Calculate enrichment factors (EF) and plot Receiver Operating Characteristic (ROC) curves to evaluate the docking protocol's ability to prioritize actives over decoys (Table 3).

Table 3: Benchmarking Metrics for Docking Protocol Validation

| Validation Test | Success Criteria | Typical Benchmark Value | Interpretation |

|---|---|---|---|

| Pose Reproduction (RMSD) | < 2.0 Å | 1.2 Å | Protocol accurately reproduces the experimental binding mode. |

| Enrichment Factor at 1% (EF1%) | > 10 | 15.3 | The protocol retrieves 15x more actives in the top 1% of ranked list than a random selection. |

| Area Under ROC Curve (AUC) | > 0.7 | 0.82 | The protocol has good overall discriminatory power between actives and decoys. |

Visualization of Workflows

Title: Virtual Screening Foundational Workflow

Title: Receptor Structure Preparation Protocol

Title: Docking Protocol Validation Process

Application Notes

Within the context of establishing a robust virtual screening workflow for molecular docking research, the preparation of a high-quality virtual compound library is a critical foundational step. The quality of input structures directly determines the reliability of docking poses and subsequent scoring. This protocol details the essential preprocessing steps: chemical standardization, representative conformer generation, and 3D structure preparation for docking. These steps ensure molecular consistency, account for ligand flexibility, and produce structures compatible with the steric and chemical requirements of the target binding site.

Protocols for Virtual Library Preparation

Protocol 1: Compound Standardization and Cleaning

Objective: To normalize molecular representation, correct errors, and remove undesired compounds to create a consistent, high-quality starting library. Materials:

- Input compound library (e.g., in SDF, SMILES format).

- Software: RDKit (v2024.03.1 or later), Open Babel (v3.1.1 or later), or KNIME with relevant chemical nodes. Procedure:

- Format Conversion: If necessary, convert all inputs to a consistent format (e.g., SMILES) using Open Babel:

babel -i<sdf> input.sdf -osmi output.smiles. - Sanitization & Valence Correction: Use RDKit's

Chem.SanitizeMol()to ensure valences are correct and aromaticity is properly perceived. - Standardization Rules:

- Neutralization: Strip salts and counterions. Remove small fragments (e.g., solvents) based on molecular weight.

- Tautomer Standardization: Apply a consistent tautomerization rule (e.g., using the RDKit's

TautomerEnumeratoror the MolVS algorithm) to represent each compound in a canonical protonation state. - Stereochemistry: Explicitly define stereocenters; flag or remove compounds with undefined stereochemistry if required.

- Functional Group Standardization: Normalize representations of nitro groups, sulfoxides, and other groups that have multiple common notations.

- Descriptor Filtering: Apply calculated property filters to remove compounds that violate drug-likeness rules (see Table 1). Use RDKit's Descriptors module.

- Duplicate Removal: Identify and remove duplicates based on canonical isomeric SMILES or InChIKey.

Protocol 2: Conformer Generation and Geometrical Optimization

Objective: To generate an ensemble of low-energy 3D conformers for each standardized molecule, representing its accessible conformational space. Materials:

- Standardized molecules from Protocol 1.

- Software: RDKit, Open Babel, or OMEGA (OpenEye). Procedure:

- Initial 3D Generation: For each molecule, generate an initial 3D conformation using RDKit's

EmbedMolecule()function (based on distance geometry) or ETKDGv3 method for better performance. - Conformer Ensemble Generation:

- Set parameters:

numConfs=50,pruneRmsThresh=0.5Å (preliminary clustering). - Use

MMFF94orETKDGforce field for generation.

- Set parameters:

- Conformer Optimization: Minimize the energy of each generated conformer using a force field (e.g., MMFF94 or UFF) with

MaxIters=200. In RDKit:MMFFOptimizeMoleculeConfs(). - Ensemble Pruning: Cluster conformers based on heavy-atom RMSD (typical threshold: 1.0 Å). Retain the lowest-energy conformer from each cluster, ensuring a maximum final set (e.g., 10-20 conformers per molecule).

Protocol 3: 3D Structure Preparation for Docking

Objective: To prepare the final 3D molecular structures in a format ready for docking simulations, including protonation state assignment and file format conversion. Materials:

- Low-energy conformer ensembles from Protocol 2.

- Software: Open Babel, Schrödinger's LigPrep, or MOE's Protonate 3D.

- Target receptor's binding site pH information. Procedure:

- Protonation State Assignment: Assign physiologically relevant protonation states at the target pH (typically pH 7.4 ± 0.5). Use tools like Open Babel's

--gen3dand-pflags or dedicated tools like Epik.- Command example:

babel -ismi molecule.smi -osdf output.sdf --gen3d -p 7.4.

- Command example:

- Partial Charge Assignment: Assign partial atomic charges compatible with the chosen docking software's force field. Common methods include Gasteiger-Marsili (fast) or MMFF94 charges.

- In RDKit:

ComputeGasteigerCharges(mol).

- In RDKit:

- Final Format Conversion: Convert the prepared 3D structures to the specific file format required by the docking engine (e.g., MOL2 for AutoDock Vina, PDBQT for AutoDock4/GPU, SDF for Glide).

- For PDBQT (AutoDock): Use Open Babel:

babel -isdf prepared.sdf -opdbqt output.pdbqt.

- For PDBQT (AutoDock): Use Open Babel:

Data Presentation

Table 1: Standard Quantitative Filters for Virtual Library Curation

| Filter Name | Typical Threshold | Purpose | Common Tool/Descriptor |

|---|---|---|---|

| Molecular Weight (MW) | 150 - 500 Da | Enforces Lipinski's Rule of 5, promotes oral bioavailability. | rdkit.Chem.Descriptors.MolWt |

| Octanol-Water Partition Coefficient (LogP) | ≤ 5 | Controls lipophilicity, impacts membrane permeability & solubility. | rdkit.Chem.Crippen.MolLogP |

| Hydrogen Bond Donors (HBD) | ≤ 5 | Limits capacity to donate H-bonds, per Rule of 5. | rdkit.Chem.Lipinski.NumHDonors |

| Hydrogen Bond Acceptors (HBA) | ≤ 10 | Limits capacity to accept H-bonds, per Rule of 5. | rdkit.Chem.Lipinski.NumHAcceptors |

| Rotatable Bonds (RB) | ≤ 10 | Controls molecular flexibility, linked to oral bioavailability. | rdkit.Chem.Lipinski.NumRotatableBonds |

| Polar Surface Area (TPSA) | ≤ 140 Ų | Predicts cell permeability (e.g., blood-brain barrier). | rdkit.Chem.rdMolDescriptors.CalcTPSA |

| Formal Charge | -2 to +2 | Removes highly charged species, improving compound handling. | rdkit.Chem.rdmolops.GetFormalCharge |

Table 2: Comparison of Conformer Generation Methods

| Method/Software | Algorithm Basis | Speed | Handling of Macrocycles | Key Parameter (Typical Value) | Optimal Use Case |

|---|---|---|---|---|---|

| RDKit ETKDGv3 | Distance Geometry + Knowledge-based Torsion Preferences | Fast | Good with constraints | numConfs (50), pruneRmsThresh (0.5Å) |

High-throughput, general-purpose screening. |

| OMEGA (OpenEye) | Systematic Rule-based + Torsion Driving | Medium | Excellent | MaxConfs (200), RMSD (1.0Å) |

High-accuracy studies, demanding flexibility. |

| Open Babel (--confab) | Systematic Rotor Search | Slow (exhaustive) | Fair | --rcutoff (6.5), --conf (1000000) |

Exhaustive search for small, flexible molecules. |

| Conformator | Incremental Construction | Fast | Good | max_conformers (100) |

Fast generation for large libraries. |

Visualization

Title: Virtual Library Preparation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Virtual Library Preparation

| Item Name | Function in Protocol | Example (Version/Provider) | Key Use |

|---|---|---|---|

| Chemical Toolkit | Core library for molecule manipulation, descriptor calculation, and conformer generation. | RDKit (2024.03.1) | Protocols 1 & 2: Sanitization, filtering, ETKDG conformer generation. |

| File Format Converter | Converts between >100 chemical file formats; performs basic 3D generation and protonation. | Open Babel (3.1.1) | Protocol 1 (format), Protocol 3 (protonation, PDBQT conversion). |

| Tautomer Standardizer | Applies consistent rules to generate a canonical tautomeric form for each molecule. | MolVS (in RDKit) / IGraph | Protocol 1: Reduces redundancy and ensures representation consistency. |

| Conformer Generator | Specialized software for generating comprehensive, high-quality conformer ensembles. | OMEGA (OpenEye) | Protocol 2: Alternative for high-accuracy, macrocycle-aware conformer sampling. |

| Protonation Tool | Predicts and assigns dominant microspecies at a given pH for 3D structures. | Epik (Schrödinger) / Open Babel | Protocol 3: Critical for accurate representation of ionization states at physiological pH. |

| Workflow Platform | Visual platform to integrate, automate, and document the entire preparation pipeline. | KNIME / Nextflow | Orchestrates all protocols into a reproducible, scalable workflow. |

Within a virtual screening workflow, molecular docking predicts the preferred orientation and binding affinity of a small molecule (ligand) within a target protein’s binding site. The core computational challenge is the efficient exploration of an astronomically large conformational and orientational space. Foundational algorithms addressing this challenge are broadly categorized into three paradigms: Systematic, Stochastic, and Incremental Construction methods. This article details their application, protocols, and integration into a robust screening pipeline.

The following table summarizes the quantitative performance characteristics and typical use cases of the three foundational algorithm classes.

Table 1: Comparative Analysis of Foundational Docking Algorithms

| Algorithm Class | Core Principle | Search Completeness | Computational Speed | Typical Use Case | Representative Software |

|---|---|---|---|---|---|

| Systematic | Explores all degrees of freedom via a fixed grid or exhaustive enumeration. | High (within defined intervals) | Slow to Moderate | Binding site mapping, focused library docking | DOCK, GRAMM |

| Stochastic | Uses random moves (Monte Carlo, GA) guided by scoring to sample space. | Probabilistic, depends on runtime | Moderate to Fast | High-throughput virtual screening of large libraries | AutoDock Vina, GOLD (options) |

| Incremental Construction | Builds ligand pose inside site by fragmenting and regrowing. | High for built fragments | Moderate | Docking flexible ligands with many rotatable bonds | Glide (SP, XP), FRED, Surflex-Dock |

Detailed Experimental Protocols

Protocol 1: Systematic Docking with a Grid-Based Approach (e.g., DOCK)

Objective: To perform an exhaustive search of ligand orientations within a pre-defined binding site grid.

Receptor Preparation:

- Obtain the target protein structure (PDB format). Remove water molecules and heteroatoms not part of the binding site.

- Add hydrogen atoms and assign partial charges using a force field (e.g., AMBER, CHARMM). Optimize side-chain conformations of ambiguous residues.

- Define the binding site using a molecular surface (e.g., Connolly surface) of the receptor.

Grid Generation:

- Enclose the binding site in a 3D box with user-defined dimensions (e.g., 20Å x 20Å x 20Å).

- Discretize the box into grid points. Pre-calculate and store physicochemical properties (e.g., electrostatic potential, van der Waals potential) at each point.

Ligand Preparation:

- Generate 3D structures for ligand library. Assign appropriate protonation states and partial charges (matching the receptor force field).

- For each ligand, enumerate multiple conformers to account for flexibility.

Pose Exploration & Scoring:

- Systematically match ligand atoms to favorable grid points using clique detection or other geometric hashing techniques.

- Score each generated pose using the pre-computed grid potentials and a force field-based scoring function.

- Cluster similar poses and output the top-ranked solutions.

Protocol 2: Stochastic Docking using a Monte Carlo/Genetic Algorithm (e.g., AutoDock Vina)

Objective: To efficiently sample the ligand's conformational space within the binding site using stochastic optimization.

System Setup:

- Prepare receptor and ligand files in PDBQT format, which includes atomic coordinates, partial charges, and atom types.

- Define the search space by specifying the center (x, y, z) and size (in Ångströms) of a 3D box encompassing the binding site.

Algorithm Execution:

- The algorithm initializes a population of random ligand conformations and orientations within the search box.

- Iterative Cycle (Monte Carlo/Genetic Algorithm): a. Perturbation: Generate new poses by applying random translations, rotations, and torsional changes. b. Evaluation: Score the new pose using a rapid scoring function (Vina uses a machine-learning-enhanced empirical function). c. Acceptance/Selection: Based on the Metropolis criterion (or fitness ranking in GA), accept or reject the new pose for the next generation.

- Continue for a predefined number of iterations or until convergence.

Post-Processing:

- Collect all unique, low-energy poses from the final population.

- Perform local energy minimization of the top poses.

- Output a user-defined number of top-scoring poses (e.g., 9) for visual inspection.

Protocol 3: Incremental Construction Docking (e.g., Glide SP/XP)

Objective: To precisely dock flexible ligands by constructing optimal poses within the binding site incrementally.

Receptor Grid Preparation:

- Generate a much finer grid than in systematic methods, capturing van der Waals and electrostatic properties of the receptor.

- Generate complementary "pharmacophore" grids that describe favorable interaction sites (H-bond donors/acceptors, hydrophobic patches).

Ligand Fragmentation:

- Identify the ligand's core fragment (largest rigid segment). The remaining parts are treated as rotatable side chains.

Placement Phase:

- Systematically position the core fragment at thousands of locations and orientations within the binding site grid.

- Score each placement using grid-based potentials. Retain the top several hundred placements.

Construction & Refinement Phase:

- For each retained core placement, incrementally add the ligand's rotatable groups in multiple torsional minima.

- Prune unpromising partial constructions to manage combinatorial explosion.

- Once the full ligand is reconstructed, perform a final minimization and optimization of the pose using the OPLS force field and a more rigorous scoring function (GlideScore).

Visual Workflows

Title: Systematic Grid-Based Docking Workflow

Title: Stochastic Search Docking Cycle

Title: Incremental Construction Docking Steps

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Virtual Screening Docking

| Reagent / Material | Function in Workflow | Example / Notes |

|---|---|---|

| Protein Structure Database | Source of 3D atomic coordinates for the target receptor. | RCSB Protein Data Bank (PDB), AlphaFold DB. |

| Small Molecule Library | Collection of compounds to be screened virtually. | ZINC, Enamine REAL, MCULE, in-house corporate libraries. |

| Molecular File Format Converters | Tools to ensure consistent formatting and atom typing. | Open Babel, RDKit, MOE. Converts SDF, MOL2, PDB to PDBQT, etc. |

| Force Field Parameters | Set of equations and constants defining molecular mechanics potentials. | OPLS4, CHARMM36, AMBER ff19SB. Used for scoring and refinement. |

| Scoring Function | Mathematical method to predict binding affinity of a pose. | Empirical (Chemscore), Force Field-based, Knowledge-based, Machine Learning (NNScore, RF-Score). |

| Visualization & Analysis Software | For inspecting docking poses, interactions, and analyzing results. | PyMOL, ChimeraX, Maestro, Discovery Studio. |

| High-Performance Computing (HPC) Cluster | Computational resource to run thousands of docking jobs in parallel. | Local CPU/GPU clusters or cloud computing (AWS, Azure). |

A Step-by-Step Guide to Constructing Your Virtual Screening and Docking Pipeline

Within the thesis framework for establishing a robust virtual screening workflow, the initial and most critical phase is the comprehensive analysis of the biological target and its binding site(s). This step directly informs all subsequent parameter selections for molecular docking, determining the success or failure of the entire campaign. This protocol details the methodologies for acquiring, analyzing, and characterizing protein targets and binding pockets to enable informed setup of docking simulations.

Target Acquisition and Preprocessing Protocol

Objective: To obtain a high-quality, biologically relevant 3D structure of the target protein.

Methodology:

- Target Identification: Using public databases (UniProt, PubMed), confirm the target's role in the disease pathway.

- Structure Retrieval:

- Access the Protein Data Bank (PDB) using the target's UniProt ID or name.

- Apply filters: Resolution ≤ 2.5 Å, Homo sapiens source organism, X-ray crystallography method.

- If multiple structures exist, prioritize complexes with relevant ligands/native substrates.

- Alternative: For targets without experimental structures, generate a homology model using servers like SWISS-MODEL or AlphaFold2 (via AlphaFold DB).

- Structure Preparation:

- Using software like UCSF Chimera or Maestro's Protein Preparation Wizard:

- Remove all non-protein entities except essential cofactors or crystallographic waters.

- Add missing hydrogen atoms and assign protonation states at physiological pH (7.4).

- Optimize hydrogen-bonding networks.

- Perform energy minimization to relieve steric clashes.

- Using software like UCSF Chimera or Maestro's Protein Preparation Wizard:

Binding Site Analysis and Characterization Protocol

Objective: To define and quantitatively characterize the primary binding pocket and any potential allosteric sites.

Methodology:

- Site Identification:

- Ligand-based: If a co-crystallized ligand exists, define the binding site as residues within 5-8 Å of the ligand.

- De novo prediction: Use computational tools like FTMap, SiteMap (Schrödinger), or DoGSiteScout to detect potential binding cavities.

- Pocket Characterization: Calculate the following physicochemical and geometric descriptors for each identified site:

- Volume & Surface Area: Using POVME or CASTp.

- Hydrophobicity: Proportion of non-polar residues.

- Electrostatics: Calculate partial charge distribution via APBS.

- Solvent Accessibility: Via DSSP algorithm.

- Residue Flexibility: Analyze B-factors from the PDB file; high values indicate high flexibility.

- Conservation Score: Use ConSurf to analyze evolutionary conservation of lining residues.

Table 1: Quantitative Binding Site Descriptors for Exemplar Target Kinase XYZ (PDB: 7ABC)

| Descriptor | Primary Site (ATP) | Allosteric Site | Measurement Tool |

|---|---|---|---|

| Volume (ų) | 485 | 312 | DoGSiteScout |

| Surface Area (Ų) | 420 | 275 | DoGSiteScout |

| Hydrophobicity (%) | 65% | 45% | PLIP |

| Avg. B-factor | 45.2 | 62.8 | PDB Data |

| Conservation Score | High (8/9) | Medium (5/9) | ConSurf |

| Predicted Druggability | High | Moderate | SiteMap |

Diagram: Virtual Screening Workflow - Target Analysis Phase

Diagram Title: Target Analysis Informs Docking Parameters

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Target and Binding Site Analysis

| Tool/Resource | Type | Primary Function | Access |

|---|---|---|---|

| RCSB Protein Data Bank | Database | Repository for experimentally determined 3D structures of proteins/nucleic acids. | https://www.rcsb.org |

| AlphaFold Protein Structure Database | Database | Repository of highly accurate predicted protein structures generated by AlphaFold2. | https://alphafold.ebi.ac.uk |

| UCSF Chimera | Software | Interactive visualization and analysis of molecular structures; preparation tasks. | https://www.cgl.ucsf.edu/chimera/ |

| PyMOL | Software | Molecular visualization system for rendering high-quality images and analysis. | https://pymol.org/ |

| Schrödinger Suite (Maestro) | Software Platform | Integrated platform for protein preparation, site analysis (SiteMap), and docking. | Commercial |

| DoGSiteScout | Web Server | Automated binding site detection, analysis, and druggability prediction. | https://dogsite.zbh.uni-hamburg.de |

| ConSurf | Web Server | Estimation of evolutionary conservation of amino acid positions in a protein. | https://consurf.tau.ac.il |

| APBS | Software | Modeling electrostatics in biomolecular systems via Poisson-Boltzmann equation. | https://www.poissonboltzmann.org |

Parameter Selection Protocol Based on Analysis

Objective: To translate binding site analysis into specific docking software parameters.

Methodology:

- Grid Generation:

- Center: Defined by centroid of the co-crystallized ligand or the predicted pocket center.

- Dimensions: Must encompass the entire characterized pocket volume with a margin of ≥ 10 Å in each direction.

- Search Algorithm & Flexibility:

- Rigid Receptor Docking: Suitable for pockets with low average B-factors (< 50) and no major sidechain conformational changes.

- Flexible Sidechains: If analysis shows high B-factors or known induced-fit mechanisms, designate key lining residues (e.g., gatekeepers) as flexible.

- Ensemble Docking: If multiple distinct conformations exist (e.g., apo/holo structures), dock against an ensemble grid.

- Scoring Function Consideration:

- Empirical (e.g., Glide SP): Preferred for well-defined, hydrophobic pockets.

- Force-field based (e.g., Gold): May be better for polar sites with explicit water networks.

- Consensus scoring from different functions can improve reliability.

Table 3: Analysis-Driven Docking Parameter Selection for Kinase XYZ

| Analysis Result | Docking Parameter Implication | Selected Value |

|---|---|---|

| Pocket Volume = 485 ų | Grid Box Size (XYZ) | 30 x 30 x 30 Š|

| High Hydrophobicity (65%) | Scoring Function Weighting | Favor van der Waals terms |

| Flexible Loop (B-factor > 60) | Flexible Residues | Arg112, Asp184 |

| Conserved Catalytic Lysine | Constraint | Hydrogen-bond to Lys78 |

| Co-crystallized Water Network | Water Handling | Retain key bridging water |

Within a thesis focused on establishing a robust virtual screening (VS) workflow, curating a high-quality ligand library is a critical second step, following target preparation. The quality and chemical diversity of this library directly dictate the success of subsequent molecular docking and scoring stages. A poorly curated library, plagued by errors, lack of diversity, or inappropriate drug-like properties, will lead to wasted computational resources and high false-negative rates. This Application Note details the protocols for constructing a library suitable for structure-based virtual screening (SBVS), emphasizing reproducibility, chemical tractability, and broad coverage of chemical space to maximize the probability of identifying novel hit compounds.

Key Concepts & Data Requirements

The objective is to transform raw compound collections (commercial, in-house, or public databases) into a refined, ready-to-dock library. Key quantitative metrics for library assessment are summarized below.

Table 1: Key Quantitative Metrics for Ligand Library Assessment

| Metric | Target Range / Criteria | Purpose & Rationale |

|---|---|---|

| Initial Compound Count | 10^5 - 10^7+ | Defines the starting chemical space for screening. |

| Lipinski's Rule of 5 Violations | ≤ 1 (for oral drugs) | Filters for compounds with likely good oral bioavailability. |

| PAINS (Pan Assay Interference Compounds) Alerts | 0 | Removes compounds with known promiscuous, assay-interfering motifs. |

| REOS (Rapid Elimination of Swill) Alerts | 0 | Filters out compounds with undesirable reactive or toxic functional groups. |

| Chemical Diversity (Tanimoto Coefficient) | Average TC < 0.6 (for diverse set) | Ensures broad exploration of chemical space; clusters similar compounds. |

| Final Library Size | 10^3 - 10^5 | A manageable number for detailed molecular docking studies. |

| Molecular Weight (MW) | 150 - 500 Da | Optimizes for drug-likeness and ligand efficiency. |

| Log P (octanol-water) | -2 to 5 | Ensures appropriate hydrophobicity for membrane permeability and solubility. |

| Rotatable Bonds | ≤ 10 | Favors compounds with potential for better oral bioavailability. |

| Formal Charge | -2 to +2 | Avoids highly charged species with potential permeability issues. |

Detailed Experimental Protocols

Protocol 3.1: Initial Data Acquisition and Format Standardization

Objective: To gather compound structures from diverse sources and convert them into a consistent, standardized format.

- Source Selection: Download compounds from chosen databases (e.g., ZINC20, ChEMBL, MCULE, Enamine REAL). For a thesis project, consider a focused subset like "ZINC20 Fragments" or "ChEMBL Bioactive Molecules."

- File Format: Acquire structures in SMILES (Simplified Molecular Input Line Entry System) or SDF (Structure-Data File) format.

- Standardization (Using OpenEye Toolkit or RDKit):

a. Tautomer Standardization: Apply a consistent tautomerization rule (e.g., favouring the most abundant tautomer at pH 7.4).

b. Chirality: Explicitly define stereochemistry; consider enumerating unknown chiral centres if computationally feasible for the library size.

c. Protonation State: Generate the major microspecies at physiological pH (7.4) using a tool like

molcharge. d. 2D to 3D Conversion: Generate an initial 3D conformation using a fast method (e.g., MMFF94). e. Output: Save all standardized structures in a single SDF file.

Protocol 3.2: Application of Drug-Like and Lead-Like Filters

Objective: To remove compounds with undesirable physicochemical properties or structural alerts.

- Calculate Descriptors: For all standardized compounds, compute key descriptors: Molecular Weight (MW), LogP (e.g., using XLogP or MolLogP), Hydrogen Bond Donors (HBD), Hydrogen Bond Acceptors (HBA), Rotatable Bonds, Formal Charge.

- Apply Hard Filters: a. Remove any compound failing more than one of Lipinski's Rule of 5 criteria (MW ≤ 500, LogP ≤ 5, HBD ≤ 5, HBA ≤ 10). b. Apply a "Lead-like" filter optionally: MW 250-350, LogP ≤ 3.5. c. Filter based on Rotatable Bonds (e.g., ≤ 10) and Polar Surface Area (e.g., ≤ 140 Ų).

- Remove Unwanted Chemistries: Screen the library against structural alert lists using a KNIME workflow or scripts with RDKit: a. PAINS: Eliminate compounds matching any of the 480 PAINS substructure filters. b. REOS/Unwanted Functionality: Remove compounds containing reactive groups (e.g., aldehydes, epoxides, Michael acceptors), metals, or toxicophores.

- Output: Generate a filtered SDF file annotated with all calculated properties.

Protocol 3.3: Ensuring Chemical Diversity (Clustering and Maximum Diversity Selection)

Objective: To select a representative, non-redundant subset of compounds that maximally covers the available chemical space.

- Fingerprint Generation: Encode the chemical structures of the filtered library into binary bitstrings (fingerprints). Morgan fingerprints (circular fingerprints, ECFP4-like) with a radius of 2 and 1024 bits are recommended.

- Calculate Similarity Matrix: Compute the pairwise Tanimoto similarity coefficient for all compounds based on their fingerprints.

- Perform Clustering: Use a clustering algorithm to group similar compounds. a. Method: Butina clustering (sphere exclusion algorithm) is efficient for large sets. b. Parameter: Set a similarity threshold (e.g., 0.7-0.8 Tanimoto similarity). Compounds within this threshold are considered similar.

- Select Representatives: From each cluster, select a single representative compound. Common strategies include selecting the centroid compound or the compound with the best "drug-likeness" score (e.g., lowest LogP, fewest rotatable bonds).

- Output: A final, non-redundant SDF file ready for energy minimization and docking.

Visual Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Ligand Library Curation

| Item / Resource | Function / Purpose | Example/Provider |

|---|---|---|

| Compound Databases | Source of molecular structures for screening. | ZINC20, ChEMBL, PUBCHEM, Enamine, MCULE. |

| Cheminformatics Toolkits | Programming libraries for molecule manipulation, descriptor calculation, and filtering. | RDKit (Open-Source), OpenEye Toolkits (Commercial), CDK. |

| KNIME / Pipeline Pilot | Visual workflow platforms for automating multi-step curation protocols without extensive coding. | KNIME Analytics Platform with Cheminformatics Extensions. |

| Filtering Rules & Alerts | Pre-defined substructure patterns to identify problematic compounds. | PAINS filters, REOS rules, In-house toxicophore lists. |

| Clustering Software | Tools to group similar compounds and select diverse subsets. | RDKit, OpenEye's quacpac, Butina clustering scripts. |

| Conformer Generator | Software to produce low-energy 3D conformations for docking. | OMEGA (OpenEye), RDKit's ETKDG, CONFGEN. |

| High-Performance Computing (HPC) | Cluster or cloud resources for computationally intensive steps like fingerprinting and clustering on large libraries. | Local HPC cluster, AWS/GCP cloud instances. |

| Database Management System | To store, query, and manage metadata for the curated library. | SQLite, PostgreSQL with molecular extensions (e.g., Cartridge). |

Within the broader thesis of establishing a robust virtual screening workflow, the selection and configuration of docking software constitute a critical juncture. This stage determines the accuracy, speed, and reliability of predicting ligand-receptor interactions. These Application Notes provide a comparative analysis of current popular molecular docking tools, their intrinsic search algorithms, and detailed protocols for initial configuration and validation, aimed at enabling researchers to make informed decisions for their specific projects.

Comparative Analysis of Popular Docking Software

The following table summarizes the core characteristics, algorithms, and suitability of widely used docking software as of recent analyses.

Table 1: Comparison of Popular Molecular Docking Software and Core Algorithms

| Software | License Type | Core Search Algorithm(s) | Scoring Function(s) | Typical Use Case & Throughput | Key Configuration Parameters |

|---|---|---|---|---|---|

| AutoDock Vina | Open Source (Apache) | Iterated Local Search (ILS), Monte Carlo | Vina, Vinardo (customizable) | High-throughput virtual screening; balance of speed/accuracy. | exhaustiveness, num_modes, energy_range, search space (center, size). |

| AutoDock-GPU | Open Source (LGPL) | Lamarckian Genetic Algorithm (LGA) | AutoDock4.2 (empirical) | High-throughput, leveraging GPU acceleration. | ga_run_number, ga_pop_size, grid spacing, grid box definition. |

| Glide (Schrödinger) | Commercial | Systematic, exhaustive search of torsional space, Monte Carlo | GlideScore (empirical, force-field based) | High-accuracy pose prediction, lead optimization. | Precision mode (SP, XP), ligand sampling (flexible/rigid), post-docking minimization. |

| GOLD (CCDC) | Commercial | Genetic Algorithm (GA) | GoldScore, ChemScore, ASP, ChemPLP | Protein-ligand docking with full ligand flexibility, water handling. | Number of GA operations, population size, niche size, ligand flexibility parameters. |

| rDock | Open Source (LGPL) | Stochastic search (Simulated Annealing, Genetic Algorithm) | Rbt scoring function (contact, polar, etc.) | High-throughput screening, structure-based design. | Number of runs, cavity definition, scoring function weights. |

| UCSF DOCK | Academic License | Anchor-and-Grow, rigid body minimization | Grid-based scoring (contact, energy) | Large-scale database screening, academic research. | Anchor selection, growth parameters, bump filter tolerance. |

| QuickVina 2 | Open Source (Apache) | Hybrid of Vina and AD4 algorithms | Modified Vina scoring | Ultra-fast screening with acceptable accuracy. | Similar to Vina, with optimized defaults for speed. |

| smina (Vina fork) | Open Source (Apache) | Vina-based, customizable optimization | Vina, custom (e.g., for scoring function development) | Customized docking, scoring function development, focused screening. | exhaustiveness, scoring function customization, minimization options. |

Table 2: Quantitative Performance Benchmarking (Representative Data)

| Software | Avg. RMSD (Å) [1] | Avg. Time per Ligand (s) [2] | Success Rate (Top-Scoring Pose <2Å) [3] | Required Computational Resources |

|---|---|---|---|---|

| AutoDock Vina | 1.5 - 2.5 | 30 - 120 | ~70-80% | Moderate CPU. |

| AutoDock-GPU | 1.5 - 2.5 | 5 - 30 | ~70-80% | High-end NVIDIA GPU. |

| Glide (XP) | 1.2 - 2.0 | 120 - 600 | ~80-90% | High CPU/Memory (cluster recommended). |

| GOLD (ChemPLP) | 1.3 - 2.2 | 60 - 300 | ~75-85% | Moderate CPU. |

| rDock | 1.8 - 3.0 | 15 - 60 | ~65-75% | Moderate CPU. |

| Notes: [1] Root-mean-square deviation of predicted vs. crystallographic pose. [2] Highly dependent on ligand/protein complexity and exhaustiveness settings. [3] Varies significantly by protein target and test set. |

Experimental Protocols

Protocol 3.1: Standardized Setup and Configuration for a Docking Run

This protocol outlines the essential steps for preparing a docking experiment, applicable to most software with tool-specific adaptations.

Materials: Prepared protein structure (PDB format, protonated, charges assigned), prepared ligand library (SDF/MOL2 format, energy-minimized), docking software installed, high-performance computing (HPC) or workstation.

Procedure:

- Receptor Preparation:

- Load the protein PDB file into a molecular viewer (e.g., PyMOL, UCSF Chimera).

- Remove all non-essential molecules (water, ions, co-crystallized ligands except critical ones).

- Add missing hydrogen atoms and assign protonation states at physiological pH (using tools like

pdb4amber,PROPKA, or software-specific utilities like Schrödinger'sProtein Preparation Wizard). - Define and save the binding site region. Note the 3D coordinates of its center (x, y, z) and its spatial extent.

Ligand Library Preparation:

- Convert ligand library to a consistent format (e.g., SDF).

- Generate plausible 3D conformations and protonation states at pH 7.4 ± 0.5 (using

LigPrep,Open Babel, orMOE). - Perform a brief energy minimization (e.g., using MMFF94 or UFF force field).

Software-Specific Grid/Box Generation:

- For grid-based methods (AutoDock Vina, DOCK), generate an energy grid centered on the binding site coordinates identified in Step 1. The box size should encompass the entire site with a margin of ~5-10 Å.

- Critical Parameter: Adjust

size_x,size_y,size_z(or equivalent) to be neither too small (misses poses) nor too large (increases noise/computation time).

Docking Parameter Configuration:

- Select the appropriate search algorithm (see Table 1).

- Set the exhaustiveness/rigor parameter. For screening, a balance is needed (e.g., Vina

exhaustiveness=8-32). For final pose prediction, increase this value. - Define the number of output poses per ligand (typically 5-20).

- Enable or disable post-docking minimization based on need for speed vs. pose refinement.

Execution and Output:

- Run the docking job via command line or GUI.

- Outputs typically include a file with all ranked poses (e.g.,

output.pdbqt,docking_score.dat) and a log file.

Validation: Dock a known native ligand (from a co-crystal structure) back into its receptor. A successful re-docking should yield an RMSD < 2.0 Å for the top-scoring pose.

Protocol 3.2: Benchmarking Docking Software Performance

Objective: To compare the pose prediction accuracy of two selected docking programs against a validated test set.

Materials: PDBbind or Directory of Useful Decoys (DUD-E) refined set, containing protein-ligand complexes with known binding poses. Software A (e.g., AutoDock Vina), Software B (e.g., GOLD).

Procedure:

- Dataset Curation: Select 20-50 diverse protein-ligand complexes with high-resolution crystal structures (<2.2 Å).

- Preparation: Prepare each protein and its native ligand separately using Protocol 3.1.

- Re-docking Experiment: For each complex, dock the prepared ligand into its prepared protein receptor using the standard configuration for Software A and Software B. Use the known binding site coordinates.

- Pose Comparison: For the top-scoring pose from each run, calculate the RMSD between the docked pose and the crystallographic pose (after superimposing the protein structures). Use tools like

OpenBabel,PyMOL, or software-specific scripts. - Analysis: For each software, calculate the success rate (percentage of complexes with RMSD < 2.0 Å) and the average RMSD across the dataset. Generate a scatter plot (Software A RMSD vs. Software B RMSD).

Interpretation: Software with higher success rates and lower average RMSD demonstrates better pose prediction accuracy for the tested set. This benchmark should inform software selection for similar targets.

Visualizations

Title: Molecular Docking Setup and Validation Workflow

Title: Core Search Algorithms and Representative Software

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Molecular Docking

| Item/Resource | Function/Explanation | Example/Provider |

|---|---|---|

| Protein Data Bank (PDB) | Primary repository for 3D structural data of proteins and nucleic acids. Source of receptor structures for docking. | rcsb.org |

| PDBbind Database | Curated database of protein-ligand complexes with binding affinity data. Essential for benchmarking and training. | pdbbind.org.cn |

| ZINC / Molport | Commercial compound libraries for virtual screening, providing readily purchasable molecules in ready-to-dock formats. | zinc.docking.org, molport.com |

| Open Babel / RDKit | Open-source cheminformatics toolkits. Critical for file format conversion, ligand preparation, and basic molecular properties calculation. | openbabel.org, rdkit.org |

| UCSF Chimera / PyMOL | Molecular visualization software. Used for protein-ligand complex analysis, binding site visualization, and figure generation. | cgl.ucsf.edu/chimera/, pymol.org |

| MGLTools (AutoDockTools) | GUI for preparing files, setting up grids, and analyzing results for the AutoDock suite of programs. | ccsb.scripps.edu |

| High-Performance Computing (HPC) Cluster | Essential for performing large-scale virtual screening campaigns, which require thousands to millions of docking calculations. | Institutional clusters or cloud services (AWS, Azure, GCP). |

| PROPKA / PDB2PQR | Software for predicting pKa values of protein residues and generating physiologically realistic protonation states. | github.com/jensengroup/propka |

| GNINA / Smina | Docking frameworks based on AutoDock Vina, supporting convolutional neural network scoring and customization. Useful for advanced users. | github.com/gnina/gnina |

This protocol details the critical execution phase of a molecular docking-based virtual screening workflow. Following the preparation of ligands, receptor, and grid parameter files, this step focuses on the deployment of docking simulations across available computational resources. Efficient management of batch jobs is essential to process thousands to millions of compounds in a timely and cost-effective manner, transforming prepared inputs into binding affinity and pose predictions.

Core Concepts and Quantitative Landscape

The computational demands of docking are dictated by the search algorithm, ligand flexibility, and system size. The following table summarizes key performance metrics for common docking software.

Table 1: Computational Resource Requirements for Common Docking Software

| Software Package | Typical CPU Core Usage per Job | Average Runtime per Ligand (Small Molecule) | Key Resource Determinants | Native Batch System Support? |

|---|---|---|---|---|

| AutoDock Vina | 1 | 30 - 120 seconds | Exhaustiveness, grid size | Yes (via command line) |

| AutoDock4/GPU | 1 / 1 GPU | 10 - 60 seconds (GPU) | Number of GA runs, population size | Script-based |

| DOCK 3.7 | 1 | 1 - 5 minutes | Anchor orientation search, minimization iterations | Yes |

| GOLD | 1 | 1 - 3 minutes | Genetic algorithm operations, flexibility | Yes (config-driven) |

| Glide (SP/XP) | 1-8 (scales) | 45 - 180 seconds | Precision setting, sampling density | Yes (Schrödinger suite) |

| rDock | 1 | 20 - 90 seconds | Number of runs, sampling | Yes |

| FlexX | 1 | 1 - 2 minutes | Fragment placement, optimization | Yes |

| SwissDock | 1 (per submission) | Variable (web service) | Cluster queue load | Web-based |

| HADDOCK | Multi-core (MPI) | Minutes to hours (per complex) | Refinement steps, explicit solvent | Yes (job arrays) |

| Ledock | 1 | 20 - 60 seconds | Simplex optimization cycles | Script-based |

Experimental Protocols

Protocol 3.1: Configuring and Executing a Local Multi-Core Docking Batch (Using AutoDock Vina)

Objective: To efficiently distribute a library of 10,000 pre-prepared ligands across available CPU cores on a local workstation or server.

Materials:

- Workstation/Server with ≥ 8 CPU cores and ≥ 16 GB RAM.

- Prepared receptor file (

receptor.pdbqt). - Prepared grid configuration file (

conf.txt). - Directory containing 10,000 ligand files in

.pdbqtformat (ligands/). - AutoDock Vina (v1.2.3 or later) installed.

- GNU Parallel or a custom Python scripting environment.

Methodology:

- Environment Setup: Create a project directory with subdirectories:

inputs/ligands_pdbqt/,outputs/, andscripts/. - Batch Script Generation: Create a Python script (

generate_jobs.py) to produce a list of docking commands.

Parallel Execution Using GNU Parallel: Execute jobs, utilizing all but one CPU core.

Monitoring: Use system monitoring tools (

htop,top) to track CPU utilization and ensure all cores are engaged.

Protocol 3.2: Submitting Docking Jobs to a High-Performance Computing (HPC) Cluster (SLURM Example)

Objective: To submit a massive virtual screen (1 million compounds) as a job array to an HPC cluster using a workload manager (SLURM).

Materials:

- Access to an HPC cluster with SLURM workload manager.

- Docking software (e.g., DOCK3.7) installed and environment modules loaded.

- Pre-prepared

sphere_clusterfile, grid (grid.bmp), and ligand library split into numbered directories.

Methodology:

- Prepare Directory Structure: Organize ligands into subdirectories (e.g.,

split_1/tosplit_100/), each containing 10,000 ligand.mol2files. - Create a Docking Script Template (

dock_template.sh):

Submit the Job Array:

Monitor Job Status:

Visualizations

Diagram 1: HPC Docking Batch Workflow

Diagram 2: Local Parallel Docking Resource Allocation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Docking Execution & Management

Item/Category

Example Solution(s)

Primary Function in Execution Phase

Docking Software

AutoDock Vina, DOCK3.7, Glide, GOLD, rDock

Core engine for performing conformational search and scoring of ligand-receptor interactions.

Job Scheduler

SLURM, PBS Pro, Sun Grid Engine (SGE), LSF

Manages computational resources on HPC clusters, schedules and prioritizes batch jobs.

Parallelization Tool

GNU Parallel, Python Multiprocessing, MPI (for MD)

Enables simultaneous execution of multiple docking jobs on multi-core CPUs.

Containerization

Docker, Singularity/Apptainer

Ensures software portability and reproducible environments across different compute infrastructures.

Workflow Manager

Snakemake, Nextflow, Apache Airflow

Automates multi-step pipelines (docking -> scoring -> analysis), handling dependencies and failures.

Data Management

SQLite, PostgreSQL, HDF5

Stores and queries large volumes of docking results (poses, scores, metadata) efficiently.

Monitoring

htop, sacct (SLURM), Prometheus + GrafanaProvides real-time insight into CPU/GPU, memory, and storage utilization during large-scale runs.

Scripting Language

Python, Bash, Perl

Glue for automating job generation, submission, and preliminary result parsing.

Post-docking analysis is the critical stage where computational predictions are transformed into prioritized, chemically interpretable hypotheses. Following the automated docking of a compound library, this step involves analyzing the ensemble of predicted ligand poses, evaluating their quality, and ranking compounds for experimental follow-up. This protocol, framed within a comprehensive virtual screening workflow, details systematic methods for pose clustering, interaction profiling, and initial hit ranking to identify the most promising lead candidates.

Key Analytical Metrics & Quantitative Data

The following metrics are calculated for each docked ligand to enable comparison and ranking.

Table 1: Core Metrics for Post-Docking Analysis

| Metric | Description | Ideal Range/Value | Purpose in Ranking |

|---|---|---|---|

| Docking Score (Affinity) | Estimated binding free energy (e.g., Vina score, Glide GScore). | More negative values (e.g., < -8.0 kcal/mol for strong binders). | Primary indicator of predicted binding strength. |

| Ligand Efficiency (LE) | Docking score per heavy atom (Score / HA). | > -0.3 kcal/mol/HA. | Normalizes affinity by size, identifying efficient binders. |

| RMSD (Root Mean Square Deviation) | Measures pose similarity to a reference (e.g., co-crystal ligand). | < 2.0 Å for pose reproduction. | Assesses pose reliability and clustering consistency. |

| Intermolecular Interactions | Counts of specific bonds (H-bonds, halogen bonds, π-stacking). | Target-dependent; more specific interactions are favorable. | Qualifies binding mode and specificity. |

| Molecular Similarity (Tanimoto) | Similarity to known active compounds. | > 0.5 suggests structural resemblance. | Leverages existing SAR data. |

| Pharmacophore Match | Fraction of required chemical features satisfied. | 1.0 (full match). | Ensures pose aligns with design constraints. |

Table 2: Typical Pose Clustering Parameters

| Parameter | Value/Setting | Rationale |

|---|---|---|

| Clustering Algorithm | Hierarchical (average linkage) or K-means. | Groups geometrically similar poses. |

| RMSD Cutoff | 1.5 - 2.5 Å. | Balances granularity and cluster number. |

| Minimum Cluster Size | 2-5 poses. | Filters out singleton, potentially spurious poses. |

| Representative Pose | Centroid (lowest RMSD to cluster center) or top-scoring pose. | Selects pose for detailed interaction analysis. |

Experimental Protocols

Protocol 5.1: Pose Clustering and Consensus Selection

Objective: To group similar ligand binding modes and identify a consensus, representative pose for each compound, reducing stochastic docking noise.

- Pose Extraction: From the docking output file (e.g.,

.sdf,.pdbqt), extract all saved poses (e.g., top 5-10 per compound) along with their scores. - Alignment: Superimpose all poses onto the rigid protein structure from the docking simulation using the protein's alpha carbons as the reference.

- RMSD Matrix Calculation: For every pair of ligand poses, calculate the all-atom RMSD after optimal structural alignment. Use

obrms(Open Babel) orcctbxlibraries in a Python script. - Clustering Execution:

- Hierarchical Clustering: Using the RMSD matrix, perform agglomerative clustering with the average linkage method. Cut the dendrogram at the specified RMSD cutoff (e.g., 2.0 Å) to define clusters.

- Alternative - K-means: Use the

KMeansmodule fromscikit-learnon pose coordinate data, determiningkby the elbow method.

- Representative Pose Selection: For each cluster, select the centroid pose (pose with the lowest average RMSD to all other cluster members). Alternatively, select the top-scoring pose within the cluster.

- Output: Generate a new file containing only the representative pose for each ligand, annotated with cluster ID and size.

Protocol 5.2: Protein-Ligand Interaction Profiling

Objective: To qualitatively and quantitatively characterize the binding mode of each representative ligand pose.

- Interaction Fingerprinting: Use software like PLIP (Protein-Ligand Interaction Profiler), Schrödinger's Interaction Fingerprint, or a custom

RDKit/Biopythonscript. - Run Analysis: Process the protein-representative pose complex through the chosen tool to detect:

- Hydrogen bonds (donor, acceptor, distance, angle)

- Hydrophobic contacts

- Halogen bonds

- π-Stacking (face-to-face, edge-to-face)

- Salt bridges

- Metal coordination

- Data Tabulation: For each ligand, compile a binary vector (fingerprint) indicating the presence/absence of interactions with specific protein residues (e.g., "ASP93:H-bond"). Create a summary table (see Table 1).

- Visual Inspection: Manually inspect top-ranked complexes in a molecular viewer (e.g., PyMOL, ChimeraX) to confirm key interactions and binding mode plausibility.

Protocol 5.3: Composite Hit Ranking Strategy

Objective: To integrate multiple metrics into a single priority score for initial hit selection.

- Data Normalization: Normalize each relevant metric (Docking Score, LE, Interaction Count, etc.) to a 0-1 scale using min-max or z-score normalization.

- Weight Assignment: Assign subjective weights (summing to 1) to each metric based on project goals. Example: Docking Score (0.4), LE (0.3), Interaction Match to Key Residue (0.3).

- Composite Score Calculation: For each compound

i, calculate the weighted sum:Composite_Score_i = Σ (Weight_j * Normalized_Metric_ij) - Ranking & Filtering: Rank all compounds by the composite score in descending order. Apply logical filters (e.g., remove compounds violating Lipinski's Rule of 5, or lacking a key interaction) to generate a final priority list.

- Output: Generate a ranked hit list table with all calculated metrics and the composite score for decision-making.

Visualization of Workflows

Post-Docking Analysis & Hit Ranking Workflow

Metrics for Composite Hit Ranking

The Scientist's Toolkit: Key Research Reagents & Software

Table 3: Essential Tools for Post-Docking Analysis

| Item | Type | Function/Benefit |

|---|---|---|

| PLIP (Protein-Ligand Interaction Profiler) | Software/Web Server | Automates detection and visualization of non-covalent interactions from PDB files. |

| RDKit | Open-Source Cheminformatics Library | Provides Python tools for molecular manipulation, fingerprinting, and similarity calculations. |

| MDTraj | Python Library | Efficiently loads and analyzes molecular dynamics trajectories and structures, useful for RMSD calculations. |

| Scikit-learn | Python ML Library | Offers robust implementations of clustering (K-means, Hierarchical) and data normalization methods. |

| PyMOL/ChimeraX | Molecular Visualization | Critical for manual inspection and validation of binding poses and interaction networks. |

| KNIME or Pipeline Pilot | Workflow Automation | Enables the construction of reproducible, graphical post-docking analysis pipelines without extensive coding. |

| Custom Python Scripts | Code | Essential for integrating different tools, calculating custom metrics (e.g., composite scores), and batch processing. |

Navigating Challenges: Troubleshooting Common Pitfalls and Optimizing Performance

Within the establishment of a robust virtual screening (VS) workflow using molecular docking, the scoring function is the critical component that determines predicted binding affinity. However, its performance is constrained by three core limitations: Accuracy (systematic prediction errors), Reproducibility (sensitivity to initial conditions and parameters), and the Rescoring Problem (the inconsistency in rankings when using different functions). This document provides application notes and protocols to diagnose and mitigate these issues.

Quantitative Assessment of Scoring Function Limitations

Table 1: Benchmark Performance of Common Scoring Functions (2023-2024 Data)

| Scoring Function (Class) | Typical Correlation (R²) vs. Experimental ΔG | RMSE (kcal/mol) | Primary Known Bias | Rescoring Concordance* |

|---|---|---|---|---|

| AutoDock Vina (Empirical) | 0.40 - 0.55 | 2.8 - 3.5 | Over-penalizes hydrophobic enclosures | Low (0.3-0.4) |

| Glide SP (Empirical) | 0.45 - 0.60 | 2.5 - 3.2 | Sensitive to ligand strain | Medium (0.4-0.5) |

| Glide XP (Empirical) | 0.50 - 0.65 | 2.2 - 3.0 | Favors specific H-bond geometries | Medium (0.4-0.5) |

| Gold: ChemPLP (Empirical) | 0.50 - 0.63 | 2.3 - 3.1 | Balanced, slight van der Waals bias | Medium (0.5-0.6) |

| CHARMM-based MM/GBSA (FF-based) | 0.55 - 0.70 | 2.0 - 2.8 | Dependent on solvation model accuracy | High (0.6-0.7) |

| Rosetta REF2015 (Physics-informed) | 0.60 - 0.75 | 1.8 - 2.5 | Computationally intensive; loop flexibility | High (0.6-0.75) |

| DeepDock (Machine Learning) | 0.65 - 0.80 | 1.5 - 2.2 | Training set dependency; black box | Variable (0.5-0.8) |

*Rescoring Concordance: Spearman's ρ between top-100 ranks from different functions on the same pose set.

| Variability Source | Impact on Score (ΔScore Range) | Mitigation Protocol Reference |

|---|---|---|

| Protein Preparation (Protonation) | 1.5 - 4.0 kcal/mol | Section 4.1 |

| Ligand Tautomer/Protoer State | 2.0 - 5.0 kcal/mol | Section 4.2 |

| Random Seed (Docking Algorithm) | 0.5 - 2.5 kcal/mol | Section 4.3 |

| Grid Placement & Size | 1.0 - 3.0 kcal/mol | Section 4.4 |

| Crystallographic Water Handling | 1.0 - 6.0 kcal/mol | Section 4.5 |

Experimental Protocols for Evaluation & Mitigation

Protocol 4.1: Systematic Protein Preparation for Reproducible Scoring

Objective: Standardize receptor structure to minimize scoring variability.

- Source: Obtain PDB structure. Prefer high-resolution (<2.0 Å) structures.

- Processing: Remove all non-protein entities (original ligands, ions, water) except critical co-factors.

- Protonation: Use a consistent tool (e.g.,

PDB2PQR,MolProbity,Protein Preparation Wizard).- Set pH to physiological 7.4 ± 0.2.

- Assign His, Glu, Asp, Lys states using PROPKA.

- Document all assigned states.

- Energy Minimization: Apply a restrained minimization (RMSD constraint of 0.3 Å) using OPLS4 or CHARMM36 force field to relieve steric clashes.

- Output: Generate a ready-to-dock receptor file (

.pdbqt,.mae) and a detailed preparation report.

Protocol 4.2: Ligand State Enumeration & Preparation

Objective: Ensure biologically relevant ligand states are considered.

- Initial Format: Start with ligand in SMILES or SDF format.

- Tautomer/Protoer Generation: Use

LigPrep(Schrödinger) orcxcalc(ChemAxon) to generate likely states at pH 7.4 ± 0.5. Set energy window to 5-10 kcal/mol. - Conformer Generation: For each state, generate a low-energy conformer ensemble (e.g., 10-50 conformers using OMEGA).

- File Preparation: Convert all final structures to a docking-ready format with correct partial charges (e.g., Gasteiger).

Protocol 4.3: Multi-Seed Docking to Assess Scoring Reproducibility