Block Relevance (BR) Analysis: A Strategic Framework for Safer Method Comparison and Enhanced Drug Candidate Prioritization

This article provides a comprehensive overview of Block Relevance (BR) analysis, a computational tool that deconvolutes the balance of intermolecular interactions in QSPR/PLS models to enhance drug discovery.

Block Relevance (BR) Analysis: A Strategic Framework for Safer Method Comparison and Enhanced Drug Candidate Prioritization

Abstract

This article provides a comprehensive overview of Block Relevance (BR) analysis, a computational tool that deconvolutes the balance of intermolecular interactions in QSPR/PLS models to enhance drug discovery. Tailored for researchers, scientists, and drug development professionals, it explores BR analysis from its foundational principles to its practical application in comparing methods for measuring lipophilicity and permeability. The content delves into methodological implementation, troubleshooting common challenges, and validating BR analysis against other comparative frameworks. By synthesizing key insights, the article demonstrates how BR analysis accelerates drug candidate prioritization and supports the adoption of Model-Informed Drug Development (MIDD) and fit-for-purpose modeling strategies, ultimately leading to more efficient and reliable decision-making in pharmaceutical R&D.

What is Block Relevance Analysis? Deconvoluting Interactions for Smarter Drug Discovery

Defining Block Relevance (BR) Analysis in Computational Pharmacology

Block Relevance (BR) analysis is a computational tool that deconvolutes the balance of intermolecular interactions governing drug discovery-related phenomena, described by Quantitative Structure-Property Relationship (QSPR) and Partial Least Squares (PLS) models [1]. This method allows researchers to make the assessment of drug-likeness faster and more efficient, particularly in selecting optimal experimental methods for measuring critical properties like lipophilicity and permeability, thereby speeding up drug candidate prioritization [1].

Core Concept and Mathematical Foundation

BR analysis operates by dissecting complex, multi-factorial biological and chemical interactions into more manageable components or "blocks". Each block represents a distinct set of intermolecular forces or structural features that collectively influence a drug's behavior and properties [1].

The methodology has recently been implemented in MATLAB, providing researchers with a accessible computational framework for performing these analyses [1]. By applying BR analysis to QSPR/PLS models, researchers can identify which specific molecular interactions dominate particular drug discovery phenomena, enabling more informed decisions in method selection and candidate optimization.

Comparative Applications in Drug Discovery

Lipophilicity Measurement Optimization

Objective: Identify the most appropriate chromatographic system to provide reliable surrogates for log P~oct~ (octanol-water partition coefficient) and log P in apolar environments [1].

Experimental Protocol:

- System Selection: Multiple chromatographic systems with varying stationary and mobile phases are evaluated

- Model Development: QSPR models are built correlating chromatographic retention factors with computed molecular descriptors

- BR Analysis: Deconvolutes the balance of intermolecular interactions governing retention in each system

- Validation: Systems whose interaction patterns most closely match traditional log P~oct~ measurements are identified as optimal surrogates

Performance Comparison: BR analysis enables identification of chromatographic systems that most accurately replicate the intermolecular interaction balance of reference lipophilicity measures, providing more reliable and high-throughput alternatives to traditional shake-flask methods.

Permeability Assessment Standardization

Objective: Check the universality of passive permeability across different cell types and identify which Parallel Artificial Membrane Permeability Assay (PAMPA) method provides the same interaction balance as cell-based systems [1].

Experimental Protocol:

- Data Collection: Permeability data is gathered from multiple cell-based assays (Caco-2, MDCK, PAMPA variants) and computational models

- Descriptor Calculation: Molecular descriptors encoding physicochemical properties are computed for each compound

- Model Construction: PLS models are built predicting permeability from molecular descriptors

- BR Analysis: The balance of interactions governing permeability in each system is deconvoluted and compared

- System Matching: PAMPA methods with interaction profiles most similar to cell-based systems are identified as optimal predictors

Performance Comparison: Systems whose BR profiles most closely align with cell-based assays provide more physiologically relevant permeability predictions, bridging the gap between high-throughput screening and biological relevance.

Comparative Performance Data

Table 1: BR Analysis Performance in Method Selection and Candidate Prioritization

| Application Area | Traditional Approach Limitations | BR Analysis Advantages | Documented Impact |

|---|---|---|---|

| Lipophilicity Assessment | Time-consuming shake-flask methods; uncertain correlation between chromatographic systems and biological membranes [1] | Identifies optimal chromatographic log P~oct~ surrogates; reveals interaction balance with apolar environments [1] | Faster and more reliable measurement of critical drug-likeness parameters [1] |

| Permeability Prediction | Discrepancies between cell-based and artificial membrane assays; poor translatability of high-throughput methods [1] | Confirms universality of passive permeability drivers; identifies PAMPA methods mimicking cell-based interaction balance [1] | More efficient candidate prioritization with reduced late-stage attrition due to permeability issues [1] |

| Drug Candidate Prioritization | Reliance on single parameters or black-box models; limited understanding of interaction drivers [1] | Deconvolutes balance of intermolecular forces; enables strategic optimization of desired properties [1] | Accelerated lead optimization through targeted molecular design [1] |

Workflow and System Requirements

BR Analysis Workflow

Essential Research Reagent Solutions

Table 2: Key Research Reagents and Computational Tools for BR Analysis

| Resource Category | Specific Tools/Platforms | Function in BR Analysis |

|---|---|---|

| Computational Environment | MATLAB with BR implementation [1] | Primary computational platform for performing BR analysis |

| Molecular Descriptors | Various physicochemical descriptor packages [1] | Calculate parameters encoding molecular structure and properties |

| Lipophilicity Measurement | Chromatographic systems (HPLC, UPLC) [1] | Generate experimental log P surrogates for model building |

| Permeability Assessment | Cell-based assays (Caco-2, MDCK); PAMPA variants [1] | Provide experimental permeability data for correlation studies |

| Validation Tools | Traditional shake-flask log P; Cell-based permeability benchmarks [1] | Verify predictions against gold standard methods |

Integration with Broader Computational Pharmacology

BR analysis represents a specialized approach within the expanding computational pharmacology landscape, which integrates both phenotypic and target-based drug discovery through data acquisition and analysis at multiple biological levels [2]. This methodology aligns with the growing emphasis on computational technologies in drug discovery, including structure-based virtual screening, deep learning predictions of ligand properties, and analysis of ultralarge chemical spaces [3].

The technique addresses specific challenges in method validation and candidate prioritization by providing mechanistic insights into the intermolecular forces driving measured properties, moving beyond black-box predictions to actionable understanding of structure-property relationships.

Block Relevance (BR) analysis is an advanced chemometric tool designed to deconvolute the complex balance of intermolecular forces that govern physicochemical properties and biological outcomes in drug discovery. As a computational methodology, it operates on Quantitative Structure-Property Relationship (QSPR) models built using Partial Least Squares (PLS) regression. The primary innovation of BR analysis lies in its ability to transform intricate arrays of molecular descriptors into interpretable blocks corresponding to distinct types of intermolecular interactions. This addresses a fundamental challenge in QSPR modeling: while statistical models can effectively predict properties, they often function as "black boxes" with limited mechanistic insights.

The technique was developed to overcome the limitations of traditional QSPR approaches, particularly when dealing with ionized compounds and complex retention mechanisms in chromatography. By aggregating molecular descriptors into property-related groups, BR analysis provides researchers with a visual framework to quantify and compare the contributions of hydrophobic effects, hydrogen bonding, electrostatic interactions, and molecular size to the overall property being modeled. This deconvolution capability makes it particularly valuable for guiding molecular design in pharmaceutical chemistry, where understanding the dominant intermolecular forces can accelerate the optimization of drug candidates.

The Operational Framework of BR Analysis

Fundamental Components and Workflow

The BR analysis methodology relies on a specific classification system that categorizes molecular descriptors into six fundamental blocks, each representing a distinct type of intermolecular interaction. The DRY block represents hydrophobic interactions, quantifying a molecule's affinity for lipophilic environments. The OH2 block characterizes interactions with water molecules, reflecting solvation effects. Hydrogen bonding capabilities are divided into two complementary components: the O block describes a solute's ability to act as a hydrogen bond donor, while the N1 block captures its capacity as a hydrogen bond acceptor. The Size block accounts for molecular dimensions and shape-related effects, and finally, an "Others" block captures additional molecular descriptors that represent imbalances between hydrophilic and hydrophobic regions on molecular surfaces [4].

The operational workflow of BR analysis begins with the generation of a comprehensive set of molecular descriptors, typically using software such as VolSurf+. These descriptors are then systematically assigned to their respective interaction blocks. A PLS regression model is built using these blocks as variables, with the resulting model coefficients indicating the relative contribution (relevance) of each interaction type to the property being predicted. The final output consists of visual representations that display the percentage contribution of each block, allowing researchers to immediately identify which intermolecular forces dominate the property under investigation [1].

Technical Implementation

From a technical perspective, BR analysis has been implemented in MATLAB, providing researchers with an accessible interface for applying this methodology to their QSPR challenges [1]. The analysis requires careful preparation of the molecular dataset, appropriate calculation of molecular descriptors, and strategic selection of the PLS parameters. A key advantage of this implementation is its compatibility with standard molecular descriptor packages, facilitating integration into existing QSPR workflows.

Recent applications have highlighted the importance of descriptor selection, particularly when dealing with ionized compounds. While VolSurf+ descriptors effectively handle neutral molecules, their performance with fully ionized compounds can be suboptimal. To address this limitation, researchers have developed complementary strategies incorporating charge-based descriptors and Multiple Linear Regression (MLR) approaches alongside the standard BR analysis framework [4].

Experimental Protocols for BR Analysis

Core Methodological Steps

Implementing BR analysis requires careful execution of several sequential steps to ensure robust and interpretable results. The following workflow outlines the key stages in applying BR analysis to deconvolute intermolecular interactions:

Step 1: Dataset Curation involves assembling a structurally diverse set of compounds with experimentally measured values for the target property. For chromatography applications, this entails measuring retention factors (log k) for each compound across multiple mobile phase compositions [4]. For permeability studies, measured permeability coefficients (log Papp) from systems like PAMPA or cell monolayers are required [5].

Step 2: Molecular Descriptor Calculation utilizes software such as VolSurf+ to compute a comprehensive array of molecular descriptors from 3D molecular structures. These descriptors encode information about molecular size, shape, hydrophobicity, and polar interactions.

Step 3: Block Assignment categorizes the calculated descriptors into the six predefined interaction blocks (DRY, OH2, O, N1, Size, and Others) based on their physicochemical interpretation.

Step 4: PLS Model Development constructs a statistical model linking the descriptor blocks to the experimental property values. The PLS algorithm is particularly suited for handling the collinearity often present between molecular descriptors.

Step 5: Block Relevance Calculation determines the relative contribution of each block to the PLS model, typically expressed as percentage relevance values.

Step 6: Interpretation and Validation involves mechanistic interpretation of the block relevance pattern and rigorous validation using test sets not included in model building.

Practical Application in Chromatography Characterization

In a detailed study characterizing the retention mechanism of the Celeris Arginine stationary phase, researchers applied BR analysis to a dataset of 100 pharmaceutically relevant compounds (36 neutrals, 26 acids, and 38 bases). Retention factors were measured at eight different concentrations of acetonitrile (from 10% to 90% v/v) in the mobile phase. The BR analysis implementation followed a specific protocol [4]:

- Chromatographic Conditions: The mobile phase consisted of acetonitrile and ammonium acetate buffer (20 mM, pH 7.0). The flow rate was maintained at 1.0 mL/min, and detection was performed with a PDA detector.

- Descriptor Calculation: VolSurf+ descriptors were computed for all compounds, with special attention to the representation of different ionization states.

- Model Validation: The dataset was divided into training and test sets to validate the predictive ability of the resulting models.

For acidic compounds, where VolSurf+ descriptors showed limitations, researchers supplemented the analysis with additional descriptors derived from Gasteiger-Marsili partial atomic charges, enabling more accurate modeling of electrostatic interactions [4].

Comparative Analysis: BR Analysis vs Alternative Methods

Performance Comparison with Other QSPR Approaches

The following table summarizes how BR analysis compares to other commonly used methods for interpreting intermolecular interactions in QSPR models:

Table 1: Comparison of BR Analysis with Alternative QSPR Interpretation Methods

| Method | Key Features | Interpretability | Handling of Ionized Compounds | Implementation Complexity |

|---|---|---|---|---|

| BR Analysis | Deconvolutes interactions into predefined blocks; Visual output of contribution percentages | High - Direct quantification of interaction types | Moderate - Requires supplementary descriptors for optimal performance [4] | Medium - Requires specialized MATLAB implementation [1] |

| Traditional QSPR/PLS | Models overall property without mechanistic decomposition | Low - Functions as "black box" without additional interpretation steps | Variable - Depends on descriptor set | Low - Standard statistical software |

| Linear Solvation Energy Relationships (LSER) | Uses solvatochromic parameters; Well-established theoretical basis | Medium - Parameters have physicochemical meaning | Good - Established approaches for ions | Low - Standard linear regression |

| Hydrophobic-Subtraction Model | Specific to chromatography; Five-parameter system | Medium - Limited to chromatographic context | Good - Specifically designed for ionic interactions | Medium - Specialized implementation |

Application-Specific Performance Metrics

In practical applications, BR analysis has demonstrated particular strengths in specific domains. The table below compares its performance across different application areas in pharmaceutical research:

Table 2: Performance of BR Analysis in Different Application Contexts

| Application Area | Key Insights Generated | Dominant Interactions Identified | Comparison to Experimental Results |

|---|---|---|---|

| Chromatographic Retention (Celeris Arginine Column) | Switch from reversed-phase to normal-phase mode between 10-20% MeCN; Strong affinity for anions [4] | Size and O blocks for neutrals; Electrostatic interactions for acids | High correlation with experimental retention factors (r values up to 0.99 for some phases) [4] |

| IAM Chromatography for Permeability Prediction | log KwIAM mainly describes molecular dimensions; Δlog KwIAM reflects polarity [5] | Size block dominant for log KwIAM; O and N1 blocks for Δlog KwIAM | Successful prediction of PAMPA permeability when combined with PSA [5] |

| Lipophilicity Measurement Selection | Identification of optimal chromatographic systems as log Poct surrogates [1] | Variable block relevance patterns across different systems | High correlation with reference partition coefficients |

Key Research Reagents and Computational Tools

Successful implementation of BR analysis requires specific computational tools and research reagents. The following table outlines the key resources referenced in the literature:

Table 3: Essential Research Reagents and Computational Tools for BR Analysis

| Resource | Type | Specific Function | Application in BR Analysis |

|---|---|---|---|

| VolSurf+ Software | Computational Tool | Calculates molecular descriptors from 3D structures [4] | Generates input descriptors for block assignment |

| MATLAB with BR Analysis Implementation | Computational Platform | Provides environment for BR analysis calculations [1] | Executes core BR analysis algorithm and visualization |

| IAM.PC.DD2 Chromatographic Column | Research Reagent | Mimics cell membrane environment [5] | Generates retention data for permeability predictions |

| Celeris Arginine Column | Research Reagent | Mixed-mode stationary phase with arginine functionality [4] | Provides retention data for interaction deconvolution |

| CORAL Software | Computational Tool | Builds QSPR models using SMILES notation [6] | Alternative QSPR approach for comparison with BR results |

Interpreting BR Analysis Results: A Decision Framework

Pathway to Mechanistic Insights

The interpretation of BR analysis outputs follows a logical pathway that translates numerical relevance values into actionable chemical insights. The following diagram illustrates this interpretive process:

The process begins with examining the Block Relevance Percentages and Interaction Contribution Pattern from the BR analysis. For example, in the characterization of the Celeris Arginine column, the analysis revealed that retention of neutral compounds was primarily governed by the Size and O blocks, while acidic compounds showed electrostatically-driven retention [4].

The next critical step involves Identifying Dominant Interactions from the pattern of block contributions. A dominance of the DRY block indicates hydrophobic interactions are primary, while strong O and N1 block contributions highlight the importance of hydrogen bonding. In the IAM chromatography study, BR analysis revealed that the retention descriptor log KwIAM was primarily influenced by the Size block, indicating its relationship with molecular dimensions rather than specific polar interactions [5].

This understanding then enables researchers to Determine Property Mechanism at a fundamental level. For instance, the switch in relative block contributions observed when changing mobile phase composition from 10% to 20% acetonitrile in the Celeris Arginine column study visually demonstrated the transition from reversed-phase to normal-phase separation mechanisms [4].

The final stages involve using these insights to Guide Molecular Design decisions and implement Experimental Validation. In permeability optimization, understanding that Δlog KwIAM primarily reflects polar interactions (O and N1 blocks) allows medicinal chemists to strategically modify hydrogen bonding groups to improve membrane permeation while maintaining target binding [5].

BR analysis represents a significant advancement in the interpretation of QSPR/PLS models, transforming statistical correlations into mechanistically meaningful insights. Its ability to deconvolute complex intermolecular interactions into quantitatively defined contributions provides researchers with a powerful decision-support tool for molecular design and method optimization. The methodology has proven particularly valuable in pharmaceutical applications, including chromatographic characterization, permeability prediction, and lipophilicity assessment.

As QSPR modeling continues to evolve with increasingly sophisticated machine learning algorithms, the interpretability challenge becomes more pressing. BR analysis addresses this need by maintaining a direct connection between statistical models and physicochemical reality. Future developments will likely enhance the methodology's capability to handle ionized compounds and incorporate dynamic properties, further solidifying its role as an essential component of the computational chemist's toolkit.

The Critical Role of BR Analysis in a Modern Model-Informed Drug Development (MIDD) Framework

In modern Model-Informed Drug Development (MIDD), the validation and comparison of analytical methods are fundamental to ensuring the reliability of quantitative models that support critical decisions. MIDD represents an essential framework that uses quantitative methods to balance the risks and benefits of drug products in development, helping to improve clinical trial efficiency and increase the probability of regulatory success [7] [8]. Within this framework, method comparison studies serve as the backbone for verifying that different measurement systems produce consistent, reproducible results—a prerequisite for any credible model output.

The emergence of Block Relevance (BR) Analysis represents a significant methodological advancement for comparing measurement techniques in pharmaceutical research. As MIDD approaches continue to gain prominence in regulatory decision-making, with the FDA maintaining dedicated programs to discuss their application, the need for robust, statistically sound comparison methodologies has never been greater [7]. BR Analysis provides a structured approach to evaluate methodological agreement while accounting for variability sources that traditional approaches might overlook, thereby strengthening the overall MIDD framework by ensuring the primary data inputs to models are trustworthy.

Understanding BR Analysis: Core Principles and Workflow

Theoretical Foundations

BR Analysis is a sophisticated methodological framework designed to assess the agreement between two or more measurement techniques while systematically accounting for structured variability within datasets. Unlike simpler correlation-based approaches, BR Analysis operates on the principle that measurement systems must be evaluated across the entire data relevance space—the full spectrum of conditions and sample characteristics encountered in practical use. The methodology identifies "blocks" of data with similar properties or relevance structures, then performs comparative analyses within these homogeneous groupings to provide a more nuanced understanding of methodological agreement.

The theoretical underpinnings of BR Analysis address several limitations of traditional method comparison approaches. Whereas conventional techniques might treat all data points as independent and identically distributed, BR Analysis recognizes that structured heterogeneity—such as differences between patient subgroups, experimental batches, or operational conditions—can significantly impact agreement metrics. By implementing a blocking strategy that groups experimental units similar to one another, the methodology controls for extraneous variability, thereby providing clearer insights into the true methodological differences [9]. This approach aligns with established statistical principles of blocking, where the goal is to arrange experimental units into groups that are similar to one another to minimize the effect of nuisance variables on the outcome of interest [10].

Operational Workflow

The implementation of BR Analysis follows a structured, sequential process designed to ensure comprehensive methodological assessment. The workflow progresses through distinct phases, from initial study design to final interpretation, with each stage building upon the previous one to create a robust analytical framework. The following diagram illustrates this sequential process:

Diagram 1: BR Analysis Operational Workflow illustrates the sequential process for implementing Block Relevance analysis in method comparison studies.

The workflow begins with objective definition, where the specific methodological comparison goals are established, including determination of the primary and secondary endpoints for agreement assessment. This is followed by blocking factor identification, where potential sources of structured variability are selected based on their known or suspected influence on measurement outcomes. Common blocking factors in pharmaceutical applications include instrument calibration batches, operator differences, sample storage conditions, and patient demographic or physiologic characteristics [10] [9].

The data collection phase involves obtaining paired measurements from both methods across all predefined blocks, ensuring that each block contains a complete set of comparative measurements. Subsequently, block-wise analysis is performed, where agreement metrics are calculated separately within each homogeneous block. Finally, cross-block synthesis integrates the block-specific findings into overall agreement estimates, providing both generalized conclusions and block-specific insights that inform the final interpretation of methodological compatibility.

Comparative Analysis of Method Comparison Techniques

Established Method Comparison Approaches

To properly contextualize the value of BR Analysis, it is essential to understand the landscape of established method comparison techniques used in pharmaceutical research. The following table summarizes the key methodologies, their underlying principles, and typical applications within MIDD:

Table 1: Established Method Comparison Approaches in Pharmaceutical Research

| Method | Statistical Foundation | Key Outputs | Common MIDD Applications |

|---|---|---|---|

| Correlation Analysis | Pearson/Spearman correlation coefficients | r-value, p-value, R² | Preliminary assay comparison, high-throughput screening validation |

| Linear Regression | Ordinary least squares estimation | Slope, intercept, confidence bands | Bioanalytical method transfers, instrument qualification |

| Bland-Altman Analysis | Mean differences and variability | Bias, limits of agreement, trend identification | Clinical biomarker assay validation, pharmacokinetic assay comparison [11] |

| BR Analysis | Blocked variance decomposition | Block-specific agreement, relevance-weighted metrics | Complex biological assays, multi-site trial method harmonization, subgroup-specific method validation |

The Bland-Altman method has been particularly influential in method comparison studies, having been cited as "the standard approach for assessment of agreement between two methods of measurement" across various disciplines [11]. This approach evaluates agreement by plotting the differences between two methods against their averages, providing intuitive visualization of bias and the range of agreement. However, its conventional implementation does not explicitly account for structured heterogeneity in the dataset—a limitation that BR Analysis specifically addresses through its blocking methodology.

Quantitative Performance Comparison

When evaluated across key performance dimensions, BR Analysis demonstrates distinct advantages for complex methodological comparisons in MIDD contexts. The following table presents a comparative assessment based on established metrics for method comparison techniques:

Table 2: Performance Comparison of Method Comparison Techniques

| Evaluation Metric | Correlation Analysis | Linear Regression | Bland-Altman Method | BR Analysis |

|---|---|---|---|---|

| Bias Detection Sensitivity | Low | Moderate | High | Very High |

| Structured Variability Handling | None | None | Low (without modifications) | High (explicit blocking) |

| Multi-Scenario Applicability | Single condition | Single condition | Single condition | Multiple relevance blocks |

| Regulatory Acceptance | Limited as standalone | Established with limitations | Well-established | Emerging with strong rationale |

| Implementation Complexity | Low | Low to Moderate | Moderate | High |

| Subgroup-Specific Insights | None | None | Limited | Comprehensive |

The enhanced performance of BR Analysis in handling structured variability is particularly valuable in MIDD applications, where models often must account for diverse patient populations, disease states, and experimental conditions. By explicitly acknowledging and modeling this heterogeneity through blocking factors, BR Analysis provides more nuanced agreement assessments that reflect real-world complexity [9]. This approach aligns with the "fit-for-purpose" philosophy emphasized in modern MIDD, where models and methods must be appropriately aligned with their specific context of use and questions of interest [12].

BR Analysis Experimental Protocol for MIDD Applications

Study Design and Blocking Structure

Implementing BR Analysis requires meticulous experimental design focused on appropriate blocking factor selection and sample allocation. The foundational principle is "block what you can; randomize what you cannot," emphasizing controlled management of major nuisance variables while randomizing minor sources of variation [9]. For a typical bioanalytical method comparison in drug development, the following blocking structure is recommended:

- Primary Blocking Factor: Instrument/Platform (e.g., LC-MS/MS systems from different vendors)

- Secondary Blocking Factor: Operator/Technician (multiple trained personnel)

- Tertiary Blocking Factor: Sample Characteristics (e.g., plasma matrix from different patient subgroups)

- Quaternary Blocking Factor: Temporal Batch (different run days to account for inter-day variability)

Each block should contain a complete set of paired measurements from both methods under comparison, with sample size determined through appropriate power calculations. For most pharmaceutical applications, a minimum of 5-8 blocks with 20-30 paired measurements per block provides robust statistical power for detecting clinically relevant differences. The following diagram illustrates a typical blocking structure for method comparison in drug development:

Diagram 2: BR Analysis Blocking Structure shows the hierarchical arrangement of blocking factors in a pharmaceutical method comparison study.

Data Collection and Analytical Procedures

The data collection phase must ensure paired measurements are obtained under identical conditions within each block, with randomization of measurement order to avoid systematic bias. For each sample, both methods should be applied in quick succession or parallel, depending on technical feasibility. The resulting dataset should contain:

- Block identifier for each measurement pair

- Raw measurements from both methods

- Relevant covariates (e.g., sample quality indicators, processing time)

- Technical replication indicators where applicable

The analytical procedure follows a sequential variance decomposition approach:

- Within-Block Agreement Assessment: Calculate standard agreement metrics (bias, precision, limits of agreement) separately for each block

- Between-Block Variability Quantification: Assess the consistency of agreement metrics across different blocks

- Relevance-Weighted Integration: Combine block-specific estimates using weights reflecting the clinical or analytical relevance of each block

- Overall Agreement Estimation: Derive comprehensive agreement metrics with associated confidence intervals

This approach facilitates identification of not just whether two methods agree on average, but specifically under which conditions they demonstrate acceptable or problematic agreement—information critical for establishing context-specific method validity in MIDD applications.

Essential Research Toolkit for BR Analysis Implementation

Successful implementation of BR Analysis in MIDD requires both specialized statistical tools and domain-specific resources. The following table catalogues essential components of the BR Analysis research toolkit:

Table 3: Research Reagent Solutions for BR Analysis Implementation

| Tool Category | Specific Tools/Platforms | Primary Function | MIDD Integration |

|---|---|---|---|

| Statistical Software | R with nlme/lme4 packages, SAS PROC MIXED, Python statsmodels | Mixed-effects modeling, variance component analysis | Interfaces with pharmacometric platforms (NONMEM, Monolix) |

| Data Management | Electronic Laboratory Notebooks, CDISC Standards-compliant databases | Structured data collection, metadata management | Supports FDA submission data standards [7] |

| Visualization Tools | ggplot2 (R), Matplotlib (Python), Spotfire | Method comparison plots, block-specific agreement visualization | Enables model-informed decision making [12] |

| Quality Control Materials | Certified reference standards, pooled quality control samples | Method performance monitoring, inter-block calibration | Aligns with ICH Q2(R1) validation guidelines |

| Computational Resources | High-performance computing clusters, cloud-based analytics platforms | Large-scale simulation, virtual population generation | Supports PBPK, QSP modeling in MIDD [12] [13] |

The integration of these tools creates a robust ecosystem for BR Analysis implementation. Particularly important is the connection between statistical platforms used for method comparison and specialized MIDD software for pharmacometric analysis, as this enables direct utilization of validated methods in downstream modeling and simulation activities that inform drug development decisions [12] [13].

Case Study: BR Analysis in a Pharmacokinetic Assay Comparison

Experimental Context and Objectives

A practical application of BR Analysis was implemented during the development of a novel immunosuppressant drug, where a new automated immunoassay platform needed validation against an established LC-MS/MS reference method for therapeutic drug monitoring. The critical question was whether the new method could be deployed across multiple clinical sites without introducing systematic biases that might impact pharmacokinetic model predictions and subsequent dosing recommendations.

The study incorporated four blocking factors: (1) three clinical sites with different operational environments, (2) two sample concentration ranges (therapeutic vs. toxic levels), (3) three sample storage durations (fresh, 1-week, 1-month frozen), and (4) two reagent lots. Each block contained 25 paired measurements, creating a comprehensive dataset for evaluating method agreement across clinically relevant conditions.

Results and MIDD Impact

The BR Analysis revealed that while overall agreement between methods was excellent (mean bias: -1.2%, 95% limits of agreement: -8.7% to +6.3%), significant variation existed across blocks. Specifically, the new immunoassay demonstrated substantially greater positive bias (+5.8%) at near-toxic concentrations in samples stored frozen for one month—a finding that would have been obscured in a conventional method comparison. This block-specific insight prompted additional method optimization before multi-site deployment.

The impact on the MIDD framework was substantial: the comprehensive understanding of methodological limitations enabled more informed interpretation of pharmacokinetic data across sites and conditions. Population pharmacokinetic models incorporating this understanding demonstrated reduced unexplained variability and more accurate exposure-response predictions, ultimately supporting better dosing recommendations for special populations. This case exemplifies how BR Analysis strengthens the MIDD framework by ensuring the quality and interpretability of primary data feeding into quantitative models.

BR Analysis represents a methodological advancement for method comparison in modern MIDD frameworks. By explicitly addressing structured heterogeneity through blocking methodology, it provides more nuanced, context-rich agreement assessments than conventional approaches. This granular understanding of methodological performance across different conditions aligns perfectly with the "fit-for-purpose" philosophy emphasized in contemporary drug development, where models and methods must be appropriately matched to their specific context of use [12].

As MIDD continues to evolve, incorporating increasingly sophisticated approaches like quantitative systems pharmacology and machine learning [12] [13], the need for robust method comparison techniques will only intensify. BR Analysis addresses this need by providing a structured framework for ensuring methodological reliability across the diverse conditions encountered throughout drug development. Its implementation strengthens the entire MIDD ecosystem by verifying that the primary data underlying complex models are trustworthy, ultimately supporting more reliable drug development decisions and regulatory submissions [7].

The Need for Reliable Lipophilicity and Permeability Measurement Methods

In the realm of drug discovery, lipophilicity and cellular permeability stand as pivotal physicochemical parameters that directly determine a compound's absorption, distribution, and ultimately, its bioavailability. Lipophilicity, commonly quantified by the octanol/water partition coefficient (LogP) or its pH-dependent counterpart (LogD), influences solubility, metabolic stability, and nonspecific binding. Membrane permeability dictates a therapeutic compound's ability to traverse cellular barriers to reach intracellular targets, a challenge particularly pronounced for difficult-to-drug targets involving protein-protein interactions. The interplay between these properties is complex; while enhanced lipophilicity generally improves permeability, it can simultaneously reduce aqueous solubility, creating a delicate balance that researchers must optimize. The high attrition rate in drug development—where approximately 40-50% of failures stem from inadequate pharmacokinetic properties—underscores the necessity for reliable, predictive measurement methods throughout the discovery pipeline. This guide provides an objective comparison of current methodologies, enabling researchers to select optimal strategies for characterizing these critical parameters.

Comparative Analysis of Measurement Techniques

Experimental Methods for Lipophilicity Assessment

Table 1: Comparison of Experimental Lipophilicity Measurement Methods

| Method | Key Principle | Throughput | Cost | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| Shake-Flask Method | Direct partitioning between octanol and water phases followed by concentration measurement [14] | Low | Low | Considered a gold standard; experimentally straightforward | Time-consuming; requires sensitive analytical methods for accurate quantification [14] |

| Chromatographic Methods (e.g., RP-HPLC, TLC) | Measures retention time/factor correlated with partitioning behavior [1] | Medium-High | Low-Medium | High throughput; small sample requirements; wide LogP range | Requires calibration with standards; correlation with LogP may be compound-dependent |

| Electrochemical Methods | Potential difference measurement related to transfer energy across interfaces | Low | Medium | Provides mechanistic insights | Limited to ionizable compounds; specialized equipment required |

| Microfluidic Methods | Automated miniaturized partitioning in microchannels | Emerging | High initially | Very low sample consumption; rapid measurement | Newer technology; limited validation across diverse chemotypes |

The shake-flask method remains a benchmark technique, as exemplified in a comparative study of resveratrol and pterostilbene where researchers used UV spectrophotometry at 266 nm after partitioning to determine LogD values, confirming pterostilbene's superior lipophilicity due to its methoxy substitutions [14]. However, for high-throughput screening during early discovery, chromatographic methods derived from reverse-phase HPLC often provide practical advantages despite being indirect measures.

Methodologies for Permeability Assessment

Table 2: Comparison of Membrane Permeability Assessment Platforms

| Method | Membrane Type | Physiological Relevance | Throughput | Cost | Key Applications |

|---|---|---|---|---|---|

| PAMPA (Parallel Artificial Membrane Permeability Assay) | Artificial phospholipid membranes on filters [15] [16] | Low-Medium (passive diffusion only) | High | Low | Early-stage passive permeability screening; formulation optimization [17] |

| Caco-2 Model | Human colorectal adenocarcinoma cell line [15] | High (includes transporters & metabolism) | Medium | Medium | Intestinal absorption prediction; active transport assessment |

| MDCK Model | Madin-Darby canine kidney cells [15] [18] | Medium-High | Medium | Medium | Blood-brain barrier modeling; general permeability screening |

| Everted Gut Sac | Actual intestinal tissue from rodents | High (intact tissue structure) | Low | Low | Regional intestinal absorption studies |

| Organ-on-a-Chip | Microfluidic systems with living cells [15] | Very High (dynamic flow, shear stress) | Low | High | Mechanistic studies; disease modeling; complex absorption pathways |

Cell-based systems like Caco-2 remain preferred for assessing apparent drug permeability coefficient (Papp) as they mimic the human intestinal epithelium with functional transporters and tight junctions [15]. However, extended cultivation time (21 days) and absence of mucosal layer represent limitations addressed through co-cultures with mucin-producing HT29-MTX cells [15]. PAMPA offers a cost-effective, cell-free alternative for evaluating passive transcellular permeability, useful for high-volume screening despite lacking biological complexity [16] [18].

Recent advancements focus on enhancing physiological relevance through three-dimensional models, including induced pluripotent stem cells, organ-on-a-chip systems, and cell spheroids, which promise improved predictability in permeability studies [15]. The choice between methods involves balancing reproducibility, physiological relevance, cost, and cultivation time against project needs.

Detailed Experimental Protocols for Key Methods

Protocol: Shake-Flask Method for LogD Determination

Materials and Reagents:

- n-octanol (high-performance liquid chromatography grade)

- Buffer solution (typically phosphate-buffered saline at physiologically relevant pH)

- Test compound solution in appropriate solvent

- UV-Visible spectrophotometer or HPLC system with detection capabilities

Procedure:

- Pre-saturation of Phases: Pre-saturate n-octanol and buffer solution by mixing equal volumes overnight with gentle agitation. Separate the phases before use to ensure thermodynamic equilibrium during partitioning experiments.

- Sample Preparation: Prepare stock solution of test compound in methanol or DMSO at 1 mg/mL concentration. Use serial dilutions to establish standard curves for concentration determination [14].

- Partitioning: Combine equal volumes (typically 1-10 mL each) of n-octanol and buffer in a glass vial. Add test compound to achieve final concentration well below its solubility limit. Cap vials tightly and shake for 4-24 hours at constant temperature (typically 25°C or 37°C) to reach partitioning equilibrium.

- Phase Separation: After equilibration, allow phases to separate completely (centrifugation at 3000 × g for 15 minutes may be necessary). Carefully separate the two phases, avoiding cross-contamination.

- Concentration Analysis: Quantify compound concentration in both phases using UV spectrophotometry at predetermined maximum absorbance wavelength (e.g., 266 nm for resveratrol and pterostilbene) [14] or HPLC with appropriate detection. Ensure measurements fall within the linear range of standard curves.

- Calculation: Calculate LogD using the formula: LogD = log10 (Concentration in octanol phase / Concentration in buffer phase)

Technical Considerations:

- For compounds with UV interference, alternative detection methods like LC-MS/MS provide enhanced sensitivity and specificity [14].

- Maintain precise temperature control throughout the experiment as partitioning is temperature-dependent.

- Include quality control compounds with known LogD values to validate method performance.

- For ionizable compounds, conduct measurements at multiple pH values to characterize the pH-partition profile.

Protocol: Parallel Artificial Membrane Permeability Assay (PAMPA)

Materials and Reagents:

- Multiwell filter plates (typically 96-well format)

- Phospholipid solution (e.g., 2% lecithin in dodecane)

- Buffer solutions (e.g., Prisma HT buffer at pH 7.4 for intestinal permeability)

- Donor and acceptor plates

- UV plate reader or LC-MS system for quantification

Procedure:

- Membrane Formation: Add phospholipid solution to filter membranes and incubate to form artificial lipid bilayers. The polyvinylidene fluoride (PVDF) filters impregnated with n-dodecane are commonly used as lipophilic membranes [17].

- Sample Preparation: Prepare test compound in donor buffer at desired concentration (typically 10-100 μM). Include reference compounds with known permeability values for validation.

- Assay Setup: Add donor solution to donor compartment and buffer to acceptor compartment. Assemble the system ensuring no air bubbles form at membrane interface.

- Incubation: Incubate at room temperature or 37°C for 2-16 hours with minimal disturbance to prevent disruption of the unstirred water layer (UWL), which can significantly impact permeability measurements [17].

- Sample Analysis: Quantify compound concentration in both donor and acceptor compartments after incubation using UV spectrophotometry (if concentration and specificity allow) or LC-MS/MS for better sensitivity.

- Calculation: Calculate apparent permeability (Papp) using the formula: Papp = (VA × CA) / (A × T × CD), where VA is acceptor volume, CA is acceptor concentration, A is membrane area, T is incubation time, and CD is initial donor concentration.

Technical Considerations:

- The thickness of the UWL, which can be 1500-4000 μm in non-stirred conditions, often dominates apparent permeability for highly lipophilic compounds [17].

- Membrane composition can be modified to mimic specific biological barriers (e.g., blood-brain barrier).

- For low-solubility compounds, include solubility-enhancing agents but account for their effect on free drug concentration.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Lipophilicity and Permeability Studies

| Reagent/Material | Function/Application | Key Considerations |

|---|---|---|

| Caco-2 Cell Line | Human intestinal epithelial model for permeability studies [15] | Requires 21-day differentiation; expresses transporters and enzymes |

| MDCK Cells | Canine kidney cell line for general permeability assessment [15] [18] | Faster differentiation (7 days); lower expression of human transporters |

| PAMPA Membrane Components | Artificial membrane formation (e.g., PVDF filters with n-dodecane) [17] | Lipid composition can be tailored for specific barriers (e.g., BBB) |

| Prisma HT Buffer | Universal buffer for permeability assays across pH range 3-10 [17] | Maintains consistent ionic strength and buffering capacity |

| HPLC/MS Grade Solvents | High-purity solvents for analytical quantification and sample preparation | Essential for reducing background interference in sensitive detection |

| Hydroxypropyl-β-cyclodextrin (HP-β-CD) | Solubility-enhancing agent for poorly soluble compounds [17] | Improves solubility but decreases permeability by reducing free drug concentration |

| Dimethyl Sulfoxide (DMSO) | Universal solvent for compound stock solutions | Maintain final concentration below 1% to avoid cellular toxicity and artifactual permeability |

Method Selection Through Block Relevance Analysis

Block Relevance (BR) analysis implemented in MATLAB has emerged as a computational tool that deconvolutes the balance of intermolecular interactions governing drug discovery-related phenomena described by QSPR/PLS models [1]. This methodology provides a systematic framework for selecting optimal measurement approaches by:

Identifying Optimal Surrogates: BR analysis helps identify the best chromatographic systems for providing reliable logP octanol surrogates and logP values in apolar environments, guiding method selection based on the specific molecular properties of interest [1].

Evaluating Method Universality: For permeability assessment, BR analysis enables researchers to check the universality of passive permeability measurements among different cell types and identify which PAMPA methodology provides the same picture in terms of balance of intermolecular interactions as cell-based systems [1].

Informing Candidate Prioritization: By elucidating the critical intermolecular forces governing permeability for specific compound classes, BR analysis accelerates drug candidate prioritization, making the choice of methods for measuring lipophilicity and permeability safer and more efficient [1].

The application of BR analysis is particularly valuable when navigating the trade-offs between high-throughput screening methods (e.g., PAMPA, chromatographic LogP) and biologically relevant systems (e.g., Caco-2, MDCK), ensuring that selected methodologies capture the essential physicochemical interactions governing permeability for specific chemical series.

Visualizing Experimental Workflows and Method Relationships

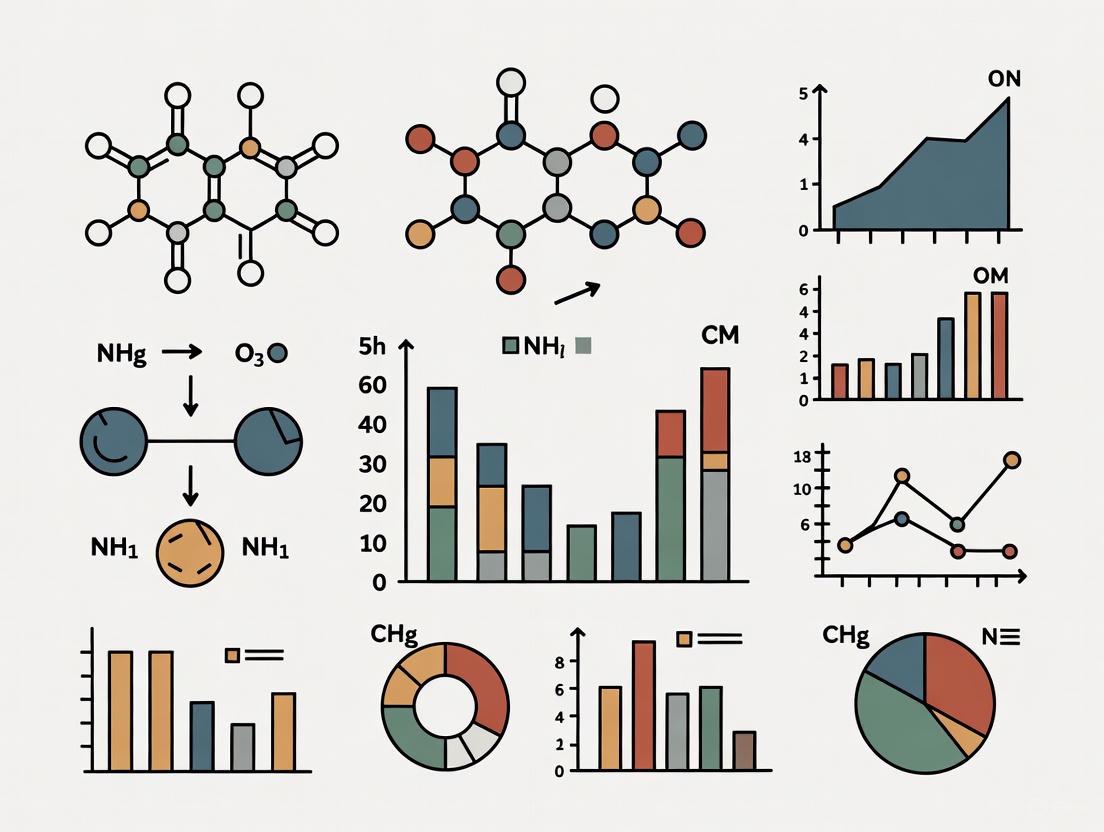

Lipophilicity and Permeability Assessment Workflow

This diagram illustrates the integrated approach to method selection, wherein data from various lipophilicity and permeability assessments feed into Block Relevance analysis to inform optimal methodology selection and candidate prioritization.

The expanding toolkit for measuring lipophilicity and permeability offers researchers multiple pathways for compound characterization, each with distinct advantages and limitations. Reliability in assessment emerges not from any single method but from strategic method selection aligned with specific discovery phase requirements—from high-throughput screening in early stages to physiologically complex models for lead optimization. The shake-flask method provides benchmark lipophilicity data but lacks the throughput needed for large compound libraries, while chromatographic methods offer practical alternatives with appropriate calibration. For permeability, PAMPA efficiently captures passive transcellular diffusion, while cell-based models like Caco-2 incorporate biological complexity including active transport processes.

The emerging application of Block Relevance analysis represents a paradigm shift, enabling quantitative assessment of which methods best capture the critical intermolecular interactions governing permeability for specific compound classes. As the field advances, integration of computational predictions with experimental validation, coupled with systematic method evaluation frameworks, will continue to enhance the reliability and efficiency of these critical measurements in drug discovery pipelines.

Implementing BR Analysis: From Lipophilicity Measurement to Permeability Prediction

A Step-by-Step Workflow for Conducting a Block Relevance Analysis

Block Relevance (BR) analysis is an advanced computational technique used to interpret complex QSPR/PLS (Quantitative Structure-Property Relationship/Partial Least Squares) models in pharmaceutical and chemical research [1]. This methodology allows researchers to deconvolute the balance of intermolecular interactions governing drug discovery phenomena by grouping descriptors into interpretable blocks and quantifying their relative importance [19]. The primary value of BR analysis lies in its ability to make the choice of methods for measuring key properties like lipophilicity and permeability safer while accelerating drug candidate prioritization [1]. Unlike conventional statistical approaches that provide single-metric outputs, BR analysis offers a nuanced understanding of which molecular descriptors predominantly influence the biological property or experimental method under investigation.

Within method comparison research, BR analysis serves as a powerful tool for determining whether different experimental techniques probe the same underlying physicochemical phenomena [1]. For instance, it can identify whether various permeability assays (e.g., PAMPA vs. cell-based systems) provide the same picture in terms of the balance of intermolecular interactions, thereby guiding researchers toward more reliable method selection [1]. The analysis achieves this by graphically representing the relevance of different descriptor blocks within a validated PLS model, moving beyond simple statistical correlation to provide mechanistically interpretable results.

Theoretical Foundations and Key Concepts

Core Principles of BR Analysis

BR analysis operates on the fundamental principle that molecular properties and biological activities emerge from distinct yet interconnected types of intermolecular interactions. The methodology groups descriptors into conceptually coherent blocks (e.g., hydrophobicity, polarity, hydrogen bonding, size/shape) and quantifies their relative contributions to the overall model [19] [20]. This block-based approach aligns with the complex reality that pharmacological properties rarely depend on a single molecular characteristic but rather on a balanced combination of multiple factors.

The analysis is typically performed on pre-validated PLS models, ensuring that the underlying statistical foundation is robust before interpreting the relative block contributions [19]. BR analysis extends traditional multivariate statistics by transforming abstract mathematical models into mechanistically interpretable frameworks. This is particularly valuable in pharmaceutical research where understanding the structural determinants of properties like permeability and lipophilicity is crucial for rational drug design [1].

Comparative Context with Alternative Methods

BR analysis occupies a unique position within the landscape of statistical methods for analyzing complex datasets. The table below compares its characteristics against other common approaches:

Table 1: Comparison of BR Analysis with Alternative Multivariate Methods

| Method | Primary Function | Interpretability | Handling of Descriptor Groups | Application Context |

|---|---|---|---|---|

| Block Relevance (BR) Analysis | Deconvoluting balance of interactions in QSPR/PLS models | High (visual and quantitative) | Explicitly groups descriptors into interpretable blocks | Method comparison, mechanistic interpretation |

| Traditional PLS Regression | Modeling relationship between X and Y variables | Moderate (variable importance but no grouping) | Treats descriptors individually | Predictive modeling |

| Multiple Regression: Block Analysis | Hierarchical variable entry in regression models | Moderate (sequential R² changes) | User-defined entry blocks | Covariate adjustment, hierarchical modeling |

| Stochastic Block Models | Inferring network structure from connectivity patterns | Variable (depends on implementation) | Groups nodes based on connection patterns | Network analysis, metadata-structure relationships |

Experimental Design and Prerequisites

Research Reagent Solutions and Essential Materials

Conducting a proper BR analysis requires specific computational tools and chemical data resources. The following table details the essential components:

Table 2: Essential Research Reagents and Computational Tools for BR Analysis

| Item | Specification/Function | Application Context |

|---|---|---|

| MATLAB Software | Platform for BR analysis implementation with recent BR toolbox [1] | Primary computational environment for analysis execution |

| VolSurf+ Descriptors | Computed molecular descriptors for physicochemical properties [20] | Standardized descriptor calculation for structural properties |

| Experimental Partition Coefficients | logP values from octanol/water, toluene/water, or other solvent systems [20] | Experimental data for model calibration and validation |

| Chromatographic Retention Data | HPLC or IAM chromatography measurements as logP surrogates [1] | High-throughput alternative to shake-flask logP determination |

| Permeability Assay Data | PAMPA or cell-based (e.g., Caco-2) permeability measurements [1] | Biological performance data for permeability model development |

| Chemical Dataset | 200+ compounds with structural diversity and measured properties [20] | Representative compound set for model development |

Data Requirements and Compound Selection

A robust BR analysis begins with careful experimental design. The compound set should include sufficient structural diversity to probe the various intermolecular interactions relevant to the property being studied. Research indicates that datasets of 200+ compounds provide a solid foundation for reliable models [20]. Each compound must be characterized with comprehensive experimental data, including partition coefficients in multiple solvent systems (e.g., logPoct and logPtol) [20], chromatographic retention factors where applicable, and permeability measurements across different assay systems.

Critical to this process is the careful curation of descriptors, which should comprehensively represent major physicochemical domains including size, shape, hydrophobicity, polarity, and hydrogen bonding capacity [19] [20]. The VolSurf+ platform has been particularly useful in this context, providing 82+ descriptors that can be logically grouped into blocks representing distinct intermolecular interaction types [20]. Additionally, compounds capable of forming intramolecular hydrogen bonds (IMHB) should be identified and potentially processed separately, as their behavior may differ significantly from compounds without this property [20].

Step-by-Step Workflow Implementation

Stage 1: Data Collection and Preprocessing

The initial stage focuses on assembling and quality-checking all necessary data components:

- Compound Selection and Curation: Compile a structurally diverse set of 200+ compounds with reliable experimental data. Remove compounds that may form intramolecular hydrogen bonds (IMHB) unless IMHB is a specific focus of the study [20].

- Experimental Data Collection: Gather measured properties (e.g., logP values, permeability coefficients) from validated experimental protocols. Ensure consistency in measurement conditions across all compounds.

- Descriptor Calculation: Compute physicochemical descriptors using appropriate software (e.g., VolSurf+). Aim for comprehensive coverage of major molecular interaction domains.

- Descriptor Block Definition: Group individual descriptors into conceptually coherent blocks representing specific interaction types (e.g., hydrogen bond acidity, hydrophobicity, molecular size) [19] [20]. Typically, 5-7 blocks provide sufficient granularity without excessive fragmentation.

- Data Quality Validation: Perform consistency checks, including range validation, outlier detection, and assessment of missing data. Address any quality issues before proceeding to model development.

The following workflow diagram illustrates the complete BR analysis process from data preparation to interpretation:

Stage 2: PLS Model Development and Validation

Before conducting BR analysis, a robust PLS model must be developed and validated:

- Model Training: Develop the initial PLS model using the predefined descriptor blocks as predictors and the target property (e.g., permeability, logP) as the response variable.

- Component Selection: Determine the optimal number of PLS components using cross-validation to avoid overfitting while capturing meaningful variance in the data.

- Model Validation: Apply rigorous statistical validation including leave-one-out cross-validation, external test set validation, and y-scrambling to ensure model robustness and prevent chance correlations.

- Performance Metrics: Calculate standard model quality metrics including R²X, R²Y, Q², and RMSE (Root Mean Square Error) to quantify model performance.

The validated PLS model serves as the foundation for the subsequent BR analysis, ensuring that the block relevance interpretation is based on a statistically sound model.

Stage 3: BR Analysis Execution and Interpretation

The core analysis phase focuses on extracting and interpreting block relevance information:

- Relevance Calculation: Execute the BR analysis algorithm to quantify the relative contribution of each predefined descriptor block to the PLS model. The MATLAB implementation automatically calculates these relevance values [1].

- Visualization: Generate graphical representations of block relevance, typically showing each block's contribution as a percentage of the total model information content [19] [20].

- Dominant Factor Identification: Identify which descriptor blocks (e.g., hydrogen bond acidity, hydrophobicity) dominate the model, providing mechanistic insight into the structural determinants of the studied property.

- Method Comparison: Compare BR profiles across different experimental methods (e.g., various PAMPA methods or cell-based assays) to determine whether they probe similar balance of interactions [1].

Application Example: ΔlogP(oct-tol) Analysis

Experimental Protocol

A published application of BR analysis examined the dominant molecular features influencing ΔlogP(oct-tol) (the difference between octanol/water and toluene/water partition coefficients) [20]. The specific experimental protocol included:

- Data Compilation: Collection of over 200 experimental logPtol values from literature along with their corresponding logPoct values [20].

- Compound Filtering: Processing of the dataset to remove molecules potentially capable of forming intramolecular hydrogen bonds (IMHB) using purposely-built in-house software [20].

- Descriptor Calculation: Computation of 82 VolSurf+ descriptors for each compound to comprehensively represent physicochemical properties [20].

- Block Definition: Grouping of descriptors into six interpretable blocks representing major interaction types (e.g., hydrogen bond donating capacity, hydrogen bond accepting capacity, hydrophobicity, size/polarity) [20].

- Model Development: Construction of a PLS model correlating the VolSurf+ descriptors with experimental ΔlogP(oct-tol) values.

- BR Analysis: Application of BR analysis to determine the relative contribution of each descriptor block to the PLS model.

Results and Interpretation

The BR analysis revealed that hydrogen bond donor (HBD) properties of solutes predominantly govern ΔlogP(oct-tol), with this single block showing dominant relevance in the PLS model [20]. This finding supported the use of ΔlogP(oct-tol) as an experimental measure for estimating HBD properties of solutes and clarified its role in intramolecular hydrogen bonding (IMHB) interpretation schemes [20].

The quantitative results from this analysis demonstrated the power of BR analysis to identify dominant molecular drivers in complex physicochemical phenomena:

Table 3: BR Analysis Results for ΔlogP(oct-tol) Study

| Descriptor Block | Relevance Percentage | Interpretation | Key Molecular Features |

|---|---|---|---|

| Hydrogen Bond Donor (HBD) | Dominant (Specific percentage not provided in source) | Primary determinant of ΔlogP(oct-tol) | Hydrogen bond acidity, donor strength |

| Hydrophobicity | Moderate | Secondary influence | Lipophilicity, partition behavior |

| Hydrogen Bond Acceptor | Moderate | Tertiary influence | Hydrogen bond basicity |

| Size/Polarity | Lower | Minor contribution | Molecular volume, polar surface area |

| Other Blocks | Combined lower relevance | Supplementary effects | Various specific interactions |

Comparative Analysis of Experimental Methods

Lipophilicity Measurement Methods

BR analysis enables systematic comparison of different methodological approaches for measuring key drug properties. The table below summarizes findings from comparative studies of lipophilicity measurement methods:

Table 4: BR Analysis Comparison of Lipophilicity Measurement Methods

| Method Category | Specific Method | Key Strengths | Limitations | Block Relevance Profile |

|---|---|---|---|---|

| Partition Coefficients | Shake-flask logP_oct | Well-established, widely used | Time-consuming, compound requirements | Balanced representation of multiple interaction types |

| Partition Coefficients | logP_tol | Sensitivity to HBD properties | Less common, limited database | Strong emphasis on HBD block [20] |

| Chromatographic Systems | IAM chromatography | High-throughput, low sample requirement | Indirect measure, requires calibration | Can be optimized to mimic logP_oct [1] |

| Chromatographic Systems | Specific HPLC conditions | Method flexibility, high precision | System-dependent results | BR analysis identifies best logP surrogate [1] |

Permeability Assay Comparison

BR analysis has been particularly valuable in comparing different permeability assessment methods:

- PAMPA vs. Cell-Based Assays: BR analysis can determine which PAMPA (Parallel Artificial Membrane Permeability Assay) method provides the same picture in terms of balance of intermolecular interactions as cell-based systems like Caco-2 [1].

- Universality Assessment: The analysis allows researchers to check the universality of passive permeability measurements among different cell types, identifying whether different cellular models probe the same fundamental physicochemical interactions [1].

- Method Selection Guidance: By comparing the BR profiles of different permeability assays, researchers can select methods that align with their specific research goals, whether for early high-throughput screening or mechanistic permeability studies.

The following diagram illustrates how BR analysis enables method comparison through profile alignment:

Best Practices and Implementation Guidelines

Methodological Considerations

Successful implementation of BR analysis requires attention to several critical methodological aspects:

- Descriptor Block Design: Carefully consider the theoretical foundation when grouping descriptors into blocks. Blocks should be conceptually coherent and represent distinct aspects of molecular interactions. Typically, 5-7 blocks provide sufficient granularity without becoming unwieldy.

- Model Validation Priority: Never conduct BR analysis on an unvalidated PLS model. The interpretation is only as reliable as the underlying statistical model, making rigorous validation through cross-validation and external testing essential.

- Data Quality Over Quantity: While larger datasets (200+ compounds) are preferable, data quality and appropriate structural diversity are more important than sheer numbers. Ensure experimental data is reliable and consistent across the compound set.

- Contextual Interpretation: Always interpret BR results in the context of the specific scientific question. The same descriptor block may show different relevance patterns depending on the property being modeled and the compound set being studied.

Common Technical Challenges and Solutions

Several technical challenges may arise during BR analysis implementation:

- Block Definition Ambiguity: Some descriptors may logically belong to multiple blocks. Establish clear decision rules for descriptor assignment prior to analysis and document any ambiguous cases.

- Overfitting Risks: With numerous descriptors and potential blocks, overfitting is a constant risk. Address this through appropriate validation techniques and by ensuring adequate compound-to-descriptor ratios.

- Software Implementation: The MATLAB implementation requires appropriate computational resources and familiarity with the platform. Ensure access to the specific BR analysis toolbox and appropriate technical support.

- Result Communication: Effectively communicating complex BR analysis results to diverse audiences can be challenging. Develop clear visualization strategies and contextual explanations to make findings accessible to both specialists and non-specialists.

When properly implemented, BR analysis provides a powerful framework for method comparison research, enabling evidence-based selection of experimental approaches and accelerating the drug development process through more informed decision-making [1].

Lipophilicity, commonly measured as the partition coefficient in an octanol-water system (log P~oct~), is a fundamental physicochemical property in drug discovery. It profoundly influences a compound's absorption, distribution, metabolism, and excretion (ADME) properties. Direct experimental determination of log P~oct~ can be slow and costly, leading to the widespread use of chromatographic methods, such as High-Performance Liquid Chromatography (HPLC), to generate surrogate indices. A core challenge is that not all chromatographic systems are equivalent; the retention times they produce reflect a unique balance of intermolecular forces between the solute, mobile phase, and stationary phase. The critical question is: which chromatographic system provides a retention index that best mimics the specific intermolecular interaction balance found in the octanol-water partitioning system?

Block Relevance (BR) analysis addresses this challenge directly. It is a computational tool that deconvolutes the balance of intermolecular interactions governing a given drug discovery-related phenomenon described by a QSPR/PLS model [1]. For lipophilicity, BR analysis allows researchers to dissect the interaction patterns within a chromatographic system and compare them to the reference pattern of the octanol-water system. This process enables the objective identification of the optimal chromatographic system whose retention mechanism best correlates with true log P~oct~, thereby making the choice of method for measuring lipophilicity safer and speeding up drug candidate prioritization [1].

The Scientist's Toolkit: Essential Materials and Reagents

The following table details key reagents, solutions, and equipment essential for conducting experiments aimed at identifying optimal chromatographic surrogates for log P~oct~.

Table 1: Essential Research Reagents and Solutions for Chromatographic Lipophilicity Assessment

| Item Name | Function/Description |

|---|---|

| Reference Compounds | A validated set of drug-like compounds with known experimental log P~oct~ values. Serves as the calibration standard for the chromatographic method. |

| Test Compound Series | A diverse set of 30-50 compounds representing the chemical space of interest, used to build and validate the QSPR model [21]. |

| Supelcosil LC-ABZ Column | A specific HPLC column noted in research for its application in determining chromatographic indices for lipophilicity [21]. |

| LC-18 Database Column | A standard reversed-phase column used in one of the compared chromatographic systems for lipophilicity screening. |

| IAM.PC.DD2 Column | An Immobilized Artificial Membrane column that models phospholipid binding, assessing a different interaction profile compared to standard reversed-phase columns. |

| Octanol-Water System | The gold-standard partitioning system for measuring true lipophilicity (log P~oct~). Provides the benchmark data for correlation with chromatographic retention times. |

| Chromatographic Solvents | High-purity methanol, acetonitrile, and buffered aqueous solutions (e.g., phosphate buffer) used to create reproducible mobile phases. |

| Modern U/HPLC System | An Ultra-High-Performance Liquid Chromatography system (e.g., Shimadzu i-Series, Agilent Infinity III) capable of high-pressure operation (e.g., up to 1300 bar) and delivering highly stable flow rates for precise retention time measurement [22]. |

| Chromatography Data System (CDS) | Software (e.g., Clarity CDS, LabSolutions) for instrument control, data acquisition, and processing of retention time data [22]. |

Methodologies and Experimental Protocols

Core Experimental Workflow

The process of identifying an optimal chromatographic surrogate for log P~oct~ involves a sequence of key steps, from system setup to data interpretation using BR analysis, as visualized below.

Detailed Experimental Protocols

Protocol 1: Establishing a Baseline with Reference log P~oct~ Values

Objective: To obtain a reliable set of experimental log P~oct~ values for a training set of compounds, which will serve as the benchmark for evaluating chromatographic surrogates.

- Compound Selection: Assemble a diverse set of 30-50 drug-like compounds with known, reliably measured log P~oct~ values from literature or previous in-house experiments [21].

- Shake-Flask Method (if required):

- Prepare a saturated solution of the test compound in n-octanol pre-saturated with water.

- Mix this solution with an equal volume of water pre-saturated with n-octanol.

- Agitate the mixture for 24 hours at a constant temperature (e.g., 25°C) to reach partitioning equilibrium.

- Separate the phases by centrifugation.

- Quantify the concentration of the compound in both the octanol and water phases using a validated analytical method (e.g., UV spectrophotometry or HPLC).

- Calculate log P~oct~ using the formula: log P~oct~ = log (Concentration~octanol~ / Concentration~water~).

- Data Compilation: Create a database of the consensus log P~oct~ values for the entire compound set.

Protocol 2: Chromatographic Retention Index Determination

Objective: To measure the retention factors of the training set compounds across different chromatographic systems.

- Chromatographic System Selection: Choose multiple HPLC systems varying in stationary phase chemistry. Key examples include:

- System A: Supelcosil LC-ABZ column [21].

- System B: Standard LC-18 database column.

- System C: IAM.PC.DD2 column.

- Method Conditions:

- Mobile Phase: For reversed-phase systems (A & B), use a binary gradient of methanol and buffer (e.g., 10-50 mM phosphate buffer, pH 7.4). For IAM, use a similar organic/buffer system.

- Flow Rate: 1.0 mL/min (adjust for column dimensions and system requirements).

- Detection: UV detection at appropriate wavelengths for the compounds.

- Temperature: Maintain column oven at 25°C or 37°C.

- Data Collection:

- Inject each compound and record the retention time (t~R~).

- Measure the column void time (t~0~) using an unretained compound like uracil or sodium nitrate.

- Calculate the retention factor (k) for each compound: k = (t~R~ - t~0~) / t~0~.

- The chromatographic index used for modeling is typically the logarithm of the retention factor, log k.

Protocol 3: Data Analysis via Block Relevance (BR) Analysis

Objective: To deconvolute the intermolecular interactions in each chromatographic system and identify the one whose interaction profile best matches that of the octanol-water system.

- Model Building:

- Construct a Partial Least Squares (PLS) regression model for each chromatographic system. The independent variable (X-block) consists of physicochemical descriptors of the compounds (e.g., Volsurf, Abraham, or other molecular descriptors). The dependent variable (Y-block) is the chromatographic retention index (log k) for that system.

- BR Analysis Execution:

- Apply BR analysis to the developed PLS model. The analysis dissects the model's predictive components to reveal the "relevance" or contribution of different blocks of intermolecular interactions (e.g., hydrophobicity, polarity, hydrogen-bonding) to the overall retention mechanism [1] [21].

- Separately, build a PLS model where the Y-block is the experimental log P~oct~ value. Perform BR analysis on this model to reveal the balance of interactions governing octanol-water partitioning.

- Profile Comparison and System Selection: