Beyond the Molecule: How the Similarity Principle is Revolutionizing Modern Drug Design

This article provides a comprehensive exploration of the molecular similarity principle, a cornerstone concept in drug discovery asserting that structurally similar molecules tend to have similar properties.

Beyond the Molecule: How the Similarity Principle is Revolutionizing Modern Drug Design

Abstract

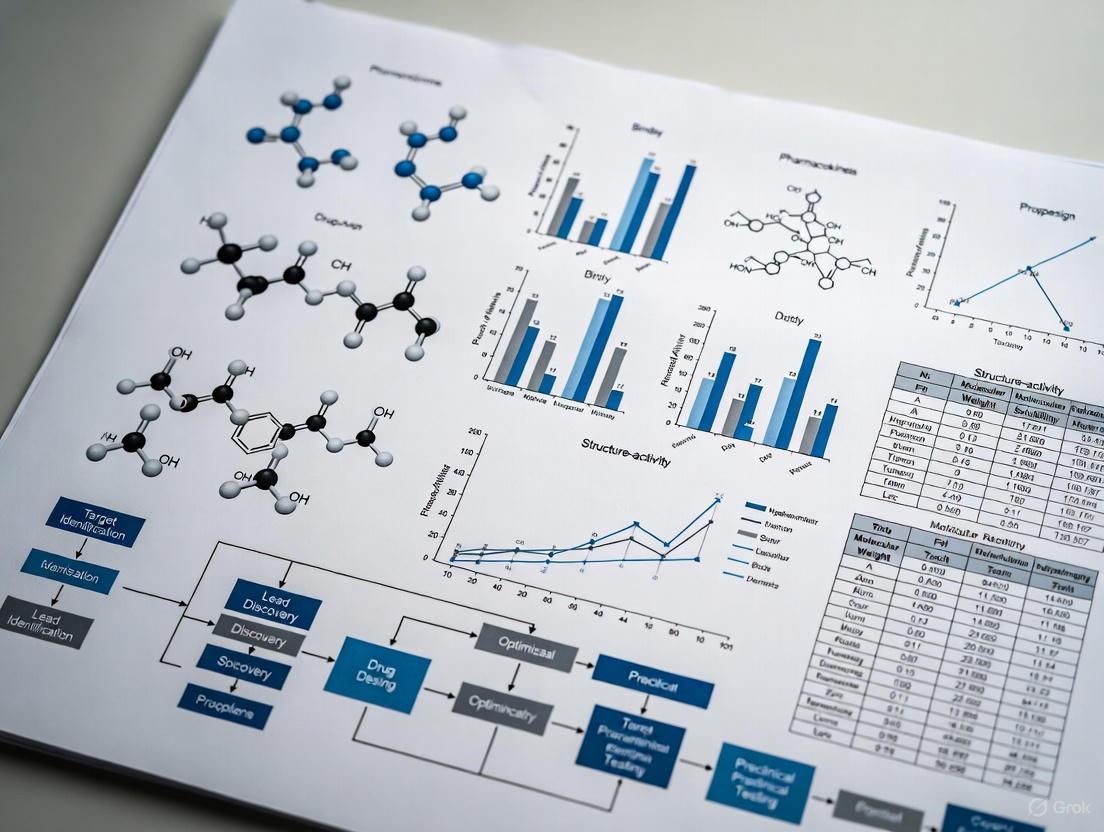

This article provides a comprehensive exploration of the molecular similarity principle, a cornerstone concept in drug discovery asserting that structurally similar molecules tend to have similar properties. Tailored for researchers and drug development professionals, it covers the foundational theory, modern computational methodologies like 2D/3D similarity screening and AI-driven informacophores, and addresses critical challenges in data bias and model interpretability. The content further examines the empirical validation of these approaches through case studies and performance comparisons, offering a holistic view of how similarity-based strategies are accelerating the development of novel therapeutics, from small molecules to advanced modalities.

The Bedrock of Discovery: Deconstructing the Molecular Similarity Principle

The similarity principle is a foundational concept in drug discovery, positing that structurally similar molecules are likely to exhibit similar biological activities [1]. For decades, this principle has been the driving force behind the field, guiding tasks from initial hit identification to lead optimization [1]. Historically, this principle was applied through the chemical intuition of experienced medicinal chemists, who visually recognized structural motifs associated with desired pharmacological properties [2]. This perspective has evolved from a qualitative, intuition-based guideline to a quantitative, computational rule powered by artificial intelligence (AI) and machine learning (ML). This transformation is reshaping the entire drug discovery pipeline, enabling the systematic exploration of ultra-large chemical spaces and facilitating more efficient identification of novel therapeutic candidates [3] [4].

The Classical Foundation: Chemical Intuition and Heuristics

The classical application of the similarity principle in medicinal chemistry is rooted in pattern recognition and heuristic reasoning. Medicinal chemists have long relied on the visual inspection of molecular structures to identify key scaffolds and functional groups responsible for biological activity.

Historical Roots and Scaffold-Centric Chemistry

The roots of rational drug design (RDD) can be traced back over a century to the work of Langmuir, and it was formally established in the 1950s when theoretical insights into drug-receptor interactions and experimental drug testing began to continuously reinforce one another [2]. The process of bioisosteric replacement exemplifies the traditional application of the similarity principle. It involves finding a balance between maintaining the desired biological activity of a molecule and optimizing drug-like properties that influence its efficacy, such as solubility, lipophilicity, and metabolic stability [2]. In practice, this often relied on limited and sometimes unstructured data, depending heavily on the intuition of a highly experienced chemist to identify preferable sites for efficient chemical modifications on a scaffold molecule [2].

The Scaffold-Hopping Paradigm

Scaffold hopping is a critical strategy that directly exploits the similarity principle. Introduced in 1999, it aims to discover new core structures (backbones) while retaining similar biological activity to the original molecule [4]. This strategy is vital for improving pharmacokinetic profiles, reducing toxicity, and navigating around existing patents [4]. Sun et al. (2012) classified scaffold hopping into four main categories of increasing complexity [4]:

- Heterocyclic substitutions

- Open-or-closed rings

- Peptide mimicry

- Topology-based hops

Traditionally, scaffold hopping was achieved using molecular fingerprinting and structure similarity searches. These methods maintain key molecular interactions by substituting critical functional groups with alternatives that preserve binding contributions, such as hydrogen bonding patterns and hydrophobic interactions [4].

The Computational Evolution: Quantifying Molecular Similarity

The transition from intuition to computational rule required the development of methods to numerically represent and compare molecules. This led to the creation of various molecular representation and descriptor systems.

Traditional Molecular Representation Methods

Traditional methods rely on explicit, rule-based feature extraction to translate molecules into a computer-readable format [4].

Table 1: Traditional Molecular Representation Methods

| Method Type | Examples | Key Characteristics | Primary Applications |

|---|---|---|---|

| String-Based | SMILES, InChI [4] | Linear string representations of molecular structure; human-readable. | Basic storage, search, and exchange of chemical structures. |

| Molecular Descriptors | Molecular weight, hydrophobicity, topological indices [4] | Quantify specific physical or chemical properties of molecules. | QSAR modeling, physicochemical property prediction. |

| Molecular Fingerprints | Extended-Connectivity Fingerprints (ECFPs) [4] | Encode substructural information as binary strings or numerical vectors. | Similarity search, clustering, virtual screening, QSAR. |

These representations are computationally efficient and have been widely used for tasks like similarity search and quantitative structure-activity relationship (QSAR) modeling [4]. However, they often struggle to capture the subtle and intricate relationships between molecular structure and function, especially as drug discovery problems increase in complexity [4].

The Rise of AI-Driven Representations

Modern AI-driven methods have ushered in a new paradigm, shifting from predefined rules to data-driven learning [4]. These approaches leverage deep learning models to directly extract and learn intricate features from large molecular datasets.

Table 2: Modern AI-Driven Molecular Representation Methods

| Method Category | Key Models/Techniques | How it Works | Advantages in Capturing Similarity |

|---|---|---|---|

| Language Model-Based | Transformers, BERT [4] | Treats molecular sequences (e.g., SMILES) as a chemical language, tokenizing them into vectors. | Learns contextual relationships between atoms and substructures in a sequence. |

| Graph-Based | Graph Neural Networks (GNNs) [4] | Represents a molecule as a graph with atoms as nodes and bonds as edges; learns features from this topology. | Inherently captures the connectivity and topological structure of molecules. |

| Multimodal & Contrastive Learning | Variational Autoencoders (VAEs), Contrastive Learning [4] | Combines multiple data types (e.g., structure, bioactivity) or learns by contrasting similar and dissimilar pairs. | Generates representations that integrate diverse data, going beyond pure structural similarity. |

These AI-driven representations can capture non-linear relationships and nuances in molecular structure that are often missed by traditional methods, allowing for a more comprehensive exploration of chemical space and the discovery of novel scaffolds with unique properties [4].

The Modern Paradigm: Extending Similarity to Biological Activity Space

A pivotal advancement in the computational application of the similarity principle is the recognition that similarity is not just a chemical concept but extends to biological activity.

The Chemical Checker: A Unified Bioactivity Signature

The Chemical Checker (CC) provides a processed, harmonized, and integrated bioactivity database for about 800,000 small molecules [1]. It systematically expands the similarity principle beyond chemical structure by representing bioactivity data at five levels of increasing complexity, from chemical properties to clinical outcomes [1].

Bioactivity levels in the Chemical Checker [1]

This framework allows for the comparison of molecules based on their integrated bioactivity signatures, which are vector representations of their effects across these different levels. This facilitates the discovery of compounds that reverse or mimic biological signatures of disease, even when their chemical structures are unrelated [1].

The Informacophore: A Data-Driven Pharmacophore

The "informacophore" is a modern concept that extends the traditional, heuristic-based pharmacophore. It represents the minimal chemical structure, combined with computed molecular descriptors, fingerprints, and machine-learned representations, that is essential for a molecule to exhibit biological activity [2]. By identifying and optimizing informacophores through the analysis of ultra-large datasets, researchers can significantly reduce biased intuitive decisions and accelerate the drug discovery process [2].

Experimental Protocols and Computational Workflows

The practical application of the computational similarity principle involves several key methodologies and workflows.

Ultra-Large Virtual Screening

The development of ultra-large, "make-on-demand" virtual libraries, containing tens of billions of compounds, has made the direct empirical screening of all molecules unfeasible [2] [3]. Ultra-large-scale virtual screening (vHTS) uses computational methods to prioritize a manageable number of compounds for experimental testing.

Protocol: Structure-Based Virtual Screening via Docking

- Target Preparation: Obtain the 3D structure of the target protein (e.g., from crystallography or cryo-EM [3]) and prepare it by adding hydrogen atoms, assigning partial charges, and defining binding sites.

- Library Preparation: Curate a virtual library of small molecules (e.g., from ZINC20 or Enamine's "tangible" libraries [2] [3]), generating plausible 3D conformers for each compound.

- Molecular Docking: Use docking software (e.g., Gorgulla et al.'s open-source platform [3]) to computationally pose each molecule within the target's binding site and score the strength of the interaction.

- Hit Prioritization: Rank the docked compounds based on their docking scores and other criteria (e.g., drug-likeness, synthetic accessibility). Select the top-ranking compounds for experimental validation.

AI-Driven Scaffold Hopping and Molecule Generation

Modern AI methods enable scaffold hopping in a more data-driven and comprehensive way.

Protocol: Deep Learning for Scaffold Hopping

- Model Training: Train a deep learning model, such as a Graph Neural Network (GNN) or a Variational Autoencoder (VAE), on a large dataset of bioactive molecules. The model learns to create a continuous, multidimensional chemical space (a latent space) where molecules with similar bioactivity are positioned near each other, regardless of scaffold differences [4].

- Representation Generation: Encode a known active molecule (the query) into this latent space to obtain its vector representation.

- Neighborhood Exploration: Search the latent space for other molecular vectors that are nearby the query vector. These neighbors are predicted to have similar activity but may have different core structures.

- Molecule Generation & Optimization: Use generative models (e.g., VAEs, GANs) to design novel molecular structures directly within the promising regions of the latent space, effectively creating new scaffolds with a high probability of possessing the desired activity [4].

AI-driven scaffold hopping workflow [4]

Table 3: Key Research Reagent Solutions for Computational Similarity-Based Discovery

| Resource Category | Specific Examples | Function in Research |

|---|---|---|

| Ultra-Large Virtual Libraries | Enamine REAL Space (65B+ compounds), OTAVA (55B+ compounds) [2]; ZINC20 [3] | Provides access to vast chemical spaces of "make-on-demand" molecules for virtual screening. |

| AI-Driven Discovery Platforms | Exscientia's Centaur Chemist, Insilico Medicine's Generative AI platform, Schrödinger's physics-enabled platform [5] | Integrated platforms that use AI for target identification, generative chemistry, and lead optimization. |

| Bioactivity Databases | The Chemical Checker (CC) [1] | Provides standardized bioactivity signatures across multiple levels for ~800k molecules, enabling similarity searches in biological activity space. |

| Molecular Representation Tools | Extended-Connectivity Fingerprints (ECFPs), Graph Neural Network frameworks [4] | Converts molecular structures into numerical formats suitable for machine learning and similarity calculations. |

The journey of the similarity principle from a guiding intuition in the mind of a medicinal chemist to a quantifiable, computable rule represents a paradigm shift in drug discovery. The integration of AI-driven molecular representations, the extension of similarity to biological activity space through resources like the Chemical Checker, and the development of advanced computational protocols have created a powerful, data-driven framework. This modern interpretation of the similarity principle allows researchers to navigate the vastness of chemical space with unprecedented precision and scale, systematically identifying and optimizing novel therapeutic candidates while explicitly accounting for the complex relationship between structure and biological function. This evolution continues to be a critical driver in reducing the time and cost associated with bringing new medicines to patients.

The principle that "similar compounds tend to have similar properties" represents a fundamental working hypothesis in modern medicinal chemistry and drug discovery [6]. This molecular similarity principle, also known as the "similar property principle," underpins virtually all ligand-based drug design methods and has created a broad range of cheminformatics tools proven useful for finding new lead compounds [7]. However, this seemingly straightforward principle conceals a fundamental challenge: similarity is inherently subjective and context-dependent [7]. As noted by Barbosa et al., "no single 'absolute' measure of molecular similarity can be conceived," and molecular similarity scores should be considered "tunable tools that need to be adapted to each problem to solve" [6]. This article explores the multifaceted nature of molecular similarity, examining how perspective and context dictate appropriate similarity methodologies across different drug discovery applications, and provides practical experimental frameworks for researchers navigating this complex landscape.

The Multiple Dimensions of Molecular Similarity

Defining Molecular Similarity Across Representations

Molecules can be compared through numerous lenses, each revealing different aspects of potential similarity. The choice of representation fundamentally alters which molecules are considered similar and directly impacts the success of virtual screening, bioisosteric replacement, and scaffold hopping efforts [7].

Table 1: Molecular Similarity Perspectives and Their Applications

| Similarity Perspective | Description | Typical Applications | Key Advantages | Principal Limitations |

|---|---|---|---|---|

| 2D Structural Similarity | Based on atomic connectivity and molecular topology [7] | Similarity searching, analog series expansion [7] | Fast computation, intuitive for chemists, high transparency [8] [7] | Limited scaffold hopping ability, no 3D information [8] |

| 3D Shape Similarity | Comparison of molecular volumes and steric outlines [8] [7] | Scaffold hopping, virtual screening, target prediction [8] | Enables identification of structurally different but shape-similar molecules [8] [7] | Computational cost, conformation dependence, alignment sensitivity [8] |

| Surface Physicochemical | Comparison of electrostatic potential, hydrophobicity, polarizability on molecular surfaces [7] | Bioisosteric replacement, lead optimization [7] | Captures key interaction determinants for binding, explains activity of structurally diverse compounds [7] | Requires accurate 3D structures and property calculations [7] |

| Pharmacophore Similarity | Comparison of spatial arrangement of key interaction features [7] | Virtual screening, multi-target drug design [7] | Focuses on essential interaction capabilities, abstracts from specific chemistry [7] | Pharmacophore model quality dependent, feature definition critical [7] |

| H-Bond Pattern Similarity | Comparison of hydrogen bond donor/acceptor spatial patterns [7] | Understanding binding modes, scaffold flipping [7] | Explains unexpected binding orientations, addresses specificity determinants [7] | May miss other important interactions (e.g., hydrophobic) [7] |

The Subjectivity of Molecular Similarity

The inherent subjectivity of similarity manifests clearly when examining the same molecular pairs through different filters. As illustrated in drugdesign.org, molecules that appear dramatically different in two-dimensional connectivity may reveal striking similarities when compared using three-dimensional shape or surface electrostatic potential representations [7]. This relativity extends to the choice of molecular descriptors, which can be broadly categorized as either "global" (providing a condensed description of the entire molecule, such as LogP) or "local" (describing properties of specific regions, fragments, or atoms) [7].

The context-dependency of relevant molecular characteristics means that a descriptor valuable for predicting one property (e.g., lipophilicity) may be entirely inadequate for predicting another (e.g., metabolic stability) [7]. For instance, replacing an oxygen linker with a secondary amine may introduce minimal changes in lipophilicity but can have "radical repercussions if the group is involved in specific hydrogen bond interactions with the receptor" [7]. This underscores why similarity cannot be an absolute concept but must instead be tailored to the specific biological context and property being investigated.

Experimental Methodologies for Similarity Assessment

Molecular Alignment Protocols

Maximizing and revealing similarities between molecules frequently requires their alignment within a common reference frame [7]. Molecular alignments are widely used for 1D, 2D, and 3D comparisons, with 3D superimpositions being particularly valuable for understanding shared pharmacophores and shape characteristics [7].

3D Molecular Alignment Protocol:

- Conformational Sampling: Generate representative low-energy conformers for each molecule using tools like MOE's LowModeMD or other conformational analysis methods [9]

- Feature Definition: Identify key pharmacophoric features (hydrogen bond donors/acceptors, aromatic rings, hydrophobic regions, charged groups) [7]

- Alignment Optimization: Use atom-based or feature-based fitting algorithms to maximize overlap of critical regions

- Similarity Quantification: Calculate shape overlap using Tanimoto coefficients or other similarity metrics [8]

- Visual Validation: Inspect alignments to ensure pharmacologically relevant overlap [7]

Similarity Maps Visualization Methodology

Similarity maps provide a powerful visualization strategy for understanding atomic contributions to fingerprint similarity or machine learning model predictions [10]. This methodology makes the often-opaque similarity calculations interpretable by highlighting which specific atoms and regions contribute positively or negatively to overall similarity.

Experimental Protocol for Similarity Maps Generation:

- Fingerprint Generation: Calculate molecular fingerprints (atom-pair, circular, or feature-based fingerprints) for reference and test molecules using RDKit or similar toolkits [10]

- Baseline Similarity Calculation: Compute original similarity (typically using Dice or Tanimoto coefficients) between reference and test molecule fingerprints [10]

- Atomic Contribution Analysis:

- For each atom in the test molecule, identify all fingerprint bits set by that atom

- Temporarily remove these bits from the test molecule fingerprint

- Recalculate similarity between modified fingerprint and reference fingerprint [10]

- Compute similarity difference: Δs = sorig - smod

- Weight Normalization: Normalize atomic weights by dividing by the maximum absolute weight value [10]

- Visualization Generation:

- Calculate bivariate Gaussian distributions centered at each atom position

- Generate topography-like map using color scheme (green: positive Δs, pink: negative Δs, gray: no change) [10]

- Superimpose atomic coordinates with Gaussian distributions and contour plots

Figure 1: Similarity Maps Workflow - Visualization of atomic contributions to molecular similarity

Shape Similarity Assessment Methods

Three-dimensional shape similarity has gained significant attention for its applications in virtual screening, target prediction, and scaffold hopping [8]. These methods can be broadly classified as alignment-free or alignment-based approaches, each with distinct advantages and limitations.

Table 2: 3D Shape Similarity Methodologies

| Method Category | Representative Approaches | Key Algorithmic Features | Computational Efficiency | Scaffold Hopping Capability |

|---|---|---|---|---|

| Alignment-Based Methods | ROCS, Phase Shape Screening [8] | Molecular superposition, volume overlap calculation [8] | Computationally expensive, performance depends on alignment quality [8] | Excellent, enables identification of diverse chemotypes with similar shapes [8] |

| Alignment-Free Methods | USR, USRCAT, Electroshape [8] | Atomic distance distributions from key points (centroid, etc.) [8] | Extremely fast, suitable for ultra-large library screening [8] | Good, but may miss subtle shape complementarities [8] |

| Surface-Based Methods | Spherical harmonics, 3D Zernike descriptors [8] | Mathematical representation of molecular surface [8] | Moderate to fast, depends on representation complexity [8] | Moderate, captures global shape properties well [8] |

| Gaussian Overlay Methods | Rapid Overlay of Chemical Structures [8] | Atom-centered Gaussian functions to represent molecular volume [8] | Moderate, optimization required for best overlay [8] | Excellent, widely used for scaffold hopping [8] |

Shape Similarity Screening Protocol:

- Conformer Generation: Generate multiple low-energy conformers for each database molecule

- Shape Representation:

- For alignment-based methods: Generate molecular surfaces or Gaussian volume representations

- For alignment-free methods: Calculate atomic distance distributions (e.g., USR: four distributions from molecular centroid, closest atom, farthest atom, etc.) [8]

- Similarity Quantification:

- Alignment-based: Calculate volume overlap using Tanimoto-like coefficients

- Alignment-free: Compare distance distributions using similarity metrics

- Ranking and Visualization: Sort database compounds by shape similarity and visually inspect top hits

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Research Reagent Solutions for Molecular Similarity Analysis

| Tool/Category | Specific Examples | Functionality | Accessibility |

|---|---|---|---|

| Molecular Visualization | Chimera, ChimeraX, PyMOL, UCSF ChimeraX [11] | Interactive analysis and presentation graphics of molecular structures and related data [11] | Free for noncommercial use, multiple platforms [11] |

| Cheminformatics Toolkits | RDKit, MOE, Schrödinger Suite [10] [9] | Fingerprint generation, similarity calculation, descriptor computation [10] [9] | RDKit: Open-source; MOE/Schrödinger: Commercial [10] [9] |

| Shape Similarity Tools | USR/VSR, ROCS, Phase Shape [8] | Ultrafast shape recognition, molecular volume comparison [8] | USR-VS: Webserver available; ROCS/Phase: Commercial [8] |

| Similarity Visualization | Similarity Maps [10] | Visualize atomic contributions to similarity or machine learning predictions [10] | Open-source implementation available [10] |

| Fingerprint Algorithms | ECFP4, FCFP4, Atom Pair, MACCS Keys [10] | Structural representation for similarity searching and machine learning [10] | Implemented in RDKit and other cheminformatics platforms [10] |

Advanced Applications: Multi-Source Similarity Networks in Drug Repositioning

Beyond single-molecule comparisons, similarity concepts extend to network-based approaches that integrate multiple relationship types among drugs, diseases, and targets. Recent advances in computational drug repositioning demonstrate the power of integrating multiple disease similarity networks—phenotypic, ontological, and molecular—to predict novel drug-disease associations [12].

Multi-Source Disease Similarity Network Protocol:

- Network Construction:

- Network Integration: Combine similarity networks into disease multiplex networks (e.g., DiSimNetOHG) [12]

- Heterogeneous Network Formation: Connect drug similarity networks with disease multiplex networks using known drug-disease associations [12]

- Prediction Algorithm: Apply adapted Random Walk with Restart (RWR) algorithm to rank candidate drug-disease associations [12]

Figure 2: Multi-Source Similarity Network - Drug repositioning workflow

This integrated approach demonstrates that both disease multiplex and multiplex-heterogeneous networks "outperform their single-layer counterparts," validating the fundamental thesis that incorporating multiple similarity perspectives enhances predictive accuracy in drug discovery [12].

The subjective nature of molecular similarity is not a limitation to be overcome but rather a fundamental characteristic that researchers must embrace and exploit. As demonstrated throughout this technical guide, context and perspective fundamentally dictate which molecules are considered similar and which computational approaches will prove most fruitful. The "optimal validation of the hypothesis that molecules that are neighbors in the Structural Space will also display similar properties" requires careful selection of molecular descriptors and similarity metrics tailored to each specific problem [6]. From simple 2D fingerprint comparisons to complex multi-source similarity networks, successful application of the similarity principle demands explicit consideration of which molecular characteristics are most relevant for the biological context and therapeutic question at hand. By understanding and leveraging the multifaceted nature of similarity—through appropriate alignment strategies, visualization tools, and multi-perspective approaches—drug discovery researchers can more effectively navigate chemical space and accelerate the identification of novel therapeutic agents.

Molecular similarity is a foundational concept in drug discovery, pervading our understanding and rationalization of chemistry. The core principle, often summarized as "similar molecules have similar properties," has served as the backbone for many computational approaches in pharmaceutical research [13] [14]. This principle enables researchers to predict the behavior of novel compounds based on their resemblance to molecules with known activities, thereby streamlining the drug development process. The concept of molecular similarity has evolved from a simple qualitative hypothesis to a sophisticated quantitative framework that encompasses multiple dimensions of molecular characteristics [14]. In modern computational chemistry, similarity measures are crucial for machine learning supervised and unsupervised procedures, virtual screening, and chemical space exploration [13].

The application of molecular similarity extends across the entire drug discovery pipeline, from initial hit identification to lead optimization. However, the definition of "similarity" itself is multifaceted, encompassing different representations and contexts. Traditionally focused on structural similarity, the concept now broadly includes physicochemical properties, biological activity profiles, and three-dimensional shape characteristics [14]. This whitepaper provides a comprehensive technical exploration of the three primary dimensions of molecular similarity—2D structure, 3D shape, and physicochemical properties—within the context of modern drug discovery research. We examine the theoretical foundations, methodological approaches, experimental protocols, and practical applications of each similarity paradigm, providing researchers with a sophisticated toolkit for navigating chemical space efficiently.

Theoretical Foundations of Molecular Similarity

The Similarity Principle and Its Paradoxes

The similarity principle in drug discovery operates on the fundamental assumption that the presence and arrangement of different chemical functionalities within a molecular structure determine intramolecular and intermolecular interactions, which in turn govern chemical forces that result in differences in physical, chemical, and biological properties [14]. This principle suggests that structurally similar compounds should behave similarly in biological systems, enabling property prediction and data gap filling for untested compounds.

However, this principle is not without its exceptions and paradoxes. The concepts of "similarity paradox" and "activity cliffs" present intriguing challenges where small structural modifications can lead to dramatic changes in biological activity [14]. These exceptions highlight the complex nature of molecular interactions and underscore the importance of considering multiple similarity contexts rather than relying solely on structural resemblance. The biological activity of a compound is determined by a complex interplay of structural features, electronic properties, and three-dimensional characteristics that collectively influence its interaction with biological targets.

Quantitative Framework for Similarity Assessment

The transition from qualitative to quantitative similarity assessment has been crucial for computational drug discovery. Similarity analysis involves two primary components: (1) structural representations and (2) quantitative measurements of similarity between these representations [8]. Various molecular representations have been developed, including physiochemical properties, topological indices, molecular graphs, pharmacophore features, and molecular shapes. Similarly, multiple metrics exist for quantifying similarity between representations, with the Tanimoto coefficient being the most popular and widely used similarity measure [8].

The quantitative framework enables researchers to move beyond subjective assessments to objective, computable metrics that can be correlated with biological outcomes. This mathematical formalization of similarity has been essential for developing predictive models in chemoinformatics, including quantitative structure-activity relationships (QSAR), read-across (RA), and more recently, read-across structure-activity relationships (RASAR) [14].

Methodological Approaches to Molecular Similarity

2D Structural Similarity

Two-dimensional structural similarity methods rely on the topological structure of molecules, representing atoms as nodes and bonds as edges in a molecular graph. These approaches are among the fastest, most efficient, and most popular similarity search methods in chemoinformatics [8].

Molecular Fingerprints and Descriptors

Molecular fingerprints encode molecular structures into binary strings or numerical vectors that facilitate rapid similarity comparison. Extended-connectivity fingerprints (ECFP) are particularly widely used to represent local atomic environments in a compact and efficient manner, making them invaluable for representing complex molecules [4]. These traditional representations are especially effective for similarity search, clustering, and quantitative structure-activity relationship modeling due to their computational efficiency and concise format [4].

Table 1: Common 2D Molecular Fingerprints and Their Applications

| Fingerprint Type | Description | Common Applications | Advantages |

|---|---|---|---|

| Extended-Connectivity Fingerprints (ECFP) | Circular topological fingerprints capturing atomic environments | Virtual screening, QSAR, similarity searching | Capture local structure effectively; widely validated |

| Path-Based Fingerprints | Enumeration of all linear fragment paths up to specified length | Similarity searching, clustering | Comprehensive structural coverage |

| MACCS Keys | Predefined structural keys based on 166 common chemical substructures | Rapid similarity assessment, clustering | Highly interpretable; fast computation |

| Atom Pair Fingerprints | Pairs of atoms with their topological distances | Scaffold hopping, similarity searching | Less dependent on central framework |

String-Based Representations

The Simplified Molecular Input Line Entry System (SMILES) provides a compact and efficient way to encode chemical structures as strings of ASCII characters, translating complex molecular structures into linear sequences that can be easily processed by computer algorithms [4]. Despite the emergence of more sophisticated representations, SMILES remains a mainstream molecular representation method due to its human-readability and compact nature [4]. Newer variations such as SELFIES (Self-Referencing Embedded Strings) have been developed to address syntactic and semantic constraints in traditional SMILES strings, ensuring that every string represents a valid molecular structure.

3D Shape Similarity

The three-dimensional shape of molecules has been widely recognized as a key determinant for biological activity, as shape complementarity between ligand and receptor is necessary for bringing them sufficiently close to form critical interactions [8]. Molecules with similar shapes are likely to fit the same binding pockets and thereby exhibit similar biological activity, making 3D shape similarity a powerful approach for scaffold hopping and bioisostere replacement.

Alignment-Based Methods

Alignment-based methods rely on finding the optimal superposition between molecules to evaluate shape similarity. These approaches are highly effective in identifying shape similarities but computationally expensive. They enable comparison of surface properties such as hydrophobicity and polarity, and visualization of molecular alignments, which provides valuable insights for molecular design [8]. However, suboptimal molecular alignment can lead to errors in similarity comparison, making the quality of alignment critical for accurate assessment.

Alignment-Free Methods

Alignment-free methods are independent of molecular position and orientation, making them significantly faster and suitable for screening large compound databases. These include atom distance-based descriptors such as Ultrafast Shape Recognition (USR) and its derivatives [8]. USR calculates the distribution of all atom distances from four reference positions: the molecular centroid (ctd), the closest atom to ctd (cst), the farthest atom from ctd (fct), and the atom farthest from fct (ftf) [8]. This method enables rapid shape comparison without requiring structural alignment.

Table 2: Comparison of 3D Shape Similarity Methods

| Method Category | Representative Techniques | Computational Efficiency | Key Advantages | Limitations |

|---|---|---|---|---|

| Alignment-Based | Molecular superposition algorithms | Low to moderate | Visualizable results; accounts for chemical features | Sensitive to initial conformation; computationally intensive |

| Atom Distance-Based | USR, USRCAT, Electroshape | High | Extremely fast; no alignment needed | May miss specific chemical features |

| Surface-Based | Spherical harmonics, 3D Zernike descriptors | Moderate | Comprehensive surface representation | Computationally demanding for large databases |

| Gaussian Overlay | ROCS, Shaper | Moderate | Good balance of speed and accuracy | Dependent on molecular conformation |

Physicochemical Property Similarity

Beyond structural and shape-based similarities, physicochemical properties provide a complementary dimension for molecular comparison. Properties such as molecular weight, hydrophobicity (logP), hydrogen bond donors/acceptors, polar surface area, and flexibility influence a molecule's absorption, distribution, metabolism, excretion, and toxicity (ADMET) profile [15].

The Chemical Checker provides an integrated framework that extends the similarity principle beyond chemical structure to biological activity space [16]. It divides bioactivity data into five levels of increasing complexity: from chemical properties to clinical outcomes, with intermediate levels including targets, off-targets, networks, and cellular information [16]. By expressing bioactivity data in vector format, the Chemical Checker enables similarity comparison based on multidimensional biological activity signatures rather than just chemical structure.

Experimental Protocols and Methodologies

Protocol for 3D Shape-Based Virtual Screening

Shape-based virtual screening has become a method of choice in an increasing number of drug discovery campaigns, particularly for scaffold hopping and identifying structurally diverse active compounds [8]. The following protocol outlines a standard workflow for 3D shape-based screening:

Query Preparation: Select a known active compound with demonstrated biological activity against the target of interest. Generate a low-energy 3D conformation using molecular mechanics methods (e.g., MMFF94 or GAFF force fields). Consider multiple conformations if the molecule has significant flexibility.

Shape Query Generation: Calculate the molecular shape descriptor using the chosen method (e.g., USR, ROCS). For alignment-based methods, this may involve defining pharmacophoric features in addition to shape points.

Database Preparation: Prepare a database of compounds in 3D format. Generate plausible 3D conformations for each database compound, considering multiple conformers for flexible molecules. Common databases include ZINC, ChEMBL, or corporate collections.

Similarity Calculation: Compute shape similarity between the query and each database compound using the appropriate metric (e.g., Tanimoto combo score in ROCS). For alignment-based methods, this involves finding the optimal superposition that maximizes shape overlap.

Result Analysis and Prioritization: Rank compounds based on shape similarity scores. Apply additional filters based on drug-likeness (e.g., Lipinski's Rule of Five), chemical diversity, or specific pharmacophoric requirements. Select top candidates for experimental testing.

Protocol for 2D Similarity Searching and RASAR Modeling

The integration of similarity principles with quantitative modeling has led to the development of novel approaches like read-across structure-activity relationships (RASAR), which combine traditional QSAR with similarity-based reasoning [14]. The following protocol outlines the workflow for 2D similarity searching and RASAR model development:

Descriptor Calculation: Compute 2D molecular descriptors and fingerprints for all compounds in the dataset. Common descriptors include ECFP, MACCS keys, and topological indices.

Similarity Matrix Generation: Calculate pairwise similarity between all compounds using an appropriate similarity metric (e.g., Tanimoto coefficient for binary fingerprints, Euclidean distance for continuous descriptors).

Similarity Descriptor Creation: For each compound, create similarity descriptors based on its similarity to compounds with known activity. This may include:

- Average similarity to known actives

- Maximum similarity to known actives

- Similarity to nearest neighbor

- Similarity profile across multiple reference compounds

Model Building: Combine traditional molecular descriptors with similarity descriptors to build predictive models using machine learning algorithms (e.g., random forest, support vector machines, neural networks).

Model Validation: Validate model performance using external test sets or cross-validation, ensuring the model generalizes to new chemical entities.

Advanced 3D Molecular Generation with Interaction-Guided Design

Recent advances in generative modeling have enabled the design of novel molecules with specific 3D shape and interaction profiles. The DeepICL framework exemplifies this approach by leveraging universal patterns of protein-ligand interactions as prior knowledge [17]. The experimental workflow involves:

Interaction Condition Setting: Analyze protein atoms of the given binding site and assign interaction types (hydrogen bonds, salt bridges, hydrophobic interactions, π-π stackings). Categorize protein atoms into one of seven classes: anion, cation, hydrogen bond donor/acceptor, aromatic, hydrophobic, and non-interacting atoms.

Interaction Pattern Extraction: For training complexes, use tools like the Protein-Ligand Interaction Profiler (PLIP) to identify non-covalent interactions from reference structures [17].

Conditional Molecular Generation: Employ deep generative models (e.g., DeepICL) to sequentially generate ligand atoms based on the 3D context of the pocket and specific interaction conditions.

Validation of Generated Molecules: Assess generated ligands for binding pose stability, affinity, geometric pattern compliance, diversity, and novelty through computational methods and experimental testing.

Visualization of Molecular Similarity Relationships

Molecular Similarity Assessment Framework

Table 3: Essential Computational Tools for Molecular Similarity Research

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Open-source cheminformatics library | Molecular fingerprint generation, descriptor calculation, substructure searching | General-purpose cheminformatics, 2D similarity assessment |

| OpenBabel | Chemical toolbox | Format conversion, descriptor calculation, molecular alignment | Preprocessing of chemical data, interoperability between tools |

| ROCS (Rapid Overlay of Chemical Structures) | Commercial software | 3D shape-based alignment and similarity calculation | Scaffold hopping, 3D similarity screening |

| USR-VS | Web server | Ultrafast shape recognition for virtual screening | Large-scale shape-based screening without alignment |

| PLIP (Protein-Ligand Interaction Profiler) | Open-source tool | Detection and analysis of non-covalent protein-ligand interactions | Interaction-guided drug design, 3D interaction analysis |

| Chemical Checker | Bioinformatics resource | Integrated bioactivity signatures across multiple levels | Multi-dimensional similarity assessment beyond structure |

| ZINC Database | Public compound database | Curated collection of commercially available compounds | Source compounds for virtual screening |

| ChEMBL Database | Public bioactivity database | Curated bioactivity data for drug-like molecules | Reference data for similarity-based prediction |

| Schrödinger Suite | Commercial drug discovery platform | Comprehensive tools for molecular modeling and simulation | Integrated workflow for structure-based drug design |

| OpenEye Toolkit | Commercial cheminformatics toolkit | High-performance molecular modeling and shape similarity | Large-scale virtual screening, lead optimization |

Advanced Applications in Drug Discovery

Scaffold Hopping and Bioisostere Replacement

Scaffold hopping represents one of the most valuable applications of molecular similarity in drug discovery, aimed at discovering new core structures while retaining similar biological activity [4]. This approach enables researchers to overcome limitations of existing leads, such as toxicity, metabolic instability, or intellectual property constraints. Sun et al. classified scaffold hopping into four main categories of increasing complexity: heterocyclic substitutions, ring opening/closure, peptide mimicry, and topology-based hops [4].

Modern AI-driven molecular generation methods have transformed scaffold hopping through data-driven exploration of chemical diversity. Techniques such as variational autoencoders (VAEs) and generative adversarial networks (GANs) are increasingly utilized to design entirely new scaffolds absent from existing chemical libraries while tailoring molecules to possess desired properties [4]. These approaches leverage advanced molecular representations, such as graph-based embeddings or deep learning-generated features, which capture non-linear relationships beyond manual descriptors.

Interaction-Guided Drug Design

The integration of 3D shape similarity with specific interaction patterns has enabled more sophisticated structure-based design approaches. Frameworks like DeepICL demonstrate how interaction-aware conditioning can guide molecular generation to fulfill specific interaction profiles within target binding pockets [17]. This approach leverages universal nature of protein-ligand interactions—hydrogen bonds, salt bridges, hydrophobic interactions, and π-π stackings—as prior knowledge to enhance generalizability, particularly in data-limited scenarios.

In practice, interaction-guided design involves analyzing protein atoms in a binding site and establishing interaction conditions that specify desired interaction types and roles. During molecular generation, these conditions guide atom addition to ensure complementary interactions with the target protein [17]. This methodology has shown promise in designing potential mutant-selective inhibitors and addressing practical challenges where specific interaction sites play crucial roles in binding affinity and selectivity.

Multi-Dimensional Similarity and RASAR Modeling

The integration of different similarity contexts has led to the development of novel modeling approaches such as quantitative read-across structure-activity relationships (q-RASAR), which combine traditional QSAR with similarity-based reasoning [14]. RASAR models use similarity descriptors in conjunction with conventional molecular descriptors to build predictive models with enhanced external predictivity compared to standard QSAR approaches [14].

This methodology has been applied across various domains, including predictive toxicology, nanotoxicity assessment, and materials property prediction. By leveraging multiple dimensions of similarity—structural, physicochemical, and biological—RASAR models provide a more comprehensive framework for predicting molecular properties and activities, particularly in data-limited scenarios where traditional statistical modeling approaches face challenges.

Future Perspectives and Challenges

The field of molecular similarity continues to evolve with advances in artificial intelligence, data availability, and computational resources. Several emerging trends are shaping the future of similarity-based drug discovery:

Geometric Deep Learning: Equivariant graph neural networks and other geometric deep learning approaches are enhancing the capability to model 3D molecular structures and their interactions [18] [19]. Models such as DMDiff incorporate SE(3)-equivariance and distance-aware attention mechanisms to better capture spatial relationships in molecular systems [18].

Multi-Modal Representation Learning: The integration of multiple molecular representations—including graphs, sequences, 3D structures, and quantum chemical properties—through cross-modal learning frameworks provides more comprehensive molecular characterization [19]. Approaches like MolFusion's multi-modal fusion and SMICLR's integration of structural and sequential data highlight the potential of these hybrid representations [19].

Self-Supervised Learning: The application of self-supervised learning techniques to molecular data enables leveraging vast unannotated chemical databases to learn meaningful representations [19]. Methods like molecular contrastive learning and pretext task-based pre-training generate transferable representations that enhance performance on downstream prediction tasks with limited labeled data.

Despite these advances, significant challenges remain. Data scarcity, representational inconsistency, interpretability, and computational costs present ongoing obstacles in molecular similarity research [19]. Furthermore, the effective integration of domain knowledge with data-driven approaches requires continued development to ensure that similarity methods remain grounded in chemical and biological principles.

Molecular similarity provides a powerful conceptual framework and practical toolkit for navigating chemical space in drug discovery. The multifaceted nature of similarity—encompassing 2D structure, 3D shape, and physicochemical properties—offers complementary perspectives for compound comparison, prediction, and design. While traditional similarity methods continue to provide value in many applications, advances in artificial intelligence, particularly in geometric deep learning and multi-modal representation, are expanding the scope and capability of similarity-based approaches.

The integration of similarity principles with structural biology and interaction profiling represents a particularly promising direction, enabling more targeted and effective molecular design. As the field continues to evolve, the thoughtful combination of data-driven methods with domain knowledge and principled approaches will be essential for realizing the full potential of molecular similarity in accelerating drug discovery and development.

The concept that similar molecules tend to exhibit similar biological properties represents a foundational pillar of modern medicinal chemistry and drug discovery [20]. This molecular similarity principle, though only explicitly defined with the advent of computers, has been implicitly employed by medicinal chemists for decades through strategies like bioisosteric replacement and scaffold hopping [20]. These approaches leverage structural and functional similarity to optimize key drug properties while maintaining or enhancing biological activity. Within a broader thesis on similarity, these methodologies demonstrate how systematic molecular modifications can yield compounds with improved pharmacokinetics, reduced toxicity, and novel intellectual property positions. This technical guide examines the historical applications, quantitative outcomes, and experimental protocols underlying these similarity-based strategies, providing researchers with a framework for their application in contemporary drug development programs.

Core Concepts and Definitions

Bioisosteric Replacement

Bioisosterism involves the substitution of a molecular fragment with another that shares similar steric and electronic characteristics, thereby preserving similar biological properties [21]. This approach is widely employed to improve potency, selectivity, and pharmacokinetic profiles [21]. Bioisosteres are traditionally classified into two main categories:

- Classical Bioisosteres: These share similar valency and size (e.g., -OH and -NH₂) and are rooted in the early work of Langmuir, Grimm, and Erlenmeyer [22]. They include mono-, di-, tri-, and tetra-valent atom replacements and ring equivalents [22].

- Non-classical Bioisosteres: These do not obey strict steric and electronic definitions but mimic biological effects through spatial or electrostatic similarity [21]. This category includes ring vs. non-cyclic structures, exchangeable groups, and shape mimics [22].

Molecular Mimicry

Molecular mimicry extends beyond simple atom or group replacement to encompass the imitation of natural molecules in their interaction with biological systems. This includes peptidomimetics, where small molecules are designed to mimic the structural features and biological function of peptides, thereby overcoming limitations like poor metabolic stability and low bioavailability [23]. The example of methotrexate and dihydrofolate binding to dihydrofolate reductase illustrates how molecules with different 2D structures can achieve similar binding through complementary hydrogen-bonding patterns [20].

Scaffold Hopping

Scaffold hopping, also known as lead hopping, aims to identify structurally novel compounds with significant different molecular backbones while maintaining similar biological activities [23]. This strategy explores novel chemical space to overcome limitations of existing scaffolds, such as poor physicochemical properties or intellectual property constraints. Scaffold hopping can be classified into four major categories [23]:

- Heterocycle Replacements: Swapping atoms within a core heterocycle while maintaining outward-facing vectors.

- Ring Opening or Closure: Modifying molecular flexibility by opening or closing ring systems.

- Peptidomimetics: Replacing peptide scaffolds with non-peptide structures that mimic their spatial arrangement.

- Topology/Shape-Based Hopping: Designing cores with different connectivity but similar three-dimensional shapes.

Quantitative Analysis of Bioisosteric Replacements

Systematic analysis of bioisosteric replacements across pharmacological targets reveals significant and consistent impacts on biological activity. The following table summarizes quantitative data on potency shifts for specific bioisosteric exchanges derived from large-scale ChEMBL database analysis [21].

Table 1: Experimentally Determined Potency Shifts for Common Bioisosteric Replacements

| Bioisosteric Replacement | Target Protein | Mean ΔpChEMBL | Number of Pairs | Statistical Significance (p-value) |

|---|---|---|---|---|

| Ester → Secondary Amide | Muscarinic Acetylcholine Receptor M2 (CHMR2) | -1.26 | 14 | < 0.01 |

| Phenyl → Furanyl | Adenosine A2A Receptor (ADORA2A) | +0.58 | 88 | < 0.01 |

| Phenyl → Furanyl | Adenosine A1 Receptor (ADORA1) | +0.14 | 66 | Not Significant |

| Secondary Amide → Ester | Various Off-targets | Variable | 5 significant cases | < 0.05 |

| Carboxylic Acid → Various | Various Off-targets | Variable | 4 significant cases | < 0.05 |

This data demonstrates that bioisosteric replacements can produce statistically significant potency shifts at specific targets. The differential effect of phenyl-to-furanyl substitutions at ADORA2A versus ADORA1 receptors highlights the potential for selective potency modulation – a crucial consideration in optimizing compound selectivity [21]. Among 58 off-target replacement cases with more than ten compound pairs, 56 exhibited statistically significant potency shifts (p < 0.05), with 53 associated with inhibition and 5 with activation [21].

Experimental Protocols and Workflows

KNIME Workflow for Systematic Bioisostere Assessment

A reproducible, semi-automated KNIME workflow was developed to systematically evaluate bioisosteric replacements across multiple targets [21]. The protocol involves the following key steps:

- Compound Pair Extraction: Identify compound pairs featuring literature-curated common bioisosteric exchanges from databases like ChEMBL.

- Activity Data Retrieval: Retrieve pChEMBL values (negative logarithms of activity values) across a panel of safety-relevant off-target proteins.

- Data Filtering: Apply filters for exact molecular weight (≤600 Da), exclusion of labeled isotopes, and removal of tripeptides and larger peptides.

- Quality Assessment: Calculate pair-level quality metrics including:

- Document Consistency Ratio: Assesses consistency of source data documentation.

- Assay Context Consistency Ratio: Evaluates consistency of assay conditions.

- Statistical Analysis: Calculate mean pChEMBL shifts and associated statistical significance using appropriate tests (e.g., t-test).

- Selectivity Profiling: Analyze pChEMBL shifts at secondary targets to determine selectivity profiles.

This workflow enables systematic, data-driven evaluation of potency shifts induced by bioisosteric replacements, aiding in the identification of substitutions associated with off-target potency increases or decreases during lead optimization [21].

Scaffold-Hopping Protocol for Molecular Glue Development

A scaffold-hopping approach for developing molecular glues stabilizing the 14-3-3σ/ERα complex utilized the following methodology [24]:

- Anchor Identification: From a known molecular glue (compound 127), identify a deeply buried "anchor" motif (p-chloro-phenyl ring serving as a phenylalanine anchor).

- Pharmacophore Definition: Define three additional pharmacophore points representing key ligand-protein interactions.

- Virtual Screening: Use AnchorQuery software to screen a library of ~31 million synthesizable compounds via one-step multi-component reactions.

- Scaffold Selection: Prioritize hits based on RMSD fit to original scaffold and molecular weight (<400 Da).

- Synthetic Exploration: Employ Groebke-Blackburn-Bienaymé multi-component reaction chemistry for rapid derivatization.

- Biophysical Characterization: Evaluate hits using intact mass spectrometry, TR-FRET, and SPR.

- Structural Validation: Determine crystal structures of ternary complexes to guide optimization.

- Cellular Assay: Confirm PPI stabilization in live cells using NanoBRET assay with full-length proteins.

Pathway and Workflow Visualization

KNIME Bioisostere Analysis Workflow

KNIME Bioisostere Analysis Workflow: A semi-automated workflow for systematic evaluation of bioisosteric replacements.

Scaffold-Hopping Methodology for Molecular Glues

Scaffold-Hopping for Molecular Glues: Computational design and optimization workflow for PPI stabilizers.

Table 2: Key Research Reagents and Computational Tools for Similarity-Based Drug Design

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| KNIME Analytics Platform | Workflow Environment | Data pipelining and analysis | Semi-automated analysis of bioisosteric replacements across target panels [21] |

| ChEMBL Database | Chemical Database | Bioactivity data repository | Source of compound pairs and pChEMBL values for bioisostere analysis [21] |

| AnchorQuery | Virtual Screening Software | Pharmacophore-based screening of MCR libraries | Scaffold hopping for molecular glues targeting 14-3-3/ERα complex [24] |

| RDKit | Cheminformatics Toolkit | Molecular fingerprint generation and similarity calculation | Chemical space analysis and molecular descriptor calculation [25] |

| MACCS Keys | Molecular Fingerprint | Structural key representation of molecules | Similarity assessment between drugs and endogenous metabolites [25] |

| Groebke-Blackburn-Bienaymé Reaction | Multi-component Reaction | Synthesis of imidazo[1,2-a]pyridines | Rapid generation of diverse molecular glue scaffolds [24] |

| Intact Mass Spectrometry | Biophysical Assay | Detection of protein-ligand complexes | Identification of molecular glue binding to 14-3-3/ERα complex [24] |

| NanoBRET | Cellular Assay | Protein-protein interaction monitoring in live cells | Cellular validation of PPI stabilization by molecular glues [24] |

Historical Case Studies in Drug Discovery

Morphine to Tramadol: Ring Opening for Improved Safety Profile

The evolution from morphine to tramadol represents an early successful application of scaffold hopping through ring opening [23]. Morphine, a potent analgesic with significant addiction potential and side effects, features a rigid 'T'-shaped structure with multiple fused rings. Tramadol was developed by breaking six ring bonds and opening three fused rings, resulting in a more flexible molecule [23]. Despite very different 2D structures, 3D superposition demonstrates conservation of key pharmacophore features: the positively charged tertiary amine, aromatic ring, and hydroxyl group (methoxyl group in tramadol, which is demethylated by CYP2D6) [23]. This scaffold hop reduced potency but significantly improved the safety profile, with tramadol exhibiting almost complete oral absorption and longer duration of action [23].

Antihistamine Development: Progressive Scaffold Optimization

The development of antihistamines provides a compelling case study of progressive scaffold optimization through ring closure and heterocycle replacement [23]. The classical antihistamine pheniramine features two aromatic rings joined to a central atom with a positive charge center. Through ring closure, cyproheptadine was developed by locking both aromatic rings to the active conformation and introducing a piperidine ring to reduce flexibility, significantly improving binding affinity to the H1-receptor [23]. Further optimization through isosteric replacement of one phenyl ring with thiophene produced pizotifen, which demonstrated improved efficacy for migraine treatment [23]. Replacement of a phenyl ring with pyrimidine in azatadine further improved solubility while maintaining antihistamine activity [23]. These examples demonstrate how small, rational changes to molecular scaffolds can result in different activity profiles and medical uses.

Contemporary Applications and Future Perspectives

AI-Enhanced Similarity Assessment

Artificial intelligence is transforming molecular similarity assessment in drug design. AI models, particularly deep learning approaches, can capture complex structure-activity relationships that traditional similarity metrics might miss [26]. These models can process multiple molecular representations simultaneously – including 2D structures, 3D conformations, and physicochemical properties – to provide more holistic similarity assessments [26]. AI-powered tools are being increasingly applied to predict bioisosteric replacements and scaffold hops with higher accuracy, accelerating lead optimization cycles [26].

Expanding the E3 Ligase Toolbox for Targeted Protein Degradation

In the rapidly advancing field of targeted protein degradation, bioisosteric replacement and scaffold hopping are crucial for expanding the E3 ligase toolbox beyond the currently dominated cereblon, VHL, MDM2, and IAP ligases [27]. Research efforts are now focusing on developing degraders that recruit underutilized E3 ligases including DCAF16, DCAF15, DCAF11, KEAP1, and FEM1B [27]. These expansions require careful optimization of molecular glues and PROTACs through similarity-based design strategies to achieve selective target degradation while minimizing off-target effects.

The historical applications of bioisosteric replacement, molecular mimicry, and scaffold hopping demonstrate the enduring power of the similarity principle in drug design. As computational methods advance, these strategies continue to evolve, enabling more systematic and predictive optimization of therapeutic agents across an expanding range of target classes.

The systematic discovery of new therapeutics relies on a central, guiding hypothesis: similar molecules exhibit similar biological activities. This principle of similarity forms the cornerstone of modern drug discovery, providing a predictive framework for identifying and optimizing chemical compounds. At its core, this hypothesis enables researchers to infer the properties of novel molecules based on the known properties of structurally related compounds, creating a rational pathway through the vastness of chemical space [28]. The operationalization of this principle has evolved from simple chemical analoging to sophisticated computational approaches that quantitatively define and exploit molecular relationships across the entire drug discovery pipeline.

The economic and temporal constraints of modern drug development necessitate such predictive principles. With the average drug taking over a decade and billions of dollars to reach patients, efficiency in the early discovery phases—particularly hit identification and lead optimization—becomes critical [29] [30]. The similarity hypothesis directly addresses this need by providing a strategic compass for navigating chemical exploration, significantly increasing the probability of success while conserving resources. This technical guide examines how this central hypothesis is applied across contemporary hit identification and lead optimization workflows, detailing the experimental and computational methodologies that transform this theoretical principle into practical discovery engines.

The Similarity Principle in Hit Identification Strategies

Hit identification (Hit ID) represents the crucial initial stage of drug discovery where molecules with desirable biological activity against a therapeutic target are identified [29]. The similarity principle informs several key strategic decisions in Hit ID campaign design:

Compound Library Design and Screening Strategies

The composition of screening libraries directly reflects the similarity hypothesis. Libraries are curated to contain compounds with proven lead-like properties, good solubility, and chemical diversity to maximize the probability of identifying quality hits [29]. The strategic application of similarity occurs through several distinct screening approaches:

Table 1: Hit Identification Screening Strategies Informed by Similarity Principles

| Screening Approach | Similarity Application | Key Considerations |

|---|---|---|

| High-Throughput Screening (HTS) [29] [30] | Broad chemical diversity maximizes chance encounters with similar active scaffolds | Requires large libraries (>100,000 compounds); High resource investment |

| Focused Screening [30] | Targets compounds similar to known binders of target family | Requires prior structural knowledge; Higher hit rate but limited novelty |

| Virtual Screening [30] [28] | Computational similarity searching against known actives | Rapid and cost-effective; Dependent on model quality |

| Fragment-Based Screening [30] | Identifies simple, similar structural motifs with weak binding | Requires specialized detection methods; Followed by fragment assembly |

The strategic selection of screening approach depends heavily on available target information. When substantial knowledge exists about ligands for similar targets, focused screening or virtual screening leveraging similarity metrics typically provides more efficient exploration of chemical space [30]. Conversely, for novel targets with limited ligand information, diverse HTS campaigns offer the best opportunity to identify novel chemotypes that can later serve as similarity search queries.

Experimental Protocols for Similarity-Driven Hit Identification

Protocol 1: Virtual Screening Workflow Using Chemical Similarity

- Query Selection: Identify one or more known active compounds against the target of interest. These may come from public databases (ChEMBL, PubChem) or prior internal screening data [28].

- Fingerprint Generation: Encode the molecular structures of query compounds into chemical fingerprints. Common fingerprints include:

Similarity Calculation: Screen the virtual compound library by calculating the Tanimoto similarity index between query and library compounds:

Tanimoto Similarity = (Number of common features) / (Total unique features in both molecules)

Compounds with similarity values typically >0.7-0.8 are prioritized for further evaluation [28].

- Hit Confirmation: Subject computationally prioritized compounds to experimental validation in primary assays.

Protocol 2: Focused Library Design for Protein Families

- Target Analysis: Identify conserved structural features or binding motifs across the target protein family (e.g., kinase ATP-binding site).

- Pharmacophore Modeling: Define essential molecular features required for target binding based on known ligands [30].

- Similarity Searching: Query corporate or commercial compound collections using the pharmacophore model or known active scaffolds as similarity queries.

- Library Assembly: Curate a focused screening set enriched with compounds sharing these similarity characteristics, typically numbering 1,000-10,000 compounds [30].

Quantitative Similarity Methods in Lead Optimization

Once initial hits are identified, the similarity hypothesis guides the lead optimization process through more nuanced quantitative approaches that explore structure-activity relationships (SAR).

Chemoinformatic Methods for Similarity Quantification

Table 2: Quantitative Methods for Leveraging Similarity in Lead Optimization

| Method | Technical Approach | Application in Lead Optimization |

|---|---|---|

| Chemical Similarity Networks [28] | Clusters compounds based on structural similarity using Tanimoto distances | Identifies distinct chemotypes; Reveals SAR patterns across structural classes |

| Similarity Ensemble Approach (SEA) [28] | Calculates similarity against random background using BLAST-like algorithm | Predicts potential off-target interactions and polypharmacology |

| Structural Poly-Pharmacology [28] | Uses 3D ligand structure similarity to identify scaffold hops | Suggests novel scaffolds with maintained activity; Designs out toxicity |

| QSAR Modeling [31] | Relates quantitative molecular descriptors to biological activity | Predicts potency of analogous compounds before synthesis |

These quantitative methods enable a more sophisticated application of the similarity principle that moves beyond simple structural analogy to include similarity in physicochemical properties, binding interactions, and network behavior.

Advanced Applications: AI and Active Learning

Recent advances have integrated the similarity principle with generative artificial intelligence (AI) to create iterative optimization systems. These systems employ active learning frameworks where:

- Initial compounds with known activity are used to train generative models.

- These models propose novel compounds with structural similarity but optimized properties.

- Proposed compounds are evaluated computationally or experimentally.

- Results feedback to retrain the model, creating a continuous improvement cycle [32].

For example, a recently developed workflow combining variational autoencoders with active learning cycles successfully generated novel, diverse scaffolds for CDK2 and KRAS targets while maintaining predicted affinity. This approach yielded experimentally confirmed nanomolar inhibitors for CDK2, demonstrating the power of combining similarity principles with modern AI methodologies [32].

Visualization of Workflows

Hit Identification and Optimization Workflow

Hit Identification and Optimization Workflow

AI-Driven Molecular Optimization

AI-Driven Molecular Optimization

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Similarity-Driven Drug Discovery

| Reagent/Material | Function in Similarity-Based Discovery | Application Notes |

|---|---|---|

| Diverse Compound Libraries [29] | Provides chemical matter for initial similarity searching; Should contain lead-like compounds with proven chemical diversity | Libraries of 100,000+ compounds common for HTS; Quality control critical for reliable SAR |

| Focused/Target-Class Libraries [30] | Enriched with compounds similar to known binders of specific protein families; Increases hit rates for related targets | Typically 1,000-10,000 compounds; Requires prior knowledge of target class |

| Fragment Libraries [30] | Minimal structural motifs for identifying fundamental similarity requirements; Weak binders optimized through similarity-guided assembly | Typically <300 Da; Requires sensitive detection methods (SPR, NMR, MS) |

| Assay Reagents [29] | Enables validation of similarity predictions through biological testing; Includes recombinant proteins, cell lines, detection reagents | Robust, pharmacologically sensitive assays essential for reliable SAR |

| Chemoinformatic Tools [28] | Quantifies molecular similarity; Enables virtual screening and SAR analysis | Multiple fingerprint types and similarity metrics should be evaluated |

The principle that similar molecules exhibit similar biological activities remains the fundamental hypothesis guiding efficient drug discovery. This central premise provides the strategic foundation for hit identification campaigns and the tactical direction for lead optimization efforts. While the core hypothesis remains unchanged, its implementation has evolved dramatically from simple chemical analoging to sophisticated computational approaches that quantitatively explore chemical space.

Modern drug discovery leverages this similarity principle across multiple dimensions—from the initial design of screening libraries to the application of AI-driven generative chemistry in lead optimization. The continued integration of this time-tested hypothesis with emerging technologies ensures that similarity-based reasoning will remain essential for addressing the ongoing challenge of efficiently navigating the vast chemical universe to discover novel therapeutics. As quantitative and systems pharmacology approaches continue to mature, the similarity principle provides the necessary conceptual framework for integrating diverse data types into coherent predictive models that accelerate the delivery of new medicines to patients.

From Theory to Toolbox: Computational Methods and Real-World Applications

The similarity principle is a foundational concept in drug design, positing that structurally similar molecules are likely to exhibit similar biological activities [33]. This principle enables researchers to prioritize compound synthesis and testing by predicting activity based on structural resemblance to known active molecules. However, a significant challenge lies in quantitatively defining and measuring "structural similarity"—a problem addressed through computational approaches using molecular fingerprints and similarity metrics [33]. Molecular fingerprints serve as bridge between chemical structures and their biological properties, creating mathematical representations that enable rapid comparison of large compound libraries [34]. These representations have become indispensable in modern cheminformatics, supporting critical tasks including virtual screening, quantitative structure-activity relationship (QSAR) modeling, and scaffold hopping in drug discovery research [4] [35].

Molecular Fingerprints: Encoding Chemical Information

Definition and Core Characteristics

Molecular fingerprints are computational representations that encode chemical structures into fixed-length vectors, transforming structural features into formats suitable for machine learning algorithms and similarity calculations [34]. Effective fingerprints share key characteristics: they represent local molecular structures, combine efficiently to represent entire molecules, and maintain mutually independent features [34].

Types of 2D Fingerprints

Table 1: Major Categories of 2D Molecular Fingerprints

| Fingerprint Category | Basis of Representation | Key Examples | Typical Vector Length | Primary Applications |

|---|---|---|---|---|

| Dictionary-Based (Structural Keys) | Predefined structural fragments | MACCS, PubChem fingerprints | 166-881 bits | Substructure search, rapid filtering [34] [35] |

| Circular Fingerprints | Atomic environments within specific radii | ECFP, FCFP | 1024-2048 bits | Similarity search, QSAR, virtual screening [34] [35] [36] |

| Topological (Path-Based) Fingerprints | Linear paths through molecular graph | Daylight, FP2 | 256-2048 bits | Similarity searching, substructure matching [35] [33] |

| Pharmacophore Fingerprints | Functional interaction features | 2D pharmacophore, PH2, PH3 | Varies | Activity prediction, binding mode analysis [35] [36] |

| Atom-Pair Fingerprints | Atom pairs with topological distances | Atom Pairs (AP) | Varies | Similarity comparisons, medium-range features [33] [36] |

Dictionary-Based Fingerprints (Structural Keys)

Dictionary-based fingerprints, also called structural keys, utilize predefined dictionaries of functional groups, substructure motifs, or fragments [34]. Each bit position in the fingerprint corresponds to a specific structural feature, with "1" indicating presence and "0" indicating absence of that feature in the molecule [34]. Common examples include Molecular ACCess System (MACCS) with 166 structural keys and PubChem fingerprints [34] [35]. These fingerprints excel in rapid substructure searching and database filtering due to their direct mapping to specific chemical features.

Circular Fingerprints

Circular fingerprints generate molecular representations by iteratively exploring the environment around each atom, extending to neighboring atoms up to a specified radius [34]. Unlike dictionary-based approaches, circular fingerprints dynamically generate structural fragments rather than relying on predefined patterns [36]. The most prominent examples are Extended-Connectivity Fingerprints (ECFPs) and Functional-Class Fingerprints (FCFPs) [34]. ECFPs have become a de facto standard for similarity searching and QSAR modeling, particularly for drug-like molecules [36].

Topological and Path-Based Fingerprints

Topological fingerprints analyze molecular connectivity through paths or fragments within the molecular graph [35]. Path-based fingerprints examine all linear paths of bonds and atoms up to a predetermined length, typically 5-7 atoms, hashing each unique path to generate the fingerprint [33]. Examples include Daylight and FP2 fingerprints [35]. These representations capture connectivity patterns that can relate to molecular properties and biological activity.

Specialized Fingerprint Variants