Beyond Rigid Docking: Mastering Protein Flexibility with Deep Learning and Ensemble Methods

Molecular docking, a cornerstone of structure-based drug design, has long been hampered by the challenge of protein flexibility.

Beyond Rigid Docking: Mastering Protein Flexibility with Deep Learning and Ensemble Methods

Abstract

Molecular docking, a cornerstone of structure-based drug design, has long been hampered by the challenge of protein flexibility. Traditional rigid docking methods offer incomplete representations of biological reality, often failing to predict accurate binding modes. This article provides a comprehensive overview for researchers and drug development professionals on the critical evolution toward flexible docking. We explore the foundational concepts of induced fit and conformational selection, detail the latest methodological advances including deep learning diffusion models and ensemble docking, and offer a comparative analysis of their performance in pose prediction, physical plausibility, and virtual screening. Finally, we present troubleshooting strategies for common pitfalls and discuss future directions, highlighting how integrating flexibility is transforming computational predictions into biomedical breakthroughs.

The Protein Flexibility Imperative: From Lock-and-Key to Induced Fit

FAQs: Understanding Rigid Body Docking and Its Challenges

FAQ 1: What is the fundamental limitation of traditional rigid body docking? The core limitation is the treatment of proteins as static, unmoving structures. In reality, proteins are dynamic, and their side chains, loops, and sometimes even secondary structures shift and move upon binding. Rigid body docking, which uses Fast Fourier Transform (FFT) algorithms for computational efficiency, cannot account for these conformational changes. This "rigid body assumption" introduces clear limitations on accuracy and reliability [1].

FAQ 2: What specific errors can this limitation cause in my results? This limitation can lead to several common issues:

- Scoring Failures: Docked conformations close to the native structure may not have the lowest energies, while incorrect, low-energy conformations are ranked highly [1].

- Pose Prediction Inaccuracy: The method's reduced sensitivity to shape complementarity can result in the failure to identify the correct binding pose, especially when side chains or backbone atoms must rearrange to accommodate the ligand [2].

- Cross-Docking Failures: A protein structure crystallized with one ligand may have an active site biased toward that specific molecule. Attempting to dock a different ligand into this rigid structure often fails if critical conformational shifts are not accounted for [2].

FAQ 3: My docking run completed, but the top-ranked pose looks wrong. What should I do? This is a classic symptom of scoring failure due to rigidity. Your next steps should be:

- Do not trust a single top pose. Rigid body methods must retain a large set of low-energy docked structures for further processing. Always analyze multiple top-ranked clusters, not just the first one [1].

- Inspect the interface. Check for steric clashes that a slightly flexible side chain could resolve. Poor electrostatic or desolvation energy terms at the interface can also explain why a correct pose was poorly ranked [1].

- Move to refinement. Use a method that incorporates flexibility—even if just for side chains—to refine the top clusters from your rigid body docking experiment.

FAQ 4: Are there specific types of complexes where rigid docking is known to fail? Yes. Performance is strongly linked to the conformational change between the unbound and bound states.

- Easy: Complexes with minimal backbone and side-chain movement (151 of 230 in the BM5 benchmark) [1].

- Medium/Difficult: Complexes involving enzymes, antibodies, and other proteins that undergo "induced fit" upon binding. The performance drops significantly for these 79 targets in the BM5 benchmark [1].

Troubleshooting Guide: Addressing Rigid Docking Failures

Problem: Inaccurate Poses Due to Protein Flexibility

Symptoms:

- High root-mean-square deviation (RMSD) of the ligand pose compared to a known experimental structure.

- Obvious steric clashes at the binding interface in the top-ranked models.

- Failure to recover key protein-ligand interactions (e.g., hydrogen bonds, hydrophobic contacts).

Solutions: 1. Employ Flexible Refinement Protocols

- Procedure: Use the output from a fast, rigid-body global docking server (like ClusPro) as the input for a more computationally intensive, flexible local refinement tool. This hybrid approach first identifies a broad region of the protein surface that might be the binding site and then allows for side-chain or limited backbone movement to optimize the fit.

- Rationale: This combines the exhaustive sampling speed of FFT-based docking with the accuracy of methods that can model induced fit [1].

2. Utilize Ensemble Docking

- Procedure: Instead of a single rigid protein structure, dock your ligand against an ensemble of multiple receptor conformations. This ensemble can be derived from:

- Multiple crystal structures of the same protein.

- NMR models.

- Snapshots from a Molecular Dynamics (MD) simulation [2].

- Rationale: This technique accounts for protein flexibility by allowing the ligand to select its optimal binding partner from a set of pre-generated conformations, a mechanism known as "conformational selection" [2].

3. Leverage Alignment-Based Docking

- Procedure: If a structure of your target protein bound to a similar ligand is available, use a method like CSAlign-Dock. This aligns your new ligand to the reference ligand within the binding pocket, considering full ligand flexibility, to predict the new complex structure [3].

- Rationale: This bypasses the ab initio sampling problem by using the known protein conformation from a related complex as a template, which can be superior to rigid-body docking, especially in cross-docking scenarios [3].

Problem: Physically Implausible or Clashing Poses

Symptoms:

- Ligand atoms intersecting with protein atoms in the predicted binding pose.

- Distorted ligand geometry (e.g., abnormal bond lengths or angles).

Solutions: 1. Check and Optimize Ligand Preparation

- Procedure:

- Ensure your ligand is in the correct PDBQT format, which includes atomic partial charges and atom types [4].

- Before docking, perform energy minimization of the ligand structure to resolve any unrealistic geometry from source libraries [5].

- Carefully define rotatable bonds. Lock bonds in rings or double bonds to preserve critical chemical geometry [5].

- Rationale: Many docking failures originate from poorly prepared input files. A chemically sensible and well-minimized ligand is crucial for successful pose prediction.

2. Evaluate the Scoring Function

- Procedure: Be aware that different scoring functions have varying tolerances for steric clashes. Some modern deep learning methods, for example, may generate poses with good RMSD but high steric clash [6]. Use a tool like PoseBusters to check the physical validity of your output poses [6].

- Rationale: A pose with a favorable score but obvious clashes is likely a scoring artifact and not a biologically relevant model.

Performance Data: Rigid Body Docking Success Rates

The following table summarizes the performance of a leading rigid-body docking server (ClusPro) on a standard benchmark (BM5), categorized by the difficulty level of the complex [1].

Table 1: Performance of Rigid Body Docking Across Complex Types

| Complex Category | Number of Targets | DockQ Score Range | CAPRI Accuracy Rating |

|---|---|---|---|

| Rigid-Body (Easy) | 151 | > 0.49 | Medium to High |

| Medium Difficulty | 45 | 0.23 - 0.49 | Acceptable to Medium |

| Difficult | 34 | < 0.23 | Incorrect |

The table below compares the general performance characteristics of different docking methodologies, highlighting the trade-offs involved.

Table 2: Comparison of Docking Methodologies

| Methodology | Typical Pose Accuracy* | Key Strength | Key Limitation |

|---|---|---|---|

| Traditional Rigid-Body | ~50-75% [2] | Computational speed, global sampling | Cannot handle protein flexibility |

| Fully Flexible Docking | ~80-95% [2] | High accuracy for induced fit | Computationally expensive |

| Deep Learning (Generative) | ~75-90% RMSD ≤ 2Å [6] | High pose accuracy, speed | May produce physically invalid poses [6] |

| Hybrid (AI + Search) | Balanced performance [6] | Good balance of accuracy and physical validity | Search efficiency can be an issue [6] |

*Pose accuracy rates are highly dependent on the specific target and benchmark used.

Experimental Workflow: From Rigid Body Docking to Refined Models

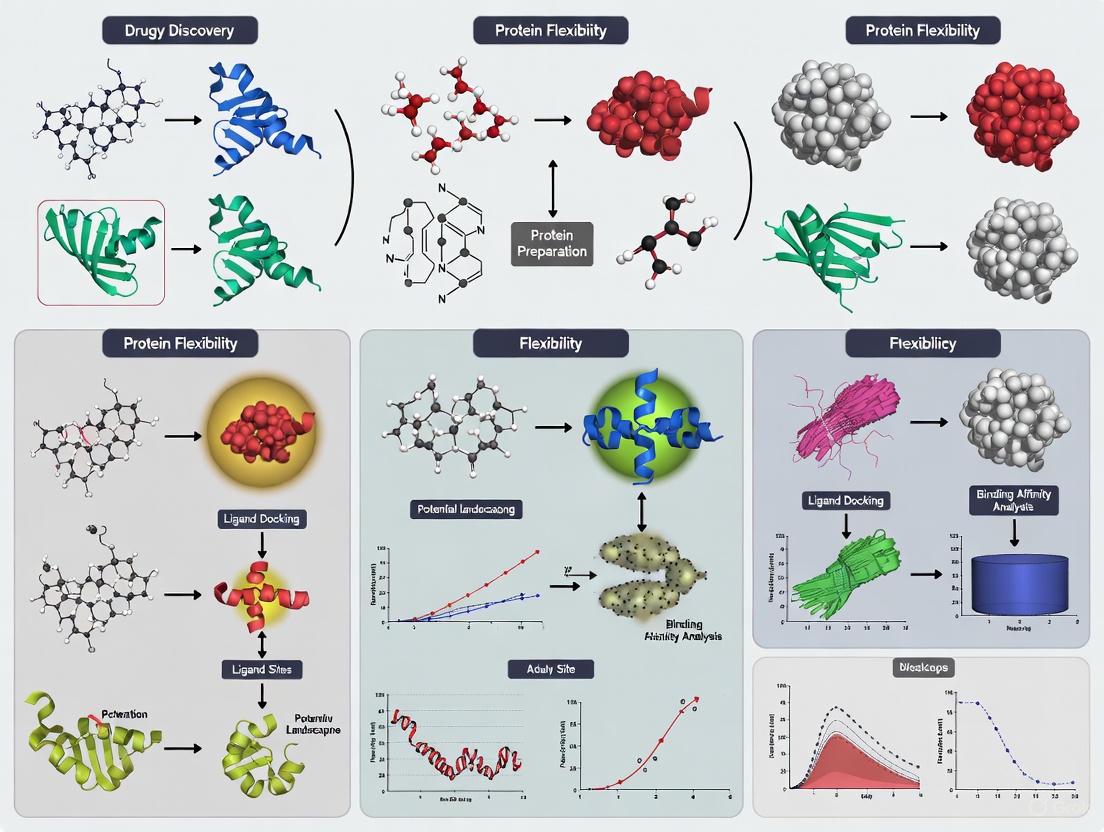

The following diagram illustrates a robust experimental strategy that uses rigid-body docking as a starting point and incorporates methods to overcome its limitations.

The Scientist's Toolkit: Key Research Reagents and Computational Tools

Table 3: Essential Resources for Advanced Docking Studies

| Tool / Resource | Type | Primary Function | Relevance to Flexibility |

|---|---|---|---|

| ClusPro Server [1] | Rigid-Body Docking Server | FFT-based global sampling and clustering. | Provides a fast starting point; top clusters are inputs for flexible refinement. |

| AutoDock Vina [6] | Docking Software | Traditional physics-based docking with stochastic search. | Widely used; a standard for comparative studies. |

| Molecular Dynamics (MD) [7] | Simulation Software | Simulates physical movements of atoms over time. | Generates ensembles of protein conformations for ensemble docking. |

| CSAlign-Dock [3] | Alignment-Based Docking | Docks a ligand using a known reference complex. | Accounts for protein conformational changes by leveraging template structures. |

| PoseBusters [6] | Validation Toolkit | Checks docking poses for physical and chemical plausibility. | Critical for identifying failures, especially from AI models that may ignore steric clashes. |

| Rosetta Software Suite [8] | Modeling Suite | Provides flexible backbone and high-resolution refinement protocols. | Used to model and analyze flexible regions in protein structures and assemblies. |

For decades, the understanding of molecular binding was dominated by the rigid "lock and key" model. However, advanced structural biology has revealed that proteins are highly dynamic macromolecules. This dynamism is crucial for function and is described by two primary, and often complementary, binding mechanisms: Induced Fit and Conformational Selection [2] [9].

Traditionally, these mechanisms were viewed as mutually exclusive. Induced Fit (IF) proposes that a ligand first binds to the protein's predominant state, inducing a conformational change to a stable bound complex. In contrast, Conformational Selection (CS) posits that the protein exists in an equilibrium of multiple conformations, and the ligand selectively binds to and stabilizes a pre-existing, minor population [10] [11]. Modern research, supported by binding flux analysis and advanced kinetics, now recognizes that IF and CS are not a strict dichotomy but can operate alongside each other within a thermodynamic cycle to produce the final ligand-target complex [10].

Understanding which mechanism dominates is critical in drug discovery, as it influences the selectivity, duration of action, and residence time of a drug on its target [10] [12]. This guide provides troubleshooting support for researchers grappling with the practical challenges of distinguishing these mechanisms within molecular docking studies.

Conceptual Framework & Key Differences

The core challenge is to correctly identify the temporal order of binding and conformational change. The following diagram illustrates the pathways and their interplay within a thermodynamic cycle.

Quantitative Comparison of Mechanisms

The table below summarizes the key characteristics that experimentally distinguish these mechanisms.

| Feature | Induced Fit (IF) | Conformational Selection (CS) |

|---|---|---|

| Temporal Order | Conformational change occurs after initial ligand binding [11]. | Conformational change occurs before ligand binding [11]. |

| Key Intermediate | A transient, initial encounter complex (P:L) [10]. | A pre-existing, excited protein state (P*) [10]. |

| Dominance at Low [Ligand] | Lower contribution; increases with ligand concentration [10]. | Typically dominates at low ligand concentrations [10]. |

| Observed Rate (kₒₑₛ) vs. [L] | Symmetric U-shape: kₒₑₛ has a minimum and is symmetric around [L]₀ = [P]₀ + K𝒹 [11]. | Asymmetric or monotonic: kₒₑₛ decreases monotonically for kₑ < k₋; has an asymmetric minimum for kₑ > k₋ [11]. |

| Ligand Specificity | Binds a broader population, inducing the "correct" fit. | Highly selective for a specific, pre-formed conformation. |

| Role in Drug Design | Often associated with achieving a long residence time on the target [10]. | Can be exploited to target specific, potentially inactive, protein states [12]. |

Successful experimental analysis requires a suite of specialized reagents and computational tools.

| Tool / Reagent | Function / Description | Relevance to Binding Mechanisms |

|---|---|---|

| Site-Directed Spin Labeling (SDSL) | Covalent attachment of spin labels (e.g., MTSSL) to engineered cysteine residues [12]. | Enables EPR distance measurements to probe conformational states and dynamics. |

| p38α MAP Kinase Constructs | Panel of double-cysteine mutants for distance mapping (e.g., p38α-119, 251) [12]. | Model system for studying A-loop conformational equilibrium (DFG-in/out). |

| Type I & II Kinase Inhibitors | Small molecules that bind distinct kinase conformations (e.g., SB203580, Sorafenib) [12]. | Tool compounds to selectively stabilize specific sub-states (CS vs. IF). |

| MMM Software Toolbox | Multiscale Modeling of Macromolecules for spin label multilateration [12]. | Converts EPR distance data into 3D probabilistic maps of flexible regions. |

| Structural Alphabets (SAs) | Libraries of small protein fragments for precise backbone conformation analysis [9]. | Analyzes backbone deformability and conformational changes from structural data. |

| Normal Modes Analysis (NMA) | Computational method to calculate a protein's collective motions [13]. | Predicts low-energy conformational changes for ensemble generation in docking. |

Troubleshooting FAQs & Experimental Guides

FAQ 1: How can I determine if my ligand binds via Induced Fit or Conformational Selection?

Answer: Distinguishing the mechanism requires a combination of kinetic and structural experiments. A critical first step is to analyze the chemical relaxation rate (kₒₑₛ) as a function of both ligand and protein concentration.

Problem: Under pseudo-first-order conditions (high ligand concentration), an increase in kₒₑₛ with [L] can be misinterpreted, as it is possible in both IF and CS mechanisms [11].

Solution: Perform relaxation experiments (e.g., temperature jump) across a wide range of ligand and protein concentrations. Plot kₒₑₛ versus the total ligand concentration [L]₀.

- If the plot is symmetric (see Diagram A below), the mechanism is Induced Fit.

- If the plot is asymmetric or shows a monotonic decrease (see Diagram B below), the mechanism is Conformational Selection [11].

This general method works for all concentrations and avoids the ambiguity of pseudo-first-order approximations.

Protocol: Chemical Relaxation Kinetics

- Prepare Solutions: Prepare a series of samples with a fixed total protein concentration ([P]₀) and varying total ligand concentrations ([L]₀). Ensure [L]₀ covers a range both below and above [P]₀.

- Perturb Equilibrium: Rapidly perturb the equilibrium of each sample using a fast technique such as a temperature or pressure jump.

- Monitor Relaxation: Use a stopped-flow apparatus or a similar fast-detection method to monitor the system's relaxation back to the new equilibrium. A spectroscopic signal (e.g., fluorescence) that reports on binding is typically used.

- Fit Data: Fit the relaxation trajectory to a single or multi-exponential function to extract the dominant relaxation rate, kₒₑₛ.

- Plot and Analyze: Plot kₒₑₛ as a function of [L]₀ for different fixed values of [P]₀. Analyze the shape of the curves as described above [11].

FAQ 2: My docking simulations are failing. How can I account for protein flexibility in my model?

Problem: Standard rigid-body docking fails when the protein's binding site undergoes conformational changes upon ligand binding. This can result in low docking scores for true binders and an inability to predict the correct binding pose [2] [13].

Solution: Implement a flexible docking strategy that moves beyond a single, static protein structure. The general workflow is outlined below.

Protocol: Flexible Docking Workflow

- Preprocessing & Ensemble Generation:

- Source: Collect multiple experimental structures (X-ray, NMR) of the target protein in different conformational states (e.g., apo, holo, with different ligands) [13].

- Generate: Use computational methods like Molecular Dynamics (MD) simulations or Normal Modes Analysis (NMA) to generate an ensemble of plausible conformations [13]. This ensemble simulates the Conformational Selection model.

- Cross-Docking: Perform rigid-body docking of your ligand against each conformation in the generated ensemble.

- Refinement (Induced Fit): Take the top poses from the cross-docking step and subject them to a refinement stage. This stage allows for small-scale movements of the protein backbone and side-chains, as well as rigid-body adjustments of the ligand, to optimize the fit. This step models the Induced Fit mechanism [13].

- Scoring: Re-score the refined complexes using a scoring function that accounts for the energy cost of protein deformation. This helps to identify the most biologically plausible complex [13].

FAQ 3: How can I directly observe the conformational states of a flexible protein region?

Problem: Highly flexible regions, like the activation loop in kinases, are often poorly resolved or missing in X-ray crystal structures, making it difficult to characterize their conformational landscape [12].

Solution: Employ Electron Paramagnetic Resonance (EPR) spectroscopy with site-directed spin labeling to measure distances and probe conformational distributions directly.

Protocol: EPR with SLiK (Spin Labels in Kinases)

- Design Double Mutants: Generate a panel of protein constructs (e.g., p38α MAPK) with two cysteine mutations each. One label is placed in the flexible region of interest (e.g., the A-loop at residue 172), and the other is placed in a stable, rigid reference region [12].

- Spin Labeling: Label the cysteine residues with a stable spin label like MTSSL.

- DEER/PELDOR Measurements: Perform pulsed EPR distance measurements (DEER/PELDOR) on the apo protein and in the presence of saturating ligand concentrations. This technique yields distance distributions between the two spin labels.

- Data Analysis:

- Apo State: A broad, multimodal distance distribution indicates a dynamic equilibrium between multiple conformational sub-states of the flexible loop.

- Ligand-Bound State: A shift to a narrow, unimodal distribution indicates that the ligand has selected and stabilized a specific sub-state (Conformational Selection). A change in the distribution shape not present in the apo state may suggest an Induced Fit mechanism [12].

- Multilateration: Use software like the MMM toolbox to combine distance distributions from multiple double mutants and compute a 3D probability map for the position of the flexible loop, providing a spatial model of its conformational ensemble [12].

Molecular docking techniques are essential for predicting how a small molecule (ligand) interacts with a biological target. The table below summarizes the key techniques for handling protein flexibility [14] [15].

Table 1: Key Molecular Docking Techniques for Handling Protein Flexibility

| Technique Name | Primary Objective | Key Advantage | Consideration for Protein Flexibility |

|---|---|---|---|

| Re-docking [14] | Validate docking protocol accuracy by re-docking a known ligand. | Provides a straightforward control to test computational settings. | Treats the protein as a rigid body; sensitive to minor conformational changes from the original crystal structure. |

| Cross-docking [14] | Test a docking protocol's ability to handle different ligands by docking multiple ligands into a single protein structure. | Assesses the robustness of a chosen protein conformation for docking diverse compounds. | Uses a single, rigid protein conformation; may fail if ligands induce different conformational changes. |

| Ensemble Docking [14] | Account for inherent protein flexibility by docking against multiple protein conformations. | Provides a more realistic representation of ligand binding by sampling different protein states. | Explicitly incorporates protein flexibility by using an ensemble of structures (e.g., from MD simulations or multiple crystals). |

| Blind Docking [14] | Identify novel binding sites on a protein without prior knowledge of their location. | Unbiased exploration of the entire protein surface. | Scans a rigid protein structure; can identify alternative binding pockets but may miss induced-fit effects. |

Frequently Asked Questions: Technique Selection & Results

Q: How do I validate my molecular docking protocol? A: Re-docking is the primary method for validation [14]. The co-crystallized ligand is extracted and re-docked into its original binding site. The predicted pose is compared to the experimental one, typically by calculating the Root-Mean-Square Deviation (RMSD). An RMSD value below 2.0 Å is generally considered a successful prediction, indicating your protocol can reproduce the known binding mode [14].

Q: My re-docking worked well, but cross-docking fails for ligands with different scaffolds. Why? A: This is a common challenge rooted in protein flexibility [14] [15]. A single, rigid protein structure used in cross-docking may be optimized for its native ligand but not accommodate others that induce different conformational changes. To address this, consider using ensemble docking, which uses multiple protein structures to account for flexibility [14].

Q: What is the difference between AutoDock Vina and AutoDock 4? A: While both come from the same lab, AutoDock Vina is a new "generation" with a completely new scoring function and search algorithm [16]. On average, Vina offers better speed and accuracy, though the best program can be target-dependent [16].

Q: Why are my docking results non-deterministic (different each time)? A: The docking algorithm in tools like AutoDock Vina is a stochastic (random) global optimization process [16]. Even with identical inputs, starting from different random seeds can lead to different results. It is good practice to perform multiple runs and analyze the statistical properties of the outcomes [16].

Troubleshooting Common Docking Problems

Table 2: Troubleshooting Guide for Molecular Docking Experiments

| Problem | Possible Cause | Solution |

|---|---|---|

| High RMSD in Re-docking | Incorrect protonation states of ligand or receptor [16]. | Check and correct the protonation states of key residues and the ligand for the physiological pH of interest. |

| The search space is defined incorrectly [16]. | Ensure the search space center and size are correct. Remember, in AutoDock Vina, size is in Ångstroms, not grid points [16]. | |

| Poor Cross-docking Performance | The chosen rigid protein structure cannot accommodate the new ligand due to induced fit [14]. | Switch to ensemble docking using multiple protein conformations to account for flexibility [14] [15]. |

| Inaccurate Binding Energy Prediction | The scoring function is inexact and has inherent limitations [16]. | Use docking scores for relative ranking, not absolute binding energy prediction. Correlate results with experimental data. |

| Docked conformation is unreasonable | Ligand or receptor was not prepared correctly (e.g., 2D ligand input, missing atoms) [16]. | Ensure proper 3D ligand geometry and that all missing side chains/atoms in the receptor have been modeled. |

| Warning about large search space volume | The defined search space is very large (e.g., >27,000 ų) [16]. | Reduce the search space size if possible. If a large space is necessary, increase the exhaustiveness parameter to improve the search [16]. |

Experimental Protocols

Protocol 1: Standard Re-docking Validation

This protocol is used to validate a molecular docking setup by reproducing a known experimental result [14].

- Obtain the Structure: Download a protein-ligand complex structure from the Protein Data Bank (PDB).

- Prepare Structures:

- Protein: Remove the ligand, all water molecules, and any irrelevant cofactors. Add hydrogen atoms and assign partial charges using your chosen molecular graphics or docking software.

- Ligand: Extract the bound, co-crystallized ligand from the PDB file. Ensure it is correctly protonated for the relevant pH.

- Define the Search Space: Center the docking search box on the original ligand's binding site. A typical box size is 20x20x20 Å to give the ligand some room to move.

- Perform Docking: Dock the prepared ligand into the prepared protein structure using your selected docking program.

- Analyze Results: Calculate the RMSD between the top-ranked docked pose and the original co-crystallized ligand pose. An RMSD of less than 2.0 Å is considered a successful validation [14].

Protocol 2: Ensemble Docking for Flexible Receptors

This protocol is used when protein flexibility is a major concern, such as when docking against a protein with known multiple conformations or when cross-docking fails [14] [15].

- Assemble the Ensemble: Collect multiple structures of the target protein. These can come from:

- Multiple PDB entries of the same protein (e.g., with different ligands bound).

- A single PDB entry, using models from an NMR ensemble.

- Molecular Dynamics (MD) Simulations: Snapshots from an MD simulation trajectory, such as those available from the ATLAS database, provide a robust set of conformations [17].

- Prepare Structures: Prepare each protein conformation in the ensemble as in Protocol 1.

- Dock: Perform a separate docking run for each ligand against every protein conformation in the ensemble.

- Analyze Results: Combine the results from all docking runs. The best-scoring pose across the entire ensemble is typically selected as the final prediction, providing a model that accounts for protein flexibility.

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item / Resource | Function / Description | Relevance to Docking Experiment |

|---|---|---|

| Protein Data Bank (PDB) | A repository for 3D structural data of proteins and nucleic acids. | The primary source for initial protein and protein-ligand complex structures for re-docking and cross-docking studies. |

| AutoDock Vina | A widely used molecular docking program for predicting ligand binding modes and affinities. | The core engine for performing the docking simulation itself. Known for its speed and user-friendliness [16]. |

| ATLAS Database | A database of standardized all-atom molecular dynamics (MD) simulations for a representative set of proteins [17]. | A key source for obtaining multiple protein conformations (an ensemble) to use in ensemble docking, directly addressing protein flexibility [17]. |

| PDBQT File Format | The required input file format for AutoDock Vina. Contains atomic coordinates, partial charges, and atom types. | The prepared protein and ligand files must be in this format. Preparation typically involves adding polar hydrogens and assigning atom types. |

| Molecular Graphics System (e.g., PyMOL) | Software for visualizing molecular structures, surfaces, and docking results. | Critical for analyzing input structures, defining docking search boxes, and visually inspecting the final docked poses. |

| Molecular Dynamics (MD) Software (e.g., GROMACS) | Software for simulating the physical movements of atoms and molecules over time. | Used to generate alternative protein conformations for ensemble docking, capturing the dynamic behavior of the protein in solution [17]. |

FAQs: Understanding Protein Flexibility in Drug Discovery

Q1: Why is considering protein flexibility so critical in virtual screening?

Accounting for protein flexibility is crucial because using a single, rigid protein structure provides an incomplete representation of its native state. Experimental studies clearly show conformational differences between a protein's unbound (apo) and bound (holo) states [2]. When a rigid receptor structure is used for docking ligands that require different binding site geometries (a problem known as cross-docking), the active site is often biased toward its original ligand, leading to failed docking attempts and missed hits [2]. Typical rigid docking shows best performance rates between only 50% and 75%, while methods incorporating full protein flexibility can enhance pose prediction accuracy to 80–95% [2].

Q2: What are the fundamental mechanisms of protein flexibility upon ligand binding?

Two primary models explain the conformational changes:

- Induced Fit: The ligand binding event itself induces a conformational change in the protein [2].

- Conformational Selection: The protein exists in an equilibrium of multiple conformations in solution. The ligand selectively binds to and stabilizes a pre-existing complementary conformation, shifting the population distribution [2]. Research suggests these are not mutually exclusive, and a mixed binding mechanism is likely for many systems [2].

Q3: What are the main technical challenges in implementing flexible docking?

The primary challenge is the immense computational cost associated with the large number of degrees of freedom a protein possesses. Directly modeling binding site flexibility is difficult due to the vast conformational space that must be sampled and the difficulties in formulating a perfectly accurate energy function to score these conformations [18]. This creates a trade-off between computational efficiency and biological accuracy that researchers must navigate.

Q4: How does structural simplification in lead optimization relate to protein flexibility?

Structural simplification is a lead optimization strategy that reduces molecular complexity and "molecular obesity" by removing unnecessary rings or chiral centers, often improving pharmacokinetic properties [19]. A simplified, more rigid ligand may have fewer degrees of freedom to accommodate, potentially reducing the conformational demands on the protein. However, the simplified ligand must still retain the key pharmacophores necessary for binding to the flexible protein target [19].

Troubleshooting Common Experimental Issues

Problem 1: Poor Pose Prediction and Enrichment in Virtual Screening

- Symptoms: Docked ligand poses have high Root-Mean-Square Deviation (RMSD) from known crystallographic poses; inability to distinguish true binders from decoys (poor enrichment).

- Solutions:

- Use Ensemble Docking: Instead of a single structure, dock into an ensemble of protein conformations. This ensemble can be built from multiple experimental structures (e.g., from the PDB), conformations from molecular dynamics simulations, or homology models [2] [20]. Tools like FlexE create a "united protein description" from a structural ensemble, allowing combinatorial joining of different conformations during docking [21].

- Employ Advanced Docking Software: Utilize modern docking programs that explicitly model protein flexibility. For example, the RosettaVS protocol incorporates full receptor side-chain flexibility and limited backbone movement, which has been shown to be critical for accurate predictions on standard benchmarks [22].

- Validate with Cross-Docking: Test your docking protocol by cross-docking various known ligands from different crystal structures into a single receptor structure. If performance is poor, it indicates a strong need for a flexible docking approach [2].

Problem 2: Inaccurate Binding Affinity Predictions Due to Rigid Receptors

- Symptoms: Scoring functions fail to correctly rank compounds by their experimental binding affinity, even when pose prediction is reasonable.

- Solutions:

- Incorporate Entropic Considerations: Standard scoring functions often fail to adequately estimate entropy changes (∆S) upon binding. Advanced methods, like the improved RosettaGenFF-VS, combine enthalpy calculations with an entropy model, leading to superior performance in affinity ranking [22].

- Model Key Water Molecules: The presence and displacement of active site water molecules significantly impact binding affinity. Use algorithms that can classify water molecules and simulate their contribution to multi-part interactions. Decide on a strategy for handling displaceable water molecules during the docking simulation [20].

Problem 3: High Computational Cost of Flexible Docking

- Symptoms: Screening a large compound library is prohibitively slow or computationally infeasible.

- Solutions:

- Adopt a Hierarchical Screening Protocol: Use a fast, initial screening mode to filter millions of compounds, followed by a high-precision mode for top hits. For instance, RosettaVS offers a Virtual Screening Express (VSX) mode for rapid screening and a Virtual Screening High-precision (VSH) mode for final ranking [22].

- Leverage Active Learning: Integrate your workflow with an AI-accelerated platform that uses active learning. This involves training a target-specific neural network during docking to intelligently select the most promising compounds for full, expensive docking calculations, drastically reducing the number of required simulations [22].

- Focus Flexibility: Restrict flexibility considerations to key binding site residues identified through prior knowledge or computational analysis, rather than treating the entire protein as flexible.

Performance Data for Flexible Docking Methods

The tables below summarize quantitative performance data from evaluations of flexible docking methods, providing a benchmark for expectations.

Table 1: Virtual Screening Performance on the DUD Dataset

| Method / Metric | AUC (Area Under Curve) | ROC Enrichment | Notes |

|---|---|---|---|

| RosettaVS (VSH Mode) | State-of-the-art | State-of-the-art | Incorporates receptor flexibility and an entropy model [22]. |

| Other Physics-Based Methods | Variable, generally lower | Variable, generally lower | Performance depends on the specific method and target [22]. |

Table 2: Pose Prediction Accuracy of FlexE on 105 PDB Structures

| Performance Metric | Success Rate | Threshold |

|---|---|---|

| Overall Placement Success | 83% (50/60 ligands) | RMSD < 2.0 Å [21] |

| Comparison to Rigid Cross-Docking | Similar quality to best single-structure result | - [21] |

Experimental Protocols

Protocol 1: Ensemble-Based Flexible Docking with FlexE

Objective: To dock a flexible ligand into a protein binding site that exhibits structural variations. Materials: Protein structure ensemble (e.g., from PDB or MD simulation), ligand structure, FlexE software. Methodology:

- Prepare the Ensemble: Superimpose all protein structures in the ensemble onto a common reference frame.

- Generate United Protein Description: FlexE internally processes the superimposed structures, merging similar regions and treating dissimilar areas (e.g., different side-chain rotamers or loop conformations) as separate, discrete alternatives [21].

- Define Incompatibilities: The software builds an incompatibility graph to manage combinations of alternatives that are geometrically or logically exclusive.

- Dock the Ligand: Using an incremental construction algorithm (derived from FlexX), FlexE places the flexible ligand into the united protein description. During placement, it selects the optimal combination of protein conformations that best fits the ligand, as determined by the scoring function [21].

- Analyze Results: Examine the top-ranked poses and the specific protein conformation selected for each.

Protocol 2: AI-Accelerated Virtual Screening with the OpenVS Platform

Objective: To efficiently screen an ultra-large chemical library (billions of compounds) against a flexible target. Materials: Target protein structure(s), multi-billion compound library (e.g., in SDF format), OpenVS platform, HPC cluster. Methodology:

- Target Preparation: Prepare the protein structure, specifying the binding site and allowing for side-chain and limited backbone flexibility in the protocol [22].

- Library Preparation: Curate the compound library, generating relevant tautomers, protomers, and stereoisomers.

- Configure Active Learning: Set up the active learning loop where a neural network is continuously trained on the fly to predict the docking scores of unscreened compounds based on those already docked [22].

- Run Hierarchical Docking:

- Stage 1 (VSX): The platform uses the RosettaVS express mode to rapidly screen compounds prioritized by the active learning model.

- Stage 2 (VSH): The top hits from VSX are re-docked using the high-precision mode of RosettaVS, which includes full receptor flexibility, for final ranking [22].

- Post-Screening Analysis: Chemically cluster the final hit-list, examine key interaction patterns, and select compounds for experimental testing.

Workflow Visualization

AI-Accelerated Flexible Docking Workflow

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Resources for Flexible Docking Research

| Tool / Resource | Type | Primary Function in Flexible Docking |

|---|---|---|

| FlexE | Software | Docks flexible ligands into an ensemble of protein structures by creating a united protein description with combinatorial conformations [21]. |

| RosettaVS | Software Suite | A state-of-the-art physics-based docking protocol that allows for full side-chain and limited backbone flexibility during virtual screening [22]. |

| Protein Data Bank (PDB) | Database | Source for experimentally determined protein structures to build conformational ensembles for docking [2] [18]. |

| ZINC / PubChem | Database | Public repositories of purchasable and virtual compounds for building screening libraries [18]. |

| Homology Models | Computational Model | Provides a 3D protein model when an experimental structure is unavailable, though flexibility considerations become even more critical [23] [20]. |

| Molecular Dynamics (MD) | Simulation Method | Generates an ensemble of protein conformations through simulation of physical movements, useful for capturing flexibility beyond crystal structures [20]. |

A Practical Toolkit: From Ensemble Docking to Deep Learning Co-folding

Traditional molecular docking often treats the protein target as a rigid structure, which is an incomplete representation of reality. Experimental studies have clearly demonstrated conformational differences between a receptor's unbound (apo) and bound (holo) states [2]. When docking is performed against a single, rigid protein structure, the results can be biased toward the specific ligand that was co-crystallized, a problem known as the cross-docking problem [2]. This can lead to high rates of false positives and false negatives in virtual screening.

Ensemble docking addresses this limitation by using multiple protein conformations to represent the dynamic, flexible nature of the target. This approach is grounded in the conformational selection model of ligand binding, where the ligand selects its preferred binding partner from an ensemble of available protein states [24] [2]. By docking candidate ligands into a diverse set of protein conformations, researchers can more accurately model the biologically relevant binding process and improve the prediction of binding modes and affinities.

An effective ensemble docking study relies on a representative set of protein conformations. The two primary sources for these structures are experimental data and computer simulations.

Experimental Structures from the Protein Data Bank (PDB)

For many pharmaceutically relevant targets, the PDB contains numerous X-ray structures solved in complex with different ligands.

- Process: Curate all available structures for your target, then remove redundant conformations to create a manageable and diverse set.

- Advantage: Experimentally determined structures provide atomic-level detail of physically realistic conformations.

- Challenge: The pattern of disordered segments can differ significantly between structures, especially when comparing apo and holo forms or structures with and without binding partners like cyclin [25].

A graph-based redundancy removal method has been shown to be more efficient and less subjective for selecting representative structures than traditional clustering-based methods [25].

Computational Conformations from Molecular Dynamics (MD)

Molecular Dynamics simulations generate a trajectory of protein movement by simulating its physical motions over time.

- Process: After running an MD simulation (e.g., 4 ns after equilibration), the trajectory is clustered based on the Root Mean Square Deviation (RMSD) of atoms in the binding site. Representative snapshots (medoids) from each cluster form the docking ensemble [26] [27].

- Advantage: MD can sample conformational states that are not captured by crystallography but may be biologically relevant for ligand binding.

- Consideration: The sampling may be limited by the timescale of the simulation, as slow conformational changes can occur over timescales beyond what is typically practical to simulate [24].

Table: Comparison of Methods for Generating Protein Conformational Ensembles

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Experimental PDB Structures | Uses multiple X-ray or NMR structures from the PDB. | High-resolution, experimentally validated conformations. | May be biased toward specific ligand-bound states; limited conformational diversity. |

| Molecular Dynamics (MD) | Computational simulation of protein movement; snapshots are clustered. | Can discover novel, druggable states not seen in crystals [24]. | Computationally expensive; force field inaccuracies; limited sampling of slow motions. |

The following diagram illustrates a typical workflow for creating and using an ensemble from Molecular Dynamics simulations:

Troubleshooting Common Issues in Ensemble Docking

FAQ: How many protein conformations should I use in my ensemble?

There is a trade-off between computational cost and accuracy. Using more conformations can better represent flexibility but increases cost and the risk of false-positive pose predictions [25]. Machine learning can help select the most important conformations. For example, one study on CDK2 showed that a few of the most important conformations were sufficient to achieve high accuracy in affinity prediction, greatly reducing the necessary ensemble size [25]. When using MD, studies suggest that 6-8 clusters can be sufficient to make an ensemble, though some protocols use more (e.g., 20) for broader sampling [26] [27].

FAQ: My ensemble docking results are poor, with incorrect poses and zero energies. What went wrong?

This specific error, where results show only one structure per cluster and zero energies, was reported in a HADDOCK forum. The solution was to check the residue numbering in the input files. If the residue numbering in your ensemble PDB files does not match the numbering used in your restraint definitions, the docking calculation will fail because the restraints are not applied correctly [28]. Always verify the consistency of your input files.

FAQ: How do I choose the best docking program for my target?

The performance of docking programs and scoring functions can be highly target-dependent [25]. A general benchmarking study found that AutoDock Vina tends to reproduce more accurate binding poses, while AutoDock4 gives binding affinities that correlate better with experimental values [25]. However, the authors emphasize that for a specific target, a receptor-specific benchmarking is desirable to decide on the best tool. If possible, test multiple programs against a set of known actives and decoys for your target.

FAQ: Can ensemble docking handle large conformational changes like "DFG-flip" in kinases?

Yes, this is a key strength of the method. Kinases are a classic example where the DFG-loop can adopt at least two distinct conformations (DFG-in and DFG-out) depending on the bound inhibitor. If a rigid docking protocol uses a DFG-in structure, it will fail to correctly dock a compound that requires the DFG-out conformation. Ensemble docking that includes both states can successfully handle such cases by providing the correct protein conformation for each ligand type [27].

Enhancing Ensemble Docking with Machine Learning

A powerful advancement is combining ensemble docking with machine learning (ML) to improve the prediction of drug binding. The process generally involves:

- Feature Generation: Perform ensemble docking to generate a set of features for each ligand, such as individual docking scores and energy terms from multiple protein conformations [26].

- Model Training: Use these features, along with labels identifying active versus decoy compounds, to train a machine learning classifier (e.g., Random Forest or K-Nearest Neighbors) [26] [25].

- Conformation Selection: ML can rank the importance of different protein conformations in the ensemble for correctly classifying actives. This identifies a small subset of the most informative structures [25].

- Prediction: The trained ML model is used to predict new active compounds, often with much higher accuracy than using docking scores alone. One study reported over 99% classification accuracy when using features from a well-correlated protein conformation [26].

This integrated approach tackles the "optimum ensemble size" problem by identifying a minimal set of critical conformations, reducing computational cost while maintaining, or even improving, predictive accuracy [25].

Table: Key Research Reagents and Software Solutions

| Tool / Reagent | Type | Primary Function in Ensemble Docking |

|---|---|---|

| AutoDock Vina [26] | Docking Software | Performs the core docking calculation, scoring ligand poses for a given protein conformation. |

| AMBER14ffsb [27] | Force Field | Provides parameters for atoms during Molecular Dynamics simulations to generate ensembles. |

| Lead Finder [27] | Docking Software | Docking algorithm used in the Flare software for pose generation and scoring. |

| Scikit-learn [26] | Machine Learning Library | Provides algorithms (e.g., Random Forest) for analyzing docking results and classifying active compounds. |

| Dragon Software [26] | Descriptor Calculator | Calculates molecular descriptors for drugs, which can be used as features in machine learning models. |

| Directory of Useful Decoys (DUD-e) [26] | Database | Provides known active and decoy compounds for a target, essential for training and validating models. |

The relationship between ensemble docking and machine learning can be summarized in the following workflow, which leads to improved prediction of drug binding:

Molecular docking is a cornerstone of modern, structure-based drug design. A significant challenge in this field is accounting for the inherent flexibility of protein targets, as side-chain or even backbone adjustments frequently occur upon ligand binding, a phenomenon known as induced fit [29] [21]. Traditional docking tools often treat the protein receptor as a single, rigid structure, which can lead to failures in predicting correct binding modes for ligands that require conformational changes in the protein [2] [18].

FlexE is a software tool specifically designed to address the problem of protein structure variations during docking calculations [29] [21]. Its core innovation is the unified protein description approach. FlexE takes an ensemble of protein structures—which could represent flexibility, point mutations, or alternative homology models—and superimposes them to create a single, unified representation [21]. In this model, similar parts of the structures are merged, while dissimilar regions, such as alternative side-chain conformations or varying loops, are treated as discrete alternatives. During the docking process, FlexE can combinatorially join these alternative conformations to create new, valid protein structures that best fit the flexible ligand being docked [29]. This method directly incorporates protein flexibility during the ligand placement phase, rather than as a post-optimization step, leading to more accurate and reliable docking outcomes [21].

Experimental Protocols & Workflows

Key Workflow of FlexE

The following diagram illustrates the core process of creating a unified protein description and docking a flexible ligand.

Step-by-Step Protocol for a FlexE Docking Experiment

This protocol provides a detailed methodology for running a standard docking calculation with FlexE, using an ensemble of protein structures to account for flexibility.

Objective: To dock a flexible ligand into a protein target, considering protein structure variations present in a given ensemble. Primary Software: FlexE. Note that FlexE is derived from FlexX and utilizes its incremental construction algorithm and scoring function, adapted for the ensemble approach [21].

Procedure:

Preparation of the Protein Structure Ensemble:

- Source: Collect an ensemble of protein structures from the Protein Data Bank (PDB). This ensemble should represent the conformational variability of your target (e.g., apo/holo forms, structures bound to different ligands, NMR ensembles) [21].

- Selection Criteria: Structures in the ensemble must have a highly similar backbone trace. FlexE is designed to handle side-chain variations and slight loop movements, not large-scale domain rearrangements [21].

- Preprocessing: Superimpose all structures of the ensemble onto a common reference frame. This is a prerequisite for generating the unified protein description [21].

Preparation of the Ligand:

- Source: Obtain the 3D structure of the ligand from a database like PubChem or ZINC, or draw it using a molecular building tool [18].

- Energy Minimization: Use molecular mechanics software (e.g., Gaussian) with an appropriate force field to optimize the ligand's geometry and minimize its internal energy before docking [18].

Generation of the United Protein Description:

- Input: Provide the superimposed ensemble of protein structures to FlexE.

- Process: FlexE automatically generates the unified description by merging identical regions and identifying discrete alternative conformations for varying parts [21]. This step creates the combinatorial search space for protein conformations.

Execution of the Docking Calculation:

- Input: Provide the united protein description and the prepared, flexible ligand to FlexE.

- Algorithm: FlexE uses an incremental construction algorithm to build the ligand within the flexible active site. For each partial ligand placement, it determines the optimal combination of protein conformations with respect to the scoring function [21].

- Internal Handling: The software manages dependencies between alternative protein conformations (incompatibilities) using a graph representation, ensuring only valid, combined protein structures are considered [21].

Analysis of Results:

- Output: FlexE produces a ranked list of ligand poses.

- Validation: The quality of the top-ranked pose is typically assessed by calculating the Root Mean Square Deviation (RMSD) between the predicted ligand pose and its known position in an experimental crystal structure [29] [21]. A successful docking typically has an RMSD below 2.0 Å.

- Inspection: Visually analyze the top poses using molecular visualization tools (e.g., PyMOL, Chimera) to check for sensible intermolecular interactions.

Protocol for Cross-Docking Benchmarking

This methodology is used to evaluate the performance of FlexE against traditional rigid-receptor docking, as described in its validation studies [21].

Objective: To compare the performance of FlexE (flexible receptor) against sequential docking into single, rigid receptor structures (cross-docking). Application: Used for method validation and performance assessment.

Procedure:

- Dataset Curation: Select several protein structure ensembles from the PDB. Each ensemble must contain multiple crystal structures of the same protein with different ligands bound [21].

- Ligand Preparation: Extract the ligands from each complex in the ensemble. These will be re-docked for testing.

- FlexE Run: Dock each ligand into the unified protein description created from its entire parent ensemble.

- Cross-Docking Run: For the same ligand, perform a separate docking calculation into each individual protein structure within the ensemble using a standard rigid-receptor docking tool (e.g., FlexX). Merge the results from all these individual runs into a single ranked list.

- Performance Analysis:

- For both methods, calculate the RMSD of the predicted ligand pose against the experimental pose for each test case.

- Determine the success rate for each method, typically defined as the percentage of ligands docked with an RMSD below 2.0 Å [29] [21].

- Compare the computational time required by FlexE versus the accumulated time for all cross-docking runs.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the main advantages of using FlexE over standard rigid-receptor docking? A1: FlexE significantly improves the ability to find correct ligand binding modes when protein flexibility is a critical factor. It prevents the failure to dock potential inhibitors that would be missed using a single, rigid protein structure [21]. While the quality of its top solutions is similar to the best outcome from exhaustive cross-docking, its computing time is often significantly lower because it avoids the need to dock into every single structure sequentially [29] [21].

Q2: My protein undergoes large domain movements upon ligand binding. Can FlexE handle this? A2: No. FlexE is designed for proteins where the "overall structure and the general shape of the active site are conserved." It explicitly handles side-chain flexibility and slight loop movements, but large main-chain variations, such as domain movements, are beyond its scope [21].

Q3: Where do I source the protein ensemble for a FlexE calculation? A3: The primary source is the Protein Data Bank (PDB), using multiple experimentally determined structures (e.g., from X-ray crystallography) of the same protein [21]. The ensemble is not limited to experimental structures; you can also use structures from molecular dynamics simulations, models generated with rotamer libraries, or ambiguous homology models, provided they are structurally superimposed [21].

Q4: What does the "unified protein description" actually mean? A4: It is a computational model created from the superimposed input structures. In this model, parts of the protein that are identical across all structures are represented once. Parts that differ (e.g., a side-chain with multiple conformations) are stored as explicit alternatives. During docking, FlexE can pick and choose from these alternatives to "assemble" a protein conformation that best complements the ligand [21].

Common Error Scenarios and Solutions

| Problem | Possible Cause | Solution |

|---|---|---|

| Docking fails to produce a pose with low RMSD for a known ligand. | The input protein ensemble may lack a conformation critical for binding the specific ligand. | Expand the ensemble by including more relevant structures from the PDB or by generating new conformations using computational methods like molecular dynamics. |

| The docking calculation is taking an excessively long time. | The combinatorial space of protein conformations might be too large due to many variable regions in the ensemble. | Check the size and diversity of your input ensemble. Consider curating a more focused ensemble with only the most relevant conformational states. |

| FlexE cannot read my input protein files. | The PDB file format may be non-standard or missing critical information like atom types or residues. | Use standard protein preparation steps: add missing hydrogen atoms, assign correct protonation states, and remove water molecules and heteroatoms unless critical [18]. Ensure all structures in the ensemble are correctly superimposed. |

| The top docking pose has steric clashes with the protein. | The scoring function's balance between different energy terms (van der Waals, hydrogen bonding, etc.) may be suboptimal for your system. | Inspect more than just the top-ranked pose. The correct binding mode might be present but ranked lower. Consider post-docking refinement with energy minimization [2]. |

Performance Data & Technical Specifications

Quantitative Performance of FlexE

The following table summarizes the key performance metrics for FlexE as reported in its foundational evaluation study [21].

| Metric | Value / Finding | Context |

|---|---|---|

| Success Rate (RMSD < 2.0 Å) | 83% (50 out of 60 ligands) | Evaluation across 10 protein ensembles (105 PDB structures + 1 model) [29] [21]. |

| Comparison to Cross-Docking | Results of "similar quality" to the best solution from sequential rigid docking. | FlexE achieves comparable pose prediction accuracy without requiring prior knowledge of the best single structure to use [21]. |

| Average Computing Time | ~5.5 minutes per ligand | Measured on a common workstation for placing one ligand into the united protein description [29] [21]. |

| Time vs. Cross-Docking | "Significantly lower than accumulated run times for single structures." | Avoids the linear time increase of docking a ligand into every single structure in the ensemble [21]. |

Essential Research Reagent Solutions

This table details the key materials and computational resources required for conducting experiments with FlexE.

| Item / Reagent | Function in the Experiment | Notes & Specifications |

|---|---|---|

| Protein Structure Ensemble | Provides the set of conformations to model protein flexibility, point mutations, or alternative models. | Typically derived from the PDB. Structures must be superimposed and have a conserved backbone [21]. |

| Ligand Database | Source of small molecules to be docked. Used for virtual screening or specific pose prediction. | Common sources: ZINC, PubChem, NCI. Ligands should be prepared (energy-minimized, correct tautomers) [18]. |

| Molecular Visualization Tool (e.g., PyMOL) | For preparing input structures, analyzing docking results, and visualizing predicted binding poses and protein-ligand interactions. | Essential for qualitative validation and interpreting the structural basis of docking scores. |

| Scoring Function | Evaluates the binding energetics of the predicted ligand-receptor complexes to rank potential poses. | FlexE uses a force field that includes evaluations of van der Waals, hydrogen bonding, electrostatic, and torsional energies, among others [21] [18]. |

Conceptual Diagrams

The Cross-Docking Problem

The diagram below illustrates the fundamental challenge that FlexE is designed to solve: a ligand may not dock correctly into a single rigid protein structure if the protein's binding site conformation is incompatible.

Troubleshooting Common Technical Issues

FAQ 1: My model produces ligand poses with physically unrealistic bond lengths or angles. How can I correct this?

This is a common issue, particularly with some early deep learning docking models. The solution depends on the tool you are using.

- If using EquiBind: The model includes a dedicated post-prediction step to correct for physical constraints. This step finds a set of coordinates that maximizes the similarity to the initial prediction while preserving bond lengths and angles via a closed-form, differentiable solution. Ensure this correction step is enabled in your pipeline [30].

- If using a model without built-in correction: Consider using a separate energy minimization tool (e.g., from molecular dynamics suites like OpenMM or GROMACS) to refine the predicted pose. This applies physical force fields to relax the structure and fix unrealistic geometries [31].

- General Advice: Newer models like DiffDock are explicitly designed to reduce such errors. If you frequently encounter this problem, switching to a diffusion-based generative model like DiffDock, which produces fewer steric clashes, is recommended [32].

FAQ 2: How should I interpret the confidence score from DiffDock for my predicted complex?

DiffDock provides a confidence score for its top-predicted pose. According to the developers, this score indicates the model's confidence in the structural quality of the prediction, not the binding affinity. A rough guideline for interpretation is [33]:

- c > 0: High confidence

- -1.5 < c < 0: Moderate confidence

- c < -1.5: Low confidence

The developers note that these thresholds assume the complex is similar to those in the training data (e.g., a drug-like molecule and a medium-sized protein). For large ligands, large protein complexes, or unbound protein conformations, you should shift these intervals downward [33].

FAQ 3: Can I use DiffDock for protein-peptide docking or to predict binding affinity?

- Protein-Peptide Docking: The standard DiffDock model was designed, trained, and tested for small-molecule docking to proteins. While it may run with peptides as input, its performance on larger biomolecules is not guaranteed. For protein-peptide interactions, specialized tools like RAPiDock are recommended [34]. For rigid protein-protein docking, you can explore DiffDock-PP [33].

- Binding Affinity Prediction: No, DiffDock does not predict binding affinity. It predicts the 3D structure of the complex and outputs a confidence score for that structure's quality. To estimate affinity, you should combine DiffDock's structural prediction with other tools like docking scoring functions (e.g., GNINA), MM/GBSA, or absolute binding free energy calculations, often after a relaxation step of the predicted pose [33].

FAQ 4: My docking performance is poor when using an unbound (apo) protein structure. How can I account for protein flexibility?

Handling unbound protein structures is a major challenge because proteins often undergo conformational changes (induced fit) upon ligand binding [2]. Here are several strategies:

- Use Flexible Docking Models: Newer models are being developed to handle protein flexibility. FlexPose enables end-to-end flexible modeling of protein-ligand complexes. DynamicBind uses equivariant geometric diffusion networks to model protein backbone and sidechain flexibility, which can help reveal cryptic pockets [31].

- Ensemble Docking: If your model is rigid, a traditional but effective method is to dock against an ensemble of multiple protein conformations. These can be obtained from experimental structures (e.g., from the PDB) of the same protein with different ligands or from computational methods like molecular dynamics (MD) simulations [2] [35].

- Leverage AlphaFold-Generated Structures: If experimental structures are unavailable, you can use predicted structures from AlphaFold. However, note that these often represent a single, ground state conformation and may not capture the flexibility needed for binding. Performance can be improved by using Alphafold to generate models with different random seeds or by using the recently released NeuralPlexer, which can predict structural differences between apo and bound forms [32].

Quantitative Performance Comparison

The table below summarizes the key performance metrics of leading deep learning docking tools as reported in the literature, providing a basis for method selection.

Table 1: Performance Comparison of Deep Learning Docking Tools

| Tool | Core Methodology | Reported Performance | Key Advantages / Limitations |

|---|---|---|---|

| EquiBind [30] [31] | Geometric Deep Learning (Equivariant Graph Neural Network) | ~100x faster than next fastest method; Mean RMSD nearly half of next most accurate method (on its benchmark). | Extreme speed; Direct, one-shot prediction. Limitations: Can produce physically unrealistic poses (26% with steric clashes); Does not model protein flexibility [30] [32]. |

| DiffDock [31] [32] [36] | Generative Diffusion Model | 38% of top predictions with RMSD < 2Å (PDBBind); DiffDock-L improves this to 50%. < 3% of predictions had steric clashes [32]. | High accuracy; Few steric clashes; Confidence estimation; Better generalization to unbound structures (22% success vs. ~10% for others) [31] [36]. |

| RAPiDock [34] | Diffusion Generative Model (for peptides) | 93.7% success rate at top-25 predictions; ~270x faster than AlphaFold2-Multimer. | Specialized for protein-peptide docking; Handles post-translational modifications; High speed and accuracy for its domain [34]. |

| Traditional Tools (e.g., VINA, GLIDE) [31] [36] | Search-and-Score | Performance varies widely; Often outperformed by DL in blind docking but can be strong with known pockets. | Well-established; Interpretable scoring functions. Limitations: Computationally demanding; Struggle with protein flexibility [31] [36]. |

Essential Experimental Protocols

Protocol: Running a Basic Docking Prediction with DiffDock

This protocol provides a step-by-step guide for using DiffDock, a state-of-the-art tool for small molecule docking.

Environment Setup

- Clone the official DiffDock repository:

git clone https://github.com/gcorso/DiffDock.git. - Navigate to the root directory and set up the Conda environment as described in the

README.mdfile. A Docker container is also available for easier deployment [33].

- Clone the official DiffDock repository:

Input Preparation

Running the Docking Calculation

Output Interpretation

- DiffDock will output several predicted poses ranked by its confidence score. Refer to the confidence score guidelines in FAQ 2 to assess the reliability of the top prediction [33].

- Visually inspect the top poses in a molecular viewer to check for reasonable binding interactions.

Protocol: Assessing Performance with Cross-Docking

Cross-docking is a rigorous method to evaluate a docking protocol's ability to handle realistic protein conformational changes.

Objective: To simulate a real-world scenario where a ligand is docked into a protein conformation that was solved with a different ligand or in its apo (unbound) state [31] [2].

Dataset Curation

- Identify a protein target with multiple experimentally determined structures in the Protein Data Bank (PDB). The set should include [31] [2]:

- Holo structures: Structures bound to different ligands.

- Apo structure: The unbound structure, if available.

- A classic example is PDB entry 1ANF, which has both apo and maltose-bound structures [30].

- Identify a protein target with multiple experimentally determined structures in the Protein Data Bank (PDB). The set should include [31] [2]:

Experimental Setup

- For each holo structure, extract the ligand. Then, try to re-dock this ligand into all other holo structures and the apo structure of the same protein [2].

- This tests the model's robustness to the natural conformational variability of the protein's binding site.

Analysis

- Calculate the Root-Mean-Square Deviation (RMSD) between the predicted ligand pose and the experimentally observed pose.

- A prediction is typically considered successful if the heavy-atom RMSD is less than 2.0 Å [31] [36].

- Compare the success rates across different protein conformations to gauge the impact of protein flexibility on your method.

Workflow Visualization: DiffDock Architecture

The following diagram illustrates the key stages of the DiffDock algorithm, which uses a diffusion process to predict ligand poses.

DiffDock's Diffusion-Based Docking Process

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Resources for Deep Learning Docking

| Resource / Tool | Type | Function in Research |

|---|---|---|

| PDBBind [31] [33] | Database | A comprehensive, curated database of protein-ligand complexes with binding affinity data. Used for training and benchmarking docking models. |

| ESMFold [33] | Software | A protein language model that can predict protein structures from sequences. Integrated into DiffDock to fold proteins when only a sequence is provided. |

| RDKit [33] | Software Cheminformatics Library | Handles ligand input, processing SMILES strings or file formats (.sdf, .mol2), and calculates molecular features for the model. |

| AlphaFold2/3 [34] [32] | Software | Provides highly accurate protein structure predictions for targets without experimental structures. Crucial for expanding the scope of docking studies. |

| Molecular Dynamics (MD) Suites (e.g., GROMACS, OpenMM) | Software | Used for post-docking refinement of predicted poses (energy minimization) and for generating conformational ensembles for flexible docking. |

Frequently Asked Questions (FAQs)

Q1: Why is it important to account for backbone flexibility in molecular docking? Traditional docking methods often treat the protein receptor as a rigid structure, which is an incomplete representation. Experimental data shows that proteins exist as ensembles of conformations, and ligands can bind by selecting from these pre-existing states or inducing new ones [2]. Accounting for backbone flexibility is crucial for accurate pose prediction, understanding allosteric regulation, and overcoming drug resistance, as it more accurately reflects the true biological process of binding [2] [37].

Q2: What are the main challenges in modeling large backbone conformational changes? The primary challenge is the vast computational resources required to sample the protein's many degrees of freedom. Other significant challenges include:

- Sampling Failure: Inability to adequately explore the vast conformational space to find the correct binding pose.

- Scoring Failure: Difficulty in accurately ranking the correct, flexible protein-ligand complex among many decoy conformations [2].

- Data Shortage: A lack of large-scale training data that captures the structural information along transition pathways, which is a bottleneck for developing deep learning models [38].

Q3: My steered molecular dynamics (SMD) simulation is causing the entire protein-ligand complex to drift. How can I prevent this? A common practice in SMD is to apply a harmonic restraint to the protein backbone to prevent drift. Instead of restraining all heavy atoms or all Cα atoms—which can overly restrict natural protein motion—a more effective method is to restrain only the Cα atoms of residues located at a distance greater than 1.2 nm from the ligand. This approach minimizes unrealistic constraints on the active site while effectively preventing global rotation [39].

Q4: Are some types of residues more important for mediating conformational changes? Yes, statistical analyses of proteins with multiple states show that residue contacts involving amino acids with long, flexible side chains—such as ARG-GLU, GLN-GLU, and GLN-GLN—are more abundant in proteins undergoing conformational changes. These residues facilitate the formation and breakage of specific interactions, like ionic locks or hydrogen bonds, which trigger movements of domains or secondary structures [38].

Troubleshooting Common Experimental Issues

Table 1: Common Problems and Solutions in Flexibility Simulations

| Problem Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| Low docking accuracy or failure to predict known binding mode. | Rigid receptor approximation; inability of the binding site to adapt to the ligand. | Use an ensemble of protein structures (e.g., from experiments or simulations) for docking [2] [37]. |

| Unphysical drift of the entire protein-ligand complex during SMD simulations. | Insufficient or inappropriate restraint of the protein backbone. | Apply harmonic restraints to Cα atoms located >1.2 nm from the ligand instead of restraining all atoms [39]. |

| Inaccurate side-chain flexibility predictions in fixed-backbone design. | The fixed backbone is too restrictive and doesn't allow for correlated movements. | Incorporate a simple model of backbone flexibility, such as Backrub motions, into Monte Carlo simulations [40]. |

| Inability to predict complex conformational changes like fold-switching. | Standard models struggle with global topological changes. | Employ a specialized deep learning model trained on a large-scale database of protein transition pathways [38]. |

Quantitative Data on Method Performance

Table 2: Performance Comparison of Flexibility Modeling Methods

| Method Category | Key Metric | Result / Performance | Context & Notes |

|---|---|---|---|

| Fixed-Backbone Model (Side-chain sampling only) | RMSD of predicted vs. experimental NMR order parameters | 0.26 [40] | Baseline performance for side-chain flexibility prediction. |

| Flexible-Backbone Model (Incorporating Backrub motions) | RMSD of predicted vs. experimental NMR order parameters | Significant improvement for 10 of 17 proteins [40] | More accurately models coupled side-chain/backbone motion. |

| Rigid Receptor Docking | Success rate for pose prediction | 50-75% [2] | Performance ceiling for rigid docking protocols. |

| Fully Flexible Docking | Success rate for pose prediction | 80-95% [2] | Highlights the benefit of incorporating protein flexibility. |

Detailed Experimental Protocols

Protocol 1: Incorporating Backbone Flexibility with Backrub Motions for Improved Side-Chain Modeling

This protocol uses Monte Carlo simulations with Backrub motions to more accurately model side-chain conformational variability, validated against NMR data [40].

- Initial Structure Preparation: Start with a high-resolution protein crystal structure.

- Generate Backbone Ensemble: Use a Backrub motion algorithm to generate an ensemble of backbone conformations. These motions are small, local shifts inspired by conformational changes observed in ultra-high-resolution crystal structures.

- Sample Side-Chains: For each backbone conformation in the ensemble, perform Metropolis Monte Carlo simulations to sample side-chain conformations from multiple rotameric states.

- Calculate Order Parameters: Compute the side-chain order parameters (S²) from the resulting conformational ensemble.

- Validation: Compare the computed S² values with experimental NMR methyl relaxation order parameters to validate the model's accuracy.

Protocol 2: A Balanced Restraint Strategy for Steered Molecular Dynamics (SMD)

This protocol outlines a method for applying backbone restraints in SMD simulations that prevents global drift without overly restricting relevant protein flexibility [39].

- System Setup: Prepare the protein-ligand complex using standard molecular dynamics procedures (solvation, ionization, energy minimization).

- Identify Restraint Atoms: Calculate the distance from every Cα atom in the protein to the ligand. Select all Cα atoms where this distance is greater than 1.2 nm.

- Apply Restraints: Apply a harmonic restraint potential to the selected subset of Cα atoms during the SMD simulation.

- Pulling Simulation: Perform the SMD simulation by applying a constant velocity or force to the ligand, pulling it away from the binding site.

- Analysis: Monitor the force-displacement profile and analyze the breaking of specific ligand-protein interactions along the unbinding pathway.

Essential Research Reagent Solutions

| Item Name | Function / Application | Reference |

|---|---|---|

| Backrub Motion Model | Models small, correlated backbone-side-chain motions to improve flexibility predictions in protein design. | [40] |

| Steered Molecular Dynamics (SMD) | Simulates the forced unbinding of a ligand from a protein, useful for studying dissociation pathways and kinetics. | [39] |

| Multi-State (MS) Protein Dataset | A large-scale database of 2,635 proteins with simulated transition pathways between two conformational states; useful for training and validating new models. | [38] |

| Molecular Dynamics with Enhanced Sampling | Combines MD with methods like metadynamics to calculate free energy landscapes and identify transition pathways for complex conformational changes. | [38] |

| Ensemble Docking | Docks a ligand into multiple pre-generated protein conformations to simulate conformational selection; a practical way to incorporate flexibility. | [2] [37] |

Workflow and Pathway Visualizations

Flexible Docking Workflow

Conformational Change Triggers

Identifying Cryptic Pockets with DynamicBind and Other Advanced Tools

Frequently Asked Questions (FAQs)

FAQ 1: What is a cryptic pocket, and why is it important in drug discovery?