Beyond R²: A Modern Framework for Validating QSAR Model Predictive Power in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating Quantitative Structure-Activity Relationship (QSAR) models.

Beyond R²: A Modern Framework for Validating QSAR Model Predictive Power in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating Quantitative Structure-Activity Relationship (QSAR) models. It covers the foundational principles of QSAR validation, explores advanced methodological approaches including machine learning and 3D-QSAR, addresses common troubleshooting and optimization challenges, and offers a comparative analysis of validation criteria. With the rising use of QSAR for virtual screening of ultra-large chemical libraries, the article synthesizes current best practices, highlights emerging trends such as the shift towards Positive Predictive Value (PPV) for hit identification, and emphasizes the importance of a model's Applicability Domain (AD) to ensure reliable and regulatory-ready predictions in biomedical research.

The Pillars of Predictive Power: Core Principles of QSAR Validation

In the high-stakes landscape of pharmaceutical development, where the average cost to bring a single new drug to market reaches $2.6 billion and the process spans 10 to 15 years, the margin for error is vanishingly small [1]. This immense financial investment and extended timeline are compounded by staggering failure rates, with approximately 90% of drug candidates that enter human trials ultimately failing to receive approval [1]. Within this challenging environment, Quantitative Structure-Activity Relationship (QSAR) models have emerged as indispensable computational tools, promising to accelerate discovery timelines and improve the identification of promising candidates. However, the predictive power of these models—and thus their value in de-risking drug development—is entirely dependent on rigorous, multifaceted validation protocols.

Validation serves as the critical bridge between computational prediction and experimental reality, transforming QSAR models from speculative tools into reliable decision-support systems. As drug discovery increasingly leverages artificial intelligence and machine learning, with venture funding for healthcare AI reaching $1.8 billion in the first half of 2025 alone, the need for robust validation frameworks has never been more pressing [2]. This guide examines the why and how of QSAR validation, providing researchers with practical methodologies for assessing model performance within the context of modern drug discovery's immense challenges and opportunities.

The Stakes: Quantifying Drug Discovery Risks and Costs

The pharmaceutical industry operates under a unique risk profile characterized by protracted timelines, massive capital investment, and devastating attrition rates. Understanding this context is essential for appreciating why rigorous model validation is not merely an academic exercise but a business imperative.

The Drug Development Timeline and Attrition

The journey from discovery to market approval is a decade-plus marathon fraught with obstacles at every stage. The table below quantifies this challenging pathway, highlighting where effective predictive models can have the greatest impact on reducing attrition [1].

Table 1: Drug Development Lifecycle with Probability of Success

| Development Stage | Average Duration | Probability of Transition to Next Stage | Primary Reason for Failure |

|---|---|---|---|

| Discovery & Preclinical | 2-4 years | ~0.01% (to approval) | Toxicity, lack of effectiveness |

| Phase I Clinical Trials | 2.3 years | ~52% | Unmanageable toxicity/safety |

| Phase II Clinical Trials | 3.6 years | ~29% | Lack of clinical efficacy |

| Phase III Clinical Trials | 3.3 years | ~58% | Insufficient efficacy, safety |

| FDA Review | 1.3 years | ~91% | Safety/efficacy concerns |

Phase II trials represent the single largest hurdle in drug development, with a success rate of only 29% [1]. This phase serves as the epicenter of value destruction, where wrong decisions about which candidates to advance to expensive Phase III trials lead to the largest possible waste of capital. Predictive models that can accurately forecast efficacy before or during Phase II trials therefore offer the highest potential return on investment by preventing catastrophic late-stage failures.

The Financial Implications of Failure

The true cost of drug development extends beyond direct out-of-pocket expenses to include capitalized costs that account for the time value of money invested over more than a decade with no guarantee of return. Clinical trials alone consume 68-69% of total R&D expenditures [1]. Each late-stage failure represents not only the direct costs invested in that specific compound but also the opportunity cost of not pursuing more promising candidates. In this context, high-quality predictive models that improve decision-making offer substantial financial protection, potentially saving hundreds of millions of dollars in avoidable development costs.

QSAR Validation Fundamentals: From Theory to Practice

The Evolution of QSAR Validation Paradigms

Traditional best practices for QSAR modeling have emphasized dataset balancing and balanced accuracy as key objectives [3]. This approach aimed to create models that could equally well predict both active and inactive compounds across an entire external set. However, contemporary research has revealed that these traditional norms require revision for modern virtual screening applications against ultra-large chemical libraries containing billions of compounds [3].

The emerging paradigm recognizes that for virtual screening—where the practical goal is to select a small number of hits (e.g., 128 compounds corresponding to a single screening plate) from libraries of millions of compounds—models with the highest Positive Predictive Value (PPV), also known as precision, are substantially more valuable [3]. This shift acknowledges that both training sets and virtual screening libraries are inherently imbalanced toward inactive compounds, and that the operational constraint of being able to test only a tiny fraction of predicted actives changes the optimal model performance metrics.

Key Validation Metrics and Their Applications

Different validation metrics serve distinct purposes in evaluating model performance. The table below compares traditional and contemporary approaches to QSAR validation, highlighting their appropriate contexts of use.

Table 2: Comparison of QSAR Validation Metrics and Approaches

| Validation Metric | Traditional Application | Modern Virtual Screening Application | Interpretation |

|---|---|---|---|

| Balanced Accuracy (BA) | Primary metric for lead optimization | Less relevant for imbalanced screening | Measures overall classification performance across all compounds |

| Positive Predictive Value (PPV) | Secondary consideration | Primary metric for hit identification | Measures proportion of true actives among predicted actives |

| Area Under ROC Curve (AUROC) | Global performance assessment | Limited value for top-ranked predictions | Measures overall ranking quality across all thresholds |

| BEDROC | Specialized use | Better than AUROC but parameter-dependent | Emphasizes early enrichment with adjustable weighting |

| PPV at Fixed N | Not traditionally used | Most relevant for practical screening | Measures expected experimental hit rate for top N compounds |

Research demonstrates that models optimized for PPV can achieve hit rates at least 30% higher than those optimized for balanced accuracy when selecting the top 128 compounds for experimental testing [3]. This performance difference directly translates to more efficient use of experimental resources and increased probability of identifying genuine hits.

Experimental Design for QSAR Validation

Comprehensive Validation Workflow

Robust QSAR validation requires a multi-stage approach that progresses from computational assessments to experimental confirmation. The integrated workflow below ensures thorough evaluation of model performance and practical utility.

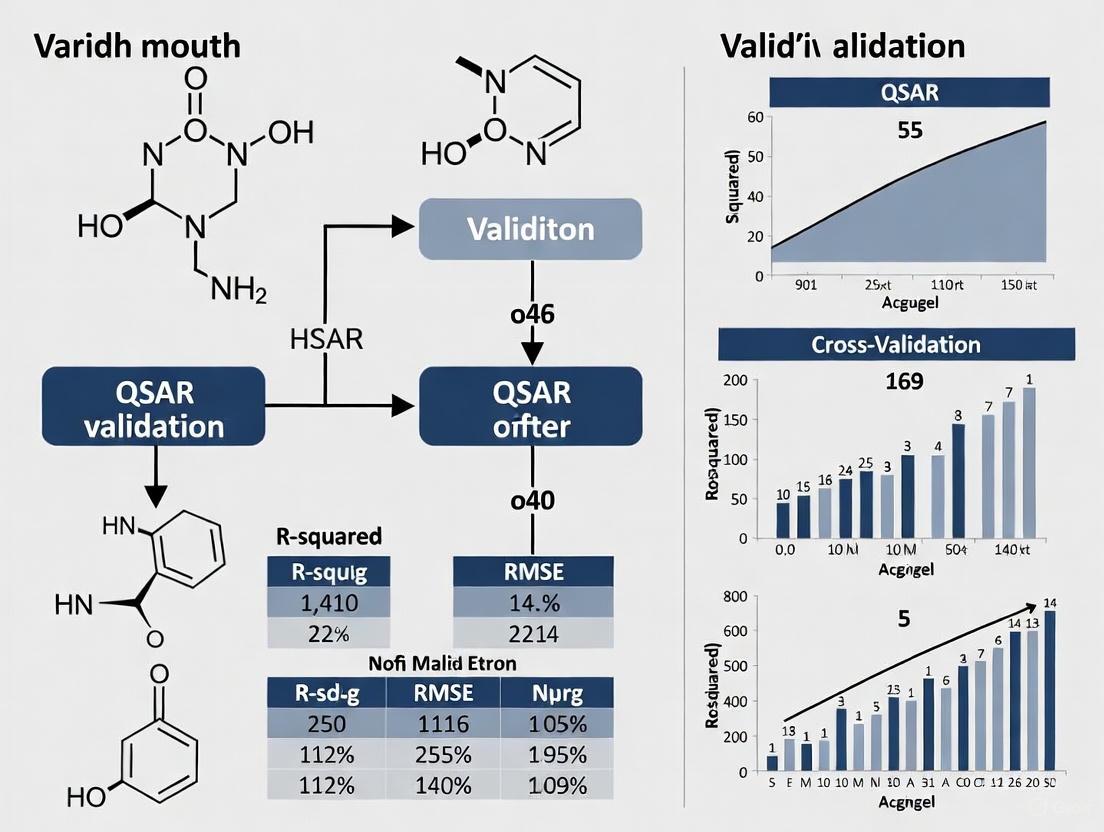

QSAR Model Validation Workflow: A multi-stage approach from data preparation to experimental confirmation.

Detailed Experimental Protocols

Computational Validation Methods

10-Fold Cross-Validation Protocol:

- Dataset Division: Randomly split the curated dataset into 10 equal subsets while maintaining activity distribution

- Iterative Training/Testing: Use 9 subsets for training and the remaining subset for testing, repeating this process 10 times

- Performance Aggregation: Calculate average performance metrics (R², PPV, etc.) across all 10 iterations

- Purpose: Provides robust estimate of model performance while maximizing data usage for both training and validation [4]

External Validation Set Protocol:

- Initial Split: Reserve 20-30% of the complete dataset before any model training or feature selection

- Stratified Sampling: Maintain similar distributions of activity values and chemical structures in both training and test sets

- Blind Testing: Apply the fully-trained model to the external set only once, after all model parameters are fixed

- Purpose: Simulates real-world prediction scenario on completely new compounds [4] [3]

Experimental Validation Methods

MTT Cell Viability Assay:

- Purpose: Measure compound cytotoxicity and anti-proliferative effects

- Cell Lines: Utilize relevant cancer cell lines (e.g., A549 for lung cancer, MCF-7 for breast cancer) with normal cell controls (e.g., HEK-293, VERO)

- Procedure: Seed cells in 96-well plates, treat with serial compound dilutions, incubate with MTT reagent, and measure absorbance at 570nm

- Output: Dose-response curves and IC₅₀ values for correlation with predicted pIC₅₀ values [4]

Cellular Thermal Shift Assay (CETSA):

- Purpose: Confirm direct target engagement in intact cells

- Procedure: Treat cells with test compounds, heat at different temperatures, isolate soluble proteins, and detect target protein levels via Western blot or mass spectrometry

- Validation: Dose-dependent and temperature-dependent stabilization of target protein indicates direct binding

- Advantage: Provides physiologically relevant confirmation of target engagement in cellular context [5]

Wound Healing and Clonogenic Assays:

- Purpose: Evaluate functional effects on cell migration and long-term proliferation

- Methods: Create "wounds" in confluent cell monolayers and measure closure over time (migration); plate single cells and count colony formation after 7-14 days (clonogenic)

- Application: Functional validation of anti-cancer activity beyond direct cytotoxicity [4]

Case Study: FGFR-1 Inhibitor QSAR Model

Model Development and Performance

A recent study developing a QSAR model for FGFR-1 inhibitors exemplifies comprehensive validation practice [4]. Researchers curated a dataset of 1,779 compounds from the ChEMBL database, calculated molecular descriptors using Alvadesc software, and employed multiple linear regression (MLR) for model development [4].

The model demonstrated strong predictive performance with an R² value of 0.7869 for the training set and 0.7413 for the test set, indicating good generalization ability [4]. External validation confirmed practical utility, with the model successfully identifying oleic acid as a promising FGFR-1 inhibitor that subsequently showed substantial inhibitory effects on A549 and MCF-7 cancer cells with low cytotoxicity in normal cell lines [4].

Integrated Computational and Experimental Workflow

The FGFR-1 inhibitor study exemplifies the modern approach to QSAR validation, combining multiple computational and experimental techniques in an integrated workflow.

Integrated Validation Workflow for FGFR-1 Inhibitor QSAR Model

Successful QSAR model development and validation requires specialized computational tools and experimental reagents. The table below details key resources referenced in the studies discussed.

Table 3: Essential Research Reagents and Computational Tools for QSAR Validation

| Tool/Reagent | Category | Primary Function | Application Context |

|---|---|---|---|

| VEGA QSAR Platform | Software | Integrated QSAR modeling and toxicity prediction | Environmental fate assessment of cosmetic ingredients [6] |

| EPI Suite | Software | Environmental parameter estimation | Persistence, bioaccumulation potential prediction [6] |

| Alvadesc Software | Software | Molecular descriptor calculation | FGFR-1 inhibitor QSAR model development [4] |

| AutoDock | Software | Molecular docking and virtual screening | Binding mode analysis and pose prediction [5] |

| CETSA (Cellular Thermal Shift Assay) | Experimental | Target engagement validation in intact cells | Confirmation of direct drug-target interactions [5] |

| MTT Assay Reagents | Experimental | Cell viability and cytotoxicity measurement | Experimental validation of predicted bioactive compounds [4] |

| ChEMBL Database | Data | Curated bioactivity database | Source of training compounds for QSAR models [4] [3] |

| eMolecules Explore/REAL Space | Data | Ultra-large chemical libraries | Virtual screening for hit identification [3] |

In the high-risk, high-reward domain of drug discovery, QSAR model validation transcends technical requirement to become strategic imperative. The paradigm shift from balanced accuracy to PPV optimization for virtual screening applications reflects the evolving sophistication of computational drug discovery and its tighter integration with practical experimental constraints. As AI and machine learning play increasingly prominent roles in pharmaceutical R&D—with venture funding for healthcare AI reaching unprecedented levels—rigorous validation remains the non-negotiable foundation ensuring these powerful tools deliver on their promise [2].

The integrated validation framework presented here, combining comprehensive computational assessment with targeted experimental confirmation, provides a roadmap for researchers to build confidence in their predictive models and make better-informed decisions throughout the drug discovery pipeline. In an industry where a single late-stage failure can cost hundreds of millions of dollars, investment in rigorous QSAR validation represents not just scientific best practice, but essential risk management.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone computational approach in modern drug discovery and environmental chemistry, mathematically linking chemical structures to biological activity or physicochemical properties [7]. The fundamental principle underpinning QSAR is that structural variations systematically influence biological activity, enabling the prediction of compounds not yet synthesized or tested [7]. While internal validation using training data provides an initial performance estimate, true predictive power is unequivocally established through rigorous external validation—assessing model performance on completely independent compounds not used in model development [8]. This distinction separates academically interesting models from practically useful tools capable of guiding real-world decision-making in pharmaceutical research and regulatory science.

The traditional reliance on internal validation metrics alone has proven insufficient for guaranteeing predictive performance. As demonstrated in a comprehensive 2022 study analyzing 44 reported QSAR models, employing the coefficient of determination (r²) alone could not reliably indicate model validity [8]. External validation remains the primary method for checking the reliability of developed models for predicting the activity of not-yet-synthesized compounds, yet the field lacks consensus on optimal validation criteria [8]. This guide systematically compares contemporary validation approaches, providing researchers with experimentally-grounded protocols for distinguishing truly predictive models from those that merely fit existing data.

Comparative Analysis of QSAR Model Performance Across Domains

Performance Evaluation in Environmental Fate Prediction

Recent comparative studies of QSAR models for predicting environmental fate parameters of cosmetic ingredients reveal significant performance variations across model types and endpoints. A 2025 systematic evaluation identified top-performing models for persistence, bioaccumulation, and mobility assessment, highlighting that qualitative predictions classified by REACH and CLP regulatory criteria generally prove more reliable than quantitative predictions [6].

Table 1: Top-Performing QSAR Models for Environmental Fate Prediction of Cosmetic Ingredients

| Endpoint | Property | Best-Performing Models | Key Findings |

|---|---|---|---|

| Persistence | Ready Biodegradability | Ready Biodegradability IRFMN (VEGA), Leadscope (Danish QSAR), BIOWIN (EPISUITE) | Highest predictive performance for classifying biodegradable cosmetic ingredients [6] |

| Bioaccumulation | Log Kow | ALogP (VEGA), ADMETLab 3.0, KOWWIN (EPISUITE) | Most appropriate for lipophilicity prediction [6] |

| Bioaccumulation | BCF | Arnot-Gobas (VEGA), KNN-Read Across (VEGA) | Superior performance for bioaccumulation factor prediction [6] |

| Mobility | Log Koc | OPERA v1.0.1 (VEGA), KOCWIN-Log Kow (VEGA) | Most relevant models for soil adsorption coefficient prediction [6] |

This comprehensive analysis emphasized the significant role of the Applicability Domain (AD) in evaluating QSAR model reliability, with predictions falling within a model's AD demonstrating substantially higher reliability [6].

Performance Metrics and Validation in Drug Discovery Applications

The evaluation of QSAR models for drug discovery has evolved significantly, with emerging evidence challenging traditional validation paradigms. A 2025 commentary established that traditional practices of dataset balancing and optimizing for balanced accuracy are suboptimal for virtual screening of modern large chemical libraries [3]. Instead, models with the highest Positive Predictive Value (PPV) built on imbalanced training sets demonstrate superior performance for identifying hit compounds within the limited screening capacity of standard well plates (e.g., 128 molecules) [3].

Table 2: Comparison of QSAR Validation Metrics for Virtual Screening

| Metric | Traditional Application | Limitations for Virtual Screening | Recommended Use Context |

|---|---|---|---|

| Balanced Accuracy (BA) | Lead optimization for small compound sets | Misleading for imbalanced screening libraries; emphasizes global over early enrichment | Limited utility for HTVS; consider deprecation [3] |

| Positive Predictive Value (PPV) | General classification performance | Requires calculation on top N predictions | Optimal for HTVS; directly measures hit rate in nominated compounds [3] |

| Area Under ROC (AUROC) | Overall ranking capability | Does not emphasize early enrichment; can be high even with poor early performance | Moderate utility; insufficient alone for HTVS assessment [3] |

| BEDROC | Early enrichment emphasis | Complex parameterization (α parameter); difficult to interpret | Better than AUROC but less intuitive than PPV [3] |

Experimental data demonstrates that training on imbalanced datasets achieves a hit rate at least 30% higher than using balanced datasets, with PPV effectively capturing this performance difference without parameter tuning [3]. This represents a paradigm shift in how the field should conceptualize predictive power for specific applications like high-throughput virtual screening (HTVS).

Experimental Protocols for Assessing Predictive Power

Systematic External Validation Methodology

Robust external validation requires standardized protocols to ensure meaningful comparisons across studies. The following workflow outlines a comprehensive approach to external validation based on analysis of current best practices:

Figure 1: Comprehensive workflow for external validation of QSAR models.

The critical steps in this protocol include:

Database Selection and Preparation: Utilizing comprehensive databases like ChEMBL (version 34), which contains over 2.4 million compounds and 20.7 million interactions across 15,598 targets [9]. Data quality filters should be applied, such as excluding entries associated with non-specific targets and removing duplicate compound-target pairs [9]. For enhanced reliability, implementing a confidence score threshold (e.g., ≥7 in ChEMBL, indicating direct protein complex subunits assigned) ensures only well-validated interactions are included [9].

Strategic Dataset Splitting: Moving beyond random splitting to more rigorous approaches such as time-split validation (simulating real-world predictive scenarios) or structural clustering-based splits that ensure chemical diversity between training and test sets. This approach prevents data leakage and provides a more realistic assessment of predictive power on genuinely novel chemotypes.

Comprehensive Metric Evaluation: Implementing multi-faceted assessment including:

- For regression models: R², RMSE, and concordance correlation coefficient

- For classification models: PPV (particularly for top-ranked predictions), BEDROC, and traditional metrics like sensitivity and specificity

- Comparative metrics: (r0^2) and (r0'^2) for regression through origin [8]

Benchmarking with Synthetic Datasets

Systematic evaluation of QSAR interpretation approaches utilizes synthetic datasets with pre-defined patterns, enabling quantitative assessment of interpretation method performance. These benchmarks include:

Simple Additive End-points: Dataset properties determined by atom counts (e.g., nitrogen atoms only, or nitrogen minus oxygen atoms) with expected atomic contributions of 1, -1, or 0 [10].

Context-Dependent End-points: Properties dependent on local chemical context, such as the number of specific functional groups (e.g., amide groups encoded with SMARTS pattern NC=O) [10].

Pharmacophore-like Settings: Classification where compounds are labeled "active" if they contain specific 3D patterns, simulating real-world scenarios where activity depends on spatial molecular features [10].

These synthetic benchmarks enable quantitative evaluation of interpretation performance by comparing retrieved patterns against known "ground truth" structural determinants [10].

Table 3: Essential Research Reagent Solutions for QSAR Modeling

| Tool Category | Specific Tools | Function and Application |

|---|---|---|

| Descriptor Calculation | PaDEL-Descriptor, Dragon, RDKit, Mordred | Generate molecular descriptors quantifying structural, physicochemical, and electronic properties [7] |

| QSAR Platforms | VEGA, EPI Suite, T.E.S.T., ADMETLab 3.0, Danish QSAR Models | Integrated platforms for specific endpoint predictions (e.g., environmental fate) [6] |

| Target Prediction | MolTarPred, PPB2, RF-QSAR, TargetNet, CMTNN | Ligand-centric and target-centric approaches for drug target identification [9] |

| Bioactivity Databases | ChEMBL, PubChem, BindingDB, DrugBank | Sources of experimentally validated bioactivity data for model training and validation [9] |

| Validation Benchmarks | Synthetic benchmark datasets | Data with predefined structure-activity relationships for interpretation method validation [10] |

Recent comparative studies indicate that MolTarPred demonstrates particularly strong performance for molecular target prediction, with Morgan fingerprints and Tanimoto scores outperforming alternative fingerprint/similarity metric combinations [9]. For environmental applications, the VEGA and EPISUITE platforms contain some of the best-performing models for persistence, bioaccumulation, and mobility endpoints [6].

Decision Framework for QSAR Model Selection and Application

The choice of optimal QSAR models and validation approaches must be guided by the specific application context. The following decision pathway illustrates the critical considerations:

Figure 2: Decision pathway for selecting QSAR validation strategies based on application context.

This framework highlights that predictive power must be defined relative to specific use cases. For virtual screening of ultra-large libraries, models with the highest PPV trained on imbalanced datasets significantly outperform balanced alternatives, delivering at least 30% more true positives in the top predictions [3]. In contrast, lead optimization may still benefit from balanced accuracy focus, while regulatory assessment prioritizes qualitative classification reliability within well-defined applicability domains [6].

True predictive power in QSAR modeling extends far beyond excellent model fit to existing data. The evidence compiled in this guide demonstrates that rigorous external validation, appropriate metric selection for specific applications, and strict adherence to applicability domain boundaries collectively determine a model's real-world utility. The field is evolving from traditional practices focused on balanced accuracy toward more nuanced, application-specific validation paradigms.

Particularly significant is the emerging understanding that different QSAR applications demand specialized validation approaches. The discovery that imbalanced training sets optimize PPV for virtual screening represents a fundamental shift in best practices for hit identification campaigns [3]. Simultaneously, the consistent superiority of qualitative predictions for regulatory classification endpoints reinforces the context-dependent nature of predictive power [6]. These advances, coupled with robust benchmarking using synthetic datasets with known ground truths [10], provide researchers with an expanded toolkit for developing and selecting QSAR models with genuine predictive power rather than retrospective descriptive capability. As the field continues to mature, the integration of these validation principles will be essential for advancing predictive modeling in drug discovery and regulatory science.

In the fields of drug discovery and chemical safety assessment, Quantitative Structure-Activity Relationship (QSAR) models have become indispensable tools for predicting the biological activity and toxicity of chemicals. These computational models establish mathematical relationships between chemical structures and biological responses, enabling researchers to prioritize compounds for synthesis and testing while reducing reliance on animal studies. However, the proliferation of QSAR methodologies and the variable quality of predictions created an urgent need for standardized validation frameworks to ensure scientific rigor and regulatory acceptance. This need was particularly amplified by legislation like the European Union's REACH regulation (Registration, Evaluation, Authorisation and Restriction of Chemicals), which explicitly encourages the use of QSAR predictions to fill data gaps while requiring demonstrated scientific validity [11].

The Organisation for Economic Co-operation and Development (OECD) addressed this challenge by developing a harmonized framework for QSAR validation. Originally formulated in 2004 and subsequently refined through international collaboration, the OECD Principles for the Validation of (Q)SAR Models provide a systematic approach to establishing confidence in QSAR predictions [12] [11]. These principles have since become the cornerstone for regulatory assessment of computational models, forming the basis for the newer OECD (Q)SAR Assessment Framework (QAF) which offers further guidance for regulatory evaluation of both models and predictions [13] [14]. This guide examines each OECD principle in detail, compares its implementation across different modeling approaches, and provides experimental data demonstrating how these principles contribute to predictive power in real-world applications.

The Five OECD Principles: Deconstruction and Analysis

The OECD principles establish five fundamental criteria that QSAR models should meet to be considered valid for regulatory purposes. Together, these principles ensure transparency, scientific robustness, and practical utility of QSAR predictions.

Table 1: The Five OECD Principles for QSAR Validation

| Principle | Core Requirement | Regulatory Importance |

|---|---|---|

| Defined Endpoint | Clear specification of the biological effect being predicted | Ensures appropriate interpretation and use of predictions [11] |

| Unambiguous Algorithm | Transparent model algorithm and calculation methodology | Enables verification and reproducibility of results [11] |

| Defined Applicability Domain | Clear description of model scope and limitations | Identifies when predictions are reliable [15] |

| Appropriate Validation | Statistical measures of goodness-of-fit, robustness, and predictivity | Quantifies model performance and reliability [11] |

| Mechanistic Interpretation | Biological plausibility of descriptor-endpoint relationship (if possible) | Increases scientific confidence in predictions [11] |

Principle 1: Defined Endpoint

The first principle requires a transparently defined endpoint with clear understanding of the associated biological effect and experimental conditions under which it was measured. This principle addresses the challenge that models can be constructed using data measured under different conditions and various experimental protocols, potentially leading to inconsistent predictions [11]. A well-defined endpoint includes not only the specific biological parameter (e.g., IC₅₀, BCF, LD₅₀) but also the experimental system, measurement methodology, and units of expression.

In regulatory contexts, this principle ensures that QSAR predictions align with the specific data requirements of the assessment. For example, the OECD QSAR Toolbox facilitates this by providing organized databases with clearly documented endpoints and associated experimental protocols [16]. When comparing models predicting biodegradability of cosmetic ingredients, researchers found that models with precisely defined endpoints like "Ready Biodegradability" produced more reliable regulatory classifications than those with vaguely defined degradation endpoints [6]. This precision in endpoint definition directly impacts the utility of predictions for decision-making.

Principle 2: Unambiguous Algorithm

The second principle mandates an unambiguous algorithm for model construction and application. This requires complete transparency about the mathematical formula, structural descriptors, and computational procedures used to generate predictions. The algorithm must be described in sufficient detail to allow independent replication of the model and its predictions [11]. This principle faces challenges with commercial models where algorithms may be protected as intellectual property, creating barriers to regulatory acceptance.

Modern implementations of this principle have evolved with advancing technology. While traditional QSAR relied on linear regression and readily interpretable equations, contemporary approaches incorporate machine learning (ML) and deep learning techniques including Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) [17]. These advanced algorithms can capture complex, non-linear relationships but present challenges for interpretation. To satisfy Principle 2, developers must provide full architectural specifications, feature engineering methodologies, and hyperparameter values. The emergence of explainable AI (XAI) techniques like SHapley Additive exPlanations (SHAP) in modern QSAR implementations helps maintain transparency even with complex models [18].

Principle 3: Defined Applicability Domain

The third principle requires a defined applicability domain (AD) that specifies the model's limitations in chemical space and response space. The AD identifies the types of chemicals for which the model can generate reliable predictions based on the structural and response characteristics of its training set [15]. This principle acknowledges that no QSAR model is universally applicable, and predictions for chemicals outside the AD are potentially unreliable.

Table 2: Comparison of Applicability Domain Approaches

| Method Category | Key Methodology | Advantages | Limitations |

|---|---|---|---|

| Range-Based (Bounding Box) | Range of individual descriptors; p-dimensional hyper-rectangle [15] | Simple implementation | Cannot identify empty regions; ignores descriptor correlations |

| Geometric (Convex Hull) | Smallest convex area containing training set [15] | Accounts for descriptor correlations | Computationally complex with high dimensions; misses internal empty regions |

| Distance-Based | Distance measures (Mahalanobis, Euclidean) from training centroid [15] | Handles correlated descriptors (Mahalanobis) | Threshold definition challenging; may not reflect data density |

| Probability Density-Based | Probability density distribution of training set [15] | Reflects actual data distribution | Computationally intensive |

Research demonstrates that AD definition significantly impacts prediction reliability. A comparative study of bioconcentration factor (BCF) models found that distance-based methods using Mahalanobis distance provided the most balanced identification of extrapolations, while range-based methods tended to be overconservative [15]. In environmental fate assessment of cosmetic ingredients, predictions were considerably more reliable for compounds falling within the model's AD, highlighting the critical importance of AD assessment in regulatory applications [6].

Principle 4: Appropriate Validation Measures

The fourth principle requires appropriate measures of goodness-of-fit, robustness, and predictivity. This involves both internal validation (using the training data) and external validation (using independent test data) to demonstrate model performance [11]. Traditional validation metrics include R² (coefficient of determination) for regression models and balanced accuracy for classification models, along with cross-validation techniques like leave-one-out (LOO) and leave-many-out (LMO) [11].

Contemporary research has revealed that traditional validation paradigms require refinement for specific applications. For virtual screening of large chemical libraries, where the practical goal is identifying active compounds within limited experimental testing capacity, Positive Predictive Value (PPV) has emerged as a more relevant metric than balanced accuracy [3]. Studies demonstrate that models trained on imbalanced datasets (reflecting real-world prevalence of inactive compounds) and optimized for PPV can achieve hit rates at least 30% higher than models trained on balanced datasets, despite having lower balanced accuracy [3].

Advanced modern implementations like Bio-QSARs for ecotoxicity prediction have achieved exceptional predictive performance (R² up to 0.92 on independent test sets) by combining large training data with machine learning algorithms like Gaussian Process Boosting that accommodate mixed effects [18]. These models employ comprehensive validation strategies that include both chemical and biological applicability domains.

Principle 5: Mechanistic Interpretation

The fifth principle recommends a mechanistic interpretation where possible, encouraging consideration of the biological phenomenon and how molecular descriptors relate to the underlying mechanism of action [11]. While recognizing that a definitive mechanism may not always be known, this principle pushes model developers beyond black-box correlations toward biologically plausible relationships.

Modern QSAR implementations have enhanced mechanistic interpretation through several approaches. The OECD QSAR Toolbox facilitates mechanistic thinking through "profilers" that incorporate structural alerts based on known toxicological mechanisms, such as covalent binding to proteins or DNA [16]. Similarly, Bio-QSAR models explicitly incorporate biological descriptors like Dynamic Energy Budget parameters and taxonomic information, creating more mechanistically transparent predictions [18]. In kinase-targeted drug discovery, integration of QSAR with structural biology and machine learning has enabled more interpretable models that capture complex structure-activity relationships, advancing both predictive accuracy and mechanistic understanding [17].

Experimental Comparison: OECD Implementation Across Modeling Platforms

To objectively compare how different modeling approaches implement OECD principles, we examined several publicly available QSAR platforms and research implementations. The comparative analysis focused on performance metrics, applicability domain characterization, and regulatory utility.

Table 3: Experimental Performance Comparison of QSAR Models for Environmental Endpoints

| Platform/Model | Endpoint | Performance Metrics | Applicability Domain Implementation | OECD Principle Compliance |

|---|---|---|---|---|

| Bio-QSAR 2.0 [18] | Aquatic toxicity | R² up to 0.92 (test set) | Feature importance-weighted AD | Principles 1-5 fully addressed |

| VEGA IRFMN [6] | Ready biodegradability | High qualitative reliability | Defined AD with reliability index | Principles 1, 3, 4 well implemented |

| EPISUITE BIOWIN [6] | Biodegradation | Relevant for persistence assessment | Limited AD definition | Principles 1, 2, 4 partially addressed |

| Danish QSAR [6] | Persistence | High performance for classification | Defined structural rules | Principles 1, 3, 5 well implemented |

| ADMETLab 3.0 [6] | Log Kow | High performance for bioaccumulation | Multiple AD measures | Principles 1, 2, 4 well implemented |

Experimental Protocol for Model Validation

The validation methodology employed in comparative studies typically follows a standardized protocol:

Data Curation: High-quality datasets with reliable experimental measurements are compiled from sources like the OECD QSAR Toolbox databases [16].

Data Preprocessing: Chemical structures are standardized, duplicates removed, and descriptors calculated.

Dataset Division: Data is split into training (model development) and test (model validation) sets, typically using 80:20 or similar ratios with appropriate stratification.

Model Training: Various algorithms (linear regression, random forest, neural networks, etc.) are applied with hyperparameter optimization.

Performance Assessment: Multiple metrics are calculated including sensitivity, specificity, accuracy, balanced accuracy, PPV, and Matthews Correlation Coefficient (MCC) [16].

Applicability Domain Characterization: Using range-based, distance-based, or density-based methods to define interpolation space [15].

Mechanistic Analysis: Examining descriptor contributions and alignment with known biological mechanisms.

This protocol ensures comprehensive evaluation of all OECD principles, with particular emphasis on external validation (Principle 4) and applicability domain (Principle 3).

Key Experimental Findings

Research comparing QSAR models for predicting environmental fate parameters of cosmetic ingredients demonstrated that qualitative predictions aligned with REACH classification criteria were generally more reliable than quantitative predictions [6]. This highlights the importance of Principle 1 (defined endpoint) in regulatory contexts.

Studies of applicability domain methods revealed that while distance-based approaches like Mahalanobis distance effectively identified extrapolations, their performance was highly dependent on threshold definition strategies [15]. The most effective thresholds considered both distances of training compounds from their mean and average distances from their first five nearest neighbors.

Assessment of profilers in the OECD QSAR Toolbox showed variable performance for different endpoints. While some structural alerts demonstrated high predictivity for mutagenicity and skin sensitization, others required refinement to improve precision [16]. This underscores the ongoing need for Principle 5 (mechanistic interpretation) to guide profiler development.

Implementing OECD principles requires specific computational tools and resources. The following table outlines key components of the QSAR researcher's toolkit.

Table 4: Essential Research Reagent Solutions for QSAR Validation

| Tool/Resource | Function | Implementation of OECD Principles |

|---|---|---|

| OECD QSAR Toolbox [16] | Chemical category formation and read-across | Provides profilers for mechanistic interpretation (Principle 5) and database with defined endpoints (Principle 1) |

| VEGA Platform [6] | QSAR model repository and prediction | Implements defined applicability domains (Principle 3) with reliability indices |

| EPI Suite [6] | Environmental fate parameter prediction | Offers well-documented algorithms (Principle 2) for specific endpoints |

| ADMETLab 3.0 [6] | ADMET property prediction | Provides comprehensive validation statistics (Principle 4) and applicability domain assessment |

| Danish QSAR Models [6] | Specific endpoint prediction | Demonstrates mechanistic structural rules (Principle 5) for chemical categories |

The OECD Principles for QSAR Validation have established a foundational framework that continues to evolve alongside computational toxicology science. From their initial formulation as five discrete principles, they have expanded into more comprehensive assessment frameworks like the OECD QSAR Assessment Framework (QAF) that provide detailed guidance for regulatory evaluation of both models and predictions [13] [14]. The experimental evidence presented demonstrates that consistent application of these principles significantly enhances prediction reliability and regulatory acceptance.

Future directions in QSAR validation will likely include more sophisticated applicability domain definitions that incorporate biological similarity in addition to chemical similarity [18], enhanced emphasis on model interpretability through explainable AI techniques [18] [3], and development of standardized validation approaches for novel machine learning architectures [17]. As these advances mature, the core OECD principles provide a stable conceptual foundation ensuring that methodological innovation translates to scientifically valid and regulatorily useful prediction tools.

The predictive power of a Quantitative Structure-Activity Relationship (QSAR) model is not determined solely by its statistical fit to the training data, but by its proven reliability and robustness through rigorous validation. For researchers and drug development professionals, employing models without proper validation carries significant risks, including wasted resources and misleading conclusions. The Organisation for Economic Co-operation and Development (OECD) has established fundamental principles for validating QSAR models, requiring a defined endpoint, an unambiguous algorithm, a defined applicability domain, appropriate measures of goodness-of-fit, robustness, and predictivity, and, whenever possible, a mechanistic interpretation [15] [19] [20]. This guide focuses on three interlinked concepts central to the fourth OECD principle: the Applicability Domain (AD), which establishes the model's boundaries; Robustness, which assesses the model's stability; and the identification of Chance Correlation, which guards against statistically significant but scientifically meaningless models. A systematic approach to these factors is essential for developing QSAR models that provide trustworthy predictions for drug discovery.

Core Concept 1: Applicability Domain (AD)

The Applicability Domain (AD) defines the chemical, structural, and response space in which a QSAR model's predictions are considered reliable [19]. It represents the boundaries of the training data used to build the model, ensuring that predictions are made primarily via interpolation rather than risky extrapolation. The fundamental principle is that a model can only be expected to make accurate predictions for compounds that are sufficiently similar to those in its training set [15]. Defining the AD is crucial because the prediction error of QSAR models has been shown to increase as the distance (e.g., Tanimoto distance on Morgan fingerprints) to the nearest training set compound increases [21].

Methodologies for Defining the Applicability Domain

Several algorithmic approaches exist to characterize the interpolation space of a model, each with distinct methodologies and limitations. The table below summarizes the most common AD methods.

Table 1: Comparison of Key Applicability Domain (AD) Methods

| Method Category | Specific Method | Core Principle | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Range-Based | Bounding Box [15] | Defines a p-dimensional hyper-rectangle based on min/max values of each descriptor. | Simple, intuitive, easy to implement. | Cannot identify empty regions or account for descriptor correlations. |

| Geometric | Convex Hull [15] | Defines the smallest convex area containing the entire training set. | Provides a compact geometric boundary. | Computationally complex for high-dimensional data; cannot identify internal empty regions. |

| Distance-Based | Leverage (Hat Matrix) [15] [19] | Calculates the Mahalanobis distance of a query compound from the centroid of the training set. | Handles correlated descriptors; well-established for regression. | Requires inversion of descriptor matrix; can be sensitive to outliers. |

| Euclidean/City Block [15] | Measures distance to training set centroid or neighbors using standard metrics. | Simple distance calculation. | Requires pre-processing (e.g., PCA) to handle correlated descriptors. | |

| Probability Density-Based | Kernel Methods [19] | Estimates the probability density distribution of the training set in descriptor space. | Accounts for data distribution density; can identify dense and sparse regions. | More computationally intensive than simpler methods. |

| Classifier-Based (for Classification QSAR) | Class Probability Estimate [22] | Uses the model's own estimated probability of class membership to define reliability. | Directly related to the classifier's confidence; often top-performing. | Specific to the classifier used; requires well-calibrated probability estimates. |

A benchmark study comparing AD measures for classification models found that class probability estimates—a confidence estimation method that uses the underlying classifier's information—consistently performed best at differentiating reliable from unreliable predictions. In contrast, novelty detection methods that rely only on the explanatory variables were generally less powerful [22].

Workflow for Applicability Domain Assessment

The following diagram illustrates a generalized workflow for assessing if a new compound falls within a QSAR model's Applicability Domain.

Diagram 1: Assessing if a compound is within the QSAR model's Applicability Domain. The compound must pass all defined AD checks (e.g., range, distance, probability) for its prediction to be considered reliable.

Core Concept 2: Robustness

A robust QSAR model is one whose predictive performance remains stable and is not overly sensitive to small perturbations in the training data or model parameters. Robustness testing ensures that the model captures a true underlying structure-activity relationship rather than memorizing noise or idiosyncrasies of a specific dataset split.

Key Techniques for Robustness Validation

1. Cross-Validation (CV): This is the primary and most common method for internal validation of robustness.

- Protocol: The training set is randomly divided into k equal-sized groups (folds). A model is built k times, each time using k-1 folds for training and the remaining fold for validation. The process is repeated to ensure each fold serves as the validation set once. The average predictive performance across all k folds is reported.

- Interpretation: A model with consistent performance across all folds is considered robust. A high variance in performance between folds indicates potential instability [23].

2. Double Cross-Validation (DCV): Also known as nested cross-validation, this technique provides a more rigorous assessment, especially for models requiring internal hyperparameter optimization.

- Protocol: An outer k-fold CV loop is set up. For each training set of the outer loop, an inner m-fold CV is performed to optimize the model's hyperparameters. The model is then refit with the optimal parameters on the complete outer training set and evaluated on the outer test set [23].

- Interpretation: DCV offers a nearly unbiased estimate of the true prediction error and is crucial for preventing model selection bias and over-optimism [23].

3. Consensus Prediction: This approach leverages the "wisdom of the crowd" to enhance robustness.

- Protocol: Multiple QSAR models are developed for the same endpoint using different algorithms, descriptors, or data splits. The final prediction for a new compound is derived as the average (for regression) or majority vote (for classification) of the predictions from all individual models.

- Interpretation: 'Intelligent' consensus prediction, which selectively combines models, has been shown to be more externally predictive than single models [23].

Workflow for Assessing Model Robustness

The diagram below outlines a sequential protocol for thoroughly evaluating the robustness of a QSAR model.

Diagram 2: A workflow for evaluating model robustness using cross-validation and consensus approaches. High consistency and low variance in performance are key indicators of a robust model.

Core Concept 3: Chance Correlation

Chance correlation occurs when a model appears to have strong statistical significance but is, in fact, modeling random noise rather than a true structure-activity relationship. This is a significant risk in QSAR modeling due to the high dimensionality of descriptor spaces and the potential for overfitting.

The Y-Randomization Test

The primary experimental protocol to detect chance correlation is the Y-Randomization test (or label scrambling).

- Protocol: The biological activity values (Y-response) of the training set are randomly shuffled, while the descriptor matrix (X) is kept unchanged. A new QSAR model is then built using the scrambled activities and the original modeling protocol. This process is repeated many times (e.g., 100-1000 iterations) [24] [20].

- Interpretation: The performance metrics (e.g., R², Q²) of the models built with scrambled data are recorded. For a valid model, its performance on the original data should be significantly higher (a common threshold is R² > 0.6 and Q² > 0.5) than the performance of any model built with randomized data. If the models with randomized data achieve similar performance, it is a strong indicator that the original model is a product of chance correlation [24].

Quantitative Thresholds: Beyond the Y-randomization test, adherence to established quantitative thresholds for key metrics is vital. As noted in a study on porphyrin-based photosensitizers, "a QSAR model is acceptable when it has an r² value greater than 0.6 and r² (CV) greater than 0.5" [24]. The coefficient of determination (r²) measures goodness-of-fit, while the cross-validated coefficient (q²) measures internal predictive power. A high r² coupled with a low q² is a classic sign of overfitting.

Integrated Validation in Practice: An Experimental Case Study

A 2025 study on acylshikonin derivatives provides a clear example of an integrated computational framework that implicitly addresses AD, robustness, and chance correlation [25]. The research employed QSAR modeling, molecular docking, and ADMET prediction to identify antitumor compounds.

- Experimental Protocol: The study evaluated 24 derivatives. Molecular descriptors were calculated and reduced via Principal Component Analysis (PCA). Multiple QSAR models were built using Partial Least Squares (PLS), Principal Component Regression (PCR), and Multiple Linear Regression (MLR). The best model (PCR) was selected based on its high predictive performance (R² = 0.912, RMSE = 0.119). Docking studies against the target 4ZAU and ADMET profiling were used for further validation [25].

- Validation in Context:

- Robustness: The use of multiple modeling algorithms (PLS, PCR, MLR) and the selection of the best performer based on statistical metrics is a form of consensus and model selection that enhances robustness.

- Applicability Domain: While not explicitly defined with a specific algorithm, the domain is inherently bounded by the structural and chemical features of the 24 acylshikonin derivatives studied. Predictions are reliable for compounds similar to this chemical space.

- Chance Correlation: The high R² value (0.912), which is well above the 0.6 threshold, and the model's ability to identify key electronic and hydrophobic descriptors with a mechanistic interpretation, strongly argue against a chance correlation [25] [24].

Table 2: Key Software and Computational Tools for QSAR Validation

| Tool / Resource Name | Type/Category | Primary Function in Validation | Relevance to AD, Robustness, Chance Correlation |

|---|---|---|---|

| CORAL Software [26] | QSAR/QSPR Modeling | Builds models using SMILES notations and Monte Carlo optimization. | Uses target functions (TF1-TF3) with IIC/CII to improve robustness and reduce overfitting. |

| DTCLab Online Tools [23] | Validation Suite | Provides tools for double cross-validation, consensus prediction, and small dataset modeling. | Directly assesses robustness and predictivity; helps define reliable predictions. |

| MATLAB / Python (scikit-learn) [15] | Programming Environment | Provides a flexible platform for implementing custom AD methods and validation protocols. | Enables coding of range-based, distance-based, and Y-randomization tests. |

| Tanimoto Distance on Morgan Fingerprints [21] | Similarity/Distance Metric | Quantifies the structural similarity between molecules based on their molecular fingerprints. | A core metric for defining the Applicability Domain in chemical space. |

| Index of Ideality of Correlation (IIC) & Correlation Intensity Index (CII) [26] | Statistical Benchmark | Advanced metrics that improve model performance by accounting for correlation and residuals. | Enhances model robustness and predictive power for test sets. |

A rigorous evaluation of a QSAR model's predictive power extends far beyond a high R² value for the training data. It requires a holistic validation strategy that systematically addresses the model's Applicability Domain, its Robustness to data variation, and the risk of Chance Correlation. As demonstrated by modern studies and available tools, best practices involve using multiple algorithms and consensus predictions, explicitly defining the chemical space of the AD using distance or probability-based methods, and rigorously testing for chance correlations through Y-randomization. By adhering to this multi-faceted validation framework, researchers in drug development can significantly increase their confidence in QSAR predictions, leading to more efficient and successful discovery pipelines.

In the field of Quantitative Structure-Activity Relationship (QSAR) modeling, the pursuit of predictive power is fundamentally a question of data. The development of QSAR models, which use mathematical relationships to connect chemical structures to biological activities or properties, relies entirely on the quality, scope, and integrity of the underlying experimental data [27]. As computational methods evolve from traditional statistical approaches to sophisticated artificial intelligence (AI) and machine learning (ML) algorithms, the principle of "garbage in, garbage out" becomes increasingly pertinent [27] [28]. The predictive validity, applicability domain, and ultimate utility of any QSAR model are constrained by the data from which it was born.

The core challenge in QSAR modeling lies in confronting the "empirical" or "fuzzy" nature of many molecular activities [27]. Unlike quantum chemistry methods that calculate properties with clear physical interactions, many biological activities arise from complex, multifaceted mechanisms that are difficult to express with explicit mathematical relationships [27]. This inherent complexity places extraordinary demands on the datasets used to train QSAR models, requiring sufficient structural diversity and experimental consistency to capture meaningful patterns. This review examines how dataset characteristics—size, quality, and diversity—govern model validity within the broader thesis of QSAR predictive power research, providing researchers with evidence-based guidance for constructing robust predictive models.

Critical Data Dimensions in QSAR Modeling

Dataset Size and Structural Diversity

The size and structural diversity of training datasets directly determine a QSAR model's ability to generalize to new chemical entities. A comprehensive bibliometric analysis of QSAR publications from 2014-2023 reveals a clear trend toward larger datasets, driven by the increasing availability of public bioactivity databases and the data requirements of deep learning methods [27]. While traditional QSAR models might be built on dozens or hundreds of compounds, modern AI-driven approaches can leverage thousands or millions of data points to capture complex structure-activity relationships [29].

The structural diversity within a dataset is equally crucial as its size. Models trained on structurally similar compounds may demonstrate high predictive accuracy within that narrow chemical space but fail dramatically when applied to structurally distinct molecules [27]. Datasets must encompass a wide variety of chemical scaffolds, functional groups, and physicochemical properties to build models with broad applicability domains [27]. The evolution of public databases like ChEMBL, which now contains over 2.4 million compounds and 20.7 million bioactivity measurements, has significantly expanded the potential chemical space for QSAR model development [9].

Table 1: Impact of Dataset Size on QSAR Model Performance

| Dataset Size | Model Type | Performance Characteristics | Limitations |

|---|---|---|---|

| Small (<1,000 compounds) | Classical QSAR (MLR, PLS) | Limited complexity, high interpretability | Poor generalization, narrow applicability domain |

| Medium (1,000-10,000 compounds) | Machine Learning (RF, SVM) | Better predictive power, captures nonlinear relationships | May miss rare activity patterns |

| Large (>10,000 compounds) | Deep Learning (GNN, Transformers) | High accuracy, identifies complex patterns | Computational intensity, requires careful regularization |

Data Quality and Experimental Consistency

The accuracy and consistency of experimental measurements underlying QSAR datasets profoundly impact model reliability. High-quality data with standardized measurement protocols and clear documentation of experimental conditions produces more robust and reproducible models [30]. Inconsistent experimental data—arising from different assay protocols, measurement techniques, or laboratory conditions—introduces noise that can obscure genuine structure-activity relationships and lead to misleading models [27] [31].

The source and curation of data significantly influence quality. For example, the ChEMBL database assigns a confidence score from 0 (target unknown) to 9 (direct single protein target assigned) to quantify the reliability of target assignments [9]. Filtering data based on such quality metrics can substantially improve model performance. A systematic study on dopamine transporter (DAT) QSAR models demonstrated that enhanced dataset quality through meticulous filtering positively impacted predictive power, independent of dataset size increases [30].

Dataset Balancing for Virtual Screening

The ratio of active to inactive compounds in classification-based QSAR models requires careful consideration based on the model's intended application. Traditional best practices often recommended balancing datasets to achieve high balanced accuracy (BA), but recent research indicates this approach may be suboptimal for virtual screening applications [3].

For virtual screening of ultra-large chemical libraries, where the practical goal is to identify a small number of true actives for experimental testing (typically 128 compounds or fewer due to well-plate constraints), models with high Positive Predictive Value (PPV) built on imbalanced training sets outperform balanced models [3]. Empirical studies demonstrate that training on imbalanced datasets achieves a hit rate at least 30% higher than using balanced datasets when evaluating the top predictions [3]. This paradigm shift emphasizes that optimal dataset construction depends critically on the model's context of use.

Experimental Comparisons and Case Studies

Comparative Performance of Target Prediction Methods

A systematic benchmark study compared seven target prediction methods using a shared dataset of FDA-approved drugs to evaluate their performance in predicting drug-target interactions [9]. The study assessed both target-centric approaches (which build predictive models for each target) and ligand-centric approaches (which leverage similarity to known active compounds), with methods including MolTarPred, PPB2, RF-QSAR, TargetNet, ChEMBL, CMTNN, and SuperPred [9].

Table 2: Performance Comparison of Target Prediction Methods

| Method | Type | Algorithm | Data Source | Key Findings |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity | ChEMBL 20 | Most effective method overall; Morgan fingerprints with Tanimoto score performed best |

| RF-QSAR | Target-centric | Random Forest | ChEMBL 20&21 | Performance varies by target family |

| TargetNet | Target-centric | Naïve Bayes | BindingDB | Depends on structural fingerprint diversity |

| ChEMBL | Target-centric | Random Forest | ChEMBL 24 | Suitable for novel protein targets |

| CMTNN | Target-centric | Neural Network | ChEMBL 34 | Benefits from large dataset |

| PPB2 | Ligand-centric | Nearest Neighbor/Naïve Bayes/DNN | ChEMBL 22 | Performance depends on similarity threshold |

| SuperPred | Ligand-centric | 2D/fragment/3D similarity | ChEMBL and BindingDB | Multiple similarity approaches |

The findings revealed that MolTarPred emerged as the most effective method overall, particularly when using Morgan fingerprints with Tanimoto similarity scores [9]. The study also demonstrated that high-confidence filtering of training data (using only interactions with confidence scores ≥7) improved prediction reliability, though at the cost of reduced recall, making such filtering less ideal for drug repurposing applications where broad target coverage is desired [9].

Case Study: SARS-CoV-2 MproInhibitor Screening

A compelling case study illustrating the consequences of limited dataset size involves the virtual screening for SARS-CoV-2 main protease (Mpro) inhibitors [31]. Researchers combined Hologram-QSAR (HQSAR) and Random Forest-QSAR (RF-QSAR) models based on merely 25 synthetic SARS-CoV-2 Mpro inhibitors to virtually screen the Brazilian Compound Library (BraCoLi) [31].

Despite selecting 24 top-ranked compounds for experimental testing, none showed inhibitory activity at 10 µM concentration [31]. This failure was attributed primarily to the extremely small training set, which was insufficient to capture the essential structural features required for Mpro inhibition. The study highlights how inadequate training data, even when combined with sophisticated algorithms, can produce models with high rates of false positives and limited practical utility [31].

Impact of Data Quality on DAT QSAR Models

Research on dopamine transporter (DAT) inhibitors provides strong evidence for the critical importance of data quality [30]. By systematically comparing DAT QSAR models trained on different versions of ChEMBL data, researchers demonstrated that enhanced dataset quality through meticulous filtering and standardization significantly improved predictive performance, even with comparable dataset sizes [30].

The study established rigorous filtering criteria for creating high-quality training sets, including specific divisions of pharmacological assays and data types. The resulting models showed substantially improved predictive power for novel compounds, validating that data quality management is as important as data quantity in QSAR development [30].

Essential Research Reagents and Computational Tools

Modern QSAR research relies on a sophisticated ecosystem of data resources, software tools, and computational frameworks. The table below catalogues key solutions mentioned in recent literature.

Table 3: Essential Research Reagent Solutions for QSAR Modeling

| Resource Category | Specific Tools/Databases | Primary Function | Key Features |

|---|---|---|---|

| Bioactivity Databases | ChEMBL, PubChem, BindingDB | Source of experimental training data | Annotated compound-target interactions, confidence scores |

| Molecular Descriptors | DRAGON, PaDEL, RDKit | Compute molecular features | 1D-4D descriptors, fingerprint calculations |

| Commercial QSAR Platforms | DeepAutoQSAR, Schrödinger | Automated model building | Integrated workflows, uncertainty estimation |

| Open Source Models | MolTarPred, RF-QSAR, CMTNN | Target prediction | Similarity searching, machine learning algorithms |

| Validation Tools | QSARINS, Build QSAR | Model assessment | Applicability domain, statistical validation |

Emerging Approaches and Future Directions

Integration of Multi-modal Data

The integration of diverse data types represents a promising frontier in QSAR modeling. Combining traditional bioactivity data with information from molecular dynamics simulations, quantum mechanical calculations, and omics technologies can provide a more comprehensive foundation for model building [28]. This multi-modal approach helps address the "fuzzy" nature of molecular activities by capturing complementary aspects of molecular behavior [27] [28].

The iterative framework that integrates wet lab experiments, molecular dynamics simulations, and machine learning techniques shows particular promise for improving model accuracy and mechanistic interpretability [28]. This framework creates a virtuous cycle where model predictions inform new experiments, and experimental results refine the models, progressively enhancing predictive power while maintaining connection to physiological reality [28].

Quantum Machine Learning for Data-Scarce Scenarios

Quantum machine learning (QML) approaches offer potential advantages for QSAR modeling, particularly in data-limited scenarios [32]. Research comparing classical and quantum classifiers found that quantum classifiers demonstrated superior generalization power when training data was limited and when using reduced feature sets [32].

After applying principal component analysis (PCA) for dimensionality reduction, quantum classifiers outperformed classical counterparts, especially when only a small number of features were selected [32]. This quantum advantage suggests promising applications in early-stage drug discovery where comprehensive bioactivity data may be scarce, though these approaches remain experimental and require specialized implementation.

Best Practices for Model Validation

Robust validation methodologies are essential for assessing true model performance, especially given the critical influence of dataset characteristics. The diagram below outlines a comprehensive validation workflow that addresses common pitfalls in QSAR model development.

The validity and utility of QSAR models remain inextricably linked to their foundational datasets. Size, diversity, quality, and appropriate balancing each play distinct but interconnected roles in determining model performance. Evidence from comparative studies and case investigations consistently demonstrates that sophisticated algorithms cannot compensate for deficient training data. Rather, the most powerful QSAR approaches emerge from the thoughtful integration of comprehensive, well-curated experimental data with computational methods appropriately matched to the research context and application goals.

As the field advances, researchers must continue to prioritize data quality management alongside algorithmic innovation. The development of larger, more diverse bioactivity databases, combined with improved data standardization and curation practices, will expand the boundaries of QSAR predictive power. Furthermore, the adoption of context-specific validation metrics and a more nuanced understanding of dataset balancing requirements will enhance the practical impact of QSAR approaches in drug discovery and chemical safety assessment. Through continued attention to data as the fundamental component of model development, QSAR research will maintain its essential role in bridging chemical structure and biological activity.

From Theory to Practice: A Toolkit for QSAR Validation Methods

In quantitative structure-activity relationship (QSAR) modeling, the strategic division of data into training and test sets represents a fundamental step for developing predictive and reliable models. The core objective of any QSAR study extends beyond merely fitting a model to existing data; it aims to create a robust mathematical relationship capable of accurately predicting the biological activity or physicochemical properties of new, unseen compounds [33]. This predictive capability is paramount in drug development, where models inform critical decisions about compound synthesis and prioritization. The division of available data into training and test sets simulates this real-world application, wherein the training set serves for model construction and the test set provides an unbiased evaluation of its predictive power [34] [35].

The validation process in QSAR modeling typically employs several strategies, including internal validation (cross-validation), validation by dividing the dataset, true external validation on new data, and data randomization [33]. While internal validation methods, such as leave-one-out (LOO) cross-validation, are valuable for assessing robustness, they often yield over-optimistic performance estimates and are insufficient alone [33] [35]. External validation through a dedicated test set is considered the gold standard for estimating a model's generalization error, as it provides a rigorous test using data that played no role in model building or selection [36] [35]. This practice helps mitigate overfitting—where a model learns noise and specific patterns from the training data that do not generalize—and protects against model selection bias, ensuring that the reported performance metrics are trustworthy and reflective of true predictive ability [35]. Consequently, the strategy employed for splitting data directly impacts the validity and practical utility of a QSAR model in a drug discovery pipeline.

Foundational Splitting Methodologies and Comparative Performance

Various methodologies exist for partitioning data, each with distinct advantages, limitations, and appropriate application contexts. The choice of method can significantly influence the perceived and actual performance of the resulting QSAR model.

Common Data Splitting Techniques

Random Splitting: This is the most straightforward approach, which randomly assigns data points to the training and test sets based on a predefined ratio. While simple to implement, a purely random split may not preserve the underlying structure or distribution of the data, potentially leading to inconsistent performance estimates if the split is fortuitous [34] [33]. It is most suitable for large, homogeneous datasets.

Stratified Splitting: For datasets with an imbalanced distribution of the target variable (e.g., a few highly active compounds among many less active ones), stratified splitting ensures that the training and test sets maintain the same proportion of classes or categories. This leads to more representative and reliable performance evaluation, particularly for classification tasks or when dealing with skewed activity ranges [34].

Time-Based Splitting: In scenarios involving time-series or sequentially generated data, a time-based split is essential. It divides the data based on temporal order, using older data for training and newer data for testing. This method preserves temporal dependencies and provides a realistic assessment of a model's ability to predict future outcomes, which is crucial for models intended for prospective use [34].

Rational Methods Based on Chemical Space (e.g., Kennard-Stone): Rather than random selection, more rational approaches select the training set to ensure it is representative of the entire chemical space covered by the dataset. Methods like the Kennard-Stone algorithm select training compounds that are uniformly distributed across the descriptor space. Studies have shown that when training and test sets were generated by random division or by an activity-range algorithm, predictive models were often not obtained. In contrast, good external validation statistics were achieved when sets were selected based on clusters within the descriptor space, ensuring the training set adequately spans the chemical diversity of the test set [33].

Impact of Splitting Ratio on Model Performance

The proportion of data allocated to the training and test sets is another critical consideration, though no universally optimal ratio exists. Common rules of thumb suggest allocating 60-80% of data for training, with the remaining 10-20% each for validation and test sets [34]. The validation set is used for model tuning and selection, while the test set is held back for a final, unbiased assessment [34].

Research has demonstrated that the impact of training set size on predictive quality is dataset-dependent. A study on three different QSAR datasets found that reducing the training set size significantly impacted the predictive ability for some datasets (e.g., cytoprotection data of anti-HIV thiocarbamates) but had negligible effects for others (e.g., bioconcentration factor data) [33]. This indicates that the optimal size of the training set should be determined based on the specific data set, the types of descriptors used, and the statistical methods employed [33]. A larger test set generally provides a more precise estimate of the prediction error but reduces the amount of data available for training, which can be detrimental for small datasets.

Table 1: Comparison of Common Data Splitting Methods in QSAR

| Splitting Method | Key Principle | Advantages | Limitations | Ideal Use Case |

|---|---|---|---|---|

| Random Splitting | Random assignment based on a fixed ratio | Simple, fast to implement | May not capture data structure; can lead to high variance in performance estimates | Large, homogeneous datasets |

| Stratified Splitting | Maintains class distribution of the target variable | Ensures representative splits for imbalanced data | Primarily for classification problems | Datasets with imbalanced activity/class distribution |

| Time-Based Splitting | Chronological division of data | Preserves temporal order; realistic for forecasting | Not applicable for non-sequential data | Time-series or prospectively generated chemical data |

| Chemical Space-Based | Selects data to cover descriptor space uniformly | Maximizes representativeness of training set | Computationally more intensive | All datasets, especially small to moderate size |

Advanced Protocols: Double Cross-Validation and Adaptive Splitting

To address the limitations of a single, static split, more advanced validation protocols have been developed. These methods use data more efficiently and provide a more comprehensive evaluation of model performance and stability.

Double Cross-Validation

Double cross-validation (DCV), also known as nested cross-validation, is a robust technique that combines both model selection and model assessment within a single framework [35]. It consists of two nested loops: an outer loop and an inner loop.

The DCV process can be summarized as follows: In the outer loop, the data are repeatedly split into training and test sets. However, unlike a single split, this process is repeated many times (e.g., 100 iterations). For each split, the following occurs: The test set is set aside for final model assessment. The training set is then passed to the inner loop, where it is further split into construction and validation sets (e.g., via 5-fold or 10-fold cross-validation). This inner loop is used for model building and hyperparameter tuning (model selection). The model with the best performance in the inner loop is selected. Finally, this selected model is evaluated on the untouched test set from the outer loop [35].

A key advantage of DCV is that it provides a nearly unbiased estimate of the prediction error under model uncertainty, as the test data in the outer loop are completely independent of the model selection process [35]. Compared to a single hold-out method, DCV offers a more realistic and stable picture of model quality by averaging performance over multiple splits [35].

The Adaptive Splitting Design

A novel approach called "adaptive splitting" has been proposed to optimize the trade-off between data used for model discovery and for external validation in prospective studies. This method is particularly relevant when a fixed total sample size is available.