Beyond R²: A Comprehensive Framework for Evaluating QSAR Model Predictive Ability in Drug Discovery

This article provides a modern, comprehensive guide for researchers and drug development professionals on evaluating the predictive ability of Quantitative Structure-Activity Relationship (QSAR) models.

Beyond R²: A Comprehensive Framework for Evaluating QSAR Model Predictive Ability in Drug Discovery

Abstract

This article provides a modern, comprehensive guide for researchers and drug development professionals on evaluating the predictive ability of Quantitative Structure-Activity Relationship (QSAR) models. It moves beyond traditional metrics like R² to explore foundational principles, advanced methodological applications, common troubleshooting and optimization strategies, and rigorous validation protocols. By synthesizing current best practices, including the use of machine learning, rigorous external validation, and applicability domain assessment, this resource aims to equip scientists with the knowledge to build, validate, and deploy reliable QSAR models for virtual screening, lead optimization, and predictive toxicology, thereby enhancing efficiency and decision-making in the drug discovery pipeline.

The Foundations of QSAR Predictive Ability: Why Going Beyond R² is Non-Negotiable

In the field of Quantitative Structure-Activity Relationship (QSAR) modeling, the coefficient of determination (R²) has long been a default metric for evaluating model performance. However, reliance on this single parameter is a critical oversimplification that can mask significant prediction errors and lead to misleading conclusions in drug discovery and toxicology. This guide objectively compares the modern, multi-faceted toolkit required for a rigorous assessment of QSAR model predictive ability, synthesizing current research and experimental data to provide a definitive protocol for scientists.

The Deception of R²: Why a Single Metric Fails

A high R² value is often mistakenly equated with a reliable and predictive model. Recent systematic analyses demonstrate that this reliance is dangerously misplaced. A 2022 study examining 44 reported QSAR models found that R² alone could not indicate model validity, as models with acceptably high R² values sometimes showed poor predictive performance on external test sets [1]. This occurs because R² measures the proportion of variance explained relative to the mean of the training data. Consequently, for datasets with a wide range of biological activity values, R² can achieve deceptively high values (>0.5) without accurately reflecting the true, absolute differences between observed and predicted values for new compounds [2] [3].

Beyond R²: The Essential Validation Metrics Toolkit

Robust QSAR validation requires a suite of metrics that evaluate different aspects of model performance, from its internal consistency to its predictive power on unseen chemicals. The most important metrics are summarized in the table below.

Table 1: Key Metrics for Comprehensive QSAR Model Validation

| Metric Category | Specific Metric | Interpretation & Threshold | Primary Function |

|---|---|---|---|

| External Validation | R²pred (Predictive R²) | > 0.6 is generally acceptable [1]. | Measures model performance on an external test set not used in training. |

| Slope of Regression Lines | k and k' |

Should be between 0.85 and 1.15 [1]. | Checks for systematic prediction bias between observed vs. predicted and vice versa. |

| rm² Metrics | rm²(LOO), rm²(test), rm²(overall) | A more stringent measure; higher values are better [2] [3]. | Assesses predictive ability based on actual differences, not training set mean. |

| Concordance | Concordance Correlation Coefficient (CCC) | > 0.8 indicates a valid model [1]. | Evaluates how well observed and predicted values fall on the line of perfect concordance. |

| Error-based | Mean Absolute Error (MAE) | Lower values indicate better performance; should be considered relative to the activity range [1]. | Provides an intuitive measure of average prediction error. |

| Categorical Analysis | Matthews Correlation Coefficient (MCC) | Values close to +1 indicate perfect prediction, 0 no better than random, -1 inverse prediction [4]. | A reliable measure for classification models, especially with unbalanced datasets. |

The rm² metric is particularly noteworthy as a stringent validation tool. It was developed specifically to overcome the limitations of R² by focusing on the actual difference between observed and predicted values, independent of the training set mean [2] [3]. It has three variants: rm²(LOO) for internal validation (leave-one-out), rm²(test) for external validation, and rm²(overall) for a combined assessment [2].

Experimental Protocols for Robust QSAR Validation

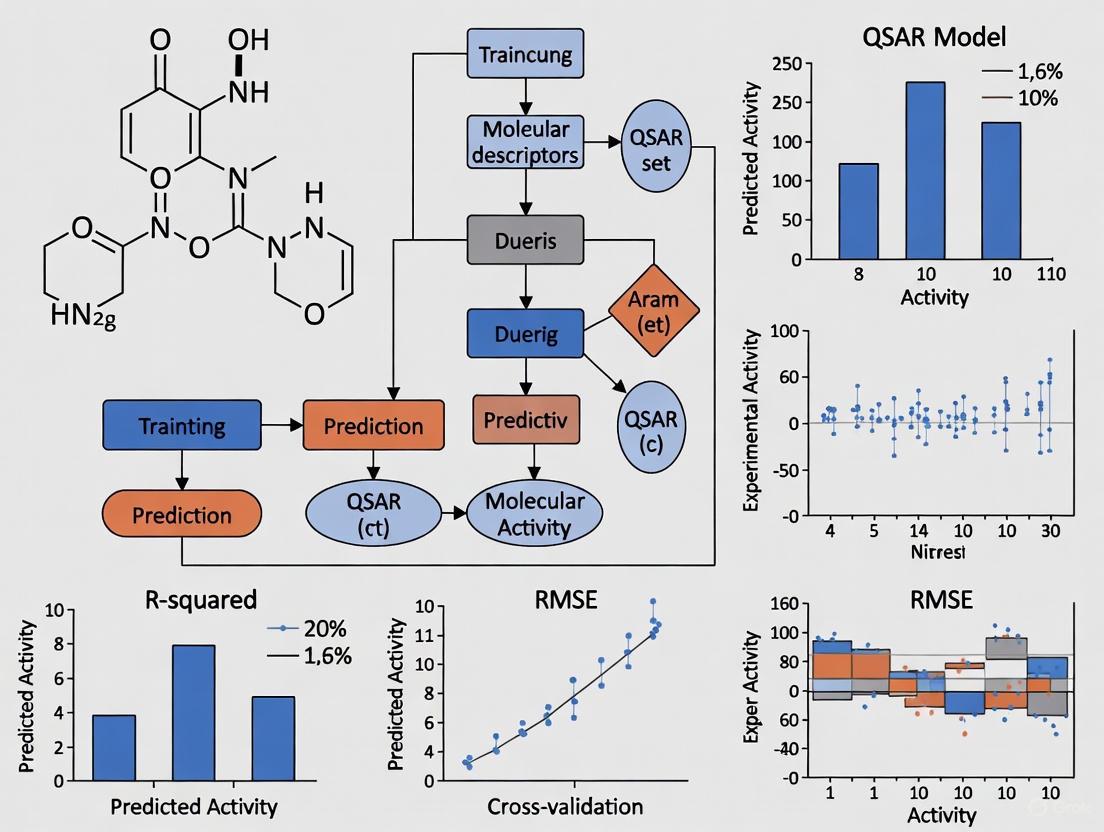

The following workflow, derived from established methodologies in the literature, provides a detailed protocol for developing and validating a QSAR model that truly assesses predictive ability.

This workflow illustrates the critical steps, including data splitting and overfitting checks, necessary for rigorous QSAR model validation.

Step-by-Step Protocol:

- Data Curation and Splitting: Curate a high-quality dataset of compounds with reliable experimental activity values. The dataset must be divided into a training set (~75%) for model construction and a validation set (~25%) for final, external testing [4]. The training set is often further sub-divided for the optimization process.

- Model Construction with Overfitting Control: Build the model using the active training set. The optimization process should be monitored using a calibration set; the point where the correlation coefficient for the calibration set peaks and begins to decline indicates the start of overfitting, and optimization should be stopped there [4].

- Comprehensive External Validation: Apply the finalized model to the held-out validation set. This step is non-negotiable for proving generalizability. The prediction results for this set are used to calculate the full suite of validation metrics from Table 1 [4] [1].

- Applicability Domain (AD) Assessment: Define the model's AD—the chemical space defined by the structures and properties of the training compounds. Predictions for new compounds falling outside this domain should be considered unreliable [5] [6].

The Scientist's Toolkit: Essential Research Reagents & Software

Successful QSAR modeling relies on a combination of software tools, computational methods, and statistical measures.

Table 2: Essential QSAR Research Reagents & Tools

| Tool Category | Example Tools & Metrics | Function & Application |

|---|---|---|

| Software Platforms | VEGA, EPI Suite, CORAL, DRAGON, ADMETLab 3.0 [5] [4] [7] | Used for descriptor calculation, model development, and toxicity/ADMET prediction. |

| Statistical & ML Algorithms | Multiple Linear Regression (MLR), Partial Least Squares (PLS), Random Forest (RF), Support Vector Machines (SVM), Neural Networks [7] [8] | The core algorithms for building the relationship between molecular structure and activity. |

| Validation Metrics | R²pred, rm², CCC, MCC [2] [1] [3] | The key statistical reagents for quantifying model predictability and reliability. |

| Molecular Descriptors | LogP, Molecular Weight, Topological Indices, 3D Conformational descriptors [7] [8] | Numerical representations of molecular structures that serve as the input variables for models. |

| Benchmark Datasets | Synthetic datasets with pre-defined patterns (e.g., atom counts, pharmacophores) [9] | Used for controlled evaluation and validation of interpretation approaches and model behavior. |

The research is clear: definitive judgment of a QSAR model's predictive ability requires moving beyond the comfort of a high R². A model's validity is not a binary state but a composite picture built from multiple lines of evidence. Scientists must adopt a rigorous, multi-metric approach that includes external validation with a held-out test set, the use of stringent metrics like rm² and CCC, and a clear definition of the model's Applicability Domain. By systematically implementing these protocols and tools, researchers can build more reliable, trustworthy models that genuinely accelerate drug discovery and safety assessment.

The Organization for Economic Cooperation and Development (OECD) has established a foundational framework to ensure the scientific validity and regulatory acceptance of Quantitative Structure-Activity Relationship (QSAR) models. In an era of increasing interest in alternatives to animal testing, the regulatory acceptance of QSAR methods hinges on demonstrating their scientific rigor [10]. The OECD principles provide a standardized approach for validating QSAR models, ensuring they remain on a solid scientific foundation for use in regulatory decision-making [11]. These principles have since evolved into the more comprehensive (Q)SAR Assessment Framework (QAF), which offers detailed guidance for regulators, model developers, and users to evaluate the confidence and uncertainties in QSAR models and their predictions [10] [12].

The Five OECD Validation Principles for QSAR Models

The OECD guidelines establish five core principles that a QSAR model must fulfill to be considered valid for regulatory application. These principles provide a systematic framework for both developing and evaluating models, with the overarching goal of ensuring their scientific robustness and practical utility in chemical safety assessment [11].

Table 1: The Five OECD Principles for (Q)SAR Validation

| Principle | Core Requirement | Key Significance |

|---|---|---|

| 1. Defined Endpoint | A clearly defined measurable outcome or property of interest (e.g., toxicity, binding affinity). | Ensures scientific clarity and purpose, forming the basis for model interpretation and regulatory application. |

| 2. Unambiguous Algorithm | A transparent algorithm that generates predictions from chemical structure data. | Guarantees reproducibility of results and allows for scientific scrutiny of the model's mechanics. |

| 3. Defined Domain of Applicability | A specified chemical space within which the model's predictions are considered reliable. | Manages uncertainty by setting boundaries for reliable prediction, preventing misuse on inappropriate chemicals. |

| 4. Appropriate Measures of Goodness-of-Fit, Robustness, and Predictivity | Statistical evidence demonstrating the model's performance on both training and external validation data. | Quantifies the model's internal consistency (fit), stability (robustness), and performance on new data (predictivity). |

| 5. A Mechanistic Interpretation | A proposed association between molecular descriptors and the endpoint, providing context for predictions. | Offers a plausible scientific rationale, increasing confidence in the model beyond a purely statistical correlation. |

Advanced Validation Protocols and Experimental Methodologies

While the OECD principles set the foundational criteria, advanced protocols are required to rigorously assess a model's predictive performance and limitations. These methodologies address critical issues such as experimental error, prediction confidence, and applicability domain.

Validation Using Predictive Distributions and KL Divergence

A sophisticated approach to validation involves representing QSAR predictions not as single values, but as predictive probability distributions. This method acknowledges that both predictions and experimental measurements have associated uncertainty [13].

- Methodology: This framework assumes prediction and experimental errors are Gaussian distributed. Each data point is represented by a mean (μ, the predicted or measured value) and a standard deviation (σ, the associated error). A QSAR model must therefore provide both the prediction value (μ) and a quantitative error estimate (σ) for each prediction [13].

Validation Metric: The quality of the predictive distributions is assessed using Kullback-Leibler (KL) divergence, an information-theoretic measure of the difference between two probability distributions. For Gaussian distributions, the KL divergence between the true experimental distribution (P) and the model's predictive distribution (Q) is calculated as [13]:

KL = ln(σ_p/σ_q) + [σ_q² + (μ_q - μ_p)²] / (2σ_p²) - 0.5Implementation: The mean KL divergence across a test set (

KL_AVE) provides a single metric to compare different models. A lowerKL_AVEindicates the model delivers predictive distributions that are both accurate and properly represent the prediction uncertainty. This metric uniquely combines the two modeling objectives of prediction accuracy and error estimation into a single objective [13].

Assessing the Impact of Experimental Noise

A critical methodological consideration is the treatment of experimental error in model training and validation. A common assertion is that a model's predictive accuracy cannot exceed the accuracy of its training data. However, research suggests this is a misconception arising from how models are evaluated [14].

- Experimental Design: To test this hypothesis, studies add simulated Gaussian-distributed random error to datasets. Models are then trained on the error-laden data but evaluated on both error-laden and error-free test sets [14].

- Key Finding: Results demonstrate that the Root Mean Squared Error (RMSE) when evaluated on error-free test sets is consistently better than when evaluated on error-laden test sets. This indicates that QSAR models can indeed make predictions more accurate than their noisy training data would suggest. The perceived "hard limit" on predictivity is actually a limit of our evaluation methodology, not the model's capability [14].

- Implication for Validation: This finding underscores the importance of acknowledging experimental uncertainty in both training and test sets. Relying solely on error-laden test sets for validation can provide a flawed measure of a model's true performance, particularly for endpoints with high inherent variability like toxicological measurements [14].

Quantifying Prediction Confidence and Domain Extrapolation

For classification models, defining the applicability domain can be achieved through quantitative measures of prediction confidence and domain extrapolation.

- Prediction Confidence Calculation: In methods like Decision Forest (a consensus QSAR technique), the confidence level for a prediction can be calculated based on the mean probability value (Pi) output by the model. The formula is [15]:

Confidence = |P_i - 0.5| * 2This scales confidence between 0 and 1, with high confidence for predictions where Pi approaches 1 (active) or 0 (inactive) [15]. - Domain Extrapolation: This measures how far a test compound is from the chemistry space of the training set. Models with larger and more diverse training sets generally maintain higher accuracy for predictions that require greater extrapolation [15].

- Validation Outcome: Studies show that models have poor accuracy for chemicals within the domain of low confidence, whereas good accuracy is obtained for those within the domain of high confidence. Accuracy is inversely proportional to the degree of domain extrapolation [15].

Table 2: Key Research Reagents and Computational Tools for QSAR Validation

| Tool / Reagent | Type | Primary Function in Validation |

|---|---|---|

| Molconn-Z Descriptors | Software | Generates 2D molecular structure descriptors that define chemical space for model development and applicability domain [15]. |

| Decision Forest (DF) | Algorithm | A consensus modeling method that combines multiple decision trees to improve predictive accuracy and reduce overfitting [15]. |

| Kullback-Leibler (KL) Divergence | Statistical Metric | Quantifies the information loss when a model's predictive distribution diverges from the "true" experimental distribution [13]. |

| Applicability Domain (AD) Metric | Methodological Framework | Defines the chemical space where a model's predictions are reliable, often using distance-to-model measures [13]. |

| Gaussian Process Regression | Algorithm | A probabilistic machine learning approach that natively outputs predictive distributions, quantifying uncertainty for each prediction [13]. |

The (Q)SAR Assessment Framework (QAF): Evolving Beyond the Principles

Building upon the original five principles, the OECD has developed the more comprehensive (Q)SAR Assessment Framework (QAF). The QAF provides detailed guidance for regulators to evaluate (Q)SAR models and their predictions in a consistent and transparent manner [10] [12].

- Extended Scope: The QAF not only includes assessment elements for the original five principles for evaluating models but also establishes new principles for evaluating individual predictions and results from multiple predictions [12].

- Regulatory Tool: It serves as a checklist for regulatory assessors, providing clear criteria to evaluate the scientific validity of (Q)SAR submissions. Simultaneously, it gives model developers and users clear requirements to meet for regulatory acceptance [10].

- Goal: The publication of the QAF is expected to increase regulatory use and acceptance of QSARs and may serve as a template for building similar frameworks for other New Approach Methodologies (NAMs) [10].

The OECD guidelines, embodied in the five validation principles and now expanded in the QSAR Assessment Framework, provide an essential and systematic methodology for establishing confidence in QSAR models. By adhering to these principles and employing advanced validation protocols—such as predictive distributions, KL-divergence assessment, and rigorous applicability domain definition—researchers and regulators can better quantify and communicate the uncertainties and confidence associated with QSAR predictions. This structured approach is fundamental to increasing the regulatory uptake of QSARs and other non-animal methods, ultimately supporting more efficient and evidence-based chemical safety assessments.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone methodology in computational chemistry and drug discovery, enabling researchers to predict biological activity, physicochemical properties, and toxicological endpoints of chemical compounds based on their molecular structures [16]. The fundamental principle of QSAR methodology can be expressed as Biological activity = f(physicochemical parameters), where mathematical relationships quantitatively connect molecular structures with their biological effects through computational analysis [17]. These models have become indispensable tools for virtual screening, data gap-filling, and prioritization for testing in pharmaceutical development and environmental risk assessment [18].

The reliability of any QSAR model is fundamentally constrained by the quality of its input data and the rigor of its validation protocols. With the exponential growth of publicly available chemical data, establishing standardized protocols for building robust QSAR models has become increasingly important for ensuring predictive accuracy and regulatory acceptance [19]. This guide provides a comprehensive comparison of methodologies, tools, and best practices for developing QSAR models that meet modern scientific and regulatory standards across diverse applications from drug discovery to environmental toxicology.

Foundational Components of QSAR Modeling

Essential Elements and Workflow

Constructing a reliable QSAR model requires systematic execution of sequential steps, each contributing to the overall predictive performance and applicability of the final model. The major stages include data collection, chemical standardization, molecular descriptor calculation, model building, and rigorous validation [17]. The typical workflow for robust QSAR model development follows a structured path as illustrated below:

Research Reagent Solutions: Essential Tools for QSAR Modeling

Table 1: Essential Computational Tools for QSAR Model Development

| Tool Category | Representative Solutions | Primary Function | Application Context |

|---|---|---|---|

| Workflow Platforms | KNIME [19] [18], Galaxy [19], Pipeline Pilot [19] | Automated workflow management | End-to-end QSAR model building and standardization |

| Chemical Standardization | QSAR-ready Workflow [18], CVSP [18], MolVS [18] | Structure curation and normalization | Preparing consistent molecular representations |

| Descriptor Calculation | RDKit [18], Dragon, MOE | Molecular descriptor generation | Converting structures to numerical features |

| Modeling Algorithms | Random Forest [20], Multiple Linear Regression [17], Artificial Neural Networks [17] | Machine learning algorithms | Establishing structure-activity relationships |

| Validation Frameworks | OECD QSAR Toolbox, KNIME Validation Nodes [19] | Model performance assessment | Internal and external validation |

Experimental Protocols: Comparative Methodologies for Robust QSAR

Data Curation and Standardization Protocols

The initial data preparation phase critically influences all subsequent modeling stages. Automated frameworks have been developed to systematically address common data quality issues through sequential standardization operations. The "QSAR-ready" workflow exemplifies this approach, performing structure desalting, stereochemistry stripping, tautomer normalization, nitro group standardization, valence correction, and neutralization where applicable [18]. This protocol can remove 62-99% of redundant data, significantly enhancing model reliability [19].

Comparative studies demonstrate that standardized curation protocols substantially improve model performance. On average, proper feature selection reduces prediction error by approximately 19% and increases the percentage of variance explained (PVE) by 49% compared to models built without feature selection [19]. The modelability (MODI) score serves as a crucial preliminary assessment, with datasets scoring above 0.6 typically producing models with average PVE scores of 0.71 [19].

Model Building and Validation Frameworks

Multiple algorithmic approaches exist for establishing quantitative relationships between molecular descriptors and biological activities. Comparative studies on Nuclear Factor-κB (NF-κB) inhibitors demonstrate that Artificial Neural Network (ANN) models often outperform traditional Multiple Linear Regression (MLR) approaches in predictive accuracy [17]. The optimal algorithm selection depends on dataset characteristics, with ANN models particularly effective for capturing non-linear relationships in complex biological systems.

Robust validation represents the most critical component of QSAR model development. The following protocol outlines essential validation steps:

Internal validation typically employs cross-validation techniques (5-10 fold), while external validation utilizes a completely independent test set (typically 20-30% of the original data) that remains unused during model development [17]. The applicability domain (AD) definition, frequently implemented using the leverage method, establishes the boundary within which the model generates reliable predictions [17]. Without proper AD assessment, model extrapolations become statistically unsupported.

Comparative Performance Analysis of QSAR Approaches

Quantitative Comparison of Modeling Algorithms

Table 2: Performance Comparison of QSAR Modeling Approaches Across Applications

| Model Type | Dataset Size | Application Area | Performance Metrics | Relative Advantages |

|---|---|---|---|---|

| Random Forest [20] | 3,592 chemicals | Repeat dose toxicity prediction | RMSE: 0.71 log10-mg/kg/day, R²: 0.53 | Handles complex descriptor interactions, robust to outliers |

| ANN [17] | 121 compounds | NF-κB inhibitor prediction | Superior to MLR in reliability | Captures non-linear relationships, complex pattern recognition |

| MLR [17] | 121 compounds | NF-κB inhibitor prediction | Good interpretability | Simple implementation, transparent coefficient interpretation |

| Consensus Model [20] | 1,247 chemicals | Repeat dose toxicity | RMSE: 0.69 log10-mg/kg/day, R²: 0.43 | Improved robustness through ensemble prediction |

| Automated QSAR [19] | 30 different problems | Multi-endpoint modeling | 19% error reduction with feature selection | Minimal user expertise required, standardized protocol |

Domain-Specific Model Performance

Comparative analysis of QSAR applications reveals significant performance variations across different domains. For environmental fate prediction of cosmetic ingredients, specific models demonstrate distinctive strengths: the Ready Biodegradability IRFMN model (VEGA), Leadscope model (Danish QSAR Model), and BIOWIN model (EPISUITE) show highest performance for predicting persistence [5]. For bioaccumulation assessment, the ALogP (VEGA), ADMETLab 3.0 and KOWWIN (EPISUITE) models excel at Log Kow prediction, while Arnot-Gobas (VEGA) and KNN-Read Across (VEGA) models perform best for BCF prediction [5].

These domain-specific comparisons highlight that model selection must consider both the target endpoint and the chemical space of interest. Qualitative predictions classified by regulatory criteria (e.g., REACH and CLP) often prove more reliable than quantitative predictions, with the applicability domain playing a crucial role in evaluating model reliability [5].

Advanced Applications and Future Directions

Emerging Applications in Drug Discovery and Toxicology

Recent advances in QSAR methodology have expanded applications into increasingly complex domains. In COVID-19 drug discovery, QSAR models have enabled rapid virtual screening of compound libraries against SARS-CoV-2 protein targets, significantly accelerating identification of potential inhibitors [16]. The integration of classification-based QSAR data mining with receptor-ligand interaction analysis has established efficient frameworks for emergency drug development.

In toxicological assessment, QSAR models predicting repeat dose toxicity point-of-departure (POD) values incorporate uncertainty quantification through confidence interval estimation [20]. This approach acknowledges the inherent variability in experimental training data (biological variability, methodological differences) and provides more realistic prediction intervals for risk assessment applications. Enrichment analysis demonstrates that such models can successfully identify 80% of the 5% most potent chemicals within the top 20% of predictions, enabling effective prioritization for regulatory review [20].

Integration with Modern Cheminformatics Platforms

Contemporary QSAR development increasingly leverages automated workflow platforms like KNIME that provide integrated environments for chemical standardization, descriptor calculation, model building, and validation [19] [18]. These platforms facilitate reproducible model development while maintaining transparency at each processing stage. The availability of open-source tools for structure standardization has particularly addressed critical data quality issues that previously compromised model reliability and reproducibility [18].

The evolution of QSAR modeling continues toward fully automated frameworks that minimize manual intervention while maintaining scientific rigor. Such systems enable researchers lacking extensive machine learning expertise to develop reliable models while providing customization options for advanced users [19]. This democratization of QSAR technology supports broader adoption across scientific disciplines while maintaining standards for model validation and interpretation.

The comparative analysis presented in this guide demonstrates that robust QSAR model development requires careful consideration of multiple factors including data quality, algorithmic selection, validation rigor, and applicability domain definition. Automated standardization protocols significantly enhance model reliability by ensuring consistent molecular representation, while advanced machine learning approaches like ANN and Random Forest often outperform traditional statistical methods for complex endpoints.

The optimal QSAR modeling strategy depends substantially on the specific application context. For regulatory applications with requirements for mechanistic interpretability, MLR models may be preferred despite potentially lower predictive accuracy. For screening applications prioritizing prediction reliability, ANN or ensemble methods offer superior performance. Across all domains, rigorous validation and clear definition of applicability domains remain essential for generating scientifically defensible predictions. As QSAR methodologies continue to evolve, integration with automated workflow platforms and adoption of standardized validation protocols will further enhance their utility in scientific research and regulatory decision-making.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational drug discovery and toxicology. These statistical models correlate chemical structure descriptors with biological activity to predict the potency of untested compounds [21]. The reliability of these predictions, however, hinges on a model's ability to generalize beyond its training data. This guide examines three critical challenges—overfitting, chance correlation, and the Structure-Activity Relationship (SAR) paradox—that can compromise predictive accuracy, particularly when models are applied to real-world drug discovery pipelines. Understanding these pitfalls is essential for developing robust QSAR models that deliver meaningful insights for researchers and drug development professionals.

Understanding the Core Pitfalls

Overfitting

Overfitting occurs when a model learns not only the underlying relationship in the training data but also its noise and random fluctuations. An overfitted model exhibits excellent performance on its training compounds but fails to accurately predict the activity of new, external compounds [22]. This pitfall frequently arises in QSAR due to the high-dimensional nature of descriptor spaces, where the number of calculated molecular descriptors often vastly exceeds the number of training compounds [23] [22]. Techniques like stepwise regression in such contexts can easily generate models that appear statistically sound but possess little to no predictive power.

Chance Correlation

Chance correlation refers to the generation of statistically significant but scientifically meaningless models that arise from the random alignment of descriptor values with biological activity measures. This risk is amplified when testing a large number of descriptor combinations against a single biological endpoint without proper statistical controls [23]. The model appears to find a meaningful relationship, but the correlation is merely accidental and will not hold for new data sets.

The SAR Paradox

The fundamental assumption of most QSAR approaches is that similar molecules have similar activities. The SAR paradox contradicts this principle, stating that it is not universally true that similar molecules have similar activities [21] [24]. This manifests dramatically in "activity cliffs" (ACs), where pairs of highly similar compounds exhibit unexpectedly large differences in potency [25]. For example, a small modification like the addition of a single hydroxyl group can lead to a thousand-fold change in binding affinity [25]. These cliffs form discontinuities in the SAR landscape and are a major source of prediction error for QSAR models, which often struggle to anticipate such abrupt changes [25].

Comparative Analysis of Pitfalls and Validation Strategies

The table below summarizes the characteristics of each pitfall and the primary methodologies used to detect and prevent them.

Table 1: Comparison of QSAR Pitfalls and Detection Methodologies

| Pitfall | Core Issue | Impact on Model | Key Detection & Prevention Methods |

|---|---|---|---|

| Overfitting | Model over-adapts to training set noise | High training accuracy, poor external predictivity | Internal validation (e.g., LOO-CV), external validation with test set, Y-scrambling [21] [26] [23] |

| Chance Correlation | Finding random, non-causal correlations | Statistically significant but non-predictive models | Y-scrambling (response randomization), careful feature selection, external validation [21] [23] |

| SAR Paradox | Small structural changes cause large activity differences | High prediction error for "activity cliff" compounds | Matched molecular pair analysis (MMPA), model performance assessment on cliff-rich test sets [21] [25] |

A 2022 study evaluating 44 reported QSAR models highlighted the necessity of robust validation, finding that relying on the coefficient of determination (r²) for the training set alone is insufficient to prove model validity [26]. The study emphasized that established external validation criteria have individual advantages and disadvantages and should be used in combination [26].

Experimental Protocols for Validating QSAR Models

Standard Workflow for QSAR Model Development and Validation

The following protocol outlines the essential steps for building a validated QSAR model, integrating checks for overfitting and chance correlation.

Diagram Title: QSAR Model Validation Workflow

Step 1: Data Curation and Preparation Collect and curate a set of compounds with consistent, experimentally measured biological activity (e.g., IC₅₀, Ki). Standardize molecular structures (e.g., remove salts, generate canonical tautomers) to ensure descriptor calculation consistency [25].

Step 2: Descriptor Calculation and Pre-processing Compute a wide range of molecular descriptors (e.g., topological, electronic, geometrical) using software such as Dragon or RDKit [22] [27]. Pre-process the descriptor matrix by removing constants and near-constants, and potentially reducing multicollinearity [22].

Step 3: Dataset Division Split the data into training and test sets. The split should ensure the test set is representative of the chemical space covered by the training set. Methods like Kennard-Stone or sphere exclusion are often preferred over random splitting [21] [23].

Step 4: Feature Selection Apply feature selection algorithms (e.g., Genetic Algorithm, Stepwise Elimination, or modern techniques like the Elastic Net [23]) to the training set to identify the most relevant descriptors and mitigate overfitting.

Step 5: Model Construction Build the QSAR model using the selected descriptors and training set. Common algorithms include Partial Least Squares (PLS), Random Forest (RF), and Support Vector Machines (SVM) [21] [27].

Step 6: Internal Validation and Y-Scrambling

- Internal Validation: Perform cross-validation (e.g., Leave-One-Out (LOO) or Leave-Many-Out) on the training set to assess model robustness [21] [23].

- Y-Scrambling: Intentionally scramble the response variable (biological activity) and attempt to rebuild the model. A robust model should fail to produce a significant correlation after multiple scramblings, whereas a model prone to chance correlation will often still appear significant [21] [26].

Step 7: External Validation Use the untouched test set to evaluate the model's true predictive power. Key metrics include ( Q^2{ext} ), ( r^2m ), and Concordance Correlation Coefficient (CCC) [21] [26].

Step 8: Define Applicability Domain (AD) Characterize the chemical space of the training set to define the model's Applicability Domain. This helps identify when a prediction is being made for a compound that is too dissimilar from the training data to be reliable [5] [24].

Protocol for Investigating the SAR Paradox and Activity Cliffs

This specialized protocol assesses a model's sensitivity to the SAR paradox.

Step 1: Identify Activity Cliffs (ACs) From the dataset, identify all pairs of compounds that meet two criteria:

- High Structural Similarity: Measured by a similarity metric like Tanimoto coefficient on fingerprints (e.g., ECFP4). A threshold of >0.85 is often used.

- Large Potency Difference: The absolute difference in activity (( |ΔpIC₅₀| )) exceeds a predefined threshold (e.g., 2 log units) [25].

Step 2: Construct a "Cliff-Rich" Test Set Compile a test set consisting primarily of compounds involved in identified AC pairs. The remaining compounds can form a control test set [25].

Step 3: Model Performance Assessment Apply the QSAR model to predict the activities of both the "cliff-rich" test set and the control test set. Compare the prediction errors (e.g., Mean Absolute Error) between the two sets. A significant performance drop on the "cliff-rich" set indicates low AC-sensitivity [25].

Step 4: Implement MMPA Use Matched Molecular Pair Analysis (MMPA) to systematically identify single-point modifications and their associated activity changes. Coupling MMPA with QSAR predictions can help flag potential activity cliffs that the model fails to capture [21].

The table below lists key computational tools and concepts vital for developing and validating rigorous QSAR models.

Table 2: Key Research Reagent Solutions for QSAR Modeling

| Tool/Resource | Category | Primary Function in QSAR |

|---|---|---|

| Dragon Software | Descriptor Calculator | Calculates thousands of molecular descriptors from 0D to 3D [26] |

| RDKit | Cheminformatics Library | Open-source platform for descriptor calculation, fingerprinting, and model building [25] |

| VEGA Platform | Integrated QSAR Tool | Provides access to multiple validated (Q)SAR models, ideal for regulatory assessment [5] |

| EPI Suite | Predictive Tool Suite | Estimates physicochemical properties and environmental fate; contains models like BIOWIN and KOWWIN [5] |

| Applicability Domain (AD) | Conceptual Framework | Defines the chemical space region where the model's predictions are considered reliable [5] [24] |

| Y-Scrambling | Validation Technique | Tests for chance correlation by randomizing the response variable during validation [21] [26] |

| Matched Molecular Pair Analysis (MMPA) | Analytical Method | Systematically identifies small structural changes and their impact on activity to study cliffs [21] |

Navigating the pitfalls of overfitting, chance correlation, and the SAR paradox is not merely an academic exercise but a practical necessity for effective computational drug design. The comparative data and experimental protocols presented here demonstrate that a single validation metric is inadequate. A comprehensive strategy—incorporating rigorous internal and external validation, Y-scrambling, and specific assessment of activity cliff prediction—is required to establish trust in a QSAR model's predictions. As the field progresses, integrating these robust validation practices with emerging techniques like graph neural networks and advanced applicability domain definitions will be crucial for developing predictive models that reliably guide lead optimization and compound prioritization.

The Critical Role of the Applicability Domain (AD) in Defining Model Scope

In cheminformatics and predictive toxicology, the Applicability Domain (AD) of a Quantitative Structure-Activity Relationship (QSAR) model defines the boundaries within which the model's predictions are considered reliable. It represents the chemical, structural, or biological space covered by the training data used to build the model [28]. The fundamental principle is that a model's predictive performance is primarily valid for interpolation within the training data space rather than extrapolation to regions of chemical space that are significantly different from the compounds used during model development [28] [29].

According to the Organisation for Economic Co-operation and Development (OECD) Guidance Document, defining an AD is a mandatory requirement for validating QSAR models intended for regulatory purposes [28]. This formal recognition underscores the critical importance of the AD concept in ensuring the proper application of computational models in safety assessment and decision-making processes.

Methodological Approaches for Defining Applicability Domains

Classification of AD Methods

Methods for characterizing the interpolation space of QSAR models can be broadly categorized into several approaches, each with distinct theoretical foundations and implementation strategies.

Table 1: Categories of Applicability Domain Methods

| Method Category | Key Principles | Representative Techniques |

|---|---|---|

| Range-Based Methods | Define boundaries based on descriptor ranges in training data | Bounding Box, Optimal Prediction Space |

| Distance-Based Methods | Assess similarity to training compounds using distance metrics | Euclidean Distance, Mahalanobis Distance, k-Nearest Neighbors |

| Geometric Methods | Define geometric boundaries encompassing training data | Convex Hull, Leverage (Hat Matrix) |

| Probability-Density Methods | Model the probability distribution of training data | Kernel Density Estimation (KDE) |

| Model-Specific Confidence Measures | Utilize internal classifier confidence indicators | Class Probability Estimates, Ensemble Variance |

Detailed Methodological Protocols

Range-Based and Geometric Methods: The leverage approach calculates the hat matrix as ( h = xi^T(X^TX)^{-1}xi ), where ( X ) is the training-set descriptor matrix and ( x_i ) is the descriptor vector for compound ( i ) [30]. A threshold is typically defined as ( h^* = 3 \times (M + 1)/N ), where ( M ) is the number of descriptors and ( N ) is the number of training examples. Compounds with leverage values ( h > h^* ) are considered X-outliers (outside the AD) [30].

Distance-Based Methods: The Z-kNN approach measures the distance between a query compound and its nearest neighbors in the training set [30]. A commonly used threshold is ( D_c = Z\sigma + \langle y \rangle ), where ( \langle y \rangle ) is the average and ( \sigma ) is the standard deviation of Euclidean distances between nearest neighbors in the training set, with ( Z ) typically set to 0.5 [30].

Probability-Density Methods: Kernel Density Estimation (KDE) models the probability density of the training data in feature space, providing a likelihood value for new compounds [31]. This approach naturally accounts for data sparsity and can handle arbitrarily complex geometries of data distributions without being restricted to single connected regions [31].

Model-Specific Confidence Measures: For classification models, class probability estimates consistently outperform other measures for differentiating between reliable and unreliable predictions [32]. These probability estimates directly reflect the confidence in class assignment and show strong correlation with prediction accuracy.

Experimental Benchmarking of AD Methods

Performance Comparison of AD Measures

Comprehensive benchmarking studies have evaluated the effectiveness of various AD measures for classification QSAR models. These studies typically use the Area Under the Receiver Operating Characteristic Curve (AUC ROC) as the primary performance metric, assessing how well each AD measure ranks predictions from most reliable to least reliable [32].

Table 2: Benchmarking Performance of AD Measures for Classification Models

| AD Measure Category | Example Methods | Average Performance (AUC ROC) | Key Strengths |

|---|---|---|---|

| Class Probability Estimates | RF class probability, SVM probability | 0.85-0.95 (varies by classifier) | Directly related to misclassification probability |

| Novelty Detection Methods | Leverage, k-NN distance, 1-Class SVM | 0.70-0.85 | Identifies structurally unusual compounds |

| Ensemble-Based Methods | Prediction variance, consensus metrics | 0.80-0.90 | Captures model uncertainty effectively |

| Hybrid Approaches | ADAN, CLASS-LAG, consensus methods | 0.75-0.90 | Combines multiple reliability aspects |

A landmark benchmarking study demonstrated that class probability estimates consistently perform best for differentiating between reliable and unreliable predictions across multiple classification techniques (Random Forests, Support Vector Machines, Neural Networks, etc.) and datasets [32]. Previously proposed alternatives to class probability estimates generally do not perform better and are often inferior.

Impact on Model Performance

The effectiveness of AD methods varies significantly based on the difficulty of the classification problem. The impact of defining an applicability domain is most pronounced for intermediately difficult problems (AUC ROC range 0.7-0.9), where appropriate AD definition can substantially improve prediction reliability [32].

For regression QSAR models, the standard deviation of model predictions has been suggested as one of the most reliable approaches for AD determination [28]. Studies have consistently shown that prediction errors increase with distance from the training set, regardless of the specific QSAR algorithm or distance metric employed [29].

Advanced Workflow for AD Assessment

The process of assessing a compound's position within a model's Applicability Domain involves multiple steps and decision points, as illustrated in the following workflow:

Table 3: Key Computational Tools for AD Assessment

| Tool/Resource | Type | Key AD Features | Application Context |

|---|---|---|---|

| VEGA | Integrated QSAR Platform | Multiple AD metrics, regulatory acceptance | Predictive toxicology, cosmetic ingredient assessment [5] |

| CORAL | QSAR Modeling Software | Model self-consistency system, random model evaluation | Mutagenicity prediction, model reliability estimation [33] |

| RDKit | Cheminformatics Library | Molecular descriptors, fingerprint calculations | General QSAR model development [34] |

| One-Class SVM | Machine Learning Algorithm | Novelty detection, boundary definition | Identifying compounds dissimilar to training set [30] |

| Random Forests | Ensemble Classification | Built-in class probability estimates | High-performance classification with natural confidence scores [32] |

| Kernel Density Estimation | Statistical Method | Probability density-based domain definition | Advanced AD determination for complex data distributions [31] |

Expanding Applications Beyond Traditional QSAR

The concept of applicability domain has expanded significantly beyond its traditional use in QSAR to become a general principle for assessing model reliability across domains such as nanotechnology, material science, and predictive toxicology [28]. In nanoinformatics, AD definition is particularly crucial for nanomaterial property and toxicity prediction, where data scarcity and heterogeneity require careful definition of model boundaries [28].

More recently, the AD framework has been extended to Quantitative Reaction-Property Relationship (QRPR) models, which predict characteristics of chemical reactions rather than individual compounds [30]. This presents unique challenges as chemical reactions are more complex objects whose properties depend on reactant and product structures as well as experimental conditions [30].

Current Challenges and Future Directions

Despite methodological advances, several challenges remain in AD research and implementation. There is still no single, universally accepted algorithm for defining applicability domains, and different methods may produce varying results for the same compounds [28] [35]. This highlights the need for continued benchmarking and standardization efforts.

The relationship between traditional AD concepts and modern machine learning approaches presents both challenges and opportunities. While conventional QSAR models show performance degradation outside their AD, modern deep learning algorithms have demonstrated remarkable extrapolation capabilities in other domains such as image recognition [29]. This suggests that as algorithm power and training data volume increase, applicability domains may effectively widen [29].

Emerging approaches like conformal prediction offer alternative frameworks for quantifying prediction uncertainty, producing confidence intervals with guaranteed validity under certain assumptions [34]. While not replacing traditional AD methods, these techniques provide complementary approaches to assessing prediction reliability.

As the field progresses, the development of more sophisticated AD methods that can automatically adapt to different model types and data characteristics will be essential for advancing the reliable application of QSAR and related approaches in chemical risk assessment and drug discovery.

Modern Methodologies and Practical Applications in QSAR Evaluation

Quantitative Structure-Activity Relationship (QSAR) modeling has undergone a remarkable evolution over the past six decades, transitioning from classical statistical approaches to sophisticated artificial intelligence (AI)-driven algorithms [36] [37]. This transformation has fundamentally enhanced how researchers predict the biological activity, toxicity, and physicochemical properties of chemical compounds, thereby accelerating drug discovery and environmental risk assessment. The journey from classical linear models to modern deep learning architectures represents a paradigm shift in computational chemistry, enabling researchers to navigate increasingly complex chemical spaces with greater predictive accuracy [37] [38].

This review systematically compares five key modeling approaches—Multiple Linear Regression (MLR), Partial Least Squares (PLS), Random Forest (RF), Support Vector Machines (SVM), and Deep Neural Networks (DNN)—within the context of evaluating QSAR model predictive ability. By examining experimental performance data, methodological protocols, and emerging best practices, we provide researchers and drug development professionals with a comprehensive framework for selecting appropriate modeling techniques based on their specific research objectives, dataset characteristics, and computational resources [39] [36].

Comparative Analysis of QSAR Modeling Techniques

Fundamental Principles and Algorithmic Characteristics

MLR and PLS represent classical statistical approaches in QSAR modeling. MLR establishes a linear relationship between molecular descriptors and biological activity using ordinary least squares estimation, while PLS addresses multicollinearity issues by projecting variables into a latent space that maximizes covariance with the response variable [37]. These methods remain valued for their interpretability, computational efficiency, and well-established validation protocols [37].

Machine learning methods like RF and SVM introduced non-linear modeling capabilities. RF operates as an ensemble method, constructing multiple decision trees and aggregating their predictions, which provides robustness against overfitting [37]. SVM, particularly through its kernel trick, maps data into higher-dimensional spaces to find optimal separating hyperplanes, making it effective for complex structure-activity relationships [39] [37].

Deep learning approaches, especially DNN, represent the most advanced evolution in QSAR modeling. These architectures feature multiple hidden layers that automatically learn hierarchical feature representations from raw molecular descriptors, eliminating the need for manual feature engineering and capturing intricate nonlinear patterns [40] [37].

Experimental Performance Comparison

Recent comparative studies provide quantitative insights into the predictive performance of different QSAR modeling techniques across various chemical domains. The table below summarizes key experimental findings from published studies.

Table 1: Comparative Performance of QSAR Modeling Techniques

| Model | Dataset/Case Study | Key Performance Metrics | Reference |

|---|---|---|---|

| SVM | HIV-1 Protease Inhibitors (48 compounds) | Predictive performance comparable to PLS in external validation; failed y-randomization test | [39] |

| DNN | TNBC Inhibitors (7,130 compounds) | R² = 0.94 (test set) with 6069 training compounds; superior to RF, PLS, and MLR | [40] |

| RF | PfDHODH Inhibitors (465 compounds) | Accuracy >80%; MCCtest = 0.76; robust feature importance interpretation | [41] |

| DNN | Kinase Inhibition (559-5,675 compounds) | Accuracy improvement up to 14% over standalone RF and SVM for various kinases | [42] |

| PLS/MLR | TNBC Inhibitors (7,130 compounds) | R² = 0.65 (test set) with 6069 training compounds; performance dropped significantly with smaller training sets | [40] |

| XGBoost-DNN Hybrid | Kinase Inhibition (Multiple datasets) | 5-14% accuracy improvement across 30+ kinase datasets compared to conventional methods | [42] |

These experimental results demonstrate several key trends. First, machine learning and deep learning approaches generally outperform classical methods, particularly with large, complex datasets [40]. Second, hybrid models that combine multiple algorithmic approaches often achieve superior performance compared to individual methods [42]. Third, the performance advantage of advanced methods becomes more pronounced with larger training datasets [40].

Table 2: Characteristic Strengths and Limitations Across QSAR Techniques

| Model | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|

| MLR | High interpretability; minimal computational requirements; minimal overfitting risk | Limited to linear relationships; sensitive to descriptor correlation | Small datasets with clear linear relationships; preliminary screening; regulatory applications |

| PLS | Handles correlated descriptors; works with more descriptors than observations; good for data reduction | Primarily captures linear relationships; model interpretation can be challenging | Spectral data; datasets with high multicollinearity; lead optimization series |

| SVM | Effective in high-dimensional spaces; versatile kernel functions; strong theoretical foundations | Parameter sensitivity; computational intensity with large datasets; black-box nature | Moderate-sized datasets with complex, non-linear structure-activity relationships |

| RF | Handles non-linear relationships; robust to outliers and noise; provides feature importance metrics | Limited extrapolation capability; tendency to overfit with noisy datasets; memory intensive | Diverse chemical libraries; feature selection studies; datasets with complex interactions |

| DNN | Automatic feature engineering; superior performance with large datasets; models complex non-linearities | Extensive data requirements; computational intensity; pronounced black-box character | Large-scale virtual screening; complex biological endpoints; multi-task learning |

Experimental Protocols and Validation Frameworks

Methodological Considerations for Model Development

Robust QSAR model development requires careful attention to dataset preparation, descriptor selection, and validation protocols. The standard workflow encompasses data collection and curation, molecular descriptor calculation, dataset division, model training, validation, and applicability domain definition [38].

Data Curation and Splitting: Best practices recommend rigorous curation to remove duplicates and errors, followed by appropriate dataset division. For the HIV-1 protease inhibitor study, researchers employed a hierarchical cluster analysis (HCA)-based approach to split 48 compounds into training (32 compounds) and external validation (16 compounds) sets, ensuring representative chemical space coverage [39]. For larger datasets, such as the 7,130 TNBC inhibitors, random splitting with 6,069 training and 1,061 test compounds was effectively employed [40].

Descriptor Calculation and Selection: Molecular descriptors quantitatively encode structural and physicochemical properties. Extended Connectivity Fingerprints (ECFPs) and Functional-Class Fingerprints (FCFPs) are widely used topological descriptors that capture circular atom environments [40]. Studies frequently employ multiple descriptor types (e.g., AlogP, ECFP, FCFP) followed by feature selection techniques like recursive feature elimination or mutual information ranking to reduce dimensionality and minimize overfitting [40] [37].

Validation Protocols: Comprehensive validation is essential for assessing model predictive ability. Internal validation typically involves cross-validation techniques (e.g., leave-one-out, leave-N-out), while external validation uses completely held-out test sets [39]. Additional validation methods include y-randomization (scrambling response variables to test for chance correlations) and assessing model performance within a well-defined applicability domain [39] [38].

Performance Metrics and Evaluation Criteria

The choice of evaluation metrics depends on whether the QSAR model is formulated as a regression or classification problem. For regression models, common metrics include R² (coefficient of determination), Q² (cross-validated R²), RMSE (root mean square error), and MAE (mean absolute error) [37] [43]. For classification models, metrics include accuracy, sensitivity, specificity, balanced accuracy (BA), Matthews Correlation Coefficient (MCC), and positive predictive value (PPV) [41] [36].

Recent research has highlighted the importance of selecting metrics aligned with the model's intended use. For virtual screening applications where identifying active compounds from extremely large libraries is the goal, PPV (precision) may be more informative than balanced accuracy, as it directly measures the proportion of true actives among predicted actives [36]. As one study noted, "training on imbalanced datasets achieves a hit rate at least 30% higher than using balanced datasets" for virtual screening tasks [36].

Diagram 1: QSAR Model Development Workflow. The standardized process for developing validated QSAR models, from data collection through deployment.

Emerging Trends and Best Practices

Paradigm Shifts in Model Selection and Evaluation

The field of QSAR modeling is experiencing several paradigm shifts driven by advances in AI and the availability of large-scale chemical data. Traditional best practices that emphasized dataset balancing and balanced accuracy as primary metrics are being reconsidered for virtual screening applications [36]. Modern research indicates that for hit identification tasks, models with the highest positive predictive value (PPV) trained on imbalanced datasets often outperform balanced models in practical screening scenarios [36].

Another significant trend involves the integration of hybrid approaches that combine the strengths of multiple algorithms. For kinase inhibition prediction, a hybrid model combining XGBoost with deep neural networks achieved 5-14% accuracy improvements across 30+ kinase datasets compared to standalone methods [42]. The XGBoost model processed structured features, while the DNN refined probability estimates, demonstrating how strategic algorithm combinations can enhance predictive performance.

Interpretation and Explainability Advances

As AI-driven QSAR models become more complex, addressing their "black-box" nature through improved interpretation techniques has gained importance. Feature importance analysis using methods like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) enables researchers to understand which molecular descriptors most influence predictions [37]. For example, in a study on PfDHODH inhibitors, the Gini index was used to identify that nitrogenous groups, fluorine atoms, oxygenation patterns, aromatic moieties, and chirality significantly influenced inhibitory activity [41].

The movement toward explainable AI (XAI) in QSAR modeling represents a crucial development for regulatory acceptance and mechanistic understanding. As one review noted, "Ensemble learning methods such as Random Forest are preferred for their robustness, built-in feature selection, and ability to handle noisy data" while maintaining some degree of interpretability [37].

Research Reagent Solutions

Table 3: Essential Computational Tools for Modern QSAR Research

| Resource Category | Specific Tools | Primary Function | Application in QSAR Studies |

|---|---|---|---|

| Descriptor Calculation | PaDEL-Descriptor, DRAGON, RDKit | Compute molecular descriptors/fingerprints | Generating ECFP, FCFP, and physicochemical descriptors for model development [40] [37] |

| Model Development Platforms | Scikit-learn, TensorFlow, PyTorch | Implement ML/DL algorithms | Building RF, SVM, and DNN models with standardized APIs [40] [42] |

| Chemical Databases | ChEMBL, PubChem, ZINC | Source bioactivity and compound data | Training set compilation and external validation [40] [36] |

| Validation Software | QSARINS, Orange | Model validation and visualization | Calculating R², Q², MCC, and defining applicability domains [38] |

| Interpretation Tools | SHAP, LIME | Explain model predictions | Identifying influential molecular descriptors in complex models [37] |

Diagram 2: Algorithm Selection Framework. A decision pathway for selecting appropriate QSAR modeling techniques based on dataset characteristics and research constraints.

The evolution of QSAR modeling from classical statistical approaches to AI-driven methodologies has significantly expanded the horizons of predictive chemical modeling. Classical techniques like MLR and PLS remain valuable for interpretable modeling with limited datasets, while machine learning methods like RF and SVM offer robust performance for moderately complex problems. Deep learning approaches demonstrate superior performance for large-scale virtual screening and complex endpoint prediction, though at the cost of interpretability and computational requirements [40] [37] [42].

The optimal selection of QSAR modeling techniques depends critically on the research context—including dataset size, chemical diversity, required interpretability, and computational resources. As the field advances, hybrid approaches that combine the strengths of multiple algorithms, along with improved model interpretation techniques, are poised to further enhance predictive accuracy and mechanistic understanding. Future developments will likely focus on integrating QSAR with structural biology approaches, enhancing explainable AI, and adapting to emerging regulatory standards for chemical safety assessment [37] [38].

For researchers navigating this complex landscape, the key to success lies in matching methodological sophistication to specific research questions while maintaining rigorous validation standards. By doing so, the QSAR community can continue to advance drug discovery and chemical safety assessment through computationally-driven insights.

In Quantitative Structure-Activity Relationship (QSAR) modeling, the ultimate goal is to develop statistical models capable of making accurate and reliable predictions of biological activity or physicochemical properties for new, untested chemicals [21]. The process of QSAR model development extends beyond mere data fitting to encompass a rigorous validation framework that ensures model robustness and predictive power [44] [1]. Without proper validation, QSAR models may appear statistically significant for the data used to create them yet fail miserably when applied to new chemical entities, potentially leading to costly errors in drug development or chemical safety assessment.

This guide focuses on four key validation metrics—q², Concordance Correlation Coefficient (CCC), rₘ², and Root Mean Square Error (RMSE)—that serve as crucial indicators of model performance. Each metric provides a distinct perspective on model quality, with strengths and limitations that must be understood within the context of the broader QSAR validation paradigm [45] [1] [46]. The validation process must address multiple aspects, including internal validation (assessing model robustness), external validation (evaluating predictive power on new data), and applicability domain assessment (determining the chemical space where reliable predictions can be made) [21].

Metric Fundamentals: Conceptual Frameworks and Mathematical Formulations

Leave-One-Out Cross-Validated R² (q²)

The q² statistic, also known as the leave-one-out cross-validated R², is one of the most widely used metrics for internal validation in QSAR studies [44]. It is calculated by systematically removing one compound from the training set, developing a model with the remaining compounds, predicting the activity of the removed compound, and repeating this process for all compounds in the training set. The mathematical formulation of q² is:

q² = 1 - PRESS/SSₜₒₜₐₗ

where PRESS is the Prediction Error Sum of Squares and SSₜₒₜₐₗ is the total sum of squares of the response values [44]. Despite its popularity, a crucial limitation identified in multiple studies is that high q² values (>0.5) do not automatically guarantee high predictive power for external test sets [44]. This metric should be viewed as a necessary but not sufficient condition for model acceptability.

Concordance Correlation Coefficient (CCC)

The Concordance Correlation Coefficient (CCC) was introduced as a more stringent measure for external validation that evaluates both precision and accuracy in predictions [45]. Unlike traditional correlation coefficients, CCC assesses the agreement between observed and predicted values by measuring how far they deviate from the line of perfect concordance (y = x). The formula for CCC is:

CCC = (2 × Cov(X,Y)) / (Var(X) + Var(Y) + (μₓ - μᵧ)²)

where Cov(X,Y) is the covariance between observed (X) and predicted (Y) values, Var(X) and Var(Y) are their respective variances, and μₓ and μᵧ are their means [45]. With a potential range from -1 to 1, values closer to 1 indicate better agreement, and a threshold of CCC > 0.8 is generally recommended for accepting a model as predictive [45] [1].

Modified r² (rₘ²)

The rₘ² metric was developed to address limitations in traditional validation parameters by considering the actual difference between observed and predicted response values without reference to training set mean [2]. This parameter has three variants: rₘ²(LOO) for internal validation, rₘ²(test) for external validation, and rₘ²(overall) for combined performance assessment [2]. The calculation involves:

rₘ² = r² × (1 - √(r² - r₀²))

where r² is the coefficient of determination between observed and predicted values, and r₀² is calculated using regression through origin [1]. This metric serves as a more stringent measure for assessing model predictivity compared to traditional parameters [2].

Root Mean Square Error (RMSE)

Root Mean Square Error (RMSE) quantifies the average magnitude of prediction error in the units of the response variable, providing an intuitive measure of model accuracy [47] [48]. It is calculated as:

RMSE = √(Σ(yᵢ - ŷᵢ)²/n)

where yᵢ represents the actual values, ŷᵢ represents the predicted values, and n is the number of observations [47]. RMSE is particularly valuable because it weights larger errors more heavily due to the squaring of individual errors, making it sensitive to outliers [47] [48]. Values closer to 0 indicate better model performance, with the metric having a range from 0 to positive infinity [48].

Comprehensive Metric Comparison: Strengths, Limitations, and Performance

Table 1: Comparative Analysis of Key QSAR Validation Metrics

| Metric | Primary Use | Ideal Value | Key Strengths | Major Limitations |

|---|---|---|---|---|

| q² | Internal validation | >0.5 | Standard practice; simple interpretation; computationally efficient | Overestimates predictive ability; insufficient alone for model acceptance [44] |

| CCC | External validation | >0.8 | Measures precision and accuracy; stable and restrictive; identifies bias | Less familiar to some researchers; requires external test set [45] [1] |

| rₘ² | Internal & external validation | Higher values better | Stringent assessment; multiple variants for different validation types | Complex calculation; multiple variants can cause confusion [2] [1] |

| RMSE | Overall error assessment | Closer to 0 better | Intuitive interpretation (same units as response); standard metric in many fields | Sensitive to outliers; scale-dependent; decreases with added variables [47] [48] |

Table 2: Performance Comparison of Validation Metrics Based on Empirical Studies

| Study Context | q² Performance | CCC Performance | rₘ² Performance | RMSE Performance | Key Findings |

|---|---|---|---|---|---|

| 44 QSAR models analysis [1] | Inconsistent correlation with true predictivity | 96% agreement with other measures; most precautionary | Varied performance based on calculation method | Not specifically reported | CCC showed highest stability and restrictiveness |

| Large dataset simulation [45] | Not the primary focus | Most restrictive measure | Not the primary focus | Not the primary focus | CCC recommended as complementary/alternative measure |

| Regression metrics comparison [46] | Theoretical flaws identified | Satisfied all mathematical principles | Theoretical flaws identified | Not specifically evaluated | QF₃² satisfied all conditions while others showed flaws |

Critical Insights from Comparative Studies

Empirical evidence from multiple studies reveals that no single metric provides a complete picture of model quality [1]. The 2022 comparative study of 44 QSAR models demonstrated that while traditional metrics like q² and R² are widely used, they frequently fail to detect poorly predictive models when used in isolation [1]. The same study found that CCC showed approximately 96% agreement with other validation measures in accepting models as predictive while being the most precautionary metric [1].

Research by Chirico et al. highlighted that CCC is conceptually simple and demonstrates stability and restrictiveness, making it particularly valuable when validation measures provide conflicting results [45]. Meanwhile, Todeschini et al. identified theoretical flaws in several Q² metrics, noting that only the QF₃² metric satisfied all stated mathematical conditions for proper validation [46].

Experimental Protocols for Metric Evaluation

Standard QSAR Model Development and Validation Workflow

Diagram 1: QSAR Model Development and Validation Workflow. This standardized protocol ensures comprehensive evaluation of model performance using multiple validation metrics at different stages.

Data Collection and Curation Protocol

The foundation of any reliable QSAR model begins with meticulous data collection and curation. Based on established benchmarking methodologies [49]:

Data Source Identification: Collect experimental data from peer-reviewed literature and reputable databases using systematic search strategies across multiple scientific databases (PubMed, Scopus, Web of Science).

Structural Standardization: Convert all chemical structures to standardized isomeric SMILES notation using tools like PubChem PUG REST service. Remove inorganic compounds, organometallics, and mixtures.

Data Quality Control:

- Identify and resolve duplicates by averaging experimental values with standardized standard deviation <0.2

- Remove intra-outliers using Z-score analysis (|Z-score| > 3)

- Eliminate inter-outliers (compounds with inconsistent values across datasets)

Chemical Space Analysis: Characterize the chemical space using circular fingerprints (e.g., FCFP) and principal component analysis to ensure representative coverage of relevant chemical categories.

Validation Metric Implementation Protocol

The implementation of validation metrics should follow a systematic, tiered approach:

Internal Validation Phase:

- Perform leave-one-out or leave-many-out cross-validation

- Calculate q² and rₘ²(LOO) to assess model robustness

- Conduct Y-scrambling to verify absence of chance correlations

External Validation Phase:

- Apply developed model to completely independent test set

- Calculate CCC, rₘ²(test), and RMSE

- Compare observed vs. predicted values using multiple metrics

Applicability Domain Assessment:

- Define the chemical space where reliable predictions can be made

- Identify extrapolations beyond the model's scope

- Report domain boundaries along with predictions

Table 3: Essential Software and Resources for QSAR Model Development and Validation

| Tool/Resource | Type | Key Features | Utility in Validation |

|---|---|---|---|

| QSAR Toolbox [50] | Software Suite | Data gap filling, read-across, category formation, metabolic simulation | Provides workflows for validation and applicability domain assessment |

| OPERA [49] | QSAR Model Suite | Open-source, various PC properties and toxicity endpoints | Built-in model validation and applicability domain assessment |

| RDKit | Cheminformatics Library | Chemical descriptor calculation, fingerprint generation | Essential for preprocessing and feature generation for validation |

| PubChem PUG | Data Service | Chemical structure retrieval, property data access | Source of experimental data for model development and validation |

The comprehensive evaluation of QSAR models requires a multi-metric approach that addresses different aspects of model quality and predictive power. Based on the comparative analysis presented in this guide, researchers should:

Implement a tiered validation strategy that includes both internal (q², rₘ²(LOO)) and external (CCC, rₘ²(test), RMSE) validation metrics rather than relying on any single parameter.

Prioritize CCC for external validation due to its stability, restrictiveness, and ability to detect bias in predictions, particularly when dealing with conflicting results from other metrics.

Recognize the fundamental limitation of q² as a necessary but insufficient condition for model acceptance, understanding that high q² values do not guarantee external predictive ability.

Utilize RMSE for intuitive error interpretation in the original units of the response variable while being mindful of its sensitivity to outliers and scale dependence.

Apply the rₘ² metric for stringent assessment of model predictivity, particularly when working with datasets having wide ranges of response variables.

The optimal validation framework incorporates multiple complementary metrics alongside rigorous applicability domain assessment to provide a comprehensive evaluation of QSAR model reliability. This multifaceted approach ensures that models deployed in drug discovery, chemical safety assessment, and regulatory decision-making possess demonstrable predictive power for new chemical entities.

External validation represents the definitive benchmark for assessing the predictive ability of Quantitative Structure-Activity Relationship (QSAR) models in drug discovery. This guide objectively compares predominant validation methodologies—single hold-out, double cross-validation, and true external validation—against established regulatory principles. We synthesize experimental data from comparative studies to evaluate performance stability, bias, and regulatory acceptance. Supporting protocols, visual workflows, and essential research tools are provided to equip scientists with frameworks for implementing rigorous, compliant model validation. Evidence indicates that while single hold-out validation exhibits significant performance variability, double cross-validation provides more reliable error estimation under model uncertainty, and true external validation remains the gold standard for confirming real-world predictive utility [51] [26] [52].

QSAR modeling mathematically links molecular descriptors to biological activities to enable predictive toxicology and drug discovery [53]. The OECD Principles for QSAR Validation establish that appropriate measures of goodness-of-fit, robustness, and predictivity are essential for regulatory acceptance [54]. External validation specifically addresses predictivity—a model's ability to accurately forecast activities for new chemicals not used in model development [54] [55].

Without rigorous external validation, QSAR models risk model selection bias and overfitting, where models memorize training data patterns but fail to generalize [51]. Studies demonstrate that relying solely on internal validation or correlation coefficients (r²) provides insufficient evidence of predictive power [26]. This guide compares established external validation protocols to establish definitive benchmarks for predictive QSAR modeling.

Comparative Analysis of External Validation Methods

We evaluate three primary external validation approaches against critical performance metrics derived from empirical studies [51] [26] [52].

Table 1: Comparative Performance of External Validation Methods

| Validation Method | Key Principle | Performance Stability | Regulatory Acceptance | Primary Use Case |

|---|---|---|---|---|

| Single Hold-Out | One-time random split into training/test sets | High variation across different splits [52] | OECD compliant with sufficient sample size [54] | Large datasets (>100 compounds) |

| Double Cross-Validation | Nested training/validation loops with repeated splits [51] | Lower variability than single split [51] | Accepted with documented protocol [54] | Small to medium datasets with model uncertainty |

| True External Validation | Completely independent compounds from different sources [56] [54] | Gold standard for real-world performance [56] | Highest regulatory confidence [54] | Final model verification before deployment |

Table 2: Empirical Performance Comparison Across 44 QSAR Models [26]

| Validation Metric | Acceptance Threshold | Models Meeting Threshold | Key Limitation |

|---|---|---|---|