Benchmarking Molecular Docking Software: A Comprehensive Guide to Accuracy, Performance, and Real-World Application

This article provides a systematic review of molecular docking software benchmarking, crucial for researchers and drug development professionals who rely on computational predictions.

Benchmarking Molecular Docking Software: A Comprehensive Guide to Accuracy, Performance, and Real-World Application

Abstract

This article provides a systematic review of molecular docking software benchmarking, crucial for researchers and drug development professionals who rely on computational predictions. It explores the foundational principles of docking accuracy, evaluates the performance of major programs like Glide, AutoDock, and GOLD in controlled and real-world scenarios, and discusses common methodological pitfalls. The content further examines the transformative impact of machine learning and hybrid approaches on virtual screening throughput and pose prediction. Finally, it offers a comparative analysis of classical versus next-generation tools and outlines best practices for validating docking results to ensure reliability in biomedical research.

Understanding Docking Accuracy: Core Concepts and Performance Metrics

Molecular docking is a cornerstone of computational drug discovery, enabling researchers to predict how small molecules interact with protein targets. However, defining a "successful" docking prediction requires a multifaceted approach that goes beyond a single metric. This guide provides a comparative analysis of the key performance indicators used to evaluate molecular docking tools, equipping researchers with the knowledge to critically assess software output and select the most appropriate methods for their projects.

Beyond RMSD: A Multidimensional Framework for Docking Evaluation

The evaluation of molecular docking software has evolved significantly. While the Root-Mean-Square Deviation (RMSD) remains a fundamental metric for measuring geometric accuracy, it is now understood that a low RMSD alone is insufficient to define a successful pose. A pose with an RMSD ≤ 2 Å relative to an experimentally determined reference structure is traditionally considered a correct prediction [1]. However, this metric does not assess the physical plausibility of the interaction [2].

Contemporary benchmarking emphasizes a dual-metric approach that integrates geometric accuracy with physical and chemical validity [2] [1]. This shift is driven by the finding that some deep learning models, particularly regression-based approaches, can generate poses with favorable RMSD values that are nevertheless physically implausible, containing steric clashes, incorrect bond lengths, or unrealistic torsion angles [2]. Frameworks like PoseBusters have been developed to systematically evaluate these aspects, defining a "PB-valid" pose as one that passes a comprehensive suite of checks for stereochemistry, bond lengths, planarity, and intermolecular clashes [1]. The combined success rate—the percentage of predictions that are both accurate (RMSD ≤ 2 Å) and physically valid (PB-valid)—is emerging as a more robust standard for comparing docking methods [2].

Table 1: Key Metrics for Evaluating Molecular Docking Performance

| Metric Category | Specific Metric | Definition | Interpretation & Threshold |

|---|---|---|---|

| Geometric Accuracy | Root-Mean-Square Deviation (RMSD) | Square root of the average squared distance between atoms in predicted and reference poses [1]. | ≤ 2.0 Å: High accuracy≤ 5.0 Å: Often considered acceptable [1] |

| Physical Validity | PB-Valid Rate [2] [1] | Percentage of poses that pass all physical plausibility checks. | A binary outcome (Yes/No); higher is better. |

| Bond Length/Angle Tolerance | Checks if bond lengths/angles are within 0.75-1.25x reference values [1]. | Must be within bounds to be valid. | |

| Steric Clashes | Measures unrealistic overlap between ligand and protein atoms. | Volume overlap with protein must not exceed 7.5% [1]. | |

| Interaction Recovery | Interaction Fidelity | Ability to recapitulate key molecular interactions (e.g., H-bonds, hydrophobic contacts) [2]. | Qualitative and quantitative assessment; critical for biological relevance. |

| Virtual Screening (VS) Performance | Receiver Operating Characteristic (ROC) Analysis [3] | Evaluates a method's ability to distinguish true binders from non-binders in a screen. | Area Under the Curve (AUC) ≥ 0.70 indicates a good classifier [3]. |

| Specificity & Sensitivity [3] | Measures the rate of true negatives and true positives identified. | High specificity reduces false positives; balance with sensitivity is key. |

Comparative Performance of Docking Paradigms

Recent comprehensive studies have evaluated a wide range of docking methods, from traditional physics-based tools to modern deep learning models. These can be broadly categorized into traditional methods, generative diffusion models, regression-based models, and hybrid methods [2]. Their performance varies significantly across the different metrics of success.

Generative diffusion models, such as SurfDock, demonstrate superior pose prediction accuracy, achieving RMSD ≤ 2 Å success rates exceeding 70-90% on standard benchmarks [2]. However, they often lag in physical validity, with PB-valid rates sometimes falling below 50% on challenging datasets, indicating a tendency to produce steric clashes or incorrect bond geometries [2]. In contrast, traditional methods like Glide SP consistently excel in physical validity, maintaining PB-valid rates above 94% across diverse tests, though their pose accuracy can be lower than the best-in-class AI models [2]. This makes them a reliable choice for generating chemically sensible structures.

Regression-based deep learning models frequently struggle on both fronts, often failing to produce physically valid poses and showing lower overall accuracy, which places them in a lower performance tier [2]. The most balanced performance often comes from hybrid methods that integrate AI-driven scoring functions with traditional conformational search algorithms. Furthermore, the integration of Convolutional Neural Network (CNN) scores, as implemented in the docking suite GNINA, has proven effective for improving virtual screening outcomes. Using a CNN score cutoff (e.g., 0.9) to filter poses before ranking by binding affinity can significantly enhance specificity—reducing false positives—with only a minor loss in sensitivity [3].

Table 2: Comparative Performance of Docking Method Types

| Docking Paradigm | Representative Tools | Pose Accuracy (RMSD) | Physical Validity (PB-Valid) | General Notes & Best Use Cases |

|---|---|---|---|---|

| Traditional Methods | Glide SP, AutoDock Vina [2] | Moderate to High | Very High (e.g., >94% [2]) | Robust and reliable; excellent for generating chemically plausible poses. |

| Generative Diffusion Models | SurfDock, DiffBindFR [2] | Very High (e.g., 70-90% [2]) | Moderate to Low (e.g., 40-60% [2]) | Top-tier geometric accuracy; often requires post-processing for physical validity. |

| Regression-Based Models | KarmaDock, QuickBind [2] | Low to Moderate | Low | Often produce invalid poses; performance lags behind other paradigms [2]. |

| Hybrid Methods | Interformer [2] | High | High | Offers the best balance between accuracy and physical realism [2]. |

| CNN-Scored Docking | GNINA [3] | N/A (Scoring Function) | N/A (Scoring Function) | Highly effective for improving virtual screening specificity and candidate ranking [3]. |

Experimental Protocols for Benchmarking Docking Tools

To ensure fair and reproducible comparisons between docking software, researchers rely on standardized experimental protocols and benchmark datasets. The typical workflow involves preparing protein and ligand structures, running docking calculations with various tools, and then evaluating the outputs against a known reference.

Benchmark Datasets and Preparation

Rigorous benchmarking requires curated datasets with experimentally validated protein-ligand complexes. Key datasets include:

- The Astex Diverse Set: A classic set of high-quality complexes often used for initial validation [2].

- PoseBusters Benchmark Set: Comprises complexes released after 2021 to test generalization on novel, unseen structures [2] [1].

- DockGen Dataset: Specifically designed to evaluate performance on novel protein binding pockets, challenging a method's ability to generalize beyond its training data [2].

The standard preparation protocol involves using crystal structures from the Protein Data Bank (PDB). The native ligand is typically removed from the binding site, and both the protein and ligand structures are processed (adding hydrogens, assigning charges) using tools like prepare_receptor.py and prepare_ligand.py from software suites such as ADFR [3].

Evaluation and Analysis Workflow

Once docking is complete, the generated poses are systematically analyzed:

- Pose Prediction Accuracy: The predicted ligand pose is aligned with the co-crystallized reference ligand, and the heavy-atom RMSD is calculated [2] [1].

- Physical Plausibility Check: Tools like the PoseBusters toolkit are used to validate the chemical and geometric correctness of the top-ranked pose against multiple criteria [2] [1].

- Virtual Screening Assessment: To evaluate screening utility, methods are tested on their ability to rank known active compounds (true positives) higher than known inactives (true negatives) using ROC analysis [3].

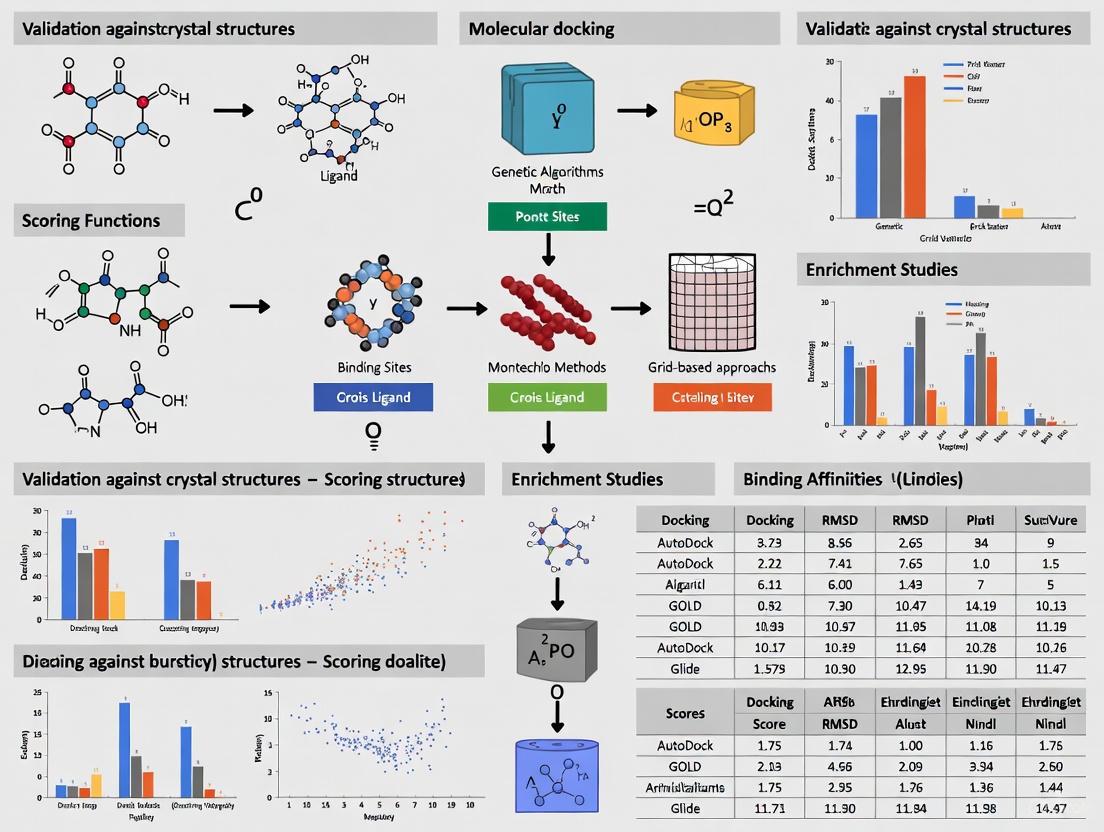

Diagram 1: Docking evaluation workflow.

A successful docking study relies on a combination of software tools, computational resources, and data repositories. The table below lists key "research reagents" for conducting and evaluating molecular docking experiments.

Table 3: Essential Research Reagents and Resources for Molecular Docking

| Resource Type | Name | Function & Application |

|---|---|---|

| Software & Tools | AutoDock Vina, GNINA, Glide [2] [3] | Core docking programs for pose generation and scoring. |

| PoseBusters Toolkit [2] [1] | Validation suite for assessing the physical plausibility of docking poses. | |

| UCSF Chimera/ChimeraX [3] | Molecular visualization and preparation tool. | |

| Databases | Protein Data Bank (PDB) [4] | Primary repository for experimentally determined 3D structures of proteins and complexes. |

| PDBbind [5] [6] | Curated database of protein-ligand complexes with binding affinity data. | |

| ZINC [3] | Publicly available database of commercially available compounds for virtual screening. | |

| Computational Resources | GPU Acceleration [7] [3] | Critical for running deep learning-based docking methods efficiently. |

| High-CPU Computing [3] | Necessary for traditional docking methods and large-scale virtual screens. |

The field of molecular docking is in a dynamic state of advancement, with deep learning models pushing the boundaries of pose prediction accuracy. However, this guide underscores that true success in molecular docking is multidimensional. Relying solely on RMSD is an outdated practice. A rigorous assessment must integrate geometric accuracy (RMSD), physical plausibility (e.g., PB-valid), and virtual screening performance (e.g., AUC).

For researchers, the choice of tool should be guided by the specific task. Generative diffusion models show immense promise for achieving high pose accuracy, while traditional and hybrid methods currently offer greater reliability in producing physically realistic results. As the field evolves, the integration of AI-powered pose generation with physics-based validation and refinement is likely to become the gold standard, ensuring that computational predictions are not only accurate but also chemically meaningful and biologically relevant.

Molecular docking stands as a cornerstone computational technique in structural biology and drug discovery, enabling researchers to predict how small molecules and biological macromolecules interact. The utility of any docking program hinges on its performance in controlled, idealized test sets, which provide standardized benchmarks for evaluating predictive accuracy. For scientists engaged in rational drug design and the study of protein interactions, selecting the correct computational tool is paramount. This guide provides an objective, data-driven comparison of top docking programs, detailing their performance on established benchmark tests. It synthesizes findings from recent, rigorous evaluations to offer a clear overview of the current landscape, empowering researchers to make informed choices based on their specific project needs concerning pose prediction, virtual screening, and handling diverse target types.

Key Performance Metrics and Evaluation Frameworks

To ensure a fair and meaningful comparison, the field relies on standardized metrics and benchmark datasets. Understanding these evaluation frameworks is crucial for interpreting performance data.

- Pose Prediction Accuracy: The primary metric is the Root-Mean-Square Deviation (RMSD) between the atom positions of a docked ligand pose and its experimentally determined crystal structure. A prediction is typically considered successful if the heavy-atom RMSD is less than 2.0 Å, indicating high spatial overlap with the native structure [8] [2].

- Virtual Screening Performance: This measures a program's ability to prioritize active compounds over inactive ones in a large database. Performance is quantified using Receiver Operating Characteristic (ROC) curves and the Area Under the Curve (AUC). A higher AUC indicates better enrichment of true actives. The Enrichment Factor (EF) at a top fraction of the screened database (e.g., EF1% or EF2%) is also a common metric [8].

- Physical Plausibility: Beyond RMSD, a predicted pose must be chemically and physically realistic. Tools like the PoseBusters toolkit systematically evaluate docking predictions against constraints including bond lengths, angles, stereochemistry, and the absence of severe protein-ligand steric clashes [2]. The PB-valid rate is the percentage of predictions that pass these checks.

- Generalization Testing: Modern evaluations test methods on diverse datasets to assess robustness. These often include a core set (e.g., the Astex diverse set of known complexes), a challenging set (e.g., the PoseBusters benchmark of unseen complexes), and an out-of-distribution set (e.g., DockGen, featuring novel protein binding pockets) to evaluate performance on unfamiliar targets [2].

Performance Comparison of Ligand Docking Programs

Recent comprehensive studies have evaluated a wide array of docking methods, from traditional physics-based tools to modern deep learning (DL) approaches, across multiple benchmarks.

Quantitative Performance on Standardized Tests

Table 1: Pose Prediction Accuracy and Physical Validity Across Benchmark Datasets

| Method Category | Method Name | Astex Diverse Set (RMSD ≤ 2 Å) | PoseBusters Set (RMSD ≤ 2 Å) | PoseBusters Set (PB-Valid) | DockGen Set (RMSD ≤ 2 Å) |

|---|---|---|---|---|---|

| Traditional | Glide SP | High Accuracy [2] | >97% Valid [2] | >97% Valid [2] | Good Performance [2] |

| Traditional | AutoDock Vina | Not Provided | Not Provided | Not Provided | Not Provided |

| Generative DL | SurfDock | 91.8% [2] | 77.3% [2] | 45.8% [2] | 75.7% [2] |

| Regression DL | KarmaDock | Low Accuracy [2] | Low Accuracy [2] | Low Validity [2] | Low Accuracy [2] |

| Hybrid | Interformer | Balanced Performance [2] | Balanced Performance [2] | Balanced Performance [2] | Balanced Performance [2] |

Table 2: Performance in Virtual Screening and Cyclooxygenase Docking

| Method Name | Virtual Screening (Avg. AUC on DUD Set) | VS Early Enrichment (Top 1%) | COX-1/2 Pose Prediction (RMSD < 2 Å) |

|---|---|---|---|

| Glide | 0.80 [9] | 25% [9] | 100% [8] |

| GOLD | Not Provided | Not Provided | 82% [8] |

| AutoDock | Not Provided | Not Provided | 59% [8] |

| FlexX | Not Provided | Not Provided | 76% [8] |

| Molegro Virtual Docker (MVD) | Not Provided | Not Provided | Not Provided |

Analysis of Performance by Method Category

- Traditional Physics-Based Methods: Tools like Glide SP and AutoDock Vina remain highly competitive. Glide, in particular, demonstrates a remarkable balance of high pose prediction accuracy and exceptional physical plausibility, with PB-valid rates consistently exceeding 97% across diverse test sets [2]. Its empirical scoring function is designed to maximize the separation between strong and weak binders, contributing to its top-tier performance in virtual screening, with an average AUC of 0.80 on the DUD set [9].

- Generative Deep Learning Models: Methods like SurfDock show exceptional performance in pose accuracy, achieving over 91% success on the Astex set [2]. This highlights the power of diffusion models to generate geometrically correct poses. However, a significant weakness is their tendency to produce physically implausible results, with PB-valid rates sometimes falling below 50% on challenging sets [2]. This indicates a potential disconnect between learned distributions and fundamental physical constraints.

- Regression-Based Deep Learning Models: Approaches such as KarmaDock have struggled in benchmarks, often ranking lowest in both pose accuracy and physical validity [2]. This suggests that directly regressing atomic coordinates without a robust generative or sampling mechanism is a challenging paradigm.

- Hybrid Methods: Tools like Interformer, which integrate traditional conformational searches with AI-driven scoring functions, aim to strike a balance. They deliver performance that is more robust than regression-based DL methods and can offer a better balance of accuracy and physical validity than purely generative approaches [2].

Performance Comparison of Protein-Protein Docking Programs

The docking of two proteins presents a distinct set of challenges due to larger, flatter interfaces. The evaluation framework often involves classifying predictions as acceptable, medium, or high quality based on interface metrics [10].

Quantitative Performance on Protein-Protein Complexes

Table 3: Protein-Protein Docking Success Rates (Top-5)

| Method Category | Method Name | Docking vs. Holo Structures (%) | Docking vs. Apo Structures (%) | Antibody-Antigen Docking (%) |

|---|---|---|---|---|

| Traditional | HDOCK | 85.2% [10] | 12.8% [10] | Not Provided |

| Deep Learning | AlphaFold3 | Not Provided | 78.0% [10] | 31.8% [10] |

| Deep Learning | AlphaFold-Multimer | Not Provided | Not Provided | Substantially Outperformed by AF3 [10] |

Analysis of Performance Trends in Protein-Protein Docking

- The AlphaFold3 Revolution: AlphaFold3 has set a new benchmark in protein-protein docking, particularly when working with apo (unbound) structures, achieving a top-5 success rate of 78% [10]. This dramatically outperforms traditional FFT-based methods like HDOCK, which manage only 12.8% in the same scenario, highlighting the limitation of the rigid-body assumption when proteins undergo conformational change upon binding [10].

- Limits of Rigid-Body Docking: Traditional methods like ClusPro and HDOCK perform significantly better when docking against holo (bound) structures, as this bypasses the challenge of side-chain and backbone flexibility [11]. This underscores that their performance is highly dependent on the structural input.

- Specialized Complexes: Docking antibody-antigen complexes remains a particularly difficult task. While AlphaFold3 substantially outperforms its predecessor, AlphaFold-Multimer, its top-5 success rate of 31.8% indicates there is still considerable room for improvement in modeling these highly specific interactions [10].

- Generalization Gaps: A critical finding from recent benchmarks is that all deep learning-based protein-protein docking methods exhibit markedly reduced performance on out-of-distribution data, revealing a significant challenge for generalizability beyond the types of complexes seen in training [10].

Experimental Protocols for Benchmarking

To ensure reproducibility and fair comparisons, benchmarking studies adhere to standardized experimental protocols.

Diagram 1: Molecular docking evaluation workflow

The typical workflow for a docking benchmark, as visualized in Diagram 1, involves several key stages. The following protocols are compiled from recent, rigorous evaluations [8] [12] [2]:

- Data Set Curation: Benchmarks use well-curated test sets. For ligand docking, this involves collecting high-resolution crystal structures of protein-ligand complexes from the PDBbind database or specialized sets like Astex or DUD [8] [2]. For protein-protein docking, benchmarks use datasets like DockingBenchmark 5.5 or the newer PPCBench for out-of-distribution testing [10].

- Structure Preparation: Protein structures are meticulously prepared before docking. This involves:

- Docking Execution: Each docking program is run according to its standard protocol, often using multiple levels of precision (e.g., Glide's HTVS, SP, and XP modes). For virtual screening evaluations, each program is used to rank a library of known active ligands and decoy molecules [8].

- Performance Analysis: The resulting poses and rankings are analyzed against the ground truth. For pose prediction, the RMSD of each top-scoring pose is calculated. For virtual screening, ROC curves are plotted and AUC values are calculated to measure enrichment [8] [2].

Table 4: Essential Resources for Docking Benchmarking

| Resource Name | Type | Primary Function in Evaluation |

|---|---|---|

| PDBbind Database | Database | A comprehensive collection of protein-ligand complex structures and binding affinities, used for training and testing scoring functions [5]. |

| CAPRI (Critical Assessment of PRedicted Interactions) | Community Initiative | A blind prediction experiment that provides a standard framework for assessing protein-protein docking methods [13]. |

| Astex Diverse Set | Benchmark Dataset | A carefully curated set of high-quality protein-ligand complexes used to test pose prediction accuracy [2]. |

| DUD (Directory of Useful Decoys) | Benchmark Dataset | A dataset containing known active ligands and computationally generated decoy molecules for evaluating virtual screening enrichment [9]. |

| PoseBusters | Validation Tool | A toolkit to check the physical plausibility and chemical correctness of docked ligand poses [2]. |

| AlphaFold Protein Structure Database | Database | A repository of predicted protein structures generated by AlphaFold, increasingly used as input for docking when experimental structures are unavailable [12]. |

The systematic comparison of top docking programs reveals a nuanced landscape where no single tool dominates all metrics. Traditional methods like Glide maintain a strong position, offering robust, physically plausible predictions and excellent virtual screening performance. Deep learning methods, particularly generative models like SurfDock, have made staggering advances in pure pose prediction accuracy but often at the cost of physical realism, limiting their immediate reliability. For protein-protein docking, AlphaFold3 represents a paradigm shift, especially for apo-structure docking, though all methods struggle with generalization and highly specific interactions like antibody-antigen binding.

The choice of software must therefore be guided by the specific research goal. For lead optimization in drug discovery, where understanding precise interactions is key, a traditional tool with high physical validity may be preferable. For rapid virtual screening of large libraries where speed is critical, the balance of accuracy and speed offered by tools like Glide SP or hybrid DL methods is advantageous. As the field evolves, the integration of AI with rigorous physical principles appears to be the most promising path toward more reliable and generalizable docking solutions. Researchers are advised to consider these performance characteristics in the context of their own targets and to perform validation where possible, especially when working with novel protein families or when using predicted structures.

The development of fast Fourier transform (FFT) algorithms marked a revolutionary advancement in computational structural biology, enabling the systematic sampling of billions of complex conformations and transforming protein-protein docking from a theoretical concept into a practical tool [14]. FFT-based methods, which correlate the surfaces of two proteins by fixing one and moving the other across a grid, provided the computational efficiency necessary for global docking without prior knowledge of the binding site [14]. This approach underpins widely used docking servers such as ClusPro, ZDOCK, and GRAMM, with ClusPro alone serving over 15,000 registered users and performing 98,300 docking calculations in 2019 [14].

However, this computational efficiency comes at a significant cost: the rigid body assumption. This simplification treats proteins as static, unchanging structures, ignoring the dynamic conformational changes that frequently occur during biological binding events [14] [7]. While "soft" docking scoring functions allow for minor steric overlaps to mitigate this issue, the core limitation remains—the inability to model the induced fit and conformational selection mechanisms that are fundamental to molecular recognition [14] [7]. This article examines the fundamental limitations imposed by this assumption, evaluates the performance of traditional docking against modern flexible alternatives, and explores the critical role of benchmarking in driving methodological progress.

How Rigid Body Docking Works: The FFT Engine and Its Scoring Functions

The Core FFT Sampling Methodology

At the heart of traditional rigid body docking lies a precise, grid-based sampling system. One protein (the receptor) is fixed at the origin of a 3D grid, while the second protein (the ligand) is placed on a movable grid. The interaction energy is calculated as a sum of correlation functions, a mathematical formulation that allows for simultaneous evaluation of all translational degrees of freedom using FFTs, with only rotations requiring explicit consideration [14].

The sampling density is controlled by key parameters. The translational grid step typically ranges from 0.8 Å to 1.2 Å, determining the fineness of the search. The number of rotational orientations, often described as a 5 to 12-degree step size in Euler angles, defines the angular coverage. This exhaustive sampling enables the evaluation of billions of conformations, systematically exploring the rotational and translational space to identify geometrically complementary poses [14].

Scoring Function Composition

To rank the billions of generated poses, rigid body docking employs scoring functions composed of linearly weighted energy terms. These typically include:

- Shape Complementarity: Attractive and repulsive van der Waals terms that evaluate surface fit, often with "soft" penalties to allow minor overlaps [14].

- Electrostatic Interactions: Coulombic potentials that model attractive and repulsive charge interactions [14].

- Desolvation Energy: Structure-based potentials that account for the hydrophobic effect and energy cost of removing water from the interface [14].

A significant challenge lies in determining the optimal weighting coefficients for these terms. Research indicates that testing hundreds of coefficient combinations can reveal the theoretical accuracy limits for specific complexes, though no single combination performs optimally across all targets [14].

Table 1: Core Components of Traditional Rigid Body Docking

| Component | Function | Common Implementation |

|---|---|---|

| FFT Sampling | Exhaustively searches translational/rotational space | Grid-based correlation with 0.8-1.2 Å steps |

| Shape Complementarity | Measures geometric surface fit | "Soft" van der Waals potential with overlap tolerance |

| Electrostatic Terms | Models charge-charge interactions | Coulombic potential calculated via FFT correlation |

| Desolvation Terms | Accounts for hydrophobic effect & dehydration penalty | Knowledge-based potentials (e.g., DARS) |

Figure 1: The Rigid Body Docking Workflow. This flowchart illustrates the sequential process of traditional FFT-based docking, from initial protein placement through sampling, scoring, and final model generation.

Quantitative Performance Benchmarks: Assessing Accuracy Across Complex Types

Performance on a Standardized Protein-Protein Docking Benchmark

Rigorous evaluation using established benchmarks provides crucial insights into the practical performance of rigid body docking. Analysis of the Protein Docking Benchmark 5.0 (BM5), which contains 230 protein complexes with known bound and unbound structures, reveals how accuracy varies significantly with complex type and conformational flexibility [14].

Table 2: ClusPro Performance on BM5 Benchmark by Complex Type

| Complex Category | Number of Targets | Success Rate (Acceptable or Better) | Key Challenges |

|---|---|---|---|

| Rigid-Body (Easy) | 151 | Highest | Minimal conformational change |

| Medium Difficulty | 45 | Moderate | Interface side-chain adjustments |

| Difficult | 34 | Lowest | Large backbone movements |

| Antibody-Antigen | 40 | Variable | CDR loop flexibility |

| Enzyme-Containing | 88 | Variable | Active site rearrangements |

The data shows a clear trend: rigid body methods perform acceptably or better for more complexes than flexible docking methods overall, but the latter can achieve higher accuracy for specific targets involving substantial conformational changes [14]. This highlights the context-dependent value of each approach.

The Critical Role of Evaluation Metrics

The docking community employs standardized metrics to evaluate prediction accuracy. The Critical Assessment of PRedicted Interactions (CAPRI) defines four accuracy categories—incorrect, acceptable, medium, and high—based on three parameters: the fraction of native contacts, ligand RMSD after receptor superposition, and interface RMSD [14]. The DockQ score integrates these measures into a continuous value from 0 to 1, where scores >0.80 indicate high accuracy, 0.49-0.80 medium accuracy, and 0.23-0.49 acceptable accuracy [14]. These metrics enable consistent cross-method comparisons in community-wide blind trials.

Fundamental Limitations of the Rigid Body Paradigm

The Conformational Change Challenge

The most significant limitation of rigid body docking is its inability to handle conformational changes upon binding. Proteins are dynamic entities whose side chains and backbones frequently rearrange during complex formation. The rigid body assumption treats them as static crystal structures, creating a fundamental mismatch with biological reality [14]. This challenge manifests differently across complexity levels:

- Side-Chain Rearrangements: Even modest side-chain rotations at interfaces can prevent correct pose identification when using unbound structures [14].

- Backbone Movements: Large-scale domain shifts or loop rearrangements, common in allosteric proteins and antibody-antigen recognition, present the most severe challenges and often lead to complete docking failure [14].

- Induced Fit: The phenomenon where binding sites remodel to accommodate ligands cannot be captured, limiting accuracy for many enzyme-containing complexes [14].

Scoring Function Limitations and the Energy-Accuracy Gap

The mathematical requirement for scoring functions to be expressed as sums of correlation functions for FFT implementation constrains their physical sophistication. This frequently leads to the "energy-accuracy gap," where poses close to the native structure do not necessarily have the lowest energies, while low-energy conformations may occur far from the X-ray structures [14]. Consequently, rigid body methods must retain large sets of low-energy decoys (typically thousands) for subsequent clustering and refinement, hoping this set includes near-native configurations [14].

Beyond Rigidity: Emerging Flexible Docking Approaches

Traditional Flexible Docking Methods

To address rigid body limitations, several traditional approaches incorporate flexibility:

- Soft Docking: Allows limited steric overlap at interfaces, tolerating minor conformational changes without explicitly modeling them [14].

- Side-Chain Flexibility: Methods that optimize side-chain conformations during or after rigid body docking, though backbone typically remains fixed [14].

- Ensemble Docking: Uses multiple receptor conformations from NMR ensembles or molecular dynamics simulations to represent natural flexibility [7].

The Deep Learning Revolution in Molecular Docking

Recent years have witnessed a surge in deep learning (DL) approaches that fundamentally reshape molecular docking:

- Equivariant Models: Methods like EquiBind use equivariant graph neural networks to identify interaction "key points" and predict optimal ligand placement [7].

- Diffusion Models: DiffDock applies diffusion models to molecular docking, iteratively refining ligand poses from noise to plausible binding configurations through learned denoising score functions [7].

- Flexible Co-folding: Emerging approaches like FlexPose enable end-to-end flexible modeling of protein-ligand complexes regardless of input conformation (apo or holo) [7].

These DL methods demonstrate particular strength in blind docking scenarios (predicting binding sites without prior knowledge), though they may underperform traditional methods when docking to known pockets [7]. However, challenges remain, including physical implausibilities in predicted structures and generalization beyond training data [7] [15].

Table 3: Comparison of Docking Methodologies and Their Capabilities

| Method Type | Representative Tools | Handles Flexibility | Computational Cost | Best Application Context |

|---|---|---|---|---|

| Rigid Body Docking | ClusPro, ZDOCK, GRAMM | Limited (soft docking only) | Low | Preliminary screening, rigid complexes |

| Traditional Flexible Docking | SwarmDock, HADDOCK | Moderate (side-chains, ensembles) | Medium | Complexes with known flexibility |

| Deep Learning Docking | DiffDock, EquiBind, FlexPose | High (full co-folding) | Low (after training) | Novel targets, blind docking |

The Essential Role of Benchmarking in Docking Methodology Development

Established Docking Benchmarks

The progression of docking methodologies relies heavily on robust, community-accepted benchmarking practices. Several key datasets enable standardized evaluations:

- Protein Docking Benchmark (BM5): Contains 230 protein-protein complexes with bound and unbound structures, categorized by difficulty and complex type [14].

- CAPRI/CASP Experiments: Community-wide blind prediction experiments that provide unbiased assessment of docking methods on unpublished targets [14].

- PDBbind: A comprehensive collection of protein-ligand complexes for small molecule docking evaluation [16] [15].

- CARA Benchmark: Focuses on compound activity prediction for real-world drug discovery applications, addressing gaps between previous benchmarks and practical scenarios [17].

Critical Benchmarking Insights and Future Directions

Recent benchmarking reveals several critical insights. The PoseBusters tool, which analyzes physical and chemical consistency, has shown that DL methods don't necessarily surpass traditional approaches in producing physically plausible poses, with performance degrading significantly for proteins with less than 30% sequence similarity to training data [15]. This highlights generalization challenges in data-driven approaches.

Future benchmarking must address key challenges including dataset diversity, realistic train-test splitting to prevent data leakage, incorporation of activity cliffs (where similar molecules show dramatically different binding), and the development of multi-faceted evaluation metrics that balance spatial accuracy with physical plausibility [18] [17].

The Scientist's Toolkit: Essential Research Reagents for Docking Benchmarking

Table 4: Key Resources for Docking Method Development and Evaluation

| Resource | Type | Function and Utility | Access |

|---|---|---|---|

| Protein Data Bank (PDB) | Data Repository | Source of experimental protein structures for docking trials and method training | Public |

| Protein Docking Benchmark 5.0 | Benchmark Dataset | Curated set of 230 complexes with bound/unbound structures for standardized evaluation | Public |

| PDBbind | Benchmark Dataset | Comprehensive collection of protein-ligand complexes with binding affinity data | Public |

| CAPRI Evaluation Framework | Assessment Protocol | Standardized metrics and procedures for blind docking assessment | Public |

| ClusPro Server | Docking Tool | Automated rigid body docking server implementing FFT-based sampling | Web server |

| PoseBusters | Validation Tool | Checks predicted complexes for physical and chemical plausibility | Open source |

| CARA Benchmark | Benchmark Dataset | Focuses on real-world compound activity prediction scenarios | Public |

Figure 2: The Docking Methodology Development Cycle. This circular workflow demonstrates how community benchmarking drives iterative improvement in docking algorithms, from challenge participation through method refinement.

The rigid body assumption, while enabling the computational feasibility of large-scale docking through FFT-based sampling, introduces fundamental limitations in accurately modeling biomolecular interactions. Benchmarking reveals that rigid body methods like ClusPro provide acceptable or better models for more complexes than flexible docking approaches, yet the latter achieves superior accuracy for specific targets involving substantial conformational changes [14]. This performance landscape suggests a pragmatic path forward: context-aware application selection.

For preliminary screening or complexes with minimal flexibility, traditional rigid body docking offers an efficient and often sufficient solution. However, for systems involving significant conformational changes, modern flexible approaches—particularly emerging deep learning methods that explicitly model protein flexibility—show increasing promise despite current challenges with physical plausibility and generalization [7] [15]. The future of molecular docking lies not in a single dominant methodology, but in the continued development and intelligent application of diverse approaches, rigorously validated through community benchmarking efforts that mirror the successful CASP model for protein structure prediction [18]. As benchmarking practices evolve to better capture real-world scenarios and method capabilities, they will continue to guide the strategic selection and development of docking tools for specific research and drug discovery applications.

Molecular docking is a cornerstone of computational drug discovery, enabling researchers to predict how small molecules interact with target proteins. Its accuracy hinges on two core components: the search algorithm, which explores possible ligand orientations (poses), and the scoring function, which evaluates and ranks these poses. This guide deconstructs these components by benchmarking popular docking software, providing a clear comparison of their performance in real-world tasks.

Docking Performance at a Glance: A Quantitative Benchmark

The accuracy of molecular docking software is typically measured by its ability to predict a ligand's correct binding pose, often defined by a Root-Mean-Square Deviation (RMSD) of less than 2 Å from the experimentally determined structure, and its power to identify active compounds in virtual screening (VS), measured by metrics like Area Under the Curve (AUC) [8] [2].

Table 1: Comparative Performance of Docking Software in Pose Prediction and Virtual Screening.

| Docking Program | Pose Prediction Success (RMSD < 2 Å) | Virtual Screening AUC (Average) | Key Strengths |

|---|---|---|---|

| Glide | 85% - 100% [8] [9] | 0.80 [9] | High pose accuracy and physical validity; excellent for structure-based design. |

| GOLD | ~82% [8] | Data Not Provided | Robust performance across diverse protein targets. |

| AutoDock | ~59% [8] | Data Not Provided | Widely used open-source tool. |

| FlexX | ~73% [8] | Data Not Provided | Fast docking using a fragment-based approach. |

| SurfDock | 76% - 92% [2] | Data Not Provided | Superior pose accuracy among deep learning methods. |

| DiffBindFR | 31% - 75% [2] | Data Not Provided | Generative model with good performance on known complexes. |

| Boltz-2 | Data Not Provided | ~0.42 (Binding Affinity Correlation) [19] | Emerging co-folding model for affinity prediction. |

The Toolkit for Docking Benchmarking

Standardized datasets and software form the foundation of reliable docking benchmarks. The experiments cited in this guide rely on the following key resources.

Table 2: Essential Research Reagents and Resources for Docking Benchmarking.

| Resource Name | Type | Primary Function in Benchmarking |

|---|---|---|

| PDBbind Database [16] [7] | Curated Dataset | A comprehensive collection of protein-ligand complexes with binding affinity data, used to test scoring and pose prediction. |

| Astex Diverse Set [2] [9] | Curated Dataset | A set of high-quality, drug-like protein-ligand complexes used for evaluating pose prediction accuracy. |

| DUD Dataset [9] | Curated Dataset | A benchmark set for virtual screening, containing known active molecules and decoys to test a method's ability to enrich actives. |

| PoseBusters [2] | Validation Tool | A toolkit to check the physical plausibility and geometric integrity of predicted docking poses. |

| CCharPPI Server [13] | Evaluation Server | A web server designed for the independent assessment of scoring functions, separate from docking algorithms. |

Decoding the Experimental Protocols

To ensure fair and interpretable comparisons, benchmarking studies follow rigorous, standardized protocols. The key methodologies are outlined below.

The Pose Prediction Protocol

The standard protocol for evaluating binding mode prediction is re-docking: the native ligand is extracted from a protein-ligand crystal structure and then docked back into the prepared protein structure [8] [20]. The resulting top-ranked pose is compared to the original experimental pose by calculating the RMSD between the atomic coordinates. An RMSD of less than 2.0 Å is typically considered a successful prediction [8]. This protocol tests a docking program's core ability to reproduce a known binding mode.

The Virtual Screening Protocol

To evaluate a program's ability to distinguish active compounds from inactive ones, researchers use a retrospective virtual screening protocol [8] [9]. A library of known active ligands for a specific target is mixed with a large set of "decoy" molecules—structurally similar but presumed inactive compounds. This combined library is docked, and the resulting scores are used to rank the compounds. The ranking is analyzed using a Receiver Operating Characteristic (ROC) curve, with the Area Under the Curve (AUC) quantifying the screening power, where a higher AUC indicates better performance [8].

Assessing Physical Plausibility

Beyond RMSD, a critical evaluation is the physical validity of predicted poses. Tools like PoseBusters [2] check for chemical and geometric consistency, including proper bond lengths, angles, and the absence of severe steric clashes (clashes) between the ligand and protein. A pose may have a good RMSD but be physically implausible, which limits its utility in drug design.

Docking Benchmark Workflow: This diagram illustrates the standard experimental workflow for benchmarking molecular docking software, from system preparation to the three primary evaluation pathways.

The Rise of Deep Learning in Molecular Docking

Deep learning (DL) has introduced a paradigm shift, moving beyond traditional search-and-score methods. Models like SurfDock (a generative diffusion model) and DynamicBind have shown remarkable pose prediction accuracy, sometimes surpassing traditional methods [2] [7]. However, a multidimensional evaluation reveals a critical trade-off: while DL models like SurfDock achieve high pose accuracy (e.g., 91.8% on the Astex set), they often generate poses with poorer physical validity (63.5% valid) compared to traditional methods like Glide SP (97.7% valid) [2]. This indicates that DL models can produce poses that look correct overall but contain unrealistic atomic clashes or bond geometries.

The Critical Role of the Target Protein

Docking performance is not uniform across all targets; it is significantly influenced by the type of target protein [20]. Proteins with deep, buried active sites (e.g., acetylcholinesterase) pose different challenges than those with open, flexible sites (e.g., kinases). This target-dependent performance means a program that excels for one protein class may be less accurate for another. Consequently, benchmarking across a diverse set of protein structures is essential for a comprehensive evaluation [20].

Docking Method Performance Tiers: A 2025 systematic evaluation classified docking methods into four distinct tiers based on their combined success rate (RMSD ≤ 2 Å and physical validity), revealing that traditional and hybrid methods currently offer the most balanced performance [2].

Practical Docking Protocols: From Standard Procedures to Advanced Workflows

Molecular docking is a cornerstone of computational drug discovery, and the objective evaluation of docking software is critical for its advancement. Standardized benchmarking sets provide the essential foundation for fair and reproducible comparisons, allowing researchers to identify the strengths and weaknesses of different methodologies. Among these, the Directory of Useful Decoys, Enhanced (DUD-E) and the PDBbind database have emerged as pivotal resources for benchmarking key aspects of docking performance, from virtual screening enrichment to binding pose and affinity prediction [21] [22] [23]. This guide provides a comparative analysis of contemporary docking methods using these standardized benchmarks.

The Critical Role of Benchmarking in Molecular Docking

The evaluation of molecular docking software extends beyond simple predictive capability; it assesses a method's utility in real-world drug discovery scenarios. Reliable benchmarking sets must control for common biases, such as the correlation between molecular size and docking scores, to ensure that enrichment reflects genuine recognition of complementary chemistry rather than artifact [22]. Standardized databases like DUD-E and PDBbind provide carefully curated, publicly available datasets that enable the direct comparison of different docking algorithms on a level playing field.

DUD-E is specifically designed to benchmark virtual screening performance. It provides a set of known active compounds alongside "decoys"—molecules that are physically similar to the actives but are topologically dissimilar to minimize the likelihood of actual binding. This construction tests a docking program's ability to prioritize true binders from a background of challenging, property-matched non-binders [21] [22].

PDBbind offers a comprehensive collection of experimentally measured binding affinity data (Kd, Ki, and IC50) for biomolecular complexes found in the Protein Data Bank (PDB). By linking structural information with energetic data, it serves as a central resource for developing and testing scoring functions for binding pose prediction and affinity estimation [23].

Table 1: Key Characteristics of DUD-E and PDBbind Databases

| Database | Primary Benchmarking Purpose | Contents | Key Features |

|---|---|---|---|

| DUD-E [21] [22] | Virtual Screening Enrichment | 22,886 active compounds against 102 targets; ~50 property-matched decoys per active. | Decoys are matched on physicochemical properties (MW, logP, HBD, HBA) but are topologically dissimilar. Includes novel targets like GPCRs and ion channels. |

| PDBbind [23] | Binding Pose & Affinity Prediction | >12,000 biomolecular complexes with experimental binding affinity data; includes a refined "core set" for scoring studies. | Links 3D structural data from the PDB with quantitative binding affinity data. Provides a curated refined set for high-quality benchmarking. |

Performance Comparison of Docking Methodologies

A comprehensive 2025 study systematically evaluated traditional and deep learning (DL) docking methods across multiple benchmarks, including DUD-E and others, providing critical insights into their performance across several key dimensions [2].

Pose Prediction Accuracy and Physical Validity

The study classified docking methods into distinct performance tiers based on their success in predicting binding poses within 2.0 Å root-mean-square deviation (RMSD) of the crystal structure while also producing physically plausible structures (as validated by the PoseBusters toolkit) [2].

Table 2: Performance Tiers of Docking Methods (Adapted from Li et al., 2025) [2]

| Performance Tier | Methodology | Representative Tools | Key Characteristics |

|---|---|---|---|

| Tier 1: Best Balance | Traditional & Hybrid Methods | Glide SP, Interformer | Excellent physical validity (>94% PB-valid rates); hybrid methods combine AI scoring with traditional conformational search. |

| Tier 2: High Pose Accuracy | Generative Diffusion Models | SurfDock, DiffBindFR | Superior pose prediction accuracy (e.g., SurfDock >70% RMSD ≤2Å across datasets) but often produce steric clashes or incorrect H-bonds. |

| Tier 3: Lower Performance | Regression-Based Models | KarmaDock, GAABind, QuickBind | Often fail to produce physically valid poses; performance lags behind other paradigms. |

The data reveals a critical trade-off: while generative diffusion models like SurfDock excel in pose accuracy, they frequently generate structures with physical imperfections. Conversely, traditional methods like Glide SP maintain exceptional physical plausibility, and hybrid methods like Interformer strike the most practical balance between these objectives [2].

Virtual Screening Performance on DUD-E

Performance in pose prediction does not always translate directly to effectiveness in virtual screening (VS), a primary application in drug discovery. The ability to correctly rank active compounds above decoys in a DUD-E benchmark is a crucial test of a method's utility for lead identification.

Regression-based models and some generative approaches, despite lower pose accuracy, can still achieve competitive enrichment in VS, as they may learn to recognize key interaction features that correlate with binding [2]. The 2025 study notes that hybrid methods, which integrate AI-driven scoring functions with traditional search algorithms, often demonstrate robust VS performance by leveraging the strengths of both approaches [2]. Another study on blind docking, CoBdock-2, also demonstrated its effectiveness on the DUD-E benchmark, highlighting how method-specific optimizations can lead to successful VS application [24].

Experimental Protocols for Benchmarking

To ensure reproducible and fair comparisons, researchers should adhere to standardized experimental protocols when using DUD-E and PDBbind.

Standardized Workflow for Benchmarking

The following diagram outlines a generalized workflow for conducting a molecular docking software benchmark using these standardized sets.

Protocol for Virtual Screening with DUD-E

- Target Selection: Select relevant targets from the DUD-E database (102 available). Each target provides a set of active ligands and property-matched decoys [21] [22].

- Structure Preparation: Use the provided protein structure files for each target. DUD-E offers a single, carefully selected X-ray structure per target, optimized for docking. Pay attention to the preparation notes, which may include guidance on handling crystallographic waters, histidine protonation states, and side-chain flips [22].

- Docking Execution: Dock the entire library of actives and decoys for a target against its prepared protein structure. It is critical to use the same docking parameters and box size for every compound to ensure a fair ranking.

- Analysis of Results: Rank the compounds based on their docking scores. Calculate enrichment metrics, such as the Enrichment Factor (EF) at a given percentage of the screened library (e.g., EF1% or EF10%) or the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). These metrics quantify how well the method prioritizes actives over decoys.

Protocol for Pose and Affinity Prediction with PDBbind

- Dataset Selection: Use the PDBbind "refined set" for general benchmarking or the "core set" for scoring function tests. These sets are curated to remove low-quality structures and ensure data integrity [23].

- Blind Pose Prediction: For each protein-ligand complex in the test set, remove the native ligand and use the docking program to re-predict its binding pose from scratch. The protein structure should be prepared consistently, often using the coordinates from the complex.

- Accuracy Assessment: Compare the predicted ligand pose to the experimentally determined crystal structure pose. The primary metric is the Root-Mean-Square Deviation (RMSD) of the ligand's heavy atoms. A prediction is typically considered successful if the RMSD is ≤ 2.0 Å. Additionally, use validation tools like PoseBusters to check the physical plausibility of the predicted pose [2].

- Affinity Prediction (Scoring): Use the docking program's scoring function to predict the binding affinity for the native (crystal) pose. Calculate the correlation (e.g., Pearson's R or Spearman's ρ) between the predicted scores and the experimental binding affinity data provided by PDBbind.

Key Technical Considerations

- Docking Box Size: The search space size significantly impacts accuracy. One study recommends an optimal docking box size of 2.9 times the radius of gyration (Rg) of the ligand, which has been shown to improve both pose prediction and virtual screening ranking compared to default settings [25].

- Generalization Testing: To truly assess robustness, benchmark methods on datasets designed to test generalization, such as the DockGen set for novel protein pockets. Many DL methods show degraded performance when faced with proteins or ligands that are topologically distinct from their training data [2].

Table 3: Key Resources for Molecular Docking Benchmarking

| Resource Name | Type | Primary Function in Benchmarking |

|---|---|---|

| DUD-E [21] | Benchmark Database | Provides actives and decoys for evaluating virtual screening enrichment. |

| PDBbind [23] | Benchmark Database | Provides structures with binding affinities for testing scoring and pose prediction. |

| PoseBusters [2] | Validation Tool | Checks docking predictions for physical plausibility and geometric correctness. |

| AutoDock Vina [2] [25] | Docking Software | Widely used traditional docking program for performance comparison. |

| Glide SP [2] | Docking Software | High-performance traditional docking method often used as a reference. |

| Diffusion Models (e.g., SurfDock) [2] | DL Docking Software | Represents state-of-the-art in pose accuracy for deep learning methods. |

| Hybrid Methods (e.g., Interformer) [2] | DL Docking Software | Combines AI scoring with traditional search for a balanced approach. |

The systematic benchmarking of molecular docking software using DUD-E and PDBbind reveals a nuanced landscape. Traditional methods like Glide SP and AutoDock Vina remain robust, particularly in producing physically valid structures. The emergence of deep learning has introduced powerful new paradigms, with generative diffusion models achieving superior pose accuracy, though often at the cost of physical plausibility. Currently, hybrid methods that integrate AI with traditional conformational searches appear to offer the most balanced performance [2].

For researchers, the choice of tool should be guided by the specific task: generative models for maximum pose accuracy, traditional methods for physical reliability, and hybrid methods for a balanced approach in virtual screening. Future developments must address the generalization challenges of DL methods, improve their physical realism, and continue to leverage standardized benchmarks like DUD-E and PDBbind to drive the field toward more reliable and effective computational drug discovery.

Molecular docking has evolved into an indispensable tool in computational drug discovery, enabling researchers to predict how small molecules interact with biological targets. The accuracy of these predictions, however, varies significantly based on the chosen software, scoring functions, and specific task requirements. Within the broader context of benchmarking molecular docking software accuracy research, structured workflows for re-docking, cross-docking, and virtual screening serve as essential frameworks for objective performance evaluation. These protocols establish standardized methodologies that allow for meaningful comparison across different docking tools, moving beyond theoretical capabilities to empirically validated performance in realistic drug discovery scenarios. Recent advances in machine learning and deep learning have further transformed the docking landscape, introducing new scoring functions and sampling algorithms that require rigorous assessment through these established workflows [7] [26].

This guide provides a comprehensive comparison of contemporary molecular docking software performance across these fundamental tasks, synthesizing experimental data from current benchmarking studies to offer evidence-based recommendations for researchers, scientists, and drug development professionals.

Experimental Protocols and Benchmarking Methodologies

Defining Core Docking Tasks and Evaluation Metrics

The performance assessment of molecular docking tools requires careful definition of specific tasks and corresponding evaluation metrics. Current research recognizes several distinct docking challenges with varying levels of difficulty and real-world relevance [7]:

Re-docking: This task involves extracting a ligand from its co-crystalized protein structure and docking it back into the same holo conformation. It represents the simplest case and serves primarily to evaluate a method's ability to reproduce a known binding pose when provided with an ideal receptor structure. Performance is typically measured by the root-mean-square deviation (RMSD) between the predicted pose and the experimental structure, with an RMSD ≤ 2.0 Å generally considered successful [7] [3].

Cross-docking: A more challenging task where a ligand from one protein-ligand complex is docked into a different conformation of the same protein (often from a complex with another ligand). This better simulates real-world drug discovery scenarios where the true binding conformation is unknown. Cross-docking success also uses RMSD measurements but typically results in lower success rates due to protein flexibility and induced fit effects [27].

Virtual Screening (VS): This large-scale application aims to identify potential binders from vast libraries of compounds. Performance is evaluated by the ability to enrich true active compounds over decoys (non-binders), typically measured by the Area Under the Receiver Operating Characteristic Curve (AUC), enrichment factors (EF) at early screening stages (e.g., EF1%), and pROC curves that assess chemotype enrichment [28] [3].

Apo-docking: Docking to unbound (apo) receptor structures, which presents significant challenges due to conformational differences between apo and holo states. This represents a highly realistic but difficult setting for practical drug discovery [7].

Blind docking: The most challenging task that requires prediction of both the binding site location and ligand pose without prior knowledge of the binding site [7].

Standardized Benchmarking Datasets and Preparation Protocols

To ensure fair comparisons across different docking tools, researchers have developed standardized benchmarking datasets and consistent preparation protocols:

| Data Source | Description | Application | Key Features |

|---|---|---|---|

| Cross-Docking Benchmark [27] | 4,399 protein-ligand complexes across 95 protein targets | Cross-docking and pose prediction | Categorized by difficulty (easy, medium, hard, very hard); docking-ready structures |

| DEKOIS 2.0 [28] | Benchmark sets with known bioactive molecules and structurally similar "decoy" molecules | Virtual screening performance evaluation | Challenging decoy sets; used for targets like PfDHFR, SARS-CoV-2 proteins |

| PDBBind [7] [26] | Comprehensive collection of protein-ligand complexes with binding affinity data | General docking and scoring validation | Curated experimental structures and binding data |

| DUD-E [27] | Database of useful decoys: enhanced | Virtual screening enrichment | Systematically designed decoys that are physically similar but chemically different from actives |

Standardized Protein Preparation Workflow:

- Structure Retrieval: Obtain crystal structures from Protein Data Bank (e.g., PDB IDs: 6A2M for WT PfDHFR, 6KP2 for quadruple-mutant PfDHFR) [28]

- Preprocessing: Remove water molecules, unnecessary ions, redundant chains, and crystallization molecules using tools like OpenEye's "Make Receptor" or PyMol API [28] [27]

- Hydrogen Addition: Add and optimize hydrogen atoms considering correct protonation states

- File Format Conversion: Convert to appropriate formats for docking software (PDBQT for AutoDock Vina, mol2 for PLANTS and FRED) [28]

Ligand Preparation Protocol:

- Library Curation: Collect known active compounds and generate decoys (typically in 1:30 active:decoy ratio for virtual screening benchmarks) [28]

- Conformer Generation: Use tools like Omega to generate multiple conformations for each ligand [28]

- Format Standardization: Convert to software-specific formats (PDBQT for AutoDock Vina, mol2 for PLANTS) using OpenBabel or SPORES [28]

Performance Comparison Across Docking Tasks

Pose Prediction Accuracy: Re-docking vs. Cross-docking

The performance gap between re-docking and cross-docking highlights the significant challenge posed by protein flexibility. Recent studies demonstrate that while most modern docking tools achieve high success rates in re-docking, their performance varies considerably in more realistic cross-docking scenarios.

Table 1: Pose Prediction Performance Across Docking Tools

| Software | Re-docking Success Rate (% <2Å RMSD) | Cross-docking Success Rate (% <2Å RMSD) | Notable Features | Experimental Conditions |

|---|---|---|---|---|

| CryoXKit with AutoDock-GPU [29] | Significant improvement over baseline | Significant improvement over baseline | Uses experimental density bias; no prior pharmacophore definition | Tested with high-resolution XRC and cryo-EM density maps |

| GNINA 1.3 [26] | High (exact % not specified) | Improved accuracy with CNN scoring | CNN scoring on atomic density grids; knowledge-distilled models for faster screening | CrossDocked2020 v1.3 dataset; updated training data |

| DiffDock [7] | State-of-the-art accuracy | State-of-the-art accuracy | Diffusion model-based; SE(3)-equivariant architecture; lower computational cost | PDBBind test set; demonstrates superior performance to traditional methods |

| AutoDock Vina [28] | Standard performance | Standard performance | Commonly used baseline; empirical scoring function | Standard benchmarking protocols |

| PLANTS [28] | Standard performance | Standard performance | Ant colony optimization algorithm | DEKOIS 2.0 benchmark sets |

The integration of experimental data directly into docking workflows shows particular promise. CryoXKit, which incorporates experimental density information from cryo-EM or X-ray crystallography as a biasing potential, demonstrated "significant improvements in re-docking and cross-docking" compared to unmodified force fields [29]. This approach addresses a fundamental limitation in transferring information between complexes without requiring expert intervention in coordinate determination.

Deep learning approaches have also shown remarkable progress. DiffDock, which applies diffusion models to molecular docking, "achieved state-of-the-art accuracy on a PDBBind test set, while operating at a fraction of the computational cost compared with traditional methods" [7]. However, these methods still face challenges with physical realism in predictions, including proper stereochemistry, bond lengths, and steric interactions.

Virtual Screening Performance and Enrichment

Virtual screening performance represents a critical metric for practical drug discovery applications, where the ability to identify true binders from large compound libraries directly impacts research efficiency.

Table 2: Virtual Screening Performance Against PfDHFR Variants [28]

| Docking Tool | ML Rescoring | WT PfDHFR EF1% | Quadruple-Mutant PfDHFR EF1% | Key Findings |

|---|---|---|---|---|

| PLANTS | None (default) | Not specified | Not specified | Baseline performance |

| PLANTS | CNN-Score | 28 | Not specified | Best enrichment for WT variant |

| AutoDock Vina | None (default) | Worse-than-random | Not specified | Poor default screening performance |

| AutoDock Vina | RF-Score-VS v2 | Better-than-random | Not specified | Significant improvement with ML rescoring |

| AutoDock Vina | CNN-Score | Better-than-random | Not specified | Significant improvement with ML rescoring |

| FRED | None (default) | Not specified | Not specified | Baseline performance |

| FRED | CNN-Score | Not specified | 31 | Best enrichment for resistant variant |

The data reveal several important patterns. First, machine learning-based rescoring consistently enhances virtual screening performance, sometimes transforming worse-than-random screening into useful enrichment. As the study notes, "re-scoring with RF and CNN significantly improved AutoDock Vina's screening performance from worse-than-random to better-than-random" [28].

Second, different docking tools may show variable performance against different protein variants. In the case of PfDHFR, PLANTS with CNN rescoring achieved the best enrichment for the wild-type (EF1% = 28), while FRED with CNN rescoring performed best against the drug-resistant quadruple mutant (EF1% = 31) [28]. This suggests that tool selection may need to be tailored to specific biological contexts.

The evaluation also highlighted that "pROC-Chemotype plots analysis revealed that these re-scoring combinations effectively retrieved diverse and high-affinity actives at early enrichment," addressing both binding affinity and chemical diversity in lead identification [28].

Addressing Protein Flexibility and Resistance Mutations

Protein flexibility remains a fundamental challenge in molecular docking, particularly relevant to cross-docking and virtual screening against mutant variants. Traditional docking methods typically treat proteins as rigid bodies while allowing ligand flexibility, but this simplification fails to capture essential biological dynamics [7].

Recent deep learning approaches aim to address this limitation. Methods like FlexPose enable "end-to-end flexible modeling of the 3D structure of protein-ligand complexes irrespective of input protein conformation (apo or holo)" [7]. Similarly, DynamicBind uses "equivariant geometric diffusion networks to model protein backbone and sidechain flexibility," potentially revealing cryptic binding pockets not evident in static structures [7].

The performance against drug-resistant targets highlights the importance of these advancements. In the PfDHFR benchmarking, the quadruple mutant (N51I/C59R/S108N/I164L) represents a clinically relevant resistance mechanism that alters binding site geometry and chemistry. The maintained screening performance against this variant, with FRED+CNN achieving EF1% = 31, demonstrates the potential of current approaches to address challenging drug targets [28].

Integrated Workflow Diagrams

Molecular Docking Benchmarking Workflow

Docking Benchmark Workflow

Structure-Based Virtual Screening Protocol with ML Rescoring

Virtual Screening with ML Enhancement

Table 3: Key Research Reagent Solutions for Docking Benchmarks

| Category | Resource | Specific Examples | Function and Application |

|---|---|---|---|

| Docking Software | Traditional Tools | AutoDock Vina, PLANTS, FRED, Surflex-Dock | Baseline docking performance; search and score algorithms [28] [30] |

| ML-Enhanced Tools | GNINA, DiffDock, CryoXKit | Improved accuracy with machine learning and experimental data integration [29] [7] [26] | |

| Scoring Functions | Classical Functions | AutoDock4 force field, Vina scoring | Traditional physics-based or empirical scoring [29] |

| Machine Learning Scores | CNN-Score, RF-Score-VS v2 | Enhanced binding affinity prediction and pose ranking [28] [3] [26] | |

| Benchmark Datasets | Pose Prediction | Cross-Docking Benchmark, Astex Diverse Set | Standardized evaluation of pose prediction accuracy [27] [30] |

| Virtual Screening | DEKOIS 2.0, DUD-E | Assessment of screening enrichment and early recognition [28] [27] | |

| Preparation Tools | Structure Processing | OpenEye Toolkits, SPORES, MGLTools | Protein and ligand preparation for docking experiments [28] [27] |

| File Conversion | OpenBabel, RDKit | Format interoperability between different docking programs [28] [3] | |

| Specialized Modules | Flexibility Handling | FlexPose, DynamicBind | Address protein flexibility and conformational changes [7] |

| Covalent Docking | GNINA 1.3 Covalent Module | Prediction of covalent ligand binding [26] |

Based on the comprehensive benchmarking data and experimental protocols analyzed, several key recommendations emerge for researchers selecting and implementing molecular docking workflows:

For pose prediction accuracy in re-docking scenarios, deep learning approaches like DiffDock and CryoXKit demonstrate superior performance, particularly when experimental structural data is available for integration. For cross-docking applications where protein flexibility is a concern, tools that incorporate receptor flexibility or use experimental density guidance show significant advantages over rigid-receptor methods.

In virtual screening campaigns, the combination of traditional docking tools with machine learning rescoring consistently outperforms either approach alone. Specifically, the pipeline of initial docking with tools like AutoDock Vina, FRED, or PLANTS followed by rescoring with CNN-Score or RF-Score-VS v2 has demonstrated enhanced enrichment factors, particularly for challenging targets like drug-resistant enzymes.

For specialized applications, recent advancements such as GNINA 1.3's covalent docking capabilities address important niche requirements, while tools like FlexPose show promise for handling significant conformational changes in apo-to-holo transitions.

The benchmarking protocols and comparative data presented provide a framework for evidence-based tool selection, enabling researchers to match software capabilities with specific project requirements in drug discovery pipelines. As the field continues to evolve with increasingly sophisticated machine learning approaches, these structured workflows and evaluation metrics will remain essential for validating new methodologies and ensuring continued progress in computational molecular docking accuracy.

In the field of computer-aided drug design, the accuracy of molecular docking predictions is fundamentally limited by the principle of "garbage in, garbage out." Even the most sophisticated docking algorithms cannot compensate for poorly prepared protein and ligand structures. As benchmarking studies reveal, structural artifacts and input errors in starting structures directly compromise the reliability of scoring functions and the predictive power of virtual screening workflows [31]. This guide examines the critical preparation steps necessary to minimize input errors, supported by experimental data comparing the performance of different tools and methodologies within a structured benchmarking framework.

The Critical Role of Input Preparation in Docking Accuracy

Molecular docking aims to predict the bound conformation and binding affinity of small molecules to protein targets, playing a pivotal role in structure-based drug discovery [32]. The process relies on computational algorithms to identify the optimal fit between two molecules based on physicochemical principles and non-covalent interactions including hydrogen bonds, ionic interactions, van der Waals forces, and hydrophobic effects [32].

Recent benchmarking efforts demonstrate that input preparation quality directly impacts docking success rates. Studies evaluating protein-ligand docking methods found that using native holo-protein structures (proteins in their ligand-bound form) resulted in success rates of approximately 52%, while using predicted structures or apo-form proteins (proteins without ligands) substantially reduced performance [33]. The quality of ligand structures proves equally critical, with one study noting that certain AI methods produced chemically invalid ligands despite sophisticated algorithms [33].

Step-by-Step Protein Preparation Protocol

Initial Structure Acquisition and Assessment

Begin by selecting a protein structure from the Protein Data Bank (PDB) based on these key criteria:

- High resolution (preferably <2.0 Å)

- Presence of a relevant co-crystallized ligand

- Minimal missing residues in the binding site region

- Favorable crystallographic R-factors

Experimental data suggests that structures with resolutions worse than 3.0 Å may introduce significant errors in docking accuracy [34].

Structure Processing and Repair

The HiQBind workflow exemplifies a systematic approach to correcting common protein structure issues [31]:

- Add missing atoms: Use tools like ProteinFixer to complete residues with absent atoms

- Correct protonation states: Ensure histidine residues and other titratable groups reflect physiological conditions

- Fix loop regions: Model missing loops or residues, particularly near binding pockets

- Remove steric clashes: Implement energy minimization to resolve atomic overlaps

Comparative studies show that proper structure correction can improve pose prediction success rates by 15-20% in benchmark evaluations [31].

Binding Site Preparation

- Explicitly define the binding pocket based on experimental data when available

- Retain crucial water molecules that mediate protein-ligand interactions

- Ensure proper metalloprotein coordination for targets with metal ions

Step-by-Step Ligand Preparation Protocol

Initial Structure Generation

- Obtain ligand structures from reliable databases such as PubChem or ZINC

- Convert 2D representations to 3D coordinates using tools like RDKit or CORINA

- Assign correct bond orders and formal charges based on chemical knowledge

Ligand Optimization and Validation

The HiQBind workflow includes a specialized LigandFixer module that addresses common issues [31]:

- Correct bond orders and aromaticity assignments

- Generate appropriate protonation states at physiological pH

- Ensure proper stereochemistry for chiral centers

- Validate chemical correctness using rule-based checkers

Studies indicate that ligand preparation errors account for approximately 25% of docking failures in virtual screening campaigns [31].

Conformational Sampling

- Generate multiple conformers for flexible ligands

- Ensure coverage of relevant torsion angles and ring conformations

- Balance computational efficiency with conformational completeness

Experimental Benchmarking of Preparation Methodologies

Performance Comparison of Docking Tools with Properly Prepared Inputs

Table 1: Success rates of docking programs on diverse protein-ligand complexes with optimized inputs

| Docking Method | Input Requirements | Success Rate (LRMSD ≤ 2Å) | Key Strengths |

|---|---|---|---|

| AutoDock Vina | Native holo structure + pocket definition | 52% | Speed, ease of use [33] |

| GNINA | CNN scoring + Vina sampling | Superior to Vina in VS | Enhanced active ligand identification [34] |

| Umol-pocket | Sequence + ligand SMILES | 45% | No experimental structure needed [33] |

| RoseTTAFold All-Atom | Sequence + ligand data | 42% | Integrated protein-ligand prediction [33] |

| DiffDock + AF2 | AF2 predicted structure | 21% | Uses predicted structures [33] |

Impact of Preparation Quality on Docking Success

Table 2: Effect of input quality on docking performance metrics

| Preparation Factor | Performance Metric | Well-Prepared | Poorly-Prepared |

|---|---|---|---|

| Protein structure resolution | Pose prediction accuracy | High (<2.0 Å) | Low (>3.0 Å) [34] |

| Ligand chemical validity | Method success rate | 98% valid (Umol) | As low as 1% (some AI methods) [33] |

| Binding site definition | Virtual screening enrichment | Significant improvement (GNINA) | Moderate (Vina) [34] |

| Protein flexibility handling | Success on diverse targets | 69% (Umol at 3Å) | 58% (Vina at 3Å) [33] |

Workflow Visualization: Protein-Ligand Preparation Process