Benchmarking Machine Learning for QSAR: A Practical Guide to Models, Metrics, and Modern Applications in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on benchmarking machine learning algorithms for Quantitative Structure-Activity Relationship (QSAR) modeling.

Benchmarking Machine Learning for QSAR: A Practical Guide to Models, Metrics, and Modern Applications in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on benchmarking machine learning algorithms for Quantitative Structure-Activity Relationship (QSAR) modeling. It covers foundational principles, from classical statistical methods to advanced deep learning and graph neural networks. The scope extends to practical methodological considerations, including molecular representation selection and task-specific model application for virtual screening or lead optimization. It addresses critical troubleshooting aspects like data quality, feature selection, and tackling dataset imbalance. Finally, the article details rigorous validation protocols, comparative performance analysis across algorithms, and the importance of applicability domain assessment. By synthesizing current best practices and emerging trends, this guide aims to equip scientists with the knowledge to build robust, predictive, and reliable QSAR models that accelerate the drug discovery pipeline.

From Linear Models to Deep Learning: Core Principles of QSAR Modeling

Quantitative Structure-Activity Relationship (QSAR) modeling has undergone a profound transformation, evolving from classical statistical approaches to modern artificial intelligence (AI)-driven paradigms. This evolution has fundamentally reshaped drug discovery, turning it from a trial-and-error process into a sophisticated, data-driven science [1]. The integration of AI has empowered researchers with faster, more accurate, and scalable methods to identify therapeutic compounds, ultimately aiming to reduce the high costs and timelines associated with traditional drug development [1] [2]. This guide objectively compares the performance of classical and modern QSAR methodologies, providing experimental data and benchmarking protocols essential for researchers and drug development professionals.

The Foundations and Evolution of QSAR Modeling

From Classical Descriptors to Deep Learning Representations

The core of QSAR lies in representing chemical structures numerically. The evolution of these representations mirrors the journey of the field itself:

- 1D/2D Descriptors: Classical QSAR relied on numerical encodings of fundamental chemical properties (e.g., molecular weight) or topological indices derived from 2D structures [1].

- 3D/4D Descriptors: These descriptors incorporated molecular shape, electrostatic potentials, and even conformational flexibility, providing a more realistic representation of molecules under physiological conditions [1].

- Quantum Chemical Descriptors: Descriptors such as HOMO-LUMO energy gaps and dipole moments were introduced to model electronic properties influencing bioactivity [1].

- Deep Descriptors: Modern AI, particularly graph neural networks (GNNs) and autoencoders, generates "deep descriptors" directly from molecular graphs or SMILES strings. These are data-driven, hierarchical features that capture complex, abstract molecular patterns without manual engineering [1].

The Progression of Modeling Algorithms

The statistical engines of QSAR have advanced from simple linear models to highly complex nonlinear architectures:

- Classical Era: The foundation was built on Multiple Linear Regression (MLR) and Partial Least Squares (PLS), prized for their simplicity, speed, and interpretability. These models are effective when relationships between descriptors and biological activity are linear [1] [3].

- Machine Learning Rise: Algorithms like Support Vector Machines (SVM), Random Forests (RF), and k-Nearest Neighbors (kNN) became standard tools. They handle high-dimensional data and capture nonlinear relationships without strict assumptions about data distribution. Random Forests, in particular, are favored for their robustness to noisy data and built-in feature selection [1].

- Deep Learning Dominance: The current state-of-the-art employs Graph Neural Networks (GNNs), transformers, and other deep learning architectures. These models learn directly from molecular structure, automatically discovering relevant features and complex structure-activity patterns that are often intractable for earlier methods [1] [4].

Table 1: Evolution of QSAR Modeling Techniques

| Era | Representative Algorithms | Key Characteristics | Typical Molecular Representations |

|---|---|---|---|

| Classical | Multiple Linear Regression (MLR), Partial Least Squares (PLS) | Linear, highly interpretable, relies on assumptions of normality and linearity | 1D/2D descriptors (e.g., molecular weight, topological indices) |

| Machine Learning | Support Vector Machines (SVM), Random Forests (RF), k-Nearest Neighbors (kNN) | Can capture non-linear relationships, more robust to noisy data | 2D/3D descriptors, fingerprints, quantum chemical descriptors |

| Deep Learning | Graph Neural Networks (GNNs), Transformers, Directed Message Passing Neural Network (DMPNN) | End-to-end learning, automatically learns features from raw data, high predictive power | Molecular graphs, SMILES strings, "deep descriptors" |

Performance Benchmarking: Classical vs. Machine Learning vs. Deep Learning

Predictive Performance on Diverse Tasks

Systematic benchmarking reveals clear performance trends across different modeling eras. A comprehensive benchmark of 13 AI methods for predicting cyclic peptide membrane permeability demonstrated that model performance is strongly dependent on molecular representation and architecture [4]. In this study, which used a large, curated dataset from the CycPeptMPDB database, graph-based models, particularly the Directed Message Passing Neural Network (DMPNN), consistently achieved top performance across regression, binary classification, and soft-label classification tasks [4].

Furthermore, the benchmark showed that regression tasks generally outperformed classification approaches for predicting permeability. While deep learning models led the pack, simpler models like Random Forest and SVM also demonstrated competitive performance, highlighting that the optimal model can be task-dependent [4].

Generalizability and the Scaffold Split Challenge

A critical test for any QSAR model is its ability to generalize to new, structurally distinct chemicals. Benchmarking studies often use a "scaffold split," where the test set contains molecules with core structures not seen during training, to simulate real-world discovery scenarios.

The cyclic peptide permeability study found that models validated via this rigorous scaffold split exhibited substantially lower generalizability compared to random splitting [4]. This performance drop is a recognized challenge in QSAR and underscores the risk of overfitting to local chemical patterns present in the training data, a pitfall that complex deep learning models are particularly susceptible to without proper validation.

Table 2: Benchmarking Model Performance and Generalizability

| Model / Approach | Reported Performance (Example) | Interpretability | Generalizability (Scaffold Split) |

|---|---|---|---|

| Classical (e.g., PLS) | Lower predictive power on complex, non-linear relationships | High (Model coefficients are directly interpretable) | Varies, can be good for congeneric series |

| Machine Learning (e.g., Random Forest) | Competitive performance, often strong for medium-sized datasets | Medium (Feature importance available, but local explanations needed) | Good, but can decrease with high dimensionality |

| Deep Learning (e.g., DMPNN) | Top performance in systematic benchmarks (e.g., Cyclic Peptide Permeability) [4] | Low (Inherent "black box"; requires post-hoc interpretation tools) | Can be substantially lower than with random splits [4] |

Experimental Protocols for Benchmarking QSAR Models

Data Preparation and Splitting Strategies

Robust benchmarking requires careful data curation and splitting to avoid over-optimistic performance estimates.

- Dataset Curation: The CARA (Compound Activity benchmark for Real-world Applications) benchmark emphasizes using data from real-world assays, distinguishing between two primary application categories [5]:

- Virtual Screening (VS) Assays: Contain compounds with a diffused, widespread distribution of pairwise similarities, mimicking screening from diverse chemical libraries.

- Lead Optimization (LO) Assays: Contain congeneric compounds with high pairwise similarities, representing a series of structurally related analogs [5].

- Data Splitting:

- Random Split: The dataset is randomly divided into training, validation, and test sets. This provides a baseline measure of performance but can overestimate real-world applicability.

- Scaffold Split: Molecules are split based on their Murcko scaffolds, ensuring the test set contains core structures not present in the training set. This is a more rigorous test of a model's ability to generalize to novel chemotypes [4] [5].

Validation and Interpretation Benchmarks

Beyond simple prediction accuracy, a comprehensive benchmark must assess model interpretability and robustness.

- Validation Techniques: Established protocols include internal validation (e.g., cross-validation), external validation with a held-out test set, and Y-scrambling to check for chance correlations [3]. Defining the model's Applicability Domain (AD) is crucial to understand the chemical space where its predictions are reliable [6].

- Interpretability Benchmarks: Synthetic datasets with pre-defined patterns allow for quantitative evaluation of interpretation methods. For example, benchmarks have been developed where the target property is the sum of nitrogen atoms in a molecule. The performance of an interpretation method (e.g., SHAP, LIME) is then measured by its ability to correctly assign positive contributions to nitrogen atoms and zero to others, providing a "ground truth" for validation [7] [8].

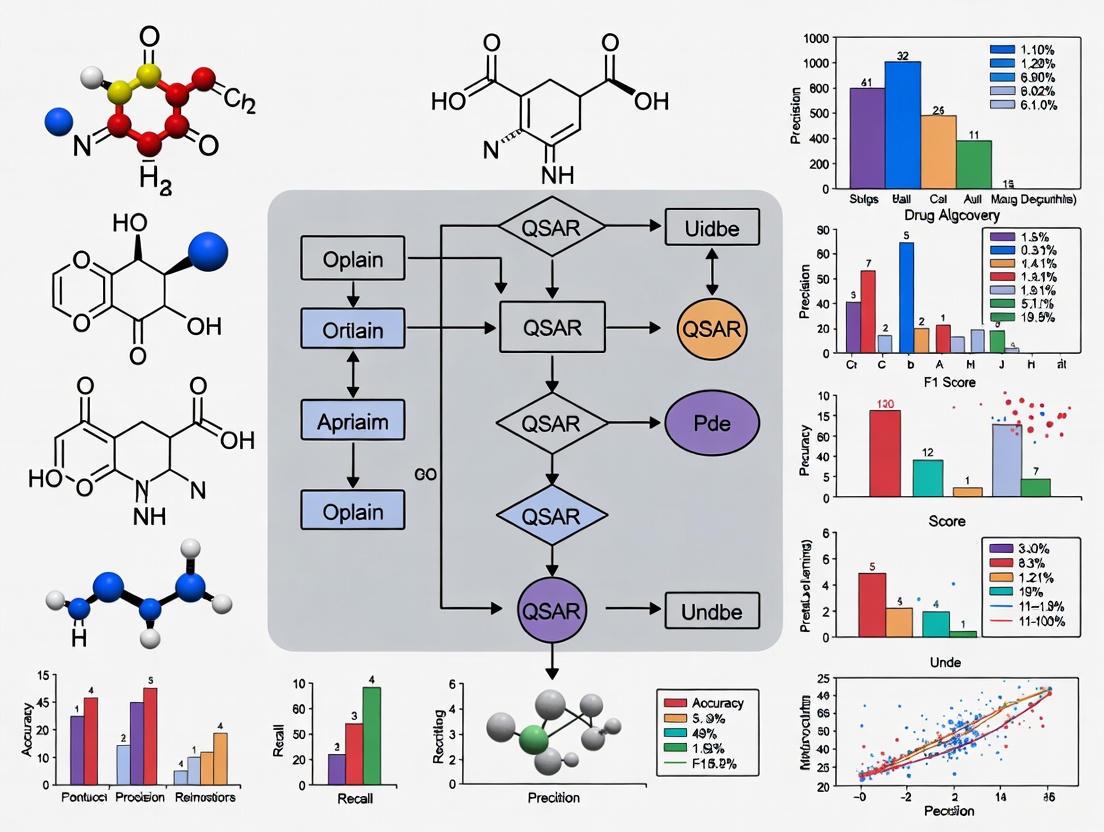

The following workflow diagram illustrates the key stages in a robust QSAR benchmarking experiment:

The Scientist's Toolkit: Essential Reagents for QSAR Research

Table 3: Key Software and Databases for Modern QSAR Research

| Tool Name | Type | Primary Function in QSAR |

|---|---|---|

| RDKit | Cheminformatics Library | An open-source toolkit for cheminformatics, used for descriptor calculation, fingerprint generation, and fundamental molecular operations [4]. |

| scikit-learn | Machine Learning Library | A comprehensive library for Python providing classical ML algorithms (RF, SVM, PLS) and utilities for model evaluation and hyperparameter tuning [1]. |

| DeepChem | Deep Learning Library | An open-source platform that simplifies the development of deep learning models on chemical data, including Graph Neural Networks [7]. |

| ChEMBL | Public Database | A manually curated database of bioactive molecules with drug-like properties, providing a vast source of experimental data for training and testing models [5]. |

| VEGA | QSAR Platform | A platform integrating various (Q)SAR models, particularly useful for regulatory applications like predicting environmental fate and toxicity [6]. |

| SHAP (SHapley Additive exPlanations) | Interpretation Tool | A unified framework for interpreting model predictions by quantifying the contribution of each feature to an individual prediction, crucial for "black box" models [1] [9]. |

The evolution of QSAR from classical MLR and PLS to modern AI is a journey from interpretable linear models to powerful, non-linear predictors. Benchmarking studies consistently show that modern AI methods, especially graph-based models, deliver superior predictive accuracy [1] [4]. However, this power comes with trade-offs: increased computational complexity, a greater risk of overfitting to training data scaffolds, and reduced inherent interpretability. The choice of model is not a simple declaration of a winner but a strategic decision. Researchers must balance the need for predictive power with the requirements for generalizability, speed, and interpretability based on their specific project phase—whether it's initial high-throughput virtual screening or the detailed, mechanism-driven optimization of lead compounds. The future of QSAR lies not in a single algorithm, but in the continued development and thoughtful application of a diverse, well-understood toolkit.

In Quantitative Structure-Activity Relationship (QSAR) modeling, molecular descriptors are fundamental numerical representations that translate chemical information into a quantifiable format suitable for machine learning algorithms. These descriptors formally represent the final result of a logical and mathematical procedure that transforms chemical information encoded within a symbolic representation of a molecule into a useful number [10]. The selection of appropriate molecular representations significantly impacts model performance in predicting biological activities and physicochemical properties, making descriptor choice a critical consideration in benchmarking studies for drug discovery applications [11] [5].

Molecular descriptors are broadly classified by their dimensionality, which corresponds to the complexity of structural information they encode. This classification system ranges from simple 0D descriptors that require no structural information to sophisticated 4D descriptors that account for conformational flexibility and molecular interactions [1] [10]. As the field of computational drug discovery advances, understanding the strengths, limitations, and appropriate applications of each descriptor type becomes essential for building robust QSAR models that can reliably predict molecular properties in real-world scenarios [5] [12].

Classification and Definitions of Molecular Descriptors

0D and 1D Descriptors

0D descriptors represent the simplest form of molecular representation, requiring no information about molecular structure or atom connectivity. These descriptors are derived directly from the chemical formula and include basic molecular properties such as atom counts, molecular weight, and atom-type frequencies. For example, the chemical formula C₇H₇Cl for p-chlorotoluene provides sufficient information to calculate these descriptors. Other examples include sum or average values of atomic properties such as mass, polarizability, or hydrophobic constants. While 0D descriptors exhibit high degeneracy (equal values for different molecular structures) and contain relatively low chemical information, they provide a valuable foundation for modeling certain physicochemical properties and are computationally efficient to calculate [10].

1D descriptors incorporate substructural information through the identification of functional groups and structural fragments within the molecule. These descriptors include counts of specific functional groups (e.g., hydroxyl, carbonyl, amino groups), hydrogen bond donors and acceptors, rotatable bonds, and ring systems. The substructure list representation forms the basis for molecular fingerprints, which are binary vectors indicating the presence or absence of specific structural patterns. 1D descriptors offer more detailed structural information than 0D descriptors while remaining computationally inexpensive to generate. They are particularly valuable for rapid similarity assessments and initial screening phases in drug discovery pipelines [13] [10].

2D Descriptors

2D descriptors, also known as topological descriptors, are derived from the molecular graph representation that defines atom connectivity without considering spatial coordinates. In this representation, atoms correspond to vertices and bonds to edges in a graph structure. These descriptors are graph invariants that capture structural patterns through mathematical transformations of the molecular connectivity matrix. Common 2D descriptors include connectivity indices, path counts, graph-theoretical indices, and information-theoretic measures that encode molecular branching, shape, and complexity [13] [10].

The advantage of 2D descriptors lies in their ability to discriminate between structural isomers while remaining independent of molecular conformation. They provide a balanced approach between informational content and computational requirements, making them widely applicable across diverse QSAR modeling scenarios. Popular software packages such as Dragon and RDKit can calculate comprehensive sets of 2D descriptors from molecular structure inputs [10] [12].

3D Descriptors

3D descriptors incorporate spatial molecular geometry by utilizing the three-dimensional coordinates of atoms within a molecule. These descriptors capture steric and electronic features that influence molecular interactions and biological activity, including molecular surface area, volume, shape parameters, and electrostatic potential distributions. Unlike 2D descriptors, 3D representations can distinguish between stereoisomers and account for conformational effects that significantly impact biological activity [14] [10].

The calculation of 3D descriptors requires energy-minimized molecular structures, which introduces computational complexity and potential uncertainties related to conformational sampling. Despite these challenges, 3D descriptors provide enhanced performance for modeling endpoints strongly influenced by molecular shape and steric factors. Common approaches for 3D similarity assessment include volume overlap methods (e.g., ROCS), surface-based comparisons, and field-based techniques that evaluate molecular interaction potentials [14].

Graph-Based Representations

Graph-based representations directly utilize the molecular graph structure as input for machine learning algorithms, particularly graph neural networks (GNNs). In this approach, atoms are represented as nodes (with features such as element type, hybridization, and charge), while bonds are represented as edges (with features such as bond type and conjugation). Message Passing Neural Networks (MPNNs) and other GNN architectures then learn molecular representations by iteratively exchanging information between connected atoms, effectively capturing complex structural patterns without manual feature engineering [11] [15].

Graph-based methods have demonstrated state-of-the-art performance across various molecular property prediction benchmarks, as they naturally represent molecular topology and can learn task-specific representations directly from data. The directed message passing neural network (D-MPNN) architecture has shown particular promise in molecular property prediction challenges, often outperforming traditional descriptor-based approaches when sufficient training data is available [16] [15].

Performance Benchmarking Across Descriptor Types

Comparative Performance in ADME-Tox Prediction

Recent benchmarking studies provide quantitative comparisons of descriptor performance across critical ADME-Tox (Absorption, Distribution, Metabolism, Excretion, and Toxicity) endpoints. These evaluations reveal consistent patterns in descriptor effectiveness for different prediction tasks, highlighting the importance of strategic descriptor selection based on the specific modeling objective and available data characteristics [12].

Table 1: Performance Comparison of Descriptor Types Across ADME-Tox Targets

| ADME-Tox Target | Best Performing Descriptor | Algorithm | Key Performance Metric |

|---|---|---|---|

| Ames Mutagenicity | 2D Descriptors | XGBoost | Superior to combined descriptors |

| P-glycoprotein Inhibition | 2D Descriptors | XGBoost | Superior to combined descriptors |

| hERG Inhibition | 2D Descriptors | XGBoost | Superior to combined descriptors |

| Hepatotoxicity | 2D Descriptors | XGBoost | Superior to combined descriptors |

| Blood-Brain Barrier Permeability | 2D Descriptors | XGBoost | Superior to combined descriptors |

| CYP 2C9 Inhibition | 2D Descriptors | XGBoost | Superior to combined descriptors |

| General ADMET | 3D Descriptors + Morgan Fingerprints | Optimized Models | Best overall performance [11] |

A comprehensive assessment of descriptor performance across six ADME-Tox targets revealed that traditional 2D descriptors consistently outperformed fingerprint-based representations and their combinations when used with the XGBoost algorithm. Surprisingly, 2D descriptors achieved better performance than models using all examined descriptor sets combined for almost every dataset, challenging the conventional practice of concatenating multiple representations without systematic optimization [12].

For specific ADMET prediction tasks, optimized combinations of descriptors and algorithms have demonstrated superior performance. A recent benchmarking study highlighted that careful feature selection and model optimization can significantly enhance prediction accuracy, with 3D descriptors and Morgan fingerprints contributing to top-performing models for various ADMET endpoints [11].

Performance Across Machine Learning Algorithms

Descriptor performance exhibits significant dependence on the machine learning algorithm employed, with different algorithms showing distinct preferences for specific descriptor types based on their underlying learning mechanisms and the characteristics of the chemical space being modeled.

Table 2: Algorithm-Descriptor Performance Interactions

| Algorithm | Best Performing Descriptor Type | Application Context | Performance Notes |

|---|---|---|---|

| XGBoost | 2D Descriptors | ADME-Tox Classification | Consistent superiority across targets [12] |

| RPropMLP | 3D Descriptors | Specific ADME-Tox Targets | Competitive with 2D descriptors [12] |

| Random Forest | Morgan Fingerprints | General Molecular Properties | Robust performance [16] |

| Graph Neural Networks | Graph Representations | Binding Affinity Prediction | State-of-the-art with sufficient data [16] |

| k-Nearest Neighbors | Compression-Based (MolZip) | Limited Data Scenarios | Competitive with fingerprints [16] |

| Support Vector Machines | Extended Connectivity Fingerprints | Various Molecular Properties | Good performance with balanced data [16] |

The interaction between algorithm choice and descriptor selection highlights the importance of considering both components simultaneously during model optimization. Tree-based methods like XGBoost and Random Forest demonstrate robust performance with traditional 2D descriptors and fingerprints, while neural network architectures often achieve superior results with learned representations from graph-based inputs or specialized descriptor sets [12] [16].

Experimental Protocols and Benchmarking Methodologies

Standardized Benchmarking Workflow

Robust evaluation of molecular descriptors requires carefully designed experimental protocols that account for dataset characteristics, model selection, and performance validation. The following workflow diagram illustrates a comprehensive benchmarking methodology derived from recent ADMET prediction studies:

This systematic approach ensures fair comparison between descriptor types by controlling for confounding factors such as data quality, model architecture, and evaluation metrics. The workflow emphasizes the importance of statistical hypothesis testing alongside conventional performance metrics to establish significant differences between descriptor combinations [11].

Data Curation and Preprocessing Protocols

High-quality data curation forms the foundation of reliable descriptor benchmarking. Standardized protocols include:

- Salt Removal and Standardization: Extraction of parent organic compounds from salt complexes using tools like the standardisation tool by Atkinson et al. with modifications to include boron and silicon as organic elements [11].

- Tautomer Normalization: Consistent representation of tautomeric forms to ensure standardized descriptor calculation [11].

- Duplicate Handling: Removal of inconsistent duplicate measurements while retaining consistent duplicates based on predefined criteria (exact match for classification, within 20% IQR for regression) [11].

- Structural Filtering: Application of heavy atom and element filters (C, H, N, O, S, P, F, Cl, Br, I) to ensure chemical relevance and computational stability [12].

- Geometry Optimization: Generation of 3D structures using tools like Macromodel (Schrödinger suite) followed by energy minimization to ensure physiologically relevant conformations [12].

These preprocessing steps address common data quality issues in public chemical databases, including inconsistent SMILES representations, fragmented structures, duplicate measurements with conflicting values, and inconsistent labeling across training and test sets [11].

Evaluation Metrics and Validation Strategies

Comprehensive benchmark studies employ multiple evaluation metrics to assess different aspects of model performance:

- Classification Tasks: Accuracy, sensitivity, specificity, Matthews Correlation Coefficient (MCC), AUC-ROC

- Regression Tasks: R², Mean Absolute Error (MAE), Root Mean Square Error (RMSE)

- Model Robustness: Q² (cross-validated R²), external validation performance, learning curves

Advanced validation strategies include scaffold splitting to assess generalization to novel chemotypes, temporal splitting to simulate real-world application scenarios, and cross-validation with statistical testing to establish significant performance differences [11] [5]. The integration of hypothesis testing with conventional cross-validation provides enhanced reliability in model selection, particularly in noisy domains like ADMET prediction [11].

Essential Research Reagents and Computational Tools

Software and Libraries for Descriptor Calculation

Table 3: Essential Computational Tools for Molecular Descriptor Research

| Tool Name | Descriptor Types | Primary Function | Application Context |

|---|---|---|---|

| RDKit | 2D, 3D, Fingerprints | Cheminformatics Platform | Standard for descriptor calculation [11] [12] |

| Dragon | 1D, 2D, 3D | Comprehensive Descriptor Calculation | Gold standard for traditional descriptors [10] |

| PaDEL | 1D, 2D | Descriptor Calculation | Alternative to Dragon [1] |

| Chemprop | Graph Representations | Message Passing Neural Networks | State-of-the-art GNN implementation [11] |

| Schrödinger Suite | 3D | Molecular Modeling & Optimization | Industry-standard for 3D structure preparation [12] |

| scikit-learn | NA | Machine Learning Algorithms | Standard ML implementations [16] |

| MolZip | Compression-Based | Novel Representation Learning | Alternative approach for limited data [16] |

These tools represent the essential software infrastructure for calculating molecular descriptors and building predictive QSAR models. RDKit has emerged as the de facto standard for open-source cheminformatics, providing comprehensive implementation of 2D descriptors, 3D descriptors, and molecular fingerprints. Commercial packages like Dragon offer the most extensive collections of molecular descriptors, with thousands of calculated parameters spanning multiple dimensions of chemical information [10] [12].

Specialized implementations like Chemprop provide optimized graph neural network architectures that directly learn from molecular graph representations, while novel approaches like MolZip explore alternative paradigms using compressed molecular representations that can achieve competitive performance without extensive training [11] [16].

Benchmark Datasets and Chemical Spaces

Robust evaluation of molecular descriptors requires diverse chemical benchmarks that represent real-world application scenarios:

- Therapeutics Data Commons (TDC): Curated ADMET benchmarks with standardized splits and evaluation protocols [11]

- MoleculeNet: Comprehensive collection of molecular property datasets for benchmarking machine learning algorithms [16]

- ChEMBL: Large-scale bioactivity data from scientific literature, enabling practical benchmark construction [5]

- PubChem: Publicly available screening data for specific targets like CYP isoforms [12]

The CARA (Compound Activity benchmark for Real-world Applications) framework addresses important distinctions between virtual screening (VS) and lead optimization (LO) scenarios, which present different compound distribution patterns and modeling challenges [5]. This differentiation is crucial for meaningful descriptor evaluation, as performance characteristics may vary significantly between these distinct application contexts.

The benchmarking evidence consistently demonstrates that 2D descriptors provide robust performance across diverse ADME-Tox prediction tasks, particularly when paired with tree-based algorithms like XGBoost. Their computational efficiency, structural interpretability, and strong predictive performance make them a practical choice for many QSAR applications. However, 3D descriptors and graph-based representations offer complementary advantages for specific endpoints influenced by molecular shape and stereochemistry, particularly as data availability increases [12] [11].

Future research directions include the development of optimized descriptor selection methodologies that move beyond conventional concatenation approaches, adaptive representation strategies that dynamically adjust to specific prediction tasks and data characteristics, and integrated multi-scale representations that combine the strengths of different descriptor types while mitigating their individual limitations [11] [15]. The integration of domain knowledge with data-driven representation learning continues to offer promising pathways for enhancing molecular property prediction in real-world drug discovery applications.

Quantitative Structure-Activity Relationship (QSAR) modeling represents a cornerstone of modern computational drug discovery and toxicology, enabling researchers to predict the biological activity or toxicity of compounds from their chemical structures. Over recent decades, the field has undergone a significant evolution, transitioning from classical statistical approaches to increasingly sophisticated machine learning (ML) algorithms. Among these, Random Forest and Support Vector Machines (SVM) have established themselves as reliable, high-performing classical methods. More recently, Graph Neural Networks (GNNs) have emerged as a powerful deep learning approach capable of learning directly from molecular graph structures. This guide provides an objective comparison of these algorithms' performance, experimental protocols, and applicability within QSAR modeling, framed within the broader context of benchmarking for pharmaceutical and toxicological research.

Algorithm Performance Comparison in QSAR Tasks

The performance of machine learning algorithms varies significantly across different QSAR tasks, dataset sizes, and evaluation metrics. The tables below summarize quantitative performance data from recent studies, providing a benchmark for algorithm selection.

Table 1: Overall Performance Comparison Across Diverse QSAR Tasks

| Algorithm | Best Reported R² (Regression) | Key Strengths | Common Challenges | Ideal Use Cases |

|---|---|---|---|---|

| Random Forest | 0.835 [17] | Robust to noise, provides feature importance, handles non-linear relationships | Can overfit on noisy data, less interpretable than linear models | Lead optimization, medium-sized datasets, feature selection [17] [1] |

| SVM | 0.862 (Accuracy) [18] | Effective in high-dimensional spaces, strong theoretical foundations | Performance depends on kernel choice; memory-intensive for large datasets | Virtual screening, binary classification tasks [18] [1] |

| GNN | High Explainability & Predictivity scores [19] | Learns molecular representations directly from graphs, superior for activity cliffs | "Black-box" nature, high computational resource demand, requires large data | Complex pattern recognition, explainable AI tasks, activity cliff prediction [19] |

Table 2: Performance on Specific QSAR Benchmarks

| Algorithm | Dataset / Task | Performance Metric | Result | Context & Notes |

|---|---|---|---|---|

| Random Forest | Anticancer Flavones (MCF-7 Cell Line) [17] | R² (Test Set) | 0.820 | Superior performance vs. XGBoost and ANN on this dataset |

| Random Forest | Anticancer Flavones (HepG2 Cell Line) [17] | R² (Test Set) | 0.835 | Demonstrated consistent accuracy across cell lines |

| SVM | World Happiness Index (Classification) [18] | Accuracy | 86.2% | Tied with Logistic Regression, Decision Tree, and Neural Network for top performance |

| Consensus Model | Rat Acute Oral Toxicity (CCM) [20] | Under-prediction Rate | 2% | Most health-protective model; combines multiple models |

| GNN (ACES-GNN) | 30 Pharmacological Targets [19] | Improved Explainability | 28/30 datasets | Framework integrates explanation supervision into training |

| GNN (ACES-GNN) | 30 Pharmacological Targets [19] | Improved Predictivity & Explainability | 18/30 datasets | Shows positive correlation between prediction accuracy and explanation quality |

Experimental Protocols and Methodologies

Benchmarking Frameworks and Data Sourcing

Robust benchmarking of QSAR models requires carefully designed frameworks that reflect real-world challenges. The CARA (Compound Activity benchmark for Real-world Applications) benchmark addresses this by distinguishing between two primary drug discovery tasks: Virtual Screening (VS) and Lead Optimization (LO) [5]. VS assays involve screening large, diverse compound libraries, resulting in datasets with "diffused" molecular patterns. In contrast, LO assays involve optimizing a lead compound, resulting in datasets with "aggregated" patterns of highly similar, congeneric compounds [5]. This distinction is critical, as an algorithm may excel in one task but underperform in the other. Performance evaluation must also adapt to the task: for VS, the Positive Predictive Value (PPV)—the hit rate within the top-ranked compounds—is often more relevant than balanced accuracy, as it reflects the practical constraint of being able to test only a limited number of compounds experimentally [21].

Algorithm Training and Validation Protocols

Data Preprocessing and Feature Selection: For classical ML algorithms like Random Forest and SVM, molecular structures are typically converted into numerical descriptors (e.g., physicochemical properties, topological indices) or fingerprints (e.g., ECFP). Dimensionality reduction techniques like Principal Component Analysis (PCA) are often applied to avoid overfitting [1]. For GNNs, this step is automated, as the model learns features directly from the graph representation of the molecule, where atoms are nodes and bonds are edges [19].

Model Validation and Performance Metrics: Rigorous validation is essential. Standard practice involves splitting data into training, validation, and external test sets. For regression tasks (e.g., predicting IC₅₀ values), common metrics include the coefficient of determination (R²) and Root Mean Square Error (RMSE). For classification tasks (e.g., active/inactive), metrics include accuracy, precision, recall, and the area under the receiver operating characteristic curve (AUROC) [17] [1]. As previously mentioned, PPV is gaining traction for evaluating virtual screening performance [21].

The following workflow diagram illustrates a standardized protocol for developing and benchmarking QSAR models.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Successful QSAR modeling relies on a suite of software tools, databases, and computational platforms. The following table details key resources used in the field.

Table 3: Essential Research Reagents & Computational Platforms

| Tool / Resource Name | Type | Primary Function in QSAR | Relevance to Algorithms |

|---|---|---|---|

| ChEMBL [5] | Public Database | Curated database of bioactive molecules with drug-like properties | Primary source of training data for all algorithms. |

| CARA Benchmark [5] | Benchmarking Framework | Provides a standardized benchmark for VS and LO tasks | Critical for objective, real-world performance comparison of RF, SVM, and GNN. |

| SHAP [1] | Interpretation Library | Explains output of ML models by computing feature importance | Commonly applied to interpret Random Forest and SVM models. |

| ACES-GNN Framework [19] | Specialized GNN Architecture | Enhances GNN interpretability and accuracy for Activity Cliffs (ACs) | Specific implementation for GNNs, addressing the "black-box" issue. |

| OpenML [22] | Open-Science Platform | Enables sharing of datasets, tasks, and model evaluations in uniform standards | Supports reproducible benchmarking and meta-learning for all algorithms. |

| OCHEM [23] | Online Modeling Environment | Platform for building QSAR models with various descriptor packages | Used for developing consensus models; cited in large-scale toxicity prediction challenges. |

The benchmarking data and experimental protocols outlined in this guide demonstrate that there is no single "best" algorithm for all QSAR modeling scenarios. Random Forest remains a highly robust and effective choice for many standard classification and regression tasks, particularly with structured molecular descriptors. Support Vector Machines continue to offer strong performance, especially in classification tasks. The rise of Graph Neural Networks represents a significant shift, offering superior capability in learning complex structure-activity relationships directly from molecular graphs and providing insights into challenging phenomena like activity cliffs.

Future progress in the field will likely be driven by hybrid and consensus approaches that leverage the strengths of multiple algorithms [20] [23], a stronger emphasis on explainable AI (XAI) to build trust and provide actionable insights [19] [1], and the development of more sophisticated benchmarking platforms like CARA that closely mimic real-world discovery pipelines [5]. As datasets continue to grow and algorithms evolve, the objective comparison of their performance will remain fundamental to advancing QSAR research and accelerating drug discovery.

Quantitative Structure-Activity Relationship (QSAR) modeling stands as a cornerstone of modern computational drug discovery. These models mathematically link the structural and physicochemical properties of chemical compounds to their biological activity, enabling the prediction of properties for novel compounds and guiding the design of new therapeutics [24]. As chemical datasets grow in size and complexity, and machine learning (ML) algorithms become increasingly sophisticated, a rigorous and well-defined workflow is paramount for developing robust, predictive, and reliable QSAR models. This guide provides a comparative examination of the key stages in the QSAR pipeline—data curation, model building, and validation—synthesizing insights from contemporary benchmarking studies to inform researchers and drug development professionals.

Data Curation: The Foundation of Predictive Models

The adage "garbage in, garbage out" is acutely relevant to QSAR modeling. The quality of the input data directly dictates the performance and reliability of the final model [25]. Data curation is the critical first step to ensure the dataset is valid, consistent, and ready for computational analysis.

A typical curation pipeline involves several standardized steps [26] [25] [27]:

- Validation: Checking SMILES strings for syntactic and semantic correctness to ensure they represent valid chemical structures.

- Cleaning: Removing salts, neutralizing charges, and standardizing tautomeric forms to create a consistent molecular representation.

- Normalization: Applying standardized rules to handle stereochemistry and other structural features.

- Duplicate Removal: Identifying and aggregating or removing duplicate molecular structures to prevent bias. Conflicting activity values for the same structure are often resolved by removal [26].

- Activity Data Standardization: Converting all biological activities to a common unit and scale (e.g., log-transform IC50 values) [24].

Specialized tools like the MEHC-Curation Python framework have been developed to automate this intricate process, transforming it into a standardized and user-friendly operation that significantly enhances downstream model performance [25].

The Critical Role of Data Splitting

Once curated, the dataset must be split into training, validation, and test sets. Benchmarking studies reveal that the splitting strategy profoundly impacts the perceived generalizability of a model. Two common approaches are [4]:

- Random Splitting: Compounds are assigned randomly to training and test sets. This often leads to optimistic performance estimates, as the test set molecules are likely structurally similar to those in the training set.

- Scaffold Splitting: The dataset is split based on molecular scaffolds (core structures), ensuring that molecules in the test set have different core frameworks from those in the training set. This is a more rigorous test of a model's ability to extrapolate to truly novel chemotypes.

A comprehensive benchmark of 13 ML models for predicting cyclic peptide permeability demonstrated that scaffold splitting "yields substantially lower model generalizability compared to random splitting," highlighting the importance of using a rigorous splitting scheme to avoid overestimating real-world performance [4].

QSAR Model Building: A Comparative Look at Algorithms and Representations

The model-building stage involves selecting molecular representations, calculating descriptors, and choosing machine learning algorithms to establish the structure-activity relationship.

Molecular Representations and Descriptors

Molecules can be represented numerically in several ways, which in turn influences the choice of ML algorithm [4] [28]:

- Molecular Descriptors/Fingerprints: Hand-crafted numerical representations based on physicochemical knowledge (e.g., molecular weight, logP, topological indices). Morgan Fingerprints (ECFP) are a widely used type that encodes circular substructures [4] [29].

- SMILES Strings: A text-based representation of the molecular structure, allowing the application of Natural Language Processing (NLP) models like RNNs and Transformers [4].

- Molecular Graphs: Atoms are represented as nodes and bonds as edges. This representation is the foundation for Graph Neural Networks (GNNs), which have shown superior performance in recent benchmarks [4].

- 2D Molecular Images: A less common approach where SMILES strings are converted into 2D images, enabling the use of Convolutional Neural Networks (CNNs) [4].

Benchmarking Machine Learning Algorithms

The choice of algorithm depends on the problem's complexity, dataset size, and desired interpretability. A systematic benchmark of 13 AI methods for predicting cyclic peptide membrane permeability provides critical insights [4]. The study evaluated models across four representation types and three prediction tasks (regression, binary classification, and soft-label classification).

Performance Comparison of Select Model Architectures (Cyclic Peptide Permeability Prediction) [4]

| Model Category | Example Algorithms | Key Findings |

|---|---|---|

| Graph-based | DMPNN, GNNs | "Consistently achieve top performance across tasks." [4] |

| Fingerprint-based (Classical ML) | Random Forest (RF), Support Vector Machine (SVM) | "Can achieve comparable performances" to more complex models, offering a strong baseline [4]. |

| SMILES-based (NLP) | RNN, Transformer | Performance is generally lower than graph and fingerprint-based models in this specific benchmark [4]. |

| Image-based | CNN | A less explored approach; performance can be competitive but is often outmatched by other methods [4]. |

The study concluded that graph-based models, particularly the Directed Message Passing Neural Network (DMPNN), consistently achieved top performance [4]. Furthermore, it found that framing the problem as a regression task generally outperformed classification approaches [4].

Another benchmarking effort, the CARA benchmark, highlighted that optimal training strategies can differ based on the drug discovery task. For Virtual Screening (VS) assays with structurally diverse compounds, meta-learning and multi-task learning were effective. In contrast, for Lead Optimization (LO) assays involving congeneric series, training separate QSAR models on individual assays already yielded decent performance [5].

QSAR Model Validation: Assessing Predictive Power and Utility

Robust validation is non-negotiable for assessing a model's true predictive power and applicability domain. This involves both internal and external validation techniques [24].

Internal and External Validation

- Internal Validation: Uses only the training data to estimate performance, typically through k-fold Cross-Validation or Leave-One-Out Cross-Validation (LOO-CV). This helps prevent overfitting during model tuning but may yield optimistic performance estimates [24].

- External Validation: The gold standard for evaluating model performance. A fully independent test set, not used in any part of model development, is used to assess the model's ability to generalize to new data [24].

Performance Metrics: Beyond Balanced Accuracy

The choice of performance metric must align with the model's intended application. A paradigm shift is occurring, particularly for models used in virtual screening [21].

- Balanced Accuracy (BA): The average of sensitivity and specificity. Traditionally, maximizing BA has been a key objective, often leading to the practice of balancing imbalanced training datasets (where inactive compounds far outnumber actives) through down-sampling [21].

- Positive Predictive Value (PPV or Precision): The proportion of predicted active compounds that are truly active. In virtual screening of ultra-large libraries, where only a tiny fraction of top-ranked compounds can be tested experimentally, PPV is a more relevant and critical metric than BA [21].

A recent study demonstrated that models trained on imbalanced datasets and selected for high PPV achieved a hit rate at least 30% higher than models trained on balanced datasets for the same number of tested compounds. This finding strongly advocates for a shift in best practices when developing QSAR models for virtual screening over lead optimization [21].

Experimental Protocol: Benchmarking Model Performance

To objectively compare different QSAR methodologies, as done in the cyclic peptide permeability study, a standard protocol can be followed [4]:

- Dataset Curation: Obtain a high-quality, curated dataset with reliable experimental data (e.g., from CycPeptMPDB or ChEMBL).

- Data Splitting: Apply both random and scaffold splitting strategies (e.g., 80/10/10 for train/validation/test) to assess generalizability.

- Model Training: Train a diverse set of models spanning different representations and architectures (e.g., RF, SVM, DMPNN, RNN) on the training set.

- Hyperparameter Tuning: Optimize model hyperparameters using the validation set, often via cross-validation.

- Performance Evaluation: Use the held-out test set to calculate key metrics. For classification, use Balanced Accuracy (BA), Area Under the ROC Curve (AUC-ROC), and Positive Predictive Value (PPV). For regression, use R² and Root Mean Square Error (RMSE).

The Scientist's Toolkit: Essential Research Reagents

| Tool / Resource | Function / Description | Examples |

|---|---|---|

| Descriptor Calculation Software | Generates numerical descriptors from molecular structures. | PaDEL-Descriptor, DRAGON, RDKit, Mordred [24] |

| Curation & Workflow Platforms | Automates data cleaning, validation, and machine learning pipelines. | MEHC-Curation (Python) [25], KNIME workflows [26] [27] |

| Public Bioactivity Databases | Sources of experimentally measured compound activities for training and testing. | ChEMBL [30] [5], BindingDB [30], PubChem [5] |

| Machine Learning Libraries | Provides implementations of algorithms for model building. | scikit-learn (RF, SVM), Deep Graph Library (GNNs), TensorFlow/PyTorch |

The integrated QSAR workflow, from rigorous data curation to thoughtful model building and stringent validation, is essential for developing predictive computational tools in drug discovery. Benchmarking studies consistently show that graph-based models like DMPNN are top performers, and that regression can be more effective than classification for certain tasks. Most importantly, the field is moving towards application-specific validation; prioritizing Positive Predictive Value (PPV) over Balanced Accuracy is crucial for virtual screening applications where the goal is to maximize the yield of true active compounds from a small set of experimental tests. By adhering to these principles and leveraging the growing toolkit of automated workflows and benchmarks, researchers can more reliably harness QSAR modeling to accelerate drug discovery.

Building and Applying Robust QSAR Models: Methods and Real-World Use Cases

The selection of molecular feature representations is a critical first step in building Quantitative Structure-Activity Relationship (QSAR) models for drug discovery. These representations transform chemical structures into numerical vectors that machine learning algorithms can process. The three primary categories of molecular features include expert-designed fingerprints, molecular descriptors, and deep-learned embeddings, each with distinct strengths and limitations. For researchers and drug development professionals, understanding the performance characteristics of these representations across various benchmarking scenarios is essential for developing predictive and robust models. This guide provides a comprehensive comparison based on current experimental studies to inform optimal feature selection for QSAR research.

Molecular Feature Representation Types

Expert-Described Fingerprints

Molecular fingerprints are bit or count vectors that encode the presence or absence of specific structural patterns or substructures within a molecule. They are categorized based on their algorithmic foundations:

- Circular Fingerprints (e.g., ECFP, FCFP, Morgan): Generate molecular features by considering the circular neighborhood around each atom at different radii, dynamically creating fragment identifiers without relying on a predefined fragment list [31] [32].

- Substructure-based Fingerprints (e.g., MACCS, PubChem): Use a predefined dictionary of structural fragments or keys; each bit in the fingerprint corresponds to the presence or absence of one of these specific substructures [33] [32].

- Path-based Fingerprints (e.g., AtomPair, Daylight): Analyze linear paths or the shortest distances between pairs of atoms in the molecular graph, hashing these paths into a fixed-size vector [12] [32].

- Pharmacophore Fingerprints (e.g., PH2, PH3): Encode atoms based on pharmacophore features (e.g., hydrogen bond donor/acceptor) and capture pairs or triplets of these features, representing potential molecular interaction patterns [32].

Molecular Descriptors

Molecular descriptors are numerical values representing theoretical or experimental physicochemical properties of a compound. They are traditionally categorized by dimensionality [12]:

- 1D Descriptors: Global molecular properties such as molecular weight, atom count, and logP.

- 2D Descriptors: Topological descriptors derived from the molecular graph, including connectivity indices and topological surface area.

- 3D Descriptors: Geometrical descriptors based on the three-dimensional conformation of a molecule, such as steric or electrostatic field values as used in 3D-QSAR approaches [34].

Deep-Learned Embeddings

Deep-learned embeddings are continuous vector representations of molecules generated by deep learning models, often in a task-specific or self-supervised manner:

- Graph Neural Networks (GNNs): Learn directly from the molecular graph structure, where nodes represent atoms and edges represent bonds. GNNs update atom representations by aggregating information from their neighbors [33] [31].

- SMILES-Based Embeddings: Models like BERT or CNNs process Simplified Molecular-Input Line-Entry System (SMILES) strings as textual data, learning representations either from the character sequence or tokenized substructures [31] [35].

- Unsupervised Embeddings (e.g., Mol2vec): Generate continuous vectors for molecules by applying word embedding algorithms like Word2vec to molecular substructures, creating representations independent of downstream prediction tasks [31].

The following diagram illustrates the workflow for generating and utilizing these different representations in a QSAR modeling pipeline.

Performance Comparison in QSAR Modeling

Benchmarking on Diverse Molecular Property Prediction Tasks

Extensive benchmarking studies across various molecular property prediction tasks reveal how representation choice impacts model performance. The table below summarizes key findings from large-scale comparative analyses.

Table 1: Performance Comparison of Molecular Representations Across Benchmarking Studies

| Representation Category | Specific Type | Reported Performance Advantages | Key Limitations |

|---|---|---|---|

| Traditional Fingerprints | MACCS Keys | Competitive performance in many classification tasks, high interpretability [36]. | Limited structural resolution due to small size (166 bits). |

| Circular (ECFP) | Considered state-of-the-art for drug-like molecules, strong in virtual screening [36] [32]. | May underperform on complex natural products [32]. | |

| Path-based (AtomPair) | Good performance in specific ADME-Tox targets [12]. | Performance varies significantly with dataset and target [12]. | |

| Molecular Descriptors | 1D & 2D Descriptors | Superior for predicting physical properties (e.g., solubility, melting point) [36]. | Require careful curation and removal of correlated descriptors [12]. |

| 3D Descriptors | Provide complementary information on shape and electrostatics for binding affinity prediction [34]. | Computationally intensive, conformation-dependent [34]. | |

| Deep-Learned Embeddings | Graph Neural Networks (GNNs) | Outperform other methods in taste prediction tasks; learn rich structural features directly from graphs [33]. | Data-hungry; can be outperformed by simpler methods on small datasets [31]. |

| SMILES-Based (e.g., BERT) | Can capture contextual semantic information from SMILES strings [35]. | Performance highly dependent on pre-training corpus and tokenization strategy [35]. | |

| Unsupervised (e.g., Mol2vec) | Competitive performance in some regression and classification tasks [31]. | Tend to underperform supervised embeddings and traditional representations [37] [31]. |

Impact of Dataset Size and Composition

The optimal choice of molecular representation is highly dependent on the size and nature of the training dataset:

Low-Data Regimes: In scenarios with limited training data (e.g., fewer than 5,000 compounds), traditional fingerprints and molecular descriptors typically outperform deep-learned representations. For instance, one benchmarking study noted that "traditional fingerprints tend to outperform learned representations in low data scenarios" [31]. Similarly, quantum machine learning classifiers have shown advantages over classical ones specifically when the number of training samples and features is limited [38].

High-Data Regimes: With larger datasets (e.g., >20,000 compounds), the performance gap narrows, and deep learning methods often become competitive. End-to-end deep learning models demonstrate "comparable performance to, and at times surpass, that of models trained on molecular fingerprints" when sufficient data is available [31].

Specialized Chemical Spaces: Representation performance can vary significantly across different chemical domains. For natural products, which possess distinct structural characteristics (e.g., higher stereochemical complexity), certain path-based and pharmacophore fingerprints can match or exceed the performance of ECFP, the de facto standard for drug-like compounds [32].

Consensus and Hybrid Modeling

Combining different feature representations seems intuitively beneficial, but experimental evidence presents a nuanced picture:

Limited Consensus Benefits: A comprehensive comparison concluded that "combining different molecular feature representations typically does not give a noticeable improvement in performance compared to individual feature representations" [36]. This suggests significant information overlap between different representation types.

Notable Exceptions: In specific applications, carefully designed hybrid models can yield benefits. For taste prediction, a "molecular fingerprints + GNN consensus model" emerged as the top performer, indicating the complementary strengths of expert-designed features and learned representations in this domain [33].

Experimental Protocols for Performance Evaluation

Standardized Benchmarking Methodology

To ensure fair and reproducible comparison of molecular representations, researchers should adhere to a standardized experimental protocol:

Data Curation and Splitting

- Source: Utilize standardized public datasets like ChemTastesDB [33], ChEMBL [31], or MoleculeNet benchmarks [36].

- Preprocessing: Apply consistent structure standardization (salt removal, neutralization, stereochemistry handling) using toolkits like RDKit or the ChEMBL structure pipeline [31] [32].

- Splitting: Implement rigorous train/validation/test splits (common ratios: 70/10/20 or 80/20) with stratification to maintain class distribution, particularly for imbalanced datasets [33].

Feature Generation

- Fingerprints: Generate with consistent parameters (e.g., ECFP4 with radius=2, 1024 bits) [31].

- Descriptors: Calculate comprehensive sets (e.g., using PaDEL software) followed by removal of constant and highly correlated descriptors [12] [39].

- Deep Embeddings: Use established architectures (GNNs, BERT) with standardized hyperparameters and, where applicable, leverage publicly available pre-trained models [33] [35].

Model Training and Evaluation

- Algorithms: Employ diverse algorithms including Random Forests, XGBoost, and neural networks to ensure robustness across model types [12] [36].

- Validation: Use nested cross-validation to prevent data leakage and overfitting [36].

- Metrics: Report multiple performance metrics (e.g., AUC-ROC, accuracy, RMSE) appropriate for classification and regression tasks [12].

Table 2: Key Research Reagents and Software for Molecular Representation

| Category | Tool Name | Primary Function | Application in Research |

|---|---|---|---|

| Fingerprint Generation | RDKit | Open-source cheminformatics toolkit; generates multiple fingerprints (Morgan, RDKitFP, AtomPair) and descriptors [31] [32]. | Standard for molecular representation calculation; used in most benchmarking studies. |

| Descriptor Calculation | PaDEL-Descriptor | Calculates 1D, 2D descriptors and fingerprints from molecular structures [39]. | Used in QSAR studies for comprehensive descriptor generation [39]. |

| Deep Learning Frameworks | DeepChem | Deep learning library for drug discovery; implements GNNs, transformers, and various molecular featurizers [31]. | Provides standardized implementations of deep learning models for fair comparison. |

| 3D-QSAR | OpenEye Orion | Implements 3D-QSAR using shape and electrostatic featurizations for binding affinity prediction [34]. | Specialized tool for 3D molecular representation and activity modeling. |

| Benchmarking Platforms | DeepMol | Python package for benchmarking compound representations on drug sensitivity prediction [31]. | Enables systematic comparison of representations on standardized tasks. |

Case Study: Taste Prediction Benchmarking

A comprehensive 2023 study provides a detailed protocol for comparing representations on taste prediction [33]:

- Dataset: 2,601 molecules from ChemTastesDB with sweet, bitter, and umami classifications.

- Representations: Compared Morgan, PubChem, Daylight, RDKit, ESPF, and ErG fingerprints against CNN and GNN models.

- Model Training: Split data 7:1:2 (train:validation:test), using DeepPurpose package for implementation.

- Key Finding: GNN-based models outperformed other approaches, with the combination of molecular fingerprints and GNNs achieving best performance, demonstrating hybrid potential in specific domains.

The benchmarking evidence clearly indicates that no single molecular representation consistently outperforms all others across every QSAR task. Traditional fingerprints like ECFP and MACCS remain strong, computationally efficient baselines, particularly for drug-like molecules and in low-data scenarios. Molecular descriptors, especially 2D ones, excel at predicting physicochemical properties, while 3D descriptors provide unique value for binding affinity prediction. Deep-learned embeddings show remarkable promise, with GNNs achieving state-of-the-art performance in specific domains like taste prediction, though they typically require larger datasets to reach their full potential.

For researchers building QSAR models, the selection strategy should be guided by the specific problem context: the target property, dataset size and composition, and available computational resources. Empirical validation on representative data remains the gold standard for identifying the optimal molecular representation for any given drug discovery application.

In modern computer-assisted drug discovery, the one-size-fits-all approach to Quantitative Structure-Activity Relationship (QSAR) modeling is increasingly being replaced by task-specific strategies. The performance requirements for machine learning models differ significantly depending on whether they are used for virtual screening of massive chemical libraries or the lead optimization of smaller, focused compound series [21]. Virtual screening aims to identify novel hit compounds from millions of candidates, while lead optimization refines a small set of promising compounds to enhance their activity and properties. This guide examines the distinct objectives, optimal performance metrics, dataset preparation strategies, and experimental protocols for each task, providing researchers with a framework for selecting and benchmarking appropriate QSAR methodologies.

Table 1: Core Objectives and Challenges in QSAR Applications

| Aspect | Virtual Screening | Lead Optimization |

|---|---|---|

| Primary Goal | Identify novel hit compounds from ultra-large libraries [21] | Enhance potency and properties of a congeneric series [21] |

| Chemical Space | Broad and diverse exploration [40] | Focused, local exploration [21] |

| Key Challenge | Managing extreme dataset imbalance (>99% inactives) [21] | Achieving balanced predictive performance for similar compounds |

| Practical Constraint | Only a small fraction of top-ranked compounds can be tested experimentally [21] | Accurate prediction of small potency changes |

Performance Metrics: Selecting the Right Benchmark

The evaluation metrics that best indicate model utility vary dramatically between virtual screening and lead optimization tasks. For virtual screening, where the goal is to select a small number of compounds for experimental testing from billions of candidates, positive predictive value (PPV), also known as precision, is the most critical metric [21]. PPV measures the proportion of true actives among those predicted as active, directly determining the experimental hit rate. In contrast, for lead optimization, where models must reliably predict activity for all compounds in a series, balanced accuracy (BA) remains the preferred metric as it ensures equal performance in predicting both active and inactive compounds [21].

Table 2: Key Performance Metrics for Virtual Screening vs. Lead Optimization

| Metric | Virtual Screening Priority | Lead Optimization Priority | Rationale |

|---|---|---|---|

| Positive Predictive Value (PPV) | Critical [21] | Secondary | Directly impacts experimental hit rate in early discovery |

| Balanced Accuracy (BA) | Less relevant [21] | Critical [21] | Ensures reliable prediction across all compounds in a series |

| Sensitivity/Recall | Moderate | High | Important for finding all potential actives in a focused series |

| Area Under ROC Curve (AUROC) | Limited value [21] | Valuable | Measures overall ranking ability without focusing on top predictions |

| Enrichment Factor (EF) | Useful early enrichment | Limited value | Measures concentration of actives in top fraction |

Dataset Preparation: Balancing Act vs. Real-World Imbalance

Dataset preparation strategies fundamentally differ between these applications. For lead optimization, best practices traditionally recommend dataset balancing through techniques like down-sampling the majority class to create models with high balanced accuracy [21]. However, for virtual screening, maintaining real-world imbalance in training datasets produces superior results. Studies demonstrate that training on imbalanced datasets achieves a hit rate at least 30% higher than using balanced datasets when evaluating top-scoring compounds in batch sizes relevant to experimental high-throughput screening (e.g., 128 molecules) [21].

This paradigm shift acknowledges that both training and virtual screening sets are highly imbalanced in favor of inactive compounds. Models trained on balanced datasets, while achieving higher balanced accuracy, typically show lower PPV, making them less effective for the primary goal of virtual screening: to maximize the number of true actives in the small subset of compounds selected for experimental testing [21].

Experimental Protocols and Workflow Design

Virtual Screening Protocol for Ultra-Large Libraries

The massive scale of make-on-demand chemical libraries containing billions of compounds necessitates specialized workflows that combine machine learning with traditional structure-based methods [40].

Machine Learning-Guided Docking Workflow:

- Initial Docking: Perform molecular docking on a structurally diverse subset (e.g., 1 million compounds) from the ultra-large library [40].

- Classifier Training: Train a classification algorithm (CatBoost with Morgan2 fingerprints recommended) to identify top-scoring compounds based on docking results [40].

- Conformal Prediction: Apply the conformal prediction framework to select compounds from the multi-billion-scale library for docking, controlling the error rate of predictions [40].

- Focused Docking: Dock only the selected subset (typically 1-10% of the full library) to identify final candidates for experimental testing [40].

This protocol reduces computational cost by more than 1,000-fold while maintaining high sensitivity (0.87-0.88), enabling practical virtual screening of billion-compound libraries [40].

Diagram 1: ML-guided virtual screening workflow for ultra-large libraries.

Lead Optimization Protocol Using Consensus QSAR

For lead optimization, consensus modeling with ensemble approaches has demonstrated superior performance for predicting activity and optimizing molecular structures within a congeneric series [41].

Consensus QSAR Modeling Protocol:

- Descriptor Calculation and Selection: Calculate molecular descriptors and fingerprints, followed by feature selection using methods like Classification and Regression Trees (CART) to identify key molecular descriptors [41] [42].

- Multiple Model Development: Develop individual QSAR models using diverse algorithms including Support Vector Machine (SVM), Artificial Neural Network (ANN), and Random Forest (RF) [41] [42].

- Consensus Prediction: Combine predictions from multiple models through consensus or majority voting approaches [41].

- Validation: Validate models using 5-fold cross-validation, y-randomization, and external test sets to ensure robustness and generalizability [41].

This approach has achieved remarkable predictive performance with R²Test > 0.93 for regression models and accuracy up to 92% for classification tasks in optimizing dual 5HT1A/5HT7 serotonin receptor inhibitors [41].

Diagram 2: Consensus QSAR modeling workflow for lead optimization.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Computational Tools for QSAR Modeling Tasks

| Tool/Resource | Function | Virtual Screening Utility | Lead Optimization Utility |

|---|---|---|---|

| Morgan Fingerprints [40] | Molecular representation using circular substructures | High-performance feature for classifiers [40] | Useful for capturing local molecular features |

| RDKit Descriptors [11] | Calculation of 2D molecular descriptors | Baseline feature set | Interpretable molecular properties |

| Conformal Prediction Framework [40] | Provides valid confidence measures for predictions | Critical for error rate control in imbalanced data [40] | Limited application |

| Consensus Modeling [41] | Combines predictions from multiple algorithms | Moderate utility | Critical - boosts accuracy and robustness [41] |

| Molecular Docking Software | Structure-based binding affinity prediction | Initial screening and training data generation [40] | Limited to target structure availability |

| Applicability Domain Assessment [41] | Defines model's reliable prediction space | Moderate utility for diverse libraries | Critical for interpolating within chemical series |

The benchmarking data and experimental protocols presented demonstrate that optimal QSAR model performance requires strategic alignment between methodology and application context. Virtual screening campaigns benefit from models trained on imbalanced datasets and evaluated by positive predictive value, leveraging machine learning-guided workflows to navigate billion-compound libraries efficiently. Conversely, lead optimization requires models with high balanced accuracy built on balanced training sets, often achieved through consensus modeling approaches. By adopting these task-specific paradigms, researchers can significantly improve the efficiency and success rates of their drug discovery pipelines.

The integration of machine learning (ML) into Quantitative Structure-Activity Relationship (QSAR) modeling has revolutionized drug discovery, shifting the paradigm from traditional trial-and-error approaches to data-driven predictive science [43] [1]. This transformation is particularly evident in critical discovery areas, including the prediction of Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, assessment of endocrine disruption potential via estrogen receptor alpha (ERα) binding, and identification of inhibitors for intricate signaling targets such as Nuclear Factor kappa B (NF-κB) [43] [44] [45]. Accurately predicting these endpoints in the early stages of drug development is crucial for reducing late-stage attrition, which remains a primary challenge in pharmaceutical R&D [46] [47].

Benchmarking studies reveal that the performance of ML models is highly dependent on the choice of molecular representations and feature selection techniques, often more so than the underlying algorithm itself [11]. For instance, systematic feature selection and model validation strategies can significantly enhance the reliability of ADMET predictions, moving beyond the conventional practice of indiscriminately concatenating different molecular representations [11]. Similarly, advanced ML-based three-dimensional QSAR (3D-QSAR) models have demonstrated superior performance over traditional two-dimensional approaches for predicting ERα binding affinity, highlighting the evolutionary trajectory of computational methodologies [44]. This guide provides a comparative analysis of these applications, detailing experimental protocols, benchmarking data, and essential computational toolkits to guide researchers in selecting and optimizing ML models for specific discovery contexts.

Case Study 1: ADMET Property Prediction

Experimental Protocols and Model Benchmarking

Predicting ADMET properties early in the drug discovery pipeline is vital for prioritizing viable lead compounds. A comprehensive benchmarking study [11] established a rigorous protocol for this task. The process begins with data curation and cleaning from public sources like the Therapeutics Data Commons (TDC), followed by the calculation of diverse molecular descriptors and fingerprints (e.g., RDKit descriptors, Morgan fingerprints) [11]. Subsequently, a structured approach to feature selection and combination is employed, moving beyond simple concatenation. Finally, models are evaluated using cross-validation with statistical hypothesis testing and assessed on external test sets to ensure robustness and generalizability [11].

Table 1: Benchmarking Performance of ML Models on Key ADMET Endpoints [11]

| ADMET Endpoint | Best-Performing Model | Key Molecular Representation | Performance Metric |

|---|---|---|---|

| Human Plasma Protein Binding | LightGBM | RDKit Descriptors + Morgan Fingerprints | MAE: 0.28 (log unit) |

| Caco-2 Permeability | Random Forest | Morgan Fingerprints | BA: 0.81 |

| Hepatic Clearance | Support Vector Machine (SVM) | RDKit Descriptors | MAE: 0.41 (log unit) |

| hERG Cardiotoxicity | SVM | Molecular Embeddings | BA: 0.76 |

| Solubility (Kinetic) | LightGBM | Constitutional Descriptors | MAE: 0.52 (log unit) |

The data reveals that no single algorithm dominates all ADMET endpoints. While tree-based models like LightGBM and Random Forest often excel, Support Vector Machines (SVM) can be optimal for specific tasks like hERG cardiotoxicity prediction [11]. The choice of molecular representation is equally critical; simpler fingerprints and descriptors frequently match or surpass the performance of more complex, deep-learned embeddings for these ligand-based prediction tasks [11].

Impact of Data Diversity and Federated Learning

A significant challenge in ADMET prediction is the degradation of model performance when applied to novel chemical scaffolds. Recent initiatives demonstrate that data diversity and representativeness are more impactful for predictive accuracy and generalization than model architecture alone [46]. Federated learning has emerged as a powerful technique to overcome data limitations by enabling collaborative model training across distributed, proprietary datasets from multiple pharmaceutical companies without centralizing sensitive data [46]. This approach systematically extends the model's effective domain, leading to more robust predictors and a reported 40–60% reduction in prediction error for endpoints like human liver microsomal clearance and solubility [46].

Case Study 2: Estrogen Receptor Alpha (ERα) Binding Affinity

Machine Learning-based 3D-QSAR Models

The binding of endocrine-disrupting chemicals (EDCs) to Estrogen Receptor Alpha (ERα) is a major mechanism of toxicity and a key target for therapeutic intervention. A recent study [44] developed advanced machine learning-based 3D-QSAR models to predict the relative binding affinity (RBA) of small molecules to ERα. The methodology involved building models using a dataset from the VEGA IRFMN-RBA model and employing algorithms such as Multilayer Perceptron (MLP), Random Forest (RF), and Support Vector Machine (SVM). These 3D-QSAR models were validated against an external dataset and benchmarked against conventional VEGA models [44].

Table 2: Performance Comparison of ML-based 3D-QSAR Models for ERα Binding [44]

| Prediction Model | Accuracy | Sensitivity | Specificity | Notes |

|---|---|---|---|---|

| MLP 3D-QSAR | 0.89 | 0.91 | 0.87 | Emerged as the most robust model |

| Random Forest 3D-QSAR | 0.86 | 0.88 | 0.84 | Good performance with built-in feature importance |

| SVM 3D-QSAR | 0.85 | 0.87 | 0.83 | Effective in high-dimensional space |

| Conventional VEGA 2D-QSAR | 0.81 | 0.79 | 0.83 | Baseline model for performance comparison |

The results demonstrate that all ML-based 3D-QSAR models outperformed the conventional VEGA model, with the MLP model showing the highest accuracy and sensitivity [44]. This underscores the advantage of integrating three-dimensional structural information with powerful non-linear machine learning algorithms for predicting specific molecular interactions like ERα binding.

Counter-Propagation Artificial Neural Networks (CPANN)

An alternative, highly accurate approach for predicting receptor binding utilizes Counter-Propagation Artificial Neural Networks (CPANN). Researchers developed six CPANN models to predict compound binding to androgen and estrogen receptors (ERα and ERβ) as agonists or antagonists [48]. The models were trained on data from the EPA's CompTox Chemicals Dashboard, using DRAGON-derived structural descriptors. Validation via leave-one-out (LOO) tests showed exceptional performance, with prediction accuracy ranging from 94% to 100% for the various receptor models [48]. This highlights CPANN as a powerful tool for the safety prioritization of chemicals regarding endocrine disruption.

Case Study 3: NF-κB Inhibition Prediction

The NfκBin Model and Workflow