AZlogD74: Unveiling the Model Powering Modern Drug Discovery

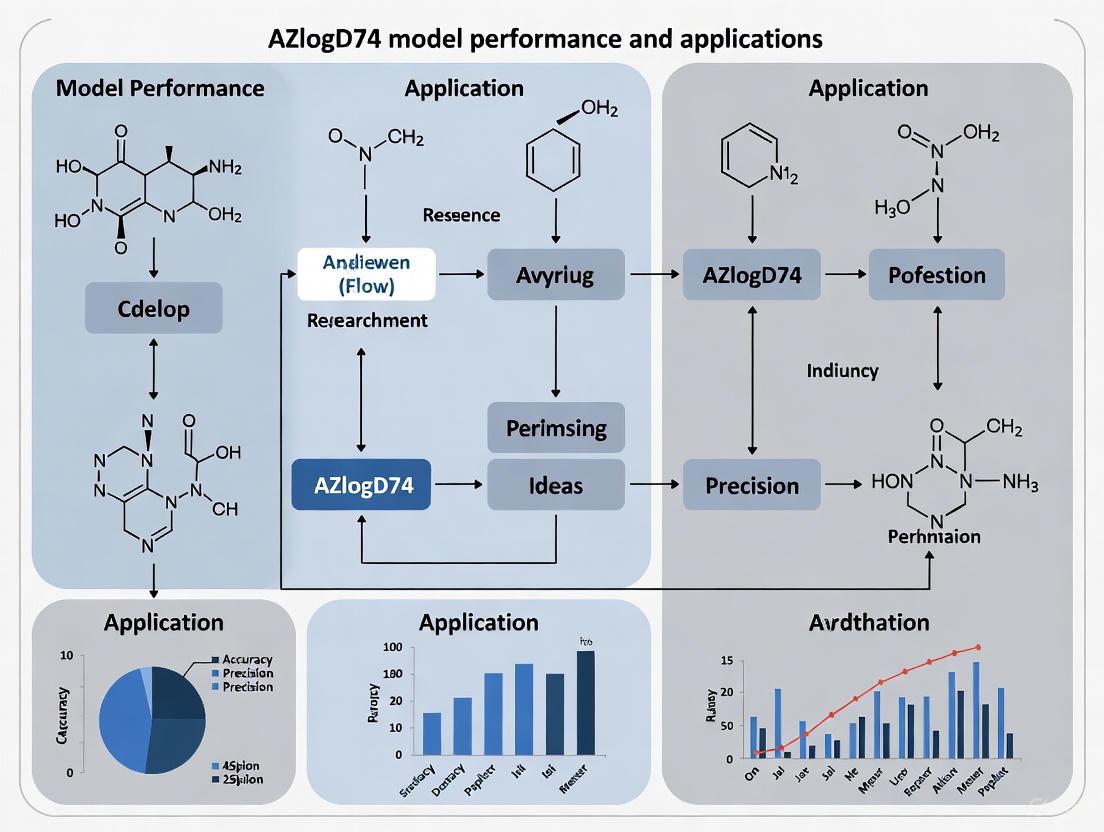

This article provides a comprehensive exploration of AstraZeneca's AZlogD74 model, a pivotal tool for predicting lipophilicity in drug discovery.

AZlogD74: Unveiling the Model Powering Modern Drug Discovery

Abstract

This article provides a comprehensive exploration of AstraZeneca's AZlogD74 model, a pivotal tool for predicting lipophilicity in drug discovery. Aimed at researchers and development professionals, we delve into the critical role of logD7.4, the model's advanced architecture leveraging a massive proprietary dataset, and its practical application for optimizing drug candidates. The discussion extends to troubleshooting common prediction challenges, a comparative analysis with other tools, and the model's profound impact on improving the efficiency and success rate of bringing safer, more effective therapies to market.

Why logD7.4 is a Cornerstone of Successful Drug Development

Lipophilicity is a fundamental physical property that significantly influences various aspects of drug behavior, including solubility, permeability, metabolism, distribution, protein binding, and toxicity [1]. In pharmaceutical development, the balance between lipophilicity and hydrophilicity of a drug candidate is crucial for its success [2]. For decades, Lipinski's Rule of Five has served as a key guideline for identifying orally active drugs, with lipophilicity—quantified as logP—being one of its core components [2]. This rule proposes that a "druggable" compound should have a calculated octanol-water partition coefficient (logP) value not greater than 5, among other criteria [2]. However, as drug discovery explores chemical spaces beyond traditional small molecules, scientists recognize that logP alone provides an incomplete picture of a compound's behavior in different biological environments. This recognition has led to the increased importance of the distribution coefficient (logD), which accounts for the pH-dependent ionization of molecules—a critical factor since approximately 95% of drugs contain ionizable groups [1].

Accurate prediction and measurement of lipophilicity parameters remain challenging yet essential for evaluating drug candidates and optimizing compound properties in the drug discovery process [1]. This article examines the critical distinction between logP and logD, their computational and experimental determination methods, and their applications in modern drug development, with particular attention to advanced prediction models like AstraZeneca's AZlogD74.

Defining logP and logD: Key Concepts and Differences

logP: The Partition Coefficient

The partition coefficient, logP, quantifies a compound's distribution between two immiscible liquids, typically n-octanol and water [2]. This value represents the logarithm of the ratio of the compound's concentrations in the organic phase (n-octanol) and the aqueous phase (water). A higher logP value indicates greater lipophilicity, suggesting the compound may more readily cross cell membranes but may also have poorer aqueous solubility [3]. The critical limitation of logP is that its calculation assumes the compound exists solely in its unionized form [2]. For compounds that do not ionize, this measurement provides a consistent lipophilicity value across all pH conditions.

logD: The Distribution Coefficient

Unlike logP, the distribution coefficient (logD) describes the pH-dependent lipophilicity of a compound, accounting for all forms present at a specific pH—including ionized, partially ionized, and unionized species [2]. Similarly to logP, a higher logD value indicates greater lipophilicity [3]. For non-ionizable compounds, logD equals logP across the entire pH range [3]. However, for ionizable compounds—which constitute the majority of pharmaceutical agents—logD varies with pH and provides a more accurate representation of a compound's behavior in various biological environments where pH differs significantly [2]. Of particular interest in drug discovery is logD at physiological pH 7.4 (logD7.4), as this reflects conditions in blood and tissues [1].

Comparative Analysis: logP vs. logD

Table 1: Key Differences Between logP and logD

| Parameter | logP | logD |

|---|---|---|

| Definition | Partition coefficient for unionized species only | Distribution coefficient accounting for all species |

| pH Dependence | pH-independent | pH-dependent |

| Ionization Consideration | Does not account for ionization | Accounts for ionization state |

| Representation | Single value for a compound | Value specific to each pH |

| Physiological Relevance | Limited for ionizable compounds | High, especially at pH 7.4 |

| Measurement Complexity | Relatively straightforward | More complex due to pH considerations |

The fundamental distinction between these parameters has significant practical implications. For example, a compound such as 5-methoxy-2-[1-(piperidin-4-yl)propyl]pyridine, which has two ionization centers (pyridine with pKa 4.8 and piperidine with pKa 10.9), would ionize to different extents throughout the gastrointestinal tract [2]. At physiologically relevant pH (1-8), ionization drastically affects the distribution coefficient, meaning the lipophilicity and membrane permeability suggested by logP may be highly misleading compared to the actual behavior predicted by logD [2].

Theoretical Foundations and Calculation Methods

Computational Approaches for logP/logD Prediction

Numerous computational methods have been developed to predict logP and logD values, falling into two major categories: substructure-based and property-based methods [4].

Substructure-based methods dissect molecules into fragments (fragmental methods) or down to the single-atom level (atom-based methods), with the final logP calculated by summing the contributions of these substructures [4]. These approaches leverage the concept that molecular lipophilicity can be approximated by the additive contributions of its components. Pioneering work by Hansch, Leo, and Rekker established fragment-based methods that assign hydrophobic values to molecular substructures, enabling logP calculation through their summation [5] [4].

Property-based approaches utilize descriptors of the entire molecule, including empirical methods, 3D-structure representations, and topological descriptors [4]. These methods often employ advanced computational techniques, including machine learning and graph neural networks (GNNs), which use graph representation learning of entire molecules [1]. Such approaches have shown success in quantitative structure-property relationship (QSPR) modeling but face challenges due to limited experimental logD datasets [1].

Advanced Predictive Models: The Case of AZlogD74

Pharmaceutical companies have developed proprietary models to predict logD values with superior performance, leveraging their extensive and confidential datasets. AstraZeneca's AZlogD74 model is trained on a dataset of over 160,000 molecules, which the company continuously updates with new measurements [1]. This massive dataset size far exceeds what is typically available in public domains or academic settings, contributing to the model's enhanced predictive capability.

A novel approach to addressing data limitations comes from the RTlogD model, which combines pre-training on chromatographic retention time (RT) datasets, incorporation of microscopic pKa values as atomic features, and integration of logP as an auxiliary task within a multitask learning framework [1]. Chromatographic retention time correlates strongly with lipophilicity, and the available data surpasses what is accessible for logP and pKa [1]. This model demonstrates how transfer learning and multi-task learning can improve prediction accuracy by leveraging related physicochemical properties.

Experimental Determination Methods

Laboratory Techniques for Lipophilicity Assessment

Several experimental techniques have been developed to measure logD7.4 values, each with distinct advantages and limitations:

Shake-flask method: This traditional approach involves vigorously mixing the compound with n-octanol (as the organic phase) and a buffer solution (as the aqueous phase) at physiological pH 7.4 [1]. After separation of the phases, the compound concentration in each phase is quantified, typically using analytical techniques like HPLC or UV spectroscopy. While considered a reference method, the shake-flask approach is labor-intensive, requires relatively large amounts of compound, and can be challenging for compounds with poor solubility [1] [5].

Chromatographic techniques: Methods such as high-performance liquid chromatography (HPLC) and reverse-phase thin-layer chromatography (RP-TLC) rely on the distribution behavior of compounds between stationary and mobile phases to estimate lipophilicity [1] [6]. RP-TLC methods typically use non-polar stationary phases (e.g., RP-2, RP-8, RP-18) with various organic modifiers as mobile phases [6] [7]. These methods offer higher throughput than shake-flask but provide indirect assessment of logD7.4 and may be less accurate [1].

Potentiometric titration: This approach involves dissolving samples in a water-octanol system and titrating with acid or base while monitoring pH changes [1]. It is limited to compounds with acid-base properties and requires high sample purity but can provide comprehensive information about the ionization behavior [1].

Methodological Considerations and Challenges

Experimental logD determination faces several challenges, particularly for compounds with limited solubility, which can lead to significant measurement errors [5]. Research has shown that filtering out compounds with kinetic solubility below 25-100 μM can reduce standard deviation in logD measurements without notably affecting median ΔlogD7.4 values [5]. This highlights the importance of considering solubility in both experimental design and data interpretation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Materials for Lipophilicity Studies

| Reagent/Material | Function/Application | Examples/Specifications |

|---|---|---|

| n-Octanol | Standard organic solvent for partition/distribution studies | High-purity grade; saturated with aqueous buffer |

| pH Buffers | Maintain physiological pH conditions during measurements | Typically phosphate-buffered saline at pH 7.4 |

| Chromatographic Phases | Stationary phases for chromatographic methods | RP-2, RP-8, RP-18 for reverse-phase techniques |

| Organic Modifiers | Mobile phase components for chromatography | Acetone, acetonitrile, 1,4-dioxane, methanol |

| Reference Compounds | Method validation and calibration | Compounds with well-established logP/logD values |

| Analytical Instruments | Quantification of compound concentrations | HPLC systems with UV/Vis or MS detection |

Lipophilicity in Drug Absorption and Distribution

Mechanisms of Membrane Permeation

Drug absorption is determined by the drug's ability to cross several semipermeable cell membranes before reaching the systemic circulation [8]. The most common mechanism of absorption for drugs is passive diffusion, where drug molecules move according to the concentration gradient from higher to lower concentration [9] [8]. This process can be described by Fick's law of diffusion and occurs through either aqueous or lipid pathways.

The lipid-aqueous partition coefficient significantly influences passive diffusion, with more lipophilic compounds generally demonstrating better membrane permeability [9]. However, extremely lipophilic compounds may have poor aqueous solubility, limiting their dissolution and absorption. Additionally, the ionization state of a compound dramatically affects its absorption characteristics, as only the un-ionized form is typically lipophilic enough to diffuse readily across lipid cell membranes [8].

pH-Partition Hypothesis and Physiological Implications

The pH-partition hypothesis explains how pH gradients across membranes influence drug absorption based on a compound's pKa and the environmental pH [8]. According to this principle:

- Weakly acidic drugs exist primarily in their un-ionized form in acidic environments (like the stomach), favoring absorption in these regions [8].

- Weakly basic drugs are predominantly ionized in acidic environments and un-ionized in more basic environments, favoring absorption in the intestine [8].

However, in practice, most drug absorption occurs in the small intestine regardless of a drug's acid-base character, due to its extensive surface area resulting from villi and microvilli, and more permeable membranes [9] [8]. This apparent paradox highlights the complexity of drug absorption and the limitations of relying solely on logP for predicting drug behavior.

Application in Drug Design and Beyond

Property-Based Design and Optimization

Lipophilicity optimization represents a crucial aspect of modern drug design. Studies have demonstrated that compounds with moderate logD7.4 values (typically between 1-3) exhibit optimal pharmacokinetic and safety profiles, leading to improved therapeutic effectiveness [1] [5]. High lipophilicity has been associated with increased risk of toxic events and poor aqueous solubility, while excessively low lipophilicity may limit membrane permeability and absorption [1] [2].

Molecular matched pair (MMP) analysis has enabled quantification of lipophilic contributions for common functional groups used in medicinal chemistry [5]. This approach identifies how specific structural modifications affect lipophilicity, providing valuable guidance for lead optimization. For example, research at Genentech established ΔlogD7.4 values for numerous substituents, finding that phenyl substitution represents one of the most lipophilic changes, while heterocyclic bioisosteres like pyridazines can decrease lipophilicity by up to 0.80 units [5].

Applications Beyond Pharmaceutical Sciences

While pharmaceutical applications dominate lipophilicity research, the concepts of logP and logD extend to other fields:

Environmental chemistry: Scientists study the behavior of chemicals affected by the pH of different soils or water bodies, where interest lies in the partitioning of species that actually exist at environmental pH values, not just neutral forms that may not dominate [2].

Separation science: Understanding partition and distribution coefficients aids in developing new separation and extraction methods, enabling chemists to select optimal pH conditions for separating positional isomers and maximizing extraction yield [2].

The distinction between logP and logD represents more than a technical nuance—it embodies a fundamental principle in modern drug design recognizing the dynamic interplay between molecular structure, ionization state, and biological environment. While logP provides a valuable baseline for understanding intrinsic lipophilicity, logD offers a more physiologically relevant perspective that accounts for the pH-dependent ionization crucial for most pharmaceutical compounds.

Advanced prediction models like AstraZeneca's AZlogD74 demonstrate how large, proprietary datasets combined with sophisticated computational approaches can enhance lipophilicity prediction, addressing limitations in publicly available data. The continued refinement of these models, alongside robust experimental methods for verification, will further improve our ability to design compounds with optimal physicochemical properties for therapeutic success.

As drug discovery ventures beyond traditional chemical space into larger, more complex molecules, the accurate characterization and prediction of lipophilicity through both logP and logD will remain essential for balancing the competing demands of solubility, permeability, and metabolic stability in successful drug candidates.

Lipophilicity, quantified as the distribution coefficient between n-octanol and buffer at physiological pH 7.4 (logD7.4), represents a fundamental molecular property with profound implications throughout drug discovery and development. Unlike logP, which describes the partition coefficient of only the neutral species, logD7.4 accounts for the distribution of all ionization states of a compound at physiological pH, making it particularly relevant for drug molecules, approximately 95% of which contain ionizable groups [10]. This parameter significantly affects various aspects of drug behavior, including solubility, permeability, metabolism, distribution, protein binding, and toxicity [10] [11]. Optimal logD7.4 values are associated with improved safety and pharmacokinetic profiles, while extremes in lipophilicity have been linked to either increased risk of toxic events or limited drug absorption and metabolism [10]. Consequently, accurate prediction and measurement of logD7.4 has become indispensable for evaluating drug candidates and optimizing compound properties in modern drug discovery pipelines.

Fundamental Relationships: How logD7.4 Influences Key ADMET Properties

Solubility and Permeability

The lipophilicity of a compound, as expressed by logD7.4, exhibits a direct and balancing relationship with both solubility and permeability. Higher logD7.4 values (indicating greater lipophilicity) generally correlate with decreased aqueous solubility but increased membrane permeability [10]. This inverse relationship creates a fundamental challenge in drug design—optimizing structures to achieve sufficient solubility for dissolution while maintaining adequate permeability for absorption. Compounds with excessively high logD7.4 values may demonstrate favorable membrane penetration but suffer from poor aqueous solubility, potentially limiting their bioavailability. Conversely, compounds with very low logD7.4 values may show excellent solubility but inadequate membrane permeability to reach therapeutic targets [10].

Distribution and Metabolism

logD7.4 significantly influences a drug's distribution within the body, including its ability to cross biological barriers such as the blood-brain barrier (BBB) [12]. Compounds with moderate logD7.4 values typically demonstrate optimal distribution profiles, while extremely lipophilic molecules may undergo extensive tissue binding and sequestration, reducing their available concentration for therapeutic action [10]. Additionally, logD7.4 affects metabolic clearance, with lipophilic compounds generally being more susceptible to cytochrome P450-mediated metabolism [13]. The lipophilicity of functional groups directly relates to excretion endpoints such as clearance, highlighting the interconnectedness of logD7.4 across the entire ADMET spectrum [13].

Toxicity Profiles

Elevated lipophilicity has been consistently associated with an increased risk of toxic events, as demonstrated in animal studies conducted by pharmaceutical companies [10]. High logD7.4 values may contribute to off-target toxicity through promiscuous binding to unintended biological targets and increased potential for drug-drug interactions [14]. Furthermore, lipophilicity has been shown to help distinguish aggregators from non-aggregators in drug discovery, providing insights into potential toxicity mechanisms [10]. These relationships underscore the critical importance of maintaining logD7.4 within an optimal range to minimize toxicity risks while preserving therapeutic efficacy.

Experimental Methodologies for logD7.4 Determination

Established Experimental Techniques

Several experimental approaches have been developed to measure logD7.4 values, each with distinct advantages and limitations:

Table 1: Comparison of Experimental logD7.4 Determination Methods

| Method | Principle | Advantages | Limitations | Throughput |

|---|---|---|---|---|

| Shake-Flask | Direct measurement of distribution between n-octanol and aqueous buffer phases [10] | Considered reference method; direct measurement | Labor-intensive; requires large compound amounts; slow [10] | Low |

| Chromatographic Techniques (e.g., HPLC) | Indirect assessment based on retention time behavior between mobile and stationary phases [10] | Simple; stable against impurities; higher throughput [10] | Indirect measurement; less accurate than shake-flask [10] | Medium |

| Potentiometric Titration | Titration with acid/base in two-phase system; measures pH-dependent distribution [10] | Provides additional pKa information; can be automated | Limited to ionizable compounds; requires high purity [10] | Medium |

Standardized Shake-Flask Protocol

The shake-flask method remains the gold standard for experimental logD7.4 determination. The following protocol outlines the key steps for reliable measurement:

Solution Preparation: Saturate n-octanol with phosphate buffer (pH 7.4) and vice versa by mixing equal volumes and shaking for 24 hours followed by phase separation [10].

Compound Distribution: Dissolve the test compound in either the aqueous or organic phase at a concentration typically below 0.01M to avoid aggregation effects.

Equilibration: Combine 1.5 mL of each phase in a glass vial and shake mechanically for 2-4 hours at constant temperature (25°C) to reach distribution equilibrium.

Phase Separation: Allow phases to separate completely, then centrifuge if necessary to achieve clear phase separation.

Concentration Analysis: Quantify compound concentration in both phases using appropriate analytical methods (e.g., UV spectroscopy, HPLC). The logD7.4 is calculated as:

logD7.4 = log10([Compound]octanol / [Compound]aqueous)

where [Compound] represents the concentration in each phase [10].

Validation: Include reference compounds with known logD7.4 values to validate method performance and ensure consistency across experiments.

Computational Prediction Models for logD7.4

The experimental determination of logD7.4 remains resource-intensive, driving the development of computational prediction methods. These approaches range from traditional Quantitative Structure-Property Relationship (QSPR) models to advanced artificial intelligence (AI) techniques:

Table 2: Comparison of logD7.4 Prediction Tools and Models

| Tool/Model | Approach | Key Features | Performance | Access |

|---|---|---|---|---|

| RTlogD [10] | Graph Neural Network with multi-source knowledge transfer | Pre-training on chromatographic RT; microscopic pKa features; logP as auxiliary task | Superior to commonly used algorithms [10] | Academic |

| AZlogD74 (AstraZeneca) [10] | Proprietary model trained on extensive in-house data | Database of >160,000 molecules; continuously updated with new measurements [10] | High performance (leverages large proprietary dataset) [10] | Commercial |

| ADMETlab2.0 [10] | Comprehensive ADMET prediction platform | Includes logD7.4 among multiple property predictions | Commonly used benchmark [10] | Web tool |

| ALOGPS [10] | Traditional QSPR approach | Established algorithm; wide historical usage | Reference for comparison studies [10] | Web tool |

The RTlogD Model: Architecture and Innovation

The RTlogD model represents a significant advancement in logD7.4 prediction by addressing the fundamental challenge of limited experimental data through knowledge transfer from multiple related domains [10]. Its architecture incorporates three key innovations:

Chromatographic Retention Time Pre-training: The model is initially pre-trained on a large dataset of nearly 80,000 chromatographic retention time measurements, which are influenced by lipophilicity, thereby allowing the model to learn relevant molecular representations before fine-tuning on the smaller logD7.4 dataset [10].

Microscopic pKa Integration: Atomic-level pKa values are incorporated as atomic features, providing granular information about ionizable sites and ionization capacity that directly impacts distribution behavior at pH 7.4 [10].

Multitask Learning with logP: The model simultaneously learns logD7.4 and logP predictions, allowing the domain knowledge contained within logP data to serve as an inductive bias that improves learning efficiency and prediction accuracy for the primary logD7.4 task [10].

This multi-faceted approach demonstrates how leveraging correlated properties can enhance model performance despite limited primary data, offering a framework for addressing similar challenges in other molecular property predictions.

Multi-Task Graph Learning Frameworks

Beyond specialized logD7.4 models, broader ADMET prediction frameworks have demonstrated the value of multi-task learning approaches. The MTGL-ADMET framework employs a "one primary, multiple auxiliaries" paradigm that adaptively selects appropriate auxiliary tasks to boost performance on a specific primary task, even accepting potential degradation in the auxiliary tasks themselves [13]. This approach utilizes status theory and maximum flow algorithms to identify friendly auxiliary tasks and estimate potential performance gains, resulting in improved prediction accuracy for ADMET endpoints including those related to lipophilicity [13].

Table 3: Key Research Reagents and Computational Tools for logD7.4 Research

| Category | Item/Resource | Specification/Function | Application Notes |

|---|---|---|---|

| Experimental Materials | n-Octanol (HPLC grade) | Organic phase for distribution studies | Pre-saturate with buffer pH 7.4 before use [10] |

| Phosphate Buffer (pH 7.4) | Aqueous phase simulating physiological conditions | Pre-saturate with n-octanol before use [10] | |

| Reference Compounds | Known logD7.4 values (e.g., caffeine, warfarin) | Method validation and quality control [10] | |

| Computational Datasets | ChEMBLdb29 [10] | Public repository of bioactive molecules | Source of experimental logD7.4 data for modeling |

| Lipophilicity Dataset [15] | 1,130 compounds with logD7.4 values | Benchmarking for regression modeling and cheminformatics | |

| Chromatographic RT Dataset [10] | ~80,000 retention time measurements | Pre-training data for transfer learning approaches | |

| Software Tools | Graph Neural Networks | Molecular graph representation learning | Base architecture for modern prediction models [10] |

| Multi-task Learning Frameworks | Simultaneous learning of related tasks | Improves performance on data-scarce endpoints [13] |

The critical impact of logD7.4 on ADMET properties underscores its essential role as a optimization parameter in drug discovery. Maintaining logD7.4 within an optimal range—typically avoiding extreme values—proves crucial for balancing the competing demands of solubility, permeability, distribution, and toxicity [10]. The development of sophisticated prediction models like RTlogD and MTGL-ADMET demonstrates how innovative computational approaches can address the challenge of limited experimental data through knowledge transfer and multi-task learning [10] [13]. For drug development professionals, these advances provide increasingly reliable tools for prospective logD7.4 optimization, potentially reducing late-stage attrition due to unfavorable ADMET properties. As these models continue to evolve through the incorporation of larger datasets and more sophisticated algorithms, their integration into early-stage drug design workflows promises to enhance the efficiency of developing compounds with optimal pharmacokinetic and safety profiles.

Lipophilicity, quantified as the distribution coefficient between n-octanol and buffer at physiological pH 7.4 (logD7.4), represents a fundamental physical property that profoundly influences various aspects of drug behavior. This parameter significantly affects a compound's solubility, permeability, metabolism, distribution, protein binding, and toxicity, making it crucial for successful drug discovery and design [16]. According to Bhal's studies, logD was proposed for consideration in the "Rule of Five" instead of the more commonly used logP value, highlighting its heightened relevance for ionizable compounds, which constitute approximately 95% of all drugs [16]. Compounds with moderate logD7.4 values demonstrate optimal pharmacokinetic and safety profiles, leading to improved therapeutic effectiveness [16]. The critical importance of accurate logD7.4 determination has driven the development of various experimental measurement techniques, each presenting distinct challenges and limitations that impact their implementation in modern drug discovery pipelines.

Comparative Analysis of Traditional logD7.4 Measurement Methods

Experimental techniques for determining logD7.4 values have evolved to address different needs within drug discovery, yet each method carries specific limitations that affect their applicability. The most commonly employed approaches include the shake-flask method, chromatographic techniques, and potentiometric titration, each with varying degrees of throughput, accuracy, and practical constraints [16]. The following table summarizes the key characteristics, advantages, and limitations of these primary experimental methods.

Table 1: Comparison of Traditional Experimental Methods for logD7.4 Measurement

| Method | Key Principle | Dynamic Range | Throughput | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| Shake-Flask | Direct partitioning between octanol and aqueous buffer phases | Limited by analytical detection | Low | Considered gold standard; direct measurement | Labor-intensive; requires large compound amounts; limited by solubility [16] |

| Chromatographic (HPLC) | Distribution behavior between mobile and stationary phases | 6+ orders of magnitude [17] | High | Simple, stable against impurities; high reproducibility; minimal sample requirement [17] | Indirect assessment; less accurate; requires calibration [16] |

| Potentiometric Titration | Titration with KOH/HCl in n-octanol/buffer system | Limited to acid-base compounds | Medium | Provides additional ionization data | Limited to compounds with acid-base properties; requires high sample purity [16] |

Detailed Experimental Protocols and Methodologies

Shake-Flask Method Protocol

The shake-flask method remains the gold standard for direct logD7.4 measurement, despite its practical challenges. The standardized protocol involves:

- Phase Preparation: Saturate n-octanol with phosphate buffer (pH 7.4) and vice versa to prevent volume changes during partitioning.

- Compound Partitioning: Dissolve the test compound in the pre-saturated octanol phase, then mix with an equal volume of buffer phase using vigorous shaking for 1-2 hours at constant temperature (typically 25°C).

- Phase Separation: Allow the mixture to stand for phase separation, then centrifuge if necessary to achieve complete separation.

- Concentration Analysis: Quantify the compound concentration in both phases using appropriate analytical methods such as UV spectroscopy, HPLC, or LC-MS.

- Calculation: Calculate logD7.4 using the formula: logD7.4 = log10([compound]octanol/[compound]buffer).

The critical challenges include the need for sensitive analytical methods to detect low concentrations in both phases, potential compound degradation during shaking, and emulsion formation that complicates phase separation [16]. Additionally, this method requires relatively large amounts of purified compound (typically milligrams), making it unsuitable for early discovery stages where compound availability is limited.

Chromatographic Method Protocol

Chromatographic approaches offer higher throughput alternatives to shake-flask methods, with the following standardized protocol:

- System Calibration: Establish a calibration curve using neutral compounds with well-established logD7.4 values (e.g., 5-10 reference compounds spanning the expected logD range).

- Chromatographic Conditions: Utilize a C18 stationary phase with a mobile phase consisting of phosphate buffer (pH 7.4) and methanol under isocratic conditions with rigorous pH control [17].

- Retention Time Measurement: Inject test compounds and measure retention times under identical conditions.

- Capacity Factor Calculation: Calculate the capacity factor (k') using the formula: k' = (tR - t0)/t0, where tR is the compound retention time and t_0 is the void time.

- logD7.4 Determination: Convert logk' to logD7.4 using the established calibration curve.

This method's advantages include a dynamic range spanning six orders of magnitude, no solubility limitations, minimal sample requirements (micrograms), and high reproducibility due to UHPLC system precision [17]. However, it provides an indirect assessment of logD7.4 and may show deviations from shake-flask values due to differences in the underlying chemical system (C18 phase versus octanol) [17].

Potentiometric Titration Protocol

Potentiometric titration approaches provide an alternative for compounds with acid-base properties:

- Sample Preparation: Dissolve the compound in a mixture of n-octanol and aqueous buffer.

- Titration Procedure: Titrate with standardized potassium hydroxide or hydrochloride while monitoring pH changes.

- Data Analysis: Calculate logD7.4 from the titration curve shifts between aqueous and octanol-water systems.

This method simultaneously provides pKa values but is restricted to ionizable compounds and requires high sample purity to avoid interference [16].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful logD7.4 measurement requires specific reagents and materials optimized for each methodological approach. The following table details essential research solutions and their functions in experimental workflows.

Table 2: Essential Research Reagent Solutions for logD7.4 Measurement

| Reagent/Material | Function/Role | Application Notes |

|---|---|---|

| n-Octanol (HPLC grade) | Organic phase simulating biological membranes | Must be pre-saturated with buffer; purity critical for accurate partitioning [16] |

| Phosphate Buffer (pH 7.4) | Aqueous phase simulating physiological conditions | Must be pre-saturated with n-octanol; pH requires precise verification before use [16] |

| C18 Chromatographic Column | Stationary phase for hydrophobic interactions | Column chemistry and age affect retention time reproducibility [17] |

| logD Calibrants | Reference compounds for chromatographic calibration | Set should cover expected logD range (-1 to 5+); neutral compounds preferred [17] |

| UHPLC System with PDA/MS detection | Analytical instrumentation for concentration measurement | Enables precise retention time measurement and impurity detection [17] |

Methodological Limitations and Implications for Drug Discovery

The technical challenges inherent in traditional logD7.4 measurement methods have direct consequences for drug discovery efficiency and decision-making. The labor-intensive nature of shake-flask methods, combined with their substantial compound requirements, creates a significant bottleneck in compound profiling, particularly during early discovery stages when material is limited [16]. While chromatographic methods offer improved throughput, they introduce their own limitations as they measure retention behavior on C18 stationary phases rather than direct octanol-water partitioning, potentially leading to systematic deviations from true physiological partitioning [17].

These experimental hurdles have prompted the pharmaceutical industry to invest in computational approaches to supplement or replace traditional measurements. As noted in recent research, "Pharmaceutical companies have harnessed their proprietary models to predict logD values. In comparison to academic endeavors, these models exhibit superior performance owing to the utilization of their extensive and confidential datasets" [16]. For instance, AstraZeneca's AZlogD74 model is trained on a dataset of over 160,000 molecules, which they continuously update with new measurements [16].

The transition toward computational prediction is further justified by the recognition that "the limited availability of data for logD modeling poses a significant challenge to achieving satisfactory generalization capability" [16]. This challenge is directly addressed by innovative models like RTlogD, which leverages knowledge from multiple sources including chromatographic retention time, microscopic pKa, and logP to enhance prediction accuracy despite limited experimental logD data [16].

Diagram 1: Experimental Challenges Driving Computational Adoption in logD7.4 Prediction. This workflow illustrates how methodological limitations in traditional techniques create bottlenecks that motivate the development of AI/ML prediction models.

The experimental hurdles associated with traditional logD7.4 measurement methods present significant challenges for modern drug discovery workflows. While each established technique offers specific advantages, their collective limitations in throughput, compound requirements, and technical complexity have driven the pharmaceutical industry toward computational solutions. The emergence of robust prediction models like AstraZeneca's AZlogD74 and the academically developed RTlogD, which leverages transfer learning from chromatographic retention time and other related properties, represents a paradigm shift in lipophilicity assessment [16]. These models address the fundamental challenge of limited experimental data availability while providing the throughput necessary for contemporary drug discovery. The optimal approach likely involves a strategic integration of targeted experimental measurements to validate and refine computational predictions, creating a synergistic workflow that maximizes both accuracy and efficiency in compound optimization and development.

Lipophilicity, measured as the distribution coefficient between n-octanol and buffer at physiological pH 7.4 (logD7.4), represents a fundamental physical property with profound implications for drug behavior. This parameter significantly affects various aspects of pharmaceutical performance, including solubility, permeability, metabolism, distribution, protein binding, and toxicity [10]. In drug-like molecules, optimal lipophilicity provides better safety and pharmacokinetic profiles, making accurate logD7.4 prediction crucial for successful drug discovery and design [10]. Whereas logP describes the differential solubility of a neutral compound, logD7.4 offers greater relevance for drug research as it accounts for the pH-dependent lipophilicity of ionizable compounds, which constitute approximately 95% of all drugs [10]. The central challenge in logD7.4 prediction lies in the limited availability of high-quality experimental data, creating a significant gap between industrial and academic modeling capabilities that directly impacts model generalization performance.

The disparity between industrial and academic logD7.4 datasets creates a fundamental limitation for academic model generalization. Pharmaceutical companies like AstraZeneca have developed proprietary models such as AZlogD74 trained on massive in-house databases containing over 160,000 molecules, which they continuously update with new measurements [10]. Similarly, Bayer generates thousands of new logD data points annually, and Merck & Co. invests significantly in leveraging institutional knowledge to guide experimental endeavors [10]. These expansive, curated datasets provide industrial models with distinct advantages in accuracy and generalizability.

In stark contrast, academic research relies predominantly on public datasets, which are considerably smaller in scale. For instance, one widely used public resource cited in the literature contains only 1,130 hand-curated compounds with experimental logD7.4 values [18]. Another study utilized the DB29 dataset from ChEMBLdb29, which nonetheless suffers from significant limitations in data quantity and diversity compared to industrial counterparts [10]. This orders-of-magnitude difference in training data creates an inherent disadvantage for academic models, constraining their ability to learn the complex structure-property relationships necessary for accurate prediction across diverse chemical spaces.

Table: Comparison of logD7.4 Data Resources in Industrial vs. Academic Settings

| Resource Type | Data Source | Approximate Dataset Size | Update Frequency | Key Characteristics |

|---|---|---|---|---|

| Industrial Database | AstraZeneca AZlogD74 | >160,000 molecules | Continuous with new measurements | Proprietary, diverse chemical space, high-quality standardized measurements |

| Industrial Database | Bayer in-house data | Thousands of new points annually | Annual updates | Targeted compounds, institutional knowledge integration |

| Academic Public Dataset | ChEMBL DB29-data | Limited (exact size not specified) | Irregular | Mixed experimental methods, requires extensive curation |

| Academic Public Dataset | nanxstats/logd74 | 1,130 compounds | Static | Hand-curated, shake-flask method focus |

Academic Innovation: Knowledge Transfer to Bridge the Data Gap

Faced with limited direct logD7.4 measurements, academic researchers have developed innovative knowledge transfer strategies to leverage related chemical properties. The RTlogD model exemplifies this approach by integrating three complementary data sources to compensate for limited logD7.4 data [10].

Chromatographic Retention Time as a Pre-training Task

Chromatographic retention time (RT) demonstrates a strong correlation with lipophilicity and offers a substantially larger dataset, with nearly 80,000 molecules available in public collections [10]. The RTlogD framework employs transfer learning by first pre-training on RT prediction, exposing the model to a broader chemical space. This pre-trained model is subsequently fine-tuned on the limited logD7.4 data, significantly enhancing generalization capability compared to models trained exclusively on logD7.4 data [10].

logP as an Auxiliary Task in Multitask Learning

Although logP describes the partition coefficient only for neutral compounds, it shares fundamental lipophilicity information with logD7.4. The RTlogD model incorporates logP prediction as a parallel task within a multitask learning framework, allowing the model to leverage domain information from logP to improve logD7.4 prediction accuracy [10]. This approach uses logP as an inductive bias to enhance learning efficiency despite limited logD7.4 examples.

Microscopic pKa Values as Atomic Features

Microscopic pKa values provide atom-specific ionization information critical for understanding pH-dependent partitioning behavior. By incorporating predicted acidic and basic microscopic pKa values as atomic features, the RTlogD model gains valuable insights into ionizable sites and ionization capacity, enabling enhanced lipophilicity prediction for different molecular ionization forms [10]. This approach offers more specific ionization information compared to macroscopic pKa values.

Experimental Protocol and Model Performance Comparison

Data Curation and Preprocessing Methodology

The experimental protocol for evaluating the RTlogD model involved rigorous data curation from ChEMBLdb29 to create the DB29-data dataset [10]. Quality control measures included: (1) removing records with pH values outside 7.2-7.6 to ensure physiological relevance; (2) eliminating records with solvents other than octanol for consistency; (3) manual verification and correction of data errors, including identification of values not logarithmically transformed and transcription errors through cross-referencing with primary literature [10]. This meticulous curation process resulted in a high-quality benchmark dataset for model training and evaluation.

Ablation Study Design

Ablation studies systematically evaluated the contribution of each knowledge component by comparing: (1) baseline model trained solely on logD7.4 data; (2) model with RT pre-training only; (3) model with logP multi-task learning only; (4) model with microscopic pKa features only; and (5) the complete RTlogD integration incorporating all three knowledge sources [10]. This experimental design enabled quantitative assessment of each component's relative importance to overall prediction performance.

Comparative Performance Analysis

The RTlogD model demonstrated superior performance compared to commonly used algorithms and prediction tools, including ADMETlab2.0, PCFE, ALOGPS, FP-ADMET, and the commercial software Instant Jchem [10]. The integration of multiple knowledge sources consistently improved prediction accuracy across diverse chemical structures, particularly for compounds structurally distinct from those in the limited logD7.4 training data.

Table: Performance Comparison of logD7.4 Prediction Methods

| Prediction Method | Type | Key Features | Reported Performance | Data Requirements |

|---|---|---|---|---|

| RTlogD (Academic) | Integrated knowledge model | RT pre-training, logP multi-task, microscopic pKa | Superior to common algorithms | Limited logD7.4 data, leverages auxiliary data |

| AZlogD74 (AstraZeneca) | Industrial proprietary | >160,000 molecule training set | High accuracy (specific metrics not published) | Extensive proprietary data |

| ADMETlab2.0 | Web-based platform | Multiple ADMET endpoints | Benchmark performance | Public data |

| ALOGPS | Academic algorithm | Virtual Computational Chemistry Laboratory | Established benchmark | Public data |

| Instant Jchem | Commercial software | Chemoinformatics platform | Commercial standard | Mixed data sources |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Research Reagents and Computational Tools for logD7.4 Modeling

| Resource/Tool | Type | Function/Application | Access |

|---|---|---|---|

| n-Octanol/Buffer System | Experimental reagent | Standard solvent system for shake-flask logD7.4 determination | Laboratory supply |

| High-Performance Liquid Chromatography (HPLC) | Instrumentation | Chromatographic retention time measurement for correlation with logD7.4 | Core facility |

| ChEMBL Database | Public data resource | Source of experimental bioactivity data including limited logD7.4 values | Public access |

| nanxstats/logd74 Dataset | Curated public dataset | 1,130 compounds with experimental logD7.4 values for benchmarking | GitHub repository |

| Microscopic pKa Predictor | Computational tool | Calculation of atom-specific ionization constants for atomic features | Various tools |

| Graph Neural Networks (GNNs) | Modeling framework | Molecular graph representation learning for QSPR modeling | Open-source implementations |

Implications for Drug Discovery and Development

The data gap between academic and industrial logD7.4 modeling has direct consequences for drug discovery efficiency. Accurate lipophilicity prediction is particularly crucial for optimizing pharmacokinetic and safety profiles of drug candidates, as compounds with moderate logD7.4 values exhibit optimal therapeutic effectiveness [10]. In precision oncology drug development, where single-arm trials often lack control groups, robust predictive models become increasingly valuable for decision-making [19]. The knowledge transfer strategies exemplified by RTlogD represent promising approaches for mitigating data limitations, potentially accelerating the development of novel therapeutics like AZD-9574 (a PARP1 inhibitor currently in Phase II trials for non-small cell lung cancer) by enabling more reliable property prediction early in the discovery pipeline [20].

The generalization challenge in academic logD7.4 prediction models stems fundamentally from limited data resources compared to industrial counterparts. While innovative knowledge transfer methods like RTlogD demonstrate promising approaches to mitigate this gap through chromatographic retention time, logP, and microscopic pKa integration, the underlying data disparity remains a significant constraint. Future progress may require novel collaborative frameworks that enable secure knowledge sharing between industry and academia while protecting intellectual property. Additionally, continued development of transfer learning and multi-task approaches that leverage complementary data sources will be essential for advancing predictive modeling capabilities in academic settings, ultimately contributing to more efficient drug discovery and development pipelines.

In modern drug discovery, accurately predicting the lipophilicity of a molecule, represented by its logD7.4 value, is crucial for optimizing its absorption, distribution, metabolism, and excretion (ADME) properties. While public datasets for this key parameter are often limited, proprietary in-house databases have emerged as a significant source of competitive advantage. AstraZeneca's AZlogD74 model, trained on a proprietary database of over 160,000 molecules, exemplifies this strategic edge. This guide provides an objective comparison of the AZlogD74 model's performance against other publicly available tools, detailing the experimental methodologies that underpin its superior predictive power and its practical applications in drug discovery pipelines.

The In-House Data Advantage in logD Prediction

The challenge of predicting the distribution coefficient at pH 7.4 (logD7.4) stems from the complex, pH-dependent ionization behavior of drug-like molecules. Publicly available experimental logD7.4 data is scarce, which severely limits the generalization capability and performance of quantitative structure-property relationship (QSPR) models built upon them [10].

- Scale and Specificity: AstraZeneca's AZlogD74 model leverages a massive, curated internal database of over 160,000 experimental logD7.4 values [10]. This scale provides a rich and diverse chemical space for training robust AI models.

- Continuous Enrichment: Unlike static public datasets, this proprietary database is continuously updated with new experimental measurements generated from AstraZeneca's ongoing drug metabolism and pharmacokinetics (DMPK) studies, ensuring the model evolves with the latest data [10] [21].

- From Data to Knowledge: The integration of this extensive data into predictive models is a core component of AstraZeneca's R&D strategy. The company embeds data science and AI across its research activities to identify targets, predict molecular properties, and increase the probability of clinical success [22] [23].

The following diagram illustrates how this large-scale internal data creates a foundational advantage for predictive modeling.

Comparative Performance Analysis

AstraZeneca's AZlogD74 model demonstrates superior performance compared to commonly used academic and commercial logD prediction tools. The following table summarizes a comparative analysis based on a time-split test set, which evaluates the model's ability to generalize to new, previously unseen chemical structures.

Table 1: Performance Comparison of logD7.4 Prediction Tools

| Tool / Model | Basis of Method | Reported Mean Absolute Error (MAE) | Key Differentiating Feature |

|---|---|---|---|

| AstraZeneca AZlogD74 | In-house data (160k+ molecules); Advanced AI/GNN | Not explicitly stated; "Superior performance" [10] | Unmatched scale of proprietary, high-quality training data |

| ADMETlab 2.0 [10] | QSPR/ML on public data | ~0.67 (reported in independent studies) | Comprehensive ADMET endpoint platform |

| ALOGPS [10] | Associative Neural Network | ~0.70 (reported in independent studies) | Early and widely-used online predictor |

| FP-ADMET [10] | Molecular fingerprint-based ML | Information missing from source | Focus on specific molecular representations |

| Instant Jchem [10] | Commercial software; QSPR | Information missing from source | Integrated chemical database and property calculation |

The time-split validation is a rigorous testing method that mirrors real-world drug discovery, where models are applied to compounds synthesized after the model was built. AZlogD74's performance in this context highlights its generalization capability, a direct benefit of training on a vast and diverse internal dataset that captures a wider spectrum of chemical space and property trends than publicly available data [10].

In contrast, academic models often rely on public data from sources like ChEMBL, which, while valuable, are orders of magnitude smaller and can be heterogeneous in experimental quality. Some models attempt to overcome data scarcity through data augmentation with predicted values or by incorporating related physicochemical properties like logP and pKa into multi-task learning frameworks [10]. While innovative, these approaches can inherit and amplify prediction errors.

Experimental Protocols and the Scientist's Toolkit

The robustness of the AZlogD74 model is rooted in both the quality of its underlying data and the sophisticated machine learning architectures employed. The experimental workflow for generating and utilizing this model involves several key stages, from data generation to model deployment.

Data Curation and Preprocessing Protocol

The foundation of the model is a high-quality, internally consistent dataset. The curation process involves:

- Source and Measurement: Experimental logD7.4 values are primarily determined using the shake-flask method at pH 7.4, with n-octanol as the organic phase and buffer as the aqueous phase. Chromatographic and potentiometric approaches may also be used for specific compound classes [10].

- Data Cleaning: Rigorous pre-processing is applied to remove records with incorrect pH values (outside 7.2-7.6) or solvents other than octanol. Furthermore, data is manually verified against primary literature to correct for transcription errors or values that were not logarithmically transformed [10].

- Feature Engineering: Molecular structures are typically represented as graphs for input into Graph Neural Networks (GNNs). Atoms become nodes, and bonds become edges, allowing the model to learn directly from the fundamental connectivity of the molecule [22].

Model Architecture and Training Workflow

AstraZeneca employs advanced AI frameworks for molecular property prediction. While the exact architecture of AZlogD74 is proprietary, the company's public research, such as the Edge Set Attention (ESA) framework developed with the University of Cambridge, offers insights into its potential technical foundations [22].

- Architecture: The ESA model uses a graph attention approach, which is particularly well-suited for analyzing molecular structures. It represents molecules as graphs, where atoms are nodes and chemical bonds are edges [22].

- Mechanism: This approach allows the AI to learn and predict molecular properties based on the structure and connectivity of the molecule. The "attention" mechanism enables the model to weigh the importance of different atoms and bonds in the context of the whole molecule when making a prediction, leading to more accurate and interpretable results [22].

- Training Regime: The model is trained on the massive in-house dataset, leveraging the continuous influx of new data to periodically refine and update the model, ensuring its predictions remain state-of-the-art [10].

Table 2: Essential Research Reagent Solutions for logD7.4 Modeling

| Reagent / Solution | Function in Experimental Protocol |

|---|---|

| n-Octanol | Serves as the organic phase in the shake-flask method, simulating the lipidic environment of cell membranes. |

| Buffer Solution (pH 7.4) | Maintains the aqueous phase at physiological pH during distribution coefficient measurement. |

| Reference Compounds | A set of compounds with known, reliably measured logD values used for method calibration and validation. |

The workflow below illustrates the process of transforming raw data into a validated predictive model.

Application in Drug Discovery and Development

The AZlogD74 model is not an isolated tool but is deeply integrated into AstraZeneca's end-to-end drug discovery engine, contributing directly to its enhanced R&D productivity.

- Informing Compound Design: Medicinal chemists use AZlogD74 predictions to guide the synthesis of new compounds, optimizing lipophilicity to achieve a balance between permeability and solubility, thereby reducing the risk of toxic events and improving safety profiles [10] [21].

- Integration with Broader AI Strategy: The model is part of a larger ecosystem of AI tools. AstraZeneca reports that more than 90% of its small molecule discovery pipeline is now AI-assisted, accelerating the identification of promising drug candidates and increasing the probability of clinical success [22].

- Impact on R&D Productivity: The application of such data-driven models is a key factor in AstraZeneca's transformation of its R&D performance. The company has achieved a five-fold improvement in the proportion of pipeline molecules advancing from pre-clinical investigation to Phase III completion, rising from 4% to 19% [21].

AstraZeneca's strategic investment in building and maintaining a proprietary database of over 160,000 molecules provides a formidable and sustainable advantage in the critical task of logD7.4 prediction. The AZlogD74 model, powered by this unique asset and advanced AI like graph attention networks, delivers demonstrably superior performance against academic and commercial alternatives. This capability is deeply embedded in the company's R&D workflow, directly contributing to the design of higher-quality drug candidates and an overall more productive and successful discovery pipeline. For researchers and scientists, this case underscores the paramount importance of high-quality, large-scale data as the foundation for the next generation of predictive AI in drug discovery.

Inside AZlogD74: Architecture, Data, and Real-World Workflow Integration

Molecular representation learning has catalyzed a paradigm shift in computational chemistry and drug discovery, transitioning from reliance on manually engineered descriptors to the automated extraction of features using deep learning. This transition enables data-driven predictions of molecular properties, inverse design of compounds, and accelerated discovery of chemical and crystalline materials [24]. Among the various deep learning architectures, Graph Neural Networks (GNNs) have emerged as particularly powerful frameworks for molecular modeling because they naturally represent molecules as graph structures where atoms correspond to nodes and chemical bonds to edges [25] [24]. This graph-based representation provides a more realistic depiction of molecules compared to traditional linear representations like SMILES strings or fixed-length fingerprints, allowing GNNs to explicitly encode relationships between atoms and capture both structural and dynamic molecular properties [26] [24].

The fundamental operation of GNNs centers around the message passing mechanism, where each node (atom) collects and processes information from its neighboring nodes (connected atoms) through multiple layers [25] [27]. This process enables each atom to incorporate information from increasingly distant neighbors in the molecular structure, effectively learning complex chemical environments and relationships. As this process repeats across layers, increasingly distant atomic information is incorporated, allowing the network to build comprehensive molecular representations [27]. Different GNN architectures implement this core concept with variations in how messages are computed, aggregated, and updated, leading to different trade-offs in expressive power, computational efficiency, and interpretability [25] [26].

Comparative Analysis of GNN Architectures for Molecular Property Prediction

Fundamental GNN Architectures

Multiple GNN architectures have been developed with distinct approaches to processing molecular graph data. Graph Convolutional Networks (GCNs) represent one of the most fundamental architectures, applying convolution operations to graphs where a node's features are combined with a weighted average of its neighbors' features [25]. Graph Attention Networks (GAT) enhance this approach by incorporating attention mechanisms that assign variable weights to different neighbors, allowing the model to focus more on important interactions and providing inherent interpretability through attention weights [25]. Graph Isomorphism Networks (GIN) were specifically designed to maximize expressive power by leveraging theoretical insights from graph isomorphism testing, enabling them to distinguish subtle structural differences between molecules [25] [28].

More recent advancements have integrated Kolmogorov-Arnold networks (KANs) with GNNs to create KA-GNNs that replace traditional multilayer perceptrons with learnable univariate functions on edges [29]. These models leverage the Kolmogorov-Arnold representation theorem to achieve improved expressivity, parameter efficiency, and interpretability compared to conventional GNNs. KA-GNNs integrate KAN modules into the three fundamental components of GNNs: node embedding, message passing, and readout, creating a unified framework with enhanced approximation capabilities [29]. Experimental results across multiple molecular benchmarks demonstrate that KA-GNN variants consistently outperform conventional GNNs in both prediction accuracy and computational efficiency [29].

Performance Comparison Across Molecular Tasks

Table 1: Performance Comparison of GNN Architectures on Molecular Property Prediction Tasks

| Architecture | logD7.4 Prediction (RMSE) | Protein Energy Prediction | Computational Efficiency | Key Advantages |

|---|---|---|---|---|

| RTlogD | ~0.7 [10] | N/A | Medium | Transfer learning from chromatographic data |

| KA-GNN | N/A | High accuracy on DISPEF [27] | High | Superior expressivity & parameter efficiency [29] |

| GIN | N/A | N/A | Medium | High discriminative power for graph structures [28] |

| Graph Transformers | Comparable to GNNs [26] | N/A | Fast inference (0.4s) [26] | Flexibility & multimodality [26] |

| 3D-GNN (PaiNN) | N/A | High accuracy [27] | Medium (3.9s inference) [26] | Incorporation of spatial geometry |

Table 2: Training Time Comparison Across Architecture Types (Seconds/Epoch)

| Architecture Type | Model | Average Training Time | Average Inference Time |

|---|---|---|---|

| 2D Models | ChemProp | 21.5s | 2.3s |

| GIN-VN | 16.2s | 2.4s | |

| Graph Transformer | 3.7s | 0.4s | |

| 3D Models | ChIRo | 49.1s | 6.9s |

| PaiNN | 20.7s | 3.9s | |

| 3D Graph Transformer | 3.9s | 0.4s | |

| 4D Models | PaiNN | 147.1s | 31.3s |

| SchNet | 99.7s | 24.4s | |

| Graph Transformer | 22.0s | 2.7s |

The performance advantages of specialized GNN architectures are evident across diverse molecular prediction tasks. For logD7.4 prediction, which is crucial for understanding drug disposition, the RTlogD framework demonstrates how incorporating knowledge from multiple sources—including chromatographic retention time (RT), microscopic pKa, and logP—significantly enhances prediction accuracy [10]. This model addresses the fundamental challenge of limited logD experimental data by leveraging transfer learning from larger RT datasets containing nearly 80,000 molecules, followed by fine-tuning on logD data [10]. The resulting model outperforms commonly used algorithms and commercial prediction tools, highlighting the value of transfer learning and multi-task learning approaches in molecular property prediction [10].

For larger biomolecular systems, recent benchmarking on the DISPEF dataset (Dataset of Implicit Solvation Protein Energies and Forces) reveals key insights into GNN performance scalability [27]. This dataset comprises over 200,000 proteins with sizes up to 12,499 atoms, providing a rigorous testbed for evaluating GNN architectures on biologically relevant systems [27]. Models like SchNet and EGNN demonstrate particularly strong performance on these challenging tasks, though computational efficiency remains a concern for large-scale applications [27]. The introduction of multiscale architectures, such as the proposed Schake model, shows promise for delivering transferable and computationally efficient energy and force predictions for large proteins [27].

Experimental Protocols and Methodologies

logD7.4 Prediction with RTlogD Framework

The RTlogD framework employs a sophisticated experimental protocol that combines multiple learning strategies to address data limitations in logD modeling [10]. The methodology begins with pre-training on a large chromatographic retention time (RT) dataset comprising nearly 80,000 molecules, leveraging the correlation between RT and lipophilicity. This pre-trained model is then fine-tuned on a carefully curated logD7.4 dataset (DB29-data) containing experimental values measured at physiological pH 7.4, exclusively obtained using reliable methods including shake-flask, chromatographic techniques, and potentiometric titration approaches [10]. The dataset undergoes rigorous preprocessing to ensure data quality, including manual verification and error correction for transcription inaccuracies.

A key innovation in the RTlogD protocol is the incorporation of microscopic pKa values as atomic features, which provide specific information about ionizable sites and ionization capacity [10]. Additionally, the model integrates logP as an auxiliary task within a multitask learning framework, creating an inductive bias that improves learning efficiency and prediction accuracy [10]. The framework employs ablation studies to validate the individual contributions of RT, pKa, and logP components, demonstrating that each element significantly enhances model performance. For evaluation, the researchers curated a time-split dataset containing molecules reported within the past two years, ensuring rigorous assessment of model generalizability to novel chemical structures [10].

KA-GNN Implementation and Training

The Kolmogorov-Arnold Graph Neural Networks (KA-GNNs) implement a novel architecture that systematically integrates Fourier-based KAN modules across the entire GNN pipeline [29]. The experimental protocol involves two primary variants: KA-GCN (KAN-augmented Graph Convolutional Network) and KA-GAT (KAN-augmented Graph Attention Network), which replace conventional MLP-based transformations with Fourier-based KAN modules in node embedding initialization, message passing, and graph-level readout components [29]. The Fourier-series-based univariate functions within KAN layers enable effective capture of both low-frequency and high-frequency structural patterns in molecular graphs, enhancing function approximation capabilities compared to traditional activation functions.

The training methodology employs a residual learning framework where node features are updated through residual KAN connections rather than standard MLPs [29]. For KA-GCN, each node's initial embedding is computed by passing the concatenation of its atomic features and the average of its neighboring bond features through a KAN layer, effectively encoding both atomic identity and local chemical context via data-dependent trigonometric transformations [29]. The researchers provide theoretical analysis grounded in Carleson's convergence theorem and Fefferman's multivariate extension to establish the strong approximation capabilities of the Fourier-KAN architecture, demonstrating that it can approximate any square-integrable multivariate function [29]. Empirical validation across seven molecular benchmarks confirms superior approximation capability compared to standard two-layer MLPs, with the models exhibiting improved interpretability by highlighting chemically meaningful substructures [29].

Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for GNN Molecular Modeling

| Tool/Resource | Type | Primary Function | Key Features |

|---|---|---|---|

| PyTorch Geometric (PyG) | Software Library | GNN Implementation | Optimized GNN operations, high flexibility [25] |

| Deep Graph Library (DGL) | Software Library | Graph Neural Networks | Support for TensorFlow/PyTorch, large graph optimization [25] |

| QM9 Dataset | Molecular Dataset | Model Training/Benchmarking | 134k stable small organic molecules with quantum properties [28] |

| DISPEF Dataset | Protein Dataset | Protein Energy Prediction | 207,454 proteins with implicit solvation energies [27] |

| ChEMBL Database | Chemical Database | Experimental Data Source | Bioactivity data, drug-like molecules [10] |

| Graph Transformers | Algorithm | Molecular Representation | Flexibility, multimodality, attention mechanisms [26] |

| Fourier-KAN Layers | Algorithm | Function Approximation | Learnable activation functions, strong theoretical guarantees [29] |

Successful implementation of GNNs for molecular representation requires not only architectural innovations but also robust computational frameworks and carefully curated datasets. PyTorch Geometric (PyG) and Deep Graph Library (DGL) represent two of the most widely adopted libraries for GNN implementation, providing optimized operations for graph-based learning and supporting both research prototyping and production deployment [25]. These frameworks offer comprehensive implementations of fundamental GNN architectures including GCN, GAT, GraphSAGE, and more recent variants, significantly accelerating model development and experimentation.

The quality and scope of molecular datasets critically influence model performance and generalizability. The QM9 dataset has served as a fundamental benchmark for small molecule property prediction, containing approximately 134,000 stable small organic molecules with comprehensive quantum mechanical properties [28]. For larger biomolecular systems, the recently introduced DISPEF dataset provides implicit solvation free energies for over 200,000 proteins ranging in size from 16 to 1,022 amino acids, enabling rigorous evaluation of GNN scalability to biologically relevant systems [27]. Additionally, the ChEMBL database offers extensive bioactivity data for drug-like molecules, serving as a valuable resource for training models on pharmaceutically relevant properties including logD7.4 [10].

Graph Neural Networks have established themselves as powerful frameworks for molecular representation learning, demonstrating superior performance across diverse chemical tasks from simple property prediction to complex biomolecular modeling. Architectural innovations such as attention mechanisms, Kolmogorov-Arnold networks integration, and 3D-geometric learning continue to push the boundaries of molecular representation capabilities. The comparative analysis presented in this overview highlights that while general-purpose GNN architectures provide strong baseline performance, task-specific adaptations incorporating domain knowledge—such as the RTlogD framework for logD7.4 prediction—deliver superior results for specialized applications.

The evolving landscape of GNN architectures faces several persistent challenges, including computational scalability for large biomolecules, data scarcity for specialized properties, and the need for improved interpretability in predictive modeling. Emerging strategies such as transfer learning, multi-task training, and self-supervised pre-training show significant promise in addressing these limitations, particularly for data-constrained scenarios common in drug discovery applications. As these methodologies continue to mature and integrate with complementary approaches from geometric deep learning and quantum chemistry, GNN-based molecular representations are poised to become increasingly central to accelerated drug discovery and materials design pipelines.

In modern drug discovery, the accuracy of predictive models is fundamentally dependent on the quality, size, and continuity of the experimental data upon which they are built. This is particularly true for the prediction of lipophilicity, quantified as the distribution coefficient at physiological pH (logD7.4), a critical property influencing a compound's absorption, distribution, metabolism, excretion, and toxicity (ADMET) [10]. While numerous in silico logD7.4 prediction tools exist, their performance varies significantly, largely determined by their underlying dataset strategies. This guide objectively compares the dataset foundations of several prominent logD prediction tools, with a focus on AstraZeneca's AZlogD74 model, to illustrate how data curation, quality control, and continuous updating protocols directly impact predictive performance and practical utility in a research setting.

Comparative Analysis of logD7.4 Prediction Tools

The following table summarizes the key characteristics of the dataset foundations for various logD7.4 prediction tools, highlighting the critical differences in their approaches to data sourcing, quality, and maintenance.

Table 1: Comparison of Dataset Foundations for logD7.4 Prediction Tools

| Tool Name | Reported Data Source & Size | Key Data Curation & QC Protocols | Update Strategy for Experimental Data | Primary Experimental Method(s) |

|---|---|---|---|---|

| AZlogD74 (AstraZeneca) | Extensive proprietary database of >160,000 molecules [10] | Not publicly detailed; inferred to use internal standardized assays. | Continuous model updates with new internal measurements [10] | In-house shake-flask, chromatographic, or potentiometric methods [10] |

| RTlogD (Academic Model) | ChEMBLdb29 (Publicly available data) [10] | Rigorous manual verification; pH range (7.2-7.6) and solvent (octanol) filtering; error correction from primary literature [10] | Not specified; typically dependent on new public data releases and research cycles. | Shake-flask, chromatographic, and potentiometric titration from literature [10] |

| ADMETlab2.0 | Diverse public databases (e.g., ChEMBL) [10] | Automated and manual data curation pipelines; standardization of molecular structures. | Periodic updates with new versions of underlying public databases. | Aggregated from various literature sources and public databases |

| ALOGPS | Publicly available data | Not specified in detail; relies on the curation of source public datasets. | Not specified; model is static between major releases. | Aggregated from various literature sources |

Detailed Experimental Protocols for Dataset Construction

The reliability of any predictive model is a direct consequence of the rigor applied during its dataset construction. The protocols for the AZlogD74 model, while proprietary, can be inferred to employ high internal standards. In contrast, the open academic RTlogD model documents a meticulous, multi-stage process for building its dataset from public sources, which serves as an excellent template for robust dataset creation [10].

Data Sourcing and Consolidation

- Source Identification: Experimental logD values are primarily sourced from large-scale public repositories such as ChEMBL, which aggregates data from thousands of scientific publications [10].

- Metadata Extraction: Crucial experimental metadata is extracted for each data point, including the pH of measurement, the solvent system used, the experimental method (e.g., shake-flask), and the original literature source.

Data Quality Control and Curation

This stage is critical for ensuring data integrity and model generalizability.

- pH Filtering: To ensure relevance to physiological conditions, only records with pH values between 7.2 and 7.6 are retained for the logD7.4 model [10].

- Solvent Filtering: Records utilizing solvent systems other than the standard n-octanol/water are eliminated to maintain consistency [10].

- Manual Verification and Error Correction: A rigorous, manual check is performed against primary literature sources to identify and correct two common error types [10]:

- Transformation Errors: Correcting values where the partition coefficient was not logarithmically transformed.

- Transcription Errors: Rectifying discrepancies between the value in the database and the value reported in the original publication.

Data Integration for Enhanced Modeling

- Auxiliary Data Incorporation: To combat data scarcity for logD, some models like RTlogD leverage knowledge transfer from larger related datasets. This includes [10]:

- Chromatographic Retention Time (RT): Used as a source task for pre-training, leveraging its strong correlation with lipophilicity.

- logP and pKa Values: Integrated as auxiliary tasks in a multitask learning framework or as atomic features to provide insights into ionization states.

The workflow for this comprehensive dataset curation and application is illustrated below.

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental data underpinning logD prediction models are generated using specific, well-established laboratory techniques and reagents. The following table details the key components of the "wet lab" toolkit that forms the empirical foundation for these in silico tools.

Table 2: Key Research Reagents and Materials for Experimental logD7.4 Determination

| Item Name | Function / Role in logD Determination |

|---|---|

| n-Octanol | Serves as the organic phase in the shake-flask method, simulating the lipid environment of cell membranes [10]. |

| Buffer Solution (pH 7.4) | Maintains the aqueous phase at a consistent physiological pH during the distribution experiment [10]. |

| High-Performance Liquid Chromatography (HPLC) System | Used in chromatographic methods to measure retention time, which correlates with lipophilicity and can be used to estimate logD7.4 [10]. |

| Potentiometric Titrator | Automates the titration process in potentiometric approaches, used for logD determination of ionizable compounds [10]. |

| Shake-Flask Apparatus | Standard equipment for the classic shake-flask method, allowing for the direct measurement of compound distribution between octanol and buffer phases [10]. |