AI-Accelerated and Holistic Strategies: A Modern Guide to Combined LB-SB Virtual Screening Workflows

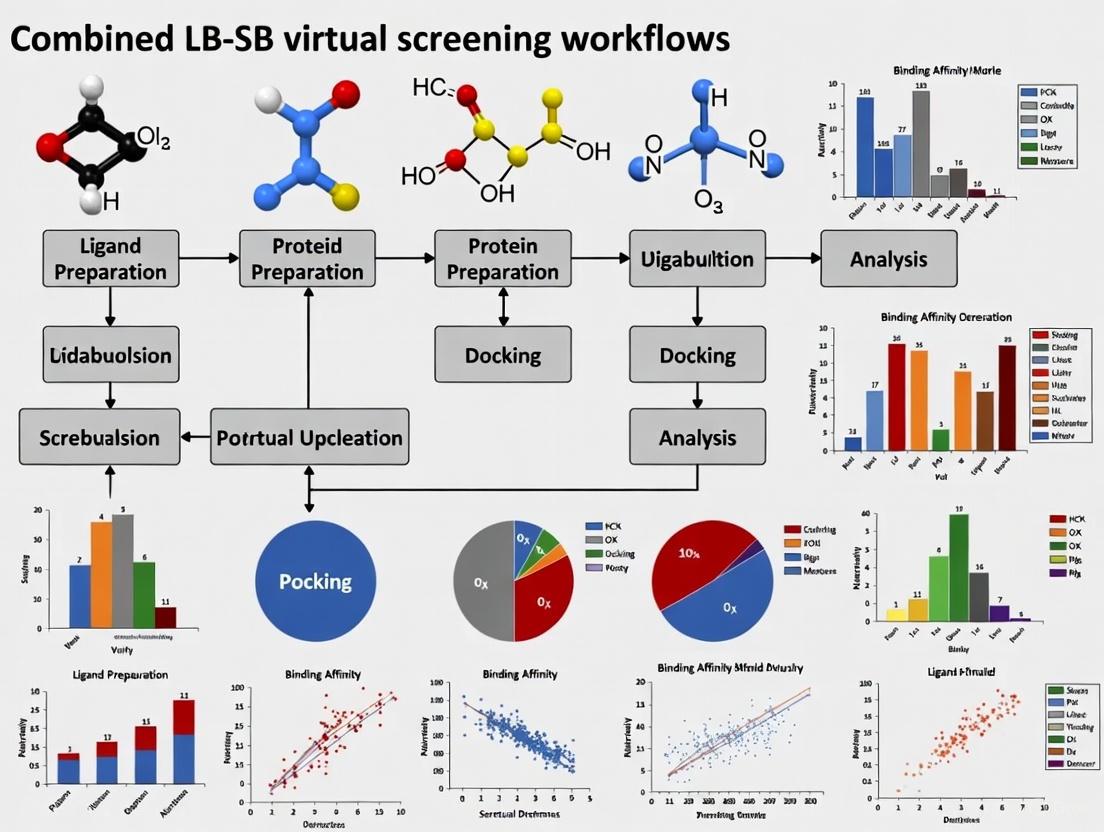

This article provides a comprehensive overview of combined ligand-based (LB) and structure-based (SB) virtual screening (VS) workflows, a cornerstone of modern computational drug discovery.

AI-Accelerated and Holistic Strategies: A Modern Guide to Combined LB-SB Virtual Screening Workflows

Abstract

This article provides a comprehensive overview of combined ligand-based (LB) and structure-based (SB) virtual screening (VS) workflows, a cornerstone of modern computational drug discovery. It explores the foundational principles that make these hybrid approaches successful and details the main strategic frameworks: sequential, parallel, and hybrid. The content delivers practical guidance on overcoming common pitfalls, such as accounting for protein flexibility and protonation states, and highlights advanced optimization techniques, including the integration of machine learning and consensus scoring. Finally, it examines current trends in validation, the rise of AI-accelerated platforms for screening ultra-large libraries, and comparative analyses that demonstrate the superior performance of integrated methods over standalone approaches in identifying novel bioactive compounds.

Why Combine Forces? The Synergistic Principles of LB and SB Virtual Screening

Virtual Screening (VS) is a cornerstone of modern computer-aided drug design (CADD), enabling researchers to efficiently identify biologically active molecules from vast chemical libraries by leveraging computational models instead of, or prior to, experimental testing [1]. This approach dramatically reduces the time, cost, and experimental effort required in drug discovery campaigns. VS methodologies are broadly classified into two fundamental pillars: Ligand-Based Virtual Screening (LBVS) and Structure-Based Virtual Screening (SBVS) [2] [3] [4]. LBVS relies on the structural information and physicochemical properties of known active ligands, operating under the principle that chemically similar molecules are likely to exhibit similar biological activities. In contrast, SBVS requires the three-dimensional (3D) structure of the target protein and predicts biological activity by evaluating the molecular interactions between a small molecule and its target, typically through molecular docking [2] [1]. These approaches are not mutually exclusive; rather, they are highly complementary. Continued efforts have been made to combine them to mitigate their individual limitations and leverage their synergistic potential, a practice that has been further empowered by the integration of machine learning (ML) techniques [3] [4]. This application note delineates the core concepts, methodologies, and protocols for both LBVS and SBVS, framing them within the context of developing combined workflows for more effective virtual screening.

Core Concepts and Comparative Analysis

Ligand-Based Virtual Screening (LBVS)

LBVS is employed when the 3D structure of the biological target is unknown or unavailable. It utilizes the collective information from known active compounds to identify new hits [1] [5].

- Fundamental Principle: The "similarity-property principle" posits that molecules with similar structures are likely to have similar properties or biological activities [3] [1]. This principle underpins all LBVS methods.

- Key Techniques:

- Molecular Descriptors and Fingerprints: Molecules are encoded into numerical representations, such as 2D topological fingerprints (e.g., Extended Connectivity Fingerprints - ECFP) or 3D shape and electrostatics, to enable quantitative similarity calculations [2] [4]. The Tanimoto coefficient is a commonly used metric for comparing these fingerprints [6] [1].

- Pharmacophore Modeling: A pharmacophore represents the essential steric and electronic features necessary for a molecule to interact with a specific biological target. LBVS pharmacophore models are derived from the alignment and analysis of active ligands [2] [7].

- Quantitative Structure-Activity Relationship (QSAR): This approach uses statistical models to correlate numerical descriptors of a set of compounds with their known biological activity, creating a predictive model for new compounds [3] [7].

- Machine Learning Advancements: Modern LBVS leverages deep learning and graph neural networks (GNNs) [3] [5]. For instance, integrating expert-crafted molecular descriptors with GNN-learned representations has been shown to enhance model performance and data efficiency in virtual screening [5].

Structure-Based Virtual Screening (SBVS)

SBVS is the method of choice when a reliable 3D structure of the target protein (e.g., from X-ray crystallography, cryo-EM, or predictive models like AlphaFold) is available [8] [1].

- Fundamental Principle: SBVS predicts the binding mode and affinity of a small molecule within a target's binding site by evaluating their physicochemical complementarity and interaction energy [2] [9].

- Key Technique: Molecular Docking: This is the most widely used SBVS technique and involves two main steps:

- Scoring Functions and Machine Learning: Traditional scoring functions, which are often based on force fields, empirical data, or knowledge-based potentials, have known limitations in accuracy [2] [10]. Machine-learning scoring functions (ML-SFs)—such as RF-Score-VS and CNN-Score—trained on protein-ligand complexes have demonstrated substantial improvements in virtual screening performance and binding affinity prediction over classical functions [9] [10]. Rescoring docking poses with ML-SFs is now a established strategy to improve hit rates [9].

Comparative Analysis: Strengths and Limitations

The table below summarizes the core characteristics, advantages, and disadvantages of LBVS and SBVS, highlighting their complementary nature.

Table 1: Comparative analysis of Ligand-Based and Structure-Based Virtual Screening methods.

| Aspect | Ligand-Based Virtual Screening (LBVS) | Structure-Based Virtual Screening (SBVS) |

|---|---|---|

| Required Information | Known active ligands [1] | 3D structure of the target protein [1] |

| Core Principle | Molecular similarity / Similarity-Property Principle [1] | Structural and chemical complementarity [2] |

| Typical Methods | 2D/3D similarity, QSAR, Pharmacophore models [2] [7] | Molecular docking, Structure-based pharmacophores [2] [7] |

| Key Advantages | - Fast; can screen millions of compounds quickly [1]- No protein structure required [5]- Excellent for scaffold hopping within known chemotypes | - Provides atomic-level interaction insights [4]- Can identify novel, diverse scaffolds [1]- Better enrichment for targets with good structures [4] |

| Main Limitations | - Bias towards known chemotypes; limited novelty [3] [1]- Susceptible to "activity cliffs" [1]- No information on binding mode | - Computationally expensive [3]- Performance depends on scoring function accuracy [2] [10]- Challenging to account for full protein flexibility [2] |

Workflow Integration and Combined Strategies

The intrinsic flaws and complementary nature of LBVS and SBVS have motivated the development of integrated workflows, which can be classified into three main strategies: sequential, parallel, and hybrid [2] [3] [7].

Table 2: Strategies for combining LBVS and SBVS approaches.

| Combination Strategy | Description | Use Case |

|---|---|---|

| Sequential | A multi-step funnel where rapid LBVS methods (e.g., similarity search, QSAR) pre-filter a large library, and the reduced subset is analyzed with more computationally demanding SBVS (e.g., docking) [2] [3] [4]. | Optimizing the trade-off between computational cost and model complexity. Ideal for screening ultra-large libraries. |

| Parallel | LBVS and SBVS are run independently on the same compound library. Results are combined post-screening via consensus scoring or rank fusion techniques [2] [3]. | Increases robustness and hit rate by mitigating the limitations of each individual method. |

| Hybrid | LB and SB information are merged at a methodological level into a unified framework [2] [3]. Examples include interaction fingerprints that encode both ligand substructure and protein residue information [6]. | Leverages synergistic effects for more stable and accurate predictions. |

The following diagram illustrates the logical relationship and workflow between these combination strategies.

Experimental Protocols

Protocol 1: LBVS using Quantitative Structure-Activity Relationship (QSAR)

This protocol outlines the steps for building a QSAR model to predict compound activity [7] [1].

- Data Curation and Preparation: Collect a set of compounds with reliably measured biological activity (e.g., IC50, Ki). The activity values are typically converted to pIC50/pKi (-log10 of the concentration) for modeling. This set should be divided into training and test sets, ideally using a scaffold-based split to evaluate the model's ability to generalize to new chemotypes [5].

- Molecular Descriptor Calculation: Calculate numerical descriptors (e.g., topological, geometrical, or quantum chemical) for all compounds in the data set using software like the BioChemical Library (BCL) [5] or other cheminformatics toolkits.

- Model Building and Validation:

- Train a machine learning model (e.g., Random Forest, Support Vector Machine, or Neural Network) on the training set using the descriptors as features and the biological activity as the target variable.

- Validate the model's predictive performance on the held-out test set using metrics such as Root-Mean-Square Error (RMSE) and Pearson correlation coefficient for regression, or AUC-ROC for classification tasks [10].

- Virtual Screening: Apply the validated model to screen a virtual compound library. Compounds predicted to have high activity are prioritized for further experimental testing or analysis in a subsequent SBVS workflow.

Protocol 2: SBVS using Molecular Docking and Machine-Learning Rescoring

This protocol details a structure-based screening workflow, enhanced by ML-based rescoring, as benchmarked in recent studies [9].

- Protein Structure Preparation:

- Obtain the 3D structure of the target (e.g., from PDB, or via AlphaFold prediction). For PDB structures, remove crystallographic waters, ions, and redundant chains.

- Add hydrogen atoms, assign protonation states to key residues (e.g., Asp, Glu, His) at physiological pH, and perform energy minimization to relieve steric clashes. Tools like OpenEye's "Make Receptor" can be used [9].

- Ligand Library Preparation:

- Obtain the structures of compounds to be screened (e.g., from an in-house database or a commercial library like Enamine REAL).

- Generate plausible 3D conformers for each ligand and assign correct bond orders and protonation states. Tools like Omega can be used for this step [9].

- Molecular Docking:

- Define the binding site coordinates, typically based on the location of a co-crystallized ligand or a known active site.

- Perform docking simulations using a program such as AutoDock Vina, FRED, or PLANTS to generate multiple binding poses for each ligand [9].

- Retain a ranked list of compounds based on the docking score (e.g., Vina score) for initial analysis.

- Machine-Learning Rescoring:

- Extract the top-N poses (e.g., 100) generated by the docking program.

- Rescore these poses using a pretrained ML scoring function such as RF-Score-VS or CNN-Score [9] [10].

- Re-rank the compounds based on the ML-SF scores. Studies have shown this can significantly improve early enrichment, increasing the hit rate in the top 0.1-1% of the screened library [9] [10].

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below lists key software tools, databases, and resources essential for executing LBVS and SBVS protocols.

Table 3: Key research reagents and computational tools for virtual screening.

| Tool / Resource | Type | Function in Workflow |

|---|---|---|

| AutoDock Vina [9] [10] | Docking Software | Widely-used tool for molecular docking and pose generation in SBVS. |

| PLANTS [9] | Docking Software | Docking tool noted for good performance in benchmarking studies, particularly when combined with ML rescoring. |

| RF-Score-VS / CNN-Score [9] [10] | ML Scoring Function | Pretrained machine-learning models used to rescore docking poses, significantly improving virtual screening enrichment. |

| BioChemical Library (BCL) [5] | Cheminformatics | Used to generate expert-crafted molecular descriptors for QSAR and other LBVS models. |

| DEKOIS [9] [10] | Benchmarking Set | A public database containing benchmark sets for various protein targets, including known actives and carefully selected decoys, used to evaluate VS performance. |

| Protein Data Bank (PDB) [8] [9] | Database | Primary repository for experimentally determined 3D structures of proteins and nucleic acids, serving as the starting point for most SBVS campaigns. |

| ChEMBL / BindingDB [6] [9] | Database | Public databases containing curated bioactivity data for drug-like molecules, essential for building LBVS models and benchmarking. |

Virtual screening (VS) has become a cornerstone of modern drug discovery, offering a computational approach to identify novel bioactive molecules from extensive chemical libraries. The two primary methodologies, ligand-based (LB) and structure-based (SB) virtual screening, each possess a distinct spectrum of strengths and weaknesses. This application note details how their strategic combination into integrated LB-SB workflows creates a synergistic framework that mitigates the individual limitations of each method. We provide a quantitative analysis of performance gains, detailed experimental protocols for implementation, and visualizations of key workflows. The evidence demonstrates that such combined approaches significantly enhance hit rates, improve the robustness of screening campaigns, and increase the probability of identifying high-quality, novel chemotypes for therapeutic development.

In silico virtual screening is hierarchically applied in the drug discovery pipeline to enrich chemical libraries with compounds likely to be active against a therapeutic target [11]. Ligand-Based Virtual Screening (LBVS) operates on the principle of molecular similarity, using known active (and sometimes inactive) compounds to identify new candidates through molecular descriptors, pharmacophore models, or shape-based comparisons [2]. Its major strength is that it does not require 3D structural information of the target. Conversely, Structure-Based Virtual Screening (SBVS) exploits the 3D atomic structure of the target, typically using molecular docking to predict how a small molecule fits and interacts within a binding site [2] [11].

The impetus for combination stems from their complementary natures. A major shortcoming of LBVS is its inherent bias toward the chemical scaffold of the reference template, which can limit chemotype novelty and lead to overfitting [2]. SBVS, while powerful, is often challenged by the need to account for protein flexibility, the treatment of bound water molecules, and the accurate prediction of binding affinity by scoring functions [2]. Furthermore, the performance of both methods exhibits a strong target dependency, making a priori selection of the optimal single method difficult [12]. Integrated LB-SB strategies have emerged to exploit available information on both the ligand and the target holistically, thereby reinforcing their mutual complementarity and palliating their individual weaknesses [2].

Quantitative Comparison of Virtual Screening Methodologies

The table below summarizes the core characteristics, strengths, and weaknesses of individual and combined VS approaches, providing a foundational understanding of their synergistic potential.

Table 1: The Strengths and Weaknesses Spectrum of Virtual Screening Approaches

| Methodology | Core Principle | Key Strengths | Inherent Limitations |

|---|---|---|---|

| Ligand-Based (LB) | Molecular similarity to known actives [2] | No target structure needed; Computationally fast; Excellent when many actives are known [11] | Bias to known chemotypes; Limited novelty; Requires quality active ligand data [2] |

| Structure-Based (SB) | Complementarity to target's 3D structure [2] | Can identify novel scaffolds; Provides structural insights for optimization [11] | Requires a high-quality 3D structure; Handling of flexibility & solvation; Scoring function inaccuracies [2] |

| Combined (LB+SB) | Holistic use of ligand and target information [2] | Mitigates individual limitations; Higher hit rates & scaffold diversity; More robust performance [2] [12] | Increased complexity in workflow design; Potential for error propagation if not carefully validated |

Empirical evidence from large-scale benchmarking studies strongly supports the combination of methods. A notable iterative screening contest for inhibitors of tyrosine-protein kinase Yes demonstrated this quantitatively. In its second iteration, which assayed nearly 2,000 compounds, the hit rate for identifying potent inhibitors (IC₅₀ < 10 μmol L⁻¹) was approximately 0.5% (10 hits/1991 compounds) across all methods [12]. Crucially, the most successful individual method achieved a hit rate of 6.6% (4 hits/61 compounds), a more than 13-fold enrichment over the background, highlighting that a well-chosen combined strategy can dramatically outperform an average single method [12].

Integrated Workflow Strategies and Experimental Protocols

Combined LB-SB strategies can be implemented in three primary configurations: sequential, parallel, and hybrid, each with distinct applications and advantages [2].

Workflow Classification and Visualization

The following diagram illustrates the logical flow and decision points for the three primary combined workflow strategies.

Detailed Experimental Protocols

Protocol 1: Sequential LB-to-SB Screening for Kinase Inhibitors This protocol is designed to efficiently identify novel kinase inhibitors by leveraging the speed of LB methods followed by the precision of SB methods [2] [12].

LB Step: Pharmacophore-Based Screening

- Objective: Rapidly reduce library size from millions to thousands of compounds.

- Procedure: a. Model Generation: Construct a 3D pharmacophore model using a co-crystallized ligand from a homologous kinase structure (e.g., Src for Yes kinase). Define features like hydrogen bond donors/acceptors, hydrophobic regions, and aromatic rings. b. Library Preparation: Prepare the virtual library (e.g., 2.4 million compounds) using software like LigPrep [11] or OMEGA [11] to generate 3D conformers, correct ionization states (pH 7.4 ± 0.5), and generate tautomers. c. Screening: Screen the prepared library against the pharmacophore model using software such as Catalyst or Phase. Select the top 20,000-50,000 compounds that match all critical chemical features.

SB Step: Molecular Docking

- Objective: Refine the LB-pre-filtered set to a few hundred high-priority hits.

- Procedure: a. Protein Preparation: Obtain the target structure (experimental or homology model). Use protein preparation tools in Maestro or MOE to add hydrogen atoms, optimize side-chain rotamers, and assign partial charges. b. Binding Site Definition: Define the docking grid centered on the native ligand's binding site. c. Docking & Scoring: Dock the pre-filtered compound set using a standard algorithm (e.g., Glide, GOLD). Rank compounds based on the docking score and visual inspection of binding poses, focusing on key interactions like hinge-binding in kinases. Select the top 100-500 compounds for experimental testing.

Protocol 2: Parallel Screening with Consensus Ranking for a Dual-Target Inhibitor This protocol was successfully applied to identify novel dual-target inhibitors of BRD4 and STAT3 for kidney cancer therapy, maximizing the chances of success by running methods independently [13].

Parallel Execution:

- LB Arm: Conduct a similarity search using multiple known active compounds for BRD4 and STAT3 as references. Use 2D fingerprint-based methods (e.g., ECFP4) and 3D shape similarity tools (e.g., ROCS). Generate a ranked list from each method and combine them using data fusion techniques like rank voting.

- SB Arm: Perform molecular docking against the crystal structures of both BRD4 and STAT3. Prioritize compounds that score well against both targets simultaneously.

Consensus and Selection:

- Intersection Analysis: Identify compounds that appear in the top ranks of both the LB and SB results lists. This consensus approach is highly effective at reducing false positives.

- ADMET Filtering: Apply predictive filters for drug-likeness and pharmacokinetic properties (e.g., using QikProp [11] or SwissADME [11]) to the consensus hits to prioritize compounds with a higher probability of downstream success.

- Experimental Validation: Select 50-200 final compounds for purchase and testing in primary inhibition assays.

Successful implementation of combined VS workflows relies on a suite of software tools and data resources.

Table 2: Key Research Reagent Solutions for Combined LB-SB Workflows

| Category | Tool/Resource | Primary Function | Application in Workflow |

|---|---|---|---|

| Commercial Software Suites | Maestro (Schrödinger) [11] | Integrated platform for VS | Unified environment for protein prep (Protein Prep Wizard), docking (Glide), and LB tools. |

| Flare (Cresset) [11] | Structure-based design and analysis | Analyze electrostatic potentials, protein-ligand interactions, and pharmacophores. | |

| Open-Source Cheminformatics | RDKit [11] | Cheminformatics toolkit | Generate molecular descriptors, perform similarity searching, and handle data curation. |

| MolVS [11] | Molecule standardization | Standardize structures, remove duplicates, and neutralize charges in compound libraries. | |

| 3D Conformer Generation | OMEGA (OpenEye) [11] | Rapid 3D conformer generation | Generate multiple, low-energy 3D conformations for each compound for LBVS and docking. |

| ConfGen (Schrödinger) [11] | High-quality conformer generation | Generate accurate bioactive conformers for pharmacophore modeling and database searching. | |

| Critical Databases | Protein Data Bank (PDB) [11] | Repository for 3D protein structures | Source experimental structures for SBVS and for constructing structure-based pharmacophores. |

| ChEMBL / BindingDB [11] | Databases of bioactive molecules | Source known active and inactive compounds for building LBVS models and validation sets. | |

| ZINC [14] | Library of commercially available compounds | Source purchasable compounds for virtual screening. |

The strategic integration of ligand-based and structure-based virtual screening methods represents a paradigm shift in computational drug discovery. By moving beyond the limitations of individual approaches, combined LB-SB workflows leverage a broader information spectrum to achieve higher hit rates, identify novel and diverse chemotypes, and deliver more robust and reliable results. The sequential, parallel, and hybrid frameworks provide flexible blueprints that can be tailored to specific project needs, available data, and target characteristics. As both computational power and algorithmic sophistication continue to advance, these synergistic strategies are poised to become the standard for efficient and effective hit identification, accelerating the discovery of new therapeutic agents.

The pursuit of novel therapeutic agents increasingly relies on computational methods to navigate the vastness of chemical space. Within this domain, the molecular similarity principle—the concept that structurally similar molecules tend to exhibit similar biological activities—forms a fundamental cornerstone of ligand-based (LB) drug design [15]. When integrated with molecular docking, a key structure-based (SB) technique that predicts how small molecules bind to a protein target, these approaches form a powerful partnership that enhances the effectiveness of virtual screening (VS) campaigns [16] [2]. This partnership is operationalized through hierarchical virtual screening (HLVS) protocols, where multiple computational filters are applied sequentially to efficiently distill large compound libraries into a manageable number of high-probability hits for experimental testing [16]. The complementary nature of these methods allows researchers to leverage the strengths of each: molecular similarity searches efficiently exploit known bioactive compounds to find new chemotypes, while molecular docking provides an atomic-level, structure-based rationale for binding, helping to prioritize compounds that form favorable interactions with the target [2] [17]. This review details the practical application of these combined workflows, providing structured protocols, illustrative case studies, and key reagent solutions for implementation in drug discovery research.

Core Concepts and Definitions

The Molecular Similarity Principle

The molecular similarity principle is a conceptual foundation that enables the prediction of a compound's properties based on its resemblance to molecules with known characteristics [15]. Its application, however, is inherently context-dependent; the definition of "similarity" changes based on the molecular features most relevant to the target property or biological activity [18] [15]. These features can be encoded as molecular descriptors, which are mathematical representations of a molecule's structure and properties [18]. The choice of descriptor directly influences the outcome of a similarity search and its ability to identify compounds with the desired activity.

- 2D Similarity: Based on molecular connectivity, often represented by structural fingerprints (e.g., binary vectors indicating the presence or absence of specific substructures). These methods are computationally efficient and powerful for finding close analogs but can be limited in their ability to "hop" to novel scaffolds [16] [19].

- 3D Shape Similarity: Focuses on the three-dimensional volume and topography of a molecule. Methods like Ultrafast Shape Recognition (USR) describe shape using distributions of atomic distances, enabling the identification of functionally similar molecules with different 2D structures, a process known as scaffold hopping [19].

- Pharmacophore Similarity: A pharmacophore model abstracts the essential steric and electronic features necessary for a molecule to interact with a biological target. Comparing molecules based on their pharmacophore patterns focuses on the spatial arrangement of key features like hydrogen bond donors/acceptors and hydrophobic regions [15] [17].

Molecular Docking

Molecular docking is a structure-based technique that predicts the preferred orientation (binding pose) of a small molecule (ligand) when bound to a macromolecular target (receptor) [20] [21]. The process involves two core components:

- Search Algorithm: Systematically samples possible ligand conformations and orientations within the defined binding site of the protein. Common strategies include systematic conformational searches, stochastic methods (e.g., Genetic Algorithms), and fragment-based approaches [20].

- Scoring Function: A mathematical function used to evaluate and rank the generated poses by predicting the binding affinity. These functions can be force field-based (calculating physical energy terms), empirical (using fitted parameters from known complexes), knowledge-based (derived from statistical analyses of complexes), or consensus (combining multiple scoring schemes) [20] [21].

A significant challenge in docking is accurately modeling the inherent flexibility of the protein receptor and the critical role of structured water molecules in the binding site, which can mediate key ligand-protein interactions [2] [21].

Practical Application Notes and Protocols

Hierarchical Virtual Screening: A Standard Workflow

A typical HLVS protocol applies computational filters in a sequential manner, moving from fast, coarse-grained methods to more rigorous, resource-intensive techniques. This funnel-like approach optimally balances computational cost with screening accuracy [16] [2]. The standard workflow is illustrated below.

Diagram 1: Hierarchical Virtual Screening (HLVS) workflow. This funnel illustrates the sequential application of filters to progressively reduce a large compound library to a manageable number of experimental hits [16] [2] [17].

Protocol 1: LB-to-SB Hierarchical Screening for Novel BACE1 Inhibitors

This protocol is adapted from a study that successfully discovered novel BACE1 inhibitors for Alzheimer's disease research [17].

Objective: To identify novel, brain-penetrant small-molecule inhibitors of BACE1. Materials: Pre-prepared and filtered database (e.g., NCI, Asinex, Specs); software for pharmacophore modeling (e.g., MOE), molecular docking (e.g., AutoDock Vina, GOLD), and ADMET prediction.

Step-by-Step Procedure:

- Database Curation:

- Obtain commercial or in-house compound databases.

- Filter compounds using Lipinski's Rule of Five and other relevant drug-likeness criteria (e.g., using the "Lipinski Druglike Test" in MOE) to ensure oral bioavailability. This typically reduces the library size by 60-80% [17].

- Generate a representative set of low-energy 3D conformations for each molecule (e.g., up to 500 conformers per molecule with a 4.5 kcal/mol strain energy cutoff).

Ligand-Based Pharmacophore Screening:

- Model Development: Construct a ligand-based pharmacophore model using a set of known, potent BACE1 inhibitors with diverse scaffolds. Identify common spatial features critical for activity (e.g., hydrogen bond acceptors/donors, hydrophobic regions, aromatic rings).

- Validation: Validate the model's ability to distinguish known active compounds from decoys using a test set from databases like DUD_E.

- Screening: Screen the curated database against the validated pharmacophore model. Retrieve the top-ranking compounds that match the essential features. This step typically reduces the library to 1,000-10,000 compounds [17].

Structure-Based Docking:

- Protein Preparation: Obtain the 3D structure of BACE1 (e.g., PDB ID: 2WF1). Prepare the protein by adding hydrogen atoms, assigning partial charges, and optimizing the side-chain orientations of key binding site residues (e.g., the catalytic aspartates Asp32 and Asp228) at a mildly acidic pH (e.g., 6.0) to reflect the endosomal environment.

- Docking Execution: Perform molecular docking of the compounds that passed the pharmacophore filter. Use a standard docking program (e.g., AutoDock Vina) to generate and score multiple binding poses for each ligand.

- Pose Analysis: Visually inspect the top-scoring poses to ensure they form key interactions with the catalytic dyad and other important residues (e.g., Tyr71, Thr72, Gln73 in the flap region).

Blood-Brain Barrier (BBB) Penetration Filter:

- Apply an in silico filter to predict the ability of the top-ranked docked compounds to cross the BBB. Use predictive models based on polar surface area, log P, and other physicochemical properties.

- Select 20-50 compounds that pass this filter for subsequent experimental testing.

Protocol 2: Integrated Similarity-Docking for Allosteric PI5P4K2C Inhibitors

This protocol demonstrates a hybrid approach combining similarity searching and bioisosteric replacement with docking, as used to find novel allosteric inhibitors [22].

Objective: To discover novel allosteric inhibitors of PI5P4K2C lipid kinase using a known inhibitor (DVF) as a starting point. Materials: Known allosteric inhibitor (DVF); open-access platforms (SwissSimilarity, SwissBioisosteres); molecular docking software; molecular dynamics (MD) simulation software (e.g., GROMACS).

Step-by-Step Procedure:

- Similarity-Based Analog Search:

- Use the known allosteric inhibitor DVF as a query molecule in a similarity search against compound databases (e.g., ZINC, ChEMBL) using a web platform like SwissSimilarity.

- Employ both 2D fingerprint-based similarity (e.g., Tanimoto coefficient) and 3D shape-based similarity (e.g., USR) to find analogs. Prioritize compounds that maintain the core pyrrolo[3,2-d]pyrimidine scaffold but incorporate diverse substituents.

Bioisosteric Replacement:

Targeted Docking to the Allosteric Site:

- Prepare the protein structure (PDB ID: 7QPN), focusing on the defined allosteric pocket (residues: Asp161, Asn165, Leu182, Phe272, Asp332, Thr335, etc.).

- Define the search space for docking explicitly around this allosteric site, not the orthosteric ATP-binding site.

- Dock the similarity-derived compounds and bioisosteric analogs. Score and rank them based on their predicted binding affinity and ability to form key interactions observed in the DVF complex (e.g., hydrogen bonds with Asn165 and Asp332).

Binding Stability Assessment with MD Simulations:

- Subject the top-ranked docked complexes to all-atom MD simulations (e.g., for 50-100 ns) to assess the stability of the ligand binding.

- Calculate the binding free energy using methods like MM-PBSA/MM-GBSA to obtain a more reliable ranking of the final hits compared to standard docking scores [22].

Essential Research Reagent Solutions

The following table summarizes key computational tools and resources that form the essential "reagent solutions" for implementing the combined similarity-docking protocols described above.

Table 1: Key Research Reagent Solutions for Combined LB-SB Workflows

| Tool/Resource Name | Type | Primary Function in Workflow | Access / Example |

|---|---|---|---|

| Molecular Databases | Data | Source of compounds for virtual screening. | ZINC, ChEMBL, NCI, commercial libraries (Asinex, Specs) [17] |

| MOE (Molecular Operating Environment) | Software Suite | Integrated platform for pharmacophore modeling, database curation, and molecular docking. | Commercial software from Chemical Computing Group [17] |

| AutoDock Vina | Software | Widely-used, open-source program for molecular docking; balances speed and accuracy. | Freely available from http://vina.scripps.edu/ [20] |

| USR (Ultrafast Shape Recognition) | Algorithm/Web Tool | Alignment-free 3D shape similarity method for extremely fast virtual screening and scaffold hopping. | Web implementation available (USR-VS) [19] |

| SwissSimilarity | Web Platform | Unified platform for performing 2D and 3D similarity searches and bioisosteric replacement. | Freely accessible web tool [22] |

| GROMACS | Software | High-performance molecular dynamics package for simulating ligand-protein complex stability. | Open-source software [22] |

| DUD_E (Database of Useful Decoys: Enhanced) | Data | Benchmarking set of known actives and decoys for validating virtual screening methods. | http://dude.docking.org/ [17] |

Data Presentation: Success Stories and Method Comparisons

The efficacy of combining molecular similarity with docking is demonstrated by numerous successful applications across diverse therapeutic targets. The table below summarizes key examples from the literature.

Table 2: Successful Applications of Combined LB-SB Hierarchical Virtual Screening

| Drug Target | Reported Activity of Best Hit | HLVS Methods Used | Reference |

|---|---|---|---|

| B-RafV600E | IC50 = 0.3 µM | SHAFT 3D ligand similarity + Molecular Docking | [16] |

| Serotonin Transporter | Ki = 1.5 nM | 2D fingerprints, ADMET filtering, 3D pharmacophore + Docking | [16] |

| SUMO specific protease 2 | IC50 = 3.7 µM | Shape similarity, electrostatic matching + Docking | [16] |

| BACE1 | 13 novel hit compounds identified | Structure- & Ligand-based Pharmacophore + Docking + BBB filter | [17] |

| PI5P4K2C (Allosteric) | Superior binding energy vs. reference | Similarity search, Bioisosteres + Docking + MD/MM-GBSA | [22] |

| HDAC8 | IC50 = 2.7 nM | Pharmacophore modeling + ADMET filtering + Docking | [2] |

To select the appropriate technique, researchers must understand the strengths and weaknesses of different similarity methods. The following table provides a comparative overview.

Table 3: Comparison of Key Molecular Similarity Methods

| Method Type | Example Techniques | Advantages | Disadvantages |

|---|---|---|---|

| 2D Similarity | Structural Fingerprints (e.g., ECFP) | Fast, simple, highly effective for finding close analogs. | Limited scaffold hopping capability; no 3D structural insights. [19] |

| 3D Shape Similarity | USR, ROCS | Enables scaffold hopping; strong correlation with biological activity. | Can be conformationally dependent; alignment-based methods can be slower. [19] |

| Pharmacophore | LB/SB Pharmacophore Models | Captures essential interaction features; can be derived from ligands or protein structure. | Model quality depends on input data; may oversimplify interactions. [15] [17] |

The foundational partnership between the molecular similarity principle and molecular docking, as formalized in hierarchical virtual screening workflows, represents a powerful and validated strategy in modern computational drug discovery. This partnership successfully merges the knowledge-derived power of LB methods with the mechanistic insights of SB approaches, creating a synergistic framework that is greater than the sum of its parts. As both similarity assessment and docking algorithms continue to advance—particularly in areas of machine learning, handling protein flexibility, and more accurate scoring functions—the efficiency and success rate of these integrated protocols are poised to increase further. The standardized application notes and protocols provided here offer researchers a clear roadmap to implement these strategies, accelerating the identification and optimization of novel lead compounds against increasingly challenging therapeutic targets.

When to Deploy a Combined Workflow

Virtual screening (VS) is a cornerstone of modern drug discovery, providing a time- and cost-effective method for identifying promising hit compounds from vast chemical libraries. The two primary computational strategies are Ligand-Based Virtual Screening (LBVS), which leverages the structural and physicochemical properties of known active ligands, and Structure-Based Virtual Screening (SBVS), which utilizes the three-dimensional structure of the target protein, most commonly through molecular docking [23] [3]. While each approach is powerful individually, they possess complementary strengths and weaknesses. The strategic integration of LBVS and SBVS into a combined workflow can mitigate their individual limitations, leading to higher confidence in results and a greater probability of identifying novel, active chemotypes [23] [4].

This application note provides a structured framework for researchers to determine when and how to deploy a combined LB-SB virtual screening workflow. We summarize the key decision criteria, present detailed experimental protocols for the three main hybrid strategies, and illustrate their application with a recent case study from the CACHE competition.

Strategic Decision Framework

The decision to employ a combined workflow, and the selection of the specific strategy, depends on the available data and the project's goals. The following table outlines the key decision criteria.

Table 1: Criteria for Selecting a Combined LB-SB Virtual Screening Workflow

| Criterion | Scenario Favoring a Combined Workflow | Recommended Strategy |

|---|---|---|

| Available Target Structure | High-quality experimental (X-ray, cryo-EM) or reliable predicted (e.g., AlphaFold2) structure is available. | Sequential; Parallel; Hybrid |

| Available Active Ligands | One or more known active ligands are available for the target. | Sequential; Parallel; Hybrid |

| Library Size | Screening an ultra-large library (>1 million compounds). | Sequential (LBVS pre-filtering) |

| Primary Goal: Hit Diversity | Seeking novel scaffolds to avoid intellectual property constraints or explore new chemical space. | Sequential; Parallel |

| Primary Goal: Hit Confidence | Prioritizing a smaller set of high-confidence candidates for experimental testing. | Parallel (Consensus); Hybrid |

| Computational Resources | Limited resources for computationally intensive SBVS on large libraries. | Sequential |

| Project Stage | Early discovery: library enrichment. Late discovery: lead optimization. | Sequential/Parallel (Early); Hybrid (Late) |

Combined Workflow Protocols

Based on the decision framework, three principal strategies can be deployed: sequential, parallel, and hybrid. The following protocols detail their implementation.

Protocol 1: The Sequential Workflow

The sequential approach is a funnel-based strategy that applies LBVS and SBVS in consecutive steps to progressively filter a large compound library [23] [3]. This is the most computationally efficient strategy for screening ultra-large chemical spaces.

Workflow Diagram:

Detailed Procedure:

- Input: A large compound library (e.g., Enamine REAL, ZINC).

- LBVS Pre-filtering:

- Objective: Rapidly reduce the library size to a manageable number of compounds (e.g., 1-5% of the original) for more costly SBVS.

- Methods:

- Pharmacophore Screening: Use a pharmacophore model derived from known active ligands or a target-ligand complex to search for compounds matching key interaction features [23] [4].

- Similarity Search: Perform 2D or 3D similarity searches (e.g., using ROCS, eSim) using one or more known active compounds as a reference [4].

- Machine Learning QSAR: Apply a pre-trained QSAR model to predict and prioritize compounds with high predicted activity [3].

- Output: A reduced library of 10,000 - 50,000 compounds.

- SBVS Refinement:

- Objective: Evaluate the pre-filtered compounds using the target structure to validate binding mode and affinity.

- Methods:

- Molecular Docking: Dock the reduced library into the target's binding site using software such as AutoDock Vina, Glide, or GOLD.

- Pose Analysis: Manually inspect the top-ranked docking poses for key ligand-protein interactions (e.g., hydrogen bonds, hydrophobic contacts, pi-stacking).

- Output: A final, high-confidence hit list of 50-500 compounds for experimental testing.

Protocol 2: The Parallel Workflow

In the parallel strategy, LBVS and SBVS are run independently on the same compound library. The results are then fused to create a unified ranking, which helps balance the biases inherent in each method [3] [4].

Workflow Diagram:

Detailed Procedure:

- Input: A compound library of moderate to large size.

- Independent Screening:

- Run LBVS (e.g., using a QSAR model or similarity search) to generate a ranked list.

- Run SBVS (e.g., molecular docking) in parallel to generate a separate ranked list.

- Data Fusion and Consensus Scoring:

- Objective: Combine the two ranked lists into a single, more robust consensus list.

- Methods:

- Rank Sum/Average: Normalize the ranks from each method and calculate the average or sum. Compounds with the best average rank are prioritized [3].

- Multiplicative Method: Multiply the normalized scores from LBVS and SBVS. This approach strongly favors compounds that rank highly in both methods.

- Parallel Selection: Simply select the top N compounds from each list without fusion, maximizing the chance of finding actives at the cost of a larger hit list [4].

- Output: A final consensus hit list for experimental testing.

Protocol 3: The Hybrid Workflow

The hybrid strategy integrates LB and SB information into a single, unified computational framework. This approach aims to leverage synergistic effects and is particularly powerful for lead optimization [3].

Detailed Procedure:

- Interaction-Based Methods: These methods use patterns of ligand-target interactions to guide screening.

- Procedure: Develop a machine learning scoring function trained on both protein-ligand interaction fingerprints (IFPs) and ligand descriptors. This model can then predict binding affinity based on a holistic view of the binding event [3].

- Docking-Based Methods: These methods incorporate ligand-based information directly into the docking process.

- Procedure: A known active ligand is docked first. Subsequent compounds are then docked and scored based on a combination of the docking score and their 3D similarity to the reference ligand's pose (pharmacophore overlap, shape similarity) [23].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Software and Resources for Combined LB-SB Workflows

| Category | Tool/Resource | Function in Workflow |

|---|---|---|

| LBVS | ROCS (OpenEye) | Rapid 3D shape and pharmacophore similarity screening [4]. |

| eSim/QuanSA (Optibrium) | 3D ligand-based similarity and quantitative affinity prediction [4]. | |

| InfiniSee (BioSolveIT) | Screens ultra-large, synthetically accessible chemical spaces via pharmacophoric similarity [4]. | |

| SBVS | AutoDock Vina, Glide | Molecular docking programs for binding pose prediction and scoring. |

| Free Energy Perturbation (FEP) | High-accuracy, computationally demanding binding affinity prediction for lead optimization [4]. | |

| Hybrid & ML | 3d-qsar.com Web Portal | Provides web apps for building 3D-QSAR (CoMFA) and structure-based COMBINE models [24]. |

| PIGNet, other DL models | Deep learning models that predict protein-ligand interactions using physical-informed features [3]. | |

| Chemical Libraries | Enamine REAL, ZINC | Sources of commercially available compounds for virtual screening. |

Case Study: Application in the CACHE Challenge #1

The CACHE (Critical Assessment of Computational Hit-finding Experiments) competition provides a real-world benchmark for virtual screening strategies. In Challenge #1, participants were tasked with finding binders for the LRRK2-WDR domain, a target with a known apo structure but no known ligands [3].

Summary of Strategies and Outcomes: A review of the 23 participating teams revealed that a combined approach was prevalent among successful entrants. While all teams used molecular docking, either for direct screening or for prioritizing compounds, they frequently employed sequential workflows. Specifically, teams used various LBVS-like filters (e.g., for drug-likeness, undesirable functional groups) to process the ultra-large library (36 billion compounds) before applying the more computationally intensive docking. The sequential strategy of pre-filtering followed by docking was effective in this challenging scenario with an ultra-large library and a novel target [3].

Combined ligand- and structure-based virtual screening workflows represent a powerful paradigm in modern drug discovery. The sequential workflow offers computational efficiency for navigating ultra-large chemical spaces. The parallel workflow with consensus scoring provides higher confidence in hit selection by balancing the strengths of both approaches. The hybrid workflow, though more complex to implement, offers the deepest integration and is highly valuable for lead optimization. By carefully considering the available data, project goals, and computational resources outlined in this document, researchers can strategically deploy these combined protocols to enhance the efficiency and success of their virtual screening campaigns.

Blueprint for Success: Sequential, Parallel, and Hybrid Workflow Strategies

The identification of novel bioactive molecules through Virtual Screening (VS) is a cornerstone of modern drug discovery [2]. VS methodologies are broadly categorized as Ligand-Based Virtual Screening (LBVS), which relies on the known physicochemical or structural properties of active ligands, and Structure-Based Virtual Screening (SBVS), which leverages the three-dimensional structure of the biological target [2] [25]. While each approach has its respective strengths, their complementary nature has motivated the development of hybrid strategies [2]. Among these, the sequential funnel, which applies rapid LBVS methods for pre-filtering before more computationally intensive SBVS refinement, has emerged as a powerful and efficient workflow to enhance hit rates and optimize resource allocation in early-stage drug discovery [2]. This protocol provides a detailed application note for implementing such a sequential LBVS-to-SBVS pipeline.

The sequential funnel approach is designed to efficiently process large chemical libraries by applying fast, coarse-grained filters first, followed by more sophisticated, fine-grained analysis on a smaller, pre-enriched subset of compounds [2]. The typical workflow consists of two major phases:

- LBVS Pre-filtering: Initial screening of an ultra-large chemical library using fast LBVS methods to select a subset of compounds that exhibit molecular similarity to known actives or comply with predefined pharmacophore or property-based rules.

- SBVS Refinement: Detailed evaluation of the LBVS hits using molecular docking and advanced scoring methods to predict binding poses and affinities within the target's binding site, culminating in a final, prioritized list of candidates for experimental testing.

The following diagram illustrates the logical flow and decision points within this sequential funnel.

Key Methodologies and Experimental Protocols

Phase 1: LBVS Pre-filtering Protocols

The initial phase aims to reduce the chemical search space from billions of compounds to a manageable number for SBVS.

3.1.1 Property-Based Filtering

This protocol removes compounds with undesirable properties early in the workflow [26] [27].

- Objective: To apply rapid, computationally inexpensive filters for identifying and removing compounds with poor drug-likeness or potential reactivity.

- Procedure:

- Library Preparation: Obtain the virtual library in a suitable format (e.g., SDF, SMILES). Precompute key molecular descriptors such as molecular weight (MW), calculated octanol-water partition coefficient (CLogP), and counts of hydrogen bond donors (HBD) and acceptors (HBA) [26].

- Apply Rule of Five: Filter the library to exclude compounds that violate more than one of the following criteria [26]:

- MW < 500

- CLogP < 5

- HBD < 5

- HBA < 10

- Filter Undesirable Moieties: Screen the library against a filter for Pan-Assay Interference Compounds (PAINS) and other potentially reactive functional groups (e.g., Michael acceptors, aldehydes) to minimize false positives in subsequent experimental assays [26].

- Software Tools: Scripting with cheminformatics toolkits (e.g., RDKit, Open Babel [27]); commercial platforms like Schrödinger's Canvas.

3.1.2 Molecular Similarity and Pharmacophore Screening

This protocol selects compounds that are structurally or functionally similar to known active molecules [2] [28].

- Objective: To enrich the library with compounds that have a high two-dimensional (2D) or three-dimensional (3D) similarity to a known reference ligand or that match a critical 3D pharmacophore model.

- Procedure:

- Reference Selection: Identify one or more known active ligands with confirmed potency against the target.

- Generate Pharmacophore Model (Optional): For structure-based pharmacophore generation, use a protein-ligand complex structure (e.g., from PDB). Software like LigandScout can identify key interaction features (e.g., H-bond donors/acceptors, hydrophobic regions, ionic interactions) to create a 3D query model [28].

- Screen Library: Screen the property-filtered library using either:

- 2D Fingerprint Similarity: Calculate Tanimoto coefficients using fingerprints like ECFP4 or Morgan fingerprints. Retain compounds above a defined similarity threshold (e.g., >0.6) [29].

- 3D Pharmacophore Search: Screen the library against the pharmacophore model, requiring compounds to match all or most critical features [2] [28].

- Software Tools: LigandScout [28], ROCS, Schrödinger's Phase.

Table 1: Key LBVS Pre-filtering Techniques and Parameters

| Technique | Core Objective | Key Parameters/Metrics | Common Tools |

|---|---|---|---|

| Property Filtering | Remove compounds with poor drug-likeness or reactive groups | Molecular Weight, CLogP, HBD, HBA, PAINS filters | RDKit, Open Babel, Pipeline Pilot [26] [27] |

| 2D Similarity Search | Find structurally analogous compounds to known actives | Tanimoto Coefficient (ECFP4/Morgan fingerprints) | Canvas, RDKit, CDK [2] [29] |

| 3D Pharmacophore Search | Find compounds matching essential 3D interaction features | Fit Value, matching of H-bond, hydrophobic, ionic features | LigandScout, ROCS, Phase [2] [28] |

Phase 2: SBVS Refinement Protocols

The second phase involves a detailed structural evaluation of the pre-filtered compound library.

3.2.1 Molecular Docking and Pose Prediction

This protocol predicts how each small molecule binds to the target protein [25] [27].

- Objective: To predict the binding conformation (pose) and provide a preliminary rank ordering of the LBVS hits within the target's binding site.

- Procedure:

- Protein Preparation:

- Obtain the 3D structure of the target protein (from PDB or homology modeling).

- Add hydrogen atoms, assign protonation states of key residues (e.g., His, Asp, Glu) using tools like PROPKA or the Protein Preparation Wizard [25].

- Optimize the hydrogen-bonding network and perform a restrained energy minimization to relieve steric clashes.

- Ligand Preparation: Prepare the LBVS hits by generating likely tautomers and protonation states at a physiological pH range (e.g., 7.0 ± 2.0). Assign partial charges and minimize 3D geometries [27].

- Grid Generation: Define the docking search space by creating a grid box centered on the binding site of interest.

- Docking Execution: Dock each prepared ligand into the defined binding site using a program like AutoDock Vina or Glide. Generate multiple poses per ligand and score them using the docking program's native scoring function [30] [27].

- Protein Preparation:

- Software Tools: AutoDock Vina [27], Glide [30], GOLD, DOCK.

3.2.2 Advanced Rescoring with Free Energy Calculations

This protocol applies more accurate, physics-based methods to refine the ranking of top docking hits [30].

- Objective: To achieve a more quantitative and reliable prediction of binding affinities for the top-ranked docked compounds, improving the prioritization of leads.

- Procedure:

- Pose Selection: From the docking output, select the top-ranking poses for a few hundred to a few thousand compounds.

- System Setup: For each protein-ligand complex, embed the system in an explicit solvent box, add ions to neutralize the system, and ensure all atoms have appropriate force field parameters.

- Free Energy Calculation: Run Absolute Binding Free Energy (ABFEP) simulations using molecular dynamics. This protocol calculates the free energy difference between the bound and unbound states of the ligand, providing a highly accurate estimate of binding affinity [30].

- Ranking and Analysis: Rank the final compounds based on the calculated binding free energy (ΔG). Analyze the key interactions stabilizing the top-ranked complexes.

- Software Tools: Schrödinger's FEP+ [30], GROMACS with free energy plugins, AMBER.

Table 2: Key SBVS Refinement Techniques and Parameters

| Technique | Core Objective | Key Parameters/Metrics | Common Tools |

|---|---|---|---|

| Molecular Docking | Predict binding pose and provide initial affinity ranking | Docking Score, GlideScore, number of poses, interaction analysis | AutoDock Vina, Glide, GOLD [30] [25] [27] |

| Absolute Binding Free Energy Perturbation (ABFEP) | Accurately calculate binding free energy for diverse chemotypes | Predicted ΔG (kcal/mol), correlation with experimental IC50/Kd | Schrödinger's FEP+, GROMACS, AMBER [30] |

| Molecular Mechanics with Generalized Born and Surface Area Solvation (MM-GBSA) | Estimate binding free energy from docking poses post-hoc | Calculated ΔG (MM-GBSA), energy component decomposition | Schrödinger's Prime, AMBER [28] |

Successful execution of a sequential VS funnel requires a combination of software, hardware, and data resources.

Table 3: Key Research Reagent Solutions for Sequential VS

| Item Name | Function/Application in the Workflow | Specific Examples & Notes |

|---|---|---|

| Virtual Compound Libraries | Source of purchasable or synthesizable compounds for screening. | ZINC database [28] [27], Enamine REAL [30]; billions of compounds available. |

| Protein Data Bank (PDB) | Primary source for 3D protein structures used in SBVS and structure-based pharmacophore modeling. | RCSB PDB (e.g., PDB ID: 4BJX for Brd4) [28]. |

| Cheminformatics Toolkits | Fundamental for library formatting, descriptor calculation, and property filtering. | RDKit, Open Babel [27]; used for SMILES/SDF processing and filter application. |

| LBVS Software | Performs molecular similarity calculations and pharmacophore-based screening. | LigandScout [28], ROCS, Schrödinger's Canvas. |

| Molecular Docking Suite | Predicts protein-ligand binding modes and provides initial scoring. | AutoDock Vina [27], Glide [30], GOLD. |

| Free Energy Calculation Software | Provides high-accuracy binding affinity predictions for lead prioritization. | Schrödinger's FEP+ [30], AMBER, GROMACS. |

| High-Performance Computing (HPC) | Provides the computational power necessary for docking large libraries and running FEP simulations. | Local computer clusters or cloud computing services (e.g., AWS, Azure). |

Application Case Study: Identifying Novel Neuroblastoma Inhibitors

A study aimed at discovering natural inhibitors for treating human neuroblastoma provides a compelling example of the sequential funnel in action [28].

- LBVS Pre-filtering: Researchers first generated a structure-based pharmacophore model from the Brd4 protein in complex with a known inhibitor (PDB: 4BJX). This model, comprising features like hydrogen bond donors/acceptors and hydrophobic regions, was used to screen a library of natural compounds from the ZINC database, identifying 136 initial hits [28].

- SBVS Refinement: The 136 pharmacophore hits were then subjected to molecular docking to evaluate their binding modes and affinities within the Brd4 active site. This step refined the list to a smaller set of compounds with favorable docking scores and interaction profiles [28].

- Post-SBVS Filtering: The top docking hits were further filtered using in silico ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) profiling to assess drug-likeness and potential toxicity [28].

- Advanced Modeling and Final Selection: The stability of the resulting complexes was confirmed through Molecular Dynamics (MD) simulations, and their binding free energies were calculated using MM-GBSA. This integrated process culminated in the identification of four promising natural lead compounds, such as ZINC2509501 and ZINC4104882, for further experimental investigation [28].

The sequential funnel strategy that couples LBVS pre-filtering with SBVS refinement represents a robust and efficient paradigm for modern virtual screening. By leveraging the speed and complementarity of LBVS methods to reduce the chemical space, and the structural precision of SBVS for detailed evaluation, this workflow dramatically improves the odds of identifying high-quality, experimentally-validated hits while conserving valuable computational and wet-lab resources [2] [30]. The provided protocols and toolkit offer a practical guide for researchers to implement this powerful approach in their drug discovery campaigns.

Parallel Power: Running LBVS and SBVS Independently and Merging Results

Virtual screening (VS) stands as a cornerstone of modern computational drug discovery, providing a powerful and cost-effective means to identify promising lead compounds from extensive chemical libraries [31]. The two primary methodologies, Ligand-Based Virtual Screening (LBVS) and Structure-Based Virtual Screening (SBVS), offer distinct and complementary advantages. LBVS leverages known active compounds to search for structurally or pharmacophorically similar molecules, while SBVS utilizes the three-dimensional structure of a target protein to dock and score potential ligands [32]. Individually, each method has inherent limitations; SBVS can struggle with accurate binding affinity prediction, and LBVS is constrained by the chemical space defined by known actives [33] [34].

This application note details a robust protocol that harnesses parallel power by executing LBVS and SBVS as independent, simultaneous processes and subsequently merging their results. This hybrid approach mitigates the risk of bias inherent in sequential workflows and increases the probability of identifying diverse, novel hit compounds by tapping into the unique strengths of each method [6]. Framed within broader research on combined workflows, this document provides a detailed, actionable guide for researchers and drug development professionals to implement this strategy, complete with methodologies, validation data, and essential resource information.

Key Concepts and Rationale

Ligand-Based Virtual Screening (LBVS)

LBVS operates on the principle that molecules with similar structures are likely to have similar biological activities. It is the method of choice when the 3D structure of the target protein is unknown but a set of active ligands is available [32]. Key techniques include:

- Molecular Similarity Searching: Uses molecular fingerprints, such as Extended Connectivity Fingerprints (ECFP), and similarity coefficients like the Tanimoto coefficient (TAN), to find compounds similar to a known reference [32].

- Pharmacophore Modeling: Identifies compounds that share critical chemical features (e.g., hydrogen bond donors/acceptors, hydrophobic regions) necessary for binding.

Advanced deep learning methods, such as Enhanced Siamese Multi-Layer Perceptrons, have been developed to improve similarity searching performance, particularly for structurally heterogeneous classes of molecules [32].

Structure-Based Virtual Screening (SBVS)

SBVS requires a known 3D structure of the target protein, typically from X-ray crystallography or homology modeling. Its core components are:

- Sampling: Generating multiple plausible conformations and orientations (poses) of a ligand within the target's binding site.

- Scoring: Evaluating each pose using a scoring function to estimate binding affinity. These functions can be physics-based (estimating interaction energies), empirical, knowledge-based, or increasingly, powered by machine learning [31] [34].

Leading-edge SBVS platforms, such as RosettaVS, incorporate receptor flexibility and advanced force fields to improve docking accuracy and virtual screening performance [34].

The Hybrid Approach: Parallel Execution and Merging

Sequential VS workflows (e.g., LBVS followed by SBVS) may prematurely exclude promising compounds that are outside the similarity scope of known actives or are challenging for docking algorithms to score correctly. The parallel independent strategy overcomes this by:

- Maximizing Diversity: LBVS and SBVS explore different regions of the vast chemical space simultaneously.

- Mitigating Method-Specific Weaknesses: Compounds missed by one method may be captured by the other.

- Enhancing Confidence: Hits identified by both methods concurrently can be prioritized with greater confidence.

The final, critical step is a structured merging of the two independent result lists. This can be achieved through heterogeneously weighted scoring, which assigns different weights to the LBVS and SBVS scores, or by using a hybrid ranking method based on binding mode similarity to a reference ligand [33] [35].

Experimental Protocol

The following protocol provides a step-by-step guide for conducting a parallel LBVS-SBVS campaign.

The entire process, from preparation to hit selection, is visualized in the following workflow diagram.

Workflow for Parallel LBVS-SBVS

Step 1: Input Preparation

For LBVS:

For SBVS:

- Prepare Protein Structure: Obtain a high-resolution 3D structure (e.g., from PDB). Conduct necessary preprocessing: remove water molecules, add hydrogen atoms, assign partial charges, and define protonation states.

- Define the Binding Site: Delineate the spatial coordinates of the binding site, often based on the location of a co-crystallized native ligand.

Compound Library:

- Select a Database: Choose a screening library (e.g., ZINC, SPECS, an in-house collection) [36] [34]. Pre-filter for drug-like properties (e.g., using Lipinski's Rule of Five).

- Prepare Ligands: Convert library compounds into 3D formats, generate plausible tautomers and protonation states at biological pH.

Step 2: Parallel Virtual Screening Execution

LBVS Protocol (using similarity searching):

- Calculate Similarity: For each compound in the library, compute its molecular fingerprint and calculate the similarity (e.g., Tanimoto coefficient) to the reference active set.

- Rank by Similarity: Rank the entire library based on the similarity scores. The top-ranking compounds (e.g., top 1-5%) proceed to the merging stage [32].

SBVS Protocol (using molecular docking):

- Perform Docking: Use a docking program like Autodock Vina, RosettaVS, or Gnina to generate and score poses for each compound in the library against the prepared protein structure [31] [34].

- Rank by Docking Score: Rank the entire library based on the calculated docking scores (e.g., predicted binding affinity). The top-ranking compounds (e.g., top 1-5%) proceed to the merging stage.

Step 3: Merging and Triaging Results

- Compile Hit Lists: Create two independent lists: the top hits from the LBVS run and the top hits from the SBVS run.

- Apply a Merging Strategy:

- Heterogeneously Weighted Rank Sum: Normalize the scores from both methods and calculate a combined score. For example:

Combined_Score = (w_lbvs * Normalized_Similarity_Score) + (w_sbvs * Normalized_Docking_Score), wherew_lbvsandw_sbvsare weights that can be adjusted based on confidence in each method [35]. - Interaction Fingerprint (IFP) Similarity: For SBVS hits, generate protein-ligand interaction fingerprints (IFPs) and compute their similarity to the IFP of a known reference ligand crystal structure. This hybrid method prioritizes docked compounds that not only have a good energy score but also recapitulate key binding interactions [33] [6].

- Heterogeneously Weighted Rank Sum: Normalize the scores from both methods and calculate a combined score. For example:

- Select Final Hits: Based on the merged ranking, select a manageable number of compounds (e.g., 50-500) for subsequent experimental validation.

Performance and Validation

Retrospective studies demonstrate the effectiveness of hybrid VS approaches. The following table summarizes the superior screening performance of a novel hybrid method, the Fragmented Interaction Fingerprint (FIFI), compared to standalone LBVS or SBVS on a set of diverse biological targets.

Table 1: Retrospective Virtual Screening Performance of FIFI, a Hybrid Method [6]

| Target | Abbreviation | LBVS (ECFP4) | SBVS (Docking) | Hybrid (FIFI+ML) |

|---|---|---|---|---|

| Beta-2 Adrenergic Receptor | ADRB2 | 0.75 | 0.80 | 0.89 |

| Caspase-1 | Casp1 | 0.69 | 0.77 | 0.85 |

| Kappa Opioid Receptor | KOR | 0.95 | 0.65 | 0.83 |

| Lysosomal Alpha-Glucosidase | LAG | 0.71 | 0.79 | 0.87 |

| MAP Kinase ERK2 | MAPK2 | 0.73 | 0.78 | 0.86 |

| Cellular Tumor Antigen p53 | p53 | 0.70 | 0.75 | 0.84 |

Values represent the area under the receiver operating characteristic curve (AUC) for each method, where 1.0 is a perfect classifier.

Furthermore, advanced SBVS tools have proven capable of identifying potent hits from ultra-large libraries in a time-efficient manner. The table below highlights a successful application of the RosettaVS platform.

Table 2: Success Metrics of an AI-Accelerated SBVS Platform (RosettaVS) on Two Unrelated Targets [34]

| Target | Library Size Screened | Screening Time | Experimentally Validated Hits | Hit Rate | Binding Affinity (μM) |

|---|---|---|---|---|---|

| KLHDC2 | Multi-billion | < 7 days | 7 | 14% | Single-digit |

| NaV1.7 | Multi-billion | < 7 days | 4 | 44% | Single-digit |

The Scientist's Toolkit

Implementing a parallel VS campaign requires a suite of software tools and databases. The following table lists essential research reagent solutions.

Table 3: Essential Research Reagent Solutions for Parallel VS

| Tool/Resource | Type | Primary Function | Key Feature |

|---|---|---|---|

| FLAP | Software | Ligand-Based VS | Performs molecular similarity and pharmacophore screening using Molecular Interaction Fields (MIFs) [36]. |

| Siamese MLP | Software/Algorithm | Ligand-Based VS | Deep learning model for improved similarity searching, especially with structurally heterogeneous molecules [32]. |

| Autodock Vina | Software | Structure-Based VS | Widely used, open-source molecular docking program [31] [34]. |

| RosettaVS | Software | Structure-Based VS | High-performance, physics-based docking platform with receptor flexibility modeling [34]. |

| Gnina | Software | Structure-Based VS | Docking software that uses convolutional neural networks as a scoring function [31]. |

| Schrödinger Virtual Screening Web Service | Platform | Integrated VS | Cloud-based service for screening billion-compound libraries using physics-based and machine learning methods [37]. |

| PLIP | Software/Tool | Hybrid VS Analysis | Generates protein-ligand interaction fingerprints for binding mode analysis and rescoring [6]. |

| FIFI | Method/Descriptor | Hybrid VS | Fragmented Interaction Fingerprint for combining ligand and structure information in machine learning models [6]. |

| PDBbind | Database | General VS | Curated database of protein-ligand complexes with binding affinity data for method training and validation [31] [6]. |

| ChEMBL | Database | Ligand-Based VS | Database of bioactive molecules with drug-like properties, a key source for known active compounds [6]. |

Decision Framework for Merging Results

The final step of merging the independent LBVS and SBVS results is critical. The strategy can be adapted based on the project's goals and the quality of available information. The following decision diagram outlines the selection process for the most appropriate merging technique.

Merging Strategy Decision Guide

The parallel execution of LBVS and SBVS, followed by a strategic merging of results, constitutes a powerful and robust protocol for hit identification in drug discovery. This approach leverages the complementary strengths of both methods to maximize the exploration of chemical space and increase the likelihood of finding diverse and novel lead compounds. By providing detailed protocols, performance benchmarks, and a clear decision framework, this application note equips researchers with the knowledge to implement this efficient hybrid strategy, thereby accelerating the early stages of drug development.

Virtual screening (VS) is a cornerstone of modern drug discovery, leveraging computational power to identify promising drug candidates from vast chemical libraries. The two primary methodologies, Ligand-Based (LB) and Structure-Based (SB) virtual screening, have traditionally operated in parallel. LB methods exploit the structural and physicochemical properties of known active ligands to screen for similar compounds, operating under the molecular similarity principle. In contrast, SB methods, such as molecular docking, utilize the three-dimensional structure of the biological target to predict ligand binding [2].

While both have proven successful, their complementary strengths and weaknesses have stimulated the development of hybrid strategies. A true hybrid integration, as explored in this protocol, moves beyond simple sequential or parallel use of methods. It involves the methodological fusion of LB and SB data into a unified computational model, creating a holistic framework that leverages all available information to enhance the success rate of drug discovery projects, particularly in challenging areas like G Protein-Coupled Receptor (GPCR) drug discovery [2] [38].

Workflow Strategies for Hybrid LB-SB Integration

Different computational schemes can be employed to combine LB and SB methods, generally falling into three main categories as defined by Drwal and Griffith [2]. The table below summarizes and compares these core strategies.

Table 1: Core Strategies for Combining LB and SB Virtual Screening

| Strategy | Description | Advantages | Limitations |

|---|---|---|---|

| Sequential | LB and SB methods are applied in consecutive steps, typically using faster LB methods for pre-filtering before more computationally expensive SB analysis. | Optimizes trade-off between computational cost and method complexity; practical for screening very large libraries. | Does not exploit all available information simultaneously; retains some individual limitations of each method. |

| Parallel | LB and SB methods are run independently, and their results are combined at the end, for instance, by merging rank-ordered lists of candidates. | Can increase performance and robustness over single-method approaches. | Performance can be sensitive to the choice of template ligand and reference protein structure. |

| True Hybrid | LB and SB data are fused at the methodological level, creating a unified model that uses both data types concurrently for prediction. | Leverages synergistic information from ligands and targets; can overcome individual method limitations for a more holistic assessment. | Increased methodological complexity; requires careful tuning and validation. |

The following diagram illustrates the logical flow and decision points for implementing these strategies within a hybrid screening workflow.

Application Notes: A Hybrid Protocol with Transfer Learning for Opioid Receptors

The following section details a specific implementation of a true hybrid LB-SB protocol, developed to predict ligand bioactivity for opioid receptors (ORs), a class of GPCRs. This protocol is particularly innovative as it integrates LB and SB molecular descriptors within a transfer learning framework, effectively addressing the challenge of limited training data for individual OR subtypes [38].

Experimental Protocol

Objective: To build a robust predictive model for ligand bioactivity at individual opioid receptor subtypes (δ, μ, and κ) by integrating LB and SB descriptors using a transfer learning approach.

Step 1: Data Collection and Curation

- Bioactivity Data: Retrieve known active (agonists/antagonists) and inactive ligands for each OR subtype (DOR, MOR, KOR) from the IUPHAR/BPS Guide to Pharmacology and ChEMBL database.

- Inactive Ligands: Defined as those with reported potency (Ki, IC50, or EC50) worse than 10 μM [38].

- Data Filtering: Remove large linear peptides and compounds with conflicting bioactivity reports. Merge individual datasets to create a combined OR subfamily dataset for pretraining.

Step 2: Calculation of Molecular Descriptors

- Ligand-Based (LB) Descriptors: Using RDKit, calculate 43 molecular descriptors for each ligand. These include basic physicochemical properties, atom counts, and topological indices [38].

- Structure-Based (SB) Descriptors:

- Obtain 3D structures of ligand-OR complexes from the Protein Data Bank (PDB).

- Prepare protein structures using standard tools (e.g., Schrodinger's protein preparation workflow).

- For each complex, use a cavity-based pharmacophore tool (e.g., iChem Volsite) to derive pharmacophoric features from the binding site.

- For each ligand, generate an ensemble of up to 200 3D conformers, accounting for probable tautomeric forms and protonation states at pH 7.4.

- Calculate the similarity of each ligand conformer to each of the 25 cavity-derived pharmacophores (e.g., using Shaper2).

- For each ligand and each structure, derive three SB features: the maximum similarity score, the average score, and the 75th percentile of the score distribution. This results in a 75-dimensional SB feature vector (25 structures × 3 statistics) per ligand [38].

Step 3: Neural Network Model Building and Transfer Learning

- Architecture: Implement two distinct neural network models:

- A Dense Neural Network (DNN) that takes the concatenated LB and SB feature vectors as input.

- A Graph Convolutional Network (GCN) that operates on the molecular graph structure.

- Transfer Learning Scheme:

- Pretraining: Train the model in a supervised manner on the large, combined OR subfamily dataset using both LB and SB descriptors. This allows the model to learn general features of ligand-OR interactions.

- Fine-Tuning: Take the pretrained model and subsequently fine-tune its parameters on the smaller, target-specific dataset (e.g., only MOR ligands). This step specializes the model for the specific OR subtype [38].

Table 2: Key Research Reagents and Computational Tools for Hybrid LB-SB Integration

| Item Name | Type | Function in Protocol | Source/Reference |

|---|---|---|---|

| IUPHAR/BPS Guide to Pharmacology | Database | Source of curated bioactive ligand data for target receptors. | [38] |

| ChEMBL Database | Database | Source of bioactivity data for inactive ligands and potent actives for testing. | [38] |