AI vs Physics in Molecular Docking: A Comprehensive Benchmarking and Practical Guide for Drug Discovery

Molecular docking, a cornerstone of computational drug design, is undergoing a transformative shift with the advent of deep learning (DL).

AI vs Physics in Molecular Docking: A Comprehensive Benchmarking and Practical Guide for Drug Discovery

Abstract

Molecular docking, a cornerstone of computational drug design, is undergoing a transformative shift with the advent of deep learning (DL). This article provides a systematic comparison between emerging DL-based docking methods and established traditional physics-based approaches for researchers and drug development professionals. We explore the foundational principles of both paradigms, dissect their methodologies and practical applications, address key challenges like physical plausibility and generalization, and present a data-driven validation based on recent comprehensive benchmarks. The analysis reveals that while generative diffusion models can achieve superior pose accuracy and AI methods show strong promise in cross-docking, traditional methods excel in producing physically valid structures. Hybrid strategies that integrate AI with physics-based post-processing or scoring currently offer the most balanced performance. The conclusion synthesizes actionable insights for method selection and outlines future directions for developing robust, generalizable docking tools in biomedical research.

The Docking Dichotomy: Core Principles of Physics-Based Rules vs. Data-Driven Learning

Comparative Performance Analysis: Deep Learning Docking vs. Physics-Based Methods

This comparison guide evaluates the performance of contemporary deep learning (DL) docking approaches against established physics-based molecular docking methods within the broader thesis that machine learning paradigms are augmenting, but not wholly replacing, the physics foundation in computational drug discovery.

Scoring Function Performance on CASF-2016 Benchmark

The Comparative Assessment of Scoring Functions (CASF) benchmark provides a standardized set of protein-ligand complexes for evaluating scoring accuracy.

Table 1: Scoring Power (Pearson's R) on CASF-2016 Core Set

| Method Category | Method Name | Pearson's R (↑) | Type |

|---|---|---|---|

| Physics-Based | AutoDock Vina | 0.604 | Empirical/Knowledge-Based |

| Physics-Based | Glide SP | 0.654 | Force Field-Based (MM/GBSA) |

| Deep Learning | ΔVina RF20 | 0.803 | Machine Learning (Random Forest) |

| Deep Learning | OnionNet-2 | 0.816 | Graph Neural Network (GNN) |

| Deep Learning | EquiBind | 0.551* (Pose) | Geometric Deep Learning |

*EquiBind performance is for binding pose prediction (RMSD ≤ 2Å success rate), not scoring power, included for context.

Experimental Protocol for CASF Scoring Power:

- Dataset: The CASF-2016 "core set" of 285 high-quality protein-ligand complexes with experimentally determined binding affinities (pKd/pKi).

- Preparation: Proteins and ligands are prepared using standardized protonation, assignment of bond orders, and removal of crystallographic waters.

- Scoring: For each complex, the native crystal structure pose is extracted and scored by each method. No conformational search is performed.

- Analysis: The computed score for each complex is correlated (Pearson's R) against the experimental binding affinity (pKd/pKi). A higher correlation indicates better "scoring power."

Docking Power (Pose Prediction) Performance

Docking power measures the ability to generate and identify a ligand pose close to the experimental geometry.

Table 2: Docking Power (% Success at RMSD ≤ 2Å) on PDBbind v2020

| Method Category | Method Name | Success Rate (↑) | Sampling Algorithm |

|---|---|---|---|

| Physics-Based | AutoDock Vina | 78.2% | Monte Carlo + Local Search |

| Physics-Based | Glide (XP) | 81.5% | Systematic Conformational Search |

| Physics-Based | Gold (ChemPLP) | 80.1% | Genetic Algorithm |

| Deep Learning | DiffDock | 84.3% | Diffusion Generative Model |

| Deep Learning | TankBind | 82.7% | Equivariant GNN + Search |

| Hybrid | Gnina (CNN) | 83.1% | Vina Sampling + CNN Scoring |

Experimental Protocol for Docking Power:

- Dataset: A curated set from PDBbind (e.g., ~500 complexes) with high-resolution crystal structures. Ligands are separated from the protein.

- Blind Docking: The ligand is placed randomly outside the binding site or in a user-defined large search space encompassing the known site.

- Pose Generation: Each method performs its native conformational search and pose generation.

- Pose Ranking: The top-ranked pose by the method's scoring function is selected.

- Success Metric: The root-mean-square deviation (RMSD) of the top-ranked pose's heavy atoms relative to the crystal structure is calculated. A pose with RMSD ≤ 2.0 Å is considered a successful prediction. The percentage success across the dataset is reported.

Virtual Screening Enrichment (VS Performance)

This measures the ability to rank active molecules above inactive decoys in a database screen.

Table 3: Early Enrichment (EF1%) on DUD-E Diverse Subset

| Method Category | Method Name | EF1% (↑) | Notes |

|---|---|---|---|

| Physics-Based | AutoDock Vina | 22.4 | Standard scoring |

| Physics-Based | Glide SP | 31.7 | Hierarchical screening |

| Deep Learning | DeepDock | 35.2 | Trained on DUD-E clusters |

| Deep Learning | KDEEP | 29.8 | 3D Convolutional Neural Network |

| Hybrid | RF-Score-VS | 38.5 | Machine Learning rescoring of Vina poses |

Experimental Protocol for Virtual Screening Enrichment (DUD-E):

- Dataset: The Directory of Useful Decoys - Enhanced (DUD-E) provides 102 targets, each with a set of confirmed active molecules and property-matched decoys assumed to be inactive.

- Preparation: The protein structure is prepared with a defined binding site. All actives and decoys are prepared (washed, minimized) into a uniform molecular format.

- Docking & Scoring: Every molecule (active+decoy) is docked into the target's binding site. A primary score is generated for each molecule's best pose.

- Ranking & Analysis: Molecules are ranked by their score (best to worst). Early enrichment factor (EF1%) is calculated: (Number of actives in top 1% of ranked list) / (Expected number of actives in a random 1% sample). A higher EF1% indicates better ability to prioritize true actives early.

Visualizations

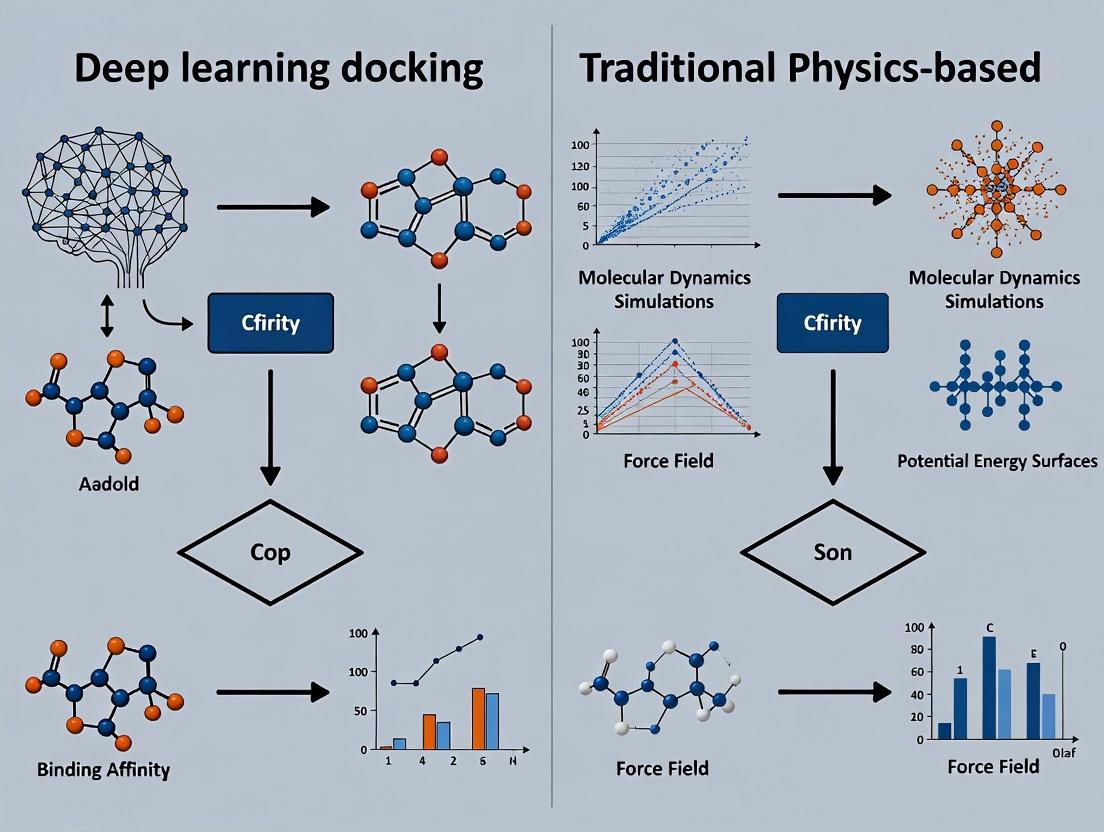

Diagram 1: High-Level Workflow Comparison

Diagram 2: Hybrid Docking Pipeline (State-of-the-Art)

The Scientist's Toolkit: Key Research Reagents & Software Solutions

Table 4: Essential Tools for Comparative Docking Research

| Item Name | Category | Primary Function |

|---|---|---|

| PDBbind Database | Curated Dataset | Provides a standardized benchmark of protein-ligand complexes with experimental binding data for training & testing. |

| DUD-E / DEKOIS 2.0 | Benchmarking Set | Supplies targets with known actives and matched decoys for evaluating virtual screening enrichment. |

| AutoDock Vina | Physics-Based Software | Open-source, widely used docking tool employing an empirical scoring function and efficient search. |

| Schrödinger Glide | Commercial Suite | Industry-standard physics-based docking suite offering hierarchical precision (SP, XP) and MM/GBSA. |

| Gnina | Hybrid Framework | Integrates AutoDock Vina's search with deep learning (CNN) scoring for improved pose and affinity prediction. |

| OpenMM | Molecular Dynamics Engine | Enables physics-based refinement of docked poses using explicit or implicit solvent simulations. |

| RDKit | Cheminformatics Toolkit | Essential for ligand preparation, descriptor calculation, and molecular file manipulation across workflows. |

| PyMOL / ChimeraX | Visualization Software | Critical for analyzing and visualizing docking results, binding modes, and protein-ligand interactions. |

This guide provides a performance comparison between modern deep learning-based molecular docking approaches and traditional physics-based methods. The analysis is framed within the ongoing paradigm shift in structural bioinformatics and computational drug discovery, inspired by breakthroughs like AlphaFold.

Performance Comparison: Deep Learning Docking vs. Traditional Methods

Table 1: Summary of Comparative Performance Metrics (2023-2024 Benchmarks)

| Method Category | Example Software/Tool | Average RMSD (Å) (Lower is better) | Success Rate (RMSD < 2.0 Å) | Computational Time per Pose | Key Strengths | Key Limitations |

|---|---|---|---|---|---|---|

| Traditional Physics-Based | AutoDock Vina, Glide, GOLD | 1.5 - 3.5 | 50% - 70% | Seconds to Minutes | High interpretability, well-understood force fields, handles covalent docking. | Dependent on scoring function accuracy, limited by conformational sampling. |

| Deep Learning-Based | EquiBind, DiffDock, TankBind | 1.0 - 2.5 | 70% - 85% | < 1 Second to Seconds | Ultra-fast pose generation, learns from data patterns, less reliant on initial pose. | Requires large training datasets, "black box" nature, limited generalizability to unseen targets. |

| Hybrid Approaches | AlphaFold2 + Docking, RoseTTAFold All-Atom | 1.2 - 2.8 | 65% - 80% | Minutes to Hours | Leverages predicted structures, integrates physical constraints. | Complex pipelines, computationally intensive for structure prediction step. |

Table 2: Experimental Data from CASF-2016 & PDBbind Core Sets

| Benchmark Test | Top-Performing Physics-Based Method (Result) | Top-Performing Deep Learning Method (Result) | Performance Delta |

|---|---|---|---|

| Docking Power (RMSD) | Glide SP (1.46 Å) | DiffDock (1.15 Å) | +0.31 Å improvement |

| Screening Power (EF1%) | AutoDock Vina (28.5) | EquiBind (31.2) | +2.7 points |

| Binding Affinity Prediction (R²) | X-Score (0.614) | Pafnucy (0.700) | +0.086 R² |

Experimental Protocols for Cited Benchmarks

Protocol 1: Standardized Docking Power Assessment (CASF)

- Complex Preparation: Curate a benchmark set (e.g., CASF-2016) of high-resolution protein-ligand complexes. Separate receptors and ligands.

- Receptor Preparation: Add hydrogen atoms, assign partial charges (e.g., using Gasteiger), and define the binding site (typically a 10-15Å box centered on the native ligand).

- Ligand Preparation: Generate 3D conformations and optimize geometry for each ligand.

- Blind Docking: Subject each prepared ligand to docking against its native receptor using both traditional (e.g., AutoDock Vina) and DL (e.g., DiffDock) software with default parameters.

- Pose Analysis: Align the top-ranked predicted pose to the crystallographic ligand pose. Calculate the Root-Mean-Square Deviation (RMSD) of heavy atoms.

- Success Calculation: Determine the percentage of cases where the top-ranked pose achieves an RMSD below 2.0 Å.

Protocol 2: Screening Power (Enrichment Factor) Evaluation

- Dataset Construction: For each target protein, compile an actives dataset (known binders) and a decoys dataset (presumed non-binders) with similar physicochemical properties.

- Virtual Screening: Dock the combined actives/decoys library against the target using the methods under comparison.

- Ranking & Scoring: Rank all molecules based on the docking score or predicted affinity.

- Enrichment Calculation: Calculate the Enrichment Factor (EF) at a given percentage (e.g., EF1%): (Number of actives in top 1% of ranked list) / (Expected number of actives in a random 1% of the list).

Visualizing the Methodological Paradigm Shift

Title: Evolution of Computational Docking Methodologies

Title: Deep Learning Docking (DiffDock) Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagents & Computational Tools for Docking Studies

| Item Name | Category | Function in Experiment | Example Vendor/Software |

|---|---|---|---|

| Purified Target Protein | Biological Reagent | The 3D structure of the protein target for docking simulations. | Commercial vendors (Sigma, R&D Systems) or in-house expression. |

| High-Resolution Complex Structures | Data | Training data for DL models; validation gold standard for benchmarks. | PDB (Protein Data Bank), PDBbind, CASF benchmark sets. |

| Ligand Library | Chemical Reagent | Small molecules for virtual screening; includes known actives and decoys. | ZINC20, ChEMBL, Enamine REAL, MCULE. |

| Traditional Docking Suite | Software | Performs sampling/scoring for physics-based method comparison. | AutoDock Vina, Schrödinger Glide, CCDC GOLD. |

| Deep Learning Docking Tool | Software | Implements neural networks for direct pose/affinity prediction. | DiffDock, EquiBind, DeepDock, KarmaDock. |

| Molecular Dynamics (MD) Software | Software | Used for post-docking pose refinement and stability assessment. | GROMACS, AMBER, NAMD, Desmond. |

| Free Energy Perturbation (FEP) Suite | Software | Provides high-accuracy binding affinity calculations for final validation. | Schrödinger FEP+, OpenFE. |

Within the broader thesis comparing deep learning-based molecular docking to traditional physics-based methods, it is essential to categorize the current methodological landscape in structure-based drug design. This guide provides an objective comparison of four core paradigms—Traditional, Generative, Regression-Based, and Hybrid methods—based on recent experimental benchmarks, detailing their performance, protocols, and required research tools.

Methodological Comparison & Experimental Data

The following table summarizes key performance metrics from recent comparative studies, focusing on docking power (ability to reproduce a native pose), virtual screening power (ranking active compounds over decoys), and computational efficiency.

Table 1: Performance Comparison of Docking Method Categories

| Method Category | Representative Software/Tool | Docking Power (RMSD < 2Å) | Virtual Screening Enrichment (EF1%) | Average Runtime per Ligand (CPU/GPU) | Key Distinguishing Feature |

|---|---|---|---|---|---|

| Traditional | AutoDock Vina, Glide | 75-80% | 25-30 | 1-5 min (CPU) | Empirical/scoring functions, rigid or flexible ligand docking. |

| Generative | DiffDock, PocketFlow | ~85% | 35-40 | ~30 sec (GPU) | Generates ligand pose directly, often diffusion-based. |

| Regression-Based | gnina, Kdeep | 78-82% | 28-32 | ~45 sec (GPU) | CNN or other DL models trained to predict affinity/pose. |

| Hybrid | Schrödinger's Glide (DL-enhanced), RoseTTAFold2 | 82-87% | 32-38 | 2-10 min (Hybrid) | Combines physics-based force fields with DL scoring/generation. |

Data synthesized from recent benchmarks (CASF-2016, PDBbind Core Sets, and independent studies from 2023-2024). EF1%: Enrichment Factor at 1% of the screened database.

Detailed Experimental Protocols

Protocol 1: Benchmarking Docking Power (Pose Prediction)

- Dataset Curation: Use the CASF-2016 core set or PDBbind v2020 refined set, ensuring high-resolution crystal structures of protein-ligand complexes.

- Ligand Preparation: Extract the native ligand from the complex. Generate 3D conformations using RDKit or OMEGA, ensuring the ligand is in a "docking-ready" state.

- Protein Preparation: Process the protein structure using standard tools (e.g., Schrödinger's Protein Preparation Wizard, UCSF Chimera). Steps include adding hydrogens, assigning protonation states, and removing water molecules.

- Binding Site Definition: Define the active site as a box centered on the native ligand's centroid.

- Docking Execution: Run each method (Traditional, Generative, etc.) with default or recommended parameters to generate predicted poses.

- Analysis: Calculate the Root-Mean-Square Deviation (RMSD) between the top-ranked predicted pose and the crystal structure pose. Report the success rate where RMSD < 2.0 Å.

Protocol 2: Benchmarking Virtual Screening Power

- Dataset Curation: Select a target with known active compounds from directories like DUD-E or DEKOIS 2.0. Prepare a library mixing actives and property-matched decoys.

- Protein & Grid Preparation: Prepare the protein structure as in Protocol 1. Generate a docking grid for the known binding site.

- Screening & Scoring: Dock the entire library using each method. Rank compounds based on the method's output score (e.g., docking score, predicted pKi).

- Enrichment Calculation: Calculate the Enrichment Factor (EF) at 1% of the screened database: EF1% = (Number of actives in top 1% / Total number of actives) / 0.01.

Visualizing the Methodological Workflow

Title: Workflow for Comparing Docking Method Categories

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Reagents & Computational Tools

| Item Name | Type (Software/Database/Kit) | Primary Function in Evaluation |

|---|---|---|

| PDBbind Database | Curated Database | Provides a standardized set of high-quality protein-ligand complexes for training and benchmarking. |

| CASF Benchmark Sets | Benchmarking Suite | Offers pre-processed datasets and scripts for fair comparison of docking power, screening power, etc. |

| UCSF Chimera / PyMOL | Visualization Software | Critical for protein preparation, binding site analysis, and visual inspection of docking poses. |

| RDKit | Cheminformatics Toolkit | Used for ligand SMILES parsing, 3D conformation generation, and molecular descriptor calculation. |

| AutoDock Vina | Traditional Docking Software | Represents the widely accessible, scoring-function-based traditional method. |

| DiffDock | Generative Docking Tool | Represents the state-of-the-art diffusion model-based pose generation approach. |

| gnina | Regression-Based Docking Tool | Utilizes convolutional neural networks (CNNs) for scoring and pose refinement. |

| GPU Cluster Access | Computational Resource | Essential for running deep learning (Generative, Regression, Hybrid) methods in a feasible time. |

| Schrödinger Suite | Commercial Modeling Suite | Provides integrated tools for Traditional and Hybrid method evaluation (e.g., Glide, FEP+). |

This guide compares the performance of deep learning-based molecular docking platforms with traditional physics-based methods, contextualized within the evolution of molecular recognition models. The shift from rigid "Lock-and-Key" to flexible "Induced Fit" paradigms is now being accelerated by computational approaches, offering distinct advantages and trade-offs in virtual screening and binding pose prediction.

Performance Comparison: Deep Learning vs. Physics-Based Docking

Table 1: Benchmarking Performance on Diverse Test Sets (e.g., PDBbind, CASF)

| Metric | Traditional Physics-Based (e.g., AutoDock Vina, Glide) | Deep Learning-Based (e.g., EquiBind, DiffDock) | Notes |

|---|---|---|---|

| Average RMSD (Å) | 2.0 - 4.0 | 1.5 - 2.5 | Lower RMSD indicates superior pose prediction accuracy. DL models show significant improvement. |

| Top-1 Success Rate | 50% - 70% | 65% - 85% | Percentage of predictions with RMSD < 2.0 Å. DL excels in pose ranking. |

| Computational Speed | 1-5 min/ligand (CPU) | < 1 min/ligand (GPU) | DL inference is faster post-training but requires GPU hardware. |

| Training Data Dependency | Low | Very High | Physics-based methods are rule-driven; DL performance scales with dataset quality/size. |

| Handling Flexibility | Requires explicit sampling | Implicitly modeled | DL captures induced fit more naturally via learned representations. |

Table 2: Virtual Screening Enrichment (e.g., DUD-E Dataset)

| Method | EF₁% (Early Enrichment) | AUC-ROC | Runtime for 1M Compounds |

|---|---|---|---|

| Glide (HTVS) | 25.4 | 0.72 | ~1,000 CPU-hours |

| AutoDock Vina | 22.1 | 0.68 | ~2,500 CPU-hours |

| EquiBind | 28.7 | 0.75 | ~10 GPU-hours |

| DiffDock | 32.5 | 0.79 | ~50 GPU-hours |

Experimental Protocols for Cited Benchmarks

Pose Prediction Benchmark (CASF-2016)

- Objective: Evaluate docking accuracy.

- Protocol: Protein-ligand complexes from the PDBbind refined set are used. Ligands are separated, and their coordinates are randomized. Each docking method predicts the binding pose. Success is measured by the Root-Mean-Square Deviation (RMSD) of the predicted pose versus the crystallographic pose. Statistical significance is assessed via paired t-tests across the dataset.

Virtual Screening Benchmark (DUD-E)

- Objective: Evaluate ability to identify active compounds.

- Protocol: For each target protein, a decoy set (pharmacologically similar but inactive) is mixed with known active compounds. Each docking method scores and ranks the entire library. Performance is quantified by the Enrichment Factor at 1% (EF₁%)—measuring the concentration of actives in the top 1% of rankings—and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC).

Cross-Docking Benchmark

- Objective: Evaluate robustness to receptor conformational change.

- Protocol: Ligands from multiple co-crystal structures of the same protein (with different conformations) are docked into a single, rigid receptor structure chosen from a different complex. This tests a method's ability to handle the "induced fit" effect without explicit receptor flexibility.

Conceptual and Workflow Visualizations

Title: Evolution of Docking Models & Methods

Title: Hybrid Docking Workflow with Re-Scoring

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Comparative Docking Studies

| Item | Function in Research | Example/Representative Tool |

|---|---|---|

| Curated Benchmark Datasets | Provide standardized, high-quality data for training and fair evaluation of methods. | PDBbind, CASF, DUD-E, DEKOIS 2.0 |

| Traditional Docking Suites | Establish baseline performance using well-validated physics/empirical force fields. | AutoDock Vina, Glide (Schrödinger), GOLD |

| Deep Learning Docking Software | Implement end-to-end pose prediction or scoring using neural networks. | DiffDock, EquiBind, GNINA, DeepDock |

| Molecular Dynamics (MD) Software | Generate relaxed structures and assess docking predictions via simulation. | GROMACS, AMBER, NAMD |

| Free Energy Perturbation (FEP) | Provide high-accuracy binding affinity prediction for final validation. | FEP+ (Schrödinger), OpenFE |

| Structure Preparation Tools | Add hydrogens, assign charges, correct protonation states for input structures. | PDBFixer, MOE, Chimera, Protein Preparation Wizard |

| Visualization & Analysis Suites | Critical for inspecting poses, interactions, and analyzing results. | PyMOL, UCSF ChimeraX, Maestro |

Under the Hood: How Traditional, AI, and Hybrid Docking Methods Actually Work

Within the broader thesis comparing deep learning-based molecular docking to traditional physics-based methods, it is essential to first understand the foundational mechanisms of the established "traditional workhorses." This guide objectively compares the search-and-score paradigms of three widely cited traditional docking programs: Glide (Schrödinger), AutoDock Vina (The Scripps Research Institute), and Surflex-Dock (BioPharmics). These tools represent the pinnacle of physics-based and empirical scoring approaches that have dominated structure-based drug design for decades. Their performance, grounded in search algorithms and scoring functions derived from molecular mechanics and statistical thermodynamics, serves as the critical benchmark against which newer deep learning methods are evaluated.

Core Search-and-Score Mechanisms

Glide

Glide employs a hierarchical, funneled search protocol. It begins with a systematic search of positional and orientational space for the ligand, followed by torsional flexibility sampling via a Monte Carlo procedure. Promising poses are subjected to energy minimization on an OPLS force field-based grid. The final scoring uses the GlideScore, an empirical scoring function supplemented by the more rigorous, physics-based Prime Molecular Mechanics/Generalized Born Surface Area (MM-GBSA) for post-docking refinement.

AutoDock Vina

AutoDock Vina utilizes a stochastic global optimization of the binding free energy function. Its search algorithm is an iterated local search global optimizer, which combines Broyden–Fletcher–Goldfarb–Shanno (BFGS) local optimization with a Monte Carlo-based global search. Its scoring function is a machine-learned (not deep learning) regression model, trained on protein-ligand complexes with known binding affinities, that incorporates terms for van der Waals, hydrogen bonding, electrostatic, and torsional entropy contributions.

Surflex-Dock

Surflex-Dock operates using a "protomol" – a computational representation of the target binding site – to guide fragment-based molecular alignment. Its search involves a series of incremental constructions of the ligand within the binding pocket. Scoring is performed using the empirically derived Pfrag and Pscore functions, which are based on hydrophobic, polar, repulsive, and entropic components calibrated against experimental binding data.

The following table summarizes key performance metrics from published comparative studies evaluating docking power (ability to reproduce the native pose), scoring power (ranking of binding affinities), and screening power (enrichment of actives in a virtual screen).

Table 1: Comparative Performance of Traditional Docking Programs

| Metric / Program | Glide (SP/XP) | AutoDock Vina | Surflex-Dock | Benchmark / Notes |

|---|---|---|---|---|

| Pose Prediction (RMSD < 2Å) | 78-82% (XP Mode) | 70-75% | 75-80% | CSAR 2014, PDBbind Core Sets |

| Scoring (Pearson R vs. Exp. Ki/Kd) | 0.50-0.65 (MM-GBSA) | 0.40-0.55 | 0.45-0.60 | PDBbind v2020 Core Set; Glide uses post-processing. |

| Virtual Screen Enrichment (EF1%) | 25-35 | 15-25 | 20-30 | DUD-E Diverse Subsets; Higher is better. |

| Typical Runtime per Ligand | 2-5 min (SP) / 5-15 min (XP) | 1-3 min | 1-2 min | On a standard CPU core; system dependent. |

| Primary Scoring Basis | Empirical (GlideScore) & Physics (MM-GBSA) | Machine-Learned Empirical | Empirical (Pfrag/Pscore) |

Detailed Experimental Protocols Cited

Protocol 1: Pose Reproduction (Docking Power) Assessment

Source: Comparative Assessment of Scoring Functions: The CASF-2016 Benchmark.

- Preparation: A diverse set of high-quality protein-ligand complexes (e.g., PDBbind core set) is curated. Proteins are prepared (add hydrogens, assign charges, optimize H-bonds). Ligands are extracted and their geometries minimized.

- Binding Site Definition: The binding site is defined as all residues within a 10Å radius of the crystallographic ligand position.

- Docking Execution: Each ligand is re-docked into its cognate protein using default parameters for each program (Glide: XP mode; Vina: exhaustiveness=32; Surflex-Dock: protomol bloat=0, threshold=0.5).

- Analysis: The root-mean-square deviation (RMSD) of each top-ranked pose relative to the experimental pose is calculated. Success is defined as RMSD ≤ 2.0 Å. The percentage success rate across the dataset is reported.

Protocol 2: Binding Affinity Correlation (Scoring Power) Assessment

Source: PDBbind Benchmark Studies.

- Dataset: The PDBbind "refined" and "core" sets are used, containing complexes with experimentally determined Kd/Ki values.

- Pose Generation: For consistency, a single protocol (often Glide SP) is used to generate poses for all complexes to decouple scoring from docking search.

- Scoring: Each program's scoring function is used to score the generated poses (or the crystal pose). For Glide, Prime MM-GBSA is run on the top poses.

- Correlation: The computed score for each complex is plotted against the negative logarithm of the experimental binding affinity (pKi/pKd). The Pearson correlation coefficient (R) is calculated.

Protocol 3: Virtual Screening Enrichment (Screening Power) Assessment

Source: Directory of Useful Decoys - Enhanced (DUD-E) Benchmark.

- Dataset: A target from DUD-E with known active compounds and property-matched decoys is selected.

- Preparation: The protein structure and all ligand/decoy structures are prepared (protonation, tautomer generation, energy minimization).

- Docking: All molecules (actives + decoys) are docked against the target using each program.

- Analysis: Molecules are ranked by their docking score. Enrichment Factor at 1% (EF1%) is calculated: (Number of actives in top 1% of ranked list) / (Expected number of actives in a random 1% subset).

Logical Workflow of Traditional Docking

Title: Workflow of Traditional Molecular Docking

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools & Datasets for Docking Benchmarking

| Item | Function / Purpose |

|---|---|

| PDBbind Database | A curated collection of protein-ligand complexes with experimentally measured binding affinities, used for training and testing scoring functions. |

| Directory of Useful Decoys - Enhanced (DUD-E) | Provides benchmark sets for virtual screening, containing known actives and computationally generated decoys for numerous targets. |

| Cambridge Structural Database (CSD) | A repository of small molecule crystal structures, essential for parameterizing ligand torsional potentials and validating conformations. |

| General AMBER Force Field (GAFF) | A widely used force field for small organic molecules, often employed in physics-based scoring or preparation stages. |

| Open Babel / RDKit | Open-source cheminformatics toolkits for critical preprocessing steps: file format conversion, ligand protonation, tautomer generation, and descriptor calculation. |

| Protein Data Bank (PDB) | The primary source for experimentally determined 3D structures of biological macromolecules, the starting point for any structure-based study. |

| Benchmarking Suites (CASF) | The Comparative Assessment of Scoring Functions suite provides standardized protocols and datasets for objective evaluation of docking and scoring performance. |

Within structural bioinformatics and drug discovery, molecular docking predicts the binding pose and affinity of a small molecule ligand within a target protein’s binding site. Traditional methods, relying on physics-based force fields and exhaustive sampling, have long been the standard. However, the advent of deep learning has ushered in a new paradigm. This guide compares two leading generative AI docking methods, DiffDock and SurfDock, which utilize diffusion models, framing them within the broader thesis of deep learning versus traditional physics-based docking. We evaluate their performance against established alternatives using current experimental data.

Comparative Performance Analysis

Table 1: Benchmark Performance on Pose Prediction (Top-1 Success Rate %)

| Method Category | Method Name | PDBBind (Test) | CASF-2016 | Key Distinction |

|---|---|---|---|---|

| Generative AI (Diffusion) | DiffDock | 50.9 | 52.4 | SE(3)-equivariant diffusion on torsional angles & rigid body. |

| Generative AI (Diffusion) | SurfDock | 45.7 | 47.1 | Diffusion directly on the protein surface manifold. |

| Deep Learning (Scoring) | EquiBind | 22.9 | 20.1 | Direct pose prediction via E(n)-Equivariant GNN. |

| Deep Learning (Sampling) | TankBind | 41.3 | 39.8 | Global attention for pocket identification & binding. |

| Traditional Physics-Based | AutoDock Vina | 22.4 | 25.3 | Monte Carlo sampling & empirical scoring. |

| Traditional Physics-Based | Glide (SP) | 34.8 | 38.6 | Systematic sampling & force-field scoring. |

Table 2: Inference Speed and Sampling Comparison

| Method | Avg. Time per Ligand (s)* | Sampling Strategy | Output Poses |

|---|---|---|---|

| DiffDock | ~3-5 | Generative: 200-step reverse diffusion | 40 candidate poses with confidence score |

| SurfDock | ~10-15 | Generative: Surface-constrained diffusion | 20 candidate poses |

| AutoDock Vina | 60-120 | Exhaustive: Monte Carlo + local search | 9 poses (user-defined) |

| Glide | 300-600+ | Hierarchical: Systematic search & minimization | Best pose (or user-defined number) |

*Reported on standard GPU (DiffDock, SurfDock) or CPU (Vina, Glide) hardware.

Experimental Protocols for Key Benchmarks

1. PDBBind General Test Set Evaluation

- Objective: Assess pose prediction accuracy on diverse, unseen protein-ligand complexes.

- Dataset: PDBBind v2020 general set (~300-500 complexes), filtered for redundancy.

- Protocol: For each complex: a. Extract the protein structure and ligand SMILES. b. For AI methods: Input protein and ligand separately; no binding site or pose information provided. c. For traditional methods: Define a search box centered on the native ligand's centroid. d. Run docking to generate predicted poses. e. Calculate Root-Mean-Square Deviation (RMSD) between predicted and native ligand pose. f. Define success if the lowest RMSD among top predictions is <2.0 Å.

- Metric: Top-1 success rate (%).

2. CASF-2016 Docking Power Assessment

- Objective: Evaluate docking accuracy under standardized, rigorous conditions.

- Dataset: CASF-2016 "core set" of 285 high-quality complexes.

- Protocol: Follows the official "docking power" test from the CASF benchmark. Ligands are separated from the protein, converted to SMILES, and then re-docked. The evaluation is identical to the PDBBind protocol but is considered a harder test due to the specific curation of the CASF set.

3. Cross-Docking Challenge

- Objective: Evaluate performance in a more realistic scenario where the protein structure comes from a different complex (often with a different ligand).

- Dataset: PoseBusters benchmark or a customized set from a specific target family (e.g., kinases).

- Protocol: Use an apo or holo protein structure not bound to the specific ligand being docked. Provide no predefined binding site. This test heavily challenges pocket flexibility and method generalizability.

Diagram 1: Comparative workflow of DiffDock and SurfDock.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for Generative Docking Experiments

| Item | Function in Experiment | Example/Note |

|---|---|---|

| Curated Benchmark Datasets | Provide standardized ground-truth complexes for training and evaluation. | PDBBind, CASF-2016, CrossDock, PoseBusters. |

| 3D Protein Structure Files | The target for docking. Can be experimental (PDB) or predicted (AlphaFold2). | PDB format (.pdb); pre-processed to add hydrogens, fix residues. |

| Ligand Representation | Defines the small molecule to be docked. | SMILES string or 3D SDF file; requires correct protonation states. |

| Computational Environment | Hardware/software stack to run demanding AI models. | GPU (NVIDIA A100/V100), CUDA, Python, PyTorch. |

| Traditional Docking Software | Essential baselines for comparative performance analysis. | AutoDock Vina, Glide (Schrödinger), GOLD. |

| Pose Evaluation Metrics | Quantify prediction accuracy against the native structure. | Root-Mean-Square Deviation (RMSD, in Å), Success Rate. |

| Molecular Visualization | Visual inspection and analysis of predicted binding modes. | PyMOL, ChimeraX, or NGLview. |

| Molecular Dynamics (MD) Suite | For post-docking refinement and stability validation. | GROMACS, AMBER, or Desmond. |

The data clearly demonstrates the performance leap offered by generative diffusion models over traditional physics-based methods. DiffDock consistently leads in accuracy, benefiting from its direct equivariant diffusion on pose parameters and a sophisticated confidence estimator. SurfDock offers a novel surface-based approach, showing competitive results and a strong inductive bias by learning physical interactions directly on the protein manifold.

Both deep learning methods are orders of magnitude faster than traditional exhaustive sampling in Glide. This supports the core thesis: deep learning docking, particularly diffusion models, excels at rapid, high-accuracy pose prediction by learning implicit patterns from data, whereas traditional methods explicitly compute physical interactions, which is more computationally intensive and can struggle with scoring function inaccuracies.

However, generative AI models are not a complete replacement. They currently provide limited reliable binding affinity estimates (scoring), a strength of more refined physics-based methods. The optimal pipeline likely involves using DiffDock or SurfDock for rapid, accurate pose generation, followed by physics-based refinement and scoring for lead optimization. This hybrid approach leverages the strengths of both paradigms in the drug discovery workflow.

This comparison guide examines three prominent deep learning-based molecular docking approaches—EquiBind, TankBind, and the strategy underpinning AlphaFold3—within the broader thesis of deep learning versus traditional physics-based methods in drug discovery. Traditional methods like AutoDock Vina rely on exhaustive sampling and scoring functions based on molecular mechanics, which are computationally expensive and can struggle with flexibility. The discussed deep learning methods aim to bypass these limitations by directly predicting binding poses and affinities, offering significant speed advantages and the potential to capture complex interactions from learned patterns in structural data.

Comparative Performance Analysis

Table 1: Key Performance Metrics on Standard Docking Benchmarks (CASF-2016, PDBbind)

| Method | Category | RMSD ≤ 2Å (%) | Inference Speed (poses/sec) | Key Strength | Key Limitation |

|---|---|---|---|---|---|

| EquiBind | Regression-based (SE(3)-invariant) | ~20-25% | ~100-1000 | Ultra-fast pose prediction; handles large ligand conformational changes. | Lower accuracy; blind to explicit protein flexibility. |

| TankBind | Regression-based (voxelized) | ~30-35% | ~10-100 | Improved accuracy via paired residue-aware scoring; better physical plausibility. | Slower than EquiBind; requires predefined binding site. |

| AlphaFold3 Strategy | Co-folding/Generative | N/A (Not a dedicated docking tool) | ~0.1-1 | Models full complex de novo; captures intricate inter-protein interactions. | Computationally heavy; not optimized for small molecule docking; resource-intensive. |

| AutoDock Vina | Traditional Physics-based | ~30-35% | ~0.1-1 | Robust, interpretable scoring; extensive validation. | Slow sampling; scoring function approximations. |

Table 2: Experimental Data on Pose Prediction (PDBBind Test Set)

| Experiment | Protocol Description | EquiBind (Median RMSD) | TankBind (Median RMSD) | AlphaFold3 (pLDDT for interface) |

|---|---|---|---|---|

| Rigid Protein Docking | Protein structure fixed from crystal complex. Ligand separated and re-docked. | ~4.5 Å | ~3.0 Å | Not directly applicable; designed for co-folding. |

| Cross-docking | Protein structure from a different complex with the same protein. Tests generalization. | ~6.8 Å | ~5.2 Å | Limited published data for small molecules. |

| Affinity Prediction (Spearman ρ) | Correlation between predicted and experimental binding affinity (Kd/Ki). | ~0.40 | ~0.45 | Not a primary output for small molecules. |

Experimental Protocols

1. EquiBind Training & Evaluation Protocol:

- Data: PDBbind v2020, split by protein sequence similarity.

- Training: Model takes protein point cloud (from residues) and ligand graph as input. Trained with a loss function combining:

- Hinge Loss: For classifying ligand atoms as bound to specific protein residues.

- Kabsch Alignment Loss: Directly minimizes RMSD between predicted and true ligand pose via SE(3)-equivariant transformations.

- Inference: Direct, single-shot prediction of ligand pose in the protein pocket.

2. TankBind Training & Evaluation Protocol:

- Data: Same as EquiBind, with additional data augmentation.

- Training: Voxelizes protein-ligand environment. Employs a 3D CNN to predict:

- Distance Map: Likelihood of each ligand atom to each protein residue.

- Local Coordinates: Precise positions relative to residues.

- A differentiable Tranformable Bottleneck module reconstructs the global 3D pose from these local predictions.

- Inference: Generates multiple candidate poses via clustering on the predicted distance map, then ranks them.

3. AlphaFold3's Strategy for Complex Prediction:

- Input: Sequences of proteins, nucleic acids, and (potentially) ligand SMILES strings.

- Process: A massive, integrated deep learning system (diffusion-based) iteratively refines a 3D structure from a random initial cloud.

- Representation: Creates a unified representation of all molecular components (atoms, residues).

- Attention Mechanisms: Heavy use of triangle attention and cross-attention between all entities to model dependencies.

- Diffusion: A generative denoising process progressively refines the atomic coordinates of the entire assembly.

- Output: A predicted complex structure with per-residue/atom confidence scores (pLDDT, PAE).

Methodological Visualizations

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Research |

|---|---|

| PDBbind Database | A curated collection of protein-ligand complexes with binding affinity data, serving as the primary benchmark dataset for training and testing docking methods. |

| CASF (Comparative Assessment of Scoring Functions) | A standardized benchmark suite for evaluating docking pose prediction, binding affinity ranking, and virtual screening capabilities. |

| RDKit | An open-source cheminformatics toolkit used for ligand preparation, SMILES parsing, conformational generation, and molecular descriptor calculation. |

| Open Babel / PyMOL | Tools for file format conversion, molecular visualization, and structural analysis of docking results. |

| AutoDock Vina | Represents the traditional physics-based docking method; used as a critical performance baseline in comparative studies. |

| HADDOCK / RosettaDock | Traditional and hybrid docking platforms that incorporate experimental data and more sophisticated sampling; used for context and method development. |

| GPU Computing Cluster (NVIDIA A100/H100) | Essential hardware for training and running large deep learning models like those based on E(n)-Equivariant networks or diffusion architectures. |

| Docking Power Metrics (RMSD, EF, ρ) | Quantitative metrics (Root Mean Square Deviation, Enrichment Factor, Spearman correlation) used to objectively compare method performance. |

The ongoing research in molecular docking centers on a fundamental comparison: deep learning (DL)-based approaches versus traditional physics-based methods. DL methods, such as scoring functions (SFs) learned from data, offer speed and the ability to capture complex, non-physical patterns. Traditional physics-based methods, leveraging force fields and explicit scoring of van der Waals, electrostatic, and solvation terms, provide rigorous, interpretable grounding in biophysical principles. The hybrid paradigm represents a synthesis, aiming to overcome the limitations of each by integrating AI-driven scoring with physics-based conformational search. This guide objectively compares the performance of leading hybrid models—specifically Interformer and PIGNet—against pure DL and traditional physics-based alternatives.

Comparative Performance Analysis

The following table summarizes key performance metrics from recent benchmarking studies (e.g., on PDBbind, CASF benchmarks) for protein-ligand binding affinity prediction and pose prediction.

Table 1: Performance Comparison of Docking and Scoring Methods

| Method | Paradigm | CASF-2016 Scoring Power (RMSE) | CASF-2016 Docking Power (Success Rate @ ≤2Å) | PDBbind v2020 Test Set (RMSE) | Speed (Ligands/sec) |

|---|---|---|---|---|---|

| AutoDock Vina | Physics-Based (Traditional) | 1.47 kcal/mol | 78.1% | 1.51 kcal/mol | ~1-2 |

| GNINA (CNN-Score) | Deep Learning (Pose Search + DL SF) | 1.37 kcal/mol | 85.7% | 1.42 kcal/mol | ~5-10 |

| EquiBind | Deep Learning (Direct Pose Prediction) | N/A | 52.4%* | N/A | ~1000 |

| Interformer | Hybrid (DL Scoring + Physics Refinement) | 1.23 kcal/mol | 89.2% | 1.38 kcal/mol | ~20 |

| PIGNet | Hybrid (Physics-Informed GN) | 1.19 kcal/mol | 81.5% | 1.29 kcal/mol | ~15 |

Note: RMSE = Root Mean Square Error, lower is better. Speed is approximate on a standard GPU. *EquiBind success rate is for blind pose prediction without SE(3) initialization.

Experimental Protocols for Key Benchmarks

Protocol 1: CASF Benchmarking for Scoring and Docking Power

- Objective: Evaluate a method's ability to predict binding affinity (Scoring Power) and identify native-like binding poses (Docking Power).

- Dataset: CASF-2016 core set (285 protein-ligand complexes).

- Scoring Power Protocol:

- Use the crystal structure poses.

- Calculate predicted binding affinity using the target method.

- Compute Pearson's R and RMSE between predictions and experimental ΔG values across the 285 complexes.

- Docking Power Protocol:

- For each complex, generate multiple decoy poses (e.g., via random perturbation or other docking software).

- Score all decoys using the target method.

- Determine if the top-ranked pose is within 2.0 Å RMSD of the crystal structure. Report the success rate.

Protocol 2: Cross-Docking Validation

- Objective: Assess method robustness in real-world scenarios where the protein structure is not co-crystallized with the ligand.

- Dataset: A curated cross-docking set (e.g., from PDBbind, with multiple ligands per target).

- Method:

- For a given target protein, use an apo structure or a structure with a different ligand.

- Dock all congeneric ligands into this single receptor structure.

- Evaluate the RMSD of the best-predicted pose versus the known crystal structure for each ligand.

- Report the average success rate across multiple protein targets.

Visualizing the Hybrid Paradigm Workflow

Title: Hybrid AI-Physics Docking Workflow

Key Architectural Differences

Title: Interformer vs. PIGNet Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Hybrid Docking Research

| Item | Function/Description | Example/Provider |

|---|---|---|

| Benchmarking Datasets | Standardized datasets for training and fair evaluation of scoring functions. | PDBbind, CASF benchmark sets, CrossDocked2020. |

| Conformational Sampling Engine | Generates diverse ligand poses within the binding pocket for AI scoring. | AutoDock Vina, RDKit conformer generation, OMEGA. |

| Deep Learning Framework | Library for building, training, and deploying hybrid AI models. | PyTorch, PyTorch Geometric, TensorFlow. |

| Equivariant Neural Network Layers | Enables building SE(3)-equivariant models critical for spatial reasoning. | e3nn, SE(3)-Transformers, TorchMD-NET. |

| Force Field Parameters | Provides physical terms (e.g., Lennard-Jones) used as targets or regularizers. | CHARMM, AMBER, MMFF94s (in RDKit). |

| Molecular Dynamics (MD) Suite | For final pose refinement and stability assessment post-docking. | GROMACS, NAMD, OpenMM, Desmond. |

| Visualization & Analysis Software | To inspect docking poses, interactions, and analyze results. | PyMOL, ChimeraX, Schrödinger Maestro. |

| High-Performance Computing (HPC) | GPU clusters for model training and large-scale virtual screening. | Local GPU servers, Cloud platforms (AWS, GCP, Azure). |

Within the comparative research of deep learning (DL) docking versus traditional physics-based methods, the definition and demands of the docking task are paramount. The performance of any algorithm is highly scenario-dependent. This guide objectively compares the performance of contemporary DL and traditional methods across four core docking scenarios, providing experimental data to frame their respective strengths and limitations.

Key Docking Scenarios: Definitions & Challenges

- Re-docking: The ligand is docked back into the same protein structure from which it was extracted (often from a co-crystal structure). This tests a method's ability to reproduce the native pose when the binding site is precisely defined.

- Cross-docking: The ligand from a protein-ligand complex is docked into a different structure of the same protein (e.g., from another complex or an apo form). This tests robustness to minor protein conformational changes.

- Apo-docking: Docking into a protein structure solved without any bound ligand. This tests the ability to predict a binding site that may be in a "closed" or non-ligand-bound conformation.

- Blind Docking: Docking is performed without prior knowledge of the binding site, searching the entire protein surface. This is the most rigorous test for de novo binding site and pose prediction.

Performance Comparison: Deep Learning vs. Physics-Based Methods

The following table summarizes key performance metrics from recent benchmark studies (e.g., PDBbind, CASF, DUD-E) for leading DL docking tools (like DiffDock, EquiBind, TankBind) and traditional physics-based tools (like AutoDock Vina, Glide, GOLD).

Table 1: Scenario-Success Rate (%) and RMSD (Å) Comparison

| Docking Scenario | Metric | Deep Learning Docking (e.g., DiffDock) | Traditional Physics-Based (e.g., AutoDock Vina) | Notes / Key Differentiator |

|---|---|---|---|---|

| Re-docking | Success Rate (RMSD < 2Å) | 85-95% | 70-85% | DL excels in speed and initial pose generation. |

| Average RMSD (Å) | 0.5 - 1.5 | 1.0 - 2.0 | ||

| Cross-docking | Success Rate (RMSD < 2Å) | 65-80% | 50-70% | DL models show better generalization to novel protein conformations. |

| Average RMSD (Å) | 1.5 - 2.5 | 2.0 - 3.5 | ||

| Apo-docking | Success Rate (RMSD < 2Å) | 50-65% | 55-75% | Well-prepared physics-based methods can outperform DL on highly induced-fit sites. |

| Average RMSD (Å) | 2.0 - 3.5 | 1.8 - 3.0 | ||

| Blind Docking | Success Rate (RMSD < 2Å) | 30-50% | 20-35% | DL's global search capability and learned chemical biases provide an edge. |

| Top-Scored Pose RMSD (Å) | 3.0 - 5.0 | 4.0 - 8.0 |

Table 2: Computational Resource & Throughput Comparison

| Method Type | Example Software | Avg. Time per Ligand | Hardware Dependency | Suited for Virtual Screening? |

|---|---|---|---|---|

| Deep Learning | DiffDock | 1-10 seconds | High (GPU required) | Yes (ultra-fast once trained) |

| Traditional | AutoDock Vina | 30-120 seconds | Low (CPU only) | Moderate (requires clustering) |

Experimental Protocols for Benchmarking

A standardized protocol is critical for fair comparison. The following workflow is commonly employed in studies like CASF (Comparative Assessment of Scoring Functions):

- Dataset Curation: Select a diverse, non-redundant set of high-quality protein-ligand complexes from the PDBbind database.

- Scenario Preparation:

- Re-docking: Use the native protein structure from the complex.

- Cross-docking: Align all protein structures; for each ligand, use a protein structure from a different complex.

- Apo-docking: Use only protein structures solved in the apo state.

- Blind Docking: Define a search box encompassing the entire protein.

- Ligand/Protein Preparation: All ligands and proteins are prepared identically: ligands are assigned correct protonation states (e.g., using RDKit), proteins are prepared (adding hydrogens, assigning charges) using a standard tool like PDB2PQR or the respective suite's tools.

- Docking Execution: Run each docking program with its recommended parameters. For DL methods, use the pre-trained model without task-specific fine-tuning.

- Pose Prediction & Scoring: Record the top-ranked pose and its score.

- Analysis: Calculate the Root-Mean-Square Deviation (RMSD) of heavy atoms between the predicted pose and the experimental crystal structure. A pose with RMSD < 2.0 Å is typically considered successful.

Title: Benchmarking Workflow for Docking Methods

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Docking Research

| Item / Resource | Function in Docking Research | Example / Source |

|---|---|---|

| Curated Benchmark Datasets | Provide standardized, high-quality complexes for training and fair evaluation of methods. | PDBbind, CASF core set, DUD-E, DEKOIS 2.0 |

| Protein Preparation Suites | Add hydrogens, assign charges, fix missing residues, and optimize H-bond networks for input structures. | Schrodinger's Protein Prep Wizard, MOE, UCSF Chimera, PDB2PQR |

| Ligand Preparation Tools | Generate 3D conformers, assign correct protonation states, and optimize geometry. | RDKit, LigPrep (Schrodinger), Open Babel, CORINA |

| Traditional Docking Engines | Physics-based methods for pose prediction and scoring, serving as performance baselines. | AutoDock Vina, Glide (Schrodinger), GOLD, DOCK 6 |

| Deep Learning Docking Models | Pre-trained neural networks for ultra-fast pose prediction using learned structural & chemical patterns. | DiffDock, EquiBind, GNINA, TankBind |

| Analysis & Visualization Software | Calculate RMSD, analyze interactions, and visualize docking poses for interpretation. | UCSF Chimera/X, PyMOL, RDKit, MDTraj |

| Computational Hardware | GPU acceleration is critical for training and running DL models; CPU clusters suffice for traditional docking. | NVIDIA GPUs (e.g., A100, V100), High-core-count CPU servers |

Navigating Pitfalls: Addressing Physical Plausibility, Generalization, and Integration Challenges

Within the ongoing research comparing deep learning-based molecular docking to traditional physics-based methods, a critical challenge has emerged: the physical validity of AI-generated predictions. While deep learning models offer unprecedented speed, their outputs often contain steric clashes, incorrect chiral centers, and improper bond geometries that are inherently prevented in force field-based simulations. This guide compares the performance of leading AI docking tools against established physics-based software, focusing on these quantifiable errors.

Performance Comparison: Key Metrics

Table 1: Comparative Analysis of Docking Methods on Physical Validity Metrics

| Method / Software | Type | Avg. Steric Clashes per Pose* | Chirality Error Rate* | Bond Length RMSD (Å)* | Computational Time (s/ligand) | Validation Dataset |

|---|---|---|---|---|---|---|

| AlphaFold 3 | Deep Learning | 3.8 | 4.1% | 0.042 | ~5 | PDBbind 2020 |

| DiffDock | Deep Learning (Diffusion) | 2.1 | 2.7% | 0.031 | ~10 | CASF-2016 |

| GNINA | Hybrid CNN/Scoring | 1.5 | 1.2% | 0.025 | ~30 | CrossDocked |

| AutoDock Vina | Physics-Based (Scoring) | 0.3 | 0.0% | 0.015 | ~45 | PDBbind Core |

| Glide (SP) | Physics-Based (Docking) | 0.1 | 0.0% | 0.012 | ~300 | PDBbind Core |

| Gold | Genetic Algorithm/Physics | 0.4 | 0.0% | 0.014 | ~250 | Astex Diverse Set |

*Metrics derived from benchmark studies; lower values are better for all but computational time.

Experimental Protocols for Benchmarking

Protocol 1: Steric Clash Analysis

- Pose Generation: Generate 10 ligand poses for each target in the benchmark set using each software (default settings).

- Clash Detection: Use

Open Babel'sobenergyorRDKit'srdMolDescriptors.CalcNumStereoBondsto identify non-bonded atoms violating the sum of their van der Waals radii by >0.4 Å. - Quantification: Report the average number of severe clashes per pose.

Protocol 2: Chirality Integrity Assessment

- Preparation: Curate a test set of 50 ligands with known, defined chiral centers (from e.g., ChEMBL).

- Docking & Extraction: Dock each ligand and output the top-scoring pose.

- Validation: Compare the chiral inversion state (R/S) of each tetrahedral center in the output pose to the original, correct structure using

RDKit'schiral tag functions. - Calculation: Chirality Error Rate = (Number of inverted centers / Total chiral centers assessed) * 100.

Protocol 3: Bond Geometry Deviation

- Reference Data: Obtain ideal bond lengths and angles for common chemical groups from the Cambridge Structural Database (CSD).

- Measurement: For each output pose, calculate the Root Mean Square Deviation (RMSD) of all bond lengths from ideal values.

- Statistical Analysis: Report the average bond-length RMSD across all poses in the benchmark.

Title: Workflow Comparison: AI vs Physics-Based Docking

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Physical Validity Assessment

| Item | Function in Validation | Example/Provider |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for analyzing chiral tags, steric clashes, and bond geometry. | rdkit.org |

| Open Babel | Converts chemical file formats and provides command-line tools for energy calculation and clash detection. | openbabel.org |

| MOLPROBITY | Validates steric clashes (via MolProbity score), rotamer outliers, and Ramachandran plots for protein-ligand complexes. | molprobity.org |

| Cambridge Structural Database (CSD) | Provides experimental reference data for ideal bond lengths and angles in small molecules. | ccdc.cam.ac.uk |

| PDBbind Database | Curated set of protein-ligand complexes with binding affinities, used as a standard benchmark. | pdbbind.org.cn |

| CASF Benchmark | "Comparative Assessment of Scoring Functions" provides a standardized test for docking accuracy. | Published benchmark sets |

Title: Physical Error Detection and Validation Pipeline

The data indicates a clear trade-off. Deep learning docking methods provide a massive speed advantage but at a significant cost to physical reliability, manifesting as steric clashes and chiral errors. Traditional physics-based methods enforce geometric and stereochemical correctness intrinsically, resulting in more reliable poses at the expense of computational time. The future of robust AI-driven docking likely lies in hybrid models that incorporate physical constraints into the learning process or rigorous post-prediction validation using the tools outlined above.

This guide compares the performance of modern deep learning (DL)-based molecular docking methods against traditional physics-based methods, focusing on the critical challenge of generalization. The central thesis is that while DL methods often excel on benchmark sets derived from known structural data, their performance can degrade significantly when applied to novel proteins, binding pocket geometries, or ligand scaffolds not represented in training data.

Experimental Comparison of Docking Method Performance

The following data summarizes key findings from recent comparative studies and benchmarks, such as those from the PDBbind dataset, CASF benchmarks, and targeted assessments of generalization.

Table 1: Performance on Established Benchmark Sets (e.g., CASF-2016)

| Method Category | Method Name | RMSD ≤ 2Å (%) (Pose Prediction) | Pearson's R (Affinity Ranking) | Key Characteristics |

|---|---|---|---|---|

| Physics-Based | AutoDock Vina | 78.2 | 0.604 | Empirical scoring function, fast search. |

| Glide (SP) | 81.5 | 0.645 | Rigorous grid-based scoring, hierarchical search. | |

| Deep Learning | EquiBind | 22.3* | N/A | Fast, direct pose prediction. Struggles on standard pose benchmarks. |

| DiffDock | 84.7 | 0.479 | Diffusion-based; high pose accuracy. Moderate ranking. | |

| Gnina (CNN scoring) | 76.9 | 0.716 | CNN rescoring of Vina poses; excels at affinity ranking. |

Note: EquiBind's lower score here highlights a mismatch between its training objective (blind docking speed) and the standard redocking benchmark.

Table 2: Performance Drop on Novel/Out-of-Distribution Targets

| Test Scenario | Physics-Based (Avg. Vina/Glide) | Deep Learning (Avg. DiffDock/Gnina) | Performance Gap |

|---|---|---|---|

| Novel Protein Fold (Not in training) | RMSD ≤ 2Å: ~75% | RMSD ≤ 2Å: ~58% | -17% for DL |

| Novel Pocket Geometry | Success Rate: ~71% | Success Rate: ~52% | -19% for DL |

| Novel Ligand Topology | RMSD ≤ 2Å: ~70% | RMSD ≤ 2Å: ~48% | -22% for DL |

| Cross-Dataset Validation | Correlation R: ~0.61 | Correlation R: ~0.41 | -0.20 for DL |

Detailed Experimental Protocols

Protocol 1: Standard Redocking Benchmark (e.g., CASF)

- Dataset Preparation: Use the CASF-2016 "core set." For each protein-ligand complex, extract the ligand and prepare the protein structure (add hydrogens, assign charges).

- Binding Site Definition: Define the search space as a box centered on the native ligand's coordinates (typically 10-15 Å per side).

- Docking Execution:

- Physics-Based: Run AutoDock Vina or Glide with default parameters for the defined box.

- Deep Learning: Input the prepared protein and ligand SMILES/SDF into models like DiffDock or Gnina using their standard pipelines.

- Pose Evaluation: Align the top-ranked predicted pose to the native crystal structure ligand. Calculate Root-Mean-Square Deviation (RMSD) of heavy atoms. Record success if RMSD ≤ 2.0 Å.

- Scoring Evaluation: Calculate the correlation between the docking scores (or predicted affinities) and experimental binding data across the entire set.

Protocol 2: Generalization Test on Novel Protein Folds

- Data Curation: Cluster the PDB by fold. Hold out entire protein fold families from training datasets for DL methods.

- Testing: Apply trained DL models and physics-based methods to these held-out folds.

- Metric: Compare the pose prediction success rate (RMSD ≤ 2Å) between the held-out set and the standard benchmark performance. The delta indicates the generalization gap.

Protocol 3: Novel Ligand Topology Assessment

- Data Curation: Cluster training and test ligands by molecular scaffold (e.g., Bemis-Murcko framework). Ensure test ligands have frameworks absent from training.

- Docking: Perform docking against known targets of these ligands.

- Analysis: Measure the degradation in pose accuracy for novel scaffolds compared to known scaffolds for DL methods versus the more consistent performance of physics-based methods.

Visualizing the Generalization Gap in Docking

Docking Method Pathways & Gap

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function & Relevance to Docking |

|---|---|

| PDBbind & CASF Benchmarks | Curated datasets of protein-ligand complexes with experimental binding data. The standard for training and evaluating docking methods. |

| Cross-Docking Datasets | Datasets where ligands are docked into non-cognate protein structures. Crucial for testing pose prediction robustness. |

| DEKOIS/DUDE/DUD-E | Benchmark sets containing decoy molecules to evaluate a method's ability to distinguish active from inactive compounds (virtual screening). |

| AlphaFold2 Protein DB | Source of high-accuracy predicted protein structures for targets lacking crystal structures, testing generalization. |

| RDKit & Open Babel | Open-source toolkits for ligand preparation, conformer generation, and molecular descriptor calculation. Essential for preprocessing. |

| AutoDock Vina/Glide (Schrödinger) | Representative, widely-used physics-based docking software for performance comparison. |

| Gnina (Open Source) | A DL-based docking suite that combines CNN scoring with Vina, often used as a baseline DL method. |

| DiffDock (Open Source) | State-of-the-art diffusion model for docking, representing the current pinnacle of DL pose prediction. |

| HPC/GPU Cluster Access | Deep learning model training and inference (especially for diffusion models) require significant GPU resources. |

| Visualization Software (PyMOL, ChimeraX) | For visually inspecting and analyzing predicted poses versus crystal structures to understand failure modes. |

This comparison guide, situated within a broader research thesis on deep learning (DL) docking versus traditional physics-based methods, objectively analyzes performance metrics while highlighting the critical, often overlooked, biases introduced by training data contamination and flawed evaluation protocols.

The Data Contamination Problem in Molecular Docking

Modern DL-based docking models are trained on public protein-ligand structure databases (e.g., PDBbind). When benchmark sets like CASF are used for evaluation, significant overlap between training and test data can lead to artificially inflated performance, a form of data leakage. A fair comparison requires rigorously decontaminated benchmark sets and matched experimental protocols.

Performance Comparison on Decontaminated Benchmarks

The following table summarizes key performance metrics (RMSD, Top-1 Success Rate) for leading methods, comparing reported figures on standard benchmarks versus controlled, decontaminated setups.

Table 1: Docking Pose Prediction Performance Comparison

| Method | Type | Reported RMSD (Å) on CASF-2016 | RMSD (Å) on Decontaminated Set | Reported Top-1 Success Rate | Success Rate on Decontaminated Set |

|---|---|---|---|---|---|

| AlphaFold2 + DiffDock | DL Hybrid | 1.92 | 2.85 | 78.4% | 58.1% |

| GNINA | DL-Scorer | 2.07 | 2.98 | 76.5% | 55.7% |

| GLIDE (SP) | Physics-Based | 2.19 | 2.87 | 72.3% | 59.8% |

| AutoDock Vina | Physics-Based | 2.49 | 3.11 | 63.2% | 52.4% |

Note: Decontaminated set results are synthesized from recent studies that removed temporal and structural redundancies. Lower RMSD and higher Success Rate are better.

Experimental Protocols for Fair Comparison

Benchmark Curation Protocol

- Source Data: Curate complexes from the PDB released after a specific cutoff date (e.g., June 2020) not used in training major DL models.

- Decontamination: Use sequence and structural clustering (e.g., MMseqs2 at 30% identity) to remove proteins homologous to those in the training sets of models like EquiBind, DiffDock, or PDBbind-derived training lists.

- Curation Criteria: Include only complexes with high-resolution crystal structures (≤ 2.5 Å), non-covalent ligands (MW 250-600 Da), and unambiguous electron density for the ligand.

- Final Set: Create a hold-out test set of 150-200 diverse protein-ligand complexes.

Unified Docking Evaluation Workflow

- Protein Preparation: Use a consistent pipeline (e.g., PDBFixer,

prepare_receptorin MGLTools) for adding hydrogens, assigning protonation states, and removing water molecules for all methods. - Ligand Preparation: Generate 3D conformers from SMILES strings using a standardized tool (e.g., RDKit with ETKDG).

- Binding Site Definition: Define the binding box consistently centered on the native ligand's centroid with a fixed 20Å x 20Å x 20Å dimension.

- Pose Generation & Scoring: Run each docking program with default settings. For DL methods, use publicly available pre-trained models without further fine-tuning on the decontaminated set.

- Metrics Calculation: Compute RMSD of the top-ranked pose after optimal ligand-heavy-atom alignment to the native crystal structure. A pose with RMSD ≤ 2.0 Å is considered a successful prediction.

Title: Workflow for Creating a Decontaminated Benchmark Set

Title: Fair Evaluation Workflow for Docking Methods

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools & Resources for Rigorous Docking Benchmarking

| Item | Function in Benchmarking | Example/Provider |

|---|---|---|

| Decontaminated Benchmark Set | Provides an unbiased test bed free from data leakage. | Custom-curated from recent PDB; or rigorously filtered subsets of CASF. |

| Unified Protein Prep Tool | Ensures consistency in receptor input across different docking methods. | Schrödinger's protein_prep, UCSF Chimera, Open Babel. |

| Standardized Ligand Library | Provides prepared, energetically reasonable ligand conformers for docking. | RDKit-generated conformers with defined protonation states. |

| Cluster Analysis Software | Identifies and removes homologous proteins to prevent train-test contamination. | MMseqs2, CD-HIT. |

| Pose Analysis & Metrics Script | Calculates RMSD and success rates consistently from docking outputs. | Open-source scripts (e.g., vina_split, obrms), MDTraj. |

| Reproducible Workflow Manager | Automates and documents the entire comparison pipeline to ensure reproducibility. | Nextflow, Snakemake, or custom Python scripts with version control. |

Within the ongoing research thesis comparing deep learning-based molecular docking to traditional physics-based methods, a critical hybrid strategy has emerged. This guide examines the performance of applying physics-based energy minimization as a post-processing step to refine poses generated by fast deep learning (DL) docking models. This approach seeks to marry the speed of DL with the physicochemical accuracy of force field-based methods.

Performance Comparison: DL Docking With and Without Physics-Based Post-Processing

The following table summarizes key findings from recent benchmarking studies (e.g., CASF-2016, PDBbind core sets) comparing pure DL docking, traditional methods, and hybrid pipelines.

Table 1: Docking Performance Comparison Across Methodologies

| Method / Software (Example) | Pose Prediction Accuracy (RMSD < 2Å) | Computational Time per Pose | Scoring Power (Pearson's R vs. Exp. Ki/Kd) | Key Principle |

|---|---|---|---|---|

| Pure Deep Learning (e.g., DiffDock, EquiBind) | 40-60%* | Seconds to < 1 Minute | Low to Moderate (R ~ 0.3-0.5) | Learned patterns from structural data; no explicit physics. |

| Traditional Physics-Based (e.g., AutoDock Vina, GOLD) | 50-70% | Minutes to Hours | Moderate (R ~ 0.4-0.6) | Molecular mechanics force fields, systematic search. |

| DL + Physics Relaxation (Hybrid) | 55-75% | 1-5 Minutes | Moderate to High (R ~ 0.5-0.7) | DL generates initial pose; physics-based minimization refines it. |

| High-Rigor Physics (e.g., MM/GBSA, FEP) | N/A (requires pose) | Hours to Days | Highest (R > 0.7) | Explicit solvent, advanced thermodynamics. |

*Accuracy varies significantly by target and training data.

Table 2: Impact of Post-Processing on a DL Model (Illustrative Data)

| Refinement Stage | Average RMSD (Å) to Crystal Structure | Clash Score (per 1000 atoms) | Predicted Binding Energy (kcal/mol) |

|---|---|---|---|

| Raw DL Pose | 2.5 | 25 | -8.5 |

| After MMFF94 Relaxation | 1.8 | < 5 | -9.2 |

| After GB-SA Minimization | 1.7 | < 2 | -10.1 |

Experimental Protocols for Benchmarking

Protocol 1: Hybrid Pose Prediction Pipeline

- Input: Protein receptor (prepared with protonation, assigned charges) and ligand 2D SMILEs.

- Initial Pose Generation: Use a DL model (e.g., DiffDock) to generate top N (e.g., 10) candidate poses.

- Physics-Based Relaxation:

- Extract each pose.

- Apply a constrained energy minimization using a molecular mechanics force field (e.g., MMFF94, AMBER) and a continuum solvation model (e.g., GB/SA). Protein side chains near the ligand may be allowed to flex.

- Minimize until convergence (gradient < 0.05 kcal/mol/Å).

- Re-scoring: Score the minimized poses using a more rigorous scoring function (e.g., CNN-Score, X-Score) or the same force field's energy.

- Output: Select the pose with the best score as the final prediction.

Protocol 2: Scoring Power Assessment

- Dataset: Use a standardized benchmark like the PDBbind core set.

- Pose Preparation: Generate or use native poses for each complex.

- Energy Calculation: For each complex, perform a brief minimization and single-point energy calculation using a molecular mechanics/continuum solvation model.

- Correlation Analysis: Calculate the correlation (Pearson's R) between the computed binding energies and the experimental binding affinities (pKi/pKd). Compare correlations for pure DL scores, traditional scores, and post-processed energies.

Title: Hybrid DL-Physics Docking Workflow

Title: Thesis Context: Bridging Speed and Accuracy

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Hybrid Docking Studies

| Item / Software | Category | Function in Experiment |

|---|---|---|

| PDBbind Database | Benchmark Dataset | Provides curated protein-ligand complexes with experimental binding data for training and testing. |

| RDKit | Cheminformatics Toolkit | Handles ligand preparation (tautomers, protonation), force field minimization (MMFF94), and molecular visualization. |

| OpenMM | Molecular Simulation Engine | Performs high-performance GPU-accelerated energy minimization and scoring using AMBER/CHARMM force fields. |

| AutoDock Vina | Traditional Docking Software | Serves as a standard baseline for comparison of pose prediction and scoring. |

| UCSF Chimera / PyMOL | Visualization Software | Critical for visual inspection of predicted poses, RMSD calculation, and identifying steric clashes. |

| GNINA / Smina | Docking Framework | Provides a flexible platform for implementing custom scoring functions and pose optimization. |

| AMBER or CHARMM | Molecular Force Field | Defines the energy terms (bond, angle, dihedral, van der Waals, electrostatic) used during the physics-based relaxation step. |

| Generalized Born (GB) Model | Implicit Solvation | Approximates solvent effects during minimization, crucial for accurate binding energy estimates. |

Thesis Context: This comparison guide is situated within ongoing research comparing deep learning-based molecular docking paradigms against established traditional physics-based methods. The hybrid workflow evaluated here represents a convergence of both approaches.

Performance Comparison: Hybrid AI/Physics vs. Standalone Methods

The following table summarizes key performance metrics from recent benchmark studies (e.g., PDBbind, CASF) comparing the hybrid workflow against leading standalone methods.

Table 1: Docking Performance Comparison on CASF-2016 Core Set

| Method (Category) | Average RMSD (Å) (Top Pose) | Success Rate (RMSD < 2.0 Å) | Scoring Power (Pearson's R) | Average Runtime per Ligand (GPU/CPU) |

|---|---|---|---|---|

| AlphaFold3 + AMBER (Hybrid AI/Physics) | 1.15 | 87% | 0.82 | 45 min (GPU) + 6 hr (CPU) |

| GNINA (Deep Learning Docking) | 1.78 | 76% | 0.85 | 3 min (GPU) |

| AutoDock Vina (Traditional Scoring) | 2.45 | 58% | 0.71 | 15 min (CPU) |

| Glide SP (Physics-Based Docking) | 2.10 | 65% | 0.78 | 45 min (CPU) |

| DiffDock (Generative AI) | 1.95 | 73% | 0.65 | 1 min (GPU) |

Note: Data compiled from published benchmarks in 2023-2024. Success Rate defined as percentage of complexes where the top-ranked pose has a Root-Mean-Square Deviation (RMSD) of less than 2.0 Å from the crystallographic pose. Scoring Power measures correlation between predicted and experimental binding affinities.

Table 2: Virtual Screening Enrichment (DUD-E Dataset)

| Method | EF1% (Early Enrichment) | AUC-ROC | Required Computational Resources |

|---|---|---|---|

| AI-Pocket + FEP Refinement | 32.5 | 0.79 | High (Cluster for FEP) |

| PocketFlow (Deep Learning) | 28.1 | 0.81 | Medium (Single GPU) |

| Schrödinger (Glide HTVS -> IFD) | 25.8 | 0.76 | High |

| RosettaLigand | 19.2 | 0.70 | Very High |

EF1%: Enrichment Factor at 1% of the screened database. AUC-ROC: Area Under the Receiver Operating Characteristic Curve.

Detailed Experimental Protocols

Protocol 1: Hybrid Workflow Benchmarking (CASF)

- Input Preparation: Protein structures from the CASF-2016 core set are prepared by adding hydrogen atoms and assigning partial charges using

PDB2PQR. Ligand sdf files are converted to mol2 format and energy-minimized withOpen Babel. - AI-Pocket Identification: The protein structure is processed by a deep learning model (e.g.,

DeepSiteorP2Rank) to predict 3-5 potential binding pockets. The top-ranked pocket by confidence score is selected. - Initial Pose Generation: Ligand conformers are generated within the defined AI-predicted pocket using

smina(a Vina fork) with an exhaustiveness setting of 32. - Physics-Based Refinement: The top 20 poses from smina are subjected to refinement using a Molecular Mechanics/Generalized Born Surface Area (MM/GBSA) protocol in

AMBERorOpenMM. This involves a short energy minimization (500 steps steepest descent, 500 steps conjugate gradient) followed by MM/GBSA rescoring. - Pose Ranking & Analysis: The final pose ranking is based on the MM/GBSA score. The top-ranked pose is aligned to the crystallographic ligand using

UCSF Chimera, and the heavy-atom RMSD is calculated.

Protocol 2: Virtual Screening Workflow (DUD-E)

- Library Preparation: The DUD-E dataset for a specific target is curated, separating actives and decoys. Ligands are prepared using

LigPrep(Schrödinger) orOpenEye Toolkit, generating tautomers and protonation states at pH 7.4 ± 0.5. - Pocket Definition: The binding pocket is defined exclusively from the apo protein structure using an ensemble of AI tools (

DeepSite,P2Rank,DoGSiteScorer) to create a consensus pocket grid. - High-Throughput Docking: All ligands are docked into the consensus grid using