Advancing Drug Discovery: A Practical Guide to Consensus Scoring and Rigorous Docking Validation

Molecular docking is a cornerstone of structure-based drug discovery, yet its predictive accuracy and reliability are often hampered by the limitations of individual scoring functions and search algorithms.

Advancing Drug Discovery: A Practical Guide to Consensus Scoring and Rigorous Docking Validation

Abstract

Molecular docking is a cornerstone of structure-based drug discovery, yet its predictive accuracy and reliability are often hampered by the limitations of individual scoring functions and search algorithms. This article provides a comprehensive guide for researchers and drug development professionals on implementing robust consensus scoring and validation protocols. We begin by establishing the foundational principles of docking and the compelling rationale for consensus approaches to mitigate individual method biases. The guide then details methodological strategies for constructing effective consensus workflows, including program selection, data normalization, and algorithm implementation. To address common pitfalls, we present a troubleshooting framework focused on ensuring physical plausibility, improving generalization, and optimizing hybrid strategies that integrate traditional and deep learning methods. Finally, we systematically review benchmarking standards, comparative performance analyses, and real-world applications, culminating in actionable best practices. This integrated approach aims to enhance the reproducibility, accuracy, and translational impact of virtual screening in biomedical research.

The 'Why' of Consensus: Core Principles and Imperatives for Reliable Docking

The Central Role of Molecular Docking in Accelerating Drug Discovery

Technical Support Center: Troubleshooting Docking & Consensus Scoring Experiments

This support center provides targeted guidance for researchers conducting molecular docking and validation studies, framed within best practices for robust consensus scoring protocols.

Frequently Asked Questions (FAQs)

Q1: My docking poses show excellent binding scores but poor biological activity in validation assays. What could be wrong? A: This is a classic sign of scoring function bias or pose inaccuracy. First, verify the ligand's protonation and tautomeric state appropriate for the target's physiological pH. Second, ensure your receptor structure, especially side-chain conformations in the binding pocket, is relevant (e.g., from an apo structure or a homology model based on a close homolog). Relying on a single scoring function is discouraged. Implement a consensus scoring strategy across multiple, diverse functions (e.g., empirical, force-field, knowledge-based) to filter out false positives. Re-dock known active ligands (co-crystallized if available) to validate your protocol's ability to reproduce native poses (RMSD < 2.0 Å is a common threshold).

Q2: How do I choose the right search algorithm and scoring function for a novel target with no known ligands? A: For targets without precedent, a phased approach is recommended. Begin with a high-throughput search algorithm (e.g., Vina's Monte Carlo-based, GLIDE SP) to explore the conformational space broadly. For refinement, use a more precise algorithm (e.g., GOLD's genetic algorithm, GLIDE XP). Employ consensus scoring from the outset using at least three functions with different theoretical bases. Crucially, perform a retrospective docking study against a decoy set (e.g., from DUD-E or DEKOIS) to calculate enrichment factors and validate your chosen combination before proceeding with virtual screening.

Q3: What are the critical steps for preparing a protein structure from the PDB for docking? A: A meticulous preparation protocol is essential:

- Structure Selection: Prefer high-resolution (<2.2 Å) structures with minimal missing loops in the binding site. Consider the biological oligomerization state.

- Pre-processing: Remove all water molecules except those mediating crucial, conserved interactions. Add missing hydrogen atoms and assign protonation states (e.g., for HIS, ASP, GLU) using tools like

PDB2PQRorEpik, targeting a physiological pH of 7.4 ± 0.5. - Energy Minimization: Apply restrained minimization (on heavy atoms) to relieve steric clashes introduced during hydrogen addition, using a force field like AMBER or CHARMM. This step should be minimal to preserve the experimental conformation.

Q4: How can I validate my consensus scoring protocol before a large-scale virtual screen? A: Conduct a comprehensive docking validation study. The key metrics are summarized in the table below:

Table 1: Key Metrics for Docking Protocol Validation

| Metric | Calculation | Target Threshold | Purpose |

|---|---|---|---|

| Pose Reproduction RMSD | RMSD between docked and co-crystallized ligand pose. | ≤ 2.0 Å | Tests algorithmic search accuracy. |

| Enrichment Factor (EF₁%) | (Hit rate in top 1%) / (Hit rate in random selection). | > 10 (Higher is better) | Gauges scoring function's ability to prioritize actives. |

| Area Under the ROC Curve (AUC) | Area under the Receiver Operating Characteristic curve. | 0.7 - 1.0 (0.5 is random) | Overall discriminative power between actives and decoys. |

| Consensus Hit Rate | % of known actives recovered by consensus vs. individual functions. | Significantly higher than single functions | Validates the consensus approach. |

Experimental Protocol: Standard Workflow for Consensus Scoring Validation

- Curation of Test Set: Compile a dataset of 20-30 known active compounds and 1000+ property-matched decoys (sources: DUD-E, DEKOIS 2.0).

- System Preparation: Prepare the target protein and all ligands using a standardized, reproducible protocol (see Q3).

- Docking Execution: Dock every compound using at least two different search algorithms.

- Multi-Function Scoring: Score all generated poses using 3-5 distinct scoring functions (e.g., X-Score, ChemPLP, ChemScore, AutoDock Vina, DSX).

- Consensus Application: Apply a consensus method: Rank-by-Rank (average rank across functions) or Rank-by-Vote (number of times a compound appears in top N%).

- Performance Analysis: Calculate EF, AUC, and early enrichment metrics. Compare consensus performance to each standalone function.

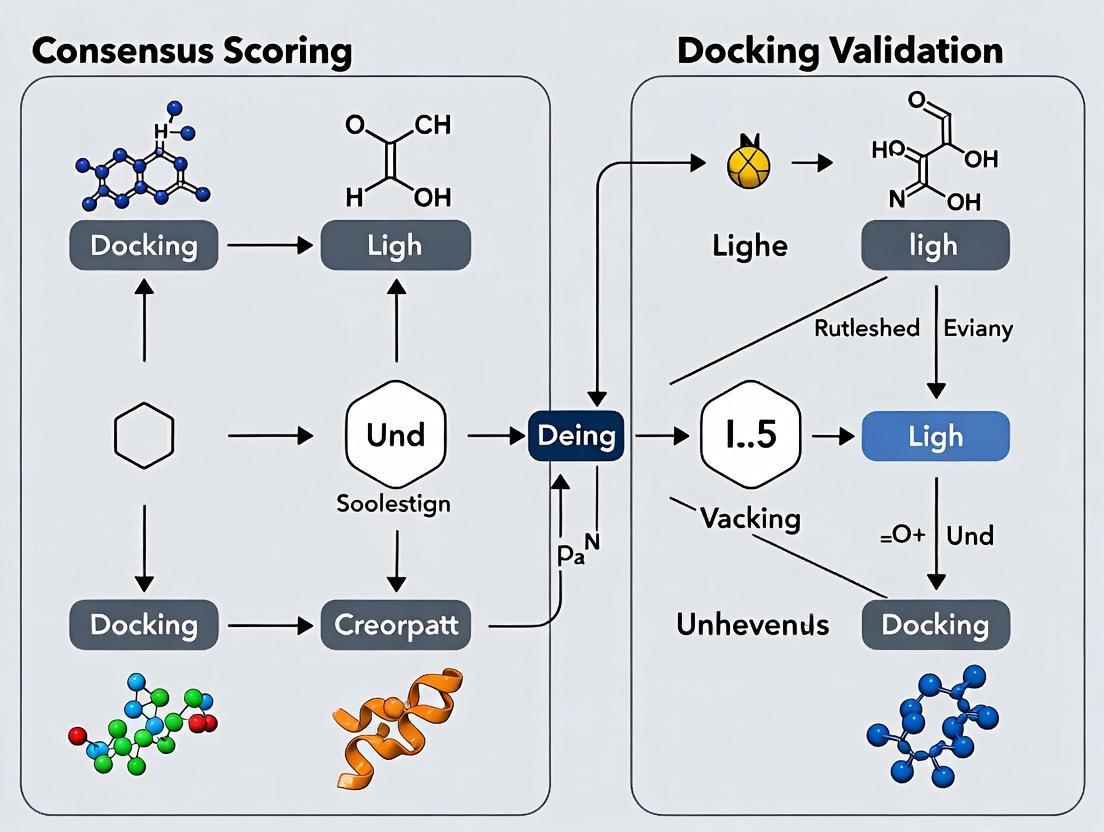

Visualization of Workflows

Diagram 1: Consensus Scoring Validation Workflow

Diagram 2: Molecular Docking & Scoring Decision Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Docking & Validation Studies

| Item / Resource | Function / Purpose | Example/Tool |

|---|---|---|

| Curated Benchmark Datasets | Provide validated sets of active ligands and matched decoys for method validation. | DUD-E, DEKOIS 2.0, LIT-PCBA |

| Protein Preparation Suite | Processes PDB files: adds H, assigns charges, fixes residues, minimizes. | Schrödinger Protein Prep Wizard, MOE QuickPrep, UCSF Chimera |

| Ligand Preparation Tool | Generates 3D conformers, assigns correct protonation/tautomer states. | OpenBabel, LigPrep (Schrödinger), CORINA |

| Docking Software | Performs the conformational search and pose optimization. | AutoDock Vina, GOLD, GLIDE (Schrödinger), rDock |

| Diverse Scoring Functions | Evaluate and rank poses using different physicochemical models. | X-Score, RF-Score, Vinardo, PLANTSCHEMP |

| Consensus Scoring Script | Implements rank-by-rank, rank-by-vote, or weighted schemes. | Custom Python/R scripts, COCONS (Open Source) |

| Visualization & Analysis | Visualizes poses, analyzes interactions, calculates RMSD. | PyMOL, UCSF Chimera, Biovia Discovery Studio |

| High-Performance Computing (HPC) | Enables large-scale virtual screening and ensemble docking. | Local clusters, Cloud computing (AWS, Azure), GPU acceleration |

FAQs and Troubleshooting Guides

Q1: My virtual screening campaign returned a high-scoring compound from a single software, but it showed no activity in the lab assay. What could be the primary reason? A1: This is a classic symptom of algorithmic bias or a scoring function's specific parameterization. No single scoring function can perfectly model the complex physicochemical realities of protein-ligand binding. The high score may reflect an excellent fit for the function's internal biases (e.g., favoring certain interaction types) rather than true binding affinity. Consensus scoring is recommended to mitigate this.

Q2: How can I determine if my docking results are statistically significant and not just random chance? A2: You must employ a robust validation protocol. Key steps include:

- Re-docking: Successfully re-dock the native crystal structure ligand to its binding site (RMSD < 2.0 Å).

- Decoy Set Generation: Use a database of known non-binders or property-matched decoys (e.g., from DUD-E or DEKOIS).

- Enrichment Analysis: Dock the actives and decoys, then calculate the enrichment factor (EF) and plot the Receiver Operating Characteristic (ROC) curve. Poor early enrichment (EF1% < 5-10) or a low Area Under the Curve (AUC < 0.7) suggests the docking protocol has limited discriminatory power for your target.

Q3: I am getting vastly different top hits from two different docking programs (e.g., AutoDock Vina vs. Glide). Which one should I trust? A3: Do not trust one over the other arbitrarily. This discrepancy highlights the core challenge of single-algorithm dependence. The best practice is to use both sets of results to inform a consensus approach. Proceed with compounds that rank highly across multiple algorithms or have complementary favorable interactions predicted by the different programs.

Q4: What are the critical experimental controls for validating a computational hit from a docking study? A4: A minimal validation workflow includes:

- Dose-Response: Confirm activity with a full concentration curve (IC50/EC50).

- Counter-Screening: Test against related and unrelated targets to establish selectivity.

- Orthogonal Assay: Use a different assay technology (e.g., SPR for binding affinity, cellular reporter assay) to confirm the mechanism.

- Analogue Testing: Test structurally similar compounds; a meaningful Structure-Activity Relationship (SAR) supports the predicted binding mode.

Q5: My search function (conformer search, pose sampling) seems to miss plausible binding poses. How can I improve sampling? A5: This indicates insufficient sampling coverage. Remedies include:

- Increase Exhaustiveness: Dramatically increase the search parameter (e.g.,

num_modes=100,exhaustiveness=100in Vina). - Use Different Search Algorithms: Combine global (e.g., genetic algorithm) and local (e.g., Monte Carlo) search methods.

- Employ Enhanced Sampling: For MD-based docking, use techniques like replica exchange.

- Define a Larger Search Space: If the binding site is uncertain, use a larger grid box, but beware of increased false positives.

Experimental Protocols

Protocol 1: Validation via Enrichment Study Using a Benchmarking Set

- Prepare the Protein Structure: Remove water and cofactors (except critical ones), add hydrogens, and assign partial charges using the docking software's recommended method.

- Prepare Ligand/Decoy Database: Download or generate a validated benchmark set (e.g., from DUD-E). Prepare ligands in 3D, optimizing for low-energy conformers and correct tautomers.

- Define the Binding Site: Use the centroid of the cocrystallized ligand for grid box placement with dimensions of at least 20x20x20 ų.

- Perform Docking: Dock all active ligands and decoys using identical parameters.

- Analyze Results: Rank all compounds by their docking score. Calculate the Enrichment Factor at 1% (EF1%) and plot the ROC curve. The Area Under the ROC Curve (AUC) provides a single metric for performance.

Protocol 2: Consensus Scoring Methodology

- Select Diverse Algorithms: Choose at least two docking programs with different scoring function philosophies (e.g., force-field based, empirical, knowledge-based).

- Standardized Docking: Dock the same compound library against the same prepared protein structure using each program. Use comparable, thorough search parameters.

- Normalize Scores: Convert raw scores from each program to a common scale, such as a percentile rank or a Z-score, within that program's result set.

- Apply Consensus Rule: Select hits based on a pre-defined rule (e.g., compounds that appear in the top 5% of at least two independent rankings, or the average of normalized ranks is in the top 10%).

Data Presentation

Table 1: Comparison of Single vs. Consensus Scoring Performance on DUD-E Benchmark

| Target Class | Single Algorithm (AutoDock Vina) AUC | Single Algorithm (Glide SP) AUC | Consensus (Rank Sum) AUC | % Improvement with Consensus |

|---|---|---|---|---|

| Kinase (EGFR) | 0.72 | 0.78 | 0.85 | 18% |

| GPCR (A2A) | 0.65 | 0.81 | 0.87 | 34% |

| Protease (Thrombin) | 0.69 | 0.75 | 0.82 | 19% |

| Nuclear Receptor (ERα) | 0.81 | 0.77 | 0.88 | 9% |

Table 2: Essential Research Reagent Solutions

| Item | Function in Docking/Validation |

|---|---|

| Protein Data Bank (PDB) Structure | High-resolution experimental (X-ray, Cryo-EM) structure of the target, essential for defining the binding site and for re-docking validation. |

| Benchmark Set (e.g., DUD-E, DEKOIS) | Curated sets of known active ligands and property-matched decoy molecules, required for objective assessment of scoring function enrichment. |

| Docking Software Suite (e.g., AutoDock Vina, Glide, GOLD) | Provides the algorithms for pose sampling (search) and scoring. Using multiple suites is critical for consensus. |

| Molecular Visualization Software (e.g., PyMOL, ChimeraX) | For structure preparation, visualization of docking poses, and analysis of protein-ligand interactions. |

| Assay Kits for Orthogonal Validation (e.g., Fluorescence Polarization, SPR Chips) | Essential experimental tools to move from in silico hits to confirmed bioactive compounds in biochemical or biophysical assays. |

Visualizations

Title: Consensus Scoring Workflow Diagram

Title: Single Algorithm Bias Leading to Failure

Technical Support & Troubleshooting Center

FAQs and Troubleshooting Guides

Q1: During re-docking, my ligand does not return to the crystallographic pose. The RMSD is >2.0 Å. What are the primary causes? A: High re-docking RMSD typically indicates issues with:

- Incorrect Protonation/Tautomer State: The ligand's charged state in the crystal structure may differ from your input file.

- Incomplete Protein Preparation: Missing residues, incorrect histidine protonation, or misplaced sidechains near the binding site can obstruct docking.

- Inappropriate Docking Parameters: The search algorithm's exhaustiveness or grid box size/center may be suboptimal.

- Force Field Incompatibility: Mismatch between the force field used for protein/ligand parameterization and the scoring function.

Q2: In cross-docking, performance is highly variable across different protein structures of the same target. How can I stabilize results? A: This highlights conformational diversity. Implement these steps:

- Structure Clustering: Use RMSD on binding site alpha carbons to cluster multiple available crystal structures. Perform cross-docking within and between clusters.

- Protein Ensemble Docking: Dock the ligand against multiple representative structures and consensus-score the results.

- Side-Chain Flexibility: Employ protocols that allow key binding site side chains (e.g., tyrosine, lysine) to be rotamerically flexible during docking.

Q3: In blind docking, the predicted binding site is incorrect. How can I improve binding site identification? A:

- Use Binding Site Prediction Tools: Run complementary tools like fpocket, DeepSite, or METAPOCKET 2 before docking to define probable regions.

- Expand the Grid: Ensure your global search grid amply covers the entire protein surface, especially at protein-protein interfaces or allosteric pockets.

- Consensus Scoring: Use multiple, distinct scoring functions (e.g., empirical, force field-based, knowledge-based) to rank poses. True binders often score well across different functions.

Q4: My docking scores do not correlate with experimental binding affinities (IC50/Ki). What validation steps should I check? A: Poor correlation often stems from neglecting critical experimental protocol factors:

- Ligand Strain Energy: High-scoring poses may be geometrically strained. Always calculate the strain/energy penalty relative to the ligand's global minimum.

- Solvation/Entropy Effects: Standard docking scores often poorly estimate desolvation and entropic terms. Consider post-docking MM/GBSA or MM/PBSA calculations.

- Active/Decoy Validation: Ensure your docking protocol can reliably distinguish known actives from decoys (enrichment in early recovery). Use a benchmark set like DUD-E or DEKOIS 2.0.

Experimental Protocols for Docking Validation

Protocol 1: Standard Re-docking and Cross-Docking Validation Objective: To assess a docking protocol's ability to reproduce known poses.

- Data Curation: For a target, compile a set of protein-ligand complexes from the PDB. Ensure resolution is <2.5 Å.

- Protein Preparation: (a) Add missing hydrogens. (b) Assign protonation states at pH 7.4 using tools like PropKa. (c) Optimize hydrogen bonding networks.

- Ligand Preparation: Extract the co-crystallized ligand. Generate correct 3D coordinates and assign charges matching the force field (e.g., Gasteiger for empirical scoring).

- Re-docking: Define a grid box centered on the native ligand. Execute docking. Calculate the RMSD between the top-ranked pose and the crystal pose.

- Cross-docking: Use each protein structure to dock all ligands from the other complexes. Record the success rate (RMSD < 2.0 Å).

Protocol 2: Consensus Scoring for Virtual Screening Objective: To improve hit rates by combining multiple scoring functions.

- Docking Execution: Dock a library (containing known actives and decoys) against the target using a single, robust search algorithm (e.g., Vina, Glide SP).

- Multiple Scoring: Re-score the generated pose library (e.g., top 20 poses per ligand) with 3-5 structurally and thermodynamically diverse scoring functions.

- Score Normalization: Normalize raw scores from each function to a common scale (e.g., Z-score) across the ligand set.

- Consensus Methods: Apply consensus rules:

- Rank-by-Vote: Rank ligands by their average rank across all functions.

- Rank-by-Sum: Rank ligands by the sum of their normalized scores.

- Validation: Plot the enrichment curve and calculate the EF1% (Enrichment Factor at 1% of screened database) for single and consensus methods.

Data Presentation

Table 1: Typical Docking Task Parameters and Validation Metrics

| Docking Task | Primary Goal | Key Performance Metric | Acceptable Threshold | Key Parameter to Adjust |

|---|---|---|---|---|

| Re-docking | Protocol validation | RMSD to X-ray pose | ≤ 2.0 Å | Search exhaustiveness, box center |

| Cross-docking | Handling receptor flexibility | Success Rate (RMSD < 2Å) | Varies by target | Protein structure selection, side-chain flexibility |

| Virtual Screening | Hit identification | EF1% (Enrichment Factor) | > 10-20 | Scoring function, consensus method |

| Blind Docking | Binding site discovery | Correct Site Identification | N/A | Grid box size, binding site prediction prior |

Table 2: Comparison of Common Scoring Functions for Consensus Strategies

| Scoring Function | Type (Empirical, FF, Knowledge) | Strengths | Weaknesses | Role in Consensus |

|---|---|---|---|---|

| X-Score | Empirical, HBond/Hydrophobic | Good affinity prediction | Sensitive to small structural changes | Provides classical Chemscore baseline |

| AutoDock Vina | Empirical (Machine Learning) | Fast, good pose accuracy | Can overfit training data | Default for initial pose generation |

| MM/GBSA | Force Field-Based (Post-dock) | Accounts for solvation/entropy | Computationally expensive, no entropy | Refines final ranking of top poses |

| PLP | Knowledge-Based (Potential) | Robust across diverse systems | Less accurate for absolute affinity | Useful as a diverse second opinion |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Docking/Validation |

|---|---|

| PDB Protein Structure | The 3D atomic starting model for the receptor. Must be curated for missing atoms/loops. |

| Ligand Structure File (SDF/MOL2) | 3D representation of the small molecule, requiring correct protonation, tautomer, and charge states. |

| Force Field Parameters | Set of equations and constants (e.g., AMBER, CHARMM, OPLS) defining atomic interactions for energy calculation. |

| Scoring Function Library | Collection of algorithms (Vina, Glide, ChemPLP, etc.) to evaluate and rank protein-ligand poses. |

| Benchmark Dataset (e.g., DUD-E) | Curated sets of known active molecules and property-matched decoys for method validation and training. |

| Structure Preparation Suite | Software (e.g., MOE, Maestro, UCSF Chimera) to add H's, assign charges, and optimize protein/ligand structures. |

| Consensus Scoring Script | Custom or published script to normalize and aggregate scores from multiple docking runs. |

Visualizations

Title: Docking Task Workflow and Validation Path

Title: Consensus Scoring Methodology Flow

Building Your Consensus Engine: Strategies, Workflows, and Implementation

Troubleshooting Guides & FAQs

Q1: My prepared protein structure contains unexpected gaps or missing loops in the binding site region. What steps should I take? A: This is often due to poor electron density in the original crystallographic data. First, consult the PDB file's REMARK 465 and 470 sections, which list residues not observed in the experiment. For homology models, check the alignment quality. Solutions include:

- Use a loop modeling tool (e.g., ModLoop, Rosetta loop modeling) to rebuild missing segments.

- If the gap is small (<4 residues), consider using a restraint during energy minimization to gently regularize the region without distorting the known structure.

- As a last resort for non-critical loops, remove the incomplete residues but document this alteration meticulously, as it can affect docking results.

Q2: How do I resolve clashes or unrealistic bond lengths after adding missing hydrogen atoms and assigning protonation states? A: Clashes often indicate incorrect protonation or tautomeric states for key residues (e.g., HIS, ASP, GLU) or a need for structural refinement.

- Re-check Protonation: Use a tool like H++ or PropKa at the specific pH of your experiment. For buried residues, the pKa can shift significantly.

- Perform Constrained Energy Minimization: Use a force field (e.g., AMBER, CHARMM) with restraints on heavy atom positions to relax the added hydrogens and relieve clashes while preserving the experimental backbone conformation. 2000 steps of steepest descent followed by 2000 steps of conjugate gradient is a typical starting protocol.

- Validate: Ensure minimized structure retains its native hydrogen-bonding network.

Q3: The binding site is not well-defined or is unknown for my target. What are the best practices for binding site prediction? A: Use a consensus approach from multiple computational methods:

- Run Multiple Predictors: Use at least three different algorithms: one geometry-based (e.g., FPocket), one energy-based (e.g., GRID), and one template-based (e.g., using COACH or a literature search for homologous proteins).

- Generate a Consensus Site: Overlap the top predicted pockets from each method. The region with the highest overlap is your strongest candidate.

- Validate with Known Data: If any experimental data exists (e.g., a mutagenesis study showing a critical residue), use it to filter or rank predictions. The final site should be a contiguous cluster of residues with mixed physicochemical properties.

Q4: My docking scores show poor correlation with experimental binding affinities (IC50/Ki) after a seemingly correct preparation. What pre-docking issues could be the cause? A: Within the context of consensus scoring validation research, this often stems from preparation artifacts.

- Systematic Error in Ligand Preparation: Ensure all ligands were prepared with identical parameters (tautomer, ionization, 3D conformation generation). A single mis-protonated ligand can skew validation statistics.

- Inappropriate Binding Site Definition: A site that is too large introduces noise; a site that is too small restricts plausible poses. Re-evaluate site dimensions using the bounding box of all cocrystallized ligands from a relevant benchmark set.

- Unaccounted Protein Flexibility: If your validation set includes ligands inducing sidechain movements, a single rigid receptor will fail. Consider generating multiple receptor conformations (e.g., from MD snapshots or alternative crystal structures).

Experimental Protocols for Cited Key Experiments

Protocol 1: Consensus Binding Site Prediction and Definition

- Objective: To define a robust binding pocket for docking when no co-crystal ligand is available.

- Method:

- Input the prepared protein structure (PDB format).

- Run three site prediction tools: FPocket (command:

fpocket -f target.pdb), DoGSiteScorer (via ProteinsPlus web server), and MetaPocket. - Collect the top 3 predicted pockets from each tool based on score/size.

- Superpose all predictions in molecular visualization software (e.g., PyMOL).

- Define the consensus site as all residues within 6.5 Å of any atom from the overlapping predicted pocket centroids.

- Output the defined site as a grid box centered on the geometric center of the consensus residues, with dimensions extending 8-10 Å beyond the extreme coordinates of those residues.

Protocol 2: Preparation and Validation of a Receptor Structure from a Crystal Structure

- Objective: To generate a biophysically realistic, minimized receptor structure ready for docking.

- Method:

- Retrieve & Clean: Download PDB file. Remove all non-protein entities (water, ions, ligands) except crucial cofactors. Remove alternate conformations, keeping the highest occupancy chain.

- Add Missing Atoms: Use

pdb4amberorPDB2PQRto add missing heavy atoms in incomplete residues (e.g., truncated side chains). For missing loops >4 residues, consider homology modeling. - Assign Protonation States: Use PropKa 3.0 (web server) to calculate pKa values of titratable residues at target pH (e.g., 7.4). Manually set states for HIS (HID, HIE, HIP), ASP, GLU, etc., accordingly.

- Add Hydrogens & Minimize: Load structure into USCF Chimera or Schrödinger Maestro. Add hydrogens, assign partial charges (AMBER ff14SB). Run constrained minimization (2000 steps SD, 2000 steps CG) with restraints on protein heavy atoms (force constant 10 kcal/mol·Å²) to relieve clashes.

- Validate: Check Ramachandran plot (≥95% in favored regions), clash score, and bond length/angle deviations from ideal values using MolProbity.

Data Presentation

Table 1: Comparison of Binding Site Prediction Tools

| Tool Name | Algorithm Type | Input Required | Key Output Metric | Typical Runtime (CPU) | Best For |

|---|---|---|---|---|---|

| FPocket | Geometry & Voronoi | Protein Structure (.pdb) | Pocket Score (PS), Druggability Score | 1-5 minutes | Rapid, unbiased pocket detection |

| DoGSiteScorer | Difference of Gaussians | Protein Structure (.pdb) | Drug Score (volume, hydrophobicity) | 2-10 minutes | Detailed subpocket analysis |

| MetaPocket 2.0 | Consensus (8 methods) | Protein Structure (.pdb) | Consensus Score | 5-15 minutes | Improving prediction reliability |

| GRID | Interaction Energy Probes | Protein Structure (.pdb) | Interaction Energy (kcal/mol) | 10-30 minutes | Identifying energetic "hot spots" |

Table 2: Impact of Protonation State on Docking Validation Metrics (Example Dataset)

| Residue | State Assumed | Correlation (R²) to Experimental Ki | Mean Docking Score (kcal/mol) | RMSD of Top Pose (Å) |

|---|---|---|---|---|

| HIS-12 | HID (δ-protonated) | 0.72 | -8.5 | 1.2 |

| HIS-12 | HIE (ε-protonated) | 0.65 | -7.9 | 1.8 |

| HIS-12 | HIP (doubly protonated) | 0.41 | -10.2 | 2.5 |

| GLU-87 | Deprotonated (default) | 0.80 | -9.1 | 1.1 |

| GLU-87 | Protonated | 0.35 | -6.8 | 3.4 |

Mandatory Visualizations

Workflow: System Preparation and Site Definition

Research Context: Pre-Docking Steps Feed Validation Thesis

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Specific Tool/Software | Function in Pre-Docking |

|---|---|---|

| Structure Preparation Suite | UCSF Chimera, Schrodinger Protein Prep Wizard | Graphical environment for adding hydrogens, assigning charges, filling missing loops, and performing energy minimization. |

| Protonation State Calculator | PropKa 3.0, H++ Server | Predicts pKa shifts for protein residues to determine correct protonation/tautomeric states at a given pH. |

| Molecular Force Field | AMBER ff14SB, CHARMM36 | Provides parameters for partial atomic charges and bond energies, essential for energy minimization. |

| Binding Site Predictor | FPocket, DoGSiteScorer, MetaPocket | Identifies potential ligand binding cavities on a protein surface using geometric or energetic algorithms. |

| Visualization & Analysis | PyMOL, VMD | Critical for visualizing and comparing predicted binding sites, inspecting minimized structures, and defining grid boxes. |

| Scripting Framework | Python (with MDAnalysis, Biopython) | Automates repetitive preparation and analysis tasks, ensuring reproducibility and consistency across datasets. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ: Score Integration & Experimental Validation

Q1: During consensus scoring, my normalized values show extreme compression (e.g., all values between 0.99-1.00). What is the cause and how do I fix it? A: This is typically caused by applying Min-Max normalization to a dataset containing severe outliers. The outlier's extreme value becomes the new max, compressing the distribution of all other data points.

- Solution: Switch to a robust scaling method. We recommend Quantile Normalization or Z-score normalization with outlier clipping.

- Protocol: For Z-score with clipping:

- Calculate the mean (µ) and standard deviation (σ) of your raw score set.

- Define clipping boundaries (e.g., µ ± 3σ).

- Clip any raw score outside these boundaries to the boundary values.

- Apply Min-Max normalization to the clipped dataset to bring it to your target range (e.g., [0,1]).

Q2: After converting multiple docking scores (Glide SP, GOLD, AutoDock Vina) to a unified metric, the consensus rank contradicts known experimental binding affinities. How should I validate my normalization pipeline? A: This indicates a potential flaw in the normalization strategy or weight assignment. Implement the following validation protocol.

- Validation Protocol: Retrospective Benchmarking

- Curate a Validation Set: Assemble a dataset of protein-ligand complexes with reliably measured experimental Ki/Kd values. Include both actives and decoys.

- Pre-Normalization Correlation Check: Calculate Spearman's Rank Correlation Coefficient (ρ) between each raw scoring function's ranks and the experimental affinity ranks. Record as Baseline ρ.

- Apply Normalization: Convert all raw scores using your chosen technique (e.g., Min-Max, Z-score).

- Generate Consensus Score: Use a simple mean or weighted sum of normalized scores.

- Post-Normalization Correlation Check: Calculate ρ between the consensus rank and the experimental affinity rank.

- Compare: Successful normalization should yield a consensus ρ significantly higher than the best individual Baseline ρ. If not, iterate on techniques (e.g., try Percentile Rank) or consensus weights.

Q3: I need to combine a probabilistic score (e.g., p-value from a machine learning model) with an energy-based score (e.g., binding free energy in kcal/mol). Which normalization technique is most appropriate? A: Probabilistic and energy-based scores have fundamentally different distributions. Percentile Rank (Rank Normalization) is the most suitable technique here, as it translates both distributions onto a uniform 0-100 scale based on relative standing, making them comparable.

- Protocol for Percentile Rank:

- For each scoring function separately, sort all compounds in ascending order (worst to best). For p-values, lower is typically better; for binding energy, more negative is better.

- Assign a rank r from 1 to N.

- Calculate Percentile Rank for each compound:

(r / N) * 100. Alternatively, for a unified 0-1 scale:(r - 1) / (N - 1). - The resulting values for both metrics are now on a comparable scale where a higher value indicates a better rank.

Data Presentation: Comparison of Common Normalization Techniques

Table 1: Key Characteristics of Score Normalization Techniques

| Technique | Formula | Range | Robust to Outliers? | Best For |

|---|---|---|---|---|

| Min-Max | ( X' = \frac{X - X{min}}{X{max} - X_{min}} ) | [0, 1] | No | Bounded, outlier-free scores. |

| Z-score (Standardization) | ( X' = \frac{X - \mu}{\sigma} ) | (-∞, +∞) | Moderate | Scores approximating a normal distribution. |

| Robust Scaling | ( X' = \frac{X - median}{IQR} ) | (-∞, +∞) | Yes | Scores with significant outliers. |

| Percentile Rank | ( Rank\% = \frac{r}{N} \times 100 ) | [0, 100] | Yes | Combining scores of different types/distributions. |

| Decimal Scaling | ( X' = \frac{X}{10^j} ) | (-1, 1) | No | Simple reduction of absolute scale. |

Table 2: Example Normalization Output for Docking Scores (Hypothetical Data)

| Compound | Raw Vina (kcal/mol) | Raw Glide SP | Norm. Vina (Min-Max) | Norm. Glide SP (Z-score) | Consensus (Mean) |

|---|---|---|---|---|---|

| Ligand A | -9.5 | -8.0 | 1.000 | 0.954 | 0.977 |

| Ligand B | -7.2 | -10.5 | 0.378 | 1.621 | 1.000 |

| Ligand C | -6.0 | -6.5 | 0.089 | 0.000 | 0.045 |

| Ligand D | -5.0 | -5.8 | 0.000 | -0.387 | -0.194 |

| Min / Max | -5.0 / -9.5 | -5.8 / -10.5 | - | - | - |

| Mean / SD | -6.93 / 1.82 | -7.70 / 2.08 | - | - | - |

Note: Consensus calculated after normalizing both scores to a 0-1 range for demonstration. Vina normalized via Min-Max. Glide SP normalized via Z-score, then Min-Max scaled to 0-1. This shows how normalization enables cross-metric averaging.

Experimental Protocols

Protocol: Implementing a Robust Consensus Docking Workflow with Normalization

Objective: To integrate scores from three distinct docking programs into a single, reliable consensus ranking.

Materials: Docking software suite (e.g., AutoDock Vina, Glide, GOLD), a curated library of ligands, a prepared protein target structure, and a scripting environment (Python/R).

Methodology:

- Docking Execution: Dock all ligands against the target using each of the three programs. Record the primary output score for each (e.g., Vina score, Glide SP score, GOLD ChemScore).

- Data Collection & Alignment: Compile results into a table with columns: LigandID, VinaScore, GlideScore, GoldScore.

- Outlier Inspection: Generate box plots for each score set to visually identify outliers.

- Normalization:

- If outliers are minimal, apply Min-Max normalization per column to range [0,1].

- If outliers are present, apply Percentile Rank normalization per column.

- Consensus Calculation: For each ligand, calculate the arithmetic mean of its three normalized scores. This is its Consensus Score.

- Ranking: Rank all ligands based on the Consensus Score in descending order (if 1 is best after Min-Max) or ascending order (if rank 1 is best after Percentile Rank).

- Validation: Compare the top-ranked consensus ligands against known actives from literature or a withheld test set. Calculate enrichment factors (EF1%, EF10%) to quantify performance.

Mandatory Visualization

Normalization & Consensus Scoring Workflow

Validation Pathway for Consensus Docking

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Docking Validation & Consensus Studies

| Item | Function in Context | Example/Note |

|---|---|---|

| Curated Benchmark Dataset | Provides ground truth with known active/inactive compounds for validation. | Directory of Useful Decoys (DUD-E), PDBbind core set. |

| Scripting Language (Python/R) | Automates the normalization, consensus calculation, and statistical analysis pipeline. | Use pandas & scikit-learn in Python; tidyverse in R. |

| Statistical Analysis Library | Calculates performance metrics to validate the consensus method. | scikit-learn (metrics), SciPy (stats) in Python. |

| Visualization Toolkit | Creates plots to inspect score distributions and outliers pre/post-normalization. | matplotlib, seaborn in Python; ggplot2 in R. |

| Docking Software Suite | Generates the heterogeneous raw scores that require normalization. | Commercial: Schrodinger Suite, MOE. Free: AutoDock Vina. |

| Normalization Software/Code | Implements the mathematical transformation of scores. | Custom scripts or built-in functions in data science libraries. |

Technical Support Center: Troubleshooting & FAQs

This technical support center is designed to assist researchers implementing consensus scoring methods within the context of docking validation and virtual screening research. The guidance is framed by best practices for robust consensus scoring as a key component of computational drug discovery.

Frequently Asked Questions (FAQs)

Q1: My consensus scoring results show no improvement over my best single scoring function. What could be wrong? A: This is a common issue. First, verify that your individual scoring functions are sufficiently diverse. If they are highly correlated (e.g., Pearson r > 0.8), consensus will offer little benefit. Calculate correlation matrices between scores for your benchmark actives/decoys. Consider incorporating functions from different mathematical families (e.g., force-field-based, empirical, knowledge-based). Secondly, check your implementation of the consensus logic. A simple error in rank averaging can negate expected performance gains.

Q2: When implementing exponential ranking, how do I choose the optimal scaling factor (λ)? A: The scaling factor (λ) controls the penalty for poor ranks. A value that is too high over-penalizes, making the consensus overly reliant on a few top functions. A value too low makes it behave like simple averaging. You must empirically determine λ using a validation set separate from your final test set. Perform a parameter sweep (e.g., λ from 0.1 to 2.0 in 0.1 increments) and select the value that maximizes your chosen metric (e.g., Enrichment Factor at 1% or AUC-ROC) on the validation set.

Q3: How should I handle missing scores for a particular compound from one of the scoring functions? A: Do not simply omit the compound or the function. Best practice is to implement a robust imputation strategy. For rank-based methods, one approach is to assign the worst possible rank from that function to the compound with the missing value. Document this handling clearly. An alternative is to use the average rank of the compound from other functions, but this can reduce diversity. Consistency in handling missing data across training and application sets is critical.

Q4: My exponential ranking consensus is computationally expensive on large virtual libraries. How can I optimize it?

A: The exponential sum calculation for each compound is the bottleneck. Pre-compute the exponential term e^{-λr} for all possible ranks r (from 1 to N, where N is library size) and store them in a lookup array. This replaces the expensive per-compound exponentiation with a simple array access. Furthermore, ensure your ranking is performed using efficient, vectorized operations (e.g., using NumPy's argsort in Python) rather than nested loops.

Q5: How do I validate that my consensus algorithm is statistically superior to a single scorer? A: You must perform statistical significance testing. For metrics like AUC-ROC, use DeLong's test to compare the AUC of the consensus method against the AUC of the best individual scorer. For early enrichment metrics (EF1%), use bootstrapping: repeatedly resample your benchmark set (with replacement), calculate the metric for both methods, and compare the resulting distributions with a paired t-test or by checking the non-overlap of confidence intervals. A p-value < 0.05 is typically required.

Experimental Protocols for Key Validation Experiments

Protocol 1: Benchmarking Individual Scoring Function Diversity Objective: To assess the complementarity of scoring functions prior to consensus building.

- Input: A standardized benchmark dataset (e.g., DUD-E, DEKOIS 2.0) containing known active compounds and property-matched decoys.

- Docking: Dock all compounds using a consistent docking engine and protocol.

- Scoring: Score the poses for each compound with M different scoring functions (S1, S2, ..., SM).

- Analysis: For each scoring function, calculate the AUC-ROC and EF1% for ranking actives over decoys. Generate an M x M correlation matrix (Pearson's r) of the raw scores or ranks across all compounds.

- Success Criteria: Selected functions should show variable performance profiles (some high AUC, some high EF) and inter-correlation coefficients mostly below 0.7-0.8.

Protocol 2: Implementing and Tuning Exponential Ranking Consensus Objective: To construct and optimize an exponential ranking consensus score.

- Rank Assignment: For each scoring function i, rank all N compounds from best (rank=1) to worst (rank=N) based on the score (e.g., lowest docking energy is best).

- Consensus Score Calculation: For each compound j, compute the consensus score C_j using: C_j = Σ_{i=1}^{M} e^{-λ * r_{ij}}, where r_{ij} is the rank of compound j by function i, and λ is the scaling factor.

- Final Ranking: Rank all compounds based on C_j (highest value is best).

- Parameter Tuning: Using a dedicated validation set, repeat steps 1-3 for a range of λ values. Plot λ versus the target validation metric (e.g., EF1%) to identify the optimum.

Protocol 3: Statistical Validation of Consensus Improvement Objective: To rigorously test if the consensus method outperforms the baseline.

- Performance Measurement: On the independent test set, calculate the primary metric (e.g., AUC-ROC) for the consensus model (with tuned λ) and for the top 3 individual scoring functions.

- Statistical Testing:

- For AUC-ROC: Apply DeLong's test for the comparison of two correlated ROC curves (consensus vs. best individual).

- For Early Enrichment: Perform a bootstrapping procedure (e.g., 5000 iterations). On each iteration, randomly sample compounds from the test set (with replacement), recalculate EF1% for both methods, and record the difference.

- Result Interpretation: Compute the 95% confidence interval for the performance difference from bootstrap. If the entire interval is above zero, the improvement is statistically significant at the 5% level.

Data Presentation: Comparative Performance of Consensus Methods

Table 1: Performance of Individual vs. Consensus Scoring on a DEKOIS 2.0 Benchmark Target

| Scoring Method | AUC-ROC | EF at 1% | EF at 5% | Mean Rank of Actives |

|---|---|---|---|---|

| X-Score (Empirical) | 0.72 | 12.5 | 28.1 | 145.3 |

| ChemPLP (Knowledge-Based) | 0.68 | 18.7 | 31.4 | 132.8 |

| GoldScore (Force-Field) | 0.75 | 15.6 | 30.9 | 121.5 |

| Simple Rank Averaging | 0.78 | 19.8 | 34.2 | 98.7 |

| Exponential Ranking (λ=0.5) | 0.81 | 25.4 | 38.9 | 76.2 |

Table 2: Inter-Correlation (Pearson r) of Scoring Function Ranks

| X-Score | ChemPLP | GoldScore | PLANTop | |

|---|---|---|---|---|

| X-Score | 1.00 | 0.65 | 0.72 | 0.58 |

| ChemPLP | 0.65 | 1.00 | 0.61 | 0.69 |

| GoldScore | 0.72 | 0.61 | 1.00 | 0.55 |

| PLANTop | 0.58 | 0.69 | 0.55 | 1.00 |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Consensus Scoring Research |

|---|---|

| Standardized Benchmark Sets (e.g., DUD-E, DEKOIS) | Provides validated datasets of known actives and matched decoys for controlled performance evaluation and method comparison. |

| Docking Software Suite (AutoDock Vina, GOLD, Glide) | Generates the initial poses and raw scores from multiple, algorithmically distinct scoring functions. |

| Scripting Environment (Python/R with NumPy/pandas) | Essential for implementing custom consensus algorithms, data wrangling, statistical analysis, and automation of workflows. |

| Statistical Libraries (scikit-learn, pROC in R) | Provides tested implementations for calculating performance metrics (AUC, EF) and performing significance tests (DeLong's test, bootstrapping). |

| Visualization Tools (Matplotlib, Seaborn, Graphviz) | Used to create publication-quality plots of ROC curves, enrichment plots, correlation matrices, and algorithmic workflow diagrams. |

Workflow and Algorithm Visualizations

Title: Consensus Scoring Implementation Workflow

Title: Exponential Ranking Algorithm Logic

Troubleshooting Guides & FAQs

Q1: My top-scoring docking pose is not conserved across different scoring functions. How should I proceed? A: This is a classic sign of pose instability. Do not rely on a single score. Implement the following protocol:

- Re-dock: Perform re-docking runs (e.g., 5-10 independent runs) with different random seeds to check for consistency.

- Consensus Pose Clustering: Cluster all generated poses (from all scoring functions and re-docks) using RMSD (typically 2.0 Å cutoff). The largest cluster represents the most conserved pose.

- Cross-Score Validation: Check the rank of this conserved pose within each individual scoring function's list. A robust pose should have a respectable rank (e.g., top 5-10) in multiple functions, not just one.

Q2: How do I validate a docking pose when no experimental structure (co-crystal) of my ligand-target complex exists? A: In the absence of a gold-standard experimental pose, employ a multi-tiered validation strategy:

- Internal Consistency Checks:

- Pose Conservation: As in Q1.

- Sensitivity to Parameters: Slight variations in binding site definition or grid box size should not radically alter the top conserved pose.

- External Predictive Checks:

- Interaction Fingerprint Consistency: Compare the interaction fingerprint (hydrogen bonds, hydrophobic contacts) of your pose to known active ligands for the same target.

- MD Simulation Stability: Subject the top conserved pose(s) to short (50-100 ns) molecular dynamics simulations. A stable pose will maintain key interactions and low backbone RMSD.

- Prospective Experimental Validation: Design compounds based on the pose's key interactions and test them via activity assays.

Q3: What are the minimum validation checks required before reporting a docked pose as a reliable prediction for publication? A: The table below summarizes the minimum validation protocol.

| Check Category | Specific Test | Acceptance Criteria | Purpose |

|---|---|---|---|

| Pose Reproduction | Re-dock a known co-crystallized ligand. | Heavy-atom RMSD ≤ 2.0 Å. | Verifies docking protocol setup. |

| Pose Conservation | Consensus across ≥3 distinct scoring functions. | Pose must appear in the top cluster. | Identifies scoring function artifacts. |

| Sensitivity | Vary grid box center/ size by ±2-3 Å. | Top conserved pose remains stable. | Ensures prediction is not grid-dependent. |

| Interaction Sanity | Visual inspection & interaction analysis. | Key known catalytic/anchoring interactions are present. | Assesses biochemical plausibility. |

Q4: My conserved docking pose suggests a novel binding mode. How can I rule out it being an artifact? A: A novel pose requires stringent artifact detection:

- Check for Forced Clashes: Ensure the pose isn't stabilized by unrealistic, severe clashes with the protein.

- Solvent Analysis: If the pose places a polar group in a hydrophobic environment without good reason, it is suspect.

- Compare to Inactive Decoys: Dock a set of known inactive molecules. If your novel pose is frequently generated for these decoys, it may be a nonspecific pose favored by the algorithm.

- Free Energy Perturbation (FEP): If resources allow, use FEP or MM/PBSA to calculate relative binding free energies compared to a known binding mode. This provides a physics-based assessment.

Experimental Protocols

Protocol 1: Consensus Pose Clustering and Validation Workflow

Objective: To identify the most reproducible docking pose using multiple scoring functions and cluster analysis.

- Preparation: Prepare protein and ligand files using standard docking software protocols (e.g., protonation, assignment of bond orders).

- Multi-Function Docking: Using a single docking algorithm (e.g., Vina, Glide, GOLD), generate a large ensemble of poses (e.g., 50-100 per run). Do not post-process with the default scorer.

- Re-Scoring: Extract all unique poses from Step 2. Score each pose using at least three chemically diverse scoring functions (e.g., one force-field based, one empirical, one knowledge-based).

- Clustering: Perform pairwise all-vs-all RMSD calculation on all poses. Use a clustering algorithm (e.g., hierarchical, k-means) with an RMSD cutoff of 2.0 Å.

- Consensus Identification: Identify the cluster with the highest number of poses. The centroid of this cluster is the Consensus Pose.

- Validation: For each scoring function, note the rank of the Consensus Pose. A reliable result shows the Consensus Pose ranked highly by multiple functions.

Protocol 2: Molecular Dynamics Stability Check for a Docked Pose

Objective: To assess the stability of a docked pose over simulated time.

- System Preparation: Place the protein-ligand complex in a solvation box (e.g., TIP3P water). Add ions to neutralize the system's charge.

- Energy Minimization: Perform 5,000 steps of steepest descent minimization to remove steric clashes.

- Equilibration:

- NVT Ensemble: Heat the system to 300 K over 100 ps while restraining protein and ligand heavy atoms.

- NPT Ensemble: Achieve 1 atm pressure over 200-500 ps with restraints on heavy atoms.

- Production Run: Run an unrestrained simulation for a minimum of 50 ns (100+ ns preferred). Use a 2 fs timestep.

- Analysis:

- Calculate the protein backbone RMSD relative to the starting structure. Convergence indicates stable protein.

- Calculate the ligand heavy-atom RMSD relative to its starting docked pose. A stable pose will show a plateau, typically below 2.0-3.0 Å.

- Analyze the persistence of key protein-ligand interactions (hydrogen bonds, salt bridges) over the simulation time.

Diagrams

Diagram 1: Consensus Docking & Validation Workflow

Diagram 2: Multi-Tiered Pose Validation Logic

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Category | Function in Pose Validation |

|---|---|---|

| AutoDock Vina / GNINA | Docking Software | Generates the initial ensemble of ligand poses within the binding site. |

| Schrödinger Glide / GOLD | Commercial Docking Suite | Provides alternative, robust docking engines and diverse scoring functions for consensus. |

| RDKit | Cheminformatics Library | Used for handling molecular files, calculating RMSD, and generating interaction fingerprints. |

| MM/PBSA or MM/GBSA Scripts | Free Energy Calculation | Estimates binding free energy from MD trajectories to complement docking scores. |

| AMBER / GROMACS / OpenMM | Molecular Dynamics Engine | Performs stability simulations to test the temporal robustness of the docked pose. |

| PyMOL / ChimeraX | Visualization Software | Critical for visual inspection of poses, interactions, and identifying clashes. |

| PoseBusters / D3R Tools | Validation Suite | Automated tools to check for steric clashes, geometry violations, and other pose artifacts. |

| Known Active/Inactive Ligand Set | Chemical Compounds | Provides a benchmark for interaction pattern comparison and decoy docking studies. |

Overcoming Real-World Hurdles: Ensuring Physical Plausibility and Robust Generalization

Technical Support & Troubleshooting Center

Troubleshooting Guides

Guide 1: Resolving Steric Clashes in Docking Poses

- Problem: Poses show unrealistic atomic overlap, indicated by high positive VDW energy terms.

- Root Cause: Inadequate sampling, poor scoring function parameterization for repulsion, or incorrect protonation states.

- Solution Steps:

- Apply a post-docking minimization using a force field (e.g., MMFF94) to relax clashes.

- Check and correct ligand and receptor protonation states at physiological pH.

- Increase the number of docking runs and implement a consensus scoring filter (see Table 1).

- Manually inspect top poses for obvious atomic overlaps; consider them unreliable.

Guide 2: Correcting Unrealistic Bond Geometry

- Problem: Ligand poses exhibit distorted bond lengths, angles, or torsions outside standard biochemical limits.

- Root Cause: Docking algorithm constraints, improper initial ligand structure preparation, or lack of conformational energy penalty in scoring.

- Solution Steps:

- Pre-process all ligands with a geometry optimization and energy minimization step before docking.

- Use docking software that includes internal ligand strain energy in its scoring function.

- Implement a post-docking validation step that calculates RMSD of bond parameters to ideal values (see Experimental Protocol 1).

Frequently Asked Questions (FAQs)

Q1: What is an acceptable steric clash tolerance in a docking pose for it to be considered "physically plausible"? A: There is no single threshold, but poses should be scrutinized if any non-bonded atom pair distance is less than 80% of the sum of their Van der Waals radii. Systematic validation against known structures is key (see Table 2).

Q2: How can I automatically filter out poses with bad bond geometry in a high-throughput virtual screen? A: Develop a script to calculate deviations from ideal bond lengths and angles (from sources like the Cambridge Structural Database). Poses with deviations exceeding 4 standard deviations from the mean for multiple bonds should be flagged or discarded.

Q3: Why does a pose with a minor steric clash sometimes score better (more favorably) than a clash-free pose? A: Scoring functions are compromises. A pose may form one extra strong hydrogen bond that overscores the penalty from a minor clash. This underscores the necessity of consensus scoring and visual inspection in validation protocols.

Q4: Which is more critical to address first: steric clashes or bond geometry errors? A: Address steric clashes first, as they represent severe, high-energy violations. Subsequent energy minimization will often correct minor bond geometry distortions.

Data Presentation

Table 1: Consensus Scoring Filters for Implausible Pose Rejection

| Validation Metric | Threshold for Warning | Threshold for Rejection | Tool/Method for Calculation |

|---|---|---|---|

| Steric Clash Score (VDW repulsion >0 kcal/mol) | > 5 clashes | > 10 severe clashes | OpenMM energy evaluation, UCSF Chimera ClashFinder |

| Max Bond Length Deviation | > 0.10 Å | > 0.20 Å | RDKit (Compare to CCDC norms) |

| Max Bond Angle Deviation | > 15.0° | > 25.0° | RDKit (Compare to CCDC norms) |

| Internal Strain Energy | > 15 kcal/mol | > 25 kcal/mol | Force field minimization (MMFF94, GAFF) |

Table 2: Prevalence of Steric Clashes in Unvalidated Docking Poses (Hypothetical Benchmark)

| Docking Program | % of Top-10 Poses with Severe Clashes* | % of Poses with Bond Length Outliers | Recommended Correction Protocol |

|---|---|---|---|

| Program A | 22% | 8% | Protocol 1 (Minimization) |

| Program B | 15% | 12% | Protocol 2 (Re-docking with constraints) |

| Program C | 31% | 5% | Protocol 1 & 3 (Consensus filter) |

Clash defined as distance < 0.75 * sum of VDW radii. *Deviation > 4σ from ideal.

Experimental Protocols

Experimental Protocol 1: Post-Docking Pose Geometry Validation

- Pose Extraction: Isolate the docked ligand conformation from the receptor complex.

- Geometry Analysis: Use

RDKit'sAllChem.MMFFGetMoleculeForceField()orOpen Babel'sforce field tools to calculate strain energy. UseRDKitto measure all bond lengths and angles. - Reference Data: Compare measured values to ideal geometry dictionaries (e.g.,

RDKit'schemical dictionary, CCDC statistical data). - Scoring: Flag poses where >5% of bonds or angles exceed 3 standard deviations from reference means. Calculate a composite "Geometry Deviance Score."

Experimental Protocol 2: Consensus Scoring Workflow for Clash Correction

- Dock ligand against target using 2-3 distinct docking algorithms (e.g., Glide, AutoDock Vina, rDock).

- Minimize top N poses from each using a standard force field (e.g., MMFF94 in vacuum).

- Score minimized poses with 3-4 disparate scoring functions (e.g., X-Score, NNScore, ChemScore).

- Rank poses by average normalized score across all functions.

- Filter by applying the thresholds defined in Table 1.

- Visually Inspect the top 5 consensus-ranked, filtered poses.

Mandatory Visualization

Diagram Title: Pose Validation and Correction Workflow

Diagram Title: Logic for Atomic Steric Clash Detection

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Pose Validation |

|---|---|

| RDKit | Open-source cheminformatics toolkit; used for ligand preparation, basic geometry analysis, and calculating deviations from ideal bond parameters. |

| UCSF Chimera / PyMOL | Visualization software; critical for manual inspection of poses, identifying clashes, and assessing binding mode plausibility. |

| OpenMM | High-performance toolkit for molecular simulation; used for running force field-based minimization on poses to relieve clashes and strain. |

| Cambridge Structural Database (CSD) | Database of experimental small-molecule crystal structures; provides statistically derived "ideal" bond lengths and angles for comparison. |

| Consensus Scoring Scripts (Custom) | In-house or literature scripts that normalize and combine scores from multiple docking/scoring programs to improve pose selection reliability. |

| Force Field Parameters (GAFF/MMFF94) | Parameter sets for describing molecular mechanics energies; essential for post-docking minimization and strain energy calculation. |

Troubleshooting Guides & FAQs

Q1: My consensus scoring function performs well on benchmark datasets but fails dramatically on a novel protein target. What could be the primary cause and how can I diagnose it?

A: This is the core manifestation of the generalization gap. The likely cause is dataset bias in your training/validation sets. Common biases include over-representation of certain protein families (e.g., kinases, GPCRs), limited chemical space in the ligand sets, or similarity between benchmark decoys and actives. To diagnose:

- Perform a t-SNE or UMAP analysis of the protein descriptors (e.g., from ProtFP or pocket descriptors like fpocket) of your benchmark set versus the novel target. Visual clustering will reveal if the novel target is an outlier.

- Calculate the Tanimoto similarity between the ligands in your benchmark and your novel test compounds. Low average similarity indicates a chemical space gap.

- Check if your model relies on specific pocket features (e.g., a deep hydrophobic cavity) that are absent or different in the novel pocket.

Protocol for t-SNE-Based Bias Diagnosis:

- Input: Protein sequence or pocket feature vectors for all training/validation proteins and the novel target.

- Tools: Use scikit-learn (

TSNEfunction). Standardize features first. - Method: Reduce features to 2D via t-SNE (perplexity=30, n_iter=1000). Plot with training/validation vs. novel target points colored differently.

- Interpretation: If the novel target point lies far from all training clusters, your model has not learned generalized rules for this region of protein space.

Q2: During docking validation, my RMSD values are good for re-docking (pose prediction) but enrichment in virtual screening is poor for novel pockets. How should I adjust my protocol?

A: Good re-docking RMSD validates the docking engine's ability to reproduce a known pose within a known pocket, which does not guarantee it can rank novel ligands correctly in a novel pocket. This indicates a scoring function limitation.

- Immediate Action: Implement consensus scoring across multiple, diverse scoring functions (e.g., Vina, PLP, ChemScore, X-Score). Use a metric like Rank-by-Vote or Rank-by-Best.

- Refinement: Move beyond simple consensus. Use machine learning-based rescoring trained on a broad, diverse set of protein-ligand complexes, explicitly including diverse pocket topologies. Ensure your negative dataset includes decoys that are challenging for physics-based functions.

Protocol for Robust Consensus Virtual Screening:

- Dock your library against the novel pocket using 2-3 different docking engines (e.g., AutoDock Vina, Glide SP, rDock).

- For each docked pose, calculate scores from 4-5 distinct scoring functions.

- For each ligand, retain its best pose from each engine.

- Rank ligands by Consensus Rank:

(Rank_Score1 + Rank_Score2 + ... + Rank_ScoreN) / N. - Evaluate enrichment (EF1% or AUC-ROC) against known actives/decoys for the novel target.

Q3: What are the best practices for creating a validation set that truly tests generalization to novel proteins and pockets?

A: The key is temporal, sequential, or structural hold-out.

- Temporal Split: Use proteins/ligands discovered after a certain date for testing. This simulates real-world application.

- Protein-Family Hold-Out: Remove an entire protein family (e.g., all Cytochrome P450s) from training and use it for testing.

- Binding-Pocket Clustering Hold-Out: Cluster binding pockets by geometry and physicochemical properties. Hold out entire clusters for testing.

- Avoid Random Split: A random split at the complex level leaks information because similar proteins/ligands appear in both sets, inflating performance.

Protocol for Pocket-Clustered Hold-Out Validation:

- For all proteins in your dataset, compute pocket descriptors (e.g., using

fpocketorP2Rank): volume, hydrophobicity, charge, residue composition. - Cluster pockets using hierarchical clustering or DBSCAN based on these descriptors.

- Identify distinct clusters. Assign entire clusters to either training or test sets, ensuring no cluster is represented in both.

- Train your model on the training clusters and evaluate its performance on the held-out clusters.

Table 1: Performance Degradation of Scoring Functions on Novel Targets

| Scoring Function / Method | Benchmark Set AUC-ROC | Novel Protein Family (Held-Out) AUC-ROC | Performance Drop (%) |

|---|---|---|---|

| AutoDock Vina (Default) | 0.78 ± 0.05 | 0.61 ± 0.08 | -21.8% |

| Glide SP | 0.82 ± 0.04 | 0.65 ± 0.09 | -20.7% |

| RF-Score (PDBBind Trained) | 0.85 ± 0.03 | 0.70 ± 0.07 | -17.6% |

| Consensus (Vina+Glide+NN) | 0.87 ± 0.03 | 0.75 ± 0.06 | -13.8% |

| GraphNN-Based Model* | 0.89 ± 0.02 | 0.81 ± 0.05 | -9.0% |

*Trained with explicit pocket-cluster hold-out validation.

Table 2: Impact of Validation Strategy on Reported Generalization Performance

| Validation Strategy | Reported Enrichment Factor at 1% (EF1%) | Estimated Real-World EF1% on Novel Target | Bias Type |

|---|---|---|---|

| Random Split (Ligand-Level) | 25.5 | 8-12 | Optimistic Bias |

| Protein-Family Hold-Out | 15.2 | 10-14 | Moderate |

| Temporal Hold-Out (>2020) | 12.8 | 11-13 | Realistic |

| Pocket-Cluster Hold-Out | 14.1 | 13-15 | Robust |

Visualizations

Title: Pocket-Cluster Hold-Out Validation Workflow

Title: Causes & Solution of Generalization Gap

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Generalization Research

| Item / Reagent | Function & Relevance to Generalization |

|---|---|

| Diverse Benchmark Sets (e.g., PDBbind refined, CSAR NRC-HiQ, DEKOIS 2.0) | Provides a broad foundation for training and testing. Must be used with hold-out strategies, not random splits. |

| Pocket Detection Software (fpocket, P2Rank, SiteMap) | Generates quantitative descriptors of binding sites, enabling pocket-level clustering and hold-out validation. |

| Multiple Docking Engines (AutoDock Vina, rDock, Glide, GOLD) | Essential for generating poses and initial scores for consensus methods, mitigating individual algorithm bias. |

| Diverse Scoring Functions (Vina, PLP, ChemScore, Machine-Learning Scores) | The basis for consensus scoring. Diversity in function form (empirical, force-field, knowledge-based) is critical. |

| Stratified Split Scripts (Custom Python/R) | Code to implement temporal, protein-family, or pocket-cluster based dataset splitting, preventing data leakage. |

| ML Rescoring Framework (e.g., with scikit-learn, DeepChem) | To build metascoring models that learn from multiple data sources and improve transfer to novel pockets. |

| Structured External Test Set (e.g., Novel Target from ChEMBL) | A completely independent set of protein-ligand data, published after model development, for the final reality check. |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: Poor Cross-Docking Poses Despite Using Flexible Side-Chains

- Q: I am performing cross-docking (using a holo structure of a different ligand) and my ligand RMSD is consistently high (>5Å), even after allowing key side-chains to be flexible. What could be wrong?

- A: High RMSD in cross-docking often indicates inadequate handling of backbone movement. Side-chain flexibility alone cannot compensate for larger conformational changes. Implement a multi-structure docking strategy. Create an ensemble of receptor structures from:

- Multiple holo structures from the PDB (if available).

- Snapshots from a molecular dynamics (MD) simulation of the apo protein.

- Generated conformations using normal mode analysis (NMA).

- Protocol: Use

MGLToolsto prepare protein and ligand files. Dock your ligand against each structure in the ensemble usingAutoDock VinaorGNINA. Use consensus scoring (see Table 2) to evaluate poses across the ensemble. - Visual Check: Superimpose your top-scored pose with the experimental holo structure of that specific ligand (if available) in

PyMOLto identify clashing regions.

- A: High RMSD in cross-docking often indicates inadequate handling of backbone movement. Side-chain flexibility alone cannot compensate for larger conformational changes. Implement a multi-structure docking strategy. Create an ensemble of receptor structures from:

FAQ 2: Handling Apo-Structure Cavity Collapse in Docking

- Q: When docking into an apo crystal structure, the binding site appears "closed" or collapsed, and the ligand cannot fit. How can I address this?

- A: Apo structures often represent a "closed" state. You must induce an "open" conformation prior to docking.

- Protocol - Induced Fit Docking (IFD) Lite:

- Preparation: Prepare your apo protein and ligand using standard protocols (add hydrogens, assign charges).

- Initial Rigid Docking: Perform a quick, rigid docking of a minimized ligand pose into the apo site. Use a larger search space to accommodate the ligand roughly.

- Protein Refinement: Use the top 5-10 rigid poses as references. Run a short, constrained MD simulation or side-chain minimization with the protein's backbone restrained, but allowing binding site residues to move. The constraint should be applied to C-alpha atoms outside a 10Å radius of the ligand.

- Final Flexible Docking: Redock the ligand into the refined protein structures from step 3 with flexible side-chains enabled.

FAQ 3: Inconsistent Results Between Different Docking Software in Flexible Docking

- Q: I get a good, low-RMSD pose from Software A, but Software B fails entirely when using the same flexible residues. How do I validate the correct pose?

- A: This highlights the need for consensus scoring and validation beyond software scoring functions. Do not rely on a single program's output score.

- Protocol for Consensus Evaluation:

- Run the docking experiment on at least two distinct docking engines (e.g.,

AutoDock Vina,GLIDE,GOLD). - Cluster the top 20 poses from each software by 3D similarity (RMSD < 2.0Å).

- Submit each cluster representative pose to a consensus scoring web server like

DockRMSDor compute multiple metrics manually (see Table 2). - The correct pose is often the one that ranks reasonably well across multiple, diverse metrics, not just the top score in one program.

- Run the docking experiment on at least two distinct docking engines (e.g.,

Experimental Protocols

Protocol 1: Generating a Receptor Ensemble for Cross-Docking

- Data Curation: From the PDB, download all high-resolution (<2.5Å) structures of your target protein, both apo and holo.

- Alignment & Clustering: Superimpose all structures on a reference (e.g., the apo structure) using C-alpha atoms. Cluster the aligned binding site residues (e.g., within 8Å of any cocrystallized ligand) by RMSD using a clustering algorithm (e.g., hierarchical, k-means). A threshold of 1.5Å C-alpha RMSD is typical.

- Selection: Select one representative structure from each major conformational cluster.

- Preparation: Prepare each representative for docking (add hydrogens, optimize protonation states, assign partial charges).

- Docking & Consensus: Dock the ligand of interest into each prepared ensemble member. Apply consensus scoring to rank final poses.

Protocol 2: Validating Docking Poses Using Independent Metrics

- Pose Generation: Generate N (e.g., 50) docking poses for your ligand.

- Metric Calculation: For each pose, calculate the following metrics:

- Software-Specific Score: The docking program's internal score (e.g., Vina score).

- MM/GBSA: Perform a brief energy minimization and calculate binding free energy using Molecular Mechanics/Generalized Born Surface Area.

- Ligand RMSD: If a known reference pose exists (e.g., from crystal structure).

- Interaction Fingerprint (IFP) Similarity: Compare the pattern of hydrophobic contacts, H-bonds, and salt bridges to a known active ligand.

- Rank Aggregation: Normalize each metric (e.g., 0-1 scale) and calculate a composite consensus score, such as a weighted sum or the rank-by-vote method.

Data Presentation

Table 1: Comparison of Flexibility Treatment Methods in Docking

| Method | Best For | Computational Cost | Key Software/Tools | Major Limitation |

|---|---|---|---|---|

| Rigid Receptor | High-affinity ligands, stable sites | Low | AutoDock Vina, DOCK6 | Cannot model induced fit. |

| Flexible Side-Chains | Side-chain rotameric changes | Medium | AutoDock FR, GOLD (flexible sidechains) | Misses backbone shifts. |

| Ensemble Docking | Cross-docking, known multiple states | Medium-High | Schrödinger (IFD), UCSF DOCK | Quality depends on ensemble representativeness. |

| Full MD Relaxation | Apo-structure docking, cryptic sites | Very High | AMBER, GROMACS, NAMD | Extremely computationally intensive. |

Table 2: Consensus Scoring Metrics for Pose Validation

| Metric | Calculation Method | Target Value (Indicates Good Pose) | Tools for Calculation |

|---|---|---|---|

| Docking Score | Native scoring function of the software. | Lower (more negative) is better. | Native to docking software. |

| MM/GBSA ΔG | Post-docking energy minimization & calculation. | < -40 kcal/mol (varies by system). | AMBER, GROMACS, Schrödinger Prime. |

| Ligand RMSD | Heavy-atom RMSD from experimental pose. | < 2.0 Å (acceptable). < 1.0 Å (excellent). | PyMOL, RDKit, UCSF Chimera. |

| Interaction Fingerprint (IFP) Similarity | Tanimoto coefficient vs. reference ligand IFP. | > 0.7 (high similarity). | RDKit, Schrödinger Canvas. |

| Contact Surface Area | Buried surface area upon binding. | Consistent with known active ligands. | PyMOL, NACCESS. |

Visualizations

Title: Workflow for Consensus Scoring in Docking Validation

Title: Creating a Receptor Ensemble for Cross-Docking

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Flexibility-Optimized Docking |

|---|---|

| Molecular Dynamics Software (AMBER, GROMACS) | Generates realistic, dynamic conformational ensembles of apo or holo proteins for ensemble docking. |

| Normal Mode Analysis Tool (ElNémo, iMODS) | Predicts collective, low-energy backbone motions to generate 'open' conformations from 'closed' apo structures. |

| Docking Suite with Side-Chain Flexibility (AutoDock FR, GOLD) | Allows specified receptor side-chains to sample rotameric states during the docking simulation. |

| Consensus Scoring Scripts (Custom Python/R) | Aggregates and normalizes scores from multiple docking programs and metrics to rank poses robustly. |

| Interaction Fingerprint Library (RDKit, Canvas) | Quantifies and compares ligand-protein interaction patterns to distinguish native-like poses. |

| MM/GBSA Rescoring Module (gmx_MMPBSA, Prime) | Performs post-docking refinement and more rigorous binding free energy estimation on multiple poses. |

FAQs & Troubleshooting

Q1: During the hybrid optimization workflow, my deep learning model generates plausible poses, but the subsequent traditional refinement (e.g., with Molecular Dynamics) frequently distorts the ligand into unrealistic conformations. What could be the cause?

A: This is often a force field parameterization issue. The classical refinement stage relies on pre-defined atom types and charges. If your ligand contains novel scaffolds or uncommon functional groups, these parameters may be missing or inaccurate.

- Troubleshooting Steps:

- Parameter Assignment: Use tools like

antechamber(from AmberTools) or theMATCHutility to generate missing parameters. Always visually inspect the assigned atom types. - Partial Charge Validation: Compare the electrostatic potential surface (EPS) of the ligand from a QM calculation (e.g., Gaussian, ORCA) with the EPS generated using the assigned force field charges. Significant discrepancies indicate a problem.

- Restrained Refinement: Implement positional or dihedral angle restraints on the core of the ligand during the initial steps of refinement to maintain the DL-predicted pose while allowing peripheral groups to relax.

- Parameter Assignment: Use tools like

Q2: How do I resolve consensus scoring conflicts when the deep learning pose scorer ranks one pose highest, but the MM/GBSA (Molecular Mechanics/Generalized Born Surface Area) refinement suggests a different pose is more stable?

A: This conflict is central to validation. A systematic protocol is required.

- Experimental Protocol for Consensus Resolution:

- Cluster Poses: Cluster the top-N outputs from the DL model (e.g., using RMSD).

- Multi-Method Scoring: Subject each cluster representative to identical MM/GBSA, MM/PBSA, and SIE (Solvated Interaction Energy) calculations using the same solvent model and interior dielectric constant.

- Statistical Analysis: Perform linear regression or Kendall's Tau correlation analysis between the scores from different methods. The table below summarizes expected outcomes:

| Scoring Conflict Scenario | Likely Interpretation | Recommended Action |

|---|---|---|

| DL score & MM/GBSA agree, but other methods disagree. | DL model may be tuned to similar physics as the force field. | Prioritize the MM/GBSA-validated pose. Seek experimental validation. |

| MM/GBSA & SIE agree, but DL score disagrees. | Potential bias in DL training data or model overfitting. | Investigate the chemical space of the discordant ligand vs. training set. Trust the consensus of physical methods. |

| No consensus across any 3 methods. | System is highly sensitive or scoring is inadequate. | Proceed to alchemical free energy perturbation (FEP) calculations if resources allow, or flag for experimental priority. |

Q3: My docking validation against a benchmark set shows excellent RMSD metrics after deep learning pose prediction but poor correlation in subsequent binding affinity (ΔG) prediction after refinement. Why?

A: High pose accuracy (low RMSD) does not guarantee accurate ΔG prediction. The refinement stage is critical for ΔG. Common pitfalls are entropy and solvent treatment.

- Troubleshooting Protocol:

- Entropy Estimation: Ensure your MM/GBSA protocol includes a normal mode analysis or quasi-harmonic approximation for entropy contribution. Omitting this leads to systematically poor ΔG correlation.

- Solvent Model Consistency: Use the same ionic strength and dielectric constant settings across all calculated complexes. Inconsistency here introduces noise.

- Trajectory Stability: Before extracting snapshots for ΔG calculation, verify the refined pose is stable in a production MD run. Calculate the backbone RMSD of the binding site over time; a drift >2.0 Å indicates an unstable refinement.