Advanced Strategies for Molecular Docking with Homology-Modeled Protein Structures

This article provides a comprehensive guide for researchers and drug development professionals on performing successful molecular docking studies using homology-modeled protein targets.

Advanced Strategies for Molecular Docking with Homology-Modeled Protein Structures

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on performing successful molecular docking studies using homology-modeled protein targets. With experimental protein structures unavailable for many drug targets, homology modeling has become indispensable. The article covers foundational principles, detailing the interplay between template selection, model quality, and docking algorithm physics. It presents a step-by-step methodological workflow for preparing models and executing docking simulations with tools like AutoDock Vina and DOCK. Crucially, it addresses common pitfalls and optimization strategies for handling the inherent flexibility and potential inaccuracies of modeled structures. Finally, it outlines rigorous validation protocols and comparative analysis techniques to assess docking reliability, empowering scientists to confidently integrate computational predictions into their structure-based drug discovery pipelines.

Understanding the Foundation: From Sequence to Docking-Ready Homology Models

Technical Support Center: Troubleshooting Homology Modeling & Docking

Frequently Asked Questions (FAQs)

Q1: My homology model has poor stereo-chemical quality despite a good template sequence alignment. What are the most common causes? A: This is often due to errors in loop modeling or side-chain packing for regions with low template similarity. First, verify your alignment in these variable regions; consider using multiple templates. Use explicit loop modeling protocols with longer sampling times. For side-chains, ensure you are using a robust rotamer library and consider using a combined scoring function (knowledge-based + physics-based) for refinement.

Q2: After docking into a homology model, I get unrealistic binding poses with ligands buried in the protein core, not in the active site. How can I fix this? A: This typically indicates inaccuracies in the binding pocket geometry or over-reliance on docking grid center coordinates. Define your docking search space using the predicted position of key catalytic/residue side chains from the template, not just a geometric center. Perform induced-fit docking or a quick MD relaxation of the binding site residues with constraints on the protein backbone before the final docking run.

Q3: How do I choose the best template when sequence identity is between 30-50%, the "twilight zone" for homology modeling? A: Do not rely on sequence identity alone. Prioritize templates based on:

- Query Coverage: Favor templates covering the entire target, especially the functional domains.

- Resolution & R-free: Choose the highest resolution crystal structure (<2.2 Å is ideal).

- Ligand Presence: A template co-crystallized with a relevant ligand (even a small molecule) provides critical binding site information.

- Overall Fold Assessment: Use fold assessment servers (like PDBsum) to check the template's quality.

Q4: My virtual screening against a homology model yields an extremely high hit rate in experimental testing, suggesting many false positives. What went wrong? A: This often points to an overly open or lipophilic binding pocket in the model, attracting too many promiscuous binders. Apply strict cavity definition filters during docking. Post-docking, use consensus scoring from at least three different scoring functions. Implement a pharmacophore model based on conserved interactions in the template family to filter poses.

Q5: Should I use my homology model for molecular dynamics (MD) simulations, and what are the key precautions? A: Yes, but with caution. Homology models require careful equilibration. Always perform a multi-step minimization and equilibration protocol, with strong positional restraints on the protein backbone initially, gradually releasing them. Run replicates. Pay close attention to the stability of loop regions and the binding site geometry throughout the simulation.

Troubleshooting Guides

Issue: Low Confidence in Modeled Binding Site Residues

- Symptoms: Docking results are inconsistent, key interacting residues are in unlikely rotamer conformations.

- Diagnostic Steps:

- Run model quality assessment tools specifically on the binding site (e.g., using

MolProbityclash score,QMEANDisColocal score). - Compare the backbone dihedral angles (Ramachandran plot) of binding site residues to the template.

- Run model quality assessment tools specifically on the binding site (e.g., using

- Resolution Protocol:

- Perform in silico mutagenesis to align binding site residues with the template if the sequence differs, using SCWRL4 or similar.

- Run a short, constrained MD simulation (50-100 ns) with restraints on the protein backbone outside the binding site to allow side-chain relaxation.

- Use the resulting ensemble of structures for ensemble docking.

Issue: Template Selection Ambiguity for a Novel Target

- Symptoms: Multiple templates with similar sequence identity but different conformations (e.g., open vs. closed state).

- Diagnostic Steps: Perform a phylogenetic analysis to understand evolutionary distance. Check literature for known conformational states relevant to your drug mechanism.

- Resolution Protocol:

- Build models using all plausible templates.

- Conduct a consensus active site analysis to identify structurally conserved residues.

- Dock a known reference ligand (if any) into all models.

- Select the model where the reference ligand docks with a pose that best recapitulates the template's native interactions.

Table 1: Expected Model Accuracy vs. Template Sequence Identity

| Template-Target Sequence Identity | Expected RMSD (Å) of Core Backbone | Recommended Use in Drug Discovery |

|---|---|---|

| >50% | <1.5 Å | High-confidence docking, SBDD |

| 30% - 50% | 1.5 - 3.0 Å | Careful docking, ensemble methods |

| <30% | >3.0 Å | Low confidence; avoid for docking |

Table 2: Performance of Different Model Refinement Protocols

| Refinement Protocol | Typical Δ in GDT-HA* | Computational Cost | Best For |

|---|---|---|---|

| Molecular Dynamics (Explicit Solvent) | 2.0 - 5.0 | Very High | Final model optimization |

| Moderate MD (Implicit Solvent) | 1.0 - 3.0 | High | Binding site relaxation |

| Side-chain Repacking & Minimization | 0.5 - 2.0 | Low | Initial correction after modeling |

*GDT-HA: Global Distance Test-High Accuracy; Δ represents potential improvement.

Experimental Protocols

Protocol 1: Building a Restrained Homology Model for Docking

- Target-Template Alignment: Use

HMMERorPSI-BLASTto identify templates. Perform multiple sequence alignment withClustalOmegaorMUSCLE, manually curate loop regions. - Model Building: Use

MODELLERorSWISS-MODEL. Generate 50 models. Apply symmetry restraints if the target is a homo-oligomer. - Initial Refinement: Subject all models to a brief energy minimization in

GROMACSorRosetta(500 steps steepest descent) with restraints on Cα atoms. - Model Selection: Rank models using

QMEAN,MolProbity, andPROSA-web. Select the top 5. - Binding Site Refinement: For the top 5 models, run a fast (5ns) implicit solvent MD simulation focusing on the binding site region (10Å around the catalytic residue).

Protocol 2: Ensemble Docking into a Homology Model

- Ensemble Generation: From Protocol 1, take the refined top 5 models. Optionally, add snapshots from the binding site MD trajectory (cluster to 10 representative structures).

- Binding Site Preparation: For each structure in the ensemble, prepare the protein (add hydrogens, assign charges) using

PDB2PQRor theProtein Preparation Wizard(Schrödinger). - Grid Generation: Define the docking grid centered on the geometric center of the conserved residues from your alignment. Use a large enough box size (e.g., 25Å) to account for model uncertainty.

- Consensus Docking: Perform docking with

AutoDock VinaorGLIDEagainst each grid. Use a consensus scoring scheme: rank compounds by their average score across all ensemble members, penalizing poses with high score variance.

Visualization

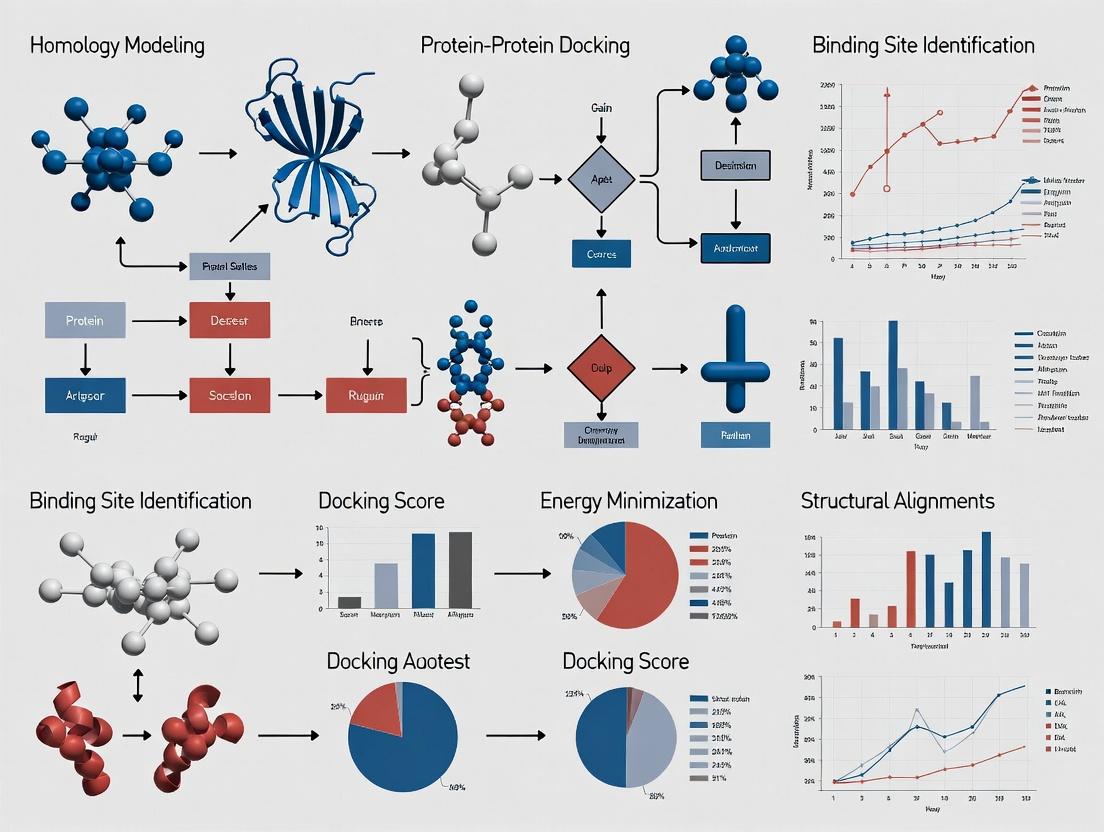

Title: Homology Modeling to Docking Workflow

Title: Docking Troubleshooting Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Homology Modeling & Docking

| Tool/Solution Name | Primary Function | Key Consideration for Homology Models |

|---|---|---|

| MODELER / SWISS-MODEL | Core homology model generation. | Use multiple templates and loop refinement options. |

| RosettaCM | Integrative modeling, especially useful for low-homology targets. | Computationally intensive but can yield superior models. |

| GROMACS / AMBER | Molecular Dynamics for model refinement and stability assessment. | Requires careful parameterization and extended equilibration. |

| AutoDock Vina / GLIDE | Molecular docking into prepared protein structures. | Use softened potentials or larger search boxes for model ambiguity. |

| QMEAN / MolProbity | Model quality assessment (global and local). | Critical for selecting the most physically plausible model. |

| Pymol / ChimeraX | Visualization and analysis of models, alignments, and docking poses. | Essential for manual inspection of binding site geometry. |

| Consensus Scoring Scripts | Combine scores from multiple docking runs or scoring functions. | Mitigates bias from any single function's limitations. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During template selection, my target sequence shows high homology (>50%) to multiple templates. Which one should I choose, and why does my model fail validation despite high sequence identity?

A: High sequence identity does not guarantee a suitable template for your specific research question. Follow this decision protocol:

- Prioritize Functional Relevance: Select the template co-crystallized with a ligand or under experimental conditions (e.g., pH) most similar to your study, even if its sequence identity is 2-3% lower.

- Check for Global vs. Local Issues: Use the alignment table below to diagnose. Model failures often stem from incorrect alignment in active site or flexible loop regions, not overall fold.

- Actionable Step: Realign using a method sensitive to local features (e.g., HHsearch) and manually inspect the alignment over your residue of interest (e.g., the binding pocket).

Table 1: Template Selection Decision Matrix

| Criterion | Optimal Choice | Risk if Ignored | Tool for Evaluation |

|---|---|---|---|

| Sequence Identity | >30% for core docking | Increased backbone errors | BLAST, HHblits |

| Resolution (Å) | <2.0 Å | Poor side-chain packing | PDB header |

| Ligand Presence | Co-crystallized with similar ligand | Incorrect binding site conformation | PDBsum |

| Coverage | Covers >90% of target length | Model contains large gaps | Alignment viewer |

Q2: After building the model, the active site geometry is distorted, leading to failed docking poses. How can I refine this region specifically?

A: This is a common issue in homology modeling for docking. Implement a protocol for local active site refinement:

- Extract the active site: Isolate residues within 10Å of your catalytic center or putative binding site.

- Apply stronger restraints: In your modeling software (e.g., MODELLER, RosettaCM), increase restraint weights on the backbone atoms of the active site to preserve template geometry.

- Perform targeted loop modeling: Use a dedicated protocol (e.g., Rosetta loopmodel, MODELLER DOPE assessment) only on the misfiled loops surrounding the pocket.

- Validate with geometry checkers: Post-refinement, analyze only the active site with MolProbity to ensure Ramachandran outliers and steric clashes are resolved.

Q3: During model evaluation, different metrics (DOPE, MolProbity, QMEAN) give conflicting results. Which metrics are most critical for downstream docking studies?

A: For docking applications, prioritize metrics that correlate with binding site accuracy over global fold metrics. Use this tiered validation protocol:

Table 2: Tiered Model Evaluation Protocol for Docking

| Tier | Metric Category | Target Value for Docking | Rationale |

|---|---|---|---|

| Tier 1 (Critical) | Stereochemical Quality (MolProbity) | Clashscore < 10, Ramachandran Outliers < 2% | Ensures physically plausible side chains for ligand interaction. |

| Tier 2 (Essential) | Local Geometry (3D) | DOPE score per-residue low in binding site | Direct indicator of binding region stability. |

| Tier 3 (Contextual) | Global Fold (QMEAN, GA341) | QMEAN Z-score > -4.0 | Confirms overall fold is correct; poor scores can indicate template misalignment. |

| Tier 4 (Functional) | Conservation Check (Verify3D) | >80% of residues have 3D-1D score > 0.2 | Ensures the model's environment is compatible with its sequence. |

Q4: My final model has a good global RMSD to the template but poor ligand docking scores compared to a crystal structure control. What specific steps can I take to improve the model's utility for virtual screening?

A: This indicates accurate backbone but inaccurate side-chain conformations (rotamers) in the binding pocket. Implement a binding site rotamer optimization protocol:

- Fix the backbone of the binding site residues based on your best template.

- Use a rotamer library (e.g., SCWRL4, Rosetta fixbb) to systematically sample and optimize side-chain conformations.

- Score with a docking-specific function: Minimize the energy of the binding site using a simplified version of your intended docking score function (e.g., Vinardo, ChemPLP).

- Validate by re-docking a known native ligand (if any) and monitor the improvement in RMSD of the docked pose.

Experimental Protocols

Protocol: Template Selection and Alignment for Docking-Ready Models Objective: Generate a target-template alignment optimized for binding site accuracy.

- Input: Target sequence, PDB database.

- Primary Search: Execute PSI-BLAST with an E-value cutoff of 0.001 over 3 iterations against the PDB.

- Profile-Profile Alignment: For top hits (>30% identity), perform a profile-based alignment using HHsearch. Manually inspect the alignment in the binding site region using Jalview.

- Template Prioritization: Rank templates by a composite score: (0.4 * Sequence Identity) + (0.3 * (1/Resolution)) + (0.3 * Binding Site Coverage). Select the top 3 templates.

- Output: A curated multiple sequence alignment file (.aln) for model building.

Protocol: Model Building with MODELLER for Docking Studies Objective: Build a model with emphasis on binding site geometry.

- Software: MODELLER v10.4.

- Script Modification: In the MODELLER Python script, increase the

special_restraintsweight for residues within 8Å of the template's ligand or catalytic site to 5.0. - Generation: Build 100 models using the

automodelclass. - Initial Scoring: Rank models by the MODELLER objective function and the

DOPEassessment score. - Output: Top 10 models for rigorous evaluation.

Visualizations

Four-Step Template Modeling Workflow

Model Evaluation & Troubleshooting Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Toolkit for Template-Based Modeling & Docking

| Tool / Reagent | Category | Primary Function | Example / Source |

|---|---|---|---|

| MODELER | Software Suite | Integrates all four steps: alignment, model building, loop modeling, scoring. | https://salilab.org/modeller/ |

| RosettaCM | Software Suite | Robust comparative modeling with integrative loop and domain modeling. | Rosetta Commons |

| Swiss-Model | Web Server | Fully automated, user-friendly pipeline for standard homology modeling. | https://swissmodel.expasy.org/ |

| MolProbity | Validation Server | Comprehensive structure validation for stereochemistry and clashes. | http://molprobity.biochem.duke.edu/ |

| UCSF Chimera | Visualization | Interactive visualization for alignment inspection, model analysis, and figure generation. | https://www.cgl.ucsf.edu/chimera/ |

| PDB Database | Data Repository | Source for all experimental protein structure templates. | https://www.rcsb.org/ |

| HH-suite | Search/Alignment | Sensitive profile-based methods (HHblits, HHsearch) for remote homology detection. | https://github.com/soedinglab/hh-suite |

| SCWRL4 | Software Tool | Fast and accurate side-chain conformation prediction for final model refinement. | http://dunbrack.fccc.edu/scwrl4/ |

Within strategies for molecular docking with homology modeled protein structures, the reliability of docking outcomes is intrinsically linked to the quality of the initial protein model. This technical support center focuses on the critical evaluation of model quality through specific metrics, with emphasis on how template selection—specifically its sequence identity to the target and structural coverage—impacts downstream virtual screening and drug discovery efforts.

Troubleshooting Guides & FAQs

Q1: My docking poses with a homology model show poor affinity despite high scoring function values. What could be wrong?

A: This discrepancy often originates in the model's structural quality, not the docking algorithm itself.

- Primary Check: Verify your model's Global Distance Test (GDT) score and Root Mean Square Deviation (RMSD) of the binding site residues. A high global GDT can mask local errors in the active site.

- Actionable Protocol:

- Align your homology model to the experimental structure (if available) or a high-quality reference using PyMOL or UCSF Chimera.

- Calculate the RMSD specifically for the residues within 5Å of the expected binding pocket.

- If the local RMSD > 2.0 Å, the binding site geometry is likely unreliable. Re-model using a different template with higher identity in that region or consider loop modeling techniques.

Q2: How do I interpret Template Identity and Coverage metrics when selecting a template for homology modeling?

A: These are pre-modeling indicators of potential quality.

- Template Identity: The percentage of identical amino acids between the target and template sequences. Higher identity generally yields more accurate models.

- Template Coverage: The percentage of the target sequence length that can be aligned to the template. Low coverage indicates large missing loops or domains.

- Decision Guide: Prefer templates with >30% identity for docking studies. For coverage, aim for >90%. If coverage is low (e.g., 70%), anticipate that unmodeled loops may border the binding site and require specialized modeling.

Q3: What are the key quantitative metrics to validate a homology model before proceeding to docking?

A: Post-modeling, use a combination of stereochemical and statistical potential checks. The following table summarizes the key metrics and their ideal thresholds:

Table 1: Key Model Quality Validation Metrics

| Metric | Tool Example | Ideal Threshold | Indicates |

|---|---|---|---|

| Ramachandran Favored | MolProbity, PROCHECK | >95% | Stereochemical quality of backbone dihedral angles. |

| Rotamer Outliers | MolProbity | <1% | Proper side-chain conformations. |

| Clashscore | MolProbity | <10 | Number of severe atomic steric overlaps per 100 atoms. |

| Cβ Deviations | WHAT-IF | 0 | Abnormal backbone conformation. |

| DOPE/Z-Score | MODELLER | Negative (Lower is better) | Statistical potential of mean force; overall model fitness. |

| Local Quality Estimate | QMEANDisCo | >0.7 per residue | Per-residue model reliability, critical for binding sites. |

Q4: I have multiple templates with varying identity and coverage. How do I design an experiment to choose the best for docking?

A: Implement a comparative modeling and validation pipeline.

Experimental Protocol: Comparative Template Assessment

- Template Selection: From your database (e.g., PDB), select 3-5 templates covering a range of identities (e.g., 25%, 40%, 60%) to your target.

- Model Building: Use a standard tool like MODELLER or SWISS-MODEL to generate one homology model per template, using the same parameters.

- Model Validation: For each model, run the validation suite in MolProbity and calculate the QMEANDisCo score. Compile results in a table.

- Binding Site Analysis: Superimpose the models and visually inspect the geometry of the predicted catalytic/binding residues.

- Decision Point: The optimal model is not always from the highest-identity template. Choose the model with the best combination of high local quality scores in the binding site, good stereochemistry (Ramachandran, Clashscore), and high overall coverage.

Table 2: Example Results from a Comparative Template Experiment

| Template PDB | Identity | Coverage | Model GDT (est.) | Clashscore | Binding Site Local QMEAN |

|---|---|---|---|---|---|

| 1A0B | 65% | 98% | 0.88 | 5.2 | 0.85 |

| 2X4F | 42% | 95% | 0.79 | 8.7 | 0.80 |

| 3KJ9 | 28% | 78% | 0.65 | 15.3 | 0.55 |

In this example, 1A0B is the clear choice. 2X4F may be a contender if 1A0B is unavailable, but 3KJ9's low coverage and poor local score disqualify it for docking.

Workflow & Relationship Diagrams

Title: Homology Model Quality Assessment Workflow for Docking

Title: Thesis: How Model Quality Factors Impact Docking Results

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Homology Modeling & Validation

| Item | Function & Purpose |

|---|---|

| SWISS-MODEL Server | Fully automated, web-based homology modeling pipeline. Ideal for quick model generation and initial quality estimates. |

| MODELLER Software | A highly flexible scripting platform for comparative modeling, allowing fine-grained control over the modeling process. |

| MolProbity Web Service | Integrative validation server providing Ramachandran, clashscore, rotamer, and Cβ deviation analysis. |

| UCSF Chimera / PyMOL | Molecular visualization software critical for visualizing model-template alignment, binding site geometry, and validation outliers. |

| PDB (Protein Data Bank) | Primary repository of experimentally determined 3D structures used as templates for homology modeling. |

| MMseqs2 / HMMER | Sensitive sequence search and alignment tools for identifying distant homologs as potential templates. |

| QMEANDisCo Server | Provides global and local (per-residue) quality estimates based on consensus methods, highlighting unreliable regions. |

| RosettaCM | An advanced, fragment-integrated comparative modeling suite for challenging targets with low template identity. |

Troubleshooting Guide & FAQs

Frequently Asked Questions

Q1: My docking poses with a homology model show good shape complementarity but are consistently ranked poorly by the scoring function. What could be the cause? A: This is a common issue when docking against homology models. The scoring function heavily depends on the precise geometry of the receptor's binding site. Inaccuracies in side-chain packing or loop modeling within the model can create artificial steric clashes or incorrect distances for optimal non-covalent interactions. The scoring function penalizes these, even if the overall pose seems correct. Focus on refining the binding site region of your model through loop modeling and side-chain rotamer optimization before docking.

Q2: How do I decide which scoring function to use for virtual screening on a novel homology model target? A: There is no single best function. The performance is target-dependent. The recommended protocol is to conduct a small-scale validation test. If you have known active and inactive compounds for your target (even a few), dock them against your model using multiple functions (e.g., Vina, Glide SP/XP, ChemPLP). Evaluate which function best separates actives from inactives. In the absence of known actives, use consensus scoring—selecting poses ranked highly by multiple, chemically diverse functions—to increase confidence.

Q3: Why does a small change in a ligand's torsion angle lead to a dramatic drop in the computed binding score? A: Scoring functions are highly sensitive to the geometry of non-covalent interactions. A change of a few degrees can break a critical hydrogen bond, moving the donor-acceptor distance outside the optimal range (typically ~2.5-3.2 Å) or misaligning dipoles. Similarly, it can disrupt favorable pi-stacking or cation-pi interactions. The functions use steep potential wells for these terms, so minor deviations result in large energy penalties, reflecting the precise nature of molecular recognition.

Q4: During ensemble docking with multiple homology model conformations, how should I interpret and combine the results? A: Docking against an ensemble accounts for model uncertainty and flexibility. Score normalization across different receptor conformations is crucial. First, dock your ligand library against each model conformation separately. Then, for each ligand, use the best score across all conformations (pose-based consensus) or calculate the average score. This approach identifies ligands that can bind favorably to at least one plausible state of the model. Present results as a ranked list based on this combined metric.

Q5: The hydrophobic contribution in my scoring function seems counterintuitive—sometimes burying a hydrophobic group lowers the score. Why? A: Most modern scoring functions evaluate hydrophobic interactions via contact terms or surface area burial, not simple "more is better." The issue may be desolvation penalty. If a hydrophobic group is not fully buried and remains partially exposed to solvent, it loses favorable van der Waals contacts with water without gaining sufficient protein contacts, resulting in a net energy cost. The scoring function is telling you the placement is suboptimal—the group may need to be more completely buried or positioned in a tighter hydrophobic pocket.

Experimental Protocols

Protocol 1: Validation of Docking Poses from a Homology Model Using Known Ligands

- Objective: To assess the predictive accuracy of a docking protocol by reproducing known ligand binding modes.

- Method:

- Preparation: Prepare your homology model and a known co-crystallized ligand from a related structure using standard software (e.g., UCSF Chimera, Schrödinger Maestro). Add hydrogens, assign partial charges, and define receptor grids.

- Docking: Perform flexible-ligand docking of the known ligand into your model. Use a high exhaustiveness setting to ensure thorough sampling.

- Analysis: Calculate the Root-Mean-Square Deviation (RMSD) between the top-ranked docked pose and the ligand's original conformation. A successful prediction typically has a heavy-atom RMSD < 2.0 Å.

- Scoring Function Audit: Manually inspect the top poses. Verify that key hydrogen bonds, salt bridges, and hydrophobic contacts predicted by the scoring function align with expected interactions from the related structure.

Protocol 2: Consensus Scoring to Prioritize Hits from Virtual Screening

- Objective: To improve the reliability of virtual screening hits by combining multiple scoring functions.

- Method:

- Docking Campaign: Dock your compound library against the prepared homology model using at least three distinct scoring functions (e.g., one empirical, one force-field-based, one knowledge-based).

- Rank Normalization: For each scoring function, rank all compounds from best (1) to worst (N).

- Consensus Calculation: For each compound, calculate its average rank across all functions. Re-sort the list based on this average rank.

- Hit Selection: Prioritize compounds with the lowest (best) average ranks. Optionally, apply a filter to select only compounds that appear in the top 10% of at least two individual lists.

Data Presentation: Common Non-Covalent Interaction Parameters in Scoring Functions

Table 1: Typical Geometric and Energetic Parameters for Key Non-Covalent Interactions

| Interaction Type | Optimal Distance (Å) | Optimal Angle (°) | Typical Energy Contribution (kcal/mol) | Functional Form in Scoring |

|---|---|---|---|---|

| Hydrogen Bond | Donor-Acceptor: 2.5-3.2 | D-H...A: ~180 | -1 to -5 (strong) | 12-10 Lennard-Jones, Angular term |

| Salt Bridge | Between charged groups: <4.0 | N/A | -3 to -6 | Coulombic electrostatics with distance-dependent dielectric |

| Van der Waals | Sum of vdW radii | N/A | -0.1 to -0.2 per contact | 6-12 Lennard-Jones potential |

| Pi-Pi Stacking | Aromatic ring centroids: 3.5-4.5 | Parallel or T-shaped | -0.5 to -2 | Special planar interaction terms |

| Cation-Pi | Cation to ring centroid: 3.0-4.5 | Cation over ring face | -2 to -5 | Combination of electrostatic and vdW terms |

| Hydrophobic | <4.0 from nonpolar atoms | N/A | ~-0.03 per Ų buried | Surface Area (SA) burial model |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Docking with Homology Models

| Item | Function in Research |

|---|---|

| Homology Modeling Suite (e.g., MODELLER, SWISS-MODEL) | Generates the initial 3D protein structure from the target sequence using a related template structure. |

| Loop Modeling Tool (e.g., Rosetta, FREAD) | Refines uncertain loop regions in the model, which are often near binding sites and critical for accurate docking. |

| Side-Chain Prediction Software (e.g., SCWRL4, ROSETTA) | Optimizes the rotameric states of amino acid side chains to minimize steric clashes and optimize packing. |

| Molecular Dynamics (MD) Simulation Package (e.g., GROMACS, AMBER) | Generates an ensemble of flexible receptor conformations for ensemble docking, capturing backbone and side-chain dynamics. |

| Docking Software with Multiple Functions (e.g., AutoDock Vina, Schrödinger Glide, GOLD) | Performs the ligand sampling and scoring, offering different algorithmic approaches to evaluate non-covalent interactions. |

| Consensus Scoring Script (e.g., Custom Python/R Script) | Combines results from multiple docking runs to improve hit identification and reduce scoring function bias. |

| Visualization & Analysis Software (e.g., PyMOL, UCSF Chimera) | Used for manual inspection of poses, auditing scoring function predictions, and analyzing interaction networks. |

Visualizations

Troubleshooting Guides & FAQs

Q1: Why do my ligands consistently dock to an unrealistic, solvent-exposed location on my homology model instead of the predicted binding pocket? A: This is often due to an overestimation of pocket hydrophobicity or incorrect side-chain rotamers in the model, creating a false favorable spot. First, recalculate and visualize the electrostatic potential surface of your model using tools like PyMOL or ChimeraX. Compare it to a known high-resolution structure of a close homolog. Manually inspect the side-chain packing in the true binding site; problematic residues may need optimization with a tool like SCWRL4 or RosettaFixBB before redocking.

Q2: After docking into a homology model, my pose rankings show no correlation with experimental activity. What's wrong? A: The geometry of the modeled active site may be too distorted for reliable scoring. Implement a two-step verification: 1) Perform a control docking of a known native ligand (or a close analog) from a co-crystal structure of the template. If this fails to reproduce the correct pose, your model's "dockability" is low. 2) Use a consensus scoring approach across multiple docking programs (AutoDock Vina, Glide, GOLD) to identify consistently ranked poses, as outlier rankings often arise from model artifacts.

Q3: How can I assess the local backbone reliability of my modeled binding site before investing in large-scale virtual screening? A: Utilize local model quality estimation tools. Run your model through the QMEANDisCo server or use ModFOLDclust2. These provide per-residue confidence scores. Focus on the binding site residues: a cluster of low scores (e.g., below 0.6) indicates a problematic region. A practical protocol is to generate an ensemble of models (e.g., 5-10) and only proceed with docking if the backbone atoms of key binding residues (e.g., catalytic triads, binding motifs) are consistent across the ensemble (Cα RMSD < 1.5 Å).

Q4: My homology model has a large, flexible loop near the binding site that is poorly aligned in the template. How should I handle it for docking? A: Indiscriminate docking into a flexible, poorly modeled loop region will generate false positives. Implement a loop modeling and clustering protocol:

- Excise and remodel the loop using RosettaCM or MODELLER's loop refinement.

- Generate multiple loop conformations (e.g., 100).

- Cluster the conformations based on loop backbone RMSD.

- Select representative models from the top 3-5 clusters for docking.

- Perform docking against each representative and compare results. Ligands that only dock well to one rare conformation are less reliable.

Q5: What are the definitive signs that a homology model is simply not suitable for docking-based studies? A: Red flags that critically compromise model "dockability" include:

- Sequence identity to the best template is below 30% for the binding site region.

- Key functional residues (e.g., catalytic residues, binding motifs) are misaligned or missing.

- Steric clashes exist in the core of the binding pocket that cannot be relieved by side-chain optimization.

- Consensus evaluation scores (like GA341 score in MODELLER < 0.7, or MolProbity clashscore > 30) indicate globally poor model quality.

Experimental Protocols

Protocol 1: Binding Site Geometry Validation via Native Ligand Docking

- Objective: To test the ability of a homology model to recapitulate a known binding mode.

- Materials: Homology model, template co-crystal structure with native ligand, docking software (e.g., AutoDock Vina), molecular visualization software.

- Method:

- Prepare the homology model and the template structure: add hydrogens, assign charges (e.g., using Gasteiger charges).

- Extract the native ligand from the template co-crystal structure.

- Define a docking grid/box. For the homology model, center it on the predicted binding site. For the template, center it on the crystallographic pose of the ligand.

- Perform docking of the native ligand into both the homology model and its template structure using identical parameters.

- Measure the Root-Mean-Square Deviation (RMSD) of the top-ranked docked pose from the crystallographic pose.

- Success Criteria: A pose RMSD ≤ 2.0 Å in the template control confirms the docking protocol. An RMSD ≤ 3.0 Å in the homology model suggests acceptable binding site geometry.

Protocol 2: Ensemble Docking to Account for Binding Site Flexibility

- Objective: To improve docking outcomes by accommodating structural uncertainty in the model.

- Materials: Main homology model, loop-modeled variants, or MD simulation snapshots.

- Method:

- Generate the Ensemble: Create multiple receptor structures. This can be done via:

- Sampling alternative side-chain rotamers (using SCWRL4).

- Modeling flexible loops in distinct conformations (using MODELLER).

- Running short, restrained molecular dynamics (MD) simulations and clustering snapshots.

- Prepare Structures: Prepare all ensemble members identically for docking (add charges, etc.).

- Perform Docking: Dock the ligand library against each ensemble member using the same grid center but potentially larger dimensions.

- Analyze Results: Consolidate results. Rank ligands by their best score across the ensemble or by their average score. Analyze pose consistency across the ensemble.

- Generate the Ensemble: Create multiple receptor structures. This can be done via:

- Note: This protocol is computationally intensive but crucial for models with mobile binding site elements.

Data Presentation

Table 1: Correlation Between Model Quality Metrics and Docking Success Rate

| Model Quality Metric | Threshold for "Dockable" Model | Impact on Virtual Screening (VS) Performance |

|---|---|---|

| Global Model Score (QMEAN) | > -4.0 | High score correlates with better enrichment in VS. |

| Binding Site Cα RMSD (vs. Native) | < 1.5 Å | Directly determines ability to reproduce native ligand pose (RMSD < 2.5 Å). |

| MolProbity Clashscore | < 20 | Lower clashscores reduce false favorable docking pockets. |

| Sequence Identity in Binding Site | > 40% | Higher identity dramatically increases probability of successful docking. |

| Per-Residue Confidence (pLDDT) in Site | Average > 70 | Ensures local backbone reliability for scoring function accuracy. |

Table 2: Troubleshooting Summary: Problematic Features vs. Remedial Actions

| Problematic Feature in Model | Symptom During Docking | Recommended Remedial Action |

|---|---|---|

| Overpacked Hydrophobic Cave | Ligands dock to non-physiological, deep hydrophobic spots. | Remodel side-chains with constraints; solvate model and re-calc. surface. |

| Mis-oriented Hydrogen Bond Donor/Acceptor | Loss of critical polar interaction; incorrect pose ranking. | Manual rotamer adjustment or use of H-bond network prediction tools. |

| Poorly Modeled Flexible Loop | Inconsistent poses; high score variance for similar ligands. | Ensemble docking with multiple loop conformations (see Protocol 2). |

| Global Backbone Distortion in Site | Native control docking fails (RMSD > 3.5 Å). | Consider alternative template or refine with rigid-body MSA. |

Visualization

Title: Workflow for Docking with Homology Models

Title: Model Features Directly Impact Docking Outcome

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Docking with Homology Models |

|---|---|

| MODELLER | Software for homology modeling; generates 3D coordinates from alignments. |

| SWISS-MODEL Server | Automated web-based homology modeling pipeline for quick initial models. |

| PyMOL/ChimeraX | Molecular visualization software for analyzing model quality, surface properties, and docking poses. |

| AutoDock Vina | Widely used, open-source docking program for pose prediction and scoring. |

| Rosetta Software Suite | For advanced model refinement (RosettaRelax), loop modeling, and ensemble generation. |

| SCWRL4 | Algorithm for accurate side-chain conformation prediction and optimization. |

| QMEANDisCo Server | Online tool for local model quality estimation, crucial for binding site assessment. |

| MolProbity | Service for structure validation, identifying steric clashes, and rotamer outliers. |

| PDBbind Database | Curated database of protein-ligand complexes for native ligand docking controls. |

| ZINC20 Database | Public library of commercially available compounds for virtual screening. |

A Practical Workflow: Preparing Models and Executing Docking Simulations

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My docking results are nonsensical, with ligands buried in non-physiological pockets or showing extreme energies. What went wrong in the model preparation step? A: This is often due to incorrect protonation states of key residues or missing critical hydrogens. Histidine tautomerization (HID, HIE, HIP) is a frequent culprit. Use a rigorous pKa prediction tool (e.g., PROPKA) to determine the correct protonation states at your target pH (typically 7.4). Re-run the hydrogen addition and charge assignment with these parameters.

Q2: After adding hydrogens and charges, the homology model shows severe steric clashes or distorted geometry. How should I proceed? A: Homology models, especially in loop regions, often contain local strain. Before docking, perform a restrained energy minimization. This relaxes the structure while keeping it close to the original model. Use a force field (e.g., AMBER, CHARMM) with restraints on the protein backbone heavy atoms (RMSD restraint of 0.3-0.5 Å). This resolves clashes without altering the overall fold.

Q3: How do I handle ambiguous side-chain rotamers in my model, particularly for surface residues not in the active site? A: For non-critical residues, use a fast side-chain optimization algorithm (e.g., SCWRL4, FASPR). For active site residues, a more careful approach is needed. Utilize a conformational search using molecular mechanics (MM) or short molecular dynamics (MD) simulations with implicit solvent, keeping the backbone fixed. Select the lowest energy rotamer consistent with known catalytic mechanisms or ligand binding data.

Q4: Which force field and charge set should I use for preparing a homology model for docking with AutoDock Vina or similar software? A: Consistency is key. Docking programs have internal scoring functions. For preparation, use a standard molecular mechanics force field.

- For general preparation/minimization: AMBER ff14SB or CHARMM36.

- For assigning Gasteiger charges (common for Vina): Use tools in Open Babel or MGLTools.

- For assigning AM1-BCC charges (often more accurate for ligands): Use Antechamber (from AMBER) or OpenEye toolkits. See the table below for a quantitative comparison.

Table 1: Comparison of Common Charge Assignment Methods for Docking Preparation

| Charge Method | Computational Speed | Typical Use Case | Recommended For |

|---|---|---|---|

| Gasteiger | Very Fast | High-throughput screening, large ligand libraries | Protein & ligand in AutoDock Vina/Znk |

| AM1-BCC | Moderate | Accurate ligand charge derivation, lead optimization | Ligand parameterization for more rigorous docking |

| RESP (HF/6-31G*) | Slow | Benchmarking, QM-derived accuracy for key complexes | Small, critical ligand sets in validated studies |

Q5: The prepared model has gaps or missing atoms in incomplete loops. Can I still use it for docking? A: It depends on the loop's location. If it's far from (>15 Å) the binding site, you may proceed. If it's near the site, you must model the loop. Use a dedicated loop modeling tool (e.g., ModLoop, Rosetta loop modeling, or the loop refinement protocol in your homology modeling software). Follow this protocol:

Experimental Protocol: Loop Refinement for Binding Site Integrity

- Identify: Isolate the incomplete loop region (residues with missing atoms).

- Sample: Generate 100-500 decoy conformations using a kinematic closure (KIC) or MD-based algorithm.

- Score: Rank decoys using a composite score (e.g., Rosetta's full-atom energy, DOPE score in MODELLER).

- Select & Minimize: Choose the top 5-10 lowest-energy models. Perform a restrained minimization of the selected loop with the surrounding protein (5 Å shell) to relieve clashes.

- Validate: Check the refined loop's geometry with MolProbity. Ensure it does not occlude the intended binding pocket.

Q6: How do I validate that my prepared model is "docking-ready"? A: Perform a post-preparation validation suite. Compare the prepared model to the initial model using RMSD (should be < 2.0 Å overall backbone). Specifically check:

- Steric Quality: Ramachandran plot (≥90% in favored regions via MolProbity).

- Charge Distribution: Visualize electrostatic surface (e.g., with PyMOL/APBS) for a physically plausible pattern.

- Active Site Integrity: Confirm catalytic residues are properly oriented and protonated.

Diagram Title: Workflow for Protein Model Preparation for Docking

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software Tools for Model Preparation

| Tool / Resource | Primary Function | Key Application in Preparation |

|---|---|---|

| PDB2PQR / PROPKA | Adds hydrogens, assigns protonation states based on pKa prediction. | Determining correct His, Asp, Glu, Lys states at physiological pH. |

| MGLTools (AutoDock Tools) | Prepares PDBQT files, assigns Gasteiger charges, merges non-polar hydrogens. | Standardized input generation for AutoDock Vina/Znk. |

| AmberTools (tleap, antechamber) | Force field parameterization, charge assignment (AM1-BCC, RESP), system building. | Creating high-quality parameters for ligands and restrained minimization. |

| SCWRL4 / FASPR | Fast and accurate side-chain conformation prediction. | Optimizing rotamers for non-active site residues. |

| Rosetta (relax protocol) | All-atom refinement and side-chain packing. | High-resolution optimization of loops and binding site residues. |

| UCSF Chimera / PyMOL | Visualization, structure analysis, and model manipulation. | Visual validation of charges, protonation, and steric fit. |

| MolProbity | All-atom structure validation server. | Final check of stereochemistry, clashes, and rotamer quality. |

Technical Support & Troubleshooting

Q1: After modeling my protein, the active site predicted by different servers (e.g., CASTp, COACH) shows significant variation. How do I define a reliable binding site for grid generation?

A: Discrepancy is common in homology models due to loop flexibility and side-chain packing errors. Follow this protocol:

- Consensus Analysis: Use at least three prediction tools (e.g., CASTp, SiteHound, Metapocket 2.0). Identify residues present in ≥70% of predictions.

- Template Alignment: Superimpose your model with the template structure(s) used for modeling. If the template has a known ligand (e.g., from PDB: 1XYZ), transfer that binding site footprint.

- Literature Mining: Use PDBsum to analyze known binding sites in homologous proteins (≥40% sequence identity).

- Define the Site: Use the union of residues from consensus and template alignment. Treat residues from literature as validation.

| Tool | Type | Key Output | Recommended Threshold |

|---|---|---|---|

| CASTp 3.0 | Geometry-based | Pockets, volume, area | Top 3 pockets by volume |

| COACH | Template-based | Ligand binding residues | Confidence score >0.7 |

| DeepSite | Deep Learning | Binding propensity grid | Probability >0.8 |

Q2: When generating a grid box around my defined site, what dimensions and center should I use to ensure it captures relevant pharmacophore space without being computationally prohibitive?

A: The optimal grid balances coverage and efficiency. Use this quantitative guide:

| Parameter | Recommended Value | Rationale & Adjustment Rule |

|---|---|---|

| Box Center | Centroid of residues defining the binding site. | Avoid using a single atom; the centroid captures the site's geometric center. |

| Box Dimensions | Start at 20Å x 20Å x 20Å. | For most drug-like ligands (<500 Da). |

| Dimension Adjustment | Increase by 1.5x if the native ligand (from template) is not fully enclosed. | Check using "Grid Box Validation" workflow below. |

| Grid Point Spacing | 0.375 Å to 0.5 Å. | Higher resolution (0.375Å) for precise scoring; 0.5Å for initial screening. |

Experimental Protocol: Grid Box Validation

- Dock the cognate ligand (from the highest-identity template) into the generated grid.

- Calculate the RMSD between the docked pose and the ligand's original coordinates (transferred from the template).

- Success Criteria: RMSD < 2.0 Å. If RMSD > 2.0 Å, systematically increase grid dimensions by 2Å increments and re-dock until criteria is met.

Q3: My homology model has a poorly defined, flexible loop near the suspected binding site. Should I include it in the grid, and how?

A: Flexible loops can lead to false positives/negatives. Implement a two-stage strategy:

- Stage 1 (Rigid): Generate a grid with the loop excluded. Perform initial docking to identify top candidate ligands.

- Stage 2 (Induced-Fit): For the top 10-20 ligands, use a flexible loop protocol (e.g., in RosettaFlex or Schrödinger's Prime) to refine the model, then re-dock into a new grid that includes the refined loop.

Q4: For a blind docking search on a model with no known site, what are the optimal global grid parameters?

A: Use an ensemble grid strategy to cover the protein surface efficiently.

| Strategy | Grid Center | Grid Dimensions | Use-Case |

|---|---|---|---|

| Single Global Box | Protein centroid. | Encompass entire protein. | Small proteins (<250 residues). |

| Multiple Sub-grids | Centers of largest 3 pockets from CASTp. | 25Å x 25Å x 25Å each. | Larger proteins, to prioritize likely pockets. |

Frequently Asked Questions (FAQs)

Q: Can I use the grid parameters from my template's crystal structure directly on my model? A: Not directly. While a good starting point, the binding cavity volume can differ by 10-15% in models. Always calculate the centroid based on your model's aligned residues and validate (see Q2 Protocol).

Q: Which software is most tolerant to the structural imperfections of a homology model during grid generation? A: AutoDock-GPU and LeDock are generally robust. For more advanced models, Schrödinger's Glide allows protein flexibility scaling during grid generation. See toolkit below.

Q: How do I report grid parameters for reproducibility? A: Always report: Software & Version, Box Center (x, y, z), Box Dimensions (Å), Grid Spacing (Å), and the list of residues used to define the center.

Experimental Workflow: From Model to Grid

Title: Workflow for Defining and Validating a Docking Grid on a Homology Model

The Scientist's Toolkit: Research Reagent Solutions

| Item / Software | Function in Binding Site/Grid Workflow | Key Consideration for Models |

|---|---|---|

| UCSF ChimeraX | Visualization, structural alignment, and centroid calculation. | Essential for visually inspecting model quality and superposing templates. |

| AutoDockTools | Generation of grid parameter files (GPF) for AutoDock Vina/GPU. | Robust to minor steric clashes; widely used benchmark. |

| Schrödinger (Glide) | High-throughput grid generation with protein flexibility options. | "Scaled van der Waals radii" setting can soften potential from modeling errors. |

| PyMOL (with APBS) | Electrostatic potential surface calculation and visualization. | Critical for defining grids in charged binding sites (e.g., kinases). |

| MetaPocket 2.0 | Consensus binding site prediction server. | Integrates 8 methods; improves reliability on models. |

| PDBsum | Database of ligand binding sites in known structures. | Source for template-based site definition. |

| REFINED | Web server for model refinement focused on binding sites. | Can improve local geometry before grid generation. |

Troubleshooting Guide & FAQs

Q1: During conformational sampling, my ligand exhibits unrealistic ring puckering or strained geometries. How can I resolve this? A: This is often caused by improper initial geometry or inadequate sampling parameters. Use the following protocol:

- Initial Optimization: Always perform a preliminary geometry optimization using quantum mechanical methods (e.g., HF/6-31G*) or a reliable force field (MMFF94) before conformational search.

- Sampling Method: For flexible rings, use a systematic torsional search or molecular dynamics (MD)-based sampling (e.g., 100-500ps in implicit solvent) instead of only stochastic methods.

- Restraints: Apply ring conformational restraints if the ligand's crystallographic data is available.

Q2: How do I determine the correct protonation and tautomeric state for my ligand at physiological pH (7.4) when docking into a homology model with uncertain electrostatic environment? A: The uncertainty of the model's binding site necessitates a multi-state docking approach.

- Protocol:

- Generate potential protonation/tautomeric states using tools like

Epik,MOE, orChemAxonCalculator Plugins at pH 7.4 ± 2.0 (range: 5.4 - 9.4). - Perform a quick docking of all states (low precision) into your homology model.

- Also, dock all states into a high-resolution reference crystal structure of a related protein, if available.

- Compare the consensus poses and rankings across both models. States that perform well consistently are more reliable.

- Select the top 2-3 states for final, high-precision docking.

- Generate potential protonation/tautomeric states using tools like

Q3: What is the impact of partial charge assignment methods on docking accuracy into homology models, and which should I choose? A: Homology models often have imprecise electrostatics, making charge choice critical. See Table 1 for a quantitative summary from recent benchmarks.

Table 1: Impact of Ligand Charge Assignment Methods on Docking to Homology Models

| Charge Method | Basis | Computational Cost | Typical Use Case for Homology Models | Reported RMSD Impact* |

|---|---|---|---|---|

| Gasteiger-Marsili | Empirical | Very Low | Initial high-throughput screening, very large libraries | Higher variability (± 2.0 Å) |

| MMFF94 | Force Field | Low | Standard protocol for organic molecules; good balance | Moderate reliability (± 1.5 Å) |

| AM1-BCC | Semi-Empirical QM | Medium | Recommended for final docking poses; better polarity | Improved accuracy (± 1.2 Å) |

| RESP (HF/6-31G*) | Ab Initio QM | High | Gold standard for key lead compounds; small libraries | Best theoretical accuracy (± 1.0 Å) |

*Reported RMSD (Root Mean Square Deviation) impact range relative to crystal ligand pose in benchmark studies.

Q4: I have a metal-coordinating ligand. How should I handle its charges and geometry? A: Standard force fields often fail. Follow this protocol:

- Geometry Optimization: Optimize the ligand-metal complex (if metal is from the protein) using DFT (e.g., B3LYP/6-31G*) with appropriate basis set for the metal.

- Charge Assignment: Use quantum mechanically derived charges (e.g., CHELPG, Merz-Kollman) for the ligand atoms involved in coordination.

- Docking Consideration: Treat coordinating bonds as potentially flexible constraints during docking if the metal ion is present in the protein model.

Q5: After preparing multiple conformers and states, my docking library is too large. How do I filter it? A: Apply a hierarchical filtering protocol:

- Energy Filter: Remove conformers with high steric strain (> 10 kcal/mol from MMFF94).

- Cluster by RMSD: Cluster remaining conformers using a 1.0-1.5 Å RMSD cutoff and select the centroid of each major cluster.

- State Priority: For each unique ligand, keep a maximum of the lowest-energy protonation state and the top 2-3 highest-population tautomers.

Experimental Protocols

Protocol 1: Comprehensive Ligand State Preparation for Homology Model Docking

- Objective: Generate an ensemble of ligand conformations and protonation/tautomeric states suitable for docking into a homology model with uncertain active site electrostatics.

- Software: Schrödinger Suite (LigPrep, Epik, ConfGen), Open Babel, RDKit, Gaussian.

- Steps:

- Input & Desalting: Provide ligand in SMILES or 2D/3D SDF format. Remove counterions and salts.

- Tautomer/Protomer Generation: Use Epik at pH 7.4 ± 2.0 to generate likely states. Set energy window to 10-20 kcal/mol for maximum coverage.

- Conformational Sampling: For each state, generate conformers using a mixed method:

- Systematic Rotamer Search: For all rotatable bonds (< 10).

- Monte Carlo Multiple Minimum (MCMM): For molecules with >10 rotatable bonds. Generate 1000-5000 conformations per state.

- Geometry Optimization & Minimization: Optimize all generated conformers using the OPLS4 or MMFF94 force field with implicit solvent (GBSA).

- Charge Assignment: Assign partial charges using the AM1-BCC method for the final library. For critical ligands, compute RESP charges via Gaussian (HF/6-31G* optimization & population analysis).

- Output: Produce a multi-conformer, multi-state library in Maestro (.maegz) or MDL SDF format.

Protocol 2: Benchmarking Ligand Preparation Protocols

- Objective: Validate your ligand preparation protocol by redocking ligands to their native crystal structures and to homology models.

- Steps:

- Curate a test set of 50-100 protein-ligand complexes from PDB.

- For each ligand, prepare it using your protocol (Protocol 1), generating an ensemble.

- Dock the ensemble back into the native crystal structure and calculate RMSD to the crystal pose.

- Create homology models for the same targets (using related templates).

- Dock the prepared ligand ensembles into the homology models.

- Compare the success rates (RMSD < 2.0 Å) between native and model structures to quantify preparation impact.

Workflow Diagram

Title: Ligand Prep Workflow for Homology Model Docking

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Tools for Ligand Preparation

| Item (Software/Tool) | Category | Primary Function in Ligand Prep |

|---|---|---|

| Schrödinger LigPrep/Epik | Commercial Suite | Integrated pipeline for generating 3D structures, tautomers, protonation states, and conformers. |

| Open Babel | Open-Source Tool | Format conversion, hydrogen addition/removal, basic conformer generation and charge assignment. |

| RDKit | Open-Source Cheminfo | Python-based toolkit for molecule manipulation, descriptor calculation, and rule-based conformer generation. |

| Omega (OpenEye) | Commercial Conformer Generator | High-speed, rule-based conformer generation for large libraries. |

| Gaussian/GAMESS | Quantum Chemistry Software | Ab initio geometry optimization and electrostatic potential calculation for deriving high-accuracy charges (RESP). |

| Antechamber (AmberTools) | Utility | Assigns AM1-BCC charges and converts between molecular file formats, often used for preparing ligands for MD. |

| MOE (Molecular Operating Environment) | Commercial Suite | Comprehensive ligand preparation, including protonation, conformational search, and charge assignment (MMFF94, etc.). |

| CYP450 Metabolism Prediction Modules | Specialized Plugin | Predicts likely sites of metabolism to guide protonation/tautomer state consideration for specific targets. |

Troubleshooting Guides & FAQs for Molecular Docking Experiments

FAQ 1: In my homology modeled receptor, DOCK systematically fails to find any poses with a favorable score. What could be the primary issue?

Answer: This is a frequent challenge when docking to homology models. The primary cause is often an improperly defined binding site due to inaccuracies in loop modeling or side-chain packing. Systematic search algorithms like DOCK are highly sensitive to the precise geometric definition of the search grid. A small deviation in the active site conformation can cause the grid to be misaligned, resulting in no favorable poses. First, verify your binding site definition using a known co-crystallized ligand from your template structure. Second, consider using a softer scoring potential or expanding the grid dimensions by 5-10 Å to account for model uncertainty.

FAQ 2: AutoDock Vina yields highly variable results (different top poses) across consecutive runs on the same homology model. Is this normal, and how should I interpret the output?

Answer: Yes, this is expected behavior due to Vina's stochastic search method (Monte Carlo). Variability indicates that the energy landscape of your homology model's binding site may be relatively flat or have multiple shallow minima. Best practice is to perform multiple runs (e.g., 20-50) with different random seeds and analyze the clustering of output poses. Consistent clustering around a similar pose conformation, despite the variability, increases confidence in the prediction. Use the --exhaustiveness parameter (increase to 32 or higher) to improve search depth and reproducibility.

FAQ 3: When validating my docking protocol on a crystal structure, both algorithms work well. But on my homology model, the predicted binding mode is radically different. How should I proceed?

Answer: This discrepancy highlights the intrinsic uncertainty of docking to modeled structures. Implement a consensus docking strategy:

- Perform docking with both DOCK (systematic) and Vina (stochastic).

- Use an ensemble of the top homology models (not just a single model).

- Apply a post-docking scoring or re-scoring step using a different, knowledge-based function. The consensus pose that appears across multiple models and/or algorithms is more likely to be reliable than any single top-scoring result.

Quantitative Data Comparison

Table 1: Algorithmic Comparison for Homology Model Docking

| Feature | DOCK (Systematic) | AutoDock Vina (Stochastic) |

|---|---|---|

| Search Method | Anchor-and-grow, systematic sampling | Monte Carlo with local gradient optimization |

| Speed (Typical Ligand) | Slower (minutes to hours) | Faster (seconds to minutes) |

| Determinism | Fully deterministic (same output for same input) | Non-deterministic (output varies per run) |

| Handling of Model Uncertainty | Low; requires precise grid definition | Moderate; stochastic search can sample imperfect pockets |

| Key Parameter for Homology Models | Grid spacing and box size | exhaustiveness and search space center/box |

| Optimal Use Case | Well-defined, rigid binding sites from high-quality models | Flexible search in potentially inaccurate or soft binding sites |

Experimental Protocol for Consensus Docking on Homology Models

Protocol: Validated Docking Workflow for Modeled Protein Structures

- Model Preparation: Refine the homology model's binding site loops and side chains using a tool like SCWRL4 or MODELLER's loop refinement.

- Binding Site Definition:

- Use the CASTp server or a known ligand from the template to define the pocket.

- For DOCK: Generate grids using

gridprogram with a box extending 8-10 Å beyond the defined site. Use a grid spacing of 0.3 Å. - For Vina: Define the center (x, y, z) and box size (sizex, sizey, size_z) from the same coordinates.

- Ligand Preparation: Generate 3D conformers and assign charges (e.g., using Open Babel or MOE). Convert to PDBQT format for Vina or mol2 for DOCK.

- Parallel Docking Execution:

- Run DOCK with the standard anchor-and-grow parameters.

- Run AutoDock Vina with an exhaustiveness value of 32, performing at least 20 independent runs.

- Post-Processing & Consensus Analysis:

- Cluster all output poses (from both programs) using an RMSD cutoff of 2.0 Å (e.g., using

clusterrmsdin DOCK or SciPy). - Rank clusters by both average docking score and population size.

- Visually inspect the top 3 consensus poses within the model's binding site context.

- Cluster all output poses (from both programs) using an RMSD cutoff of 2.0 Å (e.g., using

Visualization: Algorithm Workflow & Selection Logic

Title: Algorithm Selection Logic for Modeled Structures

Title: Consensus Docking Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Docking to Homology Models

| Item | Function & Relevance |

|---|---|

| Homology Modeling Suite (e.g., MODELLER, SWISS-MODEL) | Generates the initial 3D protein model from the template structure; critical first step. |

| Model Refinement Tool (e.g., GalaxyRefine, Rosetta) | Improves side-chain packing and loop regions of the model, directly impacting docking accuracy. |

| Protein Preparation Software (e.g., Chimera, MOE, Maestro) | Adds hydrogens, assigns charges, and optimizes H-bond networks for the model prior to docking. |

| Ligand Preparation Tool (e.g., Open Babel, LigPrep) | Generates correct 3D conformations, tautomers, and protonation states for small molecule ligands. |

Grid Generation Utility (DOCK grid, AutoDockTools) |

Defines the 3D search space for the docking algorithm; crucial parameter for homology models. |

| Pose Clustering & Analysis Scripts (e.g., in-house Python/R) | Post-processes multiple docking outputs to identify consensus poses and analyze variability. |

| Visualization Platform (e.g., PyMOL, UCSF ChimeraX) | Enables critical visual inspection of predicted poses within the context of the modeled binding site. |

Troubleshooting Guides & FAQs

Exhaustiveness

Q1: My docking results show poor ligand pose reproducibility. How can I improve consistency? A: Low reproducibility is often due to insufficient exhaustiveness. This parameter controls the number of Monte Carlo runs performed. For homology models, which have higher uncertainty, a higher value is required. Increase the exhaustiveness value to at least 16-24 for initial screens and 32-64 for final pose prediction. This allows for more thorough sampling of the conformational space.

Q2: Is there a quantitative guideline for setting exhaustiveness relative to the binding site size? A: Yes. While the binding site volume is a key factor, a practical guideline based on recent benchmarks is summarized below:

| Binding Site Volume (ų) | Recommended Exhaustiveness | Expected Computation Time Increase* |

|---|---|---|

| < 500 | 8 - 16 | 1x (Baseline) |

| 500 - 1000 | 16 - 32 | 2x - 4x |

| > 1000 | 32 - 64 | 4x - 8x |

*Time increase relative to an exhaustiveness of 8.

Protocol for Determining Optimal Exhaustiveness:

- Prepare your homology model and ligand using standard preparation tools (e.g., AutoDockTools, MGLTools, or Schrodinger's Protein Preparation Wizard).

- Define a docking grid box that fully encompasses the predicted binding site.

- Perform a series of docking runs on a known reference ligand (if available) with exhaustiveness values: 8, 16, 32, 64.

- For each run, repeat the docking 5-10 times with different random seeds.

- Calculate the Root-Mean-Square Deviation (RMSD) between the top-ranked poses from each repeat. Lower inter-run RMSD indicates higher reproducibility.

- Plot the mean top-pose RMSD (from a reference crystal pose) and the reproducibility RMSD against exhaustiveness. The optimal value is where both metrics plateau.

Flexibility

Q3: How should I handle side-chain flexibility in a homology-modeled binding site? A: Incorporate selective flexibility for key residues. Identify residues within 5-6 Å of the docked ligand that are predicted to have high B-factors or are in flexible loops from your model validation. You can treat these side chains as flexible during docking using methods like:

- Specified Flexible Residues: In tools like AutoDockFR or Vina, you can define side chains to be treated as flexible torsions.

- Ensemble Docking: Generate multiple conformations of the receptor (e.g., from molecular dynamics snapshots of the model) and dock against each.

Q4: What is the recommended protocol for identifying which residues to set as flexible? A:

- Run a short molecular dynamics (MD) simulation (50-100 ns) of the solvated homology model.

- Analyze the simulation trajectory and calculate the Root Mean Square Fluctuation (RMSF) for each residue.

- Cluster the frames from the MD trajectory to obtain 5-10 representative receptor conformations.

- Perform ensemble docking against this set of conformations. Residues that show significant conformational variation across the ensemble and are near the binding site are prime candidates for explicit flexibility.

- Alternatively, use computational alanine scanning or computational mutagenesis tools to predict hotspot residues whose flexibility impacts binding.

Handling of Co-factors/Ions

Q5: My homology model includes a critical catalytic metal ion (e.g., Zn²⁺). How do I parameterize it for docking? A: Metal ions require special force field parameters. The protocol involves:

- Retain the ion in the structure. Do not remove it.

- Assign correct charges and parameters. Use specialized tools to generate parameters:

- MCPB.py: (Metal Center Parameter Builder) for AMBER/GAFF force fields.

- MCPB.py: Can be used to generate parameters for AutoDock/ AutoDock Vina via conversion.

- Manual Parameterization: Define the ion's charge (e.g., +2 for Zn²⁺) and create a parameter file specifying its van der Waals radius and well depth. Consult the CHARMM or AMBER parameter database for standard values.

- Include the ion in the grid map generation so the scoring function accounts for its presence and interaction with the ligand.

Q6: An essential co-factor (e.g., NAD, HEM) was present in the template but is missing in my model. How do I reintroduce it? A:

- Structural Alignment: Superimpose your homology model onto the template structure that contains the co-factor.

- Co-factor Transfer: Extract the coordinates of the co-factor (and any coordinating residues) from the template and align them onto your model.

- Geometry Optimization: Perform a constrained energy minimization of the co-factor and its immediate protein environment within your model to relieve any steric clashes introduced during transfer, keeping the overall protein backbone restrained.

- Parameterization: Ensure you have the correct force field parameters and partial charges for the co-factor. Libraries such as the PRODRG2 server or the R.E.D.D.B. database can be used to obtain these.

Docking Workflow for Homology Models

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Docking with Homology Models |

|---|---|

| Homology Modeling Suite (e.g., MODELLER, SWISS-MODEL) | Generates the initial 3D protein structure from a related template. The foundation for all subsequent steps. |

| Structure Validation Tool (e.g., MolProbity, PROCHECK) | Evaluates the stereochemical quality of the model to identify problematic regions (e.g., Ramachandran outliers, clashes) before docking. |

| Force Field Parameter Database (e.g., R.E.D.D.B., AMBER parameter DB) | Provides accurate partial charges and van der Waals parameters for non-standard residues, metal ions, and co-factors essential for scoring. |

| Molecular Dynamics Software (e.g., GROMACS, NAMD) | Used to sample the flexibility and generate an ensemble of conformations for the homology model, crucial for assessing dynamics. |

| Docking Software with Flexibility Support (e.g., AutoDockFR, Vina) | The core engine that performs the ligand sampling and scoring, preferably with options for side-chain or backbone flexibility. |

| Pose Clustering & Analysis Scripts (e.g., RDKit, MDAnalysis) | Custom or community scripts to analyze docking outputs, calculate RMSD, cluster poses, and visualize results. |

| High-Performance Computing (HPC) Cluster Access | Essential for running exhaustive docking searches, ensemble docking, and any preceding MD simulations within a practical timeframe. |

Navigating Challenges: Troubleshooting Docking Failures and Refining Strategies

Troubleshooting Guides & FAQs

FAQ 1: Why does my virtual screen against a homology model yield a high hit rate in vitro, but the compounds show no activity in functional assays?

- Likely Cause: Scoring bias favoring compounds that complement errors in the modeled binding pocket, rather than the true native structure.

- Diagnosis: Perform a control docking run into the native template structure (if available). Compare the score distributions and ligand poses between the model and the template. A significant score improvement in the model suggests the scoring function is exploiting cavity artifacts.

- Solution: Re-score all top hits using a more rigorous, consensus scoring protocol or molecular mechanics-based methods (e.g., MM/PBSA). Prioritize compounds whose poses are conserved between the model and the template structure.

FAQ 2: My docking results show all top hits clustering in one pose, but it seems chemically unreasonable. What went wrong?

- Likely Cause: Poor conformational sampling due to incorrect side-chain rotamer placement in the binding site, creating a steric or electrostatic artifact that traps the docking algorithm.

- Diagnosis: Visually inspect the binding site of your homology model. Compare key side-chain orientations with those in the template and related structures. Run a short molecular dynamics (MD) simulation or side-chain repacking to assess the stability of the suspect residues.

- Solution: Generate an ensemble of homology models (using different loop modeling protocols or backbone perturbations) and dock into the ensemble. Use clustering analysis across models to identify poses that are robust despite local structural variations.

FAQ 3: How can I determine if failed experiments are due to poor sampling or an inherent scoring bias?

- Diagnosis: Conduct a "reverse decoy" test. Dock known active ligands and known inactive/decoy molecules into your model.

- Protocol:

- Curate a small test set of 5-10 known binders and 50-100 property-matched decoys.

- Perform high-throughput, conformational-expansive docking (e.g., high exhaustiveness in Vina-type software).

- Analyze the Receiver Operating Characteristic (ROC) curve and Enrichment Factors (EFs).

- Protocol:

- Interpretation: Poor early enrichment (EF1%) suggests scoring cannot distinguish actives. Good enrichment but poor pose prediction suggests sampling is inadequate to find the correct binding mode.

FAQ 4: The top-ranked compounds from docking are all chemically similar and have poor drug-like properties. Is this a bias?

- Likely Cause: Yes. This is often a combination of scoring bias (e.g., over-penalizing certain functional groups common in drugs) and dataset bias in your screening library.

- Solution: Apply property-based filters (e.g., Lipinski's Rule of Five, PAINS filters) before docking to pre-process the library. Post-docking, use a diversity-based selection from the top-scoring cluster representatives, not just the absolute top scores.

Experimental Protocol for Diagnosing Sampling & Scoring Issues

Protocol: Ensemble Docking and Consensus Scoring for Homology Models

- Model Generation Ensemble: Generate 5-10 alternative homology models using different software (e.g., MODELLER, Rosetta, SWISS-MODEL) or by sampling different loop conformations.

- Preparation: Prepare all protein models and ligands uniformly (same protonation states, force field parameters).

- Docking Execution: Dock the entire ligand library into each model structure using a standard protocol. Use a high sampling parameter (e.g., exhaustiveness=32 in AutoDock Vina).

- Pose Clustering: Pool all poses from all models. Cluster poses by ligand structural similarity and binding pose (RMSD < 2.0 Å).

- Consensus Scoring: Apply 2-3 distinct scoring functions (e.g., one empirical, one knowledge-based, one force-field based) to the top representative poses from each major cluster.

- Hit Prioritization: Rank compounds based on a consensus score (e.g., average rank across scorers) and the frequency of the pose appearing across the model ensemble.

Table 1: Diagnostic Test Results for a Sample Homology Model Docking Run

| Diagnostic Test | Metric | Value (Observed) | Target Threshold | Interpretation |

|---|---|---|---|---|

| Reverse Decoy | EF1% (Early Enrichment) | 8.5% | >10% | Marginal early enrichment. |

| Reverse Decoy | AUC-ROC (Area Under Curve) | 0.72 | >0.7 | Acceptable overall discrimination. |

| Template Comparison | Pose RMSD (Model vs. Template) | 4.2 Å | <2.0 Å | Poor pose conservation; model artifact likely. |

| Scoring Bias Check | Score Improvement (Model vs. Template) | +3.5 kcal/mol | ~0 kcal/mol | Strong bias, exploiting model errors. |

| Clustering Diversity | # of Unique Poses (Top 100 hits) | 3 | >10 | Extremely poor sampling diversity. |

Table 2: Key Research Reagent Solutions

| Item | Function in Diagnosis/Experiment |

|---|---|

| Homology Modeling Suite (e.g., MODELLER, RosettaCM) | Generates the initial 3D protein structure from the target sequence using a known template. |

| Molecular Docking Software (e.g., AutoDock Vina, Glide, rDock) | Computationally predicts the binding pose and affinity of small molecules to the protein model. |

| Decoy Dataset Generator (e.g., DUD-E, DEKOIS) | Provides property-matched inactive molecules to validate scoring function enrichment. |

| Consensus Scoring Script/Tool (e.g., VinaCarb, SIEVE-Score) | Combines results from multiple scoring functions to reduce individual method bias. |

| Molecular Dynamics Software (e.g., GROMACS, NAMD) | Assesses local stability of the homology model's binding site and refines docked poses. |

| Chemical Filtering Library (e.g., RDKit, Open Babel Pan-assay interference compounds (PAINS) filters) | Removes compounds with undesirable or promiscuous chemical motifs prior to docking. |

Diagrams

Troubleshooting Guides & FAQs

Q1: During docking with my homology model, the ligand consistently fails to make key interactions known from mutagenesis studies. The binding site region has a poorly modeled loop. What are my first steps?

A: This is a classic symptom of local structural ambiguity. First, assess the model's quality in that region. Check the per-residue confidence score (e.g., pLDDT from AlphaFold2) for the problematic loop and binding site. If scores are low (<70), consider these actions:

- Use flexible loop remodeling: Employ tools like Rosetta relax or MODELLER's loop modeling to generate an ensemble of alternative loop conformations.